Validating Socio-Cultural Ecosystem Service Data: A Methodological Framework for Researchers and Practitioners

This article provides a comprehensive methodological framework for validating socio-cultural ecosystem service (CES) data, addressing a critical gap in environmental and conservation science.

Validating Socio-Cultural Ecosystem Service Data: A Methodological Framework for Researchers and Practitioners

Abstract

This article provides a comprehensive methodological framework for validating socio-cultural ecosystem service (CES) data, addressing a critical gap in environmental and conservation science. It explores the evolution of CES concepts from first-generation critiques to contemporary second-generation approaches like relational values and biocultural indicators. The content details robust validation techniques, including cross-cultural scale development, statistical tests for measurement invariance, and innovative computational methods. Aimed at researchers and scientists, it offers practical strategies for troubleshooting common methodological challenges and provides a comparative analysis of validation approaches to ensure data reliability, cultural competence, and effective integration into policy and decision-making.

From Critique to Rigor: Establishing Foundations for CES Data Validation

Cultural Ecosystem Services (CES) refer to the non-material benefits people obtain from ecosystems through spiritual enrichment, cognitive development, reflection, recreation, and aesthetic experiences [1]. The assessment and validation of CES data have evolved through distinct methodological generations. First-generation frameworks relied heavily on traditional, resource-intensive methods like questionnaires and surveys. Second-generation frameworks leverage emerging technologies and big data sources, such as social media data analysis, to provide more scalable and cost-effective assessment solutions [2].

This technical support center provides researchers and scientists with practical guidance for navigating this methodological evolution, offering troubleshooting guides and experimental protocols for validating socio-cultural ecosystem service data.

Troubleshooting Guides & FAQs

FAQ 1: How do I choose between a first-generation and second-generation CES assessment method?

Answer: The choice depends on your research objectives, resource constraints, and the study area's context.

Use a First-Generation approach (e.g., questionnaires, interviews) when:

- Your study area has a low population density or limited social media usage.

- You require deep, qualitative understanding of cultural values and personal experiences.

- Your research focuses on specific demographic groups not well-represented on social media platforms.

Use a Second-Generation approach (e.g., social media data analysis) when:

- You are conducting a broad-scale spatial assessment of CES.

- Your project requires a cost-effective method to analyze large volumes of data.

- The study area is a popular destination for tourists and visitors who actively use social media [2].

FAQ 2: The social media data for my study area is sparse. Are second-generation methods still reliable?

Answer: Yes, under certain conditions. Recent research in less-developed, remote regions indicates that even with limited data, social media analysis can yield results highly consistent with traditional questionnaire methods. One study found that over 80-90% of places identified as having CES via questionnaires were also identified using social media data, with high statistical consistency (intraclass correlation coefficients of 0.76 to 0.96) [2]. If your area has even a minimal amount of geotagged social media content, a second-generation framework can be a viable and efficient alternative.

FAQ 3: What are the key differences in workflow between first- and second-generation frameworks?

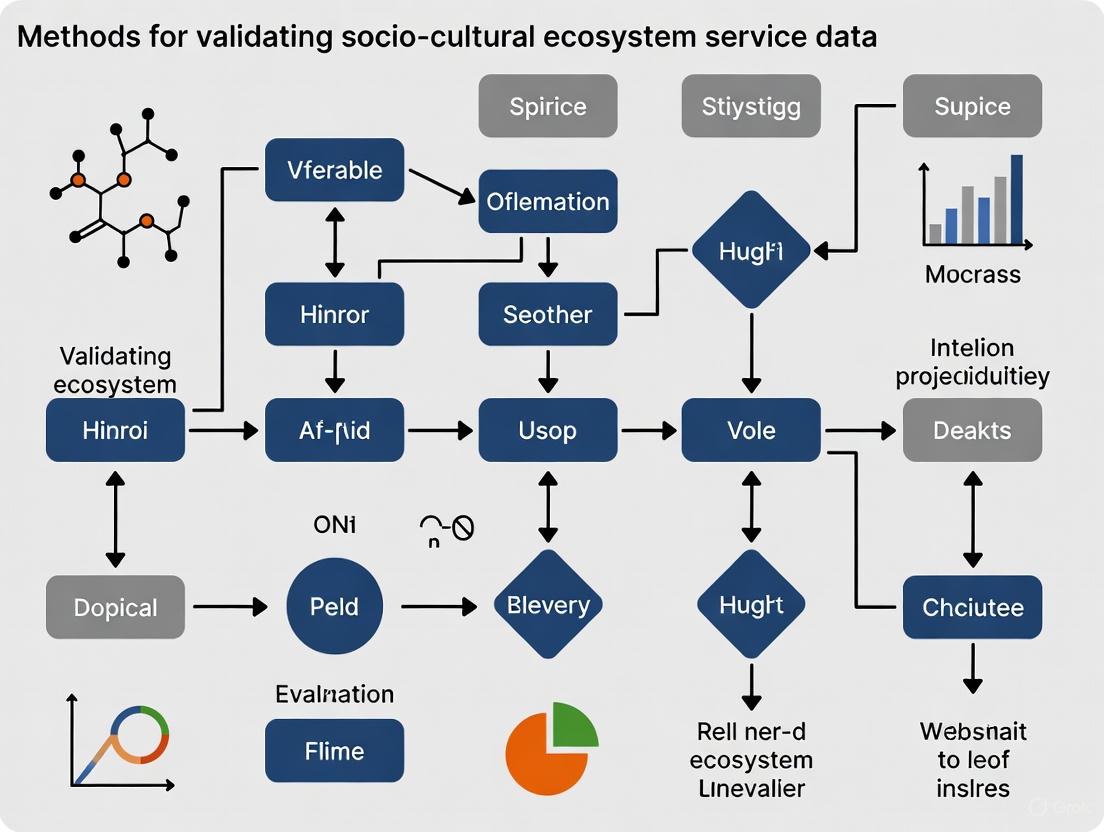

Answer: The core difference lies in data sourcing and processing. The diagram below illustrates the distinct workflows for each generation.

FAQ 4: How can I validate the results from a second-generation method?

Answer: The most robust validation involves triangulation with first-generation methods. As shown in the workflow diagram, the outputs from both frameworks should converge. You can validate your second-generation results by:

- Conducting a smaller-scale, targeted questionnaire survey in your study area and comparing the identified CES hotspots and values with those derived from social media data [2].

- Calculating consistency metrics, such as the intraclass correlation coefficient (ICC), to quantitatively assess the agreement between the two methods for different CES types (e.g., aesthetic, heritage, educational values) [2].

Experimental Protocols for CES Data Validation

Protocol 1: Traditional Questionnaire-Based CES Assessment (First-Generation)

This protocol is for establishing a ground-truth baseline to validate second-generation methods.

- Objective: To collect primary data on CES values directly from stakeholders via interviews and surveys.

- Materials: Digital or paper questionnaires, recording devices (if interviews), GIS mapping software, statistical analysis software (e.g., R, SPSS).

- Methodology:

- Questionnaire Design: Develop a survey that captures key CES indicators. These typically include:

- Aesthetic Value (AV): Rating the scenic beauty of landscapes.

- Cultural Heritage Value (CHV): Importance of historical or cultural sites.

- Recreation Value (RV): Use of the area for leisure activities.

- Spiritual or Educational Value (SEV): Significance for reflection or learning [1].

- Sampling: Identify and recruit a representative sample of local residents and visitors using random or stratified sampling techniques.

- Data Collection: Administer questionnaires through on-site interviews, mail, or online platforms.

- Data Processing: Code and digitize responses. Georeference mentioned locations.

- Data Analysis: Use statistical and spatial analysis to identify patterns and create maps of perceived CES values.

- Questionnaire Design: Develop a survey that captures key CES indicators. These typically include:

- Troubleshooting:

- Low Response Rate: Offer incentives, simplify the questionnaire, or use multiple contact methods.

- Spatial Bias in Responses: Ensure sampling covers the entire geographic area of interest, not just easily accessible locations.

Protocol 2: Social Media Data CES Assessment (Second-Generation)

This protocol uses publicly available geotagged data as a proxy for CES valuation.

- Objective: To assess and map CES values by analyzing the content and density of geotagged social media posts.

- Materials: Access to social media API (e.g., Flickr, Instagram, Twitter), data parsing scripts (e.g., Python, R), GIS software, content analysis tools.

- Methodology:

- Data Harvesting: Use APIs to collect geotagged posts (photos and text) from within your study area and a specified timeframe.

- Content Analysis:

- Text Analysis: Parse post captions and comments for keywords related to CES (e.g., "beautiful," "historic," "peaceful," "hiking").

- Image Analysis: Use machine learning-based image recognition to classify photos by landscape type or activity (e.g., mountain, waterfall, temple, picnic).

- Spatial Density Mapping: Map the density of social media posts. High-density areas often correlate with high CES.

- CES Value Assignment: Classify data into CES types based on content analysis. A post with a mountain photo and #sunrise tag can be assigned "Aesthetic Value."

- Troubleshooting:

- Low Data Volume: Widen the study area or timeframe, or aggregate data from multiple platforms.

- User Bias: Acknowledge that data represents the views of active social media users, which may not be fully representative of the entire population.

Quantitative Data Comparison

The table below summarizes a quantitative comparison of first- and second-generation methods based on a validation study.

Table 1: Comparison of CES Identification Consistency Between Methods

| CES Type | Consistency Rate (Questionnaire vs. Social Media) | Intraclass Correlation Coefficient (ICC) | Interpretation |

|---|---|---|---|

| Aesthetic Value (AV) | 90% of questionnaire-identified places were also found via social media [2]. | 0.96 [2] | Almost perfect agreement. |

| Cultural Heritage Value (CHV) | 90% of questionnaire-identified places were also found via social media [2]. | 0.84 [2] | Strong agreement. |

| Cultural Diversity Value (CDV) | 91% of questionnaire-identified places were also found via social media [2]. | 0.79 [2] | Strong agreement. |

| Scientific & Educational Value (SEV) | 80% of questionnaire-identified places were also found via social media [2]. | 0.76 [2] | Strong agreement. |

Table 2: Key Characteristics of CES Framework Generations

| Characteristic | First-Generation Framework | Second-Generation Framework |

|---|---|---|

| Primary Data Source | Questionnaires, interviews, surveys [1]. | Geotagged social media posts, online reviews [2]. |

| Typical Outputs | In-depth qualitative insights, perceived value maps. | Spatial density maps, content-based classification. |

| Relative Cost | High (labor-intensive). | Low (automated data harvesting). |

| Scalability | Limited by time and resources. | Highly scalable for large areas. |

| Spatial Resolution | Can be coarse due to sampling limits. | Can be very fine (exact GPS coordinates). |

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Research Reagent Solutions for CES Validation Studies

| Item Name | Function / Application in CES Research |

|---|---|

| Structured Questionnaire | The core "reagent" for first-generation studies. Used to quantitatively and qualitatively measure perceived CES values from human participants [1]. |

| Social Media API Access | Enables the automated harvesting of geotagged text and image data, which serves as the raw material for second-generation CES analysis [2]. |

| GIS Software Suite | The essential platform for mapping and spatially analyzing both questionnaire responses and social media data points to visualize CES distribution. |

| Statistical Analysis Package | Software (e.g., R, Python with pandas, SPSS) used to calculate descriptive statistics, run significance tests, and compute consistency metrics like ICC [2]. |

| Content Analysis Toolkit | A set of methods and software (e.g., NLP libraries, image recognition APIs) for classifying raw social media data into distinct CES categories [2]. |

Troubleshooting Guides

Guide 1: Addressing Data Intangibility in CES Research

Problem: Researchers cannot quantitatively measure intangible benefits like spiritual enrichment or cultural identity.

Solution: Employ projective and participatory techniques to make intangible values tangible.

- Recommended Action: Implement participatory mapping and free listing to convert abstract concepts into mappable spatial data or enumerable items [3] [4]. For example, ask participants to mark areas of spiritual significance on a map or list cultural benefits they derive from an ecosystem.

- Validation Tip: Triangulate your findings by using multiple methods. Combine data from interviews, focus groups, and visual tools like LANDPREF to cross-verify results and strengthen validity [5].

Guide 2: Mitigating Subjectivity and Researcher Bias

Problem: Personal and cultural biases of the researcher can skew the design, execution, and interpretation of CES studies.

Solution: Adopt a reflexive research practice and structured methodologies.

- Recommended Action: Use the Q methodology to systematically study participant subjectivity. This approach helps identify shared perspectives within a community without aggregating them into a single, potentially biased, average view [3].

- Experimental Control: Clearly document the demographic profiles of participants and the specific cultural context of the study. This transparency allows for better assessment of the transferability of findings to other groups or settings [4].

Guide 3: Navigating Diverse Cultural Contexts

Problem: Standard CES frameworks, often developed in Western contexts, may be inappropriate or misinterpret values in other cultural settings, especially in the Global South.

Solution: Prioritize context-specific frameworks and ensure ethical engagement.

- Recommended Action: Before applying a CES classification like CICES, conduct preliminary fieldwork to understand local value systems. The concept of "services" may be incompatible with some worldviews, which might instead emphasize relational values or cultural obligations to nature [6].

- Ethical Imperative: For research involving Indigenous Peoples, move beyond a utilitarian framing of "ecosystem services." Engage communities as co-researchers to ensure their knowledge systems and worldviews are accurately and respectfully represented, rather than simply extracted [6].

Frequently Asked Questions (FAQs)

How can we ensure the reliability of CES data when it is inherently subjective?

Reliability in CES is not about eliminating subjectivity but about understanding and documenting it consistently. Employ inter-coder reliability checks during qualitative data analysis. When using surveys, apply test-retest methods to check for consistency in responses over time and use structured instruments like the LANDPREF visualisation tool to reduce ambiguity [5].

What is the most effective method for validating socio-cultural valuation data?

There is no single "best" method; effective validation comes from methodological triangulation. This involves combining different valuation techniques (e.g., rating, weighting, participatory mapping) and comparing the results. If multiple methods converge on similar findings, confidence in the data's validity increases [3] [5]. Prospective evaluation in real-world contexts, rather than just retrospective analysis, is also key for robust validation [7].

Why do CES valuation studies often fail to influence environmental policy?

A significant gap exists between research and policy due to several factors:

- Geographical Bias: CES literature is heavily skewed towards Europe and North America, limiting its relevance for policymakers in the Global South [6].

- Power and Inequality: Studies often fail to address who has access to CES and whose values are being counted, leading to equity issues that complicate policy implementation [6].

- Methodological Inconsistency: The lack of a unanimous reference framework and inconsistent definitions of forest ecosystem services make it difficult to compare studies and build a compelling evidence base for policymakers [3].

Experimental Protocols for Key CES Methods

Protocol for Qualitative Comparative Analysis (QCA) in CES

Purpose: To identify complex causal patterns of conditions (e.g., CES quality and availability) that lead to a specific outcome (e.g., high visitor preference) [4].

Workflow:

- Define Outcome: Select the phenomenon you want to explain (e.g., "high visitation by older females").

- Select Antecedent Conditions: Identify key factors that might influence the outcome. Example conditions include:

- Calibrate Data: Convert all condition and outcome data into set-membership scores (e.g., 0 for non-membership, 1 for full membership).

- Construct Truth Table: Build a table listing all logically possible combinations of conditions.

- Analyze for Sufficiency: Use software to analyze which combinations of conditions are sufficient for the outcome to occur.

Protocol for Socio-Cultural Valuation Using Rating and Weighting

Purpose: To elicit and quantify the relative importance of different ecosystem services from a stakeholder perspective [5].

Workflow:

- Service Selection: In cooperation with local experts and stakeholders, derive a list of relevant ES based on a standard classification (e.g., CICES) [5].

- Stakeholder Engagement: Administer surveys (on-site or online) to a representative sample of users or residents.

- Rating Phase: Ask respondents to rate the importance of each ecosystem service on a scale (e.g., from 1, "not important," to 5, "very important") [5].

- Weighting Phase: Ask respondents to distribute a limited number of points (e.g., 100 points) across the same set of services to indicate their relative priority. This introduces a trade-off, revealing deeper preferences [5].

- Data Analysis: Analyze rating scores for absolute importance. Use weighting data to cluster respondents into groups with similar preference structures (e.g., "forest enthusiasts," "recreation seekers") [5].

Quantitative Data on CES Research

Table 1: Configurational Patterns Influencing Demographic-Destination Preferences for CES (Based on QCA of 22 Urban Green Spaces in Nagoya, Japan) [4]

| Demographic Group | Key Causal Conditions (Configuration) | Implied Visitor Preference |

|---|---|---|

| Young Adults & Males | High concern for transportation time | Quick and easy access is a primary driver. |

| Older People & Females | Multiple considerations for both CES quality and availability | A balanced combination of good facilities, diverse experiences, and convenient access. |

Table 2: Common Methodologies for Socio-Cultural Valuation of Forest Ecosystem Services [3]

| Methodological Approach | Primary Data Collection Method | Key Application in CES Research |

|---|---|---|

| Participatory Mapping | Focus Groups, Semi-structured Interviews | Identifies spatial distribution of CES values. |

| Social Media Analysis | Online Data Scraping | Assesses perceptions and preferences at a large scale. |

| Q Method | Sorting Exercises, Interviews | Identifies shared subjective viewpoints. |

| Free Listing | Surveys, Interviews | Elicits the most salient CES for a community. |

The Scientist's Toolkit: Key Research Reagents & Methods

Table 3: Essential Methodologies for CES Validation Research

| Tool/Method | Primary Function | Key Consideration |

|---|---|---|

| Qualitative Comparative Analysis (QCA) | Identifies complex, causal condition patterns for an outcome. | Moves beyond "net effects" to show how factors combine [4]. |

| LANDPREF / Visualisation Tools | Interactively assesses land use preferences via trade-off scenarios. | Reveals preferences that ES valuation alone cannot predict [5]. |

| Socio-Cultural Surveys (Rating/Weighting) | Elicits and quantifies the perceived importance of ES. | Weighting introduces trade-offs, providing deeper insight than rating alone [5]. |

| Participatory Mapping | Geographically locates and links intangible CES to landscapes. | Makes intangible values concrete and spatially explicit for planners [3]. |

| Complexity Theory Framework | Provides a lens for understanding dynamic, non-linear social-ecological systems. | Essential for interpreting QCA results and configurational causality [4]. |

Conceptual Foundation: What is Being Validated?

In the context of socio-cultural ecosystem services (CES) research, validation refers to the process of ensuring that the methods and data sources used to identify, classify, and measure non-material ecosystem benefits are accurate, reliable, and meaningful. CES represent the intangible benefits people obtain from ecosystems, including spiritual enrichment, recreational experiences, aesthetic appreciation, and cultural identity [8]. As research in this field increasingly shifts from traditional qualitative methods (like surveys and interviews) to automated approaches using crowdsourced social media data, the need for rigorous validation frameworks becomes paramount [8] [9]. This validation ensures that the digital footprints of human-nature interactions, such as geotagged photos and text reviews, are valid proxies for complex human experiences and perceptions.

The core challenge in CES validation lies in bridging the gap between digital traces and human experience. For instance, can the number of Instagram posts from a national park reliably measure its aesthetic value? Can the sentiment analysis of park reviews accurately capture cultural attachment? Validation is the systematic process of answering "yes" to these questions by demonstrating that your metrics truly represent the underlying socio-cultural concepts you intend to study [9].

Troubleshooting Guides and FAQs

Data Collection and Sourcing

Q1: Our data collection from social media APIs yields inconsistent or insufficient data volumes for analysis. What are the best practices?

- Problem: Incomplete or biased data sampling from social media platforms.

- Solution:

- Multi-Platform Sourcing: Do not rely on a single data source. Combine data from various platforms to mitigate platform-specific biases. Research uses text from Google Maps reviews alongside image data from Flickr and Instagram [8] [9].

- Longitudinal Collection: Collect data over extended periods (e.g., one full year) to account for seasonal variations in park usage and cultural activities [9].

- Robust Scraping: Use reliable programming libraries (e.g., Selenium in Python) for data collection, and always ensure you are only accessing publicly available information without breaching terms of service [8].

Q2: How can we validate that our automated CES identification method aligns with traditional survey-based results?

- Problem: Discrepancy between modern computational methods and established ground-truthing techniques.

- Solution:

- Convergent Validation: Conduct a parallel study where both a traditional survey and social media analysis are performed on the same geographic area. Statistically compare the results to see if they identify similar CES distributions and hotspots [8].

- Benchmarking: Use the survey results as a benchmark to calibrate your automated model. If the model identifies "aesthetic appreciation" in locations also highlighted by survey respondents, this provides strong evidence for its validity.

Data Processing and Classification

Q3: Our topic model for classifying CES from text data produces overlapping or incoherent categories.

- Problem: Poorly defined topics that do not cleanly map to established CES categories (e.g., recreation, aesthetics, culture).

- Solution:

- Advanced Topic Modeling: Employ state-of-the-art models like BERTopic, which leverages transformer-based embeddings for more context-aware topic identification [8].

- Human-in-the-Loop Validation: Implement a two-step process. First, the model suggests topics. Second, domain experts (researchers) review and label a sample of the topics and their associated keywords to ensure they align with CES definitions. This human-validation step is critical for metric integrity [8].

- Hyperparameter Tuning: Experiment with parameters in your model (e.g., the number of topics, minimum cluster size) to optimize the distinctness and interpretability of the resulting categories.

Q4: How do we handle and validate the sentiment analysis of user-generated text for CES studies?

- Problem: Automated sentiment scores may not accurately reflect the nuanced emotional context in CES-related text.

- Solution:

- CES-Specific Lexicons: Use or develop sentiment lexicons tailored to environmental and recreational contexts, as general-purpose lexicons may perform poorly.

- Manual Auditing: Randomly sample hundreds of comments and have human coders assign sentiment scores. Compare these human-coded scores with the algorithm's output to calculate an accuracy rate and adjust your model accordingly [9].

Analysis and Interpretation

Q5: Our CES accessibility maps show counter-intuitive results. How can we validate their accuracy?

- Problem: Spatial models of CES accessibility may not reflect real-world human mobility and preferences.

- Solution:

- Incorporate Perceived Accessibility: Move beyond simple distance-based metrics. Use a modified two-step floating catchment area (M2SFCA) method that integrates the actual perceived service level of a park (derived from social media sentiment and topic prevalence) into the accessibility calculation [9].

- Ground-Truthing with Surveys: Validate your high-resolution accessibility maps with local knowledge. Conduct spot-check surveys in neighborhoods identified as high-access and low-access to see if residents' lived experiences match the model's predictions [9].

Experimental Protocols for Validation

Protocol 1: Validating a CES Classification Model

This protocol outlines steps to validate a topic model used to classify social media text into CES categories.

- Objective: To demonstrate that an automated topic model can classify CES from text with accuracy comparable to human coding.

- Methodology:

- Data Collection: Collect a corpus of text reviews (e.g., from Google Maps) for your study area [8].

- Pre-processing: Clean the text data by removing stop words, punctuation, and performing lemmatization.

- Model Training: Apply the BERTopic model to the cleaned corpus to generate a set of candidate topics [8].

- Expert Labeling: Have a panel of CES researchers independently label the generated topics by reviewing the top key terms and a random sample of associated texts. The experts assign each topic to a CES category (e.g., Recreation, Aesthetic, Cultural) or mark it as "non-CES."

- Calculation of Metrics:

- Precision: (Number of correctly identified CES topics) / (Total number of topics identified by the model).

- Recall: (Number of correct CES topics) / (Total number of CES topics present as defined by experts).

- Inter-Coder Reliability: Calculate Cohen's Kappa among the human experts to ensure consensus.

The workflow for this validation protocol is systematic and iterative, as shown in the following diagram:

Protocol 2: Cross-Method Validation for CES Perception

This protocol validates CES perception levels derived from social media against traditional survey methods.

- Objective: To establish convergent validity by comparing CES perception levels measured via social media data with those from a standardized questionnaire.

- Methodology:

- Define Study Area and CES: Select a set of urban parks and define the CES to be studied (e.g., Recreational Activities, Outdoor Workouts, Cultural Heritage) [9].

- Social Media Data Collection & Analysis: Use APIs to crawl user reviews for the parks. Analyze the text to compute a perception score for each CES in each park, for example, based on the frequency of topic mentions weighted by sentiment [9].

- Traditional Survey: Design and administer a questionnaire to park visitors, asking them to rate the importance and performance of each CES on a Likert scale.

- Statistical Testing: Use correlation analysis (e.g., Spearman's rank correlation) to compare the relative ranking of parks based on CES levels from social media with the ranking from survey results. A strong, significant correlation provides evidence for the validity of the social media method.

The following table summarizes key quantitative benchmarks from the field to guide your validation efforts:

Table 1: Quantitative Benchmarks for CES Validation Studies

| Metric | Description | Exemplary Value from Literature |

|---|---|---|

| Data Collection Scale | Number of social media reviews for a robust analysis. | 26,657 valid online comments for 115 urban parks [9]. |

| Validation Statistical Method | Method for comparing traditional and novel data sources. | Importance-Performance Analysis (IPA); Modified Two-Step Floating Catchment Area (M2SFCA) [9]. |

| Spatial Analysis Unit | Granularity for measuring accessibility equity. | Hexagonal grid with a side length of 100m to reduce sampling bias [9]. |

| Primary CES Identified | Common CES categories identified via topic modeling. | Recreational activities, aesthetic enjoyment, cultural heritage, social interaction, and outdoor workouts [9]. |

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational "reagents" and data sources essential for conducting validated CES research.

Table 2: Essential Research Tools for CES Data Validation

| Tool / Solution | Function in CES Research |

|---|---|

| Python (with Selenium library) | A programming language and library used for creating custom web scraping programs to collect publicly available user reviews from platforms like Google Maps [8]. |

| Social Media APIs | Application Programming Interfaces (e.g., from Flickr, Google Maps, TripAdvisor) used to systematically access and collect geotagged user-generated content (images and text) [9]. |

| BERTopic Model | An advanced natural language processing (NLP) technique for topic modeling. It identifies latent themes (CES) within large text corpora by leveraging transformer-based embeddings [8]. |

| Sentiment Analysis Library | A software tool (e.g., VADER, TextBlob) that automatically determines the emotional tone (positive, negative, neutral) of text data, helping to gauge public perception of CES [9]. |

| Statistical Software (R, Python Pandas) | Environments for performing essential statistical tests (e.g., correlation, significance testing) to validate findings and ensure the robustness of the results [8]. |

| GIS (Geographic Information System) | Software (e.g., ArcGIS, QGIS) for mapping CES, analyzing spatial patterns, and calculating advanced metrics like perceived accessibility [9]. |

Researcher Support Center: FAQs & Troubleshooting

This support center provides practical guidance for researchers navigating the conceptual and methodological challenges of incorporating relational values and biocultural indicators into studies on socio-cultural ecosystem services.

Frequently Asked Questions (FAQs)

FAQ 1: What are relational values, and how do they differ from instrumental and intrinsic values in ecosystem service assessments?

Relational values are a distinct category of value assessment. They are not about what nature can do for people (instrumental value) or the value inherent in nature itself (intrinsic value). Instead, they express the importance of relationships that involve nature, such as the bonds between people and places, and the principles that guide how we interact with the non-human world, such as care, stewardship, and responsibility [10] [11]. They are anthropocentric but non-instrumental, filling a conceptual gap left by the traditional instrumental/intrinsic dichotomy [11].

FAQ 2: My quantitative data on land use preferences seems to conflict with my qualitative data on socio-cultural values. Why is this, and how should I proceed?

This is a known methodological challenge. A 2017 study on the Pentland Hills Regional Park found that while socio-cultural values of ecosystem services and user characteristics were associated with different clusters of land use preferences, they were not suitable predictors for those preferences [5]. This implies that while values inform general perceptions, they do not directly translate into specific land-use choices. Your research should, therefore, treat these as complementary but distinct data sets. Assess socio-cultural values and land use preferences separately rather than using one to replace the assessment of the other [5].

FAQ 3: How can I effectively identify and document relational values in my fieldwork?

Engage in transdisciplinary and participatory methods. Research in the Indigenous community of Capulálpam de Méndez successfully used fuzzy cognitive maps from conversations with community groups to identify central themes like "care" and "celo" (protective love and zeal) [10]. Strong intergenerational considerations—including traditions from the past and responsibilities to the future—were also found to infuse present-day management decisions [10]. The process of open discussion about the links between values and management can itself facilitate broader community awareness [10].

FAQ 4: What is "plural valuation" and why is it critical for my research on socio-cultural data?

Plural valuation is "an explicit, intentional process in which agreed-upon methods are applied to make visible the diverse values" associated with nature [10]. It is a direct response to critiques that relying solely on monetary valuation is insufficient and often problematic. It emphasizes using a diversity of methods and indicators beyond money to represent value, ensuring that often-marginalized values, common in Indigenous and local communities, are included in decision-making processes [10].

Troubleshooting Common Experimental & Methodological Issues

Issue 1: Relational values are being overshadowed by economic metrics in my integrated assessment.

- Root Cause: A common pitfall is the historical dominance and perceived objectivity of monetary valuation, which can marginalize non-material and relational values [10].

- Solution:

- Explicitly Frame Values: At the outset of your study, use a framework like the IPBES typology to deliberately categorize values as instrumental, intrinsic, or relational. This makes relational values a visible and formal part of the analysis [10].

- Use Participatory Deliberation: Employ methods like focus groups or community workshops that allow participants to articulate values in their own words, which often naturally reveals relational values such as care for future generations or cultural identity [10] [11].

- Present Values Alongside Metrics: In your final report, create a dedicated section that presents relational values with the same rigor and detail as economic or biophysical data, using direct quotes, cognitive maps, and narrative descriptions.

Issue 2: My survey results on ecosystem service importance are inconsistent and lack depth.

- Root Cause: Relying on a single technique, such as simple rating or weighting of ecosystem services without context, can produce abstract results that fail to capture nuanced values and trade-offs [5].

- Solution:

- Employ Mixed-Methods: Combine quantitative techniques (e.g., rating, weighting) with qualitative, in-depth approaches (e.g., interviews, participatory mapping) [5].

- Provide Context: Use visual aids like photographs or the LANDPREF visualisation tool to ground the valuation in realistic scenarios and trade-offs, which helps elicit more considered and meaningful responses [5].

- Clarify the "Why": Follow up survey questions with open-ended prompts that ask respondents to explain the reasons behind their ratings, uncovering the relational and instrumental motivations behind their choices.

Issue 3: I am struggling to identify meaningful biocultural indicators for my study site.

- Root Cause: Biocultural indicators—which link biological and cultural diversity—are often context-specific and cannot be effectively developed without deep local engagement.

- Solution:

- Collaborate with Local Knowledge Holders: Partner with Indigenous and local community members to co-produce indicators. The Capulálpam study demonstrates that concepts central to community life, like "celo," can form the foundation of relevant indicators [10].

- Focus on Practices and Governance: Look for indicators related to traditional land management practices, intergenerational knowledge transfer, and communal governance systems (e.g., the philosophy of comunalidad in southern Mexico) [10].

- Link to Landscape Features: Identify specific biological species, habitats, or landscape features that hold cultural significance, such as sacred groves or ancestral hunting grounds, and monitor their status.

The table below summarizes key data from case studies, highlighting the relationships between valuation methods, value types, and outcomes.

Table 1: Comparative Analysis of Socio-Cultural Valuation Approaches in Case Studies

| Case Study / Context | Primary Valuation Method(s) Used | Key Value Types Identified | Outcome / Finding Relevant to Validation |

|---|---|---|---|

| Capulálpam de Méndez, Mexico [10] | Transdisciplinary collaboration; Fuzzy cognitive maps from group conversations. | Relational values (care, celo, intergenerational responsibility); Wary of monetary value. | Relational values were pivotal in territorial management; discussion of value-management links raised community awareness. |

| Pentland Hills, Scotland [5] | Tablet-based & online surveys; Novel visualisation tool (LANDPREF) for land use preferences; Rating and weighting of ES. | Material and non-material NCP; Land use preferences (5 distinct clusters). | Socio-cultural ES values and user characteristics were associated with but were not predictors of land use preferences. |

| Theoretical Framework [11] | Analysis of valuation typologies and processes. | Relational (non-instrumental, anthropocentric), Instrumental, Intrinsic. | Relational values provide a more adequate articulation of human-nature relationships than the intrinsic/instrumental dichotomy alone. |

Experimental Protocol: Operationalizing Relational Values

Protocol Title: Participatory Identification and Mapping of Relational Values for Ecosystem Service Validation.

1. Objective: To make visible the relational values associated with a specific territory through a structured, participatory process that yields data for validating socio-cultural ecosystem service assessments.

2. Background: Relational values, such as senses of place, stewardship principles, and cultural identity, are often overlooked in standard ES assessments. This protocol provides a methodology for their explicit documentation [10] [11].

3. Materials & Reagents:

- "Research Reagent Solutions" & Essential Materials:

- Stimulus Materials: Maps (physical or digital) of the study area, photographs of key landscapes/ecosystems.

- Data Recording: Audio recorders, notebooks, fuzzy cognitive mapping software (e.g., Mental Modeler) or large sheets of paper and markers.

- Participant Recruitment: Pre-established partnerships with local communities; informed consent forms.

4. Step-by-Step Methodology: 1. Co-Design Workshop: Prior to data collection, conduct a workshop with local authorities and community representatives to define the research questions and methods, ensuring cultural appropriateness and relevance [10]. 2. Participant Selection: Use purposive sampling to engage a diverse range of stakeholders (e.g., different ages, genders, livelihoods) within the community. Aim for small, homogeneous groups (e.g., 5-7 participants per group) to encourage open discussion [10]. 3. Value Elicitation Sessions: * Introduction: Explain the session's goal is to understand relationships with the territory. * Semi-Structured Interview: Use open-ended questions: "What relationships with this territory are most important to you and your community?" "What principles (e.g., care, responsibility) should guide how this territory is managed?" "What did your ancestors leave for you, and what do you want to leave for your children?" * Cognitive Mapping: As themes emerge (e.g., "water," "forest for timber," "sacred mountains," "responsibility to future"), guide participants in creating a fuzzy cognitive map. Have them draw connections between concepts and indicate the direction (positive/negative) of the influence. 4. Data Consolidation & Feedback: Aggregate the maps and summaries from all groups. Present these consolidated results back to the broader community and local authorities for verification, reflection, and discussion [10]. 5. Analysis: Analyze the cognitive maps to identify central nodes (key values) and feedback loops. Thematically analyze the transcribed conversations to flesh out the meaning of these values (e.g., what "care" entails in practice).

Conceptual Workflow and Signaling Pathways

The following diagram visualizes the integrated methodological workflow for validating socio-cultural data, from conceptual framing to data integration.

Socio-Cultural Data Validation Workflow

The Scientist's Toolkit: Key Conceptual Frameworks & Indicators

Table 2: Essential Conceptual Tools for Socio-Cultural Ecosystem Service Research

| Tool / Framework Name | Type | Brief Explanation of Function |

|---|---|---|

| IPBES Values Typology | Conceptual Framework | A nested framework categorizing worldviews, broad values, specific values, and indicators. It helps formalize the complexity of environmental values and how they interact [10]. |

| Instrumental Values | Value Category | Captures the worth of nature as a means to achieve a human end (e.g., timber, water provision) [10] [11]. |

| Relational Values | Value Category | Captures the importance of meaningful relationships between people and nature, and the principles (e.g., care, stewardship) that guide these relationships [10] [11]. |

| Plural Valuation | Methodological Approach | The process of applying diverse methods to make visible the multiple values of nature, moving beyond a reliance on any single metric, especially monetary [10]. |

| Fuzzy Cognitive Mapping (FCM) | Participatory Method | A semi-quantitative tool for modeling complex systems as concepts (e.g., values, ecosystem services) and their causal relationships, ideal for capturing community perceptions [10]. |

| Biocultural Indicators | Metric | Context-specific measures that track the state of linkages between biological diversity and cultural diversity (e.g., status of culturally significant species, practice of traditional rituals) [10]. |

A Toolbox for Robust Validation: Techniques and Practical Applications

In the field of socio-cultural ecosystem service research, valid and reliable measurement scales are indispensable for generating comparable, cross-cultural data. Scales measure latent constructs—behaviors, attitudes, and hypothetical scenarios we expect to exist but cannot assess directly [12]. The development of scales that maintain cross-cultural equivalence presents significant methodological challenges, as instruments developed in one context often perform poorly when translated or applied in different cultural settings due to cultural differences in conceptual definitions of behaviors and experiences [12]. This technical support guide presents a comprehensive 10-step framework for structured scale development and validation, specifically designed to ensure cross-cultural validity while addressing common implementation challenges researchers encounter throughout the process.

The 10-Step Framework for Cross-Cultural Scale Development

Based on a synthesis of current methodologies and a scoping review of 141 studies, the following 10-step framework provides a systematic approach to cross-cultural scale development [12] [13]. The complete process spans three primary phases: Item Development, Scale Development, and Scale Evaluation.

Table 1: The Comprehensive 10-Step Scale Development Framework

| Phase | Step | Key Activities | Cross-Cultural Considerations |

|---|---|---|---|

| Item Development | 1. Identification of Domain and Item Generation | Literature reviews; Deductive (existing scales) and inductive (focus groups, interviews) methods [14] [15] | Conduct focus groups/interviews with diverse target populations; ensure items are relevant across cultures [12] |

| 2. Content Validity Assessment | Expert panels; Target population evaluation [15] | Involve measurement experts and linguists to ensure cross-cultural validity and translatability [12] | |

| Scale Development | 3. Translation for Cross-language Equivalence | Back-translation; Collaborative team approach [12] [16] | Use back-and-forth translation, expert review, or collaborative iterative approaches [12] |

| 4. Pre-testing Questions | Cognitive interviews [12] | Conduct cognitive interviews across languages/cultures to understand interpretation [12] | |

| 5. Survey Administration & Sampling | Administer to target population | Adapt recruitment strategies and incentives to local contexts; recommended: 10 respondents per item, 150-200 per subgroup [12] [15] | |

| 6. Item Reduction | Item difficulty, discrimination tests; item-total correlations [14] | Conduct separate reliability tests in each sample [12] | |

| 7. Extraction of Factors | Exploratory Factor Analysis (EFA); Parallel analysis [14] [15] | Perform separate factor analysis in each subgroup to understand factor structure patterns [12] | |

| Scale Evaluation | 8. Tests of Dimensionality & Measurement Invariance | Confirmatory Factor Analysis (CFA); Multigroup CFA (MGCFA); Differential Item Functioning (DIF) [12] [17] | Test configural, metric, and scalar invariance using MGCFA (ΔCFI<0.01, ΔRMSEA<0.015) [12] [17] |

| 9. Tests of Reliability | Internal consistency (Cronbach's alpha); Test-retest reliability [14] [15] | Conduct separate reliability analyses for each cultural/language group [12] | |

| 10. Tests of Validity | Criterion, convergent, discriminant validity; known-groups validation [14] | Validate against local criteria relevant to each cultural context [18] |

Diagram 1: The 10-Step Scale Development and Validation Workflow

Essential Research Reagents and Methodological Solutions

Table 2: Key Research Reagents and Methodological Solutions for Cross-Cultural Scale Development

| Category | Tool/Solution | Primary Function | Application Context |

|---|---|---|---|

| Qualitative Data Collection | Focus Group Discussions | Explore shared perspectives; identify culturally-specific constructs [12] [19] | Initial item generation; content validation with target populations |

| Semi-structured Interviews | Elicit individual experiences and concept understanding [19] [18] | Concept elicitation; cognitive interviewing during pretesting | |

| Translation & Adaptation | Back-Translation Protocol | Identify conceptual and semantic discrepancies [12] [16] | Achieving cross-language equivalence (most common approach) |

| Collaborative Team Translation | Resolve cultural and linguistic nuances through consensus [12] | Contexts where simple back-translation proves insufficient | |

| Psychometric Analysis | Multigroup Confirmatory Factor Analysis (MGCFA) | Test measurement invariance across groups [12] [17] | Establishing cross-cultural equivalence (configural, metric, scalar) |

| Differential Item Functioning (DIF) | Identify items functioning differently across subgroups [12] | Detecting cultural bias in individual scale items | |

| Validation Tools | Cognitive Interview Protocols | Verify item interpretation matches intent [12] [18] | Pretesting stage to identify problematic items |

| Known-Groups Validation | Test ability to differentiate between distinct groups [14] | Establishing criterion validity in cross-cultural contexts |

Troubleshooting Guide: Frequently Asked Questions

Implementation Challenges in Early Development Stages

Q: Our team is struggling with generating items that are relevant across different cultural contexts. What strategies can we employ?

A: Combine deductive and inductive approaches to ensure comprehensive coverage. Start with a thorough literature review of existing scales and theoretical frameworks (deductive), then supplement with qualitative research including focus groups and interviews with diverse representatives from your target populations (inductive) [14] [15]. This hybrid approach helped researchers developing a chronic kidney disease knowledge instrument in Tanzania to identify locally relevant content through focus groups with traditional healers and community members, leading to the addition of four crucial items not identified through literature review alone [18]. Ensure your expert panels include members with cross-cultural expertise and linguistic knowledge to evaluate potential translation challenges early in the process [12].

Q: We're encountering challenges with translation that go beyond simple linguistic equivalence. How can we address deeper conceptual differences?

A: When back-translation reveals persistent conceptual discrepancies, implement a collaborative team approach rather than relying solely on sequential translation. This method involves bilingual subject experts, measurement specialists, and linguists working together through parallel translation, pretesting, and revision cycles [12]. The Norwegian validation of the TeamSTEPPS questionnaire successfully employed this method, incorporating review by healthcare professionals to confirm cultural relevance of concepts in a Norwegian healthcare setting [16]. For socio-cultural ecosystem research, ensure your team includes members familiar with local environmental concepts and valuation frameworks.

Methodological Challenges During Psychometric Testing

Q: Our sample sizes vary significantly across cultural groups. What are the minimum sample requirements for robust cross-cultural validation?

A: The widely accepted rule of thumb is a minimum of 10 participants per scale item for the overall sample [15]. For multigroup analyses, aim for at least 150-200 participants per subgroup to ensure sufficient power for tests of measurement invariance [12]. If your samples are unavoidably unequal, consider using statistical techniques that accommodate unequal group sizes, and prioritize representative sampling over mere convenience samples. Nearly 50% of scale development studies fail to meet sample size requirements, limiting their psychometric robustness [15].

Q: We suspect some items function differently across cultural groups. How can we systematically identify and address these issues?

A: Implement both Multigroup Confirmatory Factor Analysis (MGCFA) and Differential Item Functioning (DIF) analyses to identify problematic items. MGCFA tests three levels of invariance: configural (same factor structure), metric (equivalent factor loadings), and scalar (equivalent item intercepts) [12] [17]. Commonly accepted thresholds for invariance include ΔCFI < 0.01, ΔRMSEA < 0.015, and ΔSRMR < 0.03 for metric invariance [12]. For individual item analysis, use DIF techniques, which test whether each item functions differently across sub-groups after controlling for the total score [12]. When problematic items are identified, return to qualitative methods (e.g., cognitive interviews) with representatives from each cultural group to understand the root causes of differential functioning.

Analytical Challenges in Scale Evaluation

Q: Our factor structure appears different across cultural groups. Does this invalidate cross-cultural comparisons?

A: Not necessarily, but it does complicate direct comparison. First, establish configural invariance (same pattern of factor loadings) through MGCFA. If metric or scalar invariance are not fully achieved, consider whether the constructs themselves might be culturally distinct or whether certain items need modification or removal [17]. In socio-cultural ecosystem service research, the same service might be valued through different dimensions across cultures. Document these differences thoroughly, as they may represent important cultural variation rather than measurement problems. Partial invariance approaches can sometimes be used, where a subset of items shows invariance and can anchor cross-cultural comparisons [17].

Q: How can we effectively demonstrate the validity of our scale across different cultural contexts?

A: Employ a comprehensive validation strategy that includes multiple approaches: (1) Content validity through expert panels representing all cultural contexts; (2) Construct validity through factor analyses within each group; (3) Criterion validity by correlating with established measures within each culture; (4) Known-groups validity by testing whether the scale differentiates between groups theoretically expected to differ [14] [18]. For cross-cultural socio-cultural ecosystem research, you might validate your scale by demonstrating it differentiates between communities with different relationships to ecosystem services (e.g., indigenous communities with deep ecological knowledge versus urban populations) [19] [3].

Diagram 2: Troubleshooting Common Cross-Cultural Validation Challenges

The 10-step framework presented here provides a systematic methodology for developing scales with cross-cultural validity, particularly valuable for socio-cultural ecosystem service research where contextual understanding is paramount. This approach emphasizes the iterative nature of scale development, the importance of mixed methods, and the necessity of testing measurement invariance before making cross-cultural comparisons [12] [13]. By implementing these structured procedures and addressing common challenges through the troubleshooting strategies outlined, researchers can enhance the methodological rigor of their instrumentation, ultimately contributing to more valid and comparable cross-cultural research in socio-cultural ecosystem services and related fields.

Frequently Asked Questions (FAQs)

Q1: What is the core definition of mixed-methods research in a socio-cultural context? A1: Mixed-methods research strategically integrates or combines rigorous quantitative and qualitative research methods within a single project to draw on the strengths of each [20]. In the context of validating socio-cultural data, it involves intentionally integrating both methods before, during, and after data collection to provide a holistic understanding of human values and preferences, connecting measurable patterns with the underlying motivations and contexts [21].

Q2: Why should I use a mixed-methods approach to validate socio-cultural ecosystem service data? A2: A mixed-methods approach is crucial for validation because:

- It Balances Strengths and Weaknesses: Quantitative methods reveal patterns across large groups but can't explain the "why," while qualitative methods uncover motivations and mental models from smaller samples [21]. Using both offsets the limitations of each.

- It Provides a Complete Picture: It delivers both scale and depth, helping you not only spot what's happening with ecosystem service valuations but also grasp why it is happening, leading to better-informed decisions [21].

- It Enhances Legitimacy: Socio-cultural valuation has great potential to improve the legitimacy of forest ecosystem management decisions and to promote consensus-building, which is strengthened by a robust methodological approach [3].

Q3: My quantitative and qualitative data seem to contradict each other. Is this a failure? A3: Not necessarily. Contradictory findings are not a sign of failure but an opportunity for deeper insight. This situation may reveal a complex reality that neither method could capture alone. The process of reconciling these differences often leads to a more nuanced and valid understanding of the socio-cultural phenomenon you are studying [21].

Q4: What are some common experimental protocols in mixed-methods research for socio-cultural valuation? A4: Common designs include:

- Explanatory Sequential Design (Quant, then Qual): You begin with a quantitative method (e.g., a survey) to identify trends, followed by a qualitative method (e.g., interviews) to explain or explore those findings in depth [21].

- Exploratory Sequential Design (Qual, then Quant): You start with qualitative research (e.g., focus groups) to explore a topic and generate hypotheses, then follow up with quantitative research (e.g., a survey) to test and validate these findings at scale [21] [22].

- Convergent Parallel Design (Qual and Quant Simultaneously): You conduct qualitative and quantitative research concurrently and independently, then merge the results to compare and contrast findings for a comprehensive view [21].

Troubleshooting Guides

Issue 1: Lack of Meaningful Integration Between Data Types

Problem: The quantitative and qualitative data are analyzed and presented in isolation, resulting in two separate reports rather than one cohesive insight.

Solution:

- Plan for Integration from the Start: During the research design phase, explicitly ask how the results from each method will connect. Will one explain the other? Are you looking to triangulate findings? [21].

- Think Integration, Not Just Addition: The value isn't in having more data, but in how the data work together. Actively look for points where the qualitative data explains the quantitative trends (e.g., interview quotes that reveal why a particular ecosystem service scored low in a survey) [21].

- Use a Framework: Employ a structured framework that specifies the points of integration, whether during data collection, analysis, or presentation of results [20].

Issue 2: Choosing the Wrong Mixed-Methods Design

Problem: The research design does not effectively address the research question, leading to inefficient use of resources and unclear findings.

Solution: Align your design with your primary research goal. The table below outlines the common designs and their applications.

Table 1: Selecting a Mixed-Methods Research Design

| Research Design | Sequence | Primary Goal | Example Application in Socio-Cultural Valuation |

|---|---|---|---|

| Explanatory Sequential | Quantitative, then Qualitative | To explain or explore quantitative results in greater depth [21]. | A survey shows users highly value 'biodiversity.' Follow-up interviews explore what 'biodiversity' means to them and how they experience it. |

| Exploratory Sequential | Qualitative, then Quantitative | To explore a topic and develop hypotheses, then test them with a larger sample [21] [22]. | Focus groups identify potential cultural ecosystem services. The findings are used to create a survey to quantify the preferences of a wider population. |

| Convergent Parallel | Quantitative and Qualitative concurrently | To compare and contrast different perspectives on the same phenomenon for a comprehensive view [21]. | A MaxDiff survey ranks features while simultaneous interviews ask participants about their feature preferences and reasoning. |

Issue 3: Managing Increased Resource Demands

Problem: Mixed-methods research requires more time, larger recruitment efforts, and closer coordination, which can strain project resources.

Solution:

- Realistic Scoping: Acknowledge from the outset that mixed-methods research requires more resources. Plan for longer timelines and larger recruitment efforts, for example, needing 40+ participants for a survey and 10+ for in-depth interviews [21].

- Align Methods with Goals: Avoid running both methods just for the sake of variety. Use them intentionally to answer different facets of the same research question to ensure resources are well-spent [21].

- Leverage Hybrid Techniques: Consider methods that have qualitative and quantitative elements built-in, such as the Deliberative Q-method, which combines focus groups (qualitative deliberation) with Q-sorting (quantitative ranking of statements) [22].

Experimental Protocols

Protocol 1: The Explanatory Sequential Design

Objective: To first measure and then understand the reasons behind user preferences for cultural ecosystem services.

Methodology:

- Quantitative Phase:

- Data Collection: Distribute a large-scale survey (e.g., n=563 as in a Pentland Hills study [5]) to measure perceptions and rankings of various ecosystem services.

- Data Analysis: Use statistical analysis (e.g., clustering) to identify measurable patterns, trends, and outliers. For example, you might identify a cluster of "recreation seekers" and a cluster of "nature enthusiasts" [5].

- Integration Point: Analyze quantitative results to identify specific patterns that need explanation (e.g., "Why do recreation seekers prioritize paths over biodiversity?").

- Qualitative Phase:

- Data Collection: Conduct in-depth interviews or focus groups with a sub-set of participants from the quantitative phase, focusing on the questions identified in the integration point.

- Data Analysis: Use thematic analysis to identify the motivations, frustrations, and mental models behind the quantitative trends [21].

Protocol 2: The Deliberative Q-Method

Objective: To understand shared and competing social values related to ecosystem services by integrating group deliberation with quantitative sorting.

Methodology:

- Statement Development (Concourse): Develop a set of statements (e.g., 30-50) that represent the full range of opinions and values about the ecosystem services in question [22] [23].

- Q-Sorting (Quantitative):

- Participants individually rank the statements on a quasi-normal forced distribution grid (a Q-grid) from "most how I think" (+4) to "least how I think" (-4) [22].

- This forces participants to make trade-offs, revealing their subjective perspective in a structured, quantifiable way.

- Focus Group Discussion (Qualitative): Facilitate a group discussion where participants are encouraged to explain their rankings, exchange anecdotes, and debate differing viewpoints. This deliberation helps uncover shared values and local ecological knowledge [22].

- Data Analysis:

This diagram illustrates the structured workflow of the Deliberative Q-Method, showing how qualitative and quantitative components are integrated.

Research Reagent Solutions: Essential Methodologies for Socio-Cultural Valuation

The table below details key methodological "reagents" for designing a mixed-methods study in socio-cultural ecosystem service research.

Table 2: Key Research Reagents for Mixed-Methods Socio-Cultural Valuation

| Method/Technique | Function in Validation | Key Characteristics |

|---|---|---|

| Semi-Structured Interviews | To gather rich, detailed contextual data on individual perceptions, values, and experiences. | Flexible, open-ended questions allow for probing and exploration of unexpected topics [3]. |

| Focus Groups | To explore shared values and uncover how knowledge and attitudes are constructed through social interaction and deliberation [22]. | Facilitates group discussion, exchange of anecdotes, and debate [22]. |

| Q-Methodology | To systematically identify a limited number of shared perspectives or viewpoints (factors) within a group [22] [23]. | Uses factor analysis on subjectively ranked statements to reveal distinct attitude patterns [22] [3]. |

| Participatory Mapping | To spatially explicitly link socio-cultural values and preferences to specific locations in a landscape [3]. | Identifies and maps locations of key ecosystem services, like scenic areas or recreational spots. |

| Social Media Analysis | To assess cultural ecosystem services and visitation patterns using passively generated, large-scale data [3] [24]. | Analyzes geotagged photos and text (e.g., calculating Photo-User-Days) to understand usage and preferences [24]. |

This diagram maps the common mixed-methods research designs to their core logic and application, providing a quick reference for selection.

Frequently Asked Questions (FAQs)

Q1: What is the core difference between measurement invariance and differential item functioning (DIF)?

Measurement invariance and DIF are two sides of the same coin, both addressing whether a construct is measured equivalently across different groups. Measurement invariance is typically assessed at the scale or factor level using a hierarchical testing process in Multi-Group Confirmatory Factor Analysis (MGCFA), examining the equivalence of the entire measurement model [25] [26]. DIF, more commonly used in Item Response Theory (IRT) frameworks, investigates bias at the individual item level, determining whether specific items function differently for distinct groups after matching on the underlying ability or trait [27] [28].

Q2: My scalar invariance model shows poor fit, but I need to compare latent means across countries. What are my options?

When scalar invariance (equal intercepts) is not achieved, you have several methodological options:

- Partial Invariance: If at least two indicators per factor show invariant loadings and intercepts, you can release constraints on non-invariant parameters while maintaining constraints on others. This approach is commonly used, though some researchers argue it may not always suffice for meaningful comparisons [25].

- Alignment Method: This modern approach, suitable for many groups, allows for approximate rather than exact invariance by minimizing the impact of non-invariant parameters. It's particularly useful when dealing with numerous groups where exact invariance is unlikely [29] [25].

- Bayesian SEM: Incorporates prior information and offers more flexibility in handling measurement noninvariance [25].

Q3: How do I handle DIF detection with multiple background variables (e.g., gender, age, education simultaneously)?

Traditional DIF methods typically examine one background variable at a time, which can be inadequate for complex real-world scenarios. Advanced approaches include:

- LASSO Regularization: A machine learning method that can simultaneously detect DIF across multiple continuous and categorical background variables while controlling false discovery rates [28].

- MIMIC Models with Multiple Covariates: Extends the standard MIMIC approach to include multiple grouping variables, allowing examination of direct effects on both the latent variable and individual items [30].

- Latent Class DIF Analysis: Identifies DIF across unobserved (latent) classes rather than pre-defined manifest groups, useful when the source of bias is unknown [31].

Q4: What are the minimum sample size requirements for measurement invariance testing?

While absolute rules are challenging, practical guidance suggests:

- MGCFA: Minimum of 100-200 cases per group for basic configural and metric invariance testing, with larger samples needed for scalar invariance and multi-group comparisons [26].

- DIF Detection: Methods perform differently; logistic regression and Mantel-Haenszel may have inflated Type I error with small samples, while IRT-based methods generally require larger samples for stable parameter estimation [27] [28].

- Small Sample Considerations: With limited samples, consider Bayesian approximate invariance or alignment optimization methods, which can be more robust with smaller group sizes [29] [25].

Troubleshooting Common Problems

Problem: Poor model fit at configural level, before any cross-group constraints

This indicates the basic factor structure does not hold across groups, meaning fundamental differences in how constructs are understood.

- Solution Steps:

- Reevaluate construct conceptualization: The construct may have different meanings or structures across groups [26].

- Check for differential item functioning: Use DIF detection methods like logistic regression or IRT-based approaches to identify problematic items [28].

- Consider exploratory methods: Exploratory SEM or factor mixture modeling may help identify differential construct structures [29].

- Theoretical reconsideration: The construct may not be equivalent across your groups, requiring theoretical reformulation [25].

Problem: Inconsistent DIF detection across methods (e.g., MH vs. IRT methods)

Different DIF detection methods have varying sensitivity and Type I error rates, particularly with complex data structures.

- Solution Steps:

- Understand method assumptions: Mantel-Haenszel is effective for uniform DIF, while IRT methods detect both uniform and non-uniform DIF [27].

- Account for data structure: With nested data (e.g., students within countries), use multilevel DIF detection methods (multilevel Wald or MH) rather than single-level approaches [27].

- Consider effect sizes: Beyond statistical significance, evaluate practical significance of DIF using measures like ΔR² in logistic regression or area measures in IRT [28].

- Implement purification: Use iterative purification processes to ensure matching is not contaminated by DIF items [27].

Problem: Noninvariance in socio-cultural valuation measures across communities

In socio-cultural ecosystem service research, measures often show noninvariance due to culturally specific relationships with nature [32] [33].

- Solution Steps:

- Mixed methods approach: Combine quantitative invariance testing with qualitative inquiry to understand sources of noninvariance [33].

- Community-specific calibration: Develop local reference points rather than assuming universal measurement scales [32].

- Multi-method triangulation: Use multiple assessment methods (e.g., Flickr geo-tags, Wikipedia pages, surveys) to capture different aspects of socio-cultural values [33].

- Partial invariance modeling: Identify and constrain only the invariant indicators while allowing culturally specific indicators to vary [25].

Experimental Protocols

Protocol 1: Establishing Measurement Invariance for Cross-Cultural Comparisons

This protocol provides a step-by-step approach for testing measurement invariance in socio-cultural valuation research.

Step 1: Configural Invariance

- Specify the same factor structure across all groups without equality constraints

- Ensure the same pattern of fixed and free parameters across groups

- Assess model fit using multiple indices: CFI > 0.90, RMSEA < 0.08, SRMR < 0.08 [26]

- Troubleshooting: If poor fit, consider exploratory analyses to identify group-specific factor structures

Step 2: Metric Invariance

- Constrain factor loadings to be equal across groups

- Compare to configural model using χ² difference test or ΔCFI (< -0.01 indicates worsening fit) [26]

- Interpretation: Metric invariance allows comparison of structural relationships (correlations, regressions)

Step 3: Scalar Invariance

- Constrain both factor loadings and item intercepts to be equal across groups

- Compare to metric model using χ² difference test

- Interpretation: Scalar invariance allows comparison of latent means across groups [25]

Step 4: Handling Noninvariance

- If scalar invariance rejected, test for partial invariance by freeing non-invariant parameters

- Ensure at least two invariant indicators per factor for meaningful comparisons [25]

- Consider approximate invariance methods (alignment optimization) if extensive noninvariance [29]

Protocol 2: Differential Item Functioning Analysis for Complex Sampling Designs

This protocol addresses DIF detection in complex research designs common in ecosystem service studies.

Step 1: Preparation and Assumption Checking

- Check unidimensionality assumption using exploratory factor analysis or H coefficient

- Ensure sufficient sample size (minimum 200 per group for Mantel-Haenszel, larger for IRT methods)

- For multilevel data (e.g., respondents nested within regions), select appropriate multilevel DIF methods [27]

Step 2: Selection of DIF Detection Method

- For initial screening: Mantel-Haenszel or logistic regression for uniform DIF

- For comprehensive analysis: IRT-based methods (likelihood ratio or Wald tests) for both uniform and non-uniform DIF

- For complex DIF sources: LASSO regularization for multiple background variables [28]

Step 3: Implementation and Purification

- Use iterative purification process where anchor items are refined across iterations

- For IRT methods, ensure careful linking/calibration across groups

- Apply multiple comparison corrections when testing multiple items

Step 4: Effect Size Interpretation and Reporting

- Report both statistical significance and practical significance

- For Mantel-Haenszel, report MH D-DIF index with classifications: Negligible (A: |ΔMH| < 1.0), Moderate (B: |ΔMH| ≥ 1.0 and significant), Large (C: |ΔMH| ≥ 1.5 and significant)

- For IRT-based methods, report area between curves or parameter difference effect sizes [28]

Method Selection Tables

Table 1: Measurement Invariance Testing Methods Comparison

| Method | Best Use Cases | Sample Requirements | Software Implementation | Key Considerations |

|---|---|---|---|---|

| Multi-Group CFA | Comparing known groups (3-10 groups); confirmatory factor structures | 100-200 per group | Mplus, lavaan (R), JASP [34] | Becomes cumbersome with many groups; strict exact invariance |

| Alignment Optimization | Many groups (10+); approximate invariance sufficient | Flexible with group size | Mplus [29] | Optimizes to minimize impact of non-invariant parameters |

| Bayesian SEM | Small samples; incorporating prior knowledge | Can work with smaller samples | Mplus, blavaan (R) | Requires specification of priors; results sensitive to prior choice |

| MIMIC Models | Continuous or multiple covariates; DIF detection | Single group, larger total N | Mplus, lavaan (R) | Assumes same factor structure across groups; cannot detect non-uniform DIF without interactions [30] |

Table 2: DIF Detection Methods for Different Data Scenarios in Socio-Cultural Research

| Method | Data Type | DIF Types Detected | Background Variables | Key Strengths | Key Limitations |

|---|---|---|---|---|---|

| Mantel-Haenszel | Dichotomous | Uniform only | Single categorical | Simple implementation; robust | Cannot detect non-uniform DIF; limited to dichotomous items |

| Logistic Regression | Dichotomous, Polytomous | Uniform and non-uniform | Single continuous or categorical | Flexible; detects both DIF types | Inflated Type I error with small samples [28] |

| IRT Likelihood Ratio | Dichotomous, Polytomous | Uniform and non-uniform | Single categorical | Strong theoretical foundation; accurate | Requires large samples; complex implementation |

| Multilevel DIF Methods | Nested data | Uniform and non-uniform | Single categorical | Accounts for data clustering | Understudied; limited software options [27] |

| LASSO Regularization | Dichotomous, Polytomous | Uniform and non-uniform | Multiple continuous and/or categorical | Handles complex DIF sources; variable selection | Conservative Type I error; emerging method [28] |

Research Reagent Solutions: Methodological Tools

Table 3: Essential Statistical Tools for Validation Research

| Tool/Software | Primary Function | Key Features for Validation | Implementation Considerations |

|---|---|---|---|

| Mplus | General SEM and mixture modeling | Alignment optimization; Bayesian SEM; complex survey data | Commercial software; steep learning curve but comprehensive features [29] |

| lavaan (R package) | Structural equation modeling | Multi-group CFA; flexible constraint specification | Free; R environment; active development community [34] |

| JASP | Statistical analysis with GUI | User-friendly SEM module with measurement invariance testing | Free; graphical interface; good for beginners [34] |

| difR (R package) | DIF detection | Multiple DIF methods; purification processes | Free; focused specifically on DIF detection [27] |

| flexMIRT | Multidimensional IRT | Comprehensive DIF detection; complex models | Commercial; powerful for advanced IRT applications |

Workflow Diagrams

Measurement Invariance Testing Decision Workflow

DIF Analysis Selection Workflow

Leveraging Big Data and Machine Learning for Unstructured Data Analysis

Technical Support Center: FAQs & Troubleshooting Guides

This technical support center provides practical guidance for researchers conducting socio-cultural ecosystem services (CES) research, with a specific focus on validating data using big data and machine learning techniques. The following FAQs and troubleshooting guides address common challenges encountered during experimental workflows.

Frequently Asked Questions (FAQs)

Q1: What types of unstructured data are most relevant for validating socio-cultural ecosystem service data? Geotagged social media photographs are a primary data source. They act as a proxy for human-nature interactions and cultural activities within protected areas [35]. The data is valuable due to its volume, geographic and temporal specificity, and its reflection of intangible CES, such as aesthetic appreciation and recreational experiences [36].

Q2: Which machine learning models are best suited for automating the analysis of image data for CES research? Convolutional Neural Networks (CNNs) are the most effective deep learning models for this task [35] [36]. They are designed for image recognition and can automatically classify natural and human elements in photographs at a scale that would be impossible manually. Models are available through APIs like Microsoft’s Azure Computer Vision or Google's Cloud Vision [35].

Q3: Our CNN model's classifications are inconsistent. How can we validate its accuracy for our specific research context? It is essential to validate the automated results against a manually classified subset of your data [36]. Establish a ground-truth dataset by having domain experts manually tag a random sample of images. The accuracy of the CNN can then be assessed by comparing its classifications against this human-verified set. Tuning the model may require adjusting the confidence score threshold for accepting a tag [35].

Q4: What are the key steps for processing social media images from raw data to analyzable insights? The standard workflow involves four key stages [35]:

- Data Collection & Cleaning: Use APIs (e.g., Flickr API) to gather geotagged photos from your study area and time period, then remove invalid or irrelevant images.

- Pre-processing & Tagging: Submit the photos to a CNN API to generate descriptive content tags for objects, living beings, and actions present in each image.