Validating Population Viability Analysis: A Framework for Robust Model Assessment in Conservation and Biomedical Research

This article provides a comprehensive framework for the validation of Population Viability Analysis (PVA) models, a critical tool for assessing extinction risk in conservation biology with emerging applications in biomedical...

Validating Population Viability Analysis: A Framework for Robust Model Assessment in Conservation and Biomedical Research

Abstract

This article provides a comprehensive framework for the validation of Population Viability Analysis (PVA) models, a critical tool for assessing extinction risk in conservation biology with emerging applications in biomedical fields. We explore the foundational principles of PVA, including key sources of stochasticity like demographic and environmental variation. The article details methodological approaches for model parameterization and application across diverse case studies, from marsh passerines to giant anteaters. It further addresses common challenges in model troubleshooting and optimization, emphasizing sensitivity analysis and uncertainty quantification. Finally, we present rigorous validation and comparative techniques, including the novel SAMSE framework, to evaluate model performance against empirical data and deterministic methods. This synthesis is tailored for researchers, scientists, and drug development professionals seeking to apply robust, predictive population models in their work.

The Core Principles of PVA: Understanding Stochasticity and Model Foundations

Population Viability represents the probability that a population will persist for a specified time period given its current size, structure, and environmental conditions [1]. In conservation biology, this concept is operationalized through quantitative assessments that evaluate extinction risk, often projecting population dynamics into the future to inform conservation decisions [2].

The Minimum Viable Population (MVP) is formally defined as the smallest population size required for a species to have a predetermined probability of persistence over a specific time frame [3] [4]. This metric serves as a critical benchmark in conservation planning, helping managers determine when populations require intervention to avoid extinction. Early estimates suggested MVPs of 50 individuals to prevent inbreeding depression and 500 individuals to maintain evolutionary potential, though recent analyses reveal much higher requirements—often thousands of individuals—for long-term persistence [3] [4].

Quantitative Measures of Population Viability

Population viability can be quantified using multiple metrics, each providing different insights into extinction risk. These measures generally fall into three primary categories: probabilistic measures, time-based measures, and population-size measures [5].

Table 1: Categories of Viability Measures Used in Population Viability Analysis

| Category | Key Metrics | Definition | Application Context |

|---|---|---|---|

| Probabilistic Measures | Probability of extinction (P₀(t)) | Proportion of simulation runs where extinction occurs within time t | Assessing necessity of conservation action |

| Risk of decline (P_N(t)) | Probability population falls to or below threshold N by time t | Evaluating population depletion risk | |

| Probability of quasi-extinction (P_QE,N(t)) | Chance population drops below threshold N at least once during time t | Setting safety thresholds for management | |

| Time Measures | Mean time to extinction (T_E) | Average time until population reaches extinction | Determining urgency of interventions |

| Intrinsic mean time to extinction (T_m) | Mean extinction time accounting for distribution skewness | Theoretical comparisons of viability | |

| Population-Size Measures | Expected population size (N_E(t)) | Average number of individuals at time t | Measuring conservation success |

| Expected minimum population size (N_min(t)) | Lowest expected population size over time t | Identifying bottleneck risks |

A 2023 comparative analysis of eight viability measures based on simulated population dynamics of over 4,500 virtual species found that while different measures generally ranked species viability similarly, direct correlations between measures were often weak and could not be generalized [5]. This highlights the importance of selecting appropriate viability metrics based on specific conservation questions rather than assuming interchangeability.

Methodological Approaches: Experimental Protocols in Population Viability Analysis

Fundamental Workflow of Population Viability Analysis

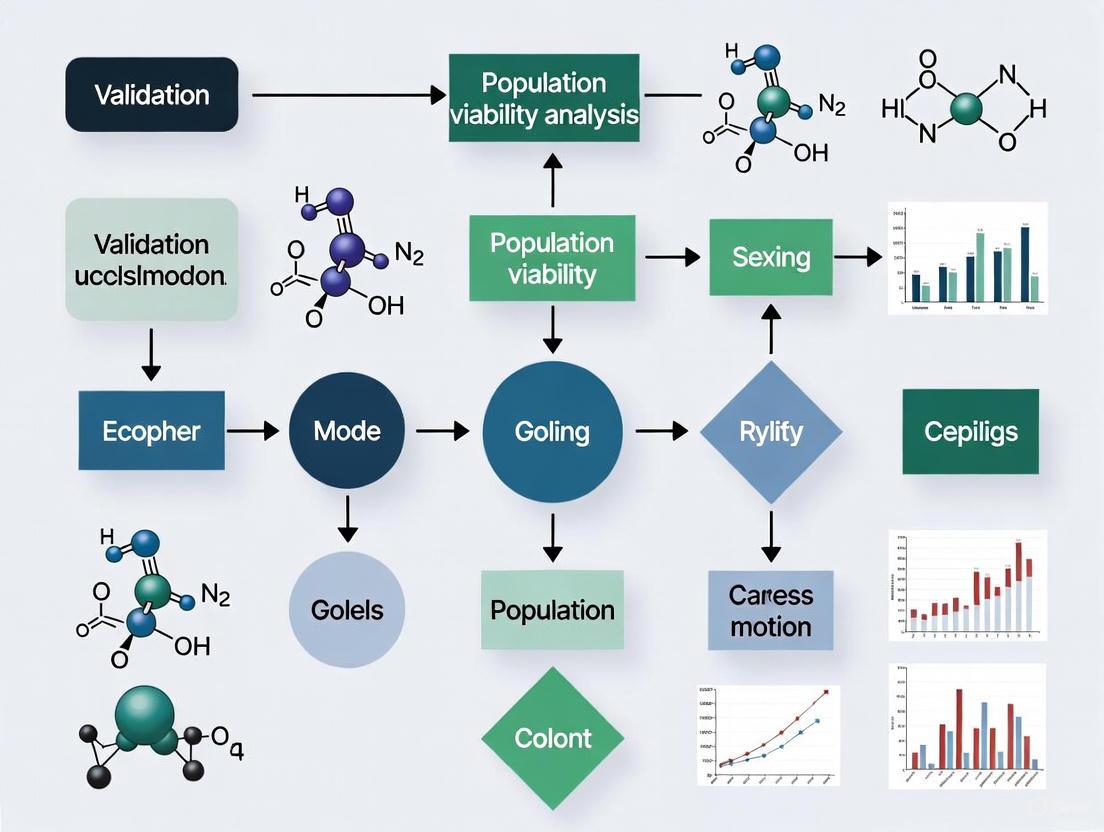

The diagram below illustrates the standard workflow for conducting a Population Viability Analysis (PVA), synthesizing methodologies from multiple studies [5] [2] [6]:

Data Requirements and Collection Protocols

Different PVA approaches require specific data types and involve distinct methodological protocols:

Time-Series PVA Protocol:

- Data Requirements: Annual population counts over multiple years [2]

- Statistical Analysis: Fitting population growth models with environmental and demographic stochasticity [1]

- Implementation: Using maximum likelihood methods to estimate intrinsic growth rate (r) and environmental variance (σ²) [2]

- Output: Probability of extinction curves over defined time horizons

Demographic PVA Protocol:

- Data Requirements: Age- or stage-specific survival and reproduction rates [2]

- Matrix Construction: Building Leslie or Lefkovitch matrices with variance-covariance structures [6]

- Simulation Approach: Individual-based modeling tracking each organism through life cycle stages

- Sensitivity Analysis: Identifying which vital rates most strongly influence population growth [2]

Metapopulation PVA Protocol:

- Data Requirements: Patch-specific habitat quality, connectivity matrices, colonization and extinction rates [6]

- Spatial Modeling: Incorporating dispersal kernels and landscape resistance [7]

- Dynamic Simulation: Modeling source-sink dynamics and rescue effects

Case Study: Eastern Iberian Reed Bunting PVA

A 2025 PVA for the critically endangered Eastern Iberian Reed Bunting (Emberiza schoeniclus witherbyi) demonstrates experimental application [6]:

- Base Model Parameterization: Used field data from 14 wetlands with 85% of the estimated 250 breeding pairs concentrated in three primary sites

- Simulation Protocol: 500 iterations over 100-year time horizon with stochastic environmental variation

- Management Scenarios Tested: Habitat restoration, predator control, translocations, and captive breeding reinforcements

- Key Finding: Population projected to halve within 20 years without intervention, with complete extinction predicted within 75 years

Stochastic Threats to Population Viability

The diagram below illustrates the four primary sources of extinction risk that influence population viability and MVP estimates [3]:

Comparative Analysis of MVP Estimates Across Taxa

Meta-analyses of MVP studies reveal substantial variation in population size requirements across species, influenced by life history characteristics, environmental conditions, and methodological approaches.

Table 2: Minimum Viable Population Size Estimates Across Studies

| Study Context | Taxonomic Group | MVP Estimate | Persistence Criteria | Key Influencing Factors |

|---|---|---|---|---|

| Meta-analysis of 102 vertebrate species [4] | Multiple vertebrates | Median: 4,169 individuals | 99% probability over 40 generations | Study duration, population growth rate |

| Literature review [3] | Terrestrial vertebrates | 500-1,000 individuals (inbreeding ignored) | Not specified | Life history strategy, ecological role |

| Terrestrial vertebrates | >1,000 individuals (inbreeding included) | Not specified | Genetic diversity requirements | |

| Cross-species frequency distribution [3] | Vertebrates | Median: 4,169 individuals (95% CI: 3,577-5,129) | Long-term persistence | Body size, environmental variation |

| Reed et al. (2003) analysis [4] | Multiple species | 1377 individuals (median) | 90% probability over 100 years | Local environmental variation |

A critical finding from research is that MVP estimates are highly sensitive to study duration. Analyses based on longer-term data consistently produce higher MVP estimates because they capture greater temporal variation in population size, providing more realistic extinction risk assessments [4]. This has important implications for methodological standardization in PVA.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Tools and Software for Population Viability Analysis

| Tool Category | Specific Solutions | Primary Function | Application Context |

|---|---|---|---|

| PVA Software Platforms | RAMAS [2] | Metapopulation viability analysis | Spatially structured populations |

| VORTEX [2] | Individual-based simulation | Species with complex life histories | |

| ALEX [2] | Population and habitat modeling | Conservation planning and reserve design | |

| Statistical Programming Environments | R with popbio/popdemo packages [5] | Matrix population model analysis | Demographic PVA with sensitivity analysis |

| Bayesian statistical packages [2] | Uncertainty quantification in PVA | Data-deficient populations | |

| Data Management Solutions | Global Population Dynamics Database [4] | Long-term population time series | Model parameterization and validation |

| RangeShifter [5] | Simulating species' range dynamics | Climate change impact assessments | |

| Genetic Analysis Tools | Inbreeding depression estimators [3] | Genetic viability assessment | Small population management |

Population viability analysis represents a critical methodological framework for quantifying extinction risk and establishing scientifically-defensible conservation targets. The integration of MVP estimates with PVA methodologies provides conservation managers with powerful tools for prioritizing actions and allocating limited resources effectively [4].

While PVA cannot provide precise predictions of exactly when a population will go extinct, it offers robust assessments of relative risk and enables comparison of alternative management strategies [2] [8]. The continued refinement of PVA methodologies—particularly through Bayesian approaches that better quantify uncertainty and multiple population viability analysis (MPVA) that share information across populations—promises enhanced utility for conserving biodiversity in an increasingly fragmented world [7]. When applied with appropriate caution regarding model assumptions and data limitations, PVA remains an essential component of the conservation biologist's toolkit for preventing species extinctions.

Population Viability Analysis (PVA) represents a cornerstone of modern conservation biology, providing quantitative methods to predict the likely future status of populations of conservation concern [9]. These analytical approaches yield probabilistic estimates of population persistence or extinction over specified time horizons, enabling managers to make informed decisions about endangered species protection [7]. At the heart of accurate PVA modeling lies the proper accounting of stochastic processes—the random demographic, environmental, and genetic events that collectively determine extinction risk, particularly for small populations. While deterministic models assume fixed parameter values, real populations experience substantial variability in birth rates, death rates, and environmental conditions that can dramatically alter population trajectories.

The recognition of stochasticity's critical role has evolved considerably over recent decades. Early conservation guidelines often focused on simple population thresholds, but research has demonstrated that "smallness alone is an insufficient predictor of risk" [7]. Instead, understanding the factors that correlate with population declines and stochasticity is essential for predicting extinction. Different types of stochasticity operate at varying spatial and temporal scales, with demographic stochasticity arising from individual chance events, environmental stochasticity reflecting temporal fluctuations affecting all individuals, and genetic stochasticity encompassing random changes in genetic composition. This review synthesizes current understanding of these stochastic processes, their interactions, and their implications for population viability assessment across diverse taxa and ecosystems.

Classifying Stochasticity in Ecological Models

Demographic Stochasticity

Demographic stochasticity refers to the random variations in individual fitness components—specifically, the independent chance events that determine individual survival and reproduction [10]. Even individuals with identical genetically-determined vital rates may differ in their actual reproductive output or longevity due to this inherent randomness in demographic processes. The implications of demographic stochasticity are particularly pronounced in small populations, where random deaths of a few individuals or failure of a few individuals to reproduce can significantly impact population growth or extinction risk.

Recent research has quantified the substantial contribution of demographic stochasticity to overall population dynamics. In an experimental population of Plantago lanceolata, demographic stochasticity explained the largest fraction of variance in survival and reproduction among individuals, far exceeding the effects of genetic differences or environmental fluctuations [10]. This dominance of demographic stochasticity has profound implications for both ecological and evolutionary dynamics, as large demographic stochastic variation can lower population growth and slow adaptive evolutionary change. The N = (5-10)T rule provides guidance for accounting for this uncertainty, stating that to reliably estimate a non-zero extinction probability T years into the future, one should have N = (5-10)T observations [11].

Environmental Stochasticity

Environmental stochasticity encompasses temporal fluctuations in environmental conditions that affect all individuals in a population simultaneously, such as variations in temperature, precipitation, or resource availability [10]. Unlike demographic stochasticity, which decreases in importance with increasing population size, environmental stochasticity affects populations regardless of their size and can drive correlated fates across individuals. This type of stochasticity is particularly important for species inhabiting highly variable environments or those facing climate change-induced shifts in environmental regimes.

The impact of environmental stochasticity is vividly illustrated in PVA models for species like the Sonoran desert tortoise, where increases in drought frequency and intensity may significantly increase extinction risk [12]. Similarly, for Lahontan cutthroat trout, population viability was influenced by stream flow variations, with high flows in the preceding year positively affecting survival and recruitment [7]. The increased variance in population growth rates driven by environmental stochasticity directly elevates extinction risk, particularly when coupled with density-dependent mechanisms that reduce population resilience to environmental extremes.

Genetic Stochasticity

Genetic stochasticity encompasses random changes in genetic composition, including processes such as inbreeding depression, loss of genetic diversity, and accumulation of deleterious mutations, which are particularly consequential in small populations [13]. While often considered separately from demographic and environmental stochasticity, genetic processes interact strongly with population size and trajectory. Recent research has revealed that the classic models of evolution, which assume large and stable populations, fail to capture the dynamics of small populations where randomness can fundamentally alter evolutionary outcomes.

A groundbreaking study demonstrated that in finite populations, a novel force called "noise-induced biasing" can actually reverse the direction of evolution predicted by natural selection alone [13]. This occurs because randomness—or "demographic stochasticity"—plays a significant role in shaping eco-evolutionary dynamics in small populations, sometimes producing evolutionary outcomes opposite to what would be expected under traditional selection models. This has critical implications for conservation, as human-driven factors like climate change and habitat loss continue to reduce population sizes worldwide, potentially altering evolutionary trajectories in unpredictable ways.

Table 1: Comparative Influence of Different Stochasticity Types on Population Viability

| Stochasticity Type | Definition | Scale of Operation | Key Findings | Conservation Implications |

|---|---|---|---|---|

| Demographic | Independent chance events affecting individual survival and reproduction | Individual level | Explains largest fraction of variance in survival/reproduction [10] | Most critical for very small populations; informs minimum monitoring requirements [11] |

| Environmental | Temporal fluctuations affecting all individuals in a population | Population/regional level | Drought frequency increases extinction risk for Sonoran desert tortoise [12] | Requires broad-scale habitat protection and climate adaptation strategies |

| Genetic | Random changes in genetic composition | Population level | Can reverse evolutionary direction predicted by natural selection [13] | Necessitates genetic management in small populations despite neutral theory predictions |

Quantitative Assessment of Variance Components

Understanding the relative contributions of different stochasticity sources is essential for effective conservation planning. Research has made significant strides in quantifying these variance components, revealing surprising patterns that challenge conventional wisdom in population biology.

In a detailed variance decomposition analysis using Plantago lanceolata, researchers found that non-selective demographic stochasticity dominated the variability in both lifespan and reproduction among individuals [10]. Specifically, additive genetic effects explained only 0.5-1% of the total variance in these fitness components, while non-selective environmental variation among years accounted for just 2.5-4.6% of variance in reproduction and approximately 25% of variance in lifespan. Genotype-by-environment interactions explained 4.6-6.7% of the variation. The overwhelming majority of variance was attributed to demographic stochastic processes operating at the individual level.

These findings highlight the challenge of distinguishing between adaptive genetic variability and neutral variation in evolutionary ecology and demographic studies. The large demographic stochastic variation exhibited within genotypes not only lowers population growth but can also slow evolutionary adaptive dynamics, potentially complicating conservation efforts that rely on evolutionary potential for population resilience. This suggests that common expectations of population growth, based on expected lifetime reproduction and generation time, can be misleading when demographic stochastic variation is substantial.

Table 2: Variance Decomposition in Plantago lanceolata Fitness Components [10]

| Variance Component | Contribution to Lifespan Variance | Contribution to Reproduction Variance | Interpretation |

|---|---|---|---|

| Genetic (G) | ~0.5-1% | ~0.5-1% | Minimal selective pressure possible |

| Environmental (E) | ~25% | 2.5-4.6% | Year-to-year fluctuations significant for survival |

| G×E Interaction | 4.6-6.7% | 4.6-6.7% | Moderate genotype-environment interactions |

| Demographic Stochasticity | ~68-70% | ~87-92% | Dominant source of variation in fitness components |

Experimental Approaches and Protocols

Stage-Structured Matrix Models

Stage-structured matrix models represent a fundamental approach for incorporating stochasticity into PVA, particularly for species with complex life histories. These models track changes in the numbers of individuals in different life stages (e.g., age or size categories) and can incorporate demographic stochasticity, environmental stochasticity, catastrophes, density-dependence, and spatial structure [9]. The application of these models is exemplified by research on the endangered plant Jacquemontia reclinata, where a stage-size matrix model with five stages (seeds in seed bank, seedlings, small adults, medium adults, and large adults) was parameterized using 10 years of demographic data [14].

The experimental protocol for this research involved establishing permanent meter-square grids across four populations, with corners marked using PVC pipes embedded in the sand [14]. Within each grid, a subgrid of 16 25×25 cm quadrats was used to: (1) locate and tag plant roots, (2) estimate occupancy (presence/absence in each subgrid), (3) estimate cover using Braun-Blanquet categories, and (4) record fruit numbers. This detailed annual census allowed researchers to construct transition matrices and calculate annual growth rates (λ), which ranged from 0.86 to 1.25 for the average matrix, with greater interannual variability in individual populations compared to the pooled average model [14]. The elasticity analysis conducted as part of this study identified that the most influential transitions were adult survival and transitions from seeds to seedlings, highlighting key targets for conservation management.

Multiple Population Viability Analysis (MPVA)

A significant methodological advancement for assessing the viability of multiple populations simultaneously is the Multiple Population Viability Analysis (MPVA) approach, which addresses the limitation of conventional PVA requiring extensive demographic data for each population of interest [7]. This innovative method borrows information from other populations through shared parameters, allowing estimation of viability for populations with insufficient data for conventional PVA. The approach can even make predictions for populations with few to no observations, making it particularly valuable for conservation of metapopulations in fragmented landscapes.

The MPVA protocol involves four defining characteristics: (1) some population parameters are shared among populations, with covariates varying in space and time; (2) parameters are estimated using Bayesian methods with explicit priors; (3) the approach uses a state-space model to account for observation error; and (4) it projects population trajectories under alternative scenarios [7]. When applied to Lahontan cutthroat trout, MPVA revealed a positive effect of high flows in the preceding year, suggesting that these flows increase survival and recruitment to the adult stage, possibly by stimulating increased productivity [7]. This approach demonstrates how spatial and temporal covariates can be leveraged to extrapolate viability estimates across multiple populations with varying data quality.

Metapopulation Projection Frameworks

For species existing in fragmented landscapes, metapopulation projection frameworks provide critical tools for assessing viability across multiple subpopulations. These models follow the fates of multiple subpopulations and determine whether the rate of establishment of new subpopulations through colonization is sufficiently high to counter local extinctions [9]. The application of such frameworks is exemplified by research on the Eastern Iberian Reed Bunting, where metapopulation models were used to simulate alternative conservation measures, including habitat restoration, predator control, population reinforcements through translocations, and captive breeding program releases [6].

The experimental protocol involved parameterizing models with population data from 14 wetlands, then projecting extinction probabilities under different scenarios [6]. Simulations predicted that without intervention, the population would halve within 20 years and become completely extinct by the 2070s. The research then systematically evaluated conservation interventions, finding that population reinforcements and reintroductions from captive breeding programs, combined with in-situ actions, were the most effective measures for conservation [6]. This approach demonstrates how metapopulation models can compare management strategies by simulating their effects on connectivity and viability across fragmented landscapes.

PVA Workflow with Stochasticity Components: This diagram illustrates the standard workflow for conducting Population Viability Analysis, highlighting points where different stochasticity components influence the process.

Case Studies in Conservation Application

Iberian Reed Bunting: Evaluating Conservation Interventions

The application of PVA to the Eastern Iberian Reed Bunting (Emberiza schoeniclus witherbyi) provides a compelling case study in using viability models to evaluate alternative conservation strategies for a critically endangered species [6]. With only 250 breeding pairs confined to 14 wetlands, and 85% of the population concentrated in just three sites, this subspecies faces extreme extinction risk. The PVA models projected that without intervention, the population would decline by 50% within 20 years and face complete extinction by the 2070s [6].

Researchers systematically tested four categories of conservation interventions: (1) habitat restoration in current breeding wetlands, (2) predator control, (3) population reinforcements through translocations, and (4) reintroductions from captive breeding programs. The results demonstrated that while habitat restoration and predator control delayed estimated extinction times, they did not prevent the disappearance of many small localities [6]. Population reinforcements through translocations required careful balancing, with the optimal strategy being the translocation of 7 pairs each year for 3 years (5 from Delta de l'Ebre and 2 from S'Albufera) to avoid severely reducing donor populations' viability. The most effective measures combined population reinforcements with in-situ actions, particularly when focused on wetlands with 'good' and 'intermediate' viabilities and implemented after habitat restoration [6].

Jacquemontia reclinata: Pooling Data Bias in Small Populations

Research on the federally endangered plant Jacquemontia reclinata highlights critical methodological considerations for PVA of small populations, particularly the bias introduced by pooling data across populations [14]. This study compared population viability estimates using two approaches: one that pooled all individuals into a single matrix to decrease variation in transition rate estimation, and another that incorporated actual dynamics of the two largest populations individually.

The findings revealed stark differences in extinction risk estimates between these approaches. While the average matrix produced a stochastic growth rate of 1.018 with less than 1% quasi-extinction risk over 50 years, the individual population matrices showed substantially different trajectories [14]. Specifically, Crandon population had a stochastic λ of 1.033 with 14% quasi-extinction risk, while South Beach had a stochastic λ of 0.933 with 87% quasi-extinction risk. The metapopulation model incorporating actual dynamics showed lower occupancy rates and higher extinction risk at an earlier time compared to the model using population averages [14]. This demonstrates how pooling data across populations can mask significant environmental variation, leading to underestimation of extinction risk for vulnerable subpopulations.

Lahontan Cutthroat Trout: MPVA for Data-Deficient Populations

The application of Multiple Population Viability Analysis (MPVA) to Lahontan cutthroat trout (Oncorhynchus clarkii henshawi) illustrates how innovative modeling approaches can leverage limited data across multiple populations to inform conservation [7]. This threatened fish species inhabits small, isolated streams in the Great Basin desert, with most populations having limited monitoring data. The MPVA approach allowed researchers to borrow information from better-studied populations to estimate viability for data-deficient populations, while accounting for environmental covariates like stream flow and temperature.

The analysis revealed a positive effect of high flows in the preceding year on population growth, suggesting that these flows increase survival and recruitment, possibly by stimulating increased productivity [7]. The model also enabled ranking populations by relative extinction risk, identifying those most vulnerable to conservation prioritization. This case study demonstrates how MPVA provides a data-driven alternative that can provide reasonable viability estimates for poorly-sampled populations, as long as there are sufficient data from other populations to estimate shared parameters and their relationship to environmental covariates [7].

Table 3: Key Research Reagent Solutions for Population Viability Analysis

| Tool/Resource | Type | Primary Function | Application Examples |

|---|---|---|---|

| RAMAS Metapop | Software | Spatially-structured population modeling | Metapopulation dynamics for J. reclinata; integrates spatial landscape data [14] |

| VORTEX | Software | Individual-based simulation modeling | Incorporates genetic information and pedigree data; suitable for small populations [9] |

| Bayesian State-Space Models | Statistical Framework | Parameter estimation with uncertainty quantification | MPVA for Lahontan cutthroat trout; shares information across populations [7] |

| Stage-Structured Matrix Models | Modeling Framework | Project structured population dynamics | PVA for J. reclinata with seed, seedling, and adult stages [14] |

| Diffusion Approximation | Analytical Method | Estimating extinction probabilities from time series | Unstructured population models with environmental stochasticity [9] |

| Elasticity Analysis | Analytical Technique | Identifying critical vital rates for population growth | Determined adult survival most important for J. reclinata [14] |

| Permanent Plot Networks | Field Methodology | Long-term demographic monitoring | 10-year study of J. reclinata with marked individuals [14] |

The critical role of stochasticity in population viability analysis necessitates approaches that explicitly account for demographic, environmental, and genetic variance throughout the modeling process. The evidence consistently demonstrates that demographic stochasticity often dominates variance in fitness components, with profound implications for both ecological and evolutionary dynamics. The integration of these stochastic elements has been enhanced through methodological advances such as Multiple Population Viability Analysis, which enables viability assessment across multiple populations even with limited data.

Future directions in PVA research will likely focus on improving the integration of different stochasticity types, particularly as climate change increases environmental variability and habitat fragmentation reduces population sizes. The surprising finding that demographic noise can reverse evolutionary selection directions in small populations warrants particular attention, suggesting that conservation strategies may need to account for these non-intuitive evolutionary outcomes [13]. Furthermore, as monitoring technologies advance and longer-term datasets become available, the precision of variance decomposition should improve, enabling more targeted conservation interventions. What remains clear is that understanding and quantifying the critical role of stochasticity is not merely an academic exercise—it is fundamental to preventing extinction and promoting population persistence in an increasingly variable world.

Population Viability Analysis (PVA) serves as a critical tool in conservation biology, enabling researchers to assess extinction risks and evaluate potential management strategies for threatened species [6]. The reliability of PVA projections, however, depends on accurate parameter estimation and understanding of population processes. This guide explores a subtle yet impactful phenomenon in small population dynamics—the 'Penny Flipping' Effect—wherein minor demographic stochasticity can disproportionately influence population trajectories, analogous to the rounding tax observed in economic systems following the phase-out of low-denomination currency [15].

The 'Penny Flipping' metaphor illustrates how small, stochastic events in individual survival and reproduction can flip population outcomes between recovery and extinction, particularly in populations already constrained by habitat fragmentation and isolation. This effect becomes especially pronounced in species like the Eastern Iberian Reed Bunting (Emberiza schoeniclus witherbyi), where 85% of the estimated 250 breeding pairs are confined to just three wetlands [6]. Understanding and quantifying this effect is crucial for developing robust conservation strategies that account for demographic variation in vulnerable populations.

Comparative Analysis of PVA Approaches and Their Handling of Demographic Variation

Table 1: Comparison of Population Viability Analysis Methodologies and Their Application

| Analysis Type | Key Parameters | Population Context | Handling of Demographic Variation | Projected Outcomes |

|---|---|---|---|---|

| Base PVA Model [6] | Apparent survival, reproduction, carrying capacity | Eastern Iberian Reed Bunting (250 pairs) | Implicit in extinction probability estimates | 50% population decline in 20 years; mean extinction time 51.6 years |

| PVA with True Survival Estimates [16] | True survival (accounting for emigration/immigration) | Bonelli's eagle | Explicitly incorporates dispersal processes | Significant improvement in census data fit compared to apparent survival |

| PVA with Habitat Restoration [6] | Enhanced carrying capacity, improved growth rates | Fragmented wetland bird populations | Mitigates variation through increased connectivity | Delayed extinction but did not prevent many local extinctions |

| PVA with Population Reinforcement [6] | Translocations, captive breeding releases | Critically endangered metapopulations | Augments small populations to reduce stochasticity | Most effective when combined with in-situ habitat measures |

Table 2: Quantifying the 'Penny Flipping' Effect: Small Changes with Major Consequences

| Parameter Variation | Effect Size | Impact on Extinction Risk | Evidence Source |

|---|---|---|---|

| Use of apparent vs. true survival | Not quantified but "potentially large differences" | Significant underestimation of population viability | Bonelli's eagle PVA [16] |

| Annual translocation of 4-7 pairs | 1.6-2.8% of total population | Stabilized critical populations when combined with habitat restoration | Reed Bunting reinforcement simulation [6] |

| Habitat restoration in main vs. secondary wetlands | Focus on 3 of 14 sites | Significantly increased national metapopulation MeanEXT | Reed Bunting conservation planning [6] |

| Cash transaction rounding | Skewed distribution affects 65% of transactions | $6.06M annual cost to U.S. consumers | Penny phase-out economic analysis [15] |

Experimental Protocols for Assessing Demographic Variation in PVA

Protocol 1: True versus Apparent Survival Estimation

Objective: To quantify the bias introduced by using apparent survival estimates instead of true survival in PVA projections.

Methodology:

- Implement PVAs structured by age, sex, and breeding status using long-term monitoring data (12+ years)

- Compare models using apparent survival data with models incorporating true survival estimates

- Integrate emigration and immigration rates into models to assess their influence on projection accuracy

- Validate models by evaluating their fit to observed census data

- Perform sensitivity analysis to determine specific levels of emigration and immigration at which each survival type delivers precise projections

Key Parameters:

- Apparent survival (local return rates)

- True survival (accounting for permanent emigration)

- Age-specific reproduction rates

- Dispersal probabilities between subpopulations

This protocol revealed that using apparent survival underestimated census data, while true survival showed considerably better fit, though each survival type may only deliver precise projections at very specific levels of emigration and immigration [16].

Protocol 2: Metapopulation Reinforcement Strategies

Objective: To test the effectiveness of different population reinforcement strategies on preventing extinction in fragmented populations.

Methodology:

- Develop base PVA model for entire metapopulation using empirical data on current population size, distribution, and vital rates

- Simulate habitat restoration scenarios focused on different wetland classifications (main vs. secondary populations)

- Test translocation strategies with varying intensity and duration:

- Scenario A: 7 pairs annually for 3 years (5 from Delta de l'Ebre + 2 from S'Albufera)

- Scenario B: 5 pairs annually for 4 years (4+1)

- Scenario C: 4 pairs annually for 5 years (3+1)

- Simulate captive breeding supplementation programs with different timing protocols (immediate, years 20-50, year 50)

- Compare outcomes using MeanEXT (mean time to extinction) and probability of extinction across 50-year and 100-year timeframes

This protocol demonstrated that population reinforcements combined with in-situ actions were the most effective measures, while habitat restoration alone succeeded in delaying extinction but did not prevent the disappearance of many small localities [6].

Visualizing PVA Workflows and the 'Penny Flipping' Effect

PVA Implementation Workflow

The Penny Flipping Effect

The Scientist's Toolkit: Essential Research Reagents for PVA

Table 3: Essential Research Tools for Population Viability Analysis

| Tool/Resource | Specification | Application in PVA |

|---|---|---|

| Long-term Monitoring Data | 12+ years of demographic data across age classes | Enables distinction between apparent and true survival estimates [16] |

| Spatially Explicit Population Models | GIS-integrated metapopulation structures | Models connectivity and dispersal in fragmented landscapes [6] |

| Genetic Analysis Tools | Microsatellite or SNP genotyping | Quantifies inbreeding depression and gene flow between subpopulations |

| Climate Projection Data | Downscaled regional climate models | Incorporates environmental stochasticity into population projections |

| Captive Breeding Programs | Assurance colonies for endangered species | Provides individuals for population reinforcement strategies [6] |

| Demographic Analysis Software | VORTEX, RAMAS, or custom PVA packages | Implements stochastic population simulations and extinction risk assessment [6] [16] |

The 'Penny Flipping' Effect represents a fundamental challenge in conservation biology, where minor demographic variations can determine population persistence or extinction. This comparative analysis demonstrates that reliable PVA outcomes require:

- True survival estimation that accounts for dispersal processes rather than relying on apparent survival [16]

- Strategic intervention targeting both main and secondary populations to maintain metapopulation connectivity [6]

- Combined approaches that pair population reinforcement with habitat restoration for maximum effectiveness [6]

The analogy to economic systems—where the removal of small-denomination coins creates a rounding tax that disproportionately affects certain transactions [15]—powerfully illustrates how losing buffering capacity against small variations can have significant consequences. In conservation practice, acknowledging and quantifying the 'Penny Flipping' Effect enables more targeted strategies that enhance population resilience to demographic stochasticity, potentially preventing the extinction of critically endangered species like the Eastern Iberian Reed Bunting within our lifetime.

Population Viability Analysis (PVA) serves as a critical tool in conservation biology, enabling researchers to assess extinction risks and evaluate the potential outcomes of management strategies for threatened species [17]. A core component of realistic PVA is the incorporation of environmental stochasticity—the random fluctuations in survival, reproduction, and dispersal rates driven by variations in climate, habitat conditions, and resource availability [17]. Ignoring this spatial and temporal variability can lead to significant biases in projections, potentially underestimating extinction risk and compromising conservation decisions [14]. This guide compares the performance of alternative PVA frameworks in quantifying and integrating these essential sources of variation, providing researchers with a basis for selecting appropriate models for their specific conservation challenges.

Core Concepts of Variation in PVA

Environmental stochasticity impacts population dynamics through two primary channels: temporal variation (fluctuations in vital rates over time) and spatial variation (differences in environmental conditions and demographic rates across a species' range) [18]. In metapopulation contexts, the interaction between these dimensions—spatial-temporal variation—simultaneously affects local survival, reproduction, and dispersal, collectively determining the overall metapopulation growth rate [18].

Theoretical decompositions of metapopulation growth rate have identified five distinct components: temporal, spatial, and spatial-temporal variation in fitness, coupled with spatial and spatial-temporal covariation in dispersal and fitness [18]. While temporal variation consistently reduces population growth, other sources can have positive or negative effects depending on context. For instance, positive autocorrelations in spatial-temporal variability can generate a positive fitness-density covariance where individuals concentrate in higher-quality habitats, thereby boosting metapopulation growth, particularly for less dispersive species [18].

Comparative Analysis of PVA Approaches

The table below compares three primary PVA approaches based on their capacity to incorporate environmental stochasticity, data requirements, and appropriate applications.

Table 1: Comparison of PVA Modeling Approaches for Incorporating Environmental Stochasticity

| Model Approach | Spatial Capabilities | Temporal Stochasticity | Data Requirements | Ideal Applications |

|---|---|---|---|---|

| Time-Series PVA | Single population | Estimates variance from population count fluctuations | Low: Total population counts over time | Rapid risk assessment for data-limited species [17] |

| Demographic PVA | Can be extended to metapopulation | Models variation in age/stage-specific vital rates | High: Age/stage-specific survival and fecundity | Identifying vulnerable life stages; management scenario testing [17] |

| Metapopulation PVA | Explicitly models multiple patches | Incorporates spatial-temporal covariation in fitness and dispersal | High: Patch-specific demography and dispersal rates | Assessing population networks in fragmented landscapes [18] [6] |

Key Insights from Comparative Studies

Pooling vs. Individual Population Data: Using averaged demographic rates across populations can mask critical local variation. A study on Jacquemontia reclinata demonstrated that models using population-specific matrices revealed greater extinction risk and variation compared to models using pooled average matrices [14]. The stochastic growth rate for the pooled model was 1.018, while individual populations showed rates of 1.033 and 0.933, with quasi-extinction risks of <1% versus 14% and 87% respectively [14].

Apparent vs. True Survival Estimates: Using apparent survival (which doesn't account for emigration) rather than true survival can significantly bias PVA projections. In a Bonelli's eagle population, models using apparent survival underestimated census data, while those using true survival showed considerably better fit [16]. This highlights the critical importance of accounting for dispersal processes in viability assessments [16].

Cyclical Populations Respond Differently: Contrary to the paradigm that environmental variability always increases population fluctuations, cycling populations may exhibit reduced long-run variance under increasing environmental variability due to interactions between stochasticity and deterministic cyclic dynamics [19]. This has been observed in flour beetles and Canadian lynx, suggesting previous predictions about extinction under environmental variability may be inadequate for some populations [19].

Experimental Protocols for Quantifying Variation

Metapopulation Growth Rate Decomposition

Objective: To partition the impacts of spatial-temporal variation in demography and dispersal on metapopulation growth rates [18].

Methodology:

- Collect longitudinal demographic data (survival, reproduction) across multiple subpopulations

- Track dispersal rates between patches using mark-recapture, telemetry, or genetic methods

- Apply stochastic demographic framework to decompose growth rate into:

- Temporal variation in fitness

- Spatial variation in fitness

- Spatial-temporal variation in fitness

- Spatial covariation in dispersal and fitness

- Spatial-temporal covariation in dispersal and fitness

Key Metrics: Variance components, autocorrelation structures, fitness-density covariance [18]

Interpretation: Positive autocorrelations in spatial-temporal variability benefit less dispersive species, while negative autocorrelations benefit highly dispersive species. Positive covariances between movement and future fitness increase growth rates [18].

Demographic PVA with Stage Structure

Objective: To assess extinction risk while accounting for age- or stage-specific responses to environmental variation [14].

Methodology:

- Establish permanent plots or monitoring stations for long-term data collection

- Collect stage-specific data: seeds/seedlings, juveniles, subadults, adults

- Tag and track individuals annually to estimate transition probabilities between stages

- Parameterize a stage-structured matrix model incorporating environmental stochasticity:

- Construct annual matrices based on stage-specific transitions

- Calculate annual population growth rates (λ)

- Perform elasticity analysis to identify critical life stages

Key Metrics: Stochastic growth rate, quasi-extinction probability, elasticity values [14]

Case Example: For Jacquemontia reclinata, elasticity analysis revealed that adult survival and seed-to-seedling transitions had the greatest impact on population growth [14].

The following diagram illustrates the workflow for implementing a demographic PVA with stage structure:

Figure 1: Workflow for demographic PVA with stage structure

Metapopulation Viability Assessment

Objective: To evaluate extinction risk and conservation strategies for spatially structured populations [6].

Methodology:

- Survey all potential habitat patches to determine occupancy patterns

- Estimate patch-specific carrying capacities and demographic rates

- Quantify dispersal probabilities between patches using mark-recapture, radio-tracking, or genetic methods

- Implement stochastic metapopulation model incorporating:

- Environmental stochasticity within patches

- Demographic stochasticity at low abundances

- Dispersal limitation between patches

- Test alternative management scenarios:

- Habitat restoration at key locations

- Predator control interventions

- Population reinforcements via translocations

- Reintroductions to unoccupied patches

Key Metrics: Mean time to extinction, cumulative extinction probability, metapopulation occupancy [6]

Case Example: For the Eastern Iberian Reed Bunting, PVA revealed that without intervention, the population would halve within 20 years and face complete extinction by the 2070s. Combined interventions of habitat restoration and captive-bred reinforcements proved most effective [6].

Table 2: Essential Research Tools for Environmental Stochasticity Analysis

| Tool/Resource | Function | Application Context |

|---|---|---|

| RAMAS Metapop | Spatially explicit population modeling | Metapopulation PVA with environmental stochasticity [14] |

| VORTEX | Individual-based simulation | Demographic PVA with genetic and demographic stochasticity [17] |

| Mark-Recapture Analysis | Estimating true survival and dispersal rates | Quantifying apparent vs. true survival differences [16] |

| Stage-Structured Matrix Models | Projecting population growth with stage-specific rates | Demographic PVA with environmental stochasticity [14] |

| Bayesian Statistical Methods | Quantifying and incorporating parameter uncertainty | Decision-support PVA with multiple uncertainties [20] |

| Long-term Monitoring Data | Parameterizing temporal variation in vital rates | All PVA approaches requiring variance estimation [6] [14] |

Incorporating environmental stochasticity in PVA requires careful consideration of both temporal and spatial dimensions of variation. Demographic and metapopulation PVAs generally outperform time-series approaches in complex environments, as they explicitly account for spatial structure and stage-specific responses to variable conditions [17] [6]. The most reliable projections account for true rather than apparent survival, incorporate population-specific rather than pooled demographic rates, and recognize that cyclical populations may respond counterintuitively to increased environmental variation [16] [14] [19].

For conservation decision-making, researchers should select PVA approaches based on the specific population structure, data availability, and the forms of environmental variation most critical to the species of concern. Bayesian methods and scenario-based evaluations can help account for residual uncertainties, ensuring that PVAs provide robust guidance despite the inherent unpredictability of natural systems [20].

Population Viability Analysis (PVA) employs a range of models to assess extinction risk and inform conservation decisions. These models exist on a spectrum from simple deterministic formulations to complex stochastic frameworks, each with distinct strengths, data requirements, and appropriate applications [9]. Simple deterministic models provide foundational insights with minimal data, while complex stochastic models incorporate randomness and individual variation to capture more realistic population dynamics [21]. Understanding the structure, capabilities, and limitations of each model type is crucial for researchers, scientists, and drug development professionals who rely on robust quantitative assessments for species conservation and management planning. This guide objectively compares the performance of these alternative modelling approaches within the broader context of validating PVA models.

Model Typology and Structural Comparison

PVA models can be broadly categorized by their complexity and treatment of variability. The following sections detail the primary model types.

Unstructured Population Models

Unstructured models, the simplest class, use time-series data on overall population size to parameterize basic stochastic growth models [9]. They typically assume density independence and do not differentiate individuals by age or stage. A key strength is their foundation in stochastic exponential growth models, which can be approximated by a diffusion equation (DA) model [9]. This approximation allows for analytical estimates of passage probabilities, such as the likelihood of crossing a quasi-extinction threshold within a specified time frame [9]. While variants can incorporate density dependence and environmental autocorrelation, they are generally best suited for initial risk screening or when data is limited to population counts over time [9].

Structured Population Models

Structured models track changes in the numbers of individuals in different stages (e.g., age or size categories) within a population [9].

- Matrix Projection Models: These models are relatively easy to construct and use readily available demographic data on stage-specific survival, growth, and reproduction [21]. They are mathematically tractable, and equilibrium (eigenvalue) analysis yields useful metrics like the population growth rate, stable age distribution, and elasticities [21]. However, they simplify community interactions, and incorporating density dependence or stochasticity necessitates numerical solution and complicates analytical eigenvalue analysis [21].

- Individual-Based Models (IBMs): IBMs simulate thousands of individuals, tracking traits like size, age, and location [21]. Equations govern how these traits change over time based on individual state, interactions, and environment. Key advantages include the ability to model individual variation, local interactions, and spatially explicit movement. Density-dependent processes emerge from individual interactions rather than being pre-defined [21]. The primary disadvantages are high computational demand, large data requirements, and complex output that can be challenging to validate and interpret [21].

Metapopulation and Spatially Explicit Models

- Metapopulation Models: These models track the fates of multiple subpopulations, assessing whether the rate of new colonization counteracts the rate of subpopulation extinction [9]. They require data on the number of subpopulations, extinction rates, and colonization patterns.

- Spatially Explicit Population Models: This is the most complex and data-intensive type, simulating individual organisms on detailed landscapes with mapped habitat patches [9]. They require data on birth/death rates, movement patterns, and the spatial configuration of habitat.

Table 1: Comparative Overview of Primary PVA Model Structures

| Model Type | Core Structure | Key Input Parameters | Level of Complexity | Treatment of Stochasticity |

|---|---|---|---|---|

| Unstructured Models | Overall population size | Time-series of total population counts | Low | Environmental variation via stochastic growth rate |

| Matrix Models | Stage or age classes | Stage-specific fecundity, mortality, growth | Medium | Can incorporate environmental stochasticity into vital rates |

| Individual-Based Models (IBMs) | Simulated individuals | Individual traits (size, age, location), rules for behavior | High | Demographic & environmental stochasticity emerge from individual processes |

| Metapopulation Models | Network of subpopulations | Number of subpopulations, extinction/colonization rates | Medium-High | Stochasticity in patch occupancy dynamics |

| Spatially Explicit Models | Individuals on a landscape | Habitat map, individual movement rules | Very High | Integrated spatial and demographic stochasticity |

Experimental Protocols for Model Comparison and Validation

Validating PVA models requires rigorous protocols to compare their predictions. The following methodologies are drawn from key studies in the field.

Protocol 1: Comparative Performance Assessment Using Virtual Species

This protocol, based on a large-scale comparison of viability measures, tests how different models rank populations and scenarios [5].

- Parameterization: Utilize a published dataset of parameters for 4,574 virtual mammals, designed to cover the diversity of sizes and life histories of real animals while accounting for parameter collinearity [5].

- Simulation: Employ an agent-based model (e.g., RangeShifter) to simulate population dynamics for all virtual species. Conduct 100 repetitions for 100 years on multiple artificial habitat maps with varying suitability (e.g., 5%, 10%, and 20% habitat cover) [5].

- Output Calculation: From the simulated population time series, calculate a suite of standard viability measures (e.g., probability of extinction, mean time to extinction, expected population size) for each model run [5].

- Comparison and Analysis:

- Compare the ranking of species and scenarios based on the different viability measures derived from the models.

- Assess direct correlations between pairs of viability measures.

- Test whether simulation model parameters (e.g., carrying capacity, dispersal distance) alter the relationship between different viability measures [5].

Protocol 2: Head-to-Head Prediction of Population Response

This protocol directly tests the predictive capability of simpler models against a complex benchmark, as demonstrated in a comparison of matrix and IBMs for yellow perch [21].

- Benchmark Model Establishment: Use a previously developed and validated complex model (e.g., an IBM for yellow perch and walleye dynamics in Oneida Lake) as the basis for comparison. This IBM should explicitly model size-specific predator-prey interactions [21].

- Simplified Model Construction: Construct alternative, simpler models from the same system. For example:

- Develop matrix projection models (e.g., annual, stage-within-age) using output from the IBM or field data.

- Incorporate different forms of density-dependence (e.g., annual vs. daily) [21].

- Perturbation Experiment: Systematically change key survival rates (e.g., egg and adult survival) in all models (IBM and matrix models) [21].

- Response Metric Comparison: Compare the predicted responses of key output variables (e.g., spawner abundance, age-0 abundance, total consumption by walleye) among the different models. Evaluate both the magnitude and the direction of the predicted changes [21].

The workflow for a comparative model validation study is outlined below.

Quantitative Performance Data and Comparison

Empirical comparisons reveal how model structure influences predictions of population viability.

Scenario Ranking Consistency

A study simulating over 4,500 virtual species found that different viability measures, which are outputs of PVA models, generally ranked species and scenarios similarly [5]. This suggests that the choice of model output metric may not alter which population is deemed more viable or which management option is best, provided the scenarios are not too dissimilar. However, the same study found that direct correlations between different viability measures were often weak and could not be generalized, indicating a loss of information when raw population data is aggregated into a single metric [5].

Predictive Accuracy Against a Complex Benchmark

A direct comparison of an Individual-Based Model (IBM) and matrix models for yellow perch population dynamics yielded the following quantitative results:

Table 2: Performance Comparison of Matrix Models vs. Individual-Based Model for Yellow Perch

| Model Type | Agreement with IBM on Abundance Outputs | Agreement with IBM on Cause of Response | Key Strengths Demonstrated | Key Limitations Revealed |

|---|---|---|---|---|

| Stage-within-Age Matrix Model (Annual Density-Dependence) | Good (0.5 agreement score) | Best (0.625 agreement score) | Mimicked complex size-specific predator-prey interactions fairly well [21]. | — |

| Stage-within-Age Matrix Model (Daily Density-Dependence) | Underestimated spawner abundance (146 vs. IBM's 190) [21]. | — | — | Poorer performance in baseline abundance estimation [21]. |

| All Matrix Models | — | — | Predicted qualitatively similar responses to changes in adult survival [21]. | Failed to predict the correct direction of population response to changes in egg survival [21]. |

This study concluded that matrix models with annual density-dependence could reasonably mimic the population responses predicted by the more complex IBM for the yellow perch-walleye system, despite the presence of strong, size-specific predator-prey interactions [21].

Successful implementation and validation of PVA models require a suite of conceptual and software-based tools.

Table 3: Key Research Reagents and Resources for PVA Model Development and Validation

| Tool Name / Concept | Type | Primary Function in PVA | Relevant Model Type |

|---|---|---|---|

| RangeShifter | Software Platform | Agent-based modeling to simulate population dynamics and individual dispersal in complex landscapes [5]. | Individual-Based Models (IBMs), Spatially Explicit Models |

| RAMAS-GIS | Software Platform | Integrates metapopulation modeling with geographic information systems (GIS) for spatially structured population analysis [9]. | Metapopulation, Spatially Explicit Models |

| VORTEX | Software Platform | A widely used individual-based simulation tool for PVA that can incorporate genetic information and is also applicable to spatially explicit analysis [9]. | Individual-Based Models (IBMs) |

| Diffusion Approximation (DA) Model | Analytical Method | Provides a mathematical framework to estimate extinction risk parameters from time-series data of population size [9]. | Unstructured Population Models |

| Projection Matrix | Mathematical Framework | The core structure of matrix models, used to project stage-structured populations over time using linear algebra [21]. | Structured Population Models (Matrix) |

| Sensitivity / Elasticity Analysis | Analytical Technique | Measures how sensitive population growth rate or extinction risk is to changes in specific model parameters (e.g., vital rates), guiding targeted management [9]. | All Model Types, esp. Structured |

The logical relationships and data flow between these core components in a PVA study are visualized below.

The transition from simple deterministic to complex stochastic matrix models represents a trade-off between data requirements, computational complexity, and biological realism. Unstructured models provide an accessible entry point for risk assessment, while structured matrix models offer greater insight into stage-specific dynamics without overwhelming computational demands. Individual-based and spatially explicit models offer the highest fidelity for complex systems but require significant data and resources. Validation studies demonstrate that simpler models can sometimes approximate the predictions of complex IBMs, particularly when appropriately parameterized with density-dependence. The choice of model should therefore be guided by the specific management question, data availability, and the need for mechanistic understanding versus general prediction. Ultimately, a multi-model approach, leveraging the strengths of each model type, often provides the most robust and defensible foundation for conservation decision-making.

Building and Applying PVA Models: From Parameterization to Real-World Scenarios

Population Viability Analysis (PVA) employs quantitative methods to predict a species' extinction risk and inform conservation decisions [22]. The reliability of these forecasts is entirely dependent on the quality of the data used to parameterize the models. This guide objectively compares the data requirements and parameter estimation methodologies for different PVA model types, providing researchers with a framework for selecting and validating appropriate models for their specific conservation challenges. The process of estimating vital rates, such as survival and fecundity, from often incomplete field data is a critical and common hurdle in ecological research [23].

PVA models vary in complexity, from simple unstructured models to intricate individual-based simulations. This variation directly corresponds to the type and volume of data required for their application. The choice of model is a trade-off between biological realism and data availability.

Table 1: Comparison of PVA Model Types, Data Requirements, and Common Software

| Model Type | Core Data Requirements | Parameter Estimation Challenges | Example Software/Tools |

|---|---|---|---|

| Unstructured Population Models [9] | Time-series data on overall population size; requires estimates of current population size and stochastic growth rate. | Sensitive to observation errors in population counts; assumes density independence, which may be biologically unrealistic. | Custom scripts in R or Python; diffusion approximation methods [9]. |

| Structured Population Models [9] | Age- or stage-specific vital rates (survival, fecundity); current population stage structure. | Data-intensive; requires estimates of variance in reproductive success (φ) across different ages or stages [9] [24]. |

Vortex (individual-based) [25], RAMAS (stage-based) [9]. |

| Metapopulation Models [9] | Number of subpopulations; rates of local extinction and colonization; dispersal patterns. | Difficult to obtain empirical data on dispersal rates and connectivity between habitat patches. | ALEX [26], RAMAS Metapop [26], META-X [26]. |

| Spatially Explicit Models [9] | All data from structured models, plus spatially-referenced maps of habitat suitability, quality, and connectivity. | Extremely data-intensive; requires detailed GIS data and knowledge of species movement through landscapes. | RAMAS GIS [9], Vortex (with spatial functions) [25]. |

Experimental Protocols for Parameter Estimation

Accurately estimating the parameters that feed into PVA models is a foundational research activity. The following protocols detail established methodologies for deriving key vital rates and dealing with common data limitations.

Protocol: Estimating Effective Population Size (Nₑ) in Species with Overlapping Generations

Objective: To calculate the genetically effective population size (Nₑ), a crucial parameter for assessing rates of inbreeding and genetic drift, using life-history traits [24].

Methodology:

- Life Table Construction: Compile a life table with age-specific data for each cohort (

x):lₓ: Cumulative survival to agex.mₓ: Mean number of offspring produced at agex.sₓ: Probability of survival from agextox+1.Vₓ: Variance in reproductive success at agex(used to calculateφₓ = Vₓ/mₓ) [24].

- Calculate Generation Length (T): Generation length is the average age of parents of a cohort.

- Compute Lifetime Variance in Reproductive Success (Vₖ•): This is the variance in the total number of offspring produced by individuals over their entire lifetimes. It is derived by integrating age-specific vital rates and variances across all age classes [24].

- Apply Hill's Equation: Use the formula

Nₑ = (N₁ * T) / Vₖ•, whereN₁is the number of newborns in a cohort [24]. For adult census size (N),N₁is replaced by the number of recruits surviving to age at maturity (Nα).

Workflow: Parameter Estimation for Effective Population Size (Nₑ)

This workflow outlines the key steps researchers follow to estimate the critical parameter of effective population size from raw demographic data [24].

Protocol: Handling Missing Demographic Data with Bayesian Modeling

Objective: To estimate vital rates and population growth from demographic studies with missing years of data, without discarding valuable information from multi-year transitions [23].

Methodology:

- Model Specification: Develop a Bayesian state-space model where the true, unobserved population state is modeled separately from the observation process.

- Prior Elicitation: Define prior distributions for all model parameters (e.g., vital rates, initial population size) based on existing literature or expert knowledge.

- Posterior Estimation: Use Markov Chain Monte Carlo (MCMC) sampling to estimate the joint posterior distribution of the model parameters. This method imputes likely values for the missing data points based on the available data and model structure.

- Model Validation: Test the model's performance on data subsets where some years are artificially removed, comparing predictions to known values. The approach can also be validated using simulated data with known parameters [23].

Successful PVA relies on a combination of software tools, statistical methods, and conceptual frameworks.

Table 2: Key Research Tools for Population Viability Analysis

| Tool Name | Type | Primary Function in PVA |

|---|---|---|

| Vortex [25] | Software | An individual-based simulation model for PVA, modeling demographic, environmental, and genetic stochasticity. |

| RAMAS GIS [9] | Software | Integrates metapopulation and stage-structured models with spatial data for spatially explicit PVA. |

| META-X [26] | Software | A generic package for metapopulation viability analysis, focused on occupancy dynamics and useful for teaching and risk assessment. |

| Bayesian State-Space Models [23] | Statistical Method | A framework for parameter estimation and forecasting that explicitly accounts for process noise and observation error, ideal for incomplete data. |

| Diffusion Approximation [9] | Analytical Method | Provides a mathematical approximation for population growth under stochasticity, allowing for calculation of extinction probabilities. |

| Sensitivity Analysis [9] | Analytical Process | Identifies which vital rates (e.g., juvenile vs. adult survival) have the greatest influence on population growth/extinction risk, guiding priority research. |

Critical Considerations for Model Validation

Given that errors in PVA models can directly lead to flawed conservation policies, a rigorous validation process is essential [27].

- Independent Technical Review: Prior to policy implementation, models and their underlying code should undergo systematic review by independent experts to ensure replicability and test for coding errors or logical inconsistencies [27].

- Uncertainty Quantification: A recent advancement demonstrates that confidence intervals for extinction risk can be reliably calculated even with limited time-series data, which is critical for robust Red List evaluations [28].

- Addressing Error-Prone Assumptions: Common sources of error include over-optimistic uncertainty accounting, incorrect model specifications, and simple coding mistakes. For example, a flawed PVA model for the gopher tortoise contained an error that inadvertently created a positive feedback loop for immigration, drastically overestimating population resilience and leading to a denial of federal protections [27].

Population Viability Analysis (PVA) serves as a critical methodology in conservation biology, enabling researchers to estimate extinction risks and evaluate the potential impacts of various threats and management strategies on wildlife populations. These analytical tools combine population biology with stochastic modeling to project future population status under different scenarios. The development of specialized software has dramatically increased the accessibility and application of PVA in conservation decision-making, though recent assessments indicate concerning trends in the quality of published analyses. A 2020 review of 160 PVAs for bird and mammal species revealed that only 18.1% were considered high quality (scoring >75% on an evaluation framework), with studies using generic programs generally showing lower quality scores across all measures [29]. This comprehensive comparison guide examines three principal approaches to PVA: the individual-based simulator VORTEX, the metapopulation-focused RAMAS Metapop, and custom-built frameworks, providing researchers with the analytical context to select appropriate tools for their specific conservation challenges.

VORTEX: Individual-Based Simulation Model

VORTEX is an individual-based simulation model that tracks the fate of each organism in a population through discrete, sequential events that mirror an annual biological cycle. The software simulates deterministic forces alongside demographic, environmental, and genetic stochastic events that create "extinction vortices" threatening small populations [25]. Its event-based structure steps through mate selection, reproduction, mortality, aging, dispersal, removals, supplementation, and carrying capacity truncation [25]. As of July 2025, VORTEX 10.10.0 remains actively maintained with recent enhancements including clonal reproduction options, improved error bars on graphs, and fixes for carrying capacity implementation bugs [25]. The software is particularly valuable for modeling polygynous mating systems, genetic dynamics, and small population processes where individual variation significantly impacts population outcomes.

RAMAS Metapop: Structured Population Modeling

RAMAS Metapop employs a structured population modeling approach, focusing on populations fragmented across heterogeneous landscapes. Unlike VORTEX, RAMAS Metapop tracks age and stage structures across multiple populations rather than individual fates [30]. The software incorporates spatial dynamics including dispersal patterns, correlation of environmental fluctuations, and recolonization of empty patches [30]. RAMAS Metapop can model up to 500 populations with 50 stages each, simulated over 500 time steps with 10,000 replications, making it suitable for complex metapopulation analyses [30]. The program is particularly effective for assessing reserve design, translocation strategies, and human impacts on fragmented populations where spatial configuration significantly influences persistence probability.

Custom-Built Frameworks: Flexibility with Complexity

Custom-built PVA frameworks developed in programming languages like R offer maximum flexibility but require significant technical expertise. These frameworks can be tailored to specific biological scenarios not adequately addressed by generic software. The 2020 review of PVA quality found that studies using custom-built programs generally demonstrated higher quality across all evaluation metrics compared to those using generic software [29]. Custom frameworks facilitate global sensitivity analysis that considers all parameters simultaneously rather than the one-at-a-time approach available in RAMAS GIS [30]. Recent developments include R packages like vortexR for post-Vortex simulation analysis and MAPS-to-Models for building fully-specified population models from mark-recapture data [30] [31].

Table 1: Core Feature Comparison of PVA Software

| Feature | VORTEX | RAMAS Metapop | Custom Frameworks |

|---|---|---|---|

| Modeling Approach | Individual-based | Age/Stage-structured | Flexible |

| Spatial Structure | Multiple populations (metapopulation) | Multiple populations (up to 500) | User-defined |

| Stochasticity Types | Demographic, environmental, genetic | Demographic, environmental, catastrophic | User-defined |

| Density Dependence | Yes, multiple functions | Yes, multiple types including Allee effects | Programmable |

| Genetic Features | Inbreeding depression, lethal equivalents | Limited | Fully programmable |

| Maximum Capacity | Limited by computer memory | 500 populations × 50 stages × 500 time steps | Hardware-dependent |

| Sensitivity Analysis | Basic | One-at-a-time | Global methods available |

| Data Integration | Field data, expert opinion, captive data | GIS integration, mark-recapture (with R tools) | Any data source |

Quantitative Performance Metrics

Computational Efficiency and Limitations

Computational requirements vary substantially between PVA approaches. RAMAS Metapop explicitly defines its technical limits, handling up to 500 populations with 50 stages each, simulated over 500 time steps with 10,000 replications [30]. This structured approach efficiently models complex age-structured metapopulations but may become computationally intensive when approaching these upper bounds. By comparison, VORTEX's individual-based approach tracks each organism separately, making it more computationally demanding for large populations but potentially more efficient for small populations where individual variation significantly impacts outcomes. Custom frameworks offer scalable performance dependent on programming optimization and hardware capabilities, though they require significant development effort to match the computational efficiency of specialized software.

Population Dynamics and Scenario Modeling

Both mainstream packages offer comprehensive population dynamics modeling but with different emphases. VORTEX includes specialized features for genetic management (inbreeding depression with 6.29 lethal equivalents default), mating systems (polygyny, monogamy, etc.), and age-specific mortality [32]. RAMAS Metapop provides more sophisticated density dependence functions (logistic, Ricker, Beverton-Holt, ceiling) and spatial correlation of environmental fluctuations [30]. A comparative study found that model quality was significantly higher for custom-built programs than generic software, though publications in high-impact factor journals generally demonstrated higher PVA quality regardless of platform [29].

Table 2: Application Performance in Case Studies

| Application Context | VORTEX Performance | RAMAS Performance | Custom Framework Performance |

|---|---|---|---|

| Gopher Frog Conservation | Not applied | Predicted persistence >0.89 with ≤3 drought years/decade; high sensitivity to reproductive success [33] | Not applied |

| Giant Anteater Viability | 5% stochastic growth rate for baseline model; most sensitive to mortality rates and female breeding percentage [32] | Not applied | Not applied |

| Generic PVA Quality (160 studies) | Not separately assessed | Not separately assessed | Significantly higher quality scores than generic programs [29] |

| Threatened Species Management | Effective for incorporating road mortality impacts | Suitable for habitat fragmentation assessment | Adaptable to specific threat combinations |

Experimental Protocols and Methodologies