Validating Citizen Science Data: Current Approaches, AI Innovations, and Best Practices for Research

This article provides a comprehensive analysis of current methodologies for validating data generated through citizen science initiatives.

Validating Citizen Science Data: Current Approaches, AI Innovations, and Best Practices for Research

Abstract

This article provides a comprehensive analysis of current methodologies for validating data generated through citizen science initiatives. It explores foundational concepts of data verification, details established and emerging methods including expert review, community consensus, and AI-driven automation, and addresses key challenges in data quality and bias. Synthesizing evidence from recent systematic reviews and case studies, it offers a comparative evaluation of verification approaches and practical optimization strategies. The content is tailored for researchers, scientists, and drug development professionals seeking to leverage large-scale, public-generated data while ensuring scientific rigor and reliability for potential applications in biomedical and clinical research.

The Critical Importance of Data Verification in Citizen Science

Defining Verification and Validation in Citizen Science Data Quality

In citizen science, where volunteers collaborate with professional scientists to generate scientific data, data quality is a paramount concern and often the most significant source of scepticism from the broader scientific community [1]. The terms verification and validation represent two distinct, critical processes within the data quality assurance framework. While sometimes used interchangeably in layman's terms, they address different stages and aspects of quality control. Establishing clear, rigorous protocols for both is essential for ensuring that citizen science data achieves the reliability and accuracy necessary for use in scientific research, environmental monitoring, and policy development [2] [3].

This guide objectively compares the current approaches and methodologies for verification and validation, providing a structured overview for researchers and professionals who need to assess, implement, or improve data quality protocols in participatory research.

Definitions: Distinguishing Verification from Validation

Within the data quality pipeline, verification and validation serve separate but complementary functions. The table below summarizes their core distinctions.

Table 1: Core Definitions and Differences between Validation and Verification

| Aspect | Data Validation | Data Verification |

|---|---|---|

| Primary Purpose | Checks if data falls within acceptable ranges and conforms to defined rules and formats at the point of entry [4]. | Checks the factual correctness and consistency of data after submission, confirming it reflects reality [2] [4]. |

| Typical Timing | Upon data creation or entry [4]. | After data submission, often before final acceptance into a database [2] [4]. |

| Common Example | Ensuring a "state" field contains a valid two-letter abbreviation or a "height" field contains a plausible numeric value [4]. | Confirming the species identification in an ecological record is correct, often via an expert or community consensus [2]. |

| Citizen Science Context | Implementing data entry forms with dropdown menus or range checks in a data collection app. | A hierarchical process where an expert ornithologist reviews a submitted bird photograph to validate the species. |

In essence, validation asks, "Is this data point formattedly and structurally acceptable?" whereas verification asks, "Is this data point factually correct?" [4]. In ecological citizen science, verification most commonly involves confirming the correct identification of a reported species [2].

A systematic review of published ecological citizen science schemes reveals that verification is a critical process for ensuring data quality and building trust in the resulting datasets [2]. The approaches can be categorized, with expert review being the most traditional and widely used method.

Table 2: Predominant Verification Approaches in Ecological Citizen Science

| Verification Approach | Description | Prevalence in Published Schemes | Key Characteristics |

|---|---|---|---|

| Expert Verification | Records are checked for correctness (e.g., species ID) by a professional scientist or designated expert [2]. | Most widely used, especially among longer-running schemes [2]. | - High accuracy, considered the "gold standard".- Resource-intensive and difficult to scale with large data volumes. |

| Community Consensus | The correctness of a record is determined by agreement among multiple participants or experienced community members [2]. | A recognized and used method, though less common than expert review [2]. | - Leverages "wisdom of the crowd".- Builds a strong, collaborative community.- May require a large and active participant base. |

| Automated Verification | Records are checked using algorithms, artificial intelligence (AI), or predefined rules without direct human intervention [2]. | A growing area, often used in conjunction with other methods in a hierarchical system [2]. | - Highly scalable for large data streams.- Efficiency is high, but accuracy depends on the algorithm's sophistication.- Ideal for clear-cut, rule-based checks. |

The Hierarchical Verification Model

As data volumes grow, many projects are adopting a hierarchical approach to improve efficiency without sacrificing quality [2]. This idealised system proposes that the bulk of records are first processed by automation or filtered through community consensus. Any records that are flagged by these systems—due to rarity, uncertainty, or complexity—are then escalated to additional levels of verification, ultimately reaching experts for a final decision [2]. This ensures that expert time is allocated to the records that need it most.

Experimental Protocols for Assessing Data Quality

To objectively compare data from different sources (e.g., citizen scientists vs. experts) or to validate the effectiveness of a new verification protocol, researchers employ controlled experimental designs. The following case study provides a detailed, replicable methodology.

Case Study: Comparative Analysis of Bioacoustic Recordings

A 2021 study directly compared the quality of nightingale song recordings made by citizen scientists using a smartphone app with those made by academic researchers using professional equipment [5]. The objective was to evaluate the opportunities and limitations of citizen science data for bioacoustic research.

1. Research Question: Can citizen science recordings, collected via a smartphone app without standardized protocols, produce data of sufficient quality for bioacoustic analysis compared to expert recordings with professional devices? [5]

2. Experimental Groups:

- Citizen Scientist (CS) Group: Participants used the "Forschungsfall Nachtigall" smartphone app to record nightingale songs without specific briefing or protocols [5].

- Expert (EX) Group: Researchers used professional recording equipment (e.g., Telinga Pro 6 parabola with a stereo microphone and Marantz PMD 661 MKII recorder) to make standardized recordings [5].

3. Data Analysis and Quality Parameters: The study analyzed the quantity (number of recordings, temporal patterns) and quality of the data. For quality, specific acoustic parameters were measured from the recordings [5]:

- Spectral Parameters: Minimum and maximum frequency (kHz).

- Temporal Parameters: Duration of specific song elements (seconds).

- Signal-to-Noise Ratio: Assessed through visual inspection of spectrograms.

4. Validation Protocol - Playback Test: A controlled playback experiment was conducted to isolate the impact of recording devices from the skill of the citizen scientists. A known, high-quality original nightingale song was played back and re-recorded using both the smartphone devices and the professional expert equipment. The deviation of the acoustic parameters (frequency, duration) in these re-recordings from the original song was then quantitatively measured [5].

5. Key Findings: The study found that citizen scientists provided a large volume of recordings, many of which were valid for further research. Differences in data quality were primarily attributed to the technical limitations of the smartphones (e.g., lower fidelity microphones) rather than to errors by the citizens themselves. The study concluded that for many research questions, CS recordings are a valuable resource, but that spectral analyses require caution due to device-based deviations [5].

Table 3: Key Quantitative Findings from the Bioacoustics Case Study [5]

| Measured Aspect | Citizen Science (CS) Recordings | Expert (EX) Recordings | Interpretation for Data Quality |

|---|---|---|---|

| Data Quantity | High volume; broad spatial and temporal coverage. | Targeted, less numerous. | CS excels in scale, providing data at a scope often unattainable by experts alone. |

| Temporal Patterns | Reflected user engagement patterns (e.g., more recordings on weekends). | Consistent, following a standardized research protocol. | CS data may introduce temporal biases that must be accounted for in analysis. |

| Spectral Accuracy | Showed measurable deviation from the original signal in playback tests. | High accuracy and minimal deviation from the original signal. | EX data is superior for research questions relying on precise frequency measurements. |

| Overall Usability | High for research on song type occurrence and distribution. | High for all analyses, including detailed spectral and temporal measurement. | CS data quality is fit-for-purpose; it is sufficient for many, but not all, research questions. |

Implementing robust verification and validation requires a combination of technological tools and methodological frameworks. The following table details key "research reagents" for building a reliable citizen science data pipeline.

Table 4: Essential Reagents and Resources for Citizen Science Data Quality

| Tool/Resource | Type | Primary Function in Data Quality |

|---|---|---|

| Global Address Validation & Geocoding Data [4] | Data Source | Validates location data against authoritative external postal sources, ensuring spatial accuracy. |

| Citizen Science Motivation Scale (CSMS) [6] | Methodological Framework | A standardized, theory-based scale to assess volunteer motivations, helping to design projects that sustain engagement and, by extension, data quality. |

| Deduplication & Fuzzy Matching Tools [4] | Software/Algorithms | Identifies and flags duplicate or highly similar records (e.g., from the same participant), a key step in data verification and cleaning. |

| Logic Model of Evaluation [7] | Methodological Framework | A structured approach (Inputs -> Activities -> Outputs -> Outcomes -> Impacts) for project design and evaluation, including the assessment of data quality outcomes. |

| Standardized Acoustic Recording Equipment [5] | Hardware | Provides a baseline for high-fidelity data collection in fields like bioacoustics, used for calibrating or comparing with citizen-collected data. |

| Change of Address Databases [4] | Data Source | Allows for verification and updating of participant contact information, ensuring the timeliness and relevance of longitudinal data. |

Verification and validation are not merely bureaucratic steps but are foundational to the scientific credibility of citizen science. Validation acts as the first line of defense, ensuring data is clean and conformant at entry. Verification, particularly through a multi-tiered system that smartly leverages automation, community, and expert oversight, provides the necessary assurance of factual correctness [2] [4].

The experimental evidence shows that while differences exist between citizen and expert data, these are often predictable and manageable [5]. The key is a "fit-for-purpose" approach to data quality, where the level of rigor in verification and validation is aligned with the intended use of the data [1]. By transparently implementing and reporting these processes, citizen science can continue to strengthen its role as a powerful engine for generating reliable, high-impact scientific knowledge.

Why Data Trustworthiness is Paramount for Scientific and Policy Use

In an era of data-driven discovery and decision-making, the trustworthiness of data forms the foundational bedrock for scientific advancement and sound public policy. This is especially critical in the realm of participatory sciences, where data collected by volunteer non-scientists contributes to formal research and environmental monitoring [8]. The integration of such data into policy frameworks and scientific publications demands rigorous validation to ensure its reliability and accuracy. The paramount importance of data trustworthiness stems from its direct link to the integrity of research conclusions and the efficacy of policies derived from them, influencing everything from public health to conservation efforts.

This guide objectively compares current approaches for validating citizen science data, providing a structured analysis of methodologies, their performance, and the essential tools required to ensure data quality.

Evaluating Citizen Science Data: A Comparative Framework

The skepticism surrounding data collected by non-experts makes the evaluation of its accuracy a frequent subject of research. Studies across various disciplines have employed different protocols to test the effectiveness of citizen scientists, often by comparing their data to benchmarks such as expert-collected data or technologically verified measurements [8].

The table below summarizes key experimental findings from peer-reviewed studies that evaluate the reliability of citizen science data:

Table 1: Comparative Analysis of Citizen Science Data Validation Studies

| Field of Study | Validation Methodology | Key Performance Metric | Result and Reliability Assessment |

|---|---|---|---|

| Marine Wildlife Monitoring [8] | Comparison of shark counts by dive guides vs. acoustic telemetry data from tagged sharks. | Accuracy of population counts over a five-year period. | Data was extremely useful; validation confirmed a high degree of accuracy in citizen science counts. |

| Invasive Species Detection [8] | Comparison of data from community scientist dog-handler teams against standardized detection criteria for spotted lanternfly egg masses. | Ability of dog teams to meet standardized detection criteria. | Demonstrated high accuracy in locating egg masses and differentiating them from environmental distractors. |

| Urban Biodiversity (Butterflies) [8] | Comparison of butterfly data collected over 10 years in two large cities (Chicago and New York). | Long-term consistency and comparative analysis of species data. | Effectiveness confirmed; citizen science was especially valuable in urban landscapes with large volunteer pools. |

| Environmental Pollution (Lichens) [8] | Testing of a citizen science survey (OPAL Air Survey) to detect trends in lichen communities as indicators of pollution. | Ability to detect ecological trends (lichen composition over transects away from roads). | Methodology was successful in detecting meaningful environmental trends. |

| Invasive Plant Mapping [8] | Comparison of invasive plant mapping by 119 volunteers over three years against expert assessments and collected samples. | Accuracy in mapping and estimating abundance of invasive plants. | Data quality was sufficiently accurate and reliable; volunteer participation enhanced data generated by scientists alone. |

| Pollinator Communities [8] | Comparison of floral visitor data from 13 trained citizen scientists (observational) with data from professionals (specimen-based). | Accuracy in classifying insects to the resolution of orders or super-families. | Protocol was effective; citizen scientists provided valuable data that extended the scope of professional monitoring. |

Experimental Protocols for Data Validation

To ensure the trustworthiness of data, particularly from participatory sources, researchers employ rigorous experimental protocols. The following section details the methodologies cited in the comparative analysis.

Protocol 1: Benchmarking Against Technological Gold Standards

Objective: To validate the accuracy of citizen-collected observational data by comparing it with data from an objective, technological system.

Methodology as Used in Marine Wildlife Monitoring [8]:

- Independent Data Collection: Two parallel datasets are collected over the same spatial and temporal scale.

- Citizen Scientist Data: Trained volunteer observers (e.g., dive guides) record counts of a target species (e.g., sharks) during their activities.

- Technology-Generated Data: An automated system (e.g., acoustic telemetry receivers) detects signals from tagged individuals of the target species, providing a benchmark for presence and absence.

- Data Correlation Analysis: The two datasets are statistically compared to assess the correlation between volunteer counts and telemetry detections.

- Accuracy Quantification: The rate of true positives, false positives, and false negatives in the citizen science data is calculated to quantify its accuracy and reliability.

Protocol 2: Comparative Analysis with Expert Data

Objective: To evaluate the performance of citizen scientists by directly comparing their data with that collected by professional experts or against verified physical samples.

Methodology as Used in Invasive Species and Pollinator Studies [8]:

- Structured Sampling: Volunteers and experts survey the same pre-defined areas or transects.

- Blinded Verification: In studies like invasive plant mapping, pressed plant samples collected by volunteers are later verified by botanical experts to confirm species identification. For pollinator studies, professional entomologists collect specimen-based data from the same sites for comparison.

- Statistical Comparison: The identification accuracy, spatial data precision, and abundance estimates from the two groups are compared using statistical tests (e.g., measures of agreement, ANOVA) to determine if there is a significant difference in data quality.

Foundational Principles of Data Trustworthiness

Beyond specific validation protocols, the trustworthiness of any data is formally assessed against established criteria, which differ for quantitative and qualitative research. The following diagram illustrates the core pillars of trustworthiness and their relationships.

Pillars of Trustworthy Data

The diagram above shows the parallel criteria for quantitative and qualitative research [9]. For quantitative data, including much of citizen science data, the four key criteria are:

- Internal Validity: The extent to which a study establishes a trustworthy causal relationship, free from the influence of confounding variables [9].

- External Validity: The degree to which the results of a study can be generalized to other contexts, people, places, and times [9].

- Reliability: The consistency and repeatability of the measurements over time [9].

- Objectivity: The requirement that findings are independent of the researcher's personal beliefs and values [9].

For qualitative data, the corresponding criteria are credibility, transferability, dependability, and confirmability, which emphasize the richness of data and the neutrality of interpretation [9].

The Researcher's Toolkit for Data Quality

Ensuring data trustworthiness requires a suite of methodological and practical tools. The following table details key solutions used in the field to maintain data quality, particularly in participatory science.

Table 2: Essential Research Reagent Solutions for Data Quality Assurance

| Tool or Solution | Primary Function | Application in Citizen Science |

|---|---|---|

| Structured Data Collection Protocols | Standardizes methods for data gathering across all participants. | Minimizes variability and error by providing clear, step-by-step instructions for volunteers [8]. |

| Automated Acoustic Telemetry | Provides objective, continuous monitoring of animal movement and presence. | Serves as a technological gold standard for validating visual observations made by citizens [8]. |

| Digital Data Submission Platforms (e.g., Naturblick App) | Enables real-time data entry using mobile devices, often with integrated guides. | Reduces transcription errors, allows for immediate geo-tagging, and can include visual aids for species identification [8]. |

| Statistical Analysis Software | Performs reliability tests, compares datasets, and quantifies agreement. | Used by researchers to statistically compare citizen data with expert data and measure accuracy [8]. |

| Triangulation Framework | Enhances credibility by using multiple methods or data sources to investigate a phenomenon. | Strengthens findings by combining citizen science data with other lines of evidence, such as existing research or expert analysis [9]. |

| Blinded Verification Samples | Provides a physical reference for objective validation of identifications. | Used to audit the accuracy of volunteer-collected samples, such as pressed plants or insect specimens [8]. |

The imperative for data trustworthiness is clear: it is the non-negotiable currency of credible science and effective policy. For participatory science to maintain its value and expand its influence, the approaches to validation outlined in this guide are not merely academic exercises but essential practices. The consistent application of rigorous benchmarking, structured protocols, and a clear understanding of the principles of trustworthiness allows researchers and policymakers to harness the power of citizen science with confidence, ensuring that this valuable source of data continues to enrich our understanding of the world in a meaningful and reliable way.

The available literature provides a strong foundational guide on how to conduct a systematic review [10] [11], but it does not contain the primary research data, comparative performance metrics, or specific experimental protocols for citizen science data validation schemes that your outlined "Publish Comparison Guides" requires.

To proceed with your article, I suggest these alternative approaches:

- Refine Your Search for Primary Studies: Conduct a more targeted search for individual primary research articles that directly study and compare various citizen science data verification methods.

- Locate Existing Systematic Reviews: Search for published systematic reviews on the specific topic of citizen science data validation, which would already have synthesized data from multiple studies.

- Broaden the Article's Scope: Consider developing a methodological guide on "How to Conduct a Systematic Review of Verification Practices in Citizen Science," for which the current search results provide ample information.

Please let me know if you would like to explore any of these alternative directions for your article.

The Evolution from Ad-Hoc Checking to Structured Validation Frameworks

In the domain of citizen science, the shift from informal, ad-hoc data checking to structured validation frameworks is critical for ensuring data quality and bolstering scientific credibility. This guide objectively compares these approaches, detailing their methodologies, performance, and applications for researchers and drug development professionals. Supported by experimental data and protocols, we demonstrate how structured frameworks enhance data reliability for research use.

Citizen science enables ecological and environmental data collection over vast spatial and temporal scales, producing datasets invaluable for pure and applied research, including environmental monitoring and public health studies [2]. However, the accuracy of citizen science data is often questioned due to concerns about data quality and verification, the critical process of checking records for correctness post-submission [2]. The verification process is a cornerstone for ensuring data quality and building trust in these datasets, allowing them to be used confidently in environmental research, management, and policy development [2].

This evolution mirrors a broader trend in data-driven fields. Ad-hoc approaches, characterized by their unstructured, spontaneous nature, offer speed and flexibility but suffer from low repeatability and high dependency on individual skill [12] [13] [14]. In contrast, structured validation frameworks provide a systematic, evidence-based approach to assessment, ensuring consistency, reliability, and scalability [15] [16]. For researchers and drug development professionals, understanding this evolution and the relative performance of these approaches is fundamental to leveraging citizen science data effectively.

Defining the Approaches: Core Concepts and Characteristics

Ad-Hoc Checking

Ad-hoc checking is an informal, unstructured approach where testing or validation is performed without predefined test plans, cases, or documentation [12] [13]. The term "ad hoc" itself is Latin for "for this," indicating a situational and spontaneous activity [14].

- Core Philosophy: Relies on the intuition, creativity, and prior knowledge of the tester or validator to uncover defects or issues that structured methods might miss [13] [14].

- Key Contexts:

- Typical Characteristics:

Structured Validation Frameworks

A structured validation framework is a formalized system of processes, rules, and tools designed to ensure that data or products meet predefined quality standards [16]. In citizen science, this translates to a systematic process for verifying the correctness of contributed records [2].

- Core Philosophy: Replaces guesswork with data-driven decision-making through a structured, repeatable approach for assessment [15] [16].

- Key Contexts:

- Typical Characteristics:

- Structured Documentation: Procedures and findings are documented and can be reviewed [12] [16].

- Predefined Rules & Metrics: Uses specific rules and quantifiable measures to track quality [16].

- Collaborative & Scalable: Designed for cross-functional input and can be applied consistently across large projects [2] [15].

Comparative Analysis: Performance and Outcomes

A direct comparison reveals fundamental differences in how these approaches perform across key metrics relevant to scientific research.

Table 1: Direct comparison of ad-hoc checking versus structured validation frameworks.

| Evaluation Criteria | Ad-Hoc Checking | Structured Validation Framework |

|---|---|---|

| Formal Planning & Documentation | No formal planning or documentation required [12] [14] | Comprehensive documentation and a formalized template are used [12] [16] |

| Primary Goal | Quickly find obvious bugs or issues in a short time [13] | Ensure data quality for informed decision-making; uncover surface-level and deeper issues [13] [16] |

| Test Repeatability | Low, as steps are not documented [14] | High, due to documented processes and rules [16] |

| Skill Dependency | High; relies heavily on tester's familiarity with the system [12] | Moderate; the framework guides the user, reducing individual variance [15] |

| Bug/Error Handling | Bugs are often not handled properly [12] | Critical bugs and data issues can be handled efficiently and systematically [12] [16] |

| Efficiency & Scalability | Less efficient for large-scale efforts; scalability is limited [12] [14] | More efficient and scalable for large, complex projects [12] [15] |

| Practical Use in Research | Limited practical use for robust research datasets [12] | High practical use; enables datasets to be trusted for environmental research and policy [12] [2] |

Experimental Data on Verification in Citizen Science

A systematic review of 259 published citizen science schemes provides quantitative evidence on the adoption and effectiveness of different verification methods. The study, which located verification information for 142 schemes, offers a real-world performance comparison [2].

Table 2: Prevalence and application of different verification methods in published citizen science schemes, based on a systematic review [2].

| Verification Approach | Prevalence in Schemes (from review) | Common Application Context | Reported Effectiveness |

|---|---|---|---|

| Expert Verification | Most widely used; especially among longer-running schemes | Schemes requiring high accuracy for scientific or policy use | High; considered the traditional default for ensuring quality |

| Community Consensus | Used, but less common than expert verification | Online platforms with community features and voting systems | Moderate to High; effective for building community trust |

| Automated Approaches | Emerging use, but not yet widespread | Schemes with high data volume or where data can be checked against algorithms | High potential; enables efficient verification at scale |

The review concluded that while expert verification has been the default, the growing volume of data means many schemes could benefit from implementing more efficient approaches. It proposed an idealized hierarchical system where most records are verified by automation or community consensus, with only flagged records undergoing expert review [2].

Experimental Protocols for Validation

To ensure robust data collection and validation in citizen science projects, implementing standardized experimental protocols is essential.

Protocol for a Hierarchical Data Verification System

This protocol, derived from a proposed ideal system in citizen science research, ensures efficiency and accuracy [2].

- Objective: To implement a scalable, multi-layered data verification process that maximizes accuracy while managing resource constraints.

- Materials: Citizen-submitted data records, a data management platform, automated validation scripts, a community forum or voting system, and access to subject-matter experts.

- Procedure:

- Data Submission: Volunteers submit data records (e.g., species observations with photos, GPS coordinates, and timestamp) via a digital platform.

- Automated Validation (First Pass):

- Run scripts to check for technical feasibility and basic data quality dimensions [16].

- Checks include: Data format validity (e.g., correct coordinate format), value reasonableness (e.g., location within a possible range), and timestamp consistency.

- Records that pass proceed; records that fail are flagged for immediate review or rejection.

- Community Consensus (Second Pass):

- Records passing automated checks are made visible to a trusted community of volunteers.

- The community provides input, such as confirming species identification from a photo through voting or commenting.

- Records achieving a high-confidence threshold from the community are considered verified.

- Expert Verification (Third Pass):

- Records flagged by automation or those with low community consensus are escalated to domain experts.

- Experts conduct a final review using their specialized knowledge, and their decision is considered definitive.

- Outcome Measurement: Track the percentage of records verified at each stage, time-to-verification, and the accuracy rate of community versus expert verification.

Protocol for a Data Quality Framework Implementation

This protocol provides a step-by-step methodology for establishing a structured validation framework, based on global best practices for data quality [16].

- Objective: To define and implement a structured data quality validation framework for a scientific dataset.

- Materials: Source dataset, data profiling tools (e.g., OpenRefine, Informatica Data Quality), a defined set of data quality rules, and a data governance policy.

- Procedure:

- Define Goals & Identify Critical Data: Align data quality goals with research objectives. Identify Critical Data Elements (CDEs) most vital to the study [16].

- Profile the Data: Use profiling tools to analyze the structure, content, and relationships within the raw data. Identify patterns, ranges, and existing quality issues [16].

- Define Data Quality Rules: For each CDE, define specific rules based on key dimensions [16].

Table 3: Examples of data quality rules for a citizen science dataset.

Data Dimension Example Rule for a Species Sighting Record Completeness The species_nameandgeolocationfields must not be null.Validity The sighting_datemust be in the format YYYY-MM-DD and not a future date.Accuracy The geolocationcoordinates must resolve to a terrestrial location within the study area.Reasonableness A sighting of a migratory species must fall within its known migratory season. - Implement Validation & Cleansing: Integrate validation rules into the data pipeline using ETL tools or custom scripts. Correct errors, fill missing values via imputation, and remove duplicates [16].

- Monitor & Improve: Continuously track data quality metrics (e.g., completeness rate, accuracy rate). Generate reports and refine rules and processes as needed [16].

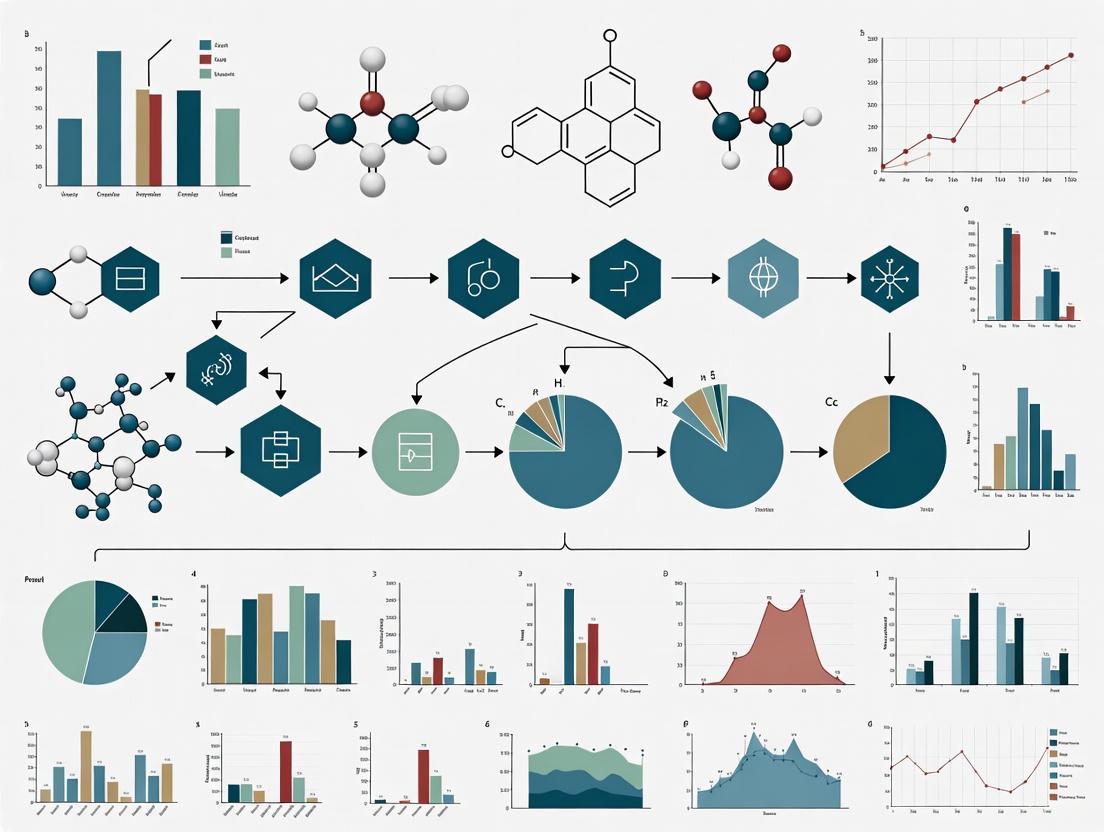

Visualization of Workflows

The following diagrams illustrate the logical relationships and workflows for the key processes described.

Evolution of Data Validation Approaches

Hierarchical Citizen Science Data Verification

The Scientist's Toolkit: Research Reagent Solutions

Implementing robust validation frameworks requires a suite of methodological and technological tools.

Table 4: Essential tools and solutions for implementing structured validation in research contexts.

| Tool or Solution | Primary Function | Application in Validation |

|---|---|---|

| Data Profiling Tools (e.g., OpenRefine, Informatica) | To analyze the structure, content, and quality of raw data [16]. | Identifies patterns, anomalies, and existing data quality issues during the initial assessment phase. |

| Idea Management Platforms (e.g., Qmarkets) | To provide a centralized system for evaluating ideas with scorecards and tournaments [15]. | Offers a structured process for assessing and prioritizing validation methodologies or research ideas based on predefined criteria. |

| Data Quality Software (e.g., Talend Data Quality, SAS Data Quality) | To provide comprehensive features for profiling, validation, cleansing, and monitoring [16]. | Automates the execution of data quality rules and supports ongoing data cleansing efforts. |

| Expert Review Committees | To provide domain-specific knowledge and final arbitration [2] [15]. | Serves as the highest tier in a hierarchical verification system, validating complex or ambiguous records. |

| Community Consensus Platforms | To leverage the collective intelligence of a volunteer community [2]. | Enables scalable peer-review for data verification, building trust and engagement. |

| Validated Competency Frameworks (e.g., Universal Competency Framework) | To provide a structured, evidence-based method for understanding and assessing competencies [18]. | Ensures that personnel involved in validation and analysis possess and apply the necessary skills consistently. |

The evolution from ad-hoc checking to structured validation frameworks represents a critical maturation process for fields reliant on distributed data collection, such as citizen science. While ad-hoc methods offer a quick means for initial exploration, the comparative data clearly demonstrates the superiority of structured frameworks in producing reliable, scalable, and research-ready data. The experimental protocols and hierarchical models presented provide a tangible pathway for implementation. For researchers and drug development professionals, adopting these structured approaches is not merely a technical improvement but a fundamental requirement for ensuring that citizen-sourced data meets the rigorous standards of scientific inquiry and application.

A Practical Guide to Citizen Science Data Verification Methods

In the landscape of citizen science and professional research, expert verification has long been regarded as the traditional gold standard for ensuring data quality and reliability [2]. This process involves subject matter specialists systematically examining collected data to confirm its correctness, a critical step for data intended for scientific research, environmental policy, and drug development [2] [1]. In citizen science specifically, verification is the process of checking records for correctness, which for ecological data often means confirming species identity [2]. While emerging technologies offer new validation approaches, expert verification remains a benchmark for credibility, particularly in high-stakes fields like pharmaceutical development where regulatory compliance and patient safety are paramount [19].

The fundamental principle underlying expert verification is that human expertise can identify nuances, context, and patterns that automated systems might miss [20]. This is especially valuable when dealing with complex, uncertain, or incomplete information where rigid algorithmic approaches may fail [21]. For researchers and drug development professionals, understanding the workflow, applications, and limitations of expert verification is essential for designing robust data collection and validation protocols, particularly when integrating citizen science data or other non-traditional data sources into research pipelines.

Expert Verification as the Gold Standard: A Comparative Analysis

Expert verification serves as a gold standard across multiple disciplines due to its ability to leverage human judgment and domain-specific knowledge. The table below compares how expert verification is applied across different fields, highlighting its universal principles and domain-specific implementations.

Table 1: Applications of Expert Verification Across Disciplines

| Field | Verification Focus | Key Characteristics | Primary Output |

|---|---|---|---|

| Citizen Science Ecology | Confirming species identification from volunteer observations [2] | Hierarchical approach; often used in longer-running schemes [2] | Verified biological records for distribution tracking [2] |

| Pharmaceutical Regulation | Assessing FDA compliance, manufacturing quality, and clinical trial integrity [19] | Conducted by former FDA professionals and senior industry experts [19] | Expert reports, courtroom testimony, regulatory submissions [19] |

| Expert Systems Development | Ensuring knowledge bases accurately represent domain expertise [20] [21] | Uses partitioning, incidence matrices, and knowledge models [21] | Verified and validated expert systems [21] |

| Construction Frameworks | Verifying compliance with "Gold Standard" procurement recommendations [22] | Independent verifiers assess processes against 24 recommendations [22] | Fully verified framework alliances [22] |

When compared to alternative verification methods, expert verification demonstrates distinct advantages and limitations. The table below provides a structured comparison of these approaches, highlighting why expert verification maintains its gold standard status despite the emergence of newer technologies.

Table 2: Expert Verification Compared to Alternative Validation Methods

| Verification Method | Key Advantages | Key Limitations | Best-Suited Applications |

|---|---|---|---|

| Expert Verification (Gold Standard) | High credibility with stakeholders [1]; Handles complex, ambiguous cases [20]; Provides nuanced interpretation [2] | Resource-intensive and time-consuming [2]; Subject to potential human error [20]; Scalability challenges with large datasets [2] | High-stakes validation (regulatory, research); Complex or novel cases; Final approval stages [19] |

| Community Consensus | Leverages collective knowledge [2]; More scalable than expert review [2]; Engages participant community [1] | Potential for groupthink [1]; Variable expertise levels [2]; Difficult to quality-control [1] | Peer-based review systems; Preliminary filtering; Projects with engaged communities [2] |

| Automated Verification | Highly scalable for large datasets [2]; Consistent application of rules [2]; Rapid processing times [2] | Limited to predefined parameters [2]; Poor handling of novel cases [20]; Requires extensive validation [21] | High-volume, standardized data; Initial quality screening; Well-defined domains [2] |

| Proof of Sampling | Efficient for decentralized systems [23]; Game-theoretical foundation promotes honesty [23]; Cost-effective for large networks [23] | Emerging technology with limited track record [23]; Requires strategic sampling rate calculation [23]; Less established in scientific literature [23] | Decentralized AI inference verification; Cryptographic applications; Systems where Nash Equilibrium can be maintained [23] |

The Expert Verification Workflow: A Systematic Process

The expert verification process follows a systematic workflow that ensures thoroughness and consistency. The diagram below illustrates this multi-stage process, highlighting the critical decision points and actions at each stage.

Diagram 1: Expert Verification Workflow. This diagram illustrates the systematic process of expert verification, from initial data screening through to final integration of validated data.

Workflow Stage Details and Protocols

Data Collection and Submission Protocol: The verification process begins with data collection following established protocols. In citizen science, this may involve standardized sampling methods to minimize bias, while in pharmaceutical contexts, this stage requires adherence to Good Clinical Practice (GCP) and Good Manufacturing Practice (GMP) guidelines [1] [19]. For ecological data, this includes documenting species observations with supporting evidence such as photographs, location data, and timestamps [2].

Initial Data Screening Methodology: Before expert review, data undergoes initial screening to eliminate obvious errors or incomplete submissions. This may involve automated checks for data format, geographic plausibility, and required field completion [2]. In regulatory contexts, this includes verifying that all required documentation is present and properly formatted before full expert assessment [19].

Expert Assignment and Assessment Criteria: Qualified experts are matched to data based on their specialized domain knowledge. In pharmaceutical expert witness services, this involves selecting professionals with 25+ years of experience in specific therapeutic areas or regulatory domains [24]. Assessment criteria must be established priori, which for species verification includes comparing observations against known distribution maps, morphological characteristics, and seasonal patterns [2].

Decision Point and Documentation Standards: The expert makes a determination on data validity based on established criteria. Documentation of this decision is critical, including the evidence considered, decision rationale, and level of certainty [20] [19]. For regulatory submissions, this documentation must withstand potential legal scrutiny and cross-examination [19].

Feedback Implementation and System Improvement: A key benefit of expert verification is the feedback loop to data collectors, which improves future data quality [1]. In citizen science, this may involve educating volunteers on identification challenges, while in pharmaceutical manufacturing, it translates to corrective and preventive actions (CAPAs) to address systematic quality issues [19].

Implementing robust expert verification requires specific resources and methodologies. The table below outlines key components of the verification toolkit across different applications.

Table 3: Essential Resources for Implementing Expert Verification

| Tool/Resource | Function in Verification Process | Application Examples |

|---|---|---|

| Reference Collections | Provide authoritative standards for comparison [2] | Herbarium specimens in botany; Drug compound libraries in pharma [2] |

| Digital Verification Platforms | Enable remote expert review and collaboration [2] | Online citizen science portals; Digital regulatory submission systems [2] [19] |

| Validation Methodologies | Structured approaches to assess system correctness [20] [21] | Partitioning techniques for knowledge bases; Statistical validation methods [21] |

| Expert Qualification Standards | Ensure verifiers possess necessary expertise [19] [24] | Former FDA officials for regulatory testimony; Taxonomists for species identification [19] [2] |

| Documentation Frameworks | Create auditable records of verification decisions [19] | Expert reports for litigation; Verification logs for citizen science data [19] [2] |

While expert verification remains the gold standard for data validation, its implementation is evolving. Current research indicates a shift toward hierarchical verification systems where the bulk of records are verified through automation or community consensus, with experts focusing on flagged records or complex cases [2]. This approach maintains quality while addressing scalability limitations.

In specialized fields like pharmaceutical development and regulatory compliance, expert verification continues to be indispensable due to the high-stakes nature of decisions based on the validated data [19] [24]. The credibility provided by verified experts—particularly those with regulatory agency experience—remains unmatched for legal and regulatory proceedings [19].

Future developments will likely focus on integrating expert knowledge with emerging technologies such as artificial intelligence, creating hybrid systems that leverage both human expertise and computational efficiency [2] [23]. However, the fundamental principles of expert verification—rigorous assessment by qualified professionals using systematic methodologies—will continue to underpin high-quality research and development across scientific disciplines.

In the evolving landscape of scientific research, particularly within drug development and environmental monitoring, the proliferation of data sources presents both unprecedented opportunities and significant validation challenges. The growing integration of citizen science data and the expanding use of synthetic datasets demand robust methodological frameworks to ensure analytical accuracy and reliability. Community consensus emerges as a critical paradigm, leveraging collective intelligence to distinguish robust signals from noise in complex datasets. This approach is particularly vital as the industry navigates the tension between rapidly generated synthetic data and traditional real-world evidence, a balance increasingly prioritized for creating clinically valid drug discovery processes [25].

The methodology is broadly applicable, extending from biomedicine to environmental science. In drug development, consensus clustering and data integration techniques are refining how researchers analyze gene expression patterns across multiple microarray studies, yielding more reliable results based on larger sample sizes [26]. Simultaneously, in ecological studies, structured citizen science initiatives are validating methods for community-driven environmental monitoring, demonstrating that non-specialist data collection can achieve scientific rigor when governed by appropriate consensus protocols [27]. This guide compares prominent community consensus approaches, evaluating their experimental performance and practical implementation to serve researchers and drug development professionals in their validation workflows.

Comparative Analysis of Consensus Methodologies

Quantitative Performance Comparison

The following table summarizes the core characteristics and performance metrics of three dominant consensus approaches, highlighting their respective advantages and optimal use cases.

Table 1: Comparative Analysis of Community Consensus Methodologies

| Methodology | Primary Domain | Core Consensus Mechanism | Key Performance Metrics | Relative Computational Load | Data Input Requirements |

|---|---|---|---|---|---|

| Iterative Co-occurrence (Louvain) [28] | Network Psychometrics, Complex Networks | Iterative reapplications of a community detection algorithm to derive a stable solution from a co-occurrence matrix. | Stability of the final partition across iterations; Modularity of the solution. | High (requires 100s-1000s of iterations) | A single network or correlation matrix. |

| FCA-Enhanced Clustering [26] | Genomic Data Integration, Multi-Experiment Analysis | Application of Formal Concept Analysis (FCA) to pool and analyze clustering solutions from multiple related datasets. | Biological Relevance (e.g., gene function enrichment); Cluster Consistency across datasets. | Medium (depends on initial clustering and lattice construction) | Multiple datasets or pre-computed clusterings addressing a similar biological question. |

| Citizen Science Validation [27] | Environmental Monitoring, Microplastic Pollution | Standardized data collection protocols using calibrated instruments, with statistical validation against known baselines. | Data Accuracy (vs. expert measurements); Statistical Power (p-values, variance); Policy Impact. | Low (focus on field data collection) | Physical samples and standardized trawl data from multiple sites. |

Experimental Outcome Comparison

The table below synthesizes key experimental findings from studies that implemented these consensus methods, providing a summary of their demonstrated efficacy.

Table 2: Summary of Experimental Outcomes from Consensus Approaches

| Methodology | Reported Outcome / Efficacy | Validation Approach | Identified Limitations |

|---|---|---|---|

| Iterative Co-occurrence (Louvain) [28] | Produces a stable, reproducible community structure from stochastic algorithms. | Internal stability and modularity metrics across many algorithm applications. | Can be computationally intensive; Performance can degrade with a very high number of nodes. |

| FCA-Enhanced Clustering [26] | Able to construct high-quality, representative consensus clustering from multiple experiments, preserving biological signals. | Evaluation of cluster consistency and biological relevance (e.g., gene function). | Quality is dependent on the initial grouping of experiments and the chosen clustering algorithm. |

| Citizen Science Validation [27] | Successfully quantified microplastic density (0.02 ± 0.03 particles/m³) across 58 samples, identifying significant inter-site variation (p=0.005). | Comparison with previous professional studies; statistical analysis of variance between sites. | Limited by sampling effort and instrument sensitivity; potential for contamination. |

Detailed Experimental Protocols

This section outlines the standard operating procedures for implementing the key consensus methodologies, providing a reproducible framework for researchers.

Protocol for Iterative Consensus Clustering (Louvain)

This protocol is based on the method described by Lancichinetti & Fortunato (2012) and implemented in the EGAnet R package [28].

- Objective: To derive a stable community structure from a complex network using a consensus-based approach that mitigates the inherent stochasticity of the Louvain algorithm.

- Materials: A network representation as a matrix or

igraphobject. Procedure:

- Algorithm Application: Apply the standard Louvain community detection algorithm to the target network a large number of times (N), typically N = 1000, to generate a set of partitions.

- Co-occurrence Matrix Construction: Create an N x N co-occurrence matrix, where each cell (i, j) represents the proportion of partitions in which nodes i and j were assigned to the same community.

- Thresholding and Sparsification: Set all values in the co-occurrence matrix below a predetermined threshold (e.g., 0.30) to zero, effectively creating a new, sparsified network.

- Iteration: Re-apply the Louvain algorithm to this new network and repeat steps 2 and 3. This process continues iteratively.

- Termination: The procedure terminates when a consensus is reached, typically when all nodes within a community co-occur with each other in 100% of the iterations, resulting in a final, stable partition.

Consensus Variants: The protocol allows for different methods to select the final consensus solution from the set of partitions, including:

"iterative": The original, recommended approach described above."most_common": Selects the single partition that appears most frequently across all N applications."highest_modularity": Selects the partition with the highest modularity score.

Protocol for FCA-Enhanced Consensus Clustering

This protocol is designed for integrating clustering results from multiple gene expression datasets, as validated in bioinformatics research [26].

- Objective: To generate a unified, biologically relevant clustering of genes (or other entities) from multiple related experimental datasets.

- Materials: Multiple gene expression matrices (e.g., from microarray studies) addressing a similar biological question.

- Procedure:

- Dataset Grouping: Partition the available microarray experiments into logically related groups based on a predefined criterion (e.g., experimental condition, tissue type).

- Initial Consensus Clustering: Apply a standard consensus clustering algorithm (e.g., Integrative or PSO-based) to each group of experiments separately, producing one clustering solution per group.

- Membership Pooling: Pool the cluster membership information from all the group-level consensus solutions into a single, combined dataset.

- Formal Concept Analysis (FCA):

- Input: The pooled membership data, where genes are objects and cluster assignments are attributes.

- Process: FCA constructs a concept lattice. Each formal concept in this lattice is a pair (A, B), where A is a set of genes (the extent) and B is the set of clusters that all genes in A belong to (the intent).

- Final Partition Generation: The formal concepts are used to derive the final disjoint clustering partition for the entire compendium of experiments. Genes that are frequently grouped together across the different group-level solutions will tend to form concepts together.

Protocol for Validating Citizen Science Data

This protocol outlines the method for community-driven microplastic monitoring, which can be adapted for other citizen science validation projects [27].

- Objective: To collect and validate scientifically robust environmental monitoring data through structured citizen science initiatives.

- Materials:

- Trawls: Low-tech aquatic debris instrument (LADI) or high-speed AVANI trawl.

- Vessels: Whale-watching or expedition vessels for sample collection.

- Lab Equipment: Filters, microscopes, and Fourier-Transform Infrared (FTIR) or Raman spectroscopy for polymer identification.

- Procedure:

- Training: Equip citizen scientists with standardized protocols for operating trawling equipment and collecting samples.

- Systematic Sampling: Collect trawl samples from a pre-defined set of geographic locations over a sustained period (e.g., 58 samples over 4 years). The sampling design should ensure spatial and temporal coverage.

- Laboratory Analysis:

- Extraction: Meso- and microplastics (M/MP) are extracted from samples following standardized wet lab procedures.

- Identification and Quantification: Particles are counted, measured, and the polymer composition is identified using spectroscopic techniques.

- Data Validation:

- Statistical Analysis: Calculate average particle densities and perform analysis of variance (ANOVA) to test for significant differences between sampling sites.

- Benchmarking: Compare findings with those from previous professional studies to assess accuracy and consistency.

- Uncertainty Quantification: Report densities with standard deviations and statistical confidence measures.

Visualizing Workflows and Signaling Pathways

Workflow for Iterative Consensus Clustering

The following diagram illustrates the iterative process of the consensus clustering method, showing how multiple applications of an algorithm converge on a stable solution.

Figure 1: Iterative consensus clustering workflow for network data.

Workflow for FCA-Enhanced Data Integration

This diagram maps the flow of pooling multiple clustering results and applying Formal Concept Analysis to achieve a final integrated consensus.

Figure 2: FCA-enhanced workflow for multi-dataset consensus.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key reagents, software, and instruments crucial for implementing the consensus methodologies discussed in this guide.

Table 3: Essential Research Reagents and Solutions for Consensus Methodologies

| Item Name | Category | Primary Function in Consensus Protocols |

|---|---|---|

EGAnet R Package [28] |

Software | Provides the community.consensus function and related tools for implementing iterative consensus clustering with the Louvain algorithm. |

| Formal Concept Analysis (FCA) [26] | Analytical Framework | A mathematical framework for data analysis that enables the integration of multiple clustering solutions by deriving a concept lattice from pooled memberships. |

| Low-tech Aquatic Debris Instrument (LADI) [27] | Field Equipment | A standardized, accessible trawl used in citizen science for consistent collection of microplastic samples from surface waters, enabling data comparability. |

| AVANI Trawl [27] | Field Equipment | A high-speed trawl for collecting microplastic samples, offering an alternative to the LADI for specific marine monitoring conditions. |

| Polymer Identification Spectroscopy (FTIR/Raman) [27] | Laboratory Equipment | Used to identify the polymer composition (e.g., Polyethylene, Polypropylene) of collected plastic particles, providing critical data for source analysis. |

| Particle Swarm Optimization (PSO) [26] | Algorithm | An evolutionary computation method used in one consensus clustering approach to find an optimal solution by updating candidate solutions based on group information. |

The integration of artificial intelligence (AI) into high-stakes fields like healthcare and drug development necessitates robust validation frameworks to ensure reliability and safety. A significant barrier to clinical implementation is the traditional model output of a single "point prediction" without any associated measure of reliability. This is particularly critical when models encounter data that differs from their training set, leading to unreliable and potentially dangerous predictions [29]. Conformal Prediction (CP) has emerged as a powerful, model-agnostic framework for addressing this exact challenge. It provides a mathematically rigorous method for quantifying uncertainty, transforming single-point predictions into statistically valid prediction sets or intervals [30]. This guide objectively compares the performance of CP-enhanced deep learning models against traditional approaches, framing the discussion within the broader thesis of validation for citizen science and biomedical research, where data variability and quality are paramount concerns.

Core Principles of Conformal Prediction

Conformal Prediction is a distribution-free framework that assigns a measure of confidence to predictions from any machine learning model. Its core operation involves calculating a nonconformity score, which quantifies how "unusual" a new data point is compared to a set of previously seen calibration data [30]. The framework operates under the assumption that data is exchangeable, a slightly weaker assumption than the traditional independent and identically distributed (i.i.d.) requirement.

CP generates a prediction set for classification tasks or a prediction interval for regression tasks. The user specifies a desired confidence level (e.g., 95%), and the CP guarantee ensures that the true outcome will be contained within the generated set with at least that probability [31] [29]. This process acts as a quality control system; if the model is uncertain, the prediction set will contain multiple possible labels (or a wider interval), flagging the prediction for human review. Conversely, a single-label prediction indicates high model confidence [32].

Two primary frameworks exist:

- Transductive CP (TCP): Often considered the "full" version, it provides highly accurate predictions but is computationally intensive as it requires retraining the model for each new test instance [30].

- Inductive CP (ICP): Also known as split-conformal, this method splits the data into a proper training set and a calibration set. The model is trained only once, making ICP much more efficient and practical for large datasets, such as those in genomic medicine and deep learning [30] [33].

Performance Comparison: Conformal Prediction vs. Traditional Methods

The following tables summarize experimental data from various domains, comparing the performance of models enhanced with conformal prediction against traditional point-prediction models.

Table 1: Performance in Medical Diagnostics and Genomics

| Application Domain | Model / Method | Key Performance Metric (Without CP) | Key Performance Metric (With CP) | Outcome and Implications |

|---|---|---|---|---|

| Prostate Cancer Pathology [29] | Deep Neural Network | 14 errors (2%) for cancer detection | 1 error (0.1%) for cancer detection; 22% of predictions flagged as unreliable | Drastic error reduction and clear flagging of uncertain cases significantly enhance patient safety. |

| Acute Lymphoblastic Leukemia (ALL) Subtyping [32] | ALLIUM RNA-seq Classifier | 8.95% False Negative Rate (FNR) | 3.5% FNR (with a specified tolerance) | ALLCoP (the CP implementation) provided statistically guaranteed FNR control, reducing missed diagnoses. |

| Sepsis Prediction in Non-ICU Patients [33] | Deep Learning Model (LSTM/Transformer) | High false alarm rates, lack of generalizability | 57% reduction in false alarms at the 6-hour prediction window | CP made the model more reliable and practical for clinical deployment in low-monitoring environments. |

| ISUP Grading of Prostate Biopsies [29] | Deep Neural Network | 117 errors (33%) | 70 errors (20%) using a mixed-confidence CP approach | CP managed uncertainty differently across disease grades, optimizing the trade-off between accuracy and informativeness. |

Table 2: Performance in Renewable Energy Forecasting and Robustness

| Application Domain | Model / Method | Key Performance Metric | Comparative Findings |

|---|---|---|---|

| Very-Short-Term Solar Power Forecasting [34] | Transformer + CP (ICP, J+aB, EnbPI) | Prediction Interval Coverage & Efficiency (Width) | Traditional models (Random Forest, XGBoost) with CP produced well-calibrated intervals. The LSTM model with CP produced overly narrow intervals with undercoverage. The Transformer model with CP demonstrated the most robust performance, balancing interval sharpness and reliability across all conditions. |

| Image Classification with Altered Data [31] | Standard CP vs. Adaptive PRCP (aPRCP) | Coverage under data perturbations | Standard CP struggled with altered data. The aPRCP algorithm, using a "quantile-of-quantile" design, achieved better trade-offs between nominal performance on clean data and robustness against noisy or adversarially altered inputs, outperforming worst-case robust methods. |

Detailed Experimental Protocols

To ensure reproducibility and provide a clear understanding of the cited experiments, this section outlines their core methodologies.

Protocol 1: Anatomical Pathology Cancer Diagnosis

This experiment demonstrated how CP could safeguard an AI system for diagnosing and grading prostate cancer from biopsies [29].

- Objective: To validate an AI system for prostate biopsy analysis and ensure patient safety by quantifying diagnostic uncertainty.

- Dataset: 7,788 biopsies for training; 3,059 biopsies across six test sets evaluating different scanners, labs, and tissue types.

- Model: A convolutional deep neural network served as the underlying AI [29].

- Conformal Setup: An inductive conformal predictor was implemented. The nonconformity score measured the disagreement between the model's predicted class and the ground truth label assigned by an expert uropathologist.

- Validation: The CP framework was evaluated on Test Set 1 (idealized conditions) and Test Set 4 (atypical prostate tissue). Performance was measured by validity (whether the error rate was below the preset significance level) and efficiency (the proportion of predictions that were single, precise labels) [29].

Protocol 2: Molecular Leukemia Subtyping

This study applied CP to reduce errors in RNA-sequencing-based classification of Acute Lymphoblastic Leukemia (ALL) subtypes [32].

- Objective: To quantify uncertainty and control the False Negative Rate (FNR) in ALL subtype classifiers.

- Dataset: RNA-seq data from 1,227 pediatric and young adult ALL patient samples.

- Model: Three classifiers were used: ALLIUM, ALLSorts, and ALLCatchR.

- Conformal Setup: The researchers used split conformal prediction with risk control. The softmax outputs from the classifiers served as the input for calibration.

- A calibration set was used to find a threshold

lamhaton the softmax scores. For any new sample, all subtypes with a softmax score abovelamhatwere included in the prediction set, guaranteeing the FNR would be at or below a user-specified toleranceα[32].

- A calibration set was used to find a threshold

- Validation: ALLCoP was cross-validated on a subset of samples with known subtypes to demonstrate FNR reduction. It was then applied to samples with unknown subtypes to analyze the resulting prediction sets.

Protocol 3: Robustness Against Data Perturbations

This research addressed a key limitation of standard CP: its vulnerability to perturbations in the input data [31].

- Objective: To develop a conformal prediction method that remains reliable even when input data is noisy or has been altered.

- Dataset: Standard image classification datasets including CIFAR-10, CIFAR-100, and ImageNet.

- Method: The proposed Adaptive Probabilistically Robust Conformal Prediction (aPRCP) algorithm uses a "quantile-of-quantile" design. It essentially sets two thresholds—one for the original data and another for the altered data—allowing it to adaptively balance performance between clean and corrupted inputs without requiring knowledge of the worst-case scenario [31].

- Validation: aPRCP was compared against standard CP and worst-case robust CP methods. The key metric was the achievement of "probabilistically robust coverage," showing better trade-offs between accuracy on clean data and robustness on altered data.

Visualization of Workflows and Relationships

The following diagrams illustrate the logical workflow of a standard inductive conformal prediction process and the core concept of the adaptive PRCP algorithm.

Inductive Conformal Prediction Workflow

Adaptive PRCP Core Concept

The Scientist's Toolkit: Essential Research Reagents and Solutions

This table details key computational tools, datasets, and methodological components essential for implementing conformal prediction in validation workflows.

Table 3: Key Research Reagents for Conformal Prediction Experiments

| Item Name | Type | Function in Research | Example Use Case |

|---|---|---|---|

| Calibration Dataset | Data | A held-out set of labeled data, independent from the training set, used to calculate the nonconformity score distribution and calibrate the prediction sets. | Critical for all CP applications; used to set the coverage threshold for sepsis prediction [33] and leukemia subtyping [32]. |

| Nonconformity Measure | Algorithm | A function that quantifies how different a new example is from the calibration set. Common measures include 1 - predicted probability of the true class (inverse probability). | The core of any CP implementation; morphological dilation count was used as a novel measure for segmentation tasks [35]. |

| Structuring Element | Parameter (Image Analysis) | Defines the neighborhood and shape for morphological operations (like dilation) used to calculate nonconformity scores for image segmentation models. | Used in CONSEMA to define the "margin" around a segmentation mask to ensure coverage of the ground truth [35]. |

| ALLCoP | Software Tool | A specific implementation of a conformal predictor designed for risk control in RNA-seq ALL subtype classification. | Used to provide FNR guarantees for the ALLIUM, ALLSorts, and ALLCatchR classifiers [32]. |

| aPRCP Algorithm | Algorithm | An advanced conformal method that sets dual thresholds to maintain reliability against a probabilistic corruption of input data. | Provides robustness for image classifiers deployed in environments where input data may be noisy or altered [31]. |

| MIMIC-IV / eICU-CRD | Dataset | Publicly available critical care databases containing detailed, timestamped patient data, ideal for developing and validating time-series models. | Used to train and validate the deep learning model for sepsis prediction across different hospital settings [33]. |

The field of biodiversity conservation is undergoing a technological revolution, driven by the integration of artificial intelligence (AI) into the data collection and validation pipelines of citizen science. The core challenge in modern ecology—processing the exponentially growing volumes of data from camera traps, acoustic sensors, and citizen scientist observations—has found a potent solution in AI. This case study examines the pivotal role of AI-powered mobile applications in enabling real-time species identification, with a specific focus on its capacity to validate citizen science data. This technological advancement is not merely a convenience; it represents a fundamental shift in how ecological data is gathered, verified, and utilized, moving from slow, labor-intensive manual processes to rapid, scalable, and automated systems that empower both professional researchers and the public.

Traditionally, species identification and population tracking relied on manual fieldwork, which is often slow, expensive, and difficult to scale [36]. The emergence of AI tools addresses a critical bottleneck. As noted in research on AI for biodiversity monitoring, these systems "能够实现自动化、智能化、长周期的实时监测" (can achieve automated, intelligent, long-term real-time monitoring), directly tackling issues of data inconsistency, high costs, and limited scope that have long plagued traditional methods [36]. This case study will objectively compare the performance of leading AI species identification tools, detail the experimental protocols that validate their efficacy, and frame these advancements within the broader research thesis of strengthening the scientific rigor of citizen-collected data.

Comparative Analysis of AI Species Identification Tools

The market for AI-powered wildlife monitoring tools has expanded significantly, offering researchers and conservationists a suite of options tailored to different needs, from camera trap image analysis to acoustic monitoring. The following analysis provides a data-driven comparison of leading platforms, evaluating their core capabilities, accuracy, and ideal use cases to inform selection for citizen science and professional research applications.

Table 1: Top AI Wildlife Monitoring Tools for Species Identification

| Tool Name | Primary Function | Key Supported Data Types | Reported Accuracy/Performance | Citizen Science Integration |

|---|---|---|---|---|

| SpeciesNet (Wildlife Insights) [37] | Multi-species image identification | Camera trap images | - Animal detection: 99.4%- Presence prediction correct: 98.7%- Species-level prediction accurate: 94.5% | High (open-source model, global platform) |

| WildTrack AI [38] | Species ID from footprints & images | Footprint images, Camera trap images | Camera trap image processing with >95% accuracy | Medium (mobile app for field researchers) |

| Zooniverse Wildlife AI [38] | Citizen-science powered species classification | Camera trap images | Relies on human-AI loop; accuracy improves with crowdsourced validation | Very High (gamified interface for volunteers) |

| ConserVision AI [38] | Acoustic species recognition | Audio (bird calls, whale songs) | Real-time audio species recognition for 500+ bird species | Low (specialized for researchers) |

| WildMe (Wildbook) [38] | Individual animal identification | Images of patterned species (whales, cheetahs) | Uses stripe/spot recognition for individual ID; excellent for population studies | Medium (collaborative open-source platform) |

Table 2: Technical Features and Practical Considerations

| Tool Name | Standout Feature | Platform Access | Pricing Model | Best For |

|---|---|---|---|---|

| SpeciesNet (Wildlife Insights) [37] | Trained on >65 million images; open-source | Web platform, API | Free / Open Source | Large-scale, multi-species camera trap projects |

| WildTrack AI [38] | Footprint recognition technology | Web, Mobile | Starts at $199/month | Remote locations, elusive species tracking |

| Zooniverse Wildlife AI [38] | Human-in-the-loop training for AI accuracy | Web | Free | Community-driven projects with massive datasets |

| ConserVision AI [38] | Real-time sound analysis; works in no-light conditions | Web, IoT devices | Starts at $99/month | Ornithology, marine research, and nocturnal studies |

| WildMe (Wildbook) [38] | Individual-level identification via patterns | Web | Free / Custom | Precise population tracking of specific species |

The performance data indicates that AI tools like SpeciesNet achieve accuracy levels that meet or exceed the capabilities of many human experts in specific identification tasks, a fact crucial for their role in validating citizen science data [37]. For instance, one study noted that "AI在CT检查结果中识别肺结节的灵敏度约为96.7%,高于放射科医生的78.1%" (AI's sensitivity in identifying pulmonary nodules on CT scans is about 96.7%, higher than radiologists' 78.1%), demonstrating the potential of AI to augment and verify human observation in biological contexts as well [39]. The choice of tool depends heavily on project parameters: governments and large parks may require the integrated data dashboard of EarthRanger AI, while NGOs on a budget can leverage the citizen-powered Zooniverse or open-source SpeciesNet for cost-effective data validation at scale [38].

Experimental Protocols for Validating AI Identification Tools

The deployment of AI for species identification, particularly in a citizen science context, necessitates rigorous and standardized experimental protocols to quantify performance, identify limitations, and establish scientific trust in the resulting data. The following section outlines the key methodological frameworks used to evaluate these AI systems.

Camera Trap Image Recognition Validation

Objective: To evaluate the accuracy, sensitivity, and specificity of an AI model in identifying and classifying animal species from camera trap images.

Workflow: The standard validation protocol follows a structured pipeline from data acquisition to model performance assessment, ensuring that the AI is tested on a robust and representative dataset.

Methodology Details:

- Data Acquisition: Models like SpeciesNet are trained on massive, diverse datasets. For example, the model powering the Wildlife Insights platform was trained on a dataset of over 65 million images contributed by global conservation organizations [37]. This scale is critical for teaching the AI to recognize species across different geographies, camera angles, and lighting conditions.

- Expert Annotation: A subset of images is meticulously labeled by expert ecologists to establish a "ground truth" benchmark. This curated dataset is then typically split, with a large portion (e.g., 80%) used for training the AI and the remainder (e.g., 20%) held back as a test set to evaluate its performance objectively [37].

- Performance Metrics: The AI's predictions on the test set are compared against the expert labels to calculate key metrics [37]:

- Animal Detection Rate (Sensitivity): The proportion of images containing animals that are correctly identified as such by the AI (e.g., 99.4%).

- Precision: When the AI predicts an animal is present, the probability that it is correct (e.g., 98.7%).

- Species-Level Accuracy: The proportion of species-level identifications that match the expert label (e.g., 94.5%).

Citizen Science Data Integration and Quality Control

Objective: To establish a framework for integrating AI-powered mobile apps into citizen science projects while implementing automated and manual checks to ensure data quality and reliability.

Workflow: A robust citizen science platform leverages AI for real-time support but incorporates multiple validation layers to create a reliable and scalable system.

Methodology Details:

- Real-Time AI Assistance: As a citizen scientist uploads a photo via a mobile app, the AI provides a real-time species suggestion along with a confidence score. This immediate feedback improves the accuracy of initial submissions and provides a valuable educational benefit [38].