Top-Down vs. Bottom-Up Control: From Ecological Theory to Drug Discovery Applications

This article synthesizes the foundational principles of top-down (predator-driven) and bottom-up (resource-driven) control in ecological food webs and explores their critical parallels in pharmaceutical research and development.

Top-Down vs. Bottom-Up Control: From Ecological Theory to Drug Discovery Applications

Abstract

This article synthesizes the foundational principles of top-down (predator-driven) and bottom-up (resource-driven) control in ecological food webs and explores their critical parallels in pharmaceutical research and development. We examine how these dual control mechanisms govern ecosystem stability and, analogously, influence modern drug discovery strategies—from target-based, bottom-up molecular design to phenotype-based, top-down screening. For an audience of researchers and drug development professionals, the article provides a comparative analysis of methodological applications, addresses key challenges in both fields, and discusses the emerging 'middle-out' paradigm that integrates both approaches for optimized outcomes in ecological management and therapeutic innovation.

Foundations of Trophic Control: Defining Top-Down and Bottom-Up Forces in Nature and Science

Core Definitions and Conceptual Framework

In ecological research, the regulation of population sizes and ecosystem structure is primarily governed by two contrasting mechanisms: predator-limitation (top-down control) and resource-limitation (bottom-up control). These fundamental concepts form the foundational framework for understanding trophic dynamics and energy flux in biological systems.

Predator-limitation, or top-down control, describes a regulatory mechanism where populations at lower trophic levels are primarily controlled by the consumption pressure from organisms at higher trophic levels [1]. In this model, the presence or absence of top predators cascades downward through the food web, ultimately influencing the density and distribution of primary producers. This approach is also termed the "predator-controlled food web" of an ecosystem [1].

Resource-limitation, or bottom-up control, represents the alternative mechanism where ecosystem dynamics are driven primarily by the availability of resources at the base of the food web [1]. In this model, changes in the population density or biomass of primary producers—through either absence of food or inaccessibility due to competition—propagate upward through successive trophic levels, affecting herbivores and then carnivores [1]. This approach is consequently described as the "resource-controlled" or "food-limited" food web of an ecosystem [1].

Modern ecological research recognizes that these control mechanisms are not mutually exclusive; rather, they represent endpoints on a continuum of regulatory forces [1]. The dominant controlling factor in any given ecosystem often depends on which component—predators or resources—presents the greater limiting constraint on population growth, with the limiting factor determined by their relative presence in lesser numbers or biomass [1]. Emerging theoretical frameworks suggest that intra-trophic diversity creates effective "emergent competition" between species within a trophic level due to feedbacks mediated by other trophic levels, forcing a crossover from top-down to bottom-up control regimes [2].

Table 1: Fundamental Characteristics of Predator-Limitation and Resource-Limitation

| Characteristic | Predator-Limitation (Top-Down) | Resource-Limitation (Bottom-Up) |

|---|---|---|

| Primary Driver | Consumption by higher trophic levels | Availability of primary resources |

| Direction of Control | Downward through trophic cascade | Upward through resource availability |

| Limiting Factor | Predation pressure | Nutrient/energy availability |

| Population Response | Prey populations suppressed by predators | Consumer populations track resource abundance |

| Theoretical Basis | Predator-controlled food web | Resource-controlled food web |

| Ecosystem Stability | Dependent on predator-prey dynamics | Dependent on resource consistency |

Experimental Evidence and Case Studies

Terrestrial Ecosystem Evidence

The classic tri-trophic system of plants, deer, and tigers exemplifies predator-limitation dynamics [1]. In this model, tigers as top predators regulate deer populations through consumption pressure. The absence of tigers leads to deer population explosion, subsequent overgrazing of plants, and eventual ecosystem collapse due to resource depletion [1]. Conversely, resource-limitation is observed when plant populations dwindle, causing deer starvation and population decline, which then leads to reduced tiger numbers due to prey scarcity [1]. Competition intensifies resource-limitation even when total food appears plentiful; the introduction of competing herbivore species (e.g., blackbucks) creates a food-limited system where competition for plants can lead to competitive exclusion [1].

Aquatic and Marine Ecosystem Evidence

Marine systems provide compelling experimental evidence for both control mechanisms. The sea otter-urchin-kelp system demonstrates clear predator-limitation dynamics [3]. Sea otters as top predators control sea urchin populations, which in turn regulates kelp consumption. Otter removal triggers urchin population explosions that devastate kelp forests, while otter recovery restores the kelp beds through reduced grazing pressure [3].

Conversely, the Northern Gulf of Mexico presents a resource-limitation case study, where agricultural runoff increases nutrient levels, stimulating epiphyte growth on seagrass blades [3]. This artificially enriched resource base supports larger herbivore populations and longer trophic chains, demonstrating bottom-up control. The negative resource-limitation scenario appears in eutrophication events, where excessive nutrient input causes algal blooms that block sunlight and oxygen, creating dead zones that collapse higher trophic levels [3].

Table 2: Comparative Experimental Evidence Across Ecosystem Types

| Ecosystem | Predator-Limitation Evidence | Resource-Limitation Evidence |

|---|---|---|

| Terrestrial Forest | Tiger predation regulates deer populations, preventing overgrazing | Drought reduces plant growth, limiting entire food web |

| Marine Coastal | Sea otter predation controls urchins, protecting kelp forests | Nutrient runoff stimulates algal growth, altering food web structure |

| Freshwater | Pike predation regulates minnow populations, indirectly affecting zooplankton | Nutrient limitation controls phytoplankton biomass and productivity |

| Grassland | Wolf predation on elk prevents overgrazing of willow and aspen | Soil nitrogen availability limits plant production and herbivore carrying capacity |

Methodological Approaches and Analytical Frameworks

Mathematical Modeling Foundations

The theoretical underpinnings of predator-prey dynamics are often derived from Lotka-Volterra equations, which form the basis for analyzing multi-species interactions in food webs [4]. The generalized multi-species Lotka-Volterra model can be represented as:

[ \frac{d X{i}}{d t}=X{i}\left(b{i}+\sum{j=1}^{S}a{ij}X{j}\right) ]

Where (S) represents the number of species in the web, (bi) is the intrinsic rate of increase of species (i), and (a{ij}) is the per capita effect of species (j) on species (i) [4]. This framework allows researchers to quantify the strength and direction of species interactions, parameterizing the relative importance of top-down versus bottom-up forces.

Modern approaches have expanded these foundations through generalized Consumer Resource Models (CRMs) with multiple trophic levels [2]. The dynamics for a three-tier ecosystem can be described by:

[ \begin{align} \frac{dX_\alpha}{dt} &= X_\alpha\left(\eta_X \sum_j d_{\alpha j}N_j - u_\alpha\right) \ \frac{dN_i}{dt} &= N_i\left(\eta_N \sum_Q c_{iQ}R_Q - m_i - \sum_\beta d_{\beta i}X_\beta\right) \ \frac{dR_P}{dt} &= R_P\left(K_P - R_P - \sum_j c_{jP}N_j\right) \end{align} ]

Where (X\alpha), (Ni), and (RP) represent top predators, intermediate consumers, and basal resources respectively, with parameters for consumption rates ((d{\alpha j}), (c{iQ})), conversion efficiencies ((\etaX), (\etaN)), and mortality rates ((u\alpha), (m_i)) [2]. This framework enables researchers to simulate the crossover between top-down and bottom-up control regimes based on the ratio of surviving species at different trophic levels [2].

Empirical Measurement and Food Web Reconstruction

Ecologists employ various quantitative descriptors to characterize food web structure and infer control mechanisms [4]:

- Connectance: The proportion of possible feeding links that are realized, indicating web complexity

- Trophic Level Calculation: Quantitative measures accounting for omnivory: (T{i}=1+\sum{j=1}^{S}T{j}p{ij}), where (T{i}) is the trophic level of species (i), and (p{ij}) is the proportion of predator (i)'s diet consisting of prey (j) [4]

- Characteristic Path Length: The average shortest path between any two nodes, reflecting ecosystem connectivity

- Modularity: The degree to which subsets of species are highly connected independently of other species

For soil food webs specifically, the soilfoodwebs R package provides tools for analyzing nutrient fluxes through food webs, calculating effects of organisms on ecosystem processes, and addressing parameter uncertainty [5]. This approach uses ecostoichiometric principles to balance carbon and nitrogen fluxes simultaneously, incorporating uncertainty in biomass estimates and food web structure [5].

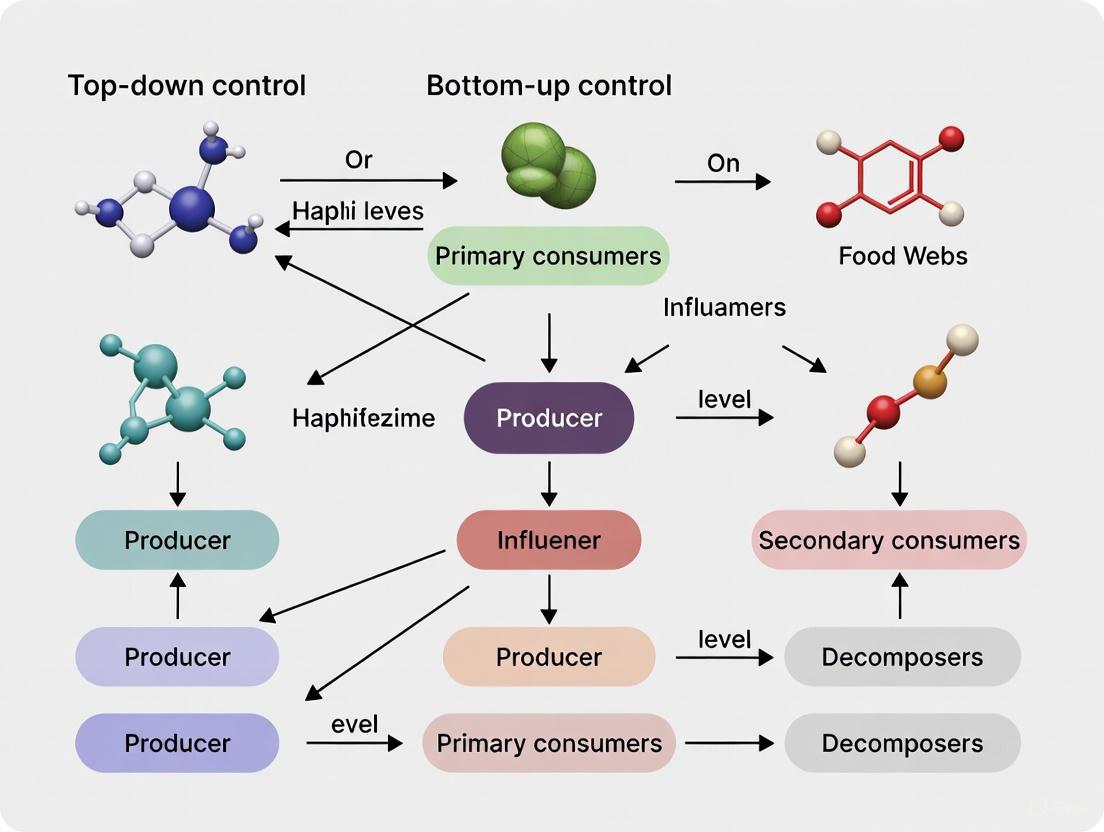

Figure 1: Conceptual diagram illustrating the directional control mechanisms in top-down versus bottom-up regulation of ecosystems.

Modern ecological research employs specialized software packages and analytical tools to investigate predator-limitation and resource-limitation dynamics:

Table 3: Essential Computational Tools for Trophic Control Research

| Tool/Package | Primary Function | Application Context |

|---|---|---|

| soilfoodwebs R package | Analyzes nutrient fluxes through food webs with carbon and nitrogen stoichiometry | Soil food web modeling, parameter uncertainty analysis [5] |

| Fluxweb | Calculates energy flux through food webs | Ecosystem energetics, stability analysis [5] |

| Cheddar | Food web analysis, visualization, and comparison | Trophic structure analysis, comparison across ecosystems [4] [5] |

| NetIndices Package | Calculates trophic levels using TrophInd() function | Food web topology, omnivory quantification [6] |

| igraph Package | Network visualization and analysis | Food web plotting, network property calculation [6] |

Figure 2: Generalized workflow for investigating predator-limitation and resource-limitation in ecological research.

Emerging Research Directions and Applications

Contemporary research has revealed that most natural ecosystems exhibit elements of both top-down and bottom-up control simultaneously, with the dominant mechanism often shifting across spatial and temporal scales [1]. In marine ecosystems initially thought to be purely bottom-up controlled, periods of top-down control emerge through extraction of large predators via fishing activities [1]. This dynamic interplay creates ecological crossovers where systems transition between control regimes based on the relative strength of different limiting factors.

Theoretical advances now enable quantification of the transition between control regimes using the zero-temperature cavity method, which identifies a simple order parameter for the crossover: the ratio of surviving species in different trophic levels [2]. This approach demonstrates that intra-trophic diversity generates effective "emergent competition" between species within a trophic level through feedbacks mediated by other trophic levels [2].

Human impacts add complex layers to these ecological dynamics. Overfishing has dramatically reduced predator populations in global oceans, with an estimated 300,000 small whales, dolphins, and porpoises killed annually in fishing gear, and approximately 12 million sharks and rays caught as bycatch annually during the 1990s [3]. These predator removals trigger trophic cascades through disrupted top-down control, emphasizing the conservation importance of understanding these regulatory mechanisms.

Conversely, restoration ecology demonstrates that reintroducing keystone species can reestablish healthy trophic function in degraded ecosystems [3]. Netherlands projects reintroducing eelgrass, salmon, and beavers have initiated habitat revitalization, showing how understanding both predator-limitation and resource-limitation dynamics informs effective ecosystem management.

A foundational question in ecology is what regulates the flow of energy and the structure of food webs: Is it control from the top, by predators, or from the bottom, by resource availability? Top-down control describes a "predator-limited" food web where populations of lower trophic levels are controlled by the consumption pressure from their predators [1] [3]. The removal of a top predator can trigger a trophic cascade, a series of indirect effects that ripple down through the food web, often altering the basal level and the entire ecosystem's state [7] [3]. In contrast, bottom-up control describes a "resource-limited" food web where the abundance of primary producers, and thus the entire community structure, is determined by the availability of nutrients and other resources [1] [8]. This guide objectively compares two classic case studies that exemplify these opposing forces, synthesizing experimental data and methodologies to illuminate their distinct mechanisms and outcomes.

Case Study 1: Top-Down Control via a Sea Otter-Urchin-Kelp Trophic Cascade

Experimental Findings and Quantitative Data

The sea otter (Enhydra lutris) is a classic keystone predator, whose presence or absence directly governs the state of North Pacific nearshore ecosystems [9] [10] [7]. The following table synthesizes key experimental data from multiple studies on this trophic cascade.

Table 1: Quantitative Data from Sea Otter Trophic Cascade Studies

| Metric | System State with Sea Otters | System State without Sea Otters | Location and Study Context |

|---|---|---|---|

| Sea Urchin Biomass Density | ~99% reduction [10] | High (Baseline) | Southeast Alaska, post-repatriation [10] |

| Kelp Density | >99% increase [10] | Low (Baseline) | Southeast Alaska, post-repatriation [10] |

| Local Otter Abundance | High (Baseline) | ~70% decline [10] | Sitka Sound, SE Alaska, post-harvest [10] |

| Sea Otter Urchin Consumption | Increased ~3x during urchin outbreak [9] | Pre-outbreak levels | Monterey Bay, CA, post-"Blob" heatwave [9] |

| Urchin Gonad Nutritional Value | High in kelp forest urchins [9] | Low ("starved," "empty") in urchin barrens [9] | Monterey Bay, CA [9] |

| Kelp Forest Cover | Remnant patches maintained [9] [11] | >80% loss, replaced by urchin barrens [9] | Northern California [9] |

Detailed Experimental Protocol

The understanding of this cascade is built upon decades of interdisciplinary research. A representative protocol, synthesizing methods from multiple studies, is outlined below.

Objective: To determine the effects of sea otter presence, absence, and foraging behavior on sea urchin populations and kelp forest ecosystem structure.

Methodology:

- Time-Series & Spatial Comparison: Researchers survey sites with contrasting otter occupancy histories. This includes:

- Reintroduction Studies: Surveying sites before and after sea otters naturally recolonize or are deliberately reintroduced (e.g., Southeast Alaska) [10].

- Harvest-Induced Absence: Surveying sites where a previously established otter population has been locally reduced by human harvest (e.g., Sitka Sound) [10].

- "Unplanned Experiment" Monitoring: Monitoring ecosystem responses to large-scale perturbations, such as the sea star wasting disease and marine heatwave in Monterey Bay [9] [11].

- Sea Otter Population Monitoring:

- Aerial & Boat-Based Surveys: Regular surveys are conducted to count otters and map their distribution [10].

- Dietary Analysis: Foraging observations are conducted from shore or boats, recording prey items (e.g., urchins, crabs) brought to the surface [9] [11]. Scat or gut content analysis is also used.

- Subtidal Community Surveys:

- Site Selection: Subtidal reef sites are selected randomly or systematically along a depth gradient (e.g., the 6-7 m isobath) [10].

- Kelp and Urchin Quantification: Divers place quadrats (e.g., 0.25 m²) randomly on the seafloor along transect lines.

- Urchin Nutritional Analysis:

- Sample Collection: Sea urchins are collected by divers from different habitats: kelp forests versus urchin barrens.

- Laboratory Analysis: Urchins are dissected, and the gonad (the primary energy storage organ) is weighed and its condition (full, partially full, empty) is assessed to determine nutritional value [9] [11].

Diagram: Sea Otter Trophic Cascade Logic Model

Case Study 2: Bottom-Up Control via Nutrient-Driven Production

Experimental Findings and Quantitative Data

In bottom-up control, the structure of the entire food web is governed by the availability of nutrients and resources for primary producers. The following table summarizes the effects of nutrient loading in marine ecosystems.

Table 2: Quantitative Data on Nutrient-Driven Bottom-Up Effects

| Metric | Oligotrophic (Low-Nutrient) Conditions | Eutrophic (High-Nutrient) Conditions | Study Context / Location |

|---|---|---|---|

| Primary Producer Biomass | Low (Baseline) | High / Dense algal blooms [3] | General eutrophication dynamics [3] |

| Epiphyte Load on Seagrass | Low (Baseline) | Increased growth [3] [8] | Northern Gulf of Mexico [3] |

| Water Column Oxygen | Normal (Baseline) | Hypoxic or Anoxic (Dead Zones) [3] | General eutrophication dynamics [3] |

| Trophic Chain Length | Shorter, energy-limited | Potentially longer, resource-driven [3] | Theoretical & observational [3] |

| Seagrass Health | Healthy | Degraded due to light-blocking epiphytes [8] | Elkhorn Slough, CA [8] |

Detailed Experimental Protocol

Studying bottom-up control involves manipulating or observing resource levels and tracking the subsequent effects through the food web.

Objective: To assess the impact of increased nutrient loading on primary producer biomass, community structure, and higher trophic levels.

Methodology:

- Nutrient Source & Measurement:

- Anthropogenic Inputs: Studies are often conducted in ecosystems affected by agricultural runoff, which carries fertilizers (nitrogen, phosphorus) [3].

- Water Sampling: Regular water samples are taken from the study site (e.g., an estuary) and analyzed in the lab for concentrations of dissolved inorganic nitrogen (DIN), phosphate, and other relevant nutrients.

- Primary Producer Response:

- Algal Bloom Assessment: Satellite imagery or boat-based surveys can map the spatial extent and chlorophyll-a concentration of phytoplankton blooms.

- Epiphyte Load Quantification: For seagrass ecosystems, shoots are collected, and the biomass of epiphytic algae growing on the seagrass blades is carefully scraped off, dried, and weighed [8].

- Higher Trophic Level & Ecosystem Response:

- Seagrass Monitoring: The density, percent cover, and biomass of seagrass are measured in permanent plots or random quadrats. Declines are linked to light deprivation from epiphytes [8].

- Water Quality Profiling: Dissolved oxygen (DO), temperature, and salinity are measured at various depths using a multi-parameter sonde. Hypoxic (low-oxygen) conditions are identified.

- Faunal Surveys: Surveys of invertebrate (e.g., crab, isopod) and fish populations are conducted to correlate their abundance and diversity with changing habitat and oxygen conditions [8].

Diagram: Bottom-Up Control Logic Model

The Scientist's Toolkit: Key Research Reagents and Materials

Table 3: Essential Materials for Trophic Cascade and Bottom-Up Control Research

| Research Solution / Material | Primary Function | Application in Case Studies |

|---|---|---|

| GPS Units & Navigational Charts | Precise site location and relocation for long-term monitoring. | Mapping and returning to specific subtidal reef sites over decades in SE Alaska and Monterey Bay [10]. |

| SCUBA / Diving Transect Gear | Underwater access for direct observation and measurement. | Deploying quadrats and conducting visual surveys of kelp, urchins, and other biota [9] [10]. |

| Plankton Nets & Water Samplers | Collection of micronekton and water samples for analysis. | Studying prey availability for top predators (e.g., tuna food webs) and collecting water for nutrient analysis [12]. |

| Nitrogen & Carbon Stable Isotope Analysis | Determining trophic level and long-term dietary habits of consumers. | Analyzing muscle tissue from fish and invertebrates to confirm food web linkages and trophic positions [12]. |

| Stomach Content & Scat Analysis | Direct identification of recently consumed prey items. | Understanding the diet of sea otters, tuna, and other predators; assessing natural mortality [9] [12]. |

| Aerial & Vessel Survey Platforms | Large-scale population counts and distribution mapping. | Monitoring population trends and spatial distribution of sea otters and other marine mammals [10] [7]. |

| DNA Barcoding & Reference Libraries | Molecular identification of prey species from gut contents or feces. | Precisely identifying partially digested prey items to reconstruct food webs [12]. |

These case studies demonstrate that top-down and bottom-up forces are not mutually exclusive; they can operate simultaneously, and their relative strength determines ecosystem structure [1] [3] [2]. The sea otter cascade shows that even in a system strongly controlled from the top, bottom-up stressors like marine heatwaves can trigger widespread change by altering prey behavior and food quality [9]. Conversely, the nutrient-loading case study reveals that top-down forces can sometimes mitigate bottom-up effects; the introduction of a top predator (sea otters consuming crabs) mediated the negative impacts of eutrophication on seagrass beds [8]. Modern theoretical work confirms that ecosystems can exhibit a crossover from top-down to bottom-up control, often dictated by the ratio of surviving species at different trophic levels and the emergent competition within levels [2]. The choice of experimental protocols and reagents, as detailed in this guide, is therefore critical for elucidating the complex and context-dependent interplay of these fundamental ecological forces.

The classical understanding of control mechanisms in biological systems has undergone a fundamental transformation. Historically, scientific paradigms often framed regulatory controls as mutually exclusive alternatives—systems were thought to be governed either by top-down or bottom-up processes. This perspective mirrored Thomas Kuhn's description of normal science, where a dominant paradigm defines problems and methodologies until accumulating anomalies can no longer be reconciled with the existing framework [13]. In food web ecology, this manifested as a long-standing debate between proponents of top-down control (where predators regulate ecosystem structure) and bottom-up control (where resources and primary producers drive ecosystem dynamics) [14].

Contemporary research across multiple disciplines has revealed this binary classification to be insufficient. A paradigm shift is underway, recognizing that top-down and bottom-up controls frequently co-occur and interact within complex systems. This shift moves beyond simple dichotomies to embrace multidimensional understanding, where the interplay between different control mechanisms creates emergent properties not predictable from studying either mechanism in isolation [15] [2]. The transformation represents what Kuhn would identify as a scientific revolution, where the underlying assumptions of a field are fundamentally reorganized to accommodate new evidence [13].

This synthesis explores how evidence from diverse fields—including cancer genomics, ecosystem ecology, and molecular biology—has converged to challenge the traditional mutually exclusive paradigm in favor of an integrated framework that acknowledges the prevalence and functional significance of co-occurring controls.

Theoretical Framework: From Mutually Exclusive to Co-occurring Controls

The Classic Paradigm of Mutually Exclusive Control

The mutually exclusive paradigm dominated scientific thinking for decades across multiple disciplines. In food web ecology, the "green world" hypothesis proposed that terrestrial vegetation prevalence resulted primarily from top-down control of herbivores by predators [14]. This perspective was countered by bottom-up proponents who emphasized the fundamental role of nutrient availability and primary production in regulating ecosystem structure [14]. Similarly, in cancer genomics, research initially focused on identifying whether tumors were driven primarily by mutations in specific oncogenes (top-down) or tumor suppressor genes (bottom-up), with the assumption that these represented distinct and mutually exclusive pathways to tumorigenesis [16].

This either-or framework provided a simplified approach to studying complex systems but increasingly failed to account for observed complexities. In ecological modeling, theoretical approaches often ignored intra-trophic level diversity to focus on coarse-grained energy flows between trophic levels [2]. While this simplification yielded valuable insights, it obscured the nuanced interactions between competition, diversity, and trophic structure that shape ecosystem dynamics.

The Emerging Paradigm of Co-occurring Control

The emerging paradigm recognizes that control mechanisms operate simultaneously and interactively across biological scales. In diverse ecosystems with multiple trophic levels, species within a trophic level exhibit what has been termed "emergent competition"—competition that arises due to feedbacks mediated by other trophic levels [2]. This competition creates a continuum between top-down and bottom-up control rather than a strict dichotomy.

The shift has been driven by accumulating anomalies that the old paradigm could not adequately explain. For instance, in marine ecosystems, fear of predators (non-consumptive effects) rather than predation mortality itself drives many trophic cascades and massive vertical migrations [14]. Similarly, paradoxical and synergistic trophic interactions, along with positive feedback loops derived from biological nutrient cycling, complicate the conventional dichotomy between top-down and bottom-up control [14].

Table 1: Characteristics of Mutually Exclusive versus Co-occurring Control Paradigms

| Aspect | Mutually Exclusive Paradigm | Co-occurring Control Paradigm |

|---|---|---|

| Fundamental Premise | Systems are governed by either top-down OR bottom-up controls | Systems are regulated by BOTH top-down AND bottom-up controls |

| Interaction Model | Competitive exclusion between control types | Interactive, synergistic, and antagonistic relationships |

| System Behavior | Linear, predictable | Non-linear, emergent properties |

| Analytical Approach | Isolated factor analysis | Multidimensional, integrated assessment |

| Representation | Binary classification | Continuum or network representation |

| Ecological Focus | Trophic levels as uniform entities | Intra-trophic diversity and niche differentiation |

Evidence Across Biological Systems

Cancer Genomics and Molecular Pathways

In cancer research, analysis of mutation patterns across tumors has revealed fundamental insights about co-occurrence and mutual exclusivity. Co-occurring mutations in driver genes typically activate two collaborating oncogenic pathways that convey different hallmark features of cancer (e.g., apoptosis evasion, cell proliferation, cell invasion, and host immune evasion) [16]. For example, in melanoma, recurrent point mutations in the BRAF oncogene activate the pro-proliferative MAPK signaling pathway and frequently co-occur with gene deletions involving the tumor suppressor PTEN, which activates the PI3K/AKT pathway [16].

Conversely, mutually exclusive mutation patterns can reveal functionally redundant oncogenic processes. In the same melanoma example, genetic alterations in NRAS, BRAF/PTEN, or c-KIT/NF1 are mutually exclusive of one another as they engage the MAPK and PI3K/AKT pathways through different molecular mechanisms but toward similar oncogenic outcomes [16]. This mutual exclusivity suggests these alterations represent different routes to the same functional consequence, making it disadvantageous for a tumor to develop multiple alterations within the same pathway.

Table 2: Co-occurrence and Mutual Exclusivity Patterns in Cancer Genomics

| Pattern Type | Molecular Relationship | Functional Interpretation | Therapeutic Implications |

|---|---|---|---|

| Co-occurrence | Positive epistatic relationship | Alterations trigger complementary oncogenic pathways conveying different cancer hallmarks | Combined targeted therapy may be effective |

| Mutual Exclusivity | Redundant oncogenic function | Alterations represent different routes to disrupting the same biological process | Single agent therapy may suffice for pathway inhibition |

| Mutual Exclusivity | Divergent, antagonistic functions | Alterations represent incompatible routes toward tumorigenesis from different cells of origin | Context-specific therapeutic strategies needed |

Beyond genetic mutations, co-occurrence and mutual exclusivity analysis has been extended to epigenetic modifications like DNA methylation. Studies have identified millions of co-occurrence and mutual exclusivity (COME) events of DNA methylation across different cancer types [17]. These COME events can classify patients into subtypes with significantly different clinical outcomes and show significant associations with clinical features such as age, gender, and pathological stage [17].

Ecosystem Dynamics and Food Webs

Ecological systems provide compelling evidence for the co-occurrence of top-down and bottom-up controls. Research in a highly diverse subtropical forest with 5,716 taxa across 25 trophic groups revealed strong interrelationships among plants, arthropods, and microorganisms, indicating complex multitrophic interactions [18]. The study found substantial support for top-down effects of microorganisms belowground, indicating important feedbacks of microbial symbionts, pathogens, and decomposers on plant communities [18]. In contrast, aboveground pathways were characterized by bottom-up control of plants on arthropods, including many non-trophic links [18].

This demonstrates that within a single ecosystem, different compartments can experience predominant but not exclusive control from different directions. The belowground compartment showed stronger statistical support for top-down control, while the aboveground compartment was clearly determined by bottom-up effects [18]. This challenges simplified models and highlights the context-dependency of control mechanisms.

In marine ecosystems, the debate between top-down and bottom-up control has been particularly contentious [14]. Current evidence suggests that top-down control is more widespread in neritic and pelagic ecosystems than species-level trophic cascades, which in turn are more frequent than community-level cascades [14]. The incidence of community-level trophic cascades among neritic and pelagic ecosystems appears to be inversely related to biodiversity and omnivory, which are in turn associated with temperature [14].

Diagram 1: Co-occurring controls in aboveground and belowground ecosystem compartments

Cross-Ecosystem Subsidies and Interaction Pathways

The integration of top-down and bottom-up controls is particularly evident in ecosystems connected by subsidies—flows of energy, materials, or organisms between ecosystems. A single subsidy can have direct effects on consumers and detritus in the recipient ecosystem through processes like direct consumption (a top-down effect) and recycling to the nutrient pool (contributing to bottom-up effects) [19].

For example, migratory salmon provide marine-derived subsidies to streams, where they are directly consumed by various organisms (direct consumption pathway) while their carcasses also enter the stream's nutrient pool (recycling pathway) to benefit primary producers [19]. Modeling approaches reveal that these direct consumption and recycling pathways of subsidies interact antagonistically, as the feedbacks between both pathways lead to lower stocks and functions of the recipient ecosystem than models that omit these feedbacks [19].

This complexity is further amplified by the fact that subsidy effects are consistent for each trophic level of the recipient ecosystem, but the recycling coupling pathway always leads to equal or higher stocks and functions across recipient ecosystem trophic levels, whereas consumption couplings have alternating positive and negative effects depending on trophic level and the characteristics of the trophic cascade [19].

Methodological Approaches and Analytical Tools

Experimental Designs for Multidimensional Ecology

Modern experimental ecology faces the challenge of capturing the multidimensional nature of control mechanisms in biological systems. Ecological dynamics in natural systems are inherently multidimensional, with multi-species assemblages simultaneously experiencing spatial and temporal variation over different scales and in multiple environmental factors [15]. Historically, experimental studies have focused on testing single-stressor effects on individuals, single populations, or over limited spatial and temporal scales. There is, however, a growing appreciation of the need for multi-factorial ecological experiments [15].

Experimental approaches range from fully-controlled laboratory experiments to semi-controlled field manipulations, examining both intra- and inter-specific diversity [15]. These include studies manipulating a range of biotic and abiotic factors across different scales, from small-scale microcosms and field manipulations to larger-scale mesocosms and whole-system manipulations [15]. Each approach has its own challenges—such as lack of realism in microcosms and the logistical difficulty associated with replication in large-scale field experiments—but cumulatively they contribute to a fundamental understanding of ecological and evolutionary processes [15].

Diagram 2: Multidimensional experimental framework for studying co-occurring controls

Statistical and Modeling Frameworks

Advanced statistical approaches are essential for detecting and quantifying the interplay between different control mechanisms. The analysis of complex community webs with thousands of species requires methods that can identify patterns beyond simple diversity metrics. Research on highly diverse systems has demonstrated that species composition data reveal much stronger interrelationships across trophic levels than analyses based solely on diversity patterns [18].

Powerful approaches include Procrustes correlation analysis of principal components and structural equation modeling (SEM) to analyze below- and aboveground multitrophic community patterns [18]. These methods allow researchers to explore potential causal links between trophic levels by testing for direct and indirect relationships and the support for bottom-up and top-down control, while accounting for potential environmental covariation [18].

For theoretical exploration, generalized Consumer Resource Models with multiple trophic levels provide insights into how the interplay between trophic structure, diversity, and competition shapes ecosystem properties [2]. Using methods such as the zero-temperature cavity method and numerical simulations, these models can show how intra-trophic diversity gives rise to effective "emergent competition" between species within a trophic level due to feedbacks mediated by other trophic levels [2].

The Scientist's Toolkit: Essential Research Solutions

Table 3: Key Research Reagents and Solutions for Studying Co-occurring Controls

| Tool/Category | Specific Examples | Function/Application |

|---|---|---|

| Genomic Analysis Tools | DISCOVER algorithm | Statistical independence test for identifying significant co-occurrence and mutual exclusivity gene pairs [17] |

| Epigenetic Profiling | DNA methylation arrays | Genome-wide assessment of epigenetic modifications and co-methylation patterns [17] |

| Community Composition Analysis | Procrustes correlation with PCA | Analyzing multivariate community patterns and correlations across trophic groups [18] |

| Causal Modeling | Structural Equation Modeling (SEM) | Testing direct and indirect relationships in complex multitrophic systems [18] |

| Theoretical Ecology Models | Multi-trophic Consumer Resource Models | Exploring interplay between trophic structure, diversity, and competition [2] |

| Stable Isotope Analysis | δ¹³C, δ¹⁵N labeling | Tracing subsidy pathways and energy flows between ecosystems [19] |

| Experimental Ecosystems | Mesocosms and microcosms | Controlled manipulation of multiple factors across trophic levels [15] |

Implications and Future Directions

Theoretical and Conceptual Implications

The recognition of co-occurring controls represents what Kuhn would describe as a true paradigm shift—not merely an extension of existing knowledge but a fundamental transformation in how we conceptualize biological systems [13]. This shift requires moving beyond the traditional mutually exclusive framework to develop new models that explicitly account for the interactions between different control mechanisms.

In theoretical ecology, this has led to the development of models that incorporate emergent competition—competition that arises from feedbacks mediated by other trophic levels [2]. This emergent competition creates a continuum from top-down to bottom-up control, captured by a simple order parameter related to the ratio of surviving species in different trophic levels [2]. The theoretical approach predicts that whether a system exhibits top-down or bottom-up control depends solely on this ratio, providing a quantitative framework for understanding the relative strength of different control mechanisms.

Practical Applications and Future Research

The paradigm shift from mutually exclusive to co-occurring controls has profound implications for applied fields. In conservation biology and ecosystem management, recognizing the simultaneous operation of top-down and bottom-up controls suggests the need for integrated approaches that address multiple regulatory pathways simultaneously [14] [18]. For instance, marine protected areas and recovery plans for endangered species must consider both predator-prey relationships (top-down) and resource availability (bottom-up) to be effective [14].

In cancer research and drug development, understanding co-occurring mutation patterns may inform combination therapies that target multiple pathways simultaneously [16]. The recognition that certain mutations co-occur because they activate complementary oncogenic pathways suggests that joint targeting of these pathways could be more effective than single-agent approaches [16].

Future research directions should focus on:

- Multidimensional experiments that simultaneously manipulate multiple factors across different trophic levels or biological scales [15]

- Integrated modeling approaches that combine insights from different methodological traditions [19] [2]

- Cross-system comparisons to identify general principles governing the relative strength of different control mechanisms [14]

- Novel technologies that enable more comprehensive measurement of biological responses across multiple levels of organization [15]

The paradigm shift from mutually exclusive to co-occurring controls represents a maturation in our understanding of biological systems—from simplified, reductionist models toward integrated, holistic frameworks that embrace the complexity and multidimensionality of natural systems.

The dynamics of energy flow and population regulation within ecosystems are primarily governed by two contrasting mechanisms: top-down and bottom-up control. Top-down control describes a predator-driven system where populations of lower trophic levels (e.g., herbivores) are regulated by consumers at the top (e.g., carnivores) [1] [3]. Conversely, bottom-up control is a resource-driven system where the abundance and quality of primary producers (e.g., plants, algae) dictate the structure of higher trophic levels [1] [20]. The relative importance of these controls is not static; it is mediated by a suite of drivers including nutrient availability, predation pressure, and environmental stressors. Understanding the interplay of these drivers is critical for predicting ecosystem responses to anthropogenic changes, from agricultural runoff to climate warming, and for informing effective conservation and management strategies [21] [22]. This guide provides a comparative analysis of these key drivers, synthesizing experimental data and methodologies to inform researchers and applied scientists.

Comparative Analysis of Key Drivers

The following table synthesizes core experimental findings on how nutrient availability, predation pressure, and environmental stressors function as ecosystem drivers, and how they influence the balance between top-down and bottom-up control.

Table 1: Comparative Analysis of Key Drivers in Ecosystem Control

| Key Driver | Mechanism of Action | Impact on Trophic Dynamics | Supporting Experimental Evidence |

|---|---|---|---|

| Nutrient Availability | Acts as a bottom-up control by altering the quantity and quality of primary producers [1]. | Increased nutrients can lengthen trophic chains by supporting greater biomass at the base [3]. Overload can cause eutrophication, leading to hypoxia and ecosystem collapse [3] [21]. | Mar Menor Lagoon Study: Chronic nutrient input (N & P) from agriculture over 30 years led to eutrophication, phytoplankton blooms, and dystrophic crises, overcoming the system's resilience [21]. |

| Predation Pressure | Acts as a top-down control by directly consuming prey and inducing non-lethal effects (e.g., behavioral changes) in prey species [1] [22]. | Regulates herbivore populations, preventing overgrazing and enabling producer communities to thrive (e.g., the sea otter-urchin-kelp cascade) [3]. | Snowshoe Hare Experiment: A field experiment with controlled plots showed predator exclusion doubled hare density, while combined food addition and predator exclusion caused an 11-fold increase, demonstrating interactive top-down and bottom-up effects [20]. |

| Environmental Stressors (e.g., Temperature, Turbidity) | Abiotic factors that modulate the efficiency of biological interactions, particularly predation [22]. | Warmer, clearer waters can intensify top-down pressure by increasing predator activity and efficiency. High turbidity or extreme flow rates can weaken predation by providing prey refuge [22]. | Trinidadian Guppy Study: In situ filming revealed predators were more prevalent and attacked more frequently in warmer, less turbid, slower-flowing habitats, showing how environmental context shapes predation pressure [22]. |

Detailed Experimental Protocols

To equip researchers with methodologies for investigating these drivers, this section details key experimental approaches cited in the comparative analysis.

Protocol 1: Field Manipulation of Top-Down and Bottom-Up Factors

This protocol is derived from the seminal snowshoe hare (Lepus americanus) population study, which successfully disentangled the effects of resource limitation and predation [20].

- Objective: To quantify the separate and interactive effects of food resources and predation pressure on prey population dynamics.

- Methodology:

- Site Selection: Establish multiple large (e.g., 1 km²) study plots in an undisturbed natural habitat.

- Experimental Treatments:

- Control: Plots with no manipulation.

- Food Addition: Plots supplied with high-quality supplemental food to test bottom-up control.

- Predator Exclusion: Plots enclosed with electric fencing to exclude mammalian predators (while allowing access to avian predators).

- Combined Treatment: Plots that receive both food addition and predator exclusion.

- Data Collection: Conduct population censuses (e.g., mark-recapture studies) at regular intervals (e.g., pre-breeding and post-winter) over multiple years to track population density, survival, and reproductive rates.

- Key Outcome Measures: Average population density over the study period, survival rates, and reproductive output across the different treatment groups.

Protocol 2:In SituQuantification of Predation Under Multiple Stressors

This protocol outlines the approach used in a recent study of Trinidadian guppies (Poecilia reticulata) to assess how co-occurring environmental stressors affect predator-prey interactions in the wild [22].

- Objective: To correlate predator distribution and behavior with a suite of simultaneously measured environmental variables.

- Methodology:

- Site Characterization: Select multiple sampling sites across an environmental gradient (e.g., different rivers). At each site, quantitatively measure abiotic factors including water temperature, turbidity, flow velocity, dissolved oxygen, and canopy cover.

- Predation Assay: Use standardized in situ video recording. A prey stimulus (e.g., live guppies in a transparent, perforated container) and an empty control apparatus are deployed.

- Behavioral Quantification: From video footage, record for each predator species:

- Presence/Absence.

- Latency to first visit the prey stimulus.

- Time spent near the stimulus.

- Number of attacks on the stimulus.

- Data Analysis: Use multivariate statistics (e.g., Principal Component Analysis) to reduce environmental variable dimensionality. Then, employ regression models to link environmental principal components to the quantified measures of predation pressure.

- Key Outcome Measures: Predator species composition, attack frequency, and visitation rates as a function of key environmental drivers like temperature and turbidity.

Visualizing Ecosystem Dynamics and Transitions

The following diagrams, generated using Graphviz, illustrate the core concepts and experimental workflows related to top-down and bottom-up controls.

Pathways of Ecosystem Control

Experimental Workflow for Multi-Stressor Field Studies

The Scientist's Toolkit: Essential Research Reagents and Solutions

This table catalogues key materials and tools required for conducting field and laboratory research on the drivers of ecosystem control.

Table 2: Essential Reagents and Materials for Ecosystem Driver Research

| Research Reagent / Tool | Function / Application | Example Use Case |

|---|---|---|

| Electric Exclusion Fencing | Creates controlled field plots to exclude mammalian predators and isolate top-down effects. | Studying the impact of predator removal on snowshoe hare population dynamics [20]. |

| Environmental Sensors (Multi-parameter Sondes) | Provides continuous, high-resolution in situ measurements of abiotic factors (temperature, dissolved O₂, turbidity, pH). | Characterizing the environmental context at each study site to correlate with biological observations [22]. |

| Underwater Video Cameras (Baited/Stimulus) | Enables non-invasive observation and quantification of predator presence, behavior, and attack rates in natural settings. | Assessing how water clarity and temperature influence predator visits and attacks on guppy prey [22]. |

| Stable Isotope Tracers (e.g., ¹⁵N, ¹³C) | Used to track nutrient pathways and energy flow through food webs, quantifying the strength of bottom-up linkages. | Determining the assimilation of agricultural nutrients into aquatic food webs following runoff events [21]. |

| DNA Extraction & Metagenomic Kits (e.g., Qiagen DNeasy PowerSoil) | Standardizes the extraction of high-quality genetic material from complex environmental samples like soil, sediment, or biofilms. | Enabling sequencing-based analysis of microbial community assembly in response to top-down and bottom-up controls [23]. |

The paradigm of top-down versus bottom-up control is not a binary choice but a dynamic continuum. The preponderance of evidence demonstrates that these forces act simultaneously, with their relative dominance shifting across ecosystems, time, and environmental conditions [1] [3]. A key finding from recent research is that environmental stressors like temperature and turbidity do not operate in isolation but interact to modulate the strength of top-down predation [22]. Furthermore, chronic anthropogenic pressures, such as nutrient pollution, can trigger critical transitions, pushing an ecosystem from a balanced or top-down regulated state to one dominated by bottom-up forces, with severe consequences for stability and biodiversity [21]. Therefore, effective ecosystem management and predictive ecological modeling require an integrated framework that accounts for the complex, non-additive interactions between nutrient availability, predation pressure, and the evolving portfolio of environmental stressors.

This comparison guide explores the innovative application of ecological trophic control principles to pharmaceutical research and development. Drawing direct parallels from food web dynamics, we examine how top-down control strategies, characterized by high-level biological system interventions, compare with bottom-up control approaches that target fundamental molecular pathways. The analysis synthesizes current research across therapeutic domains, providing a structured framework for understanding drug discovery paradigms through an ecological lens. We present quantitative efficacy data, detailed experimental protocols, and essential research tools to equip scientists with methodologies for evaluating these complementary approaches in their own drug development workflows.

In ecological science, trophic structure represents the partitioning of biomass between different feeding levels in a food chain, typically categorized as primary producers, herbivores, and carnivores [24]. The regulation of these structures occurs through two primary mechanisms: bottom-up control, where each trophic level is limited by resource availability from lower levels, and top-down control, where upper trophic levels exert predatory pressure on lower levels [25]. These concepts have provided fundamental insights into ecosystem dynamics, particularly how energy flows from basal resources (plants) through intermediate consumers (herbivores) to top predators (carnivores) [26].

The translation of these ecological principles to drug discovery offers a powerful conceptual framework for understanding therapeutic intervention strategies. In this analogous model, disease pathways function as trophic networks, with molecular initiating events representing basal resources, cellular signaling pathways as intermediate consumers, and system-level physiological effects as top predators [2]. This review systematically compares how these control paradigms manifest in pharmaceutical research, examining their relative efficacies across therapeutic domains, with particular emphasis on metabolic disorders, oncology, and neurological conditions where both approaches have been clinically validated.

Bottom-Up Control Strategies in Drug Discovery

Bottom-up control strategies in drug discovery operate on the fundamental principle that interventions at foundational molecular levels can produce cascading therapeutic effects throughout biological systems. This approach directly mirrors ecological bottom-up control, where primary producer abundance determines the carrying capacity of entire ecosystems [24].

Molecular-Targeted Therapies

Enzyme inhibitors represent a classic bottom-up approach, targeting rate-limiting steps in pathological biochemical pathways. For instance, HMG-CoA reductase inhibitors (statins) intervene at a critical juncture in cholesterol biosynthesis, creating upstream-downstream effects that ultimately reduce atherosclerotic cardiovascular risk. Similarly, kinase inhibitors in oncology target driver mutations in specific signaling pathways, interrupting the proliferative signals that fuel cancer growth at their source.

Receptor modulators constitute another bottom-up strategy, acting at the interface between extracellular stimuli and intracellular responses. GLP-2 analogs like teduglutide exemplify this approach by directly targeting intestinal mucosal growth and function [27]. By activating GLP-2 receptors on intestinal epithelial cells, these compounds stimulate crypt cell proliferation and inhibit enterocyte apoptosis, ultimately improving nutrient absorption in Short Bowel Syndrome (SBS) through a cascade of trophic effects [27].

Gene-Targeted Approaches

Antisense oligonucleotides and RNA interference technologies represent the most fundamental bottom-up strategies, intervening at the genetic level to modulate disease processes. By targeting mRNA molecules, these approaches reduce the production of pathogenic proteins before they can exert downstream effects. Gene replacement therapies operate similarly by introducing functional copies of genes to compensate for defective ones, addressing genetic disorders at their molecular origin.

Table 1: Efficacy Metrics for Bottom-Up Therapeutic Approaches

| Therapeutic Class | Molecular Target | Primary Indication | Clinical Efficacy Measure | Reported Outcome |

|---|---|---|---|---|

| GLP-2 Analogs [27] | GLP-2 Receptor | Short Bowel Syndrome | PN Volume Reduction | 63% patients achieved >20% reduction vs. 30% with placebo |

| GLP-2 Analogs [27] | GLP-2 Receptor | Short Bowel Syndrome | PN Independence Rate | 13/88 patients completely weaned off parenteral nutrition |

| DPP-4 Inhibitors | Dipeptidyl Peptidase-4 | Type 2 Diabetes | HbA1c Reduction | 0.5-0.8% decrease from baseline |

| STAT3 Inhibitors | STAT3 Transcription Factor | Oncology | Tumor Response Rate | 15-30% across various cancers |

Experimental Protocols for Bottom-Up Therapeutic Evaluation

GLP-2 Analog Efficacy Assessment Protocol (Adapted from STEPS Trial Methodology [27]):

- Patient Selection: Enroll PN-dependent SBS patients with residual bowel length 10-200cm

- Intervention: Subcutaneous teduglutide (0.05mg/kg/day) or placebo for 24 weeks

- Primary Endpoint: Percentage of patients achieving ≥20% reduction in PN volume at week 20 and maintained at week 24

- Secondary Endpoints:

- Absolute change in PN volume (L/week)

- Number of days off PN per week

- Plasma citrulline concentration (marker of intestinal absorption)

- Statistical Analysis: Mixed-effects models with repeated measures accounting for baseline PN requirements

In Vitro Trophic Effect Assessment:

- Cell Culture: Human intestinal epithelial cell lines (IEC-6, Caco-2) in DMEM with 10% FBS

- Treatment Groups: Vehicle control, native GLP-2 (1-100nM), GLP-2 analogs (teduglutide, glepaglutide, apraglutide)

- Proliferation Assay: MTT assay at 24, 48, and 72 hours

- Apoptosis Measurement: Annexin V/propidium iodide flow cytometry after 48-hour treatment

- Gene Expression: qRT-PCR for proliferation markers (Ki-67, PCNA) and anti-apoptotic factors (Bcl-2)

Top-Down Control Strategies in Drug Discovery

Top-down control strategies in pharmacology operate through high-level interventions that modulate system-wide regulatory mechanisms, analogous to how apex predators structure ecological communities through consumption pressure on herbivore populations [25]. These approaches typically target master regulators, endocrine systems, or neural circuits that exert broad influence over pathological processes.

System-Level Interventions

Immunomodulatory therapies represent a prime example of pharmacological top-down control. Checkpoint inhibitors in oncology (e.g., anti-PD-1, anti-CTLA-4 antibodies) do not directly target cancer cells but instead remove inhibitory signals on immune effector cells, enabling the immune system to mount anti-tumor responses through natural cytotoxic mechanisms. This approach mirrors the ecological concept where top predators regulate herbivore populations, indirectly benefiting primary producers through reduced consumption pressure [25].

Endocrine system modulators constitute another top-down strategy. Corticosteroids exert widespread anti-inflammatory and immunosuppressive effects by modulating gene expression in multiple cell types, effectively "rewiring" the immune response at a system level. Similarly, thyroid hormone replacements influence metabolic rate throughout the body by acting on nuclear receptors that regulate transcriptional programs in diverse tissues.

Neural Circuit-Targeted Approaches

Central nervous system (CNS) drugs frequently operate through top-down mechanisms. Antidepressants like SSRIs modulate serotonin signaling in key brain regions, producing downstream effects on mood, cognition, and neuroplasticity. Neurostimulation therapies (e.g., deep brain stimulation, vagus nerve stimulation) represent even higher-level interventions, modulating neural circuit activity to treat conditions ranging from Parkinson's disease to depression.

Table 2: Efficacy Metrics for Top-Down Therapeutic Approaches

| Therapeutic Class | Systemic Target | Primary Indication | Clinical Efficacy Measure | Reported Outcome |

|---|---|---|---|---|

| Immune Checkpoint Inhibitors | PD-1/PD-L1 Axis | Metastatic Melanoma | Objective Response Rate | 40-45% as monotherapy |

| Corticosteroids | Glucocorticoid Receptor | Inflammatory Disorders | Clinical Remission Rate | 60-80% in autoimmune conditions |

| SSRI Antidepressants | Serotonin Transporter | Major Depression | Response Rate (≥50% improvement) | 50-60% vs. 30-40% placebo |

| Deep Brain Stimulation | Basal Ganglia Circuits | Parkinson's Disease | Motor Function Improvement | 40-60% UPDRS reduction |

Experimental Protocols for Top-Down Therapeutic Evaluation

Immunomodulatory Therapy Assessment Protocol:

- Animal Model: Syngeneic tumor models (e.g., MC38, CT26) in immunocompetent mice

- Intervention Groups: Isotype control antibody, anti-PD-1 monotherapy, combination therapies

- Tumor Measurement: Caliper measurements 3x weekly, tumor volume calculation (0.5 × length × width²)

- Immune Monitoring:

- Flow cytometry of tumor-infiltrating lymphocytes (CD8⁺, CD4⁺, Tregs)

- Cytokine profiling (IFN-γ, TNF-α, IL-2) by Luminex

- Immunohistochemistry for CD8⁺ T-cell density

- Endpoint Analysis: Tumor growth curves, survival analysis, correlation of immune parameters with response

Neuroimmunological Top-Down Assessment:

- Stress Paradigm: Chronic unpredictable stress model in rodents (4 weeks)

- Intervention: SSRI administration (fluoxetine, 10mg/kg/day) vs. vehicle control

- Behavioral Testing:

- Sucrose preference test (anhedonia measure)

- Forced swim test (behavioral despair)

- Open field test (anxiety-like behavior)

- Neuroimmune Parameters:

- Microglial activation status (Iba1 immunohistochemistry)

- Pro-inflammatory cytokine levels in hippocampus and prefrontal cortex

- Neurogenesis assessment (BrdU/DCX double labeling)

Comparative Analysis of Control Strategies

The relative efficacy of top-down versus bottom-up therapeutic strategies varies significantly across disease contexts, mirroring ecological findings where the dominance of these control mechanisms depends on environmental conditions and system characteristics [25]. Understanding the determinants of success for each approach enables more rational therapeutic development.

Context-Dependent Effectiveness

Disease stage considerations significantly influence control strategy effectiveness. Early-stage pathologies with well-defined molecular drivers often respond optimally to bottom-up approaches that directly target the causative mechanisms. In contrast, advanced diseases with established feedback loops and system-wide dysregulation may require top-down interventions that reset overall system homeostasis.

Therapeutic window differences emerge between these approaches. Bottom-up therapies typically offer superior safety profiles due to their precise targeting but may succumb to compensatory resistance mechanisms. Top-down strategies often produce more profound efficacy but with increased risk of off-target effects and immune-related adverse events, particularly with immunomodulatory approaches.

Temporal response patterns distinguish these control strategies. Bottom-up interventions frequently produce rapid biomarker improvements but may not translate to long-term clinical benefits without addressing system-level adaptations. Top-down approaches may exhibit delayed onset of action as they require time to engage endogenous regulatory circuits but can produce more durable responses.

Table 3: Strategic Comparison of Control Approaches in Drug Discovery

| Parameter | Bottom-Up Control | Top-Down Control |

|---|---|---|

| Molecular Precision | High (single target focus) | Moderate (system-level modulation) |

| Therapeutic Window | Generally wider | Often narrower |

| Onset of Action | Typically rapid | Often delayed |

| Durability of Response | Limited by resistance | Potentially more durable |

| Applicable Disease Stage | Early, molecularly-defined | Advanced, systemically-disrupted |

| Resistance Mechanisms | Target mutations, bypass signaling | Compensatory pathway activation |

| Combination Potential | High with other targeted agents | High with complementary mechanisms |

| Biomarker Requirements | Essential for patient selection | Helpful but not always essential |

Hybrid Control Strategies

Vertical inhibition approaches combine bottom-up and top-down elements by targeting multiple nodes within the same signaling pathway. For example, in HER2-positive breast cancer, combining trastuzumab (extracellular domain antibody) with tucatinib (intracellular kinase inhibitor) provides complementary inhibition at different pathway levels.

Network pharmacology strategies represent another hybrid approach, using multi-targeted agents or rationally designed combinations to simultaneously engage both upstream drivers and downstream effectors. Kinase inhibitor polypharmacology exemplifies this paradigm, where single compounds with appropriate promiscuity can modulate entire signaling networks more effectively than highly selective agents.

The Scientist's Toolkit: Essential Research Reagents and Materials

Implementing trophic control principles in drug discovery requires specialized research tools for evaluating interventions at different biological levels. The following table catalogs essential reagents and their applications in studying therapeutic control mechanisms.

Table 4: Essential Research Reagents for Studying Trophic Control in Drug Discovery

| Research Tool Category | Specific Examples | Research Application | Control Paradigm |

|---|---|---|---|

| Recombinant Proteins | GLP-2 analogs (teduglutide, glepaglutide, apraglutide) [27] | Intestinal trophism studies | Bottom-Up |

| Monoclonal Antibodies | Anti-PD-1, anti-CTLA-4 checkpoint inhibitors | Immune activation assays | Top-Down |

| Cell Line Models | Caco-2 intestinal cells, primary T-cell cultures | Pathway mechanism studies | Both |

| Animal Disease Models | SBS rodent models, syngeneic tumor models | Efficacy and mechanism studies | Both |

| Signal Transduction Assays | Phospho-specific flow cytometry, Western blot | Pathway activation measurement | Bottom-Up |

| Immune Monitoring Tools | Multiplex cytokine arrays, IHC markers | System-level response assessment | Top-Down |

| Gene Expression Tools | qRT-PCR panels, RNA sequencing | Transcriptional regulation studies | Both |

| Metabolic Assays | Seahorse analyzers, stable isotope tracing | Metabolic pathway analysis | Bottom-Up |

The conceptual framework of trophic control provides valuable insights for strategic decision-making in drug discovery. Bottom-up approaches offer precision and favorable safety profiles in diseases with well-defined molecular drivers, exemplified by GLP-2 analogs in Short Bowel Syndrome [27]. Top-down strategies excel in complex, systemically dysregulated conditions where resetting homeostatic balance is paramount, as demonstrated by immunotherapies in oncology.

The future of therapeutic development lies in context-appropriate application of these paradigms and their rational combination. As with ecological systems where top-down and bottom-up forces interact along a continuum [25], successful drug discovery will increasingly require understanding how targeted interventions engage broader biological networks to achieve therapeutic efficacy while minimizing resistance. This integrative perspective enables more sophisticated therapeutic strategies that respect the complexity of biological systems while effectively treating human disease.

Methodological Applications: From Ecosystem Modeling to Drug Development Pipelines

Understanding the forces that shape ecosystems, specifically the debate between top-down (predator-driven) and bottom-up (resource-driven) control, is a central goal in ecology. Predictive ecological models are essential tools in this endeavor, allowing researchers to test hypotheses and simulate ecosystem dynamics under various conditions. Among these, Consumer-Resource Models (CRMs) provide a mechanistic framework for understanding how species interactions influence community structure and stability. This guide offers a comparative analysis of prominent ecological modeling approaches used to predict trophic interactions, evaluating their performance, data requirements, and applicability to the top-down versus bottom-up control paradigm.

Model Comparison: Approaches for Predicting Trophic Interactions

Different modeling approaches offer varying balances of mechanistic detail, parameter demand, and ease of application. The table below summarizes the core characteristics of several key model types used in food web research.

Table 1: Comparative Overview of Ecological Modeling Approaches for Trophic Interactions

| Model Type | Core Principle | Typical Data Requirements | Strengths | Key Limitations |

|---|---|---|---|---|

| Consumer-Resource Models (CRMs) | Mechanistically links species growth to resource consumption and conversion [2] [28]. | Resource requirements and consumption rates for each species; often from monoculture experiments [28]. | High predictive accuracy across environments [28]; Explicitly represents energy flow and niche competition [2]. | Parameter-intensive; Can be complex to scale to highly diverse food webs. |

| Generalized Lotka-Volterra (gLVM) | Describes population growth rates as a function of linear pair-wise species interactions [29]. | Intrinsic growth rates and a matrix of pair-wise interaction coefficients [29]. | Conceptual simplicity; Few parameters; Analytic tractability for stability analysis [29]. | Interactions are static and phenomenological; Sensitive to environmental context [28]. |

| Size-Spectrum Models (e.g., mizer) | Structures the community based on body size, governing metabolism, predation, and growth [30]. | Size spectra of communities; trait-based parameters (e.g., growth, reproduction) [30]. | Reduces parameter burden; Effective for exploring fisheries policies and climate impacts [30]. | Relies on equilibrium assumptions; Limited automated parameter optimization [30]. |

| Ecosystem-Scale Models (e.g., Ecopath with Ecosim) | Mass-balanced snapshot of energy flows through an entire ecosystem [31]. | Biomass and diet data for all functional groups; production and consumption rates [31]. | Holistic, whole-ecosystem approach; Extensive curated repository (EcoBase) exists [31]. | Complex model construction; High data demand for initial parameterization. |

Experimental Validation of Consumer-Resource Models

A pivotal 2025 study provided a robust experimental test of a mechanistic CRM, demonstrating its power to predict community composition across different resource conditions and levels of species richness [28].

Experimental Protocol and Workflow

The following diagram illustrates the integrated experimental and modeling workflow used to parameterize and validate the consumer-resource model.

Detailed Experimental Methodology

The experimental validation was conducted as follows [28]:

- Monoculture Parameterization: Twelve phytoplankton species were grown in monoculture under a gradient of concentrations of nitrate (NO₃⁻), ammonium (NH₄⁺), or phosphorus (P). All other nutrients were provided in non-limiting quantities.

- Data Collection: Daily growth rates were tracked over four days. These data were used with Bayesian modeling to quantify each species's specific resource requirement (the minimum resource level needed to support growth) and resource consumption rate.

- Community Competition Experiments: Polycultures of two, three, four, or six species were assembled and grown in semi-continuous cultures. These communities competed for either:

- Essential Resources: Different ratios of nitrate and phosphorus.

- Substitutable Resources: Different ratios of nitrate and ammonium.

- Composition Tracking: Community composition was tracked over 12 days using an automated pipeline integrating high-content microscopy, image analysis, and machine learning.

- Model Prediction & Validation: The CRM, parameterized solely with monoculture data, was used to predict the relative species abundances in the 960 polyculture communities. Predictions were compared against observed outcomes using Bray-Curtis similarity.

Key Quantitative Findings

The study yielded critical quantitative results, summarized in the table below, which highlight the CRM's predictive power and the conditions for species coexistence.

Table 2: Key Experimental Results from CRM Validation Study [28]

| Metric | Competition for Essential Resources (NO₃ & P) | Competition for Substitutable Resources (NO₃ & NH₄) |

|---|---|---|

| Overall Predictive Accuracy (vs. observed data) | 83.4% (Mean Bray-Curtis similarity) | 83.4% (Mean Bray-Curtis similarity) |

| Accuracy in Novel Conditions (vs. null model) | No significant drop in predictive power | No significant drop in predictive power |

| Pairs Meeting Tilman's 1st Rule* (Different Limiting Resources) | 30.3% (20 of 66 pairs) | 37.9% (25 of 66 pairs) |

| Pairs Meeting Tilman's 2nd Rule* (Consume more of most limiting resource) | 40.0% (of the 20 pairs) | 60.0% (of the 25 pairs) |

| Final Pairs with Stable Coexistence | 12.1% (8 of 66 pairs) | 22.7% (15 of 66 pairs) |

Tilman's rules provide a mechanistic basis for stable coexistence in CRMs [28].

Theoretical Advances: Emergent Competition and Control Regimes

Theoretical work using CRMs has provided profound insights into the emergence of top-down and bottom-up control in complex ecosystems. Research analyzing a three-tiered CRM (plants, herbivores, carnivores) with random parameter distributions revealed that intra-trophic diversity generates "emergent competition" between species within the same level [2]. This competition arises from feedback loops mediated by species at other trophic levels.

The balance of this emergent competition dictates the ecosystem's control regime. The model demonstrates a crossover between two states [2]:

- Bottom-Up Control: Populations are limited by the availability of primary producers (resources). This occurs when emergent competition within the herbivore level is strong.

- Top-Down Control: Populations are limited by predators (secondary consumers). This occurs when top-down pressure from carnivores is the dominant limiting factor.

Strikingly, this complex crossover is captured by a simple order parameter: the ratio of surviving species in different trophic levels [2]. This provides a quantifiable metric from CRM outputs to classify an ecosystem's operational control regime.

Implementing and testing CRMs requires a combination of software tools, data repositories, and conceptual frameworks.

Table 3: Key Resources for Research on Consumer-Resource and Trophic Models

| Tool / Resource | Type | Primary Function & Application |

|---|---|---|

| tmm4py [32] | Software Package | Enables efficient, global-scale biogeochemical modeling in Python using the Transport Matrix Method. |

| mizer [30] | R Package | A specialized tool for multi-species size-spectrum modeling of marine ecosystems, useful for fisheries and climate projections. |

| EcoBase [31] | Model Repository | An open-access repository of published Ecopath with Ecosim (EwE) models, facilitating meta-analyses and model reuse. |

| Global Biotic Interactions (GloBI) [33] | Data Repository | An open infrastructure that provides access to a vast array of species interaction datasets (e.g., predator-prey, parasite-host). |

| "Eat-to-Live" (E2L) vs "Live-to-Eat" (L2E) [34] | Conceptual Framework | A critical consideration in CRM implementation: E2L models set max growth rate as input, modulating feeding; L2E models set max grazing rate as input. |

| Satiation Controlled Encounter Based (SCEB) [34] | Modeling Function | An alternative to the standard rectangular hyperbola (RHt2) for grazing; it explicitly separates prey encounter from satiation feedback. |

Consumer-Resource Models stand out for their high mechanistic accuracy and transferability across environmental contexts, making them powerful tools for investigating top-down and bottom-up control. While other models like gLVM offer simplicity and EwE provides a holistic view, the CRM's ability to accurately predict community composition from monoculture data, as demonstrated in recent empirical work, is a significant advantage [28]. The theoretical discovery that the ratio of surviving species across trophic levels can serve as an indicator for the dominant control regime further enhances the utility of CRMs in fundamental food web research [2]. Future work should focus on integrating these different modeling approaches and incorporating more dynamic physiological responses, such as the "eat-to-live" paradigm, to better capture the complex reality of ecosystem responses to environmental change [34].

In ecological research, bottom-up control describes how the foundational layers of a food web, such as nutrient availability and primary producers, dictate the structure and function of the entire ecosystem. A parallel paradigm exists in drug discovery. The bottom-up approach initiates the drug discovery process from the most fundamental, molecular level: the three-dimensional structure of a biological target, typically a protein involved in disease pathology [35]. This strategy assumes that a deep, mechanistic understanding of the target's structure and function enables the rational design of therapeutic molecules that can precisely interact with it to modulate its activity. This stands in stark contrast to the top-down approach, which begins at the level of complex biological systems—cells, tissues, or whole organisms—by observing the phenotypic effects of compounds without necessarily understanding their precise mechanism of action at the molecular level [35].

The transition towards bottom-up, structure-based methods began in earnest with Paul Ehrlich's systematic screening of chemical compounds in the early 20th century, but it truly accelerated decades later with concurrent advances in structural biology, synthetic chemistry, and computational power [35]. This review provides a comparative guide to modern bottom-up strategies, focusing on Structure-Based Drug Design (SBDD) and target-first approaches. We will objectively compare the performance of various computational frameworks and experimental protocols, supported by quantitative data, to offer researchers a clear perspective on the tools and techniques shaping rational drug design.

Core Principles and Methodologies of Bottom-Up Drug Design

The Workflow of Structure-Based Drug Design

At its core, SBDD is an iterative process that relies on the knowledge of the target protein's structure. The fundamental premise is that a drug candidate's binding affinity and selectivity are determined by complementary structural and chemical interactions with its target's binding site. The canonical SBDD workflow, as exemplified in a recent study targeting the human αβIII tubulin isotype, involves several key stages [36]:

- Target Selection and Structural Determination: The process begins with identifying a protein target that plays a critical role in a disease pathway. Its three-dimensional structure is elucidated experimentally through X-ray crystallography, NMR spectroscopy, or Cryo-Electron Microscopy, or predicted computationally using tools like AlphaFold [37].

- Binding Site Identification and Analysis: The structure is analyzed to locate key binding pockets or active sites. Tools like MDMix can identify interaction hotspots, such as regions favorable for hydrogen bonding or hydrophobic interactions [38].

- Virtual Screening and Molecular Docking: Large libraries of compounds are computationally screened against the target structure. Docking programs like AutoDock Vina simulate how each compound (ligand) fits into the binding pocket, predicting binding poses and scoring their affinity [36] [38].