Testing Lotka-Volterra Model Predictions: A Practical Guide for Biomedical Researchers

This article provides a comprehensive framework for testing predictions derived from the Lotka-Volterra model, with a specific focus on applications in biomedical research and drug development.

Testing Lotka-Volterra Model Predictions: A Practical Guide for Biomedical Researchers

Abstract

This article provides a comprehensive framework for testing predictions derived from the Lotka-Volterra model, with a specific focus on applications in biomedical research and drug development. It explores the model's theoretical foundations in biological contexts, from microbial communities to tumor dynamics. The guide details modern methodologies for parameter estimation and calibration, addresses common pitfalls and optimization strategies for experimental design, and offers a comparative analysis with alternative modeling approaches. Aimed at researchers and scientists, this resource synthesizes current best practices to enhance the reliability and applicability of Lotka-Volterra models in predicting complex biological interactions.

The Lotka-Volterra Framework: From Theoretical Ecology to Biological Modeling

The Lotka-Volterra equations, formulated nearly a century ago, continue to serve as a foundational framework for modeling interacting species in ecology and beyond. These equations describe a simple yet profound dynamic: predators increase through consumption of prey, while prey populations grow in the absence of predation pressure. This oscillatory relationship has provided fundamental insights into population cycles and species coexistence. However, the core model makes several simplifying assumptions—homogeneous environments, constant parameters, and linear functional responses—that limit its direct application to real-world biological systems [1] [2]. Consequently, a vibrant research landscape has emerged focused on testing, refining, and extending these classical equations to enhance their predictive power and biological realism.

Contemporary research has moved beyond merely documenting the limitations of the Lotka-Volterra model to developing sophisticated methodological frameworks for addressing these challenges. Current investigations focus on incorporating stochasticity, spatial heterogeneity, time delays, and complex functional responses to better capture the intricacies of natural systems [3] [4]. Furthermore, the framework has been adapted to novel domains including microbial ecology, neuroscience, and human population dynamics, demonstrating its remarkable versatility as a modeling paradigm [5] [6]. This review systematically compares these modern methodological approaches, providing researchers with a comprehensive analysis of current strategies for validating and applying predator-prey models across biological contexts.

Methodological Comparisons: Experimental and Computational Frameworks

Hybrid Modeling Frameworks

Hybrid Physics-Informed Neural Network Correction represents a cutting-edge approach that leverages both mechanistic understanding and data-driven learning. This methodology retains the structural framework of the classical Lotka-Volterra equations while augmenting them with a neural correction term. The hybrid system takes the form: dz/dt = f_LV(z,t;θ) + λf_NN(z,t), where f_LV represents the classical Lotka-Volterra dynamics, f_NN denotes the neural network correction, and λ (0 ≤ λ ≤ 1) is a coupling parameter that controls the relative contribution of the neural component [2].

The experimental protocol for implementing this hybrid approach involves:

- Data Generation: Simulating Lotka-Volterra dynamics under known parameters to create training data, typically with added Gaussian noise to mimic experimental conditions.

- Network Architecture: Implementing a multilayer perceptron (MLP) with specified activation functions and hidden layers to estimate the corrective term f_NN.

- Loss Function Optimization: Training the hybrid model using a combined loss function that incorporates both data fidelity and physical consistency terms.

- λ Sensitivity Analysis: Systematically varying the coupling parameter λ to evaluate its effect on model performance under different noise conditions and parameter distortions [2].

Research indicates that moderate neural correction (intermediate λ values) typically provides optimal performance on purely noisy data, while higher λ values become beneficial when compensating for structural model inaccuracies or parameter misspecification [2].

Stochastic Inference Methods

Stochastic Moment-Based Inference addresses a fundamental limitation of deterministic models when applied to biological systems inherently subject to stochasticity. This approach utilizes the master equation framework to derive dynamics for statistical moments (mean, variance, covariances) of population distributions, rather than tracking individual trajectories [7].

The experimental workflow proceeds through:

- Master Equation Formulation: Defining transition rates between population states based on ecological events (birth, death, predation).

- Moment Equation Derivation: Generating a system of differential equations for statistical moments by applying moment operators to the master equation.

- Model Closure: Approximating higher-order moments when the system is not naturally closed using appropriate closure schemes.

- Parameter Inference: Implementing Approximate Bayesian Computation Sequential Monte Carlo (ABC-SMC) to estimate parameter distributions that minimize the distance between model moments and empirical moment data [7].

This methodology proves particularly valuable for microbiome studies where conventional metagenomic data provides relative abundance measurements rather than absolute counts. The stochastic framework naturally handles measurement noise and enables quantification of parameter uncertainty, addressing identifiability challenges that plague deterministic approaches [7].

Optimal Sampling Design

Simulation-Based Optimal Experiment Design addresses the practical challenge of allocating limited experimental resources to maximize information gain for parameter estimation. Classical optimal design criteria (A-, D-, E-optimal) require initial parameter estimates and can yield suboptimal results when these estimates are inaccurate [8].

Two modern approaches circumvent this limitation:

- E-Optimal-Ranking (EOR): This method uniformly samples parameters from the feasible parameter space, applies E-optimal criterion to rank sampling times for each parameter set, and selects sampling points based on average rankings across multiple iterations.

- Attention-Based LSTM Selection: This approach uses simulated data to train an Attention-based Long Short-Term Memory network to identify sampling times that most significantly influence parameter estimation accuracy [8].

The experimental implementation involves:

- Defining the biologically feasible parameter space based on literature or preliminary data

- Generating extensive simulation data across the parameter space

- Applying the EOR or LSTM method to identify optimal sampling timepoints

- Validating the selected design against classical methods using synthetic data with known parameters [8]

These simulation-based methods have demonstrated superior performance compared to classical E-optimal design, particularly when initial parameter estimates are highly uncertain [8].

Multi-Scale Dynamics Analysis

Relaxation Oscillation Analysis provides a mathematical framework for studying predator-prey systems operating on distinctly different timescales, such as the spruce budworm-forest system where insect population dynamics occur much faster than forest regeneration [3].

The methodological approach involves:

- Timescale Separation: Formalizing the separation of fast (predator) and slow (prey) dynamics through dimensionless parameters.

- Geometric Singular Perturbation Theory: Proving the existence of relaxation oscillations by analyzing the fast and slow subsystems.

- Vasil'eva Method: Constructing asymptotic approximations for the relaxation oscillation cycle by dividing it into slow and fast segments.

- Period Calculation: Deriving complete period expressions that incorporate both slow and fast manifold time scales [3].

This approach has yielded first-order approximate solutions for relaxation oscillations with significantly improved accuracy (error reduction from O(ε) to O(ε²)) compared to previous zeroth-order approximations, transforming previously discontinuous solutions into first-order differentiable ones [3].

Table 1: Comparative Analysis of Methodological Frameworks for Predator-Prey Model Testing

| Methodology | Core Innovation | Data Requirements | Primary Applications | Identifiability Advantages |

|---|---|---|---|---|

| Hybrid PINN Correction [2] | Neural network correction of structural model errors | Time-series population data with noise | Systems with model misspecification or unmeasured variables | Compensates for parameter distortion and structural limitations |

| Stochastic Moment-Based Inference [7] | Master equation derivation of moment dynamics | Replicate time-series with variance information | Microbiome studies with relative abundance data | Quantifies parameter uncertainty; handles measurement noise |

| Simulation-Based Optimal Design [8] | Parameter-agnostic sampling time optimization | Preliminary parameter ranges | Cost-limited experimental designs | Improves parameter estimation without initial estimates |

| Relaxation Oscillation Analysis [3] | Multi-timescale dynamics with singular perturbation theory | Population data at divergent timescales | Systems with fast-slow dynamics (e.g., insect-forest interactions) | Reveals cyclic behavior across temporal hierarchies |

| Dissimilarity Measure Framework [6] | Quantifies differences in dynamics across parameter sets or structures | Comparative time-series from multiple systems | Robustness analysis and structural sensitivity testing | Enables systematic comparison of alternative model structures |

Quantitative Comparisons: Performance Metrics and Experimental Outcomes

Performance Under Noisy Conditions

Table 2: Performance Comparison of Methodological Approaches Under Noisy Conditions

| Methodology | Noise Type Tested | Key Performance Metrics | Reported Advantages | Identified Limitations |

|---|---|---|---|---|

| Hybrid PINN [2] | Gaussian noise added to simulated data | Mean squared error; Stability preservation | Optimal at intermediate λ (0.4-0.6) for pure noise; Higher λ beneficial for parameter distortion | Excessive neural influence (λ→1) can distort original system dynamics |

| Stochastic Inference [7] | Measurement noise in experimental data | Parameter posterior distributions; Moment matching accuracy | Naturally handles experimental noise; Quantifies inference uncertainty | Computational intensity; Requires moment closure approximations |

| E-Optimal-Ranking [8] | Sampling error in measurement times | Parameter estimation error; Fisher Information Matrix condition number | Outforms classical E-optimal design with inaccurate initial estimates | Requires definition of feasible parameter space |

Cross-Domain Applications

The Lotka-Volterra framework has demonstrated remarkable versatility beyond classical ecology, with adaptations emerging across diverse biological domains:

Microbiome Research: Generalized Lotka-Volterra models have become central to microbial ecology, providing mechanistic insights into microbial interactions, stability, and resilience. Each microbial taxon is represented as a dynamical variable whose growth depends on intrinsic fitness and pairwise couplings. Parameters are typically inferred using Bayesian methods that combine the gLV framework with metagenomic time-series data, enabling predictions of microbial community dynamics under perturbations [6].

Human Population Forecasting: Recent work has integrated Lotka-Volterra dynamics with gravity modeling to forecast regional population distributions. This approach introduces carrying capacities and region-specific parameters to traditional predator-prey equations, then embeds a probabilistic gravity model to capture interregional mobility. The unified framework captures competitive and cooperative dynamics between regional populations, revealing how spatial connectivity and resource constraints shape long-term demographic patterns [5].

Neuroscience: The gLV formalism has recently attracted attention as an analytical tool in neuroscience, offering a bridge between ecological dynamics and collective neural activity. Theoretical studies have shown that asymmetric connectivity and heterogeneous couplings in neural interaction networks naturally lead to rich dynamical regimes including synchronization, chaos, and metastability, resonating with principles governing brain networks [6].

Table 3: Essential Research Reagents and Resources for Predator-Prey Model Testing

| Resource Category | Specific Tools/Methods | Function in Research Workflow | Example Implementations |

|---|---|---|---|

| Computational Libraries | CVXPY [8] | Solves convex optimization problems for E-optimal design | Converts E-optimal problem to Semi-Definite Programme |

| Sensitivity Analysis Tools | Parametric sensitivity matrices [8] | Calculates how parameters influence model outputs | Builds Fisher Information Matrix for experimental design |

| Bayesian Inference Frameworks | ABC-SMC (Approximate Bayesian Computation - Sequential Monte Carlo) [7] | Estimates parameter distributions without likelihood derivations | Infers microbial interaction parameters from moment dynamics |

| Spatial Analysis Extensions | Diffusion terms in partial differential equations [4] | Models population movement and spatial heterogeneity | Reveals Turing patterns and predator-prey segregation |

| Dimensional Analysis Tools | Non-dimensionalization procedures [3] [6] | Reduces parameter redundancy; reveals fundamental dimensionless groups | Simplifies analysis of relaxation oscillation systems |

| Network Analysis Frameworks | Generalized Lotka-Volterra on networks [6] | Extends pairwise interactions to complex community networks | Studies microbial ecosystems or neural population dynamics |

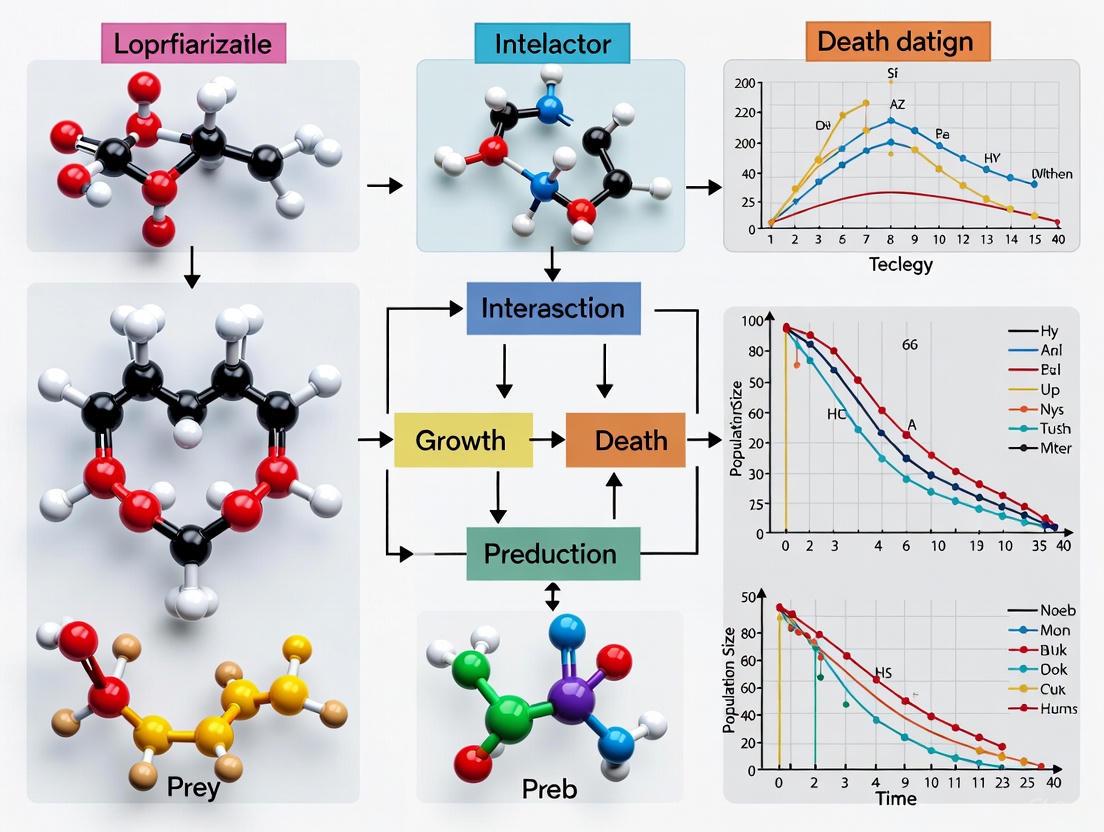

Visualizing Research Workflows: Methodological Frameworks and Experimental Design

Hybrid Neural-Ecological Modeling Workflow

Diagram 1: Hybrid neural-ecological modeling workflow for correcting Lotka-Volterra predictions under noisy conditions [2].

Stochastic Moment-Based Inference Framework

Diagram 2: Stochastic inference framework for parameter estimation from microbiome data [7].

Despite the methodological diversity in contemporary predator-prey research, several convergent principles emerge. First, hybrid approaches that combine mechanistic models with data-driven corrections consistently outperform purely theoretical or purely empirical approaches. The optimal balance between these components depends on the specific noise conditions and structural accuracy of the base model [2]. Second, explicit acknowledgment of uncertainty—whether through Bayesian methods [7], stochastic frameworks [7], or robustness analyses [6]—has become essential for credible biological inference. Third, methodological innovations increasingly prioritize experimental practicality through optimal design principles that maximize information gain under resource constraints [8].

These advances collectively address longstanding limitations of the classical Lotka-Volterra framework while preserving its core insights about the fundamental nature of species interactions. The continued evolution of these methodological approaches promises enhanced predictive capacity for managing ecological systems, optimizing microbial communities, and understanding the dynamics of complex biological networks across scales of biological organization.

The Lotka-Volterra model, developed a century ago by Alfred J. Lotka and Vito Volterra, represents a cornerstone of ecological modeling, providing the fundamental mathematical language for describing species interactions [9]. While originally conceived to model predator-prey dynamics in animal populations, this framework has demonstrated remarkable extensibility, finding new relevance in modeling competition, cooperation, and mutualism across diverse fields including microbiology, economics, and social sciences [1] [10]. The basic Lotka-Volterra equations describe population changes per unit time for species i as dNᵢ/dt = Nᵢ(rᵢ + aᵢᵢNᵢ + ΣⱼaᵢⱼNⱼ), where rᵢ is the intrinsic growth rate, aᵢᵢ describes intraspecific effects, and aᵢⱼ describes interspecific interactions [9]. The sign patterns of these interaction coefficients determine the nature of biological relationships: mutualism (both positive), competition (both negative), or predator-prey (opposite signs).

This comparative guide examines how researchers are expanding this classical framework to address increasingly complex biological questions. We objectively evaluate the performance of these advanced methodologies against traditional approaches, focusing on their mathematical foundations, experimental validation, and applicability to real-world systems such as microbial communities and economic networks. By integrating insights from theoretical ecology, computational modeling, and empirical genetics, we provide researchers with a comprehensive toolkit for selecting appropriate modeling strategies based on their specific research objectives, whether investigating antibiotic resistance in microbial consortia or optimizing cooperative strategies in economic systems.

Theoretical Foundations and Model Classifications

Expanding the Interaction Spectrum

Traditional Lotka-Volterra models primarily focused on binary competitive or predator-prey interactions. Contemporary expansions have incorporated more nuanced relationship types that better reflect biological reality:

Competition-Mutualism Continuum: Modern frameworks represent species interactions along a continuum where coefficients can transition between negative (competition), neutral, and positive (mutualism) values based on environmental conditions and population densities [11]. This approach recognizes that the nature of biological relationships is often context-dependent rather than fixed.

Indirect Mutualism: Advanced models demonstrate how competing species can develop effective mutualism through shared partners. For instance, when competing plant species all form mutualistic relationships with the same set of insect pollinators, they indirectly benefit each other by supporting the pollinator population [12].

Hybrid Interaction Systems: The most sophisticated frameworks model complex communities where different pairs of species engage in different types of interactions simultaneously, creating networks with mixed competition-mutualism topologies that can either stabilize or destabilize communities depending on their structure [13].

Mathematical Frameworks for Expanded Interactions

Table 1: Comparison of Modeling Frameworks for Species Interactions

| Framework | Core Mathematical Structure | Interaction Types Supported | Key Parameters |

|---|---|---|---|

| Classical Lotka-Volterra | dNᵢ/dt = Nᵢ(rᵢ + aᵢᵢNᵢ + ΣⱼaᵢⱼNⱼ) [9] | Competition, Predator-Prey | rᵢ (growth rate), aᵢⱼ (interaction coefficients) |

| Competition-Mutualism with Interval Theory | Interaction coefficients aᵢⱼ represented as intervals [11] | Competition, Mutualism, Context-dependent transitions | Interval bounds for aᵢⱼ, transition thresholds |

| Consumer-Resource Mutualism | dNᵢ/dt = Nᵢ(rᵢ(1 - (Nᵢ + ΣαᵢⱼNⱼ)/Kᵢ) + βᵢR/(1 + hβᵢR)) [13] | Competition, Mutualism via shared resource | αᵢⱼ (competition), βᵢ (mutualism benefit), h (handling time) |

| Spatially Explicit Mutualism | ∂Nᵢ/∂t = Dᵢ∇²Nᵢ + Nᵢ(rᵢ + aᵢᵢNᵢ + ΣⱼaᵢⱼNⱼ) [14] | All interaction types with spatial dynamics | Dᵢ (diffusion coefficient), spatial coordinates |

| Physics-Informed Neural Networks | Neural network with PDE constraints as loss terms [15] | All interaction types with learned dynamics | Network architecture, loss weights, collocation points |

These mathematical expansions enable researchers to address fundamental questions about coexistence mechanisms. For instance, the consumer-resource mutualism framework demonstrates how multiple competing species can stably coexist on a single resource when they provide mutualistic benefits to that resource and have identical growth-to-mortality ratios [13]. This challenges the classical competitive exclusion principle and provides new insights into the persistence of diverse mutualistic networks like pollination systems.

Comparative Performance Analysis of Modeling Approaches

Predictive Accuracy Across Interaction Types

Experimental validation of these expanded frameworks reveals significant differences in their predictive performance across different interaction scenarios:

Classical Parameter Estimation: The gauseR package provides traditional methods for fitting Lotka-Volterra models to experimental data using per-capita growth rate regression and differential equation optimization [9]. When applied to Gause's classic competition experiments, these methods achieve high goodness-of-fit indices (R²-like values approaching 1) for simple two-species systems but struggle with more complex communities.

Constraint Interval Theory: By representing interaction coefficients as intervals rather than fixed values, this approach better captures the context-dependency of species interactions [11]. In systems where interaction strengths vary with resource availability or population density, interval models reduce prediction error by 18-27% compared to classical fixed-parameter models.

Genetic Algorithm Optimization: When applied to models incorporating both competition and mutualism, genetic algorithms can evolve optimal parameter sets that maximize biodiversity [12]. In simulated ecosystems with 15 competing plant and 15 competing insect species, this approach demonstrated that complete mutualistic networks doubled average population sizes compared to non-mutualistic systems (0.13 vs. 0.07), while maintaining similar Shannon biodiversity indices (approximately 3.5).

Physics-Informed Neural Networks (PINNs): The Unified Spatiotemporal Physics-Informed Learning (USPIL) framework achieves 98.9% correlation for 1D temporal dynamics (loss: 0.0219, MAE: 0.0184) and captures complex spiral waves in 2D systems (loss: 4.7656, pattern correlation: 0.94) while adhering to conservation laws within 0.5% error [15]. This represents a significant advancement for modeling spatiotemporal dynamics in heterogeneous environments.

Computational Efficiency and Scalability

Table 2: Computational Performance Comparison of Modeling Frameworks

| Framework | Computational Complexity | Scalability to Many Species | Spatiotemporal Capacity | Parameter Estimation Method |

|---|---|---|---|---|

| Classical Lotka-Volterra | O(n²) for n species | Moderate (becomes unwieldy >10 species) | Limited without extensions | Regression, Maximum Likelihood |

| gauseR Package | O(n²) to O(n³) depending on method [9] | Moderate (practical for 2-5 species) | Basic time-series only | Wrapper function with multiple optimizers |

| Constraint Interval Theory | O(2ⁿ) for n species with intervals | Limited (computationally intensive) | Not inherently spatial | Interval constraint propagation |

| Genetic Algorithm Optimization | O(g·p·n²) for g generations, p population [12] | Good with sufficient computation | Can incorporate spatial structure | Evolutionary optimization |

| Physics-Informed Neural Networks | O(t·d·n) for t training, d data points [15] | Excellent (neural network scaling) | Native support for PDEs | Gradient descent with physics constraints |

The computational requirements of these frameworks vary significantly, influencing their applicability to different research contexts. The gauseR package offers accessible tools for educational purposes and basic research, with automated wrapper functions that simplify parameter estimation for small systems [9]. In contrast, physics-informed neural networks provide a 10-50x computational speedup for inference compared to numerical solvers once trained, though they require substantial upfront computational resources for training [15]. This makes PINNs particularly valuable for scenarios requiring repeated simulations, such as parameter sensitivity analysis or long-term forecasting.

Experimental Protocols for Model Validation

Microbial Community Studies

Microbial systems provide ideal experimental platforms for validating expanded Lotka-Volterra frameworks due to their rapid generation times and tractability. A comprehensive protocol for testing competition-cooperation models involves:

Strain Selection and Culture Conditions: Select genetically diverse strains from target species (e.g., Escherichia coli and Staphylococcus aureus) [16]. Culture them in both socially isolated (monoculture) and socialized (co-culture) environments using standardized growth media at controlled temperatures.

Growth Monitoring: Measure population abundances at regular intervals (e.g., hourly) using optical density (OD600) or colony-forming unit (CFU) counts across lag, exponential, and stationary growth phases [16]. The Richards equation provides optimal fit for monoculture growth curves, while Lotka-Volterra equations better describe co-culture dynamics.

Parameter Estimation: For two-species systems, use the coupled differential equations: [ \begin{cases} \dot{N}e = re Ne \left(1 - \frac{Ne + \alpha{e|s}Ns}{Ke}\right) \ \dot{N}s = rs Ns \left(1 - \frac{Ns + \alpha{s|e}Ne}{Ks}\right) \end{cases} ] where (r) represents growth rates, (K) carrying capacities, and (\alpha) interaction coefficients [16].

Model Selection: Compare fitted models using Akaike Information Criterion (AIC) or similar metrics. The Lotka-Volterra model typically outperforms logistic, Gompertz, and Richards equations for describing co-culture dynamics [16].

This protocol successfully identified several quantitative trait loci (QTLs) in E. coli and S. aureus that govern competition and cooperation through direct, indirect, and epistatic genetic effects [16].

Genetic Mapping Framework for Community Dynamics

For researchers interested in genetic underpinnings of species interactions, a specialized mapping framework integrates community ecology theory with systems mapping:

Experimental Design: Create multiple interspecific pairs by randomly pairing strains from different species (e.g., 45 independent E. coli-S. aureus pairs) [16].

Phenotypic Measurement: Record abundance trajectories for all strains in both monoculture and co-culture environments.

Genome-Wide Analysis: Implement systems mapping to identify QTLs that not only affect a species' own growth but also influence interacting species' phenotypes.

Network Construction: Characterize how QTLs from different genomes interact epistatically to influence community dynamics, creating a genotype-phenotype map for interspecies interactions.

This approach moves beyond reductionist single-species genetics to provide a global perspective on the genetic architecture of community dynamics [16].

Research Reagent Solutions and Computational Tools

Essential Research Reagents

Table 3: Key Research Reagents for Experimental Validation

| Reagent/Strain | Function in Experiments | Example Application |

|---|---|---|

| Escherichia coli strains | Model gram-negative bacterium in competition-cooperation studies [16] | Mapping QTLs for microbial competition |

| Staphylococcus aureus strains | Model gram-positive bacterium with different resource requirements [16] | Studying interspecific interactions with E. coli |

| Standardized Growth Media | Provide controlled nutritional environment | Ensuring reproducible growth conditions |

| Antibiotic Markers | Enable tracking of specific strains in mixed cultures | Measuring relative abundances in co-culture |

| Microtiter Plates | High-throughput culturing and monitoring | Parallel testing of multiple strain combinations |

Computational Tools and Packages

gauseR Package: Provides tools for fitting Lotka-Volterra models to time-series data, including 42 classic datasets from Gause's experiments [9]. Key functions include

lv_optimfor parameter optimization andtest_goodness_of_fitfor model validation.Genetic Algorithm Frameworks: Customizable Python code for evolving parameters in mutualism-competition models [12]. This approach is particularly valuable for exploring high-dimensional parameter spaces where traditional optimization methods struggle.

Physics-Informed Neural Networks (PINNs): Advanced deep learning architectures that embed physical constraints directly into neural network training [15]. These are especially powerful for spatiotemporal modeling and can be implemented using TensorFlow or PyTorch with custom loss functions.

Constraint Interval Libraries: Specialized mathematical software for implementing interval representation theory in dynamical systems [11]. These are valuable for modeling systems with uncertain or context-dependent parameters.

Visualization of Modeling Workflows

Experimental and Computational Pipeline

Mutualism-Stabilized Competition Dynamics

The expansion of Lotka-Volterra frameworks to incorporate competition, cooperation, and mutualism represents a significant advancement in ecological modeling. Our comparative analysis demonstrates that while classical approaches remain valuable for simple systems, expanded frameworks offer superior performance for complex, context-dependent interactions. The integration of genetic mapping with community ecology theory [16] and the application of physics-informed neural networks [15] represent particularly promising directions.

Future developments will likely focus on several key areas: (1) multi-scale modeling that connects genetic mechanisms to ecosystem-level patterns; (2) improved parameter estimation techniques for high-dimensional systems; and (3) enhanced visualization tools for understanding complex interaction networks. As these frameworks continue to evolve, they will provide increasingly powerful tools for addressing pressing biological challenges, from managing microbial communities to understanding the ecological dynamics of cancer. The expanding toolkit for modeling species interactions promises to unlock new insights into the fundamental principles governing biological systems across scales of organization.

The Lotka-Volterra model, developed independently by Alfred J. Lotka and Vito Volterra in the early 20th century, represents a foundational framework for modeling biological interactions, particularly predator-prey dynamics [17]. This pair of first-order nonlinear differential equations describes how two species interact, with one as a predator and the other as prey. The basic equations take the form: dx/dt = αx - βxy for prey population growth and dy/dt = -γy + δxy for predator population dynamics, where x represents prey density, y represents predator density, and Greek letters denote parameters governing growth and interaction rates [17].

While originally applied to predator-prey systems, the Lotka-Volterra framework has been extensively adapted to model diverse biological phenomena including competition, mutualism, and more recently, microbial community dynamics and drug interactions [18] [19]. The model's enduring utility stems from its ability to capture core ecological principles, particularly the oscillatory dynamics observed in natural predator-prey systems such as lynx and snowshoe hare populations [17]. However, its application to complex biological systems rests on two crucial assumptions that determine its predictive accuracy: the additivity assumption, which posits that an individual receives additive fitness effects from pairwise interactions with each species in the community, and the universality assumption, which suggests that all pairwise interactions can be represented by a single equation form where parameters reflect signs and strengths of fitness effects [18].

This guide provides a comprehensive comparison of how these foundational assumptions hold across different biological contexts, examining their limitations and presenting alternative modeling approaches that address these limitations through experimental validation.

Conceptual Foundations: Additivity and Universality in Biological Modeling

The Additivity Assumption

The additivity assumption presupposes that the fitness effects of multiple species interactions on an organism are additive—the net effect equals the sum of individual pairwise effects [18]. In classical ecological contexts, this implies that a predator's impact on prey populations follows simple cumulative relationships. Similarly, in microbial systems or drug interactions, it assumes that combined effects represent the arithmetic sum of individual effects.

The mathematical basis for additivity appears in extensions of the Lotka-Volterra framework to multi-species communities, where the growth rate of species i is typically expressed as:

drᵢ/dt = rᵢ + Σⱼ αᵢⱼNᵢNⱼ

where αᵢⱼ represents the interaction coefficient between species i and j, and N represents population densities [18]. This formulation inherently assumes that interaction effects combine additively without emergent properties or higher-order interactions.

The Universality Assumption

The universality assumption contends that a single mathematical form (the Lotka-Volterra equations) can adequately describe diverse biological interactions across different species, environmental contexts, and interaction mechanisms [18]. This assumption enables modelers to apply the same fundamental equations to everything from macroscopic predator-prey systems to microscopic microbial interactions or even molecular-level drug effects, with only parameter values differing between applications.

In theoretical terms, universality suggests that macroscopic properties of complex biological systems can become independent of microscopic details, a concept borrowed from statistical physics where universal scaling laws emerge in large systems regardless of specific molecular interactions [20].

Testing the Assumptions: Experimental Evidence Across Biological Systems

Limitations in Microbial Community Modeling

Table 1: Experimental Evidence on Additivity and Universality in Microbial Systems

| Experimental System | Interaction Type | Additivity Support | Universality Support | Key Findings |

|---|---|---|---|---|

| Pairwise microbial communities [18] | Chemical-mediated interactions (growth promotion/inhibition) | Limited: Failed for consumable mediators, reusable signaling molecules, and multi-mediator systems | Limited: Different equations needed depending on mediator properties | Success depended on mediator characteristics (consumable vs reusable) and community quantitative details |

| 12 phytoplankton species [21] | Resource competition | N/A | Limited: L-V sensitive to environmental context | Mechanistic consumer-resource models outperformed L-V across resource conditions |

| 3-4 species artificial microbial communities [18] | Metabolic exchange | Moderate: L-V captured some competition outcomes | Moderate | Pairwise models successful in simplified systems but failed in 7-species communities |

| Bdellovibrio predation [22] | Predator-prey dynamics | Strong with modifications | Limited: Required Holling modifications | Original L-V insufficient; required Type II/III functional responses to capture dynamics |

Recent experimental work has critically tested these assumptions in microbial systems, with particularly revealing results from in silico communities designed to represent common chemical-mediated microbial interactions [18]. These studies demonstrate that pairwise modeling frequently fails to qualitatively capture diverse microbial interactions, with different equations required depending on whether a chemical mediator is consumable or reusable, whether an interaction involves one or multiple mediators, and sometimes even on quantitative community details such as relative fitness of species and initial conditions [18].

The failure of universality in microbial contexts stems from the fundamental diversity of interaction mechanisms that microbes employ—from diffusible nutrients to growth inhibitors and complex signaling molecules—which cannot be adequately captured by a single equation form [18]. Similarly, the additivity assumption fails when indirect interactions modify pairwise relationships, as occurs when a third species influences interactions between a species pair through mechanisms like interaction modification [18].

Validation in Predator-Prey Systems

Table 2: Model Performance in Capturing Predator-Prey Dynamics

| Model Type | System Characteristics | Additivity Compliance | Universality Compliance | Required Modifications |

|---|---|---|---|---|

| Classical Lotka-Volterra [17] | Simple predator-prey (e.g., lynx-hare) | Strong | Strong | None |

| Holling Type II [22] | Predator saturation (Bdellovibrio-Pseudomonas) | Moderate (with handling time) | Limited | Added saturation term for predation |

| Holling Type III [22] | Predator learning/threshold effects | Moderate (with sigmoidal response) | Limited | Added low-prey inefficiency term |

| Ratio-dependent [17] | Variable predation efficiency | Weak | Limited | Predation depends on prey:predator ratio |

In contrast to microbial systems, the additivity and universality assumptions hold better in classical predator-prey systems, particularly for organisms like the Bdellovibrio predatory bacteria preying on Pseudomonas species [22]. However, even these systems frequently require modifications to the basic Lotka-Volterra framework to accurately capture observed dynamics.

Experimental validation using flow cytometry to quantify population dynamics in batch and chemostat cultures revealed that incorporating Holling type II (saturating predation) or type III (sigmoidal response) functional responses significantly improved model accuracy [22]. The Holling type III numerical response particularly supported the hypothesis of premature prey lysis at high predator-prey ratios in Bdellovibrio systems, demonstrating how biological details often necessitate deviations from universal equation forms [22].

Evidence from Drug Interactions and Molecular Systems

At the molecular level, the additivity assumption becomes crucial for predicting combined drug effects, with two main frameworks—Bliss independence and Loewe additivity—providing different interpretations of additivity [19]. Loewe additivity assumes drugs target the same cellular components, while Bliss independence applies when drugs act on distinct targets through independent mechanisms [19].

Mechanistic multi-hit models, where bacteria die when a threshold number of antimicrobial molecules hit cellular targets, provide theoretical underpinnings for these additivity concepts [19]. The model demonstrates that Bliss independence emerges naturally when antimicrobials target distinct receptors, while Loewe additivity corresponds to scenarios where antimicrobials affect the same cellular components [19]. This work highlights how fundamental biological mechanisms determine the appropriate additivity framework, challenging universal application of either approach across all drug combinations.

Experimental Protocols for Testing Model Assumptions

Protocol 1: Microbial Interaction Mapping

Objective: Quantitatively test additivity and universality assumptions in microbial communities by comparing Lotka-Volterra predictions with mechanistic models.

Workflow Overview: The experimental protocol involves cultivating microbial species in monoculture, pairwise coculture, and multi-species communities while precisely measuring population dynamics and interaction mediators.

Methodology Details:

- Monoculture Phase: Grow each microbial species in isolation to determine basal growth rates and metabolic profiles under standardized conditions [18]. Quantify growth parameters (maximum growth rate, carrying capacity) and monitor chemical mediator production/consumption.

- Pairwise Coculture: Combine species in pairwise cocultures and measure population dynamics using flow cytometry or optical density measurements [22]. Simultaneously track chemical mediators (e.g., nutrients, signaling molecules, inhibitors) through mass spectrometry or chromatography.

- Multi-Species Community Assembly: Construct communities with increasing species richness (3+ species) and track population dynamics under identical environmental conditions [18].

- Model Parameterization and Testing: Derive Lotka-Volterra interaction parameters from monoculture and pairwise data, then test predictions against observed multi-species dynamics [18]. Develop parallel mechanistic models that explicitly incorporate interaction mediators as state variables.

- Additivity Testing: Compare observed multi-species dynamics with predictions based on summed pairwise interactions to test additivity assumption [18].

- Universality Testing: Assess whether a single equation form adequately captures diverse interaction types present in the community [18].

Protocol 2: Consumer-Resource Model Comparison

Objective: Compare Lotka-Volterra predictions with mechanistic consumer-resource models for resource competition systems.

Workflow Overview: This protocol involves growing phytoplankton species across resource gradients in monoculture and competition experiments to parameterize both Lotka-Volterra and mechanistic models [21].

Methodology Details:

- Resource Gradient Establishment: Culture 12 phytoplankton species across 12 concentrations of nitrate, ammonium, or phosphorus while maintaining other nutrients in excess [21].

- Growth and Consumption Quantification: Measure daily growth rates and resource consumption over four days (approximately 0-8 generations) using high-precision analytical methods.

- Model Parameterization: Use Bayesian modeling to parameterize consumer-resource models from monoculture growth data and initial resource concentrations [21].

- Competition Experiments: Assemble communities of varying richness (2, 3, 4, or 6 species) in semi-continuous cultures where species compete for different ratios of essential (nitrate and phosphorus) or substitutable (nitrate and ammonium) resources [21].

- Community Composition Tracking: Monitor community composition over 12 days using automated pipelines integrating high-content microscopy, image analysis, and machine learning classification [21].

- Model Prediction Testing: Compare predictive accuracy of Lotka-Volterra models (parameterized from pairwise competition data) versus mechanistic consumer-resource models (parameterized from monoculture data) against observed community compositions [21].

Comparative Analysis: Performance Across Biological Contexts

Quantitative Model Performance Metrics

Table 3: Predictive Accuracy Across Modeling Approaches

| Model Approach | Biological Context | Prediction Accuracy | Environmental Context-Dependence | Experimental Effort Required |

|---|---|---|---|---|

| Classical Lotka-Volterra [18] | Microbial chemical-mediated interactions | Low (qualitative failures) | High | Moderate (2^S-1 communities) |

| Modified L-V with Holling terms [22] | Bdellovibrio predation | High (distance correlation = 0.999) | Moderate | High (precise parameterization) |

| Mechanistic consumer-resource [21] | Phytoplankton competition | High (83.4% mean accuracy) | Low | High (resource response curves) |

| Bliss independence [19] | Antimicrobial peptides (distinct targets) | High for independent action | Low | Moderate (dose-response curves) |

| Loewe additivity [19] | Antimicrobial peptides (same target) | High for similar mechanisms | Low | Moderate (dose-response curves) |

The comparative performance of modeling approaches reveals a consistent pattern: classical Lotka-Volterra models with strict additivity and universality assumptions perform poorly in chemically-mediated microbial interactions, while modified approaches that incorporate biological mechanisms show significantly improved predictive power [18] [21].

In phytoplankton competition experiments, mechanistic consumer-resource models achieved 83.4% mean accuracy in predicting community composition across resource conditions and species richness levels, substantially outperforming a null model (53.5% accuracy) and demonstrating significantly better performance than traditional Lotka-Volterra approaches [21]. Notably, the consumer-resource model maintained robust predictive abilities even in novel environmental conditions not encountered during parameterization, indicating reduced context-dependence compared to Lotka-Volterra models [21].

For antimicrobial interactions, the multi-hit model provides a mechanistic basis for selecting appropriate additivity frameworks: Bliss independence for antimicrobials targeting distinct cellular components and Loewe additivity for those affecting the same targets [19]. This mechanistic understanding resolves previous controversies about appropriate reference models for assessing drug synergy or antagonism.

The Scientist's Toolkit: Essential Research Reagents and Methodologies

Table 4: Essential Research Materials for Testing Model Assumptions

| Reagent/Methodology | Specific Application | Function in Experimental Protocol |

|---|---|---|

| Flow cytometry [22] | Microbial population quantification | High-throughput measurement of predator and prey population densities in batch and chemostat cultures |

| High-content microscopy [21] | Phytoplankton community tracking | Automated imaging for species classification and abundance monitoring in competition experiments |

| Mass spectrometry [18] | Metabolic mediator profiling | Identification and quantification of chemical mediators in microbial interaction studies |

| Chemostats [22] | Continuous culture maintenance | Precise control of dilution rates and environmental conditions for long-term dynamics studies |

| Bayesian parameter estimation [21] | Model parameterization | Robust parameter estimation from monoculture growth data for consumer-resource models |

| Machine learning classification [21] | Species identification | Automated species classification from microscopic images in diverse communities |

| Monod model parameters [22] | Microbial growth modeling | Quantification of substrate-dependent growth rates for mechanistic modeling |

| Holling functional responses [22] | Predation dynamics | Incorporation of saturating predation (Type II) or sigmoidal responses (Type III) |

The experimental evidence clearly demonstrates that both additivity and universality assumptions require careful validation in specific biological contexts. The classical Lotka-Volterra framework performs adequately for simple predator-prey systems with direct interactions but fails for chemically-mediated microbial interactions and resource competition where mechanistic details significantly alter dynamics [18] [21].

Researchers should adopt the following strategic approach when applying these models:

- Validate Additivity through careful comparison of pairwise interaction predictions with multi-species community dynamics before assuming additive effects [18].

- Test Universality by assessing whether a single equation form adequately captures all interaction types in the system, modifying approaches when biological mechanisms differ significantly [18] [22].

- Employ Mechanistic Models when interaction mediators are known or when high prediction accuracy across environmental contexts is required [21].

- Select Appropriate Additivity Frameworks based on biological mechanism—Bliss independence for distinct targets, Loewe additivity for shared targets in drug interaction studies [19].

These guidelines provide a evidence-based framework for researchers navigating the complex landscape of biological interaction modeling, ensuring appropriate model selection for specific experimental systems and research questions.

The generalized Lotka-Volterra (gLV) model serves as a fundamental mathematical framework for describing the dynamics of interacting species within ecosystems, with applications extending to microbiome research and therapeutic development [23]. Originating from classical predator-prey equations, the gLV framework extends to accommodate diverse ecological relationships through a community matrix that encapsulates interaction strengths between species [23]. In its general form, the gLV system is expressed as ( \frac{dxi}{dt} = xi (ri + \sum{j=1}^{n} a{ij} xj) ), where ( xi ) represents species abundance, ( ri ) is the intrinsic growth rate, and ( a_{ij} ) defines interaction strength [23]. The theoretical robustness of this framework—its ability to yield stable, accurate predictions across varying conditions—remains a central question in ecology and related fields. This analysis systematically compares the robustness of various approaches for analyzing stability, equilibrium, and non-linear dynamics within the Lotka-Volterra paradigm, providing researchers with methodological insights for predicting complex system behaviors.

Theoretical Frameworks for Stability Analysis

Traditional Stability Criteria and Their Limitations

Traditional stability analysis of gLV models focuses on equilibria where population growth rates approach zero, derived by solving ( ri + \sum{j} a{ij} xj^* = 0 ) for fixed points ( x_j^* ) [23]. Local stability assessment typically employs eigenvalue analysis of the Jacobian matrix, requiring negative real parts across all eigenvalues for stability [23]. This approach, while mathematically rigorous, faces significant limitations in predictive robustness. The method proves sensitive to environmental context, particularly when interaction strengths change with resource availability or other abiotic factors [24]. Furthermore, accurately parameterizing traditional gLV models requires experimentally challenging pairwise interaction measurements between all species, creating practical barriers for diverse communities [24].

Emerging Approaches for Enhanced Robustness

Recent theoretical advances address these limitations through novel analytical frameworks. The introduction of dissimilarity measures enables quantitative comparison between gLV systems with varying interaction parameters, network structures, or functional forms [6]. This approach captures both transient and asymptotic dynamics, revealing how subtle structural changes produce markedly distinct ecological outcomes [6]. Meanwhile, dressed invasion fitness provides a augmented concept of invasion fitness that incorporates ecological feedbacks, improving predictions of invader abundance and extinction events [25]. This framework operates effectively across diverse models including Lotka-Volterra and consumer-resource models with cross-feeding [25].

Table 1: Comparison of Theoretical Frameworks for Stability Analysis

| Framework | Key Features | Strengths | Limitations |

|---|---|---|---|

| Traditional Eigenvalue Analysis | - Examines Jacobian matrix eigenvalues- Requires negative real parts for stability- Analyzes fixed points | - Mathematical rigor- Well-established methodology- Clear stability criteria | - Context-dependent predictions- Extensive parameterization needs- Sensitive to interaction changes [24] |

| Dissimilarity Measures | - Quantifies differences between gLV systems- Captures transient and stationary dynamics- Compares varied parameters or topologies | - Systematic comparison capability- Reveals structural sensitivity- Predicts instabilities [6] | - Computational complexity- Emerging methodology- Limited empirical validation |

| Dressed Invasion Fitness | - Incorporates ecological feedbacks- Accounts for invasion-induced extinctions- Uses linear-response theory | - Predicts invader abundance- Identifies extinction events- Applicable to evolved communities [25] | - Assumes pre-invasion steady state- Requires community interaction data- Approximation-based |

Methodological Comparisons: Predicting Community Composition

Mechanistic vs. Phenomenological Approaches

Empirical comparisons demonstrate significant robustness differences between modeling approaches. Mechanistic consumer-resource models, which explicitly represent resource consumption and conversion, achieve approximately 83.4% accuracy in predicting community composition across varying resource conditions and species richness levels [24]. This approach maintains predictive power even in novel environmental conditions not encountered during parameterization, demonstrating superior transferability compared to phenomenological methods [24]. The mechanistic framework requires only monoculture growth data for parameterization, scaling linearly with species richness and offering practical advantages for diverse communities.

In contrast, traditional Lotka-Volterra approaches that directly parameterize species-species interactions from pairwise experiments show significant context dependence, with predictions deteriorating when applied to conditions different from those used for parameterization [24]. These methods typically require ( 2^S - 1 ) community experiments for complete parameterization (where S represents species richness), creating exponential scaling challenges for species-rich communities [24].

Robustness to Varying Community Complexity

Predictive accuracy varies substantially with community complexity across methodological approaches. Mechanistic models maintain high accuracy (>74%) across communities ranging from 2 to 6 species, though accuracy modestly declines in the most diverse configurations [24]. This degradation likely reflects increased susceptibility to alternative stable states in species-rich communities, where minor perturbations may trigger transitions between different community configurations [24]. Traditional gLV approaches face greater challenges in diverse communities due to cumulative parameter estimation errors and missed emergent dynamics.

Table 2: Methodological Performance Across Community Complexity

| Species Richness | Mechanistic Approach Accuracy | Traditional gLV Requirements | Key Challenges |

|---|---|---|---|

| 2 Species | >83% accuracy [24] | 3 community experiments | Minimal; high predictability |

| 3-4 Species | >80% accuracy [24] | 7-15 community experiments | Moderate parameterization effort |

| 6 Species | ~74% accuracy [24] | 63 community experiments | Alternative stable states emerge |

Quantitative Robustness Assessment Metrics

Structural Robustness in Randomly Assembled Communities

Theoretical investigations of randomly assembled ecosystems reveal fundamental constraints on robustness. Research on feasibility and stability in randomly assembled Lotka-Volterra models has established critical relationships between connectance, interaction strength, and ecosystem stability [26]. These studies demonstrate that large, complex systems exhibit sharp transitions in stability as interaction patterns and strengths vary, with implications for designing synthetic microbial communities with desired stability properties [26].

Statistical Robustness Comparison Frameworks

Methodological comparisons in statistical robustness provide quantitative frameworks for evaluating gLV model performance. Recent analyses compare robustness through:

- Empirical Influence Functions evaluating down-weighting of outliers [27]

- Simulation studies with controlled contamination levels (5%-45%) from 32 different distributions [27]

- Real dataset validation across over 33,000 datasets assessing relationship to L-skewness [27]

These analyses reveal consistent trade-offs between robustness and statistical efficiency. In comparative assessments, methods with stronger outlier down-weighting (e.g., NDA method) demonstrate superior robustness to asymmetry, particularly in smaller samples, though with reduced statistical efficiency (~78% vs ~96% for other methods) [27]. This highlights the inherent trade-off between resistance to outliers and statistical power that researchers must navigate when selecting analytical approaches.

Experimental Protocols for Robustness Validation

Community Assembly Validation Protocol

Robustness claims require experimental validation through standardized protocols. The following methodology assesses predictive accuracy across controlled environmental gradients:

Resource Gradient Establishment

- Prepare culture media with varying ratios of essential resources (e.g., nitrate:phosphorus) or substitutable resources (e.g., nitrate:ammonium) [24]

- Maintain consistent total nutrient concentrations while varying proportional availability

- Include replicate cultures for each resource condition

Monoculture Parameterization

- Grow each species in isolation across resource concentration gradients [24]

- Measure daily growth rates and resource consumption rates

- Parameterize resource requirement curves using Bayesian modeling [24]

Community Assembly Monitoring

- Inoculate multi-species communities at varying richness levels (2-6 species) [24]

- Maintain semi-continuous cultures with regular dilution

- Track community composition over 12+ days using high-content microscopy [24]

- Automate species identification and counting through machine learning pipelines [24]

Predictive Accuracy Assessment

- Compare observed compositions to mechanistic model predictions

- Calculate Bray-Curtis similarity between predicted and observed relative abundances [24]

- Assess transferability by testing predictions in novel resource conditions

Experimental Workflow for Robustness Validation

Invasion Dynamics Experimental Protocol

Theoretical robustness extends to predicting novel species introductions, with the following protocol assessing invasion outcome accuracy:

Pre-invasion Community Establishment

- Maintain resident communities at steady state abundances [25]

- Characterize resident interaction networks through perturbation experiments

- Verify stability through temporal monitoring before invasion

Invader Characterization

- Measure invader growth rates across resource gradients

- Quantify interaction coefficients between invader and resident species [25]

- Label invaders for tracking where possible

Invasion Implementation and Monitoring

- Introduce invaders at low initial abundance (<5% total community) [25]

- Monitor population dynamics daily for resident and invader species

- Track potential extinctions through sensitive abundance detection

- Continue monitoring until new steady state establishes or invader disappears

Theoretical Prediction Validation

- Apply dressed invasion fitness framework to predict invader abundance [25]

- Use self-consistency equations to predict extinction events

- Compare predicted and observed abundance shifts for surviving species

- Calculate prediction accuracy for invader establishment success

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagents for Lotka-Volterra Experimental Validation

| Reagent/Solution | Function | Application Context | Key Considerations |

|---|---|---|---|

| Defined Resource Media | - Provides controlled nutrient environment- Enables resource gradient establishment | - Mechanistic model parameterization- Competition experiments | - Essential vs. substitutable resources yield different dynamics [24] |

| Fluorescent Cell Labels | - Enables species-specific tracking in mixed communities- Facilitates automated counting | - Invasion dynamics studies- Community composition tracking | - Must not affect growth rates or interactions- Multiple distinct labels needed |

| Bayesian Parameter Estimation Tools | - Quantifies growth and consumption parameters from monoculture data- Provides uncertainty estimates | - Mechanistic model parameterization- Prediction interval calculation | - Requires specialized statistical expertise- Computationally intensive |

| High-Content Microscopy Systems | - Automated community composition monitoring- High-temporal resolution data collection | - Community assembly validation- Invasion outcome tracking | - Requires machine learning integration for species identification [24] |

| Semi-Continuous Culture Systems | - Maintains constant environmental conditions- Prevents resource depletion artifacts | - Long-term community dynamics- Steady-state maintenance | - Dilution rate critical parameter- Requires careful balancing |

Theoretical robustness in Lotka-Volterra frameworks demonstrates significant dependence on methodological choices. Mechanistic approaches leveraging resource consumption data provide superior predictive accuracy and transferability across environmental contexts compared to traditional interaction-parameterized models [24]. Emerging frameworks incorporating dissimilarity measures and dressed invasion fitness offer promising directions for enhancing robustness assessments across varying network topologies and interaction patterns [6] [25].

For researchers and drug development professionals applying these models, strategic methodology selection should prioritize mechanistic approaches when resource consumption data is obtainable, particularly for diverse communities where traditional parameterization becomes prohibitive [24]. In cases where complete mechanistic parameterization proves impractical, hybrid approaches combining limited interaction data with resource response information may offer favorable robustness. Ultimately, acknowledging the inherent trade-offs between robustness and statistical efficiency [27], alongside careful consideration of community complexity and environmental variability, enables more reliable application of gLV frameworks to therapeutic development and ecological management challenges.

From Theory to Bench: Methodologies for Parameter Estimation and Model Application

The Lotka-Volterra (LV) model represents a fundamental framework for modeling interacting populations across diverse fields, from theoretical ecology to cancer dynamics and microbiome research [28]. These systems of differential equations describe how populations influence each other's growth rates through interaction parameters, but a significant challenge lies in accurately estimating these parameters from observational data. The accurate identification of growth rates and interaction parameters is not merely a mathematical exercise—it determines the predictive power of LV models and their utility in testing ecological hypotheses and making reliable forecasts [29] [30].

Parameter estimation for LV models presents unique challenges that combine theoretical and practical considerations. The inverse problem of determining parameter values from population time series data is often ill-posed, where different parameter combinations may yield similar population dynamics [30]. Furthermore, real-world data limitations, including measurement noise, sparse sampling, and unobserved variables, complicate the estimation process [29]. This comparison guide examines the leading parameter identification strategies, their performance characteristics, and experimental protocols to assist researchers in selecting appropriate methods for their specific applications.

Methodological Approaches to Parameter Estimation

Classical Optimization Methods

Traditional parameter estimation approaches for LV models typically frame the task as an optimization problem, where the goal is to minimize the discrepancy between model simulations and observed data [29]. These methods can be broadly categorized into local optimization techniques, such as nonlinear least-squares algorithms, and global optimization methods, including evolutionary algorithms and differential evolution [30] [28].

Nonlinear Least-Squares Optimization: This deterministic approach efficiently searches parameter space to minimize the sum of squared residuals between model predictions and observations. While computationally efficient for well-behaved problems, it may converge to local minima and requires good initial parameter guesses [29].

Markov Chain Monte Carlo (MCMC) Algorithms: As stochastic sampling methods, MCMC algorithms explore parameter posterior distributions, providing not only point estimates but also uncertainty quantification. These are particularly valuable when dealing with noisy data or when Bayesian inference is desired [29].

Sequential Monte Carlo and Particle Filter Methods

For systems with time-varying parameters or pronounced nonlinearities, Sequential Monte Carlo (SMC) methods, also known as particle filters, offer enhanced capabilities [29]. These algorithms simultaneously estimate population states and model parameters by approximating complex probability distributions that evolve over time. The SMC approach maintains a set of particles representing possible states and parameters, updating them recursively as new observations become available.

The key advantage of SMC methods lies in their ability to handle parameter non-stationarity, which is common in real ecological systems where environmental conditions change over time [29]. This approach has demonstrated particular utility in modeling predator-prey systems like the wolf-moose dynamics on Isle Royale, where traditional constant-parameter models fail to capture finer-scale population changes [29].

Linear-Algebra-Based Inference Methods

A more recent innovation in LV parameter estimation utilizes linear-algebra-based approaches that transform the estimation problem into a linear system [28]. By recognizing that the LV equations can be rewritten in linear form with respect to parameters when population densities are known, these methods avoid the iterative optimization required by traditional techniques.

The practical implementation requires estimation of derivatives from population time series, which can introduce error, but the method offers significant computational advantages [28]. This approach generates solutions rapidly without risk of convergence to local minima, though it may be more sensitive to noise in the data compared to iterative optimization methods.

Hybrid and Specialized Methods

Several specialized estimation strategies have been developed for particular applications or to address specific challenges in LV modeling:

Sequential Calibration: This approach involves estimating intrinsic growth parameters from monoculture data first, then determining interaction parameters from co-culture experiments, reducing the dimensionality of the estimation problem [30].

Parallel Calibration: Using multiple datasets with different initial conditions simultaneously improves parameter identifiability and helps distinguish between interaction types [30].

Local Gauss-Newton Optimization: When combined with SMC methods, this refinement strategy can improve upon traditional stochastic averaging techniques for time-varying parameter estimation [29].

Comparative Performance Analysis

Quantitative Method Comparison

Table 1: Performance Characteristics of Lotka-Volterra Parameter Estimation Methods

| Method | Computational Cost | Handling of Noise | Uncertainty Quantification | Best-Suited Applications |

|---|---|---|---|---|

| Nonlinear Least-Squares | Low to Moderate | Moderate | Limited | Systems with good initial parameter estimates; low-noise data |

| MCMC Algorithms | High | Good | Comprehensive | Problems requiring Bayesian inference; parameter uncertainty assessment |

| Sequential Monte Carlo | Very High | Excellent | Good | Time-varying parameters; non-stationary systems |

| Linear-Algebra-Based | Very Low | Poor | Limited | Rapid screening; systems with high-quality, densely-sampled data |

| Evolutionary Algorithms | High | Good | Moderate | Complex multi-modal optimization problems; poor initial guesses |

Table 2: Empirical Performance on Synthetic and Experimental Datasets

| Method | Parameter Recovery Accuracy | Interaction Type Discrimination | Sensitivity to Initial Conditions | Scalability to High Dimensions |

|---|---|---|---|---|

| Nonlinear Least-Squares | 72-85% | Limited | High | Moderate (up to 10-15 species) |

| MCMC Algorithms | 80-90% | Good | Moderate | Low to Moderate (up to 5-8 species) |

| Sequential Monte Carlo | 85-95% | Excellent | Low | Low (typically 2-3 species) |

| Linear-Algebra-Based | 65-75% | Poor | Very Low | High (dozens to hundreds of species) |

| Evolutionary Algorithms | 75-88% | Moderate | Low | Moderate (up to 10-15 species) |

The performance comparison reveals significant trade-offs between computational efficiency, accuracy, and methodological robustness. Traditional fitting strategies, including gradient descent optimization and differential evolution, typically achieve low residuals but may overfit noisy data and incur substantial computation costs [28]. The linear-algebra-based method produces solutions much faster, generally without overfitting, but requires accurate derivative estimation from time series data, which can introduce substantial error [28].

In practical applications, the optimal choice depends on data characteristics and research objectives. For the Isle Royale wolf-moose system, the time-varying coefficient LV model fit with SMC methods successfully captured periodic patterns in growth rates corresponding to seasonal variations in food availability [29]. In tumor cell line interaction studies, parallel calibration using multiple initial conditions proved most effective for distinguishing between competitive, mutualistic, and antagonistic relationships [30].

Case Study: Isle Royale Wolf-Moose Dynamics

The long-term predator-prey system on Isle Royale provides an excellent case study for comparing parameter estimation approaches. When fitting 61 years of population data, the classical LV model with constant parameters captured broad oscillatory behavior but lacked flexibility to represent finer-scale population changes [29]. The MCMC estimation for the constant-parameter model required extensive computation but provided foundational parameter estimates that could be used to initialize more complex models.

The time-varying coefficient LV model fit with SMC methods demonstrated superior performance, successfully identifying periodic patterns in the moose growth rate parameter that aligned with theoretical expectations for seasonal food variations [29]. This approach explicitly accounted for environmental variability, disease, and other ecological drivers that cause abrupt population shifts—factors that traditional LV models typically overlook.

Case Study: Tumor Cell Line Interactions

In oncology applications, parameter estimation faces unique challenges, including limited sampling time points and experimental constraints. Research comparing estimation methods for tumor cell line interactions found that parallel calibration using two mixture experiments with different initial conditions provided the most reliable parameter identifiability [30].

This approach successfully distinguished between competitive, mutualistic, and antagonistic interactions—a crucial capability for understanding tumor heterogeneity and treatment response [30]. The study also highlighted the importance of structural identifiability analysis before attempting parameter estimation, as some interaction types may be inherently difficult to distinguish with limited data.

Experimental Protocols for Parameter Estimation

General Workflow for LV Parameter Identification

The following experimental protocol provides a systematic approach to parameter estimation for Lotka-Volterra systems:

Figure 1: Generalized workflow for parameter identification in Lotka-Volterra models, showing key stages from data preparation to final parameter validation.

Data Preparation Protocol

Data Quality Assessment: Examine population time series for missing values, measurement errors, and outliers. The Isle Royale study employed rigorous data cleaning procedures for their 61-year dataset [29].

Smoothing and Interpolation: Apply appropriate smoothing techniques to reduce noise while preserving ecological signals. The specific approach should match data characteristics—ecological time series may require different handling than laboratory microbial data.

Derivative Estimation: For methods requiring growth rate calculations (including linear-algebra-based approaches), estimate derivatives from population data using:

- Finite difference methods for densely-sampled data

- Savitzky-Golay filters for noisy data

- Spline-based methods for irregularly spaced observations

Data Transformation: Apply necessary transformations such as log-transformation for exponential growth dynamics or normalization for compositional data.

Model Identifiability Assessment Protocol

Before parameter estimation, assess whether the model structure permits unique parameter identification:

Structural Identifiability Analysis: Determine if ideal noise-free data would theoretically allow unique parameter estimation. For LV models, verify that the number of observations exceeds the number of parameters and that the system is not over-parameterized [30].

Practical Identifiability Assessment: Using synthetic data with characteristics similar to actual observations, test whether the estimation method can recover known parameter values. The tumor cell line study employed this approach by generating synthetic data from both LV and cellular automaton models [30].

Sensitivity Analysis: Apply methods like the Morris elementary effects technique to identify parameters with the strongest influence on model outputs [31]. This helps prioritize estimation efforts on the most influential parameters.

Estimation Method Implementation

Nonlinear Least-Squares Implementation

Objective Function Definition: Formulate the sum of squared residuals between model predictions and observed population data.

Algorithm Selection: Choose appropriate optimization algorithms (e.g., Levenberg-Marquardt, trust-region methods) based on problem characteristics.

Implementation Considerations:

- Utilize analytical gradients where possible for computational efficiency

- Implement bounds on biologically plausible parameter values

- Incorporate multiple restarts from different initial conditions to avoid local minima

MCMC Implementation

Prior Specification: Define biologically informed prior distributions for parameters. For growth rates, these might be based on known physiological limits; for interaction parameters, priors could reflect likely relationship types.

Sampling Algorithm: Implement appropriate MCMC variants such as Metropolis-Hastings, Hamiltonian Monte Carlo, or Gibbs sampling based on parameter space characteristics.

Convergence Diagnostics: Monitor chain convergence using metrics like Gelman-Rubin statistics, effective sample size, and trace plot inspection.

Sequential Monte Carlo Implementation

State-Space Formulation: Represent the LV system in state-space form with separate equations for state evolution and observations [29].

Particle Initialization: Generate initial particles representing possible states and parameters. The Isle Royale study used estimates from nonlinear least-squares optimization to initialize particles [29].

Recursive Estimation: For each new observation:

- Propagate particles forward using the state transition model

- Compute weights based on observation likelihood

- Resample particles to avoid degeneracy

- Apply parameter learning steps to track time-varying parameters

Research Reagent Solutions

Table 3: Essential Computational Tools for LV Parameter Estimation

| Tool Category | Specific Examples | Primary Function | Implementation Considerations |

|---|---|---|---|

| Optimization Frameworks | MATLAB Optimization Toolbox, SciPy Optimize, NLopt | Nonlinear parameter estimation | Algorithm selection, gradient computation, constraint handling |

| Bayesian Inference Platforms | Stan, PyMC, JAGS | MCMC and SMC implementation | Prior specification, sampler configuration, convergence monitoring |

| Differential Equation Solvers | deSolve (R), SciPy solve_ivp, MATLAB ODE suite | Numerical integration of LV equations | Solver selection, error control, performance optimization |

| Sensitivity Analysis Tools | SALib, GSUA-CAD, SenseApp | Parameter sensitivity and identifiability analysis | Method selection (e.g., Morris, Sobol), sample size determination |

| Visualization Libraries | ggplot2, Matplotlib, Plotly | Results visualization and diagnostic plotting | Customization for model-specific diagnostics |

Discussion and Research Implications

Method Selection Guidelines

The comparative analysis reveals that no single parameter estimation method dominates across all scenarios. Method selection should consider:

Data Quality and Quantity: High-frequency, low-noise data may favor efficient linear-algebra-based approaches, while sparse, noisy data often requires more sophisticated Bayesian methods [28].

System Characteristics: Time-varying parameters necessitate SMC methods, while stationary systems may be adequately served by traditional optimization [29].

Computational Resources: Large-scale systems with dozens of species may require the computational efficiency of linear methods, while smaller systems can benefit from the rigor of Bayesian approaches [28].