Supervised vs. Unsupervised Learning for Accelerometer Data: A Practical Guide for Biomedical Behavior Classification

This article provides a comprehensive comparison of supervised and unsupervised machine learning approaches for classifying behavior from accelerometer data, tailored for researchers and professionals in drug development and biomedical science.

Supervised vs. Unsupervised Learning for Accelerometer Data: A Practical Guide for Biomedical Behavior Classification

Abstract

This article provides a comprehensive comparison of supervised and unsupervised machine learning approaches for classifying behavior from accelerometer data, tailored for researchers and professionals in drug development and biomedical science. It covers foundational principles, methodological workflows, and common pitfalls like overfitting, supported by recent validation studies. The content synthesizes performance metrics, practical application guidelines, and future directions to inform robust study design in clinical and preclinical research, helping scientists select the optimal analytical path for their specific research questions and data constraints.

Core Principles: Defining Supervised and Unsupervised Learning for Behavioral Phenotyping

The analysis of complex biologging data, particularly from accelerometers, relies heavily on machine learning to classify animal behavior. These methods can be broadly categorized into three paradigms: supervised, unsupervised, and semi-supervised learning. Each approach offers distinct methodologies, advantages, and limitations for extracting behavioral information from multi-dimensional sensor data [1] [2]. As biologging datasets continue to grow in size and complexity, understanding the fundamental principles and practical applications of these learning techniques becomes crucial for researchers studying animal movement, behavior, and energy expenditure in natural environments [3] [1]. This guide provides a comprehensive comparison of these approaches, supported by experimental data and methodological details from recent biologging studies.

Core Definitions and Conceptual Frameworks

Supervised Learning

Supervised learning (SL) requires a fully labeled dataset where each input data point (e.g., accelerometer readings) is associated with a known output label (e.g., specific behavior). The algorithm learns to map inputs to outputs by training on these labeled examples, then applies this mapping to classify new, unseen data [3] [4]. Common supervised algorithms used in biologging include Random Forests, Discriminant Analysis, and Temporal Convolutional Networks (TCNs) [1] [4] [5].

Unsupervised Learning

Unsupervised learning (UL) operates without labeled data, instead identifying inherent patterns, clusters, or structures within the dataset [2]. This approach is particularly valuable when limited prior knowledge exists about the behaviors a species exhibits. Common techniques include k-means clustering and Expectation-Maximization (EM) algorithm using Gaussian Mixture Models, which group data points based on similarity metrics without human guidance [1] [2].

Semi-Supervised Learning

Semi-supervised learning (SSL) occupies a middle ground, utilizing both a small amount of labeled data alongside larger volumes of unlabeled data [6] [7]. This approach addresses the key challenge of biologging: the high cost of obtaining expert-labeled data while leveraging the abundant unlabeled data collected by modern sensors. Techniques like FixMatch and other consistency regularization methods combine pseudo-labeling with consistency regularization to improve model performance with limited annotations [6].

Performance Comparison: Experimental Data

Table 1: Comparative performance of machine learning approaches across biologging studies

| Study & Species | Learning Approach | Algorithm(s) Tested | Key Performance Metrics | Notable Findings |

|---|---|---|---|---|

| Red deer (Cervus elaphus) [4] | Supervised | Discriminant Analysis, others | Highest accuracy with minmax-normalized data | Discriminant analysis most accurate for classifying lying, feeding, standing, walking, running |

| Penguins (Adélie & Little) [1] | Unsupervised + Supervised | Expectation Maximization + Random Forest | >80% agreement between approaches | Consideration of behavioral variability resulted in high agreement; minimal differences in energy expenditure estimates |

| Aquatic species recognition [6] | Semi-supervised | FixMatch with Wavelet Fusion | 9.34% improvement in overall classification accuracy | Effective for long-tailed class imbalance common in aquatic species datasets |

| Medical image classification [8] | SSL vs SL | Various CNN architectures | SL outperformed SSL in small training sets | With limited labeled data, SL often outperformed SSL, contrary to expectations |

| Animal action segmentation [5] | Supervised vs Semi-supervised | TCN vs S3LDS | TCN superior with temporal features | Fully supervised TCN performed best across multiple species when including velocity features |

Table 2: Data requirements and computational characteristics

| Parameter | Supervised Learning | Unsupervised Learning | Semi-Supervised Learning |

|---|---|---|---|

| Labeled Data Requirement | High (extensive labeled datasets) | None | Low (small amount of labeled data) |

| Primary Strength | High accuracy for known behaviors | Discovers novel behaviors without bias | Balances annotation cost with performance |

| Primary Limitation | Dependent on quality/quantity of labels | Difficult to align clusters with biologically meaningful behaviors | Implementation complexity |

| Interpretability | High (direct behavior-label mapping) | Low (post-hoc interpretation needed) | Moderate to High |

| Computational Load | Moderate to High | Variable (often high for large datasets) | High (dual training processes) |

| Ideal Use Case | Well-defined behaviors with ample training data | Exploratory analysis of unknown behaviors | Large unlabeled datasets with limited annotation resources |

Experimental Protocols and Methodologies

Supervised Learning Protocol for Wild Red Deer

A recent study on wild red deer in the Swiss National Park established a comprehensive protocol for supervised behavioral classification [4]:

Data Collection: Researchers equipped wild red deer with GPS collars containing accelerometers measuring movement at 4Hz on multiple axes (x, y, z). Acceleration was averaged over 5-minute intervals per axis as unit-free numbers (0-255 scale).

Behavioral Observations: Simultaneous visual observations of collared individuals were conducted to create labeled data, identifying behaviors including lying, feeding, standing, walking, and running.

Data Preprocessing: Acceleration data underwent minmax normalization before model training.

Algorithm Training: Multiple machine learning algorithms were trained including Discriminant Analysis, Random Forests, and others.

Validation: Models were evaluated using a novel metric accounting for behavioral imbalance, with Discriminant Analysis achieving highest accuracy for multiclass classification [4].

Unsupervised Learning Protocol for Seabirds

A study on razorbills and common guillemots demonstrated a complete unsupervised learning workflow [2]:

Sensor Deployment: Three-axis accelerometer tags were deployed on seabirds in combination with GPS tags.

Data Processing: Raw acceleration data was processed without behavioral labels.

Expectation-Maximization Algorithm: The EM algorithm was applied to fit Gaussian Mixture Models to the multivariable accelerometry data:

- E-step: Calculated posterior probabilities of latent behavioral states given current parameter estimates.

- M-step: Re-estimated model parameters (means, covariances, mixing coefficients) using probabilities from E-step.

- Iteration: Repeated until convergence of log-likelihood function.

Behavioral State Identification: The approach automatically identified behavioral modes both above and below water, including flying, floating, descending, ascending, and prey capture [2].

Semi-Supervised Learning Protocol for Aquatic Species

Research on aquatic species recognition developed an advanced SSL approach to address class imbalance [6]:

Framework: Modified FixMatch algorithm combining consistency regularization and pseudo-labeling.

Wavelet Fusion Network: Implemented to handle complex collection environments by:

- Decomposing images into high-frequency and low-frequency components via wavelet transform

- Using dual-stream network to capture both fine details and high-level semantics

- Integrating streams through FusionBlock with attentive interactions

Consistency Equilibrium Loss: Designed new loss function to address long-tailed class distribution by:

- Refining pseudo-labels

- Adaptively adjusting margin for each aquatic species class

- Preventing model bias toward head classes with abundant samples

Training: Leveraged both limited labeled data and extensive unlabeled data from the FishNet dataset, improving classification accuracy by 9.34% over baseline methods [6].

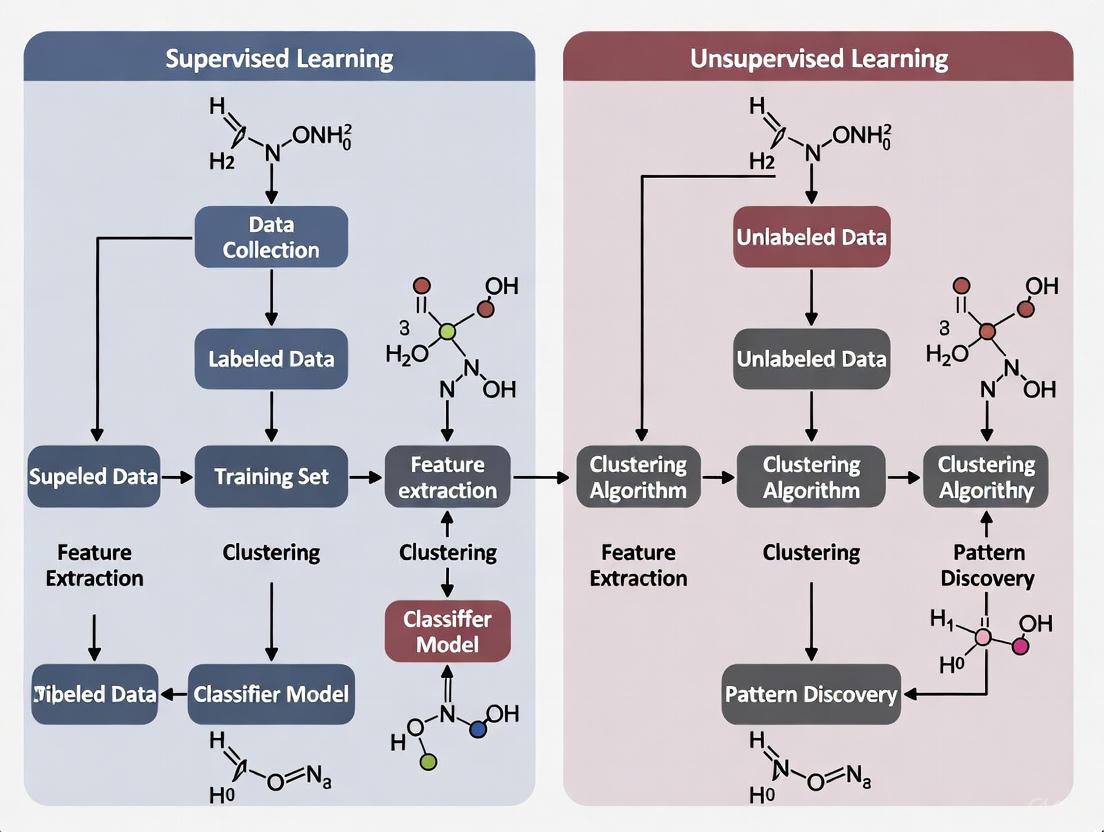

Technical Workflow Diagram

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Essential tools and methods for biologging machine learning research

| Tool/Category | Specific Examples | Function/Purpose | Considerations |

|---|---|---|---|

| Data Collection Hardware | Axy-Depth accelerometers, VECTRONIC GPS collars [4] [2] | Capture movement data in 2-3 axes at high frequency | Trade-offs between resolution, battery life, and storage capacity |

| Data Preprocessing Tools | Minmax normalization, wavelet transform [6] [4] | Standardize data, reduce noise, extract relevant features | Normalization critical for model performance; feature engineering impacts results |

| Supervised Algorithms | Random Forest, Discriminant Analysis, TCN [1] [4] [5] | Classify predefined behaviors with high accuracy | Require substantial labeled data; performance depends on label quality |

| Unsupervised Algorithms | Expectation-Maximization, k-means, SLDS [1] [2] [5] | Discover behavioral patterns without prior labeling | Output requires biological interpretation; may reveal novel behaviors |

| Semi-Supervised Algorithms | FixMatch, S3LDS, YATSI variants [6] [7] [5] | Leverage both labeled and unlabeled data | Complex implementation but addresses data scarcity |

| Validation Methods | Independent test sets, cross-validation, novel imbalance metrics [3] [4] | Assess model generalizability and detect overfitting | Critical for ecological relevance; 79% of studies insufficiently validate [3] |

| Domain-Specific Adaptations | Consistency Equilibrium Loss, Wavelet Fusion Networks [6] | Address challenges like class imbalance and environmental noise | Tailored solutions for ecological data characteristics |

The comparison of supervised, unsupervised, and semi-supervised learning approaches in biologging reveals a complex landscape where no single method dominates universally. Supervised learning maintains advantages for well-defined classification tasks with sufficient labeled data, particularly when incorporating temporal features [5]. Unsupervised approaches remain invaluable for exploratory analysis and novel behavior discovery [2]. Semi-supervised learning shows increasing promise for addressing the fundamental challenge of biologging: extracting meaningful behavioral information from increasingly large datasets with limited annotation resources [6] [7].

Future research directions should focus on developing more sophisticated hybrid approaches, improving model interpretability for ecological applications, and creating standardized validation frameworks specific to biologging data. As machine learning continues to evolve, biologists must maintain focus on the biological relevance and ecological validity of classification outputs rather than purely optimizing technical metrics. The choice among supervised, unsupervised, and semi-supervised approaches should be guided by specific research questions, data characteristics, and available resources rather than presumptions of technical superiority.

The Role of Accelerometers in Quantifying Animal and Human Behavior

Accelerometers have become a cornerstone technology in behavioral research, enabling the objective quantification of behavior in both humans and animals. These sensors, often integrated into wearable bio-loggers, capture high-resolution kinematic data that reveal intricate patterns of movement [9]. The core analytical challenge lies in interpreting these vast datasets to classify distinct behavioral states. The field primarily employs two machine learning paradigms for this task: supervised learning, which uses labeled data to predict known behaviors, and unsupervised learning, which identifies hidden patterns and structures without pre-defined labels [10]. The choice between these approaches significantly influences the research workflow, the types of questions that can be addressed, and the ultimate findings of a study. This guide provides a comparative analysis of supervised and unsupervised methods for accelerometer-based behavior classification, detailing their respective protocols, performance, and optimal applications for researchers and scientists.

Supervised vs. Unsupervised Learning: Core Methodologies

Supervised and unsupervised learning represent two fundamentally different philosophies for extracting meaning from accelerometer data.

Supervised Learning requires a pre-determined ethogram—a catalog of defined behaviors—and a set of training data where accelerometer recordings are manually matched to these behavioral labels [9] [11]. The model learns the unique acceleration signatures associated with each behavior, such as the specific body movements of a seal during grooming or the gait of a human during running [11]. This method is ideal for testing specific hypotheses about known behaviors. However, it is limited by the effort required for manual annotation and its inability to discover novel, unanticipated behaviors [12].

Unsupervised Learning, in contrast, requires no labeled data. It operates by identifying inherent structures or clusters within the accelerometer data itself [13] [10]. This data-driven approach is particularly valuable for exploratory research, such as discovering new behavioral phenotypes in human health or identifying consistent behavioral sequences across different animal species without prior assumptions [14] [13]. A key limitation is that the resulting clusters must be interpreted by the researcher to assign behavioral meaning.

The following diagram illustrates the typical workflows for both approaches, highlighting their distinct processes from data collection to final output.

Comparative Performance Analysis

Empirical studies across diverse species consistently benchmark the performance of these classification methods. The tables below summarize key quantitative findings, providing a reference for researchers to evaluate the expected accuracy and applicability of each technique.

Table 1: Performance of Supervised Learning Methods in Animal Behavior Classification

| Species | Behaviors Classified | Supervised Method | Key Predictor Variables | Reported Accuracy | Reference |

|---|---|---|---|---|---|

| Thick-billed murres & Black-legged kittiwakes | Standing, swimming, flying, diving | Multiple methods (e.g., threshold, k-means, random forests) | Depth, wing beat frequency, pitch, dynamic acceleration | >98% (murres); 89-93% (kittiwakes) | [12] |

| Otariids (fur seals & sea lions) | Resting, grooming, feeding, travelling | Support Vector Machine (SVM) with polynomial kernel | Tri-axial acceleration + animal feature statistics | >70% (overall); 52-81% (per-behavior, excluding travel) | [11] |

| Pre-weaned dairy calves | Lying, standing, walking, running, etc. | Machine learning models (validated on ActBeCalf dataset) | 3D-accelerometer data (25 Hz) synchronized with video | 92% (2-class model); 84% (4-class model) | [15] |

Table 2: Performance and Applications of Unsupervised & Data-Driven Methods

| Species / Population | Method | Purpose | Key Findings / Output | Reference |

|---|---|---|---|---|

| Spotted hyenas, meerkats, coatis | Unsupervised analysis of classified behaviors | Identify underlying patterns in behavioral sequences | Discovery of a common principle: longer engagement in a behavior makes a switch less likely ("decreasing hazard function") | [14] |

| Adult Humans | K-means Clustering, Latent Profile Analysis | Identify multidimensional physical activity behavior profiles from accelerometry | Discovery of data-driven subgroups (profiles) with distinct associations to health outcomes | [13] |

| Multiple Taxa (BEBE Benchmark) | Deep Neural Networks (DNNs) vs. Classical Methods | Compare classical ML vs. deep learning for behavior classification | DNNs consistently outperformed classical methods across all 9 tested datasets | [9] |

The data reveals that supervised methods are highly accurate for classifying specific, pre-defined behaviors, with performance influenced by the model and feature selection [12] [11]. Unsupervised methods excel at discovering novel patterns and profiles that are not defined a priori, revealing everything from common rules governing behavior transitions in mammals [14] to clinically relevant activity profiles in human populations [13]. Recent benchmarks also indicate that deep neural networks consistently outperform classical machine learning models like random forests, particularly when leveraging self-supervised learning on large datasets [9].

Detailed Experimental Protocols

To ensure reproducibility and provide a clear technical roadmap, this section outlines the standard protocols for implementing both supervised and unsupervised learning approaches with accelerometer data.

Protocol for Supervised Learning

The supervised learning pipeline involves a series of methodical steps from data collection to model validation.

Data Collection & Annotation:

- Sensor Deployment: Tri-axial accelerometers are deployed on subjects (e.g., via collars on calves [15] or harnesses on seals [11]) at a suitable sampling frequency (typically ≥25 Hz).

- Ground-Truthing: Simultaneous video recording is conducted to serve as a gold standard for behavior annotation [15] [11].

- Behavioral Labeling: Using software like the Behavioral Observation Research Interactive Software (BORIS), annotators meticulously label the video footage, synchronizing the timestamps with the accelerometer data to create a labeled dataset [15]. Inter-observer reliability (e.g., Cohen's Kappa) should be calculated to ensure consistency [15].

Data Preprocessing & Feature Engineering:

- The raw accelerometer data is segmented into epochs (e.g., 1-second windows) [12].

- Informative features are extracted from each epoch. Studies indicate that a small number of critical metrics—such as pitch, dynamic acceleration, and wing-beat frequency for birds [12]—can be sufficient for high accuracy, avoiding over-complexity.

Model Training & Validation:

- The labeled dataset is split into training and testing sets.

- A classification algorithm (e.g., SVM, Random Forest, Neural Network) is trained on the training set.

- Model performance is rigorously evaluated on the held-out test set using metrics like overall accuracy and per-behavior balanced accuracy [15] [12].

Protocol for Unsupervised Learning

The unsupervised learning workflow is more exploratory, focusing on letting the data reveal its own structure.

Data Collection & Preprocessing:

- Accelerometer data is collected as in the supervised approach, but without the need for exhaustive manual labeling.

- Standard preprocessing (filtering, segmentation) is applied, and general features (e.g., average acceleration, variance) may be extracted across the 24-hour cycle [13].

Model Application & Pattern Discovery:

Profile Interpretation & Validation:

- Researchers interpret the resulting clusters by examining their characteristic activity patterns (e.g., "highly active throughout the day" vs. "sedentary with evening activity") to define meaningful profiles or phenotypes [13].

- The validity and relevance of these data-driven profiles are often tested by examining their association with external health or ecological outcomes [14] [13].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of accelerometer-based behavior classification requires a suite of methodological "reagents." The following table details essential components and their functions in a typical research pipeline.

Table 3: Essential Research Reagents for Accelerometer-Based Behavior Classification

| Tool / Component | Category | Function / Application | Examples / Notes |

|---|---|---|---|

| Tri-axial Accelerometer | Hardware | Measures acceleration in three perpendicular axes (surge, sway, heave), capturing multi-directional movement. | ActiGraph models [16]; Axy-trek [12]; CEFAS G6a+ [11]. |

| Video Recording System | Hardware | Provides ground-truth data for annotating behaviors and synchronizing with sensor data. | High-up cameras for group pens [15]; handheld cameras for focal follows. |

| Behavioral Annotation Software | Software | Enables efficient and precise manual labeling of behaviors from video for supervised learning. | BORIS (Behavioral Observation Research Interactive Software) [15]. |

| Bio-logger Ethogram Benchmark (BEBE) | Software/Data | A public benchmark of diverse, annotated datasets for developing and comparing classification methods. | Facilitates cross-species method validation [9]. |

| Supervised Classifiers | Algorithm | Predicts pre-defined behavior labels from accelerometer features. | Support Vector Machine (SVM) [11]; Random Forests [12] [9]; Deep Neural Networks (DNNs) [9]. |

| Unsupervised Clustering Algorithms | Algorithm | Identifies hidden patterns, groups, or profiles within accelerometer data without labels. | K-means [13]; Latent Profile Analysis [13]. |

| Self-Supervised Learning Models | Algorithm | A hybrid approach; a model is pre-trained on a large unlabeled dataset, then fine-tuned with a small labeled set. | DNNs pre-trained on human accelerometer data can be fine-tuned for animal behavior classification [9]. |

In accelerometer-based behavioral classification, the choice between supervised and unsupervised machine learning is foundational. Supervised learning relies on labeled datasets to train models for predicting known, pre-defined behaviors, while unsupervised learning discovers hidden patterns and structures without labeled training data [17] [18]. This guide objectively compares their performance, with a focused analysis on scenarios where pre-defined behavioral categories make supervised learning the preferred methodology.

Performance and Accuracy Comparison

Empirical studies consistently demonstrate that supervised learning models achieve higher classification accuracy for pre-defined behaviors compared to unsupervised approaches.

The table below summarizes key performance metrics from controlled experiments:

| Study Context | Supervised Model & Accuracy | Unsupervised Model & Accuracy | Key Finding |

|---|---|---|---|

| California Condor Behavior [19] | Random Forest (RF): >0.81 overall accuracy, High Kappa [19] | K-means/EM Clustering: <0.8 accuracy, Very low Kappa (0.06 to -0.02) [19] | Supervised RF and kNN were most effective; unsupervised clustering performed poorly. |

| Classifying Aggressive Child-Toy Interactions [20] | AutoML (Supervised): 0.944 F1-Score, 0.945 AUC [20] | Not Tested | Automated supervised approach achieved high performance for specific behavior. |

| Female Wild Boar Behavior [21] | Random Forest: 94.8% overall accuracy [21] | Not Tested | Specific behaviors like foraging and lateral resting were identified with high accuracy (up to 97%). |

Experimental Protocols in Supervised Learning

Robust supervised learning requires meticulous protocol design. The workflow involves data collection, labeling, model training, and rigorous validation [19] [3]. The diagram below illustrates this multi-stage process for classifying pre-defined behaviors from accelerometer data.

Detailed Methodological Components

Data Collection and Sensor Placement: Researchers deploy tri-axial accelerometers on subjects, configuring sampling rates (e.g., 1 Hz to 20 Hz) based on battery life and behavior dynamics [19] [21]. Device placement is strategic; for example, ear tags for wild boar [21] or patagial tags for condors [19].

Ground Truth Labeling and Segmentation: Creating a labeled dataset is the most critical step. Continuous accelerometer data is divided into segments, often using change point detection algorithms for variable-time windows that group similar behavioral events [19]. Each segment is then labeled based on synchronized video observation according to a pre-defined ethogram—a catalog of target behaviors [19].

Feature Engineering and Model Training: Features are extracted from each labeled data segment. These can include static features (e.g., mean, variance) and dynamic properties [21]. The labeled features are used to train a classifier, such as Random Forest or k-Nearest Neighbor (kNN), which learns the mapping between acceleration patterns and specific behaviors [19].

Validation and Overfitting Prevention: A portion of the labeled data is held back as a test set. The model's performance on this unseen data is the true measure of its accuracy and generalizability [3]. A significant performance drop between training and test sets indicates overfitting, where the model memorizes training data instead of learning generalizable patterns. Robust validation, such as using independent test sets from different individuals, is essential for credible results [3].

The Scientist's Toolkit: Essential Research Reagents

Successful implementation of supervised learning requires specific "research reagents"—tools and materials that enable the reproducible collection and analysis of behavioral data.

| Tool/Reagent | Function & Relevance in Supervised Learning |

|---|---|

| Tri-axial Accelerometer Tag | The primary data collection tool. It measures acceleration in three dimensions (X, Y, Z), providing the raw waveform data used for classification. [19] [21] |

| Video Recording System | Serves as the source of "ground truth." Synchronized video is essential for manually labeling accelerometer data segments with the correct pre-defined behaviors. [19] |

| Pre-defined Ethogram | A structured list of the behaviors of interest (e.g., "sitting," "walking," "foraging"). It standardizes the labeling process, ensuring consistency across observers and studies. [19] |

| Random Forest Algorithm | A powerful, ensemble supervised learning algorithm. It is frequently used for classification tasks due to its high accuracy and ability to handle complex feature relationships. [19] [21] |

| AutoML Frameworks | Tools like Auto-WEKA automate the process of algorithm selection and hyperparameter tuning, potentially optimizing model performance with less manual effort. [20] |

The experimental evidence clearly indicates that a supervised approach is the superior choice for accelerometer-based behavior classification when research objectives involve identifying a specific, pre-defined set of behaviors. Its strength lies in leveraging labeled ground truth data to build highly accurate and interpretable models, as validated by rigorous testing protocols. While unsupervised learning retains value for exploratory analysis, the demand for precise classification of known behavioral states in fields from wildlife ecology to human medicine solidifies the role of supervised learning as the definitive methodology in these scenarios.

The analysis of complex behavioral data, particularly from sources like accelerometers and video-based pose estimation, presents a significant challenge in research and drug development. Traditional supervised learning approaches rely on pre-defined labels and human annotation, which inherently limits their capacity for discovery. In contrast, unsupervised machine learning is revolutionizing this field by allowing subtle patterns and novel behaviors to emerge directly from the data itself without predetermined categories or labels. This paradigm shift is especially valuable for exploratory analysis where researchers may not know all relevant behavioral categories in advance, or when seeking to identify previously uncharacterized behavioral phenotypes that could inform therapeutic development.

This guide objectively compares the performance of unsupervised approaches against traditional methods, providing researchers with evidence-based insights for methodological selection. By examining experimental data across diverse applications—from wearable accelerometry to rodent behavioral analysis—we demonstrate how unsupervised methods uncover biologically meaningful patterns that might otherwise remain obscured by predefined analytical constraints.

Performance Comparison: Quantitative Evidence

Key Advantages of Unsupervised Approaches

Table 1: Comparative Performance of Unsupervised vs. Traditional Methods

| Application Domain | Unsupervised Method | Traditional Method | Performance Metric | Unsupervised Result | Traditional Result |

|---|---|---|---|---|---|

| Physical Activity Monitoring in Children [22] [23] | Hidden Semi-Markov Model | Cut-points Thresholding | Correlation with Mobility (R²) | 0.51 | 0.39 |

| Physical Activity Monitoring in Children [22] [23] | Hidden Semi-Markov Model | Cut-points Thresholding | Correlation with Social-Cognitive Capacity (R²) | 0.32 | 0.20 |

| Physical Activity Monitoring in Children [22] [23] | Hidden Semi-Markov Model | Cut-points Thresholding | Correlation with Responsibility (R²) | 0.21 | 0.13 |

| Physical Activity Monitoring in Children [22] [23] | Hidden Semi-Markov Model | Cut-points Thresholding | Correlation with Daily Activity (R²) | 0.35 | 0.24 |

| Human Activity Recognition [24] | Self-Supervised Learning (Pre-trained) | Random Forest | Median Relative F1 Improvement | 24.4% | Baseline |

| Human Activity Recognition [24] | Self-Supervised Learning (Pre-trained) | Deep Learning (From Scratch) | Median Relative F1 Improvement | 18.4% | Baseline |

| Behavior Change Detection [25] | U-BEHAVED Algorithm | N/A (Detection Rate) | Users with Low Variability | 80% (400 steps) | N/A |

| Behavior Change Detection [25] | U-BEHAVED Algorithm | N/A (Detection Rate) | Users with High Variability | 80% (1600 steps) | N/A |

Limitations and Considerations

While unsupervised approaches demonstrate superior performance in many scenarios, researchers must consider their limitations. Unsupervised models can develop "Clever Hans" effects, where accurate predictions arise from spurious correlations in the data rather than genuine behavioral signals [26]. For example, representation learning models have been shown to rely on text annotations in medical images or background features rather than clinically relevant patterns, which can lead to significant performance degradation under operational conditions [26]. This underscores the importance of applying explainable AI techniques to validate that identified features are biologically or clinically meaningful.

Experimental Protocols and Methodologies

Protocol 1: Unsupervised Physical Activity Analysis with Hidden Semi-Markov Models

Objective: To quantify physical activity intensity from accelerometer data in a diverse pediatric population without relying on population-specific calibration [22] [23].

Equipment: ActiGraph GT3X+ accelerometer, flexible waist-worn belt, Paediatric Evaluation of Disability Inventory-Computer Adaptive Test (PEDI-CAT).

Participant Preparation:

- Fit device snugly around the waist at the mid-axillary line

- Wear for 7 consecutive days during waking hours

- Remove only for bathing, showering, swimming, or sleeping

Data Collection Parameters:

- Sampling frequency: 100 Hz

- Record all movement lasting ≥1 second

- Set device to record two days after parent receives pack

Analytical Procedure:

- Data Segmentation: Divide continuous accelerometer data into discrete segments using a hidden semi-Markov model (HSMM)

- Clustering: Group segments based on movement intensity and patterns without predefined thresholds

- Validation: Compare resulting activity clusters with PEDI-CAT measures of mobility, social-cognitive capacity, responsibility, and daily activity

- Benchmarking: Compare results with traditional cut-points method using thresholds from literature

Key Advantage: This approach allows activity intensity categories to emerge from the data itself rather than imposing external thresholds, making it particularly suitable for diverse or rapidly changing populations where traditional calibration is challenging [22] [23].

Protocol 2: Self-Supervised Learning for Human Activity Recognition

Objective: To leverage large-scale unlabeled accelerometer data (700,000 person-days) to build foundation models that generalize across devices, populations, and environments [24].

Data Preprocessing:

- Utilize UK Biobank accelerometer dataset from ~100,000 participants

- Process 700,000 person-days of free-living, 24/7 human motion data

- Apply multi-task self-supervision with three pretext tasks: arrow of time, permutation, and time warping

Model Architecture:

- Implement deep convolutional neural network for pre-training

- Use weighted sampling to improve convergence across pretext tasks

Training Procedure:

- Pre-training: Train network on unlabeled data using multi-task self-supervision

- Fine-tuning: Adapt pre-trained model to specific downstream activity recognition tasks

- Evaluation: Test on eight benchmark datasets with varying sizes and characteristics

Validation Metrics:

- F1 score and Kappa score across multiple datasets

- Performance in transfer learning scenarios

- Cluster analysis using UMAP for visualization

Key Finding: Self-supervised pre-training consistently improved downstream human activity recognition, especially in small datasets, reducing the need for labeled data while maintaining strong generalization across external datasets [24].

Protocol 3: Early Detection of Physical Activity Behavior Changes

Objective: To detect significant changes in physical activity behavior as they emerge and determine if they become sustained habits [25].

Data Source: Wearable accelerometer step data from 79 users (N=12,798 records).

Algorithm Implementation (U-BEHAVED):

- Streaming Analysis: Periodically scan step data streamed from activity trackers

- Rolling Windows: Compare current behaviors with recent previous ones using rolling time windows

- Change Detection: Identify statistically significant changes in activity patterns

- Habit Classification: Flag new behaviors as potential habits if sustained over time

Validation Approach:

- Test detection rate for behavior changes of varying magnitudes (400-1600 steps)

- Evaluate in users with both low and high variability in physical activity

- Validate habit detection with minimum thresholds of 500-1600 steps per hour

Performance Outcome: The algorithm detected 80% of behavior changes, with step thresholds adapting to individual variability patterns [25].

Research Reagent Solutions

Table 2: Essential Tools for Unsupervised Behavioral Analysis

| Tool Category | Specific Solution | Function/Application | Key Features |

|---|---|---|---|

| Accelerometers | ActiGraph GT3X+ [22] [23] | Raw movement data collection | 100 Hz sampling, waist-worn, research-grade |

| Pose Estimation | DeepLabCut [27] [28] | Markerless body movement tracking | Deep learning-based, open-source |

| Pose Estimation | SLEAP [27] | Animal body part tracking | Multi-animal tracking capability |

| Behavior Classification | B-SOiD [27] | Unsupervised behavior identification | Open-source, Python-based |

| Behavior Classification | VAME [27] | Behavioral motif discovery | Variational autoencoder framework |

| Behavior Classification | Keypoint-MoSeq [27] [28] | Sequencing behavioral motifs | Hidden Markov model approach |

| Analysis Frameworks | U-BEHAVED [25] | Behavior change detection | Real-time monitoring, habit identification |

| Analysis Frameworks | Hidden Semi-Markov Models [22] [23] | Activity intensity clustering | Data-driven category emergence |

Decision Framework and Implementation

When to Choose an Unsupervised Approach

Optimal Scenarios for Unsupervised Learning:

- Exploratory Research: When investigating new behavioral phenotypes without predefined categories

- Diverse Populations: When studying populations where traditional calibration fails (e.g., children with diverse developmental abilities [22] [23])

- Novel Behavior Discovery: When seeking to identify previously uncharacterized behavioral patterns or sequences

- Large Unlabeled Datasets: When leveraging massive datasets where manual labeling is impractical (e.g., 700,000 person-days of accelerometer data [24])

- Individualized Assessment: When behavior change detection needs to be personalized to individual baseline patterns [25]

Scenarios Where Supervised Approaches May Be Preferable:

- Well-Established Behavioral Categories: When studying previously validated behavioral classifications with sufficient labeled data

- Specific Hypothesis Testing: When investigating predefined behavioral outcomes rather than exploring novel patterns

- Limited Computational Resources: When unsupervised model validation and interpretation is not feasible

Implementation Workflow

The following diagram illustrates a typical workflow for implementing unsupervised behavior analysis:

Figure 1: Unsupervised Behavior Discovery Workflow

U-BEHAVED Algorithm Process

The U-BEHAVED algorithm for detecting physical activity behavior changes follows this specific process:

Figure 2: U-BEHAVED Behavior Change Detection Process

Unsupervised approaches offer transformative potential for exploratory analysis and novel behavior discovery in accelerometer data and beyond. The experimental evidence demonstrates their superiority in diverse populations, their ability to detect subtle behavior changes, and their capacity to identify meaningful patterns without predefined labels. While requiring careful validation to avoid spurious correlations, these methods enable researchers to move beyond known behavioral categories and discover genuinely novel phenotypes—a crucial capability for advancing both basic research and therapeutic development.

Researchers should consider adopting unsupervised approaches when working with diverse populations where traditional methods fail, when exploring new behavioral domains without established categories, or when leveraging large-scale unlabeled datasets. The continued development of explainable AI techniques will further enhance our ability to validate and interpret discoveries made through these powerful unsupervised methods.

Comparative Strengths and Weaknesses at a Glance

The analysis of accelerometer data for behavior classification is a cornerstone of modern movement ecology, biomedical research, and drug development. The selection between supervised and unsupervised machine learning approaches represents a fundamental methodological decision that directly impacts research outcomes, interpretation, and validity. Supervised learning relies on labeled datasets where accelerometer data is paired with directly observed behaviors, enabling the training of models to predict known behavioral categories [3] [29]. In contrast, unsupervised learning identifies inherent patterns and structures within accelerometer data without pre-existing labels, potentially revealing previously unclassified behaviors [1] [29]. This guide provides a systematic comparison of these approaches, synthesizing experimental data and methodologies to inform researchers' analytical decisions.

Comparative Analysis of Classification Approaches

Table 1: High-level comparison of supervised and unsupervised classification approaches

| Feature | Supervised Learning | Unsupervised Learning |

|---|---|---|

| Data Requirements | Requires labeled training data with observed behaviors [3] | No labeled data needed; works with raw accelerometer data [1] [29] |

| Primary Output | Classification into predefined behavioral categories [3] [29] | Identification of behavioral clusters based on signal similarity [1] |

| Implementation Complexity | High (feature engineering, model training, validation) [3] [30] | Moderate (cluster identification and interpretation) [1] |

| Validation Approach | Performance metrics on test sets (accuracy, precision, recall) [3] [29] | Manual labeling of clusters for validation [1] |

| Key Strengths | Predicts known behaviors directly; higher agreement with ground truth [1] | Discovers novel behaviors; no need for extensive labeling [1] [29] |

| Key Limitations | Vulnerable to overfitting; dependent on labeled data quality [3] | Clusters may not align with biologically meaningful behaviors [1] |

Table 2: Experimental performance comparison across studies

| Study Context | Supervised Performance | Unsupervised Performance | Agreement Between Approaches |

|---|---|---|---|

| Penguin Behavior Classification [1] | Random Forest: >80% agreement with unsupervised | Expectation Maximization: 12 behavioral classes identified | >80% overall, with outliers <70% for behaviors with signal similarity |

| Animal Behavior Classification [29] | SVM, ANN, RF, XGBoost performed well with proper validation | k-means clustering applied but requires manual interpretation | Not directly quantified |

| Human Activity Recognition [31] | Hybrid DeepF-SVM: 93.57-98.48% accuracy on benchmark datasets | Not evaluated | Not applicable |

| Wild Red Deer Behavior [4] | Discriminant Analysis: Accurate multiclass classification | Not the focus of study | Not applicable |

Detailed Methodological Protocols

Supervised Learning Workflow

Supervised learning for accelerometer behavior classification follows a structured pipeline. First, researchers collect raw accelerometer data while simultaneously conducting behavioral observations to create labeled datasets [4]. The data is then segmented into windows, typically ranging from 6-second non-overlapping windows in human studies [30] to 5-minute intervals in wildlife research [4]. Feature extraction follows, calculating time-domain features (mean, standard deviation, skewness) and frequency-domain features (spectral entropy, frequency bands) from the raw signals [30] [31]. The labeled dataset is split into training (typically 70%) and testing (30%) subsets [30] [4]. Model selection and training proceed using algorithms such as Random Forest, Support Vector Machines, or Artificial Neural Networks [30] [29]. Critical validation through independent test sets assesses performance metrics including accuracy, precision, recall, and F1-score [3] [29]. Finally, the trained model deploys to classify new, unlabeled accelerometer data [29].

Unsupervised Learning Workflow

The unsupervised learning methodology begins with raw accelerometer data collection without behavioral labels [1]. Data undergoes similar preprocessing and segmentation as in supervised approaches. Feature calculation generates relevant input variables for clustering algorithms [1]. Cluster analysis applies algorithms such as Expectation Maximization or k-means to identify natural groupings within the data [1] [29]. Researchers then manually interpret these clusters by examining characteristic signal patterns and, when possible, correlating with limited behavioral observations [1]. The identified behavioral classes validate through comparison with independent datasets or expert assessment [1]. For enhanced utility, unsupervised outputs sometimes train supervised models, creating a hybrid approach that leverages the strengths of both methods [1].

Performance and Validation Considerations

Overfitting Risks in Supervised Learning

A critical challenge in supervised learning is overfitting, where models perform well on training data but fail to generalize to new datasets [3]. A systematic review of 119 accelerometer-based behavior classification studies revealed that 79% (94 papers) did not adequately validate their models to robustly identify potential overfitting [3]. Overfitting occurs when model complexity approaches or surpasses data complexity, causing the model to memorize training instances rather than learning generalizable patterns [3]. Detection requires rigorous validation using independent test sets completely unseen during training [3]. Common practices that mask overfitting include non-independent test sets, non-representative test set selection, failure to tune hyperparameters on validation sets, and optimization on inappropriate performance metrics [3].

Agreement Between Approaches

Research comparing supervised and unsupervised methods reveals generally high agreement. In penguin behavior classification, integrated unsupervised and supervised approaches demonstrated greater than 80% agreement in behavioral classifications, with minimal differences in energy expenditure estimates [1]. However, outliers with less than 70% agreement occurred for behaviors characterized by signal similarity, highlighting challenges in distinguishing mechanically similar activities [1]. This suggests that while both approaches generally converge, certain behaviors remain challenging regardless of methodology.

Computational Performance for On-Board Classification

For applications requiring real-time classification on resource-constrained devices, computational efficiency becomes critical. Studies evaluating machine learning classifiers for next-generation smart trackers identified Random Forest (RF), Artificial Neural Networks (ANN), and Extreme Gradient Boosting (XGBoost) as suitable for on-board classification due to favorable runtime and storage requirements [29]. These algorithms maintained performance even with reduced feature sets, minimizing computational demands while preserving classification accuracy [29].

Essential Research Reagents and Tools

Table 3: Essential research toolkit for accelerometer-based behavior classification

| Tool Category | Specific Examples | Function and Application |

|---|---|---|

| Accelerometer Sensors | Tri-axial accelerometers [4] [32]; 9-axis IMUs (accelerometer, gyroscope, magnetometer) [33] | Capture raw movement data on multiple axes; IMUs provide complementary orientation information |

| Data Processing Tools | SENS motion software [32]; ActiPASS [32]; Custom MATLAB/Python scripts | Preprocess raw data, extract features, and implement classification algorithms |

| Supervised Algorithms | Random Forest [4] [1] [29]; SVM [30] [29] [31]; CNN [34] [31]; Artificial Neural Networks [30] [29] | Classify behaviors from labeled training data; range from traditional ML to deep learning approaches |

| Unsupervised Algorithms | Expectation Maximization [1]; k-means clustering [29] | Identify natural groupings in unlabeled accelerometer data |

| Validation Methods | Independent test sets [3]; Cross-validation [3]; Manual cluster interpretation [1] | Assess model generalizability and prevent overfitting |

| Performance Metrics | Accuracy, Precision, Recall, F1-score [34] [31]; Cohen's Kappa [32]; Balanced Accuracy [32] | Quantify classification performance and model effectiveness |

The selection between supervised and unsupervised approaches for accelerometer-based behavior classification involves fundamental trade-offs between methodological rigor, data requirements, and interpretability. Supervised learning provides direct classification into predefined behavioral categories with higher agreement to ground truth but requires extensive labeled data and risks overfitting without proper validation [3] [1]. Unsupervised learning discovers novel behaviors without labeling effort but produces clusters that may not align with biologically meaningful categories [1] [29]. Emerging hybrid approaches that combine unsupervised cluster identification with subsequent supervised classification leverage the strengths of both methods [1]. The choice ultimately depends on research objectives, data availability, and computational resources, with both approaches offering distinct advantages for advancing behavioral research in ecological, biomedical, and pharmaceutical contexts.

From Data to Discovery: Methodological Workflows and Real-World Applications

The fundamental difference between supervised and unsupervised learning paradigms in accelerometer-based behavior classification is the reliance on labeled data. Supervised learning requires a ground-truthed dataset where acceleration signals are paired with corresponding behavior labels (e.g., foraging, resting, walking) [21] [4]. This labeled dataset serves as the foundational teacher, enabling models to learn patterns that distinguish different behaviors. In contrast, unsupervised approaches identify inherent patterns or clusters in accelerometer data without predefined labels, making them suitable for exploratory analysis but less effective for precise behavior identification [35] [36]. The quality, volume, and methodological rigor applied during data labeling and validation directly dictate the performance and reliability of the resulting classification models [37] [3].

This comparison guide examines the complete supervised learning pipeline for accelerometer data, focusing on experimental evidence that quantifies performance differences between methodological approaches. We present structured comparisons of annotation strategies, sensor configurations, algorithm performance, and validation protocols to equip researchers with evidence-based guidance for developing robust behavioral classification systems.

Data Labeling Strategies: Balancing Quality, Speed, and Cost

The initial phase of the supervised learning pipeline involves creating high-quality labeled datasets through various annotation strategies, each offering distinct trade-offs between quality, control, scalability, and cost [37] [35].

Table: Comparison of Data Labeling Approaches for Behavioral Research

| Approach | Key Advantages | Key Limitations | Best Suited For |

|---|---|---|---|

| In-House Labeling | High control, domain expertise utilization, data privacy [38] [36] | Expensive, time-consuming, management overhead [38] [36] | Projects with sensitive data or requiring specialized expertise [38] |

| Crowdsourcing | Cost-effective, rapid scaling, flexibility [36] | Questionable quality, inconsistent results, limited domain knowledge [38] [36] | Non-specialized tasks with limited budgets and flexible quality requirements [38] |

| Third-Party Partners | High quality, technical expertise, cost-efficient at scale [38] | Relinquished control, can be expensive [38] | Large-scale projects requiring high-quality labels and technical guidance [38] |

| Programmatic/Semi-Supervised | Rapid scaling, combines human expertise with automation [37] [35] | Potential quality issues, requires technical setup [35] | Large datasets where manual labeling is impractical [37] [35] |

Each strategy represents a different point on the spectrum of the data labeling trade-off. For specialized behavioral research, a hybrid approach often yields optimal results. For instance, subject matter experts can establish a ground-truth dataset and develop labeling guidelines, while automated methods or crowdsourced workers handle initial annotations, with expert review reserved for edge cases or quality assurance [37].

Experimental Comparisons: Sensor Configurations and Algorithm Performance

Sensor Fusion Enhances Classification Accuracy

Experimental evidence demonstrates that combining multiple sensor modalities significantly improves classification performance over single-sensor approaches. A comprehensive study on dairy cow behavior classification collected over 780,000 labeled observations to compare accelerometer-only, gyroscope-only, and combined sensor models [39].

Table: Performance Comparison of Sensor Configurations for Cattle Behavior Classification

| Behavior | Accelerometer-Only Model | Gyroscope-Only Model | Combined Sensor Model |

|---|---|---|---|

| Lying | High accuracy | High accuracy | Consistently superior performance |

| Standing | Moderate accuracy | Moderate accuracy | Consistently superior performance |

| Eating | High variability | High rotational activity capture | Improved robustness across individuals |

| Walking | Lower sensitivity | Better rotational detection | Improved classification robustness |

The integration of accelerometer and gyroscope data was particularly valuable for distinguishing behaviors with similar postures but different movement characteristics, such as standing versus eating. Gyroscope sensors (GyroY and GyroZ axes) captured the highest rotational activity during eating and walking behaviors, providing complementary information to the linear acceleration data [39].

Algorithm Selection Significantly Impacts Model Performance

Comparative studies across multiple species reveal substantial performance differences between machine learning algorithms, influenced by data characteristics, preprocessing methods, and behavioral complexity. Research on wild red deer compared multiple algorithms using minmax-normalized acceleration data from multiple axes and their ratios [4] [40].

Discriminant analysis generated the most accurate classification models, successfully differentiating between lying, feeding, standing, walking, and running behaviors in alpine environments [4]. The study highlighted that algorithm performance varied significantly depending on the transformation method and combination of input variables used.

In human movement studies comparing deep learning (DL) and classical machine learning approaches for classifying 24-hour movement behaviors from wrist-worn accelerometers, Long Short-Term Memory (LSTM) networks achieved approximately 85% overall accuracy when trained on raw acceleration signals [41]. Classical algorithms including Random Forest, when trained on handcrafted features, achieved overall accuracy ranging from 70% to 81%, with higher confusion observed between moderate-to-vigorous physical activity and light physical activity categories compared to sleep and sedentary behaviors [41].

Sampling Strategies and Data Resolution Trade-Offs

The trade-off between data resolution and practical constraints like battery life presents significant methodological considerations for long-term behavioral studies. Research on wild boar demonstrated that low-frequency accelerometers (1 Hz) can successfully classify behaviors including foraging, lateral resting, sternal resting, and lactating with accuracies ranging from 50% (walking) to 97% (lateral resting) using random forest models [21].

This approach addresses critical constraints in wildlife research where frequent recapture for battery replacement causes severe stress and potential mortality [21]. Low-frequency sampling enables extended monitoring periods essential for capturing seasonal and inter-annual behavioral trends, despite some limitation in classifying dynamic behaviors like walking.

Methodological Protocols: Experimental Workflows for Behavioral Classification

Standardized Data Collection and Annotation Protocols

Robust behavioral classification requires meticulous experimental design and data collection protocols. The red deer study implemented a comprehensive methodology where animals were fitted with GPS collars containing accelerometers measuring movement on multiple axes at 4 Hz, with data averaged over 5-minute intervals [4]. The key innovation was collecting simultaneous behavioral observations in wild environments, creating labeled datasets where acceleration data served as input variables and observed behaviors as output variables [4].

The dairy cow study employed even more rigorous annotation protocols, using two trained observers who independently annotated behaviors from synchronized video recordings across a 90-day period [39]. Inter-observer reliability was quantified using Cohen's Kappa (κ=0.84), with discrepancies resolved through discussion and consensus meetings. This approach ensured high-quality ground truth labels for model training and evaluation [39].

Validation Practices to Prevent Overfitting

A systematic review of 119 studies using accelerometer-based supervised learning revealed critical gaps in validation practices, with 79% of studies not adequately validating their models to detect overfitting [3]. Overfitting occurs when models memorize specific instances in training data rather than learning generalizable patterns, leading to poor performance on new data [3].

Recommended validation practices include:

- Independent test sets: Completely separate from training data

- Individual-level splitting: When classifying behavior across multiple subjects, splitting data by individual rather than randomly pooling all data points

- Appropriate performance metrics: Using metrics that account for class imbalance common in behavioral datasets

- Cross-validation: Employing k-fold or leave-one-subject-out cross-validation where appropriate

The red deer study addressed class imbalance by developing a novel performance metric that accounted for unequal behavior distribution, providing a more realistic assessment of model utility [4].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table: Essential Research Toolkit for Accelerometer-Based Behavioral Classification

| Tool/Resource | Function/Purpose | Examples/Alternatives |

|---|---|---|

| Tri-axial Accelerometers | Measures linear acceleration in three dimensions (X, Y, Z axes) | Commercial wildlife collars (VECTRONIC), research-grade sensors (Axivity AX3) [4] [41] |

| Gyroscope Sensors | Captures angular velocity and rotational movements | MPU-6050 sensors used in cattle study [39] |

| Data Annotation Platforms | Tools for creating labeled behavioral datasets | Label Studio, Prodigy, Amazon SageMaker Ground Truth [37] |

| Machine Learning Environments | Programming environments for model development | R with h2o package, Python with scikit-learn, TensorFlow, PyTorch [21] [39] |

| Validation Frameworks | Methods to assess model generalizability and detect overfitting | Cross-validation, independent test sets, performance metrics for imbalanced data [3] [4] |

Experimental evidence consistently demonstrates that supervised learning approaches using high-quality labeled datasets achieve superior precision in classifying specific behaviors compared to unsupervised methods. Key findings from comparative studies indicate:

- Sensor fusion of accelerometer and gyroscope data consistently outperforms single-sensor approaches, particularly for distinguishing behaviors with similar postures but different movement patterns [39]

- Algorithm selection significantly impacts performance, with discriminant analysis excelling in wild red deer classification [4] and Random Forest with sensor fusion achieving high accuracy in cattle monitoring [39]

- Low-frequency sampling (1 Hz) can successfully classify many behaviors while enabling long-term battery life essential for wildlife studies [21]

- Rigorous validation using independent test sets is critical but frequently overlooked, with 79% of reviewed studies insufficiently validating for overfitting [3]

For researchers designing behavioral classification studies, we recommend: investing in high-quality data labeling with expert annotation where possible; implementing sensor fusion approaches when monitoring complex behaviors; selecting algorithms based on empirical comparison rather than default preferences; and employing rigorous validation protocols with completely independent test sets. These practices ensure developed models will generalize effectively to new individuals and environmental conditions, advancing the reliability and applicability of accelerometer-based behavioral classification across research domains.

Unsupervised machine learning, particularly clustering, serves as a powerful approach for identifying inherent patterns in complex datasets without prior knowledge of outcomes. This capability is especially valuable in fields like behavioral analysis using accelerometer data, where labeled data is scarce and populations are diverse. This guide provides a comparative analysis of unsupervised clustering methodologies against supervised alternatives, detailing performance metrics, experimental protocols, and practical implementation workflows to inform researchers and development professionals in selecting appropriate techniques for their specific applications.

Machine learning classification strategies are broadly categorized into supervised and unsupervised paradigms. Supervised learning requires a labeled dataset to train models for predicting known outcomes, while unsupervised learning seeks to identify the inherent structure of unlabeled data to discover novel patterns or natural groupings [42]. Clustering, a cornerstone of unsupervised learning, is increasingly critical for analyzing complex data from sources like wearable accelerometers, where manual labeling is impractical and the underlying categories may not be fully known [19] [22]. The core strength of clustering lies in its data-driven approach, which can reveal meaningful subgroups within heterogeneous populations—such as distinct physical activity states in children [22] or patient phenotypes in heart failure cohorts [43]—without the constraints and potential biases of pre-defined labels. This guide systematically compares the performance of various clustering techniques against supervised and semi-supervised alternatives, providing a foundation for methodological selection in research and development.

Comparative Performance: Unsupervised vs. Supervised and Semi-Supervised Methods

The effectiveness of learning algorithms varies significantly depending on the data characteristics and analytical goals. The table below summarizes a comparative study on classifying behaviors from accelerometer data in California condors, illustrating a typical performance hierarchy.

Table 1: Classification Performance Across Machine Learning Approaches (California Condor Accelerometer Data) [19]

| Learning Type | Specific Algorithms | Overall Accuracy | Kappa Statistic | Notes |

|---|---|---|---|---|

| Unsupervised | K-means, EM Clustering | < 0.8 | -0.02 to 0.06 | Poor performance, very low Kappa |

| Semi-Supervised | Nearest Mean Classifier | 0.61 | N/A | Effective for only 2 of 4 behavior classes |

| Supervised | Random Forest (RF), k-Nearest Neighbor (kNN) | > 0.81 | Highest | Most effective across all behavior types |

This case study demonstrates a common finding: while unsupervised methods are valuable for exploration, supervised models often achieve higher accuracy for well-defined classification tasks where labeled training data is available [19] [42]. However, this performance gap narrows or reverses in scenarios where labels are unavailable, costly to produce, or when the objective is to discover new, previously undefined categories.

Experimental Protocols in Unsupervised Accelerometer Research

To ensure reproducible and valid results, studies employing unsupervised learning for accelerometer data follow rigorous experimental protocols. The following workflow generalizes the common steps, from data collection to cluster interpretation.

Diagram 1: Experimental Workflow for Unsupervised Accelerometer Analysis

Data Collection and Preprocessing

Data is typically collected from wearable, tri-axial accelerometers set to record at frequencies between 20-100 Hz [19] [22]. Preprocessing is critical and involves:

- Segmentation: Dividing continuous data into analyzable units. Variable-time segmentation, which uses change points in the data to define boundaries, often improves classification accuracy by grouping similar behaviors [19].

- Feature Extraction: Calculating summary metrics from raw acceleration signals. Common features in the literature include mean, standard deviation, skewness, kurtosis, and dominant frequency [44].

Feature Selection and Dimensionality Reduction

Given the high dimensionality of feature-extracted accelerometer data, feature selection and dimensionality reduction are essential to avoid the "curse of dimensionality" and prevent model overfitting [45] [44]. The most prevalent technique identified in a systematic review is Principal Component Analysis (PCA), which projects original features into a new, lower-dimensional space while retaining maximum information [44]. Correlation matrices are also frequently used to select a subset of features that are highly correlated with cluster membership but uncorrelated with each other [44].

Clustering Algorithm Application and Validation

The core of the pipeline is applying clustering algorithms to the processed data. A benchmark study on univariate data recommends testing multiple algorithms, as performance is highly dependent on the data type [45]. Key steps include:

- Algorithm Selection: Common choices include partitioning methods like K-means and Partitioning Around Medoids (PAM), density-based methods like DBSCAN, and neural models like Self-Organizing Maps [43] [44] [46].

- Cluster Number (NoC) Estimation: The optimal number of clusters is determined using internal validation indices. A robust approach involves using a histogram of predictions from multiple indices (e.g., silhouette width, Dunn Index) to estimate the final NoC [43] [45].

- Validation: Internal validation indices assess the compactness and separation of the resulting clusters. For example, the PAM algorithm was validated as superior in an HFpEF patient study because it produced six distinct, clinically meaningful clusters with statistically different outcomes, whereas hierarchical clustering yielded groups that were too small, and K-prototype showed significant overlap [43].

Performance Benchmarking of Clustering Algorithms

Direct benchmarking of algorithms on datasets with known classes provides the most reliable guidance for selection. The following table synthesizes findings from a large-scale benchmark study on univariate data and a clinical study on patient phenotyping.

Table 2: Benchmarking of Unsupervised Clustering Algorithms [43] [45]

| Clustering Algorithm | Classification | Key Findings & Performance Notes |

|---|---|---|

| Partitioning Around Medoids (PAM) | Partitioning | Superior group separation in clinical data; robust to noise. Identified 6 distinct HFpEF phenotypes with different mortality [43]. |

| K-means / K-prototype | Partitioning | Commonly used but may show significant overlap between clusters. Performance is highly dependent on feature space construction [43] [45]. |

| Hierarchical Clustering | Hierarchical | May produce too many small, clinically meaningless clusters. Generated clusters with only 2 and 7 members in a patient cohort [43]. |

| Fuzzy C-means (FCM) | Fuzzy | Included in top performers for univariate data benchmarking [45]. |

| Gustafson-Kessel (GK) | Fuzzy | Included in top performers for univariate data benchmarking [45]. |

| DBSCAN | Density-Based | Does not require pre-specification of cluster number; can identify noise points [44]. |

The benchmark study on simulated nanoelectronics data concluded that careful selection of both the feature space construction method and the clustering algorithm is critical, as their interaction can greatly impact classification accuracy [45].

Case Study: Hidden Semi-Markov Model (HSMM) vs. Cut-Points for Physical Activity

A compelling application of unsupervised learning is using accelerometer data to quantify physical activity in children, a rapidly changing and diverse population.

Experimental Protocol

In a study with 279 children aged 9-36 months, a Hidden Semi-Markov Model (HSMM) was applied to waist-worn ActiGraph accelerometer data [22]. The HSMM is a data-driven approach that segments and clusters the accelerometer trace without relying on pre-calibrated thresholds, allowing activity intensity states to emerge from the data itself [22]. This was compared directly to the traditional cut-points approach, which classifies activity intensity based on thresholds calibrated against energy expenditure in a lab setting [22] [47].

Performance and Clinical Relevance

The unsupervised HSMM approach demonstrated a stronger correlation with the children's developmental abilities, as measured by the Paediatric Evaluation of Disability Inventory (PEDI-CAT).

Table 3: Correlation with Developmental Abilities (R²): HSMM vs. Cut-Points [22]

| PEDI-CAT Domain | HSMM (Unsupervised) | Cut-Points (Traditional) |

|---|---|---|

| Mobility | 0.51 | 0.39 |

| Social-Cognitive | 0.32 | 0.20 |

| Responsibility | 0.21 | 0.13 |

| Daily Activities | 0.35 | 0.24 |

| Age | 0.15 | 0.10 |

The results show that the HSMM consistently explained more variance in developmental scores, establishing it as a more sensitive and appropriate method for quantifying physical activity in heterogeneous or rapidly changing populations [22]. This case highlights a key advantage of unsupervised methods: they do not require costly calibration studies and can generalize better across diverse populations.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table catalogues key computational tools and materials referenced in the featured experiments for replicating unsupervised clustering studies.

Table 4: Research Reagent Solutions for Unsupervised Accelerometer Analysis

| Reagent / Solution | Function / Purpose | Example Use Case |

|---|---|---|

| ActiGraph GT3X+ | A research-grade accelerometer for collecting raw tri-axial acceleration data. | Primary data collection device in the Hidden Semi-Markov Model (HSMM) study of children's physical activity [22]. |

| GENEActiv | A wrist-worn, raw-data accelerometer with a wide dynamic range (±8g). | Used to capture accelerometer data in the Millennium Cohort Study at age 14 [47]. |

| R Package 'GGIR' | An open-source software for processing raw accelerometer data, including calibration, non-wear detection, and metric extraction. | Used to preprocess raw acceleration data into vector magnitude (ENMO) and orientation angles [47]. |

| Gower Distance Metric | A similarity measure that handles mixed data types (numeric and categorical) by scaling results between 0 and 1. | Used by the PAM algorithm in the HFpEF patient clustering study, contributing to its superior performance [43]. |

| t-SNE (t-distributed SNE) | A non-linear dimensionality reduction technique ideal for visualizing high-dimensional data in 2D or 3D. | Employed for visualizing high-dimensional cluster outcomes in the HFpEF study [43] and benchmarked in [45]. |

| Silhouette Width Index | An internal cluster validation index that measures how similar an object is to its own cluster compared to other clusters. | Used to determine the optimal number of clusters by evaluating compactness and separation [43]. |

The choice between supervised and unsupervised learning for classification is context-dependent. Supervised methods like Random Forest excel in accuracy when classifying data into known, well-defined categories with sufficient labeled examples [19]. However, unsupervised clustering is an indispensable tool for exploratory data analysis, patient or behavior phenotyping, and studies of diverse populations where labeled data is a barrier. As evidenced by the superior clinical correlation of HSMM in quantifying children's physical activity, unsupervised methods can provide more sensitive and appropriate solutions for real-world, heterogeneous data [22]. A robust analytical strategy involves benchmarking multiple clustering algorithms and feature space constructions specific to the measurement type to achieve optimal performance [45].

Feature Engineering and Selection for Robust Classification

The expanding field of movement ecology, human health monitoring, and industrial predictive maintenance increasingly relies on data from accelerometers. A critical challenge in translating raw sensor data into meaningful classifications—whether of animal behavior, human activities, or machine faults—lies in the processes of feature engineering and selection. These steps are paramount for building robust, generalizable machine learning models, especially within a research paradigm that compares the efficacy of supervised versus unsupervised learning approaches. Supervised learning, which relies on labeled datasets to train models, remains the dominant method for behavior classification from accelerometer data [3] [9]. However, its performance is highly contingent on the features used to represent the underlying signal. This guide objectively compares the performance of different feature engineering and selection methodologies, providing researchers with the experimental data and protocols needed to inform their own analytical workflows.

Comparative Performance of Feature Engineering and Selection Methods

The choice of how to process, engineer, and select features from raw accelerometer data significantly impacts the performance and generalizability of classification models. The following tables summarize quantitative results from recent studies across biological and engineering domains.

Table 1: Performance Comparison of Feature Engineering and Selection Methods in Ecological Studies

| Study & Species | Feature Engineering Approach | Selection/Method | Classification Model | Key Performance Metric & Result |

|---|---|---|---|---|

| Wild Red Deer [4] | Min-max normalization; Ratios of multiple axes | Model-based optimization | Discriminant Analysis | High accuracy for lying, feeding, standing, walking, running |

| Javan Slow Loris [48] | Hand-crafted features from raw accelerometer data | Not Specified | Random Forest | Resting: 99.16%; Feeding: 94.88%; Locomotion: 85.54% |

| Multi-Species Benchmark (BEBE) [9] | Deep features from raw data (via CNN/RNN) | Embedded in architecture | Deep Neural Networks | Outperformed classical ML methods across all 9 tested datasets |

| Multi-Species Benchmark (BEBE) [9] | Hand-crafted summary statistics (features) | Not Specified | Random Forest (Classical ML) | Lower performance than deep neural networks across all datasets |

Table 2: Performance in Human Health and Industrial Applications

| Study & Application | Feature Engineering Approach | Selection/Method | Classification Model | Key Performance Metric & Result |

|---|---|---|---|---|

| Smartphone Fall Detection [49] [50] | 64 statistical features from 3s windows with two 50% overlapping sub-windows (3s2sub) |

Not Specified | K-Nearest Neighbors (KNN) | 99.89% accuracy (MobiAct dataset); 98.45% accuracy (UniMiB SHAR, LOSO) |

| Smartphone Fall Detection [49] [50] | 64 statistical features from 3s windows with two 50% overlapping sub-windows (3s2sub) |

Not Specified | Support Vector Machine (SVM) | 95.35% sensitivity, 98.12% specificity (FARSEEING dataset) |

| Gearbox Failure [51] | 64 time-domain statistical condition indicators (CIs) | Wrapper method with Random Forest | Random Forest (RF) | >98% accuracy and AUC |

| Gearbox Failure [51] | 7 most relevant CIs (selected from 64) | Wrapper method with Random Forest | K-Nearest Neighbors (K-NN) | >98% accuracy and AUC |

| Dairy Cattle Lameness [52] | Raw accelerometer data | Dimensionality Reduction (PCA/fPCA) | Multiple ML Models | fPCA with fCV gave most robust performance for independent farm data |

Detailed Experimental Protocols

To ensure reproducibility and provide a clear framework for future research, this section outlines the detailed methodologies from key cited studies that demonstrated high classification performance.

Wrapper-Based Feature Selection for Gearbox Failure Severity

This methodology [51] provides a structured, automated framework for selecting the most informative time-domain features.

- Data Acquisition: Vibration signals were acquired using six accelerometers (A1–A6) mounted at different positions and inclinations on a spur gearbox test bench. Data was sampled at 50 kHz over a 10-second period, generating 500,000 data points per sensor per run. Four failure types (breaking, cracking, pitting, scuffing) were simulated and tested across nine progressive severity levels.

- Feature Extraction: From the raw vibration signals, 64 statistical condition indicators (CIs) were calculated in the time domain. These included conventional metrics (e.g., root mean square, kurtosis, skewness) and non-conventional ones (e.g., waveform length, Wilson amplitude).

- Feature Selection - Wrapper Method:

- Phase 1 (Model Optimization and Ranking): A Random Forest (RF) classifier was trained using all 64 CIs from accelerometers A1-A3. The model's hyperparameters were optimized, and the mean influence (MI) of each CI on the model's accuracy was calculated, generating a ranked list.

- Phase 2 (Cross-Failure Analysis): The top 10 CIs from each accelerometer (A1-A3) were assigned a descending weight (10 for most important, 1 for least). These weights were then summed by failure type.