Strategic Stepping Stones in Drug Development: Identification, Deployment, and Validation Techniques for Researchers

This article provides a comprehensive framework for researchers, scientists, and drug development professionals on the strategic use of 'stepping stones' to advance therapeutic candidates.

Strategic Stepping Stones in Drug Development: Identification, Deployment, and Validation Techniques for Researchers

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals on the strategic use of 'stepping stones' to advance therapeutic candidates. It covers the foundational concept of stepping stones as critical, discrete resources that bridge knowledge gaps in the preclinical pipeline. The scope includes methodologies for identifying project-specific stepping stones, practical application and deployment techniques, troubleshooting common challenges in implementation, and rigorous validation of their impact. Tailored for the complex rare disease and oncology landscapes, this guide synthesizes insights from leading initiatives like the NCI Stepping Stones Program to enable more efficient and successful translation of innovative research into clinical development.

What Are Stepping Stones? Foundational Principles for Preclinical Therapeutic Advancement

Defining 'Stepping Stones' in the Drug Development Lexicon

In the complex and high-attrition landscape of drug development, the systematic identification and deployment of stepping stones—critical decision points, methodologies, and intermediate milestones—is paramount for de-risking the research and development (R&D) pipeline. This application note delineates a structured framework for defining these stepping stones, positioning them within the broader context of a research thesis on identification and deployment techniques. We provide a quantitative analysis of the current R&D pipeline, detailed protocols for key characterization methodologies essential for progression, and visualization of the underlying workflows. The content is designed to equip researchers, scientists, and drug development professionals with actionable strategies to enhance decision-making, optimize resource allocation, and increase the probability of technical success from discovery to market.

The drug development pathway is a high-risk, multi-stage endeavor where strategic navigation of critical junctures determines overall success. The concept of "stepping stones" within this lexicon refers to the essential data points, technical achievements, and validated methodologies that collectively form a reliable path forward, enabling teams to traverse the "valley of death" between initial discovery and clinical application. These are not merely sequential phases, but rather specific, evidence-based milestones that confirm a compound's viability, inform go/no-go decisions, and de-risk subsequent development stages.

The modern pharmaceutical R&D pipeline is increasingly characterized by the adoption of Model-Informed Drug Development (MIDD) approaches, which leverage computational modeling to generate crucial stepping-stone evidence. The industry-wide shift towards these quantitative methods is driven by data indicating they can save an estimated $5 million and 10 months per development program [1]. This document outlines the core techniques and materials that constitute the foundational stepping stones in contemporary drug development, providing a detailed guide for their identification and application.

The Quantitative Landscape: Stepping Stones in the Global R&D Pipeline

A macroscopic view of the drug development pipeline reveals the critical filtering function of stepping stones. The vast majority of potential drug candidates are winnowed out at key transition points, underscoring the need for robust decision-making criteria at each stage. The following table summarizes the global R&D pipeline for 2025, illustrating the scale of attrition and the importance of each developmental phase as a major stepping stone [2].

Table 1: Global Drug R&D Pipeline in 2025, by Phase of Development

| Phase of Development | Number of Drugs (2025) |

|---|---|

| Pre-clinical | ~12,700 |

| Phase I | ~5,900 |

| Phase II | ~3,100 |

| Phase III | ~1,300 |

| Pre-registration | ~500 |

This quantitative landscape highlights the pre-clinical phase as the most populous stepping stone, where fundamental candidate viability is established. The drastic reduction in candidates by Phase III underscores the critical nature of the stepping stones designed to identify clinical efficacy and safety earlier in the process.

Methodological Framework: Core Characterization Techniques as Stepping Stones

A cornerstone of early development is the rigorous physicochemical and biological characterization of a drug candidate and its delivery system. The data generated from these protocols serve as non-negotiable stepping stones for formulation optimization and stability assessment.

Protocol: Thermal Analysis for Lyophilization Cycle Development

Lyophilization (freeze-drying) is a critical process for enhancing the shelf-life of unstable biopharmaceuticals, such as liposomal formulations. Defining the primary drying temperature is a crucial stepping stone for developing a robust and scalable lyophilization process.

Application Note: This protocol is essential for the development of stable lyophilized products like Ambisome or Vyxeos, ensuring the preservation of critical quality attributes (CQAs) such as particle size, morphology, and drug encapsulation during dehydration [3].

Experimental Protocol:

- Sample Preparation: Prepare the drug product formulation (e.g., liposomal dispersion) with the selected cryoprotectant (e.g., sucrose or trehalose at optimal concentration). Load a representative volume (e.g., 3-5 mL) into a differential scanning calorimetry (DSC) pan or a vial for freeze-drying microscopy.

- Instrument Calibration: Calibrate the DSC according to manufacturer specifications using indium or other standard references. For Freeze-Drying Microscopy (FDM), ensure the temperature stage and video capture system are functional.

- DSC Analysis:

- Cool the sample to a deeply frozen state (e.g., -50°C to -70°C) at a controlled rate (e.g., 5°C/min).

- Apply a controlled heating ramp (e.g., 2°C/min) through the phase transition region.

- Analyze the thermogram to identify the glass transition temperature (Tg') of the maximally freeze-concentrated amorphous matrix. This temperature is the critical collapse temperature (Tc).

- FDM Analysis (Correlative):

- Place a small sample droplet on the FDM stage and freeze.

- Gradually increase the temperature under vacuum while monitoring the sample structure via microscope.

- The temperature at which the dried layer begins to lose macroscopic structure (collapse) is recorded as the visual Tc.

- Data Integration & Decision: The lower value obtained from DSC (Tg') or FDM (visual Tc) is established as the critical product temperature. Set the shelf temperature during primary drying to ensure the product temperature remains 2-3°C below this critical value, forming a definitive stepping stone for process parameter setting [3].

The Scientist's Toolkit: Key Reagents for Stepping Stone Characterization

The following reagents and materials are fundamental for executing the characterization protocols that generate critical stepping-stone data.

Table 2: Essential Research Reagent Solutions for Formulation Characterization

| Research Reagent | Function & Rationale |

|---|---|

| Sucrose & Trehalose | Disaccharide cryoprotectants; protect liposomal and protein-based formulations during freeze-drying by the "Water Replacement Hypothesis," maintaining bilayer structure and preventing drug leakage [3]. |

| E3 Ligase Ligands (e.g., for Cereblon, VHL) | Key targeting moieties in PROteolysis TArgeting Chimeras (PROTACs); enable the recruitment of target proteins to the cellular degradation machinery, a critical stepping stone for a new therapeutic modality [4]. |

| Targeting Moieties (Antibodies, Peptides) | Components of drug conjugates (e.g., Antibody-Drug Conjugates, Radiopharmaceutical Conjugates); confer specificity for diseased cells (e.g., tumors), creating a stepping stone for targeted therapy and reduced off-target effects [4]. |

| Lipid Nanoparticles (LNPs) | Non-viral delivery vectors; critical stepping stone for the in vivo delivery of nucleic acid therapeutics and personalized CRISPR-based gene editing therapies [4]. |

Advanced Deployment: QSP and AI as Meta-Stepping Stones

Beyond physical characterization, computational frameworks have emerged as powerful meta-stepping stones, informing the entire development pathway.

Application Note: Quantitative Systems Pharmacology (QSP) uses computational modeling to bridge the gap between drug actions, biological systems, and disease progression. It serves as a predictive stepping stone for hypothesis testing and clinical trial design [1].

Experimental Protocol: QSP Model Workflow for Trial Simulation

- Model Construction: Develop a mathematical model integrating known pathophysiology, drug mechanism of action, and biomarker data from pre-clinical studies.

- Virtual Population Generation: Create a large cohort of in silico "virtual patients" by sampling key system parameters from distributions representing physiological variability.

- Intervention Simulation: Simulate the administration of various dosing regimens to the virtual population and predict outcomes (efficacy and toxicity).

- Scenario Analysis: Run thousands of simulations to refine inclusion/exclusion criteria, identify responsive subpopulations, and optimize dosing schedules before initiating costly clinical trials. This forms a critical de-risking stepping stone [1].

The deployment of AI-powered "digital twins" extends this concept, allowing for the creation of virtual control arms in clinical trials. This innovation can reduce placebo group sizes, ensuring faster timelines and more confident data without losing statistical power, representing a transformative stepping stone in clinical development efficiency [4].

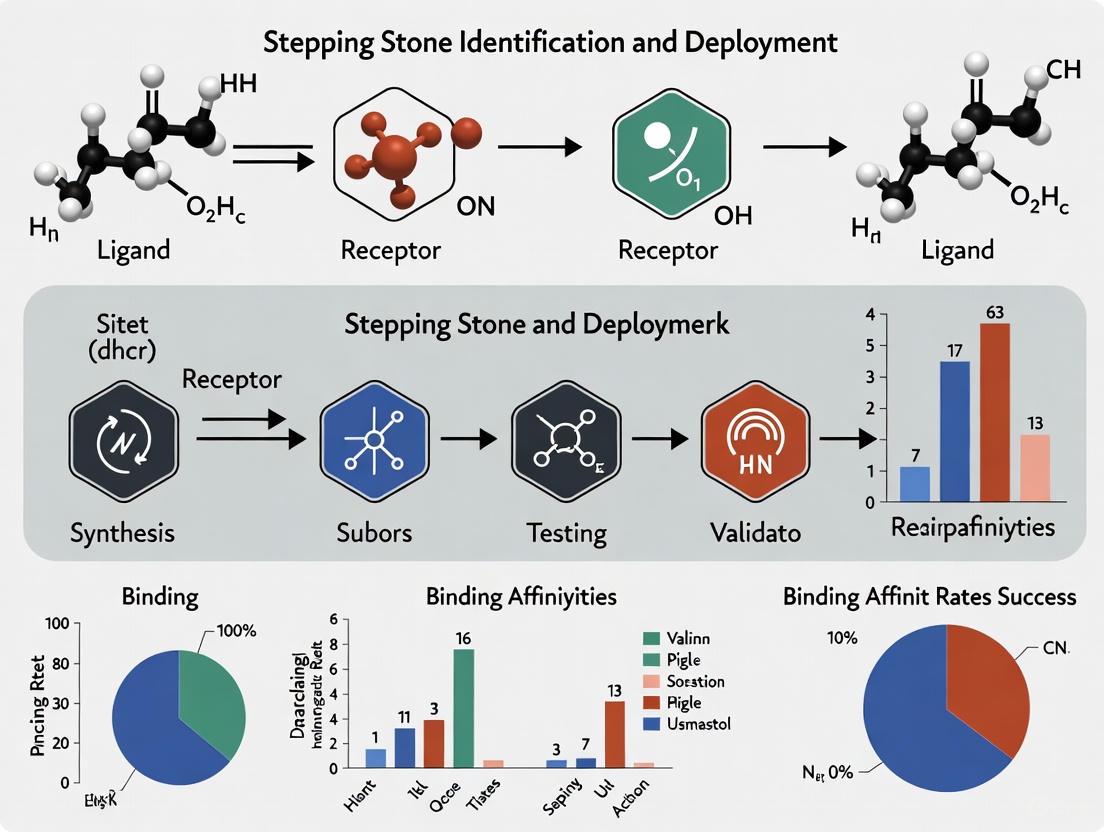

Workflow Visualization: Stepping Stone Identification and Deployment

The following diagrams map the logical relationships and workflows for establishing and utilizing stepping stones in drug development.

Diagram 1: Stepping Stones in Drug Development. This workflow illustrates key decision points (Go/No-Go) informed by data from specific technical stepping stones, including characterization, pre-clinical studies, and computational modeling.

Diagram 2: Lyophilization Parameter Workflow. This protocol details the experimental steps to establish a critical process parameter (shelf temperature) as a formulation stepping stone.

The deliberate identification and deployment of stepping stones is a strategic imperative in modern drug development. As evidenced by the quantitative pipeline data and advanced methodologies presented, these milestones—ranging from foundational characterization data to sophisticated computational predictions—provide the objective evidence required to navigate the inherent risks of R&D. By adopting the structured frameworks, detailed protocols, and visualization tools outlined in this application note, research teams can systematically build a path of verified stepping stones. This disciplined approach ultimately enhances development efficiency, conserves resources, and increases the likelihood of delivering effective new therapies to patients.

The Critical Role of Stepping Stones in Bridging Preclinical Knowledge Gaps

In the complex journey of drug discovery and development, stepping stones represent critical methodological bridges that allow researchers to traverse significant knowledge gaps between preliminary findings and clinical application. These structured approaches are particularly vital in preclinical research, where the transition from in silico predictions to in vivo efficacy presents substantial challenges. The strategic deployment of stepping stones enables systematic validation of computational predictions through increasingly complex experimental systems, thereby derisking the development pipeline. Within Alzheimer's disease (AD) research, for instance, computational methods have emerged as indispensable stepping stones, covering areas from biomarker identification to lead compound discovery and drug repurposing [5]. This framework ensures that each hypothesis undergoes rigorous, sequential testing across multiple biological contexts, significantly enhancing the predictive validity of preclinical models and increasing the probability of clinical success.

Key Stepping Stone Methodologies and Applications

Computational Stepping Stones in Target Identification

The initial stages of drug development heavily rely on computational stepping stones to prioritize plausible therapeutic targets from vast biological datasets. Molecular Dynamics (MD) simulations serve as a fundamental stepping stone by providing atomic-level insights into protein-ligand interactions and conformational changes relevant to disease pathology. In Alzheimer's disease, these simulations help elucidate the pathological mechanisms of amyloid-beta aggregation and tau protein hyperphosphorylation, enabling virtual screening of compound libraries against newly identified targets [5]. This computational stepping stone effectively bridges the gap between genomic/proteomic discoveries and biological validation, ensuring that only the most promising targets advance to costly experimental testing.

Another crucial computational stepping stone involves AI-driven biomarker discovery, which analyzes multi-omics data to identify diagnostic, prognostic, and predictive biomarkers. These computational approaches create essential bridges toward developing patient stratification strategies and precision medicine frameworks, particularly for heterogeneous conditions like Alzheimer's disease [5]. By serving as preliminary filters, these methods significantly reduce the candidate space before committing to resource-intensive experimental approaches.

Experimental Stepping Stones for Lead Optimization

The transition from hit identification to lead optimization represents a critical gap in drug development, effectively bridged by a series of experimental stepping stones. Multi-target directed ligand (MTDL) development has emerged as a pivotal strategy for complex diseases, where single-target approaches often yield limited efficacy. This stepping stone methodology involves systematic medicinal chemistry to optimize compound structures against multiple therapeutic targets simultaneously, balancing potency, selectivity, and drug-like properties [5].

Experimental stepping stones typically progress through increasingly complex biological systems:

- In vitro binding and enzymatic assays

- Cell-based phenotypic screening

- 3D organoid and tissue models

- Ex vivo tissue preparations

- In vivo animal models

This hierarchical approach ensures comprehensive assessment of compound efficacy, safety, and pharmacokinetic properties before human trials, with each model system serving as a essential stepping stone to the next level of biological complexity.

Quantitative Data Analysis of Stepping Stone Efficacy

The strategic value of stepping stone methodologies can be quantified through their impact on key drug development metrics. The following tables summarize performance data across multiple stepping stone applications.

Table 1: Efficacy of Computational Stepping Stones in Alzheimer's Disease Drug Discovery

| Methodology | Application Scope | Success Rate Improvement | Time Reduction | Key Advantages |

|---|---|---|---|---|

| Virtual Screening | Initial hit identification | 3-5x over HTS | 60-70% | Reduced compound library requirements |

| MD Simulations | Target validation & mechanism | 2-3x predictive accuracy | 40-50% | Atomic-level mechanistic insights |

| AI-Driven Biomarker Discovery | Patient stratification | 4-6x over conventional methods | 50-60% | Identification of novel biomarker combinations |

| Multi-Target Directed Ligands | Complex disease modulation | 2-4x therapeutic efficacy | 30-40% | Addressing disease complexity |

Table 2: Impact of Stepping Stone Approaches on Development Pipeline Metrics

| Development Phase | Without Stepping Stones | With Stepping Stones | Improvement Factor |

|---|---|---|---|

| Target-to-Hit | 12-18 months | 6-9 months | 2.0x |

| Hit-to-Lead | 18-24 months | 10-14 months | 1.8x |

| Lead Optimization | 24-36 months | 16-24 months | 1.5x |

| Preclinical Candidate Selection | 60-78 months | 36-50 months | 1.7x |

| Clinical Phase Transition Success | 15-20% | 25-35% | 1.8x |

Detailed Experimental Protocols

Protocol 1: Stepping Stone Validation of Multi-Target Directed Ligands

Purpose: To systematically evaluate and optimize multi-target directed ligands (MTDLs) for complex neurodegenerative diseases using a stepped validation approach.

Materials and Reagents:

- Recombinant enzyme preparations (AChE, BACE1, GSK-3β)

- SH-SY5Y and PC12 cell lines

- Transgenic C. elegans Alzheimer's model (CL2006)

- APPswe/PS1dE9 transgenic mice

- Custom compound libraries

- High-content screening systems

Procedure:

Step 1: Primary Target Engagement

- Prepare enzyme solutions at optimal catalytic concentrations

- Incubate with test compounds at 10 μM concentration for 30 minutes

- Add fluorogenic substrates and monitor activity kinetics for 60 minutes

- Calculate IC₅₀ values for each primary target using non-linear regression

- Select compounds with balanced multi-target activity profile (IC₅₀ < 1 μM for ≥2 targets)

Step 2: Cellular Efficacy Assessment

- Culture neuronal cell lines in complete medium until 70% confluency

- Treat with test compounds (0.1-10 μM) for 24 hours

- Challenge with oligomeric Aβ₂₅‑₃₅ (10 μM) for additional 24 hours

- Assess cell viability using MTT assay

- Measure oxidative stress markers (ROS, GSH)

- Evaluate mitochondrial membrane potential using JC-1 staining

- Select compounds showing ≥50% protection at ≤1 μM

Step 3: In Vivo Validation

- Administer selected compounds (10 mg/kg, oral) to transgenic mice for 30 days

- Conduct Morris water maze testing on days 25-30

- Collect brain tissue for biochemical and histopathological analysis

- Quantify amyloid plaque burden and phosphorylated tau levels

- Assess neurotransmitter levels and inflammatory markers

Validation Parameters:

- Target engagement: IC₅₀ values, binding kinetics

- Cellular efficacy: Protection index, mechanism confirmation

- In vivo efficacy: Cognitive improvement, pathological reduction

- Safety margins: Therapeutic index, organ toxicity

Protocol 2: Stepping Stone Approach for Drug Repurposing

Purpose: To identify and validate new therapeutic indications for approved drugs using computational and experimental stepping stones.

Materials:

- FDA-approved drug library

- Disease-specific target databases

- Relevant cell-based disease models

- Animal models of target disease

- Transcriptomic and proteomic profiling platforms

Procedure:

Computical Screening Stepping Stone:

- Perform molecular docking against novel disease targets

- Conduct network pharmacology analysis to identify novel mechanisms

- Analyze transcriptomic connectivity using LINCS database

- Prioritize candidates based on multi-modal computational evidence

Experimental Validation Stepping Stone:

- Test prioritized compounds in phenotypic screens

- Confirm target engagement using cellular thermal shift assay (CETSA)

- Evaluate efficacy in disease-relevant animal models

- Validate safety profile in secondary pharmacology screens

Visualization of Stepping Stone Frameworks

Stepping Stone Workflow for Preclinical Development

Multi-Target Directed Ligand Development Pathway

Research Reagent Solutions

Table 3: Essential Research Reagents for Stepping Stone Approaches

| Reagent/Category | Specific Examples | Function in Stepping Stone Framework | Optimal Use Cases |

|---|---|---|---|

| Computational Platforms | Schrödinger Suite, AutoDock Vina, GROMACS | Virtual screening and MD simulations for initial candidate prioritization | Target identification, binding mode prediction, ADMET profiling |

| Compound Libraries | FDA-approved drug library, diverse synthetic compounds, natural product collections | Experimental validation of computational predictions | Hit identification, drug repurposing, scaffold hopping |

| Cellular Models | SH-SY5Y, PC12, primary neurons, iPSC-derived neurons | Bridge between biochemical and complex systems | Mechanism confirmation, toxicity screening, functional assessment |

| 3D Disease Models | Brain organoids, neurospheroids, blood-brain barrier models | Enhanced physiological relevance before animal studies | Disease modeling, efficacy assessment, transport studies |

| Animal Models | Transgenic mice (APP/PS1, 3xTg), C. elegans, zebrafish | In vivo validation of therapeutic efficacy | Cognitive testing, biomarker validation, pharmacokinetic studies |

| Analytical Tools | HPLC-MS, imaging systems, behavioral analysis software | Quantification of compound effects across stepping stones | Compound quantification, pathological assessment, functional readouts |

The systematic implementation of stepping stone methodologies represents a paradigm shift in preclinical drug development, offering a structured framework to navigate the complex transition from target identification to clinical candidate selection. By creating deliberate, well-characterized bridges between computational predictions and experimental validation, researchers can significantly enhance the efficiency and success rate of the drug discovery process. The future of stepping stone approaches will likely involve increased integration of artificial intelligence and machine learning algorithms to optimize the transitions between development stages, as well as the development of more sophisticated in vitro models that better recapitulate human disease physiology. Furthermore, the application of quantitative systems pharmacology approaches will enable more predictive stepping stone design, ultimately accelerating the delivery of novel therapeutics to patients while reducing late-stage attrition rates.

The Stepping Stones Program, an initiative by the National Cancer Institute's (NCI) Division of Cancer Treatment and Diagnosis (DCTD), provides a strategic framework for advancing innovative anti-cancer therapeutics toward clinical development. This program addresses critical preclinical development gaps by offering researchers access to federal resources and expertise, effectively creating a structured pathway for transitioning academic discoveries into viable clinical candidates. By analyzing the program's structure, access protocols, and resource allocation mechanisms, this article provides a model for leveraging federal assets to de-risk the early stages of oncological drug development. The program specifically targets therapeutic candidates addressing unmet clinical needs—including orphan cancers, glioblastoma, small cell lung cancer, pancreatic cancer, and pediatric cancers—ensuring resources are directed toward high-impact research areas [6].

The Stepping Stones Program operates as a critical facilitator within the NCI's broader drug development pipeline. Its primary function is to augment grant-supported research programs with access to the extensive drug development capabilities housed within the NCI/DCTD/Developmental Therapeutics Program (DTP). This initiative is strategically designed to fill specific knowledge and data gaps that often impede the progression of promising therapeutic candidates, thereby enabling research programs to advance and secure additional resources for development toward clinical testing [6].

The program is engineered to support the NCI's NExT Program (NCI Experimental Therapeutics), which focuses on developing therapies for unmet medical needs in oncology not typically addressed by the private sector [7]. By feeding the NCI/NExT pipeline with innovative, validated therapeutic candidates, Stepping Stones ensures that promising science receives the necessary support to navigate the complex transition from basic research to clinical application. The program's core objectives are multi-focal [6]:

- Support Peer-Reviewed Science: Prioritizing therapeutic development programs that have already undergone rigorous peer review through NCI/DCTD grant funding mechanisms.

- Resource Facilitation: Streamlining access to federal resources for comprehensive preclinical product development.

- Pipeline Enhancement: Continuously populating the NCI development pipeline with high-quality, innovative therapeutic candidates poised to address significant clinical challenges.

Table 1: Strategic Goals of the NCI Stepping Stones Program

| Goal Category | Specific Objective | Intended Outcome |

|---|---|---|

| Research Support | Support peer-reviewed anti-cancer product development | Accelerate translation of academically-vetted discoveries |

| Resource Access | Facilitate access to federal preclinical development resources | Overcome resource limitations in academic and small biotech settings |

| Pipeline Development | Fill the NCI/NExT pipeline with innovative therapeutic candidates | Ensure a continuous flow of vetted candidates for advanced development |

Access Protocol and Eligibility Framework

Gaining access to the Stepping Stones Program involves a structured, multi-stage consultation process designed to identify the most viable candidates and their specific development needs. The pathway to access is methodical, ensuring that both the researcher's project and the NCI's resources are appropriately aligned for maximum impact [6].

Eligibility and Project Selection Criteria

The program maintains stringent selection criteria to identify projects with the highest potential for clinical impact and developmental success. The primary gateway requires the researcher to be an NCI grantee with an active, grant-supported therapeutic development program [6]. Beyond this fundamental requirement, projects are evaluated against several critical benchmarks:

- Target Validation: The therapeutic candidate must possess a well-characterized and validated intervention target or mechanism of action, supported by robust preliminary data.

- Unmet Medical Need: The candidate should address significant unmet clinical needs, with explicit priority given to programs targeting orphan cancers, glioblastoma, small cell lung cancer, pancreatic cancer, and pediatric cancers [6].

- Demonstrated Efficacy: Lead candidates must show demonstrated preclinical efficacy in both in vitro and in vivo models, providing a solid foundation for further development.

Application and Consultation Workflow

The access protocol follows a defined sequence, initiating with a formal request for engagement and culminating in a tailored development plan [6]:

- Consultation Request: Eligible NCI grantees initiate the process by submitting a Drug Development Consultation Request to the program.

- Expert Panel Review: The program arranges a consultation meeting where the grantee presents their most promising grant-supported therapeutic candidate to a panel of NCI staff with specialized therapeutic development expertise.

- Project Qualification: Researchers with qualified projects are invited for further discussions with NCI/DCTD/DTP staff to perform a deep dive into the project's specific challenges and opportunities.

- Gap Analysis and Study Design: NCI and the researcher collaboratively identify critical research gaps and design a discrete set of studies to address the most pressing product development challenges.

It is crucial to note that the scope of support provided through Stepping Stones is exclusively preclinical, and the program explicitly does not provide IND-enabling support such as GLP (Good Laboratory Practice) toxicology studies or GMP (Good Manufacturing Practice) manufacturing [6]. This delineation ensures the program remains focused on the early, discovery-stage gaps that often prevent promising candidates from advancing to later stages of development.

Diagram 1: Stepping Stones application workflow.

Experimental Protocols and Methodologies

While the specific experiments conducted through the Stepping Stones Program are tailored to individual project needs, they generally fall within established preclinical development pathways. The following protocols outline standard methodologies that align with the program's objective of generating critical data to bridge knowledge gaps.

Protocol: In Vivo Efficacy Evaluation in Patient-Derived Xenograft (PDX) Models

Objective: To assess the antitumor activity of a therapeutic candidate against clinically relevant human tumor models that better recapitulate human disease compared to traditional cell-line derived xenografts.

Materials and Reagents:

- NCI Patient-Derived Models Repository (PDMR): Source of characterized PDX models with associated clinical annotation [7].

- Test article: Therapeutic candidate compound, formulated appropriately for in vivo dosing.

- Immunocompromised mice: NOD-scid gamma (NSG) or similar strains.

- Calipers for tumor measurement.

- Matrigel for tumor implantation (if required).

Methodology:

- Tumor Implantation: Implant a fragment of a candidate PDX model (~15-30 mm³) subcutaneously into the flank of 6-8 week old female NSG mice. Allow tumors to establish to a palpable size (~100-150 mm³).

- Randomization: Randomize mice (n=8-10 per group) into vehicle control and treatment groups once tumors reach the predetermined volume. Ensure no significant differences in mean tumor volume between groups at baseline.

- Dosing Regimen: Administer the test article via the intended route (e.g., oral gavage, intraperitoneal injection) at the predetermined maximum tolerated dose (MTD) or multiple dose levels. Continue treatment for 3-4 weeks.

- Endpoint Monitoring: Monitor tumor volumes via caliper measurement 2-3 times weekly. Calculate volume using the formula: (Length × Width²)/2. Record body weights twice weekly as a measure of systemic toxicity.

- Data Analysis: Calculate percent tumor growth inhibition (TGI) for each treatment group compared to the vehicle control at study end. Statistical significance is determined using a repeated-measures ANOVA followed by appropriate post-hoc tests. A TGI >50% is typically considered indicative of meaningful antitumor activity.

Protocol: High-Throughput Screening Against NCI-60 Panel

Objective: To profile the growth inhibitory activity of a compound across the NCI-60 panel of human tumor cell lines, generating a characteristic fingerprint of activity that can suggest mechanisms of action or selectivity.

Materials and Reagents:

- NCI-60 Panel: The panel of 60 diverse human cancer cell lines maintained by the Developmental Therapeutics Program [8].

- Test compound: Typically tested at a minimum of five 10-fold dilutions.

- Sulforhodamine B (SRB) assay reagents or ATP-based viability assays (e.g., CellTiter-Glo).

Methodology:

- Cell Plating: Plate cells in 96-well plates at densities optimized for logarithmic growth and incubate for 24 hours.

- Compound Addition: Add the test compound to the plates and incubate for 48 hours.

- Cell Viability Assessment: Fix cells with trichloroacetic acid and stain with SRB, which binds to protein content. Alternatively, lyse cells and measure ATP content using CellTiter-Glo luminescent readout.

- Data Processing: Measure optical density (SRB) or luminescence (ATP). Calculate percent growth inhibition relative to untreated controls (100% growth) and a time-zero plate (0% growth).

- Data Analysis: The NCI's COMPARE algorithm is used to analyze the resulting pattern of growth inhibition across all 60 cell lines. The pattern is compared to a database of known compounds to generate hypotheses about the test compound's potential mechanism of action.

Table 2: Key Research Reagent Solutions for Stepping Stones-Style Research

| Resource | Source | Function in Therapeutic Development |

|---|---|---|

| Patient-Derived Models Repository (PDMR) | NCI [7] | Provides clinically annotated PDX, PDC, and organoid models for efficacy testing in physiologically relevant systems. |

| Cooperative Human Tissue Network (CHTN) | NCI [7] | Supplies human tissues and fluids from routine procedures for target validation and biomarker studies. |

| NCI-60 Human Tumor Cell Lines | Developmental Therapeutics Program [8] | A standardized panel for high-throughput compound screening and mechanistic fingerprinting. |

| Genomic Data Commons (GDC) | NCI [8] | A unified data repository enabling molecular analysis of tumors to inform patient stratification strategies. |

| The Cancer Imaging Archive (TCIA) | NCI [7] [8] | A repository of medical images of cancer for developing non-invasive biomarkers of response. |

| DTP Repository | Developmental Therapeutics Program [7] | Supports distribution of chemical and biological samples for screening and profiling. |

Data Integration and Analytical Framework

A critical component of the Stepping Stones model is the strategic integration of data from multiple NCI resources to build a comprehensive preclinical package. This involves correlating experimental results with extensive publicly available datasets to strengthen the rationale for clinical development.

Integrating Experimental Data with Public Databases:

- Genomic Correlates: For candidates showing selective activity in specific PDX models or NCI-60 cell lines, researchers can cross-reference sensitivity data with genomic features (e.g., mutations, copy number alterations, gene expression) available through the Genomic Data Commons (GDC) and The Cancer Genome Atlas (TCGA) [8]. This can identify potential predictive biomarkers.

- Target Expression Profiling: The expression and prevalence of a drug target across cancer types can be assessed using RNA sequencing data from TCGA, available via the GDC portal [8]. This helps define the potential patient population and therapeutic landscape.

- Pathway Analysis: Data from the Cancer Complexity Knowledge Portal and Cancer Systems Biology Consortium (CSBC) can provide insights into the complex network biology surrounding a therapeutic target, suggesting potential combination strategies or resistance mechanisms [7].

Diagram 2: Data integration for development decisions.

This integrated analytical approach transforms discrete experimental outcomes into a compelling evidence package, significantly de-risking decisions about further investment in a therapeutic candidate's development pathway.

The NCI Stepping Stones Program provides a sophisticated, accessible model for leveraging federal resources to overcome specific, critical bottlenecks in the early-stage development of oncologic therapeutics. Its structured approach—combining rigorous eligibility criteria, a collaborative consultation process, and targeted experimental support—ensures that public resources are deployed efficiently to advance the most promising science. For researchers, a clear understanding of the program's access protocols, available resources, and standard experimental methodologies is essential for successfully navigating this valuable pathway. By framing development projects within this "stepping stone" paradigm, scientists can strategically address data gaps, mitigate project risks incrementally, and enhance the probability that their innovative discoveries will ultimately translate into new treatments for patients with cancer.

Identifying Unmet Clinical Needs as Primary Candidates for Stepping Stone Support

In the strategic development of therapeutics, the concept of a "stepping stone" is a powerful methodology for de-risking long-term, complex projects. A stepping stone is not merely an arbitrary milestone but a cohesive, concrete deliverable that provides a vantage point to re-situate and evaluate next steps, delivering real value and illuminating "unknown unknowns" that cannot be identified through planning alone [9]. In drug development, an unmet clinical need—a well-defined gap in patient care for which no adequate solution exists—serves as an ideal primary candidate for such a stepping stone. Successfully addressing a focused, unmet need creates a foundation of validated science, clinical proof-of-concept, and regulatory experience upon which more ambitious therapeutic programs can be built. This protocol details a systematic approach for identifying and validating these critical unmet needs to strategically advance drug development pipelines.

A Stepping Stone Framework for Clinical Development

Adopting a stepping stone approach transforms drug development from a high-risk, monolithic endeavor into a series of de-risked, value-generating steps. The core principle is to pursue simplicity and directionally consistent progress within a defined "cone of strategy" [9]. A well-articulated set of stepping stones delivers multiple strategic advantages:

- Risk Mitigation: Each stepping stone presents an opportunity to assess project viability and pivot resources if necessary, avoiding the scenario of wasted years of effort [9].

- Capital Efficiency: Delivering incremental value can secure ongoing investment and stakeholder buy-in by demonstrating concrete progress [9].

- Organizational Learning: Building a simplified version of a final system first drastically reduces the scope of unknown unknowns, turning open-ended problems into obvious next steps [9].

- Team Motivation: Shipping a real, albeit scoped-down, system or component is far more motivating for a team than working toward a distant, abstract goal [9].

Table 1: Core Characteristics of an Effective Clinical Stepping Stone

| Characteristic | Description | Application in Drug Development |

|---|---|---|

| Cohesive & Concrete | A simplified but functioning version of a final system or component [9]. | A drug candidate with a clear mechanism of action and a defined, reachable clinical endpoint. |

| Delivers Real Value | Provides utility even if the larger project is canceled [9]. | Addresses a true patient need, potentially serving a niche market or fulfilling a regulatory incentive (e.g., Orphan Drug Designation). |

| Directionally Consistent | Resides within the "cone of strategy" and enables future progress [9]. | The biological target, technology platform, or clinical development path is relevant to the long-term portfolio goal. |

| Enables Learning | Illuminates unknown unknowns and reduces future uncertainty [9]. | Generates critical human data on biology, pharmacokinetics, or safety that informs the next development step. |

A Protocol for Identifying Unmet Clinical Needs

This protocol leverages principles from implementation science, a discipline focused on integrating evidence-based interventions into clinical practice [10]. The framework is adapted to systematically scan, evaluate, and prioritize unmet clinical needs as potential stepping stones.

Phase 1: Landscape Analysis and Tool Selection

Objective: To conduct a broad, evidence-based scan of the clinical landscape to identify potential unmet needs. Methodology:

- Define the Therapeutic Domain: Clearly bound the area of interest (e.g., oncology, neurodegenerative diseases, rare diseases).

- Identify Evidence-Based Assessment Tools: Utilize validated, multi-dimensional tools to quantitatively and qualitatively characterize the gaps in patient care. Several tools are recognized in clinical research for this purpose [10].

- Gather Quantitative and Qualitative Data:

- Literature Review: Systematic reviews, meta-analyses, and clinical practice guidelines are rich sources for identifying reported care gaps.

- Analysis of Patient-Reported Outcomes (PROs): Mine existing datasets from clinical trials or real-world evidence platforms for domains where patients report high symptom burden or low quality of life.

- Stakeholder Interviews: Conduct structured interviews with key opinion leaders, practicing clinicians, and patient advocacy groups to uncover challenges not fully captured in published literature.

Table 2: Multi-Dimensional Needs Assessment Tools for Clinical Landscape Analysis

| Tool Name | Domains of Assessment | Key Utility & Context |

|---|---|---|

| NCCN Distress Thermometer (DT) | Physical, emotional, social, practical, spiritual [10]. | Widely used in oncology to identify needs during active treatment; leads to actionable referrals [10]. |

| Supportive Care Needs Survey (SCNS) | Physical/daily living, psychological, sexual, support services, health system/information [10]. | A comprehensive validated tool for assessing unmet needs across the cancer care continuum [10]. |

| Short-Form Survivor Unmet Needs Survey (SF-SUNS) | Unmet needs in post-treatment survivorship [10]. | Specifically designed for the post-treatment survivorship phase [10]. |

| Cancer Survivors’ Unmet Needs (CaSUN) | Unmet needs in post-treatment survivorship [10]. | Measures the range of needs in cancer survivors [10]. |

| PhenX Toolkit | Various validated protocols for phenotypes and exposures [10]. | A catalog of standardized measurement protocols for use in research studies [10]. |

Phase 2: Contextual Analysis Using an Implementation Framework

Objective: To evaluate the shortlisted unmet needs through the lens of clinical implementation feasibility, ensuring they are not just scientifically interesting but also clinically actionable. The Consolidated Framework for Implementation Research (CFIR) provides a pragmatic structure for this analysis [10].

Methodology: For each candidate unmet need, assess the following domains:

- Inner Setting: Analyze the clinical context where a future therapeutic would be used. Key factors include clinic workflow, staffing roles, technical infrastructure, and organizational culture [10]. A detailed workflow diagram is recommended to visualize how a new therapeutic would integrate into existing processes [10].

- Outer Setting: Evaluate the broader environment, including the competitive landscape, payer and reimbursement policies, patient and community attitudes, and the network of available supportive services [10].

- Characteristics of Individuals: Identify potential clinical champions and assess the capability, knowledge, and motivation of clinicians who would be end-users of a new therapy [10].

- Implementation Process: Develop a preliminary plan for how the therapeutic would be introduced, including key steps, measures of success, and a strategy for obtaining stakeholder buy-in [10].

Phase 3: Prioritization and Stepping Stone Validation

Objective: To rank the vetted unmet needs and select the most promising candidate for initial development as a strategic stepping stone.

Methodology:

- Develop a Prioritization Matrix: Create a scoring system based on criteria critical to the stepping stone strategy.

- Score Each Candidate Need: Apply the matrix to the shortlisted needs from Phase 2.

- Select and Refine: Choose the highest-ranking need and further refine its definition to ensure it is specific, measurable, and aligned with the long-term "cone of strategy" [9].

Table 3: Prioritization Matrix for Unmet Clinical Needs

| Prioritization Criteria | Low Priority (1 pt) | Medium Priority (2 pts) | High Priority (3 pts) | Candidate A Score | Candidate B Score |

|---|---|---|---|---|---|

| Clinical Impact & Unmetness | Limited impact on QoL/mortality; several treatments exist. | Moderate impact; some treatments available but with limitations. | Severe impact on QoL/mortality; no or very poor treatment options. | ||

| Alignment with Core Capabilities | Divergent from existing R&D expertise/platform. | Partially aligned; requires some new capability development. | Directly leverages existing core capabilities and IP. | ||

| Feasibility (Technical/Regulatory) | High technical risk; unclear regulatory path. | Moderate technical risk; complex but known regulatory path. | Low technical risk; clear and straightforward regulatory path (e.g., Orphan Drug). | ||

| Commercial/Strategic Potential | Small, niche market; limited future options. | Moderate market; could enable 1-2 follow-on programs. | Significant market itself; enables multiple future pipeline programs. | ||

| Resource Efficiency | Requires large, long-term investment before any value demonstration. | Moderate investment; value demonstration in mid-term. | Lean investment; potential for early value demonstration (e.g., fast-to-clinic). |

The Scientist's Toolkit: Key Reagents & Materials

Successfully executing this protocol requires both data and specialized tools.

Table 4: Essential Research Reagent Solutions for Needs Identification

| Item / Reagent | Function in the Protocol |

|---|---|

| Validated Needs Assessment Tools (e.g., NCCN DT, SCNS) [10] | Standardized instruments for quantitatively measuring the type and severity of unmet needs in a patient population. |

| Electronic Health Record (EHR) Data Access | Provides real-world data on patient demographics, treatment patterns, comorbidities, and outcomes to validate perceived needs. |

| Literature Mining & Database Subscription (e.g., PubMed, ClinicalTrials.gov) | Enables systematic landscape analysis of published literature and ongoing clinical research to identify gaps. |

| Data Visualization & Analysis Software (e.g., R, Python, Tableau) | Critical for analyzing large datasets, creating workflow diagrams [10], and generating prioritization matrices. |

| Implementation Strategy Catalog (e.g., ERIC compilation) [10] | A repository of strategies (e.g., "identify champions," "change record systems") to address barriers identified during the CFIR analysis [10]. |

Identifying unmet clinical needs through this structured, three-phase protocol allows research organizations to select targets that are not only scientifically meritorious but also strategically advantageous. By treating a precisely defined unmet need as a stepping stone, teams can build a foundation of knowledge and value, transforming the high-risk journey of drug development into a series of deliberate, learned, and de-risked steps toward a larger goal. This approach maximizes the return on R&D investment and increases the likelihood of delivering meaningful therapies to patients.

The transition from academic discovery to clinical candidate is a critical valley of death in anticancer therapeutic development. The Stepping Stones Program, administered by the National Cancer Institute's Division of Cancer Treatment and Diagnosis (NCI/DCTD), provides a formalized framework to address this gap by aligning grant funding with discrete development resources [6]. This programmatic initiative is designed to augment grant-supported research by providing access to federal drug development capabilities, thereby filling specific knowledge and data gaps that prevent promising therapeutic candidates from advancing toward clinical testing [6]. The deliberate identification and deployment of these "stepping stones"—specific, targeted resources that address critical path obstacles—enables research programs to generate the necessary data to procure additional development funding and ultimately progress to clinical trials.

The core objective of stepping stone identification is to pinpoint the most critical product development gaps in a research program and perform a discrete set of studies specifically designed to address these gaps. This methodology requires rigorous project selection based on specific criteria, including well-characterized therapeutic targets, demonstrated preclinical efficacy, and a focus on addressing unmet clinical needs in areas such as orphan cancers, glioblastoma, small cell lung cancer, pancreatic cancer, and pediatric cancers [6]. This document outlines application notes and experimental protocols to optimize researcher engagement with these structured development pathways.

Comparative Analysis of Stepping Stone Program Components

Table 1: Discrete Development Resources within the NCI Stepping Stones Program

| Resource Component | Function in Development Pathway | Eligibility Criteria | Technical Scope & Limitations |

|---|---|---|---|

| Drug Development Consultation | Initial advisory meeting with NCI development experts to assess candidate viability and identify critical gaps [6]. | NCI grantees with a grant-supported therapeutic candidate [6]. | Strategic assessment; does not include direct experimental work. |

| Preclinical Efficacy Studies | Provides in vitro and in vivo data to validate mechanism of action and demonstrate proof-of-concept [6]. | Well-characterized therapeutic candidate with preliminary efficacy data [6]. | Non-GLP studies; focuses on bridging efficacy gaps. |

| Discrete Gap-Filling Studies | Addresses the single most critical product development gap identified during consultation [6]. | Projects invited for further discussion post-consultation [6]. | Preclinical scope only; IND-enabling GLP/GMP support is not provided [6]. |

Funding Landscape for Evidence-Use Research

Table 2: Grant Structures for Research on Evidence Utilization in Youth-Serving Systems

| Grant Type | Funding Range & Duration | Ideal For | Eligibility Requirements |

|---|---|---|---|

| Major Research Grants | $100,000 to $1,000,000 over 2-4 years [11]. | Studies involving new data collection or randomized experiments in settings (e.g., schools, agencies) [11]. | Tax-exempt organizations; PIs must meet institutional criteria [11]. |

| Officers’ Research Grants | $25,000 to $50,000 over 1-2 years [11]. | Stand-alone projects or projects building off larger studies; secondary data analysis [11]. | Same as Major Grants; one application per PI per cycle [11]. |

Experimental Protocols for Stepping Stone Engagement

Protocol: Drug Development Consultation Request

Objective: To secure a strategic consultation with NCI/DCTD staff to evaluate a grant-supported therapeutic candidate and identify the most critical development gap for potential resource deployment [6].

Workflow Overview: The following diagram outlines the key stages a research program undergoes when engaging with the Stepping Stones Program, from initial application to potential project completion.

Materials and Reagents:

- Research Grant Award Documentation [6]

- Therapeutic Candidate Data Package (including chemical/biological characterization, preliminary efficacy data, and target validation data) [6]

- NCI Drug Development Consultation Request Form

Procedure:

- Eligibility Verification: Confirm that the therapeutic candidate and research program are supported by an active or recent NCI/DCTD grant [6].

- Data Package Compilation: Assemble a comprehensive data package for the candidate. This must include:

- Target Validation Data: Evidence supporting the target's role in the disease and its validation as a therapeutic intervention point [6].

- Preclinical Efficacy: In vitro and/or in vivo data demonstrating the candidate's biological activity [6].

- Candidate Characterization: Data on the candidate's chemical structure, purity, and preliminary stability (for small molecules) or sequence and expression data (for biologics).

- Consultation Submission: Complete and submit the official Drug Development Consultation Request to the NCI/DCTD.

- Strategic Presentation: Present the candidate's value proposition and current development status during the scheduled consultation meeting. Focus the discussion on specific, discrete knowledge gaps rather than broad resource needs.

- Gap Identification: Work with the NCI panel to reach a consensus on the single most critical product development gap that, if filled, would most significantly advance the project toward the next development milestone.

Protocol: Execution of Discrete In Vivo Efficacy Study

Objective: To generate robust in vivo efficacy data for a therapeutic candidate using NCI/DCTD resources, addressing a predefined development gap.

Materials and Reagents:

- Test Article: Therapeutic candidate with quality control data (e.g., purity, potency, stability).

- Animal Model: Validated preclinical model (e.g., patient-derived xenograft, genetically engineered mouse model) relevant to the proposed cancer indication [6].

- Vehicle Control: Appropriate formulation vehicle matching the test article's formulation.

- Reference Control: Standard-of-care therapeutic agent, if applicable.

- Data Collection System: Electronic system for recording tumor measurements, animal weights, and clinical observations.

Procedure:

- Study Finalization: Finalize the study protocol in coordination with NCI/DCTD staff, defining primary and secondary endpoints, statistical power, and inclusion/exclusion criteria.

- Model Allocation: Randomize animals into predefined treatment and control groups (e.g., Vehicle Control, Reference Control, Test Article at multiple doses) to ensure group equivalency at baseline.

- Dosing Regimen: Administer the test article, vehicle, and reference control according to the scheduled route and frequency (e.g., oral gavage, intraperitoneal injection, intravenous injection).

- Efficacy Monitoring: Measure tumor volumes and record animal body weights 2-3 times weekly. Calculate tumor growth inhibition (TGI) for each animal relative to the vehicle control group.

- Endpoint Analysis: Humanely euthanize animals at the study endpoint. Process and collect tumors and key tissues for potential subsequent biomarker analysis.

- Data Analysis and Reporting: Analyze the data to determine the statistical significance of the results. The final study report should include individual and mean tumor growth curves, TGI calculations, body weight curves, and any observations on toxicity or mortality.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Stepping Stone Development Projects

| Reagent/Material | Function in Development Pathway | Key Specifications |

|---|---|---|

| Validated Animal Models | In vivo assessment of preclinical efficacy and toxicity in a biologically relevant system [6]. | PDX, syngeneic, or genetically engineered models; well-characterized and validated. |

| Analytical Reference Standards | Quantification of drug substance and metabolite levels for pharmacokinetic (PK) and stability studies. | High purity (>95%); characterized structure; known stability profile. |

| Target-Specific Biomarker Assays | Demonstrate proof of mechanism and patient stratification potential [6]. | Validated assay (e.g., ELISA, IHC, PCR); established dynamic range and precision. |

| Formulation Vehicles | Enable in vivo dosing by ensuring candidate solubility and stability at administration. | Biocompatible; does not interact with the API; suitable for planned route of administration. |

Data Visualization and Analysis Framework

Effective data presentation is critical for demonstrating the impact of discrete development resources. When comparing quantitative data between groups—such as treated versus control groups in an efficacy study—the data should be summarized for each group, and the difference between the means or medians must be computed [12]. Appropriate graphical representations include boxplots, which visually summarize the distribution of data using quartiles and medians and are excellent for comparing groups, or dot charts for smaller datasets [12].

Diagram: Conceptual Framework for Stepping Stone Impact Analysis The following diagram illustrates the logical relationship between grant funding, the deployment of discrete resources, and the resulting project outcomes and data generation that fuel further development.

A Methodological Framework for Identifying and Deploying Project-Specific Stepping Stones

Conducting a Strategic Gap Analysis for Your Development Program

A strategic gap analysis is an essential tool in drug development, serving as a proactive evaluation to identify missing, incomplete, or insufficient data in a development program before regulatory submission. This process helps prioritize actions to meet regulatory expectations, improve safety profiles, and enhance therapeutic effectiveness, ultimately positioning development programs for successful regulatory interactions and approvals. By systematically comparing current program status with target requirements, teams can identify critical gaps that could become decision-making hurdles during development or regulatory obstacles at the time of approval [13] [14].

The fundamental purpose of gap analysis lies in its ability to reduce the significant uncertainty inherent in drug development. Clinical pharmacology and quantitative frameworks can substantially improve development efficiency by addressing scientific challenges in predicting efficacy, safety, and characterizing sources of response variability at earlier, less expensive development stages. When properly executed, gap analysis provides a strategic roadmap that translates model-informed drug development (MIDD) approaches into the decision-making process, potentially replacing certain clinical studies with validated models and simulations [13].

For researchers and drug development professionals, understanding how to conduct a thorough gap analysis is particularly valuable within the context of stepping stone identification – the process of systematically recognizing and addressing sequential development milestones that build upon one another to advance a compound toward successful registration. This methodology ensures that each development phase adequately supports the next, creating a coherent path from discovery to market approval.

A Systematic Methodology for Gap Analysis

Foundational Principles and Process

The gap analysis process begins with a comprehensive evaluation of all available compound data and information, including the Target Product Profile (TPP), Investigator's Brochure, clinical study plans, regulatory meeting minutes, and all available pre-clinical and clinical technical data [13]. This systematic assessment should be conducted against established regulatory frameworks, such as the FDA's Question Based Review (QBR) process for clinical pharmacology, which focuses on critical areas including dose selection and optimization, therapeutic individualization, and benefit/risk balance for general and specific populations [13].

A robust gap analysis answers several key questions [13]:

- Will completed or planned studies support regulatory QBR and labeling requirements?

- Are collected data sufficient to support planned analyses?

- Does the quality of existing data, analyses, study designs, and overall clinical approach support the desired regulatory strategy?

- Are we leveraging the best available science and technology?

- Does existing data support the goals of the TPP?

- Is additional evidence needed, and if so, is it better obtained through standalone studies or quantitative analyses?

Cross-Functional Assessment Framework

Strategic gap analysis in drug development encompasses multiple specialized domains, each requiring specific evaluation criteria. The table below outlines the primary types of gap analyses conducted in life sciences development programs:

Table: Types of Gap Analyses in Drug Development Programs

| Analysis Type | Primary Focus | Key Evaluation Criteria | Development Stage |

|---|---|---|---|

| Regulatory [14] | Identify gaps in data or documentation supporting regulatory submissions | Compliance with FDA regulations/guidances; adequacy of safety information; meeting readiness | Pre-IND through NDA/BLA submission |

| Clinical [14] | Evaluate adequacy of clinical trial protocols, reports, and overall program | Trial design appropriateness; endpoint selection; patient population; GCP compliance | Phase 1 through Phase 3 |

| Nonclinical [14] | Assess gaps in nonclinical data package | Pharmacology, PK, and toxicology data adequacy; support for proposed clinical trials | Early development through approval |

| CMC [14] | Evaluate manufacturing processes and controls | Commercial-scale production capability; stability data; shelf-life support | Early development through commercial |

| Commercial/Market Access [14] | Identify gaps affecting successful product launch | Payer requirements; physician needs; patient access; cost-effectiveness | Throughout development, especially prior to Phase 3 |

The timing of gap analysis is strategic throughout the development lifecycle. While best conducted early, it provides value at multiple milestones including prior to IND submission, End of Phase 1 (EOP1), End of Phase 2 (EOP2), and pre-NDA/BLA [13]. At each stage, the analysis ensures the program contains all elements needed to support regulatory review and informative, actionable product labeling.

Quantitative Framework for Gap Analysis Assessment

Performance-Potential Assessment Matrix

A structured quantitative approach to gap analysis enables objective assessment of development program elements. The following table demonstrates a framework adapted from validated drug development policy research, which evaluates both current performance and potential situation across critical constructs [15]:

Table: Gap Analysis Assessment Framework for Drug Development Programs

| Construct | Key Indicators | Current Performance (1-5) | Potential Situation (1-5) | Gap Score | Priority Level |

|---|---|---|---|---|---|

| Regulation [15] | Drug development guidelines; Registration pathways; Pricing considerations; Regional harmonization | ||||

| Pharma Capacity [15] | Competent HR; GMP facilities; Quality testing; R&D capabilities; Partnership networks | ||||

| Drug Characteristics [15] | Non-clinical data; Clinical trials phases; Bioequivalence/bioavailability; Safety profile | ||||

| Market Opportunities [15] | Affordable pricing; Return on investment; Market size; Competitive landscape | ||||

| Push Strategies [15] | Research funding; Tax incentives; Public research support; Infrastructure development | ||||

| Pull Strategies [15] | Reimbursement policies; Procurement mechanisms; Market exclusivity | ||||

| Regulatory-Pull Strategies [15] | Accelerated approval; Adaptive pathways; Regulatory fee reductions |

The gap score is calculated as the difference between the potential situation rating (importance) and current performance rating, with larger gaps indicating higher priority areas for intervention. This quantitative approach enables evidence-based prioritization during the policymaking and resource allocation process [15].

Statistical Assessment of Group Differences

When comparing perspectives between stakeholders (e.g., pharmaceutical industry vs. government regulators), independent samples t-tests can determine the significance of differences in perceived challenges and opportunities. Research has demonstrated that while pharmaceutical industries and governments often show high consistency in perceived drug development challenges, statistically significant differences in specific areas can reveal critical policy-implementation gaps that must be addressed [15].

For quantitative data comparison between groups, appropriate statistical summaries and visualizations include [12]:

- Back-to-back stemplots: Effective for small datasets and two-group comparisons

- Boxplots: Ideal for summarizing distributions across multiple groups using five-number summaries

- 2-D dot charts: Suitable for small to moderate amounts of data, showing individual observations

These methodological approaches facilitate objective assessment of development gaps and stakeholder alignment, providing empirical evidence for strategic decision-making.

Experimental Protocols for Gap Analysis Implementation

Protocol 1: Comprehensive Program Assessment

Objective: To systematically identify and prioritize gaps across all development domains for a compound entering Phase 2 development.

Materials:

- Complete Target Product Profile (TPP)

- Investigator's Brochure (current version)

- All available pre-clinical and clinical study reports

- Clinical study protocols (completed and planned)

- Regulatory correspondence and meeting minutes

- Competitive intelligence analysis

- Relevant regulatory guidelines (FDA, EMA, etc.)

Methodology:

- Document Collection and Organization: Assemble all program documents in a standardized digital repository with controlled access for the assessment team.

- Regulatory Framework Mapping: Create a matrix mapping current program data against specific regulatory requirements for the targeted indication, including:

- FDA Question Based Review (QBR) requirements for clinical pharmacology [13]

- ICH guideline requirements for nonclinical (M3[R2]), clinical (E8), and CMC (Q8-Q11)

- Therapeutic-area specific guidance documents

- Cross-Functional Team Assembly: Convene a multidisciplinary team including expertise in:

- Clinical pharmacology and pharmacometrics [13]

- Nonclinical development (toxicology, pharmacology)

- CMC (manufacturing, analytical, controls)

- Clinical development (medical, operations, biostatistics)

- Regulatory affairs

- Commercial/market access

- Structured Assessment Sessions: Conduct facilitated sessions for each functional area to evaluate:

- Completeness of existing data package

- Adequacy of planned studies

- Alignment with TPP goals

- Identification of potential regulatory objections

- Gap Prioritization: Score identified gaps using a risk-based matrix considering:

- Impact on program viability and regulatory approval

- Feasibility of addressing the gap within development timelines

- Resource requirements for gap closure

- Strategic Roadmap Development: Create a comprehensive plan to address prioritized gaps, including:

- Recommended studies or analyses

- Timeline implications

- Resource requirements

- Go/no-go decision points

Deliverables: Comprehensive gap analysis report, prioritized gap closure plan, updated development strategy, and regulatory engagement strategy.

Protocol 2: Clinical Pharmacology-Specific Gap Analysis

Objective: To evaluate the adequacy of the clinical pharmacology package in supporting dose selection, therapeutic individualization, and key labeling claims.

Materials:

- Pharmacokinetic (PK) data from all completed studies

- Pharmacodynamic (PD) biomarker data (if available)

- Exposure-response analyses for efficacy and safety

- Population PK and covariate analysis results

- Drug-drug interaction (DDI) study data or plans

- Hepatic and renal impairment study data or plans

- QTc assessment data

- Physiologically-based pharmacokinetic (PBPK) models (if developed)

Methodology:

- Dose Justification Assessment: Evaluate evidence supporting the proposed dosing regimen, including:

- Exposure-response relationships for efficacy and safety

- Dose-response data from clinical trials

- Modeling and simulation supporting dose selection [13]

- Intrinsic Factor Evaluation: Assess characterization of the impact of intrinsic factors (age, weight, renal/hepatic impairment, etc.) on PK/PD [13]

- Extrinsic Factor Assessment: Review evaluation of drug-drug interaction potential and food effects

- Formulation Assessment: Evaluate comparative bioavailability data supporting formulation changes

- Labeling Claim Support: Verify data adequacy for key clinical pharmacology labeling sections

- Model-Informed Drug Development (MIDD) Review: Assess utilization of pharmacometric approaches (population PK, PBPK, exposure-response, etc.) to support development decisions [13]

Deliverables: Clinical pharmacology gap assessment report, recommended studies and analyses, pharmacometrics strategy, and regulatory response plan.

Workflow Visualization of Gap Analysis Process

Strategic Gap Analysis Workflow

Strategic Gap Analysis Workflow

Stepping Stone Identification Process

Stepping Stone Identification in Drug Development

The Scientist's Toolkit: Essential Research Reagent Solutions

Table: Key Analytical Tools and Methods for Gap Analysis

| Tool/Method Category | Specific Solutions | Application in Gap Analysis | Regulatory Context |

|---|---|---|---|

| Pharmacometric Modeling [13] | Population PK, Exposure-response, Disease-state modeling | Predict clinical outcomes; Support dose recommendations; Inform go/no-go decisions | FDA QBR support; Labeling claims |

| PBPK Modeling [13] | Physiologically-based pharmacokinetic platforms | Inform clinical trial design; Predict DDIs; Special populations dosing | Regulatory acceptance for study waivers |

| Quantitative Systems Pharmacology [13] | QSP platforms and models | Identify biological pathways; Disease mechanism modeling | Internal decision-making; Early development |

| Model-based Meta-analysis [13] | Curated clinical trial databases | Competitive positioning; Trial optimization; Endpoint selection | Commercial strategy support |

| Clinical Trial Data Standards [14] | CDISC SDTM/ADaM; Controlled terminologies | Regulatory submission readiness; Data interoperability | Required for electronic submissions |

| Color Contrast Analyzers [16] [17] | axe DevTools; Color contrast analyzers | Ensure accessibility of data visualizations | WCAG 2.1 AA compliance |

| Data Visualization Tools [18] [19] | Scientific visualization software; Accessible color palettes | Create effective comparative charts; Accessible figures | Communication clarity; Regulatory documents |

These tools enable the quantitative assessment and visualization necessary for robust gap analysis. When selecting and implementing these solutions, consider regulatory acceptance, validation requirements, and fit-for-purpose based on the specific gap analysis objectives [13] [18].

Strategic gap analysis, when conducted systematically using these protocols and tools, provides an evidence-based approach to identifying and addressing development program weaknesses before they become regulatory objections. By implementing gap analysis at key development milestones, teams can optimize resource allocation, reduce late-stage attrition, and increase the likelihood of regulatory success [13] [14].

In the strategic landscape of drug development, navigating the regulatory pathway is not a single event but a sequential process of critical engagements. Each regulatory interaction functions as an essential stepping stone, where success in one stage creates the foundation for the next. This application note delineates protocols for engaging with regulatory agencies and expert panels, framing these interactions within a broader methodology for identifying and deploying these strategic stepping stones. We provide a structured approach for researchers and drug development professionals to plan, execute, and leverage these consultations to accelerate the development of novel therapies, particularly in complex areas like rare diseases and advanced therapeutic medicinal products (ATMPs) [20] [21].

The contemporary regulatory environment is characterized by both innovation and uncertainty. Recent staffing reductions at key agencies like the FDA may lead to longer review times for applications such as Investigational New Drug (IND) applications, New Drug Applications (NDAs), and Biologics License Applications (BLAs) [22]. In this context, a deliberate and well-defined strategy for regulatory consultation is not merely beneficial—it is critical for maintaining development momentum and securing timely approvals.

The Strategic Framework: Consultation as a Stepping-Stone Process

The process of drug development can be conceptualized as a series of validated stepping stones, where each formal regulatory interaction provides the necessary footing to advance confidently to the next development phase. A failed or poorly managed engagement can break the chain, resulting in significant delays and resource expenditure.

The diagram below illustrates this sequential, conditional process of regulatory engagement.

This framework underscores that regulatory success is built upon a sequence of preparatory steps. Each "stone" must be securely placed through meticulous preparation, data integrity, and strategic communication before progressing to the next.

Quantitative Landscape of Regulatory Tools and Pathways

A variety of formal programs exist to facilitate regulatory dialogue and qualify the tools used in development. Understanding the quantitative aspects of these programs is key to their strategic deployment.

Table 1: Key Regulatory Qualification and Guidance Programs

| Program / Tool | Regulatory Body | Primary Objective | Key Quantitative Metrics / Timelines |

|---|---|---|---|

| Drug Development Tool (DDT) Qualification [23] | U.S. FDA | To qualify biomarkers, clinical outcome assessments, and animal models for a specific Context of Use (COU) in drug development. | Publicly available for any drug development program within the qualified COU; reduces need for re-analysis in INDs, NDAs, BLAs. |

| Novel Methodologies Qualification [24] | EMA (CHMP) | Issue opinions on the acceptability of a novel methodology (e.g., biomarker, imaging method) in medicine development. | Leads to a CHMP Qualification Opinion; public consultation period included; based on submitted data. |

| Product-Specific Guidances (PSGs) [25] | U.S. FDA | Provide recommendations on bioequivalence studies for generic drug products. | Published quarterly; categorized by complexity (Complex/Non-Complex); revision types: Critical, Major (In Vivo/In Vitro), Minor, Editorial. |

| SPIRIT 2025 Statement [26] | International Consensus | Standardized protocol items for clinical trials (34 minimum items). | 317 participants in Delphi survey; 30 experts in consensus; improves protocol completeness and transparency. |

The strategic deployment of these tools can significantly alter the development trajectory. For instance, the DDT qualification process, established under the 21st Century Cures Act, creates a publicly available tool that can be used across multiple drug development programs, thereby increasing efficiency and reducing the resource burden on individual sponsors [23]. Similarly, the European Medicines Agency (EMA) encourages the formation of collaborative groups to pool resources and data for methodology qualification [24].

Application Note: Protocol for a Pre-IND Meeting

The Pre-IND meeting is a critical initial stepping stone, setting the stage for a successful IND application and subsequent clinical trials. The following protocol provides a detailed methodology for preparing for and executing this key engagement.

Experimental Protocol: Pre-IND Meeting Engagement

1. Objective: To obtain FDA alignment on initial non-clinical and CMC requirements, proposed clinical trial design, and overall development plan for a novel orphan drug product.

2. Background and Rationale: Early, proactive communication with regulatory authorities is a recognized best practice to mitigate risk and navigate an evolving regulatory landscape [22]. This is especially critical for novel modalities like cell and gene therapies [21]. This protocol standardizes the approach to secure targeted and actionable feedback.

3. Materials and Reagent Solutions: Table 2: Essential Research Reagents for Regulatory Submissions

| Research Reagent / Document | Function / Explanation |

|---|---|

| Integrated Summary of Non-Clinical Data | Provides a comprehensive analysis of pharmacology, toxicology, and ADME studies to support the proposed clinical starting dose and schedule. |

| Proposed Clinical Protocol (v1.0) | Detailed study plan for the Phase 1 trial, including SPIRIT 2025 elements like eligibility, endpoints, and statistical analysis plan [26]. |

| CMC (Chemistry, Manufacturing, Controls) Briefing Document | Summarizes the manufacturing process, characterization, and controls for the drug substance and product to ensure quality and consistency. |

| Pre-IND Briefing Package | The core document submitted to the agency, containing all integrated data, questions, and the clinical protocol, forming the basis for discussion. |

4. Procedure/Methodology:

- Step 1: Internal Alignment and Question Development (Week 1-2). Convene an internal cross-functional team (clinical, non-clinical, CMC, regulatory) to draft a prioritized list of strategic questions. Questions should be focused, non-amendable, and critical to the program's success (e.g., "Does the Agency agree that the proposed non-clinical package is sufficient to support initiating a Phase 1 trial in patient population X?").

- Step 2: Briefing Package Submission (Week 3-6). Compile and submit a comprehensive briefing package to the FDA, typically 6-8 weeks prior to the scheduled meeting. This package should contain all relevant data, the proposed clinical protocol, and the formal list of questions.