Single vs. Double Precision in Ecological Simulations: A Practical Guide for Accuracy and Performance

The choice between single and double floating-point precision is a critical, yet often overlooked, decision in ecological and environmental simulation modeling.

Single vs. Double Precision in Ecological Simulations: A Practical Guide for Accuracy and Performance

Abstract

The choice between single and double floating-point precision is a critical, yet often overlooked, decision in ecological and environmental simulation modeling. This article provides a comprehensive analysis for researchers and scientists, exploring the fundamental trade-offs between computational speed and numerical accuracy. We examine the theoretical foundations of floating-point arithmetic, present methodological approaches for implementing different precision levels, and offer strategies for troubleshooting common numerical errors. Through validation case studies and performance comparisons, we deliver evidence-based guidance to help practitioners select the appropriate precision for their specific research questions, from large-scale climate forecasts to fine-scale ecosystem models, ensuring both reliable results and efficient resource utilization.

Understanding Floating-Point Precision: Core Concepts and Ecological Implications

In scientific computing, particularly in ecological simulation, the choice of floating-point precision is a critical determinant of both the accuracy of results and the computational efficiency of models. Floating-point numbers allow computers to represent real numbers across an extreme range of magnitudes, from the atomic to the galactic scale, making them indispensable for scientific applications [1]. The Institute of Electrical and Electronics Engineers (IEEE) 754 standard establishes consistent formats for these representations, with single-precision (32-bit) and double-precision (64-bit) being the most prevalent in scientific computing [2] [1] [3].

The tension between computational cost and numerical accuracy forms the core challenge in precision selection. As ecological models grow in complexity and spatial resolution, the computational demands can become prohibitive [4] [5]. This guide provides an objective comparison of single and double-precision floating-point formats, with specific attention to their implications for ecological simulation results, to empower researchers in making informed decisions for their computational experiments.

Technical Specifications: A Structural Comparison

The fundamental architectural differences between single and double-precision formats directly influence their computational characteristics and suitability for different applications.

Structural Composition

- Single-Precision (FP32): Utilizes 32 bits of computer memory: 1 bit for the sign, 8 bits for the exponent, and 23 bits for the significand (fraction/mantissa) [2] [1] [6]. The exponent employs a bias of 127 [2] [6].

- Double-Precision (FP64): Utilizes 64 bits: 1 bit for the sign, 11 bits for the exponent, and 52 bits for the significand [7] [1] [6]. The exponent bias is 1023 [1] [6].

The "hidden bit" convention in both formats adds an implicit leading 1 to the significand, effectively providing 24 bits of precision for single-precision and 53 bits for double-precision [2].

Quantitative Comparison

Table 1: Technical specification comparison between single and double-precision formats.

| Feature | Single-Precision (FP32) | Double-Precision (FP64) |

|---|---|---|

| Total Bits | 32 bits [1] [6] | 64 bits [1] [6] |

| Sign Bits | 1 [2] [1] | 1 [7] [1] |

| Exponent Bits | 8 [2] [1] [6] | 11 [7] [1] [6] |

| Significand Bits | 23 (effectively 24) [2] [6] | 52 (effectively 53) [7] [6] |

| Exponent Bias | 127 [2] [6] | 1023 [1] [6] |

| Approximate Decimal Precision | 7-8 significant digits [2] [6] | 15-16 significant digits [6] |

| Numerical Range | ±1.18×10⁻³⁸ to ±3.4×10³⁸ [2] | ±2.23×10⁻³⁰⁸ to ±1.80×10³⁰⁸ [1] |

| Memory Usage | 4 bytes [1] | 8 bytes [1] |

Performance and Accuracy in Scientific Applications

The choice between precision formats represents a fundamental trade-off between computational efficiency and numerical accuracy, with significant implications for ecological modeling.

Computational Performance and Resource Utilization

Single-precision operations generally provide superior computational performance due to several factors: they require less memory bandwidth, enable better cache utilization, and on certain hardware (particularly GPUs), can be executed at higher throughput [1] [3] [8]. In practice, this can translate to speed improvements of approximately 30-40% in scientific simulations [5]. One study on the Model for Prediction Across Scales – Atmosphere (MPAS-A) reported runtime reductions of 5.7% to 28.6% when using optimized single-precision approaches compared to pure double-precision [5].

The memory advantage is also substantial – single-precision requires exactly half the memory of double-precision for storing floating-point values [1] [6]. This difference becomes critically important when working with large ecological datasets or high-resolution models where memory bandwidth often represents a primary bottleneck.

Accuracy and Round-off Error Considerations

Double-precision's primary advantage lies in its superior accuracy and reduced susceptibility to round-off errors [5] [6]. The additional significand bits provide approximately twice the decimal precision, which becomes crucial when dealing with:

- Ill-conditioned problems where small errors propagate dramatically

- Long-time simulations where round-off errors accumulate over millions of operations

- Processes with widely varying scales where adding large and small numbers can cause catastrophic cancellation [5]

Recent research in fluid dynamics turbulence simulations has demonstrated that "flow physics are remarkably robust with respect to reduction in lower floating-point precision" [9]. In many cases, other uncertainty sources, such as time averaging, had greater impact on results than precision reduction [9].

Table 2: Performance and accuracy trade-offs in precision selection.

| Characteristic | Single-Precision | Double-Precision |

|---|---|---|

| Computational Speed | Faster (ideal for real-time applications) [1] [6] | Slower due to increased processing requirements [1] [6] |

| Memory Efficiency | Higher (4 bytes per value) [1] | Lower (8 bytes per value) [1] |

| Numerical Accuracy | ~7-8 decimal digits [2] [6] | ~15-16 decimal digits [6] |

| Error Accumulation | Higher risk in long-running simulations [5] | Lower risk due to reduced round-off error [5] |

| Typical Applications | Graphics processing, machine learning, games [1] [6] | Scientific computing, financial calculations, high-fidelity simulation [1] [6] |

Experimental Protocols and Case Studies in Environmental Science

Climate and Oceanographic Modeling

Research into precision reduction for climate and ocean models provides valuable insights for ecological simulation. The NEMO (Nucleus for European Modelling of the Ocean) ocean model study found that 95.8% of its 962 variables could be computed using single precision without significant accuracy loss [4] [5]. Similarly, the Regional Ocean Modeling System (ROMS) demonstrated that all 1146 variables could use single precision, with 80.7% compatible with half-precision [5].

Methodology: Researchers typically employ a porting tool to automatically convert model code to mixed precision, then analyze the impact on key output variables across different test cases. The evaluation compares results against double-precision reference simulations using statistical metrics to identify precision-sensitive components [4].

Turbulence Simulation Studies

A multi-solver investigation published in 2025 examined effects of reduced precision on scale-resolving numerical simulations of turbulence across four computational fluid dynamics solvers [9]. The study employed test cases including turbulent channel flow and compressible flow over a wing section.

Experimental Protocol:

- Implement identical simulation setups across multiple CFD solvers

- Execute parallel simulations in single and double precision

- Compare results using statistical analysis of flow fields

- Evaluate differences against other uncertainty sources (e.g., time averaging)

Finding: "Standard IEEE single precision can be used effectively for the entirety of the simulation, showing no significant discrepancies from double-precision results across the solvers and cases considered" [9].

Precision Compensation Algorithms

When pure single precision proves insufficient, quasi-double-precision (QDP) algorithms offer a middle ground. Applied to the MPAS-A model, the QDP algorithm "reduces the surface pressure bias by 68%, 75%, 97%, and 96%" across different test cases while maintaining runtime reductions of 5.7% to 28.6% compared to pure double precision [5].

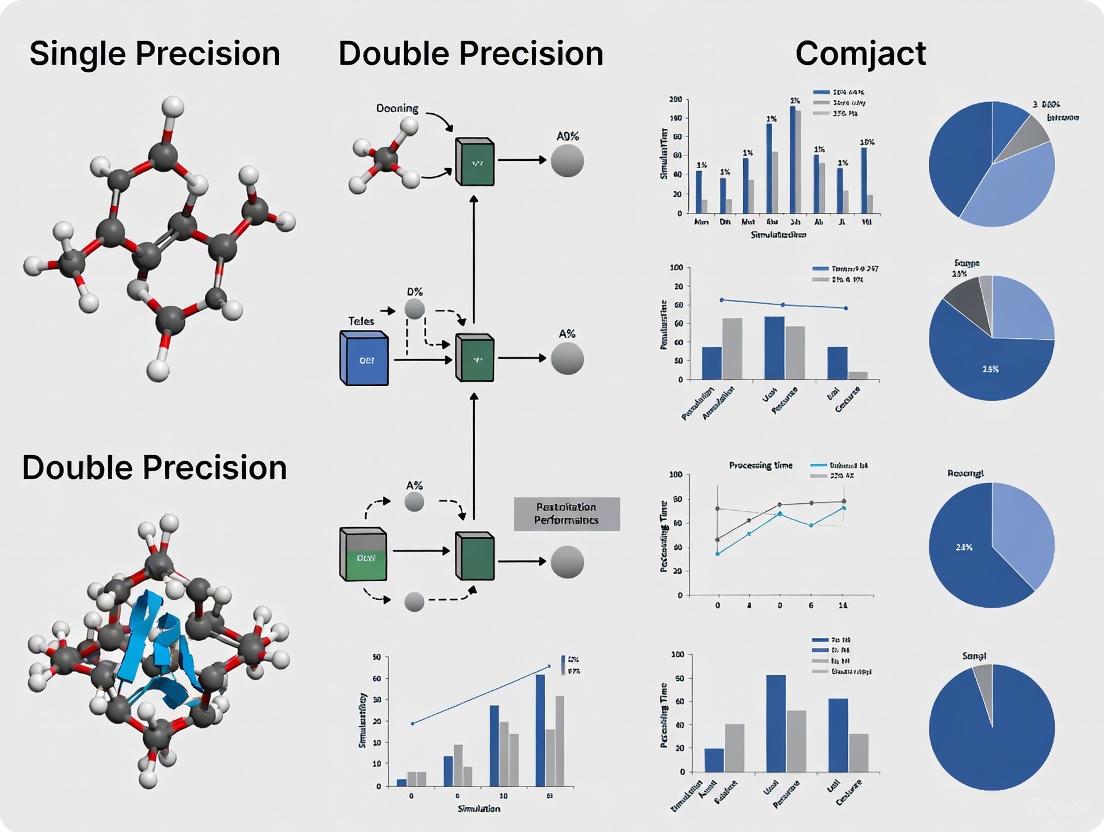

Figure 1: Decision workflow for selecting appropriate precision in ecological simulations.

Mixed-Precision Strategies and Implementation

Mixed-Precision Computing

Mixed-precision computing, sometimes called transprecision, strategically employs different precision formats within a single application [3]. This approach performs the majority of calculations in lower precision (typically single) while reserving double precision for critical operations that determine numerical stability [5]. In machine learning applications, this often involves starting with half-precision (16-bit) values for rapid matrix multiplication, then storing accumulated results at higher precision [3].

Implementation Framework:

- Precision Auditing: Profile the model to identify variables sensitive to precision reduction

- Selective Promotion: Assign double precision only to precision-critical variables

- Validation: Compare mixed-precision results against double-precision benchmarks

- Optimization: Iteratively adjust precision assignment to balance performance and accuracy

The Researcher's Toolkit: Precision Management Solutions

Table 3: Essential tools and techniques for precision management in ecological simulation.

| Tool/Technique | Function | Application Context |

|---|---|---|

| Auto-Porting Tools | Automatically converts code to mixed precision | Identifying precision-sensitive code sections [4] |

| Quasi-Double-Precision (QDP) | Compensates for round-off errors in single precision | Maintaining accuracy while reducing precision [5] |

| Precision Emulation | Allows higher precision on hardware with native lower precision | Testing precision effects without specialized hardware [10] |

| Error Metric Analysis | Quantifies impact of precision reduction on model outputs | Validation of mixed-precision implementations [9] |

| Selective Precision Promotion | Applies higher precision only to critical operations | Balancing performance and accuracy [5] |

The choice between single and double precision in ecological simulation involves contextual trade-offs rather than universal prescriptions. For many applications, particularly those constrained by memory bandwidth or computational throughput, single precision provides sufficient accuracy with significant performance gains [9] [6]. For simulations requiring extreme numerical fidelity, modeling widely disparate scales, or running over extended temporal horizons, double precision remains necessary [5] [8].

Future developments in precision-aware algorithms and specialized hardware will likely expand the viable applications of reduced precision in scientific computing [10]. The emerging paradigm of precision as a tunable parameter, rather than a fixed constraint, promises to enhance both the efficiency and capability of ecological simulations, enabling more complex models and higher resolutions within existing computational resources [4] [5].

Figure 2: Mixed-precision simulation workflow with error compensation.

In the realm of numerical computing, particularly within ecological simulations, researchers face an unavoidable challenge: balancing the inherent errors that arise from representing continuous natural phenomena with discrete computational methods. These errors represent a fundamental trade-off that directly impacts the reliability, accuracy, and computational feasibility of environmental forecasts. As ecological models grow increasingly complex—aiming to create digital replicas of Earth systems with unprecedented precision—understanding and managing these errors becomes paramount for supporting real-time decision-making and long-term adaptation strategies [4].

Numerical errors primarily manifest in two distinct forms: rounding errors that stem from how computers represent numbers with finite precision, and truncation errors that arise from mathematical approximations of infinite processes. For researchers working with single versus double precision ecological simulations, this trade-off presents critical decisions in model design. Reduced precision calculations can dramatically improve computational efficiency and reduce resource consumption—vital considerations for large-scale or real-time forecasting—but may introduce unacceptable errors that compromise predictive validity [11]. This guide systematically compares these error types, their behaviors in ecological contexts, and provides experimental frameworks for quantifying their impacts on simulation outcomes.

Defining Rounding and Truncation Errors

In numerical analysis, errors are categorized based on their origin and behavior. Rounding error, also called arithmetic error, is an unavoidable consequence of working in finite precision arithmetic [12]. Computers use a finite amount of memory (64-bits for double precision) to store floating point numbers, which means they cannot represent the infinite set of numbers in the number line exactly [13]. This leads to approximations when storing values and during arithmetic operations. A classic example is the number 0.1, which cannot be exactly represented in floating point format and is actually stored as approximately 0.10000000000000000555 [13].

Truncation error, also called discretization or approximation error, arises when infinite mathematical processes are approximated by finite ones [12]. Many standard numerical methods (for example, the trapezoidal rule for quadrature, Euler's method for differential equations, and Newton's method for nonlinear equations) can be derived by taking finitely many terms of a Taylor series. The terms omitted constitute the truncation error [12]. For instance, when approximating a derivative using the finite difference method ( f'(x) \approx \frac{f(x+h) - f(x)}{h} ), the error introduced is proportional to ( h )—a classic truncation error [13].

Comparative Analysis of Error Properties

Table 1: Fundamental Characteristics of Rounding and Truncation Errors

| Property | Rounding Error | Truncation Error |

|---|---|---|

| Origin | Finite precision of computer arithmetic | Approximation of mathematical procedures |

| Dependence | Computer architecture, precision level (single/double) | Algorithm choice, step size, discretization method |

| Behavior | Generally unpredictable, can accumulate | Often quantifiable, typically reduces with refined approximation |

| Control Methods | Increased precision, algorithmic restructuring | Decreasing step size, higher-order methods |

| Impact in Chaotic Systems | Can trigger divergent solutions via butterfly effect | Affects convergence rate and stability |

The essential trade-off emerges from the relationship between these errors in practical computation. As one reduces truncation error by using smaller step sizes or higher-order methods, the number of computational operations increases, potentially amplifying rounding errors. Conversely, reducing operations to minimize rounding error may necessitate larger step sizes that increase truncation error. This fundamental tension necessitates careful balancing based on the specific requirements of each ecological simulation [13].

Quantitative Error Analysis in Ecological Simulations

Experimental Protocols for Error Quantification

To assess the impact of precision choices in ecological modeling, researchers can implement the following experimental methodologies:

Error Propagation Analysis Protocol:

- Baseline Establishment: Run simulations using high-precision benchmarks (double64) as reference values

- Precision Variation: Execute identical simulations with reduced precision formats (float32, float16)

- Error Metrics Calculation: Compute quantitative error measures including:

- Statistical Analysis: Perform sensitivity analysis across multiple simulation runs with varying initial conditions

Mixed-Precision Implementation Protocol:

- Variable Sensitivity Classification: Categorize model variables based on their mathematical properties and physical sensitivities [11]

- Precision Allocation: Assign precision levels (double64, float32, float16) to variable types based on their sensitivity

- Performance Benchmarking: Compare computational efficiency (execution time, memory usage) against accuracy metrics

- Validation: Verify physical realism of results against observational data

Case Study: MASNUM Ocean Wave Model

The MArine Science and Numerical Modeling (MASNUM) ocean wave model provides an exemplary case study for precision-error trade-offs in ecological simulations. Researchers applied a mixed-precision framework to this model, strategically reducing precision for non-critical variables while maintaining higher precision for sensitive components [11].

Table 2: Performance Metrics of Mixed-Precision Implementation in MASNUM Model

| Precision Scheme | Computational Speedup | Significant Wave Height SMAPE | Significant Wave Height RMSE | Memory Efficiency |

|---|---|---|---|---|

| Double-Precision Baseline | 1.0× | Baseline | Baseline | Baseline |

| Single-Precision (float32) | 2.97–3.39× | 0.12%–0.43% | 0.01m–0.02m | ~50% reduction |

| Mixed-Precision Framework | 2.97–3.39× | 0.12%–0.43% | 0.01m–0.02m | ~50% reduction |

The experimental results demonstrated that strategic precision reduction yielded substantial computational benefits with minimal accuracy loss. The mixed-precision approach achieved 2.97–3.39× speedup over double-precision baselines while maintaining SMAPE values for significant wave height between 0.12% and 0.43%, with RMSE ranging from 0.01m to 0.02m [11]. This highlights the potential for optimizing the error trade-off in ecological simulations.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Precision Management in Ecological Modeling

| Tool/Technique | Function | Application Context |

|---|---|---|

| Reduced-Precision Emulator (RPE) | Analyzes and implements precision reduction in existing models | NEMO ocean model precision optimization [11] |

| Automatic Mixed-Precision Porting Tools | Automates conversion of code to mixed precision | Barcelona Supercomputing Center's tool for oceanographic code [4] |

| CMIP6 Climate Projections | Provides future climate scenarios under different precision schemes | Ecological quality prediction using geographic information systems [15] |

| PLUS Model | Predicts land use patterns for ecological forecasting | Simulation of future ecological environment quality [15] |

| Taylor Diagrams | Visualizes model performance across multiple statistics | Evaluation of regression models for ecological indices [15] |

Visualization of Error Dynamics in Ecological Simulations

The following diagram illustrates how rounding and truncation errors propagate through a typical ecological simulation workflow and interact with precision decisions:

Chaos and Sensitivity: The Special Challenge of Ecological Systems

Ecological and climate systems exhibit chaotic behavior where small errors can amplify dramatically over time—the famous "butterfly effect" [16]. This behavior was first documented by Edward Lorenz in the 1950s when he discovered that rounding input parameters to three decimal places instead of six led to dramatically different weather forecasts [16]. In such systems, the trade-off between rounding and truncation errors becomes particularly critical.

The chaotic nature of climate systems means that tiny rounding errors can potentially trigger divergent solutions, similar to the uncertainty introduced by inexact initial conditions. This has profound implications for precision selection in ecological forecasting. As evidenced in operational settings, minute rounding errors can accumulate to create significant forecast discrepancies. In one documented case, a round-off error in the Patriot missile defense system's timing calculation resulted in a 687-meter shift in target tracking, far exceeding the 137-meter tolerance for considering a target out of range [17].

The trade-off between rounding and truncation errors in numerical solvers represents a fundamental consideration for ecological modelers. Rather than seeking to eliminate either error type—an impossibility—successful implementation requires strategic balancing based on the specific application context. The experimental evidence from oceanographic and climate modeling demonstrates that mixed-precision approaches can optimize this balance, delivering substantial computational gains with acceptable accuracy loss.

For researchers engaged in single versus double precision ecological simulations, the key lies in classifying variables by sensitivity, applying rigorous error quantification protocols, and continuously validating against physical realities. As ecological models grow increasingly critical for addressing environmental challenges, sophisticated management of numerical errors will remain essential for producing reliable, timely, and computationally feasible forecasts.

Numerical climate models are fundamental tools for understanding and projecting the future of Earth's climate, particularly for sensitive systems like permafrost. These models solve complex differential equations using discretized numerical algorithms and finite precision arithmetic, introducing two unavoidable sources of error: truncation errors from finite increments in time and space, and rounding errors from representing real numbers with finite-sized computer words [18]. While decades of research have focused on minimizing truncation errors through improved numerical techniques, the effects of rounding errors from floating-point arithmetic have received comparatively little attention [18]. The choice between single precision (32-bit) and double precision (64-bit) arithmetic represents a critical trade-off between computational efficiency and numerical accuracy, with profound implications for simulating slowly evolving systems like deep soil temperatures and permafrost dynamics.

The precision selection determines both the dynamic range (±10³⁰⁸ for double precision vs. ±10³⁸ for single precision) and the accuracy (machine precision ~10⁻¹⁶ for double precision vs. ~10⁻⁷ for single precision) of numerical representations [19]. For temperature values, this means double precision can represent extremely fine details (e.g., 296.45678912345676 K), while single precision offers coarser resolution (e.g., 296.4568 K) [19]. This distinction becomes critically important when modeling processes occurring over decadal to centennial timescales, where models must capture deep soil temperature trends as small as 1–10 K per century, corresponding to instantaneous rates of change accurate to order 10⁻⁹ to 10⁻¹⁰ K s⁻¹ [18].

Experimental Evidence: Precision-Dependent Accuracy in Soil Temperature Simulations

Deep Soil Temperature Simulations in the CLASS Model

A foundational study examining the Canadian LAnd Surface Scheme (CLASS) provides crucial experimental evidence of precision-dependent accuracy in deep soil temperature simulations [18]. This research systematically analyzed the theoretical and practical effects of using single versus double precision on simulated deep soil temperatures, revealing striking differences in model performance.

Table 1: Precision-Dependent Accuracy in CLASS Model Soil Temperature Simulations

| Precision Level | Reliable Simulation Depth | Key Limitations | Impact of Smaller Timesteps |

|---|---|---|---|

| Single Precision (32-bit) | Limited to ~20-25 meters | Complete loss of accuracy below critical depth | Further reduction in accuracy |

| Double Precision (64-bit) | No loss to several hundred meters | Maintains accuracy at all depths | Minimal impact on accuracy |

The research demonstrated that reliable single-precision temperatures were limited to depths of less than approximately 20-25 meters, while double precision showed no loss of accuracy to depths of at least several hundred meters [18]. This depth limitation poses a fundamental constraint for permafrost studies, as accurate representation of dynamics at depths of several tens of meters is essential for capturing deep permafrost behavior [18] [20].

Additionally, the study identified a counterintuitive relationship between temporal resolution and accuracy: for a given precision level, model accuracy deteriorated when using smaller time steps [18]. This further reduces the usefulness of single precision in applications requiring high temporal resolution, creating a fundamental constraint on how modelers can balance numerical accuracy with computational efficiency.

Ensemble Verification in Regional Climate Models

Recent research has employed sophisticated ensemble-based statistical methodologies to evaluate precision effects in regional climate simulations. One comprehensive study conducted 10-year-long ensemble simulations over the European domain of the Coordinated Regional Climate Downscaling Experiment (EURO-CORDEX) with 100 ensemble members in both single and double precision [19].

Table 2: Ensemble-Based Statistical Verification of Precision Effects

| Evaluation Metric | Single vs. Double Precision Differences | Comparison to Model Uncertainty |

|---|---|---|

| Distribution differences at grid-cell level | Marginally increased rejection rate | Much smaller than variations from diffusion coefficient changes |

| Temporal detection | Mostly detectable in first hours/days | Negligible for climate timescales |

| Practical significance | Deemed negligible for regional climate | Masked by inherent model uncertainty |

The analysis applied statistical testing at a grid-cell level for 47 output variables every 12 or 24 hours, detecting only a marginally increased rejection rate for single-precision climate simulations compared to the double-precision reference [19]. Crucially, this increase was much smaller than that arising from minor variations of the horizontal diffusion coefficient in the model, suggesting it is negligible as it is masked by model uncertainty [19].

This research highlights an important distinction: while single precision may be sufficient for atmospheric processes in weather forecasting and some climate applications, processes involving deep soil thermodynamics may require special consideration. In fact, some operational centers running mixed-precision models still maintain double precision for specific components like soil models [19].

Experimental Protocols and Methodologies

Permafrost Model Implementation

Research on permafrost processes in global land surface models has demonstrated the importance of accurately representing physical properties in frozen ground. Improved model formulations typically incorporate three key physical considerations:

Temperature-dependent thermophysical properties: Accounting for changes in heat capacity and thermal conductivity when soil moisture freezes, as frozen water has smaller heat capacity and greater thermal conductivity than liquid water [20].

Organic layer representation: Including the insulating effect of organic layers near the surface in high-latitude Taiga and Tundra regions [20].

Unfrozen water content: Modeling the presence of unfrozen water that decreases exponentially with subfreezing temperatures, with exponent coefficients predetermined by soil types (sand, silt, clay) [20].

These physical refinements significantly affect model projections. Using conventional formulations, one study predicted approximately 60% cumulative reduction in permafrost area by year 2100 under the RCP8.5 scenario, while the improved formulation projected only approximately 35% reduction [20]. This divergence underscores how structural model uncertainties interact with numerical precision considerations.

Precision Assessment Methodologies

The experimental protocols for evaluating precision effects typically follow these methodological steps:

Model configuration: Identical model setups are run in both single and double precision, often using the same compiler and computational architecture to isolate precision effects [18] [19].

Deep soil representation: Implementation of multi-layer soil models extending to significant depths (e.g., 6 vertical layers to 14 meters in MATSIRO [20] or even deeper in specialized permafrost models like GIPL 2.0, which can extend to 500-1000 meters [21]).

Statistical evaluation: Application of ensemble methods with multiple members (up to 100 in recent studies [19]) to distinguish precision-related differences from natural variability.

Validation against observations: Comparison of simulated permafrost distribution with observational datasets such as the International Permafrost Association maps [20] or the Map of the Snow, Ice and Frozen Ground in China [22].

Figure 1: Experimental workflow for assessing precision effects in permafrost models

Table 3: Research Reagent Solutions for Permafrost and Soil Temperature Modeling

| Tool/Model | Primary Application | Key Features | Precision Considerations |

|---|---|---|---|

| CLASS (Canadian LAND Surface Scheme) | Land surface processes | Soil temperature and moisture profiles | Shows depth-dependent precision limitations [18] |

| GIPL 2.0/2.1 | Permafrost dynamics | Finite difference method for non-linear heat conduction; enthalpy formulation | MPI-enabled for HPC; no communication between nodes [21] |

| MATSIRO | Global land surface interactions | Permafrost distribution; organic layer representation | Improved physics reduce projected permafrost loss [20] |

| Surface Frost Number Model | Permafrost distribution mapping | Incorporates soil temperature at 20cm depth | Uses LPJ model for soil temperature computation [22] |

| FDTD Methods | Nanoplasmonic structures | Finite-Difference Time-Domain computational electromagnetics | Double precision needed to avoid round-off errors [23] |

Implications for Climate Projections and Policy

The precision-dependent accuracy in deep soil temperature simulations has profound implications for climate change projections, particularly regarding permafrost thaw and its associated carbon feedbacks. The representation of permafrost processes in models directly influences projections of greenhouse gas releases from thawing permafrost [20]. Different model formulations show substantial variation in both the distribution and magnitude of projected permafrost thaw, explaining part of the wide variation in permafrost degradation predictions across climate models [20].

Permafrost degradation rates are closely related to multiple factors in the climate system, including changes in surface air temperature, precipitation, and evaporation in high latitudes, all of which involve significant uncertainty [20]. The numerical precision of soil temperature calculations represents an additional dimension of uncertainty that interacts with these physical processes.

For engineering applications and infrastructure planning in permafrost regions, reliable projections are essential for risk assessment. The simulated responses of permafrost distribution to climate change on the Qinghai-Tibet Plateau, for instance, show varying degradation rates across scenarios: approximately 17% reduction in the near-term (2011-2040) increasing to 64% reduction in the long-term (2071-2099) under high-emission scenarios [22]. These projections directly inform "decision-making for engineering construction programs on the QTP, and support local units in their efforts to adapt climate change" [22].

The evidence from multiple studies indicates a nuanced picture of precision requirements in environmental simulations. For many atmospheric processes in weather forecasting and regional climate modeling, single precision may provide sufficient accuracy while offering significant computational savings of 30-40% in runtime [19]. However, for deep soil temperatures and permafrost simulations, the limitations of single precision become critically important, restricting reliable simulations to depths of less than 20-25 meters [18].

This precision-dependent accuracy has particular significance for long-term climate projections, where deep permafrost dynamics play crucial roles in carbon cycle feedbacks. As one study unequivocally states, "any scientifically meaningful study of deep soil permafrost must at least use double precision" [18]. The computational cost of double precision appears to be a necessary investment for this specific application, despite the trend toward reduced precision in other components of climate models.

Modeling teams must therefore consider adopting mixed-precision approaches, where critical components like soil models maintain double precision while other model elements use single precision. This strategy balances the conflicting needs of computational efficiency and numerical accuracy, ensuring reliable projections of permafrost dynamics under changing climate conditions.

The accurate identification of high-risk ecological and climate scenarios is paramount for proactive environmental management and policy development. This pursuit is increasingly reliant on sophisticated computational simulations that operate over long-term horizons, across multiple spatial scales, and through coupled model frameworks. A critical yet often overlooked aspect of these simulations is the role of numerical precision—the choice between single and double floating-point arithmetic—in shaping model performance, predictive accuracy, and ultimately, the reliability of risk assessments. Precision selection creates a fundamental trade-off: reduced precision lowers computational cost and energy consumption, enabling higher-resolution or longer-term simulations, while double precision ensures mathematical rigor and minimizes error accumulation in complex, nonlinear systems [4] [24].

This guide provides a comparative analysis of contemporary modeling approaches deployed for high-risk scenario identification. It objectively evaluates the performance of various models and precision strategies by synthesizing current experimental data, detailing methodological protocols, and contextualizing findings within the broader research theme of precision ecology and climate modeling. The analysis aims to equip researchers and scientists with the practical insights needed to select appropriate modeling tools and precision levels for their specific simulation challenges.

Comparative Analysis of Modeling Approaches and Precision Strategies

Table 1: Comparative Performance of Ecological and Climate Simulation Models

| Model / Approach | Primary Application | Key Performance Metrics | Reported Performance Data | Notable Strengths | Documented Limitations |

|---|---|---|---|---|---|

| Mixed-Precision NEMO (Ocean Model) | Climate & Weather (Destination Earth) | Computational efficiency, Operational speed | Significant speedup on HPC resources; Precision reduction in computationally intensive functions [4] | Makes faster operational results feasible; Optimizes communication | Potential impact on accuracy in chaotic systems requires careful analysis |

| Quasi-Double Precision MPAS-A (Atmosphere Model) | Climate Prediction | Accuracy vs. Precision balance | Achieves double-precision accuracy while running in enhanced single-precision mode [24] | Reduces runtime and energy consumption; Maintains high accuracy | Implementation complexity; May not be suitable for all model components |

| PLUS Model (Patch-generating Land Use Simulation) | Land Use Change | Simulation accuracy, Landscape realism | Overall accuracy of 0.74 for predicting 2020 land use from historical data [25] | Simulates realistic land-use patches; Superior landscape representation | Limited ability to reflect deep process mechanisms of land change |

| Biomod2 Ensemble Algorithm | Species Distribution | Predictive Accuracy (AUC) | AUC up to 0.965 (0.083° resolution, 10,462 data points) [26] | High predictive accuracy with sufficient data; Enhanced stability from ensemble methods | High computational memory demand; Complex data preprocessing |

| MaxEnt Model (Maximum Entropy) | Species Distribution | Predictive Accuracy (AUC) | AUC up to 0.949 (0.083° resolution, 10,462 data points) [26] | High accuracy with presence-only data; User-friendly interface; Faster processing | Lower accuracy compared to Biomod2 with large datasets; Less suitable for absence data |

Table 2: Impact of Data Parameters on Species Distribution Model (SDM) Accuracy

| Factor | Model | Tested Conditions | Impact on Accuracy (AUC) | Experimental Findings |

|---|---|---|---|---|

| Spatial Resolution | Biomod2 Ensemble | 1°, 0.5°, 0.25°, 0.083° | Significant improvement with higher resolution [26] | Highest AUC (0.965) achieved at 0.083° resolution using EMwmean method. |

| Spatial Resolution | MaxEnt | 1°, 0.5°, 0.25°, 0.083° | Improvement with higher resolution | Highest AUC (0.949) achieved at 0.083° resolution. |

| Data Volume | Biomod2 & MaxEnt | 122 to 10,462 presence points | Significant improvement with larger data volume [26] | Model accuracy increased substantially with larger numbers of species presence records. |

The data reveals a clear performance-sensitivity trade-off in Species Distribution Models (SDMs). The Biomod2 ensemble algorithm achieves superior accuracy (AUC: 0.965) under optimal conditions of high-resolution data and large sample sizes, but this comes at the cost of significant computational memory and complex preprocessing [26]. In contrast, the MaxEnt model offers a more accessible and computationally efficient alternative, still achieving high accuracy (AUC: 0.949) under the same conditions, making it a robust choice for many research applications [26].

In climate modeling, strategies to manage precision demonstrate a direct trade-off between computational cost and numerical accuracy. The application of mixed-precision in the NEMO ocean model shows targeted precision reduction in specific functions can yield significant computational gains, crucial for operational forecasting and large-scale projects like Destination Earth [4]. Conversely, the "quasi-double precision" approach for the MPAS-A model seeks a balance, enhancing single-precision calculations to achieve double-precision accuracy, thereby reducing runtime and energy use without sacrificing the fidelity required for reliable climate projections [24].

Detailed Experimental Protocols

To ensure the reproducibility of the models and data discussed, this section outlines the standard experimental methodologies employed in the cited research.

Protocol for Multi-Scenario Land Use Simulation (PLUS Model)

The PLUS model is used to project future land use changes under different developmental scenarios, which serves as a critical input for assessing long-term landscape ecological risk [25] [27].

- Data Acquisition and Preparation: Collect historical land use/cover data for at least two past periods (e.g., 2000, 2010, 2020). Gather spatial driving factor data, which typically includes:

- Topographic: Elevation, slope.

- Socioeconomic: GDP, population density.

- Accessibility: Distance to roads, railways, water bodies, and city centers.

- Climatic: Annual precipitation, temperature.

- Land Use Demand Simulation: Use models like the Markov chain to predict the total quantitative demand for each land use type at a future date.

- Land Use Spatial Distribution Simulation:

- Rule Mining: The PLUS model uses a land expansion analysis strategy (LEAS) to extract the contributions of various driving factors to the expansion of each land use type between two historical periods.

- Multi-type Random Patch Seeds (CARS): The model integrates a cellular automata algorithm that uses multi-type random patch seeds to simulate the evolution of land use patches, generating spatially explicit projections.

- Scenario Definition: Define distinct future development scenarios. Common scenarios include:

- Natural Development (ND): Projects trends based on historical transitions.

- Rapid Economic Development (RED): Prioritizes the expansion of construction land.

- Ecological Protection (ELP): Restricts the conversion of ecological lands like forests and water bodies.

- Model Validation: Validate the model's accuracy by simulating a past year for which data is available (e.g., simulating 2020 using data from 2000 and 2010) and comparing the results to the actual map using metrics like overall accuracy.

Protocol for Species Habitat Modeling under Climate Scenarios

This protocol involves using SDMs like Biomod2 and MaxEnt to project species habitats under different climate pathways [26].

- Species and Environmental Data Collection:

- Species Occurrence: Obtain precise georeferenced records of species presence. Data can range from scientific surveys to commercial fishing logs.

- Environmental Variables: Acquire current and future climate data (e.g., sea surface temperature, salinity, currents) for the study area. Future data is typically derived from Global Climate Models (GCMs) under various emission scenarios (e.g., SSP1-2.6, SSP5-8.5).

- Data Preprocessing:

- Spatial Resolution: Re-sample all environmental raster data to a consistent, pre-defined resolution (e.g., 1°, 0.25°, 0.083°).

- Correlation Analysis: Check for high correlation between environmental variables to avoid multicollinearity, removing or combining variables as needed.

- Model Training and Evaluation:

- Data Partitioning: Split the species occurrence data into training (e.g., 70-80%) and testing (e.g., 20-30%) sets.

- Model Fitting: Train the Biomod2 (using ensemble methods like EMwmean) and MaxEnt models with the training data and environmental layers.

- Accuracy Assessment: Use the testing data to evaluate model performance. The Area Under the Receiver Operating Characteristic Curve (AUC) is a common metric, where a value of 1 indicates perfect prediction and 0.5 indicates no better than random.

- Habitat Projection: Apply the trained models to future climate layers to create maps of potential habitat distribution and shifts for each scenario and time period.

Protocol for Precision Reduction in Climate Models

This methodology assesses the impact of reduced floating-point precision on climate model simulation accuracy [4] [24].

- Base Model Selection: Choose a well-established climate model, such as NEMO (ocean) or MPAS-A (atmosphere).

- Precision Porting: Use automated tools or manual code modification to create mixed-precision or reduced-precision versions of the model. This typically involves converting specific, computationally intensive model components from 64-bit (double) to 32-bit (single) precision.

- Experimental Simulation:

- Run the double-precision model to establish a benchmark.

- Run the reduced-precision version(s) under identical experimental setups (e.g., initial conditions, forcing data, simulation length).

- Ensemble Verification: For chaotic systems like climate, use ensemble-based statistical verification. Run multiple simulations with minor perturbations to initial conditions for both precision versions to statistically compare their outputs against observational data or the benchmark.

- Performance and Diagnostics:

- Computational Performance: Measure runtime, energy consumption, and computational resource usage.

- Diagnostic Accuracy: Quantify differences in key physical diagnostics (e.g., sea surface temperature patterns, ocean heat content, atmospheric pressure fields) between the standard and reduced-precision simulations.

Visualizing Modeling Workflows

The following diagrams illustrate the core workflows for the key methodologies discussed, highlighting the role of precision and scenario planning.

Table 3: Key Computational Tools and Data Resources for Ecological and Climate Simulation

| Tool / Resource Name | Type | Primary Function in Research | Relevance to Precision/Sensitivity |

|---|---|---|---|

| Google Earth Engine (GEE) | Cloud Platform | Access and processing of satellite imagery and global geospatial datasets [28]. | Provides high-precision land use classification data (10m Sentinel-2) critical for model calibration. |

| InVEST Model | Software Model | Mapping and valuing ecosystem services, including carbon stock calculations [28]. | Outputs (e.g., carbon pools) serve as key inputs for assessing ecological risk and conflict. |

| R with Biomod2 Package | Software Library | Ensemble species distribution modeling using multiple algorithms [26]. | Allows precision tuning in data preprocessing and model fitting; sensitive to data volume and resolution. |

| MaxEnt Software | Standalone Software | Species distribution modeling using maximum entropy theory [26]. | Highly sensitive to the number of species presence points; efficient with presence-only data. |

| PLUS Model | Software Model | Simulating land use change by mining past transitions and generating patches [25] [27]. | Simulates scenarios with different precisions in spatial planning (economic vs. ecological). |

| Future Land Use Simulation (FLUS) | Software Model | Simulating future land use patterns under multiple scenarios [27]. | Similar to PLUS, used for projecting future spatial patterns that drive ecological risk assessments. |

| CMIP Data Portal | Data Archive | Access to coordinated climate model output from various institutions worldwide [29]. | Provides future climate projection data (various resolutions/uncertainties) essential for forcing ecological models. |

| Sentinel-2 MSI Data | Satellite Imagery | High-resolution (10m) multispectral imagery for land cover classification [28]. | Source of high-precision input data for land use maps, directly impacting model accuracy. |

| WorldPop Data | Population Dataset | High-resolution data on human population distributions [28] [25]. | A key socioeconomic driving factor in land use change models. |

The identification of high-risk scenarios through long-term, multi-scale simulations is a complex endeavor that requires careful consideration of model selection, data quality, and computational strategy. The comparative data presented in this guide demonstrates that no single model universally outperforms others; rather, the choice depends on the specific research question, data availability, and computational resources. The emergence of precision ecology underscores a paradigm shift towards leveraging big data and computational advances for site-specific, effective conservation interventions [30].

The trade-off between numerical precision and computational cost is a central theme. While reduced precision offers a viable path to greater computational efficiency and faster operational turnaround in projects like Destination Earth [4], it necessitates rigorous ensemble-based verification to ensure statistical reliability, particularly in chaotic climate systems. For ecological assessments, the sensitivity of model outputs to the precision of input data—such as spatial resolution and sample size—is often more critical than the numerical precision of the arithmetic itself [26]. Ultimately, robust risk identification hinges on a coupled-model philosophy: integrating diverse tools, validating across multiple scenarios, and transparently acknowledging the uncertainties inherent in each step of the simulation process.

Implementing Precision in Ecological Models: From Theory to Practice

In the computational realm of ecological and climate simulation, the choice between single and double precision is a critical design consideration that directly influences a model's accuracy, performance, and scientific validity. This guide provides an objective comparison of precision levels, drawing on current research to outline their respective advantages, limitations, and optimal applications. The drive for greater computational efficiency must be carefully balanced against the risk of introducing excessive rounding errors, which can corrupt long-term simulations and compromise the integrity of sensitive ecological forecasts [18]. By matching numerical precision to the specific scale and sensitivity of the physical process being modeled, researchers can make informed decisions that leverage the benefits of each precision standard without sacrificing reliability.

Comparative Analysis of Precision in Ecological Simulations

The table below summarizes key findings from recent studies on the application of single and double precision across various environmental modeling contexts.

Table 1: Comparison of Single and Double Precision in Environmental Models

| Model / Study | Precision Type | Key Findings on Accuracy | Key Findings on Performance | Recommended Use Case |

|---|---|---|---|---|

| CLASS Land Surface Model [18] | Single Precision (float32) | Reliable temperatures limited to depths <20–25 m; accuracy deteriorates with smaller time steps. | Not explicitly quantified, but offers inherent savings in memory and CPU usage. | Processes with limited dynamic range and shorter time scales. |

| CLASS Land Surface Model [18] | Double Precision (float64) | No loss of accuracy to depths of several hundred meters. | Higher computational and memory costs. | Deep soil processes (e.g., permafrost); long-term climate simulations. |

| MASNUM Ocean Wave Model [11] | Mixed Precision (Tailored) | Minimal accuracy loss: SMAPE for wave height 0.12%-0.43%, RMSE 0.01m-0.02m. | 2.97–3.39× speedup over double-precision baseline. | High-resolution, real-time forecasting applications. |

| ExaGeoStat Software [31] | Mixed Precision (Single/Double) | Maintains predictive accuracy for large-scale spatial statistics. | 1.9× speedup on average with V100 GPU; 10x speedup vs CPU for one iteration. | Large-scale environmental predictions (e.g., temperature, wind speed). |

| NEMO Ocean Model [4] | Mixed Precision (Automated) | Maintains stability and accuracy in chaotic climate applications when applied selectively. | Significant computational gains, better HPC resource utilization. | Large-scale oceanographic and climate models like Destination Earth. |

Experimental Protocols for Precision Analysis

Protocol 1: Assessing Precision in Deep Soil Heat Diffusion

This protocol is derived from a study that investigated the reliability of single precision for simulating deep soil temperatures, a process critical for permafrost thawing projections [18].

- Objective: To theoretically and experimentally analyze the effects of single and double precision on simulated deep soil temperature in a land surface model.

- Model Used: Canadian LAnd Surface Scheme (CLASS), a state-of-the-art land surface model [18].

- Methodology:

- Theoretical Analysis: A formalism of finite arithmetic was applied to identify the potential for rounding errors. The analysis focused on the vulnerability of operations involving numbers with extreme dynamic ranges or the subtraction of nearly identical numbers [18].

- Experimental Setup: The CLASS model was run in both single and double precision configurations. Simulations focused on deep soil temperature, tracking accuracy at various depths (from surface to several hundred meters) over long time scales [18].

- Accuracy Threshold: The study defined required accuracies for resolving deep soil temperature gradients, demanding temperatures be accurate to within 10⁻⁶ to 10⁻⁷ K when using typical time steps of order 10³ seconds [18].

- Key Outcome Variables:

- Maximum depth of reliable temperature simulation.

- Rate of accuracy degradation with reduced time step size.

- Comparison of simulated temperatures against the defined accuracy thresholds.

Protocol 2: Implementing a Mixed-Precision Framework in an Ocean Wave Model

This protocol outlines the methodology for applying mixed precision to the MASNUM ocean wave model, considering physical sensitivities to balance efficiency and accuracy [11].

- Objective: To enhance the computational performance of the MASNUM wave model via a mixed-precision framework while maintaining simulation accuracy.

- Model Used: MArine Science and Numerical Modeling (MASNUM) ocean wave model [11].

- Methodology:

- Variable Classification: Variables within the model were classified based on their mathematical properties and physical attributes. The sensitivity of different physical processes to reduced precision was analyzed [11].

- Precision Allocation: A mixed-precision scheme was implemented, strategically reducing the precision of non-critical variables to single-precision (float32) or half-precision (float16) while keeping critical variables in double-precision (float64) [11].

- Hardware/Software Environment:

- Key Outcome Variables:

- Computational Performance: Measured speedup over the double-precision baseline.

- Simulation Accuracy: Quantified using Symmetric Mean Absolute Percentage Error (SMAPE) and Root Mean Square Error (RMSE) for significant wave height against the double-precision benchmark [11].

Decision Framework for Precision Selection

The following diagram illustrates the logical workflow for selecting the appropriate numerical precision strategy based on the characteristics of the simulation.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Solutions for Precision Analysis in Environmental Modeling

| Tool / Solution | Function | Relevance to Precision Research |

|---|---|---|

| Automatic Code Porting Tools (e.g., from Barcelona Supercomputing Center) [4] | Automatically ports oceanographic code to mixed precision. | Facilitates the transition to mixed precision by identifying suitable parts of the codebase for precision reduction, saving development time. |

| Reduced-Precision Emulator (RPE) [11] | Emulates the behavior of reduced precision on standard hardware. | Allows researchers to test and analyze the impact of lower precision (e.g., single, half) without fully porting the code, enabling risk-free experimentation. |

| ExaGeoStatR Software Package [31] | An R package for large-scale spatial statistics. | Provides a GPU-accelerated, accessible environment for statisticians to run large-scale models with mixed precision directly from the R programming language. |

| GPU Architectures (e.g., NVIDIA A100, V100) [11] [31] | Hardware accelerators for parallel computation. | Crucial for exploiting the performance benefits of mixed precision, especially with dedicated Tensor Cores for accelerated half-precision math. |

| High-Performance Computing (HPC) Suites (e.g., NVIDIA HPC SDK) [11] | A collection of compilers and libraries for HPC. | Provides the necessary compilers (e.g., nvfortran) and libraries to compile, optimize, and run environmental models on GPU and CPU architectures. |

The choice between single, double, or mixed precision is a fundamental aspect of ecological model design that cannot be reduced to a one-size-fits-all rule. As evidenced by the comparative data, double precision remains indispensable for processes requiring high numerical stability over long time scales, such as deep soil permafrost simulation [18]. Conversely, the strategic application of mixed precision, which leverages the performance advantages of single or half-precision for non-critical components, offers a compelling path forward for high-resolution and real-time forecasting applications [4] [11] [31]. The decision framework and tools outlined in this guide provide researchers and drug development professionals with a structured approach to selecting the appropriate precision level, ensuring that computational resources are used efficiently without compromising the scientific integrity of their simulations.

The computational demands of high-fidelity ecological and climate simulations present a significant bottleneck for researchers. Traditional models relying exclusively on double-precision (FP64) arithmetic offer high accuracy but at tremendous computational cost. Mixed-precision frameworks have emerged as a transformative solution, strategically allocating different numerical precisions within computational workflows to dramatically accelerate performance while maintaining scientific rigor. This approach recognizes that not all calculations require the same level of precision, enabling intelligent resource allocation that balances computational efficiency with simulation accuracy.

The core principle involves using lower-precision formats like single-precision (FP32) and half-precision (FP16) for calculations tolerant to reduced accuracy, while reserving double-precision for critical operations where numerical stability is paramount. This strategy is particularly valuable in ecological modeling where researchers must often choose between spatial resolution, temporal scope, and model complexity due to computational constraints. By leveraging hardware advancements like NVIDIA Tensor Cores that provide order-of-magnitude speedups for lower-precision operations, mixed-precision frameworks are enabling new scientific possibilities in environmental forecasting and climate research.

Performance Comparison: Quantitative Analysis of Mixed-Precision Benefits

Experimental Data from Environmental Modeling Applications

Table 1: Performance Comparison of Environmental Models Using Mixed-Precision Techniques

| Application Domain | Model/Framework | Precision Configuration | Speedup vs. FP64 | Accuracy Metrics | Hardware |

|---|---|---|---|---|---|

| Ocean Wave Modeling | MASNUM Wave Model | Mixed (FP64/FP32/FP16) | 2.97-3.39× | SMAPE: 0.12%-0.43%RMSE: 0.01m-0.02m | NVIDIA A100 |

| Regional Environmental Statistics | ExaGeoStatR | Mixed (FP64/FP32) | 1.9× | Equivalent statistical accuracy | NVIDIA V100 |

| Climate Simulation | NEMO (Destination Earth) | Mixed (FP64/FP32) | Significant operational gains | Maintained forecast quality | HPC Systems |

| Deep Learning Training | Various CNN/RNN Models | Mixed (FP32/FP16) | Up to 3× | Maintained model accuracy | Volta/Turing GPUs |

The data demonstrates that mixed-precision approaches consistently deliver substantial performance improvements across diverse environmental modeling applications. The MASNUM wave model achieved 3.39× speedup – one of the highest reported gains – while maintaining high accuracy with SMAPE (Symmetric Mean Absolute Percentage Error) values below 0.5% and minimal increases in RMSE [11]. Similarly, the ExaGeoStat framework for spatial statistics showed nearly 2× acceleration using mixed precision on V100 GPUs, enabling analysis of datasets comprising millions of locations that would be computationally prohibitive in double-precision alone [31].

Table 2: Precision Formats and Their Computational Characteristics

| Precision Format | Bits | Memory Usage | Dynamic Range | Appropriate Use Cases |

|---|---|---|---|---|

| Half-precision (FP16) | 16 | 2 bytes | 2^(-14) to 2^15 (~40 powers of 2) | Non-critical calculations, image processing, tolerant matrix operations |

| Single-precision (FP32) | 32 | 4 bytes | 2^(-126) to 2^127 (~264 powers of 2) | Most forward propagation, intermediate calculations |

| Double-precision (FP64) | 64 | 8 bytes | 2^(-1022) to 2^1023 | Reductions, master weights, sensitive convergence checks |

The strategic allocation of these precision formats follows the principle of using the lowest acceptable precision for each computational task. As evidenced by the NEMO ocean model analysis, approximately 69.2% of variables (652 total) could be successfully represented in single-precision without compromising results [11]. This precision tailoring is particularly effective in chaotic systems like climate models, where small perturbations naturally grow, reducing the long-term impact of minor numerical errors introduced by lower precision [4].

Experimental Protocols: Methodologies for Mixed-Precision Implementation

Precision Reduction in Oceanographic Models

The implementation of mixed-precision in the MASNUM wave model followed a systematic methodology that can serve as a template for ecological simulations [11]:

Variable Classification: Variables within the model were categorized based on their mathematical properties and physical attributes. This involved identifying which physical processes were sensitive to precision reduction through controlled experiments.

Precision Allocation Strategy:

- Critical variables (e.g., certain accumulation terms, sensitive physical parameters) remained in double-precision

- Intermediate calculations used single-precision

- Non-critical operations with high computational intensity utilized half-precision

GPU Porting and Optimization: The model was ported to GPU architectures using CUDA interfaces, with special attention to memory transfers between precision domains. The researchers utilized NVIDIA A100 GPUs with 6,912 CUDA cores, taking advantage of their 19.5 TFLOPS single-precision and significantly higher half-precision performance.

Validation Protocol: Results were validated against double-precision benchmarks using multiple metrics including SMAPE and RMSE for significant wave height predictions across different oceanic conditions.

The experimental workflow for mixed-precision implementation involves several critical stages that ensure maintained accuracy while achieving performance gains:

Figure 1: Mixed-Precision Implementation Workflow for Ecological Models

Loss Scaling and Gradient Preservation

A critical technical challenge in mixed-precision training is preserving small gradient values that may be lost when converted to lower precision. As shown in research on the Multibox SSD network, 31% of gradient values became zeros when directly converted to FP16, potentially causing training divergence [32]. The solution involves:

- Loss Scaling: Multiplying the loss value by a scaling factor (typically 8-32,000×) before backpropagation, which shifts gradient values into the FP16 representable range

- Weight Update Correction: Unscaling the gradients before weight updates to maintain correct magnitude

- Automatic Scaling Factor Determination: Modern frameworks like PyTorch's AMP (Automatic Mixed Precision) can algorithmically determine optimal scaling factors [33]

This approach ensures that relevant gradient information is preserved while still benefiting from the computational advantages of half-precision arithmetic. The methodology has been validated across diverse network architectures including convolutional neural networks, recurrent networks, and generative adversarial networks [33].

Table 3: Research Reagent Solutions for Mixed-Precision Implementation

| Tool/Category | Specific Examples | Function/Role | Application Context |

|---|---|---|---|

| Hardware Platforms | NVIDIA A100/V100 GPUs, TPUs | Provide Tensor Cores specialized for FP16/FP32 matrix operations | General mixed-precision computation, deep learning training |

| Software Frameworks | PyTorch AMP, TensorFlow Mixed Precision, ExaGeoStatR | Automated precision management, loss scaling, GPU acceleration | Accessible implementation for statisticians and researchers |

| Development Tools | NVIDIA HPC SDK, CUDA, cuDNN | Compiler support, library functions for reduced precision | Porting existing models to mixed-precision |

| Validation Tools | Custom validation scripts, SMAPE/RMSE metrics | Precision-emulation, result verification | Ensuring maintained accuracy in scientific applications |

| Precision Analysis Tools | Barcelona Supercomputing Center tools, RPE | Identify precision-sensitive components in existing code | Climate and oceanographic models |

The toolkit highlights the ecosystem of resources enabling mixed-precision research. The ExaGeoStatR package is particularly noteworthy for making GPU-accelerated mixed-precision statistics accessible to R users without requiring deep CUDA expertise [31]. Similarly, PyTorch's Automatic Mixed Precision (AMP) API simplifies implementation by automatically handling casting between precision formats and loss scaling [33].

For researchers working with legacy models, tools developed at the Barcelona Supercomputing Center enable automatic porting of oceanographic code to mixed precision, significantly reducing implementation effort [4]. These tools facilitate the identification of precision-tolerant sections in complex models like NEMO, which is crucial for the Destination Earth initiative creating digital replicas of our planet [4].

Mixed-precision frameworks represent a paradigm shift in computational science, moving from uniform high-precision computation to strategic precision allocation based on numerical sensitivity. The experimental evidence demonstrates that speedups of 2-3× are consistently achievable without sacrificing accuracy in ecological simulations, directly addressing the computational bottlenecks that have limited model resolution and scope.

The implications for ecological modeling are profound. As one research team noted, the efficiency gains enable "high-resolution, real-time ocean forecasting applications" that were previously computationally prohibitive [11]. Furthermore, the reduced energy consumption associated with faster computation represents an additional environmental benefit, making large-scale simulation research more sustainable.

For the research community, the rise of mixed-precision frameworks means that traditional trade-offs between model complexity and computational cost can be renegotiated. This enables more sophisticated ecological models that better represent complex biogeochemical processes, higher spatial resolutions that capture critical heterogeneity, and longer-term forecasts essential for understanding climate change impacts. As hardware continues to evolve with enhanced support for reduced-precision arithmetic, mixed-precision approaches will likely become the standard for large-scale ecological simulation, empowering researchers to tackle increasingly complex environmental challenges.

In the field of ecological and oceanographic simulation, researchers perpetually face a fundamental trade-off: the tension between computational efficiency and numerical accuracy. For complex models like the MArine Science and Numerical Modeling (MASNUM) ocean wave model, this challenge is particularly acute. The MASNUM model, a third-generation global ocean wave model developed in China, simulates wave dynamics by solving energy balance equations in spherical coordinates, incorporating complex physical processes including wind input, wave breaking dissipation, and nonlinear wave-wave interactions [11]. Such models are crucial for understanding marine environments, climate interactions, and maritime transportation safety.

Traditionally, scientific computing has relied heavily on double-precision (float64) arithmetic, which uses 64 bits to represent floating-point numbers, providing approximately 15-17 significant decimal digits of precision [34]. This high precision ensures numerical stability and accuracy but comes with substantial computational costs—higher memory usage, greater energy consumption, and slower execution times. Single-precision (float32) formats use 32 bits, offering a middle ground with about 7 decimal digits of precision, while half-precision (float16) utilizes merely 16 bits, enabling maximum speed but with significantly reduced numerical range and accuracy [11] [34].

Mixed-precision computing represents a paradigm shift, strategically employing different numerical precisions within a single application to optimize this efficiency-accuracy balance [11]. This article explores how researchers successfully applied mixed-precision techniques to the MASNUM model, achieving substantial performance gains while maintaining the accuracy required for reliable ecological and wave forecasting simulations.

Experimental Protocols: Implementing Mixed Precision in MASNUM

The MASNUM wave model is a numerical simulation approach based on the energy balance equation in wavenumber space, with the wave spectrum as its primary simulation target [11]. The core of the model solves the conservation equation of the wave energy spectrum in a spherical coordinate system, incorporating multiple source functions representing different physical mechanisms:

- SS = Sin + Sds + Sbo + Snl + Scu [11]

Where Sin represents wind input, Sds wave breaking dissipation, Sbo bottom friction dissipation, Snl nonlinear wave-wave interactions, and Scu wave-current interactions. The numerical implementation involves several critical functions, including propagat for solving wave propagation equations and implsch for handling local changes from source functions [35].

The mixed-precision implementation followed a systematic methodology based on variable-specific precision allocation. Researchers analyzed the sensitivity of different physical processes and variable types within MASNUM to numerical precision, then strategically assigned precision levels accordingly [11]. Critical variables maintaining numerical stability retained double-precision, while non-critical variables were downgraded to single-precision or half-precision formats.

Computational Environment and Experimental Setup

The experimental configuration was meticulously designed to enable fair comparison between traditional and mixed-precision approaches:

Table: Experimental Configuration for MASNUM Mixed-Precision Testing

| Component | Specification |

|---|---|

| CPU System | High-performance cluster with dual Intel Gold 6258R processors (56 cores), x86_64 architecture, 192GB memory |

| GPU System | NVIDIA A100 GPU cards (Ampere architecture), 6,912 CUDA cores, support for half-precision computation |

| Software Environment | NVIDIA HPC SDK suite (v22.2), CUDA v11.6, OpenMPI v3.1.5 |

| Optimization Level | Compiler flags set to -O2 for all configurations |

| Porting Method | CUDA interface for GPU implementation of mixed-precision operations |

The MASNUM program was ported to GPU architecture to leverage hardware-optimized half-precision operations, as CPU architectures typically lack efficient native support for float16 computations [11]. This porting process involved identifying computational bottlenecks and implementing GPU kernels with appropriate precision specifications.

Results and Performance Comparison

Quantitative Performance Metrics

The mixed-precision implementation yielded significant performance improvements across multiple metrics while maintaining acceptable accuracy levels:

Table: Performance Comparison of MASNUM Model Under Different Precision Schemes

| Precision Scheme | Speedup Factor | Significant Wave Height SMAPE | Significant Wave Height RMSE | Key Applications |

|---|---|---|---|---|

| Double-Precision (Baseline) | 1.00x | Baseline | Baseline | Reference standard for high-fidelity simulation |

| Single-Precision | ~2.0x (estimated) | 0.12%-0.43% | 0.01m-0.02m | Good balance for many operational scenarios |

| Mixed-Precision | 2.97x-3.39x | 0.12%-0.43% | 0.01m-0.02m | Optimal for high-resolution, real-time forecasting |

The accuracy metrics demonstrate that the mixed-precision approach introduced minimal error compared to the double-precision baseline. The Symmetric Mean Absolute Percentage Error (SMAPE) for significant wave height remained between 0.12% and 0.43%, while Root Mean Square Error (RMSE) values ranged from 0.01m to 0.02m—well within acceptable tolerances for operational wave forecasting [11].

Comparative Analysis with Alternative Approaches

When contextualized within broader scientific computing efforts, the MASNUM mixed-precision achievements align with successful implementations in other domains:

Table: Mixed-Precision Applications Across Scientific Domains

| Domain/Model | Precision Strategy | Performance Gain | Key Researcher/Institution |

|---|---|---|---|

| MASNUM Wave Model | Variable-specific precision allocation | 2.97x-3.39x speedup | Qilu University of Technology/OUC [11] |

| NEMO Ocean Model | RPE-based precision reduction | 69.2% variables to single-precision | Barcelona Supercomputing Center [4] |

| ExaGeoStat Statistics | FP16/FP32 combination | 1.9x speedup (V100 GPU) | KAUST [31] |

| Earthquake Simulation | Double-, single-, half-precision mix | 25x speedup | University of Tokyo/ORNL [34] |

The MASNUM implementation stands out for its systematic approach to variable classification based on physical sensitivities, which enabled more strategic precision allocation than one-size-fits-all reductions [11]. This methodology contrasts with earlier approaches that simply converted entire models to lower precision, often resulting in unacceptable accuracy degradation.

The Technical Foundation: Understanding Precision in Scientific Computing

Precision Formats and Their Characteristics

The effectiveness of mixed-precision strategies hinges on understanding the distinct properties of each floating-point format:

Figure: Floating-Point Precision Characteristics and Trade-offs

Half-precision (float16) utilizes 16 bits total: 1 sign bit, 5 exponent bits, and 10 significand bits. This compact representation enables maximum computational throughput and memory efficiency but has limited range and precision, making it susceptible to overflow and underflow [34]. Single-precision (float32) uses 32 bits: 1 sign bit, 8 exponent bits, and 23 significand bits, offering improved accuracy while maintaining better performance than double-precision [34]. Double-precision (float64) employs 64 bits: 1 sign bit, 11 exponent bits, and 52 significand bits, providing the highest numerical stability and accuracy at the cost of computational efficiency [11] [34].

Mixed-Precision Computational Workflow

The successful implementation of mixed-precision computing follows a systematic workflow that maintains accuracy while optimizing performance:

Figure: Mixed-Precision Implementation Workflow for Scientific Models

This approach leverages the principle that many operations, particularly matrix multiplications, can be performed rapidly in lower precision, while critical operations (such as accumulation and certain physically sensitive calculations) benefit from higher precision [11] [34]. In the MASNUM implementation, this involved classifying variables based on their mathematical properties and physical attributes, then determining optimal precision formats for different variable types [11].

Successfully implementing mixed-precision strategies requires both hardware and software components optimized for variable-precision computation:

Table: Essential Research Reagent Solutions for Mixed-Precision Implementation

| Tool/Category | Specific Examples | Function in Mixed-Precision Research |

|---|---|---|

| Hardware Platforms | NVIDIA A100 GPU, NVIDIA V100 Tensor Core GPU | Provide specialized cores for accelerated FP16/BF16/FP32 operations |

| Software Development Kits | NVIDIA HPC SDK, CUDA Toolkit | Enable programming of mixed-precision algorithms and GPU porting |

| Precision Emulation Tools | Reduced-Precision Emulator (RPE) | Allow precision reduction testing without hardware deployment |

| Deep Learning Frameworks | PyTorch AMP, TensorFlow Mixed Precision | Offer automatic mixed-precision features for AI-driven model components |

| Performance Profilers | NVIDIA Nsight Systems, Intel VTune Amplifier | Identify computational bottlenecks and precision-sensitive code sections |

| Mathematical Libraries | cuBLAS, cuSOLVER, MAGMA | Provide optimized mixed-precision implementations of linear algebra routines |

Modern GPU architectures, particularly those with Tensor Cores like NVIDIA's A100 and V100, are instrumental for efficient mixed-precision computation [11] [31]. These processors contain specialized hardware that dramatically accelerates half-precision and single-precision operations while maintaining double-precision capabilities for critical computations. The software ecosystem has similarly evolved, with frameworks like PyTorch Automatic Mixed Precision (AMP) and TensorFlow's mixed-policy API simplifying implementation through automated precision management and loss scaling [36].

The successful implementation of mixed-precision techniques in the MASNUM ocean wave model demonstrates a viable path forward for ecological simulations facing computational constraints. By achieving a 2.97-3.39× speedup while maintaining accuracy within 0.43% SMAPE for significant wave height, this approach addresses the core challenge of balancing computational efficiency with numerical precision [11].