Robustness Analysis for Ecological Network Performance: Methods, Validation, and Optimization Strategies

This article provides a comprehensive framework for assessing the robustness of ecological networks, a critical property for ensuring ecosystem stability and functionality amidst environmental disturbances.

Robustness Analysis for Ecological Network Performance: Methods, Validation, and Optimization Strategies

Abstract

This article provides a comprehensive framework for assessing the robustness of ecological networks, a critical property for ensuring ecosystem stability and functionality amidst environmental disturbances. Targeting researchers and environmental scientists, we explore the foundational principles of ecological network robustness, detail advanced methodological approaches for its analysis, and present practical troubleshooting and optimization strategies. By integrating cutting-edge research on network inference validation, spatial analysis, and composite indices, this guide addresses common pitfalls in predictive modeling and offers validated techniques for enhancing network resilience. The synthesized knowledge aims to empower professionals in constructing more reliable ecological models and implementing effective conservation interventions.

Understanding Ecological Network Robustness: Core Concepts and Importance for Ecosystem Stability

Robustness has emerged as a critical concept in ecology, describing the capacity of ecological networks to maintain their fundamental properties amidst disturbance. This guide provides a comparative analysis of robustness frameworks, experimental data, and methodologies that define the current state of ecological network performance research. Ecological robustness encompasses two complementary yet distinct dimensions: structural integrity, which concerns the persistence of network architecture and connectivity, and functional resilience, which refers to the maintenance of ecological processes and functions under stress. Understanding the relationship between these dimensions is paramount for predicting ecosystem responses to anthropogenic pressures and environmental change. This guide objectively compares different approaches to quantifying robustness, presents supporting experimental data, and details the methodologies enabling these advancements for researchers and scientists working at the intersection of ecology and conservation.

Comparative Frameworks of Ecological Robustness

The concept of ecological robustness is applied and quantified differently across studies, depending on whether the focus is on structural or functional aspects of networks.

Table 1: Comparative Frameworks for Assessing Ecological Robustness

| Framework Focus | Key Metric | Network Type | Primary Application | Key Finding |

|---|---|---|---|---|

| Structural Integrity | Connectivity, Node Betweenness, Community Structure | Species Interaction Networks [1] | Predicting responses to missing data | Network properties show substantial variation in robustness to missing edges; community detection algorithms vary in sensitivity [1]. |

| Functional Resilience | Seed Dispersal Effectiveness (SDE) | Plant-Frugivore Networks [2] | Assessing defaunation impacts | Functional robustness declines more sharply than structural robustness following defaunation [2]. |

| Spatial Resilience | Cascading Failure Dynamics | Ecological Spatial Networks [3] | Landscape conservation planning | Attacking high-degree nodes causes more severe disruption; network stability depends on node capacity and load [3]. |

| Adaptive Capacity | Rewiring Capacity & Potential [4] | Plant-Pollinator Networks | Forecasting responses to global change | Quantifies the trait space for forming new interactions, offering a metric for network adaptability [4]. |

Structural Integrity: The Backbone of Networks

Structural robustness refers to a complex system's ability to maintain its functions or properties under perturbations [3]. In ecological terms, this often translates to a network's resistance to fragmentation when nodes (e.g., species, habitat patches) are removed. Research on species interaction networks has revealed that different structural properties exhibit varying levels of robustness to missing data, which simulates incomplete sampling or species loss. For instance, the number of connected components and variance in node betweenness are specific metrics used to assess this structural integrity [1].

Functional Resilience: The Persistence of Processes

Functional robustness, in contrast, measures the maintenance of specific ecological processes. A seminal study on hyperdiverse seed dispersal networks demonstrated that functional robustness declines faster than structural robustness following defaunation. This research measured functional robustness using Seed Dispersal Effectiveness (SDE), which integrates both the quantity and quality of seed dispersal, providing a more comprehensive picture of functional integrity than interaction counts alone [2]. This divergence highlights that a network can retain its abstract structure even while its core ecological functions are eroding.

Experimental Data and Performance Comparison

Quantitative data from recent studies provides critical insights into how different ecological networks respond to disturbances.

Table 2: Quantitative Comparison of Network Robustness Under Disturbance

| Study Focus | Disturbance Type | Structural Response | Functional Response | Key Experimental Data |

|---|---|---|---|---|

| Seed Dispersal Networks [2] | Size-Selective Defaunation | Moderate decline in plant species connected | Sharp decline in Seed Dispersal Effectiveness | Functional robustness more sensitive to loss of large frugivores; services not replaced by smaller species. |

| Species Interaction Networks [1] | Random Edge Removal (Missing Data) | Varies by topological property | Not Measured | Clauset-Newman-Moore & Louvain community detection more robust than Label Propagation & Girvan-Newman. |

| Ecological Spatial Networks [3] | Malicious vs. Random Node Attack | Faster cascading failure from targeted attack | Not Measured | Robustness index (global efficiency) drops below 0.1 after ~10% of high-degree nodes fail. |

| Regional Ecological Networks [5] | Urban Expansion & Climate Stress | Increased fragmentation, decreased connectivity | Decline in ecosystem services | "Structural damage → functional decline → resilience loss" chain reaction observed. |

Divergence Between Structural and Functional Robustness

The most striking finding from comparative studies is the frequent decoupling of structural and functional responses. In seed dispersal networks, simulations of defaunation scenarios (e.g., size-selected, specialization-selected, and random species loss) show that the number of persistent plant-frugivore interactions (structure) does not accurately reflect the loss of seed dispersal function. The functional robustness, measured via SDE, consistently exhibited steeper declines because the loss of large-bodied frugivores—which are often more efficient dispersers—creates a functional deficit that is not captured by simply counting remaining connections [2]. This underscores the necessity of measuring function directly rather than inferring it from structure.

Topological Features and Network Vulnerability

Analysis of 148 real-world bipartite networks revealed that robustness varies by both network property and interaction type. For example, the robustness of a network's community structure depends heavily on the algorithm used to detect it. Furthermore, certain topological metrics, such as those based on eigenvalues, may be more sensitive to the addition of new edges (simulating improved data collection or interaction rewiring) than others [1]. This implies that the choice of metric can significantly influence conclusions about a network's vulnerability.

Methodological Protocols for Robustness Assessment

Protocol 1: Assessing Structural Robustness to Missing Data

This protocol is designed to evaluate how inferences about a network's structure might be biased by incomplete sampling [1].

- Network Compilation: Gather a set of fully-constructed, empirical ecological interaction networks (e.g., pollination, host-parasite).

- Topological Metric Calculation: For each network, compute a suite of topological properties. Key metrics include:

- Number of connected components

- Variance in node betweenness centrality

- Variance in node PageRank

- Largest eigenvalue of the adjacency matrix

- Number of non-zero eigenvalues

- Community structure using multiple detection algorithms (e.g., Clauset-Newman-Moore, Louvain, Label Propagation, Girvan-Newman)

- Perturbation Simulation: Systematically add random edges to each network, simulating the process of discovering missed interactions.

- Robustness Quantification: Recalculate the topological metrics after each edge addition. The robustness of a specific metric is defined by the rate at which it changes as the network becomes more complete. A robust metric shows minimal or very gradual change.

- Comparative Analysis: Compare the robustness of different metrics and across different types of interaction networks.

Protocol 2: Quantifying Functional Robustness via Defaunation Simulations

This protocol uses a combination of network data and functional traits to assess the impact of species loss on ecosystem function [2].

- Community Network and Trait Data Collection: Construct a detailed quantitative network of species interactions (e.g., frugivores and plants). Additionally, collect data on functional traits relevant to the ecological process (e.g., for seed dispersal: frugivore body size, gape width, plant seed size, germination success with/without gut passage).

- Calculate Seed Dispersal Effectiveness (SDE): For each plant-frugivore pair, calculate SDE as the product of a quantity component (e.g., number of seeds dispersed) and a quality component (e.g., probability of a dispersed seed surviving to recruitment).

- Define Defaunation Scenarios: Establish multiple, realistic defaunation scenarios:

- Size-Selected: Remove larger frugivore species first.

- Specialization-Selected: Remove specialist frugivores first.

- Random: Remove species randomly as a control.

- Abundance Decline: Simulate uniform population declines across all species.

- Simulate Extinctions and Calculate Losses: Simulate the sequential removal of frugivore species according to each scenario. After each removal, calculate:

- Structural Robustness: The proportion of plant species that still have at least one disperser.

- Functional Robustness: The proportion of total original SDE that remains in the community.

- Compare Robustness Trajectories: Plot the decline of structural versus functional robustness across the defaunation gradients to identify divergence points and critical thresholds.

Protocol 3: Modeling Cascading Failures in Spatial Networks

This protocol assesses the resilience of landscape networks to dynamic, cascading disturbances [3].

- Spatial Network Construction: Model a landscape as a network where nodes represent habitat patches and edges represent corridors or potential dispersal pathways. Use data from field surveys and remote sensing. The load on a node (e.g., its ecological importance) can be proportional to its degree or betweenness centrality.

- Define Node Capacity: Assign a capacity to each node, representing its ability to handle additional load if neighboring nodes fail. Capacity is often defined as a multiple of the node's initial load.

- Apply Attack Strategies: Subject the network to different node-removal strategies:

- Random Attack: Remove nodes randomly.

- Malicious Attack: Sequentially remove nodes with the highest load or degree.

- Simulate Cascading Failure:

- When a node fails, redistribute its load to neighboring, functional nodes.

- If the redistributed load causes any neighbor's load to exceed its capacity, that neighbor also fails.

- Iterate this process until no new nodes fail.

- Quantify Resilience: Calculate the robustness index (R) of the network based on the proportion of nodes that have not failed after the cascade, or the drop in global efficiency. Compare the outcomes of different attack strategies to identify network vulnerabilities.

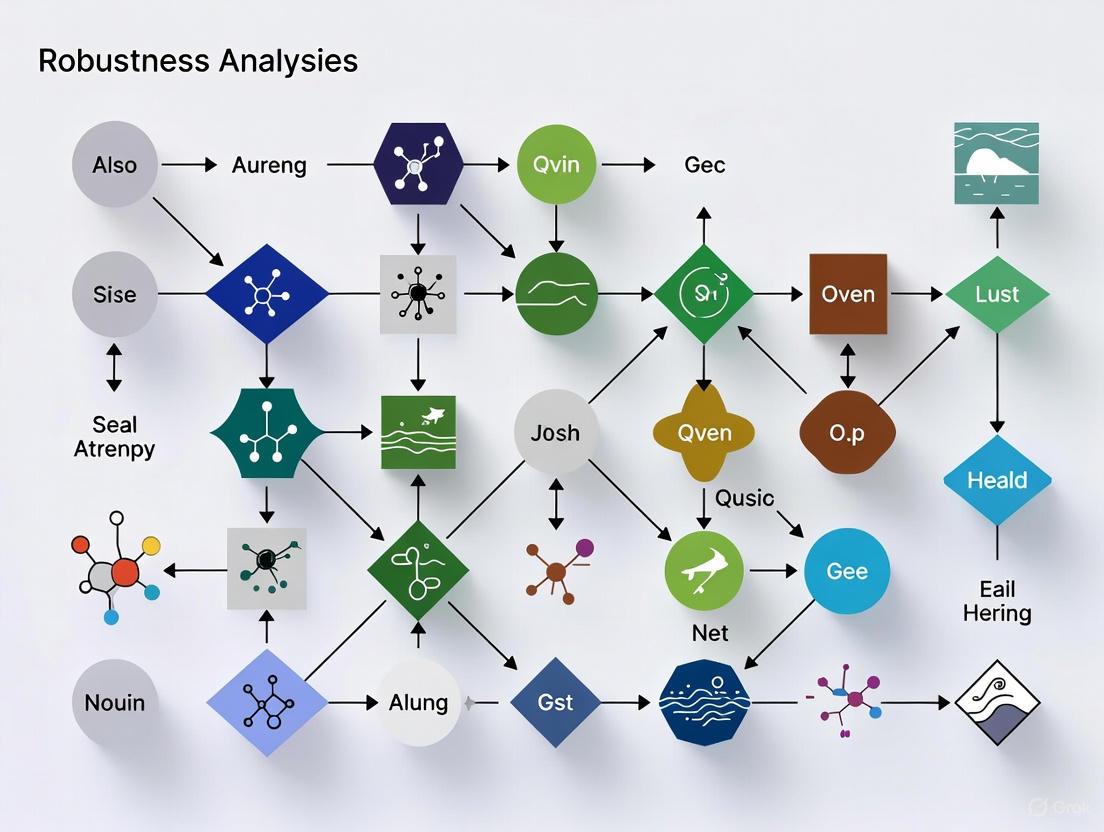

Conceptual Workflows and Signaling Pathways

The following diagrams illustrate the core conceptual relationships and experimental workflows in ecological robustness research.

Diagram 1: A generalized workflow for assessing ecological robustness, showing the key stages from data collection through perturbation simulation to final analysis.

Diagram 2: The conceptual relationship between structural integrity and functional resilience in response to disturbance, highlighting that these dimensions can respond independently and at different rates.

The Scientist's Toolkit: Essential Research Reagents and Solutions

This section details key computational tools, models, and data types essential for conducting robustness analysis in ecological networks.

Table 3: Research Reagent Solutions for Ecological Network Analysis

| Tool/Reagent | Primary Function | Application in Robustness Research |

|---|---|---|

| NetworkX Library [3] | Network analysis and metric calculation | Used for computing degree indices, centrality measures, and simulating network perturbations in Python. |

| Circuit Theory Models [6] | Predicting movement and connectivity in landscapes | Applied to identify ecological corridors and sources, forming the basis for constructing spatial ecological networks. |

| Cascading Failure Model [3] | Simulating dynamic, sequential node failures | Allows assessment of spatial network resilience under different attack strategies (random vs. malicious). |

| Seed Dispersal Effectiveness (SDE) Framework [2] | Quantifying the functional outcome of species interactions | Provides an integrated metric (quantity × quality) to measure functional robustness in plant-frugivore networks. |

| Morphological Spatial Pattern Analysis (MSPA) [7] | Classifying landscape spatial patterns | Used to identify core ecological sources and structural elements from land cover maps for network construction. |

| Rewiring Capacity/Potential Metrics [4] | Quantifying trait space for new interactions | Offers a functional measure of network adaptability and resilience to species loss or environmental change. |

Understanding the stability of complex systems in the face of disturbance is a cornerstone of ecological research. Robustness analysis has emerged as a critical framework for quantifying this stability, measuring a system's ability to maintain its structure and function despite species loss, habitat fragmentation, or other perturbations. Central to this analysis is network topology—the specific arrangement of connections between components within a system. This guide objectively compares how different topological configurations, particularly the presence of hub nodes and varying connectivity patterns, influence systemic vulnerability. Drawing upon current ecological research and experimental simulations, we provide a structured comparison of topological performance, detailed methodologies for key experiments, and essential tools for researchers in ecology and related fields.

Topology and Robustness: A Comparative Framework

The structure of an ecological network—often visualized as nodes (e.g., species, habitat patches) connected by edges (e.g., trophic interactions, dispersal corridors)—profoundly influences its robustness. The following table summarizes how key topological features impact system vulnerability, based on experimental data from network analysis.

Table 1: Comparative Influence of Network Topology on System Robustness

| Topological Feature | Impact on Robustness | Experimental Evidence | Key Vulnerability |

|---|---|---|---|

| Hub Nodes (Centralized) | High initial efficiency; low connectivity robustness under targeted attack. Rapid collapse if hubs are removed [8]. | Node removal experiments show networks with highly centralized hubs experience a sharp drop in connectivity after hub loss [9] [10]. | Targeted removal of hub nodes triggers cascading failures, threatening overall system stability [10]. |

| Distributed Connectivity | Higher redundancy; slower, more graceful degradation under stress. Maintains pathways after random failure [8]. | Simulation of weed spread showed modified square and hexagonal tessellations had similar, more robust propagation behavior compared to von Neumann neighborhoods [11]. | Potential for lower overall efficiency in resource or energy transfer under normal conditions. |

| Module Interdependence | Varies by system. Low interdependence can insulate modules from cascading effects [12]. | In tripartite networks, the correlation of robustness between animal species sets was often low, suggesting restoration may not automatically propagate [12]. | High interdependence between modules (e.g., pollination & herbivory) can lead to cross-system collapse. |

| Network Scale | Invariability (inverse of population fluctuations) often increases with network size due to statistical averaging of asynchronous dynamics [13]. | Analytical models show community-level invariability is greater than species-level invariability due to asynchrony [13]. | Smaller networks are more susceptible to stochastic fluctuations and localized disturbances. |

Quantitative data from a 2024 analysis of 44 tripartite ecological networks further illustrates the structural differences between network types. The study measured the proportion of shared species that act as connector nodes between different interaction layers (e.g., between herbivory and parasitism networks) [12].

Table 2: Structural Differences in Ecological Network Types [12]

| Network Type (by Interaction) | Connector Nodes (%) | Hub Connectors (%) | Link Distribution (Avg. PCC) |

|---|---|---|---|

| Antagonism-Antagonism (AA) | ~35% | ~96% | 0.89 (Evenly Split) |

| Mutualism-Mutualism (MM) | ~10% | ~32% | 0.59 (Less Evenly Split) |

| Mutualism-Antagonism (MA) | ~22% | ~56% | 0.59 (Less Evenly Split) |

Experimental Protocols for Topology and Vulnerability Analysis

To ensure reproducibility and objective comparison, researchers employ standardized protocols to quantify topology-driven vulnerability. Below are detailed methodologies for two key experimental approaches cited in this guide.

Node Removal Simulation for Robustness Quantification

This protocol measures the robustness of an ecological network to species loss or habitat patch removal and is central to findings in [12] [9] [10].

- Primary Objective: To simulate species extinction or habitat loss and quantify the resulting cascade of secondary extinctions or connectivity loss.

- Experimental Workflow:

- Network Construction: Formally define the network, identifying all nodes (e.g., plant species, ecological source areas) and edges (e.g., pollinator interactions, dispersal corridors).

- Define Extinction Threshold: Set a threshold for node failure (e.g., an animal species goes secondarily extinct if it loses all its plant partners; a habitat patch becomes isolated if all corridors to it are severed) [12].

- Sequential Removal: Remove nodes from the network in a specific order. This involves two primary strategies:

- Cascade Tracking: After each removal, identify any secondary extinctions or loss of connectivity based on the pre-defined threshold.

- Robustness Metric Calculation: Calculate robustness as the area under the curve of the proportion of remaining species or persistent connectivity against the proportion of nodes removed [12].

- Key Output Metrics: Connectivity robustness, collapse threshold, rate of cascade failure [10].

Patch Stability and Network Connectivity Trade-off Analysis

This protocol, derived from [9], assesses the often-contrasting objectives of conserving stable individual patches versus maintaining a well-connected network.

- Primary Objective: To evaluate and balance the dual conservation goals of patch stability and network connectivity in landscape planning.

- Experimental Workflow:

- Patch Stability Assessment:

- Map land use and landscape pattern within each ecological source patch.

- Calculate a land use conflict index based on landscape composition and spatial pattern to reflect a patch's susceptibility to disturbance [9].

- Network Connectivity Assessment:

- Use complex network analysis to calculate topology-based metrics for the Ecological Security Pattern (ESP). Key metrics include:

- Connectivity Robustness: The network's ability to maintain connectivity as nodes are removed.

- Global Efficiency: A measure of the efficiency of parallel species or information transfer across the network [9].

- Use complex network analysis to calculate topology-based metrics for the Ecological Security Pattern (ESP). Key metrics include:

- Correlation and Trade-off Analysis:

- Spatially correlate the values of patch stability and node-based connectivity importance across the network.

- Identify areas of synergy (high stability and high connectivity) and trade-off (high stability but low connectivity, or vice versa) [9].

- Priority Ranking: Develop a comprehensive conservation priority for each patch by integrating its stability and connectivity scores.

- Patch Stability Assessment:

- Key Output Metrics: Land use conflict index, global efficiency, equivalent connectivity, and priority restoration maps [9].

Visualizing Topological Relationships and Workflows

The following diagrams illustrate the core logical relationships in network topology and the sequence of experimental protocols.

Figure 1: A comparison of fundamental network topologies, highlighting their core characteristics, strengths, and inherent vulnerabilities. Hub-and-spoke systems are efficient but fragile, while distributed networks trade peak efficiency for greater resilience.

Figure 2: The standardized workflow for node removal experiments, a key protocol for quantifying network robustness. The critical step is the choice of removal strategy, which tests the network's resilience to different types of threats.

The Scientist's Toolkit: Essential Research Reagents and Solutions

This table details key computational tools, models, and data types essential for conducting research in ecological network robustness analysis.

Table 3: Key Research Reagent Solutions for Network Robustness Analysis

| Tool/Model/Data Type | Primary Function | Application Context | Relevance to Topology |

|---|---|---|---|

| Circuit Theory (Circuitscape) | Models ecological flows and connectivity as electrical current. | Identifying ecological corridors and pinch-points; random-walk dispersal simulation [6] [10]. | Quantifies functional connectivity and identifies critical, high-flow links that may act as hubs. |

| Graph Theory Software (Pajek) | Analyzes complex network topology and calculates metrics. | Computing node degree, centrality, and network-level indices (α, β, γ) for structural assessment [10]. | Directly measures topological properties like hub presence, connectivity, and modularity. |

| Linkage Mapper | A GIS toolbox to model ecological corridors. | Building ecological networks by connecting core habitat patches [10]. | Constructs the physical network structure for subsequent topological analysis. |

| MaxEnt Model | A species distribution model using presence-only data. | Identifying ecological source areas (nodes) based on habitat suitability [10]. | Defines the initial set of nodes in the network, the foundation of the topology. |

| Morphological Spatial Pattern Analysis (MSPA) | A pixel-based image processing for landscape morphology. | Classifying landscape structures to identify core areas, bridges, and branches [14] [6]. | Provides a fine-scale, structural understanding of the landscape matrix in which the network exists. |

| PARTNER CPRM | A platform for mapping and tracking inter-organizational networks using social network analysis [8]. | Measuring trust, alignment, and shared value in collaborative networks (e.g., conservation partnerships). | Quantifies relational topologies in human-centered ecosystems, identifying central coordinators. |

Robustness describes the ability of a system to maintain its core functions, structures, and identity despite external and internal disturbances. In ecology, this concept is crucial for understanding how biodiversity loss affects the reliable supply of ecosystem services upon which human societies depend. As global extinction rates accelerate, predicting and enhancing the robustness of ecological systems has become a central challenge for conservation science [15] [12].

The historical context of ecological robustness traces back to Robert May's 1972 work, which initially suggested that complex randomly constructed communities might be less stable. This sparked decades of research revealing that real ecological networks possess distinct non-random architectures that significantly enhance their stability and robustness beyond what random network models would predict [16]. Contemporary research has moved toward understanding how specific network properties—such as functional redundancy, modularity, and interaction diversity—contribute to ecosystem robustness in the face of species extinctions and environmental change [15] [12] [16].

This guide compares different methodological frameworks for assessing ecological robustness, presents quantitative findings on how network structure determines robustness, and provides practical experimental protocols for researchers evaluating conservation interventions.

Theoretical Frameworks for Assessing Ecological Robustness

The Network Fragility Framework

The Boolean network modeling framework provides a qualitative approach for identifying universal drivers of ecosystem service robustness to species loss. This model conceptualizes species as either present or absent and ecosystem services as either provided or not, defining a robust-vulnerable continuum [15].

At one extreme lies the logical AND function, where every species is essential and the loss of any single species results in service failure due to complete lack of functional redundancy. At the opposite extreme lies the logical OR function, where all species are fully substitutable and only a single species is needed to supply a service, creating high robustness through full redundancy [15].

The framework introduces the concept of network fragility (f~c~), a synthetic parameter that combines simple features of species-to-trait bipartite networks: the numbers of species (S), functional traits (N), and links between them (characterized by connectance p). For random networks, the cth percentile of the robustness distribution R~c~(E) is given by:

$$Rc(E)=1-fc;\ where\ f_c=\frac{\log(1-(1-q^S)e^{-\frac{c}{N}})}{S\log q}$$

where q = 1 - p [15]. This relationship demonstrates that robustness statistics are driven entirely by network fragility, which can be quantified from basic network features.

Multilayer Network Robustness

Real ecosystems contain multiple interaction types (e.g., pollination, seed dispersal, herbivory) that form multilayer networks. Recent research has analyzed tripartite networks composed of two layers of interactions among three species sets, with one set shared between both interaction layers [12] [17].

In these multilayer networks, the interdependence of robustness between different animal species sets depends critically on how the interaction layers connect. Key structural properties affecting robustness include: the proportion of connector nodes (shared species that have links in both interaction layers), the proportion of shared species hubs that are connector nodes, and the participation coefficient of connector nodes (how evenly their links are split between interaction layers) [12] [17].

Research shows that antagonistic-antagonistic networks (e.g., herbivory-parasitism) have approximately 35% of shared species acting as connector nodes, with about 96% of shared species hubs connecting both layers. In contrast, mutualistic-mutualistic networks (e.g., pollination-seed dispersal) have only about 10% of shared species as connector nodes, with just 32% of shared species hubs connecting both layers [12].

Comparative Analysis of Robustness Assessment Frameworks

Table 1: Comparison of Ecological Robustness Assessment Frameworks

| Framework | Core Approach | Key Metrics | Applications | Strengths | Limitations |

|---|---|---|---|---|---|

| Network Fragility Framework [15] | Boolean modeling of species-to-trait networks | Network fragility (f~c~), robustness percentiles (R~c~), functional redundancy | Predicting ecosystem service collapse from species loss | Universal drivers identified; applicable to diverse services | Simplified binary representation of species presence/function |

| Multilayer Network Analysis [12] [17] | Tripartite network modeling with multiple interaction types | Connector node proportion, robustness interdependence, participation coefficient | Understanding cross-interaction extinction cascades | Captures real-world complexity of multiple interaction types | Computationally intensive; limited empirical data availability |

| Dynamical Systems Approach [16] | Coupled population dynamics models (e.g., Ricker equation) | Species persistence, asymptotic network size | Predicting biodiversity outcomes from ecosystem merging | Incorporates population dynamics | Limited to small networks due to computational constraints |

Experimental Protocols for Quantifying Robustness

Standard Robustness Assessment Protocol

The following protocol is adapted from methodologies used in analyzing 251 empirical ecological networks and 44 tripartite networks [15] [12]:

Objective: Quantify robustness of ecosystem service supply or community persistence to sequential species loss.

Materials:

- Interaction network data: Bipartite (species-trait) or multilayer networks

- Computational environment: R, Python, or specialized network analysis software

- Statistical packages: For replication and null model comparisons

Procedure:

- Network characterization: Quantify basic network properties (species richness, connectance, functional trait diversity)

- Extinction simulation: Generate multiple random sequences of species removal (typically 1,000-3,000 iterations)

- Function assessment: After each removal, evaluate whether ecosystem service persists (Boolean framework) or which secondary extinctions occur (multilayer framework)

- Robustness calculation: Compute the area under the curve of surviving species/function versus proportion of species lost

- Null model comparison: Compare observed robustness to appropriate null models to identify significant deviations

- Sensitivity analysis: Test robustness against targeted versus random removals

Validation: The protocol should be validated using datasets with known outcomes and tested for sensitivity to network sampling completeness [15] [12].

Multilayer Network Robustness Protocol

Specialized applications: For networks with multiple interaction types [12] [17]

Additional materials:

- Tripartite network data: Two interaction layers with one shared species set

- Structural metrics: Connector node identification, participation coefficients

Enhanced procedure:

- Layer-specific robustness: Calculate robustness separately for each interaction layer

- Interdependence quantification: Measure correlation between layer-specific robustness values across extinction sequences

- Connector node analysis: Identify shared species connecting both layers and quantify their structural properties

- Comparative analysis: Classify networks by interaction type combination (MM, MA, AA) and compare structural patterns

Diagram Title: Multilayer Robustness Assessment Workflow

Quantitative Comparison of Ecosystem Robustness

Empirical Robustness Across Network Types

Table 2: Robustness Values Across Empirical Ecological Networks

| Network Type | Number of Networks Studied | Average Robustness | Key Structural Drivers | Response to Targeted Attacks |

|---|---|---|---|---|

| Pollination Services [15] | 251+ (combined) | Varies by network fragility | Functional redundancy, connectance | Highly sensitive to keystone pollinator loss |

| Mutualism-Mutualism Networks [12] [17] | 23 | Lower robustness interdependence | Low connector node proportion (∼10%) | Limited cascade effects between layers |

| Antagonism-Antagonism Networks [12] [17] | Not specified | Higher robustness interdependence | High connector node proportion (∼35%) | Cross-layer extinction cascades more likely |

| Mutualism-Antagonism Networks [12] [17] | Not specified | Intermediate interdependence | Moderate connector nodes (∼22%) | Intermediate cascade vulnerability |

Network Fragility and Robustness Relationship

Analysis of 251 empirical networks revealed that corrected network fragility (f~c~) explains approximately 89% of the variation in ecosystem service robustness (Spearman ρ = -0.89), demonstrating remarkable predictive power [15]. The correction accounts for deviation from randomness in species-to-trait networks:

$$fc^*=fc+{\lambda}c fc(1-fc){\log}{10}(d)$$

where d represents dispersion measured as the ratio of observed variance in species per trait to the null expectation [15].

The relationship between network fragility and robustness follows a predictable pattern where robustness is most predictable (has lowest variance) at both low and high fragility values and when services are underpinned by many functional traits [15].

The Researcher's Toolkit: Essential Methods and Reagents

Table 3: Research Toolkit for Ecological Robustness Analysis

| Tool/Method | Function | Application Context | Key References |

|---|---|---|---|

| Boolean Network Modeling | Simplifies complex systems to binary states | Predicting ecosystem service collapse from species loss | [15] |

| Tripartite Network Analysis | Quantifies multiple interaction types simultaneously | Understanding cross-interaction extinction cascades | [12] [17] |

| Null Model Comparisons | Tests significance of observed patterns against random expectations | Identifying non-random network structures enhancing robustness | [15] [12] |

| Robustness Interdependence Metric | Measures correlation between layer-specific robustness | Predicting cross-system vulnerability in multilayer networks | [12] [17] |

| Sequential Extinction Simulations | Models species loss scenarios | Quantifying tolerance to biodiversity loss | [15] [12] |

Implications for Conservation Priorities

Identifying Keystone Species for Conservation

The network fragility framework enables quantification of how individual species contribute to ecosystem service robustness. The loss of a species with L~i~ traits affects connectance as:

$$p \to p - p(\frac{L_i}{L} - \frac{1}{S})$$

This allows identification of species with disproportionate contributions to robustness—typically those with many functional links—which become priority targets for conservation [15].

Designing Robust Ecological Communities

Conservation efforts should prioritize maintaining functional redundancy across critical ecosystem functions rather than simply maximizing species richness. The multilayer network perspective reveals that low robustness interdependence between interaction layers in many systems suggests restoration efforts may not automatically propagate through whole communities, requiring targeted interventions in each interaction type [12].

Diagram Title: Conservation Priority Framework Based on Network Robustness

Robustness analysis provides powerful frameworks for predicting how ecological systems respond to biodiversity loss. The network fragility approach demonstrates that simple network properties can successfully predict ecosystem service robustness across diverse systems [15]. Meanwhile, multilayer network analysis reveals that the interdependence between different interaction types strongly influences vulnerability patterns [12] [17].

These approaches enable conservationists to move beyond simplistic species-counting toward functional network preservation, identifying critical leverage points for maintaining ecosystem services despite ongoing environmental change. Future research should focus on integrating temporal dynamics and anthropogenic drivers into robustness models to enhance their predictive power for conservation decision-making.

Ecological networks represent a critical conservation strategy for mitigating biodiversity loss caused by habitat fragmentation and climate change [18]. These networks facilitate species mobility and population vitality by connecting habitat patches through traversable landscapes [18]. The core components of any ecological network include ecological sources (habitat patches), corridors (connectivity pathways), and resistance surfaces (landscape permeability maps) [19]. Analyzing these components systematically is essential for assessing ecological network robustness—the ability to maintain functionality under environmental change [18]. This review compares methodological approaches for identifying these key components, evaluates their performance under varying environmental conditions, and provides experimental protocols for network construction and analysis aimed at enhancing robustness in conservation planning.

Comparative Analysis of Ecological Network Components

Methodological Approaches for Component Identification

Ecological network construction follows a established research framework: "ecological source identification–resistance surface construction–corridor extraction–node identification" [19]. Approaches have evolved from simple identification of protected areas to sophisticated analytical techniques incorporating ecosystem functionality.

Table 1: Methods for Identifying Ecological Network Components

| Network Component | Identification Methods | Key Metrics/Models | Application Examples |

|---|---|---|---|

| Ecological Sources | Morphological Spatial Pattern Analysis (MSPA) [19] | Patch size, connectivity indices, ecosystem service value | Shenmu City, China (2000-2035) [19] |

| Ecosystem Service Assessment [19] | InVEST model, habitat quality, ecological sensitivity | Resource-based regions [20] | |

| Resistance Surfaces | Multi-Factor Assessment [19] | Land use type, elevation, human disturbance | Loess Plateau region [19] |

| Nighttime Light Data Correction [19] | Light pollution intensity, anthropogenic pressure | Urbanizing regions [19] | |

| Ecological Corridors | Circuit Theory [19] | Current density, pinch points, barrier points | Rare and endangered plants [21] |

| Least-Cost Path Analysis [19] | Cumulative resistance value, corridor width | Typical resource-based regions [20] |

Quantitative Assessment of Network Performance

Robustness analysis evaluates how ecological networks maintain functionality under changing conditions. Research in Shenmu City demonstrated dynamic changes in ecological networks from 2000 to 2035 under different climate scenarios, with key metrics revealing varying network stability [19].

Table 2: Ecological Network Performance Under Different Scenarios

| Performance Metric | SSP119 Scenario (2035) | SSP585 Scenario (2035) | Interpretation |

|---|---|---|---|

| α Index (Connectivity) | Increases | Decreases | Measures network node connection strength |

| β Index (Complexity) | Increases | Decreases | Measures network complexity and alternate routes |

| γ Index (Efficiency) | Increases | Decreases | Measures network connectivity efficiency |

| Ecological Source Area | Expands | Contracts | Habitat availability for species conservation |

| Network Stability | Stabilizes | Degrades | System robustness to environmental change |

Experimental data from Shenmu City showed that from 2000 to 2020, ecological sources continuously shrank while landscape fragmentation increased [19]. Under future scenarios, ecological networks demonstrated varying robustness, with the sustainable development scenario (SSP119) showing improved network metrics compared to the fossil-fueled development scenario (SSP585) [19].

Experimental Protocols for Network Construction

Integrated Workflow for Ecological Network Analysis

The following workflow illustrates the comprehensive process for constructing and analyzing ecological networks, integrating multiple methodological approaches:

Detailed Experimental Methodology

Ecological Source Identification Protocol

Purpose: To identify core habitat patches serving as ecological sources in the network. Materials: Land use/cover data, vegetation indices, species distribution data, GIS software. Procedure:

- Conduct Morphological Spatial Pattern Analysis (MSPA) to identify core habitat areas, islets, bridges, and branches [19]

- Assess ecosystem service function using the InVEST model to quantify habitat quality, carbon storage, and soil conservation [19]

- Evaluate ecological sensitivity to environmental changes and anthropogenic pressures [19]

- Integrate results using spatial overlay analysis to delineate priority ecological sources

- Validate source selection with field surveys or species occurrence data

Data Interpretation: Larger, well-connected patches with high ecosystem service values represent optimal ecological sources. In Shenmu City, this approach revealed continuous shrinkage of ecological sources from 2000-2020, with fragmentation increasing over time [19].

Resistance Surface Construction Protocol

Purpose: To create a landscape resistance map representing permeability to species movement. Materials: Land use data, digital elevation models, nighttime light data, road networks, human footprint data. Procedure:

- Select disturbance factors affecting species migration (land use intensity, road density, human population density, topography) [19]

- Assign resistance values (1-100) to each factor based on known species responses to barriers

- Create individual resistance layers for each factor in GIS environment

- Correct resistance values using nighttime light data to account for anthropogenic pressure [19]

- Integrate multiple factors using weighted overlay analysis to create comprehensive resistance surface

- Validate resistance values with species movement data or expert knowledge

Data Interpretation: Lower resistance values indicate higher landscape permeability. In the Loess Plateau, precipitation and temperature were identified as primary factors influencing ecological source distribution, followed by anthropogenic factors [19].

Ecological Corridor Extraction Protocol

Purpose: To identify optimal connectivity pathways between ecological sources. Materials: Resistance surface, ecological source locations, GIS software with corridor analysis tools. Procedure:

- Apply circuit theory using Linkage Mapper tool to model ecological flows between sources [19]

- Calculate least-cost paths between ecological sources using the cumulative resistance surface

- Identify pinch points (areas with concentrated flow) and barrier points (areas disrupting connectivity) using current density calculations [19]

- Determine corridor width based on target species requirements and landscape context

- Classify corridors by priority based on connectivity importance and threat level

Data Interpretation: Higher current density indicates more important connectivity areas. In Shenmu City, 27 ecological pinch points and 40 ecological barrier points were identified under the optimal SSP119 scenario as priority restoration areas [19].

Table 3: Research Reagent Solutions for Ecological Network Analysis

| Tool/Resource | Function | Application Context |

|---|---|---|

| Linkage Mapper | Corridor identification using circuit theory | Pinch point and barrier point analysis [19] |

| InVEST Model | Ecosystem service quantification | Habitat quality assessment for source identification [19] |

| MSPA Algorithms | Spatial pattern analysis of habitats | Structural connectivity assessment [19] |

| PLUS Model | Land use simulation under future scenarios | Projecting ecological network dynamics [19] |

| GeoDetector | Spatial heterogeneity analysis | Identifying drivers of ecological network changes [19] |

| Climate Projections | SSP-RCP scenario data | Assessing network robustness to climate change [19] |

Robust ecological networks require precise identification of three core components: ecological sources assessed through MSPA and ecosystem service evaluation, resistance surfaces constructed with multi-factor assessment and nighttime light correction, and corridors extracted using circuit theory and least-cost path analysis [19]. Experimental evidence demonstrates that network robustness varies significantly under different climate scenarios, with sustainable development pathways (SSP119) enhancing stability while fossil-fueled development (SSP585) accelerates degradation [19]. Integrating multidimensional assessment approaches that consider climate change impacts, land use dynamics, and species-specific requirements provides the most robust framework for ecological network conservation [22] [18]. Future research should prioritize empirical validation of model predictions, incorporation of eco-evolutionary dynamics, and development of multi-species network optimizations to enhance conservation outcomes in rapidly changing environments.

The study of ecological networks has been profoundly transformed by the application of complex network theory, which provides a mathematical framework for understanding the intricate web of species interactions. Robustness, defined as a network's ability to withstand failures and perturbations, represents a critical attribute for predicting ecosystem responses to disturbances such as species extinctions, habitat fragmentation, and climate change [23]. This analysis examines the fundamental theoretical frameworks connecting complex network theory to ecological applications, comparing the predictive capabilities and methodological approaches of three dominant paradigms in the field: multilayer robustness analysis, k-core decomposition, and percolation theory. Each framework offers distinct advantages for characterizing ecological stability, with significant implications for conservation prioritization, extinction risk assessment, and biodiversity management.

The theoretical foundation rests on representing ecological communities as networks where species constitute nodes and their interactions form links between these nodes [24]. This abstraction enables researchers to apply formal mathematical measures to quantify structural properties that confer stability. For ecologists, understanding these structural determinants of robustness provides crucial insights for designing effective conservation strategies, identifying keystone species, and predicting cascade effects following perturbations [12] [24]. The following sections provide a comparative analysis of major theoretical frameworks, experimental protocols for robustness assessment, and practical research tools for implementing these approaches in ecological research contexts.

Comparative Theoretical Frameworks for Ecological Network Robustness

Multilayer Network Robustness Analysis

Multilayer network analysis represents a paradigm shift from single-interaction studies to frameworks that incorporate the diversity of interaction types occurring simultaneously in ecological communities. This approach examines tripartite networks composed of two layers of interactions (e.g., pollination and herbivory), containing three different species sets, one of which is shared between the two interaction layers [12]. The structural properties of these networks are characterized through specific metrics: the proportion of connector nodes (shared species involved in both interaction types), hub connector percentage (proportion of highly-connected shared species acting as connectors), and participation coefficients (how evenly connector nodes split connections between layers) [12].

Research on 44 tripartite networks from empirical studies reveals fundamental architectural differences across network types. Antagonistic-antagonistic networks (e.g., herbivory-parasitism) display approximately 35% of shared species as connectors, with 96% of hub species connecting layers and high participation coefficients (0.89 average), indicating strong integration between layers [12]. Conversely, mutualistic-mutualistic networks (e.g., pollination-seed dispersal) show only 10% of shared species as connectors, with merely 32% of hubs connecting layers and lower participation coefficients (0.59 average), suggesting more modular architecture [12]. These structural differences directly impact robustness interdependence—the correlation between robustness measures of the two animal species sets when plants are removed [12]. The multilayer approach demonstrates that considering multiple interactions simultaneously provides a more accurate assessment of whole-community robustness and enables better identification of keystone species critical for conservation planning [12].

K-Core Decomposition Framework

The k-core decomposition framework characterizes network robustness by partitioning species into hierarchically ordered substructures based on their connection patterns, revealing a network's core-periphery organization [24]. This method iteratively removes nodes with the fewest connections, assigning each node to a k-shell based on its remaining connections after each pruning cycle [24]. Species in the innermost k-shells constitute the network core, while those in outer shells form the periphery. Empirical analyses of mutualistic ecological networks and financial systems reveal a consistent U-shaped occupancy curve, with high occupancy in both inner core and outer shells, while intermediate shells remain sparsely populated [24].

This distinctive architecture provides dual resilience benefits: highly-connected core species (symbionts) confer resistance to global attacks or systemic perturbations, while numerous peripheral species (commensalists) absorb random local attacks, as their removal rarely triggers cascade effects [24]. The k-core robustness can be quantified through mathematical models that simulate species density dynamics, incorporating parameters for species die-off rates and interaction strengths [24]. Research confirms that networks with higher maximum k-core occupancy demonstrate enhanced resilience against both targeted and random removal of species [24]. This framework provides critical insights for identifying core species whose protection is essential for network integrity and predicting tipping points for community collapse.

Percolation Theory Approach

Percolation theory models network robustness as an inverse percolation process, analyzing how connectivity deteriorates as nodes or links are progressively removed [23]. This approach frames robustness in terms of a critical threshold (f_c)—the fraction of nodes that must be removed to disintegrate the network's giant connected component [23]. The Molloy-Reed criterion (κ = ⟨k²⟩/⟨k⟩ > 2) provides the mathematical foundation for determining this threshold, establishing that giant components require nodes to average at least two connections [23].

The percolation framework reveals a fundamental distinction between random networks (f_c^ER = 1 - 1/⟨k⟩) and scale-free networks with power-law degree distributions [23]. Scale-free ecological networks exhibit exceptional resilience to random failures but pronounced vulnerability to targeted hub removal, creating a "robust-yet-fragile" dichotomy with significant conservation implications [23]. This approach also models cascading failures, where initial removals trigger propagation through the network, potentially causing disproportionate collapse [23]. The critical threshold for cascades depends on network degree distribution, with scale-free networks exhibiting unique propagation dynamics compared to random networks [23].

Table 1: Comparative Analysis of Ecological Network Robustness Frameworks

| Framework | Core Metrics | Analytical Approach | Ecological Applications | Key Findings |

|---|---|---|---|---|

| Multilayer Robustness | Connector node proportion, Participation coefficient, Robustness interdependence | Sequential plant species removal measuring secondary extinctions in multiple interaction layers | Multi-interaction communities, Ecosystem service assessment | Robustness interdependence varies by network type; AA networks show stronger layer integration than MM networks [12] |

| K-Core Decomposition | k-Shell occupancy, Maximum k-core size, U-shape distribution | Iterative node removal by degree, k-shell classification, Stability simulation | Mutualistic networks, Core species identification, Tipping point prediction | U-shaped k-shell occupancy provides resilience to both random and targeted attacks [24] |

| Percolation Theory | Critical threshold (f_c), Giant component size, Cascade size distribution | Inverse percolation process, Molloy-Reed criterion application, Cascade modeling | Habitat fragmentation, Metapopulation dynamics, Extinction cascades | Scale-free networks robust to random failure but fragile to targeted attacks [23] |

Experimental Protocols for Robustness Assessment

Multilayer Robustness Experimental Protocol

The experimental protocol for assessing multilayer network robustness employs a standardized methodology for simulating species loss and measuring cascade effects. The procedure begins with compiling interaction matrices for both network layers, identifying shared species (typically plants), and classifying connector nodes (species participating in both layers) [12]. Researchers sequentially remove plant species according to specified removal orders—random, targeted by degree, or phylogenetic specificity—while tracking secondary extinctions in animal species sets for both interaction layers [12].

The key measurements include robustness curves (proportion of surviving animal species versus proportion of removed plants) and robustness correlation (Pearson correlation between the number of surviving species in both animal sets across removal sequences) [12]. The experimental design incorporates four null models with increasing constraints to distinguish structural effects from random expectations: (1) completely random networks, (2) networks preserving node degrees, (3) networks preserving degrees and shared set connections, and (4) networks preserving the exact sequence of removals [12]. This protocol reliably quantifies how the structural connectivity between interaction layers affects the interdependence of their robustness, providing insights for designing restoration interventions that leverage or disrupt these interdependencies.

K-Core Decomposition Experimental Protocol

The k-core decomposition protocol begins with constructing the adjacency matrix representing species interactions, followed by iterative pruning to assign k-shell indices [24]. The algorithm identifies all nodes with degree k=1, removes them and their links, repeats until no degree k=1 nodes remain, and assigns these to the ks=1 shell [24]. This process iterates for increasing k values until all nodes are classified, with the innermost shell constituting the k-core [24].

To quantify robustness, researchers simulate two attack modes: random attacks (nodes removed in random order) and targeted attacks (nodes removed in decreasing k-shell order) [24]. The robustness measure R quantifies the inverse of the area under the curve of the proportion of surviving species versus removal fraction, with higher values indicating greater resilience [24]. For dynamical robustness assessment, researchers implement population dynamics models that incorporate k-core structure, such as: dxi/dt = -dxi + γΣAij[xj^n/(α^n + xj^n)], where xi represents species density, d is the die-off rate, γ is maximal interaction strength, and A_ij is the adjacency matrix [24]. This protocol enables prediction of how network core-periphery structure modulates stability against different perturbation types.

Percolation Theory Experimental Protocol

The percolation theory protocol employs the Molloy-Reed criterion to calculate the critical threshold fc = 1 - 1/(⟨k²⟩/⟨k⟩ - 1), where ⟨k⟩ and ⟨k²⟩ represent the first and second moments of the degree distribution [23]. Researchers begin by calculating the degree distribution for the empirical network, then compute the critical threshold for random node removal [23]. For targeted attacks, the protocol uses a modified critical threshold formula that accounts for degree-based removal strategies: fc^(2-γ)/(1-γ) = 2 + [(2-γ)/(3-γ)]Kmin(fc^(3-γ)/(1-γ) - 1) for scale-free networks with exponent γ [23].

The experimental procedure simulates node removal sequences while monitoring the relative size of the largest connected component (S) and the average cluster size of remaining components (⟨s⟩) [23]. Near the critical threshold, the average cluster size follows a power law: ⟨s⟩ ~ |p - pc|^(-γp), where γ_p is a universal exponent [23]. For cascade failure modeling, the protocol implements threshold models where node failure occurs when a specific fraction of neighbors fail, propagating disruptions through the network [23]. This approach successfully predicts collapse patterns in mutualistic networks and provides early warning indicators for community disintegration.

Table 2: Quantitative Robustness Metrics Across Theoretical Frameworks

| Metric | Theoretical Foundation | Calculation Method | Interpretation | Range |

|---|---|---|---|---|

| Robustness Interdependence | Multilayer Network Theory | Pearson correlation between surviving species in two interaction layers during sequential removals | High values indicate coordinated collapse across interaction types; Low values suggest independent vulnerability [12] | -1 to 1 |

| k-Core Robustness (R) | k-Core Decomposition | Inverse area under curve of surviving species vs. removal fraction | Higher values indicate greater resistance to node removal; U-shaped shell occupancy predicts dual resilience [24] | 0 to 1 |

| Critical Threshold (f_c) | Percolation Theory | f_c = 1 - 1/(⟨k²⟩/⟨k⟩ - 1) | Fraction of nodes whose removal fragments the network; Lower values indicate higher robustness to random failure [23] | 0 to 1 |

| Cascade Size Exponent | Cascade Failure Models | Power-law exponent of cascade size distribution | Higher values indicate more severe cascade potential; Universal exponent for scale-free networks [23] | Network-dependent |

Visualization of Theoretical Framework Integration

Theoretical Framework Integration Pathway

Table 3: Research Reagent Solutions for Ecological Network Analysis

| Research Tool | Function | Application Context | Implementation Considerations |

|---|---|---|---|

| Tripartite Network Models | Represents two interaction layers sharing a common species set | Multilayer robustness analysis; Studying pollination-herbivory, seed dispersal-parasitism systems | Requires detailed interaction data for multiple relationship types; Identifies connector species [12] |

| k-Core Decomposition Algorithm | Iterative node classification by residual degree | Core-periphery structure analysis; Resilience assessment for mutualistic networks | Reveals U-shaped occupancy pattern; Identifies critical core species and resilient peripherals [24] |

| Percolation Threshold Models | Calculates critical node removal fraction for network fragmentation | Assessing vulnerability to random vs. targeted attacks; Modeling cascade effects | Scale-free networks show robust-yet-fragile dichotomy; Different critical thresholds for attack types [23] |

| Cascade Failure Simulations | Models propagation of disruptions through network | Predicting extinction cascades; Identifying systemic risk indicators | Incorporates threshold behaviors; Reveals power-law distribution of cascade sizes [23] |

| Null Model Comparisons | Generates randomized networks for statistical testing | Distinguishing significant structural properties from random expectations | Multilayer analysis uses four null models with increasing constraints [12] |

Comparative Analysis and Research Implications

The comparative analysis of these theoretical frameworks reveals distinct strengths and applications for different ecological contexts. The multilayer approach excels in modeling real-world complexity by incorporating multiple interaction types, demonstrating that considering diverse relationships simultaneously provides more accurate robustness assessments than single-layer analyses [12]. The framework successfully identifies how the structural connectivity between layers affects robustness interdependence, with important implications for restoration planning—networks with low interdependence may require targeted interventions in specific interaction layers rather than whole-community approaches [12].

The k-core decomposition framework provides unique insights into core-periphery organization, revealing that the characteristic U-shaped shell occupancy pattern creates dual resilience against both random and targeted perturbations [24]. This approach identifies keystone species in the network core whose protection is paramount for stability, while also recognizing the importance of peripheral species in absorbing random disturbances. The mathematical formalism connecting k-core structure to population dynamics enables predictions about how topological features modulate species persistence under environmental stress [24].

Percolation theory offers a rigorous mathematical foundation for quantifying critical thresholds and understanding phase transitions in ecological networks [23]. Its powerful analytical framework explains why scale-free architectures common in mutualistic networks confer resilience to random species loss while creating vulnerability to targeted hub removal—a critical consideration given anthropogenic impacts frequently target specific species groups [23]. The cascade modeling components provide particularly valuable tools for predicting extinction sequences and identifying early warning indicators of community collapse.

Integration of these complementary frameworks provides the most comprehensive approach for ecological robustness analysis, leveraging the multilayer perspective on interaction diversity, the k-core insight into core-periphery architecture, and the percolation understanding of critical transitions. This theoretical synthesis empowers researchers to move beyond simplistic stability measures toward multidimensional assessments that better predict ecological responses to anthropogenic change and inform effective conservation strategies in facing the biodiversity crisis.

Advanced Methodologies for Assessing Ecological Network Robustness

Circuit Theory Applications: Modeling Ecological Flows and Connectivity

Ecological connectivity is fundamental for preserving biodiversity, maintaining ecosystem functions, and supporting species adaptation in a changing world. Circuit theory has emerged as a powerful computational approach for modeling ecological flows, offering a distinct alternative to traditional connectivity models. This guide objectively compares circuit theory's performance against other modeling approaches, details its application in robustness analysis for ecological networks, and provides the experimental protocols and resources that underpin this methodology.

Model Comparison: Circuit Theory vs. Alternative Approaches

Circuit theory is one of several analytical frameworks used to model landscape connectivity. The table below compares its performance and characteristics against other common models.

Table 1: Performance and Characteristic Comparison of Connectivity Models

| Model Type | Key Principle | Strengths | Limitations | Best-Suited Applications |

|---|---|---|---|---|

| Circuit Theory [25] [26] | Models movement probability across all possible pathways using electrical circuit principles. | Accounts for multiple dispersal pathways; identifies pinch points and barriers; provides a theoretical link to random walk theory [25] [26]. | Can be computationally intensive for very large grids; may over-predict connectivity for species with highly directed movement [27]. | Predicting gene flow and genetic differentiation; modeling exploratory movement and dispersal; identifying critical corridors and barriers in complex landscapes [25] [27]. |

| Least-Cost Path (LCP) [25] | Identifies the single optimal (lowest-resistance) path between two points on a landscape resistance surface. | Intuitive and simple to implement and interpret; computationally efficient [28]. | Oversimplifies movement by ignoring multiple paths; assumes organisms have perfect landscape knowledge [25] [27]. | Modeling routine movements between known points (e.g., foraging); modeling species with high fidelity to established paths [27]. |

| Graph Theory [29] | Abstractly represents habitats as nodes and dispersal paths as edges to analyze network topology. | Computationally efficient for analyzing landscape-scale connectivity; provides many topological metrics (e.g., connectivity robustness) [29]. | Loss of spatial explicitness when corridors are represented as edges; can oversimplify the quality of connecting pathways [29]. | Macro-scale planning and prioritizing habitat patches; evaluating the structural robustness of ecological networks [29]. |

Comparative Performance Data: A study on wolverine dispersal found that circuit theory (implemented via Circuitscape) outperformed least-cost path models for predicting the movements of dispersing juveniles. Conversely, for elk, which follow established routes, least-cost path models slightly outperformed circuit theory [27]. This highlights that model performance is context-dependent and influenced by species-specific movement behavior.

Robustness Analysis in Ecological Networks

Robustness analysis evaluates an ecological network's ability to maintain its connectivity and function when habitat patches or corridors are lost due to disturbances like urbanization or climate change.

Circuit theory integrates with robustness modeling by providing a spatially explicit map of connectivity, which is then abstracted into a graph network of nodes and links for robustness simulation [30] [29]. Key circuit theory outputs like current density maps are used to identify critical corridors and "pinch points" that become the focus of robustness testing [25] [30].

Table 2: Circuit Theory Metrics for Robustness Analysis

| Circuit Theory Metric | Ecological Interpretation | Role in Robustness Analysis |

|---|---|---|

| Current Density [25] | The probability of movement or gene flow through a landscape cell. | Identifies high-use corridors and critical pinch points whose failure is simulated in targeted attack scenarios [30]. |

| Effective Resistance [25] | A pairwise measure of isolation between populations or habitat patches. | Serves as a baseline measure of connectivity between nodes; changes in effective resistance are tracked during robustness simulations to quantify disruption [25] [31]. |

| Resistance Distance | A more accurate measure of isolation than Euclidean or least-cost distance. | Used to weight the connections (edges) in the ecological network, making the robustness model more biologically realistic than simple binary connections [31]. |

Experimental Findings: Research on the ecological network of Yantai City found that its structure depended heavily on protecting ecological pinch points and barriers identified by circuit theory. The study concluded that networks with high redundancy (multiple alternative pathways) showed strong resilience to random disturbances but remained vulnerable to targeted attacks on these critical nodes [30]. Similarly, a study in the Yellow River Basin used this combined approach to simulate land-use scenarios and evaluate their impact on network stability [29].

Experimental Protocol: Applying Circuit Theory

The following workflow details the standard methodology for applying circuit theory to model ecological connectivity, from data preparation to robustness assessment.

Phase 1: Data Preparation and Resistance Surface Creation

- Habitat Suitability Modeling: Use species presence data (e.g., from camera traps, transect surveys, or telemetry) with environmental variables (elevation, slope, land cover, water sources) in a Maximum Entropy (MaxEnt) model to create a habitat suitability map [28]. Model performance is typically validated using the Area Under the Curve (AUC) of the Receiver Operating Characteristic (ROC), with values above 0.75 indicating acceptable performance [28].

- Resistance Surface Creation: Transform the habitat suitability map into a resistance surface. High-suitability areas are assigned low resistance values, indicating easy movement, while low-suitability areas are assigned high resistance values [28].

Phase 2: Circuit Theory Analysis

- Software Application: Implement the analysis using the open-source software Circuitscape [25] [27]. Input the resistance surface and define focal habitat patches (e.g., wildlife refuges, natural reserves) as "nodes" in the circuit.

- Key Outputs: The primary outputs are current density maps and effective resistance values [25].

- Current Density Maps: Visualize the probability of movement across the entire landscape, highlighting all potential pathways and critical pinch points.

- Effective Resistance: Quantifies the functional isolation between each pair of focal habitat patches.

Phase 3: Robustness Evaluation

- Network Construction: Abstract the landscape into an ecological network graph. Habitat patches become nodes, and the corridors identified by circuit theory become edges, which can be weighted by effective resistance or current density [30] [29].

- Failure Simulation: Evaluate network robustness using two scenarios [30] [29]:

- Random Failure: Corridors are removed randomly in sequential steps.

- Targeted Attack: Corridors are removed in order of importance (e.g., starting with the highest current density corridors).

- Robustness Metric: The connectivity robustness index is calculated as the area under the curve of the remaining network connectivity (e.g., the fraction of nodes that remain connected) plotted against the fraction of removed corridors [29]. A slower decline indicates a more robust network.

The Scientist's Toolkit

The following reagents, software, and data sources are essential for conducting circuit theory-based ecological connectivity research.

Table 3: Essential Research Tools for Circuit Theory Analysis

| Tool Name | Type | Primary Function | Key Features |

|---|---|---|---|

| Circuitscape [25] [27] | Software | The primary open-source platform for implementing circuit theory connectivity models. | Integrates with GIS; can solve large raster landscapes; offers both pairwise and advanced network modes. |

| MaxEnt [28] | Software | Uses the maximum entropy method to create species distribution and habitat suitability models from presence-only data. | Robust performance with small sample sizes; widely used and cited in ecology. |

| GPS/GIS Data [28] | Data | Provides spatial data on species occurrences, land cover, topography, and human infrastructure. | Forms the foundational layers for creating accurate resistance surfaces. |

| Camera Traps [28] | Field Equipment | Non-invasively collects species presence and abundance data across a landscape. | Essential for gathering the occurrence data needed to build and validate species-specific models. |

| Graphab [29] [27] | Software | Constructs and analyzes ecological networks from graphs, useful for the robustness analysis phase. | Allows for the computation of many connectivity metrics and includes robustness simulation features. |

| Genetic Sample Data [25] | Data | Provides measurements of genetic differentiation (e.g., FST) between sub-populations. | Used to validate circuit theory models by testing the correlation between effective resistance and genetic distance. |

Key Insights for Practitioners

- Hybrid Modeling Approach: For many applications, a hybrid approach that leverages the strengths of both circuit theory and least-cost path models yields the most insight. For instance, one can use circuit theory to identify broad zones of high movement probability and then use least-cost path analysis to pinpoint specific corridors within those zones [27].

- Pinch Points are Conservation Priorities: The "pinch points" identified by high current density in narrow corridors are often the most cost-effective targets for conservation action, as their protection or restoration can disproportionately benefit overall landscape connectivity [25] [30].

- Validate with Independent Data: Whenever possible, validate connectivity models with independent data. Genetic data is a powerful validator for gene flow models [25], while GPS telemetry or camera trap data can be used to validate movement models [28] [27].

Morphological Spatial Pattern Analysis (MSPA) for Identifying Critical Structural Elements

The stability and functionality of ecological networks are fundamentally governed by the spatial arrangement of their constituent elements. Morphological Spatial Pattern Analysis (MSPA) has emerged as a critical computational methodology for systematically characterizing these spatial structures, enabling researchers to quantify how pattern geometry influences system robustness. As a specialized form of image processing, MSPA employs mathematical morphological operators to decompose landscape patterns into mutually exclusive and exhaustive classes, providing a standardized framework for structural assessment [32]. This analytical approach has become increasingly vital for ecological network research, particularly for identifying critical structural elements that enhance or diminish system resilience to species losses and environmental perturbations [33] [12].

The theoretical foundation of MSPA rests on the premise that spatial configuration significantly impacts ecological processes and functionality. Recent research on ecological networks with multiple interaction types has demonstrated that the robustness of entire communities is intrinsically linked to their structural architecture [12]. When applied to ecological networks, MSPA provides empirical evidence to support this theoretical framework, revealing how specific spatial elements—such as corridors, core areas, and bridges—contribute disproportionately to maintaining connectivity and functionality despite disturbances [34]. By quantifying these structural relationships, MSPA enables researchers to predict vulnerability to species losses and design more effective conservation strategies that optimize ecological robustness [33].

Methodological Framework: The MSPA Approach

Core Principles and Analytical Process

MSPA operates through a customized sequence of mathematical morphological operations specifically designed to describe the geometry and connectivity of image components. The methodology requires an initial binary segmentation of the landscape into foreground (the structural element of interest, such as forest habitat) and background (the complementary matrix) [32]. This binary mask is then processed through a series of erosion, dilation, and connectivity operations that classify each foreground pixel into one of seven distinct morphological classes [32] [34]. The analytical scale can be adjusted by the user through parameter modification, allowing for multi-scale assessments of structural patterns [32].

A key advantage of MSPA lies in its geometric basis, which enables application across diverse spatial scales and ecosystem types without requiring species-specific parameters. This geometric objectivity facilitates comparative studies across different regions and temporal periods. The method has been successfully implemented in various contexts, including forest ecology [35], urban planning [34], climate change studies [32], and even medical applications [32], demonstrating its remarkable methodological versatility.

MSPA Classification Schema

The MSPA algorithm categorizes landscape patterns into seven visually and functionally distinct classes:

Table 1: MSPA Pattern Classification and Ecological Interpretation

| MSPA Class | Structural Description | Ecological Function | Conservation Priority |

|---|---|---|---|

| Core | Interior areas with sufficient distance from boundary | Provides habitat sanctuary, supports sensitive species | High - critical for biodiversity |

| Islet | Small, isolated patches | Limited habitat value, potential stepping stones | Low to Medium - context dependent |

| Perforation | Transition zone between core and internal background | Edge habitat, high species turnover | Medium - regulates core conditions |

| Edge | External boundary between core and background | Edge habitat, filter for ecological flows | Medium - buffer function |

| Loop | Redundant connections between core areas | Alternative pathways, network resilience | Medium - redundancy value |

| Bridge | Critical connecting elements between core areas | Facilitates movement, genetic exchange | Very High - connectivity maintenance |

| Branch | Connectors leading to peripheral areas | Access to resources, potential dead-ends | Low to Medium - limited functionality |