Renewable Energy Storage Solutions 2025: A Comprehensive Performance Comparison for a Resilient Grid

This article provides a systematic performance comparison of contemporary renewable energy storage solutions, tailored for energy researchers, system designers, and policy professionals.

Renewable Energy Storage Solutions 2025: A Comprehensive Performance Comparison for a Resilient Grid

Abstract

This article provides a systematic performance comparison of contemporary renewable energy storage solutions, tailored for energy researchers, system designers, and policy professionals. It establishes a foundational understanding of key storage technologies—from dominant lithium-ion batteries to mature pumped hydro and emerging long-duration solutions—by defining critical performance metrics like Levelized Cost of Storage (LCOS), cycle life, and round-trip efficiency. The analysis then explores advanced optimization methodologies and control strategies that enhance economic and operational outcomes, including AI-driven management and shared storage models. A detailed, data-driven comparative analysis validates technologies against application-specific criteria such as duration, response time, and scalability, offering actionable insights for selecting optimal storage configurations to improve grid stability, maximize renewable integration, and achieve decarbonization goals.

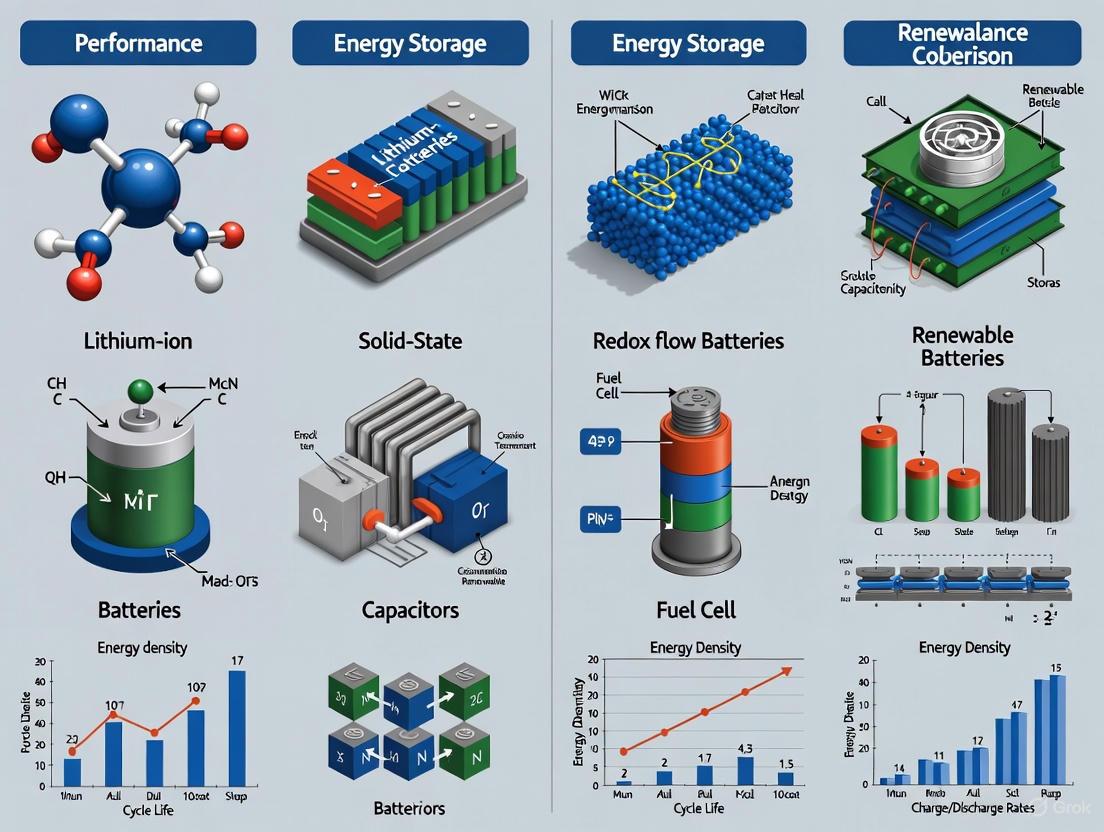

Understanding the Energy Storage Landscape: Core Technologies and Performance Metrics

The global transition to a sustainable energy future is inherently dependent on the ability to store energy effectively, bridging the gap between intermittent renewable energy supply and constant demand. Energy storage systems (ESS) have thus become a cornerstone technology, enabling grid stability, renewable energy integration, and backup power. The modern energy storage ecosystem encompasses a diverse portfolio of technologies, each with unique performance characteristics tailored for specific durations and applications, from milliseconds of grid stabilization to seasonal shifts in energy availability. This guide provides an objective, data-driven comparison of contemporary energy storage solutions, framing the analysis within the critical context of matching technology capabilities to application requirements for researchers and scientists driving innovation in this field.

Classification of Energy Storage Technologies

Energy storage systems are fundamentally classified by the form of energy they utilize, which dictates their inherent characteristics, optimal applications, and scalability. Understanding this classification framework is essential for appropriate technology selection.

The classification tree above illustrates the technological diversity within the modern energy storage ecosystem. Mechanical storage systems, including pumped hydro storage (PHS) and compressed air energy storage (CAES), dominate utility-scale applications due to their massive storage capacity and long duration capabilities [1]. Electrochemical storage, particularly lithium-ion and flow batteries, has revolutionized residential, commercial, and grid-scale applications with their versatility and declining costs [2]. Electrical storage technologies like supercapacitors provide ultra-fast response for power quality applications, while thermal and chemical storage offer solutions for long-duration and seasonal energy shifting challenges [1].

Performance Comparison of Energy Storage Technologies

Selecting an appropriate energy storage technology requires careful evaluation of multiple performance metrics against specific application requirements. The following comprehensive comparison synthesizes experimental data and operational characteristics across the major technology categories.

Quantitative Performance Metrics Comparison

Table 1: Comprehensive performance comparison of major energy storage technologies [2] [1]

| Technology | Efficiency (%) | Energy Density | Cycle Life | Discharge Duration | Response Time | Typical Capacity |

|---|---|---|---|---|---|---|

| Lithium-ion (Li-ion) | 85-95% | High (200-400 Wh/L) | 1,000-10,000 cycles | Minutes to 8 hours | Seconds to minutes | kWh to 100+ MWh |

| Flow Batteries | 70-85% | Medium (20-70 Wh/L) | 10,000+ cycles | 4-12+ hours | Seconds | MWh to GWh scale |

| Pumped Hydro (PHS) | 70-85% | Low | 30+ years | 6-20 hours | Minutes to hours | 500-3000+ MWh |

| Compressed Air (CAES) | 40-70% | Low | 20+ years | 2-20 hours | Minutes to hours | 100-500+ MWh |

| Supercapacitors | 90-95% | Very low | 1,000,000+ cycles | Seconds to minutes | Milliseconds | Wh to kWh |

| Hydrogen Fuel Cells | 30-50% (round trip) | High | Unlimited (depends on fuel) | Days to months | Minutes to hours | MWh to GWh scale |

| Lead-Acid | 70-85% | Low | 500-2,000 cycles | Minutes to hours | Seconds | kWh to MWh |

| Nickel-Metal Hydride | 70-80% | Medium | 300-500 cycles | Minutes to hours | Seconds | kWh scale |

Safety and Environmental Profile Comparison

Table 2: Safety, cost, and environmental characteristics of energy storage technologies [2]

| Technology | Fire Risk | Environmental Impact | Cost Trend | Material Constraints | Typical Application |

|---|---|---|---|---|---|

| Lithium-ion (Li-ion) | High (thermal runaway) | Moderate (mining impact) | Declining | Lithium, cobalt, nickel | EVs, grid storage, residential |

| Flow Batteries | Low (non-flammable electrolyte) | Low to moderate | Declining rapidly | Vanadium (for VRFB) | Long-duration grid storage |

| Pumped Hydro | Low | High (land use) | Stable high CAPEX | Geographical constraints | Utility-scale storage |

| Compressed Air | Low | Moderate (geological) | High CAPEX | Geological formations | Large-scale storage |

| Supercapacitors | Low | Low (no toxic waste) | Moderate | Specialty materials | Power quality, regeneration |

| Hydrogen Fuel Cells | Low (with protocols) | Low (if green H₂) | Very high | Platinum group metals | Seasonal storage, transportation |

| Lead-Acid | Low | High (lead contamination) | Stable low cost | Lead availability | Automotive, UPS |

| Nickel-Metal Hydride | Medium | Moderate (mining impact) | Stable | Rare earth elements | Hybrid vehicles, electronics |

Technology Selection Guidance by Application

Different energy storage technologies excel in specific applications based on their discharge duration, power rating, and cycle life characteristics. The following diagram illustrates the optimal application space for major technologies based on discharge duration and power requirements.

The technology selection workflow demonstrates that supercapacitors excel for sub-second to second duration applications requiring very high power, such as power quality management and frequency regulation [2] [1]. Lithium-ion batteries dominate the minutes to hours duration range with medium to high power capabilities, making them ideal for electric vehicles, residential storage, and partial grid support [2]. Flow batteries and pumped hydro storage cover the hours to days duration category, with flow batteries offering better scalability for medium power applications and PHS providing very high power for utility-scale needs [3] [1]. For seasonal storage requirements spanning days to months, hydrogen fuel cells represent the only commercially viable technology, despite efficiency challenges [2].

Deep-Dive Analysis: Flow Batteries for Long-Duration Storage

Flow batteries represent a particularly promising technology for long-duration energy storage requirements, offering unique advantages for grid-scale applications. Their architecture fundamentally differs from conventional solid-electrode batteries by storing energy in liquid electrolytes contained in external tanks.

Flow Battery Chemistry Comparison and Commercial Landscape

Table 3: Major flow battery chemistries and key commercial players [3]

| Chemistry Type | Leading Companies | Core Advantages | Limitations | Commercial Status |

|---|---|---|---|---|

| All-Vanadium Redox (VRFB) | Dalian Rongke (China), VRB Energy (China), Invinity Energy Systems (UK), Sumitomo Electric (Japan) | Long cycle life (20,000+), proven commercial technology, high efficiency | Vanadium price volatility, lower energy density | Commercial with >1GWh projects |

| Iron-Chromium | ESS Inc. (USA) | Abundant low-cost materials, avoid vanadium dependence | Lower efficiency, cross-contamination challenges | Early commercial deployment |

| Zinc-Bromine | Redflow (Australia) | Higher energy density, good temperature stability | Zinc dendrite formation, complex management | Niche commercial applications |

| Iron-Air | Form Energy (USA) | Ultra-low theoretical cost (~$20/kWh), abundant materials | Very low efficiency, early development stage | Pilot projects (2024) |

Regional Development Trends and Market Insights

The global flow battery market exhibits distinct regional characteristics and competitive advantages. Chinese companies, led by Dalian Rongke and VRB Energy, have achieved dominant market positioning through vertical integration strategies, controlling approximately 70% of global vanadium flow battery production capacity as of 2023 [3]. This dominance is reinforced by substantial government support, including tax exemptions and equipment purchase subsidies. European and American companies have pursued technological differentiation strategies, with companies like ESS Inc. focusing on iron-chromium chemistry to avoid vanadium supply dependencies, while Form Energy innovates with ultra-low-cost iron-air systems [3]. Japanese firms, particularly Sumitomo Electric, maintain strong intellectual property positions, holding 387 core flow battery patents and charging licensing fees to other manufacturers [3].

Experimental Protocols for Flow Battery Performance Validation

Standardized experimental protocols are essential for validating flow battery performance claims and enabling direct comparison between different systems. The following methodology outlines key testing procedures for assessing critical performance parameters.

Electrolyte Stability and Cycle Life Testing Protocol

Objective: Determine electrochemical stability, cycle life, and capacity retention of flow battery electrolytes under controlled conditions.

Materials and Equipment:

- Test Cell: Symmetrical flow cell with graphite bipolar plates, carbon felt electrodes, and Nafion membrane

- Electrolyte: 1.6 M VOSO₄ in 2 M H₂SO₄ (positive) and 1.6 M VOSO₄ in 2 M H₂SO₄ (negative) for VRFB systems

- Pumping System: Peristaltic pumps with precise flow rate control (50-200 mL/min)

- Potentiostat/Galvanostat: Biologic VMP-300 or equivalent with 5 A current booster

- Environmental Chamber: Temperature control capability (±0.5°C) from 15°C to 45°C

Methodology:

- Cell Assembly: Assemble flow cell with predetermined compression on carbon felt electrodes (typically 20-30%)

- Electrolyte Preparation: Prepare 500 mL each of positive and negative electrolytes, recording initial vanadium concentrations

- Initial Characterization:

- Perform cyclic voltammetry at 5 mV/s between voltage limits specific to chemistry

- Measure electrochemical impedance spectrum from 100 kHz to 100 mHz at 50% state of charge

- Determine initial energy efficiency via charge-discharge cycling at 50 mA/cm²

- Cycle Life Testing:

- Implement continuous charge-discharge cycling between 10-90% state of charge

- Apply constant current density of 80 mA/cm² with voltage cutoffs

- Record capacity, efficiency, and pressure drop measurements every 50 cycles

- Maintain temperature at 25±2°C throughout testing

- Post-Test Analysis:

- Measure final vanadium concentrations in both electrolytes

- Inspect membrane for crossover or degradation

- Analyze electrode morphology changes via SEM

Data Analysis: Calculate capacity decay rate per cycle, round-trip energy efficiency, voltage efficiency, and coulombic efficiency. Compare beginning-of-life (BOL) and end-of-life (EOL) performance parameters.

Research Reagent Solutions for Flow Battery Development

Table 4: Essential research materials for flow battery experimentation [4] [5]

| Research Reagent | Function | Technical Specifications | Application Notes |

|---|---|---|---|

| Vanadium Electrolyte | Active energy storage material | 1.5-2.0 M VOSO₄ in 2-3 M H₂SO₄ | Stability enhanced with phosphoric acid additives; concentration affects energy density |

| Nafion Membrane | Proton-selective separator | 50-180 μm thickness, 0.9-1.1 meq/g exchange capacity | Pretreatment required (boiling in H₂O₂, H₂SO₄, DI water); primary cost driver |

| Carbon Felt Electrodes | Reaction surface for redox reactions | 0.3-0.5 mm thickness, 95-99% porosity, 5-20 μm fiber diameter | Thermal activation (400°C air, 2h) enhances surface functionality |

| Graphite Bipolar Plates | Current collection and flow field structure | 2-5 mm thickness, 1.8-2.0 g/cm³ density, <50 μΩ·m resistivity | Machined flow patterns critical for electrolyte distribution |

| Perfluorinated Sulfonic Acid (PPSA) | Alternative membrane material | 50-150 μm thickness, 1.1-1.3 meq/g exchange capacity | Lower cost alternative to Nafion with comparable performance |

| Electrolyte Additives | Stability and performance enhancement | 1-3% w/w bismuth, 2-5% w/w phosphoric acid | Suppress gas evolution, improve thermal stability |

Emerging Trends and Future Research Directions

The energy storage landscape continues to evolve rapidly, with several emerging technologies and improvement pathways shaping the future ecosystem. Solid-state batteries represent a promising advancement in lithium-ion technology, offering enhanced safety through non-flammable solid electrolytes and potentially higher energy densities exceeding 500 Wh/L [2]. While currently at the research and early commercialization stage, solid-state batteries demonstrate cycle lives potentially exceeding 10,000 cycles with significantly reduced fire risks compared to conventional lithium-ion chemistries [2].

Vanadium redox flow batteries are experiencing substantial cost reductions, with system costs declining from approximately $600/kWh in 2018 to $350/kWh in 2023, a 42% reduction driven by manufacturing scale and electrolyte optimization [3]. Research initiatives focused on novel electrolyte systems, including mixed acid supports and organic chelating agents, aim to enhance operating temperature ranges and energy density while maintaining the inherent safety advantages of aqueous systems [4] [5].

Supply chain security and material sustainability represent critical research priorities. The concentration of vanadium production (over 60% from China) has prompted initiatives to develop alternative flow battery chemistries using more abundant materials, as well as resource leasing models and dynamic database development to improve market transparency [4]. Similar efforts focus on reducing lithium-ion dependence on cobalt through advanced cathode chemistries like lithium iron phosphate (LFP), which offers improved safety and sustainability profiles [2] [6].

The increasing diversification of energy storage technologies reflects a maturation of the industry, with different solutions finding optimal applications based on technical characteristics rather than one-size-fits-all approaches. This technology-specific optimization pathway promises enhanced overall system economics and reliability as the global energy transition accelerates.

The global transition to renewable energy has fundamentally increased the demand for efficient and reliable energy storage solutions. While lithium-ion batteries (LIBs) currently dominate the market, their suitability for every application is being re-evaluated. This guide provides a performance comparison of the established LIB technology against three emerging alternatives: Lithium Iron Phosphate (LFP), sodium-ion (Na-ion), and vanadium redox flow batteries (VRFBs). Framed within a broader thesis on renewable energy storage research, this analysis synthesizes technical data and experimental findings to offer researchers and scientists a clear, objective comparison of these technologies' characteristics, applications, and future potential.

The energy storage landscape is diversifying, with each technology offering a distinct profile of advantages and trade-offs. The following table provides a high-level comparison of the key technologies examined in this guide.

Table 1: Core Technology Overview and Primary Applications

| Technology | Key Characteristics | Primary Research & Application Focus |

|---|---|---|

| Lithium-ion (NMC/LCO) | High energy density, compact size, established supply chain [7] | Portable electronics, EVs where space/weight are critical [8] [7] |

| LFP (LiFePO₄) | Exceptional safety, long cycle life, cobalt-free chemistry [9] [8] | Stationary storage (solar, UPS), EVs prioritizing safety/lifespan [9] [10] |

| Sodium-ion (SIB) | Abundant raw materials, lower cost, safer operation [11] [12] | Cost-sensitive grid storage, backup power; emerging EV applications [11] |

| Vanadium Flow (VRFB) | Decoupled power/energy, extremely long cycle life, non-flammable [13] [14] [15] | Long-duration (4+ hours) utility-scale storage, renewable integration [13] [14] |

The Incumbent: Lithium-ion Batteries and the LFP Variant

Lithium-ion is an umbrella term for batteries with cathodes made from various lithium metal oxides, such as Lithium Cobalt Oxide (LCO) and Nickel Manganese Cobalt (NMC) [7]. These chemistries are valued for their high energy density, which is crucial for portable electronics and electric vehicles (EVs) [9] [7]. However, they carry safety risks like thermal runaway and use scarce materials like cobalt [9] [13].

Lithium Iron Phosphate (LFP), a subtype of LIB, has a different cathode chemistry that uses iron and phosphate. Its stable olivine structure with strong covalent bonds makes it inherently safer and virtually eliminates the risk of thermal runaway [9] [8]. LFP batteries also boast a much longer cycle life—typically 3,000 to 7,000 cycles, compared to 1,000 to 2,500 for conventional NMC batteries [8] [7]. The trade-off is a lower energy density, making LFP batteries larger and heavier for the same energy capacity [9] [7]. This makes LFP ideal for stationary storage where safety and longevity are more critical than compact size.

The Challengers: Sodium-ion and Flow Batteries

Sodium-ion batteries (SIBs) operate on a similar "rocking-chair" principle as LIBs but use sodium ions, which are derived from far more abundant resources [11] [12]. The primary advantage of SIBs is lower cost, with raw material savings making them 20-30% cheaper than LFP cells [11] [10]. They also exhibit enhanced safety and better performance at extreme temperatures [11] [12]. Their main limitation is lower energy density (100-160 Wh/kg), though this is expected to exceed 200 Wh/kg with future advancements [11].

Vanadium Redox Flow Batteries (VRFBs) represent a fundamental architectural shift. Energy is stored in liquid electrolytes held in external tanks, which are pumped through a stack to charge or discharge [13] [15]. This decouples power (stack size) and energy (tank volume) [14]. VRFBs offer an exceptionally long cycle life of over 10,000 cycles with minimal degradation, non-flammable electrolytes, and excellent recyclability [13] [14] [15]. Their low energy density makes them unsuitable for mobility but ideal for long-duration, grid-scale storage [13].

Quantitative Performance Data

For research and development decisions, quantitative data is critical. The following tables summarize key performance metrics and economic indicators for the discussed battery technologies.

Table 2: Key Electrochemical and Performance Metrics for Energy Storage Technologies

| Parameter | Lithium-ion (NMC) | LFP | Sodium-ion | Vanadium Flow (VRFB) |

|---|---|---|---|---|

| Energy Density (Wh/kg) | 150-250 [7] | ~90-160 [7] | 100-160 [11] | Low (System-level) |

| Cycle Life (to 80% capacity) | 1,000 - 2,500 cycles [8] [7] | 3,000 - 7,000+ cycles [9] [8] | 2,000 - 6,000 cycles [11] [10] | 10,000 - 20,000+ cycles [13] [14] [10] |

| Round-Trip Efficiency | 85-95% [10] | 85-95% [10] | Comparable to LIB [12] | 70-85% [10] |

| Nominal Voltage | 3.6-3.7V [9] | 3.2V [9] | Lower than LIB [11] | Cell: 1.15-1.55V [13] |

| Operational Temp. Range | 32°F to 113°F (0°C to 45°C) [9] | -4°F to 140°F (-20°C to 60°C) [9] | Wider than LIB [12] | Ambient [15] |

| Self-Discharge Rate (per month) | Low | 1-3% [9] | Low | Negligible [13] |

Table 3: Cost, Safety, and Sustainability Comparison

| Aspect | Lithium-ion (NMC) | LFP | Sodium-ion | Vanadium Flow (VRFB) |

|---|---|---|---|---|

| Cost per kWh (System) | ~$115/kWh (pack) [10] | Slightly higher than NMC [9] | 20-30% lower than LFP [11] [10] | $130-$600/kWh [12] |

| Safety & Thermal Runaway Risk | Moderate to High [9] [13] | Very Low [9] [8] | Low, more stable [11] [12] | Very Low (non-flammable) [13] [15] |

| Key Materials & Abundance | Lithium, Cobalt, Nickel (Limited) [9] [13] | Lithium, Iron, Phosphate (Abundant) [9] | Sodium (Extremely Abundant) [11] [12] | Vanadium (Recyclable) [13] |

| Environmental Impact | Higher due to mining [13] | Lower, no cobalt/nickel [9] | Lower, abundant sodium [11] | Recyclable components, lower manufacturing impact [13] [15] |

Experimental Protocols for Performance Validation

Standardized testing protocols are essential for the objective comparison of battery technologies. The following experimental workflows and methodologies are critical for validating manufacturer claims and advancing research.

Cycle Life Testing and Degradation Analysis

The cycle life is a key metric for determining a battery's economic viability, especially for stationary storage. The standard protocol involves repeated charge and discharge cycles under controlled conditions.

Diagram 1: Battery Cycle Life Test Workflow

Key Experimental Parameters:

- Depth of Discharge (DoD): The percentage of the battery's capacity that is used. Testing at 100% DoD is more stressful than 80% DoD [8].

- C-rate: The rate of charge and discharge. A 1C rate means a full battery is discharged in one hour. Higher C-rates can accelerate aging [12].

- Temperature Control: Tests are conducted in thermal chambers, as temperature significantly impacts degradation [9].

Data Analysis: The State of Health (SoH) is tracked, typically defined as the ratio of current maximum capacity to initial capacity (C/C₀). The experiment concludes when SoH drops to 80% [8]. The total cycles achieved are the reported cycle life.

Techno-Economic-Environmental Assessment (TEEA) for Grid Storage

For large-scale applications, a more holistic assessment framework is required. The TEEA model integrates technical performance, cost, and environmental impact over the system's lifetime.

Diagram 2: Techno-Economic-Environmental Assessment Framework

Core Methodologies:

- Iterative Sizing Framework: For a given application (e.g., a standalone PV system), the minimum battery size meeting daily demand is calculated [12].

- Techno-Economic Modeling: Key metrics include Levelized Cost of Storage (LCOES), which accounts for capital, operational, and replacement costs over the system's lifetime, divided by total energy discharged [12].

- Life Cycle Assessment (LCA): This evaluates environmental impact from cradle to grave, including manufacturing, operation, and recycling. A key output is the Levelized Carbon Emission of Storage (LCEOS), measuring CO₂ emissions per kWh of stored electricity delivered [12].

- Economic-Ecological Efficiency: This combined metric identifies technologies that deliver the greatest environmental benefit (e.g., carbon reduction) at the lowest cost [12].

The Scientist's Toolkit: Research Reagents and Materials

The development and testing of these storage technologies rely on a suite of specialized materials and reagents.

Table 4: Key Research Reagents and Materials in Battery Development

| Category | Specific Material/Reagent | Primary Function in R&D |

|---|---|---|

| Cathode Materials | NMC (LiNiₓMnᵧCo₂O₂), LCO (LiCoO₂) [7] | Provides the source of lithium ions; key determinant of energy density and stability in conventional LIBs. |

| LiFePO₄ (Lithium Iron Phosphate) [9] [7] | Provides stable olivine structure for LFP cathodes; enables safety and long cycle life. | |

| Prussian White (Sodium Ferrous Ferrocyanide) [12] | A leading cathode material for SIBs; symmetric structure enables fast charging and long life. | |

| Anode Materials | Graphite (Carbon) [9] [11] | Standard anode material for LIBs; hosts lithium/sodium ions in layered structure. |

| Hard Carbon [11] | The preferred anode material for SIBs due to its larger interlayer spacing accommodating sodium ions. | |

| Electrolytes & Solvents | Lithium Hexafluorophosphate (LiPF₆) in Organic Solvents [9] | Common lithium salt electrolyte for LIBs; conducts ions between cathode and anode. |

| Sodium Salts (e.g., NaClO₄) in Organic Solvents [11] | Electrolyte salts for SIBs; function similarly to LIB electrolytes but with sodium ions. | |

| Vanyl Sulfate / Vanadium in Sulfuric Acid [13] | The electroactive electrolyte for VRFBs; contains V⁴⁺/V⁵⁺ and V²⁺/V³⁺ redox couples. | |

| Cell Components | Nafion Membrane [13] | A common proton-exchange membrane used in VRFBs; allows selective ion passage while preventing electrolyte mixing. |

| Carbon Felt/Paper [13] [15] | Used as electrodes in VRFBs; provides surface for redox reactions without participating in them. | |

| Polypropylene (PP) / Polyethylene (PE) Separators [9] | Porous polymer film preventing electrical short circuits between anode and cathode in LIBs/SIBs. |

The era of lithium-ion dominance is evolving into a period of strategic diversification. No single battery technology is optimal for all applications. Lithium-ion NMC remains the leader for applications where high energy density is paramount. LFP has established itself as the superior choice for stationary storage and safety-critical applications due to its longevity and stability. Sodium-ion batteries present a compelling, cost-effective alternative for grid storage, with a rapidly growing manufacturing base. Vanadium Flow Batteries are unmatched for long-duration, utility-scale storage where a 25-year lifespan and absolute safety are required.

The future energy storage ecosystem will not be a winner-take-all market. Instead, it will be a heterogeneous landscape where the "best" battery is defined by the specific application—be it cost, longevity, energy density, or power scaling. As one industry expert succinctly stated, "It's not a matter of sodium versus lithium, we need both" [16]—a sentiment that extends to the entire portfolio of electrochemical storage technologies. Continued research, guided by rigorous experimental protocols and holistic assessment frameworks, is crucial to optimizing each technology and integrating them into a resilient, renewable-powered grid.

The global transition to a sustainable energy future is intrinsically linked to the efficient integration of variable renewable sources such as wind and solar power. The inherent intermittency of these resources creates critical challenges for grid stability and reliability, necessitating robust, large-scale, and long-duration energy storage solutions [17]. Among the available technologies, mechanical and thermal storage systems—particularly pumped hydro storage, compressed air energy storage, and emerging gravity-based systems—offer the capacity, longevity, and scale required to support this transition. This guide provides a performance comparison of these technologies, framing them within a broader thesis on renewable energy storage solutions. It is designed to equip researchers, scientists, and energy development professionals with objective, data-driven insights into the operational principles, performance metrics, and experimental validations of each system, thereby informing research directions and technology selection.

Pumped Hydro Storage (PHS)

Pumped Hydro Storage is the most mature and widely deployed grid-scale energy storage technology, representing over 90% of the world's installed storage capacity [18] [19]. Its operating principle involves using surplus electrical energy to pump water from a lower reservoir to an upper reservoir, thereby converting electrical energy into gravitational potential energy. When electricity is needed, water is released back to the lower reservoir, passing through turbines to generate power [20]. PHS systems are characterized by long lifetimes (50-60 years), high round-trip efficiencies (70-85%), and immense power and energy capacities, often reaching gigawatt-scale and multiple gigawatt-hours [19]. Recent developments focus on closed-loop systems, which do not connect to natural waterways and are therefore less environmentally intrusive; in the United States, over 95% of new PHS projects in the development pipeline are closed-loop configurations [18].

Compressed Air Energy Storage (CAES)

Compressed Air Energy Storage functions by using electrical energy to compress air, which is stored under high pressure in underground geological formations such as salt caverns, depleted gas fields, or aquifers. During discharge, the pressurized air is released, heated, and expanded through a turbine to generate electricity [21]. Two primary configurations exist:

- Diabatic CAES (D-CAES): The heat generated during compression is vented and not stored. Upon expansion, the air is reheated using natural gas, resulting in lower round-trip efficiencies (42-55%) and carbon emissions [21] [19].

- Adiabatic CAES (A-CAES): The compression heat is captured and stored in a Thermal Energy Storage (TES) unit, then reused to heat the air during expansion. This eliminates the need for fossil fuels and can achieve higher round-trip efficiencies, with demonstration projects reaching over 70% [22] [21]. A-CAES represents the current research and development frontier for this technology.

Emerging Gravity Energy Storage (GES)

Gravity Energy Storage is an emerging technology that shares the fundamental principle of PHS—converting between electrical energy and gravitational potential energy—but uses solid masses instead of water [20]. Key configurations include:

- Tower-Based (T-SGES): A crane system stacks and lowers composite bricks or concrete blocks within a tall structure.

- Rail-Mounted (R-SGES): Heavy weights are transported on rail vehicles along an incline.

- Shaft-Type (S-SGES): A heavy piston is raised and lowered within a deep, sealed borehole, often leveraging abandoned mine shafts for infrastructure reuse [23] [20]. These systems aim to offer the large-scale, long-duration storage benefits of PHS with reduced geographical constraints and a smaller environmental footprint. Their projected lifespans are comparable to PHS, with round-trip efficiencies potentially exceeding 80% [20].

Table 1: Fundamental Principles and Characteristics of Mechanical Storage Technologies

| Technology | Storage Medium | Energy Conversion Process | Primary Configurations | Typical Project Scale |

|---|---|---|---|---|

| Pumped Hydro (PHS) | Water | Electrical Kinetic Gravitational Potential | Open-Loop, Closed-Loop [18] | 100 MW - 3,600 MW [20] |

| Compressed Air (CAES) | Air | Electrical Kinetic (Pressure) + Thermal | Diabatic (D-CAES), Adiabatic (A-CAES) [21] | 100 MW - 500 MW [17] [21] |

| Gravity Storage (GES) | Solid Masses (Concrete, Composite) | Electrical Kinetic Gravitational Potential | Tower, Rail, Shaft [20] | < 100 MWh (pilots) [24] |

Performance Comparison and Experimental Data

Quantitative Performance Metrics

A comprehensive performance assessment requires evaluating key techno-economic metrics, including efficiency, cost, lifespan, and energy density. The following table synthesizes data from operational facilities, pilot projects, and technical literature.

Table 2: Techno-Economic Performance Metrics for Mechanical Storage Systems

| Performance Parameter | Pumped Hydro Storage (PHS) | Compressed Air Energy Storage (CAES) | Gravity Energy Storage (GES) |

|---|---|---|---|

| Round-Trip Efficiency (RTE) | 70% - 85% [20] [19] | 42% - 55% (D-CAES); 60% - 70%+ (A-CAES) [22] [21] [19] | Projected: 80% - 90% [20] |

| Typical Lifespan (Years) | 50 - 60 years [19] | 20 - 40 years [21] | Projected: > 50 years [20] |

| Energy Density (Wh/m³) | Low (Site Dependent) | Low (Site Dependent) | Low [20] |

| Capital Cost (CAPEX) | High; Closed-loop: ~$3,000-4,500/kW [25] | Moderate-High [17] | Moderate-High (Projected) [23] |

| Levelized Cost of Storage (LCOS) | Low-Moderate [19] | Low (lowest among technologies) [19] | To be determined (Technology immature) |

| Technology Readiness Level (TRL) | 9 (Commercial) | 9 (D-CAES); 6-7 (A-CAES) [17] | 4-7 (Pilot/Demonstration) [20] |

Analysis of Comparative Performance

- Efficiency and Lifespan: PHS demonstrates the highest and most proven round-trip efficiency, alongside an exceptionally long operational lifespan, making it a benchmark for reliability and long-term performance. Advanced Adiabatic CAES aims to bridge the efficiency gap with PHS while GES projections are promising but require validation through commercial deployment [22] [20].

- Cost Considerations: While PHS has high upfront capital costs, its long life results in a competitive Levelized Cost of Storage (LCOS). CAES, particularly using existing geological formations, offers the lowest LCOS among major technologies, providing a significant economic advantage for large-scale, long-duration storage [19]. The economic viability of GES remains a key research question, pending further scale-up and learning curves [23].

- Geographical and Environmental Constraints: PHS requires specific topography and faces significant permitting hurdles. CAES is limited to regions with suitable geology for underground caverns [21]. In contrast, solid-mass GES can be deployed in a wider range of locations with a potentially smaller environmental footprint, representing its primary potential advantage [20].

Experimental Protocols and Validation

Protocol: Performance Testing of a Variable-Speed Contra-Rotating Pump-Turbine for Low-Head PHS

Objective: To experimentally determine the hydraulic efficiency and optimal operating range of a novel contra-rotating pump-turbine (CR RPT) for low-head pumped hydro storage applications [26].

- Methodology:

- Test Rig Configuration: A model-scale test rig is established using two open water surface tanks to simulate variable low heads, unlike conventional rigs that use recirculating pumps. The CR RPT is installed between them.

- Instrumentation: Sensors are deployed to measure:

- Head (m): Differential pressure sensors across the turbine.

- Flow Rate (m³/s): Electromagnetic or ultrasonic flow meters.

- Rotational Speed (RPM): Tachometers on both rotors.

- Torque (Nm): Shaft torque meters.

- Electrical Power (W): Power analyzers on the motor/generator terminals.

- Data Acquisition: For both pump and turbine modes, the rotational speed and flow rate are systematically varied. At each set point, all sensor readings are recorded after the system reaches steady state.

- Efficiency Calculation:

- Turbine Mode Efficiency (ηₜ): ηₜ = (Electrical Power Output) / (Hydraulic Power Input) = (Pelec) / (ρ ⋅ g ⋅ Q ⋅ H)

- Pump Mode Efficiency (ηₚ): ηₚ = (Hydraulic Power Output) / (Electrical Power Input) = (ρ ⋅ g ⋅ Q ⋅ H) / (Pelec) where ρ is water density, g is gravity, Q is flow rate, and H is head.

- Key Findings from Cited Experiment: The CR RPT achieved peak efficiencies of 88.6% in pump mode and 86.1% in turbine mode, maintaining efficiencies over 80% across a wide operating range. This demonstrates the technology's potential for high-performance, low-head PHS applications [26].

Protocol: Thermodynamic and Economic Performance Comparison of CAES vs. CCES

Objective: To conduct a comprehensive thermodynamic and economic comparison between Adiabatic Compressed Air Energy Storage (A-CAES) and Vapor-Liquid Compressed CO₂ Energy Storage (VL-CCES) under a given energy storage capacity [22].

- Methodology:

- System Modeling: Detailed dynamic models of both A-CAES and VL-CCES systems are developed, incorporating all key components (compressors, expanders, thermal stores, gas storage).

- Parameter Definition: Key performance parameters are defined for evaluation:

- Round-Trip Efficiency (RTE): (Total Electrical Energy Discharged) / (Total Electrical Energy Charged).

- Energy Density (kWh/m³): Stored energy per unit volume of the storage medium.

- Capital Cost: Estimated from major equipment costs.

- Transient Simulation: Unlike steady-state models, the systems are simulated over complete charge-discharge cycles to capture the impact of sliding pressures in storage tanks on performance.

- Techno-Economic Analysis: The models are used to calculate RTE, energy density, and estimate costs for a standardized storage capacity, enabling a direct comparison.

- Key Findings from Cited Experiment: The study highlighted that while A-CAES benefits from mature component technology, VL-CCES can achieve higher round-trip efficiencies (exceeding 75% in some designs) and significantly higher energy density due to the ease of liquefying CO₂, which reduces storage volume and cost. The trade-off is the increased complexity of the CCES system and the need for both high- and low-pressure storage vessels [22].

Visualization of System Workflows

The following diagrams, generated using Graphviz DOT language, illustrate the core operational workflows and energy flows for each storage technology.

Pumped Hydro Storage (PHS) Operational Workflow

PHS Energy Flow

Adiabatic Compressed Air (A-CAES) Workflow

A-CAES Energy Flow

Solid Gravity Energy Storage (GES) Workflow

GES Energy Flow

The Scientist's Toolkit: Key Research Reagents and Materials

Table 3: Essential Materials and Components for Experimental Research

| Component / Material | Primary Function in Research | Associated Technology |

|---|---|---|

| Variable-Speed Contra-Rotating Pump-Turbine | Enables high-efficiency energy conversion at variable low heads for PHS. Critical for testing operational flexibility and performance [26]. | PHS |

| Thermal Energy Storage (TES) Unit | Stores heat of compression for reuse. Core component for achieving high round-trip efficiency in A-CAES; research focuses on media (molten salts, ceramics) and design [22] [21]. | A-CAES |

| High-Pressure Vessel / Artificial Cavern | Stores the working fluid (air/CO₂) at high pressure. Used in CAES/CCES experiments to study containment, pressure dynamics, and energy density [22] [17]. | CAES, CCES |

| Composite Mass Blocks | Serve as the gravity medium in solid GES. Research focuses on optimizing mass-to-volume ratio, durability, and cost for commercial viability [20]. | GES |

| Motor/Generator System | The primary electromechanical interface. Used across all technologies to convert between electrical and mechanical energy; key for efficiency measurements [26] [20]. | PHS, CAES, GES |

| Programmable Logic Controller (PLC) & Sensors | Provides automated control and real-time data acquisition (e.g., pressure, temperature, flow, position, power). Essential for precise experimental control and performance validation [26]. | All |

Pumped Hydro Storage remains the undisputed cornerstone of grid-scale energy storage, offering unparalleled capacity, efficiency, and technological maturity. Its future growth lies in closed-loop systems that mitigate environmental concerns. Compressed Air Energy Storage, particularly the advancing Adiabatic CAES, presents a compelling alternative with a lower Levelized Cost of Storage and reduced geographical limitations, provided suitable geology is available. Emerging Gravity Energy Storage technologies offer a promising path to replicating the benefits of PHS with greater siting flexibility, though they must still overcome challenges related to capital costs and demonstration at full scale.

The optimal choice among these technologies is not universal but depends heavily on specific local factors: geography, geology, grid requirements, and cost constraints. For researchers, the frontier involves enhancing the round-trip efficiency and energy density of CAES, reducing the capital costs and proving the long-term reliability of GES, and developing advanced materials and controls for all systems. The continued development and integration of these mechanical storage systems are indispensable for building a resilient, renewable-powered grid.

The global transition to a renewable energy future is fundamentally dependent on the advancement of energy storage technologies. As power systems increasingly integrate variable renewable sources like solar and wind, the ability to store energy for later use has become essential for grid stability and reliability [27]. For researchers and industry professionals, evaluating the performance and economic viability of energy storage solutions requires a deep understanding of four critical performance indicators: Levelized Cost of Storage (LCOS), round-trip efficiency, cycle life, and degradation. These metrics provide a comprehensive framework for comparing diverse storage technologies across different applications and time horizons.

The unprecedented growth in energy storage deployment underscores the importance of these metrics. Global battery storage additions reached 42 GW in 2023 alone—more than double the previous year's installations—with projections of 80 GW of new additions in 2025, representing an eightfold increase from 2021 levels [28]. This rapid scaling, coupled with dramatic cost reductions of 97% since 1991 for battery technologies, makes rigorous performance comparison essential for guiding research priorities and investment decisions [28]. This article provides a systematic comparison of these critical performance indicators across major energy storage technologies, supported by experimental data and methodological frameworks for researchers.

Defining the Critical Performance Indicators

Levelized Cost of Storage (LCOS)

The Levelized Cost of Storage (LCOS) represents the average net present cost of storing and discharging one unit of electricity (typically kWh or MWh) over the entire lifetime of a storage system [29]. Unlike simple upfront capital cost metrics, LCOS provides a more comprehensive economic assessment by accounting for all lifetime costs and energy delivery. The calculation of LCOS converts the total capital expenditure from project construction to retirement with a discount rate, then divides this by the number of roundtrips, effectively considering the time value of money to present cost-effectiveness more accurately [30].

The standard formula for LCOS calculation is: LCOS = (Total Lifetime Costs) / (Total Lifetime Electricity Discharged) Where total lifetime costs include capital expenditure (CAPEX), operational expenditure (OPEX), charging electricity cost, and any end-of-life costs, minus any residual value [30] [29]. This metric has become the primary benchmark for comparing the economic performance of different energy storage technologies and project designs, enabling investors to identify the true cost per kWh stored and delivered [29].

Round-Trip Efficiency (RTE)

Round-trip efficiency (RTE) is the percentage of electricity put into a storage system that can be retrieved later for useful work [31]. It is calculated as: RTE (%) = (Energy Discharged / Energy Charged) × 100 For example, if 10 kWh of electricity is stored and only 8 kWh can be retrieved, the round-trip efficiency is 80% [31]. This 20% energy loss occurs as heat during conversion processes, standby power consumption, and system auxiliary loads.

RTE becomes increasingly critical at grid scale, where efficiency losses translate to massive infrastructure costs and environmental impacts [32]. As one analysis notes, "Losing 50% of the energy stored in a home battery system is inconvenient but manageable; a 50% loss of stored energy at the grid scale—amounting to gigawatt-hours of stored energy—is catastrophic" [32]. The U.S. Department of Energy analysis finds that for cost-effective grid decarbonization, long-duration energy storage must achieve a levelized cost of storage below $0.05/kWh, with 70% RTE emerging as the target for grid-scale applications [32].

Cycle Life and Degradation

Cycle life refers to the number of complete charge-discharge cycles a storage system can undergo before its capacity falls below a specified percentage of its original capacity (typically 80%) [10]. Different technologies exhibit substantially different cycle lives, from 3,000-5,000 cycles for lithium Nickel Manganese Cobalt (NMC) batteries to 10,000+ cycles for flow batteries and 20,000+ cycles for pumped hydro storage [10].

Degradation is the gradual loss of storage capacity or reduction in performance over time and use. The degradation rate determines how quickly a system loses its ability to store and deliver energy at its initial capacity. Both cycle life and degradation rate directly impact the lifetime energy delivery of a storage system, which in turn affects the LCOS—systems with longer cycle lives and slower degradation can deliver more total energy over their operational lifetimes, spreading the initial capital investment over more units of energy [29].

Comparative Performance Data Across Technologies

Table 1: Comparative Performance Indicators for Major Energy Storage Technologies

| Technology | LCOS Range (USD/MWh) | Round-Trip Efficiency (%) | Cycle Life (cycles) | Typical Degradation Rate |

|---|---|---|---|---|

| Lithium-ion (NMC) | $115 - $277 (utility-scale) [33] | 85-95% [10] | 3,000 - 5,000 [10] | ~2-3% per year [29] |

| LFP Batteries | RMB 0.3-0.4/kWh (~$40-55/MWh) [30] | 90-95% [31] | 4,000 - 8,000 [10] | Lower than NMC [10] |

| Vanadium Flow Battery | RMB 0.2/kWh (~$28/MWh) for some projects [30] | 60-80% [32] | 10,000+ [10] | Minimal capacity fade over 25+ years [10] |

| Pumped Hydro | RMB 0.213/kWh (~$30/MWh) [30] | 70-85% [10] | 20,000+ [10] | Very low; decades-long operation [10] |

| Sodium-ion | Projected 20% lower than LFP [10] | 85-90% (emerging) [10] | 2,000 - 4,000 (current) [10] | Similar to early lithium-ion [10] |

Table 2: U.S. LCOS Ranges for Battery Storage (Lazard 2025 Analysis)

| System Configuration | LCOS Range (USD/MWh) | Key Applications |

|---|---|---|

| 100MW/200MWh (2-hour) | $129 - $277 [33] | Peak shaving, frequency regulation |

| 100MW/400MWh (4-hour) | $115 - $254 [33] | Energy arbitrage, capacity firming |

| 1MW/2MWh (C&I) | $319 - $506 [33] | Demand charge reduction, backup power |

| With Investment Tax Credit | $83 - $192 (4-hour) [33] | All applications with policy support |

Table 3: Round-Trip Efficiency Breakdown by Technology and Loss Components

| Technology | Typical RTE Range | Primary Loss Sources |

|---|---|---|

| Lithium-ion (LFP) | 90-95% [31] | Internal resistance, inverter losses, thermal management |

| Flow Batteries | 60-80% [32] | Pumping losses, stack inefficiencies, power conversion |

| Pumped Hydro | 70-85% [10] | Turbine/generator losses, evaporation, seepage |

| Compressed Air | 60-80% [10] | Compression heat losses, storage losses, expansion |

The comparative data reveals several key insights. First, while lithium-ion batteries (particularly LFP chemistry) offer excellent round-trip efficiency, flow batteries and pumped hydro provide superior cycle life, making them potentially more economical for applications requiring frequent cycling over long durations [30] [10]. Second, the LCOS advantage of pumped hydro storage is evident, though this technology faces geographical constraints [30]. Third, emerging technologies like sodium-ion batteries promise lower costs but currently trail in cycle life performance [10].

The impact of the Investment Tax Credit (ITC) on LCOS is particularly noteworthy, reducing the levelized cost of 4-hour utility-scale storage to as low as $83/MWh—making storage highly competitive with conventional peaking power plants [33]. This highlights how policy support can accelerate the economic viability of emerging storage technologies.

Experimental Protocols for Performance Measurement

Standardized LCOS Calculation Methodology

For researchers comparing storage technologies, a standardized LCOS calculation protocol ensures comparable results:

Define System Boundaries: Clearly specify what components are included in the analysis (battery packs, power conversion system, balance of plant, etc.) [29].

Establish Key Parameters:

- Capital expenditures (CAPEX): Include equipment, installation, and grid connection costs

- Operational expenditures (OPEX): Include maintenance, monitoring, and replacement costs

- System lifetime: Define by years or throughput cycles

- Discount rate: Apply appropriate rate (typically 5-10%) for net present value calculation

- Charging electricity cost: Specify assumed electricity price for charging [29]

Calculate Total Lifetime Energy Delivery:

- Account for cycle life, depth of discharge, and degradation effects

- Include round-trip efficiency losses in net energy delivery

- Model capacity fade over time using established degradation models [29]

Compute LCOS: Apply standard formula: LCOS = (Total Lifetime Costs) / (Total Lifetime Electricity Discharged) [30]

Researchers should document all assumptions and conduct sensitivity analyses on key variables such as cycle life, degradation rate, and electricity prices to provide robust comparison across technologies.

Round-Trip Efficiency Testing Protocol

Standardized experimental testing for round-trip efficiency should follow these procedures:

Test Conditions Establishment:

- Stabilize battery at 25°C (±2°C) in temperature chamber

- Set C-rate between C/3 and C/2 for representative conditions

- Define state of charge (SOC) window (e.g., 10-90% for lithium-ion)

Efficiency Measurement Cycle:

- Charge to upper SOC limit at specified C-rate

- Implement rest period of 30 minutes

- Discharge to lower SOC limit at same C-rate

- Implement second rest period of 30 minutes

- Record energy in (during charge) and energy out (during discharge)

Calculation: RTE = (Discharge Energy / Charge Energy) × 100 [31]

Multiple Cycle Testing: Repeat for multiple cycles (typically 100) to establish stabilized efficiency values, as initial cycles may show variation

This protocol ensures comparable RTE measurements across different technologies and research laboratories.

Cycle Life and Degradation Testing

Standardized cycle life testing requires controlled laboratory conditions:

Test Cell Preparation:

- Assemble cells under controlled environment (dry room for lithium-ion)

- Perform formation cycles according to manufacturer specifications

- Measure initial capacity and impedance as baseline

Cycling Protocol:

- Apply standardized depth of discharge (e.g., 80% DoD for comparable results)

- Use specified C-rates for charge and discharge (typically 1C)

- Maintain temperature control throughout testing (±2°C of setpoint)

- Implement periodic reference performance tests (e.g., every 100 cycles) to measure capacity retention and impedance growth

Endpoint Definition: Continue cycling until capacity fade reaches 20% of initial capacity (80% retention) or power capability falls below specification

Degradation Modeling: Fit capacity fade data to established models (linear, square-root of time, etc.) to extrapolate long-term performance

For flow batteries and other novel technologies, researchers should adapt these protocols to account for technology-specific degradation mechanisms, such as membrane fouling or electrolyte cross-contamination.

Visualization of Performance Indicator Relationships

Essential Research Reagents and Materials for Energy Storage Testing

Table 4: Essential Research Materials and Equipment for Energy Storage Performance Testing

| Research Material/Equipment | Function in Performance Testing | Application Notes |

|---|---|---|

| Potentiostat/Galvanostat | Controls voltage/current during cycling tests; measures electrochemical response | Essential for half-cell and full-cell testing; enables precise charge/discharge profiling |

| Battery Cycler | Automates charge-discharge cycling for cycle life testing | Must accommodate various chemistry-specific voltage windows and current densities |

| Environmental Chamber | Maintains precise temperature control during testing | Critical for degradation studies at various temperatures; typically -20°C to +60°C range |

| Impedance Analyzer | Measures internal resistance and impedance spectroscopy | Detects degradation mechanisms; identifies interface changes |

| Reference Electrodes | Enables half-cell testing and potential measurement | Technology-specific (Li metal for lithium-ion, Hg/HgO for aqueous systems) |

| Electrolyte Solutions | Ion conduction medium specific to storage technology | Composition critically affects cycle life and efficiency; must be purity-controlled |

| Active Materials | Electrode materials for specific storage technologies | Include cathodes (NMC, LFP, vanadium oxides) and anodes (graphite, lithium titanium oxide) |

| Separators/Membranes | Prevent short circuits while enabling ion transport | Key component affecting safety and performance (polyolefin, ceramic-coated, ion-exchange) |

| Thermal Imaging Camera | Monitors temperature distribution during operation | Identifies hot spots and thermal management issues |

| Calorimeters | Measures heat generation during operation | Quantifies efficiency losses and thermal runaway risks |

The systematic comparison of LCOS, round-trip efficiency, cycle life, and degradation across energy storage technologies reveals a complex landscape with clear trade-offs. No single technology currently dominates all performance metrics, highlighting the need for application-specific technology selection. Lithium-ion batteries, particularly LFP chemistry, offer excellent round-trip efficiency and rapidly declining LCOS, making them suitable for daily cycling applications [30] [10] [31]. Flow batteries provide exceptional cycle life with minimal degradation, ideal for frequent deep-cycle applications [10]. Pumped hydro remains economically competitive for large-scale applications where geography permits [30].

For researchers, the standardized testing protocols and performance metrics outlined in this primer provide a framework for consistent technology evaluation. The interrelationships between these indicators—particularly how round-trip efficiency, cycle life, and degradation collectively determine the ultimate LCOS—underscore the importance of a systems-level approach to storage technology development [29]. As the global energy storage market continues its rapid expansion, with projections to reach $114 billion by 2030, these critical performance indicators will guide research priorities, investment decisions, and policy support toward the most promising technologies for a renewable energy future [10].

The global energy storage landscape is undergoing a fundamental transformation driven by a decisive milestone: lithium-ion battery pack prices falling to a record low of $115 per kilowatt-hour (kWh) in 2024. This represents the largest annual drop since 2017, a 20% decrease from 2023 levels [34]. This price threshold is not merely a statistical benchmark but represents a critical economic viability point that is actively reshaping deployment strategies across the renewable energy sector. For researchers and scientists developing next-generation energy storage solutions, understanding this new cost environment is paramount. The declining cost curve, which has seen an 85% reduction in pack prices from 2010 to 2018, is accelerating the transition of storage technologies from laboratory curiosities to commercially viable assets [35]. This analysis provides a performance comparison of contemporary storage solutions within this evolving economic context, detailing the experimental protocols and material considerations essential for rigorous research in the field.

Quantitative Analysis of Cost and Performance Metrics

The Evolving Cost Landscape

The historical and projected costs for lithium-ion batteries demonstrate a consistent downward trajectory, fundamentally altering the economic calculus for energy storage deployment.

Table 1: Historical and Projected Lithium-ion Battery Pack Prices (Global Average)

| Year | Average Price per kWh (USD) | Notes |

|---|---|---|

| 2010 | ~$1,000+ | Base year for tracking cost reduction [35] |

| 2013 | ~$668 | Significant improvement from 2010 [36] |

| 2018 | ~$176 | 85% reduction from 2010 [36] [35] |

| 2023 | ~$139 | Continuation of long-term trend [36] |

| 2024 | $115 | 20% year-over-year drop, largest since 2017 [34] |

| 2025 (Projected) | ~$100-$113 | Expected continued decline, though potentially at slower rate [36] [34] |

Regional variations in cost are significant, reflecting differing levels of market maturity, production costs, and manufacturing scale. In 2024, pack prices were lowest in China at $94/kWh, while packs in the U.S. and Europe were 31% and 48% higher, respectively [34]. These disparities highlight the impact of localized supply chains and production expertise on final cost.

Performance Comparison of Dominant Battery Chemistries

The economic viability of energy storage solutions cannot be evaluated on cost alone. Performance characteristics, particularly energy density and cycle life, directly influence the total cost of ownership and application suitability. The following table provides a comparative analysis of the two dominant lithium-ion battery chemistries.

Table 2: Performance and Cost Comparison of Key Lithium-ion Battery Chemistries

| Parameter | Lithium Iron Phosphate (LFP) | Nickel Manganese Cobalt (NMC 811) |

|---|---|---|

| Average Cell Price (2024) | Just under $60/kWh [37] | Higher than LFP; ~$103/kWh pack price in China [37] |

| Cathode Active Material Cost | 43% less expensive per kWh than NMC811 [38] | Higher due to nickel and cobalt content [38] |

| Energy Density | Lower (~65-70% of NMC811) [38] | Higher; enables greater range in less space [38] |

| Cycle Life | Long; ideal for applications requiring frequent cycling [36] | Shorter than LFP, but improving [36] |

| Thermal Stability & Safety | Excellent; more stable and safer chemistry [36] [39] | Good; but more prone to thermal issues than LFP [40] |

| Key Raw Materials | Iron, Phosphorus (Abundant, low-cost) [36] | Nickel, Manganese, Cobalt (Supply chain risks) [41] [37] |

| Primary Applications | Stationary storage, buses, cost-sensitive EVs [38] [39] | High-performance EVs, consumer electronics [38] |

The data reveals a clear trade-off between cost and performance. LFP chemistry sacrifices energy density for lower cost, enhanced safety, and longer cycle life, making it particularly suitable for stationary storage where space constraints are less critical than in electric vehicles (EVs) [38] [39]. The adoption of cell-to-pack (CTP) technology, which reduces the number of components and simplifies assembly, has further improved the volumetric efficiency and reduced the cost of LFP packs [38] [37].

Experimental Protocols for Battery Performance Evaluation

For researchers validating new energy storage materials and chemistries, standardized experimental protocols are critical for generating comparable and reproducible data. The following methodologies are foundational to performance evaluation.

Protocol for Cycle Life and Durability Testing

Objective: To determine the number of charge-discharge cycles a battery can undergo before its capacity falls below 80% of its initial rated capacity.

- Cell Formation: Subject fresh cells to 3-5 initial formation cycles at low C-rates (e.g., 0.1C) to stabilize the solid-electrolyte interphase (SEI) layer.

- Baseline Capacity Measurement: At 25°C, fully charge the cell to its upper voltage cutoff using a Constant Current-Constant Voltage (CC-CV) protocol. Then, discharge at a 1C rate to the lower voltage cutoff to measure the initial discharge capacity.

- Cycling Regimen: Place the cell in a temperature-controlled chamber (25°C ± 2°C). Continuously cycle the cell by:

- Charging at a specified C-rate (e.g., 1C) using a CC-CV method until the upper voltage limit is reached, with a current cutoff at C/20.

- Discharging at a specified C-rate (e.g., 1C) using a constant current method until the lower voltage limit is reached.

- Periodic Check-up Cycles: Every 100 cycles, interrupt the cycling regimen to perform a baseline capacity measurement (as in step 2) to track capacity fade.

- Endpoint Determination: The test concludes when the discharge capacity measured during the check-up cycle drops below 80% of the initial capacity. The total number of cycles completed is recorded as the cycle life.

Protocol for Thermal Abuse and Safety Testing

Objective: To evaluate the thermal stability, safety margins, and failure mechanisms of battery cells under abusive conditions, as guided by research from the National Renewable Energy Laboratory (NREL) [40].

- Accelerating Rate Calorimetry (ARC): Place instrumented cells in an ARC chamber. The test protocol follows a heat-wait-seek sequence to identify the cell's self-heating temperature and subsequently characterize its thermal runaway behavior under adiabatic conditions.

- State of Charge (SOC) Variation: Perform tests on cells at different states of charge (e.g., 0%, 50%, 100% SOC) to understand how energy content influences failure severity [40].

- Abuse Condition Application: Subject cells to defined abuse conditions, including:

- External Short Circuit: Apply a low-resistance connection across the cell terminals.

- Overcharge: Charge the cell beyond its specified voltage limit at a controlled rate.

- Nail Penetration: Use a standardized nail to internally short-circuit the cell.

- Data Collection: Monitor and record cell voltage, surface temperature, and internal pressure (if instrumented). High-speed video may be used to document the failure event.

- Post-Mortem Analysis: After the test, disassemble the cell in a controlled environment for visual inspection and material analysis to identify failure initiation points and propagation pathways [40].

Protocol for Round-Trip Efficiency Measurement

Objective: To measure the energy efficiency of a battery system by comparing the discharge energy to the charge energy over a full cycle.

- Initialization: Fully charge the battery, then allow it to rest for 1 hour.

- Discharge Phase: Discharge the battery at a constant power level (e.g., its rated power) to its minimum state of charge (SOC), recording the total energy output (in Wh).

- Rest Period: Allow the battery to rest for 1 hour.

- Charge Phase: Charge the battery back to 100% SOC using the same constant power level, recording the total energy input (in Wh).

- Calculation: Calculate the round-trip efficiency (η) as: η (%) = (Discharge Energy / Charge Energy) × 100.

This test should be repeated at different C-rates and temperatures to characterize efficiency across a range of operating conditions.

Visualization of Battery Technology Selection Logic

The decision-making process for selecting an appropriate battery technology involves weighing key performance and cost parameters against application requirements. The following diagram maps this logical pathway, providing a framework for researchers and developers.

Figure 1: Battery Chemistry Selection Logic. This decision tree outlines the primary technical and economic considerations for selecting between dominant lithium-ion battery chemistries, LFP and NMC, based on application requirements and priorities [36] [38] [39].

The Scientist's Toolkit: Key Research Reagents and Materials

Research into next-generation batteries requires a suite of specialized materials and analytical tools. The following table details essential components for a research laboratory focused on energy storage.

Table 3: Essential Research Materials and Reagents for Battery Development

| Material/Reagent | Function in Research & Development |

|---|---|

| Lithium Iron Phosphate (LiFePO₄) | Cathode active material for LFP chemistry; valued for its stable olivine structure, safety, and long cycle life in experimental cell testing [36] [38]. |

| High-Nickel NMC (e.g., NMC811, NMCA) | Cathode active material for high-energy-density cells; research focuses on stabilizing the structure and reducing cobalt dependency [38] [37]. |

| Silicon or Lithium Metal Anode Materials | Next-generation anode materials under investigation to significantly increase energy density compared to traditional graphite anodes [34] [35]. |

| Solid-State Electrolytes | Enabling material for solid-state batteries; research aims to overcome challenges related to ionic conductivity and interfacial stability [40] [34]. |

| Lithium Hexafluorophosphate (LiPF₆) | Common lithium salt used in the formulation of conventional liquid electrolytes for laboratory-scale cell testing. |

| Carbon Additives (e.g., Super P, Carbon Black) | Conductive agents mixed with active materials to enhance the electronic conductivity of electrodes in research cells. |

| Polyvinylidene Fluoride (PVDF) | Binder polymer used in the fabrication of electrodes for laboratory cells to hold active material particles together. |

| N-Methyl-2-pyrrolidone (NMP) | Solvent used in the slurry process for electrode coating during R&D cell manufacturing. |

The descent of lithium-ion battery pack prices to approximately $115/kWh represents a definitive crossing of an economic viability threshold, fundamentally reshaping the landscape for renewable energy deployment [34]. This analysis demonstrates that the choice between leading battery chemistries like LFP and NMC is not a matter of superiority but of application-specific optimization, balancing the competing demands of cost, energy density, safety, and longevity [36] [38]. For researchers and scientists, the path forward involves a dual focus: refining the performance and reducing the cost of existing technologies through advanced manufacturing and supply chain maturation, while simultaneously pioneering next-generation materials and architectures, such as solid-state electrolytes and silicon anodes [40] [34] [35]. The experimental frameworks and material toolkit detailed herein provide a foundation for the rigorous, comparable research required to drive this innovation. As the industry moves beyond this cost threshold, the focus of research and development will increasingly shift toward maximizing lifetime value, enhancing safety protocols, and integrating storage seamlessly into a decarbonized grid.

Optimization and Deployment: Methodologies for Maximizing Storage Value in Real-World Systems

Techno-economic modeling provides a critical analytical framework for evaluating the financial viability and technical performance of energy storage systems within modern power grids. These models are indispensable for comparing diverse storage technologies—from lithium-ion batteries to pumped hydro storage—based on their lifecycle costs and operational value. As the global energy landscape shifts towards variable renewable sources like solar and wind, the role of storage in balancing supply and demand has become paramount [42]. Frameworks such as the Storage Futures Study (SFS) led by the National Renewable Energy Laboratory (NREL) offer a visionary structure for the storage industry's evolution, outlining a phased deployment from short-duration to seasonal storage solutions [43]. For researchers and engineers, these models deliver the quantitative foundation needed to determine the cost-optimal mix of storage technologies that will ensure a resilient, flexible, and low-carbon power system through 2050 and beyond.

Comparative Analysis of Energy Storage Technologies

Selecting an appropriate energy storage technology requires a multi-faceted comparison across performance metrics, financial parameters, and operational characteristics. The following tables summarize key quantitative data for major grid-scale storage options, providing a basis for techno-economic analysis.

Table 1: Performance and operational characteristics of energy storage technologies [1] [42]

| Technology | Efficiency (Round-trip) | Cycle Life | Energy Density | Typical Response Time | Discharge Duration |

|---|---|---|---|---|---|

| Lithium-ion Batteries | 85-95% | 1,000-10,000 cycles | High (200-400 Wh/L) | Seconds to minutes | Minutes to 8 hours |

| Pumped Hydro Storage | 70-85% | 40-60 year lifespan | Low | Minutes | 6-20 hours |

| Flow Batteries | 70-85% | 10,000+ cycles | Medium (20-70 Wh/L) | Seconds to minutes | 4-12 hours |

| Compressed Air (CAES) | 40-70% | 20-60 year lifespan | Low | Minutes | 2-20 hours |

| Supercapacitors | 90-95% | 1,000,000+ cycles | Very low | Milliseconds | Seconds to minutes |

| Hydrogen Storage | 30-40% | 20-30 year lifespan | Low (volumetric) | Minutes | 100+ hours (seasonal) |

Table 2: Cost characteristics and projected trends for energy storage systems [42]

| Technology | 2021 Capital Cost (100 MW, 10-hr system) | Projected 2030 Capital Cost | Key Cost Drivers |

|---|---|---|---|

| Lithium-ion (LFP) | $356/kWh | $291/kWh | Raw materials, manufacturing scale, cycle life limitations |

| Pumped Hydro | $263/kWh | $83/kWh (for 24-hour systems) | Geography, permitting, long construction timelines |

| Vanadium Flow Battery | ~$385/kWh | Not projected | Vanadium supply constraints, system complexity |

| Compressed Air (CAES) | $122/kWh | $18/kWh (for 100-hour systems) | Suitable geologic formations, system efficiency |

| Hydrogen Storage | Not specified | ~$15/kWh (100 MW, 100-hour system) | Electrolyzer costs, storage infrastructure, efficiency losses |

| Thermal Energy Storage | $295/kWh (8-hour) | Not projected | Tank assembly, insulation quality, temperature retention |

The data reveals distinctive techno-economic profiles across storage options. Lithium-ion batteries, particularly lithium iron phosphate (LFP), offer an excellent balance of efficiency and cost for short-duration applications (up to 8 hours), with prices declining from $800/kWh in 2013 to under $140/kWh in 2023 [42]. For long-duration storage, pumped hydro remains the most established technology with the lowest levelized costs at scale, though geographical constraints limit new development. Compressed air and hydrogen storage present compelling economics for very long durations (multi-day to seasonal), albeit with significant efficiency trade-offs [42].

NREL's REopt and Modeling Framework Methodology

The REopt platform is NREL's techno-economic decision support model that evaluates the economic viability of renewable energy, storage, and conventional generation technologies at a single site or across distributed systems. Integrated within NREL's broader Storage Futures Study (SFS) analysis framework, REopt employs a lifecycle cost optimization approach to determine optimal technology selection, sizing, and dispatch strategies [43]. The model evaluates storage technologies against multiple value streams—including energy time-shift, capacity deferral, ancillary services, and resilience benefits—to identify cost-optimal investment pathways.

The SFS outlines a conceptual framework for storage deployment organized into four sequential phases, each characterized by distinct primary services, duration requirements, and deployment triggers [43]:

- Phase 1 (Prior to 2010): Dominated by pumped hydro storage providing peaking capacity and energy time-shifting with 8-12 hour duration

- Phase 1 (Present-Near Future): Focused on operating reserves with less than 1 hour duration and millisecond to second response requirements

- Phase 2 (Emerging): Peaking capacity applications with 2-6 hour duration, strongly linked to solar PV deployment

- Phase 3 (Development): Diurnal storage with 4-12 hour duration for capacity and energy time-shifting

- Phase 4 (Future): Multi-day to seasonal storage with greater than 12 hour duration requirements

This phased framework provides researchers with a structured approach to modeling storage deployment trajectories and understanding how technology requirements evolve with increasing renewable penetration.

Table 3: NREL's four-phase framework for energy storage deployment [43]

| Phase | Primary Services | National Deployment Potential | Duration | Response Speed |

|---|---|---|---|---|

| Phase 1 | Operating reserves | <30 GW | <1 hour | Milliseconds to seconds |

| Phase 2 | Peaking capacity | 30-100 GW | 2-6 hours | Minutes |

| Phase 3 | Diurnal capacity and energy time-shifting | 100+ GW | 4-12 hours | Minutes |

| Phase 4 | Multiday to seasonal capacity and energy time-shifting | 0->250 GW | >12 hours | Minutes |

Experimental Protocols for Techno-Economic Analysis

A standardized methodology for conducting techno-economic assessments of energy storage systems ensures comparable results across research studies. The following protocol outlines key steps for modeling storage technologies using frameworks like NREL's REopt.

Input Definition Phase

- Scenario Horizon & Objectives: Define the analysis timeframe (typically 20-30 years for storage assets), regional focus, and specific research questions (e.g., technology comparison, policy impacts, or renewable integration studies) [43].

- Technology Selection: Identify storage technologies for evaluation based on application requirements—lithium-ion for short-duration (2-6 hours), flow batteries for medium-duration (6-12 hours), and pumped hydro/CAES/hydrogen for long-duration (>12 hours) applications [1] [42].

- Parameter Compilation: Gather technical performance data including round-trip efficiency, cycle life, degradation rates, energy and power density, and response times from laboratory tests and field demonstrations [42].

- Economic Assumptions: Establish capital costs, operation and maintenance expenses, discount rates, financing structures, and projected cost reductions through learning curves. Include policy inputs such as tax credits and carbon prices where applicable [42].

Model Execution Phase

- Optimization Algorithm: Implement linear or mixed-integer programming to minimize total system costs while meeting technical and reliability constraints. The objective function typically minimizes net present value of total lifecycle costs [43].

- Lifecycle Cost Calculation: Compute net present value using the formula: NPC = CAPEX + ∑(OPEXₜ - Revenueₜ)/(1+d)ᵗ where CAPEX is initial capital cost, OPEX is annual operating cost, Revenue is annual value streams, d is discount rate, and t is year.

- Performance Simulation: Model system operation over representative time periods (typically hourly for a full year) to capture seasonal variations and technology-specific performance characteristics including degradation over the project lifetime [43].

Output Analysis Phase

- Sensitivity Analysis: Identify key cost and performance drivers through one-at-a-time variation or Monte Carlo simulation across uncertain parameters such as future cost reductions, policy changes, and fuel price volatility [42].

- Scenario Comparison: Evaluate storage deployment across multiple future scenarios—such as NREL's Standard Scenarios—varying renewable energy costs, load growth, and decarbonization policies [43].

- Policy Implications: Translate modeling results into actionable insights for storage deployment barriers, research priorities, and market design recommendations to capture full storage value streams [43].

Table 4: Key research reagents and computational tools for energy storage modeling

| Tool/Resource | Type | Primary Function | Application in Techno-Economic Analysis |

|---|---|---|---|

| NREL REopt | Optimization Model | Lifecycle cost minimization for energy systems | Determines optimal storage sizing and dispatch to meet cost and resilience goals [43] |

| NREL Storage Futures Study | Analytical Framework | Long-term storage deployment scenarios | Provides phased framework for storage adoption and capacity projections through 2050 [43] |

| Lithium-ion Cost Projections | Performance & Cost Data | Technology characterization | Inputs for modeling lithium-ion battery economics and deployment potential [42] |

| Pumped Hydro Cost Data | Performance & Cost Data | Technology characterization | Enables comparison of established long-duration storage with emerging technologies [42] |

| Long-Duration Storage Assessment | Methodology Framework | Evaluation of extended storage duration | Analyzes technologies for multi-day and seasonal storage applications [43] |

| Production Cost Models (e.g., PLEXOS) | Simulation Software | Grid operations modeling | Simulates hourly system operations with high storage penetration [43] |

| Capacity Expansion Models (e.g., ReEDS) | Optimization Software | Generation and transmission planning | Identifies least-cost storage portfolios under renewable energy scenarios [43] |