Preventing Data Aliasing in Animal Studies: A Researcher's Guide to Accurate Accelerometer Use in Preclinical and Drug Development

This article provides a comprehensive guide for researchers and drug development professionals on addressing the critical challenge of accelerometer data aliasing in animal studies.

Preventing Data Aliasing in Animal Studies: A Researcher's Guide to Accurate Accelerometer Use in Preclinical and Drug Development

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on addressing the critical challenge of accelerometer data aliasing in animal studies. Data aliasing, a distortion that occurs when the sampling rate is too low to accurately capture high-frequency movements, can compromise the validity of behavioral data used to assess drug efficacy and animal welfare. We explore the technical foundations of aliasing, present methodologies for its prevention across various study designs, offer troubleshooting and optimization strategies for existing data, and review validation frameworks to ensure data integrity. By synthesizing current best practices and emerging analytical techniques, this resource aims to enhance the reliability of accelerometer-derived endpoints in preclinical research, thereby strengthening the pipeline for therapeutic development.

Understanding Accelerometer Data Aliasing: Foundational Concepts and Impact on Preclinical Data Integrity

Frequently Asked Questions (FAQs)

Q1: What is data aliasing and why is it a problem in animal movement studies? Data aliasing is a distortion that occurs when a signal is sampled at a rate that is too low to accurately capture its highest frequency components. Instead of disappearing, these high frequencies "fold back" and appear as lower, misleading frequencies in the recorded data [1]. In animal movement studies, this can cause rapid, fine-scale movements to be misrepresented as slower, non-existent behaviors, severely compromising the validity of your data and leading to incorrect biological interpretations [2] [1].

Q2: How can I identify aliasing in my collected accelerometer data? Aliasing can be tricky to spot, but some common signs include [1]:

- Unexplained Low-Frequency Patterns: Observing rhythmic movements or patterns in your data that do not align with any observed animal behavior.

- Inconsistencies with Video Evidence: When your accelerometer data shows a particular movement trend, but simultaneous video recording reveals completely different animal activities.

- Signal Clipping in Time-Domain Data: Visual inspection of the raw signal might show a "clipped" waveform where the peaks are flattened because the signal exceeded the sensor's measurement range, which can be associated with aliasing-related distortions [1].

Q3: My sampling rate should be sufficient based on the animal's expected movement. Why am I still seeing aliasing? The sampling rate must be high enough to capture not just the gross body movement, but also the high-frequency vibrations and shocks. For instance, in a study quantifying activity counts, the ActiGraph processing pipeline requires raw data to be sampled at rates between 30 Hz and 256 Hz before being down-sampled [3]. Furthermore, mechanical impacts (e.g., a foot striking the ground, a bird's wingbeat) can generate very high-frequency vibrations that will alias if not properly filtered before sampling [1].

Q4: What is the Nyquist-Shannon Sampling Theorem and how does it relate to my study design? The Nyquist-Shannon Sampling Theorem is a fundamental principle that states a signal must be sampled at a rate at least twice as high as its highest frequency component to be accurately represented [1] [3]. If your study animal exhibits rapid movements with a maximum frequency component of 10 Hz, your accelerometer must sample at a minimum of 20 Hz. However, in practice, researchers typically sample at 5 to 10 times the highest frequency of interest to ensure data quality [3].

Troubleshooting Guide: Preventing and Resolving Data Aliasing

Problem: Suspected Aliasing in Existing Data

| Symptom | Possible Cause | Diagnostic Check | Corrective Action |

|---|---|---|---|

| Appearance of slow, undulating patterns in data [1] | High-frequency movements undersampled | Compare data with video recordings or higher-frequency data logs. | Solution: Re-collect data with a higher sampling rate. The data cannot be reliably "fixed" after collection. |

| Inconsistent results between different sensor models [3] | Different devices use different internal sampling rates & processing | Review device specifications for sampling rate and built-in anti-aliasing filters. | Solution: Standardize equipment across studies or fully characterize differences using the published algorithms for each device [3]. |

| Signal distortion/clipping in time-domain data [1] | Sensor range exceeded during high-impact movements | Plot raw signal and check for flattened peaks. | Solution: Use an accelerometer with a higher measurement range (e.g., 500 g-pk instead of 50 g-pk) [1]. |

Problem: Designing a New Study to Avoid Aliasing

| Design Phase | Common Pitfall | Best Practice |

|---|---|---|

| Sensor Selection | Choosing a sensor with a fixed, low sampling rate. | Select a sensor whose sampling rate can be configured and exceeds your Nyquist requirement. |

| Parameter Configuration | Setting a sampling rate based only on obvious, slow behaviors. | Sample at a high rate (e.g., ≥ 30 Hz for general movement, up to 256 Hz for fine details or impacts) [3]. |

| Data Acquisition | Failing to use an anti-aliasing filter before sampling. | Ensure your data acquisition system applies a proper anti-aliasing low-pass filter to remove high-frequency noise above the Nyquist frequency [3]. |

Experimental Protocol: A Framework for Anti-Aliasing Data Collection

This protocol provides a methodology for setting up an accelerometer study on animal movement that minimizes the risk of data aliasing, based on established practices in the field [2] [3].

1. Pre-Study Calibration and Setup

- Objective: Determine the appropriate sampling rate and filter settings.

- Procedure:

- Estimate Maximum Frequency: Based on the literature or pilot observations, estimate the maximum possible frequency (f_max) of the animal's movement of interest (e.g., wingbeat, footfall).

- Apply Nyquist Criterion: Calculate the minimum sampling rate:

f_s_min = 2 * f_max. For safety, set your final sampling ratef_sto5 * f_maxor higher [3]. - Configure Anti-Aliasing Filter: Set a low-pass filter on your data acquisition system with a cutoff frequency

f_cat or below the Nyquist frequency (f_s / 2). For example, ActiGraph devices apply adjustable low-pass filters with cutoff frequencies matched to their output data rates (e.g., 16 Hz cutoff for a 32 Hz output data rate) [3]. - Verify Sensor Range: Conduct a pilot test to ensure the accelerometer's measurement range (in g's) is not exceeded during high-intensity activities [1].

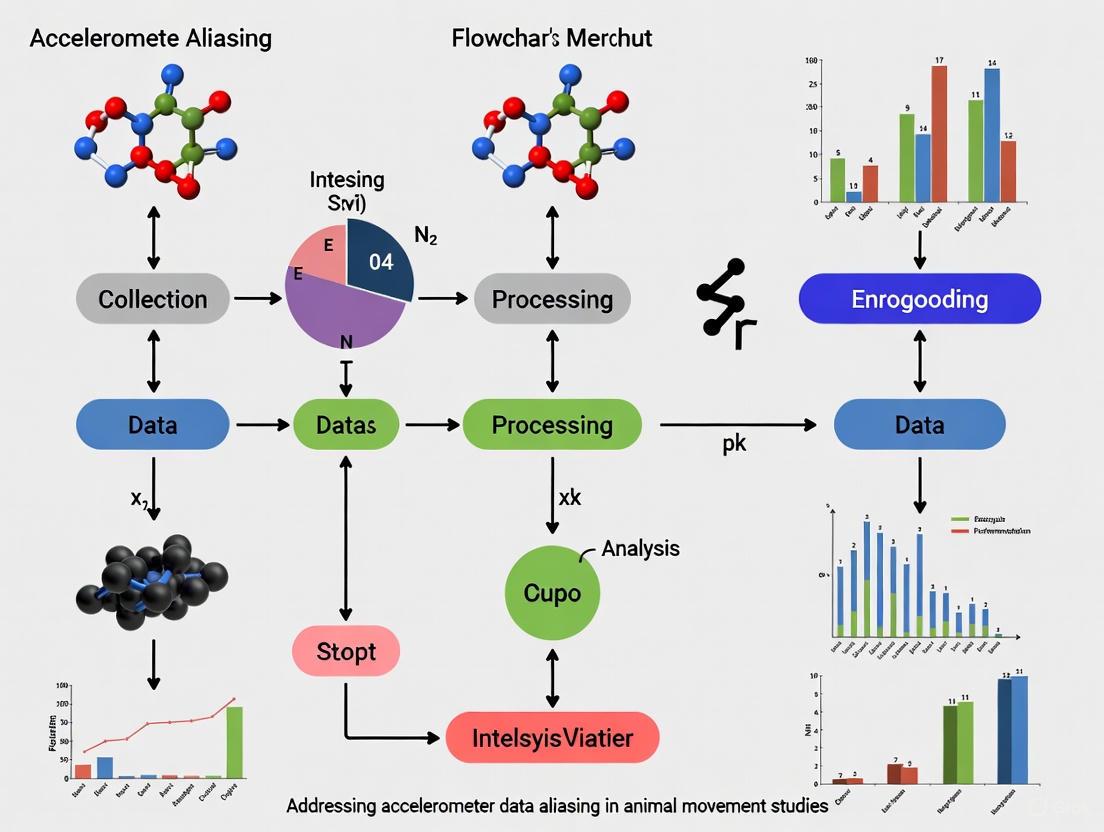

2. Data Collection Workflow The following diagram illustrates the critical steps for preventing aliasing during data collection.

3. Post-Collection Data Processing and Validation

- Objective: Confirm data quality and process data for analysis.

- Procedure:

- Visual Inspection: Plot the raw data to check for any obvious signs of clipping or unnatural periodicities [1].

- Down-sampling (if needed): If storage or analysis requires a lower frequency, properly apply a digital low-pass filter to the raw data before down-sampling to prevent introducing aliasing artifacts [3]. For example, the ActiGraph algorithm resamples raw data to 30 Hz after appropriate filtering [3].

- Generate Activity Metrics: Convert the processed raw data into relevant movement metrics, such as statistical movement elements (StaMEs) for path segmentation or traditional activity counts [2] [3].

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key materials and their functions for ensuring high-quality accelerometer data in animal movement studies.

| Item / Reagent | Function / Application in Research |

|---|---|

| Programmable Accelerometer (e.g., ActiGraph models) | Core sensor for capturing raw acceleration data. Programmability allows researchers to set a sufficiently high sampling rate and access raw data for transparent processing [3]. |

| Anti-Aliasing Low-Pass Filter | A hardware or software filter that removes frequency components above the Nyquist frequency before the signal is sampled. Critical for preventing aliasing at the data acquisition stage [3]. |

| Data Acquisition System with High Sampling Rate | System (e.g., Biopac MP150) capable of sampling at high frequencies (e.g., 2 kHz) to capture transient, high-impact movements without distortion, providing a clean raw signal for later analysis [4]. |

| Signal Processing Software (e.g., MATLAB, Python) | Used to implement custom processing pipelines, including proper filtering, down-sampling, and extraction of derived metrics like activity counts or StaMEs [2] [3]. |

| Published Counts Algorithm (e.g., ActiLife Python package) | An open-source algorithm that provides transparency into how raw acceleration data is converted into activity counts, enabling reproducibility and comparison across studies [3]. |

The table below consolidates critical numerical guidelines from the search results to inform your experimental design.

| Parameter | Guideline / Example | Research Context |

|---|---|---|

| Sampling Rate (General) | At least 2× the highest frequency of interest (Nyquist rate); 5-10× is recommended [1] [3]. | Fundamental signal processing rule. |

| Sampling Rate (Specific) | 30 Hz to 256 Hz [3]. | Processing pipeline for generating activity counts in ActiGraph devices. |

| Sampling Rate (High-Fidelity) | 2 kHz [4]. | Capturing sternum accelerometry for quantifying restlessness in opioid withdrawal studies. |

| Anti-Aliasing Filter Cutoff | Should be set at or below half the sampling rate (Nyquist frequency) [3]. | Prevents high-frequency noise from aliasing into the signal band. |

| Analog Band-Pass Filter Range | Max gain at ~0.76 Hz, -6 dB at 0.21-2.15 Hz [3]. | Used in ActiGraph devices to filter signals to the frequency range of human activity. |

Troubleshooting Guides

FAQ 1: What is the minimum sampling rate I should use for my animal-borne accelerometers?

The minimum sampling rate is determined by the Nyquist-Shannon sampling theorem. This theorem states that to accurately capture a signal without distortion (aliasing), the sampling frequency must be at least twice the highest frequency component present in the animal's behavior you wish to record [5]. For example, if the fastest behavior of interest has a frequency of 30 Hz, your minimum sampling rate should be 60 Hz [6] [5].

However, in practice, sampling at exactly the Nyquist frequency is often insufficient for detailed analysis. Research on European pied flycatchers showed that for short-burst behaviors like swallowing food (mean frequency of 28 Hz), a sampling frequency higher than 100 Hz was needed for accurate classification. For longer-duration, rhythmic behaviors like flight, a lower sampling frequency of 12.5 Hz was adequate [6]. To accurately estimate signal amplitude, especially for short data segments, a sampling frequency of four times the signal frequency (twice the Nyquist frequency) is recommended [6].

FAQ 2: Why does my accelerometer data show low-frequency patterns that don't match the animal's observed behavior? (Aliasing)

This is a classic sign of aliasing [7]. Aliasing occurs when a signal is sampled at a rate that is too low, causing high-frequency components in the signal to be misrepresented as lower frequencies in the recorded data [5] [8]. It can create the illusion of slow-motion behavior that doesn't actually exist.

- How it happens: If the sampling rate is

fs, then any signal frequency abovefs/2(the Nyquist frequency) will be "folded back" into the lower frequency spectrum [9]. For instance, with a 50 Hz sampling rate (Nyquist frequency = 25 Hz), a true 40 Hz vibration would appear in your data as a 10 Hz signal [10] [7]. - How to prevent it:

- Increase Sampling Rate: The most direct method is to sample at a high enough rate to capture all relevant frequencies [7].

- Use an Anti-Aliasing Filter: Before the signal is digitized, it should pass through an analog low-pass filter. This filter removes frequency components above the Nyquist frequency, preventing them from being misrepresented [10] [9]. Many digital MEMS accelerometers have built-in filters, but their effectiveness varies [9].

FAQ 3: I am sampling above the Nyquist rate, but my behavior classification is still inaccurate. What could be wrong?

Sampling rate is only one factor. Other critical considerations include:

- Behavioral Characteristics: The required sampling rate depends heavily on the nature of the behavior. Short-burst behaviors (e.g., prey capture, swallowing) that last only a few movement cycles require much higher sampling rates than sustained, rhythmic behaviors (e.g., flight, walking) [6].

- Sampling Duration: The length of your data recording windows also impacts accuracy. For short sampling durations, the combination of duration and sampling frequency significantly affects the estimation of signal frequency and amplitude. Accuracy, especially for amplitude, declines with shorter durations [6].

- Sensor Placement and Calibration: Inconsistent sensor attachment or lack of calibration before deployment can degrade data quality and lead to misclassification, regardless of sampling rate [6].

Quantitative Sampling Guidelines from Animal Research

The table below summarizes findings from an accelerometer study on European pied flycatchers, providing a concrete example of how sampling requirements differ by behavior [6].

| Behavior Type | Example Behavior | Mean Frequency | Recommended Minimum Sampling Rate | Key Consideration |

|---|---|---|---|---|

| Short-Burst | Swallowing food | 28 Hz | 100 Hz (>> 2×Nyquist) | Captures rapid, transient movements accurately [6]. |

| Sustained Rhythmic | Flight | Lower than swallowing | 12.5 Hz (~Nyquist) | Adequate for characterizing longer-duration, rhythmic patterns [6]. |

| Mixed (with rapid maneuvers) | Flight with prey capture | N/A | 100 Hz | The sustained flight can be sampled at a lower rate, but to identify rapid maneuvers within the bout, a high rate is essential [6]. |

Experimental Protocol: Determining Sampling Rate for a New Species or Behavior

This methodology, adapted from a study on pied flycatchers, provides a systematic approach to establish sampling parameters for your specific research context [6].

Objective: To empirically determine the minimum accelerometer sampling frequency required for accurate classification of key behaviors and estimation of energy expenditure.

Materials:

- Miniaturized accelerometer loggers.

- Synchronized high-speed video camera system (e.g., >90 fps).

- Calibration equipment.

- Appropriate animal housing/aviaries.

Procedure:

- Logger Attachment: Calibrate accelerometers. Attach loggers securely to the animal (e.g., over the synsacrum in birds using a leg-loop harness) to minimize movement artifacts [6].

- High-Frequency Data Collection: Record tri-axial acceleration data from the freely moving animal at the highest feasible sampling rate (e.g., 100 Hz or more) concurrently with high-speed video [6].

- Behavioral Annotation: Use the synchronized video to identify and label the precise start and end times of specific behaviors of interest (e.g., flight, foraging, swallowing) in the high-frequency accelerometer data [6].

- Data Down-Sampling: Digitally down-sample the original high-frequency accelerometer data to progressively lower sampling rates (e.g., from 100 Hz down to 10 Hz).

- Performance Analysis: At each down-sampled rate:

- For Behavior Classification: Train a classification model and test its accuracy in identifying the annotated behaviors.

- For Energy Expenditure: Calculate proxies like ODBA (Overall Dynamic Body Acceleration) and compare their values to those from the original high-rate data.

- Determine Critical Frequency: Identify the sampling rate at which performance (classification accuracy or agreement in energy expenditure metrics) begins to significantly degrade. This is your empirically derived minimum sampling rate for your study system.

The Scientist's Toolkit: Essential Research Reagents and Materials

| Item | Function in Experiment |

|---|---|

| Multi-sensor Biologger | A miniaturized device, often containing an accelerometer, to be attached to the animal for data collection in the field or lab [6]. |

| High-Speed Video Camera | Provides ground-truth data for behavioral annotation; crucial for validating accelerometer data and identifying behavioral signatures [6]. |

| Leg-Loop Harness | A common method for secure and safe attachment of loggers to birds and other animals [6]. |

| Anti-Aliasing Filter | An analog or digital filter used to remove high-frequency noise above the Nyquist frequency before sampling to prevent aliasing [10] [9]. |

| Signal Processing Software | Software (e.g., MATLAB, Python with SciPy) used for data analysis, including down-sampling, frequency analysis, and behavior classification [6]. |

Workflow Diagram: From Signal to Analysis

Aliasing Visualization Diagram

FAQs: Understanding and Troubleshooting Aliasing in Animal Behavior Research

Q1: What is aliasing in the context of accelerometer data, and how can it lead to misclassified animal behaviors? Aliasing is a data distortion phenomenon that occurs when an accelerometer is sampled at a frequency that is too slow to accurately capture rapid movements. High-frequency motions are misrepresented as lower-frequency signals [11]. In behavior classification, this means a rapid action (e.g., a quick head shake or a paw movement) might be misidentified as a slower, entirely different behavior (e.g., steady walking or grazing), compromising the validity of your results [12] [13].

Q2: I am setting up a study on grazing behavior in goats. What is the minimum sampling frequency I should use to avoid aliasing? While the optimal rate can depend on the specific behavior, a general guideline is to use a sampling frequency of 20–30 Hz as a baseline [14]. However, for finer-grained behaviors, studies have successfully used higher rates, such as 100 Hz for human ankle movements during agility tests [15] and 24 Hz for sheep activity monitoring [16]. Always err on the side of a higher sampling rate if your equipment and data storage allow.

Q3: My model, trained in a controlled setting, performs poorly when deployed on animals in a free-living environment. Could aliasing be a factor? Yes. This is a common challenge. Laboratory-calibrated models often fail to generalize to free-living settings because they encounter a wider variety of "transitive and unseen activities" and differences in acceleration signals that were not present in the training data [14]. This can include novel, high-frequency movements that, if undersampled, cause aliasing and misclassification.

Q4: Beyond sampling frequency, what is the most critical hardware setting to prevent aliasing? The most critical step is to enable an anti-aliasing filter. This is an analog low-pass filter applied to the data before it is digitized, designed to remove high-frequency components that the sampling rate cannot accurately capture. Without this filter, high-frequency energy will "fold" down into lower frequencies, irrevocably distorting your data [11].

Q5: Our classifiers struggle to distinguish between "eating" and "ruminating" in cattle. Are these behaviors particularly susceptible to misclassification? Yes. Studies show that while major behaviors like lying and standing are reliably predicted, other behaviors are more challenging. "Eating" often exhibits high variability in sensor signals, and "transitional behaviors" (like moving from lying to standing) are frequently misclassified [12] [13]. Ensuring proper data collection and processing is essential for these complex activities.

Troubleshooting Guide: Aliasing and Behavior Misclassification

| Problem | Possible Causes | Recommended Solutions |

|---|---|---|

| Poor model generalization from lab to field | Model trained on low-variability data; unseen high-frequency behaviors cause aliasing [12] [14]. | Maximize variability in training data; use large datasets with a wide range of animals and conditions [12]. |

| Low accuracy for specific behaviors (e.g., eating, walking) | Inadequate sampling rate for high-motion behaviors; incorrect pre-processing for target behavior [12] [13]. | Increase sampling frequency; tailor pre-processing methods (e.g., window size, feature selection) to the specific behavior [12] [17]. |

| Consistent misclassification of rapid, transitional movements | Transitional behaviors are inherently brief and complex; sampling rate may be too low to capture their dynamics [12]. | Focus pre-processing on capturing short-duration events; validate models specifically on transition data [12]. |

| Unexpected low-frequency signals in data | Aliasing is occurring due to a lack of an anti-aliasing filter or an incorrectly set one [11]. | Apply an analog anti-aliasing filter with a cut-off frequency set below the Nyquist frequency (e.g., ( fc < 0.6 fN )) [11]. |

Quantitative Evidence: Impact of Model Generalization on Behavior Classification

The following table summarizes documented performance drops in behavior classification models when applied to new individuals, a problem exacerbated by data issues like aliasing.

Table 1: Performance Decrease in Models Applied to Unseen Animals

| Species | Behavior | Performance (AUC) on Known Animals | Performance (AUC) on New Animals | Source |

|---|---|---|---|---|

| Dairy Goats | Rumination | 0.800 | 0.644 | [17] |

| Dairy Goats | Head in Feeder | 0.819 | 0.733 | [17] |

| Dairy Goats | Lying | 0.829 | 0.741 | [17] |

| Dairy Goats | Standing | 0.823 | 0.749 | [17] |

Experimental Protocol: Validating an Accelerometer Setup to Minimize Aliasing

This protocol, adapted from validation studies on children and livestock, provides a methodology to ensure your accelerometer system is correctly configured before a full-scale experiment [18].

Objective: To confirm that the chosen sampling frequency and anti-aliasing filter settings accurately capture a range of species-specific behaviors without distortion.

Materials:

- Accelerometer sensors

- Video recording system (as gold standard for validation)

- Secure mounting equipment (collars, ear tags, etc.)

- Data processing software (e.g., Python, R, MATLAB)

Procedure:

- Sensor Configuration: Initialize the accelerometers with a target sampling frequency (e.g., ≥ 20 Hz [14]) and ensure any built-in anti-aliasing filters are enabled.

- Standardized Activity Protocol: Fit the sensors on the animal and guide it through a structured protocol of target behaviors. Example activities include:

- Lying down (stationary)

- Standing quietly (stationary)

- Walking at a normal pace

- Eating/foraging

- Any other high-frequency behavior of interest (e.g., head shaking, scratching)

- Video Recording: Simultaneously record all activities with a high-frame-rate video camera. Precisely synchronize the video timestamps with the accelerometer data.

- Data Analysis:

- Comparison: Extract the total time spent in each behavior from both the accelerometer data (processed by its algorithm) and the video observations (manual coding).

- Agreement Analysis: Use statistical tests like paired t-tests, Bland-Altman plots, and Intraclass Correlation Coefficient (ICC) to assess the agreement between the two methods [18].

- Interpretation: Strong agreement and high ICC values across all behaviors indicate a robust setup. If specific behaviors (especially rapid ones) show poor agreement, consider increasing the sampling rate or adjusting the filter settings and re-test.

The Researcher's Toolkit: Essential Reagents and Materials

Table 2: Key Materials for Accelerometer-Based Behavior Studies

| Item | Function / Explanation | Example from Literature |

|---|---|---|

| Tri-axial Accelerometer | Measures acceleration in three perpendicular dimensions (X, Y, Z), providing a detailed picture of movement and posture. | Actigraph GT3X+ used on ankles in human agility tests [15]. |

| Integrated Sensor (Accelerometer + Gyroscope) | The gyroscope measures angular velocity, complementing the accelerometer's linear motion data. This fusion significantly improves classification of complex behaviors like eating and walking [13]. | MPU-6050 sensor used on dairy cow necks [13]. |

| Anti-aliasing Filter | An analog filter that removes high-frequency signal components before digitization to prevent aliasing. A critical, often overlooked, component [11]. | Recommended as a mandatory hardware feature for accurate data collection [11]. |

| Customizable Data Pipeline | Software that allows for tailored pre-processing (e.g., noise filtering, feature extraction, window segmentation) to optimize models for specific behaviors [17]. | ACT4Behav pipeline for dairy goats [17]. |

| Axivity AX3 Accelerometer | A specific model of research-grade accelerometer commonly used in long-term livestock studies due to its small size and configurable sampling. | Used on ear tags in a sheep health monitoring study [16]. |

Workflow Diagram: A Robust Framework for Behavior Classification

The diagram below outlines a recommended experimental workflow that incorporates checks against aliasing and poor generalization, synthesizing best practices from the literature.

Troubleshooting Guides and FAQs

This technical support center provides targeted guidance for researchers addressing the critical issue of accelerometer data aliasing in animal studies. Proper data acquisition is fundamental to ensuring the validity of behavioral findings in drug development and welfare assessment.

Frequently Asked Questions

Q1: What is accelerometer data aliasing and why is it a problem in animal behavior studies?

Aliasing is a distortion effect that occurs when an accelerometer is sampled at a rate too slow to accurately capture the true frequency of an animal's movement [19]. When the sampling rate is insufficient, high-frequency movements are misrepresented as lower-frequency, slower signals that are not actually occurring [20]. In animal studies, this means a rapid behavior like a head shake or a swallow could be misclassified as a slower, different behavior. This corrupts your dataset, leading to inaccurate activity budgets, misrepresentation of drug-induced behavioral changes, and ultimately, flawed conclusions about drug efficacy or animal welfare [6].

Q2: How can I determine the minimum sampling rate needed for my specific animal model and behaviors of interest?

The foundational rule is the Nyquist-Shannon theorem, which states that your sampling frequency (ODR) must be at least twice the highest frequency component of the behavior you wish to measure [19] [6]. However, recent research suggests this is a theoretical minimum and higher rates are often needed for real-world accuracy.

For classification of short-burst behaviors (e.g., food swallowing in birds, escape responses in fish), studies recommend a sampling frequency of at least 1.4 times the Nyquist frequency of the behavior for reliable classification [6]. For accurate estimation of movement amplitude (important for energy expenditure studies), a sampling frequency of four times the signal frequency (twice the Nyquist frequency) is necessary, especially when sampling durations are short [6].

Q3: My accelerometer data shows clear behavior patterns, but my machine learning model performs poorly when applied to new subjects. Could aliasing be a factor?

While poor model generalization can stem from several issues, aliasing is a potential contributor. If your training data contains aliased signals, the model learns to recognize these distorted patterns. When applied to new data—even if collected with the same hardware—slight variations in how individuals perform behaviors can interact with the sampling rate to produce differently aliased signals, which the model fails to recognize correctly [17]. This underscores the importance of ensuring clean, non-aliased data from the start of your project.

Troubleshooting Guide: Identifying and Resolving Aliasing

| Symptom | Possible Cause | Solution | Verification Method |

|---|---|---|---|

| Implausible low-frequency signals in the data when animals are known to be moving rapidly. | Sampling rate far below the Nyquist limit for the behavior. | Increase the sensor's Output Data Rate (ODR). | Compare data collected at a very high rate (e.g., 100+ Hz) with down-sampled data. |

| Machine learning models that perform well on training data but poorly on validation data or new subjects. | Model trained on aliased signals that are not consistent. | Implement an analog anti-aliasing filter before the ADC and retrain the model [19]. | Validate model predictions against video recordings of behavior. |

| Inconsistent amplitude measurements for rhythmic, high-frequency behaviors. | Sampling duration too short and/or sampling frequency too low [6]. | Increase sampling duration or increase sampling frequency to at least 4x the behavior's frequency. | Use simulated signals of known frequency and amplitude to test the sampling setup. |

| Inability to distinguish short-burst, high-intensity behaviors (e.g., sneezing, startle responses). | Sampling frequency is too low to capture the transient signal's detail [21] [6]. | Significantly increase the sampling frequency (e.g., 100 Hz+) and use a high-pass filter to isolate the burst. | Annotate high-speed video recordings and synchronize with accelerometer data. |

Experimental Protocol: Establishing a Validated Accelerometry Pipeline

This protocol provides a step-by-step methodology to prevent aliasing from the outset of your experiment, based on best practices from recent literature [17] [6].

Step 1: Pre-Study Sampling Frequency Determination

- Pilot Study: Conduct a pilot study using a subset of animals.

- High-Rate Recording: Record accelerometer data at the highest feasible sampling rate (e.g., 100-200 Hz) to capture the full range of behavioral signals without distortion.

- Frequency Analysis: Using synchronized video, identify epochs of the fastest behaviors of interest (e.g., wingbeats in birds, head shakes in ruminants). Perform a Fourier analysis (FFT) to determine the highest fundamental frequency present in these behaviors.

- Set Sampling Rate: Calculate the Nyquist frequency (2 × highest observed frequency). Set your final sampling rate to at least 2.5 to 4 times this observed frequency to ensure a safety margin and accurate amplitude recording [6].

Step 2: Data Collection with Anti-Aliasing Safeguards

- Sensor Selection: Where possible, select digital accelerometers with embedded analog anti-aliasing filters (AAF) in their signal chain, such as the LIS2DU12 family [19]. These filters remove high-frequency noise before it can be aliased.

- Configuration: If using a sensor with a configurable AAF, set its cutoff frequency slightly above your maximum analysis frequency but below the Nyquist frequency of your chosen ODR [19] [20].

Step 3: Model Training and Validation with Processing Tuning

- Feature Selection: When training machine learning models for behavior classification, explore features derived from a mixture of time window sizes, as this has been shown to improve classification accuracy for diverse behaviors [17] [21].

- Behavioral Balance: Ensure your training dataset has a standardized duration for each behavior to prevent the model from being biased toward more common behaviors and against rare but important ones [21].

- Cross-Validation: Always test your final model on data from animals that were not included in the training set to truly assess its generalizability and check for hidden issues like aliasing [17] [22].

The Scientist's Toolkit: Essential Research Reagents and Solutions

| Item | Function in Research | Key Consideration |

|---|---|---|

| Digital MEMS Accelerometer with Embedded AAF (e.g., LIS2DU12) [19] | Measures acceleration; built-in analog filter prevents aliasing by removing high-frequency noise before digitization. | Preferable for most studies as it mitigates aliasing at the hardware level, conserving battery and storage. |

| High-Speed Video Camera | Provides ground-truth behavioral labels for accelerometer data validation; essential for identifying behavioral frequencies in a pilot study. | Frame rate should be significantly higher than the accelerometer's sampling rate to accurately observe fast movements. |

| Machine Learning Core (MLC) Embedded Sensors (e.g., LIS2DUX12) [19] | Allows on-device behavior classification, reducing data transmission and storage needs. | Ideal for long-term deployments, but models must be trained on non-aliased data. |

| Tri-axial Accelerometer Loggers | Capture movement and static acceleration (for posture) in three dimensions, providing a rich dataset for behavior classification [6] [23]. | Ensure sufficient bit-resolution (e.g., 8-bit or higher) and configurable sampling rates to fit study needs. |

| Leg-Loop or Collar Harness | Securely attaches the accelerometer to the animal in a consistent orientation [6]. | Attachment method and placement on the body significantly influence the signal and must be standardized. |

Sampling Requirements for Common Behavioral Objectives

The table below summarizes evidence-based sampling recommendations for different common research goals in animal studies.

| Research Objective | Behavioral Example | Recommended Minimum Sampling Frequency | Key Reference |

|---|---|---|---|

| Classification of short-burst behaviors | Swallowing in birds, escape responses in fish | 1.4 × Nyquist Frequency (e.g., ~80 Hz for a 28 Hz behavior) [6] | [6] |

| Estimation of energy expenditure (ODBA/VeDBA) | Sustained walking, swimming | Can be low (1-10 Hz), but amplitude accuracy requires 4× signal frequency for short windows [6] | [6] |

| Classification of long-endurance, rhythmic behaviors | Flight in birds, grazing in ruminants | Can be lower (e.g., 12.5 Hz for flight) [6] | [6] |

| General multiclass behavior identification | Lying, feeding, standing, walking in deer | 4 Hz (when using low-resolution, averaged data) [22] | [22] |

| High-accuracy classification of captive animal behaviors | Rumination, head-in-feeder in goats | Tuned per behavior; achieved AUC scores >0.8 [17] | [17] |

Data Acquisition Workflow for Alias-Free Research

The diagram below outlines the logical workflow for designing an accelerometer study that prevents data aliasing.

Methodological Frameworks: Designing Aliasing-Resistant Accelerometer Studies in Animal Models

Establishing Minimum Sampling Rates for Common Preclinical Behaviors (Grazing, Running, Grooming)

Troubleshooting Guide: Common Data Aliasing Issues

Q1: My accelerometer data shows animals engaging in "impossible" or jittery behaviors. What is happening and how can I fix it?

This is a classic sign of aliasing, which occurs when your sampling rate is too low to accurately capture the true frequency of the animal's movements [24]. The device misinterprets high-frequency movements as slower, unnatural ones.

- Solution: Increase your sampling rate. As a rule of thumb, the sampling rate should be at least twice the frequency of the fastest behavior you wish to capture [24]. For high-frequency behaviors like running or head movements during grazing, this often requires rates of 20 Hz or higher.

- Verification: Conduct a pilot study. Use a high sampling rate (e.g., 50-100 Hz) to record a range of behaviors. Visually inspect the raw acceleration signals to confirm they are smooth and not jagged, which indicates sufficient sampling.

Q2: My machine learning model confuses grazing and grooming behaviors. How can I improve classification accuracy?

This error often stems from inadequate feature selection due to low-resolution data or poorly chosen data processing parameters [17].

- Solution: Optimize your data processing pipeline for each specific behavior [17].

- Re-evaluate your epoch or window size. Shorter windows (e.g., 1-3 seconds) may better capture brief, distinct movements in grooming, while longer windows (e.g., 5-10 seconds) might be better for sustained grazing.

- Apply behavior-specific filtering techniques and feature selections during data pre-processing [17].

- Verification: Perform a sensitivity analysis on your pilot data to identify the optimal window segmentation and feature set for discriminating between grooming and grazing.

Q3: My dataset is dominated by resting behavior, and my classifier performs poorly on rarer, active behaviors. What should I do?

This is a class imbalance problem, which is common in free-living animal studies [25].

- Solution: Implement data resampling strategies. A combination of Synthetic Minority Over-sampling Technique (SMOTE) and Edited Nearest Neighbours (ENN) has been shown to effectively balance datasets and improve the prediction of minority behaviors like running or grooming [25].

- Verification: Compare the F1-scores of your model before and after applying resampling. A robust model should have high and balanced accuracy across all behavior classes.

Frequently Asked Questions (FAQs)

Q: What is the minimum sampling rate required to distinguish between walking and running in rodents?

While the exact rate can depend on the species and sensor placement, a sampling rate of 20 Hz is generally considered a reasonable minimum for distinguishing locomotor behaviors. However, to capture finer kinematic details or for very small, fast-moving animals, rates of 50 Hz or higher are recommended to prevent aliasing.

Q: How long should my data epoch be for analyzing grazing behavior?

Epoch length should be chosen based on the behavioral bout length you want to detect. For cattle, studies have successfully used 15-second epochs to characterize grazing patterns [25]. We recommend testing multiple epoch lengths (e.g., 1s, 5s, 15s) during pilot studies to determine which best captures the natural structure of the behavior without oversmoothing key elements.

Q: Can I use the same sampling rate for all species and all behaviors?

No. The optimal sampling rate is dependent on the kinematic properties of the specific behavior and the species' anatomy. The high-frequency head movements of a goat during rumination require a higher sampling rate than the slower, ambulatory movements of a grazing cow [17]. Always base your rate on the fastest, most dynamic component of the behavior you are studying.

Q: What is the relationship between sampling rate and battery life?

It is a direct trade-off. Higher sampling rates consume significantly more power and will deplete the battery faster, limiting study duration. To optimize, use the lowest sampling rate that still faithfully captures your behaviors of interest, as determined by pilot work.

Minimum Sampling Rate Recommendations for Common Preclinical Behaviors

The following table summarizes evidence-based minimum sampling rates to prevent aliasing for core preclinical behaviors. These rates are derived from validated studies using accelerometers and machine learning classification.

| Behavior | Species | Recommended Minimum Sampling Rate | Key Rationale & Frequency Characteristics |

|---|---|---|---|

| Grazing | Cattle, Goats | 15 Hz [25] | Captures the characteristic slow, forward movement and head-down position. Lower frequency dominance. |

| Running | Cattle, Sheep | 20 Hz [25] | Necessary to capture the high-impact, high-frequency foot strikes and full gait cycle without aliasing. |

| Grooming | Cattle, Goats | 25 Hz [17] | Requires higher rates to accurately distinguish small, repetitive head, neck, and limb movements from grazing. |

Experimental Protocol: Validating Behavior Classification and Sampling Rates

This protocol outlines how to empirically determine the minimum sampling rate required to classify a specific behavior without aliasing.

1. Pilot Data Collection with High-Frequency Reference

- Fit animals with a validated accelerometer (e.g., tri-axial capacitive MEMS sensor) [24].

- Record synchronized video footage as your ground truth.

- Set the accelerometer to its maximum sampling rate (e.g., 50-100 Hz) to ensure all behavioral frequencies are captured [24].

2. Data Processing and Epoch Selection

- Manually annotate the video to create a ground truth dataset, labeling the start and end times of target behaviors.

- Synchronize the video labels with the high-frequency accelerometer data.

- Segment the accelerometer data into epochs of varying lengths (e.g., 1s, 5s, 10s) for analysis [17].

3. Machine Learning Model Training and Testing

- Extract features (e.g., mean, variance, FFT components) from the epoch-level accelerometer data.

- Train a machine learning classifier (e.g., K-Nearest Neighbours, Random Forest) using the features and video-based labels [25].

- To address class imbalance, apply resampling techniques like SMOTE-ENN [25].

4. Down-Sampling Analysis to Find Minimum Rate

- Down-sample your original high-frequency dataset to progressively lower rates (e.g., from 50 Hz to 40 Hz, 30 Hz, 20 Hz, 10 Hz).

- Re-run the classification model at each new, lower sampling rate.

- Plot the model's performance (e.g., F1-score) against the sampling rate. The minimum acceptable rate is the point just before a significant drop in classification accuracy occurs.

Workflow Diagram: Establishing Minimum Sampling Rates

The following diagram illustrates the logical workflow for establishing a minimum sampling rate, from data collection to validation.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Specification in Behavioral Research |

|---|---|

| Tri-axial Accelerometer | The core sensor for capturing movement in three planes (X, Y, Z). Capacitive MEMS type is suitable for low-frequency animal movement [24]. |

| GPS Collar | Provides spatiotemporal location data, essential for correlating accelerometer-derived behaviors like grazing with specific environmental contexts [25]. |

| K-Nearest Neighbours (KNN) Classifier | A machine learning algorithm effective for behavior classification, especially when combined with resampling techniques to handle imbalanced data [25]. |

| SMOTE-ENN Resampling | A combined data pre-processing technique that synthesizes new minority class instances (SMOTE) and cleans overlapping data (ENN) to improve model performance on rare behaviors [25]. |

| ACT4Behav Pipeline | A general-purpose accelerometer data processing pipeline that allows for behavior-specific optimization of filtering, window segmentation, and feature selection [17]. |

Foundational Concepts: Understanding Signal Fidelity and Aliasing

What is accelerometer signal aliasing and why is it a critical concern in animal studies?

Accelerometer signal aliasing is a distortion that occurs when a signal is sampled at an insufficient rate, causing high-frequency movements to be misrepresented as lower-frequency patterns in the data [24]. This is a fundamental concern in animal studies because it can lead to the misclassification of behaviors; for instance, a rapid head movement in a cow might be misinterpreted as a slower, ambling gait. This error compromises the validity of activity budgets and behavioral analyses, potentially leading to incorrect scientific conclusions and ineffective therapeutic interventions [24] [26]. Ensuring high signal fidelity is therefore paramount for producing reliable, interpretable data.

What technical specifications of a sensor most directly impact its susceptibility to aliasing?

The sampling rate, or sampling frequency (measured in Hertz, Hz), is the most direct technical specification affecting aliasing. It defines the number of data points collected per second [24]. According to the Nyquist theorem, to accurately represent a signal, the sampling rate must be at least twice the highest frequency component of the movement being measured. For very rapid animal movements (e.g., a mouse's whisker twitch or a bird's wingbeat), a higher sampling rate is required to avoid aliasing. Furthermore, the type of accelerometer technology—whether piezoelectric (AC-coupled, detecting only dynamic acceleration) or capacitive MEMS (DC-coupled, detecting both static and dynamic acceleration)—can influence the device's ability to capture certain movement qualities and postures, which indirectly relates to overall signal integrity [24].

Sensor Placement and Selection Guide

How does sensor placement on an animal's body influence the fidelity of the signal for different research applications?

The optimal sensor placement is entirely dependent on the specific behaviors of interest. A device mounted on a collar will capture gross head and neck movements, which is ideal for monitoring feeding or drinking behaviors in cattle via ear tags [27]. A harness-mounted sensor on the torso (back or chest) is better suited for assessing overall gait, posture, and general locomotion [24]. Implantable sensors provide data on core physiological processes and are less susceptible to motion artifacts from the external environment but require surgical intervention [28]. The following table summarizes the primary considerations for different placement locations.

Table 1: Comparison of Animal Sensor Placement Locations

| Placement Location | Ideal Research Applications | Key Considerations |

|---|---|---|

| Collar | Monitoring feeding, rumination, and head movement patterns; long-term ecological studies [27]. | Signal can be influenced by collar rotation; may not accurately represent full-body movement. |

| Harness (Back/Chest) | Assessing overall activity levels, gait analysis, posture, and general locomotion patterns [24] [29]. | Provides a good representation of the body's center of mass; harness fit is critical to prevent chafing and signal noise. |

| Head-Mounted | Fine-scale behavioral classification, spatial tracking (when combined with GPS), and specific head movement analysis [30]. | Device miniaturization is critical to avoid impeding natural behavior; can be highly intrusive. |

| Ear Tag | Large-scale livestock monitoring for behaviors like rumination and estrus detection [27]. | Practical for farm use; signal is specific to head movement. |

| Implantable | Monitoring core body temperature, deep physiological processes, and in scenarios where external devices are not feasible [28]. | Provides the highest protection from environmental damage; requires surgery, raising ethical and welfare considerations. |

What are the key characteristics of different accelerometer technologies that researchers should consider?

The core technology inside an accelerometer dictates its performance characteristics. The two most common types used in biologging are piezoelectric and capacitive MEMS accelerometers [24]. Piezoelectric sensors are AC-coupled, meaning they are excellent at capturing dynamic, high-frequency motions but cannot measure static forces like gravity, making them less ideal for determining body orientation. Capacitive MEMS accelerometers are DC-coupled, allowing them to measure both dynamic movement and static gravitational force, which enables the distinction between different postures (e.g., sitting vs. standing) [24]. The number of measurement axes (uni-axial, bi-axial, or tri-axial) is also crucial, with tri-axial sensors providing the most comprehensive data on movement in three-dimensional space [24].

Table 2: Key Accelerometer Technologies for Animal-Borne Sensors

| Technology Type | Key Operating Principle | Strengths | Weaknesses |

|---|---|---|---|

| Piezoelectric | AC-coupled; measures dynamic acceleration via voltage generated from deformation of a crystal [24]. | Well-suited for capturing high-frequency, high-magnitude vibrations and movements. | Cannot measure static acceleration (gravity), thus cannot determine body orientation or posture. |

| Capacitive MEMS | DC-coupled; measures capacitance changes from the displacement of a seismic mass between plates [24]. | Measures both dynamic movement and static gravitational pull, enabling posture detection; widely used in consumer electronics. | May be less suited for extremely high-frequency vibrations compared to specialized piezoelectric sensors. |

Troubleshooting Common Data Fidelity Issues

We are observing unexpected, high-frequency noise in our data. What are the primary sources of such artifacts and how can they be mitigated?

High-frequency noise can originate from multiple sources. Technically, it can result from electromagnetic interference from other electronic equipment or poor connection integrity. Biologically, it can be caused by the sensor not being firmly attached to the animal, leading to independent movement of the device (e.g., a loose collar or harness) [24]. To mitigate this, ensure sensors are securely fitted according to the animal's size and morphology, use devices with protective casing to minimize external interference, and apply low-pass digital filters during data processing to remove frequencies above those biologically plausible for the study species [24].

Our machine learning model performs well on training data but fails to generalize to new individuals. What validation error might this indicate?

This is a classic sign of overfitting, a prevalent challenge in machine learning where a model memorizes the training data, including its noise and specific individual characteristics, rather than learning the underlying generalizable patterns of the behavior [26]. A review found that 79% of animal accelerometer studies did not adequately validate their models to robustly identify this issue [26]. This can be caused by a lack of independence between training and test sets, often due to data leakage, where information from the test data inadvertently influences the training process [26]. To prevent this, it is essential to use rigorous validation techniques, such as training on data from one set of individuals and testing on a completely separate, unseen set of individuals (leave-one-subject-out cross-validation) [26] [29].

Experimental Protocols for Signal Validation

What is a robust protocol for validating a sensor placement and classification model for a new species or behavior?

A robust validation protocol ensures that your model can accurately identify behaviors in new, unseen individuals. The following workflow, utilized by benchmarks like the Bio-logger Ethogram Benchmark (BEBE), outlines a rigorous methodology [29].

- Data Collection & Annotation: Collect accelerometer data from multiple individuals simultaneously with video recordings. Annotate the video to create a ground-truthed dataset linking accelerometer signals to specific behaviors [29].

- Independent Data Partitioning: Split the data into three independent sets: a Training Set (e.g., 70%) for model learning, a Validation Set (e.g., 15%) for tuning model hyperparameters, and a Test Set (e.g., 15%) for the final, unbiased evaluation. The split should be performed by individual animal to prevent data leakage and ensure the model is tested on completely novel subjects [26] [29].

- Model Training & Tuning: Train your machine learning model (e.g., a Random Forest or Deep Neural Network) on the Training Set. Use the Validation Set to adjust model settings (hyperparameters) to optimize performance without overfitting [26] [29].

- Final Model Evaluation: Use the held-out Test Set, which has never been used during training or tuning, to obtain a final performance metric (e.g., accuracy, F1-score). This score reflects how well your model will generalize to new data [26] [29].

How can researchers control for variation between individual sensors and animals?

A study found that differences between individual accelerometer devices can be a significant source of error, with variations detected in 80% of calculated metrics [31]. Furthermore, individual animal variation and temporal effects (e.g., week of study) also introduce variability [31]. To control for this:

- Sensor Calibration: Calibrate all sensors before deployment according to manufacturer specifications.

- Randomized Rotation: In controlled studies, use a Latin-square design where sensors are systematically rotated among animals across different time periods. This helps to disentangle the variance caused by the animal from the variance inherent to the specific sensor [31].

- Statistical Control: Account for "individual" and "sensor" as random effects in statistical models to partition the variance appropriately [31].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Toolkit for Sensor-Based Animal Behavior Studies

| Tool / Reagent | Function & Purpose |

|---|---|

| Tri-axial Accelerometer | The core sensor that measures acceleration in three spatial planes (X, Y, Z), providing detailed movement data for behavior classification [24] [29]. |

| Bio-logger Ethogram Benchmark (BEBE) | A public benchmark comprising diverse, annotated datasets used to validate and compare the performance of different machine learning models for behavior classification [29]. |

| Random Forest / Deep Neural Networks | Machine learning algorithms used to classify raw or processed accelerometer data into specific behavioral states. Evidence suggests deep neural networks may outperform classical methods, especially with large datasets [29]. |

| Cross-Validation Framework | A statistical technique, particularly "leave-one-subject-out" cross-validation, used to assess how a model will generalize to an independent dataset and to guard against overfitting [26]. |

| Isolated & Non-isolated DC-DC Converters | Critical power management components in sensor design. Isolated converters protect sensitive sensor electronics from power surges, while non-isolated versions are more compact and efficient for space-constrained wearable devices [28]. |

Advanced Topics and Future Directions

What emerging technologies are shaping the future of sensor-based animal monitoring?

The field is rapidly evolving with several key trends:

- Miniaturization and Integration: Devices are becoming smaller, lighter, and capable of integrating multiple sensors (e.g., GPS, accelerometer, gyroscope, physiological sensors) into a single "multi-modal" platform, providing a more holistic view of an animal's state and environment [30].

- Artificial Intelligence and Self-Supervised Learning: AI is moving beyond simple classification. Self-supervised learning techniques, where models are pre-trained on vast amounts of unlabeled data (e.g., human accelerometer data), show promise for improving classification accuracy in new species, especially when labeled training data is scarce [29].

- Advanced Power Management: Research into AI-driven power management strategies is optimizing energy consumption in wearable and implantable sensors, which is critical for enabling long-term, remote monitoring studies [28].

How can I systematically diagnose a persistent signal fidelity problem?

A structured troubleshooting tree is the most effective way to isolate the root cause of a persistent problem, systematically checking from data collection through to analysis.

Frequently Asked Questions (FAQs)

Q1: What is the primary risk of using a low sampling rate for my accelerometer study? The primary risk is aliasing, a signal distortion that occurs when high-frequency movements are sampled at an insufficient rate, making them appear as lower-frequency movements that never actually occurred [32]. This can severely misrepresent rapid animal behaviors. Additionally, sampling below the required rate can cause a loss of critical information on signal amplitude, which is a key proxy for energy expenditure [6].

Q2: How do I determine the minimum sampling frequency needed for my research? The minimum sampling frequency is governed by the Nyquist-Shannon sampling theorem. It states that to accurately record a behavior, your sampling rate must be at least twice the frequency of the fastest movement you need to characterize [6]. For short-burst behaviors, an even higher rate may be necessary.

Table 1: Recommended Sampling Guidelines for Different Research Objectives

| Research Objective | Behavior Type | Recommended Minimum Sampling Frequency | Key Consideration |

|---|---|---|---|

| Behavior Classification | Short-burst (e.g., swallowing, prey capture) | 2x Nyquist Frequency (e.g., 100 Hz for a 28 Hz behavior) [6] | Essential for capturing brief, rapid events. |

| Behavior Classification | Rhythmic, long-duration (e.g., flight, walking) | Nyquist Frequency (e.g., 12.5 Hz for a 6 Hz wingbeat) [6] | Lower frequencies can suffice for sustained, periodic movements. |

| Energy Expenditure (Amplitude/ODBA) | General activity | 10-100 Hz [33] [6] | Accuracy depends on both sampling frequency and the duration of the analysis window. |

Q3: What are the practical trade-offs between raw high-resolution and processed low-resolution data? The choice involves a direct trade-off between data fidelity and operational constraints.

Table 2: Raw High-Resolution vs. Processed Low-Resolution Data Trade-Offs

| Factor | Raw High-Resolution Data | Processed Low-Resolution Data |

|---|---|---|

| File Size & Storage | Very large files (e.g., 18+ MB for an 18-megapixel raw file) [34]. | Significantly smaller files (half to one-fifth the size) [34]. |

| Battery Life & Memory | Faster battery drain and memory fill, limiting deployment duration [6]. | Longer battery life and deployment periods; more data can be stored [6]. |

| Data Flexibility | High flexibility for post-processing; allows for correction of exposure and recovery of detail [34]. | Limited post-processing flexibility; information is permanently lost during compression/processing [34]. |

| Information Content | Records all sensor data, enabling discovery of unforeseen patterns [35]. | Contains only a pre-determined subset of information, which may omit biologically relevant signals [35]. |

| Usability | Requires significant post-processing effort before analysis [34]. | Ready for immediate analysis and use [34]. |

Q4: How does sensor placement affect my data, and can I combine datasets from different studies? Sensor placement critically affects signal amplitude. Studies show that identical behaviors can generate significantly different acceleration metrics depending on tag placement. For example:

- In pigeons, upper and lower back-mounted tags varied in Dynamic Body Acceleration (DBA) by 9% [35].

- In kittiwakes, tail- and back-mounted tags varied in DBA by 13% [35]. These differences mean that combining datasets from studies using different attachment protocols can generate trends with no biological meaning. Consistency in placement and calibration is essential for valid comparisons [35].

Q5: Why is accelerometer calibration critical, and how can I perform it in the field? Calibration is crucial because manufacturing processes can introduce sensor inaccuracies. An uncalibrated tag can lead to errors in DBA of up to 5% [35]. A simple "6-Orientation" (6-O) method can be performed in the field:

- Place the motionless tag in six defined orientations (like the faces of a die) so each accelerometer axis points up and down.

- Record data for about 10 seconds in each position.

- Use the recorded maxima to derive correction factors for each axis, ensuring the vectorial sum equals 1.0 g [35]. This calibration data should be archived with all subsequent data collections.

Troubleshooting Guides

Problem: Inability to Classify Short-Burst Behaviors

- Symptoms: Classifier fails to identify rapid events like swallowing or escape maneuvers; signal for fast behaviors appears distorted.

- Possible Causes:

- Insufficient Sampling Frequency: The sampling rate is below the Nyquist frequency for the target behavior.

- Incorrect Analysis Window: The window length used to classify behaviors is too long to capture brief events.

- Solutions:

Problem: Poor Generalization of Energy Expenditure Models

- Symptoms: A model that works well in one study population or season performs poorly in another, with inconsistent ODBA or VeDBA values.

- Possible Causes:

- Uncalibrated Sensors: Systematic error from uncalibrated sensors creates biased data [35].

- Variable Tag Placement: Differences in tag attachment or position between studies alter signal amplitude [35].

- Confounding Covariables: Changes in signal amplitude are conflated with other factors, such as season or device type [35].

- Solutions:

- Implement Pre-Deployment Calibration: Always perform and archive a calibration (e.g., the 6-O method) before deployment [35].

- Standardize Attachment Protocols: Use a consistent, documented method for tag placement and attachment across all subjects and studies.

- Use Raw Data where Possible: Archiving raw data allows for re-processing with improved methods in the future [35].

Experimental Protocols for Key Studies

Protocol 1: Field Calibration of Accelerometers using the 6-O Method This protocol, derived from [35], ensures the absolute accuracy of your accelerometers before animal deployment.

- Equipment Setup: Secure the data logger to a flat, stable surface.

- Data Collection: Orient the logger so that each of its three primary axes points directly upward and then downward, mimicking the six faces of a cube. In each orientation, keep the logger perfectly still for approximately 10 seconds while recording data.

- Data Processing:

- For each static period, calculate the vectorial sum of the acceleration:

||a|| = √(x² + y² + z²). - For a perfect sensor, this sum should be 1.0 g in all orientations. Deviations indicate error.

- Calculate axis-specific correction factors to ensure both the minimum and maximum for each axis are symmetrical, then apply a gain to normalize the vector sum to 1.0 g.

- For each static period, calculate the vectorial sum of the acceleration:

- Archiving: The derived correction factors must be saved and applied to all subsequent data collected with that tag. The raw calibration data should be archived with the study data.

Protocol 2: Determining Behavior-Specific Sampling Frequencies This protocol, based on [6], uses a combination of high-speed videography and accelerometry to determine the minimum required sampling rate for your species and behaviors of interest.

- Experimental Setup:

- Fit animals with accelerometers logging at a high frequency (e.g., 100 Hz).

- Simultaneously record the animal's behavior with synchronized high-speed cameras (e.g., 90 fps).

- Data Annotation:

- Use the video footage to precisely annotate the start and end times of specific behaviors (e.g., flight, swallowing, foraging).

- Extract the corresponding accelerometer data segments.

- Frequency Analysis:

- For each behavior, perform a frequency analysis (e.g., using a Fast Fourier Transform) to identify the dominant and maximum frequencies present in the signal.

- The highest frequency of biological interest (

f_max) dictates the Nyquist frequency (2 * f_max).

- Down-Sampling Test:

- Digitally down-sample the original high-frequency data to progressively lower rates.

- Test the performance of behavior classifiers or the accuracy of amplitude metrics (like VeDBA) at each lower rate.

- The minimum acceptable sampling frequency is the lowest rate that does not result in a statistically significant loss of performance or accuracy.

Data Acquisition Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Materials for Accelerometer-Based Animal Behavior Research

| Item | Function & Explanation | Example Models / Types |

|---|---|---|

| Tri-Axial Accelerometer Logger | Measures acceleration in three spatial dimensions (vertical, lateral, anterior-posterior), enabling detailed reconstruction of posture and movement [35]. | Daily Diary tags [35], ActiGraph GT3X/+ [33]. |

| Data Acquisition (DAQ) System | Powers the sensor and acquires (digitizes) the analog signal from the accelerometer for recording and analysis [36]. | National Instruments CompactDAQ with NI 9234 module [36] [37]. |

| Leg-Loop Harness | A common attachment method for birds and some mammals that secures the logger to the animal's body with minimal impact on welfare and behavior [6]. | Custom-made from Teflon tubing or similar material [6]. |

| High-Speed Video Camera | Provides ground-truth behavioral data that is synchronized with accelerometer signals, essential for validating and annotating behaviors [6]. | GoPro Hero (90 fps) [6]. |

| Anti-Aliasing Filter | An analog low-pass filter that removes high-frequency signal components before digitization to prevent aliasing artifacts that cannot be fixed later [32] [38]. | Butterworth Filter (general vibration), Bessel Filter (shock/transient events) [38]. |

| Calibration Platform | A precisely leveled, stable surface used for the 6-O calibration method to establish the baseline accuracy of the accelerometers [35]. | Standard laboratory bench with leveling feet. |

Frequently Asked Questions (FAQs)

Q1: What are the primary advantages of using video data to validate accelerometer-based behavior classifications? Video annotation provides the ground truth needed to build reliable supervised machine learning models. By directly observing an animal's behavior on video and matching it to the corresponding accelerometer signal, researchers can create a labeled "training dataset." This dataset is used to teach a classification model, such as a Random Forest, to recognize patterns in the accelerometer data and automatically identify behaviors in future datasets where video is not available [21]. This process is crucial for identifying specific, often rare, behaviors like grooming or feeding [12] [39].

Q2: How can ECG signals complement accelerometer data in activity recognition? Accelerometer (ACC) and electrocardiogram (ECG) data offer complementary strengths. ACC signals excel at recognizing gross motor activities, while ECG signals, which reflect cardiac dynamics, are more sensitive to physiological states and are superior for identifying the individual subject themselves. The table below summarizes their performance in unsupervised recognition tasks [40]:

Table: Comparative Performance of ECG and Accelerometer Data in Unsupervised Recognition Tasks

| Modality | Primary Strength | Recognition Task Performance Metric | Reported Accuracy |

|---|---|---|---|

| Accelerometer (ACC) | Human Activity Recognition | Normalized Mutual Information (NMI) / Accuracy | 0.728 / 0.817 [40] |

| Electrocardiogram (ECG) | Subject Identification | Normalized Mutual Information (NMI) / Accuracy | 0.641 / 0.500 [40] |

Q3: What are common data synchronization challenges when using multiple sensors, and how can they be resolved? A major challenge is the post-hoc fusion of data from different devices, which can introduce variability and reduce performance compared to using a single, optimal modality [40]. To ensure precise synchronization:

- Use Integrated Sensor Platforms: Whenever possible, use a single device that records multiple data streams (e.g., ACC and ECG) on a unified timeline.

- Implement Synchronization Protocols: At the start and end of data collection, introduce a clear, simultaneous event (e.g., a specific movement sequence like three jumps) that is visible in all data streams (ACC, ECG, and video). This event creates a shared timestamp to align all data during processing.

Q4: Why is my model performing well on training data but poorly on new, unseen data from a different study population? This is typically a problem of model generalizability. Models often fail when the new data differs from the training data in key aspects [12]. To improve generalizability:

- Maximize Variability in Training Data: Collect training data from a diverse set of individuals, across different seasons, and under various environmental conditions [12] [35].

- Standardize Behavior Durations: Ensure your training dataset contains a balanced representation of all behaviors of interest, rather than being skewed toward common behaviors (e.g., resting). This prevents the model from being biased toward over-represented behaviors [21].

- Calibrate Your Sensors: Inaccurate accelerometer readings can introduce significant error. Perform a simple static calibration before deployment to correct for sensor-specific biases [35].

Troubleshooting Guides

Issue 1: Poor Performance in Classifying Specific Behaviors

Problem: Your machine learning model consistently fails to accurately identify certain behaviors, particularly rare or transitional ones (e.g., grooming in cats, scratching in goats).

Solution: Refine your model's training data and features.

- Step 1: Analyze the Training Data. Check the distribution of behaviors in your training dataset. If a behavior like "running" is rare, the model has fewer opportunities to learn it.

- Step 2: Standardize Behavior Durations. Balance your dataset by ensuring you have a similar number of examples for each target behavior. This prevents the model from favoring the most common behaviors [21].

- Step 3: Optimize Data Frequency. For fast-paced behaviors (e.g., running), use higher-frequency accelerometer data (e.g., 40 Hz). For slower, more periodic behaviors (e.g., grazing, grooming), data averaged over 1-2 seconds (1 Hz) can yield better accuracy [21].

- Step 4: Add Descriptive Variables. Calculate and include additional metrics from the raw accelerometer data beyond basic static and dynamic acceleration. Features like the dominant power spectrum frequency, amplitude, and the running standard error of the waveform can significantly improve the model's ability to discriminate between similar behaviors [21].

Issue 2: Inconsistent Results After Changing Sensor Placement or Type

Problem: A model developed with sensors on an animal's back performs poorly when deployed with sensors on the tail, or when using a different brand of accelerometer.

Solution: Implement sensor calibration and placement protocols.

- Step 1: Calibrate All Accelerometers. Before deployment, calibrate each sensor using a simple static method. Place the sensor motionless in six different orientations (like the faces of a die) and record the output. Use this data to correct for any biases in the sensor's measurements, ensuring the vector sum of the three axes reads 1g in all orientations [35].

- Step 2: Document Placement Precisely. The position of the tag on the body (e.g., upper vs. lower back, tail mount) can change the amplitude of the acceleration signal. Document the exact placement and consider it a fixed variable in your study. Avoid comparing DBA (Dynamic Body Acceleration) values from tags placed in different locations, as amplitude differences may not reflect true differences in energy expenditure [35].

Table: Impact of Device Placement on Acceleration Metrics (VeDBA)

| Species | Comparison | Reported Variation in VeDBA |

|---|---|---|

| Pigeon | Upper vs. Lower Back Mount | ~9% [35] |

| Kittiwake | Back vs. Tail Mount | ~13% [35] |

| Human | Back vs. Waist Mount | ~0.25 g at intermediate running speeds [35] |

Issue 3: Data Aliasing and Loss of Behavioral Detail

Problem: Your accelerometer is set to sample in short, intermittent bursts, and you suspect you are missing critical, short-duration behaviors.

Solution: Leverage continuous on-board processing or optimize burst sampling.

- Step 1: Implement On-Board Classification. Where technology allows, use trackers with integrated algorithms that process raw accelerometer data into behavior codes continuously on the device itself. This eliminates the risk of aliasing and provides a complete record of all behaviors [41].

- Step 2: Optimize Burst Sampling Intervals. If continuous sampling is not possible, the burst sampling interval must be carefully chosen. The table below shows how sampling interval affects the accuracy of time-activity budgets, especially for rare behaviors [41]:

Table: Error Ratios for Rare Behaviors at Different Sampling Intervals

| Sampling Interval | Impact on Rare Behavior Detection |

|---|---|

| Every 10 seconds | Minimal error |

| Every 5 minutes | Error ratios >1 become common for rare behaviors (e.g., flying, running) [41] |

| Every 10 minutes | Error ratios >1 are common, significantly distorting time-activity budgets [41] |

| Every 60 minutes | Severe inaccuracy for estimating daily distances and rare behaviors [41] |

Experimental Protocols for Data Validation

Protocol 1: Creating a Video-Annotated Training Dataset

This protocol is essential for developing a supervised machine learning model for behavior recognition [21] [39].

- Sensor Deployment: Equip study subjects with accelerometers. For terrestrial mammals, collar mounts are common; for birds, back or tail mounts are used. Ensure the sensor is securely attached to minimize movement artifacts [35].

- Video Recording: Simultaneously record high-quality video of the subjects for a representative period. The video should be clear enough for an expert to distinguish all behaviors of interest.

- Synchronization: Create a synchronization event at the start and end of recording (e.g., a specific series of taps on the sensor or a unique light flash visible to the camera).

- Behavior Annotation: A trained observer reviews the video and labels the occurrence and duration of specific behaviors (e.g., "grazing," "ruminating," "walking," "lying"). This can be done using software like BORIS or ELAN.

- Data Alignment: Precisely align the video annotations with the corresponding segments of the accelerometer data stream using the synchronization events. The result is a labeled dataset where each accelerometer data segment is paired with a behavior code.

Protocol 2: A Multi-Modal Workflow for Validating Cardiac and Behavioral States

This workflow integrates accelerometer and ECG data to provide a more holistic view of an animal's physiological and behavioral state [40] [42].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key Materials for Multi-Modal Biologging Studies

| Item | Function & Application Notes |

|---|---|

| Tri-axial Accelerometer | Measures dynamic body acceleration in three dimensions (surge, heave, sway). The core sensor for quantifying movement and classifying behavior. Select based on size, weight, sampling frequency, and battery life [21] [35]. |

| Electrocardiogram (ECG) Sensor | Records the electrical activity of the heart. Used for subject identification and monitoring physiological response to activity. Can be integrated into wearable harnesses or implanted devices [40] [42]. |

| Video Recording System | Provides ground truth data for validating and labeling accelerometer signals. Critical for creating training datasets for supervised machine learning models [21] [39]. |

| Random Forest Classifier | A common and robust supervised machine learning algorithm. Used to train models that predict behavior from features extracted from accelerometer data [21]. |

| Dynamic Body Acceleration (DBA) Metrics | Calculated proxies (ODBA - Overall DBA, VeDBA - Vectoral DBA) from raw accelerometer data. Serve as a well-validated proxy for movement-based energy expenditure [41] [35]. |

| Synchronization Tool | A device or protocol (e.g., LED flash, NTP server, specific movement) to create a simultaneous mark in all data streams (video, ACC, ECG), enabling precise temporal alignment during analysis. |

Troubleshooting and Optimization: Correcting and Preventing Aliasing in Existing and Future Studies

Frequently Asked Questions (FAQs)

1. What is aliasing and why is it a problem in data collection? Aliasing is a phenomenon in signal processing where high-frequency components in a signal appear as false lower frequencies in sampled data [43] [44]. This occurs when a continuous signal is sampled at a rate that is too low to accurately represent the original signal [45]. In animal studies research, aliasing can cause distorted vibration data, leading to misinterpretation of an animal's movement patterns, gait, or physiological vibrations [9]. Once aliasing occurs, it is difficult to detect and almost impossible to remove using software alone [46].

2. How can I tell if my historical accelerometer data has aliasing? Diagnosing aliasing in historical data relies on identifying certain patterns, as the original high-frequency content is lost. Key indicators include:

- Unexplained Low-Frequency Components: Presence of low-frequency vibrations or tones that have no clear biological origin [9].

- Frequency Shifts: A noticeable shift in frequency content that correlates with changes in sampling rate rather than actual behavior.

- Non-Physical Signals: Frequency components that appear at illogical times, such as vibration frequencies exceeding an animal's possible physiological range.