Performance Evaluation of Biomimetic Algorithms in Ecological Optimization: Advances and Applications in Biomedical Research

This article provides a comprehensive performance evaluation of biomimetic optimization algorithms, exploring their foundational principles, methodological applications, and significant potential for solving complex ecological and biomedical problems.

Performance Evaluation of Biomimetic Algorithms in Ecological Optimization: Advances and Applications in Biomedical Research

Abstract

This article provides a comprehensive performance evaluation of biomimetic optimization algorithms, exploring their foundational principles, methodological applications, and significant potential for solving complex ecological and biomedical problems. It systematically examines the inspiration drawn from natural systems—including evolutionary processes, swarm behaviors, and plant intelligence—to develop powerful metaheuristics. The scope encompasses a critical analysis of algorithmic robustness, scalability, and convergence properties, addressing common challenges like parameter sensitivity and premature convergence through innovative hybridization and adaptive strategies. By presenting rigorous validation frameworks and comparative case studies across drug discovery and clinical optimization domains, this work serves as an essential resource for researchers and drug development professionals seeking efficient, nature-inspired computational solutions.

The Biological Blueprint: Foundations of Nature-Inspired Metaheuristics

Historical Evolution and Chronological Emergence of Bio-Inspired Algorithms

Bio-inspired algorithms, also known as biomimetic algorithms, constitute a class of metaheuristic methods inspired by biological and natural processes that have emerged as compelling alternatives for addressing complex computational challenges characterized by high dimensionality, nonlinearities, and dynamic environments [1]. These algorithms emulate strategies from evolution, swarm behavior, foraging, and immune response systems, offering robust and flexible problem-solving mechanisms where mathematical models are unavailable or too complex to derive [1]. Unlike classical optimization methods that rely on gradient information, bio-inspired algorithms are inherently stochastic, population-based, and adaptive, enabling them to traverse vast and complex search spaces efficiently without requiring derivative information [2] [1].

The historical progression of these algorithms illustrates a continuous quest for robust, adaptive, and computationally efficient optimization techniques capable of addressing increasingly complex real-world problems across diverse domains including engineering, computational biology, renewable energy, and ecological planning [3] [1] [4]. This review systematically charts the chronological emergence of seminal bio-inspired algorithms, analyzes their comparative performance through experimental data, and examines their applications in ecological optimization research, providing researchers with a comprehensive reference for selecting and implementing appropriate algorithms for specific problem domains.

Historical Timeline of Major Bio-Inspired Algorithms

The development of bio-inspired algorithms spans nearly five decades, reflecting an expanding repertoire of biological metaphors applied to computational optimization. Table 1 summarizes the key milestones in this evolutionary journey, highlighting the year of introduction, algorithmic names, and their respective biological inspirations.

Table 1: Chronological Emergence of Major Bio-Inspired Algorithms

| Year | Algorithm | Type | Inspiration Source |

|---|---|---|---|

| 1975 | Genetic Algorithm (GA) [1] | Evolutionary | Natural selection & survival of fittest |

| 1992 | Ant Colony Optimization (ACO) [1] | Swarm Intelligence | Ant foraging behavior & pheromone trails |

| 1995 | Particle Swarm Optimization (PSO) [5] [1] | Swarm Intelligence | Bird flocking & fish schooling |

| 1995 | Differential Evolution (DE) [2] | Evolutionary | Natural selection & vector differences |

| 2002 | Bacterial Foraging Optimization (BFO) [1] | Swarm/Foraging | E. coli foraging behavior |

| 2005 | Artificial Bee Colony (ABC) [6] [1] | Swarm Intelligence | Honeybee foraging behavior |

| 2009 | Cuckoo Search (CS) [3] [1] | Evolutionary | Brood parasitism of cuckoos |

| 2010 | Bat Algorithm (BA) [1] | Swarm Intelligence | Bat echolocation behavior |

| 2014 | Grey Wolf Optimizer (GWO) [3] [1] | Swarm Intelligence | Wolf pack hunting hierarchy |

| 2016 | Whale Optimization Algorithm (WOA) [1] | Swarm Intelligence | Bubble-net feeding of humpback whales |

| 2016 | Dragonfly Algorithm (DA) [1] | Swarm Intelligence | Static & dynamic swarming of dragonflies |

| 2017 | Salp Swarm Algorithm (SSA) [3] [1] | Swarm Intelligence | Chain foraging behavior of salps |

| 2023+ | Hybrid BIAs [1] | Hybrid | Multiple biological inspirations |

The evolution of bio-inspired algorithms has been largely driven by the need to overcome limitations of earlier models when applied to increasingly complex, high-dimensional problems [1]. Foundational techniques like Genetic Algorithm (GA) and Particle Swarm Optimization (PSO) introduced core principles of global search and population-based exploration but often suffered from premature convergence, poor exploitation near optima, and sensitivity to manually tuned parameters [1]. Newer paradigms emerged to address these issues, incorporating more sophisticated biological metaphors and adaptive mechanisms to better balance exploration and exploitation [1].

Algorithmic Mechanisms and Comparative Analysis

Fundamental Methodologies

Bio-inspired algorithms can be broadly categorized based on their underlying biological metaphors and operational mechanisms. The diagram below illustrates the taxonomic relationships and core inspirations of major algorithms.

Diagram 1: Taxonomy of Bio-Inspired Algorithms by Primary Inspiration Source

Evolutionary Algorithms, including Genetic Algorithms (GA) and Differential Evolution (DE), are inspired by biological evolution processes [7] [2] [8]. GA mimics Darwinian evolution through selection, crossover, and mutation operations on a population of candidate solutions [7] [8]. DE creates new candidates by combining existing ones according to simple formulae, keeping whichever candidate solution has the best fitness [2]. These algorithms are particularly effective for global optimization but may suffer from premature convergence [7] [1].

Swarm Intelligence algorithms simulate collective behavior of decentralized systems. Particle Swarm Optimization (PSO) is based on the social dynamics of bird flocking and fish schooling, where particles adjust their trajectories based on personal and collective experiences [5] [9]. Ant Colony Optimization (ACO) mimics ant foraging behavior using pheromone trails to guide search processes [1]. Newer approaches like Grey Wolf Optimizer (GWO) and Whale Optimization Algorithm (WOA) incorporate dynamic leadership and hunting-inspired behaviors to enhance convergence stability [3] [1].

Foraging Algorithms such as Artificial Bee Colony (ABC) and Bacterial Foraging Optimization (BFO) model food search strategies of biological organisms [6] [1]. ABC specifically simulates the foraging behavior of honeybee colonies with employed bees, onlooker bees, and scout bees performing different roles in the optimization process [6].

Performance Comparison in Optimization Tasks

Experimental comparisons across various domains provide insights into the relative strengths and limitations of different bio-inspired algorithms. Table 2 summarizes quantitative performance data from a study optimizing an artificial neural network (ANN) for Maximum Power Point Tracking (MPPT) in photovoltaic systems under partial shading conditions [3].

Table 2: Performance Comparison of Bio-Inspired Algorithms in ANN Optimization for MPPT [3]

| Algorithm | Neurons in Layer 1 | Neurons in Layer 2 | Mean Square Error (MSE) | Mean Absolute Error (MAE) | Execution Time (s) |

|---|---|---|---|---|---|

| Standard ANN | 64 | 32 | 159.9437 | 8.0781 | - |

| Grey Wolf Optimizer (GWO) | 66 | 100 | 11.9487 | 2.4552 | 1198.99 |

| Particle Swarm Optimization (PSO) | 98 | 100 | - | 2.1679 | 1417.80 |

| Squirrel Search Algorithm (SSA) | 66 | 100 | 12.1500 | 2.7003 | 987.45 |

| Cuckoo Search (CS) | 84 | 74 | 33.7767 | 3.8547 | 1904.01 |

The experimental protocol involved augmenting the base dataset with perturbations to simulate partial shading conditions, with each algorithm tasked with tuning the number of neurons in each layer of the ANN to minimize error metrics [3]. Among the optimized approaches, GWO achieved the best prediction accuracy with competitive computational efficiency, while PSO minimized MAE but required longer execution time [3]. SSA emerged as the fastest algorithm with respectable accuracy, while CS demonstrated less reliable performance with higher errors and longer computation times [3].

The performance variations can be attributed to fundamental differences in algorithmic mechanisms. GWO's hierarchical leadership model and balanced exploration-exploitation capabilities contribute to its strong performance [3] [1]. PSO's social learning mechanism enables effective knowledge transfer but may lead to premature convergence in complex landscapes [5] [1]. ABC maintains population diversity through its employed-onlooker-scout bee mechanism but may exhibit slower convergence [6]. DE's differential mutation provides strong exploration capabilities but may require parameter tuning for optimal performance [2].

Applications in Ecological Optimization Research

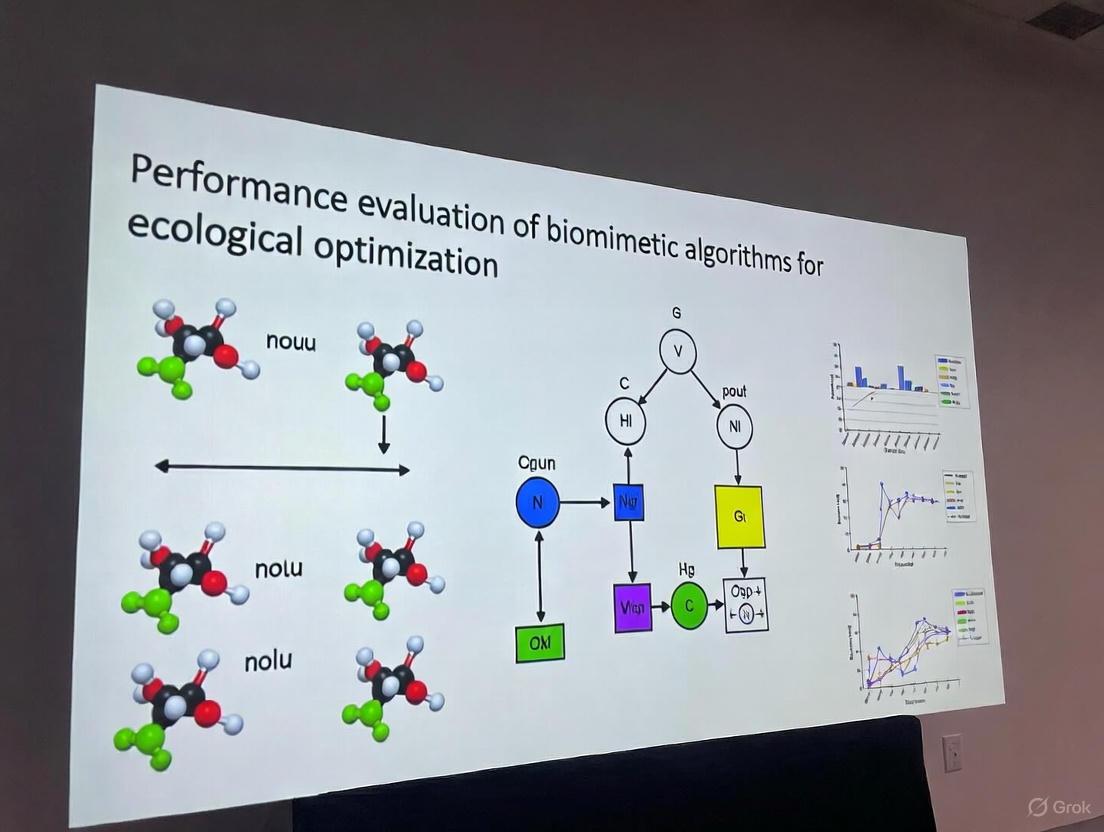

Bio-inspired algorithms have demonstrated significant utility in addressing complex ecological optimization challenges, particularly in spatial planning, resource management, and network design. The workflow below illustrates a typical application of biomimetic algorithms in ecological network optimization.

Diagram 2: Ecological Network Optimization Workflow Using Biomimetic Algorithms

A prominent application involves optimizing ecological network (EN) function and structure through spatial operators coupled with biomimetic intelligent algorithms [4]. Research has demonstrated that modified Ant Colony Optimization (MACO) models can successfully address the collaborative optimization of patch-level function and macro-structure of ecological networks [4]. These approaches incorporate both bottom-up functional optimization through micro spatial operators and top-down structural optimization through mechanisms for identifying potential ecological stepping stones [4].

The integration of GPU-based parallel computing techniques has significantly improved computational efficiency for city-level ecological network optimization using patch-level land use optimization models, making large-scale spatial optimization feasible [4]. This advancement addresses previous limitations in computational efficiency when performing complex optimization operations on large amounts of geospatial data [4].

Experimental implementations in regions like Yichun City, China, have demonstrated the effectiveness of biomimetic algorithms in enhancing ecological connectivity while maintaining landscape functionality [4]. The optimization process typically involves defining objective functions based on both structural metrics (e.g., connectivity indices, corridor efficiency) and functional metrics (e.g., habitat quality, ecosystem services), with constraint conditions representing real-world ecological and socioeconomic limitations [4].

Practical Implementation Considerations

Research Reagent Solutions

Implementing bio-inspired algorithms for optimization tasks requires both computational frameworks and domain-specific evaluation metrics. Table 3 outlines essential components in the researcher's toolkit for developing and applying biomimetic algorithms in ecological and engineering contexts.

Table 3: Essential Research Reagents for Bio-Inspired Algorithm Implementation

| Component Category | Specific Elements | Function & Purpose |

|---|---|---|

| Algorithmic Parameters | Population size, iteration limits, cognitive/social coefficients (PSO), crossover/mutation rates (GA), differential weight (DE) | Control exploration-exploitation balance and convergence behavior |

| Performance Metrics | Mean Square Error (MSE), Mean Absolute Error (MAE), convergence speed, computational time, solution diversity | Quantify solution quality and algorithmic efficiency |

| Ecological Indices | Habitat suitability scores, connectivity indices, landscape metrics, ecosystem service valuations | Evaluate ecological effectiveness of optimization outcomes |

| Computational Frameworks | Parallel computing (GPU/CPU), spatial optimization libraries, geographic information systems (GIS) | Enable efficient processing of large-scale and spatial problems |

| Validation Protocols | Statistical testing, comparison with baseline methods, sensitivity analysis, field verification | Ensure methodological rigor and practical relevance |

Implementation Guidelines

Successful implementation of bio-inspired algorithms requires careful consideration of problem characteristics and algorithmic strengths. For ecological spatial optimization problems involving habitat network design or land use planning, swarm intelligence approaches like ACO and PSO have demonstrated particular effectiveness due to their ability to handle complex spatial constraints and multiple objectives [4]. The modified ACO (MACO) approach with specialized spatial operators has shown promise in simultaneously addressing functional and structural optimization of ecological networks [4].

For high-dimensional continuous optimization problems such as neural network parameter tuning, algorithms with strong exploration capabilities including DE, GWO, and PSO have proven effective [3]. Recent hybrid approaches that combine multiple algorithmic strategies often outperform individual algorithms by leveraging complementary strengths [1] [9].

Parameter tuning remains critical for optimal performance across all algorithm classes. While default parameters provide reasonable starting points, problem-specific tuning significantly enhances performance [5] [2]. Recent trends toward self-adaptive parameter control mechanisms reduce the need for manual tuning and improve algorithmic robustness across diverse problem domains [1] [9].

The historical evolution of bio-inspired algorithms demonstrates a continuous expansion of biological metaphors applied to computational optimization, from early evolutionary approaches to sophisticated swarm intelligence and foraging models. Performance comparisons reveal that no single algorithm dominates all problem domains, with different approaches excelling in specific contexts based on problem characteristics, computational constraints, and performance requirements.

In ecological optimization research, biomimetic algorithms have proven particularly valuable for addressing complex spatial planning challenges that integrate multiple objectives and constraints. The ongoing development of hybrid approaches, parallel computing implementations, and adaptive parameter control mechanisms continues to enhance the applicability and effectiveness of these algorithms for increasingly complex real-world problems across scientific and engineering domains.

The field of optimization has increasingly turned to nature for inspiration, yielding powerful algorithms that solve complex computational problems. This paradigm, known as biomimetic or bio-inspired optimization, encompasses approaches ranging from swarm intelligence to ecological processes. Swarm Intelligence Optimization Algorithms (SIOAs) draw inspiration from the collective behaviors exhibited by insects, animals, and other organisms, demonstrating remarkable abilities in solving non-convex, nonlinearly constrained, and high-dimensional optimization tasks [10] [11]. Their inherent capability to swiftly converge toward optimal solutions while effectively escaping local optima has been well-documented in numerous studies, making them particularly valuable for engineering applications and drug discovery research [10] [12].

Meanwhile, ecological processes have inspired another branch of optimization techniques that model predator-prey dynamics, foraging behaviors, and evolutionary adaptation. The fundamental strength of these approaches lies in their population-based nature, which allows them to efficiently explore vast search spaces without gradient information while maintaining diversity to avoid premature convergence [13]. As industrialization continues to progress at an unprecedented pace, engineering applications are proliferating alongside myriad intricate and diverse challenges, making these nature-inspired approaches increasingly valuable for researchers, scientists, and drug development professionals seeking robust optimization solutions [10].

This article presents a comprehensive performance evaluation of biomimetic algorithms, with a specific focus on comparing swarm intelligence with ecology-based optimization approaches. We provide structured experimental data, detailed methodologies, and analytical frameworks to guide algorithm selection for research and industrial applications, particularly in the demanding field of drug development where optimization challenges abound.

Algorithmic Taxonomy and Theoretical Foundations

Swarm Intelligence: Principles and Classification

Swarm Intelligence (SI) emerges from the collective behavior of decentralized, self-organized systems, both natural and artificial. Typical SI phenomena include fish schooling, ant foraging, and bird flocking [14]. Researchers have developed various models to characterize the mechanisms of SI, which can be classified into four primary categories:

- Self-driven particle models: These models, with the Boids model as a primary example, simulate the motion of individuals based on simple rules of attraction, alignment, and repulsion [14].

- Pheromone communication models: This category includes the ant colony pheromone model, which forms the foundation for Ant Colony Optimization (ACO) algorithms [14].

- Leadership decision models: These models utilize hierarchical dynamics, such as the pigeon flock model, where leadership emerges from individual interactions [14].

- Empirical research models: These employ observational data to build accurate models, such as the topological rule model of starling flocks [14].

These theoretical foundations have been translated into powerful optimization algorithms that leverage decentralized control and self-organization to solve complex problems.

Ecological Optimization: From Populations to Ecosystems

Ecologically-inspired algorithms constitute a distinct branch of biomimetic optimization that models broader biological processes beyond collective swarm behavior. These approaches can be further categorized into several subgroups:

- Evolutionary-based approaches: Including Genetic Algorithms (GA), Evolution Strategies (ES), and Differential Evolution (DE), which model natural selection and genetic operations [13].

- Ecology and plant-based algorithms: Modeling ecological systems, plant growth, and resource distribution mechanisms [13].

- Predator-prey optimization: Simulating hunting behaviors and predator-prey dynamics to balance exploration and exploitation [13].

- Human-inspired algorithms: Drawing inspiration from social behaviors, cultural evolution, and other human activities [13].

Well-established algorithms including GA, ES, DE, PSO, and ACO have achieved the status of rigorously validated methods with strong theoretical foundations, while many newer approaches face criticism for offering primarily metaphorical novelty rather than substantive algorithmic innovations [13].

Conceptual Relationships in Biomimetic Optimization

The diagram below illustrates the taxonomic relationships and key characteristics of major biomimetic optimization approaches:

Performance Comparison: Experimental Data and Metrics

Numerical Optimization Performance on Benchmark Functions

Comprehensive evaluation using standardized benchmark functions provides critical insights into algorithm performance. The following table summarizes results from multiple studies comparing biomimetic algorithms on the CEC2017 benchmark suite:

Table 1: Performance Comparison on CEC2017 Benchmark Functions

| Algorithm | Category | Average Rank (10-D) | Average Rank (30-D) | Average Rank (50-D) | Average Rank (100-D) | Key Strengths |

|---|---|---|---|---|---|---|

| EECO [10] | Swarm Intelligence | 2.138 | 1.438 | 1.207 | 1.345 | Convergence accuracy, stability |

| ERDBO [10] | Swarm Intelligence | N/A | N/A | N/A | N/A | Global exploration, local exploitation |

| EKSSA [15] | Swarm Intelligence | Superior to 8 state-of-the-art algorithms | N/A | N/A | N/A | Balance of exploration and exploitation |

| DE [16] | Ecological/Evolutionary | N/A | N/A | N/A | N/A | Accuracy, local optima avoidance |

| HOA [16] | Ecological/Predator-Prey | N/A | N/A | N/A | N/A | Adaptive randomization, dynamic tuning |

| PSO [16] | Swarm Intelligence | N/A | N/A | N/A | N/A | Established performance, simplicity |

The Enhanced Educational Competition Optimizer (EECO) demonstrates remarkable dimensional scalability, achieving top ranks across varying problem dimensions [10]. Similarly, the Enhanced Knowledge-based Salp Swarm Algorithm (EKSSA) outperformed eight state-of-the-art algorithms, including Randomized Particle Swarm Optimizer (RPSO), Grey Wolf Optimizer (GWO), and Archimedes Optimization Algorithm (AOA) [15].

Engineering Application Performance

Real-world engineering applications provide another critical performance dimension. The following table compares algorithm performance across various engineering domains:

Table 2: Performance in Engineering Applications

| Algorithm | Application Domain | Performance Metrics | Comparative Results |

|---|---|---|---|

| DE [16] | Photovoltaic Parameter Estimation | RMSE: 0.0001 (DDM) | Outperformed PSO, AOA, HOA |

| HOA [16] | Photovoltaic Parameter Estimation | Competitive RMSE values | Effective parameter optimization |

| PSO [16] | Photovoltaic Parameter Estimation | Competitive RMSE values | Established effectiveness |

| ERDBO [10] | Engineering Design Problems | Successful application to tension/compression spring, three-bar truss, pressure vessel | Efficient and applicable framework |

| EKSSA-SVM [15] | Seed Classification | Higher classification accuracy | Effective hyperparameter optimization |

Differential Evolution (DE) demonstrated particular strength in photovoltaic parameter estimation, achieving the lowest root mean square error (RMSE) of 0.0001 for the double-diode model, highlighting its precision in complex engineering optimization tasks [16].

Energy Efficiency and Computational Performance

With growing emphasis on sustainable computing, energy efficiency has become a crucial performance metric. The following table summarizes findings from a comprehensive study of optimizer energy efficiency:

Table 3: Energy Efficiency Analysis in Neural Network Training

| Optimizer | Category | Training Duration (MNIST) | CO2 Emissions (kg) | Accuracy (MNIST) | Performance Notes |

|---|---|---|---|---|---|

| AdamW [17] | Gradient-based | 14.3s ± 2.7 | 1.09e-06 ± 7.14e-07 | 0.9799 ± 0.0040 | Consistently efficient |

| NAdam [17] | Gradient-based | Similar to AdamW | Similar to AdamW | Similar to AdamW | Consistently efficient |

| SGD [17] | Gradient-based | Longer duration | Higher emissions | Superior on complex datasets | Better performance despite higher emissions |

| Adadelta [17] | Gradient-based | 15.3s ± 3.9 | 9.52e-07 ± 5.50e-07 | 0.9829 ± 0.0033 | Lowest emissions, high accuracy |

| Adam [17] | Gradient-based | 15.6s ± 3.7 | 8.91e-07 ± 4.98e-07 | 0.9803 ± 0.0031 | Balanced performance |

The study revealed substantial trade-offs between training speed, accuracy, and environmental impact that varied across datasets and model complexity, with AdamW and NAdam emerging as consistently efficient choices across multiple metrics [17].

Experimental Protocols and Methodologies

Standardized Benchmarking Methodology

Rigorous evaluation of biomimetic algorithms requires standardized experimental protocols. The CEC benchmark functions provide a established framework for comparing algorithmic performance across diverse problem landscapes, including unimodal, multimodal, hybrid, and composition functions [10] [15]. These functions are specifically designed to test different algorithm capabilities: exploration, exploitation, local optima avoidance, and convergence behavior.

Experimental protocols should include multiple dimensions of analysis:

- Convergence Behavior: Tracking solution quality improvement over iterations

- Computational Complexity: Measuring time and resource requirements

- Solution Quality: Assessing accuracy and precision of final solutions

- Robustness: Evaluating performance consistency across multiple runs

- Scalability: Testing performance across varying problem dimensions [10]

For statistically significant results, studies should conduct multiple independent runs (typically 15-30) with different random seeds and report both mean and standard deviation of results [17].

Algorithm Enhancement and Modification Strategies

Recent advances in biomimetic algorithms frequently incorporate enhancement strategies to address limitations of basic versions:

- Adaptive Parameter Control: The Enhanced Knowledge Salp Swarm Algorithm (EKSSA) implements adaptive adjustment mechanisms for parameters c1 and α to better balance exploration and exploitation [15].

- Hybridization Approaches: Combining strengths of different algorithms, such as integrating PSO with local search techniques [10] [13].

- Learning Mechanisms: Incorporating experience-based learning, such as the larval growth phase in Enhanced Randomized Dung Beetle Optimizer (ERDBO) that uses experiential learning to enrich population diversity [10].

- Mutation Strategies: Implementing Gaussian walk-based position updates to enhance global search ability, as demonstrated in EKSSA [15].

- Dynamic Learning Strategies: Utilizing techniques such as dynamic mirror learning to expand search domains and strengthen local search capability [15].

The experimental workflow for evaluating these enhanced algorithms follows a systematic process illustrated below:

Domain-Specific Applications: Drug Discovery Case Study

Optimization Challenges in Pharmaceutical Development

Drug discovery presents particularly challenging optimization problems that can benefit from biomimetic algorithms. The pathway from drug discovery to clinic is long, complex, and costly, with the likelihood that a new molecular entity entering clinical evaluation will reach the marketplace at just 7% for cardiovascular disease [12]. This high attrition rate, combined with massive investment in drug discovery, creates compelling opportunities for optimization approaches that can improve efficiency and success rates.

Key optimization challenges in drug discovery include:

- Target Identification: Prioritizing molecular targets with the highest therapeutic potential

- Compound Screening: Identifying promising drug candidates from vast chemical libraries

- Toxicity Prediction: Assessing potential adverse effects early in development

- Clinical Trial Design: Optimizing trial parameters for efficiency and statistical power

- Formulation Optimization: Designing optimal drug delivery systems [12]

Biomimetic Approaches in Preclinical Testing

Biomimetic algorithms show particular promise in optimizing preclinical testing methodologies. The limitations of traditional approaches have become increasingly apparent, with species differences in animal models, lack of long-standing cardiac pathology in these models, and rare consideration of concomitant diseases representing significant challenges [12].

Advanced biomimetic applications in this domain include:

- 3D Culture Optimization: Utilizing algorithm-driven approaches to enhance the structural, chemical, and biochemical control of 3D culture systems that better mimic native tissues [12].

- High-Throughput Screening: Applying optimization algorithms to design efficient screening protocols for rapid evaluation of potential drug candidates [12].

- Microfluidic System Design: Optimizing organ-on-a-chip technologies that require precise control of fluid dynamics, cell patterning, and environmental parameters [12].

The shift toward these advanced models is further supported by regulatory changes, including the FDA's Modernization Act 2.0, which advocates for integrating alternative methods to conventional animal testing, including cell-based assays employing human induced pluripotent stem cell (iPSC)-derived organoids and organ-on-a-chip technologies in conjunction with sophisticated AI methodologies [12].

Implementing and evaluating biomimetic optimization algorithms requires specialized computational resources and benchmark materials. The following table outlines key components of the research toolkit for scientists working in this field:

Table 4: Essential Research Reagents and Computational Resources

| Resource Category | Specific Examples | Function/Purpose | Application Context |

|---|---|---|---|

| Benchmark Suites | CEC2017, CEC2022 | Standardized performance evaluation | Algorithm validation and comparison |

| Engineering Problem Sets | Tension/compression spring, Three-bar truss, Pressure vessel design | Real-world performance testing | Engineering applications [10] |

| Classification Datasets | Seed classification datasets, UCI repository datasets | Application-oriented algorithm testing | Hybrid classifier development [15] |

| Photovoltaic Models | Single-diode (SDM), Double-diode (DDM), Triple-diode (TDM) models | Parameter estimation challenges | Renewable energy optimization [16] |

| Energy Measurement Tools | CodeCarbon, powermetrics | Energy consumption monitoring | Sustainable computing assessment [17] |

| Simulation Frameworks | Custom MATLAB/Python implementations, TensorFlow/PyTorch | Algorithm development and testing | General optimization research |

These resources enable rigorous, reproducible evaluation of biomimetic optimization algorithms across multiple performance dimensions, from computational efficiency to practical application effectiveness.

The taxonomy of inspiration in optimization algorithms reveals a rich landscape of approaches derived from natural systems, ranging from swarm intelligence to ecological processes. Performance comparisons demonstrate that while well-established algorithms like DE, PSO, and GA continue to deliver robust performance, newer enhanced variants such as EECO, ERDBO, and EKSSA offer significant improvements in specific domains, particularly in balancing exploration and exploitation capabilities [10] [15] [16].

Future developments in biomimetic optimization will likely focus on several key areas:

- Hybrid Approaches: Combining strengths of different algorithm families to create more robust optimizers [10] [13]

- Adaptive Parameter Control: Developing self-tuning algorithms that automatically adjust to problem characteristics [15]

- Energy-Aware Optimization: Incorporating computational efficiency and environmental impact as explicit optimization objectives [17]

- Domain-Specialized Variants: Tailoring algorithms to specific application domains like drug discovery and renewable energy [12] [16]

- Theoretical Foundations: Strengthening mathematical understanding of why and how biomimetic algorithms work [13]

For drug development professionals and researchers, selecting appropriate biomimetic algorithms requires careful consideration of problem characteristics, performance requirements, and computational constraints. The experimental data and comparative analysis presented in this guide provide a foundation for making informed decisions in algorithm selection and implementation, potentially leading to more efficient and successful optimization outcomes in both research and industrial applications.

In the quest to solve complex optimization problems, researchers are increasingly turning to nature's playbook. Biomimetic algorithms, inspired by the core biological principles of exploration, exploitation, and adaptation, have emerged as powerful tools for navigating high-dimensional, non-linear search spaces where traditional gradient-based methods falter [18] [19]. These algorithms mimic processes ranging from the collective intelligence of social insects to the evolutionary pressure of natural selection, offering robust solutions for applications spanning from renewable energy systems to drug development [20] [21].

The fundamental exploration-exploitation dilemma represents one of nature's most basic trade-offs: organisms must balance the resource cost of seeking new information against the benefits of using existing knowledge [22]. This biological imperative finds direct parallels in computational optimization, where algorithms must balance searching new regions of the solution space (exploration) with refining known good solutions (exploitation) [23]. The dynamic interplay between these competing objectives, coupled with the capacity for adaptation in changing environments, forms the theoretical foundation for biomimetic optimization approaches that demonstrate remarkable efficiency and robustness across diverse problem domains [22] [24].

Core Biological Principles and Their Computational Analogues

The Exploration-Exploitation Paradigm

The exploration-exploitation paradigm is fundamental to both biological systems and their computational counterparts. In biological terms, exploration encompasses activities like foraging for new food sources or investigating unfamiliar territories, while exploitation involves efficiently utilizing known resources [22]. This trade-off is formally modeled in biological systems through energy allocation decisions, where organisms dynamically adjust their investment in knowledge acquisition versus energy acquisition throughout their lifecycle [22].

Computational implementations of this paradigm mirror these biological processes. In networked biological systems, exploration can be modeled as the stochastic mutation of network configurations, while exploitation exponentially increases the probability of retaining functional states based on a fitness metric [23]. The ratio between exploitation and exploration rates (functional pressure, ρ) determines system behavior: low values result in random walk-like dynamics, while high values drive the system toward optimal configurations [23]. This framework successfully models developmental processes such as the brain wiring in C. elegans, demonstrating how stochastic exploration combined with functional constraints can produce robust biological structures [23].

Adaptation in Dynamic Environments

Adaptation represents the third crucial component in this biological triad, enabling systems to maintain functionality amid changing conditions. Biological organisms exhibit remarkable adaptive capabilities through mechanisms like phenotypic plasticity, evolutionary change, and behavioral flexibility. These natural adaptation strategies have inspired computational techniques that allow algorithms to maintain performance despite shifting problem landscapes, noisy data, or evolving objectives [18].

In biomimetic optimization, adaptation manifests through dynamic parameter adjustment, memory mechanisms that retain information about previously successful strategies, and diversity preservation techniques that prevent premature convergence [24]. The Zeroing Neural Network (ZNN), for instance, represents a specialized approach for solving time-varying optimization problems, maintaining performance despite temporal changes that would degrade the effectiveness of static solvers [18]. Such adaptive capabilities are particularly valuable in real-world applications like ecological network optimization, where solutions must remain viable despite environmental changes and anthropogenic pressures [4].

Performance Comparison of Biomimetic Algorithms

Experimental Protocol for Algorithm Evaluation

Rigorous evaluation of biomimetic algorithms requires standardized experimental protocols that assess performance across diverse problem types. The methodology outlined below, derived from studies comparing bio-inspired algorithms for Maximum Power Point Tracking (MPPT) in photovoltaic systems, provides a template for objective algorithm comparison [20]:

Problem Selection: Algorithms are tested on benchmark problems with known optimal solutions, including both classical test functions (e.g., CEC-2017, CEC-2022 benchmarks) and real-world applications with practical relevance [20] [24]. Partial shading conditions were introduced to simulate real-world challenges in photovoltaic systems [20].

Performance Metrics: Multiple quantitative metrics are employed, including:

- Mean Squared Error (MSE): Measures precision of solutions

- Mean Absolute Error (MAE): Assesses prediction accuracy

- Execution Time: Quantifies computational efficiency

- Convergence Speed: Tracks iteration count to reach satisfactory solutions

Parameter Configuration: Each algorithm is fine-tuned with optimal parameter settings determined through preliminary experimentation to ensure fair comparison.

Neural Network Optimization: For algorithms optimizing artificial neural networks (ANNs), the number of neurons in each layer is treated as an optimizable parameter, with algorithms proposing architectures that minimize error metrics [20].

Quantitative Performance Analysis

Table 1: Performance Comparison of Bio-Inspired Optimization Algorithms for ANN-Based MPPT Forecasting

| Algorithm | Neuron Configuration (Layer 1, Layer 2) | Mean Squared Error (MSE) | Mean Absolute Error (MAE) | Execution Time (seconds) |

|---|---|---|---|---|

| Standard ANN | 64, 32 | 159.9437 | 8.0781 | - |

| Grey Wolf Optimizer (GWO) | 66, 100 | 11.9487 | 2.4552 | 1198.99 |

| Particle Swarm Optimization (PSO) | 98, 100 | - | 2.1679 | 1417.80 |

| Squirrel Search Algorithm (SSA) | 66, 100 | 12.1500 | 2.7003 | 987.45 |

| Cuckoo Search (CS) | 84, 74 | 33.7767 | 3.8547 | 1904.01 |

Source: Adapted from performance data in [20]

The comparative analysis reveals significant performance differences among algorithms. Grey Wolf Optimizer (GWO) demonstrated the best balance of prediction accuracy and computational efficiency, achieving the lowest MSE (11.9487) while maintaining reasonable execution time [20]. Particle Swarm Optimization (PSO) achieved the lowest MAE (2.1679) but required longer computation time, suggesting better precision at the cost of efficiency [20]. The Squirrel Search Algorithm (SSA) emerged as the fastest algorithm (987.45 seconds) while maintaining competitive accuracy, making it suitable for time-sensitive applications [20]. Cuckoo Search (CS) exhibited inferior performance on both accuracy and speed metrics, indicating potential limitations for this specific application domain [20].

Table 2: Algorithm Performance Across Problem Domains

| Algorithm | Computational Efficiency | Solution Quality | Implementation Complexity | Robustness to Noise | Best-Suited Applications |

|---|---|---|---|---|---|

| Genetic Algorithm (GA) | Medium | High | Medium | Medium | Feature selection, structural optimization |

| Particle Swarm Optimization (PSO) | Low to Medium | High | Low | Medium | Parameter tuning, neural network training |

| Ant Colony Optimization (ACO) | Low | High | High | High | Pathfinding, network routing |

| Grey Wolf Optimizer (GWO) | High | High | Low to Medium | Medium | Engineering design, power systems |

| Zeroing Neural Network (ZNN) | High (for time-varying) | High (for time-varying) | Medium to High | High | Time-varying matrix problems, control systems |

Source: Synthesized from [20] [18] [4]

Specialized Biomimetic Approaches

Zeroing Neural Networks for Time-Varying Problems

Zeroing Neural Networks (ZNNs) represent a specialized class of biomimetic algorithms specifically designed for time-varying optimization problems [18]. Unlike traditional gradient-based neural networks whose residual error accumulates over time, ZNNs exploit a special evolution formula that ensures convergence to the theoretical solution of time-varying problems [18]. This makes them particularly valuable for applications requiring real-time adaptation, such as robotic control systems, signal processing, and dynamic portfolio optimization.

ZNN variants can be classified into three categories based on their performance characteristics:

- Accelerated-Convergence ZNNs: Designed for applications requiring rapid solution times

- Noise-Tolerance ZNNs: Maintain performance despite noisy or imperfect data

- Discrete-Time ZNNs: Offer higher computational accuracy and easier hardware implementation [18]

The unique architecture of ZNNs enables them to solve time-varying matrix problems that challenge conventional solvers, demonstrating the value of specialized biomimetic approaches for particular problem classes.

Ecological Network Optimization

Biomimetic algorithms have shown remarkable success in addressing complex spatial optimization problems, particularly in ecological network (EN) optimization [4]. These algorithms help resolve the conflict between functional optimization (improving specific ecological functions at the patch level) and structural optimization (enhancing macroscopic connectivity and layout) that often challenges conventional approaches [4].

The Modified Ant Colony Optimization (MACO) model exemplifies this approach, incorporating both micro-functional optimization operators and macro-structural optimization operators to simultaneously address local and global optimization objectives [4]. By combining bottom-up functional optimization with top-down structural optimization, these biomimetic approaches can identify potential ecological stepping stones and quantitatively guide land-use adjustments at the patch level, answering critical questions of "where to optimize, how to change, and how much to change" [4]. Implementation of GPU-based parallel computing has further enhanced the computational efficiency of these approaches, making city-level ecological optimization feasible at high resolution [4].

Biomimetic Algorithm Workflows and Signaling Pathways

The conceptual framework governing biomimetic algorithms can be visualized as a dynamic decision process that mirrors biological signaling pathways. The following diagram illustrates the core logical relationships and decision pathways:

Biomimetic Algorithm Decision Pathway

Tissue Regeneration Model for Data Imputation

Biomimicry extends to specialized computational models, such as the tissue regeneration model for handling incomplete data in pairwise comparison matrices [25]. This approach directly emulates biological regeneration processes through a three-phase algorithm:

Identification of Damaged Areas: Corresponding to detecting inconsistencies in pairwise comparison matrices using specialized inconsistency indices [25]

Cellular Proliferation: Mathematically transposed as iteratively computing missing values through geometric means and stabilization formulas [25]

Tissue Stabilization: Implemented as gradient-based minimization of global inconsistency until convergence thresholds are met [25]

This biomimetic approach demonstrates robust convergence to consistent solutions, outperforming traditional statistical imputation methods for this specific problem domain by leveraging principles derived from biological tissue repair mechanisms [25].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Computational Tools for Biomimetic Algorithm Research

| Tool Category | Specific Tools/Platforms | Primary Function | Application Context |

|---|---|---|---|

| Optimization Frameworks | MATLAB GPOPS, Python SciPy | Algorithm implementation and solving | General optimization problem solving [22] |

| Benchmark Suites | CEC-2017, CEC-2022 | Standardized performance testing | Algorithm comparison and validation [20] [24] |

| Neural Network Platforms | TensorFlow, PyTorch | Deep learning model development | ANN-based optimization systems [20] |

| Parallel Computing | GPU/CUDA, CPU/GPU heterogeneous architecture | Accelerating computational intensive tasks | Large-scale spatial optimization [4] |

| Data Imputation Libraries | MICE (R), LightGBM | Handling missing data | Data preprocessing for real-world datasets [25] |

| Visualization Tools | Graphviz, Matplotlib | Algorithm workflow and result presentation | Research documentation and publication [4] |

The performance evaluation of biomimetic algorithms reveals a diverse landscape of specialized approaches, each with distinct strengths and optimal application domains. While Grey Wolf Optimizer and Particle Swarm Optimization demonstrate excellent performance for static optimization problems, Zeroing Neural Networks offer superior capabilities for time-varying problems [20] [18]. For spatial optimization challenges such as ecological network planning, Modified Ant Colony Optimization provides unique advantages in balancing functional and structural objectives [4].

Despite the proliferation of proposed algorithms, the field faces challenges related to methodological rigor and innovation substance. Critical analyses indicate that many newly proposed algorithms represent minor variations of established approaches rather than genuinely novel methodological contributions [24]. This "paradox of success" necessitates stricter adherence to benchmarking standards, comprehensive performance validation across diverse problem domains, and clearer demonstration of practical advantages over existing alternatives [24].

Future developments in biomimetic optimization should prioritize automated algorithm design, theoretical foundation strengthening, and specialized method development for emerging application domains such as renewable energy systems, drug development pipelines, and large-scale ecological planning [20] [4] [24]. By maintaining focus on biological fidelity while enforcing computational rigor, researchers can fully leverage nature's optimization strategies to address increasingly complex challenges across scientific and engineering disciplines.

The field of biomimetic optimization represents a cornerstone of modern computational intelligence, drawing inspiration from biological systems, ecological processes, and physical phenomena to solve complex problems. These algorithms provide powerful alternatives to traditional optimization methods, particularly for navigating high-dimensional, non-linear search spaces where gradient-based approaches struggle. The fundamental performance of these metaheuristics hinges on effectively balancing two competing search processes: exploration, which discovers diverse solutions across the search space, and exploitation, which refines promising solutions to accelerate convergence [26]. Excessive exploration slows convergence, while predominant exploitation risks entrapment in local optima, making this balance a critical research focus in algorithm design and evaluation [26]. This guide provides a structured performance comparison of prominent biomimetic algorithms, from established genetic algorithms to the emerging Red-crowned Crane Optimization, framing the analysis within rigorous experimental protocols and ecological inspiration principles that underpin this dynamic research domain.

Bio-inspired metaheuristics are broadly categorized by their source of inspiration, which directly influences their search behavior and application suitability. Table 1 outlines the primary algorithm categories and their representative members.

Table 1: Classification of Biomimetic Optimization Algorithms

| Category | Inspiration Source | Key Algorithms | Representative Mechanisms |

|---|---|---|---|

| Evolutionary | Natural evolution | Genetic Algorithm (GA), Differential Evolution (DE) | Selection, Crossover, Mutation [27] |

| Swarm Intelligence | Collective animal behavior | Particle Swarm Optimization (PSO), Ant Colony Optimization (ACO), Grey Wolf Optimizer (GWO) | Social hierarchy, Information sharing [27] |

| Human-Based | Social & decision-making behaviors | Harmony Search (HS), Teaching-Learning-Based Algorithm (TLBA) | Collaboration, Imitation, Competition [27] |

| Physics-Based | Physical laws & principles | Archimedes Optimization Algorithm (AOA), Simulated Annealing (SA) | Buoyancy, Thermodynamics [27] |

| Ecological & Other Bio-Inspired | Specific species or ecological interactions | Red-crowned Crane Optimization (RCO), Whale Optimization Algorithm (WOA) | Mating rituals, Foraging behavior [27] |

The following diagram illustrates the taxonomic relationships and primary search emphasis (exploration vs. exploitation) of these major algorithm categories.

Figure 1: Taxonomy of bio-inspired algorithms and their typical exploration-exploitation balance.

Comparative Performance Analysis of Selected Algorithms

Objective performance evaluation against standardized benchmark functions and real-world problems is crucial for understanding algorithmic strengths and weaknesses. The following table synthesizes quantitative results from controlled experimental studies, particularly a comprehensive review of the Archimedes Optimization Algorithm (AOA) that performed head-to-head comparisons with multiple established algorithms [27].

Table 2: Performance Comparison of Biomimetic Optimization Algorithms

| Algorithm | Key Inspiration | Convergence Speed | Global Search (Exploration) | Local Search (Exploitation) | Reported Superiority vs. 9 Competitors | Typical Application Domains |

|---|---|---|---|---|---|---|

| Genetic Algorithm (GA) | Natural selection | Medium | High | Medium | Not Superior [27] | Feature selection, scheduling [27] |

| Particle Swarm Optimization (PSO) | Bird flocking | Fast | Medium | High | Not Superior [27] | Parameter tuning, neural networks [27] |

| Grey Wolf Optimizer (GWO) | Wolf social hierarchy | Fast | High | High | Not Superior [27] | Engineering design, clustering [27] |

| Whale Optimization Algorithm (WOA) | Bubble-net hunting | Medium | High | Medium | Not Superior [27] | Mechanical design, classification [27] |

| Archimedes Optimization (AOA) | Buoyancy force | Fast | High | High | 72.22% of cases [27] | Photovoltaic systems, clustering [27] |

| Red-crowned Crane (RCO) | Mating & territorial behavior | Under investigation | Under investigation | Under investigation | Benchmarking ongoing [27] | Emerging applications |

The Archimedes Optimization Algorithm (AOA), a physics-based metaheuristic, demonstrates notable performance, showing superiority in 72.22% of case studies against a pool of nine other algorithms including GA, PSO, GWO, and WOA, while also exhibiting stable dispersion in box-plot analyses [27]. This suggests its robust balance between exploration and exploitation. The Red-crowned Crane Optimization (RCO) is a more recent entrant, and while its inspiration is documented [27], comprehensive comparative performance data in the literature is still emerging, highlighting an active area of research.

Experimental Protocols for Algorithm Evaluation

A standardized methodology is essential for ensuring fair and reproducible comparison of optimization algorithms. The following workflow outlines the key stages in a rigorous performance evaluation study, drawing from established practices in the field [26] [27].

Figure 2: Standard workflow for experimental evaluation of optimization algorithms.

Detailed Methodological Components

Benchmark Selection: Studies typically employ a suite of benchmark functions, including unimodal functions (to test exploitation) and multimodal functions with many local optima (to test exploration capability) [27]. The CEC2017 benchmark test functions are a common standard for this purpose [28].

Parameter Configuration: A critical step involves setting a fixed population size and maximum number of iterations across all compared algorithms to ensure fairness. Each algorithm's specific parameters (e.g., crossover and mutation rates for GA, social coefficients for PSO) must be carefully tuned to their recommended values, often through preliminary parametric studies [27].

Statistical Validation: Due to the stochastic nature of these algorithms, performance is evaluated over multiple independent runs (commonly 30+). Researchers then collect statistical metrics like best, worst, mean, and standard deviation of the final fitness values. Non-parametric statistical tests like the Wilcoxon signed-rank test are then used to confirm the significance of performance differences [27].

The Scientist's Toolkit: Essential Research Reagents

The experimental evaluation and development of biomimetic algorithms rely on a suite of computational "reagents" and tools. The following table details these key components and their functions in performance analysis research.

Table 3: Essential Research Tools for Algorithm Development and Testing

| Tool/Reagent | Primary Function | Application Example |

|---|---|---|

| Standard Benchmark Suites (e.g., CEC2017) | Provides standardized, scalable test functions for controlled performance comparison [28]. | Quantifying convergence precision on unimodal functions and avoidance of local optima on multimodal functions. |

| Real-World Problem Datasets | Evaluates practical utility and scalability beyond synthetic benchmarks. | Testing algorithm performance on real engineering design or clinical data [29]. |

| Statistical Testing Frameworks | Provides mathematical rigor to confirm the significance of performance differences between algorithms [27]. | Using Wilcoxon signed-rank test to validate that a new algorithm's performance is statistically better. |

| Visualization Libraries (e.g., for convergence plots, box plots) | Enables intuitive visual comparison of algorithm behavior and result dispersion [27]. | Generating convergence curves to show speed and box plots to display solution quality stability. |

| Bibliometric Analysis Tools (e.g., Bibliometrix, VOSviewer) | Maps the evolution, collaborative networks, and thematic trends in the research field [26]. | Systematically characterizing the conceptual evolution of exploration-exploitation balance studies [26]. |

This comparison guide objectively analyzes the performance landscape of biomimetic algorithms, from foundational genetic algorithms to nascent ecological inspirations like the Red-crowned Crane Optimization. The empirical data reveals that performance is highly contextual, with newer algorithms like the Archimedes Optimization Algorithm demonstrating strong overall performance in recent comparative studies [27]. The ongoing challenge for researchers lies in the principled balancing of exploration and exploitation [26], a task informed by both standardized experimental protocols and a deep understanding of the biological metaphors that inspire these powerful optimization tools. The field continues to evolve rapidly, driven by interdisciplinary research that connects computational intelligence with deeper ecological insights.

From Theory to Practice: Methodological Frameworks and Biomedical Applications

The field of biomimetic optimization involves developing computational algorithms inspired by natural processes and biological behaviors to solve complex problems. In ecological and biomechanical research, these algorithms have become indispensable tools for optimizing systems where traditional mathematical approaches fall short. Biomimetic algorithms such as Particle Swarm Optimization (PSO), Ant Colony Optimization (ACO), Grey Wolf Optimizer (GWO), Squirrel Search Algorithm (SSA), and Cuckoo Search (CS) leverage principles observed in biological systems—including swarm intelligence, foraging behavior, and social hierarchy—to navigate high-dimensional, nonlinear solution spaces effectively [3] [4].

The application of these algorithms spans multiple domains, from optimizing ecological network structures to enhancing the performance of renewable energy systems. In ecological research, these methods help address critical challenges such as habitat fragmentation by optimizing the spatial layout of ecological networks, balancing both functional and structural objectives [4]. Similarly, in biomechanics, computational modeling integrates finite element analysis (FEA) with response surface methodology (RSM) to optimize medical implementations such as dental implant designs, demonstrating the cross-disciplinary utility of bio-inspired approaches [30]. This guide provides a comprehensive comparison of leading biomimetic algorithms, detailing their performance characteristics, experimental protocols, and practical implementation requirements to assist researchers in selecting appropriate methodologies for their specific optimization challenges.

Comparative Performance Analysis of Biomimetic Algorithms

Performance Metrics and Benchmarking Framework

Evaluating the performance of optimization algorithms requires a structured benchmarking approach using standardized metrics. Key performance indicators include solution quality (measured by objective function value), computational efficiency (execution time), convergence speed, and consistency across problem variants. Statistical validation through non-parametric tests like the Wilcoxon signed-rank test is recommended for reliable algorithm comparison, as it doesn't assume normal distribution of performance data [31]. Item Response Theory (IRT) models provide an advanced statistical framework for assessing benchmark difficulty and algorithm discrimination capabilities, enabling more nuanced performance comparisons [32].

Algorithm Performance Comparison

The table below summarizes the quantitative performance of various biomimetic algorithms across different optimization domains, based on experimental data from published studies:

Table 1: Performance Comparison of Biomimetic Optimization Algorithms

| Algorithm | Application Domain | Key Performance Metrics | Comparative Advantages | Limitations |

|---|---|---|---|---|

| Particle Swarm Optimization (PSO) | Photovoltaic systems MPPT [3], Ecological network optimization [4] | MAE: 2.1679 [3]; Effective in land-use layout retrofits [4] | Reliable performance under partial shading conditions; Effective for global optimization [3] [4] | Hyperparameter tuning sensitive; Longer execution time (1417.80s) [3] |

| Grey Wolf Optimizer (GWO) | Photovoltaic systems MPPT [3] | MSE: 11.9487, MAE: 2.4552, Execution time: 1198.99s [3] | Balanced accuracy and computational efficiency [3] | Less effective with rapidly changing environmental conditions [3] |

| Squirrel Search Algorithm (SSA) | Photovoltaic systems MPPT [3] | MSE: 12.1500, MAE: 2.7003, Execution time: 987.45s [3] | Fastest execution among compared algorithms [3] | Slightly lower accuracy compared to GWO and PSO [3] |

| Cuckoo Search (CS) | Photovoltaic systems MPPT [3] | MSE: 33.7767, MAE: 3.8547, Execution time: 1904.01s [3] | Effective for some optimization problems [3] | Less reliable accuracy and slower computational speed [3] |

| Modified Ant Colony Optimization (MACO) | Ecological network optimization [4] | Improved connectivity and structural efficiency [4] | Effective for spatial optimization problems; Compatible with parallel computing [4] | Requires significant computational resources for large-scale problems [4] |

Domain-Specific Performance Considerations

Algorithm performance varies significantly across application domains. In ecological network optimization, PSO and specially modified ACO variants have demonstrated superior capability in balancing functional and structural optimization objectives. The spatial-operator based MACO model successfully addressed both micro-scale functional optimization and macro-scale structural optimization in Yichun City, China, improving ecological connectivity while maintaining computational feasibility through GPU acceleration [4]. For photovoltaic systems under partial shading conditions, GWO achieved the best balance between prediction accuracy (MSE: 11.9487) and computational time (1198.99 seconds), outperforming PSO, SSA, and CS in comprehensive testing [3].

Experimental Protocols and Methodologies

General Experimental Framework for Algorithm Validation

Rigorous experimental protocols are essential for meaningful algorithm comparison. The standard methodology involves:

- Problem Selection: Curating benchmark suites with diverse difficulty levels and characteristics representative of real-world optimization challenges [32].

- Parameter Configuration: Establishing fair parameter settings for all compared algorithms, often through preliminary tuning experiments.

- Multiple Runs: Executing numerous independent runs to account for stochastic variations in algorithm performance.

- Performance Measurement: Collecting data on solution quality, convergence speed, and computational resources using standardized metrics.

- Statistical Analysis: Applying appropriate statistical tests (e.g., Wilcoxon signed-rank, Friedman) to validate significance of performance differences [31].

Domain-Specific Methodologies

Ecological Network Optimization Protocol

The experimental protocol for ecological network optimization using MACO involves these methodical stages [4]:

- Data Collection and Preprocessing: Gathering land use data, ecological sensitivity assessments, and habitat distribution information, then rasterizing to appropriate resolution (e.g., 40m).

- Ecological Network Construction: Identifying ecological sources through morphological spatial pattern analysis and establishing corridors using connectivity models.

- Algorithm Implementation: Applying spatial operators for micro-functional optimization and macro-structural enhancement through the MACO framework.

- GPU-Accelerated Computation: Leveraging parallel processing to handle large-scale spatial optimization efficiently.

- Validation and Analysis: Evaluating optimized networks using functional indicators (ecosystem service value, habitat quality) and structural metrics (connectivity, complexity).

Diagram 1: Ecological network optimization workflow

Biomechanical Implant Optimization Protocol

For biomechanical applications such as dental implant design, researchers employ integrated computational approaches [30]:

- 3D Model Generation: Creating anatomical models from medical imaging data (e.g., CT scans) using software such as SolidWorks.

- Material Property Assignment: Defining mechanical properties (Young's modulus, Poisson's ratio) for all components.

- Finite Element Analysis (FEA): Simulating biomechanical behavior under various loading conditions.

- Response Surface Methodology (RSM): Developing predictive models through designed experiments (e.g., Central Composite Design).

- Optimization and Validation: Identifying optimal parameter combinations and validating predictions against experimental data.

Diagram 2: Biomechanical implant optimization workflow

Implementation and Computational Requirements

Successful implementation of biomimetic optimization algorithms requires specific computational tools and frameworks:

Table 2: Essential Research Toolkit for Biomimetic Algorithm Implementation

| Tool/Resource | Category | Primary Function | Application Examples |

|---|---|---|---|

| GPU Computing | Hardware | Parallel processing of large-scale spatial optimization | Ecological network optimization [4] |

| Finite Element Analysis Software | Software | Simulating biomechanical behavior | Implant design optimization [30] |

| Motion Capture Systems | Data Collection | Tracking movement for kinematic data | Musculoskeletal modeling [33] |

| OpenSim | Software | Creating and simulating musculoskeletal models | Movement simulation and muscle force estimation [33] |

| Statistical Analysis Tools | Analysis | Performance comparison and validation | Algorithm benchmarking [31] |

| Biomimetic Algorithm Frameworks | Software | Implementing optimization algorithms | PSO, GWO, ACO, SSA implementation [3] [4] |

Computational Considerations and Optimization Techniques

Computational efficiency represents a critical factor in algorithm selection, particularly for large-scale ecological or biomechanical problems. Several strategies can enhance performance:

- Parallelization: Implementing algorithms on GPU architectures can dramatically reduce computation time for spatial optimization problems [4].

- Hybrid Approaches: Combining multiple algorithms or integrating them with other optimization techniques (e.g., FEA with RSM) can improve both efficiency and solution quality [30].

- Adaptive Parameter Tuning: Dynamically adjusting algorithm parameters during execution based on performance feedback.

- Problem-Specific Operators: Developing custom spatial operators or mutation strategies tailored to specific problem domains [4].

The comparative analysis presented in this guide demonstrates that algorithm performance is highly context-dependent, with different biomimetic approaches excelling in specific application domains. PSO and its variants show particular promise for spatial optimization problems in ecological research, while GWO provides balanced performance for dynamic optimization challenges such as MPPT in photovoltaic systems. The integration of these algorithms with specialized computational techniques—including GPU acceleration, finite element analysis, and response surface methodology—significantly enhances their practical utility for complex biological modeling applications.

Future research directions should focus on developing more adaptive hybrid algorithms, improving computational efficiency for large-scale problems, and establishing standardized benchmarking protocols specific to biological and ecological optimization domains. As biomimetic algorithms continue to evolve, their capacity to address increasingly complex challenges in ecological modeling, biomechanics, and biomedical research will expand, offering powerful tools for understanding and optimizing biological systems.

Computational Implementations for High-Dimensional Problem Solving

High-dimensional problem solving represents one of the most significant challenges in computational science, particularly in fields requiring the optimization of complex systems with numerous interacting parameters. In ecological optimization research, where systems exhibit non-linear behaviors, multiple constraints, and vast solution spaces, traditional optimization techniques often prove inadequate. Biomimetic algorithms, inspired by natural processes and biological systems, have emerged as powerful tools for navigating these complex landscapes. These algorithms mimic successful strategies found in nature—such as swarm intelligence, evolutionary processes, and neural systems—to efficiently explore high-dimensional spaces and identify optimal or near-optimal solutions where classical methods struggle due to the curse of dimensionality [34].

The relevance of these computational approaches extends directly to critical applications in drug development, where researchers must navigate complex molecular spaces, predict multi-parameter interactions, and optimize for efficacy, safety, and manufacturability simultaneously. For ecological researchers and drug development professionals, understanding the comparative performance of these algorithms is essential for selecting appropriate methodologies that balance computational efficiency with solution quality. This guide provides an objective comparison of prominent biomimetic algorithms, supported by experimental data and detailed protocols, to inform research decisions in high-dimensional optimization contexts [34].

Performance Comparison of Biomimetic Algorithms

Quantitative Performance Metrics

Evaluating algorithm performance requires multiple metrics to capture different aspects of optimization effectiveness. Key metrics include convergence speed (time or iterations to find optimal solution), solution accuracy (proximity to known optimum or best-found solution), computational efficiency (resource consumption), and robustness (performance consistency across diverse problems). For high-dimensional ecological and pharmaceutical problems, stability in noisy environments and ability to avoid local optima are particularly valuable characteristics [3] [34].

Table 1: Core Performance Metrics for Biomimetic Algorithm Evaluation

| Metric | Definition | Measurement Approach | Importance in Ecological/Drug Optimization |

|---|---|---|---|

| Mean Squared Error (MSE) | Average squared difference between predicted and actual values | Calculated during validation on test datasets | Quantifies prediction accuracy for ecological models and drug efficacy predictions |

| Mean Absolute Error (MAE) | Average absolute difference between predicted and actual values | Direct computation from result comparisons | Provides interpretable error magnitude for environmental impact assessments |

| Execution Time | Computational time required to reach stopping criterion | Measured in seconds/minutes under standardized conditions | Critical for time-sensitive applications like real-time ecological monitoring |

| Convergence Iterations | Number of iterations until solution stabilizes | Tracked during algorithm execution | Indicates efficiency in exploring high-dimensional solution spaces |

| Memory Utilization | Computational memory required during execution | Monitored via system resources | Important for large-scale ecological datasets and molecular libraries |

Algorithm Comparison and Experimental Data

Different biomimetic algorithms exhibit distinct performance characteristics based on their underlying mechanisms and problem structures. The following comparison draws from multiple experimental studies to provide a comprehensive overview of algorithmic strengths and limitations.

Table 2: Biomimetic Algorithm Performance Comparison for High-Dimensional Optimization

| Algorithm | Best Reported MSE | Best Reported MAE | Execution Time (seconds) | Key Strengths | Documented Limitations |

|---|---|---|---|---|---|

| Grey Wolf Optimizer (GWO) | 11.95 | 2.46 | 1198.99 | Excellent balance of accuracy and speed; effective exploration/exploitation balance | Moderate computational overhead; parameter sensitivity in some implementations |

| Particle Swarm Optimization (PSO) | ~159.94 (standard) to ~12.0 (optimized) | 2.17 | 1417.80 | Reliable performance across diverse problems; extensive research base | Slower convergence in high-dimensional spaces; tendency for premature convergence |

| Squirrel Search Algorithm (SSA) | 12.15 | 2.70 | 987.45 | Fastest execution in comparative studies; efficient for large-scale problems | Slightly reduced accuracy versus top performers; limited application history |

| Cuckoo Search (CS) | 33.78 | 3.85 | 1904.01 | Effective for global search; good for problems with multiple optima | Slowest execution time; inconsistent performance across problem types |

| Ant Colony Optimization (ACO) | Not reported in studies | Not reported in studies | Varies by implementation | Excellent for discrete optimization problems; robust to noise | Limited performance on continuous problems; complex parameter tuning |

Experimental data from a photovoltaic system optimization study demonstrates these performance differences clearly. In this research, standard artificial neural networks achieved an MSE of 159.94 and MAE of 8.08 when optimizing power output prediction. When enhanced with biomimetic algorithms, significant improvements emerged: GWO-optimized networks reduced MSE to 11.95 and MAE to 2.46, while PSO-optimized versions achieved the best MAE of 2.17. The SSA algorithm demonstrated superior computational efficiency at 987.45 seconds execution time, significantly faster than CS at 1904.01 seconds [3].

Another study focusing on ecological network optimization implemented a modified ACO approach with spatial operators to simultaneously optimize ecological function and structure. This approach successfully addressed the "where to optimize, how to change, and how much to change" questions in habitat restoration, demonstrating the algorithm's capability to handle complex spatial optimization problems with multiple constraints and objectives [4].

Experimental Protocols and Methodologies

Standardized Testing Framework for Algorithm Evaluation

To ensure fair and reproducible comparisons between biomimetic algorithms, researchers should implement standardized testing protocols. The following methodology provides a framework for evaluating algorithm performance on high-dimensional optimization problems, particularly relevant to ecological and pharmaceutical applications.

Experimental Setup and Parameter Configuration

- Hardware/Software Environment: Conduct tests on standardized computing infrastructure with consistent CPU (e.g., Intel Xeon Gold 6248R, 3.0GHz), GPU (e.g., NVIDIA A100 40GB), and memory configurations (128GB DDR4). Utilize common programming frameworks (Python 3.8+, MATLAB R2023a) with algorithm-specific libraries.

- Parameter Tuning Phase: Implement a preliminary tuning phase where each algorithm undergoes optimization of its intrinsic parameters using a subset of test problems. For example, PSO requires optimization of inertia weight (typically 0.4-0.9) and acceleration coefficients (often 1.4-2.0), while GWO needs parameter adjustment for convergence behavior [3] [34].

- Stopping Criteria: Define consistent stopping conditions across all algorithms, including maximum iterations (500-2000, based on problem complexity), solution stability thresholds (e.g., <0.1% improvement over 50 iterations), and maximum computation time (aligned with practical application constraints).

Benchmark Problem Selection

- Diverse Problem Types: Select benchmark problems representing different challenge types: unimodal (single optimum) for convergence speed assessment, multimodal (multiple optima) for ability to avoid local minima, and composite functions with noise for real-world applicability.

- Dimension Scalability: Test performance across increasing dimensions (50, 100, 500, 1000+ variables) to evaluate scalability, crucial for high-dimensional applications in drug design (molecular optimization) and ecological modeling (landscape optimization).

- Domain-Specific Problems: Include real-world problems such as ecological network structure optimization [4] or photovoltaic system MPPT under partial shading conditions [3] to assess practical performance.

Performance Measurement Protocol

- Multiple Run Execution: Execute each algorithm 30+ times on each benchmark problem to account for stochastic variations, reporting mean and standard deviation for all performance metrics.

- Statistical Significance Testing: Apply appropriate statistical tests (e.g., Wilcoxon signed-rank test, ANOVA) to determine significant performance differences between algorithms.

- Data Collection Points: Record performance at fixed intervals (e.g., every 10% of maximum iterations) to generate convergence curves and compute area-under-curve metrics.

Specialized Protocol for Ecological Network Optimization

For ecological applications specifically, the spatial-operator based Modified Ant Colony Optimization (MACO) model provides a specialized methodology for high-dimensional landscape optimization [4].

Data Preparation and Preprocessing

- Land Use Classification: Convert vector land use data from ecological surveys to raster format (e.g., 40m resolution), creating a grid representation of the study area. For the Yichun City case study, this resulted in 4,326 × 5,566 grids [4].

- Ecological Factor Quantification: Compute key metrics including ecological function score (based on habitat quality, species support capacity), ecological sensitivity (resistance to disturbance), and landscape connectivity indices.

- Constraint Definition: Establish transformation constraints based on land use regulations, conservation priorities, and practical implementation feasibility.

Algorithm Implementation Workflow

- Spatial Operator Definition: Implement four micro-functional optimization operators (edge-edge, edge-core, core-edge, core-core transformations) and one macro-structural optimization operator to balance bottom-up and top-down optimization approaches.

- GPU Parallelization: Utilize GPU/CPU heterogeneous architecture with parallel computing techniques to manage computational demands of city-level optimization at high resolution, dramatically reducing processing time.

- Dynamic Probability Adjustment: Incorporate a global ecological node emergence mechanism using Fuzzy C-Means clustering probabilities to identify potential ecological stepping stones.

Validation and Analysis Phase

- Multi-metric Evaluation: Assess optimization results using both functional indicators (ecological function value, habitat quality) and structural indicators (network connectivity, corridor integrity).

- Comparative Assessment: Compare optimized ecological networks against baseline conditions and alternative optimization approaches to quantify improvements.