Overcoming Multi-GPU Scaling Challenges in Scientific Computing: Strategies for Accelerating Biomedical Breakthroughs

This article provides a comprehensive analysis of the multi-GPU scaling challenges faced by researchers, scientists, and drug development professionals in scientific computing.

Overcoming Multi-GPU Scaling Challenges in Scientific Computing: Strategies for Accelerating Biomedical Breakthroughs

Abstract

This article provides a comprehensive analysis of the multi-GPU scaling challenges faced by researchers, scientists, and drug development professionals in scientific computing. It explores the foundational shift towards accelerated computing in high-performance computing (HPC), detailing core parallelization strategies like data, model, and pipeline parallelism. The piece offers practical methodological guidance for implementing these strategies using modern frameworks, addresses critical troubleshooting and optimization techniques for performance bottlenecks, and presents validation case studies with comparative analyses of scaling efficiency. By synthesizing these intents, the article serves as an essential guide for overcoming computational barriers to enable faster drug discovery, more accurate climate modeling, and advanced AI-driven research.

The New Era of Accelerated Computing: Why Multi-GPU Systems are Redefining Scientific Research

FAQs and Troubleshooting for Multi-GPU Scientific Computing

This section addresses common challenges researchers face when scaling scientific applications across multiple GPUs.

FAQ: Our multi-GPU simulation shows low overall GPU utilization. What are the primary causes?

Low GPU utilization often stems from bottlenecks outside the GPU processors themselves. Key factors to investigate include:

- Slow Data Loading: If the data pipeline from storage cannot keep up, GPUs sit idle. This is caused by network latency, insufficient data preprocessing, or lack of prefetching mechanisms [1].

- CPU Bottlenecks: A slow or overloaded CPU can throttle the entire pipeline, especially if single-threaded data transformation code or an inadequate CPU-to-GPU ratio starves the GPUs of work [1].

- Inefficient Memory Access: GPU cores may spend more time waiting for data than processing it due to non-coalesced memory reads or excessive host-device memory transfers [1].

- Poor Parallelization: Small batch sizes that underutilize GPU cores or sequential operations that cannot be parallelized will lead to low utilization [1].

FAQ: When scaling to multiple nodes, our performance plateaus or gets worse. What is the likely culprit?

This typically indicates that inter-GPU communication has become the bottleneck. As you scale, the time spent transferring data between GPUs, especially across nodes, can dominate the total runtime [2]. This is particularly acute in applications like distributed state-vector quantum circuit simulation, where the bisection bandwidth of the inter-GPU interconnect is the primary performance concern [2].

FAQ: What are the most effective strategies to mitigate communication bottlenecks in multi-GPU setups?

- Leverage High-Performance Interconnects: Utilize specialized GPU interconnects like NVLink for intranode communication, which offers significantly higher bandwidth (e.g., 900 GB/s with NVLink 4) than PCIe [2].

- Use Asynchronous Communication APIs: Implement asynchronous multi-GPU programming with OpenMP Target Tasks (using

nowaitanddependclauses) or OpenACC Parallel (usingasync(n)). These allow computation and communication to overlap, hiding latency [3]. - Co-locate Compute and Storage: Deploy high-speed NVMe storage directly on GPU nodes and use high-speed interconnects like InfiniBand to minimize data access latency [1].

- Fuse Communication Operators: Apply system-level optimizations like communication-computation pipelining and communication operator fusion to reduce the total volume and overhead of data transfers [4].

FAQ: Our model requires high-precision (FP64) arithmetic. Do all GPUs support this effectively?

No. Consumer-grade and many professional RTX GPUs have weak double-precision (FP64) support. For scientific applications like climate modeling or medical research that require high FP64 accuracy, you should use NVIDIA's compute-class GPUs such as the L40S, H100, or H200, which are optimized for FP64 performance [5] [6].

Quantitative Performance Data from Multi-GPU Scaling Studies

The tables below summarize empirical data from scientific computing studies, illustrating the impact of optimization and scaling.

Table 1: Performance Gains from GPU Optimization in a Scientific Application (optiGAN)

| Optimization Metric | Before Optimization | After Optimization | Improvement |

|---|---|---|---|

| Training Runtime | Baseline (Naive GPU training) | Optimized | ~4.5x faster [7] |

| Hardware | NVIDIA Quadro RTX 4000 (8GB) | Same GPU | - |

| Profiling Tool | - | NVIDIA Nsight Systems | - |

Table 2: Multi-GPU Scaling Performance for a Plasma Simulation (BIT1)

| Implementation Method | Simulation Runtime Reduction | Hardware / Scale | Key Technique |

|---|---|---|---|

| MPI + OpenMP | 53% reduction [3] | Petascale Supercomputer (16 MPI ranks + OpenMP threads) | Hybrid parallelization |

| MPI + OpenACC | 58% reduction [3] | Compared to MPI-only version | async(n) clause |

| OpenACC Particle Mover | 24% improvement [3] | 64 MPI ranks | - |

| OpenMP (Async Multi-GPU) | 8.77x speedup (54.81% Parallel Efficiency) [3] | MareNostrum 5 supercomputer | Target Tasks with nowait & depend |

| OpenACC (Async Multi-GPU) | 8.14x speedup (50.87% Parallel Efficiency) [3] | MareNostrum 5 supercomputer | Parallel with async(n) clause |

Table 3: Peak Bandwidth of Modern GPU Interconnects

| Interconnect Technology | Peak Bidirectional Bandwidth | Typical Use Case |

|---|---|---|

| PCIe 5.0 | 128 GB/s [2] | Base-level GPU connection to host |

| NVLink 4 | 900 GB/s [2] | High-speed intranode GPU-GPU |

| NVLink-C2C | 900 GB/s [2] | Coherent interconnect between Grace CPU and GPU |

| Connect-X 7 NIC | 50 GB/s [2] | High-performance internode networking |

Experimental Protocols for Multi-GPU Benchmarking

Protocol 1: Profiling and Optimizing a Single-GPU Workflow This methodology is based on the optimization of the optiGAN model [7].

- Establish a Baseline: Run the initial, unoptimized code on the target GPU (e.g., NVIDIA Quadro RTX 4000) and record the execution time and memory consumption per epoch or iteration.

- Profile with NVIDIA Nsight Systems: Use this profiler to identify the initial performance bottlenecks, such as kernel execution times, memory transfer overhead, and CPU idle time.

- Implement GPU Optimizations:

- Memory Management: Optimize data transfers between CPU and GPU by batching and using pinned memory.

- Kernel Optimization: Ensure efficient parallelization of operations to fully utilize CUDA cores and streaming multiprocessors (SMs).

- Framework Tweaks: Leverage built-in GPU support in deep learning frameworks like TensorFlow and PyTorch, ensuring compatible library versions (e.g., CUDA, cuDNN).

- Validate Performance and Model Accuracy: Re-run the profiler to measure improvements in execution time and memory footprint. Crucially, verify that optimization has not compromised the model's output quality or accuracy [7].

Protocol 2: Benchmarking Multi-GPU Scalability and Communication This protocol is derived from scaling studies in quantum simulation and plasma physics [3] [2].

- Select Scaling Configuration: Choose the number of GPUs and nodes, ensuring the software supports distributed training (e.g., via Horovod/MPI) [6].

- Choose Multi-GPU API: Select a programming API for inter-GPU communication:

- OpenMP Target Tasks: Use

#pragma omp target nowait dependclauses for asynchronous, dependency-aware data transfers and kernel execution [3]. - OpenACC: Use the

async(n)clause to create asynchronous computation queues for overlapping communication and computation [3]. - CUDA-Aware MPI: Use MPI implementations that support direct transfer of data between GPU memories across nodes.

- OpenMP Target Tasks: Use

- Run Weak and Strong Scaling Tests:

- Strong Scaling: Keep the total problem size fixed and increase the number of GPUs. The ideal outcome is a proportional decrease in runtime (linear speedup).

- Weak Scaling: Increase the problem size proportionally with the number of GPUs. The ideal outcome is a constant runtime.

- Measure Key Metrics: Record the total time-to-solution and calculate the parallel efficiency (PE). PE is calculated as (Speedup / Number of GPUs) * 100%. A decline in PE at higher node counts indicates growing communication bottlenecks [3].

- Analyze with Profiling Tools: Use system-level profilers (e.g., NVIDIA Nsight Systems) to identify specific communication bottlenecks and validate the efficiency of asynchronous operations [3].

The Scientist's Toolkit: Essential Solutions for Multi-GPU Research

Table 4: Key Research Reagent Solutions for Multi-GPU Systems

| Item | Function / Explanation | Relevance to Multi-GPU Scaling |

|---|---|---|

| NVIDIA Nsight Systems | A system-level performance profiler that provides a holistic view of application performance across CPU and GPU. | Essential for identifying bottlenecks in kernel execution, memory transfers, and multi-GPU communication patterns [7] [3]. |

| NVIDIA H100/A100 GPU | Compute-class GPUs with high FP64 performance, large VRAM (e.g., 80GB), and high-speed NVLink interconnects. | Designed for scalable HPC and AI; NVLink enables fast intranode multi-GPU communication, reducing bottlenecks [5]. |

| OpenMP / OpenACC | APIs for multi-platform shared-memory parallel programming, with directives for offloading computation to GPUs. | Enable asynchronous multi-GPU programming, allowing computation and communication to overlap, which is critical for scalability [3]. |

| NVIDIA NVLink | A high-bandwidth, energy-efficient interconnect between the GPU and CPU or between multiple GPUs. | Provides significantly higher bandwidth than PCIe (e.g., 900 GB/s for NVLink 4), which is crucial for data-intensive multi-GPU applications [2]. |

| Kubernetes with GPU Device Plugins | An orchestration system for automating deployment and management of containerized applications, extended to support GPUs. | Enables efficient scheduling and management of multi-GPU workloads across a cluster, improving overall resource utilization [1]. |

| Mixed Precision Training | A technique using a combination of single (FP32) and half (FP16) precision to speed up training and reduce memory usage. | Leverages specialized Tensor Cores on modern GPUs, allowing for larger models or batch sizes, which improves throughput [1] [8]. |

Workflow and System Architecture Diagrams

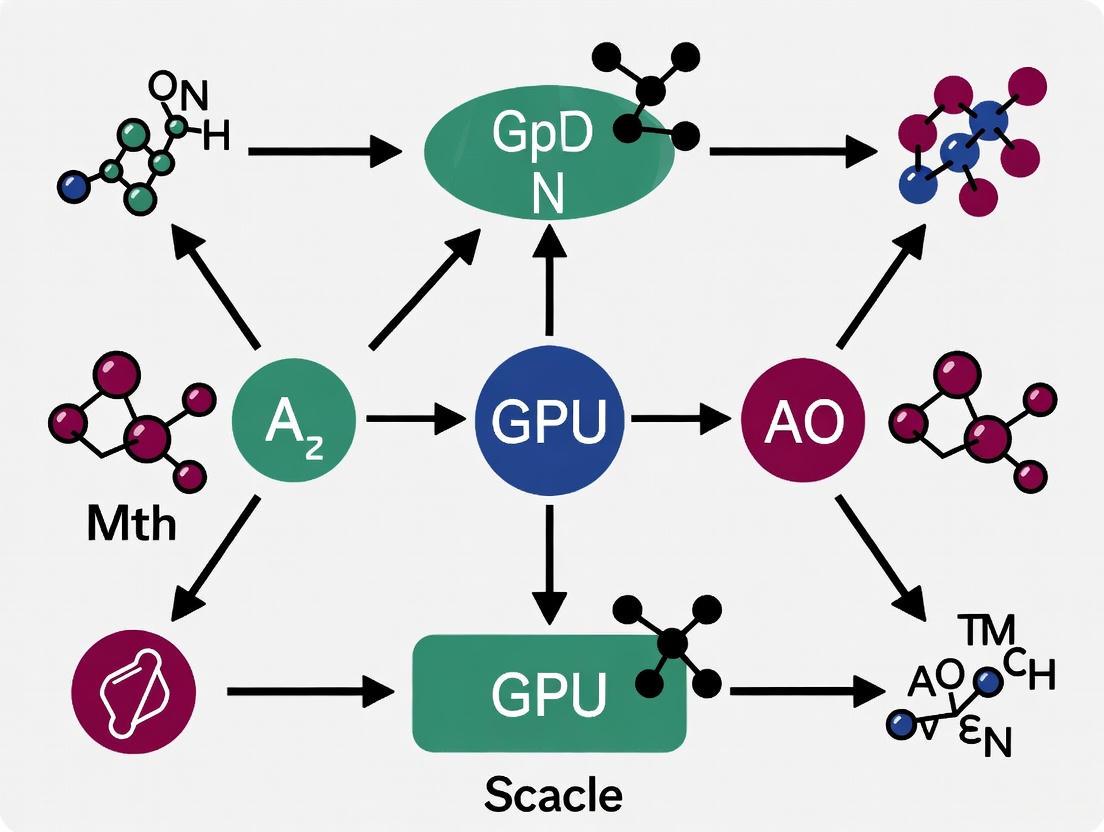

Diagram 1: Multi-GPU Scaling: Optimization and Troubleshooting Workflow

Diagram 2: System Architecture for Asynchronous Multi-GPU Execution

In scientific computing, particularly in data-intensive fields like drug development, the ability to train large, complex models is often gated by the available computational resources. Single GPUs frequently lack the memory and processing power to handle the vast models and datasets common in modern research. Multi-GPU parallelization is therefore not just an optimization but a necessity for scaling scientific experiments [9] [10]. This guide details the core paradigms—Data, Model, and Pipeline Parallelism—that enable researchers to overcome these scaling challenges.

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between data and model parallelism?

Data parallelism involves replicating the entire model on each GPU and distributing different subsets of the data to these replicas for simultaneous processing. After processing, the results (like gradients) are synchronized across all devices [11] [12]. In contrast, model parallelism splits a single model across multiple GPUs. Each GPU is responsible for computing a different part of the model, and the intermediate results (activations) are passed between devices during the forward and backward passes [11] [13].

2. When should I choose data parallelism over model/pipeline parallelism?

Data parallelism is the most straightforward choice when your model can fit within the memory of a single GPU. It is ideal for scaling training with large datasets and provides nearly linear speedups when communication overhead is low (e.g., with fast interconnects like NVLink) [11]. If your model is too large for a single GPU's memory, you must use model or pipeline parallelism [11] [13].

3. My model doesn't fit on one GPU. Should I use pipeline or tensor parallelism?

The choice depends on the model architecture and communication constraints.

- Pipeline Parallelism is well-suited for models with a sequential stack of layers (e.g., CNNs, Transformers). It splits the model into consecutive stages across GPUs. Its main challenge is the "pipeline bubble," where devices can sit idle waiting for others to finish [14].

- Tensor Parallelism splits individual layers (or tensors) across devices. It is particularly effective for models with large linear layers, like Transformers. However, it typically has higher communication overhead than pipeline parallelism because it requires synchronizing results at every layer [15] [14]. For very large models, a hybrid approach is often used [9] [16].

4. What are "pipeline bubbles" and how can I minimize them?

In pipeline parallelism, a "bubble" refers to the idle time experienced by GPUs when they are waiting for data from the previous or next stage in the pipeline. This is a key source of inefficiency [14]. A primary method to reduce bubbles is to split a mini-batch into smaller micro-batches. This allows the pipeline to be filled more completely, enabling different GPUs to process different micro-batches simultaneously and overlapping computation across devices [13] [14]. Finding the optimal micro-batch size is critical for balancing GPU utilization and memory usage [13].

5. How does the Zero Redundancy Optimizer (ZeRO) help with memory limitations?

ZeRO is a powerful memory optimization technique that works by sharding (partitioning) the model's states across all GPUs instead of replicating them.

- ZeRO-1 shards the optimizer states.

- ZeRO-2 additionally shards the gradients.

- ZeRO-3 shards the model parameters themselves [12]. This approach significantly reduces the memory footprint per GPU, allowing for the training of much larger models or the use of larger batch sizes. The trade-off is an increase in communication, which is most substantial with ZeRO-3 [12].

Troubleshooting Guides

Problem 1: Out-of-Memory (OOM) Errors During Training

Symptoms: The training process fails with a CUDA "out of memory" error.

Possible Causes and Solutions:

- Cause A: The model is too large for a single GPU.

- Solution: Transition from data parallelism to model or pipeline parallelism. Split your model across multiple GPUs. A simple starting point is to manually place different model layers on different GPUs using

.to('cuda:X')in PyTorch [11].

- Solution: Transition from data parallelism to model or pipeline parallelism. Split your model across multiple GPUs. A simple starting point is to manually place different model layers on different GPUs using

- Cause B: High memory consumption from replicated data in data parallelism.

- Solution: Utilize the ZeRO (Zero Redundancy Optimizer) optimization stages available in libraries like DeepSpeed or via PyTorch's Fully Sharded Data Parallel (FSDP). This eliminates memory redundancy by partitioning optimizer states, gradients, and parameters [12].

- Cause C: Large activation memory in pipeline parallelism.

- Solution: Employ activation checkpointing (also known as gradient checkpointing). This technique trades compute for memory by selectively recomputing activations during the backward pass instead of storing them all [14].

Problem 2: Slow Training Performance and Low GPU Utilization

Symptoms: Training time does not improve significantly with more GPUs, or GPU usage metrics show frequent dips and low percentages.

Possible Causes and Solutions:

- Cause A: Communication bottlenecks in data parallelism.

- Solution: Ensure you are using an optimized communication strategy. In PyTorch, prefer DistributedDataParallel (DDP) over the older

DataParallelbecause it is more efficient and scales to multiple machines [11]. Also, verify that high-speed interconnects (like InfiniBand) are properly configured for multi-node training [17].

- Solution: Ensure you are using an optimized communication strategy. In PyTorch, prefer DistributedDataParallel (DDP) over the older

- Cause B: Severe pipeline bubbles in model/pipeline parallelism.

- Solution: Implement a micro-batching strategy and tune the micro-batch size. Using more, smaller micro-batches helps keep all stages of the pipeline busy. Research has shown that an optimal micro-batch size can increase throughput by over 10% [13].

- Cause C: Load imbalance in model parallelism.

- Solution: If partitioning the model manually, ensure that the computational load is as even as possible across GPUs. Some advanced frameworks offer automatic partitioning to achieve better load balance [13].

Problem 3: Training Instability or Accuracy Degradation

Symptoms: The training loss behaves erratically, fails to converge, or the final model accuracy is lower than expected when using multiple GPUs.

Possible Causes and Solutions:

- Cause A: Incorrect normalization layer statistics in distributed training.

- Solution: Normalization layers like Batch Normalization (BN) can be problematic with small per-GPU batch sizes. In data parallelism, use synchronized BN which calculates mean and variance across all GPUs [13]. For model/pipeline parallelism where micro-batches are small, consider switching to Group Normalization or Layer Normalization, which are not dependent on batch size and have been shown to minimize accuracy degradation [13].

- Cause B: Gradient synchronization issues.

- Solution: In data parallelism, verify that gradient all-reduce is functioning correctly. Using DDP in PyTorch automatically handles this. Also, check for gradient explosion/vanishing, which can be exacerbated in distributed settings, and consider using gradient clipping.

Paradigm Comparison and Selection

The table below summarizes the key characteristics of the main parallelization paradigms to aid in selection.

Table 1: Comparison of Core Multi-GPU Parallelization Paradigms

| Aspect | Data Parallelism | Pipeline Parallelism | Tensor Parallelism |

|---|---|---|---|

| Basic Concept | Replicates model on each GPU; splits and processes data in parallel [11]. | Splits model into sequential stages; data flows through the pipeline [14]. | Splits individual layers (tensors) of the model across GPUs [15]. |

| Memory Efficiency | Low (each GPU holds a full model copy) [15]. | High (each GPU holds only a part of the model) [15]. | High (each GPU holds a portion of each layer) [15]. |

| Communication Overhead | Low to Moderate (synchronizing gradients) [11] [12]. | Low (point-to-point between adjacent stages) [15] [14]. | High (synchronization required at every layer) [15] [14]. |

| Ideal Use Case | Models that fit on one GPU; large-batch training [11]. | Large models with a sequential structure [13]. | Models with very large individual layers (e.g., Transformer FFN layers) [9]. |

| Key Challenge | Model must fit on one GPU; gradient sync overhead [11]. | Pipeline "bubbles" causing GPU idle time [14]. | High communication frequency and complexity [14]. |

Experimental Protocols for Multi-GPU Training

Protocol 1: Implementing Data Parallelism with PyTorch DDP

This protocol outlines the steps to set up Distributed Data Parallel (DDP) training, the recommended approach for data parallelism in PyTorch [11].

- Process Setup: Use

torch.multiprocessing.spawnto launch a function for each training process (one per GPU). - Process Group Initialization: In each process, initialize the distributed process group using

torch.distributed.init_process_groupwith the "nccl" backend. - Model Wrapping: Move your model to the current GPU and wrap it in

torch.nn.parallel.DistributedDataParallel. - Data Loading: Use a

torch.utils.data.distributed.DistributedSamplerto ensure each process loads a unique subset of the data. - Training Loop: Run a standard training loop. DDP automatically synchronizes gradients across processes during

loss.backward().

Code Example: PyTorch DDP Setup

Protocol 2: Implementing Basic Model Parallelism via Manual Layer Placement

For models that slightly exceed single-GPU memory, a simple manual partition can be effective [11].

- Model Partitioning: Split your model's layers into logical groups.

- Device Placement: Move each group of layers to a different GPU using the

.to()method. - Forward Pass Logic: Explicitly move intermediate tensors between devices in the model's

forwardmethod.

Code Example: Manual Model Parallelism

Workflow Visualization

The following diagram illustrates the flow of data and model components in a hybrid parallel strategy, which is commonly used for training very large models.

Diagram 1: Data and Pipeline Hybrid Parallelism

The Scientist's Toolkit: Essential Research Reagents

This section lists key software "reagents" required for implementing multi-GPU training in scientific computing research.

Table 2: Essential Software Tools for Multi-GPU Research

| Tool / Library | Function and Purpose |

|---|---|

| PyTorch DDP | The standard for data parallelism in PyTorch, enabling efficient multi-process training on one or multiple machines [9] [11]. |

| DeepSpeed | A deep learning optimization library that provides advanced implementations of ZeRO for unprecedented memory savings and the ability to train trillion-parameter models [12]. |

| PyTorch FSDP | Fully Sharded Data Parallel (FSDP) is PyTorch's native implementation of ideas like ZeRO-3, seamlessly sharding model parameters, gradients, and optimizer states [12]. |

| NCCL | The NVIDIA Collective Communication Library is a highly optimized backend for GPU-to-GPU communication, essential for fast gradient synchronization in distributed training [11]. |

| TensorFlow MirroredStrategy | TensorFlow's API for synchronous data parallelism on a single machine with multiple GPUs, replicating the model and managing gradient aggregation [11] [17]. |

Frequently Asked Questions (FAQs)

Q1: What is a memory barrier, and why is it necessary in GPU computing?

A memory barrier (or fence) is an operation that enforces ordering constraints on memory operations. It ensures that memory accesses issued before the barrier are visible to other threads before any accesses issued after the barrier [18]. This is crucial for correct synchronization when threads communicate through shared memory, preventing subtle bugs arising from hardware and compiler reordering in relaxed memory models [18].

Q2: My single-GPU kernel hangs when I use a barrier. What could be wrong?

A common cause is that the barrier cannot be reached by all threads in the synchronization scope [19]. In a threadblock using __syncthreads() or workgroupBarrier(), if any thread diverges and does not execute the barrier (e.g., due to a conditional branch), it will cause a deadlock [19]. Ensure all threads in the block encounter the barrier uniformly. For grid-wide sync using cooperative groups, a hang might occur if your kernel launch is too large; the GPU must be able to run all blocks concurrently, so check for a "too many blocks in cooperative launch" error [20].

Q3: After synchronization, my threads are reading stale data. What is the issue?

This often indicates a missing or incorrect memory fence. Synchronization primitives like __syncthreads() ensure threads reach a point in code but do not guarantee memory visibility between threads [19]. You likely need a memory barrier to enforce ordering. For example, a thread that writes to shared memory must use a barrier before another thread reads that data to ensure the write is visible [19] [21].

Q4: What is the difference between__threadfence(),__threadfence_block(), and__syncthreads()?

The function and scope of these operations differ, as summarized in the table below.

| Function | Scope | Effect |

|---|---|---|

__syncthreads() |

Threadblock | Execution barrier: Waits until all threads in the block reach this point [19]. |

__threadfence_block() |

Threadblock | Memory barrier: Ensures all memory accesses by this thread before the fence are visible to all threads in the block after the fence [18]. |

__threadfence() |

Device (GPU-wide) | Memory barrier: Ensures memory accesses before the fence are visible to all threads on the GPU after the fence [18]. |

A __syncthreads() execution barrier often combines the effects of both execution and memory barriers for workgroup (shared) memory [19]. For global memory, you may need to use __threadfence() in conjunction with synchronization [21].

Q5: Can I synchronize across all thread blocks (a grid-wide sync) on a single GPU?

Yes, but this is an advanced operation with strict requirements. You must use Cooperative Groups and launch the kernel with cudaLaunchCooperativeKernel [20]. The primary challenge is that the entire grid (all thread blocks) must be resident on the GPU simultaneously to avoid deadlock. The number of blocks you launch must not exceed the maximum your GPU can support concurrently, which can be queried programmatically [20].

Troubleshooting Guides

Problem: Incorrect Results from Producer-Consumer Pattern

This pattern involves one set of threads (producers) writing data that another set (consumers) reads. Without proper fencing, consumers may read stale or uninitialized data [18] [21].

Diagnosis Steps:

- Reproduce the Error: Ensure the bug is reproducible. Inconsistent, rare failures are a hallmark of memory ordering issues [18].

- Check Message-Passing Logic: Isolate the code where data and its "ready" flag are written/read.

- Use Formal Tools: Run your code through a formal model checker like Dartagnan, which supports GPU memory models and can verify if your synchronization is sound or identify allowed but undesired behaviors [18].

Solution: Insert the appropriate memory fences to enforce ordering. The classic solution is shown in the diagram below.

Figure 1: Message-Passing Synchronization with Memory Fences

If the producer (Thread 0) and consumer (Thread 1) are in the same threadblock, a cheaper __threadfence_block() suffices. If they are in different blocks, a full device-wide __threadfence() is required [18].

Problem: Kernel Performance is Poor Due to Excessive Synchronization

Over-synchronization can serialize execution and negate the performance benefits of parallelization [18].

Diagnosis Steps:

- Profile Your Code: Use NVIDIA Nsight Systems or Nsight Compute to profile your kernel. Look for:

- Long stretches of idle time where warps are waiting at barriers.

- Low SM (Streaming Multiprocessor) utilization.

- Inspect Barrier Scope: Check if you are using a device-wide fence where a block-scoped fence would be sufficient. Device-wide fences are significantly slower [18].

Solution:

- Minimize Synchronization Frequency: Redesign your algorithm to have threads perform more independent work between synchronization points.

- Use the Narrowest Possible Fence Scope: If threads only communicate within a block, use

__threadfence_block()instead of__threadfence()[18]. - Eliminate Redundant Barriers: A barrier just before the end of a kernel is often unnecessary, as the kernel exit provides an implicit synchronization point.

Experimental Protocols for Validating Synchronization

Methodology 1: Litmus Test for Message-Passing

This test verifies the correctness of the message-passing pattern shown in Figure 1 [18].

1. Hypothesis: If the memory fences are correctly placed, a consumer thread that sees the updated flag (flag == 1) must subsequently read the updated data value (data == 1). Any other outcome is a forbidden behavior.

2. Experimental Setup:

- Initialize two device variables:

data = 0andflag = 0. - Launch a kernel with at least two threads (can be in different blocks).

- Thread 0 executes:

data = 1; __threadfence(); flag = 1; - Thread 1 executes:

while (flag == 0); __threadfence(); result = data;

3. Data Collection and Analysis:

- Run the kernel millions of times to stress-test the memory model.

- Check if the outcome

result == 0ever occurs. - Tools: Use testing frameworks like GPUHarbor to run this test automatically on your hardware and report weak behaviors [18].

Methodology 2: Validating Grid-Wide Synchronization

This protocol tests the correctness of a cooperative grid sync for an in-place transpose operation [20].

1. Algorithm Workflow: The workflow for a kernel that reads from and writes to the same global memory array requires a grid-wide sync, as visualized below.

Figure 2: Workflow for In-Place Operation Requiring Grid Sync

2. Validation Protocol:

- Use an atomic counter in global memory. Before the sync, each threadblock atomically increments this counter.

- After the sync, but before writing, a single thread reads the counter's value.

- Expected Outcome: The read value must equal the total number of blocks in the grid, proving all blocks reached the sync point before any proceeded. Failure to achieve this indicates an incorrect cooperative launch setup [20].

The Scientist's Toolkit: Essential Research Reagents

This table details key tools and concepts for debugging GPU memory consistency issues.

| Tool / Concept | Function / Purpose | Relevance to Research |

|---|---|---|

| Nsight Compute (NVIDIA) | Detailed GPU kernel profiler. Metrics on memory throughput, shared memory bank conflicts, and stall reasons. | Identifies performance bottlenecks and verifies if memory access patterns are efficient [22]. |

| GPUHarbor | Browser-based testing platform for memory consistency models. | Empirically tests for allowed and forbidden memory behaviors on your specific hardware, revealing model complexities [18]. |

| Dartagnan | Formal verification tool based on model checking. | Rigorously proves the correctness (or finds bugs) in your synchronization scheme against a formal GPU memory model specification [18]. |

| CUDA Cooperative Groups | Programming model for managing thread groups, enabling grid-wide sync. | Essential for implementing advanced synchronization patterns that span an entire GPU, a stepping stone to multi-GPU algorithms [20]. |

| Litmus Test | A small, carefully crafted concurrent program used to test a specific memory ordering behavior. | The scientific method applied to memory models. Allows for isolated testing of hypotheses about synchronization [18]. |

Preparing for Multi-GPU Scaling

Understanding memory barriers on a single GPU is the foundational step for multi-GPU programming. The challenges are amplified in a multi-GPU system:

- Global Synchronization: A grid-wide sync on a single GPU becomes a system-wide sync across multiple GPUs, requiring inter-device communication and synchronization, which is even more complex and expensive [23].

- Unified Memory and Fences: When using Unified Memory or direct peer-to-peer memory access, you may need to use the strongest fence,

__threadfence_system(), to ensure memory operations are visible to other GPUs and the CPU [20]. - Performance Overhead: The cost of synchronization scales with the number of devices. Inefficient synchronization that is merely a performance problem on one GPU can become a crippling bottleneck that prevents any scaling on multiple GPUs [18] [23]. The principles of using the narrowest possible scope and minimizing synchronization frequency become critically important.

FAQs

1. What is the fundamental difference between NVLink and InfiniBand?

NVLink and InfiniBand serve distinct but complementary roles in high-performance computing (HPC) infrastructure. NVLink is a proprietary NVIDIA technology designed for ultra-high-speed, direct communication within a single server or node, primarily between GPUs and between GPUs and CPUs. It creates a high-bandwidth fabric that allows processors to share memory and computations, effectively making multiple GPUs operate as a single, larger accelerator [24] [25].

In contrast, InfiniBand is an industry-standard networking protocol that connects multiple servers across clusters and data centers. It is designed for scalable, low-latency server-to-server communication, forming the backbone of large-scale supercomputing and AI clusters by enabling efficient data transfer between compute nodes, storage systems, and other devices [26] [27] [25].

2. When should I use NVLink versus InfiniBand in my research setup?

The choice depends on the scale and nature of your computational workload:

Use NVLink when your work is constrained by the communication bottlenecks between GPUs inside a single server. This is critical for:

- Training large AI models (e.g., LLMs like GPT) that require massive GPU memory and frequent data exchange between GPUs [25].

- Scientific simulations (e.g., CFD, elastodynamics) where GPUs within a node need tightly coupled, high-bandwidth communication [23] [28].

- Any multi-GPU workload where direct memory access between GPUs can eliminate copying overhead and accelerate time-to-solution [29].

Use InfiniBand when your computational problem requires scaling across multiple servers in a cluster. This is essential for:

- Distributed training of very large models that exceed the capacity of a single node [26] [25].

- Large-scale scientific research involving complex simulations and data-intensive tasks spread across a cluster [27].

- Building supercomputing environments that require low-latency, RDMA-enabled communication between thousands of compute nodes [26] [25].

3. My multi-node training job is experiencing slow performance. How can I determine if the interconnect is the bottleneck?

Slow scaling in distributed training often points to inter-node communication bottlenecks. Here is a systematic diagnostic protocol:

- Profile Network Utilization: Use profiling tools (e.g., NVIDIA Nsight Systems,

dcgm) to monitor InfiniBand network utilization during the training job. If the bandwidth is consistently saturated during gradient synchronization phases (e.g., All-Reduce operations), the interconnect is likely a bottleneck [25]. - Check for Packet Loss: Use InfiniBand diagnostics (e.g.,

ibdiag,perfquery) to check for packet loss or errors. Packet loss triggers retransmissions, drastically increasing latency and reducing effective throughput [27]. - Verify Topology and Cable Health: Ensure the InfiniBand fabric is wired for an optimal topology (e.g., Fat Tree) to avoid hotspots. Inspect cables and transceivers for physical damage, as these can degrade signal integrity [27].

- Leverage In-Network Computing: Confirm that your InfiniBand network has NVIDIA Scalable Hierarchical Aggregation and Reduction Protocol (SHARP) enabled. SHARP offloads reduction operations to the switch network, significantly decreasing the volume of data transferred and accelerating collective operations [24] [29].

4. Can I use both NVLink and InfiniBand together in a single system?

Yes, modern large-scale data centers and supercomputing systems frequently deploy a hybrid interconnect architecture to leverage the strengths of both technologies [27] [25].

- Within a server node, NVLink is used to fully interconnect all local GPUs, maximizing the performance for compute-intensive tasks and deep learning on that node [24].

- Between server nodes, InfiniBand provides the high-speed, low-latency fabric, connecting these powerful NVLink-enabled nodes into a seamless, large-scale cluster [27].

This hybrid approach ensures that both intra-node (within server) and inter-node (between servers) communication are optimized, which is essential for solving exascale computing challenges and running complex, multi-node scientific applications [24] [25].

Troubleshooting Guides

Issue: Poor Multi-GPU Scaling Within a Single Server

Symptoms: Adding more GPUs to a server does not improve performance linearly; high latency is observed in GPU-to-GPU communication.

Diagnosis and Resolution Protocol:

| Step | Action | Tools & Commands | Expected Outcome |

|---|---|---|---|

| 1 | Verify NVLink Link Status | Check nvidia-smi topology (nvidia-smi topo -m) or use dcgmi diagnostics. |

Confirms active NVLinks between GPUs. Identifies if links are falling back to PCIe. |

| 2 | Inspect Memory Usage | Use nvidia-smi to monitor GPU memory utilization. |

Rules out GPU memory exhaustion. High NVLink traffic is indicated if memory copies are a bottleneck. |

| 3 | Profile Application | Use NVIDIA Nsight Systems to trace application execution. | Identifies specific kernels or communication phases where delays occur. |

| 4 | Check for Resource Contention | Ensure no other processes are consuming significant GPU resources. | Isolates the performance issue to the target application. |

Issue: High Latency or Low Throughput in Multi-Node Clusters

Symptoms: Slow data transfer between nodes; collective operations (All-Reduce) take excessively long; job completion time does not improve with added nodes.

Diagnosis and Resolution Protocol:

| Step | Action | Tools & Commands | Expected Outcome |

|---|---|---|---|

| 1 | Basic IB Fabric Check | Run ibstatus and ibdiag to verify link states and subnet health. |

Confirms all links are active and ports are initialized correctly. |

| 2 | Performance Benchmark | Run point-to-point bandwidth tests with ib_write_bw and ib_read_bw. |

Establishes baseline performance and compares it against theoretical max (e.g., HDR 200Gb/s). |

| 3 | Switch & Cable Inspection | Use switch management software (NVOS) to check for port errors and ECC issues. | Identifies faulty cables, transceivers, or switch ports causing packet corruption [30]. |

| 4 | Enable SHARP | Verify SHARP is enabled on InfiniBand switches for in-network aggregation. | Reduces data volume during All-Reduce, lowering latency and network congestion [24] [29]. |

Technical Specifications and Data

Quantitative Comparison: NVLink vs. InfiniBand

Table 1: Comparative specifications of the latest generation NVLink and InfiniBand technologies.

| Feature | NVLink 5.0 (Blackwell) | InfiniBand NDR |

|---|---|---|

| Bandwidth | 1.8 TB/s per GPU | 400 Gb/s (50 GB/s) per port |

| Primary Scope | Intra-node (within a server) | Inter-node (between servers/cluster) |

| Typical Latency | Sub-microsecond | < 600 ns (with RDMA) |

| Physical Range | Short (within a server chassis) | Long (data center scale) |

| Maximum Devices | 576 GPUs (with NVLink Switch) | 64,000+ devices |

| Key Technology | Direct GPU memory sharing | Remote Direct Memory Access (RDMA) |

| Protocol Type | Proprietary (NVIDIA) | Industry Standard |

NVLink Generational Evolution

Table 2: Evolution of NVLink performance across NVIDIA GPU architectures [24] [31].

| Generation | NVIDIA Architecture | Bandwidth per GPU | Max Links per GPU |

|---|---|---|---|

| NVLink 3 | Ampere | 600 GB/s | 12 |

| NVLink 4 | Hopper | 900 GB/s | 18 |

| NVLink 5 | Blackwell | 1.8 TB/s | 18 |

Experimental Protocols

Protocol 1: Benchmarking Intra-Node Multi-GPU Communication

Objective: To quantify the performance advantage of NVLink over PCIe for GPU-to-GPU data transfers within a single server.

Methodology:

- System Setup: Use a server with at least two NVIDIA GPUs that support NVLink (e.g., H100, A100). Ensure NVLink is enabled and verified via

nvidia-smi topo -m. - Tooling: Employ standard benchmarking suites like NCCL Tests or custom CUDA applications that perform peer-to-peer (P2P) memory access.

- Procedure:

- Run a point-to-point bandwidth test between two GPUs.

- Conduct collective operation tests (e.g., All-Reduce) across all GPUs in the system.

- Repeat tests with NVLink disabled (forcing communication over PCIe) for a baseline comparison.

- Metrics: Measure bandwidth (GB/s) for data transfers and time to completion for collective operations.

Protocol 2: Evaluating Inter-Node Scaling Efficiency with InfiniBand

Objective: To measure the scaling efficiency of a distributed application across multiple InfiniBand-connected nodes and identify network bottlenecks.

Methodology:

- Cluster Setup: Configure a cluster with at least two nodes, each with one or more GPUs, interconnected via InfiniBand.

- Tooling: Use NCCL Tests for network performance and application-level profiling with NVIDIA Nsight Systems.

- Procedure:

- Run a distributed training job or a synchronized computational kernel, scaling from 2 to N nodes.

- Use

ib_write_bwto validate the raw InfiniBand bandwidth between node pairs. - Profile the application to track the time spent in communication (synchronization, gradient All-Reduce) versus computation.

- Metrics: Calculate scaling efficiency (performance increase per node added) and analyze the profile trace to pinpoint communication overhead.

System Architecture Diagrams

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key hardware and software components for multi-GPU scientific computing research.

| Item | Function & Purpose |

|---|---|

| NVLink-Enabled GPU Server (e.g., DGX/HGX) | Provides the foundational compute platform with high-bandwidth intra-node GPU interconnects for tackling problems requiring massive, tightly-coupled parallel processing [24] [29]. |

| InfiniBand Network Fabric | Creates the low-latency, high-throughput cluster-scale network essential for distributed computing, enabling scalable scientific simulations and multi-node AI training [26] [27]. |

| NVIDIA NCCL (Collective Comm. Library) | An optimized library of standard communication routines (All-Reduce, Broadcast) that is essential for achieving high bandwidth and low latency across multi-GPU and multi-node systems [29]. |

| Profiling Tools (NVIDIA Nsight) | Provides deep, system-level performance analysis to identify bottlenecks in computation, memory, and communication, which is critical for optimizing complex research applications [25]. |

| SHARP-Enabled InfiniBand Switches | Implements in-network computing by offloading aggregation operations (e.g., during All-Reduce) to the switch hardware, drastically reducing data volume and accelerating distributed workloads [24] [29]. |

Technical Troubleshooting Guides

Guide: Diagnosing Low GPU Utilization in Multi-GPU Setups

Problem: One or more GPUs in a multi-node cluster are showing consistently low compute utilization (<30%), leading to prolonged experiment runtimes and inefficient resource use. [1]

Investigation Methodology:

Step 1: Isolate the Bottleneck

- Action: Use the NVIDIA System Management Interface (

nvidia-smi) to checkGPU-UtilandVolatile GPU-Utilmetrics. Concurrently, use a profiler likeNsight Systemsto trace the application's execution. [32] [33] - Interpretation: If GPU utilization spikes intermittently but has long idle periods, the bottleneck is likely upstream in the data pipeline or CPU. Consistently low utilization may indicate a problem with workload distribution or the GPU compute mode. [1] [33]

- Action: Use the NVIDIA System Management Interface (

Step 2: Check Data Pipeline Performance

- Action: In your training script, profile the data loader. Measure the time the GPU spends waiting for the next batch of data.

- Interpretation: If data loading time is a significant fraction of the total batch processing time, the GPU is being starved of data. This is a classic CPU or I/O bottleneck. [1]

Step 3: Verify Multi-GPU Communication

- Action: When using data-parallel training, profile the All-Reduce operation (synchronizing gradients across GPUs). Look for excessive time spent in communication.

- Interpretation: If communication time is high relative to computation, the interconnect (e.g., PCIe) may be saturated, or the model may be too small for effective parallelization. [23] [34]

Resolution Actions:

- For CPU/Data Bottlenecks: Implement asynchronous data loading and prefetching. Increase the number of CPU workers in your data loader. Consider using a faster storage solution co-located with compute nodes. [1]

- For Workload Distribution Issues: Ensure your software framework (e.g., PyTorch DDP, Horovod) is correctly configured for the number of GPUs. For small models, consider switching to a larger batch size or a different parallelism strategy (e.g., model parallelism). [34]

- For Communication Bottlenecks: If available, enable high-speed interconnects like NVIDIA NVLink. For network-based clusters, ensure InfiniBand or a high-throughput network is configured correctly. [34]

Guide: Resolving System Instabilities and Failures in Multi-GPU Rigs

Problem: A system with multiple GPUs, especially those using riser cards, experiences Blue Screen of Death (BSOD) errors, driver crashes, or failure to boot with all GPUs recognized. [35]

Investigation Methodology:

Step 1: Isolate Faulty Hardware

- Action: Power down the system and disconnect all GPUs. Reconnect GPUs one by one, booting the system after each addition. [35]

- Interpretation: This helps identify if a specific GPU or riser card is causing the failure. Note that systems have a maximum number of GPUs they can boot with, even with "Above 4G Decoding" enabled. [35]

Step 2: Diagnose Power and Riser Issues

- Action: Visually inspect all power connections to the GPUs and riser cards. Ensure riser cards are firmly seated.

- Interpretation: A common point of failure is using SATA power connectors for risers, which are not designed to handle the 75W a PCIe slot can draw. Always use VGA power cables for risers. [35] Flaky USB cables in USB-based risers can also cause instability. [35]

Step 3: Check Thermal and Power Load

- Action: Use monitoring tools (e.g.,

nvidia-smi dmonor DCGM) to log GPU temperatures and power draw under load. - Interpretation: Overheating GPUs will throttle performance and can cause crashes. The combined peak power draw of all GPUs must not exceed the capacity of the power supply unit (PSU). [32]

- Action: Use monitoring tools (e.g.,

Resolution Actions:

- Update and Configure Firmware: Ensure the motherboard BIOS is updated and that "Above 4G Decoding" is enabled. Set the PCIe link speed to a stable generation (e.g., Gen3) instead of "Auto" in the BIOS. [35]

- Replace Inadequate Components: Replace any SATA-powered risers with those using VGA power connectors. Use high-quality, shielded PCIe ribbon cables for better reliability than USB-based risers. [35]

- Address Power and Cooling: Upgrade the PSU to one with sufficient wattage and high-quality power rails. Improve case airflow or use an open mining frame to ensure GPUs are adequately cooled. [35] [32]

Frequently Asked Questions (FAQs)

Q1: Our multi-GPU training job is running, but we are not seeing a linear speedup. Why is this happening? A: Perfect linear scaling (N times speedup with N GPUs) is often not achieved due to overhead. Key bottlenecks include:

- Communication Overhead: Time spent synchronizing gradients and data between GPUs. [23]

- Imbalanced Workloads: The slowest GPU in the cluster determines the pace for each training step. [34]

- Software Framework Inefficiencies: Incorrect configuration of the distributed training library can introduce significant latency. Use profiling tools to identify if the bottleneck is in computation or communication. [23] [36]

Q2: Should we use a single node with 4 GPUs or 4 nodes with 1 GPU each for our research? A: A single node with multiple GPUs is generally preferable for most research workloads. The table below summarizes the key differences:

| Factor | Single Node, Multi-GPU | Multi-Node, Multi-GPU |

|---|---|---|

| Communication Speed | Very High (NVLink/PCIe) | Network Dependent (InfiniBand/Ethernet) [34] |

| Setup & Management | Simpler | More Complex (requires Kubernetes/Slurm) [37] [33] |

| Maximum Scale | Limited by motherboard/PSU | Virtually Unlimited [34] |

| Best For | Most single-lab research, model development | Extremely large models (LLMs), massive datasets [34] |

Q3: How can we improve the power efficiency of our multi-GPU cluster? A: Power efficiency is critical for both cost and sustainability. [1] Key strategies include:

- Increase Utilization: The primary driver of efficiency. An idle GPU still consumes a large fraction of peak power. Consolidate workloads to achieve higher average utilization. [1]

- Adopt Energy-Aware Scheduling: Use scheduling tools that dynamically adjust GPU power states based on workload demands. [32]

- Use Modern Hardware: Newer GPU architectures (e.g., NVIDIA H100) deliver significantly more performance per watt than older generations. [33]

- Optimize Model and Code: Use techniques like mixed-precision training, which allows GPUs to use their more efficient tensor cores. [1]

Experimental Protocols & Visualization

Protocol: Benchmarking Multi-GPU Scaling Efficiency

Objective: To quantitatively measure the performance and efficiency of a research application when scaled across multiple GPUs.

Materials:

- GPU Cluster: A node with 2 or more GPUs, preferably connected with a high-speed link like NVLink. [33]

- Software: Kubernetes with GPU device plugins or a job scheduler like Slurm. [37] [33] Your application configured for data-parallel execution (e.g., with PyTorch DDP).

Methodology:

- Baseline Measurement: Run the application on a single GPU and record the average time per training step (T₁) and the total time to completion.

- Multi-GPU Execution: Run the identical workload, distributing it across N GPUs (e.g., 2, 4). Record the new average time per step (T_N).

- Data Collection: For each run, log GPU utilization (via

nvidia-smi), power draw, and total runtime. - Analysis: Calculate the speedup and efficiency.

- Speedup: S = T₁ / T_N

- Efficiency: E = (S / N) * 100%

Diagnostic Workflow Visualization

The following diagram outlines the logical flow for diagnosing common multi-GPU performance issues.

The Scientist's Toolkit: Key Research Reagent Solutions

The table below lists essential software and hardware "reagents" required for effective multi-GPU scientific computing.

| Item Name | Function / Purpose | Key Considerations |

|---|---|---|

| NVIDIA CUDA Toolkit | Core programming model and library for GPU-accelerated computing. Provides compilers (NVCC) and debuggers. [33] | Required for any custom GPU code. Different versions have varying support for GPU architectures. |

| Kubernetes GPU Device Plugin | Allows Kubernetes to schedule pods on GPU nodes and exposes GPU resources. [37] | Essential for containerized workloads in a cluster. Must match the GPU driver version. |

| NVIDIA DCGM (Data Center GPU Manager) | A suite of tools for monitoring, management, and health checks of GPUs in cluster environments. [37] | Critical for tracking utilization, temperature, and power in production research clusters. |

| NVIDIA NVLink | A high-bandwidth, energy-efficient GPU-to-GPU interconnect that enables memory pooling. [34] | Drastically reduces communication overhead compared to PCIe. Available on high-end GPUs (V100, A100, H100). |

| Slurm Workload Manager | An open-source, highly scalable job scheduler for HPC clusters. [33] | The de facto standard for managing multi-node, multi-GPU research jobs in academic HPC centers. |

| PyTorch DDP / Horovod | Libraries for distributed data-parallel training, enabling a single training job to run across multiple GPUs/nodes. [34] | PyTorch DDP is easier to integrate for PyTorch users. Horovod is framework-agnostic (PyTorch, TensorFlow). |

Implementing Multi-GPU Strategies: A Practical Framework for Scientific Workloads

Frequently Asked Questions

1. What are the most common signs that my multi-GPU setup has a communication bottleneck? The most common signs include low GPU utilization (compute cores are idle) despite the model running, a significant drop in performance scaling as you add more GPUs, and high values for communication-related metrics (e.g., high AllReduce time) in profiling tools like NVIDIA Nsight Systems. When the number of devices grows too large relative to the model, communication begins to dominate the computation, leading to these inefficiencies [38].

2. My training job is running out of memory on a single GPU. What is the best strategy to try first? For models that don't fit on a single GPU, Fully Sharded Data Parallelism (FSDP) is often the most effective first strategy. FSDP shards the model parameters, gradients, and optimizer states across all GPUs, gathering them only when needed for computation. This can significantly reduce the memory footprint per GPU and is generally easier to implement than more complex strategies like pipeline or tensor parallelism [39].

3. Why does my training throughput not improve linearly when I add more GPUs? This is a classic case of diminishing returns from scaling. As you add more GPUs, the global batch size often increases, but so does the communication overhead required to synchronize gradients and model states. After a certain point, the cost of this communication can outweigh the benefits of added compute resources, leading to sub-linear scaling. This is especially true if the hardware interconnect (e.g., network) is not high-bandwidth [38] [1].

4. How can I determine if my workload is suitable for GPU acceleration? GPUs are ideal for workloads that can be massively parallelized, such as large matrix multiplications common in deep learning. If your application involves performing the same operation on thousands or millions of data elements simultaneously, it will likely benefit from a GPU. Conversely, tasks with sequential operations or minimal parallelism may not see significant improvements and could even run slower due to data transfer overheads [40].

Troubleshooting Guides

Issue: Out-of-Memory Errors During Model Training

Problem Description Your training job fails with a CUDA out-of-memory (OOM) error, even when using a GPU with substantial memory.

Diagnostic Steps

- Profile Memory Usage: Use tools like

nvidia-smior the NVIDIA DCGM Exporter to track memory consumption over time. Identify which tensors (parameters, gradients, activations) are consuming the most memory [1]. - Check Batch Size: A batch size that is too large is a common cause of OOM errors. Try reducing the batch size as a first step.

- Analyze Model Architecture: Large models with hundreds of billions of parameters naturally demand more memory during training [38].

Resolution Actions

- Implement Mixed Precision Training: Use a combination of FP16/BF16 and FP32 precision. This can reduce memory usage by nearly 50% for parameters and activations, often without sacrificing model accuracy [39].

- Enable Gradient Checkpointing: Also known as activation recomputation, this technique trades compute for memory by re-computing activations during the backward pass instead of storing them. It can reduce activation memory by up to 80% [39].

- Adopt a Sharded Strategy: Move from basic Data Parallelism to Fully Sharded Data Parallelism (FSDP). FSDP shards model parameters, optimizer states, and gradients across GPUs, dramatically lowering the memory footprint per device [39].

- Consider Model Parallelism: For extremely large models, use Pipeline Parallelism (PP) or Tensor Parallelism (TP) to split the model itself across multiple GPUs [38] [39].

Issue: Poor Multi-GPU Scaling Efficiency

Problem Description After adding more GPUs, the training speed (throughput) does not increase as expected, or it even gets worse.

Diagnostic Steps

- Measure Scaling Efficiency: Calculate the throughput (samples/second) for different world sizes (e.g., 1, 2, 4, 8 GPUs). Plot the relative speedup to visualize the scaling efficiency.

- Profile Communication Overhead: Use a profiler to measure the time spent on collective communication operations like AllReduce and AllGather. High communication time indicates a bottleneck [38].

- Check Hardware Topology: Ensure that GPUs are connected via high-speed links (e.g., NVLink within a node) and that nodes are connected with a low-latency, high-bandwidth network like InfiniBand [38].

Resolution Actions

- Optimize Communication Strategy: If using FSDP, experiment with different sharding strategies (e.g.,

SHARD_GRAD_OPinstead ofFULL_SHARD) to reduce communication frequency [39]. - Increase Batch Size and Use Micro-Batching: With more GPUs, increase the global batch size to ensure each GPU has enough work to do. For Pipeline Parallelism, using smaller micro-batches can help reduce "pipeline bubbles" where GPUs are idle [39].

- Overlap Computation and Communication: Leverage frameworks that allow for overlapping gradient synchronization with backward pass computation, which can hide some of the communication latency.

- Re-evaluate Strategy: For large models, a pure Data Parallelism approach may not be optimal. Consider a hybrid strategy like combining FSDP with Tensor Parallelism to balance memory savings and communication overhead [39].

Experimental Protocols & Methodologies

Protocol 1: Establishing a Performance Baseline

Objective To measure the baseline performance and memory consumption of a model on a single GPU, which will serve as a reference for evaluating different multi-GPU strategies.

Materials

- Research Reagent Solutions:

Item Function NVIDIA DCGM Exporter Monitors GPU utilization, memory usage, and power metrics. PyTorch Profiler / NVIDIA Nsight Systems Traces operations and identifies performance bottlenecks. Custom Benchmarking Script A script to run a fixed number of training steps and record throughput.

Methodology

- Environment Setup: Configure your environment with the necessary deep learning framework (e.g., PyTorch), CUDA drivers, and profiling tools.

- Model and Data Preparation: Load your target model (e.g., Llama-2 7B) and a representative dataset [38].

- Execution:

- Run the profiling tool (e.g.,

torch.profiler) alongside your training script for a fixed number of steps (e.g., 100). - Use the benchmark script to measure the average throughput (samples/second or tokens/second).

- Use

nvidia-smior DCGM to log peak GPU memory consumption.

- Run the profiling tool (e.g.,

- Data Collection: Record the throughput, peak memory usage, and profiler output highlighting the most time-consuming operations.

Protocol 2: Evaluating Parallelization Strategies

Objective To systematically compare the performance, memory efficiency, and scaling of different parallelization strategies across multiple GPUs.

Materials

- Research Reagent Solutions:

Item Function NVIDIA GPU Operator (Kubernetes) Automates the management of GPU software components in a cluster [41]. FSDP (PyTorch) Enables memory savings via sharding [39]. Tensor Parallelism (e.g., Megatron-LM) Splits individual model layers across GPUs [39]. Pipeline Parallelism (e.g., PyTorch) Splits model layers across GPUs in a sequential manner [39].

Methodology

- Strategy Implementation: Implement training for the same model using different strategies: Data Parallelism (DP), FSDP, and a hybrid strategy (e.g., FSDP + TP).

- Hardware Configuration: Perform experiments on a consistent hardware setup, such as a node with 8 GPUs. Use high-speed interconnects like NVLink if available [38].

- Controlled Experiment:

- For each strategy, train the model for a set number of steps (e.g., 500) with a consistent global batch size.

- Use the same profiling and benchmarking tools from Protocol 1.

- Data Collection: For each run, record:

- Throughput: Samples/second.

- Memory Usage: Peak memory per GPU.

- GPU Utilization: Average compute and memory copy utilization.

- Communication Time: Time spent on inter-GPU communication, obtained from the profiler.

The experimental setup for validating parallelization strategies involves a multi-node Kubernetes cluster with automated GPU provisioning, as outlined below.

Data Presentation

Table 1: Parallelization Strategy Comparison

This table summarizes the key characteristics of common parallelization strategies to aid in selection.

| Strategy | Core Principle | Ideal Model Size | Key Advantage | Primary Limitation | Typical Use Case |

|---|---|---|---|---|---|

| Data Parallelism (DP/DDP) [39] | Replicates model on each GPU; splits data. | Fits on a single GPU. | Simple to implement; no model changes. | Entire model must fit on each GPU; high communication. | Gemma-2B-it on 2-8 GPUs [39]. |

| Fully Sharded DP (FSDP) [39] | Shards model states (params, gradients, optimizer) across GPUs. | Large (exceeds single GPU memory). | Drastically reduces memory per GPU. | Higher communication overhead than DP. | Llama3.1-8B on 8+ GPUs [39]. |

| Pipeline Parallelism (PP) [39] | Splits model layers (stages) across GPUs. | Very Large (many layers). | Enables training of extremely deep models. | "Pipeline bubbles" cause GPU idle time. | Models with hundreds of layers (e.g., GPT-3). |

| Tensor Parallelism (TP) [39] | Splits individual tensor operations across GPUs. | Models with large layers. | Efficient for large matrix multiplications. | Requires very high-speed interconnect (NVLink). | Transformer models with wide FFN layers. |

| Hybrid (e.g., FSDP+TP) [39] | Combines two or more strategies. | Extremely Large (e.g., 100B+ params). | Optimal balance of memory and compute use. | High implementation and tuning complexity. | State-of-the-art foundational model training. |

Table 2: Quantitative Scaling Results for Llama-7B Model

The following table, based on a large-scale study, shows how performance scales with the number of GPUs using FSDP, highlighting the point of diminishing returns [38].

| Number of GPUs | Relative Throughput | Power Consumption (Relative) | Estimated Scaling Efficiency |

|---|---|---|---|

| 8 | 1.0x (Baseline) | 1.0x | 100% |

| 64 | ~6.5x | ~8.0x | ~81% |

| 512 | ~28x | ~64x | ~55% |

| 2048 | ~55x | ~256x | ~27% |

Decision Framework

Use the following workflow to select an appropriate parallelization strategy based on your model size and hardware constraints.

FAQs: Core Concepts and Decision-Making

Q1: What is the fundamental difference between synchronous and asynchronous data parallelism?

The core difference lies in how and when worker nodes synchronize their computed gradients. In synchronous data parallelism, all workers process their data subsets and compute gradients simultaneously. The system then waits for every worker to finish before aggregating all gradients (typically via an All-Reduce operation) and updating the model. This ensures all model copies stay identical after each update [42]. In asynchronous data parallelism, workers operate independently without waiting for others. A worker reads the current model parameters, processes its data, computes gradients, and immediately sends updates to a central parameter server. This means model copies can be based on slightly outdated parameter versions and may diverge [42].

Q2: When should I choose synchronous over asynchronous updates for my research project?

The choice involves a trade-off between stability and hardware utilization [42].

| Aspect | Synchronous Updates | Asynchronous Updates |

|---|---|---|

| Stability & Convergence | More stable and predictable convergence [42]. | Can be less stable; requires careful hyperparameter tuning [42]. |

| Hardware Compatibility | Best for homogeneous clusters (similar GPU models) [42]. | Tolerates heterogeneous, mixed-speed, or unreliable hardware [43]. |

| System Complexity | Uses direct worker-to-worker "All-Reduce" [42]. | Requires a "Parameter Server" architecture [42]. |

| Ideal Use Case | Most deep learning frameworks; applications requiring accuracy [42]. | Edge devices or when maximum hardware utilization is critical [42]. |

For most scientific computing research, especially with stable, homogeneous GPU clusters, synchronous updates are the standard and recommended choice due to their training stability and simpler debugging [42] [43].

Q3: Does gradient accumulation provide a performance (throughput) benefit?

No, gradient accumulation does not increase training throughput. It simulates a larger effective batch size by running several forward/backward passes (accumulating gradients) before performing a single optimizer step [44]. This process takes more time than processing a single large batch that fits in memory. Its primary purpose is to overcome memory limitations, allowing you to use a larger batch size than your hardware can physically hold, which can sometimes help stabilize training [43] [44].

Q4: My multi-GPU training is slower than expected. What are the common bottlenecks?

Performance issues in multi-GPU setups often stem from:

- Communication Overhead: The time spent synchronizing gradients between GPUs can become a bottleneck, especially with smaller models or slower interconnects [42] [43].

- Stragglers: In synchronous training, the fastest GPU must wait for the slowest one. Performance variability across GPUs can significantly slow down the entire process [42].

- I/O Bottlenecks: If the data loading pipeline cannot keep up with the GPUs, they will sit idle waiting for the next batch of data [43].

- Network Configuration: Incorrect network settings, like a misconfigured MTU, can severely degrade performance in multi-node setups [45].

Troubleshooting Guides

Guide 1: Diagnosing and Resolving Poor Multi-GPU Scaling Efficiency

Symptoms: Training speed does not improve linearly when adding more GPUs; low GPU utilization; GPUs are intermittently idle.

Methodology:

- Profile Communication vs. Computation: Use profiling tools like PyTorch Profiler or NVIDIA Nsight Systems to measure the time spent in All-Reduce communication versus actual computation. If communication time dominates, it indicates a bottleneck [43].

- Check for I/O Waits: Monitor your processes. If they frequently show a state of waiting for I/O (e.g.,

Dstate intop), your data loading pipeline is likely the issue. Consider usingFUSEor other optimized data loaders [46]. - Validate Hardware Utilization: Use

nvidia-smito observe GPU utilization (GPU-Util). Consistently low or fluctuating utilization suggests a systemic bottleneck like slow data loading or synchronization waits [46].

Resolution:

- For Communication Bottlenecks:

- For I/O Bottlenecks:

- Use Multiple Data Workers: Pre-load data asynchronously in multiple subprocesses.

- Switch to Memory-Mapped Files: For large datasets, use formats that allow for efficient random access.

Guide 2: Troubleshooting Cluster Networking and Synchronization Errors

Symptoms: Training jobs hang during startup or synchronization; NCCL or RCCL errors about connectivity; low bandwidth in multi-node setups.

Methodology:

- Test Basic RDMA Connectivity: Use the

ib_write_bwbenchmark to test the raw RDMA bandwidth between nodes. Failure or low performance here points to a network hardware or driver issue [45]. - Verify Interface Configuration: Ensure the network interface (e.g.,

eth0) specified viaNCCL_SOCKET_IFNAMEexists and is consistent across all nodes in the cluster. Mismatches can cause hangs [45]. - Check System Settings:

- Firewall: Disable firewalls that may be blocking necessary ports for inter-node communication [45].

- NUMA Balancing: Disable NUMA auto-balancing as it can cause performance variability. Run

sudo sh -c 'echo 0 > /proc/sys/kernel/numa_balancing'and confirm the value is set to 0 [45]. - MTU Size: For RoCE, ensure the Maximum Transmission Unit (MTU) is set to a jumbo frame size (e.g., 9000) on both hosts and switches to support large message sizes without fragmentation [45].

Resolution:

- Resource Limits: Check and increase system resource limits (

ulimit) for the number of open files and processes [45]. - MPI Parameters: When using MPI, explicitly exclude non-physical network interfaces (like Docker or loopback) using flags:

-mca oob_tcp_if_exclude=docker,lo -mca btl_tcp_if_exclude=docker,lo[45]. - GPU Subsystem Health: For persistent low performance, use vendor-specific tools (like AMD's AFHGC) to check the health of the GPU subsystem and PCIe links [45].

Experimental Protocol: Comparing Update Strategies

Objective: To empirically evaluate the impact of synchronous and asynchronous data parallelism on the training speed (throughput), convergence stability, and final accuracy of a benchmark model.

Materials & Setup:

- Hardware: A cluster of 4 nodes, each equipped with 8 GPUs (e.g., NVIDIA A100 or AMD MI250).

- Software Stack: PyTorch 2.1 with DistributedDataParallel (synchronous) and PyTorch with a parameter server (asynchronous); CUDA/ROCm; NCCL/RCCL.

- Benchmark Model & Dataset: Use a standard model like ResNet-50 and the ImageNet dataset to ensure reproducibility.

Procedure:

- Baseline Measurement: Train the model on a single GPU to establish a baseline for time-per-epoch and final validation accuracy.

- Synchronous Training: Configure the cluster for synchronous training using

DistributedDataParallel. Train for 50 epochs, recording the time-per-epoch and validation accuracy at the end of each epoch. - Asynchronous Training: Configure the cluster for asynchronous training using a parameter server architecture. Use the same number of workers. Train for 50 epochs, recording the same metrics.

- Data Analysis: For both experiments, calculate:

- Training Throughput: Average images processed per second over all epochs.

- Convergence Speed: Number of epochs required to reach a target validation accuracy (e.g., 70%).

- Final Accuracy: The highest validation accuracy achieved.

Expected Outcome: Synchronous training is expected to show more stable convergence and likely a higher final accuracy, while asynchronous training may show higher throughput but potentially unstable convergence and lower accuracy, especially in a homogeneous cluster [42].

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Multi-GPU Research |

|---|---|

| PyTorch DistributedDataParallel (DDP) | The primary API for synchronous data parallel training on multiple GPUs and multiple nodes. It uses an All-Reduce algorithm for efficient gradient synchronization [42] [43]. |

| NCCL (NVIDIA) / RCCL (AMD) | Optimized communication libraries for GPU-to-GPU transfers. They are the backbone for fast collective operations like All-Reduce in frameworks like DDP [45]. |

| Horovod | A distributed training framework that uses All-Reduce for synchronous training and is compatible with multiple ML libraries (TensorFlow, PyTorch) [42]. |

| Parameter Server Framework | A software architecture required for implementing asynchronous data parallelism, where a central server holds model parameters and workers push/pull updates [42] [47]. |

| DeepSpeed / FSDP | Advanced frameworks (by Microsoft & Meta) that support data and model parallelism, with features like the Zero Redundancy Optimizer (ZeRO) to shard optimizer states and models for massive model training [42]. |

Workflow Diagrams

Synchronous Data Parallelism (All-Reduce)

Asynchronous Data Parallelism (Parameter Server)

# FAQs on Parallelism Strategies and Implementation

What is the fundamental difference between Data, Model, and Pipeline Parallelism?

Data Parallelism involves replicating the entire model on each GPU and distributing different portions of the data batch across them. Model Parallelism splits the model itself across multiple GPUs, with each device hosting a different part of the model. Pipeline Parallelism is a more efficient form of model parallelism that splits the model into stages and uses micro-batching to keep all devices busy, reducing idle time [48].

When should I choose Pipeline Parallelism over other methods?

Pipeline Parallelism is the recommended strategy when your model is too large to fit onto a single GPU [49] [48]. It is particularly effective for homogeneous architectures like Transformers, where layers are often of similar size, making it easier to create balanced stages [50].

How do I handle the "pipeline bubbles" or idle time in Pipeline Parallelism?

Pipeline bubbles, periods where GPUs are waiting for data from other stages, can be mitigated by using micro-batching [49] [48]. Breaking a single batch into smaller micro-batches allows for overlapping computation and communication between stages, improving overall GPU utilization [49] [50].

What are the common signs of an unbalanced model partition in Pipeline Parallelism?

The primary sign is poor GPU utilization, where one or more stages consistently take longer to compute than others. This creates a bottleneck, forcing all other stages to wait. For optimal performance, the time to execute each stage should be as balanced as possible [50].

Can I combine different parallelism strategies?

Yes, combining strategies is essential for training extremely large models. A common and powerful combination is 3D parallelism, which integrates Data, Pipeline, and Tensor Parallelism. This approach simultaneously optimizes for both memory and compute efficiency, making it scalable to models with trillions of parameters [48].

# Troubleshooting Guides

# Problem: GPU Out-of-Memory (OOM) Errors When Scaling Model Size

Description The program fails with a CUDA out-of-memory error when attempting to train a large model, even with a small batch size.

Diagnosis Steps

- Verify Model Size: Calculate the total memory required to store model parameters, gradients, and optimizer states. For example, the Adam optimizer requires enough memory to store two states for each parameter [50].

- Check Activation Memory: Use profiling tools (e.g.,

torch.profiler) to determine if the memory is being consumed by intermediate activations, especially during the backward pass. - Identify Largest Layer: Check if a single layer of the model (e.g., a large linear layer) is too large to fit on one GPU [48].

Solutions

- Implement Pipeline Parallelism: Split the model sequentially across multiple GPUs. This is the primary solution for models that don't fit on a single device [49] [48].

- Enable Activation Checkpointing: Also known as gradient checkpointing, this technique trades extra computation for memory by selectively recomputing activations during the backward pass instead of storing them all [49].

- Use Tensor Parallelism: If a single model layer is too large, use tensor parallelism to split the large tensor operations (e.g., within a linear layer) across multiple GPUs [48].

- Leverage ZeRO Data Parallelism: If using data parallelism, implement the ZeRO optimizer (e.g., via DeepSpeed) to partition optimizer states, gradients, and parameters across processes instead of replicating them on every GPU [48].

# Problem: Poor Multi-GPU Utilization and Slow Training

Description Training runs without errors, but the overall throughput is low. GPU usage metrics show significant periods of idle time.

Diagnosis Steps

- Profile Communication Overhead: Use distributed training profilers to check if the bottleneck is in the synchronization of gradients (in Data Parallelism) or the passing of activations (in Pipeline/Tensor Parallelism).

- Check for Pipeline Bubbles: In Pipeline Parallelism, visualize the execution timeline to identify the ramp-up and ramp-down phases where GPU utilization is not at 100% [50].

- Identify Load Imbalance: In Pipeline Parallelism, check the time taken by each stage. A significant variance indicates an unbalanced model partition [50].

Solutions

- Optimize Micro-Batch Size: For Pipeline Parallelism, experiment with the micro-batch size. A larger number of micro-batches can help fill the pipeline and reduce bubbles, but must be balanced with per-micro-batch overhead [49] [48].

- Balance the Pipeline: Re-partition your model so that the computational load is approximately equal across all stages. Tools like DeepSpeed can help automate this [49] [50].

- Switch to DistributedDataParallel (DDP): If using the older

DataParallelin PyTorch, switch toDistributedDataParallel, which is more efficient and reduces communication overhead [48]. - Use a Faster Interconnect: Ensure you are using a high-speed backend like NCCL for GPU-to-GPU communication. Direct GPU links (NVLink/NVSwitch) can drastically improve performance [49] [48].

# Problem: Instability or Divergence in Molecular Dynamics Simulations

Description When using a machine-learned force field (MLFF) for molecular dynamics (MD) simulations, the simulation becomes unstable, exhibiting runaway energy increases or non-physical behavior.