Optimizing Shared Memory on GPUs for Accelerated Ecological Algorithm Performance

This article provides a comprehensive guide for researchers and scientists on optimizing shared memory usage in GPUs to significantly accelerate ecological and evolutionary computations.

Optimizing Shared Memory on GPUs for Accelerated Ecological Algorithm Performance

Abstract

This article provides a comprehensive guide for researchers and scientists on optimizing shared memory usage in GPUs to significantly accelerate ecological and evolutionary computations. It covers foundational concepts of GPU architecture and its relevance to ecological modeling, practical methodologies for implementing shared memory strategies, solutions for common performance bottlenecks, and frameworks for validating and comparing computational gains. By addressing critical challenges like thread divergence and memory hierarchy management, this resource enables professionals to tackle more complex models, from large-scale population simulations to high-resolution environmental analyses, thereby pushing the boundaries of computational ecology and biomedical research.

GPU Architecture and Ecological Computing: Foundations for Speed

Why GPUs? The Parallel Processing Power for Ecological Simulations

Ecological simulations are fundamental to understanding complex environmental processes, from climate change and sea-ice dynamics to storm surge forecasting and ecosystem management. The computational demand for high-resolution, cross-scale models has traditionally required massive, expensive central processing unit (CPU) clusters. The emergence of graphics processing units (GPUs) as a powerful parallel computing architecture has fundamentally shifted this paradigm, offering researchers the ability to run larger, more complex simulations in a fraction of the time and with greater energy efficiency [1]. The core advantage of GPUs lies in their massively parallel architecture. Unlike CPUs, which consist of a few cores optimized for sequential tasks, GPUs contain thousands of smaller, efficient cores designed to execute many mathematical operations simultaneously [1]. This architecture is exceptionally well-suited to the data-parallel nature of many ecological algorithms, where identical operations—such as calculating physical forces on a grid or updating the state of millions of agents—can be performed concurrently across vast datasets [2]. Harnessing this power is particularly critical for processing the high-resolution spatial and temporal data essential for accurate ecological forecasting and understanding the effects of climate change [2] [3].

Framing this within the context of shared memory optimization for GPU ecological algorithms reveals a critical pathway to maximizing performance. Many ecological models operate on structured or unstructured grids where data locality is key. Efficient use of a GPU's shared memory—a small, fast, software-managed cache accessible by groups of threads—can dramatically reduce access latency to frequently used data, such as the state of an agent and its immediate neighbors in an agent-based model, or the values of adjacent cells in a fluid dynamics simulation. Optimizing for this memory hierarchy is essential for overcoming bandwidth limitations and achieving high throughput, turning memory-bound algorithms into computationally bound ones, as demonstrated in recent algorithmic advances for linear algebra operations on GPUs [4].

GPU Applications in Ecological Simulation

The application of GPU acceleration has led to breakthroughs in several key areas of ecological and environmental science. The following table summarizes the performance gains observed in various real-world simulations.

Table 1: Performance Gains in GPU-Accelerated Ecological Simulations

| Application Domain | Specific Model/Code | Reported Speedup | Key Notes |

|---|---|---|---|

| Sea-Ice Dynamics | neXtSIM-DG (Kokkos implementation) | 6x faster than OpenMP-based CPU code [2] | Maintains CPU competitiveness; single precision offers further acceleration [2] |

| Ocean Modeling & Storm Surges | SCHISM (CUDA Fortran) | 35.13x speedup for large-scale case (2.56M grid points) [3] | GPU advantages magnified with higher-resolution calculations [3] |

| Computational Fluid Dynamics | CPFD Barracuda Virtual Reactor | 400x faster and 140x more energy efficient [5] | Demonstrates massive energy savings alongside performance gains [5] |

| General Scientific Computing | Various GPU-accelerated libraries (NVIDIA data) | 10x to 180x speedups over CPUs [5] | Across data processing, computer vision, and other technical workloads [5] |

Sea-Ice and Climate Modeling

The cryosphere is a crucial component of the Earth's climate system, and accurately simulating sea-ice dynamics is essential for improving climate projections [2]. The neXtSIM-DG model, a novel sea-ice code, has been successfully ported to GPUs. Researchers evaluated multiple programming frameworks, including CUDA, SYCL, and Kokkos, for parallelizing its finite-element-based dynamical core [2]. The implementation using Kokkos demonstrated a six-fold speedup on the GPU compared to an OpenMP-based CPU code, while maintaining competitive performance when run on the CPU itself [2]. This "performance portability" is a significant advantage for research groups using heterogeneous computing environments. Furthermore, the study explored the use of mixed-precision arithmetic, finding that switching to single precision could further accelerate sea-ice codes without degrading results, a finding consistent with trends in numerical weather prediction [2].

Coastal Oceanography and Storm Surge Forecasting

High-resolution forecasting of storm surges is critical for mitigating coastal disasters, but operational deployment at local forecasting stations is often hampered by limited hardware [3]. A recent study developed GPU–SCHISM, a GPU-accelerated version of the unstructured-grid SCHISM ocean model using CUDA Fortran [3]. The research demonstrated that a single GPU could achieve a speedup ratio of 35.13 for a large-scale experiment with 2.56 million grid points [3]. This highlights the potential for "lightweight" operational deployment, where powerful simulations can be run on individual workstations or servers rather than large CPU clusters. The study also identified that GPUs are particularly effective for higher-resolution calculations, while CPUs retain advantages for smaller-scale problems [3]. The Jacobi iterative solver, a computational hotspot, was accelerated by 3.06 times on a single GPU [3].

Experimental Protocols for GPU Implementation

Protocol 1: Porting a Model Dynamical Core to GPU

Objective: To accelerate the computational core of an ecological model (e.g., a momentum or advection solver) by leveraging GPU parallelism and shared memory.

Methodology:

- Performance Profiling: Begin by profiling the existing CPU code to identify computationally intensive kernels (hotspots). In the SCHISM model, for example, the Jacobi solver was identified as a key target [3].

- Framework Selection: Choose a GPU programming model based on performance and usability requirements.

- CUDA: Offers the best performance and low-level control for NVIDIA hardware but is vendor-specific [2].

- Kokkos: A robust framework for performance portability across different GPU vendors (NVIDIA, AMD, Intel) and CPUs [2].

- SYCL/PyTorch: Emerging alternatives; SYCL's toolchain was noted as less mature, while PyTorch is not yet ideal for traditional C++ model code [2].

- Algorithm Refactoring: Redesign the identified kernel for massive parallelism. This involves:

- Data Decomposition: Partition the computational domain (e.g., a grid) so that each GPU thread works on a small portion of the data.

- Shared Memory Utilization: For data with locality, stage tiles of the global data into the GPU's fast shared memory. Threads within a block can collaboratively load and reuse this data, drastically reducing global memory accesses.

- Precision Adjustment: Evaluate the numerical stability of the kernel in single (

float) or mixed precision. As demonstrated with neXtSIM-DG and weather models, this can provide significant additional speedups with acceptable accuracy loss [2].

- Kernel Implementation & Optimization: Write the GPU kernel code, focusing on:

- Optimizing memory access patterns for coalescence.

- Minimizing thread divergence.

- Tuning launch parameters (block and grid sizes) for the specific problem and hardware.

- Validation & Benchmarking: Ensure the GPU results match the validated CPU results within an acceptable tolerance. Benchmark the performance against the original CPU implementation using metrics like speedup and energy efficiency [5].

Protocol 2: Multi-GPU Scaling for Large-Scale Simulation

Objective: To distribute a simulation that is too large for a single GPU's memory across multiple GPUs, managing inter-GPU communication efficiently.

Methodology:

- Domain Decomposition: Split the model's spatial domain into subdomains, each assigned to a different GPU. For unstructured grids, use graph partitioning libraries to minimize boundary cells.

- Communicator Initialization: Use a communication library like NCCL (NVIDIA Collective Communication Library) to initialize a communicator across the GPUs participating in the simulation [6]. Each GPU is assigned a unique rank.

- Halo Exchange Implementation: Implement a "halo exchange" or "boundary update" routine. Before computing on its subdomain, each GPU must receive the boundary data (the "halo") from its neighboring subdomains.

- Overlap Communication and Computation: To hide communication latency, use asynchronous memory copies and NCCL calls. This allows the GPU to begin computation on the inner part of the subdomain while the boundary data is still being transferred.

- Scaling Analysis: Run strong and weak scaling tests to evaluate the efficiency of the multi-GPU implementation. Note that, as seen in SCHISM, increasing the number of GPUs can reduce workload per GPU and expose communication overhead, which can hinder further acceleration [3].

The Scientist's Toolkit: Essential GPU Research Reagents

Table 2: Key Hardware and Software Solutions for GPU-Accelerated Ecological Research

| Tool / Reagent | Category | Function & Application in Ecological Simulation |

|---|---|---|

| NVIDIA H200/A100 GPU | Hardware | Data-center GPUs with high memory bandwidth; strong for double-precision (FP64) codes like some climate models [7]. |

| AMD Instinct MI300X | Hardware | High-performance AI accelerator, a competitive alternative in the GPU market [8]. |

| NVIDIA Kokkos | Software Framework | A C++ library for performance-portable programming, allowing a single codebase to run efficiently on multiple GPU architectures [2]. |

| NVIDIA NCCL | Software Library | Optimizes communication primitives (e.g., AllReduce) across multi-GPU/multi-node systems, crucial for scaling simulations [6]. |

| NVIDIA CUDA Fortran | Software Framework | Enables direct GPU programming in Fortran, commonly used by legacy scientific models like SCHISM [3]. |

| NVIDIA Warp | Software Library | A high-performance framework for writing differentiable physics simulations, useful for AI-physics hybrid models [5]. |

| Julia GPUArrays.jl | Software Framework | Allows hardware-agnostic GPU programming in the Julia language, facilitating cross-vendor implementation [4]. |

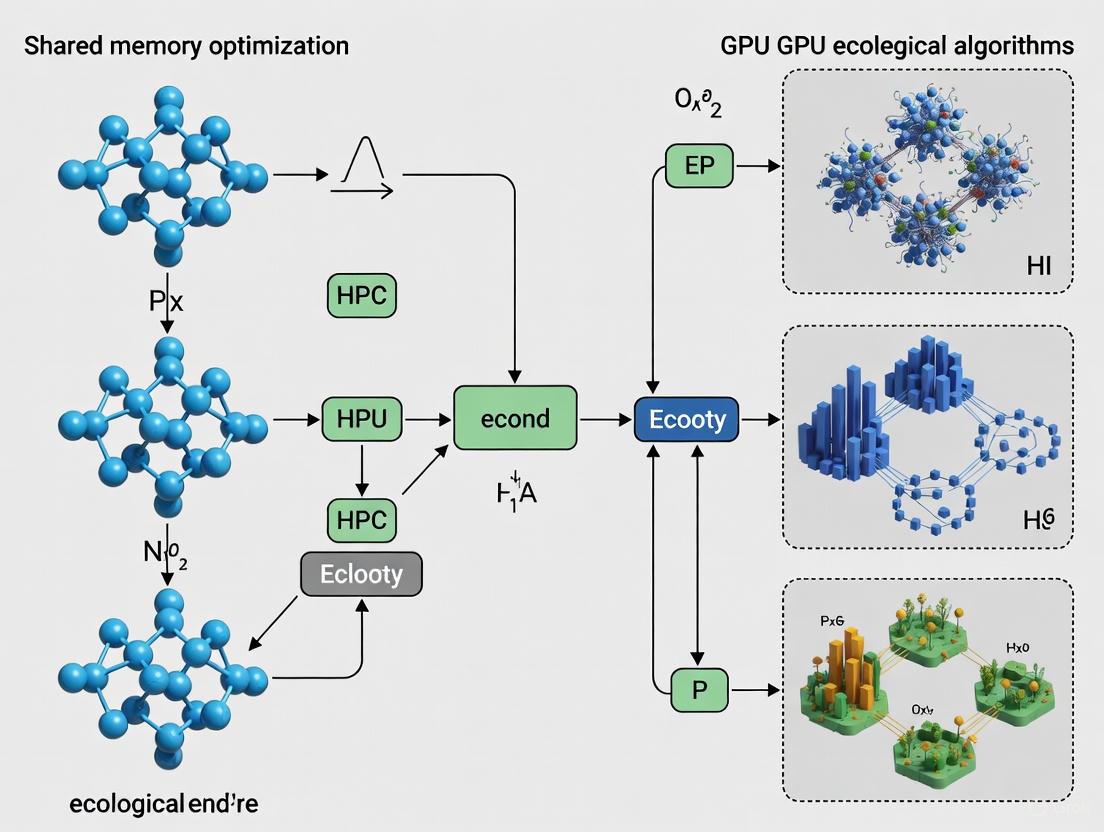

Visualizing GPU Parallelization and Memory Optimization

The diagram below illustrates the logical workflow and key optimization strategies for implementing an ecological simulation on a GPU, with a focus on memory hierarchy.

Diagram: GPU Implementation and Memory Optimization Workflow. This chart outlines the key steps in porting an ecological simulation to a GPU, highlighting the critical pathway of optimizing data placement across the GPU's memory hierarchy to maximize performance.

The integration of GPU acceleration into ecological simulations represents a fundamental shift in computational environmental science. By leveraging the massive parallelism, superior memory bandwidth, and evolving software ecosystems of GPUs, researchers can overcome previous barriers of resolution, scale, and time-to-solution. The experimental protocols and toolkit outlined here provide a foundation for developing and optimizing these high-performance applications. As GPU hardware continues to advance, with increasing focus on energy efficiency and specialized cores for AI and scientific computing, their role in enabling timely, high-fidelity insights into our planet's complex ecological systems will only become more pronounced.

The pursuit of computational efficiency in ecological algorithms research extends beyond raw performance; it is fundamentally an exercise in energy-aware computing. The graphics processing unit (GPU) stands as a powerful engine for parallel processing, yet its performance and power consumption are profoundly dictated by how algorithms manage data across the complex GPU memory hierarchy. Missteps in this management can lead to significant energy waste, conflicting with the ecological principles underpinning the research. This application note demystifies the three most critical tiers of GPU memory for algorithm optimization: registers, shared memory, and global memory. We provide a quantitative framework and practical experimental protocols to guide researchers in drug development and scientific computing toward implementing memory-aware algorithms that maximize computational throughput while minimizing environmental impact.

Modern GPU architectures feature a multi-tiered memory system designed to balance speed, capacity, and scope. Each tier possesses distinct characteristics that make it suitable for specific roles within a parallel algorithm. The effective use of this hierarchy is the cornerstone of high-performance, energy-efficient GPU computing. The following table provides a detailed comparison of the three primary memory types.

Table 1: Characteristics of Key GPU Memory Types

| Characteristic | Registers | Shared Memory | Global Memory |

|---|---|---|---|

| Location & Hardware | On-chip, within each SM's cores [9] | On-chip, physically resides in the same memory as L1 cache [9] | Off-chip DRAM (e.g., HBM2, HBM3) [10] |

| Scope | Private to a single thread [10] | Shared across all threads in a thread block [11] | Accessible by all threads in a GPU (entire grid) [11] |

| Size (Per SM/GPU) | ~65,536 x 32-bit (256 KB) per SM [10] [12] | 48-228 KB, configurable with L1 [10] (e.g., H100: 256 KB combined [12]) | Tens of GB per GPU (e.g., H100: 96 GB [12]) |

| Approx. Latency | 0 cycles (immediate) [10] | ~20-30 cycles [10] | ~400-600 cycles [10] |

| Primary Use Case | Storing thread-local variables and intermediate results [11] | Inter-thread communication, data reuse, and cache-blocking (tiling) [11] | Primary storage for input/output data and large datasets [11] |

The logical and physical relationships between these memory types, as well as their placement relative to the GPU's compute units, are visualized in the following architecture diagram.

Memory-Specific Optimization Strategies and Protocols

Register File Optimization

Registers offer the fastest possible memory access but are a scarce resource managed by the compiler. Excessive register usage can limit the number of concurrent threads on a Streaming Multiprocessor (SM), a state known as low occupancy, which reduces the GPU's ability to hide memory latency.

Key Optimization Strategies:

- Limit Register Pressure: Analyze compiler output to monitor register usage per thread. Restructuring code to reduce the scope of variables or using compiler flags to limit register count can increase occupancy [10].

- Avoid Register Spilling: When a kernel requires more registers than are available, the compiler "spills" excess variables to local memory (which resides in global memory), incurring a severe performance penalty [13] [9]. The

--ptxas-options=-vcompiler flag reveals spill statistics. - Exploit Shared Memory Spilling (CUDA 13.0+): For register-heavy kernels, CUDA 13.0 introduced an opt-in feature to spill registers into shared memory instead of local memory. This can dramatically reduce spill latency and L2 pressure. It is enabled by placing

asm volatile (".pragma \"enable_smem_spilling\";");inside the kernel [13].

Experimental Protocol 1: Profiling Register Pressure and Spilling

- Baseline Compilation: Compile your kernel using

nvcc -Xptxas -v -o kernel.o kernel.cu. Note the reported number of registers used per thread and the bytes of spill stores and loads. - Occupancy Analysis: Use the NVIDIA Nsight Compute profiler to determine the achieved occupancy of your kernel. Correlate low occupancy with high register usage.

- Mitigation: Refactor the kernel to break large loops or reduce the lifetime of temporary variables. If necessary, use the

__launch_bounds__qualifier or the-maxrregcountcompiler flag to explicitly limit register usage. - Advanced Mitigation (CUDA 13.0+): For kernels showing significant spilling, enable shared memory register spilling using the pragma. Re-compile and profile, comparing the spill metrics and kernel duration against the baseline.

Shared Memory Optimization

Shared memory enables cooperation and communication within a thread block. Its effective use is critical for algorithms with data reuse, such as stencils, matrix multiplication, and spectral methods common in ecological simulations.

Key Optimization Strategies:

- Tiling (Blocking): Decompose large datasets into smaller tiles that fit into shared memory. Threads collaboratively load a tile from slow global memory into fast shared memory, perform computations on it, and then write results back [10].

- Bank Conflict Avoidance: Shared memory is divided into 32 banks (matching warp size). When multiple threads in a warp access different addresses within the same bank, the accesses are serialized, causing conflicts. This is avoided by padding arrays (e.g., using

TILE_DIM+1in matrix transpose) to ensure concurrent accesses map to different banks [10]. - L1/Shared Memory Configuration: The on-chip memory can be partitioned to favor either L1 cache (48KB L1 / 16KB Shared) or shared memory (16KB L1 / 48KB Shared) using

cudaFuncSetCacheConfig(). For shared memory-heavy kernels, preferring shared memory allocation is beneficial [10].

Experimental Protocol 2: Benchmarking Shared Memory Tiling

- Setup: Implement two versions of a compute kernel (e.g., for a spatial correlation filter in landscape ecology): a naive version reading directly from global memory and an optimized version using shared memory tiling.

- Kernel Execution: Execute both kernels on a representative dataset (e.g., a large raster map). Use Nsight Compute to profile key metrics:

dram__bytes_read.sum,dram__bytes_write.sum, andl1tex__data_bank_conflicts.sum. - Performance Analysis: Compare the execution time and memory bandwidth utilization of the two kernels. The tiled version should show a significant reduction in global memory transactions. Check the profiler output for bank conflicts and, if present, apply padding to the shared memory array to eliminate them.

- Energy Assessment: Using system power sensors or profiler metrics, compare the energy consumption (Joules) of both kernel runs. The more efficient tiled kernel should demonstrate lower energy use per computation.

Global Memory Optimization

Global memory is the highest-latency memory tier, making its access patterns the most critical for overall performance. Optimizations here yield the greatest gains in reducing wasteful data movement and its associated energy cost.

Key Optimization Strategies:

- Memory Coalescing: This is the most crucial optimization. Threads in a warp should access contiguous, aligned segments of global memory. This allows the GPU to combine multiple memory accesses into a single transaction [10].

- Utilizing Vector Loads/Stores: Using data types like

float2orfloat4can help maximize the bandwidth of each memory transaction [10]. - L2 Cache Persistence: Newer architectures (Ampere/Hopper) allow data to be marked as "persistent" in the L2 cache, which is beneficial for data reused across multiple kernels [10].

Experimental Protocol 3: Analyzing Global Memory Access Patterns

- Profiling: Run your kernel under Nsight Compute and collect metrics related to global memory efficiency, specifically

l1tex__t_bytes_pipe_lsu_mem_global_op_ld.sumandsmsp__sass_average_data_bytes_per_sector_mem_global_op_ld.pct. A low average data bytes per sector percentage indicates uncoalesced access. - Pattern Identification: Based on profiler data, identify the type of inefficient access in your kernel:

- Strided Access: Threads access memory with a constant stride >1.

- Misaligned Access: The starting address of a memory transaction is not aligned to a cache line.

- Random Access: Threads access memory via non-linear indices.

- Remediation: Restructure the kernel or data layout in device memory to achieve coalesced access. This often involves transposing data structures in memory or rearranging thread indices to ensure consecutive threads access consecutive addresses. For random access patterns, consider restructuring the algorithm or using on-chip memory (shared, L1) as a programmer-managed cache.

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Software and Profiling Tools for GPU Memory Optimization

| Tool / "Reagent" | Function & Purpose | Key Commands / Metrics |

|---|---|---|

| NVIDIA Nsight Compute | A kernel-level profiler for detailed performance analysis. Essential for identifying memory bottlenecks [13]. | ncu --metrics l1tex__t_bytes_pipe_lsu_mem_global_op_ld.sum,smsp__sass_average_data_bytes_per_sector_mem_global_op_ld.pct,l1tex__data_bank_conflicts.sum ./app |

| NVIDIA Nsight Systems | A system-wide performance analysis tool for visualizing application activity over time, including kernel execution and memory transfers. | nsys profile --stats=true ./application |

nvcc Compiler |

The NVIDIA CUDA compiler. Provides vital initial information on register usage and spilling [13]. | nvcc -Xptxas -v -o kernel.o kernel.cu |

| CUDA Device Query | A runtime API to query GPU device properties, such as shared memory per SM and global memory size. | cudaGetDeviceProperties() |

| Kernel Abstractions.jl (Julia) | A package for writing hardware-agnostic GPU kernels, enabling performance portability across NVIDIA, AMD, and Intel GPUs [4]. | using KernelAbstractions; @kernel function my_kernel(...) |

Understanding and optimizing for the GPU memory hierarchy is not merely a performance exercise—it is a fundamental requirement for sustainable high-performance computing. By meticulously applying the protocols and strategies outlined in this note, researchers can transform their ecological algorithms from power-hungry workloads into models of computational efficiency. The reduction in wasteful data movement directly translates to lower energy consumption and a smaller carbon footprint for large-scale simulations in drug discovery and climate modeling. Mastering registers, shared memory, and global memory is the key to unlocking both the speed and the ecological integrity of GPU-accelerated research.

In the field of GPU-accelerated ecological algorithms research, efficient data movement is as critical as computational power. While modern GPUs offer immense parallel processing capabilities, their performance in large-scale simulations is often gated not by floating-point operations, but by the bandwidth limitations of the Peripheral Component Interconnect Express (PCIe) interface that connects them to host systems. This bottleneck is particularly acute in memory-intensive applications such as population genetics modeling, landscape ecology simulations, and drug discovery workflows, where massive datasets must shuttle between CPU and GPU memory spaces. The computational demands of these domains are exemplified by applications like molecular dynamics, where the evaluation of a single drug candidate can require screening large ligand databases against target proteins across extensive surface areas [14].

This application note analyzes the PCIe bandwidth bottleneck within the context of shared memory optimization for GPU-based ecological algorithms. We examine the progression of PCIe standards, provide methodologies for quantifying data transfer overhead, and present optimization strategies to mitigate this critical performance constraint.

PCIe Generations: Bandwidth Evolution

The PCIe standard has evolved significantly to address growing bandwidth demands, with each generation doubling the data transfer rate of its predecessor. This progression is crucial for data-intensive research, as it directly impacts how quickly data can move between host memory and GPU accelerators.

Table 1: PCIe Generation Bandwidth Specifications

| PCIe Version | Release Year | Raw Bit Rate (GT/s) | x16 Bi-directional Throughput (GB/s) | Encoding Scheme |

|---|---|---|---|---|

| PCIe 3.0 | 2010 | 8 | 31.5 | 128b/130b |

| PCIe 4.0 | 2017 | 16 | 63.0 | 128b/130b |

| PCIe 5.0 | 2019 | 32 | 126.0 | 128b/130b |

| PCIe 6.0 | 2022 | 64 | 242.0 | PAM4 |

| PCIe 7.0 | 2025 | 128 | 484.0 | PAM4 [15] |

PCIe 7.0, officially released in June 2025, represents the latest standard with a raw bit rate of 128 GT/s, delivering up to 512 GB/s of bi-directional throughput in a x16 configuration [15]. It maintains backward compatibility with previous generations while utilizing PAM4 (Pulse Amplitude Modulation with 4 levels) signaling, which encodes two bits per symbol to achieve higher data density [15]. This substantial bandwidth increase is particularly relevant for ecological algorithms involving large spatial datasets or complex molecular simulations, where data transfer requirements can easily exceed hundreds of gigabytes.

Experimental Protocols for Bandwidth Analysis

PCIe Bandwidth Benchmarking

Objective: Quantify effective data transfer rates between host and device memory for different PCIe generations.

Materials:

- Test system with target PCIe generation

- NVIDIA GPU with CUDA support

- CUDA Toolkit with sample code utilities

- Precision timing mechanism (e.g.,

std::chrono::high_resolution_clock)

Methodology:

- Buffer Allocation: Allocate pinned host memory (

cudaMallocHost) and device memory (cudaMalloc) for data transfer tests. - Transfer Timing:

- Execute multiple host-to-device (H2D) and device-to-host (D2H) transfers with varying payload sizes (1 MB to 1 GB).

- Record transfer duration from kernel launch to synchronization.

- Calculate bandwidth as:

Bandwidth (GB/s) = (Data Size × Number of Transfers) / Transfer Time.

- Statistical Analysis: Perform multiple iterations (minimum 10) to account for system variability and calculate mean bandwidth with standard deviation.

Collective Communication Overhead Assessment

Objective: Measure the impact of PCIe bandwidth on multi-GPU collective operations.

Materials:

- Multi-GPU system with NCCL support

- NVIDIA Collective Communication Library (NCCL)

- Application profiling tools (e.g., NVIDIA Nsight Systems)

Methodology:

- Topology Setup: Configure ring and tree topologies using NCCL's communication channels [6].

- Protocol Selection: Test NCCL's communication protocols (Simple, LL, LL128) with different message sizes [6].

- Performance Profiling:

- Execute collective operations (AllReduce, Broadcast) with varying payloads.

- Profile time spent in data transfer versus computation.

- Calculate efficiency metric:

Transfer Time / Total Operation Time.

Signaling Pathways and Workflows

The data pathway between CPU and GPU involves multiple stages where bottlenecks can occur. Understanding this pathway is essential for identifying optimization opportunities.

Diagram 1: PCIe Data Transfer Pathway

The diagram illustrates the data pathway from CPU memory to GPU execution, highlighting potential bottleneck points where optimization efforts should be focused. The pathway shows how data moves through the PCIe bus via DMA transfers, with protocol encoding and lane utilization representing critical optimization points.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for PCIe Bandwidth Optimization Research

| Tool/Category | Specific Examples | Function in Research |

|---|---|---|

| Communication Libraries | NVIDIA NCCL [6] | Optimized multi-GPU collective operations |

| Profiling Tools | NVIDIA Nsight Systems, nvprof |

Pinpoint data transfer bottlenecks |

| Memory Management | CUDA Pinned Memory, Unified Memory | Reduce transfer overhead |

| Benchmarking Suites | SHOC, Rodinia | Standardized performance measurement |

| Hardware Interfaces | PCIe 7.0, NVLink, CXL | High-speed interconnect technologies |

Optimization Strategies for Ecological Algorithms

Data Management Techniques

Effective data management can significantly reduce PCIe bandwidth pressure in ecological algorithms:

- Data Layout Optimization: Transform array-of-structures to structure-of-arrays to enable coalesced memory access patterns.

- Transfer Aggregation: Batch small data transfers into larger contiguous operations to amortize protocol overhead.

- Asynchronous Overlap: Overlap data transfers with computation using CUDA streams and events.

- Memory Hierarchy Awareness: Implement cache-aware algorithms to maximize data reuse once transferred.

Multi-GPU Communication Strategies

For ecological simulations distributed across multiple GPUs, NCCL provides several communication protocols with distinct performance characteristics:

- Simple Protocol: Optimized for large messages with high bandwidth utilization [6].

- LL (Low Latency) Protocol: Designed for small messages, sacrificing bandwidth (25-50% of peak) for reduced latency [6].

- LL128 Protocol: Balances latency and bandwidth, achieving approximately 95% of peak bandwidth for medium-sized messages [6].

The selection of communication topology (ring vs. tree) further influences bandwidth utilization, with tree structures often providing better performance for reduction operations in large-scale simulations [6].

Future Directions

The impending arrival of PCIe 7.0 and development of PCIe 8.0 (targeting 2028) promise continued bandwidth improvements, with PCIe 8.0 potentially doubling again to 256 GT/s per lane [16]. These advances will particularly benefit ecological algorithms with unprecedented scale and complexity, such as continent-scale ecosystem modeling and atomic-resolution environmental simulations. However, realizing these benefits requires algorithmic co-design that minimizes data movement through techniques such as computation migration, near-memory processing, and innovative data reduction strategies.

Common Ecological Algorithms and Their Computational Demands (e.g., Agent-Based Models, Population Simulations)

Computational ecology leverages mathematical modeling and computer simulations to understand the complex dynamics of ecological systems. This approach has become indispensable, as large-scale replicated field experiments are often logistically infeasible, costly, or ethically problematic [17]. The field employs a range of algorithms, from individual-based mechanistic models to statistical approaches, to study systems from population dynamics to entire ecosystems [18] [17]. The core challenge lies in balancing model complexity and biological realism with computational tractability, especially as ecological problems often lack mathematically unambiguous descriptions and generate noisy field data that complicates validation [17].

Ecological research utilizes a spectrum of computational algorithms, each with distinct strengths, limitations, and application domains. The table below summarizes the primary algorithm classes used in modern computational ecology.

Table 1: Common Algorithm Classes in Computational Ecology

| Algorithm Class | Key Characteristics | Primary Ecological Applications | Inherent Computational Demand |

|---|---|---|---|

| Agent-Based Models (ABMs) | Bottom-up, stochastic simulations of autonomous agents; captures emergence and complex interactions [19] [20]. | Modeling terrestrial ecological dynamics, ecosystem management, behavior recognition, and conservation planning [18] [19]. | Very high (scales with agent population size, complexity of behavioral rules, and interaction topology) [21]. |

| Equation-Based Predictive Models | Top-down systems of differential equations (ODEs/PDEs) describing population or ecosystem states [17]. | Food-web modeling, nutrient cycling, and climate change impacts on ecosystems [17]. | High for large, non-linear systems (scales with number of equations and numerical solver complexity) [17]. |

| Network & Food-Web Models | Represents species as nodes and trophic interactions as edges in a graph; often uses ODE systems [17]. | Understanding community structure, stability, and the impact of species loss in ecological networks [17]. | High (scales with the number of species/nodes and the complexity of their interaction functions) [17]. |

| Community Detection Algorithms (e.g., LPA) | Graph-based clustering to identify groups of nodes with dense internal connections [22]. | Analyzing structure in ecological networks, such as mutualistic or trophic interactions [22]. | Moderate to High (scales with graph size and density; efficient parallel implementations exist) [22]. |

Computational Demands and Performance Characteristics

The execution of ecological algorithms consumes significant computational resources, primarily measured in processing time, memory usage, and energy.

Quantitative Demands of Key Algorithms

Table 2: Computational Demand Characteristics of Ecological Algorithms

| Algorithm / Model Type | Processing Time Scale | Memory & Storage Demand | Key Performance Factors | ||||

|---|---|---|---|---|---|---|---|

| Large-Scale ABM | Hours to days for a single simulation run [21]. | Can be massive, requiring efficient data structures to track agent states and histories [21]. | Number of agents, agent rule complexity, interaction radius, simulation duration, required Monte Carlo repetitions [19] [21]. | ||||

| Complex Food-Web ODEs | Minutes to hours for a single parameter-set simulation [17]. | Scales with the number of species (equations); memory for numerical solvers is typically manageable. | Number of equations (species/compartments), non-linearity of interactions, stiffness of the system, solver type [17]. | ||||

| GPU-Accelerated LPA | Seconds to minutes for large graphs (billions of edges) [22]. | High; e.g., a naive GPU implementation required ~64GB for a 4-billion-edge graph [22]. | Graph size ( | V | , | E | ), graph structure, convergence threshold, GPU memory bandwidth and compute [22]. |

Energy and Environmental Impact

The computational intensity of these algorithms translates directly into energy consumption and environmental footprint.

- Operational Energy: Training a single large AI model, such as OpenAI's GPT-3, was estimated to consume 1,287 megawatt-hours of electricity, generating about 552 tons of carbon dioxide [23]. While not all ecological models are this large, the trend toward more complex AI-driven models in ecology points to increasing energy use [18].

- Hardware Embodied Carbon: The production of computing hardware itself has a carbon footprint. For instance, the embodied carbon footprint of a single NVIDIA H100 GPU is approximately 164 kg CO₂e [24].

- Inference Costs: For deployed models, the "inference" phase (using the trained model) can dominate long-term energy use. A single query to a model like ChatGPT can consume about five times more electricity than a standard web search [23].

Optimization Strategies for Shared Memory Systems

Optimizing ecological algorithms for GPUs and other shared-memory architectures is crucial for performance and feasibility. The following workflow outlines a structured approach to this optimization process.

Key Optimization Techniques

- Memory Access Optimization: GPU performance is often limited by memory bandwidth, not compute. Techniques include ensuring coalesced memory access and leveraging shared memory (user-managed cache) for frequently accessed data to reduce global memory latency [22].

- Advanced Data Structures: Replacing high-overhead data structures is critical. For example, in Label Propagation Algorithms (LPA), replacing per-thread hash tables with Misra-Gries (MG) sketches reduced memory usage by 98x on a multicore CPU and 44x on a GPU, with only a minor performance penalty [22].

- Efficient Parallelization Paradigms: Designing algorithms to exploit massive parallelism is key. This involves using warp-level primitives for efficient communication between threads in a warp and implementing fine-grained parallelism where thousands of lightweight threads (e.g., agents, graph nodes) execute concurrently [22].

- Algorithmic Selection and Calibration: Choosing the right model complexity is a form of optimization. Using a conceptual model instead of a highly detailed predictive model can reduce computational demands when the goal is qualitative insight rather than quantitative prediction [17]. For ABMs, robust frameworks like krABMaga facilitate efficient model exploration and calibration over parallel and cloud architectures [21].

Experimental Protocols for Algorithm Implementation and Benchmarking

Protocol: Implementing a GPU-Accelerated Agent-Based Model

This protocol outlines the steps for developing a high-performance, reliable ABM simulation using modern frameworks.

Table 3: Research Reagent Solutions for ABM Development

| Reagent / Tool | Function / Purpose | Exemplary Options |

|---|---|---|

| ABM Simulation Framework | Provides the core engine for scheduling, agent management, and environment simulation. | krABMaga (Rust) [21], MASON (Java) [21], NetLogo [20]. |

| High-Performance Programming Language | Offers control over memory and performance, crucial for compute-intensive models. | Rust (for reliability and speed) [21], C++, CUDA/C++ (for GPU kernels) [22]. |

| Model Exploration & Optimization Library | Automates parameter calibration, sensitivity analysis, and Monte Carlo runs. | krABMaga's model exploration module [21], Custom scripts with HPC job schedulers. |

| Visualization Tool | Enables real-time or post-hoc analysis of emergent spatial and temporal patterns. | krABMaga's native/web visualization [21], NetLogo's GUI, Custom plotting in Python/R. |

Procedure:

- Model Formulation: Define the agent attributes (e.g., size, age, energy), behavioral rules (e.g., movement, reproduction, interaction), and the environment (e.g., a 2D grid or network) [19] [20].

- Framework Selection and Setup: Choose a framework aligned with performance needs. For efficiency and reliability in long-running simulations, initialize a project using the krABMaga framework in Rust [21].

- Agent and Environment Implementation: Code the agent behaviors as discrete rules. In krABMaga, this involves implementing the

Agenttrait'sstepfunction. The environment (e.g., a grid) is implemented using theFieldtype [21]. - GPU Offloading Analysis: Identify computationally intensive, parallelizable segments (e.g., force calculations in movement, sensory updates). Isolate these kernels for GPU implementation using a language like CUDA C++ [22].

- Simulation Execution and Monitoring: Run the model with multiple Monte Carlo repetitions. Use krABMaga's dynamic monitoring system to track key metrics (e.g., population size, spatial clustering) in real-time [21].

- Validation and Analysis: Compare the model's emergent outcomes with real-world data or theoretical expectations. Use the framework's built-in tools for data collection and statistical analysis [21].

Protocol: Benchmarking a Memory-Efficient Graph Algorithm on GPU

This protocol details the process of benchmarking and optimizing a graph-based ecological algorithm, such as LPA for community detection in networks.

Procedure:

- Baseline Implementation: Establish a performance baseline using a standard, non-optimized GPU implementation of the algorithm (e.g., ν-LPA, which uses per-vertex hash tables) [22].

- Profiling: Use profiling tools (e.g., NVIDIA Nsight Compute) to analyze the baseline. Identify performance limiters, which for graph algorithms are typically divergent warps and non-coalesced global memory access patterns [22].

- Memory Optimization Implementation: Integrate memory-efficient data structures. For LPA, replace the hash tables with a weighted Misra-Gries (MG) sketch (e.g., with 8 slots). This structure tracks frequent labels with minimal memory [22].

- Kernel Optimization: Employ warp-level primitives (e.g.,

__shfl_sync()in CUDA) for fast sketch updates within a warp. For high-degree vertices, use multiple sketches and merge them to avoid write contention [22]. - Benchmarking and Validation: Execute the optimized algorithm (e.g., νMG8-LPA) and the baseline on the same GPU hardware.

- Metrics: Measure execution time, memory consumption (using

nvidia-smi), and the quality of the result (e.g., modularity for community detection) [22]. - Validation: Ensure the output of the optimized algorithm remains ecologically valid, even if slightly different from the baseline. A small quality drop (e.g., 2.9-4.7% for νMG8-LPA) may be acceptable for massive memory savings [22].

- Metrics: Measure execution time, memory consumption (using

The architecture of a high-performance ABM system, from core logic to distributed execution, is visualized below.

In scientific computing, particularly in data-intensive fields like ecological modeling and drug development, the quality of research code directly dictates the scale and reliability of the scientific questions that can be investigated. Inefficient code acts as a silent constraint, artificially limiting the complexity of models, the size of datasets, and the pace of discovery. This case study examines how specific code inefficiencies can cripple research progress within the context of developing GPU-accelerated ecological algorithms. We synthesize empirical data on code quality issues, present structured protocols for their identification and remediation, and provide a practical toolkit for researchers to enhance the performance and scope of their computational work.

Quantitative Evidence of Code Inefficiencies in Research

The first step in addressing inefficiency is to understand its prevalence and nature. A recent large-scale empirical study manually analyzed 492 code snippets generated by state-of-the-art Large Language Models (LLMs) like CodeLlama and DeepSeek-Coder, which are increasingly used in research prototyping. The study established a comprehensive taxonomy of inefficiencies, finding that a significant portion of code suffers from multiple, co-occurring issues [25]. The table below summarizes the identified categories and their frequency.

Table 1: Taxonomy and Prevalence of Inefficiencies in LLM-Generated Code (based on [25])

| Inefficiency Category | Description | Example Subcategories | Prevalence & Impact |

|---|---|---|---|

| General Logic | Issues affecting functional correctness and algorithmic soundness. | Incorrect logic, requirement misinterpretation, poor corner case handling [25]. | Most frequent category; directly leads to incorrect research results and invalid conclusions. |

| Performance | Suboptimal implementations causing slow execution and high resource use. | Redundancies, unnecessary computations, memory inefficiencies [25]. | Highly frequent; limits experiment scale (e.g., smaller datasets, fewer parameters) and increases computational costs. |

| Maintainability | Code that is difficult to understand, modify, or extend. | Poor structure, lack of modularity [25]. | Often co-occurs with logic and performance issues; hinders collaboration and long-term project sustainability. |

| Readability | Code that is hard for researchers (or their future selves) to decipher. | Unclear naming, poor documentation [25]. | Increases the time required to debug, verify, and build upon existing work. |

| Errors | Presence of bugs and security vulnerabilities. | Runtime errors, import errors [25]. | Causes runtime failures, crashes, and potential data corruption. |

Furthermore, evidence from high-performance computing demonstrates the dramatic performance gap between inefficient and optimized code. In one striking example, an AI-generated optimization effort for a fundamental Conv2D operation on a GPU achieved a final performance of 179.9% of the baseline PyTorch implementation [26]. The optimization trajectory, summarized below, shows how successive fixes to memory access and parallelism transformed a kernel that was initially only 20.1% of the baseline performance into one that was significantly faster [26]. This highlights the immense performance potential that is often untapped in research code.

Table 2: Optimization Trajectory for a Conv2D Kernel on GPU (Adapted from [26])

| Optimization Round | Kernel Performance (% of Baseline) | Key Optimization Idea |

|---|---|---|

| 0 | 20.1% | Initial, naive CUDA implementation. |

| 2 | 41.0% | Algorithmic change: Conversion to FP16 Tensor-Core GEMM. |

| 6 | 103.6% | Memory optimization: Precomputation and caching of indices in shared memory. |

| 9 | 105.1% | Latency hiding: Software pipelining to overlap data loading with computation. |

| 13 | 179.9% | Advanced memory access: Vectorized shared memory writes using half2 data type. |

Experimental Protocols for Identifying and Remediating Code Inefficiencies

To systematically address code inefficiencies, researchers should adopt structured experimental protocols. The following methodologies are adapted from software engineering best practices and recent research.

Protocol 1: Code Quality Assessment and Profiling

This protocol is designed to establish a baseline of code health and identify performance bottlenecks.

- Static Analysis: Use automated tools (e.g., linters, static analyzers) to calculate quantitative metrics [27].

- Dynamic Profiling: Execute the code on a representative dataset and model.

- GPU Profiling: Use tools like

nvprofor Nsight Systems to collect hardware-level metrics. Critical metrics include:- GPU Utilization: Low utilization often indicates a memory-bound kernel rather than a compute-bound one [4].

- Memory Throughput: Compare achieved bandwidth with the hardware's peak bandwidth.

- Divergent Branching: Identify warp execution paths that diverge, causing serialization.

- GPU Profiling: Use tools like

- Correctness Verification: Implement a testing harness that compares the output of the optimized code against a known-good, albeit slower, reference implementation for a range of inputs, ensuring numerical correctness within a defined tolerance (e.g., 1e-5) [26].

Protocol 2: Structured Optimization of GPU Kernels

This protocol outlines a systematic, parallel search strategy for performance optimization, moving beyond sequential, local edits.

- Hypothesis Generation: For a given kernel, prompt an LLM to reason in natural language and generate a diverse set of optimization ideas. Condition these ideas on past attempts to avoid local minima. Example ideas include: "Convert the convolution to an FP16 Tensor-Core GEMM," or "Implement double-buffering to overlap memory transfers with computation" [26].

- Parallel Implementation and Evaluation:

- Branching: For each optimization idea, generate multiple code variants or parameterizations.

- Massive Parallel Evaluation: Leverage GPU resources to compile and benchmark all variants in parallel. Retain the highest-performing, correct kernels [26].

- Iterative Refinement: Use the best-performing kernels from the previous round to seed the next round of hypothesis generation. Continue for a set number of rounds (e.g., 5-10), allowing the optimization search to explore radically different algorithmic approaches [26].

Diagram 1: Experimental protocol for code optimization.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key software "reagents" and their functions in the development and optimization of high-performance research code.

Table 3: Essential Tools and Libraries for GPU-Accelerated Research Code

| Item Name | Function / Purpose | Application Notes |

|---|---|---|

| NVIDIA Nsight Systems | System-level performance profiler. | Identifies the most significant bottlenecks (kernel execution, memory transfers) in the entire application [26]. |

| CUDA/CUTLASS | Low-level and template-based GPU programming libraries. | Essential for writing custom, high-performance kernels. CUTLASS provides reusable modular components for linear algebra [26]. |

| Static Analysis Tools (e.g., SonarQube, Pylint) | Automated code quality scanners. | Quantifies technical debt and maintainability issues like complexity and duplication, providing an initial assessment "triage" [27]. |

| Julia Language with KernelAbstractions.jl | High-performance, high-productivity programming language. | Enables writing hardware- and precision-agnostic code that runs efficiently across NVIDIA, AMD, and Intel GPUs from a single codebase [4]. |

| Version Control (e.g., Git) | Change tracking and collaboration platform. | Critical for reproducibility, collaboration, and managing experimental code branches. A foundational practice for reliable research [28]. |

Discussion and Recommended Practices

Transitioning from a "prototyping mode," where the sole focus is on achieving a functional result, to a "development mode," which emphasizes code quality, is critical for sustainable research [28]. The empirical data shows that inefficiencies are not merely stylistic concerns but fundamental limitations that co-occur and compound, affecting correctness, speed, and long-term viability [25].

Based on the evidence presented, the following practices are recommended for research teams:

- Adopt Sensible Standards: Establish a standardized directory structure and configuration for the programming environment to ensure consistency and reproducibility across the team [28].

- Profile Before Optimizing: Use profiling tools to identify the true performance bottleneck (e.g., memory bandwidth vs. compute) before investing effort in optimization, as this dictates the most effective strategy [4] [26].

- Embrace Parallel Exploration for Optimization: Move beyond sequential code editing. Use structured, parallel search strategies to explore a wider range of optimization ideas and escape local performance minima [26].

- Write "Good" Code: Prioritize readability, modularity, and documentation. This reduces the time required for others (and your future self) to understand, debug, and extend the code, thereby accelerating the research cycle [28].

- Track Technical Debt: Quantify technical debt and code quality metrics to make informed decisions about when refactoring is necessary to support future research goals, rather than allowing code to deteriorate until it becomes unusable [27].

Diagram 2: Impact of inefficient code on research scope.

Implementing Shared Memory Strategies in Ecological Models

In the context of GPU-accelerated ecological algorithms research, efficient memory management is paramount for achieving high performance. Shared memory is a critical, on-chip memory resource in CUDA-capable GPUs, allocated per thread block and accessible by all threads within that block. Its significance stems from a latency that is roughly 100 times lower than uncached global memory, provided accesses are structured to avoid bank conflicts [29]. This makes it an indispensable tool for facilitating global memory coalescing and enabling high-performance cooperative parallel algorithms.

Memory coalescing describes the efficient grouping of memory accesses from threads in a warp into a minimal number of transactions. When consecutive threads in a warp access consecutive memory locations, their requests can be combined, or coalesced, into a single, wide memory transaction. Conversely, non-coalesced access occurs when threads access disparate memory locations, resulting in multiple, smaller transactions and significantly underutilizing the GPU's available memory bandwidth [29] [30]. For researchers developing large-scale ecological models, mastering these techniques is essential for exploiting the full computational capacity of modern heterogeneous systems and improving overall device utilization [31].

Core Principles and Data-Driven Analysis

Fundamental Concepts

Understanding the hardware organization of shared memory is the first step in designing optimized algorithms. Shared memory is divided into equally sized modules called banks. Each bank can be accessed simultaneously, allowing a memory request that spans n distinct banks to be serviced in parallel, delivering an effective bandwidth multiplied by n. However, if two or more threads within a warp request addresses that map to the same memory bank, these accesses are serialized, drastically reducing effective bandwidth. This occurrence is known as a bank conflict [29].

A key programming primitive for correct shared memory usage is __syncthreads(). This barrier synchronization function ensures that all threads in a thread block have reached a specific point in the execution before any thread is allowed to proceed. It is crucial for preventing race conditions when threads write data to shared memory that other threads within the same block will subsequently read [29].

Quantitative Performance Impact

The following table summarizes the performance outcomes of various shared memory optimization strategies as demonstrated in empirical studies:

Table 1: Performance Impact of Shared Memory Optimizations

| Optimization Technique | Performance Metric | Improvement | Context / Application |

|---|---|---|---|

| Shared Memory Register Spilling [13] | Kernel Duration | 7.76% reduction | Register-heavy kernel |

| Elapsed Cycles | 7.8% reduction | ||

| SM Active Cycles | 9.03% reduction | ||

| Coalesced Global Access via Shared Memory [29] | Effective Memory Bandwidth | ~100x lower latency vs. uncached global memory | General memory-bound kernels |

| Tiling for Coalescing & Bank Conflict Avoidance [31] | Execution Time & Device Utilization | Up to 29.6% and 5.4% improvement, respectively | Matrix Multiplication & Scientific Proxy Apps |

A data-driven analysis methodology reveals how optimizations interact with hardware resources. By treating hardware performance counters as features in a machine learning model, researchers can calculate a Resource Significance Measure (RSM). This metric quantifies the importance of specific hardware resources (e.g., L1 cache, shared memory banks) in explaining a target performance metric like execution time or utilization. This approach moves beyond simple runtime measurement to understand the why behind performance gains [31].

Experimental Protocols for Coalescing

Protocol 1: Basic Coalescing via Shared Memory Tiling

This protocol details the canonical method for achieving coalesced memory access in operations like matrix transposition or matrix multiplication, commonly encountered in ecological simulation data.

A. Research Reagent Solutions

Table 2: Essential Components for Coalescing Experiments

| Component | Function |

|---|---|

| CUDA-Enabled GPU (Compute Capability 3.0+) | Hardware platform for kernel execution and profiling. |

| NVIDIA Nsight Compute | Primary profiler for analyzing kernel performance, memory transactions, and bank conflicts. |

__shared__ Variable Specifier |

Used to statically or dynamically declare memory within a kernel. |

__syncthreads() |

Barrier synchronization primitive to ensure correct data sharing between threads. |

| PTXAS (Parallel Thread Execution Assembler) | The CUDA assembler used at compile time; the -v flag provides verbose output on register and shared memory usage. |

B. Step-by-Step Procedure

- Kernel Design and Declaration: Define the kernel function. Within the kernel, declare a shared memory array (

__shared__) with a size sufficient to hold a tile of the input data. The tile is typically a 2D square of sizeTILE_WIDTH x TILE_WIDTH. - Thread Indexing: Calculate the global input and output indices for each thread based on

blockIdx,blockDim, andthreadIdx. - Coalesced Data Load: Have each thread load a single element from global memory into the shared memory tile. The indexing during the load should be arranged so that consecutive threads in a warp access consecutive global memory addresses. This is the coalesced read from global memory.

- Synchronize: Execute

__syncthreads()to ensure all data has been loaded into shared memory by all threads in the block before any thread begins reading from it. - Uncoalesced but Fast Shared Memory Access: Allow threads to read the required data from the shared memory tile. This access may be non-sequential (e.g., for a transpose, reading across rows becomes reading down columns) but will be fast due to shared memory's low latency.

- Coalesced Data Write: Have each thread write its result to global memory. The output indices should be arranged so that consecutive threads in a warp write to consecutive memory addresses, ensuring a coalesced write to global memory.

The logical workflow of this protocol is visualized below.

C. Example Code Snippet: Tiled Matrix Multiplication

In this example, the accesses to A and B in global memory are coalesced because consecutive threads (with consecutive tx values) access consecutive memory locations. The subsequent access pattern within As and Bs may cause bank conflicts, but these are far less costly than non-coalesced global access [30].

Protocol 2: Diagnosing and Eliminating Bank Conflicts

This protocol focuses on identifying and resolving performance bottlenecks arising from shared memory bank conflicts.

A. Profiling and Diagnosis

- Use NVIDIA Nsight Compute to profile the kernel.

- Analyze the profiler output for metrics related to shared memory bank conflicts. A high number of conflicts indicates serialized access.

- Identify the section of code and the specific memory access pattern causing the conflicts.

B. Step-by-Step Resolution: The Consecutive Powers Example Consider a problem where each thread i in a warp must compute 32 consecutive powers of an input value x_i, storing all results in shared memory. A naive approach where each thread writes all powers of its x_i consecutively results in a stride of 32 elements between adjacent threads' first writes, causing severe bank conflicts [30].

Solution via Access Pattern Transformation:

- Restructure the Output Data Layout: Instead of storing all powers for a single x_i contiguously, store the same power for all x_i values contiguously.

- Coalesced Write: In the first step, all 32 threads compute the square of their respective x_i value. They then write this value,

x_i^2, to shared memory such that thread i writes to locationi. This results in consecutive threads writing to consecutive memory locations, avoiding bank conflicts. - Repeat: This pattern is repeated for each subsequent power (

x_i^3,x_i^4, etc.), ensuring that for each power calculation, all writes are bank-conflict-free.

The difference between the problematic and optimized memory layout is illustrated below.

Advanced Optimization Technique

Shared Memory Register Spilling

A recent advanced optimization introduced in CUDA 13.0 is shared memory register spilling. When a kernel uses more variables than the hardware registers available, the compiler "spills" the excess to local memory (in global memory), which is slow. This new feature allows developers to opt-in to spilling these registers into much faster shared memory instead [13].

Adoption Protocol:

- Identification: Compile your kernel with

nvcc -Xptxas -v. The output showing non-zero "spill stores" and "spill loads" indicates register spilling. - Implementation: To enable the optimization, add the pragma

asm volatile (".pragma \"enable_smem_spilling\";");at the very beginning of the kernel function. - Verification: Recompile with the same flags. The output should now show "0 bytes spill stores" and "0 bytes spill loads," and an increase in "bytes smem" used [13].

Table 3: Impact of Enabling Shared Memory Register Spilling

| Performance Metric | Without Optimization | With Optimization | Improvement |

|---|---|---|---|

| Spill Stores/Loads | 176 bytes | 0 bytes | 100% reduction |

| Kernel Duration | 8.35 µs | 7.71 µs | 7.76% reduction |

| Elapsed Cycles | 12477 | 11503 | 7.8% reduction |

| Primary Memory Used | Local Memory (Global DRAM) | Shared Memory (On-Chip) | Latency reduction |

For researchers in GPU ecological algorithms, mastering shared memory coalescing is not a minor optimization but a fundamental design principle. The step-by-step protocols outlined—ranging from basic tiling and bank conflict resolution to advanced techniques like shared memory register spilling—provide a structured methodology for significantly enhancing application performance and hardware utilization. By systematically applying these techniques and using robust profiling and data-driven analysis to guide optimization efforts, scientists can ensure their complex models run efficiently, unlocking the full potential of GPU-accelerated research.

In the context of GPU-accelerated ecological algorithms research, efficient memory management is paramount for achieving high performance. Modern GPU architectures feature a complex memory hierarchy that includes both hardware-managed caches (L1/L2) and programmer-managed shared memory. While hardware caches operate automatically, shared memory provides researchers with direct control over data placement, enabling strategic optimization for specific computational patterns common in ecological modeling and drug discovery pipelines.

The key distinction lies in management: L1/L2 cache behavior is hardware-controlled and largely transparent to the programmer, whereas shared memory is explicitly user-managed [32]. This manual control allows for predictable, low-latency access to frequently used data such as species interaction matrices, molecular structure fragments, or spatial environmental data, making it particularly valuable for algorithms with regular, predictable data access patterns.

Comparative Analysis of GPU Memory Types

Table 1: Characteristics of GPU Memory Types Relevant to Ecological Algorithms

| Memory Type | Scope | Management | Access Latency | Ideal Use Case in Ecological Research |

|---|---|---|---|---|

| Global Memory | Device-wide | Implicit by programmer | High | Storing large ecological datasets, genome sequences, molecular libraries |

| L1/L2 Cache | SM-specific/Device-wide | Hardware-automated | Medium | Automatic caching of recently accessed environmental variables, drug compounds |

| Shared Memory | Thread Block | Programmer-managed | Very Low | Frequently accessed data tiles: distance matrices, local particle interactions, molecular docking templates |

| Registers | Individual Thread | Compiler-assisted | Lowest | Loop counters, temporary variables in simulation calculations |

Table 2: Performance Considerations for Shared Memory vs. Cache

| Factor | Shared Memory | Hardware Cache |

|---|---|---|

| Control Level | Explicit programmer control | Hardware-controlled, transparent |

| Access Predictability | Deterministic | Dependent on access patterns |

| Best For | Regular, predictable data reuse | Irregular access patterns with locality |

| Optimization Method | Data tiling, bank conflict avoidance | Access pattern restructuring |

| Latency | Lower (direct programmer management) [32] | Higher (automatic management) |

Experimental Protocols for Shared Memory Optimization

Protocol: Assessing Shared Memory Applicability in Ecological Algorithms

Purpose: To determine whether a specific ecological algorithm component would benefit from shared memory caching.

Materials:

- NVIDIA GPU with CUDA capability 3.0+

- NVIDIA Nsight Systems performance analysis tool [33]

- CUDA application with target algorithm

Methodology:

- Baseline Profiling: Execute the target algorithm using only global memory and hardware caching

Data Access Pattern Analysis:

- Identify data structures with high access frequency within thread blocks

- Map data reuse patterns within algorithmic phases (e.g., spatial proximity calculations in landscape models)

- Quantify the scope of data reuse: within thread, thread block, or device-wide

Shared Memory Suitability Evaluation:

- Calculate the ratio of memory operations to compute operations

- Assess whether data access patterns are regular and predictable

- Verify that frequently accessed data fits within shared memory constraints

Interpretation: Algorithms demonstrating high memory-compute ratios with regular, block-local data reuse patterns are strong candidates for shared memory optimization.

Protocol: Implementing Shared Memory Caching for Population Dynamics Simulation

Purpose: To optimize a predator-prey population dynamics model using shared memory as programmer-managed cache.

Materials:

- CUDA C++ development environment

- Population grid data (species counts, environmental factors)

- GPU with at least 64KB shared memory per SM

Methodology:

- Data Tiling Strategy:

- Divide the spatial grid into tiles matching thread block dimensions

- Design shared memory buffers for current population states and environmental variables

- Include halo regions for boundary conditions between tiles

Implementation Workflow:

- Load tile data from global memory to shared memory

- Synchronize threads to ensure complete tile loading

- Perform population calculations using shared memory data

- Write results back to global memory

Performance Validation:

- Compare execution time with baseline global memory implementation

- Verify numerical equivalence with reference implementation

- Measure speedup factor and reduced global memory traffic

Figure 1: Shared Memory Workflow for Ecological Simulation

Technical Implementation Framework

Signaling Pathway for Memory Operations in GPU Ecological Algorithms

Figure 2: GPU Memory Hierarchy Signaling Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for GPU Memory Optimization Research

| Tool/Reagent | Function | Application Context |

|---|---|---|

| NVIDIA Nsight Systems | System-wide performance analysis | Identifying memory bottlenecks in ecological simulation pipelines [33] |

| CUDA C++ Template Libraries (CuTe) | Layout and tensor abstractions | Optimizing data organization for molecular structure analysis [33] |

| nvidia-smi Command Line Tool | GPU monitoring and management | Real-time profiling of memory usage during drug screening algorithms [33] |

| Triton Python Framework | High-level GPU programming | Rapid prototyping of shared memory optimizations for research prototypes [33] |

| FP16/FP8 Precision Models | Reduced precision computation | Accelerating large-scale ecological models with minimal accuracy loss [33] |

Advanced Optimization Strategies for Research Applications

Memory Access Pattern Optimization for Molecular Docking

Background: Molecular docking simulations in drug discovery involve calculating interaction energies between ligand and receptor molecules, requiring frequent access to atomic coordinate data and force field parameters.

Shared Memory Strategy:

- Cache ligand atom coordinates in shared memory for simultaneous access by multiple threads

- Store frequently accessed force field parameters (van der Waals radii, charge distributions) in shared memory

- Implement tiling approaches for large receptor structures that exceed shared memory capacity

Implementation Protocol:

- Data Structure Design:

- Partition ligand and receptor data into memory tiles

- Design shared memory buffers with padding to avoid bank conflicts

- Implement layered caching for hierarchical molecular data

- Performance Metrics:

- Measure cache hit rates using NVIDIA profiler counters

- Quantify reduction in global memory transactions

- Calculate speedup in conformational sampling rate

Multi-Instance GPU Applications in Ecological Research

Modern GPU architectures like NVIDIA A100 and H100 support Multi-Instance GPU (MIG) technology, which enables hardware-level partitioning of GPUs into smaller, isolated instances [34]. This capability is particularly valuable for research environments running multiple simultaneous experiments.

Research Deployment Strategy:

- Allocate dedicated GPU instances for different algorithm components

- Use shared memory caching within each instance for localized data reuse

- Enable secure collaboration by isolating sensitive research data between instances

Validation and Performance Assessment Framework

Benchmarking Protocol for Shared Memory Optimizations

Purpose: To quantitatively evaluate the effectiveness of shared memory caching implementations in ecological algorithms.

Experimental Setup:

- Control: Algorithm implementation using only global memory and hardware caches

- Experimental: Algorithm implementation with strategic shared memory caching

- Fixed Variables: GPU hardware, input dataset, CUDA version, compiler optimization flags

Performance Metrics:

- Execution time reduction percentage

- Global memory traffic reduction

- GPU utilization efficiency [34]

- Computational throughput (operations/second)

Statistical Validation:

- Multiple runs to account for system variability

- Statistical significance testing (t-tests for performance differences)

- Sensitivity analysis for parameter variations

The strategic use of shared memory as a programmer-managed cache represents a critical optimization technique for GPU-accelerated ecological algorithms and drug discovery research. By providing deterministic low-latency access to frequently used data structures, researchers can achieve significant performance improvements in computational models, molecular simulations, and large-scale ecological analyses. The experimental protocols and implementation frameworks presented here provide a foundation for researchers to systematically apply these techniques across diverse computational biology applications, ultimately accelerating the pace of scientific discovery in ecological and pharmaceutical domains.

Ecological simulations, such as individual-based models (IBMs) and ecosystem process modeling, are increasingly leveraging GPU parallelism to manage vast computational workloads. A significant performance bottleneck in these simulations is thread divergence, which occurs when threads within the same warp follow different execution paths due to data-dependent conditional logic. In ecological contexts, this often manifests as branching code based on traits like organism behavior, species type, or environmental responses [35].

This application note details a methodology for refactoring such branching ecological logic into state machine architectures, thereby minimizing thread divergence and enhancing computational efficiency on GPU hardware. This approach is framed within a broader research thesis focused on shared memory optimization for GPU-accelerated ecological algorithms.

Quantitative Analysis of Thread Divergence Impact

The performance penalty from thread divergence stems from the serialization of execution paths within a warp. The following table summarizes key performance metrics associated with divergent code, based on profiling common ecological simulation kernels.

Table 1: Performance Impact of Thread Divergence in a Model Ecological Kernel

| Metric | Divergent Branching Code | State Machine (Optimized) | Improvement |

|---|---|---|---|

| Kernel Duration (μs) | 8.35 | 7.71 | 7.76% [13] |

| Elapsed Cycles | 12,477 | 11,503 | 7.8% [13] |

| SM Active Cycles | 218.43 | 198.71 | 9.03% [13] |

| Spill Loads/Stores (bytes) | 176 | 0 | 100% [13] |

| Shared Memory Usage | 0 bytes | 46,080 bytes | N/A [13] |

Furthermore, inefficient memory access patterns can compound performance issues. Shared memory bank conflicts, which occur when multiple threads access the same memory bank, can significantly reduce throughput.

Table 2: Runtime Improvement from Resolving Shared Memory Bank Conflicts

| Benchmark Suite | Kernels with Conflicts | Runtime Improvement |

|---|---|---|

| RODINIA & CUDA SDK | 13 | 5% - 35% [36] |

Methodology: State Machine Transformation for Ecological Logic

This protocol outlines the process of transforming a divergent, condition-driven ecological kernel into a non-divergent kernel using a state-based paradigm.

Problem Identification: Divergent Kernel Example

Consider a kernel where individual organisms (threads) calculate their movement. The original, divergent code might look like this:

In this paradigm, threads within a warp processing different species types are forced to serialize, leading to severe thread divergence [35].

State Machine Design and Implementation

The solution involves replacing branching logic with a state machine where the behavior is selected via a function pointer or a lookup table. This ensures all threads in a warp execute the same instructions, albeit with different parameters.

Experimental Protocol: State Machine Refactoring

- State Enumeration: Identify and enumerate all possible behavioral states from the conditional branches. In the example, states are

FORAGING,PREDATOR_AVOIDANCE, andDEFAULT. - Function Table Creation: Create a lookup table (in constant or shared memory) that maps states to the corresponding function pointers or function indices.

- Kernel Refactoring: Rewrite the kernel to use the organism's state to index into the function table and execute the uniform instruction.

The optimized, non-divergent kernel utilizes a function table:

This approach eliminates the divergent if-else chain. While the mathematical operations for each thread may differ, the control flow is uniform across the warp, allowing for full parallel execution [35]. The compiler may also use predication to avoid divergence in simpler cases, but explicit state machines offer more predictable and robust performance gains for complex logic [35].

Experimental Protocol for Performance Validation

To validate the performance improvements from the state machine transformation, researchers should employ the following profiling protocol.

Baseline Profiling:

- Compile the original divergent kernel, ensuring it is built for the correct target architecture (e.g.,

-arch=sm_90). - Use

-Xptxas -vto output compiler information and note any register usage and spill loads/stores. - Execute the kernel on a representative dataset and profile using NVIDIA Nsight Compute to establish baseline metrics for duration, elapsed cycles, and warp execution efficiency.

- Compile the original divergent kernel, ensuring it is built for the correct target architecture (e.g.,

Optimized Kernel Profiling:

- Implement the state machine-based kernel as described in Section 3.2.

- Compile with the same flags and note the changes in register usage and spilling.

- Profile the optimized kernel using the same dataset and Nsight Compute metrics.

Advanced Optimization (CUDA 13.0+):

- For kernels exhibiting high register pressure and spilling, enable the shared memory register spilling feature introduced in CUDA 13.0.

- Insert the pragma

asm volatile (".pragma \"enable_smem_spilling\"")at the beginning of the kernel. - Re-compile and profile. The compiler will now prioritize using on-chip shared memory for register spills, which reduces access latency and L2 pressure, leading to further performance gains [13].

Data Analysis:

- Compare the key performance metrics (as listed in Table 1) between the baseline and optimized kernels.

- Quantify the reduction in thread divergence and the improvement in overall kernel execution time.

Visualizing the Architectural Transformation

The following diagram illustrates the logical transformation from a branching code structure to a unified state machine architecture, highlighting the change from serialized to parallel execution within a warp.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Profiling Tools for GPU Ecological Algorithm Research

| Tool / Resource | Function | Use Case in Ecological Research |

|---|---|---|

| CUDA Toolkit (v13.0+) | Provides compiler (nvcc), libraries, and development headers for GPU programming. | Essential for building and optimizing ecological simulation kernels. Enables shared memory register spilling optimization [13]. |