Optimizing Sampling in Clinical Trials and Ecological Studies: Strategies for Plot Size and Number to Maximize Precision and Efficiency

This article provides a comprehensive guide for researchers and drug development professionals on optimizing sampling strategies, focusing on the critical balance between plot size and number.

Optimizing Sampling in Clinical Trials and Ecological Studies: Strategies for Plot Size and Number to Maximize Precision and Efficiency

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on optimizing sampling strategies, focusing on the critical balance between plot size and number. Drawing parallels from established methodologies in forest inventory and recent innovations in oncology trials, we explore foundational principles, advanced methodological applications, and practical optimization frameworks. The content covers the limitations of traditional approaches like the 3+3 design, introduces modern alternatives such as adaptive and model-assisted designs, and provides a comparative analysis of validation techniques. This synthesis aims to equip scientists with the knowledge to design more efficient, accurate, and cost-effective studies in both clinical and ecological research contexts.

The Foundational Principles of Sampling: Why Plot Size and Number Matter

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between accuracy and precision in sampling?

Answer: In sampling, accuracy and precision are distinct but equally important statistical indicators.

- Accuracy refers to how close a sample-based estimate is to the true population value. It is often expressed as a percentage, with 100% indicating a perfect match with the true value [1].

- Precision relates to the variability of the sample estimates. It is measured in reverse by the coefficient of variation (CV) and determines the confidence limits of the estimates. An estimate can be precise (with low variability and narrow confidence limits) but inaccurate if the samples are not representative of the population [1].

FAQ 2: How does sample size affect the accuracy and precision of my estimates?

Answer: Sample size has a direct, though non-linear, relationship with accuracy and precision [1].

- Accuracy Growth: Increasing the sample size improves accuracy, but the gains are most significant when moving from very small samples. The rate of growth slows considerably beyond a certain point, meaning that doubling a very large sample size yields negligible accuracy improvements [1].

- Precision Improvement: Larger sample sizes generally lead to higher precision (lower variability). However, like accuracy, the most substantial improvements in precision occur in the small-sample region [1]. The table below shows how the required sample size changes for different accuracy levels in two common research contexts [1]:

Table: Safe Sample Sizes for Different Accuracy Levels

| Accuracy Level | Sample Size for Boat Activities | Sample Size for Landing Surveys |

|---|---|---|

| 90% | 96 | 32 |

| 92% | 150 | 50 |

| 95% | 384 | 128 |

| 98% | 2,401 | 800 |

FAQ 3: What are the most common types of sampling errors that can undermine my research?

Answer: Several common sampling errors can introduce bias and reduce the validity of your findings [2]:

- Sample Frame Error: Occurs when the list used to select the sample (the sampling frame) does not adequately represent the entire population (e.g., using a phone directory that excludes people without landlines) [2].

- Selection Error: Often results from using only volunteer participants, which can over-represent individuals with strong opinions on the topic [2].

- Non-Response Error: Happens when a significant portion of the selected sample does not respond, and their opinions may systematically differ from those who did respond [2].

- Convenience Sampling Error: Arises from surveying only individuals who are easily accessible, which may not represent the broader population [2].

FAQ 4: What is the advantage of a stratified sampling design?

Answer: Stratification is the process of dividing a target population into more homogeneous subgroups (strata) based on specific characteristics [1]. Its primary advantages are:

- Reduced Variability: By ensuring representation from each key subgroup, stratification decreases the overall variability of the estimates, leading to greater precision [1].

- Targeted Analysis: It allows researchers to analyze each stratum separately and compare them, providing more insightful results [1].

- Cost-Effectiveness: It can lead to more efficient survey designs by allowing for different sampling strategies in different strata, potentially reducing overall costs [1].

Troubleshooting Guides

Problem 1: Underpowered Study with Insufficient Sample Size

Symptoms: Inability to detect significant effects or differences, even when they exist (high Type II error rate); unreliable or exaggerated effect size estimates; limited generalizability of findings [3].

Solution:

- Conduct an A Priori Power Analysis: Before data collection, determine the minimum sample size required to detect your assumed effect size with a sufficient probability (typically 80% power) [4]. This analysis should account for your desired statistical significance level (α, usually 5%), the expected variability in the response, and the statistical test you plan to use [4].

- Utilize Model-Based Approaches: In drug development, model-based drug development (MBDD) approaches using exposure-response analyses can sometimes achieve higher power with smaller sample sizes compared to conventional methods. This leverages prior knowledge of pharmacokinetics to inform the design [4].

- Consult a Statistician: Seek expert guidance during the planning phase to optimize your sample size for your specific research question and design, balancing statistical needs with resource constraints [3].

Problem 2: Biased Samples Due to Flawed Selection

Symptoms: Sample is not representative of the population; some groups or characteristics are over- or under-represented; conclusions about the population are inaccurate or misleading [5].

Solution:

- Implement Randomization: Use random or probability sampling techniques to ensure every member of the population has a known, non-zero chance of being selected. This is the most effective way to avoid selection bias [5] [6].

- Avoid Convenience Sampling: Do not base your sample solely on who is easiest to access unless for preliminary, non-inferential research [2].

- Define Clear Eligibility Criteria: Precisely define who is included in your target population to ensure your sample consists of the intended subjects [5].

- Use Stratified Random Sampling: If your population contains important subgroups, divide it into strata and then randomly sample from within each stratum to guarantee proportional representation [7].

Problem 3: Uncontrolled Confounding Variables

Symptoms: Observed associations between variables may be spurious; difficult to isolate the true effect of the independent variable on the dependent variable [3].

Solution:

- Identification: Conduct a thorough literature review and consult with domain experts to identify potential confounding variables before the study begins [3].

- Control through Design:

- Control through Analysis: Use statistical methods like multiple regression analysis, propensity score matching, or analysis of covariance (ANCOVA) to account for the influence of confounders after data collection [3].

Experimental Protocols

Protocol 1: Implementing a Stratified Random Sampling Design

Objective: To obtain a sample that is representative of key subgroups within a population, thereby increasing precision and reducing sampling bias.

Materials:

- A complete and accurate sampling frame of the population.

- Clear definition of the stratification variables (e.g., gear type, vessel size, geographical area).

- Random number generator or equivalent tool.

Methodology:

- Define Strata: Identify the distinct, non-overlapping subgroups (strata) within your population based on the chosen variable(s) known or suspected to influence the outcome of interest [1].

- List Population Elements: Obtain a list of all elements in the population and classify each element into its appropriate stratum [1].

- Determine Sample Allocation: Decide on the sample size for each stratum. This can be:

- Proportional: The sample size from each stratum is proportional to the stratum's size in the overall population.

- Disproportional (Optimal Allocation): Larger samples are taken from strata with greater variability to minimize overall variance [1].

- Randomly Sample within Strata: Within each stratum, use a simple random sampling method to select the predetermined number of elements. This ensures every element within the stratum has an equal chance of selection [7].

- Combine Sub-samples: The final sample is the aggregate of all elements selected from each stratum.

Protocol 2: Exposure-Response Power Analysis for Dose-Ranging Studies

Objective: To determine the statistical power and required sample size for a dose-ranging study by leveraging prior exposure-response knowledge and pharmacokinetic data [4].

Materials:

- Prior estimates of the exposure-response relationship (e.g., from a Phase I trial).

- A developed population pharmacokinetic (PK) model for the drug.

- Statistical software (e.g., R) capable of running simulations.

Methodology:

- Define Hypotheses: The null hypothesis (H₀) is that the slope of the exposure-response relationship is zero; the alternative (Hₐ) is that it is not zero [4].

- Simulate Exposures: For a given sample size

nand set ofmdoses, simulate the drug exposure (e.g., AUC) for each virtual subject using the population PK model, which describes the variability in drug clearance (CL/F) [4]. - Simulate Responses: For each simulated exposure, calculate the probability of response using the predefined exposure-response model (e.g., a logistic regression equation). Then, simulate a binary response (e.g., yes/no) based on this probability [4].

- Perform Statistical Test: For each of the

lsimulated study replicates, conduct an exposure-response analysis (e.g., test if the slope β₁ is statistically significant) [4]. - Calculate Power: The power for the given sample size

nis the proportion of thelstudy replicates in which the null hypothesis was correctly rejected. This process is repeated for a range of sample sizes to generate a power curve [4].

The workflow for this simulation-based power analysis is outlined below:

The Scientist's Toolkit: Key Reagent Solutions

Table: Essential Methodological Components for Robust Sampling Design

| Item / Solution | Function / Explanation |

|---|---|

| Sampling Frame | A list or source from the population elements are selected. A complete and accurate frame is critical to avoid "erroneous exclusions" that bias the sample [2]. |

| Stratification Variables | Characteristics (e.g., age, gear type, disease severity) used to partition a population into homogeneous subgroups before sampling, which increases precision and ensures subgroup representation [1]. |

| Random Number Generator | A tool (software or hardware-based) used to ensure every element in the sampling frame has an equal chance of selection, forming the basis for unbiased, probability sampling methods [5]. |

| Power Analysis Software | Tools (e.g., R packages, G*Power, online calculators) used before a study to calculate the minimum sample size required to detect an effect, given desired power, effect size, and significance level [3] [4]. |

| Coefficient of Variation (CV) | A key statistical indicator (standard deviation/mean) used to measure the relative variability and thus the precision of sample estimates. Lower CV values indicate higher precision [1]. |

Troubleshooting Guide: Common Plot Design Challenges

FAQ 1: My species richness estimates are not comparable between studies that used different plot sizes. How can I resolve this?

- Problem: Species richness (SR) is highly sensitive to the area sampled, making comparisons across inventories with different plot sizes unreliable [8].

- Solution: Implement a rarefaction curve adjustment method to correct for environmental heterogeneity.

- Protocol:

- Randomly aggregate plots from your inventory data by incrementally combining plot data [8].

- Build a sample-based rarefaction curve representing the relationship between cumulative area and cumulative species richness [8].

- Quantify environmental heterogeneity (EH) introduced during aggregation using climate, topography, and soil data from the aggregated plots [8].

- Statistically correct the rarefaction curve for the introduced EH, creating an adjusted curve that mimics sampling a single, large, environmentally homogeneous area [8]. Models using distributions like the Conway–Maxell–Poisson can account for underdispersed species richness data [8].

- Expected Outcome: This method produces comparable species richness estimates independent of original plot size, enabling reliable cross-inventory comparisons [8].

FAQ 2: How do I choose the optimal plot size and shape to balance accuracy and time efficiency?

- Problem: The choice of plot size and shape directly impacts the accuracy of estimates and the time required for data collection [9].

- Solution: The optimal design depends on your primary variables of interest and local forest conditions. There is no universal "best" size, but the following table synthesizes findings from various forest types to guide your design.

Table 1: Optimal Plot Size and Shape Findings from Different Forest Types

| Forest Type / Focus | Recommended Optimal Plot Size & Shape | Key Findings and Trade-offs |

|---|---|---|

| Tropical Hill Forest (Bangladesh) [9] | Large Circular Plot (1134 m²) | • Highest accuracy for above-ground biomass carbon (AGBC), stand volume, basal area, and tree density.• Most time-efficient per unit of accuracy. |

| Multipurpose Inventory (North Lapland) [10] | Concentric Plots | • A good compromise between the efficiency of relascope plots for volume/basal area and fixed-radius plots for stem count.• Optimal design is sensitive to measurement times and the relative importance of different target variables. |

| Urban Forest Assessment (New York, U.S.) [11] | 0.04 ha (1/10 acre) Circular Plots | • A balance between data collection time and precision.• Doubling plot size from 0.017 ha to 0.067 ha nearly halved the relative standard error but also nearly doubled the time needed per plot [11]. |

FAQ 3: How does sample tree selection within a plot affect the accuracy of inventory results?

- Problem: Errors in field plot data, such as those from how sample trees are selected for detailed measurements (e.g., height), are often overlooked and can reduce the accuracy of final inventory predictions [12].

- Solution: Optimize the number and selection method of sample trees.

- Protocol:

- Number of Sample Trees: A higher number of sample trees consistently improves the accuracy of plot-level values like volume and mean height [12].

- Selection Method: For the most accurate plot values, select sample trees with a probability proportional to their basal area (e.g., using a relascope) [12]. This means trees with a larger diameter have a higher chance of being selected, which improves the representation of the stand's structure.

- Calculation Method: Retain field-measured heights for sample trees and use height-diameter models to predict heights for the remaining non-sample trees [12].

Experimental Protocols for Key Studies

Protocol 1: Methodology for Determining Optimal Plot Size in a Tropical Forest [9]

- Objective: To estimate growing stocks and tree species diversity and identify the optimal plot size and shape based on accuracy and time efficiency.

- Site Description: Conducted in the Hazarikhil Wildlife Sanctuary, a semi-evergreen tropical forest in Bangladesh.

- Complete Enumeration: A full census (100 x 100 m area) was performed to establish baseline values for tree density, basal area, stand volume, and above-ground biomass carbon (AGBC) [9].

- Experimental Design:

- Plot Shapes Tested: Circular, square, and rectangular.

- Plot Sizes Tested: Small (~314 m²), medium (~628 m²), and large (~1134 m²).

- Data Collection: Within each plot, researchers measured tree density, seedling density, diameter at breast height (DBH), and tree height. They also recorded the time taken for each assessment.

- Data Analysis: Calculated basal area, stand volume, AGBC, and species diversity indices (Shannon-Wiener, Jaccard). The coefficient of variation (CV%) was used to measure accuracy. The optimal plot was selected by comparing accuracy and time efficiency across all designs.

Protocol 2: Simulation Workflow for Multipurpose Forest Inventory Optimization [10]

- Objective: To explore factors affecting optimal plot design (size, type, sub-sample tree selection) in a multipurpose inventory.

- Data Foundation: The study used accurately measured and mapped 50 m x 50 m test areas from North Lapland, including planar coordinates, DBH, height, and upper diameter for all trees [10].

- Simulation Procedure:

- Plot Simulation: Different plot types (fixed-radius, concentric, relascope) with varying parameters (radii, relascope factors) were simulated over the test areas.

- Cost & Loss Modeling: A cost-plus-loss approach was used. "Cost" included time for plot establishment and tree measurements. "Loss" represented the economic impact of poor estimates due to uncertainty.

- Optimization Criterion: The optimal plot design was identified by minimizing the total cost-plus-loss or by minimizing the weighted standard error for a fixed budget, considering multiple target variables like volume, basal area, and stems per hectare [10].

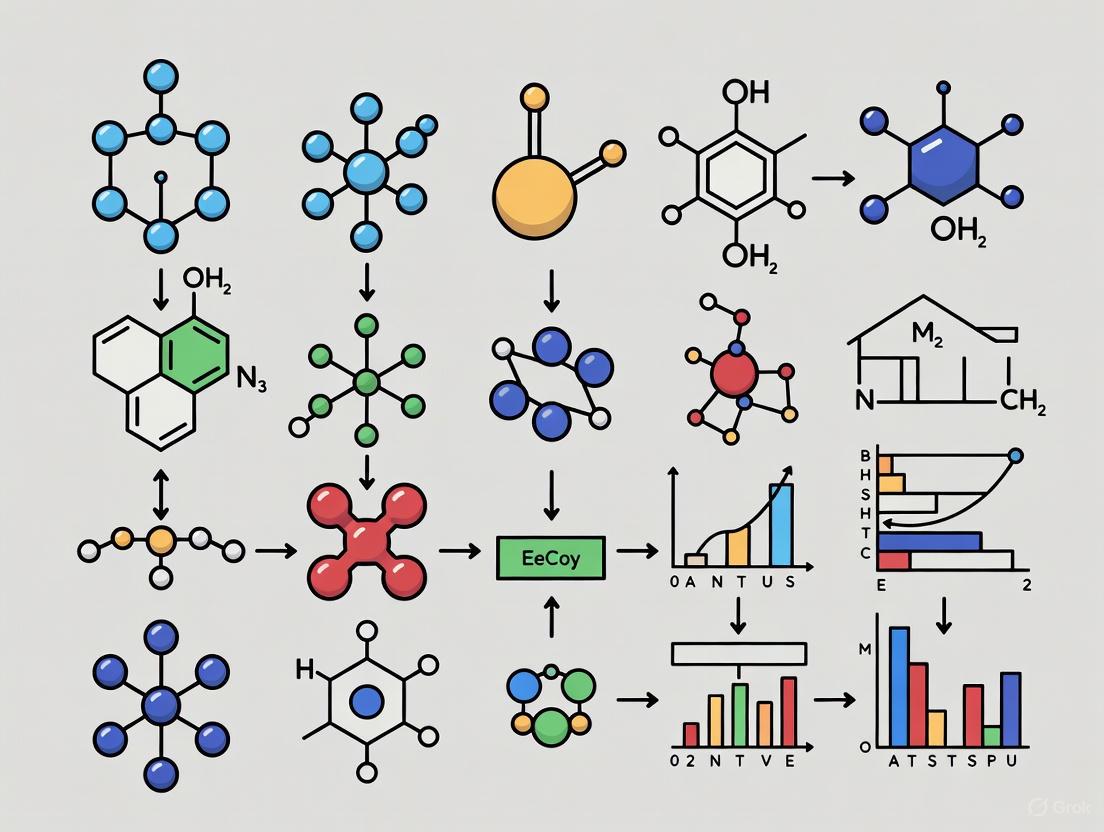

Visual Workflow: Decision Framework for Plot Design

The following diagram illustrates the logical process for selecting a plot design based on your inventory goals and constraints.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials and Tools for Forest Inventory Fieldwork

| Item / Tool | Function in Forest Inventory |

|---|---|

| Relascope | An optical tool used for rapid estimation of stand basal area and for selecting sample trees with a probability proportional to their basal area [12]. |

| Electronic Distance Measurer | Precisely measures the distance from plot center to trees to determine if they are within a fixed-radius plot boundary [11]. |

| Hypsometer | Measures tree height. Essential for gathering data from sample trees to build height-diameter models [12]. |

| Diameter Tape (D-tape) | Measures a tree's diameter at breast height (DBH). A fundamental measurement for all tally trees in a plot [12]. |

| Tachymeter | A precision surveying instrument that measures horizontal and vertical angles and distances. Used for creating highly accurate, fully mapped reference plots for simulation studies [10]. |

Frequently Asked Questions (FAQs)

Q1: What is the fundamental premise of the 3+3 design in dose-finding studies? The 3+3 design is a traditional rule-based algorithm for Phase I clinical trials in oncology. Its primary goal is to identify the Maximum Tolerated Dose (MTD) of a new drug by exposing small cohorts of patients (typically three) to escalating dose levels based on the observation of Dose-Limiting Toxicities (DLTs). The decision to escalate, repeat, or de-escalate a dose is governed by a fixed set of rules depending on how many patients in a cohort experience a DLT [13].

Q2: Our trial using a 3+3 design is stagnating at a dose level due to inconsistent DLT observations. How can we troubleshoot this? A common issue is the inherent discreteness of the 3+3 rules, which can lead to prolonged enrollment at intermediate dose levels without clear escalation or de-escalation. Troubleshooting steps include:

- Review Protocol Definitions: Ensure the criteria for Dose-Limiting Toxicities (DLTs) are precisely defined and consistently applied across all patients and trial sites. Ambiguity in what constitutes a DLT can cause inconsistent data.

- Sample Size Adequacy: The 3+3 design uses very small sample sizes at each dose level, leading to high variability in MTD estimation. Confirm that the trial's overall sample size is still reasonable given the delays.

- Pre-plan Interim Analyses: Pre-specify rules for handling scenarios where the trial seems to "stall." Consult with the study's statistician to review the accumulated data and determine if a protocol amendment is warranted.

Q3: What are the primary limitations of the 3+3 design that modern methods address? The 3+3 design has several key limitations that have prompted the development of model-based approaches [13]:

- Poor Target Accuracy: It has a low probability of correctly selecting the true MTD, often converging to a dose that is lower than the actual MTD.

- Sample Size Inefficiency: It requires a relatively large number of patients treated at sub-therapeutic doses and provides imprecise estimates of the toxicity curve.

- Lack of Flexibility: It cannot easily incorporate finer gradations of toxicity severity, patient characteristics, or combinations with other drugs.

- Slow Dose Escalation: The conservative nature of the design can lead to slow escalation, delaying the trial's completion.

Q4: How do model-assisted designs like the Bayesian Optimal Interval (BOIN) design improve upon the 3+3 paradigm? Model-assisted designs represent a significant reform, bridging the simplicity of rule-based designs with the efficiency of model-based designs. The BOIN design, for instance, uses pre-calculated decision boundaries for dose escalation and de-escalation, making it as easy to implement as the 3+3 design. However, its boundaries are derived from a statistical model, which gives it superior performance: higher accuracy in identifying the MTD, greater safety for patients, and reduced sample size requirements compared to the 3+3 design.

Q5: What key reagents and materials are essential for implementing a robust dose-finding study? The following table details essential components for a modern dose-finding trial, moving beyond the basic 3+3 framework.

Table 1: Research Reagent Solutions for Dose-Finding Studies

| Item | Function in Dose-Finding |

|---|---|

| Biomarker Assay Kits | Used to identify and validate predictive biomarkers of response or toxicity, enabling enrichment of patient populations or pharmacodynamic endpoint analysis. |

| Pharmacokinetic (PK) Assay Reagents | Critical for measuring drug exposure (e.g., AUC, C~max~) in patients. PK data is essential for understanding the relationship between the administered dose and the circulating drug levels. |

| Validated Toxicity Grading Scales | Standardized tools (e.g., NCI CTCAE) are mandatory for the consistent, objective, and reliable assessment of Dose-Limiting Toxicities (DLTs) across all trial sites. |

| Statistical Software Packages | Specialized software (e.g., R, SAS with clinical trial modules) is required for the complex simulations and statistical modeling needed for designs like CRM or BOIN. |

Troubleshooting Common Experimental & Methodological Issues

Problem: Inaccurate estimation of the true Maximum Tolerated Dose (MTD).

- Potential Cause: The 3+3 design's algorithmic nature and small cohort sizes lead to high statistical variability and a well-documented tendency to select doses below the true MTD.

- Solution Protocol:

- Pre-trial Simulation: Before initiating the trial, conduct extensive computer simulations to evaluate the operating characteristics (e.g., probability of correct MTD selection, average sample size) of the 3+3 design versus model-based designs (like the Continual Reassessment Method - CRM) for your specific toxicity scenario.

- Adopt a Model-Based Design: If resources and expertise allow, transition to a model-based design like the CRM or a model-assisted design like the BOIN design. These methods use all accumulated data from the trial to make more informed dose-escalation decisions.

- Implement a Sensitivity Analysis: After trial completion, perform a sensitivity analysis on the final MTD estimate using alternative statistical models to assess the robustness of the conclusion.

Problem: The trial is enrolling too slowly due to stringent eligibility criteria and the sequential nature of the 3+3 design.

- Potential Cause: The 3+3 design requires the full DLT observation period for one cohort to complete before the next cohort can be enrolled and dosed, creating inherent delays.

- Solution Protocol:

- Accelerated Titration: Implement an accelerated titration design for the initial dose levels, where single patients are dosed until a pre-specified toxicity is observed, before switching to the standard 3+3 cohort structure.

- Multi-Center Collaboration: Expand the trial to multiple research centers to accelerate patient recruitment and parallelize the enrollment of different cohorts.

- Continuous Reassessment: Utilize a model-based design that allows for more flexible and continuous enrollment, as decisions are based on a model updated with each new patient's data rather than fixed cohort boundaries.

The following diagram illustrates the logical workflow and decision points of the classic 3+3 design, highlighting where delays or stagnation can occur.

3+3 Dose Escalation Decision Logic

Problem: Inconsistent classification of Dose-Limiting Toxicities (DLTs) across investigative sites.

- Potential Cause: Lack of rigorous training and standardized procedures for identifying and grading adverse events according to protocols like the NCI's Common Terminology Criteria for Adverse Events (CTCAE).

- Solution Protocol:

- Centralized Training: Implement mandatory, standardized training for all investigators and site staff on DLT definitions and CTCAE grading before the trial begins.

- Blinded Adjudication Committee: Establish an independent committee of experts to blindly review and adjudicate all potential DLT events to ensure consistency and objectivity.

- Ongoing Quality Checks: Conduct periodic audits of case report forms and source documents to verify the accuracy and consistency of toxicity reporting.

Visualizing the Shift in Clinical Trial Paradigms

The evolution from the 3+3 design to more advanced methods represents a significant paradigm shift in oncology drug development. The diagram below contrasts the characteristics of these different eras.

Oncology Trial Design Paradigm Shift

Frequently Asked Questions

What is the relationship between sample size and statistical power? Statistical power is the probability that your test will detect an effect if one truly exists. A larger sample size increases power by reducing the margin of error and making your results more robust to outliers, thereby lowering the chance of a Type II error (failing to detect a real effect) [14] [15]. However, the relationship is one of diminishing returns; each additional subject provides less and less increase in power [16].

How do I estimate the sample size needed for my study? You can determine the necessary sample size by defining key parameters [14]:

- Significance (α): Typically set at 0.05.

- Power (1-κ): Typically set at 0.80.

- Minimum Detectable Effect (MDE): The smallest effect size you want to be able to detect.

- Variance of the outcome variable (σ²): Estimated from prior literature or pilot studies. With these parameters, you can use standard power calculation formulas or software to compute the required sample size [14].

My budget is limited. How can I maximize power without increasing sample size? You can maximize power for a fixed sample size and budget by [14]:

- Improving measurement precision to reduce outcome variance.

- Optimizing treatment allocation. An equal split between treatment and control typically maximizes power, though a different allocation may be better if costs differ significantly between groups or if the treatment affects the outcome variance [14].

- Using more efficient experimental designs, such as covariates or stratification, to account for variation [10].

What logistical challenges most commonly impact feasibility, and how can I mitigate them? Common challenges include patient or participant non-compliance, staff workload, and technical issues [17]. Mitigation strategies include [17]:

- Simplifying procedures for participants and staff.

- Integrating the intervention seamlessly into the existing clinical or operational routine.

- Providing comprehensive training and ensuring strong IT support.

- Securing buy-in from all staff members, who must view the new procedure as a standard part of their workflow.

How does plot size and number affect data collection in field studies like forest inventories? In field ecology and forestry, the choice of plot size and number involves a direct trade-off between statistical accuracy and resource expenditure [9] [10].

- Larger plots typically capture more variation within a plot, leading to lower between-plot variation and more precise population estimates. However, they take more time to measure, reducing the number of plots you can establish with a fixed budget [10].

- Smaller plots are quicker to measure, allowing for a larger sample size and better geographic coverage, but they may miss rare elements or be more sensitive to local clustering, potentially increasing overall variance [9].

What are the ethical considerations in determining sample size? Ethical research practice requires that a study is both scientifically sound and respectful of participants' well-being [15].

- An overly large sample size exposes more participants than necessary to potential risks or inconvenience.

- An underpowered study with a sample size that is too small is likely to yield inconclusive results, which wastes resources and renders the participants' contribution meaningless [15]. The goal is to find the smallest sample size that has a high probability of yielding a reliable answer.

The following tables summarize key quantitative relationships and findings from research on optimizing study design.

Table 1: How Factors Influence Statistical Power and Minimum Detectable Effect (MDE) [14]

| Component | Relationship to Power | Relationship to MDE |

|---|---|---|

| Sample Size (N) Increase | Increases power | Decreases the MDE |

| Outcome Variance (σ²) Decrease | Increases power | Decreases the MDE |

| True Effect Size Increase | Increases power | n/a |

| Equal Treatment Allocation (P) | Increases power | Decreases the MDE |

| Intra-cluster Correlation (ICC) Increase | Decreases power | Increases the MDE |

Table 2: Comparison of Plot Designs in a Forest Inventory Study (North Lapland) [10]

| Plot Type | Relative Efficiency for Volume/Basal Area | Relative Efficiency for Stems per Hectare | Key Characteristics & Compromise |

|---|---|---|---|

| Fixed-Radius Plot | Less Efficient | Most Efficient | Simple layout; efficient for counting trees. |

| Relascope Plot | Most Efficient | Less Efficient | Very time-efficient for measuring basal area and volume. |

| Concentric Plot | Efficient | Efficient | A good compromise; allows for different measurement intensities in different radii. |

Table 3: Impact of Plot Size on Estimation Accuracy in a Tropical Forest Inventory [9]

| Plot Size (Shape) | Above-Ground Biomass Carbon (AGBC) vs. True Value | Coefficient of Variation (CV%) | Key Finding |

|---|---|---|---|

| Large Circular (1134 m²) | Closest to true value | Lower | Recommended as most efficient for accuracy and time. |

| Small Plots | Farther from true value | Higher | Less accurate but faster to measure. |

| Large Square Plots | - | - | Less time-efficient due to longer perimeter and more borderline trees. |

Detailed Experimental Protocols

Protocol 1: Optimizing Sampling Plot Design for a Forest Inventory

This protocol is designed to determine the most efficient plot design for estimating forest variables like biomass and tree density within a fixed budget [9] [10].

- Define Objectives and Variables: Identify the key variables of interest (e.g., stem density, basal area, above-ground biomass carbon, species diversity) and assign priorities [10].

- Select Test Area: Identify a large, fully enumerated area (e.g., 1 hectare) that represents the forest population of interest. This "gold standard" data will serve as a benchmark [9].

- Choose Plot Designs and Sizes: Select the plot types and sizes to be tested. Common designs include [9] [10]:

- Fixed-radius circular plots of varying radii.

- Concentric circular plots (e.g., a small radius for high tree density and a larger radius for mature trees).

- Relascope plots (angle-count sampling) with varying factors.

- Simulate Sampling: Using software and the mapped tree data from the test area, simulate the process of placing plots according to a randomized design. For each simulated plot, record [10]:

- The estimated value for each variable of interest.

- The time required to "measure" the plot (based on established time models for layout, tallying, and sub-sample tree measurements).

- Analyze Efficiency: For each plot design and size, calculate [9] [10]:

- Bias: The difference between the mean estimated value and the true value from the fully enumerated area.

- Precision: The coefficient of variation (CV%) of the estimates.

- Time Cost: The average time required per plot.

- Select Optimal Design: Use a cost-plus-loss approach or minimize the standard error for a fixed total time budget. The optimal design balances the highest accuracy (low bias and high precision) with the lowest time cost for your priority variables [10].

Protocol 2: Implementing Routine Computerized Data Collection in a Clinical Setting

This protocol outlines steps for integrating routine computerized Health-Related Quality of Life (HRQoL) measurements into a busy outpatient clinic, based on a feasibility study [17].

- Develop a Tailor-Made Computer Program: Work with an IT professional to develop a user-friendly program. Key specifications include [17]:

- Instant scoring and graphical output of data for clinicians.

- Instant availability of data on the clinic's system.

- Secure data transmission and guaranteed patient privacy.

- Questionnaires available in multiple languages.

- Pilot Testing: Run an intensive pilot phase (e.g., 3 months) to identify and resolve technical and logistical problems. Actively solicit feedback from patients on usability [17].

- Staff Training and Engagement: Secure buy-in from all staff, including physicians and receptionists.

- Physicians: Train them on how to interpret and use the HRQoL data in consultations.

- Reception Employees: Train them to actively direct participating patients to the computers. Their role is critical for high patient compliance [17].

- Integration into Clinical Routine: Make the system a standard part of the patient visit pathway. Use eye-catching posters in waiting rooms as reminders. The goal is for the process to become an automatic part of the clinical routine [17].

- Monitor and Evaluate Compliance: Continuously track the percentage of occasions where patients complete the questionnaires. Investigate reasons for non-compliance and adjust the procedure accordingly [17].

Workflow and Relationship Diagrams

The following diagram illustrates the logical process of optimizing your study design by balancing the core trade-offs.

Diagram 1: Study Design Optimization Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Tools for Sampling and Feasibility Research

| Item / Solution | Function in Research |

|---|---|

| Power Analysis Software (e.g., G*Power, SPSS SamplePower) | Calculates necessary sample size or achievable power based on statistical goals (α, power, MDE, variance), providing a quantitative foundation for study design [14] [16]. |

| Pilot Study Data | Provides crucial estimates for outcome variance, intra-cluster correlation (ICC), and feasibility of procedures, making main study sample size calculations more accurate and realistic [14]. |

| Computerized Data Collection System | A custom-built system for instant data capture, scoring, and reporting. Essential for integrating patient-reported outcomes (e.g., HRQoL) into clinical workflow without disrupting routine [17]. |

| Time Consumption Models | Mathematical models that predict the time required for various field tasks (e.g., plot layout, tree measurement). Critical for optimizing the trade-off between statistical precision and operational cost in field surveys [10]. |

| Cost-Plus-Loss (CPL) Framework | An analytical approach that balances the monetary cost of data collection against the projected financial loss due to estimation error. Helps justify the budget for a specific sample size or plot design [10]. |

Frequently Asked Questions (FAQs)

Q1: During our dose-ranging studies for a new oncology drug, we observed severe toxicity at a specific dose level. How do we formally determine if this is the Maximum Tolerated Dose (MTD)? A1: The MTD is formally determined through a structured clinical trial design, most commonly a 3+3 design. If you observe a Dose-Limiting Toxicity (DLT) in your cohort, you must follow the protocol's escalation rules. The MTD is defined as the highest dose at which no more than one out of six patients experiences a DLT during the first cycle, and the dose immediately above causes DLTs in at least two patients.

Q2: We are comparing two different radiation therapy fractionation schedules. How can we calculate the Biologically Effective Dose (BED) to equate their biological impact?

A2: The BED allows for this direct comparison. You will need the total physical dose (D), the dose per fraction (d), and the alpha/beta ratio (α/β) for the relevant tissue (e.g., typically 10 for tumor control, 3 for late-responding tissues). Use the standard Linear-Quadratic model formula: BED = D * [1 + d / (α/β)]. Calculate the BED for both schedules using the appropriate α/β values to compare their biological effectiveness.

Q3: In our assay validation for measuring drug concentration, we calculated a CV% of 25%. Is this acceptable for bioanalytical method validation? A3: A CV% of 25% is generally considered high and may not be acceptable for a validated bioanalytical method. According to FDA and EMA guidelines, the precision (CV%) for assay validation should typically be within 15% for the majority of measurements and within 20% at the Lower Limit of Quantification (LLOQ). You should investigate sources of variability, such as pipetting error, instrument instability, or sample processing inconsistency, and work to optimize your assay to achieve a lower CV%.

Q4: How does the concept of Coefficient of Variation (CV%) relate to optimizing the number of sampling plots in ecological or pharmacological research? A4: The CV% is critical for statistical power analysis when determining the required sample size (or number of sampling plots). A higher CV% indicates greater variability in your data. To detect a specific effect size with a given statistical power (e.g., 80%), a higher CV% will require a larger sample size to ensure your results are reliable and not due to random chance. Therefore, estimating the CV% from a pilot study is a fundamental step in designing an efficient and powerful experiment.

Troubleshooting Guides

Problem: Inconsistent CV% values across experimental replicates.

- Potential Cause 1: Technical Pipetting Error.

- Solution: Calibrate pipettes regularly. Use reverse pipetting for viscous reagents. Train all personnel on proper pipetting technique.

- Potential Cause 2: Reagent Instability.

- Solution: Prepare fresh reagents aliquots. Avoid multiple freeze-thaw cycles. Store reagents according to the manufacturer's specifications.

- Potential Cause 3: Instrument Drift or Calibration.

- Solution: Perform routine maintenance and calibration as per the instrument manual. Include quality control samples in every run to monitor performance.

Problem: Difficulty in distinguishing the MTD from sub-therapeutic doses in animal studies.

- Potential Cause 1: Insufficient monitoring period for toxicity.

- Solution: Extend the observation period post-dosing as some toxicities have a delayed onset. Ensure you are monitoring all relevant clinical parameters (weight, behavior, clinical chemistry).

- Potential Cause 2: Poorly defined Dose-Limiting Toxicity (DLT) criteria.

- Solution: Pre-define objective and measurable DLT criteria in your study protocol before initiating the experiment. This removes subjectivity from the determination.

Problem: BED calculation yields unexpected or unrealistic values.

- Potential Cause 1: Incorrect Alpha/Beta (α/β) Ratio.

- Solution: Double-check that you are using the appropriate α/β ratio for the specific tissue type (tumor vs. normal tissue) and endpoint you are studying. Consult the latest literature for accepted values.

- Potential Cause 2: Unit Inconsistency.

- Solution: Ensure that the total dose (D) and dose per fraction (d) are in the same units (e.g., both in Gy). The α/β ratio also has units of Gy, so they will cancel out in the calculation.

Data Presentation

Table 1: Key Parameter Comparison in Dose Optimization Research

| Parameter | Acronym | Definition | Key Formula / Calculation | Primary Application |

|---|---|---|---|---|

| Maximum Tolerated Dose | MTD | The highest dose of a drug that does not cause unacceptable dose-limiting toxicities. | Determined empirically via trial designs (e.g., 3+3). | Phase I clinical trials; Preclinical toxicology. |

| Biologically Effective Dose | BED | A measure of the true biological dose delivered by a radiation therapy regimen, accounting for dose per fraction and tissue sensitivity. | BED = D * [1 + d / (α/β)] Where D=total dose, d=dose/fraction, α/β=tissue ratio. |

Radiation oncology; Comparing radiotherapy schedules. |

| Coefficient of Variation | CV% | A standardized measure of data dispersion relative to the mean, expressed as a percentage. | CV% = (Standard Deviation / Mean) * 100% |

Assay validation; Sample size calculation; Quality control. |

Table 2: Acceptable Precision (CV%) Ranges in Bioanalytical Method Validation

| Analytical Level | Acceptable CV% | Context |

|---|---|---|

| Lower Limit of Quantification (LLOQ) | ≤ 20% | The lowest concentration that can be measured with acceptable accuracy and precision. |

| Quality Control (QC) Samples (Low, Mid, High) | ≤ 15% | Demonstrates precision and accuracy across the calibration range of the assay. |

Experimental Protocols

Protocol 1: Determining MTD in a Preclinical Murine Model (3+3 Design Adaptation)

- Dose Selection: Select a starting dose based on prior toxicology data (e.g., 1/10th of the Severely Toxic Dose in rodents).

- Cohort Dosing: Administer the test compound to a cohort of 3 animals.

- Observation: Observe animals for a predefined period (e.g., 21 days) for signs of Dose-Limiting Toxicity (DLT). Pre-defined DLTs may include >20% body weight loss, severe morbidity, or death.

- Dose Escalation/De-escalation:

- If 0/3 experience DLT: Escalate dose for the next cohort of 3 animals.

- If 1/3 experiences DLT: Expand this cohort to 6 animals.

- If 1/6 experience DLT: Escalate dose.

- If ≥2/6 experience DLT: MTD has been exceeded.

- If ≥2/3 experience DLT: MTD has been exceeded.

- MTD Definition: The MTD is the highest dose level at which no more than 1 out of 6 animals experiences a DLT.

Protocol 2: Calculating BED for a Radiotherapy Schedule

- Gather Parameters:

- Total Physical Dose (D): e.g., 60 Gray (Gy).

- Dose Per Fraction (d): e.g., 2 Gy.

- Alpha/Beta Ratio (α/β): Select based on tissue type (e.g., 10 Gy for tumor control, 3 Gy for late-responding normal tissue).

- Apply Linear-Quadratic Formula:

BED = D * [1 + d / (α/β)]

- Example Calculation: For a regimen of 60 Gy in 2 Gy fractions (α/β=10):

BED = 60 * [1 + 2 / 10] = 60 * [1 + 0.2] = 60 * 1.2 = 72 Gy₁₀

Mandatory Visualization

Research Design Flow: Sampling to Dose

MTD Determination via 3+3 Design

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function |

|---|---|

| Calibrated Micropipettes | Precisely dispense minute volumes of drug solutions or reagents, critical for accurate dose preparation and minimizing technical CV%. |

| In Vivo Imaging System (e.g., IVIS) | Non-invasively monitors tumor burden or biodistribution in animal models, providing longitudinal data for dose-response studies. |

| Liquid Chromatography-Mass Spectrometry (LC-MS/MS) | The gold-standard for quantifying drug concentrations in biological matrices (plasma, tissue) with high sensitivity and specificity for PK/PD analysis. |

| Cell Viability Assay Kits (e.g., MTT, CellTiter-Glo) | Quantify the cytotoxic or cytostatic effects of drug candidates on cultured cells to establish initial dose-response curves. |

| Clinical Chemistry Analyzer | Measures biomarkers in serum/plasma (e.g., liver enzymes, creatinine) to objectively assess organ toxicity and define DLTs in preclinical studies. |

| Dimethyl Sulfoxide (DMSO) | A common solvent for reconstituting water-insoluble compounds for in vitro and in vivo dosing. Concentration must be controlled to avoid solvent toxicity. |

Modern Methodologies and Their Application in Sampling Design

Troubleshooting Guide: Common Issues in Modern Dose-Finding Trials

FAQ 1: How does BOIN design improve upon the traditional 3+3 method, and what are its key parameters?

Issue: The traditional 3+3 design has poor accuracy in identifying the true maximum tolerated dose (MTD) and tends to underdose patients [18]. Researchers need a clearer understanding of how BOIN provides a superior, yet accessible alternative.

Solution: The Bayesian Optimal Interval (BOIN) design is a model-assisted approach that uses predefined toxicity probability intervals to guide dose escalation decisions. It outperforms the 3+3 design by using accumulating patient response data to make more nuanced decisions, actively minimizing risks of over- or under-dosing [19]. The key parameters to implement BOIN are:

- Target Toxicity Rate (φ): The desired probability of dose-limiting toxicity (DLT) at the MTD (e.g., 0.25, 0.33) [20].

- DLT Rates for Decision Boundaries (φ1 and φ2): Toxicity rates that warrant escalation (φ1, typically 0.6φ) and de-escalation (φ2, typically 1.4φ) [20].

- Escalation/De-escalation Boundaries (λe and λd): Calculated values against which the observed DLT rate (

p̂j) is compared to make dosing decisions [19] [20].

The decision framework is simple to operationalize:

- If

p̂j≤ λe → Escalate dose - If

p̂j≥ λd → De-escalate dose - If λe <

p̂j< λd → Stay at current dose [19] [18]

Preventative Tips:

- Use available software (e.g., from MD Anderson's

trialdesign.org) to correctly calculate boundaries and simulate trial scenarios before beginning [19] [18]. - Engage a statistician early in the design process to specify these parameters appropriately [21].

FAQ 2: What steps should we take to transition from a rule-based to a model-based design like CRM?

Issue: Teams familiar with algorithm-based designs like 3+3 find model-based methods like the Continual Reassessment Method (CRM) complex and difficult to implement, often perceiving them as a "black box" [22] [18].

Solution: A phased approach focusing on education, preparation, and validation ensures a smooth transition.

- Team Education and Communication: Hold dedicated meetings with the chief investigator, trial manager, and statisticians to align on the design's principles, including the statistical model and its assumptions [22].

- Specify Design Parameters: Collaboratively define the skeleton (prior estimates of toxicity probability for each dose) and the target toxicity level (TTL) [22].

- Develop and Validate Statistical Programs: Given the complexity, have statisticians independently develop and cross-validate software (e.g., in R) for executing and simulating the trial. This is crucial for debugging and compliance with standard operating procedures [22].

- Conduct Comprehensive Simulations: Use a range of simulation scenarios to decide on critical design characteristics like escalation rules, stopping rules, and sample size [22] [21].

- Establish a Governance Plan: Define the role of the Trial Management Group (TMG) and an Independent Data Safety and Monitoring Committee (DSMC) in reviewing the model's recommendations [22].

Preventative Tips:

- Allocate additional time and resources for the initial set-up phase, as the first model-based trial requires a new skill set for the entire team [22].

- Leverage guiding publications and document templates to make the process more efficient [22].

FAQ 3: When should we consider model-assisted designs over model-based designs?

Issue: Choosing between design types involves balancing statistical performance with operational feasibility.

Solution: Model-assisted designs like BOIN or the keyboard design offer an optimal balance for many scenarios. The following table compares the three main classes of phase I trial designs.

Table: Comparison of Phase I Clinical Trial Design Characteristics

| Feature | Algorithm-Based (e.g., 3+3) | Model-Based (e.g., CRM) | Model-Assisted (e.g., BOIN) |

|---|---|---|---|

| Statistical Foundation | Simple, pre-specified rules [18] | Statistical model of dose-toxicity curve [18] | Statistical model to derive pre-tabulated rules [18] |

| Implementation | Very simple, no statistical software needed [18] | Complex; requires real-time statistical computation [18] | Simple; decision rules can be pre-tabulated in the protocol [18] |

| MTD Identification Accuracy | Poor [18] | High [18] | High, comparable to model-based [18] |

| Patient Allocation to MTD | Low [18] | High [18] | High [18] |

| Overdosing Risk | Low (but high risk of underdosing) [19] [18] | Can be controlled with careful design [23] | Low, with built-in overdose control [19] [23] |

| Best Use Cases | Preliminary studies with limited resources | Trials requiring high precision, with dedicated statistical support [23] | Most trials seeking a balance of performance and simplicity [19] [18] |

Preventative Tips:

- For most teams moving beyond 3+3, a model-assisted design like BOIN is recommended due to its superior performance over 3+3 and operational simplicity comparable to model-based designs [18].

- If your trial involves complex scenarios like combination therapies or late-onset toxicities, consider the specific extensions available within the BOIN framework [19] [20].

Experimental Protocols for Design Implementation

Protocol 1: Implementing a Standard BOIN Design

Objective: To accurately determine the Maximum Tolerated Dose (MTD) of a single agent using the BOIN design.

Materials:

- Statistical software (e.g., R with

BOINpackage or software fromtrialdesign.org). - Pre-specified protocol with escalation/de-escalation rules.

Methodology:

- Design Phase:

- Specify the target toxicity rate, φ (e.g., 0.33) [20].

- Define the lowest DLT rate for escalation, φ1 (default 0.6φ), and the highest DLT rate for de-escalation, φ2 (default 1.4φ) [20].

- Calculate the escalation (λe) and de-escalation (λd) boundaries using the formulae:

λe = log((1-φ1)/(1-φ)) / log((φ(1-φ1))/(φ1(1-φ)))λd = log((1-φ)/(1-φ2)) / log((φ2(1-φ))/(φ(1-φ2)))[20]

- Pre-tabulate these boundaries and the corresponding decisions for all possible outcomes in the protocol.

Trial Conduct Phase:

- Treat the first cohort of patients at the pre-specified starting dose (often the lowest dose) [20].

- For each subsequent cohort at dose level

j:- Calculate the observed DLT rate,

p̂j = yj / nj, whereyjis the number of DLTs andnjis the number of patients treated at that dose. - Apply the pre-tabulated rule:

- Calculate the observed DLT rate,

- Incorporate a dose elimination rule for safety: if the posterior probability that the DLT rate exceeds the target is greater than 0.95, eliminate the current and higher doses [19] [20].

Analysis Phase:

The following workflow summarizes the BOIN design process:

Protocol 2: Simulation Study for Design Evaluation

Objective: To evaluate the operating characteristics (e.g., MTD selection accuracy, patient safety) of a proposed model-based or model-assisted design under various realistic scenarios.

Materials:

- Statistical computing environment (e.g., R, SAS).

- Validated simulation code for the chosen design.

Methodology:

- Define Simulation Scenarios:

- Collaboratively define 4-6 different true dose-toxicity scenarios with the clinical team. These should include:

- A scenario where the assumed skeleton is correct.

- Scenarios where the true MTD is higher or lower than anticipated.

- A scenario where all doses are overly toxic.

- A scenario where all doses are safe [22].

- Collaboratively define 4-6 different true dose-toxicity scenarios with the clinical team. These should include:

Set Trial Parameters:

- For each scenario, fix the trial parameters such as the target toxicity rate, starting dose, cohort size, maximum sample size, and stopping rules [21].

Run Simulations:

- Simulate the trial thousands of times (e.g., 10,000 runs) for each scenario using the defined parameters.

Analyze Operating Characteristics:

- For each scenario, calculate the following metrics across all simulation runs:

- Percentage of correct MTD selection: The proportion of simulated trials that correctly identify the pre-defined true MTD.

- Patient allocation: The average percentage of patients treated at each dose level, especially at the true MTD.

- Overdose risk: The average percentage of patients treated at doses above the true MTD.

- Trial duration: The average number of cohorts or time to complete the trial.

- Early stopping probability: The proportion of trials that stop early for safety or futility [22] [21].

- For each scenario, calculate the following metrics across all simulation runs:

Compare and Select Design:

- Compare these metrics across different design options (e.g., BOIN vs. CRM) or different parameters within the same design (e.g., different skeletons for CRM). Select the design with the most robust and desirable operating characteristics across all scenarios [22].

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table: Key Tools for Implementing Innovative Trial Designs

| Tool / Solution | Function | Examples & Notes |

|---|---|---|

| Statistical Software | Executes design logic, performs simulations, and calculates dose assignments. | R (with BOIN, dfcrm packages), SAS, Stata. User-friendly web interfaces are available from trialdesign.org [18]. |

| Pre-Tabulated Decision Tables | Allows simple, rule-based implementation of model-assisted designs without real-time computation. | Created during the design phase and included directly in the study protocol [18]. |

| Dose-Escalation Guidelines | A predefined flowchart or table that maps observed patient outcomes to specific dosing decisions. | Critical for ensuring consistent trial conduct and clear communication with the clinical team [19]. |

| Validated Simulation Code | Independently developed and cross-validated programs to test the trial design under various scenarios. | Essential for debugging and is a requirement of many trial units' standard operating procedures for model-based trials [22]. |

| Regulatory Guidance Documents | Provide insight into agency perspectives on adaptive and novel trial designs. | FDA/EMA guidelines support the use of adaptive designs like BOIN, which has received a "fit-for-purpose" designation [19] [18]. |

Adaptive sampling methods represent a class of computational procedures that iteratively tailor sampling distributions or patterns based on information obtained during the sampling process itself. These methods are designed to reduce computational complexity, accelerate convergence, and improve estimate accuracy in high-dimensional or data-intensive settings by concentrating sampling effort where it is most valuable [24]. Within the context of optimizing sampling plot size and number for research, these methods provide a principled framework for dynamically adjusting sample sizes and allocations to maximize information gain while minimizing resource expenditure. This technical support guide addresses the specific implementation challenges and troubleshooting needs that researchers may encounter when applying these methods to stochastic optimization and experimental design.

Frequently Asked Questions (FAQs)

1. What is the core principle behind adaptive sampling for optimization? Adaptive sampling departs from static approaches by actively modifying sampling distributions based on accruing data. The core principle is to leverage online updates of proposal distributions, variance estimators, or local error diagnostics to concentrate computational effort on critical regions of the parameter space, thereby minimizing metrics such as estimator variance or regret under resource constraints [24]. In practice, this creates an exploration-exploitation dilemma where the algorithm must balance learning more about the system (exploration) with using current knowledge to obtain good performance (exploitation) [25].

2. How do I determine the appropriate sample size for each iteration in my adaptive sampling algorithm? Sample size determination follows two main paradigms. In methods like Adaptive Sampling Trust-Region Optimization (ASTRO), sample sizes are adaptively chosen before each iterate update, ensuring the objective function and gradient are sampled only to the extent needed [26]. Alternatively, Retrospective Approximation (RA) uses a fixed sample size for multiple updates until progress is deemed statistically insignificant, at which point the sample size is increased [26]. The appropriate approach depends on whether your priority is granular control (ASTRO) or computational simplicity (RA).

3. My adaptive sampling algorithm seems to be converging slowly. What could be wrong? Slow convergence often stems from insufficient exploration or improper initialization. If the algorithm is over-exploiting, it may become trapped in local optima. Consider adjusting your scoring function to favor less explored regions [25]. Additionally, the initialization of proposal distributions heavily influences convergence; poor initialization may require heuristic adjustments such as uniformization or small probability boosting [24].

4. What are the computational overhead concerns with adaptive sampling methods? While adaptive sampling improves sample efficiency, it introduces overhead from tracking dynamic proposals and updating adaptive statistics. In very high-dimensional regimes, this can become expensive [24]. However, modern implementations like sketch-based adaptive sampling have achieved wall-clock speedups of 1.5–1.9× over static baselines by reducing these costs [24].

5. How do I validate that my adaptive sampling implementation is working correctly? Validation should assess both statistical efficiency and computational performance. Compare your results against theoretical guarantees where available; for instance, information-directed sampling (IDS) achieves sublinear Bayesian regret with bounds scaling as (O(\sqrt{dT})) in generalized linear models [24]. Additionally, monitor whether the algorithm successfully identifies multiple potential solutions or binding sites more efficiently than traditional methods, which is a key indicator of effective exploration [25].

Troubleshooting Guides

Problem 1: Poor Exploration-Exploitation Balance

Symptoms: Algorithm consistently converges to suboptimal solutions; fails to discover known optima; samples too uniformly without focusing on promising regions.

Diagnosis and Solutions:

- Root Cause: The scoring function for selecting clusters for resampling may be improperly weighted.

- Solution: Implement and test different scoring functions. The "hub scores" metric has shown promise for improving exploration [25]. Additionally, consider framing the problem explicitly as an exploration-exploitation dilemma and employing dedicated algorithms like Information-Directed Sampling (IDS) [27].

- Verification: The algorithm should efficiently identify multiple potential binding sites or optimal regions, not just a single one [25].

Problem 2: High Computational Overhead per Iteration

Symptoms: Each iteration of the adaptive loop takes prohibitively long; overall wall-clock time exceeds that of simpler methods.

Diagnosis and Solutions:

- Root Cause: The statistical model (e.g., clustering, tICA) is being rebuilt from scratch each round, or the sample size selection is too computationally intensive.

- Solution:

- For model building, consider incremental learning techniques that update the existing model instead of recomputing it entirely.

- For sample size selection, the ASTRO algorithm provides a framework for adaptive choice with proven consistency and complexity results, ensuring samples are not wasted [26].

- Leverage reduced-order models to design biasing distributions, which can dramatically reduce sample size growth [24].

- Verification: Profile your code to identify bottlenecks. The cost of the adaptive logic should be small compared to the simulation or evaluation cost.

Problem 3: Algorithm Instability and Parameter Sensitivity

Symptoms: Significant performance variation with different random seeds; high sensitivity to hyperparameters like initial sample size or clustering resolution.

Diagnosis and Solutions:

- Root Cause: Inadequate stabilization of statistical properties and insufficient repetitions for each chosen sample size.

- Solution: Implement a framework like Algorithm 1 from [28], which automatically determines a sufficient number of repetitions for each sample size to reduce sampling deviations below a predefined threshold. This ensures reliable conclusions that do not depend heavily on a single run.

- Verification: Run your algorithm multiple times with different seeds. The variance in the final result should be within an acceptable tolerance for your application.

Experimental Protocols & Data

Key Experimental Workflow

The following diagram illustrates the standard iterative workflow for a typical adaptive sampling protocol, as applied in molecular dynamics and other fields [25].

Performance Comparison of Adaptive Sampling Tools

The following table summarizes a benchmarking study of adaptive sampling tools in nanopore sequencing, illustrating the performance variation that can occur between different algorithmic strategies [29]. While domain-specific, this highlights the importance of tool selection.

Table 1: Tool Performance in Intraspecies Enrichment (48-hour run)

| Tool Name | Classification Strategy | Absolute Enrichment Factor (AEF) | Key Characteristic |

|---|---|---|---|

| MinKNOW | Nucleotide Alignment | 3.45 | Optimal for most scenarios, excellent balance [29] |

| BOSS-RUNS | Nucleotide Alignment | 3.31 | Top-class performance, similar to MinKNOW [29] |

| Readfish | Nucleotide Alignment | 2.80 | Generally excellent enrichment [29] |

| UNCALLED | Signal (k-mer) | 1.60 | Faster drop in active channels, lower output [29] |

| ReadBouncer | Nucleotide Alignment | 1.50 | Optimal channel activity maintenance [29] |

Quantitative Analysis of Convergence and Complexity

The table below summarizes theoretical guarantees for different adaptive sampling methodologies, providing benchmarks for what can be achieved in well-implemented algorithms.

Table 2: Theoretical Performance of Adaptive Sampling Methods

| Methodology | Theoretical Guarantee | Application Context | Reference |

|---|---|---|---|

| Information-Directed Sampling (IDS) | Sublinear Bayesian regret, (O(\sqrt{dT})) | Generalized Linear Models, Discovery | [24] [27] |

| Adaptive Grid Refinement | Exponential convergence for finitely many singularities | hp-adaptive schemes, PDEs | [24] |

| Adaptive Importance Sampling (AIS) | Orders-of-magnitude MSE reduction | Bayesian Inference with rare evidence | [24] |

| ASTRO | (\mathcal{O}(\epsilon^{-1})) iteration complexity | Stochastic unconstrained optimization in (\mathbb{R}^d) | [26] |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Adaptive Sampling Experiments

| Tool / Resource | Function in Experiment | Application Note |

|---|---|---|

| ASTRO Algorithm | Derivative-based stochastic trust-region algorithm for smooth unconstrained problems. | Adaptively chooses sample sizes for function/gradient estimates. Efficient due to quasi-Newton Hessian updates [26]. |

| tICA Clustering | Reduces high-dimensional trajectory data to slowest-evolving components for clustering. | Critical for featurizing data without prior knowledge of the target state (e.g., binding site) [25]. |

| Information-Directed Sampling (IDS) | Algorithm for adaptive sampling for discovery problems. | Balances exploration and exploitation by quantifying information gain. Applicable to linear, graph, and low-rank models [27]. |

| Retrospective Approximation (RA) | An alternative adaptive paradigm using fixed sample sizes until progress stalls. | Useful for constrained problems in infinite-dimensional Hilbert spaces, often combined with progressive subspace expansion [26]. |

| MinKNOW / Readfish | Software tools for implementing real-time, decision-theoretic adaptive sampling. | While from nanopore sequencing, they exemplify the full integration of adaptive decision-making into a data collection pipeline [30] [29]. |

The Role of Biomarkers and ctDNA in Establishing Biologically Effective Dose Ranges

Frequently Asked Questions (FAQs)

FAQ 1: What is ctDNA and why is it crucial for defining biologically effective doses? Circulating tumor DNA (ctDNA) is a fraction of cell-free DNA in the bloodstream that is shed by tumor cells through processes such as apoptosis, necrosis, and active release [31]. It carries tumor-specific genetic alterations. In dose-finding studies, ctDNA levels provide a minimally invasive, real-time quantitative measure of tumor burden and molecular response [32] [33]. A decreasing ctDNA level after initiating therapy indicates that the drug is hitting its biological target and effectively killing tumor cells, thereby helping to establish a dose that produces the desired pharmacological effect.

FAQ 2: My ctDNA assay results are inconsistent. What are the key pre-analytical factors to check? Inconsistent results most commonly stem from pre-analytical variability. Key parameters to verify are listed in the table below [34]:

| Factor | Recommendation | Rationale |

|---|---|---|

| Sample Type | Use plasma over serum. | Serum has higher background DNA from leukocyte lysis during clotting, reducing assay sensitivity [34]. |

| Blood Collection Tube | Use K2/K3-EDTA tubes; process within 4-6 hours. Or use dedicated cell preservation tubes. | EDTA inhibits DNases but delays in processing lead to white blood cell lysis and contamination [34]. |

| Centrifugation Protocol | Two-step centrifugation: 1) 800-1,600×g for 10 mins, 2) 14,000-16,000×g for 10 mins (4°C). | Removes cells and debris to obtain truly cell-free plasma [34]. |

| Plasma Storage | Store at -80°C for long-term; avoid repeated freeze-thaw cycles. | Preserves ctDNA integrity by minimizing nuclease activity [34]. |

FAQ 3: How can I use ctDNA to distinguish between true disease progression and pseudoprogression on immunotherapy? This is a critical application of longitudinal ctDNA monitoring. In pseudoprogression (where lesions may appear larger on imaging due to immune cell infiltration), ctDNA levels would be expected to decrease or remain undetectable. In contrast, true progression is characterized by a consistent rise in ctDNA levels [33]. Therefore, trending ctDNA dynamics can provide clarifying molecular data to complement radiographic imaging.

FAQ 4: What does "ctDNA negativity" signify in clinical trials for drug development? Achieving ctDNA negativity (where tumor-informed assays can no longer detect ctDNA in plasma) is a powerful prognostic biomarker. It is strongly associated with improved clinical outcomes, including longer Progression-Free Survival (PFS) and Overall Survival (OS) [32]. In the context of dose-finding, a dose that leads to a higher rate of ctDNA negativity is likely to be more biologically effective. Regulatory guidance also supports its investigation for detecting Molecular Residual Disease (MRD) to identify patients at high risk of recurrence who may benefit from adjuvant therapy [35] [33].

FAQ 5: What is the difference between a prognostic and a predictive biomarker in dose-response studies?

- Prognostic Biomarker: Provides information about the patient's overall cancer outcome, regardless of therapy. For example, the presence of ctDNA after curative-intent surgery is a strong prognostic indicator of higher relapse risk [36] [33].

- Predictive Biomarker: Helps identify patients who are more or less likely to benefit from a specific therapeutic intervention. For example, an EGFR mutation is a predictive biomarker for response to EGFR tyrosine kinase inhibitors [37]. A biomarker used for dose selection should ideally be predictive of the drug's pharmacological effect.

Troubleshooting Guides

Issue 1: Undetectable ctDNA in a patient with visible tumor burden

| Possible Cause | Investigation Actions | Solution |

|---|---|---|

| Pre-analytical errors [34] | Check plasma preparation protocol and inspect plasma for hemolysis (red/orange tint). | Re-draw blood using correct tubes and adhere strictly to centrifugation protocols. |

| Assay sensitivity too low | Verify the Limit of Detection (LoD) of your assay. Is it appropriate for the expected low ctDNA fraction? | Switch to a more sensitive technology (e.g., dPCR or tumor-informed NGS) or increase plasma input volume [32] [34]. |

| Tumor type with low shedding [38] | Research ctDNA shedding characteristics for the specific cancer type (e.g., CNS tumors). | Consider alternative liquid biopsy sources (e.g., CSF for brain tumors) [31] [38]. |

Issue 2: High levels of background noise in NGS data

| Possible Cause | Investigation Actions | Solution |

|---|---|---|

| Low ctDNA fraction | Calculate the variant allele frequency (VAF); very low VAFs (<0.5%) are challenging. | Use error-corrected NGS methods (e.g., unique molecular identifiers) to suppress PCR and sequencing errors [31]. |

| DNA from lysed white blood cells [34] | Review time-to-processing and tube type. Check for high levels of wild-type DNA. | Ensure rapid plasma separation (<6 hrs for EDTA tubes) or use cell preservation tubes. |

| Non-optimal bioinformatic filtering | Interrogate the raw data and filtering parameters for sequencing artifacts. | Apply filters based on ctDNA biological features (e.g., fragment size analysis) to enrich for tumor-derived signals [31]. |

Experimental Protocols & Workflows

Protocol 1: Longitudinal ctDNA Monitoring for Dose-Response

Objective: To correlate changes in ctDNA levels with different drug dose levels to establish the biologically effective dose range.

Materials:

- Blood Collection Tubes: Cell preservation tubes (e.g., Streck, PAXgene).

- DNA Extraction Kit: Silica-membrane or magnetic bead-based kit optimized for low-concentration cfDNA.

- Quantification Kit: Flurometric assay (e.g., Qubit dsDNA HS Assay).

- Assay Platform: Tumor-informed NGS assay (e.g., RaDaR, Signatera) or dPCR for specific mutations [32].

Methodology:

- Baseline Sampling: Collect a blood sample and a tumor tissue biopsy (if available) prior to treatment initiation.

- Dosing and Serial Sampling: Administer the investigational drug at a specific dose level. Collect longitudinal blood samples at predefined time points (e.g., pre-dose Cycle 2 Day 1, Cycle 3 Day 1, etc.) [32].

- Sample Processing: Isolate plasma from all blood samples using a standardized two-step centrifugation protocol [34].

- ctDNA Analysis:

- For tumor-informed NGS: Sequence the baseline tumor sample to identify patient-specific mutations. Design a custom panel to track these mutations in serial plasma samples [32].

- For dPCR: Test plasma samples for a known mutation using mutation-specific assays.

- Data Analysis: Quantify ctDNA levels (e.g., as variant allele frequency or mean tumor molecules per mL) at each time point. Plot ctDNA dynamics over time for each patient and dose cohort.

Protocol 2: Assessing Molecular Residual Disease (MRD) Post-Treatment

Objective: To determine if a dose level is sufficient to eradicate micrometastatic disease after definitive therapy (e.g., surgery).

Key Consideration: Timing is critical. Blood should not be collected immediately after surgery due to background cfDNA from tissue injury. A minimum wait of 1-2 weeks is recommended [34] [33].

Methodology:

- Pre-operative Baseline: Collect a pre-surgery blood sample.

- Tumor Tissue Analysis: Sequence the resected tumor to define a patient-specific mutation signature.

- Post-operative MRD Sampling: Collect the first post-operative blood sample 2-4 weeks after surgery, followed by serial samples every 3-6 months.

- Analysis: Use a highly sensitive (LoD ~0.001%) tumor-informed NGS assay to detect any residual mutations [32] [33].

- Correlation with Outcome: A dose that results in ctDNA negativity (undetectable MRD) is associated with significantly improved Relapse-Free Survival (RFS) and is likely biologically effective [36] [33].

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function in ctDNA Research | Key Considerations |

|---|---|---|

| Cell-Free DNA BCT Tubes (Streck) | Preserves blood samples by stabilizing nucleated blood cells, preventing lysis and background DNA release. Allows for delayed processing (up to 5-7 days at room temp) [34]. | Essential for multi-center trials where immediate processing is not feasible. |

| K2/K3 EDTA Tubes | Standard anticoagulant blood collection tubes. | Require plasma separation within 4-6 hours of draw to prevent white cell lysis [34]. |

| Digital PCR (dPCR) Systems | Provides absolute quantification of rare mutations with high sensitivity and precision. Ideal for tracking known mutations in longitudinal studies [38]. | Excellent for targeted analysis of 1-5 mutations. Lower multiplexing capability than NGS. |

| Tumor-Informed NGS Assays (e.g., RaDaR, Signatera) | Custom, ultra-sensitive assays designed around the unique mutation profile of a patient's tumor. | Highest sensitivity for MRD detection (~0.001%). Requires a tumor tissue sample for sequencing [32]. |

| Qubit Fluorometer & HS DNA Kit | Accurately quantifies low concentrations of extracted cfDNA. | More accurate for short-fragment cfDNA than spectrophotometric methods (e.g., Nanodrop). |

Table 1: Prognostic Value of ctDNA Status in Solid Tumors [36] [33]

| Clinical Scenario | Metric | Hazard Ratio (HR) for Recurrence/Death | 95% Confidence Interval |

|---|---|---|---|

| Post-Definitive Therapy (MRD) | Relapse-Free Survival (RFS) | HR = 8.92 | 6.02 - 13.22 |

| Post-Definitive Therapy (MRD) | Overall Survival (OS) | HR = 3.05 | 1.72 - 5.41 |

| During ICB Therapy (R/M HNSCC) [32] | Overall Survival (OS) | HR = 0.04 | 0.00 - 0.47 |

| During ICB Therapy (R/M HNSCC) [32] | Progression-Free Survival (PFS) | HR = 0.03 | 0.00 - 0.37 |

Table 2: Key Performance Metrics of ctDNA Assays [32] [33]

| Assay Type | Approximate Limit of Detection (LoD) | Key Strengths | Key Limitations |

|---|---|---|---|

| Tumor-Informed NGS | 0.001% VAF (e.g., LoD95: 0.0011%) [32] | Ultra-high sensitivity; personalized for low background noise. | Requires tumor tissue; longer turnaround time; higher cost. |

| Tumor-Naive NGS Panels | 0.1% - 0.5% VAF | Broad profiling without need for tissue; faster turnaround. | Lower sensitivity for MRD; higher background noise. |

| Digital PCR (dPCR) | 0.01% - 0.05% VAF | High sensitivity and precision; rapid; cost-effective for known targets. | Limited to a small number of pre-defined mutations. |

Frequently Asked Questions (FAQs)

FAQ 1: What is the primary goal of integrating PK/PD modeling in first-in-human study design? The primary goal is to support the rational selection of a first-in-human starting dose and subsequent dose escalations by integrating diverse sets of preclinical information. This approach aims to maximize the potential for detecting a therapeutic signal while safeguarding human subjects by minimizing the risk of adverse effects. It represents a key component of the translational research strategy to de-risk projects early in development [39].