Optimizing Memory Hierarchy for Large-Scale Ecological Simulations: Techniques for Enhanced Performance and Sustainability

This article explores the critical intersection of advanced memory hierarchy optimization and large-scale ecological simulation.

Optimizing Memory Hierarchy for Large-Scale Ecological Simulations: Techniques for Enhanced Performance and Sustainability

Abstract

This article explores the critical intersection of advanced memory hierarchy optimization and large-scale ecological simulation. As ecological models grow in complexity, encompassing spatial cellular automata, ecosystem service trade-offs, and AI-driven pattern recognition, they place unprecedented demands on computational resources. We detail methodologies for coordinating multi-level memory systems—from cache prefetching to dynamic dataflow management—to accelerate simulations of habitat connectivity, biodiversity, and climate impact. Targeting researchers and scientists, this guide provides a foundational understanding of memory bottlenecks, applies optimization techniques like the Dynamic Hierarchy Coordination Mechanism (DHCM) and carbon-aware computing, troubleshoots common performance issues, and validates approaches through comparative analysis. The goal is to enable more frequent, higher-resolution, and environmentally sustainable ecological forecasting.

Understanding the Memory Bottleneck in Modern Ecological Modeling

The Computational Demands of High-Resolution Spatial Simulations

Performance Analysis: Quantitative Demands of High-Resolution Modeling

High-resolution spatial simulations impose significant computational burdens across scientific domains. The relationship between increased resolution and computational cost is often non-linear, creating substantial challenges for research infrastructure.

Table 1: Computational Performance Metrics Across Simulation Domains

| Domain | Resolution Enhancement | Compute Time Increase | Key Impact Findings |

|---|---|---|---|

| Energy Systems Modeling [1] | ~134 to >3000 regions (county-level) | Order of magnitude increase | Lower-cost solutions; shifted capacity toward regions with better resource adequacy |

| Climate Modeling (HighResMIP1) [2] | ~100km to ~25km grid spacing | Significantly increased (multi-model) | Improved extreme weather representation; reduced large-scale model biases |

| Neuroscience (tDCS) [3] | Concentric spheres to gyri-specific models | Increased complexity for anatomical precision | Current "hotspots" in sulci; profound impact from individual skull defects |

The computational burden stems from fundamental modeling requirements. In energy systems, higher spatial resolution captures critical heterogeneity in renewable resources and transmission constraints, enabling more optimal capacity placement but drastically increasing solve times [1]. Climate modeling reveals that resolutions of 25km or finer are necessary to adequately represent extreme processes like tropical cyclones and atmospheric rivers [2].

Experimental Protocols for High-Resolution Spatial Simulation

Protocol: Finite Element Method for Transcranial Direct Current Stimulation

This protocol outlines the workflow for generating high-resolution computational forward models of non-invasive neuromodulation [3].

- Application: Predicting brain current flow for transcranial direct current stimulation (tDCS).

- Purpose: Relate externally controllable dose parameters with resulting brain current flow to optimize clinical electrotherapy.

- Workflow:

- Tissue Demarcation: Demarcate individual tissue types from high-resolution anatomical data (e.g., 1mm MRI slices) using automated and manual segmentation tools. Distinguish tissues by resistivity.

- Electrode Modeling: Model physical properties of electrodes (shape, size) and place them precisely within segmented image data along the skin mask surface.

- Mesh Generation: Generate high-quality factor meshes from tissue/electrode masks while preserving anatomical resolution. This divides each mask into small contiguous elements for finite element method simulations.

- Solver Setup: Import volumetric meshes into commercial finite element solver. Assign resistivity to each mask element and impose boundary conditions including current applied to electrodes.

- Numerical Solution: Solve the standard Laplacian equation using appropriate numerical solver and tolerance settings.

- Data Visualization: Plot results as induced cortical electric field or current density maps.

Diagram 1: FEM workflow for tDCS modeling.

Protocol: Cellular Mapping of Attributes with Position Algorithm

This protocol details the CMAP method for mapping single-cells to precise spatial locations by integrating single-cell and spatial transcriptomic data [4].

- Application: Spatial transcriptomics and cellular mapping in complex tissues.

- Purpose: Reconstruct genome-wide spatial gene expression profiles at single-cell resolution to explore tissue microenvironments.

- Workflow:

- CMAP-DomainDivision (Level 1):

- Identify spatially specific genes and cluster spatial domains using hidden Markov random field.

- Evaluate Silhouette score to determine optimal domain number.

- Train classification model (e.g., Support Vector Machine) to assign spatial domain labels to individual cells.

- CMAP-OptimalSpot (Level 2):

- Identify spatially variable genes within each spatial domain.

- Generate random alignment matrix between cells and spots.

- Aggregate cells linked to each spot and construct cost function measuring discrepancy between actual and aggregated spatial expression.

- Apply Structural Similarity Index for pattern comparison and information entropy for cell distribution density.

- Perform iterative refinement via deep learning-based optimization to find optimal mapping matrix.

- CMAP-PreciseLocation (Level 3):

- Build nearest neighbor graph representing relationships among spots.

- Calculate associations between cells and their neighboring optimal spots.

- Employ Spring Steady-State Model learned from physical field to assign exact (x, y) coordinates to each cell.

- CMAP-DomainDivision (Level 1):

Diagram 2: CMAP algorithm for single-cell mapping.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Research Reagent Solutions for Spatial Simulations

| Item | Function/Purpose | Application Context |

|---|---|---|

| High-Resolution Anatomical Data (e.g., MRI at 1mm thickness) | Provides physical geometry and tissue boundaries for modeling electrical properties [3]. | Computational models of neuromodulation |

| Spatial Transcriptomics Platforms (10x Genomics Visium, Xenium, Slide-seq) | Generates spatial expression data for mapping and validation [4]. | Cellular mapping in complex tissues |

| Finite Element Solver Software | Numerically solves partial differential equations governing physical phenomena [3]. | All spatial simulation domains |

| CARD Framework | Generates simulated spatial data with predefined domains for benchmarking [4]. | Method validation and performance testing |

| Hidden Markov Random Field (HMRF) | Identifies spatially specific genes and clusters spatial domains [4]. | Initial spatial domain identification |

| Structural Similarity Index (SSIM) | Image-based metric for capturing spatial dependencies in expression patterns [4]. | Pattern comparison in spatial mapping |

Technical Specifications for Simulation Infrastructure

Resolution Specifications Across Modeling Domains

Table 3: Spatial and Temporal Resolution Requirements

| Modeling Domain | Spatial Resolution | Temporal Resolution | Key Computational Constraints |

|---|---|---|---|

| Climate Modeling (HighResMIP2) [2] | Atmosphere: 25-50km; Ocean: 10-25km (bridging to global cloud-resolving <10km) | Sub-daily to centennial scales | Ensemble sizes, scenario complexity, model spinup, coupling between components |

| Energy Systems (ReEDS Model) [1] | County-level (3000+ regions) vs Balancing Areas (134 regions) | Multi-year to decadal planning | Transmission representation, resource variability, combinatorial optimization |

| Neuroscience (tDCS Modeling) [3] | Gyri-specific resolution (~1mm MRI slices) | Static (steady-state) current flow | Anatomical precision, tissue resistivity values, segmentation accuracy |

| Cellular Mapping (CMAP) [4] | Sub-spot cellular coordinates | Single time point (snapshot) | Integration of disparate data types, optimization cost functions |

The resolution requirements directly impact computational resource needs. High-resolution climate models require significant high-performance computing resources for coupled atmosphere-ocean simulations with multiple ensemble members [2]. Energy system models face combinatorial challenges with higher spatial resolution, where an order of magnitude increase in model regions leads to at least an order of magnitude increase in runtime [1].

Data Management and Integration Protocols

Standardized experimental protocols are crucial for generating reproducible, quantitative data for mathematical modeling [5]. Key considerations include:

- Data Standardization: Implementing standardized procedures for data acquisition, processing, and annotation to enable comparison and integration across laboratories [5].

- System Biology Markup Language: Using SBML as a software-independent format for model representation and exchange [5].

- Spatial Data Management: Developing systematic approaches for ingesting, organizing, and storing diverse spatial data types (drone, LIDAR, image, video, 3D data) that are often scattered across silos [6].

The effectiveness of large-scale ecological simulations is fundamentally constrained by the efficiency of the underlying computer memory system. Modern research in fields such as microbial community dynamics and drug interaction modeling requires processing vast, complex datasets that push the limits of conventional computing architectures. The memory hierarchy—a structured organization of memory storage from small, fast cache memories to larger, slower main memory—serves as a critical bridge between processor speed and data availability. This architecture directly influences the performance and feasibility of computational experiments in ecological research [7].

Optimizing this hierarchy is particularly crucial for ecological simulations, which often exhibit irregular memory access patterns and must track the state of numerous interacting components over extended timeframes. The growing disparity between processor speed and memory access times, known as the "memory wall" or "von Neumann bottleneck," presents a significant challenge. Processor performance has increased by approximately 60% annually, while main memory performance has improved by only about 9% per year, creating a substantial performance gap that hierarchy optimization aims to address [7].

Fundamental Components of the Memory Hierarchy

Cache Memory: The First Line of Defense

Cache memory comprises the fastest and closest memory elements to the processor cores, designed to hold frequently accessed data and instructions. Modern systems typically implement a multi-level cache structure [7]:

- L1 Cache: Split into instruction and data caches, provides the lowest latency access (typically 1-4 clock cycles), but smallest capacity (8-64 KB per core).

- L2 Cache: Serves as a secondary buffer between L1 and shared L3, with moderate latency (8-25 cycles) and size (256-512 KB per core).

- L3 Cache (Last-Level Cache - LLC): Shared among multiple cores, featuring higher latency (20-50 cycles) but larger capacity (2-64 MB) to facilitate core-to-core data sharing.

Cache operation leverages two fundamental principles of locality. Temporal locality exploits the tendency of recently accessed data to be reused soon, while spatial locality capitalizes on the likelihood that data adjacent to accessed locations will be needed subsequently. These principles enable caches to achieve high hit rates despite their limited size relative to main memory [7].

Caches are predominantly built using Static Random-Access Memory (SRAM), which provides fast access times but lower density and higher power consumption compared to technologies used for main memory. The limited size stems from this tradeoff between speed and density, making efficient cache management algorithms crucial for performance [7].

Main Memory: The Working Dataset

Main memory, primarily implemented with Dynamic Random-Access Memory (DRAM), serves as the substantial working storage for active applications and data. While significantly slower than cache memories (access latencies of 200-300 clock cycles), DRAM offers much larger capacities (typically 8-128 GB in modern systems) at substantially lower cost per bit [7].

Unlike SRAM used in caches, DRAM requires periodic refresh operations to maintain data integrity, as it stores bits as electrical charges in capacitors that leak over time. This refresh requirement introduces additional complexity and slight performance overhead but enables much higher storage densities [7] [8].

The performance relationship between cache and main memory follows a critical dependency: even the fastest processor will remain idle if the memory system cannot supply data at an adequate rate. This interdependence makes hierarchy optimization essential for maintaining computational efficiency in data-intensive ecological simulations [7].

Table 1: Key Characteristics of Memory Hierarchy Components

| Component | Technology | Typical Size | Access Latency | Primary Function |

|---|---|---|---|---|

| L1 Cache | SRAM | 8-64 KB per core | 1-4 cycles | Hold currently executing instructions and data |

| L2 Cache | SRAM | 256-512 KB per core | 8-25 cycles | Buffer between L1 and shared L3 |

| L3 Cache (LLC) | SRAM | 2-64 MB shared | 20-50 cycles | Shared cache for multi-core coordination |

| Main Memory | DRAM | 8-128 GB system | 200-300 cycles | Hold working set of active applications |

Quantitative Performance Metrics and Energy Considerations

Efficiency Metrics for Memory Systems

Evaluating memory hierarchy performance requires specific quantitative metrics that reflect both speed and energy efficiency. For ecological researchers selecting computational infrastructure or optimizing simulation code, understanding these metrics is essential for making informed decisions [8].

The Energy per Bit Access (measured in picojoules per bit, pJ/bit) quantifies how much energy is required to read or write a single bit of data. Lower values indicate higher efficiency, which is particularly important for large-scale or long-running simulations where energy consumption becomes a significant operational cost and sustainability concern [8].

Bandwidth per Watt (measured in gigabytes per second per watt, GB/s/W) indicates how much data can be transferred per unit of energy consumed. Higher values signify better energy efficiency in data movement, which benefits both mobile field research applications and large data center deployments [8].

Cache effectiveness is commonly measured through hit rate (the percentage of accesses found in cache) and miss penalty (the additional time required to fetch data from lower hierarchy levels after a cache miss). Even modest improvements in cache hit rate can yield substantial performance gains by reducing costly main memory accesses [8].

Sustainability Implications for Large-Scale Research

The energy consumption of memory systems extends beyond immediate operational costs to encompass broader environmental impacts. Manufacturing integrated circuits for memory subsystems contributes significantly to the total environmental footprint of computing hardware. Research indicates that in many cases, the energy invested in manufacturing modern processors and memory systems can equal the operational energy consumption over typical product lifetimes [9].

This life-cycle perspective is particularly relevant for ecological researchers who increasingly consider the environmental impact of their computational work. Optimization decisions that reduce memory traffic not only improve simulation performance but also contribute to more sustainable research practices by extending hardware lifespan and reducing total energy consumption [9].

Table 2: Memory Hierarchy Performance and Energy Metrics

| Metric | Definition | Importance for Ecological Simulations |

|---|---|---|

| Hit Rate | Percentage of memory accesses found in cache | Higher rates dramatically reduce simulation time by avoiding main memory accesses |

| Access Latency | Time required to retrieve data from a memory level | Directly impacts time-to-solution for iterative calculations |

| Energy per Bit Access | Energy consumed per bit read/written (pJ/bit) | Critical for energy-efficient high-performance computing and battery-field operation |

| Bandwidth per Watt | Data transfer rate per energy unit (GB/s/W) | Determines computational throughput within power budgets |

| Memory Footprint | Total memory capacity required by application | Influences hardware selection and parallelization strategy |

Advanced Memory Architecture Models

Uniform and Non-Uniform Memory Access

Multicore systems implement different architectural approaches to memory organization that significantly impact software performance. In Uniform Memory Access (UMA) architectures, all memory is equidistant from all processor cores, providing consistent access latency. This simplicity comes at the cost of scalability, as memory bandwidth becomes a contended resource as core counts increase [7].

Non-Uniform Memory Access (NUMA) architectures provide each processor cluster with local memory segments, resulting in varying access times depending on whether data resides in local or remote memory. While more complex to program, NUMA systems offer better scalability for memory-intensive workloads, making them relevant for large ecological simulations running on high-performance computing systems [7].

Emerging Architectures and Processing-in-Memory

Recent architectural innovations aim to address the fundamental limitations of traditional memory hierarchies. Processing-in-Memory (PIM) architectures integrate computational units directly within memory chips, performing operations where data resides rather than transferring it to separate processors. This approach shows particular promise for neural network computations used in ecological pattern recognition and for reducing data movement energy costs [7].

Hybrid memory systems that combine different memory technologies (such as DRAM with Phase Change Memory) offer potential pathways to optimize both performance and cost. These systems typically use DRAM for caching and buffering while leveraging emerging non-volatile memories for larger capacity storage, creating new tradeoff opportunities for different simulation workloads [7].

Experimental Protocols for Memory Hierarchy Optimization

Protocol: Cache-Conscious Data Structure Transformation

Objective: Optimize data layout to improve cache utilization and reduce memory access latency in ecological population tracking simulations.

Materials and Methods:

- Simulation environment with performance monitoring capabilities (e.g., hardware performance counters)

- Profiling tools (e.g., perf, VTune)

- Custom data structures for organism population tracking

Procedure:

- Baseline Profile: Execute representative simulation while monitoring LLC miss rate and memory bandwidth utilization using performance counters.

- Data Layout Analysis: Identify frequently accessed data fields within population structures that are accessed together temporally.

- Structure Splitting: Separate frequently and infrequently accessed fields into distinct structures to reduce cache pollution.

- Array of Structures to Structure of Arrays Transformation: Convert from AoS (Array of Structures) to SoA (Structure of Arrays) layout to enable vectorized access patterns.

- Padding and Alignment: Add strategic padding to ensure critical data structures align with cache line boundaries (typically 64-byte boundaries).

- Validation Profile: Re-execute simulation with transformed data structures and compare memory performance metrics against baseline.

Expected Outcome: 15-30% reduction in last-level cache misses and corresponding improvement in simulation throughput due to reduced memory stall cycles.

Protocol: Dynamic Hierarchy Coordination Mechanism (DHCM) Implementation

Objective: Implement and evaluate adaptive memory prefetching and prediction to accelerate ecological memory-influenced simulations.

Background: The DHCM approach intelligently schedules prediction hierarchies and dynamically optimizes memory access processes to enhance system performance. It simultaneously manages both off-chip load requests and on-chip cache accesses based on real-time system feedback [10].

Materials and Methods:

- ChampSim simulator or equivalent memory hierarchy simulator

- Benchmark traces from ecological simulations

- DHCM implementation modules (hierarchy coordination, state trigger mechanism)

Procedure:

- Workload Characterization: Collect memory access traces from target ecological simulations exhibiting both regular (spatial/temporal) and irregular access patterns.

- Baseline Establishment: Evaluate baseline performance using conventional prefetchers (spatial, temporal) measuring Instructions Per Cycle (IPC), miss coverage, and DRAM load operations.

- DHCM Integration: Implement hierarchy coordination mechanism that includes:

- State Trigger for dynamic strategy selection

- Parallel management of on-chip cache accesses and off-chip memory requests

- Real-time adaptation based on access pattern detection

- Parameter Tuning: Optimize threshold values for state transitions based on specific ecological workload characteristics.

- Comparative Analysis: Execute simulations with DHCM active and compare against baseline prefetching strategies across performance metrics.

Expected Outcome: Based on published research, DHCM implementation can achieve approximately 34% IPC improvement in single-core systems and 24% in multi-core systems, with 64% miss coverage and 89% reduction in DRAM loads [10].

Protocol: Electromigration-Aware Memory Reliability Assessment

Objective: Evaluate and mitigate reliability risks from uneven write distributions in long-running ecological simulations.

Background: Electromigration (EM) refers to the degradation process of integrated circuit metal nets, exacerbated by uneven distributions of write activities that create signal toggling hotspots. Mission-critical ecological simulations running for extended durations require special attention to these hardware reliability concerns [11].

Materials and Methods:

- EM simulation and analysis tools

- Memory access trace analyzers

- Write distribution monitoring modules

Procedure:

- Write Distribution Profiling: Instrument simulation code to track write patterns across memory elements during extended execution.

- Hotspot Identification: Analyze write distributions to identify memory elements with disproportionately high write activity.

- Wear-Leveling Implementation: Implement architectural techniques to distribute write operations uniformly across all memory elements, such as:

- Cache line reindexing with multiple mapping functions

- Swap-shift methods that rotate cache set assignments when write thresholds are reached

- EM Impact Simulation: Use EM analysis tools to estimate median-time-to-failure improvements from write distribution optimization.

- Overhead Assessment: Quantify performance, power, and area impacts of EM mitigation techniques to ensure favorable tradeoffs.

Expected Outcome: Significant extension of memory hierarchy lifetime with minimal performance overhead (typically <2%), ensuring reliability for long-duration ecological simulations [11].

Table 3: Essential Research Reagents and Computational Resources for Memory Hierarchy Optimization

| Resource | Function | Application Context |

|---|---|---|

| Hardware Performance Counters | CPU-level monitoring of cache hits/misses, branch prediction, memory accesses | Performance profiling and bottleneck identification in simulation code |

| ChampSim Simulator | Configurable memory hierarchy simulator for architecture research | Evaluating cache policies, prefetchers, and memory controllers without hardware fabrication |

| Electromigration Analysis Tools | Simulate circuit degradation under various workload patterns | Reliability assessment for long-running ecological simulations |

| Low-Power DDR (LPDDR) Memory | Energy-optimized memory technology for mobile and embedded systems | Field deployment of ecological monitoring and simulation systems |

| Non-Volatile Memory (PCM, ReRAM) | Persistent memory technologies with unique energy characteristics | Exploring memory architecture tradeoffs for different ecological workload patterns |

| Structure of Arrays (SoA) Data Layout | Memory layout that optimizes spatial locality for vectorized operations | Improving cache efficiency in population dynamics and environmental factor simulations |

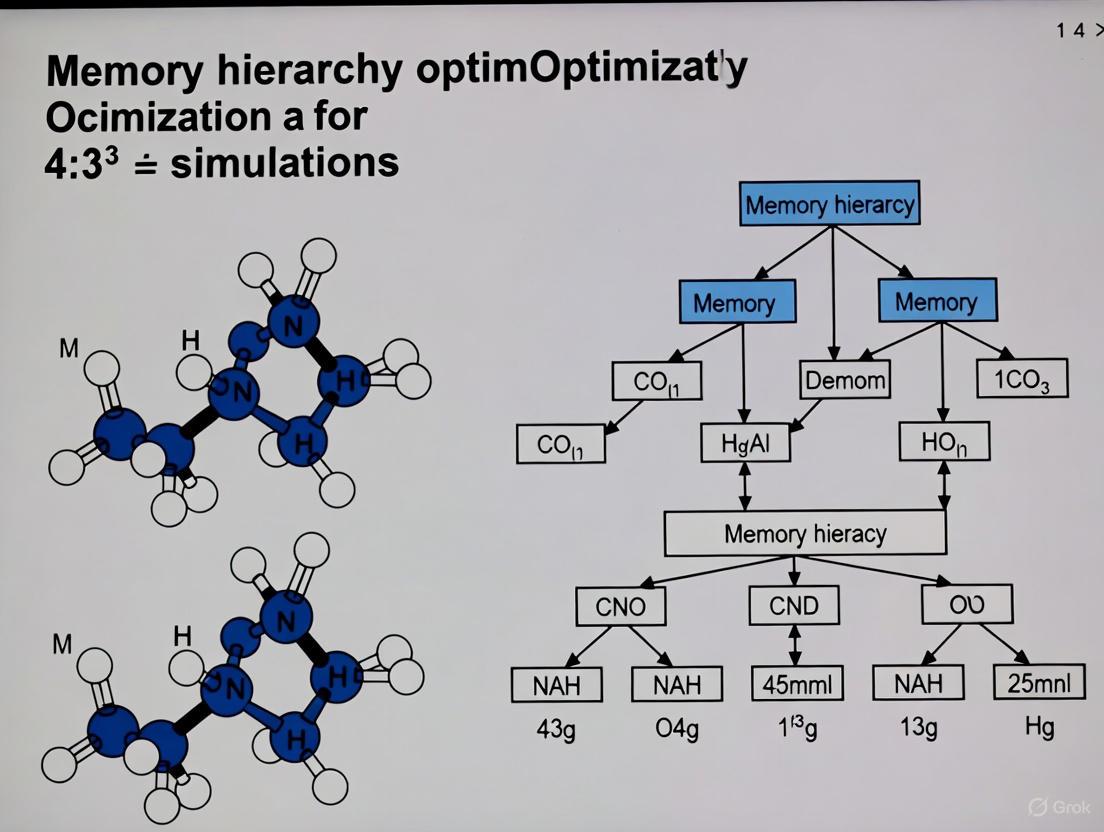

Visualization of Memory Hierarchy and Optimization Workflows

Diagram 1: Memory Hierarchy Structure and Access Latencies. This diagram illustrates the typical multi-level cache hierarchy with increasing access latencies at each level from registers to secondary storage. Optimization techniques target specific hierarchy levels to reduce effective access time.

Diagram 2: Memory Hierarchy Optimization Workflow. This workflow outlines the systematic approach to identifying and addressing memory performance bottlenecks in ecological simulations, with multiple optimization techniques available based on specific workload characteristics.

Common Memory Access Patterns in Ecological Models (e.g., Cellular Automata, Agent-Based Models)

Ecological models are fundamental tools for understanding complex biological systems, from tumour evolution to microbial community dynamics. The computational performance of these models, particularly spatial agent-based models (SABMs) and cellular automata, is intrinsically linked to their memory access patterns. Efficient memory usage is not merely a technical concern but a prerequisite for enabling larger, more realistic simulations and accelerating scientific discovery. These models, which simulate autonomous, interacting agents such as individual cells or organisms, generate memory access patterns that directly reflect the spatial structure and interaction rules of the biological system being studied [12] [13].

A memory access pattern refers to the sequence and frequency with which a program accesses memory locations during execution. Spatial locality exists when a program accessing a memory location is likely to also access nearby locations. Temporal locality occurs when a recently accessed memory location is likely to be accessed again in the near future [14]. In ecological modelling, inefficient memory access patterns are frequently identified as a primary bottleneck in performance optimization, especially in code not yet modernized for vector Single Instruction Multiple Data (SIMD) parallelism [14]. Understanding these patterns is thus essential for optimizing simulation performance within modern memory hierarchies.

Fundamental Memory Access Patterns in Core Ecological Modeling Paradigms

Cellular Automata and Regular Grid Models

Cellular automata represent one of the simplest yet most powerful approaches to spatial ecological modeling. They typically operate on a regular grid of sites, where each site has a state (e.g., unoccupied or occupied by a specific cell type) and update rules that depend on the states of neighboring sites [13]. The Eden growth model, for instance, is a classic stochastic cellular automaton used to simulate tumour growth, where new cells are added to the surface of a growing cluster [13].

The memory access pattern for basic cellular automata is characterized by structured, predictable strides. When updating a cell, the simulation must access the state of that cell and the states of all cells in its defined neighborhood (e.g., von Neumann or Moore neighborhoods). This results in a pattern with excellent spatial locality, as the processor accesses contiguous or regularly spaced memory locations corresponding to adjacent grid cells.

Table 1: Memory Access Characteristics of Ecological Modeling Approaches

| Model Type | Primary Access Pattern | Locality | Performance Considerations |

|---|---|---|---|

| Cellular Automata (e.g., Eden model) | Sequential/Strided | High spatial locality | Amenable to vectorization and prefetching [14] |

| Agent-Based Models (Simple) | Random/Irregular | Poor spatial and temporal locality | Pointer-chasing, cache-inefficient [14] |

| SABMs with Grid Prox. | Mixed (Grid: Regular, Agent: Irregular) | Moderate spatial locality | Performance depends on efficient grid traversal |

Agent-Based Models and Irregular Access

Agent-based models (ABMs) simulate a system as a collection of autonomous, decision-making entities (agents). In ecology and oncology, these agents often represent individual organisms, such as tumour cells or members of a microbial community [12] [13]. Each agent typically has its own state and set of behaviors, and the model evolves through agent-agent and agent-environment interactions.

The memory access pattern for a naive implementation of an ABM is often random or irregular. If agents are stored as objects in a list or array, and the simulation processes each agent in sequence, the data accessed for one agent (e.g., its position, genotype, internal state) may be scattered widely in memory. This is especially true if the agent data structure is large and complex. Such irregular access patterns exhibit poor spatial and temporal locality, leading to high rates of cache misses and page faults [14]. These "pointer-chasing" codes serialize memory operations and limit the effectiveness of hardware prefetchers, as the address of the next required data cannot be easily predicted [14].

Experimental Protocols for Profiling and Analysis

Protocol 1: Profiling Memory Access Patterns in Existing Simulations

Objective: To identify inefficient memory access patterns in an existing ecological simulation codebase, establishing a performance baseline before optimization.

Materials and Software:

- The target ecological simulation executable (e.g., a C++ or Fortran-based SABM).

- A supported Linux operating system.

- Intel VTune Profiler or Intel Advisor with the Memory Access Patterns (MAP) analysis feature.

Procedure:

- Build the Application: Compile the target simulation code with debugging symbols enabled (

-gflag) and moderate optimization (-O2). Avoid aggressive optimizations that may obscure the source of memory operations. - Run Survey Analysis: Execute the VTune Profiler and run a General Exploration or Hotspots analysis on a representative workload (e.g., 1000 time steps of a tumour growth simulation). This identifies code regions (loops, functions) where the application spends most of its time.

- Configure MAP Analysis: From the identified hotspots, select key loops for deeper memory analysis. In the Intel Advisor GUI, initiate a new Memory Access Patterns (MAP) analysis targeting these loops.

- Execute and Collect Data: Run the MAP analysis. This process is relatively expensive, with typical runtimes ranging from 30 seconds to 10 minutes. The tool dynamically tracks memory access instructions in the selected code regions [14].

- Analyze the MAP Report: Examine the generated report for the following key metrics:

- Stride Distribution: Visually identifies inefficient patterns (e.g., random stride in the Strides Distribution column) [14].

- Memory Footprint Characteristics: Estimates the number of cache lines accessed. A large footprint indicates a high probability of cache misses.

- Source Report: Maps access pattern data back to specific source code lines and variables, pinpointing the exact data structures causing bottlenecks [14].

Deliverable: A profiling report highlighting the top 3-5 code regions with inefficient memory access patterns (e.g., random access in an agent loop), along with the specific data structures involved and the observed stride distributions.

Protocol 2: Evaluating Optimized Data Layouts for Agent-Based Models

Objective: To quantitatively compare the performance and cache efficiency of two common data layouts for storing agent data in a SABM.

Materials and Software:

- A SABM benchmark that simulates a 2D tumour with at least 10^5 cells.

- Implementation of the two data layouts: Array-of-Structs (AoS) and Struct-of-Arrays (SoA).

- Intel Advisor (for MAP analysis) and a performance timer.

- CPU performance counter tools (e.g.,

perfon Linux).

Procedure:

- Implement Data Layouts:

- AoS Layout: Define a

Cellstruct containing all agent data (e.g.,position_x,position_y,genotype,metabolism) and create astd::vector<Cell>. - SoA Layout: Create separate arrays for each property:

std::vector<double> position_x,std::vector<double> position_y,std::vector<int> genotype, etc.

- AoS Layout: Define a

- Design Workload: Define a benchmark kernel that performs a common operation, such as updating the position of every agent based on a simple rule or calculating interactions between nearby agents.

- Execute and Measure: Run the benchmark kernel for 1000 iterations for both data layouts.

- Measure total execution time.

- Use CPU performance counters to record L1 and L3 cache miss rates.

- Use Intel Advisor's MAP analysis on the kernel loop to document the stride distribution and memory footprint for each layout.

- Data Analysis: Compare the results. The SoA layout is expected to yield better performance for operations that process a single attribute across all agents (e.g., updating all X positions) due to contiguous, unit-stride access patterns that exploit spatial locality and enable vectorization [14].

Deliverable: A table comparing execution time, cache miss rates, and dominant stride patterns for the AoS and SoA implementations, providing quantitative evidence for selecting an optimal data layout.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Analyzing and Optimizing Memory Access in Ecological Models

| Tool / Reagent | Category | Primary Function | Application Example in Ecology |

|---|---|---|---|

| Intel Advisor (MAP) | Profiling Software | Dynamically tracks memory access instructions, provides stride distribution and locality analysis. | Identifying random access in an agent loop of a tumour growth SABM [14]. |

| USIMM / DRAMSim2 | Memory Simulator | Enables performance evaluation by modeling diverse memory systems and analyzing access patterns. | Projecting the performance of a new grid model on future hardware with different cache hierarchies. |

| Struct-of-Arrays (SoA) | Data Layout | An optimization technique that stores each attribute of a data entity in a separate, contiguous array. | Improving cache locality when processing a single attribute (e.g., metabolic rate) across all agents in a community model [14]. |

| Spatial Grid Index | Computational Algorithm | A data structure that partitions space to quickly locate agents in a specific region. | Accelerating neighbor-finding in a SABM by reducing search space, thus improving access locality [13]. |

| Cache-Blocking (Tiling) | Loop Transformation | Restructures loops to operate on data subsets that fit into cache, reusing loaded data. | Optimizing the update step in a 2D diffusion model for soil nutrients or chemical signals [14]. |

Visualization of Memory Access Patterns and Optimization Strategies

Understanding the abstract concept of memory access patterns is greatly aided by visualization. The following diagrams illustrate common patterns and optimization workflows encountered in ecological modeling.

Linking Ecosystem Service Trade-offs and Synergies to Computational Workloads

Understanding the complex trade-offs and synergies between ecosystem services—the benefits humans obtain from ecosystems—is critical for effective environmental management and policy development. Concurrently, advances in computational modeling have enabled the simulation of these relationships at unprecedented scales and resolutions. This application note explores the intrinsic connection between these two domains, framing the analysis within the pressing need for memory hierarchy optimization in ecological simulations. As models grow in complexity to capture non-linear ecological interactions, their computational footprints expand significantly, making efficient memory system design not merely a performance concern but a fundamental enabler of research accuracy and scope [8] [15].

The analysis of ecosystem service relationships often involves quantifying how the enhancement of one service (e.g., carbon sequestration) leads to the reduction (trade-off) or co-enhancement (synergy) of others (e.g., water yield or agricultural production) [16] [17]. Similarly, computational workloads managing these analyses must navigate trade-offs between simulation fidelity, spatial resolution, temporal scale, and resource consumption. This note provides a detailed framework for quantifying these relationships, outlines protocols for associated computational tasks, and proposes optimization strategies to enhance the efficiency of ecological simulation research.

Quantitative Foundations of Ecosystem Service Relationships

Ecosystem services are commonly categorized into provisioning (e.g., food, water), regulating (e.g., climate regulation, flood control), and cultural services. Their interrelationships are quantified through biophysical measurements and economic valuation, which subsequently inform computational modeling parameters.

Table 1: Key Ecosystem Services and Example Valuation Metrics

| Service Category | Specific Service | Example Biophysical Metric | Example Valuation Method |

|---|---|---|---|

| Provisioning | Food Production | Crop yield (tons/ha) | Market price [16] |

| Provisioning | Water Supply | Water usage volume (m³) | Market value [16] |

| Regulating | Carbon Sequestration | CO₂ quantity sequestered (tons) | Replacement cost (social cost of carbon) [16] |

| Regulating | Soil Retention | Soil quantity retained (tons) | Replacement cost (sedimentation reduction) [16] |

| Regulating | Flood Regulation | Water area of reservoir (km²) | Replacement cost [16] |

| Cultural | Recreation | Number of visitors | Travel cost method [16] |

Global assessments reveal the immense scale and interconnectedness of these services. One study estimated the global Gross Ecosystem Product (GEP) to average USD 155 trillion, approximately 1.85 times the global GDP, highlighting the economic significance of these natural assets [16].

Table 2: Documented Ecosystem Service Trade-offs and Synergies

| Continent/Group | Synergistic Relationship | Trade-off Relationship |

|---|---|---|

| Global | Oxygen release, climate regulation, and carbon sequestration [16] | - |

| Low-income countries | - | Flood regulation vs. Water conservation & Soil retention [16] |

| China (Loess Plateau) | - | Carbon sequestration vs. Water production [16] |

| Xizang | Water production & Net Primary Productivity (NPP) [16] | - |

These relationships are driven by shared biophysical processes and anthropogenic drivers. For instance, afforestation can create synergies between carbon sequestration and soil retention but may cause trade-offs with water yield due to increased evapotranspiration [16] [17]. Understanding these drivers is essential for structuring accurate computational models.

Computational Workloads in Ecological Simulation

Ecological simulations, particularly those modeling spatial dynamics and ecosystem services, impose specific and demanding computational workloads. Key modeling approaches include:

- Process-Based Models (e.g., InVEST, CICE): These models simulate underlying biophysical processes. The Sea Ice Model (CICE), for example, uses a dynamics EVP (Elastic-Viscous-Plastic) rheology model to simulate sea ice dynamics, which involves solving complex stress and velocity equations critical for climate regulation services [18].

- Spatial Simulation Models (e.g., CA with LSTM): Cellular Automata (CA) coupled with deep learning models like Long Short-Term Memory (LSTM) networks are used to simulate land-use change under ecological constraints. These models predict urban expansion by learning long-term temporal dependencies from historical data [15].

- Hybrid AI Frameworks: Edge-cloud synergistic frameworks deploy lightweight AI models (e.g., for anomaly detection) on edge devices and more complex models (e.g., LSTM for predictive failure analysis) in the cloud. This approach optimizes latency, bandwidth, and energy consumption for real-time monitoring applications [19].

These workloads are characterized by data-intensive operations, complex spatial and temporal dependencies, and often, the need for high-resolution, large-scale simulations. The core computational challenge lies in efficiently managing the memory access patterns, data transfer, and hierarchical data storage required by these tasks.

Experimental Protocols for Analysis and Simulation

Protocol 1: Quantifying Ecosystem Service Trade-offs/Synergies

Objective: To statistically identify and quantify trade-offs and synergies among key ecosystem services within a study region.

- Data Collection:

- Gather spatial datasets on ecosystem services (see Table 1). For a regional study, this may include land use/cover maps, soil data, precipitation, and temperature data. Primary data can be sourced from remote sensing products (e.g., 1 km resolution remote sensing data as used in global studies [16]) and national statistics.

- Biophysical Modeling:

- Statistical Analysis:

- At a predefined spatial unit (e.g., county, watershed), extract the calculated values for each ecosystem service.

- Perform a correlation analysis (e.g., Pearson or Spearman correlation) on the paired ecosystem service values.

- Interpretation: A statistically significant positive correlation indicates a synergistic relationship, while a significant negative correlation indicates a trade-off [16] [17].

Protocol 2: Simulating Urban Expansion Under Ecological Constraints

Objective: To project future urban expansion dynamics under different ecological protection scenarios using a memory-optimized LSTM-CA model.

- Model Coupling:

- Couple a Cellular Automata (CA) model with a Long Short-Term Memory (LSTM) network. The LSTM is used to automatically learn and predict the temporal transition rules of land use based on historical data and driving factors (e.g., topography, infrastructure), which are then fed into the spatially explicit CA model [15].

- Ecological Scenario Definition:

- Natural Expansion (NE) Scenario: Project growth based solely on historical trends and socio-economic drivers.

- Ecological Constraint (EC) Scenario: Incorporate ecological "red lines." Identify Ecological Sources (ES) based on habitat quality and landscape connectivity, and construct a Resistance Surface (RS) using environmental and socio-economic factors. Use these to delineate ecologically protected zones where urban development is restricted [15].

- Simulation and Validation:

- Train the LSTM-CA model on historical land-use data (e.g., 2000-2020).

- Validate the model by simulating a known year and comparing the result to the actual map using metrics like overall accuracy. Research has shown LSTM-CA can achieve an accuracy of 91.01%, outperforming traditional models like ANN-CA [15].

- Run simulations to a future target year (e.g., 2030) under both NE and EC scenarios to compare outcomes.

Protocol 3: Optimizing Sea Ice Dynamics Simulation

Objective: To optimize the memory access and parallel efficiency of the dynamics EVP model within the CICE sea ice model on a heterogeneous many-core processor (e.g., SW39000).

- Baseline Implementation:

- Port the standard EVP model, which involves stress and velocity component calculations over a finite-difference grid, to the target processor. This model is critical for simulating sea ice's role in climate regulation [18].

- Memory Access Optimization:

- Data Differentiation: Classify model arrays based on access patterns and sparsity.

- DMA Compressed Transfer: For sparse arrays, use a Data Probability Density Estimation to compress data before Direct Memory Access (DMA) transfer, improving effective bandwidth.

- Inter-Operator Data Caching: Use Remote Memory Access (RMA) communication to cache intermediate data that is shared between computational operators, reducing master-slave core interactions [18].

- Performance Evaluation:

- Measure performance against the baseline in terms of speedup (e.g., slave core speedup) and parallel efficiency. For example, optimized implementations have achieved a speedup of 27.54x within a single core group and 70% parallel efficiency using 10 core groups [18].

Visualizing Logical and Workflow Relationships

The following diagrams illustrate the core conceptual and procedural frameworks discussed in this note.

Eco-Computational Feedback Loop

Ecosystem Service Analysis Workflow

The Scientist's Toolkit: Essential Research Reagents & Computational Solutions

Table 3: Key Tools and Technologies for Advanced Ecological Simulation Research

| Tool/Solution Category | Specific Example | Function & Application |

|---|---|---|

| Ecosystem Service Modeling | InVEST Model [20] | A suite of models for mapping and valuing ecosystem services, used to quantify biophysical and monetary values. |

| Spatial Simulation & AI | LSTM-CA Model [15] | A deep learning-coupled cellular automata framework for simulating complex spatiotemporal processes like urban expansion under ecological constraints. |

| Climate System Model | CICE Sea Ice Model [18] | A widely used model for simulating sea ice dynamics and thermodynamics, employing the EVP rheology model for calculating ice stresses and velocities. |

| High-Performance Computing Optimization | DMA Compressed Transfer [18] | A technique to improve memory bandwidth utilization by compressing sparse data arrays before transfer, crucial for models with sparse data patterns. |

| Hybrid Computing Framework | Edge-Cloud Synergy [19] | A deployment strategy that uses edge devices for low-latency, lightweight processing and cloud servers for heavy computations, optimizing latency and bandwidth. |

| Feature Selection | OOA-PSO Algorithm [21] | A hybrid bio-inspired optimization technique for selecting the most relevant features in a dataset, reducing computational complexity in predictive modeling. |

The intricate analysis of ecosystem service trade-offs and synergies is inextricably linked to the computational workloads required to model them. Effectively managing these workloads through advanced memory hierarchy optimization—such as data differentiation, compressed data transfer, and intelligent caching—is paramount for scaling ecological simulations to address global environmental challenges. The protocols and tools outlined herein provide a foundation for researchers to enhance the fidelity, scale, and efficiency of their work, ultimately leading to more informed and sustainable environmental decision-making. Future progress in this field hinges on the continued synergy between ecological science and computational innovation.

The Impact of Memory Latency on Simulation Throughput and Model Scalability

Memory latency, the delay in accessing data from main memory, represents a critical bottleneck in high-performance computing (HPC) environments, particularly for memory-intensive ecological simulations. As models increase in complexity—from individual organism interactions to entire ecosystem dynamics—the computational workload grows exponentially, placing immense pressure on memory subsystems. The growing disparity between processor speeds and memory access times, known as the "Memory Wall," severely limits simulation throughput and restricts model scalability [10]. Efficient memory hierarchy optimization is therefore essential for ecological researchers seeking to conduct larger, more detailed, and more accurate simulations within feasible timeframes and resource constraints.

This application note examines the fundamental relationship between memory latency, simulation throughput, and model scalability within the context of ecological research. We present quantitative performance data, detailed experimental protocols for benchmarking memory performance, and visualization of memory hierarchy architectures. Additionally, we provide a comprehensive toolkit to assist researchers in optimizing their computational workflows for ecological simulation tasks, enabling more ambitious modeling of complex ecosystems from microbial communities to global biogeochemical cycles.

Quantitative Impact of Memory Latency on Simulation Performance

The performance degradation caused by memory latency can be measured through several key metrics. The following table summarizes empirical data on how memory latency affects simulation throughput and the potential performance gains achievable through optimization techniques.

Table 1: Performance impact of memory latency and optimization gains

| Performance Metric | Baseline Performance | With Memory Optimization | Improvement | Source/Context |

|---|---|---|---|---|

| Instructions Per Cycle (IPC) | Baseline | +34.08% (single-core)+24.09% (multi-core) | Dynamic Hierarchy Coordination [10] | |

| Cache Miss Coverage | Baseline | 64.17% improvement | Reduced memory access delays [10] | |

| DRAM Load Reduction | Baseline | 89.33% decrease | Less off-chip memory access [10] | |

| Simulation Runtime | Weeks (CPU-only) | Hours (GPU-accelerated) | HPC system transformation [22] | |

| Mesh Generation Time | Not specified | 11 minutes (172M elements) | Advanced hardware utilization [22] |

Analysis of these performance metrics reveals that memory latency optimization directly enhances simulation throughput by reducing processor stall times. Ecological simulations, which often involve complex agent-based models or spatial analyses with irregular memory access patterns, particularly benefit from these improvements. The significant reduction in DRAM loads indicates more efficient cache utilization, which is crucial for iterative algorithms common in ecological modeling, such as population dynamics simulations or phylogenetic analyses [10].

Experimental Protocols for Assessing Memory Performance

Protocol 1: Benchmarking Memory Latency Impact on Ecological Simulations

Objective: Quantify how memory latency affects specific ecological simulation workloads and identify performance bottlenecks.

Materials:

- Computing system with configurable memory settings

- Ecological simulation code (e.g., population dynamics, ecosystem models)

- Performance monitoring tools (perf, VTune, or custom metrics)

- Memory latency profiling tools (LMbench, memtester)

Methodology:

- Baseline Establishment: Run the ecological simulation on a controlled system configuration, collecting performance metrics including execution time, memory access patterns, and cache hit/miss ratios.

- Memory Stress Testing: Configure system BIOS to introduce artificial memory latency where possible, or utilize memory throttling tools to emulate constrained memory subsystems.

- Workload Variation: Execute simulations with varying model complexities:

- Population models with different agent counts

- Spatial models with increasing resolution

- Metabolic network models with growing reaction networks

- Data Collection: Record execution times, memory bandwidth utilization, and cache performance statistics for each run.

- Analysis: Correlate memory latency metrics with simulation throughput degradation across different model types and sizes.

Expected Outcomes: This protocol helps researchers identify the memory sensitivity of their specific ecological models and determine the most beneficial optimization targets, whether in algorithm design, data structures, or hardware configuration.

Protocol 2: Evaluating Memory Hierarchy Optimization Techniques

Objective: Test the effectiveness of various memory optimization strategies for ecological simulation workloads.

Materials:

- gem5 simulator with SALAM integration [23]

- ChampSim simulator [10]

- Benchmark ecological datasets (e.g., species distribution data, genomic sequences)

- Custom acceleration algorithms relevant to ecological research

Methodology:

- Infrastructure Setup: Configure the gem5 simulator for full-system heterogeneous simulation, incorporating CPUs, GPUs, and custom accelerators using the integrated SALAM framework [23].

- Optimization Implementation: Apply memory optimization techniques:

- Implement Dynamic Hierarchy Coordination Mechanism (DHCM) for coordinated cache prefetching and off-chip prediction [10]

- Configure cache hierarchy prediction to bypass unnecessary levels

- Implement hardware prefetchers targeting ecological data patterns

- Workload Execution: Run representative ecological simulations:

- Individual-based models with varying population sizes

- Phylogenetic tree construction with growing taxon sets

- Spatial ecosystem models with increasing grid resolutions

- Metric Collection: Record Instructions Per Cycle (IPC), cache miss rates, DRAM access frequency, and total simulation completion time.

- Validation: Compare optimized performance against baseline configurations using statistical significance testing.

Expected Outcomes: Researchers can identify which memory optimization techniques provide the greatest benefit for their specific simulation types, enabling informed decisions about both algorithmic improvements and hardware investments.

Visualization of Memory Hierarchy Architecture

The following diagram illustrates a coordinated memory hierarchy architecture, showing how different optimization techniques interact across cache levels to reduce effective memory latency.

Diagram 1: Memory hierarchy with optimization components

This visualization shows how a Dynamic Hierarchy Coordination Mechanism (DHCM) manages both on-chip cache prefetching and off-chip access prediction strategies. The prefetch engine proactively loads data into cache hierarchies based on observed access patterns, while the hierarchy predictor enables bypassing certain cache levels when beneficial. This coordinated approach significantly reduces memory access latency for ecological simulations with predictable data access patterns, such as spatial grid traversals or sequential processing of individual organisms in population models.

The Ecological Modeler's Toolkit for Memory Optimization

Table 2: Essential tools and techniques for memory-efficient ecological simulations

| Tool/Technique | Function | Application in Ecological Research |

|---|---|---|

| gem5-SALAM Simulator | Full-system heterogeneous simulation with accelerator modeling [23] | Evaluate new algorithms before hardware implementation; study system-level performance of ecological models |

| Dynamic Hierarchy Coordination (DHCM) | Coordinates cache prefetching and memory prediction [10] | Optimize memory access patterns in population dynamics and spatial ecosystem simulations |

| Hardware Prefetchers | Proactively load data into caches based on access patterns | Accelerate spatial data processing in landscape ecology models |

| Cache Hierarchy Prediction | Predict appropriate cache level for data access | Reduce latency in phylogenetic tree searches and community analyses |

| ChampSim Evaluator | Memory system simulation and benchmarking [10] | Test optimization strategies for specific ecological workload patterns |

| LLVM-based Accelerator Modeling | Create custom hardware accelerators for specific computations [23] | Develop domain-specific accelerators for frequently used ecological algorithms |

Memory latency stands as a fundamental constraint on simulation throughput and model scalability in ecological research. Through structured assessment using the provided experimental protocols and implementation of appropriate optimization strategies from the research toolkit, computational ecologists can significantly enhance their simulation capabilities. The visualized memory hierarchy architecture demonstrates how coordinated optimization mechanisms can alleviate memory bottlenecks. As ecological models grow in complexity and scale to address pressing environmental challenges, attention to memory hierarchy optimization will become increasingly essential for enabling next-generation simulations that fully leverage advancing computational infrastructure.

Applying Advanced Memory Optimization Techniques to Ecological Workloads

Leveraging Dynamic Hierarchy Coordination Mechanisms (DHCM) for Multi-Level Management

Application Notes

Dynamic Hierarchy Coordination Mechanisms (DHCM) provide a structured approach to managing the complex, multi-level data flows inherent in large-scale ecological simulations. These mechanisms address the core challenge of runtime composition—the on-the-fly discovery, integration, and coordination of constituent systems—which is crucial for adaptability in dynamic environments [24]. Within ecological modeling, DHCM facilitates the interaction between disparate model components, such as climate models, species population dynamics, and habitat suitability maps, enabling a more holistic and accurate simulation of ecological systems [24].

The efficacy of DHCM is underpinned by several key functional areas identified in modern Systems of Systems (SoSs). The table below summarizes these core solution strategies and their specific applications in ecological simulation research.

Table 1: Core DHCM Solution Strategies for Ecological Simulations

| Solution Strategy | Application in Ecological Simulation Research |

|---|---|

| Co-simulation & Digital Twins | Creating live, synchronized virtual representations of an ecosystem (e.g., a forest or watershed) for scenario testing and prediction without real-world interference [24]. |

| Semantic Ontologies | Defining a common vocabulary and relationship rules for data exchange between different ecological models (e.g., ensuring "canopy cover" means the same for a forestry model and a climate model) [24]. |

| Adaptive Architectures | Designing system structures that can automatically re-prioritize data processing resources in response to simulated events, such as a wildfire or a sudden population decline [24]. |

| AI-Driven Resilience | Using machine learning to detect anomalous patterns within simulation data that may indicate model drift or unexpected ecological feedback loops, thereby maintaining the reliability of long-running simulations [24]. |

A critical aspect of implementing DHCM is the proper structuring of data to reflect the different levels of hierarchy and granularity. In the context of ecological simulations, this means clearly defining what a single row of data represents across different model components [25]. Furthermore, presenting the resulting data effectively is key to comprehension. Tables are particularly advantageous for this purpose, as they provide a precise representation of numerical values and facilitate detailed comparisons between different data points or categories, which is essential for analyzing simulation outputs [26].

Experimental Protocols

Protocol: Evaluating DHCM Performance in a Multi-Scale Ecological Model

This protocol outlines a methodology for assessing the impact of a Dynamic Hierarchy Coordination Mechanism on the performance and accuracy of a simulated ecosystem.

I. Hypothesis Implementing a DHCM based on an adaptive service-oriented framework will significantly improve data throughput and reduce latency between model components compared to a static coupling approach, without sacrificing simulation accuracy.

II. Key Reagent Solutions & Computational Materials

Table 2: Essential Research Reagents and Materials

| Item Name | Function / Explanation |

|---|---|

| Co-Simulation Platform | Software (e.g., based on the Functional Mock-up Interface standard) that acts as the master coordinator, managing the time synchronization and data exchange between the constituent models [24]. |

| Semantic Ontology | A machine-readable file (e.g., an OWL file) defining key ecological entities (Species, Habitat, ClimateVariable) and their properties. This ensures all models interpret data consistently [24]. |

| Constituent Models (CSs) | The individual, self-contained simulation models that represent different hierarchical levels (e.g., a soil chemistry model, a plant growth model, and a herbivore population model) [24]. |

| Metrics Logging Library | A software library integrated into the co-simulation platform to automatically record performance metrics (e.g., data exchange latency, resource utilization) at runtime. |

III. Procedure

- Model Integration: Integrate three distinct ecological models (e.g., Soil Model, Vegetation Model, Fauna Model) into the co-simulation platform. Define all input and output variables for each model.

- DHCM Configuration: Implement the coordination logic within the platform. This includes:

- Setting up the semantic ontology to map output from the Soil Model (e.g., nitrogen levels) to the required input for the Vegetation Model.

- Configuring the adaptive architecture rules to increase the update frequency of the Fauna Model if the Vegetation Model detects a biomass drop below a defined threshold.

- Baseline Run (Static Coupling): Execute the simulation with a fixed, low-frequency data exchange rate between all models. Log the final state of key variables (e.g., total biomass, predator population) and performance metrics.

- Experimental Run (Dynamic DHCM): Execute the same simulation scenario with the DHCM enabled. The system should dynamically adjust data flow based on the predefined adaptive rules.

- Data Collection: For both runs, record:

- Performance Data: Average and peak latency of inter-model data exchanges, total simulation completion time.

- Output Data: The final values of key ecological metrics from the simulation.

- Validation: Compare the output data of the baseline and experimental runs against a validated benchmark dataset (if available) to ensure the DHCM did not introduce computational artifacts that reduce accuracy.

IV. Analysis

- Calculate the percentage improvement in data throughput and reduction in latency.

- Perform a statistical comparison (e.g., a t-test) of the key ecological output metrics between the baseline and experimental runs to confirm that differences are not statistically significant, thus validating the DHCM's accuracy.

Protocol: Testing AI-Driven Anomaly Detection within a DHCM

This protocol tests the integration of an AI-based component to enhance the resilience of a coordinated simulation.

I. Hypothesis An AI-driven anomaly detection module, integrated as part of the DHCM, can identify and flag anomalous simulation states resulting from faulty model interactions earlier than traditional threshold-based methods.

II. Procedure

- Establish Baseline: Run a validated, stable ecological simulation with the DHCM and record the data streams between models as a "normal" baseline.

- Introduce Anomaly: Manually inject a systematic error into one model's output (e.g., gradually skewing the temperature output from a climate model).

- AI Module Operation: The AI component continuously monitors the data streams, comparing them to the baseline pattern. It is tasked with generating an alert when it detects a statistically significant deviation in the pattern of data flow or content.

- Detection Comparison: Record the time (or simulation step) at which the AI module flags the anomaly. Compare this to the time at which the anomaly becomes large enough to trigger a simple, threshold-based alert on the raw data.

- Evaluation: The performance is measured by the "early warning" lead time provided by the AI module over the traditional method.

Data Presentation

The following tables summarize quantitative data from hypothetical experiments designed to evaluate DHCM performance, structured for easy comparison as required for research analysis.

Table 3: Performance Metrics Comparison: Static vs. Dynamic DHCM Coupling

| Metric | Static Coupling | Dynamic DHCM | % Improvement |

|---|---|---|---|

| Avg. Inter-Model Latency (ms) | 450 | 150 | 66.7% |

| Total Simulation Time (s) | 1,850 | 1,220 | 34.1% |

| Max Data Throughput (MB/s) | 55 | 125 | 127.3% |

| CPU Idle Time (%) | 35% | 18% | - |

Table 4: Final Ecological Output Metrics for Model Validation

| Output Metric | Benchmark Value | Static Coupling Result | Dynamic DHCM Result |

|---|---|---|---|

| Total Ecosystem Biomass (kg/ha) | 15,200 | 14,950 | 15,180 |

| Top Predator Population | 550 | 545 | 552 |

| Soil Nitrogen (ppm) | 25.5 | 25.8 | 25.4 |

Visualizations

DHCM Logical Workflow

Multi-Level Management Hierarchy

Implementing Hardware Prefetching for Spatial and Temporal Data Locality

In the domain of ecological simulations, researchers face the formidable challenge of the "Memory Wall"—the growing performance disparity between processor speeds and memory access times [10] [27]. Techniques such as simulating population dynamics, nutrient cycles, or climate impacts involve processing massive, multi-dimensional datasets with complex, pointer-rich data structures (e.g., ecological networks, spatial grids, and evolutionary trees). These workloads exhibit diverse and often irregular memory access patterns that strain conventional memory systems, making memory latency a critical bottleneck [28].

Hardware prefetching stands as a crucial technique to mitigate this latency by proactively loading data into caches before the processor explicitly demands it. Its effectiveness hinges on accurately predicting future memory accesses by exploiting spatial and temporal locality principles [10]. This application note, framed within a broader thesis on memory hierarchy optimization, provides a detailed guide for implementing advanced hardware prefetchers. It aims to equip researchers and engineers with the protocols and knowledge necessary to enhance the performance of data-intensive ecological simulations.

Theoretical Foundation and Key Prefetching Concepts

Spatial vs. Temporal Locality

- Spatial Locality refers to the tendency of a processor to access data items that are stored at addresses close to recently accessed addresses. Spatial prefetchers excel with contiguous or structured data patterns. For instance, when a simulation accesses an element from a spatial grid, it is likely to soon access its neighboring cells [10] [29].

- Temporal Locality refers to the tendency of a processor to re-access the same data items over time. Temporal prefetchers track sequences of memory accesses to predict recurrences. This is beneficial for data that is frequently reused, such as a shared environmental parameter in an ecological model [10].

Advanced Prefetching Paradigms

Modern research has moved beyond simple stride or next-line prefetchers to address complex, irregular patterns found in real-world applications.

- Context-Based vs. Pattern-Based Prefetching: Traditional spatial prefetchers often use environmental features like the trigger instruction or data address (the "context") to find and replicate the spatial footprint of a previously accessed memory region. However, this approach can lack flexibility and accuracy. A novel approach, exemplified by the Gaze prefetcher, instead characterizes a spatial pattern by its internal temporal correlations—specifically, the order of the first few accesses within a region. This method more effectively captures the essential characteristics of access patterns, leading to superior prediction for complex workloads [30] [31].

- Hierarchical and Coordinated Prefetching: Modern systems employ multiple prefetchers at different levels of the memory hierarchy (e.g., L1, L2, Last-Level Cache). The Dynamic Hierarchy Coordination Mechanism (DHCM) intelligently schedules and coordinates these prediction hierarchies. By leveraging real-time system feedback, DHCM dynamically prioritizes memory operations, simultaneously managing on-chip cache accesses and off-chip memory requests to maximize overall system performance [10].

- Machine Learning-Enhanced Prefetching: ML techniques are increasingly applied to prefetching. Perceptron-based filters can meta-predict the utility of prefetch candidates from other prefetchers, significantly reducing unnecessary memory traffic. Furthermore, online reinforcement learning frameworks, like CHROME, can dynamically adapt cache management and prefetching policies based on fluctuating workload demands, offering robust performance across diverse scenarios [10] [27].

Quantitative Performance Comparison of Prefetching Techniques

The table below summarizes the performance characteristics of various modern prefetching techniques as reported in recent literature, providing a basis for comparison and selection.

Table 1: Performance Comparison of Hardware Prefetching Techniques

| Prefetcher / Mechanism | Key Technique | Reported Performance Improvement | Hardware/Cost Notes |

|---|---|---|---|

| Gaze [30] [31] | Spatial patterns with internal temporal correlations | 5.7% (single-core), 11.4% (eight-core) speedup over baselines | 81% accuracy, 30x less metadata than context-based predictors |

| DHCM [10] | Dynamic hierarchy coordination for on-chip/off-chip requests | 34.08% IPC (single-core), 24.09% IPC (multi-core) | Lightweight hardware implementation |

| Memory-Side GOP [29] | Delta-based algorithm in memory controller | 10.5% performance gain, 61% memory latency reduction | Complements core-side prefetchers |

| GPGPU Prefetch Engine [32] | Parallel prefetching engines with stride detection | Up to 82% latency reduction, 1.24-1.79x speedup | Modular design for DDR/HBM memory |

| APAC Framework [27] | Adaptive prefetch based on concurrent access patterns | 17.3% average IPC gain | Part of concurrency-aware memory optimization |

| CHROME [27] | Online reinforcement learning for cache management | 13.7% performance gain (16-core systems) | Adapts to dynamic environments |

Experimental Protocols for Prefetching Evaluation

To ensure reproducible and meaningful results when evaluating hardware prefetchers, a standardized experimental protocol is essential. The following methodology is synthesized from the evaluated literature.

Simulation Environment Setup

- Simulation Platform: Use a widely-accepted, cycle-accurate architectural simulator. Gem5 and ChampSim are the most common choices in recent research [10] [29].

- Baseline Configuration: Model a modern multi-core processor with a deep memory hierarchy. A typical setup includes:

- Cores: 1 to 8 Out-of-Order (OoO) CPU cores.

- Cache Hierarchy: Private L1 instruction and data caches, a shared L2 cache, and a large, shared Last-Level Cache (LLC).

- Main Memory: DDRx DRAM model with detailed memory controller timing.

- Prefetcher Integration: Implement the candidate prefetcher(s) at the designated level(s) of the cache hierarchy (e.g., L2, LLC, or memory controller). Studies often compare against baseline prefetchers (e.g., stride, stream) and state-of-the-art designs like PMP and vBerti [30] [29].

Workload Selection and Preparation

- Benchmark Suites: Employ a diverse set of standard benchmark suites to capture a wide range of access patterns:

- Data Collection: For each workload run, collect the following key metrics:

- Instructions Per Cycle (IPC): The primary measure of overall performance improvement.

- Cache Miss Rates: At all levels (L1, L2, LLC) to measure prefetcher effectiveness.

- Prefetcher Accuracy & Coverage:

- Accuracy: (Useful Prefetches / Total Prefetches Issued) * 100. Measures correctness.

- Coverage: (Misses Eliminated by Prefetching / Total Baseline Misses) * 100. Measures comprehensiveness.

- Memory Access Latency: The average time to service a memory request [31] [10] [29].

Data Analysis and Validation

- Comparative Analysis: Calculate the performance improvement of the proposed prefetcher against the baseline (no prefetching) and other state-of-the-art prefetchers using the collected metrics.

- Sensitivity Studies: Analyze the impact of key prefetcher parameters (e.g., prefetch degree, distance, table sizes) on performance and overhead. This is crucial for optimizing the design [32].

- Statistical Reporting: Report results using the geometric or harmonic mean across all benchmarks to avoid over-representing high-performance outliers. Provide detailed results for key individual workloads to highlight strengths and weaknesses.

Implementation Diagram for a Gaze-like Spatial Prefetcher

The following diagram illustrates the core components and dataflow of a spatial prefetcher, like Gaze, that leverages internal temporal correlations.

The Researcher's Toolkit

The table below details essential tools and components required for implementing and evaluating hardware prefetchers in a research setting.

Table 2: Essential Reagents and Tools for Prefetching Research

| Item | Function / Description | Exemplars / Specifications |

|---|---|---|

| Architectural Simulators | Cycle-accurate software to model processor and memory system behavior without hardware fabrication. | Gem5, ChampSim [10] [29] |

| Benchmark Suites | Standardized collections of applications and kernels used to stress-test the memory system under diverse workloads. | SPEC CPU2006/2017, PARSEC, CloudSuite, Ligra [31] [10] |

| Performance Counters | Hardware registers in CPUs/GPUs to count low-level events like cache misses, instructions retired, etc. | Core-level and memory controller-level stats [10] [28] |

| Pattern History Metadata | On-chip storage for recording learned memory access patterns and their correlations. | Pattern History Module (PHM), Accumulation Table (AT) [31] |

| Prefetch Buffers | Dedicated storage for holding prefetched data, preventing pollution of the main cache. | Prefetch Buffer (PB), located near L2 cache or memory controller [31] [29] |

| Coordination Logic | Lightweight hardware unit to manage multiple prefetchers and coordinate with memory controller. | State Trigger mechanism (as in DHCM) [10] |

Optimizing hardware prefetching for spatial and temporal locality is a powerful strategy to breach the memory wall in ecological simulations. Moving beyond simple heuristics to embrace temporal correlations within spatial patterns, dynamic multi-level coordination, and machine learning-driven adaptation offers significant performance gains. By adhering to the detailed application notes and experimental protocols outlined in this document, researchers and engineers can effectively implement and validate advanced prefetching mechanisms. This will ultimately accelerate the pace of discovery in data-intensive fields like ecology and drug development by ensuring that computational resources are no longer bottlenecked by memory latency.

Memory access latency and bandwidth are critical bottlenecks in high-performance computing (HPC) systems running complex ecological simulations [10] [33]. Cache hierarchy prediction has emerged as a pivotal technique to optimize memory performance by intelligently managing data placement and movement across different cache levels. For ecological researchers dealing with massive spatiotemporal datasets, effective cache management can dramatically accelerate simulation runtimes, enabling more complex models and detailed analyses [34].

Modern processors employ sophisticated prediction mechanisms to determine whether data should be placed in a particular cache level or bypassed directly to the next level, optimizing for temporal and spatial locality patterns [35] [10]. These techniques are particularly relevant for ecological simulations characterized by diverse data access patterns—from regular grid-based computations to irregular agent-based interactions. This application note explores cutting-edge bypassing and placement strategies within the context of ecological modeling, providing structured protocols and quantitative frameworks for researchers seeking to optimize their computational workflows.

Background and Key Concepts

Cache Hierarchy Fundamentals

Modern memory systems employ multiple cache levels (L1, L2, L3, LLC) to bridge the growing performance gap between processor speeds and main memory access times [10]. The fundamental principle behind cache hierarchy design is exploiting program locality—both temporal (recently accessed data is likely to be accessed again) and spatial (data near recently accessed locations is likely to be accessed) [35]. However, ecological simulation data often exhibits complex, mixed access patterns that challenge conventional caching strategies.

Cache Bypassing Principles