Optimizing Host-Device Data Transfer in Biomedical Research: Strategies to Reduce Overhead and Accelerate Discovery

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on reducing host-device data transfer overhead, a critical bottleneck in data-intensive fields like bioinformatics, medical imaging, and...

Optimizing Host-Device Data Transfer in Biomedical Research: Strategies to Reduce Overhead and Accelerate Discovery

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on reducing host-device data transfer overhead, a critical bottleneck in data-intensive fields like bioinformatics, medical imaging, and AI-driven drug discovery. It explores the foundational causes of transfer inefficiency, presents practical methodological solutions from edge computing and high-performance computing (HPC), offers advanced troubleshooting and optimization techniques for real-world scenarios, and establishes a framework for validating and comparing strategy effectiveness. By synthesizing current research and emerging trends, this resource aims to equip biomedical teams with the knowledge to significantly accelerate computational workflows, reduce operational costs, and expedite the path from data to discovery.

Understanding the Data Transfer Bottleneck: Why Overhead Slows Down Biomedical Research

Defining Host-Device Data Transfer Overhead and Its Impact on Computational Pipelines

In heterogeneous computing systems, host-device data transfer overhead refers to the performance cost incurred when moving data between the CPU (host) and an accelerator like a GPU (device). This overhead is a critical bottleneck that can severely impact the overall performance and efficiency of computational pipelines, particularly in data-intensive fields such as scientific research and drug development [1] [2]. This guide provides troubleshooting and FAQs to help researchers identify, understand, and mitigate this overhead.

Frequently Asked Questions (FAQs)

1. What exactly is host-device data transfer overhead? This overhead encompasses the time and computational resources required to copy data from the host's memory to the device's memory and back. It includes latency from kernel launches, signaling between host and device, and the physical transfer of data across the PCIe bus [1] [3]. During this transfer, the computational units on the device often sit idle, leading to underutilization.

2. Why does transferring small data chunks result in lower throughput? PCIe is a packet-based transport with fixed overhead per transfer, including packet headers. With small data chunks, this fixed overhead constitutes a larger proportion of the total transfer time, reducing efficiency. Full throughput is typically achieved only with larger transfers (e.g., over 8 MB on PCIe gen3 x16) [3].

3. What is the difference between pageable and page-locked (pinned) host memory?

- Pageable memory is standard memory managed by the operating system, which can be paged out to disk. Transferring data from pageable memory to a device requires the driver to first copy it to a temporary page-locked buffer, adding significant overhead [4] [5].

- Page-locked memory is locked to physical RAM, preventing the OS from paging it out. This allows for direct memory access (DMA) by the device, leading to higher and more consistent transfer bandwidth [4] [5].

4. How can I overlap data transfers with computation on the device? Using asynchronous operations and streams, you can pipeline your workflow. While one stream is executing a kernel on the device, a different stream can be simultaneously transferring data for the next operation, effectively hiding the transfer latency behind useful computation [2] [6].

5. My application processes data in chunks. How can I minimize latency? Instead of offloading one large batch, break the data into smaller chunks and process them with multiple shorter-running kernels. This "streaming" design makes the first pieces of processed data available to the host much earlier, significantly reducing latency [1].

Troubleshooting Guide

Identifying Data Transfer Overhead

Use profiling tools like Intel VTune Profiler or NVIDIA Nsight Systems to:

- Measure the time spent on data transfers (

cudaMemcpycalls) versus kernel execution. - Identify whether the application is bound by data transfer operations.

- Visualize the timeline to see if there are gaps between kernels and transfers, indicating a lack of overlap.

Common Symptoms and Solutions

| Symptom | Possible Cause | Recommended Solution |

|---|---|---|

| Low overall throughput; GPU is often idle. | Data transfers are blocking kernel execution; transfers and computation are sequential. | Use asynchronous streams to overlap data transfers and kernel execution [2] [6]. |

| High latency for receiving first result. | Processing data in one large batch (offload model). | Switch to a streaming model with smaller, more frequent kernel launches [1]. |

| Low PCIe transfer bandwidth, especially with small data sizes. | Using pageable memory; small transfer sizes magnifying PCIe packet overhead. | Allocate critical buffers in page-locked host memory; aggregate small transfers into larger chunks [3] [4] [5]. |

| Performance degrades when multiple tasks are launched. | Suboptimal scheduling of tasks leads to poor overlap of transfers and kernels. | Reorder tasks using a scheduling model to maximize concurrent execution of transfers and compute from different tasks [2]. |

Quantitative Data and Performance Comparison

| Transfer Size | Pageable Memory (GB/s) | Page-Locked Memory (GB/s) |

|---|---|---|

| 16 KB | 6.9 | 11.9 |

| 64 KB | 5.4 | 12.0 |

| 256 KB | 5.4 | 12.4 |

| 1 MB + | ~5.5 | ~12.4 |

| Data Transfer Method | Total Processing Time (seconds) |

|---|---|

| H2D from Pageable Memory | 7.90 |

| H2D from Page-Locked Memory | 7.92 |

| H2D from Page-Locked Memory (with multi-threading) | 4.92 |

Experimental Protocols

Protocol 1: Measuring the Impact of Page-Locked Memory

Objective: To quantify the performance benefit of using page-locked host memory for data transfers.

Methodology:

- Allocation: Create two sets of host buffers: one allocated with standard

malloc(pageable) and another withcudaMallocHostorcudaHostAlloc(page-locked). - Timing: For a range of data sizes (e.g., 16 KB to 16 MB), use

cudaEventtimers to measure the duration ofcudaMemcpyoperations from host to device. - Calculation: Compute the effective bandwidth for each transfer:

Bandwidth = Data Size / Transfer Time. - Analysis: Plot bandwidth against transfer size for both memory types, as shown in Table 1.

Protocol 2: Implementing a Pipelined Workflow with Streams

Objective: To hide data transfer latency by overlapping it with kernel execution.

Methodology:

- Setup: Create multiple CUDA streams. Allocate page-locked memory for input and output buffers.

- Division: Split the workload into N chunks.

- Execution: For each chunk

i:- In a dedicated stream, initiate an asynchronous transfer (H2D) for chunk

i's input data. - Launch the processing kernel in the same stream, which will wait for the transfer to complete.

- Initiate an asynchronous transfer (D2H) for chunk

i's output data.

- In a dedicated stream, initiate an asynchronous transfer (H2D) for chunk

- Overlap: While the kernel for chunk

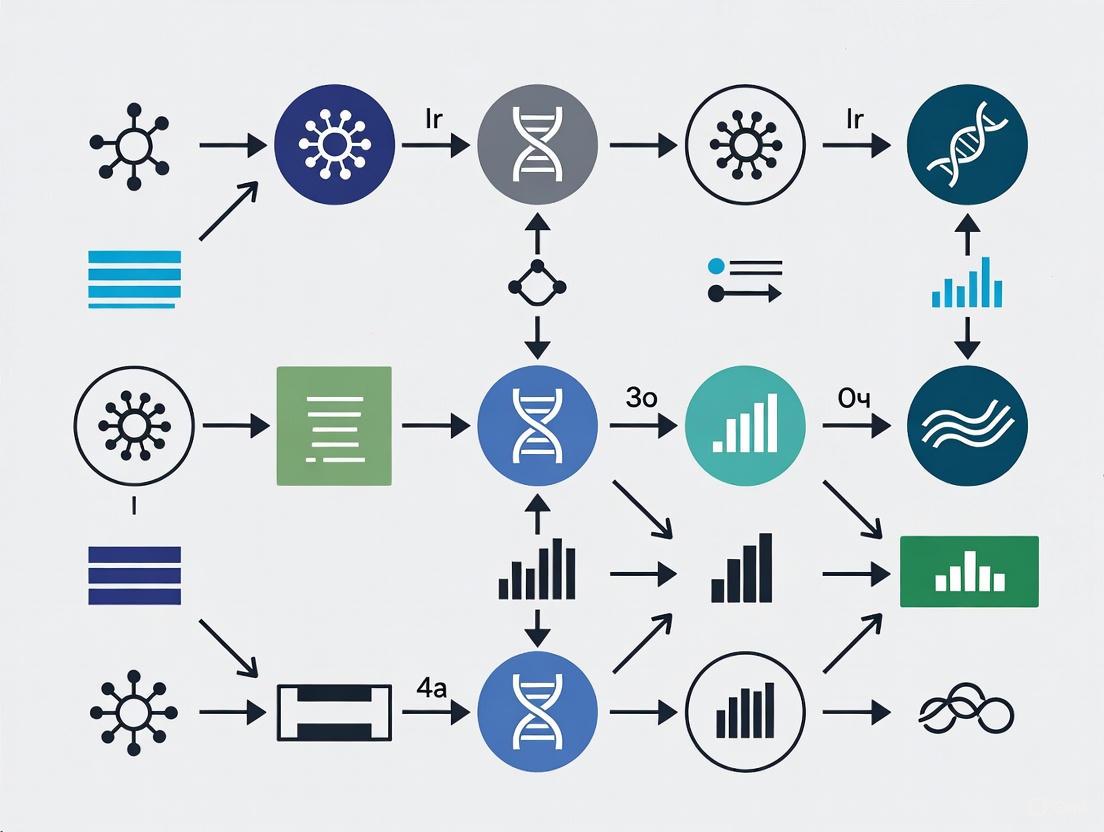

iis running in one stream, the data transfers for chunki+1can occur concurrently in another stream. The following diagram illustrates this pipelined workflow.

Diagram Title: Stream Pipeline Overlap

The Scientist's Toolkit: Key Research Reagents & Solutions

| Item | Function in Experiment |

|---|---|

| SYCL Unified Shared Memory (USM) | A memory management model that simplifies data access across host and device, facilitating zero-copy access and host-device streaming designs [1]. |

| CUDA Streams / OpenCL Command Queues | Software constructs used to queue operations (transfers, kernels) for concurrent execution, enabling overlap of data transfer and computation [2]. |

Page-Locked Memory Allocator (e.g., cudaMallocHost) |

Allocates non-pageable host memory, enabling high-bandwidth, direct transfers to and from the device [4] [5]. |

| nvCOMP Library | A GPU-accelerated compression library that can reduce the volume of data transferred. On NVIDIA Blackwell architectures, it can offload decompression to a dedicated hardware engine [7]. |

| Profiling Tools (e.g., Intel VTune, NVIDIA Nsight) | Essential for identifying performance bottlenecks, measuring transfer times, and verifying the effectiveness of overlap strategies [6] [8]. |

To visualize the fundamental trade-off between latency and throughput that guides the choice of data processing models, refer to the diagram below.

Diagram Title: Processing Model Trade Offs

For researchers, scientists, and drug development professionals, high-performance computing (HPC) and artificial intelligence (AI) have become indispensable tools. The efficiency of moving data between hosts and devices (e.g., CPUs and GPUs) is a critical, yet often overlooked, factor that can make or break an experiment's feasibility, cost, and timeline. Inefficient data transfers create a cascade of negative effects, directly increasing latency, energy consumption, and operational expenses. This guide, framed within the broader thesis of reducing host-device data transfer overhead, provides a technical support center to help you diagnose, understand, and mitigate these inefficiencies in your experimental workflows.

FAQs: Understanding Data Transfer Inefficiency

1. What are the primary technical causes of data transfer inefficiency? Data transfer inefficiency arises from a combination of suboptimal application-layer configurations, hardware limitations, and dynamic network conditions. Key technical causes include:

- Improper Concurrency and Parallelism: The parameters for task-level parallelism (

concurrency) and file-level parallel streams (parallelism) are often set statically. If these values are too low, they underutilize available network bandwidth and I/O capacity. If set too high, they can oversaturate the network, triggering TCP congestion control mechanisms and drastically reducing throughput [9]. - Unoptimized Data Payloads: The format and size of the data being transferred significantly impact performance. Using uncompressed or inefficiently compressed data increases transfer volume and time. Furthermore, transfers involving numerous small files can be hampered by protocol overhead, as opposed to larger, consolidated files [7].

- Hardware and Network Bottlenecks: A chain is only as strong as its weakest link. Slow storage (e.g., HDDs instead of NVMe drives), limited network interface card (NIC) bandwidth, or lack of high-speed interconnects like InfiniBand for multi-node workloads can create severe bottlenecks, leaving powerful accelerators like GPUs idle while waiting for data [10].

2. How does transfer inefficiency directly increase our research costs? The financial impact is twofold, affecting both immediate operational expenditure (OPEX) and long-term capital outlays.

- Cloud Computing Costs: In cloud environments, you pay for GPU/CPU time by the hour. Inefficient data transfers extend the total runtime of your training or analysis jobs. A model that takes 100 GPU hours to train with efficient transfers could take 120+ hours with poor transfers, increasing compute costs by over 20% [11] [10].

- Energy Consumption: Data transfers consume significant energy at the end systems (sender and receiver). Research shows that on a nationwide network, end systems can account for 60% of the total energy consumed during an end-to-end transfer. Inefficient transfers that run longer or overload resources can increase this energy usage by up to 40% [9].

- Infrastructure Investment: To compensate for poor transfer performance, organizations may feel pressured to over-provision hardware (e.g., purchasing more or faster GPUs) or buy more network bandwidth, leading to unnecessary capital expenditure (CAPEX) [12].

3. What is the connection between data transfer performance and energy usage? The relationship is direct and proportional. Prolonged data transfers keep CPUs, NICs, and storage systems under high load for extended periods, consuming more electricity. Actively transferring data also prevents systems from entering low-power idle states. A study on adaptive data transfer optimization demonstrated that intelligent parameter tuning can achieve up to a 40% reduction in energy usage at the end systems compared to baseline methods, highlighting the significant energy waste caused by inefficiency [9].

4. Are there hardware solutions to accelerate data transfer and decompression? Yes, new hardware innovations are specifically designed to offload and accelerate these costly operations. NVIDIA's Blackwell architecture, for example, introduces a dedicated Decompression Engine (DE), a fixed-function hardware block that offloads the task of decompressing common formats like Snappy, LZ4, and Deflate from the general-purpose GPU cores. This not only speeds up decompression but also frees up valuable Streaming Multiprocessor (SM) resources to focus on core computation tasks, thereby reducing overall job completion time and latency [7].

Troubleshooting Guides

Guide 1: Diagnosing Data Transfer Bottlenecks

Use this workflow to systematically identify the source of transfer slowdowns in your experimental pipeline.

Diagnostic Steps:

- Check Network Utilization: Use tools like

nloadoriftopto monitor the network interface during a transfer. If the bandwidth is consistently maxed out (e.g., at 10 Gbps on a 10 Gbps link), the network itself is the bottleneck. - Check CPU Utilization: Use

toporhtop. If the CPU cores on the sending and/or receiving nodes are at or near 100% utilization during the transfer, the data transfer process itself is CPU-bound, likely due to protocol processing or software-based compression/decompression. - Check I/O Wait Times: Use

iostat -x 1. High%utilandawaitvalues for your storage devices (e.g.,/dev/sda) indicate that the storage system cannot keep up with the read/write requests, creating an I/O bottleneck.

Guide 2: Implementing an Adaptive Transfer Tuning Strategy

For environments with dynamic network conditions (e.g., shared research clusters), static tuning is insufficient. This guide outlines a methodology for adaptive optimization based on state-of-the-art research.

Experimental Protocol: Reinforcement Learning for Parameter Tuning

- Objective: Dynamically adjust application-layer parameters (

concurrencyandparallelism) to maximize throughput and minimize energy consumption under changing network traffic. - Background: The relationship between transfer parameters (

cc,p), throughput, and energy is non-linear. Research shows optimal settings can improve performance by up to 10x compared to baseline (cc=1,p=1), but these optima shift with background traffic [9]. - Methodology (Based on SPARTA DRL Framework):

- Define State Space: The state should include real-time metrics such as current throughput, round-trip time (RTT), and CPU idle time on the end systems.

- Define Action Space: The actions are discrete changes to the

concurrencyandparallelismparameters (e.g., increment, decrement, or hold). - Define Reward Function: Design a reward function that balances multiple objectives. For example:

Reward = α * Throughput - β * Energy_Consumption. This encourages the system to find a Pareto-optimal solution between speed and efficiency. - Training: Train a Deep Reinforcement Learning (DRL) agent in an emulation environment that replicates your network conditions. Using logged state transitions from initial real-world episodes can significantly accelerate training and reduce the associated energy costs [9].

- Expected Outcome: Studies have shown this approach can yield up to a 25% increase in throughput and up to a 40% reduction in energy consumption at the end systems compared to static configuration or heuristic-based methods [9].

Quantitative Data on Transfer Inefficiency

Table 1: Documented Impacts of Data Transfer Inefficiency

| Metric | Impact of Inefficiency | Source / Context |

|---|---|---|

| Big Data Project Failure Rate | 85% of projects fail | Gartner analysis of large-scale data projects [12] |

| System Integration Failure Rate | 84% fail or partially fail | Integration research across industries [12] |

| Annual Revenue Loss | 25% of revenue lost | Due to poor data quality and related inefficiencies [12] |

| Productivity Cost of Data Silos | $7.8 million annually | Lost productivity from fragmented data [12] |

| Energy Overconsumption | Up to 40% higher at end systems | Compared to optimized adaptive transfer methods [9] |

| Cloud AI Data Transfer Fees | Up to 30% of total cloud AI spend | For data-intensive applications [11] |

Table 2: Cost Comparison: Cloud vs. Edge AI Processing

This table summarizes the financial trade-offs, which are heavily influenced by data transfer volume and cost [11].

| Cost Factor | Cloud-Based AI Processing | Edge-Based AI Processing |

|---|---|---|

| Cost Model | Operational Expenditure (OPEX) | Capital Expenditure (CAPEX) |

| Primary Costs | GPU instance time, data egress fees, API calls | Upfront hardware investment, power, maintenance |

| Example: Video Analytics (200 stores) | ~$1.92M annually (streaming + processing) | ~$2.8M over 3 years (hardware + maintenance) |

| Example: NLP (1M calls/month) | ~$48,000 annually | ~$111,000 over 3 years |

| Best For | Variable workloads, less data-heavy inference | Predictable, data-heavy workloads, low-latency scenarios |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Optimizing Data Transfers

| Tool / Technology | Function | Relevance to Research |

|---|---|---|

| NVIDIA nvCOMP with Blackwell DE [7] | Hardware-accelerated decompression library. Offloads decompression from GPU SMs to a dedicated engine. | Crucial for accelerating data-loading pipelines in AI-driven research (e.g., drug discovery, genomics). Reduces GPU idle time and overall experiment latency. |

| High-Performance Interconnects (InfiniBand) [10] | Low-latency, high-throughput networking for multi-node systems. | Essential for distributed training of large models across multiple GPU nodes. Prevents communication from becoming the bottleneck. |

| SPARTA DRL Framework [9] | A Deep Reinforcement Learning framework for dynamic parameter tuning of data transfers. | Provides a methodology for researchers to autonomously optimize their data transfers for performance and energy efficiency in shared, dynamic network environments. |

| DataOps Platforms [12] | Platforms that bring rigor and orchestration to data management and flow. | Ensures high data quality and efficient pipeline operations, which is foundational for reliable and reproducible experimental results. The market is growing at a 22.5% CAGR. |

| Hybrid AI Architecture [11] | A strategy that splits AI workloads between cloud and edge computing. | Enables researchers to train models in the cloud but run inference locally (at the edge), minimizing ongoing data transfer costs and latency for real-time analysis. |

Quantifying the cost of transfer inefficiency is the first step toward building more robust, cost-effective, and sustainable research computing environments. The latency, energy waste, and operational expenses are not merely theoretical but are quantifiable drains on research budgets and timelines. By leveraging the diagnostic guides, experimental protocols, and tools outlined in this technical support center, researchers and scientists can systematically attack the problem of data transfer overhead. Integrating these optimization strategies directly into your experimental design is no longer a niche advanced technique but a core competency for leading-edge research in 2025 and beyond.

Technical Support Center

Troubleshooting Guides

Q1: My GPU inference efficiency is lower than expected. Profiling shows the GPU is often idle. What is the cause and how can I resolve this?

A: This is a classic symptom of host overhead, where the GPU (device) is blocked waiting for the CPU (host) to prepare work [13]. The root cause often lies in the disjoint address spaces between the host and device, which necessitates explicit data transfers that can stall the GPU [6].

Diagnosis and Resolution Protocol:

- Profile Your Application: Use the PyTorch Profiler or NVIDIA Nsight Systems to collect a trace of your application's execution. Visually inspect the trace for "gaps" in the CUDA streams where no kernels are running [13].

- Identify Synchronization Points: In the trace, look for these common culprits:

- Unnecessary data transfers between CPU and GPU within critical loops.

- A high number of small, individual CUDA kernel launches.

- CPU-based operations that must complete before the GPU can proceed.

- Apply Corrective Optimizations:

- Eliminate Redundant Transfers: Construct tensors directly on the GPU instead of on the CPU and then transferring them. Use

cudaMallocManagedfor Unified Memory to let the system manage data movement [14]. - Fuse Kernels: Use the Torch compiler to merge multiple small kernel launches into a single, larger kernel, reducing launch overhead [13].

- Use CUDA Graphs: For a fixed sequence of operations, capture the entire graph of kernels into a single launchable unit using CUDA Graphs. This amortizes the launch overhead and is widely used in production inference servers [13].

- Eliminate Redundant Transfers: Construct tensors directly on the GPU instead of on the CPU and then transferring them. Use

The following workflow diagram illustrates the diagnostic process for identifying and resolving host overhead:

Q2: The data transfer time between my host and device is a major bottleneck. How can I improve the transfer performance?

A: Data transfer overhead is a fundamental challenge in systems with disjoint address spaces [6]. Optimizing it involves both hardware awareness and software techniques.

Experimental Protocol for Data Transfer Optimization:

- Verify Hardware Configuration:

- Run

nvidia-smiduring an active transfer to ensure your GPU is using a PCIe gen3 x16 slot (or higher). Slots configured as x4 or x8 will have lower bandwidth [15]. - In multi-socket CPU systems, set CPU and memory affinity so each GPU communicates with its "near" CPU to avoid inter-socket traffic [15].

- Run

- Use Pinned Host Memory: Allocate page-locked ("pinned") memory on the host using

cudaHostAlloc(). This enables higher bandwidth transfers compared to standard pageable memory [15]. - Maximize Transfer Size: PCIe transfer rates increase with block size. Aim to transfer large, contiguous blocks (e.g., up to 16MB) to achieve full interface throughput [15].

- Overlap Transfers and Computation: Use CUDA streams to perform asynchronous data transfers concurrently with kernel execution. On GPUs with dual copy engines, this also allows simultaneous copies to and from the device [15].

The table below summarizes key quantitative considerations for data transfer optimization:

| Optimization Factor | Target / Best Practice | Quantitative Impact / Rationale |

|---|---|---|

| PCIe Interface | PCIe gen3 x16 (or higher) | Enables >= 10 GB/s throughput for large transfers [15]. |

| Host Memory Type | Pinned (Page-locked) Memory | Can provide ~12 GB/s vs. ~5 GB/s for pageable memory [15]. |

| Transfer Size | Large, contiguous blocks (e.g., 16 MB) | Larger transfers are needed to achieve full PCIe throughput [15]. |

| Execution Overlap | CUDA Streams for Async Transfer | Hides transfer latency by executing kernels concurrently [15]. |

Frequently Asked Questions (FAQs)

Q1: From a research perspective, what is the core architectural reason for host-device data transfer overhead?

A: The fundamental reason is physically separate memories [6]. In conventional heterogeneous systems like CPU-GPU setups, the host (CPU) and device (GPU) have their own distinct, attached physical memories. This design creates disjoint address spaces. Therefore, any data needed for a GPU computation must be explicitly transferred from host memory to device memory, an operation that incurs significant latency and bandwidth costs over the PCIe bus [6]. The staging of data in a temporary area is a direct consequence of this architectural separation.

Q2: What are the trade-offs between using a staging environment for testing versus directly deploying to production?

A: Using a staging environment (a near-exact replica of production) for testing provides significant benefits but also has limitations, leading to alternative strategies like "staging in production."

| Strategy | Benefits | Limitations & Risks |

|---|---|---|

| Staging Environment | - Catches performance and integration issues before production [16]. - Reduces liability and improves regulatory compliance for critical apps [16]. - Enables final User Acceptance Testing (UAT) [16]. | - Cannot perfectly simulate real-world traffic and user behavior [16]. - Configuration mismatches with production can yield inaccurate test results [16]. - Adds management overhead and cost [16]. |

| Direct Production Deployment (e.g., with Feature Flags) | - Tests with real user traffic and data volumes [17]. - Faster iteration by skipping staging setup [16]. - Enables gradual rollouts and instant rollbacks [17]. | - Higher risk of exposing users to bugs [16]. - Requires robust feature flagging and monitoring systems [17]. - Less suitable for highly regulated or mission-critical applications [16]. |

Q3: How can our research team build an efficient and manageable HPC environment for GPU-accelerated drug discovery?

A: Modern managed services can significantly reduce operational overhead. The architecture below, inspired by a real-world implementation, provides a robust foundation [18].

Methodology for a Managed HPC Environment:

- Use a Managed HPC Service: Leverage services like AWS Parallel Computing Service (PCS), which uses Slurm as a job scheduler. This automates cluster management tasks like Slurm version upgrades [18].

- Automate Custom Image Creation: Use a service like EC2 Image Builder to create custom Amazon Machine Images (AMIs). Define a recipe that installs necessary PCS agents, Slurm packages, and your team's specific application software (e.g., molecular modeling tools) [18].

- Streamline User Management: Implement an automated workflow using AWS Step Functions and Systems Manager (SSM) Documents. When compute nodes start, they execute scripts that pull user information from a JSON file and automatically create user accounts, providing immediate access to the HPC environment [18].

The following diagram visualizes this automated HPC environment architecture:

The Scientist's Toolkit: Key Research Reagent Solutions

This table details essential software and hardware "reagents" for conducting high-performance computing experiments focused on reducing host-device overhead.

| Tool / Solution | Function in Experimentation |

|---|---|

| NVIDIA Nsight Systems | A system-wide performance analysis tool used to visualize application execution, identify GPU idle periods ("gaps"), and pinpoint the root cause of host overhead [13]. |

| PyTorch Profiler | A profiling tool integrated with PyTorch that helps diagnose performance issues in ML models, including data transfer bottlenecks and kernel execution times [13]. |

| CUDA Unified Memory | A memory management technology that creates a single address space for CPU and GPU, simplifying programming by reducing the need for explicit data transfers (though it may not eliminate all overhead) [14]. |

| CUDA Graphs | A technique to capture a sequence of kernel launches and dependencies into a single, reusable unit. This dramatically reduces kernel launch overhead and is critical for low-latency inference [13]. |

| Pinned (Page-Locked) Memory | A type of host memory allocation that enables the highest possible data transfer speeds between the host and GPU device [15]. |

| AWS Parallel Computing Service (PCS) | A managed HPC service that reduces operational overhead by automating job scheduling (Slurm) and cluster management, allowing researchers to focus on their experiments [18]. |

In the context of research aimed at reducing host-device data transfer overhead, understanding the "hidden data tax" imposed by security and communication protocols is paramount. For scientists and drug development professionals transmitting sensitive experimental data, Transport Layer Security (TLS) is the essential cryptographic protocol that ensures privacy and integrity. However, this security comes at a cost: significant overhead that can impact data transfer efficiency. This overhead manifests as additional data traffic, increased computational processing, and communication latency, primarily introduced during the initial TLS handshake and through the record layer headers for each data packet [19].

This guide provides troubleshooting and methodological support for researchers measuring and mitigating these overheads in experimental data transfer setups, directly supporting the broader thesis of optimizing data efficiency in research environments.

Quantitative Analysis of TLS Overhead

The overhead caused by TLS can be broken down into two main phases: the connection-establishing handshake and the ongoing data encapsulation in the record layer. The following tables summarize the typical overhead encountered in practice.

TLS Handshake Overhead

The TLS handshake establishes a secure connection by negotiating cryptographic parameters and authenticating the server. The following table quantifies the traffic overhead for different handshake types, based on average message sizes [20].

Table: TLS Handshake Traffic Overhead

| Handshake Type | Description | Approximate Traffic Overhead | Round Trips (TLS 1.2) |

|---|---|---|---|

| Full Handshake | Establishes a new secure session. | ~6.5 KB [20] | 2 |

| Session Resumption | Resumes a previously established session. | ~330 bytes [20] | 1 |

TLS Full Handshake Flow

TLS Record Layer Overhead

After the handshake, application data is transmitted in protected packets. The per-packet overhead depends on the cryptographic cipher suite used [21].

Table: Per-Packet Data Overhead in TLS Record Layer

| Cipher Suite Type | TLS Header | IV/Nonce | MAC (Message Auth.) | Padding | Total Approx. Overhead |

|---|---|---|---|---|---|

| AES-CBC (e.g., TLSRSAWITHAES128CBCSHA) | 5 bytes | 16 bytes [21] | 20 bytes [20] | 0-15 bytes [20] | ~40-55 bytes |

| AEAD (e.g., AES-GCM, ChaCha20-Poly1305) | 5 bytes | 8 bytes [21] | 16 bytes (integrated) | 0 bytes | ~13-21 bytes |

Experimental Protocols for Measuring Overhead

To accurately characterize protocol overhead in a research data transfer environment, follow these experimental methodologies.

Experiment 1: Measuring Handshake Traffic Volume

Objective: To quantify the total bytes transferred solely for establishing a TLS connection. Methodology:

- Setup: Use a packet capture tool (e.g., Wireshark) on either the client or server host device.

- Configuration: Ensure the research application is configured to force a new TLS connection (disable session resumption).

- Execution: Initiate a connection from the client to the server. Filter captures to show only TLS traffic between the two hosts.

- Measurement: In the packet capture tool, select all packets from the initial

ClientHelloto the finalFinishedmessage. The tool's statistics function will report the total bytes captured. This value is the handshake overhead. - Variation: Repeat the experiment with session resumption enabled and compare the total bytes transferred.

Experiment 2: Measuring Record Layer Efficiency

Objective: To determine the efficiency loss due to TLS per-packet encapsulation. Methodology:

- Setup: Configure a test setup where a research host device generates a known, consistent payload size (e.g., 100 bytes, 500 bytes, 1500 bytes).

- Execution: Transmit this payload securely to another device.

- Measurement: Use a packet capture tool to examine the resulting TLS records. Compare the original payload size with the actual wire size of the TLS record(s). Calculate the efficiency as

Payload Size / Wire Size * 100. - Analysis: Repeat with different payload sizes and cipher suites (e.g., AES-CBC vs. AES-GCM) to build a model of overhead impact.

The Scientist's Toolkit: Research Reagent Solutions

This table details key technical solutions and their role in mitigating data transfer overhead.

Table: Key Reagents for Overhead Mitigation

| Reagent / Solution | Primary Function | Role in Reducing Overhead |

|---|---|---|

| TLS 1.3 | The latest TLS protocol version. | Reduces handshake latency from 2 round-trips to 1, significantly cutting connection setup time [19]. |

| Session Resumption | A mechanism to reuse previously negotiated session parameters. | Avoids the full handshake, reducing subsequent connection overhead to a fraction of the original [22]. |

| AEAD Cipher Suites | Cryptographic algorithms like AES-GCM and ChaCha20-Poly1305. | Combine encryption and authentication, eliminating the need for separate MAC and padding, which reduces per-packet overhead [21]. |

| Packet Capture Software | Tools like Wireshark for network analysis. | Enables precise measurement of protocol overhead by inspecting raw traffic between host devices. |

| HTTP/2 | A major revision of the HTTP network protocol. | Allows multiple requests/responses to be multiplexed over a single TLS connection, amortizing handshake overhead across many data transfers [19]. |

Troubleshooting Guide: FAQs

Q1: Our data transfer rates for sensitive experimental data are slower than expected. Could TLS be the cause?

A: Yes. Investigate the following:

- Handshake Frequency: Are you opening a new TLS connection for every transaction? This is highly inefficient. Solution: Modify your application code or configuration to reuse connections (HTTP keep-alive) and leverage TLS session resumption.

- Cipher Suite: Are you using an older cipher suite? Solution: Prioritize modern, more efficient AEAD cipher suites like

TLS_AES_128_GCM_SHA256. This reduces per-packet CPU and traffic load [21] [19]. - Payload Size: Are you sending a vast number of very small packets? Solution: If possible, batch small data points into larger payloads to reduce the relative impact of the fixed record layer overhead.

Q2: We are seeing high CPU usage on our data acquisition server during encrypted transfers. Is this normal?

A: Cryptographic operations are computationally expensive, so some increase is expected. However, high usage can be mitigated.

- Check Cipher Suite: Ensure you are using cipher suites with hardware acceleration, such as AES-NI for AES-GCM, which is common on modern processors [21].

- Consider Offloading: For very high-traffic scenarios, investigate TLS termination at a load balancer or dedicated security appliance, which offloads the CPU cost from your application server [19].

Q3: What is the single most effective change to reduce TLS overhead for a long-lived data stream?

A: Ensure TLS session resumption is working. A full 6.5 KB handshake occurs only for the first connection. All subsequent resumptions on that session use a much lighter ~330-byte exchange, saving substantial bandwidth and latency [20]. Verify this is enabled in your client and server configurations.

Q4: How does the choice of cipher suite directly impact our data usage costs?

A: The cipher suite dictates the per-packet overhead. For a continuous stream of small data packets (e.g., sensor telemetry), the difference between a 55-byte overhead (AES-CBC-SHA) and a 15-byte overhead (AES-GCM) compounds rapidly. Over millions of packets, this can result in a significant increase in transmitted bytes, directly impacting costs if you are paying for bandwidth [21].

TLS Overhead Symptom and Solution Map

Technical Support Center: Troubleshooting High-Throughput Data Workflows

This technical support center provides targeted guidance for researchers facing computational bottlenecks in genomics, medical imaging, and molecular dynamics. The following troubleshooting guides and FAQs address common issues, with a specific focus on methodologies to reduce host-device data transfer overhead, a critical bottleneck in high-performance biomedical computing.

Genomics Data Analysis Support

Frequently Asked Questions

What are the primary data management challenges in genomic research? Genomic research, particularly with Next-Generation Sequencing (NGS), faces several key challenges [23]:

- Volume: A single human genome requires up to 100 GB of storage. NGS workflows generate terabytes of data, straining traditional storage systems [23].

- Variety and Complexity: Workflows involve diverse data types (sequences, ligated variants, linkages) and change frequently with new reagents, protocols, and instruments [23].

- Data Transfer Bottlenecks: Processing and analytics have become the new bottleneck, as moving massive datasets between storage, host (CPU), and device (GPU/accelerator) memory is slow [23] [24].

How can we securely manage genomic data from external collaborators? For collaborations with CROs or academic partners, implement a cloud-based Laboratory Information Management System (LIMS). This provides controlled, role-based data access, ensures data security across locations, and offers the scalability needed for massive genomic datasets. The solution must have robust controls for compliance with regulations like CLIA, GDPR, and HIPAA [23].

Troubleshooting Guide: Slow Genomic Variant Calling Pipeline

- Problem: AI-powered variant calling (e.g., with DeepVariant) is unacceptably slow, delaying analysis [25].

- Investigation Steps:

- Profile the Workflow: Measure the time spent on data loading, host-to-device (H2D) transfer, GPU computation, and result output.

- Check GPU Utilization: Use tools like

nvidia-smito confirm the GPU is being used and is not memory-bound. - Verify Data Locality: Check if data is being read from a remote or slow network-attached storage source.

- Solution: Implement a high-performance, portable data reduction framework like HPDR [24].

- Methodology: HPDR minimizes costly data transfers by executing state-of-the-art reduction algorithms directly on the GPU. It uses an optimized pipeline that adaptively overlaps data reduction with transfer operations.

- Expected Outcome: HPDR can reduce data transfer overhead to just 2.3% of the original and accelerate end-to-end reduction throughput by up to 3.5x [24].

Quantitative Performance Metrics for Data Reduction Frameworks

| Framework/Metric | Transfer Overhead Reduction | End-to-End Throughput Gain | Multi-GPU Speedup Efficiency | Key Feature |

|---|---|---|---|---|

| HPDR Framework [24] | 2.3% of original | Up to 3.5x faster | 96% of theoretical maximum | Portable across CPU/GPU architectures |

| Standard GPU Compression [24] | 34-89% of total time | Baseline (1x) | As low as 74% | Typically optimized for NVIDIA only |

Medical Imaging & Cloud PACS Support

Frequently Asked Questions

Our hospital's on-premise PACS is running out of storage. What are our options? You can implement a Cloud Tiering strategy or migrate to a full Cloud PACS [26] [27].

- Cloud Tiering: Automatically moves older, less frequently accessed "cold" DICOM images to cost-effective cloud storage tiers while keeping current "hot" data on high-performance on-premise storage. This optimizes costs while meeting data retention requirements [26].

- Cloud PACS: A full cloud-based Picture Archiving and Communication System offers scalability, reduced upfront costs, and robust disaster recovery. It also enhances accessibility for remote diagnostics and teleradiology [26] [27].

Is it safe to store patient scans in the cloud? Yes, with proper safeguards. Leading cloud providers implement advanced security measures for healthcare data, including encryption for data at rest and in transit (e.g., TLS for DICOM transfers), role-based access controls, and regular security audits. These measures often exceed the security of on-premise systems and are designed for compliance with HIPAA and other regulations [26] [27].

Troubleshooting Guide: Slow Retrieval of Medical Images for AI Analysis

- Problem: Researchers experience long delays when retrieving large sets of DICOM images from a biobank to train AI models [28] [27].

- Investigation Steps:

- Check Network and Storage Tier: Confirm the images are not stored in a deep, slow "cold" storage archive. Verify network bandwidth sufficiency.

- Analyze Data Access Patterns: Determine if the AI training is accessing the entire dataset or small, random batches.

- Inspect Data Pre-processing: Check if the bottleneck is in decoding or normalizing the DICOM files after transfer.

- Solution: Optimize the storage architecture and data pipeline.

- Methodology: For the biobank, implement a scalable cloud storage solution with a "warm" cache tier for active research projects [26]. Integrate a dedicated DICOM file-sharing solution that uses compression and efficient networking protocols (C-STORE, C-MOVE) to speed up transfer [27].

- Expected Outcome: Faster, secure access to imaging data, enabling efficient training of multimodal AI models that integrate imaging with clinical metadata [29].

Molecular Dynamics & High-Performance Computing Support

Frequently Asked Questions

Our molecular dynamics simulation slows down when visualizing results in real-time. Why? This is a classic host-device data transfer bottleneck. The simulation running on the GPU generates massive amounts of particle data (coordinates, velocities). To visualize it, this data must be transferred back to the host CPU and then to the GPU again for rendering. The PCIe bus linking the CPU and GPU becomes saturated, causing low frame rates and poor interactivity [30].

How can we achieve real-time, interactive visualization of massive MD simulation data? The solution requires a combination of in-situ visualization and advanced scheduling [30].

- In-situ Visualization: Process and render the data as it is generated on the GPU, avoiding the costly transfer back to the CPU host [30].

- GPU Hyper-tasking & Scheduling: A specialized scheduling scheme keeps both the CPU and GPU fully utilized. The GPU is "hyper-tasked" to perform local data compression and participate in rendering, while the CPU handles simulation tasks. An activity-aware technique minimizes redundant data copies [30].

- Expected Outcome: This methodology has been shown to enable interactive visualization of over 1.7 billion protein data points with an average of 42.8 frames per second [30].

Troubleshooting Guide: Low Frame Rate in Real-Time MD Visualization

- Problem: In-situ visualization of a large-scale molecular dynamics trajectory is non-interactive, with a very low frame rate [30].

- Investigation Steps:

- Profile Data Transfers: Use profilers (e.g., NVIDIA Nsight) to quantify H2D and D2H transfer times versus computation time.

- Check Memory Usage: Confirm that the dataset exceeds the GPU's global memory, forcing continuous on-demand data transfers.

- Review Visualization Code: Determine if the rendering is being done on the CPU or if the GPU is used inefficiently.

- Solution: Implement the optimized scheduling and data transfer minimization technique described above [30].

- Methodology:

- Reconstruct the scheduling scheme to hyper-task the GPUs for rendering.

- Use an activity-aware data-transfer minimization algorithm to reduce redundant copies of structural data.

- Leverage all available GPUs in a system by having each compress its local data for rendering.

- Experimental Protocol:

- Software: C++, OpenMP for CPU multi-threading, CUDA for GPU operations, OpenGL for rendering.

- Hardware: A single node with a multi-core CPU (e.g., 44-core Xeon) and multiple GPUs (e.g., NVIDIA Tesla M40).

- Benchmarking: Compare frame rates and interactivity before and after implementing the optimized scheduler.

- Methodology:

Diagram 1: MD Visualization Bottleneck & Optimization.

The Scientist's Toolkit: Essential Research Reagent Solutions

Key Computational Tools & Frameworks for High-Performance Biomedical Computing

| Tool/Framework | Primary Function | Application Context |

|---|---|---|

| HPDR [24] | High-performance, portable data reduction framework. | Minimizes data transfer overhead in genomics and general scientific computing on GPUs. |

| Cloud PACS [26] [27] | Cloud-based Picture Archiving and Communication System. | Securely stores, manages, and provides scalable access to DICOM medical images. |

| In-situ Scheduler [30] | CPU-GPU scheduling for real-time visualization. | Enables interactive exploration of massive molecular dynamics and agent-based simulation data. |

| Modern LIMS [23] | Laboratory Information Management System. | Tracks complex genomic workflows, manages sample lineage, and ensures data integrity. |

| Multimodal AI (Transformers, GNNs) [29] | Integrates imaging, clinical, and genomic data. | Provides comprehensive diagnostic and prognostic models for precision medicine. |

Practical Strategies for Efficient Data Movement: From Compression to Unified Memory

FAQ: Core Concepts and Techniques

What is data reduction and why is it critical in scientific research? Data reduction involves reducing the size or complexity of data while preserving its essential characteristics and minimizing information loss. In scientific research, this is crucial due to the "Big Data" phenomenon, where massive datasets from instruments, sensors, and simulations can lead to inefficient energy consumption, suboptimal bandwidth utilization, and rapidly increasing storage costs in cloud environments. Strategically applying data reduction techniques is fundamental to managing this information overload and streamlining data analysis processes in a resource-efficient way [31].

What is the main difference between lossy and lossless reduction techniques? The primary difference lies in whether the original data can be perfectly reconstructed after the reduction process.

- Lossless Techniques: These methods allow for the exact original data to be reconstructed from the compressed data. They are typically used when absolute data fidelity is required, such as in the storage of raw genomic sequences or final clinical trial data.

- Lossy Techniques: These methods achieve higher reduction rates by permanently discarding some data deemed less critical, accepting a controlled loss of information. They are suitable for scenarios like preliminary data analysis from high-frequency sensors or image data where minor inaccuracies are tolerable [31].

How do I choose the right data reduction technique for my dataset? The choice depends on your data type, the required fidelity, and your specific goal (e.g., reducing storage vs. speeding up transfer). The table below summarizes the purpose and common applications of core techniques [31] [32].

| Technique | Primary Function | Common Scientific Applications |

|---|---|---|

| Compression | Reduces data size by encoding information more efficiently. | Storing large genomic files (FASTQ, BAM), medical images, and historical sensor data [31] [33]. |

| Aggregation | Summarizes detailed data into a concise format (e.g., averages, sums). | Generating daily summary statistics from continuous environmental sensors or high-throughput screening results [31]. |

| Dimensionality Reduction | Reduces the number of random variables or features under consideration. | Preprocessing high-dimensional data (e.g., from transcriptomics or proteomics) for machine learning models [31]. |

| Pruning | Removes less important components from a model. | Compressing large AI models (e.g., BERT) used in drug discovery to reduce computational load and energy consumption [32]. |

| Knowledge Distillation | Transfers knowledge from a large, complex model to a smaller, faster one. | Creating compact, efficient models for real-time analysis of scientific data without significant performance loss [32]. |

| Quantization | Reduces the numerical precision of a model's parameters. | Accelerating inference of AI models on specialized hardware, enabling faster analysis in clinical trial data pipelines [32]. |

Our research involves AI models for drug discovery. Can data reduction help with sustainability? Yes, significantly. Model compression techniques directly address the environmental impact of large AI models. A 2025 study demonstrated that applying pruning and knowledge distillation to a BERT model reduced its energy consumption by 32.1% while maintaining 95.9% accuracy on a sentiment analysis task. Similarly, compression applied to other transformer models like ELECTRA achieved a 23.9% reduction in energy use. This makes AI-driven research more carbon-efficient without compromising critical performance metrics [32].

Troubleshooting Guides

Problem: High Data Transfer Overhead Slowing Down Analysis

Symptoms:

- Delays in moving data from instruments to analysis servers.

- Inability to perform real-time or near-real-time processing.

- High cloud egress costs and inefficient bandwidth utilization.

Solution: Implement a Cloud-Edge Collaborative Framework This approach processes data closer to its source (the "edge") before transferring it to the central cloud, drastically reducing the volume of data that needs to be transferred [34].

- Deploy Edge Nodes: Install small-scale computing devices at the data source (e.g., lab instrument, clinical site).

- Apply Initial Reduction: On the edge node, run a lightweight reduction algorithm. For heterogeneous IoT-style data from sensors, a two-stage approach is effective:

- Transfer Only Reduced Data: Send the significantly smaller, reduced dataset to the cloud for long-term storage or complex analysis.

This framework has been shown to achieve compression ratios below 40%, meaning over 60% of data volume is eliminated before transfer [34].

Problem: Loss of Critical Information After Aggregation

Symptoms: Important outliers or subtle patterns in the raw data are lost after applying aggregation (e.g., averaging), leading to incorrect conclusions.

Solution: Adopt a Tiered Fidelity Data Strategy

- Define Data Criticality: Categorize data based on its potential information value. For example, data from a final clinical trial is "high-criticality," while initial exploratory assay data may be "lower-criticality."

- Apply Lossless Reduction to High-Criticality Data: Use lossless compression algorithms (e.g., LZW, GZIP) for all final, validated datasets where every data point must be preserved [31].

- Apply Lossy Reduction for Exploration: For initial data exploration and analysis, use aggressive lossy techniques (e.g., Symbolic Aggregate Approximation - SAX) to quickly identify high-level trends and patterns [31].

- Retain Raw Data Temporarily: Store raw, high-fidelity data in a low-cost storage tier for a predefined period. This allows researchers to revert to the original data if anomalies are detected in the reduced dataset.

Problem: Compressed AI Models Show Significant Performance Degradation

Symptoms: After applying model compression to reduce computational load, the model's accuracy, precision, or other performance metrics drop unacceptably.

Solution: Follow a Structured Compression and Fine-Tuning Protocol

This methodology is based on experimental protocols used for compressing transformer models like BERT and ELECTRA [32].

- Establish a Performance Baseline: Fully train your model on the target dataset (e.g., Amazon Polarity for sentiment) and measure its baseline performance (Accuracy, F1-Score, ROC AUC) and resource consumption.

- Apply Compression Methodically:

- Pruning: Iteratively remove the least important weights (e.g., those with the smallest magnitudes) from the model. Prune in small increments (e.g., 10% of weights at a time).

- Knowledge Distillation: Train a smaller "student" model to mimic the output and intermediate representations of the larger, accurate "teacher" model.

- Quantization: Convert the model's parameters from 32-bit floating-point numbers to lower-precision formats like 16-bit floats or 8-bit integers.

- Fine-Tune the Compressed Model: This is a critical step. After compression, retrain the model for a small number of epochs on the original training data. This allows the model to recover from the performance loss induced by compression.

- Evaluate and Iterate: Compare the performance and energy efficiency of the compressed-and-fine-tuned model against your baseline. If performance is insufficient, adjust compression parameters and repeat.

The table below quantifies the performance and energy savings achieved in a controlled study applying these techniques [32].

| Model & Compression Technique | Performance (Accuracy) | Performance (ROC AUC) | Reduction in Energy Consumption |

|---|---|---|---|

| BERT (Baseline) | (Reference) | (Reference) | (Reference) |

| BERT + Pruning + Distillation | 95.90% | 98.87% | 32.10% |

| DistilBERT + Pruning | 95.87% | 99.06% | 6.71% |

| ELECTRA + Pruning + Distillation | 95.92% | 99.30% | 23.93% |

| ALBERT + Quantization | 65.44% | 72.31% | 7.12% |

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Solution | Function in Data Reduction Research |

|---|---|

| CodeCarbon | An open-source Python package that estimates the amount of carbon dioxide (CO₂) produced by the computing resources used to run machine learning models. It is essential for quantifying the environmental benefits of model compression [32]. |

| Wavelet Transform Toolkits (e.g., PyWavelets) | Software libraries used for the first stage of data aggregation in edge computing, particularly effective for denoising and compressing signal and time-series data from scientific sensors [34]. |

| Tensor Decomposition Libraries (e.g., TensorLy) | Provide implementations of tensor decomposition methods like Tucker decomposition, used for advanced multi-dimensional data compression after initial aggregation [34]. |

| Pruning & Distillation Frameworks | Libraries integrated with deep learning frameworks (e.g., TensorFlow Model Optimization Toolkit, PyTorch) that provide algorithms for pruning model weights and performing knowledge distillation [32]. |

| Electronic Health Record (EHR) APIs (e.g., FHIR) | Standardized application programming interfaces that enable automated, systematic capture of clinical trial data (labs, medications) directly from source systems, reducing manual entry errors and the need for subsequent data verification [35]. |

| Cloud-Native Container Orchestration (e.g., Kubernetes) | Technology used to manage and scale the microservices that perform data reduction in cloud-edge frameworks, ensuring portable, reproducible, and efficient processing pipelines [33] [34]. |

Edge computing is a distributed computing paradigm that processes data near its source, at the "edge" of the network, rather than sending it to distant, centralized cloud servers. [36] [37] For researchers, scientists, and drug development professionals, this approach is transformative. It directly addresses the critical bottleneck of data transfer overhead in research workflows, enabling real-time analytics, reducing bandwidth costs and latency, and enhancing data security and privacy—a paramount concern when handling sensitive experimental or patient data. [38] [39] [37] By filtering and pre-processing data locally, edge computing allows you to transmit only valuable, aggregated insights, minimizing the massive data transfers that can slow down research and increase costs.

Core Architecture: How Edge Computing Works

Understanding the core components of an edge architecture is the first step to successful implementation. The following diagram illustrates how these components interact to process data efficiently.

Edge Computing Data Flow

The architecture consists of several key layers [37]:

- Edge Devices: These are the instruments generating raw data, such as lab sensors, high-throughput screeners, or patient monitoring devices. They perform minimal processing, like initial data filtering.

- Edge Gateways: This layer aggregates data from multiple devices. It handles basic analytics and preprocessing, such as data format conversion and aggregation.

- Edge Servers: Located close to the data source (e.g., in a lab or clinic), these servers perform heavy-duty local processing. This is where critical tasks like running AI inference models or real-time analytics occur. Data is temporarily stored here before only essential information is synced to the cloud.

- Cloud/Data Center: The central cloud provides long-term storage, in-depth analytics, and centralized management, such as training complex AI models that are then deployed back to the edge.

The Researcher's Toolkit: Protocols & Platforms

IoT Protocol Comparison for Research Environments

Choosing the right communication protocol is crucial for optimizing the performance of your edge computing setup. The table below compares the key characteristics of common protocols to guide your selection.

| Protocol | Energy Use | Latency | Bandwidth Efficiency | Security Features | Best For (Research Context) |

|---|---|---|---|---|---|

| MQTT | Moderate | Low | Moderate | Basic encryption | Resource-limited setups; lightweight sensor data collection. [40] |

| AMQP | High | Low | High | Built-in security | Mission-critical systems requiring reliable, secure message delivery. [40] |

| CoAP | Lowest | Lowest | Lowest | Basic security (DTLS) | Battery-powered, low-bandwidth devices; constrained lab environments. [40] |

| HTTP/REST | Highest | High | High | Mature security (HTTPS) | Scenarios prioritizing broad compatibility over efficiency. [40] |

| DDS | Low | Low | Low | Advanced security | Complex, real-time systems requiring high scalability and robustness. [40] |

Leading Edge Computing Platforms for 2025

Selecting a platform that fits your research infrastructure is key. Here are some leading platforms for 2025 [39]:

- Scale Computing Platform: A hyper-converged infrastructure (HCI) that combines virtualization, storage, and computing. It is known for its simplicity and automated self-healing technology, ideal for remote or distributed research sites with minimal IT support. [39]

- Azure IoT Edge (Microsoft): Allows deployment of AI-driven analytics and cloud workloads directly at the edge. It integrates seamlessly with Microsoft's cloud ecosystem, making it strong for projects already using Azure services. [39]

- Eclipse ioFog: An open-source framework designed for scalable, containerized workloads. It integrates with Kubernetes, offering flexibility for managing complex edge computing infrastructure. [39]

- Google Distributed Cloud Edge: Delivers AI-powered analytics and real-time data processing at the edge, with strong support for third-party integrations. [39]

Experimental Setup & Methodology

Workflow for Data Transfer Overhead Experiment

To quantitatively assess the impact of edge computing on data transfer overhead, you can implement the following experimental workflow.

Data Overhead Experiment Workflow

Objective: To measure the reduction in data transfer volume, latency, and bandwidth consumption achieved by implementing edge-based data pre-processing compared to a raw data transfer model.

Methodology [37]:

- Define Data Source and Baseline: Select a high-data-volume source relevant to your research (e.g., a live-cell imaging system, DNA sequencer, or distributed environmental sensor network). Establish a baseline by measuring the total data volume generated over a set period and the time taken to transfer this raw data to a central cloud server.

- Configure Edge Node Hardware: Select and set up an edge server or gateway with adequate CPU, memory, and storage for your processing needs. Ensure it is physically located near the data source. [37]

- Implement Pre-processing Logic: Deploy containerized applications on the edge node to perform data filtering. Examples include:

- Anomaly Detection: Transmit only data points that deviate significantly from a defined baseline.

- Data Compression: Use algorithms to reduce file sizes before transmission.

- AI Inference: Run a pre-trained machine learning model (e.g., for image segmentation or feature extraction) and send only the model's results (e.g., "cell count: 145") instead of the raw image or video feed. [38] [39]

- Execute Experiment and Collect Metrics: Run the data source with edge processing enabled. Collect the following quantitative data for the same duration as the baseline:

- Data Volume Transferred: Total size of data sent to the cloud.

- End-to-End Latency: Time from data generation to the availability of insights in the cloud.

- Bandwidth Utilization: Network bandwidth used during transmission.

- Analyze Results: Compare the metrics from the edge-enabled run against the baseline. Calculate the percentage reduction in data volume and latency.

Frequently Asked Questions (FAQ) & Troubleshooting

General Concepts

Q1: Why can't I just use the cloud for all my data processing? Traditional cloud computing centralizes processing in remote data centers. For large, continuous data streams, this creates a bottleneck due to latency (the delay in sending data and receiving a response), high bandwidth costs, and potential security risks from constantly transmitting sensitive data. Edge computing processes data locally, providing near-instant results and mitigating these issues. [39] [37]

Q2: How does edge computing relate to Federated Learning in drug discovery? They are highly complementary. Edge computing provides the infrastructure to process data locally on devices or within hospital firewalls. Federated Learning is a technique that leverages this infrastructure: it sends an AI model to the edge nodes where data resides, the model trains locally, and only the model updates (not the raw data) are sent back to a central server. This is a powerful paradigm for collaborating on AI model training without sharing sensitive patient or proprietary research data. [38]

Technical Implementation

Q3: My edge device has limited computing power. Which protocol should I use? For resource-constrained devices, CoAP (Constrained Application Protocol) is often the best choice. It is specifically designed for low-power, low-bandwidth devices and has the lowest energy consumption of the major protocols. [40] MQTT is another strong, lightweight candidate for simple messaging.

Q4: I am experiencing high latency even with an edge server. What could be wrong?

- Check the Protocol: Ensure you are not using a protocol like HTTP/REST, which has high latency. Switch to a more efficient protocol like MQTT or CoAP. [40]

- Network Configuration: Investigate local network congestion or interference.

- Server Load: The edge server itself may be overloaded. Monitor its CPU and memory usage to see if it requires more powerful hardware or if workloads need to be optimized. [37]

Q5: How can I ensure my edge node is secure?

- Encrypt Data: Ensure data is encrypted both at rest on the edge device and in transit to the cloud. [37]

- Secure Access: Implement multi-factor authentication and the principle of least privilege for accessing the edge node. [37]

- Regular Updates: Establish a process for regularly updating and patching the software running on your edge devices to fix vulnerabilities. [37]

Data Management

Q6: What kind of data pre-processing is most effective for reducing transfer volume?

- Filtering: Discard irrelevant data (e.g., removing empty or control images from a high-throughput screen).

- Compression: Using lossless or lossy compression algorithms for images and videos.

- Feature Extraction: Running AI models to extract only the relevant features (e.g., protein binding affinity scores) instead of sending the entire raw dataset. [38]

Q7: How do I handle data synchronization between the edge and the cloud if the connection is unstable? This is a core strength of edge architecture. Use local buffering or storage on the edge server to temporarily hold data. Employ messaging protocols like MQTT or AMQP with Quality of Service (QoS) levels that ensure messages are delivered once the connection is restored. The system can continue local operations independently during an outage. [40] [37]

This guide provides a technical framework for researchers, scientists, and drug development professionals to select the optimal data transfer protocol for scientific instrumentation and data acquisition systems. The recommendations are framed within the broader research objective of minimizing host-device data transfer overhead, a critical factor in accelerating experimental throughput and improving the efficiency of data-intensive research workflows.

Protocol Comparison and Selection Criteria

The following table summarizes the core characteristics of MQTT, gRPC, and Custom UDP to aid in initial protocol selection.

Table 1: Quantitative Protocol Comparison for Research Data Transfer

| Feature | MQTT | gRPC | Custom UDP |

|---|---|---|---|

| Architecture/Model | Publish/Subscribe [41] | Request/Response, Streaming [42] | Connectionless Datagrams [43] |

| Underlying Transport | TCP [41] [44] | HTTP/2 (over TCP) [42] | UDP [43] |

| Header Overhead | Very Low (2-byte header) [41] | Moderate (HTTP/2 headers + Protobuf) | Minimal (UDP header only) |

| Data Serialization | Data-agnostic (Binary, JSON, etc.) [41] | Protocol Buffers (Binary) [42] | Any custom binary format |

| Reliability & Delivery Guarantees | Selectable QoS (0, 1, 2) [44] | Inherent via TCP/HTTP/2 | Unreliable; must be implemented in application [43] |

| Typical Latency | Low [43] | Low [42] | Very Low [43] |

| Ideal Research Scenario | Many devices/sensors streaming to multiple consumers [41] | Microservices, high-performance computing, complex data structures [42] | High-frequency, loss-tolerant real-time data (e.g., video streams) [43] |

Experimental Setup and Validation Methodologies

To validate protocol performance within a research context, the following experimental methodologies are recommended.

Experiment 1: Baseline Bandwidth and Latency Profiling

Objective: To establish quantitative performance baselines for each protocol under controlled network conditions.

Research Reagent Solutions:

- Protocol Implementations: Mosquitto MQTT Broker, gRPC with Protobuf, and a custom UDP socket application.

- Network Emulator: A tool like

tc(Linux traffic control) or Wanem to simulate network constraints. - Data Generator: A script to generate standardized, reproducible data payloads of varying sizes.

- Monitoring Tool: Wireshark for precise packet-level analysis of header overhead and transmission behavior.

Methodology:

- Setup: Configure the network emulator to a pristine, low-latency setting.

- Execution: For each protocol, transmit the standardized data payloads from a client to a server, repeating the process to ensure statistical significance.

- Measurement: Record key metrics for each transmission, including round-trip time (for request/response models), end-to-end latency (for streaming), and total bandwidth consumed.

- Constraint Introduction: Re-run the experiment while systematically introducing network constraints via the emulator, such as limited bandwidth (e.g., 1 Mbps), high latency (e.g., 100ms), and packet loss (e.g., 1%).

- Analysis: Compare the performance degradation of each protocol to identify its operational tolerance for unstable network conditions, a common challenge in lab environments.

Experiment 2: Data Reliability and Integrity Verification

Objective: To stress-test the delivery guarantees of each protocol and verify data integrity under duress.

Methodology:

- Controlled Packet Loss: Use the network emulator to introduce a known percentage of packet loss.

- MQTT QoS Validation: For MQTT, publish a known sequence of messages at QoS 0, 1, and 2. On the subscriber side, verify the receipt of messages, checking for duplicates (QoS 1) and guaranteed, single delivery (QoS 2) [44].

- gRPC Stream Integrity: For gRPC, initiate a long-lived bidirectional stream. Intentionally drop the connection at the network level and monitor the time for the client and server to detect the failure and re-establish the stream, noting any data loss during the interruption [45].

- Custom UDP Application-Level Checks: For the custom UDP implementation, transmit data with sequence numbers and a checksum in the payload. On the receiver, measure the actual packet loss rate and validate the integrity of received packets. Implement and test a simple application-level retransmission mechanism for critical data.

Diagram 1: High-Level Experimental Workflow for comparing MQTT, gRPC, and Custom UDP under various network conditions.

Troubleshooting Guides and FAQs

Frequently Asked Questions (FAQs)

Q1: For a high-throughput sensor network in a lab with an unreliable wireless network, which protocol is most suitable? A1: MQTT is often the optimal choice. Its publish/subscribe model efficiently distributes data from many sensors to multiple consumers [41]. Most importantly, its Quality of Service (QoS) levels allow you to guarantee message delivery for critical data even over unstable links, and its lightweight nature conserves bandwidth and power on constrained devices [44].

Q2: We are building a distributed analysis application where services need to request complex, structured data from each other with low latency. What should we use? A2: gRPC is designed for this scenario. Its use of HTTP/2 provides multiplexing for efficient concurrent requests, and Protocol Buffers offer a fast, compact, and strongly-typed serialization format for complex data structures, reducing parsing overhead and bandwidth compared to JSON [42].

Q3: Our experiment involves streaming high-frequency video data where losing an occasional frame is acceptable, but latency must be absolute minimum. What is the best approach? A3: Custom UDP is the foundational protocol for this use case. Its connectionless nature and lack of retransmission mechanisms provide the lowest possible latency [43]. You can build a custom application on top of UDP that sends video frames as datagrams, accepting some frame loss as a trade-off for real-time speed.

Q4: Our gRPC client frequently experiences long delays after a server pod is restarted in our Kubernetes cluster. What is happening? A4: This is a classic "zombie connection" issue. The gRPC client maintains a long-lived connection, and if the server disappears ungracefully (e.g., hardware failure), the client's TCP stack may not immediately detect the failure [45]. To resolve this, enable gRPC Keepalive settings on both client and server. This forces periodic pings to proactively verify the health of the connection, reducing failure detection time from minutes to seconds [45].

Q5: MQTT clients are unable to connect to the broker with an "identifier rejected" error. How can we fix this?

A5: This is typically a broker misconfiguration. Check the broker's configuration file (e.g., mosquitto.conf) for settings related to client IDs. The issue may be caused by restrictive Access Control Lists (ACLs) or a misconfigured allow_duplicate_client_ids setting if you are attempting to reconnect with a previously used client ID [46].

Troubleshooting Guide: Common Connection Issues

Problem: MQTT Broker Connection Refused

- Step 1: Verify Broker Configuration: Check the broker's configuration file (e.g.,

/etc/mosquitto/mosquitto.conf). Ensure it is listening on the correct IP address (bind_address) and port (default 1883) [46]. - Step 2: Check Authentication: If authentication is enabled, verify the client is providing the correct username and password. Ensure the password file path in the broker config is correct and the file has been created using

mosquitto_passwd[46]. - Step 3: Inspect Firewall Rules: Confirm that firewalls (host-based or network) are not blocking traffic on the MQTT port (1883 or 8883 for SSL).

Problem: gRPC Requests Hanging or Timing Out (DEADLINE_EXCEEDED)

- Step 1: Adjust Client Deadlines: Increase the timeout setting in your gRPC client configuration. However, this may only mask a underlying performance issue [47].

- Step 2: Profile Server Performance: Use profiling tools to identify and optimize expensive or slow server-side methods that are causing the bottlenecks [47].

- Step 3: Implement Keepalive: As detailed in FAQ A4, configure gRPC Keepalive to prevent zombie connections from causing long delays [45].

Diagram 2: Troubleshooting workflow for MQTT broker connection issues.

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Software and Hardware Solutions for Protocol Implementation

| Item | Function in Research Context |

|---|---|

| Mosquitto MQTT Broker | An open-source broker that acts as the central nervous system for MQTT-based data acquisition, routing messages from publishers (sensors) to subscribers (data analysis services) [46]. |

| gRPC Protocol Buffers (.proto files) | The interface definition language for gRPC. Used to strictly define the methods and data structures of your services, ensuring type-safe and efficient communication between different parts of your analysis pipeline [42]. |

Network Emulator (e.g., tc) |

A critical tool for simulating real-world network imperfections (latency, packet loss, bandwidth limits) in a controlled lab environment to validate protocol robustness. |

| Wireshark | A network protocol analyzer used for deep packet inspection. It is indispensable for debugging protocol behavior, verifying handshakes, and accurately measuring header overhead. |

| TLS/SSL Certificates | The foundational reagent for securing data in transit. Essential for encrypting MQTT (via MQTT over SSL/TLS) and gRPC communications to protect sensitive research data [41] [48]. |

FAQs: Core Concepts and Architecture

Q1: What is the fundamental architectural difference between traditional PIM and CXL-PIM that affects data transfer?

A1: The core difference lies in their memory addressing models. Traditional Processing-in-Memory (PIM) uses disjoint host-device address spaces, requiring explicit data staging—copying inputs to PIM memory before computation and results back to host memory afterward. In contrast, CXL-PIM leverages the Compute Express Link (CXL) standard to create a unified, cache-coherent address space. This allows the host CPU to access device memory directly using standard load/store instructions, eliminating the need for explicit copying and enabling a zero-copy programming model [49] [50].

Q2: What are the three types of Unified Shared Memory (USM) allocations, and when should I use each?

A2: USM provides three allocation types, each with distinct performance characteristics [51]:

| Allocation Type | Host Accessible | Device Accessible | Data Location | Ideal Use Case |

|---|---|---|---|---|

malloc_device |

No | Yes | Device | Kernel-only data; fastest device execution. |

malloc_host |

Yes | Yes (remotely) | Host | Rarely accessed or large datasets not fitting in device memory. |

malloc_shared |

Yes | Yes | Migrates between Host & Device | Data frequently accessed by both host and device; enables zero-copy. |

Q3: Under what workload conditions does CXL-PIM outperform traditional PIM?

A3: CXL-PIM excels with workloads characterized by large dataset sizes, high input/output volumes, and irregular access patterns where explicit staging overhead becomes prohibitive. Research shows that when traditional PIM handles large datasets (e.g., 128GB), host-PIM data transfer can dominate 60-90% of total runtime, causing the system to underperform a CPU baseline. CXL-PIM's unified memory avoids this staging penalty. Conversely, traditional PIM can be better for small, tightly-coupled workloads where its lower access latency is beneficial [49].

Troubleshooting Guides

Problem 1: Poor End-to-End Performance with Traditional PIM

Symptoms: Overall application runtime is slower than a CPU-only baseline, especially as dataset sizes or the number of Processing Units (PUs) increase. Performance profiling shows minimal time spent in actual computation.

Diagnosis: The application is likely bottlenecked by explicit data staging overhead between the host and PIM memory. This is a known structural limitation of conventional DIMM-based PIM architectures [49].

Solution:

- Profile Data Transfers: Use profiling tools (e.g., NVIDIA Nsight Systems) to quantify the time spent in Host-to-PIM and PIM-to-Host transfers [52].