Optimizing Ecological Algorithms on GPU: Advanced Load Balancing Strategies for Biomedical Research

This article provides a comprehensive exploration of load-balancing strategies essential for accelerating ecological algorithms on GPU architectures, with a specific focus on applications in drug discovery and bioinformatics.

Optimizing Ecological Algorithms on GPU: Advanced Load Balancing Strategies for Biomedical Research

Abstract

This article provides a comprehensive exploration of load-balancing strategies essential for accelerating ecological algorithms on GPU architectures, with a specific focus on applications in drug discovery and bioinformatics. It establishes the foundational principles of GPU computing and the unique challenges posed by irregular, data-intensive ecological models. The content delves into advanced methodological approaches, including hybrid metaheuristic-reinforcement learning techniques and dynamic scheduling frameworks, detailing their implementation for real-world biomedical problems like virtual screening and genome analysis. Further, it offers practical troubleshooting and optimization guidance to overcome common performance bottlenecks and energy efficiency concerns. Finally, the article presents a comparative analysis of modern scheduling paradigms, validating their performance and cost-effectiveness to equip researchers and drug development professionals with the knowledge to build more efficient and scalable computational pipelines.

GPU Computing and Ecological Algorithms: Foundations for Biomedical Simulation

Ecological models, especially those simulating individual-based interactions or spatial dynamics, are inherently complex and computationally demanding. The shift from Central Processing Units (CPUs) to Graphics Processing Units (GPUs) represents a fundamental change in computational architecture, moving from sequential to parallel processing. This guide explains the technical reasons behind this shift and provides practical support for researchers implementing GPU-accelerated ecological models.

Core Concepts: CPU vs. GPU Architectural Differences

What are the fundamental architectural differences between CPUs and GPUs?

The primary distinction lies in their design philosophy and core architecture, which dictates their suitability for different types of computational tasks [1] [2].

- CPU (Central Processing Unit): Designed as a "brain" for general-purpose computing, a CPU excels at processing instructions sequentially and solving complex problems one after another. It typically features a smaller number of powerful, versatile cores (often between 2 and 16 in consumer-grade hardware) that operate at high clock speeds. This makes it ideal for managing a wide variety of tasks on a computer, from running the operating system to handling logic-based operations [1].

- GPU (Graphics Processing Unit): Originally designed for rendering graphics, a GPU is a specialized processor built for parallel processing. It contains thousands of smaller, more efficient cores that work together to perform many similar calculations simultaneously. This architecture is exceptionally well-suited for breaking down large, complex problems into thousands of smaller tasks that can be processed at the same time [1] [2].

Table: Architectural Comparison of CPU vs. GPU

| Feature | CPU (Central Processing Unit) | GPU (Graphics Processing Unit) |

|---|---|---|

| Core Design Philosophy | Fast, sequential task execution | Massive parallel task execution |

| Processing Approach | Sequential | Parallel |

| Typical Core Count | Fewer (1-64+ in servers), powerful cores | Thousands of smaller, efficient cores |

| Ideal Workload | Diverse, complex tasks; system management | Repetitive, similar calculations on large datasets |

| Memory Bandwidth | Lower (e.g., ~50 GB/s) [2] | Very High (e.g., up to 4.8 TB/s in HBM3) [2] |

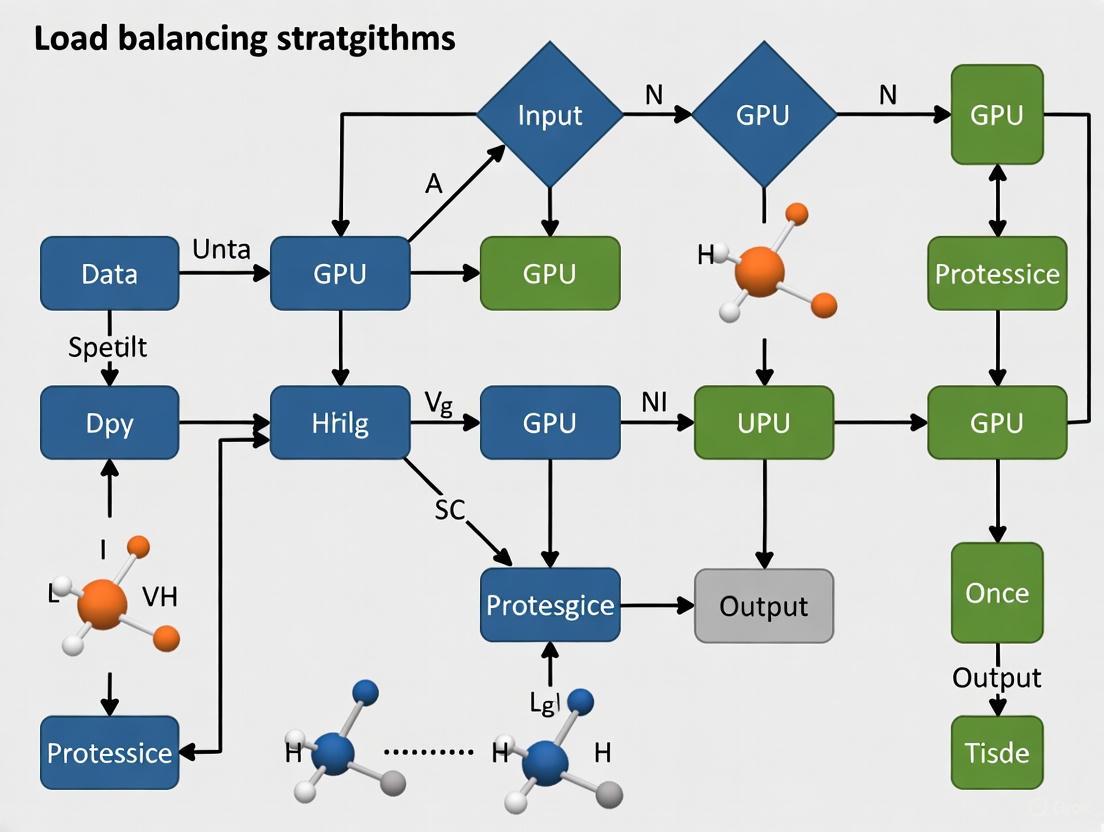

The following diagram illustrates how these architectural differences translate to processing workflows:

Quantitative Evidence: GPU Performance in Ecological Research

Empirical studies across various ecological domains demonstrate the significant performance gains offered by GPU acceleration. The table below summarizes key findings.

Table: Documented Speedups from GPU-Accelerated Ecological Models

| Ecological Model / Application | Reported Speedup Factor | Key Research Context |

|---|---|---|

| Bayesian Population Dynamics (Grey Seal) | Over 100x [3] | Particle Markov chain Monte Carlo (MCMC) parameter inference [3] |

| Spatial Capture-Recapture (Bottlenose Dolphin) | 20x [3] | Animal abundance estimation from photo-ID data [3] |

| Topographic Anisotropy Analysis (Earth Sciences) | ~42x [4] | Every-direction Variogram Analysis (EVA) for directional dependency [4] |

| Agent-Based Bird Migration Model | ~1.5x [4] | Simulating flight patterns based on weather and endogenous factors [4] |

Experimental Protocols & Methodologies

Protocol for Porting a Sequential Ecological Model to GPU

This protocol outlines a general methodology for accelerating an existing model, as demonstrated in research on topographic analysis and bird migration [4].

1. Problem Identification and Suitability Assessment:

- Objective: Determine if the model's computational bottleneck is suitable for parallelization.

- Procedure: Profile the existing CPU code to identify the most time-consuming functions. Ideal candidates are "embarrassingly parallel" problems where calculations for one element (e.g., a grid cell, an individual animal) are independent of others [4].

- Expected Outcome: A decision on whether GPU acceleration is feasible and which parts of the model will yield the highest returns.

2. Algorithm Refactoring for Parallelization:

- Objective: Redesign the core algorithm from a sequential to a parallel paradigm.

- Procedure:

- Decomposition: Break the main problem into smaller, independent work units (e.g., processing one individual in an agent-based model, or calculating anisotropy for one grid point) [4].

- Decoupling: Ensure that each work unit can be processed with minimal communication or synchronization with others during the computation phase. This may require duplicating some data to avoid dependencies [5].

- Expected Outcome: A theoretical parallel design for the algorithm, defining the independent work units (threads) and the data they require.

3. GPU Implementation and Coding:

- Objective: Translate the refactored algorithm into code that executes on the GPU.

- Procedure:

- Language/Framework Selection: Choose a GPU programming platform. The Compute Unified Device Architecture (CUDA) API for NVIDIA GPUs is a common choice, supporting languages like C, C++, and Python [4].

- Kernel Development: Write the computational kernel(s)—the functions that will be executed by thousands of GPU threads in parallel.

- Memory Management: Explicitly manage data transfer between the CPU's host memory and the GPU's device memory to minimize latency.

- Expected Outcome: A functioning GPU-accelerated version of the model.

4. Validation and Performance Benchmarking:

- Objective: Ensure the GPU model produces correct results and measure its performance gain.

- Procedure:

- Run the original CPU model and the new GPU model with identical inputs and parameters.

- Verify that the outputs are numerically equivalent within an acceptable tolerance.

- Measure the execution time for both versions and calculate the speedup factor (TimeCPU / TimeGPU).

- Expected Outcome: A validated, benchmarked GPU model ready for production use.

The workflow for this protocol is summarized in the following diagram:

The Scientist's Toolkit: Essential Hardware & Software

Implementing GPU-accelerated ecological models requires access to specific hardware and software resources.

Table: Essential Resources for GPU-Accelerated Ecological Research

| Category | Item / Technology | Function / Purpose |

|---|---|---|

| Hardware | NVIDIA GPU (Compute Capability > 3.0) [6] | The physical processor that performs parallel computations. Modern data center GPUs (e.g., H100, A100) feature Tensor Cores that further accelerate matrix math common in ML/DL [2]. |

| Hardware | Sufficient System RAM | The computer's main memory. It should be at least equal to the combined memory of all GPUs in the system [6]. |

| Hardware | High-Speed Interconnect (e.g., InfiniBand) [6] | Enables fast communication between multiple compute nodes in a cluster, crucial for scaling models beyond a single machine. |

| Software | CUDA (Compute Unified Device Architecture) [4] | A parallel computing platform and programming model created by NVIDIA that allows developers to use GPUs for general-purpose processing. |

| Software | GPU-Accelerated Libraries | Libraries like cuBLAS (linear algebra) and cuRAND (random number generation) provide optimized functions for common operations. |

| Software | Programming Languages (C, C++, Python) [4] | Languages with support for CUDA or other GPU programming interfaces, allowing for the development of custom model kernels. |

Frequently Asked Questions (FAQs) & Troubleshooting

General GPU Concepts

Q: Can my ecological model run on a CPU-only machine? A: While possible, it may be impractical for large, complex models. Some software, like certain fluid dynamics simulators, requires an NVIDIA GPU to run at all [6]. For models you develop yourself, they will run on a CPU, but performance for parallelizable tasks will be significantly lower than on a GPU [1].

Q: When should I consider using a CPU over a GPU for my research? A: CPUs are more effective for tasks that involve complex, sequential decision-making, or for smaller-scale models where the overhead of transferring data to the GPU outweighs the computational benefits [1] [7]. They are also suitable for initial prototyping and development before scaling up with GPUs [2].

Hardware & Performance

Q: Does a more powerful CPU speed up my GPU-accelerated simulation? A: Only to a very limited extent. Since GPUs perform all heavy computations, heavy investment in CPU power typically does not bring significant acceleration. The primary role of the CPU becomes managing the GPU's tasks and handling non-intensive system operations [6].

Q: How many GPUs do I need to get started? A: This is highly case-dependent. For simpler models or coarse-resolution studies, one or two GPUs may suffice. For complex, multi-phase, or high-resolution simulations, four GPUs are a recommended starting point, with eight or more for cutting-edge research or high workloads [6].

Q: What are the energy and environmental impacts of using GPUs? A: GPU use significantly increases the energy consumption of a server. AI servers can have idle power draw equal to ~20% of their maximum rated power [8]. The manufacturing of GPUs also carries a substantial "embodied" carbon footprint, with modern GPUs estimated to embody over 160 kg of CO2e per card [8]. This highlights the importance of maximizing computational efficiency to justify the environmental cost.

Implementation & Optimization

Q: My GPU model isn't producing the expected speedup. What could be wrong? A: This is a common challenge in parallel computing. Potential bottlenecks include:

- Insufficient Parallelism: The problem may not be decomposed into enough independent tasks to fully utilize all GPU cores. Aim for at least one to two million computational elements (e.g., particles, agents) per GPU for optimal efficiency [6].

- Memory Transfer Overhead: Excessive data transfer between CPU and GPU memory can slow down the overall process. Structure your algorithm to minimize these transfers.

- Non-Parallelizable Sections: Amdahl's Law states that the sequential part of your code that cannot be parallelized will ultimately limit the maximum possible speedup.

Q: What are the "tenets of parallel computational ecology" I should follow? A: Based on extensive research, three key principles have been identified [5]:

- Identify the Correct Unit of Work: Determine the fundamental, independent element of your simulation (e.g., an individual organism, a grid cell).

- Decouple Work Units for Distribution: Structure these units so they can be processed across multiple compute nodes with minimal interdependency, which may involve adding redundant information to each unit.

- Balance the Computational Load: Ensure work is distributed evenly across all available GPU cores to prevent some cores from sitting idle while others are still working.

Core Concepts: Ecological Algorithms and GPU Load Balancing

This section addresses fundamental questions about the core principles and setup of nature-inspired metaheuristic algorithms and their relationship with GPU computing.

FAQ 1: What are nature-inspired metaheuristic algorithms, and why are they used in biomedical research? Nature-inspired metaheuristic algorithms are a class of optimization algorithms within artificial intelligence that are inspired by natural phenomena, such as animal swarm behavior, evolution, or physical processes [9]. They are important components for tackling various types of challenging optimization problems across disciplines [9]. In biomedical and biostatistical research, these algorithms provide flexible and robust strategies for solving complex optimization problems that traditional statistical methods cannot handle [10]. Their utility has been demonstrated in areas such as improving accuracy in single-cell RNA sequencing data analysis, parametric and non-parametric statistical estimation, and finding more efficient experimental designs in toxicology [10]. They are particularly valuable because they are fast, assumption-free, and serve as general-purpose optimization algorithms, often finding optimal or near-optimal solutions for problems involving complex, high-dimensional parameter spaces [9] [11].

FAQ 2: What is the relationship between GPU load balancing and ecological algorithm performance? GPU load balancing is crucial for achieving high performance when running ecological algorithms because it ensures that the massive parallel computations are evenly distributed across the GPU's thousands of processing cores [12]. Fine-grained workload and resource balancing is the key to high performance for both regular and irregular computations on GPUs [12]. Irregular computations, which are common in nature-inspired algorithms where particles or agents may have varying amounts of work, can suffer from significant performance degradation if not properly load-balanced [12]. Effective load balancing helps to avoid situations where some GPU cores are idle while others are overburdened, thereby maximizing the utilization of computing resources and accelerating the time to solution for optimization problems in ecological and biomedical research [12].

FAQ 3: What are some common nature-inspired algorithms used in this field? Several nature-inspired metaheuristic algorithms are commonly employed, each with different strengths. Key algorithms and their applications include:

Table: Common Nature-Inspired Metaheuristic Algorithms

| Algorithm Name | Nature Inspiration | Common Applications in Research |

|---|---|---|

| Particle Swarm Optimization (PSO) [11] | Social behavior of bird flocking or fish schooling | Dose-finding designs in clinical trials [11] |

| Competitive Swarm Optimizer (CSO) [9] | Competitive and learning behavior in swarms | Single-cell generalized trend models, Rasch model estimation [9] |

| Competitive Swarm Optimizer with Mutated Agents (CSO-MA) [9] | Enhanced CSO with mutation for diversity | Parameter estimation in Markov renewal models, matrix completion [9] |

| Genetic Algorithm (GA) [11] | Process of natural selection and evolution | General-purpose complex optimization |

FAQ 4: What are the essential components of a research computing environment for these algorithms? A well-configured computing environment is essential for productive research. The key components, often referred to as the "research reagent solutions," include both hardware and software elements.

Table: Essential Research Reagent Solutions for GPU-Accelerated Ecological Algorithms

| Item / Tool | Function / Purpose | Implementation Notes |

|---|---|---|

| Discrete GPU (e.g., NVIDIA A100, RTX series) [13] [14] | Provides massive parallel processing for algorithm computation. | High memory (>=8-11GB) is critical for large models [14]. Blower-style fans are recommended for multi-GPU setups [14]. |

| GPU Programming Framework (CUDA) [12] | Allows developers to write software for GPU processors. | Ensure driver compatibility with the OS and other software stacks [15]. |

| GPU Load Balancing Framework (e.g., Stream-K) [12] | Abstracts load balancing from work processing to improve utilization. | Crucial for irregular computations; enables quick experimentation with scheduling techniques [12]. |

| Software Libraries (e.g., PySwarms in Python) [9] | Provides pre-built tools for implementing metaheuristic algorithms. | Reduces development time; ensures reliable implementation [9]. |

| High-Speed RAM [14] | Stores active data and facilitates smooth prototyping. | Size should at least match the largest GPU's memory; clock rate is less important [14]. |

| Multi-core CPU [14] | Handles data preprocessing, GPU initiation, and general computation. | More cores (e.g., 2 per GPU) are needed for real-time preprocessing [14]. |

The diagram below illustrates the typical workflow of a nature-inspired metaheuristic algorithm like CSO-MA, highlighting the iterative process of solution generation and refinement.

Troubleshooting Common Experimental Issues

This section provides practical solutions to frequently encountered problems when running ecological algorithms on GPU systems.

FAQ 5: My algorithm appears to be stuck in a local optimum. How can I escape it? Premature convergence to a local optimum is a common challenge. Several strategies based on the algorithm's mechanics can help:

- Enable or Increase Mutation Rates: If using an algorithm like CSO-MA, the mutation step is specifically designed to help the swarm escape local optima. This is done by randomly changing the value of a variable in a "loser" particle to a boundary value (either

xmax_qorxmin_q), which increases swarm diversity and allows exploration of distant regions in the search space [9]. - Adjust Social Parameters: In PSO, the parameters

c1andc2control the influence of a particle's own best position and the swarm's global best position, respectively. Tuning these can balance exploration and exploitation [11]. Furthermore, using a large value for the social factorφin CSO can enhance swarm diversity, though it may impact the convergence rate [9]. - Increase Swarm Size: A larger swarm size allows for a broader exploration of the search space, increasing the likelihood of particles discovering a more promising region that leads to the global optimum [11].

- Hybridization: Consider using a hybridized algorithm that creatively combines two or more metaheuristics. This strategy can markedly increase performance and help avoid pitfalls inherent in a single algorithm [9] [11].

FAQ 6: I am experiencing unexpectedly low GPU utilization during runs. What could be the cause? Low GPU utilization often points to bottlenecks elsewhere in the system. Follow this diagnostic flowchart to identify the cause.

FAQ 7: My GPU code runs slowly when using multiple GPUs. Could PCIe lanes be the issue? For most multi-GPU research setups, the number of PCIe lanes is unlikely to be the primary performance bottleneck. As a rule of thumb, you should not spend extra money to get more PCIe lanes per GPU [14]. The performance impact is often minimal:

- With 4 PCIe lanes per GPU, data transfer for a typical mini-batch might take about 9 milliseconds.

- With 16 PCIe lanes per GPU, this transfer time is reduced to about 2 milliseconds.

- Since the forward and backward pass of a deep neural network on the same batch often takes over 200 milliseconds, the performance gain from more PCIe lanes is marginal (around 3.2%) [14].

- Solution: Focus instead on ensuring your software implementation (e.g., using PyTorch's data loader with pinned memory) is optimized, as this can reduce the data transfer overhead to nearly zero [14]. Only when running systems with a large number of GPUs (e.g., more than 4) do PCIe lanes become a critical concern [14].

FAQ 8: How do I choose the right hardware for my research phase? The ideal hardware configuration depends heavily on the stage of your research and your budget. The following table provides general recommendations.

Table: Hardware Configuration Guide by Research Phase

| Research Phase | Recommended GPU Memory | Key Hardware Considerations | Cloud vs. Local |

|---|---|---|---|

| Ideation & Early Validation [16] | 4 - 8 GB | Cost-effectiveness is key. Used GTX 10-series cards can be viable [14]. | Cloud platforms (e.g., AWS, GCP) are ideal for flexibility and avoiding upfront costs [16]. |

| Formal Validation & Prototyping [14] [16] | >= 8 GB | A single powerful discrete GPU (e.g., RTX 2070/2080 Ti). Ensure adequate RAM and a capable CPU [14]. | A local workstation offers convenience for frequent, medium-scale experiments. |

| Production & State-of-the-Art Research [14] [16] | >= 11 GB | Multiple high-end GPUs with blower-style coolers. Requires robust cooling and power supply [14]. | A mixed strategy: local cluster for daily work, cloud bursting for peak demand [16]. |

Advanced Optimization and Performance Tuning

This section covers protocols for advanced optimization and strategies to enhance the performance and security of your research computations.

FAQ 9: What is a standard protocol for optimizing a dose-finding problem using PSO? The following methodology outlines the steps for applying PSO to find an optimal design for a phase I/II dose-finding trial that jointly considers toxicity and efficacy [11].

Problem Definition:

- Objective: Find the Optimal Biological Dose (OBD) using a continuation-ratio model with four parameters.

- Constraints: The design must protect patients from doses higher than the unknown Maximum Tolerated Dose (MTD) and ensure the OBD is estimated with high accuracy [11].

PSO Setup and Hyperparameters:

- Swarm Size (

S): This is a critical choice. A larger swarm size allows for broader exploration of the search space. The exact number is user-specified [11]. - Iterations / Evaluations: Define the stopping condition by setting a maximum number of function evaluations or iterations [11].

- Hyperparameters: Use established defaults as a starting point. The inertia weight (

w) can be constant or gradually reduced. The cognitive and social parameters (c1andc2) are often set to 2 [11].

- Swarm Size (

Algorithm Execution:

- Initialization: Randomly generate the initial positions

X_i(0)and velocitiesV_i(0)for all particles in the swarm [11]. - Iteration Loop: For each iteration

k, update every particleiusing the core PSO equations:V_i(k) = w * V_i(k-1) + c1 * R1 ⊗ [L_i(k-1) - X_i(k-1)] + c2 * R2 ⊗ [G(k-1) - X_i(k-1)]X_i(k) = X_i(k-1) + V_i(k)- Here,

L_iis the particle's personal best,Gis the swarm's global best, andR1,R2are random vectors [11].

- Initialization: Randomly generate the initial positions

Output: The algorithm terminates when the stopping criteria are met, and the global best position

Gis returned as the optimal design [11].

FAQ 10: What are the key security considerations for GPU clusters running sensitive biomedical data? As GPUs become central to research, their security cannot be an afterthought. Key vulnerabilities and mitigation strategies include:

- Memory Exploits: A lack of robust memory isolation in GPUs means that data from one computational task might not be reliably cleared before the next task uses the same memory. This can lead to data leakage. In multi-tenant environments (like shared university clusters), a flaw in isolation could allow one user's VM to snoop on another's data [13].

- Mitigation Strategies:

- Hardware-Level: Use GPUs with Error Correction Code (ECC) memory to help fend off attacks like Rowhammer. Employ driver and workload isolation to contain any potential exploit to a single zone [13].

- Software and Practice: Regularly update GPU drivers and firmware to patch known vulnerabilities. Implement strict Role-Based Access Control (RBAC) and use monitoring tools to detect anomalous GPU usage [13].

FAQ 11: How can I benchmark the performance of different metaheuristic algorithms for my problem? To fairly compare algorithms like PSO, CSO, and CSO-MA, follow this structured protocol:

- Define Benchmark Functions: Select a set of functions with known global optima and different geometric properties (e.g., separable vs. non-separable). Examples from the literature include the Weierstrass, Quartic, and Ackley functions [9].

- Standardize Experimental Conditions:

- Run all algorithms on the same hardware and software environment.

- Use identical swarm sizes and a fixed maximum number of function evaluations for all algorithms.

- For each algorithm, use the default or recommended hyperparameters (e.g., for CSO-MA, set

φ = 0.3) [9].

- Measure Performance Metrics: Record the following over multiple independent runs to ensure statistical significance:

- Best Objective Value Found: How close does the algorithm get to the known global optimum?

- Convergence Speed: How many iterations or function evaluations are required to reach a solution of a certain quality?

- Consistency: The standard deviation of the final objective value across runs [9].

- Analyze and Report: Summarize the quantitative results in a table for clear comparison. The superior algorithm is typically the one that is consistently the fastest with the best quality results [9].

Table: Sample Benchmark Results for Metaheuristic Algorithms

| Algorithm | Average Best Value (Ackley) | Std. Dev. | Avg. Iterations to Converge | Remarks |

|---|---|---|---|---|

| PSO | 0.05 | 0.02 | 12,500 | Good performance, but can get stuck in local optima. |

| CSO | 0.02 | 0.01 | 9,800 | Frequently faster than PSO with competitive quality [9]. |

| CSO-MA | 0.01 | 0.005 | 9,500 | Enhanced diversity via mutation prevents premature convergence [9]. |

FAQs: Load Balancing in Computational Drug Discovery

What is load balancing and why is it critical in heterogeneous GPU systems? Load balancing involves efficiently distributing computational workloads across multiple GPUs to maximize resource utilization and minimize overall processing time. In heterogeneous systems containing GPUs of different architectures and capabilities, effective load balancing is essential because an uneven distribution can cause slower GPUs to become bottlenecks, drastically reducing system efficiency. Research shows that improper workload distribution in heterogeneous GPU setups can lead to performance penalties exceeding 30% compared to optimal balancing strategies [17].

How does data irregularity complicate load balancing in drug discovery pipelines? Data irregularity refers to variations in data size, structure, and computational requirements commonly found in drug discovery datasets such as molecular structures of different complexities or varying image modalities from high-throughput screening. These irregularities create unpredictable computational demands that challenge static load distribution approaches. Additionally, pharmaceutical companies often manage petabytes of disorganized, siloed medical imaging data from diverse sources and modalities, further complicating automated workload distribution and requiring sophisticated data curation before effective load balancing can be implemented [18].

What are the main load balancing strategies for heterogeneous GPU environments? The two primary approaches are static and dynamic load balancing. Static methods (like the MINLP-based approach) perform offline analysis to determine optimal workload distribution before execution, requiring minimal runtime overhead but needing accurate performance modeling [17]. Dynamic methods continuously monitor performance and redistribute workloads during execution, adapting to changing conditions but introducing runtime management overhead. Recent hybrid approaches combining algorithms like Ant Colony Optimization (for local search) and Water Wave Optimization (for global exploration) have demonstrated improvements in task scheduling efficiency (11%), operational cost reduction (8%), and energy consumption reduction (12%) [19].

Which computational methods in drug discovery benefit most from GPU load balancing? Molecular docking and molecular dynamics simulations are particularly dependent on effective GPU load balancing due to their computationally intensive nature and ability to be parallelized [20]. These methods involve predicting how drug molecules interact with target proteins and simulating their behavior over time—processes that require testing numerous molecular orientations and configurations. Virtual screening of compound libraries and machine learning algorithms for predicting drug properties also significantly benefit from balanced GPU workloads, especially when processing large, diverse chemical datasets [21] [20].

Troubleshooting Guides

Poor Performance Scaling on Multiple GPUs

Symptoms: System with multiple GPUs shows minimal performance improvement compared to single GPU execution; some GPUs remain idle while others are overloaded.

Diagnosis and Resolution:

- Profile individual GPU utilization during typical workloads using monitoring tools like NVIDIA-smi to identify imbalance patterns.

- Implement a performance modeling approach using Mixed-Integer Non-Linear Programming (MINLP) as researched by Lin et al. [17]:

- Collect execution time samples for your application across different workload sizes on each GPU

- Build linear regression models predicting execution time based on problem size for each GPU

- Use MINLP to calculate optimal workload distributions that equalize execution times across GPUs

- Consider hybrid optimization algorithms combining ACO and WWO for dynamic cloud environments, which have demonstrated 11% improvement in task scheduling efficiency [19].

Table: Performance Improvement from Advanced Load Balancing Strategies

| Balancing Method | Performance Gain | Key Advantage | Implementation Complexity |

|---|---|---|---|

| MINLP-based Approach | Up to 33% improvement [17] | Optimal static distribution | High (requires mathematical modeling) |

| Hybrid WWO-ACO | 11% task scheduling efficiency [19] | Multi-objective optimization | Medium (algorithm implementation) |

| Static Waterfall Model | Limited data | Power efficiency focus | Low (simple partitioning) |

| Dynamic Redistribution | Varies with workload | Adapts to runtime conditions | Medium (requires monitoring infrastructure) |

Handling Data Irregularity in High-Throughput Screening

Symptoms: Inconsistent processing times for different data batches; difficulty predicting overall completion time; some GPUs finish early while others process complex datasets.

Diagnosis and Resolution:

- Implement data categorization by computational complexity before distribution:

- Pre-analyze molecular complexity or image characteristics

- Categorize data into complexity tiers based on historical processing time

- Distribute categories evenly across GPUs rather than单纯ly balancing data volume

- Use dynamic workload queuing with work-stealing capabilities:

- Implement a central queue system where GPUs request new tasks upon completion

- Allow faster GPUs to steal pending tasks from slower GPUs' queues

- Incorporate data transfer time considerations in task sizing

- Adopt collaborative platforms like CDD Vault with advanced visualization capabilities that help researchers identify data patterns and irregularities before initiating large-scale computations [21].

Memory Capacity Imbalances in Heterogeneous Systems

Symptoms: Memory errors on lower-capacity GPUs; inefficient utilization of available GPU memory; need to process datasets separately that could theoretically fit in aggregate memory.

Diagnosis and Resolution:

- Profile memory usage patterns across different drug discovery applications:

- Molecular docking typically requires less memory than molecular dynamics

- Deep learning models vary significantly in memory requirements based on model size and batch size

- Implement memory-aware workload distribution:

- Modify MINLP approaches to include memory constraints alongside performance considerations [17]

- Implement dynamic workload splitting where single large tasks are divided among multiple GPUs with results combined post-processing

- Utilize unified memory architectures where available to enable memory over-subscription with automatic data migration.

Experimental Protocols for Load Balancing Research

Protocol 1: MINLP-Based Workload Distribution

Objective: Establish optimal static workload distribution for specific application/GPU combinations using Mixed-Integer Non-Linear Programming [17].

Materials:

- Heterogeneous GPU system with at least two different GPU models

- Target application (molecular docking, dynamics, or ML inference)

- Performance profiling tools (NVIDIA Nsight, custom timers)

- MINLP solver software (e.g., MINLP solvers in MATLAB, Python scipy)

Methodology:

- Training Phase:

- Execute application with varying problem sizes (25%, 50%, 75%, 100% of typical workload) on each GPU

- Record execution times for each problem size/GPU combination

- Perform linear regression to establish performance models: TG1(s) = a1 × s + b1, TG2(s) = a2 × s + b2, etc.

Modeling Phase:

- Formulate MINLP problem with objective function minimizing variance between GPU completion times

- Add constraint that sum of distributed workloads equals total problem size

- Solve for optimal workload fractions (s1, s2, ..., sn) for n GPUs

Validation Phase:

- Execute application with calculated workload distribution

- Measure actual execution times and load balance efficiency

- Compare with naive distribution approaches (equal splitting, capacity-proportional splitting)

Table: Research Reagent Solutions for Load Balancing Experiments

| Item | Function | Example Specifications |

|---|---|---|

| Heterogeneous GPU Cluster | Execution environment for load balancing tests | Mix of NVIDIA Tesla K20c, GTS250, GTX690 [17] |

| CUDA CUBLAS Library | GPU-accelerated mathematical operations | Enables matrix multiplication and other linear algebra operations [17] |

| Molecular Docking Software | Target application for benchmarking | AutoDock, Schrödinger, or custom docking simulations [20] |

| CDD Vault Platform | Data management and visualization | Web-based tools for HTS data analysis and collaboration [21] |

| Cloud GPU Infrastructure | Scalable computational resources | Paperspace, AWS EC2 with NVIDIA GPU instances [20] |

Protocol 2: Hybrid ACO-WWO Algorithm Implementation

Objective: Implement and validate hybrid Ant Colony Optimization-Water Wave Optimization algorithm for dynamic load balancing in cloud GPU environments [19].

Materials:

- Cloud computing platform with GPU instances

- Workload trace files from previous drug discovery simulations

- CloudSim simulator or similar simulation environment

- Implementation of ACO and WWO algorithms

Methodology:

- Algorithm Implementation:

- Develop ACO component for local search and task scheduling

- Implement WWO component for global exploration and resource allocation

- Create hybrid coordination mechanism switching between approaches based on convergence metrics

Simulation Setup:

- Configure cloud simulation environment with heterogeneous virtual GPU resources

- Load historical workload traces from molecular dynamics or virtual screening experiments

- Define performance metrics: response time, operational cost, energy consumption, resource utilization

Validation and Comparison:

- Execute simulations with hybrid ACO-WWO against baseline algorithms (GA, SMO, pure ACO)

- Collect performance metrics across multiple workload scenarios

- Statistical analysis of improvements in task scheduling efficiency, cost reduction, and energy savings

MINLP-Based Workload Distribution Workflow

Load Balancing Method Classification

Troubleshooting Guide: Common GPU Performance Bottlenecks

This guide helps researchers identify and resolve common performance bottlenecks in GPU-accelerated drug discovery applications such as BINDSURF.

1. Problem: Low GPU Utilization During Molecular Dynamics Simulations

- Symptoms: Simulation runs slower than expected; GPU usage percentage is low (e.g., below 50%); high CPU usage instead.

- Possible Causes:

- Kernel Launch Overhead: Frequent launches of small kernels cause significant overhead [22].

- Serial Bottlenecks on CPU: The CPU is busy with serial tasks (e.g., file I/O, preparing data for the next step), leaving the GPU idle [22].

- Incorrect Workload Distribution: The computational grid and block structure in CUDA is not optimally configured for the problem size [23] [24].

- Solutions:

- Use CUDA Graphs to group multiple kernel launches together, reducing launch overhead and improving execution efficiency [22].

- Employ GPU Throughput Optimization: Schedule multiple independent simulations on the same GPU to keep it busy and mask serial bottlenecks on the host CPU [22].

- Optimize CUDA grid and block dimensions based on your GPU's compute capability and the specific workload [24].

2. Problem: Slow Data Transfer Between CPU and GPU

- Symptoms: Significant pauses in simulation; high latency; low overall throughput.

- Possible Causes:

- Frequent Data Transfers: The application copies small chunks of data between host and device too often [22].

- PCIe Bus Contention: Other devices are competing for bandwidth on the PCIe bus.

- Solutions:

- Use Mapped (Zero-Copy) Memory to allow direct access between host and device, eliminating explicit data transfer delays [22].

- Batch data transfers to larger, less frequent operations to minimize overhead.

3. Problem: Inefficient Load Balancing in Heterogeneous Clusters

- Symptoms: Some GPU nodes in a cluster finish tasks quickly and sit idle, while others are still processing; overall job completion time is long [25].

- Possible Causes:

- Static Work Assignment: Tasks are assigned to nodes statically and cannot be redistributed if some nodes are faster or have a lighter initial load [23].

- Lack of Dynamic Redistribution: The system lacks a dynamic load balancing strategy to handle the spatially heterogeneous and unpredictable computational load of CA-based models [23].

- Solutions:

- Implement a Dynamic Load Balancing (DLB) strategy that can redistribute workloads from slower or busier nodes to idle nodes during runtime [23].

- For large-scale, non-real-time jobs, consider volunteer computing paradigms (e.g., BOINC) to leverage underutilized GPU resources across a global network [26].

4. Problem: Memory Bottlenecks on the GPU

- Symptoms: Kernel execution stalls; low arithmetic intensity (low FLOPS); possible "out of memory" errors.

- Possible Causes:

- Solutions:

Frequently Asked Questions (FAQs)

Q1: Our BINDSURF simulations are taking too long. What is the first thing I should check? A1: First, profile your application using tools like NVIDIA Nsight Systems to measure GPU utilization. If utilization is low, investigate kernel launch overhead and CPU-side serial bottlenecks. Implementing CUDA Graphs and throughput optimization are high-impact first steps [22].

Q2: What are the trade-offs between dynamic and static load balancing for CA-based tumor growth simulations? A2: Static Load Balancing is simpler but can lead to significant idle time if the computational load is uneven across the simulation grid [23]. Dynamic Load Balancing improves resource utilization by redistributing work during runtime but introduces overhead from synchronization and communication, which can sometimes offset the performance gains [23]. The choice depends on the predictability and heterogeneity of your specific model.

Q3: How can we reduce the energy consumption of our GPU cluster running long-term drug discovery jobs? A3: Maximizing GPU utilization is key to energy efficiency [26] [27]. Techniques include:

- Dynamic Load Balancing: Ensures all GPUs are busy, preventing energy waste from idling [23] [27].

- Volunteer Computing: Using distributed, donated GPU resources can be more energy-efficient than maintaining a large, power-hungry local infrastructure [26].

- High Utilization Rates: A GPU operating at high utilization delivers more computation per watt consumed [27].

Q4: Can we use integrated GPUs (iGPUs) for applications like BINDSURF? A4: While possible, iGPUs are not recommended for compute-intensive tasks like virtual screening. A consumer-grade dedicated GPU can deliver 4 to 23 times the single-precision floating-point throughput of an integrated GPU from the same generation [25]. The limited memory bandwidth and VRAM capacity of iGPUs are major bottlenecks for large-scale biomolecular simulations.

Quantitative Performance Data

The following tables summarize key performance metrics and comparisons relevant to optimizing GPU-accelerated drug discovery applications.

Table 1: GPU vs. CPU Performance Comparison for HPC Workloads [25]

| Metric | High-End Server CPU | NVIDIA A100 GPU | Performance Gap |

|---|---|---|---|

| Number of Cores | ~192 cores | 6,912 CUDA cores | 36x more cores |

| Memory Bandwidth | Baseline (e.g., ~100-200 GB/s) | Up to 2 TB/s (54x CPU) | Up to 54x higher bandwidth |

| Typical Speedup | Baseline | 55x to over 100x | For highly parallelizable workloads (e.g., deep learning, scientific simulation) |

Table 2: Impact of Optimization Techniques on Performance [23] [22]

| Optimization Technique | Application Context | Reported Performance Gain |

|---|---|---|

| Dynamic Load Balancing | GPU-accelerated Tumor Growth Simulation (1024x1024 grid) | Up to 54% reduction in execution time [23] |

| CUDA Graphs & Coroutines | Molecular Dynamics (Schrödinger's FEP+/Desmond) | Up to 2.02x speedup in key workloads [22] |

Experimental Protocols

Protocol 1: Implementing a Dynamic Load Balancing Strategy for a Cellular Automata Model

This methodology is derived from a GPU-accelerated tumor growth simulation [23].

- Initial Domain Decomposition: Divide the computational domain (e.g., a 2D grid) into subregions and assign each to a thread block on the GPU.

- Independent State Update Rule: Design the CA update rule so that each cell's next state can be calculated using only its current state and that of its neighbors. This eliminates the need for mutual exclusion (locks) and allows fully parallel execution [23].

- Workload Monitoring: During simulation, monitor the computational load of each thread block. In a tumor model, load can be inferred from the density of active (e.g., proliferating) cells.

- Work Redistribution: If a significant load imbalance is detected, redistribute the cell processing workload among GPU threads to ensure all multiprocessors are utilized efficiently. The goal is to avoid costly synchronization and maximize concurrency [23].

- Performance Profiling: Compare execution time against a static load balancing implementation to quantify the improvement.

Protocol 2: BINDSURF's Virtual Screening Workflow

This protocol outlines the core steps of the BINDSURF methodology for blind virtual screening [24].

- Input Preparation: Read the main configuration file (

bindsurf_conf.inp). This file defines parameters like the target protein, ligand database, and simulation settings. - Grid Generation: Generate electrostatic (ES), Van der Waals (VDW), and hydrogen bond (HBOND) potential energy grids for the entire protein surface using the

GEN_GRIDfunction. - Ligand Conformation Generation: Use

GEN_CONFto precompute possible 3D conformations for each ligand in the database. - Protein Surface Spot Definition: Use

GEN_SPOTSto divide the protein surface into numerous independent regions (spots) for screening. - GPU-Accelerated Surface Screening: For each ligand conformation, perform the following on the GPU using

SURF_SCREEN:- Calculate the initial system configuration.

- Perform a Monte Carlo energy minimization simulation in all surface spots simultaneously.

- The scoring function (ES, VDW, HBOND) is evaluated using the precomputed grids for speed.

- Result Analysis: Process the results to identify new protein hotspots by examining the distribution of scoring function values across the entire protein surface.

Workflow and Strategy Diagrams

Diagram 1: BINDSURF Virtual Screening Workflow

Diagram 2: Dynamic Load Balancing Strategy

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Components for GPU-Accelerated Drug Discovery

| Item / Software | Function / Description | Relevance to Field |

|---|---|---|

| NVIDIA CUDA Toolkit | A parallel computing platform and programming model for leveraging NVIDIA GPUs for general-purpose processing. | The foundational environment for developing and running high-performance applications like BINDSURF [24]. |

| Precomputed Energy Grids | 3D grids storing pre-calculated electrostatic, Van der Waals, and hydrogen bond potentials for a target protein. | Drastically accelerates the scoring function calculation in molecular docking by replacing complex sums with fast grid lookups [24]. |

| Molecular Dynamics Engines (e.g., Desmond, GROMACS) | Software that simulates the physical movements of atoms and molecules over time. | Used for detailed study of drug-target interactions and free energy calculations, now optimized with GPUs [28] [22]. |

| BOINC (Berkeley Open Infrastructure for Network Computing) | Open-source middleware for volunteer computing, enabling projects to utilize idle processing power of personal computers worldwide. | Provides a scalable, cost-effective alternative to local HPC clusters for non-real-time bioinformatics applications [26]. |

| Monte Carlo Minimization Scheme | A stochastic algorithm that uses random sampling to find the global minimum of a function, such as a binding energy scoring function. | Core to the conformational search in BINDSURF's docking simulations; its computational intensity is well-suited to GPU parallelism [24]. |

Advanced Load Balancing Methodologies for GPU-Accelerated Bio-Simulations

The integration of the Whale Optimization Algorithm (WOA) and Double Deep Q-Networks (DDQN), exemplified by the WORL-RTGS (Whale Optimization Algorithm and Reinforcement Learning with Running Time Gap Strategy) scheduler, addresses the complex challenge of scheduling Directed Acyclic Graph (DAG)-structured machine learning workloads on heterogeneous GPU clusters. This hybrid approach is designed to solve NP-complete Nonlinear Integer Programming (NIP) problems inherent in this domain by leveraging the global search capabilities of WOA and the adaptive decision-making of DDQN [29].

The core innovation enabling this synergy is the established positive correlation between Scheduling Plan Distance (SPD) and Finish Time Gap (FTG). This relationship allows the algorithm to use FTG as a proxy for distance, transforming it into SPD to guide the WOA's search process effectively within complex DAG dependencies [29].

Experimental Protocol: Implementing WORL-RTGS

Environment Setup and Configuration

GPU Cluster Specifications: The experimental setup requires a heterogeneous GPU environment to properly evaluate the scheduler's adaptability. A combination of high-performance (e.g., NVIDIA A100) and mainstream (e.g., NVIDIA V100, RTX 3090) GPUs should be utilized to simulate real-world conditions [29].

Software Dependencies:

- PyTorch 2.5.1+ with CUDA 12.1 support

- OpenAI Gym for environment simulation

- NVIDIA CUDA Toolkit 12.1+

- Custom WORL-RTGS implementation [29]

Detailed Experimental Procedure

Phase 1: Workload Characterization

- Collect DAG-structured ML workload traces from real-world sources (e.g., Alibaba cluster data)

- Characterize workload patterns including task dependencies, computational requirements, and memory constraints

- Define performance metrics: makespan, resource utilization, scheduling overhead [29]

Phase 2: Algorithm Initialization

Phase 3: Training and Validation

- Train DDQN component using historical scheduling decisions

- Integrate WOA for global exploration of scheduling space

- Validate using k-fold cross-validation on workload traces

- Compare against baseline schedulers (Chronus, DRAS, MORL-WS) [29]

Table 1: Key Performance Metrics for WORL-RTGS Validation

| Metric | Definition | Target Value | Measurement Method |

|---|---|---|---|

| Makespan Improvement | Reduction in workflow completion time | Up to 66.56% [29] | Comparison against baselines |

| Resource Utilization | Percentage of GPU resources actively used | >85% | Cluster monitoring tools |

| Scheduling Overhead | Time to generate scheduling decisions | <100ms | Profiling during runtime |

| Solution Stability | Consistency of WOA performance | >90% of iterations | Statistical analysis of outputs |

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: What are the primary indicators that my WORL-RTGS implementation is suffering from premature convergence?

A1: The key indicators include:

- Consistently identical scheduling plans across multiple iterations

- Failure to improve makespan beyond initial quick gains

- Limited diversity in the WOA population's positions

- DDQN showing minimal exploration (epsilon stuck at low values)

Solution: Increase the exploration component by adjusting the WOA "a" parameter to decrease more slowly and raise the DDQN exploration rate. Also consider increasing population size [29].

Q2: How can I handle extremely large DAGs (1000+ tasks) without excessive memory consumption?

A2: Implement the following optimizations:

- Use incremental SPD calculation rather than storing full distance matrices

- Implement hierarchical DAG partitioning to break problems into sub-DAGs

- Reduce DDQN replay buffer size with priority experience replay

- Enable gradient checkpointing in the DDQN network [29]

Q3: What is the recommended approach for balancing the influence between WOA and DDQN components?

A3: The balance should be dynamically adjusted based on:

- Current iteration (early: favor WOA exploration, late: favor DDQN exploitation)

- Population diversity metrics

- Recent improvement trends

- Implement an adaptive weighting mechanism that monitors performance and adjusts influence ratios accordingly [29]

Common Implementation Issues and Solutions

Table 2: Troubleshooting Common Implementation Problems

| Problem | Symptoms | Root Cause | Solution |

|---|---|---|---|

| Unstable Training | Oscillating makespan, divergent loss values | Learning rate too high, insufficient replay buffer sampling | Reduce DDQN learning rate to 0.0001, implement prioritized experience replay [29] |

| Poor WOA Diversity | Similar scheduling plans, trapped in local optima | Excessive exploitation bias in WOA parameters | Increase "C" coefficient variation, implement opposition-based learning [30] |

| Long Scheduling Times | Decision latency >500ms, unable to keep pace with workload arrival | Complex SPD-FTG calculations, large action space | Optimize distance computation, implement action space pruning, use neural network for SPD approximation [29] |

| GPU Memory Exhaustion | Out-of-memory errors during training, unable to process large DAGs | Large replay buffer, excessive network size, unoptimized tensor operations | Implement gradient checkpointing, reduce batch size, use memory-efficient attention mechanisms [31] |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Components and Their Functions

| Component | Function | Implementation Notes |

|---|---|---|

| SPD-FTG Correlation Module | Maps scheduling plan differences to performance gaps | Core innovation enabling WOA-DDQN communication [29] |

| Dynamic Opposition Learning | Enhances WOA exploration capability | Uses quasi-opposition and partial opposition strategies [30] |

| Hybrid Reward Function | Balances multiple objectives: makespan, utilization, load balance | Critical for guiding both WOA and DDQN learning [29] |

| Adaptive Parameter Control | Dynamically adjusts exploration-exploitation balance | Monitors population diversity and performance trends [29] [30] |

| Distributed Training Framework | Enables multi-GPU implementation for large-scale problems | Leverages DDP (Distributed Data Parallelism) [31] |

Advanced Configuration Parameters

Critical Algorithm Hyperparameters

WOA Component Tuning:

- Population size: 20-50 individuals (balance diversity vs. computation)

- Convergence parameter (a): Linear decrease from 2 to 0

- SPD-FTG correlation weight: 0.5-0.9 (higher for more complex DAGs)

- Opposition learning probability: 0.3-0.7 [30]

DDQN Component Tuning:

- Learning rate: 0.0001-0.001 (lower for stable training)

- Target network update frequency: 100-1000 steps

- Replay buffer size: 50,000-200,000 experiences

- Exploration decay: 1.0 to 0.01 over training [29]

Performance Optimization Guidelines

For optimal performance with ecological algorithms research workloads:

- Implement sequence parallelism for long-context learning tasks [31]

- Use mixed-precision training (BF16/FP16) on supported GPUs

- Enable FlashAttention for memory-efficient attention computation

- Implement gradient accumulation for larger effective batch sizes

- Utilize tensor parallelism for very large models [31]

Frequently Asked Questions

What is dynamic load balancing and why is it needed in tumor growth simulations? Traditional sequential simulations of tumor growth are computationally inefficient and fail to utilize the parallel processing power of modern multi-core CPUs and GPUs. Dynamic load balancing distributes the computational workload of simulating millions of cells across available processors and automatically adjusts this distribution during runtime. This prevents situations where some processors are idle while others are overloaded, leading to a significant reduction in execution time and improved scalability for large-scale, biologically realistic models [32] [23].

What are the common performance issues when running CA-based simulations on GPUs? Performance bottlenecks in Cellular Automata (CA) tumor simulations often stem from:

- Synchronization Overhead: Threads may need to wait for each other to update shared data, causing delays [23].

- Memory Bottlenecks: Inefficient memory access patterns can slow down computation [23].

- Load Imbalance: Without dynamic balancing, the computational load from simulating active tumor cells may be unevenly distributed across GPU cores [32] [23].

- Race Conditions: Concurrent threads attempting to write to the same memory location can lead to errors and require complex handling [23].

How can I verify that my simulation's results are biologically accurate? Validation is a multi-step process. Begin by comparing your simulation's output, such as the overall tumor growth curve and spatial morphology, to established in vitro or in vivo data. For agent-based models, ensure that emergent behaviors, like heterogeneous cell distribution and nutrient-driven growth patterns, align with known biology. Using high-quality, longitudinal patient data for calibration and benchmarking is crucial for improving predictive accuracy [33] [34].

What is the difference between MTD and Metronomic scheduling in therapy simulations? These are two distinct dosing regimens simulated in treatment models:

- Maximum Tolerated Dose (MTD): Involves administering high doses of chemotherapy with long rest periods to allow patient recovery. This can lead to high toxicity in normal tissues [35].

- Metronomic Scheduling: Involves frequent, low-dose administration of drugs. Computational models have shown that this approach can help normalize tumor vasculature, improve drug delivery to the tumor, and reduce accumulated toxicity in healthy tissues [35].

Troubleshooting Guides

Unexpectedly Slow Simulation Performance

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Inefficient Load Balancing | Profile code to identify processors with high idle time. Check if load balancing frequency is too high or too low [32]. | Implement a dynamic load balancing strategy that redistributes cells among threads based on computational load, adjusting the frequency of rebalancing to minimize overhead [32] [23]. |

| GPU Memory Bandwidth Saturation | Use profiling tools (e.g., NVIDIA Nsight) to analyze global memory access patterns. | Optimize memory usage by leveraging shared memory for intermediate cell state updates and structuring data access to be contiguous [23]. |

| High Synchronization Overhead | Check for excessive use of atomic operations or locks that cause threads to wait [23]. | Redesign the update algorithm to ensure each thread processes its own cell without requiring mutual exclusion, eliminating contention [23]. |

| Suboptimal GPU Utilization | Verify the configuration of the CUDA grid and thread blocks. | Ensure the computational domain is divided efficiently across GPU cores, with thread block sizes optimized for the specific GPU architecture [23]. |

GPU-Related Errors and Application Crashes

| Symptom | Possible Interpretation | Resolution Protocol |

|---|---|---|

| Application crashes or displays "GPU has fallen off the bus" (Xid 79) | This indicates a serious hardware or driver communication error [36]. | 1. Drain all active workloads from the node [36].2. Follow the process for reporting a GPU issue, collecting system configuration and logs [36].3. A GPU reset may be required [36]. |

| "Graphics Engine Exception" (Xid 13) | Often points to an issue in the application code running on the GPU [36]. | 1. Run diagnostics to rule out hardware failure [36].2. Debug the user application for potential memory access violations or other code errors [36]. |

| Display artifacts or no display output | Artifacting can signal a failing GPU, while no display often indicates connection or power issues [37]. | 1. Reseat the GPU and check power connections [37].2. Perform a clean reinstall of the GPU drivers [37].3. Test with a different monitor or cable to isolate the fault [37]. |

| Driver error (e.g., Code 43) | Windows has detected a problem with the hardware or drivers [37]. | 1. Perform a clean reinstall of the latest GPU drivers [37].2. If the error persists, the GPU may be damaged or defective and require replacement [37]. |

Handling Simulation Instability and Non-Reproducibility

| Problem Area | Checklist | Corrective Action |

|---|---|---|

| Model Initialization | Are initial conditions (e.g., number of stem cells, nutrient gradients) consistent across runs? | Implement a robust parameter initialization file and use a fixed random seed for stochastic elements to ensure reproducibility. |

| Stochasticity | Are random number generators (RNGs) used and parallelized correctly? | Use parallel-safe RNGs with independent streams for each computational thread to avoid correlations. |

| Numerical Solvers | Are appropriate ODE solvers and time-step sizes selected for your model's stiffness? | For Neural-ODE frameworks, ensure the numerical integrator is suitable for the problem. Adjust tolerances and step sizes to balance accuracy and performance [34]. |

Experimental Protocols & Workflows

Protocol 1: Implementing a Dynamic Load Balancing Strategy for a CA Tumor Model

This protocol outlines the methodology for parallelizing a cellular automaton tumor growth simulation, based on strategies that have demonstrated up to 54% reduction in execution time [23].

1. Objective To design and implement a GPU-accelerated tumor growth simulation using a dynamic load balancing strategy to optimize computational efficiency and scalability.

2. Materials and Reagent Solutions

| Item | Function/Specification |

|---|---|

| Compute Node | CPU: Multi-core processor (e.g., Intel Xeon, AMD EPYC). GPU: NVIDIA architecture (e.g., Ampere, Hopper) with CUDA support [23]. |

| Programming Model | CUDA C/C++ for kernel development [23]. |

| Memory | GPU Global Memory: Sufficient for the cell grid state (e.g., 4+ GB for 1024x1024 grids). GPU Shared Memory: For caching cell states within a thread block [23]. |

| Simulation Framework | Custom CA model incorporating probabilistic rules for cell proliferation, migration, and death [32] [23]. |

3. Methodology

Step 1: Computational Domain Decomposition

- Represent the tumor tissue as a discrete 2D grid.

- Divide the grid into rectangular sub-regions, with each region assigned to a thread block on the GPU.

- Assign individual cells within a sub-region to parallel threads for processing [23].

Step 2: Define Cell Behavioral Rules

- Program the following stochastic rules for each cell based on its state and neighborhood (e.g., Moore neighborhood):

- Define distinct rules for tumor stem cells (immortal, unlimited proliferation) and tumor daughter cells (limited proliferation, non-zero death probability) [23].

Step 3: Implement Dynamic Load Balancing

- Key Principle: Structure the algorithm so each thread updates its assigned cell's next state independently, avoiding the need for atomic operations or locks that cause synchronization overhead [23].

- Use a dual-state buffer (current and next) to allow all threads to read from the consistent current state while writing updates independently.

- After each iteration, a lightweight balancing step can assess the active cell count per region and subtly adjust sub-region boundaries if a significant imbalance is detected.

Step 4: Memory Optimization

- Utilize shared memory within thread blocks to cache the cell states of the current sub-region and its halo (boundary cells from neighboring regions). This drastically reduces access to the slower global memory [23].

Step 5: Execution and Profiling

- Launch the CUDA kernel and use profiling tools like NVIDIA Nsight Systems to identify any remaining bottlenecks in kernel execution, memory access, or load distribution.

The following workflow diagram illustrates the parallel computation process.

Protocol 2: Simulating Metronomic Therapy Using a Hybrid Multiscale Model

This protocol describes how to build a 3D multiscale model to simulate and compare different chemotherapeutic treatment schedules [35].

1. Objective To simulate tumor growth and angiogenesis and use the model to evaluate the efficacy of metronomic therapy versus maximum tolerated dose (MTD) scheduling, both alone and in combination with anti-angiogenic drugs.

2. Materials and Reagent Solutions

| Item | Function/Specification |

|---|---|

| Model Domain | A 10x10x8 mm region of virtual tissue [35]. |

| Computational Framework | Hybrid continuous-discrete model: Agent-based for cells, Continuum PDEs for diffusible factors [35]. |

| Simulated Factors | Oxygen, Glucose, VEGF, ECM, MMPs, Angiopoietins, Cytotoxic drug, Anti-angiogenic drug [35]. |

| Simulated Cells & Vessels | Cancer cells (proliferation, migration), Blood vessels (angiogenic sprouting from a 'mother vessel') [35]. |

3. Methodology

Step 1: Model Initialization

- Set up the 3D computational domain.

- Seed a small population of cancer cells at the center.

- Define an idealized circular "mother vessel" surrounding the tumor at the mid-plane to serve as the source for angiogenic sprouts [35].

Step 2: Simulate Avascular Growth and Angiogenesis

- Allow the tumor to grow initially without a blood supply, consuming ambient nutrients.

- As the tumor expands, hypoxic cells will secrete VEGF, triggering the angiogenic process from the mother vessel [35].

- Simulate the migration of endothelial tip cells and the formation of new capillary sprouts towards the VEGF gradient.

Step 3: Apply Therapeutic Interventions

- Once a vascularized tumor is established, apply one of four treatment regimens in separate simulations:

- MTD: High-dose cytotoxic drug with long rest periods.

- Metronomic (M): Frequent, low-dose cytotoxic drug.

- MTD + Anti-angiogenic: Co-administration.

- M + Anti-angiogenic: Co-administration [35].

- Model the pharmacokinetics/pharmacodynamics of the drugs, including their effect on killing cancer cells (cytotoxic) and normalizing vessel structure (anti-angiogenic).

- Once a vascularized tumor is established, apply one of four treatment regimens in separate simulations:

Step 4: Analyze Output Metrics

- Compare the simulation results across regimens by measuring:

- Tumor Killing: Final tumor cell count.

- Vascular Normalization: Changes in vessel permeability, density, and interstitial fluid pressure (IFP).

- Normal Tissue Toxicity: Estimated drug accumulation in healthy tissue.

- Cancer Cell Invasiveness [35].

- Compare the simulation results across regimens by measuring:

The diagram below outlines the key components and interactions in this multiscale model.

The Scientist's Toolkit: Essential Computational Research Reagents

| Tool/Solution | Function in Research |

|---|---|

| Cellular Automata (CA) Framework | A discrete model that uses local rules to simulate individual cell behavior (proliferation, migration, death), capturing emergent tumor morphology and heterogeneity [32] [23]. |

| Hybrid Multiscale Model | Combines agent-based modeling of cells with continuum models of diffusible factors (oxygen, drugs) to simulate complex interactions between tumors and their microenvironment in 3D [35]. |

| Neural-ODE (TDNODE) | A deep learning framework that combines neural networks with ordinary differential equations to discover dynamical laws from longitudinal tumor size data and generate predictive kinetic parameters [34]. |

| CUDA & GPU Acceleration | A parallel computing platform and programming model for NVIDIA GPUs that enables massive parallelism, drastically speeding up computationally intensive tasks like CA updates and ODE solving [23]. |

| Dynamic Load Balancing (DLB) | A runtime strategy that redistributes computational workload among processors to maximize resource utilization and minimize simulation time in spatially heterogeneous models [32] [23]. |

| Anti-angiogenic Agent (in-silico) | A simulated drug that targets tumor blood vessels. In models, it can "normalize" vasculature, reducing permeability and improving drug delivery, unlike high doses that cause vessel pruning [35]. |

FAQs: Core Concepts and Troubleshooting

Q1: What is the fundamental principle behind the CB-HRV scheduling strategy? A1: The CB-HRV (Coefficient of Balance - History Ratio Value) strategy is a dynamic GPU task scheduling algorithm designed to reduce energy consumption. Its core principle is to minimize task migration between the GPU's Streaming Multiprocessors (SMs) by achieving a balanced task assignment. It accomplishes this by combining two key factors: the task balance impact factor (CB) , which relates to task characteristics, and the SM historical utilization value (HRV) , which reflects the past workload of each SM. By rationally assigning tasks to SMs based on these factors, it reduces the energy loss typically caused by imbalanced workloads and frequent task migrations [38].

Q2: During our experiments, we are not observing the expected reduction in energy consumption. What could be the cause? A2: Several implementation or configuration issues could be responsible:

- Incorrect CB Factor Calculation: Verify that the task balance impact factor (CB) is accurately capturing the resource demands and migration characteristics of your specific tasks. An inaccurate calculation will lead to poor scheduling decisions [38].

- Uncalibrated HRV Metric: Ensure the History Ratio Value (HRV) correctly reflects the long-term utilization trend of each SM. If the HRV is too sensitive to short-term fluctuations, it may not effectively guide tasks away from overloaded SMs [38].

- Ignored Pre-Measurement Guidelines: GPU power consumption is sensitive to the system's state. Adherence to pre-measurement guidelines is crucial for consistent results. This includes ensuring no other high-power applications are running on the GPU and that the GPU is in a stable thermal state before testing [39].

Q3: How does task migration lead to increased energy consumption in a GPU? A3: When a task is moved from one SM to another, the process involves overhead operations such as saving and loading context, transferring intermediate data, and potentially stalling other tasks. These operations consume additional computational cycles and memory bandwidth without performing useful work, leading to a direct increase in the GPU's power draw and a loss of overall power efficiency [38].

Q4: Our simulation results show high variance when comparing the CB-HRV strategy to other methods. How can we improve the reliability of our measurements? A4: High variance often stems from uncontrolled environmental or methodological factors. To improve reliability:

- Standardize the Measurement Environment: Conduct experiments in a controlled, air-conditioned lab space to minimize the impact of ambient temperature on GPU power and performance [39].

- Control for Position and Time: Perform your benchmark comparisons at the same time of day and with the GPU in a consistent physical orientation to reduce variability [39].

- Ensure Sufficient Data: Use long-enough measurement periods and multiple experimental runs to average out short-term fluctuations and obtain statistically significant results [38].

Experimental Protocols & Data Presentation

Protocol: Empirical Validation of CB-HRV Performance

This protocol outlines the methodology for comparing the CB-HRV scheduler against other common scheduling algorithms, as described in the foundational research [38].

1. Objective: To validate the feasibility and effectiveness of the CB-HRV method by comparing its energy consumption and execution efficiency against three existing scheduling methods: RAD, DFB, and PHB.

2. Experimental Setup:

- Hardware: A GPU research scenario based on a multi-SM (Streaming Multiprocessor) architecture. The SMs are the core computing components and consume approximately 40% of the GPU's total power [38].

- Software: A task scheduling simulation framework that can implement the CB-HRV, RAD, DFB, and PHB algorithms.

3. Procedure:

- Step 1: Generate a set of benchmark tasks with varying computational loads and resource requirements.

- Step 2: For each scheduling algorithm (CB-HRV, RAD, DFB, PHB), execute the same set of benchmark tasks on the simulated GPU environment.

- Step 3: During execution, collect key performance metrics, including:

- Total energy consumed by the GPU.

- Task execution efficiency (e.g., makespan, throughput).

- Number of task migrations between SMs.

- Step 4: Repeat the experiment multiple times to ensure statistical significance.

4. Data Analysis:

- Compare the average energy consumption across all scheduling strategies.

- Analyze the relationship between the reduction in task migrations and the achieved energy savings for the CB-HRV method.

- Perform a statistical test (e.g., t-test) to confirm that the performance improvements of CB-HRV are significant.

Quantitative Performance Data

The following table summarizes the typical comparative results from an empirical evaluation of the CB-HRV strategy against other schedulers [38].

Table 1: Comparative Performance of GPU Task Scheduling Algorithms

| Algorithm | Primary Focus | Key Metric: Energy Consumption | Key Metric: Task Migration | Key Metric: SM Utilization Balance |

|---|---|---|---|---|

| CB-HRV (Proposed) | Task Balance & History | Lowest | Significantly Reduced | High |

| RAD | Dynamic Resource Allocation | Higher than CB-HRV | High | Low |

| DFB | Data-Flow Based Scheduling | Higher than CB-HRV | Moderate | Moderate |

| PHB | Partitioned Harmonic Scheduling | Higher than CB-HRV | High | Low |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for GPU Load-Balancing Research

| Item / Concept | Function in the Research Context |

|---|---|

| Streaming Multiprocessor (SM) | The core computational unit of the GPU. The scheduling algorithm's goal is to distribute tasks evenly across these SMs to maximize efficiency [38]. |

| Task Balance Impact Factor (CB) | A quantitative value abstracted from task characteristics. It is used by the scheduler to predict a task's resource needs and its potential to cause imbalance, guiding its initial placement [38]. |

| History Ratio Value (HRV) | A value representing the historical utilization of an SM. It helps the scheduler identify which SMs are consistently overloaded or under-utilized, informing future task assignments [38]. |

| Task Migration | The process of moving a task from one SM to another. This is a key source of energy overhead that the CB-HRV strategy aims to minimize [38]. |

| Energy Consumption Model | A mathematical model that relates SM activity, task migration events, and other factors to the total power drawn by the GPU. It is used to quantify the performance of different scheduling strategies [38]. |

Strategy Visualization

The diagram below illustrates the logical workflow and decision-making process of the CB-HRV task scheduling strategy.

Performance Profiling and Core Computational Phases of BLASTN

What are the primary computational bottlenecks in BLASTN that load balancing addresses?

Performance profiling reveals that BLASTN execution time is dominated by a small number of critical functions. Through systematic profiling using Unix time, built-in profiler modules, and gprof, researchers have identified that a single function, RunMTBySplitDB, consumes 99.12% of the total runtime [40] [41]. Within this function, five core child functions account for 92.12% of the overall BLASTN execution time [40] [42]. This extreme concentration of computational demand makes these functions prime targets for optimization through parallelization and load balancing strategies.

Table: BLASTN Runtime Distribution by Function

| Function Name | Runtime Percentage | Description |

|---|---|---|

| RunMTBySplitDB | 99.12% | Main driver function for multi-threaded processing |

| Core Child Function 1 | ~38% | Key alignment computation |

| Core Child Function 2 | ~25% | Sequence comparison operations |

| Core Child Function 3 | ~15% | Database scanning |

| Core Child Function 4 | ~9% | Results scoring |

| Core Child Function 5 | ~5% | Output generation |

The computational intensity of BLASTN stems from its core algorithm, which processes millions of biological sequences by identifying short words common between query and database sequences (seeding), extending these seeds to find longer common subsequences (extension), and evaluating the statistical significance of matches [43]. For nucleotide alignment using BLASTN, this process becomes computationally challenging due to the exponential growth of molecular databases [44].

Load Balancing Implementation Strategies for HPC Environments

What load balancing strategies effectively distribute BLASTN workloads across high-performance computing clusters?

The "dual segmentation" method represents one effective approach, where both the database and query are partitioned into subsets [40] [41]. If the database is divided into m pieces and the query into n pieces, then m × n unique database-query pairs are processed in parallel across the computing cluster [40]. This method has demonstrated remarkable performance improvements, reducing runtime from 27 days to less than one day on a homogeneous HPC cluster with 500+ nodes [40].

More sophisticated approaches utilize performance modeling to guide data partitioning. The execution time for each sub-job on node type k can be represented as e_{i,j} = T_k(D_i,Q_j), where T is the estimated runtime for a sub-job of size (D_i,Q_j) [40] [41]. The optimal load balancing configuration minimizes the cost function max_{i,j} {e_{i,j}}, ensuring that no single node disproportionately delays the overall job completion [40].

Table: Load Balancing Performance Improvements

| Strategy | Cluster Type | Performance Improvement | Key Innovation |

|---|---|---|---|

| Dual Segmentation | Homogeneous (500+ nodes) | 27× faster (27 days → 1 day) | Database and query partitioning |

| Performance Model-Guided | Homogeneous | 81% runtime reduction | Quadratic performance models |