Optimizing Data Access Patterns for GPU-Accelerated Ecological and Biomedical Codes: A 2025 Guide for Researchers

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on optimizing data access patterns for computational ecology and biomedical codes running on GPUs.

Optimizing Data Access Patterns for GPU-Accelerated Ecological and Biomedical Codes: A 2025 Guide for Researchers

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on optimizing data access patterns for computational ecology and biomedical codes running on GPUs. It covers foundational principles of GPU memory hierarchy, methodological approaches like efficient data batching and leveraging GPU-accelerated libraries, troubleshooting for common bottlenecks like thread divergence and memory oversubscription, and validation strategies using real-world case studies from drug discovery. The goal is to equip practitioners with the knowledge to significantly reduce computational runtime and cost, thereby accelerating critical research pipelines.

GPU Computing Fundamentals: Why Data Access is the Key to Performance

Technical Support Center

Troubleshooting Guides

Guide 1: Resolving Low GPU Utilization in Drug Discovery Workloads

Problem: Your GPU utilization is consistently low (e.g., below 50%) during model training or molecular dynamics simulations, indicating the GPU is idle and not performing computations efficiently.

Primary Symptoms:

- Low percentage values in

nvidia-smioutput. - Training times are significantly longer than expected.

- The CPU usage is high while GPU usage remains low.

Diagnosis Steps:

- Identify the Bottleneck: Use profiling tools to determine where the pipeline is stalling.

- Conduct a Simple Timing Test:

- Measure the time per training step with your normal data loading.

- Measure it again using synthetic data generated directly in GPU memory.

- A significant speedup with synthetic data confirms an I/O or Data Preprocessing Bottleneck [1].

Solutions:

| Bottleneck Type | Solution | Implementation Example |

|---|---|---|

| Data Loading & I/O | Implement parallel data loading and prefetching. | In PyTorch, increase num_workers in DataLoader. In TensorFlow, use tf.data with parallel interleaving and .prefetch() [1]. |

| CPU Preprocessing | Offload preprocessing to the GPU. | Use libraries like NVIDIA DALI for image decoding, augmentation, and other transforms directly on the GPU [1]. |

| Memory Transfer | Use "pinned" (page-locked) memory for faster CPU-to-GPU transfers [1]. | |

| Small Batch Size | Increase the batch size to more fully utilize GPU cores, taking care to stay within memory limits. |

Guide 2: Fixing GPU Memory Errors in Large-Scale Molecular Simulations

Problem: Your job fails with "out-of-memory" (OOM) errors, especially when working with large molecular structures, complex models, or large batch sizes.

Diagnosis Steps:

- Monitor memory usage using

nvidia-smiorgpudash[2] to track the peak memory consumption. - Use the PyTorch

torch.cuda.memory_summary()or TensorFlow profiler to analyze memory allocation per tensor.

Solutions:

- Reduce Batch Size: The most straightforward action to lower memory consumption.

- Use Mixed Precision Training: Utilize FP16 or BF16 precision instead of FP32. This halves the memory footprint and accelerates computation on modern GPUs with Tensor Cores [1]. This can be implemented with PyTorch's

torch.cuda.ampor TensorFlow's mixed precision API [1]. - Implement Gradient Checkpointing: Also known as activation recomputation. This trades compute for memory by re-calculing activations during the backward pass instead of storing them all [1].

- Apply Model Parallelism: Split a large model across multiple GPUs. For example, different layers of a neural network can reside on different GPUs [1].

Frequently Asked Questions (FAQs)

Q1: Our team is new to GPU computing. What is the most important conceptual shift we need to understand when moving from CPU to GPU? The fundamental shift is from sequential to massively parallel processing. A CPU has a few powerful cores optimized for executing a single thread of work quickly. A GPU has thousands of smaller, efficient cores designed to execute many threads concurrently [3] [4]. Successful GPU programming requires refactoring problems so that they can be executed across thousands of threads simultaneously on the same data (data parallelism) [5].

Q2: When we submit a job to a cluster, how can we check if our GPU is being used effectively? You can use command-line tools for real-time monitoring.

- Find the node your job is running on using

squeue --me[2]. - SSH to that node:

ssh della-iXXgYY[2]. - Run

watch -n 1 nvidia-smi[2]. This will update every second, showing you:- GPU Utilization: A percentage indicating how busy the GPU cores are.

- GPU Memory Usage: How much of the GPU's dedicated memory is being used.

For a more detailed summary of a completed job, use the

jobstats <JobID>command [2].

Q3: What are the most common performance pitfalls in multi-GPU training for generative AI in drug discovery? The most common pitfall is communication bottlenecks [1]. When multiple GPUs are training a model, they must synchronize their gradients regularly. If the network connection between GPUs (e.g., over InfiniBand or Ethernet) is slow or congested, the GPUs will spend much of their time waiting instead of computing.

- Solutions: Use techniques like gradient accumulation to synchronize less frequently, or employ gradient compression to reduce the amount of data that needs to be sent [1]. Ensuring your cluster has high-speed interconnects like NVIDIA NVLink or InfiniBand is also critical [1].

Q4: We are using a large dataset of molecular structures. How can we speed up our data loading? The key is to ensure the GPU is never waiting for data. This is achieved by:

- Parallel Data Loading: Use multiple worker processes to load and preprocess data in parallel [1].

- Data Prefetching: Overlap data loading with computation by pre-loading the next batch of data while the GPU is processing the current one [1].

- Local Caching: For datasets used repeatedly, cache them on fast local NVMe SSDs to avoid slow reads from remote storage systems [1].

Protocol: Diagnosing a GPU Bottleneck

Objective: To systematically identify the primary bottleneck in a GPU-accelerated research workflow.

Methodology:

- Baseline Measurement: Run your standard training or simulation script for a fixed number of steps (e.g., 100). Record the average time per step and the average GPU utilization (from

nvidia-smi). - Eliminate the I/O Variable: Modify your script to use a synthetic dataset, generated on-the-fly directly in GPU memory. Run for the same number of steps and record the time and utilization.

- Analyze the Difference:

- If the time per step decreases dramatically and GPU utilization increases with synthetic data, your bottleneck is in the data loading and preprocessing pipeline.

- If the time per step and utilization remain similarly low, the bottleneck is likely in the computational kernel itself or in memory transfers. Profiling with

Nsight Systemsis the required next step [1].

Quantitative Data: GPU Specifications for Research Computing

The table below summarizes key specifications of modern GPUs used in high-performance research environments, such as those for drug discovery [2] [6].

| GPU Model | Architecture | FP64 Performance (TFLOPS) | Memory per GPU (GB) | Key Feature for Research |

|---|---|---|---|---|

| NVIDIA V100 | Volta | 7.0 [2] | 16 or 32 [2] | First generation with Tensor Cores [6] |

| NVIDIA A100 | Ampere | 9.7 [2] | 40 or 80 [2] | Improved Tensor Cores, more memory [6] |

| NVIDIA H100 | Hopper | 34 [2] | 80 or 94 [2] [6] | Advanced Tensor Cores for transformative AI performance [7] [8] |

| AMD MI210 | CDNA 2 | 11.5 [2] | 64 [2] | Competitive alternative for FP64 performance |

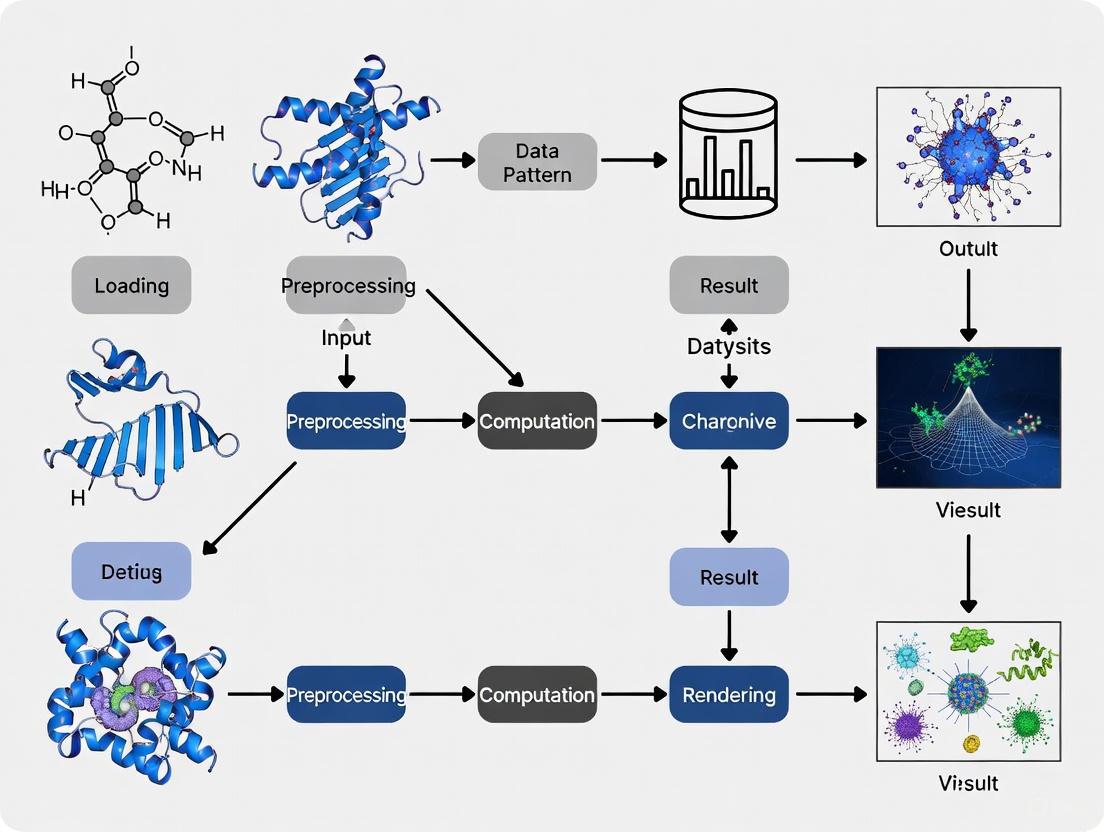

Workflow Visualization

Diagram 1: GPU Bottleneck Identification Workflow

Diagram 2: Optimized GPU Data Pipeline

The Scientist's Toolkit: Key GPU Research Reagents

| Item | Function in Research |

|---|---|

| NVIDIA CUDA Toolkit | The core software development environment for creating GPU-accelerated applications. It includes compilers, libraries, and debugging tools [3]. |

| NVIDIA cuDNN | A highly tuned library for deep learning primitives, accelerating standard routines used in neural networks. Essential for frameworks like PyTorch and TensorFlow [6]. |

| NVIDIA BioNeMo | A comprehensive platform for developing and deploying AI models in biology and chemistry. Includes pre-trained models for tasks like protein structure prediction and molecular generation [7] [8]. |

| NVIDIA Nsight Tools | A suite of performance profiling tools (Nsight Systems, Nsight Compute) that provides deep insights into GPU kernel performance, memory usage, and pipeline bottlenecks [1]. |

| NVIDIA DALI | (Data Loading Library) A library for data loading and preprocessing to accelerate deep learning applications. It executes augmentation pipelines on the GPU, alleviating CPU bottlenecks [1]. |

| Slurm Workload Manager | The job scheduler used on many high-performance computing (HPC) clusters to manage and submit GPU jobs (e.g., sbatch --gpus-per-node=1) [6]. |

The memory hierarchy in a GPU is a critical architectural feature designed to balance the competing needs of high bandwidth, low latency, and large capacity for parallel computing workloads. For researchers working on data-intensive ecology codes, understanding this hierarchy is the first step toward optimizing data access patterns and achieving significant performance improvements. GPU memory is structured in multiple tiers, each with distinct characteristics in terms of speed, size, and scope of access [9] [10].

This guide provides a practical framework for understanding and troubleshooting memory usage within the context of GPU-accelerated ecological research. By focusing on the three most crucial memory types—registers, shared memory, and global memory—you will learn to diagnose performance bottlenecks and apply targeted optimizations to your scientific code.

Register File

- Function and Scope: Registers are the fastest and most private memory in the GPU hierarchy. They are allocated to individual threads and used to store local variables, pointers, and intermediate results for immediate computation [9] [11]. Each thread can only access its own private set of registers.

- Performance Characteristics: Register access has the highest bandwidth and lowest latency, with operations occurring at the full speed of the CUDA core. Their proximity to the compute units makes them ideal for holding frequently used data [11].

- Key Management Consideration: The number of registers available per thread is limited. If a kernel's requirements exceed this limit, the compiler will spill excess data to the much slower local memory (which resides in global memory), causing a significant performance penalty [9] [10].

Shared Memory

- Function and Scope: Shared memory is a software-managed cache that is shared by all threads within the same thread block [9]. It enables threads within a block to communicate and collaborate efficiently by sharing data.

- Performance Characteristics: It offers high bandwidth and low latency, comparable to the L1 cache [11]. Its effective use is paramount for algorithms where data reuse is possible within a thread block.

- Key Management Consideration: Unlike a cache, its management is explicit. The programmer must manually place data into shared memory and use synchronization primitives like

__syncthreads()to coordinate access among threads and prevent race conditions [12].

Global Memory

- Function and Scope: Global memory (or VRAM) is the largest memory pool on the GPU, typically ranging from several to tens of gigabytes. It is accessible by all threads across all blocks and persists for the lifetime of the application [9] [13].

- Performance Characteristics: It is the slowest major memory type due to its high latency and location off the Streaming Multiprocessor (SM) chip [9] [11]. However, its vast size is necessary for holding input datasets and output results.

- Key Management Consideration: Performance is highly dependent on access patterns. Coalesced accesses, where consecutive threads in a warp access consecutive memory locations, are crucial for achieving high effective bandwidth [12].

Table 1: Quantitative Comparison of Key GPU Memory Types

| Feature | Register File | Shared Memory | Global Memory |

|---|---|---|---|

| Scope | Per-thread | Per thread block | All threads (entire grid) |

| Lifetime | Thread lifetime | Thread block lifetime | Application lifetime |

| Size | ~256 KB/SM [13] | 128-256 KB/SM [13] | GBs (e.g., 40-96 GB/GPU [13]) |

| Speed | Fastest | Very Fast | Slow (high latency) |

| Management | Compiler | Programmer | Programmer |

| Primary Use | Local variables, intermediates | Inter-thread communication, data reuse | Large datasets, input/output |

Troubleshooting Common Memory Issues

This section addresses specific problems researchers may encounter during experiments.

Problem 1: Kernel Runs Slower Than Expected

- Possible Cause: The kernel is memory-bound, spending most of its time waiting for data from global memory rather than computing [14].

- Diagnosis:

- Calculate your kernel's Arithmetic Intensity (AI): AI = Total FLOPs / Total Bytes Accessed (from Global Memory) [14].

- Compare it to your GPU's ops:byte ratio (e.g., ~13 for an A100 [11]). If AI is lower, the kernel is memory-bound.

- Use

nvprofor Nsight Compute to profile global memory throughput and cache hit rates [12].

- Solution:

- Restructure your algorithm to reuse data. Use shared memory tiling to load blocks of data that can be used multiple times, reducing global memory traffic [12].

- Ensure global memory accesses are coalesced so that threads in a warp read contiguous blocks of memory.

Problem 2: "Out of Memory" Error During Kernel Launch

- Possible Cause: The GPU's global memory is insufficient for the requested allocation [12].

- Diagnosis:

- Use

cudaMemGetInfo(&freeMem, &totalMem)in your host code to check available memory before allocation [12]. - Check for memory leaks by ensuring all

cudaMalloccalls are paired withcudaFree.

- Use

- Solution:

- Process data in smaller batches or chunks.

- Use unified memory as a fallback, but be aware of potential performance overheads from data migration [12].

- Optimize data types (e.g., use

fp16instead offp32where precision allows).

Problem 3: Incorrect Results from Shared Memory

- Possible Cause: Race conditions or incorrect synchronization between threads in a block.

- Diagnosis:

- Carefully review all points where threads write to shared memory.

- Check if a thread reads data that another thread is supposed to write.

- Solution:

- Use

__syncthreads()as a barrier to ensure all threads have finished writing to shared memory before any thread begins reading from it [12]. - Implement a clear data ownership and communication pattern within the thread block.

- Use

Problem 4: Low GPU Utilization and Occupancy

- Possible Cause: Register usage per thread is too high, limiting the number of active warps on an SM [3].

- Diagnosis:

- Use the NVIDIA Nsight Compute profiler to examine register usage and occupancy.

- Look for compiler warnings about register spillage to local memory.

- Solution:

- Restructure the kernel to use fewer temporary variables, or launch it with a smaller number of threads per block.

- Use the

__launch_bounds__qualifier to guide the compiler on register usage.

Frequently Asked Questions (FAQs)

Q1: How do I choose between using shared memory and relying on the L1 cache?

Use shared memory when you know the exact data access pattern and can explicitly manage data reuse within a thread block (e.g., tiling in matrix multiplication). Rely on the L1 cache for less predictable, read-only access patterns. Shared memory is a guaranteed on-chip resource, while cache behavior is hardware-controlled [10].

Q2: What is a "bank conflict" in shared memory and how do I avoid it?

Shared memory is divided into banks. A bank conflict occurs when multiple threads in the same warp access different addresses within the same bank, serializing the accesses. To avoid this, structure your memory accesses so that threads in a warp access different banks (e.g., via padding or modifying the access pattern) [15].

Q3: My ecology model has many conditional branches. How does this affect performance?

GPUs excel at running many threads in parallel. When threads within a single warp take different execution paths (branch divergence), the warp serially executes each path, disabling threads that are not on the current path. This can significantly reduce performance. Try to restructure algorithms to minimize warp-level branch divergence [3].

Q4: What is the "roofline model" and how can it help my optimization efforts?

The roofline model is a visual performance model that plots attainable performance (FLOPS) against arithmetic intensity. It shows the two fundamental performance limits of a GPU: the memory bandwidth roof (for low-AI kernels) and the compute roof (for high-AI kernels). It helps you identify what type of bottleneck your kernel has and how much headroom for improvement exists [11] [14].

Experimental Protocols for Memory Optimization

Protocol 1: Assessing Arithmetic Intensity and Performance Regime

Objective: Determine if your kernel is memory-bound or compute-bound [14].

- Instrument Your Kernel: Modify your kernel or use profiling tools to estimate:

FLOPS: The total number of floating-point operations performed.Bytes_Accessed: The total volume of data read from and written to global memory.

- Calculate Arithmetic Intensity (AI):

AI = FLOPS / Bytes_Accessed. - Plot on Roofline Model: Compare your kernel's AI to your GPU's ridge point (e.g., ~13 FLOPs/byte for A100). Kernels with AI below this point are memory-bound; those above are compute-bound [11].

- Analysis: This diagnosis directs your optimization strategy. If memory-bound, focus on improving data reuse and access patterns. If compute-bound, focus on improving instruction throughput.

Protocol 2: Implementing and Profiling Shared Memory Tiling

Objective: Optimize a matrix multiplication kernel by reducing global memory traffic [12].

- Establish Baseline: Implement a naive matrix multiplication kernel that reads directly from global memory. Profile its performance using Nsight Systems.

- Design the Tile: Define a 2D tile size (

TILE_DIM x TILE_DIM) that fits within your GPU's shared memory capacity per block. - Implement Tiled Kernel:

- Declare a shared memory array:

__shared__ float tile_A[TILE_DIM][TILE_DIM]; - Have cooperating threads within a block load a contiguous sub-matrix (tile) of

AandBfrom global into shared memory. - Use

__syncthreads()after loading to ensure all tiles are available. - Perform the partial matrix multiplication using data from shared memory.

- Accumulate the result in a thread-local register before writing the final value back to global memory.

- Declare a shared memory array:

- Profile and Compare: Run the optimized kernel and compare performance metrics (e.g., execution time, global memory throughput) against the baseline.

Essential Diagrams for GPU Memory Hierarchy

Diagram 1: Simplified GPU Memory Hierarchy. Data flows from slow, large off-chip memory to fast, small on-chip memory.

The Scientist's Toolkit: Key Research Reagents

Table 2: Essential Software and Profiling Tools for GPU Code Optimization

| Tool / "Reagent" | Function / Purpose | Use Case in Ecology Code Research |

|---|---|---|

| NVIDIA Nsight Systems | System-wide performance profiler | Identify the most time-consuming (hotspot) kernels in your simulation for prioritization. |

| NVIDIA Nsight Compute | Detailed kernel profiler | Dive deep into a specific kernel to analyze memory bandwidth, occupancy, and stall reasons. |

nvcc Compiler |

NVIDIA CUDA C++ compiler | Compile code and use flags like -maxrregcount to control register usage and investigate spilling. |

| CUDA-MEMCHECK | Memory error checking tool | Detect memory access violations (e.g., out-of-bounds errors) in your kernel code. |

cuda-memcheck |

||

| Arithmetic Intensity Analyzer | (Custom Scripting) | Calculate the AI of your kernels to apply the roofline model and determine the optimization regime. |

How Data Access Patterns Directly Impact Computational Throughput

In GPU-accelerated research, particularly in ecology codes and drug development, computational throughput is not just about raw processing power. Data access patterns—the order and manner in which your GPU threads request data from memory—are a primary determinant of performance. An inefficient pattern can leave powerful Streaming Multiprocessors (SMs) idle, waiting for data, thereby crippling overall throughput. Understanding and optimizing these patterns is essential for accelerating simulations and data analysis. This guide provides troubleshooting and methodologies to identify and resolve these critical bottlenecks.

Frequently Asked Questions

Q1: My GPU utilization is high, but the computation is slow. Why?

You are likely experiencing a memory bottleneck. High GPU utilization only indicates that the GPU is busy, not that it is working efficiently [16]. The issue is often non-coalesced memory access, where threads within a warp (a group of 32 threads) read data from scattered, unpredictable memory locations instead of consecutive ones [17]. This forces the memory subsystem to fetch more data than needed, drastically reducing the effective memory bandwidth and causing the SMs to stall, waiting for data [17] [16].

Q2: What is the difference between "coalesced" and "strided" access?

- Coalesced Access: Consecutive threads in a warp access consecutive memory locations. This is the optimal pattern, as it bundles all memory requests from a warp into a minimal number of transactions (e.g., as few as four 32-byte transactions for 4-byte elements) [17].

- Strided Access: Threads access memory locations with a large stride (e.g., every 128 bytes). This is highly inefficient, as each thread's access may trigger a separate memory transaction, fetching a full 32-byte sector while utilizing only a small portion of it [17].

Q3: How do data access patterns affect different vector search methods in data analysis?

Memory access patterns fundamentally differentiate search algorithms [18].

- Flat Indexes: Use a sequential, contiguous access pattern. This is cache-friendly and allows for high throughput, especially when combined with SIMD parallelism, but latency grows linearly with dataset size [18].

- Graph-Based Indexes (e.g., HNSW): Require random access to traverse pointers between nodes scattered in memory. This causes frequent cache misses and higher latency per query, though they can examine fewer total data points [18].

Q4: What tools can I use to diagnose poor data access patterns?

- NVIDIA Nsight Compute: This is the primary tool for profiling NVIDIA GPUs. Use its

MemoryWorkloadAnalysis_Tablessection to get detailed metrics on memory transactions. It can directly flag uncoalesced accesses and show how many bytes are utilized per transaction [17]. - Intel VTune Profiler: Its Memory Access Patterns (MAP) analysis can identify if your code is bound by non-unit strides (constant stride) or a lack of locality, and it can recommend specific code transformations [19].

Troubleshooting Guides

Problem: Suspected Non-Coalesced Memory Access

Symptoms:

- High

dram__sectors_read.sumbut lowdram__bytes_read.sum.per_secondin profiler metrics [17]. - The profiler reports a low ratio of bytes utilized to bytes fetched (e.g., only 4 out of 32 bytes used per sector) [17].

- Low SM Activity despite high GPU Utilization [16].

Diagnostic Protocol:

- Profile with Nsight Compute: Run a detailed profile of your kernel, focusing on memory metrics.

- Analyze DRAM Metrics: Check the

dram__sectors_read.summetric. Compare it between an efficient and inefficient kernel; a significant increase points to a poor access pattern [17]. - Identify the Source: The profiler output will point to the specific kernel and, if using a tool like Intel Advisor's MAP, even the specific source code line and variable causing the inefficient access [19].

Resolution Strategies:

- Restructure Your Kernel: Ensure that the thread with index

threadIdx.xaccesses the array element at indexi(e.g.,data[threadIdx.x]), notdata[threadIdx.x * large_stride][17]. - Data Layout Transformation: Change an Array-of-Structures (AoS) to a Structure-of-Arrays (SoA). This can transform a strided access pattern into a contiguous one [19].

- AoS (Inefficient):

struct Particle { float x, y, z; } particles[N]; - SoA (Efficient):

struct Data { float x[N], y[N], z[N]; } data;

- AoS (Inefficient):

Problem: Low GPU Throughput Due to Memory Latency

Symptoms: The GPU is not reaching its peak computational throughput, and profiling shows high memory latency.

Diagnostic Protocol:

- Check SM Activity: Use your profiler to monitor Streaming Multiprocessor (SM) activity. High utilization but low activity suggests the SMs are stalled [16].

- Analyze Locality: Use a profiler to determine the memory footprint and reuse distance of your data arrays. A large footprint with little reuse indicates poor temporal locality, leading to constant cache misses [19].

Resolution Strategies:

- Increase Arithmetic Intensity: Restructure your algorithm to perform more operations per byte of data fetched from memory.

- Use Tiling/Blocking: Break down the dataset into smaller tiles (blocks) that fit into the GPU's shared memory or L1 cache. This promotes data reuse and reduces trips to global memory [20].

- Leverage Shared Memory: Manually cache frequently used data in shared memory, which has much lower latency than global memory.

Experimental Protocols & Quantitative Data

Protocol 1: Quantifying the Impact of Coalescing with NVIDIA Nsight Compute

This experiment measures the direct performance difference between coalesced and uncoalesced memory access.

1. Hypothesis: A coalesced memory access pattern will result in fewer DRAM sectors read, higher memory bandwidth, and faster kernel execution compared to an uncoalesced pattern.

2. Experimental Setup:

- Code: Implement two kernels that process a large array (

float* input, float* output).- Coalesced Kernel:

output[tid] = input[tid] * 2.0f; - Uncoalesced Kernel:

int scattered_index = (tid * 32) % n; output[tid] = input[scattered_index] * 2.0f;[17]

- Coalesced Kernel:

- Tools: NVIDIA Nsight Compute command-line interface (CLI).

- Metrics:

dram__sectors_read.sum,dram__bytes_read.sum.per_second, kernel duration.

3. Procedure: 1. Compile the code for a specific NVIDIA GPU architecture. 2. Profile the coalesced kernel:

3. Record the results. 4. Profile the uncoalesced kernel using the same command. 5. Record the results.4. Data Analysis and Interpretation: The results will clearly show the penalty of uncoalesced access. Expect a massive increase in the number of DRAM sectors read for the same amount of useful data, leading to a much lower effective bandwidth and longer runtime.

Table 1: Sample Results from Memory Coalescing Experiment

| Kernel Type | DRAM Sectors Read | Bandwidth (GB/s) | Estimated Speedup |

|---|---|---|---|

| Coalesced | ~8.3 million | ~160 | Baseline |

| Uncoalesced | ~67.1 million | ~290 (but inefficient) | 83% improvement possible with optimization [17] |

Protocol 2: Analyzing Data Locality with Reuse Distance Histograms

This methodology helps you understand the cache behavior of your application and decide on data placement.

1. Objective: To characterize the data locality of a kernel by building a reuse distance histogram for its arrays [19].

2. Methodology:

- Concept: Reuse distance is the number of distinct data items accessed between two consecutive accesses to the same item. A short reuse distance indicates strong temporal locality.

- Implementation: Use a profiling tool (like Intel Advisor) or a custom runtime method (like PORPLE's shadow kernels) to capture the memory access trace or pattern of your kernel [19].

- Analysis: The tool processes the trace to build a histogram showing the distribution of reuse distances for each array.

3. Interpretation:

- A histogram skewed towards short distances indicates good locality; the data likely benefits from being cached.

- A histogram with mostly long distances indicates poor locality; caching may not be effective, and optimizing access patterns or data layout is more critical [19].

Diagram 1: Data Locality Optimization Workflow

The Scientist's Toolkit: Key Research Reagents & Software

Table 2: Essential Tools for GPU Data Access Pattern Optimization

| Tool / Solution | Function | Vendor |

|---|---|---|

| NVIDIA Nsight Compute | Kernel-level profiler for detailed performance analysis of CUDA kernels, including memory workload. | NVIDIA |

| NVIDIA Nsight Systems | System-wide performance profiler for identifying large-scale bottlenecks across CPU and GPU. | NVIDIA |

| ROCm Profiler (rocprof) | Performance analysis tool for AMD GPUs. | AMD |

| Intel VTune Profiler | CPU and GPU profiler with a dedicated Memory Access Patterns (MAP) analysis. | Intel |

| CUDA Toolkit | Compiler (nvcc) and debugger (cuda-gdb, compute-sanitizer) for developing CUDA applications [20]. | NVIDIA |

| RenderDoc | Graphics debugger that supports compute shader inspection and a shader replacement workflow for debugging [21]. |

Diagram 2: Simplified GPU Memory Hierarchy - The path to global memory is the slowest, underscoring why efficient access is critical.

Common Bottlenecks in Ecological and Biomedical Simulations

Frequently Asked Questions

1. Why does my simulation's performance drop significantly when processing smaller, non-consecutive chunks of data? This is typically due to inefficient global memory access patterns on the GPU [22]. When your application reads 64-byte chunks from various places in an array instead of larger 128-byte consecutive blocks, it fails to utilize the GPU's memory architecture optimally. The L2 cache is often organized in 128-byte lines, and accessing smaller, strided chunks can lead to overfetch (loading data that won't be used) and reduced throughput [22].

2. How can I improve the interoperability of models written in different programming languages? Adopt a simulation environment designed for this purpose, such as the one developed within the Synergy-COPD project [23]. This environment uses a web-based graphical interface and a central knowledge base that maps variables and units between different models, allowing deterministic (e.g., differential equations) and probabilistic models to communicate parameters and run cohesively [23].

3. My GPU kernel is highly optimized but still underperforms. What can I do? Beyond manual optimization, consider automated tools like GEVO, which uses evolutionary computation [24]. It can find non-obvious code edits that improve runtime. For example, it has reduced runtimes for bioinformatics applications like multiple sequence alignment by nearly 29% by discovering complex, application-specific optimizations that involve significant epistasis (gene interaction) [24].

4. What are the key considerations when choosing a modelling and simulation platform for integrative physiology? The platform should support your model's level of detail, timescale, and facilitate community collaboration [25]. While many specialized tools exist (like JSim, PhysioDesigner, HumMod), they often lack the ability to run models from different programming languages. Platforms like Simulink offer graphical environments, but the absence of a universally adopted standard remains a challenge [25] [23].

Troubleshooting Guides

Problem 1: Suboptimal GPU Memory Access Patterns

Symptoms: Performance degrades when processing smaller (e.g., 64B) or non-consecutive data chunks compared to larger (e.g., 128B) consecutive chunks [22].

| Troubleshooting Step | Description & Action |

|---|---|

| Check Access Pattern | Ensure threads access consecutive memory addresses. Strided or random access can drastically reduce throughput [22]. |

| Verify L2 Fetch Granularity | Use cudaDeviceSetLimit to set cudaLimitMaxL2FetchGranularity. For random access, a smaller value (32 bytes) can hint the hardware to reduce overfetch [22]. |

| Use the Profiler | Run the CUDA Profiler to identify the exact bottleneck. Check metrics related to L1TEX and L2 cache utilization and global load efficiency [22]. |

| Ensure Proper Alignment | Make sure memory accesses are aligned (e.g., 64B accesses should be 64B aligned). Unaligned accesses can force reads from multiple cache lines, hurting performance [22]. |

Problem 2: Inefficient Integration of Multi-Language Models

Symptoms: Inability to execute models written in different programming languages (C++, Fortran, etc.) within a single workflow, hindering comprehensive simulation [23].

| Troubleshooting Step | Description & Action |

|---|---|

| Implement a Control Module | Develop a central controller to manage execution flow and data exchange between disparate models [23]. |

| Establish a Knowledge Base | Create a semantic knowledge base to store and map parameters across models, resolving differences in variable names and units [23]. |

| Deploy a Data Warehouse Manager | Use this component to manage all data requests and flow, ensuring consistent information is delivered to and from each model and the visualization interface [23]. |

Experimental Protocols & Methodologies

Protocol 1: Analyzing GPU Memory Access Performance

Objective: To quantify the performance impact of different data access patterns on GPU global memory.

Materials:

- Compute-capable GPU (e.g., NVIDIA Ampere architecture) [22].

- CUDA toolkit with profiler (e.g., Nsight Compute) [22].

- Code for a kernel that can be configured for different chunk sizes (e.g., 64B vs. 128B) and access patterns (consecutive vs. strided).

Methodology:

- Kernel Configuration: Prepare two versions of your kernel. One should be optimized for 128-byte consecutive accesses, and another for 64-byte accesses from various locations [22].

- Set L2 Granularity: Use

cudaDeviceSetLimit(cudaLimitMaxL2FetchGranularity, ...)to test performance under different settings (32, 64, 128 bytes) [22]. - Profile Execution: Run the kernels under the CUDA profiler. Use flags like

--clock-control noneto ensure the GPU runs at boost clocks for accurate measurement [22]. - Analyze Metrics: Key metrics to examine in the profiler report are:

l2tex__sectors.sum(number of 32B sectors requested)l2tex__throughput.avg.pct_of_peak_sustained_elapsed(L2 throughput utilization)sm__throughput.avg.pct_of_peak_sustained_elapsed(Overall SM throughput) [22].

Expected Outcome: The kernel with 128-byte consecutive accesses will show higher memory throughput and better utilization of the L1TEX and L2 caches, leading to lower elapsed time [22].

Protocol 2: Establishing an Interoperable Simulation Environment

Objective: To integrate and execute physiological models written in different compiled programming languages.

Materials:

- A set of models (e.g., deterministic ODE-based, probabilistic).

- A central server to host the simulation environment.

- A defined knowledge base schema (e.g., using semantic web technologies) [23].

Methodology:

- Model Encapsulation: Wrap each model in a standardized interface that can be called by the control module, handling data I/O.

- Knowledge Base Population: For each model, define its input and output parameters in the knowledge base. Establish explicit "mapping" relationships between equivalent parameters in different models, including unit conversions [23].

- Workflow Execution:

- The user selects models and initial parameters via a Graphical Visualization Environment (GVE), which is a web interface [23].

- The Control Module receives the request and queries the Data Warehouse Manager for the necessary model and mapping information [23].

- The Control Module executes the models in sequence, using the knowledge base to translate and pass output parameters from one model as inputs to the next [23].

- Final results are aggregated and sent back to the GVE for the user [23].

Expected Outcome: Successful execution of a multi-model simulation where output from one model (e.g., a cardiovascular model) is accurately used as input for another (e.g., a pulmonary model), despite being originally written in different languages [23].

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details computational tools and their functions for advanced simulation research.

| Item/Reagent | Function & Explanation |

|---|---|

| CUDA Profiler (Nsight Compute) | An essential tool for identifying performance bottlenecks in GPU code. It provides detailed metrics on memory access, cache efficiency, and compute throughput [22]. |

| GEVO (GPU Evolutionary Optimizer) | An automated program optimization tool that uses evolutionary computation to find non-intuitive code edits that improve runtime, often missed by human experts [24]. |

| Simulation Workflow Management System (SWoMS) | A software architecture that controls the execution of multiple, heterogeneous models and manages data flow between them, enabling integrative physiological simulations [23]. |

| Semantic Knowledge Base | A structured database that stores the meaning and relationships of model parameters. It is crucial for solving interoperability problems by mapping analogous variables across different models [23]. |

| cudaLimitMaxL2FetchGranularity | A CUDA API for setting the L2 cache fetch granularity. Adjusting this limit (e.g., to 32 bytes) can improve performance for applications with "random" memory access patterns by reducing unnecessary data transfer [22]. |

Workflow and Signaling Diagrams

Simulation Environment for Multi-Model Interoperability

GPU L2 Cache Inefficiency with 64B Access

Troubleshooting Guides and FAQs

This technical support center addresses common inefficiencies in GPU-accelerated research, specifically for computational ecology and drug development. The guides below focus on optimizing data access patterns to reduce costs and prevent project delays.

Frequently Asked Questions

Why is my large-scale genomic data visualization or protein structure analysis running slowly and consuming excessive cloud credits? Slow performance and high costs in data-intensive visualization, such as with genomic data or protein structures, are frequently caused by data access patterns overwhelming the GPU. When data is not fed efficiently from storage to the GPU, the powerful processors sit idle, wasting compute resources you are paying for. Key reasons include:

- Data Bottlenecks: The CPU cannot prepare and transfer data to the GPU fast enough, causing the GPU to remain underutilized. This is a primary cause of low GPU utilization rates, which in centralized data centers can be as low as 15-30% [26].

- Excessive Data Movement: Transferring large datasets repeatedly from cloud storage to computing instances incurs high network fees and delays.

- Incorrect Resource Allocation: Using a high-performance GPU for tasks that are not sufficiently parallelized or are memory-bound will not improve speed and will drastically increase costs [27].

How can I confirm that my GPU resources are being underutilized? Most cloud platforms and on-premise clusters provide profiling tools. For containers in a Kubernetes environment, you can use tools like AI Profiling, an eBPF-based tool that performs online detection of GPU tasks and dynamically starts/stops performance data collection without modifying business code [28]. Key metrics to monitor are:

- GPU Utilization: The percentage of time the GPU is actively processing data.

- Memory Bandwidth Usage: How efficiently the GPU's high-speed memory is being used.

- CPU-GPU Data Transfer Rates: Identifying if data transfer is the limiting factor.

What is the most effective way to reduce cloud egress fees for my large dataset iterations? To minimize egress fees, which are charges for moving data out of the cloud provider's network, architect your workflows to minimize data movement.

- Colocate Data and Compute: Ensure your data storage and GPU instances are in the same cloud region and availability zone.

- Use Efficient Data Formats: Adopt columnar formats like Apache Parquet that allow you to read only the necessary data chunks.

- Leverage Caching: Implement a caching layer, such as using the EFC client to mount NAS (Network Attached Storage), which provides distributed cache to accelerate file access for data-intensive applications like AI training [28].

My distributed model training is slow due to node-to-node communication latency. How can I optimize this? Network latency between nodes in a multi-node GPU training cluster is a major performance bottleneck. To address this:

- Utilize cloud services that offer eRDMA (Remote Direct Memory Access) networks. Technologies like eRDMA provide high-throughput, low-latency communication, which can significantly speed up distributed training jobs. For example, you can use

Arenato submit PyTorch distributed jobs configured with eRDMA acceleration [28]. - Ensure your cluster is using a high-performance network backend like InfiniBand, which is virtualized in some advanced AI cloud platforms to ensure data security and resource efficiency [29].

Troubleshooting Guide: Diagnosing Data Access Inefficiencies

Follow this systematic guide to identify and resolve common data access issues that lead to GPU underutilization.

| Step | Action | Tool/Metric to Use | Expected Outcome |

|---|---|---|---|

| 1 | Profile GPU Utilization | nvidia-smi (command line), Cloud Monitoring Dashboards, AI Profiling [28] |

Identify periods of low GPU activity (e.g., utilization below 70-80% during compute-heavy tasks). |

| 2 | Analyze CPU-GPU Workflow | Profiler traces (e.g., NVIDIA Nsight Systems) | Visualize the timeline to see large gaps where the GPU is idle, waiting for data from the CPU. |

| 3 | Check Storage I/O | System monitoring tools (e.g., iostat on Linux) |

Identify if the storage system's read/write speed is the bottleneck. |

| 4 | Verify Network Throughput | Cluster network monitoring | For multi-node jobs, confirm that the network is not saturated and latency is low. |

| 5 | Implement Optimizations | Apply fixes from the table below. | A significant increase in GPU utilization and a reduction in job completion time. |

Optimization Strategies for Data Access Patterns

Once you've identified a bottleneck, apply these targeted optimizations.

| Bottleneck | Optimization Strategy | Implementation Example | Potential Impact |

|---|---|---|---|

| Data Loading (I/O Bound) | Use a high-performance parallel file system. | Use CPFS智算版 (Cloud Parallel File System), which offers ultra-high throughput and IOPS, is end-to-end RDMA-accelerated, and is ideal for AI training and inference scenarios [28]. | Can improve data read/write speeds by orders of magnitude, fully saturating GPU compute capabilities. |

| CPU-GPU Transfer | Overlap data copying with computation (pipelining). | Use CUDA streams to concurrently execute memory transfers and kernel executions. | Can hide data transfer latency, leading to near-seamless GPU utilization. |

| Memory Bandwidth | Optimize data structures for contiguous memory access. | Ensure your data arrays are aligned in memory to enable coalesced memory accesses by the GPU. | Can significantly increase effective memory bandwidth, speeding up kernel execution. |

| Multi-Node Communication | Use advanced network protocols. | Configure training scripts to use eRDMA or InfiniBand for inter-node communication [29] [28]. | Can reduce communication latency, directly speeding up distributed training cycles. |

Experimental Protocols for Optimization

Protocol 1: Benchmarking Data Access Patterns for GPU Ecology Codes

This methodology assesses the efficiency of different data access strategies in a controlled environment.

1. Objective: To quantify the impact of data access patterns on the runtime performance and cost of a standard ecological modeling algorithm (e.g., population genetics simulation, protein folding prediction with AlphaFold2 [30]) on a GPU-enabled cloud instance.

2. Materials:

- Compute Instance: A cloud VM with a high-performance GPU (e.g., NVIDIA A100 or H100).

- Software Environment: A containerized environment managed by Kubernetes (e.g., using ACK) [28].

- Dataset: A standard public dataset relevant to the model (e.g., genomic sequences, protein databases).

3. Procedure:

- Step 1: Baseline Measurement.

- Run the model with the data stored on a standard cloud block storage volume.

- Record the total job completion time and profile the GPU utilization using

nvidia-smiand timeline profilers.

- Step 2: Optimized Data Layout.

- Convert the dataset into a chunked, columnar format (e.g., Parquet) and run the same model, reading only the required columns.

- Record the performance metrics.

- Step 3: High-Performance Storage.

- Place the dataset on a high-throughput parallel file system (e.g., CPFS智算版 [28]).

- Run the model and record the metrics.

- Step 4: Analysis.

- Compare the total runtime, GPU utilization percentage, and cloud cost (based on instance runtime) for all three scenarios.

Protocol 2: Implementing and Validating a GPU-Accelerated Optimization Framework

This protocol uses an LLM-driven framework to automatically generate optimized GPU kernels, addressing inefficiencies at the most fundamental level.

1. Objective: To apply the "GPU Kernel Scientist" framework [31] to iteratively optimize a computational kernel central to an ecology simulation code, thereby reducing its runtime.

2. Materials:

- Hardware: A GPU server (e.g., equipped with AMD MI300 or NVIDIA H100).

- Software: The GPU Kernel Scientist framework, which uses a three-stage LLM-driven process [31].

- Code Base: The target GPU kernel (e.g., a matrix multiplication or custom spatial analysis function) written in a language like HIP or CUDA.

3. Procedure:

- Step 1: Selection. The LLM Evolution Selector analyzes a population of historical code versions and selects the most promising "base code" and a "reference code" for the next iteration [31].

- Step 2: Experiment Design. The LLM Experiment Designer brainstorms 10 potential optimization directions (e.g., "mitigate LDS bank conflicts," "optimize matrix core layout") and then generates 5 detailed experimental plans with predicted performance gains [31].

- Step 3: Code Writing. The LLM Kernel Writer generates new, compilable HIP or CUDA code based on the best experimental plans, incorporating techniques like shared memory double buffering and mixed-precision computation [31].

- Step 4: Validation.

- The newly generated kernel is compiled and run on the target hardware.

- Its performance is benchmarked against the original kernel.

- The process repeats, creating an automated optimization loop. This framework has been shown to generate code that achieves a 6x speedup over the original version on an AMD MI300 GPU [31].

The workflow for this iterative optimization is as follows:

The Scientist's Toolkit: Key Research Reagent Solutions

The following tools and platforms are essential for building an efficient, cost-effective GPU research environment.

| Item / Solution | Function / Explanation | Relevance to Research |

|---|---|---|

| AI-Native Cloud (e.g., GMI Cloud) | Provides specialized, high-performance GPU instances with stable supply (e.g., H200, GB200) and optimized AI software stacks [29]. | Avoids queue times and procurement delays; offers inference-optimized engines for rapid deployment of models. |

| Decentralized GPU Networks (e.g., Aethir) | A DePIN (Decentralized Physical Infrastructure Network) that aggregates idle GPU power into a cloud service, often at competitive rates [26]. | Provides an alternative sourcing model for compute power, potentially lowering costs and increasing resource availability. |

| Container Orchestration (e.g., ACK) | Managed Kubernetes service that supports advanced GPU scheduling, like Dynamic Resource Allocation (DRA), for sharing GPUs among multiple research jobs [28]. | Maximizes utilization of expensive GPU resources in a shared lab environment, directly controlling costs. |

| High-Performance File System (e.g., CPFS智算版) | A parallel file system designed for AI workloads, offering massive throughput and RDMA acceleration [28]. | Eliminates I/O bottlenecks for data-intensive tasks like genome analysis or molecular dynamics simulations. |

| LLM-Driven Optimization (GPU Kernel Scientist) | A framework that uses Large Language Models to automatically redesign and optimize low-level GPU code [31]. | Directly attacks the root cause of inefficiency—poorly written kernels—to speed up core research algorithms. |

| Profiling Tools (e.g., AI Profiling) | eBPF-based, non-intrusive performance analysis tools that can profile running GPU tasks in containers without code changes [28]. | Essential for diagnosing the exact stage of a workflow that is causing delays or inefficiencies. |

Strategic Methodologies for Efficient Data Access on GPUs

Frequently Asked Questions (FAQs)

Q1: What is memory coalescing and why is it critical for GPU performance? Memory coalescing occurs when all threads in a warp (a group of 32 threads) access consecutive global memory locations in a single instruction. This allows the GPU hardware to combine these accesses into a single, consolidated memory transaction. Coalescing is critical because it maximizes global memory bandwidth utilization; uncoalesced access can be more than twice as slow, significantly impacting kernel performance [32] [33].

Q2: My kernel performance is poor. How can I check for uncoalesced memory access? Use profiling tools like NVIDIA Nsight Systems or Compute to analyze global memory load/store efficiency metrics. Look for kernels where "DRAM Utilization" is low relative to peak bandwidth. Uncoalesced patterns often manifest as strided or non-sequential access when threads in a warp read/write data separated by large strides (e.g., accessing matrix columns in row-major storage) [33].

Q3: What are shared memory bank conflicts and how do I resolve them?

Shared memory is divided into 32 banks. A conflict occurs when two or more threads in the same warp access different addresses within the same bank, forcing serialized access. To resolve conflicts, use padding by adding an extra column to shared memory arrays (e.g., tile[32][33] instead of tile[32][32]), which shifts data into different banks for consecutive threads [32] [34].

Q4: When should I use tiling strategies in my CUDA kernels? Implement tiling when your application exhibits data reuse or when global memory access patterns cannot be efficiently coalesced. This is particularly beneficial in stencil computations, matrix operations, and molecular dynamics simulations where the same data elements are accessed multiple times by different threads [35] [36].

Q5: How does the TiledCopy abstraction in CuTe improve memory transfers?

TiledCopy is a CuTe library abstraction that efficiently copies data tiles between global and shared memory. It is highly configurable via thread and value layouts, making it adaptable to various tensor shapes and memory layouts, and can leverage hardware instructions like cp.async on SM80+ GPUs for asynchronous, coalesced transfers [37].

Troubleshooting Guides

Issue 1: Poor Kernel Performance Due to Non-Coalesced Memory Access

Symptoms: Low memory throughput, high kernel execution time, poor DRAM utilization in profiler.

Diagnosis and Resolution:

- Identify Access Pattern: Check if consecutive threads in a warp access consecutive memory addresses. For array processing, ensure

threadIdx.xcorresponds to the most rapidly changing index in memory [32]. - Row-Major vs Column-Major: For matrices stored in row-major order, ensure threads with consecutive

threadIdx.xaccess elements in the same row, not the same column. The latter creates a strided access pattern [33]. - Use Shared Memory for Transposes: If your algorithm requires access to both rows and columns, use shared memory as an intermediate buffer.

- Load data from global memory to shared memory in a coalesced manner.

- Then, read from shared memory in the required (possibly non-coalesced) pattern for computation. This avoids non-coalesced global memory accesses [33].

Issue 2: Shared Memory Bank Conflicts

Symptoms: Performance degradation after introducing shared memory tiling, despite reduced global memory access.

Diagnosis and Resolution:

- Identify Conflict Pattern: Use the profiler to detect bank conflicts. Conflicts occur when multiple threads in a warp access the same bank.

- Apply Memory Padding: Add an extra element to the leading dimension of shared memory arrays. This changes the mapping of data elements to banks.

- Before:

__shared__ float tile[TILE_DIM][TILE_DIM]; - After:

__shared__ float tile[TILE_DIM][TILE_DIM + 1];// Padding added [32]

- Before:

- Adjust Access Patterns: For 64-bit data types, configure shared memory bank size to 8 bytes using

cudaDeviceSetSharedMemConfig()to reduce conflicts [38].

Issue 3: Suboptimal Tile Size Selection

Symptoms: Limited performance improvement from tiling, or "out of shared memory" errors.

Diagnosis and Resolution:

- Analyze Resource Constraints: Each GPU multiprocessor has limited shared memory (e.g., 64KB or 128KB). The tile size must allow multiple active thread blocks to occupy each multiprocessor for latency hiding.

- Consider Data Type Size: Tile dimensions must account for the data type (e.g.,

float,double,half2). ATILE_DIMof 32 forfloatelements uses32 * 32 * 4 bytes = 4KBof shared memory per tile. - Experimental Tuning: Sweep over different tile sizes (e.g., 16x16, 32x32, 64x64) and profile performance. The optimal size balances data reuse with available parallelism.

Performance Data and Experimental Protocols

Table 1: Impact of Memory Access Patterns on Kernel Performance

| Access Pattern | Kernel Example | Performance Time | Relative Slowdown | Conditions |

|---|---|---|---|---|

| Coalesced | Vector addition with aligned access | 232 microseconds | 1.0x | NVIDIA GPU, 32-thread warp [32] |

| Uncoalesced | Vector addition with offset=1 | 540 microseconds | ~2.3x | Same conditions as above [32] |

| Strided (stride=2) | Strided memory access | ~10x slower than coalesced | ~10.0x | RTX 4050 GPU [38] |

Table 2: Optimization Impact in Practical Applications

| Application Domain | Baseline Implementation | Optimized Implementation | Speedup | Key Optimization |

|---|---|---|---|---|

| Matrix Multiplication (with transpose) | Naive kernel without tiling | Tiled shared memory kernel | 1100 ms → 750 ms | Shared memory tiling for coalesced access [38] |

| Molecular Docking (Amber Scoring) | CPU-based (AMD dual-core) | GPU-accelerated with CUDA | 6.5x | Porting MD simulation to GPU, memory pattern optimization [36] |

| Matrix Transpose | Naive kernel (uncoalesced writes) | Shared memory with padding | 1.61 ms → 0.79 ms | Coalesced reads/writes via shared memory, bank conflict resolution [32] |

Experimental Protocol 1: Evaluating Coalescing in Matrix Multiplication

Objective: Compare the performance of coalesced versus uncoalesced memory access patterns in a matrix multiplication kernel.

Methodology:

- Kernel Implementation: Implement two kernels for multiplying matrices A and B to produce C.

- Coalesced Access:

C[row][col] = sum(A[row][k] * B[k][col])where consecutive threads access consecutivecolvalues for matrix B, resulting in coalesced access [33]. - Uncoalesced Access: Modify the kernel to access the transpose of B:

C[row][col] = sum(A[row][k] * B[col][k]). This causes consecutive threads to access non-consecutive memory locations in B if stored in row-major order [38].

- Coalesced Access:

- Data Setup: Use square matrices (e.g., 2048x2048) of single-precision floats.

- Execution and Profiling: Execute both kernels on the same GPU (e.g., RTX 4050). Use

cudaEventRecord()to measure precise kernel execution time. Profile with NVIDIA Nsight Systems to examine memory throughput.

Expected Outcome: The coalesced kernel should demonstrate significantly higher memory bandwidth and lower execution time, as shown in Table 1.

Experimental Protocol 2: Tiling and Bank Conflict Resolution

Objective: Demonstrate the performance benefit of shared memory tiling and resolving bank conflicts in matrix transpose.

Methodology:

- Kernel Implementation: Implement three transpose kernels.

- Naive: Direct assignment

out[col][row] = in[row][col], leading to uncoalesced writes [32]. - Tiled with Conflicts: Use shared memory tiling but without padding, potentially causing bank conflicts when writing to or reading from shared memory [34].

- Tiled without Conflicts: Use shared memory tiling with padding (e.g.,

tile[TILE_DIM][TILE_DIM+1]) to eliminate bank conflicts [32].

- Naive: Direct assignment

- Data Setup: Use a large matrix (e.g., 4096x4096) to ensure measurable timing differences.

- Performance Metrics: Measure kernel execution time and use the profiler to count shared memory bank conflicts.

Expected Outcome: The padded tiled kernel should achieve the fastest execution time, demonstrating the importance of resolving bank conflicts after implementing tiling.

Workflow and Relationship Diagrams

Diagram 1: GPU memory access pattern optimization workflow for "GPU ecology codes".

Diagram 2: Memory access patterns showing coalesced vs uncoalesced memory transactions by a warp of 32 threads.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for GPU Memory Optimization Research

| Tool / Resource | Function | Application Context |

|---|---|---|

| NVIDIA Nsight Systems | System-wide performance profiler for CUDA applications. Identifies optimization opportunities like uncoalesced memory access and load imbalance. | Performance analysis of molecular docking pipelines and custom simulation kernels [3]. |

| CuTe C++ Template Library | Abstraction for efficiently copying and partitioning data tiles between GPU memory hierarchies. Simplifies implementation of coalesced data transfers. | Accelerating tensor operations in deep learning workloads for drug discovery [37]. |

| CUDA Compute Sanitizer | Runtime checking tool for memory access errors and shared memory bank conflicts. | Debugging GPU-accelerated ecological modeling code during development. |

| ROCm for AMD GPUs | Open software platform for GPU computing on AMD hardware. Provides profiling tools and libraries analogous to CUDA. | Cross-platform deployment of virtual screening applications [39]. |

| Shared Memory Padding Templates | Preprocessor macros or template functions for declaring padded shared memory arrays. | Standardizing bank conflict resolution across multiple kernels in a codebase. |

| Tiled Matrix Multiplication Kernels | Reference-optimized kernels demonstrating coalesced access and shared memory usage. | Benchmarking and integration into molecular dynamics force calculations [35] [34]. |

FAQs and Troubleshooting Guides

This section addresses common challenges researchers face when integrating GPU-accelerated libraries into their computational ecology workflows.

Q1: My GPU utilization is low during deep learning model training. What could be causing this bottleneck?

A low GPU utilization often indicates that your GPU is waiting for data, making the data pipeline a primary suspect [40]. To diagnose and fix this:

- Verify the Data Loader: Check if your CPU cores are maxed out while the GPU is idle. This is a clear sign your data loaders cannot keep up with the GPU's processing speed. Use frameworks like PyTorch's

DataLoaderwith multiple workers to parallelize data loading and augmentation tasks [40]. - Optimize File Access: Accessing millions of small files can cripple I/O performance. Consider preprocessing your dataset into a more efficient, contiguous format. Research indicates that transforming many small JPEG files into a single HDF5 file can significantly improve read performance on parallel file systems, reducing I/O overhead during training [41].

- Check Storage Speed: Ensure your data resides on a high-throughput storage system. For large-scale workloads, a single NVMe SSD can be a substantial upgrade from slower network-attached storage [40].

Q2: After updating my CUDA Toolkit, my existing code fails to find GPU libraries like cuDNN. How can I resolve this?

This is typically a version compatibility or path configuration issue.

- Confirm Version Alignment: First, verify that your versions of CUDA, cuDNN, and your deep learning framework (like TensorFlow) are compatible. You can check your CUDA version with

nvcc --versionand cross-reference it with your framework's build information [42]. - Inspect Environment Variables: The error

Could not load dynamic library 'libcudnn.so.8'often occurs when the system cannot locate the shared library. Ensure yourLD_LIBRARY_PATHenvironment variable includes the path to your CUDA libraries (e.g.,/usr/local/cuda/lib64). You might need to add this to your~/.bashrcfile [42]. - Reinstall and Link cuDNN: If the files are missing, manually reinstall cuDNN. After downloading the archive from the NVIDIA developer site, copy the header and library files to your CUDA directory. You may need to create a symbolic link to ensure the correct version is linked [42].

Q3: How can I profile my application to understand CPU-GPU interaction and identify kernel performance issues?

NVIDIA's Nsight tools are designed for this exact purpose.

- For a System-Wide View: Use Nsight Systems to visualize your application's entire performance timeline. It helps you see how CPU tasks correlate with GPU kernel execution, data transfers, and library calls (like cuBLAS and cuDNN). This is ideal for identifying large-scale bottlenecks, such as excessive synchronization or CPU-side delays that leave the GPU idle [43].

- For Detailed Kernel Analysis: Use Nsight Compute to drill down into the performance of individual CUDA kernels. It provides detailed metrics on GPU hardware utilization, including memory bandwidth, compute throughput, and pipeline stall reasons. This helps you optimize kernel code for specific architectures [44] [45].

Q4: In distributed training, my GPUs exhibit poor scaling efficiency. What should I investigate?

This problem usually points to communication bottlenecks between GPUs.

- Profile Inter-Node Communication: Use the

nccl-testssuite, specifically theall_reduce_perfbenchmark, to measure the performance of gradient synchronization across your nodes. This can quickly expose issues with your network fabric (InfiniBand or RoCE) configuration [40]. - Check Intra-Node Connectivity: Run a single-node NCCL test to ensure that GPUs within the same server are communicating at high speeds. Modern servers should leverage high-bandwidth interconnects like NVLink for optimal multi-GPU performance [40].

- Ensure Driver Configuration: Confirm that your NVIDIA drivers, Fabric Manager, and InfiniBand drivers (like MLNX_OFED) are up-to-date and configured with features like GPUDirect RDMA enabled, which allows for direct data exchange between GPUs and network devices [40].

Experimental Protocols for Performance Analysis

This section provides a reproducible methodology for evaluating data access patterns and computational efficiency in GPU-accelerated ecology codes.

Protocol 1: Quantifying the Impact of File Format on I/O-Bound Workloads

Objective: To measure how different file formats affect data loading throughput and overall training time in a deep learning pipeline.

Materials:

- Dataset: Image dataset (e.g., Tiny ImageNet-200) originally stored as numerous small JPEG files [41].

- Computing Environment: HPC system with a parallel file system (e.g., Lustre).

- Software: Python, PyTorch or TensorFlow, h5py library.

Methodology:

- Preprocessing: Convert the original JPEG dataset into a single HDF5 file. The HDF5 file should store images as structured datasets within a hierarchical group, preserving labels and metadata [41].

- Data Loader Implementation: Create two separate data loader implementations:

- Loader A: Designed to read from the original directory structure of JPEG files.

- Loader B: Designed to read chunks of data from the single HDF5 file.

- Benchmarking: Execute a controlled training script that uses each data loader. The model can be a standard CNN (e.g., ResNet-50). For each run, record:

- Average samples loaded per second (I/O throughput).

- Total epoch time.

- GPU utilization (using

nvidia-smior DCGM).

Expected Outcome: The HDF5-based data loader (Loader B) is expected to demonstrate higher I/O throughput and reduced epoch time by minimizing the filesystem metadata overhead associated with managing millions of small files [41].

Protocol 2: Profiling Computational Kernels in cuBLAS and cuDNN

Objective: To identify performance bottlenecks within GPU-accelerated library calls and understand low-level hardware utilization.

Materials:

Methodology:

- System Profiling: Use Nsight Systems to collect an application-level trace.

- Command:

nsys profile --trace=cuda,osrt,nvtx -o my_report ./my_application - Analysis: Open the report and identify the timeline for long-running kernels or gaps between kernel launches that suggest CPU-side bottlenecks. Check the trace for cuBLAS and cuDNN API calls to see their duration and concurrency [43].

- Command:

- Kernel Profiling: For key kernels identified in step 1, use Nsight Compute for detailed profiling.

- Command:

ncu -o kernel_details -k "my_kernel_name" ./my_application - Analysis: Import the report into the Nsight Compute GUI. Examine key metrics such as:

- Streaming Multiprocessor (SM) Utilization: Is the kernel compute-bound or memory-bound?

- Tensor Core Utilization: Is the kernel leveraging specialized units for mixed-precision math?

- DRAM Bandwidth: How effectively is the kernel using memory bandwidth? [44]

- Command:

- Iteration: Use the insights to optimize your code or library function calls and re-profile to measure improvement.

- System Profiling: Use Nsight Systems to collect an application-level trace.

The Scientist's Toolkit: Research Reagent Solutions

The table below catalogues essential software and tools for developing and optimizing GPU-accelerated research codes.

| Tool/Solution Name | Function & Purpose |

|---|---|

| NVIDIA Nsight Systems | A system-wide performance analysis tool that visualizes CPU-GPU interactions, API calls, and data movement to identify high-level bottlenecks [43]. |

| NVIDIA Nsight Compute | An interactive kernel profiler for CUDA applications, providing detailed low-level performance metrics to optimize individual GPU kernels [44] [45]. |

| NCCL Tests | A suite of benchmarks to test and verify the performance and correctness of multi-GPU and multi-node communication primitives, crucial for distributed training [40]. |

| HDF5 Library | A data model and file format for storing and managing large, complex data. It enables efficient parallel I/O access in HPC environments, reducing overhead from numerous small files [41]. |

| CUDA Toolkit | A development environment for creating high-performance GPU-accelerated applications. It includes compilers, libraries (cuBLAS, cuSOLVER), and debugging tools [46]. |

| cuDNN Library | A GPU-accelerated library of primitives for deep neural networks, providing highly tuned implementations for standard routines like convolutions and pooling [42]. |

Workflow and Data Access Diagnostics

The following diagram illustrates a structured workflow for diagnosing and optimizing performance in GPU-accelerated research applications, with a focus on data access patterns.

GPU Performance Diagnosis Workflow

The diagram below contrasts suboptimal serial file access with optimized parallel data access, a key consideration for I/O-bound workflows.

Data Access Pattern Impact

Data Preprocessing and Batching Techniques to Minimize Transfer Overhead

Frequently Asked Questions (FAQs)

Q1: What are the most common signs of a data transfer bottleneck in my GPU-accelerated drug discovery pipeline?

A data transfer bottleneck is typically indicated by low GPU utilization despite a high workload. Key signs include [47] [48]:

- The GPU utilization metric (via tools like

nvidia-smi) shows long periods of idle time or utilization consistently well below 100%. - The CPU utilization is high, with one or more cores maxed out.

- Profiling tools (like TensorFlow Profiler or NVIDIA Nsight Systems) show that the GPU is actively waiting for data from the CPU, creating a "stair-step" pattern in the execution trace.

Q2: How can I reduce the overhead of transferring numerous small, scattered data elements (e.g., molecular features) to the GPU?

For scattered data, the most efficient method is often to gather data into a contiguous buffer in CPU-pinned (page-locked) memory before performing a single, large transfer to the GPU via cudaMemcpy [49]. This approach is more efficient than many small transfers or relying on GPU threads to gather scattered data, as it makes better use of high system memory bandwidth and the PCIe bus.

Q3: My data preprocessing steps, like molecular structure normalization, are causing a bottleneck. What are my options?

You have several options to alleviate this [50] [47]:

- Optimize the CPU pipeline: Use parallel processing with

num_workersin DataLoader to process multiple batches concurrently. - Move preprocessing to the GPU: Perform operations like tokenization or normalization directly on the GPU to eliminate transfer entirely [50].

- Use specialized libraries: Leverage GPU-accelerated libraries like NVIDIA DALI, which are designed to build efficient, high-speed data preprocessing pipelines.

- Preprocess offline: Perform the most expensive preprocessing steps once and cache the results for faster loading in subsequent training runs.

Q4: Does increasing the batch size always improve performance and reduce transfer overhead?

Larger batch sizes can improve performance by amortizing the cost of data transfer and kernel launches over more samples. However, there is a point of diminishing returns. An excessively large batch size can exceed GPU memory capacity, lead to poor model convergence, or provide minimal further reduction in per-sample overhead [48]. It is crucial to profile performance with different batch sizes to find the optimal value for your specific model and hardware.

Troubleshooting Guides

Issue: Low GPU Utilization Due to Data Preprocessing Bottleneck

Symptoms:

- GPU utilization is low (e.g., below 50%) with frequent dips to 0% [47].

- CPU utilization is high, especially on one or more cores.

- Training time does not decrease significantly when upgrading to a more powerful GPU.

Diagnosis and Resolution Protocol:

| Step | Action | Tool / Command Example | Expected Outcome |

|---|---|---|---|

| 1. Confirm Bottleneck | Profile training to identify GPU idle time. | tf.profiler.experimental.Profile('logdir') or PyTorch Profiler [47]. |

Profiler trace confirms GPU is waiting for data input. |

| 2. Measure Ideal Time | Cache a single batch to bypass preprocessing. | Add ds = ds.take(1).cache().repeat() to data pipeline [47]. |

A significant reduction in epoch runtime confirms a CPU bottleneck. |

| 3. Optimize Data Loading | Use parallel data loading and prefetching. | DataLoader(..., num_workers=4, prefetch_factor=2) [51]. |

Increased GPU utilization and decreased step time. |

| 4. Reduce Transfer Volume | Adopt mixed precision training. | torch.cuda.amp.autocast() [51]. |

Lower memory usage and faster data transfer. |

| 5. Offload Preprocessing | Use TensorFlow Data Service or NVIDIA DALI. | tf.data.experimental.service.dispatch() [47]. |

Distributed preprocessing load, freeing the main CPU. |

Issue: Finding the Optimal Batch Size for Molecular Dynamics Simulations

Symptoms:

- Training runs out of GPU memory when batch size is increased.

- No significant training speed improvement after a certain batch size.

- Model accuracy degrades with larger batches.

Diagnosis and Resolution Protocol:

| Step | Action | Tool / Command Example | Expected Outcome |

|---|---|---|---|

| 1. Baseline Measurement | Start with a small batch size (e.g., 8 or 16) and profile the training step time and memory usage. | torch.profiler.profile(profile_memory=True) [48]. |

Establishes a baseline for performance and memory consumption. |

| 2. Gradual Increase | Systematically double the batch size, monitoring GPU memory usage until it is near full capacity. | Monitor via nvidia-smi. |

Identifies the maximum batch size that fits in GPU memory. |

| 3. Performance Profiling | For each viable batch size, run a short training epoch and record the average samples processed per second. | Custom logging or framework profiler. | A table of throughput vs. batch size is generated. |

| 4. Analyze Convergence | For the top 2-3 batch sizes, run a longer training session to monitor loss and validation accuracy. | Training logs and validation metrics. | Selection of a batch size that offers a good trade-off between speed and model quality. |

| 5. Use Gradient Accumulation | If the maximum batch size is still too small, simulate a larger batch size. | For K steps: loss.backward(); on step K: optimizer.step() and optimizer.zero_grad() [51]. |

Effectively trains with a larger batch size without increasing memory footprint. |

Experimental Protocols & Data

Protocol: Quantifying Data Transfer Bottleneck with Cached Batch Profiling

Objective: To measure the potential training speedup achievable by eliminating data preprocessing and transfer overhead.

Methodology:

- Standard Training Baseline: Train your model for a fixed number of steps (e.g., 100) using the standard data pipeline. Record the total time,

T_standard[47]. - Cached Batch Profiling: Modify the dataset to cache the first batch and repeatedly use it.

Train the model for the same number of steps and record the time,

T_cached. - Calculation: The potential speedup factor is

T_standard / T_cached. This reveals the maximum performance gain if the data bottleneck were completely removed.

Expected Data:

| Model | Dataset | T_standard (sec) |

T_cached (sec) |

Potential Speedup |

|---|---|---|---|---|

| ResNet50 | CIFAR-10 | 122 | 58 | 2.10x [47] |

| Custom CNN | Molecular Structures | To be measured | To be measured | To be calculated |

Protocol: Systematic Sweep for Optimal Batch Size

Objective: To empirically determine the batch size that maximizes training throughput without causing an out-of-memory (OOM) error or significant accuracy loss.

Methodology:

- Memory Capacity Check: Start with a very small batch size and incrementally double it until the training process runs out of GPU memory. This establishes the upper limit,

B_max. - Throughput Profiling: For a set of batch sizes

[8, 16, 32, ..., B_max], run a short, fixed-number-of-steps training profile for each. - Data Collection: For each batch size

B, record:- Samples/Second: The training throughput.

- GPU Memory Used: Peak memory utilization.

- Time per Step: The average time to process one batch.

- Convergence Check: Perform a mini-convergence test for the most promising batch sizes (e.g., top 3 by throughput) over several epochs to check for stability in loss and accuracy.

Expected Data:

| Batch Size | Samples/Second | GPU Memory Used (GB) | Avg. Step Time (ms) | Final Validation Loss |

|---|---|---|---|---|

| 16 | 1250 | 4.2 | 12.8 | 0.45 |

| 32 | 2105 | 6.1 | 15.2 | 0.43 |

| 64 | 2850 | 9.8 | 22.5 | 0.44 |

| 128 | 3120 | 17.1 | 41.0 | 0.46 |

| 256 | 3350 | 23.9 (Near Max) | 76.4 | 0.48 |

Data Visualization

Data Preprocessing and Transfer Optimization Workflow

Diagram Title: Optimized Data Preprocessing and Transfer Pipeline

Relationship Between Batch Size and Performance