Next-Generation Remote Sensing Technologies: Revolutionizing Conservation and Ecosystem Monitoring

This article explores the transformative role of advanced remote sensing technologies in modern conservation science.

Next-Generation Remote Sensing Technologies: Revolutionizing Conservation and Ecosystem Monitoring

Abstract

This article explores the transformative role of advanced remote sensing technologies in modern conservation science. It provides a comprehensive overview of foundational technologies like LiDAR, hyperspectral imaging, and drones, and details their methodological applications in forest monitoring, coral reef health assessment, and habitat preservation. The content addresses key challenges including data resolution costs, ethical considerations, and algorithmic limitations, while offering validation frameworks that integrate ground truthing and AI-driven analysis. Aimed at researchers and environmental professionals, this synthesis of current innovations and practical troubleshooting serves as a critical resource for implementing effective, data-driven conservation strategies in an era of rapid environmental change.

The Core Technologies: Understanding Satellites, LiDAR, and Hyperspectral Imaging

Defining Remote Sensing and Earth Observation for Conservation

Remote Sensing (RS) is a method of collecting information about the Earth's surface without making physical contact, utilizing sensors mounted on satellites, aircraft, or drones to detect and measure reflected or emitted electromagnetic radiation [1]. Earth Observation (EO) refers to the gathering of this information about Earth's physical, chemical, and biological systems via remote sensing technologies, often with the specific purpose of monitoring environmental conditions and changes over time [2] [3]. In the context of conservation science, these technologies enable the systematic monitoring of ecosystems and biodiversity at spatial and temporal scales unattainable through ground-based methods alone [1] [3].

The fundamental principle underpinning remote sensing is that all materials reflect and emit electromagnetic radiation in unique, wavelength-dependent ways, creating spectral signatures. Conservation researchers leverage these signatures to identify and characterize landscape features—for example, distinguishing healthy vegetation from stressed vegetation, mapping wetland boundaries, or detecting deforestation fronts [1] [4]. The integration of EO data with in-situ biological observations, such as species counts from camera traps or environmental DNA (eDNA) samples, creates a powerful framework for generating predictive models of biodiversity distribution and ecosystem function across vast, often inaccessible, geographical areas [5].

Key Technological Platforms and Data Acquisition Methods

Remote sensing for conservation relies on a suite of platforms, each offering distinct advantages in terms of spatial resolution, temporal frequency, and spectral characteristics. The following table summarizes the primary platforms and their conservation applications.

Table 1: Remote Sensing Platforms and Their Conservation Applications

| Platform Type | Spatial Resolution | Key Conservation Applications | Examples/Programs |

|---|---|---|---|

| Satellites (Optical) | Medium (10m-1km) to High (<1m) | Habitat mapping, deforestation monitoring, land-use change detection, vegetation health assessment [1] [4] | Landsat, Sentinel-2, ESA's Living Planet Programme [6] |

| Satellites (Radar) | Medium (10m-100m) | Forest structure and biomass estimation, mapping surface water under cloud cover, monitoring ground deformation | NASA NISAR, ESA's Living Planet Programme [6] [2] |

| Aircraft/Manned Aircraft | Very High (<1m) | High-resolution habitat classification, targeted species mapping, validation of satellite data | NASA's Airborne Science Program [2] |

| Drones (UAVs) | Ultra-High (cm-level) | Surveying remote wildlife habitats, assessing individual plant health, monitoring hard-to-reach areas [1] | Thermal and multispectral drones for wildlife and vegetation surveys [1] |

The data acquired by these platforms can be categorized as either passive or active. Passive sensors measure reflected solar radiation or emitted thermal radiation, forming the basis for most multispectral and hyperspectral imaging used in vegetation and land cover studies [1]. Active sensors, such as LiDAR (Light Detection and Ranging) and RADAR, emit their own energy and measure the signal returned, enabling detailed measurements of three-dimensional vegetation structure, which is critical for assessing habitat quality [2].

Recent and upcoming satellite missions are specifically designed to address conservation and Earth science challenges. These include PACE (Plankton, Aerosol, Cloud, ocean ecosystem) for ocean color, NISAR (NASA-ISRO Synthetic Aperture Radar) for ecosystem disturbance, and SBG (Surface Biology and Geology) for functional diversity and plant traits, all of which were highlighted in the 2025 NASA Biodiversity Meeting agenda [2].

Experimental Protocols and Methodologies

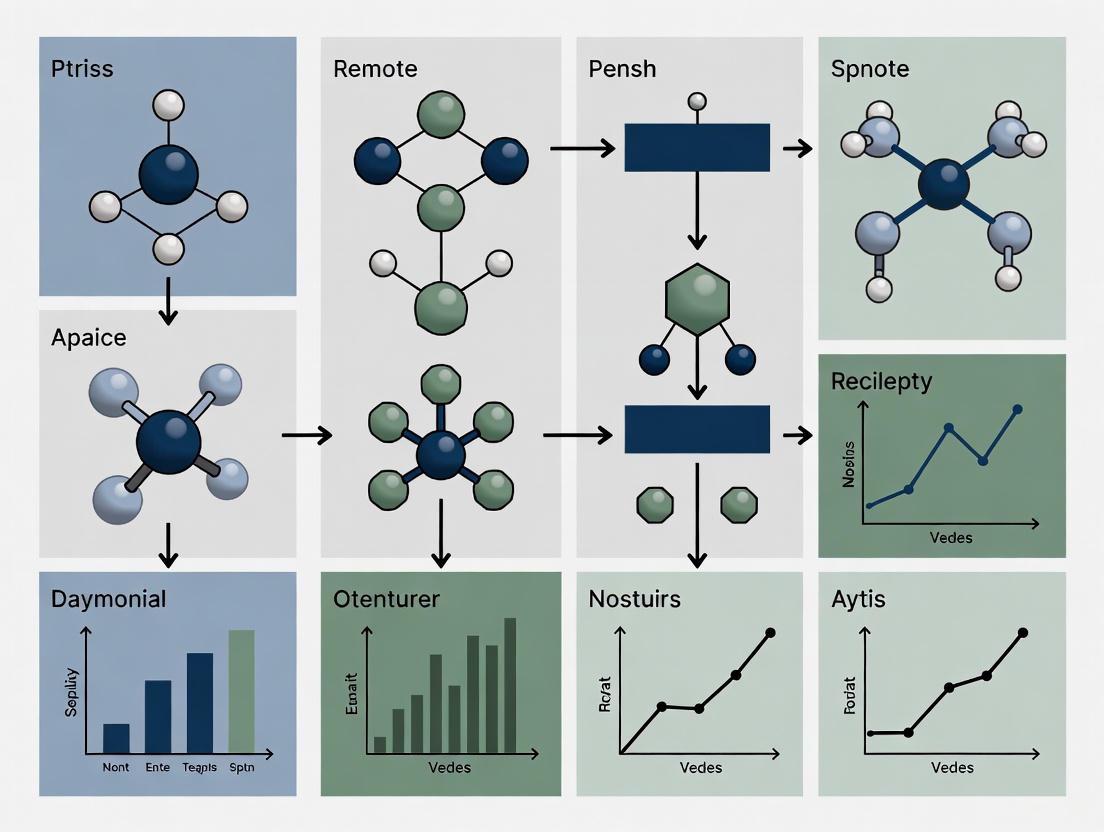

Integrating remote sensing data into conservation science requires structured methodologies. The following workflow diagram and accompanying explanation outline a standard protocol for a conservation-focused remote sensing project.

Figure 1: A standard workflow for conservation remote sensing projects, from problem definition to actionable outcomes.

Detailed Protocol Steps

Define Conservation Objective: Precisely formulate the research or management question. Example: "Map the extent and health of mangrove forests in a protected area to identify zones degraded by illegal logging." [4]

Data Acquisition Plan: Select appropriate satellite imagery or other RS data based on the objective's required spatial detail, revisit frequency, and historical archive needs. For mangrove monitoring, multi-temporal Sentinel-2 (10m resolution) or Landsat (30m resolution) imagery would be suitable. [1] [2]

Pre-processing: Correct the raw imagery to ensure data quality and geometric accuracy. This involves:

- Atmospheric Correction: Removing the scattering and absorption effects of the atmosphere to retrieve true surface reflectance values. This is a prerequisite for comparing images from different dates.

- Geometric Correction: Aligning the image to a known coordinate system so it can be integrated with other spatial datasets like protected area boundaries.

Analysis and Information Extraction: Apply algorithms to derive biologically meaningful information.

- Classification: Using supervised machine learning algorithms (e.g., Random Forest) to categorize each pixel in the image into land cover classes (e.g., "Healthy Mangrove", "Degraded Mangrove", "Water", "Sand"). [1]

- Index Calculation: Deriving vegetation indices like the Normalized Difference Vegetation Index (NDVI) to assess plant health and density. [1]

- Change Detection: Comparing classified images from two different dates to identify areas of loss, gain, or stability.

Ground Validation and Integration: Correlate remote sensing findings with ground-truthed biological data. This is a critical step for model accuracy. Methods include:

- Field Surveys: Recording species presence and habitat condition at specific GPS locations corresponding to image pixels. [5]

- High-throughput Biodiversity Data: Using automated recorders for animal sounds or images, and environmental DNA (eDNA) sampling to determine species presence. These "point data" are essential for building models that connect spectral signals to species assemblages. [5]

Modeling and Prediction: Use statistical models to extrapolate biodiversity understanding from field sample points to the entire landscape. Joint Species Distribution Models and Generalized Dissimilarity Models are powerful tools that connect in-situ species observations with the continuous environmental layers provided by remote sensing to create predictive maps of species richness or composition. [5]

Conservation Action and Decision Support: The final outputs, such as maps of habitat loss, biodiversity hotspots, or restoration priority zones, are provided to conservation managers and policymakers to guide targeted interventions, monitoring, and resource allocation. [2] [4]

The Scientist's Toolkit: Essential Research Reagents and Materials

For researchers embarking on projects that integrate remote sensing with conservation, the following "toolkit" comprises essential data, software, and analytical resources.

Table 2: Essential Research Toolkit for Conservation Remote Sensing

| Tool Category | Specific Examples | Function and Relevance |

|---|---|---|

| Satellite Data Portals | USGS EarthExplorer, ESA's Copernicus Open Access Hub, NASA Worldview | Centralized platforms to search and download free, archived, and near-real-time satellite imagery (e.g., Landsat, Sentinel). [2] |

| Biodiversity Data Platforms | Global Biodiversity Information Facility (GBIF), Movebank | Repositories for species occurrence data and animal tracking data, used for ground validation and model training. [3] |

| Specialized Conservation Software | IUCN STAR Program, Google Earth Engine | Cloud-based computing platforms for processing large geospatial datasets and conducting large-scale analyses without local computing constraints. [2] [3] |

| Statistical Modeling Frameworks | R packages (e.g., sdmpredictors, MODIStsp), Joint Species Distribution Models |

Software environments and specific algorithms for linking remote sensing data with field observations to create predictive maps of biodiversity. [5] |

| In-Situ Biosensors | Automated acoustic recorders, eDNA sampling kits, camera traps | Technologies for collecting high-throughput, geographically-referenced biodiversity data that serves as the biological ground truth for remote sensing signals. [5] |

| Training Resources | NASA's Applied Remote Sensing Training Program (ARSET) | Provides free online courses to build capacity in using Earth observations for environmental decision-making, including conservation. [2] |

Conceptual Framework for an Integrated Biodiversity Monitoring System

The most advanced applications of remote sensing for conservation do not use it in isolation, but rather integrate it with other data streams within a statistical modeling framework. The following diagram illustrates this integrative concept, which connects Earth observation to high-throughput biodiversity data.

Figure 2: A conceptual framework showing how Earth observation and field-based biodiversity data are integrated via statistical models to produce predictive maps for conservation.

This framework resolves a fundamental scaling problem: EO provides continuous spatial and temporal coverage but cannot directly observe all aspects of biodiversity, while field-based methods provide precise species-level data but only at discrete points [5] [3]. The statistical model acts as a "bridge," learning the relationship between the field-based species data and the environmental conditions measured by RS at those same points. This trained model can then predict biodiversity across the entire landscape, even in unsampled locations, by leveraging the continuous EO data layers [5]. This approach is at the heart of initiatives like the Biodiversity Survey of the Cape (BioSCape), a major NASA-funded campaign that integrates airborne hyperspectral imagery, satellite data, and intensive field sampling to map taxonomic, phylogenetic, and functional diversity [2].

Remote sensing and Earth observation have fundamentally transformed the scale and efficacy of conservation science. By providing synoptic, repeatable views of the planet, these technologies enable researchers to move from isolated case studies to systematic, global-scale monitoring of biodiversity threats and ecosystem changes. The integration of spectral data from satellites, drones, and aircraft with emerging ground-based biodiversity sensing technologies like automated recorders and eDNA, linked through sophisticated statistical models, represents the state of the art. This integrated approach, as exemplified by ongoing research initiatives and detailed in NASA's 2025 biodiversity agenda, provides an unprecedented decision-support toolkit for scientists, policymakers, and land managers to target conservation efforts, monitor their effectiveness, and ultimately work towards a more sustainable future for Earth's biological systems. [1] [2] [5]

The field of Earth observation is undergoing a revolutionary transformation, moving from periodic snapshots to a dynamic, continuous monitoring paradigm. This shift is powered by the advent of sophisticated satellite constellations—networks of coordinated satellites working in concert—that are redefining remote sensing capabilities for conservation research. Where once researchers relied on occasional imagery from single satellites, they now access daily global coverage through coordinated constellations that provide multispectral, hyperspectral, and synthetic aperture radar (SAR) data streams [7]. This technological evolution addresses a critical need in conservation science: the ability to monitor environmental changes at temporal scales relevant to ecological processes and anthropogenic impacts.

The significance of this transition extends beyond mere data collection frequency. Modern satellite constellations represent a fundamental shift toward integrated monitoring systems capable of capturing complex environmental interactions across spectral domains and spatial scales. For conservation researchers, this means unprecedented capacity to track deforestation, biodiversity loss, ecosystem changes, and illegal activities in near-real-time [8] [9]. The emergence of what scholars term the "Giant Constellation Era" marks a pivotal moment where satellite technology has progressed from isolated observation platforms to networked sensing infrastructures that can support the sophisticated monitoring requirements of contemporary conservation science [7].

Constellation Architectures and Technical Specifications

Chinese Satellite Constellation Infrastructure

China has established a comprehensive satellite constellation infrastructure comprising 100 registered constellations categorized into six distinct functional types: communication, navigation, remote sensing, meteorological, hybrid, and specialized purpose systems [10]. This diversified architecture enables multi-faceted Earth observation capabilities essential for comprehensive environmental monitoring. The scale of development is substantial, with 11 constellations having completed their deployment phase, while 60 constellations are actively expanding their orbital networks [10]. This tiered development approach ensures both operational continuity and continuous capability enhancement.

The remote sensing segment specifically has demonstrated remarkable growth, evolving from China's first returnable Earth observation satellite launched in 1975 to a sophisticated network of over 200 remote sensing satellites currently operational in orbit [7]. This technological progression has occurred through distinct developmental phases: the initial single-satellite stage, subsequent multi-satellite cooperation stage, and the current constellation stage characterized by loose coupling (satellites operating independently but with coordinated tasking) and tight coupling (satellites with inter-satellite links and autonomous coordination) architectures [7]. This evolutionary pathway has positioned China's Earth observation capabilities at the international forefront, achieving 16-meter resolution global daily coverage, 2-meter optical resolution with daily revisits, and 1-meter SAR resolution with 5-hour revisit times [7].

Table 1: Major Chinese Satellite Constellations for Environmental Monitoring

| Constellation Name | Satellite Count | Primary Capabilities | Status | Conservation Applications |

|---|---|---|---|---|

| 环天星座 (Huantian) | 86 (planned) | Optical + SAR, AI-based analysis | Phase 1: 10 satellites; Phase 2: 20 satellites; Phase 3: 86 satellites [11] | All-weather monitoring, disaster prevention, ecological assessment |

| 吉林一号 (Jilin-1) | 117 (current) | High-resolution optical, multispectral, video | 138 planned [10] | Agricultural monitoring, illegal activity detection |

| 女娲星座 (Nuwa) | 12 (current) | X-SAR radar imaging | 114 planned [10] | All-weather earth observation, surface deformation monitoring |

| 环境减灾系列 (Environment & Disaster Reduction) | Multiple | Environmental monitoring, disaster assessment | Fully operational [10] | Pollution tracking, ecological damage assessment, climate impact |

| 天启星座 (Tianqi) | 37 | IoT communications, narrowband data collection | Fully operational [12] | Wildlife tracking, sensor data relay, equipment monitoring |

| 陆地探测一号 (Land Exploration-1) | 2 | Land observation, stereoscopic mapping | Fully operational [10] | Topographic mapping, habitat assessment, geological monitoring |

| 资源三号 (ZY-3) | Multiple | Stereo mapping, high-resolution imaging | Fully operational [10] | 3D modeling, watershed analysis, coastal monitoring |

Multi-Scale Resolution and Coverage Capabilities

The operational capabilities of modern satellite constellations span a comprehensive range of spatial, temporal, and spectral resolutions essential for diverse conservation applications. Current Chinese remote sensing systems achieve sub-0.5-meter spatial resolution in optical domains, comparable to international commercial systems like WorldView, while specialized missions such as Gaofen-4 provide 50-meter resolution from geostationary orbit—the highest resolution available in such orbits [7]. The Gaofen-3 SAR satellite series further exemplifies this technical advancement, implementing 1-meter resolution C-band SAR with the most diverse imaging modes of any SAR satellite globally [7].

Temporal resolution has seen particularly dramatic improvements through constellation configurations. Where individual satellites might require weeks or months to revisit specific locations, coordinated constellations can now provide revisit times of hours or even minutes for critical areas. The Jilin-1 constellation anticipates achieving a 10-minute global revisit capability once its planned 138 satellites are fully deployed [7]. Similarly, the 环天星座 (Huantian Constellation) progresses through development phases targeting increasingly aggressive monitoring timelines: Phase 1 delivers 4.9-hour revisit through combined optical-SAR observation, Phase 2 aims for 45-minute global access, and Phase 3 will establish a two-day global coverage cycle enhanced by on-board AI analysis [11].

Table 2: Technical Specifications of Major Chinese Remote Sensing Constellations

| Constellation | Spatial Resolution | Revisit Time | Spectral Bands | Key Sensor Technologies |

|---|---|---|---|---|

| 环天星座 (Huantian) | <1m (SAR), <0.5m (optical) | 4.9 hours (Phase 1), 45 minutes (Phase 2) [11] | Multispectral, X-band SAR | Phased array SAR, high-resolution optics,星载AI |

| 吉林一号 (Jilin-1) | 0.5m-0.7m (video), 0.75m (full-color) | 10 minutes (when complete) [10] | Full-color, multispectral, infrared | Ultra-high-definition video, push-broom imaging, night light remote sensing |

| 女娲星座 (Nuwa) | <1m (SAR) | Daily (when complete) [10] | X-SAR | Multi-mode SAR, interferometric capability |

| 高分辨率系列 (Gaofen) | 0.5m (optical), 1m (SAR) | Daily to weekly [7] | Multispectral, hyperspectral, C-SAR | Large-field combined cameras, laser communication |

| 环境二号 (Environment-2) | 16m-300m | Daily [9] | Multispectral, infrared, hyperspectral | Wide-swath imaging, atmospheric correction |

Advanced Monitoring Methodologies for Conservation Research

Multispectral and Hyperspectral Analysis Techniques

Multispectral and hyperspectral imaging form the cornerstone of modern satellite-based conservation monitoring, enabling researchers to identify and quantify environmental parameters through spectral signature analysis. The foundational principle involves detecting the unique "spectral fingerprints" that different materials exhibit across electromagnetic spectra [8]. Vegetation, for instance, displays characteristic reflectance patterns with strong absorption in red wavelengths and high reflectance in near-infrared due to chlorophyll content and leaf cell structure—relationships quantified through vegetation indices like NDVI.

Advanced hyperspectral sensors aboard constellations such as 西光壹号 (Xiguang-1) capture continuous spectral profiles across hundreds of narrow bands, facilitating precise material discrimination essential for conservation applications [10]. In operational contexts, Chinese禁毒部门 has successfully employed high-resolution satellite spectral analysis to identify illegal opium poppy cultivation through detection of their distinctive spectral signatures, demonstrating the methodology's precision for targeted conservation enforcement [8]. The experimental protocol for such analysis involves:

- Spectral Library Development: Compiling reference spectral signatures for target materials (e.g., vegetation species, mineral types, water quality parameters) through field spectroscopy and laboratory measurements.

- Atmospheric Correction: Applying radiative transfer models to remove atmospheric effects from satellite radiance data, converting to surface reflectance.

- Spectral Matching: Implementing algorithms like Spectral Angle Mapper (SAM) or Mixture Tuned Matched Filtering (MTMF) to identify materials within each image pixel.

- Temporal Tracking: Monitoring spectral changes over time to detect phenological shifts, stress events, or land cover conversions.

For conservation researchers, this methodology enables precise mapping of invasive species distribution, forest health assessment, coral reef degradation, and wetland delineation at landscape scales. The 高分辨率卫星 (High-Resolution Satellites) further enhance these capabilities through specialized hyperspectral missions capable of detecting subtle spectral variations indicative of ecosystem changes before they become visually apparent [8] [7].

Synthetic Aperture Radar (SAR) Monitoring Protocols

Synthetic Aperture Radar represents a transformative monitoring technology for conservation research, providing all-weather, day-night observation capabilities particularly valuable in persistently cloud-covered tropical regions and during nighttime animal movements. Unlike optical systems that rely on reflected sunlight, SAR satellites actively illuminate targets with microwave radiation and analyze the returned signals, with different wavelengths (X-, C-, L-band) offering varying penetration capabilities through vegetation canopies and soil surfaces.

The 环天星座 (Huantian Constellation) employs X-band phased array SAR technology achieving sub-meter resolution capable of distinguishing small vehicles and infrastructure elements—a critical capability for monitoring illegal activities in protected areas [11]. The experimental methodology for SAR-based conservation monitoring involves:

- Polarimetric Analysis: Utilizing different polarization combinations (HH, HV, VH, VV) to extract information about target structure and orientation.

- Interferometric Processing (InSAR): Measuring phase differences between multiple SAR acquisitions to detect millimeter-scale surface deformations from ground water extraction, seismic activity, or permafrost thaw.

- Coherence Tracking: Analyzing signal correlation between acquisitions to detect subtle landscape changes from vegetation growth, soil moisture variation, or human disturbance.

- Backscatter Analysis: Quantifying signal return intensity to differentiate surface types (e.g., open water vs. flooded vegetation) and monitor moisture conditions.

For conservation applications, these techniques enable deforestation detection beneath cloud cover, wetland hydrology monitoring, illegal mining identification, and wildlife habitat mapping in regions with persistent cloud cover. The integration of SAR constellations like 女娲星座 (Nuwa) with optical systems creates complementary monitoring regimes where optical provides high-resolution spectral information during clear conditions, while SAR ensures continuous observation during inclement weather [10] [11].

Real-Time and Daily Monitoring Systems

The transition from retrospective analysis to near-real-time monitoring represents one of the most significant advances for time-sensitive conservation applications like natural disaster response, illegal activity detection, and wildlife protection. This capability emerges from integrated constellation architectures that combine rapid revisit times with streamlined data processing and delivery systems.

The operational methodology for daily monitoring involves:

- Constellation Tasking Optimization: Implementing sophisticated scheduling algorithms that dynamically prioritize image acquisition based on conservation priorities, cloud cover predictions, and satellite availability.

- On-Board Processing: Utilizing increasingly capable satellite-based computing to perform initial analysis and change detection before data transmission, reducing latency in alert generation.

- Automated Change Detection: Applying machine learning algorithms to identify alterations in imagery, such as new deforestation patches, water body changes, or construction activities.

- Data Fusion: Integrating multiple data sources (optical, SAR, meteorological) to improve detection accuracy and provide comprehensive situational awareness.

Chinese constellations have demonstrated this capability during environmental crises, with the 风云 (Fengyun) meteorological satellite constellation providing precise 5-day advance forecasting of super typhoon "桦加沙" in September 2025, including accurate landfall prediction with just 62 kilometers of 24-hour track error—the best performance in historical records [8]. Similarly, the 国家环境保护卫星遥感重点实验室 (National Environmental Protection Satellite Remote Sensing Key Laboratory) maintains operational capabilities producing 1,592 specialized environmental monitoring reports over three years, with 50 reports receiving ministerial-level recognition for their contribution to environmental protection [9].

Data Access Platforms and Analytical Tools

Conservation researchers leveraging satellite constellations require specialized platforms and tools to transform raw satellite data into actionable ecological insights. The 星瞰河山·视界 (Xingkan Heshan Shijie) platform exemplifies such systems, integrating 40 core spatiotemporal algorithms that automate the entire analytical workflow from data selection through final report generation [11]. These platforms typically provide:

- Automated Data Retrieval: Streamlined access to multi-satellite datasets with preprocessing to mitigate format inconsistencies

- Algorithm Libraries: Prepackaged analytical routines for vegetation analysis, change detection, water body extraction, and classification

- Custom Workflow Development: Tools for researchers to construct domain-specific analytical sequences without programming

- Visualization Capabilities: Interactive 2D/3D representation of spatiotemporal data for exploratory analysis and communication

Complementing these integrated platforms, the National Environmental Protection Satellite Remote Sensing Key Laboratory maintains specialized capabilities including 22 industry standards for ecological remote sensing and 13 dedicated environmental monitoring satellites with access to 28 additional satellite systems [9]. This infrastructure supports comprehensive conservation assessment through standardized methodologies that ensure scientific rigor and comparability across studies and temporal scales.

Research Reagent Solutions for Satellite-Based Conservation

Table 3: Essential "Research Reagents" for Satellite-Based Conservation Studies

| Research Solution | Technical Function | Conservation Application Examples | Example Sources |

|---|---|---|---|

| 高光谱卫星数据 (Hyperspectral Satellite Data) | Provides continuous spectral profiles across hundreds of narrow bands for precise material identification [8] | Invasive species mapping, vegetation stress detection, mineral exposure identification, water quality assessment | 高分辨率卫星, 西光壹号星座 |

| 合成孔径雷达数据 (SAR Data) | Enables all-weather, day-night observation through active microwave imaging [11] | Deforestation monitoring under cloud cover, wetland inundation tracking, surface deformation measurement, illegal activity detection | 女娲星座, 环天星座SAR satellites |

| 多光谱时序数据 (Multispectral Time Series) | Delivers regular surface reflectance measurements across specific spectral bands | Vegetation phenology tracking, land cover change detection, agricultural monitoring, burn scar assessment | 吉林一号, 环境减灾系列, 资源三号 |

| 恒星敏感器 (Star Trackers) | Provides precise satellite attitude determination for accurate image geolocation [13] | High-precision image registration for change detection, multi-sensor data fusion, accurate habitat boundary delineation | 天银星际 products |

| 星间链路技术 (Inter-Satellite Links) | Enables direct satellite-to-satellite communication for rapid data relay [7] | Reduced data latency for time-sensitive applications, improved constellation coordination, enhanced global coverage | 天链一号, advanced communication constellations |

| 星载AI系统 (On-board AI Systems) | Performs preliminary data analysis aboard satellites before downlinking [11] | Real-time change detection, automated alert generation, data compression to prioritize relevant imagery | 环天星座 Phase 3, 星时代星座 |

| 物联网卫星连接 (Satellite IoT Connectivity) | Provides global connectivity for field sensors and tracking devices [12] | Wildlife tracking, remote camera trap data retrieval, environmental sensor networking, equipment monitoring | 天启星座, 吉利未来出行星座 |

Future Directions in Satellite Constellation Technology

The evolution of satellite constellations for conservation monitoring continues to accelerate, with several transformative technologies emerging that will further enhance research capabilities. On-board artificial intelligence represents perhaps the most significant advancement, enabling satellites to perform preliminary analysis while still in orbit, identifying significant changes and prioritizing data transmission for time-sensitive applications [11]. The 环天星座 (Huantian Constellation) plans to implement such "smart sensing" capabilities in its third development phase, creating an intelligent "space neural network" that can autonomously recognize conservation-relevant patterns and anomalies [11].

The integration of satellite IoT connectivity through constellations like 天启星座 (Tianqi) creates novel opportunities for ground-truthing and integrated monitoring systems [12]. This technology enables seamless data relay from field sensors, camera traps, and animal tracking collars, effectively bridging the gap between satellite observations and ground-based measurements. For conservation researchers, this means truly integrated monitoring systems where satellite detections can automatically trigger higher-resolution imaging or prompt field verification.

Advances in constellation manufacturing techniques are simultaneously driving down costs while increasing deployment pace. The adoption of automotive-inspired "final assembly pull" manufacturing approaches has transformed satellite production from craft-based to industrial-scale operations [8]. This industrialization, exemplified by 银河航天 (Galaxy Space) manufacturing facilities that reduce production cycles by 80% while achieving annual capacities of hundreds of satellites, ensures the continued expansion and enhancement of monitoring capabilities available to conservation science [13]. These advancements collectively signal a future where comprehensive, daily monitoring of Earth's ecosystems becomes not just technologically feasible but operationally routine, providing conservation researchers with unprecedented tools to understand and protect global biodiversity.

LiDAR (Light Detection and Ranging) is an active remote sensing technology that has revolutionized the measurement and monitoring of three-dimensional ecosystem structures. As a conservation tool, it provides an unparalleled capacity to measure vegetation height, density, and vertical distribution across wide geographic areas, enabling researchers to address critical questions about habitat quality, carbon sequestration, and ecosystem dynamics [14]. Unlike passive optical sensors, LiDAR systems generate their own energy in the form of laser light, allowing them to make precise, three-dimensional measurements of physical surfaces independent of solar illumination [14]. This capability is particularly valuable for conservation research, where understanding the structural complexity of habitats is essential for biodiversity assessment, ecosystem service valuation, and monitoring conservation outcomes.

The fundamental principle of LiDAR operation involves measuring the two-way travel time of emitted laser pulses as they travel from the sensor to a target and back again [14]. By precisely timing this interval and knowing the speed of light, the system can calculate distances with remarkable accuracy. Each laser pulse can generate multiple returns as photons interact with various elements within the vegetation structure, such as leaves and branches at different heights, before finally reaching the ground [14]. These interactions create a detailed vertical profile of the vegetation, represented as a waveform that captures the distribution of intercepted surfaces at different heights [14]. When combined with precise positioning data from Global Positioning System (GPS) and orientation information from an Inertial Measurement Unit (IMU), these distance measurements generate dense point clouds—collections of millions of XYZ coordinates in space that digitally represent the scanned environment [14].

LiDAR Data Collection Platforms

The application of LiDAR in conservation research is implemented through various platforms, each offering distinct advantages depending on the spatial scale, level of structural detail required, and environmental context. The major platform types include airborne, terrestrial, spaceborne, and unmanned aerial vehicle (UAV) systems, which can be strategically deployed to address specific conservation questions.

Table 1: Comparison of LiDAR Platform Characteristics for Ecosystem Mapping

| Platform Type | Spatial Coverage | Spatial Resolution | Key Applications in Conservation | Limitations |

|---|---|---|---|---|

| Airborne (ALS) | Regional (10s-1000s km²) | 0.5-20 points/m² | Forest carbon mapping, watershed management, habitat connectivity | Limited undersory detail, higher cost for small areas |

| Terrestrial (TLS) | Local (single plots) | 1,000-1,000,000 points/m² | Individual tree architecture, understory characterization, habitat structure | Limited coverage, occlusion effects |

| Spaceborne | Continental to global | 0.5-2 km transects (e.g., GEDI) | Global forest height, aboveground biomass, carbon stock assessment | Coarse spatial resolution, limited sampling |

| UAV | Landscape (1-10 km²) | 50-500 points/m² | Wetland mapping, restoration monitoring, precision conservation | Regulatory constraints, limited payload capacity |

Airborne Laser Scanning (ALS) involves mounting LiDAR sensors on aircraft or helicopters to collect data over extensive areas [15]. This platform is particularly valuable for regional conservation planning, forest carbon mapping, and watershed management. ALS systems can rapidly collect highly accurate 3D data over large areas, even in regions with rugged terrain or dense vegetation cover [15]. The resulting data products, including Digital Terrain Models (DTMs) and Canopy Height Models (CHMs), provide foundational information for habitat suitability modeling and ecosystem service assessment.

Terrestrial Laser Scanning (TLS) utilizes ground-based systems to capture extremely detailed, millimeter-level resolution data of forest understory, stem structure, and fine-scale habitat complexity [16]. TLS instruments are positioned at ground level, allowing them to capture detailed measurements of both the forest understory and the upper canopy [16]. Compared to other ground-based methods, TLS offers superior geometric accuracy and structural completeness, particularly for detailed modeling of individual trees and stand structure [16]. This technology enables the creation of quantitative structure models (QSMs), which are algorithmic enclosures of point clouds in topologically-connected, closed volumes that enable precise estimation of biomass, carbon storage, and habitat structural diversity [16].

Spaceborne LiDAR systems, such as the Global Ecosystem Dynamics Investigation (GEDI) instrument on the International Space Station, provide global sampling of ecosystem structure [17]. GEDI's three lasers precisely measure forest canopy height, canopy vertical structure, and surface elevation, playing an important role in understanding the amounts of biomass and carbon forests store and how much they lose when disturbed [17]. This global perspective is essential for tracking progress toward international conservation targets, such as the Aichi Biodiversity Targets and the Sustainable Development Goals.

UAV LiDAR represents an emerging platform that bridges the gap between ground-based and airborne systems, offering flexibility for monitoring hard-to-reach or dangerous areas [15]. By mounting LiDAR sensors on drones, conservation researchers can collect high-resolution 3D data at the landscape scale with greater temporal flexibility than traditional airborne campaigns [15]. This platform is particularly valuable for monitoring restoration projects, mapping sensitive habitats, and tracking fine-scale disturbance impacts over time.

LiDAR Data Processing and Analysis Workflow

The transformation of raw LiDAR data into ecologically meaningful information involves a multi-stage processing workflow that includes data preparation, point cloud classification, and derivation of ecosystem structural metrics. Advances in computational power and algorithms have significantly accelerated these processes, enabling researchers to extract increasingly sophisticated ecological variables from point cloud data.

LiDAR Data Processing Workflow

Data Preprocessing and Cleaning

Raw LiDAR point clouds require substantial preprocessing before ecological analysis can begin. Data filtering and cleaning algorithms remove noise, outliers, and unwanted points from the point cloud data [18] [19]. Common techniques include statistical outlier removal, which eliminates points that are statistically distant from their neighbors; radius outlier removal, which removes points with too few neighbors within a specified radius; and voxel grid filtering, which subdivides the point cloud into 3D pixels and averages points within each voxel [19]. For multi-scan terrestrial LiDAR campaigns, point cloud registration aligns individual scans into a unified coordinate system using algorithms such as the Iterative Closest Point (ICP) method [18]. When point density is excessively high, downsampling techniques, including voxelization grid down sampling, uniform subsampling, and random subsampling, reduce data volume while preserving structural information [19].

Point Cloud Classification and Digital Elevation Modeling

A critical step in LiDAR processing is the classification of points based on the objects they represent. Classification algorithms assign semantic labels (e.g., ground, vegetation, building) to the points in the point cloud data [18]. Ground point classification is particularly important for conservation applications as it enables the creation of a Digital Terrain Model (DTM) representing the bare earth surface without vegetation or structures [14]. With ground points identified, normalization calculates height above ground for each non-ground point by subtracting the DTM elevation, enabling the generation of a Canopy Height Model (CHM) that represents the height of vegetation across the landscape [14]. These foundational data products serve as the basis for deriving a wide range of structural metrics relevant to conservation science, including canopy height, canopy cover, and vertical complexity.

Structural Metric Extraction for Conservation

Once point clouds are classified and normalized, researchers can extract quantitative metrics that describe ecosystem structure. For forest ecosystems, these include canopy height metrics (e.g., mean height, maximum height), density metrics (e.g., canopy cover, leaf area index), and vertical distribution metrics (e.g., relative height percentiles, vertical complexity index) [14]. Different LiDAR systems provide varying capabilities for metric extraction. Discrete return LiDAR systems record individual points for peaks in the returned energy waveform, typically capturing 1-11+ returns per pulse, while full waveform LiDAR systems record the complete distribution of returned energy, capturing more structural information, particularly in dense vegetation [14]. The GEDI mission, for example, produces full waveform data from which specialized algorithms extract detailed canopy structure profiles, including relative height (RH) metrics that indicate the energy return at specific height percentiles [17].

Accuracy Assessment and Validation Protocols

Ensuring the accuracy of LiDAR-derived structural measurements is essential for their application in conservation research and policy. LiDAR accuracy is formally defined as the closeness of measurements to true values and is typically expressed as a range (±2 cm) or standard deviation (3 cm to 1σ) [20]. The LiDAR domain recognizes two primary accuracy classifications: relative accuracy (precision of measurements within the same dataset) and absolute accuracy (how closely measurements match true geographic locations) [20]. Understanding and validating both forms of accuracy is critical for multi-temporal studies of ecosystem change and for integrating LiDAR data with other geospatial information in conservation planning.

Table 2: LiDAR Accuracy Standards and Validation Protocols

| Standard Type | Governing Body | Key Metrics | Validation Methodology | Conservation Application Context |

|---|---|---|---|---|

| ASPRS Positional Accuracy Standards | American Society for Photogrammetry and Remote Sensing | RMSEH (horizontal), RMSEZ (vertical), NVA, VVA | Minimum 30 checkpoints evenly distributed across project areas | Required for US Federal agencies, foundation for FIA integration |

| ISO 19159 Series | International Organization for Standardization | Geometric, radiometric, and characteristic calibration | Standardized calibration processes for various applications | International research collaborations, global carbon accounting |

| NSSDA | Federal Geographic Data Committee | Root-mean-square error (RMSE) | 20+ checkpoints from independent higher-accuracy source | Data sharing across agencies, national-level conservation assessments |

| Voronoi Density Method | ASPRS | Point density distribution | Partitions map into cells around each point to identify sparse areas | Ensuring uniform coverage in complex terrain and vegetation |

The ASPRS Positional Accuracy Standards for Digital Geospatial Data provide comprehensive frameworks for assessing LiDAR data quality [20]. The most recent edition incorporates several key improvements, including expressing horizontal accuracy as RMSEH (combined linear error in the radial direction) rather than separate RMSEx and RMSEy values, requiring at least 30 checkpoints spread evenly across project areas, and updating target accuracy requirements for ground control points [20]. These standards differentiate between Non-vegetated Vertical Accuracy (NVA), measured on open hard surfaces, and Vegetated Vertical Accuracy (VVA), which measures the 95th percentile error in vegetated areas [21]. Recent updates have shifted VVA from a pass/fail requirement to an informational metric, acknowledging that factors beyond sensor performance influence accuracy measurements under vegetation [21].

LiDAR Accuracy Assessment Framework

Ground control point (GCP) verification serves as the primary method for assessing absolute accuracy [20]. GCPs are reference markers with known coordinates that function as tie points in processing software, providing the point cloud with information about scale, orientation, and overall data quality [20]. These points should be located on flat or uniformly-sloped open terrain with slopes of 10% or less, avoiding vertical artifacts or sudden elevation changes [20]. Real-Time Kinematic (RTK) surveying offers the most efficient approach for collecting GCPs, typically achieving centimeter-level accuracy (1-3 cm) when establishing GCP locations [20]. It is essential to distinguish between ground control points (GCPs), used for data adjustments, and survey checkpoints (SCPs), reserved exclusively for accuracy reporting to maintain validation independence [20].

For assessing relative accuracy, also known as "swath-to-swath accuracy" or "interswath consistency," analysts examine how well overlapping areas from different data collection passes align with each other [20]. This internal geometric quality check focuses primarily on vertical differences between overlapping flight paths using surface-based comparison methods where ground surfaces derived from point-to-digital (PTD) algorithms at the per-flightline level are compared to ground-classified points from all overlapping flightlines [20]. The differences are recorded and summarized statistically, with analysis particularly focused on non-vegetated areas having only single returns and slopes less than 10 degrees [20].

A recent innovation in quality assessment is the Voronoi-based density validation method approved by ASPRS [21]. Unlike traditional point density calculations that provide only an average points-per-area value, the Voronoi method partitions the map into cells around each lidar point and calculates the area of those cells [21]. This approach identifies uneven point distributions that might leave gaps in coverage, even when average density requirements are met [21]. This method is particularly valuable for conservation applications in heterogeneous environments where structural complexity demands consistent point density for accurate characterization.

Experimental Protocols for Ecosystem Monitoring

Implementing LiDAR technology in conservation research requires carefully designed protocols to ensure scientific rigor, reproducibility, and relevance to management questions. The following experimental frameworks provide structured approaches for common conservation applications, incorporating the latest advancements in LiDAR science while addressing practical considerations for implementation across different ecosystem types.

Multi-temporal Forest Growth and Disturbance Monitoring

Objective: Quantify patterns of forest recovery, growth, and adaptation over time in response to logging, storms, fire, or climate gradients [22].

Field Protocol:

- Establish permanent forest inventory plots following Forest Inventory and Analysis (FIA) network protocols to provide ground-truthing data for LiDAR measurements [22].

- Collect complementary field measurements of tree diameter, height, species composition, and crown dimensions coincident with LiDAR acquisitions.

- Precisely geolocate plot corners and individual trees using RTK GPS with centimeter-level accuracy to enable direct linkage with LiDAR point clouds.

LiDAR Data Acquisition:

- Schedule repeat LiDAR collections using consistent parameters (e.g., pulse density, scan angle, sensor type) to ensure comparability across time periods.

- Combine repeat collections of airborne LiDAR with photogrammetric point clouds from the National Agriculture Imagery Program (NAIP) and spectral data to measure forest growth and change over time [22].

- Maintain detailed flight planning documentation, including sensor calibration records, flight altitude, speed, and alignment between multi-temporal datasets.

Data Processing and Analysis:

- Align multi-temporal point clouds using registration algorithms to create a unified representation of the scene across time periods [18].

- Apply change detection algorithms to compare point clouds acquired at different times to detect changes in the scene, such as new growth or disturbance impacts [18].

- Refine methods to distinguish between stand-replacing disturbances and gradual regrowth for more accurate forest condition assessments [22].

- Generate high-resolution models and maps of forest structure dynamics to inform both scientific understanding and practical forest management decisions [22].

Individual Tree Architecture and Habitat Complexity Assessment

Objective: Characterize the three-dimensional arrangement of plant components within and among individual trees to understand environmental influences on forest structure and habitat quality [16].

Field Protocol:

- Deploy terrestrial laser scanning (TLS) systems at multiple positions within forest plots to minimize occlusion and capture comprehensive structural data [16].

- Use modern scanning protocols that eliminate fixed calibration targets for registration, reducing setup time and enabling faster fieldwork workflows [16].

- Collect supplementary measurements of microclimate conditions (light availability, soil moisture) and biotic interactions to correlate with structural metrics.

LiDAR Data Processing:

- Apply co-registration methods to align multiple scan positions into a unified coordinate system [16].

- Implement deep learning approaches for crown delineation and automated pipelines for large-scale tree extraction from TLS point clouds [16].

- Develop quantitative structure models (QSMs) - algorithmic enclosures of point clouds in topologically-connected, closed volumes - to represent tree architecture [16].

- Extract structural economics spectrum parameters that embed tree size and structural diversity in the wider framework of plant resource use [16].

Analysis and Interpretation:

- Analyze the role of 3D structure in regulating light regimes, forest productivity, and physiological and biophysical processes [16].

- Parameterize functional structural plant models (FSPM) with TLS data to simulate interactions between structure, environment, and physiology [16].

- Test and calibrate dynamic FSPM predictions against TLS-derived structural measurements to explore ecological and environmental hypotheses [16].

- Correlate structural complexity metrics with independent biodiversity measurements (e.g., avian point counts, arthropod sampling) to quantify habitat quality.

Implementing LiDAR technology in conservation research requires access to specialized data sources, software tools, and analytical frameworks. The following table summarizes key resources that constitute the essential toolkit for researchers working with LiDAR data for ecosystem mapping applications.

Table 3: Research Reagent Solutions for LiDAR Ecosystem Mapping

| Resource Category | Specific Tools/Products | Function in Research | Conservation Application Example |

|---|---|---|---|

| Data Sources | GEDI Level 2-4 Products [17] | Global canopy structure, biomass, and height metrics | Continental-scale carbon stock assessment, deforestation monitoring |

| USGS 3D Elevation Program | High-resolution topographic and surface models | Watershed management, habitat connectivity modeling | |

| NEON Airborne Observation Platform | Ecosystem-specific LiDAR collections | Cross-site comparative ecology, climate change impact studies | |

| Software Libraries | LAStools [21] | LiDAR data compression, format conversion, and visualization | Processing large-area collections, data standardization |

| CloudCompare [20] | Point cloud comparison and analysis | Validation against field measurements, change detection | |

| R lidR package | Statistical analysis of LiDAR data for forestry | Custom metric development, scalable processing workflows | |

| Analytical Frameworks | Quantitative Structure Models (QSMs) [16] | Algorithmic reconstruction of tree architecture | Biomass estimation, growth modeling, allometric development |

| Voronoi Density Method [21] | Point density distribution assessment | Data quality assurance, acquisition planning | |

| Functional Structural Plant Models (FSPMs) [16] | Coupling 3D structure with physiological processes | Climate impact forecasting, silvicultural optimization |

LiDAR technology has fundamentally transformed our capacity to map, monitor, and understand three-dimensional ecosystem structure at scales ranging from individual plants to global biomes. By providing precise measurements of vegetation height, density, and vertical distribution, LiDAR addresses critical information needs in conservation science, from quantifying carbon storage to assessing habitat quality and tracking ecosystem responses to environmental change. The ongoing evolution of LiDAR platforms—from terrestrial to airborne, UAV, and spaceborne systems—creates unprecedented opportunities for multi-scale assessment of conservation priorities. Furthermore, advancements in data processing algorithms, accuracy assessment protocols, and analytical frameworks continue to enhance the utility of LiDAR data for addressing pressing conservation challenges. As these technologies become increasingly accessible and integrated with other remote sensing modalities and field observations, they will play an essential role in informing evidence-based conservation decisions and tracking progress toward regional, national, and international biodiversity conservation targets.

Remote sensing technologies have revolutionized the monitoring and conservation of global ecosystems, enabling researchers to non-destructively assess vegetation health at multiple scales. For conservation researchers and scientists, understanding the capabilities and limitations of multispectral and hyperspectral sensors is fundamental to designing effective monitoring protocols. These technologies serve as critical diagnostic tools, translating invisible spectral information into actionable data about plant physiology, stress status, and ecosystem function.

Multispectral imaging captures reflected energy in several defined, broad wavelength bands, providing essential information about vegetation cover and basic health indicators. In contrast, hyperspectral imaging decomposes the reflected light into hundreds of narrow, contiguous bands, creating a continuous spectral signature that can identify specific biochemical compounds and subtle physiological changes [23] [24]. This technical distinction fundamentally influences their application in conservation research, with implications for detection sensitivity, analytical complexity, and operational cost.

Core Technical Principles: Beyond Human Vision

Fundamental Differences in Spectral Resolution

The primary distinction between multispectral and hyperspectral sensors lies in their spectral resolution—the number and narrowness of the wavelength bands they capture.

Multispectral sensors typically collect data in 3 to 20 discrete, broad bands within the visible and infrared regions of the electromagnetic spectrum. Common bands include red, green, blue, near-infrared, and sometimes red-edge wavelengths [23] [25]. This structure provides generalized spectral information sufficient for calculating standard vegetation indices but lacks the detail to identify specific materials based on their unique spectral fingerprints.

Hyperspectral sensors capture hundreds of narrow, contiguous spectral bands (typically 100-250+), generating an almost continuous spectrum for each pixel in an image [26] [24]. This detailed data enables the identification of unique spectral signatures tied to specific molecular interactions, allowing researchers to detect subtle changes in plant biochemistry that precede visible symptoms [24].

Table 1: Technical Comparison of Multispectral and Hyperspectral Imaging

| Parameter | Multispectral Imaging | Hyperspectral Imaging |

|---|---|---|

| Number of Bands | 5-20 broad bands [23] [25] | 100+ narrow, contiguous bands [26] [24] |

| Spectral Resolution | Low (Broad bandwidth: 50-100 nm) [23] | High (Narrow bandwidth: 5-20 nm) [24] |

| Spectral Range | Typically limited to 400-1000 nm [24] | Can extend to 400-2500 nm, covering VNIR and SWIR [24] |

| Data Output | Separate, discrete band images | Continuous spectrum for each pixel (image cube) [25] |

| Data Volume | Lower, manageable | Very high, requires significant processing [23] |

| Primary Strength | General land cover classification, vegetation health monitoring [23] | Material identification, detection of subtle biochemical changes [26] |

The Scientific Basis: Spectral Signatures of Vegetation

Plants interact with light in wavelength-specific ways based on their biochemical and structural properties. Healthy chlorophyll strongly absorbs red and blue light for photosynthesis while reflecting green light and highly reflecting near-infrared (NIR) radiation due to leaf mesophyll structure [27]. Stressors like disease, nutrient deficiency, or water scarcity alter a plant's biochemistry and cellular structure, consequently changing its spectral signature in predictable ways [26].

Hyperspectral sensors detect these subtle alterations because they cover absorption features related to specific biochemicals. For instance, the spectral range of 1100-1700 nm is sensitive to water content and lignin, while the 700-2500 nm range contains critical overtone bands for compounds like cellulose, lignin, nitrogen, and starch [24]. Multispectral sensors, with their broader bands, average these fine features together, making it impossible to pinpoint specific biochemical drivers.

Applications in Vegetation Health Monitoring

The choice between multispectral and hyperspectral technology depends heavily on the specific research question and required diagnostic precision. The following table summarizes their application-specific performances.

Table 2: Application-Based Performance Comparison for Vegetation Monitoring

| Application | Multispectral Performance | Hyperspectral Performance | Research Context |

|---|---|---|---|

| General Plant Health & Biomass | Effective using NDVI/EVI [27]. Achieved R²=0.53 for grassland AGB [28]. | Slightly superior but may not justify cost for basic mapping. | Grassland monitoring across biomes [28]. |

| Early Disease & Stress Detection | Limited to observing visible symptoms. | High accuracy for pre-visual detection [26]. Identifies spectral shifts from biochemical changes. | Wheat crown rot detection [25]. |

| Nutrient Deficiency | Moderate, using indices like NDRE for nitrogen [27]. | High precision. Can differentiate specific nutrient shortages. | Winter wheat nitrogen monitoring [29]. |

| Species Identification | Limited to broad classifications. | High accuracy. Can map specific species/invasive weeds [30]. | Spartina alterniflora invasion mapping [30]. |

| Water Stress Detection | Effective using NDMI with SWIR bands [27]. | Superior for early warning via subtle water absorption feature changes. | Precision agriculture irrigation scheduling [26]. |

Advanced Insights from Hyperspectral Data

The rich data from hyperspectral sensors enable sophisticated analytical approaches critical for advanced conservation research. A study on grassland monitoring across diverse global biomes demonstrated that machine learning models (Random Forest Regression) applied to hyperspectral data could successfully predict forage quality (metabolizable energy) with high accuracy (nRMSE=0.108, R²=0.68), outperforming predictions for physical biomass [28]. This highlights that biochemical properties are often more directly linked to spectral signatures than structural ones.

Furthermore, research on invasive species monitoring has demonstrated the superiority of multitemporal hyperspectral analysis. A study mapping the invasive Spartina alterniflora achieved identification accuracies exceeding 91.6% by leveraging red-edge bands from the Zhuhai-1 hyperspectral satellite across multiple seasons, outperforming traditional multispectral indices like NDVI [30]. This capability to identify specific species is transformative for managing biodiversity and ecosystem health.

Experimental Protocols and Methodologies

A Representative Workflow: Winter Wheat Nitrogen Monitoring

The following diagram illustrates a typical experimental workflow for monitoring crop nitrogen status using hyperspectral data, as detailed in recent research [29].

This workflow integrates several key methodological stages:

Experimental Design & Data Acquisition: Controlled field plots with varying nitrogen treatments are established. Hyperspectral data is collected via UAV (e.g., Cubert S185 sensor) or satellite platforms across multiple growth stages, synchronized with destructive field sampling for plant nitrogen concentration (PNC) analysis [29].

Data Pre-processing & Feature Engineering: Raw imagery undergoes radiometric calibration and geometric correction. Subsequently, three variable selection strategies are employed:

- 1D Spectral Reflectance: Using all or selected narrow bands.

- Optimized 2D Spectral Indices: Systematic combination of two bands (e.g., enhanced NDVI derivatives).

- Optimized 3D Spectral Indices: Combining three bands to capture more complex spectral interactions [29].

Model Development & Validation: Machine learning algorithms—Partial Least Squares Regression (PLSR), Random Forest Regression (RFR), and Support Vector Machine Regression (SVMR)—are trained to establish the relationship between spectral features and measured PNC. Model performance is rigorously validated against independent ground-truth data [29].

Key Vegetation Indices for Health Assessment

Vegetation indices are mathematical transformations of original spectral bands designed to highlight specific vegetation properties.

Table 3: Essential Vegetation Indices for Health Assessment [27]

| Index Name | Abbreviation | Formula | Sensitivity & Application | Optimal Sensor Type |

|---|---|---|---|---|

| Normalized Difference Vegetation Index | NDVI | (NIR - Red) / (NIR + Red) | General plant health, biomass. Saturates in dense canopies. | Multispectral, Hyperspectral |

| Enhanced Vegetation Index | EVI | 2.5 * (NIR - Red) / (NIR + 6Red - 7.5Blue + 1) | Improved sensitivity in high biomass regions, corrects for atmospheric effects. | Multispectral, Hyperspectral |

| Normalized Difference Red Edge Index | NDRE | (NIR - Red Edge) / (NIR + Red Edge) | Mid-to-late season nitrogen status, chlorophyll content. | Multispectral (with Red Edge band), Hyperspectral |

| Normalized Difference Moisture Index | NDMI | (NIR - SWIR) / (NIR + SWIR) | Canopy water content, drought stress monitoring. | Multispectral (with SWIR band), Hyperspectral |

| Chlorophyll Content Index | CCI | (NIR / Red Edge) - 1 | Nitrogen, magnesium, and iron deficiency. | Handheld Sensors, UAV, Hyperspectral |

The Scientist's Toolkit: Research Reagent Solutions

Implementing a spectral analysis project requires a suite of technical tools and "reagents"—both physical and computational.

Table 4: Essential Research Toolkit for Spectral Vegetation Analysis

| Tool / 'Reagent' | Category | Function & Utility in Research |

|---|---|---|

| Cubert S185 Hyperspectral Imager | Sensor Hardware | UAV-mounted; captures 125 bands (450-950 nm); provides core hyperspectral data for detailed biochemical analysis [29]. |

| DJI P4 Multispectral (P4M) | Sensor Hardware | Integrated UAV system with 6 bands; cost-effective for standard indices (NDVI, NDRE); ideal for large-scale farm monitoring [29]. |

| Sentinel-2 Satellite Imagery | Data Source | Provides free, global multispectral data (13 bands); excellent for large-scale and time-series analysis in conservation [29]. |

| Random Forest Regression (RFR) | Algorithm | Machine learning model; robust for predicting biophysical parameters (e.g., N, biomass) from high-dimensional spectral data [28] [29]. |

| Partial Least Squares Regression (PLSR) | Algorithm | Statistical method effective for modeling relationships between spectral bands and response variables, especially with collinear data [28] [29]. |

| Savitzky-Golay Filter | Pre-processing | Smooths hyperspectral spectra to reduce noise while maintaining signal shape, a crucial step before derivative analysis [24]. |

| Calibration Targets | Field Equipment | Panels with known reflectance (e.g., white, gray); essential for converting raw sensor DN to absolute reflectance values. |

Future Directions and Research Gaps

The field of spectral sensing is rapidly evolving. Sensor miniaturization and the launch of new satellite constellations (e.g., Zhuhai-1) are making hyperspectral data more accessible [26] [30]. The integration of hyperspectral data with other sensing modalities, such as LiDAR, which can penetrate vegetation to provide structural and topographic information, presents a powerful frontier for comprehensive ecosystem assessment, including below-ground biomass estimation [31].

A significant challenge remains in achieving fully reliable global transferability of spectral models, as those trained in one region often falter when applied to different environmental and vegetation conditions [28]. Future research must focus on developing models that incorporate local variation as a meaningful component rather than treating it as noise. Advances in deep learning for automated feature extraction and the creation of expanded, globally representative spectral libraries will be decisive next steps toward robust, universal models for conservation science [28] [32].

Synthetic Aperture Radar (SAR) represents a pivotal remote sensing technology that utilizes an active sensor to emit microwave signals and process the returning backscatter to generate high-resolution imagery of the Earth's surface. Unlike optical sensors that rely on sunlight, SAR systems illuminate their target using radar, enabling data acquisition independent of solar illumination and atmospheric conditions [33]. This all-weather, day-and-night capability makes SAR particularly valuable for continuous monitoring in cloud-prone regions, addressing a significant limitation of traditional optical remote sensing for conservation research [34] [35].

The fundamental principle underlying SAR involves creating a synthetic antenna aperture by leveraging the platform's motion along the flight path (azimuth direction). Rather than deploying a physically large antenna—which would be impractically long (kilometers) for satellite missions to achieve fine spatial resolution—SAR processes sequential radar returns to simulate a much larger antenna [33]. This synthetic aperture approach enables satellites to consistently capture detailed imagery at spatial resolutions of meters or better, providing the necessary detail for precise environmental monitoring across seasons and weather conditions [33].

For conservation science operating in frequently cloud-obscured regions such as tropical forests and wetlands, SAR technology offers an unprecedented capacity for consistent earth observation. The capability to penetrate clouds, rain, smoke, and vegetation canopy positions SAR as an indispensable tool in the remote sensing arsenal for ecological management and monitoring programs that require uninterrupted data streams [36] [34].

How SAR Works: Core Principles and Technical Specifications

Signal Interaction with Surface Features

SAR imagery is created through the interaction of emitted microwave pulses with the Earth's physical structures. When the radar signal reaches the surface, it undergoes backscattering—the portion of energy reflected directly back toward the sensor. The strength and properties of this backscattered signal carry information about the surface's characteristics, including structure, moisture content, and roughness [33]. The interpretation of SAR data relies heavily on understanding three primary scattering mechanisms:

- Surface Scattering occurs when the radar signal reflects off relatively smooth surfaces with minimal penetration. This is dominant over bare soil, water bodies, and non-vegetated areas, and is most sensitive to VV (vertical-vertical) polarization [33].

- Volume Scattering happens when the radar signal penetrates into a medium and scatters multiple times within it before returning to the sensor. This is characteristic of vegetation canopies with leaves and branches, and is most sensitive to cross-polarized data like VH or HV [33].

- Double-Bounce Scattering occurs when the signal reflects off two surfaces before returning to the sensor, such as from ground-trunk interactions in forests or ground-wall structures in urban areas. This mechanism is most sensitive to HH (horizontal-horizontal) polarization [33].

Key SAR Bands and Their Applications

The wavelength (or frequency) of the radar signal fundamentally determines how it interacts with surface features. Longer wavelengths generally penetrate deeper into vegetation canopies and soils, while shorter wavelengths provide finer spatial resolution but less penetration. SAR systems operate across several designated bands, each with distinct characteristics and applications relevant to conservation research [33].

Table 1: SAR Frequency Bands and Their Conservation Applications

| Band | Frequency | Wavelength | Penetration Depth | Typical Conservation Applications |

|---|---|---|---|---|

| X-band | 8-12 GHz | 2.4-3.8 cm | Very low (leaves/tops) | Urban monitoring, snow and ice mapping, little vegetation penetration |

| C-band | 4-8 GHz | 3.8-7.5 cm | Low to moderate | Global change detection, ocean and ice monitoring, agricultural monitoring |

| S-band | 2-4 GHz | 7.5-15 cm | Moderate | Vegetation monitoring (used by upcoming NISAR mission) |

| L-band | 1-2 GHz | 15-30 cm | High | Biomass estimation, vegetation mapping, geophysical monitoring, deformation |

| P-band | 0.3-1 GHz | 30-100 cm | Very high | Experimental biomass and vegetation assessment, deep penetration |

The selection of appropriate SAR bands is crucial for conservation applications. For instance, an X-band radar (wavelength ~3 cm) interacts primarily with leaves at the top of tree canopies, while L-band signals (wavelength ~23 cm) penetrate more deeply to interact with branches and trunks, making L-band particularly valuable for forest structure analysis and biomass estimation [33]. This penetration capability enables archaeologists to use SAR data to uncover structures hidden beneath desert sands or dense vegetation, demonstrating its value for cultural conservation as well [33].

Advanced SAR Techniques: InSAR and Polarimetry

Beyond basic backscatter imaging, SAR offers sophisticated analysis techniques that expand its utility for conservation science:

Interferometric SAR (InSAR) utilizes the phase information contained in SAR signals to measure precise distance changes between the sensor and target. When at least two observations of the same target are made at different times, InSAR can detect surface deformation with centimeter-to-millimeter accuracy [33]. This capability has proven invaluable for monitoring seismic activity, volcanic deformation, landslide movement, and ground subsidence—critical applications for disaster risk reduction in conservation contexts [34] [37].

The technical principle of InSAR involves processing two or more SAR images of the same area to create an interferogram. The interference phase (φ) can be represented as φ = (4π/λ)Δr, where λ is the radar wavelength and Δr is the change in distance between sensor and target [37]. This relationship enables precise measurement of topographic changes over time, with applications ranging from glacier dynamics to infrastructure stability monitoring [37].

Polarimetric SAR (PolSAR) exploits the polarization properties of radar signals to characterize surface features. By transmitting and receiving signals in different polarizations (HH, VV, HV, VH), PolSAR provides additional information about target structure and orientation [36]. This capability enhances the discrimination of different land cover types—crucial for habitat mapping and monitoring changes in ecosystem extent and quality [36].

SAR for Conservation and Ecological Monitoring

The unique capabilities of SAR have enabled significant advances in conservation research and ecological monitoring. A systematic review of 11,201 peer-reviewed publications from 2000–2024 documented SAR's dramatically expanded applications in hazard assessment, urban development, and ecological management [36]. While urban applications have shown the fastest growth, ecological applications present critical opportunities for further research and implementation [36].

Ecosystem Structure and Change Detection

SAR technology provides unparalleled capacity for monitoring forest structure and detecting changes in forest cover. The penetration capability of longer wavelengths (L- and P-bands) enables measurement of canopy height, biomass estimation, and detection of subsurface features [33]. This is particularly valuable for monitoring deforestation in tropical regions where cloud cover often obstructs optical observations. SAR data supports consistent monitoring of forest extent and structure regardless of seasonal weather patterns, providing reliable baselines for carbon stock assessment and illegal logging detection [38].

Wetland monitoring similarly benefits from SAR's all-weather capability and sensitivity to water presence. The double-bounce scattering between water surfaces and vertical tree trunks creates a distinctive signature in flooded forests and mangroves, enabling precise mapping of inundation patterns and wetland extent [33]. These applications are critical for conserving vulnerable ecosystems that provide essential services including water filtration, flood control, and carbon sequestration [38].

Disaster Monitoring and Response

Conservation efforts increasingly recognize the interconnectedness of natural disasters and ecosystem integrity. SAR technology provides critical capabilities for disaster prevention, response, and recovery monitoring [38]. InSAR techniques enable detection of pre-failure slope movements in landslide-prone areas, potentially providing early warning signs that can save lives and protect sensitive habitats [38] [37].

Following natural disasters such as earthquakes, floods, and hurricanes, SAR facilitates rapid damage assessment through change detection analysis between pre- and post-event imagery [35]. This supports efficient resource allocation for recovery efforts and helps identify impacts on protected areas and critical habitats. The ability to image through smoke, clouds, and darkness ensures timely information when optical systems may be hampered by ongoing adverse conditions [35].

Agricultural Monitoring and Soil Management

Sustainable agriculture represents a crucial intersection of conservation and human needs. SAR data revolutionizes agricultural monitoring by providing insights into soil moisture content, crop growth stages, and land management practices [38]. The sensitivity of radar backscatter to dielectric properties—strongly influenced by water content—enables soil moisture mapping without direct ground measurements [38].

This capability supports precision agriculture practices that optimize resource use while minimizing environmental impacts. SAR can identify areas of drought stress or waterlogging, enabling targeted interventions that reduce water consumption and agricultural runoff [38]. Multi-temporal SAR analysis tracks crop development patterns, supporting yield prediction and detection of unsustainable farming practices in conservation buffer zones [38].

Experimental Protocols and Methodologies

Protocol for Generating Analysis-Ready SAR Data

The value of SAR data for conservation research depends on appropriate processing to create Analysis-Ready Data (ARD). According to the Committee on Earth Observation Satellites (CEOS), ARD is defined as "satellite data that have been processed to a minimum set of requirements and organized into a form that allows immediate analysis without additional user effort and interoperability with other datasets" [39]. The following protocol outlines the key steps for generating terrain-corrected SAR ARD:

Data Acquisition and Selection: Select Single Look Complex (SLC) or Ground Range Detected (GRD) Level-1 products from satellite missions such as Sentinel-1, ALOS, or Radarsat. Consider the appropriate spatial resolution, wavelength, and polarization for the target application [39].

Radiometric Calibration: Convert digital pixel values to radar cross-section values (sigma nought) to ensure consistent radiometric measurements across different images and sensors. This enables quantitative comparison of backscatter values over time [39].

Speckle Filtering: Apply multi-looking or advanced speckle filters (e.g., Lee, Refined Lee, Gamma Map) to reduce the granular noise inherent in SAR imagery while preserving spatial resolution and feature edges [33] [39].

Geometric Terrain Correction: Correct geometric distortions caused by topography using a Digital Elevation Model (DEM). This step includes radiometric terrain flattening to normalize backscatter values across varying slopes and aspects, producing gamma nought (γ°) values [39].

Geocoding and Projection: Convert the data from sensor geometry (slant range) to a standard map projection (ground range) to facilitate integration with other geospatial datasets in GIS environments [39].

Quality Assessment: Validate the processed data through visual inspection, comparison with reference data, and verification of metadata completeness before use in analysis [39].