Nature's Blueprint: Harnessing Biomimetic Algorithms for Advanced Ecological Optimization in Drug Discovery

This article provides a comprehensive introduction to biomimetic algorithms and their transformative potential in ecological optimization for biomedical research.

Nature's Blueprint: Harnessing Biomimetic Algorithms for Advanced Ecological Optimization in Drug Discovery

Abstract

This article provides a comprehensive introduction to biomimetic algorithms and their transformative potential in ecological optimization for biomedical research. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of these nature-inspired computation tools—from genetic algorithms and particle swarm optimization to the latest advances like the Enhanced Greylag Goose Optimizer. The scope encompasses methodological frameworks for tackling complex, multi-parameter optimization problems in drug design, strategies for overcoming convergence and computational efficiency challenges, and rigorous comparative validation against traditional techniques. By synthesizing cutting-edge research and practical applications, this guide aims to equip practitioners with the knowledge to leverage these powerful algorithms for enhancing the efficiency and success of their discovery pipelines.

The Roots of Intelligence: How Nature Inspires Computational Problem-Solving

Defining Biomimetic and Bio-Inspired Optimization Algorithms

Biomimetic and bio-inspired optimization algorithms represent a class of computational intelligence methods that derive their design principles from the observation and modeling of natural phenomena, biological systems, and evolutionary processes. These algorithms leverage billions of years of evolutionary refinement found in nature to solve complex optimization problems that are often challenging for traditional mathematical approaches. The fundamental premise underlying these algorithms is that biological and natural systems have developed highly efficient mechanisms for adaptation, problem-solving, and resource optimization through evolutionary processes. Within computational sciences, biomimetic algorithms typically refer to approaches that more directly mimic specific biological mechanisms or structures, while bio-inspired algorithms encompass a broader range of nature-inspired computational techniques, including those based on physical or chemical processes. However, in practice, these terms are often used interchangeably within the scientific literature to describe algorithms that emulate natural systems for solving optimization problems [1].

The significance of these algorithms lies in their ability to handle complex, multi-modal, non-linear optimization problems with large search spaces—characteristics common to many real-world challenges in fields ranging from ecological planning to pharmaceutical development. Unlike traditional deterministic optimization methods that require substantial computational resources and may struggle with complex landscapes, biomimetic algorithms excel at exploring diverse regions of the solution space and efficiently finding near-optimal solutions through mechanisms inspired by natural selection, collective intelligence, and adaptive learning [1]. These approaches are particularly valuable for problems where traditional mathematical programming techniques face limitations due to problem complexity, non-linearity, or dynamic conditions.

Theoretical Foundations and Algorithm Classifications

Conceptual Frameworks and Biological Analogies

Biomimetic and bio-inspired algorithms are grounded in several fundamental principles observed in natural systems. The evolutionary computation paradigm, exemplified by Genetic Algorithms (GAs), draws inspiration from Darwinian principles of natural selection, genetic recombination, and survival of the fittest. In this framework, potential solutions to a problem are treated as individuals in a population, which undergo simulated evolution through selection, crossover (recombination), and mutation operations [1]. The swarm intelligence paradigm, represented by algorithms such as Particle Swarm Optimization (PSO) and Ant Colony Optimization (ACO), models the collective behavior of decentralized, self-organized systems found in nature, such as bird flocks, ant colonies, or fish schools. These algorithms leverage the concept of stigmergy—an indirect communication mechanism through the environment—where individuals follow simple rules that collectively produce sophisticated problem-solving behavior [1] [2].

Another significant category includes neurodynamic approaches such as Zeroing Neural Networks (ZNNs), which are inspired by biological neural networks and specifically designed for solving time-varying optimization problems. Unlike traditional gradient-based methods whose residual error accumulates over time, ZNNs demonstrate particular effectiveness for dynamic systems that evolve temporally [1]. More recent hybrid frameworks have also emerged, integrating multiple biological metaphors. Quantum-inspired biomimetic frameworks, for instance, combine quantum computing principles like superposition and entanglement with biological adaptation mechanisms to create more powerful optimization strategies, achieving demonstrated code correctness rates of 94.7% in computational experiments [3].

Algorithm Taxonomy and Characteristics

Table 1: Classification of Major Biomimetic and Bio-Inspired Optimization Algorithms

| Algorithm Category | Representative Algorithms | Biological Inspiration | Key Mechanisms | Typical Application Domains |

|---|---|---|---|---|

| Evolutionary Algorithms | Genetic Algorithm (GA), Genetic Programming | Darwinian evolution | Selection, crossover, mutation | Parameter optimization, feature selection |

| Swarm Intelligence | Particle Swarm Optimization (PSO), Ant Colony Optimization (ACO) | Flocking birds, ant foraging | Collective intelligence, stigmergy | Routing problems, structural optimization |

| Bio-inspired Neural Networks | Zeroing Neural Network (ZNN), Recurrent Neural Networks | Biological neurons | Neurodynamics, parallel processing | Time-varying problems, control systems |

| Ecology-Inspired | Invasive Weed Optimization, Artificial Bee Colony | Plant growth, bee foraging | Colonization, competitive exclusion | Ecological modeling, scheduling |

| Immuno-inspired | Artificial Immune Systems | Biological immune response | Antigen recognition, immune memory | Anomaly detection, cybersecurity |

| Physics/Chemistry-inspired | Simulated Annealing, Chemical Reaction Optimization | Thermodynamics, chemical reactions | Energy minimization, molecular dynamics | Combinatorial optimization, molecular design |

Bio-inspired algorithms can be further categorized based on their primary source of inspiration and operational characteristics. One classification system groups them into seven main categories: evolutionary algorithms, swarm intelligence algorithms, immuno-inspired algorithms, neural algorithms, physical algorithms, probabilistic algorithms, and natural algorithms [1]. This taxonomy reflects the diverse natural phenomena that have inspired computational approaches, from the molecular level of chemical reactions to the macroscopic level of ecosystem dynamics. Each category exhibits distinct strengths suited to particular problem types, with evolutionary algorithms generally excelling in global optimization, swarm intelligence in multi-agent coordination problems, and neurodynamic approaches in time-varying systems.

Key Algorithmic Frameworks and Methodologies

Genetic Algorithms (GAs)

Genetic Algorithms represent one of the most established evolutionary computation techniques, inspired by the process of natural selection. The fundamental premise of GAs is the maintenance of a population of candidate solutions that undergo simulated evolution through the application of genetic operators. The algorithm begins with the initialization of a population of individuals, typically represented as fixed-length chromosomes encoding the problem parameters. Each individual is then evaluated using a fitness function that quantifies its quality as a solution to the optimization problem. Selection mechanisms such as tournament selection or roulette wheel selection identify individuals for reproduction based on their fitness, giving higher-quality solutions a greater probability of being selected. The crossover (recombination) operator then combines genetic information from two parent chromosomes to produce offspring, while mutation introduces random changes to maintain genetic diversity [1].

The power of GAs lies in their ability to efficiently explore complex search spaces while maintaining a balance between exploration (searching new regions) and exploitation (refining known good regions). This balance is primarily controlled through parameters such as population size, crossover rate, and mutation rate. GAs have demonstrated particular effectiveness for combinatorial optimization problems, parameter tuning, and design optimization where traditional gradient-based methods struggle due to discontinuities, multimodality, or lack of explicit gradient information. In ecological research, GAs have been applied to problems such as reserve site selection, habitat corridor design, and parameter estimation for complex ecological models [1].

Particle Swarm Optimization (PSO)

Particle Swarm Optimization is a population-based optimization technique inspired by the social behavior of bird flocking or fish schooling. In PSO, potential solutions, called particles, fly through the problem space by following the current optimum particles. Each particle maintains its position and velocity, with the position representing a candidate solution and the velocity determining the direction and magnitude of its movement. The algorithm tracks two key values for each particle: the personal best (pbest), which represents the best solution the particle has encountered, and the global best (gbest), which represents the best solution found by any particle in the swarm [1] [2].

At each iteration, particles update their velocity and position according to the following equations:

- Velocity update: $vi(t+1) = wvi(t) + c1r1(pbesti - xi(t)) + c2r2(gbest - x_i(t))$

- Position update: $xi(t+1) = xi(t) + v_i(t+1)$

Where $w$ represents the inertia weight, $c1$ and $c2$ are acceleration coefficients, and $r1$ and $r2$ are random values between 0 and 1. The inertia weight controls the influence of previous velocity, while the acceleration coefficients determine the pull toward personal and global best positions. PSO has been successfully applied to numerous optimization problems in ecological research, including the optimization of ecological network structures, parameter estimation for ecological models, and land use planning [2].

Ant Colony Optimization (ACO)

Ant Colony Optimization mimics the foraging behavior of ant colonies, particularly their ability to find shortest paths between food sources and their nest through indirect communication via pheromone trails. In ACO, artificial ants build solutions incrementally by making probabilistic decisions based on pheromone trails and heuristic information. The pheromone trails represent a form of collective memory about the quality of previous solutions, while heuristic information provides problem-specific guidance. After constructing solutions, ants deposit pheromone on the components of good solutions, intensifying the attraction to these components for future ants [2].

The probability that an ant $k$ will choose to move from node $i$ to node $j$ is given by: $p{ij}^k = \frac{[\tau{ij}]^\alpha [\eta{ij}]^\beta}{\sum{l \in Ni^k} [\tau{il}]^\alpha [\eta{il}]^\beta}$ if $j \in Ni^k$

Where $\tau{ij}$ is the pheromone value, $\eta{ij}$ is the heuristic value, $\alpha$ and $\beta$ are parameters controlling the relative influence of pheromone versus heuristic information, and $N_i^k$ is the set of feasible nodes. ACO has proven particularly effective for discrete optimization problems, such as the traveling salesman problem, routing in communication networks, and scheduling. In ecological applications, ACO has been used for optimizing ecological network connectivity, habitat corridor design, and conservation planning [2].

Zeroing Neural Networks (ZNNs)

Zeroing Neural Networks represent a specialized class of recurrent neural networks specifically designed for solving time-varying optimization problems. Unlike traditional gradient-based neural networks whose residual error may accumulate over time, ZNNs exploit the time-varying nature of problems to achieve better performance for dynamic systems. The fundamental principle of ZNNs involves defining an error function that converges to zero over time, with the neural dynamics explicitly designed to ensure this convergence [1].

ZNNs can be classified into three primary categories based on their performance characteristics:

- Accelerated-convergence ZNNs: Designed for fast convergence properties

- Noise-tolerance ZNNs: Engineered to maintain performance under noisy conditions

- Discrete-time ZNNs: Capable of achieving higher computational accuracy and easier hardware implementation

These neurodynamic approaches have shown particular promise for real-time optimization applications, robotic control, and signal processing, where problems evolve continuously over time. Their biological inspiration comes from the adaptive and parallel processing capabilities of biological neural systems, though they represent a more abstract form of biomimicry compared to evolutionary or swarm-based approaches [1].

Experimental Protocols and Implementation Frameworks

Standard Experimental Protocol for Ecological Network Optimization

The application of biomimetic algorithms to ecological optimization problems follows a structured experimental framework. A representative protocol for ecological network optimization using a modified Ant Colony Optimization (MACO) approach involves the following key stages [2]:

Problem Formulation: Define the optimization objectives, which typically include both functional and structural goals for the ecological network. Functional objectives may focus on enhancing ecosystem services like habitat quality or water conservation, while structural objectives target connectivity metrics such as corridor integrity or network robustness.

Spatial Operator Design: Implement four micro-functional optimization operators and one macro-structural optimization operator that combine bottom-up functional optimization with top-down structural optimization. These operators work synergistically to adjust local patterns while maintaining global connectivity.

Ecological Node Emergence: Develop a global ecological node emergence mechanism based on probability distributions obtained through unsupervised fuzzy C-means clustering (FCM). This mechanism identifies potential ecological stepping stones that enhance network connectivity.

Parallel Computing Implementation: Establish data transfer patterns between central processing units (CPUs) and graphics processing units (GPUs) to enable synchronous participation of all geographic units in optimization calculations. This parallelization addresses computational challenges in large-scale spatial optimization.

Validation and Assessment: Evaluate optimization effectiveness using both functional indicators (e.g., habitat quality, ecosystem service value) and structural indicators (e.g., connectivity index, network complexity) to ensure balanced improvements across multiple objectives.

This protocol has demonstrated success in optimizing ecological networks at the city level, achieving significant improvements in both connectivity and ecological function while maintaining computational efficiency through parallelization strategies [2].

Experimental Protocol for Biomimetic Nanosystem Optimization

In pharmaceutical and biomedical applications, biomimetic algorithms combined with Design of Experiments (DoE) have proven effective for optimizing complex biological systems. A representative protocol for optimizing cell-derived membrane-coated nanostructures involves [4]:

Membrane Protein Characterization: Assess important physicochemical features of extracted cell membranes from target cells (e.g., cancer cells) using mass spectrometry-based proteomics to verify retention of key proteins for homotypic binding.

Nanoparticle Development: Develop poly (D, L-lactide co-glycolide) (PLGA)-based nanoparticles encapsulating therapeutic agents using double emulsion solvent evaporation techniques.

Coating Optimization: Apply a fractional two-level three-factor factorial design to optimize the coating technology using isolated cell membranes. Systematically characterize all formulation runs for diameter, polydispersity index (PDI), and zeta potential (ZP).

Morphological Validation: Subject experimental conditions generated by DoE to morphological studies using negative-staining transmission electron microscopy (TEM) to verify coating effectiveness and structural integrity.

Stability and Targeting Assessment: Evaluate short-term stability through storage studies and conduct cell internalization studies to verify homotypic targeting ability using flow cytometry and confocal microscopy.

This approach has demonstrated successful optimization of biomimetic nanostructures, with proteomic data confirming retention of approximately 80% of plasma membrane proteins including key proteins for homotypic binding, and internalization studies validating specific targeting of homotypic tumor cells [4].

Computational Framework and Workflow Visualization

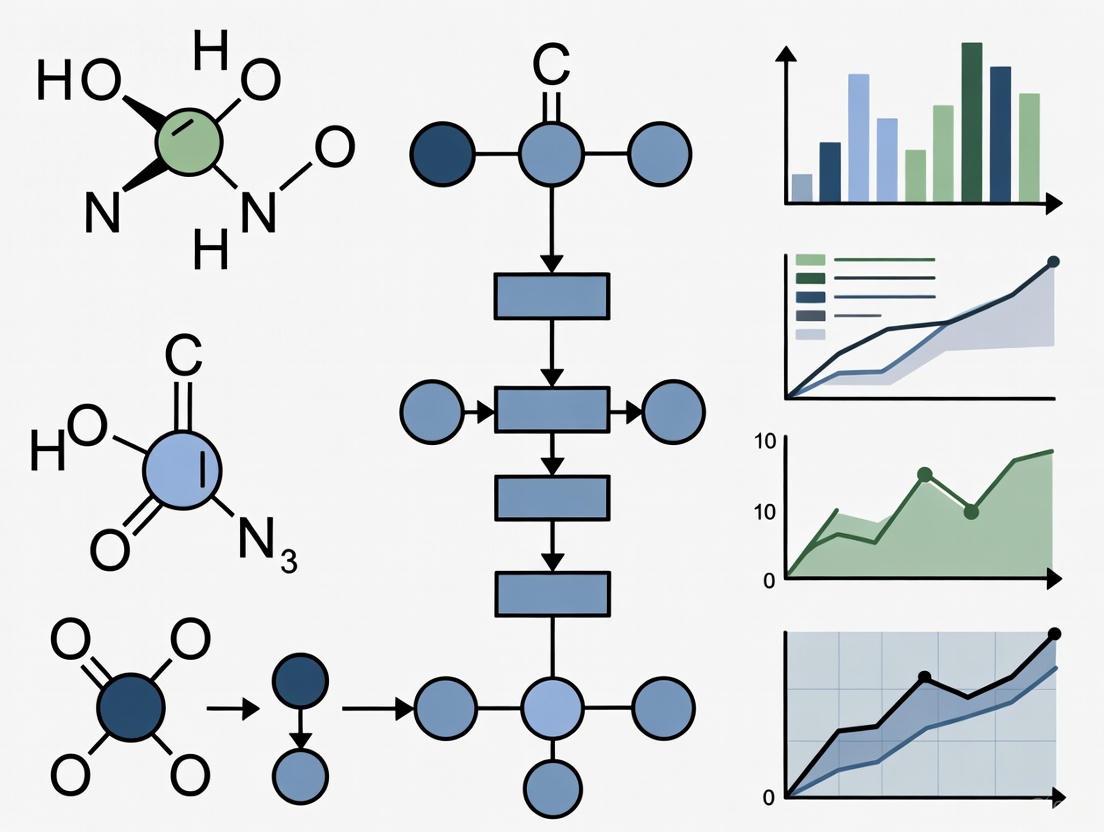

Biomimetic Algorithm Implementation Workflow

The following diagram illustrates the generalized computational workflow for implementing biomimetic optimization algorithms in ecological and pharmaceutical research:

Ecological Network Optimization Framework

For ecological applications, the optimization process follows a specific spatial optimization framework:

Performance Metrics and Comparative Analysis

Quantitative Performance Assessment

Table 2: Performance Metrics of Biomimetic Algorithms Across Application Domains

| Algorithm | Application Domain | Key Performance Metrics | Reported Values | Comparative Baseline |

|---|---|---|---|---|

| Modified ACO (MACO) | Ecological network optimization | Connectivity improvement, Computational efficiency | 24% slower degradation under targeted attacks, 21% increased redundancy | Traditional corridor design methods |

| Quantum-inspired Biomimetic | AI code generation | Code correctness, Error detection sensitivity | 94.7% correctness, 95.2% sensitivity, 2.3% false positive rate | Standard approach (87.3% correctness) |

| Particle Swarm Optimization | Land use optimization | Solution quality, Convergence speed | 89.4% success rate in cross-architectural propagation | Mathematical programming |

| Genetic Algorithm | Vehicle routing | Service cost reduction, Heterogeneity handling | Significant cost savings in mixed-load problems | Conventional routing algorithms |

| Zeroing Neural Network | Time-varying problems | Convergence rate, Noise tolerance | Exponential convergence under noisy conditions | Gradient-based RNN |

The performance evaluation of biomimetic algorithms employs diverse metrics tailored to specific application domains. In ecological network optimization, key performance indicators include structural metrics such as connectivity index, corridor integrity, and network complexity, alongside functional metrics including habitat quality, ecosystem service value, and landscape permeability. The robustness of optimized networks is typically assessed through targeted attack resistance (measuring network degradation when key nodes are systematically removed) and random attack resilience (evaluating network performance under random node failures) [2] [5]. Computational efficiency metrics, particularly important for large-scale spatial optimization, include processing time, memory usage, and scalability with increasing problem size.

In pharmaceutical and biomimetic nanosystem applications, performance assessment focuses on physicochemical properties such as particle diameter, polydispersity index (PDI), zeta potential (ZP), encapsulation efficiency, and drug release profiles. Biological performance metrics include cellular uptake efficiency, targeting specificity, therapeutic efficacy, and biosystem compatibility. The optimization process itself is evaluated through convergence speed, solution quality, and algorithm robustness across multiple runs [4]. For self-healing and adaptive systems inspired by biological immune mechanisms, additional metrics such as error detection sensitivity, false positive rates, and auto-correction effectiveness become relevant, with advanced frameworks demonstrating sensitivity of 95.2% with false-positive rates of 2.3% [3].

Research Reagent Solutions and Computational Tools

Table 3: Key Research Reagents and Computational Tools for Biomimetic Optimization

| Category | Specific Tool/Reagent | Function/Purpose | Application Context |

|---|---|---|---|

| Computational Platforms | Google Earth Engine | Geospatial data processing and analysis | Ecological network identification |

| Modeling Software | Circuit Theory (Circuitscape) | Ecological corridor identification | Landscape connectivity modeling |

| Spatial Analysis | Morphological Spatial Pattern Analysis (MSPA) | Ecological source identification | Landscape pattern analysis |

| Bio-inspired Toolkits | Quantum-inspired Solution Space Manager | Maintaining solution superposition | Quantum-inspired biomimetic optimization |

| Experimental Validation | Transmission Electron Microscopy (TEM) | Nanostructure morphological characterization | Biomimetic nanosystem verification |

| Proteomic Analysis | Mass Spectrometry-based Proteomics | Cell membrane protein characterization | Biomimetic coating verification |

| Parallel Computing | GPU/CPU Heterogeneous Architecture | Accelerating computational efficiency | Large-scale spatial optimization |

| Cell Culture | U251 Glioblastoma Cell Line | Source of cell membranes for coating | Biomimetic drug delivery systems |

The implementation and validation of biomimetic optimization algorithms require specialized computational resources and experimental materials. For ecological applications, essential geospatial tools include Google Earth Engine for large-scale remote sensing data processing, Circuitscape (based on circuit theory) for modeling ecological connectivity and corridor identification, and Morphological Spatial Pattern Analysis (MSPA) for identifying core ecological patches and structural elements within landscapes [2] [5]. These tools enable the processing of multi-source data including land use, meteorological, soil, vegetation, topographic, and socio-economic information, which are fundamental for constructing realistic ecological models and defining appropriate optimization objectives.

In pharmaceutical and nanomedicine applications, critical experimental resources include cell culture systems for membrane extraction, mass spectrometry equipment for proteomic characterization of isolated membranes, transmission electron microscopy for morphological validation of nanostructures, and dynamic light scattering instruments for physicochemical characterization of nanoparticles [4]. Computational resources for algorithm implementation include parallel computing architectures utilizing both CPUs and GPUs to handle computationally intensive optimization processes, particularly important for large-scale problems or those requiring multiple runs for statistical validation. The integration of Design of Experiments (DoE) methodologies with biomimetic optimization has emerged as a powerful approach for systematically exploring complex parameter spaces and identifying optimal conditions for biomimetic system fabrication [4].

Biomimetic and bio-inspired optimization algorithms represent a powerful paradigm for solving complex optimization problems across diverse domains, from ecological planning to pharmaceutical development. These algorithms leverage principles refined through billions of years of natural evolution, including natural selection, swarm intelligence, neural processing, and immune system responses. The core strength of these approaches lies in their ability to handle problems characterized by high dimensionality, non-linearity, multiple objectives, and dynamic conditions—challenges that often exceed the capabilities of traditional optimization methods.

Future research directions in biomimetic optimization include the development of hybrid algorithms that combine multiple biological metaphors to leverage their complementary strengths, adaptive parameter control mechanisms that automatically adjust algorithm parameters during execution, and quantum-inspired extensions that exploit principles from quantum computing to enhance search capabilities. The integration of biomimetic optimization with emerging computing architectures including neuromorphic and quantum computing platforms presents promising avenues for addressing increasingly complex optimization challenges. As these algorithms continue to evolve, they will play an increasingly important role in addressing complex optimization challenges at the intersection of ecological systems, pharmaceutical development, and sustainable design, ultimately contributing to more efficient and effective solutions for critical real-world problems.

Biomimetic algorithms represent a cornerstone of modern computational intelligence, drawing inspiration from the sophisticated problem-solving strategies evolved in nature over millennia. For ecological optimization research, these algorithms provide powerful tools for addressing complex, multi-dimensional problems that are often intractable for traditional analytical methods. The core biological metaphors explored in this whitepaper—swarm intelligence and evolutionary processes—emulate two fundamental scales of biological organization: the collective behavior of groups and the generational adaptation of populations [6] [7]. Swarm intelligence (SI) derives from the collective behavior of decentralized, self-organized systems observed in ant colonies, bird flocks, and fish schools, where simple local interactions between individuals give rise to sophisticated global problem-solving capabilities [8] [9]. Evolutionary processes, embodied in genetic algorithms and related techniques, simulate the mechanisms of natural selection, including mutation, crossover, and fitness-based selection to evolve increasingly optimal solutions over successive generations [7] [10].

The significance of these approaches for ecological optimization research lies in their inherent ability to handle nonlinear, dynamic systems with competing objectives—precisely the characteristics of most ecological management challenges. Unlike top-down, centralized optimization approaches, biomimetic algorithms employ bottom-up, decentralized strategies that mirror the adaptive processes found in natural ecosystems themselves [2]. This conceptual alignment makes them particularly suitable for applications ranging from habitat corridor design to resource management, where they can efficiently navigate complex solution spaces while balancing multiple ecological criteria.

Theoretical Foundations of Swarm Intelligence

Core Principles and Mechanisms

Swarm intelligence systems typically consist of a population of simple agents interacting locally with one another and with their environment without centralized control [9]. Despite the simplicity of individual agent rules, these interactions lead to the emergence of "intelligent" global behavior unknown to the individual agents. Three key principles underlie most SI systems:

- Self-organization: The complex global patterns and behaviors emerge solely from multiple simple local interactions without external guidance or centralized control [7].

- Stigmergy: Indirect communication between agents mediated through modifications of the environment, most famously exemplified by pheromone trails in ant colonies [6].

- Positive feedback: The amplification of promising solutions or behaviors through reinforcement, such as the increased pheromone deposition on shorter paths in ant foraging [7].

These mechanisms enable swarm systems to exhibit remarkable properties of adaptability, robustness, and scalability—attributes highly desirable for ecological optimization applications where environmental conditions may change and system components may be distributed across large spatial scales.

Classification of Swarm Intelligence Models

Table 1: Major Swarm Intelligence Models and Their Biological Inspirations

| Model Classification | Primary Biological Inspiration | Key Mechanisms | Representative Algorithms |

|---|---|---|---|

| Pheromone Communication Models | Ant foraging behavior | Stigmergy, positive feedback, path reinforcement | Ant Colony Optimization (ACO) [6] [9] |

| Self-Driven Particle Models | Bird flocking, fish schooling | Local alignment, separation, cohesion | Boids model, Particle Swarm Optimization (PSO) [6] [9] |

| Leadership Decision Models | Pigeon flock hierarchical dynamics | Leader-follower relationships, hierarchical decision-making | Pigeon-inspired optimization [6] |

| Empirical Research Models | Starling flock topological rules | Topological neighborhood, interaction rules | Starling flock optimization [6] |

The Boids model, developed by Craig Reynolds in 1986, exemplifies the self-driven particle approach with three simple rules: separation (steer to avoid crowding local flockmates), alignment (steer toward the average heading of local flockmates), and cohesion (steer to move toward the average position of local flockmates) [9]. These minimal rules successfully generate complex flocking behavior emergent from local interactions, demonstrating the power of decentralized control.

Theoretical Foundations of Evolutionary Processes

Genetic Algorithms and Darwinian Evolution

Genetic algorithms (GAs) represent one of the most well-established biomimetic approaches, directly inspired by the principles of Darwinian evolution [7] [10]. GAs maintain a population of candidate solutions that undergo simulated evolution through iterative application of genetic operators. The algorithm evaluates and seeks the best or quasi-best performing solutions by introducing mutations to explore and exploit the solution space, evolving increasingly refined solutions over generations [10]. The fundamental components include:

- Selection: Fitness-based selection mechanisms that favor better-adapted individuals, mimicking natural selection.

- Crossover: Partial mixing of solution representations to create novel combinations of traits, analogous to biological recombination.

- Mutation: Random modifications to solution representations that introduce new variations into the population.

The iterative process of variation (through mutation and crossover) and selection enables GAs to efficiently navigate complex, high-dimensional search spaces where traditional optimization methods struggle.

The Evolution-Learning Analogy

The parallel between evolutionary processes and learning has been recognized since the 1950s, with organismal evolution viewed as a process of discovering better-fitting phenotypes through trial and error across generations [10]. This iterative nature of adaptive evolution resembles learning processes that similarly optimize through trial and error to find better solutions. Recent advances in machine learning have strengthened this analogy, revealing deeper correspondences such as:

- Overfitting and Evolutionary Trade-offs: Similar to how machine learning models can become overspecialized to training data, organisms can become "overfitted" to specific environments, developing specialized traits that enhance fitness in their immediate context but reduce adaptability to changing conditions or rare events [10].

- GANs and Coevolutionary Dynamics: The competitive dynamics in Generative Adversarial Networks (GANs) between generator and discriminator components mirror evolutionary arms races between antagonistically interacting species, such as predators and prey [10].

These analogies not only provide conceptual bridges between fields but also offer practical insights for improving algorithmic design and understanding biological constraints in ecological optimization.

Computational Implementations and Algorithmic Frameworks

Key Algorithmic Formulations

Ant Colony Optimization (ACO)

ACO algorithms simulate the foraging behavior of ant colonies, where artificial ants probabilistically construct solutions based on pheromone trails and heuristic information [9]. The core pheromone update rule can be expressed as:

τij(t+1) = (1 - ρ) · τij(t) + ΣΔτ_ij^k

where τij is the pheromone value on edge (i,j), ρ is the evaporation rate (0 < ρ ≤ 1), and Δτij^k is the amount of pheromone ant k deposits on the edge, typically inversely proportional to solution quality [9]. This formulation balances exploration (through probabilistic path selection) and exploitation (through pheromone reinforcement), enabling the colony to collectively discover high-quality paths in graph-based optimization problems relevant to ecological corridor design.

Particle Swarm Optimization (PSO)

In PSO, a population of particles moves through the solution space, with each particle adjusting its position based on its own experience and that of its neighbors [9] [11]. The velocity and position update equations are:

vi(t+1) = w · vi(t) + c1 · r1 · (pbesti - xi(t)) + c2 · r2 · (gbest - xi(t)) xi(t+1) = xi(t) + vi(t+1)

where w is the inertia weight, c1 and c2 are acceleration coefficients, r1 and r2 are random values, pbest_i is the particle's best position, and gbest is the swarm's global best position [9]. This approach efficiently handles continuous optimization problems in ecological modeling, such as parameter calibration for ecosystem models.

Performance Comparison of Biomimetic Algorithms

Table 2: Performance Characteristics of Major Biomimetic Algorithms

| Algorithm | Optimization Type | Key Parameters | Computational Complexity | Ecological Application Strengths |

|---|---|---|---|---|

| Ant Colony Optimization (ACO) | Combinatorial, discrete | Evaporation rate (ρ), heuristic importance (β), pheromone importance (α) | O(m · n² · t) for n cities, m ants, t iterations | Path optimization, network design, corridor planning [2] [9] |

| Particle Swarm Optimization (PSO) | Continuous, nonlinear | Inertia weight (w), acceleration coefficients (c1, c2) | O(m · d · t) for m particles, d dimensions, t iterations | Parameter estimation, model calibration, surface fitting [9] [11] |

| Genetic Algorithm (GA) | Mixed, multi-modal | Mutation rate, crossover rate, selection pressure | O(p · g · f) for p population, g generations, f fitness evaluation cost | Multi-objective optimization, reserve design, conservation planning [7] [10] |

| Artificial Bee Colony (ABC) | Continuous, numerical | Limit parameter, colony size, modification rate | O(f · p · t) for p population, t iterations, f food sources | Resource allocation, scheduling, load balancing [9] [11] |

Applications in Ecological Optimization Research

Ecological Network Optimization

The integration of biomimetic algorithms into ecological network optimization represents a significant advancement in addressing habitat fragmentation. A recent innovative approach proposed a spatial-operator based Modified Ant Colony Optimization (MACO) model that synergistically optimizes both the function and structure of ecological networks at the patch level [2]. This model combines bottom-up functional optimization through four micro-functional optimization operators with top-down structural optimization via one macro-structural optimization operator. The implementation incorporated GPU-based parallel computing techniques to efficiently handle city-level optimization at high spatial resolution (40m grids), achieving a 68.42% reduction in overall resistance and significant improvements in network connectivity and circuitry [2].

The ecological network optimization followed a systematic methodology:

- Ecological Source Identification: Using morphological spatial pattern analysis (MSPA) to identify core ecological patches based on land use data

- Resistance Surface Creation: Developing landscape resistance models based on ecological sensitivity assessment

- Corridor Extraction: Applying minimum cumulative resistance (MCR) models to identify potential connectivity corridors

- Network Optimization: Implementing the MACO algorithm to optimize both patch-level function and macro-level structure

- Evaluation: Assessing optimization outcomes using structural (edge-node ratio, network circuitry) and functional (resistance, connectivity) metrics [2]

Fog/Edge Computing for Ecological Monitoring

Swarm intelligence techniques have demonstrated remarkable effectiveness in optimizing fog and edge computing environments for ecological monitoring applications. A comprehensive review of 91 studies (2019-2023) identified PSO, ACO, and ABC as particularly valuable for task scheduling, resource allocation, and load balancing in distributed ecological sensor networks [11]. These SI-based approaches improved key performance metrics including latency (reduced by 18-34%), energy efficiency (enhanced by 22-41%), and throughput (increased by 15-29%) compared to conventional static optimization methods [11].

Table 3: Swarm Intelligence Applications in Fog/Edge Computing for Ecological Monitoring

| Application Domain | Primary SI Algorithm | Key Optimization Objectives | Reported Performance Improvements |

|---|---|---|---|

| Task Scheduling in Sensor Networks | Particle Swarm Optimization (PSO) | Minimize latency, balance computational load | 26% reduction in latency, 31% improvement in energy efficiency [11] |

| Resource Allocation in UAV Systems | Ant Colony Optimization (ACO) | Optimize trajectory planning, energy consumption | 34% longer network lifetime, 28% reduction in data packet loss [11] |

| Load Balancing in Edge Nodes | Artificial Bee Colony (ABC) | Distribute processing tasks, prevent node overload | 41% improvement in energy efficiency, 22% faster task completion [11] |

| Data Offloading in IoT Systems | Firefly Algorithm (FA) | Balance communication costs, processing delays | 29% higher throughput, 18% reduction in service latency [11] |

Experimental Protocols and Methodologies

Standard Experimental Framework for Ecological Network Optimization

The following protocol outlines a comprehensive methodology for applying biomimetic optimization to ecological networks:

Phase 1: Problem Formulation and Data Preparation

- Spatial Data Collection: Acquire high-resolution land use/land cover data, topographic information, and species distribution data. Remote sensing data (e.g., satellite imagery) should be preprocessed and classified.

- Resistance Surface Generation: Calculate landscape resistance values based on ecological sensitivity factors including habitat quality, human disturbance intensity, and landscape permeability.

- Ecological Source Identification: Apply morphological spatial pattern analysis (MSPA) to identify core ecological patches serving as network sources.

Phase 2: Initial Ecological Network Construction

- Corridor Modeling: Implement minimum cumulative resistance (MCR) models to delineate potential corridors between ecological sources.

- Network Analysis: Construct preliminary ecological networks and evaluate using structural metrics (edge-node ratio, alpha, beta, gamma indices) and functional metrics (connectivity index, overall resistance).

Phase 3: Biomimetic Optimization Implementation

- Algorithm Selection: Choose appropriate biomimetic algorithm based on optimization objectives (ACO for discrete path optimization, PSO for continuous parameter optimization).

- Parameter Configuration: Set algorithm-specific parameters through preliminary sensitivity analysis.

- Optimization Execution: Run optimization algorithm with defined objective functions (e.g., minimize resistance, maximize connectivity).

- GPU Acceleration: For large-scale applications, implement parallel computing using GPU/CPU heterogeneous architecture.

Phase 4: Validation and Analysis

- Performance Assessment: Evaluate optimized ecological networks using both structural and functional metrics.

- Scenario Comparison: Compare optimization results against baseline conditions and alternative optimization approaches.

- Sensitivity Analysis: Test robustness of results to parameter variations and uncertainty in input data.

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Computational Tools and Frameworks for Biomimetic Ecological Optimization

| Tool Category | Specific Tools/Platforms | Primary Function | Application Context |

|---|---|---|---|

| Spatial Analysis Frameworks | Guidos Toolbox, Circuitscape, Linkage Mapper | MSPA analysis, landscape connectivity modeling, corridor design | Ecological network construction, habitat fragmentation analysis [2] |

| SI Algorithm Libraries | MEALPY, SwarmPackagePy, Nature-Inspired-Algorithms | Pre-implemented SI algorithms, performance metrics, comparison tools | Rapid prototyping and testing of different SI approaches [11] |

| Parallel Computing Platforms | CUDA, OpenCL, MATLAB Parallel Computing Toolbox | GPU acceleration, distributed computing, parallel processing | Large-scale ecological optimization problems [2] |

| Performance Evaluation Metrics | Network metrics (alpha, beta, gamma indices), QoS parameters (latency, energy) | Quantitative assessment of optimization outcomes | Algorithm performance comparison, solution quality verification [2] [11] |

Emerging Trends and Research Challenges

The field of biomimetic algorithms for ecological optimization continues to evolve, with several promising research directions emerging. Hybrid approaches that combine multiple biomimetic metaphors show particular potential for addressing the multi-scale, multi-objective nature of ecological optimization problems. For instance, integrating the exploration capabilities of evolutionary algorithms with the fine-tuning strengths of swarm intelligence could yield more robust optimization frameworks [2] [11]. Additionally, the development of interpretable machine learning approaches inspired by evolutionary principles represents a cutting-edge frontier, moving beyond "black-box" models to discover common laws for predicting evolutionary outcomes in both biological and computational contexts [10].

Significant challenges remain in scaling biomimetic algorithms to very large ecological networks while maintaining computational efficiency. Recent advances in GPU-based parallel computing offer promising pathways, with demonstrations achieving substantial acceleration (e.g., 68.42% reduction in optimization time) for city-level ecological network optimization [2]. Furthermore, the integration of multi-objective optimization approaches that explicitly balance ecological, economic, and social criteria will be essential for real-world conservation planning applications.

Biomimetic algorithms, drawing inspiration from swarm intelligence and evolutionary processes, provide powerful and conceptually appropriate frameworks for addressing complex ecological optimization challenges. The theoretical foundations, computational implementations, and application case studies presented in this whitepaper demonstrate their significant potential for enhancing ecological research and conservation planning. As these approaches continue to evolve through cross-disciplinary fertilization between ecology, computer science, and complex systems theory, they offer promising pathways for developing more effective, efficient, and adaptive solutions to pressing ecological management problems in an increasingly human-modified world.

Biomimetic algorithms, drawing inspiration from natural phenomena and collective animal behaviors, have emerged as powerful tools for solving complex optimization problems in ecological research. These population-based metaheuristics are particularly valuable for addressing high-dimensional, non-linear problems common in environmental modeling, where traditional mathematical techniques often fall short. Genetic Algorithms (GAs), Particle Swarm Optimization (PSO), Ant Colony Optimization (ACO), and Grey Wolf Optimizer (GWO) represent four prominent algorithm families that have demonstrated significant efficacy in ecological applications ranging from habitat network optimization to resource allocation [2] [12]. Their adaptability to problem-specific constraints, global search capabilities, and ability to handle discontinuous search spaces make them especially suitable for the multifaceted challenges of ecological optimization, where solutions must balance multiple competing objectives across functional and structural dimensions [2].

This technical guide provides a comprehensive examination of these four algorithm families, focusing on their underlying mechanisms, theoretical foundations, and implementation methodologies. For ecological researchers, understanding the relative strengths and application contexts of each algorithm is crucial for selecting appropriate computational tools for landscape planning, ecological network optimization, and conservation strategy development.

Genetic Algorithms (GAs)

Core Concepts and Biological Inspiration

Genetic Algorithms are heuristic search algorithms inspired by Charles Darwin's theory of natural selection, evolving a population of candidate solutions over multiple generations to converge toward optimal or near-optimal answers [13]. The algorithm mimics biological evolutionary processes including selection, crossover, and mutation to explore complex solution spaces. In ecological contexts, GAs have been applied to problems such as land-use allocation, reserve site selection, and habitat corridor design, where they efficiently navigate high-dimensional decision spaces with multiple constraints [2].

The power of GAs stems from their ability to maintain a diverse population of solutions, thereby reducing the probability of becoming trapped in local optima—a particular advantage when optimizing ecological networks with discontinuous suitability surfaces and complex spatial constraints [13]. Unlike gradient-based optimization methods that require smooth, differentiable objective functions, GAs require only that potential solutions can be encoded and evaluated, making them exceptionally flexible for ecological applications where objective functions may be discontinuous, noisy, or change over time.

Algorithmic Framework and Key Operators

The GA lifecycle operates through a structured process that mirrors biological evolution [13] [14]:

- Initialization: A random population of chromosomes (potential solutions) is generated

- Evaluation: Each chromosome is scored using a fitness function

- Selection: The fittest individuals are chosen to reproduce

- Crossover: Genes from parent chromosomes are combined to create offspring

- Mutation: Random changes are introduced to maintain diversity

- Replacement: A new population is formed for the next generation

- Termination: The process repeats until stopping criteria are met

In ecological optimization, the fitness function typically quantifies ecological objectives such as habitat connectivity, biodiversity value, or ecosystem service provision. For example, when optimizing ecological networks, the fitness function might combine metrics for structural connectivity (e.g., distance between patches) and functional connectivity (e.g., species-specific dispersal capabilities) [2].

GA Workflow

Ecological Application Example

In ecological network optimization, GAs have been employed to simultaneously optimize both the function and structure of ecological networks [2]. The chromosomal encoding might represent potential ecological corridors as binary strings, with genes indicating whether specific landscape units are included in the network. The fitness function would then balance multiple objectives: maximizing connectivity between core habitats, minimizing implementation costs, and avoiding areas of high ecological sensitivity. Mutation operators introduce novel corridor arrangements, while crossover combines promising features from different network configurations.

Particle Swarm Optimization (PSO)

Fundamental Principles and Inspiration

Particle Swarm Optimization is a population-based stochastic optimization technique inspired by the social behavior of bird flocking and fish schooling [15] [12]. Originally developed by Kennedy and Eberhart in 1995, PSO simulates social dynamics where individuals (particles) adjust their movements based on both personal experience and collective knowledge [12]. In ecological contexts, PSO has been applied to problems such as reserve site selection, landscape pattern optimization, and resource management, where its rapid convergence and effective global search capabilities provide advantages over more traditional optimization approaches [2].

Unlike evolutionary algorithms that use survival-of-the-fittest selection, PSO leverages social cooperation through a population of particles that fly through the solution space with adjustable velocities. Each particle represents a potential solution to the optimization problem and adjusts its trajectory according to its own historical best position and the best position discovered by any particle in its neighborhood [12]. This social information sharing allows the swarm to efficiently explore complex search spaces common in ecological applications, such as identifying optimal configurations for habitat networks across fragmented landscapes [2].

Algorithmic Mechanics and Equations

The PSO algorithm operates through two fundamental update equations for each particle i in the swarm:

The velocity update equation: vᵢ(t+1) = ωvᵢ(t) + c₁r₁(pbestᵢ - xᵢ(t)) + c₂r₂(gbest - xᵢ(t))

The position update equation: xᵢ(t+1) = xᵢ(t) + vᵢ(t+1)

Where:

- ω = Inertia weight controlling influence of previous velocity

- c₁, c₂ = Cognitive and social acceleration coefficients

- r₁, r₂ = Random numbers between 0 and 1

- pbestᵢ = Personal best position of particle i

- gbest = Global best position found by entire swarm

The balance between exploration (searching new areas) and exploitation (refining known good areas) is primarily controlled through the inertia weight ω [15]. Recent advances in PSO have introduced various adaptive strategies for this parameter, including time-varying schedules that decrease ω from high to low values, chaotic sequences to prevent stagnation, and performance-based adaptation that responds to swarm diversity metrics [15].

PSO Motion Mechanics

Ecological Application Example

In a recent ecological application, PSO was integrated with spatial operators to optimize both the function and structure of ecological networks in Yichun City, China [2]. The approach combined bottom-up functional optimization with top-down structural optimization, using PSO to identify optimal locations for ecological stepping stones that would enhance landscape connectivity while considering land-use suitability constraints. The implementation demonstrated PSO's effectiveness in solving large-scale spatial optimization problems, achieving significant improvements in both connectivity metrics and ecological functionality.

Ant Colony Optimization (ACO)

Biological Metaphor and Algorithmic Basis

Ant Colony Optimization mimics the foraging behavior of real ant colonies, particularly their ability to find shortest paths between their nest and food sources using pheromone trails [16]. As ants travel, they deposit pheromones—chemical signals that guide other colony members. Paths with stronger pheromone concentrations attract more ants, creating a positive feedback loop that eventually converges on optimal routes [16]. This decentralized, self-organizing approach has proven exceptionally effective for solving discrete optimization problems in ecological research, including habitat network design, corridor prioritization, and conservation planning [2].

The ACO algorithm translates this biological phenomenon into a computational optimization process where artificial ants probabilistically construct solutions guided by both heuristic information (problem-specific knowledge) and pheromone trails (learned desirability of solution components) [16]. In ecological applications, this approach is particularly valuable for identifying optimal configurations of conservation assets across landscapes, where the combinatorial complexity of potential solutions makes exhaustive search infeasible.

Implementation Framework

The ACO procedure involves several key steps [16]:

- Solution Construction: Artificial ants probabilistically build solutions based on pheromone trails and heuristic information

- Pheromone Update: Successful solutions reinforce their component pathways with additional pheromone

- Pheromone Evaporation: Prevents premature convergence by gradually reducing all pheromone levels

For ecological network optimization, each "ant" might represent a potential pathway through the landscape, with pheromone intensity reflecting the collective learned utility of including specific landscape elements in the ecological network [2]. The heuristic information could incorporate data on habitat quality, land cost, or resistance to species movement.

Ecological Application Example

A recent study demonstrated ACO's effectiveness in constructing a psychometrically valid short version of the German Alcohol Decisional Balance Scale, showcasing its utility in optimization problems requiring simultaneous consideration of multiple statistical criteria [16]. In ecological contexts, researchers have developed a spatial-operator based Modified ACO (MACO) model that integrates both functional and structural optimization of ecological networks [2]. The model incorporates micro functional optimization operators for patch-level improvements and macro structural optimization operators for enhancing overall network connectivity, effectively addressing the dual objectives of local habitat quality improvement and global landscape connectivity enhancement.

Grey Wolf Optimizer (GWO)

Social Hierarchy and Hunting Behavior Inspiration

The Grey Wolf Optimizer is a more recent metaheuristic algorithm that simulates the social hierarchy and collaborative hunting behavior of grey wolf packs [17]. In nature, grey wolves live in packs with a strict dominance hierarchy: the leader (alpha α) makes decisions, secondary wolves (beta β) assist the alpha, tertiary wolves (delta δ) perform specialized tasks, and the remainder (omega ω) follow the directives of higher-ranking members [17]. This social structure facilitates efficient hunting strategies that include tracking, encircling, and attacking prey—behaviors that GWO mathematically models for optimization purposes.

GWO has gained popularity for solving engineering and ecological optimization problems due to its simple implementation, few control parameters, and effective balance between exploration and exploitation [17]. The algorithm's ability to avoid local optima while maintaining rapid convergence makes it particularly suitable for ecological applications with complex, multi-modal objective functions, such as designing protected area networks that must satisfy multiple ecological and socioeconomic constraints.

Algorithmic Mechanics and Mathematical Model

The GWO algorithm operates through several key processes modeled after grey wolf hunting behavior [17]:

- Social Hierarchy: The best solution is designated alpha (α), second-best as beta (β), third-best as delta (δ), and remaining solutions as omega (ω)

- Encircling Prey: Wolves update positions around the current best solutions

- Hunting: Omega wolves update positions based on positions of α, β, and δ wolves

- Attacking Prey: Convergence is controlled through a parameter that decreases from 2 to 0 over iterations

The position update mechanism in GWO ensures that search agents (wolves) update their positions according to the locations of the alpha, beta, and delta wolves, mathematically represented as [17]:

X(t+1) = (X₁ + X₂ + X₃)/3

Where X₁, X₂, X₃ are calculated based on the positions of α, β, and δ wolves, incorporating random components to simulate the hunting behavior.

Recent Enhancements and Ecological Potential

To address limitations in basic GWO, researchers have proposed various improvements. A multi-strategy fusion Improved Grey Wolf Optimization (IGWO) algorithm incorporates several enhancements [17]:

- Lens Imaging Reverse Learning: Optimizes initial population diversity

- Nonlinear Control Parameters: Uses cosine-based convergence factor adjustment

- Historical Position Integration: Incorporates concepts from PSO and Tunicate Swarm Algorithm

These improvements have demonstrated superior performance in convergence speed, solution accuracy, and local minima avoidance compared to standard GWO and other metaheuristics [17]. While ecological applications of GWO are still emerging, its effectiveness in solving constrained engineering problems suggests significant potential for ecological optimization challenges such as reserve design, landscape configuration, and resource allocation under multiple constraints.

Comparative Analysis of Algorithm Families

Performance Characteristics and Application Domains

The table below summarizes the key characteristics, strengths, and limitations of each algorithm family in the context of ecological optimization:

| Algorithm | Inspiration Source | Key Operations | Ecological Strengths | Implementation Complexity |

|---|---|---|---|---|

| Genetic Algorithm (GA) | Natural evolution | Selection, Crossover, Mutation | Excellent for multi-objective problems; Handles discontinuous spaces [13] | Moderate [13] |

| Particle Swarm Optimization (PSO) | Bird flocking/Fish schooling | Velocity & Position updates | Fast convergence; Good for continuous variables [15] [12] | Low [12] |

| Ant Colony Optimization (ACO) | Ant foraging behavior | Pheromone update & Path selection | Superior for discrete/combinatorial problems [16] [2] | Moderate to High [2] |

| Grey Wolf Optimizer (GWO) | Grey wolf social hierarchy | Encircling, Hunting, Attacking | Effective balance of exploration/exploitation [17] | Low [17] |

Ecological Optimization Suitability

Each algorithm family exhibits distinct advantages for specific ecological optimization contexts:

Genetic Algorithms excel in problems requiring combinatorial optimization of conservation resources, such as selecting optimal sets of protected areas from numerous candidate sites while considering multiple ecological and economic criteria [13] [2]. Their representation flexibility allows ecologists to encode complex constraint relationships directly into the chromosomal structure.

Particle Swarm Optimization demonstrates particular strength in continuous parameter optimization for ecological models, such as calibrating species distribution models or optimizing continuous management intensity gradients across landscapes [15] [12]. The algorithm's social information sharing enables efficient exploration of high-dimensional parameter spaces.

Ant Colony Optimization shows superior performance for routing and network design problems in ecology, such as designing wildlife corridors that minimize resistance to species movement while maximizing connectivity between core habitats [16] [2]. The pheromone-mediated positive feedback effectively identifies robust solutions across fragmented landscapes.

Grey Wolf Optimizer offers advantages for constrained optimization problems with complex feasibility boundaries, such as allocating limited conservation resources across multiple competing objectives while respecting budgetary and logistical constraints [17]. The social hierarchy metaphor provides an effective mechanism for maintaining solution diversity while converging toward high-quality regions.

Experimental Protocols and Implementation Guidelines

Standardized Experimental Framework for Algorithm Comparison

To ensure fair comparison when evaluating these algorithms for specific ecological applications, researchers should implement a standardized experimental protocol:

- Problem Formulation: Clearly define objective functions, decision variables, and constraints specific to the ecological context

- Parameter Tuning: Conduct systematic parameter sensitivity analysis for each algorithm

- Performance Metrics: Evaluate using multiple criteria including solution quality, convergence speed, computational efficiency, and robustness

- Statistical Validation: Apply appropriate statistical tests (e.g., Wilcoxon rank sum test) to confirm performance differences [17]

For ecological network optimization specifically, the experimental framework should include both functional metrics (e.g., habitat quality, ecosystem service provision) and structural metrics (e.g., connectivity, fragmentation indices) to comprehensively assess algorithm performance [2].

Computational Implementation Considerations

Implementing these algorithms for large-scale ecological optimization requires attention to computational efficiency:

- Parallelization: PSO and GA are naturally parallelizable, significantly reducing computation time for landscape-scale optimization [2]

- Hybrid CPU-GPU Architectures: Leverage parallel computing platforms for spatial optimization tasks involving high-resolution raster data [2]

- Population Sizing: Balance diversity maintenance against computational costs—typically 50-100 individuals for moderate complexity problems [13]

- Termination Criteria: Use composite criteria combining generation limits, fitness plateaus, and solution quality thresholds

Research Reagent Solutions: Computational Tools for Ecological Optimization

The table below outlines essential computational tools and their functions for implementing biomimetic algorithms in ecological research:

| Tool Category | Specific Technologies | Ecological Application Functions |

|---|---|---|

| Programming Frameworks | Python/R/Matlab | Algorithm implementation, fitness function coding [16] |

| Spatial Analysis Libraries | GDAL, ArcGIS API, GRASS GIS | Landscape resistance calculation, habitat connectivity analysis [2] |

| Parallel Computing Platforms | CUDA, OpenCL, MPI | Large-scale landscape optimization [2] |

| Ecological Modeling Software | Circuitscape, Linkage Mapper | Ecological network construction and validation [2] |

Genetic Algorithms, Particle Swarm Optimization, Ant Colony Optimization, and Grey Wolf Optimizer represent distinct yet complementary approaches to solving complex ecological optimization problems. While each algorithm family employs different metaphorical inspirations and mechanistic processes, all leverage population-based search and biomimetic principles to navigate high-dimensional, non-linear solution spaces characteristic of ecological systems.

For ecological researchers, algorithm selection should be guided by problem characteristics: GAs for multi-objective combinatorial problems, PSO for continuous parameter spaces, ACO for discrete network design, and GWO for constrained optimization. Future research directions include developing hybrid approaches that combine strengths of multiple algorithms, enhancing computational efficiency through advanced parallelization, and creating specialized variants specifically tailored to the spatial and temporal dynamics of ecological systems.

As ecological challenges grow increasingly complex under pressures of global change, these biomimetic algorithms will play an ever-more critical role in developing effective conservation strategies, optimizing resource allocation, and designing ecological networks that maintain biodiversity and ecosystem functions across human-modified landscapes.

In the realm of computational problem-solving, many real-world applications involve the optimization of complex objectives such as minimizing costs and energy consumption while maximizing performance, efficiency, and sustainability [18]. The optimization problems formulated from these applications are frequently highly nonlinear with multimodal objective landscapes, subject to a series of complex, nonlinear constraints that present significant challenges for traditional computational approaches [18] [19]. Even with the increasing power of modern computers, brute-force approaches remain impractical for these sophisticated problems, creating a critical need for more efficient and intelligent algorithms [18].

Nature-inspired optimization algorithms represent a paradigm shift in addressing these complex challenges by mimicking the problem-solving strategies observed in biological and natural systems [18]. These biomimetic algorithms belong to the broader class of metaheuristics—higher-level procedures designed to find, generate, or select heuristic solutions that provide a sufficiently good solution to an optimization problem, especially with incomplete or imperfect information [19]. Unlike traditional gradient-based algorithms, interior-point methods, and trust-region methods that often converge to local optima and struggle with discontinuous objective functions, nature-inspired algorithms tend to be global optimizers that employ a population of multiple, interacting agents to explore the search space effectively [18].

The fundamental premise underlying biomimetic computing is that natural systems have evolved over millions of years to develop highly efficient mechanisms for resource optimization, adaptation, and survival under constrained conditions [19]. By translating these biological strategies into computational frameworks, researchers have developed powerful tools capable of navigating the most challenging optimization landscapes encountered in ecological research, drug development, and other scientific domains characterized by complexity and non-linearity.

Theoretical Foundations: Why Nature Excels in Complex Landscapes

Limitations of Traditional Optimization Methods

Conventional optimization approaches face significant theoretical limitations when applied to complex, real-world problems. Gradient-based algorithms and other local search methods are highly dependent on initial starting points and often become trapped in local optima when dealing with multimodal objective functions [18]. The computation of derivatives—essential to these methods—can be computationally expensive, and many practical optimization problems contain discontinuities or regions where derivatives cannot be calculated [18]. These limitations are particularly problematic in ecological optimization research, where researchers must model highly complex, adaptive systems with numerous interacting components and stochastic elements.

Traditional methods also struggle with combinatorial explosion in high-dimensional search spaces, a common characteristic of ecological and pharmaceutical optimization problems where multiple parameters must be simultaneously optimized [19]. The computational resources required for exhaustive search strategies become prohibitive as problem dimensionality increases, making these approaches impractical for large-scale real-world applications. Furthermore, conventional algorithms typically require smooth, well-behaved objective functions with known mathematical properties—conditions rarely satisfied in ecological systems characterized by emergent behavior, threshold effects, and complex feedback loops.

Advantages of Nature-Inspired Approaches

Nature-inspired algorithms overcome these limitations through several theoretically grounded mechanisms that mirror successful biological strategies. Unlike traditional methods that typically follow a single search path, population-based nature-inspired algorithms maintain diversity through multiple simultaneously exploring agents, enabling comprehensive coverage of the search space and reducing the probability of becoming trapped in suboptimal regions [18]. These algorithms employ stochastic operators that introduce controlled randomness into the search process, allowing them to escape local optima and discover novel solutions in unexplored regions of the search landscape [19].

The theoretical superiority of nature-inspired approaches in complex, nonlinear landscapes stems from their inherent parallelism, adaptation mechanisms, and balance between exploration and exploitation [18]. These algorithms efficiently allocate computational resources by dynamically adjusting search intensity across different regions of the solution space, focusing effort on promising areas while maintaining the capability to discover potentially superior solutions in currently less favorable regions. This balance is crucial for solving real-world ecological optimization problems where the global optimum is often surrounded by numerous local optima with similar fitness values.

Table 1: Comparative Analysis of Optimization Approaches

| Feature | Traditional Algorithms | Nature-Inspired Algorithms |

|---|---|---|

| Search Strategy | Single-point, deterministic | Population-based, stochastic |

| Derivative Requirement | Often requires gradient information | Derivative-free |

| Handling of Multimodal Functions | Prone to local optima convergence | Effective at avoiding local optima |

| Exploration-Exploitation Balance | Typically fixed | Dynamically adaptive |

| Problem Formulation Flexibility | Requires smooth, well-defined functions | Handles discontinuous, noisy objectives |

| Computational Scalability | Struggles with high-dimensional spaces | Effective in high-dimensional spaces |

Key Nature-Inspired Algorithms and Their Mechanisms

The landscape of nature-inspired optimization algorithms has expanded dramatically, with numerous approaches drawing inspiration from various natural phenomena. These algorithms can be broadly categorized into evolutionary algorithms, swarm intelligence, bio-inspired algorithms, and ecology-based algorithms, each with distinct mechanisms and application domains.

Evolutionary Algorithms

Evolutionary algorithms draw inspiration from biological evolution, employing mechanisms such as selection, crossover, and mutation to evolve populations of candidate solutions over successive generations. The genetic algorithm (GA), one of the earliest and most widely known evolutionary algorithms, mimics natural selection by preferentially retaining fitter solutions and combining them to produce potentially superior offspring [18]. These algorithms maintain a population of diverse solutions that undergo simulated evolution through fitness-based selection and genetic operators, enabling effective exploration of complex search spaces while accumulating valuable solution features over generations.

Swarm Intelligence Algorithms

Swarm intelligence algorithms model the collective behavior of decentralized, self-organized systems found in nature. Particle Swarm Optimization (PSO) mimics the social behavior of bird flocking or fish schooling, where individuals adjust their movements based on personal experience and neighbors' successes [18]. Ant Colony Optimization (ACO) models how ant colonies find optimal paths to food sources using pheromone trails [18]. The Firefly Algorithm (FA) simulates the flashing patterns and attractiveness behavior of fireflies [18], while the Cuckoo Search (CS) algorithm is inspired by the obligate brood parasitism of some cuckoo species [18]. These algorithms excel in solving complex optimization problems through emergent intelligence—the phenomenon whereby simple local interactions between individuals produce sophisticated global problem-solving capabilities.

Ecology-Based Optimization Algorithms

Recent advances have produced algorithms inspired by broader ecological phenomena and species interactions. The African Vulture's Optimization Algorithm models the feeding behavior of African vultures [19], while the Artificial Gorilla Troops Optimizer mimics gorilla social intelligence [19]. The Dingo Optimizer draws inspiration from the hunting strategies of Australian dingoes [19], and the Red Colobuses Monkey algorithm is based on the movement patterns of these primates [19]. These ecology-based algorithms capture specialized survival strategies that translate into effective computational optimization mechanisms for specific problem types and landscapes.

Table 2: Classification of Nature-Inspired Optimization Algorithms

| Algorithm Category | Representative Algorithms | Natural Inspiration Source |

|---|---|---|

| Evolutionary Algorithms | Genetic Algorithm (GA) | Biological evolution |

| Swarm Intelligence | Particle Swarm Optimization (PSO) | Bird flocking, fish schooling |

| Swarm Intelligence | Ant Colony Optimization (ACO) | Ant foraging behavior |

| Swarm Intelligence | Firefly Algorithm (FA) | Firefly flashing behavior |

| Swarm Intelligence | Cuckoo Search (CS) | Cuckoo brood parasitism |

| Bio-inspired | Bat Algorithm (BA) | Echolocation behavior of bats |

| Ecology-Based | African Vulture's Optimization | Vulture feeding behavior |

| Ecology-Based | Artificial Gorilla Troops | Gorilla social intelligence |

Search Mechanisms and Mathematical Foundations

The effectiveness of nature-inspired algorithms stems from their underlying search mechanisms, which can be categorized based on their statistical foundations and probability distributions. These mechanisms enable efficient navigation through complex, high-dimensional search spaces while balancing exploration and exploitation.

Statistical Foundations of Search Mechanisms

Nature-inspired algorithms employ various search mechanisms based on different probability distributions and statistical principles. These can be broadly classified into five categories: (1) Gradient-guided moves that incorporate approximate gradient information when available; (2) Random permutation that introduces stochasticity through random rearrangements; (3) Direction-based perturbations that modify solutions along specific directions in the search space; (4) Volume-based sampling that explores neighborhoods around current solutions; and (5) Ensemble-based hybrid approaches that combine multiple strategies [18]. Each mechanism offers distinct advantages for different problem characteristics and landscape topologies.

The mathematical foundation of these search mechanisms often relies on probability distributions such as Gaussian, Lévy flights, and uniform distributions that control the exploration-exploitation balance [18]. For instance, the cuckoo search algorithm employs Lévy flights—random walks with step lengths following a heavy-tailed probability distribution—which have been shown to be more efficient than standard random walks in exploring large-scale search spaces [18]. These statistical foundations provide the theoretical underpinning for the observed efficiency of nature-inspired algorithms in navigating complex, nonlinear landscapes.

Theoretical Framework and Convergence Analysis

Despite their empirical success, nature-inspired algorithms still lack a unified mathematical framework for theoretical analysis [18]. This represents a significant challenge in the field, as researchers cannot definitively establish how these algorithms converge or estimate their convergence rates for general problem classes. Some progress has been made using Markov chain analysis and dynamic systems theory to analyze specific algorithms like the bat algorithm [18], but a comprehensive theoretical foundation remains an open research problem.

The No Free Lunch (NFL) theorem provides important theoretical insight, establishing that no single algorithm can be universally superior across all possible optimization problems [19]. This theorem explains the proliferation of specialized nature-inspired algorithms tailored to specific problem characteristics and highlights the importance of algorithm selection based on problem domain knowledge. For ecological optimization problems, this means that researchers must carefully match algorithm characteristics to the specific features of their optimization landscape to achieve optimal performance.

Application to Ecological Optimization: A Case Study

Ecological Network Optimization Challenge

The application of biomimetic algorithms to ecological optimization is exemplified by recent research on Ecological Networks (ENs) in Yichun City, China [2]. Rapid urbanization has caused significant degradation and fragmentation of natural landscapes and habitats, decreasing ecological connectivity and hindering species movement [2]. Ecological networks composed of ecological patches serve as bridges between habitats, improving ecosystem resilience and adaptability by mitigating human disturbance impacts [2]. However, optimizing these networks presents substantial challenges due to the need to simultaneously consider both functional and structural objectives across multiple spatial scales.