Multisensor Approaches in Ecology Research: Integrating Technologies for a Deeper Understanding of Complex Systems

This article explores the paradigm-shifting role of multisensor approaches in modern ecological research.

Multisensor Approaches in Ecology Research: Integrating Technologies for a Deeper Understanding of Complex Systems

Abstract

This article explores the paradigm-shifting role of multisensor approaches in modern ecological research. It covers the foundational principles driving the integration of diverse sensors—from drone-borne LiDAR and hyperspectral imagers to animal-borne biologgers and automated field stations. The scope extends to methodological applications across terrestrial, aquatic, and marine ecosystems, detailing how sensor fusion is used to map forest fuels, classify animal behavior, and monitor biodiversity. The article also addresses key challenges in data management, sensor optimization, and attachment, and provides a framework for validating and comparing these sophisticated technologies. Aimed at researchers and scientists, this review synthesizes how multisensor systems are revolutionizing data collection, enhancing spatial and temporal resolution, and providing unprecedented insights into ecological processes, with profound implications for conservation and policy.

The Foundations of Multisensor Ecology: From Technological Convergence to New Scientific Frontiers

Defining the Multisensor Paradigm in Ecological Research

Ecological research is undergoing a fundamental transformation driven by technological advancement, shifting from isolated, single-sensor measurements to integrated multisensor networks that capture the complexity of natural systems. This paradigm represents a holistic framework for ecological investigation, combining multiple sensing modalities, cross-platform data integration, and advanced computational analytics to create comprehensive digital representations of ecosystems. The multisensor paradigm addresses critical limitations of traditional approaches, which often provide fragmented views of ecological processes due to technological constraints and methodological simplifications [1] [2]. By simultaneously capturing complementary data streams across spatial and temporal scales, researchers can now investigate ecosystem dynamics with unprecedented resolution, enabling more accurate modeling, prediction, and management of ecological systems in an era of rapid environmental change.

This technical guide establishes the conceptual foundation, methodological framework, and practical implementation of the multisensor paradigm within ecological research. The approach is characterized by its emphasis on data fusion, sensor synergy, and ecological validity, moving beyond simple co-location of sensors toward truly integrated observational systems. By framing this within a broader thesis on multisensor approaches, we examine how this paradigm enhances our capacity to monitor biodiversity, quantify ecosystem functions, and address pressing conservation challenges through technological integration [2] [3]. The following sections provide researchers with both the theoretical underpinnings and practical methodologies for implementing this approach across diverse ecological contexts.

Core Principles of the Multisensor Paradigm

Theoretical Foundation and Key Concepts

The multisensor paradigm is built upon several interconnected theoretical principles that distinguish it from conventional ecological monitoring approaches. First, the principle of complementary sensing recognizes that individual sensor technologies have inherent limitations and strengths, but when strategically combined, they provide a more complete representation of ecological reality [4] [5]. For instance, optical sensors capture detailed spectral information but cannot penetrate vegetation canopies, whereas LiDAR provides structural data but limited spectral resolution. Second, the principle of spatial-temporal congruence emphasizes the importance of synchronized data collection across modalities to enable meaningful correlation and fusion of disparate data streams [3] [6]. Third, the principle of ecological validity prioritizes measurement approaches that capture organisms and processes within their natural contexts with minimal disruption, acknowledging that highly controlled experimental setups may produce artifacts that limit real-world applicability [7].

The paradigm further incorporates the concept of scalable observation, which enables monitoring across relevant ecological scales from individual organisms to landscapes through nested sensor deployments [3] [4]. This is complemented by the concept of networked intelligence, where distributed sensing nodes communicate and coordinate to adapt monitoring efforts in response to detected events or environmental changes [2] [8]. Underlying all these principles is a commitment to open science frameworks that ensure data interoperability, transparent methodologies, and reproducible analytical workflows across the research community [3] [4].

Comparative Advantages Over Traditional Approaches

Traditional ecological monitoring has relied heavily on manual field surveys, single-sensor approaches, and periodic sampling, creating significant gaps in our understanding of dynamic ecological processes. The multisensor paradigm addresses these limitations through several distinct advantages, quantified in the table below based on recent implementations across studies.

Table 1: Comparative analysis of monitoring approaches across key ecological research dimensions

| Research Dimension | Traditional Single-Sensor | Multisensor Paradigm | Documented Improvement |

|---|---|---|---|

| Spatial Coverage | Limited by sensor range and access | Extended through sensor networks and fusion | 3-5x increase in effective monitoring area [3] [8] |

| Temporal Resolution | Periodic/snapshot measurements | Continuous, real-time monitoring | From daily/weekly to minute/second intervals [3] [8] |

| Taxonomic Detection | Limited to visible/focal species | Multi-taxa detection through complementary signatures | 2.3x more species detected [2] [3] |

| Data Completeness | Gapped records due to technical limitations | Continuous data streams with cross-validation | 47% reduction in data gaps [3] [6] |

| Behavioral Detail | Limited to direct observation periods | Extended monitoring of natural behaviors | Enables quantification of rare behaviors (<1% occurrence) [3] [7] |

| Scalability | Labor-intensive, limited expansion potential | Modular, expandable sensor networks | 10x more efficient spatial scaling [2] [4] |

The multisensor approach demonstrates particular strength in capturing complex ecological interactions that emerge across spatial and temporal scales. For example, in a wetland ecosystem, traditional approaches might separately monitor water quality, vegetation health, and bird populations, potentially missing critical connections between seasonal water chemistry changes, plant community shifts, and avian foraging patterns. A multisensor network simultaneously tracking hydrological parameters, vegetation spectral signatures, and bird movements can detect these cross-system linkages, providing insights essential for ecosystem-based management [8].

Technological Framework and Sensor Typology

Sensor Modalities and Their Ecological Applications

The multisensor paradigm incorporates a diverse suite of sensing technologies, each contributing unique information about ecological structures, processes, and conditions. These modalities can be categorized based on their underlying detection principles and the specific ecological properties they measure.

Table 2: Ecological sensor typology with specifications and applications

| Sensor Category | Key Parameters Measured | Spatial Resolution | Temporal Resolution | Primary Ecological Applications |

|---|---|---|---|---|

| Hyperspectral Imaging | Spectral reflectance (400-2500nm) | Sub-meter to meters | Minutes to days | Species identification, plant stress detection, biochemical traits [4] [5] |

| LiDAR | Canopy height, structure, biomass | Sub-meter | Minutes to seasonal | Forest structure, habitat complexity, carbon storage [4] [5] |

| Bioacoustics | Animal vocalizations, soundscapes | 10-100m radius | Continuous | Biodiversity monitoring, behavior studies, population density [2] [3] |

| Thermal Imaging | Surface temperature, animal presence | Sub-meter to meters | Seconds to days | Wildlife detection, thermal ecology, water stress [6] [9] |

| Multispectral (Satellite) | Spectral bands (VIS, NIR, SWIR) | 10-30 meters | Days | Vegetation monitoring, land cover change, phenology [4] |

| SAR (Synthetic Aperture Radar) | Surface structure, moisture | 10-100 meters | Days | Biomass estimation, flood mapping, deforestation [4] |

| In-situ Environmental Sensors | Temperature, humidity, pH, nutrients | Point measurements | Minutes | Microclimate, water quality, soil conditions [6] [8] |

Integrated Sensor Platforms

In practice, these sensor modalities are deployed through integrated platforms designed to maximize synergistic data collection. Automated Multisensor stations for Monitoring of species Diversity (AMMOD) represent one advanced implementation, combining autonomous samplers for insects, pollen and spores with audio recorders for vocalizing animals, sensors for volatile organic compounds emitted by plants, and camera traps for mammals and small invertebrates [2]. These stations operate as self-contained units capable of pre-processing data prior to transmission, enabling deployment in remote locations with limited connectivity.

Similarly, the SmartWilds platform demonstrates the power of synchronized multimodal data collection through coordinated deployment of drone imagery, camera traps, and bioacoustic recorders [3]. This approach captures complementary perspectives on wildlife activity, with camera traps providing high-resolution imagery at key locations, drones offering aerial views of habitat use and animal movements, and acoustic monitors detecting vocal species that might avoid visual sensors. The integration of these synchronized data streams creates a comprehensive record of ecosystem activity that exceeds the capabilities of any single sensor type.

Another innovative implementation is the PARSA-360 + Air system, which captures environmental parameters within a 360-degree field of view using panoramic high dynamic range imagery combined with arrays of sensors measuring illuminance, thermal conditions, air quality, and sound levels [6]. This approach is particularly valuable for understanding how organisms experience their environments from a situated perspective, bridging the gap between point measurements and organismal perception.

Methodological Implementation: Experimental Protocols and Workflows

Experimental Design Framework

Implementing a successful multisensor research program requires meticulous experimental design to ensure data quality, interoperability, and ecological relevance. The following workflow outlines a standardized methodology for multisensor ecological studies, synthesizing best practices from recent implementations [3] [5] [8].

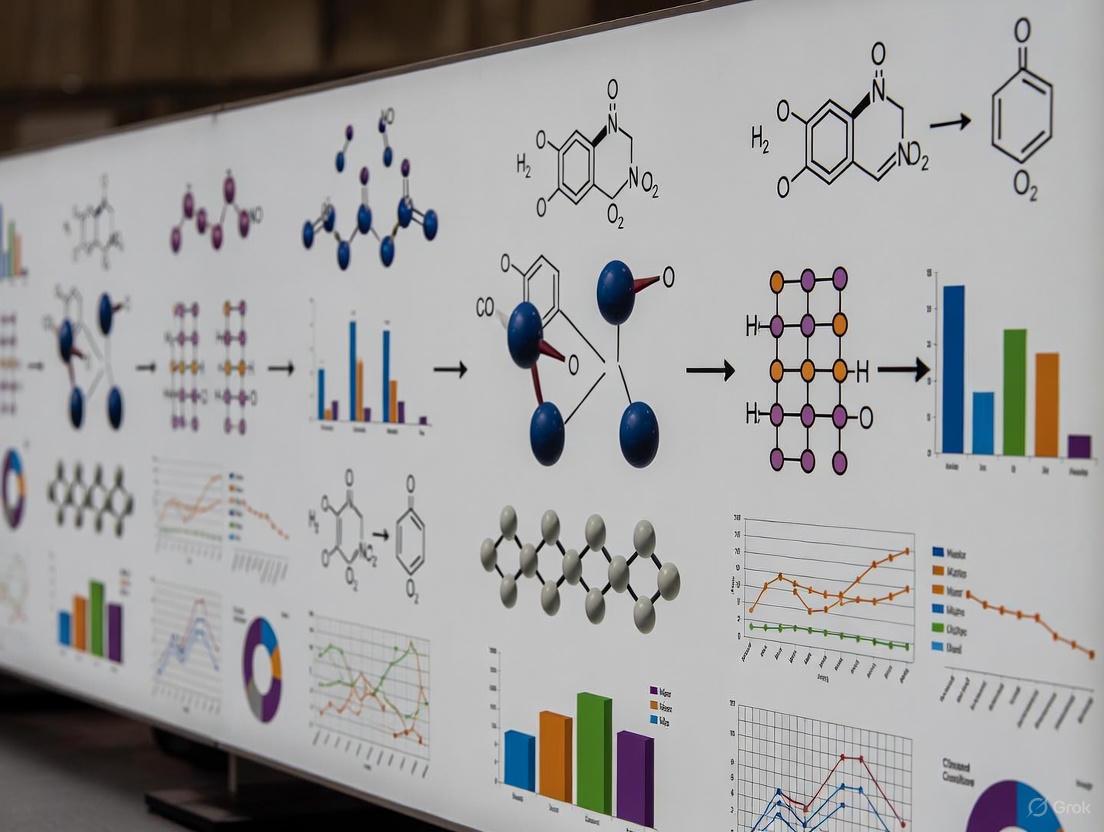

Diagram 1: Multisensor research workflow

Sensor Deployment and Calibration Protocol

The deployment phase begins with strategic sensor placement based on preliminary ecological assessment. For wildlife monitoring, this involves positioning camera traps and acoustic sensors in areas of high animal activity identified through preliminary surveys or existing knowledge [3]. Sensors should be deployed to maximize coverage while minimizing interference, with careful consideration of detection distances and potential obstructions. For example, in the SmartWilds deployment, camera traps were strategically positioned around lakes and wildlife congregation areas, while bioacoustic monitors targeted diverse acoustic environments from open grasslands to woodland edges [3].

Synchronization is critical for multimodal data fusion. This involves both temporal alignment using GPS timestamps or network time protocols and spatial registration through precise geolocation of sensor positions [3] [6]. The PARSA-360 system achieves this through integrated 360-degree sensing, while distributed deployments like AMMOD stations require precise inter-calibration [2] [6]. For the SmartWilds deployment, dedicated synchronization flights were conducted with drones within view of camera traps to enable precise cross-modal timestamp calibration [3].

Calibration procedures must be implemented for each sensor type, including:

- Radiometric calibration of optical sensors using standardized reflectance targets

- Geometric calibration to correct lens distortion and ensure accurate spatial measurement

- Acoustic calibration using reference sound sources at known frequencies and amplitudes

- Cross-sensor validation through coordinated measurement of reference features or events

Data Fusion and Analytical Methodology

The core analytical challenge in multisensor ecology is meaningful integration of disparate data streams. This typically follows a hierarchical approach progressing from data-level to decision-level fusion:

Data-level fusion combines raw data from multiple sensors before feature extraction, requiring precise spatial and temporal registration. This approach preserves maximum information but demands significant computational resources and careful handling of data heterogeneity [4] [5]. For example, combining LiDAR-derived canopy height models with hyperspectral imagery enables detailed characterization of forest structure and composition.

Feature-level fusion extracts relevant features from each sensor stream before combination, reducing dimensionality while preserving salient information. The urban vegetation classification framework demonstrates this approach, where LiDAR-derived structural features (canopy height, texture) are combined with hyperspectral vegetation indices (NDVI, EVI) to classify tree species with high accuracy [5]. The partial least squares-discriminant analysis (PLS-DA) model achieved 100% accuracy in discriminating species by integrating these complementary feature sets [5].

Decision-level fusion combines outputs from separate analyses of each data stream, offering flexibility and robustness to missing data. In the VALISENS system, object detections from thermal cameras, RGB cameras, and LiDAR are combined using late fusion to improve reliability for vulnerable road user protection [9]. This approach is particularly valuable when sensors have different coverage areas or operating constraints.

Case Studies and Validation

Forest Aboveground Biomass Estimation

A comprehensive implementation of the multisensor paradigm for ecosystem assessment demonstrated the estimation of forest aboveground biomass (AGB) in the Ozark and Ouachita forests using a machine learning approach that combined data from Sentinel-2 (optical), Sentinel-1 (SAR), and GEDI (LiDAR) on the Google Earth Engine platform [4]. The random forest regression model incorporated 34 out of 154 variables representing topographical, spectral, and textural factors, achieving strong performance with R-squared and RMSE values of 0.95 and 18.46 for the training dataset and 0.75 and 34.52 for the validation dataset, respectively [4].

Table 3: Sensor contributions to aboveground biomass estimation model

| Sensor Platform | Data Type | Key Predictor Variables | Contribution to Model Accuracy |

|---|---|---|---|

| GEDI | Waveform LiDAR | RH100, RH98, RH95 (height metrics) | Primary predictor (~40% variance explained) |

| Sentinel-2 | Multispectral optical | NDVI, EVI, LAI, textural features | ~35% variance explained |

| Sentinel-1 | C-band SAR | Backscatter coefficients, polarization | ~15% variance explained |

| Auxiliary Data | Topographic | Elevation, slope, aspect | ~10% variance explained |

This multisensor approach effectively addressed the saturation problem common in single-sensor AGB estimation, particularly the limitation of SAR and optical sensors in high-biomass forests. The integration of structural information from GEDI with spectral indices from Sentinel-2 and penetration capabilities from Sentinel-1 created a robust model that captured the diverse characteristics of forest ecosystems [4]. The study further demonstrated historical extrapolation using Landsat 8 data, with mean biomass values ranging from approximately 100 Mg/ha to 200 Mg/ha from 2015 to 2023, providing critical information for carbon sequestration monitoring and sustainable forest management [4].

Urban Vegetation Classification for Ecological Services

Another validated implementation focused on urban vegetation classification using a multi-sensor framework combining high-resolution aerial photography, LiDAR-derived products, and hyperspectral data [5]. This research addressed the critical need for detailed vegetation mapping in heterogeneous urban environments, where traditional approaches struggle with complex land cover patterns. Two classification methods were employed: object-based image analysis (OBIA) achieved 95.30% overall accuracy, while the partial least squares-discriminant analysis (PLS-DA) model achieved 100% accuracy in discriminating tree species [5].

The methodological protocol involved:

- Data acquisition using airborne platforms collecting synchronized hyperspectral imagery (400-2500nm range) and LiDAR point clouds

- Preprocessing including radiometric correction, geometric registration, and noise filtering

- Feature extraction including structural metrics from LiDAR (canopy height, volume, texture) and spectral indices from hyperspectral data (continuum removal, absorption features)

- Classification using both OBIA (segmenting images into objects based on texture, shape, and spectral properties) and PLS-DA (maximizing separation between predefined classes)

This urban vegetation classification framework directly supports improved management of ecological services by enabling precise mapping of species-specific contributions to air purification, urban heat island mitigation, and carbon sequestration. The identification of high-performing species for specific ecosystem services allows urban planners to optimize green infrastructure investments for maximum environmental benefit [5].

River System Monitoring Through Multi-Agent Sensing

A distributed multisensor approach for environmental monitoring of the Ystwyth River in Wales demonstrated the power of combining in-situ sensor networks with mobile application technology for real-time water quality assessment [8]. The system deployed AquaSonde sensors to measure key parameters including pH, electrical conductivity, temperature, dissolved oxygen, total dissolved solids, and nitrate levels at 15-minute intervals, capturing short-term fluctuations linked to rainfall and agricultural activity [8].

The implementation followed a multi-agent architecture with the following components:

- In-situ sensor nodes continuously monitoring water quality parameters

- Data transmission system using LoRaWAN technology for efficient communication from remote locations

- Cloud-based data processing for quality control and aggregation

- Interactive web and mobile application built on the Mapbox framework for real-time data visualization

- Stakeholder notification system alerting farmers, environmental agencies, and the public to water quality changes

This system identified improved grassland and livestock farming as major influences on water-quality variability, providing actionable information for targeted catchment management. The high-frequency monitoring captured event-driven pollution episodes that would be missed through traditional periodic sampling, demonstrating the critical importance of temporal resolution in understanding ecological dynamics [8].

The Researcher's Toolkit: Essential Technologies and Reagents

Successful implementation of the multisensor paradigm requires familiarity with a suite of technologies and analytical tools. The following table summarizes essential components for establishing a multisensor research program.

Table 4: Essential research toolkit for multisensor ecology

| Tool Category | Specific Technologies/Platforms | Function | Implementation Considerations |

|---|---|---|---|

| Sensor Platforms | Camera traps, bioacoustic recorders, airborne LiDAR, hyperspectral imagers, environmental sensors | Data acquisition across ecological domains | Compatibility, power requirements, environmental robustness [3] [6] |

| Positioning & Sync | GPS, GNSS receivers, NTP servers, V2X communication | Spatio-temporal registration and synchronization | Precision requirements, deployment environment, connectivity [6] [9] |

| Computing Infrastructure | Google Earth Engine, NVIDIA Jetson, Raspberry Pi, cloud computing platforms | Data processing, storage, and analysis | Computational demands, scalability, cost [3] [4] [6] |

| Analytical Frameworks | Random Forest, PLS-DA, OBIA, neural networks | Data fusion, pattern recognition, classification | Data requirements, interpretability needs, computational efficiency [4] [5] |

| Data Standards | OGC standards, Darwin Core, Ecological Metadata Language | Interoperability, reproducibility, data sharing | Domain-specific requirements, existing infrastructure [2] [3] |

The multisensor paradigm represents a fundamental shift in ecological research methodology, enabling comprehensive ecosystem monitoring through synergistic integration of complementary sensing technologies. This approach moves beyond the limitations of single-sensor studies to capture the multidimensional nature of ecological systems, providing unprecedented insights into patterns and processes across spatial and temporal scales. The technical framework outlined in this guide provides researchers with a structured methodology for designing, implementing, and analyzing multisensor research programs across diverse ecological contexts.

Future developments in this field will likely focus on several key areas: increased automation through artificial intelligence and edge computing, enhanced sensor miniaturization and energy efficiency, improved interoperability through standardized data protocols, and greater integration of citizen science and participatory monitoring approaches [2] [8]. The emerging capability to create conservation digital twins - dynamic virtual replicas of ecosystems updated in near real-time by multisensor networks - represents a particularly promising direction for predictive ecology and evidence-based environmental management [3]. As these technologies mature, the multisensor paradigm will continue to transform our understanding of ecological systems and enhance our capacity to address pressing environmental challenges in an increasingly human-modified world.

The field of ecology is undergoing a profound transformation, driven by the convergence of three key technological drivers: the reduced cost of environmental sensors, advancements in sensor technology, and the accessibility of sophisticated data analytics, particularly artificial intelligence (AI). This whitepaper details how these drivers are enabling a new era of multisensor approaches in ecological research. By integrating autonomous, multimodal data streams, researchers can now generate high-resolution, multidimensional data on biodiversity and ecosystem dynamics at unprecedented spatial and temporal scales, moving ecological monitoring from fragmented, labor-intensive surveys to integrated, automated, and operational pipelines.

Traditional ecological monitoring has been constrained by the high cost of equipment, logistical challenges of data collection in remote areas, and the labor-intensive nature of data processing. This often resulted in data that was spatially and temporally limited, hindering the ability to understand complex, dynamic ecosystems [2]. The confluence of three key technological drivers is dismantling these barriers:

- Reduced Costs: The development of low-cost sensors (LCS) has democratized access to monitoring tools, enabling dense sensor deployments that were previously financially prohibitive [10] [11].

- Advanced Sensors: Innovations in sensor technology, including miniaturized optical, acoustic, and chemical sensors, allow for the collection of rich, multimodal data (e.g., images, sounds, volatile organic compounds) [2] [12].

- Accessible Analytics: The advent of user-friendly AI and machine learning (ML) tools has automated the processing of massive datasets, extracting ecological insights from terabytes of raw sensor data with speed and accuracy that surpass manual methods [13] [12].

These drivers are synergistic. The data deluge from affordable, advanced sensors necessitates AI for analysis, while the insights from AI validate and enhance the value of sensor networks, creating a positive feedback loop that is revolutionizing ecological research.

The Core Technological Drivers

Driver 1: Proliferation of Low-Cost Sensors (LCS)

Low-cost sensors are defined not by a specific price point but by being significantly more affordable than traditional reference-grade instrumentation, making dense spatial monitoring networks economically viable [11].

Quantitative Cost-Benefit Analysis: The cost-effectiveness of these technologies is demonstrated through comparative studies. The transition from traditional to AI-powered methods yields dramatic improvements in efficiency and capability, as summarized in Table 1.

Table 1: Comparative Analysis of Traditional vs. AI-Powered Ecological Monitoring

| Survey/Monitoring Aspect | Traditional Method (Estimated Outcome) | AI-Powered Method (Estimated Outcome) | Estimated Improvement (%) |

|---|---|---|---|

| Vegetation Analysis Accuracy | 72% (manual identification) | 92%+ (AI automated classification) | +28% |

| Species Detected per Hectare | Up to 400 species (sampled) | Up to 10,000 species (exhaustive) | +2400% |

| Time Required per Survey | Several days to weeks | Real-time or within hours | -99% |

| Resource Savings (Manpower & Cost) | High labor and operational costs | Minimal manual intervention | Up to 80% |

| Data Update Frequency | Monthly or less | Daily to Real-time | +3000% |

A specific example from tiger and prey monitoring in Sumatra shows that integrating unstructured patrol data with systematic surveys improved the precision of species occupancy estimates by 14–42% while reducing operational costs by 51-fold [14]. This demonstrates that LCS, when combined with intelligent data integration, can achieve high-quality results at a fraction of the traditional cost.

Driver 2: Advancements in Multisensor Technologies

Modern ecological research leverages a suite of automated sensors that operate across different electromagnetic and chemical spectra. These sensors form the hardware backbone of the multisensor approach.

Table 2: Key Sensor Technologies for Automated Ecological Monitoring

| Sensor Category | Example Technologies | Ecological Data Collected | Primary Applications |

|---|---|---|---|

| Electromagnetic Wave Recorders | Camera traps, multispectral/hyperspectral imagers (satellite, UAV), LiDAR | Images, 3D vegetation structure, habitat maps | Species identification and counting, vegetation health assessment, canopy structure modeling, land cover change detection [2] [15] [12] |

| Acoustic Wave Recorders | Microphones, hydrophones | Animal vocalizations, soundscapes | Detection of vocalizing species (birds, amphibians, mammals), behavioral studies, biodiversity acoustic indices [2] [12] |

| Chemical Recorders | Sensors for Volatile Organic Compounds (pVOCs), environmental DNA (eDNA) samplers | Chemical signatures of plants/species, genetic material | Plant health and phenology monitoring, species presence/absence detection [2] [12] |

A prime example of integration is the AMMOD (Automated Multisensor stations for Monitoring of species Diversity) concept. Each AMMOD station combines autonomous samplers for insects, pollen, and spores with audio recorders, pVOC sensors, and camera traps. These stations are largely self-contained, capable of pre-processing data before transmission, and represent a fully realized multisensor platform for biodiversity assessment [2].

Driver 3: Accessible AI and Data Analytics

The massive, multimodal datasets generated by sensor networks are intractable for manual analysis. AI, particularly deep learning, has become the critical tool for turning data into knowledge.

Experimental Protocol: AI-Powered Sensor Calibration and Data Processing A key application of AI is in calibrating low-cost sensors to improve their accuracy. The following protocol, derived from research on low-cost ozone sensors, details this process [16]:

- Objective: To improve the accuracy of raw readings from a low-cost multisensor module (e.g., ZPHS01B) for ground-level ozone (O3) measurement using machine learning models.

- Materials:

- Low-cost multisensor module (e.g., ZPHS01B with nine sensors for O3, NO2, CO, CO2, PM, Temp, RH, etc.).

- Reference-grade ozone monitoring station for ground-truth data.

- Data logging infrastructure and computational resources (e.g., cloud platform).

- Methodology:

- Data Collection: Co-locate the low-cost sensor module with a reference station for a significant period (e.g., 165-239 days) to collect paired data [16].

- Feature Engineering: Perform exploratory data analysis. Use readings from all cross-sensitive sensors (e.g., NO2, temperature, relative humidity) as input features for the model, not just the primary O3 sensor reading. This accounts for interfering factors.

- Model Training: Train ensemble machine learning models (e.g., Gradient Boosting, Random Forest) to predict the reference O3 values based on the raw low-cost sensor inputs.

- Hyperparameter Tuning: Optimize model parameters using techniques like grid search or random search to maximize performance.

- Validation: Validate the model on a held-out portion of the data not used during training.

- Result: The cited study achieved a 94.05% reduction in estimation error, attaining a Mean Absolute Error (MAE) of 4.022 µg/m³ and a Mean Relative Error (MRE) of 7.21% for O3 in a low-concentration scenario, outperforming traditional calibration methods [16].

Beyond calibration, AI is directly used for ecological inference. Deep learning models, specifically Convolutional Neural Networks (CNNs), automate the detection, classification, and counting of species in images from camera traps or drones [13] [12]. Similarly, computer audition models analyze audio recordings to identify species by their calls [12]. These tools are increasingly accessible through cloud-based platforms and open-source software libraries, making them available to researchers without deep expertise in computer science.

Integrated Workflows: The Multisensor Approach in Action

The true power of these drivers is realized when they are combined into end-to-end automated monitoring pipelines. The following diagram illustrates the logical workflow and data flow from collection to ecological insight.

Diagram: Logical Workflow of an Automated Multisensor Monitoring Pipeline. Data flows from multiple advanced sensors to a centralized AI processing unit, which synthesizes the raw data into actionable ecological knowledge.

Case Study: Targeted Policy Assessment with Low-Cost Sensor Networks

A practical application of this integrated workflow is the targeted assessment of environmental policies, such as evaluating the impact of urban traffic-calming measures on local air quality.

Experimental Protocol: Assessing Mobility Policy Impacts on Air Quality This protocol follows a general rubric for using low-cost sensors for targeted policy assessment [10]:

- Objective: To quantify the change in local air pollutant concentrations (e.g., NO2, PM2.5) resulting from a specific policy intervention (e.g., pedestrianization, new bike lanes).

- Materials:

- A cohort of calibrated low-cost air quality sensor units (e.g., Alphasense electrochemical cells for NO2, Plantower optical sensors for PM2.5, integrated in platforms like EarthSense Zephyr) [10].

- Power supply and data transmission infrastructure (e.g., LTE/Wi-Fi).

- Calibration equipment and reference data.

- Methodology:

- Baseline Monitoring (Before Intervention): Deploy a limited number (e.g., one dozen or fewer) of sensors in the area targeted for policy change and in control locations. Collect data for a sufficient period to establish a robust baseline [10].

- Policy Implementation: The traffic-calming measure (e.g., street pedestrianization) is enacted.

- Post-Intervention Monitoring: Continue monitoring with the same sensor deployment to capture the new conditions.

- Data Analysis: Compare pre- and post-intervention data, normalizing for background concentrations. Use statistical tests to identify significant changes. For example, studies in Berlin showed that traffic restriction reduced local NO2 to urban background levels without increasing pollution on neighboring streets, while no significant changes in PM2.5 were detected [10].

- Result: The study provides high-resolution, real-world quantification of the policy's effect, creating an evidence base for urban planning and public health decisions. The portability and lower cost of the sensors make this a reusable and cost-effective method for evaluating multiple policies over time [10].

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key components and tools essential for establishing modern, automated ecological monitoring systems.

Table 3: Essential Research Toolkit for Multisensor Ecology

| Item / Solution | Function / Application |

|---|---|

| Low-Cost Multisensor Module (e.g., ZPHS01B) | A single unit integrating multiple sensors (e.g., for O3, NO2, PM, temperature, humidity) for comprehensive, co-located environmental data collection [16]. |

| Calibrated Air Quality Sensor Units (e.g., EarthSense Zephyr with Alphasense/PMS5003 sensors) | Mobile, robust sensor packages used for targeted deployments, such as policy impact assessments, providing calibrated data for specific pollutants like NO2 and PM2.5 [10]. |

| Reference-Grade Monitoring Station | Provides ground-truth data essential for the calibration and validation of low-cost sensor networks, ensuring data accuracy and reliability [16] [11]. |

| Machine Learning Models (e.g., Gradient Boosting, Random Forest, CNNs) | The core analytical tool for calibrating sensor data, classifying species in images and audio, and extracting ecological metrics from complex datasets [16] [12]. |

| Database of Training Data (e.g., DNA barcodes, animal sounds, image libraries) | Critical reference libraries used to train and validate AI models for automated species identification and classification [2]. |

| Acoustic Recorders (e.g., microphones, hydrophones) | Autonomous devices for recording soundscapes, used to monitor vocalizing species and assess acoustic biodiversity [2] [12]. |

| Camera Traps | Motion-activated cameras for remotely capturing images of wildlife, enabling population studies and behavioral observation without human presence [2] [14]. |

| Satellite & UAV-based Remote Sensing Platforms | Provide large-scale and high-resolution data on vegetation structure, health, and land cover change, which can be fused with in-situ sensor data [15] [13]. |

The convergence of reduced costs, advanced sensors, and accessible analytics is not merely an incremental improvement but a fundamental shift in ecological methodology. The multisensor approach, powered by this technological triad, enables the collection and analysis of high-resolution, multidimensional data at scales previously unimaginable. This allows researchers to move from snapshots of ecological communities to dynamic, continuous films, dramatically improving our ability to understand complex ecosystem processes, forecast responses to global change, and inform effective conservation strategies. As these technologies continue to evolve and become even more accessible, they promise to fully operationalize large-scale, automated ecological monitoring, providing the critical data needed to safeguard global biodiversity.

Modern ecological research is undergoing a transformative shift from single-technology assessments to integrated multisensor approaches that capture complementary aspects of complex ecosystems. This paradigm recognizes that no single sensor can fully characterize the multidimensional nature of ecological systems, where structure, composition, function, and behavior interact across spatial and temporal scales. The integration of LiDAR, hyperspectral imaging, thermal sensing, and biologging technologies enables researchers to overcome the limitations inherent in individual methodologies, providing a more holistic understanding of ecosystem dynamics [2] [17].

The fundamental strength of multisensor integration lies in the synergistic combination of structural, spectral, and behavioral data. For instance, while LiDAR captures the three-dimensional architecture of forests, hyperspectral sensors reveal their chemical and physiological properties through spectral signatures. When combined, these technologies enable researchers to link forest structure with species composition and functional traits—a connection nearly impossible to establish with either technology alone [18] [17]. This whitepaper provides an in-depth technical examination of core sensor technologies, their individual capabilities, integration methodologies, and implementation frameworks for advancing ecological research.

Technology-Specific Technical Specifications

LiDAR (Light Detection and Ranging)

Operating Principle: LiDAR is an active remote sensing technology that measures the time delay between emitted laser pulses and their returns to calculate precise distances to objects or surfaces. Terrestrial Laser Scanning (TLS) represents the tripod-mounted ground-based implementation that captures extremely detailed structural measurements from below the canopy [19].

Key Technical Specifications:

- Pulse Rates: Modern systems capture millions of points per second

- Accuracy: Centimeter-level vertical accuracy for topographic modeling

- Wavelengths: Typically near-infrared (e.g., 905nm, 1550nm) for vegetation penetration

- Platforms: Terrestrial (TLS), airborne (ALS), UAV-mounted, and spaceborne (GEDI) [19] [20]

Ecological Applications: LiDAR excels at quantifying three-dimensional forest structure, including canopy height, canopy cover, vertical foliage distribution, and gap dynamics. The technology can penetrate vegetation layers to characterize sub-canopy topography and ground surface elevation, enabling the development of detailed digital elevation models (DEMs) even in densely forested areas [21] [19]. In forest degradation and regeneration studies, LiDAR has proven particularly effective for discriminating second-growth from old-growth forests based on structural differences [17].

Table 1: LiDAR Systems and Their Ecological Applications

| Platform Type | Spatial Resolution | Key Measurement Capabilities | Primary Ecological Applications |

|---|---|---|---|

| Terrestrial (TLS) | Sub-centimeter to centimeter | Trunk diameter, leaf angle distribution, 3D canopy architecture | Individual tree modeling, biomass estimation, radiative transfer studies |

| Airborne (ALS) | 0.5-5 points/m² | Canopy height models, topographic mapping, vegetation density | Landscape-scale forest inventory, habitat mapping, carbon stock assessment |

| UAV-mounted | 100-500 points/m² | High-resolution 3D structure of small areas | Precision forestry, research plot monitoring, restoration assessment |

| Spaceborne (GEDI) | 25m diameter footprints | Global canopy height, vertical structure metrics | Global biomass estimation, forest monitoring across biomes |

Hyperspectral Imaging

Operating Principle: Hyperspectral imaging is a passive optical technology that captures reflected light across hundreds of narrow, contiguous spectral bands (typically 5-10nm bandwidth), generating a continuous spectral signature for each pixel in the image. Unlike multispectral imaging that captures discrete broad bands, hyperspectral sensors produce a complete reflectance spectrum from visible to shortwave infrared wavelengths [21] [18].

Key Technical Specifications:

- Spectral Resolution: 3-10nm bandwidth across hundreds of channels

- Spectral Range: 400-2500nm (VNIR, SWIR)

- Spatial Resolution: Sub-meter to several meters depending on platform

- Data Structure: Three-dimensional data cube (x, y, λ) [18]

Ecological Applications: Hyperspectral data enables species identification through spectral fingerprinting, detection of vegetation stress and disease before visible symptoms appear, and assessment of biochemical properties including chlorophyll, nitrogen, and water content. The technology has demonstrated superior performance for discriminating degraded from undisturbed forests where structural changes may be subtle but physiological alterations are detectable spectrally [17]. In complex environments like multi-layered mangrove forests and mixed-species woodlands, hyperspectral data provides the spectral discrimination necessary for accurate species classification [18].

Table 2: Hyperspectral Regions and Their Ecological Significance

| Spectral Region | Wavelength Range | Key Absorption Features | Ecological Applications |

|---|---|---|---|

| Visible (VIS) | 400-700nm | Chlorophyll absorption (450nm, 670nm), carotenoids | Photosynthetic pigment estimation, plant health assessment |

| Near-Infrared (NIR) | 700-1300nm | Leaf water content, leaf internal structure | Vegetation vigor, biomass estimation, species discrimination |

| Shortwave Infrared (SWIR) | 1300-2500nm | Water, lignin, cellulose, nitrogen content | Water stress detection, leaf chemistry, fuel moisture content |

Thermal Sensing

Operating Principle: Thermal sensors detect emitted radiation in the thermal infrared portion of the electromagnetic spectrum (approximately 3-14μm), which is directly related to the surface temperature of objects. Unlike LiDAR and hyperspectral systems that primarily rely on reflected energy, thermal sensors measure the inherent radiation emitted by all objects above absolute zero.

Key Technical Specifications:

- Spectral Range: 3-5μm (mid-wave IR) or 8-14μm (long-wave IR)

- Measurement Units: Surface temperature (°C or K) or radiance (W·m⁻²·sr⁻¹)

- Platforms: Satellite, airborne, UAV, and ground-based systems

Ecological Applications: Thermal data provides critical information on plant water stress through canopy temperature measurements, with stressed vegetation typically exhibiting elevated temperatures due to reduced evaporative cooling. Thermal sensors also enable wildlife population monitoring through detection of endothermic animals, particularly in nocturnal surveys, and identification of microclimatic variations across landscapes that influence species distributions and ecosystem processes.

Biologging Suites

Operating Principle: Biologging involves attaching miniaturized sensor packages to individual animals to record their behavior, physiology, and environmental encounters. Modern biologging systems integrate multiple sensors in compact, animal-borne packages that can record and sometimes transmit data throughout animal movements [22].

Key Technical Specifications:

- Core Sensors: Inertial Measurement Units (IMU: accelerometer, gyroscope, magnetometer), depth, temperature, light

- Additional Sensors: Video cameras, hydrophones, GPS, environmental sensors

- Data Logging: Archival (recovery required) or transmission-based (satellite, RF)

- Attachment Methods: Suction cups, harnesses, direct attachment [22]

Ecological Applications: Biologging enables the investigation of fine-scale behavioral ecology, including foraging strategies, social interactions, and energy expenditure. Multi-sensor tags have been successfully deployed on elusive species like durophagous stingrays, capturing feeding events through integrated video and acoustic sensors that record shell fracture sounds during predation [22]. These technologies are particularly valuable for studying species that are difficult to observe directly due to their size, habitat, or elusive nature.

Table 3: Biologging Sensor Capabilities and Behavioral Metrics

| Sensor Type | Measurement Parameters | Sampling Frequency | Derived Behavioral Metrics |

|---|---|---|---|

| Accelerometer | Tri-axial acceleration | 10-100Hz | Activity patterns, energy expenditure, gait, feeding events |

| Gyroscope | Orientation, rotation | 10-100Hz | Body pitch, roll, yaw, turning angles |

| Magnetometer | Magnetic field strength | 1-10Hz | Heading direction, migratory pathways |

| Depth Sensor | Pressure | 0.1-1Hz | Diving profiles, depth preferences, tidal movements |

| Video Camera | Visual record of behavior | 30fps | Context-specific behavior, prey identification, social interactions |

| Hydrophone/Acoustic | Sound recordings | 44.1kHz | Predator-prey interactions, vocalizations, environmental acoustics |

Integrated Multisensor Methodologies

Data Fusion Approaches

Pixel-Level Fusion: This approach involves the direct combination of raw data from multiple sensors before feature extraction. For example, hyperspectral and LiDAR data can be fused at the pixel level through co-registration techniques that align the spectral and structural information into a unified data structure. The Trento dataset demonstrates this approach, integrating 63-band hyperspectral data with LiDAR-derived Digital Surface Models at 1m spatial resolution [18]. While computationally demanding, pixel-level fusion preserves the original information content from both sensors, enabling joint analysis of spectral and structural features at their native resolutions.

Feature-Level Fusion: In this methodology, features are first extracted from each sensor's data independently, then combined for subsequent analysis. For vegetation classification, this might involve deriving structural metrics from LiDAR (canopy height, cover, complexity) and spectral indices from hyperspectral data (red edge position, water absorption indices), which are then concatenated into a unified feature vector for machine learning classification [18] [17]. Feature-level fusion has demonstrated significant improvements in classification accuracy, with one Amazon study reporting up to 12% improvement in distinguishing forest degradation and regeneration stages compared to single-data sources [17].

Decision-Level Fusion: This approach processes each data stream independently through separate classification or modeling pipelines, then combines the results through voting schemes, probability averaging, or other meta-learning techniques. Decision-level fusion allows for domain-specific preprocessing and modeling optimized for each data type before final integration.

Experimental Protocols for Multisensor Ecology Studies

Protocol 1: Forest Degradation and Regeneration Assessment

Site Selection: Identify study areas representing gradient of disturbance (undisturbed, degraded, second-growth forests) using historical Landsat time series (1984-2017) and auxiliary data [17].

Data Acquisition:

- LiDAR Collection: Acquire airborne LiDAR data with multiple returns per pulse, with point density ≥5 points/m²

- Hyperspectral Imaging: Collect hyperspectral data across 400-2500nm range with spatial resolution matching LiDAR

- Field Validation: Conduct ground-truth surveys for forest structure and composition within geolocated plots

Data Preprocessing:

- LiDAR Processing: Generate Digital Terrain Models (DTM), Canopy Height Models (CHM), and calculate structural metrics (upper canopy cover, vertical complexity)

- Hyperspectral Processing: Apply radiometric calibration, atmospheric correction, and geometric alignment to LiDAR data

- Data Integration: Co-register LiDAR and hyperspectral datasets to precise spatial alignment

Feature Extraction:

- Extract LiDAR-derived structural metrics: canopy height distributions, cover, gap fraction

- Extract hyperspectral features: narrow-band indices, absorption band characteristics, spectral derivatives

- Compute combined feature set incorporating both structural and spectral descriptors

Classification and Analysis:

- Implement multiple machine learning classifiers (Random Forest, SVM, Gradient Boosting)

- Compare performance across data sources (LiDAR-only, HSI-only, integrated)

- Validate with independent test sets and calculate accuracy metrics (F1 scores, overall accuracy) [17]

Protocol 2: Automated Biodiversity Monitoring Station (AMMOD)

Station Design: Deploy autonomous multisensor station integrating:

- Audio Recorders: For vocalizing animals (birds, amphibians, insects)

- Camera Traps: For mammals and small invertebrates

- Insect Samplers: Autonomous traps for arthropod collection

- Pollen and Spore Samplers: For aerobiological monitoring

- Environmental Sensors: Temperature, humidity, precipitation

- pVOC Sensors: For volatile organic compounds emitted by plants [2]

Data Collection Regime:

- Program continuous monitoring with trigger-based activation for motion or sound

- Implement diurnal and seasonal sampling strategies

- Include self-calibration sequences for sensor validation

Data Processing Pipeline:

- On-site preprocessing for noise filtering and data compression

- Automated species identification using reference databases (DNA barcodes, audio libraries, image collections)

- Wireless transmission of processed data to central repositories

- Integration of multiple data streams into biodiversity indices [2]

Maintenance Protocol:

- Regular sensor calibration and cleaning

- Power system monitoring (solar panels, batteries)

- Data storage management and backup procedures

Diagram 1: Multisensor Data Integration Workflow for Ecological Studies

Implementation Framework

The Researcher's Toolkit: Essential Research Reagent Solutions

Table 4: Core Research Reagents for Multisensor Ecology

| Solution Category | Specific Tools & Platforms | Technical Function | Ecological Application Examples |

|---|---|---|---|

| Data Acquisition Platforms | UAV/drone systems (e.g., DJI Matrice 300), Terrestrial Laser Scanners (e.g., Leica RTC360), Animal-borne tags (e.g., CATS Cam) | Physical deployment of sensors for data collection across spatial scales | High-resolution mapping of research plots, individual animal behavior monitoring |

| Reference Libraries & Databases | DNA barcode libraries, animal sound repositories, spectral signature databases, species image collections | Training data for automated species identification algorithms | Validation of remote sensing classifications, bioacoustic species identification |

| Algorithmic Toolkits | Random Forest, SVM, CNN, Vision Transformers (ViT), Point cloud processing libraries | Machine learning and deep learning for pattern recognition in complex sensor data | Species classification from hyperspectral imagery, individual tree detection from LiDAR |

| Data Fusion Frameworks | PlantViT, custom Python/R pipelines, cloud-based processing platforms (e.g., Google Earth Engine) | Integration of multimodal data streams into unified analytical framework | Combined LiDAR-hyperspectral vegetation mapping, sensor data fusion for behavioral studies |

| Validation & Ground-Truthing Tools | Field spectroradiometers, hemispherical photography, vegetation survey protocols, GPS equipment | Collection of reference data for model training and validation | Spectral signature validation, structural parameter measurement, species composition assessment |

Performance Metrics and Validation

Accuracy Assessment Protocols: Integrated multisensor systems require rigorous validation against ground reference data. Standard metrics include Overall Accuracy (OA), F1 scores for individual classes, Kappa coefficients for agreement assessment, and Root Mean Square Error (RMSE) for continuous variable estimation [18] [17]. For biodiversity monitoring, additional metrics such as species detection rates, false positive ratios, and temporal detection probability should be employed.

Comparative Performance: Studies consistently demonstrate the superiority of integrated approaches over single-sensor methodologies. The PlantViT framework, which integrates hyperspectral and LiDAR data using Vision Transformers, achieved 97.4% overall accuracy on the Trento dataset, significantly outperforming conventional CNN-based models [18]. Similarly, research in Amazon forests showed that combining LiDAR and hyperspectral data improved classification accuracy by up to 12% compared to single-data sources for distinguishing forest degradation and regeneration stages [17].

Diagram 2: Complementary Nature of Core Sensor Technologies in Ecological Research

The integration of LiDAR, hyperspectral, thermal, and biologging technologies represents a paradigm shift in ecological research, enabling unprecedented insights into ecosystem structure, function, and behavior. Rather than operating in isolation, these core sensor technologies achieve their full potential when combined through purposeful fusion methodologies that leverage their complementary strengths. Technical frameworks such as the PlantViT model for hyperspectral-LiDAR integration [18] and AMMOD stations for automated biodiversity monitoring [2] provide scalable blueprints for implementing multisensor approaches across diverse ecosystems.

As these technologies continue to advance, key developments in computational power, miniaturization, and analytical algorithms will further enhance their accessibility and capabilities. The emerging era of spaceborne hyperspectral missions (e.g., EMIT, CHIME) combined with global LiDAR data (e.g., GEDI) promises to extend detailed multisensor monitoring to continental and global scales [20]. For researchers and conservation practitioners, this technological convergence offers powerful tools to address pressing ecological challenges, from biodiversity loss to climate change impacts, through integrated, data-driven approaches that capture the complexity of natural systems in ways previously impossible.

The Rise of Automated Multisensor Stations for Biodiversity Monitoring (AMMOD)

The progressive loss of biological diversity represents a critical challenge, with studies documenting sharp declines in insect and bird populations across Central Europe since 1990 [23]. Unlike climate research, which benefits from decades of standardized meteorological data, biodiversity science lacks a national, large-scale, and standardized monitoring program for tracking species populations [23] [24]. The Automated Multisensor Stations for Monitoring of species Diversity (AMMOD) project addresses this fundamental data gap by establishing a network of automated observation stations designed to deliver continuous, standardized biodiversity data across Germany [25] [23].

Modelled after conventional weather stations, AMMOD stations represent a technological paradigm shift, combining cutting-edge sensors with advanced data analytics to compile complementary information on flora and fauna [23]. This multisensor approach enables the monitoring of a broad spectrum of species through automated image recognition, acoustic recordings, chemical sensors, and DNA analysis, paving the way for a new generation of biodiversity assessment [2].

The AMMOD Technological Framework

Core Sensor Technologies and Data Acquisition

Each AMMOD station integrates multiple complementary sensing modalities to create a comprehensive picture of local biodiversity [2]. These technologies function synergistically to overcome the limitations of individual methods.

Table 1: Core Sensor Technologies in AMMOD Stations

| Sensor Technology | Target Organisms | Data Type | Primary Function |

|---|---|---|---|

| Automated Insect Cameras [25] | Nocturnal insects (e.g., moths) | High-resolution images | Visual monitoring and species identification |

| Wildlife Camera Traps [25] [2] | Birds, mammals, small invertebrates | Image sequences / videos | Species identification, behavior, and abundance estimation |

| Acoustic Recorders [23] [24] | Birds, bats, grasshoppers, frogs | Audio recordings | Species identification via vocalizations |

| Autonomous Samplers [2] [23] | Insects, pollen, spores | Physical samples (for DNA barcoding) | Genetic identification and analysis |

| Chemical Sensors (pVOCs) [2] | Plants | Volatile organic compound data | Identification of plant species via emissions |

Data Processing and Analytical Methodologies

The raw data from AMMOD sensors undergoes sophisticated processing to generate meaningful biodiversity metrics. A core innovation lies in the application of artificial intelligence and probabilistic sensor data fusion to interpret complex ecological data [24].

Visual Data Analysis Pipeline

For visual monitoring, the project employs a two-stage deep learning pipeline. First, a detection algorithm localizes individual organisms within images, after which a classification network determines the species [25]. This pipeline has demonstrated significant performance improvements, raising species identification accuracy in moth scanner images from 79.62% to 88.05% [25]. For wildlife camera traps, active learning approaches minimize the human annotation effort required to train models that distinguish animal-containing images from empty background scenes [25].

Probabilistic Abundance Estimation

Determining species abundance (population size) presents a significant challenge. AMMOD investigates advanced methods that model various interpretations of sensor detections stochastically [24]. This approach accounts for potential errors, such as incorrect measurements or misclassification, and uses Bayesian statistical methods to integrate background knowledge about species behavior and habitats [24]. The system employs algorithms derived from object tracking theory, using stochastic motion models and sensor models to estimate populations even when individual trajectories cannot be precisely determined [24].

Diagram 1: AMMOD System Workflow illustrating the flow from multi-sensor data acquisition through AI analysis to biodiversity metrics output.

Experimental Protocols and Implementation

Station Deployment and Operational Design

AMMOD stations are engineered for autonomous operation in remote and often inaccessible locations, requiring a sophisticated system design that optimizes power consumption, data transmission bandwidth, and service requirements [25] [2]. The stations are largely self-contained, with the ability to pre-process data to reduce transmission volume before sending it to central servers for storage and integration [2]. A key operational challenge involves achieving reliable year-round functionality across all environmental conditions with minimal maintenance [25].

Methodological Approach to Sensor Data Fusion

The methodological core of AMMOD involves probabilistic sensor data fusion to resolve ambiguities in species identification and abundance estimation [24]. This process formally integrates detections from multiple sensors with contextual knowledge.

Table 2: Research Reagents and Essential Materials for AMMOD Implementation

| Category | Specific Component | Function / Application |

|---|---|---|

| Sensing Hardware | Moth Scanner [25] | Automated imaging of nocturnal insects attracted to illuminated screen |

| Wildlife Camera Traps [25] | Still image and video capture of mammals and birds | |

| Acoustic Array [24] | Recording vocalizations; array processing enables sound source localization | |

| Autonomous Sampler [2] | Collection of physical specimens (insects, pollen) for DNA analysis | |

| Data Processing | Deep Learning Models [25] | Two-stage pipeline for insect detection and species classification |

| Probabilistic Fusion Algorithms [24] | Bayesian methods for integrating sensor data and estimating abundance | |

| Active Learning Frameworks [25] | Minimizing human annotation effort for model training | |

| Contextual Data | Geographic Information Systems (GIS) [24] | Providing georeferenced background on terrain, vegetation, and water resources |

| Species Reference Databases [2] | DNA barcodes, animal sounds, images, and pVOC profiles for automated identification |

Diagram 2: Probabilistic Data Fusion Logic showing how raw detections are interpreted within the context of sensor and behavioral models to estimate species abundance.

Discussion and Future Outlook

As a technically sophisticated and interdisciplinary initiative, AMMOD represents a significant advancement in ecological monitoring technology [23]. The project's distinctive innovativeness stems from its multisensor integration, which enables a mostly automated, standardized, and continuous accounting of plant and animal species across multiple taxonomic groups [23]. In the coming years, the permanently installed AMMOD stations are expected to provide the first long-term overview of species diversity status and development at selected sites in Germany [24].

Within the broader context of multisensor approaches in ecological research, AMMOD demonstrates how complementary data streams can overcome limitations inherent to single-modality monitoring. By formally integrating detections across visual, acoustic, chemical, and genetic sensors, the system generates a more robust and comprehensive understanding of ecosystem dynamics than any single technology could provide alone. This network, envisioned to ultimately cover all of Germany, is designed to identify trends and fluctuations in the biosphere, contributing vital information for developing concrete strategies for biodiversity conservation [24].

Addressing Ecological Complexity through Data Fusion and Interdisciplinary Collaboration

Contemporary ecological research grapples with systems defined by immense complexity, non-linear dynamics, and interconnected social and ecological components. Traditional, single-discipline approaches are often inadequate for addressing challenges such as biodiversity loss, ecosystem degradation, and climate change. This whitepaper details a modern methodological framework that integrates multisensor data fusion and structured interdisciplinary collaboration to advance ecological understanding. By synthesizing cutting-edge technological approaches with proven collaborative protocols, this guide provides researchers with the practical tools and conceptual foundations needed to design and implement robust studies capable of capturing the true complexity of social-ecological systems.

Environmental challenges like biodiversity loss and climate change are not isolated phenomena but are caused by multiple interacting factors within Social-Ecological Systems (SES) [26]. These are integrated complex systems where people interact with natural components, and the dynamic interconnections between social and ecological elements often give rise to the most pressing problems [26]. Traditional research methods that simplify or isolate system components frequently fail because they overlook this fundamental complexity [26].

To overcome these limitations, a dual-pronged approach is necessary. First, technologically, we must move beyond single-source data, which provides a fragmented view. Multisensor data fusion combines diverse data streams—from in-situ sensors, satellite imagery, and audio recorders—to create a richer, more continuous picture of ecological phenomena [2] [8]. Second, methodologically, we must transcend disciplinary silos. Effective interdisciplinary collaboration is required to integrate diverse knowledge types and perspectives, though it is often hindered by difficulties in integrating disparate theories, terminologies, and values [26].

Theoretical Foundations

The Interdisciplinary Collaboration Framework

Successful interdisciplinary work requires frameworks that facilitate integration. A powerful approach involves the development of Conceptual Frameworks (CFs) that act as "boundary objects" [26]. According to Mollinga (2010), boundary work comprises three key elements [26]:

- Boundary Concepts: Concepts or terms that help researchers think about and communicate complex issues across disciplines (e.g., "resilience" or "water control").

- Boundary Objects: Practical tools, such as a shared Conceptual Framework, that are adaptable to the needs of different actors but robust enough to maintain a common identity.

- Boundary Settings: The institutional arrangements and conditions that enable effective collaboration.

A structured, iterative process for developing a CF as a boundary object is essential for bridging disciplinary divides and is detailed in the section below on Interdisciplinary Protocols [26].

Graph Theory for Ecological Network Analysis

Graph Theory (GT) provides a mathematical foundation for representing and analyzing complex ecological networks [27]. It simplifies real-world systems into a set of components [27]:

- Vertices (V): Represent discrete ecological habitats or patches.

- Edges (E): Represent functional connections or environmental flows between nodes, such as species movement or nutrient transfer.

GT is particularly advantageous in Ecological Network Analysis (ENA) for identifying, protecting, and improving ecological networks, as well as for analyzing the impact of environmental deterioration over time [27]. It allows ecologists to visualize, describe, and analyze ecological connections across different spatial scales, making it invaluable for landscape planning and biodiversity conservation [27].

Methodological Guide: Integrating Data Fusion and Collaboration

This section provides a practical, two-part methodology for implementing the proposed framework.

Technical Protocol: A Multi-Sensor Data Fusion Workflow

The following workflow, derived from river monitoring and biodiversity assessment case studies, outlines the steps for effective multi-sensor data fusion [2] [8].

Phase 1: Data Acquisition & Preprocessing

- Sensor Deployment: Deploy a network of autonomous, complementary sensors. Examples include in-situ water quality sensors (measuring pH, electrical conductivity, turbidity, nutrients) [8], audio recorders for vocalizing species [2], camera traps for mammals and invertebrates [2], and sensors for volatile organic compounds (pVOCs) emitted by plants [2].

- Satellite & Aerial Data: Integrate submeter-resolution optical and Synthetic Aperture Radar (SAR) imagery. SAR provides all-weather, day-and-night capability, complementing the fine detail of optical images [28].

- Autonomous Preprocessing: Perform initial data preprocessing (e.g., noise filtering, calibration) at the sensor or edge node to optimize bandwidth and power usage, especially in remote areas [2].

Phase 2: Data Fusion Ecological data fusion occurs at multiple levels, each with distinct advantages [29]:

Table 1: Levels of Data Fusion in Ecological Research

| Fusion Level | Description | Advantages | Common Applications |

|---|---|---|---|

| Data-Level | Direct merging of raw data from multiple sources. | Retains maximum data integrity and detail. | Fusing multi-spectral satellite bands for land cover classification [28]. |

| Feature-Level | Extraction of features from raw data, followed by fusion of feature vectors. | Provides a comprehensive, consistent description; flexible and widely applicable. | Combining extracted land cover features from optical and SAR imagery [28] [29]. |

| Decision-Level | Fusion of final outputs or decisions from models processing different data streams. | High fault tolerance; allows for use of disparate data types. | Combining species identification results from an audio analysis algorithm and a camera trap image classifier [2]. |

Phase 3: Model & Analysis

- Graph Neural Networks (GNNs): Model the ecosystem as a graph where nodes represent entities (e.g., habitat patches, water sampling points) and edges represent relationships (e.g., species dispersal, water flow). GNNs update node feature representations by aggregating information from neighbors, capturing high-order spatial associations and complex dependencies within the ecosystem [29].

- Temporal Modeling: Integrate Long Short-Term Memory (LSTM) networks with self-attention mechanisms to capture time-dependent patterns and focus on critical events in continuous data streams, such as pollutant spikes after rainfall [29].

Phase 4: Visualization & Decision Support

- Develop interactive web and mobile applications with real-time mapping interfaces (e.g., using Mapbox) to provide stakeholders with accessible data visualizations [8].

- Present specific quantitative data points using clearly structured data tables with conditional formatting to highlight key distinctions and benchmarks [30].

Interdisciplinary Protocol: Developing a Conceptual Framework

The following protocol, adapted from the TREBRIDGE project, outlines a collaborative process for creating a shared Conceptual Framework (CF) [26].

Phase 1: Define Boundary Concepts

- Activity: Researchers from each discipline (e.g., geomorphology, forest ecology, hydrology, environmental economics) individually create concept maps defining key terms and their relationships from their field's perspective.

- Activity: In a plenary workshop, teams present their maps. The goal is to identify and agree upon a set of shared "boundary concepts" (e.g., "resilience," "ecosystem service"). This process clarifies differing interpretations and builds a common vocabulary [26].

Phase 2: Develop the CF as a Boundary Object

- Activity: A small integration team (or "integration leaders") drafts an initial CF structure that incorporates the boundary concepts and maps the hypothesized relationships between social and ecological variables.

- Activity: The draft CF undergoes iterative refinement cycles where all project members provide feedback. This collaborative and iterative process is crucial for ensuring the CF is both scientifically robust and usable across disciplines. The final CF should be a visual and/or descriptive model that guides the entire project [26].

Phase 3: Use the CF as a Boundary Object

- Activity: The CF is used to guide unified study design, ensuring that data collection efforts across disciplines are coherent and address the integrated model of the system.

- Activity: The CF acts as a scaffold for knowledge integration. Research findings from different disciplines are continually related back to the framework, facilitating the synthesis of insights into a holistic understanding. Procedures like "common group learning" (synthesizing insights within the whole group) and "negotiation among experts" (combining insights through bilateral interactions) are employed [26].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Multi-Sensor Ecological Research

| Tool / Solution | Type | Primary Function | Example in Use |

|---|---|---|---|

| In-Situ Sensor Networks (e.g., AquaSonde) | Hardware | Provides continuous, high-frequency measurements of physicochemical water parameters (pH, EC, DO, NO₃, turbidity) [8]. | Real-time detection of nutrient fluctuations linked to agricultural runoff in the Ystwyth River [8]. |

| Synthetic Aperture Radar (SAR) | Remote Sensing | Provides all-weather, day-and-night submeter-resolution imagery, penetrating cloud cover for consistent monitoring [28]. | All-weather land cover mapping and post-disaster building damage assessment in the IEEE GRSS Data Fusion Contest [28]. |

| Automated Biodiversity Samplers (e.g., AMMOD) | Hardware System | Autonomous collection of physical samples (insects, pollen, spores) and acoustic data for taxonomic identification [2]. | Large-scale, fine-grained biodiversity monitoring across a network of remote stations [2]. |

| Graph Neural Networks (GNNs) | Software / Model | Processes graph-structured data to uncover hidden relationships and spatial dependencies within ecological networks [29]. | Assessing tourism ecological efficiency by modeling spatial relationships between destinations, resources, and environmental impacts [29]. |

| Conceptual Framework (CF) | Methodological Tool | Serves as a boundary object to facilitate communication, collaboration, and knowledge integration across diverse disciplines [26]. | Bridging natural and social sciences in the TREBRIDGE project to enhance resilience in Swiss Alpine ecosystems [26]. |

Addressing the complex challenges facing modern ecosystems requires a fundamental shift in research methodology. This whitepaper has argued that this shift must be twofold: a technological leap towards integrated multi-sensor data fusion and a methodological leap towards structured interdisciplinary collaboration. Neither is sufficient alone. Advanced sensors and AI models like GNNs provide the data and computational power to represent complex systems, while conceptual frameworks and collaborative protocols provide the shared language and integrative capacity to make sense of them. By adopting the technical and collaborative protocols outlined in this guide, researchers can move beyond siloed perspectives and generate the holistic, actionable knowledge necessary to steward social-ecological systems toward a more resilient and sustainable future.

Multisensor Applications in Action: From Canopy Mapping to Deep-Sea Foraging

Forest ecosystems play a critical role in the global carbon cycle, but are increasingly threatened by wildfires. Accurate assessment of forest fuel distribution is essential for effective wildfire management and mitigation. This technical guide explores the integration of NASA's Global Ecosystem Dynamics Investigation (GEDI) spaceborne LiDAR with complementary satellite imagery to advance forest fuel assessment. The GEDI mission, operational on the International Space Station since December 2018, provides the first specially-designed spaceborne LiDAR for measuring vegetation three-dimensional structure [31]. Following a hibernation period from March 2023 to April 2024, the GEDI instrument was successfully reinstalled and has been collecting data nominally, with its latest data products (Version 2.1) incorporating post-storage measurements [32]. This whitepaper details how these unique vertical structure measurements, when combined with multispectral and synthetic aperture radar (SAR) data, enable physically-based quantification of fuel properties across landscapes, overcoming limitations of traditional optical remote sensing approaches.

GEDI Mission and Data Fundamentals

GEDI Instrument Status and Data Products

The GEDI instrument operates three lasers that emit pulses along eight parallel tracks, collecting footprints approximately 25m in diameter spaced 60m along-track and 600m across-track [31]. As of the 2025 Science Team Meeting, each laser had logged over 22,000 hours of operation, collecting more than 20 billion shots each, with 72% of acquisition time over land surfaces [32]. The mission recently achieved 33 billion Level-2A land surface returns, with approximately 12.1 billion passing quality filters.

Table 1: GEDI Data Products for Fuel Assessment

| Product Level | Key Metrics | Application in Fuel Assessment |

|---|---|---|

| L1B | Waveform energy distribution | Raw waveform data for custom fuel parameter derivation |

| L2A | Relative height (RH) metrics (rh0, rh10, ..., rh100), elevation | Canopy height profile and vertical structure analysis |

| L2B | Canopy cover, Plant Area Index (PAI), Foliage Height Diversity (FHD) | Horizontal continuity and complexity metrics |

| L4A | Aboveground Biomass Density (AGBD) | Fuel load estimation at footprint level |

| L4C | Waveform Structural Complexity Index (WSCI) | Canopy heterogeneity and fuel arrangement |

The upcoming V3.0 data product release will incorporate both pre- and post-storage data with improvements to quality filtering, geolocation accuracy, and algorithm performance [32]. For regional-scale applications, researchers should note that the GEDI L4A gridded biomass product may require local calibration, particularly in heterogeneous Mediterranean ecosystems where significant underestimation has been observed (RMSE = 40.756 Mg/ha, bias = -30.075 Mg/ha) [31].

Physical Principles of LiDAR for Fuel Structure Assessment

GEDI's full-waveform LiDAR technology measures the vertical distribution of canopy elements by transmitting laser pulses and recording the returned energy as a function of height. The waveform's shape and energy distribution provide direct measurements of:

- Canopy Height Metrics: Derived from relative height (RH) values representing energy percentiles (e.g., RH100 for top of canopy, RH50 for median height)

- Vertical Fuel Distribution: The waveform's continuous energy profile indicates density of canopy elements at different heights

- Subcanopy Structure: Energy returned from lower strata quantifies understory fuels and vertical fuel continuity

- Canopy Complexity: Waveform Structural Complexity Index (WSCI) and Foliage Height Diversity metrics describe the three-dimensional arrangement of fuels

The physically-based nature of these measurements avoids the saturation limitations common to optical vegetation indices when assessing dense canopies, making LiDAR particularly valuable for fuel assessment in closed-canopy forests [31].