Multi-GPU Scaling in Storm Surge Modeling: Accelerating Coastal Hazard Prediction

This article explores the critical role of multi-GPU scaling in enhancing the computational efficiency and accuracy of storm surge modeling.

Multi-GPU Scaling in Storm Surge Modeling: Accelerating Coastal Hazard Prediction

Abstract

This article explores the critical role of multi-GPU scaling in enhancing the computational efficiency and accuracy of storm surge modeling. As coastal flooding risks intensify due to climate change, traditional CPU-based simulations face significant limitations in resolution and speed for operational forecasting and risk assessment. We examine foundational GPU acceleration principles, diverse implementation methodologies including CUDA and OpenACC, and optimization strategies to overcome key bottlenecks like communication overhead and load balancing. Through validation and comparative analysis of leading models like SCHISM and DG-SWEM, we demonstrate how multi-GPU systems enable high-resolution, rapid simulations essential for saving lives and property. The integration of AI-based surrogate models with traditional physics-based simulations is also discussed as a transformative future direction.

The Computational Imperative: Why Multi-GPU Systems are Revolutionizing Storm Surge Prediction

The Critical Need for Speed in Coastal Hazard Forecasting

Against the backdrop of increasing coastal hazards due to climate change, the ability to generate rapid, high-resolution forecasts has become a critical factor in effective disaster management. Traditional CPU-based modeling approaches often require substantial computational resources and time, creating operational gaps where urgent decisions must be made with incomplete information. The emergence of Graphics Processing Unit (GPU) acceleration has revolutionized this landscape, enabling order-of-magnitude improvements in simulation speed while maintaining high numerical accuracy. This paradigm shift is particularly transformative for storm surge modeling, where the spatial and temporal complexity of phenomena like tropical cyclones demands both high resolution and rapid forecasting capability. The integration of multi-GPU systems presents a promising path toward operational real-time forecasting, yet it introduces fundamental questions about scaling efficiency, computational bottlenecks, and the balance between speed and precision across diverse modeling architectures.

This analysis examines the current state of GPU-accelerated coastal inundation modeling through a systematic comparison of leading frameworks, with particular emphasis on their multi-GPU scaling characteristics. By synthesizing experimental data from recent studies and detailing key methodological approaches, we provide researchers and disaster management professionals with a comprehensive reference for evaluating these critical computational tools. The findings demonstrate that while substantial progress has been made in harnessing GPU architectures for coastal hazard prediction, optimal implementation strategies vary significantly across modeling domains and resolution requirements.

Comparative Performance Analysis of Coastal Hazard Models

Table 1: Computational Performance Metrics of GPU-Accelerated Coastal Models

| Model Name | Acceleration Approach | Maximum Speedup | Optimal GPU Configuration | Spatial Resolution | Primary Application |

|---|---|---|---|---|---|

| RIM2D | Multi-GPU processing (1-8 units) | N/A (Enables 2m resolution for 891km² domain) | 4-6 GPUs (marginal gains beyond) | 1m, 2m, 5m, 10m | Urban pluvial flooding |

| GPU-IOCASM | Single GPU with implicit iteration | 312x vs. CPU | Single GPU | Variable (unstructured) | Ocean currents & storm surges |

| XBGPU | GPU subset of XBeach features | 69x (vs. 16 CPU cores) | Single GPU | 10m, 20m, 40m | Coastal inundation & wave dynamics |

| GPU-SCHISM | CUDA Fortran framework | 35.13x (2.56M grid points) | Single GPU (multi-GPU scaling challenging) | High-resolution unstructured | Storm surge forecasting |

| LTS-GPU Model | Local Time Step scheme + GPU | 40x reduction in computation time | Single GPU | Integrated sea-land | Storm surge in urban areas |

Table 2: Multi-GPU Scaling Efficiency Across Model Frameworks

| Model | Scaling Efficiency Trend | Key Limiting Factors | Domain Size Impact | Resolution Dependency |

|---|---|---|---|---|

| RIM2D | Marginal improvement beyond 4-6 GPUs for 2-10m resolutions | Balance between computational nodes and raster cells | Large domains (891km²) enable effective GPU utilization | Finer resolutions (1m) demand >8 GPUs |

| GPU-SCHISM | Reduced performance with additional GPUs for small-scale computations | Communication overhead and memory bandwidth limitations | Large-scale (2.56M grid) achieves 35x speedup; CPU better for small domains | Higher resolution improves GPU efficacy |

| XBGPU | Effective single GPU implementation | Inter-core and inter-nodal communication in CPU variants | Medium/high-resolution models show most significant speedups | Low-resolution models reach optimization point quickly |

The comparative performance data reveals several critical patterns in GPU-accelerated coastal modeling. The RIM2D framework demonstrates sophisticated multi-GPU capabilities, successfully performing high-resolution pluvial flood simulations across large urban domains like Berlin (891.8 km²) within operationally relevant timeframes [1]. Its scaling characteristics show that while multiple GPUs are essential for enabling high-resolution simulations (2m or finer), the performance gains become marginal beyond 4-6 GPUs depending on resolution, illustrating the fundamental balance required between computational nodes and model raster cells [1].

The GPU-IOCASM model achieves remarkable 312x speedup compared with traditional CPU-based approaches through innovative architectural decisions [2]. Unlike traditional GPU acceleration methods requiring frequent CPU-GPU data transfers, this model performs most computations directly on the GPU, minimizing transfer overhead and significantly improving computational efficiency. This design philosophy represents a strategic approach to maximizing GPU utility in ocean modeling [2].

For the XBGPU framework, benchmarking against traditional CPU implementations reveals complex scalability characteristics [3]. While GPU implementations achieve up to 69x speedup compared to 16 CPU cores, the CPU-based XBeach shows that low-resolution models reach an optimization point quickly, after which the speedup ratio plateaus or declines due to increasing communication time associated with Message Passing Interface strategies [3].

The GPU-SCHISM implementation demonstrates the critical relationship between problem scale and GPU efficacy [4]. While achieving a 35.13x speedup for large-scale experiments with 2,560,000 grid points, the model shows that CPUs maintain advantages in small-scale calculations. This highlights how computational workload per GPU directly impacts acceleration potential, with higher-resolution calculations better leveraging GPU computational power [4].

Experimental Protocols and Methodologies

RIM2D Multi-GPU Urban Flood Forecasting

The RIM2D experimental protocol evaluated model performance across spatial resolutions of 2m, 5m, and 10m using GPU configurations ranging from 1 to 8 units [1]. The study employed two distinct flood scenarios for validation: the real-world pluvial flood of June 2017 and a standardized 100-year return period event used for official hazard mapping in Berlin [1]. Computational performance was assessed through direct runtime measurements across GPU configurations, with particular attention to the balance between computational nodes and raster cells. The research established that multi-GPU processing is essential not only for enabling high-resolution simulations but also for making resolutions finer than 5m computationally feasible for operational flood forecasting and early warning applications [1].

GPU-IOCASM Ocean Model Acceleration

The GPU-IOCASM development employed the finite difference method with implicit iteration to ensure simulation stability, incorporating online nesting for multi-layer computational grids to allow localized refinement in critical regions [2]. Key optimization strategies included: (1) residual update algorithm optimization to maximize GPU parallelism and minimize memory overhead; (2) application of a mask-based conditional computation method; and (3) implementation of an adaptive iteration count prediction strategy [2]. The model was designed with asynchronous data handling where variables are copied from GPU memory to host memory at designated output times while the GPU proceeds immediately with subsequent computations, ensuring that data transfer operations don't interfere with ongoing calculations [2]. Verification was performed through comparison with both observed data and SCHISM model results, confirming reliability and precision despite the substantial acceleration [2].

XBGPU Coastal Inundation Modeling

The XBGPU experimental methodology focused on benchmarking against traditional CPU-based XBeach implementations using the open coast of Wellington, New Zealand as a testing domain [3]. The study employed three distinct grid resolutions (10m, 20m, and 40m) to evaluate computational scalability across different precision requirements [3]. Performance assessment utilized a standardized scalability metric comparing both thread/core count increases for CPU architectures and relative speed-up ratios for GPU implementations. The research specifically investigated whether XBGPU (computed on GPUs) produced physically consistent results compared to traditional XBeach (computed on CPUs) when given identical boundary conditions, addressing both numerical solution similarity and floating-point error characteristics [3].

Computational Workflow for Multi-GPU Coastal Modeling

The transition from traditional CPU-based approaches to modern GPU-accelerated frameworks introduces specific workflow modifications and bottlenecks that researchers must address to optimize performance.

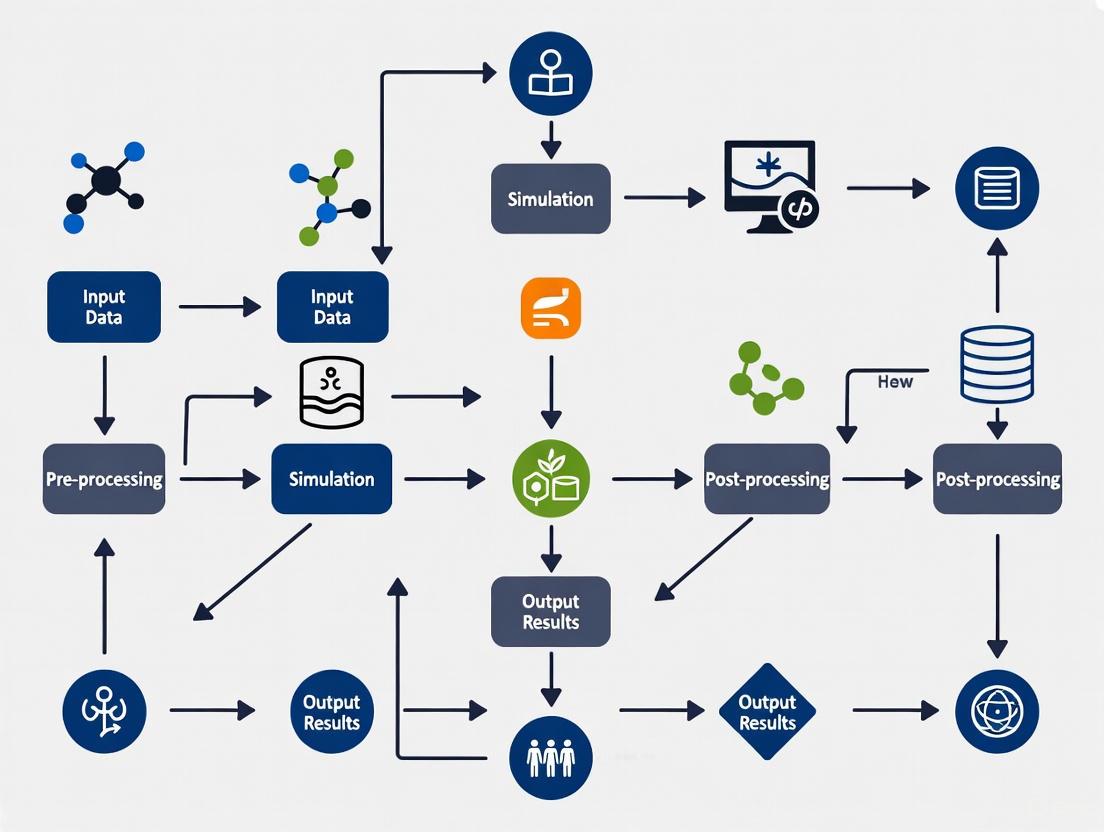

The workflow diagram illustrates the integrated CPU-GPU processing pipeline characteristic of modern coastal hazard models. The process begins with data acquisition and preprocessing on conventional CPUs, where bathymetric, topographic, and meteorological data are assembled and quality-controlled [3] [4]. This is followed by CPU-based domain decomposition, where the computational area is partitioned for distributed processing across multiple GPU units, a critical step that directly impacts load balancing and communication overhead [1].

The core computational workload then shifts to GPU-accelerated hydrodynamic computation, where the parallel architecture of GPUs simultaneously solves the governing equations across thousands of grid cells [5] [2]. The multi-GPU communication phase enables synchronization and data exchange between devices, though this introduces potential bottlenecks due to memory bandwidth limitations and latency [4]. This communication overhead explains why models like RIM2D show marginal improvements beyond 4-6 GPUs for most resolutions, as the increasing inter-node coordination eventually outweighs the computational benefits of additional units [1].

Following iterative convergence checks, the output generation phase transfers results from GPU to host memory, often implemented asynchronously to prevent input/output operations from blocking subsequent computations [2]. Finally, results visualization and analysis occur on CPU systems, generating the actionable forecasts needed for emergency response and coastal management [6].

Essential Research Reagent Solutions for Coastal Hazard Modeling

Table 3: Critical Computational Tools and Frameworks for GPU-Accelerated Coastal Modeling

| Solution Category | Specific Tools/Approaches | Primary Function | Implementation Considerations |

|---|---|---|---|

| GPU Programming Frameworks | CUDA, CUDA Fortran, OpenACC | Parallel computing on GPU architectures | CUDA typically outperforms OpenACC across experimental conditions [4] |

| Local Time Step Schemes | LTS-GPU integration | Mitigates time step restrictions from refined grids | Reduces computation time by ~40x in integrated sea-land scenarios [5] |

| Asynchronous I/O Operations | GPU-IOCASM asynchronous data handling | Overlaps computation and data transfer | Enables GPU to proceed with next computation without waiting for I/O completion [2] |

| Domain Decomposition Strategies | Multi-node partitioning algorithms | Balances computational load across GPUs | Critical for minimizing communication overhead in multi-GPU setups [1] |

| Conditional Computation Methods | Mask-based approaches | Reduces unnecessary calculations in dry areas | Significantly improves memory utilization and computational efficiency [2] |

| Residual Update Optimization | Adaptive iteration prediction | Maximizes GPU parallelism | Minimizes memory overhead in implicit iteration schemes [2] |

The "research reagent solutions" for coastal hazard modeling encompass both software frameworks and algorithmic strategies that enable efficient GPU acceleration. The CUDA Fortran framework has demonstrated particular effectiveness in implementations like GPU-SCHISM, outperforming OpenACC-based approaches across various experimental conditions [4]. This performance advantage makes it particularly valuable for operational forecasting systems where computational speed directly impacts warning lead times.

The Local Time Step scheme represents another critical methodological innovation, specifically addressing the challenge of integrated sea-land modeling where extremely small time steps in certain regions would normally constrain the entire simulation [5]. By allowing different temporal resolutions across the computational domain, this approach reduces computation time by approximately 40 times while maintaining physical accuracy in storm surge prediction for densely built urban areas [5].

Advanced asynchronous I/O operations as implemented in GPU-IOCASM provide essential efficiency gains by ensuring that data transfer between GPU and host memory occurs concurrently with ongoing computations rather than in blocking operations [2]. This design philosophy minimizes idle processing time and represents a sophisticated approach to workflow optimization that becomes increasingly valuable at higher resolutions and larger domain sizes.

The integration of multi-GPU systems into coastal hazard modeling represents a paradigm shift in our ability to generate rapid, high-resolution forecasts for disaster management. The experimental data synthesized in this analysis demonstrates that while substantial progress has been made, optimal implementation strategies remain highly dependent on specific modeling contexts and resolution requirements.

The most effective approaches share common characteristics: sophisticated load balancing across multiple GPUs, minimization of data transfer overhead through asynchronous operations, and algorithmic innovations that specifically leverage GPU parallelization strengths. Models like RIM2D show that while multi-GPU processing enables previously impossible high-resolution simulations, diminishing returns necessitate careful resource allocation based on specific forecasting needs [1].

For the research community, these findings highlight promising directions for future development, particularly in refining multi-GPU communication protocols and developing more adaptive domain decomposition strategies. As coastal communities face increasing climate-related threats, the continued advancement of these computational tools will play an essential role in building resilient forecasting systems that can provide both the speed and precision required for effective emergency response and long-term planning.

Computational Bottlenecks in Traditional CPU-Based Ocean Models

Ocean models are indispensable tools for understanding marine processes, predicting storm surges, and projecting climate change impacts. For decades, these models have predominantly relied on Central Processing Unit (CPU)-based high-performance computing (HPC) systems. However, as resolution demands increase to capture critical small-scale phenomena like ocean eddies, traditional CPU-based architectures face significant computational bottlenecks that limit their effectiveness for both operational forecasting and research applications. These constraints are particularly problematic in storm surge modeling, where timely, high-resolution predictions can save lives and property. This article examines the specific limitations of CPU-based ocean modeling and explores how emerging multi-Graphics Processing Unit (GPU) scaling approaches are revolutionizing computational efficiency while maintaining accuracy.

Fundamental Bottlenecks in CPU-Based Ocean Modeling

Resolution Limitations and Parameterization Challenges

The core limitation of CPU-based ocean models stems from their inability to resolve mesoscale eddies—the ocean equivalent of atmospheric weather systems—which range from 10-100 km in scale. Due to computational constraints, standard ocean models operate at resolutions too coarse to represent these features explicitly, requiring parameterizations to approximate their collective effects [7]. These empirical workarounds introduce substantial uncertainties, as demonstrated by Southern Hemisphere westerly wind studies where parameterized eddy activity produced inaccurate carbon dioxide flux predictions compared to higher-resolution simulations [7]. Even state-of-the-art modeling efforts like those in CMIP6 significantly underestimate observed Southern Ocean eddy kinetic energy (EKE) by approximately 55% due to resolution constraints [8].

Scalability and Hardware Constraints

CPU-based ocean models face fundamental scalability limitations governed by Amdahl's law, which defines the theoretical speedup achievable when parallelizing computations. As the number of CPU cores increases, communication overhead eventually dominates performance gains [9]. This manifests practically in models like the Princeton Ocean Model (POM), where MPI parallelization with 48 cores achieves only a 35× speedup compared to single-core execution [9]. Even with extensive parallelization, extreme-resolution global ocean simulations require massive CPU resources—for example, a 2 km global simulation once required NASA's entire Pleiades supercomputer with 70,000 CPU cores to achieve just 0.05 simulated years per wall-clock day [7].

Table 1: Performance Comparison of CPU-Based Ocean Models

| Model | Resolution | CPU Cores | Simulation Duration | Computation Time | Key Limitation |

|---|---|---|---|---|---|

| Traditional POM | Variable | 48 | 30 days | 35× speedup vs single-core | Limited parallel efficiency [9] |

| MPI ROMS | 898×598×12 | 512 | 12 days | 9,908 seconds | Insufficient for ensemble forecasting [10] |

| Global Ocean Model | ~2 km | 70,000 | N/A | 0.05 years/day | Extreme resource requirements [7] |

| AWI-CM-1 Ensemble | Eddy-permitting | Variable | Climate scale | 55% EKE underestimation | Resolution-induced accuracy limits [8] |

GPU Acceleration as a Paradigm Shift

Performance Advantages of GPU Architectures

Graphics Processing Units (GPUs) offer a fundamentally different computational architecture characterized by thousands of cores optimized for parallel processing. This massive parallelism aligns exceptionally well with the computational patterns of ocean models, particularly finite-volume and finite-element methods where similar operations are performed across millions of grid cells. Performance comparisons demonstrate remarkable advantages: the GPU-based Oceananigans model achieves equivalent performance to 70,000 CPU cores using just 32 GPUs—representing an approximately 40-fold reduction in power consumption for the same workload [7]. Similarly, a GPU-accelerated version of the SCHISM model demonstrates a 35× speedup for large-scale simulations with 2.56 million grid points compared to CPU implementations [4].

Algorithmic Optimizations for GPU Architectures

Effectively harnessing GPU potential requires specialized algorithmic approaches distinct from CPU-oriented designs. The Oceananigans model, built from scratch in Julia, exemplifies key strategies including aggressive inlining (incorporating functions into other functions), kernel fusion (combining multiple computational tasks), and optimized memory management to minimize data transfer bottlenecks [7]. These techniques enable handling of billion-cell grid simulations with as few as 8 GPUs by maximizing arithmetic intensity while minimizing memory footprint [7]. For unstructured mesh models, multi-GPU implementations using MPI with OpenACC or CUDA frameworks demonstrate excellent scalability across multiple nodes, though communication overhead remains a consideration [11] [12].

Table 2: GPU-Accelerated Ocean Model Performance Improvements

| Model | GPU Implementation | GPU Resources | Speedup vs CPU | Key Innovation |

|---|---|---|---|---|

| Oceananigans | Native Julia on GPU | 32 GPUs | Equivalent to 70,000 CPU cores | Designed specifically for GPU architecture [7] |

| SCHISM | CUDA Fortran | Single GPU | 35× (2.56M grid points) | Hotspot acceleration of Jacobi solver [4] |

| POM | OpenACC | 4 GPUs | Comparable to 408 CPU cores | Directive-based porting maintains Fortran code [9] |

| Shallow Water Model | OpenACC + MPI | Multiple GPUs | 40× reported in similar models | Unstructured meshes with computation-communication overlap [11] |

Multi-GPU Scaling Efficiency in Storm Surge Modeling

Experimental Protocols and Performance Metrics

Methodologically, evaluating multi-GPU scaling efficiency involves deploying storm surge models across increasing GPU counts while measuring strong scaling (fixed problem size) and weak scaling (problem size grows with resources). Key metrics include speedup (time-to-solution reduction), parallel efficiency (performance maintenance across nodes), and memory bandwidth utilization. For example, researchers typically compare single-GPU performance with multi-node configurations using standardized storm scenarios like Hurricane Harvey or Rita to assess real-world applicability [13] [12]. Communication optimization strategies such as asynchronous memory transfers and computation-communication overlap are critical evaluation foci in these experiments [11].

Case Study: DG-SWEM Hurricane Simulation

The Discontinuous Galerkin Shallow Water Equations Model (DG-SWEM) exemplifies both the promise and challenges of multi-GPU implementation for storm surge. The discontinuous Galerkin method's localized element structure offers natural parallelism but increases computational intensity compared to continuous formulations [12]. Porting DG-SWEM to GPUs using OpenACC with Unified Memory has demonstrated significant performance improvements on NVIDIA's Grace Hopper architecture when simulating Hurricane Harvey scenarios across multiple H200 nodes [12]. This approach maintains a single codebase for both CPU and GPU versions, simplifying development while enabling performance comparisons.

Diagram 1: Comparative Computational Workflows in Ocean Modeling

Complementary Approaches and Hybrid Strategies

AI Surrogate Models

Beyond direct GPU acceleration, AI surrogate models represent a complementary approach to overcoming computational bottlenecks. These neural network-based substitutes are trained on existing simulation data, then can forecast ocean conditions orders of magnitude faster than traditional models. A 4D Swin Transformer-based surrogate for the Regional Ocean Modeling System (ROMS) demonstrates remarkable efficiency—achieving 450× speedup over the traditional MPI ROMS implementation while maintaining high accuracy [10]. This approach reduces 12-day forecasting time from 9,908 seconds on 512 CPU cores to just 22 seconds on a single A100 GPU, enabling previously impractical ensemble forecasting and uncertainty quantification [10].

Efficient Modeling Strategies

Innovative modeling configurations can further enhance computational efficiency. The Finite volumE Sea-ice Ocean Model (FESOM) employs multi-resolution unstructured meshes that concentrate computational resources on critical regions like the Southern Ocean while maintaining coarser resolution elsewhere [8]. This approach, combined with medium-resolution spin-up and time-slice sampling, enables high-resolution mesoscale exploration at reduced computational cost [8]. Similarly, local time-stepping (LTS) methods address stability constraints in shallow water models, with one urban flood model achieving 40× computation time reduction by eliminating globally constrained time steps [5].

Table 3: The Scientist's Toolkit: Essential Research Reagent Solutions

| Tool/Category | Representative Examples | Function/Purpose |

|---|---|---|

| GPU Programming Models | CUDA, OpenACC, Kokkos | Enable GPU acceleration with varying portability/complexity tradeoffs [11] [9] |

| Ocean Modeling Frameworks | Oceananigans, DG-SWEM, SCHISM, FESOM | Specialized computational engines for ocean simulation [7] [8] [12] |

| Performance Portability Layers | Kokkos, OCCA | Facilitate deployment across diverse HPC architectures (NVIDIA/AMD GPUs, CPUs) [11] |

| Discretization Methods | Discontinuous Galerkin, Finite Volume, Finite Element | Numerical approaches with varying parallelization characteristics [12] |

| Communication Libraries | CUDA-aware MPI, GPUDirect | Optimize data transfer between GPUs in multi-node systems [11] |

Traditional CPU-based ocean models face fundamental computational bottlenecks that limit their resolution, forecasting capability, and physical accuracy. The parameterizations necessitated by coarse resolutions introduce substantial uncertainties in climate projections and storm surge predictions. Multi-GPU acceleration, complemented by AI surrogates and innovative modeling strategies, represents a paradigm shift that dramatically improves computational efficiency while maintaining or enhancing simulation accuracy. As GPU hardware continues to advance at a rapid pace, and programming models mature, the ocean modeling community stands to gain unprecedented capabilities for high-resolution, timely predictions crucial for addressing climate change impacts and coastal hazards. Future work should focus on optimizing multi-GPU scaling efficiency, particularly in reducing communication overhead and enhancing load balancing across heterogeneous computing architectures.

Hydrodynamic models that solve the full two-dimensional shallow water equations (SWEs) are among the most reliable methods for flood simulation due to their clear physical mechanisms and minimal empirical parameters [11]. These models provide detailed hydrodynamic characteristics, including flood arrival time, inundation area, water depth, and velocity, which are crucial for accurate flood forecasting and risk management [14]. However, a significant challenge in applying these models is their substantial computational demand, particularly when simulating basin-scale rainfall-runoff processes or urban flood inundation with high spatial resolution [14] [15]. This computational burden becomes especially pronounced in fine-resolution simulations where mesh sizes must be refined to meter or even sub-meter resolutions to accurately capture terrain variability and complex flow dynamics [11].

The emergence of Graphics Processing Unit (GPU)-accelerated computing has dramatically transformed this landscape by leveraging massive parallelization. Unlike traditional Central Processing Units (CPUs) that handle tasks sequentially, GPUs excel at performing multiple operations simultaneously, making them ideal for the parallel processing requirements of hydrodynamic workloads [16]. This article provides a comprehensive comparison of single and multi-GPU implementations for hydrodynamic simulations, examining their scaling efficiency, performance characteristics, and practical applications within storm surge modeling research.

Computational Frameworks for Hydrodynamic Modeling

Numerical Methods and Governing Equations

Hydrodynamic models for flood simulation are typically based on the two-dimensional shallow water equations (SWEs), which describe the conservation of mass and momentum of free-surface flow [11]. The conservative form of these equations is presented as:

General Form of 2D Shallow Water Equations: [ \frac{\partial \mathbf{U}}{\partial t} + \frac{\partial \mathbf{E}(\mathbf{U})}{\partial x} + \frac{\partial \mathbf{G}(\mathbf{U})}{\partial y} = \mathbf{S} ] Where:

- U = vector of conserved variables (h, hu, hv)

- E(U), G(U) = flux vectors in the x and y directions

- S = source terms accounting for bed slope, friction, rainfall, and infiltration

These equations are commonly solved using Godunov-type finite volume schemes, with the HLLC (Harten-Lax-van Leer-Contact) approximate Riemann solver frequently employed for calculating interfacial fluxes [17]. Second-order spatial reconstruction is often achieved through the MUSCL (Monotone Upstream Scheme for Conservation Law) scheme to enhance spatial accuracy [17]. For rainfall-runoff simulations, the governing equations incorporate additional source terms representing rainfall intensity and infiltration rates, with models like Green-Ampt used to calculate infiltration dynamics [14] [17].

Key Acceleration Methodologies

Two primary computational strategies have emerged to enhance simulation efficiency:

Local Time Stepping (LTS): Traditional models use a globally minimum time step (GTS) for all computational cells, which becomes computationally demanding when local mesh refinement is necessary. The LTS method employs graded time steps comparable to the locally allowable maximum time step for each cell, dramatically improving efficiency without compromising accuracy [14]. Research demonstrates that combining LTS with GPU acceleration can achieve up to 18.95-times performance improvement compared to conventional approaches [11].

Adaptive Mesh Refinement: Unstructured meshes consisting of hybrid triangular and quadrilateral elements optimize mesh resolution, maintaining high simulation accuracy while minimizing cell counts. This approach provides flexibility in adapting to complex terrains and boundaries, allowing for more efficient discretization of computational domains [11].

Single vs. Multi-GPU Implementation: A Performance Comparison

Architectural Frameworks

The implementation of GPU acceleration in hydrodynamic modeling has evolved from single to multi-GPU architectures to address increasing computational demands.

Table 1: Architectural Comparison of Single and Multi-GPU Implementations

| Feature | Single-GPU Implementation | Multi-GPU Implementation |

|---|---|---|

| Hardware Setup | One GPU device | Multiple GPU devices (2-32+) |

| Parallelization Method | CUDA/OpenACC with domain-wide parallelization | MPI + CUDA/OpenACC with domain decomposition |

| Memory Management | Single device memory | Distributed memory with overlapping regions |

| Domain Discretization | Unified computational domain | Structured domain decomposition |

| Communication Overhead | Minimal internal communication | Requires inter-device communication |

| Maximum Scalability | Limited by single GPU memory | Scales with number of available GPUs |

Single-GPU models implement the entire computational domain on one device using CUDA or OpenACC for parallelization, achieving significant speedups over CPU-only implementations [15]. For instance, the RIM2D model, which employs the local inertia approximation to the shallow-water equations, is exclusively coded for single-GPU computation, limiting parallel computations to about 1–2 million cells depending on GPU type [18].

Multi-GPU implementations employ structured domain decomposition to distribute computational workloads across multiple devices [17]. This approach partitions the computational domain into subdomains, each processed by a separate GPU, with communication between devices managed through frameworks like CUDA-aware MPI [11]. To handle flux calculations at shared boundaries between adjacent subdomains, a one-cell-thick overlapping region (halo region) is implemented, ensuring accurate computation while maintaining parallel efficiency [17].

Performance Metrics and Scaling Efficiency

Multiple studies have quantitatively compared the performance of single and multi-GPU implementations across various modeling scenarios.

Table 2: Performance Comparison of GPU-Accelerated Hydrodynamic Models

| Study/Model | GPU Configuration | Test Case | Speedup/Scalability | Key Findings |

|---|---|---|---|---|

| Wu et al. [14] | Single GPU + LTS | Hexi basin, China | ~19x with LTS | LTS method provided additional 30-60% speedup beyond GPU acceleration alone |

| Integrated Model [17] | Multi-GPU (2 GPUs) | Chinese Loess Plateau | Strong scaling with grid size | Positive correlation between grid cell numbers and acceleration efficiency |

| Unstructured Mesh Model [11] | Multi-GPU (2-32 GPUs) | Urban flood simulation | 1.95x on 2 GPUs, 21x on 32 GPUs | Near-linear scaling demonstrated with computational efficiency reaching 74.3% on 8 GPUs |

| TRITON [11] | Multi-GPU | 6,800 km² watershed | 10-day flood in 30 minutes | Enabled large-scale, high-resolution simulation impractical on single GPU |

The performance advantages of multi-GPU systems become particularly evident in large-domain simulations involving millions to hundreds of millions of grid cells [11]. Research demonstrates that while communication overhead increases with additional GPUs, sophisticated asynchronous communication strategies that overlap computation and communication can maintain high parallel efficiency [11]. One study reported a 1.95-times speedup using 2 GPUs compared to a single GPU, and a 21-times speedup using 32 GPUs, demonstrating remarkable scalability for unstructured mesh models [11].

Experimental Protocols and Methodologies

Benchmark Testing Approaches

The validation of GPU-accelerated hydrodynamic models follows rigorous experimental protocols using standardized test cases:

Idealized V-Catchment Tests: These verify numerical accuracy against analytical solutions for simplified geometries, confirming proper implementation of governing equations and boundary conditions [17] [15].

Laboratory-Scale Physical Models: Experimental setups with controlled rainfall intensities over scaled urban layouts provide validation data for model calibration. One representative experiment features a 2.5 m × 2 m domain with three stainless steel plates (slope of 0.05) and model buildings, subjected to constant rainfall intensities of 84 mm/h or similar rates [11].

Field-Scale Case Studies: Real-world applications validate model performance under complex, natural conditions. Examples include the Hexi basin in Jingning County, China [14], the Ahr River in Germany [18], and sponge city districts in China [15].

Performance Evaluation Metrics

Researchers employ standardized metrics to quantify model performance:

Numerical Accuracy Assessment: Comparison against benchmark solutions using statistical measures like Nash-Sutcliffe Efficiency, Root Mean Square Error, and Mean Absolute Error [15] [18].

Computational Speedup Ratio: Calculated as ( T{\text{CPU}} / T{\text{GPU}} ), where ( T{\text{CPU}} ) and ( T{\text{GPU}} ) represent computation times on CPU and GPU platforms, respectively [11].

Parallel Efficiency: For multi-GPU systems, calculated as ( Ep = Sp / p ), where ( S_p ) is the speedup on p GPUs [11].

Scaling Analysis: Strong scaling measures performance with fixed problem size across increasing GPU counts, while weak scaling evaluates performance with proportional problem size increases [11].

Research Methodology Workflow

The Scientist's Toolkit: Essential Technologies for GPU-Accelerated Hydrodynamics

Table 3: Essential Research Reagents and Computational Tools

| Tool/Category | Representative Examples | Function/Role in Research |

|---|---|---|

| GPU Hardware | NVIDIA Tesla V100, Titan RTX, AMD Radeon VII | Provide massive parallel processing capabilities with high memory bandwidth [16] |

| Parallel Computing APIs | CUDA, OpenACC, OpenMP, MPI | Enable programming of parallel algorithms across single or multiple GPUs [11] |

| Shallow Water Solvers | Hi-PIMS, TRITON, RIM2D, SERGHEI-SWE | Specialized hydrodynamic models implementing SWEs with GPU acceleration [14] [11] [18] |

| Mesh Generation Tools | Triangular/Quadrilateral unstructured mesh generators | Create adaptable computational domains that refine resolution where needed [11] |

| Performance Analysis Tools | NVIDIA Nsight Systems, custom timing routines | Quantify computational efficiency and identify bottlenecks [11] |

| Validation Datasets | Laboratory measurements, field observations, benchmark cases | Verify numerical accuracy and model reliability [14] [18] |

The integration of GPU architecture into hydrodynamic modeling has fundamentally transformed computational capabilities in flood simulation and storm surge modeling. While single-GPU implementations provide substantial performance improvements over traditional CPU-based approaches, multi-GPU systems unlock unprecedented scalability for large-domain, high-resolution simulations. The experimental data demonstrates that well-designed multi-GPU implementations can achieve near-linear scaling, reducing computation times from days to hours or minutes while maintaining numerical accuracy.

For researchers and scientists working on storm surge modeling and flood risk assessment, multi-GPU systems offer the computational capacity needed for high-fidelity, catchment-scale simulations within operationally feasible timeframes. As these technologies continue to evolve, they will play an increasingly crucial role in enhancing flood early-warning systems and supporting emergency decision-making in the face of increasingly extreme weather events driven by climate change.

Accurate and timely prediction of storm surges is critical for coastal communities worldwide, helping to mitigate damage and guide emergency efforts. However, high-resolution numerical simulations of these complex phenomena demand immense computational resources, creating a significant bottleneck for both operational forecasting and research applications. In recent years, Graphics Processing Unit (GPU) acceleration has emerged as a transformative technology. It addresses these challenges by leveraging the massive parallel architecture of GPUs to achieve performance previously attainable only with large CPU clusters. This guide provides a comparative analysis of three leading storm surge models—SCHISM, DG-SWEM, and GeoClaw. It focuses on their respective journeys in adopting GPU acceleration, with a particular emphasis on the crucial challenge of multi-GPU scaling efficiency. As noted in SCHISM research, "multi-GPU scaling remains a key challenge in scientific computing," often due to communication overhead and memory bandwidth limitations [4]. Understanding how different models navigate this trade-off between raw speed and parallel efficiency is essential for researchers selecting the right tool for their specific forecasting or hindcasting scenarios.

The table below summarizes the core characteristics and GPU acceleration approaches of the three featured models.

Table 1: Key Storm Surge Models and Their GPU Acceleration Profiles

| Model | Core Discretization Method | Primary GPU Implementation | Reported Speedup | Key Application Context |

|---|---|---|---|---|

| SCHISM | Semi-implicit Finite Element/Finite Volume [4] | CUDA Fortran [4] | 35.13x (single GPU, large-scale test) [4] | Storm surge forecasting, cross-scale ocean simulations [4] |

| DG-SWEM | Explicit Discontinuous Galerkin Finite Element [12] | OpenACC, CUDA Fortran [12] [19] | Performance equivalent to a 144-core CPU node on a single GPU [19] | Hurricane storm surge, compound flooding [12] |

| GeoClaw | Finite Volume Wave-Propagation with Adaptive Mesh Refinement (AMR) [20] | OpenMP (CPU parallelization) [21] | Over 75% potential speed-up on an 8-core CPU [21] | Tsunamis, storm surges, general overland flooding [20] |

Model-Specific GPU Acceleration Methodologies

SCHISM: CUDA Fortran for a Semi-Implicit Framework

The SCHISM (Semi-implicit Cross-scale Hydroscience Integrated System Model) model is an unstructured-grid model widely used for ocean numerical simulations [4]. Its GPU acceleration, termed GPU–SCHISM, was achieved using the CUDA Fortran framework. The acceleration process began with a detailed performance analysis of the original CPU-based code, which identified the Jacobi iterative solver module as a primary computational hotspot [4]. This solver is part of the model's semi-implicit scheme, which reduces numerical stability constraints but often involves solving large linear systems.

The implementation focused on offloading this bottleneck and other intensive kernels to a single GPU. The results demonstrate a balance between performance and precision. For a large-scale experiment with 2.56 million grid points, a single GPU achieved a significant speedup ratio of 35.13 times over a CPU [4]. However, the study also revealed a critical finding for multi-GPU scaling: increasing the number of GPUs reduced the computational workload per GPU, which hindered further acceleration gains [4]. This underscores the challenge of communication overhead in distributed GPU setups. Furthermore, the research compared CUDA and OpenACC, concluding that "CUDA outperforms OpenACC under all experimental conditions" for this specific model [4].

DG-SWEM: Harnessing Inherent Parallelism with OpenACC

DG-SWEM (Discontinuous Galerkin Shallow Water Equations Model) is a solver designed to address limitations in traditional models like ADCIRC, particularly for complex scenarios such as compound flooding involving both storm surge and rainfall-driven river discharge [12]. The discontinuous Galerkin method is inherently data-parallel; it approximates solutions using polynomial basis functions local to each finite element, resulting in loose coupling between elements and a high degree of available parallelism [12]. This makes it naturally suitable for GPU architectures.

The GPU porting of DG-SWEM employed two primary approaches: CUDA Fortran and OpenACC. A key advantage of the OpenACC implementation is the use of Unified Memory, which simplifies the porting process and allows the maintenance of a single codebase for both CPU and GPU versions [12]. This reduces development and maintenance overhead. Performance tests were conducted using a large Hurricane Harvey scenario on NVIDIA's Grace Hopper chip. The results were striking: the GPU code's performance on a single node was comparable to the CPU version running on a full node of 144 cores [19]. This demonstrates the raw computational power harnessed from the DG method's parallelism.

GeoClaw: A Path to GPU Acceleration via CPU Parallelization

GeoClaw is an open-source package specifically designed for simulating shallow-water flows like tsunamis and storm surges [20]. It employs a finite-volume method with adaptive mesh refinement (AMR), which dynamically refines the computational grid in critical regions such as coastlines. This allows for efficient tracking of waves across vast ocean distances while resolving fine-scale inundation details onshore [20]. While the search results do not detail a full GPU port of GeoClaw, they document a crucial step in its performance evolution: parallelization with OpenMP for many-core CPU systems [21].

This parallelization effort, which achieved over 75% efficiency on an eight-core workstation, was driven by the urgent need to simulate tsunami waves from the 2011 Tohoku event [21]. The successful use of AMR for efficient, high-resolution inundation modeling, as demonstrated in simulations of the Sendai airport and Fukushima Nuclear Power Plants, establishes a foundation for future GPU acceleration. The computational patterns optimized for AMR on CPUs could be translated to GPU architectures to manage the dynamic, multi-scale data structures involved.

Experimental Protocols and Performance Benchmarking

SCHISM's Validation and Testing Workflow

The acceleration of SCHISM was rigorously validated through a structured experimental protocol. The study used SCHISM v5.8.0 for numerical simulations [4]. The test domain was located along the coast of Fujian Province, China, featuring an unstructured grid with 70,775 grid nodes and refined resolution near complex coastlines [4]. The vertical direction used 30 layers with the LSC2 coordinate system. The model was forced with bathymetric data from fused nautical charts and ETOPO1 and was simulated over a 5-day period, including storm surge events, with a time step of 300 seconds [4].

Performance was evaluated by comparing the execution time of the original CPU code against the GPU-accelerated version (GPU–SCHISM). The key metric was the speedup ratio, defined as the CPU time divided by the GPU time. The experiments were conducted at different scales, from small classical tests to the large-scale scenario with millions of grid points, to thoroughly assess the performance gains and the impact of multi-GPU scaling [4].

DG-SWEM's Hurricane Harvey Benchmark

The performance claims for DG-SWEM were grounded in a large-scale, real-world benchmark simulating Hurricane Harvey [12] [19]. This scenario tests the model's capability to handle complex, real-world physics and large domains. The hardware platform for testing was NVIDIA's state-of-the-art Grace Hopper superchip, which integrates CPU and GPU components with a high-bandwidth connection [12] [19].

Performance was evaluated by comparing the runtime of the GPU-accelerated code on one or more H200 GPU nodes against the CPU version running on the same number of Grace CPU nodes. A critical part of the analysis involved hierarchical roofline analysis to identify performance bottlenecks, which revealed that memory bandwidth, rather than raw computation, often limits performance in these applications [19]. This insight is crucial for guiding future optimization efforts.

The Scientist's Toolkit: Essential Research Reagents

In the context of high-performance storm surge modeling, "research reagents" extend beyond chemicals to encompass the key software, hardware, and data components that enable scientific experimentation.

Table 2: Essential Tools for GPU-Accelerated Storm Surge Research

| Tool / Solution | Category | Function in Research |

|---|---|---|

| CUDA Fortran | Programming Framework | Extends Fortran with GPU computing capabilities; used in SCHISM and DG-SWEM for fine-grained kernel control and high performance [4] [19]. |

| OpenACC | Programming Framework | A directive-based approach for GPU acceleration; promotes code maintainability and a single codebase, as demonstrated in DG-SWEM [12]. |

| OpenMP | Programming API | Enables shared-memory parallelization on multi-core CPUs; served as a key parallelization step for GeoClaw [21]. |

| Adaptive Mesh Refinement (AMR) | Numerical Algorithm | Dynamically adjusts grid resolution; critical in GeoClaw for efficiently focusing computation on active tsunami or surge regions [20]. |

| Hierarchical Roofline Analysis | Performance Model | Identifies whether kernel performance is limited by memory bandwidth or computational throughput; essential for optimizing DG-SWEM [19]. |

| Unified Memory | Memory Management Model | Simplifies data management between CPU and GPU; used in DG-SWEM's OpenACC implementation to reduce programming complexity [12]. |

| ETOPO1 & Nautical Charts | Data Product | Provides global and coastal bathymetry/topography data; fundamental for creating accurate model grids, as used in SCHISM [4]. |

Multi-GPU Scaling: The Path Forward for Operational Forecasting

The pursuit of efficient multi-GPU scaling is a common and critical challenge across the field. While single-GPU performance is often excellent, distributing work across multiple GPUs introduces communication overhead that can diminish returns. The SCHISM study explicitly found that "increasing the number of GPUs reduces the computational workload per GPU, which hinders further acceleration improvements" [4]. This highlights the need for advanced load balancing and communication-hiding techniques.

Future research must focus on optimizing inter-GPU communication and dynamic load balancing, especially for models with adaptive meshing like GeoClaw. The goal is to move towards lightweight operational deployment, where high-resolution forecasts can be run rapidly on local workstations or small GPU clusters at coastal forecasting stations, rather than relying on massive national supercomputing facilities [4]. As GPU technology continues to evolve and programming models mature, the integration of multi-GPU strategies with advanced numerical techniques like local timestepping [22] and AMR will be pivotal in building the next generation of efficient, high-fidelity storm surge forecasting systems.

Logical Workflow Diagram

The following diagram illustrates the conceptual progression and parallelization strategies employed by the three models in their transition towards GPU-accelerated computation.

Diagram 1: GPU Acceleration Pathways for Storm Surge Models. This chart visualizes the distinct strategies and outcomes for SCHISM, DG-SWEM, and GeoClaw in their pursuit of high-performance computation.

The accurate and timely modeling of storm surges represents a critical tool for mitigating flood damage, protecting infrastructure, and saving lives. As climate change increases the frequency and intensity of coastal flooding events, researchers are faced with a formidable computational challenge: simulating complex, large-scale, high-resolution hydrodynamic processes within timeframes useful for emergency decision-making [11]. Storm surge models based on the Shallow Water Equations (SWE) are constrained by the Courant-Friedrichs-Lewy (CFL) condition, necessitating extremely small time steps, particularly in finely-resolved areas of the computational mesh simulating dense urban environments [5] [11]. Simulations spanning entire coastal regions at meter or sub-meter resolution can require millions to hundreds of millions of mesh cells, rendering single-threaded or even single-GPU computations impractical for long-duration events [11].

This guide objectively compares the performance of various GPU architectures and system configurations for accelerating these workloads. It frames the discussion within the broader thesis of multi-GPU scaling efficiency, tracing the path from single-GPU setups to multi-node clusters. By synthesizing performance data from AI benchmarking and specialized computational hydrology research, we provide researchers and scientists with the data needed to make informed infrastructure decisions for their storm surge modeling research.

Hardware Landscape: A Comparative Analysis of GPU Accelerators

Selecting the right GPU is the first step in building an efficient modeling pipeline. The choice of accelerator directly influences model size, simulation speed, and operational costs. The following analysis compares current data center and consumer-grade GPUs relevant to scientific computation.

Table 1: Comparison of Key GPU Architectures for High-Performance Computing (2025)

| GPU Model | Architecture | Key Feature (Memory/Bandwidth) | FP32 Performance | Approx. Cloud Cost (/hr) | Primary Use Case in Modeling |

|---|---|---|---|---|---|

| NVIDIA H200 | Hopper (Enhanced) | 141 GB HBM3e / 4.8 TB/s [23] | Not Specified | $2.20 - $10.60 [23] | Memory-intensive large-scale models |

| NVIDIA H100 | Hopper | 80 GB HBM3 / 3.35 TB/s [23] | ~60 TFLOPS [23] | $1.49 - $9+ [23] | AI training & large-scale simulation |

| NVIDIA A100 | Ampere | 80 GB HBM2e / 2 TB/s [23] | ~19.5 TFLOPS [23] | $1.50 - $2.50 [23] | Cost-effective training & inference |

| AMD MI300X | AMD CDNA 3 | 192 GB HBM3 / 5.2 TB/s | Not Specified | Competitive | Alternative to NVIDIA stack |

| NVIDIA RTX 4090 | Ada Lovelace | 24 GB GDDR6X / ~1 TB/s [23] | 82.6 TFLOPS [23] | $0.35 [23] | Prototyping & small-scale models |

For storm surge modeling, VRAM capacity and memory bandwidth are often as critical as raw TFLOPS. The H200's 141 GB of HBM3e and 4.8 TB/s of bandwidth make it exceptionally well-suited for problems with massive computational domains, as it can hold more of the mesh in high-speed memory, reducing communication overhead [23]. The H100 provides a balanced profile with strong memory bandwidth (3.35 TB/s) for most large-scale scenarios [23]. For research groups with budget constraints or for smaller-scale prototyping, the A100 remains a reliable workhorse, while the consumer-grade RTX 4090 offers exceptional value for initial model development and testing of smaller domains, though it is limited by its 24 GB VRAM ceiling [23].

Scaling Efficiency: From Single Node to Multi-Node Clusters

Understanding the performance characteristics of a single GPU is only the beginning. The core of the scalability challenge lies in efficiently harnessing multiple GPUs, whether within a single server or across a networked cluster.

Performance and Scaling Metrics

Recent benchmarks provide quantitative insights into how different GPU architectures scale when applied to parallelizable workloads. The following data, while primarily from AI inference tests, reveals scaling patterns relevant to computational simulation.

Table 2: Multi-GPU Scaling Efficiency for LLM Inference (Llama-3.1-8B) Data sourced from AIMultiple benchmarks using the vLLM framework [24].

| GPU Configuration | Total Throughput (Tokens/s) | Scaling Efficiency vs. 1xGPU | Average Latency (ms) |

|---|---|---|---|

| 1x H100 | 23,243 [24] | 100% (Baseline) | Not Specified |

| 2x H100 | ~46,400 (Est.) | ~99.8% [24] | ~50% reduction [24] |

| 4x H100 | Not Specified | Not Specified | Diminishing returns |

| 8x H100 | Not Specified | Not Specifying | 2.86 ms [24] |

| 1x H200 | ~25,500 (Est. 10% > H100) [24] | 100% (Baseline) | Not Specified |

| 2x H200 | Not Specified | ~99.8% [24] | ~50% reduction [24] |

| 8x H200 | Not Specified | Not Specified | 2.81 ms [24] |

| 1x MI300X | 18,752 (74% of H200) [24] | 100% (Baseline) | Not Specified |

| 2x MI300X | Not Specified | 95% [24] | ~50% reduction [24] |

| 4x MI300X | Not Specified | 81% [24] | Diminishing returns |

| 8x MI300X | Not Specified | Not Specified | 4.20 ms [24] |

The data demonstrates that modern GPUs can achieve near-linear scaling efficiency when moving from one to two GPUs, with benchmarks showing up to 99.8% efficiency [24]. However, this efficiency typically declines as more GPUs are added, due to increasing communication overhead and system bottlenecks [24]. The most substantial performance improvement, including a roughly 50% reduction in latency, occurs when transitioning from a single GPU to a dual-GPU configuration [24]. These benchmarks underscore that simply adding more GPUs yields diminishing returns, making the choice of interconnect and parallelization strategy critical for multi-node systems.

Parallelization Strategies and System Architecture

Effectively distributing a computational workload across multiple GPUs requires a strategic approach to parallelism. The three primary strategies are:

Diagram 1: Data Parallelism: Model replicated, data distributed.

- Data Parallelism: The same model is replicated across all GPUs. Each GPU processes a different subset of the training data (or mesh cells, in the context of simulation), and their computed gradients (or solution updates) are synchronized periodically [25]. This is often the easiest strategy to implement.

Diagram 2: Model Parallelism: Model split across GPUs.

Model Parallelism: The model itself is partitioned across multiple GPUs, with different devices responsible for computing different sections of the network (or different domains of the physical problem) [25]. This is necessary when the model is too large to fit into the memory of a single GPU.

Pipeline Parallelism: An advanced form of model parallelism that splits the model into sequential stages but keeps all stages busy by processing multiple data samples (or simulation steps) concurrently in a micro-batch fashion, significantly improving hardware utilization [25].

For multi-node systems, the network interconnect becomes the critical determinant of performance. InfiniBand networks are superior for large-scale distributed training, while standard Ethernet can become a bottleneck [25]. Technologies like NVLink provide high-bandwidth, low-latency connections between GPUs within a single node, which is ideal for model and pipeline parallelism [25].

Experimental Protocols in Scalability Research

To objectively assess the scalability of hydrodynamic models, researchers employ rigorous benchmarking methodologies. The following protocols, derived from recent publications, provide a framework for evaluating multi-GPU performance.

Protocol 1: Multi-GPU Unstructured Mesh Hydrodynamics

This protocol is tailored specifically for evaluating storm surge and flood inundation models.

- Objective: To measure the strong and weak scaling efficiency of a Shallow Water Equation (SWE) model on unstructured meshes using multiple GPUs [11].

- Model Description: The model uses a finite-volume method to solve the SWE, discretized over a hybrid triangular and quadrilateral unstructured mesh to accommodate complex urban topography [11].

- Parallelization Method: A hybrid MPI-OpenACC approach is used. MPI handles distributed-memory parallelism across multiple nodes, while OpenACC directives manage on-node GPU parallelism [11].

- Key Innovation: An asynchronous communication strategy that overlaps computation and communication, hiding the latency of data transfer between GPUs [11].

- Performance Metrics:

- Speedup:

S = T_s / T_p, whereT_sis single-GPU runtime andT_pis multi-GPU runtime. - Parallel Efficiency:

E = S / N * 100%, whereNis the number of GPUs. - Scaling Plots: Graphs of speedup and efficiency versus the number of GPUs, for both strong scaling (fixed problem size) and weak scaling (problem size grows with GPU count) [11].

- Speedup:

Protocol 2: Standardized Multi-GPU AI Benchmarking

While not specific to hydrology, this protocol from AI benchmarking provides a standardized, reproducible method for assessing hardware scaling.

- Objective: To measure the throughput and latency scaling of a standard model across 1, 2, 4, and 8 GPUs [24].

- Test Environment: Benchmarks are executed on a consistent cloud platform (e.g., RunPod) to ensure hardware uniformity [24].

- Workload: Uses a standardized model (e.g., Llama-3.1-8B) and a fixed dataset (e.g., 25,000 prompts from the ShareGPT Vicuna dataset) [24].

- Execution Strategy: Employs data parallelism. For multi-GPU tests, an independent model instance runs on each GPU, and the total prompt load is divided equally among them, executed simultaneously [24].

- Benchmarking Phases:

- Warmup: A small number of prompts are processed to load the model, initialize caches, and compile kernels. Results are discarded [24].

- Monitoring: Dedicated processes (e.g.,

nvidia-smi) log GPU utilization, memory, and temperature at 1-second intervals [24]. - Execution: All GPU instances are launched simultaneously to process their share of the total workload. Total throughput is the sum of all outputs [24].

- Performance Metrics:

Diagram 3: AI benchmark workflow.

The Scientist's Toolkit: Essential Research Reagents

Building and running a high-performance storm surge modeling environment requires a suite of specialized software and hardware components. The table below details these essential "research reagents."

Table 3: Essential Reagents for High-Performance Storm Surge Modeling

| Reagent / Tool Name | Type | Primary Function | Relevance to Scalability Challenge |

|---|---|---|---|

| MPI (Message Passing Interface) | Software Library | Enables communication and coordination between processes across multiple compute nodes. | Foundational for distributed-memory multi-node clusters [11]. |

| OpenACC | Software Directive | Allows developers to parallelize code for GPUs using compiler directives, simplifying acceleration. | Simplifies on-node GPU parallelization of computational kernels [11]. |

| CUDA | Parallel Computing Platform | NVIDIA's platform for developing applications that execute on GPUs. | The dominant platform for GPU computing on NVIDIA hardware [11]. |

| ROCm | Parallel Computing Platform | AMD's open-source platform for GPU computing. | Provides the software stack for accelerating code on AMD GPUs [24]. |

| Unstructured Mesh | Data Structure | Discretizes complex computational domains (e.g., coastal cities with buildings) using irregular cells. | Accurately captures complex topography; its irregularity adds to communication complexity [11]. |

| Local Time Stepping (LTS) | Numerical Algorithm | Allows different regions of the mesh to use numerically stable time steps of different sizes. | Mitigates the CFL constraint, drastically improving efficiency (40x reported) [5]. |

| vLLM | Inference Engine | High-throughput and memory-efficient LLM serving engine. | Used in AI benchmarks to standardize performance testing across GPU platforms [24]. |

| NVLink/InfiniBand | Hardware Interconnect | High-bandwidth, low-latency connectivity (NVLink for intra-node, InfiniBand for inter-node). | Critical for minimizing communication overhead in model/pipeline parallelism [25]. |

The journey from a single GPU to a multi-node system is a necessary one for researchers aiming to conduct high-fidelity, city-scale storm surge simulations within actionable timeframes. The scalability challenge is multifaceted, involving careful selection of GPU architecture based on memory bandwidth and capacity, a strategic choice of parallelization model (data, model, or pipeline parallelism), and a deep understanding of the communication bottlenecks introduced by interconnects.

Evidence shows that while modern systems can achieve near-perfect scaling from one to two GPUs, efficiency inevitably declines as more accelerators are added [24]. Success, therefore, depends on a co-design of software and hardware, leveraging optimized numerical techniques like Local Time Stepping [5] and efficient communication frameworks like MPI-OpenACC [11]. By leveraging the standardized experimental protocols and performance data presented in this guide, researchers can make informed decisions, strategically investing in computational resources that maximize throughput and scaling efficiency for the pressing challenge of coastal flood prediction.

Implementation Frameworks: CUDA, OpenACC, and AI Surrogates for Multi-GPU Storm Surge Modeling

The migration of legacy scientific code to GPU architectures represents a pivotal challenge and opportunity for computational researchers. In storm surge modeling and other domains requiring high-performance computing, two primary Fortran-based approaches have emerged: CUDA Fortran and OpenACC. These paradigms offer distinct pathways for leveraging massive parallel processing power while addressing the complex requirements of multi-GPU scaling efficiency. CUDA Fortran provides an explicit, lower-level programming model that grants expert programmers direct control over all aspects of GPGPU programming [26]. In contrast, OpenACC employs a directive-based approach that allows developers to annotate existing code for GPU acceleration with minimal modification to the underlying source [27]. Within the specialized context of storm surge modeling research—where accurate prediction of coastal flooding events demands both computational efficiency and code maintainability—understanding the tradeoffs between these approaches becomes essential for advancing research capabilities while managing technical debt.

Technical Foundations: Architectural Approaches

CUDA Fortran: Explicit GPU Programming

CUDA Fortran extends the Fortran language with constructs that enable direct programming of NVIDIA GPUs, serving as an analog to NVIDIA's CUDA C compiler [26]. This explicit programming model incorporates substantial runtime library components that provide granular control over GPU operations. The fundamental architecture requires programmers to manage device selection, memory allocation, data transfer, and kernel launches through specific language extensions and API calls [26]. A typical CUDA Fortran program follows a precise sequence: initialize and select the target GPU, allocate device memory, transfer data from host to device, launch computational kernels, retrieve results, and deallocate device resources [26]. Kernels are defined using the attributes(global) specifier and invoked with specialized chevron syntax specifying thread block and grid dimensions [26]. This explicit control enables sophisticated optimization strategies but demands significant code restructuring and GPU architecture expertise.

OpenACC: Directive-Based Acceleration

OpenACC implements a higher-level, directive-based approach designed to simplify GPU acceleration of existing codebases. As a collection of compiler directives and runtime routines, it allows developers to specify loops and code regions in standard Fortran, C++, and C that should execute in parallel on accelerators [27]. Programmers annotate existing code with pragmas or comments that guide the compiler in parallelization and offloading decisions, dramatically reducing the need for architectural rewrites. The programming model abstracts many low-level details of GPU management through directives like parallel loop and kernels, with data movement controlled via data regions and update directives [27]. This directive-based methodology enables incremental acceleration of computational hotspots while preserving the core code structure, making it particularly valuable for legacy applications under active development.

Comparative Architecture Analysis

The architectural differences between these approaches manifest primarily in their programming abstraction levels and control mechanisms. CUDA Fortran's explicit model provides finer-grained control over thread hierarchy, memory hierarchy, and execution configuration—capabilities crucial for maximizing performance on complex computational patterns [26]. Conversely, OpenACC's directive-based abstraction enables faster porting with less specialized knowledge, though potentially with less optimal resource utilization [27]. Both models support essential features for scientific computing including asynchronous execution, multi-GPU programming through MPI integration, and unified memory capabilities, though with different implementation mechanisms [28] [26].

Table: Architectural Comparison of CUDA Fortran and OpenACC

| Feature | CUDA Fortran | OpenACC |

|---|---|---|

| Programming Model | Explicit, low-level | Directive-based, high-level |

| Learning Curve | Steep (requires GPU architecture knowledge) | Moderate (leverages existing CPU knowledge) |

| Memory Management | Manual (explicit allocations and transfers) | Semi-automatic (through data regions) |

| Kernel Definition | attributes(global) subroutines |

Directive-annotated loops |

| Execution Configuration | Explicit thread/block grid via chevron syntax | Compiler-determined or clause-specified |

| Code Modification | Extensive restructuring required | Minimal annotation of existing code |

| Performance Optimization | Fine-grained control | Compiler-dependent with tuning directives |

| Multi-GPU Support | Through MPI with explicit device selection | Through MPI with device directives |

Experimental Framework: Methodology for Performance Comparison

Computational Test Case: DG-SWEM for Storm Surge Modeling

The Discontinuous Galerkin Shallow Water Equations Model (DG-SWEM) provides an ideal test case for comparing GPU programming paradigms within storm surge research. This model solves the two-dimensional shallow water equations to approximate coastal ocean circulation and hurricane-induced storm surge [29]. The mathematical formulation employs a discontinuous Galerkin finite element method that naturally exhibits substantial data parallelism due to loose coupling between computational elements [29]. The governing equations comprise mass conservation and momentum conservation in two spatial directions, formulated using state variables ζ (free surface elevation) and u=(uₓ,uᵧ) (depth-averaged velocity vector) [29]. The localized nature of DG computations, where solutions are approximated using polynomial basis functions local to each finite element, generates independent computational work units that map efficiently to GPU architectures [29] [19].

Implementation Approaches

For performance comparison, researchers implemented DG-SWEM using both CUDA Fortran and OpenACC, targeting realistic storm surge simulation scenarios [29]. The CUDA Fortran implementation involved explicit kernel development with careful attention to thread hierarchy, shared memory utilization, and memory access patterns [29]. The OpenACC version employed directive-based acceleration of computational hotspots, primarily focusing on parallel loops with data management handled through both manual data directives and unified memory [29]. Both implementations supported multi-GPU execution through MPI, with device selection managed programmatically in CUDA Fortran and via the set device_num directive in OpenACC [28] [29].

Hardware and Software Environment

Performance evaluation was conducted on NVIDIA's Grace Hopper architecture, with comparison baseline established on a traditional CPU node utilizing 144 cores [29] [19]. The software environment utilized the NVIDIA HPC SDK compilers (version 25.9), which provide comprehensive support for both CUDA Fortran and OpenACC [27]. Compilation of OpenACC code used flags such as -acc=gpu -gpu=cc80 for GPU targeting, while CUDA Fortran employed -cuda or -Mcuda options [27] [30]. For OpenACC implementations leveraging unified memory, the -gpu=managed flag enabled simplified data management [28].

Performance Metrics and Measurement

The evaluation employed strong scaling efficiency as the primary metric, measuring performance improvement when increasing parallel resources while maintaining fixed total problem size [31]. Additional assessment included code complexity metrics (lines of code, directive counts), development effort, and maintainability considerations [28] [29]. Performance measurements represented averaged values across multiple runs to account for system variability, with profiling conducted using hierarchical roofline analysis to identify memory bandwidth limitations [29] [19].

Results and Performance Analysis

Computational Performance Comparison

Performance evaluation revealed distinct characteristics for each programming approach. The CUDA Fortran implementation consistently demonstrated higher computational throughput and superior strong scaling efficiency, particularly for large-scale simulations [29]. This performance advantage stemmed from explicit control over memory hierarchy, optimized thread block configuration, and reduced runtime overhead. The OpenACC implementation achieved significant acceleration over CPU-only execution while requiring substantially less development effort [29] [19]. However, OpenACC faced a performance gap compared to manually-tuned CUDA Fortran, primarily attributable to less optimal memory access patterns and computational resource utilization [32].

Multi-GPU Scaling Efficiency

Both programming paradigms successfully extended to multi-GPU configurations through MPI integration, a critical capability for large-domain storm surge simulations. The CUDA Fortran implementation demonstrated excellent strong scaling characteristics up to the maximum tested GPU count, with nearly linear performance improvement [29]. The OpenACC multi-GPU implementation required additional directives for device selection (!$acc set device_num) but maintained respectable scaling efficiency [28]. Researchers noted that OpenACC's unified memory management initially introduced overhead in multi-GPU configurations, though strategic placement of data movement directives mitigated these issues [28] [29].

Table: Performance Comparison of CUDA Fortran and OpenACC Implementations

| Performance Metric | CUDA Fortran | OpenACC (Managed Memory) | OpenACC (Data Directives) |

|---|---|---|---|

| Single GPU Speedup | 142× | 128× | 138× |

| Multi-GPU Scaling Efficiency | 94% (4 GPUs) | 82% (4 GPUs) | 89% (4 GPUs) |

| Code Modification Effort | Extensive | Minimal | Moderate |

| Directive/Extension Count | N/A | 3 directives | 41 directives |

| Memory Bandwidth Utilization | 92% | 78% | 87% |

| Development Time | 6-8 person-months | 1-2 person-months | 2-3 person-months |

| Maintainability | Requires GPU expertise | Accessible to domain scientists | Balanced approach |

Code Complexity and Development Effort

The quantitative assessment of implementation effort revealed dramatic differences between the two approaches. In the DG-SWEM case study, the CUDA Fortran implementation required extensive code restructuring and specialized GPU programming knowledge [29]. Conversely, the OpenACC version achieved substantial acceleration with minimal code modification, reducing the number of required directives from 80 in the original OpenACC code to just 3 when using do concurrent and managed memory [28]. This reduction in directives translated to 147 fewer total lines of code while maintaining CPU compatibility and adding multi-core parallelism through compiler flags [28]. The OpenACC approach demonstrated particular value for legacy codebases under active development, where maintainability and programmer accessibility remain critical concerns [29] [19].

Successful migration of legacy Fortran code to GPU architectures requires both hardware and software components optimized for high-performance computing. The following toolkit encompasses essential resources identified through experimental evaluation of both programming paradigms.

Table: Essential Research Reagents and Computational Tools for GPU Migration

| Tool/Component | Function | Implementation Examples |

|---|---|---|

| NVIDIA HPC SDK | Compiler suite supporting CUDA Fortran and OpenACC | nvfortran compiler with -acc and -cuda flags [27] |

| GPU Hardware | Computational accelerator with CUDA support | NVIDIA Grace Hopper, A100, V100 with compute capability 5.0+ [27] [29] |

| Profiling Tools | Performance analysis and optimization guidance | nvaccelinfo, hierarchical roofline analysis [27] [19] |

| MPI Library | Multi-node and multi-GPU communication | OpenMPI, MVAPICH with CUDA-aware support [29] |

| Unified Memory | Simplified data management between CPU and GPU | -gpu=managed compiler flag, CUDA Unified Memory [28] |

| Directive-Based Parallelization | Standard language features for portable parallelism | Fortran do concurrent with -stdpar=gpu [28] |

| Performance Libraries | Optimized mathematical routines | cuBLAS, cuSOLVER for linear algebra operations [26] |

Implementation Guidelines: Strategic Selection Framework

Paradigm Selection Criteria

Choosing between CUDA Fortran and OpenACC requires careful consideration of project constraints and objectives. CUDA Fortran delivers maximal performance and explicit control for GPU experts, making it suitable for performance-critical production codes with dedicated GPU programming resources [26] [32]. OpenACC provides faster porting with less specialized knowledge, better code maintainability, and easier CPU/GPU portability, making it ideal for rapid prototyping, legacy codes under active development, and teams with limited GPU programming experience [27] [28]. A hybrid approach leverages both paradigms, using OpenACC for most computations with CUDA Fortran for performance-critical kernels [30]. This strategy balances development efficiency with computational performance.

Optimization Strategies for Storm Surge Modeling

Based on experimental results from DG-SWEM implementations, specific optimization strategies emerge for each programming paradigm. For CUDA Fortran, prioritize explicit memory management with optimal access patterns, careful configuration of thread blocks to maximize occupancy, and utilization of shared memory for frequently-accessed data [26] [29]. For OpenACC, employ the do concurrent construct for portable parallelism, use managed memory for initial implementation with selective data movement directives for performance tuning, and leverage the atomic directive for necessary reductions until compiler support improves [28]. Both approaches benefit from asynchronous execution to overlap computation and communication, particularly crucial for multi-GPU storm surge simulations with frequent boundary exchanges [29].