Multidimensional Visualization of Biologging Data: Transforming Animal-Borne Sensors into Biomedical Insights

This article explores the cutting-edge field of multidimensional visualization for biologging data, a rapidly advancing discipline where animal-borne sensors capture complex behavioral, physiological, and environmental data.

Multidimensional Visualization of Biologging Data: Transforming Animal-Borne Sensors into Biomedical Insights

Abstract

This article explores the cutting-edge field of multidimensional visualization for biologging data, a rapidly advancing discipline where animal-borne sensors capture complex behavioral, physiological, and environmental data. Tailored for researchers, scientists, and drug development professionals, we examine how platforms like the Biologging intelligent Platform (BiP) standardize and visualize diverse data streams, from dive profiles to acceleration metrics. We delve into methodological applications, including Online Analytical Processing (OLAP) for estimating environmental parameters, and address critical challenges in data integration, uncertainty visualization, and overcoming computational bottlenecks. By comparing tools and validation frameworks, this guide provides a comprehensive roadmap for leveraging biologging visualization to accelerate discovery in ecology, oceanography, and biomedical research, ultimately bridging the gap between complex data and actionable insight.

The Foundations of Biologging Data: From Animal Sensors to Complex Multidimensional Datasets

Biologging represents a paradigm-shifting approach in ecological research, employing animal-borne sensors to collect high-resolution data on animal movement, behavior, physiology, and the surrounding environment. This technical guide examines biologging through the lens of a Lagrangian framework, where observation platforms (animals) move freely with the environmental flows they inhabit, providing intrinsic spatial and temporal context to the collected data. The core advantage of this approach lies in its ability to capture multi-dimensional data streams from organisms in their natural habitats, revealing otherwise unobservable ecological phenomena. When framed within advanced visualization methodologies, these complex datasets transform into actionable insights, enabling researchers to decipher intricate patterns in animal behavior, environmental interactions, and ecological processes.

Before the term "biologging" was formally coined, researchers began attaching small recorders to marine animals to monitor behavior and physiological conditions in the wild [1]. The field has since evolved from basic tracking to sophisticated multi-sensor platforms that capture a vast array of parameters including depth, speed, acceleration, body temperature, and environmental conditions [1]. The Lagrangian perspective is fundamental to biologging's value proposition—instead of using fixed-point (Eulerian) observations, sensors move with the animal, providing a dynamic, animal's-eye view of its environment and internal state.

This approach has expanded beyond biology to contribute significantly to diverse fields such as meteorology and oceanography [1]. For instance, instrumented animals have provided crucial physical oceanographic data from regions with floating sea ice that are difficult to measure with ships or Argo floats [1]. The growth of biologging has created new challenges and opportunities in data management, analysis, and particularly visualization, as researchers seek to interpret increasingly complex, high-frequency, multivariate data streams.

Core Principles of the Lagrangian Approach

Fundamental Framework

In a Lagrangian biologging system, the observing platform (the animal) moves through the environment, collecting data that is intrinsically referenced to its own trajectory. This framework consists of several interconnected components:

- Mobile Sensors: Miniaturized devices attached to animals that record data across multiple dimensions

- Animal Agency: The animal's natural behavior determines spatial and temporal sampling patterns

- Environmental Context: Measurements are automatically contextualized within the animal's habitat

- High-Resolution Data: Collection at frequencies relevant to the animal's behavior and physiology

Comparative Advantage Over Eulerian Methods

The Lagrangian approach of biologging provides distinct advantages over traditional fixed-point observation systems, particularly for understanding animal-environment interactions.

Table 1: Lagrangian vs. Eulerian Observation Approaches

| Characteristic | Lagrangian (Biologging) | Eulerian (Fixed-Point) |

|---|---|---|

| Spatial Coverage | Animal-determined, potentially vast and targeted | Fixed, limited to instrument location |

| Temporal Resolution | High-frequency, behavior-dependent | Fixed sampling intervals |

| Data Context | Intrinsically linked to animal behavior | Requires external correlation |

| Environmental Sampling | Biased toward biologically relevant conditions | Systematic but potentially ecologically irrelevant |

| Platform Limitations | Subject to animal behavior and device retrieval | Limited by infrastructure and maintenance |

This approach enables researchers to overcome the limitations of meteorological satellites and Argo floats, which have constrained temporal resolution and cannot penetrate below ocean surfaces or operate effectively in shallow waters [1]. By using seals and sea turtles as oceanographic platforms, scientists can obtain water temperature and salinity data with comparable quality to Argo floats but from previously inaccessible regions [1].

The Biologging Toolkit: Sensors and Platforms

Sensor Types and Applications

Modern biologging employs a diverse array of sensors, each capturing different dimensions of information about the animal and its environment. The appropriate selection and combination of these sensors is critical for addressing specific biological questions.

Table 2: Essential Biologging Sensors and Their Functions

| Sensor Category | Specific Sensors | Measured Parameters | Primary Applications |

|---|---|---|---|

| Location | GPS, ARGOS, Geolocators | Position coordinates | Movement trajectories, space use, migration patterns |

| Intrinsic State | Accelerometer, Magnetometer, Gyroscope | Body posture, dynamic movement, orientation | Behavior identification, energy expenditure, biomechanics |

| Environmental | Temperature, Salinity, Depth sensors | Ambient conditions | Habitat characterization, oceanographic data collection |

| Physiological | Heart rate loggers, Temperature sensors, Neurological sensors | Internal body states | Metabolic rates, physiological responses, stress indicators |

| Acoustic/Optical | Microphones, Video loggers, Hall sensors | Vocalizations, visual environment | Behavior documentation, social interactions, foraging success |

Multi-sensor approaches represent the new frontier in biologging, enabling a more comprehensive understanding of animal ecology [2]. For example, combining accelerometers with magnetometers and depth sensors allows for detailed 3D movement reconstruction through dead-reckoning procedures, which is particularly valuable when transmission conditions limit GPS functionality [2].

Integrated Bio-logging Framework

The complexity of modern biologging requires a structured approach to study design. The Integrated Bio-logging Framework (IBF) connects four critical areas—questions, sensors, data, and analysis—through a cycle of feedback loops linked by multi-disciplinary collaboration [2]. This framework helps researchers match appropriate sensors and analytical techniques to specific biological questions while acknowledging the technological limitations and opportunities.

Multidimensional Data Visualization for Biologging Research

Visualization Challenges in Biologging Data

Biologging datasets present unique visualization challenges due to their multivariate, high-frequency, and spatiotemporally complex nature. Effective visualization must address:

- Temporal Scaling Issues: Data collected at different frequencies (e.g., GPS every hour vs. acceleration at 25 Hz)

- Spatial Complexity: Movement paths in 2D and 3D space, often with associated environmental data

- Multivariate Relationships: Interconnections between behavioral, physiological, and environmental variables

- Big Data Volume: Modern biologging studies can generate billions of data points across multiple sensors [1]

Foundational Visualization Principles

Creating clear and engaging scientific figures is crucial for communicating complex biologging data [3]. Effective visualizations follow key design principles:

- Clarity and Accessibility: Use color palettes that are colorblind-friendly and ensure sufficient contrast between visual elements [4]

- Appropriate Chart Selection: Match visualization techniques to data types and research questions [5]

- Context and Narrative: Provide sufficient background to guide interpretation while highlighting key findings [4]

- Consistency: Maintain uniform design elements across multiple plots to facilitate comparison [4]

Advanced Visualization Techniques

For the complex, multi-dimensional data generated by biologging studies, standard visualization approaches often prove insufficient. Advanced techniques include:

- Multi-panel Temporal Alignment: Simultaneous visualization of different data streams (e.g., depth, acceleration, temperature) aligned along a common time axis

- 3D Path Reconstruction: Visualization of animal movements in three dimensions using dead-reckoning approaches [2]

- Interactive Exploration: Linked dashboards that allow researchers to explore time-series and multi-dimensional data dynamically [5]

- Behavioral State Visualization: Using colors or symbols to represent different behavioral states classified from accelerometry or movement data

Experimental Protocols and Methodologies

Sensor Deployment Protocol

Proper sensor deployment is critical for collecting valid biologging data while minimizing impact on the study animals. The following protocol outlines key methodological considerations:

- Animal Selection Criteria: Choose subjects based on species, size, life history stage, and representative behavior

- Sensor Attachment Methods: Select appropriate attachment techniques (e.g., harnesses, adhesives, direct attachment) based on species and study duration

- Device Configuration: Program sampling regimes balanced against battery life and data storage limitations

- Field Deployment: Execute deployment with minimal stress to the animal, documenting precise timing and location

- Data Retrieval: Plan for instrument recovery through recapture, remote transmission, or automated release mechanisms

Standardized metadata collection is essential throughout this process, including information about animal traits (sex, body size, breeding status), instrument specifications, and deployment details [1]. Platforms like the Biologging intelligent Platform (BiP) facilitate this process by conforming to international standard formats for sensor data and metadata storage [1].

Data Processing Pipeline

Raw biologging data requires substantial processing before analysis. The essential steps include:

- Data Validation: Identify and flag sensor malfunctions or biologically impossible values

- Sensor Fusion: Integrate data from multiple sensors into a unified timeline

- Behavioral Classification: Apply machine learning algorithms or statistical models to classify behaviors from sensor data

- Movement Reconstruction: Use dead-reckoning approaches to create detailed movement paths where GPS coverage is limited

- Environmental Extraction: Derive relevant environmental parameters from animal-borne sensor data

This processing pipeline transforms raw sensor outputs into biologically meaningful variables ready for visualization and analysis.

Visualization-Focused Data Standards

Color and Contrast Guidelines

Effective visualization of biologging data requires careful attention to color use and contrast ratios to ensure accessibility and clarity.

Table 3: WCAG Contrast Requirements for Data Visualization

| Element Type | Minimum Ratio (AA) | Enhanced Ratio (AAA) | Use Case Examples |

|---|---|---|---|

| Standard Text | 4.5:1 | 7:1 | Axis labels, legends, annotations |

| Large Text | 3:1 | 4.5:1 | Chart titles, section headings |

| Graphical Elements | 3:1 | N/A | Data points, lines, chart elements |

| User Interface | 3:1 | N/A | Interactive controls, focus indicators |

These requirements ensure that visualizations are accessible to users with low vision or color blindness [6] [7]. Note that large text is defined as 18pt (24 CSS pixels) or larger, or 14pt (approximately 19 CSS pixels) and larger if bold [8].

Standardized Data Formats

To facilitate collaborative research and secondary use of biologging data across disciplines, standardized data formats are essential. Inconsistencies in column names, date-time formats, and file structures have historically limited data integration [1]. The Biologging intelligent Platform (BiP) addresses this challenge by adhering to internationally recognized standards including:

- Integrated Taxonomic Information System (ITIS)

- Climate and Forecast Metadata Conventions (CF)

- Attribute Conventions for Data Discovery (ACDD)

- International Organization for Standardization (ISO) standards [1]

Standardization enables more effective visualization by ensuring consistent interpretation of data across research groups and disciplines.

Case Study: Multi-Sensor Marine Mammal Tracking

Experimental Design

A comprehensive marine mammal biologging study demonstrates the integration of multiple data dimensions:

- Species: Southern elephant seals (Mirounga leonina)

- Sensors Deployed: GPS, CTD (conductivity, temperature, depth), accelerometers, time-depth recorders

- Deployment Location: Antarctic coastal regions

- Primary Objectives: Collect oceanographic data from ice-covered regions while monitoring seal behavior and energetics

Data Integration and Workflow

The complex data streams from such studies require sophisticated integration approaches to reveal relationships between animal behavior and environmental conditions.

Research Reagent Solutions

Table 4: Essential Research Materials for Biologging Studies

| Material/Equipment | Technical Function | Application Context |

|---|---|---|

| Satellite Relay Data Loggers (SRDL) | Compresses and transmits essential data (dive profiles, depth-temperature) via satellite | Long-term marine mammal tracking in remote regions |

| Accelerometer Tags | Measures dynamic body acceleration and posture at high frequency | Behavioral classification, energy expenditure estimation |

| CTD Sensors | Measures conductivity, temperature, and depth of surrounding water | Oceanographic data collection, habitat characterization |

| Time-Depth Recorders | Logs depth at predetermined intervals | Dive behavior analysis, foraging ecology |

| Animal Attachment Systems | Secures sensors to animals with minimal impact | Species-specific deployment (harnesses, adhesives, etc.) |

Emerging Technologies

The future of biologging research will be shaped by several technological developments:

- Smaller, More Powerful Sensors: Continuing miniaturization enabling deployment on smaller species

- Extended Battery Life: New power sources and energy harvesting technologies for longer deployments

- On-board Processing: Edge computing for real-time data processing and selective transmission

- Enhanced Connectivity: Improved satellite and wireless networks for more efficient data retrieval

- Multi-sensor Fusion: Advanced integration of complementary sensor types for richer data context

Visualization Innovations

As biologging datasets grow in size and complexity, visualization methodologies must evolve accordingly:

- Interactive Exploration Tools: Web-based platforms allowing researchers to explore complex biologging datasets dynamically [1]

- Machine Learning Integration: Automated pattern recognition and anomaly detection in high-dimensional data

- Virtual and Augmented Reality: Immersive visualization of animal movements and behaviors in environmental context

- Standardized Visualization Libraries: Open-source tools specifically designed for biologging data types

Biologging, framed as a Lagrangian approach to mobile observation, has fundamentally transformed our ability to study animals in their natural environments. The integration of multi-sensor platforms with advanced visualization techniques creates unprecedented opportunities to understand animal behavior, physiology, and ecology within environmental context. The challenges of managing, analyzing, and interpreting these complex, multi-dimensional datasets require continued development of visualization methodologies and analytical frameworks.

By adopting standardized data formats [1], following visualization best practices [3] [4], and leveraging emerging technologies, researchers can fully exploit the potential of biologging data. This approach will continue to advance not only biological research but also contribute valuable environmental data to complementary fields such as oceanography, climatology, and conservation science. The Lagrangian perspective provided by animal-borne sensors offers a unique and powerful window into the natural world, revealing patterns and processes that would otherwise remain hidden.

Biologging, the practice of attaching data recorders to wild animals, has revolutionized the study of animal physiology, behavior, and ecology by providing unprecedented access to in-situ data from free-ranging individuals [1]. The core value of biologging lies in its ability to capture multidimensional data streams that reflect the complex interactions between an animal's internal state, its external actions, and the environment it inhabits [9]. Modern biologging devices have evolved from simple depth recorders to sophisticated multi-sensor platforms capable of measuring a diverse array of parameters including depth, speed, acceleration, body temperature, and environmental conditions like water temperature and salinity [1].

The analysis of these complex datasets presents significant challenges due to their multivariate, correlated, and time-series nature [9] [10]. This technical guide establishes a standardized framework for categorizing and analyzing the core data dimensions in biologging research, with particular emphasis on applications within drug discovery and development where understanding animal models in ecological contexts can inform therapeutic strategies.

Core Data Dimensions Framework

Biologging data can be conceptualized through three primary dimensions: behavioral, physiological, and environmental parameters. These dimensions are not independent but exist in continuous interaction, collectively defining an animal's life history strategy and responses to environmental challenges [9].

Table 1: Core Data Dimensions in Biologging Research

| Dimension | Data Category | Specific Parameters | Measurement Sensors |

|---|---|---|---|

| Behavioral | Kinematics | Acceleration (tri-axial), angular velocity, body orientation, swim speed/pace | Accelerometer, gyroscope, magnetometer |

| Movement Patterns | Dive depth, flight altitude, horizontal movement paths, stroke frequency | Pressure sensor, GPS, dead-reckoning | |

| Activity Budgets | Resting, foraging, traveling, social behaviors | Multi-sensor integration, animal-borne video | |

| Physiological | Energetics | Heart rate, metabolic rate, oxygen consumption | ECG loggers, accelerometer-derived metrics |

| Thermal Biology | Core body temperature, peripheral temperature | Thermistor, thermocouple | |

| Neural Activity | Brain activity, sleep patterns | Electroencephalogram (EEG) | |

| Environmental | Physical Conditions | Water temperature, salinity, atmospheric pressure | CTD sensors, pressure sensors |

| Habitat Structure | Light intensity, chlorophyll levels, seabed topography | Light sensors, fluorometers, depth sensors |

Behavioral Parameters

Behavioral data in biologging captures the external manifestations of animal activity through movement and spatial patterns. Unlike traditional observational methods, biologging provides continuous, high-resolution datasets that reveal the full complexity of animal behavior in natural contexts [9].

The kinematic aspect of behavior is predominantly captured through motion sensors including tri-axial accelerometers, gyroscopes, and magnetometers sampled at high frequencies (20-50 Hz) [9]. These sensors allow researchers to quantify specific behavioral states such as foraging, resting, and traveling based on characteristic movement signatures. For example, tri-axial accelerometers can distinguish between different gait patterns in terrestrial animals or stroke frequencies in swimming and flying species [1].

Movement patterns represent the spatial component of behavior, documenting how animals navigate their environments. Dive profiles for marine species represent a classic example, with time-depth recorders capturing the vertical movement patterns of air-breathing divers [9]. Modern biologging devices combine pressure sensors with GPS and dead-reckoning algorithms to reconstruct detailed three-dimensional movement paths both horizontally and vertically [9]. These movement datasets reveal how animals partition their time between different activities, creating comprehensive activity budgets that quantify behavioral trade-offs and strategies [1].

Physiological Parameters

Physiological parameters in biologging capture the internal state and functional processes of animals, providing critical insights into how organisms manage energy budgets, respond to environmental stressors, and maintain homeostasis [10].

Energetic parameters represent a fundamental physiological dimension, with heart rate monitoring serving as a primary tool for estimating metabolic rate in free-ranging animals [10]. These data are particularly valuable for understanding the metabolic costs of different behaviors and environmental challenges. Additional energetic metrics can be derived from accelerometry through the relationship between body movement and energy expenditure, providing complementary approaches to metabolic monitoring.

Thermal biology parameters document how animals manage heat balance in challenging environments. Core body temperature measurements reveal patterns of thermoregulation, with data loggers capturing both circadian rhythms and responses to extreme environmental conditions [10]. For example, flatback turtles have been shown to alter their diving behavior in response to water temperature extremes as a thermoregulatory strategy [9]. Neural activity parameters, though less commonly measured due to technical challenges, provide insights into brain states and sleep patterns in wild animals through electroencephalogram (EEG) recording [10].

Environmental Parameters

Environmental parameters contextualize animal behavior and physiology by characterizing the abiotic conditions that animals experience. These data are increasingly recognized as vital for understanding the selective pressures and constraints shaping animal performance [11].

Physical condition parameters include water temperature and salinity profiles collected by animal-borne sensors [1] [11]. These measurements are particularly valuable in oceanographic research where data from marine animals complement traditional observation systems like Argo floats, especially in remote or inaccessible regions [1]. For instance, satellite relay data loggers (SRDLs) deployed on marine mammals have provided critical oceanographic data from Arctic and Antarctic regions with sea ice cover that impedes conventional measurement approaches [1].

Habitat structure parameters document the spatial heterogeneity of environments through measurements of light intensity, chlorophyll levels (as an indicator of productivity), and substrate topography [1]. These parameters help researchers understand habitat selection patterns and the distribution of resources that influence animal movement decisions and foraging strategies.

Methodologies for Data Acquisition and Analysis

Experimental Protocols and Deployment Standards

The acquisition of high-quality biologging data requires standardized protocols for device deployment, data collection, and sensor calibration. The following methodology outlines best practices derived from current biologging research:

Animal Capture and Handling: Researchers capture study animals using minimally invasive techniques appropriate to the species and environment. For marine turtles, this may involve capture by hand from research vessels using hoop nets or the "rodeo" technique [9]. Terrestrial species may require remote capture methods. Processing time should be minimized (typically <30 minutes) to reduce stress effects.

Device Attachment: Biologging devices are secured to animals using species-appropriate attachment methods. For marine turtles, options include direct attachment to the carapace using rubber suction cups or custom-made self-detaching harnesses with padded baseplates [9]. Attachment geometry should be standardized to ensure consistent sensor orientation across individuals.

Sensor Programming and Configuration: Multi-sensor tags (e.g., CATS Camera or Diary tags) should be programmed to record tri-axial acceleration, magnetometer, and gyroscope data at 20-50 Hz, while pressure and temperature sensors typically sample at 10 Hz [9]. GPS systems should be duty-cycled (e.g., recording during alternate daytime hours and every third hour overnight) to conserve power while maintaining adequate positional coverage.

Field Deployment and Recovery: Tagged animals are released at their capture locations. Deployment durations range from 24 hours to several days, depending on research objectives. Recovery mechanisms include galvanic timed releases (GTR) that detach the tag after a predetermined interval, combined with satellite transmitters (SPOT tags) for location of floating packages [9].

Data Retrieval and Validation: Upon recovery, data are downloaded and subjected to quality control procedures including checks for sensor drift, calibration validation, and identification of artifacts. Synchronization with environmental data sets (e.g., tidal cycles, remote sensing data) contextualizes animal-borne measurements [9].

Analytical Approaches for Multidimensional Data

The analysis of biologging data requires specialized statistical approaches to address the challenges of multivariate, autocorrelated time-series data [10]. The following analytical framework has proven effective for extracting biological insights from complex biologging datasets:

Data Preprocessing and Variable Extraction: Raw sensor data are calibrated and converted to biologically relevant units. For diving animals, dive variables are extracted including maximum depth, duration, bottom time, ascent/descent rates, and post-dive surface interval [9]. For accelerometry data, overall dynamic body acceleration (ODBA) or vectorial dynamic body acceleration (VeDBA) provide proxies for energy expenditure.

Dimensionality Reduction: Principal Component Analysis (PCA) is applied to condense multiple correlated dive variables into orthogonal principal components that capture the main features of diving behavior [9]. This approach objectively identifies the dominant axes of behavioral variation while removing collinearity among original variables.

Temporal Modeling: Generalized additive mixed models (GAMMs) effectively model non-linear temporal patterns in biologging data while accounting for autocorrelation [9]. These models can identify significant seasonal, diel, and tidal effects on animal behavior and physiology.

Time-Series Analysis: Autoregressive (AR) and autoregressive moving average (ARMA) models address the serial correlation inherent in physiological time-series data [10]. For example, AR(1) models account for the dependence between consecutive measurements of parameters like blood pO₂ during dives or core body temperature across circadian cycles.

Behavioral Classification: Machine learning approaches (e.g., random forests, hidden Markov models) classify behavioral states from multivariate sensor data. These methods can distinguish subtle behavioral differences that may be missed by traditional analytical techniques.

The integration of these analytical techniques enables researchers to move beyond simple descriptive accounts of animal behavior to mechanistic understanding of how internal state and external environment interact to shape ecological patterns.

Table 2: Analytical Techniques for Biologging Data

| Analytical Challenge | Recommended Technique | Application Example |

|---|---|---|

| Multicollinearity among variables | Principal Component Analysis (PCA) | Condensing 16 correlated dive variables into principal components for flatback turtles [9] |

| Temporal autocorrelation | Autoregressive (AR) models | Modeling serial dependence in physiological parameters like blood pO₂ and body temperature [10] |

| Non-linear temporal patterns | Generalized Additive Mixed Models (GAMMs) | Identifying seasonal, diel, and tidal effects on diving behavior [9] |

| Behavioral classification | Machine learning (Random Forests, HMMs) | Distinguishing foraging, traveling, and resting from accelerometry data |

| Small sample sizes | Mixed effects models | Accounting for individual variation when number of tracked animals is limited [10] |

Visualization and Data Integration Frameworks

Standardized Data Platforms

The growing volume and complexity of biologging data has driven the development of specialized platforms for data sharing, visualization, and analysis. The Biologging intelligent Platform (BiP) represents an integrated solution that adheres to internationally recognized standards for sensor data and metadata storage [1]. BiP enables researchers to upload sensor data, input standardized metadata about individual animals, devices, and deployments, and choose between open and private data sharing settings. The platform's unique Online Analytical Processing (OLAP) tools calculate environmental parameters from animal-collected data, enhancing the utility of biologging data for interdisciplinary research [1].

Movebank, operated by the Max Planck Institute of Animal Behavior, represents another major biologging database containing 7.5 billion location points and 7.4 billion other sensor records across 1478 taxa as of 2025 [1]. These platforms address the critical need for standardized data formats that facilitate collaboration and secondary use of biologging data across biological, oceanographic, and meteorological disciplines.

Data Visualization Principles

Effective visualization of multidimensional biologging data requires careful application of design principles to communicate complex relationships without overwhelming the viewer. The following guidelines support the creation of accessible, informative visualizations:

Color Selection for Data Type: Match color schemes to data characteristics. Use qualitative palettes with distinct hues for categorical data, sequential palettes with gradients of a single color for ordered numeric values, and diverging palettes with two contrasting colors for data with critical midpoints [12].

Contrast and Accessibility: Ensure sufficient color contrast (minimum 3:1 ratio) for graphical elements and text against their backgrounds [13]. Avoid color combinations that are indistinguishable to users with color vision deficiencies, particularly red-green contrasts.

Limited Color Palette: Restrict visualizations to seven or fewer colors to prevent cognitive overload and improve processing speed [12]. Use bright, saturated colors to highlight important information against muted background elements.

Contextual Alignment: Apply color conventions familiar to the target audience (e.g., blue for cold, red for warm in temperature visualizations) to leverage existing associations and streamline interpretation [12].

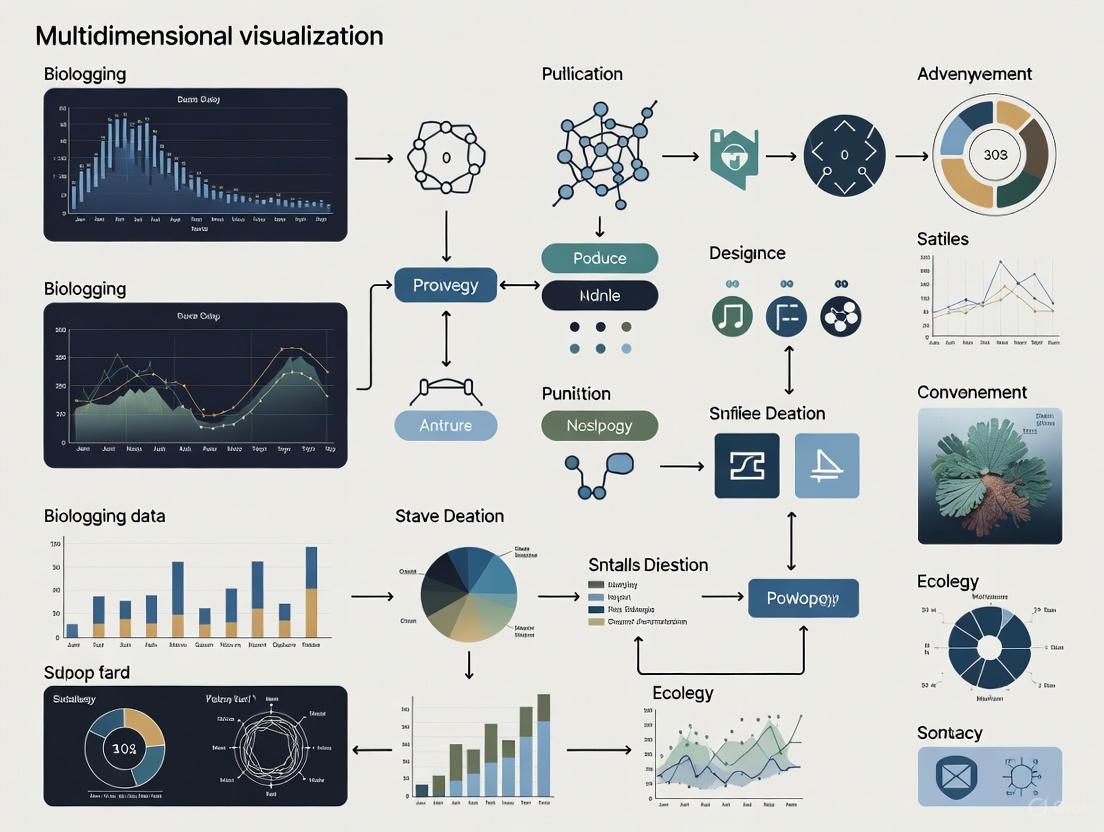

The following diagram illustrates the integrated relationship between core data dimensions in biologging research and the analytical workflow for transforming raw sensor data into ecological insights:

The effective implementation of biologging research requires specialized equipment, analytical tools, and infrastructure. The following table details key resources essential for conducting state-of-the-art biologging studies:

Table 3: Essential Research Resources for Biologging Studies

| Resource Category | Specific Tools/Platforms | Function and Application |

|---|---|---|

| Deployment Platforms | Customized Animal Tracking Solutions (CATS) tags | Multi-sensor biologgers with accelerometer, magnetometer, gyroscope, pressure, and temperature sensors [9] |

| Satellite Relay Data Loggers (SRDLs) | Transmit compressed dive profiles and depth-temperature data via satellite without animal recapture [1] | |

| Data Infrastructure | Biologging intelligent Platform (BiP) | Standardized platform for storing, sharing, and analyzing biologging data with OLAP tools [1] |

| Movebank | Largest biologging database managing billions of location points and sensor records across taxa [1] | |

| Analytical Frameworks | R statistical environment with specialized packages (nlme, mgcv) | Time-series analysis, mixed effects modeling, and GAMM implementation [10] |

| Online Analytical Processing (OLAP) | Calculates environmental parameters from animal-collected data [1] | |

| Sensor Technologies | Tri-axial accelerometers (20-50 Hz) | Quantify fine-scale kinematics and behavioral patterns [9] |

| Animal-borne video systems | Provide ground-truthing for behavioral classification from sensor data [9] |

The integration of behavioral, physiological, and environmental data dimensions through biologging technology provides unprecedented opportunities to understand animal ecology in natural contexts. The analytical frameworks and visualization principles outlined in this technical guide enable researchers to navigate the complexities of multivariate, time-series biologging data and extract meaningful ecological insights. As biologging platforms continue to evolve toward greater standardization and interoperability, their value will expand not only for basic ecological research but also for applied conservation and understanding animal models relevant to drug discovery and development. The future of biologging lies in maximizing the potential of these rich multidimensional datasets through sophisticated analytical approaches that honor the complexity of animal lives while making data accessible across scientific disciplines.

Biologging is a Lagrangian observation method that utilizes animal-borne devices to study animal behavior, physiology, and the surrounding environment [11]. The term "Bio-Logging" was formally proposed at the first international symposium in Tokyo in 2003 [14]. This method involves attaching data recorders to animals, enabling research in fields ranging from behavioral ecology to oceanography and meteorology [14] [11]. The Biologging intelligent Platform (BiP) is a recently developed database designed to store standardized sensor data alongside detailed metadata [14]. Accessible at https://www.bip-earth.com, BiP adheres to internationally recognized standards for sensor data and metadata storage, facilitating collaborative research and secondary data utilization across multiple disciplines [14].

Core Features of the Biologging Intelligent Platform (BiP)

BiP was developed in response to the growing need for a standardized database capable of storing diverse biologging data types, moving beyond primarily location data to encompass a wider range of parameters [14]. Its development is rooted in a broader framework aimed at standardizing bio-logging data across all taxa and ecosystems, a key goal of the International Bio-Logging Society [15]. The platform offers several unique features:

- Data Standardization and Metadata Management: BiP stores sensor data linked with comprehensive metadata, conforming to international standards like the Integrated Taxonomic Information System (ITIS) and Climate and Forecast Metadata Conventions (CF) [14]. This integration allows researchers to explore questions about the influence of individual animal traits on movement and behavior.

- Support for Diverse Parameters: The platform manages a wide array of data parameters, including depth, speed, water temperature, salinity, acceleration, and geomagnetism [14].

- Online Analytical Processing (OLAP): A unique feature of BiP is its OLAP tools, which calculate environmental parameters like surface currents and ocean winds from animal-collected data by integrating algorithms from published studies [14].

- Data Accessibility and Licensing: The platform provides flexible data access. Users can search for datasets using the DOI of associated papers, and open datasets are available under a CC BY 4.0 license, which permits reuse with attribution [14].

The BiP Standardization Framework

The standardization framework implemented by BiP is designed to produce a reusable and generalizable animal movement data product [15]. It uses a set of three templates to capture the entire data generation process, ensuring data is structured for interoperability from the outset. The data flow and the relationships between these core components are illustrated below.

Metadata Templates for Standardization

The framework relies on three core templates to capture essential metadata, which are critical for making sensor data meaningful and reusable [14] [15].

Table: BiP Standardization Metadata Templates

| Template Name | Core Function | Key Information Captured |

|---|---|---|

| Device Metadata | Documents the biologging instrument [15] | Device type, manufacturer, sensor specifications, calibration data. |

| Deployment Metadata | Records the attachment event [14] [15] | Individual animal traits (sex, body size), deployment location, time, and method. |

| Input Data | Contains the raw sensor data [15] | All data collected by one device deployment (e.g., time, latitude, longitude, depth, temperature). |

This structured approach maximizes interoperability and facilitates data use in global initiatives like the Biological Essential Ocean Variables and the Group on Earth Observations Biodiversity Observation Network [15].

Data Types and Analytical Capabilities (OLAP)

Biologging technology enables the measurement of a wide range of parameters, and BiP is designed to support this diversity [14]. The platform's analytical power is significantly enhanced by its Online Analytical Processing (OLAP) tools.

Quantifiable Data and Environmental Parameter Estimation

BiP's OLAP tools can derive key environmental parameters from animal movement and sensor data by applying published algorithms [14]. The following table summarizes primary data types collected by biologging devices and the environmental parameters OLAP can estimate.

Table: Biologging Data Types and OLAP-Derived Environmental Parameters

| Primary Sensor Data Collected | OLAP-Estimated Environmental Parameters | Animal Taxa Commonly Used |

|---|---|---|

| Depth, Water Temperature, Salinity | Water column structure, Ocean heat content [14] | Seals, Sea Turtles, Sharks [14] |

| Horizontal Position (Latitude, Longitude) | Surface currents, Ocean winds [14] | Seabirds [14] |

| Acceleration, Angular Velocity | Animal behavior (e.g., foraging, diving), Wave height [14] | Marine and Terrestrial Animals [14] |

| Body Temperature, Atmospheric Pressure | Physiological state, Altitude [14] | Flying Animals, Marine Mammals [14] |

This capability transforms biologging from a purely biological tool into a powerful platform for oceanographic and meteorological research, providing data in regions inaccessible to traditional platforms like Argo floats or satellites [14] [11].

Experimental Protocols and Workflow for Biologging Data

The end-to-end process of conducting a biologging study and managing the resulting data via BiP involves a series of critical steps, from animal deployment to data analysis and sharing.

Key Methodological Steps

- Animal Deployment and Data Collection: Devices are attached to animals, with early studies focusing on species less sensitive to human presence [14]. The method has expanded to various terrestrial and marine taxa [14]. Data collection can occur via device retrieval or remote transmission using satellite technology [14].

- Data and Metadata Upload to BiP: Researchers register on the BiP website and interactively upload sensor data alongside the critical device, deployment, and animal metadata outlined in Section 3.1 [14].

- Data Standardization and OLAP Analysis: Within BiP, data is standardized into consistent formats. Researchers can then utilize OLAP tools to calculate environmental and behavioral parameters from the uploaded datasets [14].

- Data Sharing and Access: Data owners can set data to open (CC BY 4.0) or private. The public can view metadata and visualized routes for all datasets, and request access to private data [14].

The Scientist's Toolkit: Essential Research Reagents and Materials

Conducting a biologging study and utilizing a platform like BiP requires a suite of essential materials and tools. The following table details key components of the biologging research pipeline.

Table: Essential Materials and Tools for Biologging Research

| Item/Reagent | Primary Function | Context & Importance |

|---|---|---|

| Satellite Relay Data Logger (SRDL) | Transmits compressed data (e.g., dive profiles, temperature) via satellite [14]. | Enables long-term (over one year) remote data collection without recapturing the animal [14]. |

| Animal-Borne Ocean Sensors | Measures physical ocean data like temperature and salinity [14]. | Contributes to global ocean observation systems (e.g., AniBOS project), complementing Argo float data [14]. |

| Standardized Metadata Templates | Provides a framework for capturing device, deployment, and input data information [15]. | Ensures data is reusable and interoperable, which is fundamental for collaboration and meta-analyses [14] [15]. |

| Online Analytical Processing (OLAP) | Calculates environmental parameters from animal movement and sensor data [14]. | A unique feature of BiP that expands the utility of biologging data for oceanography and meteorology [14]. |

| CC BY 4.0 License | Governs the use of open data shared on the platform [14]. | Permits copying, redistribution, and modification of data while requiring attribution, promoting open science [14]. |

The Biologging Intelligent Platform (BiP) represents a significant advancement in the management and application of animal-borne sensor data. By implementing a rigorous standardization framework for both data and metadata, BiP directly addresses the challenge of incompatible formats that has historically limited collaborative research and secondary data use [14] [15]. Its integrated OLAP tools unlock the potential for biologging data to contribute directly to environmental monitoring, transforming animals into mobile sensors for oceanography and meteorology [14] [11]. This functionality is crucial for resolving various marine issues, from ocean warming to fisheries bycatch [11]. The platform's commitment to open data access and its alignment with global observation initiatives position BiP as a critical infrastructure component for the future of biologging research, ultimately enhancing scientific discovery and supporting the development of sustainable ocean management policies [14] [15] [11].

Biologging, the method of attaching data recorders to animals to study their behavior, physiology, and environment, has revolutionized wildlife research and conservation biology [11] [1]. This technical guide examines four cornerstone data types in modern biologging studies: geolocation, dive profiles, acceleration, and body temperature. The effective collection, processing, and interpretation of these data streams are fundamental to advancing our understanding of animal ecology, particularly when integrated within multidimensional visualization frameworks that reveal complex relationships between animals and their environments [1] [16].

The convergence of these data types enables a holistic approach to movement ecology. For instance, while geolocation data maps an animal's horizontal movement, dive profiles describe its vertical utilization of the water column, acceleration data deciphers fine-scale behaviors and energy expenditure, and body temperature provides insights into physiological status [17] [18]. This guide provides researchers with a technical foundation for employing these data types, complete with standardized methodologies, analytical approaches, and visualization strategies essential for robust biologging research.

Core Biologging Data Types: Technical Specifications and Methodologies

Geolocation Data

Geolocation data provides the spatial context for animal movement, enabling researchers to map trajectories, identify critical habitats, and understand migration patterns. Modern biologging platforms utilize various positioning technologies, with the Global Positioning System (GPS) being most prevalent for terrestrial and surface-dwelling species [1]. For marine species that spend limited time at the surface, Argos satellite system positioning is often employed, though with generally lower spatial accuracy compared to GPS [17].

The transmission of geolocation data can occur via satellite relays, as with Platform Terminal Transmitters (PTTs), or be stored internally on data loggers for later retrieval [1]. The SPLASH10 tag (Wildlife Computers) represents a sophisticated example of a satellite-transmitting tag commonly used in marine telemetry, capable of collecting and transmitting location data alongside environmental sensors [17].

Table 1: Technical Specifications of Common Geolocation Technologies

| Technology | Spatial Accuracy | Data Transmission | Typical Taxa Applications | Key Limitations |

|---|---|---|---|---|

| GPS | 5-20 meters | Store-on-board or Satellite Relay | Terrestrial mammals, birds, surface-marine species | Requires line-of-sight to satellites; power-intensive |

| Argos Satellite | 150 meters to >1 km | Satellite Relay | Marine mammals, sea turtles, seabirds | Lower accuracy; limited data transmission bandwidth |

| GPS LTE-M | 5-20 meters | Cellular Networks | Terrestrial species in covered areas | Limited to cellular network coverage areas |

Dive Profiles

Dive profiles, typically collected using Time-Depth Recorders (TDRs), quantify the vertical movement patterns of aquatic species [17]. These sensors record pressure at programmed intervals, translating to depth measurements with high precision. For example, TDRs used in loggerhead sea turtle research were programmed with a 0.5 m resolution and ±1% accuracy [17].

The analysis of dive data involves segmenting individual dives into phases - descent, bottom, and ascent - with the bottom phase typically defined as any depth exceeding 80% of the maximum dive depth [17]. Advanced statistical approaches like Hidden Markov Models (HMMs) can infer behavioral states (e.g., resting, foraging, exploration) from these dive patterns, even with low-temporal resolution data transmitted via satellite [17].

For deep-diving marine mammals like northern bottlenose whales, extended-depth tags rated to 2000-3000 meters capture extraordinary dive capabilities, with recorded dives reaching 2288 meters depth and lasting 98 minutes [19]. These extreme dive profiles provide crucial insights into foraging ecology and habitat use in the deep sea.

Table 2: Dive Profile Metrics and Their Biological Significance

| Dive Metric | Calculation Method | Biological Interpretation | Example from Literature |

|---|---|---|---|

| Maximum Dive Depth | Maximum recorded pressure during dive cycle | Habitat utilization, physiological limits | Northern bottlenose whales: 2288 m [19] |

| Dive Duration | Time from descent initiation to surfacing | Foraging strategy, aerobic capacity | Northern bottlenose whales: 98 minutes [19] |

| Bottom Time | Time spent >80% of maximum depth | Potential foraging opportunity | Loggerhead turtles: classified via HMM [17] |

| Post-dive Surface Interval | Time at surface between dives | Oxygen recovery, metabolic status | Beaked whale dive sequences [19] |

Acceleration Data

Tri-axial accelerometers measure proper acceleration along three orthogonal axes, providing a rich data source for quantifying fine-scale behaviors, activity patterns, and energy expenditure [20] [18]. The derived metrics Dynamic Body Acceleration (DBA) and Minimum Specific Acceleration (MSA) serve as validated proxies for movement-based energy expenditure, even at fine temporal scales such as within individual dive phases [18].

In practical applications, acceleration data has revealed behavioral adaptations to anthropogenic pressures. For example, Scandinavian brown bulls exhibited increased nocturnality during hunting season, a behavioral adjustment quantified through detailed acceleration analysis [20]. Acceleration metrics also successfully detected increased propulsive power usage in deeper dives of California sea lions, validating their use for fine-scale energetic studies [18].

The classification of accelerometry data into discrete behaviors (e.g., running, walking, feeding, resting) is typically accomplished through machine learning algorithms like random forests, which have demonstrated classification precision exceeding 95% when trained with observational data [20].

Body Temperature

While the search results provide limited specific details on body temperature logging, it is referenced as one of the physiological parameters measurable using biologging technology [1]. Internal temperature loggers typically use thermistors or thermocouples to record body core temperature at programmed intervals, providing insights into thermoregulation, metabolic activity, and physiological responses to environmental conditions.

Figure 1: Interrelationship of Core Biologging Data Types and Their Applications. The four key data types form an integrated framework for addressing complex ecological questions.

Experimental Protocols and Analytical Workflows

Field Deployment Methodologies

Successful biologging research requires standardized deployment protocols to ensure data quality and minimize impacts on study subjects. For marine taxa like sea turtles, common attachment procedures involve:

- Animal Capture: Turtles are captured by hand as they rest at the surface by an observer swimming from an inflatable boat [17].

- Preparation: The carapace is cleaned of biota with acetone and lightly sandpapered to improve surface for transmitter attachment [17].

- Device Attachment: Tags are attached using two-part epoxy resin, positioned with the antenna facing forward along the turtle's first and second vertebral scutes to ensure the wet/dry sensor remains exposed during surfacing [17].

- Release: Animals are released at the capture site after attachment, with the entire process taking approximately 2 hours [17].

Similar protocols for cetaceans include using air-powered tagging systems (e.g., ARTS or DanInject) to deploy tags on the dorsal fin or blubber, with procedures permitted by relevant animal research authorities and ethics committees [19].

Data Processing and Analytical Framework

The transformation of raw sensor data into biologically meaningful information requires specialized computational workflows:

Geolocation Data Processing:

- Filtering of implausible locations using speed, distance, and angle filters [17]

- Application of state-space models (SSM) with correlated random walk models to estimate regularized locations and account for measurement error [17]

- Implementation of move persistence models (MPM) to classify movement behavior into states such as transiting and localized movement [17]

Dive Profile Analysis:

- Zero-offset correction to account for depth sensor drift [17]

- Dive identification using threshold values (e.g., 3 meters for sea turtles) [17]

- Phase segmentation (descent, bottom, ascent) using linear interpolation [17]

- Behavioral state classification using Hidden Markov Models to identify states like resting, foraging, and exploration [17]

Acceleration Data Processing:

- Classification of behaviors using machine learning algorithms (e.g., random forests) trained with observational data [20]

- Calculation of DBA and MSA metrics from tri-axial acceleration data [18]

- Validation of acceleration metrics against propulsive power calculations at fine temporal scales (5-second intervals) [18]

Figure 2: Biologging Data Processing Workflow. This framework illustrates the transformation of raw sensor data into ecological insights through standardized processing pipelines.

The Scientist's Toolkit: Essential Research Reagents and Equipment

Table 3: Essential Equipment for Biologging Research

| Equipment Category | Specific Examples | Technical Function | Research Applications |

|---|---|---|---|

| Satellite Transmitters | SPLASH10 (Wildlife Computers) | Collects and transmits geolocation, depth, temperature data via Argos satellites | Long-term tracking of marine species [17] [19] |

| Time-Depth Recorders (TDR) | Wildlife Computers TTDR | Records pressure (depth) and temperature at programmed intervals | Dive behavior analysis in marine vertebrates [17] |

| Tri-axial Accelerometers | Little Leonardo ORI400-PD3GT | Measures acceleration in three orthogonal axes | Fine-scale behavior classification and energetics [21] [18] |

| Data Loggers | LoggLaw series (Biologging Solutions) | Records multiple sensor data for store-on-board retrieval | High-resolution data collection when tag recovery is feasible [21] |

| Attachment Materials | Two-part epoxy resin (Wildlife Computers attachment kit) | Secures biologging devices to study subjects | Safe and durable attachment of tags to animals [17] |

| Data Visualization Platforms | Biologging intelligent Platform (BiP) | Standardizes, stores, and visualizes biologging data | Collaborative research and data sharing [1] |

Multidimensional Visualization Framework

The integration of multiple biologging data streams requires sophisticated visualization approaches to reveal patterns and relationships across dimensions. The Biologging intelligent Platform (BiP) represents a standardized platform for sharing, visualizing, and analyzing biologging data, adhering to internationally recognized standards for sensor data and metadata storage [1].

Effective multidimensional visualization incorporates:

- Coordinated Multiple Views: Linking spatial, temporal, and behavioral data representations so that selection in one view highlights corresponding data in others [16].

- Temporal Synchronization: Aligning data streams across time to identify correlations between behavior, physiology, and environmental conditions.

- Spatial Contextualization: Overlaying animal movement trajectories with environmental layers such as bathymetry, sea surface temperature, and prey distributions.

- Behavioral Annotation: Classifying and visualizing behavioral states derived from acceleration and dive profile data [17] [20].

Tools like Vitessce provide integrative visualization of multimodal data, enabling exploration of millions of data points across multiple coordinated views [16]. This approach facilitates the identification of patterns that would remain hidden when examining individual data streams in isolation.

The four key data types—geolocation, dive profiles, acceleration, and body temperature—form the foundation of modern biologging research. When collected using standardized methodologies, processed through robust analytical frameworks, and visualized through integrated platforms, these data streams provide unprecedented insights into animal ecology, physiology, and conservation needs.

The future of biologging research lies in further integration of these multidimensional data streams, development of more sophisticated analytical tools, and enhanced data sharing through collaborative platforms like BiP [1]. Such advances will continue to push the boundaries of our understanding of animal lives in an increasingly changing world, providing critical information for conservation and management decisions across terrestrial and marine ecosystems.

The Expanding Role of Biologging in Oceanography, Meteorology, and Biomedicine

Biologging, the use of animal-borne data loggers, has transformed from a tool for observing animal behavior into a critical technology for interdisciplinary environmental and biomedical science. This methodology involves attaching miniaturized sensors to free-living animals to collect data on their movements, behavior, physiology, and the surrounding environment [1] [22]. What began primarily as a biological observation technique now contributes significantly to oceanography, meteorology, and biomedicine by providing unique environmental datasets and physiological insights [1] [23]. This expansion creates both unprecedented opportunities and substantial challenges, particularly in managing, analyzing, and visualizing the complex, multidimensional data generated by these technologies. This technical guide explores the current applications, methodological considerations, and visualization frameworks essential for leveraging biologging data across scientific disciplines.

Core Applications Across Disciplines

Oceanographic Data Acquisition

Marine animals equipped with sensors provide invaluable oceanographic data in regions that are difficult to access through traditional methods, such as ice-covered polar seas and shallow coastal waters [1]. Species like seals, sea turtles, and sharks act as autonomous sampling platforms, collecting high-resolution water temperature and salinity profiles [1]. The AniBOS (Animal Borne Ocean Sensors) project exemplifies this approach, establishing a global ocean observation system that leverages animal-borne sensors to gather physical environmental data worldwide [1]. The data collected by these animals in the Antarctic, Arctic, and eastern Pacific Ocean has become comparable in volume to that collected by Argo floats in those regions, though with different spatial distributions [1].

Table 1: Oceanographic Parameters Measured via Biologging

| Parameter | Animal Platforms | Spatial Coverage | Comparative Advantage |

|---|---|---|---|

| Water Temperature Profiles | Phocid seals, sea turtles, sharks | Antarctic, Arctic, Eastern Pacific | Access to ice-covered areas, shallow waters |

| Salinity Profiles | Southern elephant seals, white whales | Polar regions, global oceans | High temporal resolution, complementary to Argo |

| Surface Currents | Seabirds (movement analysis) | Global ocean-atmosphere boundary | Cost-effective data acquisition |

| Ocean Winds & Waves | Seabirds (movement analysis) | Ocean surface layer | Fine-scale spatial resolution |

Meteorological and Environmental Monitoring

Beyond oceanography, biologging contributes to meteorological science by providing insights into environmental conditions at the ocean-atmosphere boundary. By analyzing the movements of instrumented seabirds, researchers can estimate physical environmental parameters such as ocean currents, ocean winds, and waves [1]. This approach is particularly valuable for understanding fine-scale environmental processes and extreme weather events [23]. The integration of biologging with environmental data also enables research on species responses to climatic variation, potentially informing predictions about long-term climate change impacts [23].

Physiological Monitoring and Biomedical Applications

In the biomedical domain, biologging devices enable continuous monitoring of physiological parameters in free-living animals, providing insights into organismal function, health, and responses to environmental stressors [10] [22]. These applications yield nearly gap-free observation of individuals, allowing researchers to measure internal states alongside external conditions [22]. This capability is particularly valuable for understanding how environmental extremes affect organismal performance and can inform biomedical research on physiological resilience.

Table 2: Physiological Parameters Measured via Biologging

| Physiological Parameter | Measurement Technology | Research Applications | Challenges |

|---|---|---|---|

| Heart Rate | Electrocardiogram (ECG) loggers | Cardiovascular physiology, stress responses | High sampling rates, data volume management [10] |

| Brain Activity | Electroencephalogram (EEG) loggers | Sleep studies, neural responses to stimuli | Functional long-term correlations, signal interpretation [10] |

| Core Body Temperature | Temperature loggers | Thermal biology, metabolic studies | Thermal inertia effects, temporal autocorrelation [10] [23] |

| Energy Expenditure | Accelerometry combined with physiological models | Conservation physiology, disease assessment | Validation against direct calorimetry [22] |

| Blood Oxygenation | Blood pO2 sensors | Dive physiology, hypoxia research | Limited sensor longevity on freely moving animals [10] |

Methodological Framework and Experimental Protocols

Sensor Deployment and Data Collection

Effective biologging research requires careful experimental design to ensure data quality while minimizing impacts on study animals. The standard deployment protocol involves: (1) appropriate animal capture and handling procedures, (2) secure attachment of biologging devices using species-appropriate methods, (3) collection of essential metadata including individual animal traits, device specifications, and deployment details, and (4) planned device recovery or data transmission protocols [1].

Metadata standardization is critical for data interoperability and reuse. The Biologging intelligent Platform (BiP) has established standardized metadata formats conforming to international standards including the Integrated Taxonomy Information System (ITIS), Climate and Forecast Metadata Conventions (CF), and Attribute Conventions for Data Discovery (ACDD) [1]. This standardization enables cross-disciplinary data integration and facilitates secondary use of biologging data in fields beyond biology.

Figure 1: Biologging Experimental Workflow. This diagram outlines the key stages in a biologging study, from initial design through data standardization.

Analytical Approaches for Time-Series Data

Biologging data presents unique analytical challenges due to its time-series nature, often exhibiting strong temporal autocorrelation where successive values depend on prior measurements [10]. Standard statistical approaches like t-tests or ordinary generalized linear models are inappropriate for such data, as they greatly inflate Type I error rates—simulations demonstrate false positive rates as high as 25.5% compared to the nominal 5% α level when using inappropriate methods [10].

Recommended analytical frameworks include:

- Autoregressive (AR) models: Account for correlation between consecutive residuals in time series, with AR(1) models being most common [10]

- Autoregressive Moving Average (ARMA) models: Combine autoregressive parameters with moving average parameters for greater flexibility [10]

- Generalized Least Squares (GLS): Controls Type I error rates at appropriate levels when analyzing temporally autocorrelated data [10]

- Mixed effects models: Accommodate hierarchical data structures common in biologging studies with multiple individuals [10]

For behavioral classification, machine learning approaches applied to high-frequency sensor data (particularly accelerometry) have proven effective for identifying specific behaviors, mortality events, or reproductive activities [22].

Data Management and Visualization Frameworks

Multidimensional Data Challenges

Biologging datasets are inherently multidimensional, capturing information across spatial, temporal, behavioral, physiological, and environmental axes simultaneously [1] [22]. A single modern biologger can concurrently record positional data, individual orientation, proximity to conspecifics, physiological and stress responses, reproduction indicators, mortality events, and fine-scale environmental parameters [22]. This complexity creates substantial challenges for data visualization, analysis, and interpretation.

The field of biologging has recognized the need for standardized approaches to facilitate data sharing between different analysis tools and research groups. Initiatives like the Open Microscopy Environment in biological imaging demonstrate the value of common data models for sharing multidimensional data between open-source tools like ImageJ and commercial packages like Volocity and Imaris [24]. Similar frameworks are needed for biologging data to enable effective collaboration and tool interoperability.

Visualization Techniques for High-Dimensional Data

Effective visualization of biologging data requires techniques that can represent multiple dimensions while preserving meaningful patterns. PHATE (Potential of Heat Diffusion for Affinity-based Transition Embedding) is one recently developed method that enables visualization of high-dimensional data by first encoding local data structure, then using potential distance to measure global relationships, and finally performing multidimensional scaling to embed data in lower-dimensional spaces [25]. This approach preserves both local and global data structures and has proven effective for identifying patterns such as branching or end points in complex datasets [25].

Figure 2: Multidimensional Data Analysis Pipeline. This workflow shows the process from raw data to biological interpretation, highlighting visualization techniques.

Data Platforms and Management Solutions

The Biologging intelligent Platform (BiP) represents a comprehensive solution for storing standardized sensor data alongside rich metadata [1]. This platform adheres to internationally recognized standards and facilitates secondary data analysis across disciplines. Key features include:

- Online Analytical Processing (OLAP) tools: Calculate environmental parameters such as surface currents, ocean winds, and waves from animal-collected data [1]

- Data standardization: Converts diverse data formats into consistent structures using international standards [1]

- Metadata management: Captures detailed information about animal traits, instruments, and deployment circumstances [1]

- Flexible sharing options: Supports both open (CC BY 4.0) and private data sharing with permission workflows [1]

Similar platforms like Movebank manage billions of location points and sensor records across thousands of taxa, demonstrating the massive scale of contemporary biologging data [1].

Essential Research Tools and Reagents

Table 3: Essential Research Reagent Solutions for Biologging Studies

| Tool Category | Specific Examples | Function | Technical Considerations |

|---|---|---|---|

| Data Loggers | Satellite Relay Data Loggers (SRDLs), GPS loggers, Time-Depth Recorders | Core data collection from free-ranging animals | Miniaturization, power management, sensor calibration [1] [23] |

| Environmental Sensors | Temperature, Salinity, Light Intensity, Atmospheric Pressure sensors | Measure physical environment experienced by animals | Accuracy, sampling frequency, biofouling resistance [1] [23] |

| Physiological Sensors | ECG loggers, EEG loggers, Accelerometers, Temperature pills | Monitor internal physiological state | Biocompatibility, signal-to-noise ratio, data compression [10] [22] |

| Data Management Platforms | Biologging intelligent Platform (BiP), Movebank | Store, standardize, and share biologging data | Metadata standards, interoperability, access controls [1] |

| Analytical Frameworks | R packages (nlme, lme4), Python machine learning libraries | Statistical analysis of time-series data | Handling autocorrelation, mixed models, classification algorithms [10] |

Future Directions and Integration Opportunities

The future of biologging research points toward increased integration across disciplines and technologies. Promising directions include:

- Multi-sensor integration: Combining data streams from environmental, physiological, and movement sensors to develop more comprehensive models of animal-environment interactions [22] [26]

- Theory-driven research: Moving beyond descriptive studies to test specific ecological and physiological theories using biologging data [26]

- Real-time conservation applications: Using biologging for immediate conservation interventions, such as detecting mortality events or identifying critical habitats [22]

- Cross-platform data exchange: Developing standardized protocols for sharing data between platforms like BiP and Movebank to enhance data discovery and use [1]

- Equitable technology access: Addressing current biases in biologging studies toward developed regions and accessible environments to enable global applications [22]

As biologging technology continues to advance, maintaining focus on robust statistical approaches, effective multidimensional visualization, and collaborative data sharing will be essential for maximizing its contributions across oceanography, meteorology, and biomedicine.

Advanced Visualization Methods and Practical Applications in Biologging Research

The Biologging intelligent Platform (BiP) represents a significant evolution in the analysis of animal-borne sensor data by integrating Online Analytical Processing (OLAP) tools specifically designed for environmental research. Biologging itself is a Lagrangian observation method that utilizes animal-borne devices to study behavioral ecology, physiology, and the surrounding environment [11]. As a platform, BiP adheres to internationally recognized standards for sensor data and metadata storage, facilitating collaborative research and biological conservation through data standardization [14]. The integration of OLAP technology enables researchers to calculate critical environmental parameters—such as surface currents, ocean winds, and waves—directly from data collected by animals, transforming biologging from a purely biological tool into a multidisciplinary platform for environmental monitoring [14].

This technical guide examines BiP's OLAP capabilities within the broader context of multidimensional visualization for biologging data research. For environmental scientists and biologging researchers, OLAP tools provide the analytical framework necessary to extract meaningful environmental information from complex, multi-dimensional datasets collected by animal-borne sensors. The platform's unique architecture allows investigators to analyze data from multiple perspectives and dimensions, enabling deeper insights into environmental patterns that might otherwise remain obscured in raw sensor data [27].

BiP's Architectural Framework and Data Standardization

Core Platform Infrastructure

BiP's architecture is built upon a foundation of data standardization and metadata integrity. The platform stores sensor data alongside comprehensive metadata using internationally standardized formats, including the Integrated Taxonomic Information System (ITIS), Climate and Forecast Metadata Conventions (CF), Attribute Conventions for Data Discovery (ACDD), and International Organization for Standardization (ISO) protocols [14]. This standardized approach ensures that data remains interoperable across different research domains and analytical tools.

The platform manages three primary categories of metadata, each essential for contextualizing sensor data:

- Animal Metadata: Includes individual traits such as species, sex, body size, and breeding history

- Device Metadata: Encompasses technical specifications of the biologging instruments

- Deployment Metadata: Records operational details including who conducted the deployment, when and where it occurred, and methodology [14]

This robust metadata framework enables researchers to explore complex questions about how individual animal traits influence movement patterns and environmental interactions, thereby enhancing the research value of the collected sensor data.

Data Integration and Accessibility Features

BiP enhances data discovery through innovative linking capabilities that connect datasets to the Digital Object Identifier (DOI) of papers in which the data was used [14]. This feature creates a seamless connection between primary research publications and the underlying data, promoting research transparency and facilitating secondary data analysis.

The platform implements flexible data sharing policies through its user permission system:

- Open Datasets: Freely available under CC BY 4.0 license, permitting copying, redistribution, and modification with proper attribution

- Private Datasets: Accessible only through direct contact and permission from data owners [14]

This balanced approach respects data ownership while encouraging data sharing and collaborative research. Additionally, BiP supports multi-repository storage and data exchange with other databases, enhancing the long-term sustainability and accessibility of biologging data [14].

OLAP Analytical Capabilities for Environmental Parameter Estimation

Core Analytical Functions

BiP's OLAP system provides multidimensional analysis capabilities that allow researchers to view environmental data from different perspectives through slicing and dicing operations [27]. These tools integrate algorithms from published scientific studies to estimate both environmental and behavioral parameters from raw sensor data [14]. The OLAP engine enables drill-down operations that allow researchers to explore data at various levels of temporal and spatial detail, from broad migration patterns to fine-scale behavioral observations.

The platform supports pivoting functionality that lets researchers rearrange data dimensions to view information from different analytical perspectives [27]. This capability is particularly valuable for identifying correlations between animal movement patterns and environmental conditions. Furthermore, BiP's architecture facilitates real-time data processing, ensuring that analyses reflect the most current information available [27], though the specifics of BiP's real-time implementation are not detailed in the available literature.

Environmental Parameter Estimation

BiP's OLAP tools specialize in deriving key environmental parameters from animal movement and behavioral data:

Table 1: Environmental Parameters Derived from Biologging Data via OLAP

| Environmental Parameter | Data Source | Calculation Method | Research Application |

|---|---|---|---|

| Surface Currents | Animal movement trajectories relative to water | Analysis of drift during surface periods between active swimming | Mapping ocean circulation patterns in ice-covered regions |

| Ocean Winds | Flight patterns of seabirds | Mathematical models correlating flight dynamics with wind conditions | Estimating wind fields at ocean-atmosphere boundary |

| Wave Conditions | Movement patterns of surface-associated animals | Spectral analysis of vertical and horizontal movements | Characterizing sea state in remote ocean regions |

| Water Temperature Profiles | Depth-temperature sensors on diving animals | Compression of dive profiles and temperature measurements | Monitoring thermal structure of water columns |

| Salinity Profiles | Conductivity sensors on marine animals | Conversion of conductivity measurements to salinity values | Tracking freshwater inputs and water mass boundaries |

The algorithms for estimating these parameters are derived from established methodologies in previous biologging research. For example, the use of marine animals like seals and sea turtles carrying Satellite Relay Data Loggers (SRDLs) has been validated for collecting oceanographic data in regions difficult to access with traditional methods [14]. These animal-borne sensors have demonstrated high correlation with measurements from established observation systems like Argo floats [14] [11].

Experimental Protocols for OLAP-Enhanced Environmental Monitoring

Sensor Deployment and Data Collection Methodology

The foundational protocol for leveraging BiP's OLAP capabilities begins with proper sensor deployment and data collection. For marine applications, researchers should:

Select appropriate species based on target habitat and research objectives. Species with known movement patterns that cover regions of interest are ideal. Historically, seals and penguins in Antarctica have been particularly suitable due to their limited sensitivity to researchers [14].

Deploy Satellite Relay Data Loggers (SRDLs) or similar biologging instruments using minimally invasive attachment methods. SRDLs store essential data such as dive profiles and depth-temperature profiles, with capability to transmit compressed data via satellite for more than one year [14].

Program sensors to collect depth, temperature, location, and acceleration data at frequencies appropriate to the research questions. For oceanographic applications, high-frequency sampling during dives provides the finest vertical resolution of water column properties.