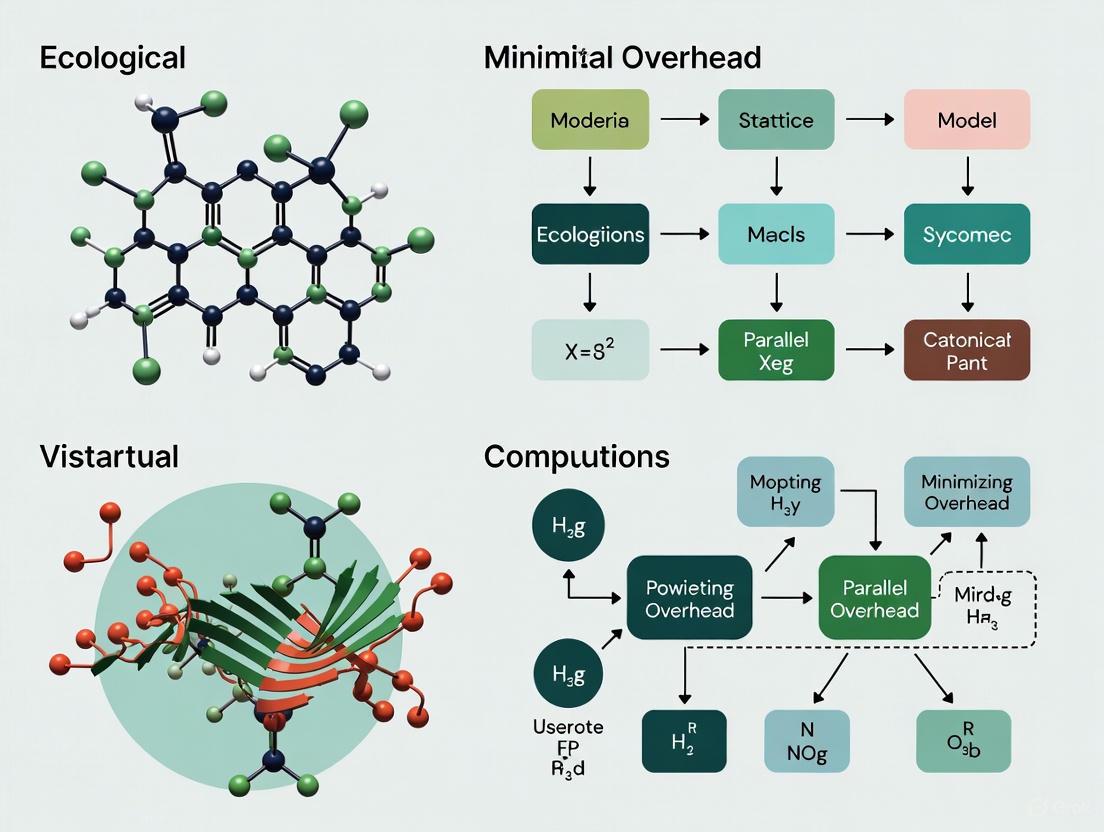

Minimizing Parallel Overhead in Ecological Computations: Strategies for Accelerating Scientific Discovery

This article provides a comprehensive guide for researchers and scientists on overcoming the critical challenge of parallel overhead in large-scale ecological computations.

Minimizing Parallel Overhead in Ecological Computations: Strategies for Accelerating Scientific Discovery

Abstract

This article provides a comprehensive guide for researchers and scientists on overcoming the critical challenge of parallel overhead in large-scale ecological computations. We explore the foundational causes of inefficiency, present cutting-edge methodological approaches from recent research, and offer practical troubleshooting and optimization techniques. Through validation and comparative analysis of real-world case studies, such as watershed hydrological modeling, we demonstrate how strategic parallelization can achieve significant speedups—up to 6x in some instances—while maintaining computational accuracy. This resource is essential for any professional looking to enhance the performance and scalability of complex ecological simulations on modern computing architectures.

Understanding Parallel Overhead: The Hidden Bottleneck in Ecological Simulations

Frequently Asked Questions

What is parallel overhead and why does it matter for my research? Parallel overhead refers to the various performance costs in parallel computing that do not exist in sequential program execution. It encompasses the time and resources spent on coordinating parallel tasks rather than on the core computation itself. For ecological researchers, minimizing this overhead is crucial as it directly impacts how effectively you can leverage high-performance computing (HPC) to solve larger, more complex models in less time. High overhead can severely limit the speedup gained from using multiple processors [1] [2].

The simulation speed doesn't improve when I use more processor cores. What could be wrong? This is a classic symptom of parallel overhead. The most common causes are:

- Load Imbalance: One or a few processors are doing more work than the others, causing the faster processors to sit idle, waiting for the slowest one to finish. The overall computation time is determined by the longest-running task [1] [3].

- Synchronization Overhead: The cores are spending too much time waiting for each other at synchronization points (e.g., barriers) instead of doing productive work [3] [4].

- Communication Overhead: The time spent transferring data between processors becomes a bottleneck, especially when using a large number of nodes [5].

How can I identify which type of overhead is affecting my application? You can diagnose overhead using profiling tools and by observing specific symptoms:

- For Load Imbalance: Use profiling tools to check the CPU utilization across all cores. A significant variation in busy-time between cores indicates imbalance [1].

- For Synchronization Overhead: Profiling tools for parallel environments (like MPI profilers) can identify functions where tasks spend excessive time in wait states, such as

MPI_Barrier[4]. - For Communication Overhead: System monitors like Ganglia or Nagios can show high network traffic and low CPU utilization, indicating that computation is stalled by data transfer [1].

Are there specific optimization techniques for ecological models like forest simulations? Yes. Spatial models, such as forest landscape models (FLMs), are often well-suited to a technique called spatial domain decomposition. This approach divides the landscape (pixels) into subsets assigned to individual processor cores for parallel execution. Key considerations include dynamically reallocating these subsets during operations like seed dispersal to maintain balance, which has been shown to reduce simulation time by 32-76% [6].

What is Communication-Computation Overlapping (CC-Overlapping) and how can I use it? CC-Overlapping is an advanced technique to hide communication latency. It works by breaking down a computation into parts that do not require external data ("pure internal nodes") and parts that do ("boundary nodes"). While the communication for the boundary nodes is happening in the background, the processor simultaneously works on the pure internal nodes. This method has been shown to improve performance in parallel finite element and volume methods by over 40% on large-scale systems [5].

The Scientist's Toolkit

Table 1: Essential Tools and Techniques for Minimizing Parallel Overhead

| Category | Tool / Technique | Primary Function | Relevance to Ecological Research |

|---|---|---|---|

| Programming Models | MPI (Message Passing Interface) | Enables communication and coordination between processes across multiple nodes in a cluster. | Essential for large-scale distributed memory simulations, e.g., watershed or landscape models spanning many computers [7] [5]. |

| OpenMP | Manages shared-memory parallelism, allowing multiple threads to work on a single node. | Ideal for parallelizing loops within a simulation running on one multi-core server [7] [5]. | |

| Optimization Libraries | Cilk Plus, TBB (Threading Building Blocks) | Provides high-level constructs for task-based parallelism and work-stealing load balancing. | Simplifies implementing dynamic load balancing for irregular workloads, such as adaptive ecological processes [1]. |

| Profiling & Monitoring | MPI Profilers (e.g., IPM, Vampir) | Identifies synchronization bottlenecks and communication patterns in MPI codes. | Critical for pinpointing why a parallel ecological simulation is scaling poorly [4]. |

| System Monitors (e.g., Ganglia, Nagios) | Aggregates real-time data on CPU, memory, and network use across a cluster. | Makes load imbalances and resource contention visible during model execution [1]. | |

| Load Balancing Strategies | Spatial Domain Decomposition | Statically divides a spatial domain (like a map) into sub-regions for each processor. | Foundation for parallelizing grid-based ecological models like forest landscape or groundwater models [6]. |

| Dynamic Work Stealing | Allows idle processors to "steal" tasks from busy ones, balancing load at runtime. | Effective for handling unpredictable computational loads, e.g., in individual-based models [1]. |

Experimental Protocols for Overhead Mitigation

The following protocols are derived from published research on optimizing parallel computations in scientific domains, including ecology.

Protocol 1: Applying CC-Overlapping to a Parallel Simulation

This methodology is adapted from work on parallel multigrid methods and is applicable to spatial ecological models [5].

1. Problem Analysis:

- Identify the core computation (e.g., updating cell values in a grid) and the associated communication (halo exchange with neighboring cells).

- Determine if the computation can be split into independent and dependent parts.

2. Data Reordering:

- Classify Nodes: Divide the computational domain into two groups:

- Pure Internal Nodes: Cells whose computation does not depend on data from other processors.

- Boundary Nodes: Cells that require data from neighboring domains (external nodes) to complete their computation.

- Reorder Data Structures: Renumber your internal arrays so that all pure internal nodes are listed first, followed by all boundary nodes. This enables efficient, contiguous memory access during the overlapping phase.

3. Implementation with Static Scheduling:

- Step A: Initiate a non-blocking communication call to send and receive the necessary halo data for the boundary nodes.

- Step B: Immediately after initiating the communication, begin computation on the pure internal nodes. This computation happens concurrently with the data transfer.

- Step C: After the pure internal node computation is finished, wait for the halo communication to complete.

- Step D: Proceed with the computation on the boundary nodes, now that the required external data is available.

The following workflow visualizes this protocol:

Protocol 2: Dynamic Load Balancing via Work Stealing

This protocol addresses load imbalance where task sizes are unpredictable or change over time [1].

1. Problem Analysis:

- Identify a loop or task pool where the time to process each element varies significantly (e.g., processing tree patches of different densities).

- Ensure the programming environment supports dynamic task scheduling (e.g., using OpenMP or TBB).

2. Implementation with Work Stealing:

- Over-decompose the Work: Divide the work into more tasks than there are processors. This creates a pool of tasks and allows for flexible scheduling.

- Create a Task Queue: Use a shared data structure (like a double-ended queue) to hold all the tasks.

- Dynamic Task Assignment: As processors (threads) become idle, they dynamically "steal" tasks from the end of another processor's queue. This ensures that all processors remain busy until the entire task pool is exhausted.

Table 2: Quantitative Impact of Load Balance on Performance

| Scenario | Description | Theoretical Speedup with 10 Cores (Amdahl's Law) [2] | Key Limiting Factor |

|---|---|---|---|

| High Imbalance | Only 60% of the code is parallelized. | 2.17x | Large sequential portion and/or poor load distribution. |

| Moderate Imbalance | 90% of the code is parallelized, but with some imbalance. | 5.26x | Remaining sequential code and minor synchronization. |

| Well-Balanced | 99% of the code is efficiently parallelized. | 9.17x | Inherent sequential code and minimal, unavoidable overhead. |

Troubleshooting Guide: Symptoms and Solutions

Table 3: Diagnosing and Fixing Common Parallel Overhead Problems

| Symptom | Likely Cause | Diagnostic Steps | Possible Solutions |

|---|---|---|---|

| Speedup plateaus or decreases as more cores are added. | Synchronization Overhead: Too much time spent at barriers or in wait states [3] [4]. | Profile the code to identify functions with high wait times (e.g., MPI_Barrier). |

Reduce synchronization points; replace blocking with non-blocking communication; reorganize code to do useful work while waiting [4]. |

| Some cores are 100% busy while others are idle. | Load Imbalance: Work is unevenly distributed among processors [1]. | Use profiling tools to compare the CPU busy-time across all cores. | Use dynamic load balancing (e.g., work stealing); over-decompose the problem; use better domain partitioning strategies [1]. |

| High CPU utilization but low floating-point performance. | Synchronization Overhead: Cores are busy executing overhead instructions (e.g., managing locks) rather than core computations [3]. | Check the ratio of floating-point instructions to total instructions using performance counters. | Optimize locking strategies; reduce frequency of synchronization; increase the computational granularity of each task [1] [3]. |

| Performance is poor, especially with small problem sizes. | Communication Overhead: The time spent communicating dominates the time spent computing. | Measure the ratio of communication time to computation time. | Increase problem size per core; apply CC-Overlapping; use more efficient data packaging for messages [5]. |

Frequently Asked Questions

1. What are the most common performance bottlenecks in spatial ecological simulations? The primary bottlenecks are complex spatial interactions and intensive seed dispersal calculations. Sequential processing, which simulates landscapes pixel-by-pixel from upper left to lower right, becomes a significant bottleneck at large scales (millions of pixels) and fine temporal resolutions [6].

2. How can parallel computing specifically address the issue of data dependencies in ecological models? Parallel computing applies spatial domain decomposition, assigning different pixel subsets (parts of the landscape) to individual processor cores. This allows species- and stand-level processes to be executed concurrently on each core. For landscape-level processes like seed dispersal that create data dependencies between domains, cores are dynamically reallocated to manage these interactions [6].

3. My model involves cascading failures across an ecological network. How can I assess its resilience to node failures? You can use a cascading failure model to simulate dynamic responses under different attack strategies (e.g., random node removal vs. targeted removal of high-degree nodes). This assesses network robustness by testing if the failure of one node, and the redistribution of its load, causes subsequent failures in neighboring nodes, potentially leading to large-scale collapse [8].

4. What is the expected performance improvement from parallelizing a forest landscape model? Performance gains are substantial, especially for large, high-resolution models. For a 200-year simulation, parallel processing saved 64.6% to 76.2% of the time at a 1-year time step, and 32.0% to 64.6% at a 10-year time step compared to sequential processing [6].

5. What are the main architectural choices for parallelizing a spatial agent-based model? A common and effective architecture is Multiple Instruction, Multiple Data (MIMD), where each processor can execute different instructions on different data streams. This is well-suited for the heterogeneous and complex processes typical of ecological models [7].

Troubleshooting Guides

Problem: Slow Simulation Runtime with Large Datasets

- Symptoms: Simulation time becomes impractically long when modeling large landscapes (millions of pixels) or at fine time steps (e.g., 1 year).

- Solution: Implement spatial domain decomposition for parallel processing.

- Protocol:

- Decompose the Landscape: Split your spatial dataset (e.g., a raster map) into smaller, manageable pixel blocks [6].

- Assign to Cores: Distribute these pixel blocks across multiple processor cores for simultaneous calculation [6].

- Manage Dependencies: Implement a dynamic reallocation mechanism to handle processes that cross domain boundaries, such as seed dispersal. This ensures that inter-block dependencies are correctly resolved without sacrificing parallelism [6].

- Evaluate: Compare simulation outputs and runtime against sequential processing to verify correctness and performance gains [6].

Problem: Network Collapse Under External Stress

- Symptoms: Small disturbances or node failures lead to disproportionately large, cascading collapses within your modeled ecological network.

- Solution: Use a cascading failure model to assess and improve network robustness.

- Protocol:

- Construct the Network: Model your ecological system as a network of nodes (e.g., habitats) and edges (e.g., corridors). Calculate the load and capacity for each node [8].

- Simulate Attacks: Conduct two types of failure experiments:

- Model Cascade Dynamics: When a node fails, redistribute its load to neighboring nodes according to a defined rule (e.g., proportional to their capacity). If the load on any neighbor exceeds its capacity, it also fails, potentially triggering an "avalanche" [8].

- Analyze Robustness: Measure the rate of network collapse. The results can guide interventions, such as maintaining high-degree nodes and increasing the capacity of low-degree nodes [8].

Experimental Data and Protocols

Table 1: Parallel vs. Sequential Processing Performance in a Forest Landscape Model Performance comparison for a 200-year simulation [6]

| Time Step | Sequential Processing Time | Parallel Processing Time | Time Saved |

|---|---|---|---|

| 1 year | Baseline | 64.6% - 76.2% | |

| 10 years | Baseline | 32.0% - 64.6% |

Table 2: Cascading Failure Model Parameters for Ecological Network Resilience Key parameters for assessing network robustness using a cascading failure model [8]

| Parameter | Description | Example/Value |

|---|---|---|

| Node Load (L) | The initial workload or importance of a node. | Can be a function of the node's degree or betweenness centrality. |

| Node Capacity (C) | The maximum load a node can handle before failing. | Often defined as C = (1 + α) * L, where α is a tolerance parameter. |

| Load Redistribution Rule | How the load of a failed node is distributed to its neighbors. | Redistributed locally to surrounding nodes, proportional to their capacity. |

| Attack Strategy | The method for selecting which nodes to fail initially. | Random attack or malicious attack (targeting high-degree nodes). |

The Scientist's Toolkit

Table 3: Essential Research Reagents for Computational Ecology

| Item | Function |

|---|---|

| Parallel Computing Cluster | A set of networked computers (nodes) used to execute parallelized model components simultaneously, drastically reducing computation time [7]. |

| Spatial Domain Decomposition Framework | Software that automatically partitions spatial data for distribution across multiple processor cores, a fundamental step for parallelizing landscape models [6]. |

| Cascading Failure Model | A computational model that simulates how the failure of a network component can trigger subsequent failures, used to assess the structural robustness of ecological networks [8]. |

| Network Analysis Library (e.g., NetworkX) | A software library used to construct, analyze, and visualize complex networks, including calculating node degrees and simulating attacks [8]. |

| High-Resolution Spatial Data | Raster or vector datasets representing the landscape (e.g., land cover, elevation, soil type) which form the foundational input for spatial models [6]. |

Model Architecture and Workflow Diagrams

Ecological Model Parallelization Logic

Cascading Failure Assessment Workflow

The Impact of Overhead on Scalability and Time-to-Solution in Research

Frequently Asked Questions

- FAQ 1: Why does my parallelized ecological simulation run slower as I add more compute nodes?

- FAQ 2: How can I distinguish between communication overhead and load imbalance in my results?

- FAQ 3: What is the maximum speedup I can realistically expect for my computation?

Troubleshooting Guides

Guide 1: Diagnosing Performance Scalability Issues

Problem: A parallel computation demonstrates poor scalability, where increasing the number of processors does not yield a proportional decrease in runtime and may even degrade performance.

Investigation Steps:

- Profile the Code: Use profiling tools to measure the time spent in different parts of your code. Identify the portions that are (a) purely parallel, (b) serial, and (c) dedicated to communication or synchronization between processes [9].

- Measure Overhead: Quantify the overhead introduced by parallelization. This includes time spent on:

- Check for Load Imbalance: Examine if all processors are completing their work simultaneously. If some finish early and remain idle while waiting for others, load imbalance is a likely cause [9].

- Analyze Scaling Laws: Apply Amdahl's Law to understand the theoretical speedup limit imposed by the serial portion of your code [9].

Solution:

- Minimize Communication: Restructure the algorithm to reduce the frequency and volume of data exchange between processes. Use collective communication operations efficiently.

- Balance the Load: Redistribute computational work more evenly across all available processors.

- Increase Problem Size: For some problems, applying Gustafson's Law by increasing the overall problem size can make parallel overhead less significant relative to the useful computation [9].

Guide 2: Managing Overhead in Virtualized and Cloud Environments

Problem: Performance degradation and increased time-to-solution when running computations on virtualized servers or cloud platforms, due to virtualization and consolidation overheads [10] [11].

Investigation Steps:

- Identify Overhead Source: Determine if the overhead stems from:

- The Hypervisor: The software layer that creates and runs virtual machines (VMs) consumes host resources [10].

- VM Consolidation: Contention for hardware resources (CPU, memory, I/O) when multiple VMs run on the same physical server [10].

- Serverless Cold Starts: In serverless platforms, the initialization time of new function instances can cause significant latency [11].

- Monitor Resource Contention: Use system monitoring tools to track metrics like CPU wait time, memory pressure, and I/O latency on the physical host.

- Benchmark Different Configurations: Compare performance using different types of hypervisors (Type I vs. Type II), virtualization techniques (full, para, hardware-assisted), or VM-to-core ratios [10].

Solution:

- Tune Consolidation Density: Find the optimal number of VMs per physical server to balance resource utilization and performance overhead. Avoid VM "sprawling" [10].

- Select Appropriate Technology: Choose hardware-assisted virtualization or para-virtualization where possible to reduce hypervisor overhead [10].

- Mitigate Cold Starts: In serverless computing, configure keep-alive policies or use provisioned concurrency to minimize cold starts, while being mindful of the associated memory and cost trade-offs [11].

Quantitative Data on Overhead and Scalability

Table 1: Characterized Overheads in Computing Systems

| System Type | Overhead Source | Characterized Impact | Citation |

|---|---|---|---|

| Virtualized Servers | VM Consolidation | Performance degradation due to resource contention and hypervisor management. | [10] |

| Serverless Platforms | Instance Churn (Cold Starts) | Computational overhead equivalent to 10–40% of CPU cycles spent on request handling. | [11] |

| Serverless Platforms | Memory Autoscaling | 2–10 times more memory allocated than actively used. | [11] |

Table 2: Theoretical Frameworks for Scalability Analysis

| Concept / Law | Formula / Principle | Application in Troubleshooting |

|---|---|---|

| Amdahl's Law | S(P) = 1 / (f + (1-f)/P) where f is the serial fraction and P is the number of processors [9]. |

Estimates the maximum possible speedup for a fixed problem size, highlighting the bottleneck created by serial code sections. |

| Gustafson's Law | Considers scaling the problem size with the number of processors [9]. | Provides a more optimistic view for workloads where problem size can grow, reducing the relative impact of serial sections. |

| Speedup & Efficiency | Speedup = T1 / TPEfficiency = Speedup / P [10] | Core metrics for evaluating parallel performance. A decrease in efficiency with more processors indicates increasing overhead. |

Experimental Protocols for Performance Analysis

Protocol 1: Measuring Parallel Speedup and Efficiency

Objective: To determine the scalability of a parallel application and identify the point at which overhead outweighs performance gains.

Methodology:

- Baseline Measurement: Execute the application on a single processor (or core) and record the completion time, T1 [10].

- Parallel Execution: Run the same application and problem size on increasing numbers of processors (P), recording the time TP for each run.

- Calculation: For each value of P, calculate Speedup (S = T1 / TP) and Efficiency (E = S / P) [10].

- Analysis: Plot Speedup and Efficiency against the number of processors. The "knee" of the Efficiency curve often indicates the optimal number of processors for that specific problem size before overhead dominates.

Protocol 2: Quantifying Virtualization Consolidation Overhead

Objective: To find the optimal number of Virtual Machines (VMs) to consolidate on a single physical server for a given workload [10].

Methodology:

- Workload Design: Prepare a benchmark of independent, parallel tasks representative of the target workload (e.g., parallel ecological model simulations).

- Consolidation Scenarios: Deploy the benchmark on a physical server, running it with an increasing number of VMs, each executing a portion of the workload.

- Performance Measurement: For each consolidation scenario (e.g., 1 VM, 2 VMs, ..., N VMs), measure the total time-to-solution for the entire workload.

- Optimal Point Determination: Identify the consolidation level that provides the shortest time-to-solution. Further increasing the number of VMs will lead to performance degradation due to overhead, marking the onset of over-consolidation [10].

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Components for Parallel Performance Analysis

| Item / Concept | Function in Analysis |

|---|---|

| Profiling Tools | Software used to measure where a program spends its time, helping to identify serial bottlenecks and parallelizable sections. |

| The HPC Cluster | A set of connected computers that work together as a single system, providing the physical resources for parallel computation [7]. |

| Message Passing Interface (MPI) | A standardized communication protocol for programming parallel computers, essential for managing data exchange in distributed memory systems [9]. |

| Amdahl's Law | A theoretical formula used to predict the maximum potential speedup from parallelizing a program, given the proportion of serial code [9]. |

| Speedup & Efficiency Metrics | Quantitative measures to evaluate the effectiveness of parallelization [10]. |

Workflow and Relationship Diagrams

Troubleshooting Guides

Q1: Why does my model run successfully on a small test watershed but fail on a large-scale basin?

A: This common issue typically stems from memory allocation errors or insufficient parallel processing configuration.

- Root Cause 1: Inefficient Memory Management

- Large-scale basins require significantly more memory for spatial data. The sequential processing of millions of grid cells can exhaust system resources [6].

- Root Cause 2: Default Parallel Processing Settings

- Some commercial tools have default parallel processing factors that are not optimized for large datasets, causing tools to hang during execution [12].

- Solution:

- Implement Spatial Decomposition: Adopt a parallel processing design that decomposes the spatial domain, assigning pixel subsets to individual processor cores [6].

- Adjust Parallel Processing Factor: For immediate relief in tools like ArcGIS Pro, manually set the parallel processing factor to

0to disable parallelism for a specific, problematic tool run, then investigate optimal settings for your hardware [12]. - Use Dynamic Task Scheduling: Implement a framework that builds a dynamic task-tree based on grid cell dependencies, allowing the scheduler to efficiently manage workloads across processors [13].

Q2: How can I reduce the extreme computation time of my model calibration?

A: Model calibration is an iterative process that is computationally intensive, especially with multi-objective functions [14].

- Root Cause: Sequential Parameter Space Exploration

- Automatic calibration requires evaluating a vast number of parameter sets. Running these simulations sequentially creates a major bottleneck [14].

- Solution:

- Parallelize the Calibration Algorithm: Instead of evaluating parameter sets one-by-one, use a parallel computing framework to run multiple model simulations with different parameter sets simultaneously [14].

- Apply Parallel Global Optimization: Utilize parallel versions of algorithms like the multi-cores parallel Artificial Bee Colony optimization to improve both calibration speed and the quality of the optimized parameters [14].

- Consider a Surrogate Model: For the fastest results, train a deep learning model, such as a Convolutional Neural Network (CNN), to replicate the outputs of your physics-based model. One study showed this reduced computation time by 45 times for monthly hydrological estimations [15].

Frequently Asked Questions (FAQs)

Q1: What are the main strategies for parallelizing a watershed eco-hydrological model?

A: The primary strategies focus on decomposing the problem across spatial and parameter dimensions.

- Spatial Domain Decomposition: The watershed landscape is partitioned into smaller pixel blocks or sub-basins. These blocks are processed simultaneously on different cores, with mechanisms to handle cross-boundary processes like seed dispersal or water flow [6].

- Parallelization of Model Calibration: This approach parallelizes the sampling of the parameter space. Multiple parameter sets are distributed across processors for concurrent simulation, significantly speeding up the search for optimal values [14].

- Dynamic Task-Scheduling: This method decouples the simulation into independent grid tasks. A scheduler then dynamically assigns these tasks to available processors based on a pre-calculated dependency tree, improving load balancing and efficiency [13].

Q2: My parallel model produces different results than the sequential version. Is this an error?

A: Not necessarily. In fact, it can be a sign of improved realism.

- Explanation: Traditional sequential models process pixels from the upper-left to the lower-right, which can introduce artificial order dependencies that do not exist in nature. Parallel processing, which simulates multiple areas simultaneously, can more accurately represent synchronous natural processes. One study on forest landscape models confirmed that parallel processing improved simulation realism for species- and landscape-level processes [6].

- Action Plan:

- Validate, Don't Just Compare: Use real-world observed data as the benchmark for both model versions, rather than expecting the parallel version to perfectly match the sequential one.

- Check for Logical Errors: Ensure that the logic for handling interactions between parallel blocks (e.g., water flow, seed dispersal) is correctly implemented.

Q3: Besides faster computation, what are other key benefits of parallelization?

A: The advantages extend beyond simple speed-up.

- Improved Model Realism: As noted above, simulating multiple processes simultaneously is closer to reality [6].

- Enhanced Solution Quality: In model calibration, parallel algorithms can explore the parameter space more thoroughly, leading to better-optimized parameter sets than their sequential counterparts [14].

- Ability to Tackle Higher-Resolution Problems: The efficiency gains allow researchers to use finer spatial and temporal resolutions or to model larger areas without making compromising simplifications [15].

Experimental Protocols & Data

Key Experimental Methodology: Parallel Spatial Domain Decomposition

This is a core method for parallelizing the model simulation itself [6].

- Decompose the Landscape: Split the entire watershed raster into multiple, smaller pixel blocks.

- Assign to Cores: Distribute these blocks to available processor cores for parallel execution.

- Simulate Internal Processes: On each core, run species-level and stand-level processes independently for the assigned pixel block.

- Handle Landscape Processes: Dynamically reallocate pixel subsets across cores to simulate cross-boundary landscape processes (e.g., seed dispersal). This step requires communication between cores.

- Synchronize and Repeat: Collect results from all cores, synchronize the overall model state, and proceed to the next time step.

Performance Data: Parallel vs. Sequential Processing

The table below summarizes quantitative findings from case studies on parallel processing.

Table 1: Performance Comparison of Parallel vs. Sequential Processing in Environmental Models

| Model / Application | Key Parallelization Strategy | Performance Improvement | Citation |

|---|---|---|---|

| Forest Landscape Model (LANDIS) | Spatial domain decomposition | Time savings of 32.0–76.2% for a 200-year simulation, depending on time-step and number of pixels. | [6] |

| Watershed Distributed Eco-Hydrological Model | Dynamic task-scheduling | Modeling efficiency improved by almost 6 times compared to sequential modeling. | [13] |

| Deep Learning (CNN) Surrogate for HydroGeoSphere | Replication of physics-based model with a trained CNN | Computation time reduced by 45 times for monthly estimations over five years. | [15] |

The Scientist's Toolkit: Essential Research Reagents & Solutions

In computational modeling, "reagents" refer to the key software, algorithms, and data components essential for building and running models.

Table 2: Essential Computational Tools for Parallel Watershed Modeling

| Tool / Solution | Function | Relevance to Parallelization |

|---|---|---|

| Message Passing Interface (MPI) | A standard for communication between parallel processes running on distributed-memory systems. | Manages data exchange and synchronization between cores/nodes, essential for spatial decomposition [16]. |

| Parallel Global Optimization Algorithms (e.g., Parallel ABC, PSO) | Algorithms designed to efficiently search high-dimensional parameter spaces by evaluating multiple candidates simultaneously. | Dramatically reduces the wall-clock time required for model auto-calibration [14]. |

| Deep Learning Surrogates (e.g., CNNs, ResNets) | Data-driven models that learn to emulate the input-output relationships of complex physics-based models. | Provides a massive speed-up (e.g., 45x) for scenarios like long-term climate impact simulations, acting as a highly efficient parallelizable proxy [15]. |

| Dynamic Task Scheduler (e.g., PBS) | Software that manages and submits computational workloads to a pool of processors. | Optimizes load balancing in parallel simulations, ensuring all processors are used efficiently [13]. |

| High-Resolution Spatial Data (DEM, Land Cover, Soil Type) | Fundamental input data representing the physical characteristics of the watershed. | The size and resolution of these datasets directly determine the computational load, motivating the need for parallel processing [15]. |

Workflow Diagrams

Parallel Model Calibration Workflow

This diagram illustrates the parallelized version of the automatic model calibration process, which involves iterative parameter adjustment to minimize prediction error [14].

Spatial Domain Decomposition Logic

This diagram depicts the core logic of decomposing a watershed for parallel processing, including the handling of cross-boundary processes [6] [13].

Advanced Parallelization Strategies for Ecological Workloads

Frequently Asked Questions (FAQs)

FAQ 1: What is a dynamic task-tree and why is it critical for parallelizing watershed model computations?

A dynamic task-tree is a hierarchical data structure that represents the computational tasks of a watershed model, where each node (or task) corresponds to the simulation of a specific subbasin. The tree structure captures the hydrological dependencies between these subbasins; specifically, upstream subbasins must be simulated before their downstream counterparts can be processed. This approach is critical because it enables the identification of tasks that can be executed in parallel (sibling subbasins without direct dependencies), thereby significantly reducing overall computation time. By dynamically generating the task scheduling sequence based on this dependency tree, the method achieves a reported efficiency improvement of almost 6 times compared to traditional sequential modeling [13].

FAQ 2: My parallel simulation is experiencing high parallel overhead. What are the primary causes and solutions?

High parallel overhead often stems from three main areas:

- Inefficient Task Scheduling: If the task scheduler does not accurately respect the hydrological dependencies in the task-tree, it can cause processors to sit idle while waiting for prerequisite tasks to complete. Implementing a scheduler like the PBS task scheduler, which follows a dynamically generated task sequence, can mitigate this [13].

- Input/Output (I/O) Bottlenecks: Managing file operations for a large number of simultaneous model simulations can become a major bottleneck. Utilizing high-performance computing frameworks like Apache Spark (as in GP-SWAT) can alleviate I/O demands and optimize computational performance on clusters [17].

- Load Imbalance: An uneven distribution of computational load across processors will cause some to finish early while others are still working. The anomaly-biased model reduction method, which prioritizes both common and anomalous rules (tasks), can help maintain a balance between representativeness and diversity, promoting better load distribution [18].

FAQ 3: How do I validate that my dynamically scheduled parallel simulation produces results identical to the sequential version?

The correctness of the parallel simulation can be validated through a two-step process:

- Result Comparison: Run a known test case (with a defined set of inputs and parameters) using both the traditional sequential model and the new parallelized version. The hydrological outputs (e.g., stream flow, chemical loadings) from both simulations should be numerically identical.

- Boundary Condition Check: Ensure that the point sources from upstream subbasins are correctly incorporated as boundary conditions for their downstream counterparts. As noted in GP-SWAT, when the simulation is properly organized, this method yields "a result identical to that of the original model" [17].

Troubleshooting Guides

Issue 1: Task-Tree Construction Failures

Problem: Errors occur when building the dynamic task-tree from the watershed model's spatial data. Symptoms: The application fails to start parallel execution, crashes during initialization, or logs errors about invalid dependencies. Resolution:

- Verify Input Data: Confirm that your watershed delineation data is correct. Check the file format, integrity, and completeness of the data defining subbasins and their connectivity.

- Check Dependency Logic: Ensure the algorithm for identifying upstream-downstream relationships is functioning correctly. The graph should be a directed acyclic graph (DAG).

- Inspect the Task-Tree: Visualize the generated task-tree to confirm its logical structure. Use the following Graphviz diagram as a reference for a correctly formed tree:

A dynamic task-tree for a watershed with six subbasins (SB_1 to SB_6). Arrows indicate flow direction and computational dependency. Subbasins SB_1, SB_2, and SB_3 are siblings and can be processed in parallel once the root task is complete.

Issue 2: Poor Parallel Speedup and High Resource Idling

Problem: The parallel simulation runs, but the speedup is low, and computational resources are frequently idle. Symptoms: The total execution time is not significantly better than the sequential version; monitoring shows some processors have no tasks assigned for long periods. Resolution:

- Profile Task Execution: Measure the time taken by each subbasin simulation. Significant variation in execution times can cause load imbalance.

- Optimize the Scheduler: Implement a dynamic task scheduler that can assign tasks to processors as they become free, rather than using a static schedule. The Pregel algorithm in a graph-parallel framework like Spark is designed for this, as it processes vertices (subbasins) in supersteps, synchronizing only when necessary [17].

- Review Granularity: If tasks are too fine-grained, the overhead of task management may overshadow the computation. Consider a two-level parallelization schema, like in GP-SWAT, which operates at both the watershed and subbasin level [17].

The table below summarizes key performance metrics from relevant case studies to set realistic expectations for speedup.

Table 1: Reported Performance Metrics from Parallel Watershed Model Studies

| Study / Tool | Parallelization Method | Model | Reported Speedup | Key Factor |

|---|---|---|---|---|

| Dynamic Task-Scheduling [13] | Dynamic task-tree & PBS scheduler | Eco-hydrological model | ~6x | Decoupling into independent grid tasks |

| GP-SWAT (Single-model) [17] | Subbasin-level on Spark cluster | SWAT | 2.3x to 5.8x | Graph-based parallelization |

| GP-SWAT (Multiple simulations) [17] | Subbasin-level on Spark cluster | SWAT | 8.34x to 27.03x | Combination of spatial decomposition and iterative run parallelization |

Issue 3: Result Inconsistency in Iterative Simulations

Problem: During iterative runs (e.g., for model calibration), the results from parallel executions are inconsistent with sequential runs. Symptoms: Output values fluctuate unpredictably between identical parallel runs, or differ from the trusted sequential baseline. Resolution:

- Check Random Number Generators (RNGs): If your model uses stochastic elements, ensure that RNGs are properly seeded and that their state is managed correctly across parallel tasks to avoid race conditions or correlated random streams.

- Audit Data Flow: Verify that in the parallel setup, the output of an upstream subbasin is fully written and communicated before the dependent downstream subbasin attempts to read it. The Pregel algorithm's vertex-centric messaging is inherently designed to handle this [17].

- Isolate I/O: Ensure that parallel tasks do not overwrite each other's temporary or output files. Use separate working directories for different parallel tasks or iterations.

Experimental Protocols & Workflows

Protocol: Implementing a Dynamic Task-Scheduler for a Watershed Model

Objective: To parallelize a watershed distributed eco-hydrological model using a dynamic task-tree to reduce computation time while maintaining result accuracy.

Materials: See "The Scientist's Toolkit" table below.

Methodology:

- Watershed Decomposition: Decouple the entire watershed simulation into independent, grid-based processing tasks. This is done by analyzing the flow direction and accumulation data to establish the relationship and sequence of each subbasin (cell) [13].

- Task-Tree Construction: Build a dynamic task-tree based on the dependency of each cell in the watershed. Each node in the tree is a subbasin simulation task. The edges represent the upstream-downstream flow dependencies.

- Scheduling Sequence Generation: From the task-tree, generate a dynamic task scheduling sequence. This sequence ensures that a task is only scheduled for execution once all its upstream (parent) tasks have been successfully completed.

- Parallel Execution: Submit the tasks to a parallel workload manager (e.g., PBS) according to the scheduling sequence. Independent tasks (siblings in the tree) are submitted simultaneously to available processors.

The following diagram illustrates the high-level workflow of this parallelization process.

Dynamic task-scheduling workflow for parallel watershed simulation.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Solutions

| Item / Solution | Function / Description | Application Context |

|---|---|---|

| Apache Spark Cluster | A distributed, in-memory data processing framework. Provides the underlying engine for parallel task execution and handles failover and load balancing. | General-purpose parallel computing platform for watershed models like SWAT (e.g., GP-SWAT) [17]. |

| PBS (Portable Batch System) Scheduler | A job scheduling system for managing and submitting workloads to parallel computing resources. | Used to execute the dynamic task scheduling sequence generated from the task-tree [13]. |

| Graph-Parallel Pregel Algorithm | An algorithm for efficient parallel processing of graph-based data, using a vertex-centric model with message passing between supersteps. | Core algorithm in GP-SWAT for managing the parallel simulation of dependent subbasins represented as a graph [17]. |

| Directed Acyclic Graph (DAG) | A finite directed graph with no directed cycles. Used to model the dependencies between computational tasks. | Serves as the input model for task scheduling, clearly depicting precedence constraints between tasks in a workflow [19]. |

| Anomaly-Biased Model Reduction | A method that prioritizes both common and anomalous rules during model simplification or task organization. | Helps maintain a balance between representativeness and diversity in hierarchical task organization, improving exploration of the parameter space [18]. |

Frequently Asked Questions (FAQs)

FAQ 1: What is the primary cause of low parallel efficiency in traditional spatial parallelization for runoff routing, and how does the stepwise method address it?

Low parallel efficiency in traditional methods is primarily caused by an insufficient number of computational tasks per time step to keep all threads busy, leading to thread idle time. This is especially pronounced in upstream catchments that produce fewer computational units per layer than the number of available threads [20]. The stepwise spatial-temporal-multimember method tackles this by adding two additional layers of parallelization:

- Temporal Indexing: Processes multiple time steps concurrently [20].

- Multimember Parallelization: Executes multiple independent ensemble members simultaneously [20]. This multi-dimensional approach ensures a sufficient workload is available for all threads, drastically improving utilization and efficiency.

FAQ 2: How does the data structure in this method contribute to computational performance?

The method employs a one-dimensional continuous memory layout instead of traditional two-dimensional arrays. This structure is derived from the D8 flow direction array and organizes grid cells in an up-to-downstream sequence [20]. This optimization:

- Reduces memory waste and minimizes fragmented memory access.

- Lowers the number of loops required in computations, significantly accelerating both serial and parallel execution [20].

- Provides a robust foundation for the subsequent spatial layering and parallel domain decomposition.

FAQ 3: My model uses a vector-based river network. Is this grid-based parallelization method still applicable?

This specific method is designed for entirely grid-based modeling systems without subdividing into sub-basins [20]. It leverages the D8 flow direction algorithm for its simplicity in handling large, topologically complex networks. If you are using a vector-based approach, you might need to consider alternative parallelization strategies or adapt the core principles of spatial-temporal-multimember decomposition to your framework. The method aims to reduce errors and programming complexity associated with sub-basin division [20].

FAQ 4: What are the specific advantages of implementing this method with OpenMP on a single cluster versus a multi-cluster MPI setup?

Using OpenMP on a single shared-memory computing cluster offers several key advantages in this context:

- Implementation Simplicity: It avoids the complexity of hybrid OpenMP/MPI environments and intricate sub-basin subdivisions [20].

- Reduced Overhead: It eliminates the need for message passing between different cluster nodes, minimizing communication overhead and parallelization costs [20].

- Flexibility and Accessibility: It provides greater flexibility for integration with land surface models and enables applications across various computing capacities without requiring heavy, multi-node computing infrastructure [20].

FAQ 5: How does this method improve simulation realism alongside computational performance?

While the search results focus on hydrological modeling, a key principle from parallelized Forest Landscape Models (FLMs) is relevant. Parallel processing can improve realism because it simulates multiple blocks simultaneously and performs multiple tasks concurrently. This concurrent execution is closer to the reality of how natural processes (e.g., species-level, stand-level, and seed dispersal) operate in an ecosystem, as opposed to the sequential, pixel-by-pixel simulation of traditional models [6].

Troubleshooting Guide

Performance and Scalability Issues

| Problem | Possible Cause | Solution |

|---|---|---|

| Severe performance drop with high thread counts (>50) when using only spatial layering. | Workload imbalance; too few computational units in upper layers compared to the number of threads [20]. | Activate temporal indexing and multimember parallelization. This adds independent tasks across time and ensemble members, ensuring better thread saturation [20]. |

| Low parallel efficiency (<0.5) even with a large spatial domain. | Inefficient data structure causing memory access bottlenecks; or insufficient parallel tasks [20]. | Transition from a two-dimensional array to a one-dimensional continuous memory layout organized by flow direction. Re-check that the spatial-temporal-multimember decomposition is fully implemented [20]. |

| Simulation results are inaccurate after parallelization. | The sequence of computations in the parallel method violates the hydrological dependencies of the basin. | Verify that the one-dimensional array correctly follows an up-to-downstream sequence. Ensure that the parallel processing within spatial layers and temporal indices respects the data dependencies defined by this sequence [20]. |

Implementation and Execution Errors

| Problem | Possible Cause | Solution |

|---|---|---|

| Difficulty in managing data dependencies and avoiding race conditions. | Improper handling of concurrency when threads access shared data. | Rely on the conflict-free temporal indices identified by the method. The decomposition is designed to ensure that tasks within the same temporal index are independent and can be processed in parallel without conflict [20]. |

| The method does not scale as expected on a multi-core SMP (Symmetric Multiprocessor) machine. | System-level overheads such as false sharing or thread creation overheads are dominating [20]. | Profile the code to identify hotspots. Ensure data is aligned to cache lines to minimize false sharing. Consider the trade-off between the number of threads and the workload per thread; there is an optimal thread count for a given problem size [21]. |

Experimental Protocols & Methodologies

Core Workflow of the Stepwise Decomposition Method

The following diagram illustrates the logical workflow and hierarchical relationship of the stepwise decomposition method for parallel runoff routing.

Performance Benchmarking Protocol

This protocol outlines the steps to quantitatively evaluate the performance of the parallel method, as demonstrated in the Pearl River Basin case study [20].

1. Experimental Setup:

- Hardware: A single computing cluster with multiple cores/processors.

- Software: Model implementation using OpenMP for shared-memory parallelization [20].

- Basin & Data: Apply the model to a river basin at a high spatial resolution (e.g., 90-m DEM from MERIT-Hydro). Use high-temporal-resolution climate data (e.g., IMERG precipitation data updated every 0.5 hours) [20].

2. Benchmarking Metrics:

- Execution Time: Measure the wall-clock time for a simulation of a fixed period (e.g., 10-year daily simulation).

- Speedup (S): Calculate as ( S = Ts / Tp ), where ( Ts ) is the serial execution time and ( Tp ) is the parallel execution time.

- Parallel Efficiency (E): Calculate as ( E = S / N ), where ( N ) is the number of threads used. An ideal efficiency is 1.0 [20].

3. Experimental Procedure:

- Run the model in serial mode to establish a baseline performance (( T_s )).

- Run the model in parallel mode, incrementally increasing the number of threads.

- For each run, record the execution time and calculate the speedup and efficiency.

- Test the method on watersheds of varying sizes to demonstrate scalability.

4. Analysis: Compare the performance metrics against traditional parallelization methods. The key success criterion is achieving high parallel efficiency (>0.8) with a large number of threads, which was a challenge for previous approaches [20].

Key Performance Data from Case Study

The table below summarizes quantitative results from applying the method in the Pearl River Basin, demonstrating its effectiveness in minimizing parallel overhead [20].

Table 1: Performance Metrics for Different Basin Sizes using Spatial Layering Only (13 Threads)

| Hydrological Station | Grid Cells | Serial Time (s) | Parallel Time (s) | Speedup | Parallel Efficiency |

|---|---|---|---|---|---|

| ZhaiGao | 110,808 | 172.18 | 21.86 | 7.88 | 0.61 |

| ShiJiao | 4.94 million | 7,726.94 | 757.93 | 10.19 | 0.78 |

| Outlet0 | 48.58 million | 79,470.21 | 7,262.06 | 10.94 | 0.84 |

Table 2: Impact of Stepwise Decomposition on a Small Basin (ZhaiGao, 52 Threads)

| Parallelization Scheme | Parallel Efficiency | Key Improvement |

|---|---|---|

| Spatial Layering Only | 0.06 | Baseline (highly inefficient) |

| + Temporal Indexing | 0.55 | Added concurrent time steps as tasks |

| + Multimember Parallelization | 0.80 | Added ensemble members as tasks |

The Scientist's Toolkit

This table details essential computational tools, data, and concepts used in implementing the stepwise decomposition method for runoff routing.

Table 3: Essential Research Reagents and Tools for Parallel Runoff Modeling

| Item | Function / Role | Application Note |

|---|---|---|

| OpenMP | An API for shared-memory multiprocessing programming [20]. | Chosen for its implementation simplicity on a single computing cluster, avoiding the overhead of message-passing models [20]. |

| pyflwdir | An open-source Python package for flow direction data processing [20]. | Used to convert traditional 2D D8 flow direction arrays into an efficient one-dimensional memory layout, which is foundational for the method [20]. |

| MERIT-Hydro | A global flow dataset providing 90-m resolution river network information [20]. | Provides high-resolution spatial data for the river basin, which is critical for testing the scalability and accuracy of the high-performance model [20]. |

| IMERG | A high-resolution satellite precipitation product updated every 0.5 hours [20]. | Serves as a key meteorological input, driving the hydrological model at a fine temporal scale and increasing computational demands [20]. |

| One-Dimensional Memory Layout | A data structure that stores grid cells in a continuous, flow-ordered sequence [20]. | Core to the method's performance; reduces memory waste and loop counts, enhancing both serial and parallel computational efficiency [20]. |

| Conflict-free Temporal Indices | Groups of time steps that can be computed independently [20]. | Generated by the decomposition algorithm; enables safe temporal parallelization without data races, which is key to minimizing parallel overhead [20]. |

FAQs and Troubleshooting Guides

This guide addresses common challenges researchers face when transitioning from 2D arrays to 1D layouts in performance-critical ecological simulations.

FAQ 1: Why should I use a 1D array instead of a native 2D array for my computational model?

Answer: A 1D array can offer performance benefits by providing a single, contiguous block of memory. This improves cache locality and can speed up access patterns, especially when traversing data in a linear fashion. In many programming languages like C, a built-in 2D array is essentially just a neat indexing scheme for a contiguous 1D array in memory [22]. The performance gain comes from maximizing sequential access, which modern processors handle efficiently, and can be crucial for reducing parallel overhead in large-scale ecological simulations.

FAQ 2: My simulation is producing incorrect results after switching to a 1D array. How can I troubleshoot this?

Answer: The most common issue is an error in the indexing function that maps 2D coordinates (i, j) to a 1D position. Follow this protocol:

- Verify Indexing Logic: For a 2D grid of size

M x N, the correct index is typicallyindex = i * NCOLS + j, whereNCOLSis the number of columns (the second dimension). Ensure you are using the correct dimension for multiplication [22]. - Check Bounds: Ensure that your indices

iandjdo not exceedM-1andN-1respectively. Accessing out-of-bounds memory can lead to undefined behavior. - Validate with a Small Case: Create a small, manageable grid (e.g., 3x3) and manually calculate the expected 1D indices for each cell. Compare this with your program's output to isolate the flaw.

FAQ 3: I am using floating-point numbers in my 1D array, and my search function sometimes fails to find values I know are present. What is the cause?

Answer: This is a frequent problem not with the array structure itself, but with comparing floating-point numbers for exact equality. Due to the way floating-point arithmetic works, calculated values might have tiny precision errors [23].

- Solution: Instead of looking for an exact match, use a threshold-based comparison. Check if the absolute difference between the array value and your target value is less than a small epsilon (e.g.,

1e-7). Many programming environments offer a "threshold" search function for this purpose [23].

FAQ 4: How does the access pattern affect performance in a 1D array representing a 2D grid?

Answer: Performance is highly dependent on accessing memory sequentially. In a row-major layout (common in C/C++), iterating through elements row-by-row accesses contiguous memory addresses, which is fast. Iterating column-by-column, however, leads to non-contiguous, strided accesses (jumping by NCOLS each time), which can cause cache misses and significantly slow down your computation [22]. Always structure your loops to favor sequential access.

Memory Layout and Access Patterns

The following diagram and table summarize the core concepts of transitioning from a 2D to a 1D array layout.

Diagram 1: Mapping a 2D array to a contiguous 1D memory layout. Colors highlight how rows are stored sequentially.

Table 1: Performance and Implementation Comparison: 2D vs. 1D Arrays

| Feature | Native 2D Array | 1D Array with Indexing |

|---|---|---|

| Memory Structure | Contiguous block; compiler-managed indexing [22]. | Single, contiguous block of memory; explicit programmer-defined indexing [22]. |

| Element Access | Direct syntax: array[i][j]. |

Calculated index: array[i * NCOLS + j] [22]. |

| Spatial Locality | Excellent when traversing row-wise (sequential access). Poor when traversing column-wise (strided access) [22]. | Excellent when traversing sequentially along the 1D structure. Performance depends entirely on the access pattern used in the indexing function. |

| Flexibility | Fixed dimensions (in many languages). | Highly flexible; can simulate grids of any dimension and can be easily resized with a single reallocation. |

| Use Case in Parallel Computing | Can be used effectively if parallel tasks are assigned contiguous rows/columns. | Often preferred; contiguous chunks of the 1D array can be cleanly partitioned among parallel processes, minimizing communication overhead. |

Experimental Protocol: Quantifying 1D Array Performance Gains

This protocol provides a methodology to empirically measure the performance benefits of a 1D array layout in a simulated ecological modeling task.

Objective: To compare the computation time of a stencil operation (e.g., a diffusion process in a landscape) using a native 2D array versus a 1D array layout.

Research Reagent Solutions (Computational Tools):

Table 2: Essential Software and Libraries

| Item | Function |

|---|---|

| Profiling Tool (e.g., gprof, VTune) | Measures execution time of code sections and identifies performance bottlenecks (hotspots). |

| High-Performance Computing Cluster | Provides a controlled environment to run parallelized experiments and measure scaling efficiency. |

| Matrix/Grid Library (e.g., BLAS, Eigen) | Offers highly optimized linear algebra operations for performance benchmarking against custom implementations. |

Methodology:

- Implementation: Implement two versions of a simple diffusion model on a large grid (e.g., 8192 x 8192).

- Version A (2D): Uses the language's native 2D array syntax.

- Version B (1D): Uses a 1D array with an indexing function

get_index(i, j) = i * N + j.

- Benchmarking: For each version, execute multiple iterations of a kernel that calculates each cell's new value as a function of its neighbors (e.g., the average). This forces frequent memory access.

- Control Access Patterns: Ensure both implementations use the same optimal row-major traversal order to ensure a fair comparison [22].

- Parallelization: Implement a simple domain decomposition, dividing the grid into contiguous row chunks. Use a parallel framework (like MPI or OpenMP) to distribute these chunks across processes/threads. Measure the strong scaling efficiency.

- Data Collection: Record the total execution time for both versions. Use a profiler to analyze cache miss rates and memory bandwidth usage.

Expected Outcome: The 1D array implementation should demonstrate faster execution times and better scaling efficiency due to improved cache utilization and more straightforward memory allocation in a parallel context, thereby reducing parallel overhead [14] [24].

Leveraging OpenMP for Accessible Shared-Memory Parallelization

Frequently Asked Questions (FAQs)

Q1: What is OpenMP and when should I use it for my research computations? OpenMP (Open Multi-Processing) is a shared-memory multithreading framework designed for high-performance computing (HPC). It provides high-level interfaces that allow researchers to parallelize programs without managing low-level thread details. You should use OpenMP when your problem fits within the memory of a single computing node and you need to speed up computationally intensive, CPU-bound tasks, such as processing large ecological datasets or running complex simulations [25] [26].

Q2: My parallel program produces different results each time I run it. What is wrong? This is typically caused by a race condition, where multiple threads unsafely access shared variables without proper synchronization. To fix this:

- Identify and mark variables that should be exclusive to each thread with the

privateclause [27]. - Use the

criticaldirective to ensure that only one thread at a time can execute a specific code section [28]. - Utilize the

default(none)clause to force yourself to explicitly declare the data-sharing attributes of every variable, helping to spot those that were incorrectly left as shared [27].

Q3: Why is my parallel code running slower than the serial version? Parallel overhead can outweigh the benefits of parallelization due to several factors:

- Excessive Overhead: The workload in the parallel region might be too small. Use the

ifclause to conditionally execute a region in parallel only if a certain condition (e.g., a large enough data size) is met [27]. - Load Imbalance: Threads receive uneven work chunks, causing some to finish early and wait. Specify a

schedule(dynamic)orschedule(guided)clause for loops with uneven iterations to improve load distribution [29]. - High Synchronization Cost: Frequent use of barriers and critical sections forces threads to wait. Minimize these synchronization points and use atomic operations where possible for better performance [29].

Q4: How do I control the number of threads used by my OpenMP program?

You can control the thread count by setting the OMP_NUM_THREADS environment variable before running your program. For example, in a bash shell, use export OMP_NUM_THREADS=4 [25] [28]. This can also be done within your job script for cluster submissions.

Q5: What is the difference between private and firstprivate?

private: Creates a new, uninitialized copy of a variable for each thread. The value of the original variable is undefined upon entry and exit of the parallel region [27].firstprivate: Similar toprivate, but each new thread's variable is initialized with the value of the original variable from before the parallel region. Usefirstprivatewhen threads need the initial value of the master thread's variable [27].

Troubleshooting Guides

Issue 1: Debugging Race Conditions and Incorrect Results

Symptoms: Non-reproducible results, segmentation faults, or output that varies slightly between runs.

Methodology:

- Apply the

default(none)Clause: Start by addingdefault(none)to your parallel directives. This requires you to explicitly declare every variable used in the parallel region asshared,private,firstprivate, etc. This forces a thorough review of variable scope and often reveals improperly shared variables [27]. - Inspect Functions in Parallel Regions: Check all functions called from within parallel constructs. Variables declared with the

statickeyword are stored in global memory and are therefore shared among all threads, which can be a hidden source of races [27]. - Systematic Isolation:

- Binary Hunt: Force specific parallel sections to run serially by commenting out the

#pragma omp paralleldirective or usingif(0)on the construct. If the bug disappears, the issue is within that parallel section [27]. - Critical Section Testing: Enclose large sections of code within a parallel region inside a

#pragma omp criticaldirective. If the code then works correctly, the bug is within that section. Narrow down the critical section until you isolate the problematic lines [27] [28].

- Binary Hunt: Force specific parallel sections to run serially by commenting out the

- Use Thread-Safe Debugging Tools: Debuggers can change timing and mask race conditions. Use specialized tools like Intel Inspector (though note it has been deprecated) or other thread sanitizers to analytically detect data races [27].

Issue 2: Diagnosing Poor Performance and Scalability

Symptoms: Low CPU utilization, speedup is less than expected, or performance degrades as more threads are added.

Methodology:

- Profile Single-Threaded Performance: Before parallelizing, ensure the single-threaded version of your code is optimized. A slow serial code will lead to a slow parallel code [27].

- Check for Load Imbalance: Use profiling tools to see how work is distributed. If one thread takes significantly longer, it creates a bottleneck.

- Solution: For loops with varying iteration cost, replace the default

schedule(static)withschedule(dynamic)orschedule(guided)to allow threads to grab new chunks of work as they finish [29].

- Solution: For loops with varying iteration cost, replace the default

- Minimize Synchronization Overhead:

- Reduce Barriers: Remove unnecessary implicit barriers by using the

nowaitclause where it is safe to do so. - Use Efficient Constructs: Prefer

atomicovercriticalfor simple updates, and usereductionfor operations like sums and products [29].

- Reduce Barriers: Remove unnecessary implicit barriers by using the

- Address Memory Issues:

- False Sharing: This occurs when multiple threads on different cores frequently write to different variables that reside on the same cache line, forcing constant cache invalidation.

- Solution: Reorganize data structures (e.g., use "array of structs" vs. "struct of arrays"), or add padding to ensure frequently written variables by different threads are on separate cache lines [29].

- NUMA Effects: On NUMA systems, use a "first-touch" policy where memory is initialized by the thread that will later use it, and leverage thread affinity to bind threads to specific cores [29].

Issue 3: Managing Parallel Task Granularity and Dependencies

Symptoms: High overhead with many small tasks, or tasks are not executing in the required order.

Methodology:

- Identify Overly Fine-Grained Tasks: If tasks are too small, the overhead of creating and scheduling them dominates the computation time.

- Solution: Implement a cutoff mechanism. For recursive algorithms like Quicksort, stop creating tasks when the problem size falls below a threshold and solve it serially instead [29].

- Handle Task Dependencies: Use the

dependclause to create a Directed Acyclic Graph (DAG) of tasks, specifying input and output dependencies to ensure tasks execute in the correct order [29]. - Synchronize Task Groups: Use the

taskgroupconstruct or thetaskwaitdirective to wait for the completion of a group of child tasks before proceeding, which is essential for ensuring data is ready for the next computation step [29].

OpenMP Scheduling Strategies

The following table summarizes the main loop scheduling strategies in OpenMP, which are critical for load balancing.

| Scheduling Strategy | Description | Best Use Case |

|---|---|---|

static |

Loop iterations are divided into contiguous chunks and assigned to threads at compile time. | Loops with uniform iteration cost where workload is predictable and even. |

dynamic |

Loop iterations are assigned to threads in chunks at runtime. When a thread finishes, it requests the next available chunk. | Loops with irregular or unpredictable iteration costs that can lead to load imbalance. |

guided |

Similar to dynamic, but the chunk size starts large and decreases to handle the remaining iterations. |

A compromise for irregular workloads, reducing scheduling overhead compared to dynamic. |

auto |

The scheduling decision is delegated to the compiler and/or runtime system. | When you want the runtime to choose a potentially good strategy. |

Source: Adapted from OpenMP best practices for work distribution [29].

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Construct | Function in the Parallel Experiment |

|---|---|

#pragma omp parallel |

Creates a team of threads that execute the following code block in parallel (the fork-join model) [25] [28]. |

#pragma omp for |

Work-sharing directive that divides the iterations of a loop across the available threads [25] [28]. |

#pragma omp critical |

Ensures a code section is executed by only one thread at a time, preventing race conditions [28]. |

#pragma omp barrier |

Synchronizes all threads; each thread waits here until all other threads in the team reach this point [28]. |

#pragma omp task |

Defines an explicit, potentially non-iterative task to be executed asynchronously, ideal for irregular structures [29]. |

omp_set_num_threads() |

Library function to set the number of threads from within the code [27]. |

OMP_NUM_THREADS |

Environment variable to control the number of threads to use for parallel regions [25] [28]. |

private(var) / shared(var) |

Data-sharing attribute clauses to specify whether a variable has a separate copy per thread or is shared among all threads [25] [27]. |

Experimental Protocol: Parallelizing a Summation Loop

This protocol details the steps to parallelize a simple summation, a common operation in data analysis, using OpenMP.

1. Problem Setup: Create a C program that sums all integers from 1 to N (e.g., N=1000). Begin with the serial code and include the necessary headers (stdio.h, omp.h) [28].

2. Variable Scoping: Declare variables for the partial sum (to be held by each thread) and the total sum (the final result).

- Apply the

private(partial_Sum)clause so each thread computes its own independent partial sum. - Apply the

shared(total_Sum)clause so all threads can add their results to the final total [28].

3. Parallel Region Construction: Enclose the summation logic within a #pragma omp parallel directive, initializing the partial_Sum and total_Sum variables inside this region [28].

4. Work-Sharing Directive: Precede the summation loop with #pragma omp for. This directive automatically divides the loop iterations (e.g., 1 to 1000) among the spawned threads [28].

5. Result Aggregation: After the loop, use a #pragma omp critical directive. Within this thread-safe section, each thread adds its partial_Sum to the shared total_Sum. This prevents a race condition where multiple threads try to update total_Sum simultaneously [28].

6. Execution and Validation: Compile the code with the OpenMP flag (e.g., -fopenmp for GCC). Set OMP_NUM_THREADS, run the executable, and validate the result against the known mathematical formula for the sum of an arithmetic series [28].

OpenMP Troubleshooting Workflow

The diagram below outlines a logical workflow for diagnosing and resolving common OpenMP issues, from initial symptoms to proposed solutions.

Task Offloading and Cluster-Based Parallel Processing in MEC Environments

Core Concepts: Troubleshooting Guide

Q1: What is "parallel overhead" and why does it critically impact my ecological simulations in MEC?

A: Parallel overhead refers to the extra computational time and resources consumed by managing parallel tasks instead of doing useful computation. In ecological computations like your forest landscape or population dynamics models, this manifests as time spent on [6]:

- Task Synchronization: Waiting for all parallel threads (e.g., simulating different landscape patches) to finish before the simulation can proceed to the next time step.

- Inter-Process Communication: Transferring data between different computing cores or edge servers, such as sharing seed dispersal data across simulated landscape segments [6].

- Cluster Management: The system resources used by the MEC framework to schedule, offload, and monitor tasks across the edge computing cluster [30] [31].

This overhead is critical because it can negate the performance benefits of parallelization. If overhead becomes too large, your simulation may run slower than a sequential version, wasting valuable research time and MEC resources.

Q2: My fine-grained ecological model shows high communication delay after offloading. How can I optimize this?

A: High communication delay often occurs when the offloaded tasks are too small, causing excessive data transfer. Implement these solutions:

- Task Consolidation: Group fine-grained tasks (e.g., individual organism calculations) into coarser chunks. The GIRD-PITO framework improves performance by clustering edge nodes with similar resource utilization profiles, reducing inter-cluster communication [31].

- Smart Clustering: Use algorithms like DBSCAN to group tasks with similar computational characteristics and data requirements. This minimizes the distance data must travel between nodes in the MEC cluster [30].

- Topology-Aware Offloading: Ensure your offloading strategy considers the physical network topology. Offload interdependent tasks to edge servers that have high-speed connections between them.

Q3: The energy consumption of my offloaded computations is higher than expected. What are the primary causes and fixes?

A: This common issue stems from inefficient resource use. Key causes and solutions include:

| Primary Cause | Diagnostic Check | Solution |

|---|---|---|

| Frequent State Transmissions | Monitor data transfer volume between local and edge nodes. | Implement memoization: store and reuse results of expensive function calls instead of recalculating [32]. |

| Inefficient Serial Code | Profile your code to identify bottlenecks (Rprof, aprof package) [32]. |

Vectorize operations and pre-allocate memory for large matrices/data structures before computation loops [32]. |

| Sub-optimal Resource Allocation | Check if MEC servers are consistently over- or under-provisioned. | Use a DRL-based resource manager like A3C or PPO to dynamically adjust CPU frequency and bandwidth allocation based on real-time task load [33] [34]. |

The fundamental principle is that parallel computers are more energy-efficient than serial computers for large problems, as they avoid the energy cost of ultra-high processor frequencies [35]. The energy saved by parallelization must outweigh the overhead of managing the parallel system.

Q4: How can I protect sensitive ecological field data during task offloading in a shared MEC environment?

A: Data privacy in MEC is a valid concern, especially for sensitive location data of endangered species. A two-layered approach is effective:

- Protect Location Privacy: Use a Proxy Forwarding Mechanism. Your mobile device does not communicate directly with the edge server. Instead, it connects through an intermediate proxy server, hiding your actual geographical location from the edge server [36].

- Protect Association Privacy: This prevents attackers from correlating different data tasks to infer sensitive information. Your offloading strategy should ensure that tasks with privacy conflicts (e.g., animal location data and health status data) are offloaded to different, non-adjacent edge servers [36].

Implementation & Configuration Guide

Q5: What are the key steps to implement a DRL-based offloading strategy for a stochastic ecological model?

A: Implementing a Deep Reinforcement Learning (DRL) offloader involves the following workflow. The corresponding logical flow of the training and execution process is shown in the diagram below.

- State Space Definition: Model your MEC system's state (s_t) using parameters like task queue length for each mobile device, wireless channel conditions, and current load on all available edge servers [30] [33].

- Action Space Definition: Define the actions (a_t) the DRL agent can take. This is typically a vector specifying for each task:

[0 = local compute, 1 = offload to Server A, 2 = offload to Server B, ...]along with resource allocations [33]. - Reward Function Design: The reward (r_t) should guide the agent toward your goal. To minimize delay and energy, design a reward that is the negative of your cost function: