Mechanistic vs. Phenomenological Models: A Strategic Guide for Enhanced Drug Development

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to evaluate and select between phenomenological and mechanistic modeling approaches.

Mechanistic vs. Phenomenological Models: A Strategic Guide for Enhanced Drug Development

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to evaluate and select between phenomenological and mechanistic modeling approaches. It covers foundational definitions, methodological applications across the drug development pipeline, and practical strategies for troubleshooting and optimization. By presenting rigorous validation techniques and a comparative analysis of each model's strengths, the content aims to guide the strategic, 'fit-for-purpose' implementation of these powerful tools to de-risk decisions, accelerate timelines, and improve clinical success rates.

Core Concepts: Defining Mechanistic and Phenomenological Models in Biological Research

What is a Mechanistic Model? Defining Biological Fidelity and Process-Based Representation

In the quest to understand complex biological systems, researchers often choose between two distinct modeling approaches: phenomenological (statistical) models and mechanistic models. A phenomenological model is a hypothesized relationship between variables that seeks only to best describe the observed data [1]. These models are primarily focused on forecasting outcomes based on correlations within the data, without attempting to explain the underlying biological processes that generate these patterns.

In contrast, a mechanistic model is a quantitative representation whose definition is determined and constrained by relevant knowledge of the biological system [2]. Also known as process-based models, they represent the mathematical representation of processes characterizing the functioning of well-delimited biological systems [3]. The key distinction is that mechanistic models seek to answer the "how" question by representing the actual biological processes, cellular interactions, and molecular mechanisms that underlie observed behaviors [1] [4]. The parameters in a mechanistic model all have biological definitions and can often be measured independently of the dataset being modeled [1].

Table: Fundamental Distinctions Between Modeling Approaches

| Characteristic | Mechanistic Model | Phenomenological Model |

|---|---|---|

| Primary Objective | Explain "how" biological processes generate behavior | Describe "what" patterns exist in observed data |

| Basis | Biological first principles and known mechanisms | Statistical correlations in empirical data |

| Parameters | Have direct biological interpretation (e.g., reaction rates) | Statistical coefficients without direct biological meaning |

| Predictive Scope | Can extrapolate to new conditions via biological mechanisms | Limited to interpolation within observed data range |

| Implementation | Systems of ODEs/PDEs, agent-based models, stoichiometric matrices [5] | Regression models, machine learning classifiers, curve-fitting [2] |

The Architecture of Mechanism: Core Principles and Mathematical Foundations

The Mathematical Underpinnings of Biological Mechanism

Mechanistic models in biology are typically implemented using various mathematical formalisms that capture the dynamic nature of biological processes. The most common frameworks include ordinary differential equations (ODEs) that describe temporal evolution of molecular concentrations or cell populations, partial differential equations (PDEs) that incorporate spatial dynamics, agent-based models that simulate individual cellular behaviors, and stoichiometric matrices that represent metabolic networks [5].

These models go beyond forecasting an outcome to suggest the biological mechanism underlying the emergence of observed outcomes [4]. For example, in viral infection modeling, a mechanistic approach would represent the processes of host cell infection, viral replication within cells, and immune response dynamics, with parameters corresponding to measurable biological rates such as infection rate constants and viral production rates [2].

Case Study: From Molecular Interactions to Physiological Response

The true power of mechanistic modeling emerges in its ability to connect molecular-level events to system-level behaviors. Consider drug action modeling: a statistical model might identify a linear relationship between drug concentration and heart rate, while a mechanistic model would detail the intermediate processes from drug entry into the system, binding to receptors, modulation of hormone levels, and signaling to the heart rate control system [1].

This multi-scale representation capability allows mechanistic models to serve as digital twins of biological systems [5]. When validated against experimental data, these models can guide investigations and anticipate outcomes in situations where experiments are difficult or expensive to perform [4]. The fidelity of these representations makes them particularly valuable for pharmaceutical development and therapeutic optimization [2] [6].

Quantitative Performance Comparison: Mechanistic Models in Action

Computational Performance of Mechanistic Model Surrogates

While mechanistic models provide high biological fidelity, their computational demands can be significant. This challenge has led to the development of machine learning surrogates that approximate mechanistic model behavior with substantially reduced computational requirements [5].

Table: Performance of ML Surrogates for Biological Mechanistic Models

| Original Model Description | Surrogate Algorithm | Surrogate Accuracy | Computational Improvement |

|---|---|---|---|

| SDE model of MYC/E2F pathway [5] | LSTM | R²: 0.925-0.998 | Not quantified |

| Pattern formation in E. coli [5] | LSTM | R²: 0.987-0.99 | 30,000-fold acceleration |

| Human left ventricle model [5] | Gaussian process | MSE: 0.0001 | 3 orders of magnitude |

| Physiology models: Small and HumMod [5] | SVM regression | Average error: 0.05 ± 2.47 and -0.3 ± 3.94 | 6 orders of magnitude |

| Heterotrimeric G-protein of budding yeast [5] | Generalized polynomial chaos | MAE: 2.5 × 10⁻² | 20% reduction in CPU time |

| Stress analysis of arterial walls [5] | Feedforward neural network | Test error: 9.86% | Not quantified |

Therapeutic Development Applications

The application of quantitative mechanistic modeling has demonstrated significant impact in supporting pharmacological therapeutics development, particularly in complex domains like immuno-oncology [6]. These models have evolved from simple one-equation descriptions of tumor growth to sophisticated multi-equation frameworks that capture essential biological principles underlying the cancer immunity cycle.

Table: Evolution of Tumor-Immune Mechanistic Models

| Model Type | Key Variables | Biological Processes Captured | Limitations |

|---|---|---|---|

| One-ODE | Tumor volume | Basic tumor growth kinetics | No immune component |

| Two-ODE | Tumor volume, Cytotoxic T lymphocytes | Predator-prey dynamics, cancer dormancy | No immuno-modulating factors |

| Three-ODE | Adds immuno-modulating factor (e.g., IL-2) | Cytokine effects on CTL function | No immunosuppression |

| Four-ODE | Adds immuno-suppressive factor (e.g., Tregs) | Immune evasion mechanisms | Limited to specific suppressor types |

| Mechanistic multi-compartmental | Multiple immune cell types and signaling molecules | Full immuno-oncology cycle concept | High parameterization, complex calibration |

Mechanistic models have proven particularly valuable in viral dynamics modeling, where they have been used to optimize interferon-antiviral combination therapy for chronic HCV infection [2]. These models successfully identified that interferon-α acts primarily by reducing viral production rates rather than preventing new infections, and explained the biphasic decline pattern of viral load observed in patients - a fast initial decline due to rapid clearance of free virus followed by a more gradual decline from the slower death rate of infected cells [2].

Experimental Protocols for Mechanistic Model Development and Validation

Protocol: Building a Mechanistic Model of Early EGFR Signaling

The development of a mechanistic model for epidermal growth factor receptor (EGFR) signaling demonstrates a rigorous approach to integrating quantitative biological data [7]:

Network Definition: Map all known interactions between six autophosphorylation sites in EGFR and proteins containing SH2 and/or phosphotyrosine-binding domains based on high-throughput interaction screens.

Parameterization with Affinity Data: Incorporate quantitative binding affinities (KD measurements) for site-specific interactions to constrain kinetic binding parameters. Use measurements from techniques like fluorescence polarization that provide comprehensive, high-precision affinity data.

Cell Line-Specific Customization: Integrate absolute protein copy numbers from mass spectrometry-based proteomics for specific cell lines (e.g., HeLa, HEK 293) to set cytoplasmic concentrations of signaling proteins.

Model Implementation: Implement using computational frameworks that account for mass action kinetics, competition effects, and cell line-specific protein expression patterns.

Validation: Compare model predictions against experimental measurements of protein recruitment to activated EGFR from co-immunoprecipitation and phosphotyrosine proteomics studies.

Protocol: Model Reduction via Manifold Boundary Approximation Method

For complex mechanistic models with high parameterization, the Manifold Boundary Approximation Method (MBAM) provides a systematic approach to reduction while maintaining biological interpretability [8]:

Define Quantities of Interest (QoIs): Identify the specific model behaviors or experimental observations the reduced model must capture, such as product concentration at specific time points for enzymatic reactions.

Characterize the Model Manifold: Compute the Riemannian metric tensor based on the model's sensitivity to parameter variations, revealing the model's intrinsic geometry.

Identify Limiting Approximations: Trace geodesics to boundaries of the model manifold where parameters become effectively infinite or zero, corresponding to biologically meaningful limiting cases.

Construct Reduced Model: Apply the identified limiting approximations to eliminate sloppy parameters while preserving the model's predictive capacity for the defined QoIs.

Validate Reduction: Ensure the reduced model maintains accuracy for the target applications while significantly simplifying parameter estimation and computational requirements.

This approach can reduce models from dozens of parameters to just a few key effective parameters while maintaining biological interpretability. For example, adaptation behavior in the EGFR pathway can be characterized by a single parameter τ representing the ratio of time scales for initial response and recovery, which can itself be expressed as a combination of microscopic reaction rates [8].

Visualization of Mechanistic Model Applications

Viral Dynamics and Immune Response Modeling

Viral Dynamics and Therapeutic Intervention

Tumor-Immune System Interactions

Tumor-Immune Interaction Network

Table: Key Reagents and Resources for Mechanistic Modeling Research

| Resource Category | Specific Tools/Reagents | Function in Mechanistic Modeling |

|---|---|---|

| Protein Quantification | Mass spectrometry (e.g., Kulak et al. protocol) [7] | Absolute protein copy numbers for parameterization |

| Binding Affinity Measurement | Fluorescence polarization (e.g., Hause et al. method) [7] | Quantitative KD values for protein-protein interactions |

| Spatial Tissue Analysis | Immunofluorescence imaging [2] | Tissue architecture and cellular localization data |

| Computational Modeling Environments | Ordinary Differential Equation solvers (e.g., MATLAB, R) [5] | Numerical integration of dynamic models |

| Model Reduction Algorithms | Manifold Boundary Approximation Method (MBAM) [8] | Systematic reduction of complex models |

| Surrogate Model Development | Long Short-Term Memory (LSTM) networks [5] | Machine learning approximation of complex simulations |

| Model Validation Data | Viral load measurements, immune cell counts [2] | Experimental validation of model predictions |

The choice between mechanistic and phenomenological modeling approaches depends fundamentally on the research objectives. Phenomenological models excel when the primary need is predictive accuracy within the range of observed data, when computational efficiency is paramount, or when the underlying biological mechanisms are poorly understood. Their statistical foundation makes them particularly valuable for diagnostic applications and preliminary analysis.

Mechanistic models are indispensable when the research goal extends beyond prediction to include biological understanding, when extrapolation to new conditions is required, or when the model must inform therapeutic interventions. Their representation of actual biological processes makes them particularly valuable for target identification, drug development, and personalized medicine applications [2] [6].

The emerging integration of machine learning surrogates with mechanistic models represents a powerful hybrid approach, maintaining biological interpretability while achieving computational efficiency [5]. As biological datasets continue to expand in scope and resolution, this synergistic combination of mechanistic understanding and statistical power will likely define the future of biological modeling, enabling researchers to not only predict biological behaviors but to truly understand their underlying causes.

What is a Phenomenological Model? Understanding Descriptive Power and Data-Driven Correlation

In the scientific modeling toolkit, two distinct philosophies exist: one that seeks to describe what happens, and another that aims to explain why it happens. The phenomenological model falls squarely into the first category, serving as a powerful, data-driven approach for correlating observations and making empirical predictions. This guide objectively compares phenomenological models with their mechanistic counterparts, evaluating their performance, applications, and suitability across different research scenarios, particularly in drug development.

Conceptual Foundation: Descriptive vs. Explanatory Models

A phenomenological model is a scientific model that describes the empirical relationship between phenomena in a way that is consistent with fundamental theory but is not directly derived from it [9]. Its primary goal is to describe the observable relationship between variables, often through statistical fitting of data, without attempting to model the underlying physical or biological processes that drive the behavior [10] [11].

This contrasts sharply with a mechanistic model, which is built from an understanding of the underlying processes, mechanisms, and first principles. While a phenomenological model forgoes explaining why variables interact as they do, a mechanistic model explicitly represents these causal relationships [8] [12].

The table below summarizes their core conceptual differences:

| Feature | Phenomenological Model | Mechanistic Model |

|---|---|---|

| Fundamental Basis | Empirical data and observed relationships [9] [11] | First principles and theoretical understanding of processes [8] |

| Primary Goal | Describe what happens; correlate inputs and outputs [11] | Explain why it happens; represent underlying causality [8] |

| Model Derivation | Often from curve-fitting or regression analysis [9] | Derived from fundamental laws (e.g., physics, chemistry, biology) |

| Parameter Meaning | Parameters are empirical and may not have direct physical meaning [10] | Parameters typically correspond to physical or biological properties [8] |

| Extrapolation Risk | Higher risk when used beyond the range of observed data [9] | Generally more robust for extrapolation, if mechanisms are correct |

| Development Speed | Typically faster to develop from available data | Often slower, requiring deep theoretical understanding |

Experimental Performance: A Quantitative Comparison

The theoretical differences between these modeling approaches have practical consequences for predictive performance, which can be quantified through direct experimental comparison.

Case Study: COVID-19 Epidemic Forecasting

A 2022 study directly compared the performance of phenomenological and mechanistic models for forecasting the early transmission of COVID-19 [13]. The research employed two phenomenological models (the Richards model and an approximate Susceptible-Infected-Recovered (SIR) model) and two mechanistic models (an exponential growth model with a lockdown effect and a full SIR model with lockdown). The models were fitted to early epidemic data from January-February 2020, and their forecasting accuracy was measured using Root Mean Square Error (RMSE).

The table below summarizes the quantitative results from this study:

| Model Type | Specific Model | RMSE (Feb 1 Data) | RMSE (Feb 5 Data) | RMSE (Feb 9 Data) |

|---|---|---|---|---|

| Phenomenological | Richards Model | Highest RMSE | Highest RMSE | - |

| Phenomenological | SIR Approximation | - | - | Highest RMSE |

| Mechanistic | Exponential Growth with Lockdown | Lowest RMSE | Lowest RMSE | - |

| Mechanistic | SIR with Lockdown | - | - | Lowest RMSE |

Experimental Protocol: The study used publicly reported daily case numbers from the early COVID-19 epidemic. Each model was calibrated using data available on three different starting dates (February 1, 5, and 9, 2020). The accuracy of each model's forecasts was then evaluated by comparing its predictions against the actual, subsequently observed case numbers. The RMSE values were calculated to provide a standardized measure of prediction error, with lower values indicating better performance [13].

Key Insight: The study concluded that once key interventions (like lockdowns) that influence transmission patterns are identified, incorporating them into mechanistic models significantly improves forecasting accuracy over purely phenomenological approaches that only describe the case curve's shape [13].

Case Study: Muscle Fatigue and Power-Endurance

Further demonstrating the utility of phenomenological approaches, a 2012 study developed a phenomenological model of muscle fatigue to describe the power-endurance relationship [14]. The model, based on motor unit contractile properties and recruitment, was simultaneously fitted to two sets of human data: power-time profiles during all-out exercise and power-endurance relationships during submaximal exercise.

Experimental Protocol: The model incorporated different distributions of motor unit types and their fatiguability. It was calibrated using experimental data from human exercise studies, where power output was measured over time under various intensity levels. The model's goodness of fit was quantified with R² values [14].

Result: The model achieved a high goodness of fit (R² = 0.96-0.97), demonstrating that a relatively simple phenomenological model could accurately describe human power output across different exercise intensities and that the inherent fatigue processes accounted for the curvilinear power-endurance relationship [14].

Research Reagent Solutions: The Modeler's Toolkit

The following table details key computational and data resources essential for developing both phenomenological and mechanistic models in modern research environments.

| Tool/Reagent | Function | Relevance |

|---|---|---|

| Quantitative Structure-Activity Relationship (QSAR) | Computational modeling to predict a compound's biological activity from its chemical structure [15]. | Foundational for phenomenological drug discovery models. |

| Physiologically Based Pharmacokinetic (PBPK) Modeling | A mechanistic approach to understand the interplay between physiology and drug product quality [15]. | Core mechanistic tool in Model-Informed Drug Development (MIDD). |

| Population PK/Exposure-Response (ER) Analysis | A mixed approach to explain variability in drug exposure and its relationship to effects in a population [15]. | Bridges phenomenological (statistical) and mechanistic elements. |

| AI Foundation Models (e.g., Bioptimus, Evo) | Large-scale models trained on massive biological datasets to uncover fundamental biological patterns [16]. | Emerging tool for creating powerful, data-driven phenomenological representations of biology. |

| AI Agents | Systems that automate bioinformatics tasks like RNA-seq analysis by choosing parameters and pipelines [16]. | Accelerates the data preprocessing required for robust phenomenological modeling. |

| Knowledge Graphs | Integrates multimodal data (genomics, proteomics, clinical trials) to map biological relationships [17]. | Provides a structured knowledge base for informing both phenomenological and mechanistic models. |

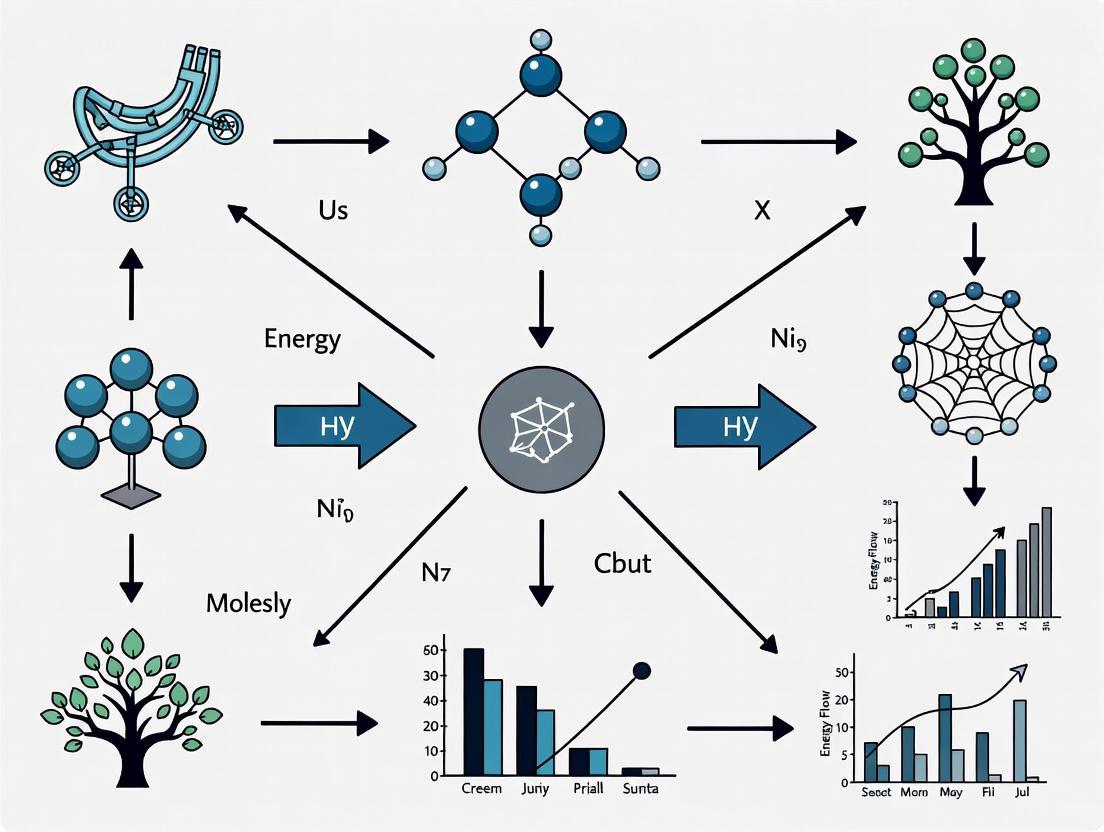

Model Selection Workflow and Relationship

The choice between a phenomenological and a mechanistic model is not always straightforward. The following diagram illustrates a general workflow and the relationship between these two modeling paradigms, based on the available information and research constraints.

Phenomenological and mechanistic models are not inherently superior to one another; they are complementary tools for different phases of research and development. The quantitative comparisons show that mechanistic models can outperform phenomenological ones when the underlying system drivers are well-understood and can be incorporated [13]. Conversely, phenomenological models provide a fast and often sufficiently accurate solution for correlation and prediction within observed data ranges, especially in complex systems where mechanisms are elusive [14].

The future of modeling, particularly in fields like drug discovery, lies in hybrid approaches and advanced AI that can leverage the strengths of both. Techniques like the Manifold Boundary Approximation Method (MBAM) can distill complex mechanistic models into simpler phenomenological forms while retaining a connection to the microscopic parameters, effectively bridging the two philosophies [8]. Furthermore, modern AI-driven platforms are increasingly attempting to create holistic, data-driven representations of biology that capture the complexity once only addressable by complex mechanistic models [17]. The choice of model should always be fit-for-purpose, aligned with the question of interest, the available data, and the required context of use [15].

In quantitative sciences, particularly in drug development and systems biology, mathematical models exist on a broad spectrum defined by their underlying principles. At one end lie purely phenomenological models, which are primarily descriptive and focus on accurately capturing patterns in observed data, often without direct reference to the biological mechanisms that generate these patterns. At the opposite end reside fully mechanistic models, which strive to represent the fundamental biological, chemical, and physical processes that govern system behavior. This spectrum does not merely represent different statistical approaches but embodies fundamentally different philosophies for using mathematics to understand complex biological systems. The choice of where to operate on this spectrum represents a critical strategic decision that balances computational complexity, data requirements, and the specific questions of interest in the drug development pipeline [8].

The distinction between these approaches has profound implications for predictive capability, interpretability, and regulatory acceptance. Phenomenological models, sometimes called "black box" models, excel at interpolation and short-term prediction within the range of observed data but often fail when conditions extend beyond previously studied parameters. Conversely, mechanistic models, or "white box" models, aim for a deeper causal understanding that can support extrapolation to novel therapeutic contexts but require more extensive system-specific knowledge and data [8]. Between these extremes exists a rich continuum of semi-mechanistic models that incorporate elements of both approaches, seeking to balance pragmatic data-fitting with biological plausibility.

Comparative Analysis of Model Types

Key Characteristics Across the Spectrum

Table 1: Fundamental Characteristics of Modeling Approaches

| Characteristic | Phenomenological Models | Semi-Mechanistic Models | Fully Mechanistic Models |

|---|---|---|---|

| Primary Objective | Describe patterns and correlations in data | Blend empirical fitting with biological structure | Elucidate underlying biological processes |

| Computational Demand | Typically low to moderate | Moderate to high | Very high |

| Data Requirements | Lower; only output variables needed | Intermediate; some system-specific data | Extensive; detailed component-level data |

| Interpretability | Limited direct biological insight | Partial biological interpretation | High theoretical interpretability |

| Extrapolation Risk | High outside observed conditions | Moderate with constrained extrapolation | Lower when mechanisms are correct |

| Common Techniques | Regression, machine learning, non-compartmental analysis | Population PK/PD, some PBPK approaches | QSP, detailed PBPK, pathway models |

Application in Drug Development

The "fit-for-purpose" principle in Model-Informed Drug Development (MIDD) emphasizes that model selection must be closely aligned with the specific Question of Interest (QOI) and Context of Use (COU) at each development stage [15]. No single approach is universally superior; each occupies a strategic position in the model ecosystem. For instance, in early discovery, quantitative structure-activity relationship (QSAR) models provide phenomenological predictions of compound properties, while later stages may employ physiologically based pharmacokinetic (PBPK) models for mechanistic simulation of drug disposition [15].

The International Council for Harmonisation (ICH) M15 guidance acknowledges this spectrum, providing a harmonized framework for assessing evidence derived from MIDD approaches regardless of their position on the phenomenological-mechanistic continuum [18]. Regulatory acceptance depends not on whether a model is purely mechanistic but on whether it is appropriately validated for its specific context of use and provides reliable evidence for decision-making.

Experimental Performance Data

Quantitative Comparison Across Domains

Table 2: Experimental Performance Metrics Across Model Types

| Application Domain | Model Type | Prediction Accuracy | Development Time | Key Strengths | Notable Limitations |

|---|---|---|---|---|---|

| First-in-Human Dose Prediction | Empirical Allometric Scaling | Moderate | Short (days-weeks) | Rapid implementation | Poor interspecies translation |

| Semi-Mechanistic PBPK | Good | Medium (weeks-months) | Incorporates physiology | Requires system parameters | |

| Full QSP Platform | Very Good | Long (months-years) | Incorporates disease biology | Extensive data requirements | |

| EGFR Signaling Adaptation* | Phenomenological (2 parameters) | Good within training range | Short | Computational efficiency | Limited biological insight |

| Mechanistic (48 parameters) | Excellent | Very Long | Identifies control points | Parameter identifiability challenges | |

| Clinical Trial Simulation | Statistical Models | Good for similar populations | Medium | Handles variability well | Limited to existing care paradigms |

| Mechanism-Based Platforms | Good for novel scenarios | Long | Explores combination therapies | Complex to implement and validate | |

| Data adapted from: MBAM analysis of EGFR pathway [8] |

The Manifold Boundary Approximation Method (MBAM) provides a formal mathematical framework for moving from complex mechanistic models to simpler phenomenological representations. In one demonstrated case, a 48-parameter mechanistic model of EGFR signaling was systematically reduced to a single adaptive parameter τ (tau), representing the ratio of activation to recovery time scales [8]. This distilled model maintained much of the predictive power of the full mechanistic model for specific behaviors while dramatically improving computational efficiency and identifiability.

Detailed Experimental Protocols

Protocol: MBAM for Model Distillation

Purpose: To systematically reduce complex mechanistic models to simpler phenomenological representations while preserving essential behaviors.

Materials:

- Mathematical model of the biological system (ODE or PDE-based)

- Experimental dataset for behaviors of interest

- Computational environment for model simulation (e.g., MATLAB, R, Python)

- Sensitivity analysis toolbox

- Parameter estimation algorithms

Procedure:

- Define Quantities of Interest (QoIs): Identify the specific system behaviors the reduced model must preserve [8].

- Compute the Model Manifold: Map how model predictions change across parameter space for the defined QoIs.

- Identify Sloppy Parameters: Use eigenvector analysis to determine which parameters least affect QoIs.

- Apply Limiting Approximations: Systematically take mathematical limits to remove non-identifiable parameters.

- Validate Reduced Model: Test whether the simplified model maintains predictive power for both training data and novel conditions.

- Interpret Effective Parameters: Relate emergent phenomenological parameters to biological mechanisms.

Deliverable: A simplified model with minimal parameters that captures essential system behavior, similar to how the Michaelis-Menten equation emerges as a special case of full enzyme kinetics [8].

Protocol: Developing a Fit-for-Purpose MIDD Strategy

Purpose: To select and implement appropriate modeling approaches aligned with drug development stage and decision context.

Materials:

- Target Product Profile (TPP)

- Development timeline and key decision points

- Available experimental data (in vitro, in vivo, clinical)

- Cross-functional team expertise (pharmacology, clinical, regulatory)

Procedure:

- Define Context of Use (COU): Clearly articulate how model outputs will inform specific development decisions [15].

- Identify Key Questions: Prioritize the most critical uncertainties that modeling could address.

- Map Available Data: Inventory existing data sources and identify critical gaps.

- Select Model Platform: Choose appropriate modeling approach based on COU, questions, and data.

- Establish Validation Plan: Define criteria for evaluating model performance and reliability.

- Implement Iterative Refinement: Update models as new data emerges throughout development.

- Document for Regulatory Submission: Prepare comprehensive model documentation following MIDD principles [18].

Deliverable: A tailored MIDD strategy that efficiently addresses critical development questions using appropriate modeling methodologies.

Signaling Pathways and Workflows

Model Selection Decision Pathway

Diagram 1: Model Selection Workflow (88 characters)

MIDD Integration in Drug Development

Diagram 2: MIDD Tools Across Development (76 characters)

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents and Computational Tools for Model Development

| Tool Category | Specific Examples | Primary Function | Model Application |

|---|---|---|---|

| Computational Platforms | MATLAB, R, Python with SciPy | Numerical computation and parameter estimation | All model types: simulation, fitting, and analysis |

| Specialized Software | NONMEM, Monolix, Simbiology | Population modeling and systems pharmacology | Semi-mechanistic and mechanistic PK/PD, QSP |

| Sensitivity Analysis Tools | Sobol method, Morris elementary effects | Identify influential parameters | Model reduction and experimental design |

| Data Resources | PubChem, ClinicalTrials.gov, GEO | Source experimental and clinical data | Parameterization and validation across scales |

| Visualization Tools | Graphviz, ggplot2, D3.js | Create diagrams and exploratory plots | Communicate model structure and results |

| Model Reduction Algorithms | MBAM, principal component analysis | Simplify complex models | Create phenomenological approximations from mechanistic models [8] |

The toolkit extends beyond software to include experimental reagents for parameterizing models at different biological scales. For cellular and molecular-level models, key reagents include pathway-specific inhibitors, activators, and detection antibodies for quantifying signaling intermediates. For whole-body PBPK models, critical parameters include tissue partition coefficients, plasma protein binding data, and enzyme expression/activity levels across relevant tissues. The selection of specific reagents should be guided by the model's context of use and the biological processes being represented.

In the fields of drug development and systems biology, researchers are often faced with a critical choice: should a predictive model prioritize the sheer accuracy of its predictions or the biological interpretability of its mechanisms? This guide objectively compares these two modeling paradigms—phenomenological (often high-accuracy, "black-box") and mechanistic (often interpretable, theory-based)—by examining their performance, applications, and experimental support.

Core Concepts and Trade-offs at a Glance

The table below summarizes the fundamental differences between the two modeling approaches.

| Feature | Phenomenological (Statistical) Models | Mechanistic Models |

|---|---|---|

| Core Philosophy | Seeks only to best describe the observed data without explaining underlying causes [19]. | A hypothesized relationship where the model structure is specified by the biological processes thought to have generated the data [19]. |

| Primary Strength | High predictive accuracy within the range of observed conditions; often more direct path to a predictive model [19]. | Facilitates biological understanding; parameters have biological definitions and can be measured independently; generally more robust for extrapolation [19]. |

| Primary Weakness | Can be a "black box"; predictions may fail outside observed conditions without clear reason [19]. | Can be complex with many parameters; may be less accurate for pure prediction if the underlying mechanisms are not fully understood [19]. |

| Interpretability | Low; often difficult to explain why variables interact the way they do [20] [19]. | High; model parameters and structure are linked to biological entities and processes [19]. |

| Typical Goal | Description and prediction [19]. | Explanation, understanding, and prediction [19]. |

Quantitative Performance and Experimental Evidence

Predictive Accuracy in Epidemiological Forecasting

A 2022 study compared model performance in forecasting early COVID-19 transmission, providing a clear example of the accuracy trade-off [13].

| Model Type | Specific Model | Key Characteristic | Performance (Root Mean Square Error - RMSE) |

|---|---|---|---|

| Phenomenological | Richards Model | Flexible curve-fitting to case data | Highest RMSE (poorest performance) |

| Phenomenological | SIR Approximation | Simplified SIR model without biological parameters | High RMSE |

| Mechanistic | Exponential Growth with Lockdown | Incorporates intervention effect | Lowest RMSE (best performance) |

| Mechanistic | SIR Model with Lockdown | Standard biological model with intervention parameter | Low RMSE |

Experimental Protocol: The study used reported case data from January-February 2020. Each model was calibrated using data from specific dates (February 1, 5, and 9). The models were then used to forecast future case numbers, and their predictions were compared against the actual reported data using RMSE [13].

Classification Accuracy in Single-Cell Multiomics

A 2025 benchmark of the interpretable scMKL (single-cell Multiple Kernel Learning) method against other algorithms demonstrates the performance of biology-informed models [21].

| Model | Interpretability | AUROC (Area Under the ROC Curve) |

|---|---|---|

| scMKL (Pathway-informed) | High (Uses known biological pathways) | Superior (Statistically significant, p<0.001) |

| XGBoost (All features) | Low | Weaker |

| Multi-Layer Perceptron (All features) | Low | Intermediate |

| Support Vector Machine | Low | Worst |

*Performance was assessed across 100 independent models on single-cell multiome datasets (RNA + ATAC) from breast cancer cell lines (MCF-7, T-47D) and patient samples (Small Lymphatic Lymphoma). scMKL achieved higher or matching accuracy despite using fewer, biologically curated features [21].

Experimental Protocol: The study employed an 80/20 train-test split, repeated 100 times with cross-validation. Models were tasked with classifying cell states (e.g., healthy vs. cancerous, or treated vs. control) based on single-cell data. scMKL constructed kernels using prior biological knowledge from the Molecular Signature Database (MSigDB) and transcription factor binding site databases (JASPAR, Cistrome) [21].

Pathways and Workflows for Interpretable AI

The MBAM Framework: From Complex Mechanism to Simple Phenomenology

The Manifold Boundary Approximation Method (MBAM) is a powerful technique for distilling complex mechanistic models into simpler phenomenological ones while retaining a connection to the underlying biology [8].

MBAM simplifies complex models into interpretable ones.

Case Study - EGFR Signaling Adaptation: MBAM was applied to a 48-parameter mechanistic model of the EGFR signaling pathway. The method reduced the model to a single, interpretable adaptation parameter (τ), which represents the ratio of time scales for the system's initial response and recovery. This parameter τ could, in turn, be expressed as a combination of microscopic reaction rates and concentrations, explicitly connecting the simple behavior to the complex mechanism [8].

The scMKL Workflow for Integrative Analysis

The scMKL framework demonstrates how to integrate multiomics data with biological knowledge to achieve both accuracy and interpretability [21].

The scMKL workflow for multiomic analysis.

The Scientist's Toolkit: Essential Research Reagents

The following table details key computational and data resources essential for conducting research in this field.

| Research Reagent | Function & Application |

|---|---|

| Manifold Boundary Approximation Method (MBAM) | A model reduction tool for deriving simple phenomenological models with clear connections to their complex mechanistic origins [8]. |

| Biologically-Informed Neural Networks (BINNs) | A neural network architecture that encodes pathway-level inductive biases, improving performance in low-data regimes and enabling the identification of biologically meaningful traits [22]. |

| Explainable AI (XAI) Techniques (e.g., SHAP, LIME) | Post-hoc methods used to explain the predictions of black-box models, enhancing transparency and user trust [20]. |

| Multiple Kernel Learning (MKL) with Group Lasso | A machine learning framework that uses biologically defined feature groups (e.g., pathways) to create interpretable models without sacrificing predictive power [21]. |

| Molecular Signature Database (MSigDB) | A curated database of gene sets representing known biological pathways and processes, used to provide prior knowledge for models like scMKL [21]. |

| JASPAR/Cistrome Databases | Curated databases of transcription factor binding profiles, used to inform models about regulatory programs in epigenomic data [21]. |

The choice between biological interpretability and predictive accuracy is not absolute. The future of model selection in drug development and biology lies in flexible frameworks that can balance these needs. Promising directions include:

- Biology-Informed Architectures: Models like BINNs and scMKL that bake biological knowledge directly into their structure [22] [21].

- Explainable AI (XAI): The growing field of XAI aims to open the black box, making complex models more transparent and their decisions more understandable, which is critical for high-stakes fields like healthcare [20].

- "Deep Data" over "Big Data": A shift in focus from simply having large datasets to generating and using high-fidelity, biologically-relevant data that provides the necessary context for accurate and meaningful models [23].

The most effective strategy is often to start simply, and then increase sophistication—and add interpretability—as needed [24]. By reframing the challenge from "sacrificing accuracy for interpretability" to "adding interpretability to accurate models," researchers can leverage the full power of modern machine learning while building the trust and understanding required for scientific discovery and clinical application.

Historical Context and Evolution in Systems Biology and Pharmacology

The evaluation of phenomenological versus mechanistic models represents a fundamental dichotomy in systems biology and pharmacological research. Mechanistic models are built from first principles, incorporating established scientific knowledge about the underlying biological processes, components, and their interactions [25]. These models aim to reconstruct the actual machinery of biological systems, from molecular pathways to cellular networks, providing explicit causal explanations for observed phenomena. In contrast, phenomenological models prioritize descriptive accuracy over mechanistic explanation, capturing input-output relationships and patterns in data without necessarily reflecting the true underlying structure of the biological system [25].

This comparison guide examines the evolution of these competing approaches within systems biology and pharmacology, tracing their historical development while objectively comparing their performance across key research applications. The tension between these modeling philosophies reflects deeper epistemological questions about how we build knowledge in complex biological systems – whether through detailed reconstruction of component interactions or through empirical patterns that predict system behavior.

Historical Evolution of Modeling Paradigms

The historical development of biological modeling reveals alternating dominance between mechanistic and phenomenological approaches, often driven by technological capabilities and theoretical frameworks.

Early Foundations: Mechanistic Dominance

The mid-20th century established strong foundations for mechanistic modeling in biology, most notably with the Hodgkin-Huxley model of neuronal action potentials [25]. This pioneering work demonstrated how mathematical formalisms could capture biophysical mechanisms, specifically ion channel dynamics, to explain cellular-level phenomena. This approach dominated early systems biology, emphasizing detailed reconstruction of known biological components and their interactions. The success of such mechanistic explanations in electrophysiology established a paradigm that would influence pharmacological research for decades.

Late 20th Century: Empirical Shifts

By the late 20th century, the limitations of purely mechanistic approaches became apparent as biological research revealed increasingly complex systems that resisted complete mechanistic characterization. During this period, phenomenological approaches gained prominence, particularly in pharmacokinetics-pharmacodynamics (PK-PD) modeling and quantitative structure-activity relationship (QSAR) studies [26]. These models prioritized predictive accuracy over mechanistic explanation, using statistical relationships between drug properties and biological effects to guide therapeutic development without requiring complete knowledge of underlying biological processes.

Contemporary Synthesis: Hybrid Approaches

The 21st century has witnessed a convergence of these traditions, fueled by advances in computational power, high-throughput technologies, and machine learning. Modern research increasingly employs hybrid models that incorporate mechanistic elements for well-characterized subsystems while using phenomenological components for less understood aspects [25]. This integration represents an pragmatic acknowledgment that both approaches offer complementary strengths for dealing with biological complexity across different scales of organization.

Table 1: Historical Timeline of Modeling Approaches in Systems Biology and Pharmacology

| Time Period | Dominant Paradigm | Key Developments | Representative Models |

|---|---|---|---|

| 1950-1970 | Mechanistic Foundation | Biophysical modeling | Hodgkin-Huxley model [25] |

| 1980-1990 | Phenomenological Expansion | PK/PD modeling, QSAR | Compartmental models, Statistical rate models |

| 2000-2010 | Computational Scaling | High-throughput data, Systems biology | Large-scale kinetic models, Network models |

| 2010-Present | Hybrid Integration | Machine learning, AI | SNPE, Mechanistic ML [25] |

Comparative Analysis: Performance Across Research Contexts

The performance characteristics of phenomenological versus mechanistic models vary significantly across different research contexts and applications. Objective comparison requires examining multiple dimensions of model utility beyond simple predictive accuracy.

Interpretability and Biological Insight

Mechanistic models excel in providing biological interpretability and insight into underlying processes. By construction, these models represent hypothesized mechanisms, allowing researchers to make direct inferences about causal relationships and potential intervention points [25]. For example, in neuroscience, mechanistic models of neural circuits have revealed how distinct parameter configurations can generate similar network-level rhythms, suggesting potential compensation mechanisms in biological systems [25].

Phenomenological models typically sacrifice interpretability for predictive power. While these models can accurately capture input-output relationships, the parameters often lack direct biological meaning, limiting their utility for understanding underlying biology. The trade-off becomes particularly significant in drug development, where understanding mechanism of action is crucial for assessing safety and identifying new therapeutic opportunities.

Data Requirements and Computational Efficiency

The data requirements for these modeling approaches differ substantially. Mechanistic models typically require detailed, multi-level experimental data to constrain numerous parameters representing biological components and processes. These requirements can make mechanistic modeling prohibitively expensive for many applications, particularly in early research stages where comprehensive data is unavailable [25].

Phenomenological models generally operate efficiently with less extensive datasets, focusing on capturing overall patterns rather than detailed mechanisms. This efficiency comes at the cost of biological generality – phenomenological models typically exhibit poorer performance when extrapolating beyond their training data conditions, whereas properly constructed mechanistic models can more reliably predict system behavior under novel conditions.

Parameter Identification and Uncertainty

A critical challenge in mechanistic modeling is parameter identifiability – determining which parameter values are consistent with observed data. Traditional approaches to this problem involved laborious trial-and-error parameter tuning or computationally expensive parameter search methods [25]. Recent advances like Sequential Neural Posterior Estimation (SNPE) have dramatically improved this process by using deep neural density estimators to identify all parameter sets consistent with experimental data, even for complex models with many parameters [25].

Table 2: Performance Comparison of Modeling Approaches

| Performance Metric | Mechanistic Models | Phenomenological Models |

|---|---|---|

| Interpretability | High – parameters have biological meaning | Low – parameters often abstract |

| Extrapolation Reliability | High – when mechanisms generalize | Low – limited to training domain |

| Data Requirements | High – multi-level, detailed data | Moderate – input-output patterns |

| Computational Cost | High – complex simulations | Low to moderate – simpler calculations |

| Mechanistic Insight | Direct – reveals causal structure | Indirect – suggests hypotheses |

| Parameter Identifiability | Challenging – requires advanced methods | Straightforward – statistical estimation |

Experimental Protocols and Methodologies

Rigorous experimental protocols are essential for objectively comparing modeling approaches. The following methodologies represent state-of-the-art practices for evaluating model performance in pharmacological and systems biology contexts.

Model Training and Validation Framework

A standardized framework for model training and validation ensures fair comparison between approaches:

Data Partitioning: Divide experimental datasets into training (70%), validation (15%), and test (15%) subsets, ensuring representative sampling across experimental conditions.

Multi-scale Data Integration: For mechanistic models, incorporate heterogeneous data types including molecular, cellular, and physiological measurements collected across relevant scales [25].

Cross-validation: Implement k-fold cross-validation (typically k=5-10) to assess model robustness, particularly for phenomenological models with potential overfitting tendencies.

External Validation: Test model predictions against completely independent datasets not used in model development, providing the most rigorous assessment of generalizability.

Performance Metrics and Evaluation Criteria

Quantitative comparison requires multiple performance metrics capturing different aspects of model utility:

Predictive Accuracy: Measure root mean square error (RMSE) or mean absolute percentage error (MAPE) between predictions and experimental observations across the test dataset.

Uncertainty Quantification: Evaluate how well model-derived confidence intervals capture actual variability in experimental data, particularly important for mechanistic models with parameter uncertainties.

Identifiability Assessment: For mechanistic models, compute posterior distributions for parameters using methods like SNPE to determine which parameters are well-constrained by data [25].

Computational Efficiency: Benchmark simulation time and resource requirements for model training and prediction phases.

Visualization of Modeling Approaches

The following diagrams illustrate key workflows and relationships in phenomenological versus mechanistic modeling approaches.

Mechanistic Model Development Workflow

Phenomenological Model Development Workflow

Modern modeling research in systems biology and pharmacology relies on specialized software tools and computational resources. The following table details key solutions used in contemporary research.

Table 3: Essential Research Tools for Modeling in Systems Biology and Pharmacology

| Tool Name | Type | Primary Function | Modeling Approach |

|---|---|---|---|

| SNPE (Sequential Neural Posterior Estimation) | Algorithm | Bayesian parameter inference for simulation-based models | Mechanistic [25] |

| RDKit | Software Library | Cheminformatics and molecular manipulation | Both [27] |

| AutoDock Vina | Software Tool | Molecular docking and virtual screening | Mechanistic [27] |

| DataWarrior | Software Application | Interactive cheminformatics and visualization | Phenomenological [27] |

| Biomni Database Tools | Tool Suite | Access to 30+ specialized biomedical databases | Both [28] |

| Partek Flow | Software Platform | Bioinformatics for genomic data analysis | Phenomenological [29] |

| BIOVIA | Software Suite | Molecular modeling and simulation | Mechanistic [29] |

The historical evolution of modeling approaches in systems biology and pharmacology reveals a field maturing toward methodological pluralism. Rather than representing competing alternatives, mechanistic and phenomenological models increasingly function as complementary approaches, each with distinct strengths and appropriate applications.

Mechanistic models provide superior biological insight and extrapolation capability when sufficient prior knowledge and experimental data exist to constrain their parameters [25]. These models excel in later stages of drug development where understanding mechanism of action is critical, and in fundamental biological research aimed at elucidating causal structures. The development of advanced parameter estimation methods like SNPE has addressed historical challenges in practical implementation, making mechanistic modeling more accessible across biological domains.

Phenomenological models offer practical utility in early research stages where data is limited or mechanisms are poorly understood. Their computational efficiency makes them valuable for rapid screening and prioritizing experimental directions [26]. In pharmaceutical applications, these models continue to play important roles in PK/PD modeling and quantitative systems pharmacology where certain subsystems resist mechanistic characterization.

The most productive path forward lies in hybrid approaches that strategically combine mechanistic and phenomenological elements, leveraging the strengths of each while mitigating their respective limitations. As machine learning and AI continue transforming biological research, the integration of these methodologies with traditional modeling approaches will likely define the next evolutionary stage in systems biology and pharmacology.

Strategic Implementation: Applying Model Types Across the Drug Development Workflow

Phenomenological models are powerful tools in drug discovery, prized for their ability to accurately describe system behaviors and predict outcomes without requiring a deep understanding of the underlying biological mechanisms. This guide objectively compares their performance against mechanistic models across key applications, providing the experimental data and protocols needed for researchers to make informed choices in their modeling strategies.

In the landscape of drug discovery and development, phenomenological models (also known as empirical models) describe the relationship between observed inputs and outputs, focusing on predicting what happens rather than explaining why it happens. They are constructed to fit experimental data, often resulting in simpler mathematical forms that are highly practical for forecasting and screening. In contrast, mechanistic models are built from first principles and biological understanding, aiming to represent the actual physical, chemical, and biological processes governing a system.

The choice between these approaches often involves a trade-off between predictive accuracy with limited data and biological interpretability. This guide provides a direct, data-driven comparison of their performance in critical pharmaceutical applications, including quantitative structure-activity relationships (QSAR), epidemic forecasting, and exposure-response analysis, offering a clear framework for model selection.

Performance Comparison: Key Metrics and Experimental Data

The table below summarizes quantitative findings from published studies, directly comparing the performance of phenomenological and mechanistic models.

Table 1: Experimental Performance Comparison of Model Types

| Application Area | Specific Model(s) Tested | Key Performance Metric | Phenomenological Model Result | Mechanistic Model Result | Study Context |

|---|---|---|---|---|---|

| Epidemic Forecasting | Richards Model, SIR Approximation | Root Mean Square Error (RMSE) | Higher RMSE [13] | Lower RMSE (Exponential model with lockdown) [13] | Early COVID-19 transmission (Feb 2020) [13] |

| Radiobiological Effects | Symbolic Regression-derived Formulas | Goodness of Fit | Comparable to established literature formulas [30] | (As benchmark) | Modeling survival fraction, microdosimetry [30] |

| Model Identifiability | Generalized Growth, Richards, Gompertz, etc. | Structural & Practical Identifiability | All six models were structurally identifiable; practical identifiability varied with noise [31] | Modified SEIR model was structurally identifiable [31] | Analysis on monkeypox, COVID-19, and Ebola data [31] |

Insights from Comparative Data

- In Epidemic Forecasting, mechanistic models demonstrated superior accuracy in the specific context of early COVID-19. The study found that once interventions like lockdowns were identified and incorporated, mechanistic models (e.g., the exponential growth model with a lockdown effect) yielded lower forecasting errors than phenomenological ones like the Richards model [13].

- For Complex Biological Effects, phenomenological models can match established benchmarks. In radiobiology, symbolic regression—an automated method for generating phenomenological formulas—produced models that predicted effects as effectively as those found in the scientific literature, highlighting their utility in data-rich but theory-poor domains [30].

- The Critical Issue of Identifiability is a key consideration. A 2025 study confirmed that several common phenomenological growth models are structurally identifiable, meaning their parameters can be uniquely determined from perfect data. However, their practical identifiability—the ability to reliably estimate parameters from real, noisy data—varied, underscoring the need for rigorous validation against the specific dataset [31].

Experimental Protocols for Key Applications

Protocol 1: Developing a QSAR Model

Quantitative Structure-Activity Relationship (QSAR) modeling is a quintessential phenomenological approach that correlates chemical structure descriptors with biological activity [32].

Table 2: Key Reagents and Solutions for QSAR Modeling

| Research Reagent / Solution | Function in the Protocol |

|---|---|

| Library of Chemical Compounds | The input dataset of structures with associated experimentally-measured biological activities. |

| Chemical Descriptor Calculation Software | Generates numerical representations of molecular structures (e.g., lipophilicity, electronic properties, shape). |

| Data Analysis & Machine Learning Algorithms | Correlates chemical descriptors with biological activity to build the predictive model (e.g., linear regression, random forests). |

| Validation Dataset | A set of compounds not used in model building, used to test the model's predictive power and robustness. |

Workflow Steps:

- Compound Library Assembly & Biological Screening: Assemble a library of chemical compounds and assay them for a specific biological activity (e.g., IC₅₀ for an anti-breast cancer target) using a consistent experimental system [32].

- Chemical Descriptor Calculation: For every compound in the library, calculate a set of numerical chemical descriptors that encode structural and physicochemical properties. These can range from simple parameters like

logP(lipophilicity) to complex 3D-dimensional fingerprints [32]. - Model Training: Use a statistical or machine learning algorithm to establish a mathematical relationship between the chemical descriptors (input) and the biological activity (output). This step generates the QSAR model [32].

- Model Validation: The model must be rigorously validated. A common approach is to use a separate test set of compounds to assess its predictive accuracy. Techniques like cross-validation are also used to ensure the model is not over-fitted to the training data [32].

- Model Application: A validated model can be used to predict the biological activity of new, untested compounds or to interpret which chemical features are most critical for activity [32].

Protocol 2: Identifiability Analysis for Growth Models

Before applying a phenomenological model to real-world data, it is crucial to determine if its parameters can be uniquely estimated—a concept known as identifiability analysis [31].

Workflow Steps:

- Model Reformulation: For models with non-integer power exponents (e.g., the Generalized Growth Model,

dC/dt = rC^α), reformulate them by introducing additional state variables. This makes them amenable to analysis with standard differential algebra software packages [31]. - Structural Identifiability Analysis: Use a computational tool like the

StructuralIdentifiability.jlpackage in JULIA. This software employs differential algebra to eliminate unobserved state variables and determine if, in principle, all model parameters can be uniquely identified from the perfect, noise-free observational data [31]. - Parameter Estimation & Forecasting: Using the structurally identifiable model, perform parameter estimation with real data. Tools like the

GrowthPredictMATLAB Toolbox can be used to fit the model to time-series data (e.g., weekly incidence data for an epidemic) [31]. - Practical Identifiability Analysis: Assess the model's robustness under real-world conditions through Monte Carlo simulations. This involves adding varying levels of observational noise to the data and re-estimating parameters to see if they remain stable and reliable [31].

Visualizing Workflows and Model Positioning

The following diagram illustrates the core, iterative workflow of phenomenological modeling, shared across the protocols described above.

Diagram 1: Core workflow for developing phenomenological models.

The diagram below positions phenomenological and mechanistic models based on their typical trade-offs, helping to guide the initial model selection strategy.

Diagram 2: Strategic positioning of model types based on common trade-offs.

In modern drug discovery, the choice between mechanistic and phenomenological modeling frameworks represents a fundamental strategic decision with profound implications for research outcomes. Mechanistic models are grounded in established biological, chemical, and physical principles, explicitly representing causal relationships within biological systems—from molecular interactions to cellular pathway dynamics. These models aim to answer not just "what" happens but "how" and "why" it happens. In contrast, phenomenological models prioritize data-driven pattern recognition and empirical correlations, often achieving short-term predictive accuracy without requiring deep understanding of underlying biological processes [13] [33].

The distinction between these approaches is particularly salient in pharmaceutical research, where the explanatory power of mechanistic models provides critical advantages for understanding complex biological systems, predicting clinical outcomes, and de-risking drug development pipelines. While phenomenological approaches such as Richards models or approximate SIR solutions can offer computational efficiency for specific forecasting tasks, they typically demonstrate higher root mean square error (RMSE) values compared to mechanistic counterparts when biological interventions alter system dynamics [13]. This comparative analysis examines the application of mechanistic modeling across three critical discovery domains—target identification, pathway sensitivity analysis, and virtual screening—contrasting their performance with phenomenological alternatives and providing experimental validation data to guide researcher selection.

Mechanistic Models in Target Identification

Target identification represents the foundational stage of drug discovery, where mechanistic models excel by integrating multi-omics data, structural biology, and pathway analysis to elucidate novel drug-gable targets with strong causal links to disease pathology. Unlike phenomenological approaches that primarily rely on correlative patterns between chemical structures and biological activity, mechanistic models explicitly represent the physical interactions between drug candidates and their biological targets within physiological contexts [34] [35].

Comparative Performance of Target Identification Methods

Table 1: Quantitative Comparison of Target Prediction Methods

| Method | Type | Algorithm | Database | Key Performance Metrics |

|---|---|---|---|---|

| MolTarPred | Ligand-centric | 2D similarity | ChEMBL 20 | Most effective method; optimization with Morgan fingerprints & Tanimoto scores [35] |

| RF-QSAR | Target-centric | Random Forest | ChEMBL 20&21 | Utilizes ECFP4 fingerprints; top similar ligand features [35] |

| TargetNet | Target-centric | Naïve Bayes | BindingDB | Multiple fingerprints (FP2, MACCS, ECFP2/4/6) [35] |

| CMTNN | Target-centric | ONNX runtime | ChEMBL 34 | Stand-alone code implementation [35] |

| PPB2 | Ligand-centric | Nearest neighbor/Naïve Bayes/DNN | ChEMBL 22 | Uses MQN, Xfp, ECFP4 fingerprints; top 2000 similarity [35] |

| SuperPred | Ligand-centric | 2D/fragment/3D similarity | ChEMBL & BindingDB | ECFP4 fingerprints [35] |

Experimental Protocol for Mechanistic Target Identification

Methodology: The standard experimental protocol for mechanistic target identification begins with constructing a knowledge base of validated drug-target interactions from curated databases such as ChEMBL (version 34 containing 15,598 targets, 2.4 million compounds, and 20.8 million interactions) [35]. For novel target prediction, researchers typically:

- Prepare Query Molecules: Convert small molecule structures to canonical SMILES representations

- Compute Molecular Descriptors: Generate Morgan fingerprints (radius 2, 2048 bits) or MACCS structural keys

- Similarity Assessment: Calculate Tanimoto coefficients against known bioactive compounds

- Confidence Filtering: Apply high-confidence filters (minimum confidence score of 7 for direct protein target assignment)

- Validation: Confirm predictions through binding affinity assays (IC50, Ki, EC50 below 10,000 nM) [35]

Case Study Application: A recent investigation applied this protocol to fenofibric acid, demonstrating its potential for drug repurposing as a THRB modulator for thyroid cancer treatment. The mechanistic model successfully identified off-target interactions with therapeutic potential, highlighting the approach's value in expanding drug indications beyond original development targets [35].

Pathway Sensitivity Analysis Using Mechanistic Frameworks

Pathway sensitivity analysis through mechanistic modeling enables researchers to quantify how perturbations to specific network components propagate through biological systems, identifying critical regulatory nodes and potential resistance mechanisms. This approach has been particularly advanced through frameworks like scHopfield, which integrates Hopfield network dynamics with Hill kinetics and RNA velocity models to infer cell-type-specific regulatory networks with mechanistic interpretability [36].

Key Signaling Pathways Analyzed Through Mechanistic Models

Table 2: Pathway Analysis Applications in Drug Discovery

| Pathway/System | Model Type | Key Insights | Experimental Validation |

|---|---|---|---|

| Pancreatic Endocrinogenesis | scHopfield framework | Identified regulatory drivers through energy landscape analysis | Validated established master regulators (GATA1, SPI1, CEBPA) and novel relationships (GATA2 in neutrophil specification) [36] |

| Hematopoietic Development | Energy landscape modeling | Progenitor states exhibit higher/more variable energies than differentiated cells | Quantitative validation of Waddington's landscape hypothesis; cells move down energy gradients during differentiation [36] |

| IgG Pharmacokinetics | PBPK with FcRn binding | Target-mediated drug disposition and saturable clearance mechanisms | Accounting for endosomal pH-dependent FcRn binding, recycling rates, and two-pore paracellular transport [37] |

| COVID-19 Transmission | Mechanistic shedding model | Connected environmental pathogen data to number of infected individuals | Bayesian inference framework applied to SARS-CoV-2 in environmental dust from isolation rooms [38] |

Workflow for Pathway Sensitivity Analysis

The pathway sensitivity workflow begins with multi-omics data collection from single-cell genomics, proteomics, and transcriptomics, which informs the construction of quantitative network models incorporating Hill kinetics for biochemical reactions and RNA velocity for transcriptional dynamics [36]. Parameter estimation follows, often employing Bayesian inference to quantify uncertainty, particularly when handling environmental surveillance data with high inter-individual variation [38]. The core sensitivity analysis involves systematic perturbation simulations, where key network parameters are modulated to quantify their impact on system-level outputs. This approach successfully identified bottleneck genes controlling fate decisions and established that cells systematically move down energy gradients during differentiation, validating Waddington's epigenetic landscape hypothesis through quantitative measures [36].

Virtual Screening: Mechanistic versus Phenomenological Approaches

Virtual screening represents a critical application where the integration of mechanistic and AI-based phenomenological approaches has demonstrated remarkable synergies. Mechanistic virtual screening employs physics-based simulations including molecular docking, molecular dynamics, and binding free energy calculations to prioritize compounds based on explicit models of molecular recognition. Phenomenological approaches, particularly modern AI implementations, utilize deep learning architectures such as graph neural networks (GNNs) and transformers trained on large chemical databases to predict bioactivity based on structural patterns [39] [40].

Performance Comparison of Virtual Screening Methods

Table 3: Virtual Screening Method Comparison

| Method/Platform | Approach | Key Features | Reported Outcomes |

|---|---|---|---|

| Exscientia | AI-Phenomenological | Generative AI with "Centaur Chemist" approach; patient-derived biology | 70% faster design cycles; 10× fewer synthesized compounds; DSP-1181 (first AI-designed drug in Phase I) [39] |

| Insilico Medicine | AI-Phenomenological | Generative adversarial networks (GANs) for de novo molecular design | TNIK inhibitor INS018_055: target discovery to Phase II in 18 months [39] [40] |

| Schrödinger | Mechanistic | Physics-based simulations (FEP+, Desmond) combined with ML | Platform combining molecular dynamics and machine learning for lead optimization [39] |

| Molecular Docking | Mechanistic | Structure-based docking simulations (AutoDock, SwissDock) | 50-fold hit enrichment rates when integrating pharmacophoric features with protein-ligand interaction data [41] |

| CETSA | Experimental Validation | Cellular Thermal Shift Assay for target engagement | Quantifies drug-target engagement in intact cells; validates mechanistic predictions [41] |

Integrated Screening Workflow

The most effective virtual screening implementations strategically combine mechanistic and phenomenological approaches, leveraging their complementary strengths. As demonstrated by leading AI-driven drug discovery platforms, this integrated workflow typically follows a design-make-test-analyze (DMTA) cycle, where AI systems rapidly generate candidate molecules which are then evaluated using physics-based simulations and experimentally validated through high-throughput approaches [41] [39].

This integrated approach has demonstrated remarkable efficiency gains. For example, Exscientia's platform achieved a clinical candidate for a CDK7 inhibitor after synthesizing only 136 compounds, compared to thousands typically required in traditional medicinal chemistry programs [39]. Similarly, recent work demonstrated that integrating pharmacophoric features with protein-ligand interaction data can boost hit enrichment rates by more than 50-fold compared to traditional virtual screening methods [41].

The Scientist's Toolkit: Essential Research Reagents and Platforms

Successful implementation of mechanistic modeling approaches requires specialized computational tools and biological reagents. The following table catalogs essential resources referenced in the experimental studies analyzed.

Table 4: Essential Research Reagents and Platforms for Mechanistic Modeling

| Resource | Type | Function/Application | Key Features |

|---|---|---|---|

| CETSA | Experimental Assay | Quantitative measurement of drug-target engagement in intact cells | Confirms dose- and temperature-dependent stabilization; validates mechanistic predictions [41] |

| AutoDock | Software Tool | Molecular docking simulations for binding pose prediction | Open-source platform for structure-based virtual screening [41] |

| SwissADME | Web Tool | Prediction of absorption, distribution, metabolism, excretion properties | Filters for drug-likeness before synthesis and in vitro screening [41] |

| ChEMBL | Database | Curated bioactive molecules with drug-target interactions | 15,598 targets, 2.4M compounds, 20.8M interactions; confidence scoring [35] |

| scHopfield | Computational Framework | Inference of gene regulatory networks from single-cell data | Integrates Hopfield network dynamics with RNA velocity models [36] |

| PBPK Modeling | Modeling Framework | Physiologically-based pharmacokinetic prediction | Multi-compartment modeling of drug biodistribution; species scaling [37] |

| MolTarPred | Target Prediction | Ligand-centric target identification | 2D similarity searching with Morgan fingerprints; top performance in benchmarks [35] |

The comparative analysis of mechanistic and phenomenological approaches across target identification, pathway analysis, and virtual screening reveals distinct and complementary strengths. Mechanistic models provide superior explanatory power, biological interpretability, and reliability when extrapolating beyond training data—particularly valuable for understanding complex biological systems, predicting clinical outcomes, and de-risking development decisions. Phenomenological AI approaches offer unprecedented speed in exploring chemical space and identifying patterns in high-dimensional data, dramatically compressing early discovery timelines.

The most successful drug discovery pipelines strategically integrate both approaches, using phenomenological methods for rapid exploration and hypothesis generation, while employing mechanistic models for validation, prioritization, and understanding translational implications. This hybrid paradigm represents the future of computational drug discovery, leveraging the scalability of AI with the biological fidelity of mechanistic modeling to address the formidable challenges of modern therapeutic development.

As regulatory agencies including the FDA and EMA develop formal guidelines for model-informed drug development, the emphasis on uncertainty quantification, model credibility, and biological plausibility will likely further increase the value of mechanistic approaches in regulatory decision-making [34]. Researchers should therefore prioritize building integrated capabilities, recognizing that the combination of mechanistic understanding and AI-driven efficiency represents the most promising path toward reducing attrition rates and delivering innovative therapeutics to patients.

Bridging Scales with Semi-Mechanistic PK/PD and Quantitative Systems Pharmacology (QSP)

In modern drug development, mathematical models are indispensable for interpreting complex biological data and making predictive decisions. The modeling spectrum spans from largely phenomenological models, which describe empirical relationships between observations, to highly mechanistic models, which seek to represent the underlying biological processes governing system behavior. Semi-mechanistic PK/PD and Quantitative Systems Pharmacology (QSP) represent two powerful, yet philosophically distinct, approaches along this spectrum. Semi-mechanistic PK/PD models traditionally focus on characterizing the exposure-response relationship using well-established structural components that approximate key biological processes. In contrast, QSP adopts an integrative framework that incorporates diverse data modalities to capture complex interactions between pharmacology, physiology, and disease pathophysiology across multiple biological scales [42]. This comparative analysis examines the technical specifications, applications, and implementation requirements of these approaches to guide researchers in selecting appropriate strategies for their drug development challenges.

Technical Comparison: Core Principles and Structural Frameworks

Fundamental Characteristics and Distinguishing Features

The table below summarizes the defining characteristics of semi-mechanistic PK/PD and QSP modeling approaches:

Table 1: Fundamental characteristics of semi-mechanistic PK/PD and QSP modeling approaches

| Characteristic | Semi-Mechanistic PK/PD | Quantitative Systems Pharmacology (QSP) |

|---|---|---|

| Primary Focus | Exposure-response relationships [43] | Integrated drug-body system analysis [44] |