Long-Term Individual-Based Data: A Foundational Framework for Effective Conservation Management

This article synthesizes current knowledge and methodologies for leveraging long-term individual-based data in conservation science.

Long-Term Individual-Based Data: A Foundational Framework for Effective Conservation Management

Abstract

This article synthesizes current knowledge and methodologies for leveraging long-term individual-based data in conservation science. It explores the foundational value of longitudinal datasets for understanding ecological and evolutionary processes, showcases advanced methodological applications like Individual-Based Models (IBMs) and genetic monitoring, and addresses critical challenges in data management and funding. By examining real-world case studies and validation techniques, it provides a comprehensive resource for researchers and conservation managers aiming to design, implement, and optimize long-term monitoring programs to ensure species persistence in a changing world.

The Critical Role of Long-Term Individual Data in Understanding Ecological Change

Why Individual-Based Longitudinal Studies Are Irreplaceable

In conservation management research, understanding temporal dynamics is paramount. Individual-based longitudinal studies, which track the same entities over extended periods, provide an unparalleled window into the processes of ecological change, population dynamics, and the long-term impacts of conservation interventions [1] [2]. Unlike cross-sectional studies that offer a mere snapshot in time, these studies allow researchers to observe directly how individuals, populations, or environmental attitudes evolve, adapt, or decline [3]. This capacity to document intraindividual change—the shifts and developments within a single subject over time—is their defining strength, making them irreplaceable for distinguishing short-term fluctuations from genuine long-term trends, identifying cause-and-effect relationships in natural systems, and forecasting future ecological states [1] [2] [4]. For instance, a 15-year longitudinal analysis of public support for nature conservation in the Netherlands can track attitudinal shifts within the same society, providing robust data to guide policy and communication strategies [5]. The fidelity of this data is crucial for developing effective, evidence-based conservation strategies that are responsive to both ecological and social dynamics.

Core Characteristics and Comparative Advantages

Defining the Longitudinal Approach

A longitudinal study is a type of correlational research that involves repeatedly observing and collecting data on the same variables or individuals over an extended period—ranging from weeks to decades—without attempting to influence those variables [2] [3]. The fundamental principle is the focus on intraindividual change, which involves examining changes at the individual level over time, be it long-term trends or short-term fluctuations [2]. This design is inherently dynamic and observational, capturing the flow of time as a key variable in the research [1].

Advantages Over Cross-Sectional Studies

Longitudinal studies offer several critical advantages that make them particularly suited for conservation research, where understanding processes is as important as documenting states.

- Establishes Temporal Sequences and Causality: By following the sequence of events, researchers can better identify cause-and-effect relationships, such as how a specific policy change influences biodiversity metrics in subsequent years [1] [3].

- Tracks Intraindividual Change: They eliminate the confusion that can arise from interindividual differences in cross-sectional studies, as each subject serves as their own control [2] [3]. This is vital for measuring growth, decline, or behavioral adaptation in individual animals or plants.

- Reduces Recall Bias: Prospective longitudinal studies, where data is collected in real-time, avoid the inaccuracies inherent in recalling past events, leading to higher data validity [1] [3].

- Reveals Complex Patterns and Heterogeneity: Longitudinal data can uncover diverse developmental trajectories within a population, showing that not all individuals respond to environmental pressures in the same way [2]. This helps in identifying resilient or vulnerable sub-populations.

- High Validation: Objectives and rules established prior to data collection ensure the authenticity and high validity of the findings [2].

Table 1: Longitudinal vs. Cross-Sectional Study Designs

| Feature | Longitudinal Study | Cross-Sectional Study |

|---|---|---|

| Time Dimension | Repeated observations over an extended period | Observations at a single point in time |

| Participants | Observes the same group multiple times | Observes different groups (a "cross-section") |

| Primary Strength | Follows changes in participants over time | Provides a snapshot of a population at a given point |

| Inference | Better for establishing sequence and causality | Limited to identifying associations |

| Cost & Duration | Typically more expensive and time-consuming [3] | Generally quicker and less costly to conduct [1] |

Key Methodological Approaches and Protocols

Types of Longitudinal Study Designs

Selecting the appropriate design is a critical first step in crafting a robust longitudinal study. The choice depends on the research question, available resources, and the time scale of the phenomenon under investigation.

- Panel Study: The same set of participants is measured repeatedly over time on the same variables [2]. This is the purest form of longitudinal research, ideal for tracking the life histories of individually tagged animals or the year-on-year health of specific forest plots.

- Cohort Study: A group of people (or organisms) sharing a common experience or demographic trait within a defined period is sampled and followed [2]. Unlike panel studies, it does not necessarily require the same individuals to be assessed each time, but rather representatives from the cohort. This is useful for studying the long-term survival of a cohort of seedlings or the fate of animals born in a particular season.

- Retrospective Study: Researchers collect data on events that have already occurred, using existing data from sources like satellite imagery, museum records, or historical archives [2]. While cost-effective, this approach is prone to biases from incomplete historical records [3].

Protocol for Implementing an Individual-Based Longitudinal Study

The following protocol provides a structured framework for initiating and maintaining a longitudinal study in a conservation context.

Phase 1: Pre-Study Planning and Design

- Define Clear Objectives and Variables: Articulate the core research questions and identify the key variables to be measured. Frame these within the five key objectives for longitudinal data: identifying intraindividual change, interindividual differences in change, interrelationships in change, and causes of both intraindividual change and differences therein [2].

- Select Study Design: Choose between panel, cohort, or retrospective designs based on objectives and resources. A prospective panel design offers the highest validity for establishing causality [2].

- Standardize Methods and Metrics: Establish rigorous, standardized protocols for data collection and recording that are identical across all study sites and consistent over time [1]. This includes defining the frequency of data collection waves.

- Pilot Testing: Conduct a small-scale pilot to refine data collection instruments and logistical plans.

Phase 2: Sampling and Baseline Data Collection

- Recruit and Select Sample: Identify and recruit the study subjects (e.g., individual animals, plots of land, human communities). For panel studies, this is the core group to be followed. Anticipate attrition by recruiting a sufficiently large sample size [2] [4].

- Collect Baseline Data: Gather comprehensive initial data on all relevant variables for all subjects at the start of the study (Time T1).

- Implement Tracking Systems: Establish reliable systems for tracking individuals over time, which may include tagging, GPS collars, or secure contact information databases for human panels [1].

Phase 3: Ongoing Data Collection and Monitoring

- Execute Repeated Measurements: Collect data from the same subjects at predetermined intervals (T2, T3...Tn) using the standardized methods [2].

- Monitor Data Quality and Protocol Adherence: Continuously check data for errors and ensure all team members adhere to the study protocols. Regular training and communication are essential [1].

- Implement Retention Strategies: To combat attrition, employ strategies such as maintaining updated contact information, providing incentives, and keeping participants engaged with the study's goals [1] [2].

- Utilize Monitoring Dashboards: For complex studies, employ interactive visualization dashboards (e.g., built on platforms like R Shiny) to monitor data collection progress, key quality indicators, and interim results in near-real-time [6].

Phase 4: Data Management and Analysis

- Manage and Curate Data: Maintain a secure, well-organized database. Use unique coding systems to link all data pertaining to specific individuals [1].

- Employ Appropriate Statistical Models: Use analytical techniques designed for longitudinal data, such as mixed-effect regression models (MRM) or generalised estimating equations (GEE), which account for linked data points and missing values [1]. Avoid the common error of using repeated cross-sectional tests.

- Handle Missing Data: Develop a strategy for dealing with attrition and missing data, using modern techniques like maximum likelihood estimation or multiple imputation rather than simple deletion [2].

Analytical Framework and Data Visualization

Essential Statistical Considerations

The analysis of longitudinal data requires specialized techniques that account for its inherent structure.

- Model Selection: Choose models like Mixed-Effect Regression Models (MRM) that focus on individual change over time while accounting for variation in the timing of measures and missing data. Generalized Estimating Equation (GEE) models are another option that focuses on population-average effects [1].

- Handling Missing Data: Attrition is a major challenge. Techniques like maximum likelihood estimation and multiple imputation are superior to older methods like listwise deletion for reducing bias [2].

- Testing Measurement Invariance: When measuring constructs like "conservation attitude," researchers must evaluate whether the same construct is being measured in a consistent, comparable way across all time points [2].

- Accelerated Longitudinal Designs: To cover a long developmental period more efficiently, researchers can sample different age cohorts over overlapping time periods (e.g., assessing 6th, 7th, and 8th graders yearly over 3 years). This requires appropriate multilevel models to analyze the complex data structure [2].

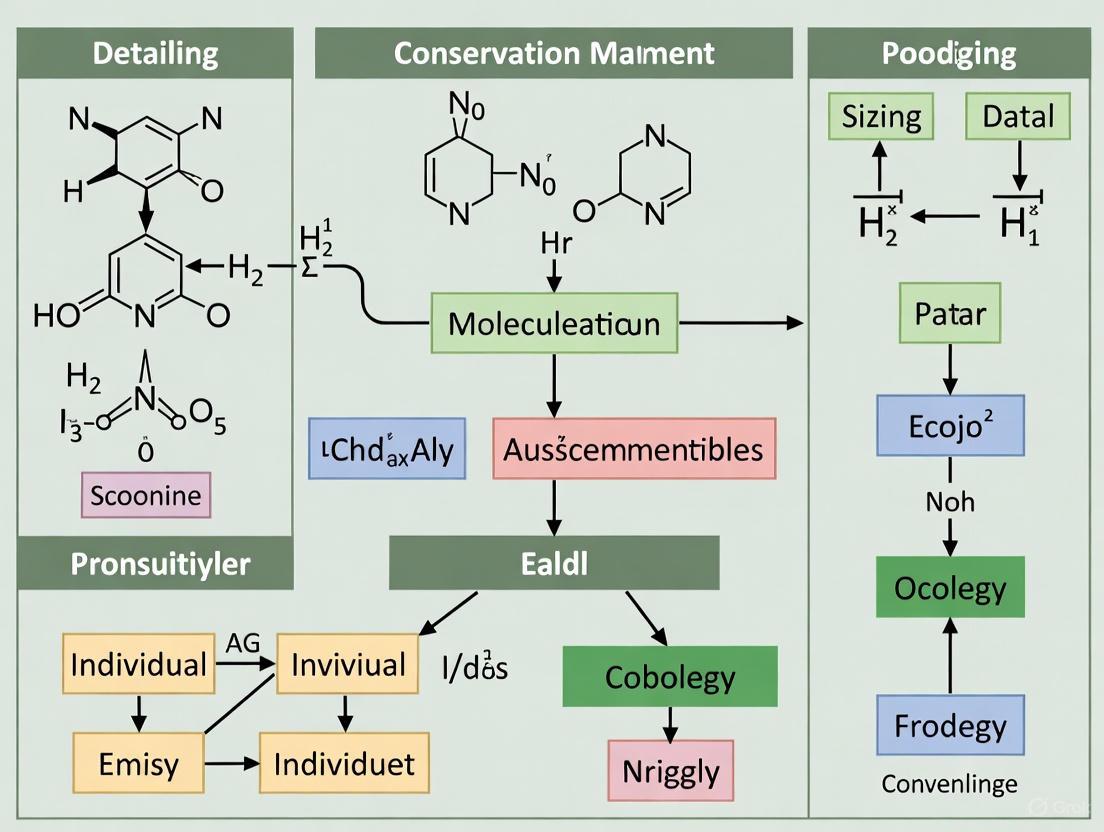

Visualizing Longitudinal Data and Workflows

Effective visualization is key to interpreting the complex data generated by longitudinal studies. The following diagram illustrates a core analytical concept, and the principles below guide the creation of clear, accessible charts.

Best Practices for Data Visualization Color Selection: When creating charts and graphs from longitudinal data, strategic use of color improves communication.

- For Categorical Data (Qualitative Palettes): Use distinct hues for unrelated categories (e.g., different species or sites). Limit the palette to ten or fewer easily distinguishable colors to avoid confusion [7] [8].

- For Ordered/Continuous Data (Sequential Palettes): Use a single color in varying lightness, with darker colors representing higher values. This intuitively shows a progression [9] [7].

- For Highlighting: Use a bright, saturated color for the most important information and mute less important elements with grey [8]. This directs the viewer's attention effectively.

- Accessibility: Ensure sufficient contrast and avoid color combinations that are difficult for color-blind users to distinguish (like red-green). Use tools to simulate color vision deficiencies [7] [8].

The Scientist's Toolkit: Research Reagent Solutions

Successful longitudinal research relies on a suite of "reagents"—both physical and conceptual tools—that enable the consistent collection, management, and analysis of data over time.

Table 2: Essential Research Reagents for Longitudinal Studies

| Tool/Reagent | Category | Primary Function | Application Example in Conservation |

|---|---|---|---|

| Unique Identifiers (Tags, Bands, GPS Collars) | Field Material | To reliably track and re-identify individual organisms over time. | Marking individual birds with leg bands to monitor migration and survival. |

| Standardized Data Collection Protocols | Methodological Framework | To ensure consistency and comparability of measurements across all time points and researchers. | Using the exact same method and equipment to measure tree diameter at breast height (DBH) every five years. |

| Relational Database (e.g., SQL-based) | Data Management | To store, link, and manage large volumes of time-series data efficiently while preserving individual data trails. | Linking individual animal sighting records to health assessment data across multiple field seasons. |

| Mixed-Effect Statistical Models (e.g., in R, Stata) | Analytical Tool | To analyze hierarchical longitudinal data, accounting for both fixed effects and random individual variation. | Modeling the growth rate of individual fish as a function of water temperature and age. |

| Interactive Monitoring Dashboard (e.g., R Shiny) | Visualization & Monitoring | To provide near-real-time visualization of data collection progress, key indicators, and interim results. | The Adaptive Total Design (ATD) Dashboard used in the National Longitudinal Study of Adolescent to Adult Health (Add Health) [6]. |

| Participant/Stakeholder Engagement Strategy | Methodological Framework | To maintain contact, ensure buy-in, and reduce attrition rates among human subjects or community partners. | Regular newsletters and community meetings for a longitudinal study on human-wildlife conflict perceptions. |

Individual-based longitudinal studies are not merely a methodological choice but a fundamental necessity for advancing evidence-based conservation management. Their unique capacity to document the dynamics of change within individuals and populations over time provides insights that are simply unattainable through other research designs. Despite their demands in terms of time, cost, and logistical complexity, the value of the causal inferences, the detailed understanding of developmental trajectories, and the robust forecasting capabilities they afford make them an indispensable component of the conservation scientist's toolkit. As environmental pressures mount, the long-term, individual-centered perspective offered by these studies will be critical for developing effective strategies to conserve and protect our natural world.

Documenting Population Trends and Evolutionary Processes

Global Population Statistics 2025

The following tables summarize key quantitative data on global population statistics and growth rates for 2025, providing a foundational dataset for evolutionary and conservation research [10].

Core Global Population Metrics

Table 1: Key global population metrics and changes observed in 2025.

| Global Population Metrics | Value | Change & Context |

|---|---|---|

| Total World Population | 8.25 billion | Milestone reached in 2025 |

| Annual Population Change | +69 million | Increase over the past 12 months |

| Current Annual Growth Rate | 0.8% | Lowest rate in recent decades |

| Peak Historical Growth Rate | 2.3% | Occurred during the 1960s baby boom |

| People Added Per Second | 2.2 individuals | Continuous growth metric |

| Growth Since 1990s | +54% | Increase of 2.89 billion people |

| Territories with Growing Populations | 175 | Majority of world territories |

| Territories with Declining Populations | 66 | Growing number of regions |

Regional Growth Extremes

Table 2: Fastest growing and declining countries and territories based on 2025 data.

| Category | Country/Territory | Annual Rate | Demographic Context |

|---|---|---|---|

| Fastest Growing | Tokelau | +3.9% | Small Pacific territory (~2,600 people) |

| 2nd Fastest Growing | Oman | +3.81% | Major Gulf nation, labor migration |

| 3rd Fastest Growing | Syria | +3.71% | Post-conflict recovery dynamics |

| Largest Absolute Growth | India | +12.9 million | Equivalent to adding Bolivia's population annually |

| Fastest Declining | Saint Martin (French) | -4.4% | Caribbean territory |

| 2nd Fastest Declining | Marshall Islands | -3.4% | Pacific island nation |

| Largest Absolute Decline | China | -3.25 million | First sustained decline in modern history |

Top 10 Most Populous Nations

Table 3: Population distribution across the ten most populous countries in 2025.

| Rank | Country | Population | Global Share | Regional Context |

|---|---|---|---|---|

| 1 | India | 1.47 billion | 17.79% | Most populous nation |

| 2 | China | 1.42 billion | 17.16% | Second most populous |

| 3 | United States | 347.8 million | 4.22% | Americas leader |

| 4 | Indonesia | 286.3 million | 3.47% | Southeast Asia giant |

| 5 | Pakistan | 256.2 million | 3.11% | South Asian power |

| 6 | Nigeria | 238.7 million | 2.89% | Africa's most populous |

| 7 | Brazil | 213.0 million | 2.58% | South America leader |

| 8 | Bangladesh | 176.2 million | 2.14% | High density nation |

| 9 | Russia | 143.8 million | 1.74% | Largest by area |

| 10 | Ethiopia | 136.3 million | 1.65% | East Africa giant |

| Combined Top 10 | 4.68 billion | 56.7% | Over half of humanity |

Experimental Protocol: Spatially Explicit Individual-Based Models for Conservation

Application: Prioritizing conservation strategies for threatened steppe birds using the little bustard (Tetrax tetrax) as a model species [11]. Research Context: Western populations of the little bustard have experienced sharp declines due to habitat degradation, skewed sex ratios, and high anthropogenic mortality [11]. Protocol Goal: To develop a spatially explicit demographic Individual-Based Model (IBM) that forecasts habitat use and population dynamics under different management scenarios over a 50-year period (2022–2072) [11].

Materials and Reagents

Table 4: Essential research reagents and computational solutions for IBM construction and analysis.

| Research Reagent / Solution | Function / Application |

|---|---|

| High-Resolution Habitat Suitability Data | Provides environmental context and survival probability parameters for the model. Nest, chick, and adult survival positively correlate with habitat suitability [11]. |

| Demographic Parameters (Field-Collected) | Includes species-specific data on fecundity, mortality, sex ratios, and dispersal behavior for model calibration [11]. |

| Spatially Explicit Landscape Data | Digital maps of the study region (e.g., Extremadura, Spain) incorporating habitat types, human infrastructure, and protected areas [11]. |

| Anthropogenic Mortality Data | Quantifies threats from human activities such as collisions, hunting, or agricultural practices to model impact and mitigation strategies [11]. |

| IBM Software Platform | Computational framework for building, running, and analyzing individual-based models (e.g., NetLogo, R with individual-based modeling packages). |

Step-by-Step Methodology

Model Parameterization

- Calibrate the model by establishing statistical relationships between habitat suitability and key demographic rates (nest survival, chick survival, adult survival) [11].

- Integrate hypothesis testing to validate model assumptions, such as investigating drivers of skewed sex ratios (e.g., low female survival in less favourable habitats) [11].

Scenario Simulation

- Simulate a baseline scenario projecting current population trends without intervention over 50 years.

- Simulate conservation scenarios:

- Habitat Improvement: Model population response to enhanced habitat quality and connectivity.

- Mortality Mitigation: Model population response to reduced anthropogenic mortality.

- Integrated Strategy: Model the combined effect of habitat improvement and mortality mitigation [11].

Model Validation and Analysis

- Compare simulated population trajectories against independent field data to validate model accuracy.

- Analyze the effectiveness of each conservation scenario by comparing final population sizes, growth rates, and probability of population persistence against the baseline scenario.

- Conduct sensitivity analysis to identify which parameters most strongly influence model outcomes.

Protocol Findings and Interpretation

- Key Finding: Habitat enhancements alone are insufficient to reverse population declines without complementary efforts to reduce anthropogenic mortality [11].

- Interpretation: This emphasizes the need for an integrated, long-term conservation strategy that combines habitat management with proactive measures to mitigate human-induced mortality [11].

- Broader Application: This protocol highlights the value of IBMs as high-resolution, spatially explicit decision-support tools for conservation planning of other endangered species [11].

Visualization of Research Workflows

Individual-Based Model Conservation Workflow

Data Integration and Analysis Pathway

The Alarming Trend of Terminated Long-Term Studies and Data Gaps

Application Note: Quantifying the Impact of Research Disruptions

Long-term individual-based studies are fundamental to conservation management research, providing critical data on population dynamics, species responses to environmental change, and the effectiveness of intervention strategies. Recent funding disruptions have created significant data gaps that threaten the continuity and validity of this essential research. This application note analyzes the current trend of study terminations and provides evidence-based protocols for mitigating their impact on conservation science.

Quantitative Analysis of Study Terminations

Data from recent biomedical research disruptions provide a concerning proxy for understanding potential impacts on ecological studies. Analysis of terminated National Institutes of Health (NIH) grants reveals the scale and disproportionate effects of such funding cuts.

Table 1: Impact of Recent Research Grant Terminations on Clinical Trials [12] [13]

| Metric | Value | Implications |

|---|---|---|

| Total Trials Analyzed | 11,008 | Baseline of active research projects |

| Trials with Terminated Grants | 383 (3.5%) | Significant portion of research disrupted |

| Affected Participants | >74,000 | Direct impact on data continuity and ethical commitments |

| International Trials Affected | 5.8% (vs 3.4% US) | Disproportionate impact on global research collaboration |

Table 2: Disproportionate Termination Effects by Research Category [12] [13]

| Research Category | Termination Rate | Specific Focus Areas |

|---|---|---|

| Infectious Diseases | 14.4% (97/675 trials) | Pathogen dynamics, host-pathogen interactions |

| Prevention Trials | 8.4% (123/1,460 trials) | Preventive interventions, proactive management |

| Behavioral Interventions | 5.0% (177/3,510 trials) | Behavioral ecology, human-wildlife interactions |

| Geographic Distribution | Northeast US: 6.3% | Regional conservation programs disproportionately affected |

Consequences for Long-Term Data Integrity

The termination of long-term studies creates compound effects that extend beyond the immediate loss of data collection. These disruptions threaten the viability of entire research trajectories essential for conservation management:

- Loss of Longitudinal Data Patterns: Longitudinal studies track the same individuals over prolonged periods to monitor changes and identify causal relationships [1]. When interrupted, they lose the ability to capture critical life history events, population turnover, and long-term environmental responses.

- Reduced Statistical Power: The value of long-term datasets increases with time series length. Premature termination creates truncated datasets with reduced power to detect subtle trends and signals amid ecological noise [14].

- Irrecoverable Data Gaps: Certain ecological phenomena occur over decades (e.g., generational shifts, climate responses). Gaps during critical periods create permanent voids in understanding these cycles [15].

- Erosion of Research Capacity: Skilled research teams disperse, institutional knowledge is lost, and hard-won community relationships for field access dissolve, making restarting research more difficult than initiating new studies [16].

Experimental Protocols for Maintaining Data Continuity

Protocol 1: Rapid Data Curation and Archiving

Purpose

To preserve existing data from threatened long-term studies through systematic curation, ensuring future usability even if primary data collection is interrupted [15].

Materials and Equipment

- Data management system (e.g., SQL database, REDCap)

- Metadata standards template (e.g., Ecological Metadata Language)

- Secure backup infrastructure (cloud and physical storage)

- Data documentation software (e.g., Electronic Lab Notebook)

Procedure

Immediate Data Triage (Days 1-7):

- Inventory all collected data, including raw field measurements, processed datasets, and associated metadata

- Identify highest-priority datasets with greatest long-term value

- Secure original field notebooks, sensor data, and genetic samples

Comprehensive Data Curation (Weeks 2-8):

- Apply standardized metadata descriptors using established ecological schemas

- Resolve data ambiguities while original researchers remain available

- Cross-validate critical datasets through independent verification

- Format data for repository compliance (e.g., Dryad, GBIF)

Secure Archiving (Weeks 9-12):

- Deposit data in multiple trusted repositories (institutional, domain-specific, general)

- Document all curation procedures and data transformations

- Establish access controls and preservation metadata

The Curation-Fieldwork Continuum:

Protocol 2: Implementing Cost-Effective Biodiversity Monitoring

Purpose

To maximize data quality and quantity through strategic investment in curation of existing biological collections before conducting new fieldwork [15].

Materials and Equipment

- Existing biological collections (herbarium specimens, tissue samples, camera trap archives)

- Digitization equipment (scanners, photographic setups)

- Georeferencing tools (GPS, historical map interfaces)

- Taxonomic validation resources (identification keys, expert networks)

Procedure

Collection Assessment Phase:

- Inventory existing specimens and associated data

- Evaluate taxonomic and geographic coverage

- Identify critical gaps in metadata (e.g., missing coordinates, collection dates)

Priority Curation Workflow:

- Integrated Fieldwork Planning:

- Use curated collection data to identify precise geographic and taxonomic gaps

- Design targeted fieldwork to address specific deficiencies

- Implement standardized protocols for new collections to minimize future curation needs

Protocol 3: Longitudinal Data Preservation Framework

Purpose

To maintain the integrity of long-term individual-based studies through structured approaches that withstand funding interruptions [1] [14].

Materials and Equipment

- Individual identification system (marking tags, genetic fingerprints, photographic databases)

- Standardized monitoring protocols (survey forms, measurement standards)

- Data linkage infrastructure (relational databases, unique identifiers)

- Temporal recording systems (standardized date formats, phenological calendars)

Procedure

Core Data Protection:

- Secure individual life history records with unique identifiers

- Preserve temporal sequences with consistent interval documentation

- Maintain linkage between different data types for the same individuals

Attrition Mitigation Strategy:

- Document reasons for individual dropout (death, migration, detection failure)

- Implement multiple capture-recapture methods to maximize detection

- Establish proxy indicators for missing individuals where possible

Statistical Continuity Measures:

- Apply appropriate longitudinal analysis techniques (mixed-effect models, GEE)

- Document and account for missing data mechanisms

- Preserve raw data alongside transformed versions for future reanalysis

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Maintaining Long-Term Ecological Studies [15] [14] [1]

| Tool Category | Specific Items | Function in Data Preservation |

|---|---|---|

| Data Management | Electronic Lab Notebooks, SQL databases, Metadata standards | Standardized recording, Secure storage, Future discoverability |

| Field Continuity | Individual marking kits, Permanent plot markers, Protocol manuals | Individual tracking, Geographic precision, Method consistency |

| Sample Preservation | Cryopreservation equipment, Herbarium supplies, Tissue collection kits | Genetic material preservation, Voucher specimens, Future analyses |

| Curation Supplies | Digitization scanners, Georeferencing software, Taxonomic keys | Data recovery from existing collections, Spatial accuracy, Identification validation |

| Analysis Tools | Longitudinal statistical packages, Data visualization software, Gap analysis programs | Appropriate analysis of time-series data, Pattern recognition, Priority identification |

The alarming trend of terminated long-term studies poses a significant threat to conservation management research, potentially creating irrecoverable data gaps just as environmental challenges intensify. The protocols outlined provide practical approaches for researchers to preserve existing data, maximize resources through strategic curation, and maintain the longitudinal integrity of individual-based studies. Implementation of these methods will help sustain the long-term data streams essential for understanding and managing biodiversity in a rapidly changing world.

For over six decades, a long-term study of yellow-bellied marmots (Marmota flaviventer) conducted at the Rocky Mountain Biological Laboratory (RMBL) in Colorado has provided unprecedented insights into mammalian ecology, evolution, and conservation biology [17]. This research represents the second-longest continuous study of individually marked mammals globally, generating a comprehensive dataset that tracks individuals across their entire lifespans [18]. The value of this research lies in its unique capacity to document ecological and evolutionary processes in real-time, offering a critical evidence base for understanding how environmental change, social behavior, and early life experiences shape population dynamics and individual fitness.

This extensive dataset has enabled the development of innovative methodological frameworks, including the first cumulative adversity index (CAI) for a wild animal species, which quantifies how early life stressors impact long-term survival and health [18]. By integrating behavioral observations, physiological measurements, and demographic monitoring, the marmot research program exemplifies how long-term individual-based data can address fundamental questions in ecology while providing practical tools for wildlife conservation and management.

Key Findings from Long-Term Research

Cumulative Adversity Effects on Lifespan

The creation of a cumulative adversity index for yellow-bellied marmots revealed that early life adversity has permanent consequences for survival and longevity, similar to patterns observed in human populations [18]. Researchers analyzed 62 years of data to quantify how various stressors experienced during early life stages affect marmots throughout their lives.

Table 1: Factors in Marmot Cumulative Adversity Index and Their Survival Impact

| Adversity Factor | Effect on Survival | Magnitude of Impact |

|---|---|---|

| Late start of growing season | Decreased survival | Significant |

| Summer drought | Increased survival (unexpected) | Variable across models |

| Maternal loss | Decreased survival | Up to 64% reduction |

| Poor maternal mass | Decreased survival | Up to 77% reduction |

| Late weaning | Decreased survival | 33% reduction |

| Large litter size | Decreased survival | Significant |

| Male-biased litters | Decreased survival | Significant |

| High maternal stress | Decreased survival | Significant |

| Predation pressure | Minor effect | Smaller than expected |

The study demonstrated that marmots experiencing moderate cumulative adversity had 30% reduced odds of surviving their first year, while those facing acute adversity faced 40% reduced survival odds [18]. These effects persisted throughout the lifespan, with early adversity reducing adult life expectancy even if conditions improved later in life. The average adult marmot lifespan is approximately 3.8 years, but acute cumulative adversity tripled the risk of adverse effects on life expectancy [18].

Social Structure and Population Dynamics

Yellow-bellied marmots exhibit facultative sociality, meaning they can adjust their social organization in response to environmental conditions [19]. Their societies form primarily when adult females recruit their daughters, creating multigenerational groups that share and defend space while maintaining the ability to distinguish group members from outsiders [19].

The research revealed that marmot societies are structured through age and kin relationships, with females typically remaining in their natal areas while males disperse [18]. This social flexibility makes them a valuable model system for studying incipient society formation and the evolutionary benefits of social living [19].

Heritability of Behavioral Traits

Analysis of flight initiation distance (FID) - how close an approaching threat can get before the animal flees - revealed that this antipredator behavior has low to moderate heritability (h² = 0.147) [20]. This suggests that 14.7% of the variation in fear responses among marmots can be attributed to additive genetic effects, indicating the trait can evolve under natural selection.

The research also found that FID was significantly repeatable within individuals (R = 0.539), meaning individual marmots show consistent fear responses across different contexts [20]. This behavioral consistency has implications for how marmots cope with human-induced environmental changes and other anthropogenic disturbances.

Experimental Protocols & Methodologies

Long-Term Population Monitoring Protocol

Objective: To systematically monitor individual marmots throughout their lifetimes to collect data on survival, reproduction, behavior, and physiology.

Methodology:

- Study Area: A 5 km stretch of the Upper East River Valley, Colorado, at approximately 2,900 m elevation, divided into "up-valley" and "down-valley" regions with distinct environmental conditions [17]

- Trapping Procedure:

- Use Tomahawk live traps baited with horse feed placed near burrow entrances [20]

- Conduct biweekly trapping sessions from spring through late summer (May to September) during active marmot season [18]

- Process captured individuals by recording mass, sex, age, and reproductive status

- Collect hair samples for genetic analysis and blood samples (up to 3 ml) for physiological assessment [21]

- Individual Identification: Mark all individuals with unique tags for lifelong tracking

- Behavioral Observations: Conduct regular behavioral monitoring using standardized protocols including:

Figure 1: Marmot Population Monitoring Workflow

Cumulative Adversity Assessment Protocol

Objective: To quantify early life stressors and their cumulative impact on marmot lifespan and health outcomes.

Methodology:

- Subject Selection: Focus on female marmots born after 2001 that remained in studied colonies until 2019 to ensure accurate pedigree, age, and lifetime experience records [18]

- Adversity Variables Measurement:

- Ecological Factors: Record timing of snowmelt, vegetation growth onset, summer drought conditions

- Demographic Factors: Document litter size, sex ratios, weaning timing

- Maternal Factors: Measure maternal body condition, stress hormone levels, survival status

- Statistical Modeling: Input variables into computational models to quantify standard, mild, moderate, and acute adversity levels

- Survival Analysis: Track individuals throughout their lives to correlate early adversity with longevity metrics

Human Impact Assessment Protocol

Objective: To evaluate how different types of human activities affect marmot physiology, behavior, and fitness.

Methodology:

- Disturbance Gradient Design: Utilize the natural variation in human exposure across different marmot colonies [21]

- Human Activity Quantification:

- Monitor and categorize human activities (vehicles, pedestrians, bicycles) at each colony

- Compare disturbance levels across years (2009 vs. 2018 in published study)

- Multi-dimensional Response Assessment:

- Physiological: Analyze fecal glucocorticoid metabolites (FGMs) and neutrophil to lymphocyte ratios (NLRs) as stress indicators [21]

- Behavioral: Measure flight initiation distance and time allocation to vigilance vs. foraging

- Fitness Correlates: Document mass gain rates as key fitness indicator, particularly important for hibernation survival [21]

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Long-Term Marmot Research

| Research Tool | Function | Application Context |

|---|---|---|

| Tomahawk Live Traps | Safe capture of individuals | Population monitoring, biological sampling [20] |

| Horse Feed Bait | Attract marmots to traps | Non-invasive capture method [21] |

| Unique Ear Tags | Individual identification | Long-term tracking across seasons and years [18] |

| Fecal Sample Collection Kits | Glucocorticoid metabolite analysis | Physiological stress assessment [21] |

| Blood Collection Supplies | Hematological and genetic analysis | NLR measurement, pedigree construction [21] |

| DNA Sampling Kits | Genetic relatedness analysis | Pedigree reconstruction, heritability studies [20] |

| Behavioral Observation Equipment | Standardized behavior recording | FID measurements, social behavior quantification [20] |

Data Integration and Analysis Framework

The marmot research program employs an integrated analytical approach that connects individual experiences to population-level outcomes through multiple pathways.

Figure 2: Integrated Data Analysis Framework

Application to Conservation Management

The long-term marmot research has yielded several critical applications for conservation science and wildlife management:

Targeted Conservation Prioritization

The cumulative adversity index provides a scientifically-grounded method for identifying the most impactful stressors to target in conservation programs [18]. For marmots, this means focusing on:

- Maternal health interventions rather than predator control, given the greater impact of maternal loss compared to predation pressure

- Regional prioritization of down-valley populations which experience greater adversity effects

- Habitat management that addresses early growing season conditions rather than summer drought concerns

Human-Wildlife Coexistence Strategies

Research on human disturbance responses revealed that marmots can habituate to certain human activities without significant fitness consequences [21]. This suggests that:

- Managed ecotourism may be compatible with marmot conservation when properly implemented

- Conservation plans need not strictly limit all human activities but should focus on specific disruptive behaviors

- Monitoring programs should integrate multiple response types (physiological, behavioral, demographic) to fully understand human impacts

Evolutionary Conservation Approaches

The documentation of heritable behavioral traits indicates that conservation strategies must account for evolutionary processes [20]. This includes:

- Maintaining genetic diversity for adaptive traits like antipredator behavior

- Considering how human-induced selection might alter evolutionary trajectories

- Recognizing that behavioral flexibility constitutes an important adaptation to environmental change

The six-decade study of yellow-bellied marmots demonstrates the irreplaceable value of long-term individual-based research for understanding ecological and evolutionary processes. By tracking known individuals throughout their lives across multiple generations, this research has revealed how early life experiences accumulate to shape health and longevity, how social structures form and persist, and how animals adapt to changing environmental conditions including human presence.

The methodological frameworks developed through this research - particularly the cumulative adversity index and integrated human impact assessment - provide powerful tools that can be adapted to other species and ecosystems. As biodiversity faces increasing threats from climate change, habitat loss, and other anthropogenic pressures, such long-term datasets become increasingly vital for developing effective, evidence-based conservation strategies that can protect species while accommodating sustainable human activities.

Methodologies and Practical Applications in the Field

Spatially Explicit Individual-Based Models (IBMs) for Predictive Conservation

Application Notes: The Role of Spatially Explicit IBMs in Conservation

Spatially Explicit Individual-Based Models (IBMs) are advanced computational tools that simulate the actions, interactions, and fates of individual organisms within a realistic geographic framework. By tracking individuals and their use of space, these models can forecast population dynamics and species persistence under various environmental scenarios, providing a powerful asset for conservation management [11]. Their application is particularly critical for threatened species worldwide, where urgent, evidence-based strategies are required to halt population declines [11].

The core strength of this approach lies in its ability to integrate high-resolution habitat suitability data with individual demographic parameters, such as survival and reproduction. This allows the model to simulate how individuals behave and interact with their heterogeneous environment, generating forecasts of both habitat use and overall population trends [11]. This capability moves beyond traditional modeling approaches, like Species Distribution Models (SDMs), which often rely on non-spatial metrics (e.g., AUC) that can fail to detect biases from uneven sampling. Spatially explicit metrics, in contrast, offer a more robust evaluation of model predictions by directly accounting for geographic patterns and sampling imperfections [22].

Key Insights from Model Applications

Spatially explicit IBMs have yielded critical insights for conservation:

- Integrated Strategies for Threatened Species: Research on the little bustard (Tetrax tetrax) in Spain demonstrated that habitat improvement alone is insufficient to reverse population declines. The models highlighted the necessity of combining habitat management with proactive measures to reduce anthropogenic mortality for sustainable recovery [11].

- Understanding Disease Dynamics: A white-tailed deer IBM for Chronic Wasting Disease (CWD) showed that the introduction of a single infected deer led to an outbreak in 29% of simulations. The model further revealed that CWD prevalence is more sensitive to deer population parameters (e.g., female harvest rates) than to disease-specific parameters, directing management towards population control [23].

- Evaluating Adaptive Potential: In marine systems, eco-evolutionary IBMs are used to predict the capacity for adaptation to climate change. These models explore the effects of genetic architecture, gene flow, and multiple stressors on species persistence, helping to identify populations most at risk and evaluate potential interventions like assisted gene flow [24].

Experimental Protocols for IBM Development and Application

The development and application of a spatially explicit IBM follow a structured workflow to ensure scientific rigor and practical utility. The diagram below outlines the core phases of this process.

Workflow for Spatially Explicit IBM Implementation

Diagram Title: IBM Development Workflow

Detailed Methodological Framework

Phase 1: Conceptual Model Formulation

- Define Conservation Objective: Clearly state the management question (e.g., "Which combination of interventions will maximize the 50-year population growth of species X?").

- Identify Key Entities and State Variables: Define the individuals (e.g., little bustards, white-tailed deer) and their key attributes (age, sex, location, health status, reproductive history) [11] [23].

- Define Spatial Environment: Construct a realistic landscape using Geographic Information System (GIS) data. This includes habitat suitability maps, land cover, human infrastructure, and other relevant spatial layers [11] [25].

Phase 2: Data Integration and Parameterization This phase involves gathering and standardizing diverse data sources to inform the model.

- Spatial Data: Integrate high-resolution remote sensing data and GIS covariates (e.g., topography, climate, vegetation, anthropogenic features) [11] [25].

- Demographic Data: Collect individual-level data on survival rates (nest, chick, adult), fecundity, sex ratios, and dispersal behavior from field studies and published literature [11].

- Environmental Drivers: Parameterize relationships between demographic rates and environmental variables. For instance, model calibration may support the hypothesis that survival rates positively correlate with habitat suitability [11].

Phase 3: Model Design and Implementation

- Formulate Individual Processes: Program rules for individual life cycles.

- Movement: Implement rules for dispersal, foraging, and migration within the spatial landscape.

- Reproduction: Define rules for mating, offspring production, and inheritance of traits.

- Mortality: Implement risk functions based on age, habitat quality, disease status, and anthropogenic factors [23].

- Incorporate Ecological Interactions: Model disease transmission using an S-E-I-D (Susceptible-Exposed-Infectious-Dead) framework for wildlife diseases [23], or simulate species interactions.

- Select Modeling Platform: Choose a flexible software environment. Studies are often implemented using:

- SLiM: For genetically explicit, eco-evolutionary models [24].

- R: A familiar tool for many biologists, often used for statistical analysis and modeling.

- Custom C++ or Python code for specific applications.

Phase 4: Model Calibration and Validation

- Calibration: Adjust model parameters within biologically plausible ranges so that model output matches known empirical data. This process tests and refines model hypotheses [11].

- Pattern-Oriented Modeling (POM): A powerful validation technique where multiple patterns (e.g., annual prevalence rates, population trends) observed in real-world data are used to validate the model structure [23].

- Sensitivity Analysis: Perform a global sensitivity analysis to identify which parameters (e.g., harvest rates, prion half-life) have the greatest influence on key model outputs (e.g., disease prevalence). This highlights critical knowledge gaps and leverages the model [23].

Phase 5: Simulation of Conservation Scenarios Run the validated model under different management scenarios to forecast outcomes. For example:

- Little Bustard: Simulate populations over 50 years under scenarios of habitat improvement, mortality mitigation, and combined strategies [11].

- Chronic Wasting Disease: Test the effect of varying harvest rates on disease prevalence and deer population decline [23].

Phase 6: Analysis and Decision Support

- Output Analysis: Compare scenario outcomes using relevant metrics (e.g., final population size, time to extinction, disease prevalence).

- Prioritization: Use model results to prioritize cost-effective conservation interventions. The little bustard study concluded that an integrated, long-term strategy was essential [11].

Quantitative Data Synthesis

The following tables synthesize key quantitative findings and parameters from cited IBM case studies.

Table 1: Conservation Insights from Spatially Explicit IBM Case Studies

| Species / System | Key Modeled Threat | Simulation Outcome | Conservation Insight |

|---|---|---|---|

| Little Bustard (Tetrax tetrax) [11] | Habitat degradation, anthropogenic mortality, skewed sex ratio | Habitat improvements alone were insufficient to reverse declines over a 50-year forecast. | An integrated strategy combining habitat management and mortality mitigation is essential for recovery. |

| White-tailed Deer (Odocoileus virginianus) [23] | Chronic Wasting Disease (CWD) | A single infected deer caused an outbreak in 29% of introductions. At year 50, populations declined by 87% in outbreaks. | Management should focus on preventing initial introduction. CWD prevalence is most sensitive to female harvest rates. |

| Marine Species & Corals [24] | Climate Change | Adaptive potential allowed persistence only under mild warming scenarios. Speed of adaptation depended on genetic loci number and population growth. | Rate of temperature change and influx of warm-adapted recruits are critical factors for persistence. |

Table 2: Key Parameters and Data Requirements for Spatially Explicit IBMs

| Parameter Category | Specific Examples | Data Sources |

|---|---|---|

| Demographic | Age/sex-specific survival, fecundity, sex ratio, initial population size | National Forest Inventories, published literature, long-term field studies [11] [25] |

| Spatial & Environmental | Habitat suitability maps, land cover, climate data, anthropogenic features | Remote sensing (e.g., satellite imagery), GIS databases, WorldClim [11] [22] [25] |

| Genetic (Eco-evolutionary) | Number of loci, mutation rate & effect, heritability, genetic variance | Genomic studies, common garden experiments, published estimates [24] |

| Disease (Epidemiological) | Transmission rate, prion shedding rate, incubation period, shedding rate | Wildlife agency reports, experimental infection studies [23] |

Table 3: Essential Tools and Data for Developing Spatially Explicit IBMs

| Tool / Resource | Function in IBM Development | Examples & Notes |

|---|---|---|

| Spatial Data Platforms | Provide landscape-level covariates and habitat variables for the model environment. | GIS datasets, remote sensing products (Landsat, MODIS), global topographic and climate layers (WorldClim) [11] [25]. |

| Forest Inventory Databases | Source of demographic and density data for model parameterization and validation. | National Forest Inventories (NFIs), Global Index of Vegetation-Plot Databases (GIVD) [25]. |

| Modeling & Simulation Software | Core computational environment for building, running, and analyzing the IBM. | SLiM (for genetically explicit models), R, NetLogo, Numpy, or custom C++ code [24]. |

| High-Performance Computing (HPC) | Provides the computational power needed for thousands of stochastic simulation runs and sensitivity analyses. | University clusters, cloud computing services (AWS, Google Cloud). Essential for complex, large-scale models [24]. |

| Pattern-Oriented Modeling Framework | A validation methodology that uses multiple patterns from real-world data to filter and validate model structures. | Increases model credibility by ensuring it reproduces several independent empirical patterns simultaneously [23]. |

Application Note

Genetic monitoring provides critical insights into population health, viability, and evolutionary potential. Traditionally, conservation genetics has relied heavily on neutral markers such as microsatellites to estimate population parameters like genetic diversity, effective population size, and gene flow [26]. However, neutral markers reveal little about adaptive genetic variation that directly influences population resilience to environmental challenges, including disease outbreaks [26]. The Major Histocompatibility Complex (MHC) represents a key component of the vertebrate adaptive immune system, encoding molecules responsible for pathogen recognition and immune response initiation [27] [26]. This application note explores the integration of neutral and adaptive markers, specifically MHC genes, into comprehensive genetic monitoring frameworks for conservation management.

Comparative Analysis: Neutral vs. Adaptive Markers

Table 1: Comparison of Neutral and Adaptive (MHC) Genetic Markers in Conservation Monitoring

| Feature | Neutral Markers (e.g., Microsatellites) | Adaptive Markers (MHC Genes) |

|---|---|---|

| Primary Function | Assess demographic history, population structure, gene flow, inbreeding [26] | Evaluate adaptive potential, pathogen resistance, immunogenetic fitness [27] [26] |

| Underlying Evolutionary Force | Genetic drift, migration [28] | Balancing selection, pathogen-driven selection [28] [26] |

| Polymorphism Level | Variable, typically lower than MHC [26] | Extremely high, often the most polymorphic genes in the genome [27] [26] |

| Key Insights for Management | Identification of distinct populations, bottlenecks, and connectivity [28] | Identification of populations vulnerable to disease, potential for mate choice [28] [26] |

| Limitations | Poor predictors of adaptive potential [26] | Complex genotyping, selection can maintain diversity despite bottlenecks [28] |

Case Studies in Conservation

Bellinger River Turtle (Myuchelys georgesi)

Genome-wide sequencing of the critically endangered Bellinger River turtle revealed critically low neutral diversity [27]. However, diversity within the core MHC region exceeded that of all other macrochromosomes, suggesting the action of balancing selection maintaining adaptive variation even in a genetically depleted population [27]. This population suffered a 90% decline due to a nidovirus outbreak, highlighting that contemporary threats often act on populations already compromised by low genetic diversity [27].

Iberian Wolf (Canis lupus)

Research on Iberian wolves demonstrated how different demographic scenarios influence adaptive diversity [28]. Both persistent and expanding wolf groups showed signals of balancing selection at MHC genes, including higher observed heterozygosity and significant departure from neutrality [28]. The expanding group exhibited a significant excess of MHC heterozygotes, consistent with heterozygote advantage [28]. This contrasts with the small, isolated group, which showed MHC diversity patterns more aligned with neutral expectations, suggesting genetic drift may be overwhelming selection in this subpopulation [28].

Table 2: Key Findings from Genetic Monitoring Case Studies

| Species (Context) | Neutral Diversity | MHC Diversity | Key Implication for Conservation |

|---|---|---|---|

| Bellinger River Turtle (Critically endangered, single population) | Critically low [27] | Higher than neutral diversity, maintained by selection [27] | Vulnerability to disease outbreaks may be linked to overall low diversity, despite maintained MHC variation. |

| Iberian Wolf (Persistent group) | High [28] | High, signals of balancing selection [28] | Population demonstrates healthy adaptive potential. |

| Iberian Wolf (Expanding group) | High [28] | High, significant excess of heterozygotes [28] | Balancing selection, potentially via heterozygote advantage, is maintaining diversity during expansion. |

| Iberian Wolf (Isolated group) | Low [28] | Aligned with neutral expectations [28] | Genetic drift may be overriding selection, increasing vulnerability. |

Integrating MHC genes into genetic monitoring provides a more comprehensive assessment of a population's conservation status and evolutionary potential. While neutral markers remain essential for understanding demography and population structure, MHC markers offer a direct window into adaptive immune competence [27] [28] [26]. The case studies demonstrate that the interaction between demographic history and selection shapes MHC diversity, necessitating population-specific management strategies. Advances in next-generation sequencing (NGS) are making the characterization of functional genes like MHC more accessible for non-model organisms, paving the way for their routine application in conservation genomics [27] [26].

Protocols

Protocol for MHC Genotyping and Diversity Analysis in Non-Model Vertebrates

Background and Application

This protocol details a comprehensive methodology for characterizing Major Histocompatibility Complex (MHC) diversity in non-model vertebrate species from sample collection through data analysis. It is designed for use in conservation genetic monitoring programs to assess population immunogenetic health and is framed within the context of long-term, individual-based research for informed management decisions [27] [26]. The protocol utilizes Sanger sequencing or next-generation sequencing (NGS) of MHC Class II genes, which are often the initial target for conservation-focused studies [27] [28].

Materials and Equipment

Research Reagent Solutions

Table 3: Essential Materials and Reagents for MHC Genotyping

| Item | Function/Application | Specific Examples/Notes |

|---|---|---|

| DNA Extraction Kit | High-quality genomic DNA isolation from tissue, blood, or non-invasive samples. | Kits from Qiagen or equivalent, suitable for the sample type [29]. |

| PCR Master Mix | Amplification of target MHC gene regions. | Kapa Taq or other high-fidelity polymerases for accurate amplification [29]. |

| MHC-Specific Primers | Target enrichment of polymorphic MHC genes. | Designed from conserved regions in related species; often target exons encoding the peptide-binding region (PBR) [26]. |

| Gel Electrophoresis System | Verification of successful PCR amplification. | Agarose gel equipment for visualizing DNA fragments. |

| Sanger Sequencing Kit or NGS Library Prep Kit | Determining the nucleotide sequence of amplified fragments. | BigDye Terminator kits for Sanger; Illumina Nextera or Kapa HyperPrep for NGS [29]. |

| Cloning Vector (if needed) | Separating alleles for sequencing when dealing with complex diploid genotypes. | TOPO TA Cloning Kit for Sanger sequencing of individual alleles [26]. |

Step-by-Step Procedure

Step 1: Sample Collection and DNA Extraction

- Action: Collect biological samples (e.g., tissue, blood, saliva) following ethical guidelines. Preserve samples appropriately (e.g., in ethanol, frozen).

- Standards: Extract high-molecular-weight genomic DNA using a commercial kit. Quantify DNA using a UV spectrophotometer (e.g., NanoDrop) and/or fluorometer (e.g., Qubit), ensuring A260/280 ratios are between 1.8-2.0 [29].

Step 2: MHC Target Amplification

- Primer Design: If species-specific primers are unavailable, design degenerate primers by aligning MHC sequences from closely related species. Focus on amplifying the highly variable exon 2 for MHC Class II genes (e.g., DRB1, DQA1, DQB1), which often encodes the peptide-binding region [28] [26].

- PCR Amplification: Set up PCR reactions in a 25-50 µL volume using a hot-start polymerase. Include negative controls.

- Cycling Conditions: Typical conditions: initial denaturation at 95°C for 5 min; 35 cycles of 95°C for 30 s, primer-specific annealing temperature (50-60°C) for 30 s, 72°C for 1 min/kb; final extension at 72°C for 10 min.

- Verification: Confirm amplification success and specificity by running PCR products on an agarose gel.

Step 3: Sequencing

- For Sanger Sequencing: Purify PCR products and sequence directly. For complex genotypes, clone PCR products into a plasmid vector and sequence multiple clones (e.g., 10-20) to ensure all alleles are captured [26].

- For NGS Sequencing: Purify PCR products and prepare sequencing libraries using a commercial kit. Use dual-indexing to multiplex samples. Sequence on an appropriate platform (e.g., Illumina MiSeq) with paired-end reads for better accuracy [27] [29].

Step 4: Data Analysis

- Quality Control & Assembly: For NGS data, use tools like Trimmomatic to remove low-quality reads and adapters. Assemble reads using a pipeline that handles high polymorphism, or map to a reference sequence if available.

- Genotype Assignment: Identify allelic variants. For NGS, a custom bioinformatics pipeline is required to cluster sequencing reads and filter artifacts [27].

- Population Genetic Analysis:

- Calculate standard diversity indices: observed (HO) and expected (HE) heterozygosity, number of alleles, and nucleotide diversity [28].

- Test for deviations from Hardy-Weinberg Equilibrium.

- Test for signatures of selection:

- Tajima's D and Fu & Li's D* tests to detect departures from neutrality [28].

- Calculate the dN/dS ratio (non-synonymous to synonymous substitutions) across all sites and specifically in the peptide-binding region (PBR). A dN/dS > 1 suggests positive selection [28].

- Use software like OmegaMap to identify specific codons under positive selection [28].

- Compare MHC differentiation (FST) with neutral differentiation (e.g., from microsatellites). MHC FST significantly lower than neutral FST suggests balancing selection, while higher MHC FST may indicate local adaptation [28].

Quality Control and Data Interpretation

- Validation: Validate a subset of MHC genotypes using an orthogonal method, such as cloning and Sanger sequencing, to confirm NGS genotyping accuracy.

- Interpretation: Interpret results in the context of neutral genetic data and demographic history. Populations with stable histories are expected to show stronger signals of balancing selection, while small, isolated populations may show MHC diversity eroded by genetic drift [28].

Workflow Visualization

MHC Genotyping and Analysis Workflow

Neutral vs. Adaptive Marker Integration

Application Notes: Leveraging AI and Individual-Based Models for Conservation

The little bustard (Tetrax tetrax) is a steppe bird that has experienced sharp population declines across its western range, with the Iberian Peninsula representing its main stronghold [11] [30]. This case study examines how IBM's artificial intelligence technologies and individual-based modeling approaches can enhance conservation strategies for this threatened species. The integration of long-term individual-based tracking data with AI-powered analytical tools represents a transformative approach to conservation management, enabling researchers to move from population-level assessments to individual-focused monitoring and intervention [31].

Agricultural intensification constitutes the primary threat to little bustard populations, leading to its classification as "Endangered" in Spain and "Near threatened" globally [30]. The species exhibits complex migratory behavior, with Iberian populations demonstrating partial migration patterns where some individuals migrate while others remain sedentary [30]. This behavioral diversity necessitates sophisticated monitoring approaches that can track individual movements and survival across vast geographical scales and throughout the annual cycle.

IBM's AI Technologies for Ecological Monitoring

IBM has developed several AI technologies with direct applications to little bustard conservation. The Granite-Geospatial foundation model, initially created for ocean monitoring, employs a vision transformer architecture that can be adapted to analyze terrestrial satellite imagery [32]. This model was pre-trained on approximately 500,000 color-coded images and fine-tuned with minimal high-quality field data, demonstrating an ability to produce accurate spatial patterns across large areas with limited ground-truthing [32].

The IBM Environmental Intelligence Suite combines weather, climate, and operational data with environmental performance management capabilities [33]. This SaaS solution provides APIs, dashboards, maps, and alerts that can help conservationists monitor disruptive environmental conditions, predict climate change impacts, and prioritize mitigation efforts for little bustard habitats [33]. Additionally, IBM Maximo Visual Inspection offers AI-powered image recognition capabilities that could be adapted to identify individual little bustards from camera trap images, similar to its current application for African forest elephants [34].

Table: IBM AI Technologies Applicable to Little Bustard Conservation

| Technology | Primary Function | Conservation Application | Performance Metrics |

|---|---|---|---|

| Granite-Geospatial Model | Satellite image analysis | Habitat mapping and change detection | Trained on 500,000 images; accurate spatial pattern reproduction [32] |

| Environmental Intelligence Suite | Climate risk analytics | Monitoring disruptive environmental conditions | Combines weather data, climate projections, operational data [33] |

| Maximo Visual Inspection | Visual identification | Individual animal recognition (potential application) | Identifies individual elephants via head/tusk features [34] |

Individual-Based Models for Population Management

Spatially explicit individual-based models (IBMs) represent powerful tools for anticipating and assessing the effectiveness of conservation scenarios for endangered species like the little bustard [11]. These models integrate high-resolution habitat suitability data with demographic parameters to simulate individual behaviors and interactions with the environment, forecasting habitat use and population dynamics under different management strategies [11].

Research in Extremadura, Spain, has demonstrated the value of demographic IBMs for little bustard conservation planning. Model calibration supported the hypothesis that nest, chick, and adult survival positively correlate with habitat suitability [11]. Notably, results suggest that observed unbalanced sex ratios are partially driven by low female survival rates in less favorable habitats [11]. Simulation of conservation strategies over 50-year periods indicated that habitat enhancements alone are insufficient to reverse population declines without complementary efforts to reduce anthropogenic mortality [11].

Table: Key Parameters for Little Bustard Individual-Based Models

| Parameter Category | Specific Metrics | Data Sources | Conservation Significance |

|---|---|---|---|

| Demographic Parameters | Nest, chick, and adult survival rates | Field monitoring, tracking data | Correlate with habitat suitability; reveal sex-specific survival patterns [11] |

| Movement Metrics | Migration distance, timing, corridors | GPS tracking (105 birds in Iberian study) | Reveals connectivity between populations; identifies critical corridors [30] |

| Habitat Preferences | Herbaceous cover, elevation, terrain roughness | Satellite imagery, land use maps | Avoids tree-covered land and water bodies; prefers low elevation areas [30] |

| Migration Behavior | Resident vs. migrant ratios, directional trends | GPS tracking across multiple populations | Varies by region (25.93% to 94.74% migrants across Iberian regions) [30] |

Migration Ecology and Connectivity Analysis

The little bustard exhibits partial migration across Iberia, with significant variation in migrant ratios between populations [30]. Research utilizing 105 GPS-tagged birds revealed that the Alentejo (94.74%) and Northern Plateau (93.75%) had the highest proportion of migrants, while the Ebro Valley had the lowest (25.93%) [30]. This migratory connectivity has crucial implications for conservation planning, as threats in wintering areas may affect breeding populations in distant regions.

Analysis of 253 migratory movements identified three principal corridors connecting little bustard populations across the Iberian Peninsula [30]. These corridors are characterized by specific topographic and land cover features, with birds preferentially moving through areas dominated by herbaceous cover while avoiding tree-covered land and water bodies [30]. Migration predominantly occurs at night through areas of low elevation and terrain roughness [30].

Diagram Title: IBM AI Integration in Little Bustard Conservation

Experimental Protocols and Methodologies

Field Tracking and Data Collection Protocol

Animal Capture and Tagging

Little bustards included in tracking studies should be adult birds captured during spring using established techniques [30]. The protocol specifies:

- Capture Methods: Utilize leg nooses, funnel traps, or spring traps remotely activated by capturers [30]. In Kyrgyzstan, researchers successfully employed decoy-based trapping after initial methods proved ineffective, adapting techniques to local behavioral patterns [35].

- Handling Procedure: Minimize handling time to less than 15 minutes once trapped [30]. The process involves immediate tagging with priority on transmitter placement, potentially deferring weighing and measuring to reduce stress on vulnerable birds [35].

- Transmitter Specifications: Use GPS transmitters such as Ornitela OT-15, OTE-10, OT-20, or Movetech MT25g, attached with a thoracic Teflon harness [30]. Transmitters should weigh between 10-25g, not exceeding 3% of body weight (birds typically weigh 740-975g) [30] [31].

Data Collection Parameters

GPS tracking devices should be configured to collect:

- Location Data: Regular positional fixes to determine movement patterns, migration timing, and habitat use [30] [31].

- Environmental Measurements: Additional sensors can record temperature, humidity, and other microclimatic variables [31].

- Accelerometer Data: Three-dimensional body acceleration provides insights into behavior, energy expenditure, and potential reproductive events [31].

Diagram Title: Field Tracking and Data Collection Workflow

IBM AI Implementation Protocol

Geospatial Habitat Analysis

Implement IBM's Granite-Geospatial model for little bustard habitat assessment:

- Data Preparation: Compile satellite imagery from Copernicus Sentinel-3 and other sources, creating a training dataset of approximately 500,000 color-coded images [32].

- Model Fine-Tuning: Adapt the model using minimal high-quality field data (100-200 ground-truthed measurements) corresponding to exact dates in satellite footage [32].

- Habitat Mapping: Generate color-coded maps of habitat suitability, correlating vegetation indices with known little bustard presence points [32].

- Change Detection: Monitor temporal changes in habitat quality and extent using the model's pattern recognition capabilities [32].

Environmental Intelligence Integration

Deploy IBM Environmental Intelligence Suite for comprehensive conservation planning:

- Climate Risk Assessment: Analyze potential impacts of climate change on little bustard habitats using built-in climate risk analytics [33].

- Weather Monitoring: Configure alerts for disruptive environmental conditions such as severe weather that may impact little bustard survival or migration [33].

- Carbon Accounting: Utilize the suite's capabilities to assess carbon sequestration potential of little bustard habitats, supporting potential sustainable finance investments [34] [33].

Individual-Based Model Development Protocol

Model Structure and Parameterization

Develop spatially explicit individual-based models using the following framework:

- Habitat Suitability Integration: Incorporate high-resolution habitat suitability data as a foundation for the model [11].

- Demographic Parameters: Calibrate the model with empirical data on nest, chick, and adult survival rates, ensuring positive correlation with habitat suitability [11].

- Behavioral Rules: Program individual movement rules based on tracked migration data, including responses to habitat quality and seasonal changes [11] [30].

- Anthropogenic Factors: Incorporate mortality risks from human activities, including collision risks and habitat degradation [11].

Conservation Scenario Simulation

Utilize the calibrated IBM to evaluate conservation strategies:

- Intervention Testing: Simulate the effects of habitat improvement measures and anthropogenic mortality reduction over extended periods (e.g., 50 years) [11].

- Cost-Effectiveness Analysis: Compare the relative effectiveness of different conservation interventions to prioritize management actions [11].

- Population Viability Assessment: Project long-term population trends under different management scenarios to identify optimal strategies [11] [31].

Table: Little Bustard Migration Patterns Across Iberian Populations

| Region | Sample Size | Migratory Ratio | Resident Ratio | Main Connectivity | Migration Features |

|---|---|---|---|---|---|

| Alentejo | 19 | 94.74% | 5.26% | Southern Plateau, Extremadura, Guadalquivir Valley | Herbaceous cover, low elevation [30] |

| Northern Plateau | 16 | 93.75% | 6.25% | Western Southern Plateau, Extremadura | Night migration, avoids trees/water [30] |

| Guadalquivir Valley | 11 | 81.82% | 18.18% | Southern Plateau, Extremadura, Alentejo | Low terrain roughness [30] |

| Extremadura | 26 | 65.38% | 34.62% | Southern Plateau, Alentejo, Guadalquivir Valley | Northward summer trend [30] |

| Southern Plateau | 18 | 55.56% | 44.44% | Northern Plateau, Extremadura, Ebro Valley | Southward winter movement [30] |

| Ebro Valley | 27 | 25.93% | 74.07% | Southern Plateau, internal movements | Three main corridors identified [30] |

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials and Technologies for Little Bustard Research

| Research Tool | Specifications | Primary Function | Conservation Application |

|---|---|---|---|

| GPS Transmitters | Ornitela OT-15, OTE-10, OT-20; Movetech MT25g; 10-25g weight | Individual movement tracking | Monitor migration, habitat use, survival; 105 birds tagged in Iberian study [30] |

| Capture Equipment | Leg nooses, funnel traps, spring traps, decoys (male/female) | Safe animal capture and handling | Tagging operations; adaptation required for local responses to decoys [35] [30] |

| IBM Granite-Geospatial | Vision transformer architecture; 50M parameters | Satellite image analysis | Habitat mapping, change detection, corridor identification [32] |

| IBM Environmental Intelligence Suite | SaaS with APIs, dashboards, alert systems | Climate risk assessment | Predict climate impacts, monitor disruptive conditions [33] |

| Individual-Based Modeling Platform | Spatially explicit demographic simulation | Conservation scenario testing | Evaluate management strategies over 50-year timelines [11] |

| Accelerometer Sensors | 3D movement recording, VeDBA algorithms | Behavior and energetics measurement | Link movement to energy expenditure, detect reproduction [31] |

Diagram Title: Threat Assessment and IBM Solution Framework

The application of IBM's AI technologies and individual-based modeling approaches to little bustard conservation demonstrates the power of integrating long-term individual tracking data with advanced analytical tools. This case study reveals that effective conservation requires a multifaceted approach that combines habitat management with targeted mortality reduction, informed by sophisticated modeling of individual movements and population dynamics [11] [30].

The synergy between biologging technologies and AI-powered analysis platforms creates new opportunities for evidence-based conservation management. By leveraging these tools, researchers can move beyond static distribution maps to dynamic understanding of how individual animals respond to environmental change and conservation interventions [31]. This approach ultimately supports the development of more effective, cost-efficient conservation strategies that can be adapted over time based on continuous monitoring and model refinement.

For the little bustard specifically, conservation success depends on international and inter-regional coordination to protect not only breeding and wintering quarters but also the migratory corridors connecting them [30]. The technologies and methodologies outlined in this case study provide a robust framework for achieving this comprehensive conservation approach, offering hope for reversing population declines and ensuring the long-term viability of this threatened species.

Application Note