Integrating GPS and Accelerometer Data in Animal Tracking: Methodologies, Applications, and Future Directions for Biomedical Research

This article provides a comprehensive overview of the integration of GPS and accelerometer biologging technologies for advanced animal tracking and behavioral analysis.

Integrating GPS and Accelerometer Data in Animal Tracking: Methodologies, Applications, and Future Directions for Biomedical Research

Abstract

This article provides a comprehensive overview of the integration of GPS and accelerometer biologging technologies for advanced animal tracking and behavioral analysis. Tailored for researchers and drug development professionals, it explores the foundational principles of these sensors, details methodological approaches for data collection and machine learning classification, and addresses key challenges in model generalization and device impact. It further covers rigorous validation techniques and comparative analyses of emerging technologies. By synthesizing recent findings and case studies from species ranging from cattle to seabirds, this resource aims to equip scientists with the knowledge to implement these tools for robust, data-driven research in ecology, toxicology, and preclinical studies.

The Core Technologies: Understanding GPS and Accelerometer Biologging

Fundamental Principles of GPS and Accelerometer Sensors in Biologging

Biologging, a term formally proposed at the first international symposium in Tokyo in 2003, involves attaching electronic data recorders to animals to monitor their behavior, physiology, and surrounding environment in the wild [1]. This method has transformed from a biological observation tool into a cross-disciplinary platform that contributes to fields such as oceanography, meteorology, and environmental science [1]. The integration of GPS and accelerometer sensors has become foundational to modern animal tracking research, enabling scientists to remotely "observe" elusive species and collect continuous data across vast spatial and temporal scales [2] [3].

The fundamental premise of biologging is that sensors mounted on animals can provide direct, real-time observations of individual performance, survival strategies, and reproductive success in dynamically changing environments [3]. As technology has advanced, devices have become progressively smaller, reducing impact on animals while expanding capabilities to include a wider range of taxonomic groups and environmental parameters [1]. This technological evolution now allows researchers to address critical conservation challenges amid the growing biodiversity crisis by providing unprecedented insights into animal lives [3].

Fundamental Principles and Technical Specifications

Global Positioning System (GPS) Technology

GPS technology, publicly available since the 1990s, revolutionized wildlife tracking by providing accurate location data [4]. The fundamental principle involves GPS collars receiving signals from satellites to calculate position through triangulation. Modern systems can transmit data via satellite networks like Argos or Iridium, enabling global coverage and near real-time monitoring even in remote locations [4].

A critical advancement in GPS tracking addresses the challenge of three-dimensional movement analysis. Traditional models only account for two-dimensional movement, creating significant errors for animals that move substantially in the vertical plane. New mathematical methods now properly account for both topography and Earth's curvature, accurately calculating distances for species like mountain lions that frequently change elevation or whales that move vertically in the water column [5]. This is essential because without these calculations, researchers fundamentally misunderstand how animals spend time and energy in their daily activities [5].

Accelerometer Sensors

Accelerometers measure the collar's (and thus the animal's) intensity of movement as the difference in velocity between two consecutive measurements [2]. These sensors typically record on multiple axes (usually x, y, and z), representing forward-backward, sideways (left-right), and up-down movements respectively [2].

The data resolution varies based on research needs and device capabilities. High-resolution data preserves raw measurements at frequent intervals (e.g., 4 Hz), while low-resolution data averages measurements over predefined intervals (e.g., 5 minutes) to conserve storage capacity [2]. This averaging is particularly valuable in long-term studies where memory capacity is limited and reduces computational demands for subsequent analysis [2].

Table 1: Technical Specifications of Biologging Sensors

| Parameter | GPS Technology | Accelerometer Sensors |

|---|---|---|

| Primary Function | Determines geographical position | Measures intensity and pattern of movement |

| Data Output | Latitude, longitude, altitude (3D) | Acceleration values on multiple axes (x, y, z) |

| Measurement Principle | Satellite signal triangulation | Velocity change between consecutive measurements |

| Sampling Frequency | Variable intervals (minutes to hours) | Typically 4Hz for raw data, or averaged over 5-min intervals [2] |

| Data Range | Global coverage via satellite networks | Unit-free numbers (0-255) representing no movement to maximum movement [2] |

| Key Advancements | 3D movement accounting for Earth's curvature [5] | Behavioral classification through machine learning [2] |

Integrated Sensor Applications and Data Outputs

The powerful synergy between GPS and accelerometer sensors emerges when their data streams are integrated. While GPS provides the spatial context of where an animal is located, accelerometers reveal what the animal is doing at that location. This integration enables researchers to connect specific behaviors with environmental features and spatial movements.

For example, accelerometer data can classify behaviors such as lying, feeding, standing, walking, and running in red deer [2], while simultaneous GPS data can relate these activities to specific habitats, elevations, or human-modified landscapes. This combination has revealed critical ecological insights, such as white storks foraging in landfills, suggesting these animals may rely on human-modified landscapes for survival [3].

Table 2: Data Integration from GPS and Accelerometer Sensors

| Data Type | Parameters Measured | Behavioral/Ecological Insights | Research Applications |

|---|---|---|---|

| GPS Location | Latitude, longitude, altitude, timestamp | Home range, migration routes, habitat selection | Protected area design, corridor identification |

| Accelerometer Signatures | Body posture, movement intensity, gait patterns | Specific behaviors (feeding, running, resting), energy expenditure | Time-activity budgets, behavioral ecology, disturbance responses |

| Environmental Sensors | Water temperature, salinity, atmospheric pressure [1] | Physical environment characterization, habitat preferences | Oceanography, meteorology, climate change studies |

| Integrated Data | Behavior in spatial context | Resource selection, movement ecology, anthropogenic impacts | Conservation planning, mitigation measures |

Experimental Protocols and Methodologies

Sensor Deployment and Data Collection

The deployment of biologging devices requires careful planning and execution to ensure both animal welfare and data quality:

Animal Capture and Handling: Researchers must follow ethical guidelines and obtain appropriate permits for animal capture. For large mammals like red deer, immobilization may be performed by wildlife officials using approved anesthetics [2]. Each individual should be marked with unique identifiers (e.g., colored ear tags) for visual identification post-deployment [2].

Device Attachment: Collars should be fitted to minimize impact on the animal's natural behavior while ensuring sensor orientation remains consistent. For accelerometers in particular, consistent positioning is critical for accurate behavioral classification [2]. Devices often include remote drop-off mechanisms programmed for release after a predetermined period (e.g., two years) [2].

Data Transmission and Retrieval: Depending on the system, data can be retrieved via UHF and VHF download in the field, directly from the device after drop-off, or through satellite transmission for remote access [2]. Systems like the Satellite Relay Data Loggers (SRDLs) can transmit compressed data via satellite for more than one year without retrieving the device [1].

Machine Learning Classification of Accelerometer Data

Behavioral classification from accelerometer data follows a structured analytical workflow. For wild red deer, researchers have successfully developed multiclass models that differentiate between lying, feeding, standing, walking, and running using low-resolution acceleration data [2]:

Data Preparation: Raw accelerometer values (typically ranging 0-255) are normalized using methods like minmax normalization. Different combinations of input variables are tested, including axial acceleration values and their derived counterparts (sum, difference, and ratio) [2].

Model Training: Various machine learning algorithms are compared, including discriminant analysis, recursive partitioning, and random forest. Training uses a supervised learning approach with observed behaviors as output variables and acceleration data as input variables [2].

Model Validation: Rigorous validation with independent test sets is essential to detect and prevent overfitting, where models memorize training data rather than learning generalizable patterns [6]. Studies indicate that 79% of accelerometer-based behavioral classification studies do not adequately validate their models, limiting interpretability of results [6]. Proper validation requires completely independent test data that the model has never encountered during training [6].

Performance Evaluation: A customized metric that considers imbalance between different behaviors is used to compare model accuracy. Research shows discriminant analysis generates the most accurate classification models when trained with minmax-normalized acceleration data from multiple axes and their ratios [2].

Data Management, Visualization, and Analysis Platforms

Standardized Data Platforms

The growing volume and complexity of biologging data has necessitated specialized platforms for data management, sharing, and analysis. The Biologging intelligent Platform (BiP) represents an integrated solution that adheres to internationally recognized standards for sensor data and metadata storage [1]. Key features include:

- Data Standardization: BiP conforms to international standard formats including Integrated Taxonomic Information System (ITIS), Climate and Forecast Metadata Conventions (CF), and Attribute Conventions for Data Discovery (ACDD) [1].

- Metadata Management: The platform stores detailed metadata about animal traits (sex, body size), instrument specifications, and deployment information, enabling meaningful secondary data analysis [1].

- Online Analytical Processing (OLAP): BiP includes tools that calculate environmental parameters such as surface currents, ocean winds, and waves from animal-collected data [1].

- Data Accessibility: Users can search for datasets using Digital Object Identifiers (DOIs) of related publications, and data is typically available under CC BY 4.0 licensing for open datasets [1].

Data Visualization Tools

Advanced visualization tools like ECODATA support the exploration and communication of complex animal movement datasets [7]. This open-source software creates animations that help ecologists study animal movement in relation to environmental factors such as extreme weather conditions or seasonal vegetation growth [7].

These visualization platforms work by combining direct wildlife location observations with complex remote sensing and geospatial data to process image frames into multiple layers of customizable maps [7]. The animations are particularly effective for illustrating study results, supporting animal exploration in uncharted territories, and aiding wildlife managers in garnering support for conservation efforts [7].

Essential Research Toolkit

Table 3: Research Reagent Solutions for Biologging Studies

| Tool/Category | Specific Examples | Function & Application |

|---|---|---|

| GPS Collars | VECTRONIC Aerospace GmbH: PRO LIGHT, VERTEX PLUS [2] | Collect location data and acceleration measurements; deployed on large mammals |

| Satellite Transmission Systems | Argos, Iridium, Kineis [4] | Enable global data transmission from remote locations via satellite networks |

| Multi-sensor Platforms | Wildlife Computers tags [4] | Measure environmental parameters (temperature, salinity) alongside movement data |

| Data Management Platforms | Biologging intelligent Platform (BiP), Movebank [1] | Store, standardize, and share biologging data with metadata following international standards |

| Visualization Software | ECODATA, Mapotic [7] [4] | Create animations and interactive maps for data exploration and communication |

| Machine Learning Environments | R packages [2] | Classify animal behavior from accelerometer data using various algorithms |

| Validation Frameworks | Independent test sets, Cross-validation [6] | Detect and prevent overfitting in behavioral classification models |

Current Applications and Future Directions

Biologging with integrated GPS and accelerometer sensors currently contributes to diverse research and conservation applications:

Oceanographic Monitoring: Instrumented marine animals complement traditional observation systems like Argo floats, providing data in shallow waters and regions with sea ice that are difficult to measure with conventional approaches [1]. The AniBOS (Animal Borne Ocean Sensors) project has established a global ocean observation system leveraging animal-borne sensors [1].

Conservation Planning: Movement data helps identify critical habitats, migration corridors, and human-wildlife conflict areas. For example, animations of elk and wolf movements in relation to roads and wildlife crossing structures near Banff National Park inform mitigation measures [7].

Behavioral Ecology: Accelerometer-based classification reveals how animals allocate time to different behaviors and respond to environmental changes. Studies on wild red deer in Alpine environments demonstrate how machine learning can differentiate multiple behavior states from accelerometer data [2].

Future directions address current limitations, including:

- Geographic Bias Reduction: Most biologging data comes from Europe and the United States, with underrepresentation from Global South regions experiencing rapid environmental change [3].

- 3D Movement Integration: New mathematical methods that properly account for vertical movement and Earth's curvature will provide more accurate measurements of animal movement [5].

- Standardized Validation: Improved validation protocols for machine learning models will enhance reliability and generalizability of behavioral classification [6].

- Real-time Conservation: Emerging software-defined tracking technologies can provide real-time, detailed environmental data directly from animals, enabling immediate conservation responses [3].

The integration of GPS and accelerometer sensors in biologging represents a powerful toolkit for addressing fundamental questions in animal ecology and conservation. As these technologies continue to evolve, they will further transform our understanding of animal movement, behavior, and ecology in an increasingly human-modified world.

The integration of GPS and accelerometer technologies has revolutionized the study of animal movement ecology, enabling researchers to move beyond simple location tracking to gain profound insights into animal behavior, energy expenditure, and welfare. This integrated approach forms a technological symbiosis where GPS sensors provide the spatial context of where an animal is located, while accelerometers reveal the behavioral context of what the animal is doing at those locations. Modern biologging devices now routinely combine these sensors, creating rich multivariate datasets that capture both movement paths and the detailed behavioral patterns that generate those paths [8] [9]. This application note details the key parameters, data outputs, and methodological protocols for effectively utilizing these technologies within animal tracking research, with particular emphasis on the derivation and application of the Overall Dynamic Body Acceleration (ODBA) metric.

Core Sensor Parameters and Data Outputs

GPS Sensor Specifications and Data Outputs

GPS modules in biologging devices are configured to balance positional accuracy with battery conservation, a critical consideration for long-term deployment.

Table 1: Key GPS Parameters and Typical Data Outputs

| Parameter Category | Specific Parameter | Typical Value/Range | Data Output | Application Significance |

|---|---|---|---|---|

| Spatial Resolution | Accuracy (with DOP <1, ≥7 satellites) | ~1.7m average error [8] | Latitude, Longitude | Determines precision of habitat use and site fidelity studies. |

| Temporal Resolution | Fix Interval | Every 5 minutes (conservative) to seconds [8] [9] | Timestamp, Coordinates | Influences detection of fine-scale movement bouts and behaviors. |

| Sampling Configuration | Dilution of Precision (DOP) Threshold | ≤1 [8] | Positional Covariance | Indicator of location fix quality and reliability. |

| Minimum Satellites | ≥7 [8] | Satellite Count | Affects fix success rate, especially in complex terrain. |

Accelerometer Specifications and Data Outputs

Accelerometers capture high-frequency data on animal posture and motion by measuring acceleration forces in three orthogonal dimensions.

Table 2: Key Accelerometer Parameters and Derived Metrics

| Parameter Category | Specific Parameter | Typical Value/Range | Derived Metric | Application Significance |

|---|---|---|---|---|

| Spatial & Temporal Resolution | Sampling Frequency | 10 Hz – 25 Hz [8] [9] | Raw X, Y, Z acceleration | Higher frequency captures more nuanced behaviors. |

| Dynamic Range | ±2g [8] | Gravitational & Motion Components | Must be suited to the species' movement intensity. | |

| Data Processing Level | Raw Data | 10-25 values per second per axis [2] | Time-series acceleration | Allows for flexible post-processing and feature extraction. |

| Averaged/Aggregated Data | 5-minute intervals [2] | Mean, SD, VeDBA | Reduces data volume for long-term deployments. | |

| Key Derived Metrics | Overall Dynamic Body Acceleration (ODBA) | Sum of dynamic body accelerations [9] | ODBA Value | Proxy for energy expenditure; useful for behavior detection. |

| Vector of Dynamic Body Acceleration (VeDBA) | (\sqrt{DX^2 + DY^2 + DZ^2}) [9] | VeDBA Value | Alternative movement/energy proxy, potentially more robust. | |

| Static Acceleration | Low-pass filtered signal [10] | Animal Posture/Orientation | Indicates body position (e.g., head-up/down for grazing). |

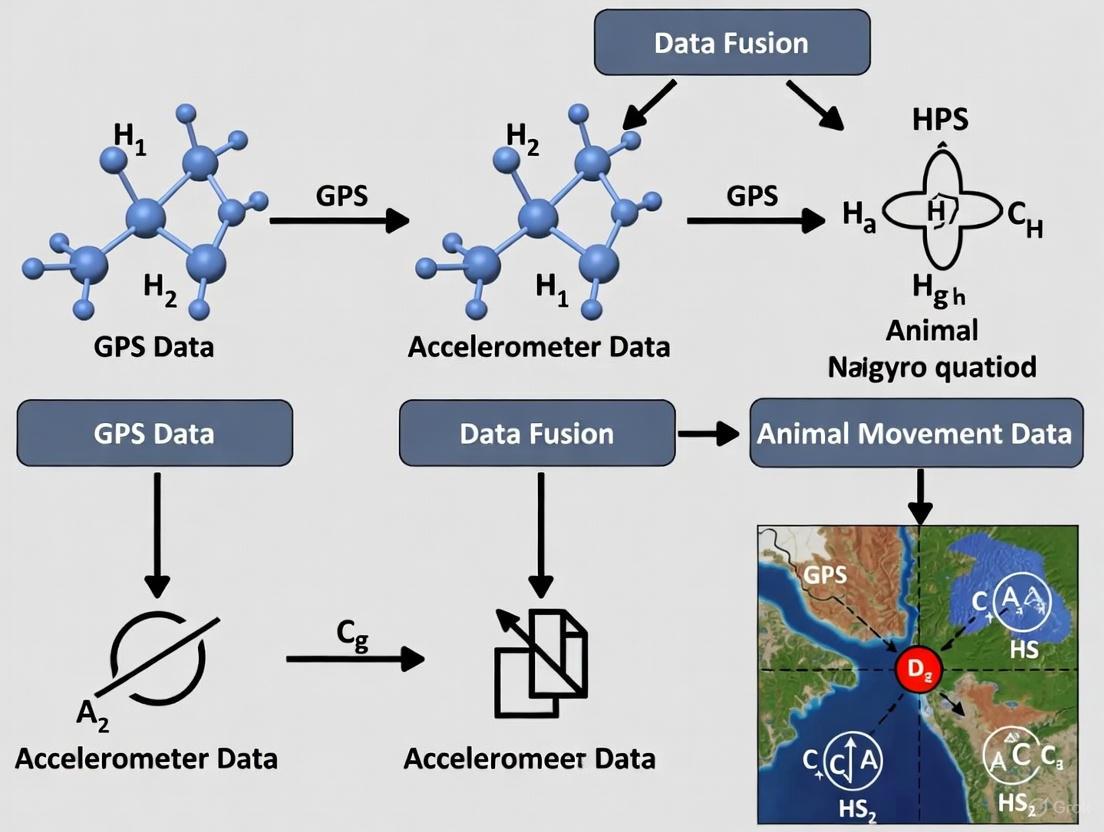

The following workflow diagram illustrates the primary data stream from raw sensor collection to the creation of validated behavioral models.

Figure 1: Primary data processing workflow from raw sensor data to behavioral classification.

Derivation and Application of ODBA

Overall Dynamic Body Acceleration (ODBA) is a computationally efficient metric that serves as a validated proxy for energy expenditure in moving animals. The calculation involves separating the dynamic components of acceleration from the static gravitational component [9].

The standard calculation procedure is as follows:

- Raw Data Acquisition: Collect tri-axial acceleration data (X, Y, Z axes) at a high frequency (e.g., 10-25 Hz).

- Signal Separation: Apply a high-pass filter or more simply, calculate the running mean for each axis over a specified window (e.g., 1-5 seconds). The static component (SA) is the mean acceleration. The dynamic component (DA) is the raw acceleration minus the static component. ( DAx = Rawx - SA_x ) (Similarly for Y and Z axes)

- Summation: Sum the absolute values of the dynamic components across all three axes for each time point. ( ODBA = |DAx| + |DAy| + |DA_z| )

ODBA values can be used as instantaneous measures or averaged over longer periods (e.g., 5-10 minutes) to relate to broader activity budgets [9].

Behavioral Classification Using Integrated Data

The power of sensor integration is fully realized when ODBA and location data are fused to classify specific behaviors using machine learning models. The following diagram details this classification logic.

Figure 2: Behavioral classification logic using ODBA and location data.

Experimental Protocols for Method Validation

Protocol 1: Training a Behavioral Classification Model

This protocol outlines the steps for developing a supervised machine learning model to classify animal behavior from accelerometer and GPS data, as validated in cattle studies [8].

- Device Deployment: Fit animals with collars containing tri-axial accelerometers (sampling at ≥10 Hz) and GPS sensors (sampling every 5 minutes). Secure the device firmly on the neck to minimize rotational artifacts.

- Reference Data Collection: Simultaneously record the behavior of equipped animals on video for a sufficient duration (e.g., covering multiple daily cycles). Ethogram should include defined classes: Grazing, Ruminating, Lying, Standing, Walking.

- Data Synchronization: Precisely synchronize the timestamps of the video observations with the accelerometer and GPS data streams.

- Feature Extraction: For the accelerometer data aligned with each behavior bout, extract 100+ features in both time and frequency domains from each axis. This includes measures like mean, variance, skewness, kurtosis, and spectral energy bands.

- Model Training: Train a Random Forest classifier using the extracted features as inputs and the video-identified behaviors as the target labels. Use a majority (e.g., 70-80%) of the data for training.

- Model Validation: Test the trained model on the remaining held-out data. Calculate a confusion matrix and overall accuracy. Expect best-case accuracy >0.93 for distinct behaviors like grazing [8].

Protocol 2: Remote Detection of Nesting Events

This protocol, adapted from studies on ground-nesting birds like sandgrouse, uses GPS and ODBA to detect cryptic breeding events without disruptive nest visits [9].

- Sensor Programming: Deploy tags programmed to collect high-resolution GPS fixes (e.g., every 30 minutes) and ODBA readings (e.g., every 10 minutes at 25 Hz).

- Threshold Determination: Using a initial training dataset, establish species- and sex-specific thresholds for:

- ODBA: A significant drop in average daily ODBA indicates reduced activity due to incubation.

- Spatial Fidelity: A consistent daily location (e.g., median daily coordinates within a small radius) indicates a nest site.

- Event Detection Algorithm:

- Calculate daily median location and average ODBA for each individual.

- Flag potential nesting events when an individual's ODBA falls below and spatial fidelity rises above the predefined thresholds for more than two consecutive days.

- The nest location is estimated as the median coordinates of the flagged days.

- Field Validation: Select a subset of remotely detected nests for field verification to ground-truth and refine the algorithm's accuracy, which can exceed 90% [9].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Materials and Analytical Tools for Sensor-Based Animal Tracking

| Category/Item | Specification/Example | Primary Function in Research |

|---|---|---|

| Biologging Device | Custom collar (e.g., Digitanimal) or commercial tag (e.g., Ornitela, Druid) [8] [9] | Houses sensors, battery, and memory; physically attached to the animal to collect raw data. |

| Tri-axial Accelerometer | MEMS-based, ±2g dynamic range, 10-25 Hz sampling [8] | Measures fine-scale head and neck movements essential for classifying specific behaviors. |

| GPS Module | Configurable fix rate (e.g., 5 min intervals), DOP threshold <1 [8] | Provides spatiotemporal context, enabling analysis of habitat use and large-scale movement. |

| Data Storage/Transmission | SD card for onboard storage; GSM/UHF for remote download [8] [2] | Secures the collected data for subsequent analysis, critical in remote environments. |

| Machine Learning Library | Random Forest, Discriminant Analysis in R or Python [8] [10] [2] | Classifies raw sensor data into ethologically meaningful behavior categories. |

| Visualization Software | DynamoVis [11], moveVis [11] | Creates static and animated visualizations of movement paths and associated behaviors. |

Successful implementation of an integrated GPS-accelerometer system requires careful consideration of several practical factors. Battery life is a primary constraint, often dictating a trade-off between GPS fix frequency and deployment duration; less frequent fixes conserve power [8]. Data volume is another critical consideration, particularly for high-frequency accelerometers, necessitating strategies like onboard processing (e.g., calculating ODBA on the tag) or selective transmission [9] [12]. Furthermore, sensor orientation can vary on free-ranging animals, making it essential to use sensor-agnostic metrics like ODBA or to apply orientation-independent feature extraction methods during machine learning [10]. By meticulously planning these parameters and following the detailed protocols outlined herein, researchers can robustly capture the complex interplay between animal movement, behavior, and the environment.

The integration of multiple sensing modalities, particularly GPS and accelerometers, has revolutionized animal tracking research. Sensor fusion—the process of combining data from multiple sensors to generate more accurate, comprehensive information—transcends the limitations of single-sensor approaches. While GPS provides high-resolution spatial data and accelerometers deliver detailed behavioral insights, their integration creates a synergistic effect that enables researchers to address complex ecological questions that were previously intractable. This approach has proven particularly valuable for studying cryptic behaviors and elusive species where direct observation is challenging or impossible, offering new insights into animal movement ecology, conservation biology, and behavioral research.

Theoretical Framework: Levels of Sensor Fusion

Sensor fusion algorithms can be categorized into three distinct levels, each offering different advantages for biological research [13]:

- Low-Level (Raw Data) Fusion: Raw data from multiple sources are combined before feature extraction. This approach preserves the most information but requires significant computational resources and sophisticated processing algorithms.

- Feature-Level (Intermediate) Fusion: Features are extracted from each sensor data stream independently, then combined for analysis. This balanced approach reduces dimensionality while maintaining critical information.

- Decision-Level (High) Fusion: Each sensor data stream is processed independently to produce preliminary conclusions or classifications, which are then combined to generate a final output. This modular approach allows researchers to leverage existing single-sensor analytical frameworks.

The complementary attributes of GPS and accelerometer data make them particularly well-suited for fusion approaches. GPS data excels at documenting large-scale movements and spatial patterns, while accelerometers capture fine-scale behaviors and energy expenditure. When combined, they provide a multi-scale understanding of animal ecology that neither could deliver independently [9] [14].

Quantitative Evidence: Performance Metrics of Sensor Fusion

Recent studies have demonstrated the superior performance of sensor fusion approaches compared to single-sensor methodologies across multiple taxa and research applications.

Table 1: Performance Comparison of Sensor Modalities in Nest Detection of Steppe Birds

| Sensor Modality | Success Rate | Key Advantages | Limitations |

|---|---|---|---|

| GPS-Only | ~95% | Accurate location data; Effective for spatial pattern analysis | May miss brief behavioral events; Limited behavioral context |

| Accelerometer-Only (ODBA) | ~100% | Excellent for detecting behavioral changes; High temporal resolution | Limited spatial information; Requires behavior validation |

| Combined GPS-ACC | ~85-95% | Enables correlation of location and behavior; Comprehensive context | More complex data processing; Higher power consumption |

In a study detecting breeding events in two elusive ground-nesting steppe bird species, the accelerometer-only approach using Overall Dynamic Body Acceleration (ODBA) data achieved a remarkable 100% success rate in identifying nests, outperforming both GPS-only (~95%) and combined approaches (~85-95%) [9]. This demonstrates that for specific behavioral classifications, accelerometer data may provide sufficient information independently, though the combined approach offers valuable contextual information.

Table 2: Cattle Behavior Classification Accuracy Using Fused Sensor Data

| Behavioral Class | Classification Accuracy | Key Identifying Features |

|---|---|---|

| Grazing | 93% | Characteristic head movement patterns; Moderate activity levels |

| Ruminating | 87% | Rhythmic jaw movements; Stationary position |

| Laying | 91% | Minimal body movement; Low ODBA values |

| Steady Standing | 84% | Limited movement; Upright posture |

In livestock monitoring, a random forest classifier trained on 108 features extracted from triaxial accelerometer data achieved high accuracy in classifying cattle behavior, with particularly strong performance in identifying grazing behavior (93% accuracy) [14]. The integration of GPS data further enhanced the understanding of spatial distribution patterns and pasture usage.

Experimental Protocols and Methodologies

Protocol 1: Remote Detection of Breeding Events in Elusive Species

Application Context: This protocol is designed for monitoring breeding behaviors in ground-nesting birds with biparental incubation care, such as the black-bellied sandgrouse (Pterocles orientalis) and pin-tailed sandgrouse (Pterocles alchata) [9].

Materials and Equipment:

- Solar-powered GPS-GSM tags with 3D accelerometers (e.g., Ornitela OT-9-3GX, Druid Mini)

- Teflon ribbon thoracic harnesses for tag attachment

- Reference video recordings for behavior validation (minimum 238 activity patterns recommended)

- Custom software for data integration and analysis

Methodological Workflow:

- Animal Capture and Tagging: Capture target species using established methods (e.g., night capture) and attach tags using thoracic harnesses. Ensure total tag weight represents <2-3% of body mass.

- Data Collection Parameters:

- Program GPS to record locations at 30-minute intervals

- Set accelerometers to record ODBA at 10-minute intervals (25 Hz) or raw 3D acceleration at 20 Hz for 4s every 20 minutes

- Maintain consistent data collection schedules across individuals

- Sex-Specific Incubation Analysis: Establish distinct temporal windows for incubation periods for each sex, as sandgrouse exhibit biparental care with males incubating at night and females during daytime

- Threshold-Based Classification: Identify incubation days using ODBA thresholds that maximize differentiation between incubation and non-incubation behaviors

- Validation: Correlate remotely detected nesting events with field observations to verify accuracy

Data Analysis:

- Calculate daily average ODBA values and time spent within consistent radius areas

- Apply threshold-based classification to identify successive incubation days

- Determine minimum number of successive incubation days needed to confirm nesting event

- Calculate median coordinates of locations meeting incubation criteria to pinpoint nest sites

Protocol 2: Cattle Behavior Classification and Anomaly Detection

Application Context: This protocol enables automated classification of cattle behavior and detection of anomalous events for livestock management and welfare assessment [14].

Materials and Equipment:

- Neck-mounted collars containing triaxial accelerometers and GPS sensors

- Weatherproof plastic cases for electronic components

- SD memory cards for data storage (minimum 10 Hz sampling capability)

- Cloud computing infrastructure for data centralization and analysis

Methodological Workflow:

- Sensor Deployment: Equip representative animals from the herd with neck collars containing accelerometer and GPS sensors

- Data Collection Parameters:

- Sample accelerometer data at 10 Hz with a dynamic range of ±2 g

- Configure GPS to record location every 5 minutes with maximum DOP threshold of 1

- Ensure GPS seeks signals from minimum of 7 satellites to improve accuracy

- Video Reference Recording: Record video footage of cattle behaviors simultaneously with sensor data collection to create labeled training dataset

- Feature Extraction: Extract 108 features in time and frequency domains from each axis of the accelerometer data

- Model Training: Train random forest classifier using video-validated behavior sequences

- Spatial Analysis: Process GPS data using k-medoids unsupervised machine learning algorithm to track herd location and spatial distribution

Data Analysis:

- Process raw acceleration signals to extract features in both time and frequency domains

- Apply random forest classification algorithm to identify behavioral patterns

- Use clustering algorithms on GPS data to identify herd movement patterns

- Correlate spatial and behavioral data to detect anomalous events

Figure 1: Cattle Behavior Classification Workflow Using Fused Sensor Data

The Researcher's Toolkit: Essential Research Reagents and Solutions

Table 3: Essential Research Reagents and Solutions for Sensor Fusion Studies

| Research Reagent | Specifications | Research Function | Example Applications |

|---|---|---|---|

| GPS-GSM Biologgers | Solar-powered, 5-9g weight, <2-3% body mass | Records high-resolution location data; Enables remote data transmission | Movement ecology, habitat use, migration studies [9] |

| Triaxial Accelerometers | 10-25 Hz sampling, ±2g dynamic range | Quantifies fine-scale behavior through ODBA; Classifies specific behaviors | Behavior classification, energy expenditure, anomaly detection [9] [14] |

| Sensor Fusion Algorithms | Random Forest, k-medoids clustering, threshold-based classification | Integrates multiple data streams; Improves classification accuracy | Behavior identification, event detection, pattern recognition [13] [14] |

| Data Visualization Tools | ColorBrewer, Viz Palette, Chroma.js Color Palette Helper | Creates accessible visualizations; Ensures colorblind-safe palettes | Data communication, result presentation, publication graphics [15] [16] |

| Quality Control Frameworks | Standardized validation protocols, false detection filters | Ensures data integrity; Removes erroneous detections | Large-scale network data, multi-study comparisons [17] |

Analytical Framework for Route Identification and Movement Analysis

The synergistic value of sensor fusion is particularly evident in the analysis of animal movement routes and spatial behavior patterns. A quantitative framework for identifying route-use leverages both GPS spatial data and accelerometer-derived behavioral information [18].

Figure 2: Analytical Framework for Identifying Animal Route Patterns

Key Analytical Components:

- Path Congruence Measurement: Quantifies the directional variability and fidelity of path reuse across multiple movements between locations

- Revisit Analysis: Examines recursions to target destinations and the determinism in ordering these visits

- Process Inference: Differentiates between routes generated by cognitive processes (memory, learning) versus environmental constraints (corridors, barriers)

This framework enables researchers to distinguish between movement capacity routes (generated by physical constraints and substrate characteristics) and cognitive routes (resulting from memory mechanisms and spatial learning), providing insights into the underlying processes shaping animal movement decisions [18].

Implementation Considerations and Best Practices

Successful implementation of sensor fusion approaches requires careful consideration of several practical factors:

Technical Considerations:

- Power Management: Balance sampling frequency with battery life, particularly for GPS sensors which consume significant power [14]

- Data Synchronization: Ensure precise temporal alignment of data streams from different sensors to enable accurate correlation

- Sensor Placement: Consider how device orientation and attachment method affect data quality, particularly for accelerometers [9]

Analytical Considerations:

- Feature Selection: Extract biologically meaningful features from raw sensor data that align with research questions [14]

- Validation Protocols: Implement rigorous ground-truthing procedures using video recording or direct observation to validate automated classifications [9] [14]

- Standardized Metrics: Adopt consistent analytical frameworks and metrics to enable cross-study comparisons and meta-analyses [17]

The synergistic value of sensor fusion extends beyond simple improvements in classification accuracy. By enabling researchers to correlate specific behaviors with precise locations and environmental contexts, these integrated approaches support more sophisticated analyses of animal-environment interactions, energy landscapes, and the cognitive processes underlying movement decisions, ultimately contributing to more effective conservation strategies and a deeper understanding of fundamental ecological processes.

The integration of GPS and accelerometer technologies has revolutionized animal movement ecology, enabling researchers to remotely track location and infer behavior with unprecedented detail. The core challenge in designing effective tracking studies lies in balancing three competing hardware constraints: battery life, which determines study duration; data resolution, which governs the spatiotemporal and behavioral detail; and form factor, which is dictated by animal size and welfare. This document provides application notes and experimental protocols for selecting and deploying integrated GPS-accelerometer systems, framed within a broader thesis on wildlife telemetry. The principles outlined are essential for researchers and scientists conducting preclinical field studies or ecological monitoring.

Quantitative Hardware Comparison

The selection of an appropriate tracking device requires a critical evaluation of its specifications against research objectives and animal welfare constraints. The following table summarizes key performance metrics for common tracking technologies relevant to scientific research.

Table 1: Performance Specifications of Wildlife Tracking Technologies

| Technology Type | Typical Weight Range | Spatial Accuracy | Key Data Outputs | Primary Impact on Battery Life |

|---|---|---|---|---|

| GPS (Cellular/Satellite) | 5g - 100+g [19] | 3-10 meters [20] | High-resolution location fixes, movement speed [21] | Fix frequency, transmission interval, cellular/satellite network usage [19] |

| Platform Transmitter Terminal (PTT) | ~2g and above [19] | Lower than GPS [19] | Large-scale movement paths, migratory stopover sites [19] | Doppler shift calculation and satellite data transmission [19] |

| Accelerometer | Varies (often integrated) | N/A | Overall Dynamic Body Acceleration (ODBA), activity states, posture [9] [21] | Sampling frequency (Hz), on-board processing vs. raw data transmission [9] |

| Integrated GPS-Accelerometer | 6g and above [9] | 3-10 meters (GPS dependent) | Location, speed, ODBA, classified behaviors (e.g., grazing, resting) [9] [21] | Combination of GPS fix frequency and accelerometer sampling rate [9] [21] |

For the form factor, a fundamental welfare guideline is that the device should not exceed 3-5% of the animal's body mass [19]. This is particularly critical for small, migratory species where excess weight can impact survival and behavior [19]. Integrated devices must also have an ergonomic design, often employing leg-loop harnesses made with soft, degradable materials to minimize irritation and injury [19].

Experimental Protocols for System Validation

Before full deployment, rigorous validation of the integrated hardware system is necessary to ensure data quality and confirm that the device and attachment method do not adversely affect the study subject.

Protocol: Behavioral Classification Using Machine Learning

This protocol details the process of classifying animal behavior from integrated sensor data, as demonstrated in cattle foraging studies [21].

- Sensor Deployment: Fit subjects with integrated GPS-accelerometer collars. Configure GPS to collect location fixes at regular intervals (e.g., every 5 minutes). Set the accelerometer to record 3-axis data at a sufficient frequency (e.g., 10-25 Hz) to capture fine-scale movements [9] [21].

- Ground Truth Data Collection: Simultaneously record the subjects' behaviors using high-definition field cameras for a minimum of 12 hours per day. Ethogram the video to label behaviors such as grazing, ruminating, walking, and resting. Precisely synchronize video timestamps with sensor data timestamps [21].

- Data Preprocessing: Calculate derived movement metrics from raw data. These include:

- Speed from sequential GPS fixes.

- Overall Dynamic Body Acceleration (ODBA) from the accelerometer's x, y, and z axes.

- Actindex, a measure of activity intensity [21].

- Model Training and Validation: Use the ground-truthed data to train supervised machine learning models (e.g., Random Forest, XGBoost). Employ data partition methods like cross-validation (CV), which has shown high reliability for complex behaviors like foraging-by-posture classification. Evaluate model performance based on accuracy metrics [21].

Protocol: Remote Nest Detection in Ground-Nesting Birds

This framework leverages sensor data to remotely detect cryptic life-history events, minimizing disruptive field visits [9].

- Hardware Configuration: Tag subjects with high-resolution GPS and accelerometer devices. Program tags to collect multiple GPS fixes per burst and ODBA readings at regular intervals (e.g., every 10 minutes) [9].

- Define Behavioral Thresholds: Using a subset of data with known outcomes (e.g., verified nest attendance), establish threshold values for key parameters. For incubating birds, this typically involves a significant drop in daily ODBA and a reduction in the daily radius of movement as the individual remains at the nest [9].

- Event Detection and Classification: Apply the thresholds to the full dataset to identify days of probable incubation. A nesting event is confirmed when a sequence of successive incubation days (e.g., 2-3 days) is detected. Validation studies have shown success rates exceeding 90% using this method [9].

The workflow for establishing and applying such a detection framework is summarized below.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful deployment of an integrated tracking study relies on a suite of specialized hardware, software, and materials.

Table 2: Essential Research Reagents and Materials for Integrated Tracking Studies

| Item | Function/Application | Key Considerations |

|---|---|---|

| Integrated GPS-Accelerometer Tag | Core data logger for capturing location and movement. | Weight must be <3-5% of body mass; solar charging can extend battery life; requires remote data transmission (GSM/Iridium) or local download [9] [19]. |

| Machine Learning Software (e.g., R, Python with scikit-learn) | For classifying raw sensor data into defined behavioral states. | Enables high-accuracy behavior identification (e.g., grazing vs. resting) from accelerometer and GPS data [21]. |

| Leg-Loop Harness | A common attachment method for birds and small mammals. | Should be constructed from soft, degradable materials (e.g., elastic) to minimize long-term impact and avoid injury [19]. |

| Triaxial Accelerometer | Sensor measuring dynamic body acceleration in three spatial dimensions. | Data is used to calculate Overall Dynamic Body Acceleration (ODBA), a proxy for energy expenditure and activity [9]. |

| Ground Truth Video Recording System | Provides labeled data for training and validating machine learning behavior models. | Requires continuous recording capability and precise time synchronization with sensor data [21]. |

Logical Framework for Hardware Selection

The decision-making process for selecting the optimal hardware configuration is iterative and must be grounded in the primary research question. The following diagram outlines the logical workflow.

From Raw Data to Behavioral Insights: A Methodological Blueprint

The integration of GPS and accelerometer technologies has revolutionized animal tracking research, enabling scientists to remotely monitor movement, behavior, and physiology with unprecedented detail. This protocol document synthesizes current methodologies for attaching biologging devices and determining optimal sampling configurations. Proper implementation of these protocols is critical for collecting high-quality data while minimizing impacts on animal welfare and ensuring the validity of research outcomes. These standardized approaches support the broader objectives of ecological research, conservation planning, and precision livestock management.

Attachment Strategies Across Taxa

The attachment method and placement of biologging devices must be tailored to the target species' morphology, ecology, and behavior. The following section details species-specific strategies documented in recent literature.

Table 1: Device Attachment Strategies for Various Animal Taxa

| Taxon | Common Name | Attachment Method | Device Placement | Considerations |

|---|---|---|---|---|

| Birds | Sandgrouses [9] | Ribbon Teflon thoracic harness | Torso | Harness and device weighed <2-3% of body mass. |

| Birds | Cinereous Vulture [22] | Leg-loop harness | Leg | Used Ornitela models (OT-30, OT-50). |

| Marine Reptiles | Loggerhead & Green Turtles [23] | Adhesive (VELCRO + superglue) & waterproof tape | Carapace (1st and 3rd scutes) | Third scute placement significantly reduced drag. |

| Livestock | Cattle [21] | Collar with buckle | Neck | Fit adjusted to allow comfortable finger space between collar and neck. |

| Small Ruminants | Dairy Goats [10] | Not specified | Ear | Mounting location suitable for classifying feeding and postural behaviors. |

Impact of Device Placement on Data and Animal

Device placement significantly influences both the welfare of the study animal and the quality of the collected data.

- Hydrodynamic Impact in Marine Species: For sea turtles, Computational Fluid Dynamics (CFD) modeling revealed that attaching a device to the first vertebral scute significantly increased the drag coefficient compared to the third scute [23]. This highlights the importance of considering placement not just for data quality, but also for minimizing energetic costs and behavioral impacts on the animal.

- Behavioral Classification Accuracy: The same study on sea turtles demonstrated that device position also affects data utility. Random Forest models achieved significantly higher accuracy in classifying behavior when the accelerometer was placed on the third scute versus the first scute [23].

Sampling Frequency Protocols

Determining the appropriate sampling frequency balances the need to capture essential behavioral information against constraints of device battery life and data storage capacity [24].

Theoretical Foundation: The Nyquist-Shannon Theorem

The Nyquist-Shannon sampling theorem states that the sampling frequency must be at least twice the frequency of the fastest body movement essential to characterizing the behavior of interest [24]. Failure to meet this Nyquist frequency results in signal aliasing, which distorts the original signal and compromises data integrity.

Empirical Guidelines for Different Research Objectives

Practical applications show that the theoretical minimum is often insufficient for complex behavioral classification.

- Short-Burst vs. Sustained Behaviors: Research on European pied flycatchers found that classifying short-burst behaviors like swallowing food (mean frequency: 28 Hz) required a sampling frequency of 100 Hz, which exceeds the Nyquist frequency [24]. In contrast, longer-duration behaviors like flight could be adequately characterized with a much lower sampling frequency of 12.5 Hz [24].

- Energy Expenditure Estimation: For calculating proxies like the Overall Dynamic Body Acceleration (ODBA), lower sampling frequencies can be sufficient. Studies suggest that frequencies as low as 10 Hz can produce consistent ODBA calculations for certain behaviors [24].

- General Recommendations: For studies with no strict battery or storage constraints, a sampling frequency of at least two times the Nyquist frequency is recommended for optimal signal representation. For classifying short-burst behaviors, a minimum of 1.4 times the Nyquist frequency is required [24].

Table 2: Recommended Sampling Frequencies for Various Research Applications

| Research Objective | Target Behavior | Recommended Sampling Frequency | Key Reference |

|---|---|---|---|

| Behavior Classification | Short-burst behaviors (e.g., swallowing) | 100 Hz | [24] |

| Behavior Classification | Sustained, rhythmic behaviors (e.g., flight) | 12.5 Hz | [24] |

| Behavior Classification | General sea turtle behavior | 2 Hz | [23] |

| Energy Expenditure | Overall Dynamic Body Acceleration (ODBA) | 10 Hz or lower | [24] |

| Movement Tracking | GPS for cattle grazing patterns | Every 5-10 minutes | [21] |

| Movement Tracking | GPS for sandgrouse nest detection | Every 20-30 minutes | [9] |

Integrated Experimental Protocols

This section outlines detailed methodologies for implementing the above strategies in specific research contexts.

Protocol 1: Remote Detection of Breeding Events in Ground-Nesting Birds

This protocol, adapted from research on sandgrouses, enables the detection of nesting events using a threshold-based classification of GPS and accelerometer data [9].

- Device Attachment: Fit birds with a GPS-GSM tag using a Ribbon Teflon thoracic harness. The combined weight of the tag and harness must not exceed 3% of the bird's body mass [9].

- Data Collection:

- Data Processing and Nest Detection:

- Define Incubation Windows: Using field-validated data, establish sex-specific daily time windows when incubation typically occurs.

- Apply Thresholds: For each individual and day, calculate the average ODBA and the time spent within a consistent, small radius.

- Identify Incubation Days: Classify a day as an "incubation day" if both metrics fall below pre-determined thresholds during the specified incubation window.

- Confirm Nesting Event: A nesting event is confirmed when a minimum number of successive incubation days are identified (e.g., 2-3 days) [9].

Protocol 2: Behavioural Classification in Captive Sea Turtles

This protocol optimizes accelerometer settings for classifying behavior in captive sea turtles, with applicability to wild populations [23].

- Device Attachment:

- Clean the attachment site on the carapace (preferably the third vertebral scute) with 70% ethanol and let it dry.

- Superglue VELCRO patches to both the scute and the accelerometer.

- Securely attach the device and seal it against water using T-Rex waterproof tape.

- Device Configuration and Ground-Truthing:

- Set the accelerometer's sampling frequency to 100 Hz during a pilot deployment to determine the appropriate dynamic range (±2g or ±4g).

- Simultaneously record turtle behavior using video cameras (e.g., GoPro) synchronized to UTC time.

- Annotate observed behaviors from the video using software like BORIS to create a labeled dataset [23].

- Data Analysis and Model Training:

- Data Segmentation: Split the synchronized accelerometer data into windows of 1-second and 2-second lengths.

- Calculate Summary Metrics: For each window, compute 18 summary metrics (e.g., mean, variance, pitch, roll) from the tri-axial data.

- Train Machine Learning Model: Use a Random Forest classifier with individual-based k-fold cross-validation to train a behavioral classification model. The model can achieve high accuracy (>0.83) with a final sampling frequency as low as 2 Hz [23].

The following workflow diagram illustrates the key steps for behavioral classification using accelerometer data.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of tracking studies requires a suite of reliable hardware, software, and analytical tools.

Table 3: Essential Research Reagents and Materials for Tracking Studies

| Item Name | Type | Function/Application | Example Use Case |

|---|---|---|---|

| Ornitela OT-9-3GX | GPS-GSM Tag | Collects GPS fixes and raw 3D acceleration data. | Sandgrouse tracking and nest detection [9]. |

| Druid Mini Tag | GPS-GSM Tag | Records GPS fixes and ODBA readings; lightweight. | Tracking smaller bird species like pin-tailed sandgrouse [9]. |

| Axy-trek Marine | Accelerometer | Tri-axial accelerometer for aquatic environments. | Behavior classification in sea turtles [23]. |

| LiteTrack Iridium 750+ | GPS-Accelerometer Collar | Combines GPS positioning with accelerometry for large animals. | Cattle foraging behavior classification [21]. |

| Ribbon Teflon Harness | Attachment Material | Secure, durable device attachment for birds. | Fitting tags on sandgrouses and vultures [9] [22]. |

| BORIS | Software | Behavioral observation and annotation from video. | Ground-truthing accelerometer data for sea turtles [23]. |

| Random Forest | Analytical Algorithm | Supervised machine learning for behavior classification. | Classifying behavior from accelerometer metrics [23] [21]. |

| Kernel Density Estimation (KDE) | Analytical Tool | Spatial analysis to identify core activity areas from GPS data. | Mapping preferred grazing territories from cattle tracking data [25]. |

Adherence to standardized protocols for device attachment and sampling frequency is fundamental to the success of biologging studies. As evidenced by the cited research, careful consideration of species-specific morphology and behavior, coupled with a clear understanding of research objectives relative to the Nyquist-Shannon theorem, allows researchers to optimize data quality. The continued refinement and adoption of these protocols will enhance the reliability and comparability of tracking data across studies, ultimately advancing our understanding of animal ecology and supporting effective conservation and management strategies.

The integration of GPS and accelerometer biologging technologies has revolutionized animal movement ecology, enabling researchers to remotely study crucial life-history events like reproduction [9]. A critical step in transforming raw sensor data into meaningful biological insights is feature engineering—the process of extracting informative metrics from the unstructured data stream. This document provides detailed application notes and protocols for extracting time and frequency-domain metrics from raw accelerometry data, specifically contextualized within wildlife tracking research. Proper feature engineering is fundamental for distinguishing complex behaviors, such as identifying nesting events in ground-nesting birds like the black-bellied and pin-tailed sandgrouse, where it enables the remote detection of incubation with high accuracy [9].

Background and Significance

Modern biologging devices deployed on animals collect high-resolution spatiotemporal and sensor data, including 3D acceleration [9]. The Overall Dynamic Body Acceleration (ODBA), derived from accelerometer data, has become a widely used proxy for energy expenditure and behavior classification [9]. In wildlife studies, the primary challenge lies in converting raw acceleration signals, which are often noisy and complex, into discriminative features that can reliably classify behaviors with minimal human disturbance. This is particularly vital for conservation-dependent species sensitive to human presence [9]. The efficacy of this approach is demonstrated by its success in remotely detecting breeding events in elusive species with a success rate exceeding 90% [9].

Data Acquisition and Pre-processing

Data Collection Protocols

The first step involves the collection of raw accelerometry data from animal-borne tags.

- Device Deployment: Devices should be securely attached to the study animal. In avian studies, this is often achieved using a Ribbon Teflon thoracic harness. The combined weight of the tag and harness must constitute less than 2-5% of the animal's body mass to avoid impacting natural behavior [9].

- Sensor Specifications: Data should be collected from a tri-axial accelerometer. Sampling frequencies can vary; protocols have successfully used rates from 10 Hz to 25 Hz, with data often collected in bursts to conserve battery life [9].

- Data Output: The raw output should include timestamped acceleration values (in g) for the three orthogonal axes: heave (surge), sway, and heave (heave).

Pre-processing Workflow

Raw signals require pre-processing before feature extraction to minimize noise and other confounding factors.

- Signal Filtering: Apply a band-pass filter (e.g., a 4th order Butterworth filter with cut-off frequencies of 0.2 Hz and 10 Hz) to remove high-frequency noise and low-frequency drift. Alternatively, wavelet de-noising can be used, though its effect may be secondary to tilt correction [26].

- Tilt Correction and Gravity Removal: This is a critical step. The measured acceleration includes both dynamic acceleration (from movement) and static acceleration (gravity). Use a method like the Moe-Nilssen dynamic tilt correction to rotate the accelerometer coordinates to an earth-vertical frame and subtract the gravity component [26]. This correction has been shown to significantly impact extracted features and improve behavioral discrimination [26].

- Calculate Derived Metrics:

- Vector Magnitude (VM):

VM = sqrt(x² + y² + z²) - Overall Dynamic Body Acceleration (ODBA): The sum of the absolute dynamic acceleration values from the three axes after tilt correction [9].

- Vector Magnitude (VM):

Feature Extraction Methodologies

This section details protocols for extracting time and frequency-domain features from the pre-processed accelerometer signals, which can be applied to segments of data (e.g., 2-10 second windows) corresponding to specific behaviors.

Time-Domain Feature Extraction

Time-domain features are calculated directly from the accelerometer signal in the time series. The table below summarizes key metrics for each axis (X, Y, Z) and the vector magnitude (VM).

Table 1: Core Time-Domain Features for Accelerometry Data

| Feature Category | Specific Metric | Calculation / Description | Biological / Behavioral Interpretation | ||||||

|---|---|---|---|---|---|---|---|---|---|

| Central Tendency | Mean | mean(signal) |

Average body posture or orientation. | ||||||

| Median | median(signal) |

Robust measure of central tendency. | |||||||

| Dispersion | Variance | var(signal) |

Volatility of the movement. | ||||||

| Standard Deviation | std(signal) |

Overall movement intensity. | |||||||

| Range | max(signal) - min(signal) |

Total range of motion. | |||||||

| Distribution Shape | Skewness | E[((x - μ)/σ)³] |

Asymmetry of the signal distribution. | ||||||

| Kurtosis | E[((x - μ)/σ)⁴] |

"Peakedness" and heaviness of tails of the distribution. | |||||||

| Signal Magnitude | Signal Magnitude Area (SMA) | `∑( | x | + | y | + | z | )` | Gross motor activity. |

| Sum of Vector Magnitude | ∑(VM) |

Total movement energy. |

Frequency-Domain Feature Extraction

Frequency-domain features provide information about the periodicity and rhythm of movements. The protocol requires converting the signal from the time to the frequency domain using a Fast Fourier Transform (FFT).

Table 2: Core Frequency-Domain Features for Accelerometry Data

| Feature Category | Specific Metric | Calculation / Description | Biological / Behavioral Interpretation |

|---|---|---|---|

| Spectral Power | Spectral Energy | ∑(PSD) |

Total power of the movement signal. |

| Bandwise Energy | Energy in specific frequency bands (e.g., 0-1 Hz, 1-3 Hz). | Differentiates between slow/postural and fast/cyclic movements. | |

| Central Tendency | Spectral Centroid | ∑(f * PSD) / ∑(PSD) |

"Center of mass" of the spectrum, indicates dominant movement frequency. |

| Dispersion | Spectral Spread | √[∑((f - centroid)² * PSD) / ∑(PSD)] |

Spread of the spectrum around the centroid. |

| Peak Features | Peak Frequency | Frequency of the maximum magnitude in the spectrum. | Dominant rhythmic frequency (e.g., stride frequency). |

| Peak Magnitude | Magnitude at the peak frequency. | Strength of the dominant rhythmic movement. |

Hjorth Parameters

Hjorth parameters are computationally efficient features that describe basic statistical properties of a signal in the time domain. They are particularly useful for characterizing the time series of animal movement [27].

- Activity: Represents the signal variance, equivalent to the mean power (

var(signal)). - Mobility: The square root of the ratio of the variance of the first derivative of the signal to the variance of the signal. It approximates the mean frequency.

- Complexity: Measures the similarity of the signal to a pure sine wave. It is the ratio of the mobility of the first derivative of the signal to the mobility of the signal itself.

Experimental Validation and Application

The utility of these extracted features is validated by applying machine learning classifiers to discriminate between behaviors.

Table 3: Performance of Feature-Based Classification in Behavioral Studies

| Study Context | Feature Set Used | Classifier(s) | Key Performance Metric (Result) |

|---|---|---|---|

| Fall Detection in Humans [27] | 44 features from time, frequency domains & Hjorth parameters | SVM, k-NN, ANN, J48, RF | F1-Score: 95.23% (Falls), 99.11% (Non-Falls) on MobiAct dataset. |

| Remote Nest Detection in Sandgrouse [9] | ODBA & GPS-derived metrics (e.g., radius area) | Threshold-based classification | Nest detection success rate: ~95% (GPS-only), 100% (ODBA-only). |

| Gait Analysis [26] | Tilt-corrected time and frequency features | Statistical analysis | Improved discrimination between pathological (Parkinson's, neuropathy) and healthy groups. |

The experimental workflow for validating features in a behavioral classification task is as follows:

The Scientist's Toolkit

Table 4: Essential Research Reagents and Solutions for Accelerometry Feature Engineering

| Tool / Resource | Type | Primary Function in Research |

|---|---|---|

| GPS-Accelerometer Tags (e.g., Ornitela, Druid) | Hardware | Collects high-resolution spatiotemporal and acceleration data from free-ranging animals [9]. |

| Tri-axial Accelerometer | Sensor | Measures acceleration along three orthogonal axes, providing the raw data for movement analysis. |

| Dynamic Tilt Correction Algorithm | Algorithm | Isolates dynamic body acceleration by removing the gravitational component, a crucial pre-processing step [26]. |

| Fast Fourier Transform (FFT) | Algorithm | Transforms a signal from the time domain to the frequency domain, enabling the calculation of spectral features. |

| Overall Dynamic Body Acceleration (ODBA) | Metric | Summarizes body movement intensity and serves as a proxy for energy expenditure [9]. |

| Machine Learning Classifiers (e.g., RF, SVM) | Software | Uses extracted features to automatically identify and classify animal behaviors from sensor data. |

The integration of Global Positioning System (GPS) and accelerometer data represents a transformative advancement in animal tracking research, enabling scientists to decipher complex animal behaviors with minimal human disturbance. Modern biologging technologies allow researchers to gain a better understanding of animal movements, offering opportunities to measure survival and remotely study breeding success of wild birds by locating nests, which is particularly valuable for species whose nests are difficult to find or access or when disturbances can impact breeding outcomes [9]. For elusive, cryptic, or nocturnal species that are challenging to observe directly, these technologies provide an unprecedented window into behavioral ecology, movement patterns, and physiological responses to environmental changes [28] [29].

The fundamental strength of this integrated approach lies in the complementary nature of GPS and accelerometer data. GPS provides macro-scale spatial movement patterns and location data, while accelerometers capture micro-scale body movements and orientations through tri-axial acceleration measurements [28]. When combined with machine learning techniques, these data streams enable automated, accurate classification of specific behaviors, ranging from basic locomotor activities to complex behavioral sequences such as breeding events in ground-nesting birds [9] or feeding patterns in nocturnal primates [29]. This integrated approach is particularly valuable for conservation-dependent species where understanding behavioral ecology is crucial for population management but where human presence may cause detrimental disturbances [9].

Table 1: Key Data Types in Integrated Animal Tracking

| Data Type | Spatial Scale | Temporal Resolution | Primary Behavioral Information | Common Sensors |

|---|---|---|---|---|

| GPS | Macro (Landscape) | Minutes to Hours | Location, Home Range, Migration Routes | GPS Loggers |

| Accelerometer | Micro (Body Movement) | Sub-second to Seconds | Body Orientation, Motion Intensity, Specific Behaviors | Tri-axial Accelerometers |

| Magnetometer | Micro (Orientation) | Sub-second to Seconds | Compass Heading, Body Position | Tri-axial Magnetometers |

| ODBA | Derived Metric | Seconds to Minutes | Energy Expenditure, Activity Budgets | Calculated from Accelerometry |

Machine Learning Approaches for Behavioral Classification

Random Forest Models

Random Forest (RF) models represent a powerful supervised machine learning approach for classifying animal behaviors from biologging data. RF models generate multiple decision trees (e.g., 300+) during training, with the most frequent predicted classification from the ensemble of trees selected as the final behavior prediction for each time period [30]. This ensemble approach reduces overfitting—a common problem where models perform well on training data but poorly on new data—and enhances classification accuracy [30].

The effectiveness of RF models has been demonstrated across diverse species and research contexts. In a study of Javan slow lorises (Nycticebus javanicus), RF models achieved remarkable accuracy in classifying 21 distinct combinations of behaviors and postural modifiers, with resting behaviors identified with 99.16% mean accuracy, feeding behaviors with 94.88% accuracy, and locomotor behaviors with 85.54% accuracy [29]. Similarly, RF models applied to domestic cat (Felis catus) accelerometer data achieved F-measure scores up to 0.96 for predicting behaviors such as resting, grooming, and feeding [30].

Three key factors significantly influence RF model performance: (1) the selection and calculation of informative variables derived from accelerometer data; (2) the sampling frequency of acceleration recordings; and (3) balancing the duration of each behavior category in the training dataset [30]. Studies have shown that incorporating additional descriptive variables beyond basic acceleration metrics—such as the dominant power spectrum frequency and amplitude, ratios of Vectoral Dynamic Body Acceleration (VeDBA) to dynamic acceleration, and running standard error of waveforms—can enhance model accuracy by providing more discriminative features for behavior classification [30].

Deep Learning Approaches

Deep learning models represent a more recent advancement in behavioral classification, particularly valuable for handling complex, high-dimensional data and recognizing subtle behavioral patterns that may challenge traditional machine learning approaches. These models automatically learn hierarchical feature representations from raw sensor data, potentially discovering discriminative patterns that might be overlooked in manually engineered features [31].

Convolutional Neural Networks (CNNs) have shown exceptional performance in processing accelerometer data and video-based behavioral analysis. For instance, ResNet-50, a deep CNN architecture, has been successfully employed for animal pose estimation by detecting key anatomical landmarks such as the nose, eyes, ears, and body center [32]. By tracking these keypoints over time, researchers can generate movement trajectories that facilitate the identification of specific behavioral patterns [32].

Multi-object tracking in complex environments, such as space habitats with microgravity-induced erratic movements, has been addressed through sophisticated deep learning frameworks that decouple appearance and motion features via dual-stream inputs [33]. These frameworks employ modality-specific encoders fused through heterogeneous graph networks to model cross-modal spatio-temporal relationships, maintaining identity continuity during occlusions or rapid movements [33].

Recurrent Neural Networks (RNNs), particularly Long Short-Term Memory (LSTM) networks, excel at modeling temporal sequences in behavioral data, making them ideal for recognizing behaviors that unfold over extended periods. The integration of CNN and LSTM architectures (CNN-LSTM) has emerged as a powerful approach for detecting basic behaviors in complex environments, such as monitoring single dairy cows in agricultural settings [32].

Table 2: Comparison of Machine Learning Approaches for Behavioral Classification

| Approach | Key Strengths | Common Applications | Accuracy Range | Computational Demand |

|---|---|---|---|---|

| Random Forest | Handles mixed data types, robust to outliers, provides feature importance | Behavior classification from accelerometer data, activity budgeting | 85-99% [30] [29] | Moderate |

| CNN Architectures | Automatic feature learning, superior image processing | Pose estimation, video-based behavior analysis, trajectory analysis | >96% [32] | High |

| LSTM/RNN | Models temporal sequences, handles time-series data | Behavioral sequence analysis, continuous monitoring | Varies by application | Moderate to High |

| Hybrid CNN-LSTM | Spatio-temporal pattern recognition | Complex behavior detection in variable environments | >90% for cow behavior [32] | High |

Experimental Protocols and Methodologies

Data Collection and Instrumentation

Effective behavioral classification begins with appropriate data collection strategies. Biologging devices should be selected based on species size, behavior, and research questions, with total device weight typically not exceeding 2-5% of the animal's body mass to minimize impact on natural behavior [9]. In a study of sandgrouses, tags (including harness) weighted less than 2% of the birds' body weight (mean ± SD of 1.61 ± 0.37% for black-bellied sandgrouse and 1.84 ± 0.15% for pin-tailed sandgrouse) [9].

Deployment methods vary by species and research context. Captive studies allow for direct behavioral observation synchronized with sensor data collection, enabling the creation of high-quality labeled datasets for supervised learning [34] [30]. For wild species, careful consideration must be given to attachment methods (e.g., collars, harnesses, adhesives), deployment duration, and recovery strategies [9] [29].

Sampling frequency represents a critical consideration balancing behavioral resolution against battery life and data storage. For accelerometer data, sampling rates between 20-40 Hz are often sufficient for most behavioral classification tasks, though lower frequencies may be adequate for broader activity categorizations [30] [29]. In a study on lemon sharks, classification precision of fine-scale behaviors did not decrease significantly until recording frequency reached as low as 5 Hz [29]. GPS fix intervals should be determined based on movement ecology of the study species, ranging from sub-minute intervals for fine-scale movement analysis to hourly locations for broader habitat use patterns [9].

Data Collection Workflow for Behavioral Classification

Data Preprocessing and Feature Engineering

Raw accelerometer and GPS data require substantial preprocessing before behavioral classification. GPS data should be processed to filter spurious data points and reduce common interference patterns using systems such as the Personal Activity Location Measurement System (PALMS), which applies filters to remove points with excess speed, large changes in elevation, or very small distance changes between consecutive points [35].

For accelerometer data, common preprocessing steps include calibration, noise filtering, and segmentation into analysis windows. The resulting data streams are then transformed into feature vectors that capture relevant and predictive information for behavior classification [35]. Feature extraction typically occurs over fixed time windows (e.g., 1-second to 5-second epochs), with multiple features calculated for each axis and their combinations [35] [30].

Table 3: Essential Features for Accelerometer-Based Behavioral Classification

| Feature Category | Specific Features | Behavioral Significance | Calculation Method |

|---|---|---|---|

| Time-Domain | Mean, Standard Deviation, Minimum, Maximum, Median | General activity levels, behavior intensity | Statistical moments of acceleration signals |

| Frequency-Domain | Dominant frequency, Spectral entropy, Band energy | Cyclic behaviors, gait patterns | Fast Fourier Transform (FFT), wavelet analysis |

| Orientation | Pitch, Roll, Static acceleration | Body position, posture | Low-pass filtering, trigonometric functions |

| Movement Dynamics | ODBA, VeDBA, Stroke cycles | Energy expenditure, movement intensity | Vector algebra, high-pass filtering |

Model Training and Validation Protocols

The model training process begins with the creation of a labeled dataset where accelerometer and GPS data are matched with directly observed behaviors. For supervised learning approaches, the dataset is typically split into training (60-80%) and testing (20-40%) subsets, with cross-validation techniques employed to optimize model parameters and reduce overfitting [30].

Critical considerations in model training include addressing class imbalance, where common behaviors may dominate the dataset while rare but biologically important behaviors are underrepresented. Techniques to handle this imbalance include standardizing durations—ensuring the training data incorporates similar durations of each behavior—or employing algorithmic approaches such as synthetic minority oversampling [30].

Validation represents a crucial step in verifying model performance. Independent validation using data not included in model training provides the most realistic assessment of classification accuracy [34] [30]. For wild species, this often requires collecting additional field observations or using video surveillance to verify automated behavior classifications [30] [29].

When applying models to new individuals or populations, cross-individual validation tests whether models generalize across subjects. Studies have shown that model performance can decrease significantly when classifying behavior of individuals not used to train models (e.g., from >98% to 56.1% in one study), highlighting the importance of individual variability in movement signatures [34]. Similarly, using domestic counterparts as surrogates for wild species may yield insufficient accuracy (>55% in pygmy goats predicting ibex behavior), suggesting that calibration should ideally use conspecifics in environments that reflect the natural habitat of the study species [34].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 4: Essential Research Materials for Behavioral Classification Studies

| Category | Specific Tools | Purpose/Function | Example Applications |

|---|---|---|---|

| Biologging Hardware | GPS-GSM tags, Tri-axial accelerometers, Magnetometers | Data collection on animal movement, location, and orientation | Remote monitoring of wild birds [9], nocturnal primates [29] |

| Software Libraries | Python (scikit-learn, TensorFlow, PyTorch), R (randomForest, caret) | Machine learning implementation, data processing and visualization | Behavior classification in cats [30], slow lorises [29] |