Improving the Reliability of Integrated Ecosystem Services Assessments: A Framework for Validation, Interoperability, and Decision-Making

Integrated Ecosystem Services (ES) assessments are crucial for informing sustainable development and conservation policies, yet their reliability is often hampered by validation gaps, methodological inconsistencies, and fragmented data.

Improving the Reliability of Integrated Ecosystem Services Assessments: A Framework for Validation, Interoperability, and Decision-Making

Abstract

Integrated Ecosystem Services (ES) assessments are crucial for informing sustainable development and conservation policies, yet their reliability is often hampered by validation gaps, methodological inconsistencies, and fragmented data. This article addresses researchers and scientists by exploring the core challenges and solutions in enhancing the credibility of ES assessments. We first establish the foundational need for robust validation frameworks and the critical role of data interoperability. The article then delves into advanced methodological approaches, including machine learning and spatial modeling, for integrated assessments. A significant focus is on troubleshooting common pitfalls, such as unstated assumptions and data scarcity, and optimizing practices through stakeholder engagement. Finally, we compare model outputs with stakeholder perceptions and present emerging validation techniques. The conclusion synthesizes these insights into a cohesive path forward, emphasizing how rigorous, transparent, and integrated ES assessments can significantly improve environmental decision-making and policy effectiveness.

The Pillars of Credibility: Core Concepts and the Critical Need for Validation

Troubleshooting Guide: Common ES Assessment Validation Errors

This guide addresses specific issues that can compromise the validity of Ecosystem Services (ES) assessments.

Problem: Weak or No Correlation with Real-World Outcomes

| Possible Cause | Solution / Diagnostic Check |

|---|---|

| Incorrect Construct Definition | Clearly define the ecosystem service (e.g., "water purification") and ensure assessment measures the defined construct, not a correlated but different one [1]. |

| Poor Extrapolation Inference | Evaluate if performance in a model or simulation (e.g., InVEST) generalizes to real-world field conditions. Collect field data to test this extrapolation [1]. |

| Overlooked Endogenous Uncertainties | Account for uncertainties influenced by your assessment decisions, such as stakeholder response probability changing with survey frequency [2]. |

Problem: Inconsistent Assessment Results Across Repeated Trials

| Possible Cause | Solution / Diagnostic Check |

|---|---|

| Unreliable Scoring | Use detailed rubrics and train assessors to ensure consistent scoring of qualitative data. Automated scoring can enhance reliability [3]. |

| High Background "Noise" | Identify and control for external variables (e.g., seasonal weather changes, land-use history) that add variability not related to the ES being measured [1]. |

| Instrumentation Drift | Calibrate sensors and models regularly. Re-calibrate if consistent drift is detected across multiple study sites [4]. |

Problem: Assessment Itself Alters the Measured Outcome (Assessment Effects)

| Possible Cause | Solution / Diagnostic Check |

|---|---|

| Reactivity to Measurement | The act of measuring (e.g., through stakeholder surveys) can raise awareness and change behavior. Use control groups that are not pre-assessed [5]. |

| Pre-test Sensitization | A baseline assessment can sensitize participants to the intervention. Consider Solomon's Four-Group Design to quantify this effect [5]. |

Frequently Asked Questions (FAQs)

Q1: What is the single most important thing to do to improve the credibility of our ES assessments? The most crucial step is to define a clear "interpretation-use argument." Before collecting data, explicitly state what you intend to conclude from the scores and what decisions will be based on them. Then, empirically test the most questionable assumptions in that argument [1].

Q2: We have high reliability in our models, but reviewers say our assessment lacks validity. Is this possible? Yes. Reliability (consistency) is a prerequisite for validity, but it does not guarantee it. An assessment can be consistently wrong if it is measuring the wrong thing or cannot be generalized beyond the model [1]. You must provide evidence for other inferences, like extrapolation to real ecosystems.

Q3: How can we practically evaluate the consequences of our ES assessment? Consequences form a key part of modern validity evidence [1]. Ask:

- Has the assessment led to beneficial management decisions?

- Are there unintended negative impacts? (e.g., Has prioritizing one ES led to the degradation of another?)

- Does the assessment improve stakeholder trust and engagement?

Q4: What is the difference between "exogenous" and "endogenous" uncertainties, and why does it matter?

- Exogenous (Decision-Independent Uncertainties - DIUs): Uncertainties that are independent of your assessment, such as future climate variability [2].

- Endogenous (Decision-Dependent Uncertainties - DDUs): Uncertainties that are influenced by your assessment decisions or modeling choices, such as stakeholder response rates being affected by survey design [2]. Overlooking DDUs can lead to a significant overestimation of your assessment's reliability [2].

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Concept | Function in ES Assessment |

|---|---|

| Validity Framework (e.g., Kane's) | Provides a structured approach (Scoring, Generalization, Extrapolation, Implications) to build a coherent validity argument [1]. |

| Structured Rubrics | Tools to standardize the scoring of qualitative or semi-quantitative data, improving the "scoring inference" and reliability [3]. |

| Solomon Four-Group Design | An experimental design that separately quantifies the effect of the assessment itself from the effect of the intervention or management action [5]. |

| Chance-Constrained Optimization | A modeling technique that incorporates probabilistic uncertainties (both DIUs and DDUs) to provide more robust and realistic reliability indices [2]. |

Methodological Protocols for Key Validation Experiments

Protocol 1: Testing for Assessment Effects (Reactivity)

Objective: To determine if the process of conducting a baseline assessment influences the outcome of a subsequent ES assessment or management intervention.

Procedure:

- Recruit a pool of study sites or stakeholder groups and randomly assign them to one of four groups:

- Group 1: Pre-test → Intervention → Post-test

- Group 2: Pre-test → [No Intervention] → Post-test

- Group 3: [No Pre-test] → Intervention → Post-test

- Group 4: [No Pre-test] → [No Intervention] → Post-test

- Administer the same post-test to all groups.

- Analyze: Compare post-test results across groups.

- Compare Group 1 and Group 3 to isolate the effect of the pre-test on the intervention.

- Compare Group 2 and Group 4 to isolate the effect of the pre-test alone [5].

Protocol 2: Validating the Extrapolation Inference

Objective: To gather evidence that assessment results obtained in a model or controlled setting (Model Output A) accurately predict conditions in the real-world ecosystem (Real-World Outcome B).

Procedure:

- Define the real-world outcome (Criterion B) that your model is intended to predict (e.g., actual water quality measurements, documented species richness).

- Run your ES assessment model (e.g., InVEST) for a set of test sites to generate predictions (Model Output A).

- Collect concurrent, independent field data for the same test sites to measure the real-world outcome (Criterion B).

- Analyze: Perform a correlation or regression analysis between Model Output A and Criterion B. A strong, significant relationship provides evidence for the extrapolation inference [1].

Workflow and Relationship Diagrams

ES Assessment Validation Workflow

Relationship of Modern Validity Evidence

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: What are the FAIR Data Principles and why are they critical for integrated research? The FAIR principles are a set of guiding rules to enhance the Findability, Accessibility, Interoperability, and Reuse of digital assets, with a specific emphasis on machine-actionability [6] [7]. They are critical because they prepare complex, multi-modal data for computational analysis and AI, which is essential for ensuring the reliability and reproducibility of integrated ecosystem assessments [6]. The principles were formally published in 2016 to address the challenges of reusing fast-growing but often inaccessible data resources [8].

Q2: How is "Interoperability" technically defined within the FAIR framework? Interoperability means that data must be integrated with other data and applications for analysis, storage, and processing [9]. Technically, this requires using formal, accessible, and broadly applicable languages for knowledge representation in metadata, standardized vocabularies that follow FAIR principles themselves, and qualified references to other metadata [6] [7] [10]. This ensures data is machine-readable and can be seamlessly combined with other datasets.

Q3: We have legacy data. What is the most common challenge in making it FAIR? The most frequently cited challenge is the high cost and time investment required to transform legacy data [6] [8]. This process often involves dealing with fragmented data systems and formats, a lack of standardized metadata or ontologies used by the original creators, and infrastructure that was not built for modern, multi-modal data [6]. The effort depends on the skills, competencies, and resources available to the team [8].

Q4: Does making data FAIR mean we have to make it open and publicly available? No. FAIR and open data are distinct concepts. FAIR data is focused on making data easily usable by computational systems, which includes data that is well-structured and richly described but behind secure authentication and authorization layers for privacy, IP protection, or other restrictions [6]. Accessibility in FAIR means the user knows how the data can be accessed, which can include a protocol for controlled access [6] [9].

Q5: What are the key organizational and human factors for successful FAIR implementation? Successful implementation requires addressing organizational challenges, which include providing training to individuals and developing a FAIR organizational culture [8]. The availability of in-house technical data experts or "data champions," as well as scientific experts with domain-specific knowledge, is a crucial factor for assessing the impact and ensuring the correct interpretation of FAIRified data [8].

Troubleshooting Guides

Issue 1: Inconsistent Data and Vocabulary Across Fragmented Sources

- Problem: Attempting to integrate multi-omics or clinical datasets from different labs, departments, or collaborators fails due to semantic mismatches, inconsistent naming conventions (e.g., for genes or diseases), and a lack of standardized ontologies [6].

- Solution:

- Map to Standard Vocabularies: Identify and adopt community-standard ontologies and controlled vocabularies (e.g., from the OBO Foundry) relevant to your field [6].

- Create a Data Dictionary: Develop and enforce the use of an internal data dictionary that maps local terms to standard ones before integration.

- Use Semantic Web Technologies: Consider using Resource Description Framework (RDF) and tools that support it to create semantically interoperable data.

Issue 2: Data Findability and Access is Difficult for Machines

- Problem: Datasets cannot be automatically discovered or retrieved by computational workflows, scripts, or other researchers because they lack persistent identifiers and machine-readable metadata [7] [11].

- Solution:

- Assign a Persistent Identifier: Register your dataset in a trusted repository to obtain a Globally Unique and Persistent Identifier like a Digital Object Identifier (DOI) [6] [9] [11].

- Deposit in a FAIR-Enabling Repository: Store your data in a repository that provides rich metadata fields, a persistent identifier, and a clear usage license [11].

- Rich Metadata: Create comprehensive, machine-actionable metadata that explicitly includes the dataset's identifier and is indexed in a searchable resource [6] [7].

Issue 3: Data Cannot Be Reused or Reproduced

- Problem: Other researchers, or even your future self, cannot understand or reuse the data due to insufficient documentation, missing provenance, or unclear licensing [6].

- Solution:

- Create Detailed Documentation: Use a

READMEfile template to document methods, data collection procedures, file structures, units, and abbreviations [11]. - Define Provenance: Track and describe the origin and processing history of the data (how it was created, derived, and modified).

- Specify a Clear License: Attach a standard data usage license (e.g., Creative Commons) to the dataset so users know the terms of reuse [9] [11].

- Create Detailed Documentation: Use a

FAIRification Experimental Protocols

Protocol 1: A Practical Framework for Making Data FAIR This methodology outlines the key steps for the "FAIRification" of a dataset, from initial assessment to final deposition [11].

- Data Assessment and Selection: Prioritize datasets for FAIRification based on their potential for reuse, scientific impact, and alignment with organizational goals [8].

- Define a Data Management Plan (DMP): Outline how data will be handled during and after the research project, adhering to funder requirements [8].

- Data Standardization and Cleaning:

- Convert data to standard, open file formats (e.g., CSV, XML, JSON) instead of proprietary formats [11].

- Clean the data and map variables to standardized ontologies.

- Generate Rich Metadata and Documentation:

- Deposit and Publish:

Protocol 2: Workflow for Integrating Disparate Datasets for Ecosystem Assessment This protocol provides a high-level workflow for researchers tackling data integration for complex assessments.

The following tables summarize key quantitative and categorical information related to FAIR implementation.

Table 1: Common Challenges in Implementing FAIR Principles [6] [8]

| Challenge Category | Specific Examples | Primary Impacted Area |

|---|---|---|

| Technical | Fragmented data systems and formats; Lack of standardized metadata or ontologies; Legacy data transformation [6]. | Data Integration, Interoperability |

| Financial | High cost of data curation; Infrastructure setup and maintenance; Ensuring business continuity [8]. | Project Resources, ROI |

| Organizational | Cultural resistance; Lack of FAIR-awareness; Need for training and development of a FAIR culture [6] [8]. | Team Collaboration, Adoption Rate |

| Legal & Ethical | Compliance with data protection regulations (e.g., GDPR); Accessibility rights; Managing sensitive data [8]. | Data Accessibility, Reusability |

Table 2: Benefits and Impact of FAIR Data Adoption [6]

| Benefit | Outcome for Research Efficiency | Example / Impact |

|---|---|---|

| Faster Time-to-Insight | Accelerates discovery by making data easily discoverable and machine-actionable. | Reduced gene evaluation time for Alzheimer's drug discovery from weeks to days [6]. |

| Improved Data ROI | Maximizes the value of existing data assets, preventing duplication and redundant efforts. | Reduces need for repetitive data generation and training, optimizing infrastructure investment [6]. |

| Supports AI & ML | Provides the foundational structure needed to harmonize diverse data types for advanced analytics. | Enables large-scale analysis across multi-omics, imaging, and EHR data [6] [8]. |

| Ensures Reproducibility | Embeds metadata, provenance, and context to allow results to be replicated and traced. | Helped researchers discover and reduce false positive DNA differences to <1 in 50 subjects [6]. |

Table 3: Key Research Reagent Solutions for FAIR Data Management

| Item / Resource | Function in the FAIRification Process |

|---|---|

| Trusted Data Repository | Provides a platform for depositing data, assigns a Persistent Identifier (e.g., DOI), and often offers curation services to enhance findability and long-term accessibility [11]. |

| Metadata Schema & Templates | Standardized templates (e.g., README files) guide researchers in creating comprehensive, consistent, and machine-actionable metadata, which is core to all FAIR principles [11]. |

| Standardized Ontologies | Formal, shared, and broadly applicable vocabularies (e.g., Gene Ontology, ENVO) enable semantic interoperability by ensuring data from different sources describes the same concept in the same way [6] [7]. |

| Data Management Plan (DMP) | A formal document that outlines how data will be handled, described, and shared throughout the research lifecycle and after its completion, ensuring proactive FAIR alignment [8]. |

| Persistent Identifier Services | Services that issue globally unique and persistent identifiers (e.g., DOIs, Handles) for datasets, which is the foundational step for making data Findable [6] [9]. |

Core Concepts FAQ

Q1: What is the definitive difference between an ecosystem function and an ecosystem service?

A: An ecosystem function refers to the natural, intrinsic processes and operations of an ecosystem—such as nutrient cycling, soil formation, or photosynthesis. These are the biological, chemical, and physical processes that occur irrespective of human benefit. An ecosystem service is the direct or indirect contribution of these ecosystem functions to human well-being, survival, and quality of life. Essentially, functions become services when they provide a tangible benefit to humans [12] [13]. For example, the process of water filtration in a wetland is a function; the provision of clean drinking water to a community is the service [14].

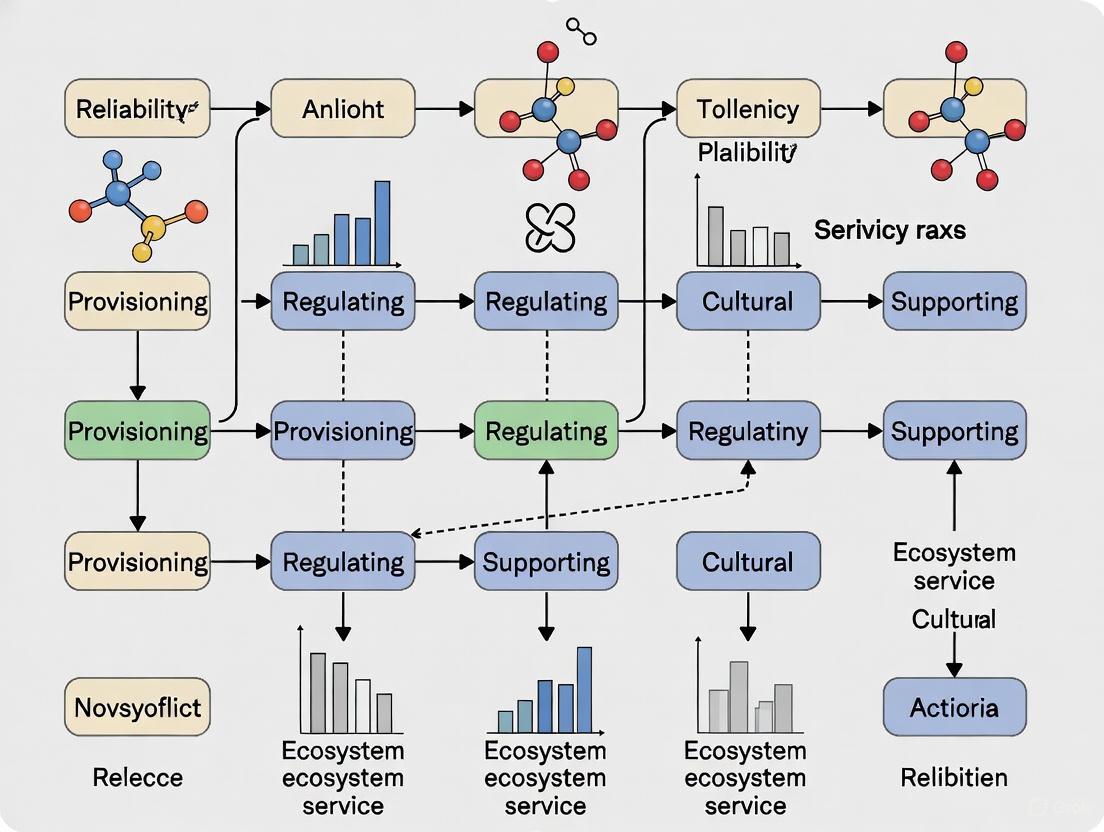

Q2: How does the "ecosystem service cascade" framework model the relationship between functions, services, and benefits?

A: The Ecosystem Service Cascade Framework is a conceptual model that delineates the pathway from ecosystem structures to human benefits. It shows how ecological structures and processes lead to ecosystem functions, which are then transformed into ecosystem services, and finally into benefits that contribute to human well-being [15]. This step-wise model helps avoid confusion between the components and clarifies their sequential relationships for more integrated assessments [15].

Q3: What are the standard categories for ecosystem services, and how are "benefits" classified within them?

A: Ecosystem services are typically broken down into four established categories [12] [13]. The "benefits" are the specific, often measurable, gains that humans receive from these services.

Table: Categories of Ecosystem Services and Their Associated Benefits

| Service Category | Description | Examples of Human Benefits |

|---|---|---|

| Provisioning | Material or energy outputs from ecosystems [12]. | Food, fresh water, raw materials (wood, fiber), genetic resources, and medicines [12] [13]. |

| Regulating | Benefits obtained from the moderation of ecosystem processes [12]. | Climate regulation, flood control, water purification, disease regulation, and pollination [12] [13]. |

| Cultural | Non-material benefits obtained from ecosystems [12]. | Recreational opportunities, aesthetic enjoyment, spiritual enrichment, and cognitive development [12] [13]. |

| Supporting | Services necessary for the production of all other ecosystem services [12]. | Soil formation, photosynthesis, nutrient cycling, and maintenance of genetic diversity [12] [13]. |

Q4: Our assessment model is yielding inconsistent results for cultural services. How can we improve reliability?

A: Challenges in quantifying cultural services are common, as they involve non-material, subjective benefits. To improve reliability:

- Employ mixed methods: Combine quantitative surveys and spatial analysis with qualitative techniques like interviews to capture both the physical access to and the perceived value of cultural spaces [15].

- Utilize the cascade framework: Ensure your study analyzes the full pathway from the ecosystem structure (e.g., a park) to the function (recreation) and its final impact on human well-being (e.g., improved mental health) [15].

- Expand scope: Studies focused solely on regulatory and supporting services should intentionally expand their scope to include the impact assessments of these services on human well-being, a step that is often missed [15].

Technical Troubleshooting Guide

This guide addresses common methodological issues encountered during integrated ecosystem service assessments.

Issue: Resolving Trade-offs and Synergies Between Multiple Ecosystem Services

Symptoms: Your model shows that enhancing one ecosystem service (e.g., food production through agriculture) leads to the decline of another (e.g., water purification or soil conservation). Conversely, you may find that some services are positively correlated [16].

Investigation & Resolution Protocol:

- Identify & Quantify: Use assessment tools like the InVEST model to quantitatively evaluate the individual services in question (e.g., water yield, carbon storage, habitat quality) over the same temporal and spatial scale [16].

- Correlation Analysis: Apply statistical methods to identify relationships. Common approaches include:

- Driver Analysis: Use machine learning regression models (e.g., Gradient Boosting Machines) to identify the key environmental and anthropogenic drivers influencing the observed trade-offs and synergies. This moves beyond correlation to identify potential causation [16].

- Scenario Planning: Project future land-use changes using models like the PLUS model under different scenarios (e.g., natural development, planning-oriented, ecological priority). Re-run your ecosystem service assessments for each scenario to inform planning decisions that optimize the desired suite of services [16].

Issue: Incorporating Spatial Dynamics and Scale into Assessments

Symptoms: The value or flow of an ecosystem service is not adequately captured, leading to inaccurate maps and conclusions about its availability to beneficiaries.

Investigation & Resolution Protocol:

- Define the Service-Providing Unit (SPU): Clearly map the ecosystem area that provides the service (e.g., a forest for carbon sequestration, a wetland for water filtration).

- Define the Service-Benefiting Area (SBA): Identify and map the location of the human populations or systems that benefit from the service (e.g., a downstream community for clean water).

- Model the Flow: Account for the spatial connectivity between the SPU and SBA. This can involve modeling the flow of water, movement of pollinators, or accessibility of recreational areas. Tools like the InVEST model and the RBI (Rapid Benefit Indicators) Approach can facilitate this spatial analysis [14] [16].

- Cross-Scale Analysis: Conduct analyses at multiple spatial scales (e.g., local, regional) to understand how the provision and demand for services change. This is particularly important for large cities and diverse regions [15].

Issue: Engaging Stakeholders and Capturing Value Plurality

Symptoms: Research outcomes are not adopted by policymakers or local communities, or the assessment fails to capture values that are not easily quantifiable in monetary terms.

Investigation & Resolution Protocol:

- Stakeholder Identification: Use structured tools like the FEGS (Final Ecosystem Goods and Services) Scoping Tool to identify and prioritize stakeholders and the specific environmental benefits they care about [14].

- Employ Non-Monetary Metrics: Utilize frameworks like the RBI (Rapid Benefit Indicators) Approach, which uses readily available data to estimate and quantify benefits to people using non-monetary indicators [14].

- Consider Alternative Frameworks: For culturally diverse contexts, consider using the Nature's Contributions to People (NCP) concept. This framework, developed by IPBES, can be more inclusive of worldviews beyond standard economic valuation [15].

Experimental Protocol for a Multi-Scenario ES Assessment

This protocol outlines a methodology for assessing ecosystem services under different future land-use scenarios, integrating machine learning for driver analysis.

Objective: To quantitatively assess and predict the dynamics of key ecosystem services, identify their drivers, and evaluate trade-offs under various future scenarios to inform regional ecological protection strategies [16].

Materials & Reagents:

Table: Key Research Reagent Solutions for ES Modeling

| Item | Function/Explanation |

|---|---|

| InVEST Model | A suite of open-source software models used to map and value the goods and services from nature that contribute to human well-being. It is central to quantifying specific services like carbon storage, water yield, and habitat quality [16]. |

| PLUS Model | A land-use simulation model used to project future changes in land use/cover under various scenarios. It excels at simulating complex dynamics at fine spatial scales [16]. |

| Machine Learning Library (e.g., scikit-learn) | Provides algorithms (e.g., Gradient Boosting) for identifying nonlinear relationships and key drivers within complex ecological datasets, improving predictive accuracy over traditional statistical methods [16]. |

| GIS Software (e.g., ArcGIS, QGIS) | A geographic information system for spatial data management, analysis, and the cartographic presentation of results. |

Methodology:

Data Acquisition & Harmonization:

- Collect spatial data for the study area, including: land use/cover maps, digital elevation models (DEMs), soil data, climate data (precipitation, temperature), and socio-economic data.

- Process all datasets to a consistent spatial resolution and projection to ensure comparability [16].

Historical ES Assessment:

- Use the InVEST model to quantify selected ecosystem services (e.g., water yield, carbon storage, habitat quality, soil conservation) for historical benchmark years (e.g., 2000, 2010, 2020).

- Calculate a comprehensive ecosystem service index to assess overall ecological capacity [16].

Driver Analysis with Machine Learning:

- Compile a dataset of potential driving factors (e.g., land use, vegetation index, precipitation, population density, GDP).

- Train and compare multiple machine learning models (e.g., Gradient Boosting, Random Forest) on the historical data to identify the most important drivers for each ecosystem service [16].

Future Scenario Design & Land Use Simulation:

- Design multiple future scenarios (e.g., 2035) reflecting different policy priorities:

- Natural Development Scenario: Projecting current trends.

- Planning-Oriented Scenario: Incorporating official development plans.

- Ecological Priority Scenario: Emphasizing conservation and restoration.

- Use the PLUS model, calibrated with historical data and informed by the driver analysis, to simulate land use changes for each scenario [16].

- Design multiple future scenarios (e.g., 2035) reflecting different policy priorities:

Future ES Assessment & Analysis:

- Run the InVEST model using the simulated land-use maps from Step 4 to evaluate ecosystem services under each future scenario.

- Analyze the trade-offs, synergies, and overall changes in ecosystem service capacity compared to the historical baseline [16].

Conceptual Diagram: The Ecosystem Service Cascade

The following diagram illustrates the logical progression from ecosystem structures to human well-being, as defined by the ecosystem service cascade framework.

Ecosystem services connect nature to human well-being.

Conceptual Diagram: Integrated ES Assessment Workflow

This diagram outlines the logical workflow for conducting an integrated ecosystem service assessment with multi-scenario prediction, as described in the experimental protocol.

Integrated workflow for ecosystem service assessment.

Troubleshooting Guides

Data Scarcity

Problem: Lack of locally relevant data for ecosystem service (ES) assessment at a regional scale.

- Solution: Employ a tiered approach for ES mapping. When data is scarce (Tier 1), use expert-based matrix approaches or simple GIS mapping with proxy indicators like land use/land cover (LULC) data to generate quick overviews [17]. Leverage citizen science-based data and knowledge co-generation to make the valuation process more inclusive and policy-oriented, filling critical data gaps at the local scale [18].

- Experimental Protocol: The expert-based ES matrix approach links ES values to appropriate geobiophysical spatial units, such as LULC types [17]. Values are classified on a relative scale (e.g., 0 to 5) to reduce complexity and allow comparisons between individual ES [17]. This protocol is cost-efficient and applicable in data-scarce areas [17].

Problem: Inability to use complex models due to data, time, or knowledge constraints.

- Solution: Use value transfer methods, where estimates of ES values per LULC from other published studies are applied to your area of interest [17]. While this provides a quick approximation, always explicitly state the sources and acknowledge the limitations of transferring data from a different context [17].

- Experimental Protocol: Compile a database of ES values from literature for various LULC classes. Apply these values to a regional LULC map using GIS software for spatial overlay. This generates a preliminary map of ES supply or value [19].

Conceptual Fuzziness

Problem: Ambiguity in defining and categorizing ecosystem services and their components.

- Solution: Adopt a generally accepted framework, such as the Common International Classification of Ecosystem Services (CICES), to reduce conceptual fuzziness and ensure a shared language [20]. Explicitly name the assessed ES components (e.g., potential supply, actual use, demand) in your assessment to avoid misinterpretations [20].

- Experimental Protocol: For transdisciplinary teams, implement a fuzzy set theory exercise. Have team members independently categorize system elements (e.g., a dam, forest management) as social, ecological, or technological, and then quantify their degree of membership (e.g., 50% social, 50% technological). This visually maps similarities and differences in perception, honoring diverse epistemological perspectives [21].

Problem: Conventional "crisp set" sustainability assessments make knife-edge conclusions that ignore inherent uncertainties.

- Solution: Implement a fuzzy logic approach for evaluation. This method uses fuzzy sets and conditional probabilities to assess sustainability, allowing for continuous gradations rather than sharp thresholds [22].

- Experimental Protocol:

- Define fuzzy sets for sustainability attributes (e.g., for income: "very low," "moderately low") [22].

- Develop fuzzy propositions about ecosystem sustainability (e.g., "biodiversity is moderately high") [22].

- Apply fuzzy logic rules to these propositions to reach a conclusion about the ecosystem's strong sustainability, which is more robust to uncertainty than crisp-set methods [22].

Spatial Heterogeneity

Problem: Understanding the complex spatial relationships and drivers of multiple ecosystem services.

- Solution: Analyze ES from an individual-pair-bundle perspective [23]. Quantify individual ES, statistically analyze trade-offs and synergies between ES pairs, and then use cluster analysis (e.g., K-means) to identify ES bundles—sets of services that repeatedly appear together in space [23] [24].

- Experimental Protocol:

- Map multiple key ES (e.g., water yield, carbon storage, habitat quality) using models or proxy data [23] [24].

- Use correlation analysis or spatial overlay to identify trade-offs (negative relationships) and synergies (positive relationships) between all ES pairs [23].

- Apply a clustering algorithm on the ES maps to delineate spatially explicit ES bundles. These bundles can then be used for targeted ecological function zoning [24].

Problem: Accounting for the flow of ecosystem services between service-producing areas and service-benefiting areas.

- Solution: Integrate supply-and-demand dynamics into valuation. Use a scarcity value model that adjusts the theoretical value of ES based on regional supply and socio-economic demand factors like population density and GDP [19].

- Experimental Protocol:

- Calculate the theoretical supply value of omni-directional and directional ES (e.g., gas regulation, water containment) [19].

- Adjust this value using demand factors (e.g., per capita GDP, population density) to calculate the ecosystem service scarcity value (ESSV) [19].

- Map the ESSV to reveal areas of high scarcity, which can serve as a reference for setting inter-regional ecological compensation prices [19].

Frequently Asked Questions (FAQs)

Q1: Does using an ecosystem services approach mean I have to put a dollar value on everything? A1: No. Using ecosystem services in decision-making does not require monetary valuation [25]. The value can be described in terms of health outcomes, material benefits, or through qualitative analyses that identify which services are most important to communities [25]. Monetary valuation is one useful tool among many for analyzing trade-offs [25].

Q2: How can I select the right mapping method for my specific research context? A2: Follow a tiered approach [17]. Let your research purpose, resources, and data availability guide you:

- Tier 1 (Rapid Assessment): For communication and awareness-raising. Use expert-based matrices, simple GIS with LULC proxies, or value transfer [17].

- Tier 2 (Intermediate Assessment): For more specific analysis. Use simple models (e.g., InVEST) or combine methods to assess a broader range of ES [17].

- Tier 3 (High-Resolution Assessment): For detailed, mechanistic understanding. Use complex, process-based models requiring high-quality, localized data [17].

Q3: We are a multidisciplinary team and can't agree on how to classify system elements. Is this a problem? A3: This is a common challenge and can be an opportunity. Different perspectives enrich the understanding of complex systems [21]. Instead of forcing a single classification, use a fuzzy SETS framework to acknowledge multiple memberships explicitly. This helps honor diverse epistemologies and creates a basis for deeper, more productive discussions about system dynamics [21].

Q4: What is the most common cause of unreliable ES assessment results? A4: A primary source of unreliability is the failure to explicitly recognize and address the underlying assumptions of the assessment [20]. These can range from conceptual and ethical foundations to assumptions about data representativeness, indicator validity, and economic rationality [20]. Increasing transparency about these assumptions and testing their consequences is crucial for improving reliability [20].

Quantitative Data Tables

Table 1: Ecosystem Service Scarcity Value (ESSV) Change in the Yangtze River Delta (2010-2020)

This table summarizes the impact of incorporating supply and demand dynamics on ecosystem service valuation, moving beyond theoretical value [19].

| Valuation Scenario | Total Value (2010) | Total Value (2020) | Percentage Change |

|---|---|---|---|

| Theoretical Value (ESTV) | Not Specified | Decreased by 8.67% | -8.67% |

| Scarcity Value (ESSV) | RMB 213 million | RMB 1.323 billion | +521.13% |

Table 2: Prevalence of Trade-offs and Synergies among Ecosystem Service Pairs

This table illustrates the complex relationships between ecosystem services, which is critical for understanding spatial heterogeneity. Data is illustrative of a study in Northeast China [23].

| Ecosystem Service | Relationship with Other ES | Percentage of ES Pairs Exhibiting Trade-offs |

|---|---|---|

| Carbon Storage (CS) | Trade-offs with over 70% of other ES | >70% |

| Habitat Quality (HQ) | Trade-offs with SC, WS, WP, AL | Not Specified |

| Overall ES Pairs | Synergies more prevalent than trade-offs | Less than 50% |

Methodological Workflows and Frameworks

Fuzzy Logic Assessment Workflow

ES Bundle Identification Workflow

Research Reagent Solutions

Table 3: Essential Data and Modeling Tools for ES Assessments

This table details key "research reagents"—data and tools essential for conducting integrated ES assessments.

| Item Name | Category | Primary Function | Key Considerations |

|---|---|---|---|

| Land Use/Land Cover (LULC) Data | Spatial Data | Serves as a fundamental proxy for mapping the potential supply of many ES (e.g., food, carbon storage) [17]. | Widely accessible (e.g., Urban Atlas); may not capture ecological quality or management intensity [17]. |

| InVEST Models | Software Tool | A suite of open-source, spatially explicit models for quantifying and valuing multiple ES (e.g., carbon storage, water yield) [17]. | Requires intermediate GIS skills; each model has specific data input requirements [17]. |

| Expert-Based ES Matrix | Methodology | A lookup table that assigns ES scores to LULC classes, enabling rapid ES assessment in data-scarce contexts [17]. | Subjectivity requires careful expert selection; best for communication and initial screening [20] [17]. |

| Multiscale Geographically Weighted Regression (MGWR) | Statistical Tool | Analyzes spatial non-stationarity and identifies driving factors of ES patterns across a landscape [23]. | Reveals how the influence of drivers (e.g., slope, GDP) varies across space, explaining heterogeneity [23]. |

Advanced Tools and Techniques for Integrated ES Assessment

Troubleshooting Guides & FAQs

InVEST Model Troubleshooting

Q: My InVEST model runs but produces illogical results (e.g., negative water yield values). What should I check?

A: This commonly stems from input data issues. Please verify the following:

- Data Format and Projection: Ensure all input raster and vector files are in the same, supported coordinate reference system (CRS). InVEST requires projected CRS (e.g., UTM) rather than geographic coordinates (e.g., WGS84) for accurate area and distance calculations [26].

- Input Data Validity: Check that all input rasters (e.g., Land Use/Land Cover (LULC), DEM) contain valid, positive values where expected. Search for and replace NoData values or negative numbers that are not biologically meaningful [27].

- Preprocessing with Helper Tools: Use InVEST's built-in helper tools like

RouteDEMfor advanced flow direction and accumulation calculations, which can improve the hydrological inputs for models like the Annual Water Yield [28].

Q: How can I visualize and share my InVEST results more effectively?

A: Beyond traditional GIS, the InVEST team offers two powerful solutions:

- InVEST Dashboards: This feature allows you to create interactive, web-based visualizations of your results. You can explore outputs with interactive maps and charts and share them with colleagues via a simple link, eliminating the need for complex GIS symbology [28].

- Python API: For advanced users, the InVEST Python API enables integration into custom scripts and complex analytical workflows, allowing for automated post-processing and visualization [28].

Q: What is the difference between the "classic" InVEST application and the new "Workbench"?

A: The InVEST Workbench is a repackaged version of the same InVEST models with a new user interface. It provides all the same functionality with the goal of being more accessible and extensible. The classic application remains available, but the Workbench represents the future of the software [26].

PLUS Model Troubleshooting

Q: My PLUS model simulation fails to start or crashes during the Land Expansion Analysis Strategy (LEAS) phase. What could be the cause?

A: This is often related to input data format or system compatibility.

- Data Format Compatibility: Ensure all your input raster data (e.g., LULC maps, driving factor maps) have the same spatial extent, cell size, and coordinate system. Mismatches are a frequent cause of failure [29].

- Software Environment: Confirm that you have the correct version of the PLUS model for your operating system and that all necessary dependencies (like specific .NET Framework versions) are installed. Consult the user manual for specific system requirements [29].

Q: The simulated land use pattern from PLUS appears highly fragmented and unrealistic. How can I improve it?

A: You can adjust the model's parameters to better reflect real-world land use dynamics:

- CAAF Module Parameters: Tune the parameters within the Cellular Automata (CA) model, specifically the neighborhood weight and conversion cost matrix. These settings control the influence of surrounding land use types and the ease with which one land type can convert to another. Refer to the user manual for detailed guidance on these parameters [29].

RUSLE Model Integration Troubleshooting

Q: When integrating RUSLE with InVEST for a comprehensive ecosystem service assessment, how should I handle discrepancies in spatial resolution between models?

A: Consistency is key for integrated assessments.

- Resample to a Common Resolution: Choose a target spatial resolution that is appropriate for your study area and research question. All input layers for both RUSLE (e.g., rainfall erosivity, soil erodibility) and InVEST (e.g., LULC for the Sediment Delivery Ratio model) should be resampled to this identical resolution and extent [27]. This ensures that the outputs from one model can be correctly used as inputs for another.

Q: What is the best way to validate the soil conservation results from an integrated InVEST-RUSLE analysis?

A: A multi-faceted validation approach is recommended [27]:

- Field Measurements: Compare your modeled soil retention values with empirical data from sediment traps or erosion pins in the field, if available.

- Spatial Pattern Checks: Visually compare the spatial pattern of predicted soil erosion with high-resolution imagery or known erosion features (e.g., gullies) to assess if the model captures "hotspots" correctly.

- Literature Comparison: Benchmark your results against soil erosion rates reported in scientific literature for similar regions and land cover types.

Table 1: Key Ecosystem Services and Corresponding Models for Integrated Assessment.

| Ecosystem Service | Primary Model | Quantifiable Outputs | Key Input Data Requirements |

|---|---|---|---|

| Water Yield | InVEST | Water yield volume (mm) | LULC, DEM, precipitation, soil depth, plant available water content [27] |

| Carbon Storage | InVEST | Carbon storage (tons) in four pools | LULC, carbon pool data (above/biomass, belowground, soil, dead organic matter) [27] |

| Habitat Quality | InVEST | Habitat quality/degradation index (0-1) | LULC, threat data sources (e.g., roads, urban areas), threat sensitivity [27] |

| Soil Conservation | InVEST / RUSLE | Soil retention (tons/ha) | Rainfall erosivity (R), soil erodibility (K), DEM, LULC, management factor (C & P) [27] |

| Land Use Simulation | PLUS | Future land use maps, transition probabilities | Historical LULC maps, driving factors (e.g., slope, population), development constraints [29] |

Table 2: Summary of a Recent Integrated Assessment Study Using InVEST and RUSLE (Central Yunnan Province, 2000-2020) [27].

| Ecosystem Service | Trend (2000-2020) | Primary Drivers (q-value rank) | Notes |

|---|---|---|---|

| Water Yield (WY) | Increasing | Relief degree of land surface (RDLS), Slope, NDVI | Modeled using InVEST |

| Carbon Storage (CS) | Decreasing | Relief degree of land surface (RDLS), Slope, NDVI | Modeled using InVEST |

| Habitat Quality (HQ) | Increasing | Relief degree of land surface (RDLS), Slope, NDVI | Modeled using InVEST |

| Soil Conservation (SC) | Increasing | Relief degree of land surface (RDLS), Slope, NDVI | Modeled using RUSLE |

| Integrated Index (IESI) | Decreased then Increased | Analysis via Optimal Parameter-based Geographical Detector (OPGD) | Constructed using Principal Component Analysis (PCA); Optimal detection scale was 4500m grid. |

Experimental Protocols for Integrated Assessment

Protocol: Spatio-Temporal Assessment of Multiple Ecosystem Services

This protocol is derived from a 2025 study that integrated InVEST and RUSLE to evaluate ecosystem services in Central Yunnan Province (CYP) [27].

1. Study Area Definition:

- Clearly delineate the spatial boundary of the study area (e.g., CYP: 94,558 km²).

- Document the key characteristics of the area, such as dominant landforms, climate, and prevailing socio-economic activities.

2. Data Collection and Preprocessing:

- Gather time-series data for the study period (e.g., 2000, 2005, 2010, 2015, 2020).

- Core data includes: LULC maps, Digital Elevation Models (DEMs), meteorological data (precipitation, temperature), soil type maps, and socio-economic data if needed.

- Crucially, preprocess all spatial data to a common coordinate system, spatial extent, and cell size.

3. Ecosystem Service Modeling:

- Run InVEST Models: Execute the relevant InVEST models (e.g., Annual Water Yield, Carbon Storage, Sediment Delivery Ratio, Habitat Quality) for each time point using the preprocessed data [27].

- Run RUSLE Model: Calculate soil conservation service using the Revised Universal Soil Loss Equation, which estimates potential soil loss without vegetation minus the actual soil loss [27].

4. Data Integration and Index Construction:

- Normalize the outputs of the four key services (WY, CS, HQ, SC) to make them comparable.

- Use Principal Component Analysis (PCA) to construct an Integrated Ecosystem Service Index (IESI). This method objectively determines the weight of each service based on its contribution to the overall variance, avoiding subjective weighting [27].

5. Driving Force Analysis:

- Select potential driving factors (e.g., RDLS, slope, NDVI, population density, precipitation).

- Use the Optimal Parameter-based Geographical Detector (OPGD) model to identify the key drivers and their interaction effects on the spatial divergence of ecosystem services. This method helps determine the optimal spatial scale for analysis [27].

Protocol: Scenario Analysis with Land Use Projection

1. Historical Land Use Change Analysis:

- Use two historical LULC maps (e.g., from 2000 and 2010) to analyze transitions and develop transition probability matrices.

2. Land Use Simulation with PLUS:

- Utilize the PLUS model, which combines the Land Expansion Analysis Strategy (LEAS) and a Cellular Automata (CA) model with a Patch-generating Simulation (CARS) mechanism.

- Input historical LULC and driving factors into the LEAS module to extract land expansion patterns and transition probabilities.

- Simulate future LULC (e.g., for 2020) using the CA model. Validate the simulation by comparing it to the actual 2020 LULC map [29].

3. Future Ecosystem Service Assessment:

- Use the simulated future LULC map from PLUS as a primary input for the InVEST and RUSLE models.

- Run the ecosystem service models under the simulated future scenario to assess the potential impact of land use change on service provision.

Workflow Visualization

Integrated Ecosystem Services Assessment Workflow

Research Reagent Solutions: Essential Data & Tools

Table 3: Essential "Research Reagents" for Integrated Spatial Modeling.

| Item / Tool | Type | Primary Function in Analysis |

|---|---|---|

| LULC Maps | Core Input Data | The foundational layer representing earth's surface; primary driver for estimating service supply (e.g., carbon, habitat) in InVEST and for change analysis in PLUS [27]. |

| Digital Elevation Model (DEM) | Core Input Data | Used for calculating slope, flow direction, and watershed delineation; critical for hydrological modeling in InVEST and RUSLE, and as a driving factor in PLUS [28] [27]. |

| InVEST Helper Tools (RouteDEM, DelineateIT) | Preprocessing Tool | Enhances input data quality. RouteDEM calculates advanced flow routing, while DelineateIT automates watershed delineation, improving inputs for freshwater models [28]. |

| RUSLE Factors (R, K, C, P) | Model Parameters | The core components for calculating soil loss: Rainfall Erosivity (R), Soil Erodibility (K), Cover Management (C), and Support Practice (P) [27]. |

| Principal Component Analysis (PCA) | Statistical Method | Used to objectively integrate multiple ecosystem service metrics into a single, comprehensive index (IESI), avoiding subjective weighting [27]. |

| Optimal Parameter-based Geographical Detector (OPGD) | Analysis Tool | Identifies the key driving factors behind the spatial patterns of ecosystem services and determines the optimal scale for analysis [27]. |

Frequently Asked Questions (FAQs)

FAQ 1: What is the most objective method to assign weights when constructing an IESI? Principal Component Analysis (PCA) is a highly objective method for constructing an IESI. Unlike cumulative equations, maximum value methods, or subjective weighting approaches like the Analytic Hierarchy Process (AHP), PCA uses the data structure itself to determine weights. It reduces dimensionality while concentrating information, objectively considering the relative importance of multiple ecosystem service indicators without researcher bias [27].

FAQ 2: Which ecosystem services should I include in my IESI? The specific services depend on your regional context, but commonly assessed key services include Water Yield (WY), Carbon Storage (CS), Habitat Quality (HQ), and Soil Conservation (SC). These represent crucial provisioning, regulating, and supporting services. In the Central Yunnan Province case study, these four services provided a comprehensive foundation for integration [27].

FAQ 3: My IESI shows a decreasing trend. What are the most likely causes? A declining IESI often reflects landscape degradation. Key drivers to investigate include:

- Land Use/Cover Change (LUCC), particularly conversion of natural areas to agriculture or urban use

- Changes in vegetation cover (declining NDVI)

- Topographic factors like relief degree of land surface (RDLS) and slope

- Climate factors affecting ecosystem processes [27]

FAQ 4: What is the optimal spatial scale for analyzing driving forces behind my IESI? The optimal scale varies by region. In Central Yunnan Province, a 4500 m × 4500 m grid was identified as optimal for detecting the spatial divergence of comprehensive ecosystem services using the OPGD model. You should test multiple scales in your study area, as key driving factors may shift with changing spatial scales [27].

FAQ 5: How can I validate my IESI results? Validation can be achieved through:

- Comparing spatio-temporal trends against known environmental changes

- Testing the IESI's response to documented policy implementations

- Analyzing driving mechanisms using geographical detector models

- Correlating with independent environmental quality indicators [27]

Troubleshooting Guides

Problem 1: Subjectivity in Weighting Multiple Ecosystem Services

Symptoms: Difficulty justifying weight assignments; results vary significantly with different weighting schemes.

Solution: Implement Principal Component Analysis (PCA)

- Standardize all ecosystem service datasets to ensure comparability

- Run PCA on the correlation matrix of your ecosystem services

- Extract weights from the first principal component loadings

- Calculate IESI using the formula: IESI = Σ(Standardized ES value × PCA-derived weight)

- Verify that the first principal component explains sufficient variance (>70% is ideal) [27]

Problem 2: Inconsistent Spatial Scales Causing Integration Issues

Symptoms: Data misalignment; artifacts at boundaries; difficulty interpreting results.

Solution: Establish Consistent Spatial Framework

- Identify optimal analysis scale using the OPGD model at multiple grid sizes

- Resample all data to common resolution and extent before integration

- Validate scale choice by ensuring key drivers maintain explanatory power

- Document all scale transformations for reproducibility [27]

Problem 3: Handling Conflicting Trends in Individual Ecosystem Services

Symptoms: Some services improve while others degrade; unclear overall ecosystem status.

Solution: Implement Trend Analysis and Trade-off Identification

- Calculate temporal trends for each service individually using linear regression

- Identify trade-offs and synergies through correlation analysis

- Interpret IESI in context of individual service trajectories

- Report both integrated and disaggregated results for comprehensive understanding [27]

Experimental Protocols and Data

Protocol 1: IESI Construction via Principal Component Analysis

Purpose: To objectively integrate multiple ecosystem services into a single composite index.

Materials:

- Spatially explicit datasets for target ecosystem services

- GIS software with raster calculation capabilities

- Statistical software with PCA functionality

Procedure:

- Data Preparation: Standardize each ecosystem service layer to zero mean and unit variance

- Spatial Alignment: Ensure all layers share identical projection, extent, and cell size

- PCA Execution: Extract principal components from the correlation matrix

- Weight Assignment: Use first principal component loadings as integration weights

- Index Calculation: Compute weighted sum: IESI = w₁×ES₁ + w₂×ES₂ + ... + wₙ×ESₙ

- Validation: Check that first component explains sufficient variance (>50%) [27]

Protocol 2: Driving Force Analysis using OPGD Model

Purpose: To identify key factors influencing IESI spatial patterns.

Materials:

- IESI spatial distribution data

- Candidate driving factor datasets (topography, climate, vegetation, human activity)

- Optimal parameter-based geographical detector (OPGD) software

Procedure:

- Factor Selection: Compile potential driving factors based on ecological relevance

- Scale Optimization: Test multiple spatial scales to identify optimal detection scale

- Factor Detection: Calculate q-values for each factor using geographical detector

- Interpretation: Rank factors by explanatory power (q-value)

- Interaction Analysis: Test factor interactions for enhanced understanding [27]

Table 1: IESI Values in Central Yunnan Province (2000-2020) [27]

| Year | Mean IESI Value | Trend Direction | Key Influencing Factors |

|---|---|---|---|

| 2000 | 0.7338 | Baseline | RDLS, Slope, NDVI |

| 2005 | 0.6981 | Decreasing | Land use change, vegetation cover |

| 2010 | 0.6947 | Stable | Climate factors, topography |

| 2015 | 0.6650 | Decreasing | Human activity intensity |

| 2020 | 0.6992 | Increasing | Conservation policies, management |

Table 2: Ecosystem Service Assessment Methods [27]

| Ecosystem Service | Assessment Model | Key Inputs | Output Metrics |

|---|---|---|---|

| Water Yield (WY) | InVEST | Precipitation, evapotranspiration, soil depth | mm/year |

| Carbon Storage (CS) | InVEST | Land use, carbon pools (above, below, soil, dead) | Mg/ha |

| Habitat Quality (HQ) | InVEST | Land use, threat sources, sensitivity | 0-1 index |

| Soil Conservation (SC) | RUSLE | Rainfall, soil erodibility, topography | t/ha/year |

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Tool/Reagent | Function | Application in IESI Research |

|---|---|---|

| InVEST Model Suite | Spatially explicit ecosystem service modeling | Quantifying water yield, carbon storage, habitat quality |

| RUSLE Model | Soil erosion estimation | Calculating soil conservation service |

| Geographical Detector | Spatial stratified heterogeneity analysis | Identifying driving forces behind IESI patterns |

| Principal Component Analysis | Multivariate data reduction | Objectively weighting and integrating multiple ES |

| Normalized Difference Vegetation Index | Vegetation vigor assessment | Serving as proxy for ecosystem productivity |

Workflow Visualization

IESI Construction and Analysis Workflow

Ecosystem Service Integration Methodology

Harnessing Machine Learning and Geodetector Models for Driver Analysis

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between driver analysis and Geodetector?

Driver analysis typically refers to a set of statistical methods, often based on regression, used to estimate the importance of various independent variables (drivers) in predicting a dependent variable. For example, it can use Linear Regression Coefficients, Shapley Regression, or Relative Importance Analysis to compute importance scores [30]. In contrast, Geodetector is a specialized tool designed to measure and attribute spatially stratified heterogeneity (SSH). Its core function is to test the coupling between two variables (Y and X) without assuming linearity and to investigate interactions between explanatory variables [31].

FAQ 2: My Geodetector model fails to run. What are the most common data requirements I should check?

The most common data requirements for Geodetector that can cause runtime failures are:

- Variable Types: The response variable (Y) must be numerical. The explanatory factors (X) must be categorical (e.g., landuse types, seasons). If your X variables are numerical, you must first discretize them into strata [32].

- Sample Size: The data must contain a sufficient number of samples. There should be at least three sample units within each stratum of a factor [32].

- Data Volume: While not specific to Geodetector, related analytical platforms often enforce data volume limits (e.g., a maximum file size of 40 MB), which is a good general checkpoint [33].

FAQ 3: Why does my machine learning model have poor performance even after using driver analysis for feature selection?

Poor model performance can stem from issues beyond feature importance. Common culprits include:

- Implementation Bugs: Incorrect tensor shapes, improper loss function inputs, or errors in toggling between training and evaluation modes can cause silent failures [34].

- Data-Model Fit: The model architecture might be too simple or too complex for your data. It is recommended to start with a simple architecture (e.g., a fully-connected network with one hidden layer, or a LeNet for images) and sensible hyper-parameter defaults [34].

- Data Quality: The problem could be in the dataset itself, such as noisy labels, imbalanced classes, or a mismatch between the training and test set distributions [34] [35].

FAQ 4: What should I do if my driver analysis results seem counter-intuitive or unreliable?

First, always remember that driver analysis offers insights to aid decision-making but does not guarantee absolute accuracy. Correlation does not imply causation [33].

- Check for Correlated Predictors: If your independent variables are highly correlated, methods like Linear Regression Coefficients can become unreliable. In such cases, switch to methods designed to handle multicollinearity, such as Shapley Regression or Relative Importance Analysis [30] [36].

- Review Diagnostics: Ensure you have reviewed technical diagnostics for outliers and heteroscedasticity. Also, check the p-values and standard errors of the importance scores to assess their statistical significance [36].

- Validate with a Simple Baseline: Compare your model's performance against a simple baseline (e.g., the average of outputs or a linear regression) to verify that it is learning meaningful patterns [34].

Troubleshooting Guides

Issue 1: Preparing and Integrating Data for Combined ML and Geodetector Workflows

Problem: Users are unsure how to structure their data and preprocess variables to be compatible with both machine learning and Geodetector models.

Solution: Follow this integrated data preparation protocol.

Step 1: Variable Transformation and Discretization Geodetector requires categorical X variables. You must discretize any continuous explanatory variables.

- Methodology: For a continuous variable (e.g., "GDP per capita" or "elevation"), stratify the values into a discrete number of meaningful strata (e.g., 5 strata: Very Low, Low, Medium, High, Very High) [32]. The boundaries can be defined using natural breaks, quantiles, or domain knowledge.

- Integration Note: The same discretized variables can then be used as features in your machine learning model, often by converting them into one-hot encoded representations.

Step 2: Data Formatting

- Geodetector Format: For the Excel version of Geodetector, data should be formatted with each row representing a sample unit (e.g., a village). The first column is the numerical response variable (Y), and subsequent columns are the categorical factors (X) [32].

- ML Format: Ensure the dataset is split into training and testing sets. Normalize the input data for the ML model by subtracting the mean and dividing by the standard deviation. For images, it is sufficient to scale values to [0, 1] [34].

Step 3: Data Volume and Quality Check

Issue 2: Selecting the Appropriate Driver Analysis Method

Problem: With multiple driver analysis methods available, users often select an inappropriate one, leading to misleading results, especially with correlated predictors.

Solution: Select a method based on the characteristics of your predictors and your research goal. The table below summarizes the key methods.

Table 1: Comparison of Driver Analysis Methods

| Method | Core Principle | Best Used When | Key Consideration |

|---|---|---|---|

| Linear Regression Coefficients [30] | Normalized absolute values of regression coefficients. | You need to understand the sensitivity of Y to changes in X, and predictors are independent. | Highly unreliable when predictors are correlated. |

| Contribution [30] | Explains variance based on both the coefficient and the variation in the data. | You want to measure the historical impact of variables, not just their potential. | Is scale-independent. |

| Shapley Regression [30] [36] | Averages the incremental R² improvement across all possible variable orderings. | Predictors are correlated, and you need a robust measure of importance. | Computationally intensive for >15 variables; may auto-switch to Relative Importance Analysis. |

| Relative Importance Analysis [30] | Uses orthogonalized predictors to disentangle correlated contributions. | You have many correlated predictors (>15) and need a faster alternative to Shapley. | Provides results highly similar to Shapley but is computationally more efficient. |

The following workflow can help visualize the selection process:

Issue 3: Debugging and Improving a Machine Learning Model

Problem: A model has been implemented, but its performance is low, and the cause is unknown.

Solution: Adopt a systematic troubleshooting strategy.

Step 1: Start Simple The key is to start simple and gradually ramp up complexity [34].

- Architecture: Choose a simple architecture (e.g., a fully-connected network with one hidden layer, LSTM with one layer for sequences, LeNet for images).

- Defaults: Use sensible defaults: ReLU activation, no regularization, and normalized inputs.

- Problem: Simplify the problem by working with a small training set (e.g., ~10,000 examples) or a synthetic dataset to ensure the model can learn at all.

Step 2: Implement and Debug

- Overfit a Single Batch: The most critical heuristic. Try to drive the training error on a single batch of data arbitrarily close to zero. This catches a vast number of bugs [34].

- If the error explodes, it is often a numerical issue or a learning rate that is too high.

- If the error oscillates, lower the learning rate and inspect the data.

- If the error plateaus, increase the learning rate and inspect the loss function and data pipeline.

- Common Bugs: Check for incorrect tensor shapes, improper input preprocessing (e.g., forgetting to normalize), and incorrect input to the loss function [34].

- Overfit a Single Batch: The most critical heuristic. Try to drive the training error on a single batch of data arbitrarily close to zero. This catches a vast number of bugs [34].

Step 3: Evaluate and Analyze Errors

- Bias-Variance: Apply bias-variance decomposition to prioritize the next steps [34].

- Error Analysis: Create a dataset with target values, predictions, and prediction probabilities. For categorical features, group by each category and calculate the mean accuracy. This helps identify specific categories (e.g., "Month-to-month" contract type) or value ranges where the model performs poorly [35].

The following diagram outlines a high-level debugging decision tree:

Table 2: Key Software and Analytical Tools for Integrated Driver Analysis

| Item | Function | Relevance to Research |

|---|---|---|

| Geodetector Software [31] [32] | A statistical tool to measure spatially stratified heterogeneity and detect interactions between factors. | Core tool for analyzing the driving forces behind spatial patterns in ecosystem services without assuming linearity. |

| Shapley Regression [30] [36] | A driver analysis method that robustly handles correlated predictors by averaging over all possible models. | Provides reliable variable importance scores when ecological predictors are collinear (e.g., elevation, soil type, precipitation). |

| Relative Importance Analysis [30] | A computationally efficient alternative to Shapley for datasets with a large number of predictors. | Essential for analyzing high-dimensional datasets, such as those incorporating numerous remote sensing indices or climate variables. |

| LightGBM Classifier [35] | A high-performance gradient boosting framework based on decision tree algorithms. | A powerful machine learning model for classification and regression tasks in ecosystem prediction, such as modeling land use change or species distribution. |

| Optuna [35] | A hyperparameter optimization framework for automating the search for the best model parameters. | Crucial for systematically tuning machine learning models to achieve peak predictive performance on ecosystem service data. |

Frequently Asked Questions (FAQs) for Researchers

This section addresses common conceptual and practical questions researchers encounter when integrating local knowledge into ecosystem services assessments.

Table 1: Frequently Asked Questions on Citizen Science and Participatory Mapping

| Question | Answer & Application to Ecosystem Services Research |

|---|---|

| What is local knowledge and why is it valuable for ecosystem services (ES) research? | Local knowledge is a place-based, experiential system of knowledge developed by people who depend upon an ecosystem [37]. Unlike siloed scientific data, it communicates connections in social-ecological systems, providing fine-scale, spatially explicit data that can fill critical information gaps in ES appraisals, thereby enhancing their reliability [37] [38]. |

| How can local knowledge improve the reliability of ES assessments? | It provides fine-scale data on system change, informs locally relevant hypotheses, and captures social and ecological data in tandem [37]. This helps address information gaps and cumulative uncertainties in governance-relevant ES appraisals, moving beyond potential service values to understanding actual benefits accrued by society [38] [39]. |

| What is the "right to research" in this context? | Coined by Arjun Appadurai, it is the concept that the capacity to perform systematic inquiry is a right and a crucial tool for all citizens. In ES research, this means empowering local communities to document their knowledge and use it to intervene in issues that affect their lives, fostering a more democratic and relevant science [40]. |

| What are the main participatory mapping methods? | Participatory Mapping: Engages participants to map ES, locate conflicts, and highlight threatened areas, often using tools like PGIS [41]. Photovoice: Allows participants to use photography to highlight local issues and aspects of their life associated with ES, providing qualitative context [41]. |

| What is a key challenge in integrated ES appraisals? | An "information gap" can exist where the decision context requires high accuracy and reliability, but the expected uncertainty of ES appraisal methods is also high, making their use less likely. Participatory methods can help bridge this gap by providing missing local context [38]. |

Troubleshooting Guides for Experimental Protocols

This section provides step-by-step solutions for common methodological challenges.

Troubleshooting Guide 1: Overcoming Lack of Community Participation

Problem: Difficulty in recruiting or sustaining engagement from local community members in your participatory mapping project.

Root Cause: This often stems from a lack of community buy-in, persistent power structures that prioritize expert knowledge, or a research design that does not address locally identified problems [37].

Solutions:

- Employ a Co-Production Model: Frame the project from the bottom-up, focusing on collaborative inquiry and developing solutions to community-identified problems. Researchers should act as facilitators [37].

- Ensure Equitable Exchange: Design the process so that the community gains immediately valuable knowledge, capacity, or tools from participation, reinforcing their "capacity to aspire" [40].

- Utilize Appropriate Tools: For communities with lower literacy, combine methods like Photovoice and group discussions to ensure diverse participation and anonymous input [41].

The following workflow outlines a co-production approach to ensure meaningful community participation from start to finish:

Problem: How to systematically combine qualitative local knowledge with quantitative scientific data for a robust ES assessment.

Root Cause: Local knowledge and scientific data often differ in scale, format, and epistemology, creating integration challenges [38] [39].

Solutions:

- Adopt a Structured Framework: Use a tailored social-ecological systems (SES) framework to guide the accumulation and synthesis of social and ecological variables [37].

- Use Participatory Mapping as a Bridge: Spatial data from mapping can be directly incorporated into Geographic Information Systems (GIS) to enrich technical spatial analyses [41] [40].

- Leverage Integrated Modeling Methodologies: Employ approaches like the ARIES methodology, which uses artificial intelligence to assist in assembling customized models that can handle diverse data types and explicitly quantify uncertainty [39].

Table 2: Research Reagent Solutions for Participatory Mapping

| Research 'Reagent' | Function in Experimental Protocol |

|---|---|

| Social-Ecological Systems (SES) Framework | A conceptual scaffold to identify and organize key variables and relationships between resource systems, governance, users, and resource units, ensuring all relevant factors are considered [37]. |

| Participatory GIS (PGIS) | A technological tool that integrates local spatial knowledge from participants into a digital mapping environment, creating visually compelling and analytically robust data layers [40]. |

| Photovoice Methodology | A qualitative method that provides context and meaning to spatial data. It allows community members to document and discuss their realities through photography, highlighting issues unknown to outsiders [41]. |

| Semi-Structured Interviews | A data collection technique used alongside participatory mapping to gather in-depth qualitative data that explains and enriches the mapped information, providing the "why" behind the "where" [37]. |

Detailed Experimental Protocols from Cited Studies

This section provides reproducible methodologies from key studies.

Protocol 1: Participatory Mapping for Coastal Marine SES (Maine, USA)

This protocol demonstrates how to co-produce fine-scale data on a social-ecological system [37].

- Objective: To document local knowledge of coastal marine systems to inform collaborative research on changes in shellfish species, predators, and human activities.

- Theoretical Framework: The research is guided by a social-ecological system (SES) framework tailored for benthic small-scale fisheries [37].

- Methodology:

- Participatory Mapping: Conduct mapping sessions with local resource users (e.g., shellfish harvesters) to spatially document their knowledge of the system.

- Semi-Structured Interviews: Perform interviews alongside mapping to gather detailed contextual information.

- Data Integration: Synthesize mapped spatial data and interview transcripts to characterize the SES, generate local hypotheses, and directly inform the design of subsequent ecological research projects [37].

Protocol 2: Integrating Participatory Mapping and Photovoice (Tun Mustapha Park, Malaysia)

This protocol combines two participatory methods to elicit a comprehensive understanding of ecosystem services [41].

- Objective: To understand ecosystem services, their dynamics, and the anthropogenic impacts on marine-associated habitats from the community perspective.

- Methodology:

- Participatory Mapping: Invite participants from community-based organizations to map the location of ecosystem services.

- Photovoice: Equip participants with cameras to document ecological, sociocultural, and economic issues surrounding the ecosystem services.

- Group Discussions: Facilitate discussions about the maps and photographs, allowing participants to provide in-depth qualitative data, highlight issues, and develop consensus views and recommendations [41].

- Output: The process generates rich visual, spatial, and qualitative data to enhance ecosystem-based management and empowers participants to engage in governance [41].

The following diagram illustrates the logical flow of this integrated methodology, showing how different components connect to produce scientific and community outcomes:

Navigating Pitfalls and Optimizing ES Assessment Practices

Identifying and Mitigating Prevalent Assumptions in ES Modeling

Frequently Asked Questions

What are assumptions in the context of Ecosystem Services (ES) modeling? Assumptions are implicit or explicit statements that are accepted as true without immediate proof. They are necessary to simplify the immense complexity of real-world social-ecological systems, making ES assessments manageable. However, if they are ambiguous or inappropriate, they can lead to misconceptions and reduce the usefulness of the assessment for conservation decisions [20].

Why is it critical to identify assumptions in my model? Unchecked assumptions are a primary source of Requirements Technical Debt (RTD). If these assumptions are incomplete, incorrect, or become invalid over time, they can lead to system failures, unexpected behavior, and costly rework much later in the project lifecycle. Explicitly managing assumptions is fundamental to improving the reliability and dependability of your research outcomes [42].

My ES model is producing unrealistic results. Where should I start troubleshooting? Begin by isolating the section of the model or the specific geoprocessing tool that is causing the error. Run the tool outside the model with the same inputs to see if the issue persists. This helps determine if the problem is with the tool itself, the model structure, or the data inputs [43]. Furthermore, validate your model against independent population or field data if available; a model's ability to recreate multiple observed patterns in real data is a strong indicator that its assumptions and structure are appropriate [44].

Are there standardized tools for managing environmental assumptions? While there is no single universal standard, several modeling frameworks provide structured support. A comparative evaluation of representative approaches shows that KAOS and Obstacle Analysis are particularly strong for explicitly modeling assumptions and their potential violations. SysML excels at integration with broader systems engineering workflows, and RDAL demonstrates superior capabilities in tracing the relationships between assumptions, requirements, and verification conditions [42].

A common assumption is that my data are representative. What if they are not? Using secondary data or data from a different spatial or temporal context can severely limit the credibility of your assessment when applied to a specific area, like a protected area. To mitigate this, ask local communities for their knowledge, use adjusted value-transfer functions, and always collect field data to evaluate uncertainties in the transferred data [20].

Troubleshooting Guides

Issue 1: Unrealistic or Highly Uncertain Model Outputs

This often stems from foundational assumptions about the system that do not hold true.