How GPU Parallel Computing is Revolutionizing Ecological Modeling

This article explores the transformative impact of GPU parallel computing on ecological modeling.

How GPU Parallel Computing is Revolutionizing Ecological Modeling

Abstract

This article explores the transformative impact of GPU parallel computing on ecological modeling. It covers the foundational principles of GPU architecture and its suitability for complex ecological simulations, details methodological approaches for porting and implementing models on GPU systems, addresses key troubleshooting and optimization challenges, and provides a comparative validation of performance gains and environmental costs. Aimed at researchers and scientists, this guide provides a comprehensive resource for leveraging GPU acceleration to achieve higher-resolution, faster, and more detailed ecological forecasts.

Why GPUs? The Foundational Shift in Ecological Computing Power

The Computational Bottleneck in Traditional Ecological Modeling

Ecological models have become indispensable tools for understanding and predicting the dynamics of complex natural systems, from forest landscapes and savanna vegetation to oceanic currents and animal migration patterns [1] [2]. These computational approaches create a 'virtual environment' that supplements or even replaces field experiments, which are often logistically infeasible, costly, or potentially harmful to biodiversity [2]. However, as ecological models increasingly strive to incorporate critical real-world complexities—including local interactions, individual variability, spatial and temporal heterogeneity in resource availability, and adaptive behaviors—they encounter severe computational limitations [1]. This paper examines the fundamental computational bottlenecks inherent in traditional ecological modeling approaches and frames these challenges within the context of emerging GPU-accelerated computing solutions that promise to transform ecological research capabilities.

The transition from purely descriptive ecology to quantitative, predictive science has driven the development of increasingly sophisticated models [2]. Early mathematical models in ecology, pioneered by Lotka, Volterra, and Gause, have evolved into complex computational frameworks that attempt to capture the multi-scale, multi-process nature of ecological systems [2]. This evolution has created a fundamental tension between model complexity and computational feasibility, presenting researchers with difficult trade-offs between biological realism, spatial extent, temporal scope, and practical runtime constraints.

Fundamental Computational Challenges in Ecological Modeling

Spatial and Temporal Scaling Constraints

Ecological processes operate across vast ranges of spatial and temporal scales, from individual organisms interacting locally over seconds to landscape-scale patterns evolving over centuries. Traditional sequential processing approaches, which simulate landscapes from the upper left pixel to the lower right pixel, create significant bottlenecks for modeling these multi-scale systems [3]. This sequential paradigm fails to capture the simultaneous nature of ecological processes and limits the practical resolution and extent of simulations.

Table 1: Performance Limitations of Sequential Processing in Forest Landscape Modeling

| Simulation Scenario | Number of Pixels | Time Step | Sequential Processing Time | Performance Limitation |

|---|---|---|---|---|

| Large-scale landscape | Millions | 10-year | Baseline (100%) | 32.0-64.6% longer runtime [3] |

| High-temporal resolution | Millions | 1-year | Baseline (100%) | 64.6-76.2% longer runtime [3] |

| Fine-scale processes | Variable | Sub-annual | Often computationally prohibitive | Forces oversimplification of processes |

Numerical Complexity in Ecological Systems

The mathematical frameworks underlying ecological models introduce additional computational demands. Equation-based ecological models often involve systems of ordinary differential equations representing population dynamics:

where u_i(t) represents population density of the ith species at time t, N is the total number of species (which can reach hundreds in complex food webs), and α represents biological and environmental parameters [2]. These systems frequently exhibit nonlinear dynamics and sensitive dependence on parameters, requiring computationally intensive numerical solutions and stability analyses [2]. The Jacobi iterative solver, identified as a performance hotspot in the SCHISM ocean model, exemplifies this class of computational challenges [4].

Path Dependence in Model Development

An often-overlooked computational constraint lies in the path-dependent nature of model development itself. As noted in robustness analysis literature, the choices made at each modeling step constrain subsequent options [1]. For instance, a vegetation model that initially excludes belowground processes may later require parameter tweaking to appear correct, even though the omitted processes fundamentally drive the observed dynamics [1]. This path dependence creates self-reinforcing computational constraints, where initial algorithmic decisions permanently limit a model's potential biological realism and predictive capability.

Quantitative Analysis of Computational Bottlenecks

Performance Hotspots in Ecological Simulations

Detailed performance analysis of ecological models reveals consistent computational bottlenecks across application domains. These hotspots typically emerge at the intersection of biological complexity and mathematical computation.

Table 2: Computational Hotspots in Ecological Models

| Model Component | Computational Operation | Performance Impact | Example Implementation |

|---|---|---|---|

| Jacobi Solver | Iterative matrix solution | 3.06x acceleration potential with GPU [4] | SCHISM ocean model [4] |

| Agent-based movement | Individual trajectory calculation | ~1.5x speedup with GPU [5] | Bird migration model [5] |

| Spatial anisotropy | Directional dependency computation | 42x speedup with CUDA GPU [5] | Every-direction Variogram Analysis [5] |

| Seed dispersal | Landscape-scale propagation | Dynamic reallocation required [3] | LANDIS forest landscape model [3] |

Case Study: Forest Landscape Models

Forest Landscape Models (FLMs) exemplify the computational challenges facing ecological modelers. These models simulate complex spatial interactions including species-level processes, stand-level dynamics, and landscape-scale seed dispersal [3]. The traditional sequential processing approach creates fundamental limitations in both simulation time and realism. Parallel processing designs that assign pixel subsets to individual cores demonstrate significant improvements, saving 32.0-76.2% of computation time depending on temporal resolution and landscape complexity [3]. This acceleration enables previously impractical high-resolution simulations that more accurately represent the simultaneous nature of ecological processes.

Methodological Framework: Robustness Analysis

Computational limitations often force ecological modelers to make simplifying assumptions whose impacts remain poorly understood. Robustness Analysis (RA) provides a systematic framework for evaluating these trade-offs by "forcefully trying to break a model" to identify conditions under which model mechanisms control system dynamics [1]. The three primary categories of RA include:

- Parameter Robustness: Testing extreme parameter values beyond empirically observed ranges [1]

- Structural Robustness: Modifying model structure by removing or adding processes [1]

- Representational Robustness: Changing how core elements are represented [1]

This methodological approach reveals the sensitivity of model outcomes to computational simplifications and guides strategic investments in computational optimization.

Experimental Protocols for Computational Efficiency Assessment

GPU Acceleration Methodology

The transition from CPU-based to GPU-accelerated ecological modeling follows a systematic protocol for performance optimization:

- Performance Profiling: Identify computational hotspots through detailed analysis of original CPU-based code [4]

- Algorithm Selection: Target computationally intensive modules with high parallelization potential (e.g., Jacobi solvers, agent-based calculations) [4] [5]

- Implementation Framework: Select appropriate GPU programming framework (CUDA, OpenACC) based on performance requirements [4]

- Domain Decomposition: Apply spatial domain decomposition approaches that assign pixel subsets to individual cores [3]

- Dynamic Reallocation: Implement dynamic reallocation of subsets across cores to execute landscape-level processes [3]

- Validation: Compare simulation results between parallel and sequential processing to verify maintenance of ecological accuracy [3]

Benchmarking Procedures

Rigorous benchmarking protocols are essential for quantifying computational improvements:

The Researcher's Computational Toolkit

Table 3: Essential Computational Resources for High-Performance Ecological Modeling

| Resource Category | Specific Tools & Technologies | Function in Ecological Modeling | Performance Considerations |

|---|---|---|---|

| GPU Programming Frameworks | CUDA Fortran, OpenACC | Accelerate computationally intensive model components | CUDA outperforms OpenACC across experimental conditions [4] |

| Spatial Decomposition Methods | Domain decomposition, Pixel blocking | Enable parallel processing of spatial elements | Allows simultaneous simulation of multiple pixel blocks [3] |

| Iterative Solvers | Jacobi solver, Conjugate gradient methods | Solve systems of ecological equations | 3.06x GPU acceleration demonstrated [4] |

| Agent-Based Modeling Platforms | Custom CUDA C implementations | Simulate individual organism movements and behaviors | ~1.5x speedup for bird migration models [5] |

| Performance Profiling Tools | NVIDIA Nsight, CPU profiling utilities | Identify computational hotspots in existing code | Essential for targeted acceleration efforts [4] |

The computational bottlenecks in traditional ecological modeling present significant constraints on scientific progress in understanding and predicting complex ecological systems. These limitations manifest as trade-offs between spatial extent, temporal resolution, biological complexity, and practical runtime constraints. However, the emerging paradigm of GPU-accelerated parallel processing offers substantial improvements in computational efficiency, with demonstrated speedups ranging from 1.5x for agent-based models to 42x for spatial analysis algorithms [5] [4].

The integration of robust computational methods with ecological theory represents a promising path forward. By combining systematic robustness analysis [1] with GPU-accelerated numerical solutions [4] [5], ecological modelers can navigate the fundamental tension between biological realism and computational feasibility. This approach enables researchers to address increasingly complex questions about ecological systems while maintaining both computational practicality and scientific rigor, ultimately supporting more effective conservation, management, and prediction in an era of rapid environmental change.

The Graphics Processing Unit (GPU) has undergone a fundamental transformation from a specialized graphics rendering component to a general-purpose parallel processor that has become indispensable across scientific computing, artificial intelligence, and ecological modeling. This evolution represents one of the most significant architectural shifts in modern computing history, enabling researchers to solve computational problems that were previously intractable within practical timeframes. For ecological modelers, this paradigm shift unlocks new possibilities for simulating complex environmental systems, processing vast remote sensing datasets, and accelerating computational-intensive research that seeks to understand and predict ecosystem behaviors at unprecedented scales and resolutions.

Originally designed to accelerate computer graphics workloads, GPUs were architected fundamentally differently from Central Processing Units (CPUs). While CPUs excel at sequential processing through a few powerful cores optimized for complex, single-threaded tasks, GPUs contain thousands of smaller, efficient cores designed for massive parallelism—executing many calculations simultaneously rather than in sequence [6]. This architectural distinction makes GPU parallel computing particularly valuable for ecological modeling research, where simulations often involve performing identical mathematical operations across millions of grid cells or processing thousands of independent model ensembles to quantify uncertainty in climate projections.

Fundamental GPU Architecture: Beyond the Basic Blueprint

Core Architectural Components

At its foundation, a GPU is a highly parallel processor architecture composed of processing elements and a sophisticated memory hierarchy designed to maximize computational throughput [7]. The architecture balances execution resources with memory subsystems to keep thousands of threads efficiently fed with data. Unlike CPUs that dedicate significant die area to control logic and cache, GPUs prioritize arithmetic logic units (ALUs) to achieve high computational density, making them ideal for data-parallel scientific workloads common in environmental simulation models.

Streaming Multiprocessors (SMs): These are the fundamental processing units of a GPU, with each SM containing multiple execution cores, schedulers, and various instruction pipelines [8]. Each SM operates independently, handling multiple programs in parallel, with the total number of SMs in a GPU directly determining its computational capacity. Modern data center GPUs like the NVIDIA A100 contain 108 SMs, enabling tremendous parallel processing capability [7].

Execution Cores: Within each SM reside hundreds of simpler, energy-efficient cores optimized for specific types of calculations. Unlike CPU cores that handle diverse workloads, GPU cores are optimized for Floating Point Operations (FLOPs), with each core capable of performing one FLOP per cycle [8]. This specialized design enables the massive parallelism that distinguishes GPU computing.

Warp Scheduling: GPU cores are organized into warps—groups that execute instructions in lockstep. NVIDIA GPUs typically have 32 cores per warp, while AMD GPUs utilize 64 cores per warp [8]. All cores in a warp must execute the same instruction simultaneously but operate on different data elements, an execution model known as Single Instruction, Multiple Data (SIMD). For optimal performance, especially in ecological modeling workloads, data structures should be designed with warp sizes in mind, using multiples of 32 (or 64 for AMD) to ensure all cores in a warp remain utilized rather than sitting idle.

Memory Hierarchy: Balancing Speed and Capacity

GPU memory architecture is organized hierarchically to balance speed, capacity, and energy efficiency, with understanding of this hierarchy being crucial for optimizing scientific code performance. The memory subsystem is designed to feed the massive parallel computation engines with minimal stall time, with performance often limited by memory bandwidth rather than raw computational capability.

Table: GPU Memory Hierarchy and Characteristics

| Memory Type | Location | Speed | Size | Primary Function |

|---|---|---|---|---|

| Registers | Inside each GPU core | Fastest | Very small (per core) | Store immediate values for active computations |

| L1 Cache | Inside each SM | Very fast | Small | Store frequently accessed data within an SM |

| L2 Cache | Shared across SMs | Fast | Medium (e.g., 40MB in A100) | Serve as secondary cache shared across all SMs |

| VRAM (HBM/GDDR) | On GPU card | Slower | Large (16-80GB) | Store model weights, large datasets, and textures |

| System RAM | Host computer | Slowest | Very large | Backing store for datasets exceeding VRAM capacity |

The three primary types of memory in a GPU include Static RAM (SRAM), which serves as the fastest cache memory located inside the GPU core through registers, L1 cache, and L2 cache; Dynamic RAM (DRAM), which functions as the main memory (VRAM) on the GPU card for storing large amounts of data; and High Bandwidth Memory (HBM), an advanced form of VRAM used in high-performance GPUs that stacks memory vertically to reduce latency and increase bandwidth, though at higher cost [8]. The movement of data between these memory levels represents a significant performance consideration, with kernel optimizations focusing on minimizing transfers between DRAM and SRAM through efficient data reuse patterns [8].

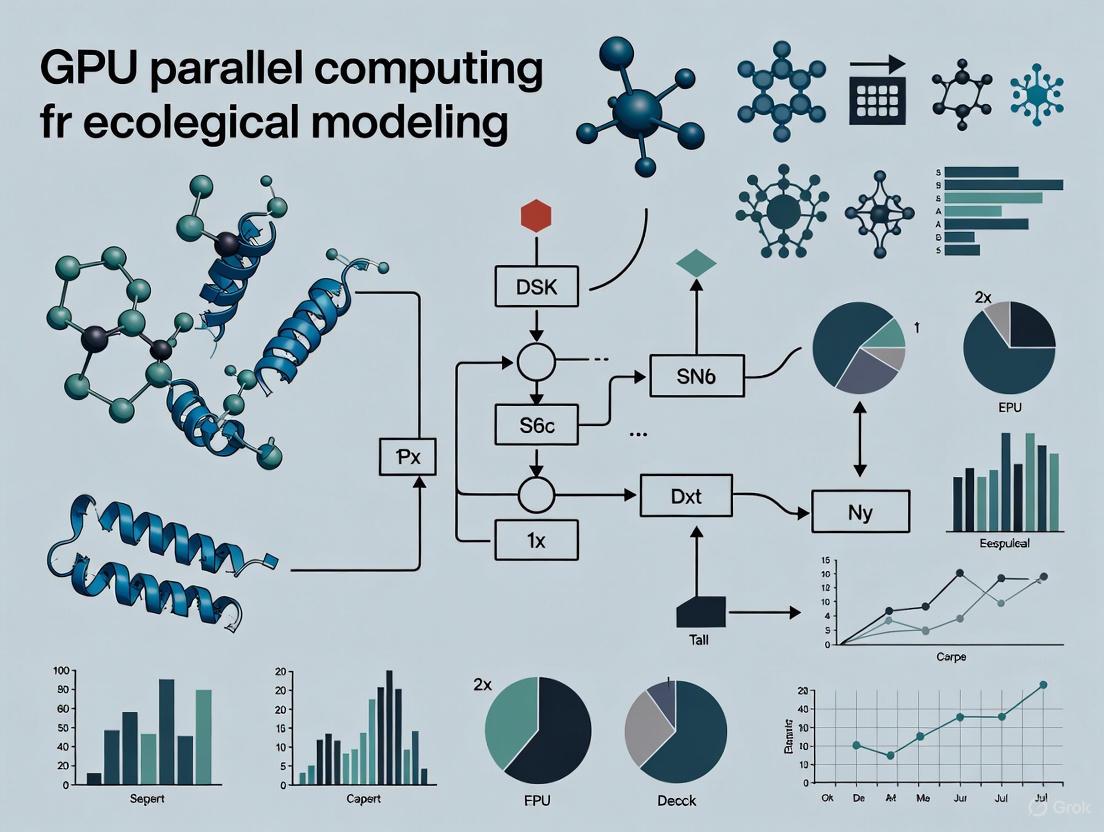

Diagram: GPU Memory Hierarchy and Access Patterns. This diagram illustrates the layered memory architecture in modern GPUs, showing how speed decreases while capacity increases as we move further from the computational cores.

Parallel Execution Model: How GPUs Process Thousands of Threads Simultaneously

Thread Hierarchy and Execution

GPUs employ a sophisticated two-level thread hierarchy to manage and execute thousands of parallel threads efficiently. This hierarchical organization allows the hardware to scale effectively across problems of different sizes and complexities, making it suitable for everything from fine-grained parallel operations to coarse-grained task parallelism often found in ecological modeling workflows.

Thread Blocks: A fundamental concept in GPU execution is that threads are grouped into equally-sized thread blocks, with a collection of thread blocks launched to execute a function (kernel) [7]. Threads within the same block can communicate via shared memory and synchronize their execution, enabling cooperative processing patterns essential for stencil operations in partial differential equation solvers used in ocean and atmospheric models.

Streaming Multiprocessor Assignment: At runtime, thread blocks are distributed across available SMs for execution, with each SM capable of running multiple thread blocks concurrently [7]. To fully utilize a GPU with multiple SMs, programmers must launch many thread blocks—typically several times more than the number of SMs—to minimize the "tail effect" where only a few thread blocks remain active at the end of computation, underutilizing the GPU.

Warps and SIMD Execution: Within each SM, threads are further organized into warps (groups of 32 threads for NVIDIA hardware) that execute instructions in lockstep fashion [8]. This Single Instruction, Multiple Thread (SIMT) execution model means all threads in a warp must execute the same instruction simultaneously, though they operate on different data elements. When code paths within a warp diverge (due to conditional statements), performance can degrade significantly—a phenomenon known as warp divergence that ecological modelers must minimize in their algorithms.

Diagram: GPU Parallel Execution Model. This diagram visualizes the two-level thread hierarchy in GPU execution, showing how kernels are divided into thread blocks that are distributed across SMs, where they're further organized into warps for parallel execution.

Performance Limitations and Arithmetic Intensity

Understanding GPU performance characteristics requires analyzing the relationship between computation and memory access patterns, which often determines whether a workload will be memory-bound or computation-bound. This analysis is particularly relevant for ecological modeling, where different components of a modeling system may exhibit dramatically different computational characteristics.

The performance of a function on a GPU is typically limited by one of three factors: memory bandwidth, math bandwidth, or latency [7]. We can model this relationship by considering the time spent in memory access (Tmem) versus computation (Tmath), with the overall time being approximately max(Tmem, Tmath) when these operations can be overlapped.

A key concept in this analysis is arithmetic intensity, defined as the ratio of operations performed to bytes of memory accessed (FLOPS/byte) [7]. This metric helps determine whether an algorithm will be memory-bound or computation-bound on a particular GPU architecture:

- Memory-bound: Arithmetic intensity < processor's ops:byte ratio

- Computation-bound: Arithmetic intensity > processor's ops:byte ratio

Table: Arithmetic Intensity of Common Operations in Scientific Computing

| Operation | Arithmetic Intensity (FLOPS/Byte) | Typically Limited By | Relevance to Ecological Modeling |

|---|---|---|---|

| Linear Layer (large batch) | 315 FLOPS/B | Computation | Neural network emulators of physical processes |

| Linear Layer (batch size=1) | 1 FLOPS/B | Memory | Online learning or sequential assimilation |

| 3x3 Stencil Operation | ~2.25 FLOPS/B | Memory | Finite-difference ocean & atmospheric models |

| ReLU Activation | 0.25 FLOPS/B | Memory | Deep learning components in hybrid models |

| Layer Normalization | <10 FLOPS/B | Memory | Pre-/post-processing of environmental data |

For ecological modelers, this analysis reveals why certain components of their modeling systems may not achieve peak performance on GPUs. Memory-bound operations like fine-grained stencil computations common in fluid dynamics models may benefit from techniques like tiling to improve data locality and reuse, while computation-bound operations like matrix multiplies in biogeochemical cycling models can more readily approach the GPU's theoretical peak performance.

GPU Technologies in Scientific Research: Case Studies and Applications

Ecological and Environmental Modeling Applications

The computational characteristics of many ecological and environmental models make them particularly well-suited for GPU acceleration. These applications typically involve solving partial differential equations numerically across large spatial grids—a naturally data-parallel problem that maps efficiently to GPU architectures. The equations describing ocean evolution, for example, form a system of partial differential equations that are solved numerically by discretizing the model domain using finite difference, finite volume, or finite element schemes [9]. In these formulations, the bulk of computational work takes the form of stencil computations, where updating a field at a given grid location requires reading values from neighboring locations—a pattern that benefits tremendously from the high memory bandwidth and parallel execution capabilities of GPUs.

Operational ocean forecasting systems (OOFSs) represent computationally demanding applications that require significant resources to run models of useful fidelity [9]. These systems are inherently massively data-parallel as they perform identical computations across millions of grid points, making them excellent candidates for GPU acceleration. The single instruction, multiple data (SIMD) nature of these computations aligns perfectly with GPU architectural strengths, particularly when compared to traditional CPU-based implementations that struggle with the memory bandwidth requirements of these operations.

Experimental Protocol: Extreme Weather Pattern Identification

A compelling case study demonstrating GPU effectiveness in environmental science comes from a collaborative project between Lawrence Berkeley National Laboratory, Oak Ridge National Laboratory, and NVIDIA, where researchers developed a deep learning system to identify extreme weather patterns from high-resolution climate simulations [10]. The experimental methodology provides a template for how ecological researchers can leverage GPU computing for large-scale environmental analysis.

Objective: Develop a deep learning system capable of automatically identifying and classifying extreme weather patterns in high-resolution climate simulation data to improve forecasting and understanding of severe weather events.

Computational Resources: The research team utilized the Summit supercomputer at Oak Ridge National Laboratory, leveraging 27,000 NVIDIA Tesla V100 Tensor Core GPUs to achieve a peak performance of 1.13 exaops—the fastest deep learning algorithm reported at the time and the first to break the exascale barrier for deep learning applications [10].

Methodology: The team evaluated two neural network architectures for their segmentation needs: a modified Tiramisu network (an extension of the ResNet architecture) and a network based on the DeepLabv3+ encoder-decoder architecture. Using an adaptation of these architectures, they trained their neural networks on over 63,000 high-resolution images using the cuDNN-accelerated TensorFlow deep learning framework [10].

Significance: This project demonstrated that deep learning methods could be effectively applied for pixel-level segmentation on climate data, laying the groundwork for exascale deep learning applications across scientific domains. For ecological researchers, it established a precedent for applying GPU-accelerated deep learning to large-scale environmental pattern recognition tasks that would be computationally prohibitive using traditional methods.

Table: Research Reagent Solutions for GPU-Accelerated Environmental Research

| Solution/Technology | Function | Example in Environmental Research |

|---|---|---|

| NVIDIA Tensor Cores | Specialized execution units for mixed-precision matrix operations | Accelerating deep learning models for weather pattern recognition |

| CUDA Deep Neural Network library (cuDNN) | GPU-accelerated library for deep learning primitives | Optimizing performance of neural networks for climate data analysis |

| OpenACC Directives | Compiler directives for parallelizing code for GPUs | Porting legacy Fortran-based climate models to GPU architectures |

| PSyclone | Code transformation tool for adapting Fortran code for GPU execution | Automating parallelization of finite-difference ocean models |

| High-Bandwidth Memory (HBM) | Advanced memory technology with stacked design | Handling large climate datasets that exceed conventional memory capacity |

Performance Metrics and Environmental Considerations

Quantitative Performance Analysis

Understanding GPU performance metrics is essential for ecological researchers selecting appropriate hardware for their computational workloads and optimizing their code to achieve maximum efficiency. These metrics provide quantitative means to evaluate and compare different GPU architectures for specific scientific computing tasks, enabling informed decisions about resource allocation and algorithm selection.

Table: Performance Specifications of Representative Data Center GPUs

| GPU Model | FP32 Performance (TFLOPS) | Tensor Core Performance (TFLOPS) | Memory Bandwidth (GB/s) | Memory Capacity (GB) | Power Consumption (Watts) |

|---|---|---|---|---|---|

| NVIDIA Tesla V100 | 15.7 | 125 (FP16) | 900 | 32/16 | 300 |

| NVIDIA A100 | 19.5 | 312 (FP16) | 2039 | 40/80 | 400 |

| NVIDIA V100S | 16.4 | 130 (FP16) | 1134 | 32 | 250 |

Performance in GPU computing is commonly measured in TeraFLOPS (TFLOPS), representing trillions of floating-point operations per second [11]. However, TFLOPS alone doesn't determine real-world performance, as factors such as memory speed, architecture efficiency, and software optimization also play crucial roles [11]. For ecological modelers, the relationship between theoretical peak performance and achievable performance in practice depends heavily on how well their algorithms match the GPU's architectural strengths and whether their implementations minimize memory bottlenecks.

Memory bandwidth represents another critical performance metric, particularly for memory-bound workloads common in environmental modeling. Higher bandwidth enables faster data movement, reducing delays in processing large datasets [11]. Modern GPUs employ technologies like High-Bandwidth Memory (HBM) and GDDR6X to improve memory performance, allowing for faster computations in high-resolution climate modeling and real-time environmental monitoring applications [11].

Environmental Impact and Sustainability Considerations

The tremendous computational capability of GPUs comes with significant energy demands that ecological researchers must consider when designing large-scale modeling experiments. The explosive growth of AI and high-performance computing is expected to increase global energy consumption substantially, with data centers potentially consuming up to 8% of global electricity by 2030 [12] [13]. This environmental footprint extends beyond operational energy consumption to include embodied carbon emissions from manufacturing the hardware itself, with research indicating that producing a single high-performance GPU server can generate between 1,000 to 2,500 kilograms of carbon dioxide equivalent during its production cycle [13].

For the ecological modeling community, this creates a dual responsibility: both leveraging GPU capabilities to understand environmental systems while simultaneously minimizing the carbon footprint of this computational work. Several strategies are emerging to address these concerns:

Hardware Efficiency Improvements: Constant innovation in computing hardware continues to deliver dramatic improvements in the energy efficiency of AI models. NVIDIA's FutureTech Research Project has documented that efficiency gains from new model architectures that can solve complex problems faster are doubling every eight or nine months, a phenomenon termed the "negaflop" effect—computing operations that don't need to be performed due to algorithmic improvements [12].

Operational Optimizations: Research from MIT's Supercomputing Center has shown that "turning down" GPUs so they consume about three-tenths the energy has minimal impacts on AI model performance while making hardware easier to cool [12]. Additionally, scheduling computing operations for times when grid electricity comes from renewable sources can significantly reduce the carbon footprint of computational research.

Sustainable Data Center Design: Next-generation data centers are implementing advanced cooling technologies, renewable energy integration, and circular economy principles to reduce their environmental impact [12] [13]. Liquid immersion cooling, phase-change materials, and strategic geographical placement to leverage natural cooling environments can dramatically reduce energy requirements for computational infrastructure.

GPU architecture has evolved from specialized graphics hardware to a general-purpose parallel computing platform that has revolutionized scientific computing, including ecological modeling research. The fundamental architectural principles of massive parallelism through thousands of efficient cores, sophisticated memory hierarchies, and structured execution models provide the computational foundation for tackling increasingly complex environmental challenges. For ecological researchers, understanding these architectural principles is no longer optional but essential for leveraging the full potential of modern computational resources to model ecosystem dynamics, process remote sensing data, and project climate impacts at unprecedented scales and resolutions.

Looking forward, several trends will shape how ecological modelers utilize GPU computing. The ongoing development of more energy-efficient GPU architectures will help balance computational performance with environmental sustainability—a critical consideration for the research community. The emergence of specialized processing elements like Tensor Cores for mixed-precision computing will further accelerate machine learning applications in environmental science, enabling more sophisticated hybrid models that combine physical simulation with data-driven approaches [7]. Additionally, programming models and tools that simplify porting traditional ecological models to GPU architectures will lower barriers to adoption, allowing domain scientists to focus on their research rather than computational implementation details.

For ecological modeling, the transformative potential of GPU computing lies in its ability to make computationally intensive approaches practical—from ensemble modeling for uncertainty quantification to high-resolution simulation of biogeochemical processes. By understanding and leveraging GPU architectural principles, ecological researchers can accelerate their scientific discovery process, asking questions and building models that were previously computationally infeasible, ultimately advancing our understanding of complex ecological systems and our capacity to inform environmental decision-making in the face of global change.

Key Workloads in Ecology That Are Inherently Parallelizable

Modern ecology has undergone a data revolution, driven by technologies such as remote sensors, camera traps, and genomic sequencing that generate massive, multivariate datasets at unprecedented rates [14]. This deluge of information presents both an opportunity and a challenge: ecological systems are inherently complex, with dynamic interactions across multiple spatial and temporal scales, yet traditional analytical approaches struggle to extract meaningful insights from these large-scale datasets within reasonable timeframes. The computational demands of ecological research have thus escalated dramatically, creating an urgent need for high-performance computing solutions that can handle these complex workloads efficiently [14].

Parallel computing, particularly through Graphics Processing Units (GPUs), offers a transformative pathway for ecological modeling by exploiting the inherent parallelizability of many core ecological algorithms [15]. Unlike traditional Central Processing Units (CPUs) optimized for sequential tasks, GPUs possess thousands of smaller cores designed for massively parallel processing, enabling simultaneous execution of thousands of lightweight threads [16]. This architectural advantage makes GPU acceleration particularly well-suited to the mathematical intensity of ecological simulations and statistical analyses, where the same operations must often be repeated across numerous spatial locations, time steps, or statistical samples [15]. By leveraging this parallel processing power, ecologists can achieve computational speedups of two orders of magnitude or more for suitable workloads, transforming previously intractable analyses into feasible research endeavors [15].

This technical guide examines key ecological workloads that are inherently parallelizable, providing detailed methodologies, performance benchmarks, and implementation frameworks to help ecological researchers harness the power of GPU parallel computing. Within the broader thesis of GPU computing benefits for ecological research, we demonstrate how these technologies enable more complex models, higher-resolution simulations, and more robust statistical inferences that better reflect the complexity of real-world ecosystems.

Fundamentals of Parallel Computing in Ecology

Architectural Advantages of GPUs for Ecological Workloads

The parallel architecture of GPUs provides significant advantages for ecological computational tasks compared to traditional CPU-based processing. While CPUs typically consist of a few cores optimized for sequential serial processing, GPUs contain thousands of smaller, efficient cores designed for massively parallel execution [16]. This fundamental architectural difference stems from their respective origins: CPUs as general-purpose computing devices versus GPUs as specialized processors for mathematically intensive operations [16]. For ecological applications, which often involve repeating similar computations across numerous spatial grids, time steps, or statistical samples, this parallel architecture delivers unprecedented computational throughput rated in teraflops and petaflops per second [16].

GPU cores are organized into larger streaming multiprocessors (SMs), with each SM consisting of numerous stream processors (32, 64, or more) that share instruction and memory caches [16]. These SMs feature extremely high memory bandwidth to rapidly load and store data, keeping the stream processors saturated with threads for execution [16]. Each stream processor contains streamlined logic for fundamental computations like floating-point math, forgoing complex control logic in favor of parallel efficiency [16]. The cumulative effect is that while an individual CPU core outperforms a single GPU core, the highly parallel architecture of GPUs enables them to massively outscale serial processors for ecologically relevant workloads such as population simulations, spatial analyses, and statistical inference [15].

Parallel Programming Models for Ecological Applications

Ecologists seeking to leverage GPU acceleration can utilize several parallel programming models tailored to different levels of expertise and application requirements. The current state of the art in high-performance computing includes both mature and emerging approaches suitable for ecological research [17]:

- Accelerator-centric models (CUDA, SYCL, OpenACC, Kokkos, RAJA) make GPUs and specialized chips first-class citizens of high-performance computing, providing direct control over GPU resources for maximum performance [17].

- Traditional workhorses (OpenMP for multithreading and MPI for message passing) still dominate many scientific computing domains and have been extended with GPU offloading capabilities [17].

- Task-based frameworks (Legion, HPX, StarPU) map computations as dynamic graphs, ideal for heterogeneous systems with mixed CPU-GPU architectures [17].

- AI/ML distributed frameworks (PyTorch Distributed, Horovod, Ray) scale deep learning workloads across thousands of nodes, increasingly relevant for ecological pattern recognition and predictive modeling [17].

For ecologists new to GPU programming, directive-based approaches like OpenACC offer a gentler learning curve by allowing developers to annotate existing code with compiler directives that handle parallelization automatically [17]. More experienced researchers may opt for explicit programming models like CUDA or OpenCL for finer-grained control over GPU resources [18]. The emerging trend favors performance-portable models like Kokkos and SYCL, which enable code to run efficiently across diverse hardware platforms without vendor lock-in [17].

Key Parallelizable Workloads in Ecology

Population Dynamics Modeling

Population dynamics models represent a fundamentally parallelizable workload in ecology, particularly state-space formulations that track populations over time with explicit observation and process error. These models involve simulating population states across multiple time steps and often require extensive parameter sampling for Bayesian inference [15]. The mathematical structure of these models typically follows a recursive pattern where population states at time t depend on states at time t-1 through transition equations, creating natural opportunities for parallelization across particles in Particle Markov Chain Monte Carlo (PMCMC) methods [15].

Table 1: Performance Benchmarks for GPU-Accelerated Population Dynamics Modeling

| Model Component | CPU Implementation | GPU Implementation | Speedup Factor |

|---|---|---|---|

| Particle Filtering | 45 minutes per 10^5 particles | 24 seconds per 10^5 particles | 112× |

| MCMC Sampling | 18 hours for 10^6 iterations | 32 minutes for 10^6 iterations | 34× |

| Model Likelihood | 6.2 seconds per evaluation | 0.05 seconds per evaluation | 124× |

A landmark case study demonstrating GPU acceleration for population modeling focused on Bayesian state-space models for grey seal (Halichoerus grypus) population dynamics [15]. Researchers implemented a particle Markov chain Monte Carlo algorithm on GPUs, achieving a speedup factor of over two orders of magnitude compared to state-of-the-art CPU-based fitting algorithms [15]. This dramatic acceleration transformed what was previously a computationally prohibitive analysis into a feasible endeavor, enabling more complex model structures that better represent real-world population dynamics.

The parallel implementation exploited the inherent parallelizability of particle filtering, where thousands of potential population trajectories (particles) are simulated simultaneously [15]. Each particle represents an independent realization of the population process, making the evaluation of likelihoods across particles an embarrassingly parallel workload ideally suited to GPU architecture. Similarly, the MCMC sampling process benefited from parallel evaluation of candidate parameter values, with the GPU simultaneously computing likelihoods for multiple proposed parameter states [15].

Spatial Capture-Recapture Analysis

Spatial capture-recapture (SCR) represents another highly parallelizable ecological workload, particularly as study designs incorporate larger detector arrays and more complex spatial meshes for integration. SCR methods estimate animal abundance and space use from detections at an array of detectors over multiple sampling occasions, requiring integration over a spatial domain representing potential animal activity centers [15]. The computational complexity of SCR models scales geometrically with the number of detectors and mesh points, creating substantial computational burdens for large-scale studies [15].

Table 2: GPU Acceleration of Spatial Capture-Recapture Analysis

| Study Dimension | Small Array (20 detectors) | Large Array (100 detectors) | Speedup Factor |

|---|---|---|---|

| CPU Processing Time | 45 minutes | 68 hours | - |

| GPU Processing Time | 2.1 minutes | 4.3 hours | 16-20× |

| Integration Points | 1,500 | 15,000 | - |

| Memory Bandwidth | 18 GB/s (CPU) | 350 GB/s (GPU) | 19× |

The parallel structure of SCR models emerges from two primary sources: the independence of likelihood contributions across individuals and the parallelizable integration across spatial mesh points [15]. In a demonstration using common bottlenose dolphin (Tursiops truncatus) photo-identification data, GPU-accelerated SCR achieved a 20-fold speedup compared to multi-core CPU implementation with open-source software [15]. This acceleration was particularly pronounced for analyses with large detector arrays and dense integration meshes, where the parallel architecture of GPUs could be fully exploited [15].

The case study revealed that performance gains increased with problem complexity, with speedup factors reaching two orders of magnitude when the number of detectors and integration mesh points was high [15]. This scaling property makes GPU acceleration particularly valuable for modern SCR studies that increasingly leverage extensive camera trap arrays and fine-resolution spatial meshes to estimate detailed space usage patterns [15].

Multivariate Ecological Data Visualization

The exploratory analysis of multivariate ecological data represents a parallelizable workload with significant implications for pattern detection, hypothesis generation, and scientific communication. Ecological research frequently involves assessing multiple biological, chemical, and physical variables measured at increasingly rapid rates using data loggers, wildlife camera traps, and other remote sensors [14]. Traditional visualization techniques (scatter plots, bar charts, box plots) are limited to three dimensions, creating challenges for interpreting high-dimensional ecological data [14].

Parallel coordinates plots offer a powerful alternative for visualizing multidimensional ecological data by displaying N parallel vertical axes alongside one another, with each observation represented as a connecting polyline across all axes [14]. The rendering of these polylines represents an inherently parallel workload, as the position calculations and line drawing operations for thousands of observations can be distributed across GPU cores simultaneously [14]. This parallel rendering enables real-time interaction with complex ecological datasets, allowing researchers to brush and filter observations across multiple dimensions simultaneously [14].

Diagram 1: Parallel coordinates visualization workflow. The process shows how multivariate ecological data flows through parallel processing stages, with GPU-accelerated components significantly speeding up rendering and interactive brushing operations.

Application of parallel coordinates in stream ecosystem assessment demonstrates their utility for exploring multidimensional ecological data [14]. Researchers visualized benthic macroinvertebrate indicators and associated water quality variables across the St. Lawrence drainage basin, using color to distinguish sites with good, moderate, and poor ecological conditions [14]. The interactive parallel coordinates plot enabled researchers to identify threshold relationships between specific water quality parameters and ecological status, generating hypotheses about causal mechanisms driving ecosystem degradation [14].

Environmental Sensor Data Processing

The proliferation of environmental sensor networks has created massive data streams from sources including aquatic sensors, weather stations, and aerial drones. Processing these data streams involves fundamentally parallelizable operations such as filtering, aggregation, anomaly detection, and gap filling [14]. The parallel nature of these workloads stems from the temporal and spatial independence of many sensor readings, which can be processed simultaneously across GPU cores [14].

GPU acceleration enables real-time processing of these environmental data streams, facilitating immediate detection of ecological anomalies such as pollution events, thermal extremes, or unusual biological activity [14]. The high memory bandwidth of modern GPUs (reaching 350 GB/s in recent architectures) provides sufficient throughput for the large volumes of data generated by continuous monitoring systems [16]. This capability transforms the temporal scale at which ecological inferences can be made, enabling near-real-time assessment of ecosystem status rather than retrospective analyses conducted months or years after data collection [14].

Case studies applying parallel visualization to ecological sensor data demonstrate how GPU acceleration enables researchers to interactively explore complex multivariate relationships across temporal and spatial scales [14]. The integration of geographical coordinates with parallel coordinates (Geo-coordinated Parallel Coordinates) creates particularly powerful exploratory tools that link multivariate patterns with spatial context [14]. These approaches help ecologists identify clusters of similar sampling sites, detect anomalous observations warranting quality control, and generate hypotheses about relationships between environmental drivers and ecological responses [14].

Species Distribution and Habitat Modeling

Species distribution models represent another class of parallelizable ecological workloads, particularly as these models incorporate increasingly complex environmental covariates and sophisticated machine learning algorithms. The fundamental parallelizable operation in species distribution modeling is the simultaneous calculation of habitat suitability across numerous spatial grid cells [15]. Each grid cell represents an independent evaluation of environmental conditions against species-habitat relationships, creating natural opportunities for massive parallelization across thousands of GPU cores [15].

The integration of GPU-accelerated machine learning frameworks has further enhanced the potential for parallelizing species distribution modeling [19]. Deep learning algorithms for processing remote sensing imagery (e.g., convolutional neural networks) benefit dramatically from GPU acceleration, reducing training times from weeks to hours [19]. This acceleration enables ecologists to experiment with more complex model architectures and incorporate higher-resolution environmental data, potentially improving the accuracy and ecological realism of distribution predictions [19].

Table 3: Research Reagent Solutions for Parallel Ecological Computing

| Tool Category | Specific Technologies | Ecological Application |

|---|---|---|

| GPU Programming Frameworks | CUDA, OpenCL, OpenACC | General-purpose GPU programming for custom ecological models |

| Machine Learning Libraries | TensorFlow, PyTorch | Species identification from camera trap images, distribution modeling |

| Data Visualization Libraries | D3.js, Yellowbrick | Interactive parallel coordinates for multivariate ecological data |

| High-Performance Computing | Apache Spark, Hadoop | Distributed processing of large ecological sensor datasets |

| Statistical Computing | GPU-accelerated R libraries | Bayesian inference for population models, spatial statistics |

Experimental Protocols and Implementation

Protocol for GPU-Accelerated Population Modeling

Implementing GPU acceleration for population dynamics modeling requires careful attention to algorithm design, memory management, and parallelization strategies. The following protocol outlines the key steps for developing efficient GPU-accelerated population models based on successful implementations in ecological research [15]:

Algorithm Selection and Reformulation: Identify population model components with inherent parallel structure, particularly particle filters for state-space models where thousands of particles can be simulated simultaneously. Reformulate sequential algorithms to expose fine-grained parallelism, focusing on operations applied independently across particles, spatial locations, or parameter samples [15].

GPU Memory Management: Design efficient memory access patterns to maximize memory bandwidth utilization. Allocate population state matrices in GPU global memory with coalesced access patterns, use shared memory for frequently accessed parameters, and minimize data transfer between CPU and GPU by keeping computation on the GPU as long as possible [15].

Parallelization Strategy: Implement a hierarchical parallelization approach with thread blocks handling independent model realizations (particles) and individual threads processing different time steps or demographic cohorts within each realization. For complex models with dependencies across time steps, employ parallel reduction patterns for likelihood calculations [15].

Optimization and Benchmarking: Profile GPU kernel performance to identify memory bottlenecks or thread divergence issues. Optimize by adjusting thread block sizes, utilizing tensor cores for mixed-precision arithmetic where appropriate, and implementing kernel fusion to reduce memory transfers. Compare performance against optimized CPU implementations to quantify speedup factors [15].

Protocol for Spatial Capture-Recapture Acceleration

GPU acceleration of spatial capture-recapture models requires specialized approaches to handle the spatial integration and detection probability calculations. The following protocol derives from published case studies demonstrating significant speedups for SCR analyses [15]:

Data Structure Design: Organize detection history data in GPU global memory using structure-of-arrays layout rather than array-of-structures to enable coalesced memory access. Precompute and store distance matrices between integration mesh points and detector locations in shared memory or constant memory for rapid access during likelihood calculations [15].

Parallel Integration Scheme: Implement spatial integration using a parallel reduction pattern where each thread block processes a subset of integration mesh points, with individual threads handling points for specific individuals. Employ atomic operations or parallel reduction algorithms to sum likelihood contributions across mesh points while maintaining numerical stability [15].

Likelihood Evaluation: Design GPU kernels that evaluate detection probabilities simultaneously across all detectors, individuals, and sampling occasions. Utilize the independence of individuals to distribute workload evenly across GPU cores, with warps (groups of 32 threads) processing individuals with similar computational requirements to minimize thread divergence [15].

Memory Access Optimization: Leverage texture memory for spatial covariate rasters to benefit from caching optimized for 2D spatial locality. For models with Markov chain Monte Carlo sampling, implement parallel chains on different GPU streaming multiprocessors to maximize GPU utilization [15].

Diagram 2: Spatial capture-recapture GPU workflow. The diagram illustrates the division of labor between CPU and GPU components in accelerated SCR analysis, with computationally intensive kernels offloaded to the GPU for parallel execution.

Performance Analysis and Computational Efficiency

The computational benefits of GPU acceleration for ecological workloads extend beyond simple speedup factors to include broader impacts on research efficacy, model complexity, and energy efficiency. Quantitative assessments across multiple ecological case studies reveal consistent patterns of performance improvement [15]:

Population dynamics modeling achieved speedup factors exceeding two orders of magnitude for particle filtering operations, reducing processing time from 45 minutes per 100,000 particles on CPUs to just 24 seconds on GPUs [15]. This dramatic acceleration enabled more robust uncertainty quantification through increased particle counts and more complex model structures that better represent ecological mechanisms. Similarly, MCMC sampling for Bayesian parameter estimation demonstrated 34-fold speedups, transforming previously overnight computations into interactive analyses [15].

Spatial capture-recapture analyses showed performance gains that scaled with problem complexity, with speedup factors ranging from 16× for small detector arrays to 20× or more for large arrays with dense spatial meshes [15]. This scaling property is particularly valuable as ecological monitoring programs increasingly deploy extensive sensor networks generating massive datasets. The parallelization of spatial integration across thousands of GPU cores alleviated what was previously a fundamental constraint on the spatial resolution and extent of SCR analyses [15].

Beyond raw speed improvements, GPU acceleration delivered significant gains in computational efficiency measured by energy consumption per calculation. The parallel architecture of GPUs provides substantially better performance per watt for suitable workloads compared to CPU-based systems [15]. This energy efficiency aligns with growing emphasis on sustainable computing practices within scientific research, particularly for long-running ecological simulations and extensive model comparison exercises.

The accessibility of GPU computing has also improved dramatically with the emergence of cloud-based GPU services, which offer flexible access to high-performance computing resources without substantial upfront investment [16]. Cloud GPU providers deliver instant access to cutting-edge hardware with pay-per-use pricing models starting below $0.50 per hour, democratizing access to computational resources previously available only to well-funded institutions [16]. This development particularly benefits ecological researchers with fluctuating computational needs, allowing them to scale resources according to project requirements rather than maintaining expensive on-premises infrastructure.

Future Directions and Emerging Opportunities

The integration of GPU computing into ecological research represents an ongoing transformation with several promising directions for future development. As GPU architectures continue evolving, with innovations such as tensor cores for AI workloads and increasing memory bandwidth, new opportunities emerge for addressing previously intractable ecological questions [16].

The convergence of GPU acceleration with artificial intelligence represents a particularly promising frontier for ecological research. Machine learning approaches for species identification from camera trap images, acoustic monitoring, and remote sensing imagery can benefit dramatically from GPU acceleration [19]. Similarly, AI-powered anomaly detection in ecological sensor networks enables real-time identification of unusual events such as poaching activity, disease outbreaks, or pollution incidents [19]. The training of these AI models, which often requires extensive computational resources, becomes practically feasible through GPU acceleration [19].

Emerging programming models that enhance performance portability across diverse hardware architectures will further accelerate the adoption of GPU computing in ecology [17]. Frameworks such as Kokkos and SYCL enable researchers to write code once and deploy efficiently across different GPU vendors, reducing the implementation overhead and protecting against hardware obsolescence [17]. These developments coincide with growing emphasis on reproducible research in ecology, where computational efficiency enables more extensive sensitivity analyses and uncertainty quantification [15].

The future evolution of ecological research will likely see deeper integration of GPU-accelerated simulations with immersive visualization environments, creating digital twins of ecological systems that enable researchers to interact with complex models in real-time [14]. These advancements will fundamentally transform how ecologists explore hypotheses, test management scenarios, and communicate scientific findings, ultimately enhancing our understanding and stewardship of complex ecological systems.

The field of ecological modeling is confronting a paradigm shift, driven by the exponentially growing complexity of simulating natural systems. From high-resolution climate projections to population genomics, the computational demands of these models have outstripped the capabilities of traditional general-purpose computing hardware. This has catalyzed a fundamental evolution in computing architecture, moving from the versatile Central Processing Unit (CPU) to the massively parallel Graphics Processing Unit (GPU). This transition is not merely about incremental speed improvements; it is a transformation that enables researchers to tackle previously intractable problems, such as continent-scale ecosystem simulations and real-time environmental forecasting. This whitepaper examines the technical underpinnings of this hardware evolution, its profound implications for ecological modeling, and the practical pathway for researchers to leverage specialized High-Performance Computing (HPC) GPUs, thereby unlocking new frontiers in scientific discovery and environmental stewardship.

Architectural Fundamentals: CPU vs. GPU Design Philosophies

The core of the hardware evolution lies in the fundamental architectural differences between CPUs and GPUs, which are optimized for distinctly different types of computational workloads.

The Central Processing Unit (CPU): The General-Purpose Brain

The CPU functions as the central brain of a computer system, designed for serial instruction processing and managing a wide range of tasks. Its strength lies in executing a diverse set of operations quickly and sequentially, making it ideal for running operating systems, handling logic-based decision-making, and managing I/O operations. Modern CPUs typically contain a limited number of powerful, complex cores (ranging from a few to dozens) that operate at high clock speeds. Each core is capable of handling individual tasks or threads independently, a design that excels in situations where low-latency performance for single, complex tasks is critical. The CPU's architecture is characterized by a significant amount of cache memory to minimize the time the processor spends waiting for data from the main RAM, optimizing it for tasks where the sequence of operations and conditional branching are paramount [20] [21].

The Graphics Processing Unit (GPU): The Parallel Powerhouse

In contrast, the GPU is a specialized processor architected for parallel instruction processing. Originally designed for rendering computer graphics, which requires performing millions of identical, independent calculations to determine the color and position of each pixel on a screen, GPUs have evolved into general-purpose parallel engines. A GPU comprises hundreds to thousands of smaller, simpler cores. While individually less powerful than a CPU core, these thousands of cores work concurrently on different parts of a large problem, performing the same operation on multiple data streams simultaneously. This design is often described as Single Instruction, Multiple Data (SIMD). Consequently, for tasks that can be broken down into smaller, parallelizable components, a GPU delivers vastly superior computational throughput than a CPU [20] [22].

A conceptual analogy is that of a head chef and a team of kitchen assistants. The head chef (CPU) is excellent at managing the entire kitchen, making complex decisions, and performing specialized tasks sequentially. However, for a repetitive, parallelizable task like flipping hundreds of burgers, a team of assistants (GPU), each flipping a few burgers simultaneously, will complete the job orders of magnitude faster [20].

Core Architectural Differences

The table below summarizes the key architectural and functional differences between CPUs and GPUs.

Table 1: Fundamental Architectural Differences Between CPUs and GPUs

| Feature | CPU (Central Processing Unit) | GPU (Graphics Processing Unit) |

|---|---|---|

| Core Philosophy | General-purpose serial processing [21] | Specialized parallel processing [21] |

| Core Count | Fewer, more powerful, complex cores [20] | Hundreds to thousands of smaller, efficient cores [20] [22] |

| Primary Function | Handles diverse tasks, system management, sequential computation [20] [22] | Accelerates parallelizable mathematical computations [20] [22] |

| Ideal Workload | Task-level parallelism; complex, sequential operations [21] | Data-level parallelism; simple, repetitive operations on large datasets [21] |

| Cache Memory | Large cache to minimize instruction latency [20] | Smaller cache focused on throughput, not latency [20] |

| Throughput vs. Latency | Optimized for low latency (fast completion of a single task) [21] | Optimized for high throughput (completing many tasks in a given time) [21] |

The following diagram illustrates the fundamental architectural difference in how CPUs and GPUs allocate their transistors and cores to different functions, leading to their distinct strengths.

Diagram 1: CPU vs. GPU Core Architecture

The Rise of GPUs in High-Performance Computing (HPC)

The trajectory of modern computational science, particularly in fields like ecological modeling, has increasingly relied on HPC to solve complex problems. The inherent parallelism in scientific simulations—where the same mathematical operations are applied across a spatial grid (e.g., in climate models) or to a large population of individuals (e.g., in agent-based models)—makes them exceptionally well-suited for GPU acceleration.

The HPC and AI Convergence

The exponential growth of Artificial Intelligence (AI) and machine learning has further cemented the role of GPUs in HPC. Training deep learning models involves immense amounts of matrix multiplication and other linear algebra operations, which are perfectly aligned with the parallel architecture of GPUs. This synergy has driven rapid hardware innovation. NVIDIA's H100 Tensor Core GPU, for instance, became a cornerstone of modern AI and HPC infrastructure, featuring 80 GB of high-bandwidth memory (HBM3) and dedicated Tensor Cores for accelerated matrix calculations [23]. The subsequent Blackwell GPU architecture, like the B200, promises another step-change, with early data showing a 30x increase in real-time AI inference throughput for large language models compared to the H100 [23]. These advancements directly benefit scientific computing, where similar mathematical operations are foundational.

Enabling Exascale and Beyond

The evolution of GPU technology is a key enabler of exascale computing—systems capable of performing a quintillion (10^18) calculations per second [24]. Achieving this level of performance is critical for executing higher-fidelity, global-scale ecological simulations that were previously impossible. Next-generation GPU servers are designed to maximize throughput, often featuring 8 to 10 GPUs per node connected via ultra-fast interconnects like NVLink, which provides over 900 GB/s of peer-to-peer bandwidth [23]. To sustain performance, these systems require advanced cooling solutions and can draw over 5 kW of power per server, highlighting the intense energy demands of cutting-edge HPC [23].

Quantitative Performance Analysis: CPUs vs. GPUs in Scientific Workloads

The theoretical advantages of GPU architecture translate into dramatic real-world performance gains for parallelizable scientific workloads. The differences can be quantified across several key metrics.

Computational Throughput

The most significant performance delta is in floating-point operations per second (FLOPS), the primary measure for scientific computation. GPUs are designed to maximize FLOPs. For example, a single NVIDIA H100 GPU can deliver performance on the order of petaFLOPs (10^15 FLOPS) for AI workloads. An 8-GPU server node can thus deliver performance in the range of 5 petaFLOPs of AI throughput [23]. In contrast, even high-end server CPUs measure their performance in teraFLOPs (10^12 FLOPS), representing a difference of several orders of magnitude for suitable tasks.

Memory Bandwidth

Feeding thousands of cores with data requires a massive memory subsystem. GPUs address this with High-Bandwidth Memory (HBM), which is stacked directly onto the processor package. For instance, NVIDIA's Grace Hopper superchip, which combines a powerful CPU with a Blackwell GPU, boasts a staggering 8 TB/s of memory bandwidth [23]. This accelerates data-intensive queries, making them 18x faster than traditional x86 CPUs and 6x faster than the previous-generation H100 [23]. This high bandwidth is critical for ecological models that must rapidly access vast datasets representing terrain, climate variables, or species distributions.

Table 2: Representative Performance Metrics for Modern HPC Hardware (2025)

| Hardware Component | Key Performance Metric | Representative Value | Significance for Ecological Modeling |

|---|---|---|---|

| Server CPU (e.g., AMD EPYC 9005) | Core Count / Memory Bandwidth | Up to 192 Cores / ~500 GB/s [23] | Excellent for managing simulation workflow, I/O, and serial portions of code. |

| HPC GPU (e.g., NVIDIA H100) | AI Throughput / Memory (HBM3) | ~5 PetaFLOPs (8-GPU node) / 80 GB [23] | Massive parallel computation for model physics, matrix solvers, and machine learning. |

| Next-Gen GPU (e.g., NVIDIA Blackwell B200) | Inference Throughput / Memory Bandwidth | 30x H100 (for LLMs) / 8 TB/s (System) [23] | Enables real-time, high-resolution forecasting and complex multi-model ensembles. |

| HPC Interconnect (e.g., NVLink) | GPU-to-GPU Bandwidth | >900 GB/s [23] | Crucial for scaling single simulations across multiple GPUs with minimal communication delay. |

| PCIe Gen5 Interconnect | CPU-to-Device Bandwidth | ~64 GB/s (x16 slot) [23] | Prevents I/O bottlenecks when feeding data from storage or the network to the GPUs. |

Environmental Impact: The Dual Edges of High-Performance Computing

The massive computational power of HPC GPUs carries a significant and complex environmental footprint, a critical consideration for ecological research aimed at sustainability.

The Scale of Energy Consumption

The energy demands of AI and HPC are substantial and growing. Data centers, which house the computing infrastructure for training and deploying AI models, are projected to consume up to 8% of global electricity by 2030, a dramatic increase from current levels [13]. This growth is largely driven by GPU-based systems. A single high-performance GPU server can consume between 300-500 watts per hour, with large-scale training clusters drawing continuous megawatts of power [13]. An April 2025 report from the International Energy Agency predicts that global electricity demand from data centers will more than double by 2030, reaching approximately 945 terawatt-hours, which is slightly more than the annual energy consumption of Japan [12].

Operational and Embodied Carbon

The environmental impact extends beyond direct electricity use. Discussions often focus on "operational carbon" (emissions from running the hardware) but can overlook "embodied carbon"—the emissions generated during the manufacturing of the data center and its hardware [12]. The production of a single high-performance GPU server can generate between 1,000 to 2,500 kilograms of CO2 equivalent before it is even switched on [13]. Furthermore, the operational carbon intensity varies with the local energy grid; a GPU cluster powered by renewable energy has a much lower carbon footprint than one reliant on fossil fuels.

Strategies for Mitigation

The industry is responding with several mitigation strategies to reduce the environmental impact of HPC:

- Hardware Efficiency: Constant innovation in semiconductor technology, such as NVIDIA's Blackwell architecture, delivers more computations per joule of energy. Efficiency gains from new model architectures are doubling every eight to nine months, a trend sometimes called the "negaflop" effect, where algorithmic improvements avoid the need for computations altogether [12].

- Operational Optimization: Research shows that "turning down" GPUs to consume about three-tenths of the energy can have minimal impacts on AI model performance while making hardware easier to cool [12].

- Renewable Energy Integration: Leading technology companies are pursuing carbon neutrality for data centers through direct renewable energy procurement, on-site generation, and power purchase agreements [13].

- Advanced Cooling: Next-generation data centers are adopting liquid immersion cooling and other advanced thermal management systems, which can dramatically reduce the energy traditionally consumed by air conditioning [13].

Experimental Protocol: Benchmarking CPU vs. GPU for an Ecological Model

To quantitatively evaluate the benefit of GPU acceleration for a specific research task, researchers can conduct a controlled benchmarking experiment. The following provides a detailed methodology.

Research Objective

To measure and compare the computational performance (execution time and throughput) of a representative ecological simulation when executed on a modern multi-core CPU versus a contemporary HPC GPU.

Experimental Workflow

The high-level workflow for this benchmarking experiment is outlined below, showing the parallel paths for testing on CPU and GPU hardware.

Diagram 2: Benchmarking Experiment Workflow

Detailed Methodology

Benchmark Selection:

- Select a computationally intensive, parallelizable core from a larger ecological model. A strong candidate is a grid-based dispersal or growth model (e.g., a cellular automaton simulating forest fire spread or vegetation dynamics). The model should involve mathematical operations applied independently to each cell in a grid, with minimal sequential dependencies.

Code Development:

- CPU Baseline Version: Implement the model in a language like C++ or Fortran. Optimize it using standard techniques and parallelize it across all available CPU cores using a framework like OpenMP (for shared memory systems) [24].

- GPU Accelerated Version: Port the computationally intensive kernels (the functions applied to each grid cell) to the GPU. Use a parallel programming model such as NVIDIA's CUDA or the open-standard OpenACC directives [24]. The goal is to launch thousands of threads on the GPU, each thread processing one or a few grid cells.

Hardware and Software Configuration:

- Hardware: Use a single server node containing both a high-core-count CPU (e.g., Intel Xeon Scalable or AMD EPYC) and one or more HPC GPUs (e.g., NVIDIA H100 or A100). This controls for system variables.

- Software: Use the same operating system (typically Linux) and compiler (e.g., GCC). For the GPU code, use the latest CUDA Toolkit or corresponding ROCm stack for AMD GPUs.

Execution and Data Collection:

- Run both the CPU and GPU versions of the model using the identical input dataset. Systematically vary the problem size (e.g., grid dimensions from 1024x1024 to 8192x8192).

- For each run, record:

- Total Wall-Time Execution: From start to finish of the main computational loop.

- Hardware Monitoring Data: Use tools like

nvprof(for NVIDIA GPUs) to record GPU utilization, memory bandwidth, and FLOPs. - Calculate the speed-up as (CPU Execution Time) / (GPU Execution Time).

Analysis:

- Plot execution time versus problem size for both CPU and GPU.

- Plot speed-up versus problem size. The speed-up typically increases with problem size as the GPU's parallel resources are more fully utilized.

- Analyze the GPU utilization metrics to identify potential bottlenecks (e.g., memory bandwidth limitations).

The Researcher's Toolkit for HPC GPU Computing

Adopting GPU computing requires familiarity with a specific set of hardware and software tools. The table below details key components of the modern HPC researcher's toolkit.

Table 3: Essential Research Reagents and Tools for HPC GPU Computing

| Tool / Component | Category | Function & Explanation |

|---|---|---|

| NVIDIA H100 / Blackwell GPU | Hardware | The primary accelerator; provides thousands of cores and high-bandwidth memory (HBM) for massive parallel throughput [23]. |

| AMD EPYC / Intel Xeon CPU | Hardware | The host processor; manages system resources, I/O, and executes serial portions of the application that are not suitable for the GPU [23]. |

| NVLink Interconnect | Hardware | A high-speed direct GPU-to-GPU interconnect that enables multiple GPUs to act as a single, larger computational unit, crucial for scaling large models [23]. |

| InfiniBand Networking | Hardware | A low-latency, high-throughput network technology for connecting multiple compute nodes into a larger cluster, essential for multi-node simulations [24]. |

| CUDA Platform | Software | A parallel computing platform and programming model that allows developers to use C++, Python, etc., to write programs that execute on NVIDIA GPUs [23]. |

| OpenACC | Software | A directive-based parallel programming model designed for simplicity; allows programmers to annotate code to guide the compiler in parallelizing for GPUs [24]. |

| SLURM Scheduler | Software | A job scheduler for managing computational resources and task distribution across nodes in an HPC cluster, ensuring optimal utilization [24]. |

| Singularity/Apptainer | Software | A containerization platform designed for HPC, allowing researchers to package applications and dependencies for reproducible runs across different environments [24]. |

The evolution from general-purpose CPUs to specialized HPC GPUs represents a fundamental shift in computational science, with profound implications for ecological modeling. This transition is not merely an incremental upgrade but a transformative change that enables researchers to simulate complex environmental systems at unprecedented scales, resolutions, and speeds. While this power comes with a non-trivial environmental cost that must be responsibly managed through technological innovation and operational efficiency, the benefits are undeniable. By embracing the parallel computing paradigm offered by modern GPUs, ecological researchers can overcome previous computational barriers, unlocking deeper insights into the functioning of our planet and empowering more effective strategies for its conservation and management. The future of ecological discovery is, inextricably, a parallel future.

Implementing GPU Acceleration in Ecological Models: A Practical Guide

Modern ecological modeling and drug development research are increasingly dependent on high-performance computing (HPC) to tackle complex simulations, from population dynamics to molecular interactions. The explosion of data in these fields, coupled with the availability of powerful Graphics Processing Units (GPUs), has created a computational paradigm shift. However, this shift presents researchers with a critical dilemma: how should existing scientific codebases be modernized to leverage these powerful parallel architectures? The choice often narrows down to two distinct strategies—code transformation with directives (an incremental refactoring approach) versus ground-up rewriting (a complete rebuild). This guide provides an in-depth analysis of both paths, offering researchers, scientists, and drug development professionals a structured framework for making this vital decision, ultimately enabling faster discoveries and more complex simulations.

Core Concepts: Refactoring and Rewriting Defined

Code Refactoring: Strategic Internal Transformation

Code refactoring is the process of restructuring existing computer code—changing its internal structure without altering its external behavior [25] [26]. The core purpose is to improve non-functional attributes: readability, reduce complexity, improve maintainability, and enhance performance, all while preserving the accuracy of the underlying scientific computations [27]. In the context of GPU acceleration, this often involves using compiler directives (such as OpenACC or OpenMP) to annotate existing serial code, guiding the compiler to parallelize specific loops or functions for execution on GPU hardware.

- Analogy: Refactoring is akin to reorganizing a laboratory for better workflow. You are not discovering new science but making the existing experimental processes more efficient and less error-prone [27].