Harnessing GPU Computing for Large-Scale Ecological Data: A Sustainable Path from Analysis to Insight

This article explores the transformative role of GPU computing in managing and analyzing large-scale ecological datasets.

Harnessing GPU Computing for Large-Scale Ecological Data: A Sustainable Path from Analysis to Insight

Abstract

This article explores the transformative role of GPU computing in managing and analyzing large-scale ecological datasets. Tailored for researchers and scientists, it provides a comprehensive guide from foundational concepts and methodological applications to advanced optimization and validation techniques. Crucially, it addresses the growing environmental footprint of high-performance computing, introducing frameworks like FABRIC for measuring biodiversity impact and offering strategies for balancing computational power with ecological sustainability. Readers will gain practical insights into selecting hardware, implementing efficient algorithms, and validating results to accelerate discovery while minimizing environmental costs.

The Power and The Cost: Why GPUs are Revolutionizing Ecological Research

The analysis of large-scale ecological datasets, from metagenomics to environmental modeling, presents monumental computational challenges that traditional CPU-based architectures struggle to meet efficiently. This technical guide examines how the parallel processing architecture of Graphics Processing Units (GPUs) provides transformative solutions for ecological data challenges. By leveraging thousands of computational cores optimized for parallel execution, GPUs enable researchers to achieve order-of-magnitude acceleration in processing times while maintaining scientific accuracy. This paper details the architectural foundations of GPU computing, presents quantitative performance comparisons, outlines experimental methodologies for implementing GPU-accelerated solutions, and provides a comprehensive toolkit for researchers embarking on computational ecology studies. Within the broader context of GPU computing for large-scale ecological datasets research, this work demonstrates how specialized hardware architectures are unlocking new possibilities for analyzing complex environmental systems at unprecedented scales and resolutions.

Ecological research has entered an era of data-intensive science, driven by advanced sensing technologies, high-throughput DNA sequencing, and large-scale environmental monitoring networks. The analysis of metagenomic samples to characterize microbial communities, for instance, involves comparing millions of DNA sequences against reference databases—a process that is both data- and computation-intensive [1]. Similarly, numerical simulations of environmental phenomena using advection-reaction-diffusion equations demand substantial computational resources, particularly when modeling at high spatial and temporal resolutions [2].

Traditional sequential processing approaches using Central Processing Units (CPUs) have proven inadequate for these challenges, resulting in protracted analysis times that hinder scientific progress. CPU-based clusters attempting to meet these demands often entail high cost and power consumption without delivering the requisite performance [1]. This computational bottleneck restricts the scope and scale of ecological investigations, limiting the complexity of models, the resolution of analyses, and the feasibility of real-time environmental monitoring.

GPU Architectural Foundations for Parallel Processing

Core Architectural Components

The GPU is a highly parallel processor architecture composed of processing elements and a memory hierarchy designed for massive parallelism. At a high level, NVIDIA GPUs consist of three fundamental components:

- Streaming Multiprocessors (SMs): The primary execution units that contain multiple cores for parallel computation. For example, an NVIDIA A100 GPU contains 108 SMs [3].

- L2 Cache: An on-chip cache that serves as a buffer between the SMs and device memory.

- High-Bandwidth Memory (HBM): Specialized DRAM that provides substantially higher data transfer rates compared to traditional memory architectures. The A100 features up to 2039 GB/s bandwidth from 80 GB of HBM2 memory [3].

Unlike CPUs optimized for sequential serial processing, GPUs employ a Single Instruction, Multiple Threads (SIMT) architecture where multiple threads execute the same instruction on different data elements simultaneously [1]. This architecture enables modern GPUs to execute thousands of threads concurrently, making them exceptionally well-suited for the repetitive mathematical operations common in ecological data analysis.

Memory Hierarchy and Data Throughput

GPU memory architecture is optimized for high-throughput data access patterns common in scientific computing. The hierarchy includes:

- Global Memory: Large-capacity board memory shared by all stream processors

- Shared Memory: Low-latency memory accessible by processors within the same SM, often used as cache [1]

- Register Files: Dedicated high-speed memory for active threads

This hierarchical structure is crucial for ecological datasets where efficient memory access often determines overall performance. Memory bandwidth—the rate at which data can be read from or stored to memory—significantly impacts how quickly GPUs can process large datasets for AI training and data analytics applications [4]. Higher bandwidth enables faster data movement, reducing processing delays in ecological analyses.

Table 1: Key GPU Architectural Components and Their Functions

| Component | Function | Relevance to Ecological Data Processing |

|---|---|---|

| Streaming Multiprocessors (SMs) | Execute parallel threads of computation | Enables simultaneous processing of multiple data elements |

| CUDA Cores | Perform fundamental mathematical operations | Accelerates matrix operations in environmental models |

| Tensor Cores | Specialized for matrix operations | Optimizes deep learning applications in ecological research |

| High-Bandwidth Memory (HBM) | Provides rapid access to large datasets | Facilitates processing of massive genomic or sensor datasets |

| Shared Memory | Low-latency memory shared within an SM | Enables efficient data sharing for parallel algorithms |

Quantitative Performance Advantages for Ecological Workloads

Performance Metrics and Measurement

GPU performance is quantified through several key metrics that demonstrate their advantage for ecological data processing:

- TFLOPS (Teraflops): Measures floating-point operations per second, indicating raw computational capacity. Higher TFLOPS values signify greater computational power for deep learning models and scientific simulations [4].

- Memory Bandwidth: The data transfer rate between GPU memory and processing units, critical for data-intensive operations. For example, the NVIDIA H200 GPU features HBM3e memory with 141 GB capacity and 4.8 TB/s bandwidth [5].

- Arithmetic Intensity: The ratio of operations performed to bytes of memory accessed, which determines whether a computation is memory-bound or compute-bound [3].

The relationship between these metrics determines real-world performance for ecological applications. A computation is considered math-limited when the arithmetic intensity exceeds the processor's ops:byte ratio, and memory-limited when the intensity falls below this ratio [3]. Many ecological data operations fall into the memory-bound category, making GPU memory architecture particularly important.

Documented Performance Improvements

Empirical studies demonstrate substantial performance gains when applying GPU acceleration to ecological and environmental modeling tasks:

Table 2: Documented Performance Improvements in Ecological Applications

| Application Domain | CPU Baseline | GPU-Accelerated Performance | Speed-up Factor |

|---|---|---|---|

| Metagenomic Data Analysis (Parallel-META) | Traditional sequential processing | 15x faster processing | 15x [1] |

| Environmental Impact Modeling (PARMOD2D) | Sequential CPU code | 25x faster simulation | 25x [2] |

| 3D Reaction-Diffusion Modeling | CPU implementation | 5-40x faster solution | 5-40x [2] |

| Groundwater Flow Simulation (MODFLOW) | Standard CPU version | 10x acceleration | 10x [2] |

These performance improvements translate to practical scientific advantages. For example, the 25-fold speedup reported for atmospheric dispersion modeling enables researchers to run more complex simulations or perform parameter sensitivity analyses that would be infeasible with traditional CPU-based approaches [2]. In metagenomics, a 15x acceleration in processing means that binning—once a time-consuming process—no longer represents a bottleneck, enabling researchers to perform deeper comparative analyses across multiple samples [1].

Experimental Protocols for GPU-Accelerated Ecological Analysis

Metagenomic Analysis with Parallel-META

The Parallel-META pipeline demonstrates an effective protocol for leveraging GPU acceleration in microbial ecology studies [1]:

Experimental Workflow:

- Data Acquisition and Preprocessing: Collect raw metagenomic sequences from environmental samples. Quality control includes filtering low-quality reads and removing artifacts.

- Parallelized Database Search: Implement similarity-based binning through parallel alignment against reference databases (Greengenes, SILVA, or RDP) using GPU-accelerated sequence comparison algorithms.

- Taxonomic Profiling: Assign sequences to phylogenetic groups based on alignment results, generating abundance profiles across taxonomic ranks.

- Comparative Analysis and Visualization: Perform statistical comparisons across multiple samples and visualize results using integrated tools.

GPU Implementation Details:

- The similarity search is parallelized using CUDA-enabled GPUs based on the SIMT architecture

- Multiple sequence comparisons are distributed across thousands of threads

- Each thread performs computations on independent data elements (sequence reads)

- CPU-GPU data transfer is minimized through efficient memory management

This protocol demonstrated a 15x speedup over traditional methods while maintaining equivalent accuracy, making large-scale metagenomic studies more feasible [1].

Environmental Modeling with PARMOD2D

The PARMOD2D software provides a GPU-accelerated implementation for solving the 2D advection-reaction-diffusion equation, with applications in pollutant dispersion, forest growth, and groundwater flow [2]:

Numerical Implementation:

- Problem Discretization: Apply finite-difference discretization using the Crank-Nicolson scheme for numerical stability

- Matrix Assembly: Construct sparse matrices representing the discretized differential operators

- Parallel Solver Implementation: Utilize GPU-accelerated linear algebra routines from CUSP and CuSPARSE libraries

- Solution and Visualization: Solve the linear system and output results for visualization and analysis

GPU Optimization Strategies:

- Leverage CUDA for massive parallelization of grid-based computations

- Assign individual threads to discrete spatial elements in the computational domain

- Utilize shared memory for efficient data access patterns

- Implement thread synchronization to maintain numerical integrity

This approach enabled the simulation of problems with up to 20 million computational cells while achieving a 25x speedup compared to sequential CPU implementation [2].

The Scientist's Toolkit: Essential GPU Technologies for Ecological Research

Implementing GPU-accelerated solutions for ecological research requires both hardware and software components. The following toolkit details essential technologies and their applications in environmental and ecological research.

Table 3: Research Reagent Solutions for GPU-Accelerated Ecology

| Tool/Technology | Function | Application in Ecological Research |

|---|---|---|

| CUDA Toolkit | Development environment for GPU-accelerated applications | Provides compiler, libraries, and tools for creating custom ecological modeling solutions [6] |

| RAPIDS Suite | Open-source libraries for end-to-end data science on GPUs | Accelerates entire data processing pipelines for large ecological datasets [7] |

| NVIDIA H200 GPU | High-performance data center GPU with HBM3e memory | Handles large-scale environmental simulations and complex models [5] |

| TensorFlow/PyTorch | Deep learning frameworks with GPU acceleration | Enables AI-powered analysis of ecological data patterns [8] |

| CuSPARSE Library | GPU-accelerated sparse matrix operations | Optimizes numerical solutions for partial differential equations in environmental models [2] |

| NVLink Technology | High-bandwidth GPU interconnect | Connects multiple GPUs for larger ecological models than possible with a single GPU [5] |

Implementation Considerations and Best Practices

Algorithm Selection and Optimization

Successful implementation of GPU-accelerated ecological analysis requires careful algorithm selection and optimization:

- Arithmetic Intensity Analysis: Identify whether operations are memory-bound or compute-bound to guide optimization efforts. Element-wise operations like ReLU activation (0.25 FLOPS/B) are typically memory-limited, while dot-product operations like linear layers with large batch sizes (315 FLOPS/B) are often compute-limited [3].

- Parallelization Strategy: Decompose problems into independent units that can be processed concurrently. Ecological simulations often exhibit natural parallelism across spatial domains or independent samples.

- Memory Access Patterns: Optimize data access for coalesced memory operations to maximize bandwidth utilization. Structure data to enable contiguous memory access by threads within warps.

Computational Architecture and Scaling

For large-scale ecological research problems, multi-GPU and cluster configurations may be necessary:

- Multi-GPU Systems: Technologies like NVIDIA NVLink enable high-bandwidth communication between GPUs, creating a unified memory space across multiple devices [5].

- Distributed Computing: Frameworks like Apache Spark with GPU acceleration can distribute workloads across multiple nodes for extremely large datasets [7].

- Hybrid Approaches: Combine GPU acceleration with multi-core CPU processing for optimal resource utilization, as demonstrated in the Parallel-META pipeline [1].

The parallel processing architecture of GPUs provides a transformative advantage for addressing the computational challenges inherent in modern ecological research. By leveraging thousands of computational cores optimized for simultaneous execution, GPU-accelerated solutions demonstrate order-of-magnitude improvements in processing speed for applications ranging from metagenomic analysis to environmental modeling. The architectural alignment between GPU capabilities—including massive parallelism, high memory bandwidth, and specialized processing cores—and the fundamental characteristics of ecological data processing enables researchers to tackle problems at scales previously considered infeasible.

As ecological datasets continue to grow in size and complexity, embracing GPU-accelerated computational strategies will become increasingly essential for research progress. The experimental protocols, performance metrics, and implementation guidelines presented in this technical guide provide a foundation for researchers to leverage these technologies in their own work. Future advances in GPU architecture, including dedicated AI cores and enhanced memory systems, promise to further expand the boundaries of what is computationally possible in ecological research, opening new frontiers for understanding and managing complex environmental systems.

The integration of Artificial Intelligence (AI) and High-Performance Computing (HPC) represents a paradigm shift in scientific research, enabling unprecedented capabilities in fields ranging from drug discovery to large-scale ecological modeling. However, this computational revolution is accompanied by a rapidly expanding energy footprint. The very tools that allow scientists to simulate virtual cells or analyze global biodiversity datasets are themselves becoming significant consumers of global energy resources. Understanding the scale and trajectory of this energy demand is crucial for researchers who depend on these technologies to advance scientific discovery while navigating the growing constraints of energy availability, environmental impact, and computational sustainability [9] [10]. This whitepaper provides a comprehensive analysis of the projected energy demand of AI and HPC in scientific computing, framing the issue within the context of GPU-dependent research and outlining pathways toward a more sustainable computational future.

Projected Global Energy Demand for Data Centers

The energy demand required to power the global digital infrastructure is entering a phase of unprecedented growth, primarily fueled by the expansion of AI and HPC workloads. The following table summarizes key projections from recent analyses.

Table 1: Projected Global Data Center Electricity Demand

| Region/Scope | 2024/2025 Estimate | 2030 Projection | Key Drivers & Notes | Source |

|---|---|---|---|---|

| Global Demand | 415 TWh (2024) [11]448 TWh (2025 est.) [12] | 980 TWh [12] | AI-optimised servers to account for 44% of consumption by 2030. | Gartner [12], IEA [11] |

| U.S. Demand | 183 TWh (4% of U.S. demand) [13] | 426 TWh [13] | Could represent 8.6% of total U.S. electricity use by 2035 [11]. | IEA, Pew Research [13] |

| U.S. Power Capacity | 25 GW (2024 demand) [14] | 80 GW (2030 demand) [14] | The U.S. needs to triple annual power capacity to meet data center demand. | McKinsey [14] |

This surge is largely driven by the specialized hardware required for advanced AI research. AI-optimized servers are significantly more power-intensive than traditional servers, consuming two to four times as many watts to run [13]. While they accounted for an estimated 21% of datacentre power consumption in 2025, this share is projected to rise to 44% by 2030 [12]. The computational models powering scientific breakthroughs, such as the training of OpenAI's GPT-4, have already demonstrated this immense appetite, consuming an estimated 50 gigawatt-hours of energy—enough to power San Francisco for three days [9].

Environmental and Economic Impacts

The dramatic rise in energy consumption creates ripple effects across environmental, infrastructural, and economic domains, which are of particular concern to public and private research institutions.

Carbon Emissions and Biodiversity Loss

The environmental impact of computing extends beyond sheer energy volume. The carbon intensity of the electricity used is a critical factor. One analysis noted that the electricity powering data centers was 48% higher in carbon intensity than the U.S. average [9]. Furthermore, a groundbreaking study from Purdue University introduced the FABRIC framework, which quantifies computing's impact on biodiversity—a traditionally overlooked metric [10]. The framework reveals that while manufacturing hardware (e.g., CPUs and GPUs) imposes a significant one-time biodiversity cost, the operational electricity use can cause nearly 100 times greater biodiversity damage over the system's lifetime, primarily due to pollutants from power generation that lead to acidification and eutrophication [10].

Strain on Infrastructure and Household Costs

The geographic concentration of data centers can overwhelm local power grids and lead to higher energy costs for consumers. In 2023, data centers consumed about 26% of the total electricity supply in Virginia, with other states like Nebraska and Iowa also seeing significant shares [13]. Utilities must make expensive upgrades to power grids, costs that are often passed on to ratepayers. One analysis projected that data centers and cryptocurrency mining could lead to an 8% increase in the average U.S. electricity bill by 2030, with potential increases exceeding 25% in high-demand markets like central Virginia [13].

Methodologies for Measuring Computing Energy Consumption

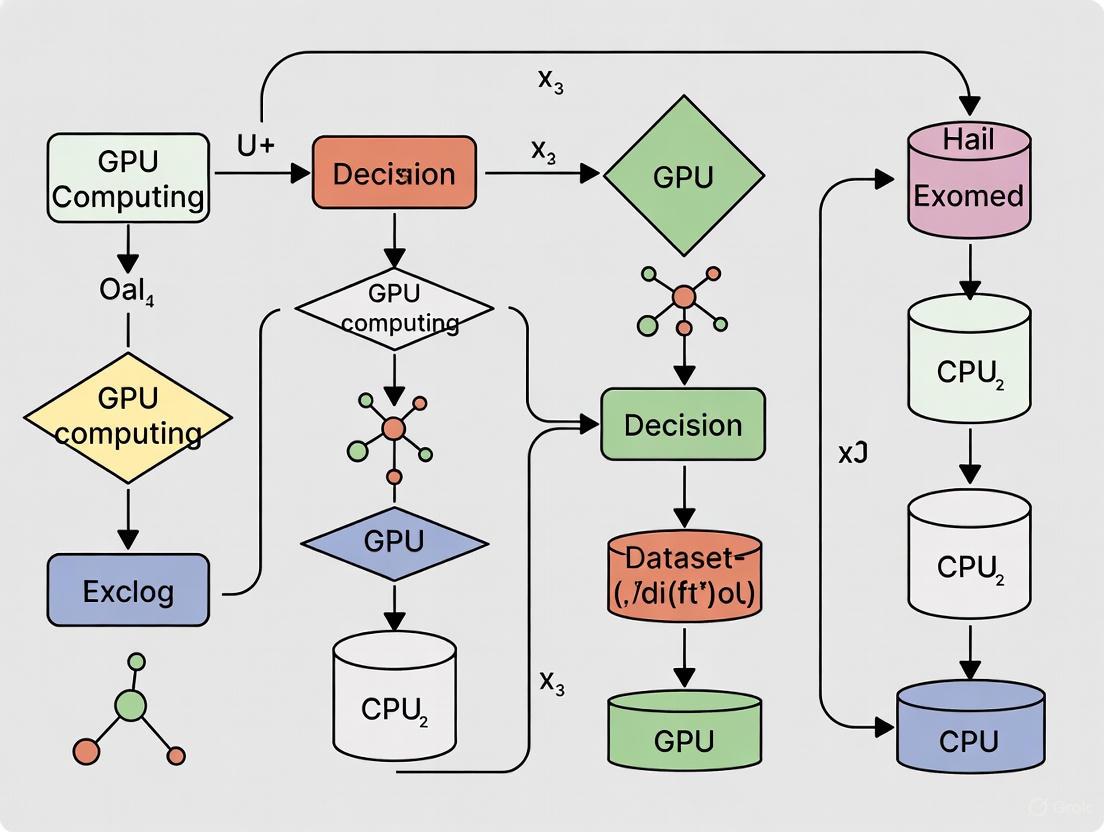

Accurately quantifying the energy consumption of AI and HPC workloads is a foundational step toward mitigation. The following diagram illustrates the core workflow for empirical energy measurement in a high-performance computing context.

Experimental Protocol for Process-Level Energy Estimation

The methodology visualized above is detailed in recent computer science research, which proposes novel models for estimating the energy consumption of specific processes in shared HPC environments [15]. The protocol can be summarized as follows:

- Objective: To estimate the energy consumption of a specific computational process (e.g., training an AI model on ecological data) without requiring exclusive access to the computing node.

- Data Collection:

- Total Node Power: Measure the total power drawn by the entire computing node (including CPUs, GPUs, memory, fans) using built-in sensors (e.g., Intel RAPL, NVIDIA NVML).

- Process Utilization: Monitor the GPU and CPU usage (%) attributable to the target process.

- Instruction Profile: For higher accuracy, profile the probability distribution of instruction types being executed by the process on both CPU and GPU.

- Mathematical Modeling: Input the collected data into a mathematical model. One proposed model estimates a process's energy use based on its resource usage and a normalized vector of its instruction-type distribution. This approach has demonstrated high accuracy, predicting CPU power consumption with a 1.9% error and GPU power with a 9.7% relative error [15].

- Output: The model outputs an estimated energy consumption (e.g., in watt-hours) for the specific process, enabling accountability and optimization.

This methodology is vital for researchers to benchmark the energy efficiency of their software and algorithms, moving beyond node-level measurements to a more granular understanding of their environmental footprint.

For researchers embarking on GPU-accelerated scientific computing, the following table details essential "research reagents"—both computational and methodological—required for conducting and evaluating large-scale experiments.

Table 2: Essential Research Reagents for GPU-Accelerated Scientific Computing

| Reagent / Resource | Function / Purpose | Example in Use |

|---|---|---|

| GPU Clusters (H100, A100, Blackwell) | Provides the massive parallel computational power required for training large AI models and running HPC simulations. | A single AI model may be housed on a dozen GPUs; large data centers can have over 10,000 interconnected [9]. |

| Virtual Cells Platform (VCP) | An open-source platform that lowers barriers for biologists to apply AI to specific tasks like virtual cell model development [16]. | Hosts state-of-the-art models and tools, providing a unified ecosystem for open, reproducible biological AI research [16]. |

| FABRIC Framework | A modeling framework to trace the biodiversity footprint of computing across its entire lifecycle (manufacturing to disposal) [10]. | Allows researchers to quantify the embodied (EBI) and operational (OBI) biodiversity impact of their computing workload [10]. |

| Energy Estimation Models | Mathematical models that enable energy accounting for specific software processes in shared supercomputing environments [15]. | Lets a researcher measure the energy cost of training a specific ecological model without node isolation [15]. |

| cz-benchmarks | An open-source Python package that provides standardized evaluation benchmarks for AI models in biology [16]. | Enables model developers to spend less time on evaluation setup and more time on improving models to solve real problems [16]. |

Pathways to a Sustainable Computing Future

The energy challenge posed by AI and HPC is not insurmountable. A multi-faceted approach focused on efficiency, infrastructure, and strategic planning can align technological progress with sustainability goals. The following pathways are critical:

- Adopt Scalable and Efficient Design Principles: Data center developers are moving towards scalable reference designs that are 60-80% standardized. This approach, coupled with modular construction and consolidated MEP systems, can accelerate project delivery by 10-20% and reduce capital spending by similar margins, potentially shaving up to $250 billion off the projected $1.7 trillion in global spending through 2030 [14].

- Prioritize Location and Energy Source: The biodiversity impact of operational computing can vary by an order of magnitude depending on the local power grid. Research shows that using renewable-heavy grids, like Québec's hydroelectric mix, drastically reduces ecological damage compared to fossil-fuel-heavy grids [10]. Siting new computation facilities in regions with abundant, low-carbon energy is therefore a high-impact strategy.

- Channel AI Investments to Accelerate the Energy Transition: The AI boom is incentivizing massive investment in clean energy. Tech companies are signing long-term clean-power contracts and investing in advanced nuclear and geothermal ventures [11]. The key is to ensure this new capacity strengthens the public grid and benefits wider society, not just individual data centers, through shared infrastructure planning and integrated policy frameworks [11].

- Leverage AI for System-Level Energy Intelligence: Beyond being an energy consumer, AI can be a powerful tool for optimizing the energy system. It can improve renewable forecasting, grid balancing, predictive maintenance, and building efficiency, ultimately making the entire energy system more adaptive and resilient [11].

The relationship between scientific computing and energy is at a critical juncture. For researchers relying on GPU clusters to analyze ecological datasets or develop virtual cell models, the energy footprint of their work is becoming an integral part of the research equation. By adopting rigorous measurement practices, utilizing efficient tools and platforms, and advocating for sustainable infrastructure, the scientific community can continue to drive discovery while leading by example in the responsible use of planetary resources.

The push to process large-scale ecological datasets has positioned powerful computing hardware, particularly GPUs, as a cornerstone of modern environmental research. However, the environmental footprint of this computational power extends far beyond the operational carbon emissions that typically dominate sustainability discussions. A comprehensive, cradle-to-grave perspective reveals significant impacts on biodiversity, water resources, and human health through mechanisms like acidification, eutrophication, and toxic emissions. For researchers using GPU computing to study ecological systems, understanding this full footprint is not merely an operational concern but a fundamental aspect of responsible scientific practice. This guide provides a technical foundation for quantifying and mitigating the multi-faceted environmental impacts of computing infrastructure, enabling scientists to align their research methods with its sustainability goals.

Core Impact Categories and Quantitative Metrics

The environmental footprint of computing hardware is categorized across multiple impact domains, spanning the entire lifecycle from manufacturing to decommissioning. The following table synthesizes the key impact categories, their primary causes within the computing lifecycle, and their measured environmental effects.

Table 1: Key Environmental Impact Categories of Computing Hardware

| Impact Category | Primary Lifecycle Source | Measured Environmental Effect |

|---|---|---|

| Climate Change [17] | Use-phase electricity generation (dominates) [17]; Manufacturing [18] | Global warming potential, measured in kg CO₂-equivalent [17]. |

| Biosphere Integrity [10] | Manufacturing (acidifying emissions); Use-phase (air pollution from electricity) [10] | Biodiversity loss, quantified as potential fraction of species lost over time (species·year) [10]. |

| Human Toxicity (Cancer & Non-cancer) [17] | Manufacturing of GPU chips and other components [17] | Human health impacts from emission of toxic substances, measured in comparative toxic units (CTUh) [17]. |

| Freshwater Ecotoxicity [17] | Manufacturing stage [17] | Damaging effects of toxic substances on freshwater ecosystems, measured in CTUe [17]. |

| Resource Depletion (Minerals & Metals) [17] | Raw material extraction for hardware components [17] | Scarcity and depletion of abiotic resources, measured in kg Sb-equivalent [17]. |

| Water Consumption [19] [20] | On-site cooling of data centers; Power plant cooling for electricity [19] | Freshwater depletion, particularly in water-stressed regions [20]. |

Introducing Biodiversity-Specific Metrics

Traditional sustainability metrics often fail to capture computing's effect on ecosystems and species. Recent research introduces two new, quantifiable metrics to bridge this gap [10]:

- Embodied Biodiversity Index (EBI): Captures the one-time environmental toll of manufacturing, shipping, and disposing of computing hardware.

- Operational Biodiversity Index (OBI): Measures the ongoing biodiversity impact from the electricity used to power computing systems.

These indices integrate data on pollutants like sulfur dioxide, nitrogen oxides, and heavy metals—key drivers of acid rain, eutrophication, and freshwater toxicity—and translate them into a unified "species·year" metric [10].

Lifecycle Assessment (LCA) and Quantitative Data

A cradle-to-grave Lifecycle Assessment (LCA) is essential for a complete understanding of computing hardware's environmental footprint. The lifecycle is typically divided into three core phases: manufacturing, use, and end-of-life.

Manufacturing Phase (Cradle-to-Gate)

The manufacturing of GPUs and other computing hardware is resource-intensive, creating a significant embodied environmental footprint before the hardware is ever switched on.

Table 2: Environmental Impact of Manufacturing an NVIDIA A100 GPU (SXM 40GB)

| Impact Category | Contribution from Manufacturing | Key Contributing Components |

|---|---|---|

| Human Toxicity, Cancer [17] | 99% of total cradle-to-grave impact [17] | GPU chip, memory, and integrated circuits [17]. |

| Resource Use, Minerals & Metals [17] | 85% of total cradle-to-grave impact [17] | GPU chip and other electronic components [17]. |

| Climate Change [17] | ~4% of total cradle-to-grave impact [17] | Energy-intensive fabrication processes [18]. |

| Freshwater Ecotoxicity [17] | 37% of total cradle-to-grave impact [17] | Manufacturing processes and material extraction [17]. |

Key manufacturing drivers include the complex fabrication of chips at nanoscale process nodes, which requires extreme ultraviolet lithography (EUV) and substantial chemical inputs [18], and the integration of High-Bandwidth Memory (HBM), which involves 3D die stacking and adds thermal and manufacturing complexity [18].

Use Phase (Operational)

The operational phase of computing hardware, particularly for energy-intensive AI training and inference, dominates many environmental impact categories.

Table 3: Use Phase Environmental Impact for A100 GPU Training BLOOM Model

| Impact Category | Contribution from Use Phase | Primary Driver |

|---|---|---|

| Climate Change [17] | 96% of total impact [17] | Carbon intensity of the local electricity grid [17]. |

| Resource Use, Fossils [17] | 96% of total impact [17] | Reliance on fossil fuels for electricity generation [17]. |

| Acidification [10] | Significant (Grid-dependent) | Emissions of sulfur dioxide (SO₂) and nitrogen oxides (NOₓ) from power generation [10]. |

The operational biodiversity impact from electricity use can be nearly 100 times greater than the impact from device production at typical data center loads [10]. The location of the data center is therefore a critical factor, as a renewable-heavy grid can cut biodiversity impact by an order of magnitude compared to a fossil-fuel-heavy grid [10].

End-of-Life Phase

The end-of-life phase is the least documented in LCAs but contributes to challenges like electronic waste. In 2022, the world generated 62 million metric tons of e-waste, with only 22% being recycled [20]. Circuit boards in computing hardware contain precious metals but also toxic metals like arsenic, beryllium, chromium, and lead, which can leach into the environment if not disposed of properly [20].

Experimental Protocols for Impact Assessment

Protocol 1: Comprehensive Lifecycle Assessment (LCA) for AI Hardware

This protocol provides a framework for conducting a cradle-to-grave LCA for a specific computing hardware component, such as a GPU.

- Goal and Scope Definition: Define the study's purpose, the specific hardware product (e.g., NVIDIA A100 SXM 40GB GPU), and the system boundaries (cradle-to-grave: raw material extraction, manufacturing, transportation, use, end-of-life) [17].

- Lifecycle Inventory (LCI) - Primary Data Collection:

- Teardown Analysis: Physically disassemble the GPU into its major component groups (GPU chip, memory, printed circuit board, capacitors, thermal solution, etc.) [17].

- Elemental Composition Analysis: Perform a multi-element composition analysis on each component group to determine the precise mass of individual materials (e.g., silicon, gold, copper, lead, plastics) [17]. This primary data is crucial for accuracy, especially for toxicity and resource depletion impacts [17].

- Lifecycle Inventory (LCI) - Use Phase Modeling:

- Model the total energy consumption during the operational lifespan. This requires data on the GPU's Thermal Design Power (TDP), typical utilization rates, and the duration of operation [18] [21].

- Factor in the Power Usage Effectiveness (PUE) of the data center to account for overhead energy from cooling and other infrastructure [20]. The U.S. average PUE was 1.6 in 2023 [20].

- Lifecycle Impact Assessment (LCIA):

- Use specialized LCIA software (e.g., SimaPro, OpenLCA) and databases (e.g., ecoinvent) to translate the lifecycle inventory data into multiple environmental impact category scores, as defined in Table 1 [17].

- Interpretation and Transparency:

Protocol 2: Biodiversity Impact Calculation Using the FABRIC Framework

The FABRIC (Fabrication-to-Grave Biodiversity Impact Calculator) framework provides a methodology for assessing computing's impact on ecosystems [10].

- System Boundary Definition: Trace the computing system's biodiversity footprint across its full lifecycle: manufacturing, transportation, operation, and disposal [10].

- Emission Data Compilation: Gather data on key ecosystem-relevant pollutants released throughout the lifecycle. These include:

- Impact Characterization:

- Model the fate and transport of these pollutants in the environment.

- Quantify their effect on species in local ecosystems using established ecological models.

- Metric Calculation:

- Aggregate the characterized impacts into the two core biodiversity metrics:

- Express the final result in a unified "species·year" metric, which represents the cumulative loss of species in an ecosystem over time [10].

The following workflow diagram illustrates the key steps and data flows for these assessment protocols:

Diagram 1: Environmental Impact Assessment Workflow. This diagram outlines the parallel steps for conducting a full Lifecycle Assessment (LCA - red) and a specialized biodiversity assessment using the FABRIC framework (blue). Both processes rely on specific data inputs to generate comprehensive environmental impact reports.

The Scientist's Toolkit: Key Reagents & Tools for Footprint Analysis

For researchers aiming to quantify the environmental impact of their computational work, the following tools and datasets are essential.

Table 4: Essential Tools for Computational Footprint Analysis

| Tool / Dataset | Function | Application in Research |

|---|---|---|

| LCA Software (e.g., SimaPro, OpenLCA) | Models environmental impacts based on lifecycle inventory data; contains databases for common materials and processes [17]. | Used to perform a full cradle-to-grave impact assessment for a specific hardware configuration or research project. |

| Primary Material Inventory | A dataset detailing the mass and composition of every component in a specific hardware unit (e.g., A100 GPU), obtained via teardown and elemental analysis [17]. | Serves as the critical, high-quality input data for an accurate LCA, replacing less accurate proxy data. |

| Power Usage Effectiveness (PUE) | A ratio measuring data center energy efficiency (total facility energy / IT equipment energy) [20]. | Used to scale the direct power draw of computing hardware to the total energy footprint of the facility it operates in. |

| Local Grid Carbon & Emission Intensity | Data on the mix of energy sources (coal, gas, nuclear, renewables) and associated emission factors for the electricity grid powering the computation [10] [17]. | Critical for accurately calculating the operational carbon and biodiversity impact (OBI) of a model's training or inference. |

| FABRIC Framework | A modeling framework that traces computing’s biodiversity footprint across its lifecycle and calculates the Embodied and Operational Biodiversity Indices (EBI/OBI) [10]. | Connects computing activities directly to biodiversity loss, moving beyond a carbon-centric view of sustainability. |

Mitigation Strategies for Sustainable Computing Research

Addressing the full environmental footprint requires a multi-faceted approach that extends beyond simply purchasing carbon offsets.

Hardware Selection and Utilization: Deploy the latest generation of energy-efficient GPUs and specialized AI accelerators. For example, NVIDIA's Blackwell platform is reported to be over 50 times more energy-efficient than traditional CPUs for AI workloads [22]. Furthermore, virtualization allows one physical server to run multiple programs, reducing the total number of servers needed and improving utilization, thereby cutting the embodied and operational footprint [20].

Algorithmic and Workload Efficiency: Utilize techniques like model pruning, quantization, and knowledge distillation to create smaller, less computationally intensive models that achieve similar performance with significantly less energy [22]. Schedule non-urgent AI workloads (e.g., long model training jobs) to run during periods when the energy grid is supplied by a higher percentage of renewables [22].

Strategic Infrastructure Choices: Choose to colocate research computing infrastructure in data centers that are committed to 100% renewable energy and employ advanced, water-efficient cooling technologies [22]. The geographic location of computation is a powerful lever; using a grid powered largely by hydroelectricity, like Québec's, can cut biodiversity impact by an order of magnitude compared to a fossil-fuel-heavy grid [10].

Extending Hardware Lifespan: Extending the operational life of computer hardware delays the energy and materials burdens associated with manufacturing new equipment [20]. This directly reduces the annualized embodied footprint of research infrastructure.

For the scientific community using GPU computing to solve ecological challenges, there is a profound opportunity and responsibility to lead by example. A narrow focus on carbon emissions provides an incomplete picture, potentially overlooking significant impacts on freshwater, species, and human health. By adopting the comprehensive assessment frameworks, quantitative metrics, and mitigation strategies outlined in this guide, researchers and drug development professionals can make informed decisions that drastically reduce the environmental footprint of their work. Integrating this multi-criteria perspective into computational research is not just a technical necessity for achieving true sustainability; it is a critical step towards ensuring that our efforts to understand and protect the natural world are not inadvertently harming it.

The exponential growth in computational demand, particularly from artificial intelligence (AI) and high-performance computing (HPC), has created a well-documented energy crisis, with projections indicating these systems could consume up to 8% of global electricity by 2030 [23]. However, the environmental consequences extend far beyond carbon emissions and energy consumption. In a first-of-its-kind study, researchers from Purdue University's Elmore Family School of Electrical and Computer Engineering have unveiled FABRIC (Fabrication-to-Grave Biodiversity Impact Calculator), a comprehensive framework that quantifies computing's previously overlooked impact on global biodiversity loss [10].

This framework emerges at a critical juncture for researchers utilizing GPU computing for large-scale ecological datasets. While GPU-accelerated platforms like NVIDIA Clara have revolutionized drug discovery by enabling molecular docking simulations, molecular dynamics, and machine learning algorithms that analyze massive biological datasets [24] [25], the ecological footprint of this computation has remained largely unmeasured. FABRIC introduces the first quantifiable link between computing activities and ecosystem integrity, providing researchers with methodologies to account for biodiversity in their sustainability calculations [10].

Core Methodology: Quantifying Computing's Biodiversity Footprint

Foundational Metrics and Impact Translation

The FABRIC framework establishes two pioneering metrics that enable researchers to quantify computing's ecological impact across its entire lifecycle [10]:

Embodied Biodiversity Index (EBI): Captures the one-time environmental toll of manufacturing, shipping, and disposing of computing hardware such as CPUs, GPUs, and memory. This metric accounts for pollutants released during chip fabrication, including sulfur dioxide, nitrogen oxides, and heavy metals that drive acidification, eutrophication, and freshwater toxicity.

Operational Biodiversity Index (OBI): Measures the ongoing biodiversity impact from the electricity used to power computing systems. This incorporates both direct emissions from on-site generation and indirect emissions from electricity production, translating them into biodiversity impact units.

The framework's analytical power lies in its ability to translate these diverse environmental stressors into a unified "species·year" metric, representing the fraction of species lost in an ecosystem over time due to computing activities [10]. This standardization enables direct comparison between different computing infrastructures and methodologies.

Experimental Framework and Data Integration

The FABRIC methodology employs a comprehensive approach to data integration and impact assessment:

Lifecycle Inventory Analysis: Compiles material and energy flows across four lifecycle stages: manufacturing, transportation, operation, and disposal.

Impact Characterization: Translates inventory data into ecosystem impacts using species-area relationships and dose-response models that connect emissions to changes in species richness.

Spatial Differentiation: Incorporates regional variations in ecosystem vulnerability and grid composition to account for location-specific impacts.

The framework was validated through analysis of seven high-performance computing workloads running on diverse hardware, from local servers to supercomputers and cloud platforms [10]. This experimental approach enabled the isolation of biodiversity impact factors across different computational architectures and geographic locations.

Table: Core Metrics in the FABRIC Biodiversity Assessment Framework

| Metric | Scope | Key Impact Drivers | Measurement Unit |

|---|---|---|---|

| Embodied Biodiversity Index (EBI) | Hardware manufacturing, transport, and disposal | Acidification from chip fabrication, heavy metal emissions, resource extraction | species·year |

| Operational Biodiversity Index (OBI) | Electricity generation for computing operations | Sulfur dioxide (SO₂), nitrogen oxides (NOₓ) from power generation, water consumption for cooling | species·year |

Key Findings: Computing's Biodiversity Impact Revealed

Relative Impact of Lifecycle Stages

The application of FABRIC to HPC workloads yielded striking insights about the distribution of biodiversity impacts across the computing lifecycle [10]:

Manufacturing Dominance: The initial embodied impact of hardware production accounts for up to 75% of total biodiversity damage, largely due to acidification from chip fabrication processes.

Operational Amplification: Despite manufacturing's significant impact, operational electricity use can overshadow manufacturing—at typical data center loads, the biodiversity damage from power generation can be nearly 100 times greater than that from device production over the system's lifetime.

Location Dependence: The geographic location of computing infrastructure profoundly influences its biodiversity footprint. Renewable-heavy grids with strict emission limits—like Québec's hydroelectric mix—can reduce biodiversity impact by an order of magnitude compared to fossil-fuel-heavy grids.

Projected Environmental Footprints

When applied to forecast AI server deployment across the United States from 2024-2030, FABRIC projects substantial environmental impacts [26]:

Water Footprint: AI server operations could generate an annual water footprint ranging from 731 to 1,125 million m³, with indirect water footprint (from electricity generation) contributing 71% of the total.

Carbon Emissions: Additional annual carbon emissions could range from 24 to 44 Mt CO₂-equivalent, with Scope 2 emissions from indirect energy purchases constituting a substantial portion.

These projections underscore the significant ecological burden associated with the expanding computational infrastructure required for large-scale research, including ecological dataset analysis and drug discovery applications.

Table: Projected Annual Environmental Impact of AI Servers in the U.S. (2024-2030) [26]

| Scenario | Energy Consumption | Water Footprint | Carbon Emissions |

|---|---|---|---|

| Low Demand | Lower bound | ~731 million m³ | ~24 Mt CO₂e |

| Mid-Case | Moderate | Intermediate range | Intermediate range |

| High Demand | Upper bound | ~1,125 million m³ | ~44 Mt CO₂e |

Methodological Framework for Researchers

Experimental Protocol for Biodiversity Impact Assessment

Researchers can implement FABRIC's methodology through the following structured protocol:

System Boundary Definition: Determine assessment scope (cradle-to-gate or cradle-to-grave) and identify all included lifecycle stages.

Inventory Data Collection: Compile hardware specifications, manufacturing data, transportation logistics, power consumption metrics, and disposal pathways.

Regional Grid Analysis: Characterize the electricity grid mix at the computation location, incorporating temporal variations in energy sources.

Impact Characterization: Apply species-area relationships to land use impacts and dose-response models for chemical emissions.

Result Interpretation: Aggregate characterized impacts into EBI and OBI metrics for comparative analysis.

The framework integrates with existing computational workflows, allowing researchers to maintain their GPU-accelerated pipelines while adding biodiversity accountability.

Integration with GPU-Accelerated Research Workflows

For researchers utilizing GPU computing in drug discovery and ecological research, FABRIC offers specialized assessment modules:

Molecular Simulation Impact Tracking: Correlates GPU-hours for molecular docking and dynamics simulations with biodiversity impacts based on hardware efficiency and location-specific grid factors.

Machine Learning Workload Assessment: Evaluates the biodiversity cost of training deep learning models for protein structure prediction or chemical property analysis.

Comparative Architecture Analysis: Enables biodiversity efficiency comparisons between different GPU architectures and computing platforms.

Diagram: FABRIC Methodology Workflow showing the integration of embodied and operational impact assessments into a unified biodiversity metric.

The Researcher's Toolkit: Implementing Sustainable Computing Practices

Research Reagent Solutions for Biodiversity-Aware Computing

Table: Essential Components for Sustainable GPU-Accelerated Research

| Tool/Component | Function in Research | Biodiversity Consideration |

|---|---|---|

| High-Efficiency GPU Servers | Parallel processing for molecular simulations and deep learning | Newer architectures reduce operational biodiversity impact per computation |

| Advanced Liquid Cooling Systems | Thermal management for high-density computing | Can reduce water consumption by up to 85% compared to traditional cooling [26] |

| Workload Scheduling Software | Dynamic resource allocation and distribution | Enables computation during low-impact periods (high renewable availability) |

| Carbon-Aware Computing Platforms | Geographical workload distribution | Routes computations to regions with cleaner energy grids |

| Lifecycle Assessment Tools | Environmental impact tracking | Quantifies EBI and OBI for specific research projects |

Strategic Implementation for Drug Discovery Research

For drug development professionals utilizing GPU computing, several strategic approaches can significantly reduce biodiversity impact while maintaining research efficacy:

Computational Efficiency Optimization: Maximize utilization of existing GPU resources through improved algorithms and parallelization strategies, reducing the need for additional hardware with its associated embodied impacts.

Renewable Energy Sourcing: Prioritize computation in regions with low-carbon grid mixes or implement power purchase agreements for renewable energy to directly reduce operational biodiversity impacts.

Hardware Lifecycle Extension: Extend the usable life of GPU systems through modular upgrades and maintenance, amortizing the initial embodied impact over more research computations.

Consolidated Computing Sessions: Batch computational workloads to maximize hardware utilization rates and reduce the relative overhead of idle systems.

Pathways to Sustainable Computational Research

Technological Mitigation Strategies

The FABRIC analysis reveals several promising pathways for reducing the biodiversity impact of computational research [10] [26]:

Advanced Cooling Technologies: Implementation of liquid immersion cooling and air-side economizers can reduce the water footprint of data centers by up to 85%, directly addressing a major contributor to operational biodiversity impact.

Server Utilization Optimization: Improving active server ratios from current averages to best practices could reduce energy, water, and carbon footprints by approximately 5.5% by 2030.

Grid Decarbonization: Accelerating the transition to renewable energy sources represents the most significant opportunity for reducing operational biodiversity impacts, with potential reductions of an order of magnitude in regions with clean energy mixes.

Diagram: Computing's Biodiversity Impact Pathway tracing how computational activities drive environmental stressors that ultimately affect ecosystem integrity.

Policy and Industry Implications

The FABRIC framework carries significant implications for research institutions and policy makers [10] [27]:

Biodiversity as a First-Class Metric: Sustainability assessments must expand beyond carbon emissions to include biodiversity impact as a core metric in computational research proposals and infrastructure planning.

Transparent Reporting Standards: Research institutions should implement comprehensive environmental impact reporting that includes both embodied and operational biodiversity impacts of their computational infrastructure.

Interdisciplinary Collaboration: Addressing computing's ecological impact requires collaboration across computer science, environmental science, and policy domains to develop holistic solutions.

The FABRIC framework represents a paradigm shift in how the research community conceptualizes the environmental impact of computation. By moving beyond narrow carbon-centric metrics to a comprehensive biodiversity assessment, it provides researchers utilizing GPU computing for drug discovery and ecological analysis with the tools to quantify and mitigate their full ecological footprint.

As Professor Yi Ding notes, "Our goal isn't to stop progress—it's to make computing more aware of its ecological footprint" [10]. For drug development professionals leveraging powerful GPU-accelerated platforms, this awareness enables more sustainable research practices that maintain scientific progress while minimizing ecological harm. The integration of biodiversity metrics into computational research planning represents an essential evolution toward truly sustainable scientific discovery.

The rapid expansion of GPU computing for processing large-scale ecological datasets has revolutionized fields such as joint species distribution modelling, landscape genetics, and ecosystem forecasting. However, this computational progress carries an often-overlooked environmental cost: biodiversity loss. Traditional sustainability metrics in computing have focused predominantly on carbon emissions and energy consumption, creating a significant gap in assessing technology's full ecological footprint. This whitepaper introduces Embodied Biodiversity Index (EBI) and Operational Biodiversity Index (OBI) as critical complementary metrics that enable researchers to quantify computing's impact on global ecosystems [10].

The FABRIC framework (Fabrication-to-Grave Biodiversity Impact Calculator) represents a methodological breakthrough, providing the first standardized approach to trace computing's biodiversity footprint across its complete lifecycle—from chip manufacturing and hardware transportation to data center operation and eventual disposal [10]. For researchers utilizing GPU clusters to analyze ecological data, these metrics offer a crucial lens through which to evaluate and minimize the paradoxical impact of their conservation work—using powerful computing tools that may themselves contribute to the biodiversity crisis they seek to address.

Defining the Core Metrics: EBI and OBI

Embodied Biodiversity Index (EBI)

The Embodied Biodiversity Index (EBI) quantifies the one-time environmental toll associated with the production, transportation, and disposal of computing hardware. This metric captures biodiversity impacts from the extraction of raw materials, manufacturing processes, shipping, and end-of-life management of components such as GPUs, CPUs, and memory modules [10] [28].

EBI calculations incorporate the effects of pollutants released during these stages, including:

- Sulfur dioxide (SO₂) and nitrogen oxides (NOₓ) that contribute to acid rain, damaging terrestrial and aquatic ecosystems

- Heavy metals that cause freshwater toxicity

- Nutrient runoff leading to eutrophication in water bodies [10]

These impacts are unified into a single "species·year" metric, representing the cumulative fraction of species lost in affected ecosystems over time due to the hardware's creation and disposal [10].

Operational Biodiversity Index (OBI)

The Operational Biodiversity Index (OBI) measures the ongoing biodiversity impact resulting from the electricity consumed during system operation. Unlike the one-time embodied impact, OBI accumulates throughout the operational lifespan of computing equipment [10] [28].

OBI varies significantly based on:

- Energy source composition in the local grid (renewable vs. fossil fuels)

- Emission control technologies at power generation facilities

- Geographical location and its associated ecosystem sensitivity [10]

Critically, OBI reveals that low-carbon energy sources don't always equate to low biodiversity impact. For instance, a coal-heavy grid might have similar carbon emissions to a gas-heavy one but generate substantially higher acid gas emissions that harm ecosystems [10].

The FABRIC Assessment Framework: Methodology and Workflow

The FABRIC framework implements a comprehensive methodology for quantifying computing's biodiversity footprint through a systematic multi-stage process.

Data Collection and Lifecycle Inventory

The initial phase involves compiling a detailed inventory of all hardware components and their material composition. For GPU-based research systems, this includes:

- GPU units (including specific model and memory specifications)

- Supporting hardware (CPUs, memory, storage systems, networking equipment)

- Infrastructure elements (cooling systems, power supplies)

The most accurate assessments utilize primary data from hardware teardowns and elemental composition analysis, as demonstrated in recent studies of NVIDIA A100 GPUs [17]. This approach involves methodical disassembly of components and multi-element composition analysis to determine the exact material inventory of each component group [17].

Impact Assessment and Normalization

The framework translates inventory data into biodiversity impacts using characterization factors that model how specific emissions affect ecosystems. Key impact pathways include:

- Acidification potential from sulfur and nitrogen compounds

- Freshwater ecotoxicity from heavy metal emissions

- Eutrophication potential from nutrient releases [10]

These diverse impact pathways are normalized into the unified "species·year" metric, enabling cross-comparison of different environmental mechanisms affecting biodiversity.

Computational Implementation

The FABRIC framework can be implemented through both proprietary and open-source Life Cycle Assessment software tools. The computational backend processes the life cycle inventory through environmental impact models to generate the final EBI and OBI metrics [10].

Figure 1: The FABRIC Framework Workflow illustrating the integrated assessment of Embodied (EBI) and Operational (OBI) Biodiversity Indices across the complete hardware lifecycle.

Quantitative Findings: EBI and OBI in High-Performance Computing Workloads

Application of the FABRIC framework to seven high-performance computing workloads has yielded critical insights into the relative contributions of embodied versus operational impacts across different computing scenarios.

Table 1: Relative Contributions of Embodied vs. Operational Biodiversity Impacts in Computing Systems

| System Type | Embodied Impact (EBI) Dominance | Operational Impact (OBI) Dominance | Key Impact Drivers |

|---|---|---|---|

| Local Server | Manufacturing: Up to 75% of embodied impact [10] | Electricity source dependent; can be 5-10× embodied impact [10] | Chip fabrication (acidification), material extraction [10] |

| Cloud Computing | GPU chips contribute ~81% to climate change impacts in manufacturing [17] | Use phase dominates 10-11 of 16 impact categories [17] | Energy grid mix, server utilization rates, cooling overhead [10] |

| Supercomputers | Manufacturing dominates human toxicity (94%), freshwater eutrophication (81%) [17] | At typical data center loads, OBI can be nearly 100× greater than EBI [10] | Scale of infrastructure, specialized cooling systems [10] |

Table 2: Biodiversity Impact Variation by Geographical Location and Energy Source

| Energy Grid Profile | Biodiversity Impact Reduction | Primary Factors |

|---|---|---|

| Renewable-heavy grid (e.g., Québec hydroelectric) | Order of magnitude reduction vs. fossil fuels [10] | Minimal acid gas emissions, reduced freshwater toxicity [10] |

| Fossil-fuel-heavy grid | Highest biodiversity impact per kWh [10] | SO₂, NOₓ emissions driving acidification; heavy metal emissions [10] |

| Grid with emission controls | Intermediate impact reduction | Limited acid gas emissions, but persistent toxicity impacts [10] |

GPU-Accelerated Ecological Research: A Case Study in Balanced Implementation

The integration of GPU computing in ecological research presents a compelling case for applying biodiversity indices to maximize scientific benefit while minimizing environmental harm. Recent advances in Joint Species Distribution Modelling (JSDM) demonstrate both the power and paradox of computational ecology.

The Computational Ecology Workflow

Modern ecological analysis using the Hmsc R-package involves a multi-stage process that can be significantly accelerated through GPU implementation [29]:

- Model Structure Definition: Specifying the relationship between species occurrence, environmental covariates, species traits, and phylogenetic relationships

- Model Fitting: Using Markov Chain Monte Carlo (MCMC) sampling to estimate parameters—the most computationally intensive stage

- MCMC Diagnostics: Assessing chain convergence and reliability

- Inference and Prediction: Applying the fitted model to ecological questions and conservation planning [29]

The Hmsc-HPC implementation demonstrates how GPU porting can achieve speed-ups of over 1000× for large datasets, dramatically reducing operational time and energy requirements [29]. This efficiency gain directly translates to reduced OBI for the same computational task.

Figure 2: GPU-Accelerated Ecological Research Workflow with Integrated Biodiversity Impact Assessment, showing how EBI and OBI considerations can inform computational choices in ecological modeling.

Biodiversity Impact Optimization in Research Computing

Ecological researchers can apply several strategies to minimize the biodiversity footprint of their computational work:

- Hardware Selection: Choosing energy-efficient GPUs and extending hardware lifespan through careful maintenance to amortize embodied impacts over more research cycles

- Computational Efficiency: Implementing GPU acceleration to complete analyses faster, reducing operational impacts, as demonstrated by 1000× speedups in Hmsc-HPC [29]

- Resource Allocation: Leveraging cloud computing in regions with low-biodiversity-impact energy grids rather than maintaining local fossil-fuel-powered servers

- Model Optimization: Balancing model complexity with computational requirements to avoid unnecessary biodiversity impacts from overtrained models [30]

Implementation Protocol: Measuring Biodiversity Impact in Research Computing

Experimental Protocol for EBI Assessment

Research Objective: Quantify the embodied biodiversity impact of a GPU-based research computing system.

Materials and Equipment:

- Target computing hardware (GPU units, CPUs, memory modules)

- Life Cycle Inventory database (e.g., Ecoinvent, GREET)

- FABRIC-compatible assessment software

- Material composition data from hardware teardown (if available) [17]

Methodology:

- Hardware Inventory Compilation:

- Document all major components with manufacturer specifications

- Record mass and composition of key materials (metals, plastics, rare earth elements)

- Obtain manufacturing location data for supply chain analysis

Impact Calculation:

- Process inventory through life cycle impact assessment method

- Apply characterization factors for acidification, ecotoxicity, and eutrophication

- Sum impacts across all components to calculate total EBI

Normalization:

- Express total impact in "species·year" units

- Allocate impact per expected operational lifetime (typically 3-5 years for research GPUs)

Experimental Protocol for OBI Assessment

Research Objective: Quantify the operational biodiversity impact of running ecological models on GPU clusters.

Materials and Equipment:

- Power consumption monitoring equipment (e.g., PDU with metering capabilities)

- Regional electricity grid mix data

- Computational task profiling tools

- Operational time records

Methodology:

- Energy Consumption Monitoring:

- Measure power draw at GPU rack level during model execution

- Profile different computational phases (data loading, training, inference)

- Record total energy consumption (kWh) for complete analysis

Grid Impact Analysis:

- Obtain region-specific electricity generation mix

- Apply location-specific emission factors for SO₂, NOₓ, and heavy metals

- Calculate biodiversity impact using species-potential models

Impact Allocation:

- Allocate OBI to specific research projects based on computational time

- Compare alternative implementations (CPU vs. GPU, algorithm efficiency)

The Researcher's Toolkit: Essential Solutions for Sustainable Computational Ecology

Table 3: Research Reagent Solutions for Biodiversity Impact Assessment

| Tool/Solution | Function | Application Context |

|---|---|---|

| FABRIC Framework | Integrated EBI and OBI calculation across hardware lifecycle [10] | Comprehensive biodiversity impact assessment for computing infrastructure |

| Hmsc-HPC Package | GPU-accelerated joint species distribution modelling [29] | High-performance ecological analysis with reduced operational impacts |

| Life Cycle Inventory Databases | Primary data on material composition and manufacturing impacts [17] | Embodied impact calculation for specific hardware components |

| Hardware Teardown Analysis | Physical disassembly and elemental composition analysis [17] | Primary data collection for accurate EBI assessment |

| Power Monitoring Systems | Real-time measurement of energy consumption during model execution [10] | Operational impact quantification for specific computational tasks |

| Regional Grid Mix Data | Location-specific electricity generation sources and emission profiles [10] | Geographical differentiation of operational biodiversity impacts |

The introduction of Embodied and Operational Biodiversity Indices represents a critical evolution in sustainable computing metrics, moving beyond carbon tunnel vision to address technology's comprehensive impact on living systems. For researchers using GPU computing to analyze and protect ecological systems, these metrics offer a necessary framework to align computational methods with conservation values.

As ecological datasets continue to grow in scale and complexity, and as GPU computing becomes increasingly essential for timely conservation insights, the research community must lead in adopting comprehensive sustainability assessments. By integrating EBI and OBI into computational planning and implementation, researchers can minimize the paradoxical impact of their work—using powerful computing tools to understand and protect global biodiversity while ensuring those tools do not inadvertently contribute to the problems they seek to solve.

The FABRIC framework provides the methodological foundation for this integration, enabling informed decisions about hardware selection, computational approach, and resource allocation that balance scientific progress with ecological responsibility. Through conscious adoption of these metrics, the computational ecology community can model the same environmental stewardship it studies in natural systems.

Building Sustainable Workflows: A Practical Guide to GPU-Accelerated Ecological Analysis

The analysis of large-scale ecological datasets—from satellite imagery and bioacoustic recordings to genomic sequences and climate models—increasingly relies on the computational power of Graphics Processing Units (GPUs). For researchers in ecology and drug development, selecting the right software framework is not merely a technical detail but a strategic decision that directly impacts the scale, efficiency, and sustainability of scientific inquiry. These frameworks act as the critical bridge between raw hardware power and scientific application, enabling researchers to build and deploy complex models that can uncover patterns in vast, multidimensional data. This guide provides an in-depth overview of the dominant GPU-optimized frameworks in 2025, with a specific focus on their application in processing large-scale ecological data. It further introduces a crucial, often-overlooked dimension: measuring and minimizing the biodiversity impact of the substantial computational resources these models consume. By aligning tool selection with both scientific and environmental goals, researchers can accelerate discovery while adhering to principles of ecological responsibility.

Core Framework Architectures

The deep learning ecosystem in 2025 is vibrant and diverse, offering several sophisticated libraries for building neural networks [31]. For scientific workloads, two frameworks have established themselves as the foremost choices, each with a distinct architectural philosophy and strengths.

PyTorch: The Flexible Research Workhorse

PyTorch remains a dominant framework in both research and production, prized for its dynamic computation graph and intuitive, Pythonic interface that accelerates prototyping and experimentation [32] [31]. Its architecture is particularly well-suited for research, as it allows for dynamic modification of the computation graph during runtime, facilitating rapid iteration and debugging of novel model architectures. This flexibility is invaluable for ecological researchers experimenting with new approaches to model complex systems.

Recent advancements have further solidified its position. TorchScript provides a path to transition models from this flexible "eager" mode to a high-performance graph mode for production deployment [33]. The introduction of torch.compile and projects like FlexAttention demonstrates PyTorch's performance evolution, enabling users to achieve performance comparable to hand-tuned kernels while maintaining the framework's signature ease of use [34]. Furthermore, PyTorch's robust ecosystem includes specialized libraries like PyTorch Geometric for graph neural networks, which are increasingly relevant for modeling species interactions, molecular structures in drug discovery, and landscape connectivity [33].

TensorFlow: The Production Ecosystem

TensorFlow continues to be a major force, particularly valued by enterprises for its mature, end-to-end production tooling and scalable architecture [32] [31]. Its central feature is the definition and execution of static computation graphs, which enables extensive pre-run optimizations and deployment across a wide array of platforms, from embedded devices to large-scale server clusters.

TensorFlow's strength lies in its comprehensive ecosystem. TensorFlow Extended (TFX) provides a complete pipeline for deploying and maintaining production-grade models, while TensorFlow Serving is a dedicated high-performance system for model inference [32]. For researchers whose workflows will mature into stable, continuously running applications—such as real-time biodiversity monitoring systems—this production-ready tooling is a significant advantage. Optimization techniques like mixed-precision training, the use of the tf.data API for building efficient data pipelines, and integration with the Open Neural Network Exchange (ONNX) format are critical for maximizing throughput and minimizing resource use when working with massive ecological datasets [35] [31].

The Foundational Layer: CUDA and cuDNN

Underpinning both PyTorch and TensorFlow is the NVIDIA CUDA Toolkit, a parallel computing platform and programming model that allows developers to leverage the massive parallelism of NVIDIA GPUs [36]. CUDA provides the fundamental building blocks for GPU-accelerated computing. For deep learning specifically, the CUDA Deep Neural Network (cuDNN) library offers highly tuned implementations of standard routines such as convolutions, pooling, and normalization layers [35]. Frameworks like PyTorch and TensorFlow are built upon these libraries, meaning that a proper installation of the CUDA Toolkit and cuDNN is a prerequisite for GPU acceleration [35] [37]. As of 2025, CUDA Toolkit 13.0 introduces support for the new NVIDIA Blackwell architecture and includes enhancements for accelerated Python, making it a critical component of the high-performance computing stack for science [36].

Table 1: Core Framework Comparison for Scientific Workloads

| Feature | PyTorch | TensorFlow |

|---|---|---|

| Primary Strength | Research flexibility, rapid prototyping [32] | Production maturity, end-to-end deployment [32] |

| Computational Graph | Dynamic (eager execution first) [31] | Static (graph definition first) [31] |

| Python Integration | Very intuitive, Pythonic [31] | Robust, though can be more complex [31] |

| Key Deployment Tool | TorchServe, TorchScript [33] | TensorFlow Serving, TensorFlow Lite [32] |

| Distributed Training | torch.distributed backend [33] |

Integrated strategies & APIs |

| Ecosystem for Science | PyTorch Geometric (Graphs), Captum (Interpretability) [33] | TensorFlow Probability, BioTensor |

| Ideal For | Experimental models, academic research, dynamic graphs [32] [31] | Large-scale production systems, static graph optimization [32] |

Framework Selection for Ecological and Pharmaceutical Domains

The choice between PyTorch and TensorFlow is guided by the specific nature of the research problem and its eventual application.

Modeling Complex Interactions with PyTorch

PyTorch excels in domains requiring flexible and novel model architectures. For ecological research, this makes it an excellent choice for:

- Graph Neural Networks (GNNs): Using libraries like PyTorch Geometric, researchers can model complex relational data, such as species interaction networks in ecology, protein-protein interaction networks, or molecular structures in drug development [33]. A specific use case demonstrated modeling capital markets with a bipartite graph and link-prediction GNN, a technique directly transferable to modeling ecological networks like predator-prey relationships or habitat connectivity [34].

- Reinforcement Learning (RL): Projects involving autonomous ecological monitoring, resource management, or robotic data collection can leverage RL frameworks that integrate strongly with PyTorch, such as Stable Baselines3 and RLlib [32].

- Custom Attention Mechanisms: For researchers developing new transformer-based models to process sequential ecological data (e.g., genetic sequences, time-series sensor data), PyTorch's FlexAttention provides the flexibility to experiment with custom, user-defined attention mechanisms while maintaining performance close to hand-optimized kernels [34].

Large-Scale, Deployment-Centric Workflows with TensorFlow

TensorFlow is a powerful choice for large-scale, standardized workflows where robust deployment is the end goal. Its application in science includes:

- Large-Scale Image and Signal Processing: TensorFlow's robust data pipeline and distribution capabilities are ideal for processing vast datasets of satellite imagery for land-use classification or bioacoustic data for species identification [31]. The

tf.dataAPI ensures that the GPU is continuously fed with data, avoiding bottlenecks during training [35]. - Genomic Sequencing and Analysis: The high-throughput, batch-processing capabilities of a TensorFlow pipeline, optimized with techniques like mixed-precision training, can significantly accelerate the analysis of large genomic datasets [35] [37].

- Operational Forecasting Systems: For ecological forecasts that need to be deployed and served reliably—such as disease outbreak predictions or climate impact models—TensorFlow's mature serving infrastructure (TensorFlow Serving) and full pipeline tooling (TFX) provide a stable foundation [32].

Table 2: Specialized Tools for Scaling and Optimization

| Tool/Framework | Primary Function | Relevance to Ecological Research |

|---|---|---|

| Ray | Distributed training & serving orchestration [32] | Scaling model training across clusters for continent-scale spatial analyses. |

| DeepSpeed / Megatron-LM | Memory & parallelism optimization for massive models [32] | Training large foundation models on ecological text, image, or genetic data. |

| ONNX (Open Neural Network Exchange) | Model interoperability & format standardization [31] | Deploying models trained in one framework (e.g., PyTorch) on another's runtime (e.g., TensorFlow). |

| NVIDIA cuDNN | GPU-accelerated deep learning primitives [34] | Underlying library that speeds up core operations (convolutions, attention) in all major frameworks. |

| Stable Baselines3 / RLlib | Reinforcement Learning implementations & scaling [32] | Building agent-based models for ecosystem management or optimizing drug treatment strategies. |

Quantifying the Biodiversity Impact of Computing

The substantial computational resources required for modern ecological research carry their own environmental cost, which has historically been overlooked. Traditional sustainability metrics in computing have focused on carbon emissions and water consumption. However, a groundbreaking study from Purdue University introduces a first-of-its-kind framework, FABRIC (Fabrication-to-Grave Biodiversity Impact Calculator), to quantify computing's impact on global biodiversity and biosphere integrity [10].

The FABRIC framework introduces two key metrics: