Harnessing Citizen Science Data for Long-Term Ecological Trends: Validity, Methods, and Biomedical Applications

This article explores the transformative potential of citizen science data for tracking long-term ecological trends, a field of growing importance for understanding environmental determinants of health.

Harnessing Citizen Science Data for Long-Term Ecological Trends: Validity, Methods, and Biomedical Applications

Abstract

This article explores the transformative potential of citizen science data for tracking long-term ecological trends, a field of growing importance for understanding environmental determinants of health. It examines the foundational role of public-collected data in closing critical environmental monitoring gaps, from urban pond biodiversity to deforestation. The content delves into innovative methodologies, including AI-powered tools and environmental DNA, that enhance data scalability and accuracy. A significant focus is placed on troubleshooting inherent data quality challenges and presenting rigorous validation frameworks for assessing reliability and integrating datasets across platforms. Finally, the article synthesizes how these ecological insights can inform biomedical and clinical research, particularly in understanding the complex linkages between ecosystem change and human health outcomes.

The Rise of Citizen Science in Filling Critical Ecological Data Gaps

The Evolution and Imperative of Citizen Science in Ecology

Ecological monitoring has undergone a paradigm shift, with Environmental Citizen Science evolving from a niche pursuit to an indispensable component of long-term ecological research. This transformation is driven by the field's demonstrated capacity to realize substantial strides in public involvement for addressing complex ecological challenges [1]. The dynamic interplay of community participation and technological advancement has enabled the collection of data at spatiotemporal scales that were previously unattainable, providing critical insights into long-term environmental trends [1] [2]. This whitepaper details the methodologies, technologies, and data protocols that underpin this transition, providing researchers and development professionals with the technical framework to integrate citizen science into robust ecological monitoring programs.

The convergence of artificial intelligence (AI) with citizen science offers transformative tools that move monitoring from reactive observation to proactive management. These technologies are no longer experimental; they are proven, scalable, and ready to support global climate resilience journeys by democratizing and amplifying the voices and actions of citizens [2]. For researchers investigating long-term trends, this integration provides unprecedented capacity for predictive analytics and real-time monitoring, enabling intervention before environmental issues escalate [2].

Quantitative Impact: Scaling Ecological Research Through Public Participation

The quantitative impact of citizen science on the scale and scope of ecological monitoring is demonstrated by several pioneering projects. These initiatives showcase the ability to generate massive, validated datasets that inform both conservation planning and environmental policy.

Table 1: Impact Metrics of Representative AI-Powered Citizen Science Projects

| Project Name | Primary Ecological Focus | Key Quantitative Output | Community Accuracy / Impact |

|---|---|---|---|

| Biome App (Japan) [2] | Biodiversity Monitoring | Over 6 million biodiversity records accumulated since 2019 | Exceeds 95% accuracy for birds, mammals, reptiles, and amphibians |

| GeoAI Platform (India) [2] | Air Pollution Source Detection | Detection of over 47,000 brick kilns across Indo-Gangetic plains | Enabled regulatory action and pollution mitigation |

| River Watchers Project [2] | Freshwater Pollution | AI-generated interactive maps of waste pollution | Informs cleanup efforts and policymaking |

| Friends of Bradford's Becks [2] | River Health | Thousands of photographs used to train AI models | Identified visual markers of river health |

The data from these projects highlights a critical trend: the shift from isolated metrics to comprehensive, actionable insights. By integrating diverse datasets—citizen science observations, satellite imagery, sensor outputs, and weather models—AI-powered monitoring systems provide a holistic understanding of complex environmental dynamics [2]. For researchers, this means moving beyond simple correlation to understanding causation in ecological systems.

Experimental Protocols for Citizen Science Data Collection

The validity of long-term ecological trend analysis depends on the rigor of underlying data collection protocols. The following methodologies provide a framework for generating research-grade data.

Protocol for AI-Assisted River Health Monitoring

This protocol outlines the procedure for community groups to collect visual data for training AI models to assess river ecosystem health [2].

- Metadata: Title: "Visual River Health Assessment"; Keywords: river, water quality, habitat, AI training; Author: [Project Lead]; Description: Protocol for capturing images of river conditions to train AI algorithms in identifying visual markers of health and degradation.

- Pre-Fieldwork Preparation:

- Confirm camera or smartphone functionality and storage space.

- Check weather conditions; avoid filming during heavy rain or poor light.

- Review standardized shooting angles and distances.

- Field Data Collection:

- Step 1: Site Geolocation

- Title: Record GPS coordinates.

- Description: Use smartphone GPS to record and note the exact location of the monitoring site.

- Step 2: Visual Documentation

- Title: Capture panoramic and macro imagery.

- Description: Film a slow 360-degree panorama of the river bank and habitat. Capture close-up footage of the water surface, sediment, and any potential pollution points or wildlife.

- Checklist: 360-degree panorama completed; Water surface footage captured; Sediment close-ups taken; Pollution sources documented.

- Step 1: Site Geolocation

- Post-Collection Processing:

- Step 3: Data Upload and Tagging

- Title: Upload media to designated platform.

- Description: Upload files to cloud storage (e.g., Google Earth Engine), tagging each with date, time, and coordinates [2].

- Comments: Tag project lead for any uncertainties in upload procedure.

- Step 3: Data Upload and Tagging

Protocol for Biodiversity Monitoring and Species Identification

This protocol leverages AI-powered mobile applications for real-time species recording and identification, crucial for tracking population trends [2].

- Metadata: Title: "Field Biodiversity Survey via AI App"; Keywords: species, identification, AI, conservation; Author: [Research Lead]; Description: Standardized method for using applications like iNaturalist or Biome to record and identify species during transect walks.

- Pre-Survey Setup:

- Install and create an account on the designated AI biodiversity app (e.g., Biome, iNaturalist).

- Fully charge device and consider a portable power bank.

- Define survey transect route and timing.

- Field Survey Execution:

- Step 1: Document Observation

- Title: Capture species photograph or audio.

- Description: Take a clear, well-framed photograph of the organism or record a 30-second audio clip of bird vocalizations.

- Step 2: AI Identification

- Title: Process media through AI model.

- Description: Upload the media file within the application to get a real-time AI-generated species identification [2].

- Step 3: Validation and Submission

- Title: Verify and log observation.

- Description: Confirm the AI suggestion against personal knowledge. Submit the validated record, which feeds into a global database for conservation planning [2].

- Step 1: Document Observation

- Data Integration:

- Step 4: Data Export for Analysis

- Title: Aggregate records for research.

- Description: Export project data from the application platform for integration with remote sensing inputs and large-scale environmental research [2].

- Step 4: Data Export for Analysis

Workflow Visualization: Integrating Citizen Science and AI for Ecological Insights

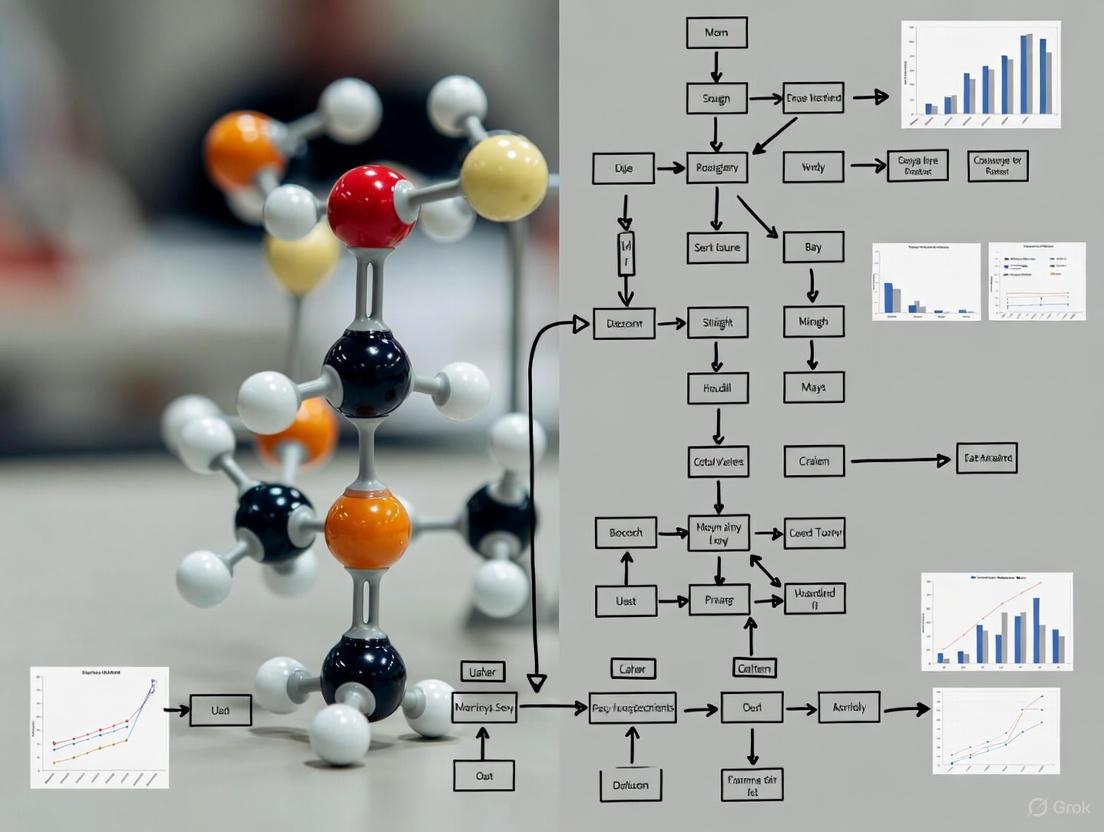

The following diagram illustrates the integrated workflow of data collection, AI processing, and analysis that transforms community-generated observations into actionable ecological intelligence.

AI-Enhanced Ecological Monitoring Workflow

This workflow is powered by a continuous feedback loop. Citizen scientists collect raw field observations (images, sounds, GPS points). This data undergoes AI Processing & Validation, where algorithms perform tasks like species identification, pattern detection, and data cleaning to ensure research-grade quality [2]. The validated data is then integrated with other sources like satellite imagery and sensor networks in a Multi-Source Data Synthesis phase, creating a holistic environmental model [2]. The final output is Actionable Insights for research and policy, which in turn guides future citizen science data collection efforts, creating a virtuous cycle of improved monitoring.

The Scientist's Toolkit: Essential Research Reagent Solutions

The technological and methodological toolkit for modern ecological monitoring relies on a suite of digital and analytical "reagents." These platforms and solutions are essential for handling the scale and complexity of citizen-sourced data.

Table 2: Essential Research Reagent Solutions for Citizen Science Ecology

| Tool / Solution | Function | Application in Ecological Monitoring |

|---|---|---|

| AI Biodiversity Apps (e.g., iNaturalist, Biome) [2] | Real-time species identification via image/sound recognition | Enables rapid, accurate field data collection by volunteers; gamification fosters sustained engagement. |

| Cloud Geospatial Platforms (e.g., Google Earth Engine) [2] | Analysis of geospatial and satellite imagery | Allows communities to integrate their data with remote sensing inputs for large-scale research on deforestation, water quality, etc. |

| Predictive AI Models | Pattern detection and forecasting in complex datasets | Processes large citizen-sourced datasets to identify trends and provide early warnings for environmental issues. |

| Multi-Modal Data Integration Frameworks | Synthesizes citizen data with remote sensing and hydrological models | Provides a comprehensive understanding of environmental dynamics, e.g., forecasting water contamination events. |

Citizen science has unequivocally transitioned from a niche activity to a necessity in ecological monitoring. The frameworks, protocols, and technologies detailed in this whitepaper provide a blueprint for researchers to leverage this powerful approach. By adopting standardized methodologies and embracing AI-powered tools, the scientific community can harness the full potential of citizen-generated data to uncover and understand long-term ecological trends, ultimately informing more effective conservation strategies and policy decisions on a global scale.

Urban freshwater ecosystems, particularly ponds, are critical biodiversity hotspots, supporting an estimated two-thirds of all freshwater species in the UK [3]. Despite their ecological significance, these habitats constitute a pronounced data gap in ecological research, especially within urban landscapes where most are located on private property and have been historically understudied [3]. This lack of fundamental data on species distribution, pond condition, and even the total number of urban ponds presents a substantial challenge for effective conservation policy and ecological trend analysis [3].

Framed within a broader thesis on utilizing citizen science for long-term ecological research, this paper examines how innovative projects are overcoming these barriers. We present a detailed case study of Defra’s Natural Capital and Ecosystem Assessment programme, which has pioneered three synergistic initiatives—GenePools, the Priority Ponds Project, and the Urban Pond Count [3]. By deploying citizen scientists and leveraging emerging technologies like environmental DNA (eDNA) analysis, these projects are generating robust, large-scale datasets. This study provides a technical analysis of their methodologies, quantitative outcomes, and the practical reagents and tools that enable this community-powered research, offering a model for bridging the urban ecological data divide.

Project Methodologies and Experimental Protocols

The GenePools Project: eDNA Workflow

The GenePools project, an ambitious partnership between Natural England, the Natural History Museum, and CEFAS, was designed to explore urban pond biodiversity using environmental DNA (eDNA) testing [3]. This approach allows for the detection and classification of species based on genetic material they shed into their environment [3]. The project's engagement was citizen-led, recruiting volunteers from six UK cities to collect water samples from over 750 ponds [3].

Figure 1: The GenePools eDNA analysis workflow, from citizen-led sampling to bioinformatic analysis.

Detailed Experimental Protocol: eDNA Sampling and Analysis

- Participant Recruitment and Training: Volunteers from diverse demographics were recruited in six cities. They received instructions and sampling kits [3].

- Field Sampling - Water Collection: Citizens collected water samples from garden and urban ponds using provided sterile containers [3] [4].

- Field Sampling - Filtration: Water samples were filtered on-site or in a lab setting to capture particulate matter, including cellular material containing DNA [4].

- eDNA Extraction and Purification: DNA was extracted from the filters in a laboratory setting. This step isolates and purifies the genetic material from other environmental contaminants [4].

- DNA Amplification and Sequencing (DNA Barcoding): Specific genetic regions were amplified using Polymerase Chain Reaction (PCR). These regions, known as DNA barcodes, are variable between species but conserved within them. The amplified DNA was then sequenced using high-throughput sequencing platforms [4].

- Bioinformatic Analysis: The resulting DNA sequences were processed and compared against established online genomic databases to identify the species present in the original pond sample [4].

- Data Curation and Validation: Over 70,000 records are being added to the National Biodiversity Network Atlas, forming one of the first large-scale, open-access collections of DNA-based biodiversity records in the UK [3].

Priority Ponds and Urban Pond Counts

Concurrently, the Freshwater Habitats Trust led two complementary initiatives: the Priority Pond Assessment and the Urban Pond Count [3]. The Priority Pond Assessment addresses the challenge that only 2% of England's ponds are designated as priority habitats, despite an estimated 20% meeting the criteria [3]. The Urban Pond Count is the first national attempt to estimate the number of urban ponds, a knowledge gap since the last national survey in 2007 [3].

Figure 2: The Priority Pond Assessment workflow, using a citizen-friendly survey and algorithm to filter ponds for expert review.

Detailed Experimental Protocol: Priority Pond Assessment

- Pond Identification: Volunteers identified ponds for assessment, including previously unrecorded urban ponds [3].

- Field Survey - Standardized Metrics: Citizens conducted surveys based on seven simple, observable pond features, such as shade coverage and plant coverage. This non-specialist approach is designed for public participation [3].

- Data Input and Algorithmic Filtering: Survey data were input into an algorithm developed by the Freshwater Habitats Trust. This algorithm acts as an initial filter, identifying ponds with a high probability of meeting the official "priority habitat" criteria [3].

- Expert Verification: Ponds flagged by the algorithm as "probable priority ponds" were then targeted for verification by specialist ecologists, making the expert validation process more efficient [3].

Quantitative Results and Data Synthesis

The application of these citizen science methodologies has yielded significant quantitative data, closing critical knowledge gaps in urban freshwater ecology.

Table 1: Biodiversity Findings from the GenePools eDNA Analysis (Sample of 750+ Urban Ponds)

| Taxonomic Group | Prevalence in Ponds | Key Example Species Identified |

|---|---|---|

| Insects | 98% | Mosquitoes, Diving Beetles, Scarab Beetles [3] [4] |

| Amphibians | 53% | Common Frog, Smooth Newt [3] [4] |

| Mammals | 50% | Weasel, Dog, Human [3] [4] |

| Birds | Identified in multiple ponds | Pigeon, Coot, Moorhen, Mallard, Swan/Goose [4] |

| Fish | Identified in multiple ponds | European Perch, Roach, Goldfish [4] |

| Plants & Trees | Wide variety | Duckweed, Nettle, Elder, Ash, Willow, Beech, Alder [4] |

| Microbes & Protists | Hundreds of species | Green/Golden Algae, Ciliate Protists, Diatoms, Flagellates [4] |

Table 2: Project Outputs and Impact Metrics for Pond Assessment Initiatives

| Metric | GenePools Project | Priority Ponds & Urban Count |

|---|---|---|

| Project Duration | 2021 - 2025 [3] | Launched mid-2024 [3] |

| Number of Sites Sampled/Surveyed | > 750 ponds sampled [3] | ~750 surveys completed [3] |

| Key Data Outputs | >70,000 DNA-based records for the National Biodiversity Network Atlas [3] | >100 probable priority ponds identified; >250 new priority ponds recorded when combined with other data [3] |

| New Urban Ponds Mapped | Not Applicable | 89 previously unmapped ponds [3] |

| Estimated Total Urban Ponds (England) | Not Applicable | ~8,500 [3] |

| Algorithm/Survey Efficacy | Not Applicable | Identifies 97% of non-priority ponds and 58% of priority ponds [3] |

The Scientist's Toolkit: Essential Research Reagents and Materials

The successful implementation of these projects relies on a combination of biological reagents, field equipment, and digital tools.

Table 3: Key Research Reagents and Solutions for Citizen Science Ecology

| Item / Solution | Function / Application |

|---|---|

| eDNA Sampling Kit | Contains sterile containers and filters for citizens to collect and stabilize water samples from ponds, preventing contamination [3] [4]. |

| DNA Extraction & Purification Kits | Commercial kits used in the lab to isolate pure DNA from environmental filters, a critical step for downstream genetic analysis [4]. |

| PCR Reagents | Enzymes, primers, and nucleotides used to amplify specific DNA barcode regions from the mixed eDNA, enabling species identification [4]. |

| DNA Sequencing Reagents | Chemicals and flow cells for high-throughput sequencing platforms to determine the precise order of nucleotides in the amplified DNA [4]. |

| Bioinformatic Databases | Online genomic reference libraries used as a BLAST repository to match unknown DNA sequences from the samples to known species [4]. |

| Priority Pond Field Guide | A standardized protocol defining the seven observable pond features, enabling consistent data collection by non-specialists [3]. |

| Digital Data Platforms | Tools like iNaturalist and the National Biodiversity Network Atlas for data management, storage, and public dissemination of results [3] [5]. |

The GenePools and Urban Pond projects demonstrate a transformative approach to ecological monitoring. By embedding citizen scientists within a rigorous technical framework, these initiatives have generated unprecedented datasets on urban pond biodiversity and condition [3]. The project outcomes—from the species inventories generated by eDNA to the refined map of priority habitats—provide a validated model for how citizen science can directly contribute to long-term ecological trends research and inform environmental policy [3] [5].

Key to their success is the strategic integration of new technologies with accessible methods. The GenePools project not only collected data but also refined the sampling and engagement strategies needed to make eDNA monitoring practical and scalable for public participation [3]. Similarly, the Priority Pond Assessment developed a simple yet effective algorithmic filter that empowers citizens to contribute meaningfully to a national conservation prioritization process [3]. These projects underscore that the future of urban ecological assessment lies in hybrid models that combine the scale of citizen science with the precision of expert validation and advanced laboratory techniques. This blueprint offers a replicable path for researchers and policymakers worldwide to bridge critical data gaps and foster a deeper connection between the public and their local ecosystems.

The monumental challenge of understanding and mitigating global environmental change necessitates ecological data at spatiotemporal scales that transcend the capacity of individual research teams. Long-term ecological trends research, fundamental to predicting ecosystem trajectories and informing policy, is increasingly constrained by logistical and funding limitations. This whitepaper frames the integration of citizen science within this context, demonstrating its critical role in scaling up data collection across forest and aquatic ecosystems. By quantifying the diversity of approaches and their global applications, we provide researchers and scientists with a technical guide for leveraging public participation to generate the robust, long-term datasets required for discerning significant ecological signals from environmental noise [6] [7].

The Diversity and Evolution of Citizen Science Approaches

Citizen science represents a spectrum of methodologies for involving volunteers in scientific research. A quantitative analysis of 509 environmental and ecological projects revealed that this diversity cannot be neatly categorized but instead forms a continuum of approaches [8] [9]. This variation is best understood across two primary axes: methodological approach and project complexity.

Table 1: Key Dimensions of Citizen Science Project Design [8] [9]

| Dimension | Category | Description | Typical Data Output |

|---|---|---|---|

| Methodological Approach | Mass Participation | Easy participation by anyone, anywhere, often with minimal training (e.g., single-species counts, incidental wildlife sightings). | Large spatial coverage, single-timepoint or intermittent data. |

| Systematic Monitoring | Trained volunteers repeatedly sampling at specific, often fixed, locations (e.g., water quality testing, forest phenology plots). | Long-term, structured time-series data from defined locations. | |

| Project Complexity | Simple | Minimal support provided; tasks and data structures are straightforward. | High volume of data, potentially variable in quality without validation. |

| Elaborate | Significant support and training provided to gather rich, detailed datasets. | High-quality, complex datasets suitable for peer-reviewed research. |

A separate cluster of projects exists for entirely computer-based activities, where volunteers classify or process data online [9]. The overall "accumulated diversity" of active citizen science projects has increased over time, indicating a growing toolkit of available approaches for researchers. This expansion is largely driven by technological innovation, allowing projects to become more specialized and different from one another [8]. Understanding this landscape is a prerequisite for the comparative evaluation of project success and for selecting the appropriate approach for a given research objective.

Global Applications and Methodological Protocols

The application of these diverse citizen science approaches has been critical in advancing research in both forest and aquatic ecosystems, enabling data collection at a genuine global scale.

Applications in Aquatic Ecosystems

In aquatic environments, citizen science has been instrumental in addressing two pervasive challenges: water scarcity/pollution and biological invasions.

- Trends and Global Prospects: Citizen scientists contribute to monitoring freshwater and marine systems by tracking water quality parameters, reporting pollution events, and recording species sightings. These activities help document the detrimental impacts of anthropogenic pressures and highlight disparities in water usage between developed and developing countries. The data gathered is essential for developing comprehensive management strategies and fostering the international cooperation needed to safeguard these vital ecosystems [10].

- Global-Scale Screening of Non-Native Species: A landmark example of a standardized global protocol is the use of the Aquatic Species Invasiveness Screening Kit (AS-ISK). This multi-lingual decision-support tool allows assessors to screen non-native aquatic species for their risk of becoming invasive under both current and future climate conditions. In a global-scale screening of 819 species, 33 were identified as posing a 'very high risk'. The protocol involves scoring species based on their biology, ecology, and climate preferences, with the results enabling decision-makers to prioritize species for rapid management actions, such as eradication or control, and to inform policy on species importation bans [11].

Table 2: Selected Global Citizen Science Initiatives in Aquatic and Forest Ecology

| Ecosystem | Project Focus | Methodological Approach | Geographic Scale | Key Output |

|---|---|---|---|---|

| Aquatic | Non-native species risk | Systematic Monitoring / Screening | Global (120 risk assessment areas) | Risk scores and thresholds for 819 aquatic species under current and future climates [11]. |

| Aquatic | Freshwater use and pollution | Mass Participation / Mixed | Global | Data on water usage trends, pollution hotspots, and ecosystem requirements to inform policy [10]. |

| Forest & Dryland | Primary Production | Systematic Monitoring | Regional (Sevilleta LTER, USA) | >20 years of data on Aboveground Net Primary Production (ANPP) and precipitation [6]. |

Applications in Forest and Dryland Ecosystems

In terrestrial systems, the value of citizen science is particularly evident in long-term studies designed to capture ecosystem dynamics.

- Long-Term Ecological Research (LTER): The analysis of long-term information is crucial for enhancing predictive capacity about ecosystem trajectories. A study in the dryland transition zones of the Sevilleta National Wildlife Refuge exemplifies the elaborate, systematic monitoring approach. This research utilizes over 20 years of data on Aboveground Net Primary Production (ANPP) and precipitation variability across grassland-to-shrubland transitions [6].

- Experimental Protocol: Long-Term Dryland Ecosystem Analysis

- Site Selection: Establish permanent plots in representative ecosystems (e.g., Great Plains grassland, Chihuahuan Desert grassland, Chihuahuan Desert shrubland).

- ANPP Measurement:

- Frequency: Data collection during peak growing seasons (e.g., spring and fall each year).

- Method: A non-destructive allometric scaling method. Within permanently established 1m² quadrats (40-80 per site), measure the height and cover of individual plants.

- Species-Level Estimation: Use linear regression models based on weight-to-volume ratios from reference specimens to convert field measurements to species-level ANPP.

- Annual Calculation: Sum peak seasonal ANPP for each species to derive annual ANPP [6].

- Climate Data: Collect concurrent, high-resolution data on precipitation and other relevant climatic variables.

- Time Series Analysis: Apply a suite of geostatistical methods to the long-term dataset:

- Model univariate probability distribution functions.

- Model temporal semivariograms to understand autocorrelation.

- Model copula-based dependency functions between annual precipitation and ANPP [6].

- Predictive Capacity Testing: Systematically incorporate additional years of data into the models to quantify how predictive capacity evolves over time, thereby justifying the continuation of long-term monitoring efforts [6].

The Scientist's Toolkit: Research Reagents and Essential Materials

The following table details key solutions and tools used in the featured citizen science fields and experiments.

Table 3: Essential Research Reagents and Tools for Ecological Monitoring

| Item / Solution | Function / Application | Technical Specification / Example |

|---|---|---|

| Aquatic Species Invasiveness Screening Kit (AS-ISK) | A standardized decision-support tool for risk screening of non-native aquatic organisms. | Multi-lingual questionnaire-based tool that outputs climate-threshold-calibrated risk scores for species [11]. |

| Allometric Scaling Equations | Non-destructive estimation of plant biomass and ANPP from field measurements. | Species-specific linear regression models developed from reference specimens; e.g., for grasses like Bouteloua gracilis and shrubs like Larrea tridentata [6]. |

| Permanent Monitoring Quadrats | Fixed-location plots for repeated, long-term ecological measurement to ensure data consistency. | Typically 1m² plots, permanently marked with stakes or rebar, with precise locations mapped and recorded [6]. |

| Geostatistical Time Series Analysis | A toolset for modeling the natural behavior of ecological variables over time. | Includes modeling probability distributions, temporal semivariograms, and copula-based dependency functions [6]. |

Data Management, Visualization, and Workflow

The integrity and utility of data collected by citizen scientists are paramount for its acceptance in rigorous scientific research.

Data Quality Assurance

Robust protocols are essential. These can range from automated data validation in mobile apps to comprehensive training programs and iterative data checking by professional scientists. The principle is that data quality must be "adequate for the intended purpose," with methods tailored to the project's complexity and goals [9].

Effective Data Visualization

Transforming complex ecological datasets into clear, compelling visuals is critical for communication with both scientific and public audiences.

- Color Use: Employ intuitive colors (e.g., blue for water, green for vegetation) and ensure sufficient contrast for readability. For sequential data (e.g., pollution levels), use a single-color gradient. For categorical data, use distinct, easily distinguishable hues, limiting the palette to seven or fewer colors [12] [13].

- Chart Selection: Use line charts for temporal trends (e.g., ANPP over time), maps for spatial data (e.g., species distribution), and bar charts for comparative analysis (e.g., emissions across industries) [14].

The following diagram illustrates a generalized workflow for implementing a large-scale citizen science project in ecology.

Citizen science has fundamentally expanded the scale of ecological inquiry, moving from localized studies to global, networked research essential for long-term trends analysis. The diverse and evolving approaches—from mass participation to systematic monitoring—provide a versatile toolkit for addressing critical data gaps in forest and aquatic ecosystems. As demonstrated by global applications in screening invasive species and documenting long-term dryland dynamics, the integration of robust methodological protocols, rigorous data management, and strategic visualization is key to producing high-quality, scientifically valuable data. For researchers, embracing this paradigm is not merely a cost-effective strategy but a necessary one to build the comprehensive, long-term datasets required to understand and mitigate the impacts of global environmental change.

Why Now? Technological, Social, and Policy Drivers of Growth

The utilization of citizen science data for long-term ecological trends research is transitioning from a supplementary data source to a core methodological approach. This shift is not serendipitous but is driven by a convergent evolution across technological, social, and policy domains that has created a unique enabling environment. Citizen science, the involvement of the public in scientific research, now generates data at spatiotemporal scales and resolutions that were previously impossible through traditional scientific fieldwork alone [15]. The growth drivers behind this expansion are multifaceted and interdependent, creating a synergistic effect that accelerates adoption across research institutions, government agencies, and conservation organizations.

This whitepaper examines the specific technological innovations, social transformations, and policy frameworks that collectively explain why citizen science has emerged as a critical tool for ecological research at this historical moment. Understanding these drivers is essential for researchers, scientists, and drug development professionals seeking to leverage these data streams for analyzing long-term ecological patterns, tracking biodiversity shifts, and understanding environmental changes that may impact public health and ecosystem stability.

Technological Drivers

Technological advancement represents the most immediate catalyst for the proliferation of ecological citizen science. The convergence of mobile, data, and artificial intelligence technologies has created an infrastructure that supports rigorous, large-scale data collection and validation.

Mobile and Connected Technologies

The widespread adoption of smartphones has democratized data collection capabilities. Modern mobile devices integrate high-resolution cameras, GPS localization, and constant connectivity, creating a powerful ecological research tool that fits in participants' pockets.

- Pervasive Sensing: Mobile applications like iNaturalist and eBird transform opportunistic observations into structured, geotagged biodiversity records [16] [15]. This has enabled the documentation of species distributions at unprecedented resolutions.

- Real-Time Data Transmission: Connectivity allows for immediate submission of observations to centralized databases, drastically reducing latency between data collection and research availability [17].

- Standardized Data Collection: Mobile apps embed standardized protocols that guide participants through systematic recording processes, enhancing data consistency and quality across diverse user groups [16].

Data Infrastructure and Management Platforms

The backend systems that support citizen science projects have evolved to handle the massive datasets generated by distributed networks of contributors.

- Centralized Repositories: Platforms like the Global Biodiversity Information Facility (GBIF) serve as clearinghouses for biodiversity records, aggregating citizen-generated data with museum collections and professional research datasets [15].

- Interoperability Standards: Data standardization allows for integration across platforms and merging with traditional research datasets, creating more comprehensive ecological baselines [1].

- Quality Control Mechanisms: Community-based validation systems, such as the "Research Grade" status on iNaturalist, leverage collective expertise to verify identifications before data enters scientific pipelines [15].

Artificial Intelligence and Automation

AI and machine learning technologies are revolutionizing how citizen-generated data is processed, validated, and analyzed, addressing previous concerns about data quality.

- Automated Species Identification: Image recognition algorithms provide real-time taxonomic suggestions, improving accuracy and providing immediate feedback to participants [16].

- Data Quality Enhancement: Machine learning algorithms can flag anomalous observations for additional review and automate data cleaning processes, enhancing overall dataset reliability [16].

- Pattern Detection at Scale: AI enables analysis of massive image datasets for behavioral, phenological, or morphological patterns that would be impractical for human researchers to process manually [15].

Table 1: Impact of Digital Technologies on Citizen Science Capabilities

| Technology | Specific Applications | Impact on Ecological Research |

|---|---|---|

| Smartphones & Mobile Apps | iNaturalist, eBird, Mosquito Alert | Enabled real-time, geotagged biodiversity monitoring at continental scales [16] [15] |

| Cloud Computing & Data Platforms | Zooniverse, GBIF integration | Supported management and sharing of massive datasets across institutions [16] |

| AI & Machine Learning | Automated species identification, data validation | Improved data quality and enabled analysis of complex image datasets [16] [15] |

| Sensors & IoT | Low-cost air/water quality sensors | Expanded beyond biodiversity to abiotic environmental monitoring [16] |

Social and Participatory Drivers

Parallel to technological advancements, significant shifts in public engagement with science have created a willing and capable participant base essential for citizen science growth.

Diversifying Models of Participation

The paradigm of citizen science has expanded beyond simple data collection to include more collaborative and citizen-led approaches that deepen engagement and relevance.

- Contributory to Co-Created Projects: While contributory projects (where scientists design projects and citizens primarily collect data) remain common, there is growth in collaborative models where participants contribute to project design and citizen-led initiatives where communities drive the research agenda [18].

- Integration of Traditional Knowledge: Research increasingly recognizes the value of incorporating traditional environmental knowledge through knowledge co-production, particularly in indigenous communities protecting local biodiversity [1] [18].

- Gamification and Motivation: The strategic use of game elements (badges, leaderboards, challenges) in platforms like Foldit enhances participant motivation and sustains engagement over time [16].

Demonstrated Dual Benefits

Research has documented significant co-benefits of participation that reinforce long-term engagement and attract new audiences to citizen science.

- Mental Health and Wellbeing: Nature-based citizen science initiatives show statistically significant improvements in mental health outcomes, including reduced symptoms of depression, stress, and anxiety [19]. These benefits are particularly pronounced in initiatives with extended duration and social components.

- Enhanced Nature Connection: Participation strengthens participants' connection to nature across multiple dimensions (Self, Experience, and Perspective), which in turn promotes pro-environmental attitudes and behaviors [19].

- Educational Value: These projects provide hands-on STEM learning opportunities for both adults and children, enhancing scientific literacy and environmental knowledge [19].

Strategic Community Engagement

Modern citizen science increasingly emphasizes meaningful community involvement rather than treating participants merely as data collectors.

- Targeted Recruitment: Projects are increasingly designed for specific communities, including youth, marginalized groups, and those with particular health conditions, ensuring relevance and accessibility [18].

- Local Problem-Solving: Community-led initiatives address locally relevant environmental concerns, empowering citizens to advocate for evidence-based policy changes in their communities [18].

Diagram 1: Social Engagement Feedback Cycle in Citizen Science

Policy and Institutional Drivers

Strategic policy interventions and institutional adoption have created supportive frameworks that legitimize and resource citizen science approaches within ecological research.

Research Policy Integration

Government and international organizations are systematically embedding citizen science into research funding streams and scientific infrastructure.

- Research Funding Alignment: Initiatives like the OECD policy paper "Embedding citizen science into research policy" demonstrate high-level recognition of citizen science as a valid research methodology worthy of institutional support and investment [20].

- Scientific Priority Status: The establishment of dedicated research topics and collections in leading journals (e.g., BMC Ecology and Evolution's "Citizen science in ecological research" collection) signals academic legitimacy and creates publication pathways for citizen science research [21].

- Horizon Europe and Global Frameworks: European projects such as Urban ReLeaf and FRAMEwork incorporate citizen science as core methodologies, funded through mainstream research programs [21].

Environmental Governance and Reporting

Citizen-generated data is increasingly formalized within environmental monitoring, management, and reporting cycles.

- Biodiversity Assessment and Monitoring: Conservation agencies, including the International Union for Conservation of Nature (IUCN), utilize iNaturalist data to assess threatened species status and track invasive species spread [15].

- Policy-Focused Projects: Initiatives like the IDAlert project employ citizen science to study and monitor invasive mosquito and tick species capable of transmitting diseases, directly informing public health policies [18].

- Urban and Public Health Integration: Policies such as England's Environmental Improvement Plan (aiming for 15-minute access to green space) and Belgium's "Green Deal for Sustainable Healthcare" create natural alignment points for nature-based citizen science [19].

Data Quality and Standardization Frameworks

The development of methodological standards has been critical for overcoming initial skepticism about citizen-generated data's reliability for ecological research.

- Validation Protocols: Projects implement multi-layered validation systems combining community verification, expert review, and algorithmic checking to ensure data quality [1] [15].

- Methodological Transparency: Reporting standards for citizen science methodologies are increasingly formalized, enabling proper evaluation and replication of studies using these data [21].

- FAIR Data Principles: Application of Findable, Accessible, Interoperable, and Reusable (FAIR) data principles to citizen science outputs enhances their utility for long-term ecological research [1].

Table 2: Policy Frameworks Supporting Citizen Science Growth

| Policy Level | Specific Initiatives | Impact on Ecological Citizen Science |

|---|---|---|

| International Policy | OECD research policy integration, IPBES recognition | Legitimation as valid research method; access to funding streams [20] |

| National Environmental Policy | England's Environmental Improvement Plan, Belgium's "Green Deal for Sustainable Healthcare" | Alignment with public health and environmental quality objectives [19] |

| Conservation Agency Practice | IUCN species assessments using citizen data, agency use of iNaturalist | Direct application to conservation decision-making and status assessments [15] |

| Research Infrastructure | Dedicated journal collections, GBIF data integration | Academic recognition and pathways for formal publication [15] [21] |

Experimental Protocols and Methodologies

The integration of citizen science into long-term ecological research requires rigorous methodological frameworks. Below are detailed protocols for key application areas.

Biodiversity Monitoring and Species Distribution Modeling

This protocol outlines the systematic collection of species occurrence data for modeling distribution changes over time.

- Primary Objective: Document spatial and temporal patterns of species distributions to inform conservation planning and understand ecological responses to environmental change.

- Participant Training Materials: Digital field guides with image recognition support; tutorial videos on photographic documentation; species identification quizzes with immediate feedback.

- Data Collection Protocol:

- Observation Documentation: Photograph organisms with sufficient detail for identification (multiple angles for plants; dorsal/ventral views for insects).

- Metadata Recording: Automated capture of timestamp and geocoordinates through mobile devices; manual entry of microhabitat notes and abundance estimates.

- Data Submission: Upload to platforms (e.g., iNaturalist, eBird) with automated data validation checks for completeness and geographic plausibility.

- Quality Control Mechanism: Multi-step verification process combining computer vision suggestions, community expert identification consensus, and taxonomic specialist review for difficult taxa.

- Data Integration Pipeline: Research-grade records shared with GBIF following Darwin Core standards, enabling fusion with museum specimens and professional survey data.

Mental Health and Ecological Participation Assessment

This protocol measures the dual benefits of citizen science participation for ecological data collection and human wellbeing outcomes.

- Primary Objective: Quantify changes in nature connection and mental health indicators following participation in nature-based citizen science initiatives.

- Assessment Tools:

- Nature-Relatedness Scale: Validated instrument measuring connection to nature across three dimensions: Self (internalized identity), Experience (direct contact), and Perspective (worldview) [19].

- DASS-21: 21-item Depression, Anxiety and Stress Scales instrument assessing symptoms of depression, anxiety, and stress [19].

- SPANE: Scale of Positive and Negative Experience measuring emotional states [19].

- Study Design: Pre-test/post-test design with measurements immediately before and after participation events; controlled for socioeconomic confounders in analysis.

- Implementation Variations: Testing across initiatives with different durations (15 minutes to 48 hours), social structures (individual vs. group data collection), and ecosystem types (urban parks, forests, freshwater streams).

Diagram 2: Citizen Science Data Flow in Ecological Research

The Scientist's Toolkit: Research Reagent Solutions

The effective implementation of citizen science for ecological monitoring requires both digital and physical tools. The following table details essential components of the modern citizen science toolkit.

Table 3: Essential Research Reagent Solutions for Ecological Citizen Science

| Tool Category | Specific Examples | Function in Ecological Research |

|---|---|---|

| Mobile Applications | iNaturalist, eBird, Mosquito Alert | Enable real-time species documentation with embedded GPS coordinates and automated data submission; provide identification support through computer vision [16] [15] [18] |

| Online Platforms | Zooniverse, iNaturalist website, GitHub | Facilitate project management, data aggregation, community discussion, and collaborative analysis; enable data sharing with global repositories [16] |

| Field Equipment | Aquatic dip nets, water quality test kits, macro lenses, portable microscopes | Standardize physical sample collection and enhance observation quality for difficult-to-document taxa or parameters [18] [19] |

| Data Validation Tools | Computer vision algorithms, expert review systems, data quality dashboards | Ensure research-grade data quality through automated checks and community expert verification processes [16] [15] |

| Analytical Modules | Species distribution modeling packages, trend analysis tools, image analysis algorithms | Transform raw observations into analyzable formats for quantifying ecological patterns and changes over time [15] |

The convergence of technological accessibility, social engagement models, and supportive policy frameworks has created an unprecedented opportunity for citizen science to transform how we monitor and understand long-term ecological trends. Technological drivers have addressed previous limitations in data quality and scale, while social drivers have built sustainable participation models that generate dual benefits for both science and participants. Concurrently, policy drivers have established the institutional legitimacy and funding pathways necessary for mainstream adoption.

For researchers and scientists focused on long-term ecological trends, these converging drivers mean that citizen science data now offers not just supplementary value but core methodological utility. The quantitative data, experimental protocols, and conceptual frameworks presented in this whitepaper demonstrate that citizen science has matured into a rigorous approach capable of generating high-quality, scalable data for analyzing ecological patterns over time and space. As these drivers continue to evolve and reinforce one another, the integration of citizen science into mainstream ecological research methodology will likely accelerate, opening new possibilities for understanding and responding to environmental change at global scales.

Innovative Tools and Techniques for Robust Data Collection

Environmental DNA (eDNA) metabarcoding has emerged as a revolutionary technique for biodiversity assessment, enabling the detection of multiple species from a single environmental sample such as water, soil, or air [22]. This non-invasive method leverages the genetic material organisms continuously shed into their environments, providing a powerful tool for monitoring ecosystem health and species distribution [23]. The integration of artificial intelligence (AI) and machine learning (ML) algorithms has further enhanced the precision and efficiency of eDNA analysis, offering unprecedented capabilities for processing complex genetic datasets and improving species identification accuracy [24] [25]. Within citizen science frameworks, these cutting-edge methodologies present transformative potential for gathering robust, scalable data on long-term ecological trends, empowering researchers and community scientists alike to collaborate in monitoring environmental changes across extensive spatial and temporal scales.

eDNA Metabarcoding Fundamentals

eDNA metabarcoding utilizes trace genetic material present in environmental samples to determine species composition without direct observation or capture of organisms [22]. The technique relies on the fact that all organisms continuously shed DNA into their environment through skin cells, mucus, saliva, feces, urine, blood, pollen, and decomposing remains [22] [23]. This genetic material can be collected, sequenced, and analyzed to identify the species present in a particular ecosystem.

Core Workflow and Methodology

The standard eDNA metabarcoding workflow consists of six critical stages, each requiring careful execution to ensure reliable results:

- DNA Barcoding Region Selection: Researchers select appropriate variable gene regions suitable for distinguishing between taxonomic groups. Common markers include cytochrome c oxidase I (CO1) for animals, 16S ribosomal RNA for bacteria, 18S for eukaryotes, and internal transcribed spacer (ITS) for fungi [22] [23].

- Reference Database Curation: A comprehensive database of known DNA barcodes for likely-to-be-encountered species is compiled from vouchered specimens in repositories like GenBank or BOLD [22].

- Sample Collection and DNA Extraction: Environmental samples are collected using standardized methods that minimize contamination. DNA is then extracted and purified, with techniques optimized for the specific sample type (water, soil, sediment) and potential inhibitors [22].

- PCR Amplification and Labeling: Target barcode regions are amplified using universal primers designed for the conserved flanking regions. Molecular identifiers (MIDs) are added to track sample origins in multiplexed sequencing runs [22].

- DNA Sequencing: Next-generation sequencing (NGS) platforms enable high-throughput parallel sequencing of multiple samples simultaneously, generating thousands to millions of sequences [22] [23].

- Bioinformatic Analysis: Computational tools process the raw sequence data, performing quality filtering, clustering into operational taxonomic units (OTUs), and taxonomic classification through comparison with reference databases [22] [26].

Advantages and Limitations

eDNA metabarcoding offers distinct advantages over traditional survey methods, including:

- Non-invasive sampling that minimizes ecosystem disruption [23]

- Increased sensitivity for detecting cryptic, elusive, or low-abundance species [24]

- Higher throughput and potentially lower cost compared to morphological identification [22]

- Ability to simultaneously survey multiple taxonomic groups from a single sample [22]

However, several challenges remain:

- DNA degradation influenced by environmental factors like temperature, pH, and UV exposure [23]

- Variable shedding rates among organisms affecting abundance estimates [23]

- Incomplete reference databases limiting species identification accuracy [23] [27]

- Primer biases affecting detection probabilities for certain taxa [27]

- Logistical challenges in standardizing sampling protocols across diverse environments [27]

Table 1: Key Genetic Markers Used in eDNA Metabarcoding

| Marker Gene | Target Organisms | Advantages | Limitations |

|---|---|---|---|

| CO1 | Animals, especially vertebrates | High discrimination between species; standardized for metazoans | Less effective for some invertebrate groups; requires longer sequences |

| 16S rRNA | Bacteria and archaea | Extensive reference databases; highly conserved | Variable resolution for closely related species |

| 12S rRNA | Fish and other vertebrates | Short regions ideal for degraded eDNA; good for freshwater biomonitoring | Limited taxonomic resolution in some groups |

| 18S rRNA | Eukaryotes | Broad eukaryotic coverage; useful for microbial eukaryotes | Lower species-level resolution compared to CO1 |

| ITS | Fungi | High variability for species discrimination; standard for fungi | Multiple copies can complicate quantification |

AI Integration in eDNA Analysis

Artificial intelligence, particularly machine learning and deep learning algorithms, has transformed the analysis of eDNA metabarcoding data by enhancing species detection accuracy, identifying complex patterns in large datasets, and automating previously labor-intensive processes [24] [25].

Machine Learning Applications in eDNA

Machine learning algorithms have demonstrated significant improvements in eDNA metabarcoding outcomes across multiple studies:

Species Classification and Prediction: ML algorithms can be trained on reference sequences to accurately classify and predict species from eDNA sequences, even with incomplete or noisy data [24]. In reviewed studies, ML implementation increased detection sensitivity by an average of 20% compared to conventional approaches [24].

Rare and Invasive Species Detection: ML models excel at identifying rare or invasive species that are often overlooked by traditional methods due to their low abundance in samples [24]. This capability is particularly valuable for early detection of invasive species and monitoring endangered populations.

Data Quality Enhancement: AI approaches can compensate for common eDNA challenges such as contamination, degradation, and amplification biases by learning patterns from high-quality training data and applying these patterns to correct or interpret problematic samples [24].

Richness Estimation: Studies applying ML to eDNA metabarcoding have reported an average increase of 14% in species richness detection compared to traditional bioinformatics approaches [24], indicating a superior ability to discern multiple species from complex environmental samples.

AI Implementation Framework

The integration of AI into eDNA analysis follows a structured pipeline:

- Data Preprocessing: Raw sequence data undergoes quality control, filtering, and normalization to create standardized input for AI models.

- Feature Selection and Engineering: Relevant features (sequence characteristics, abundance measures, ecological metadata) are selected and transformed to optimize model performance.

- Model Selection and Training: Appropriate ML algorithms are chosen based on the specific research question and trained using validated datasets.

- Validation and Testing: Models are evaluated using independent datasets not included in training, with performance metrics assessed for ecological relevance.

- Interpretation and Visualization: Results are processed to generate ecologically meaningful outputs, such as species distribution maps or community composition summaries.

Table 2: Machine Learning Performance in eDNA Metabarcoding Applications

| Application | ML Algorithm Types | Reported Performance Improvements | Key Benefits |

|---|---|---|---|

| Species Classification | Neural Networks, Support Vector Machines | 20% average increase in detection sensitivity [24] | Handles ambiguous sequences; reduces false positives |

| Rare Species Detection | Random Forests, Anomaly Detection Algorithms | Improved detection of low-abundance taxa (<0.01% relative abundance) | Identifies endangered and invasive species overlooked by conventional methods |

| Community Composition Analysis | Clustering Algorithms, Dimensionality Reduction | 14% average increase in species richness estimation [24] | Reveals complex ecological patterns from sequence variants |

| Data Quality Control | Autoencoders, Convolutional Neural Networks | Significant reduction in false positives from contamination [24] | Automates quality filtering; recognizes technical artifacts |

Figure 1: Integrated eDNA and AI Analysis Workflow

Experimental Protocols and Methodologies

Standardized eDNA Metabarcoding Protocol

For freshwater biomonitoring (adapted from Nigerian fishery study [27]):

Sample Collection:

- Collect water samples in triplicate from each site using sterile containers

- Filter 1-2 liters of water through 0.22μm membrane filters within 6 hours of collection

- Include field blanks (sterile water processed identically) to monitor contamination

- Preserve filters in DNA stabilization buffer and store at -20°C until extraction

DNA Extraction:

- Extract genomic DNA using commercial soil or water DNA extraction kits

- Include extraction controls to detect kit contamination

- Quantify DNA yield using fluorometric methods

- Store extracts at -20°C if proceeding directly to PCR, or -80°C for long-term storage

PCR Amplification (12S rRNA for fish):

- Prepare 25μL reactions containing: 10-50ng eDNA template, primer mix (12S-V5 primers), PCR master mix

- Use a touchdown PCR program: 95°C for 5min; 20 cycles of 95°C/30s, 65-55°C/30s (-0.5°C per cycle), 72°C/30s; 15 cycles of 95°C/30s, 55°C/30s, 72°C/30s; final extension 72°C/5min

- Include negative PCR controls (no template) to confirm no amplification in reagents

- Verify amplification success by gel electrophoresis

Library Preparation and Sequencing:

- Index PCR amplicons with dual indices and Illumina sequencing adapters

- Purify libraries using magnetic bead-based cleanups

- Quantify libraries by qPCR for accurate pooling

- Sequence on Illumina platforms (MiSeq or NovaSeq) using 2×250bp or 2×300bp chemistry

AI Model Training Protocol (Species Identification)

Based on the Hebeloma case study [28] and eDNA review [24]:

Data Preparation:

- Compile reference dataset with validated sequences and morphological parameters

- Partition data into training (70%), validation (15%), and test sets (15%)

- Perform data augmentation to balance representation across species classes

- Engineer features including sequence characteristics, abundance measures, and ecological metadata

Model Training:

- Select appropriate algorithm based on data structure and problem type (Random Forest for morphological data, Neural Networks for sequence data)

- Train multiple models with hyperparameter optimization using cross-validation

- Validate models against independent datasets not used in training

- Assess performance using metrics including accuracy, precision, recall, and F1-score

Implementation:

- Deploy top-performing model as a web tool or standalone application

- Establish confidence thresholds for species identifications

- Implement continuous learning framework to incorporate new validated data

- Provide mechanism for expert override and correction of misidentifications

In the Hebeloma case study, this approach correctly identified 77% of collections with its highest probabilistic match, 96% within its three most likely determinations, and over 99% within its five most likely determinations [28].

Citizen Science Integration for Ecological Monitoring

The combination of eDNA metabarcoding and AI presents unique opportunities for citizen science initiatives aimed at tracking long-term ecological trends. This integration enables volunteers to contribute meaningfully to large-scale biodiversity monitoring while maintaining scientific rigor.

Implementation Framework

Standardized Sampling Protocols:

- Develop simplified, standardized sampling kits with detailed instructions

- Include materials for controlled sample collection (sterile containers, filters, preservatives)

- Implement chain-of-custody documentation for sample tracking

- Utilize video tutorials and pictorial guides to ensure protocol adherence

Data Management and Quality Control:

- Establish centralized database for sample metadata and tracking

- Implement automated quality checks for submitted data

- Incorporate control samples in citizen science kits to monitor contamination

- Use blockchain or similar technology for data provenance tracking

AI-Powered Identification Platforms:

- Develop user-friendly mobile applications for data submission and results access

- Implement automated feedback systems to flag potentially problematic samples

- Create interactive visualization tools for citizens to explore results

- Establish expert validation systems for unusual or significant findings

Case Study: Nigerian Freshwater Monitoring

A study in Nigerian water bodies demonstrated both the potential and challenges of eDNA metabarcoding for fish biodiversity surveys [27]. Researchers identified several advantages highly relevant to citizen science:

- Rapid and non-invasive identification of fish taxa without specialized taxonomic expertise

- Detection of differences in community composition and biodiversity metrics across water bodies

- Identification of species overlooked by traditional methods

- Ability to detect both threatened and invasive species

The study also highlighted constraints that must be addressed in citizen science applications, including logistical challenges around sampling protocols, the lack of comprehensive regional DNA reference databases, and primer specificity issues [27].

Figure 2: Citizen Science eDNA Workflow for Ecological Monitoring

Essential Research Reagents and Materials

Table 3: Research Reagent Solutions for eDNA Metabarcoding

| Category | Specific Products/Examples | Function and Application | Considerations for Citizen Science |

|---|---|---|---|

| Sample Collection | Sterile polycarbonate bottles, 0.22μm membrane filters, DNA stabilization buffers | Preservation of environmental DNA immediately upon collection | Pre-assembled kits with pre-measured reagents improve standardization |

| DNA Extraction | Commercial kits (DNeasy PowerWater, MoBio PowerSoil), proteinase K, magnetic bead solutions | Isolation of high-quality DNA from complex environmental matrices | Simplified protocols with minimal steps reduce potential for contamination |

| PCR Amplification | Target-specific primers (12S, 16S, 18S, CO1, ITS), PCR master mixes, molecular grade water | Amplification of target barcode regions for sequencing | Pre-aliquoted reagents reduce measurement errors; touchdown PCR protocols improve specificity |

| Library Preparation | Illumina sequencing adapters, dual indices, purification beads, quantification standards | Preparation of amplified DNA for high-throughput sequencing | Barcoding systems allow sample multiplexing and tracking |

| Sequencing | Illumina MiSeq/NovaSeq reagents, flow cells, buffer solutions | Generation of sequence data from prepared libraries | Typically performed at centralized facilities due to cost and expertise requirements |

| Bioinformatics | BLAST databases, OBITools, QIIME2, MOTU clustering algorithms | Processing raw sequence data into taxonomic assignments | Cloud-based platforms with simplified interfaces enable broader access |

| AI/ML Analysis | Python/R libraries (scikit-learn, TensorFlow, BIOM-format) | Species identification and pattern recognition in complex datasets | Pre-trained models with web interfaces allow users without coding expertise |

The integration of eDNA metabarcoding with AI-powered identification represents a paradigm shift in ecological monitoring, particularly within citizen science frameworks. These methodologies enable scalable, cost-effective biodiversity assessment that can track ecological trends across large spatial and temporal scales. The non-invasive nature of eDNA sampling makes it ideally suited for citizen science applications, while AI algorithms ensure scientific rigor in species identification.

Future advancements in several areas will further enhance these technologies:

- Reference Database Expansion: Developing comprehensive, region-specific reference databases is critical for improving identification accuracy, especially in biodiverse but understudied regions [27].

- Standardized Protocols: Establishing and validating standardized field and laboratory protocols will improve data comparability across studies and citizen science initiatives [24].

- Algorithm Refinement: Continuing development of specialized ML algorithms for eDNA analysis will address current challenges including quantification, rare species detection, and handling of degraded DNA [24] [25].

- Portable Sequencing Technologies: Advances in portable sequencing platforms like Oxford Nanopore technologies will enable real-time eDNA analysis in field settings.

- Integrated Data Systems: Developing systems that combine eDNA data with traditional survey methods, remote sensing, and environmental parameters will provide more comprehensive ecosystem assessments [25].

For researchers and conservation professionals, these technologies offer powerful tools for addressing pressing environmental challenges, from monitoring ecosystem responses to climate change to tracking the spread of invasive species. The integration of citizen science not only expands data collection capabilities but also promotes public engagement with science and conservation, creating a collaborative framework for understanding and protecting global biodiversity.

As these methodologies continue to evolve, they will play an increasingly important role in ecological research, environmental management, and conservation policy, providing the scientific foundation for evidence-based decision-making in an era of unprecedented environmental change.

The use of citizen science—engaging the public in scientific research—has become a transformative approach in ecology, enabling the collection of data at spatiotemporal scales unattainable by individual research teams [29]. Long-term ecological trends research, crucial for understanding phenomena like climate change and biodiversity loss, relies heavily on extensive, sustained datasets. Citizen science platforms have emerged as critical tools for generating these datasets, coupling deep public engagement with rigorous scientific data co-creation [29]. This guide provides a technical examination of three pivotal approaches: the global biodiversity platform iNaturalist, the specialized ornithological tool eBird, and the creation of custom applications using platforms like SPOTTERON. Framed within the context of long-term ecological studies, we detail their operational protocols, data outputs, and integration into the researcher's toolkit.

Platform Comparative Analysis

The following table summarizes the core characteristics, data outputs, and research applications of iNaturalist, eBird, and custom SPOTTERON apps, highlighting their distinct roles in ecological monitoring.

Table 1: Technical Comparison of Citizen Science Platforms for Ecological Research

| Feature | iNaturalist | eBird | Custom Apps (e.g., SPOTTERON) |

|---|---|---|---|

| Primary Taxonomic Focus | Pan-biodiversity (all taxa) [29] | Birds exclusively [30] | Highly flexible (e.g., plants, butterflies, social surveys) [31] |

| Core Data Collected | Geotagged photos/sounds, species IDs, timestamps, community verification | Checklist of species, counts, effort (duration, distance), location, habitat [30] | Customizable data points (observations, sensor data, survey answers), media, location [31] |

| Key Data Collection Protocol | Incidental or structured observations; research-grade status requires photo & community ID | Complete Checklists, Traveling Count, Stationary Count protocols with defined effort [30] | Fully customizable protocols defined by the research team (e.g., Satoyama monitoring) [31] [29] |

| Data Quality Mechanism | Community-driven identification consensus to achieve "Research Grade" status | Automated filters for outliers, regional reviewers, and expert curation [30] | Project-specific validation by researchers, with potential for community features [31] |

| Primary Research Applications | Species distribution modeling, phenology studies, occurrence data for rare species [29] | Population trends, distribution models, habitat use studies, Status and Trends products [30] | Targeted monitoring (e.g., threatened species), citizen action, social science studies [31] [29] |

| Notable Long-Term Project | Monitoring Sites 1000 (Japan) [29] | eBird Status and Trends (global) [30] | Monitoring Sites 1000 Satoyama project (Japan) [29] |

Experimental Protocols for Data Collection

Adherence to standardized protocols is fundamental for ensuring the scientific utility of citizen-collected data in long-term trend analysis.

eBird Protocol: The Complete Checklist

The Complete Checklist protocol is a cornerstone of eBird's scientific value, requiring observers to report all bird species detected by sight or sound during a sampling period [30].

- Primary Purpose: To record presence and absence data, which is critical for understanding species distributions and trends. A complete checklist informs analysts that unreported species were genuinely not encountered, not merely unreported [30].

- Methodology Details:

- Checklist Initiation: Before starting observations, create a new checklist specifying the location, date, and observation protocol (e.g., Traveling, Stationary).

- Effort Documentation: Record the start and end time, and for traveling counts, the distance covered. eBird recommends checklists under 5 miles (8 km) for traveling and under 3 hours for stationary counts for maximum scientific precision [30].

- Species Reporting: Identify and count every bird species seen or heard during the checklist period. Use "X" if a species is confirmed but counting is impractical.

- Data Submission: Submit the checklist, ensuring it is marked as "Complete." Incidental observations should be marked as such, as they have lower analytical value [30].

- Key Controls: Standardized effort metrics (duration, distance) allow for statistical control of detectability in analyses. The protocol explicitly excludes captive, dead, or remotely sensed birds to maintain data consistency for population studies [30].

iNaturalist Protocol: Research-Grade Observations

The Research-Grade Observation protocol leverages community consensus to validate species occurrences, making data suitable for use in platforms like the Global Biodiversity Information Facility (GBIF).

- Primary Purpose: To create a vetted dataset of biodiversity occurrences with a high degree of identification accuracy.

- Methodology Details:

- Observation Capture: Record an organism with verifiable evidence, typically a geotagged photograph or sound recording, along with the date.

- Initial Upload and Identification: Upload the media to iNaturalist and provide an initial species identification, which can be as broad as a taxonomic family.

- Community Identification: The community of users adds supporting or improving identifications. The observation becomes "Research Grade" when more than two-thirds of the identifications agree at the species level and the taxon is considered rankable [29].

- Data Export: Research-grade observations are automatically exportable to aggregated data repositories.

- Key Controls: The requirement of verifiable media allows for expert validation and correction post-submission. The community-driven, consensus-based identification model provides a scalable quality control mechanism.

Custom App Protocol: Standardized Transect Monitoring

The Standardized Transect Monitoring protocol, as implemented in projects like Japan's Monitoring Sites 1000 Satoyama, uses custom apps for structured, long-term monitoring at fixed sites [29].

- Primary Purpose: To track changes in species richness and population indicators for specific taxa over decades within defined ecosystems.

- Methodology Details:

- Site Selection: Establish permanent transects or monitoring plots within a specific habitat (e.g., satoyama agricultural landscapes).

- Standardized Taxa Sampling: Trained volunteers and researchers conduct regular surveys (e.g., annually) along the transects, recording predefined taxa such as flora, birds, and butterflies using a custom mobile application [29].

- Structured Data Entry: The custom app (e.g., built on SPOTTERON) presents data entry forms tailored to the protocol, ensuring consistent recording of species and counts [31].

- Centralized Data Aggregation: All submissions are funneled into a central administration hub for researchers to manage, analyze, and export [31].

- Key Controls: The use of fixed sites and consistent methodology across years allows for the detection of subtle, long-term trends. The custom app enforces data structure and completeness.

Data Flow and Research Workflow

The journey from a field observation to an analyzable data point in ecological research involves a structured flow of information and validation. The diagram below illustrates this integrated pipeline for citizen science data.

The Scientist's Toolkit: Research Reagent Solutions

For researchers leveraging or developing citizen science platforms for ecological monitoring, the following "research reagents" are essential components.

Table 2: Essential Tools and Solutions for Citizen Science Research

| Tool/Solution | Function in Research | Example Platforms/Tools |

|---|---|---|

| Custom Mobile App Framework | Provides the interface for standardized data collection, ensuring protocol adherence and data structure integrity. | SPOTTERON [31] |

| Data Curation & Management Hub | A central platform for researchers to manage, validate, clean, and export large volumes of volunteered data. | iNaturalist API, eBird Admin, SPOTTERON Data Administration [31] |

| Open-Source Visualization Libraries | Creates interactive charts, graphs, and maps to explore data, communicate results, and engage participants. | D3.js, Chart.js, Grafana [32] |

| Statistical Modeling Packages | Analyzes complex citizen science data, accounting for sampling bias and effort to produce robust trend estimates. | R packages (e.g., spOccupancy, birdPOP for eBird Status and Trends) [30] [29] |

| Geospatial Mapping Services | Provides the base mapping and geolocation infrastructure for recording and visualizing spatial observation data. | OpenStreetMap, Polymaps, Google Maps [32] |

Data Visualization and Accessibility Standards

Effectively communicating the results derived from citizen science platforms requires adherence to data visualization and accessibility best practices.

- Color Palette Selection: Use distinct, accessible color palettes for different data types. For sequential data (e.g., population density), use a single hue with varying saturation from light (low) to dark (high). For categorical data (e.g., different species), use a palette with distinct hues and ensure sufficient contrast between them [33] [34]. The U.S. Census Bureau's standards, featuring teal, navy, orange, and grey, provide an excellent, accessible starting point [34].

- Accessibility Imperative: Do not rely on color alone to convey meaning. Incorporate patterns, shapes, and direct labels to ensure interpretability for users with color vision deficiencies (CVD) [33] [35]. All non-text elements must meet a minimum 3:1 contrast ratio against the background [33].

- Clarity and Honesty: Prioritize clarity by using clear labels and legends and avoiding "chart junk." Ensure comparisons are truthful by providing proper context (e.g., using "per capita" instead of gross totals where appropriate) and maintaining consistent metrics and styles across visualizations [35].