GPU-Accelerated Lagrangian Particle Models: From Fundamentals to Drug Discovery Applications

This article provides a comprehensive guide to parallelizing Lagrangian particle models on Graphics Processing Units (GPUs), tailored for researchers and professionals in drug development.

GPU-Accelerated Lagrangian Particle Models: From Fundamentals to Drug Discovery Applications

Abstract

This article provides a comprehensive guide to parallelizing Lagrangian particle models on Graphics Processing Units (GPUs), tailored for researchers and professionals in drug development. It explores the fundamental principles of GPU architecture and its synergy with Lagrangian methods, details practical implementation strategies for molecular dynamics and docking simulations, presents advanced optimization techniques to overcome memory and performance bottlenecks, and validates the approach through comparative performance analysis and real-world case studies. The content demonstrates how GPU acceleration can dramatically reduce computation time in critical pharmaceutical research tasks, enabling larger-scale simulations and faster time-to-discovery.

Lagrangian Particle Methods and GPU Architecture: A Foundational Synergy

Core Principles of Lagrangian Particle Methods in Biomedical Simulation

Lagrangian particle methods (LPMs) represent a powerful computational approach for simulating transport phenomena in biomedical systems. Unlike Eulerian methods that observe flow properties at fixed locations, the Lagrangian framework tracks individual particles or fluid parcels as they move through a domain [1]. This paradigm is particularly suited for biomedical applications such as drug particle transport, platelet adhesion, and pollutant dispersion in airways, where understanding the precise pathways and history of discrete elements is critical [2] [3]. Within the context of advanced computing, the parallelization of these methods on Graphics Processing Units (GPUs) unlocks the potential for simulating large numbers of particles with high computational efficiency, enabling more complex and realistic simulations [4]. This application note details the core principles, implementation protocols, and key applications of LPMs in biomedical simulation.

Theoretical Foundations

The foundational principle of Lagrangian particle tracking in fluid mechanics is that the motion of a massless, passive tracer particle is governed by the ordinary differential equation:

[ \frac{d\mathbf{x}}{dt} = \mathbf{v}(\mathbf{x}, t) ]

where (\mathbf{x}) is the particle position and (\mathbf{v}(\mathbf{x}, t)) is the fluid velocity field at the particle's location and time [2]. This equation states that a particle moves with the local fluid velocity. The particle's position at any future time (t) is found by integrating this equation from an initial condition (\mathbf{x}(t0) = \mathbf{x}0):

[ \mathbf{x}(t) = \mathbf{x}0 + \int{t_0}^{t} \mathbf{v}(\mathbf{x}(\tau), \tau) d\tau ]

In cardiovascular simulations, for example, this kinematic equation is directly applied to trace blood elements or platelets, treating them as passive tracers [2]. For more complex physics, such as the transport of contaminants in groundwater or aerosols in airways, an advection-diffusion equation may be solved, often using a stochastic random-walk model to represent turbulent or diffusive processes [5] [6].

Table 1: Key Governing Equations for Lagrangian Particle Methods in Different Domains.

| Application Domain | Governing Equation | Primary Forces/Processes | Key References |

|---|---|---|---|

| Cardiovascular Hemodynamics | (\frac{d\mathbf{x}}{dt} = \mathbf{v}(\mathbf{x}, t)) | Fluid advection | [2] |

| Atmospheric/Pollutant Transport | (\frac{d\mathbf{x}}{dt} = \mathbf{v}(\mathbf{x}, t) + \mathbf{v}_{diffusion}) | Advection, turbulent diffusion | [5] [4] |

| Contaminant Transport in Groundwater | Advection-Diffusion Equation (ADE) with retardation | Advection, molecular diffusion, sorption | [6] |

GPU Parallelization and Computational Protocols

The deployment of LPMs on GPU architectures is a critical advancement for handling the computationally intensive nature of tracking millions of particles in complex domains.

Core Computational Workflow

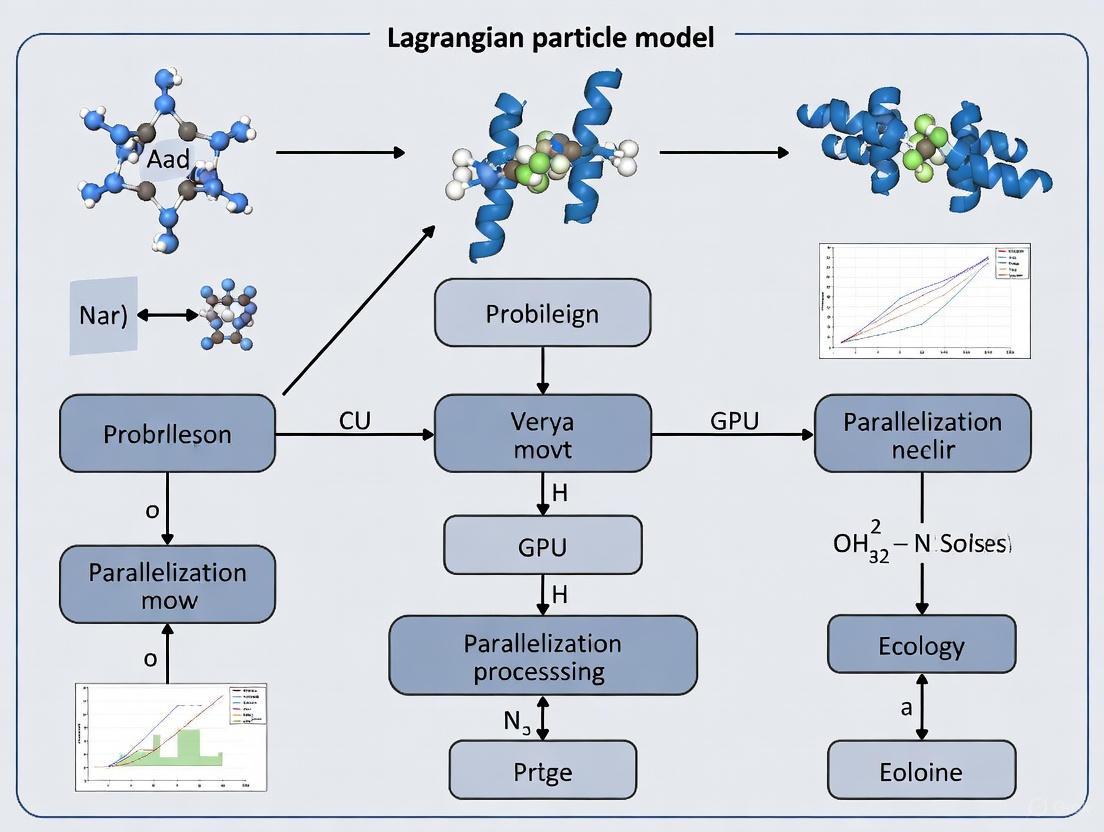

The following diagram illustrates the standard algorithm for Lagrangian particle tracking, highlighting steps amenable to parallelization.

GPU-Specific Optimization Strategies

A significant performance bottleneck in GPU-accelerated LPMs is memory access. Atmospheric transport simulations with the MPTRAC model have demonstrated that naive implementations suffer from near-random memory access patterns, as particles scattered throughout a domain request meteorological data from non-contiguous memory locations [4]. Two key optimizations have proven highly effective:

- Memory Layout Transformation: Changing the data structure of field variables (e.g., wind velocity) from a Structure of Arrays (SoA) to an Array of Structures (AoS) can improve cache coherence. In an AoS layout, all velocity components for a single grid point are stored contiguously, which is more efficient when a single particle requires all components at a specific location [4].

- Particle Data Sorting: Periodically sorting particles based on their spatial location (e.g., the grid cell they reside in) dramatically improves memory alignment. This ensures that particles processing concurrently on the GPU require data from the same region of the computational mesh, thereby reducing memory access latency [4].

These optimizations in the MPTRAC model led to a 85% reduction in runtime for the advection kernel and a 75% reduction for the full set of physics computations in simulations involving 10^8 particles [4].

Table 2: Performance Impact of GPU Optimizations in Lagrangian Transport Models.

| Optimization Strategy | Baseline Performance | Optimized Performance | Relative Improvement | Test Case Details |

|---|---|---|---|---|

| Memory Layout (SoA to AoS) & Particle Sorting | Baseline runtime | 75% reduction in total physics runtime | 4x speedup | MPTRAC, 10^8 particles, ERA5 data [4] |

| Fully Parallelized Adaptive Particle Refinement | Serialized APR algorithm | Parallelized APR algorithm | Improved efficiency and accuracy | SPH for nuclear safety analysis [7] |

| GPU Parallelization for Contaminant Transport | CPU-based SPH solver | GPU-CUDA implementation | Significant speedup ratio | 1D/2D Advection-Diffusion equations [6] |

Experimental Protocols and Methodologies

This section provides a detailed protocol for a representative biomedical simulation: analyzing particle transport in a patient-specific artery using fluid-structure interaction (FSI) data.

Protocol: Lagrangian Post-processing of Hemodynamics

Objective: To quantify hemodynamic transport parameters such as Particle Residence Time (PRT) and Wall Shear Stress (WSS) exposure from a time-dependent FSI simulation [3].

Input Data:

- A time-series of velocity fields (\mathbf{v}(\mathbf{x}, t)) and mesh node coordinates (\mathbf{x}_n(t)) from a validated FSI simulation.

- The mesh topology (element connectivity) is assumed constant, while nodal positions change over time [3].

Procedure:

Particle Initialization:

- Define a set of seed points ({\mathbf{x}{p, 0}}) at the inlet(s) of the domain or on a specific surface of interest at time (t0).

- Each particle (p) is assigned a unique identifier and initial state.

Element Location (A2):

- For the initial seed, use an efficient global search algorithm (e.g., using an octree) to find the host tetrahedral element (e(\mathbf{x}_p)) for each particle.

- For subsequent time steps, use a local search starting from the previously known host element. This is highly efficient as particle motion between steps is typically small [3].

Spatio-Temporal Interpolation (A3):

- To obtain the fluid velocity at a particle's position (\mathbf{x}p) at time (tp), first interpolate the mesh node coordinates to time (tp) using linear interpolation: [ \mathbf{x}n(tp) = \mathbf{x}n(tq) \left[1 - \frac{tp - tq}{t{q+1} - tq}\right] + \mathbf{x}n(t{q+1}) \frac{tp - tq}{t{q+1} - tq} ] where (tq \leq tp \leq t{q+1}) [3].

- Map the particle's position to the natural (shape function) coordinates (\boldsymbol{\xi}_p = (\xi, \eta, \zeta)) of the host element.

- Interpolate the fluid velocity using the same shape functions (Nk(\boldsymbol{\xi}p)): [ \mathbf{v}(\mathbf{x}p, tp) = \sum{k=1}^4 Nk(\boldsymbol{\xi}p) \, \mathbf{v}k(tp) ] where (\mathbf{v}k) are the nodal velocities.

Numerical Integration (A4):

- Advance the particle position by solving (\frac{d\mathbf{x}}{dt} = \mathbf{v}(\mathbf{x}, t)). A first-order Euler or fourth-order Runge-Kutta (RK4) method is commonly used [5] [3].

- The choice of time step (\Delta t) is critical. It must be small enough for numerical stability and accuracy, often a fraction of the flow-through time of a mesh element.

Data Tracking and Output:

- At each time step, record relevant Lagrangian metrics for each particle. This can include:

- Pathlines: The full trajectory (\mathbf{x}_p(t)).

- Exposure Time: The cumulative time a particle spends in a region of interest (e.g., high shear stress > a threshold).

- Finite-Time Lyapunov Exponent (FTLE): A measure of flow mixing and separation [3].

- Write particle data at specified intervals for post-processing and visualization.

- At each time step, record relevant Lagrangian metrics for each particle. This can include:

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Models for Lagrangian Biomedical Simulation.

| Tool/Model | Type | Primary Function in Lagrangian Simulation | Example Application |

|---|---|---|---|

| MPTRAC | Lagrangian Particle Dispersion Model | Simulates atmospheric transport processes; optimized for GPU HPC systems. | Tracking aerosolized drug particle dispersion in lung airways [4]. |

| SERGHEI-LPT | Lagrangian Particle Transport Model | Models passive particle transport driven by 2D shallow water equations. | Simulating transport of contaminants in overland flow during flood events [5]. |

| Fluid-Structure Interaction (FSI) Solver | Computational Physics Solver | Provides time-varying velocity fields and mesh deformation in compliant vessels. | Lagrangian post-processing of blood flow in deformable arteries [3]. |

| Random Walk Model | Stochastic Algorithm | Adds stochastic perturbations to particle trajectories to model turbulent diffusion. | Simulating mixing and dispersion of inhaled pharmaceuticals in the airways [5]. |

| Smoothed Particle Hydrodynamics (SPH) | Meshless Lagrangian Method | Solves advection-diffusion equations; can be parallelized on GPU using CUDA. | Modeling contaminant transport in groundwater [6]. |

Lagrangian particle methods provide a powerful and intuitive framework for investigating transport phenomena in biomedical systems. Their strength lies in the ability to track the history and pathways of discrete elements, such as drug carriers or blood cells, offering insights that are often obscured in Eulerian analyses. The integration of these methods with GPU parallelization, through optimized memory access and massive parallelism, addresses the primary challenge of computational cost. This enables high-fidelity, patient-specific simulations that were previously infeasible, opening new frontiers in predictive medicine, drug delivery optimization, and medical device design. As GPU technology continues to evolve, Lagrangian methods are poised to become an even more central tool in computational biomedicine.

Particle-based computational methods have become a cornerstone for simulating complex physical systems across diverse scientific and engineering domains, from fluid dynamics and molecular biology to astrophysics and geotechnics. These methods, including Smoothed Particle Hydrodynamics (SPH), the Material Point Method (MPM), and Discrete Element Methods (DEM), model materials as discrete particles whose interactions govern the system's evolution. The parallel architecture of Graphics Processing Units (GPUs) presents an ideal computational platform for these methods due to their inherent capacity for executing thousands of simultaneous computational threads. This synergy enables researchers to overcome the prohibitive computational costs associated with large-scale particle simulations, transforming previously intractable problems into feasible investigations.

The fundamental advantage of GPU parallelization stems from its ability to apply the same computational operation to numerous particles concurrently, a paradigm known as Single Instruction, Multiple Data (SIMD). Whereas traditional Central Processing Unit (CPU)-based approaches process particles sequentially, GPU kernels can evaluate forces, update positions, and manage interactions for thousands of particles simultaneously. This massive parallelism is particularly well-suited to the Lagrangian framework employed by many particle methods, where individual particles carry field information and move with the material flow. As computational demands in research continue to escalate, leveraging GPU architecture has transitioned from a specialized optimization to a fundamental necessity for cutting-edge particle-based simulation.

Architectural Advantages of GPUs for Particle Computations

Fine-Grained Parallelism and Data Locality

The architectural design of GPUs emphasizes high throughput for parallelizable workloads, making them exceptionally capable for particle-based computations. A modern GPU comprises thousands of computational cores organized into streaming multiprocessors, enabling the efficient execution of a vast number of threads. This structure aligns perfectly with the natural parallelism in particle systems, where each particle can be processed by a separate thread. The resulting fine-grained parallelism allows for significant speedups, as demonstrated in Monte Carlo simulations for tomography, where GPU implementations achieved acceleration factors of 100–1000× compared to single-core CPU implementations [8].

Furthermore, particle methods benefit from enhanced data locality when implemented on GPUs. During critical computation phases, such as the calculation of interaction forces, particle data can be stored in fast on-chip memory (shared memory or cache), drastically reducing access latency. The structured background grids often used in methods like MPM and Particle-In-Cell (PIC) facilitate coherent memory access patterns, allowing the GPU to maximize memory bandwidth utilization. One study on GPU-based MPM solvers noted that "every GPU thread can manage up to very few grid cells or particles," highlighting the workload balance achievable with this approach [9]. This efficient mapping of computational tasks to hardware resources is fundamental to the performance gains observed in GPU-accelerated particle simulations.

Overcoming Traditional Bottlenecks

Particle simulations traditionally faced significant bottlenecks in contact detection and inter-particle force calculations, which scale quadratically with particle count in naive implementations ((O(N^2))). GPU parallelization, combined with efficient spatial sorting and search algorithms, mitigates these constraints. For instance, hierarchical grid data structures and linear bounding volume hierarchies (LBVH) enable rapid neighbor identification by grouping spatially proximate particles, reducing the complexity of contact detection [10]. The parallel computation of interactions then distributes these localized calculations across many threads.

The computational advantage of GPUs becomes particularly evident in multi-resolution simulations. A study on parallelized Adaptive Particle Refinement (APR) for SPH noted that conventional serialized APR "diminishes the computational efficiency of the system, negating the advantages of acceleration achieved through high-performance computing devices" [7]. Their solution was a fully parallelized APR algorithm that enhanced both efficiency and computational accuracy. This demonstrates how GPU architecture not only accelerates straightforward particle calculations but also enables more sophisticated, adaptive simulation techniques that were previously hampered by serial bottlenecks.

Performance Benchmarks and Quantitative Analysis

Documented implementations of particle methods on GPU architectures consistently demonstrate substantial performance improvements across various application domains. The following table summarizes key performance metrics reported in recent studies:

Table 1: Performance Benchmarks of GPU-Accelerated Particle Methods

| Application Domain | Particle Method | Performance Gain | Key Achievement |

|---|---|---|---|

| Nuclear Safety Analysis [7] | Smoothed Particle Hydrodynamics (SPH) with Adaptive Particle Refinement | Improved computational efficiency vs. conventional SPH and serial APR | Stable multi-resolution computing system for nuclear safety analysis |

| Tomography Simulation [8] | Monte Carlo (MC) Simulation | 100–1000× speedup over CPU implementations | Enabled practical, large-scale MC applications for medical imaging |

| Exascale Computing [11] | Molecular Dynamics (MD) and N-body Simulations | Enabled exascale-ready simulations | Co-design of software libraries for performance portability across architectures |

| Compressible Flow Simulation [9] | Material Point Method (MPM) | Promising speed-ups vs. C++ CPU version | Portable, highly parallel solver for compressible gas dynamics |

Beyond these application-specific benchmarks, the Exascale Computing Project's Co-Design Center for Particle Applications (CoPA) has developed portable software libraries like Cabana and PROGRESS/BML to ensure performance portability across diverse GPU architectures [11]. These libraries provide optimized data structures and computational kernels that abstract architecture-specific complexities, allowing researchers to leverage GPU capabilities without low-level programming. The performance consistency achieved through these libraries underscores the maturity of GPU programming models for scientific particle simulations.

Experimental Protocols for GPU-Accelerated Particle Simulations

Protocol: Parallelized Adaptive Particle Refinement for SPH

This protocol outlines the methodology for implementing and benchmarking parallel Adaptive Particle Refinement (APR) in Smoothed Particle Hydrodynamics (SPH) simulations, based on work in nuclear safety analysis [7].

- Objective: To enhance computational efficiency and accuracy in multi-resolution SPH simulations through full parallelization of particle refinement operations.

- Computational Environment:

- Hardware: High-performance computing node(s) equipped with modern GPUs (e.g., NVIDIA A100, AMD MI250X).

- Software: CUDA or HIP programming model; C++ compiler with GPU support.

- Procedure:

- Initialization: Discretize the computational domain with particles of varying initial resolutions based on expected solution features.

- Parallel Particle Neighbor Search: Implement a parallel neighbor-finding algorithm (e.g., using spatial hashing or uniform grid partitioning) to identify particles within smoothing lengths.

- Parallel Criterion Evaluation: Concurrently on all particles, calculate refinement criteria (e.g., density gradient, proximity to interfaces) to flag particles for splitting or coalescing.

- Parallel Refinement Operations: Execute particle splitting and coalescing in parallel:

- Assign independent threads for each refinement operation.

- Use atomic operations for safe memory allocation in particle data arrays.

- Implement a new adaptive smoothing length model to maintain accuracy near resolution interfaces.

- Parallel Field Variable Interpolation: For newly created particles, interpolate field variables (density, energy, etc.) from neighboring particles using parallel kernel operations.

- Time Integration: Proceed with standard parallel SPH time integration on the adapted particle set.

- Validation:

- Test Cases: Simulate 2D and 3D hydrostatic and hydrodynamic benchmarks with known analytical solutions.

- Performance Metrics: Compare computational time against conventional SPH and serial APR implementations.

- Accuracy Assessment: Quantify errors near resolution interfaces and analyze jet breakup dynamics to verify physical performance.

Protocol: Lagrangian Particle Transport in Shallow Water Flows

This protocol details the implementation of a Lagrangian Particle Tracking (LPT) model driven by a 2D shallow water solver, as described in the SERGHEI model development [5] [12].

- Objective: To analyze the accuracy and computational efficiency of numerical schemes for tracking passive particles in shallow water flows over complex topography.

- Computational Environment:

- Hardware: GPU-accelerated computing node.

- Software: SERGHEI model framework (open-source); CUDA or OpenACC for parallelization.

- Procedure:

- Hydrodynamic Solution: Solve the 2D shallow water equations on a structured grid using a finite volume or finite element method, storing velocity fields at discrete time steps.

- Particle Initialization: Seed passive particles (representing pollutants, drifters, etc.) at specified locations. Particles are assumed to have negligible mass and volume without mutual interactions.

- Particle Advection (Parallel): For each particle, solve the advection equation using one of the following parallelized schemes:

- Online Euler Method: First-order explicit scheme, updating particle position using the flow velocity at every time step.

- Online Runge-Kutta Method: Fourth-order explicit scheme, requiring multiple velocity interpolations per time step.

- Offline Runge-Kutta Method: Fourth-order scheme using pre-computed velocity fields stored at specified intervals.

- Parallel Turbulent Diffusion: Incorporate a random-walk model to simulate turbulent diffusion. For each particle, concurrently generate random displacements based on a turbulent diffusivity coefficient.

- Boundary Condition Handling: Implement parallel checks for particle beaching or boundary interactions (e.g., specular reflection, absorption).

- Validation:

- Test Cases: Simulate laboratory-scale setups (e.g., particle transport in flumes) and a realistic localized precipitation event in the Arnás catchment.

- Analysis: Compare the trajectories and final distributions of particles against experimental data or analytical solutions.

- Performance Benchmarking: Measure and compare computational time and accuracy for each numerical scheme (Euler vs. Runge-Kutta, online vs. offline).

Computational Workflow and Data Structures

The typical workflow of a GPU-accelerated particle simulation involves a sequence of parallel operations that manage particle data, compute interactions, and update states. The diagram below illustrates this generalized logic flow, which is common to many particle methods including SPH, MPM, and DEM.

Diagram 1: Logical flow of GPU-accelerated particle simulation.

Efficient data structures are critical for leveraging GPU memory bandwidth. The Cabana toolkit, developed by the Exascale Computing Project's CoPA center, provides a performance-portable library for particle-based simulations [11]. Cabana offers user-configurable particle data structures (Array-of-Structs vs. Struct-of-Arrays) and computational kernels for common particle operations. Its use of the Kokkos programming model ensures performance portability across different GPU architectures (NVIDIA, AMD, Intel) and multicore CPUs.

The Scientist's Toolkit: Essential Software and Libraries

Successful implementation of particle methods on GPUs relies on a robust software ecosystem encompassing programming models, specialized libraries, and performance portability tools. The following table catalogues key "research reagent" solutions in this domain.

Table 2: Essential Software Tools for GPU-Accelerated Particle Simulations

| Tool/Library | Type | Primary Function | Application Context |

|---|---|---|---|

| Cabana [11] | Particle Simulation Toolkit | Provides performance-portable data structures and algorithms for particle operations. | Molecular Dynamics, SPH, PIC, N-body simulations. |

| PROGRESS/BML [11] | Quantum Molecular Dynamics Library | Implements O(N) complexity algorithms for electronic structure calculations. | Quantum MD, electronic structure simulations. |

| Kokkos [11] | Programming Model | Abstraction layer for parallel computation and data management. | Performance portability across CPU/GPU architectures. |

| CUDA [10] | Parallel Computing Platform | NVIDIA's programming model for GPU computing with C/C++. | General-purpose GPU programming (NVIDIA hardware). |

| gPU-SPH [7] | Specific Implementation | Fully parallelized SPH with Adaptive Particle Refinement. | Nuclear safety analysis, multi-resolution fluid dynamics. |

| SERGHEI-LPT [5] | Lagrangian Particle Transport Model | Simulates passive particle transport in 2D shallow water flows. | Environmental modeling, pollutant transport, flood drifters. |

GPU parallel architecture has fundamentally transformed the landscape of particle-based computation, enabling unprecedented scale and fidelity in scientific simulations. The intrinsic alignment between the fine-grained parallelism of particle methods and the massively parallel architecture of GPUs delivers performance improvements of orders of magnitude, making previously intractable problems solvable. This synergy is evident across diverse fields, from the simulation of compressible flows for nuclear safety [7] to the modeling of pollutant transport in hydrology [5] and the advancement of medical tomography [8].

The future trajectory of this field points toward increased performance portability and algorithmic sophistication. As hardware continues to evolve, libraries like Cabana and programming models like Kokkos will be essential for maintaining performance across diverse architectures without code rewrites [11]. Furthermore, the integration of emerging GPU features—such as ray-tracing cores for enhanced neighbor searches and tensor cores for machine learning-enhanced force models—promises to unlock new capabilities. The ongoing development of monolithic solvers for complex multiphysics problems, such as Fluid-Structure Interaction across all flow regimes [9], will continue to rely on the computational power and architectural advantages of GPUs, solidifying their role as an indispensable tool for particle-based scientific discovery.

Graphics Processing Units (GPUs) have revolutionized scientific computing by providing massive parallel processing power, enabling researchers to solve complex problems previously deemed infeasible. In the context of Lagrangian particle model parallelization—a method critical for simulating the transport and diffusion of particles in fields like drug development and atmospheric science—the choice of GPU programming model is paramount. These models provide the essential link between high-level simulation objectives and the low-level hardware instructions that execute on the GPU. Among the available options, NVIDIA's CUDA and the open standard OpenCL have emerged as two of the most prominent frameworks for accelerating scientific computations. This article provides detailed application notes and experimental protocols for utilizing these models, with a specific focus on applications relevant to researchers, scientists, and drug development professionals working with particle-based simulations.

CUDA (Compute Unified Device Architecture) is a parallel computing platform and programming model created by NVIDIA. It is specifically designed to work exclusively with NVIDIA GPU hardware, enabling deep-level hardware optimization. This vendor-specific nature allows for highly tuned performance and a rich ecosystem of development tools [13].

OpenCL (Open Computing Language) is a framework for writing programs that execute across heterogeneous platforms. It is an open, royalty-free standard maintained by the Khronos Group, supporting a wide range of processors including GPUs, CPUs, DSPs, and FPGAs from multiple vendors such as NVIDIA, AMD, and Intel [13].

Table 1: Fundamental Characteristics of CUDA and OpenCL

| Feature | CUDA | OpenCL |

|---|---|---|

| Primary Vendor | NVIDIA | Khronos Group (Multi-vendor) |

| Licensing Model | Proprietary | Open Standard |

| Hardware Support | NVIDIA GPUs only | Cross-platform (GPUs, CPUs, accelerators) |

| Language Syntax | C++ with CUDA extensions | C99-based |

| Memory Hierarchy | Shared, Local, Global, Constant | Shared, Local, Global, Constant |

| Maturity & Ecosystem | Mature, extensive libraries and tools | Broad platform support, less tooling |

The decision between CUDA and OpenCL often involves a fundamental trade-off between performance optimization and hardware flexibility. CUDA typically offers superior performance on NVIDIA hardware due to its deep integration and optimized drivers, while OpenCL provides greater flexibility for code that must run across different hardware architectures [13]. For research institutions with existing NVIDIA GPU infrastructure or those requiring specific CUDA-accelerated libraries, CUDA often presents the most straightforward path to high performance. Conversely, for projects requiring long-term hardware agnosticism or targeting diverse computing environments, OpenCL provides a more portable solution.

Performance Benchmarks and Quantitative Analysis

Empirical benchmarking is crucial for selecting the appropriate GPU framework. Performance can be measured in terms of raw simulation throughput (e.g., nanoseconds of simulation per day) and cost efficiency. The following tables consolidate performance data from molecular dynamics and particle dispersion simulations, which share computational similarities with Lagrangian particle models.

Table 2: GPU Performance Benchmarking in Molecular Dynamics (OpenMM, ~44,000 atoms) [14]

| GPU Model | Programming Framework | Speed (ns/day) | Relative Cost Efficiency |

|---|---|---|---|

| NVIDIA H200 | CUDA | 555 | ~13% better than T4 baseline |

| NVIDIA L40S | CUDA | 536 | ~60% better than T4 baseline |

| NVIDIA H100 | CUDA | 450 | Varies by provider |

| NVIDIA A100 | CUDA | 250 | More efficient than T4/V100 |

| NVIDIA V100 | CUDA | 237 | ~33% worse than T4 baseline |

| NVIDIA T4 | CUDA | 103 | Baseline |

Table 3: Performance Comparison in Particle Dispersion Modeling [15]

| Simulator | Programming Framework | Hardware | Performance Insight |

|---|---|---|---|

| FLEXCPP | CUDA | NVIDIA GPU | Vendor-locked performance |

| FlexOcl | OpenCL | NVIDIA GPU | Outperformed equivalent CUDA code |

| FlexOcl | OpenCL | Intel Xeon Phi | Achieved equivalent performance |

The data reveals several key insights. First, high-end GPUs like the H200 and L40S offer significant performance advantages for computational workloads [14]. Second, raw speed does not always correlate with cost-effectiveness; the L40S frequently emerges as the most cost-efficient option [14]. Third, in specific use cases like the FLEXPART Lagrangian particle simulator, an OpenCL implementation (FlexOcl) demonstrated superior performance on NVIDIA hardware compared to its CUDA counterpart, challenging the assumption that CUDA is always the optimal choice, even on NVIDIA GPUs [15]. This is particularly relevant for researchers parallelizing atmospheric or indoor pollutant dispersion models.

Experimental Protocols for GPU Acceleration

This section provides detailed methodologies for implementing and benchmarking GPU-acceler simulations, drawing from proven approaches in the literature.

Protocol 1: GPU-Accelerated Lagrangian Particle Dispersion Model

This protocol outlines the steps for developing a GPU-accelerated model for tracking particulate matter or airborne pollutants, based on the successful implementation in the FlexOcl model [16] [15].

1. Problem Decomposition:

- Objective: Map the continuous physical processes of particle transport onto a discrete, parallel computational framework.

- Procedure: Decompose the Lagrangian model into core computational kernels:

- Advection Kernel: Calculate particle movement due to fluid flow.

- Turbulent Diffusion Kernel: Model stochastic particle movements due to turbulence.

- Gravitational Settling Kernel: Apply gravitational forces.

- Boundary Deposition Kernel: Handle particle-wall interactions.

2. Framework Selection & Setup:

- Tools: Choose either CUDA (for maximum performance on NVIDIA hardware) or OpenCL (for cross-platform compatibility and performance as demonstrated by FlexOcl) [15] [13].

- Setup: Install the necessary SDK (NVIDIA CUDA Toolkit or Khronos OpenCL headers) and verify the target GPU is accessible.

3. Memory Management Design:

- Procedure: Allocate GPU memory buffers for particle data (position, velocity, mass). Structure data for coalesced memory access. Explicitly manage the transfer of input data from CPU (host) to GPU (device) and results back to host after simulation.

4. Kernel Implementation:

- Procedure: Write each computational kernel (from Step 1) in CUDA C++ or OpenCL C. Assign one GPU thread to each particle or computational cell. Utilize on-chip shared memory (CUDA) or local memory (OpenCL) to cache frequently accessed data and reduce global memory latency.

5. Validation & Verification:

- Objective: Ensure numerical accuracy and consistency with known results.

- Procedure: Run a controlled test case and compare the GPU output against a validated CPU-based model or analytical solution. Metrics include particle concentration distribution and total simulation energy.

Protocol 2: Benchmarking GPU Performance and Efficiency

This protocol provides a standardized method for evaluating the performance of an implemented GPU model, based on established benchmarking practices [14].

1. Baseline Establishment:

- Objective: Determine the performance of a single, well-optimized CPU core implementation.

- Procedure: Execute a standard test case (e.g., a fixed number of particle time-steps) on a single CPU core. Record the total execution time.

2. GPU Performance Profiling:

- Objective: Measure raw simulation throughput.

- Procedure: Execute the same test case on the GPU. Record the execution time and calculate the simulation speed in meaningful units (e.g., particle-steps per second, nanoseconds of simulation per day).

- Tools: Use profilers like

nvproffor CUDA orCodeXLfor OpenCL to identify performance bottlenecks within the kernels.

3. Multi-Process Throughput Testing:

- Objective: Maximize total GPU utilization, crucial for smaller systems that don't fully saturate the GPU.

- Procedure: Use NVIDIA's Multi-Process Service (MPS). Launch multiple simulation instances concurrently on the same GPU [17].

- Advanced Tuning: Set the

CUDA_MPS_ACTIVE_THREAD_PERCENTAGEenvironment variable to control resource allocation per process, which can further increase total throughput by 15-25% [17].

4. I/O Overhead Minimization:

- Objective: Identify and mitigate data transfer bottlenecks.

- Procedure: Systematically vary the frequency of data saving (e.g., writing trajectory data every 100 vs. 10,000 steps). Measure the impact on overall simulation speed. Infrequent saving is often key to high GPU utilization [14].

5. Cost-Efficiency Analysis:

- Objective: Determine the most economical hardware configuration.

- Procedure: For cloud instances, calculate the cost per unit of simulation (e.g., cost per 100 ns of simulation). For local hardware, consider the total cost of ownership against performance gains [14].

Visualization of Workflows

The following diagrams, generated with Graphviz DOT language, illustrate the core logical workflows for the GPU acceleration of a Lagrangian particle model.

GPU Acceleration Development Workflow

GPU Performance Benchmarking Protocol

The Scientist's Toolkit

This section details the essential hardware and software components for establishing an effective GPU computing environment for Lagrangian particle simulations and related scientific computing tasks.

Table 4: Essential Research Reagents and Materials for GPU Computing

| Item Name | Function/Application | Example Specifications |

|---|---|---|

| NVIDIA HPC GPUs | Primary accelerator for CUDA; high memory capacity for large particle systems. | RTX 6000 Ada (48GB VRAM), L40S [14] [18] |

| High-Clock-Speed CPU | Manages simulation control flow and feeds data to the GPU; single-thread performance is critical. | AMD Threadripper PRO 5995WX [18] |

| System Memory (RAM) | Holds the complete dataset before transfer to GPU VRAM; sufficient capacity is necessary. | 128GB+ DDR4/DDR5 [18] |

| NVIDIA CUDA Toolkit | Core software environment for compiling and running CUDA C++ code. | Includes nvcc compiler, debuggers, profilers [17] |

| OpenCL SDK & Headers | Required libraries and headers for developing and compiling OpenCL applications. | Khronos OpenCL Headers, vendor-specific ICD [15] |

| OpenMM | Open-source MD simulator; a reference for implementing particle dynamics in CUDA/OpenCL. | OpenMM 8.2.0 with Python API [17] |

| NVIDIA MPS | Enables concurrent execution of multiple simulations on a single GPU, boosting throughput. | nvidia-cuda-mps-control [17] |

In the field of computational physics and engineering, Lagrangian particle methods have become indispensable for simulating complex systems involving large deformations, multiphase flows, and dynamic fragmentation. Methods such as Smoothed Particle Hydrodynamics (SPH) and the Material Point Method (MPM) leverage a meshfree approach, where the computational domain is represented by discrete particles that carry all necessary state information [19]. While this formulation excels at handling complex geometries and large material distortions, it presents significant computational challenges, particularly in calculating the interactions between vast numbers of particles.

The pursuit of high-fidelity simulations has driven the development of parallel computing strategies, with the Graphics Processing Unit (GPU) emerging as a transformative architecture for particle-based simulations. A modern GPU contains hundreds to thousands of processing cores, offering massive parallel throughput that aligns perfectly with the inherent parallelism of particle interaction calculations [20]. For instance, a single NVIDIA GTX 285 GPU with 240 cores can achieve a peak performance of 1062 GFLOPS, far surpassing traditional multi-core CPUs [20]. This document details the comprehensive computational workflow for implementing Lagrangian particle models on GPU architectures, providing application notes and experimental protocols for researchers in computational sciences and engineering.

Computational Foundation of Particle Methods

Core Lagrangian Particle Methods

Lagrangian particle methods discretize a continuum into moving particles, each carrying mass, velocity, stress, and other material properties. The primary methods include:

- Smoothed Particle Hydrodynamics (SPH): A purely Lagrangian method where particle approximations are based on a smoothing kernel function [7] [19]. SPH is particularly well-suited for fluid dynamics and free-surface flows.

- Material Point Method (MPM): A hybrid Eulerian-Lagrangian approach where particles (material points) carry state variables, while computations occur on a background grid that is reset each time step [19]. This method effectively handles history-dependent materials like soils and granular media.

- Discrete Element Method (DEM): Models granular materials by representing individual particles and solving Newton's equations of motion with contact forces [19].

These methods share a common computational pattern: at each time step, the simulation must calculate interaction forces between each particle and its neighbors within a specified cutoff radius.

The Particle Interaction Computation Challenge

The fundamental computational kernel in particle methods involves calculating pairwise interactions between particles. In a naive implementation, this requires O(N²) operations for N particles, which becomes prohibitively expensive for large-scale simulations [21]. To reduce this complexity, particle methods typically employ:

- Cutoff Radii: Interactions are calculated only between particles within a specified distance (cutoff radius), leveraging the rapid decay of interaction kernels [21].

- Spatial Decomposition: The simulation domain is divided into a grid of cells, with width at least equal to the cutoff radius, ensuring that a particle's interaction partners are limited to its own cell and immediate neighbors [21].

Even with these optimizations, the efficient implementation on parallel architectures requires careful consideration of memory access patterns, load balancing, and data structures.

GPU Architecture and Parallelization Strategies

GPUs are massively parallel processors designed with a hierarchical architecture:

- Streaming Multiprocessors (SMs): Contain multiple processing cores that execute threads in Single Instruction, Multiple Data (SIMD) fashion [20].

- Memory Hierarchy: Includes global device memory (large but high latency), shared memory (small but low latency, shared within a thread block), and registers (fastest, private to each thread) [20].

Effective GPU programming requires maximizing parallelism while minimizing data transfer between different memory levels, particularly avoiding frequent access to global memory.

GPU Parallelization Patterns for Particle Interactions

Several strategies have been developed for implementing particle interactions on GPUs, each with distinct advantages and limitations:

Table 1: GPU Implementation Strategies for Particle Interactions

| Strategy | Parallelization Approach | Memory Usage | Best Use Case |

|---|---|---|---|

| Par-Part-NoLoop [21] | One thread per particle, no loops | Minimal shared memory | Simple implementations with regular data access |

| Par-Part-Loop [21] | Flexible thread assignment with loops | Minimal shared memory | Dynamic particle distributions |

| Par-Cell [21] | One thread-block per cell | Moderate global memory | Uniform particle distribution per cell |

| Par-Cell-SM [21] | One thread-block per cell with shared memory | Shared memory for particle data | Memory-bound problems with data reuse |

| All-in-SM [21] | Thread-block processes sub-box of cells | Extensive shared memory | Scenarios with few particles per cell |

| X-Pencil [21] | Thread-block loads pencil-shaped region | Targeted shared memory | Specific architectures with favorable memory alignment |

The selection of an appropriate parallelization strategy depends on factors such as particle distribution uniformity, the number of particles per cell, and the specific GPU architecture being targeted.

Comprehensive Computational Workflow

The following diagram illustrates the complete computational workflow for GPU-accelerated particle simulations:

Figure 1: High-Level Computational Workflow for Particle Simulations

Detailed GPU Implementation Workflow

The core implementation workflow on GPU involves specific steps to maximize parallel efficiency:

Figure 2: Detailed GPU Implementation Workflow

Data Structure Preparation

Particle data should be stored in a Structure-of-Arrays (SoA) format, where each component (position, velocity, force) is stored in separate arrays [21]. This enables coalesced memory access when different threads access the same component of different particles.

Spatial Sorting and Neighbor List Construction

The workflow for efficient neighbor list construction includes:

- Cell Index Calculation: For each particle, compute the cell index based on its spatial position [21].

- Particle Counting: Count particles per cell using atomic operations to avoid race conditions [21].

- Prefix Sum Calculation: Compute the prefix sum (cumulative sum) of the particle counts to determine the starting position of each cell's particles in the sorted arrays [21].

- Particle Sorting: Rearrange particles into cell-based order using the prefix sum array [21].

This spatial sorting ensures that particles in the same or adjacent cells are stored contiguously in memory, dramatically improving cache performance during interaction calculations.

Interaction Computation Kernel

The force computation kernel employs the chosen parallelization strategy (from Table 1) to calculate pairwise interactions. For the Par-Cell-SM approach, the kernel:

- Loads particles from the current cell and its neighbors into shared memory

- Computes interactions between particles using the fast shared memory

- Accumulates forces for each particle

- Writes results back to global memory

This approach minimizes expensive global memory accesses by reusing loaded particle data for multiple interaction calculations [21].

Performance Analysis and Benchmark Data

Quantitative Performance Metrics

Recent studies provide concrete performance data for GPU-accelerated particle simulations:

Table 2: Performance Benchmarks for GPU-Accelerated Particle Simulations

| Simulation Method | Particle Count | Hardware | Performance Metric | Reference |

|---|---|---|---|---|

| Parallelized APR-SPH [7] | Not specified | GPU | Improved computational efficiency vs. serial APR | [7] |

| JAX-MPM [19] | 2.7 million | Single GPU | 1000 steps: 22s (single), 98s (double precision) | [19] |

| GPU Monte Carlo Coagulation [20] | 80 million | NVIDIA GTX 285 | 50x speedup vs. single-threaded CPU | [20] |

| X-Pencil Approach [21] | Few particles/cell | Multiple GPU models | Significant speedup in specific cases | [21] |

Factors Influencing Performance

The performance of GPU-accelerated particle simulations is influenced by several key factors:

- Particles per Cell: The All-in-SM and X-Pencil approaches show significant speedups only with few particles per cell, as limited shared memory constrains how many cells can be loaded simultaneously [21].

- Memory Access Patterns: Coalesced memory access, where threads in a warp access contiguous memory locations, can improve memory bandwidth utilization by up to 10x compared to random access [21].

- Precision: Using single-precision floating-point arithmetic typically provides 1.5-4x speedup compared to double-precision, though with potential accuracy tradeoffs [19].

Experimental Protocols

Protocol 1: Baseline GPU Implementation

This protocol establishes a baseline implementation of particle interactions on GPU:

Initialization:

- Initialize particle positions, velocities, and other state variables in SoA format in GPU global memory.

- Precompute and allocate necessary data structures: cell indices, particle counts, prefix sums.

Spatial Sorting:

- Launch kernel with one thread per particle to compute cell indices.

- Use atomic operations to count particles per cell.

- Implement prefix sum using optimized parallel algorithm [21].

- Rearrange particles according to cell membership.

Force Calculation:

- Implement Par-Part-Loop kernel with one thread per particle.

- Each thread iterates over particles in the same cell and 26 neighboring cells (in 3D).

- Calculate interactions based on cutoff radius.

Time Integration:

- Update particle velocities and positions based on computed forces.

- Apply boundary conditions.

Performance Measurement:

- Use GPU timers to measure execution time of key kernels.

- Compare with single-threaded CPU implementation for speedup calculation.

Protocol 2: Shared Memory Optimization

This protocol enhances the baseline implementation with shared memory utilization:

Thread Block Configuration:

- Configure thread blocks to process individual cells or groups of cells.

- Set block size to match typical particle counts per cell (often 64-256 threads).

Shared Memory Loading:

- Load all particles from the current cell into shared memory.

- Iteratively load particles from neighbor cells into shared memory buffers.

- Utilize synchronization points after loading to ensure data consistency.

Interaction Computation:

- Compute interactions between particle pairs using shared memory data.

- Accumulate forces in thread-local registers to minimize write conflicts.

- Write final force values to global memory.

Optimization Tuning:

- Experiment with different sub-box sizes for the All-in-SM approach.

- Adjust shared memory allocation based on GPU capabilities.

- Utilize GPU occupancy calculators to optimize thread block configuration.

Protocol 3: Adaptive Particle Refinement

For multi-resolution simulations, implement parallelized Adaptive Particle Refinement (APR):

Refinement Criterion:

- Define criteria for particle splitting and merging based on local resolution requirements.

- Common criteria include particle density, stress gradients, or feature-based metrics.

Parallel Refinement Algorithm:

Load Balancing:

- Dynamically balance computational load across GPU cores as particle count changes.

- Implement work-stealing algorithms for highly non-uniform distributions.

Validation:

- Test with hydrostatic and hydrodynamic benchmark cases in 2D and 3D [7].

- Verify accuracy near resolution interfaces and conservation properties.

The Scientist's Toolkit

Essential Research Reagent Solutions

Table 3: Essential Tools and Libraries for GPU-Accelerated Particle Simulations

| Tool/Component | Function | Implementation Notes |

|---|---|---|

| CUDA/HIP [21] | GPU programming frameworks | Provide low-level control over GPU execution and memory management |

| JAX [19] | Differentiable programming framework | Enables automatic differentiation through simulation for inverse modeling |

| Structure-of-Arrays (SoA) [21] | Data layout format | Ensures coalesced memory access for improved bandwidth utilization |

| Parallel Prefix Sum [21] | Algorithm for particle sorting | Foundation for efficient spatial hashing and neighbor list construction |

| Adaptive Smoothing Length [7] | Multi-resolution support | Maintains accuracy at resolution interfaces in APR simulations |

| Spatial Hashing Grid [21] | Neighbor search acceleration | Reduces complexity from O(N²) to O(N) via cell-based decomposition |

Advanced Applications and Future Directions

Differentiable Particle Simulations

Emerging frameworks like JAX-MPM enable differentiable particle simulations, which support gradient-based optimization through the entire simulation pipeline [19]. This capability is particularly valuable for:

- Inverse Modeling: Estimating unknown parameters (e.g., material properties, initial conditions) from observational data.

- Data Assimilation: Combining simulation with partial observations to improve predictive accuracy.

- System Identification: Discovering constitutive model parameters from experimental measurements.

The differentiable approach naturally supports optimization without requiring manual derivation of adjoint equations or finite-difference approximations [19].

Multi-Scale and Multi-Physics Integration

Advanced applications increasingly require coupling particle methods with other physical models and scales:

- Fluid-Structure Interaction: Combining SPH or MPM with rigid body or finite element methods.

- Multi-Phase Flows: Simulating interactions between different materials with sharp interfaces.

- Machine Learning Integration: Hybrid approaches that combine traditional particle methods with neural networks for parameterization or closure modeling [19].

The computational workflow from particle interactions to GPU kernels represents a critical pathway for advancing high-fidelity simulations across scientific and engineering disciplines. By leveraging the massive parallelism of modern GPUs and implementing optimized computational strategies, researchers can achieve order-of-magnitude speedups compared to traditional CPU-based approaches. The protocols and methodologies outlined in this document provide a foundation for implementing efficient GPU-accelerated particle simulations, while the emerging capabilities in differentiable programming open new possibilities for inverse modeling and data assimilation. As GPU architectures continue to evolve and particle methods mature, these computational workflows will enable increasingly complex and predictive simulations of natural and engineered systems.

Lagrangian particle tracking, which involves calculating the trajectories of numerous individual particles based on external forces, represents a classic embarrassingly parallel problem. In such problems, identical operations are performed independently on a large number of data elements, making them ideally suited for massively parallel architectures like Graphics Processing Units (GPUs). The core computational task in particle tracking—applying the same algorithm to thousands or millions of particles with minimal interdependency—aligns perfectly with the Single Instruction, Multiple Data (SIMD) paradigm of modern GPUs. This alignment enables dramatic acceleration of scientific simulations across fields including computational fluid dynamics, atmospheric modeling, and single-molecule biophysics, transforming previously computationally prohibitive studies into feasible endeavors.

The parallelization potential arises from a key characteristic: each particle's path can be computed independently from others at each time step. As noted in atmospheric transport modeling, "the air parcel trajectories are computed independently of each other. This is an embarrassingly parallel computational problem because the air parcel trajectories are computed independently of each other" [4]. Similarly, in GPU-accelerated air pollution modeling, "each particle can be handled independently, thus being a perfect candidate for parallelization" [22]. This independence eliminates the need for extensive inter-process communication during the core computation, allowing GPU threads to process particles concurrently with maximal efficiency.

Quantitative Performance Benchmarks

GPU implementations consistently demonstrate substantial performance improvements across diverse particle tracking applications. The following table summarizes documented speedup factors:

Table 1: Documented Performance Improvements of GPU-Accelerated Particle Tracking

| Application Domain | GPU Implementation | Speedup Factor | Key Performance Notes |

|---|---|---|---|

| Lagrangian Carotid Strain Imaging | Tesla K40c GPU with CUDA | 168.75× | Runtime reduced from ~2.2 hours to 50 seconds for full cardiac cycle analysis [23] |

| Physics-Inspired Single-Particle Tracking | NVIDIA GTX 1060 GPU | 50× | Mid-range GPU vs. Intel i7-7700K CPU with no loss of inference quality [24] |

| FastGraph k-Nearest Neighbor Algorithm | NVIDIA A100 GPU | 20-40× | Acceleration for graph construction in low-dimensional spaces (2-10 dimensions) [25] |

| MPTRAC Atmospheric Transport Model | NVIDIA A100 GPU (JUWELS Booster) | 75-85% runtime reduction | 85% reduction specifically for advection kernel with 108 particles [4] |

The performance advantages extend beyond raw speed. In single-particle tracking for biophysics, GPU implementation enables more sophisticated analysis: "Unlike accuracy metrics, comparing computational efficiency across methods is more nuanced due to inherent differences in what each algorithm infers from the data. For instance, the physics-inspired frameworks estimate particle trajectories and quantify the uncertainty associated with each track—an important capability absent in tools like TrackMate" [24].

Experimental Protocols for GPU-Accelerated Particle Tracking

Protocol: GPU-Accelerated Lagrangian Particle Dispersion Simulation

This protocol outlines the implementation of atmospheric transport modeling using the MPTRAC framework, optimized for GPU architectures [4].

Primary Objective: Simulate the transport and dispersion of air parcels in the atmosphere using Lagrangian particle methods accelerated on GPU systems.

Computational Hardware Requirements:

- NVIDIA A100 GPU (or equivalent high-performance computing GPU)

- Multi-core CPU host system

- Adequate GPU memory for particle data and meteorological fields (~80GB recommended for large simulations)

Input Data Preparation:

- Meteorological data from reanalysis datasets (e.g., ECMWF ERA5) in NetCDF format

- Initialize particle properties (position, mass, chemical identity)

- Configure simulation parameters: time step, duration, output frequency

GPU Implementation Steps:

- Data Structure Optimization: Convert meteorological data from Structure of Arrays (SoA) to Array of Structures (AoS) layout

- Memory Alignment: Implement particle sorting by spatial coordinates to ensure coalesced memory access

- Kernel Configuration: Launch advection kernel with one thread per particle

- Wind Field Interpolation: Implement bilinear interpolation for efficient memory access patterns

- Numerical Integration: Solve kinematic equation of motion using Euler or Runge-Kutta methods

Performance Optimization Considerations:

- Minimize thread divergence by ensuring similar execution paths

- Utilize shared memory for frequently accessed meteorological data

- Implement asynchronous memory transfers to overlap computation and data movement

Validation and Output:

- Compare results with CPU implementation for consistency

- Output particle trajectories, concentrations, and diagnostic variables

- Analyze performance using profiling tools (Nsight Systems, Nsight Compute)

Protocol: GPU-Accelerated Single-Particle Tracking in Biophysics

This protocol details the implementation of physics-inspired single-particle tracking for analyzing the motion of individual molecules in biological systems [24].

Primary Objective: Track the motion of single particles (e.g., fluorescently labeled molecules) in microscopy image sequences with high accuracy under low signal-to-noise conditions.

Experimental Setup Requirements:

- Microscopy image sequences (e.g., 128×128 pixels × 10 frames)

- Mid-range GPU (e.g., NVIDIA GTX 1060 with 6GB memory)

- PyTorch or CUDA development environment

Algorithm Implementation:

- Likelihood Evaluation: Compute per-pixel probabilities based on physics-based emission model

- Posterior Inference: Implement Markov chain Monte Carlo (MCMC) sampling for trajectory estimation

- Parallelization Strategy: Exploit parallelism in both likelihood evaluation and posterior sampling

- Motion Modeling: Incorporate Brownian motion and directed motion models as priors

GPU-Specific Optimizations:

- Vectorize operations across particles and pixels

- Implement frequent, lightweight inter-thread communication

- Utilize GPU memory hierarchy (shared, texture memory) appropriately

Validation Metrics:

- Detection ratio: correct detections / total ground truth positions

- Localization accuracy under varying SNR conditions (SNR = 1-7)

- Comparison with conventional tools (e.g., TrackMate) for benchmarking

Workflow Visualization

The following diagram illustrates the typical computational workflow for GPU-accelerated particle tracking, highlighting the parallelized components:

Figure 1: GPU particle tracking workflow showing parallel kernel execution.

Table 2: Essential Computational Tools for GPU-Accelerated Particle Tracking

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| CUDA (Compute Unified Device Architecture) | Parallel Computing Platform | GPU programming model with C/C++ extensions | General-purpose GPU computing for particle tracking algorithms [23] [22] |

| MPTRAC (Massive-Parallel Trajectory Calculations) | Lagrangian Transport Model | Atmospheric transport simulations with hybrid MPI-OpenMP-OpenACC parallelization | Large-scale particle dispersion studies (e.g., volcanic emissions, pollutant transport) [4] |

| FastGraph | GPU-Optimized Library | k-nearest neighbor search for graph construction in low-dimensional spaces | Particle clustering and graph-based tracking in GNN workflows [25] |

| BNP-Track 2.0 | Physics-Inspired Tracking Framework | Bayesian non-parametric tracking with parallelized likelihood evaluation | Single-particle tracking in low SNR biological imaging [24] |

| FAISS | GPU Library | Efficient similarity search and clustering of dense vectors | Nearest-neighbor searches in particle tracking and graph construction [25] |

Optimization Strategies for Memory and Performance

Efficient GPU implementation requires careful attention to memory access patterns and data structures. The near-random memory access patterns inherent in Lagrangian particle models, where "air parcels exhibit near-random memory access patterns to the meteorological data due to the near-random distribution of air parcels in the atmosphere" [4], present a significant performance challenge. Two key optimization strategies have proven effective:

Data Structure Transformation: Converting meteorological data from Structure of Arrays (SoA) to Array of Structures (AoS) layout significantly improves memory coalescing. This optimization alone contributed substantially to the 85% runtime reduction observed in MPTRAC advection kernel performance [4].

Particle Sorting: Implementing spatial sorting of particles by their coordinates ensures better memory alignment and reduces access latency. This technique improves cache utilization by processing particles that access similar regions of the meteorological data concurrently.

Additional performance gains come from algorithmic adaptations specifically designed for GPU architectures. In carotid strain imaging, researchers developed "a new scheme for sub-sample displacement estimation referred to as a multi-level global peak finder (MLGPF)" when the original CPU optimization technique proved unsuitable for GPU implementation [23]. Similarly, in k-nearest neighbor algorithms for graph construction, "static compile-time allocation and graph creation" coupled with dimension-limited binning strategies (2-5 dimensions) enable significant speedups by allowing compiler optimization and register-based storage [25].

Particle tracking exemplifies the transformative potential of GPU acceleration for embarrassingly parallel problems across scientific domains. The consistent demonstration of order-of-magnitude speedups—from 50× in single-particle tracking to 168× in medical imaging—validates the fundamental architectural alignment between particle-based simulations and massively parallel processors. These performance gains enable previously infeasible studies, whether tracking billions of atmospheric parcels or resolving nanometer-scale molecular motions under biologically relevant conditions.

Future developments will likely focus on enhancing algorithmic sophistication while maintaining computational efficiency. As GPU architectures evolve, particle tracking methods will continue to benefit from increased parallelism, memory bandwidth, and specialized processing units. The integration of machine learning approaches with physics-based models presents a particularly promising direction, potentially further accelerating the most computationally demanding aspects of particle tracking while maintaining the physical rigor required for scientific applications.

Implementing Lagrangian Particle Models on GPU: A Methodological Guide for Biomedical Research

Structuring Particle Data for Optimal GPU Memory Access

Lagrangian particle models are indispensable tools in computational physics, enabling the simulation of atmospheric transport, drug dispersion in biological systems, and granular flows [4]. These models track millions to billions of individual particles, making them computationally expensive yet embarrassingly parallel—ideal candidates for GPU acceleration [4]. However, achieving optimal performance on GPU architectures requires careful attention to memory access patterns, as naive implementations can leave significant performance untapped.

The fundamental challenge lies in the conflict between GPU memory architecture and particle data access patterns. GPUs excel with coherent, sequential memory access but suffer severe performance penalties when threads access scattered memory locations [4] [26]. Lagrangian particle models inherently exhibit near-random memory access patterns to meteorological data due to the near-random distribution of air parcels in the atmosphere [4]. This article details proven methodologies for restructuring particle data to transform memory access from random to coherent, enabling researchers to fully leverage GPU computational power.

GPU Memory Architecture and Particle Methods

GPU Memory Hierarchy

GPU architecture comprises thousands of computational cores organized into Streaming Multiprocessors (SMs), each with various memory types: global memory, shared memory, and registers [27]. Global memory is the most abundant but also the slowest, with access latencies of hundreds of cycles. Shared memory offers substantially lower latency but is limited in capacity [28].

Key Memory Characteristics:

- Global Memory: High capacity, high latency, requires coalesced access

- Shared Memory: Low latency, limited capacity, manually managed

- L1/L2 Caches: Automatic caching, effectiveness depends on access patterns

For particle methods, the primary bottleneck typically occurs in global memory access, where uncoalesced patterns can reduce effective bandwidth by an order of magnitude [4].

The Particle Memory Access Problem

In Lagrangian simulations, each particle must access field data (wind velocity, temperature, etc.) at its specific location within the Eulerian grid [4]. When particles are randomly distributed in space, their memory accesses to these field variables become scattered throughout global memory. This random access pattern defeats GPU cache prefetching mechanisms and prevents memory coalescing, where multiple threads can combine their memory requests into a single transaction [4] [26].

The performance impact can be severe. Timeline analysis of the MPTRAC model revealed that the advection kernel spent approximately 85% of its runtime stalled on memory requests in the baseline implementation [4].

Core Optimization Strategies

Data Structure Transformations

Array of Structures (AoS) to Structure of Arrays (SoA)

Two primary data structure patterns govern how particle data is organized in memory:

Array of Structures (AoS):

Structure of Arrays (SoA):

The AoS pattern often results in poor memory access because when threads process different particles but access the same attribute (e.g., x-position), the memory accesses are strided, wasting memory bandwidth. SoA ensures that when all threads in a warp access the same attribute for different particles, the memory accesses are contiguous and can be coalesced [4].

Performance Impact: In MPTRAC, converting meteorological data from AoS to SoA provided significant performance improvements, particularly when combined with particle sorting [4].

Meteorological Data Restructuring

For the Eulerian field data that particles access during simulation, the memory layout significantly impacts performance. The MPTRAC team transformed their horizontal wind and vertical velocity fields from Structure of Arrays to Array of Structures format [4]. This restructuring ensured that when a particle accesses all wind components at a specific grid point, these values are stored contiguously in memory, improving spatial locality.

Particle Data Sorting and Memory Alignment

Spatial Sorting Algorithms

Particle sorting reorganizes particles in memory based on their spatial positions, ensuring that particles close in physical space are also contiguous in memory. This dramatically improves coherence when particles access field data [4] [26].

Morton Ordering (Z-order Curve): This space-filling curve maps multidimensional data to one dimension while preserving spatial locality [26]. Particles are first binned into grid cells, then ordered along the Z-curve within each cell:

Implementation Protocol:

- Determine Spatial Bounds: Find min/max coordinates of all particles

- Normalize Coordinates: Map particle positions to integer grid coordinates

- Compute Morton Codes: Calculate Z-order index for each particle

- Sort Particles: Reorder particle array based on Morton codes

- Periodic Re-sorting: Repeat sorting as particles move significantly

Performance Benefit: Applications of Morton ordering in CFD-DEM simulations showed performance improvements of up to 40× compared to unsorted implementations [26].

Cache-Conscious Bucketing

For extremely large simulations where full sorting is prohibitive, particles can be grouped into cache-sized buckets:

- Divide spatial domain into coarse grid

- Assign particles to buckets based on position

- Process all particles within a bucket before moving to the next

- This ensures that field data accessed by particles in the same bucket remains in cache

Table 1: Performance Impact of Data Structure Optimization in MPTRAC

| Optimization | Runtime Reduction (Physics) | Runtime Reduction (Advection) | Memory Bandwidth Utilization |

|---|---|---|---|

| Baseline (No optimization) | 0% | 0% | Low (Memory-bound) |

| AoS to SoA Conversion | 45% | 60% | Moderate |

| Particle Sorting | 60% | 75% | High |

| Combined Optimizations | 75% | 85% | Near Optimal |

Experimental Protocols and Validation

Performance Evaluation Methodology

Benchmark Configuration

To quantitatively evaluate optimization effectiveness, implement the following experimental protocol:

Test System Specification:

- GPU: NVIDIA A100 (40GB VRAM)

- CPU: 2× Intel Xeon Gold 6230

- Memory: 192GB DDR4

- Software: CUDA 11.4, NVIDIA Nsight Systems/Compute

Benchmark Case:

- Particle Count: 10⁸ particles

- Meteorological Data: ECMWF ERA5 reanalysis (0.25° resolution)

- Simulation Duration: 24 hours model time

- Physics Modules: Advection, turbulence, convection, sedimentation [4]

Profiling Metrics

Use NVIDIA Nsight tools to collect these critical metrics:

Table 2: Key Performance Metrics for GPU Particle Code Optimization

| Metric | Measurement Tool | Target Value | Significance |

|---|---|---|---|

| Memory Throughput | Nsight Compute | >80% of theoretical peak | Indicates efficient memory utilization |

| L1/TEX Cache Hit Rate | Nsight Compute | >70% | Measures locality of memory accesses |

| DRAM Bandwidth Utilization | Nsight Compute | >75% | Shows global memory efficiency |

| Wavefront Stalls | Nsight Compute | <20% of cycles | Indicates memory vs. compute balance |

| Kernel Runtime | Nsight Systems | Compare against baseline | Overall performance improvement |

Validation Experiments

Weak Scaling Test

Protocol:

- Fix particles per GPU thread (typically 1-8)

- Increase total particle count with increasing GPU resources

- Measure runtime and memory throughput

- Compare optimized vs. baseline implementations

Validation Criteria: Optimized implementation should maintain constant runtime per particle as problem size increases.

Strong Scaling Test

Protocol:

- Fix total particle count (10⁷ particles)

- Vary number of GPU SMs employed

- Measure speedup and efficiency

- Analyze parallel efficiency

Validation Criteria: Optimized implementation should show near-linear speedup with additional computational resources.

Implementation Workflow

Code Modernization Protocol

Step 1: Baseline Profiling

- Instrument code with internal timers for major kernels

- Run Nsight Systems for timeline analysis

- Use Nsight Compute for detailed kernel profiling

- Identify memory-bound kernels through stall analysis

Step 2: SoA Conversion

- Transform particle data structures from AoS to SoA

- Modify kernel access patterns accordingly

- Maintain separate arrays for position, velocity, and attributes

- Ensure alignment to 128-byte boundaries for coalescing

Step 3: Particle Sorting Implementation

- Implement spatial binning using uniform grid

- Add Morton code calculation and sorting

- Determine optimal sorting frequency (every N timesteps)

- Balance sorting overhead against access benefits

Step 4: Validation and Tuning

- Verify physical results match baseline implementation

- Tune sorting frequency based on particle mobility

- Optimize thread block sizes for sorted data

- Validate performance improvements across scaling tests

The Scientist's Toolkit

Table 3: Essential Tools and Libraries for GPU Particle Code Optimization

| Tool/Library | Function | Application Context |

|---|---|---|

| NVIDIA Nsight Systems | Performance profiling and timeline analysis | System-level optimization identification [4] |

| NVIDIA Nsight Compute | Detailed kernel profiling and memory analysis | Kernel-level optimization and memory access pattern analysis [4] [27] |

| CUDA Toolkit | GPU programming framework and libraries | Core development environment for GPU acceleration [27] [28] |

| Morton Code Libraries | Spatial indexing and reordering | Particle sorting implementation [26] |

| CUB Library | GPU parallel primitives (sorting, reduction) | Efficient sorting and parallel operations [26] |

| Thrust Library | GPU parallel algorithms library | Alternative for sorting and data management |

Structuring particle data for optimal GPU memory access is not merely an implementation detail but a fundamental requirement for high-performance Lagrangian particle simulations. The transformation from naive data structures to optimized memory layouts can reduce kernel runtimes by 85% as demonstrated in the MPTRAC model [4]. The combination of SoA data structures and spatial particle sorting transforms memory access patterns from random to coherent, enabling the GPU to utilize its full memory bandwidth potential.

These optimization techniques have proven effective across diverse application domains—from atmospheric transport modeling [4] to CFD-DEM simulations [26] and contaminant transport in groundwater [29]. As GPU architectures continue to evolve toward exascale computing, these memory-centric optimization strategies will become increasingly critical for researchers seeking to maximize the scientific return from their computational investments.

GPU Kernel Design for Efficient Particle-Particle Interaction Calculations

This application note details advanced GPU kernel design strategies for accelerating particle-particle interaction calculations, a computational cornerstone of Lagrangian particle models. In fields ranging from atmospheric science to drug development, researchers rely on particle methods like Smoothed Particle Hydrodynamics (SPH), Molecular Dynamics (MD), and Lagrangian transport modeling to simulate complex physical systems. These methods share a common computational challenge: efficiently calculating interactions between thousands to billions of particles. The massively parallel architecture of Graphics Processing Units (GPUs) offers transformative potential for these simulations, enabling finer resolutions and larger timescales. However, achieving optimal performance requires carefully designed kernel implementations that address memory bandwidth limitations and execution divergence. This note provides structured methodologies, performance data, and optimized protocols to guide researchers in developing high-performance particle simulation codes for scientific and industrial applications.

Core Computational Challenges in Particle Methods

Particle-based simulations calculate the evolution of a system by tracking discrete particles and their interactions. In molecular dynamics, this involves atoms and molecules interacting through force fields [30] [31], while Lagrangian atmospheric models simulate air parcel transport [4], and SPH methods model fluid dynamics [32] [7]. Despite different applications, they face shared computational bottlenecks on GPU architectures.

The primary challenge is the O(N²) computational complexity of direct particle-particle force calculations. While advanced algorithms like cell lists reduce this to O(N) or O(N log N), they introduce irregular memory access patterns [30]. GPUs, with their SIMD (Single Instruction, Multiple Data) architecture, are particularly sensitive to these patterns. Performance can be severely impacted by memory-bound kernels suffering from non-coalesced memory access and thread divergence where different threads within a warp execute different code paths [4].

Table 1: Computational Characteristics of Particle Methods

| Method | Primary Interaction Type | Computational Complexity | Key Bottleneck |

|---|---|---|---|

| Molecular Dynamics (MD) | Non-bonded forces (Lennard-Jones, Coulomb) | O(N²) with cutoffs, O(N) with cell lists [30] | Neighbor list construction, random memory access |