GPU-Accelerated Futures: Optimizing Ecological Networks for Biomedical Research

This article explores the transformative role of GPU parallel computing in optimizing ecological networks, a critical methodology for modeling complex biological systems in drug development.

GPU-Accelerated Futures: Optimizing Ecological Networks for Biomedical Research

Abstract

This article explores the transformative role of GPU parallel computing in optimizing ecological networks, a critical methodology for modeling complex biological systems in drug development. We first establish the foundational principles of ecological network analysis and the parallel architecture of GPUs. The core of the article details methodological advances, including the application of biomimetic intelligent algorithms and spatial operators for high-resolution, patch-level optimization. We then address key computational challenges such as energy efficiency, scalability, and portability, providing best practices for troubleshooting. Finally, we present rigorous validation through case studies and performance benchmarks, demonstrating speedups exceeding 6,000x in related environmental simulations. This synthesis provides researchers and scientists with a comprehensive guide to leveraging GPU power for accelerating the analysis of complex biological networks, from foundational theory to clinical application.

The Confluence of Ecological Networks and GPU Architecture

Ecological Networks (ENs) are conceptual and quantitative models representing the interactions between biological entities within an ecosystem. Composed of ecological patches and the connections between them, these networks serve as a crucial bridge between fragmented habitats, enhancing ecosystem resilience and adaptability by mitigating the negative effects of human disturbances [1]. The structure and function of ENs provide a framework for understanding complex ecological processes, from energy transfer between species to the maintenance of regional biodiversity. In the face of rapid urbanization, which causes significant degradation and fragmentation of natural landscapes, the optimization of ecological networks has become a pivotal strategy for restoring habitat continuity and guiding policymakers in aligning economic development with ecological conservation [1]. The accurate modeling of these networks, especially when scaled to city-level or regional levels, presents substantial computational challenges that benefit significantly from advanced parallel computing architectures like GPUs.

Structural and Functional Components of Ecological Networks

The architecture of an ecological network is defined by its core structural components: ecological patches (sources), corridors, stepping stones, and the resistance matrix that influences species movement.

- Ecological Patches (Sources): These are the core habitats of high ecological quality, often identified through assessments of habitat quality and ecological sensitivity [1] [2]. Techniques like Morphological Spatial Pattern Analysis (MSPA) are frequently employed to pinpoint these critical areas within a landscape [1]. In microbial systems, patches could represent distinct microhabitats with high species diversity.

- Eco-Corridors: Corridors are linear landscape elements that connect ecological patches, facilitating the movement of organisms, genes, and ecological processes. They are often extracted using models like the Minimum Cumulative Resistance (MCR) model [2]. The density and connectivity of these corridors are vital for the overall network functionality.

- Stepping Stones: These are smaller, intermediate habitat patches that act as relays or stopover points for species moving between larger core areas. An optimization strategy involves identifying potential ecological stepping stones by increasing the proportion of ecological land, thereby improving overall network connectivity [1].

- Resistance Surface: This matrix represents the landscape's permeability to species movement. Different land-use types (e.g., urban areas, roads) offer varying levels of resistance, which is quantified to calculate the least-cost paths for corridors.

Functionally, ENs are not merely structural maps but dynamic systems that support critical ecosystem services. These include biodiversity conservation, soil retention, and water yield [2]. The functional performance is often evaluated through connectivity metrics and analysis of trade-offs and synergies between different ecosystem services. For instance, soil retention often shows significant synergies with habitat quality and water yield, while habitat quality may exhibit trade-offs with ecological degradation [2].

Table 1: Key Components of an Ecological Network and Their Functions

| Component | Description | Primary Function |

|---|---|---|

| Ecological Patches | Core habitats of high ecological quality (e.g., forests, wetlands). | Serve as primary sources for biodiversity and ecological processes. |

| Eco-Corridors | Linear landscape elements connecting patches. | Facilitate species movement and genetic flow between isolated patches. |

| Stepping Stones | Smaller, intermediate habitat patches. | Act as relays to support long-distance dispersal and migration. |

| Resistance Surface | A grid representing landscape permeability. | Models the cost or difficulty of movement across different land types. |

The Computational Challenge: GPU-Accelerated Optimization

Optimizing ecological networks, particularly for large-scale regions like cities, is a computationally intensive, high-dimensional nonlinear problem. Traditional serial computing methods are often inefficient when processing complex optimization operations on vast amounts of geospatial data [1].

The Role of GPU Parallel Computing

GPU (Graphics Processing Unit) architecture is fundamentally designed for massive parallelism, executing thousands of parallel operations simultaneously across thousands of cores [3]. This makes GPUs vastly superior to traditional CPUs for complex spatial optimization tasks. The key advantage lies in their ability to handle fine-grained, patch-level land-use adjustments across an entire study area concurrently [1].

- Parallel Processing Capabilities: In ecological network optimization, GPU acceleration allows for the simultaneous calculation of connectivity metrics, resistance surfaces, and corridor pathways for millions of grid cells, dramatically accelerating model development and scenario simulation [3].

- Computational Hotspots: In many numerical models, specific modules, such as iterative solvers (e.g., a Jacobi solver), are identified as performance bottlenecks. Offloading these hotspots to a GPU can result in significant speedups—for instance, a 3.06 times efficiency improvement for the solver itself [4].

- High-Resolution Modeling: GPUs are particularly effective for higher-resolution calculations, leveraging their computational power to make city-level EN optimization feasible at high spatial resolution. One study demonstrated a GPU speedup ratio of 35.13 for large-scale experiments with 2,560,000 grid points [4].

Frameworks and Communication

The effective use of multi-GPU systems in large-scale simulations requires robust communication frameworks. The NVIDIA Collective Communication Library (NCCL) is a critical software layer that enables high-performance collective operations (e.g., ncclAllReduce, ncclBroadcast) across large-scale GPU clusters [5]. NCCL employs various communication protocols (Simple, LL, LL128) and topologies (ring, tree) to optimize data transfer efficiency, which is essential for synchronizing ecological data across multiple GPUs during parallel processing [5].

Table 2: GPU Performance Metrics Relevant to Ecological Network Optimization

| Performance Metric | Description | Relevance to Ecological Modeling |

|---|---|---|

| TFLOPS | Teraflops; measures floating-point performance (calculations per second). | Determines the speed for complex ecological simulations and spatial calculations. |

| Memory Bandwidth | The speed at which data can be read from or stored to memory. | Critical for processing large geospatial datasets (e.g., high-resolution land-use rasters). |

| Parallel Processing Cores | The number of independent processing units available for concurrent tasks. | Enables simultaneous computation of ecological metrics across millions of grid cells. |

Experimental Protocols for Network Construction and Optimization

Protocol 1: Constructing a Baseline Ecological Network

This protocol outlines the steps to delineate a baseline ecological network from land-use data.

Data Preparation and Land Use Simulation:

- Gather land-use/land-cover (LULC) data, a Digital Elevation Model (DEM), soil data, and meteorological data.

- For future scenarios, simulate land use using models like the CLUE-S (Conversion of Land Use and its Effects at Small region extent) model. This involves a non-spatial demand module and a spatial allocation module to project land-use changes under different scenarios (e.g., natural development vs. ecological protection) [2].

Ecosystem Service Assessment:

- Use the InVEST (Integrated Valuation of Ecosystem Services and Trade-offs) model to quantify key ecosystem services.

- Run the Habitat Quality, Sediment Retention, and Water Yield modules using the LULC data and other relevant inputs. This generates maps of ecosystem service provision [2].

Identifying Ecological Sources:

- Determine ecological patches (sources) by integrating results from the habitat quality assessment, ecological sensitivity evaluation, and Morphological Spatial Pattern Analysis (MSPA) [1].

Constructing Resistance Surface and Corridors:

- Create a comprehensive resistance surface based on land-use types, slope, and human disturbance intensity.

- Extract potential eco-corridors using the Minimum Cumulative Resistance (MCR) model to link ecological sources [2].

Analyzing Trade-offs and Synergies:

- Perform correlation analysis (e.g., Pearson correlation) on the quantified ecosystem services to identify significant trade-offs and synergies between them at the regional scale [2].

Protocol 2: GPU-Accelerated Biomimetic Optimization of the Network

This protocol details the use of biomimetic algorithms on GPU platforms to optimize the network's structure and function.

Model Setup and Objective Definition:

- Develop an EN-oriented optimization framework containing objective functions, land-use suitability rules, constraint conditions, and land-use transformation rules [1].

- Define optimization objectives, such as maximizing ecological connectivity (structural) and enhancing habitat quality/ecosystem services (functional).

Implementation of Biomimetic Intelligent Algorithm:

- Employ a biomimetic algorithm like the Modified Ant Colony Optimization (MACO). The model should incorporate both micro-functional optimization operators (for patch-level adjustments) and a macro-structural optimization operator (for global connectivity) [1].

- Integrate a mechanism, such as the Fuzzy C-Means (FCM) clustering algorithm, to identify potential locations for new ecological stepping stones globally [1].

GPU Parallelization:

- Port the computationally intensive parts of the optimization algorithm (e.g., the iterative solver, spatial operator calculations) to the GPU.

- Use a programming framework like CUDA Fortran or CUDA C++. Establish an efficient data transfer pattern between the CPU and GPU to ensure all geographic units participate in the optimization concurrently and synchronously [1] [4].

- For multi-GPU systems, leverage communication libraries like NCCL to manage data transfer and synchronization across devices, using protocols appropriate for the message size (e.g., LL128 for high bandwidth) [5].

Network Evaluation:

- Evaluate the optimized network using structural metrics such as network circuitry, edge/node ratio, and network connectivity [2]. Compare these with the pre-optimized baseline to quantify improvement.

Visualization of Workflows and Relationships

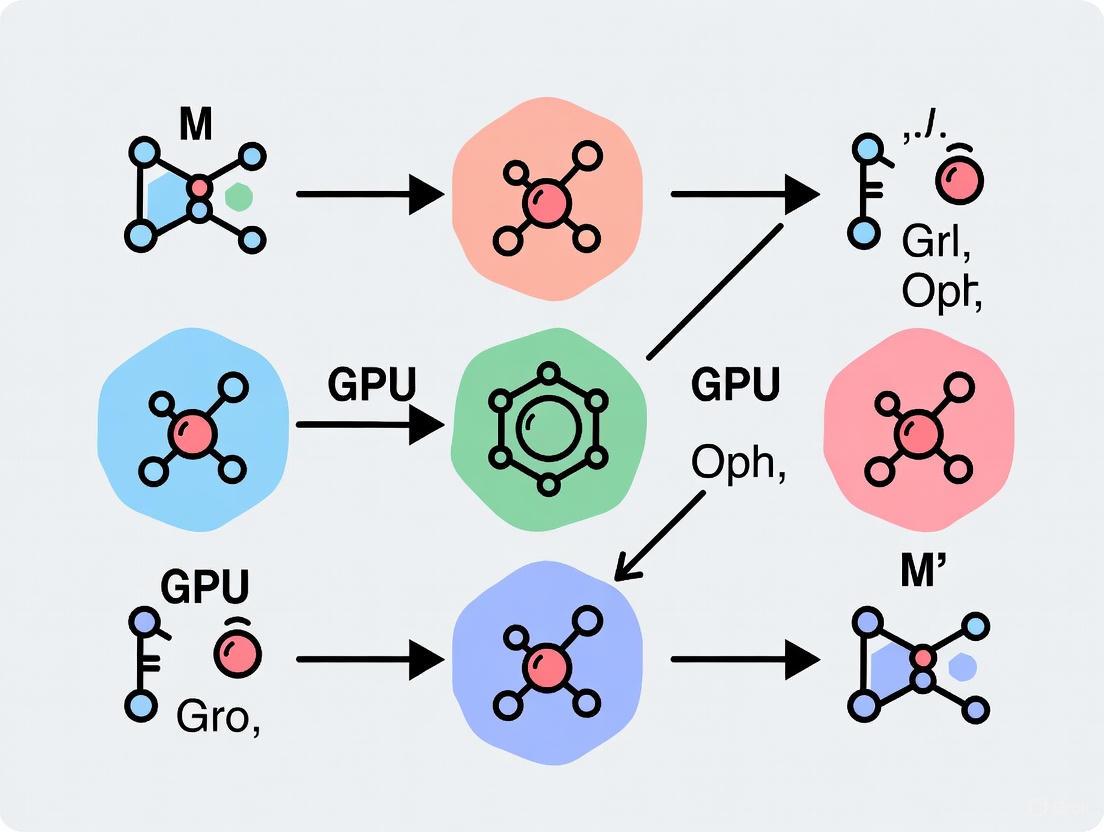

Ecological Network Construction and Optimization Workflow

The following diagram illustrates the integrated workflow for constructing and optimizing an ecological network using GPU-accelerated methods.

GPU Parallel Computing Architecture for Ecological Modeling

This diagram outlines the layered architecture of a GPU-accelerated system for ecological network processing.

Table 3: Key Research Reagents and Resources for Ecological Network Modeling

| Item / Resource | Type | Function / Application |

|---|---|---|

| InVEST Model | Software Suite | Quantifies and maps multiple ecosystem services (habitat quality, water yield, soil retention) for source identification. |

| CLUE-S Model | Software | Simulates land-use change scenarios under different developmental policies. |

| MCR Model | Algorithm | Calculates the least-cost paths for species movement, used to delineate ecological corridors. |

| Biomimetic Algorithms (PSO, ACO) | Algorithm | Solves high-dimensional nonlinear global optimization problems for land-use layout retrofits. |

| GPU (NVIDIA L4/Tesla) | Hardware | Provides massive parallel processing capabilities to accelerate computationally intensive spatial optimizations. |

| NCCL Library | Software Library | Enables high-performance multi-GPU communication for large-scale ecological simulations across compute nodes. |

| CUDA/OpenACC | Programming Framework | Provides the interface and directives for programming NVIDIA GPUs and parallelizing code. |

| Fuzzy C-Means Clustering | Algorithm | Identifies potential ecological stepping stones in a global structural optimization process. |

Graphics Processing Units (GPUs) have undergone a profound transformation from specialized hardware for rendering images to foundational pillars of general-purpose scientific computation. This evolution is driven by the GPU's inherent massively parallel architecture, which contains hundreds or thousands of processing cores capable of simultaneously executing thousands of threads [6] [7]. This parallel design offers a dramatic performance advantage over traditional Central Processing Units (CPUs) for computational problems that can be structured for parallel execution.

The field of ecological network optimization exemplifies this computational shift. The construction and optimization of Ecological Networks (ENs) is crucial for mitigating habitat fragmentation and achieving coordination between regional development and ecological protection [1] [8]. However, these processes involve computationally intensive tasks, such as simulating ecological processes across large spatial domains and iteratively optimizing complex network structures. The computational efficiency of traditional serial programs often fails to meet the demands of real-time, high-resolution simulation and optimization [7]. Consequently, GPU parallel computing has emerged as a critical enabling technology, allowing researchers to solve ecological network problems that were previously intractable within feasible timeframes [1].

This article details the application of GPU parallel computing to scientific research, with a specific focus on protocols and methodologies for ecological network optimization. We provide quantitative performance comparisons, detailed experimental protocols, and essential toolkits to equip researchers with the practical knowledge needed to leverage GPU acceleration in their computational workflows.

Quantitative Performance Benchmarks

The transition to GPU computing is justified by substantial performance gains across diverse scientific domains. The table below summarizes documented speedup factors achieved by GPU-accelerated applications compared to conventional CPU-based implementations.

Table 1: Performance Benchmarks of GPU-Accelerated Scientific Applications

| Application Field | Specific Model/Task | CPU Baseline | GPU Performance | Speedup Factor | Key Enabling Technology |

|---|---|---|---|---|---|

| Ocean Modeling [4] | SCHISM Model (Large-scale) | Single CPU node | Single GPU (NVIDIA A100) | 35.13x | CUDA Fortran |

| Network Analysis [9] | Betweenness Centrality | Single-threaded C++ | NVIDIA Tesla C2050 | 10-50x | CUDA C |

| Concrete Simulation [7] | Temperature Control Simulation | Serial Program | GPU with Async. Parallelism | 61.42x | CUDA Fortran |

| Ecological Network Optimization [1] | Land-use Layout Retrofit | Serial Biomimetic Algorithm | GPU Parallel (MACO Model) | City-level high-resolution optimization enabled | CUDA/CPU Heterogeneous Architecture |

These benchmarks demonstrate that GPU acceleration can yield order-of-magnitude improvements in computational efficiency. This performance is critical for ecological research, where high-resolution, dynamic simulations of landscape processes were previously limited by computational bottlenecks. GPU acceleration now enables city-level ecological network optimization at a patch-level resolution, facilitating more nuanced and scientifically robust planning decisions [1].

Experimental Protocols for GPU-Accelerated Ecological Network Optimization

The following section provides a detailed, actionable protocol for implementing a GPU-accelerated ecological network optimization framework, based on the methodology described by Tong et al. [1].

Protocol: Spatial-Operator Based MACO Model for EN Optimization

I. Objective To synergistically optimize the function and structure of Ecological Networks (ENs) at the patch level by coupling spatial operators and a modified ant colony optimization (MACO) algorithm, leveraging GPU parallel computing for high-resolution, city-level simulation.

II. Experimental Workflow and Materials

Table 2: Research Reagent Solutions for GPU-Accelerated EN Optimization

| Category | Item/Solution | Function/Description | Example Sources/Tools |

|---|---|---|---|

| Computational Hardware | GPU Accelerator | Provides massive parallel processing cores for fine-grained spatial computations. | NVIDIA Tesla, GeForce RTX series |

| High-Performance CPU | Manages serial tasks and coordinates GPU operations. | Multi-core processors (e.g., Intel Xeon) | |

| Software & Programming | CUDA Fortran / CUDA C | Primary programming platforms for developing GPU-accelerated code. | PGI Compiler, NVIDIA Nsight |

| Parallel Computing APIs | Enable management of parallel execution across different hardware architectures. | CUDA, OpenCL, OpenACC | |

| Data Inputs | Land Use/Land Cover (LULC) Data | Raster data used to identify ecological sources and calculate resistance surfaces. | National Land Survey Data, Remote Sensing Imagery |

| Ecological Sensitivity & Function Metrics | Data layers used to assess habitat quality and identify priority conservation areas. | Soil, Topographic, Meteorological data | |

| Core Algorithms | Morphological Spatial Pattern Analysis (MSPA) | Identifies core ecological patches and structural elements from LULC data. | GuidosToolbox |

| Circuit Theory | Models ecological flows and identifies corridors and pinch points. | Circuitscape | |

| Biomimetic Intelligent Algorithm (MACO) | Optimizes land-use layout for enhanced EN connectivity and function. | Custom implementation [1] |

III. Step-by-Step Procedure

EN Construction and Identification a. Data Preparation: Collect and pre-process multi-source data, including land use/land cover (LULC) maps, remote sensing imagery, and ecological sensitivity indicators. Rasterize all data to a consistent, high resolution (e.g., 40m) [1]. b. Ecological Source Identification: Determine ecological sources through a combined assessment of ecological function (e.g., habitat quality, water conservation) and sensitivity. Use Morphological Spatial Pattern Analysis (MSPA) to identify core landscape patterns from LULC data [8]. c. Resistance Surface Modeling: Construct a comprehensive resistance surface by weighting various natural and anthropogenic factors (e.g., topography, human footprint) [8]. d. Corridor and Node Extraction: Apply circuit theory to extract ecological corridors and identify key strategic nodes (barrier points, pinch points) based on cumulative resistance and current flow patterns [8].

GPU-Accelerated Optimization Setup a. Algorithm Selection and Modification: Implement a Modified Ant Colony Optimization (MACO) algorithm. The algorithm should incorporate two types of spatial operators: * Micro Functional Optimization Operators: Four operators for bottom-up, patch-level land use adjustment. * Macro Structural Optimization Operator: One operator for top-down identification of potential ecological stepping stones [1]. b. GPU Kernel Development: Port the computationally intensive sections of the MACO algorithm to the GPU using CUDA Fortran or CUDA C. This involves: * Designing the parallel execution configuration (number of thread blocks and threads per block). * Allocating and managing GPU device memory for large geospatial data arrays. * Implementing kernel functions that execute the spatial operators concurrently across thousands of threads [1] [7]. c. Integration of Emergence Mechanism: Develop a global ecological node emergence mechanism using an unsupervised Fuzzy C-Means (FCM) clustering algorithm. This mechanism, running on the GPU, identifies potential areas for ecological stepping stones based on optimization probability, enhancing global connectivity [1].

Execution and Performance Optimization a. Leverage Heterogeneous Architecture: Establish an efficient data transfer pattern between the CPU (host) and GPU (device) to minimize communication overhead. b. Employ Parallel Computing Techniques: Utilize GPU-based parallel computing techniques to ensure every geographic unit participates in the optimization calculation concurrently and synchronously. For further efficiency, consider using CUDA Streams to overlap data transfer and kernel execution [1] [7]. c. Model Execution: Run the spatial-operator based MACO model on the GPU. The model will dynamically simulate land-use changes and output optimized EN configurations.

Validation and Analysis a. Evaluation: Assess the optimized EN using predefined evaluation indicators for both functional orientation (e.g., ecosystem service value) and structural orientation (e.g., connectivity indexes, network complexity) [1]. b. Robustness Testing: Test the stability and resilience of the optimized EN against both random and targeted disturbances to evaluate its long-term effectiveness [8].

Diagram 1: Workflow for GPU-accelerated ecological network optimization, highlighting the core computational phase performed on the GPU.

Advanced Technical Implementation and Optimization

Achieving peak performance in GPU-accelerated applications requires careful attention to memory management, parallel execution patterns, and communication protocols.

Memory Hierarchy and Access Optimization

GPU memory architecture includes global, shared, and register memory. Efficient use of shared memory is critical for performance, as it offers much higher bandwidth and lower latency than global memory.

- Protocol: Matrix Transposition using Shared Memory [7]

- Objective: Accelerate a matrix transposition subroutine, a common operation in solvers.

- Procedure: a. Each thread block is assigned a tile (e.g., 32x32 elements) of the source matrix. b. All threads within the block collaboratively load the assigned tile from global memory into shared memory. c. Threads synchronize to ensure the entire tile is loaded. d. Threads write the data from shared memory to the destination matrix in the transposed layout.

- Optimization: Address bank conflicts in shared memory by padding the shared memory array or using appropriate access patterns. This optimization alone has been shown to achieve speedups of 437.5x for matrix transposition [7].

Asynchronous Execution and Concurrent Data Transfer

To further hide the latency of data transfers between the CPU and GPU, asynchronous execution patterns can be employed.

- Protocol: Asynchronous Parallelism with CUDA Streams [7]

- Objective: Overlap data transfer time with kernel execution time to improve overall computational efficiency.

- Procedure:

a. Create multiple CUDA streams.

b. Divide the input data into chunks.

c. For each chunk:

* Use

cudaMemcpyAsyncin a specific stream to copy the chunk from host to device. * Launch a processing kernel in the same stream to operate on the chunk already in device memory. * UsecudaMemcpyAsyncto copy the result back to the host. d. This pipeline allows data transfer for chunkn+1to occur concurrently with kernel execution for chunkn. - Result: This method can double the computing efficiency compared to a basic GPU-parallel implementation, leading to a 61.42x speedup over the original serial program for inner product matrix multiplication [7].

Multi-GPU and Large-Scale Cluster Communication

For problems exceeding the memory or computational capacity of a single GPU, scaling across multiple GPUs or nodes is necessary. The NVIDIA Collective Communication Library (NCCL) is essential for this task.

- Protocol: Multi-GPU Collective Communication with NCCL [5]

- Objective: Perform efficient collective operations (e.g., All-Reduce) across multiple GPUs in a cluster.

- Procedure:

a. Communicator Management: Initialize an NCCL communicator that defines the set of GPUs participating in the communication using

ncclCommInitAll(single process) orncclCommInitRank(multi-process). b. Algorithm Selection: NCCL internally selects efficient algorithms (e.g., ring or tree-based) and protocols (Simple, LL, LL128) based on message size and system topology to optimize bandwidth and latency [5]. c. Operation Launching: Call the collective operation (e.g.,ncclAllReduce) within the established communicator. UsencclGroupStartandncclGroupEndto aggregate operations and reduce launch overhead. d. Cleanup: Safely destroy the communicator withncclCommDestroyafter operations complete.

Diagram 2: GPU execution and memory model, illustrating the relationship between host code, kernel execution, and the critical memory hierarchy on the device.

The journey of GPU parallel computing from a graphics-specific tool to a cornerstone of general-purpose scientific computation has fundamentally expanded the scope of problems researchers can tackle. In the specific context of ecological network optimization, GPU acceleration enables high-resolution, dynamic, and quantitatively rigorous simulations that directly inform conservation planning and ecosystem management. The protocols and performance data outlined herein provide a roadmap for researchers to harness this computational power. As GPU hardware and programming models like CUDA continue to evolve, their role in solving complex scientific and environmental challenges will only become more pronounced.

Why GPUs? Understanding Massively Parallel Architecture for Ecological Optimization Problems

Quantitative Analysis of GPU Performance and Impact

The adoption of GPU computing for ecological optimization is driven by quantifiable performance gains and specific architectural advantages. The tables below summarize key performance metrics and environmental considerations.

Table 1: GPU Performance Acceleration in Scientific Modeling

| Application Domain | Specific Model/Task | CPU Baseline | GPU-Accelerated Performance | Achieved Speedup | Key Factor for Speedup |

|---|---|---|---|---|---|

| Geological Anisotropy Analysis [10] | Every-direction Variogram Analysis (EVA) | Serial CPU Implementation | GPU Implementation | ~42x | Embarrassingly parallel grid computation |

| Bird Migration Simulation [10] | Agent-Based Model (Bird Flight) | Serial CPU Implementation | GPU Implementation | ~1.5x | Parallel processing of independent agents |

| Topology Optimization [11] | 3D Linear Elastic Compliance Minimization | 48 CPU Cores (~3.17 hours) | Single GPU (~2 hours) | ~1.6x (faster) | Parallel processing of ~65.5 million elements |

| General Climate Modeling [12] | AI/ML Inference Benchmarks | Advanced CPU | NVIDIA A100 GPU | 237x | Massive parallelization of AI workloads |

Table 2: GPU Operational Characteristics and Environmental Impact

| Metric | Value / Range | Context & Explanation |

|---|---|---|

| Thermal Design Power (TDP) [13] | 15 - 2,400 Watts | Range for workstation GPUs (post-2020); outliers like Intel's Data Center GPU Max Subsystem reach 2,400W. |

| Idle Power Consumption [13] | ~20% of TDP | AI servers idle at roughly 20% of their rated power, highlighting base energy draw. |

| Embodied Carbon per GPU Card [13] | 141 - 585 kg CO₂e | Carbon dioxide equivalent emissions from manufacturing; varies by study and card model. NVIDIA H100: ~164 kg CO₂e [13]. |

| Projected Global Electricity Consumption [14] | Up to 8% by 2030 | Projected share for AI and high-performance computing (HPC), underscoring the scale of GPU-driven energy demand. |

Experimental Protocols for GPU-Accelerated Ecological Optimization

This section provides a detailed, actionable protocol for implementing a GPU-accelerated ecological network optimization, synthesing methodologies from recent research.

Protocol 1: Coupling Spatial Operators and Biomimetic Algorithms for EN Optimization

This protocol is adapted from a study optimizing the ecological network (EN) of Yichun City, which integrated microscopic functional optimization with macroscopic structural optimization [1].

2.1 Research Objectives and Preparation

- Objective: To quantitatively and dynamically optimize an ecological network's function and structure at the patch level, answering "Where to optimize, how to change, and how much to change?"

- Preparatory Steps:

- Define Study Area: The methodology is designed for city-level or urban agglomeration-scale areas [1].

- Data Collection: Gather high-resolution geospatial data. The foundational study used a 40m resolution land use map derived from the National Land Survey, alongside data on topography, climate, soil, and socio-economic factors [1].

- Initial EN Construction: Construct a baseline ecological network using standard methods:

2.2 Computational Hardware and Software Setup

- Hardware: A computing system with a modern GPU. The protocol leverages GPU/CPU heterogeneous architecture [1].

- Software & Frameworks:

- Programming Language: C, C++, or Python are suitable due to their support for GPU programming and large scientific communities [10].

- Parallel Computing API: OpenCL (for vendor-agnostic code) or NVIDIA's CUDA (for optimal performance on NVIDIA hardware) [1] [15].

- Algorithm Implementation: Implement the modified Ant Colony Optimization (ACO) algorithm or other biomimetic algorithms (e.g., Particle Swarm Optimization) as the core optimization engine [1].

2.3 Implementation of the Optimization Model The core of the protocol is a spatial-operator-based Multi-objective ACO (MACO) model.

- Define Objective Functions: Formulate two primary objectives for the algorithm to pursue simultaneously:

- Incorporate Land-Use Constraints: Define constraints within the model, such as total available land for conversion and land-use suitability, to ensure results are practical [1].

- Apply Spatial Optimization Operators: The MACO model uses several operators to guide the optimization:

- Four Micro Functional Operators: Bottom-up operators that handle fine-scale, patch-level land-use adjustments for functional enhancement [1].

- One Macro Structural Operator: A top-down operator that identifies and prioritizes the creation of new "ecological stepping stones" in strategically important global locations to improve overall network connectivity [1].

- Enable GPU Parallelization: Implement the model to run on the GPU. The key is to ensure that calculations for each geographic unit (e.g., grid cell) are processed concurrently and synchronously by the GPU's thousands of cores [1] [10]. This involves establishing an efficient data transfer pattern between the CPU and GPU.

2.4 Validation and Analysis

- Performance Evaluation: Compare the optimized EN against the baseline using pre-defined quantitative metrics for both function and structure [1].

- Computational Benchmarking: Record the time taken for the GPU-accelerated optimization and compare it against a serial CPU implementation if possible, to quantify the speedup [10].

- Spatial Output Analysis: Interpret the final optimized map, which provides a patch-level land-use adjustment plan, specifying the location, type, and extent of changes needed [1].

Protocol 2: GPU-Acceleration of Spatially-Explicit Ecological Models

This protocol provides a generalized framework for porting classic ecological models to GPUs, based on established practices in the field [15].

2.1 Problem Definition and Code Selection

- Objective: To significantly accelerate the simulation of a spatially-explicit ecological model (e.g., patterns in mussel beds, arid vegetation).

- Preparatory Steps:

- Select a Model: Choose a model where the state of each cell in a grid depends only on its neighbors, making it suitable for parallelization.

- Obtain Code: Start with an existing, validated serial code (e.g., in C, C++) for which the dynamics are well-understood [15].

2.2 Implementation for GPU Execution

- Grid Representation: Represent the spatial landscape as a 2D or 3D grid in the GPU's memory (VRAM). The high memory bandwidth of GPUs is critical for efficiently handling this data [12].

- Kernel Function Development: The core computational logic (e.g., calculating growth, mortality, interaction between species) is written as a "kernel." This kernel is a function that is executed simultaneously by thousands of GPU threads.

- Thread Per Cell Mapping: Design the kernel so that a single GPU thread is responsible for performing the model calculations for a single cell (or a small block of cells) in the spatial grid. This maps the natural parallelism of the problem to the GPU architecture [15].

- Neighborhood Handling: Program the kernel to efficiently access the state of neighboring cells, which is essential for modeling spatial feedback and diffusion processes.

2.3 Execution and Simulation

- Data Transfer: Copy the initial state of the model grid from the CPU's main memory to the GPU's VRAM.

- Kernel Launch: For each time step of the simulation, launch the kernel on the GPU. The GPU automatically executes the kernel across all threads in parallel.

- Result Retrieval: After the simulation is complete, or at intervals for saving snapshots, copy the final state of the grid from the GPU back to the CPU.

Visualizing Workflows and System Architecture

The following diagrams, generated using Graphviz, illustrate the logical relationships and workflows described in the protocols.

Diagram 1: High-Level Research Workflow

Diagram 2: GPU vs. CPU Architectural Paradigm

The Scientist's Toolkit: Essential Research Reagents and Solutions

This table details the key hardware, software, and data "reagents" required for conducting GPU-accelerated ecological optimization research.

Table 3: Essential Research Reagents for GPU-Accelerated Ecological Optimization

| Category | Item / Solution | Function / Purpose in Research |

|---|---|---|

| Hardware | High-Performance GPU (e.g., NVIDIA A100, H100) | Provides the core parallel processing power for accelerating complex simulations and optimization algorithms [12] [16]. |

| Hardware | Adequate System Memory (RAM) & VRAM | Ensures the system can hold and rapidly process large, high-resolution geospatial datasets and model states [12]. |

| Software & APIs | Parallel Computing API (CUDA, OpenCL) | Provides the programming interface to write code that executes on the GPU hardware [15] [10]. |

| Software & APIs | Scientific Computing Libraries (e.g., CuPy, RAPIDS) | Offers GPU-accelerated versions of common mathematical and data science operations, speeding up development [17]. |

| Software & APIs | Machine Learning Frameworks (e.g., TensorFlow, PyTorch with GPU support) | Used for developing and training AI-powered climate emulators or surrogate models within the optimization workflow [12] [17]. |

| Data & Models | High-Resolution Geospatial Data | Serves as the foundational input for constructing and validating ecological networks and spatial models [1]. |

| Data & Models | Validated Serial Model Code | Provides a correct, baseline implementation of the ecological model to be parallelized and used for verifying GPU-accelerated results [15]. |

The concurrent crises of biodiversity loss and protracted biomedical discovery timelines represent critical challenges for global sustainability and human health. While these fields appear distinct, they are increasingly united by a common dependency on advanced computational solutions. High-performance computing (HPC), particularly GPU-accelerated parallel processing, is emerging as a transformative tool for addressing the data-intensive modeling and simulation requirements in both ecology and biomedicine. In ecology, habitat fragmentation driven by human activities damages ecological connectivity and undermines ecosystem resilience [1]. In biomedicine, the complexity of biological systems demands computational power that can scale with the volume of omics and clinical data. This application note details how GPU technologies enable high-fidelity simulations of ecological networks and accelerate therapeutic discovery, providing detailed protocols for researchers in both domains.

Habitat Fragmentation: Ecological Crisis and Computational Solutions

The Problem and Its Impacts

Habitat fragmentation, characterized by the disassembly of continuous habitats into smaller, isolated patches, is a primary driver of global biodiversity decline. It involves two distinct components: habitat loss (overall reduction in habitat area) and fragmentation per se (the breaking apart of habitat independent of total area loss) [18]. The ecological consequences are severe:

- Impaired Ecosystem Function: Habitat loss weakens the positive relationship between plant richness and above-ground biomass in grasslands, directly reducing carbon storage and ecosystem productivity [18].

- Increased Extinction Risk: For terrestrial mammals, the degree of habitat fragmentation is a stronger predictor of extinction risk transition than life-history traits or general human pressure variables [19].

- Matrix Condition Mediation: The condition of the surrounding landscape matrix critically determines fragmentation impacts. High human pressure in the matrix (e.g., intensive agriculture, urbanization) exacerbates extinction risks, while lower-pressure matrices can mitigate them [19].

- Disease Emergence Risk: Fragmented landscapes with complex boundaries increase human exposure to wildlife microbes, potentially elevating the risk of novel infectious disease emergence [20].

Table 1: Ecological Impacts of Habitat Fragmentation Components

| Fragmentation Component | Primary Ecological Effect | Impact on Biodiversity |

|---|---|---|

| Habitat Loss | Decreases plant richness [18] | Reduces specialist species [18] |

| Fragmentation Per Se | Increases plant richness but decreases above-ground biomass [18] | Alters species composition |

| Matrix Degradation | Increases patch isolation effects [19] | Heightens extinction risk for specialists [19] |

| Edge Effects | Alters microclimate and species interactions | Promotes generalist species |

GPU-Accelerated Ecological Network Optimization

Traditional ecological network optimization approaches have struggled to simultaneously address functional and structural constraints. A groundbreaking methodology couples spatial operators with biomimetic intelligent algorithms (e.g., Modified Ant Colony Optimization, MACO) within a GPU-accelerated framework [1]. This approach synergizes bottom-up functional optimization with top-down structural optimization through:

- Micro-functional Optimization Operators: Four patch-level operators that adjust local land use patterns to enhance ecological function.

- Macro-structural Optimization Operator: A single landscape-level operator that identifies potential ecological stepping stones using unsupervised fuzzy C-means clustering to improve overall connectivity [1].

- GPU-CPU Heterogeneous Architecture: Parallel computing techniques that enable city-level ecological optimization at high spatial resolution by ensuring "every geographic unit can participate in the optimization calculation concurrently and synchronously" [1].

This computational framework dynamically answers critical planning questions: "Where to optimize, how to change, and how much to change?" providing quantitative guidance for conservation prioritization [1].

Biomedical Discovery: The High-Performance Computing Imperative

Computational Challenges in Biomedicine

Biomedical research faces exponentially growing computational demands across multiple domains:

- Multi-scale Biological Modeling: Integrating molecular, cellular, and organ-level simulations requires enormous computational resources [21].

- Large-Scale Omics Data Analysis: Genome sequencing and proteomic datasets present substantial processing bottlenecks [22].

- Precision Medicine Applications: Developing patient-specific "digital twins" necessitates rapid, high-fidelity simulations of physiological processes [21].

GPU-Accelerated Biomedical Breakthroughs

The Center for Precision Medicine and Data Sciences (CPMDS) at UC Davis exemplifies GPU-enabled biomedical innovation, using HPC resources to:

- Simulate large protein systems, including voltage-gated ion channels, to study modulator influences on structure and function [21].

- Analyze large-scale omics datasets to identify disease-related genes, proteins, and networks.

- Develop predictive AI models capturing genetic variant effects and simulate populations of synthetic cardiomyocytes to model electrophysiological variability and drug sensitivity [21].

As CPMDS researchers note, "Deep learning frameworks depend on GPU acceleration to train models on terabytes of synthetic cell simulations," enabling clinically relevant predictive modeling that would be "impractical without HPC" [21].

Experimental Protocols and Workflows

Protocol: GPU-Accelerated Ecological Network Optimization

Application: Optimizing ecological network function and structure in fragmented landscapes.

Required Resources: Land use/land cover data, species distribution data, remote sensing imagery, high-performance computing environment with GPU capabilities.

Methodology:

Ecological Source Identification:

- Conduct ecological function and sensitivity assessments using spatial analytics.

- Apply Morphological Spatial Pattern Analysis (MSPA) to identify core habitat patches.

- Calculate ecological connectivity indices using graph theory metrics.

Spatial-Operator Based MACO Model Setup:

- Implement four micro-functional optimization operators for patch-level land use adjustment.

- Configure one macro-structural optimization operator with fuzzy C-means clustering for stepping stone identification.

- Define objective functions balancing ecological connectivity and land use suitability.

GPU Parallelization:

- Establish data transfer patterns between CPU and GPU using CUDA or OpenACC frameworks.

- Implement parallel processing of geographic units using thousands of concurrent threads.

- Optimize memory bandwidth usage for large geospatial datasets.

Optimization Execution:

- Run biomimetic algorithm iterations with simultaneous functional and structural optimization.

- Validate results against conservation targets and spatial constraints.

- Generate spatial explicit land use adjustment recommendations with quantitative change amounts.

Computational Note: The GPU-CPU heterogeneous architecture enables city-level optimization at 40m resolution, processing over 24 million grids concurrently [1].

Diagram 1: Ecological Network Optimization Workflow

Protocol: GPU-Accelerated Biomedical Simulation

Application: Precision medicine and drug discovery through multi-scale biological modeling.

Required Resources: Omics datasets, protein structures, clinical data, HPC environment with NVIDIA GPUs, molecular dynamics software (e.g., GROMACS, NAMD), deep learning frameworks (e.g., TensorFlow, PyTorch).

Methodology:

System Preparation:

- For molecular simulations: obtain protein structures from Protein Data Bank, prepare topology files, solvate systems, and apply boundary conditions.

- For omics analysis: preprocess raw sequencing data, perform quality control, and generate normalized expression matrices.

- For digital twin development: integrate physiological parameters, medical imaging data, and clinical biomarkers.

GPU-Accelerated Computation:

- Molecular Dynamics: Offload force calculations and particle mesh Ewald electrostatics to GPU using CUDA-accelerated codes.

- Deep Learning: Utilize GPU tensor cores for training neural networks on terabyte-scale simulation datasets.

- Implement CUDA kernels for customized biological algorithms and analysis pipelines.

Multi-Scale Integration:

- Couple molecular simulation outputs with cellular-scale models through parameterization.

- Integrate cellular models into tissue and organ-level simulations.

- Validate multi-scale predictions against experimental and clinical observations.

Analysis and Prediction:

- Apply AI surrogate models to rapidly predict system behavior across parameter spaces.

- Identify critical disease mechanisms and therapeutic targets.

- Generate patient-specific treatment predictions and risk assessments.

Implementation Tip: Researchers new to HPC should "start with small test jobs before scaling to larger workflows, learn the basics of SLURM early, document pipelines carefully, and take advantage of available HPC training and support" [21].

Diagram 2: Biomedical Discovery Computational Pipeline

Performance Metrics and Accelerated Outcomes

Computational Performance Gains

GPU acceleration delivers transformative performance improvements across both ecological and biomedical domains:

Table 2: Computational Performance Improvements with GPU Acceleration

| Application Domain | Traditional CPU Performance | GPU-Accelerated Performance | Speedup Factor |

|---|---|---|---|

| Ecological Network Optimization | Serial processing of geographic units [1] | Concurrent synchronous processing of all units [1] | City-level optimization now feasible |

| SCHISM Ocean Model | CPU-based finite element computation [4] | GPU-accelerated Jacobi solver [4] | 35.13x (2.56M grid points) |

| Cardiac Electrophysiology | Workstation-scale simulation [21] | HPC-enabled digital twin modeling [21] | Months to days conversion |

| Computational Fluid Dynamics | CPU-based CFD simulation [23] | GPU-accelerated CFD with AI surrogates [23] | 10x faster |

| Bioinformatics Variant Calling | CPU-based processing [22] | NVIDIA Parabricks GPU acceleration [22] | >60% cost savings |

Scientific and Conservation Outcomes

Beyond computational metrics, GPU acceleration enables substantive scientific advances:

- Ecological: Identification of optimal locations for ecological stepping stones and quantitative guidance for patch-level land use adjustments, enhancing landscape connectivity [1].

- Biomedical: Prediction of arrhythmia risk from electrophysiology data, revelation of novel drug mechanisms through protein-modulator interaction simulations, and population-scale analysis of genetic variant effects [21].

- Cross-disciplinary: AI surrogate models achieving 3,600x speedup for methane leak detection (energy sector) and 10x acceleration in porosity field prediction (geology) demonstrate the transferability of these approaches [23].

Table 3: Key Research Reagent Solutions for Computational Ecology and Biomedicine

| Resource Category | Specific Tools & Platforms | Primary Function | Application Domain |

|---|---|---|---|

| Hardware Platforms | NVIDIA Blackwell GPUs [23] | Massively parallel computation | Both |

| Software Libraries | CUDA-X, OpenACC [23] [24] | GPU programming frameworks | Both |

| Ecological Modeling | Spatial-operator based MACO [1] | Ecological network optimization | Ecology |

| Biomedical Analysis | NVIDIA Parabricks [22] | Genomic variant calling | Biomedicine |

| Molecular Simulation | GROMACS, NAMD | Molecular dynamics | Biomedicine |

| Collaboration Platforms | NVIDIA Omniverse [23] | Real-time 3D collaboration | Both |

| Workflow Management | Nextflow [22] | Pipeline orchestration | Both |

| HPC Orchestration | SLURM [21] | Job scheduling and resource management | Both |

GPU parallel computing has evolved from a specialized technology to an essential enabling platform addressing critical challenges in both ecology and biomedicine. The methodologies detailed herein provide actionable pathways for researchers to implement these advanced computational approaches, transforming previously intractable problems into solvable challenges. As GPU technologies continue to advance with architectures like NVIDIA Blackwell offering 150x more compute power, the potential for cross-disciplinary innovation expands considerably [23]. By adopting these GPU-accelerated frameworks, researchers can dramatically accelerate the pace of discovery while addressing urgent sustainability and health challenges through high-fidelity simulation and predictive modeling.

Core Conceptual Framework and Quantitative Definitions

Ecological networks (ENs) are foundational to mitigating habitat degradation and fragmentation caused by rapid urbanization. They function as interconnected systems that enhance ecosystem resilience and maintain regional ecological processes by facilitating species movement [1]. The table below defines the core components and their quantitative descriptors.

Table 1: Core Components of an Ecological Network

| Component | Definition | Key Quantitative Metrics |

|---|---|---|

| Ecological Patches | Habitats that serve as sources for species dispersal and provide ecosystem services [25]. | Area, shape index, habitat quality index, importance value (dPC). |

| Ecological Corridors | Spatial pathways that connect ecological patches, facilitating the flow of species, energy, and material [25]. | Width, length, cost-weighted distance, current flow (from circuit theory). |

| Ecological Connectivity | The functional measure of how landscape structure facilitates or impedes movement between resource patches [1]. | Probability of Connectivity (PC), Integral Index of Connectivity (IIC), Equivalent Connectivity (EC). |

| Computational Stencils | In high-performance computing, data transfer patterns between CPU and GPU that enable geographic units to participate in optimization concurrently [1]. | Spatial resolution (e.g., 40m grid cells), number of parallel processing threads, memory bandwidth. |

Computational Analysis and Optimization Protocols

Protocol: Constructing a Landscape Ecological Network (LEN)

This protocol outlines the methodology for identifying and connecting ecological patches to form a foundational ecological network [25].

1. Identify Ecological Sources:

- Data Inputs: Land Use/Land Cover (LULC) map, Normalized Difference Vegetation Index (NDVI), Digital Elevation Model (DEM).

- Procedure: a. Conduct a habitat suitability assessment, integrating factors like vegetation cover, human disturbance, and topography. b. Apply Morphological Spatial Pattern Analysis (MSPA) to the suitability map to identify core habitat areas. c. Evaluate the connectivity importance of each core patch using a probability of connectivity (PC) index in software such as Conefor. d. Select patches with the highest importance values as final ecological sources.

2. Develop an Ecological Resistance Surface:

- Data Inputs: LULC map, road network, population density, topographic data.

- Procedure: a. Assign a resistance value (e.g., 1-100) to each landscape feature based on its perceived impedance to species movement (e.g., high for urban land, low for forests). b. Create a composite resistance surface using a GIS, integrating all weighted factors.

3. Delineate Ecological Corridors:

- Tools: Linkage Mapper, Circuitscape, or least-cost path algorithms in GIS.

- Procedure: a. Use a Minimum Cumulative Resistance (MCR) model to calculate the least-cost path between pairs of ecological sources. b. Alternatively, apply circuit theory to identify pinch points and movement pathways across the entire landscape.

Protocol: Optimizing an Ecological Network using a Biomimetic Intelligent Algorithm

This protocol details a advanced method for the synergistic, patch-level optimization of an EN's function and structure using a modified ant colony optimization (MACO) algorithm [1].

1. Define the Optimization Framework:

- Objective Functions:

- Function-oriented: Maximize total habitat quality/function of all ecological patches.

- Structure-oriented: Maximize the global connectivity (e.g., IIC) of the entire network.

- Constraint Conditions: Set constraints for total area of ecological land, maximum budget for land-use conversion, and spatial adjacency rules.

- Transformation Rules: Define land-use transition rules (e.g., how farmland can be converted to ecological land).

2. Implement Spatial Optimization Operators:

- Integrate the following operators into the MACO algorithm: a. Micro Functional Optimization Operators (Bottom-up): Adjust local land-use patterns to improve the ecological function of individual patches. b. Macro Structural Optimization Operator (Top-down): Identifies potential new ecological stepping stones globally to enhance overall network connectivity. c. Global Ecological Node Emergence: Uses the Fuzzy C-Means (FCM) clustering algorithm to identify locations with high potential to become new ecological sources.

3. Execute GPU-Accelerated Parallel Computation:

- Hardware: Utilize consumer-grade high-performance Graphics Processing Units (GPUs).

- Software: Implement using parallel computing frameworks like CUDA or OpenCL.

- Procedure: a. Establish a data transfer pattern (computational stencil) between the CPU and GPU. b. Distribute the optimization calculation for every geographic unit (e.g., grid cell) across thousands of parallel GPU threads. c. Run the MACO model synchronously and concurrently on all units to identify the optimal land-use retrofit plan.

Table 2: Key Metrics for Evaluating Network Optimization Performance

| Evaluation Orientation | Performance Metric | Description |

|---|---|---|

| Functional Optimization | Habitat Quality Index | Measures the capacity of a patch to support species, based on its intrinsic characteristics and surrounding threats. |

| Structural Optimization | Integral Index of Connectivity (IIC) | A graph-based metric that evaluates the overall connectivity of the network based on the topology of patches and links. |

| Computational Efficiency | Speed-up Ratio | The ratio of computation time on a CPU to the computation time on a GPU for the same optimization task. |

Visual Workflows and System Architecture

Ecological Network Construction & Optimization

GPU-Accelerated Optimization Stencil

Table 3: Essential Tools and Platforms for Ecological Network Research

| Tool/Resource | Category | Primary Function | Reference |

|---|---|---|---|

| Conefor | Stand-alone / R-based | Quantifies the importance of habitat patches for landscape connectivity using graph theory. | [25] [26] |

| Linkage Mapper | ArcGIS Toolbox | A GIS toolset to model ecological corridors using least-cost path analysis. | [26] |

| Circuitscape | Stand-alone / GIS | Applies circuit theory to model landscape connectivity and identify movement corridors. | [25] [26] |

| GECOT | Open-source Tool | Models conservation and restoration planning as a connectivity optimization problem under budget constraints. | [26] |

| CUDA/OpenCL | Parallel Computing Framework | APIs for enabling parallel computing on NVIDIA (CUDA) or cross-vendor (OpenCL) GPUs. | [1] [6] |

| Fuzzy C-Means (FCM) | Algorithm | Unsupervised clustering used to identify potential new ecological nodes in optimization models. | [1] |

| Ant Colony Optimization (ACO) | Biomimetic Algorithm | A metaheuristic optimization algorithm inspired by ant foraging behavior, used for land-use layout retrofits. | [1] |

Building and Deploying GPU-Optimized Ecological Models

Ecological network optimization research has entered a data-intensive era, where understanding complex spatiotemporal patterns requires immense computational power. Modern studies, such as those analyzing the spatiotemporal evolution of ecological networks in arid regions, involve processing decades of satellite imagery, climate data, and species distribution information. These analyses generate massive datasets with trillions of grid points and quadrillion-degree-of-freedom simulations that surpass the capabilities of traditional central processing unit (CPU)-based computing. The emergence of graphics processing unit (GPU) parallel computing frameworks has revolutionized this field by enabling researchers to execute complex ecological simulations orders of magnitude faster than previously possible.

GPU computing frameworks provide the essential toolkit for accelerating every stage of ecological modeling, from data preprocessing to simulation and optimization. The parallel architecture of GPUs, featuring thousands of computational cores, is uniquely suited to the embarrasingly parallel nature of many ecological algorithms, including spatial pattern analysis, landscape connectivity modeling, and circuit theory applications. This article provides detailed application notes and experimental protocols for leveraging three key GPU computing frameworks—CUDA, OpenACC, and PyTorch—within the context of ecological network optimization research. By integrating these tools, researchers can achieve unprecedented scale and precision in modeling complex ecological systems, ultimately supporting more effective conservation strategies and ecosystem management decisions.

The GPU Computing Toolkit for Ecological Research

Ecological researchers have multiple pathways for leveraging GPU acceleration, each with distinct advantages for different aspects of ecological modeling. The three primary frameworks discussed herein—CUDA, OpenACC, and PyTorch—represent complementary approaches that can be integrated into a comprehensive research workflow. CUDA provides low-level hardware control for optimizing performance-critical routines, OpenACC offers directive-based acceleration with minimal code modification, and PyTorch enables rapid prototyping of machine learning components for ecological prediction tasks. A comparative analysis of these frameworks reveals their respective positioning within the research toolkit.

Table 1: Comparison of GPU Computing Frameworks for Ecological Research

| Framework | Programming Approach | Primary Use Cases in Ecology | Learning Curve | Performance Optimization |

|---|---|---|---|---|

| NVIDIA CUDA | C++, Python, Fortran with GPU-specific extensions | High-performance computing for spatial analysis, circuit theory, fluid dynamics simulations | Steep | Maximum performance via direct hardware control |

| OpenACC | Directives added to C++, Fortran | Accelerating existing ecological simulation code with minimal rewriting | Moderate | Good performance with minimal code modification |

| PyTorch | Python with GPU tensor operations | Machine learning for species distribution modeling, habitat suitability prediction | Gentle | High performance for ML models, automatic differentiation |

The selection of an appropriate framework depends on multiple factors, including the researcher's computational background, existing codebase, and specific modeling requirements. CUDA delivers maximum performance but requires significant programming expertise and code restructuring. OpenACC provides a balanced approach for accelerating existing Fortran, C, or C++ ecological simulations with minimal code changes, making it ideal for legacy codebases. PyTorch offers the most accessible entry point for researchers already familiar with Python and is particularly valuable for integrating machine learning approaches with traditional ecological modeling.

Research Reagent Solutions: Essential Software Components

Implementing GPU-accelerated ecological modeling requires a suite of specialized software components that function as the "research reagents" of computational ecology. These foundational elements provide the mathematical operations, data structures, and programming interfaces necessary to efficiently execute ecological algorithms on GPU hardware. The core components listed below represent the essential toolkit for researchers embarking on GPU-accelerated ecological network optimization.

Table 2: Essential Software Components for GPU-Accelerated Ecological Research

| Component | Function | Ecological Application Example |

|---|---|---|

| CUDA Toolkit | Development environment for GPU-accelerated applications | Provides compiler, libraries and runtime for custom ecological algorithms |

| cuDF | GPU-accelerated DataFrame operations | Accelerating spatial data processing for habitat fragmentation analysis |

| OpenACC Compiler | Directive translation for automatic GPU parallelization | Accelerating legacy Fortran code for hydrological simulations |

| PyTorch with CUDA | GPU-accelerated tensor operations and neural networks | Species distribution modeling using deep learning |

| CUDA-Q | Hybrid quantum-classical computing platform | Future applications in modeling complex ecological networks |

| Thrust Library | CUDA C++ template library for parallel algorithms | Spatial sorting and searching operations in landscape genetics |

These software components interface with specialized hardware to form a complete research environment. The hardware foundation typically includes NVIDIA GPUs (from consumer-grade RTX cards to data center accelerators like the H100), AMD Instinct series GPUs for ROCm-based workflows, or cloud-based GPU instances. For ecological research teams with limited computational expertise, containerization platforms like Docker with the NVIDIA Container Toolkit provide pre-configured environments that eliminate complex dependency management, while services like Thunder Compute offer affordable testing for both CUDA and ROCm frameworks at approximately $0.66-$0.78 per hour for A100 GPUs.

Application Notes: Framework Integration in Ecological Workflows

CUDA for High-Performance Ecological Simulation

The CUDA platform provides the foundational layer for GPU-accelerated ecological modeling, delivering maximum performance for computationally intensive simulations. Ecological research applications that benefit most from CUDA implementation include high-resolution spatial pattern analysis, landscape connectivity modeling, and individual-based simulations with numerous autonomous agents. A recent study on ecological network optimization in Xinjiang from 1990-2020 exemplifies this approach, implementing Morphological Spatial Pattern Analysis (MSPA) and circuit theory with GPU acceleration to process 30 years of satellite-derived vegetation and drought indices [27]. This research, which analyzed changes over 26,438 km² of ecological resistance and 743 km of ecological corridors, required the massive parallelism that CUDA provides.

The CUDA ecosystem offers specialized libraries that directly benefit ecological modeling workflows. The cuDF library, for instance, provides GPU-accelerated DataFrame operations that can dramatically accelerate the preprocessing of ecological tabular data, with performance gains of 10-100× over CPU-based pandas operations for large datasets [28]. For matrix operations fundamental to spatial analysis, the cuBLAS library delivers optimized linear algebra routines, while the Thrust library offers parallel algorithms for sorting, reduction, and prefix-sums that accelerate landscape connectivity analyses. These components enable researchers to construct complete ecological modeling pipelines that remain entirely on the GPU, minimizing costly data transfers between system and GPU memory.

A representative example of CUDA acceleration in ecological modeling can be found in the implementation of circuit theory algorithms for modeling landscape connectivity. Circuit theory, which treats landscapes as electrical circuits where current flow represents movement probability, requires solving systems of linear equations for millions of grid cells. A CUDA implementation parallelizes this process across thousands of GPU cores, reducing computation time from days to hours and enabling higher-resolution analyses. Similarly, individual-based models that simulate the movement of thousands of organisms through heterogeneous landscapes achieve nearly linear scaling with CUDA implementation, allowing researchers to incorporate greater ecological complexity and run more simulations for robust statistical analysis.

OpenACC for Directive-Based Acceleration of Legacy Code

OpenACC provides a directive-based approach to GPU acceleration that is particularly valuable for ecological research groups with substantial investments in legacy Fortran, C, or C++ codebases. Unlike CUDA, which requires rewriting algorithms specifically for GPU execution, OpenACC allows researchers to incrementally accelerate existing code by adding simple compiler directives that identify parallel regions. This approach was demonstrated in the MHIT36 project, where OpenACC directives were used to create a GPU-tailored solver for interface-resolved simulations of multiphase turbulence, achieving excellent scaling efficiency across 1024 GPUs [29]. The directive-based model enables ecological researchers to accelerate complex simulations with minimal code restructuring, preserving decades of validated algorithmic development.

The OpenACC programming model uses #pragma acc directives to specify parallel regions, data movement between host and device memory, and loop-level parallelism. A typical ecological simulation accelerated with OpenACC might include directives to parallelize nested loops that update spatial grid cells, reduce error metrics across the computational domain, and manage the transfer of landscape resistance matrices between CPU and GPU memory. During an Open Hackathon organized by CINECA, this approach delivered a 26× speedup for the MHIT36 solver [29], demonstrating the significant performance gains possible with directive-based acceleration. Similar benefits have been realized in ecological applications, where OpenACC has been used to accelerate wind farm wake modeling in the FLORIS simulator, achieving 190× speedup for a real-world challenge problem [29].

The OpenACC ecosystem supports researchers through specialized training events, hackathons, and bootcamps designed to build proficiency with directive-based programming. These resources are particularly valuable for ecological research teams that may have extensive domain expertise but limited parallel programming experience. The OpenACC specification continues to evolve, with ongoing development focused on improving performance portability across diverse accelerator architectures, including AMD and Intel GPUs. This architectural diversity is increasingly important for ecological researchers seeking to maximize computational efficiency on different supercomputing platforms, such as Frontier (AMD GPUs), Aurora (Intel GPUs), and Polaris (NVIDIA GPUs) [29].

PyTorch for Machine Learning in Ecological Prediction

PyTorch has emerged as the leading framework for integrating machine learning approaches with ecological modeling, particularly for tasks involving pattern recognition, species distribution prediction, and habitat suitability assessment. The framework's intuitive interface, automatic differentiation capabilities, and extensive ecosystem of pre-trained models enable ecological researchers to rapidly develop and deploy deep learning solutions without low-level GPU programming. PyTorch's support for both CUDA and ROCm backends ensures broad hardware compatibility, from individual workstations with consumer GPUs to large-scale computing clusters with enterprise accelerators [30].

Ecological applications of PyTorch span multiple subdisciplines, from conservation biology to landscape ecology. Researchers developing foundation models for ecological prediction can leverage PyTorch's distributed training capabilities to scale across multiple GPUs, efficiently processing high-resolution satellite imagery, acoustic monitoring data, and climate projections. The framework's flexibility supports custom neural network architectures tailored to ecological data, including graph neural networks for modeling habitat networks, convolutional neural networks for analyzing remote sensing imagery, and recurrent neural networks for modeling temporal dynamics in ecological time series. These capabilities were highlighted at the recent PyTorch Conference 2025, which featured sessions on AI-powered scientific computing and the release of new libraries supporting reinforcement learning and agentic frameworks [31].

A particularly powerful application of PyTorch in ecological research involves combining traditional process-based models with data-driven machine learning approaches. This hybrid methodology leverages the mechanistic understanding embedded in process models while using neural networks to learn from complex observational data where explicit mechanistic relationships are unknown. For example, researchers might use PyTorch to develop a neural network that emulates the output of a computationally intensive ecological simulation, creating a "surrogate model" that executes thousands of times faster than the original. This approach enables previously infeasible sensitivity analyses, uncertainty quantification, and scenario exploration that would be computationally prohibitive with traditional simulation techniques alone.

Experimental Protocols for GPU-Accelerated Ecological Modeling

Protocol 1: Landscape Connectivity Analysis Using CUDA-Accelerated Circuit Theory

Objective: Implement a high-performance circuit theory analysis to model landscape connectivity for species movement, utilizing CUDA for computational acceleration.

Background: Circuit theory models landscapes as electrical circuits where resistance values represent landscape permeability, and current flow represents movement probability. This approach requires solving large systems of linear equations across high-resolution grids, making it computationally intensive and ideal for GPU acceleration.

Materials and Reagents:

- Software: NVIDIA CUDA Toolkit 12.6+, Python 3.9+ with NumPy and CuPy, GIS software (QGIS or ArcGIS)

- Hardware: NVIDIA GPU with 8GB+ VRAM (RTX 3000+ series or data center equivalent)

- Data Inputs: Land cover raster, resistance surface, species occurrence points

Procedure:

- Data Preparation (CPU):

- Convert land cover data to a resistance grid (ASCII or GeoTIFF format)

- Resample all spatial layers to consistent resolution and extent

- Normalize resistance values to a consistent scale (e.g., 1-100)

GPU Memory Allocation (CUDA):

- Allocate device memory for resistance matrix, voltage grid, and current density

- Transfer resistance matrix from host to device memory

Kernel Implementation (CUDA C++):

- Develop CUDA kernels for solving the linear system using iterative methods

- Implement parallel reduction for convergence checking

- Optimize memory access patterns for spatial coherence

Circuit Theory Execution:

- Set source and ground locations based on species occurrence data

- Iteratively solve for voltage across the landscape using Jacobi or Gauss-Seidel methods

- Calculate current densities from voltage gradients

Result Analysis:

- Transfer results from device to host memory

- Identify pinch points and barriers in the connectivity network

- Calculate cumulative current flow for corridor prioritization

Validation: Compare results with CPU-based implementations using synthetic landscapes with known connectivity patterns. Verify conservation of current flow at landscape junctions.

Protocol 2: Ecological Network Optimization with OpenACC Directives

Objective: Accelerate existing ecological network simulation code using OpenACC directives to enable larger-scale and higher-resolution analyses without extensive code rewriting.

Background: Ecological network simulations often involve stencil operations across regular grids, which are highly amenable to directive-based parallelization. This protocol demonstrates how to accelerate a typical vegetation dynamics model using OpenACC.

Materials and Reagents:

- Software: OpenACC-compatible compiler (NVHPC, GCC), Ecological simulation code (Fortran/C/C++)

- Hardware: GPU-equipped system (NVIDIA, AMD, or Intel GPU)

- Data Inputs: Initial vegetation cover, environmental drivers, species parameters

Procedure:

- Code Analysis:

- Profile existing code to identify computational hotspots

- Identify nested loops over spatial domains for parallelization

- Locate reduction operations for error calculation

Directive Insertion:

- Add

#pragma acc datadirectives to manage data movement - Annotate parallel loops with

#pragma acc kernelsor#pragma acc parallel loop - Specify reduction operations for error metrics

- Add

Memory Management:

- Use

copyclause for input/output arrays - Use

createclause for temporary arrays - Minimize host-device transfers through data regions

- Use

Performance Optimization:

- Experiment with loop collapsing for nested loops

- Implement tiling for memory-intensive operations

- Utilize unified memory for systems with CPU-GPU integration

Validation and Verification:

- Compare results with original CPU implementation

- Verify conservation laws (mass, energy) are maintained

- Check numerical precision matches original implementation

Example Code Snippet:

Troubleshooting: If performance is suboptimal, use profiling tools (NVProf, Nsight Systems) to identify memory bottlenecks. Ensure data regions persist across iterations to minimize transfer overhead.

Protocol 3: Species Distribution Modeling with PyTorch Neural Networks

Objective: Develop a GPU-accelerated deep learning model for predicting species distributions using environmental variables and occurrence records.

Background: Species distribution models correlate environmental conditions with species occurrence to predict habitat suitability across landscapes. Deep learning approaches can capture complex nonlinear relationships but require significant computational resources, making GPU acceleration essential.

Materials and Reagents:

- Software: PyTorch with CUDA or ROCm support, Scikit-learn, Pandas, GeoPandas

- Hardware: GPU with 8GB+ VRAM

- Data Inputs: Species occurrence records, environmental raster layers (climate, topography, land cover)

Procedure:

- Data Preparation:

- Extract environmental values at occurrence and background points

- Normalize environmental variables to zero mean and unit variance

- Split data into training, validation, and test sets (70-15-15)

Model Architecture Design:

- Define neural network with fully connected layers

- Implement appropriate activation functions (ReLU, Sigmoid)

- Add dropout layers for regularization

GPU Acceleration Setup:

- Initialize model with

device = torch.device("cuda" if torch.cuda.is_available() else "cpu") - Transfer model and tensors to GPU using

.to(device) - Utilize DataLoader with pin_memory=True for faster data transfer

- Initialize model with

Model Training:

- Implement custom loss function accounting for presence-background data

- Configure optimizer (Adam, learning rate 0.001)

- Execute training loop with batch processing

Prediction and Mapping:

- Apply trained model to generate habitat suitability maps

- Transfer predictions back to CPU for GIS export

- Calculate evaluation metrics (AUC, TSS, accuracy)

Validation: Use spatial cross-validation to assess model transferability. Compare with traditional methods (MaxEnt, GLM) using the same evaluation framework.

Performance Benchmarking and Sustainability Considerations

Computational Performance Metrics

Evaluating the performance of GPU-accelerated ecological models requires careful consideration of both computational efficiency and ecological relevance. Benchmarking should include traditional computational metrics alongside ecological specific measures that reflect the scientific value of acceleration. The table below summarizes key performance indicators for GPU-accelerated ecological modeling applications.

Table 3: Performance Metrics for GPU-Accelerated Ecological Modeling

| Metric Category | Specific Metric | Target Performance | Ecological Relevance |

|---|---|---|---|

| Computational Speed | Simulations per day | 10-100× CPU performance | Enables parameter sweeps and uncertainty analysis |

| Memory Efficiency | GPU memory utilization | >80% utilization | Supports higher-resolution spatial domains |