GPU-Accelerated Ecological Solvers: A Performance Comparison for Biomedical Research and Drug Discovery

This article provides a comprehensive performance comparison of GPU-accelerated ecological solvers, tailored for researchers, scientists, and professionals in drug development.

GPU-Accelerated Ecological Solvers: A Performance Comparison for Biomedical Research and Drug Discovery

Abstract

This article provides a comprehensive performance comparison of GPU-accelerated ecological solvers, tailored for researchers, scientists, and professionals in drug development. It explores the foundational shift from CPU to GPU computing, details methodological applications in key biomedical areas like molecular dynamics and docking, presents crucial optimization strategies for maximizing hardware utilization, and offers a rigorous validation of solver performance across different hardware and software platforms. The goal is to equip the target audience with the knowledge to select, implement, and optimize GPU solvers to drastically reduce simulation times and accelerate discovery.

The GPU Computing Paradigm Shift: From Traditional CPUs to Accelerated Ecological Simulation

In the demanding fields of scientific research and industrial development, complex simulations are indispensable for discovery and innovation. However, the computational cost of these high-fidelity models can be prohibitive. Graphics Processing Unit (GPU) computing has emerged as a transformative force, leveraging massive parallel processing to accelerate simulations across diverse domains from climate science to drug discovery. By performing thousands of calculations simultaneously, GPUs are breaking down computational barriers, enabling faster iteration, higher resolution models, and the exploration of problems previously considered intractable. This guide provides an objective comparison of GPU-accelerated performance against traditional CPU-based methods, detailing the experimental protocols and hardware that are reshaping the landscape of computational science.

The Core Advantage: Parallel Architecture

At the heart of GPU computing's power is its parallel architecture. Unlike a Central Processing Unit (CPU) with a few cores optimized for sequential serial processing, a GPU is comprised of thousands of smaller, efficient cores designed to handle multiple tasks simultaneously [1]. This architecture is ideal for computational simulations, which often involve applying the same mathematical operations (e.g., solving differential equations for fluid flow or calculating interaction energies between molecules) across a massive grid of points or over millions of time steps.

- CPU (Serial Processing): Tasks are executed one after the other, like a single cashier serving a long line of customers.

- GPU (Parallel Processing): Tasks are executed simultaneously, like dozens of cashiers serving all customers in the line at the same time.

This fundamental difference explains the dramatic speedups observed when suitably parallelizable workloads are offloaded to GPUs.

Performance Comparison: GPU vs. CPU Across Scientific Domains

The performance benefits of GPU computing are not merely theoretical; they are consistently demonstrated in real-world scientific applications. The following table summarizes quantitative findings from recent studies and implementations across various fields.

Table 1: GPU vs. CPU Performance in Scientific Simulations

| Application Domain | Specific Model / Solver | Key Performance Metric (GPU vs. CPU) | Reported Speedup / Performance Improvement | Hardware Configuration (GPU / CPU) |

|---|---|---|---|---|

| Groundwater Flow [2] | 3D Richards Equation (rich3d) | Simulation runtime | Significant speedup in all test cases; scaling dependent on numerical scheme and soil parameters. | NVIDIA A100 GPU / Multi-threaded CPU |

| Computational Fluid Dynamics [3] | CaLES (Large-Eddy Simulation) | Computational speed equivalence | 1 GPU equivalent to approximately 15 CPU nodes (performance varies with model). | NVIDIA A100 GPU / Intel Xeon Platinum 8358 (32 cores) |

| Air Quality Modeling [4] | CMAQ-CUDA (Gas-Phase Chemistry) | Time per chemistry integration step | Required only 35% - 51% of the time compared to CPU (CMAQu5.4), depending on chemical mechanism. | GPU implementation / Baseline Fortran CPU (CMAQu5.4) |

| Neuroscience [5] | NeoCortical Simulator 6 (NCS6) | Simulation scale and speed | Capable of simulating 1 million cells and 100 million synapses in quasi-real time. | Cluster of 8 machines, each with 2 GPUs |

| Drug Discovery [6] | AI/ML Inference Benchmarks | Computational throughput | NVIDIA A100 GPU outperformed a leading CPU by 237 times in AI inference benchmarks. | NVIDIA A100 GPU / "Most advanced CPU" |

Detailed Experimental Protocols

To critically evaluate the claims in Table 1, it is essential to understand the methodologies behind these benchmarks.

Experiment 1: Accelerating 3D Variably-Saturated Flow Simulation

This study systematically compared the performance of different numerical schemes for solving the 3D Richardson–Richards equation on GPUs [2].

- Objective: To understand the scaling performance and sensitivity of numerical schemes for GPU-based hydrological models.

- Methodology:

- An experimental code ("rich3d") was developed using the Kokkos portable framework to enable seamless execution on both CPU and GPU architectures.

- Four numerical schemes (two iterative and two non-iterative) were tested on three benchmark infiltration problems with known reference solutions.

- The simulation time and speedup (ratio of serial to parallel runtime) were analyzed, factoring in the influence of the numerical scheme, soil constitutive model, and problem size.

- Key Findings: The study confirmed that using a GPU significantly enhances computational speed across all test cases compared to multi-threaded CPU. It also revealed that the performance scaling of different solver components on the GPU is not uniform, indicating that a poorly-scaled component can bottleneck the entire simulation [2].

Experiment 2: Large-Eddy Simulation of Turbulent Flows

The CaLES solver was developed to demonstrate the efficiency of GPU acceleration for incompressible wall-bounded turbulent flows [3].

- Objective: To assess the computational performance and scalability of a GPU-accelerated finite-difference solver for large-eddy simulations.

- Methodology:

- The solver uses a fast direct method based on eigenfunction expansions to solve the discretized Poisson/Helmholtz equations.

- GPU acceleration was implemented using OpenACC directives.

- Performance was assessed on a high-performance cluster (Leonardo at CINECA) with nodes containing one Intel Xeon Platinum CPU and four NVIDIA A100 GPUs.

- Scaling tests and predictive capability assessments were conducted for cases like turbulent channel and duct flow.

- Key Findings: The solver demonstrated that a single NVIDIA A100 GPU could provide computational power equivalent to approximately 15 nodes of 32-core CPUs, and it showed efficient scaling across multiple GPUs [3].

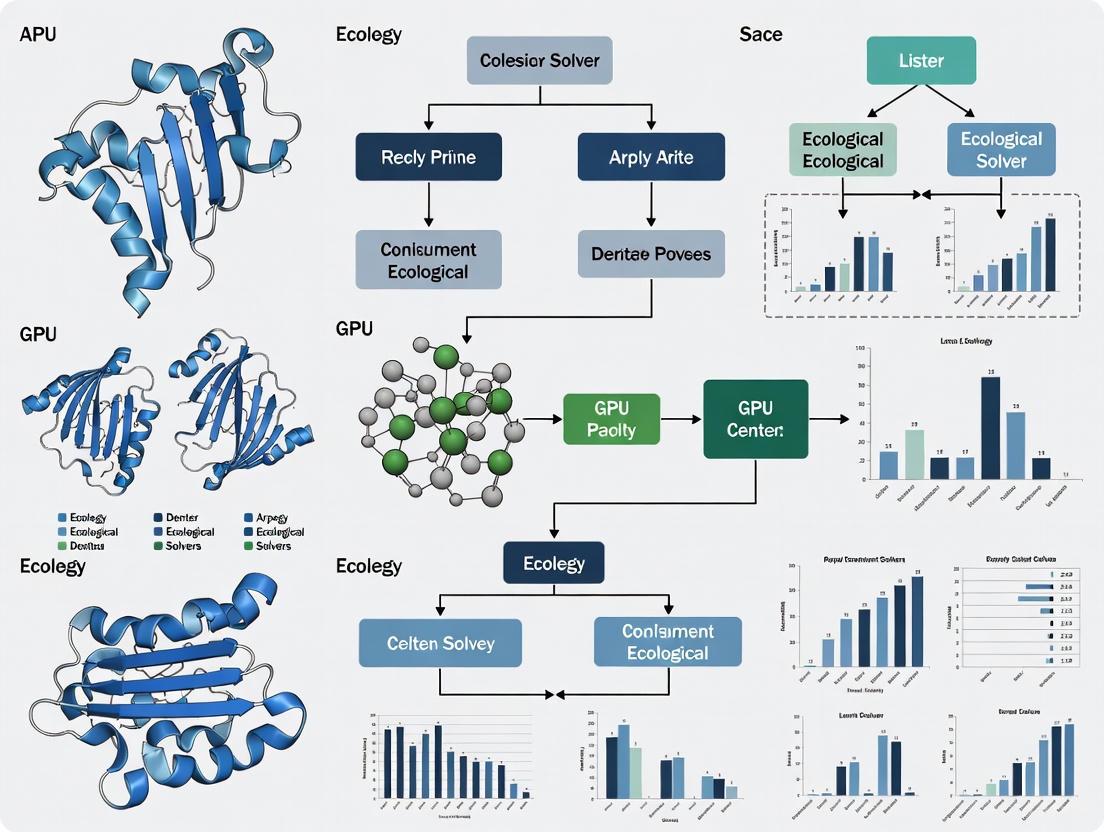

Visualizing the GPU Acceleration Workflow

The acceleration of complex simulations typically follows a structured computational pipeline, which can be generically represented for many of the domains discussed.

Diagram: Generic GPU-Accelerated Simulation Workflow

This diagram illustrates the typical workflow where the CPU manages serial tasks and input/output, while computationally intensive parallel kernels are executed on the GPU, with data transferred between them as needed.

Beyond hardware, a suite of software and programming frameworks is critical for leveraging GPU power in research.

| Resource Name | Type | Primary Function in Research |

|---|---|---|

| CUDA (Compute Unified Device Architecture) [5] [4] | Programming Model & Parallel Computing Platform | Provides an instruction set and API for developers to write programs that execute directly on NVIDIA GPUs. It is the foundation for many scientific computing applications. |

| OpenCL (Open Computing Language) [1] | Framework for Parallel Programming | An open, royalty-free standard for cross-platform parallel programming across CPUs, GPUs, and other processors, offering hardware flexibility. |

| OpenACC | Directive-Based Parallel Programming Model | Simplifies GPU programming by allowing developers to add compiler directives to standard C++ or Fortran code to identify areas for parallel acceleration. |

| Kokkos [2] | Programming Model & C++ Library | A performance-portable programming model for writing C++ applications that run efficiently on different high-performance computing platforms (e.g., different GPUs and CPUs) from a single code base. |

| NVIDIA A100 / H100 Tensor Core GPUs [3] [6] | Hardware | High-performance computing GPUs featuring specialized Tensor Cores that dramatically accelerate AI training and inference, as well as HPC simulations. |

| NVIDIA Clara for Drug Discovery [7] | Domain-Specific SDK & Platform | A GPU-accelerated computational platform that combines AI, simulation, and data analytics for cross-disciplinary workflows in drug design and development. |

| MODULUS [6] | Neural Network Framework | A framework for developing physics-informed machine learning models, crucial for creating AI-based surrogates of complex physical systems in climate and engineering. |

The evidence from across the computational science landscape is clear: GPU computing is a foundational technology for accelerating complex simulations. The quantitative data shows that GPU acceleration is not a matter of incremental improvement but can deliver order-of-magnitude speedups, making previously infeasible simulations routine. This performance leap, driven by massive parallel processing, is enabling higher-resolution models in climate science, faster virtual screening in drug discovery, and more detailed simulations in fluid dynamics and neuroscience. As both hardware and the software ecosystem continue to evolve, the role of GPU computing as a critical tool for researchers, scientists, and developers will only become more pronounced, pushing the boundaries of what is possible in scientific exploration.

Selecting the right GPU is crucial for accelerating scientific research. For ecological solvers and other simulation-heavy fields, performance hinges on three key metrics: TFLOPS (theoretical compute power), memory bandwidth (data transfer speed), and VRAM capacity (data set size handling). This guide compares current GPUs through the lens of these metrics to help researchers make informed decisions.

The "best" GPU depends on the specific computational workload. The following table summarizes the primary function and importance of each core metric for scientific computing.

Table 1: Core GPU Metrics for Scientific Computing

| Metric | What It Measures | Why It Matters for Scientific Computing |

|---|---|---|

| TFLOPS (FP64) | Trillions of Floating-Point Operations Per Second, specifically for 64-bit double-precision calculations [8] | Critical for accuracy in simulations (e.g., climate modeling, molecular dynamics) requiring high numerical precision [9] [10] |

| Memory Bandwidth | The speed at which data can be read from or stored into the GPU's VRAM (GB/s) [11] | Prevents bottlenecks in data-intensive tasks; high bandwidth keeps thousands of compute cores fed with data [11] [9] |

| VRAM Capacity | The amount of dedicated memory on the GPU (GB) [9] [12] | Determines the size of models and datasets that can be processed; insufficient VRAM will halt computation [9] [12] |

Quantitative GPU Comparison for Scientific Workloads

The GPU market is segmented into consumer/workstation cards and specialized data center cards, with significant differences in performance, particularly for double-precision (FP64) calculations.

Table 2: Key Metric Comparison of Select GPUs

| GPU Model | FP64 (TFLOPS) | FP16 (TFLOPS) | Memory Bandwidth | VRAM |

|---|---|---|---|---|

| NVIDIA H200 | 34.00 [8] | 1,979 [8] | 4.8 TB/s [12] | 141 GB HBM3e [12] |

| NVIDIA H100 | 34.00 [8] | 1,979 [8] | 3.35 TB/s [12] | 80 GB HBM3 [12] |

| AMD MI300X | 88.00 [8] | 1,000+ [8] | 5.3 TB/s [12] | 192 GB HBM3 [12] |

| AMD MI250X | 47.90 [8] | 383 [8] | Not Specified | 128 GB HBM2e [8] |

| NVIDIA A100 | 9.70 [8] | 312 [8] | 2,039 GB/s [13] | 80 GB HBM2e [13] |

| NVIDIA RTX 6000 Ada | 1.4 [14] | 91.1 [14] | 960 GB/s [14] | 48 GB GDDR6 [14] |

| NVIDIA RTX 4090 | 1.3 [14] | 165.2 [14] | 1.01 TB/s [14] | 24 GB GDDR6X [14] |

Performance Analysis and Workload Matching

Data Center GPUs (H200, MI300X, A100): These cards are designed for maximum throughput in high-performance computing (HPC). Their high FP64 TFLOPS and immense memory bandwidth from HBM technology make them ideal for large-scale, precision-sensitive simulations like climate modeling and molecular dynamics [9] [10]. The AMD MI300X stands out with an exceptional 192 GB VRAM pool, ideal for the largest ecological models that cannot be partitioned [12] [8].

Workstation GPUs (RTX 6000 Ada, RTX 4090): These cards offer a balance of performance and accessibility. However, they have intentionally limited FP64 performance, making them a "tricky or poor fit" for codes that mandate true double precision end-to-end [10]. They excel in mixed-precision workloads, AI training, and simulations that have been optimized to run primarily in single precision [10].

Experimental Protocols and Benchmarking Data

Reproducible benchmarking is fundamental for hardware selection. Standardized deep learning benchmarks like ResNet on image classification tasks are commonly used to gauge performance.

Table 3: Deep Learning Training Benchmark (Throughput in images/second)

| GPU Model | ResNet-50 (FP32) | ResNet-50 (FP16) | ResNet-152 (FP32) | ResNet-152 (FP16) |

|---|---|---|---|---|

| NVIDIA H100 NVL | 1,350 [15] | 3,042 [15] | 520 [15] | 1,232 [15] |

| NVIDIA A100 (PCIe) | 1,001 [15] | 2,179 [15] | 409 [15] | 930 [15] |

| NVIDIA RTX 4090 | 927 [15] | 1,720 [15] | n/a [15] | n/a [15] |

| NVIDIA Tesla V100 | 321.57 [15] | 706.07 [15] | 134.94 [15] | 308.35 [15] |

Methodology for Benchmarks

- Workload: Benchmarks typically use standard models like ResNet-50 and ResNet-152 trained on datasets such as ImageNet [15].

- Precision: Tests are run at different numerical precisions. FP32 (single) is a common baseline, while FP16 (half) leverages Tensor Cores for significantly higher throughput, demonstrating the benefit of mixed-precision training [15].

- Metric: Throughput is measured in images processed per second. Higher values indicate faster training times [15].

- Configuration: Benchmarks are run on a single GPU to isolate individual card performance, though multi-GPU results are also valuable for scaling analysis [15].

Scientific Computing Workflow and GPU Selection

The following diagram illustrates the decision process for selecting a GPU based on the nature of the scientific application.

The Researcher's Toolkit: Essential GPU Solutions

This table outlines critical hardware and software "reagents" needed for a high-performance computing environment for ecological solver research.

Table 4: Essential Research Reagents for GPU-Accelerated Computing

| Tool / Solution | Function / Description |

|---|---|

| NVIDIA A100/H100 GPU | Data center-grade accelerators providing a balance of high FP64 performance, memory bandwidth, and VRAM for diverse scientific workloads [13] [12]. |

| AMD Instinct MI300X | An alternative data center GPU offering exceptional VRAM capacity (192GB), ideal for memory-bound models that do not fit on other cards [12] [8]. |

| NVIDIA RTX 4090/5090 | Consumer-grade cards providing high FP16/FP32 performance for mixed-precision workloads at a lower cost, but with limited FP64 [14] [10]. |

| CUDA & ROCm | Parallel computing platforms and programming models (NVIDIA CUDA and AMD ROCm) essential for developing and running GPU-accelerated applications [9] [12]. |

| NGC / Containers | NVIDIA's GPU-optimized software hub (NGC) provides pre-trained models, Helm charts, and ready-to-run containers to ensure reproducible, high-performance results. |

| High-Speed Interconnects (NVLink/NCCL) | Technologies that enable high-speed communication between multiple GPUs, crucial for scaling workloads across a single node or multi-node cluster [9] [13]. |

| Multi-Instance GPU (MIG) | A feature in data center GPUs like the A100 that allows partitioning a single GPU into multiple, secure instances for optimal resource sharing [13] [12]. |

For scientific computing, there is no universal "best" GPU. The choice is a strategic decision based on application requirements:

- For FP64-Dominated Codes (e.g., high-fidelity climate models, ab-initio quantum chemistry), data center GPUs like the NVIDIA H200/A100 or AMD MI300X/MI250X are necessary due to their high double-precision throughput [9] [8] [10].

- For Memory-Bound Workloads (e.g., large ecological landscape models), VRAM capacity is the top priority, making the AMD MI300X with 192GB a standout solution [12] [8].

- For Mixed-Precision or AI-Enhanced Solvers, cost-effective consumer/workstation GPUs like the NVIDIA RTX 4090 or RTX 6000 Ada can provide exceptional performance, so long as the application's accuracy is not compromised by lower FP64 speed [14] [10].

Researchers should benchmark a representative slice of their workload on different GPU types, measuring meaningful metrics like "cost per result" (e.g., €/ns/day for molecular dynamics) to make the most economically efficient choice [10].

The fields of molecular dynamics and systems biology are confronting a grand challenge posed by increasingly complex, multiscale simulations. The computational power required for detailed biological simulations often exceeds the capabilities of traditional desktop computers and CPU-based clusters. In response, general-purpose GPU computing has emerged as a transformative solution, offering the power of a small computer cluster at a fraction of the cost and energy consumption [16] [17]. This paradigm shift toward GPU-accelerated platforms is driven by the need for high-resolution, real-time simulations that can integrate the vast amounts of omics data generated by modern experimental techniques [18].

The adoption of GPU acceleration represents more than just an incremental improvement; it enables research previously constrained by computational limitations. Molecular dynamics simulations of macromolecules, for instance, are exceptionally computationally demanding, making them natural candidates for GPU implementation [19]. Similarly, in systems biology, the development of detailed, coherent models of complex biological systems is recognized as a key requirement for integrating growing experimental datasets, and GPU computing provides the necessary computational resources to build and simulate these models [16]. The trend is unmistakable: across the broader TOP500 list of supercomputers, 388 systems (78%) now use NVIDIA technology, with 218 being GPU-accelerated systems—an increase of 34 systems year over year [20].

Performance Comparison of GPU Solvers

Molecular Dynamics Performance Benchmarks

Molecular dynamics simulations have shown remarkable performance improvements when ported to GPU architectures. A complete implementation of all-atom protein molecular dynamics running entirely on GPUs, including all standard force field terms, integration, constraints, and implicit solvent, demonstrated speedups exceeding 700 times compared to conventional implementations running on a single CPU core [19].

Recent benchmarking of the AMBER 24 molecular dynamics suite across NVIDIA GPU architectures reveals how performance varies significantly with both GPU model and simulation size. The following table summarizes key benchmark results across different molecular systems:

Table 1: AMBER 24 Performance Benchmarks Across NVIDIA GPU Architectures (in ns/day) [21]

| GPU Model | STMV NPT 4fs (1,067,095 atoms) | Cellulose NVE 2fs (408,609 atoms) | FactorIX NVE 2fs (90,906 atoms) | DHFR NVE 4fs (23,558 atoms) | Myoglobin GB 2fs (2,492 atoms) |

|---|---|---|---|---|---|

| RTX 5090 | 109.75 | 169.45 | 529.22 | 1655.19 | 1151.95 |

| RTX 5080 | 63.17 | 105.96 | 394.81 | 1513.55 | 871.89 |

| GH200 Superchip | 101.31 | 167.20 | 191.85 | 1323.31 | 1159.35 |

| B200 SXM | 114.16 | 182.32 | 473.74 | 1513.28 | 1020.24 |

| H100 PCIe | 74.50 | 125.82 | 410.77 | 1532.08 | 1094.57 |

| RTX 6000 Ada | 70.97 | 123.98 | 489.93 | 1697.34 | 1016.00 |

The data reveals several important trends: the NVIDIA RTX 5090 consistently delivers top-tier performance across most simulation sizes, while the B200 SXM excels with the largest systems (over 1 million atoms). Interestingly, the GH200 Superchip shows exceptional performance on the small Myoglobin system but lags significantly on medium-sized simulations like FactorIX, highlighting how architectural optimizations can favor specific problem sizes [21].

Cross-Architecture Performance Portability

Beyond single-vendor performance, the critical challenge for biomedical research is maintaining performance across diverse computing architectures. A comprehensive performance study of the SERGHEI-SWE solver across four state-of-the-art heterogeneous HPC systems—Frontier (AMD MI250X), JUWELS Booster (NVIDIA A100), JEDI (NVIDIA H100), and Aurora (Intel Max 1550)—demonstrated consistent scalability with a speedup of 32 and efficiency upwards of 90% for most test ranges [22].

Performance portability was evaluated using both harmonic and arithmetic mean-based metrics while varying problem size. Results indicated that while achieving portability across devices with tuned problem sizes (<70%), there remains room for kernel optimization with more granular architecture control [22]. Roofline analysis revealed that memory bandwidth is the dominant performance bottleneck across architectures, with key solver kernels residing in the memory-bound region [22].

Table 2: Performance Portability Metrics Across GPU Architectures [22]

| Architecture | GPU | Strong Scaling (GPUs) | Weak Scaling (GPUs) | Portability Efficiency |

|---|---|---|---|---|

| AMD MI250X | Frontier | Up to 1024 | Upwards of 2048 | <70% with tuned sizes |

| NVIDIA A100 | JUWELS Booster | Up to 1024 | Upwards of 2048 | <70% with tuned sizes |

| NVIDIA H100 | JEDI | Up to 1024 | Upwards of 2048 | <70% with tuned sizes |

| Intel Max 1550 | Aurora | Up to 1024 | Upwards of 2048 | <70% with tuned sizes |

Experimental Protocols and Methodologies

Molecular Dynamics Implementation Details

Successful GPU implementation requires fundamentally different algorithmic approaches compared to CPU-based computing. Realizing the full potential of GPUs demands considerable effort in reworking data structures and code to align with GPU architecture, and not all algorithms are equally amenable to these architectural constraints [19].

Key implementation considerations include:

Memory Access Optimization: Unlike CPUs with large caches, GPUs have minimal cache memory and hide latency with massive multithreading. This necessitates grouping related data together and accessing it in contiguous blocks. In many cases, recalculating values is more efficient than storing and retrieving them from memory [19].

Minimizing CPU-GPU Communication: Data transfer between CPU and GPU across the PCIe bus creates significant bottlenecks. In molecular dynamics simulations, transferring all atomic coordinates between GPU and CPU at each time step can decrease overall performance by 20%, even without additional computation [19].

Flow Control Management: GPU processors are arranged in groups where all threads must execute identical instructions simultaneously (SIMD execution). Branching penalties are severe when threads within a group follow different execution paths, necessitating careful algorithm design to maintain coherence [19].

The computational workflow for GPU-accelerated molecular dynamics follows a structured pipeline that maximizes GPU utilization while minimizing CPU-GPU communication:

Diagram 1: GPU Molecular Dynamics Workflow

Performance Portability Evaluation Framework

Evaluating performance across diverse architectures requires standardized methodologies. The SERGHEI-SWE solver evaluation employed several key experimental protocols:

Strong Scaling Tests: Measuring performance improvement while keeping the problem size constant and increasing the number of GPUs from small to large counts (up to 1024 GPUs) [22].

Weak Scaling Tests: Measuring performance while maintaining a constant problem size per GPU and increasing the total system size (upwards of 2048 GPUs) [22].

Roofline Model Analysis: Identifying whether kernels are compute-bound or memory-bound by plotting performance against operational intensity [22].

Portability Metrics: Applying both harmonic and arithmetic mean-based metrics to quantify performance portability across architectures while varying problem size [22].

The conceptual framework for achieving performance portability spans multiple levels of the computational stack, from high-level programming models down to hardware-specific optimizations:

Diagram 2: Performance Portability Framework

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of GPU-accelerated biomedical simulations requires both hardware and software components optimized for specific research needs. The following toolkit details essential resources for researchers in this field:

Table 3: Essential Research Reagent Solutions for GPU-Accelerated Biomedical Simulations

| Category | Item | Function | Representative Examples |

|---|---|---|---|

| Software Frameworks | Performance Portability Abstraction Layers | Enables code to run efficiently across diverse hardware architectures without rewriting | Kokkos [22], SYCL [22], RAJA |

| Molecular Dynamics Engines | Specialized Simulation Software | Implements numerical algorithms for biomolecular simulation with GPU support | AMBER (pmemd.cuda) [21], HARVEY [22] |

| Systems Biology Platforms | Network Modeling Tools | Constructs and analyzes static and dynamic network models of biological systems | WGCNA [18], Context Likelihood of Relatedness [18] |

| GPU Architectures | NVIDIA Data Center GPUs | High-performance computing focused GPUs with large memory capacity | NVIDIA H100, A100 [21], B200 SXM [21] |

| GPU Architectures | NVIDIA Workstation GPUs | Cost-effective GPUs for individual researchers and small teams | NVIDIA RTX 5090 [21], RTX 6000 Ada [21] |

| GPU Architectures | AMD and Intel GPUs | Alternative GPU architectures for diverse HPC environments | AMD MI250X [22], Intel Max 1550 [22] |

| Computing Systems | HPC Clusters | Large-scale computing infrastructure for production simulations | Frontier (AMD) [22], JEDI (NVIDIA) [22], Aurora (Intel) [22] |

Signaling Pathways in Systems Biology Modeling

Systems biology approaches disease mechanisms and drug responses through integrated network models that span multiple biological layers. These models visualize a wide range of components—genes, proteins, and drugs—and their interconnections, creating comprehensive maps of metabolism and molecular regulation [18].

Two primary modeling frameworks dominate systems biology:

Static Network Models: These capture functional interactions from omics data and provide topological properties from presented interactions. They integrate intra- and extra-cellular information to identify modules' functional responses through multiple network alignment [18].

Dynamic Models: These incorporate temporal dynamics and regulatory behaviors, often using differential equations or agent-based approaches to model system behavior over time [18].

The integration of multi-omics data follows a systematic workflow that progresses from data acquisition through network construction to biological insights:

Diagram 3: Multi-omics Data Integration Workflow

Static networks are particularly valuable for predicting potential interactions among drug molecules and target proteins through shared components that act as intermediaries conveying information across different network layers [18]. For example, diseases can be associated based on shared genetic associations, gene-disease interactions, and disease mechanisms, enabling drug repurposing through network-based approaches [18].

The integration of GPU-accelerated computing into biomedical research represents a fundamental shift in how scientists approach complex biological simulations. The performance gains—ranging from 20x to over 700x speedup compared to CPU implementations—are enabling research that was previously computationally infeasible [19] [23]. As the field evolves, several key trends are shaping its trajectory:

The push toward performance portability will continue to gain importance as supercomputing infrastructures incorporate increasingly diverse hardware architectures. Frameworks like Kokkos, SYCL, and RAJA are proving essential for maintaining performance across NVIDIA, AMD, and Intel platforms without costly code rewrites [22]. The evaluation of the SERGHEI-SWE solver across four heterogeneous HPC systems demonstrates that while current implementations achieve reasonable portability (<70% with tuned problem sizes), there remains significant opportunity for optimization through more granular architecture control [22].

The convergence of simulation and artificial intelligence represents another frontier. Modern supercomputers like JUPITER deliver both traditional double-precision performance (1 exaflop FP64) and exceptional AI capabilities (116 AI exaflops), enabling researchers to combine physics-based simulation with data-driven machine learning approaches [20]. This flexibility allows scientists to stretch power budgets further, running larger, more complex simulations while training deeper neural networks.

For biomedical researchers, the practical implications are profound. GPU acceleration makes parameter inference for Bayesian population dynamics models feasible, with speedup factors exceeding two orders of magnitude [17]. In drug discovery, the integration of multi-omics data through network models enables more accurate prediction of molecular interactions, potentially reducing the cost and time required for drug development while improving safety profiles [18].

As GPU technology continues to advance—with architectures like NVIDIA's Blackwell demonstrating significant performance improvements—and programming models mature, GPU-accelerated biomedical simulation will become increasingly accessible to researchers across institutions and funding levels, potentially democratizing capabilities that were once restricted to well-resourced centers [21]. The computational burden in biomedicine remains substantial, but the tools and techniques for scaling simulations are rapidly evolving to meet these challenges.

The field of computational science has undergone a fundamental transformation with the adoption of Graphics Processing Units (GPUs) for accelerating scientific solvers. This paradigm shift from traditional Central Processing Unit (CPU)-based computing to GPU-ccelerated architectures has enabled researchers to tackle increasingly complex problems across domains ranging from climate modeling to drug discovery.

GPUs, with their massively parallel architecture featuring thousands of smaller cores, excel at handling the computational patterns common in scientific simulations, particularly the matrix operations and floating-point computations required for solving partial differential equations [24]. Unlike CPUs, which use fewer, more powerful cores for sequential tasks, GPUs employ a data flow execution model that processes thousands of operations simultaneously, making them ideally suited for the repetitive, parallelizable computations in scientific solvers [24].

This article provides a comprehensive analysis of GPU-accelerated solver architectures, examining their performance across different implementation frameworks and hardware platforms, with specific attention to applications in ecological and environmental modeling that form the context for broader performance comparison research.

GPU Architectural Foundations for Scientific Solvers

Core Architectural Differences: CPU vs. GPU

Understanding the fundamental architectural differences between CPUs and GPUs is essential for appreciating their respective roles in high-performance computing environments.

Table 1: Fundamental Architectural Differences Between CPUs and GPUs [24]

| Architectural Aspect | CPU | GPU |

|---|---|---|

| Core Function | Handles general-purpose tasks, system control, logic, and sequential instructions | Executes massive parallel workloads like simulations, AI, and rendering |

| Core Count | 2-128 (consumer to server models) | Thousands of smaller, simpler cores |

| Execution Style | Sequential (control flow logic) | Parallel (data flow, SIMT model) |

| Memory Access Pattern | Low-latency access for instructions and logic | High-bandwidth coalesced access for large datasets |

| Design Goal | Precision, low latency, efficient decision-making | Throughput and speed for bulk data processing |

| Best At | Real-time decisions, branching logic, varied workloads | Matrix math, simulations, AI model training |

The GPU pipeline operates on a Single Instruction, Multiple Thread (SIMT) execution model, where a warp (typically 32 threads) executes the same instruction simultaneously [24]. This approach, combined with memory coalescing techniques that optimize how threads access memory, provides significant advantages for the regular computational patterns found in scientific solvers.

Memory Hierarchy and Data Throughput

GPU memory architecture is optimized for bandwidth rather than latency, employing several critical technologies:

- High-Bandwidth Memory (HBM): Advanced GPU architectures feature HBM stacked directly on the package, providing dramatically higher bandwidth compared to traditional GDDR memory [24].

- Memory Coalescing: GPUs optimize memory access by combining requests from threads in the same warp when they access sequential memory locations, significantly improving bandwidth efficiency [24].

- Shared Memory per Block: Thread blocks have access to fast shared memory, reducing global memory access delays for frequently used data [24].

These memory architecture features make GPUs particularly well-suited for handling the large datasets and memory-bound operations common in scientific simulations and ecological modeling.

Performance Portable Frameworks for Cross-Architecture Deployment

The Performance Portability Challenge

As high-performance computing (HPC) systems evolve to incorporate diverse GPU architectures from multiple vendors (NVIDIA, AMD, Intel), the challenge of performance portability has become increasingly important. The traditional approach of developing architecture-specific implementations using CUDA creates vendor lock-in and limits the deployment flexibility of scientific solvers [22].

Performance portable programming frameworks address this challenge by providing abstraction layers that allow developers to write code once and deploy it efficiently across multiple hardware architectures. This capability is particularly valuable for ecological solvers that may need to run on different supercomputing infrastructures with varying hardware configurations.

Case Study: SERGHEI-SWE Solver Framework

The SERGHEI-SWE (Shallow Water Equations) solver exemplifies the modern approach to performance-portable GPU acceleration. This framework uses the Kokkos performance portability abstraction layer to enable GPU acceleration across multiple architectures while maintaining performance efficiency [22].

Table 2: SERGHEI-SWE Performance Across Heterogeneous HPC Systems [22]

| HPC System | GPU Architecture | Strong Scaling | Weak Scaling Efficiency | Key Performance Characteristic |

|---|---|---|---|---|

| Frontier | AMD MI250X | Up to 1024 GPUs | >90% (up to 2048 GPUs) | Consistent scalability across system sizes |

| JUWELS Booster | NVIDIA A100 | Up to 1024 GPUs | >90% (up to 2048 GPUs) | Demonstrated 32x speedup |

| JEDI | NVIDIA H100 | Up to 1024 GPUs | >90% (up to 2048 GPUs) | Advanced tensor core utilization |

| Aurora | Intel Max 1550 | Up to 1024 GPUs | >90% (up to 2048 GPUs) | Cross-architecture performance portability |

The SERGHEI-SWE implementation demonstrates that performance portable frameworks can achieve impressive scalability across diverse GPU architectures, with the study reporting scaling efficiency upwards of 90% for most test configurations and a speedup of 32x on certain systems [22].

Performance Portable Programming Models

Several programming models have emerged to address the performance portability challenge:

- Kokkos: A C++ abstraction layer that provides performance portability across CPU and GPU architectures, supporting CUDA, HIP, SYCL, OpenMP, and Pthreads backends [22].

- SYCL: A cross-platform abstraction layer that enables code to target multiple accelerator types, including CPUs, GPUs, and FPGAs [22].

- OpenMP: Provides directive-based accelerator support with growing maturity for GPU offloading [22].

Recent comparative studies have shown that while SYCL has demonstrated strong performance portability across CPU and GPU architectures, Kokkos remains particularly well-suited for complex memory access patterns in GPU algorithms [22].

Figure 1: Performance Portable Framework Abstraction Architecture

Domain-Specific GPU Accelerators and Emerging Architectures

Specialized AI Accelerators

While general-purpose GPUs remain versatile for diverse workloads, the growing computational demands of AI and machine learning have spurred development of domain-specific architectures that optimize for particular computational patterns:

- TPUs (Tensor Processing Units): Google's application-specific integrated circuits (ASICs) designed specifically for neural network workloads, using systolic arrays optimized for matrix operations rather than the CUDA cores found in GPUs [25].

- LPUs (Language Processing Units): Groq's domain-specific architecture optimized for language inference workloads, achieving impressive latency metrics (~0.22 seconds Time To First Byte and ~185 tokens/second) [26].

- WPUs (Wafer-Scale Processors): Cerebras' innovative architecture featuring the WSE-3 with 900,000 cores and 4 trillion transistors, enabling training of trillion-parameter models in days rather than months [26].

Architectural Comparison: GPU vs. TPU

Table 3: Architectural Comparison Between GPUs and TPUs [25]

| Attribute | GPU | TPU |

|---|---|---|

| Purpose | General-purpose compute | ML-specific acceleration |

| Core Architecture | Thousands of programmable CUDA cores | Systolic arrays for matrix operations |

| Flexibility | High (graphics, AI, scientific computing) | Low (tailored for AI workloads) |

| Memory per Chip | Up to 80 GB (H100) | 192 GB (Ironwood) |

| Memory Bandwidth | ~3.35 TB/s (H100) | 7.2 TB/s (Ironwood) |

| Interconnect Technology | NVLink/NVSwitch (up to 900 GB/s) | Inter-Chip Interconnect (ICI) (1.2 Tbps) |

| Energy Efficiency | Moderate | High - especially for inference |

The architectural specialization of TPUs provides significant advantages for specific workload types, with Google's Ironwood TPU offering approximately 2x the performance-per-watt of its predecessor and up to 30x improvement over earlier TPU generations [25].

Experimental Protocols and Methodologies for GPU Solver Evaluation

Standardized Performance Evaluation Framework

Rigorous evaluation of GPU-accelerated solvers requires standardized methodologies to enable meaningful cross-architecture comparisons:

Strong Scaling Experiments: Measure performance while keeping the problem size constant and increasing the number of GPUs. This evaluates how efficiently a solver utilizes additional computational resources for a fixed problem [22].

Weak Scaling Experiments: Measure performance while increasing both problem size and computational resources proportionally. This assesses the solver's capability to handle increasingly larger problems [22].

Roofline Model Analysis: Identifies performance bottlenecks by comparing actual performance against theoretical hardware limits, particularly useful for determining whether a solver is compute-bound or memory-bound [22].

Case Study: Shallow Water Equations Solver Experimental Protocol

The SERGHEI-SWE evaluation provides a comprehensive example of rigorous GPU solver assessment:

- System Diversity: Testing across four state-of-the-art HPC systems: Frontier (AMD MI250X), JUWELS Booster (NVIDIA A100), JEDI (NVIDIA H100), and Aurora (Intel Max 1550) [22].

- Scale Testing: Strong scaling up to 1024 GPUs and weak scaling upwards of 2048 GPUs [22].

- Performance Portability Metrics: Application of both harmonic and arithmetic mean-based metrics while varying problem size to quantify portability [22].

- Bottleneck Identification: Roofline analysis revealed that memory bandwidth was the dominant performance bottleneck, with key solver kernels residing in the memory-bound region [22].

Benchmarking Ecological Solvers

For ecological solvers specifically, benchmarking should include:

- Real-world Dataset Application: Testing with geographically diverse datasets representing different ecosystem types.

- Multiple Resolution Analysis: Evaluating performance at resolutions relevant to ecological forecasting (1km to 1m).

- Time-to-Solution Metrics: Measuring computational efficiency against forecasting deadlines for practical utility.

Figure 2: GPU-Accelerated Solver Performance Evaluation Workflow

Research Toolkit for GPU-Accelerated Solver Development

Essential Software and Programming Frameworks

Table 4: Essential Research Toolkit for GPU-Accelerated Solver Development

| Tool/Framework | Category | Primary Function | Target Architectures |

|---|---|---|---|

| Kokkos | Performance Portability | C++ abstraction layer for performance portability | NVIDIA, AMD, Intel GPUs, CPUs |

| CUDA | GPU Programming | NVIDIA's parallel computing platform and programming model | NVIDIA GPUs |

| HIP | GPU Programming | AMD's heterogeneous computing interface for porting CUDA applications | AMD GPUs |

| SYCL | GPU Programming | Cross-platform abstraction layer based on standard C++ | NVIDIA, AMD, Intel GPUs, CPUs, FPGAs |

| OpenMP | GPU Programming | Directive-based accelerator programming with growing GPU support | Multiple GPU architectures |

| MPI | Distributed Computing | Message passing interface for multi-node distributed memory systems | All distributed systems |

| TensorFlow/PyTorch | ML Frameworks | High-level neural network frameworks with GPU acceleration | Primarily NVIDIA GPUs |

| JAX | ML Framework | Differentiable programming with composable transformations | NVIDIA GPUs, Google TPUs |

Hardware Platforms for Experimental Evaluation

A comprehensive evaluation of GPU-accelerated solvers should include testing across diverse hardware platforms:

- NVIDIA-based Systems: Featuring A100, H100, or newer architectures with CUDA support and mature software ecosystems [22].

- AMD-based Systems: Such as Frontier with MI250X GPUs, requiring HIP or Kokkos for optimal performance [22].

- Intel-based Systems: Such as Aurora with Max Series GPUs, utilizing SYCL or OpenMP for acceleration [22].

- Cloud GPU Instances: Providing accessibility through platforms like GCP, AWS, and Azure with various accelerator options [26].

The evolution of GPU-accelerated solver architectures demonstrates a clear trajectory toward performance portability and architectural specialization. The emergence of frameworks like Kokkos enables researchers to develop scientific solvers that maintain performance efficiency across diverse hardware platforms, while domain-specific accelerators offer unprecedented efficiency for specialized workloads.

For ecological solvers specifically, this portability is crucial for ensuring that critical environmental modeling capabilities can be deployed across the heterogeneous computing infrastructures available to researchers worldwide. The SERGHEI-SWE case study demonstrates that with appropriate abstraction layers, solvers can achieve impressive scaling efficiency across different GPU architectures [22].

As GPU architectures continue to diversify with offerings from NVIDIA, AMD, Intel, and domain-specific vendors, the research community's ability to leverage these advancements will depend on adopting performance portable frameworks and rigorous, standardized evaluation methodologies. This approach ensures that ecological and environmental solvers can both exploit the latest hardware advancements and remain deployable across the diverse computing infrastructure available to the global research community.

The AMReX Framework and Its Role in Enabling Scalable, GPU-Accelerated Simulation

The AMReX (Adaptive Mesh Refinement Exascale) framework is a performance-portable software library designed for massively parallel, block-structured adaptive mesh refinement (AMR) applications. Originally developed from the BoxLib framework through the U.S. Department of Energy's (DOE) Exascale Computing Project (ECP), AMReX was specifically redesigned to support both multicore CPUs and various GPU accelerators, addressing the critical need for exascale computing capabilities [27]. The framework provides a comprehensive foundation for solving systems of partial differential equations (PDEs) across diverse scientific domains, from combustion and astrophysics to wind energy and cosmology [27] [28].

Block-structured AMR serves as a "numerical microscope" that dynamically controls mesh resolution to focus computational effort where it is most needed, such as at shock waves or flame fronts [27]. Unlike uniform mesh approaches that maintain consistent resolution throughout the domain, AMR employs a hierarchical representation of the solution at multiple resolution levels. This strategy significantly reduces computational cost and memory footprint compared to uniform meshes while preserving accurate descriptions of complex physical processes [28]. The AMReX framework implements this through logically rectangular grid patches that can be distributed across computing nodes, creating a natural hierarchical parallelism ideally suited for modern GPU-accelerated supercomputers [27].

Comparative Analysis of AMR Methodologies

AMR Approach Classification

Within structured grid applications, AMR strategies primarily fall into two distinct categories with different characteristics and implementation considerations:

Table: Comparison of AMR Implementation Approaches

| Feature | Level-Based Patch AMR (AMReX) | Tree-Based Cell AMR |

|---|---|---|

| Grid Structure | Logically rectangular patches at multiple refinement levels | Individual cells split into finer elements (quad/oct-tree) |

| Computational Unit | Patches containing many cells | Individual cells or small element groups |

| Communication Pattern | Optimized for same-resolution patches and coarse-fine interactions | Tree-based neighborhood relationships |

| Implementation Complexity | Simplified communication through patch-based parallelism | Complex tree management and traversal |

| Memory Access Patterns | Regular, contiguous memory blocks within patches | Potentially irregular access patterns |

| Typical Applications | Finite difference/volume methods for PDEs | Spectral element methods, discontinuous Galerkin |

Scientific and Implementation Trade-offs

The level-based patch AMR approach employed by AMReX offers distinct advantages for GPU-accelerated systems. By operating on large, logically rectangular patches, it preserves regular data access patterns that are essential for high performance on GPU architectures [27]. This structured approach makes reasoning about numerical methods more straightforward since algorithms locally compute on structured grids rather than completely unstructured meshes [27]. The patch-based paradigm also enables efficient communication aggregation, where data between patches is "stitched together" to form a complete solution through optimized synchronization operations [27].

In contrast, tree-based cell AMR provides more granular refinement control, allowing individual cells to be refined based on local criteria. While this can potentially provide more precise adaptation to solution features, it introduces challenges for GPU acceleration due to potentially irregular memory access patterns and more complex load balancing [29]. Tree-based approaches typically require sophisticated space-filling curves to maintain data locality and may exhibit less efficient communication patterns compared to the patch-based approach [29].

For numerical weather prediction (NWP) applications, studies have demonstrated that both approaches can effectively resolve atmospheric phenomena with disparate scales. However, the level-based AMR methodology offers practical advantages in terms of scalability, performance portability, and integration within existing modeling frameworks [29]. The ability to use established solvers for locally uniform meshes simplifies implementation while maintaining computational efficiency across diverse supercomputing architectures.

Performance Analysis and Benchmarking

Experimental Framework and Metrics

Comprehensive performance evaluation of AMReX-based applications follows rigorous methodologies to assess computational efficiency, scaling behavior, and portability across diverse hardware architectures. Standard benchmarking protocols include:

Weak Scaling Studies: Problem size per computing unit remains constant while increasing the total number of units, measuring the ability to efficiently utilize growing computational resources [30].

Node-Level Performance Comparison: Execution time comparison between GPU-accelerated and CPU-only implementations on the same node architecture, quantifying GPU acceleration benefits [30].

Roofline Analysis: Assessment of achieved performance relative to hardware limitations, evaluating both memory bandwidth and computational throughput utilization [31].

Performance metrics typically focus on wall-clock time measurements for key algorithmic components, memory usage patterns, and scaling efficiency (defined as the ratio of actual to ideal speedup when increasing computational resources). The AMReX framework incorporates specialized profiling tools like the Tiny Profiler to precisely track execution time distribution across different simulation components [30].

Quantitative Performance Results

Table: Performance of AMReX-Based Applications on DOE Supercomputers

| Supercomputer | GPU Architecture | CPU Configuration | Application | Speedup vs CPU | Key Performance Factors |

|---|---|---|---|---|---|

| Perlmutter (NERSC) | 4× NVIDIA A100 | AMD EPYC 7763 (Milan) | PeleLMeX | 4× | MAGMA dense-direct solver for chemistry |

| Crusher (ORNL) | 4× AMD MI250X (8 GCDs) | AMD EPYC 7A53 (Trento) | PeleLMeX | 7.5× | Bulk-sparse integration strategy |

| Summit (ORNL) | 6× NVIDIA V100 | 2× IBM Power9 | PeleLMeX | 4.5× | cuSparse solver for memory efficiency |

| H100 Cluster | 96× NVIDIA H100 | N/A | Compressible Combustion Solver | 2-5× (vs initial GPU) | Column-major storage, kernel fusion |

Recent advancements in AMReX-based solver optimization demonstrate significant performance improvements. A specialized compressible combustion solver achieved 2-5× speedup over initial GPU implementations through memory access optimization and computational workload balancing [31]. Roofline analysis revealed substantial improvements in arithmetic intensity for both convection (∼10×) and chemistry (∼4×) routines, confirming efficient utilization of GPU memory bandwidth and computational resources [31].

The PeleLMeX combustion code exemplifies AMReX's performance portability, demonstrating efficient weak scaling up to 192 GPU hours on NVIDIA V100 architectures while resolving 53.6 million cells with adaptive mesh refinement [30]. The distribution of computational time within these simulations highlights the dominant contribution of stiff chemistry integration, particularly on GPUs, where it can account for over 90% of the computational expense in detailed chemistry calculations [31].

Technical Implementation and GPU Acceleration

AMReX Portability and Performance Layer

AMReX employs a sophisticated hardware abstraction layer that enables performance portability across diverse computing architectures without sacrificing efficiency. This lightweight layer provides constructs that allow users to specify operations on data blocks without detailing hardware-specific implementation [28]. The framework currently supports CUDA for NVIDIA GPUs, HIP for AMD GPUs, SYCL for Intel GPUs, and OpenMP for multicore CPU architectures [28].

The portability layer utilizes several key components to achieve both performance and readability:

ParallelFor Lambdas: AMReX's lambda launch system executes work over configurations on either CPUs or GPUs, supporting operations on mesh points or particles through highly optimized, portable performance [32].

Memory Arenas: Specialized memory pools reduce allocation overhead by reusing contiguous memory chunks, eliminating unnecessary allocations and frees while providing flexible control of memory in a performant, tracked manner [32].

Array4 Objects: Lightweight, device-friendly objects containing non-owning pointers and indexing information enable Fortran-like data access patterns while maintaining GPU compatibility [32].

This comprehensive approach allows AMReX-based applications to run successfully at scale on some of the world's largest supercomputers, including OLCF's AMD MI250X-based Frontier, NERSC's NVIDIA A100 machine Perlmutter, ALCF's Aurora with Intel Xe GPUs, and Riken's Fugaku platform with ARM A64FX CPUs [28].

GPU-Specific Optimizations

AMReX incorporates several advanced optimizations specifically designed for GPU architectures:

Bulk-Sparse Chemical Kinetics Integration: A novel strategy that addresses computational workload variability arising from the highly localized nature of chemical reactions in AMR contexts, resulting in up to 6× speedup for chemistry routines [31].

Kernel Fusion: Combining multiple computational kernels reduces launch overhead, warp divergence, and global memory access, particularly beneficial for multigrid AMR algorithms [31].

Column-Major Storage Optimization: Improves memory access patterns for hierarchical grid structures, enhancing arithmetic intensity for both convection and chemistry routines [31].

Asynchronous Execution: Overlapping computation and communication through GPU streams and asynchronous I/O operations maximizes resource utilization [32].

These optimizations directly address key challenges in GPU-based simulations of multiscale phenomena, particularly the disparate space and time scales characteristic of reacting flows where stiff chemistry often dominates computational expense [31].

Research Applications and Ecosystem

Domain-Specific Implementations

The AMReX framework supports a diverse range of scientific applications across multiple domains, demonstrating its versatility and robust capabilities:

Combustion Modeling: The PeleLMeX code (low Mach number) and PeleC (compressible) simulate reacting flows with detailed kinetics and transport in complex geometries, leveraging AMReX's embedded boundary capabilities for complex geometry representation [28] [30].

Astrophysics and Cosmology: Castro models high-fidelity explicit algorithms for compressible flow with self-gravity and nuclear reaction networks, while Nyx simulates compressible flow in an expanding universe with Lagrangian particle representation of dark matter [28].

Plasma and Accelerator Physics: WarpX employs advanced particle-in-cell methods for simulations of particle accelerators, beams, and laser-plasmas, demonstrating exceptional parallel scalability [28].

Weather and Climate Modeling: The Energy Research and Forecasting (ERF) code applies AMReX to atmospheric modeling with adaptive mesh refinement for phenomena such as thunderstorms and tropical cyclones [33] [29].

Multiphase Flows: MFiX-Exa models multiphase particle-laden flows with reactions and heat transfer effects in complex geometries [28].

Essential Research Reagent Solutions

Table: Key Computational Components in AMReX-Based Research

| Component | Function | Implementation in AMReX |

|---|---|---|

| Block-Structured AMR | Dynamic mesh refinement/coarsening | Hierarchical grid management with flexible refinement criteria |

| Performance Portability Layer | Hardware-agnostic code execution | ParallelFor, Array4, Memory Arenas for CPU/GPU support |

| Linear Algebra Solvers | Solving elliptic/parabolic PDE systems | Native geometric multigrid + interfaces to hypre/PETSc |

| Particle-Mesh Methods | Lagrangian particle tracking with mesh interactions | ParIter, ArrayOfStructs, StructOfArrays data layouts |

| Embedded Boundary Methods | Complex geometry representation | Cut-cell approach for irregular domains |

| I/O and Visualization | Data output and analysis | Asynchronous I/O with native format for ParaView/VisIt/yt |

| Time Integration | Stiff ODE integration for chemical kinetics | SUNDIALS interface with specialized GPU solvers |

The AMReX framework represents a significant advancement in scalable, GPU-accelerated simulation capabilities, successfully addressing the triple challenge of dynamic mesh refinement for tracking localized features, extreme scalability across diverse computing architectures, and performance portability without compromising efficiency. Through its unique combination of block-structured AMR algorithms and sophisticated GPU acceleration strategies, AMReX enables high-fidelity simulations of complex multiscale phenomena across numerous scientific domains.

Performance comparisons demonstrate that AMReX-based applications consistently achieve substantial speedups—typically 4-7.5× compared to CPU-only implementations—across various supercomputer architectures [30]. Continued optimization efforts yield further 2-5× improvements over initial GPU implementations through memory access optimization, bulk-sparse integration strategies, and computational workload balancing [31].

As computational science increasingly relies on heterogeneous computing architectures, AMReX's performance-portable approach provides a critical foundation for next-generation scientific simulation. The framework's active development, including the recent introduction of pyAMReX for Python integration and enhanced machine learning capabilities, ensures its continued relevance in the rapidly evolving landscape of high-performance computing [28]. For researchers pursuing GPU-accelerated ecological solvers and multiscale simulations, AMReX offers a robust, scalable, and performant foundation addressing the complex challenges of exascale computing.

GPU Solvers in Action: Methodologies and Real-World Applications in Drug Discovery

Molecular dynamics (MD) simulations are a cornerstone of computational chemistry, biophysics, and materials science, enabling researchers to study the physical movements of atoms and molecules over time. This guide provides an objective performance comparison of hardware and software for running these computationally intensive simulations, with a focus on balancing raw speed with cost and ecological efficiency.

Hardware Performance Comparison for Molecular Dynamics

Selecting the right hardware is paramount for efficient MD simulations. The choice involves a trade-off between raw performance, cost, and memory capacity, which varies significantly across different GPU architectures and is highly dependent on the size of the molecular system being studied.

GPU Performance and Cost-Efficiency

Graphics Processing Units (GPUs) provide the most significant acceleration for MD software. The table below summarizes the performance of various NVIDIA GPUs across different MD applications and system sizes.

Table 1: GPU Performance Metrics for Molecular Dynamics Simulations

| GPU Model | Key Architecture | VRAM | Performance Highlight | Best Use-Case & Cost-Efficiency |

|---|---|---|---|---|

| NVIDIA RTX 4090 | Ada Lovelace | 24 GB GDDR6X | ~109.75 ns/day (STMV, ~1M atoms) [21] | Excellent price-to-performance for single-GPU workstations [34] [21]. |

| NVIDIA RTX 5090 | Blackwell | 32 GB | ~109.75 ns/day (STMV, ~1M atoms); outperforms RTX 4090 in larger systems [21]. | Peak single-GPU throughput; best performance for its cost [21]. |

| NVIDIA RTX 6000 Ada | Ada Lovelace | 48 GB GDDR6 | 70.97 ns/day (STMV, ~1M atoms) [21] | Large-scale simulations requiring extensive VRAM [34]. |

| NVIDIA L40S | Ada Lovelace | 48 GB | 536 ns/day (T4 Lysozyme, ~44k atoms) [35] | Best value overall for traditional MD; top cost-efficiency [35]. |

| NVIDIA H200 | Hopper | 141 GB | 555 ns/day (T4 Lysozyme, ~44k atoms) [35] | Peak performance for AI-enhanced workflows (e.g., machine-learned force fields) [35]. |

| NVIDIA RTX PRO 4500 Blackwell | Blackwell | Not Specified | Matches RTX 5000 Ada performance at lower cost [21]. | Cost-effective choice for small simulations (<100,000 atoms) [21]. |

For central processing units (CPUs), performance relies more on clock speed than core count. A mid-tier workstation CPU with high base and boost clock speeds is often better suited than an extreme core-count processor, as some MD software cannot utilize all cores efficiently [34].

The Impact of System Size on Performance

The optimal hardware configuration depends heavily on the size of the molecular system.

- Small Systems (< 50,000 atoms): GPUs are often underutilized, and communication between the CPU and GPU can be a bottleneck. Consumer GPUs like the RTX 4070 or 4080 offer a good balance of performance and cost, though data center GPUs paired with powerful CPUs may perform better [36].

- Medium to Large Systems (> 50,000 atoms): High-end consumer GPUs like the RTX 4090 and RTX 5090 begin to match or surpass the performance of data center GPUs (A100, H100) due to their high FP32 TFLOPS, making them highly cost-effective [36] [21]. For the largest systems (e.g., >1 million atoms), GPUs with large VRAM, such as the RTX 6000 Ada (48 GB) or H200 (141 GB), are necessary to hold the entire simulation in memory [34] [35].

Table 2: Recommended GPU Selection Based on System Size

| System Size | Best Performing GPUs | Most Cost-Effective GPUs |

|---|---|---|

| Small (< 50k atoms) | RTX 4090, RTX 4080 SUPER [36] | RTX 4070 Ti, RTX 3060 Ti, RTX 4080 [36] |

| Medium (50k-500k atoms) | RTX 4090, RTX 4080 SUPER [36] | RTX 4090, RTX 4080, RTX 4070 [36] |

| Large (> 500k atoms) | RTX 4090, RTX 6000 Ada, H200 [34] [36] [35] | RTX 4090, RTX 4080 [36] |

Experimental Protocols for Benchmarking

To ensure fair and reproducible comparisons between different hardware and software, standardized benchmarking protocols are essential. The methodology below is compiled from recent industry and academic benchmarks.

General MD Benchmarking Workflow

The following diagram outlines a generalized workflow for conducting MD benchmarks, synthesizing common steps across multiple studies [36] [21] [35].

Detailed Benchmarking Methodology

The workflow is implemented through the following detailed steps, which ensure consistency and reliability in results.

System Preparation and Parameters:

- Benchmark Systems: Standardized molecular systems are used to ensure comparability. These range from small peptides like the

alanine dipeptideto large complexes like theSTMV virus(1,066,628 atoms) orT4 Lysozyme(43,861 atoms) [36] [37] [35]. - Simulation Parameters: Common settings include a 2-4 femtosecond (fs) integration timestep, Particle Mesh Ewald (PME) electrostatics for explicit solvent simulations, and a simulation length sufficient to achieve stable performance metrics (e.g., 100 ps to 200,000 steps) [36] [35].

- Benchmark Systems: Standardized molecular systems are used to ensure comparability. These range from small peptides like the

Software and Hardware Configuration:

- MD Engines: Benchmarks are run using GPU-accelerated versions of popular MD software such as

pmemd.cuda(AMBER),gmx mdrun(GROMACS), or OpenMM [36] [21] [35]. - Execution Command: A typical GROMACS command is:

gmx mdrun -s input.tpr -nb gpu -pme gpu -bonded gpu -update gpu -ntomp 8 -nsteps 200000 -deffnm output[36]. This offloads calculations to the GPU. For AMBER, thepmemd.cudaengine is used [21]. - CPU-GPU Collaboration: The CPU manages task distribution and I/O. To avoid bottlenecks, the number of OpenMP threads (

-ntomp) is typically set to match the number of physical CPU cores [36].

- MD Engines: Benchmarks are run using GPU-accelerated versions of popular MD software such as

Performance and Cost Analysis:

- Simulation Speed: The primary metric is

nanoseconds per day (ns/day), which measures how much simulated time is computed in 24 hours of real time [36] [21]. - Cost Efficiency: For cloud or cost-aware deployments,

nanoseconds per dollar (ns/dollar)is calculated. This metric is crucial for ecological and budgetary assessments, as it reveals that consumer GPUs can be 8-14x more cost-effective than data center GPUs [36] [35].

- Simulation Speed: The primary metric is

Optimization to Avoid a Common Bottleneck

A frequently overlooked performance pitfall is disk I/O throttling. Frequently saving trajectory data forces data transfer from GPU to CPU memory, interrupting computation. One study found that optimizing the save interval can improve performance by up to 4x [35]. For short simulations, saving frames less frequently is critical for maximizing GPU utilization.

The Scientist's Toolkit: Essential Research Reagents and Materials

This section details the key software and hardware components that form the foundation of modern, high-performance molecular dynamics research.

Table 3: Essential Tools for Molecular Dynamics Simulations

| Tool Name | Type | Primary Function |

|---|---|---|

| GROMACS | Software | A highly optimized, open-source MD package known for its exceptional speed on both CPUs and GPUs [36]. |

| AMBER | Software | A leading suite of MD programs, with its pmemd.cuda engine highly optimized for NVIDIA GPUs [34] [21]. |

| NAMD | Software | A widely used, parallel MD program designed for high-performance simulation of large biomolecular systems [34] [37]. |

| OpenMM | Software | A hardware-independent library for MD simulations, enabling easy deployment across diverse computing platforms [35] [38]. |

| NVIDIA CUDA Cores | Hardware | Parallel processors on NVIDIA GPUs that handle the core computational workload of most MD simulations [34] [36]. |

| GPU VRAM | Hardware | Video Random Access Memory. Its capacity determines the maximum size of a molecular system that can be simulated on a single GPU [34] [36]. |

Emerging Trends and Ecological Considerations

The field of molecular dynamics is evolving beyond pure performance metrics toward more sustainable and accelerated computing practices.

Novel Algorithms and Hardware Utilization

Recent research focuses on overcoming traditional limits. Force-free MD uses machine learning to directly update atomic positions, lifting traditional integration constraints and allowing time steps at least one order of magnitude larger than conventional MD [39]. Other studies explore integrating fluid dynamics concepts to optimize the representation of molecular interactions, thereby boosting simulation speed and accuracy [40]. Furthermore, new MD engines like apoCHARMM are designed for maximal GPU efficiency, performing energy, force, and integration calculations exclusively on the GPU to minimize performance-sapping data transfers with the CPU [38].

The EcoL2 Metric for Sustainable Computing

As computational demands grow, so does their environmental impact. The EcoL2 metric has been proposed to balance model accuracy with carbon emissions, promoting environmentally informed model assessment [41]. It accounts for the total carbon footprint (C) across a project's lifecycle:

[ \text{EcoL2} = \frac{1 - e^{\log_{\alpha}(\mathcal{R})}}{1 + \beta C} ]

Where (\mathcal{R}) is the relative L2 error, and (\alpha), (\beta) are hyperparameters. The total carbon footprint includes embodied carbon (data acquisition), developmental carbon (hyperparameter tuning), operational carbon (training), and inference carbon (deployment) [41]. This holistic view aligns with findings that selecting cost-effective hardware, like consumer GPUs, inherently reduces the operational carbon footprint by delivering more scientific results per dollar and per watt [36] [35].

The process of discovering a new drug is notoriously time-consuming and expensive, often taking over a decade and costing billions of dollars. A critical early stage in this pipeline is molecular docking, a computational method that predicts how a small molecule (such as a potential drug candidate) binds to a target protein. The accelerating growth of make-on-demand chemical libraries, which now contain over 70 billion readily available molecules, provides unprecedented opportunities to identify starting points for drug discovery through virtual screening [42]. However, these multi-billion-scale libraries present a monumental computational challenge. Traditional docking methods, which rely on simulating physical interactions between molecules, require substantial computational resources to evaluate such vast chemical spaces, creating a critical bottleneck that can delay the identification of promising therapeutic compounds [42].

Within this context, the role of high-performance computing infrastructure, particularly Graphics Processing Units (GPUs), has become indispensable. GPUs are designed to handle massive numbers of parallel calculations, making them ideal for the repetitive scoring of protein-ligand interactions inherent to docking simulations [10]. Specialized GPU-optimized docking tools, such as AutoDock-GPU and Vina-GPU, have been developed specifically to leverage this parallel architecture, offering significant speed improvements over traditional CPU-based approaches [10]. As these computational demands grow, so does the focus on the ecological impact of the required computing resources. The pursuit of faster docking simulations must now be balanced with considerations of energy efficiency and environmental sustainability, giving rise to the field of "GPU ecological solvers" [43] [44]. This guide provides a performance comparison of these emerging solutions, evaluating their effectiveness in accelerating drug discovery while managing their environmental footprint.

Performance Comparison of Docking Approaches

The computational methods for virtual screening can be broadly categorized into traditional docking and machine learning-guided workflows. The table below summarizes their key performance characteristics.

Table 1: Performance Comparison of Virtual Screening Approaches

| Methodology | Throughput | Computational Cost | Key Strength | Key Limitation |

|---|---|---|---|---|

| Traditional Docking (e.g., AutoDock-GPU, Vina-GPU) | High throughput on consumer GPUs [10] | Lower price/performance ratio for batch screening [10] | Direct, physics-based scoring of interactions | Becomes prohibitively expensive for billion-compound libraries [42] |

| Machine Learning-Guided Workflow (e.g., CatBoost classifier with conformal prediction) | Reduces required docking by >1,000-fold [42] | Enables screening of 3.5 billion compounds at modest cost [42] | Unlocks screening of ultralarge (billion+) libraries | Requires initial training data (~1 million docked compounds) [42] |

Analysis of Comparative Data

The quantitative data reveals a clear trade-off. Traditional docking tools like AutoDock-GPU and Vina-GPU are mature, provide a direct physics-based assessment, and perform well on cost-effective consumer-grade GPUs, making them an excellent choice for libraries of up to hundreds of millions of compounds [10]. However, their computational cost scales linearly with library size.

For the new frontier of ultralarge, multi-billion-compound libraries, a hybrid ML-guided approach is necessary. As demonstrated in a landmark 2025 study, training a CatBoost classifier on a million docked compounds and using the conformal prediction framework can reduce the number of compounds that require explicit docking by over a thousand-fold. This workflow made it feasible to screen a library of 3.5 billion compounds, leading to the experimental identification of ligands for G protein-coupled receptors (GPCRs), a key drug target family [42]. This approach effectively creates a powerful filter, using a fast ML model to identify a small, high-probability subset of compounds worthy of detailed, resource-intensive docking simulation.

Experimental Protocols and Workflows

To ensure reproducibility and provide a clear roadmap for researchers, this section details the core protocols for both traditional and ML-accelerated docking.

Protocol for Traditional GPU-Accelerated Docking

This protocol is optimized for tools like AutoDock-GPU and Vina-GPU, which are designed for high-throughput screening on consumer or data center GPUs [10].

- System Preparation:

- Protein Preparation: Obtain the 3D structure of the target protein from a database like the Protein Data Bank (PDB). Remove water molecules and co-crystallized ligands. Add hydrogen atoms and assign appropriate protonation states using tools like PDB2PQR or the respective software's preparation suite.

- Ligand Preparation: Prepare a library of small molecules in a suitable format (e.g., SDF, MOL2). Generate 3D coordinates and optimize their geometry. Assign correct bond orders and ionization states, typically at physiological pH (7.4).

- Grid Map Generation: Define the search space for the ligand within the protein. This involves creating a 3D grid box centered on the binding site of interest. The box dimensions and spacing should be specified to encompass the entire binding pocket.

- Docking Execution: Launch the docking simulation on the GPU. The software will automatically parallelize the workload across available GPU cores. Key parameters to specify include the exhaustiveness of the search and the number of binding poses to generate per ligand.

- Result Analysis: The output consists of a ranked list of ligands and their predicted binding poses, each with a corresponding scoring function value (e.g., in kcal/mol). The top-ranked compounds are selected for further experimental validation.

Protocol for ML-Guided Docking of Ultralarge Libraries

This workflow, as validated in a recent Nature Computational Science paper, combines machine learning with molecular docking to efficiently screen billions of compounds [42]. The following diagram illustrates this integrated process.

Diagram 1: Workflow for ML-Guided Ultralarge Library Screening

The detailed methodology is as follows:

- Initial Sampling and Docking: A subset of 1 million compounds is randomly selected from the multi-billion-entry chemical library (e.g., Enamine REAL Space) [42]. This subset is docked against the prepared target protein using a traditional GPU-accelerated docking method to generate a set of known scores.

- Classifier Training: The molecular structures of the 1 million docked compounds are converted into numerical descriptors, specifically Morgan2 fingerprints (the RDKit implementation of ECFP4) [42]. These features, along with their docking scores, are used to train a machine learning classifier. The study found the CatBoost algorithm provided an optimal balance of speed and accuracy [42]. The top-scoring 1% of compounds from the docking screen are typically used to define the "active" class for training.

- Conformal Prediction for Library Screening: The trained CatBoost model is applied to the entire multi-billion-compound library using the Mondrian Conformal Prediction (CP) framework. The CP framework allows researchers to set a significance level (ε, e.g., 0.1), which guarantees that the error rate of predictions will not exceed this value [42]. This step classifies the vast library into "virtual actives" (compounds predicted to be top-binders) and "virtual inactives."

- Targeted Docking and Validation: Only the drastically reduced "virtual active" set (e.g., 10-20 million compounds from a 234-million library) proceeds to explicit molecular docking [42]. The top-ranking compounds from this final docking step are then selected for experimental testing in biochemical or cellular assays to confirm biological activity.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful virtual screening relies on a combination of software, hardware, and data resources. The table below details key components of the modern computational researcher's toolkit.

Table 2: Essential Research Reagents and Materials for Molecular Docking

| Tool Category | Specific Examples | Function & Application |

|---|---|---|

| Software & Algorithms | AutoDock-GPU, Vina-GPU [10] | High-throughput, GPU-native docking engines for scoring ligand binding. |