GPU-Accelerated Ecological Simulation: A Transformative Approach for Environmental Research and Conservation

This article explores the paradigm shift in ecological modeling driven by GPU acceleration.

GPU-Accelerated Ecological Simulation: A Transformative Approach for Environmental Research and Conservation

Abstract

This article explores the paradigm shift in ecological modeling driven by GPU acceleration. It details the foundational principles that make GPUs ideal for complex environmental simulations, showcases cutting-edge methodological applications from hydrology to wildlife tracking, provides essential guidance for optimizing computational performance, and validates the technology through comparative performance benchmarks. Aimed at researchers and environmental professionals, this comprehensive review demonstrates how GPU computing enables high-resolution, real-time simulations that were previously computationally prohibitive, thereby opening new frontiers in ecological forecasting and conservation strategy.

The Engine of Change: Understanding GPU Architecture and Its Fit for Ecological Modeling

The Graphics Processing Unit (GPU) has undergone a fundamental transformation from a specialized graphics rendering component to a general-purpose parallel processor that has revolutionized scientific computing. This evolution began when researchers recognized that the massively parallel architecture optimized for rendering pixels and vertices could be harnessed to solve computationally intensive scientific problems. The creation of programmable shaders and frameworks like NVIDIA's CUDA (Compute Unified Device Architecture) unlocked this potential, providing developers with the tools to access the GPU's computational power for non-graphics applications. This paradigm shift has been particularly transformative in ecological simulation research, where complex models that were previously computationally prohibitive or required drastic simplification can now be executed with high fidelity in practical timeframes, enabling new scientific discovery through simulation at unprecedented scales and resolutions.

GPU Architecture and Programming Models

Fundamental Architectural Advantages

Unlike Central Processing Units (CPUs) with a few powerful cores optimized for sequential serial processing, GPUs contain thousands of smaller, efficient cores designed for parallel workloads [1]. This architectural difference is the key to their dominance in scientific computing. A CPU is a master of sequential tasks with a few powerful cores, while a GPU is a specialist in parallel workloads, featuring thousands of smaller, efficient cores [2]. This massive parallelism enables GPUs to execute billions of calculations required for scientific simulations at unprecedented speeds, often achieving performance improvements of 100-200 times over high-end CPUs for suitable parallelizable algorithms [1].

The CUDA Platform and Ecosystem

NVIDIA's CUDA platform, released in 2006, provided the critical programming model that enabled the widespread adoption of GPU computing in science [3]. The success of CUDA stems not only from its programming model but from the comprehensive ecosystem that has developed around it. This ecosystem includes:

- Programming Languages: While CUDA started with support for the C programming language, it now supports C++, Fortran, Python, and others through various toolchains [3].

- GPU-Accelerated Libraries: Essential for providing "drop-in" performance for common routines, these include mathematical libraries (cuBLAS, cuSPARSE), deep learning libraries (cuDNN, TensorRT), and domain-specific libraries [3] [2].

- Development Tools: Robust tools for debugging (CUDA-GDB) and performance optimization (Nsight) ensure developers can efficiently implement and tune their applications [3].

- Deployment Infrastructure: Support for containerization through NVIDIA NGC and cluster management tools enable scalable deployment in data center environments [3].

Table: Key Components of the NVIDIA CUDA Ecosystem

| Component Category | Examples | Primary Function |

|---|---|---|

| Programming Languages & APIs | CUDA C/C++, PyCUDA, OpenACC | Provide interfaces for developers to write parallel code for GPUs |

| Mathematical Libraries | cuBLAS, cuFFT, cuSPARSE | Accelerate linear algebra, Fourier transforms, and sparse matrix operations |

| Deep Learning Libraries | cuDNN, TensorRT | Optimize neural network operations for training and inference |

| Profiling & Debugging Tools | Nsight, CUDA-GDB | Enable performance optimization and code debugging |

| Cluster Management | NGC Containers, Kubernetes extensions | Facilitate deployment and management of GPU applications at scale |

GPU Acceleration in Ecological Simulation Research

Ecological systems present some of the most challenging computational problems due to their inherent complexity, spatial explicitness, and the multiple scales at which processes operate. GPU acceleration has enabled breakthroughs across multiple domains of ecological research by making previously intractable simulations feasible.

Evolutionary Spatial Cyclic Games

Evolutionary Spatial Cyclic Games (ESCGs) represent a class of agent-based models used to study ecological and evolutionary dynamics, particularly biodiversity in ecosystems [4] [5]. These models are computationally expensive and scale poorly on traditional CPU-based systems. Recent research has demonstrated the transformative impact of GPU acceleration on this field.

A 2025 study implemented GPU-accelerated simulators for ESCGs using both Apple's Metal Shading Language and NVIDIA's CUDA, with a single-threaded C++ implementation serving for validation and baseline performance comparison [5]. The benchmark results showed that GPU acceleration delivered significant speedups, with the CUDA implementation achieving up to 28x performance improvement over the single-threaded CPU version [4] [5]. This performance enhancement enabled the simulation of much larger system sizes (up to 3200×3200) that became tractable with CUDA, while the Metal implementation faced scalability limitations [4] [5].

Table: Performance Comparison of ESCG Simulation Implementations

| Implementation Platform | Maximum Speedup Factor | Maximum Tractable System Size | Scalability Assessment |

|---|---|---|---|

| Single-threaded C++ (Baseline) | 1x (Reference) | Limited by computational time | Poor scaling for large systems |

| Apple Metal Shading Language | Not specified | Smaller than CUDA | Faced scalability limitations |

| NVIDIA CUDA | 28x | 3200×3200 | Remained tractable at large scales |

Experimental Protocol: GPU-Accelerated ESCG Simulation

The methodology for implementing and benchmarking GPU-accelerated ESCG simulations consists of the following key components:

Model Formulation: ESCGs are implemented as grid-based agent-based models where each cell represents an individual agent following one of multiple strategies in a cyclic dominance relationship (e.g., Rock-Paper-Scissors dynamics) [4] [5].

Reference Implementation: A validated single-threaded C++ version is developed first to serve as a baseline for both validation of results and performance comparison [4].

GPU Kernel Design: The simulation update is partitioned into parallel GPU kernels responsible for:

- Neighborhood state assessment for each cell

- Strategy update calculations based on cyclic game rules

- Simultaneous grid state updates

Memory Access Optimization: Memory access patterns are optimized to leverage GPU memory hierarchy, minimizing global memory accesses through shared memory and register usage where appropriate [5].

Validation Framework: Results from GPU implementations are systematically compared against the C++ baseline to ensure correctness across different parameter sets and initial conditions [5].

Performance Benchmarking: Execution time is measured across varying grid sizes (from small-scale to 3200×3200) with speedup factors calculated relative to the single-threaded CPU implementation [4] [5].

Large-Scale Species Identification with BioCLIP 2

In conservation biology, the BioCLIP 2 project represents a groundbreaking application of GPU computing for species identification and ecological trait analysis. This foundation model, trained on NVIDIA GPUs, can identify over a million species and distinguish species' traits while determining inter- and intraspecies relationships [6].

The model was trained on a massive dataset called TREEOFLIFE-200M, comprising 214 million images of organisms spanning over 925,000 taxonomic classes [6]. After just 10 days of training on 32 NVIDIA H100 GPUs, BioCLIP 2 displayed novel abilities such as distinguishing between adult and juvenile animals, determining sex within species, and making associations between related species without being explicitly taught these concepts [6]. The model learns biological hierarchies implicitly through training data associations rather than explicit programming [6].

Experimental Protocol: BioCLIP 2 Training and Validation

Data Curation: Compilation of TREEOFLIFE-200M dataset through collaboration between the Imageomics Institute, Smithsonian Institution, and various universities [6].

Model Architecture: Implementation of a foundation model based on contrastive language-image pre-training tailored for biological entities [6].

GPU-Accelerated Training: Distributed training across 32 NVIDIA H100 Tensor Core GPUs for 10 days, leveraging massive parallelization of neural network operations [6].

Validation Methodology:

- Zero-shot evaluation on novel species and traits

- Testing hierarchical relationship learning without explicit labeling

- Validation against expert-annotated datasets for trait identification

Inference Deployment: Optimization for inference on individual Tensor Core GPUs to enable practical usage by researchers [6].

High-Resolution Environmental Modeling

GPU acceleration has similarly revolutionized environmental modeling, where high spatial and temporal resolution is critical for accurate predictions. The "Oceananigans" model exemplifies this advancement—a GPU-optimized ocean model that achieves decade-long simulations in a day, enabling mesoscale-resolving climate simulations that were previously impractical [7].

This breakthrough addresses a significant source of uncertainty in current oceanic climate models: the accurate representation of mesoscale ocean features such as eddies and currents [7]. Implemented in the Julia programming language, the model leverages GPU-specific programming strategies to drastically accelerate computations while maintaining the flexibility needed for scientific research [7].

In freshwater ecosystems, researchers have developed 2D GPU-enhanced water environment models to simulate transport processes of water quality factors including nitrogen cycling, phosphorus cycling, dissolved oxygen balance, and chlorophyll α dynamics [8]. These models couple hydrodynamic simulations with biogeochemical processes, achieving significant improvements in computational efficiency while maintaining high accuracy in predicting water quality parameters [8].

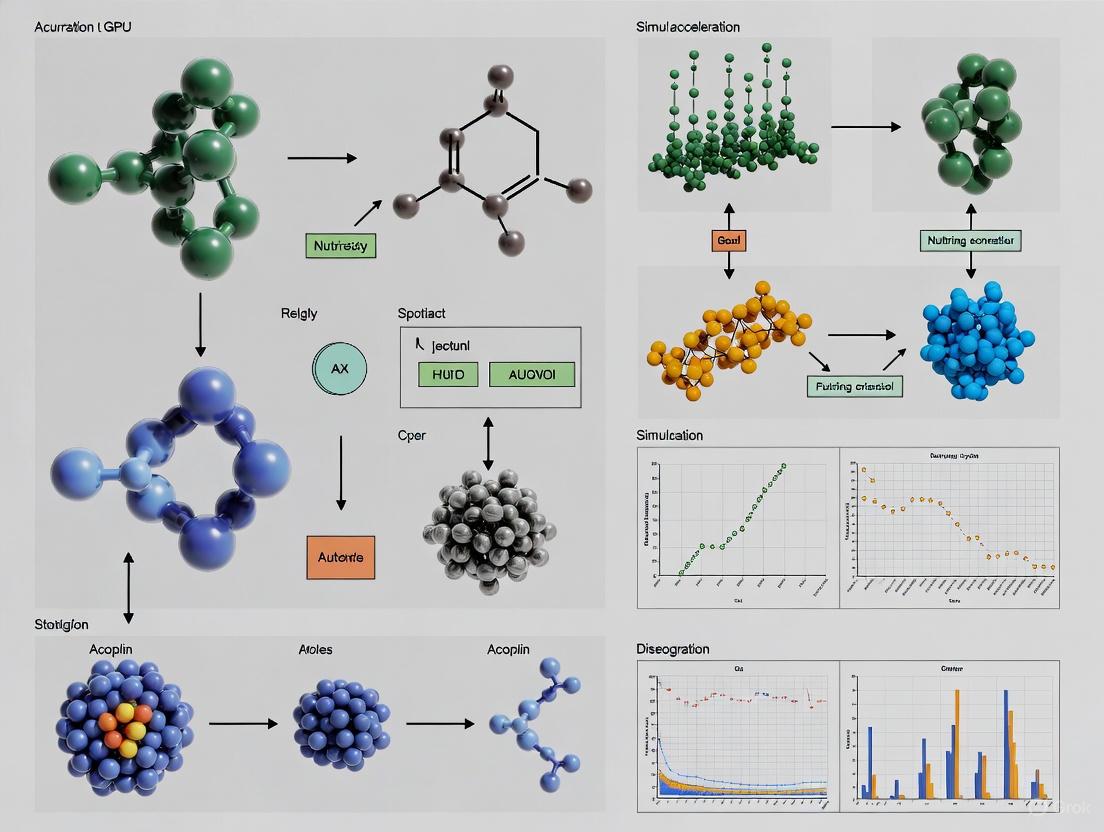

Diagram: GPU-Accelerated Ecological Simulation Workflow. The process integrates both CPU and GPU implementations, with validation ensuring correctness before leveraging GPU performance for larger-scale simulations.

Researchers entering the field of GPU-accelerated ecological simulation require familiarity with both computational tools and domain-specific resources. The following table summarizes key components of the research toolkit:

Table: Essential Research Reagent Solutions for GPU-Accelerated Ecological Simulation

| Tool Category | Specific Examples | Function in Research |

|---|---|---|

| GPU Hardware Platforms | NVIDIA H100, A100, GeForce RTX; Apple Silicon | Provide the computational hardware for parallel processing of ecological models |

| GPU Programming Models | CUDA, Metal Shading Language, OpenACC | Enable researchers to write parallel code for GPU acceleration |

| Scientific Computing Libraries | cuBLAS, cuSPARSE, cuRAND, Thrust | Provide optimized mathematical operations for scientific simulations |

| Domain-Specific Software | Oceananigans (Julia), Custom ESCG simulators | Offer tailored environments for specific ecological modeling domains |

| Data Management Tools | NVIDIA NGC Containers, Docker with GPU support | Ensure reproducible environments for model execution and deployment |

| Performance Analysis Tools | NVIDIA Nsight, CUDA-Memcheck | Enable profiling and optimization of GPU-accelerated ecological models |

Methodological Framework and Implementation Considerations

Implementing GPU-accelerated ecological simulations requires careful consideration of both algorithmic and hardware-specific factors. The following diagram illustrates the key decision points in designing such systems:

Diagram: Parallelization Strategy Decision Process. The approach depends on the ecological model's structure, with fine-grained parallelism suitable for independent agents and coarse-grained approaches for coupled processes.

Key Implementation Strategies

Parallelism Granularity: Agent-based models like ESCGs typically exhibit fine-grained parallelism where each agent can be processed independently, making them ideal for GPU acceleration [4] [5]. In contrast, coupled physical-biogeochemical models may require a hybrid approach with different components parallelized at appropriate granularities [7] [8].

Memory Hierarchy Utilization: Optimal GPU performance requires careful management of memory hierarchy. The CUDA implementations of ESCGs demonstrated the importance of minimizing global memory accesses through shared memory and register usage [5].

Algorithmic Trade-offs: Some ecological models may require reformulation from their CPU-based origins to achieve optimal GPU performance. This may involve trade-offs between mathematical exactness and computational efficiency, though validation ensures scientific integrity is maintained [5].

Future Directions and Environmental Considerations

The trajectory of GPU-accelerated ecological simulation points toward increasingly sophisticated digital twins of natural systems. Researchers are already developing wildlife-based interactive digital twins to visualize and simulate ecological interactions between species and their environments [6]. These systems will provide safe environments for studying organismal relationships that naturally occur in the wild while minimizing ecosystem disturbance [6].

However, the growing computational demands of ecological simulation raise important environmental considerations. Recent research has highlighted that the carbon footprint of AI and simulation systems is shifting from operational carbon to embodied carbon—the emissions associated with hardware manufacturing [9]. One study found that while GPU embodied carbon constituted 0.77% of GPT-3's and 2.18% of GPT-4's reported emissions, this percentage is likely to grow with increasing reliance on GPUs in scientific computing [9]. This necessitates balanced approaches that consider both computational efficiency and environmental impact in ecological simulation research.

Future developments will likely focus on more energy-efficient GPU architectures, improved algorithms that achieve higher performance with less computation, and the integration of AI techniques with traditional simulation approaches to create more powerful and efficient ecological forecasting systems. As these technologies mature, they will further transform our ability to understand and protect complex ecological systems at scales from microscopic to planetary.

The field of ecology is increasingly relying on complex computational models to understand and forecast the dynamics of natural systems. These simulations, however, are often prohibitively slow when run on traditional central processing units (CPUs), limiting the scope and resolution of research. Graphics Processing Unit (GPU) acceleration has emerged as a transformative technology in this domain, leveraging three core technical advantages—massive parallelism, high computational precision, and superior memory bandwidth—to make previously intractable ecological models feasible. This paradigm shift enables researchers to simulate larger spatial areas, incorporate more complex biological interactions, and run ensembles of forecasts in practical timeframes. The integration of GPU computing is thus advancing ecological research from theoretical exploration into operational forecasting and informed decision-making for ecosystem conservation and management [10].

The significance of GPU acceleration is particularly evident in processing the massive datasets now common in ecology, from high-resolution satellite imagery to genomic data. By executing thousands of computational threads simultaneously, GPUs unlock the potential for real-time forecasting of environmental changes and detailed agent-based modeling of populations. This technical guide examines the architectural foundations of GPU acceleration, demonstrates its application through cutting-edge ecological case studies, and provides practical methodologies for researchers seeking to harness this computational power in their simulations.

Core Technical Advantages of GPU Architecture

Massive Parallelism

At the heart of GPU acceleration lies its massively parallel architecture. Unlike CPUs with a few cores optimized for sequential serial processing, GPUs possess thousands of smaller, efficient cores designed to handle multiple tasks simultaneously. This architecture is exceptionally suited for ecological simulations where the same operation must be applied across vast datasets or numerous independent agents.

- Data Parallelism: This technique involves executing the same operation on distributed data simultaneously across multiple GPU cores. It is highly effective for workload types that require repetitive operations on large datasets, such as applying growth models to every cell in a spatial grid or calculating resource availability across a landscape. By processing data chunks in parallel, data parallelism dramatically accelerates computation, significantly reducing execution time compared to serial processing [11].

- Task Parallelism: This approach divides applications into distinct tasks that can be processed concurrently. In ecological modeling, this could involve simulating different species populations, hydrological processes, and nutrient cycling simultaneously. Task parallelism is optimal for applications where tasks can run independently and in parallel, though it requires careful structuring to ensure tasks execute without data conflicts [11].

- Hybrid Parallelism: Complex ecological simulations often benefit from hybrid parallelism, which combines data and task parallelism. This strategy allows simultaneous management of both large datasets and diverse computational tasks, dynamically adjusting to application demands and improving overall throughput and resource usage. Successful implementation requires an intricate understanding of GPU architecture and workload characteristics to balance and coordinate tasks and data efficiently [11].

Computational Precision

Computational precision is paramount in ecological forecasting, where small numerical errors can propagate through iterative simulations and lead to divergent or biologically implausible outcomes. GPU computing offers robust solutions for maintaining precision across various computational workloads.

- Mixed-Precision Computing: Modern GPUs contain specialized hardware like NVIDIA's Tensor Cores that can dramatically accelerate operations by strategically using different levels of numerical precision. For instance, a model might use 16-bit floating-point numbers for memory-intensive initial calculations while reserving 64-bit double-precision for critical final outputs where accuracy is essential. This approach balances computational speed with numerical accuracy [11].

- Deterministic Results: Ecological models intended for scientific inference and policy guidance must produce reproducible, consistent results across multiple runs. GPU programming models provide control over floating-point operations and rounding modes, enabling researchers to ensure deterministic outcomes—a requirement for model validation and peer-reviewed research.

- Algorithmic Stability: The parallel implementation of ecological algorithms on GPUs must maintain numerical stability despite the non-sequential execution of operations. Techniques such as compensated summation algorithms and careful ordering of reduction operations help mitigate rounding errors that might otherwise accumulate in large-scale parallel simulations.

Memory Bandwidth

Ecological simulations are frequently limited by memory bandwidth rather than raw computational power. GPU architectures address this bottleneck through sophisticated memory hierarchies and access patterns.

- Memory Hierarchy: GPUs implement a tiered memory structure with significant speed differences between levels. Global GPU memory (DRAM) offers large capacity but higher latency, while shared memory and registers provide faster access for frequently used data. Effective use of faster memory types like shared memory enhances data throughput and execution speed by reducing costly global memory calls [11].

- Coalesced Memory Access: Optimizing memory access patterns is essential for efficient GPU parallel computing. Coalescing memory accesses—ensuring that memory operations align with the GPU's memory architecture—maximizes bandwidth utilization. This approach minimizes access latency and is critical for achieving high performance in data-intensive ecological simulations [11].

- Memory Prefetching: Advanced GPU programming techniques include manually prefetching data from global memory to shared memory in advance of computation. This overlaps memory latency with computation, preventing threads from stalling while waiting for data transfers and ensuring computational units remain productive [11].

Table 1: GPU Memory Hierarchy and Performance Characteristics

| Memory Type | Bandwidth | Latency | Scope | Use Case in Ecological Simulation |

|---|---|---|---|---|

| Global Memory (DRAM) | High (~1 TB/s on NVIDIA A100) | High | All threads | Storing large spatial grids, environmental layers |

| Shared Memory | ~10x Global Memory | Low | Thread block | Tile of landscape for neighborhood calculations |

| Registers | ~100x Global Memory | Lowest | Single thread | Local variables, individual agent states |

| L1/L2 Cache | ~5x Global Memory | Medium | SM/All threads | Caching frequently accessed model parameters |

Case Studies in Ecological Simulation

BioCLIP 2: Large-Scale Biodiversity Modeling

The BioCLIP 2 project exemplifies how GPU acceleration enables working with unprecedented scale and complexity in biodiversity informatics. This foundation model, trained on NVIDIA GPUs, identifies over a million species from a massive dataset of 214 million images spanning 925,000 taxonomic classes—from mammals to plants and fungi [6].

- Technical Implementation: The model was trained on a cluster of 64 NVIDIA Tensor Core GPUs, with training completed in just 10 days using 32 NVIDIA H100 GPUs. This massive parallelization would have been infeasible with traditional CPU-based approaches. The BioCLIP 2 architecture demonstrates how GPUs can handle both the computational workload and memory requirements of processing hundreds of millions of images [6].

- Novel Capabilities: Beyond simple species identification, BioCLIP 2 displayed emergent abilities to distinguish subtle biological traits without explicit programming. The model autonomously learned to arrange Darwin's finches by beak size without being taught the concept of size, separated healthy from diseased plant leaves, and distinguished between adult and juvenile animals as well as males and females within species. These capabilities emerged from the model's exposure to vast datasets processed through GPU-accelerated learning [6].

- Conservation Applications: For data-deficient species—including many beetles, fungi, and even poorly studied iconic species like killer whales and polar bears—GPU-accelerated models like BioCLIP 2 offer hope. They can fill critical data gaps by extrapolating from limited examples, enhancing existing conservation efforts for threatened species and their habitats [6].

Table 2: Performance Metrics for GPU-Accelerated Ecological Research Tools

| Research Tool | GPU Platform | Speedup vs. CPU | Dataset Size | Key Achievement |

|---|---|---|---|---|

| BioCLIP 2 | 64 NVIDIA Tensor Core GPUs | Not specified (but 10-day training time) | 214 million images | Identification of 1M+ species |

| ESCG Simulation | NVIDIA CUDA | 28x | 3200x3200 grid | Tractability of large system sizes |

| BlazingSQL | NVIDIA GPUs | Varies by query | Scale factors up to 16 (SSB) | Efficient GPU DataFrames for analytics |

| Crystal+ | NVIDIA GPUs | Outperformed CPU baselines | Scale factors up to 8 (TPCH) | Limited operation support but fast execution |

Evolutionary Spatial Cyclic Games (ESCGs)

Evolutionary Spatial Cyclic Games represent a class of minimal agent-based models used to study co-evolutionary dynamics and biodiversity in ecosystems. Traditional single-threaded ESCG simulations are computationally expensive and scale poorly, but GPU acceleration has dramatically improved their feasibility [4].

- Implementation Framework: High-performance ESCG implementations were developed using both Apple's Metal and NVIDIA's CUDA, with a validated single-threaded C++ version serving as a baseline. The CUDA implementation particularly demonstrated the advantages of GPU architecture for spatially explicit ecological simulations [5].

- Performance Benchmarks: Benchmarking results showed that GPU acceleration delivered significant speedups, with the CUDA implementation achieving up to 28x improvement over single-threaded CPU execution. This performance gain enabled researchers to work with larger system sizes (up to 3200x3200) that became tractable, while the Metal implementation faced scalability limits [4].

- Scientific Implications: The GPU frameworks enabled replication and critical extension of recent ESCG studies, revealing sensitivities to system size and runtime not fully explored in prior work due to computational constraints. This demonstrates how GPU acceleration not only speeds up existing research but opens new scientific avenues previously blocked by technical limitations [5].

GPU-Accelerated Databases for Ecological Analytics

The analysis of ecological data increasingly relies on specialized database systems optimized for GPU execution. These systems leverage the parallel processing capabilities of GPUs to accelerate queries on large environmental datasets.

- System Architectures: Databases like BlazingSQL provide SQL interfaces tailored for GPU DataFrames (GDFs), enabling efficient processing of large-scale ecological datasets. These systems utilize the RAPIDS ecosystem for end-to-end data science workflows, from data preparation to machine learning, all within GPU memory [12].

- Query Performance: GPU databases demonstrate superior performance for analytical queries common in ecological research. By executing database operations in parallel across thousands of GPU cores, these systems can rapidly filter, aggregate, and join large spatial-temporal datasets, enabling interactive exploration of ecological data that would be sluggish on CPU-based systems [12].

- Integration with Analytical Workflows: The output from GPU-accelerated databases can seamlessly integrate with machine learning libraries like RAPIDS cuML or be converted to formats compatible with deep learning frameworks such as PyTorch or TensorFlow. This creates an efficient pipeline from data preparation to model training and forecasting, all accelerated by GPU parallelism [12].

Experimental Protocols and Methodologies

GPU Implementation of Evolutionary Spatial Cyclic Games

The successful GPU implementation of ESCGs provides a valuable template for ecological model development.

Diagram 1: ESCG GPU Simulation Workflow (77 characters)

- Initialization: The simulation begins with a 2D grid population where each cell represents an individual agent following one of several strategies. The grid is allocated in GPU global memory with careful attention to memory alignment for optimal access patterns. Each thread typically handles one cell or a block of cells, with initial strategies randomized across the population [5].

- Update Cycle: Each simulation time step involves parallel evaluation of all cells. The framework employs a kernel function where each thread computes the next state of its assigned cell(s) based on the states of neighboring cells. Boundary conditions are handled through either ghost cells or specialized edge-processing kernels [4].

- Competition Mechanism: The core ecological interaction involves each individual comparing its strategy with a randomly selected neighbor according to a predefined payoff matrix. In GPU implementation, this random selection uses parallel random number generators with careful attention to statistical quality and performance [5].

- Convergence Checking: The simulation runs for a fixed number of generations or until a convergence criterion is met. Checking for global convergence in parallel requires reduction patterns where partial results from thread blocks are combined efficiently [4].

BioCLIP 2 Training Methodology

The development of the BioCLIP 2 model demonstrates scalable training of foundation models for ecological applications.

Diagram 2: BioCLIP GPU Training Pipeline (80 characters)

- Dataset Curation: The TREEOFLIFE-200M dataset comprises 214 million images of organisms spanning 925,000 taxonomic classes, curated through collaboration between the Imageomics Institute, Smithsonian Institution, and various universities. This dataset represents the largest and most diverse collection of biological imagery compiled for model training [6].

- Model Architecture: BioCLIP 2 builds on the foundation model concept, processing images and taxonomic information through a multi-modal neural network that learns hierarchical relationships between species without explicit taxonomic programming. The architecture leverages attention mechanisms and contrastive learning to align visual features with biological concepts [6].

- Training Protocol: Training was completed in just 10 days using 32 NVIDIA H100 GPUs, demonstrating exceptional parallel scaling. The model utilized mixed-precision training to balance computational speed with numerical stability, maintaining sufficient precision for fine-grained biological distinctions [6].

- Validation Approach: Model performance was validated through both standard computer vision metrics and novel biological assessments, such as its ability to arrange species by morphological traits and distinguish health states in plants without explicit training on these tasks [6].

Essential Research Reagent Solutions

Table 3: Essential Computational Tools for GPU-Accelerated Ecological Simulation

| Tool/Platform | Function | Ecological Application | Implementation Consideration |

|---|---|---|---|

| NVIDIA CUDA | Parallel computing platform | General-purpose GPU acceleration | Direct access to GPU virtual instruction set |

| RAPIDS cuDF | GPU DataFrames manipulation | Data preparation for ecological analysis | Integration with Python data science stack |

| Apache Calcite | SQL parsing and optimization | Query processing in GPU databases | Federated query across multiple data sources |

| NVIDIA Nsight Compute | Performance profiling | Identifying computational bottlenecks | Detailed analysis of kernel performance |

| OpenCL | Cross-platform parallel programming | Code targeting diverse hardware | Portability across GPU vendors |

| BlazingSQL | GPU-accelerated SQL engine | Querying large ecological datasets | Integration with RAPIDS ecosystem |

Implementation Best Practices

Performance Optimization Techniques

Maximizing the performance of GPU-accelerated ecological simulations requires attention to several key principles:

- GPU Occupancy Tuning: Use GPU occupancy calculators (e.g., NVIDIA's Occupancy Calculator) to fine-tune the number of active warps and achieve the optimal balance between register usage, shared memory, and thread count. This ensures maximum throughput while avoiding underutilization or resource contention. For ecological simulations with complex agent rules, carefully managing register pressure is often necessary to maintain high occupancy [11].

- Memory Access Patterns: Optimizing memory access patterns is essential for efficient GPU parallel computing. Implement coalesced memory accesses by ensuring that consecutive threads access consecutive memory locations wherever possible. This approach minimizes memory latency and maximizes bandwidth utilization, which is particularly important for spatial simulations that require neighborhood operations across large grids [11].

- Warp Specialization: Design threads within a warp to specialize in different subtasks (e.g., some threads handle computation while others manage memory prefetching). This approach reduces memory latency and improves performance for highly memory-bound applications common in ecological modeling that combine multiple data sources and model components [11].

- Minimizing Thread Divergence: Avoid conditional branching within GPU warps, as divergent execution paths cause some threads to idle while others execute. Restructure ecological algorithms to use uniform branching or predicated instructions to ensure all threads in a warp follow the same execution path, which is particularly important for models with decision rules that vary across individuals or spatial locations [11].

Validation and Verification Framework

Ensuring the correctness of GPU-accelerated ecological simulations requires rigorous validation methodologies:

- Cross-Platform Validation: Implement simulation logic independently on both CPU and GPU platforms to verify that results are numerically equivalent within acceptable tolerance. The ESCG research, for instance, developed single-threaded versions in C++ and C for cross-validation against GPU implementations, generating baseline performance measures while ensuring algorithmic correctness [5].

- Progressive Scaling: Test simulations across a range of system sizes from small test cases to full-scale production runs. This helps identify scaling issues and verifies that model behavior remains ecologically plausible across resolutions. GPU implementations should be validated against known analytical solutions or established reference implementations where available [4].

- Precision Analysis: Conduct sensitivity analysis on numerical precision choices, particularly when employing mixed-precision techniques. Determine which model components require full double-precision and which can utilize single-precision without compromising ecological validity. This is especially important for long-running simulations where numerical errors might accumulate over thousands of time steps [11].

Emerging Trends

The integration of GPU acceleration in ecological simulation continues to evolve with several promising directions:

- Digital Twin Ecosystems: Researchers are developing wildlife-based interactive digital twins that can visualize and simulate ecological interactions between species and their engagement with the environment. These digital twins provide a safe environment to study organismal relationships that naturally occur in the wild while minimizing impact and disturbance on actual ecosystems [6].

- Real-Time Forecasting Pipelines: GPU acceleration enables the creation of real-time ecological forecasting systems that assimilate incoming sensor data and update predictions on operational timelines. Workshops at recent ecological forecasting conferences have demonstrated implementations for water quality forecasting, tick population dynamics, and post-fire recovery using MODIS LAI data [10].

- Foundation Models for Ecology: The success of BioCLIP 2 suggests a future where large-scale GPU-trained models serve as biological encyclopedias and scientific platforms capable of answering diverse ecological questions. These models could evolve into interactive research tools with inference capabilities to help address persistent challenges in conservation biology [6].

GPU-accelerated ecological simulation represents a paradigm shift in how researchers study complex environmental systems. By leveraging the core technical advantages of massive parallelism, computational precision, and superior memory bandwidth, ecological models can now address questions at unprecedented scales and resolutions. The case studies presented—from large-scale biodiversity modeling with BioCLIP 2 to evolutionary game theory implementations—demonstrate the transformative potential of this technology.

As GPU hardware continues to evolve and programming tools mature, the accessibility of these techniques will increase, enabling more ecologists to incorporate high-performance computing into their research workflows. The future of ecological forecasting and analysis will undoubtedly be built upon the computational foundations described in this guide, leading to deeper insights into ecosystem dynamics and more effective strategies for conservation and management in an increasingly changing world.

Ecology, the study of complex interactions between organisms and their environment, has traditionally been a field of patient observation. However, the advent of high-resolution spatial data, detailed individual-based models, and the urgent need to understand large-scale environmental changes has pushed ecological research into the realm of high-performance computing. Many core ecological simulations—from modeling forest landscape changes to predicting species interactions across vast territories—involve performing identical, independent calculations across millions of spatial cells or individual agents. These problems represent a class of computational challenges known as "embarrassingly parallel" problems, where minimal effort is required to separate the problem into parallel tasks. This technical guide examines how Graphics Processing Units (GPUs), with their massively parallel architecture, are revolutionizing ecological simulation research by providing the computational horsepower necessary to tackle problems at unprecedented scales, resolutions, and speeds.

Quantifying the GPU Acceleration Advantage in Ecological Modeling

The transition from traditional central processing unit (CPU)-based sequential computing to GPU-accelerated parallel processing delivers transformative performance improvements across multiple ecological domains. The table below summarizes documented speedups from implementing GPU acceleration in key ecological simulation categories.

Table 1: Performance Improvements from GPU Acceleration in Ecological Simulations

| Application Domain | Specific Model/System | CPU Baseline | GPU Implementation | Performance Improvement | Key Enabling Technology |

|---|---|---|---|---|---|

| Evolutionary Game Theory | Evolutionary Spatial Cyclic Games (ESCG) | Single-threaded C++ | NVIDIA CUDA | 28x speedup; tractable simulations up to 3200×3200 grid size [4] [5] | CUDA; Apple Metal (limited scalability) |

| Sea-Ice Dynamics | neXtSIM-DG Dynamical Core | OpenMP-based CPU | Kokkos heterogeneous computing | 6x speedup on GPU; maintained CPU competitiveness [13] | Kokkos; CUDA; Single precision floating-point |

| Forest Landscape Modeling | LANDIS Forest Landscape Model | Sequential processing (pixel-by-pixel) | Spatial domain decomposition parallelism | 64.6-76.2% time reduction for annual time-step simulations [14] | Spatial domain decomposition; Dynamic core reallocation |

| Species Identification | BioCLIP 2 Foundation Model | Not specified | 64 NVIDIA Tensor Core GPUs | Training on 214M images across 925,000 taxonomic classes in 10 days [6] | NVIDIA H100 GPUs; Transformer architecture |

The performance advantages extend beyond raw speed. GPU acceleration enables ecological simulations at previously impractical scales. For instance, the ESCG framework achieved tractable simulations of systems with 10.24 million cells (3200×3200), far exceeding the practical limits of sequential processing [4]. Similarly, the BioCLIP 2 model leveraged GPU parallelism to process 214 million images spanning 925,000 taxonomic classes, creating the largest biological dataset to date [6]. This scalability transformation allows ecologists to move from simplified theoretical models to simulations that approach the complexity of real-world ecosystems.

Experimental Protocols for GPU-Accelerated Ecological Simulations

Protocol: Implementing Evolutionary Spatial Cyclic Games on GPUs

Evolutionary Spatial Cyclic Games (ESCGs) are agent-based models that study biodiversity dynamics through spatial interactions between species. The GPU implementation protocol involves:

System Representation: Model the ecosystem as a 2D grid where each cell contains an individual agent representing a specific species. Each agent interacts with its neighbors (typically Moore neighborhood) according to species-specific rules [4] [5].

Parallelization Strategy: Implement the simulation using a data-parallel approach where each GPU thread processes one grid cell. The massive parallelism of GPUs allows simultaneous computation of all cell updates [4].

Memory Management: Optimize memory access patterns by utilizing GPU shared memory for neighbor data where possible, reducing global memory accesses which have higher latency [4].

Validation Methodology: Develop a validated single-threaded C++ version as a baseline for cross-validation against GPU implementations to ensure algorithmic correctness [5].

Implementation Options: Provide multiple implementation pathways:

Graphviz diagram: Workflow for GPU-Accelerated Evolutionary Spatial Cyclic Games

Protocol: GPU-Accelerated Sea-Ice Modeling with neXtSIM-DG

The neXtSIM-DG model simulates sea-ice dynamics using a finite-element discontinuous Galerkin method, essential for climate research:

Mathematical Formulation: Implement the viscous-plastic sea-ice model using modified Elastic-Viscous-Plastic (mEVP) solver iterations. The core computation involves identical operations on each mesh element [13].

GPU Framework Selection: Evaluate multiple GPU programming frameworks for implementation:

- CUDA: Delivers best performance but limited to NVIDIA hardware

- SYCL: Emerging standard for cross-vendor compatibility but with toolchain immaturity

- Kokkos: Provides performance portability across different hardware platforms

- PyTorch: Leverages machine learning infrastructure but less optimal for traditional simulation [13]

Precision Optimization: Implement mixed-precision computations where appropriate, as sea-ice simulations demonstrate sufficient accuracy with single-precision floating-point, providing additional performance gains [13].

Performance Validation: Compare GPU implementation against OpenMP-based CPU code with identical mathematical formulation to quantify speedup (typically 6x) while verifying result equivalence [13].

Computational Frameworks for Ecological GPU Research

The ecosystem of GPU programming frameworks offers multiple pathways for implementing ecological simulations, each with distinct advantages and limitations.

Table 2: GPU Programming Frameworks for Ecological Simulations

| Framework | Hardware Support | Development Complexity | Performance | Best Suited For |

|---|---|---|---|---|

| CUDA | NVIDIA GPUs only | High | Highest [13] | Production models where maximum NVIDIA performance is critical |

| Kokkos | Multiple architectures (CPU, GPU) | Medium | High (competitive with CUDA) [13] | Cross-platform projects requiring hardware flexibility |

| SYCL | Multiple vendor GPUs | Medium | Evolving (toolchain challenges) [13] | Future-proof code targeting heterogeneous systems |

| Metal | Apple Silicon only | Medium | Limited scalability [4] | Research deployments on Apple hardware ecosystems |

| PyTorch/TensorFlow | Multiple architectures via ML backends | Low | Moderate for non-ML workloads [13] | Ecological models integrating AI/ML components |

| OpenMP/OpenACC | Multiple architectures | Low to Medium | Lower than native frameworks [13] | Initial ports of existing codebases with limited optimization |

The selection of an appropriate GPU framework involves trade-offs between performance, portability, and development effort. For ecological research teams, Kokkos presents a compelling option with its robust heterogeneous computing capabilities and competitive performance on both NVIDIA hardware and alternative platforms [13].

The Ecological Researcher's GPU Toolkit

Implementing GPU-accelerated ecological simulations requires both hardware and software components optimized for parallel processing.

Table 3: Essential Tools for GPU-Accelerated Ecological Research

| Tool Category | Specific Technologies | Ecological Application |

|---|---|---|

| GPU Hardware Platforms | NVIDIA H100, A100; Apple Silicon | Training large foundation models (BioCLIP 2); General simulation [6] |

| Programming Models | CUDA, Kokkos, Metal, SYCL | Implementing parallel algorithms for specific ecological models [4] [13] |

| GPU-Accelerated Libraries | RAPIDS (cuDF, cuML), NVIDIA HPC SDK | Data preprocessing, analysis, and machine learning on ecological datasets [15] |

| Domain-Specific Frameworks | neXtSIM-DG, LANDIS PP design | Specialized implementations for sea-ice and forest landscape modeling [13] [14] |

| Precision Management | Single-precision floating point, Mixed-precision algorithms | Accelerating simulations where full double-precision is not required [13] |

Graphviz diagram: GPU-Accelerated Ecological Research Toolchain

Environmental Considerations and Sustainable GPU Computing

The computational intensity of GPU-accelerated ecology raises important environmental considerations. The manufacturing of AI GPUs is projected to generate 19.2 million metric tons of CO₂ equivalent emissions by 2030, a dramatic increase from 1.21 million metric tons in 2024 [16]. This "embodied carbon" represents a significant ecological footprint that researchers must balance against the benefits of accelerated simulations.

Strategies for sustainable GPU ecology research include:

Algorithmic Efficiency: Employ techniques such as model pruning, quantization, and knowledge distillation to create less computationally intensive models [17].

Hardware Optimization: Utilize the latest generation energy-efficient GPUs, with modern architectures delivering up to 50 times better energy efficiency for AI workloads compared to traditional CPUs [17].

Workload Management: Schedule non-urgent simulations to align with periods of renewable energy availability in the local grid [17].

Precision Selection: Implement single-precision floating-point operations where scientifically valid, reducing computational demands while maintaining sufficient accuracy for ecological assessments [13].

GPU acceleration represents a paradigm shift in ecological modeling, transforming previously intractable problems into feasible research programs. The ability to simulate systems with millions of interacting components at high speed enables ecologists to address fundamental questions about biodiversity, ecosystem dynamics, and environmental change at unprecedented scales. As GPU hardware continues to evolve and programming frameworks mature, the integration of these technologies into ecological research will undoubtedly expand, potentially enabling entire digital twins of ecosystems for detailed experimental analysis [6]. However, this computational power brings responsibility—ecological researchers must implement these technologies thoughtfully, balancing the pursuit of scientific understanding with awareness of the environmental footprint of their computational tools. By embracing GPU acceleration while prioritizing efficiency and sustainability, the ecology community can dramatically accelerate insights into pressing global environmental challenges.

Ecological simulation research increasingly relies on high-performance computing (HPC) to model complex systems, from population dynamics and disease spread to nutrient cycling and ecosystem resilience. These simulations involve mathematically intensive computations that are inherently parallel, making them ideal candidates for GPU acceleration. Unlike traditional Central Processing Units (CPUs) with a handful of powerful cores, Graphics Processing Units (GPUs) are designed with thousands of smaller, efficient cores that perform many calculations simultaneously [18]. This massively parallel architecture can dramatically reduce simulation time, enabling researchers to run larger, more complex models or explore parameter spaces more thoroughly than previously possible. Understanding the key hardware specifications—particularly FP64 (double-precision floating-point) performance, CUDA core architecture, and VRAM (Video Random Access Memory)—is therefore fundamental to building and utilizing effective computational research environments for ecological modeling.

Core Hardware Specifications Demystified

Floating-Point Precision: FP64 and Its Alternatives

Floating-point precision defines the numerical format used for calculations, directly impacting both the accuracy of results and computational speed. For scientific computing, choosing the right precision is critical.

- FP64 (Double Precision): Uses 64 bits to represent a number, providing a wide dynamic range and about 15-17 significant decimal digits. It is essential for simulations involving weak gradients, long-term stability, or complex physical phenomena where rounding errors could accumulate and invalidate results [19]. Many legacy scientific codes were written with FP64 as the default.

- FP32 (Single Precision): Uses 32 bits, offering faster computation and reduced memory usage but with lower accuracy (about 6-9 significant digits). Many modern solvers are robust enough to use FP32 or mixed-precision approaches effectively [20] [19].

- Mixed/Hybrid Precision: An increasingly common strategy where most calculations are done in fast FP32, while critical operations use FP64 to maintain overall accuracy. This approach can offer a excellent balance of speed and precision [20].

The hardware support for these precisions varies significantly. Consumer-grade GPUs (e.g., GeForce RTX series) often have intentionally limited FP64 throughput, sometimes performing these calculations at 1/32nd or 1/64th of their FP32 speed [21]. In contrast, data-center GPUs (e.g., NVIDIA A100, H100) feature dedicated FP64 cores, providing the high throughput required for demanding scientific workloads [22] [19].

Parallel Compute Architecture: CUDA Cores and Tensor Cores

NVIDIA's GPU architecture is built around different types of processing cores, each optimized for specific tasks.

- CUDA Cores (Compute Unified Device Architecture): These are the GPU's general-purpose, versatile workers. They handle most of the logical, arithmetic, and control-flow operations in a parallel computation. A higher number of CUDA cores generally correlates with stronger performance in a wide range of parallel tasks, including many simulation workloads [23].

- Tensor Cores: These are specialized cores designed to accelerate matrix multiplication and convolution operations at very high speeds using reduced precision (like FP16, BF16, INT8, and FP8). While initially developed for deep learning, their utility is expanding to other scientific domains, including parts of linear algebra solvers used in various simulations [23].

For ecological simulations that are not built around dense linear algebra, CUDA cores typically form the backbone of the computation. However, the presence of Tensor Cores may provide significant speedups for specific sub-tasks or emerging algorithms.

Memory Subsystem: VRAM Capacity and Bandwidth

VRAM is the high-speed memory located on the GPU itself, used to store the active simulation data, including model parameters, state variables, and mesh information.

- VRAM Capacity: This determines the size of the problem you can fit onto a single GPU. Exceeding VRAM capacity will typically cause the simulation to fail or force data swapping to system RAM, which cripples performance. A common rule of thumb for grid-based simulations is a requirement of 1-3 GB of VRAM per million grid elements or cells, though this can increase with model complexity [18] [19].

- Memory Bandwidth: Measured in GB/s or TB/s, this defines the rate at which data can be read from or written to the VRAM. Simulations that process large amounts of data (e.g., large 3D spatial models) are often memory-bandwidth-bound, meaning their speed is limited by how fast data can be moved, not by how fast it can be calculated [21] [18].

High-bandwidth memory (HBM), found on data-center GPUs like the A100 and H100, offers a significant advantage over GDDR memory for these bandwidth-intensive workloads [22] [18].

Quantitative Comparison of Key GPU Hardware

Table 1: Key Hardware Specifications for Select NVIDIA GPUs Relevant to Scientific Simulation

| GPU Model | FP64 (TFLOPS) | FP32 (TFLOPS) | VRAM Capacity | Memory Bandwidth | Core Type Highlights |

|---|---|---|---|---|---|

| NVIDIA H200 | 67 [22] | ~ | 141 GB HBM3e [24] | 4.8 TB/s [24] | High FP64, Massive VRAM |

| NVIDIA H100 | 67 [22] | ~ | 80-94 GB HBM3 [22] [24] | 3.9 TB/s [22] | Dedicated FP64 Cores [19] |

| NVIDIA A100 | 19.5 [22] | ~ | 40-80 GB HBM2e [22] | 2.0 TB/s [22] | Dedicated FP64 Cores, MIG |

| NVIDIA V100 | ~7 [25] | ~14 [25] | 32 GB HBM2 [25] | 900 GB/s [25] | 1st Gen Tensor Cores |

| RTX 6000 Ada | Low (FP32 Emulation) [19] | ~91 [25] | 48 GB GDDR6 [25] | 960 GB/s [25] | High VRAM, No Native FP64 |

| RTX 4090 | ~0.56 [21] (Est.) | 82.6 [24] | 24 GB GDDR6X [24] | 1.0 TB/s [24] [25] | Consumer-Grade, Low FP64 |

Table 2: GPU Memory Requirement Estimation for Different Model Scenarios

| Simulation Type / Scale | Estimated Mesh/Elements | Estimated VRAM Need | Recommended GPU Class |

|---|---|---|---|

| Small-scale / Prototyping | 1 - 5 million | 5 - 15 GB | High-End Consumer (e.g., RTX 4090) |

| Medium-scale / Standard Research | 10 - 50 million | 20 - 100 GB | Multi-GPU or Pro/Data Center (e.g., A100, H100) |

| Large-scale / High-Fidelity 3D | 50 - 200+ million | 100+ GB | High-Memory Data Center (e.g., H200) or Multi-Node |

Decision Framework and Experimental Protocols

Hardware Selection Workflow for Ecological Simulations

The following diagram outlines a logical decision process for selecting appropriate GPU hardware based on the simulation's precision requirements and scale.

Protocol for Benchmarking GPU Performance

To make an evidence-based hardware decision, researchers should conduct a standardized benchmark. The following protocol provides a methodology for comparing performance across different GPU platforms.

- Define a Representative Test Case: Select a well-understood, medium-complexity ecological model that captures the core computational kernels of your typical work. The model should be scalable to assess performance at different sizes.

- Establish Performance Metrics: Determine the primary metric for comparison. This could be:

- Simulation Throughput:

(Simulated Model Years) / (Wall-clock Hour) - Time to Solution: Total wall-clock time to complete a fixed-duration simulation.

- Iterations per Second: For iterative solvers, the rate of convergence.

- Simulation Throughput:

- Configure Hardware and Software Stack: Use containerization (e.g., Docker, Singularity) to ensure a consistent software environment across all tested systems. Precisely record versions of the OS, CUDA driver, CUDA Toolkit, and scientific libraries [20].

- Execute Benchmark Runs: Run the test case on each candidate GPU hardware configuration. For reliability, execute each test multiple times and report the average and standard deviation of the performance metrics.

- Analyze Cost-Efficiency: Calculate the cost-effectiveness of each setup using a metric like

(Total Hardware Cost) / (Simulation Throughput). This provides a practical value metric for procurement decisions [20].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Hardware and Software "Reagents" for GPU-Accelerated Ecological Simulation

| Tool / Resource | Category | Function in Research |

|---|---|---|

| NVIDIA H100/A100 GPU | Core Hardware | Provides high FP64 throughput and large VRAM for accurate, large-scale simulations [22]. |

| NVIDIA RTX 4090/5090 | Core Hardware | Cost-effective hardware for model development, testing, and smaller-scale runs [20] [24]. |

| CUDA Toolkit | Software | Provides the compiler, libraries, and tools necessary to execute and optimize code on NVIDIA GPUs [22]. |

| NGC Containers | Software | Pre-configured, performance-optimized containers for scientific software, ensuring reproducibility [20]. |

| NVLink/NVSwitch | Hardware Interconnect | High-speed interconnect for multi-GPU systems, enabling efficient scaling across GPUs [22] [24]. |

| Ansys Fluent GPU Solver | Simulation Software | An example of a commercial CFD solver with native GPU acceleration, applicable to fluid dynamics in ecological systems [18] [19]. |

| GROMACS/AMBER | Simulation Software | GPU-accelerated molecular dynamics packages, useful for biochemical or molecular-level ecological interactions [20]. |

| FLAME GPU | Simulation Software | A framework for designing and running agent-based models (e.g., animal movement, disease spread) on GPUs [20]. |

Selecting the right GPU hardware is a critical step in building a capable ecological simulation research platform. The core specifications of FP64 performance, VRAM capacity/bandwidth, and the balance of CUDA and Tensor Cores must be evaluated against the specific needs of the target models. Data-center GPUs like the H100 and A100 are indispensable for traditional, FP64-heavy simulations and very large models, while consumer GPUs like the RTX 4090 offer remarkable value for mixed-precision workloads and development. By applying the structured decision framework and benchmarking protocols outlined in this guide, researchers can make informed investments in computational infrastructure that will power the next generation of ecological discovery.

From Theory to Field: Real-World Applications of GPU-Accelerated Ecological Models

High-Resolution Hydrological and Flood Hazard Modeling

High-resolution hydrological and flood hazard modeling represents a critical frontier in understanding and mitigating the impacts of extreme water-related events. These models have evolved from conceptual frameworks to sophisticated simulation tools that capture the complex interplay between atmospheric, terrestrial, and aquatic systems. Within the broader context of GPU-accelerated ecological simulation research, hydrological modeling stands as a paradigm for how computational advances can transform our predictive capabilities across environmental sciences [26]. The emergence of graphics processing unit (GPU) acceleration has fundamentally reshaped this landscape, enabling researchers to achieve unprecedented spatial and temporal resolution while maintaining computational feasibility for practical applications.

Traditional hydrological models faced significant constraints in balancing numerical accuracy with computational efficiency, particularly when simulating large domains with complex topography and infrastructure. GPU-accelerated computing addresses this challenge by leveraging parallel processing architectures to perform thousands of simultaneous calculations, dramatically reducing simulation times from hours to minutes while enabling higher-fidelity representation of physical processes [27] [28]. This technological advancement has opened new possibilities for real-time flood forecasting, ensemble modeling for uncertainty quantification, and high-resolution scenario analysis that were previously computationally prohibitive.

The integration of GPU acceleration into hydrological modeling frameworks represents more than merely faster calculations—it enables a fundamental shift in scientific approach. Researchers can now incorporate finer spatial resolutions, couple previously segregated model components, perform comprehensive sensitivity analyses, and explore complex what-if scenarios that better represent the integrated nature of watershed systems [29]. This evolution aligns with the broader trajectory of ecological simulation research, where GPU technologies are simultaneously advancing fields ranging from evolutionary game theory to species distribution modeling [4] [6] [5].

Core Computational Frameworks and Architectures

GPU-Accelerated Numerical Solvers for Hydrological Systems

At the heart of high-resolution flood modeling lie the shallow water equations (SWEs), which describe the flow of water over terrain. The conservation form of the two-dimensional SWEs can be expressed as [27]:

∂q/∂t + ∂f/∂x + ∂g/∂y = S

Where q represents the flow variable vector; f and g are the flux vectors in the x and y directions, respectively; and S is the source term accounting for bed slope, friction, and infiltration effects. The vector terms expand to [27]:

q = [h, qₓ, qy]ᵀ f = [uh, uqₓ + gh²/2, uqy]ᵀ g = [vh, vqₓ, vqy + gh²/2]ᵀ S = [i, -gh(∂zb/∂x) - Cfu√(u²+v²), -gh(∂zb/∂y) - Cfv√(u²+v²)]ᵀ

Here, h represents water depth; u and v are velocity components; qₓ and qy are unit-width discharges; zb is bed elevation; g is gravitational acceleration; Cf is the bed roughness coefficient; and i represents rainfall and infiltration sources/sinks.

GPU-accelerated implementations solve these equations using Godunov-type finite volume schemes with approximate Riemann solvers (typically HLLC - Harten-Lax-van Leer-Contact) for flux calculations across cell interfaces [27]. The numerical discretization follows:

qᵢⁿ⁺¹ = qᵢⁿ + Δt/Ω [∫SdΩ - ∑Fₖ(qⁿ)·nₖlₖ]

Where qᵢⁿ⁺¹ is the updated flow state for cell i at the next time step; Δt is the time step; Ω is cell volume; Fₖ represents the flux normal to cell boundary k; nₖ is the outward unit normal vector; and lₖ is the edge length.

Parallel Computing Architectures for Hydrological Simulation

The computational efficiency of GPU-accelerated hydrological models stems from sophisticated parallelization strategies that distribute calculations across thousands of GPU cores. The most effective approaches implement structured domain decomposition, where the computational domain is partitioned into subdomains processed by different GPU threads or streams [27].

Table: GPU Parallelization Approaches in Hydrological Modeling

| Parallelization Strategy | Implementation Method | Application Context | Performance Advantage |

|---|---|---|---|

| Multi-GPU Domain Decomposition | Dividing computational domain into subdomains with overlapping ghost cells | Large watersheds with complex topography | Near-linear scaling with additional GPUs |

| CUDA Stream Concurrent Execution | Multiple CUDA streams for overlapping computation and data transfer | Integrated hydrological-hydrodynamic coupling | 25-40% reduction in communication overhead |

| Thread-Per-Cell Mapping | Assigning individual grid cells to separate GPU threads | High-resolution 2D flood inundation modeling | 28x speedup over single-threaded CPU [5] |

| Batch Processing of Ensemble Members | Simultaneous processing of multiple parameter sets or scenarios | Uncertainty quantification and parameter calibration | Enables large ensemble simulations |

For multi-GPU implementations, the computational domain (M × N cells) is partitioned along the y-direction into subdomains corresponding to available GPUs. To handle flux calculations at shared boundaries, a one-cell-thick overlapping region (ghost cells) is implemented, with CUDA streams managing inter-device communication for efficient data transfer between GPU memory spaces [27]. This approach effectively addresses the challenge of balancing computational workload across devices while maintaining numerical accuracy at subdomain interfaces.

The Compute Unified Device Architecture (CUDA) parallel computing framework has emerged as the dominant platform for GPU-accelerated hydrological modeling, though implementations also exist using Apple's Metal API and OpenCL [26] [5]. CUDA-based implementations typically achieve 15-28x speedup over single-threaded CPU versions, with performance gains increasing with problem size due to more efficient utilization of the GPU's parallel architecture [26] [5].

Experimental Protocols and Validation Methodologies

Model Verification Using Idealized Test Cases

Before application to real-world scenarios, GPU-accelerated hydrological models must undergo rigorous verification against analytical solutions and benchmark problems. The standard protocol involves three hierarchical validation stages [26] [27]:

Stage 1: Static Verification under Hydrostatic Conditions An idealized square domain (50m × 50m) with zero bottom slope is constructed to verify pure reaction processes without advective transport. Pollutant decay under steady conditions is simulated and compared to analytical solutions of the form C(t) = C₀e^(-kt), where C is concentration, t is time, and k is the decay rate. This tests the numerical implementation of source/sink terms in isolation from transport processes [26].

Stage 2: Dynamic Verification in Regular Channels A straight channel topography with regular cross-sections is used to verify coupled transport and reaction processes. The numerical solution is compared to the analytical solution for advection-diffusion-reaction equations, validating the implementation of flux calculations and their coupling with kinetic processes [26].

Stage 3: Experimental Benchmark Validation The model is applied to standardized test cases with empirical measurements, such as the V-catchment idealized watershed and experimental catchment benchmarks with observed inflow-outflow hydrographs and water level measurements [27]. Performance metrics include Nash-Sutcliffe Efficiency (NSE), Percent Bias (PBIAS), and Root Mean Square Error (RMSE) between simulated and observed values.

Performance Benchmarking Protocols

Computational performance of GPU-accelerated hydrological models is quantified using standardized benchmarking protocols that measure both strong scaling and weak scaling efficiency [27]:

Strong Scaling Tests Maintain a fixed problem size (e.g., 1000×1000 grid cells) while increasing the number of GPUs. Perfect strong scaling would achieve a linear reduction in computation time with additional processors. The measured metrics include:

- Speedup Ratio: T₁/Tₙ, where T₁ is single-GPU time and Tₙ is n-GPU time

- Parallel Efficiency: (T₁/(n×Tₙ)) × 100%

Weak Scaling Tests Increase problem size proportionally with the number of GPUs (e.g., each GPU processes 1000×1000 cells). Perfect weak scaling would maintain constant computation time regardless of problem size. This measures the ability to handle larger, more realistic domains.

Table: Typical Performance Metrics for GPU-Accelerated Hydrological Models

| Performance Metric | Target Value | Experimental Measurement | Significance |

|---|---|---|---|

| Speedup Ratio | >15x | 28x for CUDA vs. single-threaded CPU [5] | Computational efficiency gain |

| Strong Scaling Efficiency | >70% | 65-80% for 2-8 GPUs [27] | Multi-GPU parallelization effectiveness |

| Weak Scaling Efficiency | >80% | 75-90% for domain sizes up to 3200×3200 [27] | Capability for large-domain simulation |

| Calculation Rate | >10⁶ cells/second | 1.2×10⁶ cells/second on RTX 6000 Ada [28] | Absolute computational throughput |

The experimental workflow for model validation follows a structured pathway from component verification to integrated system validation and finally application to real-world scenarios, as illustrated below:

Figure 1: Experimental Validation Workflow for Hydrological Models

Implementation Guide: Multi-GPU Hydrological-Hydrodynamic Modeling

Software Architecture and Workflow Design

Implementing a GPU-accelerated hydrological modeling system requires careful architectural planning to maximize computational efficiency. The core system comprises two tightly coupled modules: the hydrological module handling precipitation, infiltration, and runoff generation; and the hydrodynamic module solving the 2D shallow water equations for overland flow [27]. The implementation follows a structured workflow:

Step 1: Preprocessing and Domain Decomposition

- Convert raw topographic data (LiDAR, DEM) to structured computational grids

- Decompose domain into subdomains for multi-GPU processing

- Initialize hydrological parameters (Manning's roughness, infiltration properties) for each cell

- Set boundary conditions and initial conditions

Step 2: Hydrological Module Execution

- Calculate rainfall input from radar or gauge data

- Compute infiltration losses using Green-Ampt model: i(t) = Kₛ[1 + (h + hₚ)/z(t)] Where i(t) is infiltration rate, Kₛ is saturated hydraulic conductivity, h is ponding depth, hₚ is suction head, and z(t) is cumulative infiltration [27]

- Determine excess rainfall available for surface runoff

Step 3: Hydrodynamic Module Execution

- Solve 2D shallow water equations using finite volume method

- Calculate inter-cell fluxes using HLLC approximate Riemann solver

- Update flow variables with second-order temporal accuracy (MUSCL scheme)

- Apply stability conditions (CFL condition) to determine time step

Step 4: Inter-GPU Communication and Synchronization

- Exchange ghost cell data between adjacent subdomains

- Synchronize all GPUs to ensure temporal consistency

- Collect output data for visualization and analysis

The following diagram illustrates the computational workflow and data flow in a multi-GPU hydrological modeling system:

Figure 2: Multi-GPU Hydrological Modeling System Architecture

Successful implementation of GPU-accelerated hydrological modeling requires both specialized software tools and hardware resources. The following table catalogs the essential "research reagents" in this field:

Table: Essential Research Reagents for GPU-Accelerated Hydrological Modeling

| Tool/Resource | Category | Function | Implementation Example |

|---|---|---|---|

| NVIDIA CUDA Toolkit | Development Framework | Parallel computing API for GPU acceleration | CUDA/C++ implementation of shallow water solver [27] |

| Earth2Studio | AI Weather Modeling | Generation of synthetic weather scenarios for flood risk assessment | NVIDIA Earth-2 platform for large ensemble generation [29] |

| SFINCS | Specialized Software | Fast hydrodynamic model for coastal flood mapping | UC Santa Cruz coastal flooding assessment [28] |

| SWAT | Hydrological Model | Watershed-scale water quality and quantity modeling | Parameter regionalization for ungauged watersheds [30] |

| FCN-SFNO Models | AI Architecture | Spherical Fourier Neural Operators for weather forecasting | HENS pipeline for hypothetical weather generation [29] |

| Green-Ampt Infiltration | Physical Parameterization | Calculation of infiltration losses in hydrological module | Integration into shallow water equation source terms [27] |

| HLLC Riemann Solver | Numerical Method | Approximation of inter-cell fluxes in Godunov-type schemes | Finite volume solution of shallow water equations [27] |

| MUSCL Scheme | Numerical Method | Second-order spatial reconstruction for accuracy enhancement | Monotonic Upstream-centered Scheme for Conservation Laws [27] |

Case Studies and Performance Benchmarks

Real-World Application: Urban Flood Simulation

The GPU-accelerated hydrological modeling framework has been successfully applied to the Xidagou River in Yinchuan, addressing critical urban water management challenges [26]. This implementation demonstrated the model's capability to simulate complex urban river systems and evaluate intervention strategies for mitigating black and odorous water bodies—a persistent problem in rapidly urbanizing watersheds.

In coastal applications, researchers at UC Santa Cruz employed GPU-accelerated models to map coastal flooding and assess nature-based adaptation solutions [28]. Using the SFINCS model accelerated with NVIDIA RTX 6000 Ada Generation GPUs, they reduced computation times from approximately six hours on CPU systems to just 40 minutes per simulation—a 3-4x speedup that enabled more comprehensive sensitivity analysis and parameter exploration [28]. This computational efficiency gain allowed the team to set ambitious global goals, including mapping all small-island developing states before the COP30 climate conference.

Large-Scale Ensemble Modeling for Flood Risk Assessment

The JBA Risk Management case study exemplifies advanced application of GPU-accelerated modeling for probabilistic flood risk assessment [29]. Using the NVIDIA Earth-2 platform, JBA developed a Huge Ensemble (HENS) pipeline that generated 1,008 ensemble members representing 300 years of synthetic atmospheric data for the Elbe River basin [29]. This approach addressed the fundamental challenge of quantifying extreme flood events (e.g., "200-year floods") from limited historical records (typically <50 years).

The HENS implementation utilized a multi-checkpoint ensemble inference approach with the configuration:

- Temporal scope: Winter 2023-2024 season

- Ensemble size: 18 members per checkpoint

- Forecast duration: 678 steps (112 days at 6-hour resolution)

- Spatial resolution: High-resolution global weather modeling

- Computational acceleration: 8× speedup over traditional numerical weather prediction models

This ensemble generation capability, impossible with conventional computing approaches, provides insurers and financial institutions with statistically robust flood risk assessments that account for climate change impacts and enable evidence-based adaptation planning [29].

Future Directions in GPU-Accelerated Ecological Simulation

The rapid advancement of GPU-accelerated hydrological modeling reflects broader trends in ecological simulation research. Several emerging directions promise to further transform the field:

Integration of AI/ML with Physical Models The development of hybrid modeling approaches that combine physics-based numerical solvers with machine learning components represents a promising frontier. Examples include AI-based precipitation diagnostics applied to weather model outputs [29], and physics-informed neural networks for parameterization of subgrid-scale processes.

Digital Twin Technology for Watershed Management GPU acceleration enables the creation of interactive digital twins for complex watershed systems, allowing stakeholders to visualize flood impacts and test intervention strategies in silico [28] [6]. The BioCLIP 2 project's ambition to develop wildlife-based interactive digital twins exemplifies this direction in broader ecological research [6].

Multi-Scale Model Coupling Future systems will increasingly couple watershed-scale hydrological models with regional climate models and infrastructure-scale hydraulic models, requiring exascale computing capabilities that only GPU acceleration can provide [27] [28].

Real-Time Ensemble Forecasting for Emergency Response As computational performance improves, real-time ensemble flood forecasting with quantified uncertainty will become operational, providing emergency managers with probabilistic inundation maps hours before extreme events [29] [28].

The convergence of GPU acceleration with artificial intelligence represents a paradigm shift in hydrological and ecological modeling, transforming these fields from data-limited to simulation-rich disciplines. This technological evolution enables researchers to address increasingly complex questions about environmental systems under changing climatic conditions, ultimately supporting more resilient and sustainable water resource management.

The field of eco-hydraulics represents a critical interdisciplinary frontier, combining hydraulic engineering, ecology, and computer science to understand and predict the complex interactions between aquatic organisms and their fluid environment. GPU-accelerated simulation has emerged as a transformative technology in this domain, enabling researchers to overcome traditional computational barriers that have long limited the scale, resolution, and biological realism of eco-hydraulic models [31]. The massively parallel architecture of modern Graphics Processing Units (GPUs) provides the computational throughput necessary to resolve fine-scale hydrodynamic processes while simultaneously tracking biological responses across ecologically relevant spatial and temporal scales.