GPU-Accelerated Bayesian Methods for Population Dynamics: Accelerating Inference from Genetics to Drug Development

This article explores the transformative impact of Graphics Processing Unit (GPU) acceleration on Bayesian inference for population dynamics models.

GPU-Accelerated Bayesian Methods for Population Dynamics: Accelerating Inference from Genetics to Drug Development

Abstract

This article explores the transformative impact of Graphics Processing Unit (GPU) acceleration on Bayesian inference for population dynamics models. We cover the foundational principles that make these computationally intensive problems suitable for parallel processing, detail cutting-edge methodological implementations across fields like population genetics and epidemiology, and provide practical guidance for troubleshooting and optimization. By examining validation studies and comparative performance benchmarks, we demonstrate how GPU-accelerated Bayesian methods are enabling faster, more accurate, and scalable analyses, ultimately advancing research in drug development and clinical applications.

The Core Challenge: Why Population Dynamics Demand Bayesian Inference and GPU Power

Bayesian population dynamics provides a powerful statistical framework for inferring how populations change over time, whether tracking the genetic history of species or forecasting the spread of infectious diseases. By combining prior knowledge with observed data, these methods quantify uncertainty in dynamic processes, from millennia of evolutionary history to real-time epidemic trajectories. The computational intensity of Bayesian inference has traditionally limited its application to large datasets; however, GPU acceleration is now enabling complex, high-dimensional models to be deployed at scale, transforming our ability to model population systems with unprecedented speed and precision [1] [2] [3].

Bayesian population dynamics refers to a class of statistical models that treat population processes as latent, dynamic systems about which researchers make probabilistic inferences. These models are uniquely powerful for several reasons: they formally incorporate prior knowledge from previous studies or expert opinion; they represent all unknown quantities—including parameters, model structures, and predictions—with full probability distributions, thereby explicitly quantifying uncertainty; and they can be hierarchically structured to share information across related datasets or population subgroups [4] [5].

The core application domains within this field are diverse. In evolutionary biology, Bayesian methods reconstruct historical population sizes from genetic sequences. In epidemiology, they estimate critical parameters like the basic reproduction number (R₀) and forecast outbreak trajectories. In ecology, they model species abundance and spatial spread. The unifying thread is the treatment of population change as a stochastic process, with observed data (e.g., genetic variants, case reports, species counts) providing incomplete, noisy glimpses of the underlying dynamics [4] [2] [6].

The adoption of GPU computing addresses the primary bottleneck in this field: computation. Bayesian inference typically relies on Markov Chain Monte Carlo (MCMC) sampling or sequential Monte Carlo methods, which can be prohibitively slow for large populations or complex models. GPUs, with their massively parallel architecture, excel at executing the myriad independent calculations required for these algorithms, offering speedups of orders of magnitude and making previously intractable analyses feasible [1] [2] [3].

Core Methodologies and Quantitative Comparisons

Foundational Model Families

Bayesian approaches to population dynamics are built upon several foundational model families, each tailored to specific data types and scientific questions.

- Coalescent-Based Genetic Models: Methods like the Pairwise Sequentially Markovian Coalescent (PSMC) and its Bayesian extensions (e.g., PHLASH) use genome sequence data to infer historical effective population size (Nₑ). These models treat the Time to the Most Recent Common Ancestor (TMRCA) across genomic loci as a latent variable in a Hidden Markov Model (HMM), linking patterns of genetic variation to past demographic events [2].

- Compartmental Epidemic Models: For infectious diseases, models such as the Susceptible-Exposed-Infectious-Removed (SEIR) framework are used as process models within a Bayesian state-space formulation. These models describe the underlying transmission dynamics, while an observation model accounts for the fact that reported case data are typically incomplete and noisy [4] [7] [6].

- Branching Process Models: To model heterogeneous transmission, such as super-spreading events, stochastic branching processes can be embedded within a Bayesian framework. These models infer the offspring distribution—the number of secondary cases generated by an infected individual—from incidence time-series data, providing insights into the over-dispersion of contagion [8].

- Spatio-Temporal Point Process Models: For data with a geographic component, such as the locations of infected individuals, Bayesian hierarchical models can quantify spatial correlations and forecast the spread of a pathogen or invasive species across a landscape, even with very limited local data [9].

Quantitative Performance of Select Models

The table below summarizes the reported performance and scope of several Bayesian models discussed in the literature, highlighting the role of GPU acceleration.

Table 1: Performance and Scope of Selected Bayesian Population Dynamics Models

| Model / Software | Primary Application | Reported Performance | Key Computational Features |

|---|---|---|---|

| CUDAHM [1] | Cosmic population density estimation | Accurate deconvolution for 300,000 objects in ~1 hour | NVIDIA Tesla K40c GPU; massively parallel Metropolis-within-Gibbs sampling |

| PHLASH [2] | Population size history from whole genomes | Faster and lower error vs. SMC++, MSMC2, FITCOAL | GPU-accelerated; novel score function algorithm for HMMs (O(M²) time) |

| SIMPLE [7] | Epidemic prevalence from survey data | Orders-of-magnitude faster runtime in some cases | Sequential Monte Carlo (SMC); compared to Bayesian P-splines & Gaussian Processes |

| PMCMC for SEIR [6] | Onset time and R₀ for emerging diseases | Accurate estimates consistent with empirical studies | Particle Markov Chain Monte Carlo; integrates MCMC with particle filtering |

Application Notes & Protocols

This section provides detailed methodologies for implementing Bayesian population dynamics analyses, with a focus on GPU-accelerated inference.

Protocol 1: Inferring Population History from Genomic Data using PHLASH

Application Note: This protocol details the process of estimating a population's effective size through time using whole-genome sequence data. The PHLASH method is designed for scalability and accuracy, leveraging GPU hardware to perform full Bayesian inference efficiently [2].

- Objective: To infer the historical effective population size function, η(t), from a sample of whole-genome sequences.

- Experimental Principles: The method is based on a coalescent HMM. It models the local TMRCA along the genome, which carries information about past population size. PHLASH places a non-parametric prior on η(t) and uses a novel, efficient algorithm to compute the gradient of the log-likelihood, enabling rapid posterior exploration [2].

Step-by-Step Workflow:

- Data Preparation: Input a multiple sequence alignment (FASTA format) or variant calls (VCF format) from a diploid individual or a population sample.

- Preprocessing: Calculate the density of heterozygous sites or pairwise differences within a sliding window across the genome.

- Model Configuration:

- Specify the recombination rate (ρ) and mutation rate (θ). These can be estimated from the data or taken from literature.

- Define the prior distribution for the population size history. PHLACH uses a prior that encourages smoothness without enforcing a pre-defined parametric form.

- GPU-Accelerated Inference:

- The algorithm draws random, low-dimensional projections of the coalescent intensity function.

- The key computational kernel involves forward-backward algorithms for the underlying HMM, which are parallelized across the GPU's many cores.

- Samples from the posterior distribution of η(t) are generated by averaging these projections.

- Post-processing and Output:

- The output is a posterior distribution over population size histories.

- Summarize the posterior to obtain a point estimate (e.g., the posterior mean) and credible intervals at each time point.

- Visualize the estimated population trajectory, plotting time (generations ago) against effective population size (Nₑ).

Protocol 2: Real-Time Bayesian Forecasting of Disease Spread

Application Note: This protocol addresses the critical need for rapid assessment during the early stages of an epidemic. It demonstrates how to use a Bayesian state-space model with limited local data, leveraging informative priors from previous outbreaks in similar populations or regions to make statistically valid predictions and forecasts [9] [6].

- Objective: To estimate the onset time, basic reproduction number (R₀), and other key epidemiological parameters, and to forecast short-term case incidence.

- Experimental Principles: A stochastic SEIR model serves as the process model for the underlying transmission dynamics. An observation model links the true, unobserved incidence to the reported case data, accounting for under-reporting and noise. Parameters are inferred using Particle MCMC (PMCMC), which combines the flexibility of MCMC with the ability of particle filters to handle complex state dynamics [6].

Step-by-Step Workflow:

- Data Preparation: Collect a time series of early reported cases (e.g., daily or weekly incidence). Gather informative prior distributions for parameters (e.g., R₀, latent period) from literature on similar diseases or populations [9].

- Model Specification:

- Process Model: Define a stochastic SEIR model with states S(susceptible), E(exposed), I(infectious), R(removed). Incorporate noise on the transmission rate β to capture stochasticity.

- Observation Model: Specify a distribution for the reported cases (e.g.,

zt ~ Normal(ρ * Δct, τ * ρ * Δct)), whereρis the reporting rate andΔctis the true number of new infections [6].

- Prior Elicitation: Assign priors to all unknown parameters (β, γ, α, ρ, τ, onset time d). Use strongly informative priors from previous studies where available, and weakly informative or vague priors otherwise.

- PMCMC Inference:

- Initialization: Initialize the MCMC chain with plausible parameter values.

- Particle Filtering (Inside MCMC): For each proposed set of parameters in the MCMC chain, run a particle filter to compute an estimate of the model likelihood. This involves simulating multiple trajectories (particles) of the state-space model.

- MCMC Acceptance: Use the likelihood estimate from the particle filter to accept or reject the proposed parameters.

- Forecasting and Output:

- After convergence, the MCMC output provides a posterior distribution for all parameters (R₀, onset time, etc.).

- To forecast, simulate the state-space model forward in time using parameters drawn from the posterior, generating a predictive distribution of future cases.

The Scientist's Toolkit: Research Reagent Solutions

This table catalogues essential computational tools, models, and data types that constitute the "reagent solutions" for experimental research in Bayesian population dynamics.

Table 2: Essential Research Reagents for Bayesian Population Dynamics

| Category | Reagent / Solution | Function / Explanation |

|---|---|---|

| Computational Tools | GPU-Accelerated Libraries (e.g., CUDA) | Provides the parallel computing framework to execute millions of simultaneous operations, drastically reducing inference time for complex models [1] [3]. |

| Particle MCMC (PMCMC) | A hybrid algorithm that enables Bayesian parameter inference for complex, non-linear state-space models with latent variables, which are common in epidemiology [6]. | |

| Core Model Components | Coalescent Hidden Markov Model (HMM) | The foundational statistical model for relating genetic variation data to historical population size; the latent states represent time to most recent common ancestor [2]. |

| Stochastic SEIR Compartmental Model | A generative model for the underlying transmission dynamics of an infectious disease, serving as the process model in a state-space framework [4] [6]. | |

| Bayesian P-splines / Gaussian Processes | Flexible priors used for smoothing noisy time-series data, such as infection prevalence from repeated cross-sectional surveys [7]. | |

| Data & Priors | Informative Prior Distributions | Probability distributions derived from previous studies or expert knowledge, used to constrain model parameters when local data are scarce or non-existent [9]. |

| Time Series of Reported Incidence | The primary observation data for epidemiological models, representing the noisy, real-world measurement of the underlying epidemic process [6]. | |

| Whole Genome Sequence Data | The primary data source for coalescent-based inference, containing the signal of historical population processes in patterns of variation and linkage [2]. |

Markov Chain Monte Carlo (MCMC) methods represent a cornerstone of modern Bayesian inference, enabling researchers to estimate complex posterior distributions for models where analytical solutions are intractable. Despite their theoretical robustness, traditional MCMC methods face a significant computational bottleneck, particularly as models grow in complexity and datasets increase in size. This bottleneck severely impacts research workflows in fields like drug development and population genetics, where iterative model refinement is essential. The sequential nature of traditional MCMC sampling, typically executed on central processing units (CPUs), creates fundamental performance constraints that limit practical application to real-world problems. However, recent advancements in hardware and software, particularly the adoption of graphics processing units (GPUs) and specialized sampling algorithms, are demonstrating promising pathways to overcome these limitations and make Bayesian inference tractable for large-scale problems.

The Inherent Computational Limitations of Traditional MCMC

The computational cost of traditional MCMC stems from several inherent algorithmic characteristics. First, MCMC methods are fundamentally sequential—each sample in the Markov chain depends on the previous state, creating a critical path that prevents trivial parallelization [10]. Second, each iteration typically requires evaluating the likelihood function across the entire dataset, which becomes prohibitively expensive with large data volumes [11]. Third, convergence often requires drawing thousands or millions of samples, with additional "warm-up" iterations discarded to ensure the chain reaches its stationary distribution.

The efficiency of MCMC is commonly measured by the effective sample size (ESS), which quantifies the number of independent samples equivalent to the autocorrelated MCMC samples, and the effective sample size per second (ESS/s), which incorporates computational time [12] [13]. For a function f, the variance of the MCMC estimator is given by:

$$\text{Var}{\text{MCMC}}[\hat{\theta}f] = \frac{\text{Var}{p(\theta|y)}[f(\theta)]}{\text{ESS}f}$$

This relationship demonstrates that to reduce estimation error, one must increase ESS, which typically requires longer runtimes. Alternative metrics like Expected Squared Jump Distance (ESJD) are used during adaptation to optimize sampler hyperparameters, as they are easier to compute from adjacent states [12].

Quantitative Assessment of Computational Costs

Table 1: Comparative Performance of MCMC Implementations on Different Hardware

| Implementation | Hardware | Dataset Size | Sampling Time | Speed-up Factor | Minimum ESS/s |

|---|---|---|---|---|---|

| PyMC (CPU) | Intel i7-9750H | 160,420 matches | ~12 minutes | 1x (baseline) | Baseline |

| Stan (CPU) | Intel i7-9750H | 160,420 matches | ~20 minutes | 0.6x | Comparable to PyMC |

| PyMC + JAX (CPU) | Intel i7-9750H | 160,420 matches | ~12 minutes | ~1x | 2.9x |

| PyMC + JAX (GPU) - Sequential | NVIDIA RTX 2070 | 160,420 matches | >2.7 minutes | >4.4x | >11x |

| PyMC + JAX (GPU) - Vectorized | NVIDIA RTX 2070 | 160,420 matches | 2.7 minutes | ~4.4x | ~11x |

| bedpostx (CPU) | Intel Xeon X5670 | Brain voxel data | Baseline | 1x (baseline) | Not reported |

| bedpostx_gpu (GPU) | NVIDIA Tesla C2075 | Brain voxel data | ~1/100 of CPU time | ~100x | Not reported |

| Custom MH (GPU) | GPU | Large datasets | 1/68 of CPU time | 68x (DP), 136x (SP) | Not reported |

Table 2: Performance Scaling with Dataset Size for Bradley-Terry Model Inference

| Start Year | Number of Matches | CPU Time (minutes) | GPU Time (minutes) | GPU Efficiency Advantage |

|---|---|---|---|---|

| 2020 | Small subset | ~1 | ~1.5 | CPU superior for small data |

| 2015 | ~50,000 | ~4 | ~1.8 | GPU becomes favorable |

| 2010 | ~80,000 | ~6 | ~2.0 | GPU significantly faster |

| 2000 | ~120,000 | ~8 | ~2.3 | GPU 3.5x faster |

| 1968 | 160,420 | ~12 | ~2.7 | GPU 4.4x faster |

The quantitative data reveals several important patterns. First, GPU acceleration provides minimal benefit for small datasets due to hardware initialization and memory transfer overheads [13]. Second, the performance advantage of GPUs becomes substantial as data size increases, with 4-100x speedups commonly achieved for moderately large to very large datasets [13] [14]. Third, specialized GPU implementations can achieve even greater speedups (68-136x) for specific algorithms like Metropolis-Hastings [11]. Fourth, beyond raw time savings, GPU-accelerated sampling often produces higher effective sample size per second, indicating not just faster but statistically more efficient sampling [13].

Protocol for GPU-Accelerated MCMC Implementation

Experimental Setup and Software Configuration

Research Reagent Solutions: Table 3: Essential Software and Hardware Components for GPU-Accelerated MCMC

| Component | Example Options | Function/Role |

|---|---|---|

| GPU Computing Framework | JAX, PyTorch | Provides automatic differentiation, JIT compilation, and GPU/TPU support |

| Probabilistic Programming | PyMC, NumPyro, Stan | Defines Bayesian models and implements samplers |

| Sampling Algorithms | HMC, NUTS, ChEES-HMC | Generates samples from posterior distributions |

| Hardware Accelerators | NVIDIA GPUs, Google TPUs | Provides massive parallelization for numerical computations |

| Visualization & Analysis | ArviZ | Computes diagnostics like ESS, R-hat for convergence |

Implementation Protocol:

Model Definition Phase: Implement the statistical model using a GPU-compatible framework. For example, specify a hierarchical Bayesian model using PyMC with JAX backend:

Hardware Configuration: Ensure proper installation of GPU drivers and computing frameworks (CUDA, cuDNN for NVIDIA GPUs). Configure the software environment to utilize available accelerators, typically through environment variables or framework-specific commands.

Sampler Selection: Choose appropriate sampling algorithms based on model characteristics and hardware:

- For models with many parameters and differentiable log-posterior, Hamiltonian Monte Carlo (HMC) and No-U-Turn Sampler (NUTS) are preferred [12]

- For GPU efficiency, consider alternatives to NUTS like ChEES-HMC when possible, as NUTS' adaptive trajectory length can create synchronization overhead [15]

- Implement multiple chains in parallel using vectorized mapping (

vmapin JAX) rather than running chains asynchronously [12]

Execution and Monitoring: Run sampling with appropriate warm-up iterations, typically 1,000-2,000, followed by sampling iterations. Monitor chain convergence using diagnostics like R-hat (potential scale reduction factor) and effective sample size, computed using tools like ArviZ [13].

Validation: For critical applications, validate GPU results against CPU implementations to ensure algorithmic equivalence, particularly when using reduced precision (e.g., single-precision instead of double-precision) [14].

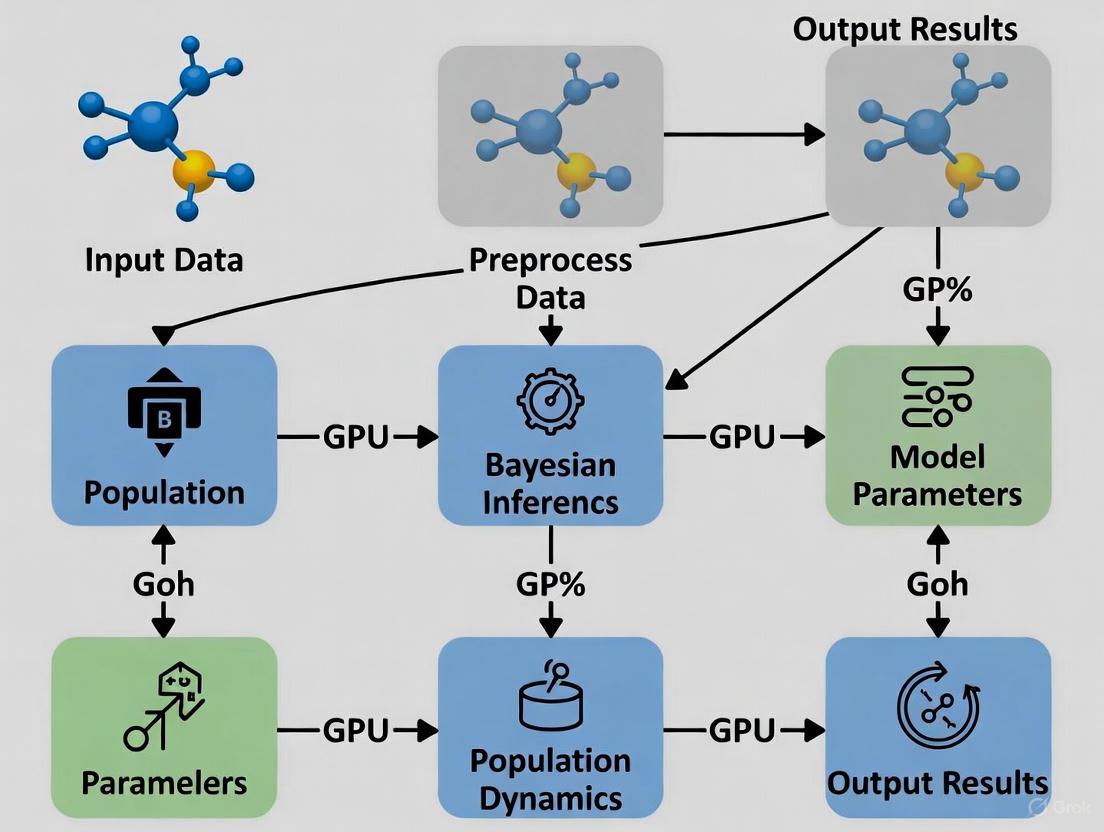

Workflow Visualization

Advanced Strategies for Computational Efficiency

Algorithmic Optimizations

Beyond hardware acceleration, several algorithmic strategies can mitigate computational bottlenecks:

Alternative Inference Methods: For extremely large datasets, Stochastic Variational Inference (SVI) provides a compelling alternative to MCMC, transforming Bayesian inference into an optimization problem. SVI can achieve speedups of 3-5 orders of magnitude (1000x-100,000x) compared to traditional MCMC, though it comes with different approximation trade-offs and may underestimate posterior uncertainty [16].

Precision Adjustment: Implementing MCMC in single-precision rather than double-precision floating-point arithmetic can provide approximately 2x speedup on GPUs with minimal impact on results for many applications [11].

Specialized Samplers: New GPU-friendly sampling algorithms like ChEES-HMC are designed specifically to minimize control flow divergence and synchronization overhead, addressing fundamental limitations of adaptive samplers like NUTS on parallel hardware [15].

Method Selection Framework

Application to Population Dynamics and Pharmacological Research

The computational advances in MCMC sampling have particular significance for Bayesian population dynamics models in ecological and drug development contexts. For example, in phylodynamic inference of pathogen population dynamics, specialized MCMC samplers enable estimation of previously intractable parameters related to dormancy and evolutionary dynamics [17]. Similarly, Bayesian integrated population models (BIPMs) for wildlife monitoring can now accommodate complex state-space structures and changing information needs through efficient sampling [18].

In drug development, GPU-accelerated Bayesian inference enables rapid fitting of hierarchical models to clinical trial data, particularly valuable for dose-response modeling and pharmacokinetic/pharmacodynamic analysis. The ability to quickly refit models with new data (reducing time from 20 minutes to 2.7 minutes in one benchmark) supports iterative model refinement and adaptive trial designs [13].

For demographic inference in population genetics, where likelihood evaluations are exceptionally computationally expensive, alternative optimization approaches like Bayesian optimization can complement MCMC methods when dealing with four or more populations, demonstrating the ongoing innovation in computational Bayesian methods [19].

The computational bottleneck of traditional MCMC sampling represents a significant challenge in applied Bayesian statistics, but one that is being aggressively addressed through hardware and algorithmic innovations. GPU acceleration, specialized sampling algorithms, and alternative inference methods collectively provide a pathway to make Bayesian inference tractable for increasingly complex models and larger datasets. For researchers in population dynamics and drug development, adopting these advanced computational approaches can transform Bayesian methods from theoretical tools to practical solutions for modeling complex biological systems. The protocols and comparisons provided here offer a foundation for selecting appropriate implementation strategies based on specific research requirements, dataset characteristics, and available computational resources.

Bayesian inference provides a powerful, principled framework for modeling complex biological systems, such as those encountered in population dynamics and drug discovery. It allows researchers to estimate unknown parameters of a model and quantify the uncertainty in those estimates. However, a significant obstacle often prevents its full potential from being realized in practice: computational intractability. The core of Bayesian analysis involves calculating the posterior distribution, which requires solving high-dimensional integrals that are frequently impossible to compute directly [16]. For sophisticated models, like those analyzing whole-genome sequence data to infer historical population sizes, this process can be prohibitively slow, taking days or even months on conventional hardware [20].

Massively parallel inference on Graphics Processing Units (GPUs) has emerged as a transformative solution to this bottleneck. Unlike Central Processing Units (CPUs) with a few powerful cores, GPUs contain thousands of smaller cores capable of performing simultaneous calculations. This architecture is ideally suited for the repetitive, parallel operations inherent in many Bayesian inference algorithms. By leveraging GPU acceleration, researchers can achieve speedups of several orders of magnitude, reducing computation times from months to minutes and making previously intractable analyses feasible [16]. This paradigm shift opens new frontiers in research, from scaling demographic inference to thousands of genomic samples [20] to enabling large-scale, active learning-driven drug combination screens [21].

Core Inference Methods & Their GPU Parallelization

The two primary families of algorithms for Bayesian inference are Markov Chain Monte Carlo (MCMC) and Variational Inference (VI). Their different computational characteristics influence how they are adapted for GPU parallelism.

Table 1: Core Bayesian Inference Methods and Their Parallelization

| Method | Core Principle | Computational Bottleneck | GPU Parallelization Strategy |

|---|---|---|---|

| Markov Chain Monte Carlo (MCMC) | Draws correlated samples from the posterior distribution by constructing a Markov chain. | Sequential nature of chain; evaluating likelihood for entire dataset at each step [16]. | Batched likelihood evaluations: Computing likelihoods for multiple samples or chains simultaneously [22]. |

| Stochastic Variational Inference (SVI) | Posits a simpler family of distributions and optimizes to find the best approximation to the true posterior. | Optimization process; calculating gradients over many data subsets [16]. | Massively parallel gradient computation: Using data parallelism across thousands of cores to accelerate optimization [16]. |

| Nested Sampling | Computes the Bayesian evidence by evolving a set of "live points" through progressively higher likelihood regions [22]. | Evaluating likelihoods for many live points and drawing new samples from constrained priors [22]. | Parallel live point evolution: Using many GPU threads to advance multiple live points simultaneously [22]. |

Application Protocol: Demographic Inference with PHLASH

The Population History Learning by Averaging Sampled Histories (PHLASH) algorithm provides a state-of-the-art example of GPU-accelerated Bayesian inference for population dynamics.

- Objective: To infer the historical effective population size (Nₑ) from whole-genome sequence data.

- Key Innovation: A new algorithm for efficiently computing the score function (gradient of the log-likelihood) of a coalescent hidden Markov model, which has the same computational cost as evaluating the log-likelihood itself. This gradient enables highly efficient exploration of the posterior distribution [20].

- GPU Acceleration: The PHLASH software package is implemented to leverage GPU acceleration where available. The parallelism is exploited in the low-dimensional projections of the coalescent intensity function and the averaging of sampled histories, leading to an estimator that is both accurate and computationally efficient [20].

- Performance: In benchmarks, PHLASH demonstrated a tendency to be faster and have lower error than several competing methods (SMC++, MSMC2, FITCOAL) across a panel of 12 different demographic models from the stdpopsim catalog [20].

Quantitative Performance Benchmarks

The theoretical advantages of GPU parallelism translate into dramatic real-world performance gains. The following table synthesizes benchmark data from various scientific applications, demonstrating the profound impact of GPU acceleration on inference tasks.

Table 2: Performance Benchmarks of GPU-Accelerated vs. Traditional Inference

| Application Context | CPU Baseline (Hardware & Time) | GPU Accelerated (Hardware & Time) | Speedup Factor |

|---|---|---|---|

| Hierarchical Bayesian Modeling (Generalized Linear Models) | Traditional MCMC (CPU, unspecified): "months" [16] | Multi-GPU SVI (Multiple GPUs): "minutes" [16] | ~10,000x [16] |

| Cosmological Model Comparison (39-dimensional analysis) | CPU-based Nested Sampling: "prohibitively long" [22] | GPU-accelerated Nested Sampling (Single A100 GPU): "two days" [22] | Orders of magnitude [22] |

| Population Dynamics P Systems (Ecosystem simulation) | Multi-core CPU (OpenMP, 4-core Intel i7): Baseline 1x [23] | NVIDIA Tesla K40 GPU: 18.1x faster than multi-core CPU [23] | 18.1x [23] |

| Genomic CNN Scanning (Sliding-window algorithm) | 16-core CPU (PyTorch): Baseline 1x [24] | FPGA Accelerator (Alveo U250): 19.51x - 28.61x faster than 16-core CPU [24] | ~19-29x (vs. CPU) [24] |

| High-end GPU (unspecified): Baseline 1x [24] | FPGA Accelerator (Alveo U250): 1.22x - 2.89x faster than high-end GPU [24] | ~1.2-2.9x (vs. GPU) [24] |

Advanced Application Protocol: Bayesian Active Learning for Drug Screens

GPU-accelerated inference is not limited to analysis but is also revolutionizing experimental design. The BATCHIE platform uses Bayesian active learning to make large-scale combination drug screens tractable.

- Objective: To efficiently identify synergistic drug combinations from a vast space of possibilities by dynamically designing maximally informative batches of experiments [21].

- Core Algorithm: Probabilistic Diameter-based Active Learning (PDBAL). This criterion selects experiments that minimize the expected distance between posterior samples, theoretically guaranteeing near-optimal experimental designs [21].

- GPU-Accelerated Model: A hierarchical Bayesian tensor factorization model runs on GPU. It uses embeddings for cell lines and drug-doses, decomposing a combination's response into individual drug effects and an interaction term [21].

- Prospective Validation: In a screen of a 206-drug library on pediatric cancer cell lines, BATCHIE accurately predicted unseen combinations and detected synergies after testing only 4% of the 1.4 million possible experiments [21].

The Scientist's Toolkit: Essential Research Reagents & Software

Implementing massively parallel Bayesian inference requires a combination of specialized software libraries and hardware. The following table details key "research reagents" for building a modern computational pipeline.

Table 3: Essential Toolkit for GPU-Accelerated Bayesian Inference

| Tool / Reagent | Type | Primary Function | Relevance to Population Dynamics/Drug Discovery |

|---|---|---|---|

| JAX [16] [22] | Software Library | NumPy-like API for GPU/TPU acceleration with automatic differentiation. | Foundational for building custom, differentiable models and inference algorithms. Enables data sharding across multiple devices. |

| Pyro / NumPyro [16] | Probabilistic Programming Library | Facilitates building complex Bayesian models and performing SVI. | Used for defining flexible hierarchical models (e.g., for population genetics or drug response). |

| NVIDIA CUDA-X (e.g., cuEquivariance) [25] | GPU-Accelerated Library | Provides optimized kernels for specific mathematical operations (e.g., tensor products). | Accelerates key layers in biomolecular AI models, such as those used in protein structure prediction. |

| NVIDIA BioNeMo Framework [25] | AI Framework | Open-source framework for building/training models on biomolecular data (DNA, RNA, proteins). | Provides domain-specific foundation models and training recipes for drug discovery pipelines. |

| PHLASH [20] | Specialized Software | Python package for inferring population size history from genomic data. | Directly enables fast, accurate, and scalable demographic inference with automatic uncertainty quantification. |

| BATCHIE [21] | Specialized Software | Open-source platform for Bayesian active learning in combination drug screens. | Enables tractable, large-scale screening to identify effective drug combinations with minimal experiments. |

| GPU-Accelerated Nested Sampling (e.g., NSS) [22] | Inference Algorithm | Efficiently computes Bayesian evidence for model comparison in high dimensions. | Critical for robust model selection in cosmology and other fields with complex, high-dimensional models. |

Application Note: Accelerated Bayesian Inference in Population Genetics

Population genetics relies on inferring historical demographic patterns from genetic data to understand evolutionary processes, migration events, and population bottlenecks. Traditional methods like the pairwise sequentially Markovian coalescent (PSMC) have limitations, including an inability to analyze large sample sizes and a "stair-step" visual bias in estimates. Bayesian approaches offer a powerful alternative but face computational constraints that have limited their widespread adoption [20].

The PHLASH (population history learning by averaging sampled histories) method represents a significant advancement by enabling full Bayesian inference of population size history from whole-genome sequence data. This method combines advantages of previous approaches into a single, general-purpose inference procedure that is simultaneously fast, accurate, capable of analyzing thousands of samples, and able to return a full posterior distribution over the inferred size history function [20].

Key Innovation and GPU Acceleration

The key technical advance in PHLASH is a novel algorithm for computing the score function (gradient of the log likelihood) of a coalescent hidden Markov model, which maintains the same computational cost as evaluating the log likelihood itself. This innovation, combined with a highly efficient, graphics processing unit (GPU)-based software implementation, enables Bayesian inference at speeds exceeding many optimized methods [20].

GPU acceleration provides remarkable efficiency gains for population genetics analyses. General-purpose GPU libraries like PyTorch and TensorFlow have demonstrated >200-fold decreases in runtime and approximately 5–10-fold reductions in cost relative to central processing unit (CPU)-based approaches. This performance improvement enables researchers to investigate previously unanswerable hypotheses involving more complex models and larger datasets [26].

Performance Benchmarks and Validation

In comprehensive benchmarking across 12 demographic models from the stdpopsim catalog representing eight different species, PHLASH demonstrated superior performance. It achieved the highest accuracy in 61% of scenarios (22 of 36) compared to competing methods including SMC++, MSMC2, and FITCOAL [20].

Table 1: Performance Comparison of Population Genetics Methods

| Method | Optimal Sample Size | Computational Requirements | Key Advantages |

|---|---|---|---|

| PHLASH | 1-1000+ diploid samples | GPU-accelerated, 24h wall time | Highest accuracy, provides full posterior distribution, automatic uncertainty quantification |

| SMC++ | 1-10 diploid samples | CPU, limited to 24h wall time | Incorporates frequency spectrum information |

| MSMC2 | 1-10 diploid samples | CPU, limited to 256GB RAM | Optimizes composite objective across all haplotype pairs |

| FITCOAL | 10-100 diploid samples | CPU, limited to 24h wall time | Extremely accurate for constant or exponential growth models |

The PHLASH method provides automatic uncertainty quantification and enables new Bayesian testing procedures for detecting population structure and ancient bottlenecks. The posterior distribution becomes more dispersed for very recent time periods (t < 10^3 generations) where fewer coalescent events are available to accurately estimate effective population size [20].

Experimental Protocols

Protocol: Population History Inference Using PHLASH

Research Objectives and Experimental Design

This protocol describes the process for inferring population size history from whole-genome sequence data using the PHLASH method. The primary objective is to estimate historical effective population sizes (N_e) over time and quantify estimation uncertainty through Bayesian posterior distributions [20].

Software and Hardware Requirements

- Software: PHLASH Python package (requires Python 3.7+ with PyTorch/TensorFlow backend)

- Hardware: NVIDIA GPU (P100 or later recommended) with CUDA support

- Memory: Minimum 8GB GPU memory, 16GB system RAM

- Data Input: Whole-genome sequence data in VCF or PLINK format

Step-by-Step Procedure

Data Preparation: Convert raw sequence data to required input format

- Ensure data is properly formatted and filtered for quality

- Phase genotypes if possible (method is robust to unphased data)

Parameter Configuration: Set analysis parameters in configuration file

- Define time range for inference (default: 10^2 to 10^6 generations)

- Specify number of MCMC iterations (default: 10,000)

- Set random seed for reproducibility

Initialization: Initialize the coalescent hidden Markov model

- Load genetic data and population structure priors

- Initialize coalescent intensity function with random projections

MCMC Sampling: Execute the Bayesian sampling procedure

- Draw random, low-dimensional projections of coalescent intensity function

- Compute score function using efficient gradient calculation

- Update posterior distribution using Metropolis-Hastings algorithm

Result Aggregation: Average sampled histories to form final estimator

- Combine multiple chains to ensure convergence

- Compute posterior median and credible intervals

Output Generation: Produce visualization and summary statistics

- Generate plots of population size history with uncertainty intervals

- Export posterior samples for further analysis

- Perform Bayesian hypothesis tests for population structure

Validation and Quality Control

- Run multiple independent chains to assess convergence

- Compare results with simulated data where ground truth is known

- Perform posterior predictive checks to validate model fit

- Calculate Gelman-Rubin statistics to ensure chain convergence [27]

Protocol: GPU-Accelerated QTL Mapping with TensorQTL

Research Context and Objectives

This protocol describes quantitative trait locus (QTL) mapping using tensorQTL, a GPU-accelerated implementation that enables scaling to millions of individuals. The method identifies associations between genetic variants and gene expression patterns, with applications in understanding disease mechanisms and identifying therapeutic targets [26].

Computational Requirements and Setup

- Software: tensorQTL package with PyTorch backend

- Hardware: NVIDIA GPU with ≥8GB memory

- Data: Genotype data in PLINK format, phenotype data, covariates

Step-by-Step Workflow

- Data Loading: Read genotype-phenotype data into GPU memory using pandas-plink and dask arrays

- Preprocessing: Normalize phenotypes and apply quality control filters

- Cis-QTL Mapping: Test associations within specified genomic windows

- Permutation Testing: Calculate empirical p-values using beta approximation

- Trans-QTL Mapping: Perform genome-wide association testing

- Result Processing: Filter and store significant associations

Performance Optimization

- Batch processing for large datasets that exceed GPU memory

- Parallel execution across multiple GPUs for hyperparameter optimization

- Efficient data transfer between CPU and GPU memory

Figure 1: PHLASH Bayesian Inference Workflow for Population Genetics

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Bayesian Population Genetics

| Tool/Resource | Function | Implementation |

|---|---|---|

| PHLASH Software | Bayesian inference of population size history | Python package with GPU acceleration [20] |

| tensorQTL | GPU-accelerated QTL mapping | PyTorch-based implementation [26] |

| gPGA | Isolation with migration model analysis | CUDA-based GPU implementation [28] |

| NIMBLE/JAGS | Bayesian hierarchical modeling | BUGS-language compilers for integrated population models [29] [30] |

| stdpopsim | Standardized population genetic simulations | Python library with empirical demographic models [20] |

| AgentTorch | Differentiable agent-based modeling | PyTorch-based framework for epidemiological forecasting [31] |

Application Note: Epidemiological Forecasting with Large Population Models

Innovation in Epidemiological Modeling

Large Population Models (LPMs) represent an evolution of agent-based models (ABMs) that overcome traditional limitations in scale, data integration, and real-world feedback. These models simulate entire populations with realistic behaviors and interactions at unprecedented scale, enabling researchers to observe how individual choices aggregate into system-level outcomes and test interventions before real-world implementation [31].

LPMs achieve this through three key innovations: compositional design for efficient simulation of millions of agents, differentiable specification for gradient-based learning and calibration, and decentralized computation using secure multi-party protocols. The AgentTorch framework implements these theoretical advances, providing GPU acceleration, million-agent populations, differentiable environments, and neural network composition [31].

Formal Model Specification

In epidemiological applications, LPMs represent individuals with states (si(t)) containing both static and time-evolving properties such as age, disease status, and immunity level. The model updates individual states through interactions with neighbors (Ni(t)) and environment (e(t)) according to the equation:

si(t+1) = f(si(t), ⊕(j∈Ni(t)) mij(t), ℓ(·|si(t)), e(t; θ))

Where mij(t) represents disease transmission from individual j to i, ℓ(·|si(t)) captures behavioral decisions (e.g., mask-wearing, social distancing), and e(t; θ) represents environmental factors including viral evolution and intervention policies [31].

Performance and Applications

Current LPM deployments are optimizing vaccine distribution strategies to help immunize millions of people and tracking billions of dollars in global supply chains to improve efficiency and reduce waste. Case studies on pandemic response in New York City demonstrate more accurate predictions, more efficient policy evaluation, and more seamless integration with real-world systems than traditional ABMs [31].

Figure 2: Large Population Model Architecture for Epidemiological Forecasting

Application Note: Clinical Trial Modeling and Bayesian Methods

Bayesian Framework for Clinical Applications

Bayesian statistics provides a powerful framework for clinical trial modeling through its ability to sequentially update knowledge with new data, handle complex hierarchical models, and incorporate prior information. This approach addresses common challenges in classical statistics, including lack of power in small sample research and convergence issues in complex models [27].

The Bayesian framework enables researchers to specify prior distributions based on existing knowledge, derive posterior distributions that combine prior information with new data, and perform prior/posterior predictive checking to validate model assumptions. This methodology is particularly valuable in clinical settings where ethical considerations and cost constraints limit sample sizes [27].

Implementation and Best Practices

Successful implementation of Bayesian methods in clinical trial modeling follows established best practices outlined in the WAMBS-checklist (When to Worry and how to Avoid the Misuse of Bayesian Statistics). This includes [27]:

- Prior Specification: Defining plausible parameter space and expected prior means with appropriate uncertainty

- Convergence Diagnostics: Monitoring MCMC chains using Gelman-Rubin statistics and trace plots

- Sensitivity Analysis: Assessing how results vary under different prior specifications

- Predictive Checking: Validating model fit through prior and posterior predictive checks

- Reproducibility: Documenting complete analysis pipeline including software versions and random seeds

Software Tools and Training

Clinical researchers can implement Bayesian clinical trial models using accessible software tools including brms in R and bambi in Python, which provide intuitive interfaces for specifying complex models. Specialized training workshops, such as the "Bayesian Population Analysis with NIMBLE and JAGS" workshop, provide hands-on experience with hierarchical models, integrated population models, and advanced MCMC configuration [30].

These methodologies enable more efficient clinical trial designs, including adaptive trials that can modify enrollment criteria or treatment arms based on accumulating data while maintaining statistical validity and protecting patient safety.

The study of population dynamics—whether in ecology, epidemiology, or genetics—relies on sophisticated computational models to infer past demographic history, predict future trends, and understand complex system behaviors. Three methodological frameworks form the cornerstone of modern computational population biology: coalescent theory for reconstructing historical population sizes from genetic data, agent-based models for simulating the emergent dynamics of interacting individuals, and Bayesian hierarchical structures for quantifying uncertainty and integrating diverse data sources. Individually, each approach offers powerful insights; integrated within a Bayesian framework, they enable rigorous, data-driven inference about population processes across multiple scales and levels of organization. The recent integration of these methods with GPU computing has dramatically accelerated their capabilities, allowing researchers to address questions at unprecedented scales and resolutions. This note details the core concepts, applications, and practical protocols for implementing these methodologies in population dynamics research.

Core Concepts and Methodologies

Coalescent Theory for Phylodynamic Inference

Coalescent theory is a probabilistic model that generates genealogies relating individuals sampled from a population by tracing their ancestry backward in time to their most recent common ancestor (MRCA). The rate at which lineages coalesce depends on the effective population size (Nₑ), which represents the genetic diversity in a population [32].

Mathematical Foundation: The coalescent models the time between coalescent events as exponentially distributed. For a sample of (n) individuals, the waiting time (wk) until the next coalescent event, which reduces the number of lineages from (k) to (k-1), is exponentially distributed with rate (\binom{k}{2} / Ne(t)). The probability density of a genealogy (g) given a demographic function (Ne(t)) is [32]: [ p(g | Ne(t)) = \prod{k=2}^{n} \frac{\binom{k}{2}}{Ne(tk)} \exp\left[ -\int{t{k-1}}^{tk} \frac{\binom{k}{2}}{Ne(s)} ds \right] ] where (tk) is the time of the (k)-th coalescent event [32].

Non-Parametric Bayesian Extensions: Modern implementations often use non-parametric approaches to estimate (N_e(t)) without assuming a simple parametric form. The Skygrid model uses a Gaussian Markov random field (GMRF) prior on a piecewise constant demographic function defined on a pre-specified grid of time points, enabling flexible estimation of past population dynamics [32].

Agent-Based Models for Complex System Simulation

Agent-based models (ABMs) are computational frameworks for simulating the actions and interactions of autonomous agents within an environment. ABMs are particularly valuable for studying emergent system-level behaviors resulting from individual-level decisions.

Core Principles: In an ABM, each agent (e.g., an individual, a cell, a vehicle) operates according to a set of rules that dictate its behavior, state changes, and responses to other agents and environmental stimuli. The simulation proceeds through discrete time steps, with all agents updating their states asynchronously or synchronously. This bottom-up approach is ideal for modeling complex, heterogeneous systems where centralized control is absent [33] [34].

GPU-Accelerated Implementation: Traditional CPU-based ABMs execute agent actions serially, limiting scalability. GPU-accelerated frameworks like FLAME-GPU exploit data-parallel architectures by mapping thousands of agents to concurrent GPU threads. This allows for simultaneous execution of agent functions, yielding massive performance gains and enabling simulations of hundreds of millions of agents [35] [34]. Key technical innovations include stochastic memory allocation for parallel agent replication and specialized data structures for efficient spatial queries and message passing between agents [35].

Hierarchical Bayesian Models for Structured Inference

Hierarchical Bayesian modeling provides a statistical framework for analyzing data that are structured across multiple levels, allowing for partial pooling of information and robust uncertainty quantification.

Foundational Framework: In a hierarchical model, observed data (y) are modeled as arising from a probability distribution conditional on parameters (\theta). These parameters are themselves drawn from a population distribution governed by hyperparameters (\phi). This structure is formally expressed as [36]: [ \begin{aligned} y &\sim p(y | \theta) \ \theta &\sim p(\theta | \phi) \ \phi &\sim p(\phi) \end{aligned} ] This approach is naturally suited for multi-level data, such as time series from multiple populations or experimental replicates, as it allows for estimating shared patterns while acknowledging group-specific variations [36].

State-Space Modeling for Dynamic Systems: A common application in population dynamics is the Bayesian state-space model, which separates an underlying ecological process (e.g., true population abundance) from an observation process (e.g., imperfect sampling). The process model describes how the true state (Xt) evolves over time, while the observation model links measurements (Yt) to the latent state [37]. Fitting such models often requires Markov Chain Monte Carlo (MCMC) methods to sample from the complex posterior distribution of parameters and latent states [37].

Integration for Population Dynamics Research

Synthesizing Coalescent Theory and Covariate Data

The standard coalescent inference framework can be extended to incorporate time-varying covariates, moving beyond simple demographic reconstruction to test hypotheses about the drivers of population change. The Skygrid with Covariates model uses a generalized linear model (GLM) framework to link the effective population size to external time series [32]: [ \log(Ne(t)) = \beta0 + \beta1 X1(t) + \dots + \betap Xp(t) + \epsilon(t) ] where (X1(t), \dots, Xp(t)) are covariates and (\epsilon(t)) is a GMRF smoothing term that accounts for residual temporal autocorrelation not explained by the covariates. This approach has been used to identify significant associations between viral effective population size and incidence rates, and between species abundance and historical climate data [32].

Modeling Dependent Population Dynamics

Many systems involve subpopulations whose dynamics are interdependent due to shared ancestry or environmental pressures. The adaPop framework extends coalescent modeling to infer such dependencies. It models the joint effective population size trajectories of multiple subpopulations using a multivariate nonparametric prior, allowing researchers to quantify the time-varying association between populations and share statistical strength across datasets, leading to more precise estimates with narrower credible intervals [38].

Hierarchical Bayesian Inference for Population Time Series

Integrating dynamical population models with hierarchical Bayesian inference allows for direct parameter estimation from observational time series while accounting for uncertainty and variability. A typical application involves fitting a system of ordinary differential equations (ODEs) representing a predator-prey model to abundance data. The hierarchical structure estimates global hyperparameters that describe the central tendency and variability of model parameters across different experimental replicates, explicitly quantifying between-replicate differences and their ecological consequences [36].

Computational Framework and GPU Implementation

GPU-Accelerated Bayesian Computation

The computational burden of Bayesian inference for complex population models is substantial, particularly when using MCMC methods. GPU acceleration addresses this challenge by parallelizing likelihood calculations and linear algebra operations.

Parallel Computing Strategies: In a typical implementation, the computation of the log-likelihood across independent data blocks (e.g., different genomic loci in coalescent inference or independent time series in hierarchical modeling) is distributed across hundreds of GPU cores. Each core computes the partial likelihood for its assigned data block, and the results are efficiently reduced to a global sum [39].

Massive-Scale Agent-Based Simulation: Frameworks like LPSim and FLAME-GPU demonstrate the transformative impact of GPU computing for ABMs. LPSim uses vectorized data storage and access patterns to manage large-scale transportation networks, enabling simultaneous simulation of millions of individual vehicle trips. Its multi-GPU implementation relies on graph partitioning strategies to balance computational load across devices while managing inter-GPU communication through "ghost zone" designs [39].

Table 1: Performance Benchmarks of GPU-Accelerated Simulation Frameworks

| Framework | Application Domain | Hardware Setup | Simulation Scale | Execution Time |

|---|---|---|---|---|

| FLAME-GPU [35] | General Agent-Based Modeling | NVIDIA A100 / H100 GPU | Hundreds of millions of agents | Real-time performance, >1000x faster than CPU-based toolkits for some models |

| LPSim [39] | Regional Traffic Simulation | Single Tesla V100 (5120 cores) | 2.82 million trips | 6.28 minutes |

| LPSim [39] | Regional Traffic Simulation | AWS p2, dual NVIDIA K80 (4992 cores) | 9.01 million trips | 21.45 minutes |

Software and Tools for Implementation

Table 2: Essential Research Reagent Solutions for Computational Modeling

| Tool / Reagent | Type | Primary Function | Application Example |

|---|---|---|---|

| FLAME-GPU [35] | Software Framework | GPU-accelerated agent-based simulation | Simulating epidemic spread or urban mobility |

| Stan [36] | Probabilistic Programming | Bayesian inference with MCMC sampling | Fitting ODE-based population models to time series data |

| bayesTFR / bayesLife [40] | R Packages | Bayesian hierarchical projection of fertility and mortality | Probabilistic population projections for the United Nations |

| adaPop [38] | R Package | Nonparametric inference of dependent population dynamics | Studying variant-specific dynamics of SARS-CoV-2 |

| INLA [38] | Statistical Method | Approximate Bayesian inference for latent Gaussian models | Fast inference for Gaussian Markov Random Field models |

Detailed Application Notes and Protocols

Protocol 1: Inferring Effective Population Size with Covariates

Application Objective: Reconstruct past demographic history and test the association between effective population size and external covariates (e.g., climate data, incidence rates) using a coalescent framework.

Experimental Workflow:

- Genealogy Estimation: With molecular sequence data, first infer a phylogenetic tree or genealogy using software like BEAST or RevBayes. Alternatively, use a previously estimated tree [32].

- Covariate Data Preparation: Compile time-series data for covariates of interest. Ensure the time axes are aligned with the genealogy (e.g., time before present). Standardize covariates to have mean zero and standard deviation one for stable model fitting [32].

- Model Specification with Skygrid:

- Define a regular grid of time points over which the demographic function will be estimated.

- Specify the GMRF smoothing prior on the log-effective population sizes.

- For the covariate model, define the linear predictor: (\eta = \beta0 + \beta1 X(t) + \epsilon(t)), where (\epsilon(t)) is the GMRF [32].

- Posterior Inference: Use efficient MCMC algorithms (e.g., Hamiltonian Monte Carlo) to sample from the joint posterior distribution of the demographic trajectory, regression coefficients (\beta), and GMRF precision parameter. Assess MCMC convergence using trace plots and effective sample sizes [32] [38].

- Model Checking and Interpretation: Calculate posterior credible intervals for the (\beta) coefficients. A coefficient whose 95% interval excludes zero provides evidence for a significant association. Compare the full model with a null model (without covariates) using Bayes factors or Widely Applicable Information Criterion (WAIC) [32].

Protocol 2: Hierarchical Bayesian Fitting of a Dynamical Population Model

Application Objective: Estimate parameters and their variability from multiple time series of population abundances (e.g., predator-prey cycles) using a continuous-time model.

Experimental Workflow:

- Data Preparation: Compile multiple time series of observed population counts. Ensure time points are aligned and any missing data are noted. Standardize data if necessary [36].

- Model Formulation:

- Process Model: Define a system of ODEs representing the population dynamics (e.g., Lotka-Volterra, chemostat model). For a predator-prey system, this might be: [ \begin{aligned} \frac{dP}{dt} &= rP - aPC \ \frac{dC}{dt} &= eaPC - mC \end{aligned} ] where (P) is prey, (C) is predator, and (r, a, e, m) are parameters [36].

- Observation Model: Specify a probability distribution linking the true, unobserved state (Xt) to the data (Yt), e.g., (Yt \sim \text{LogNormal}(\log(Xt), \sigma^2)) [36].

- Hierarchical Structure: Assume parameters for each time series (i) are drawn from a common population distribution: (\thetai \sim \text{Normal}(\mu\theta, \tau_\theta)) [36].

- Implementation in Probabilistic Programming:

- Code the ODE model and hierarchical observation model in Stan.

- Use Stan's built-in ODE solver and HMC sampler.

- Specify weakly informative priors for hyperparameters (\mu\theta) and (\tau\theta) [36].

- Inference and Analysis:

- Run multiple HMC chains to sample from the posterior.

- Diagnose convergence and effective sample size for all key parameters.

- Analyze the posterior distributions of (\thetai) and hyperparameters (\mu\theta, \tau_\theta) to understand central trends and between-series variability [36].

Protocol 3: Building a Large-Scale, GPU-Accelerated Agent-Based Model

Application Objective: Simulate the behavior of a large population (e.g., epidemic spread, urban traffic) with millions of agents to study emergent phenomena.

Experimental Workflow:

- Agent and Environment Definition:

- Define agent types (e.g., "Person", "Vehicle") and their state variables (e.g., health status, location).

- Define the environment (e.g., a road network graph, a abstract grid) [35].

- Agent Behavior Specification:

- Write agent functions that describe how an agent updates its state each simulation step. In FLAME-GPU, this is done as a CUDA device function using the FLAME-GPU API [35].

- Example: An infection function might check for nearby infectious agents and update a susceptible agent's state with a certain probability.

- Model Description and Configuration:

- Use the FLAME-GPU API (C++ or Python) to describe the model structure: agent types, state variables, message lists for communication, and the order of function execution.

- Configure the execution flow via a directed acyclic graph (DAG) that specifies dependencies between agent functions [35].

- Simulation Execution and Optimization:

- Compile and run the model on a supported NVIDIA GPU.

- For multi-GPU execution, use graph partitioning to distribute the environment and agents across devices, ensuring load balancing. Implement "ghost zones" to handle inter-partition agent interactions [39].

- Output and Analysis:

- Log agent states at specified time intervals.

- Use the logged data to compute summary statistics and visualize the emergent population-level dynamics [35].

Table 3: Key Agent Functions for an Epidemiological ABM (SEIR Model)

| Agent Function | Agent State | Key Operations | Output Message |

|---|---|---|---|

| Check_Contacts | Susceptible, Exposed, Infectious | Outputs location; Reads locations of nearby agents; Computes force of infection | Location (Spatial3D) |

| Update_Infection | Susceptible, Exposed, Infectious, Recovered | Reads infection risk from messages; Updates individual health state (e.g., S->E); Updates infection timers | None |

| Move | All | Updates agent location based on movement rules | Location (Spatial3D) |

Implementing GPU-Accelerated Bayesian Models: Architectures and Real-World Cases

Application Notes: GPU-Accelerated Bayesian Algorithms

The implementation of Bayesian computational methods on graphics processing units (GPUs) represents a paradigm shift, enabling researchers to tackle problems of unprecedented scale and complexity in fields ranging from astrophysics to drug development. The following application notes detail how specific algorithmic innovations are exploiting the massive parallel architecture of modern GPUs.

Batched Nested Sampling for Gravitational-Wave Inference

Nested sampling, a cornerstone algorithm for Bayesian inference, has traditionally been limited by its sequential nature. Recent work has successfully re-engineered the "acceptance-walk" sampling method—a community-standard within the bilby and dynesty frameworks—for GPU execution through a vectorized formulation [41] [42] [43].

The key innovation lies in replacing the sequential replacement of individual "live points" with batched processing, where hundreds of points are evolved simultaneously. This approach leverages the GPU's thousands of cores to perform parallel Markov Chain Monte Carlo (MCMC) walks, with each core independently seeking new samples that satisfy the likelihood constraint [42] [43]. This architectural shift from CPUs to GPUs has demonstrated speedups of 20-40× for analyzing binary black hole signals, while producing statistically identical posterior distributions and evidence estimates [41].

Table 1: Performance Metrics of GPU-Accelerated Nested Sampling vs. CPU Implementation

| Metric | CPU Implementation (16-core) | GPU Implementation | Improvement Factor |

|---|---|---|---|

| Wall-time for typical analysis | Baseline (100s of CPU-hours) | 20-40× faster | 20-40× [41] [43] |

| Direct computational cost | Baseline | 1.5-2.5× lower | Cost reduction [43] |

| Statistical fidelity | Reference posteriors and evidences | Statistically identical recovery | No statistical deviation [42] |

Differentiable Large Population Models (LPMs)

For simulating population-scale dynamics, such as pandemic response or supply chain logistics, Large Population Models (LPMs) built on agent-based frameworks introduce three key innovations that benefit from GPU acceleration [31]:

- Compositional Design: Enables efficient simulation of millions of agents on commodity hardware through composable interactions and tensorized execution, balancing behavioral complexity with computational constraints [31].

- Differentiable Specification: Makes simulations end-to-end differentiable, supporting gradient-based learning for model calibration, sensitivity analysis, and efficient data assimilation from heterogeneous streams [31].

- Decentralized Computation: Extends differentiable simulation to distributed agents using secure multi-party protocols, facilitating integration with real-world systems while preserving privacy [31].

Formally, in an LPM, the state of an agent, s_i(t), updates based on interactions with its neighbors and environment. The GPU enables the simultaneous evaluation of this update function, f, for massive numbers of agents, transforming a traditionally sequential process into a parallel one [31].

Parallelized MCMC for Phylogenetics and Regression

Beyond nested sampling, other Bayesian Monte Carlo methods have seen significant GPU-driven innovation.

MrBayes for Phylogenetics: The Metropolis Coupled Markov Chain Monte Carlo (MC3) algorithm in MrBayes has been accelerated by a "tight" GPU implementation (

tgMC3). This approach merges multiple discrete GPU kernels based on data dependency, reducing launch overhead and data transfer complexity. This strategy has demonstrated speedups of 6-51× compared to serial CPU execution, depending on the dataset and hardware configuration [44].Bayesian Additive Regression Trees (BART): A GPU-enabled implementation of BART achieves speedups of up to 200× relative to a single CPU core. This makes this high-quality, nonparametric Bayesian regression technique competitive in runtime with popular gradient-boosting methods like XGBoost, while retaining its advantages in uncertainty quantification [45].

Table 2: Performance of GPU-accelerated MCMC Algorithms Across Domains

| Algorithm | Application Domain | CPU Baseline | GPU Speedup | Key GPU Utilization |

|---|---|---|---|---|

| tgMC3 [44] | Bayesian Phylogenetics | Serial MrBayes MC3 | 6× to 51× | Kernel fusion to reduce launch overhead |

| GPU-BART [45] | Nonparametric Regression | Single CPU core (M1 Pro) | Up to 200× | Parallel tree operations and prediction |

Experimental Protocols

Protocol: GPU-Accelerated Nested Sampling with a Custom Acceptance-Walk Kernel

This protocol details the steps for performing gravitational-wave inference using a GPU-accelerated nested sampler, as validated in Prathaban et al. [41] [42].

Research Reagent Solutions

Table 3: Essential Software and Hardware for GPU Nested Sampling

| Item Name | Function/Brief Explanation |

|---|---|

| blackjax-ns | A JAX-based library providing the vectorized nested sampling framework upon which the custom kernel is built [42]. |

| Acceptance-Walk Kernel | The custom sampling kernel that replicates the logic of the CPU-based bilby/dynesty sampler, but operates on batches of points [43]. |

| ripple | A GPU-native library for calculating gravitational-waveform models, essential for fast likelihood evaluations [42] [43]. |

| JAX | A high-performance numerical computing library that enables automatic differentiation and seamless CPU/GPU/TPU execution [42]. |

| NVIDIA A100/Analogous GPU | A modern GPU with thousands of cores and sufficient memory for handling large batches of live points and complex models [46]. |

Procedure

Initialization:

- Define the prior probability distribution

π(θ|H)and the likelihood functionL(d|θ,H)for the gravitational-wave datad[42]. - Specify the number of live points

N(e.g., 1000 to 4000). On the GPU, this becomes the initial batch size. - Draw the initial ensemble of

Nlive points from the prior.

- Define the prior probability distribution

Sampling Loop:

- Identify Worst Points: Find the live point with the lowest likelihood value,

L_min. In the batched GPU implementation, this step is performed across the entire ensemble simultaneously [42]. - Manage Live Points: Remove the worst point(s) from the live set and add them to a set of "dead" points. The GPU implementation uses a batched removal strategy, which creates a "saw-tooth" pattern in the number of live points, requiring a specific correction factor for evidence calculation [43].

- Generate New Points: Replace the removed points by drawing new samples from the prior subject to the constraint

L_new > L_min. This is the core of the acceptance-walk kernel [42]:- Parallel MCMC Walks: Launch hundreds of Differential Evolution MCMC walks concurrently on the GPU. Each walk explores the parameter space independently from a different starting point within the live set [43].

- Adaptive Tuning: Modify the MCMC walk length (number of steps) adaptively at the batch level to ensure uniform workload across GPU threads and prevent thread divergence [43].

- Iterate: Repeat the sampling loop until a predefined evidence tolerance or maximum iteration count is reached.

- Identify Worst Points: Find the live point with the lowest likelihood value,

Post-processing:

Workflow Visualization

Diagram 1: GPU Nested Sampling Workflow.

Protocol: Differentiable Simulation with Large Population Models

This protocol outlines the methodology for constructing and calibrating a differentiable Large Population Model (LPM) using frameworks like AgentTorch [31].

Research Reagent Solutions

Table 4: Key Components for Differentiable Large Population Models

| Item Name | Function/Brief Explanation |

|---|---|

| AgentTorch Framework | An open-source framework that provides the core components for building LPMs, supporting GPU acceleration, differentiable environments, and neural network composition [31]. |

| PyTorch/TensorFlow (JAX) | Deep learning libraries with automatic differentiation capabilities that form the backbone of the differentiable simulation environment [31]. |

| Real-world Data Streams | Heterogeneous data (e.g., epidemiological case counts, mobility data) used for model calibration and data assimilation [31]. |

| Gradient-based Optimizer | An optimizer (e.g., Adam, SGD) used to minimize a loss function by adjusting model parameters based on gradients propagated through the simulation steps [31]. |

Procedure

Model Specification:

- Agent State Definition: Define the state vector

s_i(t)for each agentiat timet, containing both static and dynamic properties (e.g., health status, location) [31]. - Interaction Network: Define the neighborhood

N_i(t)for each agent, which can be a graph or based on proximity. - Update Rules: Formalize the state update function

f(Eq. 1) and environment update functiong(Eq. 2). The functionfdetermines how an agent's new state is computed from its current state, messages from neighbors, and environmental factors [31]. - Message Passing: Define the message function

Mthat computes the information exchangem_ij(t)between interacting agentsiandj[31].

- Agent State Definition: Define the state vector

Implementation for GPU:

- Tensorization: Represent the states of all agents as a single state tensor

s(t), and all agent-agent interactions as an interaction tensor. This allows the update functionsfandgto be applied simultaneously to all agents/edges in a single, vectorized operation [31]. - Gradient Tracing: Ensure the entire simulation computation graph is built using operations from a differentiable programming library (e.g., JAX, PyTorch), enabling gradient flow from outputs back to model parameters

θ[31].

- Tensorization: Represent the states of all agents as a single state tensor

Calibration & Data Assimilation:

- Define Loss Function: Construct a loss function that quantifies the discrepancy between the model's aggregate outputs

x_t = h(s(t))and observed real-world datay_t(e.g., mean squared error) [31]. - Gradient-based Optimization: Use backpropagation through time (BPTT) to compute the gradient of the loss function with respect to the model parameters

θ. Updateθusing a gradient-based optimizer to minimize the loss [31].

- Define Loss Function: Construct a loss function that quantifies the discrepancy between the model's aggregate outputs

Diagram 2: Differentiable LPM Calibration.

The inference of past population size history from genetic data is a cornerstone of population genetics, providing crucial insights into evolutionary events such as migrations, bottlenecks, and expansions. Population History Learning by Averaging Sampled Histories (PHLASH) represents a significant methodological advance in this field, enabling full Bayesian inference of population size trajectories from whole-genome sequence data [20]. This approach combines the strengths of coalescent-based modeling with modern computational techniques to address longstanding limitations in demographic inference.

Traditional methods like the Pairwise Sequentially Markovian Coalescent (PSMC) have been instrumental in analyzing single diploid genomes but suffer from visual bias ("stair-step" appearance) and an inability to leverage data from multiple samples [20]. Subsequent methods attempted to address these limitations but often faced computational constraints, particularly with Bayesian approaches. PHLASH distinguishes itself by providing a nonparametric estimator that adapts to variability in the underlying size history without user intervention, while simultaneously offering automatic uncertainty quantification through full posterior distribution estimation [20] [47].

The methodological innovation of PHLASH is particularly relevant for researchers studying population dynamics across various species, as it has been validated on a diverse panel of eight species from the stdpopsim catalog, including Anopheles gambiae, Arabidopsis thaliana, and Homo sapiens [20]. By leveraging GPU acceleration and an efficient Python implementation, PHLASH achieves computational speeds that enable previously impractical analyses of large genomic datasets.

Key Methodological Innovations

Core Algorithmic Advancements

At the heart of PHLASH lies a novel algorithmic breakthrough that enables efficient Bayesian inference for coalescent hidden Markov models (HMMs). The key innovation is a new technique for computing the score function (gradient of the log-likelihood) of a coalescent HMM, which has the same computational complexity as evaluating the log-likelihood itself [20] [47]. This technical advancement enables the method to efficiently navigate high-dimensional parameter spaces and locate regions of high posterior probability.

The PHLASH algorithm operates by drawing random, low-dimensional projections of the coalescent intensity function from the posterior distribution of a PSMC-like model and averaging them together to form an accurate and adaptive size history estimator [47]. This Bayesian averaging approach provides several advantages over previous methods:

- Automatic adaptation to variability in the underlying size history without manual tuning

- Uncertainty quantification through full posterior distribution estimation

- Robust point estimates via the posterior median of sampled size histories

- Enhanced detection capabilities for population structure and ancient bottlenecks through formal Bayesian testing procedures [20]

GPU-Accelerated Computational Framework

PHLASH leverages modern graphics processing unit (GPU) hardware to achieve dramatic computational acceleration. The implementation utilizes a hand-tuned codebase that fully exploits the parallel processing capabilities of GPUs, resulting in inference speeds that can exceed traditional optimized methods [47]. This hardware-aware design follows a broader trend in scientific computing, where GPU acceleration is revolutionizing Bayesian inference across domains, from astrophysics to genomics [48] [1] [16].

The computational efficiency of PHLASH stems from several design principles:

- Massively parallel execution of computationally intensive operations across GPU cores

- Efficient memory management strategies optimized for GPU architecture

- Conditional independence exploitation between genomic regions, enabling parallel processing

- Optimized data structures that minimize data transfer between host and device memory

This GPU-accelerated framework enables researchers to perform full Bayesian inference on large genomic datasets in practical timeframes, opening new possibilities for population genetic analysis.

Performance Benchmarks and Comparative Analysis

Experimental Design and Evaluation Metrics

The performance of PHLASH was rigorously evaluated against three established methods—SMC++, MSMC2, and FITCOAL—across a panel of 12 different demographic models from the stdpopsim catalog [20]. The benchmark encompassed eight different species to ensure broad biological applicability, with whole-genome data simulated for diploid sample sizes of n ∈ {1, 10, 100}. Each experimental scenario was replicated three times, resulting in 108 distinct simulation runs.

Method performance was assessed using multiple accuracy metrics, with root mean-square error (RMSE) serving as the primary quantitative measure. The RMSE was calculated as:

[ \text{RMSE}^{2}=\int{0}^{\log T}\left[\log {\hat{N}}{e}({e}^{u})-\log {N}_{0}({e}^{u})\right]^{2} \,{\rm d}u ]

where (N{0}(t)) represents the true historical effective population size used to simulate data, and T is a time cutoff set to 10^6 generations [20]. This metric emphasizes accuracy in the recent past and for smaller values of (N{e}), which are typically more challenging to estimate precisely.

Comparative Performance Results