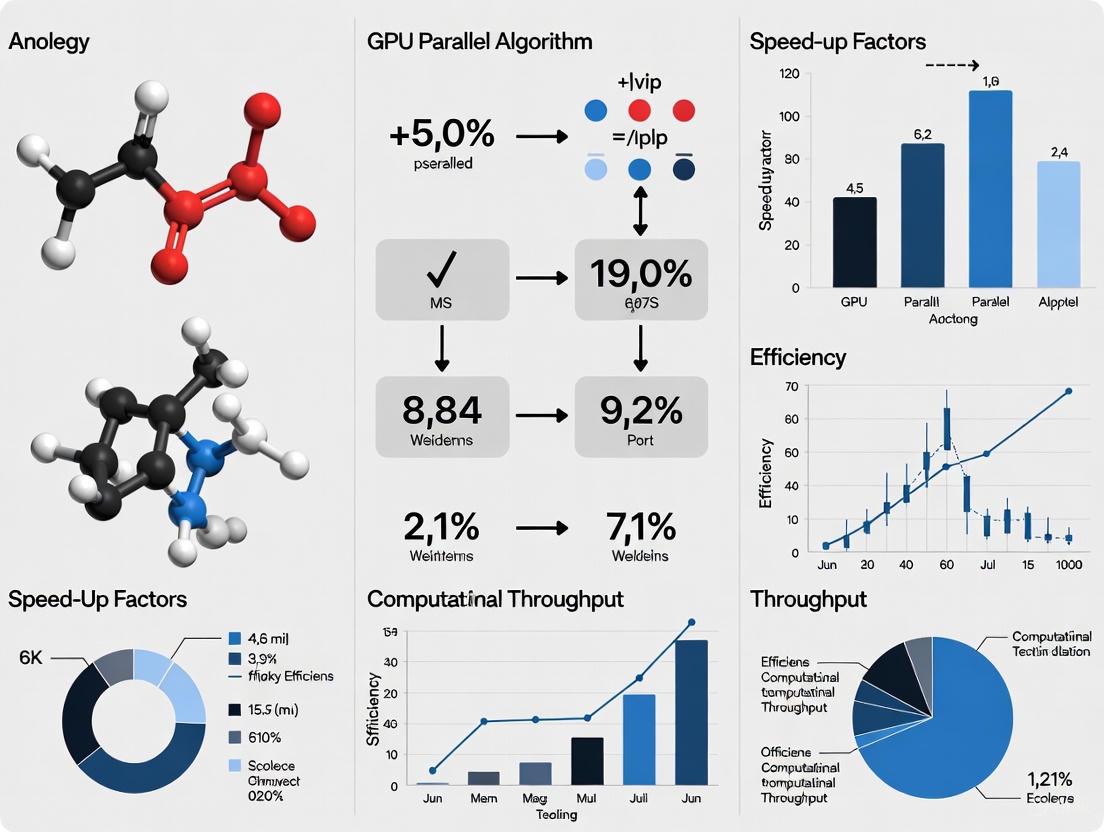

GPU Parallel Algorithm Performance Analysis: Essential Formulas and Optimization Techniques for Drug Discovery

This article provides a comprehensive guide to performance analysis and optimization of GPU parallel algorithms, tailored for researchers and professionals in drug development.

GPU Parallel Algorithm Performance Analysis: Essential Formulas and Optimization Techniques for Drug Discovery

Abstract

This article provides a comprehensive guide to performance analysis and optimization of GPU parallel algorithms, tailored for researchers and professionals in drug development. We cover foundational performance models and metrics, explore applications in molecular docking and dynamics, detail advanced troubleshooting and optimization formulas for memory and compute bottlenecks, and present methodologies for rigorous validation and cost-benefit analysis. By synthesizing these core intents, the article delivers a practical framework for maximizing computational efficiency in biomedical research, enabling faster and more cost-effective discovery pipelines.

Core Principles and Performance Metrics for GPU Acceleration

▎Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between a CPU core and a CUDA core?

A CPU core is designed for complex, powerful tasks and operates at high clock speeds (e.g., ~3.4 GHz). It can handle out-of-order or speculative operations and often features its own L1 and sometimes L2 cache [1].

A CUDA core is a simpler, less powerful core focused on repetitive number-crunching. It runs at a lower clock speed (e.g., 1.4-2.0 GHz) and is optimized for massive parallel scalar operations. It does not have its own dedicated cache, instead sharing L1 cache and other resources within its Streaming Multiprocessor (SM) [1].

Q2: How do Tensor Cores differ from CUDA Cores, and why are they crucial for AI research?

CUDA Cores handle general-purpose parallel computing, performing scalar arithmetic operations like single-precision (FP32) floating-point calculations [2] [1].

Tensor Cores are specialized hardware units designed exclusively to accelerate matrix multiply-and-accumulate (MMA) operations (D = A x B + C), which are fundamental to deep learning training and inference [2] [3]. They perform these operations on small matrix blocks (e.g., 4x4x4) in a single clock cycle, offering vastly higher throughput for matrix math than CUDA cores alone. This specialization makes them the bedrock of modern AI and machine learning [3].

Q3: What do "warp" and "thread block" mean in CUDA programming?

In the CUDA threading model, the thread is the smallest unit of execution [1].

- A warp is a group of 32 threads within a thread block. The GPU's warp scheduler executes all 32 threads in a warp simultaneously using the Single Instruction, Multiple Threads (SIMT) model, meaning they all execute the same instruction on different data elements [2] [1] [4].

- A thread block (or Cooperative Thread Array) is a larger group of threads (up to 1024) that can cooperate by synchronizing their execution and efficiently sharing data through a fast, low-latency shared memory [2] [1]. A thread block is executed on a single SM [1].

Q4: My CUDA program is compiling but failing to run, reporting "CUDA driver version is insufficient for CUDA runtime version". How do I fix this?

This error indicates a mismatch between your installed NVIDIA driver and the version of the CUDA toolkit you are using [5]. To resolve it:

- Check the driver version on your system by opening a command prompt and typing

nvidia-smi. - Consult the NVIDIA CUDA Toolkit release notes to verify the minimum required driver version for your specific CUDA toolkit version.

- If your driver is outdated, download and install the latest NVIDIA driver from the official website that meets or exceeds the requirement [5].

Q5: My GPU computation results are correct, but performance is lower than expected. What are the first things I should check?

- Profile your application: Use tools like the NVIDIA Visual Profiler or

nvprofto identify bottlenecks, such as excessive time spent on data transfers between the host (CPU) and device (GPU) [6] [4]. - Analyze memory access patterns: Inefficient memory access is a major performance killer. Strive for coalesced memory accesses to global memory and leverage faster on-chip memories like shared memory and L1 cache to reduce latency [7] [8].

- Check occupancy: Ensure you are launching a sufficient number of threads to keep the GPU's many cores busy. Tools like the CUDA Occupancy Calculator can help with this [1] [4].

▎Troubleshooting Guides

Issue 1: CUDA Compilation and Installation Errors

Problem: Errors during the installation of the CUDA toolkit or when compiling CUDA code.

| Error Symptom | Possible Cause | Solution |

|---|---|---|

nvcc: command not found [5] |

Incorrect PATH environment variable. |

Ensure the CUDA bin directory (e.g., /usr/local/cuda/bin on Linux, C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\vX.Y\bin on Windows) is added to your system's PATH [6] [5]. |

Unsupported GNU version! [5] |

Host compiler incompatibility. | Each CUDA version supports specific compilers (e.g., a maximum GCC version). Check CUDA documentation and install a compatible compiler. You can also specify the compiler path explicitly in your build configuration [5]. |

CUDA driver version is insufficient [5] |

Driver and toolkit version mismatch. | Update your NVIDIA graphics driver to the version required by your CUDA toolkit [5]. |

Linker errors (e.g., cannot find -lcudart) [5] |

Incorrect LD_LIBRARY_PATH (Linux) or library paths. |

Verify that the CUDA lib64 directory (e.g., /usr/local/cuda/lib64) is included in your LD_LIBRARY_PATH (Linux) or that library paths are correctly set in your project (Windows) [6] [5]. |

Issue 2: Performance Bottlenecks in GPU-Accelerated Simulations

Problem: A computational fluid dynamics or molecular dynamics simulation, central to drug development research, is running slower than theoretical peak performance.

Diagnosis and Resolution Protocol:

Identify the Bottleneck Type: Use a performance profiling tool to categorize the bottleneck [7] [8].

- Compute-bound: GPU cores are fully utilized, but computation takes too long.

- Memory-bound: The GPU is waiting for data from memory.

Apply Optimizations:

For Memory-bound Kernels:

- Utilize Shared Memory: Explicitly cache frequently accessed data from global memory into shared memory, which is ~100x faster [8]. One study on concrete temperature simulation achieved a 155% improvement in memory access efficiency using this method [8].

- Avoid Bank Conflicts: Structure data access in shared memory to avoid conflicts between threads, which can serialize accesses. Resolving this has led to speedups of 437.5x in specific matrix operations [8].

- Ensure Coalesced Memory Access: Structure your data and memory accesses so that threads in a warp access contiguous, aligned segments of global memory. This allows the GPU to combine memory requests into a single transaction [2] [4].

For Compute-bound Kernels:

- Leverage Tensor Cores: If your algorithm can be formulated as matrix multiplications (e.g., in linear solvers), using libraries that leverage Tensor Cores can provide a massive speedup [3].

- Increase Arithmetic Intensity: Reformulate the algorithm to perform more computations per data element fetched from memory.

Hide Data Transfer Overhead:

- Use Asynchronous Execution: Employ CUDA Streams to overlap data transfers between the host and device with kernel execution on the GPU. Research shows this can double the computational efficiency for applications like inner product matrix multiplication [8].

- The optimal number of CUDA streams can be guided by a theoretical data access overlap rate formula [8].

Experimental Protocol: Optimizing a Finite Element Solver using Shared Memory

Objective: Reduce the simulation time for a temperature field solver by optimizing its memory access.

Materials:

- GPU: NVIDIA V100, A100, or H100 [1].

- Software: CUDA Fortran or CUDA C++ platform, NVIDIA Nsight Systems profiler [8].

Methodology:

- Baseline Profiling: Run the original solver and use the profiler to confirm that the target kernel is memory-bound.

- Algorithm Analysis: Identify a specific subroutine with frequent, reusable data access patterns (e.g., matrix transposition).

- Shared Memory Implementation:

- a. Declare a block of shared memory within the CUDA kernel:

__shared__ float tile[TILE_DIM][TILE_DIM]; - b. Have each thread in a block collaboratively load a tile of data from slow global memory into the fast shared memory tile.

- c. Synchronize all threads in the block (

__syncthreads()) to ensure the entire tile is loaded. - d. Allow threads to perform computations by reading from the shared memory tile.

- a. Declare a block of shared memory within the CUDA kernel:

- Bank Conflict Resolution: Ensure that the data access pattern within the shared memory tile is structured so that consecutive threads access consecutive 32-bit words, preventing bank conflicts.

- Validation and Performance Measurement: Run the optimized kernel and verify it produces identical results to the baseline. Use the profiler to measure the reduction in global memory transactions and the overall speedup.

▎GPU Architecture & Performance Data

Key Specifications of NVIDIA Data Center GPUs

Table: A comparison of key hardware specifications across three generations of NVIDIA data center GPUs. [1]

| Component / GPU Model | NVIDIA V100 (Volta) | NVIDIA A100 (Ampere) | NVIDIA H100 (Hopper) |

|---|---|---|---|

| Streaming Multiprocessors (SMs) | 80 | 108 | 132 |

| FP32 CUDA Cores (per SM) | 64 | 64 | 128 |

| Total FP32 CUDA Cores | ~5,120 | ~6,912 | ~16,896 |

| Tensor Cores (per SM) | 8 | 4 (3rd Gen) | 4 (4th Gen) |

| Shared Memory / L1 Cache (per SM) | 128 KB | 192 KB | 256 KB |

| L2 Cache (total) | 6,144 KB | 40,960 KB | 61,440 KB |

| Memory (total) | 32 GB HBM2 | 80 GB HBM2e | 96 GB HBM3 |

| Memory Bandwidth | ~900 GB/s | ~2,000 GB/s | ~3,350 GB/s |

| NVLink Bandwidth | 300 GB/s | 600 GB/s | 900 GB/s |

GPU Memory Hierarchy Characteristics

Table: The performance and scope of different memory types in the NVIDIA GPU hierarchy. [1] [4]

| Memory Type | Location | Scope | Latency & Bandwidth | Key Function |

|---|---|---|---|---|

| Registers | On-chip (SM) | Single Thread | Fastest | Stores thread-local variables and operands for immediate operations. |

| Shared Memory | On-chip (SM) | All threads in a Block | Very Low / Very High | User-managed cache for inter-thread communication within a block. |

| L1 Cache | On-chip (SM) | All threads in an SM | Low / High | Hardware-managed cache for automatic storage of frequently accessed data. |

| L2 Cache | On-chip (GPU) | All SMs on the GPU | Medium / High | Unified cache that serves all memory operations, bridging SMs to DRAM. |

| Global Memory | Off-chip (HBM) | All grids on the GPU | High (Latency) / High (Bandwidth) | Main GPU memory; large but high-latency. Requires coalesced access. |

| Constant Memory | Off-chip (Cached) | All grids on the GPU | High (if cache miss) | Cached read-only memory for constants that are broadcast to multiple threads. |

▎Architecture and Workflow Visualizations

▎The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key hardware and software components for GPU-accelerated research in computational drug development.

| Item | Function & Relevance to Research |

|---|---|

| NVIDIA Data Center GPU (A100/H100) | Provides the core computational hardware with thousands of CUDA cores and dedicated Tensor Cores for accelerating both general-purpose simulations and specific AI/deep learning tasks like molecular docking or protein folding prediction [1] [3]. |

| CUDA Toolkit | The essential software development platform containing the nvcc compiler, debugging and profiling tools, and core libraries (e.g., cuBLAS, cuSOLVER) necessary for building and optimizing GPU-accelerated applications [6] [5]. |

| cuDNN Library | A highly tuned library for deep learning primitives (e.g., convolutions, RNNs). Critical for achieving peak performance when training or running inference with neural network models on NVIDIA GPUs [3]. |

| NVIDIA Nsight Tools | An integrated suite of performance analysis tools, including Nsight Systems for application-level profiling and Nsight Compute for detailed kernel analysis. Used to identify bottlenecks in compute and memory usage [6]. |

| OpenMP / OpenACC | Directive-based programming models that enable parallelization of existing C++/Fortran code for GPUs with less effort than low-level CUDA C++, facilitating faster porting of scientific simulations [4]. |

| Host System Memory (RAM) | Sufficient CPU RAM is critical for handling large datasets before they are transferred to the GPU. Inadequate RAM can become a system-level bottleneck [4]. |

| NVLink Interconnect | A high-bandwidth, energy-efficient GPU-to-GPU interconnect that enables scalable multi-GPU systems, which are essential for tackling very large problems that exceed the memory capacity of a single GPU [1]. |

Frequently Asked Questions (FAQs)

What is the relationship between FLOP/s and Memory Bandwidth, and how do they determine GPU performance?

FLOP/s (Floating-Point Operations Per Second) and Memory Bandwidth (GB/s) are the two primary hardware limits that define GPU performance. Their interaction determines whether a computation is compute-bound or memory-bound [9] [10].

A kernel's performance is governed by its Arithmetic Intensity (AI), which is the ratio of total FLOPs to total bytes accessed from global memory [10]. The "ridge point" is the AI where the GPU's peak compute and memory bandwidth limits intersect [10]. For example, an NVIDIA A100 with 19.5 TFLOPS FP32 performance and 1.5 TB/s memory bandwidth has a ridge point at approximately 13 FLOPs/Byte [10]. Kernels with an AI below this value are memory-bound, while those above it are compute-bound.

My GPU is not achieving its theoretical peak FLOP/s. What are the common causes?

Failing to reach peak FLOP/s is often due to your workload operating in the wrong performance regime or suffering from overheads. Common causes include:

- Memory-Bound Workloads: If your kernel's Arithmetic Intensity is too low, performance is limited by memory bandwidth, not compute power. The time spent waiting for data from memory prevents the compute units from being fully utilized [9] [10].

- Inefficient Compute Operations: Even with high AI, using slow instruction types (e.g., scalar arithmetic, transcendental functions like

sinorexp) can result in performance far below the peak "compute roof" [10]. - Host-Side Overhead: The GPU can be underutilized if the CPU cannot prepare and dispatch kernels fast enough. This is often caused by launching too many small kernels, where GPU execution time is overshadowed by CPU dispatch overhead [10].

- Low Parallelism: Insufficient threads and thread blocks can lead to poor GPU utilization, as the hardware relies on massive parallelism to hide instruction and memory latency [9].

How can I quickly estimate if my application is memory-bound or compute-bound?

You can use the Roofline Model for a first-order analysis [10]. Follow this methodology:

- Calculate Your Kernel's Arithmetic Intensity (AI): For your algorithm, calculate

AI = Total_FLOPs / Total_Bytes_Accessed_from_Global_Memory[10]. - Plot on the Roofline: On a log-log plot, the GPU's performance limit is defined by a diagonal line (memory bandwidth limit) and a horizontal line (peak compute limit).

- Determine the Bound:

- If your kernel's performance point falls on the diagonal, it is memory-bound.

- If it falls on the horizontal line, it is compute-bound.

For a precise measurement, use profiling tools like NVIDIA Nsight Systems and Nsight Compute to identify the specific bottleneck [11].

What techniques can I use to optimize a memory-bound kernel?

The primary strategy is to increase the Arithmetic Intensity (AI) of your kernel by reusing data once it's been loaded into the GPU [10]. Key techniques include:

- Leverage Fast On-Chip Memory: Manually cache frequently accessed data from global memory into the much faster Shared Memory (SRAM). This allows threads within a block to cooperatively load a tile of data and perform multiple operations on it, drastically reducing trips to slow global memory [10].

- Optimize Memory Access Patterns: Ensure that memory accesses by threads in a warp are coalesced (i.e., accessing contiguous, aligned memory locations). This maximizes the efficiency of each memory transaction [12].

- Use Hardware-Aware Algorithms: Implement algorithms like FlashAttention, which are explicitly designed to exploit the GPU's memory hierarchy, minimizing reads and writes to global memory [13] [11].

How do latency and throughput relate to FLOP/s and bandwidth in GPU computing?

- Latency: The time delay between the start of a request (e.g., a memory load or a kernel launch) and when the result is available. Low latency is critical for interactive applications [11].

- Throughput: The total amount of work completed per unit of time (e.g., tokens/second for LLM inference, images/second for training). High throughput is the primary goal for batch-processing large datasets [11].

FLOP/s is a measure of computational throughput, while memory bandwidth is a measure of data transfer throughput. A GPU is architected for massive throughput via parallel execution of thousands of threads. High latency operations (like a global memory access) can be hidden as long as there is sufficient parallel work (high throughput) to keep the cores busy [9].

Troubleshooting Guides

Guide 1: Diagnosing and Resolving Memory Bandwidth Saturation

Symptoms: Your application's performance correlates strongly with memory bandwidth and does not improve with increased clock speeds. Profiling tools show high DRAM utilization and memory-bound warnings.

Methodology:

- Profile to Confirm: Use

nvidia-smito monitor memory bandwidth utilization and a profiler like NVIDIA Nsight Systems to confirm the kernel is memory-bound [11]. - Analyze Access Patterns: Check the profiler for uncoalesced memory access patterns, which manifest as inefficient memory transactions.

- Implement Tiling: Redesign your kernel to use shared memory. The general approach is [10]:

- Load a block or "tile" of input data from global memory into shared memory.

- Synchronize all threads in the block to ensure the tile is fully loaded.

- Perform computations on the data from shared memory.

- Write the final results back to global memory.

Experimental Protocol (Matrix Multiplication Tiling): The following workflow outlines the key steps for implementing a tiled matrix multiplication to mitigate memory bandwidth saturation.

Key Performance Indicators (KPIs): Monitor the kernel's achieved AI and memory bandwidth. Success is indicated by a higher AI moving the kernel's performance into the compute-bound regime on the Roofline model [10].

Guide 2: Diagnosing and Resolving Low Compute Utilization (Low FLOP/s)

Symptoms: Profiler shows low SM (Streaming Multiprocessor) utilization and low FLOP/s counts, even though the kernel is not memory-bound.

Methodology:

- Profile to Confirm: Use Nsight Compute to check warp execution efficiency and SM utilization [11].

- Increase Parallelism:

- Ensure you are launching a sufficiently high number of thread blocks (several times the number of SMs) to avoid "tail effects" where the GPU is underutilized at the end of a kernel launch [9].

- Structure your algorithm to maximize independent parallel operations.

- Leverage Specialized Cores: For deep learning and linear algebra, formulate operations to use Tensor Cores which provide vastly higher FLOPS for matrix math [14] [15].

- Use Efficient Data Types: Utilize lower precision like FP16 or BF16 with Tensor Cores where possible, as this can dramatically increase FLOP/s and reduce memory pressure [14] [11].

Experimental Protocol (Enabling Tensor Cores): The protocol below outlines the transition from using standard CUDA cores to leveraging Tensor Cores for massively parallel operations like matrix multiplication.

Key Performance Indicators (KPIs): Monitor SM utilization and achieved TFLOPS. Compare the results against the theoretical peak FLOP/s for the specific precision on your GPU [15].

Quantitative Data Reference

Table 1: Key Performance Metrics for Select NVIDIA Data Center GPUs

Data sourced from public specifications and benchmark reports [14] [16] [15].

| GPU Model | Architecture | FP32 TFLOPS (CUDA Cores) | FP16 TFLOPS (Tensor Cores) | Memory Bandwidth (GB/s) | VRAM (GB) |

|---|---|---|---|---|---|

| V100 | Volta | 15.7 | 125 | 900 | 16/32 |

| A100 | Ampere | 19.5 | 312 / 624 (sparse) | 1,555 - 2,000 | 40/80 |

| H100 | Hopper | 67 | 1,979 (FP8) | 3,000 | 80 |

Table 2: Performance Regime Analysis Based on Arithmetic Intensity

Adapted from the Roofline Model concept [10].

| Operation Example | Arithmetic Intensity (FLOPs/Byte) | Typical Performance Regime | Primary Limiting Factor |

|---|---|---|---|

| ReLU Activation | 0.25 | Memory-Bound | Memory Bandwidth |

| Vector Addition | 0.5 | Memory-Bound | Memory Bandwidth |

| 3x3 Max Pooling | 2.25 | Memory-Bound | Memory Bandwidth |

| Large Matrix Multiplication | > 100 | Compute-Bound | FP / Tensor Core Throughput |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Software and Libraries for GPU Performance Analysis

| Tool / Library | Function | Use Case in Performance Analysis |

|---|---|---|

| NVIDIA Nsight Systems | System-wide performance profiler | Identifying high-level bottlenecks (e.g., kernel launch overhead, CPU-GPU sync issues) [11]. |

| NVIDIA Nsight Compute | Detailed kernel profiler | In-depth analysis of individual kernel performance, including memory access patterns and SM efficiency [11]. |

NVIDIA SMI (nvidia-smi) |

Management and monitoring CLI | Real-time monitoring of GPU utilization, memory usage, and ECC errors [11]. |

| cuBLAS / cuDNN | Accelerated linear algebra and DNN kernels | High-performance baseline implementations for GEMM and convolutions; target for optimization [11]. |

| CUTLASS / CuTe | CUDA C++ templates for linear algebra | Building custom, highly optimized kernel implementations, especially those using Tensor Cores [11]. |

| Triton | Python-based GPU programming language & compiler | Writing efficient GPU kernels without deep CUDA expertise, useful for rapid prototyping of new operations [11]. |

For researchers, scientists, and drug development professionals working with GPU-accelerated applications, understanding performance bottlenecks is crucial for optimizing computational workflows. This guide provides methodologies to diagnose whether your algorithm is limited by the GPU's computational capacity (compute-bound) or by its memory bandwidth (memory-bound), with special consideration for applications in pharmaceutical research and development.

Core Concepts: Compute-Bound vs. Memory-Bound

Definitions and Key Differences

| Characteristic | Compute-Bound Algorithm | Memory-Bound Algorithm |

|---|---|---|

| Primary Limitation | GPU computational throughput [10] | Memory bandwidth [10] [17] |

| Arithmetic Intensity | High (>13 FLOPs/byte for A100) [10] | Low (<13 FLOPs/byte for A100) [10] |

| Runtime Determination | Time to perform calculations [10] | Time to transfer data from global memory [10] |

| Typical GPU State | Computation units busy, memory bus relatively idle [10] | Computation units idle, waiting for data [10] |

| Common Examples | Large matrix multiplication [10], LLM prefill phase [18] | Element-wise operations [10], LLM decode phase [17] [18] |

| Optimization Focus | Improve computational efficiency, use tensor cores [10] | Maximize data reuse, optimize memory access patterns [10] [19] |

The Roofline Model Framework

The Roofline Model is a visual performance model that plots achievable performance against arithmetic intensity [10] [20]. It establishes two fundamental performance limits:

- Memory Bandwidth Roof: The maximum performance achievable for a given arithmetic intensity, determined by global memory bandwidth [10]

- Compute Roof: The maximum performance achievable when arithmetic intensity is high enough to fully utilize computational units [10]

The ridge point is the arithmetic intensity where these two roofs intersect, typically around 13 FLOPs/byte for an NVIDIA A100 GPU [10]. Algorithms with arithmetic intensity below this value are memory-bound; those above are compute-bound.

Diagnostic Methodologies

Theoretical Analysis Using Arithmetic Intensity

Protocol: Calculating Theoretical Arithmetic Intensity

- Count Total FLOPs: Determine the total number of floating-point operations required by your algorithm [10]

- Calculate Memory Traffic: Sum the total bytes transferred between global memory and SMs [10]

- Compute Arithmetic Intensity: Apply the formula: AI = Total FLOPs / Total Bytes Accessed [10]

- Compare to Hardware Ridge Point: Determine if AI is above or below your GPU's ridge point (~13 FLOPs/byte for A100) [10]

Example: Matrix Multiplication Analysis

For matrix multiplication C = A × B with N×N matrices using 4-byte floats:

| Implementation | FLOPs per Output | Bytes Accessed | Arithmetic Intensity | Bound Type |

|---|---|---|---|---|

| Single element | 2N | 8N | 0.25 FLOPs/byte | Memory-bound [10] |

| 2×2 tile | 8N | 16N | 0.5 FLOPs/byte | Memory-bound [10] |

| Shared memory block | ~2N² | ~4N | ~N/2 FLOPs/byte | Compute-bound (for large N) [10] |

Empirical Profiling with NVIDIA Tools

Protocol: Performance Profiling with Nsight Systems and Nsight Compute

Profile with Nsight Systems:

- Execute:

nsys profile --stats=true your_application - Identify kernels with longest execution times [18]

- Execute:

Detailed Kernel Analysis with Nsight Compute:

- Execute:

ncu --metrics l1tex__t_bytes_pipe_lsu_mem_global_op_ld.sum,l1tex__t_requests_pipe_lsu_mem_global_op_ld.sum your_application - Collect key metrics from the report [18]

- Execute:

Calculate Empirical Arithmetic Intensity:

- Use measured FLOPs and memory transactions

- Compare with theoretical values to identify optimization opportunities [18]

Key Profiling Metrics for Diagnosis

| Metric Category | Specific Metrics | Memory-Bound Indicators | Compute-Bound Indicators |

|---|---|---|---|

| Memory Throughput | GPU memory bandwidth utilization | High utilization (>80%) [21] | Low utilization |

| Compute Utilization | SM utilization, tensor core activity | Low SM activity | High SM utilization [21] |

| Memory Patterns | Global load efficiency, shared memory bank conflicts | High memory latency, inefficient access patterns [19] | Efficient memory access |

| Instruction Mix | Compute vs. memory instruction ratio | High memory instruction percentage | High compute instruction percentage |

Optimization Strategies

Addressing Memory-Bound Algorithms

Protocol: Optimizing Memory-Bound Kernels

Maximize Data Reuse:

Optimize Memory Access Patterns:

Leverage Memory Hierarchy:

Addressing Compute-Bound Algorithms

Protocol: Optimizing Compute-Bound Kernels

Increase Computational Efficiency:

Optimize Thread Configuration:

Reduce Computational Overhead:

Application to Drug Discovery Workloads

Drug Discovery Specific Considerations

| Workload Type | Typical Bound | Optimization Strategies |

|---|---|---|

| Molecular Dynamics Simulations | Often memory-bound [22] | Increase batch sizes, optimize neighbor lists [22] |

| AI-Driven Molecular Design | Mixed (depends on phase) [23] | Model parallelism for large networks [21] |

| Virtual Screening | Often memory-bound [22] | Pre-load compound libraries, efficient data structures [22] |

| Quantum Chemistry Calculations | Often compute-bound | Utilize tensor cores, mixed precision [21] |

Case Study: Large Language Models in Drug Discovery

LLM Inference Characteristics:

- Prefill Phase: Compute-bound - parallel processing of input sequence [18]

- Decode Phase: Memory-bound - limited by loading weights for each generated token [17] [18]

Optimization Approach:

- Increase batch size to improve arithmetic intensity in decode phase [17]

- Use model compression techniques to reduce memory bandwidth requirements [17]

- Implement continuous batching to improve GPU utilization [21]

Research Reagent Solutions

| Tool/Resource | Function | Application Context |

|---|---|---|

| NVIDIA Nsight Systems | System-wide performance analysis | Identifying performance bottlenecks across entire application [18] |

| NVIDIA Nsight Compute | Detailed kernel profiling | Instruction-level analysis of CUDA kernels [18] |

| Intel Advisor GPU Roofline | Roofline model implementation | Visualizing performance limits on Intel GPUs [20] |

| CUDA Profiler | Built-in CUDA profiling | Basic metrics collection and timeline analysis [19] |

| Unified Compute Plane | Resource orchestration | Managing GPU resources across distributed systems [22] |

FAQs

How can I quickly determine if my algorithm is compute-bound or memory-bound?

Calculate the arithmetic intensity (FLOPs/byte) of your kernel and compare it to your GPU's ridge point (~13 FLOPs/byte for A100). Alternatively, use profiling tools to check if memory bandwidth or compute utilization is the limiting factor [10] [18].

Why is my GPU utilization low even though my algorithm should be compute-bound?

Low GPU utilization can stem from host-side overhead, insufficient parallelization, CPU bottlenecks in data loading, or suboptimal thread block scheduling. Profile your entire application to identify the specific bottleneck [10] [21].

How does batch size affect whether my model is compute-bound or memory-bound?

Increasing batch size typically increases arithmetic intensity, as more computations are performed per parameter loaded. This can shift workloads from memory-bound to compute-bound regimes, particularly in LLM inference [17] [18].

What are the specific challenges for memory-bound algorithms in drug discovery pipelines?

Drug discovery workflows often involve processing large chemical libraries or complex biological data, creating memory bandwidth pressures. Optimizing data loading pipelines, using efficient data formats, and leveraging distributed caching can mitigate these issues [22] [21].

How do I optimize a kernel that shows characteristics of both compute and memory bounds?

Focus on the primary bottleneck first, then iteratively optimize. Common approaches include increasing data reuse to reduce memory traffic while also ensuring computational patterns are efficient. The Roofline model can help identify which bound is more critical to address first [10] [20].

Frequently Asked Questions (FAQs)

1. What is the difference between theoretical and achieved occupancy on a GPU?

- Theoretical Occupancy is the upper limit of active warps on a Streaming Multiprocessor (SM), determined by the kernel's launch configuration and the GPU's hardware limits. It is calculated based on factors like the maximum number of warps or blocks per SM, register usage per thread, and shared memory usage per block [24].

- Achieved Occupancy is the average ratio of active warps to the maximum supported active warps that was actually measured during the kernel's execution. It accounts for how this number varies over time as warps begin and end their work [24].

2. Why is my achieved occupancy higher than the theoretical occupancy?

This is an unusual scenario. Typically, achieved occupancy cannot exceed theoretical occupancy. If this occurs, it may indicate that the profiler is reporting values for a specific SM rather than the average across all SMs, or it could potentially be a bug within the profiling tool itself [25].

3. What are common bottlenecks that cause low achieved occupancy?

Low achieved occupancy can result from several factors, including [24]:

- Unbalanced Workloads: Warps or blocks that finish execution at different times create a "tail effect" where fewer warps are active.

- Insufficient Parallelism: Launching too few blocks to keep all SMs busy, or a "partial last wave" of blocks that doesn't fully utilize the GPU.

- Resource Limitations: While these constrain theoretical occupancy, their effect is manifested in the low number of achieved active warps.

4. How can I improve the performance of my GPU-accelerated algorithm?

Focus on two main areas:

- Increase Theoretical Occupancy: Adjust your kernel's launch parameters (like block size) or reduce its resource consumption (registers, shared memory) to allow more warps to be active concurrently [24].

- Optimize Within the Algorithm: Parallelize the most expensive parts of your code. For instance, if your objective function involves independent calculations (like a Monte Carlo simulation), using a

parforloop can yield substantial performance gains [26].

Troubleshooting Guide: Diagnosing Performance Gaps

Symptom: Low Achieved Occupancy

If your achieved occupancy is significantly lower than the theoretical value, follow this diagnostic workflow:

Symptom: Application Errors or GPU Unresponsiveness

Use this protocol to diagnose and address stability issues that impact performance.

Diagnostic Protocol:

- Check GPU Status: Run

nvidia-smito verify all GPUs are visible and check critical health metrics like temperature, power draw, and utilization [27]. - Inspect for Errors: Check for ECC (Error Correction Code) errors with

nvidia-smi -q -d ECC. Non-zero values could indicate GPU memory issues [27]. - Examine System Logs: Use

dmesg | grep -iE 'nvidia|drm|nvrm'to find kernel-level error messages related to the GPU drivers [27]. - Interpret XID Errors: The GPU driver generates XID errors for critical events. Consult the table below for common errors and actions [28].

Common GPU XID Errors and Resolution Strategies

The following table summarizes frequent XID errors based on NVIDIA's debug guidelines [28].

| XID | Description | Recommended Action |

|---|---|---|

| 13 | Graphics Engine Exception | First, run diagnostics to check for hardware issues. If none are found, debug the user application [28]. |

| 31 | Suspected Hardware Problems | Contact your hardware vendor to run their diagnostic process [28]. |

| 48 | Double Bit ECC Error | If followed by Xid 63 or 64, safely drain work from the node and reset the GPU[sci-citation:1]. |

| 63 | ECC Page Retirement / Row-remap Event | If associated with XID 48, drain work and reset GPU. If not, it is safe to continue until a convenient reboot [28]. |

| 74 | NVLink Error | Check the error bits. This may indicate a marginal signal integrity issue; check mechanical connections and re-seat if necessary [28]. |

| 79 | GPU has fallen off the bus | Drain the node and report the issue to your system vendor [28]. |

| 95 | Uncontained ECC Error (A100+) | If MIG is disabled, reboot the node immediately. If errors continue, drain and triage the node [28]. |

The Scientist's Toolkit: Key Research Reagents & Materials

| Item | Function in GPU Performance Analysis |

|---|---|

| NVIDIA System Management Interface (nvidia-smi) | A command-line utility that provides monitoring and management capabilities for NVIDIA GPU devices. It is essential for checking GPU status, topology, and processes [27]. |

| NVIDIA Nsight Compute / Nsight Systems | Professional-level performance analysis tools for CUDA applications. They provide detailed profiling data on achieved occupancy, memory bandwidth, and instruction throughput [24]. |

| CUDA-Memcheck | A tool that helps identify memory access errors in CUDA applications, such as out-of-bounds accesses, which can cause crashes and incorrect results [27]. |

| Data Center GPU Manager (DCGM) | An enterprise-grade tool for managing and monitoring groups of GPUs in datacenter environments. It simplifies health checks, diagnostics, and policy enforcement [27]. |

| Iterative DFS Algorithm | A key algorithmic transformation used to adapt recursive problems for efficient GPU execution by minimizing stack depth and fitting working data into fast shared memory [29]. |

Case Study: N-Queens Solver Optimization

A high-performance N-Queens solver on GPU demonstrates the principles of closing the gap between theoretical and achieved performance. The researchers redesigned a recursive search into an iterative depth-first search (DFS) algorithm, allowing the entire stack to fit within the GPU's fast shared memory [29].

Key Experimental Protocol:

- Objective: Count all valid solutions for the 27-Queens problem.

- Hardware: Eight NVIDIA RTX 5090 GPUs.

- Core Optimization: The algorithm's stack structure was mapped to GPU shared memory with a carefully designed access pattern to eliminate bank conflicts, a major performance bottleneck [29].

- Result: The solver achieved a 26x speedup, verifying the 27-Queens solution in 28.4 days and demonstrating how memory access optimization directly translates to realized performance gains [29].

Troubleshooting Guides and FAQs

This guide provides targeted solutions for common issues researchers encounter with nvidia-smi and NVIDIA Nsight Systems during GPU-accelerated parallel algorithm experiments.

nvidia-smi Troubleshooting

Q: The system reports: "NVIDIA-SMI has failed because it couldn't communicate with the NVIDIA driver." What steps should I take?

A: This error indicates that the system cannot locate a functional NVIDIA driver. Follow this diagnostic protocol [30] [31]:

- Verify Driver Installation: Run

nvidia-smias a basic test. If it fails, proceed with diagnostics. - Inspect Kernel Module: Use the command

lsmod | grep nvidiato check if thenvidiakernel module is loaded. An empty output confirms the module is not loaded. - Diagnose and Reinstall:

- This often occurs after a Linux kernel update if the driver was not installed with DKMS (Dynamic Kernel Module Support), which automatically rebuilds the kernel module on updates [30].

- Recommended Fix: Reinstall the driver using the official NVIDIA installer with the

--dkmsflag to enable persistent module support for future kernel updates [30]. - Alternative (Ubuntu): Use the package manager after adding the official graphics drivers PPA:

sudo apt install nvidia-driver-470(or a newer version). Using both package managers and.runfiles can cause conflicts [30]. - Resolve Broken Packages: If using

aptresults in dependency errors, runsudo apt install --fix-broken[30].

- Last Resort - Clean Installation: If the above fails, completely purge all existing NVIDIA and CUDA packages, then reinstall the driver [30].

Q: How can I programmatically log GPU utilization and memory usage for long-running computational experiments?

A: Use the nvidia-smi query options with a loop interval to generate structured data logs perfect for post-processing [32].

- Basic CSV Logging: The following command queries key attributes and logs them to a CSV file every second (1000 ms).

- Advanced Selective Query: For more detailed performance counter collection, use the

--query-gpuflag with a comprehensive list of properties. This is essential for correlating algorithm performance with hardware states [32].

The table below summarizes key metrics for algorithm performance profiling [32]:

| Metric | CLI Query Parameter | Research Application in Performance Analysis |

|---|---|---|

| GPU Utilization | utilization.gpu |

Identifies overall GPU workload and potential bottlenecks in parallel execution. |

| Memory Usage | memory.used, memory.free |

Tracks memory footprint of datasets and algorithms; critical for optimizing data transfers. |

| Power Draw | power.draw |

Correlates algorithm efficiency with energy consumption for green computing metrics. |

| Core Clock | clocks.gr |

Controls and monitors processor speed for performance vs. stability experiments. |

| Temperature | temperature.gpu |

Ensures thermal throttling does not impact the validity of performance measurements. |

NVIDIA Nsight Systems Troubleshooting

Q: Profiling fails with the error: "Nsight-NvEvents-Provider: Too few event buffers." How is this resolved?

A: This error occurs when the profiling data buffers are exhausted due to high thread counts in complex parallel applications [33].

- Root Cause: Each CPU thread emitting events requires a dedicated buffer. When all are occupied, new events are discarded [33].

- Resolution Protocol: Increase the number of event buffers. In the Nsight menu, go to

Nsight > Options > Analysis. EnsureShow Controller Optionsis set toTRUE, then increase theNvEventscontroller option. For optimal performance, the number of buffers should be at least twice the number of threads outputting events [33].

Q: The CUDA debugger hangs during a debugging session. What are the common causes?

A: Debugger hangs typically result from improper setup or resource conflict [33].

- CPU and GPU Debugging Conflict: Never use the same Visual Studio instance for both CUDA and CPU debugging. The CUDA debugger will hang if the CPU process is paused. Use two separate Visual Studio instances if both are needed [33].

- Local Multi-GPU Configuration: When debugging locally on a machine with multiple GPUs, avoid having a display attached to the GPU you are debugging. Concurrent activities can cause hangs. Configure the system for "headless" GPU debugging [33].

- Check TDR Settings: Ensure Timeout Detection and Recovery (TDR) settings in the OS are correctly configured to prevent the system from resetting the GPU during long-running kernels [33].

Q: Why are breakpoints in my CUDA kernel not triggering as expected?

A: This is often a toolchain compatibility issue [33].

- Driver and Toolkit Compliance: Use the exact NVIDIA driver version specified in the Nsight Systems release notes. This is the most common reason for breakpoint failure [33].

- Symbol Information: Ensure your project is built with a compatible CUDA toolkit and that symbolic information is generated. For the Runtime API, this is often embedded. For the Driver API, look for accompanying

.cubin.elf.ofiles in the build output directory [33]. - Focus Picker Context: The debugger's default behavior is to break only on the first thread

(0,0,0). Use the CUDA Focus Picker to switch context to the specific thread you wish to debug or set conditional breakpoints [33].

Experimental Protocols for Performance Analysis

Adhering to these standardized protocols ensures consistent, reproducible, and valid performance data for your research.

Protocol 1: System-Wide Profile Collection with Nsight Systems

This protocol is for initial, high-level performance analysis to identify major bottlenecks.

- CLI Profiling: Use the

nsys profilecommand to launch your application. This is less intrusive than GUI profiling and is ideal for server environments [34]. - Basic Command:

--trace=cuda,nvtx,osrt: Enables tracing of CUDA APIs, NVTX markers, and OS runtime libraries.-o: Specifies the output report file.

- Advanced CPU Analysis: To include detailed CPU sampling and context switch information, add the following flags [34]:

--sample=cpu: Enables CPU instruction sampling.--cpuctxsw=system-wide: Traces thread scheduling across all CPUs (may require root).

Protocol 2: Controlled Kernel Profiling with Capture Ranges

This protocol is for isolating and profiling specific kernels or phases within a long-running experiment, minimizing profiling overhead.

- Instrument Application: Insert

cudaProfilerStart()andcudaProfilerStop()API calls into your source code to bracket the region of interest. - Profile with Capture Range:

--capture-range=cudaProfilerApi: Instructs the profiler to collect data only between thecudaProfilerStart/Stopcalls [34].

- Multiple Captures: To perform multiple captures in a single session without restarting the application, use [34]:

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential software "reagents" for GPU performance analysis research.

| Tool / Component | Function in Research | Key CLI Command / Metric |

|---|---|---|

| NVIDIA Nsight Systems | System-wide performance analyzer that visualizes algorithm execution across CPU and GPU to identify optimization opportunities [35]. | nsys profile [34] |

| nvidia-smi | GPU monitoring and management interface for real-time and logged telemetry data collection [32]. | nvidia-smi --query-gpu=... [32] |

| CUDA Profiling Tools Interface (CUPTI) | Low-level API used by tools like Nsight Systems to access GPU performance counters, enabling detailed kernel analysis [35]. | (SDK for custom tools) |

| NVTX (NVIDIA Tools Extension) | Library for annotating events, code ranges, and resources in your application to correlate algorithm stages with profile timelines [34]. | --trace=nvtx [34] |

Performance Analysis Workflow

The diagram below illustrates the logical workflow for a rigorous GPU performance analysis experiment, integrating the tools and protocols described above.

Key Performance Metrics for Algorithm Analysis

For quantitative analysis of parallel algorithm efficiency, track the following metrics derived from nvidia-smi and Nsight Systems. Structure your results in tables for clear comparison across algorithm iterations.

| Metric Category | Tool for Collection | Formula / Interpretation for Research |

|---|---|---|

| GPU Utilization | nvidia-smi |

(Time GPU Active / Total Kernel Time). Low utilization can indicate memory-bound algorithms or host-side bottlenecks. |

| Memory Bandwidth | Nsight Systems | (Bytes Transferred / Transfer Time). Compare achieved bandwidth to hardware peak to identify memory access inefficiencies. |

| Kernel Efficiency | Nsight Systems | (Active Warps / Total Available Warps). Measures how effectively the GPU's parallel capacity is utilized. |

| CPU-GPU Overlap | Nsight Systems | Qualitative analysis from timeline; identifies periods where memory transfers and kernel execution occur concurrently. |

Implementing GPU-Accelerated Algorithms in Biomedical Research

FAQs: Navigating Parallel Computing in Drug Discovery

Q1: Our virtual screening workloads are slow. What parallelization strategy can best accelerate them? Virtual screening is a quintessential "embarrassingly parallel" problem, making data parallelism an ideal strategy. You can simultaneously dock millions of different compounds against a target protein by distributing different ligands or ligand batches across multiple computing units [36] [37]. The key is to use a tool designed for GPU acceleration, like Vina-GPU 2.1, which employs parallel computing to significantly speed up AutoDock Vina and its derivatives [37]. For large-scale deployment, a managed HPC environment, such as AWS Parallel Computing Service (PCS), can streamline the distribution of these massive workloads across a cluster [38].

Q2: How can I parallelize a complex, multi-stage drug discovery pipeline where each step has different resource requirements? For complex pipelines, a hybrid parallelism approach is most effective. You can use task parallelism to orchestrate the entire workflow, where different stages (e.g., molecular dynamics simulation, followed by docking, followed by analysis) are managed as separate, coordinated tasks [38] [22]. Within each computationally intensive stage, you can then apply data parallelism (e.g., running many simulations concurrently) and/or fine-grained task parallelism on GPUs (e.g., using different GPU threads for different aspects of a single simulation) [39] [7]. Modern orchestration tools and unified compute planes are designed to manage this complexity, allowing you to efficiently utilize heterogeneous resources (CPUs, GPUs) across different pipeline stages [22].

Q3: We are achieving poor GPU utilization in our molecular simulations. What could be the cause? Low GPU utilization is a common bottleneck. It can often be attributed to an inefficient parallelization scheme for the specific algorithm. For instance, in Method of Characteristics (MOC) neutron transport calculations (conceptually similar to some radiation-based therapy models), the chosen level of parallelization—ray-level, energy-group-level, or polar-angle-level—dramatically impacts performance [7]. Furthermore, the problem may be memory-bound or latency-bound rather than compute-bound, meaning the GPU is waiting for data from memory or other system components [7]. Optimizing memory access patterns and using grid cache optimization, as seen in Vina-GPU 2.1, can alleviate this [37]. Infrastructure-level issues, like inadequate job scheduling, can also strand GPU resources [22].

Q4: What is the primary operational benefit of using a managed HPC service for parallelized drug discovery? The main benefit is a drastic reduction in administrative overhead and the elimination of person-dependent operations [38]. Managed services automate critical but time-consuming tasks like job scheduling (e.g., Slurm) upgrades, failure recovery, and node configuration [38]. This allows researchers and scientists to focus on the science of drug discovery rather than on maintaining complex HPC infrastructure, thereby accelerating research cycles and democratizing access to high-performance computing for teams without specialized HPC expertise [38].

Troubleshooting Guides

Issue 1: Slow Virtual Screening Throughput

Problem: Data-parallel virtual screening jobs are taking too long to complete, creating a bottleneck in the early discovery pipeline.

Diagnosis and Solutions:

- Check Workload Distribution: Ensure the job scheduler is efficiently distributing ligands across all available compute nodes. Avoid situations where a few nodes are overburdened while others sit idle [22].

- Evaluate GPU Acceleration: Verify that your docking software is fully leveraging GPUs. Consider migrating to a GPU-optimized version like Vina-GPU 2.1, which uses novel algorithms like Reduced Iteration and Low Complexity BFGS (RILC-BFGS) to accelerate the most time-consuming operations [37].

- Profile Resource Usage: Use performance monitoring tools to check for low GPU utilization. If utilization is low, the problem may be I/O-bound. Implement grid cache optimization and ensure that ligand and receptor structure files are optimally prepared to reduce computational overhead [37].

Issue 2: Low GPU Utilization in Numerical Simulations

Problem: GPUs are underutilized in complex simulations like molecular dynamics or neutron transport, leading to wasted resources and slow results.

Diagnosis and Solutions:

- Analyze Parallelization Scheme: The chosen level of parallelization may not map efficiently to the GPU architecture. For example, in MOC simulations, performance analysis has shown that the optimal parallelization scheme (e.g., ray-level) depends on the specific workload and must be experimentally determined [7].

- Determine Performance Bound: Classify the bottleneck using a performance model. If the algorithm is memory-bound, focus on optimizing data access and memory transfers. If it is compute-bound, investigate whether increasing the computational workload per thread or using mixed precision (e.g., fp32 instead of fp64) can improve throughput without significant accuracy loss [7].

- Inspect Infrastructure Scheduling: Confirm that your cluster's job scheduler is not a bottleneck. Modern "infrastructure-aware" schedulers can dynamically allocate resources and reduce job fragmentation, driving GPU utilization to over 90% in some cases [22].

Issue 3: Difficulty Managing Hybrid Parallel Workflows

Problem: A hybrid parallel workflow, which combines task and data parallelism, is complex to orchestrate and becomes unstable or inefficient.

Diagnosis and Solutions:

- Implement a Unified Compute Plane: Adopt an orchestration layer that abstracts all compute resources (cloud, on-premise) into a single pool. This software shift, as opposed to simply buying more hardware, enables dynamic scheduling, rapid deployment, and intelligent resource allocation across the entire hybrid workflow [22].

- Automate Environment Provisioning: Use services like AWS PCS in conjunction with EC2 Image Builder to create custom machine images (AMIs) with all necessary software pre-installed. This ensures that all nodes in a dynamically scaled cluster have a consistent and ready-to-use environment, which is crucial for hybrid tasks [38].

- Streamline User and Resource Management: Automate the process of adding users and mounting filesystems when cluster nodes start up. This can be achieved using services like AWS Step Functions and Systems Manager, reducing administrative burden and ensuring researchers can access resources immediately [38].

Experimental Protocols & Performance Data

Protocol 1: Accelerated Virtual Screening with Vina-GPU

This protocol details the use of GPU-accelerated data parallelism for high-throughput virtual screening [37].

1. Objective: To rapidly screen millions of compounds from a chemical library (e.g., ZINC, DrugBank) against a specific protein target. 2. Software: Vina-GPU 2.1 [37]. 3. Methodology: * Preparation: Prepare the protein receptor file in PDBQT format. Prepare the library of ligand files in the same format. * Configuration: Define the search space (binding pocket) using a configuration file. Set up the job script to leverage multiple GPUs. * Execution: Launch the Vina-GPU job. The software will automatically use a data-parallel approach to distribute different ligands across available GPU cores, employing the RILC-BFGS algorithm to optimize the docking process for each ligand [37]. * Post-processing: Collect the results (binding poses and affinity scores) from all parallel docking runs and rank the ligands.

4. Key Performance Metrics (Vina-GPU 2.1 vs. Vina-GPU 2.0): The following table summarizes the performance gains achieved by the optimized parallelization in Vina-GPU 2.1 [37].

Table 1: Performance Metrics for Vina-GPU 2.1 Virtual Screening

| Metric | Vina-GPU 2.0 (Baseline) | Vina-GPU 2.1 | Improvement |

|---|---|---|---|

| Docking Speed | 1x | 4.97x (avg) | 397% faster [37] |

| Early Enrichment (EF1%) | 1x | 3.42x (avg) | 242% better [37] |

Protocol 2: Performance Analysis of Parallelization Schemes in MOC

This protocol, derived from neutron transport research, provides a framework for analyzing different parallelization strategies on GPU architectures, which is applicable to similar computational problems in drug discovery [7].

1. Objective: To identify the most efficient parallelization scheme (ray-level, energy-group-level, polar-angle-level) for a given computational workload on a GPU. 2. Software: A custom GPU-based MOC application [7]. 3. Methodology: * Workload Definition: Construct a series of test cases with varying computational loads by refining MOC parameters (e.g., number of rays, energy groups). * Scheme Implementation: Implement the three parallel schemes (ray, group, angle) using a programming model like CUDA. * Execution and Profiling: Run each test case with each scheme on the target GPU. Use profiling tools (e.g., NVIDIA Nsight) to collect performance data, including execution time and hardware utilization. * Performance Analysis: Use a performance model to classify each scheme as compute-bound, memory-bound, or latency-bound for the given workload. This helps identify the primary performance bottleneck [7].

4. Key Performance Findings: The table below generalizes the results of testing different parallelization schemes, showing that the optimal choice is highly workload-dependent [7].

Table 2: Analysis of Parallelization Schemes for GPU-Based MOC Calculation

| Parallelization Scheme | Best For Workloads That Are... | Performance Characteristic | Considerations |

|---|---|---|---|

| Ray-level | Large & computationally intensive | High parallelism; efficient for large segment counts [7] | Many independent threads. |

| Energy-group-level | Smaller or memory-intensive | Less efficient for large workloads [7] | Potential for memory bandwidth limitations. |

| Polar-angle-level | Smaller or memory-intensive | Less efficient for large workloads [7] | Similar to group-level, may not fully utilize GPU. |

Workflow Visualization

The following diagram illustrates a high-level hybrid parallel workflow for drug discovery, integrating both task and data parallelism, manageable by a unified orchestration layer.

Diagram 1: Hybrid Parallel Drug Discovery Workflow

The Scientist's Toolkit: Essential Research Reagents & Solutions

This table lists key computational tools and infrastructure solutions that enable effective parallelization in modern drug discovery research.

Table 3: Key Reagents and Solutions for Parallelized Drug Discovery

| Item / Solution | Function / Purpose | Relevance to Parallelism |

|---|---|---|

| Vina-GPU 2.1 [37] | An accelerated molecular docking tool. | Implements fine-grained data and task parallelism on GPUs for virtual screening. |

| OptiPharm / pOptiPharm [36] | An algorithm for ligand-based virtual screening. | The parallel version (pOptiPharm) uses a two-layer parallelization for distributing molecules and internal methods. |

| AWS Parallel Computing Service (PCS) [38] | A managed HPC service using Slurm. | Provides the underlying infrastructure to easily deploy and manage data-parallel and hybrid parallel clusters. |

| Unified Compute Plane (e.g., Orion) [22] | An abstraction layer for compute resources. | Enables orchestration and task parallelism across heterogeneous environments (cloud, on-prem). |

| EC2 Image Builder [38] | A service for automating OS image creation. | Ensures consistent, reproducible environments for parallel cluster nodes, a foundation for all parallel strategies. |

| Method of Characteristics (MOC) Code [7] | A solver for neutron transport equations. | A research example for analyzing different GPU parallelization schemes (ray, group, angle). |

Frequently Asked Questions (FAQs)

Q1: What are the most common performance bottlenecks when running BINDSURF on a GPU, and how can I address them? A primary bottleneck is thread divergence, where threads within the same warp (a group of 32 threads) execute different instructions instead of operating in lockstep. This can drastically reduce the number of active threads per cycle, severely underutilizing the GPU's compute capacity [40]. To mitigate this:

- Restructure Algorithms: Transform nested branching logic into state machines where possible to ensure threads follow similar execution paths [40].

- Optimize Launch Configuration: Use a thread block size that is a multiple of 32 (e.g., 32, 64, 128) to avoid leaving warp capacity unused [40].

Another critical bottleneck is inefficient memory access. BINDSURF uses precomputed interaction grids (electrostatic, Van der Waals) to accelerate scoring function calculations [41]. Slow access to these grids in global memory can limit performance.

- Leverage Faster Memory: Utilize on-chip memories like shared memory whenever possible, as it offers much lower latency than global memory. Strategically cache frequently accessed grid data for threads within the same block [4].

Q2: My GPU utilization appears high in system monitors, but the performance is poor. What could be wrong?

System monitoring tools like nvidia-smi can be misleading, reporting high utilization even when the kernel is not efficiently using the hardware [40]. It is essential to use professional profiling tools like Nvidia Nsight Compute for a detailed analysis. This tool provides metrics like:

- Achieved Occupancy: The ratio of active warps to the maximum supported warps on a multiprocessor.

- Memory Bandwidth Utilization: The percentage of theoretical peak memory bandwidth being used.

- Compute Utilization: The percentage of theoretical peak compute capacity being used. Low scores in these metrics, despite high generic utilization, indicate issues like thread divergence or inefficient memory access patterns that need to be optimized [40].

Q3: How does BINDSURF's approach to virtual screening differ from traditional docking methods? Traditional virtual screening methods, like standard docking in AutoDock or Glide, perform simulations in a single, predefined binding site on the protein surface [41] [42]. In contrast, BINDSURF is a "blind" methodology that does not assume the binding site location. It scans the entire protein surface by dividing it into numerous defined regions and docks each ligand from a database into all these spots simultaneously [41] [42]. This unbiased approach allows for the discovery of novel binding hotspots and is particularly useful when the true binding site is unknown.

Q4: What is the role of the CUDA programming model in these applications? CUDA is a parallel programming model that enables developers to execute general-purpose computations on NVIDIA GPUs. In both BINDSURF and MOC, CUDA allows the massive parallelism of the GPU to be harnessed effectively [41] [7].

- Thread Hierarchy: Computational work is organized into a hierarchy of grids, blocks, and threads, mapping perfectly to problems involving many independent calculations, such as docking different ligands or tracking numerous neutron characteristic rays [41] [7].

- Memory Hierarchy: CUDA provides control over different memory spaces (global, shared, constant, etc.), which is crucial for optimizing data access and overcoming memory bandwidth bottlenecks [4].

Performance Analysis and Benchmarking

To objectively evaluate the performance of GPU-accelerated algorithms, specific metrics and formulas are used. The tables below summarize key performance data and configurations.

Table 1: Key Performance Metrics for GPU-Accelerated Codes

| Metric | Description | Formula / Calculation | Target Value |

|---|---|---|---|

| Speedup | Acceleration compared to a baseline (e.g., CPU). | ( T{\text{baseline}} / T{\text{GPU}} ) | As high as possible (>30x achieved in some cases [40]) |

| Throughput | Number of computational units processed per second (e.g., deals/s, ligands/s). | ( \text{Number of Units} / \text{Execution Time} ) | Varies by application (e.g., 2.9M deals/s on CPU [40]) |

| Theoretical Peak Performance | Maximum possible FLOPs/s or memory bandwidth for the hardware. | Manufacturer's specification (e.g., A100 has 6,912 CUDA cores [4]) | N/A |

| Achieved Performance | Actual measured FLOPs/s or memory bandwidth. | Profiling tool measurement (e.g., via Nsight Compute [40]) | Close to theoretical peak |

| Compute Utilization | Effectiveness in using the GPU's compute units. | ( \text{Achieved FLOPs/s} / \text{Theoretical FLOPs/s} ) | >80% (Low initial score of 12% improved after optimization [40]) |

| Memory Bandwidth Utilization | Effectiveness in using the available memory bandwidth. | ( \text{Achieved Bandwidth} / \text{Theoretical Bandwidth} ) | >80% (Was 28% in initial port [40]) |

Table 2: Experimental Configuration for Performance Analysis

| Component | Specification |

|---|---|

| GPU Hardware | NVIDIA GeForce GTX 1650 (for optimization case study [40]), NVIDIA A100 (latest architecture reference [4]) |

| CPU Hardware | Intel Core i7-9750H (12 logical cores [40]) |

| Programming Model | CUDA |

| Analysis Tools | Nvidia Nsight Compute, nvidia-smi |

| Key Optimizations | Minimizing thread divergence, maximizing memory coalescing, using shared memory, optimal block/thread configuration [40] [4] |

Essential Research Reagent Solutions

Table 3: Key Software and Computational Tools

| Tool Name | Type | Primary Function in Research |

|---|---|---|

| BINDSURF | Software Application | Performs blind virtual screening by docking ligands over the entire protein surface [41] [42]. |

| Method of Characteristics (MOC) | Computational Algorithm | Solves the neutron transport equation; implementation accelerated on GPU for full-core simulation [7]. |

| NVIDIA CUDA | Parallel Programming Platform | Enables general-purpose programming on NVIDIA GPUs for accelerating scientific codes [41] [4]. |

| Nvidia Nsight Compute | Performance Profiler | Detailed kernel profiling to identify performance bottlenecks like thread divergence and memory issues [40]. |

| OWL2Vec* | Knowledge Graph Embedding | Creates meaningful representations of entities in a knowledge graph (e.g., for ElementKG in molecular AI models) [43]. |

Experimental Workflow and System Architecture

The following diagrams illustrate the core workflows and system architectures discussed in this case study.

BINDSURF High-Level Algorithm Workflow

GPU Memory Hierarchy for Optimization

Troubleshooting Guides and FAQs

Common GROMACS Errors and Solutions

| Error Message | Context / Tool | Cause | Solution |

|---|---|---|---|

| Out of memory when allocating [44] | General Analysis | Insufficient system memory for the calculation scope [44]. | 1. Reduce atoms selected for analysis [44].2. Shorten trajectory length [44].3. Check for box size unit errors (Å vs. nm) [44].4. Use a computer with more memory [44]. |

| Residue 'XXX' not found in residue topology database [44] | pdb2gmx |

The chosen force field lacks parameters for the residue/molecule 'XXX' [44]. | 1. Rename residue to match database names [44].2. Manually provide a topology file (.itp) [44].3. Use a different force field with the required parameters [44]. |

| WARNING: atom X is missing in residue XXX [44] | pdb2gmx |

The input structure is missing atoms expected by the force field [44]. | 1. Use -ignh to let pdb2gmx add hydrogens [44].2. For terminals (e.g., N-terminus), use correct -ter flags and naming (e.g., 'NALA') [44].3. Model missing atoms with external software [44]. |

| Found a second defaults directive [44] | grompp |

The [defaults] directive appears more than once in topology files [44]. |

1. Comment out the extra [defaults] section in the offending .itp file [44].2. Avoid mixing force fields; include only one forcefield.itp [44]. |

| Invalid order for directive xxx [44] | grompp |

Incorrect order of directives (e.g., [ atomtypes ]) in the .top or .itp files [44]. |

Ensure all [*types] directives and #include statements for new species appear before any [ moleculetype ] directive [44]. |

| Atom index in position_restraints out of bounds [44] | grompp |

Position restraint files included in the wrong order for multiple molecules [44]. | Place the #include for a molecule's position restraints immediately after its own [ moleculetype ] block [44]. |

Performance and GPU Parallelization FAQs

Achieving optimal performance involves correctly distributing workloads between the CPU and GPU.

- Domain Decomposition: The Particle-Particle (PP) rank, which handles short-range non-bonded forces, should be mapped to a GPU. For best efficiency, use one PP rank per GPU, ensuring each rank has thousands of particles [45].

- Particle-Mesh Ewald (PME): The long-range PME calculation can be assigned to a subset of ranks (e.g., ¼ to ½). Using separate PME ranks can improve performance, as the global communication for the 3D FFT can become a bottleneck [45].

- GPU Update and Constraints: For simulations with a fast GPU and slow CPU, use the

-update gpuoption to offload the coordinate update and constraint algorithms to the GPU [46]. - GPU Direct Communications: To minimize data transfer between CPU and GPU, enable direct communication between GPUs by setting environment variables:

GMX_GPU_DD_COMMS(for halo exchanges),GMX_GPU_PME_PP_COMMS(for PP-PME communication), andGMX_FORCE_UPDATE_DEFAULT_GPU(to use with GPU update). This requires using GROMACS's internal thread-MPI and is currently limited to a single node [46].

Q2: My simulation is running slower than expected after switching to a GPU. What should I check?

- CPU vs. GPU Compilation: Ensure your

mdrunwas compiled with the highest SIMD instruction set (e.g., AVX2, AVX512) native to your CPU architecture. Using a generic binary will result in suboptimal CPU performance, which can bottleneck the GPU [45]. - PP/PME Load Balancing: The PP-PME load balancing now starts after a 5-second delay to account for CPU/GPU clock ramp-up. Allow your simulation to run for more than this period to let the balancer find the optimal settings [46].

- SIMD and Clock Speed Trade-off: On some Intel architectures (e.g., Skylake, Cascade Lake), building

mdrunwithGMX_SIMD=AVX2_256instead ofAVX512can yield better performance because the CPU can maintain higher clock frequencies [45].

Q3: What are key performance metrics for high-throughput screening assays?

For High-Content Screening (HCS) often used in conjunction with MD for validation, the Z'-factor is a standard metric for assessing assay quality and robustness [47].

Z'-factor Calculation and Interpretation [47]:

| Z'-factor Value | Assay Quality Interpretation |

|---|---|

| 1 > Z' > 0.5 | An excellent assay [47]. |

| 0.5 ≥ Z' > 0 | A marginal or "yes/no" type assay. Often acceptable for complex HCS phenotypes [47]. |

| Z' ≤ 0 | The positive and negative controls are not well separated. The assay is not suitable for screening [47]. |

The Z'-factor is defined as: Z' = 1 - [ 3(σₚ + σₙ) / |μₚ - μₙ| ] where μₚ and σₚ are the mean and standard deviation of the positive control, and μₙ and σₙ are those of the negative control [47].

Experimental Protocol: High-Throughput Protein-Ligand Screening with GROMACS

This protocol outlines a high-throughput MD workflow for screening multiple ligands against a protein target, using the Hsp90 protein and a resorcinol ligand as a model [48].

System Preparation and Topology Generation

- Input: PDB file (e.g.,

6hhr.pdb). - Method: Use text processing tools (e.g.,

grep) to separate lines.- Protein file: Select lines that do not match

HETATM. - Ligand file: Select lines that match the ligand's residue identifier (e.g.,

AG5E).

- Protein file: Select lines that do not match

- Tool:

pdb2gmx(or Galaxy tool "GROMACS initial setup"). - Parameters:

- Force field:

AMBER99SB-ILDN - Water model:

TIP3P

- Force field:

- Outputs: Protein structure file (.gro), topology file (.top), and include topology (.itp) for position restraints.

- Add Hydrogens: Use a tool like Open Babel (

compound conversion) to add hydrogen atoms appropriate for pH 7.0 to the ligand PDB file. - Parameterize: Use

acpype(or "Generate MD topologies for small molecules").- Force field:

gaff(General AMBER Force Field). - Charge method:

bcc(AM1-BCC charge model).

- Force field:

- Outputs: Ligand structure file (.gro) and include topology (.itp).

Step 4: Assemble the Full System

- Solvation: Use

gmx solvateto place the protein-ligand complex in a water box (e.g., TIP3P). Ensure the box size is large enough (e.g., 1.0 nm from the complex). - Ion Addition: Use

gmx genionto add ions (e.g., Na⁺, Cl⁻) to neutralize the system's charge and achieve desired ionic strength. - Final Topology: Manually edit the main .top file to

#includethe ligand's .itp file.

Simulation and Analysis

Step 5: Energy Minimization and Equilibration

- Energy Minimization: Run a steepest descent algorithm to remove bad steric clashes.

- Equilibration:

- NVT Ensemble: Equilibrate the system at constant Number of particles, Volume, and Temperature (e.g., 300 K) for ~100 ps, applying position restraints to the protein and ligand heavy atoms.

- NPT Ensemble: Equilibrate at constant Number of particles, Pressure (1 bar), and Temperature for ~100 ps, with the same restraints.

Step 6: Production MD and High-Throughput Execution

- Production Run: Run an unrestrained simulation for a duration sufficient to observe the phenomena of interest (e.g., 10-100 ns per ligand).

- High-Throughput Setup: To screen multiple ligands, replicate the above workflow for each ligand. Use job arrays or workflow management systems (e.g., Galaxy, Snakemake) to execute dozens to hundreds of simulations in parallel.

Step 7: Trajectory Analysis

- Root-mean-square deviation (RMSD): Measure the stability of the protein and ligand over time.

- Root-mean-square fluctuation (RMSF): Identify flexible regions of the protein.

- Protein-Ligand Interactions: Calculate hydrogen bonds, salt bridges, and hydrophobic contacts between the protein and ligand over the trajectory.

- Energetics Analysis: Use methods like MM-PBSA/GBSA to estimate binding free energies.

The Scientist's Toolkit: Essential Research Reagents and Materials

| Item / Reagent | Function in High-Throughput MD |

|---|---|

| Protein Data Bank (PDB) File | The initial 3D structural model of the biomolecular system, obtained from crystallography, NMR, or cryo-EM [48]. |

| Force Field (e.g., AMBER99SB, CHARMM36) | An empirical function and parameter set used to calculate the potential energy of the system, governing atomistic interactions [48]. |

| Water Model (e.g., TIP3P, SPC/E) | A set of parameters defining how water molecules are represented and behave in the simulation [48]. |

| Small Molecule Ligand | The molecule(s) of interest, such as drug candidates, whose interaction with the protein is being studied [48]. |

| Positive & Negative Controls (for HCS) | In associated experimental screening, controls are required to calculate the Z'-factor and validate the assay's dynamic range and quality [47]. |

| Replicate Samples | Running experimental or simulation replicates (typically 2-4) reduces false positives/negatives and provides estimates of variability [47]. |

GPU Acceleration Architecture

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: My parallel reduction kernel is slower than the serial version. What are the most common causes of this performance issue?

The most common causes are warp divergence, non-coalesced memory access, and shared memory bank conflicts [49] [50]. Warp divergence occurs when threads within the same warp take different execution paths, serializing operations that should be parallel. Non-coalesced memory access happens when threads access memory in a scattered pattern rather than sequentially, reducing memory bandwidth utilization. Shared memory bank conflicts arise when multiple threads attempt to access the same memory bank simultaneously, causing serialization [50]. Begin by implementing sequential addressing (Reduction 3) which addresses all these issues, and verify your implementation with a profiler.

Q2: How can I maintain deterministic results when performing floating-point reductions?

Floating-point operations are non-commutative in parallel environments, meaning a + b may not equal b + a due to different summation orders [51]. This non-determinism stems from weak memory consistency on GPUs and unpredictable operation orders between threads. For deterministic algorithms in PyTorch, use torch.use_deterministic_algorithms(True), though this may impact performance. To preserve precision, especially with float16, upcast the accumulator to a higher precision (e.g., float32) during the reduction or use formats like bfloat16 designed for better accumulation [51].

Q3: What strategies exist for reducing arrays larger than my GPU's shared memory capacity?

For large arrays, use algorithm cascading (Reduction 7) which combines sequential and parallel reduction [50]. This approach has each thread sequentially accumulate multiple elements from global memory into a partial sum before participating in the parallel block reduction. The kernel calculates a gridSize and uses a while loop to process all elements assigned to the block, enabling reduction of indefinitely sized arrays in a single kernel launch while maintaining efficient, coalesced memory access patterns [50].

Q4: Why does my reduction kernel produce incorrect results only with certain array sizes?

This often indicates insufficient thread synchronization or index calculation errors near block boundaries. Ensure you use __syncthreads() after each reduction step in shared memory. For the final warp reduction, note that synchronization is implicit within warps, so __syncthreads() should not be used [50]. Carefully check bounds checking in your kernel, particularly in the initial data loading phase, to prevent threads from accessing memory beyond allocated regions, especially when the array size isn't a perfect multiple of the block size.

Performance Issue Diagnostic Table

| Performance Issue | Symptom | Solution |

|---|---|---|

| Warp Divergence [50] | Low compute utilization, threads in warp serialize | Replace tid % (2*s) == 0 with sequential addressing |

| Memory Bank Conflicts [49] | High shared memory latency, memory serialization | Implement sequential addressing (Reduction 3) |