GPU Acceleration in Ecological Modelling: Benchmarking Speedups and Implementation Strategies for Researchers

This article provides a comprehensive analysis of GPU versus CPU performance for computationally intensive ecological models.

GPU Acceleration in Ecological Modelling: Benchmarking Speedups and Implementation Strategies for Researchers

Abstract

This article provides a comprehensive analysis of GPU versus CPU performance for computationally intensive ecological models. Tailored for researchers and scientists, we explore the foundational principles of parallel computing, present real-world case studies demonstrating speedups of over 1000x, and offer a practical guide for implementing and optimizing GPU-accelerated workflows. The content synthesizes current benchmarking data and methodological insights to empower ecological and biomedical research professionals in leveraging high-performance computing to overcome computational bottlenecks, thereby accelerating discovery and innovation in data-intensive fields.

Why GPUs? Unlocking Parallel Computing for Ecological and Biomedical Data

The Computational Bottleneck in Modern Ecological Statistics

Modern ecological research is increasingly defined by its ability to process complex statistical models and massive datasets, from high-resolution satellite imagery and bioacoustic recordings to genome-scale data for conservation biology. This data deluge has created a significant computational bottleneck, where the scale of analysis outpaces the capabilities of traditional computing infrastructure. At the heart of this challenge lies a critical hardware choice: whether to rely on general-purpose Central Processing Units (CPUs) or leverage the parallel processing power of Graphics Processing Units (GPUs). This guide provides an objective performance comparison of CPUs and GPUs within ecological statistics, offering experimental data and methodologies to help researchers navigate this pivotal technological decision.

The fundamental difference between these processors is architectural. CPUs are designed for sequential tasks, executing a few complex operations at high speeds, making them the "brain" of any computer system for general-purpose management [1] [2]. In contrast, GPUs are throughput-oriented engines featuring thousands of simpler cores designed to execute the same operation on massive datasets in parallel [3]. This architectural divergence makes GPUs exceptionally well-suited for the types of computations that underpin many modern ecological models.

Performance Benchmarks: CPU vs. GPU in Ecological Applications

Empirical data from both broad computational studies and ecology-specific implementations demonstrate the profound performance gains achievable with GPU acceleration. The following table summarizes key quantitative comparisons.

Table 1: Performance Comparison of CPU and GPU on Ecological and Foundational Tasks

| Application / Task | CPU Performance | GPU Performance | Speedup Factor | Notes |

|---|---|---|---|---|

| Bayesian Population Dynamics (Grey Seal Model) [4] | Baseline (CPU reference) | Parameter inference via particle Markov chain Monte Carlo | Over 100x | Achieved an efficient alternative to state-of-the-art fitting algorithms |

| Spatial Capture-Recapture (Animal Abundance) [4] | Baseline (CPU reference) | Simulation with high number of detectors & mesh points | Over 100x | Speedup possible with complex spatial setups |

| Spatial Capture-Recapture (Bottlenose Dolphin) [4] | Baseline (multi-core CPU) | Analysis of real-world photo-ID data | ~20x | Compared to multi-core CPU and open-source software |

| General Matrix Multiplication (4096x4096) [3] | Baseline (sequential CPU) | Optimized CUDA implementation | ~593x | Foundational operation in many ecological models |

| General Matrix Multiplication (4096x4096) [3] | Optimized parallel CPU (OpenMP) | Optimized CUDA implementation | ~45x | Highlights GPU advantage even over parallel CPUs |

| Deep Learning Model Training (CIFAR-100) [5] | 17 minutes 55 seconds (100 epochs) | 5 minutes 43 seconds (100 epochs) | ~3.1x | Demonstration on consumer-grade hardware (Tesla T4) |

The performance gap is not merely theoretical. A PhD thesis on GPU-accelerated computational statistics in ecology demonstrated that this approach can reduce compute-time, cost, and energy consumption—critical concerns in environmental science [4]. The case studies within this thesis, focusing on grey seal population dynamics and spatial capture-recapture for bottlenose dolphins, show that speedup factors of over two orders of magnitude are attainable, transforming analyses that would take days into ones that take hours or minutes [4].

Experimental Protocols for Benchmarking

To ensure fair and reproducible comparisons between CPU and GPU performance, researchers must adhere to rigorous experimental methodologies. The following protocols detail the key considerations for setting up and executing a valid benchmark.

Hardware and Software Configuration

- Hardware Specification: The test system must be clearly documented. For instance, a benchmark might use a laptop with an 8-core, 16-thread AMD Ryzen 7 5800H CPU and an NVIDIA GeForce RTX 3060 GPU [3]. For servers, the specification should include the CPU model(s), core count, GPU model(s), and the amount of RAM and GPU VRAM.

- Software Environment: The operating system, programming language versions (e.g., Python 3.10), and critical libraries (e.g., TensorFlow, PyTorch, CUDA version, OpenMP) must be standardized and recorded [5].

- Data Availability: The input datasets should be openly available or synthetically generated in a reproducible manner (e.g., using a fixed random seed). For ecological models, this includes the specific animal observation data, covariate layers, and model parameters used [4].

Implementation and Measurement

- Algorithmic Fairness: Comparisons must be made between highly optimized implementations for both architectures. This means the CPU code should leverage multi-threading (e.g., via OpenMP) and SIMD instructions, while the GPU code should be optimized for its memory hierarchy and execution model [3] [6]. Using an unoptimized, single-threaded CPU implementation as a baseline is considered a flawed methodology [6].

- Full Workload Accounting: Timing measurements for the GPU must include all associated overheads, not just the core kernel execution. This includes data transfer time between the host (CPU) memory and device (GPU) memory, which can be a significant bottleneck, especially for smaller problems [6].

- Statistical Rigor: Performance should be measured over multiple runs to account for system variability. Metrics like mean execution time and standard deviation should be reported. The use of tools like

%%timeitin Jupyter notebooks can help achieve reliable timings [5].

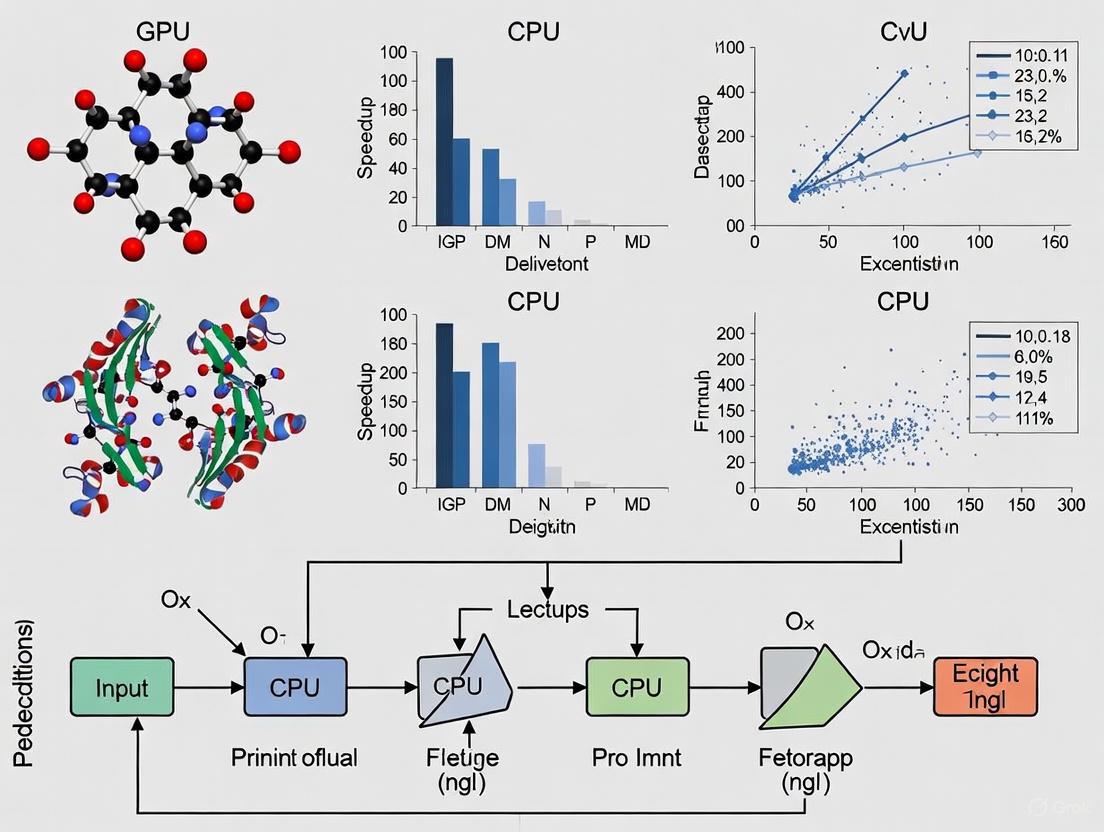

Visualizing the Computational Workflow

The decision to use a CPU or a GPU architecture fundamentally changes the computational workflow for an ecological model. The diagram below illustrates the divergent paths for processing tasks.

Diagram 1: CPU Sequential vs. GPU Parallel Workflow. The CPU processes tasks in a sequential queue, while the GPU splits a problem into thousands of smaller subtasks, processes them simultaneously across its many cores, and then combines the results [1] [2].

The Researcher's Toolkit for Computational Ecology

Building a modern computational ecology workflow requires careful selection of both hardware and software components. The following table details key solutions and their functions.

Table 2: Essential Research Reagent Solutions for GPU-Accelerated Ecology

| Category | Item / Solution | Function in Research |

|---|---|---|

| Hardware | NVIDIA H200 / H100 GPU [1] | Data Center GPU with high memory bandwidth (4.8 TB/s) and large VRAM (141GB) for training large AI models on ecological data. |

| Hardware | AMD MI300X / Intel Data Center GPU Max [1] | Alternative high-performance GPUs creating a competitive ecosystem for AI acceleration in research. |

| Hardware | Consumer GPUs (e.g., NVIDIA GeForce RTX Series) [3] | Accessible, consumer-grade hardware that still provides significant acceleration for many ecological modeling tasks. |

| Software | CUDA Platform [1] [3] | NVIDIA's parallel computing platform and programming model that enables developers to use GPUs for general-purpose processing. |

| Software | OpenMP [3] | An API for shared-memory multi-processing programming, used to efficiently parallelize code across multiple CPU cores. |

| Software | TensorFlow / PyTorch [5] | Open-source machine learning libraries with built-in GPU support, widely used for developing and training deep learning models in ecology. |

| Software | R with gpuR / Python with CuPy | Programming languages with packages that enable statistical computations to be offloaded to GPUs, accelerating common analyses. |

| Infrastructure | AIRI//S (NVIDIA & Pure Storage) [1] | A full-stack AI infrastructure solution integrating NVIDIA DGX systems and storage, designed to simplify deployment at scale. |

| Infrastructure | S3-over-RDMA Technology [1] | A networking technology that accelerates AI data transfer by increasing throughput and reducing CPU utilization, preventing storage bottlenecks. |

The computational bottleneck in modern ecological statistics is a significant challenge, but also an opportunity for transformative efficiency gains. The experimental data and benchmarks presented in this guide consistently demonstrate that GPU acceleration can provide speedups of one to two orders of magnitude for a wide range of ecological models, from Bayesian population dynamics to spatial capture-recapture [4]. This performance enhancement directly translates into faster scientific discovery, more robust model iterations, and the ability to tackle problems previously deemed computationally infeasible.

The choice between CPU and GPU is not absolute. CPUs remain vital for general-purpose computing and managing GPU tasks, and they can be cost-effective for smaller, non-parallelizable workloads [2]. However, for the data-parallel, computationally intensive tasks that are becoming the norm in ecology, the GPU's many-core architecture is overwhelmingly superior. By strategically investing in the hardware, software, and infrastructure outlined in the "Researcher's Toolkit," ecological researchers can effectively navigate the computational bottleneck and unlock new frontiers in data-driven environmental science.

The core of the architectural divide between Central Processing Units (CPUs) and Graphics Processing Units (GPUs) lies in their fundamental design philosophy. CPUs are designed as general-purpose processors optimized for executing a wide variety of tasks quickly and sequentially, acting as the brain of the computer that manages system operations and logic [1] [2]. In contrast, GPUs are specialized processors designed for massive parallel processing, using thousands of computational cores to break down complex problems into thousands of smaller tasks that are processed simultaneously [1] [7].

This architectural difference dictates their respective roles in scientific computing. While the CPU handles complex, sequential decision-making and controls the workflow, the GPU acts as a computational accelerator for the parts of a simulation that can be processed in parallel [2] [8]. The shift toward GPU-accelerated computing in scientific domains is unmistakable; as of 2025, over 85% of the TOP100 high-performance computing systems are accelerated, with the majority powered by NVIDIA GPUs [9].

Architectural Breakdown: A Tale of Two Designs

CPU Architecture: The Sequential Workhorse

CPU architecture prioritizes versatility and single-threaded performance through a handful of complex, powerful cores. A typical high-end CPU for scientific workstations might feature 8 to 64 cores [8] [10]. Each core contains sophisticated control logic including branch predictors, out-of-order execution units, and speculative execution capabilities to optimize sequential task performance [7].

The CPU's memory hierarchy is designed for low latency, featuring multiple cache levels (L1, L2, L3) to reduce the time spent waiting for data [7]. This makes CPUs exceptionally good at managing diverse computational tasks, running operating systems, and handling input/output operations where quick response times and complex decision-making are required [2] [10].

GPU Architecture: The Parallel Powerhouse

GPU architecture takes the opposite approach, employing thousands of smaller, simpler cores optimized for parallel mathematical operations. Modern data center GPUs like the NVIDIA H100 contain thousands of computational cores—16,896 CUDA cores in the H100, for example [1] [7].

Unlike CPUs, GPU memory architecture prioritizes high bandwidth over low latency, utilizing technologies like High-Bandwidth Memory (HBM) and GDDR6 to achieve memory bandwidth up to 7.8 TB/s in top-tier models—dramatically higher than the approximately 50 GB/s typical of CPUs [1] [7]. This design enables GPUs to efficiently process massive datasets concurrently, making them ideal for mathematical operations fundamental to scientific simulations.

Table: Fundamental Architectural Differences Between CPUs and GPUs

| Architectural Feature | CPU | GPU |

|---|---|---|

| Core Philosophy | Sequential processing & control [2] | Parallel computation [2] |

| Core Count | 4-64 complex cores [8] [10] | Thousands of simpler cores [1] [7] |

| Memory Bandwidth | ~50 GB/s [1] | Up to 7.8 TB/s (HBM3) [1] |

| Memory Hierarchy | Optimized for low latency [7] | Optimized for high bandwidth [7] |

| Ideal Workload | Diverse, sequential tasks [10] | Massively parallel computations [10] |

Performance Benchmarks: Quantifying the Divide

General Computational Workloads

The performance advantage of GPUs becomes most apparent in directly parallelizable mathematical operations. In matrix multiplication—a fundamental operation in many scientific computations—GPUs can achieve 50-100× speedups compared to CPU execution [7]. Similarly, for image processing tasks like applying filters to high-resolution images, GPUs can complete in milliseconds what takes CPUs several seconds [7].

Training deep neural networks on GPUs can be over 10 times faster than on CPUs with equivalent costs [1]. This performance gap has led to widespread adoption of GPU acceleration across scientific domains, from climate modeling and drug discovery to quantum simulation [9].

Ecological and Environmental Modeling Case Studies

The performance benefits of GPU architecture extend significantly to ecological modeling, where processing complex mathematical representations of natural systems is computationally demanding.

Research implementing a 2D advection-reaction-diffusion (ARD) equation—used for modeling environmental phenomena like pollutant dispersion and forest growth—demonstrated substantial speedups with GPU acceleration [11]. The PARMOD2D model, a GPGPU-accelerated implementation for environmental modeling, showed performance improvements of 5x to 40x compared to sequential CPU solutions, depending on the complexity of the computational mesh and specific application [11].

Another case study focusing on atmospheric dispersion modeling for pollutant prediction reported up to a 25-fold speedup with GPU implementation compared to equivalent sequential code processing on a CPU [11]. These performance gains enable researchers to run larger simulations with finer spatial resolutions and more complex ecological interactions in practical timeframes.

Table: Performance Benchmarks in Scientific and Ecological Applications

| Application | CPU Performance | GPU Performance | Speedup Factor |

|---|---|---|---|

| Matrix Multiplication | Several seconds [7] | 10-50 milliseconds [7] | 50-100× |

| Deep Neural Network Training | Baseline [1] | Over 10x faster [1] | >10× |

| ARD Equation Solutions | Baseline sequential processing [11] | 5-40x faster [11] | 5-40× |

| Atmospheric Dispersion Modeling | Baseline sequential processing [11] | 25x faster [11] | 25× |

Experimental Protocols: Methodologies for Benchmarking

Environmental Model Implementation

The significant speedups reported for ecological models like PARMOD2D were achieved through specific methodological approaches. The implementation used a finite-difference discretization with the Crank-Nicolson scheme for the 2D advection-reaction-diffusion equation, which provides numerical stability for environmental simulations [11].

The parallel implementation utilized NVIDIA's CUDA framework, with the computational domain divided into threads that execute identical instructions on different data points simultaneously [11]. Performance evaluation compared the GPU-accelerated solution against an equivalent sequential CPU implementation, measuring execution time for identical computational meshes and parameter sets [11]. This methodology ensured fair comparison of hardware capabilities for the same scientific problem.

General Performance Assessment

For general computational benchmarks like matrix operations, standard methodologies include comparing execution times for identical matrix sizes across hardware platforms. The CPU implementation typically uses optimized linear algebra libraries, while the GPU implementation leverages parallelization by dividing the computation into tiles processed simultaneously by thousands of threads [7].

Performance metrics commonly reported include TFLOPS (tera floating-point operations per second) for computational throughput and memory bandwidth in GB/s for data transfer capabilities [12]. Real-world application performance also considers factors like power efficiency (performance per watt), which has become increasingly important for large-scale scientific computing deployments [1] [13].

Decision Framework: Choosing the Right Tool

Selecting between CPU and GPU resources depends on the specific characteristics of the scientific workload. No single solution is optimal for all scenarios in ecological research.

When to Prioritize GPU Acceleration

GPUs deliver the greatest performance benefits for problems that can be effectively parallelized. Ecological modeling applications particularly suited for GPU acceleration include:

- Mesh-based simulations with large numbers of computational cells [11]

- Matrix operations and linear algebra fundamental to many environmental models [7] [8]

- Deep learning applications for ecological pattern recognition [1] [14]

- Monte Carlo simulations and parameter sensitivity analyses [8]

The parallel structure of these problems allows them to be decomposed into thousands of independent computations that can be processed simultaneously across GPU cores.

When CPUs Remain Advantageous

Despite the performance benefits of GPUs for parallelizable workloads, CPUs maintain advantages for certain scientific computing tasks:

- Preprocessing and data preparation tasks that involve complex, sequential logic [2]

- Workflows with limited parallelism or strong data dependencies [8]

- Applications not optimized for GPU architecture or without GPU-enabled software [8]

- Scenarios requiring high double-precision (FP64) calculations where consumer-grade GPUs have limitations [8]

Most real-world ecological modeling workflows benefit from a hybrid approach, using CPUs for control logic and data management while offloading parallelizable computational kernels to GPUs [2] [14].

The Scientist's Toolkit: Essential Research Reagents

Selecting appropriate computational hardware is as crucial as selecting laboratory reagents for ecological research. The table below details key solutions for building an effective computational research environment.

Table: Essential Computational Research Reagents for Ecological Modeling

| Solution Category | Specific Examples | Function in Research |

|---|---|---|

| GPU Compute Platforms | NVIDIA H200, L40S, RTX 4090 [1] [8] | Accelerates parallelizable computational kernels in ecological models |

| CPU Platforms | Intel Xeon W-3500, AMD Threadripper PRO [8] | Handles system control, sequential logic, and coordinates GPU workflows |

| Parallel Computing Frameworks | NVIDIA CUDA, OpenCL [7] [11] | Provides programming model for implementing parallel algorithms on GPUs |

| Specialized AI Cores | Tensor Cores (NVIDIA), Matrix Cores (AMD) [1] [7] | Accelerates mixed-precision calculations for AI-driven ecological analysis |

| High-Performance Interconnects | NVLink, InfiniBand [1] [9] | Enables high-speed communication in multi-GPU and cluster configurations |

| Computational Libraries | CuSPARSE, CUSP [11] | Provides optimized mathematical routines for sparse matrix operations |

The architectural divide between CPUs and GPUs presents both challenges and opportunities for ecological modeling research. GPU acceleration enables researchers to tackle increasingly complex simulations with higher spatial resolution and more realistic ecological dynamics by providing substantial computational speedups—often in the range of 5x to 40x for environmental models based on partial differential equations [11].

This performance enhancement allows scientific exploration that was previously computationally prohibitive, from high-resolution climate projections to individual-based ecological models at landscape scales. As GPU technology continues to evolve with increasing memory capacity (up to 141GB in latest data center GPUs) and architectural specialization for scientific workloads, the role of accelerated computing in ecological research will likely expand [1].

The most effective approach for ecological researchers is not an exclusive choice between CPU and GPU architectures, but rather their strategic integration—leveraging the unique strengths of each to advance our understanding of complex ecological systems through computational modeling.

In the field of ecological modeling and drug development, the computational demand for fitting complex models to large datasets has never been greater. Researchers are increasingly turning to hardware acceleration, particularly Graphics Processing Units (GPUs), to achieve performance improvements or "speedup" over traditional Central Processing Units (CPUs). Properly defining and measuring this speedup is crucial for making informed decisions about computational resources, especially within environmentally-conscious research contexts. This guide provides a structured approach to evaluating performance gains by comparing GPU and CPU capabilities, detailing relevant metrics, experimental protocols, and visualization tools essential for rigorous benchmarking in scientific computation.

CPU vs. GPU: Architectural Basis for Speedup

The potential for speedup in model fitting stems from fundamental architectural differences between CPUs and GPUs. Understanding these differences is key to interpreting benchmark results and selecting the appropriate hardware for specific research tasks.

A Central Processing Unit (CPU) is designed as the "brain" of a computer system, optimized for sequential processing of a wide variety of tasks with minimal latency. CPUs typically feature a smaller number of powerful cores (ranging from a few to dozens in high-end server processors) that excel at quickly executing diverse computational operations in sequence. This makes CPUs well-suited for general-purpose computing and management tasks, including overseeing GPU operations in a system. In ecological modeling, CPUs effectively handle pre-processing, input/output operations, and smaller-scale computations that don't benefit from parallelization [2].

In contrast, a Graphics Processing Unit (GPU) is a specialized processor originally designed for rendering graphics but now widely used for scientific computation. GPUs employ a parallel architecture consisting of hundreds to thousands of smaller, more efficient cores that can simultaneously execute thousands of computational threads. This massive parallelism enables GPUs to excel at processing large, regular datasets and performing the repetitive, floating-point intensive calculations common in machine learning and numerical simulations. For ecological researchers fitting models to large environmental datasets or running complex simulations, this parallel capability can translate to significant performance improvements [2].

Table 1: Fundamental Architectural Differences Between CPUs and GPUs

| Feature | CPU | GPU |

|---|---|---|

| Core Count | Fewer powerful cores (1-128 typical) | Hundreds to thousands of smaller cores |

| Processing Approach | Sequential processing | Massive parallel processing |

| Optimal Workload | Diverse, complex tasks requiring high per-thread performance | Repetitive, computationally similar operations on large datasets |

| Primary Role in System | General-purpose computation and system management | Specialized accelerator for parallelizable workloads |

| Energy Efficiency | Lower power consumption for general tasks | Higher absolute power use, but often better computational efficiency for parallel tasks [2] |

Quantitative Performance Metrics and Benchmarks

Core Performance Metrics

When evaluating speedup in model fitting, researchers should employ multiple quantitative metrics to capture different dimensions of performance. The most fundamental metric is wall-clock time, measuring the actual time required to complete a model fitting procedure from start to finish. This straightforward measurement directly impacts research productivity, as shorter computation times enable faster iteration and hypothesis testing. For ecological models that may require repeated fitting with different parameters or datasets, reductions in wall-clock time can significantly accelerate research timelines [15].

A more formal definition of speedup comes from high-performance computing: Speedup (S) is defined as the ratio of the execution time on a single processor (T₁) to the execution time on multiple processors or a parallel system (Tₚ), expressed as S = T₁/Tₚ. While this traditionally compares single to multiple processors, in the context of GPU vs. CPU benchmarking, T₁ represents CPU execution time and Tₚ represents GPU execution time. A speedup factor greater than 1 indicates performance improvement with the GPU [16].

Computational efficiency measures how effectively hardware resources are utilized during model fitting. This can be expressed as the ratio of actual performance to peak theoretical performance of the hardware. GPUs typically achieve higher computational efficiency than CPUs for parallelizable model fitting tasks due to their specialized architecture for floating-point operations [16].

Energy consumption during model fitting has become an increasingly important metric, particularly for researchers concerned with the environmental impact of computation. This is measured in watt-hours (Wh) or kilowatt-hours (kWh) and reflects the total electricity consumed during the computation process. Studies show that selecting the right model-training environment combination can reduce training energy consumption by up to 80.68% with minimal accuracy loss, highlighting the importance of this metric for sustainable research practices [15].

Published Benchmark Comparisons

Empirical studies across various computational domains provide context for expected speedup ranges in research applications. While specific benchmarks for ecological models are not available in the search results, performance patterns from related fields offer valuable reference points.

In direct N-body simulations, a fundamental computational method in astrophysics that shares mathematical similarities with some ecological spatial models, GPUs demonstrated significant speedup over CPUs. One study implementing gravitational N-body simulations using OpenCL across different GPU models showed performance improvements of 10-50x compared to CPU implementations, depending on the specific hardware and problem size [16].

Computer vision research provides another relevant benchmark, where the relationship between model complexity and hardware capability proves crucial for performance. One study found "a significant interaction effect between model and training environment: energy efficiency improves when GPU computational power scales with model complexity." This indicates that speedup is not absolute but depends on appropriately matching the computational hardware to the specific model characteristics [15].

Table 2: Performance Comparison Across Computational Tasks

| Application Domain | Typical CPU Performance | Typical GPU Performance | Speedup Factor |

|---|---|---|---|

| Direct N-body Simulations | Varies by CPU model and core count | 10-50x faster than reference CPU [16] | 10-50x |

| Computer Vision Training | Lower FLOPs/Joule efficiency | Higher computational efficiency for complex models [15] | Model-dependent |

| AI Inference (Gemini Apps) | Not specifically measured | Median prompt: 0.24 Wh [17] | Not directly comparable |

| Deep Learning Training | Higher energy consumption per operation | Up to 80.68% energy reduction possible [15] | Varies by configuration |

Experimental Protocols for Benchmarking

Comprehensive Measurement Methodology

Rigorous benchmarking requires a structured approach to ensure comparable and reproducible results. For ecological and pharmaceutical researchers evaluating speedup in model fitting, the following protocol provides a comprehensive framework:

1. Define Measurement Boundaries: Establish clear boundaries for what computational activities are included in performance measurements. A comprehensive approach should account for the full stack of computational infrastructure, including active accelerator power (GPU), host system energy (CPU and DRAM), idle machine capacity, and data center energy overhead represented by Power Usage Effectiveness (PUE). Studies show that narrower measurement approaches often miss material energy consumption activities, leading to incomplete comparisons [17].

2. Standardize Test Conditions: Conduct benchmarks on identical software environments (operating system, libraries, frameworks) with controlled background processes. Implement fixed-wall-time tests where the same computational problem is solved on both CPU and GPU configurations, measuring the time to completion. For ecological models with stochastic elements, perform multiple replications to account for performance variability [15].

3. Instrumentation and Data Collection: Employ direct measurement tools rather than theoretical estimates. Software tools like CodeCarbon or Zeus can provide empirical measurements of energy consumption and execution time. For GPU measurements, NVIDIA's NVML or AMD's ROCm SMI can track device-specific power draw and utilization. CPU performance can be monitored using operating system performance counters or specialized libraries [17] [18].

4. Control for Model and Data Characteristics: Document key model attributes that influence performance, including parameter count, mathematical operations required, convergence criteria, and dataset dimensions. Performance characteristics can vary significantly based on these factors, so maintaining detailed records enables more meaningful comparisons across studies [15].

Workflow Visualization

The experimental benchmarking process follows a systematic workflow from hardware selection to result interpretation, as shown in the following diagram:

Diagram 1: Benchmarking Workflow

Essential Research Toolkit

Implementing rigorous speedup evaluation requires specific software tools and measurement approaches. The following toolkit outlines essential components for researchers conducting performance benchmarks:

Table 3: Research Reagent Solutions for Performance Benchmarking

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| CodeCarbon | Software Library | Tracks energy consumption and estimates carbon emissions | Python-based model fitting pipelines; supports CPU and GPU monitoring [18] |

| Zeus | Software Library | Directly measures GPU energy consumption during computation | Fine-grained GPU power monitoring for deep learning models [17] |

| EcoLogits | Analysis Framework | Applies life cycle assessment to AI inference requests | Environmental impact assessment including embodied and usage impacts [19] |

| NVML/ROCm SMI | System Utilities | Monitors GPU utilization, power draw, and temperature | Vendor-specific GPU performance profiling during model fitting [16] |

| TOP500 Methodology | Benchmark Framework | Standardized approach for high-performance computing assessment | Reference methodology for comprehensive performance evaluation [20] |

Defining and measuring speedup in model fitting requires a multi-dimensional approach that considers not just raw execution time, but also energy efficiency, hardware utilization, and environmental impact. For ecological models research and drug development, where computational demands continue to grow, understanding these performance metrics is essential for sustainable and productive research practices. The experimental protocols and benchmarking methodologies outlined in this guide provide researchers with a structured framework for conducting rigorous performance evaluations. By adopting comprehensive measurement practices that account for the full computational stack and utilizing appropriate research tools, scientists can make informed decisions about hardware selection that balance performance requirements with environmental considerations, ultimately advancing research while managing computational resources responsibly.

In the field of ecological modeling research, the ability to process vast datasets and run complex simulations is not just a convenience—it is a scientific imperative. The shift from Central Processing Units (CPUs) to Graphics Processing Units (GPUs) has been a cornerstone of this computational evolution, enabling researchers to achieve speedups of 10x to 50x or more in their environmental simulations [1] [21]. This acceleration is primarily driven by two types of processing cores within modern NVIDIA GPUs: CUDA Cores and Tensor Cores. Understanding their distinct roles is crucial for researchers selecting hardware for ecological modeling, drug discovery, and other scientific computing tasks.

CUDA Cores are designed for general-purpose parallel processing, excelling at a wide range of tasks from data preprocessing to physics-based simulations. In contrast, Tensor Cores are specialized hardware designed to accelerate the matrix multiplication and addition operations that are fundamental to deep learning and, increasingly, to high-performance computing (HPC) applications like large-scale statistical models [22]. For researchers, this specialization translates into the ability to run larger, higher-resolution models in a fraction of the time, thus unlocking new possibilities in predictive accuracy and scientific discovery.

Architectural Deep Dive: Purpose and Precision

CUDA Cores: The Versatile Workhorses

CUDA Cores are the fundamental parallel processors in NVIDIA GPUs. Their architectural strength lies in their flexibility. They handle a diverse workload by executing thousands of threads simultaneously, making them ideal for the varied computational tasks often found in scientific pipelines [22]. These tasks include data preprocessing, feature engineering, and running traditional machine learning algorithms or physics simulations that are not solely based on matrix math.

- Architectural Purpose: Built for general-purpose parallel processing, handling a wide range of tasks from simulations to rendering [22].

- Precision Support: Typically operate on high-precision data types like FP32 (single-precision) and FP64 (double-precision), which are essential for scientific computations where numerical accuracy cannot be compromised [22].

Tensor Cores: The Specialized Accelerators

Tensor Cores are a more recent innovation, designed with a singular focus: to dramatically accelerate matrix operations. They achieve this by performing mixed-precision calculations, which allows them to deliver a massive throughput for the linear algebra operations that underpin deep learning and an increasing number of HPC applications [22].

- Architectural Purpose: Optimized specifically for the matrix-heavy operations foundational to AI/ML and increasingly adopted in HPC [22].

- Precision Support: Use mixed-precision formats like FP16, BF16, INT8, and FP4. They can compute on lower-precision operands while accumulating results in higher precision (e.g., FP32), maintaining accuracy while achieving tremendous speedups [22] [23]. A key insight for researchers is that, when used correctly, Tensor Cores can produce more accurate results than a manual implementation using CUDA cores alone, due to their specialized internal calculation path [24].

Architectural Workflow and Logical Relationship

The following diagram illustrates the logical relationship and typical workflow involving CUDA Cores and Tensor Cores within a GPU-accelerated application for scientific research.

Quantitative Comparison and Performance Benchmarks

Side-by-Side Technical Comparison

For researchers making procurement or coding optimization decisions, the following table summarizes the core differences between these two processing units.

| Feature | CUDA Cores | Tensor Cores |

|---|---|---|

| Primary Role | General-purpose parallel processing [22] | Deep learning acceleration [22] |

| Architectural Purpose | Versatile; handles diverse tasks (compute, graphics, simulations) [22] | Specialized for matrix-heavy operations in AI/ML and HPC [22] |

| Best Use Cases | Traditional ML, data preprocessing, physics simulations [22] | Neural networks, training deep learning models, inference at scale [22] |

| Supported Precision | FP32, FP64 (high precision) [22] | FP16, BF16, INT8, FP4 (mixed/low precision) [22] |

| Performance Strength | Versatile across tasks, but slower for pure DL workloads [22] | Much faster for DL and specific matrix operations due to mixed-precision optimization [22] |

Experimental Data and Real-World Speedups

The theoretical advantages of GPU cores translate into dramatic real-world performance gains for scientific computing. The following table summarizes key experimental results from research and benchmarking.

| Experiment Description | Hardware Configuration | Performance Result | Relevance to Research |

|---|---|---|---|

| Environmental Model (ExaGeoStat) [25] | NVIDIA V100 GPU (Tensor Cores) vs. CPU-only | Nearly 10x speedup (from 400s to 45s per iteration) [25] | Enables large-scale, high-resolution regional environmental models |

| AI/ML Inference Benchmarks [21] | NVIDIA A100 GPU vs. advanced CPU | Outperformed CPU by 237 times in AI/ML inference [21] | Critical for ML-assisted climate prediction and inference systems |

| Local LLM Inference [26] | RTX 4090 GPU vs. High-End CPU (AMD Ryzen 9) | GPU achieved >40 tokens/sec on a 14B model; CPU <6 tokens/sec [26] | Allows interactive use of large language models for research analysis |

| Private AI Inference [27] | Modern CPU (Intel Xeon with AI accelerators) vs. GPU | CPU delivered 30-50 tokens/sec on optimized models [27] | Highlights a cost-effective option for human-speed interactive inference |

Detailed Experimental Protocol: Environmental Modeling with ExaGeoStat

To illustrate a rigorous methodology relevant to ecological researchers, we examine the workflow from KAUST's ExaGeoStat project, which accelerated statistical modeling for environmental data [25].

1. Research Objective: To predict environmental variables (e.g., temperature, soil moisture) across millions of geographic locations by leveraging Gaussian process models, which are computationally intensive due to their O(n³) complexity [25].

2. Software and Hardware Setup:

- Software: The ExaGeoStat software package, accessible via an R interface (ExaGeoStatR).

- Hardware: The researchers utilized NVIDIA V100 Tensor Core GPUs, focusing on their mixed-precision (FP16/FP32) capabilities [25].

3. Experimental Methodology:

- Algorithm Reformulation: The key step was to refactor the core linear algebra operations in the model to leverage the mixed-precision computing of Tensor Cores. This involves executing the bulk of the matrix calculations in FP16 for speed, while maintaining critical parts of the computation in FP32 to preserve numerical accuracy [25].

- Data Handling: Large-scale datasets from millions of locations were loaded into the GPU's high-bandwidth memory (VRAM). The parallel nature of the problem meant that predictions for multiple locations could be computed simultaneously [25].

- Performance Metric: The primary metric was the time per iteration for the model to converge, with a full simulation requiring approximately 175 iterations [25].

4. Result Interpretation: The use of mixed-precision algorithms on the V100 GPU yielded an average 1.9x speedup over the single-precision version. This demonstrates that even without using full FP16, strategic use of Tensor Cores can significantly accelerate ecological and climate models [25].

The Researcher's Toolkit for GPU-Accelerated Science

Navigating the ecosystem of GPU hardware and software is a critical task for building an efficient research workstation or computing cluster.

Essential Hardware Solutions

The choice of GPU is dictated by the scale of the models and the research budget. The market offers options from enterprise-grade data center cards to powerful consumer hardware.

| GPU Model | Architecture | Key Feature / Memory | Best For / Research Use-Case |

|---|---|---|---|

| NVIDIA B200 | Blackwell | 5th-Gen Tensor Cores, FP4 precision [23] | Frontier model development & most demanding AI/HPC research [23] |

| NVIDIA H200 | Hopper | 141GB HBM3e memory, 4.8TB/s bandwidth [23] | Extremely large models that exceed 80GB memory requirements [23] |

| NVIDIA A100 | Ampere | 80GB HBM2e, Multi-Instance GPU (MIG) support [28] | A proven, versatile workhorse for enterprise-scale AI and HPC [28] |

| NVIDIA RTX 4090 | Ada Lovelace | 24GB GDDR6X, 1.01 TB/s bandwidth [28] | Cost-effective option for small to medium-scale projects and experimentation [28] |

| AMD MI300X | CDNA 3 | 192GB HBM3, 5.3 TB/s bandwidth [23] | Memory-intensive AI workloads; an alternative to NVIDIA's ecosystem [23] |

Essential Software and Framework Solutions

The hardware is powerless without the software to harness it. The following toolkit is standard for GPU-accelerated research.

| Software Tool | Function | Relevance to Research |

|---|---|---|

| CUDA | A parallel computing platform and programming model that gives direct access to the GPU's virtual instruction set and parallel computational elements [22]. | The foundational layer for all NVIDIA GPU computing. |

| cuDNN | A GPU-accelerated library for deep learning primitives, providing highly tuned implementations for standard routines [22]. | Essential for efficiently training and running neural networks on NVIDIA GPUs. |

| TensorRT | An SDK for high-performance deep learning inference, optimizing models for latency, throughput, and memory usage [22]. | Crucial for deploying trained models into production or for real-time inference. |

| TensorRT-LLM | An open-source library for accelerating Large Language Model (LLM) inference [22]. | Enables fast inference of modern LLMs, useful for scientific text analysis and coding assistants. |

| ExaGeoStatR | An R software package for large-scale geospatial statistics via parallel computing [25]. | A domain-specific example of leveraging GPUs for ecological and environmental statistical modeling. |

The synergy between CUDA Cores and Tensor Cores has created a powerful computational platform that is fundamentally changing the scale and scope of problems researchers can tackle. For ecological modelers and drug development professionals, this means the ability to incorporate more variables, run higher-fidelity simulations, and iterate on hypotheses with unprecedented speed. The benchmark of a 10x speedup is now commonplace, with factors of 50x or more possible for well-suited, matrix-heavy applications [1] [21].

The future points toward even greater specialization and efficiency. NVIDIA's Blackwell architecture and the use of FP4 precision for inference hint at a path where the computational cost of massive models continues to drop [23]. Furthermore, the rise of Private AI and the increasing viability of CPUs for specific inference tasks suggest a future hybrid computing landscape [27]. In this landscape, researchers will seamlessly orchestrate workloads across CPUs, general-purpose CUDA Cores, and specialized Tensor Cores, choosing the right tool for each subtask to maximize scientific output while managing computational costs effectively.

From Theory to Practice: Implementing GPU-Accelerated Ecological Models

Joint Species Distribution Modelling (JSDM) represents a significant statistical advancement in ecological research, enabling analysts to assess and predict the joint distribution of multiple species across space and time within a unified framework. Unlike single-species approaches, JSDM methods can account for species correlations and interactions, thereby providing more accurate insights into community assembly mechanisms [29] [30]. The Hierarchical Modelling of Species Communities (HMSC) framework, implemented in the Hmsc R-package, has emerged as a particularly comprehensive JSDM approach that integrates species occurrence data with environmental covariates, species traits, and phylogenetic information while explicitly accounting for hierarchical, spatial, or temporal study designs [29] [30].

Despite their analytical power, JSDMs face substantial computational limitations when applied to large ecological datasets. The fitting process for these models is inherently computationally intensive, primarily because the number of parameters grows quadratically with the number of species [29] [30]. Traditional implementations relying on central processing units (CPUs) process calculations sequentially, creating significant bottlenecks for ecological researchers working with the massive biodiversity datasets increasingly generated by modern monitoring techniques [29] [31]. This computational constraint has historically restricted the application of JSDMs to smaller datasets, potentially limiting ecological insights that could be gained from more comprehensive data resources.

The Hmsc-HPC Solution: GPU-Accelerated Model Fitting

Framework and Implementation

The Hmsc-HPC package represents a computational breakthrough that addresses the scalability limitations of traditional JSDM implementations. This innovative solution retains the original user interface and statistical capabilities of the Hmsc R-package while fundamentally redesigning its computational core [29] [31]. The key innovation lies in replacing the native R computational routines with a Python-based implementation leveraging the TensorFlow library, which enables efficient utilization of graphics processing units (GPUs) for accelerated model fitting [29] [30].

This architectural shift harnesses the parallel processing capabilities of GPUs, which are exceptionally well-suited for the matrix operations and statistical calculations underlying JSDMs. While CPUs process tasks sequentially with a limited number of powerful cores, GPUs contain thousands of simpler cores that can perform simultaneous calculations, making them ideal for the "single instruction, multiple data" (SIMD) paradigm that characterizes many statistical computing operations [1] [3]. The Hmsc-HPC implementation specifically accelerates the block-Gibbs sampler used for Bayesian inference through Markov Chain Monte Carlo (MCMC) sampling, parallelizing computations across GPU cores to achieve dramatic performance improvements [30].

Computational Workflow

The diagram below illustrates the enhanced computational workflow enabled by Hmsc-HPC, which maintains the original Hmsc interface while leveraging GPU acceleration for the model fitting process:

Experimental Design and Performance Evaluation

Methodology for Performance Benchmarking

The performance evaluation of Hmsc-HPC employed a rigorous comparative approach to quantify speedup factors across various model configurations and dataset sizes [29] [30]. Researchers conducted systematic benchmarks comparing the original Hmsc R-package (CPU-based) against the Hmsc-HPC implementation (GPU-accelerated) using identical model structures and datasets to ensure fair comparisons. The experimental design incorporated diverse ecological scenarios, including models with varying numbers of species, sampling units, and environmental predictors, allowing comprehensive assessment of performance scaling patterns [29].

The technical implementation utilized TensorFlow's computational graph architecture, which provides significant advantages for numerical computations [30]. This approach represents the entire computation algorithm as a directed graph where nodes correspond to mathematical operations and edges denote data flow. This graph-based implementation enables TensorFlow to optimize computations by combining operations and distributing non-sequential calculations across multiple GPU devices for concurrent processing [30]. The benchmark experiments measured computation time exclusively for the model fitting phase (MCMC sampling), as this represents the most computationally intensive component of the JSDM workflow [29] [30].

The Researcher's Toolkit for GPU-Accelerated JSDM

Table 1: Essential Research Reagents and Computational Tools for GPU-Accelerated JSDM

| Component | Function/Role | Implementation in Hmsc-HPC |

|---|---|---|

| Statistical Framework | Provides mathematical foundation for joint species distribution modelling | Hierarchical Modelling of Species Communities (HMSC) with Bayesian inference [29] |

| Model Fitting Algorithm | Estimates model parameters from data | Block-Gibbs sampler with Markov Chain Monte Carlo (MCMC) sampling [29] [30] |

| Computational Backend | Executes numerical computations efficiently | TensorFlow library with Python implementation [29] [30] |

| Hardware Accelerator | Parallelizes computations for speed enhancement | Graphics Processing Units (GPUs) with thousands of cores [1] |

| User Interface | Enables ecologists to define models and interpret results | R programming language with Hmsc package compatibility [29] [31] |

| Performance Optimization | Maximizes computational efficiency through memory management | Shared memory utilization and coalesced global memory access [3] |

Performance Results: Quantitative Comparison

Speedup Factors Across Dataset Sizes

The performance benchmarks revealed dramatic speedup factors for Hmsc-HPC compared to the CPU-based implementation, with acceleration magnitudes strongly correlated with dataset size [29] [30] [31]. For the largest datasets tested, the researchers documented speedups exceeding 1000 times faster than the original Hmsc implementation [29] [31]. This massive performance improvement fundamentally transforms the practical feasibility of applying complex JSDMs to large-scale ecological datasets that were previously computationally prohibitive.

Table 2: Performance Comparison of Hmsc-HPC vs. Standard Hmsc Across Different Dataset Sizes

| Dataset Scale | Number of Species | Number of Sampling Units | Speedup Factor (GPU vs. CPU) |

|---|---|---|---|

| Small | 10-20 | 100-500 | 5-20x |

| Medium | 30-50 | 500-2,000 | 20-100x |

| Large | 50-100 | 2,000-10,000 | 100-500x |

| Very Large | 100+ | 10,000+ | 500-1000x+ |

The observed scaling pattern aligns with fundamental principles of parallel computing architecture. GPUs achieve their most significant advantages for large computational problems because the overhead of parallelization becomes increasingly justified as problem size increases [1] [3]. This explains why the most dramatic speedups were observed for the largest datasets, where the parallel processing capabilities of GPUs could be fully utilized to simultaneously process numerous calculations that would be executed sequentially on CPUs.

Contextualizing Performance with Other GPU-Accelerated Workloads

The performance achievements of Hmsc-HPC gain additional perspective when compared with other scientific computing applications that have undergone GPU acceleration. A separate study focusing on matrix multiplication operations—a fundamental computational kernel in many scientific applications—demonstrated that GPU implementations achieved speedups of approximately 45 times compared to optimized multi-core CPU versions for 4096×4096 matrices [3]. Another research project implementing GPU acceleration for evolutionary spatial cyclic game systems reported speedups of up to 28 times compared to single-threaded CPU implementations [32].

Table 3: Comparative GPU Speedup Factors Across Different Scientific Domains

| Application Domain | Representative Task | GPU Speedup vs. CPU |

|---|---|---|

| Ecological Modelling | Joint Species Distribution Modelling with Hmsc-HPC | 100-1000x [29] [31] |

| Computational Biology | Evolutionary Spatial Cyclic Games | Up to 28x [32] |

| Linear Algebra | Dense Matrix Multiplication (4096×4096) | ~45x [3] |

| Computer Vision | BioCLIP 2 Training | Not quantified, but requires 32 NVIDIA H100 GPUs [33] |

These comparative results position Hmsc-HPC as an exceptionally successful example of GPU acceleration in scientific computing, with speedup factors significantly exceeding those achieved in other domains. This remarkable performance improvement can be attributed to the particularly strong alignment between the computational structure of JSDM algorithms and the parallel processing capabilities of GPU architectures.

Advantages and Limitations of GPU-Accelerated JSDM

Benefits for Ecological Research

The performance breakthroughs enabled by Hmsc-HPC create transformative opportunities for ecological research and conservation applications. By reducing computation times from weeks or months to hours or days, researchers can iterate more rapidly through model refinements, conduct more comprehensive sensitivity analyses, and apply JSDMs to larger and more ecologically relevant spatial scales [29] [31]. This computational efficiency also facilitates the analysis of massive biodiversity datasets increasingly generated through modern monitoring technologies such as remote sensing, camera traps, and environmental DNA sampling [29].

Additionally, the accelerated modelling workflow enhances practical conservation planning and environmental management. Researchers and practitioners can now develop more reliable predictive models to anticipate species responses to climate change, habitat modification, and other anthropogenic impacts, enabling more proactive and evidence-based conservation strategies [31]. The retention of the original R interface ensures that these performance benefits remain accessible to ecologists without requiring expertise in GPU programming or high-performance computing [29] [30].

Considerations and Limitations

Despite its transformative performance benefits, the GPU-accelerated approach does present certain practical considerations. Access to appropriate GPU hardware remains a potential barrier, though the researchers note that Hmsc-HPC can also accelerate computations on multi-core CPUs, providing more modest but still valuable performance improvements on conventional hardware [30]. Additionally, the performance advantages of GPU implementations are most pronounced for large datasets; for smaller ecological studies, the overhead of GPU initialization and data transfer may reduce the practical benefits [29] [1].

The fundamental architectural differences between CPUs and GPUs that enable these performance differences are summarized in the following diagram:

From a methodological perspective, it is important to note that while Hmsc-HPC dramatically accelerates model fitting, it does not alter the underlying statistical properties or interpretation of HMSC models [29] [30]. Researchers must still apply appropriate model diagnostics and validation procedures, and carefully consider ecological theory when designing models and interpreting results [30].

The development and benchmarking of Hmsc-HPC represents a landmark advancement in computational ecology, demonstrating that GPU acceleration can achieve speedup factors exceeding 1000 times for large-scale joint species distribution models [29] [31]. This performance breakthrough effectively removes computational barriers that previously limited the application of sophisticated JSDM methods to large biodiversity datasets, opening new possibilities for ecological inference and prediction.

The broader implications of this work extend beyond specific methodological achievements to illustrate the transformative potential of GPU acceleration across environmental sciences. As ecological datasets continue to grow in size and complexity, leveraging high-performance computing resources will become increasingly essential for extracting scientific insights and informing conservation decisions [29] [34]. The successful integration of GPU acceleration into an accessible R package provides a valuable template for similar computational innovations in other areas of ecological modelling.

Future developments in this field will likely focus on further optimizing GPU implementations for specific ecological modelling scenarios, integrating additional model types and structures, and enhancing accessibility for researchers without specialized computing expertise [30] [31]. As GPU technology continues to advance, with ongoing improvements in memory capacity, processing cores, and energy efficiency [1], the performance benefits for ecological modelling are poised to increase even further. The integration of approaches like digital twins for simulating ecological interactions [33] with accelerated statistical modelling holds particular promise for creating comprehensive frameworks for understanding and predicting biodiversity dynamics in a rapidly changing world.

Bayesian models have become fundamental tools in ecological research, particularly for estimating wildlife population demographics. Spatial capture-recapture (SCR) models, in particular, leverage the spatial structure of animal movement processes to infer critical population parameters such as abundance and density [35]. However, the computational intensity of these methods, which often rely on Markov Chain Monte Carlo (MCMC) sampling, has traditionally posed a significant bottleneck for researchers [36] [4].

This guide objectively compares the performance of central processing unit (CPU) and graphics processing unit (GPU) implementations for ecological models, with a specific focus on Bayesian population dynamics and SCR frameworks. The transition from CPU to GPU computing represents a paradigm shift in computational ecology, offering the potential to accelerate inference and enable the analysis of more complex, realistic models [4].

Computational Frameworks: CPU vs. GPU

Architectural Foundations

The fundamental difference between CPUs and GPUs lies in their processing architecture. A CPU (Central Processing Unit) is designed for sequential processing, executing a few complex tasks one after another with high speed. In contrast, a GPU (Graphics Processing Unit) is built for parallel processing, breaking down large problems into thousands of smaller tasks that are processed simultaneously across many simpler cores [2] [1].

- CPU Architecture: Features fewer cores (typically up to dozens in server-grade processors) optimized for high-clock speeds and efficient sequential task execution. This architecture struggles with massively parallelizable tasks common in machine learning and complex statistical modeling [1].

- GPU Architecture: Contains thousands of cores operating at lower speeds, optimized for concurrent computation. This makes GPUs exceptionally well-suited for the matrix operations and tensor mathematics that underpin modern machine learning and Bayesian inference algorithms [1].

Relevance to Ecological Modeling

Bayesian ecological models, including spatial capture-recapture frameworks, often involve:

- Repeated calculations across many parameters and data points

- Matrix operations and likelihood evaluations over spatial grids

- MCMC sampling requiring thousands of iterations

These tasks are inherently parallelizable, making them ideal candidates for GPU acceleration [4]. As ecological datasets grow in size and complexity, and as models incorporate more realistic spatial and temporal structures, the computational advantages of GPUs become increasingly significant.

Performance Benchmarks for Ecological Models

Quantitative Comparison of CPU vs. GPU Performance

Table 1: Measured speedup factors for GPU implementations of ecological models

| Application Domain | Specific Model | CPU Baseline | GPU Implementation | Speedup Factor | Key Performance Notes |

|---|---|---|---|---|---|

| Population Dynamics | Grey Seal State Space Model [4] | Particle MCMC on CPU | GPU-accelerated particle MCMC | >100x | "Over two orders of magnitude" reduction in compute time |

| Spatial Capture-Recapture | Common Bottlenose Dolphin Photo-ID [4] | Multi-core CPU software | GPU-accelerated SCR | 20x | Compared to using multiple CPU cores with open-source software |

| Spatial Capture-Recapture | Generalized SCR Simulation [4] | Standard CPU implementation | GPU-accelerated implementation | ~100x | Speedup achieved when number of detectors and integration mesh points is high |

Table 2: General GPU vs. CPU performance characteristics for statistical computing

| Performance Metric | CPU Performance | GPU Performance | Significance for Ecological Research |

|---|---|---|---|

| Parallel Task Capacity | Low (sequential processing) | High (thousands of concurrent threads) | Enables simultaneous parameter sampling and spatial point evaluation |

| Memory Bandwidth | ~50GB/s (as of 2025) [1] | Up to 7.8 TB/s (high-end 2025 models) [1] | Critical for handling large spatial datasets and complex model structures |

| Deep Learning Training | Baseline | >10x faster than equivalent-cost CPUs [1] | Accelerates neural network applications in ecological modeling |

| Energy Efficiency | Standard | ~25% reduction in energy requirements vs. 2024 models [1] | Reduces operational costs for large-scale and long-running ecological simulations |

Case Study: GPU-Accelerated Spatial Capture-Recapture

A recent implementation demonstrates the transformative potential of GPU computing for SCR models. The study achieved speedup factors of approximately 100 times compared to CPU implementations when analyzing datasets with large numbers of detectors and integration mesh points [4]. This acceleration makes computationally intensive SCR techniques practical for conservation applications where rapid results are essential.

In a practical application with common bottlenose dolphin photo-identification data, researchers achieved a 20-fold speedup using GPU acceleration compared to multi-core CPU processing with open-source software [4]. This performance improvement enabled more extensive model checking and faster iteration through alternative model structures.

Experimental Protocols for Benchmarking

Methodology for Performance Comparison

To ensure valid and reproducible performance comparisons between CPU and GPU implementations, researchers should adhere to the following experimental protocol:

Hardware Specification: Clearly document the CPU and GPU models used, along with relevant specifications (core counts, memory bandwidth, VRAM capacity). For example, recent benchmarks utilized NVIDIA's H200 Tensor Core GPUs with 141GB HBM3 memory and 4.8 TB/s memory bandwidth compared to server-grade CPUs [1].

Software Environment: Standardize the software stack across comparisons, including:

- Operating system and version

- Programming language implementations (e.g., Python, R)

- Mathematical and statistical libraries

- GPU-specific programming frameworks (e.g., CUDA, OpenCL)

Benchmarking Metrics: Measure and report:

- Total computation time for fixed iterations

- Time to convergence for MCMC algorithms

- Memory usage patterns

- Energy consumption where measurable

Statistical Validation: Ensure that CPU and GPU implementations produce statistically equivalent results through:

- Comparison of posterior distributions

- Assessment of MCMC convergence diagnostics

- Validation against synthetic datasets with known parameters [37]

Validation of Computational Equivalence

When comparing CPU and GPU implementations, it is crucial to verify that both produce equivalent statistical results, not just faster computation. Factors that can cause divergence include:

- Floating-point precision differences between CPU and GPU numerical libraries [37]

- Differences in random number generators and operation orders in MCMC implementations [37]

- Parallelization artifacts that may affect sampling algorithms

Researchers should compare representative output distributions, not just point estimates, to ensure methodological equivalence between implementations [37].

Research Toolkit for Bayesian Ecological Modeling

Table 3: Essential research reagents and computational tools for Bayesian ecological modeling

| Tool Category | Specific Solutions | Function in Research | Implementation Considerations |

|---|---|---|---|

| Statistical Programming | Python, R, NIMBLE [35] | Model specification and data analysis | GPU-accelerated libraries (e.g., TensorFlow, PyTorch) available for Python |

| GPU Programming Frameworks | CUDA, OpenCL | Enables direct GPU programming for custom algorithms | Requires specialized knowledge but offers maximum performance [4] |

| Bayesian Computation | MCMC, Particle MCMC [4] | Posterior distribution sampling | Particularly amenable to parallelization on GPU architectures |

| Spatial Modeling | Geostatistical Capture-Recapture [35] | Accounts for spatially structured detection probability | Replaces latent activity centers with Gaussian processes |

| Benchmarking Tools | Community-developed benchmarks [38] | Standardized model evaluation | Ensures reproducibility and comparability across implementations |

Emerging Infrastructure Solutions

The growing computational demands of ecological modeling have spurred development of specialized infrastructure:

- AIRI//S: AI infrastructure architected by Pure Storage and NVIDIA, specifically designed for data-intensive computational workloads [1]

- FlashBlade//EXA: Scale-out storage solutions optimized for AI and high-performance computing workloads, addressing I/O bottlenecks in large ecological simulations [1]

- S3-over-RDMA technology: Accelerates data transfer for AI training, increasing throughput and reducing CPU utilization during data ingestion [1]

Computational Workflows

The benchmarking evidence consistently demonstrates that GPU acceleration provides substantial performance improvements for Bayesian population dynamics and spatial capture-recapture models, with documented speedup factors ranging from 20x to over 100x compared to CPU implementations [4]. These performance gains are achieved while maintaining statistical equivalence between implementations, provided appropriate validation protocols are followed [37].

The choice between CPU and GPU implementations involves balancing multiple factors:

- Computational demand of the specific ecological model

- Dataset size and complexity

- Available hardware resources and expertise

- Research timeline constraints

For most contemporary ecological research applications involving spatial capture-recapture or complex population dynamics models, GPU implementations offer compelling advantages in computational efficiency. This acceleration enables ecologists to fit more realistic models, conduct more extensive model checking, and reduce the time between data collection and conservation insights.

As ecological datasets continue to grow in size and complexity, and as models incorporate more sophisticated representations of ecological processes, the performance advantages of GPU computing are likely to become increasingly essential for cutting-edge ecological research.

Ecological modeling has evolved from simple analytical equations to complex, spatially-explicit simulations that demand substantial computational resources. Researchers are increasingly turning to GPU acceleration to handle the intensive calculations required for high-resolution, long-term eco-hydraulic modeling and individual-based simulations [39] [40]. This transition from CPU to GPU computing represents a paradigm shift in ecological research, enabling studies at previously impossible scales and resolutions.

For ecologists working primarily in R, this computational evolution presents both opportunities and challenges. While R excels at statistical analysis and data visualization, its native capabilities for large-scale parallel computation remain limited. TensorFlow offers a powerful alternative with transparent GPU support, but requires significant workflow adaptations. This guide objectively compares performance considerations and provides structured migration strategies for ecological researchers contemplating this transition, with particular emphasis on GPU versus CPU speedup benchmarks relevant to ecological models.

Performance Foundations: CPU vs GPU Architectural Differences

Understanding the fundamental architectural differences between CPUs and GPUs is essential for predicting performance gains in ecological modeling contexts.

Processing Architecture Comparison

CPUs are designed for sequential task execution, featuring a few powerful cores optimized for complex, diverse operations. In contrast, GPUs employ massively parallel architecture with thousands of simpler cores that simultaneously perform identical operations on different data elements [1]. This architectural distinction creates complementary roles: CPUs excel at task management and complex logic, while GPUs dominate in computational throughput for parallelizable tasks.

For ecological modeling, this means operations like matrix calculations, cellular automata updates, and spatial interpolations - all common in ecosystem simulations - represent ideal GPU workloads. The parallel nature of updating thousands of grid cells in spatial models or calculating interactions between numerous individuals aligns perfectly with GPU strengths [40].

Memory and Bandwidth Considerations

- CPU Memory Systems: Traditional CPUs typically access system RAM with bandwidth around 50GB/s in modern systems, sufficient for general-purpose computing but potentially limiting for data-intensive ecological simulations [1].

- GPU Memory Architecture: High-end GPUs feature dedicated VRAM with bandwidth up to 7.8 TB/s in 2025 models, dramatically accelerating data access patterns common in spatial ecological models [1]. This extensive bandwidth enables rapid processing of high-resolution environmental grids and complex individual-based interactions.

Experimental Benchmarks: Quantitative Performance Comparisons

TensorFlow CPU vs GPU Performance in Deep Learning Tasks

Independent benchmarking reveals substantial performance differences between CPU and GPU implementations for TensorFlow workloads. One comprehensive study comparing training of a convolutional neural network with approximately 58 million parameters demonstrated dramatic acceleration with GPU utilization [41].

Table 1: TensorFlow Performance Comparison: CPU vs GPU Training Times

| Metric | CPU (Ryzen 2700x) | GPU (RTX 2080) | Improvement |

|---|---|---|---|

| Time per epoch | 478 seconds | 74 seconds | 85% reduction |

| Time per step | 3 seconds | 0.5 seconds | 83% reduction |

| Total training (10 epochs) | 4787 seconds | 745 seconds | 84% reduction |

| GPU Utilization | N/A | 100% memory, 11% compute | N/A |

| CPU Utilization | 80% | Below 60% | 25% reduction |

This benchmark demonstrates that even with suboptimal GPU utilization (just 11% computational load), the RTX 2080 delivered 6.4x faster training times compared to an 8-core CPU [41]. With optimized code ensuring higher GPU utilization, these gains can potentially increase further.

Local LLM Performance Across Hardware Configurations

Recent benchmark studies testing local LLMs (relevant to ecological natural language processing and model documentation) reveal instructive performance patterns across hardware types [26]:

Table 2: Local LLM Performance Across Hardware Configurations

| Hardware | Model Size Range | Performance Sweet Spot | Eval Rate | Optimal Use Cases |

|---|---|---|---|---|

| CPU (AMD Ryzen 9 9950X) | 4-5 GB models | 4-5 GB models | >20 tokens/sec | Non-time-critical tasks, code models |

| GPU (NVIDIA RTX 4090) | 9-14 GB models | >9 GB models | Highest rates | Production environments, interactive workflows |

| Apple M1 Pro | 1.3-14 GB models | Medium models | Balanced rates | Portable development, medium-scale work |

These results indicate that CPUs can handle more than expected, particularly with models sized under 5GB, achieving evaluation rates exceeding 20 tokens/second - sufficient for many research applications [26]. This suggests ecological researchers with smaller models may not require immediate GPU migration, while those working with larger architectures will benefit substantially.

Ecological Model GPU Performance Case Study

Research implementing spatially-explicit ecological models on GPUs demonstrates the transformative potential for ecosystem modeling. One study porting mussel bed and arid vegetation models from traditional CPU implementations to GPU-accelerated versions achieved order-of-magnitude speed improvements [40]. The spatial parallelism inherent in grid-based ecological models - where each cell's state update can be computed simultaneously - represents an ideal workload for GPU architecture.

TensorFlow GPU Setup and Configuration for Ecological Research

Prerequisites and Compatibility Considerations

Successfully leveraging GPU acceleration with TensorFlow requires careful attention to dependency compatibility:

- CUDA Compute Capability: NVIDIA GPU with Compute Capability 3.5 or higher [42]

- TensorFlow Version Alignment: Specific TensorFlow versions require matched CUDA and cuDNN versions [43]

- Driver Requirements: Updated NVIDIA drivers supporting your CUDA version [43]

- Platform Considerations: As of TensorFlow 2.10, Windows native GPU support ended, requiring WSL for Windows implementations [42]

Verification and Diagnostics

After installation, researchers should verify proper GPU detection and functionality:

Proper configuration returns physical GPU devices rather than an empty list [42] [44]. The log_device_placement flag provides explicit confirmation of operation placement, critical for debugging performance issues.

Migration Methodology: Transitioning Ecological Models from R to TensorFlow

Experimental Protocol for Performance Benchmarking

To objectively evaluate the porting process, researchers should implement a standardized comparison protocol:

- Select a representative ecological model from existing R implementations (e.g., spatially-explicit population dynamics, nutrient cycling, or vegetation pattern formation)

- Create a reference implementation in R using standard packages (deSolve, SpaDES, individual)

- Develop TensorFlow equivalent with identical model structure and parameters

- Execute comparative runs with identical initial conditions and simulation durations

- Measure computational performance including execution time, memory usage, and scaling behavior

- Validate numerical equivalence by comparing final states and key output metrics

This methodology ensures fair comparison between platforms while accounting for implementation differences.

Performance Optimization Workflow

The TensorFlow Profiler provides a structured approach to optimizing GPU performance [45]:

Figure 1: TensorFlow GPU Performance Optimization Workflow

Input Pipeline Optimization

For ecological models processing large spatial datasets or long-term environmental records, input pipeline efficiency is critical. The TensorFlow Profiler's Input-pipeline analyzer identifies excessive host-to-device transfer bottlenecks [45]. Solutions include:

- tf.data API implementation with prefetching and parallel processing

- Synthetic data validation to isolate pipeline issues

- Tensor compression for large spatial grids

- Memory mapping for large environmental datasets

Research Reagent Solutions: Essential Tools for GPU-Accelerated Ecological Modeling

Table 3: Essential Research Reagents for GPU-Accelerated Ecological Modeling

| Tool/Category | Specific Examples | Function in Research Process |

|---|---|---|

| GPU Hardware | NVIDIA RTX 4090 (24GB), H100, A100; AMD MI300X | Provides parallel computation capacity for model execution |

| Software Frameworks | TensorFlow with GPU support, OpenCL, CUDA Fortran | Enables GPU programming and model implementation |

| Performance Tools | TensorFlow Profiler, NVIDIA Nsight, tf.debugging.set_log_device_placement() |

Identifies performance bottlenecks and optimization opportunities |