From Raw Tracks to Insights: A Guide to Visualizing Complex Biologging Data

This article provides a comprehensive framework for researchers and scientists tackling the challenges of biologging data visualization.

From Raw Tracks to Insights: A Guide to Visualizing Complex Biologging Data

Abstract

This article provides a comprehensive framework for researchers and scientists tackling the challenges of biologging data visualization. It covers the foundational principles of exploring complex, multi-dimensional animal behavior data, details practical methodologies using modern tools like Python's Seaborn, addresses common troubleshooting and optimization techniques for noisy datasets, and establishes robust validation methods to ensure scientific rigor. By integrating these four core intents, the guide empowers professionals in ecology, conservation, and drug development to transform raw sensor data into actionable, publication-ready visual insights.

Understanding Your Data: First Steps in Exploring Biologging Datasets

Biologging employs animal-borne sensors to collect high-resolution data on behaviour, physiology, and environmental context [1] [2]. These datasets are inherently multi-dimensional, capturing variables like depth, acceleration, magnetic field strength, and water temperature simultaneously [1]. This complexity introduces significant challenges in data analysis, including high-dimensionality, collinearity between variables, and substantial background noise that can obscure biologically relevant signals [1] [3]. This document outlines standardized protocols for processing, analyzing, and visualizing such data, with an emphasis on statistical techniques for noise reduction and ethical considerations for device deployment.

The table below summarizes the core dimensions of biologging data, common sources of noise, and recommended mitigation strategies.

Table 1: Characteristics and Challenges of Biologging Data

| Data Dimension | Typical Sensors | Common Data Issues (Noise) | Recommended Mitigation |

|---|---|---|---|

| Depth & Time | Pressure sensor | Sensor drift, surface detection error | Kalman filtering, state-space modeling |

| 3D Kinematics | Accelerometer, Gyroscope, Magnetometer | Dynamic body movement, tag displacement | High-frequency sampling, PCA for collinearity [1] |

| Animal Path | GPS, Dead-reckoning | Location error, integration drift | Path smoothing algorithms, combining GPS with dead-reckoning [1] |

| Environment | Temperature, Light | Spurious values, sensor fouling | Threshold-based filtering, manual validation |

Experimental Protocol: Multi-Dimensional Dive Analysis

This protocol details the process for analyzing diving behaviour, as exemplified in flatback turtle studies [1].

Equipment and Tag Deployment

- Tag Type: Customized Animal Tracking Solutions (CATS) diary or camera tag.

- Sensors: Tri-axial accelerometer (20–50 Hz), magnetometer, gyroscope, pressure sensor (10 Hz), temperature sensor, and duty-cycled GPS [1].

- Attachment: Secure to the carapace using rubber suction cups or a custom-made, padded self-detaching harness to minimize impact on animal behaviour [1].

- Data Retrieval: Use a Galvanic Timed Release (GTR) mechanism. Tags are buoyant for recovery [1].

Data Processing and Analysis Workflow

- Data Extraction: Isolate individual dives from the continuous time-depth series using a depth threshold (e.g., >1 meter).

- Variable Calculation: For each dive, compute a suite of 16+ variables describing its geometry and kinematics. Examples include:

- Maximum depth and duration

- Vertical and horizontal path angles

- Descent/ascent rate, bottom time

- Overall dynamic body acceleration (ODBA)

- Stroke frequency [1]

- Noise Reduction and Dimensionality Reduction:

- Apply Principal Component Analysis (PCA) to the calculated dive variables. This objective technique condenses the data, removes collinearity, and extracts the main features of diving behaviour without imposing subjective dive classifications [1].

- Statistical Modeling:

- Use the resulting principal components as response variables in Generalized Additive Mixed Models (GAMMs).

- Model the effects of environmental drivers such as season, time of day (diel), and tidal phase to quantify their influence on diving behaviour [1].

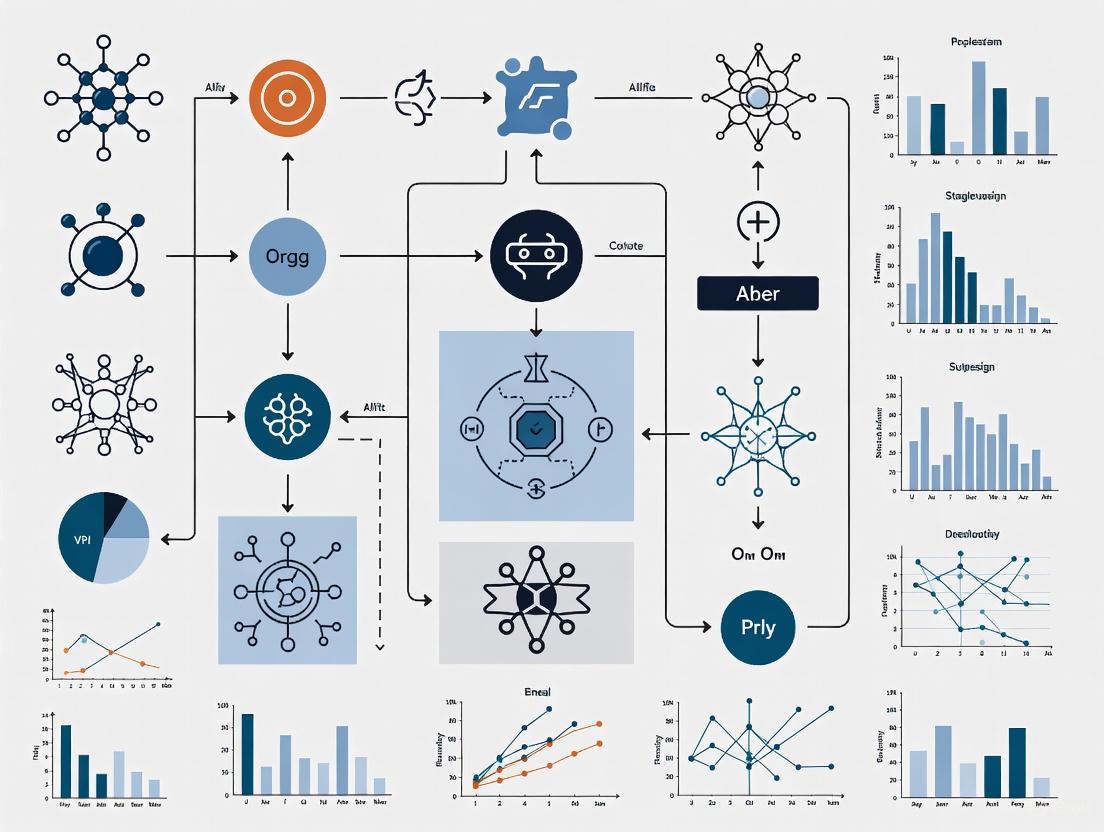

Diagram 1: Dive analysis workflow

Visualization of High-Dimensional Data

Effective visualization is critical for exploring and communicating patterns in complex biologging data.

Visualization Workflow

The grammar of graphics, as implemented in the R package ggplot2, provides a logical and flexible framework for building complex plots from modular components [4]. This high-level approach allows researchers to intuitively try different visualization types without dealing with low-level canvas plotting instructions [4].

Diagram 2: Grammar of graphics workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Biologging Studies

| Item | Function/Application | Example/Notes |

|---|---|---|

| Multi-sensor Biologging Tag | Primary data collection unit. | CATS "Diary" or "Camera" tags with accelerometer, magnetometer, gyroscope, pressure, and temperature sensors [1]. |

| Attachment System | Secures tag to the study animal with minimal impact. | Custom polyester-webbing harness with Velcro and a padded baseplate, or rubber suction cups [1]. |

| Galvanic Timed Release (GTR) | Ensures tag recovery and limits deployment duration. | Ocean Appliances Australia GTR; corrodes after a pre-set time to release the tag [1]. |

| R Statistical Software | Core platform for data analysis, statistical modeling, and visualization. | Use of packages like ggplot2 for visualization [4] and mgcv for GAMMs [1]. |

| Data Integration Framework | Combines different data types (vertical/mosaic integration). | Used to connect phenotypic, environmental, and genomic data to understand drivers of variation [3]. |

Ethical Considerations and Data Integrity

- Animal Welfare: Prioritize minimizing device impact. Harness design should allow for natural behaviour, and deployment duration should be justified and limited using GTRs [1].

- Noise Navigation: Recognize that "noise" in high-dimensional data may contain biologically meaningful stochasticity. Analytical approaches should aim to navigate through, rather than blindly discard, this noise to understand its contribution to the system [3].

- Open Data: Promoting open access to biologging data is crucial for the "Internet of Animals" (IoA) initiative, which aims to create a global network of animal-borne data to resolve large-scale marine and ecological issues [2].

The analysis of animal-borne sensor data, or biologging, presents a significant challenge and opportunity in ecology and evolution. Understanding an individual's behavior is central to assessing its reproductive opportunities and probability of survival, and is key to planning successful conservation interventions [5]. The advent of bio-loggers—devices carrying sensors like accelerometers, gyroscopes, and GPS receivers—has enabled the remote collection of vast kinematic and environmental datasets [5]. The central challenge lies in interpreting these complex, high-dimensional datasets to define core behavior patterns and identify significant outliers, which are observations that deviate markedly from others and may have been generated by a different mechanism [6]. Effectively visualizing and analyzing this data is therefore not merely a technical step, but a fundamental philosophical and methodological process for reintegrating rare but critical events into our scientific understanding [6]. This document outlines key questions, protocols, and visualization strategies to structure this exploratory analysis, framing them within the broader context of data visualization techniques for complex biologging data.

Key Analytical Questions for Exploratory Data Analysis

A structured exploratory analysis should be guided by fundamental questions that help define normal behavior and surface meaningful anomalies. The table below organizes these key questions.

Table 1: Key Questions for Exploratory Analysis of Biologging Data

| Analytical Dimension | Core Question | Sub-questions for Deeper Investigation | Suggested Visualization Tools |

|---|---|---|---|

| Behavioral State Identification | What are the dominant, recurring behavioral states in the dataset? | How are these states distributed to create an individual's activity budget? Do these budgets vary by individual, sex, age, or season? | Bar charts, Pie charts [7] |

| Temporal Patterning | How are behavioral states structured in time? | Are there clear diurnal or nocturnal patterns? Is the behavior rhythmic or arrhythmic? Are transitions between states predictable or stochastic? | Line diagrams, Time-series graphs [8] |

| Contextual & Environmental Drivers | How do behaviors correlate with environmental context? | How does behavior change with terrain, weather, or habitat? Are there specific environmental triggers for certain behaviors? | Scatter plots, Maps [8] |

| Outlier Detection & Significance | Which observations are statistical outliers, and what is their potential biological significance? | Does the outlier represent a rare but crucial event (e.g., a predation attempt)? Could it indicate a new, previously unclassified behavior? Could it signal a "keystone" individual influencing group dynamics? [6] | Scatter plots, Histograms |

Experimental Protocols for Behavior Classification from Bio-logger Data

The following protocol provides a detailed methodology for applying supervised machine learning (ML) to classify animal behavior from bio-logger data, a common and powerful approach in the field [5].

Protocol: Supervised Machine Learning for Behavior Classification

1. Objective: To train a computational model to automatically classify animal behaviors based on time-series data from animal-borne tags.

2. Materials and Research Reagents:

Table 2: Essential Materials and Reagents for Biologging Analysis

| Item Name | Function/Description |

|---|---|

| Animal-borne Bio-logger | A tag attached to an animal that records sensor data (e.g., accelerometer, gyroscope, magnetometer, GPS). |

| Ethogram | A predefined inventory of the behaviors an individual may perform, essential for annotation [5]. |

| Video Recording System | For simultaneous recording of animal behavior to establish ground-truth data for annotation. |

| Annotation Software | Software used to synchronously link sensor data streams with behavioral labels from video. |

| Computing Hardware | Computers with sufficient processing power (often with GPUs) for training machine learning models. |

| Programming Environment | An environment such as R or Python with relevant ML libraries (e.g., scikit-learn, TensorFlow, PyTorch). |

3. Step-by-Step Methodology:

Step 1: Data Collection & Synchronization

- Deploy bio-loggers on study subjects to record sensor data at an appropriate sampling rate.

- Simultaneously record video of the animal's behavior for the duration of the deployment feasible for ground-truthing.

- Precisely synchronize the clock of the bio-logger with the video recording system.

Step 2: Behavioral Annotation & Ethogram Creation

- Create an ethogram that is exhaustive and mutually exclusive for the behaviors of interest.

- Using annotation software, a human expert carefully reviews the synchronized video and labels the corresponding bio-logger data with the correct behavioral states.

- This process creates a labeled dataset where the input is the sensor data (e.g., acceleration on three axes) and the output is the behavioral state (e.g., "resting," "foraging").

Step 3: Data Preprocessing & Model Training

- Split the annotated dataset into a training set (e.g., 70-80%) and a test set (e.g., 20-30%). The test set must be held out and not used during training.

- For classical ML methods (e.g., Random Forest), engineer features from the raw sensor data (e.g., mean, variance, frequency-domain features) [5].

- For deep learning methods (e.g., Convolutional Neural Networks), the model may learn features directly from the raw or minimally processed data [5].

- Train the chosen ML model(s) on the training set. The model learns the complex relationships between the sensor data patterns and the behavioral labels.

Step 4: Model Evaluation & Application

- Use the held-out test set to evaluate the trained model's performance. Report standard metrics such as overall accuracy, precision, recall, and F1-score for each behavior class.

- Once validated, the model can be used to predict behavioral labels for the remaining, un-annotated bio-logger data, allowing for the analysis of vast datasets.

4. Advanced Application: Self-Supervised Learning for Data-Scarce Scenarios For situations with limited annotated data, a self-supervised learning approach can be highly effective.

- Pre-training: A deep neural network is first pre-trained on a large corpus of unlabeled accelerometer data (which can even be from a different species, such as humans) to learn general features of movement [5].

- Fine-tuning: This pre-trained model is then fine-tuned using the smaller, annotated dataset from the target species. This approach has been shown to outperform others when training data is scarce [5].

The following workflow diagram illustrates the complete process from data collection to behavioral insight.

Workflow for Behavior Classification

A Philosophical and Practical Framework for Outliers

Outliers in biologging data should not be automatically dismissed as noise. A philosophical shift is required to view them as potential drivers of scientific discovery [6]. The case of the hybrid Galápagos finch that founded a new lineage exemplifies how rare individuals and events (hybridization, immigration, rare weather) can have a disproportionate impact on a population's evolutionary trajectory [6]. Differentiating between spurious artifacts and biologically meaningful outliers is a central challenge.

Long-term studies act as a "continuous-video" dataset, providing the necessary context to detect outlier events and understand their consequences over time, unlike short-term "snapshot" studies [6]. Emerging technologies like smaller, non-invasive biologgers and machine learning algorithms are crucial for identifying and classifying these rare events in complex field environments [6]. The following diagram outlines a decision process for evaluating outliers.

Outlier Evaluation Framework

Application Note: Core Principles for Effective Data Visualization

Within the framework of a thesis on data visualization for complex biologging data, the initial exploration phase is critical. This note outlines the application of three fundamental plot types, guided by the core principles of effective visualizations: accuracy, utility, and efficiency [9]. Biologging data, such as that obtained from animal-borne sensors, presents unique challenges including strong temporal autocorrelation, complex random effect structures, and often low sample sizes [10]. Selecting the appropriate visual tool is the first step in transforming raw data into robust, interpretable scientific insights.

The following workflow diagram illustrates the recommended logical pathway for selecting and creating these essential plots during initial data exploration.

Experimental Protocols for Visualization

Protocol: Creating and Interpreting Scatter Plots

Objective: To investigate the potential relationship between two numeric variables (e.g., animal heart rate and diving depth) and identify correlations, clusters, and outliers [11] [12].

Methodology:

- Data Preparation: Select two continuous numeric variables from your dataset. Each row (e.g., a single observation from a biologging tag) becomes a single point on the plot [11].

- Axis Assignment: Plot the independent variable (e.g., dive depth) on the horizontal x-axis and the dependent variable (e.g., heart rate) on the vertical y-axis.

- Plotting: Generate a point for each pair of values. The position is determined by its x and y values [11].

- Enhancement (Optional):

- Add a trend line (line of best fit) to highlight the overall relationship and its strength [11] [12].

- For a third numeric variable (e.g., animal mass), use a bubble chart, varying point size [11].

- For a third categorical variable (e.g., species), encode point color or shape [11].

- Use logarithmic scales on one or both axes if data points are clustered closely together [12].

- Interpretation: Analyze the plot for the direction (positive/negative), strength (strong/weak), and form (linear/non-linear) of the correlation. Critically evaluate whether a observed correlation implies causation or could be driven by other factors [11] [12].

Protocol: Creating and Interpreting Histograms

Objective: To visualize the distribution, central tendency, and spread of a single continuous variable (e.g., the durations of animal dives) [9].

Methodology:

- Data Selection: Select a single continuous variable of interest from the biologging dataset.

- Binning: Divide the entire range of values into a series of consecutive, non-overlapping intervals (bins).

- Counting: Count the number of data points that fall into each bin.

- Plotting: Construct bars for each bin, where the height of the bar corresponds to the count (frequency) of observations in that bin.

- Interpretation: Analyze the shape of the distribution. Assess if it is unimodal, bimodal (suggesting multiple underlying groups or states) [12] [9], normal, or skewed. Identify the presence of gaps or outliers that may warrant further investigation.

Protocol: Creating and Interpreting Time Series Plots

Objective: To display the value of a measured variable (e.g., body temperature, GPS location) at sequential time points, identifying trends, cycles, and anomalies [10].

Methodology:

- Data Preparation: Ensure data is structured with timestamps and one or more corresponding measurement variables. Data is often collected at fixed intervals (e.g., every 4 seconds) by biologging devices [13].

- Axis Assignment: Plot the timestamp on the horizontal x-axis and the measured variable on the vertical y-axis.

- Plotting: Draw line segments connecting consecutive observations in chronological order. For multiple variables, use different colored lines or small multiples.

- Statistical Consideration: Account for temporal autocorrelation, where successive values depend on prior values (e.g., an animal's oxygen store during a dive) [10]. Never use ordinary t-tests or GLMs on raw time-series data without checking for autocorrelation, as this greatly inflates Type I error rates [10].

- Interpretation: Look for long-term trends, seasonal or diurnal patterns (cyclical behavior), and sudden shifts or outliers that deviate from the norm.

Table 1: Characteristics and Applications of Essential Plot Types

| Plot Type | Primary Purpose | Variables Required | Key Strengths | Common Pitfalls & Solutions |

|---|---|---|---|---|

| Scatter Plot [11] [12] | Show relationship between two numeric variables. | Two Continuous Numeric | Reveals correlation, strength, form, and outliers. | Overplotting: Use transparency, sampling, or 2D density plots (heatmaps) [11]. Causation Fallacy: Correlation does not imply causation [11]. |

| Histogram [9] | Display distribution of a single variable. | One Continuous Numeric | Shows shape (normal, bimodal, skewed), center, and spread. | Bin size choice can distort perception. Use multiple bin widths to test robustness. Prefer over bar charts for continuous data [9]. |

| Time Series Plot [10] | Visualize data points at sequential time intervals. | One Continuous Numeric + Timestamp | Identifies trends, cycles, and autocorrelation over time. | Temporal Autocorrelation: Use specialized models (e.g., GLS, ARMA) instead of standard tests to avoid inflated Type I error [10]. |

Table 2: A Scientist's Toolkit: Essential Materials and Reagents for Biologging Data Visualization

| Tool / Reagent | Type | Function / Application | Notes |

|---|---|---|---|

| CTD-SRDL [13] | Hardware | Animal-borne data logger that collects Conductivity, Temperature, Depth data and relays it via satellite. | The foundation of many marine biologging studies; protocols manage energy and bandwidth to collect biological & environmental data [13]. |

| Generalized Least Squares (GLS) / ARMA Models [10] | Statistical Method | Correctly models time-series data with autocorrelation, controlling Type I error rates. | Essential for rigorous analysis of physiologging data (e.g., heart rate, temperature) that is inherently autocorrelated [10]. |

| Trend Line (Line of Best Fit) [11] [12] | Visualization Element | Highlights the correlational relationship between two variables in a scatter plot. | Provides a visual cue on the nature and strength of the correlation. |

| Sequential Colormap [14] | Visualization Tool | Used to represent quantitative data varying from low to high values. | More effective and less misleading than default "rainbow" colormaps for representing ordered data [14]. |

| Sea Stack Plot [9] | Novel Plot Type | Combines vertical histograms and summary statistics to accurately represent large univariate datasets. | An emerging alternative that overcomes weaknesses of boxplots and density plots for large and/or unevenly distributed data [9]. |

Data cleaning and pre-processing form the critical foundation for any subsequent analysis and visualization in biologging research. High-quality data is essential for accurate analysis and modeling, leading to improved accuracy, better insights, and enhanced model performance [15]. In the context of complex biologging data, which often encompasses vast quantities of noisy, incomplete, and inconsistent measurements from high-throughput technologies, rigorous pre-processing ensures that results are biologically relevant and reproducible [16]. This protocol outlines a standardized framework for preparing raw biological data, enabling researchers to transform disparate data streams into a clean, analysis-ready resource.

Data Assessment and Quality Control Protocol

Experimental Protocol: Initial Data Diagnosis

Objective: To systematically identify and catalog data quality issues in raw biologging data prior to cleaning.

- Step 1: Data Ingestion and Integrity Check. Load the dataset into your computational environment (e.g., R, Python). Verify that all expected records and variables are present and that file integrity is maintained.

- Step 2: Structural and Formatting Inspection. Examine data for inconsistencies in formatting, including date/time formats, numerical delimiters, and categorical value representations (e.g., "M", "Male", "male") [17]. The

clean_names()function from the Rjanitorpackage is recommended for standardizing column names to a consistent lowercase format [15]. - Step 3: Comprehensive Summary Statistics. Generate summary statistics (e.g., mean, median, range, standard deviation, missing value count) for all quantitative variables. This helps identify potential outliers and unexpected value ranges.

- Step 4: Range and Logic Validation. Perform automated checks to ensure numerical values fall within biologically plausible ranges (e.g., positive values for enzyme concentrations) [17]. Check for logical inconsistencies between related variables (e.g., an animal's birth date must precede a tracking observation date).

- Step 5: Visual Data Profiling. Create exploratory visualizations, including histograms to examine distributions, box plots to identify potential outliers, and scatter plots to inspect relationships between key variables [15] [18].

Table 1: Categorization and Frequency of Common Data Issues in Biologging Research.

| Issue Category | Specific Data Issue | Common Frequency in Raw Data | Potential Impact on Analysis |

|---|---|---|---|

| Completeness | Missing Completely at Random (MCAR) | 1-5% | Reduced statistical power |

| Missing at Random (MAR) | 2-7% | Introduced bias in parameter estimates | |

| Missing Not at Random (MNAR) | 1-3% | Severe bias and invalid conclusions | |

| Consistency | Inconsistent Categorical Labels | 3-10% | Misgrouping of data during analysis |

| Inconsistent Units of Measurement | 2-5% | Incorrect comparisons and results | |

| Date/Time Format Inconsistencies | 5-15% | Failed time-series analysis | |

| Accuracy | Outliers due to Measurement Error | 2-8% | Skewed summary statistics and models |

| Data Entry Errors | 1-4% | Local inaccuracies in data records | |

| Structural | Duplicate Records | 1-5% | Inflated sample size and biased counts |

Data Cleaning and Transformation Methodology

Experimental Protocol: Handling Missing Data and Outliers

Objective: To address data incompleteness and extreme values using statistically sound methods.

- Step 1: Diagnose Missing Data Mechanism. Determine the nature of the missingness—whether it is Missing Completely at Random (MCAR), Missing at Random (MAR), or Missing Not at Random (MNAR)—as this guides the appropriate handling technique [17].

- Step 2: Apply Deletion or Imputation.

- Listwise Deletion: Remove entire records (rows) with any missing values. Use only if the data is MCAR and the resulting loss of data is minimal.

- Imputation: Replace missing values with statistical estimates. Simple methods include mean, median, or mode imputation. For a more robust approach capable of preserving dataset integrity, use multiple imputation or machine learning-based methods like K-nearest neighbors (KNN) imputation, which is considered a gold standard for reducing bias [15] [17]. In R, this can be performed using the

replace_na()function from thedplyrpackage.

- Step 3: Detect and Treat Outliers.

- Identification: Use visual methods (box plots, scatter plots) and statistical methods (Z-scores, Interquartile Range (IQR)) to flag outliers [15] [17]. The IQR method typically defines outliers as values below

Q1 - 1.5*IQRor aboveQ3 + 1.5*IQR. - Treatment: Decide on a case-by-case basis. Options include investigation for measurement error, transformation (e.g., log transformation), or Winsorization (capping extreme values at a specified percentile). Deletion is recommended only when an outlier is confirmed to be a data-entry error [15] [17].

- Identification: Use visual methods (box plots, scatter plots) and statistical methods (Z-scores, Interquartile Range (IQR)) to flag outliers [15] [17]. The IQR method typically defines outliers as values below

Experimental Protocol: Standardization and Encoding

Objective: To create consistent and analytically suitable data formats.

- Step 1: Standardize and Normalize Data. Convert all data to consistent formats and units. For machine learning, apply feature scaling. Use normalization (scaling values to a 0-1 range) or standardization (scaling to a mean of 0 and standard deviation of 1) based on the requirements of subsequent analyses [17].

- Step 2: Encode Categorical Variables. Convert categorical text labels into numerical representations that algorithms can process.

- Label Encoding: Assign an integer to each category (e.g., "WT"=0, "KO"=1). Use for ordinal data.

- One-Hot Encoding: Create new binary (0/1) columns for each category. Use for nominal data where no order exists. This can be achieved in R by creating new columns with

mutate()andas.numeric()[15].

- Step 3: Transform Skewed Data. For heavily skewed distributions, apply transformations (e.g., log, square root) to make the data more symmetrical and meet the assumptions of parametric statistical tests [15] [17].

Workflow Visualization: Data Cleaning and Pre-processing Pipeline

The Scientist's Toolkit: Essential Research Reagents & Software

Table 2: Key Software Tools and Packages for Bioinformatics Data Pre-processing.

| Tool Category | Specific Tool/Package | Primary Function in Pre-processing |

|---|---|---|

| Quality Control | FASTQC | Quality assessment of raw sequencing data [16] |

| Trimmomatic | Trimming of adapter sequences and low-quality bases from NGS reads [16] | |

| Data Wrangling & Analysis | R tidyverse (dplyr, tidyr) |

Data manipulation, cleaning, and transformation [15] |

| Python (Pandas, NumPy) | Data cleaning, transformation, and numerical computations [16] | |

| Statistical Normalization | DESeq2 | Normalization and analysis of RNA-Seq count data [16] |

| Bioconductor | Suite of R packages for the analysis and comprehension of genomic data [16] | |

| Visualization | ggplot2 (R) | Creating static, publication-quality visualizations for data exploration [15] [4] |

| PyMOL / UCSF Chimera | Visualization of macromolecular structures [19] | |

| Integrated Platforms | Galaxy | Web-based platform providing a user-friendly interface for preprocessing tools [16] |

Data Integration and Validation for Complex Biological Studies

Experimental Protocol: Data Integration and Validation

Objective: To combine data from multiple sources or experiments and verify the integrity of the final cleaned dataset.

- Step 1: Integrate Datasets. Combine multiple data frames or sources, ensuring all fields align correctly. In R, use functions like

bind_rows()to vertically stack datasets [15]. Ensure consistent variable names and units across all sources before integration. - Step 2: Validate the Cleaned Dataset. Perform a final set of checks to confirm the success of the pre-processing pipeline.

- Range Checks: Re-verify that all values remain within plausible biological limits [17].

- Consistency Checks: Confirm that logical relationships between variables are still valid (e.g., no individual has two conflicting genotypes).

- Cross-referencing: Where possible, validate key findings or summary statistics against external data sources or established biological knowledge [17].

- Step 3: Documentation and Reproducibility.

- Maintain a Data Cleaning Log: Document every cleaning operation, including the rationale for decisions (e.g., why certain outliers were removed) and parameters used for imputation or transformation [17].

- Use Version Control: Employ a system like Git to track changes in both data and code, ensuring the entire workflow is reproducible [17].

- Create Scripted Workflows: Use R Markdown or Jupyter Notebooks to create a fully reproducible record of the entire data cleaning and pre-processing pipeline [17].

Workflow Visualization: From Raw Data to Visual Insight

The explosion of complex, high-dimensional data in biology, particularly from high-resolution biologging and multi-omics studies, demands robust computational tools for analysis and visualization. Biologging tags, for instance, generate high-frequency data from accelerometers, magnetometers, and pressure sensors, requiring specialized processing to extract meaningful biological insights [20]. This article provides an overview of three critical computational environments—Python, R, and visual programming platforms—framed within the context of visualizing and analyzing complex biologging data. We detail specific application notes and experimental protocols to equip researchers, scientists, and drug development professionals with practical methodologies for their data exploration needs.

The Scientist's Toolkit: Essential Software and Packages

The choice of computational tools is critical for handling the volume and complexity of modern biological data. The table below summarizes key software solutions and their primary applications in biological research.

Table 1: Essential Computational Tools for Modern Biological Research

| Tool Name | Type/Environment | Primary Function in Biological Research | Key Features |

|---|---|---|---|

| Biopython [21] [22] | Python Package | Biological computation, sequence manipulation, and parsing bioinformatics file formats. | Freely available tools for a wide range of bioinformatics tasks. |

| scikit-bio [23] | Python Package | Bioinformatics algorithms for genomics, microbiomics, and ecology. | Provides data structures, ordination methods (PCoA), and statistical tests (PERMANOVA). |

| Pandas & NumPy [24] [22] | Python Packages | Foundational data manipulation and numerical operations on tabular and array data. | Enables data cleaning, transformation, and efficient numerical computation. |

| Seaborn & Matplotlib [24] [22] | Python Packages | Statistical data visualization and creation of static, animated, and interactive plots. | High-level interface for creating publication-quality figures like violin plots and heatmaps. |

| R/LinkedCharts [25] | R Package | Creating linked interactive plots for exploratory data analysis. | Allows user clicks in one chart to update the content of another, facilitating detailed data inspection. |

| tagtools [20] | R Package | Processing and analysis of high-resolution biologging tag data. | Tools for calibration, visualization, dive detection, and track reconstruction from sensor data. |

| ggplot2 [26] | R Package | Creating flexible, publication-quality plots using a layered grammar of graphics. | Powerful and intuitive syntax for building complex visualizations step-by-step. |

| Pluto Bio [27] | Visual Programming Environment | Interactive bioinformatics analysis and visualization with no coding required. | Browser-based platform for creating and customizing a wide array of biological visualizations. |

| GraphPad Prism [26] | GUI-based Application | Biostatistics and clinical data comparisons. | User-friendly interface for common statistical analyses and graph generation. |

Application Note: Interactive Visualization of Biologging Data in R

Background and Protocol Objective

High-resolution biologging tags sample data many times per second, generating complex multivariate datasets [20]. The objective of this protocol is to create an interactive, linked visualization in R to explore such data, enabling researchers to seamlessly transition from an overview of entire datasets to detailed inspection of specific events.

Research Reagent Solutions

Table 2: Essential Software "Reagents" for Biologging Data Visualization in R

| Item Name | Function | Example Use Case in Protocol |

|---|---|---|

| tagtools R Package [20] | Data import, calibration, and fundamental processing of biologging sensor data. | Reading raw accelerometer data, calibrating it to scientific units, and detecting specific movement events. |

| R/LinkedCharts R Package [25] | Framework for creating linked interactive charts where a click in one plot updates another. | Linking an overview time-series plot with a detailed "zoom-in" plot and a histogram of dynamic acceleration. |

| ggplot2 R Package [26] | Creation of static, publication-quality visualizations. | Generating the initial overview plot of accelerometer data over time. |

Experimental Protocol: Creating Linked Visualizations withtagtoolsandR/LinkedCharts

Step 1: Data Preparation and Preprocessing

- Install and load required R packages:

tagtools,rlc,ggplot2,dplyr. - Import raw sensor data (e.g.,

.csvor specific tag data formats) usingtagtoolsfunctions likeread_tag_data(). - Calibrate the raw data to engineering units (e.g., m/s² for acceleration) using the

calibrate()function fromtagtools[20]. - (Optional) Compute derived metrics. For example, calculate dynamic body acceleration (DBA) as a proxy for energy expenditure using

compute_dba().

Step 2: Create the Overview Visualization

- Use

ggplot2to generate a static overview time-series plot of the entire calibrated data stream (e.g., accelerometer magnitude over a several-hour dive). This provides context for the animal's overall activity.

Step 3: Build the Interactive Linked Charts App

- The core of the application is built with

R/LinkedCharts. The following code skeleton outlines the process, using the principle of a shared global variable (selected_region) that is updated by clicks.

Step 4: Interpretation and Analysis

- Launch the app in a web browser. Click on potential points of interest (e.g., sudden spikes in acceleration indicating possible prey capture attempts) in the overview chart (A1).

- Observe how the detailed chart (A2) automatically zooms in on the selected time window, allowing for close inspection of the signal morphology.

- Simultaneously, the histogram (A3) will update to show the distribution of a derived variable (e.g., dynamic acceleration) within the selected window, which can be compared to the overall distribution to understand how the event differs from baseline behavior.

Workflow Diagram

Diagram 1: R-linked charts workflow for biologging data.

Application Note: Exploratory Data Analysis of Omics Data in Python

Background and Protocol Objective

Python has become a cornerstone for biological data analysis due to its powerful, integrated stack of packages [24]. This protocol demonstrates a streamlined workflow for the visual exploration of a typical omics dataset, such as from an RNA-Seq experiment, leveraging the combined power of Pandas for data manipulation and Seaborn for visualization.

Research Reagent Solutions

Table 3: Essential Python Package "Reagents" for Omics Data Exploration

| Item Name | Function | Example Use Case in Protocol |

|---|---|---|

| Pandas [24] [22] | Reading, cleaning, and processing tabular data. | Loading a counts matrix, filtering low-count genes, and calculating summary statistics. |

| Seaborn [24] [22] | High-level interface for drawing statistical graphics. | Generating a clustered heatmap, violin plots of expression distribution, and a PCA scatter plot. |

| Matplotlib [24] [22] | Foundation 2D plotting library. | Customizing and fine-tuning the plots created with Seaborn. |

| scikit-bio [23] | Bioinformatics algorithms and data structures. | Performing Principal Coordinate Analysis (PCoA) for dimensionality reduction. |

Experimental Protocol: Visual Exploration of an RNA-Seq Dataset with Pandas and Seaborn

Step 1: Environment Setup and Data Loading

- Install packages:

pip install pandas seaborn matplotlib scikit-bio. - In a Jupyter notebook, import the libraries:

import pandas as pd,import seaborn as sns,import matplotlib.pyplot as plt. - Load the normalized gene expression count matrix (samples as columns, genes as rows) and the sample metadata file (e.g., linking sample IDs to tissue type or treatment group) using

pd.read_csv().

Step 2: Data Wrangling with Pandas

- Filtering: Remove genes with low counts. For example, filter to keep only genes with counts per million (CPM) > 1 in at least

nsamples. - Transformation: Apply a variance-stabilizing transformation (e.g., log2(CPM + 1)) to the count data to make it more suitable for visualization.

- Integration: Merge the transformed expression DataFrame with the metadata DataFrame to enable coloring by experimental group in subsequent plots.

Step 3: Multi-panel Visual Exploration with Seaborn Create a series of plots to understand different aspects of the data.

Distribution and Outliers: Use

sns.violinplot()orsns.boxplot()to visualize the distribution of expression values per sample and identify any potential outliers.Sample Similarity and Clustering: Create a clustered heatmap of the correlation matrix between samples to visualize overall data structure.

Dimensionality Reduction: Perform Principal Component Analysis (PCA) or PCoA and plot the first two components to see if samples cluster by biological group.

Step 4: Interpretation

- The violin/box plots confirm data normality and flag any problematic samples.

- The clustermap shows hierarchical relationships between samples based on global expression similarity.

- The PCoA plot reveals major sources of variation in the dataset and whether these correspond to the experimental conditions.

Workflow Diagram

Diagram 2: Python data exploration workflow for omics data.

Application Note: Accessible Analysis with Visual Programming Environments

Background and Protocol Objective

Visual programming environments (VPEs) like Pluto Bio lower the barrier to entry for complex bioinformatics by providing a graphical, no-code interface for analysis and visualization [27]. This protocol outlines the process for a researcher with limited coding experience to create publication-ready figures from a differential expression analysis results file.

Research Reagent Solutions

Table 4: Key Features of Visual Programming "Reagents"

| Item Name | Function | Example Use Case in Protocol |

|---|---|---|

| Pluto Bio Visualizations [27] | Pre-built, customizable interactive plots for biological data. | Uploading a results table and generating a dynamic volcano plot and a clustered heatmap. |

| GraphPad Prism [26] | GUI-based application for biostatistics and graph generation. | An alternative desktop tool for creating static versions of similar plots. |

Experimental Protocol: Creating a Volcano Plot and Heatmap in Pluto

Step 1: Data Upload and Project Creation

- Create an account and log in to the Pluto Bio web platform.

- Create a new project and give it a descriptive name (e.g., "Oral Cancer DE Analysis").

- Upload your differential expression results file (e.g., a

.csvcontaining columns for gene identifier, log2 fold-change, and p-value).

Step 2: Generate a Volcano Plot

- In the visualization canvas, click "Add Plot" and select "Volcano Plot" from the menu.

- Assign the data columns to the correct visual roles:

- X-axis: Map to the

log2FoldChangecolumn. - Y-axis: Map to the

-log10(pvalue)column. - Label: Map to the

gene_namecolumn.

- X-axis: Map to the

- Customize the appearance:

- Adjust the significance thresholds (e.g., vertical lines for |FC| > 2, horizontal line for p-value < 0.05).

- Change the color of significantly up-regulated and down-regulated points.

Step 3: Generate a Clustered Heatmap

- Click "Add Plot" again and select "Heatmap".

- Select the option to create the heatmap from the normalized expression matrix (often uploaded separately or linked to the gene identifiers).

- Enable hierarchical clustering on both rows (genes) and columns (samples).

- Customize the color scale (e.g., using a perceptually uniform colormap like Viridis) [26].

- In the Pluto interface, these two plots can be linked. Clicking a gene on the volcano plot can highlight the corresponding row in the heatmap, showing its expression pattern across all samples [27].

Step 4: Export and Reporting

- Use the platform's tools to arrange the volcano plot and heatmap side-by-side on the canvas.

- Add annotations or text boxes to highlight key findings.

- Export the final composite figure in a high-resolution format (e.g., PNG or PDF) for publication, or share a live link to the interactive canvas with collaborators for discussion.

Workflow Diagram

Diagram 3: Visual programming environment workflow for bioinformatics.

Advanced Visualization Techniques for Animal Behavior and Sensor Data

Within the framework of a broader thesis on data visualization techniques for complex biologging data research, the ability to efficiently explore and interpret high-dimensional datasets is paramount. Researchers in fields such as toxicology, environmental health, and drug development are frequently confronted with complex datasets containing measurements for numerous variables across multiple experimental conditions or biological samples. The initial step in analyzing such data involves a comprehensive Exploratory Data Analysis (EDA), a process crucial for recognizing patterns, identifying anomalies, and establishing testable hypotheses [28]. Among the myriad of EDA techniques, the pair plot stands out as a foundational and powerful visualization tool that provides a multi-faceted overview of the relationships within a dataset. This article details the application of pair plots as a key methodology for visualizing correlated behaviors in high-dimensional biological data, offering structured protocols, customizable code, and essential guidance for integrating this technique into the modern biologist's computational toolkit.

Background & Key Concepts

A pair plot, also known as a scatterplot matrix, is a matrix of graphs that enables the visualization of the relationship between each pair of variables in a dataset [28]. It combines both histograms (or kernel density estimates) and scatter plots, providing a unique overview of the dataset's distributions and correlations. The primary purpose of a pair plot is to simplify the initial stages of data analysis by offering a comprehensive snapshot of potential relationships, thus guiding further statistical modeling and hypothesis testing [28].

In the context of biologging and complex biological data, such as the chemical speciation analysis of wildfire smoke samples or multi-parameter drug response data, pair plots are instrumental for several reasons. They facilitate a quick, yet thorough, examination of how variables interact with each other, allowing scientists to [28]:

- Visualize Distributions: Understand the distribution of individual variables.

- Identify Relationships: Observe linear or nonlinear relationships between variables.

- Detect Anomalies: Spot outliers that may indicate errors, unique insights, or critical biological responses.

- Reveal Clusters: Identify groups of data points that share similar characteristics, hinting at subpopulations within the dataset, such as distinct response profiles to a therapeutic compound.

Application Notes: Implementing Pair Plots for Biological Data

The following section provides a detailed, step-by-step protocol for generating and customizing pair plots, using Python's Seaborn library, to analyze high-dimensional biological data.

Experimental Protocol: Creating a Basic Pair Plot

This protocol uses a hypothetical dataset structurally similar to the environmental chemistry data described in the search results, containing chemical concentration measurements across multiple biological samples or experimental conditions [29].

1. Software and Package Preparation

- Ensure a Python environment (e.g., Jupyter Notebook, Google Colab) is available.

- Install and import the required Python packages:

pandas,numpy,matplotlib.pyplot, andseaborn.

2. Data Loading and Preprocessing

- Load the dataset (e.g., from a

.csvfile) into a pandas DataFrame. - Perform essential preprocessing: handle missing values, ensure numerical variables are correctly typed, and set row names if necessary, as demonstrated in the formatting of the smoke dataset [29].

- Verify the data structure. The DataFrame should be in a tidy (long-form) format where each column is a variable and each row is an observation [30].

3. Generate a Basic Pair Plot

- Use the

sns.pairplot()function to create a basic visualization. At this stage, the goal is to generate an initial overview.

Experimental Protocol: Advanced Customization for Enhanced Readability

Building upon the basic plot, this protocol adds critical customizations to improve interpretability, particularly for complex datasets with inherent groupings.

1. Incorporating a Grouping Variable (hue)

- Use the

hueparameter to color data points based on a categorical variable (e.g., "Species", "TreatmentGroup", "CellLine"). This is essential for identifying cluster-based patterns [30] [31].

2. Customizing Plot Aesthetics and Layout

- Adjust the

heightandaspectratio to control the size of each subplot. - Use the

corner=Trueparameter to plot only the lower triangle, removing redundant plots and creating a more concise visualization [28] [30]. - Customize markers and palette colors for better distinction between groups and adherence to specific color contrast rules.

3. Final Code for an Advanced Pair Plot

Table 1: Essential sns.pairplot Parameters for Biological Data Analysis

| Parameter | Data Type | Common Options | Function in Biological Context |

|---|---|---|---|

data |

pandas DataFrame | Tidy dataframe | The primary data structure containing biological observations. |

hue |

String (column name) | e.g., 'species', 'patient_id' | Colors data by a categorical variable to reveal clusters or group-specific patterns. |

vars |

List of strings | e.g., ['gene1', 'gene2', 'protein_A'] |

Selects a subset of relevant variables to focus the analysis and reduce visual clutter. |

kind |

String | 'scatter' (default), 'kde', 'reg' | Determines the plot type for off-diagonals; 'reg' adds a regression line. |

diag_kind |

String | 'auto', 'hist', 'kde', None | Determines the plot type for diagonals; 'kde' shows smoothed distributions. |

palette |

Dictionary or palette name | e.g., {'Ctrl': '#34A853', 'Treat': '#EA4335'} |

Defines colors for hue categories, crucial for accessibility and brand consistency. |

corner |

Boolean | True or False (default) |

Plots only the lower triangle, making the visualization more concise. |

plot_kws / diag_kws |

Dictionary | e.g., {'alpha': 0.5, 's': 30} |

Passes keyword arguments to customize the appearance of off-diagonal and diagonal plots. |

The Scientist's Toolkit

Table 2: Research Reagent Solutions for Computational Biology

| Item | Function | Application in Protocol |

|---|---|---|

| Seaborn Library (Python) | A high-level data visualization library based on matplotlib. | Provides the pairplot function and related customization tools for creating the statistical graphics. [28] [30] |

| pandas DataFrame | A fundamental data structure for data manipulation and analysis in Python. | Serves as the required input format for sns.pairplot, holding the tidy biological dataset. [30] |

| Jupyter Notebook | An open-source web application for creating and sharing documents containing live code. | Provides an interactive environment for running the analysis protocol, visualizing results immediately, and documenting the workflow. |

| scikit-learn | A machine learning library for Python. | Often used in conjunction with pair plots for subsequent steps like clustering confirmed relationships or building predictive models from identified features. |

| Color Palette | A defined set of colors (e.g., Google-inspired: #4285F4, #EA4335, #FBBC05, #34A853). | Ensures visualizations are accessible (with sufficient contrast) and adhere to project or organizational branding guidelines. [32] [33] |

Visual Workflow and Logical Relationships

The following diagram illustrates the logical decision-making process and workflow for employing pair plots in the analysis of complex biologging data, from data preparation to insight generation and subsequent analysis.

Pair Plot Analysis Workflow

Pair plots serve as a cornerstone in the exploratory analysis of high-dimensional biological data. Their primary utility lies in their ability to provide a bird's-eye view of complex relationships, guiding researchers toward meaningful patterns and robust hypotheses. The structured protocols and customizable tools provided here offer a clear pathway for scientists and drug development professionals to integrate this powerful technique into their standard analytical workflows, thereby enhancing the depth and clarity of their data-driven narratives.

Table 3: Key Insights from Pair Plots and Subsequent Analytical Actions

| Pattern Identified | Visual Signature in Pair Plot | Potential Biological Interpretation | Recommended Next Step |

|---|---|---|---|

| Strong Positive Correlation | Off-diagonal scatter plot shows points forming a linear pattern with a positive slope. | Two biomarkers may be co-expressed or part of the same biological pathway. | Calculate Pearson/Spearman correlation coefficient; consider multi-collinearity in models. |

| Distinct Clusters by Hue | Data points form separate, distinct clouds when colored by a grouping variable. | Different experimental treatments or patient subtypes drive unique phenotypic responses. | Apply clustering algorithms (e.g., k-means); use ANOVA to test for group differences. |

| Outliers | One or a few points lie far outside the main distribution in multiple variable pairs. | Potential measurement error, unique biological responder, or a novel subpopulation. | Investigate source data for errors; consider replicating experiment; explore outlier analysis. |

| Non-Linear Relationship | Scatter plot shows a curved (e.g., parabolic, exponential) pattern. | Saturation effect, threshold response, or complex regulatory mechanism. | Apply non-linear regression models or consider variable transformations (e.g., log). |

In the analysis of complex biologging data, researchers often encounter the "curse of dimensionality" [34] [35]. Modern biological datasets, particularly from transcriptomic studies like RNA-seq, frequently measure tens of thousands of genes (variables) across a much smaller number of samples or individuals [35] [36]. This high-dimensional space presents significant challenges for visualization, analysis, and interpretation [34]. Principal Component Analysis (PCA) serves as a powerful technique to project these high-dimensional samples into a lower-dimensional space, preserving the essential structure and variability of the data for intuitive visualization and analysis [37] [36].

Theoretical Foundation of PCA

The Core Concept

PCA is a dimensionality reduction technique that identifies the principal directions of maximum variance in high-dimensional data [34]. It operates by transforming the original variables into a new set of uncorrelated variables called principal components (PCs), which are ordered such that the first few retain most of the variation present in the original dataset [37] [36]. Each principal component represents a linear combination of the original gene expression values, with earlier components capturing the highest level of variability [36].

Mathematical Underpinnings

The mathematical procedure for PCA involves several key steps [34]:

- Standardization: Centering the data to have zero mean and scaling to unit variance

- Covariance Matrix Computation: Calculating the covariance matrix to capture feature correlations

- Eigenvalue Decomposition: Finding the eigenvalues and eigenvectors of the covariance matrix

- Component Selection: Sorting eigenvectors by eigenvalues (largest to smallest) and selecting the top k components

The eigenvalues represent how much variance each direction captures, while the eigenvectors define the new directions (principal components) [34].

Applications in Biological Research

PCA has become indispensable in biological data analysis for several key applications [34] [36]:

- Sample Clustering: Intuitively identify sample clusters and subgroups in transcriptional data

- Batch Effect Detection: Visualize and identify potential batch effects in experimental data

- Noise Reduction: Remove components with minimal variance that likely represent noise

- Exploratory Data Analysis: Gain initial insights into data structure before formal statistical testing

- Data Compression: Reduce storage requirements while preserving essential information

Experimental Protocol: PCA for Transcriptomic Data

Research Reagent Solutions

Table 1: Essential Materials and Computational Tools for PCA Analysis

| Item | Function | Implementation Example |

|---|---|---|

| Gene Expression Matrix | Primary input data containing expression values for all genes across all samples | RNA-seq count matrix, microarray intensity data |

| Standardization Tool | Normalizes data to zero mean and unit variance to ensure equal feature contribution | StandardScaler from scikit-learn, scale() function in R |

| PCA Implementation | Computes principal components and transforms data | sklearn.decomposition.PCA in Python, prcomp() in R |

| Visualization Library | Creates 2D/3D scatter plots of principal components | matplotlib and seaborn in Python, ggplot2 in R |

| Computational Environment | Provides environment for statistical computing and analysis | Python with pandas, NumPy, SciPy; R with Bioconductor |

Step-by-Step Workflow

The following diagram illustrates the complete PCA workflow for biological data analysis:

Data Preparation and Preprocessing

Begin with a gene expression matrix where rows represent samples and columns represent genes [35]. The data should be filtered to remove lowly expressed genes and normalized for sequencing depth or other technical artifacts before PCA application.

Code Implementation:

PCA Computation and Component Selection

Apply PCA to the standardized data and determine the number of components to retain based on explained variance.

Code Implementation:

Visualization and Interpretation

Create visualizations to explore the reduced-dimensionality data and identify patterns, clusters, or outliers.

Code Implementation:

Results Interpretation Guidelines

Table 2: Key Outputs and Their Biological Interpretation in PCA Analysis

| PCA Output | Description | Biological Significance |

|---|---|---|

| Scree Plot | Shows variance explained by each principal component | Determines how many components to retain; identifies the "elbow" point |

| PCA Plot (PC1 vs PC2) | Projection of samples onto first two principal components | Reveals sample clustering, outliers, and batch effects |

| Loadings | Contribution of original variables to each principal component | Identifies genes driving the observed sample separation |

| Explained Variance | Proportion of total variance captured by each component | Quantifies information retention in reduced dimensions |

Data Presentation Standards

Table Design Principles for Biological Data

Effective table design follows three key principles: aiding comparisons, reducing visual clutter, and increasing readability [38]. For biological data presentation:

- Aid comparisons by right-flush aligning numeric columns and using consistent precision

- Reduce visual clutter by avoiding heavy grid lines and removing unit repetition

- Increase readability with clear headers, highlighted statistical significance, and concise titles

Table 3: Exemplary Table Format for Presenting PCA Results from a Transcriptomic Study

| Principal Component | Explained Variance (%) | Cumulative Variance (%) | Key Contributing Genes |

|---|---|---|---|

| PC1 | 42.3 | 42.3 | TP53, EGFR, BRAC1 |

| PC2 | 18.7 | 61.0 | MYC, HER2, KRAS |

| PC3 | 9.4 | 70.4 | VEGFA, PTEN, MET |

| PC4 | 5.2 | 75.6 | PDGFRA, FLT1, KIT |

| PC5 | 3.8 | 79.4 | RET, ROS1, ALK |

Advanced Considerations and Limitations

Methodological Constraints

While PCA is widely applicable, researchers should be aware of its limitations [37] [34]:

- Linear Assumption: PCA only captures linear relationships in the data

- Variance Focus: Components reflect directions of maximum variance, which may not align with biologically relevant signals

- Interpretation Challenge: Principal components may lack intuitive biological meaning

- Sensitivity to Scaling: Results are sensitive to data standardization methods

- Outlier Influence: Extreme values can disproportionately affect component directions

Alternative and Complementary Approaches

For data with nonlinear structures, consider these alternative dimensionality reduction techniques [34]:

- t-SNE: Effective for visualizing high-dimensional data in 2D/3D with emphasis on local structure

- UMAP: Preserves both local and global structure, often superior to t-SNE for large datasets

- Linear Discriminant Analysis (LDA): Supervised method that maximizes separation between predefined classes [36]

PCA remains a foundational technique in the analysis of high-dimensional biological data, providing researchers with a powerful tool for visualization, quality control, and exploratory analysis. When properly implemented with attention to data preprocessing, component selection, and visualization best practices, PCA can reveal hidden structures in complex biologging data that might otherwise remain obscured in high-dimensional space. As biological datasets continue to grow in size and complexity, mastering dimensionality reduction techniques like PCA becomes increasingly essential for extracting meaningful biological insights.

The transition of bioimaging from an observational method to a quantitative discipline necessitates robust statistical visualization techniques for communicating complex data distributions. Within biologging research, where data often originates from animal-borne sensors and tracking devices, researchers must extract meaningful patterns from multivariate, high-dimensional datasets. Violin plots, boxplots, and kernel density estimation (KDE) provide powerful complementary approaches for visualizing data distributions beyond simple summary statistics, enabling scientists to identify patterns, outliers, and underlying biological phenomena that might otherwise remain hidden in tabular data. These visualization tools are particularly valuable for comparative analysis across different experimental conditions, animal groups, or environmental contexts commonly encountered in biologging studies.

The interconnected nature of quantitative bioimaging and biologging analysis requires careful consideration at every stage—from sample preparation and data acquisition through to analysis and interpretation. As highlighted in contemporary bioimaging guides, proper quantification requires planning and decision-making at each step, and one must always "begin experiments with the end in mind," considering how data will ultimately be visualized and communicated. This reverse workflow approach ensures that visualization choices effectively represent the underlying biological reality captured through biologging technologies.

Table 1: Core Components of Distribution Visualizations

| Component | Boxplot Representation | Violin Plot Representation |

|---|---|---|

| Center | Median (line inside box) | Median (marker within density) |

| Spread | Interquartile range (IQR) of the box | Full density shape width |

| Range | Whiskers extending to min/max values | Extents of density plot |

| Outliers | Individual points beyond whiskers | Often shown with superimposed boxplot |

| Distribution Shape | Not shown | Full probability density via KDE |

Comparative Analysis of Visualization Techniques

Boxplots, also known as box-and-whisker plots, provide a concise summary of univariate data based on a five-number statistical summary: minimum, first quartile (Q1), median, third quartile (Q3), and maximum. The box itself represents the interquartile range (IQR) containing the middle 50% of the data, with a line inside marking the median value. Whiskers extend from the box to the minimum and maximum values within 1.5 times the IQR from the quartiles, while points beyond these whiskers are considered outliers and plotted individually.

This visualization technique is particularly valuable for identifying outliers and comparing central tendencies and spread across multiple groups. In biologging research, boxplots enable rapid comparison of behavioral metrics, environmental measurements, or physiological parameters across different animal groups, treatment conditions, or temporal periods. Their strength lies in providing a standardized summary that facilitates quick interpretation while highlighting potential data quality issues through outlier detection. Boxplots are most effective when comparing a limited number of groups side-by-side and when the primary analytical questions concern median values and variability rather than detailed distribution shape.

Violin Plots: Revealing Distribution Morphology

Violin plots combine the statistical summary of a boxplot with the distribution shape revealed by a kernel density estimate (KDE). The width of the violin at any value represents the estimated probability density of the data at that value, providing a smooth visualization of the distribution's shape. This hybrid approach enables researchers to identify multimodal distributions, asymmetries, and other complex distribution features that would be invisible in a standard boxplot.

The KDE component is calculated using a non-parametric method to estimate the probability density function, with the smoothness of the resulting curve controlled by a bandwidth parameter. Smaller bandwidth values produce more detailed but potentially noisier plots, while larger values create smoother distributions that may obscure finer features. In practice, violin plots often include an embedded boxplot or marker lines showing the median and quartiles, combining the strengths of both approaches. For biologging data, which often exhibits complex temporal patterns and behavioral modalities, violin plots can reveal subpopulation structures and non-uniform response patterns that might have biological significance.

Technical Comparison and Selection Guidelines

Choosing between boxplots and violin plots depends on the analytical goals, data characteristics, and communication context. Boxplots offer superior clarity for focused comparisons of central tendency and spread, particularly when dealing with small sample sizes or when the primary interest lies in identifying outliers. Their standardized interpretation makes them accessible to diverse audiences, including those with limited statistical training.

Violin plots provide more comprehensive distributional information and are particularly valuable during exploratory data analysis or when communicating complex distribution shapes. They excel at revealing bimodality, skewness, and other features that may reflect biologically important phenomena in biologging data, such as distinct behavioral states or differential responses to environmental conditions. However, violin plots can become visually cluttered when comparing many groups and may require more explanation for non-technical audiences.

Table 2: Guidelines for Selecting Visualization Techniques

| Consideration | Boxplot Preference | Violin Plot Preference |

|---|---|---|

| Sample Size | Small to moderate samples | Larger datasets (n > 30) |

| Primary Focus | Summary statistics and outliers | Distribution shape and density |

| Audience | General scientific audience | Statistically knowledgeable viewers |

| Distribution Complexity | Simple, unimodal distributions | Multimodal or complex distributions |

| Comparison Type | Many groups side-by-side | Focused comparison of few groups |

Implementation Protocols for Biologging Data

Data Preparation and Preprocessing

Effective distribution visualization begins with rigorous data preprocessing to ensure that visualizations accurately represent biological phenomena rather than artifacts of data collection or processing. For biologging data, this typically involves several key steps: (1) Data cleaning to identify and address sensor errors, missing values, and physiologically impossible measurements; (2) Timestamp alignment to synchronize data streams from multiple sensors; and (3) Behavioral segmentation to isolate distinct behavioral states or environmental contexts that may exhibit different statistical distributions.

Following established practices in quantitative bioimaging, researchers should implement systematic controls throughout data collection and processing. This includes validation against manual observations, calibration using known references, and processing of positive and negative controls where feasible. Data should be structured in a tidy format with each row representing an observation and columns corresponding to variables, grouping factors, and experimental conditions. This structure facilitates the generation of comparative visualizations across biological replicates, experimental groups, or temporal phases.

Creating Effective Violin Plots

The construction of biologically informative violin plots requires careful attention to parameter selection and visual design. The kernel density estimation process requires specification of the bandwidth parameter, which controls the smoothness of the resulting distribution. For biologging data, we recommend using Scott's normal reference rule or Silverman's rule of thumb as starting points, with adjustment based on biological knowledge of the expected scale of variation. As noted in bioimaging best practices, "there is no single correct answer" for such parameter choices, as optimal settings depend on the specific goals and characteristics of each experiment.

Visual design choices significantly impact the interpretability of violin plots. Key considerations include: (1) Using split violins to compare distributions across groups within the same plot; (2) Employing semantically meaningful color schemes that highlight biological comparisons while maintaining sufficient contrast for interpretation; (3) Overlaying summary statistics as boxplots or marker points to facilitate precise reading of medians and quartiles; and (4) Providing appropriate axis labeling and scale bars consistent with the biological context. These practices align with the broader principle in quantitative bioimaging that "decisions at one stage affect what is possible at others," emphasizing the interconnectedness of data collection, analysis, and visualization.

Creating Informative Boxplots

While boxplots are conceptually simpler than violin plots, their effective implementation requires attention to statistical细节 and visual design. The conventional 1.5×IQR rule for outlier identification should be applied consistently across comparisons, but researchers should also visually inspect identified outliers for potential biological significance rather than automatically excluding them. For biologging data with known seasonal, diurnal, or behavioral patterning, consider creating separate boxplots for distinct contexts rather than aggregating across biologically meaningful boundaries.

Visual customization of boxplots can enhance their communicative value: (1) Use variable width to represent sample size differences across groups; (2) Employ color coding to highlight statistically significant or biologically important comparisons; (3) Add data stripplots or jittered points to show underlying data distribution for small to moderate sample sizes; and (4) Include annotations that highlight effect sizes or statistical comparisons directly on the plot. These practices support the goal of "designing rigorous, reproducible experiments with proper controls and optimized workflows" emphasized in contemporary bioimaging literature.

Application to Biologging Research Questions

Case Study: Animal Movement Analysis

Biologging data frequently includes movement metrics such as speed, acceleration, turning angles, and path straightness, which often exhibit complex distributions reflecting behavioral states and environmental interactions. For example, the distribution of movement speeds may reveal bimodality corresponding to foraging versus traveling behaviors, while turning angle distributions can indicate directional persistence versus area-restricted search. Violin plots are particularly valuable for visualizing these complex distributions alongside categorical variables such as time of day, habitat type, or reproductive status.

In practice, researchers can implement a hierarchical visualization approach that combines distribution plots with temporal context. For instance, a primary visualization might show violin plots of movement speed distributions across habitat types, with embedded boxplots highlighting median differences. Supplementary panels could show time-series of individual movements, allowing researchers to connect distributional patterns with temporal sequences. This multi-perspective approach aligns with the bioimaging principle of using "pilot experiments" to "test all aspects of a workflow," ensuring that visualization strategies capture the full complexity of biological phenomena.

Case Study: Environmental Physiology

Biologging devices increasingly capture physiological parameters such as heart rate, body temperature, and metabolic rate alongside environmental conditions and movement data. These continuous physiological measurements often show complex distributional responses to environmental gradients, behavioral states, and individual characteristics. Visualization strategies must accommodate these multi-factorial influences while maintaining clarity.

For physiological data, we recommend conditional distribution plots that show how the distribution of a physiological parameter changes across environmental conditions or behavioral states. Violin plots can effectively visualize how the entire distribution of body temperature shifts across ambient temperature ranges, revealing not just central tendency but also changes in variance and shape. Similarly, boxplots can efficiently summarize differences in physiological metrics across categorical groups such as age classes, reproductive status, or experimental treatments, facilitating statistical comparison while controlling for other factors.

Figure 1: Biologging Data Visualization Workflow

Technical Specifications and Reporting Standards

Quantitative Bioimaging Principles for Biologging

The principles of rigorous quantitative bioimaging provide a valuable framework for distribution visualization in biologging research. Specifically, researchers should adopt a checklist approach to ensure comprehensive reporting and appropriate visualization choices. Before creating distribution visualizations, consider: (1) Whether the chosen metric appropriately captures the biological phenomenon of interest; (2) Whether samples and conditions include appropriate positive and negative controls; (3) Whether acquisition settings were appropriate and consistent across comparisons; and (4) Whether measurements were made equivalently for controls and experimental samples.

Furthermore, consistent with standards in the bioimaging community, all distribution visualizations should: (1) Display individual data points wherever possible to communicate sample size and distribution shape; (2) Use appropriate summary statistics that match the distribution characteristics (e.g., median and IQR for skewed distributions); (3) Include scale bars that provide biological context; and (4) Disclose any data transformations or adjustments in figure legends. These practices ensure that visualizations accurately represent the underlying data and facilitate appropriate interpretation.

Research Reagent Solutions for Biologging Visualization

Table 3: Essential Analytical Tools for Distribution Visualization

| Tool Category | Specific Implementation | Application in Biologging Research |

|---|---|---|

| Programming Environments | R with ggplot2, Python with matplotlib/seaborn | Flexible creation of customized distribution plots with statistical annotations |

| Statistical Packages | scipy.stats (Python), stats (R) | Calculation of kernel density estimates, summary statistics, and comparative tests |

| Data Standards | Biologging Data Standardization Framework [39] | Interoperable data structures enabling reproducible visualization across studies |

| Visualization Libraries | plotly (interactive), vega-lite (declarative) | Creation of interactive distribution plots for exploratory data analysis |

| Color Accessibility Tools | WCAG contrast checkers [40] | Ensuring visualizations are interpretable by all audiences, including those with color vision deficiencies |

Advanced Applications and Future Directions

Multivariate Distribution Visualization

While violin plots and boxplots traditionally display univariate distributions, biologging research often requires visualization of complex multivariate relationships. Recent methodological advances enable extended applications, including: (1) Conditional violin plots that show how the distribution of one variable changes across levels of other variables; (2) Clustered distribution plots that incorporate dimension reduction techniques to visualize distributions in latent space; and (3) Spatial distribution maps that geolocate distributional information to reveal spatial patterning.

For example, researchers might create a matrix of violin plots showing the distributions of multiple physiological variables across different behavioral states, or use animated violin plots to show how movement distributions change over diurnal cycles. These advanced applications require careful design to maintain interpretability while representing additional data dimensions. As in all quantitative bioimaging, researchers should ensure that "qualitative figures comply with best practices on colors used, annotations, and other adjustments" to prevent misleading representations.

Integrated Workflow for Distribution Analysis