From Data Deluge to Ecological Insight: Mathematical Foundations for High-Frequency Ecological Data Analysis

The explosion of high-frequency data from sensors, acoustic recorders, and biologging devices is transforming ecological monitoring and biomedical research.

From Data Deluge to Ecological Insight: Mathematical Foundations for High-Frequency Ecological Data Analysis

Abstract

The explosion of high-frequency data from sensors, acoustic recorders, and biologging devices is transforming ecological monitoring and biomedical research. This article provides a comprehensive guide to the mathematical and statistical foundations required to analyze these complex temporal datasets. We explore core concepts from time series analysis and state-space modeling to advanced machine learning and optimal transport theory. A strong emphasis is placed on practical application, comparing model performance, troubleshooting common pitfalls like imperfect detection and data integration, and validating findings. Targeted at researchers and scientists, this review synthesizes current methodologies to empower robust analysis, enhance predictive accuracy, and inform critical decisions in ecology, conservation, and drug development.

The New Landscape of Ecological Data: Why High-Frequency Sampling Demands Sophisticated Math

Frequently Asked Questions

Q1: What defines a 'high-frequency' system in ecological monitoring versus engineering? In ecological monitoring, a system is considered 'high-frequency' when data collection occurs at a rate sufficient to capture critical behavioral or physiological events, such as animal movement bursts or rapid environmental changes. This is often relative to the organism's life history and the phenomenon studied. In engineering, high-frequency is defined by absolute metrics; for instance, the hydraulic system research classifies a system as high-frequency when valve movement times are as brief as 11.1 milliseconds to track engine valve lifts [1].

Q2: My high-frequency sensor data is noisy, making analysis difficult. What are the primary strategies to manage this? Noise in high-frequency data is a common challenge. The main strategies are:

- Signal Processing: Apply filters to smooth data, but ensure they do not distort the biological signal of interest.

- Model-Based Denoising: Use mathematical models, such as state-space models, to separate the underlying process (e.g., true movement) from observation error. A core principle is to select a Homomorphic model, which simplifies the system by focusing only on its essential characteristics for the research question, rather than attempting a perfect, complex replica [2].

- Robust Controller Design: In experimental systems involving actuators, design controllers that are less sensitive to noisy signals. For example, avoid control methods that require high-order differences of displacement signals, as these amplify noise [1].

Q3: My mathematical model is complex but isn't helping with management decisions. Why? A common reason for this disconnect is that the model does not address the manager's specific, real-world question [3]. To be useful for decision-making, a model should:

- Be developed with close coordination between decision-makers and modellers to ensure a common understanding [3].

- Have a clearly stated objective from the outset, defining key variables and outputs [3].

- Include only features essential to the objective, avoiding unnecessary complexity [3].

Q4: How can I compensate for time delays in my sensor-actuator systems? Time delays, like valve phase delay in hydraulic systems, can be compensated without relying on high-order models that amplify noise. One effective strategy is desired trajectory transformation, where the known reference signal (e.g., a desired engine valve lift) is adjusted in advance to account for the known system delay [1].

Troubleshooting Guides

Issue: Model Predictions Do Not Match Observed Ecological Dynamics

This occurs when a model's internal logic fails to capture the real system's behavior.

| Step | Action & Rationale |

|---|---|

| 1 | Verify Model Type and Goal. Determine if a strategic model (simple, for revealing generalities) or a tactical model (complex, for predicting specific system dynamics) is appropriate for your question [3]. |

| 2 | Check Time Dependencies. Confirm your model correctly implements time-dependent (dynamic) or stationary (static) assumptions based on the ecological process being studied [2]. |

| 3 | Validate with Independent Data. Test your model's predictions against a dataset not used for parameterization (model fitting). Large discrepancies indicate poor predictive power. |

| 4 | Re-evaluate Model Complexity. If the model is overly complex (isomorphic), consider simplifying to a homomorphic model that retains only the system's essential features [2]. |

Issue: Poor Performance in High-Frequency Actuator Control

This guide addresses performance issues in systems like hydraulic actuators used to control experimental environments.

| Step | Action & Rationale |

|---|---|

| 1 | Diagnose Delay Type. Decouple the system delay into phase delay (time shift) and amplitude delay (reduction in response magnitude) [1]. |

| 2 | Compensate for Phase Delay. Implement a desired trajectory transformation. Shift the command signal temporally based on measured system lag [1]. |

| 3 | Compensate for Amplitude Delay. Introduce a feedback loop based on the integral of the flow error. This strategy provides faster dynamic response than using instantaneous error alone [1]. |

| 4 | Account for Nonlinearities. Synthesize controller parameters to handle inherent system issues like valve dead-zone and other uncertainties [1]. |

Experimental Protocols

Protocol 1: Validating a Dynamic Mathematical Model for Animal Movement

Objective: To calibrate and test a time-dependent mathematical model against high-frequency animal tracking data.

Model Formulation:

- Define the model's state variables (e.g., animal position, velocity, energy state).

- Formulate the mathematical equations (e.g., differential equations) governing the transitions between states.

- Decide whether the model will be deterministic (exact outcomes) or stochastic (probabilistic outcomes) to account for random environmental effects [2].

Parameterization:

- Use a portion of the high-frequency movement data (the training set) to estimate key model parameters (e.g., movement speed, turning angles).

- Employ statistical fitting techniques like maximum likelihood or Bayesian inference.

Model Validation:

- Simulate the model using the calibrated parameters.

- Compare the model's output against the reserved portion of the empirical data (the testing set) using pre-defined metrics (e.g., Mean Squared Error, Cohen's d).

Analysis:

- Use the validated model as a "virtual laboratory" to run experiments and test hypotheses about animal behavior under different environmental scenarios [3].

Protocol 2: Implementing a Backstepping Controller with Valve Dynamics Compensation

Objective: To achieve high-precision position control in a hydraulic actuator, compensating for proportional valve dynamics.

System Identification:

Controller Design:

- Develop a backstepping controller to handle system nonlinearities.

- Integrate Compensation:

Implementation & Testing:

- Implement the controller on the experimental system.

- Run comparative experiments with and without the compensation strategies to quantify performance improvement in both steady-state and transient conditions [1].

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in High-Frequency Research |

|---|---|

| Proportional Valve | Controls the direction and rate of oil flow in hydraulic systems, enabling precise actuator movement for simulating environmental changes or mechanical stimuli [1]. |

| High-Frequency Position Sensor | Provides real-time, time-stamped data on actuator piston or animal tag position, serving as the primary data stream for model validation and control feedback [1]. |

| Hydraulic Actuator | Converts controlled hydraulic pressure into precise mechanical motion, used to drive engine valves or other experimental apparatus [1]. |

| State-Space Model | A mathematical framework that represents a system as a set of input, output, and state variables related by first-order differential equations. Ideal for describing and predicting the dynamics of high-frequency systems [2]. |

| Backstepping Controller | An advanced nonlinear control method that systematically designs control laws for complex systems by breaking them down into smaller subsystems, handling nonlinearities like valve dead-zones [1]. |

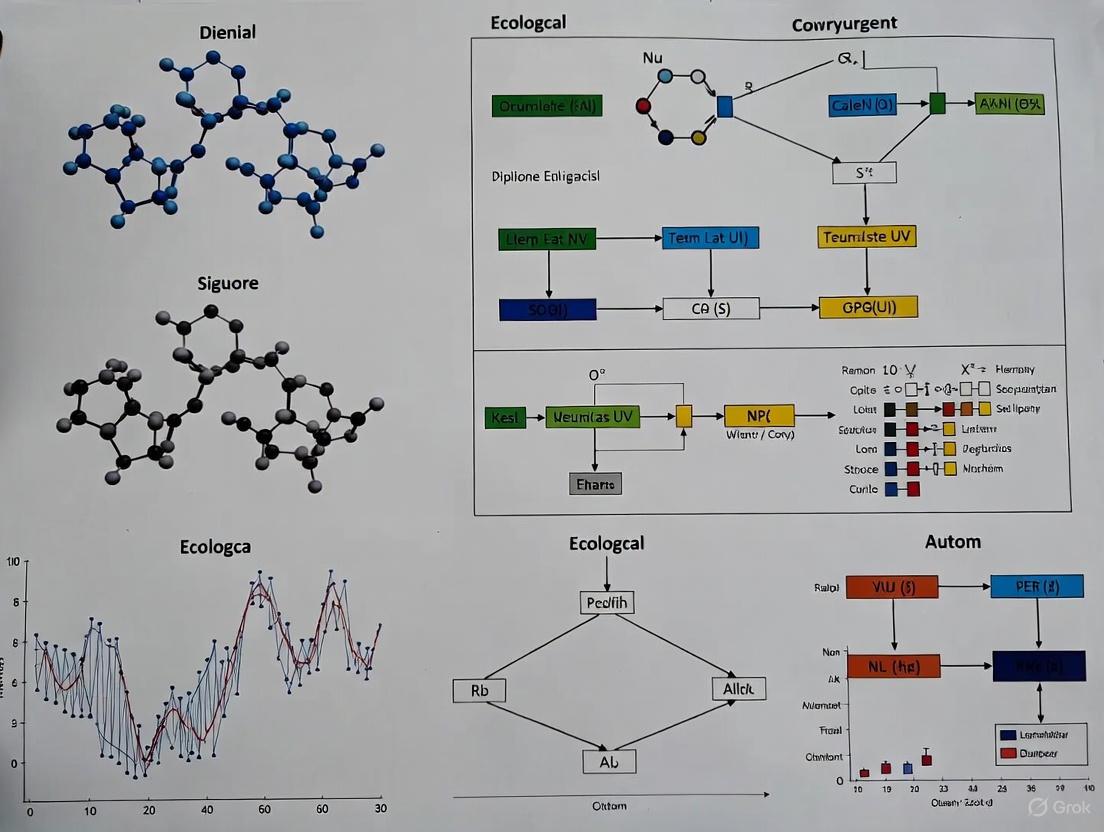

Workflow and Signaling Diagrams

Diagram 1: Ecological Model Development Workflow

Diagram Title: Ecological Model Development Workflow

Diagram 2: Actuator Control with Delay Compensation

Diagram Title: Actuator Control with Delay Compensation

Frequently Asked Questions (FAQs)

FAQ 1: What is the primary advantage of using a state-space model (SSM) for ecological time-series analysis? State-space models are powerful because they explicitly account for two distinct sources of variability often present in ecological data: the true biological process (e.g., actual population dynamics or animal movement) and the observation error inherent in the measurement method. This allows researchers to separate the underlying ecological signal from the noise introduced by data collection [4].

FAQ 2: My hierarchical model is producing biased parameter estimates. What could be wrong? A common issue, even with simple models, is parameter estimability. This occurs when the available data is insufficient to uniquely determine the parameter values. In state-space models, this problem is particularly acute when the measurement error is large compared to the process stochasticity—precisely the conditions where SSMs are most needed. This can lead to biased estimates and inaccurate ecological conclusions [4].

FAQ 3: When analyzing count data (e.g., eggs laid, individuals sighted), why should I consider a hierarchical Bayesian approach over traditional ANOVA? Traditional methods like ANOVA on proportional data often violate key assumptions (e.g., normality) and do not directly estimate the parameters of biological interest, such as individual preference strengths. A hierarchical Bayesian approach models the count data directly with appropriate distributions (e.g., Multinomial), simultaneously estimates parameters at both the individual and population levels, and more robustly accounts for uncertainty and variation in total counts among replicates [5].

FAQ 4: What is the "ecological fallacy" and how can hierarchical models help? The ecological fallacy is a bias that can occur when aggregated data (e.g., at the group or cluster level) is used to make inferences about individual-level relationships. Analyzing only aggregated data can introduce this well-known bias. Individual-level data analyzed within a formal causal framework are essential to correctly assess causal relationships that affect the individual [6].

Troubleshooting Guides

Problem: Parameter Estimation Issues in State-Space Models

Symptoms:

- Parameter estimates have very large standard errors or confidence intervals.

- Estimates are highly sensitive to the model's initial values.

- The model fails to converge.

Diagnosis and Solutions:

- Check the Ratio of Variances: The problem often arises when the measurement error variance is large relative to the process variance [4].

- Conduct a Simulation Study: Simulate data from your model with known parameters. If you cannot recover the parameters from the simulated data, the model is likely non-identifiable or has severe estimability issues [4].

- Profile the Likelihood: Examine the likelihood profile for the parameters. A flat likelihood profile indicates that the data provides little information about the parameter's value [4].

- Incorporate Prior Information: If using a Bayesian framework, consider using informative priors for problematic parameters. These priors should be based on previous studies or expert knowledge to constrain possible values [5] [4].

Problem: Choosing an Appropriate Time Series Algorithm

Symptoms:

- Forecasts fail to capture clear seasonal patterns.

- Projections are overly influenced by short-term fluctuations and appear too "noisy".

- The model performs poorly when market conditions or system dynamics change.

Diagnosis and Solutions: Select an algorithm based on the characteristics of your data and the goal of your analysis. The table below summarizes common choices.

Table 1: Guide to Selecting Time Series Algorithms

| Algorithm Type | Key Characteristics | Best For | Ecological Example |

|---|---|---|---|

| Automated Smoothing (e.g., Linear Regression, Growth Rates) | Generates a smooth projection curve; does not inherently account for seasonality [7]. | Identifying long-term, overall trends when seasonal cycles are not the primary focus. | Projecting the overall decline of a species population over decades. |

| Automated Non-Smoothing (e.g., ARIMA, Holt-Winters) | Captures and replicates historical peaks, troughs, and seasonal/cyclical patterns [7]. | Forecasting when precise seasonal patterns (e.g., annual breeding cycles) are a key feature of the data. | Predicting seasonal peaks in pollen distribution or insect emergence [8]. |

| Manual / User-defined | Forecaster overlays market knowledge and expertise onto the historical data [7]. | Highly volatile markets, new products with no history, or when accounting for specific known future events (e.g., a new policy). | Modeling the impact of a sudden conservation law or an invasive species arrival on population dynamics. |

Problem: Modeling Complex Hierarchical and Spatially-Explicit Systems

Symptoms:

- The model fails to capture emergent system properties.

- Results change drastically when the scale or spatial resolution of the data is altered (known as the Modifiable Areal Unit Problem, MAUP) [6] [9].

- The model is computationally intractable.

Diagnosis and Solutions:

- Adopt a Spatially Explicit Hierarchical Framework: Implement approaches like the Hierarchical Patch Dynamics Paradigm (HPDP). This models the system as a hierarchy of interacting ecological patches at different spatial and temporal scales, which helps manage complexity and account for spatial heterogeneity [9].

- Use a Structured Causal Framework: Before simulating or analyzing data, encode your assumptions about the multilevel data-generating mechanism using a hierarchical causal diagram (e.g., a Directed Acyclic Graph, DAG). This helps identify confounding variables and appropriate analytical strategies [6].

- Leverage Specialized Modeling Platforms: Utilize software platforms designed for hierarchical patch dynamics modeling (e.g., HPD-MP) to manage the technical complexity of programming, data handling, and model linkage [9].

Experimental Protocols & Workflows

Protocol 1: Building a State-Space Model for Population Dynamics

Objective: To model the true, unobserved population size over time from a series of estimates containing measurement error.

Methodology:

- Define the State Process: This equation describes the true biological process. For a simple population model, this could be:

x(t) = ρ * x(t-1) + η(t), whereη(t) ~ N(0, σ_η²)Here,x(t)is the true population size at timet,ρis the intrinsic growth rate, andσ_η²is the process variance [4]. - Define the Observation Process: This equation links the true state to the observations.

y(t) = x(t) + ε(t), whereε(t) ~ N(0, σ_ε²)Here,y(t)is the observed population estimate, andσ_ε²is the measurement error variance [4]. - Parameter Estimation: Estimate the parameters (

ρ,σ_η²,σ_ε²) and the unobserved states (x(1)...x(t)) using methods such as:- Kalman Filter: A recursive algorithm for state and parameter estimation.

- Markov Chain Monte Carlo (MCMC): Used in a Bayesian framework to sample from the joint posterior distribution of parameters and states [5] [4].

- Template Model Builder (TMB): An R package that uses the Laplace approximation for efficient parameter estimation [4].

Protocol 2: Implementing a Hierarchical Bayesian Model for Count Data

Objective: To estimate individual and population-level preferences from choice experiment count data (e.g., eggs laid on different host plants).

Methodology:

- Model the Individual-Level Data: Assume the count data for each individual

ifollows a Multinomial distribution:x_i ~ Multinomial(n_i, p_i)wherex_iis the vector of counts for each choice,n_iis the total number of choices for individuali, andp_iis the vector of probabilities that individualichooses each option [5]. - Model the Population-Level Structure: Assume that the individual-level probability vectors are drawn from a common population-level Dirichlet distribution:

p_i ~ Dirichlet(α)The Dirichlet parameterαcan be decomposed into a mean vectorq(the population-level preference) and a scalarwthat describes the variance between individuals [5]. - Specify Priors and Estimate: Assign uninformative or weakly informative priors to

qandw. Use MCMC sampling to obtain the posterior distributions for all individualp_iand the population-level parametersqandw[5].

Research Reagent Solutions

Table 2: Essential Analytical Tools for Mathematical Ecology

| Tool / Reagent | Function | Application Example |

|---|---|---|

| Directed Acyclic Graph (DAG) | A graphical causal model that encodes assumptions about the data-generating mechanism, helping to identify confounders and sources of bias [6]. | Used to structure a hierarchical causal diagram before data simulation to avoid ecological fallacy [6]. |

| R package 'TMB' | A tool for parameter estimation in nonlinear hierarchical models using the Laplace approximation [4]. | Fitting a state-space model to animal movement data to estimate process and measurement variances [4]. |

| JAGS / 'rjags' | A program for analyzing Bayesian hierarchical models using Markov Chain Monte Carlo (MCMC) sampling [4]. | Implementing a hierarchical Bayesian model for ecological count data [5]. |

| Hierarchical Patch Dynamics Modeling Platform (HPD-MP) | A software platform designed to facilitate the development of spatially explicit, multi-scale ecological models [9]. | Modeling the complex interactions within an urban landscape across different spatial scales [9]. |

| Kalman Filter | A recursive algorithm for estimating the state of a dynamic system from a series of incomplete and noisy measurements [4]. | Estimating the true, unobserved path of a moving animal from a set of locational estimates with error [4]. |

Workflow and Model Diagrams

Diagram 1: State-space model structure showing latent states and observed data.

Diagram 2: Hierarchical Bayesian model for count data analysis.

Welcome to the Technical Support Center

This resource provides troubleshooting guides and FAQs for researchers addressing the critical challenge of imperfect detection in high-frequency ecological data. Here, you will find solutions to separate true ecological processes from observational noise, ensuring the reliability of your findings for conservation and drug development applications.

Frequently Asked Questions

Q1: What is imperfect detection, and why is it a critical problem in my research? Imperfect detection means the true occupancy state of surveyed units will not always be observed, creating ambiguity about true changes in occupancy state [10]. In practical terms, you may fail to detect a species that is present (a false negative), or incorrectly record a species as present when it is truly absent (a false positive) [10]. Even a low false-positive rate (e.g., <5%) can induce substantial bias in occupancy estimates [10]. If unaccounted for, this observational noise can lead to flawed inferences about species distribution, population trends, and the impacts of environmental change or therapeutic interventions.

Q2: My high-frequency sensor data suggests a species has vanished. How can I tell if it's truly absent or just undetected? A single non-detection is ambiguous. The key is to conduct repeated surveys over a short time period at a given site [10]. The pattern of detections and non-detections across these surveys allows you to model and account for detection probability. If a species is detected at least once, you know it is present. If it is never detected, you can use a statistical model (like an occupancy model) to estimate the probability that the non-detection is a true absence versus a series of false negatives [10] [11].

Q3: My field team is reporting species misidentification. How does this affect my models, and how can I correct for it? Species misidentification causes false positive detections, which lead to a systematic overestimation of occupancy probability [10]. In the context of high-frequency data, this can create the illusion of a stable population where there is none. To address this:

- Pre-Collection: Implement rigorous observer training programs to minimize errors at the source [10].

- Post-Collection: Use statistical models that incorporate a probability of misclassification [10]. These models can often leverage auxiliary data, such as DNA samples from animal scats, to estimate and correct for the misidentification rate [10].

Q4: What are the best practices for ensuring data quality in high-frequency ecological monitoring? Implement a system of High-Frequency Checks (HFCs) on your incoming data stream [12]. These are systematic checks performed at regular intervals (e.g., daily or weekly) during data collection to identify and correct issues early. As shown in the table below, these checks evaluate different aspects of the data collection process [12].

Table: Essential High-Frequency Checks for Ecological Data Quality

| Check Type | Specific Checks | Purpose |

|---|---|---|

| Daily Logic Checks | Duplicate observations, missing critical variables, outliers in numeric variables, survey progress | Ensure the basic integrity and completeness of each day's data [12]. |

| Enumerator Performance | Percentage of "Don't know" answers, average interview duration, productivity, statistics for numeric variables | Monitor and maintain consistent performance from data collection personnel or automated sensors [12]. |

| Survey Dashboard | Survey consent rate, percentage of missing values, variables with all missing values | Provide a high-level overview of the entire survey's health and progress [12]. |

Q5: How do I design a study that proactively accounts for imperfect detection from the start? Incorporate these elements into your experimental design:

- Replication: Plan for repeated surveys at your sampling units.

- Validation: Include methods for external validation, such as collecting physical samples (e.g., DNA, photos) to confirm species identity and reduce false positives [10].

- Covariates: Record environmental (e.g., temperature, time of day) and methodological covariates that might influence detection probability (e.g., observer ID, sensor type).

- Pilot Studies: Conduct a small pilot study to get initial estimates of detection probability, which can be used to optimize the number of required survey replicates.

Troubleshooting Guides

Issue: Suspected False Negatives are Obscuring True Absence

Symptoms: A species is rarely detected despite known presence from other sources. Models indicate a low or highly variable detection probability.

Resolution Steps:

- Diagnose: Use a single-season occupancy model with your detection history data. A key output is the estimated probability of detection (

p). Ifpis low (e.g., <0.5), your study is suffering from significant false negatives. - Act: Increase the number of survey replicates. The number of visits needed can be estimated from pilot data or using simulation tools. Improve detection methods (e.g., more sensitive equipment, surveys at optimal times of day).

- Model: In your final analysis, use the occupancy model to estimate the true probability of occupancy (

ψ), which is corrected for the imperfect detectionp[10].

Issue: Suspected False Positives are Inflating Occupancy Estimates

Symptoms: A species is reported in unlikely habitats or by only a single observer, creating unexplained "spikes" in presence data.

Resolution Steps:

- Diagnose: Implement a multi-state occupancy model or a false-positive occupancy model that includes a parameter for the probability of a false positive (

p10orfp) [10]. - Act: Review and validate all records of the species in question. Cross-reference with other data sources (e.g., audio recordings, photos, DNA samples) to confirm identities [10].

- Model: Fit the false-positive model to your data. This model will provide a corrected estimate of occupancy that is not biased upwards by misidentification.

Experimental Protocols for High-Frequency Data Collection

The following protocol, inspired by a study on artificial light at night (ALAN), provides a template for designing high-frequency ecological studies that account for imperfect detection [13].

Objective: To monitor the valve behavior of two oyster species (Crassostrea gigas and Ostrea edulis) in response to an environmental variable (ALAN) over one year.

1. Site Selection and Experimental Design:

- Location: A coastal channel in Arcachon Bay, France [13].

- Design: Two experimental oyster tables were established:

- Control condition: Exposed to natural light cycles.

- Treatment condition (ALAN): Exposed to skyglow intensity using underwater LEDs [13].

- Replication: The two tables were placed 18 meters apart to ensure identical environmental conditions (e.g., water chemistry, temperature) while preventing light pollution of the control site [13].

2. Data Collection and Sensor Deployment:

- Biological Response: Valve activity of 16 oysters per species, per condition, was recorded using High-Frequency Non-Invasive (HFNI) valvometer biosensors. Data was acquired at 10 Hz (a measurement every 1.6 seconds) [13].

- Environmental Covariates: The platform was equipped with sensors to continuously measure:

- Light irradiance

- Temperature

- Salinity

- Turbidity

- Conductivity

- Water level [13]

- Temporal Scope: Data was collected continuously from December 2023 to November 2024 [13].

3. Data Quality Assurance (HFCs for Sensor Data):

- Internal Consistency Checks: Construct book snapshots from raw data streams and compare derived aggregates (e.g., daily activity cycles) to known biological patterns [14].

- Flag, Don't Discard: Inconsistent data states (e.g., sensor malfunction) are marked with flags (

F_BAD_TS_RECV,F_MAYBE_BAD_BOOK) rather than being deleted, preserving data integrity for further review [14]. - Systematic Bug Fixes: Address anomalies by fixing parser bugs or normalization edge cases, followed by a complete regeneration of historical data to ensure version consistency [14].

The logical workflow for such an experiment is outlined below.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Components for an Imperfect Detection Study

| Item / Solution | Function / Explanation |

|---|---|

| Occupancy Models | A class of statistical models that jointly estimates the true probability of a species being present (occupancy, ψ) and the probability of detecting it given it is present (detection, p) [10]. |

| Multi-State Occupancy Models | Extends basic occupancy models to situations where a site can be classified into more than two states (e.g., absent, present with low abundance, present with high abundance), while accounting for observation error in classifying the state [10]. |

| High-Frequency Non-Invasive (HFNI) Biosensors | Sensors that automatically and continuously record physiological or behavioral data from organisms without causing disturbance, crucial for capturing high-resolution temporal patterns [13]. |

| Valvometers | A specific type of HFNI biosensor that measures the opening and closing of bivalve shells, serving as a sensitive indicator of organism behavior and environmental stress [13]. |

| Environmental Sensor Array | A suite of sensors that measure covariates (e.g., temperature, light, salinity) which are essential for understanding the drivers of both ecological state and detection probability [13]. |

| DNA Barcoding | A molecular technique used to validate species identifications from field observations, providing a "truth standard" to quantify and correct for false positive errors in models [10]. |

ipacheck Stata Package |

A software package providing a comprehensive set of tools to implement High-Frequency Checks (HFCs) on survey data, streamlining the process of quality assurance during data collection [12]. |

Conceptual Framework for Imperfect Detection

To effectively troubleshoot, it is vital to understand the core conceptual framework. The fundamental issue is that what you observe is not the true ecological state but a filtered version of it. The following diagram illustrates how the true state is transformed into observed data through the dual filters of ecological process and observation noise.

The mathematical core of this framework treats the observed data as a compound distribution [11]. The observed abundance in a sample (M_1) is the sum of detections from the true population (M_0), where each individual has a probability of being detected [11]. This is formalized as:

- Abundance:

M_1 = Σ Z_i for i=1 to M_0(ifM_0 > 0), whereZ_iis an indicator of whether the i-th individual was detected [11]. - Presence/Absence:

Y_1 = I(M_1 > 0), which is1if the species is detected and0otherwise [11].

This formal structure unifies the treatment of various data types (counts, presence/absence, biomass) and allows for the development of statistical models that can "remove" the observation filter to reveal the underlying ecological truth [11].

Frequently Asked Questions & Troubleshooting Guides

Q1: My species interaction model inferred from microbiome time-series data is inaccurate. The predicted dynamics do not match new experimental observations. What could be wrong?

- A: This is a common challenge when inferring ecological dynamics. The issue often lies in the parameter estimation method.

- Potential Cause 1: Over-reliance on Gradient Matching. Traditional methods, like gradient matching used with generalized Lotka-Volterra (gLV) models, can be highly inaccurate if data is sparsely sampled or collected near equilibrium states, leading to poor gradient estimates [15].

- Solution: Consider using a computational framework like MBPert, which leverages numerical solutions of differential equations and machine learning optimization instead of gradient matching. This iteratively solves the ODEs and updates parameters to minimize the difference between predicted and observed states, leading to more robust captures of microbial dynamics [15].

- Potential Cause 2: Insufficient Perturbation Data for Training. The model may not have been trained on a wide enough range of perturbation conditions (e.g., only single-species perturbations) to accurately predict responses to novel, combinatorial perturbations [15].

- Solution: When designing experiments, include a variety of perturbation types. Simulation studies show that incorporating higher-order combinatorial perturbations (e.g., three-species perturbations) during training significantly improves the model's predictive accuracy for unseen perturbation scenarios [15].

Q2: How do I choose the right statistical model to relate animal movement data to environmental features for habitat conservation?

- A: The choice of model depends heavily on the temporal resolution of your data and the specific ecological question.

- For broad-scale habitat selection (e.g., identifying a species' home range or critical habitat), Resource Selection Functions (RSFs) are appropriate. They compare "used" locations to "available" locations within a defined area and are well-suited for data at a coarser temporal resolution [16].

- For fine-scale, movement-influenced habitat selection, a Step Selection Function (SSF) is more appropriate. SSFs use a matched case-control design to compare each observed movement step to a set of hypothetical random steps, thereby explicitly accounting for movement constraints and autocorrelation in high-frequency data [16].

- To understand how habitat relates to specific, latent behaviors, a Hidden Markov Model (HMM) is ideal. HMMs can identify discrete behavioral states (e.g., foraging, resting) from movement data and then link the probability of being in each state to environmental covariates [16]. Using the wrong model can lead to different ecological conclusions and identification of different "important" areas [16].

Q3: I need a single, robust indicator for marine ecosystem health that is practical for management. What are my options?

- A: A composite index that combines several network-based metrics is often more informative than a single metric.

- Solution: Consider implementing the Ecosystem Traits Index (ETI) [17]. It combines three complementary dimensions of ecosystem structure:

- Hub Index: Identifies critically important "hub species" based on their network connectivity (degree, degree-out, PageRank). The loss of these species disproportionately impacts ecosystem function [17].

- Gao's Resilience Score: Provides a measure of the ecosystem's overall structural resilience and capacity to withstand perturbations, based on network density and the relative weight of strong/weak connections [17].

- Green Band Index: Measures the pressure on ecosystem structure from human activities, such as fishing mortality [17].

- Troubleshooting: The ETI may not distinguish the effects of individual stressors like fishing vs. climate change. It should be used as a general health indicator, with its component indices tracked individually to help diagnose specific pressures [17].

- Solution: Consider implementing the Ecosystem Traits Index (ETI) [17]. It combines three complementary dimensions of ecosystem structure:

Q4: My ecological data comes from different sources (e.g., satellite tags, manual surveys, genetic sampling). How can I integrate them reliably?

- A: Data integration is a central challenge in statistical ecology. The key is using models that can separate the ecological process from the observation process.

- Recommended Framework: Hierarchical or State-Space Models. These models are designed to handle complex, layered data streams [18].

- In these models, one sub-model represents the underlying ecological process of interest (e.g., true animal abundance, location, or behavior).

- A separate sub-model represents the observation process, which accounts for biases, imperfect detection, and different error structures inherent in each data source [18].

- Best Practice: Prior to analysis, ensure you follow good Research Data Management (RDM) practices. Clean and standardize data from different sources, use a structured workflow for data preparation, and document all metadata meticulously. This improves efficiency, transparency, and reproducibility [19].

- Recommended Framework: Hierarchical or State-Space Models. These models are designed to handle complex, layered data streams [18].

Table 1: Comparison of Statistical Models for Animal Movement Data

| Model | Primary Use | Data Scale | Key Advantage | Key Limitation |

|---|---|---|---|---|

| Resource Selection Function (RSF) [16] | Broad-scale habitat selection & identifying critical areas. | Population/Home range scale (2nd order selection). | Ease of use and implementation; provides a landscape-level view of habitat probability. | Does not account for movement autocorrelation in fine-scale data. |

| Step Selection Function (SSF) [16] | Fine-scale, movement-driven habitat selection. | Within-home range scale (3rd/4th order selection). | Explicitly accounts for movement constraints and autocorrelation by modeling sequential steps. | Requires relatively high-frequency data compared to RSFs. |

| Hidden Markov Model (HMM) [16] | Linking environmental covariates to discrete behavioral states. | Behavioral scale. | Infers unobserved behavioral states, providing a mechanistic link between environment and behavior. | Increased model complexity; requires careful interpretation of hidden states. |

Table 2: Components of the Ecosystem Traits Index (ETI)

| Index Component | What It Measures | Interpretation for Ecosystem Health | Formula / Key Metrics |

|---|---|---|---|

| Hub Index [17] | Topological importance of species critical to food web structure and function. | Identifies species whose conservation is paramount for maintaining overall ecosystem integrity. | (Hu{b}{Index}=\text{min}({R}{degree},{R}{degree_out},{R}{pageRank})) |

| Gao's Resilience [17] | Structural resilience of the ecosystem network based on connection density and flow patterns. | A higher score indicates a greater capacity to absorb perturbations without collapsing. | Based on network density and the relative weight of strong interactions. |

| Green Band [17] | Anthropogenic pressure on the ecosystem structure (e.g., from harvesting mortality). | Quantifies the distortive pressure human activity places on the ecosystem. | Measures mortality from human activities applied to the ecosystem network. |

Detailed Experimental Protocols

Protocol 1: Inferring Species Interactions from Perturbation Time-Series Data using MBPert

Application: This protocol is used for inferring directed, signed, and weighted species interaction networks from time-series data, such as microbiome data, to predict community dynamics under perturbation [15].

Workflow Diagram: MBPert Computational Framework

Methodology:

- Input Data Preparation: Gather one of two data types:

- Model Initialization: Define the governing dynamical system model. For microbial ecosystems, this is typically a modified generalized Lotka-Volterra (gLV) formulation that includes terms for perturbation effects [15].

- Iterative Parameter Estimation:

- The framework uses a machine learning optimizer (e.g., in PyTorch) to estimate model parameters (growth rates, interaction strengths).

- In each iteration, the differential equations are numerically solved using the current parameter estimates to generate a predicted system state.

- The optimizer then calculates the loss by comparing the predicted state against the observed data.

- Parameters are updated to minimize this loss, iterating until convergence [15].

- Validation: Assess model performance on a held-out validation set of perturbation conditions not used during training. Performance is measured by the correlation between predicted and true steady states or dynamics [15].

Protocol 2: Modeling Species-Habitat Associations with Movement Data

Application: This protocol outlines the steps for using movement data to understand how animals select habitat, which is fundamental for designating critical habitat and conservation planning [16].

Workflow Diagram: Habitat Association Analysis

Methodology:

- Data Collection & Preparation: Collect animal tracking data (e.g., from GPS tags) and rasterize all relevant environmental covariates (e.g., vegetation, terrain, prey density) to the same spatial resolution [16].

- Model Selection: Choose the model based on your research question and data resolution (refer to Table 1). For example:

- Use an RSF to identify critical habitat across a landscape. This involves sampling "used" locations from the track and "available" locations from the animal's potential home range, then fitting a logistic regression [16].

- Use an SSF to understand habitat selection during movement. This involves generating random steps from each observed location and fitting a conditional logistic regression to the case (observed step) and controls (random steps) [16].

- Use an HMM to connect habitat to behavior. This involves fitting a model to the movement data to infer discrete behavioral states and then modeling the state transition probabilities or initial state probabilities as a function of environmental covariates [16].

- Model Fitting & Interpretation: Fit the selected model and interpret the selection coefficients. Map the resulting function (e.g., relative selection probability) back into geographic space to visualize important areas [16].

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Computational Tools & Data Sources for Ecological Analysis

| Item Name | Type | Function in Analysis |

|---|---|---|

| Generalized Lotka-Volterra (gLV) Equations [15] | Mathematical Model | A foundational ODE framework for modeling the dynamics of competing species, used as the core engine in interaction inference tools like MBPert. |

| R Statistical Environment [18] | Software Platform | A primary tool for statistical ecologists; used for implementing a wide range of models including hierarchical models, state-space models, and selection functions. |

amt R Package [16] |

Software Tool | A dedicated package for analyzing animal movement data; provides functions for processing tracking data and implementing RSFs and SSFs. |

momentuHMM R Package [16] |

Software Tool | A package designed for fitting complex Hidden Markov Models to animal movement data, allowing for the incorporation of various data streams and covariates. |

| Long-Term Ecological Research (LTER) Data [20] | Data Source | Publicly accessible, long-term data from representative ecosystems, essential for testing ecological theory and analyzing phenomena over long time scales. |

| Ecological Network Models [17] | Data Structure/Model | A representation of an ecosystem as a network of nodes (species) and edges (interactions), enabling the calculation of structural indices like the Hub Index and Gao's Resilience. |

A Practical Toolkit: Key Mathematical Models for High-Frequency Ecological Data

Troubleshooting Guide: Common ARIMA-GARCH Implementation Issues

Problem 1: Non-Stationary Input Data Causing Model Instability

- Symptoms: Poor model fit, unreasonable parameter estimates, and forecasts that diverge rapidly.

- Root Cause: ARIMA models require the input time series to be stationary, meaning its statistical properties (mean, variance) do not change over time [21] [22]. Environmental data often exhibit trends and seasonality, violating this assumption.

- Solution:

- Test for Stationarity: Perform an Augmented Dickey-Fuller (ADF) test. The null hypothesis (H0) is that the data is non-stationary. A p-value below a significance level (e.g., 0.05) allows you to reject H0 and conclude the data is stationary [22].

- Transform the Data: If the data is non-stationary, apply differencing (part of the "I" in ARIMA). For data with a trend, first-order differencing (subtracting the current value from the previous one) is often sufficient. The

pmdarimalibrary can automatically determine the optimal order of differencing [22].

Problem 2: Model Residuals Exhibit Volatility Clustering

- Symptoms: The residuals from a well-fitted ARIMA model are not white noise; their variance changes over time, often in clusters [22]. This is common in high-frequency environmental data.

- Root Cause: The ARIMA model has captured the conditional mean of the process but not the conditional variance. The residuals still contain exploitable patterns of heteroskedasticity.

- Solution:

- Test for ARCH Effects: Conduct a Lagrange Multiplier (LM) test for ARCH effects in the ARIMA residuals [22]. A significant p-value (e.g., < 0.05) indicates the presence of volatility clustering, justifying the use of a GARCH model.

- Apply GARCH Model: Fit a GARCH model (e.g., GARCH(1,1)) to the residuals of the ARIMA model to model the time-varying volatility [22].

Problem 3: Inaccurate Forecast Intervals

- Symptoms: Prediction intervals from the ARIMA model do not accurately contain the future observed values, especially in periods of high volatility.

- Root Cause: The ARIMA model assumes a constant variance (homoskedasticity). When this assumption is violated, the forecast intervals are inaccurate.

- Solution:

- Use Simulation: Generate prediction intervals by simulating multiple future paths (

B = 1000) of the combined ARIMA-GARCH model [23]. - Calculate Quantiles: For each forecast horizon, calculate the pointwise prediction intervals (e.g., 95% interval) using the 2.5th and 97.5th percentiles of the simulated future values [23].

- Use Simulation: Generate prediction intervals by simulating multiple future paths (

Problem 4: GARCH Model Parameter Estimation Difficulties

- Symptoms: Optimization algorithms fail to converge, or the estimated parameters are at the boundary of the parameter space (e.g.,

α=0, β=1), leading to unrealistic, infinite forecasts [24] [25]. - Root Cause: This can be caused by an incorrect assumption for the initial variance (e.g., setting

σ²₀=0is invalid) [24], model misspecification, or insufficient data. - Solution:

- Initialize Variance Correctly: Set the initial variance

σ²₀to the sample variance of the ARIMA residuals, not zero [24]. - Check Parameter Constraints: Ensure the GARCH parameters (

ω, α, β) are non-negative and thatα + β < 1for a stationary process. Thearchlibrary in Python handles these constraints during estimation [22].

- Initialize Variance Correctly: Set the initial variance

Frequently Asked Questions (FAQs)

How do I determine the correct orders (p,d,q) for my ARIMA model?

Use a combination of statistical tests and information criteria. The pmdarima.auto_arima function can automatically search for the best (p,d,q) orders by minimizing metrics like the Akaike Information Criterion (AIC) [22]. Ensure your input data is stationary before this step.

Why is a hybrid ARIMA-GARCH model particularly useful for high-frequency ecological data?

High-frequency ecological data (e.g., from valvometer biosensors [13]) often exhibit:

- Complex Patterns: Trends, diurnal/nocturnal cycles, and tidal influences captured by ARIMA.

- Volatility Clustering: Periods of calm and high variability (e.g., during storms or behavioral events) captured by GARCH. The hybrid model separately and effectively models these two components for more robust point forecasts and reliable prediction intervals [21] [26].

What are the main limitations of GARCH models in environmental forecasting?

- Sensitivity to Model Specification: Choosing the wrong GARCH order (p,q) can lead to poor forecasts [25].

- Assumption of Stationarity: The underlying series for the variance must be stationary [25].

- Difficulty with Extreme Events: GARCH models with normal innovations may underestimate the probability of extreme events, which have fat-tailed distributions [25].

- Computational Intensity: Estimation can be computationally demanding, especially with long, high-frequency time series [25].

Table 1: Key Statistical Tests for Model Identification and Diagnosis

| Test Name | Purpose | Interpretation of Key Result (p-value) | Application in Workflow |

|---|---|---|---|

| Augmented Dickey-Fuller (ADF) | Tests for stationarity in the time series [22]. | p < 0.05: Reject null hypothesis, data is stationary. | Pre-processing, before ARIMA modeling. |

| Ljung-Box Test | Tests for autocorrelation in model residuals (white noise test) [22]. | p < 0.05: Reject null hypothesis, residuals are not white noise. | Post-ARIMA fitting, to check for leftover patterns. |

| ARCH LM Test | Tests for autoregressive conditional heteroskedasticity (ARCH effects) [22]. | p < 0.05: Reject null hypothesis, ARCH effects present. | Post-ARIMA fitting, to justify GARCH modeling. |

Table 2: Essential Software Packages for Implementation

| Package/Library | Programming Language | Primary Function | Key Feature |

|---|---|---|---|

pmdarima [22] |

Python | Automatically finds optimal ARIMA orders. | Wraps statistical tests and model selection into a single function. |

statsmodels [22] |

Python | Fits ARIMA and other statistical models. | Provides detailed summary tables and diagnostics. |

arch [22] |

Python | Estimates GARCH and many variant models. | Handles complex distributions (e.g., Student's t) for innovations. |

rugarch [23] |

R | Fits a wide range of univariate GARCH models. | Allows for joint estimation of ARMA-GARCH models with fixed parameters. |

Experimental Protocol: Building an ARIMA-GARCH Model

Objective: To construct and validate a hybrid ARIMA-GARCH model for point and interval forecasting of a high-frequency environmental parameter (e.g., water temperature).

Workflow Overview:

Step-by-Step Procedure:

- Data Acquisition: Collect high-frequency time series data for the target environmental parameter. For example, use a high-accuracy sensor to record water temperature at 10 Hz frequency [13].

- Pre-processing and Stationarity:

- Clean data: Handle missing values and outliers.

- Test for stationarity: Perform the ADF test on the raw data.

- Make data stationary: If non-stationary, apply differencing. The

pmdarimalibrary'sauto_arimacan suggest the differencing orderd[22].

- ARIMA Modeling:

- Residual Diagnosis:

- Extract residuals: Obtain the residuals from the fitted ARIMA model.

- Test for white noise: Perform the Ljung-Box test. A non-significant p-value is desired, indicating no autocorrelation.

- Test for ARCH effects: Perform the ARCH LM test on the residuals. A significant p-value (e.g., < 0.05) indicates the need for a GARCH model [22].

- GARCH Modeling:

- Specify model: Specify a GARCH model (e.g., GARCH(1,1)) using the

arch_modelfunction from thearchlibrary. - Fit the model: Fit the specified GARCH model to the ARIMA residuals. The distribution of innovations can be set to Student's t to better capture fat tails [22].

- Specify model: Specify a GARCH model (e.g., GARCH(1,1)) using the

- Forecasting:

- Point forecast: Use the fitted ARIMA model to forecast the conditional mean.

- Interval forecast: Use simulation-based methods [23] with the combined ARIMA-GARCH model to generate prediction intervals that account for time-varying volatility.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for High-Frequency Environmental Time Series Analysis

| Item / Tool Name | Function / Purpose | Example in Ecological Research |

|---|---|---|

| HFNI Valvometer Biosensor [13] | Records valve activity of bivalves (e.g., oysters) at high frequency (e.g., 10 Hz) as a proxy for environmental and behavioral changes. | Used as a sentinel system to monitor the impact of Artificial Light at Night (ALAN) on coastal ecosystems [13]. |

| Multi-parameter Sonde (WiSens, MPE-PAR) [13] | Measures concurrent physical environmental parameters (Temperature, Salinity, Turbidity, Light Irradiance, Conductivity). | Provides the covariate time series data for modeling and understanding drivers of biological responses. |

pmdarima Python Library [22] |

Automates the process of selecting the optimal (p,d,q) parameters for an ARIMA model. | Speeds up model identification for long, high-frequency ecological datasets, ensuring a robust starting point. |

arch Python Library [22] |

Provides a comprehensive framework for estimating and diagnosing GARCH models and their variants. | Allows researchers to formally model and forecast the volatility inherent in ecological processes. |

State-Space Models and Hidden Markov Models (HMMs) for Inferring Hidden Behavioral States

Core Concepts FAQ

1. What is the fundamental difference between a Markov Model and a Hidden Markov Model (HMM)?

A Markov Model describes a system where each state is directly observable, and the probability of each state depends only on the previous state (the Markov Property). In contrast, a Hidden Markov Model (HMM) assumes the system possesses hidden (or latent) states that are not directly observable. We can only observe outputs or emissions that are probabilistically dependent on these hidden states [27] [28]. In ecological studies, you might observe an animal's movement patterns (observations) to infer its underlying behavioral state, such as foraging or resting (hidden states).

2. What are the key mathematical parameters needed to define an HMM?

An HMM is defined by three core components [27]:

- Transition Probabilities (A): The probability of moving from one hidden state to another. For states

iandj, this isa_ij = P(S_{t+1} = j | S_t = i). - Emission Probabilities (B): The probability of observing a particular output given a specific hidden state. For state

jand observationk, this isb_j(k) = P(O_t = k | S_t = j). - Initial State Distribution (π): The probability distribution over the hidden states at the first time step,

π_i = P(S_1 = i).

3. What are the primary types of inference problems solved using HMMs?

Researchers typically tackle three key problems with HMMs [29] [27]:

- Evaluation: Computing the probability of a particular observation sequence given the model parameters, typically solved using the Forward Algorithm.

- Decoding: Finding the most likely sequence of hidden states that generated a given sequence of observations, often solved using the Viterbi Algorithm.

- Learning: Determining the model parameters (

A,B,π) that best fit the observed data, usually achieved with the Baum-Welch Algorithm (an Expectation-Maximization algorithm).

Troubleshooting Guides

Problem 1: My HMM Fails to Converge or Produces Inaccurate State Estimates

Issue: The model fails to learn a meaningful pattern from the high-frequency ecological data (e.g., GPS tracks, accelerometer readings), resulting in poor inference of hidden behavioral states.

Potential Causes and Solutions:

Cause: Poorly Chosen Initial Parameters. The EM algorithm used in learning (like Baum-Welch) is sensitive to initial values and can converge to a local maximum instead of the global optimum.

- Solution: Run the learning algorithm multiple times with different random initializations for the transition and emission matrices. Select the model with the highest likelihood on the observation data [29].

Cause: Model Mismatch. The structure of your HMM (e.g., number of states, assumptions on emissions) does not reflect the underlying biological process.

- Solution: Re-evaluate the number of hidden states. Incorporate domain knowledge to define a biologically plausible state space. For continuous observational data, ensure you are using the appropriate emission distribution (e.g., Gaussian, mixture model) instead of forcing a discrete model [29].

Cause: Insufficient Data. The model requires a sufficient amount of sequential data to robustly estimate all parameters.

- Solution: Gather more observation sequences. If working with a single long sequence, consider windowing or bootstrapping techniques to generate more training samples.

Experimental Protocol for Model Validation:

- Synthetic Data Generation: Define a known HMM with a specific transition matrix

A_trueand emission matrixB_true. - Data Simulation: Generate a long sequence of observations from this true model.

- Model Training: Feed the simulated observations into your learning algorithm to obtain estimated parameters

A_estandB_est. - Parameter Recovery: Compare

A_estandB_estwithA_trueandB_true. Successful recovery indicates your implementation is correct. This is a critical first step before applying the model to real ecological data [27].

Problem 2: Numerical Instability and Underflow Errors During Calculation

Issue: When implementing algorithms like the Forward Algorithm, probabilities become so small that they cause numerical underflow, making computations unstable.

Symptoms: Probabilities or likelihoods calculated in the model become zero, NaN (Not a Number), or the forward probabilities do not sum to one as expected [30].

Solution:

Implement the Forward Algorithm using log-probabilities. Instead of multiplying probabilities, which yields ever smaller numbers, add log-probabilities. The core operation becomes log_sum_exp instead of a simple sum, which is more numerically stable [30].

Detailed Methodology (Log-Scale Forward Algorithm):

The forward variable is defined as α_t(j) = P(O_1, O_2, ..., O_t, S_t = j | Model).

- Initialization: For each state

j, computelog(α_1(j)) = log(π_j) + log(b_j(O_1)). - Induction: For each subsequent time step

tand statej, compute:log(α_t(j)) = log( b_j(O_t) ) + log_sum_exp( log(α_{t-1}(i)) + log(a_{ij}) )for all previous statesi. Here,log_sum_exp(x)is a function that calculateslog(Σ exp(x_i))in a numerically safe way. - Normalization (Optional but Recommended): At each time step, normalize the

log(α_t(j))values by subtracting thelog_sum_expof the entirelog(α_t)vector. This helps maintain stability over long sequences [30].

Problem 3: Implementing HMMs with Time-Varying Transition Probabilities

Issue: In many ecological systems, the probability of transitioning between behaviors is not constant but depends on external covariates (e.g., time of day, predator presence, resource availability).

Solution:

Use a HMM with time-varying transition probabilities. The static transition matrix A is replaced by a time-dependent matrix A(t), where the probabilities are functions of covariates [31] [30].

Implementation Workflow:

- Define Covariates: Identify and measure the relevant covariates

C_1(t), C_2(t), ...for your study system. - Link Covariates to Transitions: Model each transition probability as a function of these covariates. A common approach is using a linear predictor with a logistic link function. For instance, the probability of switching from state 1 to state 2 could be:

logit( a_{12}(t) ) = β_0 + β_1 * C_1(t) + β_2 * C_2(t)whereβare parameters to be estimated. - Integrate into HMM Framework: The learning and inference algorithms (e.g., Forward-Backward) remain conceptually the same, but must now account for a different transition matrix at every time step

t[30].

HMM Parameter Table: Weather-Dependent Behavior Example

The following table quantifies a classic HMM example where an animal's hidden behavioral state (Active vs. Resting) is influenced by unobserved weather, and only its activity is measured [27] [28].

| Parameter Type | Symbol | Value & Meaning |

|---|---|---|

| Hidden States (S) | S1, S2 |

Sunny, Rainy (the underlying weather influencing behavior) |

| Observations (O) | O1, O2 |

Active, Resting (the measured animal behavior) |

| Initial Probabilities (π) | π1 |

0.6 (Probability to start in a Sunny state) |

π2 |

0.4 (Probability to start in a Rainy state) | |

| Transition Probabilities (A) | a11 |

0.7 (P(Sunny → Sunny)) |

a12 |

0.3 (P(Sunny → Rainy)) | |

a21 |

0.4 (P(Rainy → Sunny)) | |

a22 |

0.6 (P(Rainy → Rainy)) | |

| Emission Probabilities (B) | b1(O1) |

0.8 (P(Active | Sunny)) |

b1(O2) |

0.2 (P(Resting | Sunny)) | |

b2(O1) |

0.4 (P(Active | Rainy)) | |

b2(O2) |

0.6 (P(Resting | Rainy)) |

The Scientist's Toolkit: Research Reagent Solutions

This table outlines essential computational "reagents" for constructing and analyzing HMMs in ecological research.

| Item Name | Function in HMM Analysis |

|---|---|

| Forward Algorithm | Computes the probability of an observation sequence given the model; foundational for evaluation and parameter learning [27] [28]. |

| Viterbi Algorithm | Decodes the most likely sequence of hidden states given the observations and the model [27]. |

| Baum-Welch Algorithm | An Expectation-Maximization (EM) algorithm used to learn the optimal HMM parameters (A, B, π) from data [29] [27]. |

| Kalman Filter | The analog of the Forward Algorithm for continuous hidden states in linear Gaussian state-space models [29]. |

| Sequential Monte Carlo (SMC) | Also known as particle filtering; used for inference in more complex, non-linear, non-Gaussian state-space models [29]. |

| logsumexp Function | A critical, numerically stable function for adding probabilities in log space, preventing underflow in HMM algorithms [30]. |

HMM Architecture and Forward Algorithm Workflow

The diagram below illustrates the structure of a Hidden Markov Model and the data flow for the Forward Algorithm calculation, which is used to compute the probability of an observation sequence.

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between a Resource Selection Function (RSF) and a Step-Selection Function (SSF)?

A1: The core difference lies in the sampling design of "used" and "available" points.

- A Resource Selection Function (RSF) is typically used to model an animal's selection of a home range or territory within the broader landscape. It compares "used" locations (from telemetry data) to "available" locations randomly sampled from a large area, like a study area or migration corridor [32].

- A Step-Selection Function (SSF) incorporates temporal dynamics and movement constraints. It compares each "used" location to "available" locations randomly sampled from a circle or distribution centered on the animal's previous location. This accounts for where the animal could have moved next given its current position and movement capabilities [32].

Q2: My RSF/SSF model is producing implausible coefficients or failing to converge. What are the primary troubleshooting steps?

A2: This is often related to data preparation or model specification. Follow this checklist:

- Check for Complete Separation: Ensure there is no single environmental variable that perfectly predicts "used" vs. "available" points. If found, consider collapsing categories or removing the variable.

- Scale and Center Covariates: Standardize all continuous environmental covariates (e.g., subtract the mean and divide by the standard deviation) to improve model convergence and make coefficients comparable.

- Assess Correlation: Check for high multicollinearity among your predictor variables using Variance Inflation Factors (VIF). Remove or combine highly correlated variables (VIF > 10).

- Review Availability Design: For SSFs, ensure the step-length and turn-angle distributions used to generate available points are biologically realistic and fit your data.

Q3: How many "available" points should I generate per "used" point for a reliable model?

A3: While the optimal ratio can depend on your specific data, a common and generally robust starting point is to use 100 available points per used point. Studies have shown that increasing the ratio beyond 100:1 often provides diminishing returns in model accuracy. For initial exploration, a ratio of 10:1 is often sufficient, but final models should be tested with higher ratios (50:1 to 100:1) for stability [32].

Troubleshooting Guide: Common RSF/SSF Errors

| Problem | Potential Cause | Solution |

|---|---|---|

| Model does not converge | Highly correlated covariates, unscaled covariates, or a complex random effects structure. | Center/scale numeric covariates, check for multicollinearity (VIF), and simplify the model structure. |

| Coefficient estimates are implausibly large | Complete or quasi-complete separation in the data. | Diagnose with tables or graphs, and consider regularization (e.g., Firth's penalty) or variable removal. |

| Poor model predictive performance | Misspecification of the availability domain, missing a key habitat variable, or an incorrect functional form (e.g., assuming a linear relationship for a non-linear one). | Re-evaluate how "availability" is defined, include additional ecologically relevant covariates, and test for non-linear effects using splines. |

| Spatial autocorrelation in residuals | The model fails to account for the inherent dependency between consecutive animal locations. | Include an autocorrelation structure in the model or use a conditional logistic regression framework for SSFs. |

Experimental Protocol: Fitting a Step-Selection Function

Objective: To model habitat selection while explicitly accounting for animal movement constraints.

Materials & Software:

- Animal tracking data (e.g., GPS coordinates with timestamps).

- Environmental covariate raster layers (e.g., elevation, vegetation cover, distance to water).

- Statistical software (e.g., R with the

amt,survival, andlme4packages).

Methodology:

Data Preparation:

- Import tracking data and convert into a track object (

amt::make_track). - Derive movement parameters: Calculate step lengths and turn angles between consecutive locations.

- Generate Available Steps: For each observed step, generate a set of random steps (e.g., 100) from the empirical distributions of step lengths and turn angles. This creates the "available" points.

- Import tracking data and convert into a track object (

Data Extraction & Merging:

- Extract values from all environmental raster layers for both the observed ("used") and random ("available") endpoints.

- Combine the "used" and "available" points into a single dataset, with a new binary column (e.g.,

case_) whereTRUEindicates the "used" point.

Model Fitting:

- Fit a conditional logistic regression model using the

survival::clogit()function. The formula structure is:case_ ~ covariate1 + covariate2 + ... + strata(step_id_), wherestep_id_is a unique identifier for each used step and its associated available steps.

- Fit a conditional logistic regression model using the

Model Interpretation:

- Exponentiated coefficients from the model represent Relative Selection Strength (RSS). An RSS of 2 for "forest cover" means the animal is twice as likely to select a location with that forest cover compared to an otherwise identical location without it, given its movement constraints.

The Scientist's Toolkit: Essential Research Reagents & Solutions

| Item | Function in Analysis |

|---|---|

| GPS Telemetry Collars | Primary data collection tool for obtaining high-frequency, high-accuracy animal location data. |

| Geographic Information System (GIS) Software | Platform for managing, processing, and analyzing spatial data, including extraction of covariate values. |

| Environmental Covariate Rasters | Georeferenced layers (e.g., digital elevation models, land cover maps) that represent potential habitat factors influencing selection. |

R Statistical Environment with amt package |

The core computational toolkit for data cleaning, track analysis, and SSF/RSF model fitting. |

| Conditional Logistic Regression Model | The statistical framework used to compare "used" vs. "available" points while controlling for the stratification inherent in the sampling design. |

Visualization: RSF/SSF Analysis Workflow

The diagram below outlines the logical workflow for a typical SSF analysis, which can also be adapted for RSF.

Frequently Asked Questions

Q1: My Sound Event Detection (SED) model performs well on clean audio but fails in noisy real-world conditions. What feature extraction techniques can improve robustness?

Feature extraction is critical for building noise-resistant models. Using image-based representations of audio signals allows a Convolutional Neural Network (CNN) to extract meaningful patterns while suppressing interference [33].

- Mel Spectrograms: Effectively represent how humans perceive sound frequencies and are a standard input for many audio deep learning models [33] [34].

- Discrete Cosine Transform (DCT) Spectrograms: Research indicates these can enhance robustness against external noise, improving feature extraction for SED tasks [33].

- Cochleagrams: Another biologically-inspired representation that can be combined with other spectrograms to improve feature richness [33].

For optimal results, you can use an ensemble approach that combines predictions from models trained on different feature types (e.g., DCT spectrograms, Cochleagrams, and Mel spectrograms) to reduce variance and improve generalization [33].

Q2: How can I classify environmental sounds based on their ecological role rather than just their source?

You can implement a two-stage system that integrates deep learning with R. Murray Schafer's soundscape theory [34]. This framework classifies sounds into three functional categories:

- Keynotes: Persistent, often unconscious background sounds (e.g., traffic hum, ventilation) that define a place's acoustic character.

- Sound Signals: Foreground sounds that consciously capture attention and convey information (e.g., sirens, bird alarm calls).

- Soundmarks: Sounds unique to a location that hold cultural or community significance (e.g., a specific church bell, a unique insect chorus).

Table: Schafer's Soundscape Categories for Ecological Analysis

| Category | Description | Ecological Function | Examples |

|---|---|---|---|

| Keynotes | Persistent background sounds | Defines the baseline acoustic environment | Traffic hum, wind in trees, river flow |

| Sound Signals | Foreground, attention-grabbing sounds | Conveys immediate information or warnings | Bird alarm calls, animal alerts, sirens |

| Soundmarks | Unique, culturally significant sounds | Contributes to the acoustic identity and ecological character of a place | Distinctive species calls (e.g., specific frog or insect choruses) |

Q3: My LSTM model struggles to learn long-term dependencies in ecological time series data. What is the core architectural solution?

The problem is likely the vanishing gradient, which is common in standard Recurrent Neural Networks (RNNs). Long Short-Term Memory (LSTM) networks are specifically designed to solve this [35] [36].

The core solution lies in the LSTM's gating mechanism and cell state [37] [36]. Unlike RNNs, which have a single hidden state, LSTMs have a separate cell state that acts as a "conveyor belt," carrying information across many time steps with minimal changes. Three gates regulate the flow of information:

- Forget Gate: Decides what information to remove from the cell state [35] [36].

- Input Gate: Decides what new information to store in the cell state [35] [36].

- Output Gate: Decides what part of the cell state to output as the hidden state [35] [36].

These gates use sigmoid functions to output values between 0 and 1, allowing them to finely control how much information is retained, forgotten, or exposed [35].

Q4: When should I use a hybrid CNN-LSTM model for ecological data analysis, and how is it structured?

A hybrid CNN-LSTM architecture is ideal when your data has both spatial features (like an image) and temporal dependencies (like a sequence) [38] [39].

- When to Use: This model is perfect for tasks like classifying animal behavior in video feeds [39], analyzing spectrograms of soundscapes over time [33], or predicting sensor readings that depend on spatial arrangements of collection points [38].

- Basic Structure: The CNN acts as a feature extractor from the spatial data (e.g., converting an image or spectrogram into a set of feature vectors). The LSTM then processes these feature vectors sequentially, learning the temporal patterns (e.g., how the features evolve over multiple time steps) [33] [39].

Experimental Protocols & Methodologies

Protocol 1: Building an Ensemble Model for Sound Event Detection

This protocol outlines the process for creating a robust SED model, as described in recent research [33].

Data Preparation:

- Dataset Curation: Collaborate with domain experts (e.g., ecologists, police) to collect and label a dataset of relevant sounds. A recent study created a dataset of 5055 audio files (14.14 hours) with 13 sound categories [33].

- Feature Extraction: Generate multiple image-based representations from your raw audio data. The recommended set includes Mel Spectrograms, DCT Spectrograms, and Cochleagrams [33].

Model Architecture & Training:

- Base Model (CRNN): For each feature type, train a Convolutional Recurrent Neural Network (CRNN). The CNN layers (e.g., 2D convolutions) extract spatial features from the spectrograms. These are followed by recurrent layers (e.g., Bidirectional GRU) to model the temporal sequence [33].

- Ensemble Creation: Train separate CRNN models on each feature type (Mel, DCT, Cochleagram). Combine their predictions at inference time, which reduces variance and improves overall robustness [33].

Performance Metrics:

- Evaluate using standard SED metrics. The ensemble model should achieve a high segment-based F1 score (e.g., 71.5%) and a respectable event-based F1 score (e.g., 46%), demonstrating its ability to handle noisy, imbalanced data [33].

Table: Key Research Reagents for Audio Analysis with CNNs

| Reagent / Material | Function in the Experiment |

|---|---|

| Labeled Audio Dataset (e.g., UrbanSound8K, ESC-50) | Provides the raw, annotated data required for supervised learning of sound events [34]. |

| Mel Spectrogram | Converts audio signals into a time-frequency representation based on human auditory perception, serving as a primary input feature for CNNs [33] [34]. |

| DCT Spectrogram | An alternative time-frequency representation that can enhance robustness against noise in the audio signal [33]. |

| Convolutional Recurrent Neural Network (CRNN) | The deep learning architecture that combines CNNs for spatial feature extraction and RNNs for modeling temporal sequences in audio data [33]. |

Protocol 2: Implementing a Two-Stage Soundscape Classification System

This methodology classifies sounds based on Schafer's theoretical framework, bridging acoustic ecology and machine learning [34].

Stage 1: Learning Distinctive Features with a VAE:

- Objective: To learn a compressed latent representation of the input sounds and identify distinctive samples.

- Process: Train a Variational Autoencoder (VAE) on Mel-spectrograms of your environmental audio. The VAE learns to reconstruct the input. Sounds with a high reconstruction error are considered less common and thus more distinctive or characteristic of a specific environment [34].

Stage 2: Categorization with a CNN:

- Objective: To classify the characterized sounds into keynote, sound signal, or soundmark categories.

- Process: Train a CNN classifier using the features learned by the VAE or the original spectrograms. The output layer is a 3-node softmax for the three Schafer categories [34].

Validation:

- Evaluate the entire system on standard datasets (e.g., UrbanSound8K, ESC-50). This system has been shown to achieve high average accuracy (e.g., 80.7%), providing empirical validation of the theoretical framework [34].

Protocol 3: LSTM Forward Pass Implementation from Scratch

Understanding the mathematical operations of an LSTM is key to debugging and customizing models [35] [37].

Initialization:

- Initialize all weight matrices (

Wf,Wi,Wo,Wc) and bias vectors (bf,bi,bo,bc) for the forget, input, output, and candidate cell gates. Use random initialization scaled by the hidden size [37].

- Initialize all weight matrices (

Forward Pass Computation (for one timestep):

- Input Concatenation: Combine the current input

x_tand the previous hidden stateh_{t-1}into a single vector. - Gate Calculations:

- Forget Gate:

f_t = σ(Wf * [h_{t-1}, x_t] + bf) - Input Gate:

i_t = σ(Wi * [h_{t-1}, x_t] + bi) - Output Gate:

o_t = σ(Wo * [h_{t-1}, x_t] + bo) - Candidate Cell State:

c_tilde_t = tanh(Wc * [h_{t-1}, x_t] + bc)

- Forget Gate:

- State Updates:

- Input Concatenation: Combine the current input

Table: LSTM Gate Functions and Mathematical Formulations

| Component | Role in the LSTM Architecture | Governing Equation |

|---|---|---|

Forget Gate (f_t) |

Decides what information to discard from the long-term cell state. | f_t = σ(W_f · [h_{t-1}, x_t] + b_f) [35] [36] |

Input Gate (i_t) |

Decides what new information to store in the long-term cell state. | i_t = σ(W_i · [h_{t-1}, x_t] + b_i) [35] [36] |

Candidate Cell State (c_tilde_t) |

Creates a vector of new candidate values that could be added to the state. | c_tilde_t = tanh(W_c · [h_{t-1}, x_t] + b_c) [35] [37] |

Cell State Update (c_t) |

Updates the long-term memory of the cell by combining the past and new information. | c_t = f_t ⊙ c_{t-1} + i_t ⊙ c_tilde_t [35] [37] |

Output Gate (o_t) |

Decides what part of the updated cell state will be read as the output (hidden state). | h_t = o_t ⊙ tanh(c_t) [35] [36] |

Navigating Computational Challenges: Data Integration, Model Selection, and Uncertainty

FAQs