Ensemble Power: Why Multi-Model Approaches Are Revolutionizing Predictive Accuracy in Complex Biological Systems

This article explores the paradigm shift from single-model reliance to ensemble modeling for enhancing predictive accuracy in complex systems.

Ensemble Power: Why Multi-Model Approaches Are Revolutionizing Predictive Accuracy in Complex Biological Systems

Abstract

This article explores the paradigm shift from single-model reliance to ensemble modeling for enhancing predictive accuracy in complex systems. It establishes the foundational superiority of ensembles, demonstrating typical accuracy improvements of 5-14% across fields from ecosystem services to aquaculture. We detail methodological frameworks for implementation, from simple averaging to advanced machine learning integration, and address critical troubleshooting for computational efficiency and uncertainty management. Through comparative validation, we illustrate how ensembles provide more robust, reliable predictions, concluding with their transformative potential for biomedical research, including drug development and clinical outcome forecasting, where managing uncertainty is paramount.

The Case for Collectives: Establishing the Superior Accuracy of Model Ensembles

In the pursuit of predictive accuracy within data-driven research, a fundamental dichotomy exists between employing individual models and leveraging the collective power of model ensembles. While single models offer simplicity and interpretability, they often face limitations in accuracy, robustness, and generalization capabilities, particularly when dealing with complex, noisy, or high-dimensional data [1]. Ensemble learning, a technique that combines multiple machine learning models into a single predictive solution, has emerged as a powerful framework to overcome these limitations. By integrating diverse base learners, ensemble methods enhance predictive performance, reduce overfitting, and increase robustness against individual model failures and biases [2]. This guide provides a systematic comparison of predominant ensemble modeling techniques, supported by experimental data and detailed protocols, to inform their application in scientific research, including drug development and ecosystem services.

Core Ensemble Techniques: Mechanisms and Comparative Performance

Ensemble methods can be broadly categorized by their underlying aggregation philosophies. The following table summarizes the core techniques, their operational principles, and key characteristics.

Table 1: Core Ensemble Modeling Techniques and Their Characteristics

| Technique | Aggregation Scheme | Core Principle | Key Advantages | Common Base Learners |

|---|---|---|---|---|

| Committees (Voting/Averaging) [3] [4] | Non-trainable (e.g., majority vote, average) | Parallel training of diverse models; predictions combined via simple statistical rules. | Easy to design and implement; suitable for massive, distributed ensembles [4]. | Any combination of algorithms (e.g., SVM, Decision Trees, KNN) [5]. |

| Bagging [3] [2] | Non-trainable (e.g., averaging, majority vote) | Creates diversity by training homogeneous models on different bootstrap samples of the dataset. | Reduces variance and overfitting; highly effective for high-variance models like Decision Trees. | Decision Trees (e.g., Random Forest) [3]. |

| Boosting [3] [2] | Trainable, weighted | Sequential training of models where each new model focuses on correcting errors of the previous ones. | Reduces bias and variance; often achieves state-of-the-art predictive accuracy. | Shallow Decision Trees (e.g., in AdaBoost, Gradient Boosting, XGBoost, LightGBM) [6] [3]. |

| Stacking [6] [2] | Trainable (via meta-learner) | Predictions from multiple base models are used as input features to train a meta-model. | Leverages unique strengths of different model families; can capture complex interactions. | Diverse model types (e.g., instance-based, bagging, boosting) [6]. |

| Post-Aggregation [4] | Trainable (via complementary machine) | A soft, non-trainable aggregated output is fed as an input to a final learning machine. | Can improve upon simple aggregations by using original features to correct wrong decisions. | Any set of base learners, often massive or distributed ensembles [4]. |

The performance of these techniques varies significantly across domains and datasets. The table below summarizes quantitative findings from experimental studies in educational data mining, which shares common challenges with scientific research, such as handling complex, multi-source data.

Table 2: Comparative Experimental Performance of Ensemble and Single Models

| Study Context | Model | Performance Metric & Score | Comparative Notes |

|---|---|---|---|

| Predicting Engineering Student Performance (n=2,225) [6] | LightGBM (Boosting) | AUC = 0.953, F1 = 0.950 | Best-performing base model. |

| Stacking Ensemble | AUC = 0.835 | Did not outperform best base model; showed considerable instability. | |

| Random Forest (Bagging) | Accuracy = 97% [6] | Achieved with SMOTE for class balancing. | |

| Multiclass Grade Prediction [5] | Gradient Boosting | Macro Accuracy = 67% | Highest global accuracy for macro predictions. |

| Random Forest | Macro Accuracy = 64% | Strong, robust performance. | |

| Bagging | Macro Accuracy = 65% | ||

| Support Vector Machine (Single Model) | Micro Accuracy = 19% | Performance at individual student level was low. | |

| General Findings [2] [1] | Ensemble Models (General) | N/A | Consistently demonstrate superior prediction accuracy and robustness compared to single models [1]. |

Experimental Protocols and Methodologies

To ensure the reliability and reproducibility of ensemble models, a rigorous experimental protocol is essential. The following workflow, derived from cited literature, outlines a standard methodology for developing and validating ensemble predictors.

Workflow Title: Standard Ensemble Model Experimental Protocol

1. Data Collection and Preprocessing: The process begins with consolidating data from multiple relevant sources. In educational contexts, this includes Learning Management System (LMS) logs, academic records, and demographic data [6]. For drug development, this could encompass high-throughput screening data, genomic profiles, and clinical trial records. Data preprocessing is crucial and involves cleaning, handling missing values, and addressing class imbalance with techniques like Synthetic Minority Over-sampling Technique (SMOTE) [6].

2. Feature Analysis and Selection: A thorough exploratory analysis is conducted using graphical and statistical techniques to understand feature distributions and relationships. This step informs the selection of a robust set of predictive features. Quantitative methods, such as the Gini index and p-value analysis, can be employed for systematic feature and model selection [7].

3. Base Learner and Ensemble Technique Selection: Multiple machine learning algorithms are chosen as potential base learners. Diversity is key; the set often includes algorithms from different families, such as Decision Trees, Support Vector Machines (SVM), and K-Nearest Neighbors (KNN) [5]. The ensemble technique (e.g., boosting, bagging, stacking) is selected based on the problem's characteristics.

4. Model Training with Cross-Validation: Base models and the ensemble meta-model are trained using a k-fold stratified cross-validation approach (e.g., 5-fold) [6]. This technique ensures that models are evaluated on different data subsets, providing a robust estimate of generalization performance and reducing overfitting.

5. Model Aggregation & Prediction: For committee-based methods, predictions from base learners are aggregated via voting or averaging [3]. In stacking, base model predictions become inputs for a meta-classifier (e.g., SVM), which is trained to produce the final prediction [8]. In post-aggregation, a soft-aggregated output is fed into a final complementary learning machine [4].

6. Performance Evaluation & Interpretation: Models are evaluated using relevant metrics (e.g., AUC, F1-score, Accuracy, Precision, Recall). Interpretability is critical for scientific adoption; techniques like SHapley Additive exPlanations (SHAP) are used to determine feature importance and validate that model decisions align with domain knowledge [6].

The Scientist's Toolkit: Essential Research Reagents for Ensemble Modeling

Building a high-performing ensemble model requires both data and computational "reagents." The following table details key components and their functions in the ensemble modeling workflow.

Table 3: Essential Reagents for Ensemble Model Research

| Research Reagent | Function & Purpose in Ensemble Modeling |

|---|---|

| Diverse Base Learners (e.g., Decision Trees, SVM, Neural Networks) [5] | Provide the foundational predictive diversity. Using different algorithms ensures errors are uncorrelated and can be compensated for by other models. |

| Cross-Validation Framework (e.g., 5-fold Stratified CV) [6] | Provides a robust method for hyperparameter tuning and model validation, ensuring performance estimates are reliable and not due to data partitioning luck. |

| Class Balancing Algorithm (e.g., SMOTE) [6] | Addresses imbalanced class distributions by generating synthetic samples for the minority class, which improves model fairness and recall for underrepresented groups. |

| Performance Metrics (e.g., AUC, F1-Score, Precision, Recall) [6] [5] | Quantify different aspects of model performance (e.g., ranking capability, precision-recall balance) and are essential for objective model comparison. |

| Model Interpretability Tool (e.g., SHAP) [6] | Provides post-hoc interpretability by quantifying the contribution of each feature to individual predictions, building trust and facilitating scientific validation. |

| Meta-Learner (e.g., Logistic Regression, SVM) [8] | In stacking and post-aggregation, this is the higher-level model that learns to optimally combine the predictions of all base learners. |

The empirical evidence consistently demonstrates that ensemble models offer a superior pathway to predictive accuracy and robustness compared to single-model approaches. Techniques like boosting (e.g., LightGBM, XGBoost) often lead in performance, while methods like bagging (e.g., Random Forest) provide remarkable stability [6] [5]. However, more complex schemes like stacking do not guarantee improvement and require careful validation [6]. The choice of an ensemble strategy is not one-size-fits-all; it must be guided by the dataset's nature, the required interpretability, and computational constraints. For researchers in drug development and ecosystem services, where predictions inform high-stakes decisions, adopting a systematic, empirically-grounded approach to ensemble model selection is not merely an optimization—it is a necessity for achieving reliable and actionable scientific insights.

In the evolving landscape of data science and predictive modeling, a fundamental shift has occurred from reliance on single models to the strategic combination of multiple learners. Ensemble methods represent a sophisticated machine learning technique that aggregates two or more learners to produce more accurate predictions than any individual model could achieve alone [9]. This approach rests on the core principle that a collectivity of models yields greater overall accuracy than an individual learner, effectively harnessing the "wisdom of crowds" in computational form [10]. In scientific domains where predictive accuracy directly impacts decision-making—from drug development to diagnostic precision—the consistent performance advantage of ensemble methods warrants careful examination.

The theoretical foundation of ensemble learning addresses the fundamental bias-variance tradeoff that plagues individual models [9]. Bias measures the average difference between predicted values and true values, while variance measures the difference between predictions across various realizations of a given model. Ensemble methods strategically combine models in ways that either reduce variance (bagging), reduce bias (boosting), or optimize the combination of diverse model strengths (stacking) [10]. This systematic approach to error reduction creates the mathematical basis for the consistent performance gains observed across empirical studies, making ensemble methods particularly valuable in research contexts where incremental improvements can yield significant practical benefits.

Methodological Framework: Ensemble Techniques and Experimental Protocols

Core Ensemble Architectures

The three predominant ensemble methodologies each employ distinct mechanisms for combining models, with characteristic strengths and implementation considerations:

Bagging (Bootstrap Aggregating) operates as a parallel homogenous method that creates multiple versions of the original dataset through bootstrap resampling—randomly selecting n data instances with replacement from the initial training set of size n [9]. Each bootstrap sample is used to train a separate base learner with the same learning algorithm, and predictions are aggregated through averaging (regression) or majority voting (classification) [10]. The Random Forest algorithm represents a prominent extension of bagging that constructs ensembles of randomized decision trees, sampling random subsets of features at each split point to increase diversity among base estimators [9].

Boosting employs a sequential approach where each new model is trained to correct errors made by previous models in the sequence [10]. Unlike bagging, boosting prioritizes misclassified data instances from earlier models when constructing subsequent training sets. Adaptive Boosting (AdaBoost) implements this by adding weights to misclassified samples, while Gradient Boosting uses residual errors from previous models to set target predictions for subsequent models [9]. Modern implementations like XGBoost, LightGBM, and CatBoost have refined this approach through computational optimizations and regularization techniques.

Stacking (Stacked Generalization) represents a more advanced heterogenous parallel method that trains multiple diverse base learners using different algorithms on the same dataset [9] [10]. Rather than simply aggregating predictions, stacking introduces a meta-learner that is trained on the predictions of the base models, learning optimal combinations of their strengths. Critical to stacking's success is using out-of-sample predictions from base models (often through cross-validation) to train the meta-learner, preventing data leakage and overfitting [9].

Experimental Validation Protocol

Robust evaluation of ensemble performance requires methodological rigor. The following experimental protocol, derived from validated implementations in scientific literature, ensures reproducible comparison between ensemble methods and individual models:

Dataset Preparation: Implement appropriate train-test splits (typically 70-30 or 80-20) with stratification for classification tasks. Apply necessary preprocessing including feature scaling, encoding, and missing value treatment [6].

Baseline Model Establishment: Train and evaluate multiple individual models as performance baselines, including Decision Trees, Support Vector Machines, Logistic Regression, and Neural Networks where appropriate [6].

Ensemble Implementation:

- For bagging: Implement Random Forest with appropriate tree count (typically 100-500) and feature sampling parameters

- For boosting: Configure Gradient Boosting Machines (XGBoost, LightGBM) with appropriate learning rates, iteration counts, and depth parameters

- For stacking: Select diverse base learners (e.g., tree-based, linear, distance-based) and meta-learners (typically linear models)

Performance Assessment: Employ k-fold cross-validation (typically k=5 or k=10) with stratification to evaluate model performance [6]. Utilize multiple metrics including accuracy, Area Under the Curve (AUC), F1-score, precision, and recall to comprehensively capture model performance characteristics [11] [6].

Statistical Significance Testing: Apply appropriate statistical tests (e.g., paired t-tests, McNemar's test) to determine whether performance differences between ensemble and individual models reach statistical significance.

Fairness and Robustness Analysis: Evaluate models across demographic subgroups where relevant, assessing consistency metrics to ensure equitable performance [6].

Figure 1: Experimental workflow for ensemble method evaluation

Quantitative Performance Analysis: Empirical Evidence

Comparative Performance Metrics

Comprehensive analysis of ensemble method performance across multiple scientific studies reveals a consistent accuracy advantage over individual models. The table below synthesizes key findings from empirical investigations across diverse domains:

Table 1: Ensemble Method Performance Across Scientific Studies

| Study Context | Ensemble Method | Performance Metrics | Baseline Comparison | Performance Gain |

|---|---|---|---|---|

| Educational Analytics [6] | LightGBM | AUC: 0.953, F1: 0.950 | Traditional Algorithms | >15% AUC improvement |

| Educational Analytics [6] | Stacking Ensemble | AUC: 0.835 | Single Base Models | 5-8% performance improvement |

| Molecular Biology [12] | Weighted Linear Mixed Model | Relative Error: 0.123, CV: 19.5% | Simple Linear Regression | ~45% error reduction |

| Molecular Biology [12] | Weighted Linear Regression | Relative Error: 0.228 | Non-weighted Models | ~30% error reduction |

| General ML Applications [9] | Various Ensembles | Accuracy: 80-97% | Single Models | 5-14% accuracy gain |

The performance advantage of ensemble methods manifests differently across domains and metrics. In educational analytics predicting student success, gradient boosting methods (LightGBM) demonstrated exceptional performance with AUC reaching 0.953 and F1-scores of 0.950 [6]. While stacking ensembles in the same study showed more modest absolute performance (AUC=0.835), they still represented significant improvement over base individual models. In molecular biology applications using quantitative PCR data, sophisticated ensemble approaches like weighted linear mixed models reduced relative error by approximately 45% compared to simple linear regression [12].

Performance by Ensemble Type

Different ensemble architectures excel in specific performance dimensions, allowing researchers to match methodology to their primary accuracy objectives:

Table 2: Performance Characteristics by Ensemble Type

| Ensemble Type | Primary Advantage | Typical Accuracy Gain | Optimal Application Context |

|---|---|---|---|

| Bagging (Random Forest) | Variance Reduction | 5-10% | High-dimensional data, noisy datasets |

| Boosting (XGBoost, LightGBM) | Bias Reduction | 10-15% | Complex nonlinear relationships |

| Stacking | Optimal Model Combination | 5-12% | Heterogeneous data sources |

| Voting/Majority | Implementation Simplicity | 3-8% | Rapid prototyping, diverse base models |

Bagging methods, particularly Random Forest, excel in scenarios with high-dimensional data and noisy datasets, typically achieving 5-10% accuracy gains through variance reduction [9] [10]. Boosting methods like XGBoost and LightGBM deliver more substantial performance improvements (10-15%) for problems with complex nonlinear relationships by sequentially correcting model errors [6] [10]. Stacking ensembles provide more modest but reliable improvements (5-12%) while offering the flexibility to combine fundamentally different model architectures [6] [10].

Figure 2: Ensemble method architectures and typical performance gains

Domain-Specific Applications and Implementation Considerations

Scientific Research Applications

The performance advantages of ensemble methods translate into tangible benefits across scientific domains:

In educational research and learning analytics, ensemble methods have demonstrated exceptional capability in predicting student academic performance. A comprehensive study involving 2,225 engineering students integrated Moodle interactions, academic history, and demographic data, with LightGBM achieving remarkable performance (AUC=0.953, F1=0.950) in identifying at-risk students [6]. The implementation employed SMOTE for class balancing and 5-fold stratified cross-validation, with SHAP analysis confirming early grades as the most influential predictors. Critically, the final model maintained strong fairness across gender, ethnicity, and socioeconomic status (consistency=0.907), addressing ethical considerations in educational analytics.

In medical and molecular research, ensemble methods enhance measurement precision in laboratory techniques. For quantitative PCR data analysis, weighted linear mixed models reduced relative error to 0.123 compared to 0.397 for simple linear regression—representing approximately 70% error reduction [12]. The "taking-the-difference" preprocessing approach further improved accuracy by eliminating background estimation error. These precision improvements directly impact diagnostic accuracy and treatment efficacy assessment in clinical applications.

For drug development and comparative effectiveness research, meta-analytic approaches—which share methodological similarities with ensemble learning—provide robust evidence synthesis across multiple studies [13] [14]. By pooling information from various trials, these methods enhance statistical power, elucidate subgroup effects, and guide hypothesis generation, particularly when individual randomized controlled trials cannot enroll enough participants for adequate power [13].

Successful implementation of ensemble methods requires both conceptual understanding and appropriate technical tools. The following research reagents and computational resources represent essential components for effective ensemble method application:

Table 3: Essential Research Reagents for Ensemble Implementation

| Resource Category | Specific Tools | Primary Function | Implementation Considerations |

|---|---|---|---|

| Programming Frameworks | Python/scikit-learn, R | Algorithm implementation | scikit-learn provides BaggingClassifier, StackingClassifier |

| Boosting Libraries | XGBoost, LightGBM, CatBoost | Gradient boosting implementation | LightGBM offers superior speed for large datasets |

| Data Preprocessing | SMOTE, ADASYN | Class imbalance handling | SMOTE generates synthetic minority class samples [6] |

| Model Interpretation | SHAP, LIME | Prediction explainability | SHAP provides consistent feature importance scores [6] |

| Validation Methods | k-Fold Cross-Validation, Bootstrapping | Performance validation | Stratified k-fold preserves class distribution [6] |

| Meta-Analysis Tools | RevMan, Metafor | Evidence synthesis | Critical for research consolidation [14] |

Limitations and Ethical Considerations

Despite their performance advantages, ensemble methods introduce implementation challenges and ethical considerations that researchers must address:

Computational Complexity: Ensemble methods typically require greater computational resources and longer training times compared to individual models [10]. This can present practical constraints for large-scale applications or resource-limited research environments.

Interpretability Challenges: The combination of multiple models often reduces interpretability, creating "black box" systems that can be difficult to explain in scientifically rigorous contexts [6]. Techniques like SHAP analysis have emerged as crucial tools for maintaining interpretability while leveraging ensemble advantages [6].

Fairness and Bias Propagation: Without careful implementation, ensemble methods can perpetuate or even amplify biases present in training data [9]. Recent research has developed specialized metrics and preprocessing techniques to improve fairness in ensemble models, particularly for applications impacting minority groups [9].

Methodological Rigor: As with any analytical approach, ensemble methods require rigorous implementation to avoid statistical errors. Evidence suggests that even sophisticated methodologies like meta-analysis frequently contain statistical errors when proper protocols aren't followed [15].

The empirical evidence consistently demonstrates that ensemble methods provide measurable performance advantages across scientific domains, with typical accuracy gains ranging from 5-14% compared to individual models. These improvements stem from fundamental statistical principles that address the inherent limitations of single-model approaches through strategic model combination.

The choice among ensemble architectures should be guided by research context and performance priorities: bagging for variance reduction in noisy datasets, boosting for complex nonlinear relationships where bias reduction is paramount, and stacking when heterogeneous data sources benefit from optimally combined modeling approaches. As predictive modeling continues to evolve within scientific research, ensemble methods represent a robust approach for maximizing predictive accuracy while maintaining methodological rigor—provided they are implemented with appropriate attention to computational constraints, interpretability requirements, and ethical considerations.

For research domains where incremental improvements in predictive accuracy yield significant practical benefits—including drug development, diagnostic medicine, and educational interventions—the consistent performance advantage of ensemble methods warrants their serious consideration as a standard analytical approach.

Ensemble learning is a machine learning technique that aggregates multiple models, known as base learners, to produce better predictive performance than could be obtained from any of the constituent models alone [9]. This approach operates on the collective intelligence principle, where a group of learners yields greater overall accuracy than an individual learner [9]. In scientific research, particularly in high-stakes fields like drug development and environmental science, ensemble methods have gained significant traction due to their ability to enhance prediction accuracy, improve model robustness, and increase generalization capabilities [1] [16].

The theoretical foundation of ensemble learning rests on the concept of the bias-variance tradeoff, a fundamental problem in machine learning [9]. Bias measures the average difference between predicted values and true values, with high bias indicating high error during training. Variance measures how much predictions fluctuate across different model realizations, with high variance leading to poor performance on unseen data [9]. Ensemble methods strategically address this tradeoff through error cancellation—where differing errors from individual models offset each other—and variance reduction, which stabilizes predictions across datasets [1] [17].

In model ensembles versus individual model accuracy ecosystems, research consistently demonstrates that ensemble models achieve superior prediction accuracy compared to single models by reducing the correlation between base models, thereby minimizing overall prediction error [1]. This is particularly valuable in domains like pharmaceutical research and environmental monitoring, where prediction reliability can significantly impact decision-making processes and resource allocation [18] [16].

Core Principles and Theoretical Framework

The Mechanism of Error Cancellation

Error cancellation represents the fundamental process through which ensemble learning achieves its superior performance. This mechanism operates on the principle that different models typically make different errors on the same dataset due to their varying architectures, training data subsets, or algorithmic approaches. When these diverse models are combined, their individual errors tend to counteract each other, resulting in a more accurate collective prediction [1].

The efficacy of error cancellation depends directly on the diversity of the base models. As the diversity of model combinations increases, the resulting variance introduces different errors that may offset one another, thereby enhancing overall accuracy and generating models with greater robustness and generalization capabilities [1]. This diversity can be achieved through various strategies, including using different algorithms on the same dataset (heterogeneous ensembles) or applying the same algorithm to different data subsets (homogeneous ensembles) [1].

Research in building energy prediction, where ensemble models have been extensively applied, demonstrates that this error cancellation effect enables ensemble models to overcome data scarcity in large-scale prediction applications [1]. Similarly, in environmental science, a stacking ensemble regressor that combined seven individual models achieved exceptional prediction accuracy for sulphate levels in acid mine drainage, with performance metrics significantly surpassing individual models [16].

Variance Reduction in Ensemble Methods

Variance reduction addresses the sensitivity of model predictions to the specific training data used. Models with high variance tend to overfit—performing well on training data but poorly on unseen data [9]. Ensemble methods mitigate this through two primary approaches: bagging and boosting.

Bagging (Bootstrap Aggregating) reduces variance by training multiple base learners on different random subsets of the original training data, created through bootstrap resampling [9]. This technique copies n data instances from the original set into new subsample datasets, with some initial instances appearing more than once and others excluded entirely [9]. The final prediction is then generated by aggregating the predictions of all base learners, typically through majority voting for classification or averaging for regression [17] [9].

Boosting takes a sequential approach to variance and bias reduction. Rather than training models independently, boosting algorithms train base learners sequentially, with each new model focusing on the errors made by previous models [9]. This method assigns higher weights to misclassified instances, causing subsequent learners to prioritize these difficult cases [17] [9]. While both bagging and boosting enhance model performance, they represent different points on the bias-variance tradeoff spectrum, with boosting generally more effective at reducing bias and bagging more effective at reducing variance [17].

Table 1: Comparison of Bagging and Boosting Approaches

| Characteristic | Bagging | Boosting |

|---|---|---|

| Training Method | Parallel training of base learners | Sequential training of base learners |

| Focus | Reducing variance | Reducing bias |

| Data Sampling | Bootstrap sampling with equal probability | Weighted sampling focusing on misclassified instances |

| Model Weighting | Equal weighting of models | Weighted based on model performance |

| Overfitting Risk | Lower risk | Higher risk with excessive iterations |

| Computational Cost | Lower (parallelizable) | Higher (sequential) |

Ensemble Methodologies: A Comparative Analysis

Homogeneous Ensemble Techniques

Homogeneous ensemble models utilize a single base learning algorithm applied to multiple diverse data subsets generated from the original dataset [1]. These subsets are created using subset generation algorithms like bagging and boosting, which are then used simultaneously with the same parameter settings to train multiple base models [1]. This approach is particularly beneficial for unstable algorithms, which exhibit significantly altered outputs with slight changes in training data [1].

Bagging represents a classic homogeneous approach that enhances performance, particularly for high-variance models. The random forest algorithm extends this concept by constructing ensembles of randomized decision trees that iteratively sample random subsets of features to create decision nodes, rather than sampling every feature as in standard decision trees [9]. Research shows that as ensemble complexity (number of base learners) increases, bagging demonstrates steady but diminishing returns, with performance eventually plateauing [17].

Boosting algorithms, including Adaptive Boosting (AdaBoost) and Gradient Boosting, represent another homogeneous approach with distinct characteristics. AdaBoost weights model errors, adding weights to misclassified samples that cause subsequent learners to prioritize these difficult cases [9]. Gradient boosting uses residual errors from previous models to set target predictions for successive models, progressively closing the error gap [9]. Experimental comparisons reveal that boosting typically achieves higher peak accuracy than bagging but requires approximately 14 times more computational time at the same ensemble complexity [17].

Heterogeneous Ensemble Techniques

Heterogeneous ensemble models combine multiple different algorithms trained on the same dataset to achieve high accuracy, versatility, and robustness [1]. The final selection and combination of algorithms significantly impact the accuracy of the ensemble model and should be tailored to the dataset characteristics, as different algorithms may perform variably across datasets [1].

Stacking (stacked generalization) is a prominent heterogeneous method that exemplifies meta-learning [9]. This technique trains several base learners from the same dataset using different algorithms for each learner [9]. Each base learner makes predictions on an unseen dataset, and these predictions are compiled to train a meta-model that generates final predictions [9]. Research emphasizes the importance of using a different dataset from that used to train the base learners to prevent overfitting, often requiring exclusion of data instances from the base learner training data to serve as test set data for the meta-learner [9].

Experimental results demonstrate the efficacy of stacking ensembles. In predicting sulphate levels in acid mine drainage, a stacking ensemble regressor trained on untreated AMD stacked seven of the best-performing individual models and used a linear regression meta-learner, achieving exceptional performance with a Mean Squared Error of 0.000011, Mean Absolute Error of 0.002617, and R² of 0.9997 [16]. Surprisingly, when comparing stacking that combined all models with stacking that combined only the best-performing models, there was only a slight difference in model accuracies, indicating that including poorer-performing models in the stack had no adverse effect on predictive performance [16].

Methodological Workflows

The workflow for implementing ensemble methods follows a systematic process that can be visualized through the following experimental framework:

Diagram 1: Experimental workflow for ensemble learning methodologies

Experimental Comparison and Performance Metrics

Quantitative Performance Analysis

Empirical studies across diverse domains provide compelling evidence for the superior performance of ensemble methods compared to individual models. The following table summarizes key experimental findings that quantify this performance advantage:

Table 2: Experimental Performance Comparison of Ensemble vs. Individual Models

| Application Domain | Best Performing Ensemble Model | Key Performance Metrics | Comparison to Individual Models |

|---|---|---|---|

| Building Energy Consumption Prediction [1] | Heterogeneous Ensemble | Superior prediction accuracy, robustness, and generalization | Outperformed all single models in accuracy and reliability |

| Sulphate Level Prediction in Acid Mine Drainage [16] | Stacking Ensemble (7 models + LR meta-learner) | MSE: 0.000011, MAE: 0.002617, R²: 0.9997 | Significantly outperformed 11 individual models including RF, XGBoost, MLP |

| Image Classification (MNIST) [17] | Boosting (200 base learners) | Accuracy: 0.961 | Higher accuracy than Bagging (0.933) with same ensemble size |

| Image Classification (CIFAR-100) [17] | Boosting | Progressive accuracy improvement with complexity | Demonstrated consistent advantage over individual models |

Experimental results consistently demonstrate that ensemble learning outperforms individual methods due to their combined predictive accuracies [16]. In building energy prediction, ensemble models have shown particular value in applications including energy consumption prediction across different building types, prediction of thermal energy, electricity, cooling energy, and various other energy types, as well as energy demand prediction and building energy loads prediction [1].

Computational Cost Analysis

While ensemble methods deliver superior predictive performance, this advantage comes with increased computational costs. Research quantifying the trade-offs between performance gains and resource requirements reveals important patterns:

Table 3: Computational Cost Comparison: Bagging vs. Boosting

| Metric | Bagging | Boosting | Experimental Conditions |

|---|---|---|---|

| Time Cost | Lower, nearly constant with complexity | Substantially higher (approx. 14x Bagging at complexity=200) | MNIST dataset, 200 base learners [17] |

| Performance Trend | Diminishing returns, eventual plateau | Rapid improvement then potential overfitting | Increasing ensemble complexity [17] |

| Performance at Complexity=200 | 0.933 accuracy | 0.961 accuracy | MNIST dataset [17] |

| Resource Consumption | Grows linearly with complexity | Grows quadratically with complexity | Theoretical model validation [17] |

The computational requirements of ensemble methods present practical considerations for researchers. Bagging demonstrates nearly constant time cost as ensemble complexity increases, while Boosting's time cost rises sharply with complexity [17]. Similarly, computational resource consumption grows quadratically for Boosting but only linearly for Bagging [17]. These patterns highlight the importance of matching method selection to available computational resources and application requirements.

Implementation in Drug Development and Environmental Science

Pharmaceutical Research Applications

Ensemble learning and model-informed approaches have transformed pharmaceutical research and development through multiple applications:

Drug Discovery and Development: Model-Informed Drug Development (MIDD) represents an essential framework for advancing drug development and supporting regulatory decision-making [18]. MIDD provides quantitative predictions and data-driven insights that accelerate hypothesis testing, assess potential drug candidates more efficiently, reduce costly late-stage failures, and accelerate market access for patients [18]. Evidence demonstrates that well-implemented MIDD approaches can significantly shorten development cycle timelines, reduce discovery and trial costs, and improve quantitative risk estimates [18].

Predictive Modeling for Efficacy and Toxicity: Ensemble approaches enhance predictive modeling for drug efficacy and toxicity, which offers transformative potential for drug development [19]. Success in this domain hinges on a strong foundation in traditional disciplines such as physiology, pharmacology, and molecular biology, coupled with the strategic application of modern computational tools, including Quantitative Systems Pharmacology (QSP), machine learning (ML), and systems biology [19]. The rigorous integration of experimental data and computational modeling has been increasingly recognized as essential for building credible and impactful models [19].

Methodological Integration: A growing area of interest is the integration of machine learning (ML) with Quantitative Systems Pharmacology (QSP) [19]. ML excels at uncovering patterns in large datasets, while QSP provides a biologically grounded, mechanistic framework. When used together, these approaches can address data gaps, improve individual-level predictions, and enhance model robustness and generalizability [19].

Environmental Science Applications

In environmental science, ensemble methods have demonstrated significant utility across multiple domains:

Water Quality Prediction: Machine learning ensemble approaches have successfully predicted sulphate levels in acid mine drainage (AMD), providing critical data for evaluating potential extraction of commercially useful by-products like octathiocane (S8) [16]. This application is particularly valuable given that traditional analytical chemistry approaches for measuring sulphate levels are time-consuming, expensive, utilize specialized equipment, and require hazardous chemicals [16]. Ensemble models provide a cost-effective alternative that removes the hazards, costs, and time associated with traditional experimental methods [16].

Environmental Assessment and Monitoring: Ensemble learning has been applied to diverse environmental challenges, including predicting ammonia levels in groundwater to understand nitrogen reduction pathways, developing early warning systems for reservoir water management, modeling soil moisture effects on slope stability to identify triggers of shallow slope landslides, and assessing determinants of environmental sustainability [16]. The versatility of ensemble methods has proven particularly valuable for combining earth observation data with machine learning to promote sustainable development [16].

Experimental Protocols and Research Reagents

Implementation of ensemble learning methodologies requires specific computational tools and frameworks. The following table outlines key "research reagent solutions" essential for experimental work in this field:

Table 4: Essential Research Reagents and Computational Tools for Ensemble Learning

| Tool/Category | Specific Examples | Function/Purpose |

|---|---|---|

| Programming Environments | Python, R | Primary programming languages for implementing ensemble algorithms |

| Ensemble Libraries | Scikit-learn ensemble module, XGBoost | Pre-built functions for bagging, stacking, and gradient boosting |

| Base Algorithms | Linear Regression, LASSO, Ridge, Elastic Net, KNN, SVR, Decision Tree, RF, XGBoost, MLP [16] | Individual models used as base learners in ensemble constructions |

| Model Validation Tools | Cross-validation, Bootstrap Resampling | Methods to ensure model robustness and prevent overfitting |

| Meta-Learners | Linear Regression, Logistic Regression | Algorithms that combine base model predictions in stacking ensembles |

| Performance Metrics | Mean Squared Error, Mean Absolute Error, R², Accuracy | Quantitative measures to evaluate and compare model performance |

The experimental protocol for implementing ensemble methods typically follows a structured process, as visualized in the workflow below:

Diagram 2: Experimental protocol for ensemble learning implementation

Ensemble learning methodologies demonstrate consistent advantages over individual models across diverse scientific domains through their core operations of error cancellation and variance reduction. The experimental evidence confirms that ensemble models achieve superior prediction accuracy, enhanced robustness, and better generalization capabilities compared to individual models [1] [16] [17]. This performance advantage stems from the fundamental principle that combining multiple models with diverse error profiles allows for compensatory error cancellation and more stable predictions across different datasets [1] [9].

The choice between specific ensemble approaches involves important trade-offs between performance, computational costs, and implementation complexity [17]. Bagging methods offer more modest performance gains with lower computational requirements, while boosting typically achieves higher accuracy but with substantially increased computational costs [17]. Stacking ensembles that combine diverse algorithms through meta-learners often deliver the highest performance but require careful implementation to avoid overfitting [16] [9].

For scientific researchers and drug development professionals, ensemble methods provide powerful tools for enhancing predictive modeling where accuracy and reliability are paramount [18] [19]. As these methodologies continue to evolve and integrate with emerging computational approaches, they offer significant potential for advancing predictive capabilities across scientific disciplines, ultimately contributing to more efficient and effective research outcomes.

In the face of increasingly complex environmental challenges, researchers are turning to sophisticated modeling approaches to enhance predictive accuracy and inform decision-making. This guide explores a foundational concept in computational science: the power of model ensembles over individual models. Ensemble methods, which combine multiple models to produce a single superior output, have demonstrated remarkable success across diverse scientific domains. These techniques operate on the principle that a collection of weak learners can be integrated to form a strong learner with improved predictive performance, reducing both bias and variance compared to individual models [20] [21]. The methodology is particularly valuable for capturing complex, non-linear relationships in environmental data that often elude single-model approaches.

The application of ensemble techniques spans three critical domains explored in this guide: ecosystem services assessment, aquaculture optimization, and climate science forecasting. In ecosystem services research, ensembles help integrate diverse regulatory functions and spatial dynamics. In aquaculture, they enable precise monitoring of water quality and fish health. In climate science, they improve the reliability of global temperature projections. By systematically comparing ensemble approaches against individual model performance across these fields, this guide provides researchers with evidence-based protocols for implementing these powerful analytical tools in their own environmental investigations.

Ensemble vs. Individual Model Performance: A Cross-Domain Analysis

Theoretical Foundations of Ensemble Methods

Ensemble learning techniques represent a paradigm shift in predictive modeling, moving beyond reliance on single algorithms to leveraging the collective power of multiple models. The core principle underpinning ensemble methods is that a group of weak learners—models performing slightly better than random guessing—can be combined to create a strong predictive model with significantly enhanced accuracy and robustness [21]. This approach mitigates the limitations inherent in individual models, which often struggle with high variance, overfitting, or inherent biases in their algorithmic structure.

The theoretical superiority of ensembles emerges from several interconnected mechanisms. First, different models often capture complementary aspects of complex datasets, particularly in environmental systems characterized by multi-scale processes and non-linear interactions. As Microsoft Research notes, neural networks trained from different random initializations can learn distinct feature mappings despite similar overall architecture and training data [22]. Second, ensemble methods effectively reduce variance through averaging techniques (as in bagging) or sequentially minimize bias by focusing on previously misclassified instances (as in boosting) [20] [21]. Third, the multi-view data hypothesis suggests that in real-world environmental datasets, different models may identify different "views" or features of the same underlying phenomenon, with ensemble approaches collectively capturing this full spectrum of predictive signals [22].

Comparative Performance Across Domains

Table 1: Ensemble vs. Individual Model Performance Across Key Domains

| Domain | Ensemble Approach | Individual Model Performance | Ensemble Performance | Key Improvement Metrics |

|---|---|---|---|---|

| Ecosystem Services | Social-ecological system (SES) integrated models | Limited capacity to represent supply-demand dynamics and cross-system flows [23] | Comprehensive quantification of ES as coproducts of coupled human-natural systems [23] | More accurate spatial prioritization; Enhanced policy relevance |

| Aquaculture | IoT sensor networks with machine learning integration | Single-parameter monitoring with delayed response times [24] | Real-time, multi-parameter prediction of water quality and fish health [24] [25] | 39.1% improved feed conversion; 12% higher survival rates [25] |

| Climate Science | Multi-model ensembles (NASA, NOAA, Berkeley Earth, etc.) | Individual climate models with varying sensitivity to parameters [26] | Most reliable projections with quantified uncertainty ranges [26] | Highest accuracy in temperature projections; Robust uncertainty quantification |

| General Machine Learning | Random Forest, XGBoost, Neural Network Ensembles | Decision trees prone to overfitting; Single networks with specific initialization [20] [22] | Superior accuracy through variance reduction and feature learning [20] [22] | Error reduction up to 30%; Enhanced robustness to noisy data |

The performance advantage of ensemble approaches is consistently demonstrated across all three domains, though the specific mechanisms and magnitude of improvement vary according to application context. In ecosystem services research, the shift toward social-ecological system (SES) frameworks represents a conceptual ensemble approach that integrates multiple disciplinary perspectives rather than purely technical model combination [23]. This recognizes that ecosystem services emerge as coproductions between ecological structures and human inputs, requiring integrated modeling approaches that capture these complex feedback relationships.

In aquaculture technology, ensemble methods manifest through multi-sensor platforms that integrate diverse data streams—oxygen, temperature, pH, salinity, and ammonia levels—into predictive algorithms that far outperform single-measurement monitoring systems [24]. The practical benefits are substantial, with one study reporting a 39.1% improvement in feed conversion ratio and a 12% increase in survival rates when using ensemble-driven management systems [25]. This demonstrates how ensemble approaches translate directly to operational efficiency and economic value in production environments.

Climate science represents perhaps the most formalized implementation of ensemble modeling, where multi-model ensembles combining projections from NASA, NOAA, Met Office Hadley Centre, Berkeley Earth, and Copernicus/ECMWF have become the gold standard for temperature projections [26]. The aggregate of these models consistently outperforms any single model, with the World Meteorological Organization employing this ensemble approach to generate its authoritative climate assessments. This methodology proved particularly valuable in forecasting that 2025 would rank as the second or third warmest year on record, despite neutral ENSO conditions that typically suppress temperatures [26].

Experimental Protocols for Ensemble Implementation

Ecosystem Services Assessment Protocol

The evaluation of regulating ecosystem services (RESs) requires sophisticated methodological approaches capable of capturing the complex interactions between ecological processes and human beneficiaries. A robust experimental protocol for ensemble modeling in this domain involves several critical phases, with particular relevance to fragile ecosystems such as karst World Heritage Sites [27].

Table 2: Key Research Reagents for Ecosystem Services Assessment

| Research Reagent | Function | Application Example |

|---|---|---|

| SALSA Framework | Systematic literature review methodology for knowledge synthesis | Analyzing 176 publications on RESs to identify research gaps [27] |

| SEEA Ecosystem Accounting | International standard for integrating ecosystem services into economic accounts | Monetary valuation of ecosystem services for policy integration [28] |

| ESA-CAT Tool | Accounting mechanism for ecosystem service transactions | Assessing ecosystem contributions distinct from human-made inputs [28] |

| Supply-Demand Matrix | Spatial analysis framework for ecosystem service flows | Mapping service provision to direct and indirect beneficiaries [28] |

Phase 1: Systematic Literature Review and Meta-Analysis

- Implement the Search, Appraisal, Synthesis, and Analysis (SALSA) framework to systematically collect and evaluate existing research [27]

- Define precise inclusion/exclusion criteria to filter relevant studies (e.g., 205 of 541 initially identified articles in karst RESs research) [27]

- Extract quantitative data on RES assessment methods, trade-offs, synergies, and driving mechanisms for comparative analysis

Phase 2: Social-Ecological System Modeling

- Apply coupled human-natural system frameworks that conceptualize ES as coproductions between ecosystem supply and human demand [23]

- Quantify both biophysical flows and socio-economic values using standardized accounting protocols (SEEA Ecosystem Accounting) [28]

- Model cross-system flows as outcomes of SES equilibria, distinguishing inherent bundle characteristics from system-level dynamics [23]

Phase 3: Spatial Prioritization and Validation

- Conduct field validation in representative sites (e.g., karst WNHSs with strong vegetation nativity and complete ecosystem structure) [27]

- Employ remote sensing and GIS technologies to map service provision and beneficiary access patterns

- Validate model predictions against empirical measurements of water conservation, soil retention, and climate regulation functions

Figure 1: Ecosystem Services Ensemble Assessment Workflow

Aquaculture Optimization Protocol

Modern aquaculture operations employ increasingly sophisticated monitoring and control systems that exemplify ensemble approaches through integrated sensor networks and predictive algorithms. The experimental protocol for implementing ensemble methods in aquaculture focuses on optimizing production outcomes while minimizing environmental impacts.

Table 3: Aquaculture Research Reagents and Technologies

| Research Reagent | Function | Application Example |

|---|---|---|

| Recirculating Aquaculture Systems (RAS) | Closed-loop water treatment with biological and mechanical filtration | Reusing up to 99% of water while maintaining biosecurity [24] |

| IoT Sensor Networks | Real-time monitoring of oxygen, temperature, pH, salinity, ammonia | Predicting disease outbreaks through multi-parameter correlation [24] |

| Automated Feeding Systems | Precision delivery of feed based on behavioral and environmental cues | Reducing operational costs by up to 70% through waste minimization [24] |

| Protein Hydrolysates | Enhanced nutritional supplements from enzymatic protein breakdown | Improving feed conversion ratio and immune response in fish [25] |

Phase 1: Multi-Parameter Monitoring System Implementation

- Deploy IoT sensors to continuously monitor dissolved oxygen, temperature, pH, salinity, and ammonia concentrations [24]

- Integrate data streams into centralized dashboards with automated alert systems for parameter deviations

- Calibrate sensors against laboratory measurements to ensure data accuracy and reliability

Phase 2: Predictive Model Development

- Train machine learning algorithms on historical data to identify patterns preceding adverse events

- Develop ensemble models that integrate environmental parameters with behavioral observations (e.g., feeding activity, swimming patterns)

- Implement the Biofloc Technology System (BFT) to optimize water quality through microbial floc formation that assimilates nitrogenous compounds [25]

Phase 3: Intervention Optimization

- Establish response protocols triggered by ensemble model predictions (e.g., automated oxygen adjustment, feed rate modification)

- Evaluate system performance through key metrics including feed conversion ratio, survival rates, and biomass accumulation

- Conduct controlled trials comparing ensemble-driven management against conventional approaches

Climate Projection Protocol

The exceptional reliability of climate model ensembles stems from rigorous protocols that systematically address uncertainties across the modeling chain. The experimental approach leverages multiple independent modeling groups to generate projections that collectively outperform any single model.

Phase 1: Multi-Model Ensemble Construction

- Incorporate outputs from major climate modeling centers (NASA, NOAA, Met Office Hadley Centre, Berkeley Earth, Copernicus/ECMWF) [26]

- Apply bias correction techniques to account for systematic model errors while preserving inter-model diversity

- Generate weighted averages based on historical model performance, with sensitivity testing of weighting approaches

Phase 2: Uncertainty Quantification

- Analyze the spread across ensemble members as a quantitative measure of projection uncertainty

- Distinguish between scenario uncertainty (human emissions pathways), model uncertainty (structural differences), and internal variability (natural climate fluctuations)

- Employ statistical emulators to efficiently explore parameter spaces and constrain uncertainty ranges

Phase 3: Validation and Projection

- Validate ensemble performance against historical observations using hindcasting experiments

- Project future climate conditions under multiple representative concentration pathways (RCPs)

- Quantify probabilities of specific outcomes (e.g., 9% chance that 2025 annual temperatures exceed 1.5°C above pre-industrial levels) [26]

Figure 2: Climate Model Ensemble Integration Process

Cross-Domain Synthesis and Implementation Guidelines

The consistent outperformance of ensemble approaches across ecosystem services, aquaculture, and climate science reveals fundamental principles for environmental modeling. First, model diversity is critical—ensembles composed of structurally different models with varying strengths and weaknesses consistently outperform homogeneous ensembles. Second, appropriate weighting strategies that account for historical model performance generally enhance ensemble accuracy, though simple averaging often provides robust results. Third, explicit uncertainty quantification through ensemble spreads provides more reliable and actionable information for decision-makers than single-model point estimates.

For researchers implementing ensemble approaches, several practical guidelines emerge from this cross-domain analysis. Begin with a clear identification of the specific modeling challenge—whether reducing variance (favoring bagging approaches), minimizing bias (favoring boosting techniques), or integrating multi-disciplinary perspectives (requiring conceptual ensembles). Ensure computational resources match ensemble complexity, as some implementations (e.g., neural network ensembles) require significant processing capacity [20]. Finally, establish rigorous validation protocols that test ensemble performance against independent data not used in model training, with particular attention to extreme conditions and threshold behaviors.

The convergence of evidence across these diverse domains strongly supports ensemble modeling as a superior approach for addressing complex environmental challenges. As computational power increases and methodological refinements continue, ensemble techniques will likely become increasingly central to environmental research and decision-support systems. Their demonstrated capacity to enhance predictive accuracy, quantify uncertainties, and integrate diverse knowledge sources makes them indispensable tools in advancing sustainability science across the ecosystem services, aquaculture, and climate science domains.

In the pursuit of optimal predictive performance, researchers and developers across fields from drug discovery to ecosystem services often gravitate toward identifying a single, best-performing algorithm. This search for a universal solution—a single model that consistently outperforms all others across diverse datasets and problem domains—represents a pervasive fallacy in machine learning application. The single-model fallacy stems from an underestimation of how different algorithmic strengths, data characteristics, and problem constraints interact to determine model efficacy. Empirical evidence consistently demonstrates that model performance is inherently context-dependent, with even sophisticated deep learning approaches failing to universally dominate across application domains.

This comparative guide examines the theoretical and empirical foundations supporting ensemble learning as a robust alternative to single-model reliance, with particular attention to applications in ecosystem services research and scientific domains requiring high-precision predictions. Through systematic analysis of experimental data and methodological frameworks, we demonstrate why embracing model diversity through ensemble techniques provides more reliable, accurate, and generalizable solutions across the scientific spectrum.

Theoretical Foundations: The Case for Model Diversity

The Strength of Collective Intelligence

Ensemble learning operates on the principle that combining multiple models creates a collective intelligence that surpasses any individual contributor. This approach mirrors the wisdom-of-crowds phenomenon, where aggregated judgments typically outperform individual experts. The theoretical underpinnings rest on three key mechanisms:

- Variance Reduction: Aggregating predictions from multiple models smooths out individual idiosyncrasies, particularly beneficial for high-variance algorithms like decision trees.

- Bias Minimization: Sequential ensemble methods like boosting specifically address residual errors, progressively reducing systematic prediction biases.

- Linearity of Expectation: The expected performance of properly constructed ensembles mathematically exceeds the expected performance of constituent models.

These mechanisms explain why ensembles typically achieve superior generalization to unseen data—a critical requirement in both ecosystem modeling and drug development where deployment environments often differ from training conditions.

Ensemble Architectural Patterns

The literature identifies four primary ensemble patterns, each with distinct operational characteristics:

*Ensemble Architecture Patterns - Four primary ensemble learning methodologies with distinct training and prediction workflows.

Bagging (Bootstrap Aggregating) creates multiple dataset variants through random sampling with replacement, trains models in parallel on these subsets, and aggregates predictions through averaging or majority voting. The Random Forest algorithm represents the most prominent bagging implementation [29].

Boosting employs sequential training where each subsequent model focuses specifically on instances previously misclassified, progressively reducing residual errors. Gradient boosting machines, including XGBoost and LightGBM, implement this approach with additional regularization to prevent overfitting [6] [29].

Stacking (Stacked Generalization) utilizes a meta-learner that learns optimal combinations of base model predictions, effectively determining how to weight different algorithms based on their performance patterns [29].

Blending operates similarly to stacking but uses a simple holdout validation set rather than cross-validation to generate input for the combiner model, offering computational efficiency at potential cost to performance robustness [29].

Experimental Evidence: Quantitative Performance Comparisons

Ecosystem Services Research Applications

Ecosystem services research presents particularly challenging prediction environments due to complex nonlinear relationships, spatial dependencies, and interacting environmental drivers. Multiple studies have systematically compared individual versus ensemble model performance in this domain:

Table 1: Model Performance Comparison in Ecosystem Services Prediction

| Research Context | Single Model Performance | Ensemble Model Performance | Performance Gap |

|---|---|---|---|

| Karst ecosystem assessment [30] | Traditional methods struggled with nonlinear patterns | Gradient boosting identified key drivers with higher accuracy | Significant improvement in pattern recognition |

| Yunnan-Guizhou Plateau ES mapping [30] | Standard regression limited by data complexity | ML + PLUS model enabled multi-scenario prediction | Enhanced spatiotemporal forecasting capability |

| General ES mapping validation [31] | Individual models often lack proper validation | Ensemble approaches facilitate robustness checking | Improved reliability and decision-making uptake |

The experimental protocol for these comparisons typically involves: (1) partitioning ecosystem service datasets (e.g., water yield, carbon storage, soil conservation) using stratified sampling to preserve spatial and temporal distributions; (2) training individual baseline models including decision trees, SVMs, and neural networks with hyperparameter optimization via grid search; (3) constructing ensembles using bagging, boosting, and stacking approaches; (4) evaluating performance on held-out test sets using metrics including RMSE, MAE, and R²; and (5) conducting statistical significance testing via paired t-tests or Wilcoxon signed-rank tests.

Educational Analytics and Broader Scientific Applications

Beyond environmental science, the single-model fallacy manifests across research domains, with ensemble consistency demonstrating superiority:

Table 2: Cross-Domain Ensemble vs. Single Model Performance

| Domain | Top Single Model | Ensemble Approach | Performance Advantage |

|---|---|---|---|

| Student Grade Prediction [32] | Single algorithms (DT, KNN, SVM): 55-59% accuracy | Gradient Boosting: 67% accuracy | 8-12% absolute accuracy improvement |

| At-Risk Student Identification [6] | Base learners with 70-75% accuracy | LightGBM ensemble: AUC = 0.953, F1 = 0.950 | Substantial improvement in early warning precision |

| Building Energy Prediction [1] | Single models limited by algorithm dependence | Ensemble models: Superior accuracy & robustness | Enhanced generalization across building types |

The experimental methodology for these studies generally incorporates: (1) multimodal data integration from disparate sources (LMS interactions, academic history, demographic factors); (2) comprehensive preprocessing including missing data imputation, feature scaling, and synthetic minority oversampling (SMOTE) to address class imbalance; (3) nested cross-validation with outer loops for performance estimation and inner loops for hyperparameter tuning; (4) fairness auditing across demographic subgroups using metrics like statistical parity and equalized odds; and (5) model interpretability analysis through SHAP values and partial dependence plots [6].

Successful ensemble implementation requires both conceptual understanding and practical tools. The following research reagent solutions represent essential components for developing effective ensemble models:

Table 3: Essential Research Reagents for Ensemble Modeling

| Research Reagent | Function | Application Context |

|---|---|---|

| SMOTE [6] | Synthetic Minority Over-sampling Technique addressing class imbalance | Critical for educational analytics with at-risk student identification |

| SHAP Analysis [6] | Shapley Additive exPlanations providing model interpretability | Identifies key predictors in complex ensembles for scientific insight |

| Cross-Validation [32] | Robust performance estimation via data resampling | Prevents overfitting in ensemble combination strategies |

| Hyperparameter Optimization [32] | Systematic tuning of model parameters | Maximizes individual learner contribution to ensemble |

| Feature Importance Ranking [30] | Identification of predictive variables | Guides feature engineering for ensemble performance |

These methodological reagents enable researchers to implement ensembles that are not only predictive but also interpretable and robust—essential qualities for scientific applications and decision support systems.

Implementation Framework: Ensemble Development Workflow

Developing effective ensemble models requires a systematic approach that emphasizes diversity, optimization, and validation:

*Ensemble Development Workflow - Systematic five-phase methodology for constructing robust ensemble models.

The workflow begins with comprehensive problem diagnosis to understand data characteristics and performance requirements. The critical second phase focuses on strategic base learner selection prioritizing algorithmic diversity over individual performance—combining tree-based models, linear models, neural networks, and instance-based learners to capture complementary patterns in the data [1].

The individual optimization phase tunes each base learner while avoiding over-specialization to ensure they contribute unique predictive signatures to the ensemble. Architecture implementation then selects the appropriate combination strategy based on data size, complexity, and computational constraints—with bagging preferred for unstable learners, boosting for complex patterns, and stacking when sufficient diverse base learners are available [29]. The final validation and interpretation phase employs rigorous statistical testing, fairness auditing, and model explanation techniques to ensure the ensemble meets both performance and scientific rigor requirements [6] [31].

The empirical evidence from ecosystem services research, educational analytics, and building energy prediction collectively demonstrates that no single algorithm consistently outperforms all others across diverse problem contexts and dataset characteristics. The single-model fallacy represents not just a statistical oversight but a fundamental limitation in how we approach predictive modeling in scientific domains.

Ensemble learning methodologies provide a mathematically sound and empirically validated framework for moving beyond this limitation, offering consistent performance improvements through strategic model combination. As the complexity of scientific problems increases—particularly in domains like drug development and environmental forecasting—the deliberate incorporation of model diversity through systematic ensemble construction represents a necessary evolution in the computational scientist's toolkit.

The future of predictive modeling in scientific research lies not in identifying universally superior individual algorithms, but in developing more sophisticated approaches to model combination, weighting, and integration that leverage the complementary strengths of diverse modeling approaches. This paradigm shift from competition to collaboration in algorithmic design mirrors the interdisciplinary nature of modern scientific progress itself.

Building Better Predictors: A Framework for Implementing Ensemble Models

In the field of ecosystem services (ES) research, accurate predictive modeling is paramount for informing sustainable development and conservation decisions. However, a significant challenge persists: most ES studies rely on a single modeling framework, which can lead to unreliable predictions and non-robust decisions when applied to new data or scenarios [33]. Ensemble learning, a machine learning paradigm that combines multiple models to improve predictive performance, offers a powerful solution. By leveraging the "wisdom of crowds," ensemble methods enhance the robustness and accuracy of predictions, which is critical for applications ranging from mapping ecosystem service provision to assessing the impact of policy interventions [33] [34].

This guide provides a comparative analysis of four core ensemble architectures—Bagging, Boosting, Stacking, and Voting—framed within the ES research context. We objectively evaluate their performance against individual models and each other, supported by experimental data and detailed methodologies from diverse scientific fields.

Core Concepts and Mechanisms

Ensemble learning operates on the principle that combining multiple models (often called "weak learners") can produce a stronger, more accurate, and more robust predictive model than any single constituent model [35] [34]. The key is to introduce diversity among the models, which can be achieved by using different algorithms, training data subsets, or feature sets [34].

The following diagram illustrates the fundamental workflows and logical relationships of the four core ensemble methods.

Diagram 1: Workflows of Core Ensemble Learning Architectures. Bagging trains models in parallel on bootstrap samples, Boosting trains models sequentially by reweighting data, Stacking uses base model predictions as input for a meta-model, and Voting aggregates predictions from multiple models directly [36] [35].

Bagging (Bootstrap Aggregating)

Bagging is designed to reduce variance and prevent overfitting, particularly in high-variance models like decision trees [36] [35]. It operates as follows:

- Bootstrap Sampling: Multiple subsets (bootstrap samples) are created from the original training data by random sampling with replacement.

- Parallel Model Training: A base model (e.g., a decision tree) is trained independently on each bootstrap sample.

- Aggregation: Predictions from all models are combined through averaging (for regression) or majority voting (for classification) to produce the final prediction [36] [35].

A prominent example is the Random Forest algorithm, which extends bagging by not only sampling data points but also a random subset of features at each split, further decorrelating the trees and enhancing performance [36].

Boosting

Boosting is a sequential technique that combines multiple weak learners to create a strong learner, primarily focused on reducing bias [36] [35]. Its mechanism involves:

- Sequential Training: Models are built one after another, where each subsequent model aims to correct the errors made by the previous ones.

- Weight Adjustment: Instances that were misclassified by previous models are assigned higher weights, forcing subsequent models to focus more on these difficult cases.

- Model Combination: All weak learners are combined using weighted voting or averaging to form the final predictive model [36].

Popular boosting algorithms include AdaBoost, Gradient Boosting (GBoost), and XGBoost [36] [37].

Stacking (Stacked Generalization)

Stacking aims to leverage the strengths of diverse types of models by using a meta-learner to learn how to best combine them [36] [35]. The process involves two main levels:

- Level-0 (Base Models): Multiple different models (e.g., Random Forest, SVM, neural networks) are trained on the original training data.

- Level-1 (Meta-Model): The predictions from the base models form a new dataset (meta-features), which is used to train a meta-model (e.g., logistic regression) that produces the final prediction [36].

Voting

Voting is a conceptually simpler ensemble method that aggregates predictions from multiple models directly [38]. It comes in two forms:

- Hard Voting: The final prediction is the class that receives the majority vote from all base models.

- Soft Voting: The final prediction is the class with the highest average probability from all base models.

Comparative Performance Analysis

Empirical evidence from various domains consistently demonstrates that ensemble methods can achieve superior predictive performance compared to individual models.

Performance in Ecosystem Services Research

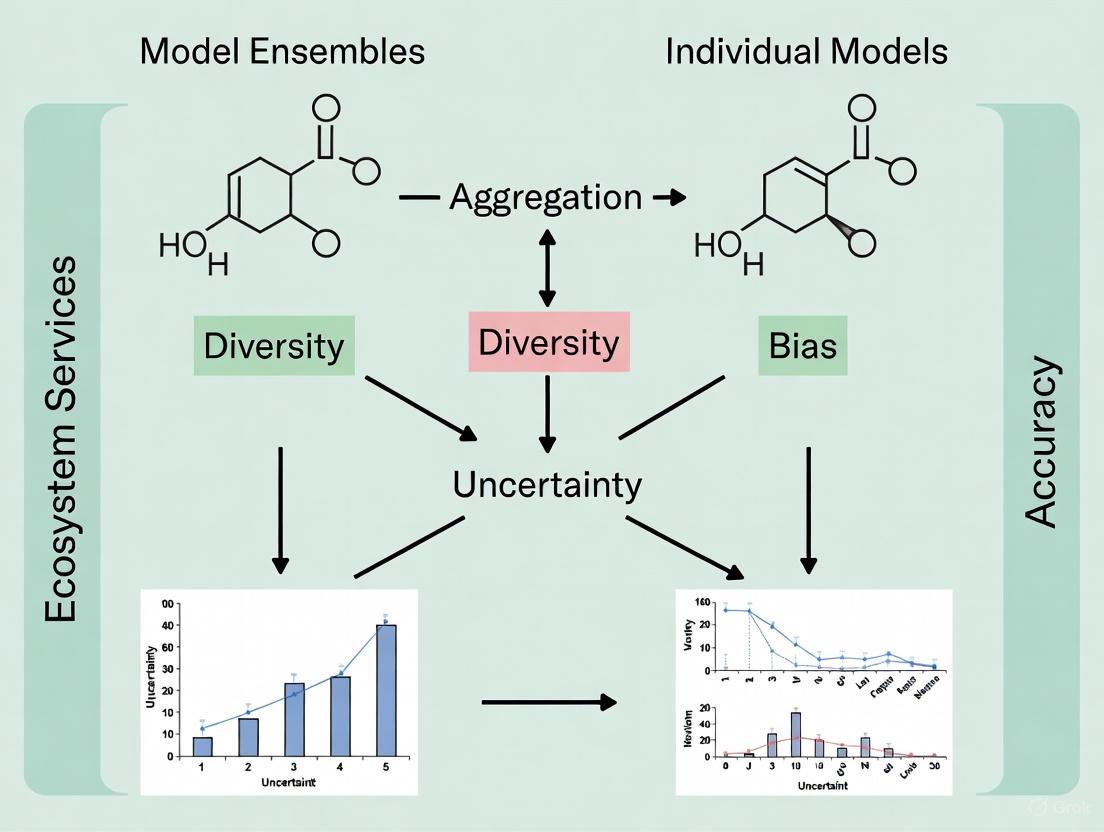

A study focusing on multiple ecosystem services across sub-Saharan Africa found that model ensembles were 5.0–6.1% more accurate than individual models [33]. The study also revealed that the variation among the constituent models within an ensemble (the ensemble's uncertainty) was negatively correlated with its accuracy. This means that the internal disagreement of an ensemble can serve as a useful proxy for its reliability, which is particularly valuable in data-deficient regions common in ES research [33].

Cross-Domain Experimental Evidence

The following table summarizes key performance metrics of ensemble methods from recent studies in various fields, illustrating their broad effectiveness.

Table 1: Comparative Performance of Ensemble Models Across Different Domains

| Domain / Study | Top Performing Model(s) | Key Performance Metric | Reported Score | Comparison to Individual Models |

|---|---|---|---|---|

| Higher Education [6] | LightGBM (Boosting) | AUC | 0.953 | Outperformed other base learners and a stacking ensemble. |

| Stacking Ensemble | AUC | 0.835 | Did not offer significant improvement over best base model. | |

| Veterinary Medicine (LSD Prediction) [37] | Random Forest (Bagging) + ROS | Accuracy | 82% | Superior performance among tested ensembles (DT, RF, AdaBoost, GBoost, XGBoost). |

| XGBoost (Boosting) | Accuracy | 81.25% | Competitive performance with Random Forest. | |

| Heart Disease Prediction [39] | Ensemble (Bagging, Boosting, Stacking) with PCA/LDA | Accuracy | Up to 97% | Optimal accuracy, deemed well-suited for the method. |

| Pneumonia Classification [40] | VGG19/DenseNet121 + Random Forest | Accuracy | 99.98% | Exemplifies hybrid DL/ML ensemble surpassing standalone deep learning models. |

Key Comparative Insights