Ecological Network Models: From Ecosystem Complexity to Biomedical Innovation

This article explores the transformative application of ecological network models in understanding complex biological systems, with particular focus on drug discovery and biomedical research.

Ecological Network Models: From Ecosystem Complexity to Biomedical Innovation

Abstract

This article explores the transformative application of ecological network models in understanding complex biological systems, with particular focus on drug discovery and biomedical research. It examines foundational principles of ecological networks—including complexity-stability relationships, network structure, and dynamics—and demonstrates how these concepts are being adapted to model disease mechanisms, identify drug targets, and predict therapeutic outcomes. The content covers methodological approaches from traditional food web analysis to modern computational techniques, addresses key challenges in model optimization and validation, and presents case studies showing successful cross-disciplinary applications. For researchers and drug development professionals, this synthesis provides both theoretical framework and practical guidance for leveraging ecological network principles to advance biomedical science.

The Architecture of Complexity: Ecological Network Fundamentals

Ecological networks are fundamental representations of the biotic interactions within ecosystems. They provide a structured framework for visualizing and analyzing the complex web of relationships among species, where species (nodes) are connected by pairwise ecological interactions (links) [1]. This network approach transforms the study of ecology from a focus on isolated species or pairwise interactions to a holistic understanding of community-level processes. The application of graph theory—a mathematical framework developed in computer science and mathematics—to these biological systems enables researchers to handle otherwise intractable complexity and detect both large-scale network structure and species-level contributions to overall network organization [2].

The historical development of ecological network research emerged from descriptions of trophic relationships in aquatic food webs, though contemporary work has expanded to include various food webs as well as webs of mutualists [1]. This expansion reflects the recognition that non-trophic interactions (such as pollination, seed dispersal, and habitat formation) play crucial roles in ecosystem dynamics. The network perspective has become increasingly vital for understanding how anthropogenic threats, including climate change and species extinctions, affect ecosystem functioning and stability [3]. By representing ecosystems as networks, ecologists can predict how disturbances propagate through communities and identify key species that disproportionately influence ecosystem persistence.

Core Components of Ecological Networks

Structural Elements: Nodes and Links

The architecture of any ecological network consists of two fundamental components: nodes and links. In ecological terms, nodes (also called vertices) typically represent biological entities such as individual species, functional groups of species, or specific populations [2] [4]. In some specialized applications, nodes may represent spatial units like habitat patches in metapopulation studies or individual organisms in social network analyses. The node definition depends on the research question and scale of investigation, though species-level nodes remain the most common representation in community ecology.

Links (also called edges or connections) represent the ecological interactions happening between the nodes [5]. These links can be characterized in several ways based on the interaction type:

- Trophic interactions: Consumption relationships where energy and nutrients flow from one species to another (e.g., predator-prey, herbivore-plant)

- Symbiotic interactions: Mutualistic, commensal, or parasitic relationships where species closely associate

- Competitive interactions: Relationships where species utilize the same limited resources

- Facilitative interactions: Relationships where one species creates favorable conditions for another

Links in ecological networks can be either directed (indicating a one-way flow of energy or influence, such as from prey to predator) or undirected (simply indicating co-occurrence or association without directionality) [2]. Furthermore, links may be binary (presence/absence of interaction) or weighted (quantifying the strength, frequency, or magnitude of the interaction) [2]. The choice between these representations depends on the research question and available data.

Table 1: Core Components of Ecological Networks

| Component | Definition | Ecological Interpretation | Representation Types |

|---|---|---|---|

| Nodes | Fundamental units in the network | Typically species or populations, sometimes habitat patches or individuals | Species, functional groups, spatial units |

| Links | Connections between nodes | Ecological interactions between species | Trophic, symbiotic, competitive, facilitative |

| Directionality | Flow orientation in links | Direction of energy flow or ecological influence | Directed (->) or undirected (—) |

| Weight | Magnitude of connection | Strength, frequency, or impact of interaction | Binary (0/1) or weighted (continuous values) |

Matrix Representation of Networks

Ecological networks are commonly represented mathematically as adjacency matrices—square matrices where rows and columns represent species, and matrix elements indicate the presence or strength of interactions between them [5]. For a network composed of S species, the adjacency matrix is an S×S matrix where the element a{ij} represents the interaction from species j to species i. In food webs, these matrices typically represent consumption relationships, where a{ij} = 1 indicates that species i consumes species j [5].

For bipartite networks (those with two distinct sets of species that only interact between sets), an incidence matrix provides a more efficient representation [5]. In this rectangular matrix, one set of species (e.g., plants) forms the rows and the other set (e.g., pollinators) forms the columns. The elements indicate interactions between members of the two sets. This representation acknowledges that interactions do not occur within the same set of species in bipartite networks, such as plant-pollinator or host-parasite systems [5].

The mathematical representation of ecological networks enables the application of sophisticated analytical tools from graph theory and linear algebra to ecological questions. For example, the number of species (S) can be determined from the dimensions of an adjacency matrix, while the number of interactions (L) can be calculated as the sum of all elements in the matrix [5]. In bipartite networks represented by incidence matrices with dimensions H×V (where H is the number of species in one set and V is the number in the other), the total number of species is H + V [5].

Key Properties and Metrics in Network Analysis

Ecological network analysis employs a suite of quantitative metrics that characterize different aspects of network structure and function. These metrics enable comparisons across ecosystems, assessment of network stability, and identification of critical species or interactions.

Basic Structural Metrics

Connectance measures the proportion of possible links between species that are actually realized (calculated as L/S², where L is the number of links and S is the number of species) [1]. Connectance reflects the overall complexity of the network and the degree of specialization in species interactions. High-connectance networks typically have generalist species with broad interaction ranges, while low-connectance networks feature more specialized species with limited interaction partners.

Linkage density represents the average number of links per species and provides an alternative measure of network complexity [1]. This metric offers intuitive interpretation as it directly reflects the mean number of interactions per species in the community.

Degree distribution describes the cumulative distribution for the number of links each species has [1]. This distribution can be split into two components: in-degree (links to a species' prey or resources) and out-degree (links to a species' predators or consumers). Empirical studies have revealed that food webs display universal functional forms in their degree distributions, with in-degree distributions typically decaying more slowly than out-degree distributions, meaning species generally have more incoming than outgoing links [1].

Advanced Structural Properties

Clustering quantifies the proportion of species that are directly linked to a focal species [1]. High clustering around a species may indicate a keystone role, where its loss could have disproportionate effects on the network. In social or metapopulation networks, clustering reflects the degree to which an individual's contacts are connected to each other.

Nestedness describes the degree to which species with few links interact with subsets of the species that interact with more connected species [1]. In highly nested networks, guilds contain both generalists (species with many links) and specialists (species with few links, all shared with the generalists). This pattern is often observed in mutualistic networks, where it tends to be asymmetrical—specialists of one guild link to generalists of the partner guild [1].

Modularity measures the division of the network into relatively independent sub-networks or modules [1]. Compartmentalization occurs when species form distinct groups with dense connections within groups but sparse connections between groups. Empirical evidence suggests compartmentalization can occur along lines of body size, spatial location, or through patterns of diet contiguity and adaptive foraging [1].

Network motifs are unique sub-graphs composed of small sets of nodes (typically 3-5 species) that occur with statistically significant frequency in a network [1]. These motifs represent familiar interaction modules studied by population ecologists, such as food chains, apparent competition, or intraguild predation. The distribution of motifs reveals fundamental building blocks of ecological networks.

Table 2: Key Metrics for Ecological Network Analysis

| Metric | Calculation | Ecological Interpretation | Application Examples |

|---|---|---|---|

| Connectance | L/S² | Proportion of possible interactions realized; measures complexity/specialization | Food web complexity analysis; stability assessment |

| Degree Distribution | Distribution of links per species | Pattern of interaction distribution across species | Identifying generalist vs. specialist species |

| Nestedness | NODF, temperature metric | Specialists interact with subsets of generalists' partners | Mutualistic network analysis; community persistence |

| Modularity | Q = Σ(eii - ai²) | Division into interacting subgroups | Habitat use analysis; functional group identification |

| Clustering Coefficient | C = 2n/(k(k-1)) | Degree of interconnectivity among a node's neighbors | Social networks; metapopulation connectivity |

Types of Ecological Networks

Unipartite Networks

Unipartite networks represent interactions between nodes of the same class or type [2]. These include food webs, where all species are represented by the same type of node regardless of trophic position, and social or contact networks between individuals within a population. In unipartite representations, all possible interactions are theoretically possible, reflected in square adjacency matrices where both rows and columns represent the same set of species [5] [2].

Unipartite networks are particularly valuable for studying disease transmission in wildlife populations, as they move beyond the "well-mixed" assumption of traditional epidemiological models to incorporate heterogeneous contact patterns [2]. Similarly, in metapopulation ecology, unipartite networks represent connectivity between habitat patches through dispersal routes. The study of unipartite networks has revealed that targeted interventions (such as vaccination of highly connected individuals) can reduce disease transmission thresholds below those predicted by traditional models [2].

Bipartite Networks

Bipartite networks represent interactions between two distinct classes of nodes, where interactions occur only between classes, not within them [5] [2]. Common examples include plant-pollinator, host-parasite, and plant-herbivore systems. In these networks, species can be classified into two distinct groups with interactions forbidden among members of the same group—for instance, plants do not interact with other plants in a plant-pollinator network [5].

The bipartite structure allows for more concise representation using incidence matrices rather than adjacency matrices [5]. In these rectangular matrices, one set of species forms the rows and the other forms the columns, with matrix elements indicating interactions between the groups. This representation acknowledges the fundamental biological constraint that certain interaction types only occur between specific taxonomic or functional groups.

Bipartite networks enable researchers to map trait or phylogenetic information onto nodes to understand what constrains interactions between species [2]. For example, plant-pollinator relationships may be limited by the match between floral morphology and pollinator mouthparts, creating "forbidden links" that shape network structure.

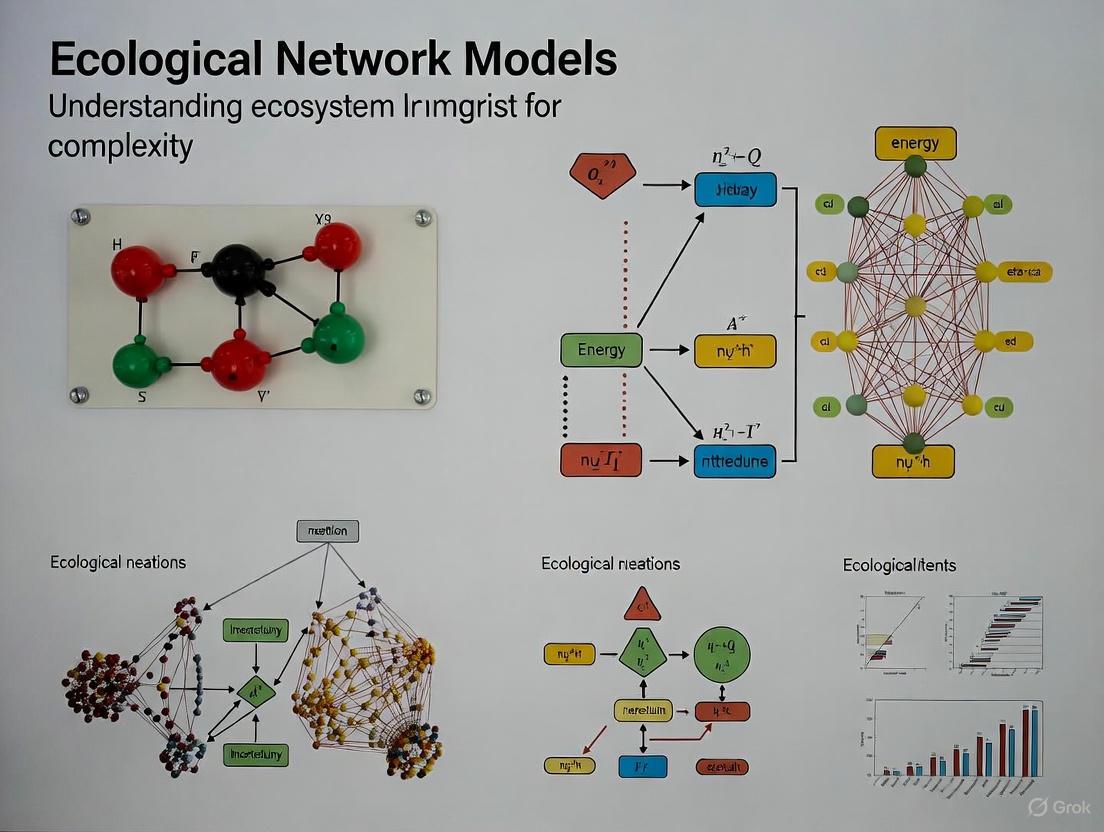

Figure 1: Network Types Comparison. Unipartite networks (top) contain one node type with various interactions. Bipartite networks (bottom) have two node types with interactions only occurring between types.

Multilayer Networks

Recent advances in network ecology have recognized that species in nature are connected by multiple interaction types simultaneously, forming multilayer networks where different relationship types constitute separate network layers [6]. A prominent example is tripartite networks composed of two interaction layers (e.g., pollination and herbivory) with three species sets, one of which is shared between layers [6].

Research on 44 tripartite networks from various ecosystems has revealed that the way interaction layers connect significantly affects network dynamics and robustness [6]. In antagonistic-antagonistic networks (e.g., combining herbivory and parasitism), approximately 35% of shared species act as connector nodes participating in both interaction types, with most shared species hubs (96%) connecting both layers [6]. Conversely, in mutualistic-mutualistic networks, only about 10% of shared species connect both interaction layers, with just 32% of shared species hubs acting as connectors [6].

This structural variation influences how disturbances propagate through different network types. The interdependence of robustness between interaction layers varies across network types, suggesting that restoration efforts may not automatically propagate through entire communities in less interdependent networks [6].

Stability and Robustness of Ecological Networks

Complexity-Stability Relationship

The relationship between ecosystem complexity and stability represents a central question in ecology that network approaches have helped illuminate [1]. Early theoretical work suggested that complexity should destabilize ecosystems, creating a paradox because observed ecosystems appeared both complex and stable [1]. Network analysis has resolved part of this paradox by identifying specific structural properties that reduce the spread of indirect effects and thus enhance stability despite complexity [1].

Key findings include that interaction strength often decreases with the number of links between species, damping disturbance effects [1]. Furthermore, compartmentalized networks limit cascading extinctions because effects of species losses remain largely contained within the original compartment [1]. The relationship between complexity and stability can even invert in food webs with sufficient trophic coherence, where increases in biodiversity enhance rather than diminish community stability [1].

Robustness to Species Loss

Robustness analysis quantifies how ecological networks respond to species losses, including both primary extinctions (directly removed species) and secondary extinctions (species lost due to dependency on removed species) [6] [3]. This approach typically involves sequentially removing species according to specific scenarios and tracking subsequent secondary extinctions.

Recent research has revealed that food web robustness and ecosystem service robustness are strongly correlated (r_s = 0.884, P = 9.504e-13), meaning threats to food webs generally also threaten the services they provide [3]. However, robustness varies across individual ecosystem services depending on their trophic level and redundancy (the number of species providing the same service) [3]. Services with higher redundancy and lower trophic levels typically demonstrate greater robustness to species loss [3].

Interestingly, species that directly provide ecosystem services (ecosystem service providers) are not necessarily critical for food web stability, whereas supporting species (those that interact with and support service providers) play critical roles in stabilizing both food webs and services [3]. This highlights the importance of considering both direct and indirect species contributions when assessing vulnerability to species losses.

Figure 2: Cascading Effects of Species Loss. Primary extinctions trigger secondary extinctions, potentially leading to ecosystem service loss or food web collapse. Structural network properties mediate these effects.

Quantitative Frameworks and Methodological Approaches

Sampling and Standardization Methods

A significant challenge in ecological network research involves sampling completeness, as nearly all network studies detect only a subset of actual species and interactions [7]. Drawing analogies from species diversity research, where each unique interaction is treated as a "species" and interaction frequency as its "abundance," ecologists have adapted sampling theory to network science [7].

The iNEXT.link method applies interpolation and extrapolation to standardize network diversity comparisons across studies with differing sampling efforts [7]. This approach integrates four key inference procedures: (1) assessing sample completeness of networks, (2) asymptotic analysis via estimating true network diversity, (3) non-asymptotic analysis based on standardizing sample completeness, and (4) estimating the degree of unevenness or specialization in networks based on standardized diversity [7].

For quantifying network diversity, researchers have proposed a three-dimensional framework incorporating:

- Taxonomic network diversity: Incorporating richness and frequency/strength of interactions

- Phylogenetic network diversity: Generalizing taxonomic diversity by considering species' evolutionary relationships

- Functional network diversity: Further accounting for species' functional traits and distinctness [7]

This unified framework uses consistent units (effective number of interactions) enabling direct comparison across diversity dimensions.

Hill Numbers Framework

Hill numbers provide a unifying mathematical framework for quantifying network diversity, parameterized by a diversity order q that controls sensitivity to interaction strength [7]. This framework integrates three established indices: interaction richness (q = 0), Shannon diversity (q = 1), and Simpson diversity (q = 2). Hill numbers effectively quantify the "effective number of interactions" in a network, facilitating intuitive interpretation and comparison.

The specialization of networks can be quantified through unevenness measures derived from Hill numbers, which capture whether interactions tend to be specialized (uneven distribution of interaction strengths) or generalized (even distribution) [7]. This approach adjusts for the effect of differing interaction richness, enabling meaningful comparison of specialization across networks with different numbers of interactions.

Table 3: Methodological Approaches for Network Analysis

| Method | Application | Key Metrics | Considerations |

|---|---|---|---|

| Adjacency Matrix | Representing unipartite networks | Connectance, degree distribution | Square S×S matrix for S species |

| Incidence Matrix | Representing bipartite networks | Specialization, interaction diversity | Rectangular H×V matrix for two species sets |

| Robustness Analysis | Predicting response to species loss | Secondary extinction curves, critical points | Requires defined extinction scenarios |

| iNEXT.link | Standardizing diversity comparisons | Sample completeness, asymptotic diversity | Accounts for sampling effort differences |

| Hill Numbers | Quantifying network diversity | Effective number of interactions (q=0,1,2) | Unified framework for abundance sensitivity |

Applications in Research and Conservation

Predicting Ecosystem Service Vulnerability

Ecological network approaches enable prediction of ecosystem service vulnerability to species losses by modeling how secondary extinctions impact service provision [3]. Research comparing robustness across twelve extinction scenarios for estuarine food webs with seven services revealed that services vary in their vulnerability depending on trophic level and redundancy [3]. This approach identifies both direct risks to service providers and indirect risks through supporting species, providing a more comprehensive assessment than approaches focusing solely on direct threats.

Weighting species' contributions to ecosystem services reveals that some species contribute disproportionately to service provision [3]. When these disproportionate contributions are considered, ecosystem service robustness decreases, though weighted and unweighted service values remain strongly correlated (r_s = 0.760, P = 7.439e-08) [3]. This refinement helps prioritize conservation efforts toward species with disproportionate contributions to ecosystem services.

Ecological Restoration and Network Optimization

Network analysis provides quantitative measures for assessing effectiveness of ecological restoration projects. Research in the Liuchong River Basin (China) demonstrated that restoration efforts significantly improved ecological network connectivity, with α, β, and γ indices increasing by 15.31%, 11.18%, and 8.33% respectively [8]. These improvements indicate enhanced network circuitry, structural accessibility, and node connectivity, shifting the ecosystem toward greater integration and resilience [8].

In arid regions, ecological network optimization employs specialized approaches including drought-resistant species selection and corridor buffer zones [9]. A framework integrating Morphological Spatial Pattern Analysis, circuit theory, and machine learning models demonstrated significant connectivity improvements, with dynamic patch connectivity increasing by 43.84%-62.86% and inter-patch connectivity increasing by 18.84%-52.94% after optimization [9]. Such approaches provide scientifically-grounded strategies for restoring degraded ecological networks.

Multi-Interaction Network Management

The study of multi-interaction networks reveals that considering multiple interaction types simultaneously provides crucial insights for conservation [6]. While considering multiple interactions may not dramatically alter overall robustness estimates, it significantly affects identification of keystone species and understanding of robustness interdependence between different animal groups [6]. This approach helps determine whether restoration efforts will propagate through entire communities or remain limited to specific interaction pathways.

Network analysis also informs targeted interventions, such as identifying highly connected species for disease control in wildlife populations [2]. By using proxy variables known to correlate with connectivity (such as sex, age, or family size in primates), managers can design efficient intervention strategies even with incomplete network data [2].

Experimental Protocols and Research Toolkit

Standardized Data Collection Framework

Comprehensive network analysis requires systematic data collection following standardized protocols. For food web construction, researchers should document:

- Species inventory: Complete census of all relevant species in the study system

- Interaction recording: Direct observation, gut content analysis, or stable isotopes to establish trophic links

- Interaction quantification: Measures of interaction frequency, strength, or biomass flow

- Spatiotemporal context: Documentation of habitat characteristics, seasonal variation, and sampling duration

For mutualistic networks (e.g., plant-pollinator systems), data collection should include:

- Interaction observations: Standardized timed observations of visitor frequency and behavior

- Effectiveness assessment: Pollen deposition, seed set, or other measures of interaction outcome

- Trait recording: Morphological measurements relevant to interaction (e.g., corolla depth, proboscis length)

- Abundance estimates: Population sizes or densities of interacting species

Computational Analysis Pipeline

Modern ecological network analysis employs a structured computational workflow:

- Network construction: Creating adjacency or incidence matrices from interaction data

- Basic metric calculation: Computing connectance, degree distribution, and specialization indices

- Advanced structural analysis: Assessing nestedness, modularity, and motif distribution

- Robustness testing: Simulating extinction scenarios and tracking secondary extinctions

- Statistical validation: Comparing observed metrics to appropriate null models

- Visualization: Creating informative network diagrams and comparative plots

Specialized software packages facilitate this pipeline, including bipartite (R package for two-mode networks), igraph (general network analysis), and iNEXT.link (diversity standardization).

Essential Research Reagents and Tools

Table 4: Research Reagent Solutions for Ecological Network Studies

| Tool/Category | Specific Examples | Function in Research | Application Context |

|---|---|---|---|

| Field Observation Tools | Camera traps, GPS loggers, binoculars | Document species presence and behavior | Interaction recording, spatial networks |

| Molecular Analysis Kits | DNA barcoding, metabarcoding, stable isotope analysis | Trophic link identification, diet analysis | Food web reconstruction, interaction confirmation |

| Network Analysis Software | R packages (bipartite, igraph), NetworkX (Python) | Calculate network metrics, simulate dynamics | Structural analysis, robustness testing |

| Spatial Analysis Tools | GIS software, remote sensing data, circuit theory models | Landscape connectivity, corridor identification | Spatial networks, conservation planning |

| Statistical Frameworks | Null models, Bayesian inference, multivariate statistics | Hypothesis testing, uncertainty quantification | Network comparison, driver identification |

Ecological networks provide a powerful framework for representing and analyzing species interactions within ecosystems, with nodes representing biological entities and links representing their ecological interactions. The typology of these networks—including unipartite, bipartite, and multilayer forms—encompasses the diversity of interaction types structuring ecological communities. Quantitative metrics such as connectance, nestedness, and modularity characterize fundamental structural properties with consequences for ecosystem stability and function.

Methodological advances in standardization approaches and diversity quantification now enable robust comparison across systems and assessment of network responses to environmental change. The integration of network ecology with ecosystem service assessment reveals patterns of vulnerability to species losses and identifies critical supporting species that stabilize both ecological and service-provision functions. These insights increasingly inform conservation strategies and restoration interventions aimed at maintaining ecosystem functions in human-modified landscapes.

As ecological network research evolves, several frontiers promise expanded understanding: incorporating temporal dynamics to capture seasonal and interannual variation; integrating spatial explicitity to connect interaction networks with landscape structure; and developing predictive models that link network structure to ecosystem functions under global change scenarios. These advances will further establish ecological networks as central to understanding and managing complex ecosystems in the Anthropocene.

The relationship between the complexity of an ecological community and its stability represents one of the most enduring debates in theoretical ecology. For decades, ecologists have grappled with the apparent paradox that complex ecosystems persist in nature despite theoretical predictions suggesting they should be unstable. This review synthesizes historical foundations and contemporary advances in complexity-stability research, examining how ecological network models have reshaped our understanding of ecosystem persistence. We analyze how shifting from random-network null models to structurally realistic food webs has resolved central tensions in this debate, highlighting stabilizing mechanisms including non-random interaction distributions, trophic organization, and functional redundancy. Emerging consensus indicates that specific architectural properties—not complexity per se—determine ecosystem stability, with profound implications for biodiversity conservation and ecosystem management in the Anthropocene.

Understanding the mechanisms governing the stability and persistence of ecosystems remains a fundamental challenge in ecology. The complexity-stability debate, initiated over five decades ago, addresses whether ecosystems with greater species diversity and more numerous interactions are more or less stable in the face of perturbation [10] [11]. This question has gained urgent relevance in the current geological era, the Anthropocene, characterized by unprecedented rates of species extinction and ecosystem degradation [10] [11].

The historical trajectory of this debate reveals a fascinating intellectual journey: from early intuitive claims that complexity begets stability, to mathematical proofs suggesting the opposite, toward a contemporary synthesis recognizing that specific structural properties of ecological networks determine how complexity affects stability [12] [13]. This review traces this conceptual evolution, with particular emphasis on how ecological network models have transformed our understanding of ecosystem dynamics.

Beyond theoretical interest, resolving the complexity-stability debate has profound practical implications. Ecosystem services—nature's contributions to human well-being, including provisioning, regulating, supporting, and cultural services—underpin human societies [10] [3]. Understanding how the complexity of ecological networks relates to their stability is crucial for predicting ecosystem responses to anthropogenic pressures and for designing effective conservation strategies [10] [3] [14].

Historical Foundations

Early Intuitions: Complexity as Stabilizing

Early ecological thought was dominated by the intuition that more complex ecosystems are inherently more stable. This perspective was championed by prominent ecologists including Robert MacArthur and Charles Elton, who argued that ecosystems with greater species diversity and more trophic connections possess enhanced ability to withstand perturbations [10] [13] [15].

MacArthur (1955) proposed that energy flow alternatives in complex food webs provide buffering capacity against population fluctuations, while Elton's observations suggested that simple ecosystems (such as agricultural monocultures or islands) were more vulnerable to invasions and population explosions than their complex counterparts [13] [16]. These early views emphasized the stabilizing effect of multiple pathways for energy flow and functional redundancy within ecosystems.

May's Revolutionary Challenge

In 1972, physicist-turned-ecologist Robert May fundamentally challenged the prevailing ecological intuition using mathematical approaches from random matrix theory [12] [13] [15]. May analyzed randomly constructed ecological communities with S species, connectance C (probability that any two species interact), and interaction strength variance σ². His stability analysis demonstrated that such randomly assembled ecosystems become almost certainly unstable when the complexity measure SCσ² exceeds a critical threshold [13].

May's formulation established that:

- Stability decreases as species richness (S) increases

- Stability decreases as connectance (C) increases

- Stability decreases as interaction strength (σ) increases

This result created the central paradox of complexity-stability relationships: if complex random ecosystems are inherently unstable, how do the highly complex, species-rich ecosystems observed in nature (coral reefs, tropical forests) persist? [13] [16] [15] May's work thus established a null model against which empirical ecosystems could be compared, shifting research toward identifying the non-random properties that stabilize natural communities.

Table 1: Key Parameters in May's Stability Criterion

| Parameter | Description | Effect on Stability |

|---|---|---|

| S | Species richness | Decreases stability as S increases |

| C | Connectance (probability of interaction between species) | Decreases stability as C increases |

| σ | Standard deviation of interaction strength | Decreases stability as σ increases |

| SCσ² | Complexity measure | Ecosystem unstable when >1 |

Theoretical Advances and Mechanistic Insights

Beyond Random Models: Structural Stabilization

Following May's provocative finding, ecologists identified several non-random structural properties that enhance stability in complex ecosystems:

Interaction Strength Distribution: Empirical food webs exhibit highly skewed distributions of interaction strengths, with numerous weak interactions and few strong ones [13] [16]. This architecture promotes stability because weak interactions dampen the propagation of perturbations through the network, while strong interactions create local compensatory effects [13].

Trophic Structure and Energy Flow: Food webs display pyramidal organization, with stronger interactions occurring at lower trophic levels [13]. This structure emerges from energetic constraints and creates asymmetry in predator-prey interactions that dampens oscillatory behavior [13].

Correlation Between Interaction Pairs: In predator-prey relationships, the effect of predator on prey (typically negative) and prey on predator (typically positive) are naturally correlated [13]. Tang et al. demonstrated that this negative correlation across the community matrix diagonal significantly enhances stability compared to the random expectation [13].

Modularity and Compartmentalization: Ecological networks often exhibit modular organization, with strongly connected subgroups of species having weaker connections to other modules. This compartmentalization contains perturbations within modules, preventing system-wide cascades [10].

Competitive Systems: New Theoretical Framework

Recent theoretical work has refined our understanding of complexity-stability relationships in competitive systems. Mazzarisi and Smerlak (2024) demonstrated using a generalized competitive Lotka-Volterra model that the relationship between complexity and stability depends critically on the relative growth rates of self-interactions versus cross-interactions [12].

Their analysis reveals a dual reality:

- When self-interactions grow faster with density than cross-interactions, complexity is destabilizing (consistent with May's findings)

- When cross-interactions grow faster than self-interactions, complexity is stabilizing

This theoretical advance helps explain the contrasting observations of stability across different ecosystem types and interaction networks, suggesting that the relationship between complexity and stability is not universal but context-dependent [12].

Theoretical Evolution of Complexity-Stability Relationships

Empirical Evidence and Experimental Validation

Large-Scale Food Web Analysis

Empirical testing of complexity-stability relationships entered a new era with the compilation and analysis of large-scale food web datasets. A landmark study published in Nature Communications in 2016 performed stability analysis on 116 quantitative food webs sampled from marine, freshwater, and terrestrial habitats worldwide [13] [16]. This represented the most comprehensive empirical test of May's predictions to date.

The research revealed several critical findings:

- No relationship was detected between stability and classic complexity descriptors (species richness, connectance, interaction strength)

- Empirical food webs demonstrated far greater stability than randomly constructed ecosystems with equivalent complexity

- Two key non-random properties explained this enhanced stability: (1) negative correlation between interaction pairs (effects of predators on prey and vice versa), and (2) high frequency of weak interactions [13] [16]

Table 2: Empirical Findings from 116-Food-Web Meta-Analysis

| Complexity Metric | Predicted Effect on Stability | Empirical Finding | Interpretation |

|---|---|---|---|

| Species Richness (S) | Negative | No relationship | Natural communities avoid complexity-stability trade-off |

| Connectance (C) | Negative | No relationship | Structure, not connectance per se, determines stability |

| Interaction Strength (σ) | Negative | No relationship | Skewed distribution with many weak interactions stabilizes |

| Interaction Correlation (ρ) | Not in original model | Strongly stabilizing | Negative correlation in predator-prey pairs enhances stability |

Randomization Tests and Structural Determinants

The 2016 study employed sophisticated randomization tests to isolate the contribution of specific structural properties to food web stability [13]. By systematically removing non-random features from empirical food webs and measuring the effect on stability, the researchers quantified the relative importance of different stabilizing mechanisms:

Pyramidal Structure of Interaction Strength: Food webs without the natural pyramidal structure (where strong interactions occur predominantly at low trophic levels) were significantly less stable than empirical webs [13].

Interaction Strength Topology: Randomizing the topological distribution of interaction strengths while maintaining their distribution reduced stability, indicating that the natural arrangement of strong and weak interactions within the network enhances persistence [13].

Interaction Pair Correlations: Removing the natural correlation between interaction pairs (e.g., c~ij~ and c~ji~ in community matrices) substantially reduced stability, confirming the theoretical importance of this property [13].

Interaction Strength Distribution: Food webs with normally distributed interaction strengths were less stable than those with the natural leptokurtic distribution (high proportion of weak interactions, long tail of strong interactions) [13].

Methodological Approaches in Complexity-Stability Research

Experimental Protocols for Food Web Stability Analysis

Research on complexity-stability relationships employs standardized methodological frameworks:

Community Matrix Construction: For empirical food webs, researchers typically construct community matrices by first building interaction matrices (A = [α~ij~]) from observational data, then converting these to community matrices (C) by multiplying interaction coefficients by species biomass (C = [α~ij~B~j~]) [13]. This approach leverages the equilibrium assumption of ecosystem models.

Stability Measurement: Local stability is assessed by calculating the real part of the dominant eigenvalue of the community matrix [13]. A negative value indicates stability (system returns to equilibrium after small perturbations), while a positive value indicates instability [13].

Randomization Tests: To identify non-random properties enhancing stability, researchers perform randomization tests that sequentially remove structural features from empirical webs [13]. Comparing stability of randomized versus empirical networks quantifies the contribution of each feature.

Ecopath with Ecosim Modeling: Many contemporary analyses utilize Ecopath mass-balance models, which provide standardized frameworks for constructing food webs from empirical data [13] [15]. These models integrate biomass, production, consumption rates, and diet composition to quantify energy flows.

The Researcher's Toolkit: Key Methodologies

Table 3: Essential Methodological Approaches in Complexity-Stability Research

| Method/Technique | Primary Function | Key Applications |

|---|---|---|

| Ecopath with Ecosim | Mass-balance modeling of trophic flows | Constructing quantitative food webs from field data |

| Community Matrix Analysis | Linear stability analysis around equilibrium | Predicting response to small perturbations |

| Random Matrix Theory | Mathematical analysis of eigenvalue distributions | Establishing stability thresholds for random communities |

| Network Robustness Analysis | Simulating secondary extinctions | Measuring food web response to species loss |

| Structural Equation Modeling | Path analysis of direct/indirect effects | Disentangling complex interaction pathways |

| Metabolic Theory | Scaling relationships based on body size | Predicting interaction strengths from trait data |

Ecological Networks and Ecosystem Services

From Food Web Persistence to Service Provision

Understanding complexity-stability relationships has practical importance for conserving ecosystem services—nature's contributions to human well-being [3]. Recent research has extended robustness analysis from food web persistence to ecosystem service maintenance, revealing several critical insights:

Correlation Between Food Web and Service Robustness: Food web robustness is strongly positively correlated with ecosystem service robustness (r~s~[36] = 0.884, P = 9.504e-13) across different extinction sequences [3]. This indicates that species losses that disrupt food web integrity generally degrade ecosystem services.

Service-Specific Vulnerability: Different ecosystem services show varying robustness to species losses, depending on their trophic level and redundancy [3]. Services with higher redundancy (provided by more species) and lower trophic level are generally more robust to species losses [3].

Critical Role of Supporting Species: Species that support ecosystem service providers through interaction networks—rather than the service providers themselves—are critical for maintaining both food web stability and ecosystem services [3]. This highlights the importance of indirect interactions and the limitations of single-species conservation approaches.

Network-Informed Conservation Strategies

The integration of complexity-stability theory into conservation practice has led to innovative approaches for ecosystem management:

Ecological Network Optimization: Landscape-scale conservation strategies now explicitly incorporate network principles, optimizing ecological networks through strategic placement of corridors and stepping stones to enhance connectivity [9] [14]. These approaches increase network circuitry, edge/node ratios, and connectivity metrics, improving ecosystem stability [14].

Scenario Analysis and Tradeoff Assessment: Conservation planners use ecosystem models to simulate different management scenarios and evaluate tradeoffs among ecosystem services [14]. For example, studies have quantified how ecological protection scenarios versus natural development scenarios affect habitat quality, soil retention, and water yield [14].

Dynamic Conservation Planning: Rather than focusing solely on protecting biodiversity hotspots, modern conservation prioritizes maintaining interaction networks and ecological processes [3]. This approach recognizes that species playing supporting roles in ecosystem services are critical to overall ecological stability [3].

Current Consensus and Future Directions

Emerging Synthesis

After five decades of theoretical development and empirical testing, a coherent consensus regarding complexity-stability relationships has emerged:

May Was Correct—About Random Ecosystems: Randomly constructed ecosystems do become less stable as complexity increases, establishing an important theoretical baseline [13].

Natural Ecosystems Are Not Random: Empirical food webs possess specific non-random architectural properties that enhance their stability relative to random expectations [13] [16].

Structure Over Complexity: Specific structural properties—including skewed interaction strength distributions, trophic organization, correlation between interaction pairs, and modularity—determine stability more than complexity per se [12] [13].

Context Dependency: The relationship between complexity and stability varies across ecosystem types and interaction networks, with competitive systems exhibiting different relationships than trophic systems depending on how self-interactions and cross-interactions scale with density [12].

Frontier Research Questions

Despite substantial progress, important questions remain active research frontiers:

- How does climate change alter the architectural properties that stabilize ecological networks?

- What role does evolutionary history play in shaping stable network configurations?

- How can multiplex networks (integrating trophic, mutualistic, and competitive interactions) improve predictions of ecosystem responses?

- Can we develop general design principles that predict stability across ecosystem types?

- How do spatial processes and meta-community dynamics affect complexity-stability relationships at landscape scales?

The historical trajectory of complexity-stability research demonstrates how a fundamental ecological paradox has driven theoretical innovation and empirical advancement. The field has progressed from early intuitive claims, through mathematical counterintuition, toward a synthetic understanding that acknowledges the context-dependent nature of complexity-stability relationships.

Contemporary consensus indicates that natural ecosystems avoid the complexity-stability trade-off through specific architectural properties: skewed interaction strength distributions with many weak interactions, correlated interaction pairs, trophic organization, and modular structure. These features enable the persistence of complex ecosystems in nature, resolving the apparent paradox between theoretical predictions and empirical observations.

This hard-won understanding has profound implications for ecosystem management in the Anthropocene. Conservation strategies that preserve not just species but the architectural properties of their interaction networks will be most effective at maintaining both ecosystem stability and the services they provide to humanity. As environmental challenges intensify, insights from complexity-stability research will increasingly inform efforts to build resilient ecological communities in a rapidly changing world.

Ecological networks provide a powerful framework for understanding the complex interplay of species within ecosystems. By representing species as nodes and their interactions as links, these networks allow researchers to move beyond pairwise relationships to analyze community-wide patterns. Among the numerous metrics developed to quantify network architecture, three structural properties stand out as fundamentally important for both the structure and function of ecological communities: connectance, nestedness, and modularity [1]. These properties are not merely descriptive; they have profound implications for ecosystem stability, resilience, and response to disturbance. This technical guide provides an in-depth examination of these key properties, focusing on their mathematical definitions, ecological significance, and measurement methodologies to support ongoing research in ecosystem complexity.

Defining the Key Structural Properties

Connectance

Connectance (also referred to as connectivity or link density) represents the proportion of realized interactions out of all possible interactions within a network [1] [17] [18]. For a network with S species, the maximum number of possible interactions is S² for directed networks (e.g., food webs) or S(S-1)/2 for undirected networks. Connectance (C) is thus calculated as:

C = L/S²

where L is the observed number of links [1] [18]. Connectance serves as a simple measure of network complexity, with higher values indicating greater interconnectedness. Early ecological theory suggested that higher complexity (including higher connectance) would destabilize ecosystems [1]. However, subsequent research has revealed a more nuanced relationship, where the stability of highly connected networks depends on additional factors such as interaction strength and distribution [1] [17].

Nestedness

Nestedness describes a pattern of interaction overlap where specialists (species with few interactions) interact with subsets of the species that generalists (species with many interactions) interact with [1] [19] [20]. In a perfectly nested network, the interaction partners of less-connected species form perfect subsets of the interaction partners of more highly-connected species. This structure creates asymmetrical specialization, where specialists interact with generalists, but generalists interact with both generalists and specialists [20].

Nestedness is a prominent pattern in mutualistic networks such as plant-pollinator and seed-dispersal systems [1] [20]. The ecological significance of nestedness lies in its potential to reduce competition and increase biodiversity by facilitating indirect facilitation [1] [19]. When circumstances become harsh, this structure allows species to indirectly support each other, though it may also create conditions for simultaneous collapse if a tipping point is passed [1].

Modularity

Modularity (compartmentalization) quantifies the degree to which a network is organized into distinct subgroups (modules) where species within a module interact more frequently with each other than with species in other modules [1] [21]. Modularity (Q) is mathematically defined as:

Q = Σᵢ=1ᴺᴹ (eᵢᵢ - aᵢ²)

where eᵢᵢ is the fraction of links within module i, aᵢ is the fraction of links connected to species in module i, and Nᴹ is the number of modules [21].

This structure often reflects spatial, temporal, or functional organization within ecosystems [21]. For example, species dwelling in the same location or active in the same season are expected to interact more frequently, forming natural modules [21]. The ecological importance of modularity centers on its potential to contain disturbances, as effects of species losses may be limited to their original compartment, potentially reducing the risk of cascading extinctions throughout the entire network [1] [21].

Table 1: Key Structural Properties of Ecological Networks

| Property | Mathematical Definition | Structural Pattern | Common Network Types |

|---|---|---|---|

| Connectance | C = L/S² | Density of interactions | Food webs, mutualistic networks |

| Nestedness | NODF, Temperature Metric | Specialist-generalist subset pattern | Plant-pollinator, host-parasite |

| Modularity | Q = Σ(eᵢᵢ - aᵢ²) | Densely connected subsystems | Spatial networks, food webs |

Quantitative Measurement and Methodologies

Measuring Connectance

Connectance measurement requires:

- Network compilation: Construct an adjacency matrix where elements aᵢⱼ = 1 if species i and j interact, 0 otherwise.

- Link counting: Sum all non-zero elements (L) in the adjacency matrix.

- Normalization: Divide L by the square of species richness (S²) for directed networks or by S(S-1)/2 for undirected networks [1] [18].

The appropriate normalization depends on whether the ecological interactions are directional (e.g., predator-prey relationships) or non-directional (e.g., mutualistic associations).

Measuring Nestedness

Multiple metrics exist for quantifying nestedness:

Nestedness Temperature: A measure ranging from 0° (perfectly nested) to 100° (random), calculated by evaluating how much the presence-absence matrix must be "heated" to eliminate its nested pattern [19]. Colder systems have more fixed order in species extinction or colonization sequences.

NODF (Nestedness Metric Based on Overlap and Decreasing Fill): Measures the degree of overlap between pairs of rows and columns in the interaction matrix, with values ranging from 0 (no nestedness) to 100 (perfect nestedness) [20].

Software Tools: Specialized software packages include ANINHADO (handles large matrices and multiple null models) and BINMATNEST (corrects mathematical limitations of earlier approaches) [19].

Measuring Modularity

Modularity measurement involves:

- Module detection: Identifying optimal partitions of species into modules using algorithms that maximize Q.

- Significance testing: Comparing observed Q values to those from appropriate null models (e.g., Erdős-Rényi random graphs) [21].

- Interpretation: Q values range from -1 to 1, with positive values indicating modular structure (typically Q > 0.3 is considered significant) [21].

Table 2: Measurement Approaches for Network Properties

| Property | Primary Metrics | Software Tools | Null Model Considerations |

|---|---|---|---|

| Connectance | C = L/S² | Custom scripts, network analysis packages | Dependent on network size; comparison requires similar richness |

| Nestedness | Temperature, NODF, PRSN | ANINHADO, BINMATNEST | Choice affects significance; various randomization algorithms available |

| Modularity | Q-value | NetworkX, igraph, specialized modularity algorithms | Erdős-Rényi common; biological constraints may require customized models |

Experimental Protocols for Structural Analysis

Standard Protocol for Network Structure-Function Analysis

Objective: To quantify connectance, nestedness, and modularity in an ecological network and relate these properties to ecosystem stability.

Materials: Species interaction data (e.g., observation records, gut content analysis, molecular analysis), computational resources, network analysis software.

Procedure:

- Data Collection: Compile comprehensive interaction data using field observations, experimental manipulations, literature review, or molecular techniques.

- Network Construction: Create adjacency matrices representing species and their interactions.

- Structural Analysis:

- Calculate connectance using the appropriate formula for network directionality.

- Quantify nestedness using NODF or temperature metrics with appropriate software.

- Determine modularity using module detection algorithms.

- Null Model Testing: Compare observed metrics to distributions from randomized networks (e.g., 1000 permutations) to assess statistical significance.

- Stability Assessment: Relate structural properties to stability metrics (e.g., resilience to perturbation, resistance to invasion, recovery rate).

Interpretation: Higher connectance may enhance robustness to secondary extinctions in some contexts but destabilize communities in others [1] [18]. Nestedness often promotes community persistence under harsh conditions but may create correlated extinction risks [1]. Modularity can compartmentalize disturbances but may reduce functional redundancy [21].

Advanced Protocol: Dynamic Stability Analysis

For investigating the relationship between network structure and stability:

- Community Matrix Construction: Build matrices where elements represent interaction strengths around equilibrium [21].

- Eigenvalue Analysis: Calculate the real part of the rightmost eigenvalue (Re(λᴍ,₁)) of the community matrix as a stability measure [21].

- Perturbation Experiments: Systematically modify network structure (Q) while holding other parameters constant.

- Stability-Structure Correlation: Analyze how stability changes with varying levels of connectance, nestedness, and modularity.

This approach reveals that the effect of modularity on stability depends on parameters such as mean interaction strength (μ) and correlation of interaction strengths (ρ) [21]. For instance, modularity has moderate stabilizing effects when mean interaction strength is negative, while anti-modularity (bipartite structure) can be highly destabilizing [21].

Interrelationships and Dynamics

The structural properties of ecological networks are not independent. Research indicates complex relationships between them, particularly between nestedness and modularity. The correlation between these two properties changes with connectance: at low connectance, highly nested networks also tend to be highly modular, while at high connectances, the relationship reverses [22]. This suggests that these properties may represent different manifestations of underlying organizational principles rather than completely independent dimensions.

Furthermore, the relationship between network structure and ecosystem stability represents a dynamic feedback loop. Structural properties affect stability, which in turn influences species persistence and interaction patterns, potentially modifying network structure over time [1] [21]. This creates complex eco-evolutionary dynamics where network structure both influences and is influenced by community dynamics.

The Researcher's Toolkit

Table 3: Essential Resources for Ecological Network Analysis

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| ANINHADO | Software | Nestedness analysis | Large matrices; multiple null models |

| BINMATNEST | Software | Nestedness calculation | Corrects mathematical limitations of earlier methods |

| Erdős-Rényi Model | Null Model | Random network generation | Baseline for modularity calculation |

| Configuration Model | Null Model | Degree-preserving randomization | Significance testing for nestedness |

| Modularity Algorithms | Algorithm | Module detection | Identifying compartments in networks |

| Circuit Theory | Analytical Framework | Connectivity modeling | Spatial ecological network optimization [9] |

| Morphological Spatial | Analysis Method | Landscape pattern analysis | Identifying ecological sources and corridors [9] |

| Machine Learning Models | Analytical Tool | Pattern recognition | Predicting network dynamics and optimization [9] |

Applications and Conservation Implications

Understanding connectance, nestedness, and modularity has practical applications in conservation biology and ecosystem management. The structural organization of ecological networks influences their response to human-induced disturbances and environmental change [18].

Connectance as an Indicator: While high connectance has been proposed as an indicator of pristine communities that are more robust to species loss, empirical evidence shows this relationship is highly context-specific [18]. Approximately 26% of studied systems showed increased connectance with environmental degradation, 39% showed decreased connectance, and 35% showed no clear relationship [18]. This suggests connectance alone should not be naively used as a conservation indicator.

Modularity for Resilience: Highly modular network structures can enhance system resilience by containing disturbances within modules and preventing cascading effects throughout the entire network [23]. This principle applies not only to ecological systems but also to designing resilient human systems such as energy grids, supply chains, and local food systems [23].

Restoration Strategies: In degraded ecosystems, understanding network structure can guide restoration efforts. For instance, in arid regions of Xinjiang, optimization of ecological networks involved improving connectivity through buffer zones, planting drought-resistant species, and establishing desert shelter forests [9]. Such strategies increased connectivity of ecological sources by 43.84%-62.84% and inter-patch connectivity by 18.84%-52.94% [9].

Connectance, nestedness, and modularity represent three fundamental dimensions of ecological network structure that collectively influence ecosystem stability, functioning, and resilience. Rather than operating in isolation, these properties interact in complex ways that depend on environmental context, species composition, and interaction strengths. Modern ecological research continues to reveal the nuanced relationships between these structural properties and ecosystem dynamics, moving beyond simplistic "complexity begets stability" generalizations to a more sophisticated understanding of how specific architectural features affect community persistence. As research in this field advances, integrating these structural metrics with dynamic models and empirical validation will remain crucial for both theoretical ecology and applied conservation.

Biodiversity-Area Relationships and Network Scaling Laws

Ecological communities are more than disconnected collections of species; they can be represented as complex networks of interactions [24]. Understanding how these networks scale with area is crucial for predicting ecosystem responses to human activities and habitat destruction [24]. This technical guide synthesizes current research on biodiversity-area relationships (SARs) and extends these concepts to higher levels of ecological organization through network-area relationships (NARs). We examine how network complexity changes with area across different spatial domains and provide methodological protocols for studying these relationships within the broader context of ecological network models for ecosystem complexity research.

Theoretical Framework

From Species-Area to Network-Area Relationships

The species-area relationship (SAR), described by the power function S ≈ cA^z, where S represents species richness, A is area, and c and z are fitted parameters, represents one of ecology's most established patterns [24]. Historically, biodiversity scaling research has focused predominantly on species counts, with less exploration of how interaction networks scale with area [24].

Network-area relationships (NARs) extend this concept beyond simple species enumeration to quantify how the complexity of ecological interactions changes with spatial scale. This framework allows researchers to:

- Quantify multidimensional biodiversity: Measure biodiversity beyond species richness to include interaction diversity

- Predict ecosystem consequences: Anticipate how habitat loss simplifies natural communities beyond species extirpations

- Understand structural constraints: Identify invariant properties maintained across spatial scales

Fundamental Scaling Relationships in Ecological Networks

The spatial scaling of network complexity occurs at multiple hierarchical levels, from basic building blocks to higher-order organizational patterns. Table 1 summarizes the core network properties and their scaling behaviors documented across empirical studies.

Table 1: Network Properties and Their Scaling Relationships with Area

| Network Property | Description | Scaling Behavior | Regional Domain Pattern | Biogeographical Domain Pattern |

|---|---|---|---|---|

| Species (S) | Number of distinct species | Power law | Linear-concave increase | Convex increase |

| Links (L) | Number of interspecific interactions | Power law | Linear-concave increase | Convex increase |

| Links per Species (L/S) | Mean number of interactions per species | Power law | Linear-concave increase | Convex increase |

| Indegree | Mean number of resources used by a consumer | Power law | High variability | High variability |

| Degree Distribution | Statistical distribution of links per species | Scale-invariant | Shape conserved | Shape conserved |

| Connectance | Proportion of realized interactions | Variable | Depends on L-S scaling | Depends on L-S scaling |

The fundamental organization of interactions within networks appears conserved across scales, as evidenced by the scale invariance of degree distributions despite changes in network size [24]. This preservation of network architecture suggests that properties influencing community stability and robustness may be maintained across spatial scales.

Methodological Framework

Experimental Design and Data Collection

Research on NARs requires spatial replication of interaction networks across areas of different sizes. The following protocol outlines the key methodological considerations:

Spatial Domain Specification

- Regional domains: Sampling conducted locally with replicated designs within narrow spatial extents (maximum ~1,000 km²)

- Biogeographical domains: Sampling units span broader areas encompassing multiple biomes, exposing communities to greater environmental heterogeneity and dispersal barriers

Network Aggregation Procedure

To characterize how network properties change with area, researchers sequentially aggregate sampling units, scoring network structure at each aggregation step [24]. This approach requires:

- Standardized sampling: Consistent methodology across all sampling units

- Interaction documentation: Pairwise interspecific interactions recorded using appropriate methodologies for the ecosystem and interaction type (e.g., mutualistic vs. antagonistic)

- Spatial explicitness: Precise geographical coordinates for all sampling locations

The following workflow diagram illustrates the experimental protocol for constructing and analyzing network-area relationships:

Data Analysis Framework

Power Law Fitting

Network-area relationships are typically described using an extended power function [24]:

N = cA^(zA^(-d))

Where:

- N = Network property (species, links, links per species)

- A = Area

- c = Intercept parameter

- z = Scaling exponent in log-log space

- d = Parameter controlling asymptotic flattening

This functional form accommodates different scaling shapes:

- Linear-concave: z » d > 0 (typical in regional domains)

- Convex: z > 0 > d (typical in biogeographical domains)

Null Model Testing

To determine whether network structure changes with area beyond those changes associated with species richness increases, researchers employ null models that [24]:

- Randomize network structure while maintaining species richness

- Test specificity of observed patterns against random expectations

- Disentangle effects of species richness from other organizational constraints

Key Research Findings

Universal Power Law Scaling

Empirical evidence from 32 spatial interaction networks across different ecosystems confirms that network complexity increases with area at multiple organizational levels [24]. The power law formalism effectively describes these relationships across all ecosystem types and interaction types (mutualistic and antagonistic).

Table 2 presents quantitative parameters for network-area relationships across spatial domains, based on empirical findings:

Table 2: Scaling Parameters for Network-Area Relationships Across Spatial Domains (Mean ± Standard Deviation)

| Network Property | Parameter | Regional Domain | Biogeographical Domain |

|---|---|---|---|

| Species | d | 0.08 ± 0.03 | -0.38 ± 0.78 |

| z | 0.48 ± 0.12 | 0.05 ± 0.41 | |

| Links | d | 0.07 ± 0.03 | -0.19 ± 0.13 |

| z | 0.72 ± 0.10 | 0.41 ± 0.63 | |

| Links per Species | d | 0.05 ± 0.11 | -0.31 ± 0.57 |

| z | 0.26 ± 0.10 | 0.08 ± 0.11 | |

| Indegree | d | 0.04 ± 0.12 | -0.27 ± 0.22 |

| z | 0.31 ± 0.13 | 0.07 ± 0.19 |

Domain-Specific Scaling Patterns

Systematic differences emerge between regional and biogeographical domains [24]:

- Regional domains show linear-concave increases for all network complexity measures

- Biogeographical domains exhibit convex increases for most datasets with greater variability

- Predictability is higher at regional scales than biogeographical scales

The number of links increases faster with area than species richness in both domains, with specific scaling exponents between 1.5 and 2 for the links-species relationship [24]. This indicates that the fundamental organization of interactions within networks is conserved across scales.

Scale Invariance of Network Architecture

Despite changes in network size with area, the fundamental architecture of ecological networks remains remarkably consistent:

- Degree distributions maintain their shape across spatial scales

- Skewed distributions (many specialists, few generalists) persist regardless of area

- Structural robustness properties may be maintained across scales

This architectural conservation suggests that the fundamental rules governing network organization operate similarly across spatial scales.

Research Toolkit

Essential Methodological Components

Table 3: Research Reagent Solutions for Network-Area Studies

| Research Component | Function | Implementation Examples |

|---|---|---|

| Spatially Explicit Interaction Data | Documents species interactions across locations | Pairwise interaction records, pollen-carrier networks, host-parasite records, plant-herbivore surveys |

| Network Aggregation Algorithm | Sequentially combines sampling units | Custom scripts in R or Python implementing sequential aggregation procedures |

| Power Law Fitting Tools | Estimates scaling parameters | Nonlinear regression algorithms, log-log linearization approaches |

| Null Model Frameworks | Tests specificity of observed patterns | Random network generators with constrained species richness and connectance |

| Spatial Data Infrastructure | Manages geographical information | GIS platforms, spatial databases documenting sampling coordinates and environmental variables |

Advanced Analytical Approaches

The following diagram illustrates the conceptual relationships between different analytical components in network-area research:

Implications for Ecosystem Complexity Research

Conservation and Habitat Loss Predictions

Biodiversity-area relationships extended to network complexity provide enhanced predictive frameworks for understanding the consequences of anthropogenic habitat destruction [24]. Rather than merely predicting species loss, NARs allow researchers to forecast:

- Simplification of interaction networks beyond species extirpations

- Disruption of ecosystem functions dependent on specific interactions

- Cascading effects through interaction pathways

Context-Dependent Biodiversity Drivers

Research indicates that biodiversity drivers operate differently across scales and taxonomic groups [25]. Key findings include:

- Regional variables (temperature, precipitation) often have greater predictive power than local vegetation structure [25]

- Taxonomic groups respond differently to environmental factors [25]

- General universal rules explaining biodiversity patterns across all geographies, scales, and species groups remain elusive [25]

This context-dependence highlights the importance of developing scale-specific and taxon-specific models for accurate biodiversity prediction.

Complementary Biodiversity Metrics

Different biodiversity metrics provide complementary information about ecological communities [26]:

- Species richness correlates with rarity indices but provides limited functional information

- Taxonomic distinctness metrics capture phylogenetic relatedness and respond differently to environmental gradients

- Multiple metrics are needed for comprehensive biodiversity assessment

This multidimensional perspective aligns with network-based approaches that consider both compositional and interactional aspects of biodiversity.

Future Research Directions

advancing NAR research requires:

- Standardized data collection protocols for interspecific interactions across spatial scales

- Integrated modeling frameworks combining spatial, environmental, and network processes

- Cross-taxonomic comparisons to identify generalities versus system-specific patterns

- Temporal dimension integration to understand how climate change and anthropogenic pressures alter scaling relationships

- Theoretical development linking observed patterns to underlying ecological and evolutionary processes

As large-scale ecological monitoring programs (e.g., NEON) mature, longer temporal datasets will enable researchers to explore how these spatial scaling relationships change over time in response to anthropogenic pressures and environmental change [25].

Ecological networks provide a powerful quantitative framework for understanding complexity in biological systems, from natural ecosystems to human physiology and drug action. The foundational concept of the Species-Area Relationship (SAR), which describes how species richness (S) increases with area (A) following a power law (S ≈ cA^z), has been extended to network ecology [24]. This expansion allows researchers to move beyond simple counts of species to a more holistic understanding of ecosystem complexity by examining how the entire architecture of interactions changes with scale. The emerging field of network medicine applies these ecological principles to pharmacological systems, recognizing that diseases and drug actions represent perturbations to highly interconnected biological networks [27] [28]. Just as ecological networks capture the complex web of species interactions, drug-target networks map the intricate relationships between pharmaceuticals and their biological targets, creating a framework for understanding therapeutic and adverse effects through network topology and dynamics [29].

Quantifying complexity through species richness, linkage density, and interaction strength provides researchers with a multidimensional perspective on system stability, resilience, and function. In both ecological and pharmacological contexts, the fundamental organization of interactions within networks appears conserved across scales, with highly skewed degree distributions featuring many specialists and few generalists maintained regardless of system size [24]. This structural conservation suggests universal principles of network organization that can be exploited for predicting system responses to perturbations, whether from habitat destruction in ecology or drug treatments in pharmacology. The integration of network science with high-throughput omics data has revolutionized our ability to quantify and model these complex systems, enabling the development of predictive frameworks for ecosystem management and drug discovery [27] [30].

Quantitative Foundations: Network-Area Relationships (NARs)

Power Law Scaling of Network Properties

Empirical studies across 32 spatial interaction networks from diverse ecosystems have demonstrated that network complexity increases with area following predictable power-law functions [24]. The fundamental Network-Area Relationships (NARs) extend the classic Species-Area Relationship, revealing how multiple aspects of network structure scale with area. These relationships follow an extended power function of the form N = cA^(zA^-d), where N represents a given network property, A is area, and c, z, and d are fitted parameters [24].

Table 1: Power Law Scaling Parameters for Ecological Network Properties Across Spatial Domains

| Network Property | Spatial Domain | Scaling Exponent (z) | Asymptotic Parameter (d) | Functional Relationship |

|---|---|---|---|---|

| Species Richness | Regional | 0.48 ± 0.12 | 0.08 ± 0.03 | Linear-Concave |

| Species Richness | Biogeographical | 0.05 ± 0.41 | -0.38 ± 0.78 | Convex |

| Number of Links | Regional | 0.72 ± 0.10 | 0.07 ± 0.03 | Linear-Concave |

| Number of Links | Biogeographical | 0.41 ± 0.63 | -0.19 ± 0.13 | Convex |

| Links per Species | Regional | 0.26 ± 0.10 | 0.05 ± 0.11 | Linear-Concave |

| Links per Species | Biogeographical | 0.08 ± 0.11 | -0.31 ± 0.57 | Convex |

| Mean Indegree | Regional | 0.31 ± 0.13 | 0.04 ± 0.12 | Linear-Concave |

| Mean Indegree | Biogeographical | 0.07 ± 0.19 | -0.27 ± 0.22 | Convex |

The scaling relationships reveal systematic differences between spatial domains. In regional domains (maximum spatial extent of ~1,000 km²), network properties typically show a linear-concave increase with area (z » d > 0), while in biogeographical domains (spanning multiple biomes), the increase is convex for most datasets (z > 0 > d) [24]. This pattern indicates more rapid accumulation of network complexity at larger spatial scales, likely due to greater environmental heterogeneity, stronger dispersal barriers, and historical contingencies that combine to produce diversity patterns across broad spatial extents.

Link-Species Scaling Laws