Decoding Animal Behavior: A Comprehensive Guide to Hidden Markov Models in Tracking Data Analysis

Hidden Markov Models (HMMs) have emerged as a powerful statistical framework for classifying animal behavior from tracking data, enabling researchers to infer unobserved behavioral states from observable movement patterns.

Decoding Animal Behavior: A Comprehensive Guide to Hidden Markov Models in Tracking Data Analysis

Abstract

Hidden Markov Models (HMMs) have emerged as a powerful statistical framework for classifying animal behavior from tracking data, enabling researchers to infer unobserved behavioral states from observable movement patterns. This article provides a comprehensive overview for researchers and drug development professionals, covering foundational concepts, practical methodologies, and advanced applications. We explore how HMMs identify discrete behavioral modes such as foraging, resting, and navigating by analyzing movement metrics like step lengths and turning angles. The content addresses critical challenges including scale dependence and model selection, while validating HMM performance against alternative machine learning approaches. Through examples from diverse species and experimental settings, we demonstrate HMMs' transformative potential for preclinical research, particularly in quantifying behavioral outcomes in disease models and therapeutic interventions.

The Statistical Foundation of HMMs in Animal Behavior Analysis

Within the framework of a thesis on classifying animal behavior from tracking data, this document details the core concepts of Hidden Markov Models (HMMs) and provides a practical protocol for their application. HMMs are powerful statistical tools for analyzing sequential data where the underlying system states are not directly observable [1] [2].

Theoretical Foundation: Core Components of an HMM

An HMM is defined by a finite set of hidden states, a set of possible observations, and three probability distributions [1] [2]:

- Hidden States (

S): The true, unobservable conditions of the system. In behavioral ecology, these represent distinct behavioral modes (e.g., Resting, Exploring, Navigating) [3]. - Observation Sequence (

O): The data that is measured and recorded. In tracking studies, this typically consists of movement metrics derived from location data [4]. - Markov Property: The assumption that the next hidden state depends only on the current state, independent of all prior states [5].

- Initial State Probabilities (

π): The probability distribution over the initial behavioral state at the beginning of the sequence. - Transition Probabilities (

A): The matrix defining the probability of switching from one behavioral state to another [3]. - Emission Probabilities (

B): The probability of making a specific observation (e.g., a particular step length) given that the animal is in a specific behavioral state [1].

Table 1: The Core Parameters of a Hidden Markov Model

| Parameter | Notation | Description | Role in Animal Behavior Classification |

|---|---|---|---|

| Hidden States | S |

The true, unobservable behavioral modes. | Represents behaviors like Resting, Exploring, and Navigating [3]. |

| Observations | O |

The recorded, quantifiable data sequence. | Derived movement metrics such as step length and turning angle [4]. |

| Initial Probabilities | π |

The likelihood of starting in each state. | The assumed probability of an animal's initial activity upon tracking start. |

| Transition Probabilities | A |

The probability of moving from one state to another. | Models behavioral persistence and transitions (e.g., Exploring → Navigating) [3]. |

| Emission Probabilities | B |

The probability of an observation being generated from a state. | Links raw data (e.g., short step lengths) to a behavioral state (e.g., Resting) [4]. |

Quantitative Models for Behavioral States

The emission probabilities are defined by state-dependent distributions. For animal movement data, the following models are standard [4]:

- Step Lengths (

L) are typically modeled using a Gamma distribution, as they are continuous and non-negative. - Turning Angles (

ϕ) are modeled using a von Mises distribution, as they are circular (support on-πtoπ).

Table 2: Standard State-Dependent Distributions for Movement Metrics

| Observation Metric | Support | Standard Distribution | State-Dependent Parameters |

|---|---|---|---|

Step Length (L) |

L ≥ 0 |

Gamma | Shape ( kj ), Rate ( \thetaj ) (or Mean ( \muj ), SD ( \sigmaj )) |

Turning Angle (ϕ) |

-π < ϕ ≤ π |

Von Mises | Mean Direction ( \muj ), Concentration ( \kappaj ) |

Experimental Protocol: HMM-Based Behavioral Classification

This protocol outlines the key steps for applying an HMM to animal tracking data, based on established methodologies [3] [4].

Data Acquisition and Preprocessing

- Apparatus: Utilize a testing arena appropriate for the species and behavior of interest. For visual cliff tests, this involves a raised table with a checkerboard pattern and a transparent acrylic plate to create a depth illusion, surrounded by a circular enclosure to minimize corner bias [3].

- Data Collection: Record high-resolution video (e.g., 30 frames per second) from above the apparatus [3].

- Pose Estimation: Use deep learning-based software (e.g., DeepLabCut) to extract the animal's body center coordinates in each video frame [3].

- Movement Metric Calculation: From the

(x, y)coordinates, compute the step lengths and turning angles for each time interval.- Step Length (

l_t): The Euclidean distance between consecutive coordinates:l_t = √( (x_t - x_{t-1})² + (y_t - y_{t-1})² )[3]. - Turning Angle (

ϕ_t): The change in direction, calculated from three consecutive coordinates.

- Step Length (

Model Definition and Training

- Specify Number of States (

N): Choose a biologically plausible number of hidden behavioral states (e.g.,N=3: Resting, Exploring, Navigating) [3]. - Initialize Parameters: Make initial guesses for the probability matrices

π,A, and the parameters for the Gamma and von Mises distributions for each state. - Train with Baum-Welch Algorithm: Use the Expectation-Maximization (EM) algorithm to find the model parameters that maximize the likelihood of the observed sequence of step lengths and turning angles [6] [2]. This iterative algorithm refines the initial parameter estimates.

State Decoding and Validation

- Decode Behavioral Sequence: Apply the Viterbi algorithm to the trained model and the observed data to determine the single most likely sequence of hidden behavioral states across time [6] [2].

- Biological Validation: Correlate the decoded state sequence with the original video footage and known biological facts to validate that the classified states correspond to meaningful behaviors [3].

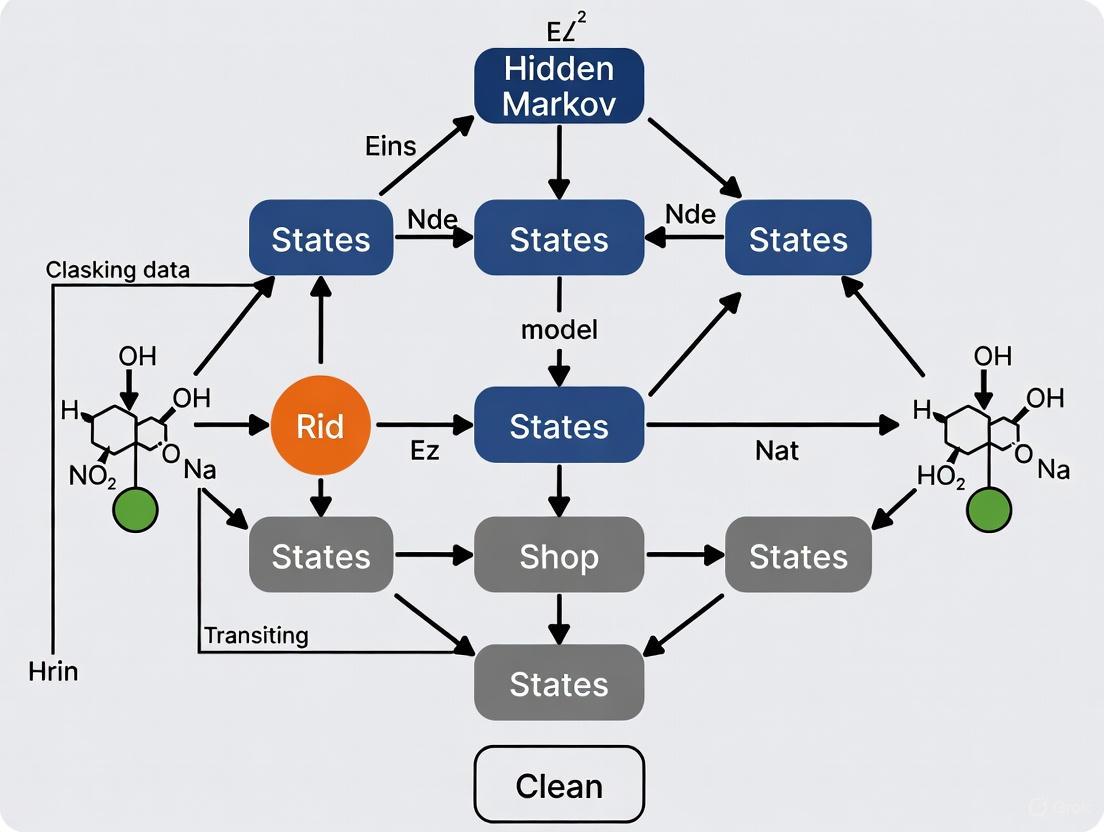

Visualizing the HMM Framework

The following diagram illustrates the logical structure and dependencies of a generic HMM, which underpins the behavioral classification workflow.

HMM Structure and Dependencies

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Materials and Software for HMM-Based Behavioral Analysis

| Item Name | Function / Rationale |

|---|---|

| DeepLabCut | Open-source software for markerless pose estimation based on deep learning. Used to extract body center coordinates from video footage [3]. |

| Visual Cliff Apparatus | A controlled environment to test depth perception and visually guided behavior in rodents, consisting of a raised table with a high-contrast pattern and a transparent acrylic floor [3]. |

| Graphviz | Open-source graph visualization software. Its DOT language is used to create clear diagrams of graph structures, including HMM architectures [7]. |

| Pomegranate Library | A Python library that implements probabilistic models, including HMMs, with built-in functions for model training, inference, and visualization [8]. |

| Baum-Welch Algorithm | An Expectation-Maximization (EM) algorithm used to train HMMs by finding the unknown parameters that maximize the likelihood of the observed data [6] [2]. |

| Viterbi Algorithm | A dynamic programming algorithm used for decoding the most probable sequence of hidden states given a sequence of observations and a trained HMM [6] [2]. |

Advanced Consideration: Autoregressive HMMs for High-Resolution Data

Standard HMMs assume observations are conditionally independent given the state. For high-resolution tracking data (e.g., >10 Hz), this assumption is often violated due to movement momentum, leading to strong within-state serial correlation in step lengths and turning angles [4]. To address this, Autoregressive HMMs (AR-HMMs) can be employed. In an AR-HMM, the mean of the state-dependent distribution (e.g., for step length) depends not only on the current state but also on the previous p observations [4]:

μ_{t,j} = Σ_{k=1}^{p} φ_{j,k} * x_{t-k} + (1 - Σ_{k=1}^{p} φ_{j,k}) * μ_j

This formulation more accurately captures the dynamics of high-resolution movement, leading to improved inference and state decoding [4].

In the analysis of animal movement, Hidden Markov Models (HMMs) have emerged as a powerful statistical tool for identifying latent behavioral states from observed tracking data. The core mathematical framework of HMMs rests on two fundamental components: transition probabilities, which govern the dynamics between hidden behavioral states, and emission distributions, which describe how observable movement metrics are generated from these underlying states [1] [9]. This framework enables researchers to move beyond simple descriptive metrics and model the complex, dynamic processes that characterize animal behavior, revealing patterns that traditional analytical methods often overlook [10].

In movement ecology, HMMs typically model sequences of movement steps derived from telemetry data. The hidden states represent behavioral modes (e.g., resting, foraging, traveling), while observations usually consist of step lengths (distance between consecutive locations) and turning angles (directional changes) [9]. The conditional independence structure of HMMs—where observations depend only on the current state, and states depend only on the previous state—provides a computationally tractable framework for decoding the behavioral processes underlying movement trajectories [1] [11].

Core Mathematical Framework

The Hidden Markov Model Structure

A Hidden Markov Model is a probabilistic time series model comprising an unobserved state sequence $(S1, S2, ..., ST)$ and an observed sequence $(Y1, Y2, ..., YT)$ [1]. In animal movement applications, the hidden states $St$ typically represent behavioral modes, while observations $Yt$ are movement metrics derived from tracking data [9]. The model is defined by three core elements:

- The initial state distribution $\bm{\delta} = (\delta1, \delta2, ..., \deltaN)$ where $\deltai = \Pr(S_1 = i)$ specifies the probability of starting in state $i$ [9] [11].

- The state transition probability matrix $\bm{\Gamma} = (\gamma{ij})$, where $\gamma{ij} = \Pr(St = j | S{t-1} = i)$ defines the probability of transitioning from state $i$ to state $j$ between times $t-1$ and $t$ [1] [9].

- The emission distributions $f(Yt | St = j, \bm{\theta}^{(j)})$, which describe the probability of observing $Y_t$ given the active state $j$ with state-dependent parameters $\bm{\theta}^{(j)}$ [1] [9].

The following diagram illustrates the conditional dependency structure of a standard HMM, showing how the hidden state sequence evolves according to Markov dynamics and generates observations at each time point.

Transition Probabilities

The transition probability matrix $\bm{\Gamma}$ is an $N \times N$ matrix where each entry $\gamma{ij}$ satisfies $0 \leq \gamma{ij} \leq 1$ and each row sums to unity: $\sum{j=1}^N \gamma{ij} = 1$ for all $i$ [9]. This matrix captures the temporal persistence and switching dynamics between behavioral states. For example, high diagonal values ($\gamma_{ii}$) indicate persistent states where animals tend to maintain their current behavior, while higher off-diagonal values indicate more frequent behavioral switching [9] [10].

In animal movement applications, transition probabilities are often modeled as functions of environmental covariates to understand how external factors influence behavioral dynamics [9]. This is typically achieved using a multinomial logit link function:

$$\gamma{ij}^{(t)} = \frac{\exp(\eta{ij}^{(t)})}{\sum{k=1}^N \exp(\eta{ik}^{(t)})}$$

where $\eta{ij}^{(t)} = \beta0^{(ij)} + \beta1^{(ij)} x{1t} + \cdots + \betap^{(ij)} x{pt}$ for $i \neq j$, and $\eta_{ii}^{(t)} = 0$ to ensure identifiability [9]. This formulation allows researchers to test specific hypotheses about how environmental conditions, such as habitat type or time of day, affect the probability of switching between different behavioral states.

Emission Distributions

Emission distributions define the relationship between the hidden behavioral states and the observed movement metrics. In animal movement applications, the bivariate observation $Yt = (lt, \phit)$ typically consists of step length $lt$ (non-negative continuous) and turning angle $\phi_t$ (circular, ranging from $-\pi$ to $\pi$) [9]. The standard approach assumes conditional independence between these metrics given the state:

$$f(Yt | St = j) = f(lt | St = j) \cdot f(\phit | St = j)$$

The most common distributional choices reflect the distinct nature of each movement metric:

Step Lengths are typically modeled using a gamma distribution [4] [9]:

$lt | St = j \sim \text{Gamma}(\muj, \sigmaj)$

parameterized by a state-dependent mean $\muj$ and standard deviation $\sigmaj$, where both parameters are strictly positive. The gamma distribution accommodates the right-skewed nature of movement step lengths while maintaining computational tractability.

Turning Angles are commonly modeled using a von Mises distribution [9]:

$\phit | St = j \sim \text{von Mises}(\lambdaj, \kappaj)$

where $\lambdaj$ is the state-specific mean angle (representing directional bias) and $\kappaj$ is the concentration parameter (representing directional persistence, with higher values indicating more concentrated angles around the mean).

Table 1: Standard Emission Distributions for Animal Movement HMMs

| Observation | Distribution | Parameters | Biological Interpretation |

|---|---|---|---|

| Step Length | Gamma | $\muj$: mean$\sigmaj$: standard deviation | Speed/displacement; higher $\mu_j$ indicates faster movement |

| Turning Angle | von Mises | $\lambdaj$: mean angle$\kappaj$: concentration | Directional persistence; higher $\kappa_j$ indicates more directed movement |

For high-resolution data where the conditional independence assumption may be violated due to momentum in movement, autoregressive HMMs extend this framework by incorporating lagged observations into the emission distributions [4]. For example, the state-dependent mean for step lengths can be modeled as:

$$\mu{t,j}^{\text{step}} = \sum{k=1}^{pj^{\text{step}}}\phi{j,k}^{\text{step}} l{t-k} + \Bigl(1-\sum{k=1}^{pj^{\text{step}}}\phi{j,k}^{\text{step}}\Bigr) \mu_j^\text{step}$$

where $\phi{j,k}^{\text{step}}$ are state-specific autoregressive coefficients, and $pj^{\text{step}}$ is the autoregressive order for state $j$ [4]. Similar structures can be applied to turning angles, effectively capturing the within-state serial correlation induced by movement momentum in high-frequency data.

Advanced Modeling Considerations

Scale Dependence in HMM Parameters

A critical consideration when applying HMMs to animal movement data is scale dependence—both transition probabilities and emission parameters depend strongly on the temporal resolution of the data [9]. This occurs because HMMs are discrete-time models whose parameters are tied to the specific time interval at which observations are recorded.

Transition probabilities reflect behavioral switching rates over the specific sampling interval. As the time between observations changes, so does the interpretation of these probabilities. For example, a transition probability of $\gamma_{12} = 0.1$ has different behavioral implications for data collected at 1-second versus 1-hour intervals [9].

Similarly, emission parameters are scale-dependent. Gamma distribution parameters for step lengths describe movement characteristics specific to the sampling rate. A "resting" state identified from high-frequency data might appear as a "slow exploration" state in lower-frequency data [9].

This scale dependence has important implications:

- It generally precludes comparing or combining tracking data collected at irregular time intervals

- It complicates comparisons between studies using different sampling rates

- Parameters and state classifications are interpretable only with reference to the specific temporal resolution of the data

Table 2: Effects of Temporal Scaling on HMM Components

| Model Component | Scale Dependence | Practical Implication |

|---|---|---|

| Transition probabilities | $\gamma_{ij}$ depends on time step | Switching rates cannot be compared across studies with different sampling intervals |

| Step length parameters | $\muj$, $\sigmaj$ depend on time step | Absolute speed/displacement values are sampling-rate specific |

| State classification | Overall behavioral classification | Same behavior may be classified into different states at different temporal resolutions |

| Model selection | Optimal number of states $N$ | Different numbers of states may be identified at different sampling rates |

Covariate-Dependent Transition Probabilities

To understand the drivers of behavioral switching, HMMs can incorporate time-varying covariates into the transition probability matrix [9]. As shown in Section 2.2, this is typically achieved using a multinomial logistic regression framework where:

$$\gamma{ij}^{(t)} = \frac{\exp(\eta{ij}^{(t)})}{\sum{k=1}^N \exp(\eta{ik}^{(t)})}$$

with $\eta{ij}^{(t)} = \beta0^{(ij)} + \beta1^{(ij)} x{1t} + \cdots + \betap^{(ij)} x{pt}$ for $i \neq j$ [9]. This approach enables researchers to test specific hypotheses about how internal (e.g., hunger state) or external (e.g., habitat characteristics, environmental conditions) factors influence the probability of switching between behavioral states.

Experimental Protocol: Implementing HMMs for Animal Behavior Classification

Workflow for HMM Application

The following diagram outlines the complete workflow for applying HMMs to classify animal behavior from tracking data, from data collection through biological inference.

Step-by-Step Methodology

Step 1: Data Collection and Preprocessing

- Collect animal location data at consistent time intervals using GPS, radio telemetry, or video tracking [10]

- Clean data to remove obvious errors and interpolation artifacts

- For high-resolution data (e.g., >1 Hz), consider appropriate filtering or smoothing techniques

Step 2: Movement Metric Calculation

- Calculate step lengths as Euclidean distances between consecutive locations: $lt = \|\bm{x}{t+1} - \bm{x}_t\|$

- Calculate turning angles as directional changes between consecutive movement steps: $\phit = \text{atan2}(y{t+1} - yt, x{t+1} - xt) - \text{atan2}(yt - y{t-1}, xt - x{t-1})$ with appropriate wrapping to ensure $\phit \in (-\pi, \pi]$ [9]

Step 3: Model Specification

- Determine the number of states $N$ based on biological knowledge and model selection criteria (AIC/BIC) [9] [12]

- Select appropriate emission distributions (typically gamma for step lengths, von Mises for turning angles) [9]

- For high-resolution data with strong serial correlation, consider autoregressive HMM extensions [4]

Step 4: Parameter Estimation

- Implement the Baum-Welch algorithm (a special case of Expectation-Maximization) to estimate model parameters [11] [13]

- The algorithm iterates between:

- E-step: Compute expected state probabilities using forward-backward algorithm

- M-step: Update parameters to maximize expected log-likelihood

- Multiple random initializations are recommended to avoid local maxima

Step 5: State Decoding

- Apply the Viterbi algorithm to find the most likely sequence of hidden states given the estimated parameters and observations [11] [13]

- This dynamic programming approach efficiently computes $\arg\max{S{1:T}} \Pr(S{1:T} | Y{1:T}, \bm{\theta})$

Step 6: Biological Interpretation and Validation

- Interpret decoded states ecologically based on their movement characteristics and temporal patterns [10]

- Validate model fit using pseudo-residuals and goodness-of-fit tests

- Compare state classifications with independent behavioral observations when available

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Computational Tools for HMM Analysis in Animal Movement

| Tool/Category | Specific Examples | Function/Purpose |

|---|---|---|

| HMM Software Libraries | hmms (Python) [14], PyHHMM [12] |

Implement core HMM algorithms (forward-backward, Viterbi, Baum-Welch) |

| Movement Data Processing | moveHMM (R), amt (R) |

Calculate movement metrics, prepare data for HMM analysis |

| Animal Tracking Systems | GPS collars, radio telemetry, video tracking with DeepLabCut [10] | Collect high-resolution location data for movement analysis |

| Model Selection Criteria | AIC, BIC [12] | Compare models with different numbers of states or structures |

| Visualization Tools | ggplot2 (R), matplotlib (Python) |

Create diagnostic plots and visualize state-dependent distributions |

The mathematical framework of transition probabilities and emission distributions provides a powerful foundation for classifying animal behavior from movement data. The HMM approach captures the inherent dynamics of behavioral processes while accounting for the uncertainty in assigning discrete states to continuous movement patterns. While the standard HMM formulation has proven valuable across numerous applications, recent extensions addressing scale dependence [9] and autocorrelation in high-resolution data [4] continue to enhance the methodology's robustness and biological realism. When implemented with careful attention to temporal scale, appropriate distributional assumptions, and thorough validation, HMMs offer researchers a principled framework for decoding the behavioral mechanisms underlying animal movement trajectories.

The analysis of animal movement is a cornerstone of behavioral ecology, critical for understanding species distribution, habitat use, and energy expenditure. Continuous movement paths, recorded via modern telemetry, present a analytical challenge: how to extract biologically meaningful patterns from a constant stream of location data. The framework of discrete behavioral states provides a powerful solution, positing that continuous movement arises from an underlying sequence of finite, functionally distinct behaviors such as foraging, migrating, and resting. Hidden Markov Models (HMMs) offer a robust statistical methodology for identifying these latent states from observed location data, enabling researchers to make ecological inferences about how animals interact with their environment [15] [16].

This application note outlines the biological and mathematical rationale for using discrete states, provides a comparative analysis of movement metrics, and details experimental protocols for implementing HMMs in behavioral classification.

Theoretical Foundation: Linking Discrete States to Continuous Paths

The Biological Basis for Behavioral States

Animal behavior is not a continuously variable process but is often organized into discrete, functional modes that serve specific purposes such as resource acquisition, predator avoidance, or reproduction. For example, a grey seal may switch between a directed transiting state to cover distance efficiently and a tortuous foraging state to locate and capture prey [15]. These states are driven by internal motivations and external environmental cues, creating a hierarchical structure where continuous movement execution is governed by discrete cognitive or motivational states.

The Mathematical Bridge: Hidden Markov Models

HMMs statistically formalize this biological concept. They treat the observed movement data (e.g., step lengths, turning angles) as emissions generated by an unobserved (hidden) Markov process that switches between a finite number of discrete behavioral states. The model assumes:

- The behavioral state at any time depends only on the state at the previous time step (the Markov property).

- Each state is characterized by a unique probability distribution for the movement metrics (e.g., a "transiting" state has a distribution of step lengths skewed toward larger values).

This framework successfully explains continuous movement paths because the model's output is a probabilistic sequence of these discrete states, creating a segmentation of the track into biologically interpretable segments [15] [10].

Quantitative Movement Metrics for State Classification

The choice of movement metrics is critical for effectively distinguishing between behavioral states. The following metrics, derived from raw location data, serve as the observed emissions for the HMM.

Table 1: Key Movement Metrics for Behavioral State Classification

| Metric | Calculation | Biological Interpretation | State Discrimination Value |

|---|---|---|---|

| Step Length | ( lt = \sqrt{(xt - x{t-1})^2 + (yt - y_{t-1})^2} ) [3] | Distance covered between consecutive locations. | High: Long steps suggest transiting; short steps suggest resting or foraging [16]. |

| Turning Angle | ( \thetat = \arctan^* (yt - y{t-1}, xt - x_{t-1}) ) | Change in direction of movement. | High: Angles near 0 indicate directed movement; angles near ±π indicate tortuous movement [15]. |

| Movement Persistence | ( dt = \gamma{b{t-1}} T(\theta{b{t-1}}) d{t-1} + N_2(0, \Sigma) ) [15] | Autocorrelation in speed and direction. | High: High γ (>0.5) indicates persistent, directed movement; low γ (<0.5) indicates less predictable movement [15]. |

Experimental Protocols for HMM Implementation

Protocol 1: Data Acquisition and Preprocessing for Aquatic Species

- Application: Classifying behavior from animal tracks with negligible measurement error (e.g., from acoustic telemetry or high-resolution GPS) [15].

- Materials: Animal tracking data (regular time intervals), computing environment (e.g., R programming language).

- Workflow:

- Data Filtering: Remove or correct obvious location outliers and fix any gaps in the time series.

- Metric Calculation: From the cleaned location data (x, y coordinates), calculate step lengths and turning angles for the entire track.

- HMM Fitting: Implement a HMM where the process equation for the movement is based on a correlated random walk. The model is defined by:

- Process Equation: ( dt = \gamma{b{t-1}} T(\theta{b{t-1}}) d{t-1} + N2(0, \Sigma) ), where ( dt ) is the movement vector, ( \gamma ) is the persistence parameter, ( T ) is a rotational matrix for the turning angle ( \theta ), and ( b_t ) is the behavioral state at time ( t ) [15].

- State-Dependent Distributions: Assume step lengths and turning angles come from distributions (e.g., gamma for step length, von Mises for turning angle) whose parameters are dependent on the behavioral state.

- Parameter Estimation: Fit the model using maximum likelihood methods, ideally with efficient computation packages like Template Model Builder (TMB) in R [15].

- State Decoding: Use the Viterbi algorithm to determine the most probable sequence of behavioral states from the observed data.

Protocol 2: Behavioral State Analysis in a Controlled Arena

- Application: Quantifying depth perception and its behavioral consequences in mice using a visual cliff test [3] [10].

- Materials: Visual cliff apparatus (circular arena recommended to reduce corner bias), overhead camera, DeepLabCut software for pose estimation [10].

- Workflow:

- Apparatus Setup: Construct a visual cliff using a table with a checkerboard pattern and a transparent acrylic plate extending beyond the edge to create a "deep" side. Use a circular enclosure to promote exploration [10].

- Experimental Trial: Place a mouse (e.g., wild-type C57BL/6J or retinal degeneration model) on a central platform facing the shallow side. Allow free exploration for a set period (e.g., 10 minutes) while recording from above [3].

- Motion Capture: Use DeepLabCut to track the body center coordinates (x, y) of the mouse throughout the trial at a defined sampling rate (e.g., 10 Hz) [3] [10].

- Metric Extraction: Calculate step length and turning angle from the coordinate data [3].

- HMM Analysis: Fit an HMM to the derived movement metrics to classify the mouse's behavior into discrete states such as Resting, Exploring, and Navigating [10]. The transition probabilities between these states reveal how the animal's behavioral mode is influenced by the visual cliff.

Critical Parameters and Method Selection

The temporal scale of data collection and the choice of analytical model are critical for making correct ecological inferences.

Table 2: Influence of Temporal Scale and Model Selection on Behavioral Inference

| Factor | Considerations | Impact on Inference |

|---|---|---|

| Temporal Scale (Time Step) | Fine-scale (e.g., 1 hour) vs. coarse-scale (e.g., 8 hours) data [16]. | Fine-scale: Can identify brief resting bouts during migration [16]. Coarse-scale: Smoothes behavioral transitions, better for distinguishing large-scale patterns like migration vs. residence [16]. |

| Model Selection | Hidden Markov Model (HMM) vs. Move Persistence Model (MPM) vs. Mixed-Membership Method (M4) [16]. | HMM: Excellent for identifying clear, discrete states from regular, low-error data [16]. MPM: Treats behavior as a continuum; can reveal fine-scale patterns missed by HMMs at short time steps [16]. M4: Makes fewer parametric assumptions, handles missing data, but requires careful interpretation [16]. |

| Number of States | Determined by model selection criteria (e.g., AIC) and biological knowledge [16]. | Over-specification leads to states with no biological meaning; under-specification collapses distinct behaviors into a single state [16]. |

Visualization of the HMM Framework and Workflow

Conceptual Framework of a Hidden Markov Model for Movement

Experimental and Analytical Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Resources for Behavioral Classification Studies

| Item | Function/Application | Examples/Specifications |

|---|---|---|

| Biotelemetry Transmitters | Attach to animal to collect location data. | Argos-linked Fastloc GPS tags (e.g., SPLASH10-F-385A) for marine species [16]; Acoustic transmitters for fine-scale positioning [15]. |

| Pose Estimation Software | Tracks animal position from video footage in controlled experiments. | DeepLabCut: An open-source tool for markerless pose estimation based on deep learning [3] [10]. |

| Computational Environment | Provides the platform for statistical modeling and analysis. | R programming environment: Use packages like moveHMM [15], swim [15], or M4 [16] for fitting HMMs and related methods. |

| Visual Cliff Apparatus | Standardized setup for testing visually guided behaviors in rodents. | Circular arena (e.g., 60 cm diameter) to minimize corner bias; transparent acrylic plate over a high-contrast checkerboard pattern [10]. |

In animal movement ecology, hidden Markov models (HMMs) have become a cornerstone technique for inferring unobserved behavioral states from tracking data [17]. These models operate on the principle that an animal's movement path is a manifestation of underlying, discrete behavioral modes, such as foraging, traveling, or resting. The process is twofold: a latent state process models the sequence of behaviors as a Markov chain, while an observation process links each behavioral state to a characteristic distribution of movement metrics [4]. The most commonly used metrics are step lengths (the distance between consecutive locations), turning angles (the change in direction), and speed [15] [18]. By analyzing the patterns in these metrics, HMMs can objectively segment a continuous movement track into meaningful behavioral sequences, providing profound insights into animal activity budgets, habitat selection, and energetics [17] [19].

Core Movement Metrics and Their Behavioral Significance

The power of HMMs lies in translating raw location data into behavioral inference by modeling the state-dependent distributions of key movement metrics. The table below summarizes how these metrics are interpreted for common behavioral states.

Table 1: Behavioral interpretation of core movement metrics in Hidden Markov Models.

| Behavioral State | Step Length / Speed | Turning Angle | Behavioral Interpretation |

|---|---|---|---|

| Encamped / Resting | Short steps; low speed [17] [20] | Uncorrelated or wide distribution [18] | Little displacement; activities like sleeping, grooming, or localized foraging [20] |

| Exploratory / Foraging | Highly variable; often intermediate [17] | High tortuosity; frequent large turns [18] | Area-restricted search (ARS); intensive search for resources like food or shelter [18] |

| Transit / Traveling | Long steps; high speed [17] [20] | Directed movement; low tortuosity (angles near 0) [15] [18] | Directed, persistent movement between distinct locations such as foraging grounds [20] |

Statistical Distributions in HMMs

In a standard HMM, these behavioral patterns are formally captured by specifying parametric distributions for the observations conditional on the state:

- Step Lengths are typically modeled using a gamma distribution due to their positive, continuous nature [4]. The Weibull distribution is also sometimes used [15].

- Turning Angles are circular quantities and are commonly modeled with a von Mises distribution, which is the circular analogue of the normal distribution [21] [4].

- Speed, which is closely related to step length, can be derived directly from the data and is often implicitly modeled through the step length distribution [22].

The model is defined by its initial state distribution δ and a transition probability matrix Γ, which governs the likelihood of switching from one state to another [4]. The forward algorithm is then used to compute the likelihood of the observed data, and the Viterbi algorithm is applied to decode the most probable sequence of hidden behavioral states [17].

Experimental Protocols for HMM Application

Data Pre-processing and Preparation

Objective: To transform raw telemetry data into a format suitable for HMM analysis.

- Data Sourcing: Collect animal location data from GPS tags or other telemetry systems. The data should ideally be collected at a regular time interval appropriate for the species and behaviors of interest [17].

- Data Cleaning: Address any data gaps or obvious errors (e.g., extreme outliers caused by GPS fix inaccuracy) [18].

- Metric Calculation:

- Step Lengths: Calculate the Euclidean distance between consecutive locations

(x_t, y_t)and(x_{t+1}, y_{t+1})[4]. - Turning Angles: Compute the relative turning angle

θ_tbetween three consecutive locations(x_{t-1}, y_{t-1}),(x_t, y_t), and(x_{t+1}, y_{t+1}). The angle is measured in radians(-π, π], where 0 indicates directed forward movement [21] [4]. - Speed: Derive speed by dividing the step length by the time interval between locations [22].

- Step Lengths: Calculate the Euclidean distance between consecutive locations

Model Fitting and Implementation

Objective: To fit an HMM to the prepared data and infer the underlying behavioral states.

- Model Formulation: Decide on the number of behavioral states

N(e.g., 2 or 3). This can be based on ecological knowledge, model selection criteria (e.g., AIC), or a desire to test specific hypotheses [21] [18]. - Parameter Estimation: Use numerical maximum likelihood estimation, often implemented in specialized R packages like

momentuHMMormoveHMM, to estimate the parameters of the state-dependent distributions (e.g., the mean and standard deviation for the gamma distribution of step lengths) and the state transition probability matrix [17] [23]. - State Decoding: Apply the Viterbi algorithm to the fitted model to determine the most likely sequence of behavioral states for the entire track [17].

- Model Validation (Critical Step): Where possible, validate the HMM-derived behaviors against direct observations or data from auxiliary sensors. For example:

- Use wet-dry sensors to validate "on-water/resting" states in seabirds [18].

- Use accelerometers to identify characteristic flapping or soaring movements in birds [19].

- Use time-depth recorders (TDR) to confirm diving and foraging events in marine animals [18].

- Even a small subset of validated data can be used in a semi-supervised framework to significantly improve model accuracy [18].

Advanced Methodological Considerations

Integrated and Enhanced HMM Frameworks

To address the complexities of animal movement, several advanced HMM frameworks have been developed:

HMM with Step Selection Functions (HMM-SSF): This integrated model combines the strengths of HMMs and step selection functions (SSFs). It classifies behaviors based on both movement characteristics and habitat selection, providing a more holistic view of space use and reducing bias in parameter estimates [17] [20]. For example, an analysis of plains zebra identified an "exploratory" state with not only fast, directed movement but also a stronger selection for grassland habitats [20].

Autoregressive HMMs (AR-HMMs): Standard HMMs assume that observations are conditionally independent given the state. This assumption is often violated in high-resolution data where momentum induces serial correlation within a behavioral state. AR-HMMs incorporate autoregressive components for both step lengths and turning angles, allowing the current value to depend on previous observations, which leads to more accurate inference [4].

Absolute Angle HMMs: Most HMMs use turning angles (relative direction). However, in some contexts, such as analyzing organelle movement within cells or movement with a global bias (e.g., towards a food source), using absolute angles (direction relative to a fixed axis) in a biased random walk (BRW) model can provide better fit and reveal biologically significant directional changes [21].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key tools, software packages, and sensors used in movement ecology for HMM-based analysis.

| Tool / Reagent | Type | Primary Function |

|---|---|---|

| GPS Loggers | Hardware | Collects high-resolution location data at pre-defined intervals [18]. |

| Accelerometers | Hardware | Measures fine-scale body movements and posture; used for behavior validation and model improvement [19] [18]. |

| Magnetometers | Hardware | Measures heading and angular velocity; useful for identifying low-acceleration behaviors like soaring [19]. |

| Wet-Dry Sensors | Hardware | Determines if an animal is in water or on land; validates resting/foraging states in marine species [18]. |

| Time Depth Recorders (TDR) | Hardware | Records dive profiles; validates underwater foraging behavior [18]. |

R package momentuHMM |

Software | Fits complex HMMs to animal tracking data, allowing for multiple data streams and covariates [17] [23]. |

R package moveHMM |

Software | Provides tools for pre-processing tracking data and fitting basic HMMs with step lengths and turning angles [21]. |

| Animal Tag Tools (Wiki) | Software/Resource | A collection of MATLAB functions for calibrating and processing data from various biologging sensors [19]. |

Workflow Visualization

The following diagram illustrates the standard workflow for classifying animal behavior using an HMM, from data collection to biological insight.

Figure 1: A standard workflow for behavioral classification using Hidden Markov Models, showing the key stages from raw data to biological insight.

Application Notes

Hidden Markov Models (HMMs) are powerful statistical tools for analyzing sequential data, defined by their memorylessness—the probability of transitioning to a new state depends only on the current state [24]. In animal research, HMMs infer hidden behavioral states (e.g., resting, foraging) from observable sensor data (e.g., movement patterns, accelerometer readings) [18] [19]. Their application has evolved from broad-scale ecological tracking to fine-scale biomedical investigation, enabling researchers to decode complex animal behaviors and physiological states with high precision.

Table 1: Evolution of HMM Applications in Animal Research

| Field of Application | Key Objective | Hidden States Inferred | Observable Data Used | Representative Study Models |

|---|---|---|---|---|

| Movement Ecology | Classify major behavioral modes and understand habitat use [18] [19]. | Resting, foraging, travelling, soaring flight, flapping flight [18] [19]. | GPS-derived step length and turning angle [18]; Accelerometer and magnetometer data [19]. | Albatrosses, Red-billed Tropicbirds [18] [19]. |

| Biomedical Research | Assess functional recovery and neural integration in disease models [3]. | Resting, Exploring, Navigating [3]. | Locomotor trajectories from the visual cliff test [3]. | Wild-type and retinal degenerative (rd1-2J) mice [3]. |

| Viral Metagenomics | Discover and classify novel viral pathogens [25]. | Viral protein family membership. | Amino acid or nucleotide sequences from metagenomic data [25]. | Profile HMMs from databases like vFam, pVOGs, and IMG/VR [25]. |

A primary ecological application involves using HMMs with GPS and IMU (Inertial Measurement Unit) data to classify animal behavior. Studies on albatrosses have successfully identified major movement modes—'flapping flight', 'soaring flight', and 'on-water'—with an overall model accuracy of 92% [19]. Similarly, research on red-billed tropicbirds demonstrated that incorporating a small subset of data from auxiliary sensors (e.g., wet-dry sensors, accelerometers) to semi-supervise HMMs significantly improved overall behavioral classification accuracy from 0.77 ± 0.01 to 0.85 ± 0.01 (mean ± sd) [18].

In biomedicine, HMMs provide sensitive measures of functional recovery in disease models. A landmark study on retinal degeneration in mice used an HMM to analyze behavior in a visual cliff test. The model identified three behavioral states (Resting, Exploring, and Navigating) and revealed that wild-type mice exhibited a strong cliff avoidance response, which habituated over trials, leading to a state collapse from three states to two [3]. Following retinal organoid transplantation, blind mice recovered a cliff avoidance response as early as two weeks post-transplantation, coinciding with early synapse formation. This robust response peaked at 16 weeks and later disappeared, accompanied by behavioral state collapse—a hallmark of adaptive learning and functional vision recovery [3].

Table 2: Quantitative Behavioral Metrics from HMM Analysis in Mouse Visual Cliff Test

| Behavioral Metric | Wild-Type (WT) Mice | Blind (RD) Mice | Transplanted RD Mice (Peak Response) |

|---|---|---|---|

| Initial Behavioral States | Three distinct states (Resting, Exploring, Navigating) [3]. | N/A | N/A |

| Cliff Avoidance Response | Strong initial response [3]. | No response [3]. | Robust response recovered [3]. |

| Behavioral Habituation | Rapid habituation and state collapse (3 → 2 states) [3]. | No habituation over time [3]. | State collapse observed by 18 weeks, similar to WT [3]. |

| Onset of Functional Recovery | N/A | N/A | 2 weeks post-transplantation [3]. |

Experimental Protocols

Protocol 1: HMM-Based Classification of Major Movement Modes from IMU Data

This protocol details the procedure for using HMMs to classify broad behavioral states like flapping flight, soaring flight, and on-water behavior in flying birds, as applied in albatross studies [19].

Research Reagent Solutions & Essential Materials

Table 3: Key Materials for Movement Ecology Protocol

| Item | Specification | Function |

|---|---|---|

| GPS/IMU Device | Includes tri-axial accelerometer and magnetometer (e.g., sampling at 25-75 Hz) [19]. | Records high-resolution movement and orientation data for behavioral inference. |

| Data Processing Software | MATLAB with Animal Tag Tools Wiki; R with moveHMM or momentuHMM packages [18] [19]. |

Processes raw sensor data, extracts features, and implements HMM fitting and decoding. |

| Computing Hardware | Computer with sufficient RAM and processing power for large high-frequency datasets [19]. | Handles computationally intensive data processing and model fitting. |

Step-by-Step Procedure

Device Deployment and Data Collection:

- Deploy GPS/IMU devices on the study animals, ensuring proper attachment to align the sensor axes (surge, sway, heave) with the animal's body axes [19].

- Program the devices to record data for the duration of a foraging trip or other relevant biological period.

Data Pre-processing and Calibration:

- Standardize Sampling Frequency: If necessary, decimate all data to a standard frequency (e.g., 25 Hz) [19].

- Calibrate Sensors: Transform the sensor data to align the device frame with the animal's body frame. Correct for any static orientation offsets using data from periods of known rest [19].

- Compute Movement Metrics: For GPS data, calculate step lengths (straight-line distance between consecutive locations) and turning angles (change in direction between steps) [18]. For accelerometer data, the overall dynamic body acceleration (ODBA) or static acceleration may be used as observed variables [19].

Exploratory Data Analysis and State Number Selection:

- Examine histograms of the observed data (e.g., step length, turning angle) to identify the number of distinct behavioral modes present.

- Based on ecological knowledge and data exploration, pre-define the number of hidden states (N) for the HMM (e.g., N=3 for "on-water", "soaring flight", "flapping flight") [19].

Model Fitting:

- Use an R package like

momentuHMMto fit an HMM to the prepared data series. - The model will estimate the initial state probabilities, the state transition probability matrix, and the state-dependent emission probability distributions (e.g., gamma distribution for step length, von Mises distribution for turning angle) [18].

- Use an R package like

State Decoding and Validation:

- Apply the Viterbi algorithm to the fitted HMM to determine the most probable sequence of hidden behavioral states for the entire observation sequence [26].

- Validate Model Output: Compare the HMM-inferred states with expert classifications identified from stereotypic patterns observed in the raw sensor data or from simultaneous video recordings to calculate accuracy [19].

Protocol 2: Assessing Visual Function Recovery in Murine Models Using HMMs

This protocol describes the use of HMMs to quantitatively assess depth perception and its recovery in mouse models of retinal degeneration, providing a sensitive metric for evaluating the efficacy of regenerative therapies [3].

Research Reagent Solutions & Essential Materials

Table 4: Key Materials for Visual Function Assessment Protocol

| Item | Specification | Function |

|---|---|---|

| Visual Cliff Apparatus | Table with high-contrast checkerboard pattern; transparent acrylic plate creating a "cliff" illusion; circular enclosure to minimize corner bias [3]. | Provides a standardized environment to test innate depth perception. |

| Video Tracking System | High-frame-rate camera (e.g., 30 fps) and pose estimation software (e.g., DeepLabCut) [3]. | Records and digitizes the mouse's locomotor activity for quantitative analysis. |

| Animal Model | Wild-type (WT) and retinal degenerative (e.g., rd1-2J) mice; cohorts receiving experimental interventions (e.g., retinal organoid transplantation) [3]. | Provides a model system to study visual function and its restoration. |

Step-by-Step Procedure

Apparatus Setup and Calibration:

- Set up the visual cliff apparatus in a room with uniform, controlled overhead lighting (e.g., 3000 K, 65 cd/m²) to ensure consistent visual cues and minimize shadows [3].

- Confirm the stability and safety of the transparent acrylic plate.

Behavioral Recording:

- At the start of each trial, place a single mouse on the central platform facing the shallow side.

- Allow the mouse to explore the apparatus freely for a set period (e.g., 10 minutes). Record the session from above using a video camera [3].

Motion Capture and Trajectory Extraction:

- Use deep learning-based software like DeepLabCut to track the body center coordinates (x, y) of the mouse across all video frames [3].

- Filter the tracking data to exclude frames with low confidence (e.g., below 90%) and downsample if necessary to reduce computational load.

Movement Metric Calculation:

- From the positional data, calculate the primary movement metrics for analysis:

- Step length (lₜ): The Euclidean distance between body center coordinates in consecutive frames [3].

- Turning angle: The change in direction of movement between steps.

- From the positional data, calculate the primary movement metrics for analysis:

HMM Fitting and State Identification:

- Fit an HMM to the sequence of derived movement metrics, typically using the first 3 minutes of the trial to assess the initial response [3].

- Define the model to infer three core behavioral states: Resting (little movement), Exploring (shorter steps, higher tortuosity), and Navigating (longer, more directed steps) [3].

Analysis of State Transitions and Cliff Response:

- Analyze the sequence of states, paying particular attention to transitions near the cliff boundary.

- Quantify the cliff avoidance response by comparing the probability of state transitions (e.g., from Navigating to Resting) when the mouse approaches the cliff edge versus the shallow side.

- Track changes in behavioral state structure (e.g., state collapse from three states to two) over repeated trials as a measure of habituation and functional integration [3].

Implementing HMMs: From Data Collection to Behavioral Classification

The accurate classification of animal behavior using hidden Markov models (HMMs) relies fundamentally on the quality and characteristics of the input sensor data. This protocol outlines the essential data requirements—encompassing sensor types, sampling rates, and tracking technologies—for researchers applying HMMs to animal movement and behavior analysis. The integration of precise data collection with robust modeling frameworks enables the identification of behavioral states from tracking data, facilitating advances in ecology, conservation, and drug development research.

Sensor Technologies for Data Acquisition

A variety of sensor technologies can be deployed on animals to collect movement data, each offering distinct advantages for capturing different aspects of behavior.

Table 1: Animal-Borne Sensor Technologies for Behavioral Studies

| Sensor Type | Primary Measurements | Common Applications in Behavior | Considerations |

|---|---|---|---|

| GPS/GNSS [27] | Animal position (longitude, latitude), sometimes altitude | Large-scale movement, habitat selection, travel paths | Accuracy varies; power-intensive; limited indoor/dense canopy use |

| Accelerometer [27] [28] [29] | Dynamic body acceleration (all three axes) | Fine-scale behaviors (grazing, running, resting), energy expenditure | High data volume; placement on body critical for signal interpretation |

| Gyroscope [29] | Angular velocity, orientation | Body rotation, turning angles, complex maneuvers | Complements accelerometer data for detailed movement reconstruction |

| Magnetometer | Heading, direction | Directional persistence, path tortuosity | Can be interfered with by local magnetic anomalies |

| Animal-Borne Video | Visual record of environment and animal actions | Direct validation of behaviors, context-aware analysis | Very high data load; limited battery life; privacy/ethical considerations |

| Bio-logger (Multi-sensor) [29] | Combination of above (e.g., ACC, GPS, Gyro, Env. sensors) | Comprehensive behavioral ethogram construction | Provides richest data source; requires sensor fusion techniques |

Sampling Rate Requirements and Data Collection Principles

The selection of an appropriate sampling frequency is critical to capturing meaningful behavioral signals without unnecessarily exhausting device power and storage.

The Nyquist-Shannon Theorem in Practice

A foundational principle in data acquisition is the Nyquist-Shannon sampling theorem, which states that the sampling frequency should be at least twice the frequency of the fastest body movement essential to characterize the behavior of interest [28]. Sampling below this Nyquist frequency results in aliasing, a distortion that misrepresents the original signal and can lead to misclassification of behaviors.

Species- and Behavior-Specific Sampling Rates

The optimal sampling rate is not universal; it depends on the specific behaviors under investigation.

Table 2: Behavior-Dependent Accelerometer Sampling Rate Guidelines

| Behavioral Characteristic | Example Behaviors | Recommended Minimum Sampling Rate | Evidence |

|---|---|---|---|

| Short-Burst, High-Frequency | Swallowing in birds, escape maneuvers in fish | 100 Hz (or 1.4x Nyquist frequency) | Pied flycatcher swallowing occurred at ~28 Hz, requiring ~100 Hz for accurate classification [28]. |

| Long-Endurance, Rhythmic | Flight in birds, steady swimming in fish | 12.5 Hz (or equal to Nyquist frequency) | Flight in pied flycatchers was adequately characterized at 12.5 Hz [28]. |

| Common Livestock Activities | Lying, walking, standing in sheep | 16-32 Hz | Classification performance for sheep showed best results at 32 Hz, with marginal gains beyond 16 Hz [28]. |

Data Collection Protocol for HMM Training

To collect high-quality data for training and validating HMMs, follow this experimental workflow:

- Hypothesis and Ethogram Definition: Define the specific behavioral states to be classified (e.g., "encamped" vs. "exploratory," "foraging" vs. "traveling") [17] [29].

- Sensor Selection and Configuration:

- Select a biologger that includes an accelerometer and, if needed for spatial context, a GPS.

- Set the accelerometer sampling rate based on the fastest behavior of interest, following the guidelines in Table 2. When in doubt, oversample (e.g., 50-100 Hz).

- Set the GPS fix rate according to the scale of movement. For fine-scale habitat selection, higher frequencies (e.g., 1 Hz) may be needed, whereas for large-scale migration, lower frequencies (e.g., every 5-15 minutes) suffice [30].

- Sensor Deployment:

- Ground-Truthing and Data Annotation:

- Simultaneously record the animal's behavior using video surveillance (e.g., high-speed cameras) or direct visual observation during controlled experiments or periods of the deployment [28] [29].

- Annotate the sensor data stream with the observed behaviors to create a labeled dataset for model training. This is the "ground truth" against which the HMM's predictions will be compared.

- Data Synchronization: Precisely synchronize the clocks on all sensors and video recording equipment to enable accurate matching of sensor data to observed behaviors.

Integrating Sensor Data with Hidden Markov Models

HMMs are powerful tools for identifying latent (unobserved) behavioral states from observed sensor data. The data collection protocols above are designed to feed directly into these models.

The HMM Framework for Behavior Classification

An HMM assumes that an animal is, at any time, in one of a finite number of hidden behavioral states. The state sequence is a Markov process, and the observations (sensor data) are probabilistic functions of the underlying state [15] [17].

From Raw Data to Model Input

The path from raw sensor data to HMM analysis involves several key steps, which can be visualized in the following workflow.

Data Analysis Workflow for HMMs

- Data Preprocessing: Clean the raw data by removing erroneous GPS fixes and sensor artifacts. For accelerometer data, calculate metrics like the vector of dynamic body acceleration (VeDBA) or Overall Dynamic Body Acceleration (ODBA) which serve as proxies for energy expenditure and movement intensity [28] [29].

- Movement Feature Extraction: From the GPS and/or accelerometer data, derive movement characteristics that are informative for behavior. Common features include:

- Step Length: The distance between consecutive locations.

- Turning Angle: The change in direction between successive steps.

- Speed: The rate of movement.

- HMM-SSF Integration: For a more powerful, spatially explicit analysis, implement an Integrated HMM-Step Selection Function (HMM-SSF) [17]. This model uses an HMM where the observation process is defined by an SSF, allowing states to be classified based on both movement mechanics (step length, turning angle) and habitat selection. This jointly estimates the behavioral state and the habitat preferences associated with each state.

- State Decoding: Use the fitted HMM and the forward-backward algorithm to compute the most probable sequence of behavioral states that generated the observed sensor data [17].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Solutions for Tracking and Behavior Analysis

| Item / Solution | Function / Application | Example Use Case |

|---|---|---|

| Bio-logger (Multi-sensor) [29] | Records time-series data (ACC, GPS, etc.) from free-moving animals. | Core data collection device deployed on animals in the field or lab. |

| AlphaTracker Software [31] | Markerless pose estimation and tracking of multiple, identical animals from video. | Provides ground-truth location and keypoint data for lab-based social behavior studies. |

| BEBE Benchmark [29] | A public benchmark of labeled bio-logger data for validating behavior classification models. | Evaluating and comparing the performance of new HMMs and other ML algorithms. |

| MoveHMM / MomentuHMM R Packages | Statistical software for fitting HMMs to animal tracking data. | Implementing the HMM analysis, including state decoding and parameter estimation. |

| Self-Supervised Learning Models [29] | Models pre-trained on large, unlabeled datasets (e.g., human accelerometer data). | Transfer learning to improve HMM performance on a target species with limited labeled data. |

| Kalman Filter [31] | An algorithm that estimates the true state of a system from noisy measurements. | Smoothing noisy GPS or keypoint tracking data before HMM analysis. |

Within research focused on classifying animal behavior using hidden Markov models (HMMs), the integrity of the model's input is paramount. A robust preprocessing pipeline that transforms raw location data into meaningful movement features is a critical first step, directly influencing the HMM's capacity to identify distinct behavioral states [32]. This document outlines detailed protocols for converting raw tracking data into analyzed-ready features, providing a standardized methodology for researchers in neuroscience and drug development.

Experimental Protocols & Workflow

The following protocol details the sequential steps from video recording to the generation of movement features suitable for HMM analysis.

Stage 1: Video Acquisition and Preprocessing

- Objective: To obtain high-quality video data amenable to automated tracking.

- Procedure:

- Record Behavior: Capture animal behavior using a high-resolution camera mounted orthogonally to the experimental arena to minimize perspective distortion.

- Decompose Video: Split the video file into individual, sequential frames. The frame rate should be sufficient to capture the behaviors of interest (e.g., 30 frames per second).

- Generate Live-Frames (Optional but Recommended): To incorporate temporal motion information, create "live-frames" by stacking three consecutive grayscale frames into a single RGB image, where each channel represents the animal's position at a different time point [33]. This creates a motion-colored image that preserves spatial and temporal information.

Stage 2: Animal Pose Tracking with DeepLabCut

- Objective: To extract precise, time-series data of animal body part locations from video frames.

- Procedure:

- Install DeepLabCut: Install the DeepLabCut package in a Python environment following the official documentation.

- Define Body Parts: Label key body parts (e.g., snout, ears, tail base, limbs) relevant to the study. For social behaviors, label body parts on all animals [32].

- Create a Training Dataset: Manually annotate the defined body parts across a diverse set of frames extracted from the videos. This "labels" the data for the model.

- Train the Network: Use the annotated dataset to train a deep neural network within DeepLabCut to recognize and track the body parts in new, unlabeled video data.

- Analyze Videos: Process the full behavior videos through the trained DeepLabCut network to obtain the estimated X, Y coordinates (and likelihood) for each defined body part in every frame [32].

- Extract Track Data: Export the resulting tracking data, typically as a CSV or HDF5 file, for further processing. The output is a table of raw location data across time.

Stage 3: Data Cleaning and Smoothing

- Objective: To address tracking errors and noise in the raw location data.

- Procedure:

- Handle Low-Likelihood Points: Identify tracked points with a low estimation likelihood (below a set threshold, e.g., 0.95). Replace these coordinates using interpolation from high-likelihood neighboring frames.

- Apply Smoothing Filters: Use a Savitzky-Golay filter or a Gaussian kernel to smooth the X and Y trajectories. This reduces high-frequency noise from tracking jitter while preserving the underlying movement dynamics.

Stage 4: Movement Feature Engineering

- Objective: To transform smoothed location data into a set of discriminative features that describe the animal's behavior.

- Procedure:

For each animal and each time point, calculate the following features from the smoothed X, Y coordinates:

- Velocity: The speed of movement, calculated as the Euclidean distance between positions in consecutive frames, divided by the inter-frame interval.

- Acceleration: The rate of change of velocity.

- Angular Velocity: The rate of change of the direction of movement (heading angle).

- Body Length: The distance between two body points (e.g., snout and tail base) to estimate posture.

- Nose-Tailbase Angle: The angle formed by three points (e.g., snout, center of mass, tail base) to capture body curvature.

- Social Features (Multi-Animal): Calculate inter-animal distance, relative orientation, and approach/retreat speeds.

The final output is a feature matrix where each row represents a time point and each column represents a calculated movement feature. This matrix is the direct input for HMM analysis.

Quantitative Data Presentation

The following table summarizes the core movement features engineered from raw location data. These features serve as the observables for the HMM.

Table 1: Engineered Movement Features from Animal Tracking Data

| Feature Category | Feature Name | Calculation Method | Behavioral Significance |

|---|---|---|---|

| Locomotion | Velocity | (\frac{\sqrt{(X{t}-X{t-1})^2 + (Y{t}-Y{t-1})^2}}{\Delta T}) | General activity level; running vs. resting |

| Acceleration | (\frac{Velocity{t} - Velocity{t-1}}{\Delta T}) | Movement bursts and sudden stops | |

| Angular Velocity | (\frac{Heading{t} - Heading{t-1}}{\Delta T}) | Meandering vs. directed movement | |

| Posture | Body Length | Distance between snout and tail base | Stretching, contracting, or freezing |

| Nose-Tailbase Angle | Angle formed by snout, center-of-mass, and tail base | Body curvature during turning or grooming | |

| Social | Inter-Animal Distance | Euclidean distance between two animals' centers-of-mass | Proximity and social interaction |

| Relative Orientation | Angle between the heading angles of two animals | Facing, following, or parallel movement |

Workflow Visualization

The following diagram illustrates the complete pipeline from data acquisition to HMM classification.

From Features to Behavioral States

The next diagram conceptualizes how the generated feature matrix is used by a Hidden Markov Model to infer latent behavioral states.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Tools for Behavioral Tracking and Analysis

| Tool / Reagent | Function in the Pipeline | Key Considerations |

|---|---|---|

| DeepLabCut [32] | Open-source tool for markerless pose estimation based on deep learning. Extracts raw body part coordinates from video frames. | Requires a Python environment and initial manual labeling of a training dataset. Highly customizable. |

| Selfee [33] | A self-supervised convolutional neural network for end-to-end feature extraction directly from video frames, without the need for pose estimation. | Useful when detailed postures are hard to extract or for multi-animal interactions. Provides "meta-representations." |

| Circular Behavioral Arena [32] | An apparatus for housing animals during video recording. A circular design eliminates corner preferences, promoting more natural exploration and unbiased data collection. | Critical for visual cliff tests and other experiments where spatial bias can confound results. |

| Hidden Markov Model (HMM) | A statistical model that identifies latent (hidden) behavioral states from a time-series of observed movement features. Models state transitions and durations. | The choice of model (e.g., AR-HMM) and number of states must be validated for the specific behavior and species. |

In animal movement ecology, Hidden Markov Models (HMMs) have emerged as a powerful statistical framework for identifying discrete behavioral states from tracking data. These models assume that observed movement patterns (e.g., step lengths, turning angles) are generated by underlying, unobservable behavioral states that follow a Markov process [1] [34]. The core concept involves a double stochastic process where latent behavioral states evolve according to transition probabilities, while observations depend probabilistically on these hidden states through state-dependent distributions [15] [35]. This approach has been successfully applied across diverse species including grey seals, lake trout, blue sharks, and laboratory mice, demonstrating its versatility for classifying behaviors such as resting, foraging, exploration, and transit [15] [32].

Quantitative Specification of Behavioral States

Mathematical Foundation

The HMM framework consists of two primary components: the state process and the observation process. The state process is defined as a Markov chain with transition probabilities between discrete behavioral states, while the observation process links these hidden states to measurable movement metrics [15] [34]. The joint density function for an HMM can be expressed as:

[p(\mathbf{z}{1:T} \mid \mathbf{x}{1:T}) = p(\mathbf{z}{1:T}) p(\mathbf{x}{1:T} \mid \mathbf{z}{1:T}) = \left[ p(z1) \prod{t=2}^{T} p(zt \mid z{t-1}) \right] \left[ \prod{t=1}^T p(\mathbf{x}t \mid zt) \right]]

where (zt) represents the hidden behavioral state at time (t), and (\mathbf{x}t) represents the observations [34]. The model is characterized by three fundamental elements: the initial state distribution (\delta), the state transition probability matrix (A), and the state-dependent observation distributions [15] [35].

Characteristic State Signatures

Table 1: Quantitative Characteristics of Common Behavioral States in Animal Movement

| Behavioral State | Step Length Characteristics | Turning Angle Characteristics | Biological Interpretation | Typical Parameter Values |

|---|---|---|---|---|

| Resting | Short steps with minimal displacement | Irregular or undirected turning angles | Energy conservation, digestion, vigilance | (\gamma < 0.3), high variance in step length |

| Exploring/Foraging | Intermediate steps with high variance | High tortuosity, frequent course reversals ((\theta \approx \pi)) | Area-restricted search, resource exploitation | (\gamma = 0.3-0.5), (\theta \approx \pi) |

| Transit/Navigating | Long, persistent steps with low variance | Directed movement with minimal turning ((\theta \approx 0)) | Directed travel between habitats, migration | (\gamma > 0.5), (\theta \approx 0), (\sigma^2) low |

The discrimination between behavioral states is primarily achieved through differences in movement parameters including autocorrelation in speed and direction ((\gamma)), turning angle distributions ((\theta)), and stochasticity in movement ((\Sigma)) [15]. These parameters are typically estimated using maximum likelihood methods implemented through specialized R packages such as momentuHMM, swim, or moveHMM [15] [36].

Experimental Protocol for Behavioral State Classification

Data Collection and Preprocessing

Animal Tracking: Deploy appropriate telemetry technology (GPS, acoustic telemetry, satellite tags) to collect high-resolution positional data. The specific technology should be selected based on species characteristics and research environment [15].

Movement Metric Calculation: From raw location data, calculate step lengths (straight-line distances between consecutive locations) and turning angles (changes in direction between successive steps). For 2D movement data, these are derived from first differences of locations: (\mathbf{d}t = \mathbf{x}t - \mathbf{x}_{t-1}) [15].

Data Cleaning: Address missing positions, measurement error, and irregular time intervals using interpolation or state-space approaches where necessary. The

momentuHMMpackage provides functionality for handling these common data issues [36].

Model Specification and Implementation

State Number Selection: Determine the appropriate number of behavioral states ((K)) based on biological knowledge, model selection criteria (AIC, BIC), or through preliminary analysis of movement patterns. Most applications utilize 2-4 behavioral states [32].

Initial Parameter Estimation: Provide initial values for state transition probabilities and parameters of state-dependent distributions (typically gamma distributions for step lengths and von Mises distributions for turning angles). These can be informed by visual inspection of movement tracks or preliminary clustering [35].

Model Fitting: Implement the HMM using specialized software. The following code demonstrates basic implementation using the

momentuHMMpackage in R:

- Model Validation: Assess model fit through pseudo-residual analysis, examination of decoding uncertainties, and comparison of predicted versus observed movement patterns [15] [36].

State Decoding and Interpretation

Global Decoding: Apply the Viterbi algorithm to determine the most likely sequence of behavioral states given the observations and fitted model parameters [1] [34].

Local Decoding: Calculate the marginal probabilities of each behavioral state at each time point using the forward-backward algorithm, providing a measure of classification certainty [1].

Biological Validation: Correlate identified behavioral states with independent biological data (e.g., feeding events, environmental context, physiological measurements) to ensure ecological relevance of the classification [32].

HMM Architecture for Behavioral Classification

HMM Behavioral State Diagram: This architecture illustrates the relationship between hidden behavioral states (Resting, Exploring, Transit) and observed movement metrics in animal tracking data. The state transition probabilities ((a{ij})) govern switches between behavioral states, while emission probabilities ((bi(observation))) link each state to characteristic distributions of step lengths and turning angles.

Essential Research Toolkit

Table 2: Essential Research Reagents and Computational Tools for HMM Implementation

| Tool/Resource | Specific Function | Application Context | Implementation Source |

|---|---|---|---|

| momentuHMM R Package | Maximum likelihood analysis of animal movement using multivariate HMMs | Handling complex telemetry data with multiple behavioral states, missing data, and measurement error | [36] |

| moveHMM R Package | Basic HMM framework for animal movement data | Standard step length and turning angle analysis with 2-3 behavioral states | [15] |

| swim R Package | Implementation of HMMM (Hidden Markov Movement Model) | Rapid analysis of highly accurate tracking data with negligible measurement error | [15] |

| DeepLabCut | Markerless pose estimation from video recordings | Extracting precise movement metrics from visual data in controlled environments | [32] [10] |

| TMB (Template Model Builder) | Maximum likelihood estimation of HMM parameters | Efficient parameter estimation for complex movement models with random effects | [15] |

| Baum-Welch Algorithm | Estimation of HMM parameters from observed data | Model fitting when initial state sequences are unknown | [35] |

| Viterbi Algorithm | Global decoding of the most likely state sequence | Identification of the optimal behavioral state path given observations | [1] [34] |

Advanced Methodological Considerations

Covariate Integration

Environmental covariates (temperature, habitat type, time of day) can be incorporated into HMMs to explain variation in both transition probabilities and state-dependent distributions. This is typically achieved through multinomial logit links for transition probabilities and parametric relationships in observation distributions [36].

Hierarchical Extensions

Hierarchical HMMs allow for individual-level variability in movement parameters while estimating population-level distributions, making them particularly valuable for studies with multiple individuals or groups [36].

Measurement Error Handling

For tracking technologies with significant measurement error (e.g., Argos satellite telemetry), state-space model extensions of HMMs can be implemented to simultaneously account for observation error and behavioral classification [15].

The visual cliff test, originally developed by Gibson and Walk, is a foundational paradigm for assessing depth perception in animals [37]. This test ingeniously creates the illusion of a sharp drop-off ("the cliff") using a transparent surface, allowing researchers to investigate an animal's innate response to visual depth cues without the risk of actual falling. Traditionally, the analysis of this behavior has relied on simple metrics, such as the time an animal spends on the "shallow" versus the "deep" side of the apparatus [38].

However, recent advancements in computational ethology have revolutionized this classic test. The integration of high-resolution movement tracking with Hidden Markov Models (HMMs) now enables a far more nuanced dissection of behavior [32] [3]. This modern approach moves beyond simplistic measures to model behavior as a dynamic sequence of hidden, or latent, states. These states—such as Resting, Exploring, and Navigating—generate the observable movements of the animal [3] [10]. This case study details how this powerful combination of a modified visual cliff apparatus and HMM-based analysis provides a sophisticated framework for studying visual perception in mice, with direct applications in evaluating visual function, modeling human visual diseases, and screening the efficacy of novel therapeutic agents.

Theoretical Background

The Original Visual Cliff Paradigm

The classic visual cliff experiment was designed to determine if depth perception is innate or learned. The apparatus consists of a central board raised to a moderate height, covered by a transparent glass surface. On one side (the "shallow" side), a textured pattern is placed directly beneath the glass. On the other side (the "deep" side), the identical pattern is placed on the floor far below the glass, creating the visual illusion of a cliff [37].

Seminal studies found that 92% of human infants (6-14 months old) refused to crawl onto the deep side when called by their mothers, suggesting an early ability to perceive depth [37]. Similar innate avoidance behaviors were observed in various terrestrial species like chicks, lambs, and kids, all of which avoided the deep side from the first day of life [37]. This established the visual cliff as a valid tool for investigating the nativist perspective on depth perception.

Hidden Markov Models for Behavioral Classification

A Hidden Markov Model (HMM) is a statistical model that is particularly suited for analyzing time-series data where the system being studied is assumed to be a Markov process with unobserved (hidden) states.