CUDA vs. OpenACC for Ocean Modeling: A Performance and Productivity Analysis for Researchers

This article provides a comprehensive comparison of CUDA and OpenACC for accelerating ocean models, a critical tool for climate science and disaster forecasting.

CUDA vs. OpenACC for Ocean Modeling: A Performance and Productivity Analysis for Researchers

Abstract

This article provides a comprehensive comparison of CUDA and OpenACC for accelerating ocean models, a critical tool for climate science and disaster forecasting. We explore the foundational principles of both programming models, present real-world application case studies from models like NEMO, SCHISM, and POM, and delve into troubleshooting and optimization strategies. By synthesizing recent performance benchmarks and validation studies, this analysis offers researchers and scientists a clear framework for selecting the right GPU-acceleration approach based on their specific project goals, balancing raw performance against development complexity and portability.

Understanding CUDA and OpenACC: Core Concepts for HPC Oceanography

In high-performance computing (HPC) for oceanography, GPU acceleration is essential for making large-scale, high-resolution simulations feasible. Two primary approaches for porting models to GPUs are NVIDIA's CUDA, a low-level programming model, and OpenACC, a high-level, directive-based standard. This guide objectively compares their performance, programming effort, and suitability for ocean models like the Princeton Ocean Model (POM) and SCHISM, helping researchers make informed decisions for their projects.

Performance and Development Trade-Offs

The table below summarizes the core characteristics and trade-offs between CUDA and OpenACC, as evidenced by recent implementations in ocean modeling.

| Feature | CUDA | OpenACC |

|---|---|---|

| Programming Model | Low-level, explicit kernel-based [1] | High-level, directive-based [1] |

| Core Philosophy | Maximum performance and control [2] | Portability and developer productivity [3] [1] |

| Control over Hardware | High, allows fine-grained optimizations [1] | Lower, relies on compiler decisions [1] |

| Code Modification | Extensive; requires rewriting code in CUDA C/C++/Fortran [4] | Minimal; directives are added to existing Fortran/C/C++ code [5] [1] |

| Data Management | Manual and explicit [3] | Can be automated, especially with Unified Memory [3] [6] |

| Performance (vs. CPU) | High (e.g., 35.13x speedup for SCHISM) [4] | High (e.g., 11.75x to 45.04x speedup for POM) [5] |

| Performance (Head-to-Head) | Outperforms OpenACC in direct comparisons [4] | Good performance, but can be outperformed by optimized CUDA [4] |

| Best Suited For | Performance-critical applications, developers with GPU expertise [4] [2] | Rapid prototyping, legacy code, teams prioritizing maintainability [5] [3] |

Experimental Performance Data in Ocean Modeling

Empirical data from recent studies provides a quantitative basis for comparison. The table below consolidates key performance metrics from GPU-accelerated ocean models.

| Ocean Model | GPU Programming Model | Speedup vs. CPU | Key Experimental Findings |

|---|---|---|---|

| SCHISM [4] | CUDA Fortran | 35.13x (large-scale, 2.56M grid points) | CUDA outperformed OpenACC in all tested scenarios, especially in large-scale simulations [4]. |

| Princeton Ocean Model (POM) [5] | OpenACC | 11.75x to 45.04x (increasing with resolution/simulation time) | Demonstrated that significant speedups are achievable with a directive-based approach, balancing performance and portability [5]. |

| SCHISM - Jacobi Solver [4] | CUDA Fortran | 3.06x (small-scale classical test) | Highlights that GPU acceleration is most effective for computationally intensive hotspot functions [4]. |

| WAM6 Ocean Wave Model [2] | OpenACC | 37x (8x A100 GPUs vs. dual-socket CPU node) | Showed that a full model port with OpenACC can achieve high performance on multi-GPU nodes [2]. |

Detailed Experimental Protocols and Methodologies

Understanding how these performance results were obtained is crucial for evaluating their validity and applicability to your own work.

Protocol 1: OpenACC Porting of the Princeton Ocean Model (POM)

The parallel version of POM was developed by restructuring the original Fortran code and applying OpenACC directives to the entire codebase [5].

- Accelerated Region Selection: Profiling identified key hotspot functions, including

profq,proft,advq,advt,advu, andadvv, which were then targeted for parallelization. This aligns with Amdahl's law, focusing effort on the parts of the code that consume the most runtime [5]. - Parallelization and Optimization: Researchers used OpenACC directives like

!$acc parallel loopto offload parallel loops to the GPU. Data transfer between CPU and GPU was optimized using thepresentclause to minimize communication overhead [5]. - Validation: The accuracy of the parallelized model was verified by comparing its output (e.g., sea surface height and temperature) with the original serial results using Root Mean Square Error (RMSE), confirming the correctness of the simulations [5].

Protocol 2: CUDA Fortran vs. OpenACC for the SCHISM Model

This study developed a GPU-accelerated SCHISM (GPU–SCHISM) using CUDA Fortran and compared it against an OpenACC implementation [4].

- Hotspot Identification: The researchers first profiled the CPU-based Fortran code to identify the computationally intensive Jacobi iterative solver module as the primary performance hotspot [4].

- GPU Implementation: The hotspot module was ported to the GPU using two separate approaches: CUDA Fortran, which involves rewriting computational kernels for the GPU, and OpenACC, which uses directives to automatically generate GPU code [4].

- Performance Evaluation: Both implementations were tested on the same hardware across different problem scales, from small-scale classical tests to large-scale simulations with millions of grid points. The computational time and speedup ratio relative to the CPU were measured for each approach [4].

The Scientist's Toolkit: Essential Research Reagents

In computational science, the "reagents" are the software tools, hardware, and code that enable research. The table below details key components used in the featured experiments.

| Tool / Solution | Function in Research |

|---|---|

| NVIDIA HPC SDK | A comprehensive suite including compilers (e.g., nvfortran) and libraries essential for compiling and optimizing Fortran code for GPUs using both CUDA and OpenACC [3] [4]. |

| OpenACC Directives | Preprocessor annotations (e.g., !$acc parallel loop) added to existing Fortran/C/C++ code to instruct the compiler to parallelize loops and manage data movement for the GPU [5] [1]. |

| CUDA Fortran | An extension of the Fortran language that allows programmers to write GPU kernels and manage device memory explicitly, providing low-level control for performance optimization [4]. |

| Unified Memory | A memory management technology that creates a single address space between CPU and GPU, simplifying data transfer and reducing the need for explicit copy clauses in OpenACC [3] [6]. |

| Profiler (e.g., nvprof) | A performance analysis tool used to identify hotspot functions in the serial code, which are the most computationally intensive and thus the most critical targets for GPU acceleration [5] [4]. |

| Root Mean Square Error (RMSE) | A standard statistical metric used to validate the accuracy of the GPU-accelerated model by quantifying the difference between its results and those from the original CPU version [5]. |

Making the Choice: A Decision Framework for Researchers

The choice between CUDA and OpenACC is a trade-off between development time and final performance. The workflow below outlines the key decision points.

For researchers and development teams, the decision often hinges on project goals and resources.

Choose CUDA for unconstrained performance: If your primary goal is to achieve the highest possible performance for a production-level forecasting system and your team possesses the necessary expertise, CUDA is the definitive choice. Its low-level nature allows for manual optimizations that compilers cannot yet match, as demonstrated in the SCHISM model [4]. This path requires a commitment to a more complex and hardware-specific codebase.

Choose OpenACC for productivity and portability: If development speed, code maintainability, and portability across different GPU architectures are higher priorities, OpenACC is an excellent option. It allows scientists to stay focused on their domain science by making minimal, non-intrusive changes to their code. The use of Unified Memory on modern architectures like Grace Hopper further simplifies data management, significantly boosting developer productivity [3] [6]. This makes OpenACC ideal for rapid prototyping and for research groups with limited GPU programming bandwidth.

Adopt a hybrid or staged strategy: A pragmatic approach is to start with OpenACC to quickly get a functional GPU port and achieve initial speedups. Subsequent profiling can reveal specific kernels that remain as bottlenecks. These critical kernels can then be selectively optimized using CUDA, creating a hybrid model that balances productivity and performance.

The pursuit of higher resolution and greater physical fidelity in ocean modeling has escalated computational demands, necessitating a shift from traditional CPUs to accelerated computing. This move has sparked a critical debate within the scientific community regarding the optimal programming approach for harnessing GPU power. On one side, explicit GPU kernel programming with models like CUDA provides fine-grained hardware control for maximum performance. On the other, directive-based models such as OpenACC offer higher abstraction levels that promise better productivity and portability. Within oceanography research, where simulations can span from regional basins to global climate projections, this tradeoff between performance and productivity carries significant implications for research timelines, code maintenance, and computational efficiency. This article provides a structured comparison of these competing paradigms, drawing on recent experimental studies from ocean modeling and related computational fields to guide researchers in making informed technology selections for their specific applications.

Performance Comparison: Quantitative Analysis

Direct performance comparisons in scientific literature reveal a complex landscape where the optimal choice depends on application characteristics, implementation effort, and hardware target. The following table synthesizes key performance metrics from recent studies:

Table 1: Performance Comparison Between CUDA and OpenACC Implementations

| Application Domain | Programming Model | Speedup vs. CPU Baseline | Performance Relative to CUDA | Key Implementation Factors |

|---|---|---|---|---|

| Princeton Ocean Model (POM) | OpenACC | 11.75x to 45.04x [5] | Not applicable | Full code porting with data structure optimizations [5] |

| GPU-IOCASM Ocean Model | CUDA | 312x [7] | Not applicable | Complete GPU implementation with minimal CPU-GPU transfer [7] |

| Combustion Simulation (Alya CFD) | OpenACC | Not specified | ~50% of CUDA performance (general case) [8] | Memory-bound operations with minimal reuse [8] |

| Combustion Simulation (Alya CFD) | OpenACC (optimized) | Not specified | Up to 98% of CUDA performance [9] | Manual optimizations for specific kernels [9] |

| MASNUM Wave Model | CUDA with mixed-precision | 2.97-3.39x over double-precision [10] | Not applicable | Strategic precision reduction for non-critical variables [10] |

The performance differential between programming models stems from their fundamental architectural approaches. CUDA's explicit programming model enables developers to precisely control memory hierarchies, thread organization, and execution configuration, allowing for extensive algorithm-specific optimizations. This explains the remarkable 312x speedup achieved in the GPU-IOCASM ocean model, where developers implemented the entire computation on GPUs with minimal data transfer overhead [7]. Conversely, OpenACC's directive-based approach relies on compiler technology to map parallelism onto the target architecture, which may not always exploit the full potential of the hardware. This performance gap, however, can be substantially narrowed through targeted optimizations, with some studies demonstrating that OpenACC can reach up to 98% of CUDA performance for specific applications [9].

Table 2: Performance Portability and Developer Productivity Factors

| Factor | CUDA | OpenACC |

|---|---|---|

| Code Modification Scope | Extensive rewrite required | Minimal directives added to existing code [3] |

| Data Management | Manual control of CPU-GPU transfers [7] | Automated via unified memory (GH200/Grace Hopper) [3] |

| Architecture Portability | Limited to NVIDIA GPUs | Supports multiple accelerators through compiler implementation |

| Learning Curve | Steep, requires deep GPU architecture knowledge | Gradual, preserves existing code structure [5] |

| Optimization Effort | High, but provides fine-grained control | Moderate, dependent on compiler capabilities |

Experimental Protocols in Ocean Modeling

OpenACC Implementation for the Princeton Ocean Model

The parallel Princeton Ocean Model based on OpenACC exemplifies a systematic methodology for accelerating legacy Fortran code. The implementation followed a structured approach:

Hotspot Identification and Profiling: Researchers first identified computationally intensive sections through profiling, focusing on functions governing 2D and 3D flow dynamics, mode splitting, and the turbulence closure model [5].

Incremental Parallelization: Applying OpenACC directives proceeded incrementally:

- Parallel Loop Constructs: Researchers annotated loops with

!$acc parallel loopdirectives, usinggangandvectorclauses to express parallelism across geographical grid points [5]. - Data Management: Initial implementations used

copyandcreateclauses to manage data transfers, though this complexity is reduced on unified memory architectures like Grace Hopper [3]. - Asynchronous Execution: The

asyncclause enabled overlapping computation and data transfer, crucial for mitigating memory bandwidth limitations [5].

- Parallel Loop Constructs: Researchers annotated loops with

Validation and Accuracy Verification: To ensure scientific integrity, researchers compared parallel and serial results using Root Mean Square Error (RMSE) analysis for key variables including sea surface height and temperature, confirming minimal deviation between implementations [5].

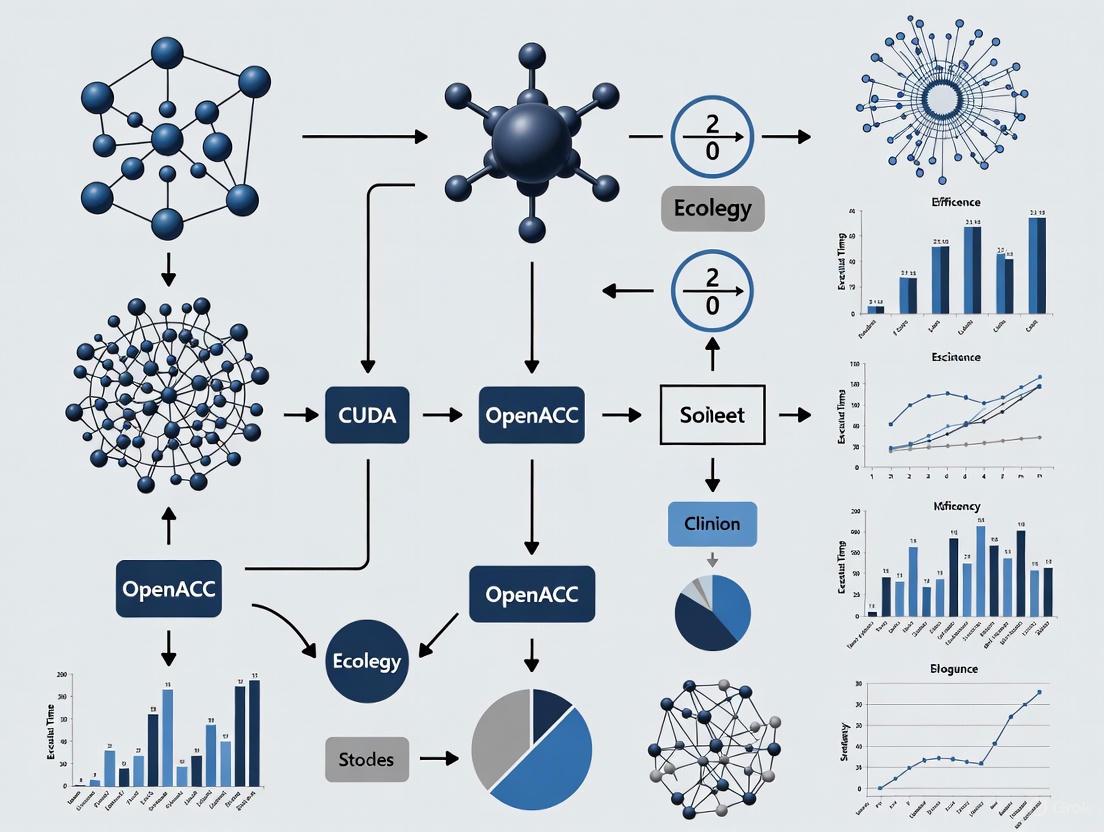

The following diagram illustrates this experimental workflow:

CUDA-C Implementation for GPU-IOCASM Ocean Model

The GPU-IOCASM (Implicit Ocean Current and Storm Surge Model) represents a ground-up CUDA implementation with distinct methodological considerations:

Algorithm Restructuring for Implicit Iteration: The finite difference method with implicit iteration required specialized attention to maintain numerical stability while exploiting GPU parallelism [7].

Memory Architecture Optimization: Developers designed data structures to maximize memory coalescing and utilize GPU memory hierarchies:

Asynchronous Execution Pipeline: The implementation separated computation from I/O operations, allowing the GPU to proceed with subsequent calculations while data transfers occurred concurrently, effectively hiding I/O latency [7].

The experimental protocol for this CUDA implementation is captured in the following workflow:

The Scientist's Toolkit: Research Reagent Solutions

Selecting appropriate tools and techniques is essential for successful GPU acceleration in ocean modeling. The following table catalogues key "research reagents" – essential software and hardware components with their specific functions in computational experiments:

Table 3: Essential Research Reagents for GPU-Accelerated Ocean Modeling

| Tool/Technique | Function | Example Applications |

|---|---|---|

| OpenACC Directives | Compiler-guided GPU parallelization with minimal code modification [5] [3] | Princeton Ocean Model (POM), NEMO ocean model [5] [3] |

| CUDA Toolkit | Explicit GPU kernel development with low-level hardware control [7] | GPU-IOCASM ocean model, MASNUM wave model [7] [10] |

| Mixed-Precision Methods | Strategic use of variable precision (float16/float32/float64) to balance accuracy and performance [10] | MASNUM wave model, NEMO ocean model [10] |

| NVIDIA HPC SDK | Compiler suite supporting OpenACC, CUDA Fortran, and unified memory programming [3] | NEMO model porting, POM optimization [5] [3] |

| Grace Hopper Architecture | Unified CPU-GPU memory system eliminating explicit data transfer directives [3] | NEMO ocean model, future porting projects [3] |

| Root Mean Square Error (RMSE) | Quantitative validation of parallel implementation accuracy [5] | POM-OpenACC verification [5] |

| MPI + OpenACC/CUDA | Multi-node scaling combining distributed and accelerated computing [11] | Large-scale ocean modeling across multiple nodes |

The choice between explicit GPU kernels and compiler directives represents a fundamental tradeoff between computational efficiency and developer productivity in ocean modeling. CUDA implementations demonstrate the upper performance potential, with studies reporting speedups exceeding 300x compared to CPU baselines through meticulous memory optimization and minimal data transfer overhead [7]. Conversely, OpenACC approaches offer compelling productivity advantages, achieving substantial speedups (11.75-45.04x) with significantly less code modification and greater platform flexibility [5] [3]. The performance gap between these paradigms is not absolute; carefully optimized OpenACC code can approach 98% of CUDA performance for memory-bound operations [9].

For research teams with GPU programming expertise targeting maximum performance, CUDA remains the preferred option, particularly for new code development. However, for most ocean modeling research groups prioritizing code maintainability, portability, and incremental acceleration of legacy Fortran codebases, OpenACC presents a compelling alternative, especially when leveraging modern unified memory architectures like Grace Hopper that simplify data management complexity [3]. Future directions will likely see increased adoption of mixed-precision strategies [10] and performance-portable programming models that further bridge the divide between these competing paradigms.

Effective data management represents one of the most persistent challenges in high-performance computing (HPC), particularly for complex scientific domains such as ocean modeling. Traditional CPU-GPU architectures require programmers to explicitly manage data transfers between separate memory spaces, adding significant complexity to development workflows. This manual data movement not only complicates code maintenance but also introduces potential performance bottlenecks through PCIe bandwidth limitations. Within ocean modeling research, where simulations increasingly incorporate multiple physical processes at higher resolutions, these constraints directly impact research productivity and computational efficiency. The NVIDIA Grace Hopper Superchip introduces a transformative approach through its hardware-integrated unified memory architecture, offering a potential paradigm shift in how scientific applications manage data across processing units.

The architectural foundation of Grace Hopper centers on the NVLink-C2C interconnect, which creates a coherent memory space between the Grace CPU and Hopper GPU. This design eliminates the traditional separation between CPU and GPU memory, enabling a programming model where data movement occurs transparently without explicit developer intervention. For research teams working with large-scale ocean models like NEMO (Nucleus for European Modelling of the Ocean) and POM (Princeton Ocean Model), this architectural innovation promises to significantly reduce the code complexity associated with GPU acceleration while maintaining computational performance [3]. This analysis examines how unified memory simplifies data management specifically within ocean modeling research contexts, comparing performance outcomes with traditional approaches and providing implementation guidance for researchers transitioning to this new architecture.

The Grace Hopper Superchip Architecture

The NVIDIA Grace Hopper Superchip represents a groundbreaking architectural approach to heterogeneous computing, integrating two distinct processing units through a high-bandwidth, memory-coherent interconnect. This system combines a 72-core Arm Neoverse V2 Grace CPU with a Hopper GPU featuring up to 144 streaming multiprocessors, creating a unified processing platform specifically optimized for HPC and AI workloads [12]. The Grace CPU incorporates up to 512 GB of LPDDR5X memory delivering 546 GB/s of bandwidth, while the Hopper GPU includes up to 96 GB of HBM3 memory with 4 TB/s of bandwidth [13]. Critically, these memory subsystems are not isolated components but rather parts of an integrated memory hierarchy accessible from both processing units.

The true innovation of this architecture lies in the NVLink-C2C interconnect, which provides a direct, coherent connection between the CPU and GPU with total bandwidth of up to 900 GB/s – 7x higher than PCIe Gen5 [12]. This high-speed link enables the CPU and GPU to share a single per-process page table, allowing all threads regardless of location to access all system-allocated memory whether it physically resides in CPU or GPU memory [12]. The hardware-enforced memory coherency means that CPU and GPU threads can concurrently and transparently access both CPU- and GPU-resident memory, fundamentally changing how applications manage data in heterogeneous environments.

Comparative Architecture Analysis

Table 1: Architectural Comparison Between Traditional and Grace Hopper Systems

| Architectural Feature | Traditional x86 + GPU | NVIDIA Grace Hopper |

|---|---|---|

| CPU-GPU Interconnect | PCIe Gen5 (128 GB/s theoretical) | NVLink-C2C (900 GB/s total) |

| Memory Coherency | Software-emulated via HMM | Hardware-enforced |

| Programming Model | Explicit data transfers | Unified Memory with transparent migration |

| Required Data Management | Manual cudaMemcpy calls |

Automatic page migration |

| Memory Oversubscription | Limited by GPU memory | Supported via CPU memory (up to 512 GB) |

| Atomic Operations | Limited cross-device support | Fully supported across CPU and GPU |

Unified Memory Implementation Mechanism

The unified memory implementation in Grace Hopper operates through a sophisticated combination of hardware and software technologies. The Address Translation Services (ATS) enable the CPU and GPU to share memory management functions, creating a unified virtual address space where both processors can access all allocated memory regardless of physical location [12]. When a GPU thread accesses a memory page initially residing in CPU memory, the NVLink-C2C interconnect facilitates direct access without requiring explicit page migration. This process occurs transparently to the application, with the NVIDIA driver managing page faults and migrations automatically.

This architecture also supports the Extended GPU Memory feature, which enables the Hopper GPU to directly address all CPU memory within the superchip [12]. Each Hopper GPU can access up to 608 GB of memory (combining 96 GB HBM3 and 512 GB LPDDR5X), significantly expanding the effective memory capacity available to GPU kernels [12]. This capability is particularly valuable for ocean modeling applications working with large domain decompositions or high-resolution datasets that exceed typical GPU memory constraints. The memory subsystem further enhances performance through intelligent caching strategies, with the Grace CPU able to cache GPU memory at cache-line granularity, optimizing access patterns for both computational units.

Programming Model Transformation: From Explicit to Implicit Data Management

Traditional Data Management Challenges

Traditional GPU programming models require explicit data movement between CPU and GPU memory spaces, creating significant development overhead particularly for complex scientific codes. In conventional systems, programmers must manually manage every data transfer using CUDA API calls like cudaMemcpy, carefully orchestrating the movement of data structures between processing units [14]. This approach becomes exceptionally complex when working with dynamic data structures, nested types, or object-oriented designs common in modern scientific software.

The challenges are particularly pronounced in ocean modeling frameworks like NEMO, which employ sophisticated data structures for representing oceanographic variables. As demonstrated in research porting NEMO to GPUs, traditional approaches require extensive "deep copy" operations to correctly handle allocatable array members within derived types [3]. The following example illustrates the code complexity required for traditional data management:

This explicit data management often constitutes a substantial portion of GPU acceleration efforts, sometimes exceeding the effort required for actual computation parallelization [3]. For C++ applications using standard template library containers like std::vector, the situation becomes even more complex because simply copying the container object does not transfer its dynamically allocated elements, requiring programmers to revert to non-object-oriented styles and work directly with raw pointers [3].

Unified Memory Programming Approach

The Grace Hopper unified memory model fundamentally simplifies GPU programming by eliminating explicit data transfer operations. With hardware-enforced memory coherency, programmers can focus primarily on computation parallelization while the system automatically manages data movement. The same data structure that required complex deep copy operations in traditional systems becomes straightforward with unified memory:

This simplification extends to C++ applications as well, where std::vector and other STL containers can be used directly in GPU kernels without specialized data transfer code [3]. The unified memory system automatically handles the complexity of container internals and element access, preserving object-oriented design patterns while maintaining performance.

The productivity benefits of this approach were quantified in the NEMO ocean model porting project, where researchers reported significantly accelerated development cycles [3]. By eliminating data management complexity, the development team could focus on parallelization strategies and performance optimization, reaching functional GPU acceleration more rapidly than with traditional approaches. As one researcher noted: "Taking advantage of unified memory programming really allows us to move faster with the porting of the NEMO ocean model to GPUs. It also gives us the flexibility to experiment with running more workloads on GPUs compared to the traditional approach" [3].

Performance Analysis: Unified Memory in Practice

Bandwidth and Transfer Performance

The performance advantages of Grace Hopper's unified memory architecture manifest most clearly in data transfer benchmarks between CPU and GPU memory spaces. Comparative testing reveals substantial improvements in memory transfer rates compared to traditional PCIe-based systems.

Table 2: Memory Transfer Performance Comparison

| Transfer Type | H200 (PCIe) | GH200 (NVLink-C2C) | Performance Improvement |

|---|---|---|---|

| Host→Device (500MiB) | 57 GiB/s | 135 GiB/s | 2.5x faster |

| Round-trip Transfer | 41 GiB/s | 65 GiB/s | 1.5x faster |

| Kernel Access to Migrated Memory | ~218 GiB/s | ~2192 GiB/s | ~10x faster |

The NVLink-C2C interconnect's 900 GB/s total bandwidth provides the foundation for these performance gains, significantly exceeding the theoretical maximum of PCIe Gen5 (128 GB/s) [15]. Real-world measurements demonstrate that practical transfer rates reach 135 GiB/s for host-to-device transfers on Grace Hopper compared to 57 GiB/s on traditional H200 systems, representing a 2.5x improvement for one-way transfers [15]. This enhanced bandwidth directly benefits ocean modeling applications that must frequently exchange boundary conditions or synchronize results between computational phases.

Ocean Modeling Case Study: NEMO Implementation

The porting of the NEMO ocean model to Grace Hopper provides a compelling case study in unified memory performance for real-world scientific applications. Researchers at the Barcelona Supercomputing Center adopted a streamlined porting strategy leveraging unified memory capabilities [3]. Their methodology involved:

- Parallelizing tightly nested loops using

!$acc parallel loop gang vector collapse()directives - Annotating loops with cross-iteration dependencies using

!$acc loop seq - Wrapping array operations in

!$acc kernelsconstructs - Annotating external routines called from parallel loops with

!$acc routine seq

Critically, this approach entirely omitted explicit data management directives that would have been essential in traditional GPU programming. The research team reported achieving "significant performance gains with relatively minimal effort," with the unified memory system automatically handling data movement between the Grace CPU and Hopper GPU [3]. Although specific speedup figures for the NEMO implementation weren't provided in the available literature, the demonstrated performance was sufficient to justify further investment in the unified memory approach for production ocean modeling workloads.

Performance Optimization Considerations

While unified memory provides substantial performance benefits, maximizing application performance requires attention to several key factors. Page size configuration significantly impacts memory access performance, with testing showing that using 64KiB pages instead of the default 4KiB Linux pages improves memory access bandwidth from approximately 218 GiB/s to over 2112 GiB/s for GPU kernels accessing data initially allocated with malloc [15].

The strategic use of allocation functions also influences performance. Benchmarks demonstrate that cudaMalloc delivers the highest bandwidth at 2390 GiB/s for data that remains primarily GPU-resident, while cudaMallocManaged reaches 2192 GiB/s [15]. For optimal performance, researchers recommend using cudaMemPrefetchAsync to proactively migrate data between memory spaces rather than relying solely on on-demand page faulting [16]. As noted in performance discussions: "Even with very sophisticated driver prefetching heuristics, on-demand access with migration will never beat explicit bulk data copies or prefetches in terms of performance for large contiguous memory regions" [16].

Cache management represents another important consideration, as demonstrated by benchmarks showing that "cold" GPU access to host memory requires 1.117 ms while "warm" access with cached data completes in 0.589 ms – more than twice as fast [15]. Applications with predictable access patterns should therefore aim to maintain data locality rather than frequently switching between different memory regions.

Comparative Analysis: CUDA vs. OpenACC with Unified Memory

Programming Approach Comparison

The unified memory architecture of Grace Hopper influences programming model selection for ocean modeling applications, with both CUDA and OpenACC offering distinct advantages in this environment. CUDA provides explicit control over GPU operations and memory management, while OpenACC offers a higher-level directive-based approach that can be more accessible to domain scientists.

Table 3: CUDA vs. OpenACC for Ocean Modeling on Grace Hopper

| Characteristic | CUDA with Unified Memory | OpenACC with Unified Memory |

|---|---|---|

| Programming Effort | Moderate to High | Low to Moderate |

| Control Granularity | Fine-grained | Coarse-grained |

| Code Modifications | Extensive | Minimal (directive-based) |

| Performance Optimization | Maximum control | Compiler-dependent |

| Portability | NVIDIA GPUs | Multiple accelerators |

| Learning Curve | Steep | Gradual |

| Maintenance Complexity | Higher | Lower |

In traditional systems, CUDA often requires significant code restructuring to manage explicit data transfers, while OpenACC directives can be added incrementally to existing code. However, with Grace Hopper's unified memory, both approaches benefit from reduced data management complexity. The NEMO ocean model implementation used OpenACC directives to accelerate code with minimal modifications, demonstrating the productivity advantages of this approach [3]. As researchers noted: "Unified memory eliminates the need for explicit data management code, enabling us to focus solely on parallelization. With less code, developers see speedups at an earlier phase of the GPU porting process" [3].

Performance Outcomes in Ocean Modeling

Research studies quantifying ocean model performance on GPU architectures provide valuable reference points for expected outcomes, though specific Grace Hopper unified memory results for some models require extrapolation from related implementations. The Princeton Ocean Model (POM) implementation using OpenACC demonstrated speedup factors ranging from 11.75x to 45.04x compared to serial execution, with higher speedups achieved for longer simulations and increased resolutions [5]. This performance resulted from restructuring parts of the POM code and applying OpenACC directives to the entire codebase while optimizing parallel algorithms and data transfer processes.

Another relevant example comes from the GPU-IOCASM (GPU-Implicit Ocean Current and Storm Surge Model), which achieved a remarkable 312x speedup compared to traditional CPU-based approaches [7]. This implementation focused on maximizing GPU computation while minimizing data transfer overhead, a strategy that aligns well with unified memory advantages. Although conducted on traditional GPU hardware, these results indicate the significant performance potential available through effective GPU acceleration of ocean modeling workloads.

For Grace Hopper specifically, NASA reported overall application speedups of 1.5-2.2x compared to Intel Milan-based systems augmented with NVIDIA A100 GPUs for various numerical analysis codes [17]. These improvements came with reduced energy consumption, demonstrating the performance-per-watt advantages of the Grace Hopper architecture for scientific computing workloads.

Diagram 1: Architectural comparison between traditional CPU-GPU systems and NVIDIA Grace Hopper

Implementation Guide: Optimizing Ocean Models for Grace Hopper

Research Toolkit for Unified Memory Development

Table 4: Essential Tools and Techniques for Grace Hopper Ocean Model Development

| Tool/Category | Specific Solutions | Application in Ocean Modeling |

|---|---|---|

| Compilation Tools | NVIDIA HPC SDK (nvfortran) | Compiling Fortran-based ocean models |

| CUDA Toolkit (nvcc) | CUDA C++ development and profiling | |

| Programming Models | OpenACC | Directive-based CPU/GPU parallelism |

| Standard Language Parallelism (ISO C++, Fortran) | Cross-platform parallel code | |

| Profiling Tools | Nsight Systems | Application performance analysis |

| Nsight Compute | GPU kernel optimization | |

| Memory Management | cudaMallocManaged | Unified memory allocations |

| cudaMemPrefetchAsync | Optimized data placement | |

| 64KiB Page Size | Improved memory access performance | |

| Optimization Techniques | Async Operations | Overlapping computation and data movement |

| Loop Collapsing | Increased GPU parallelism | |

| Stream Parallelism | Concurrent kernel execution |

Practical Implementation Methodology

Successfully deploying ocean models on Grace Hopper systems follows a structured approach that leverages unified memory advantages while addressing potential performance considerations. A recommended methodology includes:

Initial Porting Phase: Begin by adding basic OpenACC directives or CUDA kernels to computational hotspots without explicit data management. Use unified memory exclusively during initial development to validate correctness and establish performance baselines [3]. For NEMO, this involved parallelizing the diffusion and advection routines for active and passive tracers while relying on unified memory for automatic data movement.

Memory Configuration: Configure systems to use 64KiB memory pages instead of the default 4KiB pages, as this significantly improves memory access performance for GPU kernels [15]. This system-level optimization can dramatically improve memory bandwidth for applications accessing data initially allocated in CPU memory.

Performance Optimization: Introduce asynchronous operations and prefetching directives based on profiling data. Use cudaMemPrefetchAsync to proactively migrate data to the appropriate processor before computation [16]. For OpenACC applications, add async clauses to parallel constructs to enable overlapping of computation and data movement, followed by wait directives before MPI communications or when data is needed on the CPU [3].

Advanced Optimization: For production deployments, consider hybrid memory management strategies that use cudaMalloc for frequently accessed GPU-resident data while employing unified memory for less predictable access patterns or data shared between CPU and GPU. Monitor page migration statistics using profiling tools to identify optimization opportunities.

This methodology aligns with successful implementations such as the NEMO porting project, which demonstrated that unified memory enables researchers to "focus solely on parallelization" rather than data management complexities [3]. The streamlined development process allows teams to achieve functional GPU acceleration more rapidly, then incrementally optimize performance based on application-specific patterns.

The unified memory architecture of NVIDIA Grace Hopper represents a significant advancement in heterogeneous computing for ocean modeling research. By eliminating the data management barrier that has traditionally complicated GPU acceleration, this technology enables research teams to focus on algorithmic development and scientific innovation rather than computational mechanics. The integration of Grace CPU and Hopper GPU through NVLink-C2C creates a coherent memory system that provides both performance advantages through 900 GB/s interconnect bandwidth and productivity benefits through transparent data movement.

For the ocean modeling research community, these architectural innovations offer compelling opportunities to accelerate both development cycles and computational performance. The demonstrated success of projects like NEMO implementation on Grace Hopper validates the practical value of unified memory for complex scientific codes [3]. As ocean models continue evolving toward higher resolutions and incorporating more physical processes, the computational demands will further increase – making architectural efficiencies like those in Grace Hopper increasingly essential for research progress.

The comparison between programming approaches reveals that both CUDA and OpenACC benefit from unified memory, with OpenACC offering particularly attractive productivity advantages for research teams prioritizing maintainability and rapid development. The performance outcomes observed across various ocean modeling implementations – from 1.5-2.2x improvements in NASA applications to more than 300x speedups in specialized ocean models – demonstrate the significant potential of GPU acceleration when combined with streamlined data management [7] [17].

As supercomputing centers worldwide deploy Grace Hopper systems, including notable installations at NASA [17], the Swiss National Supercomputing Centre [3], and the Jülich Supercomputing Centre [3], ocean modeling researchers have increasing access to this transformative technology. By adopting the implementation methodologies and optimization strategies outlined in this analysis, research teams can effectively leverage unified memory to accelerate both their computational workflows and scientific discoveries.

The adoption of GPU programming in scientific computing represents a pivotal shift in high-performance computing (HPC), particularly for computationally intensive fields like ocean modeling. As researchers sought to overcome the limitations of traditional CPU-based computing, two primary approaches emerged: low-level programming models like CUDA and directive-based models like OpenACC. This evolution from specialized, hardware-specific coding to more accessible, portable approaches has fundamentally reshaped how scientists accelerate complex simulations. Within oceanography, this transition is particularly evident in the migration of established models such as the Princeton Ocean Model (POM) and SCHISM from CPU to GPU architectures. The historical context of this shift reveals an ongoing tension between maximizing computational performance and maintaining developer productivity and code portability, a balance that continues to drive innovation in GPU programming paradigms for scientific applications.

The Rise of GPU Programming Models

The landscape of GPU programming models has diversified significantly to cater to different needs within the scientific computing community. CUDA (Compute Unified Device Architecture), introduced by NVIDIA, emerged as a low-level programming model that provides explicit control over GPU hardware. This model requires developers to manage memory explicitly, define kernel functions, and orchestrate thread hierarchy, offering potentially superior performance at the cost of increased programming complexity and reduced code portability. In ocean modeling, CUDA has been successfully applied to achieve remarkable speedups, such as the GPU-IOCASM model which demonstrated a 312x speedup compared to traditional CPU-based approaches by performing most computations on the GPU and minimizing data transfer overhead [7].

In contrast, OpenACC represents a higher-level, directive-based approach designed to simplify GPU programming. By adding simple compiler directives to existing Fortran, C, or C++ code, developers can parallelize computational kernels without deep expertise in GPU architecture. The OpenACC specification, maintained by the OpenACC Organization, aims to help the research community "advance science by expanding their accelerated and parallel computing skills" [18]. This model particularly benefits complex scientific codes like ocean models by enabling incremental acceleration while preserving the original code structure. Recent advancements, such as those in NVIDIA HPC SDK v25.7, have further enhanced OpenACC's practicality through unified memory programming, which automates data movement between CPU and GPU, significantly reducing programming complexity [3] [6].

A third approach, CUDA Fortran, has also gained traction in scientific computing, particularly for legacy Fortran codebases. This model extends the Fortran language with GPU programming capabilities, blending elements of both CUDA and traditional Fortran. Studies comparing these approaches have found that CUDA Fortran generally outperforms OpenACC across various experimental conditions, though OpenACC offers superior programmer productivity [4].

Table: Key GPU Programming Models in Ocean Modeling

| Programming Model | Abstraction Level | Key Characteristics | Primary Advantages |

|---|---|---|---|

| CUDA | Low-level | Explicit memory and kernel management | Maximum performance potential, fine-grained control |

| OpenACC | High-level | Compiler directives, minimal code changes | Portability, programmer productivity, incremental adoption |

| CUDA Fortran | Intermediate | Fortran language extensions for GPU | Balance of performance and familiarity for Fortran developers |

Performance Comparison: CUDA vs. OpenACC in Ocean Modeling

Direct comparisons between CUDA and OpenACC performance in ocean models provide valuable insights for researchers selecting an appropriate programming model. Experimental data from recent studies reveals a consistent performance advantage for CUDA-based implementations, though the magnitude of this advantage varies based on model characteristics and implementation quality.

In a comprehensive evaluation of the SCHISM ocean model, researchers developed both CUDA Fortran and OpenACC versions and compared their performance across different grid resolutions [4]. The results demonstrated that CUDA consistently outperformed OpenACC under all experimental conditions. For large-scale simulations with 2,560,000 grid points, the CUDA implementation achieved a speedup ratio of 35.13 compared to the CPU baseline, significantly exceeding the OpenACC performance. The performance gap was attributed to CUDA's more efficient memory access patterns and reduced runtime overhead, advantages that became more pronounced with increasing problem size.

However, OpenACC implementations have demonstrated impressive scalability in their own right, particularly when leveraging modern hardware features. The parallel Princeton Ocean Model based on OpenACC showed speedup factors increasing from 11.75 to 45.04 as simulation time and horizontal resolution grew [5]. This implementation successfully restructured parts of the POM code and applied OpenACC directives to the entire codebase, optimizing parallel algorithms and data transfer processes. While this performance still trails theoretical maximums achievable through CUDA, it represents a substantial improvement over CPU-only execution and demonstrates OpenACC's practicality for production ocean modeling systems.

The performance comparison between these approaches must also consider implementation effort. The OpenACC version of POM was developed by "restructuring parts of the POM code and applying OpenACC directives to the entire POM code," a process that generally requires less specialized expertise and development time compared to the complete code restructuring often necessary for CUDA implementations [5]. This trade-off between ultimate performance and development efficiency represents a critical consideration for research teams with limited programming resources or expertise.

Table: Performance Comparison of CUDA and OpenACC in Ocean Models

| Ocean Model | Programming Model | Speedup vs. CPU | Experimental Conditions |

|---|---|---|---|

| SCHISM [4] | CUDA Fortran | 35.13x | 2,560,000 grid points |

| SCHISM [4] | OpenACC | Lower than CUDA | All experimental conditions |

| Princeton Ocean Model [5] | OpenACC | 11.75x - 45.04x | Varying simulation duration and resolution |

| GPU-IOCASM [7] | CUDA | 312x | Implicit iteration with online nesting |

Experimental Methodologies and Benchmarking Approaches

Robust experimental methodologies are essential for meaningful performance comparisons between GPU programming models in ocean modeling. Researchers typically employ standardized benchmarking approaches that control for variables such as grid resolution, simulation duration, and physical complexity to ensure fair and reproducible evaluations.

A common methodology involves identifying computational hotspots through profiling before implementation. In the SCHISM model acceleration study, researchers first conducted a detailed performance analysis of the original CPU-based Fortran code, identifying the Jacobi iterative solver module as a primary performance bottleneck [4]. This hotspot analysis guided targeted optimization efforts, ensuring efficient use of development resources. Similarly, the OpenACC-based POM parallelization applied Amdahl's law to identify parallelizable regions, focusing optimization efforts on code sections that would yield the greatest performance benefits [5].

Accuracy validation represents another critical methodological component. Researchers typically compare simulation results from GPU-accelerated versions with those from established CPU-based implementations to ensure numerical correctness. The OpenACC POM implementation used Root Mean Square Error (RMSE) calculations for sea surface height and temperature to verify that parallel results matched serial results within acceptable tolerances [5]. Likewise, the GPU-IOCASM model validation demonstrated "strong agreement with both observed data and SCHISM's results," confirming reliability and precision despite significant algorithmic changes [7].

Performance benchmarking typically employs metrics such as speedup factor (GPU time vs. CPU time), computational throughput (simulated years per day), and energy efficiency. Studies often sweep parameters including grid resolution, simulation duration, and hardware configuration to assess performance across realistic usage scenarios. For example, the SCHISM evaluation tested both small-scale classical experiments and large-scale scenarios with millions of grid points, providing a comprehensive view of performance characteristics [4].

Diagram: GPU Acceleration Methodology for Ocean Models

Successful implementation of GPU-accelerated ocean models requires both specialized software tools and hardware resources. The research community has developed a comprehensive ecosystem of compilers, libraries, and frameworks to support development across different programming models.

For OpenACC development, the NVIDIA HPC SDK provides a complete toolset, including compilers that support directive-based acceleration for Fortran, C, and C++ codes [3]. Recent versions have significantly enhanced unified memory support, particularly beneficial for architectures like the Grace Hopper Superchip where CPU and GPU share a unified address space [3] [6]. This automation of data movement dramatically reduces programming complexity, allowing researchers to focus on parallelization rather than memory management. The OpenACC model has been successfully applied to complex ocean models like NEMO (Nucleus for European Modelling of the Ocean), where developers used a strategy of annotating performance-critical loops with directives while leaving memory management to the CUDA driver and hardware [3].

For CUDA-based development, researchers typically utilize CUDA Toolkit alongside language-specific compilers such as CUDA Fortran [4]. This approach offers greater low-level control but requires explicit management of data transfers between host and device memory. The GPU-IOCASM model exemplifies this approach, implementing optimizations like "mask-based conditional computation" and "adaptive iteration count prediction" to maximize parallelism while minimizing memory overhead [7].

Emerging approaches also include framework migration strategies to support hardware diversity. Recent research has explored migrating atmospheric and oceanic AI models from PyTorch to MindSpore framework optimized for Chinese domestic chips like Sugon's DCU and Huawei's Ascend [19]. This reflects a growing trend toward hardware-agnostic implementation strategies in scientific computing.

Table: Essential Research Reagents for GPU-Accelerated Ocean Modeling

| Tool/Resource | Function/Purpose | Representative Use Cases |

|---|---|---|

| NVIDIA HPC SDK [3] | Compiler suite for OpenACC and CUDA Fortran | Directive-based parallelization of NEMO, POM |

| Grace Hopper Superchip [3] | Unified CPU-GPU memory architecture | Simplifying data management in complex ocean models |

| CUDA Toolkit [7] | Development environment for CUDA programming | GPU-IOCASM, SCHISM CUDA implementations |

| MindSpore Framework [19] | AI framework for domestic chips | Migrating ocean models to Chinese hardware |

The historical evolution of GPU programming in scientific computing reveals a clear trajectory from specialized, hardware-specific implementations toward more accessible, portable approaches without sacrificing performance. In ocean modeling, both CUDA and OpenACC have demonstrated significant acceleration capabilities, with CUDA generally offering higher performance while OpenACC provides superior programmer productivity and code maintainability.

The performance advantage of CUDA, as evidenced by its 35.13x speedup in SCHISM implementations compared to OpenACC, must be balanced against the development efficiency of directive-based approaches [4]. OpenACC has enabled impressive speedups ranging from 11.75x to 45.04x in the Princeton Ocean Model with less complex code restructuring [5]. Recent advancements in unified memory architectures further enhance OpenACC's practicality by automating data management, potentially narrowing the performance gap while maintaining development efficiency.

For the ocean modeling research community, the choice between programming models involves careful consideration of project requirements, available expertise, and long-term maintenance concerns. CUDA remains preferable for performance-critical applications targeting specific hardware, while OpenACC offers a compelling alternative for teams prioritizing portability, productivity, and incremental acceleration of existing codebases. As GPU architectures continue to evolve and programming models mature, this balance may shift further toward directive-based approaches without compromising the performance gains that have made GPU acceleration indispensable to modern oceanography.

Implementing GPU Acceleration in Real-World Ocean Models: Methodologies and Case Studies

The demand for higher resolution and more physically comprehensive ocean models has exponentially increased computational requirements, making GPU acceleration essential for timely scientific outcomes. Within this context, a pivotal choice facing researchers is the selection of a GPU programming model, primarily between the explicit data management of CUDA and the directive-based, higher-productivity approach of OpenACC. This case study examines the porting of the Nucleus for European Modelling of the Ocean (NEMO) framework using OpenACC combined with the Unified Memory feature of modern NVIDIA architectures. We objectively compare this approach against alternative methods, including CUDA and OpenACC without Unified Memory, by analyzing experimental data from NEMO and other contemporary ocean models. The analysis focuses on the critical trade-offs between developer productivity, performance, and portability, providing a evidence-based guide for scientists and researchers in computational oceanography.

Experimental Protocols and Methodologies

To ensure a fair and objective comparison, this section details the standard experimental protocols and methodologies used in evaluating the porting of ocean models to GPUs.

The NEMO Model Porting Strategy with OpenACC and Unified Memory

The porting of the NEMO model (v4.2.0) at the Barcelona Supercomputing Center (BSC) served as a primary case study for using OpenACC with Unified Memory [3]. The experimental protocol was designed to maximize developer productivity while achieving performance gains.

- Initial Porting Strategy: The developers focused exclusively on parallelizing performance-critical loops using OpenACC directives, deliberately omitting any explicit GPU data management code. Unified Memory on the Grace Hopper architecture automatically handled all data movement [3].

- Parallelization Technique: Key hotspot routines, such as those for diffusion and advection of tracers, were targeted. Fully parallel, tightly nested loops were annotated with

!$acc parallel loop gang vector collapse(). Loops with cross-iteration dependencies were marked with!$acc loop seq, and external routines within parallel regions were declared with!$acc routine seq[3]. - Performance Optimization: A significant performance optimization involved adding the

asyncclause to parallel constructs to remove implicit synchronization barriers between back-to-back parallel regions. Explicit synchronizations (!$acc wait) were only introduced before MPI communications or when data computed on the GPU was needed by the host [3]. - Benchmark and Hardware: The experiments used the GYRE_PISCES benchmark on an ORCA ½ grid. The model was run on NVIDIA Grace Hopper Superchip systems, which feature a unified address space between the CPU and GPU connected via a high-bandwidth NVLink-C2C interconnect [3] [20].

Alternative Model Protocols: CUDA and OpenACC

For comparison, we examine the methodologies used to port other prominent ocean models.

- GPU-IOCASM with CUDA C: This implicit ocean model was fully redesigned for GPUs using CUDA C. The protocol emphasized maximizing computation on the GPU to minimize data transfer overhead. Techniques included a mask-based conditional computation method and an adaptive iteration count prediction strategy. Verification was performed against observed data and the SCHISM model's results [7].

- SCHISM with CUDA Fortran: The GPU-SCHISM project ported the model using CUDA Fortran. The methodology involved profiling the original CPU code to identify the Jacobi iterative solver as the primary hotspot. This computationally intensive kernel was then offloaded to the GPU [4].

- Princeton Ocean Model (POM) with OpenACC: This parallelization effort used OpenACC directives to accelerate the entire POM code. The process involved restructuring parts of the code, optimizing parallel algorithms, and carefully managing data transfer processes. The model's accuracy was verified by comparing the Root Mean Square Error (RMSE) of sea surface height and temperature between serial and parallel runs [5].

Performance and Productivity Comparison

This section synthesizes quantitative performance data and qualitative productivity findings from the porting efforts of NEMO and other ocean models.

Quantitative Performance Analysis

The table below summarizes the performance outcomes of various ocean model porting projects, providing a direct comparison of the speedups achieved by different approaches.

Table 1: Performance Comparison of GPU-Accelerated Ocean Models

| Ocean Model | GPU Programming Model | Key Speedup Metric | Experimental Context |

|---|---|---|---|

| NEMO [3] [21] | OpenACC + Unified Memory | ~2–5x end-to-end speedup | GYRE_PISCES benchmark on NVIDIA Hopper GPU (Grace Hopper Superchip) |

| GPU-IOCASM [7] | CUDA C | >312x speedup | Compared to a traditional single-core CPU-based approach |

| SCHISM [4] | CUDA Fortran | 35.13x speedup (overall model) | Large-scale experiment with 2,560,000 grid points on a single GPU |

| SCHISM [4] | CUDA Fortran | 3.06x speedup (Jacobi solver hotspot) | Small-scale classical experiment on a single GPU |

| Princeton Ocean Model (POM) [5] | OpenACC | 11.75x to 45.04x speedup | Speedup increased with simulation time and horizontal resolution |

| POM (Previous MPI version) [5] | MPI (CPU clusters) | 35.04x with 48 cores | Relative to single-core execution |

The data shows that CUDA-based implementations can achieve extreme speedups, as demonstrated by GPU-IOCASM. This is often the result of a ground-up redesign that allows for fine-grained optimizations and maximizes GPU computation while minimizing CPU-GPU data transfer [7]. Similarly, CUDA Fortran provided significant acceleration for the SCHISM model, particularly for large-scale problems [4].

Conversely, the OpenACC-based NEMO and POM projects achieved more modest but still substantial speedups in the range of 2-5x and up to 45x, respectively [3] [5]. It is critical to note that the NEMO porting was in its early stages, with only key hotspots accelerated, whereas the POM was more fully ported. Furthermore, a direct comparison within the SCHISM model found that CUDA outperformed OpenACC under all tested experimental conditions [4].

Qualitative Productivity Analysis

While raw performance is crucial, developer productivity is an equally important metric in scientific computing.

- Developer Productivity with Unified Memory: The NEMO case study strongly highlights the productivity benefits of combining OpenACC with Unified Memory. Developers from BSC reported that this approach "allows us to move faster" and provides "the flexibility to experiment with running more workloads on GPUs" [3]. By eliminating the need for verbose and error-prone explicit data management, developers can focus their effort solely on identifying and parallelizing computational hotspots.

- Complexity of Manual Data Management: Without Unified Memory, GPU programming requires deep-copy operations for complex data structures, such as allocatable arrays within derived types in Fortran or C++ standard template library containers like

std::vector. This process is notoriously difficult, often forcing developers to abandon clean, object-oriented design in favor of low-level, non-intuitive code rewrites [3] [22]. - Porting Effort and Code Maintenance: The CUDA-based port of POM by Xu et al. demonstrated high performance but was noted to be difficult to maintain for programmers accustomed to Fortran [5]. OpenACC, being a directive-based model, allows the original Fortran or C/C++ codebase to remain largely intact, making it inherently more portable and easier to maintain and modify for domain scientists who may not be GPU experts.

The Scientist's Toolkit: Research Reagent Solutions

This section details the essential software and hardware components used in the featured NEMO porting experiment, providing a reference for researchers seeking to replicate or build upon this work.

Table 2: Essential Tools and Environments for Ocean Model GPU Porting

| Tool Name | Category | Function in the Experiment |

|---|---|---|

| NVIDIA HPC SDK v25.7 | Software Toolkit | Provides compilers (nvfortran, nvc) with support for OpenACC and Unified Memory programming [3]. |

| OpenACC | Programming Model | A directive-based API for parallel programming, used to annotate parallel loops and regions in NEMO [3]. |

| CUDA Unified Memory | Memory Management Model | Automates data movement between CPU and GPU, eliminating the need for explicit enter data and copy directives [3] [23]. |

| Grace Hopper Superchip | Hardware Architecture | An integrated CPU-GPU architecture with a unified address space, connected via high-bandwidth NVLink-C2C [3]. |

| NEMO v4.2.0 | Ocean Model | The target application for GPU acceleration in the primary case study [3]. |

| GYRE_PISCES Benchmark | Validation & Performance Test | A standard benchmark within NEMO used to measure correctness and computational performance [3]. |

Visualizing the GPU Porting Workflow

The following diagram illustrates the core workflow for porting an ocean model like NEMO to GPUs using the OpenACC and Unified Memory approach, highlighting its iterative nature and key decision points.

The evidence from NEMO and other models reveals a clear performance-productivity trade-off. CUDA offers the potential for higher peak performance, as seen in GPU-IOCASM and GPU-SCHISM, making it suitable for projects where maximum speedup is the paramount goal and where significant developer effort can be invested into a complete GPU-native rewrite [7] [4].

However, the combination of OpenACC and Unified Memory presents a compelling high-productivity alternative. The NEMO case study demonstrates that this approach allows research teams to achieve significant speedups (2-5x) with only partial code porting and a fraction of the development effort [3] [20]. This is because the model abstracts away the two most complex aspects of GPU programming: writing parallel kernels and managing data locality.

In conclusion, the choice between CUDA and OpenACC with Unified Memory is not a matter of which is universally better, but which is more appropriate for a project's specific goals and constraints. For research teams prioritizing rapid development, code maintainability, and incremental performance gains, OpenACC with Unified Memory on architectures like Grace Hopper is an excellent choice. For projects where every last ounce of performance must be extracted and where dedicated GPU programming expertise is available, a CUDA-based port may be worth the additional investment. As the underlying hardware and software ecosystems continue to evolve, particularly with tighter CPU-GPU integration, the performance gap between these two approaches is likely to narrow, further enhancing the value of high-productivity programming models in scientific research.

The pursuit of computational efficiency in high-resolution ocean modeling is crucial for accurate and timely storm surge forecasting. Within this domain, a key research focus is the performance comparison between two primary GPU programming models: the explicit, low-level CUDA and the directive-based, high-level OpenACC. This case study provides a detailed examination of a specific implementation that accelerated the SCHISM (Semi-implicit Cross-scale Hydroscience Integrated System Model) using CUDA Fortran, objectively comparing its performance against an OpenACC-based alternative. The findings offer valuable insights for researchers and scientists selecting the appropriate parallelization strategy for ocean models.

Experimental Protocols and Methodologies

To ensure a fair and meaningful comparison, the cited studies followed rigorous experimental protocols.

SCHISM Model and GPU-Accelerated Implementations

The core model in this comparison is SCHISM, a three-dimensional, unstructured-grid ocean model that solves the hydrostatic Navier-Stokes equations using a semi-implicit finite element/finite volume method combined with an Euler-Lagrange algorithm [4]. Its cross-scale capabilities make it suitable for simulating complex storm surge and compound flooding events [24].

- CUDA Fortran Implementation (GPU-SCHISM): This approach involved a manual, code-intensive porting of the computationally intensive Jacobi iterative solver module, identified as a performance hotspot. The implementation leveraged CUDA Fortran within the SCHISM v5.8.0 framework to execute this critical kernel on NVIDIA GPUs [4].

- OpenACC Implementation: The alternative OpenACC approach utilized directive-based pragmas (e.g.,

!$acc parallel loop) to offload parallel loops onto the GPU. This method benefits from simplified code changes and automated data management, particularly on modern architectures like the NVIDIA Grace Hopper Superchip that feature a unified address space [3].

Computational Environments and Benchmarks

Performance was evaluated on a single node equipped with GPUs [4]. The experiments assessed performance using two distinct computational grids:

- A classical small-scale experiment with a limited number of grid points.

- A large-scale experiment with 2,560,000 grid points to evaluate strong scaling and high-resolution performance [4].

The key metric for comparison was the speedup ratio, calculated as the original CPU execution time divided by the GPU-accelerated execution time.

Performance Comparison: CUDA Fortran vs. OpenACC

The experimental results demonstrate a clear performance hierarchy between the two GPU programming models across different scenarios.

Table 1: Speedup Ratio Comparison of CUDA and OpenACC for SCHISM

| Experimental Scenario | CUDA Fortran Speedup | OpenACC Speedup | Performance Advantage |

|---|---|---|---|

| Overall Model (Small-Scale) | 1.18x | Information Not Explicitly Provided | CUDA Outperforms OpenACC [4] |

| Jacobi Solver Hotspot (Small-Scale) | 3.06x | Information Not Explicitly Provided | CUDA Outperforms OpenACC [4] |

| Overall Model (Large-Scale, 2.56M points) | 35.13x | Information Not Explicitly Provided | CUDA Outperforms OpenACC [4] |

| General Performance Conclusion | Superior | Inferior | CUDA outperforms OpenACC under all tested experimental conditions [4] |

Analysis of Performance Discrepancies

The superior performance of the CUDA Fortran implementation can be attributed to fundamental architectural differences:

- Low-Level Control: CUDA provides programmers with explicit control over GPU resources, including memory transfers between host and device, kernel execution streams, and thread block management. This allows for fine-tuned optimizations that minimize latency and maximize bandwidth utilization [4] [7].

- Compiler Limitations: While OpenACC directives simplify the porting process, the reliance on the compiler to generate optimal GPU code can lead to inefficiencies. The compiler may not always make the most effective decisions regarding memory coalescing, register usage, or loop scheduling, resulting in lower computational throughput compared to a hand-crafted CUDA kernel [4].

The performance gap is most pronounced in the large-scale experiment, where the CUDA implementation achieved a speedup ratio of 35.13, dramatically outperforming the OpenACC version. This highlights CUDA's advantage in handling computationally intensive, high-resolution simulations where efficient resource management is critical [4].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successfully developing and benchmarking GPU-accelerated ocean models requires a specific set of software and hardware tools.

Table 2: Essential Tools for GPU-Accelerated Ocean Model Research

| Tool Name | Type | Function & Purpose |

|---|---|---|

| SCHISM v5.8.0 | Software / Numerical Model | The core open-source, unstructured-grid ocean model used for storm surge and compound flood simulation [4] [24]. |

| NVIDIA HPC SDK | Software / Compiler Toolkit | Includes compilers for CUDA Fortran and support for OpenACC directives, essential for building GPU-enabled applications [3]. |

| CUDA Fortran | Programming Model | An extension of Fortran providing explicit GPU programming capabilities for high-performance, fine-tuned acceleration [4]. |

| OpenACC | Programming Model | A directive-based API for parallel programming, designed to simplify GPU porting of HPC applications [3] [5]. |

| Grace Hopper Superchip | Hardware / GPU Architecture | An integrated CPU-GPU architecture with a unified memory space, simplifying data management for OpenACC and CUDA programs [3]. |

Workflow and Performance Relationships

The following diagram illustrates the logical workflow of the GPU acceleration process for SCHISM and the factors leading to the performance differential between CUDA and OpenACC.

This case study demonstrates a clear trade-off between performance and development efficiency for GPU programming models in oceanographic research. The CUDA Fortran implementation of SCHISM delivers superior computational speedups, making it the preferred choice for production-level, high-resolution storm surge forecasting where maximum performance is critical. In contrast, OpenACC offers a less code-intensive path to GPU acceleration, potentially accelerating development cycles and improving code maintainability, albeit at the cost of raw computational throughput.

For researchers, the choice depends on project goals: select CUDA Fortran for squeezing out the highest possible performance from dedicated HPC systems, and consider OpenACC for rapid prototyping, leveraging unified memory architectures, or when dealing with complex codebases where minimal code invasion is a priority. The ongoing development of unified memory models in architectures like Grace Hopper may further enhance the viability of OpenACC by reducing its primary performance bottlenecks [3].

The Princeton Ocean Model (POM) is a foundational, open-source regional ocean model renowned for its use of sigma coordinates in the vertical direction and a second-moment turbulence closure scheme for determining the vertical mixing coefficient [5]. As operational forecasting and high-resolution reanalysis systems demand greater computational power, parallelizing POM has become essential. While approaches like Message Passing Interface (MPI) and CUDA have been explored, they present challenges including code complexity, limited portability, and significant rewriting efforts [5] [4]. This case study examines the parallelization of POM using OpenACC, a high-level, directive-based programming model designed for portability across diverse heterogeneous computing platforms. We will evaluate its implementation methodology, performance, and accuracy, and provide a direct comparison with alternative parallelization paradigms, specifically CUDA, within the broader context of accelerator-based ocean modeling.

Methodology: OpenACC Parallelization of POM

Code Analysis and Hotspot Identification

The parallelization process began with a thorough profiling of the serial POM code to identify computational hotspots. Following Amdahl's law, the focus was on functions that consumed the most runtime, as parallelizing these would yield the greatest overall speedup [5]. The analysis revealed that the 2D external mode and the 3D internal mode were the most time-consuming sections of the model. The entire POM code was subsequently restructured, and OpenACC directives were applied to these critical regions to offload computation to the GPU [5].

OpenACC Parallelization Strategy

The core strategy involved annotating parallel loops in the hotspot functions with OpenACC directives. The !$acc parallel loop directive was used to parallelize tightly nested loops, while !$acc loop seq was applied to loops with cross-iteration dependencies that required sequential execution [5] [3]. To handle complex data structures, particularly in versions utilizing unified memory architectures, explicit data management directives (!$acc enter data copyin) were often necessary to ensure all required data was present on the GPU [3].

Key technical aspects of the implementation included:

- Data Management: The developers optimized data transfer processes between the CPU and GPU to minimize overhead, a critical step for performance [5].

- Asynchronous Execution:

asyncclauses were added to parallel constructs to enable concurrent execution of multiple kernels and overlap computation with communication, reducing idle time [3]. - Routine Annotations: External subroutines called from within parallel loops were annotated with

!$acc routine seqto indicate they are sequential and safe to run on the GPU [3].

The following diagram illustrates the workflow for porting POM to GPUs using OpenACC:

Experimental Setup and Validation Protocol

To ensure the correctness of the parallelized model, a rigorous validation protocol was followed. The results from the OpenACC version were compared against the benchmark serial POM results. The Root Mean Square Error (RMSE) was calculated for key output variables, including Sea Surface Height (SSH) and temperature [5]. The RMSE formula used was: [ RMSE = \sqrt{\frac{1}{n} \sum{i=1}^{n} (yi - \hat{yi})^2} ] where (yi) represents the results from the serial run, (\hat{y_i}) represents the results from the parallel run, and (n) is the number of samples [5]. This quantitative accuracy check was crucial for verifying that the accelerated model produced scientifically valid results.

Performance Analysis and Comparison

OpenACC Performance Results

The performance of the OpenACC-accelerated POM was tested under different simulation durations and horizontal resolutions. The key finding was that the speedup factor increased with both the simulation length and the grid resolution, highlighting the GPU's efficiency in handling larger computational workloads [5]. The achieved speedup ranged from 11.75x to 45.04x compared to the serial CPU version [5]. This demonstrates that OpenACC is highly effective for production-scale, high-resolution ocean modeling scenarios.

Table 1: Performance of OpenACC-based POM under Different Conditions

| Simulation Duration | Horizontal Resolution | Achieved Speedup |

|---|---|---|

| Increasing | Standard | 11.75x |

| Increasing | Higher | 45.04x |

OpenACC vs. CUDA Fortran: A Direct Comparison

A study parallelizing the SCHISM ocean model provides a direct, head-to-head comparison of OpenACC and CUDA Fortran, which is highly relevant for the CUDA vs. OpenACC thesis context [4]. The research found that for the SCHISM model's Jacobi solver hotspot and overall runtime, CUDA Fortran consistently outperformed OpenACC across all tested experimental conditions [4]. For a large-scale experiment with 2,560,000 grid points, while the OpenACC implementation showed a significant speedup, the CUDA version achieved a higher speedup ratio of 35.13x [4].

Table 2: OpenACC vs. CUDA Fortran for the SCHISM Model (Selected Experiments)

| Experiment Scale | Number of Grid Points | CUDA Speedup | OpenACC Speedup |

|---|---|---|---|

| Small-Scale | Not Specified | 3.06x (Hotspot) | Lower than CUDA |

| Large-Scale | 2,560,000 | 35.13x | Lower than CUDA |

Qualitative Comparison of Parallelization Approaches

Beyond raw performance, the choice between OpenACC and CUDA involves important trade-offs between performance, portability, and developer productivity.

Table 3: Qualitative Comparison of GPU Parallelization Approaches for Ocean Models

| Feature | OpenACC | CUDA |

|---|---|---|

| Programming Model | Directive-based (high-level) | Explicit programming (low-level) |

| Code Modification | Minimal (annotation-based) | Extensive (requires rewriting kernels) |

| Portability | High (across various GPUs and CPUs) | Lower (primarily NVIDIA GPUs) |

| Performance Control | Moderate (relies on compiler) | High (fine-grained control) |

| Ease of Learning | Easier for Fortran developers [5] | Steeper learning curve [5] |

| Maintainability | Higher (closer to original code) | Lower (separate, specialized code) |