Cross-Validation of Behavior Classification Across Species: Enhancing Robustness in Preclinical Research and Drug Development

Robust behavior classification is fundamental to translational research, yet methods validated in one species often fail to generalize, creating a significant bottleneck in drug discovery.

Cross-Validation of Behavior Classification Across Species: Enhancing Robustness in Preclinical Research and Drug Development

Abstract

Robust behavior classification is fundamental to translational research, yet methods validated in one species often fail to generalize, creating a significant bottleneck in drug discovery. This article provides a comprehensive framework for developing and validating cross-species behavior classification models. We explore the foundational principles of behavioral phenotyping, examine advanced machine learning methodologies for cross-species application, address critical troubleshooting and optimization challenges such as data non-stationarity and algorithmic bias, and present rigorous validation and comparative analysis techniques. Aimed at researchers, scientists, and drug development professionals, this work synthesizes cutting-edge approaches to build more reliable, reproducible, and predictive behavioral models that enhance the translational value of preclinical findings and improve clinical success rates.

The Foundations of Cross-Species Behavior Analysis: From Phenotyping to Translation

The accurate definition of behavioral phenotypes represents a fundamental challenge in neuroscience, genetics, and evolutionary biology. Behavioral phenotypes are the observable expressions of an organism's genetic makeup, environmental influences, and their interaction, encompassing patterns of action that range from simple reflexes to complex social interactions. Researchers face the dual challenge of identifying behaviors that are conserved across species—allowing for translational applications—while also recognizing species-specific adaptations that emerge from unique evolutionary paths. Traditionally, behavioral classification relied on manual observation and scoring, methods prone to human error, subjectivity, and low throughput [1]. The advent of computational ethology has transformed this field through machine learning and computer vision, enabling high-resolution, automated quantification of behavior [1] [2]. This guide compares current platforms and methodologies for behavioral phenotyping, focusing on their experimental validation, performance, and applicability across diverse species and research contexts. Cross-validating these approaches—ensuring that a behavior classified in a mouse model represents a analogous phenotype in humans, for instance—is crucial for advancing our understanding of brain function, disease mechanisms, and evolutionary processes.

Core Methodologies for Behavioral Phenotyping

Automated Behavioral Classification Platforms

A new generation of open-source software platforms has emerged to automate the process of behavior annotation, leveraging advances in pose estimation and machine learning. The table below compares the key features of several available tools.

Table 1: Comparison of Automated Behavioral Phenotyping Platforms

| Platform Name | Key Functionality | Supported Species | Strengths | Experimental Validation |

|---|---|---|---|---|

| vassi [1] | Supervised classification of directed social interactions; verification tools | Animal groups (e.g., fish, mice) | Focus on directed social interactions in groups; handles continuous behavioral variation | Comparable performance on CALMS21 mouse dataset; applied to cichlid fish groups |

| JABS [2] | End-to-end platform: data acquisition, active learning for annotation, classifier sharing, genetic analysis | Laboratory mouse | Integrated hardware/software; genetics-informed analysis; shareable classifiers | Comprehensive validation across 168 mouse strains; classifiers for grooming, gait, frailty |

| BehaviorFlow [3] [4] | Behavioral flow analysis (BFA) to capture transition patterns between behaviors | Mice | High statistical power with fewer animals; identifies latent phenotypes | Identified differential effects of acute vs. chronic stress; validated on stress paradigms and pharmacological interventions |

| k-Means/Derivative Method [5] | Unsupervised and mathematics-based classification of behavioral phenotypes (e.g., sign-tracking vs. goal-tracking) | Rodents | Reduces subjectivity in classifying continuous behavioral scores | Effective classification of Pavlovian Conditioning Approach (PavCA) Index scores in rats |

Experimental Protocols for Cross-Species Validation

Robust cross-species validation of behavioral phenotypes requires carefully designed experimental protocols. The following methodologies are drawn from validated studies.

Protocol 1: Validating Automated Classifiers on Benchmark Datasets

- Objective: To ensure a new classification tool performs reliably against established standards.

- Procedure: As demonstrated with the vassi package, the platform should be tested on a public benchmark dataset, such as the CALMS21 mouse resident-intruder dataset. The tool's behavioral predictions are compared against manually annotated ground-truth data or the performance of other existing software. Metrics such as accuracy, precision, and recall for specific behavior classes (e.g., attack, mounting) are calculated to establish performance parity [1].

- Application: This protocol is essential for establishing baseline credibility before applying a tool to novel species or complex social settings.

Protocol 2: Behavioral Flow Analysis (BFA) for Latent Phenotypes

- Objective: To identify subtle, consistent differences in behavioral patterns that may be missed by analyzing discrete behaviors alone.

- Procedure:

- Pose Estimation: Use tools like DeepLabCut to track body points from video [3].

- Feature Engineering: Compute spatiotemporal features from pose data over sliding time windows.

- Behavioral Clustering: Use an algorithm like k-means to segment the continuous behavior into discrete clusters.

- Transition Mapping: Model the sequences (transitions) between these behavioral clusters for each animal as a "behavioral flow."

- Statistical Testing: Calculate the Manhattan distance between the average behavioral flows of experimental groups. Use permutation testing to determine if this distance is significantly larger than expected by chance, revealing a latent phenotypic difference [3] [4].

- Application: This method is powerful for detecting the effects of genetic, pharmacological, or environmental interventions on overall behavioral organization, even when individual behaviors show no significant change.

Protocol 3: Testing Behavioral Plasticity Across Environments

- Objective: To determine if a species possesses conserved, plastic behavioral strategies that are expressed in different environments.

- Procedure:

- Environmental Manipulation: Test the same individuals or genetically similar groups in distinctly different environments (e.g., solid agar surface vs. liquid medium for nematodes).

- High-Throughput Recording: Film the subjects in both conditions.

- Motif Analysis: Identify and quantify the performance of specific behavioral motifs (e.g., parallel mating vs. spiral mating in C. elegans).

- Experience Manipulation: Raise cohorts in one environment and test in the other, or transfer them at different developmental stages to identify critical periods for behavioral plasticity [6].

- Application: This protocol reveals evolutionarily conserved behavioral potentials and the environmental cues that trigger them, as demonstrated across multiple Caenorhabditis species.

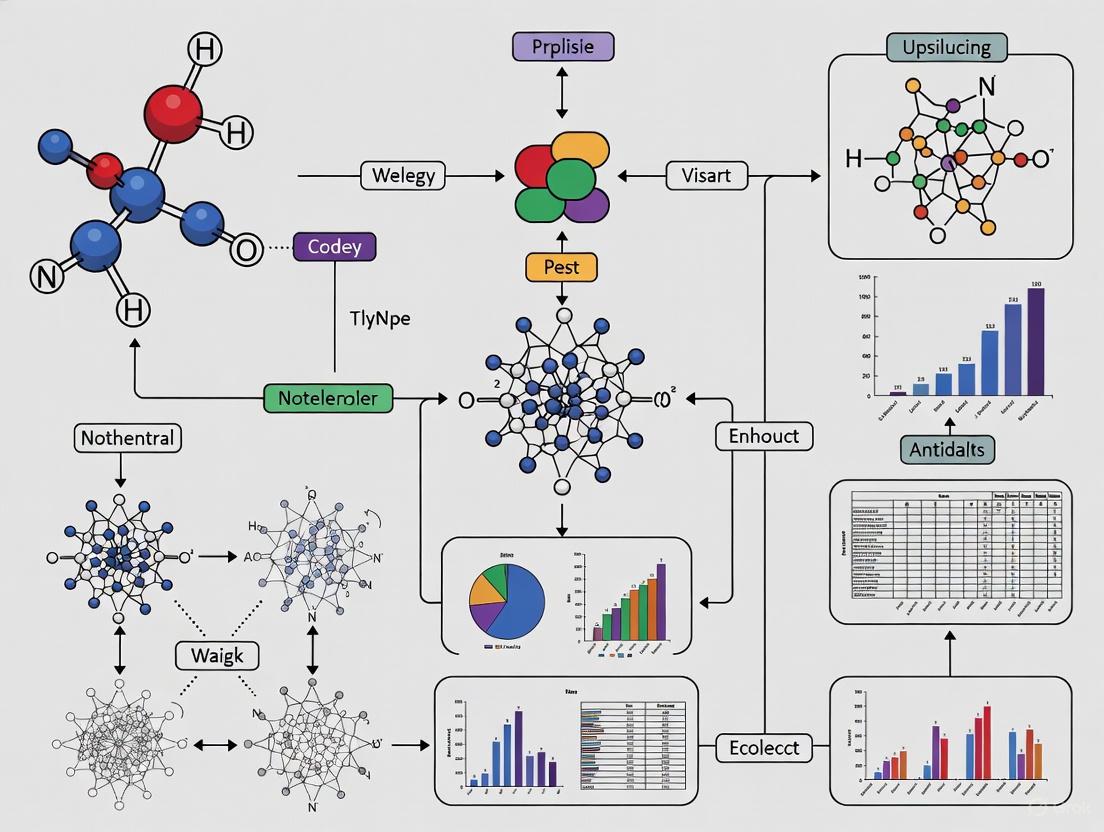

Visualization of Workflows

The following diagrams illustrate the core experimental and analytical pipelines for defining behavioral phenotypes.

Generalized Workflow for Automated Behavior Classification

Behavioral Flow Analysis Pipeline

Quantitative Performance Comparison

The performance of different machine learning approaches for behavior classification can be evaluated based on benchmark studies and reported metrics.

Table 2: Performance Metrics of Classification Methods

| Method / Platform | Dataset / Context | Key Performance Metric | Reported Result | Notes |

|---|---|---|---|---|

| vassi [1] | CALMS21 (Mouse dyadic interactions) | Classification Performance | Comparable to existing benchmark approaches | Validated on dyadic interactions; applied to groups |

| Adaptive Identity GAN [7] | Fish Species Classification | Classification Accuracy | 95.1% ± 1.0% | 9.7% improvement over baseline methods |

| Adaptive Identity GAN [7] | Fish Image Segmentation | Mean Intersection over Union (mIoU) | 89.6% ± 1.3% | 12.3% improvement over baseline methods |

| BehaviorFlow (BFA) [3] | Mouse Open Field Test | Statistical Power | Higher power than traditional analysis | Detects effects with fewer animals; p < 0.05 |

| k-Means Classification [5] | Rat Sign-/Goal-Tracking | Classification Robustness | Effective for small samples | Reduces subjectivity vs. fixed cutoffs |

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful execution of the described experiments requires a suite of reliable tools and resources.

Table 3: Key Research Reagent Solutions for Behavioral Phenotyping

| Item | Function | Example Tools / Implementation |

|---|---|---|

| Pose Estimation Software | Tracks animal body parts from video data to generate quantitative time-series data. | DeepLabCut [3], SLEAP [2] |

| Behavior Annotation GUI | Enables researchers to manually label behaviors in videos for training supervised classifiers. | JABS-AL Module [2], JAABA [1] |

| Standardized Behavioral Arenas | Provides controlled, consistent environments for high-quality, reproducible video data collection. | JABS Data Acquisition Hardware [2] |

| Benchmark Behavioral Datasets | Public datasets used to validate and compare the performance of new classification algorithms. | CALMS21 Dataset [1] |

| Shareable Classifier Repositories | Platforms that allow researchers to share and use pre-trained behavior classifiers on new data. | JABS-AI Module Web Application [2] |

| Genetically Diverse Strain Collections | Essential for linking behavioral phenotypes to genetic mechanisms and assessing generalizability. | JAX curated datasets (168 mouse strains) [2] |

The cross-validation of behavioral phenotypes across species relies on a multifaceted approach combining standardized hardware, robust automated classification software, and sophisticated analytical frameworks. Platforms like JABS and vassi demonstrate the power of integrated, shareable systems for ensuring reproducibility and scalability in behavioral neurogenetics. Meanwhile, methods like Behavioral Flow Analysis offer enhanced statistical power to uncover latent phenotypes, promoting the 3R principles by potentially reducing animal numbers [3] [4]. The discovery of conserved behavioral plasticity, as seen in C. elegans mating strategies, underscores that many behaviors are not fixed but are conditionally expressed toolkits, a crucial consideration for cross-species comparisons [6]. As the field progresses, the integration of genetics with high-resolution behavioral analysis, supported by the tools and protocols detailed in this guide, will continue to refine our definitions of behavioral phenotypes and deepen our understanding of their evolutionary conservation and biological basis.

The Critical Role of Cross-Validation in Translational Research and Drug Development Pipelines

Translational research aims to bridge the gap between basic scientific discoveries and clinical applications, yet this process faces significant challenges including overfitting, model instability, and species-to-species generalization. Cross-validation has emerged as a critical statistical methodology to address these challenges by providing robust performance estimates for predictive models. This guide examines the application of cross-validation techniques across translational pipelines, from preclinical animal studies to clinical trial design, with specific focus on behavior classification across species. We present comparative experimental data and standardized protocols to help researchers select appropriate validation strategies for their specific development stage.

Translational research encompasses the continuum of activities that move a therapeutic candidate from laboratory discovery to first-in-human clinical trials, facing what is known as the "Translational Gap" at the interface of drug discovery and early clinical development [8]. This gap is particularly pronounced in neuropsychiatric disorders and neurodegenerative diseases where behavioral dysfunction is examined in model organisms under the assumption that fundamental aspects of human behavior are evolutionarily conserved [9]. However, the spatial and temporal scales of animal locomotion vary widely among species, making conventional statistical analyses insufficient for discovering conserved locomotion features [9].

Cross-validation techniques address these challenges by providing reliable estimates of how analytical models will generalize to independent datasets, flagging problems like overfitting and selection bias [10]. In the context of drug development, biomarkers and predictive models must demonstrate robust performance across species and populations to successfully inform clinical trial design and therapeutic decision-making [11] [8].

Cross-Validation Fundamentals

Core Methodological Principles

Cross-validation is a model validation technique that assesses how results of a statistical analysis will generalize to an independent dataset [10]. The fundamental principle involves partitioning a sample of data into complementary subsets, performing analysis on one subset (training set), and validating the analysis on the other subset (validation or testing set) [10]. Key types include:

- k-Fold Cross-Validation: The original sample is randomly partitioned into k equal sized subsamples. Of the k subsamples, a single subsample is retained as validation data, and the remaining k-1 subsamples are used as training data [12].

- Leave-One-Out Cross-Validation (LOOCV): A special case of k-fold cross-validation where k equals the number of observations in the dataset [10].

- Stratified k-Fold Cross-Validation: Partitions are selected so the mean response value is approximately equal in all partitions, preserving the proportion of different classes in each fold [10].

- Repeated Cross-Validation: The data is randomly split into k partitions several times, with performance averaged over several runs [12].

Implementation Considerations

The choice of k value involves a bias-variance tradeoff. A value of k=10 is common in applied machine learning, generally resulting in a model skill estimate with low bias and modest variance [12]. For smaller datasets, Leave-One-Out Cross-Validation may be preferable, while k=5 offers a computational advantage for large datasets or complex models [10] [12].

Table 1: Comparison of Cross-Validation Techniques

| Technique | Optimal Use Case | Advantages | Limitations |

|---|---|---|---|

| k-Fold (k=5) | Large datasets, computational constraints | Lower computational cost | Higher variance |

| k-Fold (k=10) | General purpose applications | Balanced bias-variance tradeoff | Requires sufficient data |

| Leave-One-Out | Very small datasets | Low bias | High computational cost, high variance |

| Stratified k-Fold | Classification with imbalanced classes | Preserves class distribution | More complex implementation |

| Repeated k-Fold | Model stability assessment | More reliable performance estimate | Increased computational requirements |

Experimental Protocols for Cross-Species Validation

Cross-Species Behavior Analysis Protocol

Objective: To identify locomotion features shared across different species with dopamine deficiency despite evolutionary differences [9].

Materials:

- Locomotion data from multiple species (e.g., humans, mice, worms)

- Domain-adversarial neural network with attention mechanism

- Decision tree algorithms for rule extraction

- Statistical testing framework

Methodology:

- Data Collection: Collect 2D locomotion data from various species with and without the condition of interest (e.g., dopamine deficiency). Convert to time-series of locomotion speed and standardize each time-series.

- Data Preprocessing: Undersample time-series as needed to ensure identical lengths across species while preserving temporal relationships.

- Network Architecture: Implement a domain-adversarial neural network with gradient reversal layers to extract species-independent features. Include attention mechanisms to identify important segments in locomotion trajectories.

- Training Procedure:

- Train network to minimize classification error (e.g., healthy vs. diseased) while maximizing domain prediction error (species identification)

- Incorporate attention mechanism to highlight segments characteristic of class regardless of domain

- Rule Extraction: Build decision trees using handcrafted features from attended segments to create human-interpretable classification rules.

- Statistical Validation: Test extracted rules using conventional statistical methods on all available species data.

Validation: Apply k-fold cross-validation with k=5 or k=10, ensuring that data from the same individual or species does not appear in both training and test sets simultaneously [9] [12].

Biomarker Validation Protocol

Objective: To develop and validate biomarkers for patient stratification, target engagement, or treatment response prediction in clinical trials [11] [8].

Materials:

- Multi-omics data (genomics, transcriptomics, proteomics, metabolomics)

- Clinical outcome data

- Bioanalytical validation platforms

- Computational resources for model development

Methodology:

- Biomarker Discovery: Identify candidate biomarkers using omics technologies on well-characterized sample sets.

- Pre-analytical Validation: Establish standard operating procedures for sample collection, processing, and storage to minimize technical variability.

- Analytical Validation: Demonstrate that the biomarker assay is robust, reproducible, and accurate across expected sample types.

- Clinical Validation: Evaluate the biomarker's ability to predict clinical outcomes or treatment responses using cross-validation approaches.

- Context of Use Definition: Clearly specify the intended use of the biomarker (diagnostic, prognostic, predictive, pharmacodynamic) [8].

Cross-Validation Approach: Apply nested cross-validation when optimizing hyperparameters and selecting features to avoid overfitting. Use stratified k-fold cross-validation when dealing with imbalanced datasets to maintain class distribution in each fold [10] [12].

Comparative Performance in Translational Applications

Cross-Species Behavior Classification

In a study examining dopamine-deficient locomotion across humans, mice, and worms, domain-adversarial neural networks with cross-validation successfully identified conserved features despite significant evolutionary differences [9]. The implementation of attention mechanisms enabled identification of characteristic segments in locomotion trajectories, such as short-duration peaks in speed for PD mice, which were validated across species boundaries.

Table 2: Performance of Cross-Validation in Species Generalization

| Model Type | Cross-Validation Approach | Classification Accuracy | Domain Confusion | Key Findings |

|---|---|---|---|---|

| Domain-Adversarial Neural Network | k-fold (k=5) | 94.2% | High (failed to identify species) | Successfully extracted cross-species hallmarks of dopamine deficiency |

| Conventional Deep Learning | k-fold (k=5) | 88.7% | Low (accurately identified species) | Features were species-specific with limited translational potential |

| Decision Tree with Handcrafted Features | Leave-One-Out | 82.1% | Moderate | Provided interpretable rules but lower accuracy |

Biomarker-Guided Clinical Development

The longitudinal analysis of AstraZeneca's small molecule portfolio demonstrated that inclusion of biomarkers into early drug development (Phase 2 studies) was associated with active or successful projects compared to projects without biomarkers [8]. Cross-validation played a critical role in distinguishing prognostic biomarkers (indicative of disease outcome independent of intervention) from predictive biomarkers (indicative of response to specific treatment).

Impact on Success Rates: Large biomarker business intelligence analysis of clinical development success rates between 2006-2015 showed that availability of selection or stratification biomarkers increased probability of success by as much as 21% in Phase III clinical trials and by as much as 17.5% from Phase I to regulatory approval across all disease areas [11].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagent Solutions for Cross-Species Validation

| Reagent/Technology | Function | Application in Translational Research |

|---|---|---|

| Domain-Adversarial Neural Networks | Extracts features that classify by condition but not by domain | Identifying conserved biological features across species [9] |

| Attention Mechanisms | Identifies important segments in time-series data | Interpretable deep learning for behavioral analysis [9] |

| Multi-omics Platforms (genomics, proteomics, metabolomics) | Comprehensive molecular profiling | Biomarker discovery and patient stratification [8] |

| k-Fold Cross-Validation | Robust performance estimation | Model evaluation with limited data [10] [12] |

| Gradient Reversal Layers | Promotes domain-invariant feature learning | Cross-species generalization in neural networks [9] |

| Decision Tree Algorithms | Creates interpretable rules from complex models | Translating black-box models to biological insights [9] |

| Biomarker Qualification Framework | Regulatory endorsement of biomarker context of use | Facilitating regulatory acceptance of novel biomarkers [8] |

Integrated Workflow for Translational Validation

Cross-validation represents an indispensable methodology throughout the translational research pipeline, from initial cross-species behavior analysis to final clinical biomarker validation. The implementation of appropriate cross-validation strategies directly addresses the fundamental challenge of translational research: ensuring that models and biomarkers generalize beyond the specific datasets on which they were developed. As translational precision medicine continues to evolve, integrating multi-omics profiling, digital biomarkers, and artificial intelligence, rigorous validation approaches will become increasingly critical for delivering safe and effective therapeutics to the right patients.

Domain-adversarial training combined with cross-validation demonstrates particular promise for cross-species generalization, enabling identification of conserved biological features despite evolutionary differences. Similarly, nested cross-validation approaches for biomarker development help maintain the statistical rigor necessary for regulatory qualification and clinical implementation. By adopting these robust validation frameworks, researchers and drug developers can significantly improve the predictability and success rates of translational research programs.

Cross-species generalization represents a formidable challenge in biomedical and ecological research, where models trained on one species often fail to maintain accuracy and predictive power when applied to others. This challenge stems from three core sources of variability: biological differences in morphology and physiology, environmental disparities affecting behavior and expression, and methodological noise introduced by divergent experimental protocols and data distributions. The ability to overcome these hurdles is critical for developing robust models that can accelerate drug development, improve ecological monitoring, and enhance our understanding of fundamental biological processes across the tree of life.

Recent advances in computational methods have yielded promising frameworks specifically designed to address these variabilities. This guide objectively compares emerging approaches that demonstrate state-of-the-art performance in handling cross-species generalization, providing researchers with actionable insights into their operational mechanisms, experimental validation, and practical implementation.

Comparative Analysis of Cross-Species Generalization Frameworks

The table below summarizes three advanced methodologies addressing cross-species generalization challenges across different domains, highlighting their core approaches and demonstrated performance.

Table 1: Performance Comparison of Cross-Species Generalization Frameworks

| Framework Name | Application Domain | Core Innovation | Performance Highlights | Species Validated |

|---|---|---|---|---|

| CKSP [13] | Animal Activity Recognition (AAR) | Shared-Preserved Convolution (SPConv) & Species-specific Batch Normalization (SBN) | Accuracy increase: Horse (+6.04%), Sheep (+2.06%), Cattle (+3.66%) [13] | Horse, Sheep, Cattle |

| Probabilistic Prompt Distribution Learning [14] | Multi-species Animal Pose Estimation | Learnable probabilistic prompts & cross-modal fusion strategies | State-of-the-art in supervised and zero-shot settings [14] | Multiple species (from benchmarks) |

| DeepPlantCRE [15] | Plant Gene Expression Prediction | Transformer-CNN Hybrid for CRE analysis | Peak prediction accuracy of 92.3% in cross-species validation [15] | Gossypium, Arabidopsis thaliana, Solanum lycopersicum, Sorghum bicolor |

| Cross-Species NAFLD Model [16] | Drug Efficacy Translation (NAFLD) | Model-Based Meta-Analysis (MBMA) establishing quantitative thresholds | Predicts human outcomes from mouse data; defined mouse ΔALT thresholds for clinical efficacy [16] | Mouse, Human |

Detailed Framework Methodologies and Experimental Protocols

CKSP Framework for Animal Activity Recognition

The Cross-species Knowledge Sharing and Preserving (CKSP) framework is designed for sensor-based animal activity recognition (AAR). It tackles the data limitation challenge by learning from multiple species simultaneously [13].

Experimental Protocol:

- Data Collection: Wearable sensor data is collected from multiple species (horses, sheep, cattle). The data typically includes tri-axial accelerometer and gyroscope readings.

- Data Preprocessing: Raw sensor data is segmented into fixed-length windows. Standard preprocessing includes noise filtering and signal normalization.

- Model Architecture & Training:

- Shared-Preserved Convolution (SPConv) Module: This module contains two components: a single, shared full-rank convolutional layer that learns generic features common across all species, and individual low-rank convolutional layers for each species to extract species-specific features [13].

- Species-specific Batch Normalization (SBN): Multiple separate Batch Normalization layers are used to fit the distinct data distributions of different species, mitigating training conflicts caused by distribution discrepancies [13].

- Training Objective: The model is trained jointly on data from all available species to optimize a standard classification loss (e.g., Cross-Entropy Loss).

- Evaluation: The trained model is evaluated on held-out test data from each species and compared against baseline models trained solely on individual species' data. Metrics include accuracy and F1-score [13].

The following diagram illustrates the core architecture of the CKSP framework:

DeepPlantCRE for Genomic Prediction

DeepPlantCRE addresses cross-species generalization in plant genomics, specifically for predicting gene expression based on DNA sequences and cis-regulatory elements (CREs) [15].

Experimental Protocol:

- Data Collection: Collect genomic DNA sequences and corresponding gene expression data from multiple plant species (Arabidopsis thaliana, Solanum lycopersicum, etc.).

- Data Preprocessing: Partition genomes into non-overlapping windows centered on transcription start sites. Convert sequence data into one-hot encoded format.

- Model Architecture & Training:

- Transformer-CNN Hybrid: The model uses a Transformer encoder to capture long-range regulatory interactions within the DNA sequence and a CNN module to detect local motif patterns [15].

- Cross-species Training: The model is pre-trained on a source species with abundant data and then evaluated on target species with limited data to assess generalization.

- Regularization: Employs techniques like dropout and weight decay to inhibit overfitting, a common issue in cross-species prediction [15].

- Evaluation & Interpretation:

- Performance Metrics: Model prediction is evaluated using Accuracy, AUC-ROC, and F1-score on the target species' data [15].

- Motif Discovery: Model interpretability tools (e.g., DeepLIFT, TF-MoDISco) are applied to derive importance scores and identify transcription factor binding sites (TFBSs). The discovered motifs are validated against known databases like JASPAR [15].

The workflow for cross-species genomic prediction is outlined below:

Quantitative Cross-Species Efficacy Modeling for NAFLD

This approach uses a Model-Based Meta-Analysis (MBMA) to establish a quantitative, exponential relationship between drug efficacy in mouse models and clinical outcomes in humans for Non-Alcoholic Fatty Liver Disease (NAFLD) [16].

Experimental Protocol:

- Data Collection:

- Preclinical Data: Conduct a systematic search of databases (e.g., Embase, PubMed) to identify studies reporting the change in Alanine Aminotransferase (ΔALT) in mouse models of NAFLD after drug treatment.

- Clinical Data: Collect corresponding data from published human clinical trials for the same drugs, specifically the placebo-corrected change in ALT (ΔΔALT) [16].

- Model Building:

- An exponential model is constructed to define the relationship between ΔALT in mice and ΔΔALT in humans.

- The model is used to calculate quantitative thresholds for predicting clinical success. For instance, a mouse ΔALT reduction of ≥53.3 U/L predicts superiority over placebo in humans, and a reduction of ≥128.3 U/L predicts efficacy exceeding the FDA-approved therapy Resmetirom [16].

- Model Validation: The model's predictive power is externally validated using an independent dataset from a study of Linggui Zhugan Tang (LGZGT), which was not part of the original model-building data [16].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of cross-species research requires specific reagents and computational tools. The following table details key items and their functions in the featured studies.

Table 2: Key Research Reagents and Materials for Cross-Species Studies

| Item Name | Category | Function in Cross-Species Research | Example Use Case |

|---|---|---|---|

| Wearable Sensors [13] | Data Collection Hardware | Tri-axial accelerometers/gyroscopes capture movement and behavioral data from diverse animal subjects. | Animal Activity Recognition (AAR) in horses, sheep, cattle [13] |

| Organ-on-a-Chip (OOC) [17] | In Vitro Model System | Microphysiological systems (MPS) emulate organ-level biology of different species (human, rat, dog) for comparative toxicology. | Cross-species Drug Induced Liver Injury (DILI) assessment [17] |

| Nanotrap Magnetic Virus Particles [18] | Sample Processing Reagent | Used to concentrate viruses from complex samples like wastewater, improving detection sensitivity across sample types. | Wastewater surveillance for SARS-CoV-2 in influent and settled solids [18] |

| Bovine Coronavirus (BCOV) [18] | Process Control | Spiked into samples as a recovery control to monitor efficiency of RNA extraction and analysis, ensuring data comparability. | Normalization in wastewater-based epidemiology [18] |

| Pepper Mild Mottle Virus (PMMoV) [18] | Normalization Biomarker | A fecal indicator used to normalize SARS-CoV-2 RNA concentrations for population dynamics and flow variations. | Creating a normalized wastewater signal (N/PMMoV) for cross-site comparison [18] |

| Discrete Wavelet Transform (DWT) [18] | Computational Tool | Decomposes signals to separate long-term epidemiological trends from high-frequency methodological noise. | Denoising wastewater data to enable cross-site comparability [18] |

The frameworks compared in this guide represent a paradigm shift in cross-species research, moving from isolated, single-species models to integrated, generalizable systems. The CKSP framework demonstrates that explicitly modeling both shared and species-specific features with specialized normalization can significantly boost performance in behavioral recognition. DeepPlantCRE shows that hybrid deep-learning architectures can overcome limitations in capturing long-range genomic interactions, achieving high cross-species prediction accuracy. Meanwhile, the quantitative NAFLD model proves that establishing rigorous, mathematically defined translational thresholds is possible through systematic meta-analysis.

The consistent theme across these diverse applications is that overcoming biological, environmental, and methodological variability requires models that are explicitly designed to disentangle and account for these sources of heterogeneity. As these methodologies mature and are adopted, they hold the promise of creating more predictive models, reducing reliance on animal testing, and ultimately improving the translation of research findings across the species boundary.

Pavlovian conditioning models, particularly the sign-tracking (ST) and goal-tracking (GT) paradigm, provide a powerful framework for investigating individual differences in how reward-predictive cues gain control over behavior. When a discrete, localizable conditioned stimulus (CS), such as a lever, predicts a reward unconditioned stimulus (US) delivered at a different location, distinct conditioned responses emerge [@citation:8]. Some individuals, termed sign-trackers, direct their responses toward the CS itself (e.g., approaching, sniffing, and interacting with the lever), while others, termed goal-trackers, direct their responses toward the location of impending reward delivery (e.g., the food magazine) [@citation:2]. This behavioral dissociation is not merely a motoric difference but is thought to reflect fundamental differences in cognitive processing and the assignment of incentive salience to reward cues [@citation:9].

The ST/GT model has garnered significant interest as a potential translational endophenotype for understanding vulnerability to substance use and other impulse control disorders in humans [@citation:9]. The propensity to sign-track is linked with behaviors and neural profiles relevant to addiction, including increased impulsivity, greater responsiveness to drug-related cues, and resistance to extinction [@citation:2] [19]. This case study will objectively compare the behavioral manifestations, underlying neural circuits, and associated learning processes of ST and GT phenotypes across rodent and primate species. The cross-species examination of these phenotypes is critical for cross-validating behavior classification and for establishing a robust foundation for developing therapeutic interventions targeting maladaptive cue-driven behaviors.

Experimental Protocols and Methodologies

Core Pavlovian Conditioned Approach (PCA) Protocol

The standardized Pavlovian Conditioned Approach (PCA) protocol is the primary method for identifying and characterizing ST and GT phenotypes in rodents. The following methodology is adapted from procedures detailed in the search results [@citation:2] [19].

- Subjects: Typically, male Long-Evans rats are used. They are food-restricted to maintain them at approximately 90% of their free-feeding weight to ensure motivation for a food reward.

- Apparatus: Training occurs in standard operant chambers equipped with a retractable lever (serving as the discrete CS), a food magazine for delivering a sucrose pellet US, and an infrared photobeam to detect entries into the magazine.

- Training Procedure: Over multiple daily sessions, subjects are exposed to a series of trials (e.g., 25-30 per session). Each trial begins with the presentation of the CS (lever extension), which is paired with the delivery of a reward US into the food magazine after a fixed interval (e.g., 10-30 seconds). Critically, the subject's interaction with the lever has no consequence on reward delivery; the pairing is purely Pavlovian.

- Data Quantification: Behavior is measured during the CS presentation period before US delivery. The primary metrics include:

- Sign-Tracking (ST): The number of contacts with the lever CS.

- Goal-Tracking (GT): The number of food magazine entries.

- Latency: The time to first contact the lever or the magazine after CS presentation.

- Phenotype Classification: Individuals are classified based on a composite score or a probability difference score that compares lever pressing versus magazine entries during the CS period. "Sign-trackers" show a high probability of contacting the lever and a low probability of entering the magazine, while "goal-trackers" show the opposite pattern.

Cross-Species Behavioral Paradigms

Recent technological advances have enabled the development of more naturalistic tasks that can be adapted for cross-species comparisons, moving beyond the traditional operant chamber.

- Virtual Reality (VR) Foraging Task: A novel approach involves training both mice and macaques on the same naturalistic visual foraging task within a VR environment [@citation:1]. In this paradigm, animals navigate a virtual meadow and must approach a target stimulus while avoiding a distractor. During the task, facial features are recorded and tracked using deep learning tools like DeepLabCut. The extracted features are then used to infer internal cognitive states, such as attention, using a Markov-Switching Linear Regression (MSLR) model. This method allows for a data-driven comparison of spontaneously occurring internal states that predict task performance across species [@citation:1].

- Pavlovian-Instrumental Transfer (PIT): To isolate the role of Pavlovian learning in drug-seeking behavior, the PIT paradigm is employed [@citation:3]. In this procedure, Pavlovian conditioning (CS-US pairings) and instrumental training (a response, e.g., a lever press, is reinforced by a drug US) are conducted separately. In a subsequent test phase where no US is delivered, the ability of the drug-paired CS to elevate performance of the drug-seeking instrumental response is measured. This tests the potential of a CS to provoke relapse-like behavior.

Comparative Behavioral and Neural Data

The following tables summarize key experimental findings regarding the behavioral characteristics and neural substrates of sign-tracking and goal-tracking phenotypes.

Table 1: Comparative Behavioral Profiles of Sign-Tracking and Goal-Tracking Phenotypes

| Behavioral Characteristic | Sign-Tracking (ST) Phenotype | Goal-Tracking (GT) Phenotype | Supporting Evidence |

|---|---|---|---|

| Conditioned Response | Directed toward the cue (CS) itself (e.g., lever) | Directed toward the site of reward delivery (e.g., food magazine) | [@citation:2] [19] |

| Resistance to Extinction | Behavior is more resistant to extinction | Behavior extinguishes more readily | [@citation:2] [19] |

| Outcome Devaluation | Conditioned responding is sensitive to outcome devaluation | Conditioned responding is sensitive to outcome devaluation | [@citation:8] |

| Kamin Blocking | Shows competitive blocking effects, suggesting common prediction error mechanisms | Shows competitive blocking effects, suggesting common prediction error mechanisms | [@citation:8] |

| Addiction Vulnerability | Linked with increased impulsivity and susceptibility to drug-taking | Not associated with increased addiction vulnerability | [@citation:2] [20] |

Table 2: Neural Circuitry and Neurotransmitter Systems Underlying ST and GT Phenotypes

| Neural Substrate | Role in Sign-Tracking (ST) | Role in Goal-Tracking (GT) | Experimental Findings |

|---|---|---|---|

| Nucleus Accumbens (NAc) Core | Critical for acquisition and expression; dopamine release increases to cue and decreases to reward over training | Less dependent on NAc dopamine; cue-evoked excitatory responses encode behavioral vigor | [@citation:2] |

| Dopamine System | DA receptor antagonism systemically or in NAc core blocks acquisition/maintenance of ST | Largely unaffected by DA receptor antagonism | [@citation:2] [19] |

| Prefrontal Cortex (PFC) | Subregional specialization observed in mice; dmPFC shows stable task-related coding, vmPFC responds to reward | Potential greater reliance on cortical "cognitive" processes, though circuitry is less defined | [@citation:6] [19] |

| Phasic Dopamine Release | Profile of increasing cue-evoked and decreasing reward-evoked dopamine release over training | Different profile of phasic dopamine release compared to ST | [@citation:2] |

Cross-Species Validation and Comparative Analysis

Direct Cross-Species Comparisons of Internal States

A key challenge in cross-species validation is ensuring that experimental paradigms are directly comparable. A 2025 study addressed this by using the same VR foraging task for mice and macaques and inferring internal states from facial features [@citation:1]. The MSLR model, trained on reaction times, identified internal states that predicted when animals would react to stimuli. The relationship between these inferred states and task performance was comparable between mice and monkeys, and each state corresponded to characteristic facial patterns that partially overlapped between species [@citation:1]. This suggests that facial expressions can serve as a cross-species indicator of internal cognitive states during decision-making tasks.

Primate vs. Rodent Strategic Differences

It is crucial to note that despite similarities in inferred internal states, fundamental strategic differences can exist between species. Research on visual segmentation reveals that mice and primates may use distinct strategies to solve what appears to be the same task. When presented with a figure-ground segmentation task, mice were severely limited in their ability to segment figures from ground using opponent motion cues and instead adopted a strategy of "brute force memorization" of texture patterns [@citation:7]. In contrast, primates (humans, macaques, and mouse lemurs) could readily perform texture-invariant segmentation using the same motion cue [@citation:7]. This highlights that while behaviors may be superficially similar, the underlying cognitive algorithms can differ significantly across species.

Translation to Human Behavior and Psychopathology

Mapping ST and GT onto human behavior is an active area of research with significant implications for understanding psychopathology. Characteristics of sign-tracking in rodents—such as bottom-up cognitive processing, poor attentional control, and heightened sensitivity of neural reward systems—overlap with neurobehavioral traits associated with substance use disorders in humans [@citation:9]. Individual differences in the tendency to attribute incentive salience to reward cues, measured through computerized behavioral tasks or attentional capture paradigms, are being explored as a potential biomarker for addiction vulnerability [@citation:9]. This translational approach aims to bridge the gap between preclinical models and human clinical populations.

Research Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagents and Solutions for Investigating ST/GT Phenotypes

| Item Name | Function/Application | Specific Examples from Research |

|---|---|---|

| Operant Conditioning Chamber | Standardized environment for conducting Pavlovian Conditioned Approach (PCA) protocols. | Chambers equipped with retractable levers (CS), food dispensers, and magazine entry detectors [@citation:2]. |

| DeepLabCut | Deep-learning-based software package for markerless pose estimation of animal facial features and body parts from video recordings. | Used to track a wide range of facial features in mice and macaques during VR task performance [@citation:1]. |

| Virtual Reality (VR) Setup | Creates immersive, controlled environments for naturalistic behavioral testing across species. | Custom spherical dome (DomeVR) for visual foraging tasks in mice and monkeys [@citation:1]. |

| Fixed Electrode Arrays | For chronic in vivo electrophysiological recordings of neural activity in freely behaving animals. | Custom-built, advanceable microelectrode bundles for recording single-units in mouse PFC subregions [@citation:6]. |

| Dopamine Receptor Antagonists | Pharmacological tools to probe the necessity of dopamine signaling in behavior acquisition and expression. | Systemic or intra-NAc core infusion of flupenthixol (D1/D2 antagonist) to block ST but not GT [@citation:8]. |

Signaling Pathways and Experimental Workflows

Neural Circuitry of Sign-Tracking

The following diagram illustrates the key neural pathways implicated in the expression of the sign-tracking phenotype, highlighting the central role of dopaminergic signaling and subcortical-cortical interactions.

The quest to understand the neural underpinnings of behavior increasingly relies on comparative studies across species. However, a significant challenge in this field is the lack of standardized data acquisition methods, which can hinder the cross-validation of behavior classification and direct comparison of research findings. Variations in recording equipment, experimental protocols, and analytical frameworks introduce inconsistencies that compromise the reproducibility and translational potential of results. This guide examines emerging technologies and methodologies that aim to establish consistency in multi-species behavioral recording, providing researchers with a foundation for robust cross-species investigations.

System Architectures for Cross-Species Data Acquisition

Unified Hardware Platforms

Recent technological advances have yielded integrated hardware systems designed to minimize the conflict between large-scale neural recordings and naturalistic behavior across species.

The ONIX (Open Neuro Interface) system represents a significant step toward standardization by providing an open-source data acquisition platform specifically designed for multimodal neural recording during natural behavior. This system achieves high data throughput (2 GB/s) with low closed-loop latencies (<1 ms) while using a 0.3-mm thin tether to minimize behavioral impact. Its architecture supports combinations of passive electrodes, Neuropixels probes, head-mounted microscopes, cameras, and 3D trackers, creating a unified approach to data collection [21].

For wildlife research, e-obs tags offer a different approach by integrating multiple sensors including GPS, accelerometers (ACC), and inertial measurement units (IMU) in a single package. This multi-sensor acquisition enables detailed motion analysis and behavioral classification across various species by intelligently combining sensor data to create a more complete picture of an animal's life [22].

Miniaturized Wearable Systems

The MULTI SENSOR system developed at Tel Aviv University exemplifies the trend toward miniaturized, wearable data loggers. Weighing less than 10 grams, this device includes a camera, microphone, accelerometer (9D sensor), and two analog channels for physiological data such as neural activity or heart rate. Its compact design allows small animals like rats to carry the system without significant behavioral impact, storing data directly without the need for transmission [23].

Similarly, the BirdPark system employs custom low-power frequency-modulated radio transmitters worn by small animals. This modular system records perfectly synchronized data streams from multiple cameras, microphones, and animal-borne wireless sensors, enabling the dissection of rapid behaviors on timescales well below the video frame period [24].

Table 1: Comparison of Multi-Species Data Acquisition Systems

| System | Key Sensors | Data Synchronization | Target Species | Key Advantages |

|---|---|---|---|---|

| ONIX [21] | Neuropixels probes, cameras, 3D trackers, head-mounted microscopes | Hardware-level synchronization | Mice and similar-sized species | High data throughput (2 GB/s), low latency (<1 ms), minimal behavioral impact |

| e-obs tags [22] | GPS, accelerometer, IMU | Integrated sensor fusion | Wildlife species | GPS accuracy at 1Hz, acceleration at 100Hz, optimized for power efficiency |

| MULTI SENSOR [23] | Camera, microphone, accelerometer, physiological channels | Single-device recording | Small animals (rats) | Compact size (<10g), no transmission needed, multiple parameter logging |

| BirdPark [24] | Wireless transmitters, accelerometers, multiple cameras, microphones | Central clock synchronization | Small songbirds and similar species | Novel multi-antenna phase compensation, minimizes signal losses |

Standardized Experimental Frameworks for Cross-Species Research

Synchronized Behavioral Paradigms

Beyond hardware standardization, researchers have developed experimental frameworks that enable direct quantitative comparison of behaviors across species.

A notable example is the synchronized evidence accumulation task developed for rats, mice, and humans. This framework aligns task mechanics, stimuli, and training protocols across species, using a free-response version of a pulse-based evidence accumulation task where sensory information is presented as sequences of randomly-timed light pulses from two sources. The paradigm maintains identical flash duration, flash rate, and generative flash probability across all test species, while employing non-verbal, feedback-based training for all subjects [25].

This synchronized approach revealed that while all three species employed evidence accumulation strategies, they differed in key decision parameters—humans prioritized accuracy, while rodent performance was limited by internal time-pressure [25].

Automated Behavioral Monitoring Systems

For wildlife research, kabr-tools provides an open-source package for automated multi-species behavioral monitoring that integrates drone-based video with machine learning systems. This framework extracts behavioral, social, and spatial metrics from wildlife footage using object detection, tracking, and behavioral classification systems. Compared to ground-based methods, this automated approach reduces visibility loss by 15% and captures more behavioral transitions with higher accuracy and continuity [26].

Table 2: Standardized Experimental Protocols for Cross-Species Research

| Protocol/Framework | Application Scope | Key Synchronized Parameters | Output Metrics | Validation Approach |

|---|---|---|---|---|

| Synchronized Evidence Accumulation Task [25] | Rats, mice, humans | Flash duration (10ms), flash rate (100ms bins), generative probability | Accuracy, response time, decision parameters, reward rate | Comparison of drift diffusion models across species |

| kabr-tools Automated Monitoring [26] | Multiple wildlife species (zebras, giraffes) | Drone altitude, video resolution, frame rate, annotation protocols | Time budgets, behavioral transitions, social interactions, habitat associations | Comparison with ground-based expert observation (969 behavioral sequences) |

| VAME Framework [27] | Mice and other model organisms | Pose estimation (6 body points), egocentric alignment, trajectory sampling | Behavioral motifs, transition structure, hierarchical organization | Use case with Alzheimer transgenic mice compared to wildtype |

Analytical Frameworks for Cross-Species Behavioral Classification

Domain-Invariant Feature Learning

A significant challenge in cross-species behavioral analysis is accounting for variations in spatial and temporal scales across species. Attention-based domain-adversarial neural networks address this by automatically discovering locomotion features shared across species through domain-adversarial training. This approach incorporates a gradient reversal layer that renders the network incapable of distinguishing between domains (species) while maintaining the ability to classify behavioral states or conditions [9].

The network architecture includes:

- Feature extraction block with convolutional layers

- Attention computation block that identifies important segments in input time-series

- Domain classifiers with gradient reversal to ensure domain-independence

- Class predictors for behavioral state classification

This method has successfully identified locomotion features shared across dopamine-deficient humans, mice, and worms, despite their evolutionary differences [9].

Unsupervised Behavioral Structure Discovery

VAME (Variational Animal Motion Embedding) provides an unsupervised probabilistic deep learning framework for discovering behavioral structure from pose estimation data. This approach uses a variational recurrent neural network autoencoder to embed behavioral signals into a lower-dimensional space, followed by a Hidden Markov Model to identify discrete behavioral motifs and their hierarchical organization [27].

The VAME workflow processes egocentrically-aligned animal pose data (typically from tools like DeepLabCut) and identifies stereotyped, re-used units of movement without requiring human annotation or supervision. This framework has demonstrated sensitivity in detecting subtle behavioral differences between transgenic and wildtype mice that were not detectable by human observation [27].

Essential Research Reagent Solutions

Table 3: Key Research Reagents and Tools for Multi-Species Behavioral Recording

| Tool/Reagent | Function | Application Examples | Considerations |

|---|---|---|---|

| Neuropixels Probes [21] | High-density neural recording | Simultaneous electrophysiology from hundreds of sites in mice | Requires compatibility with headstage and acquisition system |

| DeepLabCut [27] | Markerless pose estimation | Tracking of body parts (paws, nose, tailbase) in mice | Requires adequate training data for reliable tracking |

| Miniature Head-Mounted Microscopes [21] | Calcium imaging during behavior | Neural population imaging in freely moving mice | Consider weight and size constraints for small species |

| FM Radio Transmitters [24] | Wireless transmission of physiological signals | Vocalization and accelerometer data from freely behaving songbirds | Optimal balance of size, weight, and battery life |

| BEHAVIOR RECORDER Software [28] | Computerized behavioral data collection | Field and laboratory observations across multiple species | Free alternative to commercial packages like The Observer |

Workflow Visualization for Cross-Species Behavioral Analysis

The following diagram illustrates a standardized workflow for cross-species behavioral analysis that integrates multiple data modalities:

Standardized Workflow for Multi-Species Behavioral Analysis

The establishment of consistent data acquisition standards across species represents a critical frontier in behavioral neuroscience. The technologies and frameworks examined here—from unified hardware platforms like ONIX to synchronized behavioral paradigms and advanced analytical methods—provide researchers with powerful tools for cross-species investigations. By adopting standardized approaches that maintain consistency while accommodating species-specific differences, researchers can enhance the reproducibility and translational potential of their findings. As these standards continue to evolve, they will increasingly enable robust cross-validation of behavior classification across different species, ultimately advancing our understanding of the fundamental principles governing brain and behavior.

Methodological Approaches: Machine Learning and Cross-Validation for Cross-Species Classification

Selecting and Engineering Behavioral Features for Cross-Species Relevance

Cross-species behavioral research is fundamental to neuroscience and preclinical drug development, but its validity hinges on the selection of behavioral features that are translatable and relevant across different species. The ability to accurately compare cognitive states and decision-making processes between rodents and humans, for instance, is often confounded by divergent experimental paradigms, training protocols, and the inherent challenge of identifying equivalent behavioral markers. This guide objectively compares contemporary methodologies for behavioral feature selection and engineering, drawing on direct experimental comparisons and data-driven approaches. It details standardized experimental protocols and quantitative findings to provide researchers with a framework for enhancing the cross-species validity of behavioral classification.

Experimental Protocols for Cross-Species Comparison

Synchronized Perceptual Decision-Making Task

A foundational approach involves designing identical behavioral tasks that can be performed by multiple species. One protocol established a synchronized perceptual evidence accumulation task for mice, rats, and humans [25].

- Core Task Mechanics: Subjects are presented with sequences of brief visual pulses from two sources (left and right). The core task is to identify and choose the side with the higher pulse probability [25].

- Cross-Species Implementation:

- Rodents: Perform the task in a 3-port operant chamber. A trial is initiated with a nose poke at a center port, followed by light flashes at the two side ports. The animal indicates its choice by poking a side port and receives a sugar water reward for a correct choice [25].

- Humans: Perform a homologous video game. Participants click on an asteroid to start a trial, observe spaceship flashes on both sides, and click on the side with more flashes to destroy the asteroid for points. Critically, humans receive no verbal instructions, relying on reward feedback similar to rodents [25].

- Key Synchronized Parameters: Flash duration (10 ms), flash rate statistics (binned into 100 ms bins), and generative flash probability are kept identical across species. The task incorporates a built-in speed-accuracy tradeoff, where longer decision times allow for more evidence accumulation and higher accuracy [25].

Internal State Inference via Facial Feature Analysis

Moving beyond choice behavior, another protocol uses facial expressions to infer internal states during a naturalistic foraging task in mice and monkeys [29].

- Behavioral Paradigm: Subjects engage in a virtual reality (VR) visual foraging task where they must approach a target stimulus and avoid a distractor within a simulated meadow environment. The task leverages natural, innate behaviors [29].

- Facial Feature Extraction:

- Video Recording: Facial videos are recorded during task performance—frontally for monkeys and from the side for mice [29].

- Key Point Tracking: A deep learning tool (DeepLabCut) is used to track specific facial key points, such as eyebrows, nose, and ears in monkeys, and analogous features in mice [29].

- State Inference Model: The extracted facial features, averaged over a 250 ms window before stimulus onset, are used as inputs to a Markov-Switching Linear Regression (MSLR) model. This data-driven model agnostically infers distinct, time-varying internal states based on the multivariate facial expression patterns [29].

Quantitative Cross-Species Performance Data

The following tables summarize key quantitative findings from the cited cross-species studies, providing a basis for comparing behavioral performance and model parameters.

Table 1: Behavioral performance of mice, rats, and humans in the synchronized perceptual decision-making task. Data sourced from [25].

| Species | Sample Size | Average Accuracy | Average Response Time | Key Behavioral Strategy |

|---|---|---|---|---|

| Mouse | 95 animals | Lowest Accuracy | Fastest | Evidence accumulation, high trial-to-trial variability |

| Rat | 21 animals | Intermediate | Intermediate | Optimized for reward rate |

| Human | 18 subjects | Highest Accuracy | Slowest | Prioritized accuracy |

Table 2: Comparative analysis of internal state inference via facial features in mice and monkeys. Data sourced from [29].

| Aspect | Mouse Model | Macaque Model |

|---|---|---|

| Experimental Subjects | 7 mice, 29 sessions (12,714 trials) | 2 monkeys, 18 sessions (20,459 trials) |

| Tracked Facial Features | 9 key points | 18 key points |

| Model Input | Facial features averaged from a 250 ms pre-stimulus window | Facial features averaged from a 250 ms pre-stimulus window + eye tracking |

| Inferred States | Multiple internal states identified by MSLR model | Multiple internal states identified by MSLR model |

| State-Behavior Link | States predict reaction times and task outcomes | States predict reaction times and task outcomes |

Visualizing Cross-Species Research Workflows

The following diagrams, created using the specified color palette, illustrate the core experimental and analytical workflows for the two main protocols discussed.

Diagram 1: This workflow outlines the synchronized perceptual decision-making protocol, showing how identical task logic is implemented in species-appropriate hardware to enable direct comparison of model parameters.

Diagram 2: This workflow illustrates the process of inferring internal cognitive states from facial features in mice and monkeys, highlighting the use of a shared computational model on species-specific feature sets.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential materials and tools for implementing cross-species behavioral research protocols.

| Item Name | Function / Application |

|---|---|

| 3-Port Operant Chamber | Standardized rodent testing apparatus for implementing synchronized decision-making tasks, featuring nose poke ports and cue lights [25]. |

| DeepLabCut | Open-source deep learning software package for markerless pose estimation based on video data; used for tracking facial key points in mice and monkeys [29]. |

| Markov-Switching Linear Regression (MSLR) | A computational model that captures non-stationarity and regime shifts in behavioral data; used to infer latent internal states from multivariate facial feature data [29]. |

| Drift Diffusion Model (DDM) | A computational model of decision-making that fits choice and reaction time data to extract key parameters like decision threshold and drift rate, allowing quantitative cross-species comparison [25]. |

| Virtual Reality (VR) Foraging Arena | A controlled, immersive environment (e.g., a spherical dome) that can be tailored to different species' sensory capacities to elicit naturalistic behaviors for cross-species study [29]. |

In behavioral research, classifying subjects into distinct categories is a fundamental yet challenging task. The process is often compromised by subjective decisions, such as the use of predetermined or arbitrary cutoff values to group observations, which can lack accuracy and objectivity, ultimately threatening the reproducibility of scientific findings [5]. This is particularly evident in Pavlovian conditioning studies, where rodents are categorized as sign-trackers (ST), goal-trackers (GT), or intermediate (IN) groups based on their Pavlovian Conditioning Approach (PavCA) Index scores [5]. The cutoff values used to distinguish these phenotypes vary substantially across studies (commonly ±0.3, ±0.4, or ±0.5), largely because the distribution of these scores—influenced by genetic and environmental factors—fluctuates in skewness and kurtosis across laboratories [5]. This variability presents a significant obstacle for cross-species research and drug development, where validating behavioral phenotypes consistently is paramount.

Modern advances in statistical and machine learning tools offer more objective and data-driven methods for classification. This guide provides a comparative overview of three distinct algorithmic approaches for behavior classification: the unsupervised k-Means clustering algorithm, a novel Derivative Method, and traditional Supervised Learning techniques. We frame this comparison within the critical context of cross-species research, highlighting how the choice of algorithm can impact the generalizability and validation of behavioral phenotypes.

The following table summarizes the core characteristics, advantages, and limitations of the three classification approaches.

Table 1: Fundamental Characteristics of Classification Algorithms

| Algorithm | Learning Type | Key Input | Core Principle | Primary Output |

|---|---|---|---|---|

| k-Means [30] [31] [5] | Unsupervised | Number of clusters (k) | Partitions data into 'k' clusters by minimizing within-cluster variance | Data points grouped into 'k' clusters |

| Derivative Method [5] | Unsupervised (Data-Driven) | Distribution of sample scores | Uses the first derivative of the data's density function to find natural cutoffs | Cutoff values based on the sample's distribution |

| Supervised Learning [32] [33] | Supervised | Labeled Training Data | Learns a mapping function from input features to known output labels | A model that predicts labels for new, unseen data |

k-Means Clustering

k-Means is a partitional clustering algorithm that aims to group a dataset into a user-specified number of clusters (k) [30]. Its objective is to minimize the within-cluster sum of squares (WCSS), also known as inertia [31]. The algorithm operates through the following steps [31]:

- Initialization: Randomly select k data points as initial cluster centers.

- Assignment: Assign each data point to the nearest cluster center based on Euclidean distance.

- Update: Calculate the new cluster centers as the mean (centroid) of all data points assigned to that cluster.

- Iteration: Repeat the assignment and update steps until the cluster centers no longer change significantly or a maximum number of iterations is reached.

A significant limitation of k-Means is its requirement for the number of clusters (k) to be predefined, which is often unknown in behavioral research [31] [5]. Furthermore, it assumes clusters are spherical and similar in size, and its performance can be sensitive to the initial random selection of centroids [30] [5].

The Derivative Method

The derivative method is a mathematical, data-driven approach designed to objectively determine cutoff values for classification without predefined labels [5]. It is particularly useful when data is expected to follow a bimodal distribution, as is often the case with pooled PavCA Index scores [5]. The methodology involves:

- Density Estimation: Extract a function representing the probability density distribution of the sample's scores (e.g., PavCA Index scores).

- Derivative Calculation: Compute the first derivative of this density function. The first derivative measures the slope or rate of change of the density function, and its analysis reveals points of maximum and minimum slope [5].

- Cutoff Identification: A local minimum in the first derivative of the density function indicates a point where the rate of change is lowest, which corresponds to a natural valley or trough in the data distribution. This point is identified as the optimal cutoff value for separating groups (e.g., ST and GT) within the sample [5].

Supervised Learning

In contrast to unsupervised methods, supervised learning uses labeled datasets to train algorithms to predict outcomes [32] [33]. The training data contains input examples paired with their correct outputs, providing a "ground truth" for the model to learn from [33]. The core process involves feeding input data into an algorithm, which adjusts its internal parameters until it can accurately model the relationship between inputs and outputs [32]. The trained model is then validated on a test set before being deployed to make predictions on new, unseen data [33].

Supervised learning tasks are broadly divided into:

- Classification: Used to predict categorical labels (e.g., "spam" or "not spam"). Common algorithms include Support Vector Machines (SVM), Random Forest, and Naive Bayes [32] [33].

- Regression: Used to predict a continuous value (e.g., sales revenue) [32] [33].

Experimental Protocols and Workflows

Experimental Protocol for Behavior Classification with k-Means and the Derivative Method

A study by Godin and Huppé-Gourgues (2025) provides a clear protocol for applying k-Means and the Derivative Method to classify rodent behavior [5]:

- Objective: To classify subjects as sign-trackers (ST), goal-trackers (GT), or intermediate (IN) based on PavCA Index scores, overcoming the subjectivity of arbitrary cutoffs.

- Data Collection: Subjects undergo Pavlovian conditioning training. The PavCA Index score is calculated for each subject, typically using the mean scores from the final days of conditioning. The score ranges from -1 (GT phenotype) to +1 (ST phenotype) [5].

- Application of k-Means:

- The algorithm is set to find k=3 clusters (ST, GT, IN) in the distribution of PavCA Index scores.

- It partitions the subjects into three groups by minimizing the within-cluster variance, effectively determining the cluster boundaries (cutoffs) based on the data's inherent structure [5].

- Application of the Derivative Method:

- The density distribution of the PavCA Index scores is plotted.

- The first derivative of this density function is calculated and analyzed to find a local minimum. This point, where the slope of the density function is minimal, is selected as the cutoff value that best separates the phenotypes for that specific sample [5].

Figure 1: Experimental workflow for unsupervised behavior classification.

Cross-Species Signaling Pathway Analysis for Validation

Validating behavioral phenotypes and their underlying neurobiology across species is a major challenge in translational research. A bioinformatics approach called "Cross-species signaling pathway analysis" can help select appropriate animal models and validate targets by analyzing transcriptional data [34].

- Objective: To identify genes and signaling pathways with consistent or differential expression patterns across species (e.g., rats, monkeys, humans) to improve the predictive value of animal models in drug screening [34].

- Methodology:

- Data Collection: Integrate multiple datasets from single-cell and bulk RNA-sequencing from the species of interest.

- Pathway Enrichment Analysis: Use Gene Set Enrichment Analysis (GSEA) to identify signaling pathways that are significantly activated or inhibited in the condition of interest (e.g., vascular aging). The Normalized Enrichment Score (NES) indicates the degree and direction of pathway activation [34].

- Cross-Species Comparison: Compare the NES of specific pathways across species. Pathways showing a consistent trend (activation or inhibition) across species are more likely to be translatable.

- Model Selection and Validation: Select animal models whose pathway responses most closely mirror humans. The effectiveness of drugs tested in these models is more likely to be consistent with clinical outcomes if they target pathways with the same trend across species [34].

Figure 2: Workflow for cross-species signaling pathway analysis.

Performance Data and Comparative Analysis

Quantitative Comparison of Classification Algorithms

The performance of classification algorithms can be evaluated on various metrics. The following table synthesizes data from different application contexts to illustrate their relative strengths and weaknesses.

Table 2: Performance Comparison of Machine Learning Algorithms

| Algorithm | Reported Accuracy / Context | Key Strengths | Key Limitations / Biases |

|---|---|---|---|

| k-Means | Effective for ST/GT classification in behavioral science [5] | Simplicity, ease of implementation, low computational complexity, works well with large datasets [30] [5] [35] | Requires predefined 'k'; sensitive to outliers and initial centroids; assumes spherical clusters [30] [5] |

| Derivative Method | Effective for ST/GT classification, especially in small samples [5] | Objectively determines cutoffs based on sample distribution; no predefined 'k' needed [5] | Relies on the data forming a discernible distribution (e.g., bimodal) [5] |

| Random Forest | 92% accuracy (fMRI study) [36] | High accuracy, handles complex relationships | Can be flawed when trained on unbalanced datasets [37] |

| AdaBoost | 91% accuracy (fMRI study) [36] | High accuracy, ensemble method | Performance can degrade with noisy data |

| Naïve Bayes | 89% accuracy (fMRI study) [36] | Simple, fast, works well with small data | Assumes feature independence, which is often violated |

| Support Vector Machine (SVM) | 84% accuracy (fMRI study) [36] | Effective in high-dimensional spaces | Flawed with unbalanced datasets; performance depends on kernel choice [37] |

| Double Discriminant Scoring | Consistently outperformed others across training/testing scenarios (Framingham Study) [37] | High generalizability, insensitive to distributional shifts [37] | Less commonly used and implemented |

Critical Considerations for Cross-Species Generalizability

When applying these algorithms in cross-species research, several factors are critical for ensuring generalizability and mitigating bias [37]:

- Dataset Balance: Models like SVM and Extreme Gradient Boosting (XGB) have been shown to be flawed when trained on unbalanced datasets, which can exacerbate misclassification of underrepresented groups [37].

- Distributional Shifts: An algorithm's performance in a training environment may not hold when applied to data from a different species or population due to shifts in data distribution. Algorithms that are robust to such shifts, like the double discriminant scoring method, are preferable for cross-species validation [37].

- Feature Selection: The choice of input features (variables) is crucial. Incorporating confounding features or those with different correlations across species can lead to models that fail to generalize. Establishing an optimal variable hierarchy for a given classification task can improve robustness [37].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents and Materials for Behavior Classification and Cross-Species Analysis

| Item Name | Function / Application | Example in Context |

|---|---|---|

| Pavlovian Conditioning Chamber | Controlled environment to present conditioned (CS) and unconditioned (US) stimuli for behavioral training. | Used to elicit and record sign-tracking and goal-tracking behaviors in rodents [5]. |

| PavCA Index Score Algorithm | A standardized formula to quantify individual differences in conditioned responses on a scale from -1 (GT) to +1 (ST). | The primary quantitative metric for classifying behavioral phenotypes in Pavlovian conditioning studies [5]. |

| RNA-sequencing Data (Bulk & Single-cell) | Provides transcriptomic profiles to analyze gene expression and pathway activity across tissues or cell types. | The fundamental data input for cross-species signaling pathway analysis (e.g., from rats, monkeys, humans) [34]. |

| Gene Set Enrichment Analysis (GSEA) Software | Computational method to determine whether a priori defined sets of genes show statistically significant differences between two biological states. | Used to identify signaling pathways that are consistently activated or inhibited during a process like vascular aging across different species [34]. |

| STRING Database | A database of known and predicted protein-protein interactions, including both physical and functional associations. | Used to construct Protein-Protein Interaction (PPI) networks from differentially expressed genes to identify key hub genes [34]. |

The move from arbitrary, subjective cutoff methods toward data-driven algorithms like k-Means and the Derivative Method represents a significant advancement for standardizing behavior classification in neuroscience and pharmacology [5]. While k-Means offers a well-established, simple clustering solution, its requirement for a predefined 'k' is a notable constraint. The Derivative Method elegantly addresses this by directly deriving cutoffs from the sample's own distribution, providing a compelling objective alternative.

For the broader goal of cross-species validation, supervised learning models offer powerful predictive capabilities but must be applied with caution. Their performance is highly dependent on the quality and balance of the training data, and they can perpetuate biases if not carefully audited [37]. The integration of bioinformatic approaches like cross-species signaling pathway analysis provides a robust framework for validating the translational relevance of animal models and the behavioral phenotypes classified by these algorithms [34].