Causal Interaction Strength and Topological Importance Metrics: From Network Theory to Drug Discovery

This article provides a comprehensive exploration of causal interaction strength and topological importance (TI) metrics, essential tools for deciphering complex biological networks.

Causal Interaction Strength and Topological Importance Metrics: From Network Theory to Drug Discovery

Abstract

This article provides a comprehensive exploration of causal interaction strength and topological importance (TI) metrics, essential tools for deciphering complex biological networks. Aimed at researchers, scientists, and drug development professionals, it covers the foundational principles of quantifying causal asymmetry and node centrality within networks. The scope extends to methodological applications in predicting drug-target interactions and identifying therapeutic targets, addresses troubleshooting and optimization challenges in causal inference, and offers a comparative analysis of validation techniques. By synthesizing insights from ecology, computational biology, and AI-driven drug discovery, this review serves as a guide for leveraging these powerful metrics to accelerate biomedical research and development.

Unraveling Complexity: The Core Principles of Causal and Topological Metrics

In the evolving landscape of data analysis and network science, accurately defining and quantifying causal interaction strength represents a fundamental challenge with significant implications across scientific domains, including pharmaceutical research and drug development. Causal interaction strength moves beyond mere correlation to capture the direction, magnitude, and asymmetry of influence between variables within a complex system. The emerging field of causal interaction strength topological importance metrics research provides sophisticated frameworks for disentangling these complex relationships, offering researchers powerful tools to identify key drivers in biological networks, prioritize therapeutic targets, and understand system dynamics.

Traditional correlation-based analyses often fail to reveal the directional influences and feedback mechanisms that characterize complex biological systems. The integration of asymmetry analysis with topological metrics enables researchers to transition from undirected associations to directed causal networks, revealing hierarchical structures and dominant influence patterns. This methodological evolution is particularly relevant for drug development professionals seeking to understand signaling pathway dynamics, identify master regulator genes in disease networks, and predict system responses to therapeutic interventions. This guide provides a comprehensive comparison of experimental protocols, quantitative metrics, and visualization frameworks for defining causal interaction strength, with specific application to biomedical research contexts.

Quantitative Metrics for Causal Strength Analysis

Core Metric Definitions and Comparisons

Quantifying causal interaction strength requires multiple complementary metrics, each capturing distinct aspects of directional influence. The table below summarizes the primary quantitative measures used in causal network analysis:

Table 1: Core Metrics for Causal Interaction Strength Analysis

| Metric Category | Specific Metric | Mathematical Definition | Interpretation | Typical Range |

|---|---|---|---|---|

| Asymmetry Indices | Net Causal Flow | Outgoing strength - Incoming strength [1] | Net influence of a node; positive values indicate sources, negative values indicate sinks | (-∞, +∞) |

| Causal Asymmetry Ratio | Relative dominance of outgoing versus incoming influence | [0, 1] | ||

| Directional Strength Metrics | Effective Connectivity (EC) | State matrix in dynamical causal modeling [1] | Direct, directional causal influence between nodes | (-∞, +∞) |

| Differential Cross-Covariance | Measures information flow and time-irreversibility [1] | (-∞, +∞) | ||

| Topological Importance | Persistence-Weighted Importance | Learned weight × persistence [2] | Importance of topological features for classification tasks | [0, +∞) |

| Reweighted Persistence Density | Learned metric on persistence diagram density [2] | Regional importance of topological features in defining classes | [0, +∞) |

Advanced Composite Metrics

For complex biological systems, researchers often employ composite metrics that integrate multiple dimensions of causal influence:

Table 2: Advanced Composite Metrics for Causal Analysis

| Metric Name | Component Metrics | Integration Method | Application Context |

|---|---|---|---|

| Spatio-Temporal Causal Index | Spatial dependence, temporal variation, bidirectional causality [3] | STCCM (Spatio-Temporal Convergent Cross Mapping) | Urban systems and traffic dynamics; adaptable to cellular signaling networks |

| Bidirectional Asymmetry Score | Forward causal strength, reverse causal strength | Forward strength - Reverse strength / Forward strength + Reverse strength | Quantifying feedback loop dominance in regulatory networks |

| Topological Causal Centrality | Net causal flow, node betweenness, persistence weight | Weighted sum of normalized metric values | Identifying master regulators in gene regulatory networks |

Experimental Protocols for Causal Analysis

Protocol 1: Effective Connectivity Mapping with fMRI Data

This protocol adapts methods from brain network research [1] for pharmacological applications:

Objective: To quantify directional influences between nodes in a biological network using time-series data.

Materials Required:

- High-temporal-resolution time-series data (e.g., calcium imaging, phosphoproteomics, gene expression)

- Computational environment with linear algebra capabilities (MATLAB, Python with NumPy/SciPy)

- Statistical software for multiple comparison correction

Procedure:

- Data Preprocessing: Detrend, filter, and normalize time-series data for each network node.

- Model Specification: Implement a linear state-space model: dx/dt = Ax + w, where A is the effective connectivity matrix to be estimated.

- Parameter Estimation: Use maximum likelihood or Bayesian methods to estimate the effective connectivity matrix.

- Asymmetry Decomposition: Separate the effective connectivity matrix into symmetric and antisymmetric components.

- Statistical Validation: Apply permutation testing or bootstrapping to establish significance of directional influences.

- Network Visualization: Represent significant causal interactions as a directed network graph.

Key Output: An asymmetric effective connectivity matrix where element Aij represents the causal influence of node i on node j.

Protocol 2: Topological Importance Mapping with Persistence Diagrams

This protocol adapts topological data analysis approaches [2] for causal inference:

Objective: To identify which topological features in data are important for defining class differences (e.g., diseased vs. healthy states).

Materials Required:

- High-dimensional dataset with class labels

- Topological data analysis software (e.g., GUDHI, JavaPlex)

- Metric learning framework (e.g., PyTorch, TensorFlow)

Procedure:

- Persistence Diagram Generation: For each sample, compute persistence diagrams capturing topological features across dimensions.

- Density Estimation: Convert each persistence diagram to a vectorized density estimate.

- Metric Learning: Train a deep metric learning classifier to distinguish classes based on persistence densities.

- Importance Field Extraction: Extract the learned weights from the classifier to create an importance field over the persistence diagram.

- Feature Mapping: Project important topological features back to the original data space to identify their structural correlates.

- Causal Hypothesis Generation: Formulate testable hypotheses about how identified topological features causally influence class membership.

Key Output: An importance field highlighting regions of the persistence diagram most relevant for class discrimination.

Visualization Frameworks for Causal Networks

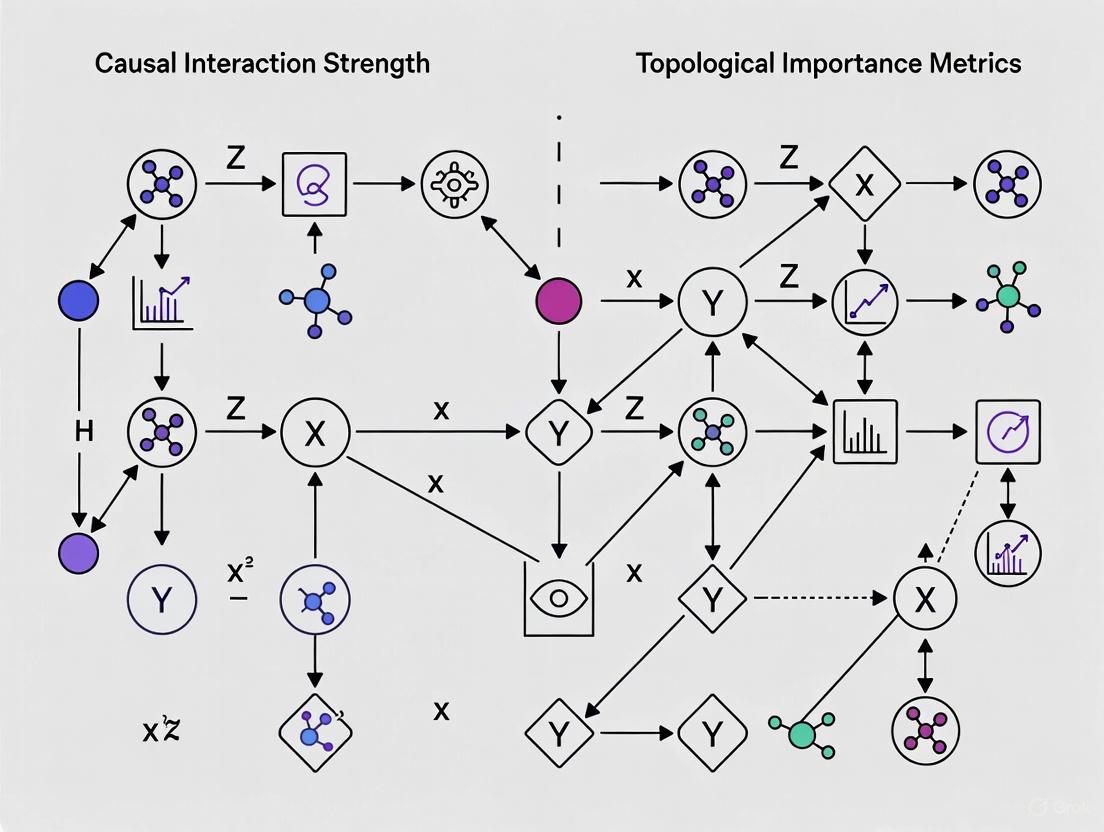

Workflow for Causal Interaction Analysis

The following diagram illustrates the complete workflow for analyzing causal interactions from data collection to network visualization:

Diagram Title: Causal Analysis Workflow

Causal Network Architecture with Asymmetry

This diagram illustrates a directed causal network with asymmetric interaction strengths, highlighting sources, sinks, and bidirectional relationships:

Diagram Title: Asymmetric Causal Network

Research Reagent Solutions for Causal Analysis

Essential Materials and Tools

Table 3: Research Reagent Solutions for Causal Interaction Studies

| Category | Specific Tool/Reagent | Function in Causal Analysis | Example Applications |

|---|---|---|---|

| Data Acquisition | High-temporal-resolution live-cell imaging systems | Captures dynamic cellular processes for time-series analysis | Calcium signaling, protein translocation |

| Phosphoproteomics platforms | Quantifies post-translational modifications across time | Kinase activity profiling, signaling pathway dynamics | |

| Single-cell RNA sequencing | Measures gene expression dynamics at single-cell resolution | Gene regulatory network inference | |

| Computational Tools | Linear State-Space Modeling software (MATLAB, Python) | Estimates effective connectivity matrices from time-series data [1] | Brain network analysis, cellular signaling |

| Topological Data Analysis libraries (GUDHI, JavaPlex) | Computes persistent homology and generates persistence diagrams [2] | Identification of important topological features in data | |

| Metric Learning frameworks (PyTorch, TensorFlow) | Learns importance weights for topological features [2] | Classification of biological states based on topological structure | |

| Visualization Platforms | Graph visualization tools (Cytoscape, Gephi) | Creates interactive visualizations of directed causal networks | Network pharmacology, pathway analysis |

| Custom scripting (D3.js, Graphviz) | Generates publication-quality diagrams of causal relationships | Scientific communication, hypothesis generation |

Comparative Analysis of Methodological Approaches

Performance Across Data Types

The table below compares the performance of different causal analysis methods across various data characteristics relevant to drug development:

Table 4: Method Performance Across Data Types

| Method | Temporal Data | Static Data | High-Dimensional Data | Nonlinear Relationships | Implementation Complexity |

|---|---|---|---|---|---|

| Effective Connectivity | Excellent [1] | Poor | Moderate | Limited | High |

| Spatio-Temporal CCM | Excellent [3] | Poor | High | Excellent [3] | Very High |

| Topological Importance | Good | Excellent [2] | Excellent [2] | Good | High |

| Cross-Correlation | Good | Fair | Low | Poor | Low |

| Granger Causality | Excellent | Poor | Low | Limited | Moderate |

Application to Pharmaceutical Research Questions

Different causal analysis methods are suited to specific research questions in drug development:

Table 5: Method Selection Guide for Pharmaceutical Applications

| Research Question | Recommended Method | Key Metrics | Data Requirements |

|---|---|---|---|

| Target identification in signaling networks | Effective Connectivity | Net causal flow, asymmetric ratio [1] | Time-series phosphoproteomics |

| Mechanism of action studies | Topological Importance Mapping | Persistence-weighted importance [2] | Multiplexed imaging, transcriptomics |

| Polypharmacology prediction | Spatio-Temporal CCM | Bidirectional causality, asymmetry score [3] | Multi-scale omics data |

| Resistance mechanism elucidation | Integrated Causal Topology | Causal centrality, importance field | Longitudinal single-cell data |

| Drug combination synergy | Multivariate Causal Inference | Interaction information flow | Dose-response time-series |

The field of causal interaction strength analysis has evolved significantly from basic asymmetry analysis to sophisticated directed network models. The integration of topological importance metrics with causal inference frameworks provides researchers with powerful tools to dissect complex biological systems and identify key intervention points. For drug development professionals, these approaches offer a more principled foundation for target identification, mechanism elucidation, and therapeutic strategy optimization. As these methods continue to mature, they promise to enhance the efficiency and success rates of pharmaceutical research by providing deeper insights into the causal architecture of disease and treatment response.

Topological Importance (TI) metrics provide a powerful framework for quantifying the centrality of nodes and their indirect influences within complex networks. Unlike simple local measures such as node degree, TI metrics capture the global structural role of a node by leveraging concepts from graph theory and algebraic topology. In the context of causal interaction strength research, these metrics are indispensable for moving beyond pairwise correlations to uncover higher-order interactions and synergistic relationships that define complex biological systems. The analysis of infrastructure networks reveals that topological measures broadly fall into two types: global measures, which quantify network attributes like accessibility and connectivity as a single value, and local measures, which quantify the contribution of an individual network component (i.e., node or link) in maintaining those network attributes [4]. In molecular sciences and drug development, TI metrics have enabled breakthroughs in understanding biomolecular stability, protein-ligand interactions, and viral evolution by extracting robust, multiscale, and interpretable features from complex data [5].

The fundamental principle underlying TI metrics is that the importance of any network component cannot be assessed in isolation but must be evaluated within the context of the entire network topology. This approach is particularly valuable for identifying critical control points in biological networks and potential drug targets, as it can reveal nodes whose influence extends far beyond their immediate neighbors. Research on distributed average algorithms has demonstrated that topological features of a network fundamentally determine its functional performance and convergence behavior, highlighting the practical significance of these structural metrics [6].

Comparative Analysis of Key Topological Metrics

Fundamental Graph Theory Metrics

Table 1: Traditional Graph Theory Metrics for Node Centrality

| Metric Name | Computational Complexity | Key Strengths | Key Limitations | Biological Applications | ||||

|---|---|---|---|---|---|---|---|---|

| Degree Centrality | O( | E | ) | Simple, intuitive, fast to compute | Purely local perspective, ignores network context | Identification of highly connected proteins in interactomes | ||

| Betweenness Centrality | O( | V | E | ) for unweighted | Identifies bridge nodes and bottlenecks | Computationally intensive for large networks | Finding critical control points in metabolic pathways | |

| Closeness Centrality | O( | V | E | ) for unweighted | Measures propagation speed to all nodes | Less meaningful in disconnected networks | Identifying cell types that efficiently communicate across tissues | |

| Eigenvector Centrality | O( | V | + | E | ) per iteration | Incorporates importance of neighbors | May overemphasize tightly-connected clusters | Ranking nodes in gene regulatory networks |

| Average Nearest Neighbor Degree | O( | E | ) | Captures assortativity patterns | Limited to direct neighborhood | Characterizing hub connectivity patterns in protein-protein interaction networks |

Advanced Topological Data Analysis Metrics

Table 2: Advanced Topological Data Analysis Metrics

| Metric Name | Computational Complexity | Key Strengths | Key Limitations | Biological Applications | ||

|---|---|---|---|---|---|---|

| Persistent Homology | O(2^( | V | )) in worst case | Captures multiscale topological features | Computational challenges for large complexes | Mapping multiscale organization of biomolecular structures [5] |

| Betti Curves | Dependent on filtration steps | Robust to noise, provides multiscale view | Requires appropriate filtration scheme | Classifying neurodegenerative diseases from brain networks [7] | ||

| Persistent Laplacians | Higher than persistent homology | Provides both topological and geometric information | Recent method, less established | Biomolecular stability analysis [5] | ||

| k-Multivariate Mutual Information (I_k) | O(2^n) for n variables | Quantifies higher-order statistical dependencies | Interpretation challenges with negativity | Identifying synergistic gene regulatory modules [8] |

Experimental Protocols and Performance Validation

Protocol 1: Comparative Evaluation of Topological Approaches in Neurodegenerative Disease Classification

Objective: To evaluate the effectiveness of Betti curves versus graph-theoretical metrics in distinguishing people with multiple sclerosis (PwMS) from healthy volunteers (HV) using structural connectivity, morphological gray matter, and resting-state functional networks [7].

Methodology:

- Data Acquisition: Acquire magnetic resonance imaging (MRI) data including diffusion MRI for structural connectivity, T1-weighted MRI for morphological gray matter networks, and resting-state fMRI for functional networks.

- Network Construction: Construct brain networks for each modality using standardized parcellation schemes to define nodes and appropriate similarity measures to define edges.

- Feature Extraction:

- Compute graph-theoretical metrics including node degree, efficiency, and centrality measures

- Generate Betti curves using persistent homology to track the evolution of topological features across multiple scales

- Multimodal Integration: Implement both feature concatenation and multilayer graph architectures to combine information across modalities.

- Classification: Train machine learning classifiers (e.g., Support Vector Machines) using leave-one-out cross-validation to distinguish PwMS from HV.

Key Results: Features extracted using Betti curves generally outperformed those based on graph-theoretical metrics across all network types. The multimodal integration approaches provided more comprehensive representation of alterations in complex brain mechanisms associated with MS, leading to improved classification performance [7].

Protocol 2: Class-Driven Visualization of Topological Feature Importance

Objective: To develop and validate a method for visualizing the importance of topological features that define classes of data, adapting explainable deep learning approaches for use in topological classification [9].

Methodology:

- Input Data Preparation: Process input data (graphs, 3D shapes, or medical images) and compute persistence diagrams that encode topological features.

- Density Estimation: Create a density estimator of the points of a persistence diagram to serve as input to the learning model.

- Metric Learning: Employ a learned metric classifier that learns to reweigh the persistence density such that classification accuracy is maximized.

- Importance Extraction: Extract the learned weights to create an importance field on persistent point density.

- Visualization: Generate intuitive representations of persistence point importance using two approaches:

- Visualization directly on each persistence diagram

- For sublevel set filtrations on images, visualization directly on the images themselves

Key Results: The approach successfully identified and visualized biologically relevant topological features in graph, 3D shape, and medical image data, providing intuitive representations of which topological structures are important for classification tasks [9].

Figure 1: Topological Feature Importance Workflow

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Essential Computational Tools for Topological Analysis

| Tool Name | Primary Function | Application Context | Key Features | Accessibility |

|---|---|---|---|---|

| GUDHI Library | Topological Data Analysis | General purpose TDA | Comprehensive persistent homology implementation, Python interface | Open source [10] |

| PHAT | Persistent Homology Algorithms | Computational topology | Efficient boundary matrix reduction | Open source [11] |

| DIPHA | Distributed Persistent Homology | Large-scale data analysis | MPI-based distributed computation | Open source [11] |

| Giotto-tda | Machine Learning with TDA | Integrating TDA in ML workflows | scikit-learn compatible API | Open source [11] |

| JavaPlex | Persistent Homology | Computational topology | Java-based, with MATLAB integration | Open source [11] |

| TDAstats | R package for TDA | Statistical analysis | Pipeline from data to persistence diagrams | Open source [11] |

Integration with Causal Interaction Strength Research

In causal interaction strength research, topological importance metrics provide the structural foundation upon which causal relationships can be mapped and quantified. The integration of these approaches enables researchers to distinguish between mere correlation and genuine causation by contextualizing interactions within the global network architecture. Studies on infrastructure networks have demonstrated that topological measures are critical for understanding vulnerability patterns, with different metrics capturing complementary aspects of network reliability, connectivity, and criticality [4].

The k-multivariate mutual information (Ik) framework offers particular promise for causal analysis as it can quantify higher-order statistical dependencies that often reflect causal interactions in biological systems. The positivity of Ik identifies variables that co-vary the most in a population, whereas negativity identifies synergistic clusters and the variables that differentiate or segregate the most [8]. This approach has been successfully applied to analyze genetic expression data for unsupervised cell-type classification, demonstrating its power to unravel biologically relevant subtypes from complex molecular data.

Figure 2: Causal-Topology Framework Relationship

Recent advances in topological deep learning (TDL) have further strengthened the connection between topological importance and causal interaction strength. TDL integrates topological data analysis with deep neural networks, creating a new paradigm for rational learning that has demonstrated remarkable success in predicting protein-ligand interactions, characterizing viral evolution mechanisms, and precisely predicting emerging dominant SARS-CoV-2 variants [5]. These approaches excel at identifying the persistent topological features that serve as robust predictors of biological behavior and causal outcomes.

Correlation analyses across transportation networks have revealed that local topological metrics often retain high explanatory power for global network performance while being computationally more efficient to calculate [12]. This principle extends to biological networks, where local topological importance metrics can provide insights into causal interaction strengths without requiring exhaustive computation of global network properties, enabling more scalable analyses of large-scale biological systems.

Topological Importance metrics represent a powerful paradigm for quantifying node centrality and indirect influences in complex biological networks. The comparative analysis presented in this guide demonstrates that while traditional graph metrics provide a foundational understanding of local network properties, advanced topological data analysis approaches offer superior capabilities for capturing multiscale organization and higher-order interactions critical for understanding biological systems. The experimental protocols validate that topological methods consistently outperform conventional graph-theoretical approaches in classification tasks relevant to disease characterization and drug development.

The integration of TI metrics with causal interaction strength research provides a robust framework for moving beyond correlation to causation in biological network analysis. As topological deep learning continues to evolve, these approaches will play an increasingly important role in drug discovery, enabling researchers to identify critical intervention points in disease networks and optimize therapeutic strategies based on a fundamental understanding of network topology and dynamics.

This guide provides a comparative analysis of three foundational theoretical frameworks used in the study of complex systems, with a specific focus on their application in causal interaction strength and topological importance metrics for drug development research.

Theoretical Frameworks Comparison

The following table summarizes the core principles, key metrics, and primary applications of each theoretical foundation in the context of biological and pharmacological research.

| Theoretical Foundation | Core Principles & Mathematical Formulations | Key Topological & Causal Metrics | Primary Applications in Drug Discovery |

|---|---|---|---|

| Information Theory | Quantifies information flow and statistical dependencies between system components. Key measures include Transfer Entropy and Mutual Information [13]. | • Joint Dimension ((DJ)): Intrinsic dimension of the combined system manifold [13].• Manifold Dimensions ((DX, D_Y)): Intrinsic dimensions of individual subsystems [13]. | Inferring causal relations in gene regulatory networks and from electrophysiological data (e.g., EEG) to identify driver nodes or epicenters of disease [13]. |

| Network Controllability | Models a system as a network where dynamics are governed by (\dot{x}(t) = Ax(t) + Bu(t)). Aims to identify how to steer system states with external inputs (u(t)) [14] [15] [16]. | • Average Controllability: A node's capacity to drive the network toward easily reachable states [14] [15].• Modal Controllability: A node's capacity to drive the network toward difficult-to-reach states [15].• Control Energy: Energy required for a state transition [14]. | Identifying key driver nodes in protein-protein interaction networks and predicting drug targets. Analyzing how brain network topology constrains dynamics in neurological disorders [15] [17]. |

| System Dynamics | Uses qualitative mapping of cause-effect relationships and feedback loops to understand complex system behavior. Often employs Causal Loop Diagrams (CLDs) [18]. | • Feedback Loops (Reinforcing 'R', Balancing 'B'): Circular cause-effect relationships that govern system behavior [18].• Causal Links with Polarity (+, -): Indicates how variables influence each other [18]. | Modeling complex systems in public health policy and strategic planning for drug development. Anticipating unintended consequences of interventions [18]. |

Detailed Experimental Protocols

Causal Inference via Manifold Intrinsic Dimension

Objective: To distinguish and assign probabilities to all possible causal relations (unidirectional, bidirectional, independent, common cause) between two dynamical systems from observed time series data [13].

- Time Delay Embedding: For each time series, reconstruct the attractor manifold using time-delay embedding, as per Takens' Theorem [13].

- Intrinsic Dimension Estimation: Calculate the intrinsic dimension (e.g., correlation dimension, Rényi information dimension) for each individual embedded manifold ((DX) and (DY)) and for the joint embedded manifold ((D_J)) [13].

- Causal Relation Diagnosis: Use the relationship between (DX), (DY), and (DJ) to diagnose the causal structure [13]:

- Independence: (DX + DY = DJ)

- Unidirectional Causality: (DJ = \max(DX, DY)) and (DX \neq DY)

- Bidirectional Causality or Generalized Synchrony: (DX = DY = DJ)

- Hidden Common Cause: (DJ < DX + DY) and (DJ > \max(DX, DY))

Network Controllability Analysis of Structural Connectomes

Objective: To quantify the control capacity of different brain regions from diffusion tensor imaging (DTI) data and identify aberrations in neurological disorders [15].

- Network Construction: Preprocess DTI data and perform whole-brain tractography. Parcellate the brain into regions (nodes) and construct a structural connectivity matrix (A) where elements represent the connection strength between regions [15].

- Controllability Metric Calculation: For each brain region (j), simulate it as a driver node by setting the input matrix (B = ej). Calculate the controllability Gramian [15]. Compute key metrics:

- Statistical Analysis: Compare whole-brain, network-level, and regional-level controllability metrics between patient and control groups using appropriate statistical tests (e.g., t-tests) with multiple comparisons correction [15].

Topological Drug Target Prediction (TREAP Algorithm)

Objective: To infer drug targets by leveraging network topology and gene expression data [17].

- Data Acquisition: Obtain a protein-protein interaction (PPI) network (e.g., from STRING database) and drug-induced gene expression data (e.g., microarray from GEO) [17].

- Topological Feature Calculation: Compute the betweenness centrality for each node (protein) in the PPI network. Betweenness quantifies the number of shortest paths passing through a node, indicating its topological importance [17].

- Differential Expression Analysis: Process gene expression data to calculate log fold change (LFC) and Benjamini-Hochberg adjusted p-values for all genes post-treatment [17].

- Target Ranking: Combine the two metrics. Rank potential drug targets based on a combination of their high betweenness centrality in the network and the statistical significance of their expression change in response to the drug [17].

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Resource | Function in Research | Example Source / Implementation |

|---|---|---|

| Structural Connectome | Represents the physical white matter connections in the brain, forming the matrix (A) for controllability analysis [15]. | Derived from Diffusion Tensor Imaging (DTI) data using tractography software (e.g., PANDA toolkit) [15]. |

| Protein-Protein Interaction (PPI) Network | Serves as the scaffold for network-based drug target prediction, defining the topological relationships between proteins [17]. | Public databases like STRING; can be refined with confidence score thresholds [17]. |

| igraph R Package | A library for network analysis and graph theory computations, used for calculating topological metrics like betweenness and degree [17]. | Available from CRAN (The Comprehensive R Archive Network). |

| Gene Expression Omnibus (GEO) | A public repository for high-throughput gene expression data, providing essential datasets for drug response studies [17]. | National Center for Biotechnology Information (NCBI). |

| limma R Package | A tool for the analysis of gene expression data, particularly for fitting linear models and conducting differential expression analyses to generate adjusted p-values [17]. | Available from Bioconductor. |

| Network Control Theory for Python (nctpy) | A Python software package providing tools for calculating network controllability metrics, including average controllability and control energy [14]. | Python Package Index (PyPI) or GitHub. |

Workflow and Relationship Diagrams

Causal Inference via Manifold Dimensions

Network Controllability Analysis

TREAP: Drug Target Prediction

In the study of biological systems and the development of new therapeutics, complexity is a fundamental challenge. Systems ranging from intracellular signaling pathways to entire ecosystems operate through intricate networks of interactions where the behavior of the whole cannot be predicted by simply summing the parts. Causal interaction strength topological importance (TI) metrics have emerged as a powerful framework for cutting through this complexity, offering researchers a quantitative lens to identify critical components, predict system behavior, and optimize interventions. Unlike traditional metrics that may only capture static properties or isolated effects, TI metrics specialize in quantifying the influence of individual elements—such as a protein, gene, or species—within a networked system by considering both direct and indirect causal pathways. This analytical shift is transforming how scientists approach problems in network biology and drug discovery, moving from a reductionist view of single targets to a holistic understanding of system-wide dynamics.

The core power of these metrics lies in their ability to move beyond correlation to infer causal influence within networks. In a biological context, this means distinguishing between entities that are merely associated with a particular outcome and those that genuinely drive or control it. For instance, in a protein-protein interaction network, a protein with high degree centrality (many connections) might seem important, but a topological importance analysis could reveal that a less-connected protein acts as a critical bridge or bottleneck, making it a more potent intervention target. By formally capturing this notion of causal influence through the asymmetry of effects within a network, TI metrics provide a more nuanced and predictive map of system functionality [19]. This article provides a comparative guide to the application of these metrics, framing them within a broader thesis on causal interaction strength and providing the experimental protocols and data frameworks needed for their implementation in biological research.

Quantitative Comparison of Key Metric Classes

The application of network-based metrics in biology and drug discovery can be broadly categorized into several classes, each with distinct strengths, limitations, and optimal use cases. The following table summarizes the key features of these metric categories for easy comparison.

Table 1: Comparative Analysis of Metric Categories in Biological Research

| Metric Category | Key Examples | Primary Applications | Data Requirements | Advantages | Limitations |

|---|---|---|---|---|---|

| Topological Importance (TI) & Causal Metrics | TIn Index, Interaction Asymmetry (A), PageRank [19] [20] | Food web stability analysis [19], Risk pathway identification [20], Target vulnerability assessment | Network topology (nodes & links), Interaction strengths | Identifies critical causal drivers, accounts for indirect effects, reveals system leverage points. | Network construction can be complex; sensitive to threshold selection. |

| Information-Theoretic Metrics | Total Correlation, Dual Total Correlation, O-Information [21] | Quantifying synergy/redundancy in neural systems [21], Analyzing higher-order interactions in omics data | Multivariate time-series or state data | Captures non-linear, higher-order dependencies beyond pairwise correlations. | High computational cost; requires substantial data for reliable estimation. |

| Traditional Centrality Metrics | Degree, Betweenness, Eigenvector Centrality [22] | Preliminary network analysis, Identifying hubs in protein-protein interaction networks | Network topology | Simple to compute and interpret; well-established benchmarks. | Often misses functional criticality and causal roles; focuses on structure over dynamics. |

| Deep Learning-Based Metrics | Graph Neural Networks (GNNs), Causal Node Embeddings [22] | Cross-network generalization for drug target identification, Robust node importance ranking | Network topology and/or node features | High representational power; can generalize across networks. | Can be a "black box"; requires significant training data; may not capture causal relationships without specific design [22]. |

Experimental Protocols for Key Applications

Protocol 1: Identifying Critical Species in Ecological Networks with Asymmetry Analysis

This protocol details the method for applying topological importance metrics to food web data to identify species with outsized causal influence on ecosystem functioning, as derived from the analysis of 34 food web models [19].

1. Objective: To identify keystone species and the dominant direction of causal effects (bottom-up vs. top-down) in a food web by constructing an asymmetry graph. 2. Materials & Reagents:

- Ecobase or Similar Database: A repository of ecological network models with trophic interaction data [19].

- R Statistical Software: Primary computational environment.

topoWebR Package: Custom package for calculating TI indices and asymmetry values.igraphR Package: For general network construction and analysis. 3. Procedure: a. Data Acquisition and Preparation: Obtain a binary, undirected food web model from a curated database. Filter the data to include only networks of a relevant size (e.g., >50 nodes) and remove duplicate temporal models to ensure independence. b. TI Index Calculation: Calculate the Topological Importance index (TIn) for all pairs of nodes (species) in the network. This index quantifies the effect of one species on another, incorporating indirect interactions up to n steps. A common and ecologically meaningful choice is TI³, which captures effects over three steps [19]. c. Asymmetry Calculation: For every pair of species (i, j), compute the asymmetry of their interaction using the formula: A = |TI³ᵢⱼ - TI³ⱼᵢ|. This quantifies the degree to which the influence of i on j differs from the influence of j on i. d. Asymmetry Graph Construction: Apply a threshold to the asymmetry values to isolate the most significant causal links. For instance, retain the top 1% of all possible pairwise interactions based on their asymmetry value (A). These links form a directed asymmetry graph, where a link from i to j indicates that i has a dominantly causal effect on j. e. Analysis and Interpretation: * Count the number of bottom-up (BUag) and top-down (TDag) links in the asymmetry graph. * Identify source nodes (only outward effects) and sink nodes (only inward effects). * Correlate these structural properties of the asymmetry graph with functional ecosystem metrics like Total Biomass (TB). A positive correlation between BUag and TB, for example, indicates systems with stronger bottom-up causal forces support greater biomass [19]. 4. Data Interpretation: The resulting asymmetry graph simplifies the complex web of interactions into a core set of dominant causal pathways. Species with high out-degree in this graph are potential keystone drivers, and the balance between BUag and TDag reveals the primary mode of control in the ecosystem.

Protocol 2: Mapping Causal Risk Networks in Air Traffic Control for Biomedical Safety

This protocol adapts a methodology that integrates text mining and causal network analysis—originally developed for safety operations [20]—to a biomedical context, such as analyzing patient safety incident reports or drug adverse event narratives.

1. Objective: To transform unstructured textual reports of safety incidents or adverse events into a structured causal network to identify critical risk factors and their interrelationships. 2. Materials & Reagents:

- Text Corpus: A collection of unstructured safety reports (e.g., FDA Adverse Event Reporting System - FAERS).

- BERTopic Model: A deep learning-based topic modeling technique for semantic analysis of text.

- Network Analysis Tool:

igraph(R/Python) orNetworkX(Python). - Domain-Specific Stopword List & Custom Dictionary: To improve text mining accuracy for biomedical terminology. 3. Procedure: a. Data Preprocessing: Collect and clean the text reports, removing irrelevant entries and duplicates. Expand abbreviations and apply the custom dictionary and stopword list during tokenization. b. Text Mining with BERTopic: Apply the BERTopic model to the preprocessed text. This model uses BERT embeddings to identify latent risk themes and extract representative keywords from the reports, grouping similar incidents based on semantic meaning [20]. c. Risk Factor Representation: Based on the extracted topics and domain knowledge, establish a structured framework of risk factors (e.g., "communication error," "equipment failure," "training deficit"). d. Causal Network Construction: Manually or semi-automatically annotate event chains from the incident narratives. Use these to construct a directed Causal Network for safety, where nodes represent risk factors and a directed edge from factor A to factor B indicates that A is a cause of B. e. Topological & Vulnerability Analysis: Analyze the constructed network using graph theory metrics to identify critical risk nodes. * PageRank: Identifies nodes that are highly influential by virtue of being pointed to by other important nodes. * Betweenness Centrality: Highlights nodes that act as bridges on many shortest paths, indicating potential bottlenecks in the risk propagation process [20]. 4. Data Interpretation: Nodes with high PageRank and betweenness centrality represent high-priority risk factors that are both central causes and key propagation points within the system. Interventions targeting these nodes can be most effective at reducing overall system risk.

The following diagram illustrates the core workflow for this causal analysis, adaptable to both ecological and biomedical contexts.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Implementing the methodologies described requires a combination of data, software, and computational resources. The following table details key components of the research toolkit.

Table 2: Essential Reagents and Tools for Causal Metric Analysis

| Tool/Reagent | Type | Function/Application | Example Use Case |

|---|---|---|---|

| Ecobase / Interaction Databases | Data Resource | Curated repository of ecological or biological network data. | Sourcing food web data for stability analysis [19]. |

| FAERS / Internal Safety Reports | Data Resource | Database of unstructured text reports on adverse events or safety incidents. | Identifying latent risk factors in clinical workflows [20]. |

R Statistical Software + topoWeb |

Software | Core computing environment with custom package for TI and asymmetry calculation. | Executing Protocol 1 for ecological networks [19]. |

Python + NetworkX/igraph |

Software | Library for the creation, manipulation, and study of complex networks. | General network construction and centrality analysis. |

| BERTopic Model | Software Algorithm | Deep learning model for topic modeling based on semantic similarity. | Extracting risk themes from textual incident reports [20]. |

| PageRank Algorithm | Computational Metric | Measures the transitive influence or importance of nodes in a network. | Ranking target proteins in a PPI network by their causal influence [20]. |

| Betweenness Centrality | Computational Metric | Identifies nodes that act as bridges or bottlenecks in a network. | Finding critical, non-hub targets in a disease signaling pathway [20]. |

Cross-Domain Comparative Analysis & Discussion

The comparative analysis in Section 2 reveals a critical evolution in metric philosophy: from descriptive to causal and from local to global. Traditional centrality metrics provide a valuable first pass but are often inadequate for predicting the functional outcome of a perturbation. For example, in a biological network, a high-degree node (hub) may be essential, but its removal might not cause system failure if the network contains redundant pathways. Conversely, a node with low degree but high betweenness centrality might be a critical bottleneck, and its failure could be catastrophic.

This is where Topological Importance and causal metrics demonstrate their superior predictive power. By incorporating indirect effects and, crucially, the asymmetry of interactions, they map the actual flow of influence and control within a system [19]. The application to food webs shows that ecosystems with greater total biomass are characterized by stronger bottom-up causal links (BUag), a finding that a simple link-counting centrality metric would likely miss. This highlights the utility of TI metrics in moving beyond structure to explain function.

Similarly, information-theoretic approaches offer a complementary but distinct lens. They are not based on a pre-defined network topology but instead infer the structure of interactions directly from multivariate data. Metrics like the dual total correlation are specifically designed to quantify "synergy"—information that is only available from the joint state of three or more variables and not from any subset [21]. This is directly applicable to complex biological systems where higher-order interactions are common, such as in neuronal networks or genetic regulatory circuits, where a combination of several genes (a pathway) produces an effect that cannot be attributed to any single one. A key finding is that these synergistic information structures have been shown to correlate with topological features like three-dimensional cavities in data manifolds, suggesting a deep mathematical link between the two frameworks [21].

The following diagram conceptualizes the relationship between different classes of metrics and the complexity of interactions they capture, illustrating the unique position of TI and information-theoretic metrics.

The adoption of causal interaction strength topological importance metrics marks a significant advancement in our ability to dissect and understand complex biological and pharmacological systems. The comparative data and experimental protocols presented in this guide demonstrate that TI metrics and related information-theoretic approaches offer a more nuanced, predictive, and functionally relevant map of system dynamics than traditional graph metrics alone. They enable researchers to move from asking "What is connected?" to "Who controls whom, and how strongly?"

The future of this field lies in greater integration and refinement. Promising directions include the fusion of TI metrics with information theory to develop a unified theory of higher-order interactions [21], the application of these hybrid models to single-cell and spatial omics data for novel drug target discovery, and the development of more robust "influence-aware causal node embedding" methods that can generalize predictions from model systems to real-world human biology [22]. As these tools become more sophisticated and accessible, they will undoubtedly become a standard component of the quantitative biologist's and drug hunter's toolkit, ultimately accelerating the development of safer and more effective therapies that are informed by a deep, causal understanding of disease.

From Theory to Practice: Methodologies and Applications in Biomedicine

Interaction asymmetry analysis and topological indices (TIs) represent complementary computational frameworks for decoding complex relational data across biological, ecological, and chemical domains. These mathematical approaches transform intricate networks into quantifiable metrics that reveal system organization, stability, and function. Topological indices are numerical descriptors derived from graph theory that summarize molecular or network structures based solely on their connectivity patterns [23] [24]. In parallel, interaction asymmetry quantifies directional relationships between components where forces or influences are not reciprocally equal, revealing causal pathways and hierarchical organizations within complex systems [25] [19].

The integration of these frameworks within causal interaction strength topological importance metrics research provides powerful tools for predicting system behavior, identifying critical elements, and understanding response dynamics. For drug development professionals, these approaches enable quantitative assessment of molecular complexity and biological activity relationships without extensive laboratory experimentation [23] [26]. The fundamental premise underpinning these methodologies is that the topological arrangement of elements within a system contains implicit information about that system's functional capabilities and dynamic behaviors [27].

Computational Frameworks: Comparative Analysis

Table 1: Comparative Analysis of Computational Frameworks

| Framework Category | Representative Methods | Primary Applications | Mathematical Basis | Key Output Metrics |

|---|---|---|---|---|

| Topological Indices | Zagreb indices, Randić index, ABC index, Sombor index [23] [24] | Drug discovery, materials science, QSAR/QSPR studies [23] [26] | Graph theory, vertex degrees, connectivity patterns [23] [24] | Numerical descriptors predicting stability, reactivity, bioactivity [23] [24] |

| Interaction Asymmetry Analysis | Topological Importance (TI), asymmetry graphs, flowscape analysis [25] [19] | Ecosystem functioning, neural connectivity, active matter systems [25] [19] [28] | Directional interaction strength, causal pathways [25] [19] | Interaction asymmetry values, causal link identification [25] [19] |

| Multifractal Network Analysis | Node-based Multifractal Analysis (NMFA), structure distance [27] | Complex network characterization, heterogeneity quantification [27] | Multifractal geometry, scaling relationships [27] | Multifractal spectra, complexity and heterogeneity degrees [27] |

| Statistical Validation Methods | Expanded Quadratic Assignment Procedure (EQAP), random/controlled rewiring [29] | Network significance testing, topological metric validation [29] | Permutation tests, edge swapping algorithms [29] | p-values, null distributions, significance assessments [29] |

Performance and Application-Specific Efficacy

Table 2: Performance Characteristics Across Domains

| Framework | Computational Complexity | Data Requirements | Strengths | Limitations |

|---|---|---|---|---|

| Degree-Based Topological Indices | Low to moderate [23] [24] | Molecular structure or network connectivity [23] [26] | Strong predictive power for molecular properties [23] [24]; Extensive validation in QSAR studies [23] | Limited to static structures; Less informative about dynamics [23] |

| Interaction Asymmetry Analysis | Moderate to high [25] [19] | Directed interaction data or time-series observations [25] [19] | Identifies causal pathways [19]; Reveals hierarchical organization [25] | Requires directional data; Sensitive to threshold selection [19] |

| Node-Based Multifractal Analysis | High [27] | Comprehensive network connectivity data [27] | Quantifies structural complexity and heterogeneity [27]; Captures multiscale properties [27] | Computationally intensive; Complex interpretation [27] |

| Statistical Validation Methods | Varies with network size [29] | Network topology data [29] | Robust significance testing [29]; Controls for Type I errors [29] | Method selection critical for accuracy [29] |

Experimental Protocols and Methodologies

Protocol for Topological Index Calculation in Molecular Networks

The computation of topological indices for molecular structures follows a standardized workflow that transforms chemical representations into quantitative descriptors. For benzenoid networks and pharmaceutical compounds, researchers typically implement the following methodology based on established cheminformatics practices [23]:

Molecular Graph Representation: Represent the chemical compound as a mathematical graph G = (V, E), where atoms correspond to vertices (V) and chemical bonds constitute edges (E) [23] [24].

Vertex Degree Assignment: For each vertex ρ ∈ V, calculate the degree Š(ρ) representing the number of edges incident to the vertex [24].

Edge Partitioning: Classify edges based on the degrees of their endpoint vertices, creating distinct edge sets E(Š(ρ), Š(φ)) for each degree pair [23] [26].

Index Computation: Apply specific mathematical formulas to calculate each topological index. For instance:

Validation: Correlate computed indices with experimental physicochemical properties using statistical methods such as linear regression [24] [26].

Figure 1: Workflow for Calculating Topological Indices in Molecular Networks

Protocol for Interaction Asymmetry Analysis in Ecological Networks

Interaction asymmetry analysis has been particularly valuable in ecological contexts for identifying causal relationships in complex food webs. The methodology adapted from Jordan et al. and applied to 34 food web models from the EcoBase database proceeds as follows [19]:

Network Preparation: Compile the food web as a binary, undirected network with species as nodes and trophic interactions as edges [19].

Topological Importance Matrix Calculation: Compute the TI³ matrix capturing indirect effects up to three steps using the formula: TI₍ij₎³ = Σ_{k=1 to 3} [Aᵏ]₍ij₎ / (k × N^(k-1)) where A is the adjacency matrix and N is the number of nodes [19].

Asymmetry Calculation: For each species pair (i,j), calculate the asymmetry value: A = |TI³{ij} - TI³{ji}| This quantifies the directional imbalance in their interaction [19].

Threshold Application: Identify strongly asymmetric effects by applying a threshold (typically the top 1% of all possible interactions) [19].

Asymmetry Graph Construction: Create a directed network containing only the significantly asymmetric interactions, transforming the original food web into a causal dominance network [19].

Metric Extraction: Calculate key properties of the asymmetry graph including:

- Number of bottom-up effects (BUag)

- Number of top-down effects (TDag)

- Ratio of top-down to bottom-up effects (TDag/BUag)

- Number of source nodes with only outward effects (Nsoag)

- Number of sink nodes with only inward effects (Nsiag) [19]

Figure 2: Interaction Asymmetry Analysis Workflow for Ecological Networks

Protocol for Node-Based Multifractal Analysis of Complex Networks

The Node-Based Multifractal Analysis (NMFA) framework quantifies structural complexity and heterogeneity in networks, capturing multiple generating rules that govern network formation [27]:

Box-Growing Algorithm: For each node i in the network, perform a box-growing process:

- Initialize with box size l = 1 containing only node i

- Iteratively increase l and include all nodes within distance l from node i

- For each l, calculate the box mass M_i(l) as the number of nodes in the box [27]

Node-Based Fractal Dimension (NFD): For each node i, estimate its fractal dimension by analyzing the relationship between log(Mi(l)) and log(l) across scales. The NFD represents the power-law exponent in the relationship Mi(l) ∼ l^{NFD} [27].

Partition Function Calculation: For different distortion exponent values q, compute the partition function: Z(q, l) = Σi [Mi(l)]^q where q emphasizes different aspects of the network structure (q > 1 amplifies dense regions, q < 1 emphasizes sparse regions) [27].

Multifractal Analysis: Determine the mass exponent τ(q) from the relationship Z(q, l) ∼ l^{τ(q)} and apply a Legendre transformation to obtain the multifractal spectrum f(α), where α represents the Lipschitz-Hölder exponent characterizing local singularities [27].

Network Characterization: Extract key metrics from the multifractal spectrum:

- Complexity degree (α₀): The value at which f(α) reaches its maximum

- Heterogeneity degree (w): The width of the spectrum w = αmax - αmin

- Structural asymmetry: The skewness of the f(α) curve [27]

Research Reagent Solutions and Essential Materials

Table 3: Essential Research Tools for Implementing Analysis Frameworks

| Tool Category | Specific Solutions | Function/Purpose | Implementation Examples |

|---|---|---|---|

| Software Libraries | topoWeb R package [19] | Calculating topological importance metrics and asymmetry graphs | Food web causality analysis [19] |

| Statistical Platforms | R Statistical Software v4.3.1 with igraph package [19] [29] | Network analysis and correlation testing | Ecosystem indicator development [19] |

| Network Analysis Tools | Custom Python libraries for EQAP [29] | Statistical significance testing of network topology | Controlled rewiring experiments [29] |

| Data Resources | EcoBase database [19] | Source of ecological network models | Food web interaction data [19] |

| Computational Methods | M-polynomial and NM-polynomial frameworks [23] | Computing degree-based topological indices | Benzenoid network characterization [23] |

| Validation Frameworks | Expanded Quadratic Assignment Procedure (EQAP) [29] | Testing statistical significance of network metrics | Comparing original vs. rewired networks [29] |

Integration and Comparative Insights

The comparative analysis of these computational frameworks reveals distinct but complementary strengths. Topological indices excel in quantifying molecular characteristics and predicting physicochemical properties with established correlations to experimental data. For instance, studies demonstrate strong correlation between the Atom-Bond Connectivity (ABC) index and heat of formation in titanium diboride networks (Pearson's r = 0.984) [24]. Similarly, the Geometric-Arithmetic (GA) index shows near-equivalent predictive power (r = 0.972) for the same property [24].

In contrast, interaction asymmetry analysis provides superior capabilities for identifying causal pathways and directional influences within complex systems. Applied to food webs, this approach reveals how total biomass correlates with bottom-up causal links (BUag) and sink nodes (Nsiag), providing ecosystem functioning indicators [19]. The method successfully reduces complexity by focusing on the most asymmetric (1%) of interactions, highlighting the predictable core of interspecific effects [19].

The Node-Based Multifractal Analysis offers unique advantages for characterizing structural complexity and heterogeneity, quantifying how multiple generating rules coexist within a single network [27]. This approach captures multiscale properties that conventional metrics miss, with the width of the multifractal spectrum (w) directly quantifying structural heterogeneity [27].

For drug development applications, integrated approaches leveraging multiple frameworks show particular promise. Topological indices can screen molecular candidates for desired properties, while asymmetry analysis might model biological pathway interactions, together accelerating lead optimization and efficacy assessment [23] [25]. The statistical validation methods ensure that observed network properties represent significant patterns rather than random configurations, a critical consideration in translational research [29].

Figure 3: Integration of Computational Frameworks for Comprehensive Analysis

The paradigm of drug discovery has progressively shifted from a traditional "one-drug-one-target" approach to a more holistic "multi-drugs-multi-targets" model, reflecting the complex polypharmacological profiles of drugs within biological systems [30]. This network-centric perspective is fundamental to understanding both therapeutic effects and safety concerns. Network-based computational methods have emerged as powerful tools for systematically predicting drug-target interactions (DTIs) and drug-drug interactions (DDIs), offering a mechanism-driven framework that accelerates drug repurposing and combination therapy design [31] [32]. These approaches leverage the topological properties of complex biological networks—such as protein-protein interactomes, drug-target networks, and multimodal causal networks—to infer novel interactions and elucidate the mechanisms of drug action [33] [34]. The core premise is that the network-based relationship between drug targets and disease proteins can reveal clinically efficacious drug combinations and identify new therapeutic indications for existing drugs [32]. This guide provides a comparative analysis of prominent network-based methodologies, evaluating their performance, underlying algorithms, and applicability in contemporary drug discovery pipelines, with a specific focus on causal interaction strength and topological importance metrics.

Methodologies and Comparative Performance

Network-based prediction methods can be broadly categorized into several classes based on their underlying algorithmic principles. The table below summarizes the core characteristics and performance of several representative approaches.

Table 1: Comparison of Key Network-Based Prediction Methods

| Method Name | Category | Core Algorithm/Principle | Key Input Data | Reported Performance (AUROC) |

|---|---|---|---|---|

| AOPEDF [35] | Heterogeneous Network Embedding & Machine Learning | Arbitrary-Order Proximity Embedded Deep Forest | 15 integrated biological networks (drug, target, disease) | 0.868 (DrugCentral), 0.768 (ChEMBL) |

| LCP-Based Methods [33] | Unsupervised Topological Link Prediction | Local-Community-Paradigm (LCP) Theory | Bipartite DTI network topology only | Comparable to state-of-the-art supervised methods |

| drug2ways [34] | Causal Path Reasoning | Exhaustive path enumeration over causal networks | Multimodal causal network (drugs, proteins, diseases) | Validated by recovery of clinical trial drug-disease pairs |

| Separation-based Model [32] | Network Proximity & Topology Analysis | Drug-Disease proximity and drug-drug target separation | Human protein-protein interactome, drug targets, disease proteins | Effectively identified validated antihypertensive combinations |

| NBI (Network-Based Inference) [30] | Resource Diffusion Algorithm | Probabilistic spreading (resource allocation) | Known DTI network (bipartite graph) | High accuracy without requiring 3D structures or negative samples |

| Graph Neural Networks [36] | Graph Representation Learning | Graph Convolutional Networks, GraphSAGE, Graph Attention Networks | Drug molecular graphs, DDI networks, knowledge graphs | Competent accuracy on DDI prediction tasks |

Key Methodological Insights

- Unsupervised Topological Methods vs. Supervised Methods: Unsupervised, pure topology-based models like those implementing the LCP theory can achieve performance comparable to state-of-the-art supervised methods that require additional biological knowledge (e.g., chemical structures) [33]. This highlights the significant predictive power inherent in the network structure itself.

- The Advantage of Heterogeneous Data Integration: Methods like AOPEDF, which integrate diverse data types (chemical, genomic, phenotypic) into a heterogeneous network, can achieve high prediction accuracy by preserving complementary proximity information from different biological contexts [35].

- Causal Reasoning for Mechanism Elucidation: Approaches such as drug2ways move beyond correlation by reasoning over causal paths in biological networks, offering direct insights into the mechanism of action and enabling the prediction of polypharmacological effects and combination therapies [34].

Experimental Protocols and Validation Frameworks

Protocol for Network-Based DTI Prediction Using AOPEDF

The AOPEDF framework provides a robust protocol for drug-target interaction prediction, which can be summarized in the following workflow [35]:

Diagram: AOPEDF Workflow for Drug-Target Interaction Prediction

Data Preparation and Benchmarking:

- Data Sources: Collect known DTIs from databases like DrugBank, TTD, and PharmGKB. Gather bioactivity data (Ki, Kd, IC50 ≤ 10 μM) from ChEMBL, BindingDB, and IUPHAR/BPS [35].

- Network Curation: Construct a comprehensive heterogeneous network. This includes:

- Drug Networks: Clinically reported drug-drug interactions, drug-disease associations, drug-side effect associations, chemical similarities, therapeutic similarities, and Gene Ontology (GO)-based similarities [35].

- Target/Protein Networks: Protein-protein interactions, protein-disease associations, protein sequence similarities, and GO-based functional similarities [35].

- Benchmarking: Split data into training and test sets. For external validation, use the latest DTIs from DrugCentral and ChEMBL, strictly excluding any overlaps with the training data [35].

Arbitrary-Order Proximity Embedded Feature Learning:

- The AROPE (Arbitrary-Order Proximity Embedding) algorithm is applied to the integrated heterogeneous network.

- This step learns low-dimensional vector representations for each drug and target node. These embeddings are designed to capture and preserve not just direct connections (first-order proximity) but also higher-order topological relationships within the network [35].

Model Training and Prediction with Deep Forest:

- A cascade deep forest classifier is trained using the learned feature representations of drug-target pairs.

- The deep forest model is chosen for its high performance, robustness to hyperparameters, and adaptive model complexity. Its tree-based nature also aids in generating more interpretable predictions compared to deep neural networks [35].

- The model outputs a prediction score indicating the likelihood of an interaction for a given drug-target pair.

Protocol for Predicting Drug Combinations via Network Proximity

The following protocol, derived from the methodology in [32], details the steps for predicting efficacious drug combinations based on topological relationships within the human interactome.

Diagram: Network-Based Drug Combination Prediction

Network and Data Assembly:

- Interactome: Compile a comprehensive human protein-protein interactome from multiple data sources (e.g., BioGRID, STRING, HPRD) [32].

- Disease Proteins: Compile a set of proteins known to be associated with the disease of interest (the "disease module," D) from databases like OMIM and DisGeNET [32].

- Drug Targets: For each drug, compile its set of known protein targets from databases like DrugBank and ChEMBL (drug-target modules A and B) [32].

Topological Metric Calculation:

- Drug-Drug Separation (( s{AB} )):

- Compute the mean shortest path length between targets of drug A (( \langle d{AA} \rangle )) and drug B (( \langle d{BB} \rangle )).

- Compute the mean shortest path length between targets of drug A and B (( \langle d{AB} \rangle )).

- Calculate the separation: ( s{AB} \equiv \langle d{AB} \rangle - \frac{\langle d{AA} \rangle + \langle d{BB} \rangle}{2} ).

- A negative ( s_{AB} ) indicates overlapping drug-target modules, while a positive value indicates topological separation [32].

- Drug-Disease Proximity (( d(X, Y) )):

- For a drug (X) and a disease (Y), calculate the mean shortest path length from each disease protein to its closest drug target: ( d(X,Y) = \frac{1}{\|Y\|} \sum{y \in Y} \min{x \in X} d(x, y) ) [32].

- Drug-Drug Separation (( s{AB} )):

Classification and Prioritization of Combinations:

- Classify each drug-drug-disease triplet into one of the six possible topological configurations (e.g., Overlapping Exposure, Complementary Exposure) [32].

- Prioritization Criterion: Clinical validation in hypertension and cancer has shown that the "Complementary Exposure" class (P2)—where two topologically separated drug-target modules both overlap with the disease module—is most strongly correlated with therapeutic efficacy [32]. Drug pairs falling into this class should be prioritized for further experimental validation.

Successful implementation of network-based drug discovery relies on a suite of computational and data resources. The following table details key components of the research toolkit.

Table 2: Essential Research Reagents and Resources for Network-Based Drug Discovery

| Resource Type | Name | Function and Application |

|---|---|---|

| Database (DTIs) | DrugBank [35] [37], ChEMBL [35], BindingDB [35] [37], IUPHAR/BPS [35] | Provide experimentally validated drug-target interactions and binding affinity data for model training and validation. |

| Database (Interactome) | BioGRID, STRING, HPRD [32] | Provide protein-protein interaction data to construct the foundational network (interactome) for proximity analyses. |

| Database (Diseases) | OMIM, DisGeNET [32] | Provide curated gene-disease associations to define disease-specific protein modules for analysis. |

| Software/Tool | drug2ways (Python package) [34] | Enables reasoning over causal paths in multimodal networks to identify drug candidates and combination therapies. |

| Software/Tool | AOPEDF (Source code) [35] | Implements the arbitrary-order proximity embedded deep forest framework for DTI prediction from heterogeneous networks. |

| Computational Framework | Graph Neural Networks (e.g., PyTor Geometric, Deep Graph Library) [36] | Provide libraries for building GNN models (e.g., GCN, GraphSAGE) for DDI and DTI prediction. |

| Metric | Separation (( s_{AB} )) [32] | A key topological metric to quantify the relationship between the target sets of two drugs within the interactome. |

| Metric | Network Proximity (( d(X, Y) )) [32] | A key topological metric to quantify the relationship between a drug's targets and a disease module in the interactome. |

Network-based methods provide a powerful, versatile, and increasingly accurate toolkit for predicting drug-target and drug-drug interactions. The comparative analysis reveals a landscape where different methods offer distinct strengths: unsupervised topological methods like LCP are powerful when biological data is scarce, heterogeneous network embedding approaches like AOPEDF excel in accuracy by integrating diverse data, and causal path reasoning with tools like drug2ways offers unparalleled mechanistic insight. The emerging consensus is that no single method is universally superior; instead, they are often complementary.

Future developments in this field will likely focus on enhancing the incorporation of biological context—such as tissue-specificity and cellular conditions—into network models. Furthermore, the integration of temporal dynamics and the improvement of model interpretability remain critical challenges. As networks grow in size and quality, and as algorithmic innovations like graph neural networks continue to mature, network-based approaches are poised to become an even more integral component of the rational drug design and repurposing pipeline.

The systematic identification of key nodes within complex biological networks has become a cornerstone of modern computational biology and drug discovery. This process involves analyzing network structures to pinpoint highly influential elements—such as proteins, genes, or metabolites—whose perturbation disproportionately affects system behavior. In the broader context of causal interaction strength topological importance metrics, these methodologies provide a quantitative framework for understanding how localized interactions propagate to produce system-wide effects, enabling researchers to move beyond correlative relationships toward establishing causal mechanisms in biological systems.

The fundamental premise underlying key node identification is that biological networks exhibit topological heterogeneity, meaning certain nodes occupy structurally privileged positions that enhance their functional importance. By applying metrics from network science, researchers can systematically rank nodes based on their potential influence on network stability, information flow, and functional output. This approach has proven particularly valuable in target prioritization for therapeutic development and disease module detection, where identifying critical regulatory elements can illuminate disease mechanisms and potential intervention points.

Methodological Framework for Key Node Identification

Core Topological Metrics

Multiple complementary metrics have been developed to quantify node importance from different topological perspectives, each with distinct strengths and limitations for biological applications. The table below summarizes the primary classes of topological importance metrics:

Table 1: Core Metrics for Key Node Identification in Biological Networks

| Metric Category | Specific Metrics | Underlying Principle | Biological Interpretation |

|---|---|---|---|

| Neighborhood-Based | Degree Centrality, K-shell, H-index | Importance derived from a node's immediate connections and their quality | Identifies nodes with direct regulatory potential or high functional engagement |

| Path-Based | Betweenness Centrality, Closeness Centrality | Importance based on position within network paths | Highlights communication bottlenecks and efficient propagators of influence |

| Spectral Influence | Eigenvector Centrality, PageRank | Importance derived from connections to other important nodes | Captures nodes embedded within influential functional modules |

| Multi-Attribute Decision | CRITIC-TOPSIS, Entropy-Weighted Methods | Integrated assessment combining multiple metrics | Provides comprehensive evaluation balancing different importance aspects |

Betweenness centrality quantifies how often a node appears on the shortest paths between other nodes, making it particularly effective for identifying bottleneck proteins in biological networks. Mathematically, it is defined as:

[ BC(v) = \sum{s≠v≠t} \frac{σ{st}(v)}{σ_{st}} ]

where ( σ{st} ) is the total number of shortest paths from node ( s ) to node ( t ), and ( σ{st}(v) ) is the number of those paths passing through node ( v ). In practice, proteins with high betweenness centrality often correspond to critical regulatory hubs whose disruption can severely impair cellular communication.

Closeness centrality measures how quickly a node can interact with all other nodes in the network, calculated as the reciprocal of the sum of the shortest path distances from the node to all other nodes:

[ CC(v) = \frac{1}{\sum_{u}d(u,v)} ]

where ( d(u,v) ) is the shortest path distance between nodes ( u ) and ( v ). This metric identifies nodes capable of rapid influence propagation, which in disease contexts may represent proteins that can quickly disseminate pathological signals.

Advanced Multi-Attribute Frameworks

Single-metric approaches often provide incomplete assessments due to their inherent methodological limitations. To address this, advanced multi-attribute decision-making frameworks like the Multi-attribute CRITIC-TOPSIS Network Decision Indicator (MCTNDI) have been developed [38]. These approaches integrate complementary perspectives—including neighborhood importance, topological location, path centrality, and node mutual information—into a unified importance score.

The CRITIC (CRiteria Importance Through Intercriteria Correlation) method objectively determines metric weights based on contrast intensity between criteria and their conflicting relationships, while TOPSIS (Technique for Order Preference by Similarity to Ideal Solution) ranks nodes by their relative distance to ideal positive and negative solutions [38]. This combined approach solves the challenge of subjective weight assignment while providing a more comprehensive node importance assessment.

Experimental Comparison of Key Node Identification Methods

Benchmarking Framework and Performance Metrics

To objectively evaluate the performance of different key node identification methods, researchers employ standardized benchmarking frameworks that assess metrics across multiple performance dimensions. The following experimental protocol provides a robust methodology for comparative analysis:

Table 2: Experimental Protocol for Method Comparison

| Protocol Step | Description | Key Parameters |

|---|---|---|

| Network Preparation | Curate high-quality, validated biological networks with known key nodes | Source databases: STRING, BioGRID, HumanNet; Network types: PPI, gene regulatory, metabolic |

| Method Application | Apply each key node identification method to the prepared networks | Implementation: Python/NetworkX, R/igraph; Normalization: Z-score for cross-metric comparison |

| Attack Simulation | Simulate network degradation through sequential node removal based on importance rankings | Removal strategies: targeted (high-centrality first) vs. random; Network metrics: efficiency, connectivity, diameter |

| Monotonicity Assessment | Evaluate ranking distinctness using monotonicity index | Monotonicity index: ( M(R) = \left(1 - \frac{\sum{r\in R}nr(nr-1)}{N(N-1)}\right)^2 ) where ( nr ) is number of nodes with rank ( r ) |

| Correlation Analysis | Measure agreement between different ranking methods | Statistical measures: Kendall's τ, Spearman's ρ; Significance testing: p-value with Bonferroni correction |

Performance evaluation typically focuses on three primary dimensions: (1) Network fragmentation efficiency measured by the rate of connectivity loss during targeted node removal, (2) Ranking monotonicity assessing the method's ability to discriminate between nodes, and (3) Methodological consistency evaluating agreement between different approaches.

Comparative Performance Analysis

Experimental comparisons reveal significant differences in method performance across biological network types. The following table summarizes quantitative results from benchmark studies:

Table 3: Comparative Performance of Key Node Identification Methods

| Method Category | Representative Methods | Attack Efficiency (ΔEfficiency) | Ranking Monotonicity | Computational Complexity | Best Application Context |

|---|---|---|---|---|---|

| Local Neighbors | Degree Centrality, H-index | Moderate (0.35-0.55) | Low (0.2-0.4) | O(N) | Large-scale networks, preliminary screening |

| Global Path | Betweenness, Closeness | High (0.55-0.75) | Medium (0.5-0.7) | O(N·E) | Small-medium networks, bottleneck identification |