Capturing the Blink of an Eye: A Researcher's Guide to Accelerometer Sampling for Short-Burst Animal Behaviors

Accurately capturing short-burst, high-frequency animal behaviors—such as prey catching, swallowing, or escape maneuvers—with accelerometers presents unique methodological challenges.

Capturing the Blink of an Eye: A Researcher's Guide to Accelerometer Sampling for Short-Burst Animal Behaviors

Abstract

Accurately capturing short-burst, high-frequency animal behaviors—such as prey catching, swallowing, or escape maneuvers—with accelerometers presents unique methodological challenges. This article synthesizes current research to provide a comprehensive framework for researchers and drug development professionals. It covers the foundational principles of sampling theory, outlines robust methodologies for data collection and machine learning analysis, addresses common pitfalls in device constraints and model overfitting, and establishes best practices for model validation. The guidance aims to enable the reliable classification of brief behavioral events, which is critical for advancing studies in animal models, behavioral pharmacology, and preclinical drug efficacy and safety assessments.

The Science of the Sudden: Defining Short-Burst Behaviors and Sampling Theory

What Constitutes a Short-Burst Behavior? Characteristics and Biomechanical Signatures

FAQ 1: What exactly defines a 'short-burst behavior'?

A short-burst behavior is characterized by a sudden, high-amplitude movement of very brief duration, often occurring over time scales of approximately 100 milliseconds to a few seconds [1] [2]. These behaviors are typically non-rhythmic, unpredictable, and are crucial actions in an animal's behavioral repertoire, such as escaping a predator or capturing prey.

- Key Characteristics:

FAQ 2: What are common examples of short-burst behaviors across different species?

Short-burst behaviors are seen in a wide range of animals. The table below summarizes documented examples from research.

| Species | Short-Burst Behavior | Documented Characteristics |

|---|---|---|

| Lemon Shark (Negaprion brevirostris) | Burst, Chafe, Headshake | Burst swimming is a high-energy escape behavior; chafing and headshakes are rapid, postural adjustments. [2] |

| Great Sculpin (Myoxocephalus polyacanthocephalus) | Feeding, Escape events | Characterized by movements lasting on the order of 100 ms. [1] |

| European Pied Flycatcher (Ficedula hypoleuca) | Swallowing food | A fast action with a mean frequency of 28 Hz. [1] |

| Domestic Cat (Felis catus) | Pouncing, Jumping | Intensive acceleratory bursts of short duration associated with hunting. [5] |

| Yellowtail Kingfish (Seriola lalandi) | Escape, Courtship | "Burst" behaviours with high amplitude accelerations that are difficult to interpret and differentiate. [6] |

| Wild Boar (Sus scrofa) | Scrubbing | A rapid behavior characterized by a high Overall Dynamic Body Acceleration (ODBA) value. [3] |

FAQ 3: What is the most critical factor in capturing these behaviors with accelerometers?

The sampling frequency of your accelerometer is the most important technical consideration. According to the Nyquist-Shannon sampling theorem, to accurately record a behavior, the sampling frequency must be at least twice the frequency of the behavior itself. [1]

- For short-burst behaviors, this often requires high sampling rates. For example:

- The Trade-Off: Higher sampling rates (e.g., 50-100 Hz) drain battery and fill memory storage faster. [1] [2] You must balance the need to capture fine-scale movements with the desired study duration.

Experimental Protocol: How to Set Up a Study to Identify Short-Burst Behaviors

The following workflow outlines the standard methodology for building a machine learning model to classify short-burst behaviors from accelerometer data, based on established research protocols. [6] [4] [5]

Step 1: Captive Trials & Data Collection

- Animal Handling: Fit captive or semi-captive animals with a tri-axial accelerometer. The device should be securely mounted to the body (e.g., on the scapular, dorsally) to minimize movement artifacts. [6] [5]

- Synchronized Recording: Record the animals' behavior with high-resolution video cameras (e.g., 60 fps) while the accelerometer is logging. It is critical to synchronize the timestamps of the video and the accelerometer precisely at the start and end of trials. [6] [1]

- Sampling Rate: Program the accelerometer to log at a high frequency, typically 50 Hz or higher, to ensure short-burst behaviors are captured adequately. [6] [1] [5]

Step 2: Data Processing & Variable Calculation

- Ground-Truthing: Manually review the synchronized video and assign a behavior label (e.g., "feed," "escape," "chafe") to each corresponding segment of the accelerometer data. This creates a "labeled" or "ground-truthed" dataset. [6] [4]

- Feature Extraction: From the raw acceleration data, calculate a wide range of predictive variables for the model. These often include: [6] [4]

- Static and Dynamic Acceleration: Decomposing the signal to isolate body movement from posture.

- Pitch and Roll: Animal body position/orientation.

- VeDBA/ODBA: Vector/Dynamic Body Acceleration, a measure of overall movement intensity.

- Standard Error: The running standard error of the waveform to capture movement 'size'.

Step 3: Model Training & Validation

- Algorithm Selection: Use a supervised machine learning algorithm, such as Random Forest (RF), to train a classification model. RF is popular because it handles large, complex datasets well and is less prone to overfitting. [6] [4]

- Training: Input the labeled data and the calculated variables into the RF algorithm to "train" it to recognize the unique accelerometer signature of each behavior.

- Validation: Test the model's accuracy by using it to predict behaviors in a portion of your labeled dataset that was not used for training. Metrics like F1 scores are used to evaluate performance for each behavior class. [6]

Step 4: Application to Field Data

Once validated, the trained model can be applied to classify behaviors from accelerometer data collected from free-ranging, wild animals. This allows researchers to identify the occurrence and timing of cryptic short-burst behaviors in a natural setting. [6]

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function & Specification | Considerations for Short-Burst Behaviors |

|---|---|---|

| Tri-axial Accelerometer (e.g., Axy-Depth, Cefas G6a+, AX3) | Measures acceleration in three orthogonal axes (surge, sway, heave). | Select a device capable of high sampling rates (≥50 Hz). Memory and battery life must be balanced with the high data volume. [6] [5] [2] |

| High-Speed Video Camera (e.g., GoPro Hero series) | Provides ground-truth data for labeling accelerometer signatures. | Record at a high frame rate (≥60 fps) and synchronize timestamps with the accelerometer. [6] [1] |

| Secure Mounting System (e.g., harness, adhesive, epoxy) | Fixes the accelerometer firmly to the animal. | Must minimize device movement to prevent signal artifacts, which is critical for interpreting high-amplitude bursts. [6] [5] |

| Machine Learning Software (e.g., R, Python with 'h2o' or 'randomForest' packages) | Used to build and run the classification algorithm (e.g., Random Forest). | Ensure computational power is sufficient to handle high-frequency data. The model requires a large set of predictive variables for accuracy. [6] [4] [3] |

Troubleshooting: Common Problems and Solutions

Problem: Model Performs Poorly on Specific Short-Burst Behaviors

- Potential Cause 1: Insufficient Training Data. Rare behaviors like "pounce" or "escape" may not have enough examples in your training dataset. The model then becomes biased toward more common behaviors. [4]

- Solution: Actively stimulate or elicit the target behavior during captive trials to increase its occurrence. Standardize the duration of each behavior class in the training dataset to balance their representation. [4]

- Potential Cause 2: Suboptimal Predictor Variables. The calculated variables may not adequately capture the unique signature of the behavior.

- Solution: Expand the suite of predictor variables. Include metrics like the dominant power spectrum frequency and amplitude, or ratios of VeDBA to dynamic acceleration. [4]

Problem: Accelerometer Data is Noisy or Inconsistent

- Potential Cause: Loose Attachment. If the device is not securely fastened, it can move independently of the animal's body, creating noise that obscures true behavioral signatures.

- Solution: In captive trials, test and refine the attachment method (e.g., harness design, adhesive type) to ensure a firm, stable fit before field deployment. [5]

Problem: Battery or Memory Depletes Too Quickly

- Potential Cause: Sampling at Excessively High Frequencies. While short-burst behaviors need high sampling rates, some behaviors can be identified at lower frequencies.

- Solution: Conduct a pilot study to determine the minimum effective sampling frequency for your target species and behaviors. For example, one study found 5 Hz adequate for classifying some burst behaviors in lemon sharks, drastically conserving power. [2]

Frequently Asked Questions

What is the Nyquist-Shannon sampling theorem in simple terms? The theorem states that to accurately digitize an analog signal, you must sample it at a rate at least twice as high as the highest frequency component contained within that signal. Sampling slower than this rate causes "aliasing," where high-frequency signals appear as erroneous low-frequency signals in your data [1].

Is the Nyquist rate a minimum or a recommended setting? For animal behavior studies, the Nyquist rate is an absolute minimum. However, research shows it is often insufficient on its own. For accurate classification of short-burst behaviors and amplitude estimation, you typically need to sample at 1.4 to 2 times the Nyquist frequency [1] [7].

My accelerometer data looks distorted. What could be wrong? Distortion can have several causes. A clipped or "flat-topped" signal indicates the acceleration exceeded the sensor's measurement range [8]. An erratic or jumping signal can result from poor connections, ground loops, or thermal transients [9]. First, verify your signal is not clipping by checking the time waveform on an oscilloscope.

How do I balance sampling frequency with battery life and storage? This is a key experimental design challenge. Higher frequencies drain battery and fill memory faster [1]. The solution is to determine the minimum frequency required for your specific behaviors. For example, slow, rhythmic behaviors like swimming in sharks can be classified at 5 Hz, while short-burst behaviors like a flycatcher swallowing food require 100 Hz [1] [2].

Can machine learning help with lower-frequency data? Yes, but with caveats. Machine learning models can maintain high accuracy at lower sampling rates for some behaviors [2] [10]. However, this is highly behavior-dependent. High-frequency models excel at identifying fast, rhythmic locomotion, while lower-frequency models can sometimes better identify slower, aperiodic behaviors like grooming [4].

Troubleshooting Guides

Guide 1: Diagnosing Poor Behavior Classification Accuracy

If your machine learning models are failing to classify animal behaviors accurately from accelerometer data, follow this logical troubleshooting pathway.

Problem: Your collected accelerometer data does not allow for accurate classification of animal behaviors using machine learning or other methods.

Solution Steps:

Verify Sampling Frequency: Compare your sampling frequency against the Nyquist criterion for the specific behaviors of interest.

- Action: Calculate the Nyquist frequency (2 × maximum movement frequency). For short-burst behaviors, plan to sample at 1.4x to 2x this value [1] [7].

- Example: A study on European pied flycatchers found that swallowing food (28 Hz mean frequency) required sampling at 100 Hz, which is significantly higher than the theoretical Nyquist frequency of 56 Hz [1].

Check for Signal Clipping: If the sampling rate is sufficient, the signal itself may be distorted.

Audit Training Data Quality: The data used to train your classification model may be flawed.

- Action: Ensure your training dataset has a standardized duration for each behavior to prevent model bias toward over-represented behaviors [4].

- Action: Improve your feature set by calculating additional variables from the accelerometer data, such as the dominant power spectrum frequency, amplitude, and the running standard error of the waveform [4].

Guide 2: Solving Data Logger Communication and Power Issues

Follow this guide when you cannot communicate with your data logger or it powers off unexpectedly.

Problem: The data logger will not turn on, cannot be communicated with, or has intermittent failures.

Solution Steps:

Perform a Basic Power Check: This is the most common oversight.

- Action: Confirm the data logger is turned on. Check the battery with a voltmeter to ensure it is charged and properly connected [11].

Inspect Physical Connections:

Measure Bias Output Voltage (BOV): The BOV is a key indicator of sensor and cable health.

- Action: Use a digital multimeter to measure the DC bias voltage between the signal and ground wires at the data logger end [9].

- BOV equals supply voltage (18-30 V): This indicates an open circuit. The sensor is disconnected or a wire is broken. Check all connectors and cables [9].

- BOV is 0 V: This indicates a short circuit. Check for pinched cables or a frayed shield shorting the signal leads. Power supply failure is also a possibility [9].

- BOV is erratic or shifting: This can indicate a poor connection, a ground loop (from shielding grounded at both ends), or signal overload. Disconnect the shield at one end to test for ground loops [9].

Experimental Protocols & Data

Protocol 1: Determining Minimum Sampling Frequency for a New Behavior

This protocol allows you to empirically determine the correct sampling frequency for classifying a specific animal behavior.

1. Hypothesis and Objective:

- Hypothesis: The minimum sampling frequency required to classify Behavior X with >90% accuracy is [Your Initial Guess] Hz.

- Objective: To identify the lowest sampling frequency that does not statistically reduce classification accuracy compared to a high-frequency baseline.

2. Materials (The Scientist's Toolkit):

| Item | Function | Example from Research |

|---|---|---|

| High-frequency Biologger | To capture the original, high-fidelity reference signal. | Logger sampling at ~100 Hz [1]. |

| Video Recording System | For ground-truthing and labeling behaviors. | Synchronized high-speed cameras [1]. |

| Machine Learning Software | To build and test behavior classification models. | Random Forest algorithm [2] [4]. |

| Data Processing Tools | For down-sampling data and feature extraction. | Python or R with signal processing libraries. |

3. Step-by-Step Methodology:

- Data Collection: Record accelerometer data at the highest feasible frequency (e.g., 100 Hz) from your study subjects while simultaneously recording high-resolution video [1].

- Ground-Truthing: Annotate the accelerometer data by meticulously matching it with the observed behaviors from the video footage [1] [4].

- Create Training Datasets: Down-sample your original high-frequency dataset to create multiple new datasets at lower frequencies (e.g., 50 Hz, 25 Hz, 10 Hz, 5 Hz) [2].

- Model Training and Validation: Train a machine learning model (e.g., Random Forest) on a portion of each down-sampled dataset. Validate the model's accuracy using the remaining, unused data [2] [4].

- Analysis: Plot the classification accuracy against the sampling frequency. The minimum frequency is the point where accuracy drops below a pre-determined acceptable threshold (e.g., 95% of maximum accuracy) [2].

Protocol 2: System Verification and Sensor Health Check

Perform this protocol before starting a new experiment to ensure your entire accelerometer measurement system is functioning correctly.

1. Pre-Experiment Setup:

- BOV Baseline: Power on the system and measure the Bias Output Voltage for each sensor channel. Record these values for future reference [9].

- Functional Test: Gently tap the sensor. Observe the time waveform on an oscilloscope or data acquisition software to verify a clean, responsive signal without clipping or excessive noise [8].

2. In-Experiment Monitoring:

- Trend BOV: If your system supports it, trend the BOV over time. A slow drift in BOV can indicate sensor damage from excessive heat or other stressors [9].

- Review Time Waveforms: Periodically spot-check raw time waveforms during data collection to quickly identify the onset of problems like clipping or erratic signals [9] [8].

3. Quantitative Findings from Animal Studies

The table below summarizes how sampling frequency affects behavior classification in different species, demonstrating that one size does not fit all.

| Species | Behavior Type | Recommended Minimum Sampling Frequency | Key Finding |

|---|---|---|---|

| European Pied Flycatcher [1] | Swallowing (short-burst) | 100 Hz | Required >1.4x Nyquist (70 Hz) for accurate classification. |

| European Pied Flycatcher [1] | Flight (sustained rhythm) | 12.5 Hz | Much lower than Nyquist frequency was adequate. |

| Lemon Shark [2] | Swim, Rest, Burst, Chafe | 5 Hz | Most behaviors could be classified effectively at this low frequency. |

| Domestic Cat [4] | Locomotion (fast-paced) | 40 Hz (original) | Higher frequencies improved identification of fast behaviors. |

| Domestic Cat [4] | Grooming, Feeding (slow) | 1 Hz (mean) | Lower frequencies more accurately identified slower, aperiodic behaviors. |

| Humans (Clinical HAR) [10] | Daily Activities (e.g., brushing teeth) | 10 Hz | Reducing frequency to 10 Hz did not significantly affect accuracy. |

Frequently Asked Questions (FAQs)

Q1: What is the Nyquist-Shannon sampling theorem and why is it critical for my study on short-burst behaviors? The Nyquist-Shannon sampling theorem states that to accurately digitize a signal, the sampling frequency must be at least twice the highest frequency contained in that signal [1]. This minimum is called the Nyquist frequency. Sampling below this rate causes aliasing, where false, low-frequency signals appear in your data, distorting the true behavior [12]. While foundational, our case study shows that for short-burst behaviors, the theoretical minimum is often insufficient in practice.

Q2: I need to classify brief swallowing events in birds. Why is a sampling frequency higher than the Nyquist frequency necessary? For short-burst behaviors like swallowing, the movement is not only fast but also occurs over a very short duration. A study on European pied flycatchers, which swallow with a mean frequency of 28 Hz, found that a sampling frequency of 100 Hz was needed for reliable classification [1] [13] [14]. Although the Nyquist frequency for a 28 Hz signal is 56 Hz, the brief, transient nature of the maneuver requires oversampling (in this case, about 1.4 times the Nyquist frequency) to capture its full profile accurately [1].

Q3: Can I use the same sampling settings for all flight-related behaviors? No. The optimal sampling frequency depends heavily on the specific behavior and your research objective.

- Sustained, rhythmic flight: Behaviors like flapping or soaring flight, which have longer durations and produce consistent waveforms, can often be characterized adequately at lower sampling frequencies (e.g., 12.5 Hz) [1].

- Transient flight maneuvers: To identify rapid, short-burst maneuvers within a flight bout, such as prey catching, a high sampling frequency (e.g., 100 Hz) is again required [1].

- Distinguishing flight types: Classifying subtle differences in passive flight (e.g., thermal soaring vs. slope soaring) can be challenging with accelerometry alone and may require additional sensors like magnetometers [15].

Q4: What are the trade-offs of using a higher sampling frequency? Higher sampling rates consume more power and fill the device's memory storage faster [1]. For example, sampling at 100 Hz drains the battery more than twice as fast and fills memory four times faster compared to sampling at 25 Hz [1]. You must balance the need for data resolution with the practical constraints of your biologging equipment and deployment duration.

Troubleshooting Guide

| Problem | Probable Cause | Solution |

|---|---|---|

| Inability to classify short-burst behaviors | Sampling frequency is too low, failing to capture the true signal of fast, transient movements. | Increase the sampling frequency. For behaviors around 28 Hz, aim for at least 100 Hz [1]. |

| Rapid battery drain or memory full | Sampling frequency is set higher than necessary for the behaviors of interest. | For long-duration, rhythmic behaviors (e.g., sustained flight), validate if a lower frequency (e.g., 12.5-20 Hz) is sufficient [1] [16]. |

| Aliasing: strange low-frequency signals in data | The original signal contains frequencies higher than half the sampling rate, and no anti-aliasing filter was used [12]. | Apply an anti-aliasing filter before sampling to remove all signal components above the Nyquist frequency. This is often more practical than massively increasing the sample rate [12]. |

| Inaccurate estimation of signal amplitude | The combination of sampling frequency and the analysis window (sampling duration) is too low. | Increase the sampling duration or increase the sampling frequency. For short analysis windows, a sampling frequency of four times the signal frequency (twice the Nyquist frequency) is recommended for accurate amplitude estimation [1]. |

Experimental Protocol from a Key Study

The following workflow and table summarize the methodology from Yu et al. (2023), which directly investigated the sampling requirements for avian swallowing versus flight [1].

- Objective: To determine the minimum accelerometer sampling frequency required to classify swallowing and flight behaviors in birds, and to evaluate the Nyquist-Shannon theorem in this biological context [1].

- Subjects: Seven European pied flycatchers (Ficedula hypoleuca) housed in aviaries [1].

- Accelerometer Setup: Custom-built tri-axial loggers were used. Key specifications are summarized in the table below [1].

- Validation Method: A stereoscopic videography system (two synchronized high-speed cameras at 90 frames per second) recorded the birds' activities, providing a ground-truth dataset to match accelerometer signals with observed behaviors [1].

- Analysis: The high-frequency (100 Hz) accelerometer data was digitally down-sampled to lower frequencies. Machine learning classifiers were then trained and tested at these different frequencies to determine the point at which classification accuracy for specific behaviors (e.g., swallowing) began to decline significantly [1].

Research Reagent Solutions: Essential Materials

| Item | Function in Experiment |

|---|---|

| Tri-axial Accelerometer Logger | Measures accelerations in three orthogonal axes (surge, sway, heave), providing data on posture and dynamic movement [1] [15]. |

| Leg-loop Harness | A method for securely attaching the biologger to the bird's body, minimizing movement artifacts and ensuring consistent sensor orientation [1]. |

| Stereoscopic Videography System | Provides synchronized, high-frame-rate video from multiple angles for precise annotation of behavior, serving as the validation standard for accelerometer data [1]. |

| Anti-aliasing Filter | A hardware or software filter applied before signal digitization to remove frequency components above the Nyquist frequency, preventing aliasing artifacts [12]. |

| Machine Learning Classifier (e.g., K-Nearest Neighbor) | A computational algorithm used to automatically identify and classify animal behaviors based on patterns in the accelerometer data [16]. |

The table below consolidates key findings from the search results, providing a quick reference for selecting sampling frequencies.

| Behavior | Characteristic | Mean Frequency | Recommended Minimum Sampling Frequency | Key Reference |

|---|---|---|---|---|

| Avian Swallowing | Short-burst, transient | 28 Hz | 100 Hz (≈1.4x Nyquist) | [1] [13] [14] |

| Sustained Flight | Rhythmic, longer duration | N/A | 12.5 Hz (can be adequate) | [1] |

| Prey Catch Maneuver | Rapid transient within flight | N/A | 100 Hz | [1] |

| General Rule (No Constraints) | For frequency & amplitude | (Signal Freq. = f) | ≥ 2 * Nyquist* (4f) | [1] |

For further details on the experimental setup and statistical analysis, please refer to the primary source: Yu et al. (2023), Animal Biotelemetry 11, 28 [1].

Frequently Asked Questions (FAQs)

Q1: Why can't I classify short-burst behaviors like food swallowing or prey capture using standard accelerometer sampling protocols? Standard protocols often use sampling frequencies based on the Nyquist-Shannon theorem, which states that the sampling frequency should be at least twice the frequency of the behavior of interest. However, for very brief, transient behaviors, sampling at just the Nyquist frequency is often insufficient. Research on European pied flycatchers showed that while flight could be characterized at 12.5 Hz, accurately classifying a swallowing behavior with a mean frequency of 28 Hz required a sampling frequency higher than 100 Hz [1] [17].

Q2: What is the relationship between sampling frequency, behavior duration, and the accuracy of my data? The combination of sampling frequency and sampling duration critically impacts the accuracy of derived metrics like signal frequency and amplitude. For long-duration behaviors, sampling at the Nyquist frequency may be adequate. However, for short sampling durations, accuracy declines significantly, especially for amplitude estimation. To accurately estimate signal amplitude with short durations, a sampling frequency of four times the signal frequency (two times the Nyquist frequency) is necessary [1].

Q3: How do I determine the correct Nyquist frequency for the behavior I am studying? You must first identify the fastest movement frequency (in Hz) within the behavioral event of interest. The theoretical minimum (Nyquist frequency) is double this value. For example, if a wingbeat is 10 Hz, the Nyquist frequency is 20 Hz. However, for reliable classification and amplitude estimation of short-burst behaviors, you should plan to sample at 1.4 to 2 times the Nyquist frequency [1] [17].

Q4: My biologger has limited battery and storage. How can I optimize my settings for transient behaviors? This requires a trade-off. If your primary interest is in short-burst behaviors, you must prioritize a high sampling frequency (e.g., 100 Hz), even if it reduces overall deployment time. If your study focuses on longer, rhythmic behaviors, a lower frequency (e.g., 12.5-20 Hz) may be sufficient and will conserve power and memory [1].

Troubleshooting Guides

Problem: Failure to detect or accurately classify short-burst behavioral events.

- Check the sampling frequency: The most common cause is an insufficient sampling rate. Compare your device's sampling frequency to the known movement frequency of the behavior.

- Recommended Action: If possible, re-configure your biologgers to sample at a higher frequency. For new experiments, use pilot data to determine the necessary rate.

- Analyze the raw waveform: Short-burst behaviors often produce abrupt, non-rhythmic waveforms. Visually inspect your high-frequency data for these unique signatures that machine learning classifiers might miss with lower-resolution data [1].

Problem: Inaccurate estimation of energy expenditure (e.g., ODBA/VeDBA) from behaviors of varying durations.

- Check the combined effect of sampling frequency and duration: Energy expenditure proxies like ODBA rely on signal amplitude, which is highly sensitive to sampling settings for short-duration events.

- Recommended Action: For a mix of long and short-duration behaviors, use a sampling frequency of two times the Nyquist frequency to ensure amplitude accuracy across events. Validate your metrics against a known energy expenditure measure if possible [1].

Problem: Biologger memory or battery depletes before the end of the study period.

- Evaluate the necessity of a high sampling rate: A continuous 100 Hz sampling rate will fill memory and drain battery much faster than a 25 Hz rate [1].

- Recommended Action: If your research question does not involve high-frequency transient behaviors, reduce the sampling frequency to a level that is still adequate for your target behaviors. Alternatively, use a triggering or intermittent sampling mode to capture high-frequency data only during specific periods of activity.

Table 1: Recommended Accelerometer Sampling Parameters for Different Behavioral Types

| Behavioral Characteristic | Example Behavior | Recommended Minimum Sampling Frequency | Key Consideration |

|---|---|---|---|

| Long-endurance, rhythmic | Sustained flight | 12.5 Hz [1] | Adequate for characterizing wingbeat frequency and overall behavior classification. |

| Short-burst, high-frequency | Swallowing food | 100 Hz [1] [17] | Required to capture the full movement dynamics and for accurate classification. |

| Rapid transient within a longer bout | Prey capture during flight | 100 Hz [1] | Essential to resolve the rapid maneuver within the broader behavioral context. |

| General target for no constraints | Mixed behaviors | 2 x Nyquist Frequency [1] | Provides a relative optimum for estimating both signal frequency and amplitude. |

Table 2: Impact of Sampling Settings on Signal Metric Accuracy

| Sampling Duration | Sampling Frequency | Signal Frequency Estimation | Signal Amplitude Estimation |

|---|---|---|---|

| Long | ≥ Nyquist Frequency | Adequate [1] | Adequate [1] |

| Short | = Nyquist Frequency | Accuracy declines [1] | Poor (up to 40% standard deviation of normalized amplitude difference) [1] |

| Short | = 2 x Nyquist Frequency | Good [1] | Accurate [1] |

Detailed Experimental Protocols

Protocol 1: Establishing Minimum Sampling Frequency for a Novel Behavior

This methodology is derived from experiments with European pied flycatchers [1].

- Animal Preparation & Data Collection: Fit animals with high-capacity accelerometers capable of sampling at a very high frequency (e.g., 100 Hz). Simultaneously, record behavior with synchronized high-speed videography (e.g., 90 fps) to ground-truth accelerometer data.

- Data Annotation: Identify and label the start and end times of target behaviors (e.g., swallowing, prey capture) from the video footage.

- Data Down-sampling: Take the original high-frequency accelerometer data and digitally re-sample it to create multiple datasets at lower frequencies (e.g., 50 Hz, 25 Hz, 12.5 Hz).

- Classifier Training & Testing: Train machine learning models to classify the annotated behaviors using each of the down-sampled datasets.

- Performance Analysis: Compare the classification accuracy across the different sampling frequencies. The point at which classification performance drops below an acceptable threshold identifies the minimum required sampling rate for that behavior.

Protocol 2: Quantifying the Impact on Energy Expenditure Proxies

- Signal Simulation & Re-sampling: Generate simulated signals with known frequencies and amplitudes. Systematically re-sample these signals at different frequencies and window lengths (durations) [1].

- Metric Calculation: Calculate dynamic body acceleration metrics (e.g., ODBA, VeDBA) from each re-sampled dataset.

- Accuracy Assessment: Compare the calculated metrics from the re-sampled data against the values from the original, high-resolution signal. Quantify the deviation, such as the normalized amplitude difference, to understand how sampling settings bias energy expenditure estimates [1].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Materials for Accelerometer Studies on Transient Behaviors

| Item | Function & Specification |

|---|---|

| High-Frequency Biologger | Records tri-axial acceleration data. Must have a sufficiently high sampling rate (e.g., ≥100 Hz), appropriate range (±8 g), and be miniaturized to avoid impacting animal behavior [1]. |

| Synchronized High-Speed Camera | Provides ground-truth data for behavioral annotation. A temporal resolution of 90 fps or higher is recommended to capture rapid movements [1]. |

| Leg-Loop Harness | A method for secure attachment of the biologger to the animal's body (e.g., over the synsacrum in birds), minimizing movement artifacts [1]. |

| Data Analysis Software | Custom or commercial software (e.g., R, Python with signal processing libraries) for processing large datasets, down-sampling signals, and building machine learning classifiers for behavior identification. |

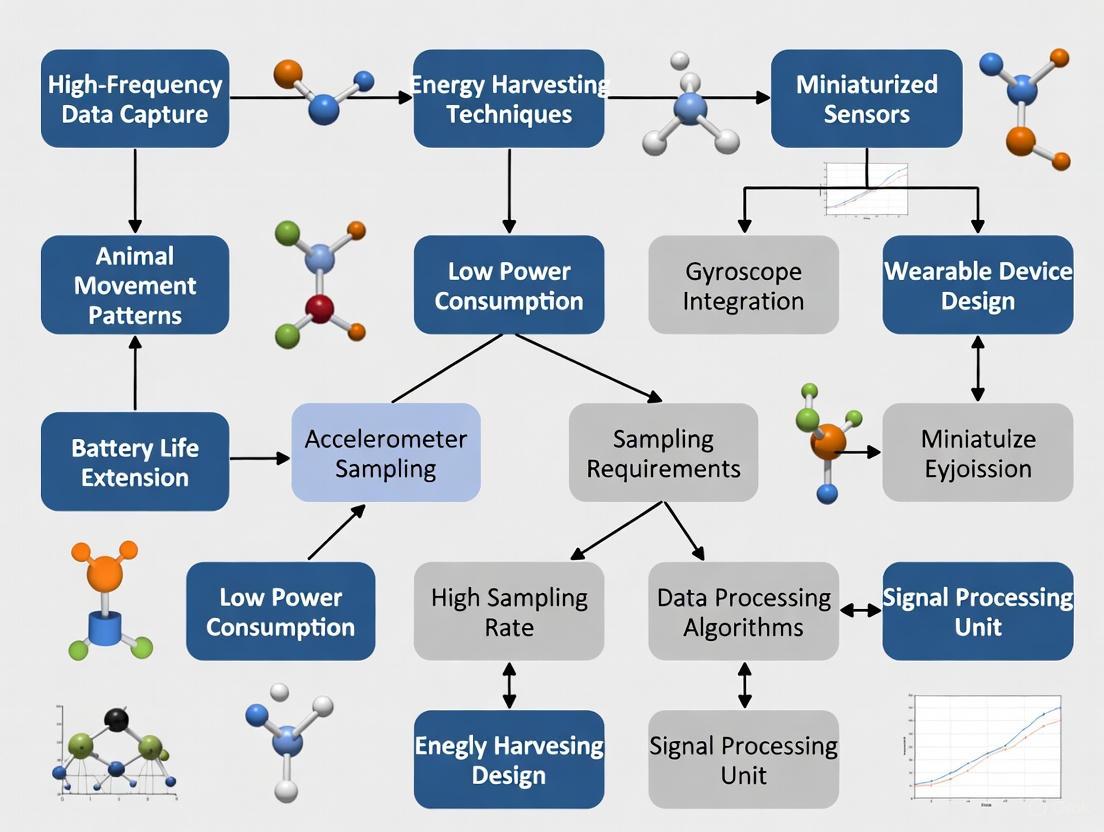

Experimental Workflow and Troubleshooting Diagrams

Experimental Setup Workflow

Troubleshooting Missed Behaviors

For researchers studying short-burst animal behaviors with accelerometers, frequency refers to how often a behavior occurs per unit of time, duration is the length of time a single behavior instance lasts, and amplitude is the magnitude or intensity of the movement. Accurately capturing these metrics depends heavily on your accelerometer's sampling frequency and sampling duration [1].

The table below summarizes these core metrics and their relationship to accelerometer sampling.

| Metric | Description | Role in Behavioral Analysis | Key Sampling Consideration |

|---|---|---|---|

| Frequency | Rate of behavioral cycles (e.g., wingbeats per second) [1]. | Classifies rhythmic behaviors (e.g., flight) and estimates energy expenditure [1]. | Must sample at least at the Nyquist frequency (2x the behavior's frequency) to avoid aliasing; short bursts may require higher rates [1]. |

| Duration | Length of time a single behavioral event lasts (e.g., a feeding bout). | Distinguishes between sustained (e.g., foraging) and very brief, short-burst behaviors (e.g., swallowing) [18] [1]. | Governed by the sampling duration (window length); must be long enough to capture the entire behavioral event [1]. |

| Amplitude | Magnitude of the acceleration signal (e.g., Overall Dynamic Body Acceleration - ODBA) [18]. | Serves as a proxy for energy expenditure; differentiates between high and low-intensity movements [18] [1]. | Accuracy depends on both sampling frequency and duration; estimating amplitude for short bursts requires a high sampling frequency [1]. |

Experimental Protocols for Determining Sampling Requirements

Protocol 1: Evaluating Sampling Frequency for Behavior Classification

This methodology is adapted from research on European pied flycatchers to classify distinct behaviors like flying and swallowing [1].

- Data Collection: Record tri-axial accelerometer data from your study animal at a very high frequency (e.g., ~100 Hz) simultaneously with video validation [1].

- Behavior Annotation: Use the synchronized video to label the accelerometer data with specific behaviors, identifying both long-duration rhythmic behaviors (e.g., flight) and short-burst behaviors (e.g., swallowing) [1].

- Data Downsampling: Create lower-frequency datasets (e.g., 50 Hz, 25 Hz, 12.5 Hz) from the original high-frequency data.

- Model Training & Validation: Train machine learning models (e.g., Random Forest) to classify behaviors using each downsampled dataset. Validate the model's accuracy against the annotated behaviors [18] [1].

- Determine Critical Frequency: Identify the minimum sampling frequency at which classification accuracy for short-burst behaviors remains acceptable. Research shows that classifying a swallow (mean frequency 28 Hz) requires a sampling frequency much higher than its Nyquist frequency [1].

Protocol 2: Evaluating Sampling for Frequency and Amplitude Estimation

This protocol uses simulated data to systematically assess how sampling settings affect the accuracy of signal metric extraction [1].

- Signal Simulation: Generate simulated acceleration signals with known frequencies and amplitudes, mimicking animal movements.

- Parameter Variation: Analyze these signals using a range of sampling frequencies (from below to above the Nyquist frequency) and sampling durations (varying window lengths).

- Metric Calculation: For each combination of frequency and duration, calculate the signal's frequency and amplitude.

- Accuracy Assessment: Compare the calculated values to the known true values. Determine the combinations of sampling frequency and duration that yield accurate estimates. Studies find that accurately estimating amplitude for short-duration signals often requires a sampling frequency of up to four times the signal frequency [1].

Research Reagent Solutions

The table below lists essential materials and tools for accelerometer-based behavioral research.

| Item | Function / Relevance |

|---|---|

| Tri-axial Accelerometer Biologgers | The primary sensor measuring acceleration in three dimensions (surge, heave, sway) for detailed movement analysis [18] [1]. |

| Machine Learning Software (e.g., R with 'h2o') | Used to build classification models (e.g., Random Forest) that predict behavior from raw acceleration data [18]. |

| Synchronized High-Speed Videography | Provides the ground-truth data for labeling accelerometer signals with specific behaviors, which is critical for model training and validation [1]. |

| Overall Dynamic Body Acceleration (ODBA) Scripts | Calculates a common metric used as a proxy for energy expenditure from the tri-axial acceleration data [18]. |

Troubleshooting Common Experimental Issues

Problem: Short-burst behaviors (e.g., prey capture, swallowing) are misclassified or not detected. Solution: This indicates insufficient sampling frequency. For short-burst behaviors, the required sampling frequency can be 1.4 times the Nyquist frequency or more [1]. Re-evaluate the target behavior's peak frequency and increase the accelerometer's sampling rate accordingly. For example, to capture a swallow at 28 Hz, a sampling rate of at least 80-100 Hz may be necessary [1].

Problem: Inconsistent or inaccurate estimates of signal amplitude for energy expenditure (e.g., ODBA). Solution: This is often caused by the combined effect of low sampling frequency and short sampling duration. To accurately estimate amplitude, especially for brief events, use a higher sampling frequency. Research suggests that a sampling frequency of four times the signal frequency (twice the Nyquist frequency) may be needed when sampling duration is low [1].

Problem: The accelerometer's battery depletes too quickly for long-term studies. Solution: You can reduce the sampling frequency, but this must be balanced against information loss [18]. For studies focused only on general behavioral states (e.g., resting vs. foraging) and not short-burst events, a lower frequency (e.g., 1 Hz) can be viable and dramatically extend battery life [18] [1].

Workflow Diagram

The following diagram illustrates the logical process of determining the correct accelerometer sampling strategy based on your research goals and the behaviors of interest.

From Theory to Practice: Designing a Robust Data Collection and Analysis Pipeline

Frequently Asked Questions

What is the Nyquist-Shannon Sampling Theorem and why is it critical for my research?

The Nyquist-Shannon sampling theorem states that to accurately digitize a continuous signal without distortion, the sampling frequency must be at least twice the highest frequency component in that signal. This minimum required rate is known as the Nyquist rate [19]. Sampling below this rate causes aliasing, a phenomenon where high-frequency components falsely appear as lower frequencies in your data, permanently contaminating your results [20] [12].

For short-burst animal behaviors, is sampling at the exact Nyquist frequency sufficient?

No. Research shows that for fast, short-burst behaviors, sampling at the exact Nyquist frequency is often insufficient. A study on European pied flycatchers found that a sampling frequency higher than the Nyquist frequency (oversampling) was necessary to accurately classify brief behaviors like swallowing food, which had a mean frequency of 28 Hz [1]. For such behaviors, a rate of 1.4 times the Nyquist frequency is recommended [1].

How does sampling frequency affect device battery and data storage?

Higher sampling frequencies significantly increase power consumption and data storage requirements. For example, sampling accelerometer data at 25 Hz can result in more than double the battery life compared to sampling at 100 Hz [1]. Furthermore, a 100 Hz sampling rate will fill device memory four times faster than a 25 Hz rate, creating a trade-off between data resolution and study duration [1] [2].

What are the two main ways to prevent aliasing in my data?

There are two primary methods to avoid aliasing [12]:

- Increase the sample rate: Using a faster data acquisition system to meet or exceed the Nyquist criterion for your signal of interest.

- Use an anti-aliasing filter: Implementing a low-pass filter before the analog-to-digital converter (ADC) to remove frequency components higher than half the sampling rate (fs/2) [20]. This is often the most practical solution.

Troubleshooting Guides

Problem: Inability to Classify Short-Burst Animal Behaviors

Symptoms

- Machine learning models fail to identify or consistently misclassify brief behavioral events (e.g., prey capture, swallowing, escape bursts).

- The extracted signal features from accelerometer data lack the definition needed to distinguish between similar, rapid behaviors.

Solution Short-burst behaviors are characterized by a few movement cycles over very short time scales (e.g., ~100 ms) and require higher sampling frequencies than sustained behaviors [1].

Experimental Protocol from Pied Flycatcher Research

- Logger Attachment: Attach a tri-axial accelerometer logger over the animal's synsacrum using a secure leg-loop harness to ensure consistent positioning [1].

- High-Frequency Recording: Sample accelerometer data at a high frequency (e.g., 100 Hz) to establish a ground-truth dataset [1].

- Behavioral Annotation: Simultaneously record the animal's behavior using a synchronized high-speed videography system (e.g., 90 frames-per-second) to provide ground-truthed labels for the accelerometer data [1].

- Data Analysis: Systematically downsample the original high-frequency data and evaluate the performance of your behavior classification algorithm at each lower sampling rate [1] [2].

Resolution For the European pied flycatcher, swallowing food (a 28 Hz behavior) required a sampling frequency of 100 Hz for accurate classification, which is substantially higher than its nominal Nyquist frequency of 56 Hz [1]. The general recommendation is to use a sampling frequency of 1.4 times the Nyquist frequency of the short-burst behavior of interest [1].

Problem: Aliasing Creates False Frequencies in Data

Symptoms

- Unexplained low-frequency signals appear in frequency-domain analysis.

- The sampled waveform looks significantly different from the expected signal.

Solution Aliasing occurs when the signal contains components exceeding half the sampling rate (fs/2). These high-frequency components "fold" back into the low-frequency spectrum [20] [12].

Resolution Follow this two-step process to eliminate aliasing:

- Implement an Anti-aliasing Filter: This is a crucial hardware step. Add a low-pass filter circuit before the ADC to attenuate all frequency content above fs/2 [20]. The filter's cutoff frequency (fc) should be set slightly lower than fs/2 but higher than the effective bandwidth of your signal [20].

- Increase Sampling Rate: If possible, select a higher sampling frequency that satisfies fs > 2B, where B is the highest frequency you need to measure. This provides a wider safety margin [1] [19].

Table: Impact of Sampling Frequency on Signal Accuracy for a 20 Hz Behavior

| Sampling Frequency | Ratio to Nyquist (2×20 Hz) | Aliasing Risk for 20 Hz Signal | Recommended Use Case |

|---|---|---|---|

| 30 Hz | 0.75x | Very High | Not recommended |

| 40 Hz | 1.0x | High (Nyquist minimum) | Estimating frequency of long, rhythmic behaviors [1] |

| 56 Hz | 1.4x | Low | Classifying short-burst behaviors [1] |

| 80 Hz | 2.0x | Very Low | Accurate amplitude estimation, energy expenditure approximation [1] |

Problem: Inaccurate Estimation of Energy Expenditure or Signal Amplitude

Symptoms

- Proxies for energy expenditure like Overall Dynamic Body Acceleration (ODBA) are inconsistent.

- The measured amplitude of rhythmic movements (e.g., wingbeats) is lower than expected and varies with sampling settings.

Solution The accuracy of amplitude-related metrics is highly dependent on the combination of sampling frequency and sampling duration (window length) [1].

Experimental Protocol for System Evaluation

- Signal Simulation: Generate simulated signals with known frequencies and amplitudes.

- Systematic Downsampling: Downsample the signal to various lower frequencies (e.g., from 100 Hz down to 12.5 Hz).

- Vary Window Length: Analyze the downsampled data using different window lengths.

- Quantify Error: Calculate the error in estimated signal frequency and amplitude compared to the known original values [1].

Resolution

- For long sampling durations, sampling at the Nyquist frequency may be adequate for frequency and amplitude estimation [1].

- For shorter sampling durations, which are common when analyzing discrete behavioral events, accuracy declines sharply for amplitude estimation. To accurately estimate signal amplitude with low sampling durations, a sampling frequency of four times the signal frequency (two times the Nyquist frequency) is necessary [1].

Table: Guide to Selecting Sampling Frequency Based on Research Objective

| Research Objective | Key Signal Metric | Recommended Minimum Sampling Frequency | Key Considerations |

|---|---|---|---|

| Classify long-endurance behaviors (e.g., flight, swimming) | Movement pattern (frequency) | 1x Nyquist (e.g., 12.5 Hz for 6.25 Hz flight) | Lower frequency saves battery and memory [1] [2] |

| Classify short-burst behaviors (e.g., swallowing, prey capture) | Movement pattern (frequency) | 1.4x Nyquist (e.g., 100 Hz for 28 Hz swallow) | Essential for capturing the full detail of transient events [1] |

| Estimate energy expenditure (ODBA/VeDBA) | Signal amplitude (acceleration) | 1x Nyquist (can be as low as 1-10 Hz) | Lower frequencies can be sufficient over long windows [1] [2] |

| Estimate signal amplitude with short windows | Signal amplitude (acceleration) | 2x Nyquist (e.g., 80 Hz for a 20 Hz behavior) | Critical for accurate amplitude reading in brief behavioral bouts [1] |

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for Accelerometer-Based Animal Behavior Studies

| Item | Function | Example Application in Research |

|---|---|---|

| Tri-axial Accelerometer Logger | Measures acceleration in three dimensions (lateral, longitudinal, vertical) to characterize posture and movement. | Logger used in pied flycatcher study: 18×9×2 mm, 0.7 g, ±8 g range, 8-bit resolution [1]. |

| Leg-loop Harness | Provides a secure and consistent method for attaching loggers to animals without inhibiting movement. | Used for dorsal attachment on European pied flycatchers over the synsacrum [1]. |

| Synchronized High-Speed Cameras | Provides ground-truthed behavioral annotations to validate and train classification models on accelerometer data. | Stereoscopic videography at 90 fps used to film flycatchers in aviaries [1]. |

| Anti-aliasing Low-Pass Filter | An analog circuit that removes high-frequency noise above the Nyquist frequency before ADC, preventing aliasing. | Can be an external RC circuit or integrated into some analog accelerometers (e.g., ADXL103) [20]. |

| Digital Filtering Software (FIR/IIR) | Processes digitized data to further reduce noise. FIR filters have linear phase; IIR filters are computationally efficient. | Used in post-processing to smooth data and improve signal quality for feature extraction [20]. |

Experimental Workflow and Signal Processing

Experimental Workflow for Sampling Frequency Selection

Signal Processing Chain for Accelerometer Data

The Critical Role of Sampling Duration and Analysis Window Length

Frequently Asked Questions (FAQs)

FAQ 1: What is the single most critical factor in determining my accelerometer sampling frequency? The most critical factor is the speed of the behavior you intend to capture. The Nyquist-Shannon sampling theorem states that your sampling frequency must be at least twice the frequency of the fastest essential body movement. For example, one study on European pied flycatchers found that swallowing food, with a mean frequency of 28 Hz, required a sampling frequency higher than 100 Hz for accurate classification. In contrast, longer-duration behaviors like flight could be characterized with a much lower sampling frequency of 12.5 Hz [1].

FAQ 2: How long should my accelerometer recording sessions be to get reliable data? The optimal recording duration depends on the variability of the behavior. For classifying parent bird nest visits, an optimal sampling duration of one hour was found to explain the most variation in total daily visits [21]. For classifying human activities, window lengths between 2.5–3.5 seconds often provide an optimal tradeoff between recognition performance and speed [22]. Longer sampling windows generally improve accuracy but with diminishing returns.

FAQ 3: My accelerometer data is collected; how do I choose the right analysis window length? The choice of analysis window length involves a trade-off:

- Short windows (e.g., 0.5-1.5 seconds) are better for detecting brief, transient behaviors and are essential for real-time applications. However, they may have lower classification accuracy for sustained activities [23].

- Longer windows (e.g., 2.5-3.5 seconds) typically yield higher overall accuracy for classifying sustained, rhythmic behaviors like walking or running [22]. The best window length should be determined empirically for your specific study behaviors.

FAQ 4: Can the placement of the accelerometer on the animal affect my results? Yes, device placement is critical for validity and reliability. The sensor should be placed as close as possible to the center of mass of the body (e.g., the sacrum/back for birds, the waist for humans) to best capture 'whole body' movements. Different placements (e.g., ear, leg, wrist) will capture different movement signatures for the same behavior [24] [25] [26].

FAQ 5: Why do my behavior classification models perform poorly in real-world conditions? Poor generalization is a common limitation. This often occurs when models are trained on data from a limited set of individuals, devices, or environmental conditions. To improve generalizability:

- Maximize variability in your training data (multiple animals, days, and contexts) [26].

- Account for device variation, as differences between individual accelerometers can affect the calculated metrics [27].

- Select pre-processing methods and classifiers (e.g., Random Forest) that are robust and avoid overfitting [26].

Troubleshooting Guides

Problem: Short, burst-like behaviors (e.g., swallowing, escape maneuvers) are missed or misclassified.

- Potential Cause 1: Sampling frequency is too low. The high-frequency components of these behaviors are not being captured.

- Solution: Increase the sampling frequency. For short-burst behaviors, a frequency of 1.4 times the Nyquist frequency of the behavior is recommended. For the flycatcher's swallowing at 28 Hz, this would necessitate a sampling rate of at least ~40 Hz, but the study used 100 Hz to ensure accuracy [1].

- Potential Cause 2: Analysis window is too long. A long window may average out the sharp, distinctive signal of a short burst.

- Solution: Use a shorter, behavior-appropriate analysis window (e.g., 0.5s) for detecting these specific events [22] [23].

Problem: Estimates of energy expenditure or overall activity levels are inconsistent.

- Potential Cause 1: Inaccurate estimation of signal amplitude. The combination of sampling frequency and sampling duration directly affects the accuracy of amplitude estimation [1].

- Solution: For studies focused on amplitude-based metrics like Overall Dynamic Body Acceleration (ODBA), ensure an adequate sampling frequency. For short sampling durations, a frequency of four times the signal frequency (twice the Nyquist frequency) is necessary for accurate amplitude estimation [1].

- Potential Cause 2: High variation between individual animals or devices.

- Solution: Include "animal" and "accelerometer device" as random effects in your statistical models to account for this inherent variation [27]. Conduct device calibration before deployment [1].

Problem: The classification model is confused between sedentary behavior and light activity.

- Potential Cause: Improper cut-off points or thresholds. The thresholds used to distinguish activity intensities are often population-specific and device-specific [24] [25].

- Solution: Use validated, population-specific cut-points (e.g., for children vs. older adults). If such thresholds do not exist for your study population, you may need to validate your own thresholds using direct observation or video recording as a reference [24] [28].

Table 1: Recommended Sampling Configurations for Different Behavior Types

| Behavior Type | Example | Recommended Sampling Frequency | Recommended Analysis Window | Key Consideration |

|---|---|---|---|---|

| Short-Burst/Transient | Swallowing, prey catch, escape maneuvers | ≥ 100 Hz or 1.4x Nyquist [1] | Short (e.g., 0.5 s) [22] | Captures rapid, non-repetitive movements. High battery/data cost. |

| Long-Endurance/Rhythmic | Flight, walking, grazing | ≥ 2x Nyquist (e.g., 12.5-25 Hz) [1] | Medium to Long (e.g., 2.5-3.5 s) [22] | Good for classifying sustained, cyclic activities. |

| Postural/Static | Lying, standing, sitting | Lower frequencies often sufficient (e.g., 10-20 Hz) | Variable; can be shorter for posture (0.5s) [22] | Focus is on orientation rather than high-frequency movement. |

| Energy Expenditure (ODBA) | Overall Dynamic Body Acceleration | Lower frequencies possible (e.g., 10 Hz) [1] | Long (e.g., 5-min windows) [1] | Accuracy depends on combo of frequency and duration for amplitude. |

Table 2: Troubleshooting Quick Reference Table

| Symptom | Likely Cause | Recommended Action |

|---|---|---|

| Missed brief events | Sampling rate too low | Increase sampling frequency to ≥ 1.4x Nyquist [1]. |

| Poor classification of sustained activities | Analysis window too short | Increase window length to 2.5-3.5s [22]. |

| Inconsistent activity counts between devices | Inter-device variation | Calibrate devices; account for device ID in models [27]. |

| Model fails with new subjects | Overfitting; poor generalization | Train model with data from more individuals and conditions [26]. |

| Can't distinguish sitting from standing | Wrong sensor placement or model | Place sensor on thigh; use a model tuned for posture [25]. |

Experimental Protocols & Workflows

Protocol 1: Determining the Minimum Sampling Frequency for a Novel Behavior

This protocol is adapted from experimental validation studies on animal behavior [1].

- High-Frequency Data Collection: Record the behavior of interest using a high-speed video camera (e.g., 90 fps) synchronized with an accelerometer sampling at a very high frequency (e.g., 100 Hz).

- Behavioral Annotation: Manually annotate the start and end times of the target behavior from the video footage to create ground truth labels.

- Signal Down-Sampling: Programmatically downsample the raw 100 Hz accelerometer data to create new datasets at lower sampling frequencies (e.g., 50 Hz, 25 Hz, 12.5 Hz).

- Model Training & Validation: Extract features from each down-sampled dataset and train behavior classification models (e.g., Random Forest). Validate the accuracy of each model against the video-based ground truth.

- Identify Critical Frequency: Plot classification accuracy against sampling frequency. The point where accuracy begins to drop significantly is below the critical minimum sampling frequency for that behavior.

Protocol 2: Optimizing the Analysis Window Length for Classification

This protocol is standard in human activity recognition [22] [23] and can be adapted for animal studies.

- Raw Data Segmentation: Using your collected accelerometer data, segment it using a sliding window approach. Test a wide range of window lengths (e.g., from 0.5 seconds to 5 seconds).

- Feature Extraction: From each window, extract relevant time-domain (e.g., mean, standard deviation, min, max) and frequency-domain (e.g., spectral entropy, dominant frequency) features.

- Model Evaluation: Train a classifier (e.g., Adaptive Boosting, Support Vector Machine) for each window length using the extracted features. Evaluate performance using metrics like overall accuracy and F1-score via cross-validation.

- Trade-off Analysis: Plot the classification performance against the window length. The "optimal" window is the shortest length that provides a satisfactorily high and stable level of performance, thus balancing accuracy and computational speed.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Materials for Accelerometer-Based Behavior Studies

| Item | Function / Explanation |

|---|---|

| Tri-axial Accelerometer Loggers | Core sensor measuring acceleration in three orthogonal planes (X, Y, Z). Critical for capturing complex, multi-directional movement. Examples: Actigraph GT3X+, custom-built biologgers [1] [24]. |

| Harness / Attachment System | Securely and safely attaches the logger to the animal. A proper fit is essential to avoid impacting natural behavior and to ensure the sensor orientation is consistent. Example: leg-loop harness for birds [1]. |

| Synchronized High-Speed Video | Serves as the "ground truth" for validating and annotating behaviors. Synchronization allows precise matching of accelerometer signals to observed activities [1]. |

| RFID System with Antenna | An automated method for validating specific behaviors like nest visits in birds, providing continuous, unbiased data to compare against accelerometer-based predictions [21]. |

| Data Processing Software (e.g., R, Python with scikit-learn) | Open-source platforms used for data cleaning, signal processing, feature extraction, and machine learning model development [22] [26]. |

| Diaries / Log-books | Used in human studies to complement accelerometer data with contextual information (e.g., sleep/wake times, device removal). Can be adapted for animal studies with keeper logs [24] [28]. |

Experimental Workflow Visualization

Diagram 1: Workflow for Optimizing Accelerometer Studies

Advanced Considerations

When moving from controlled validations to large-scale field studies, consider these factors:

- Battery and Memory Life: Higher sampling frequencies and longer durations drain batteries and fill memory faster. Sampling at 25 Hz can more than double battery life compared to 100 Hz [1]. The chosen protocol is always a balance between data resolution and practical constraints.

- Standardization and Reporting: The field suffers from a lack of methodological standardization, making cross-study comparisons difficult [28]. To improve reproducibility, always report in detail: accelerometer brand/model, placement, sampling frequency, epoch length, analysis window size, wear-time validation criteria, and the cut-points or algorithms used for classification.

Troubleshooting Guides

Guide 1: Diagnosing Suboptimal Behaviour Classification

Problem: Your device is failing to classify short-burst animal behaviours (e.g., swallowing, prey capture) accurately.

- Possible Cause 1: Insufficient Sampling Frequency. The behaviour's movement frequency may be higher than your current sampling rate can capture.

- Solution: Increase the accelerometer sampling frequency. For short-burst behaviours, a frequency of 100 Hz or higher may be necessary, which is more than the Nyquist frequency for such acts [1].

- Possible Cause 2: Inadequate On-Board Processing. Transmitting all raw data for off-board classification is draining the battery.

- Solution: Implement an on-board classification framework. This uses a hierarchical system where a low-power classifier (e.g., using only accelerometer data) triggers a more powerful, energy-intensive classifier (e.g., using gyroscope data) only when needed. This can reduce energy requirements by an order of magnitude with only a minimal (~5%) reduction in accuracy [29].

Guide 2: Resolving Accelerometer Signal and Hardware Issues

Problem: The accelerometer data is noisy, erratic, or shows a constant bias shift.

- Possible Cause 1: Poor Sensor Calibration. Uncalibrated sensors can introduce significant error in metrics like Vector of Dynamic Body Acceleration (VeDBA), a proxy for energy expenditure [30].

- Solution: Perform a simple 6-orientation (6-O) field calibration before deployment. Record data with the device motionless in six different orientations (e.g., like the faces of a die) and use the output to correct for sensor inaccuracies [30].

- Possible Cause 2: Faulty Connections or Ground Loops. This can cause an erratic bias voltage and jumping signals in the time waveform [9].

- Solution: Check for corroded, dirty, or loose connections. Apply non-conducting silicone grease to connectors. Ensure the cable shield is grounded at one end only to prevent ground loops [9].

- Possible Cause 3: Sensor Damage.

- Solution: Measure the sensor's Bias Output Voltage (BOV). A BOV that equals the supply voltage suggests an open circuit (check cables). A BOV of 0 V suggests a short circuit. A slowly drifting BOV often indicates permanent damage from excessive temperature, shock, or electrostatic discharge [9].

Frequently Asked Questions (FAQs)

Q1: What is the minimum sampling frequency I should use for my animal behaviour study?

- A: There is no single value; it depends entirely on the behaviour of interest [1].

- For long-endurance, rhythmic behaviours like flight in birds, a lower sampling frequency (e.g., 12.5 Hz) may be sufficient [1].

- For short-burst, abrupt behaviours like swallowing food or escape maneuvers, a much higher sampling frequency (e.g., 100 Hz) is required to capture the rapid movements [1]. As a general rule, a sampling frequency of at least twice the Nyquist frequency (four times the signal frequency) is recommended for accurate amplitude estimation, especially for short-duration events [1].

Q2: How does on-board processing save battery and memory compared to raw data transmission?

- A: The primary energy cost in many wearable systems comes from the wireless radio. On-board processing drastically reduces the amount of data that needs to be transmitted. Instead of sending continuous streams of raw accelerometer and gyroscope data, the device only transmits pre-processed "bits of knowledge" (e.g., classified behaviour labels or summary metrics). This reduces the radio's duty cycle, leading to massive energy savings and slower memory fill rates [29].

Q3: My accelerometer readings are zero. What should I check?

- A:

- Verify Power: Ensure the device is turned on and has sufficient battery [9].

- Check Bias Voltage: A zero bias voltage reading typically indicates a short circuit in the cabling or connections [9].

- Inspect Hardware: Check the entire cable length and all termination points (e.g., junction boxes) for frayed shields or pins that may be shorting the signal leads [9].

Experimental Protocols & Data

The table below summarizes key findings from research on sampling and data handling strategies.

Table 1: Quantitative Data on Sampling and Processing Strategies

| Factor | Recommended Value for Short-Burst Behaviours | Impact on Battery & Memory | Key Research Finding |

|---|---|---|---|

| Sampling Frequency | 100 Hz (> Nyquist frequency) [1] | Higher frequency drains battery and fills memory faster [1]. | A sampling frequency of 2x Nyquist is required for accurate frequency & amplitude estimation of short bursts [1]. |

| On-Board Classification | Hierarchical classifier design [29]. | Can improve device lifetime by one order of magnitude (10x) [29]. | Achieves high accuracy with a ~5% reduction compared to cloud-based processing [29]. |

| Signal Amplitude Accuracy | Sampling at 4x signal frequency (2x Nyquist) for low-duration signals [1]. | Higher frequency requirements strain resources. | Accuracy declines with decreasing sampling duration, with up to 40% standard deviation in normalized amplitude error at low durations [1]. |

Detailed Methodology: 6-Orientation Accelerometer Calibration

This protocol, adapted from research, ensures your accelerometer data is accurate from the start [30].

- Objective: To correct for sensor inaccuracies in tri-axial accelerometers that occur during manufacturing and soldering.

- Procedure:

- Place the data logger motionless on a level surface.

- Orient the logger so that each of its three primary axes (X, Y, Z) points directly toward the ground, and record data for ~10 seconds.

- Rotate the logger so that each of the three primary axes points directly away from the ground, and record data for ~10 seconds. This results in six unique stationary orientations.

- Data Processing:

- For each stationary period, calculate the vectorial sum of the acceleration:

‖a‖ = √(x² + y² + z²). - In a perfect sensor, all six values should be 1.0 g. Deviations are used to calculate correction factors (gain and offset) for each axis.

- For each stationary period, calculate the vectorial sum of the acceleration:

- Application: Apply the derived correction factors to all subsequent data collected during the experiment.

Visualizations

Diagram 1: On-Board Classification Workflow

This diagram illustrates the hierarchical classification framework that enables intelligent sensor duty-cycling and significant energy savings.

Diagram 2: Sampling Frequency Impact on Signal Capture

This diagram contrasts the effect of different sampling strategies on the ability to accurately reconstruct short-burst biological signals.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Materials

| Item | Function / Application |

|---|---|

| Tri-axial Accelerometer Biologger | The primary sensor for measuring acceleration in three dimensions. Critical for quantifying movement and behaviour [1] [30]. |

| Leg-loop Harness | A common attachment method for securing biologgers to birds and other animals, minimizing discomfort and ensuring consistent sensor placement [1]. |

| 6-Orientation Calibration Jig | A simple, custom apparatus to hold the accelerometer motionless in the six precise orientations required for field calibration [30]. |

| High-speed Videography System | Serves as the "ground truth" for validating and annotating behaviours captured by the accelerometer, essential for training classifiers [1]. |

| Hierarchical Classifier Algorithm | The core software for on-board processing, enabling intelligent sensor duty-cycling and data distillation [29]. |

Troubleshooting Guides

Guide 1: Addressing Poor Model Performance on Short-Duration Behaviors

Problem: Your Random Forest model fails to reliably classify brief, fast-paced animal behaviors (e.g., scratching, head-shaking) from accelerometer data.

Explanation: Brief events often have unique, high-frequency signatures that can be lost if the data is over-smoothed or described by insufficient variables [4]. Models trained on lower-frequency summaries may miss these signals.

Solution: Implement a multi-frequency feature engineering approach.

Action 1: Calculate High-Frequency Descriptive Variables Generate a comprehensive set of features from the high-frequency (e.g., 40 Hz) raw data. Beyond simple averages, include metrics that capture the waveform's shape and variability over short windows [4]:

- Static and Dynamic Acceleration: Separate the influence of body posture from movement [4].

- Pitch and Roll: Determine the animal's orientation [4].

- Standard Error of the Waveform: Quantifies the amplitude and 'size' of movements over time [4].

- Dominant Power Spectrum Frequency and Amplitude: Identifies the primary frequency component of the signal, crucial for periodic behaviors [4].

Action 2: Create Feature Sets at Different Resolutions

- High-Frequency Set: Use features calculated from the raw high-frequency data (e.g., 40 Hz) to capture fast-paced behaviors [4].

- Low-Frequency Set: Generate a second feature set from data averaged over 1-2 seconds (1 Hz). This can better represent slower, aperiodic behaviors like grooming or feeding [4].

Action 3: Train and Validate Model Variants Train separate Random Forest models on the high-frequency and low-frequency feature sets. Validate their accuracy not just on a hold-out test dataset, but also, critically, against manually identified behaviors from free-ranging animals to ensure robustness in the wild [4].

Guide 2: Fixing Bias Towards Common Behaviors in Predictions

Problem: Your model consistently misclassifies rare but biologically important brief events (e.g., vocalizations, prey capture) as more common, longer-duration behaviors (e.g., resting, walking).

Explanation: Machine learning models can become biased towards classes with more examples in the training data. If a behavior like "resting" makes up 70% of your training labels, the model will be inclined to predict "resting" to maximize overall accuracy, at the cost of poorly predicting rare events [4] [31].

Solution: Balance your training dataset and adjust model evaluation.

Action 1: Standardize Behavior Durations in Training Data Instead of using all available data, which naturally has inconsistent durations for each behavior, create a training dataset with a standardized, equal amount of data points for each behavior class. This prevents the model from being skewed by the most abundant behavior [4].

Action 2: Employ Data Resampling Techniques If collecting more data for rare classes is impossible, use techniques to rebalance your dataset.

Action 3: Use Appropriate Evaluation Metrics Stop relying solely on overall accuracy. For imbalanced datasets, use a suite of metrics that reveal true performance [31]:

- Confusion Matrix: Visualizes where misclassifications are occurring.

- Precision and Recall: Focus on the model's ability to correctly identify the rare class.

- F-measure (F1-Score): The harmonic mean of precision and recall [4].

Frequently Asked Questions (FAQs)

Q1: My accelerometer data is very high-dimensional after feature creation. How do I select the most important variables without losing predictive power? Use a combination of feature selection and extraction techniques [32] [31]. Start with filter methods like correlation analysis or mutual information scores to remove low-variance and irrelevant features. Follow this with embedded methods (e.g., LASSO regularization) or wrapper methods (e.g., recursive feature elimination) that use a model to select an optimal subset. For complex, correlated features, Principal Component Analysis (PCA) can extract the most salient information into a lower-dimensional space while preserving the variance in your data [31].

Q2: How does the sampling frequency of my accelerometer directly impact the classification of brief events? The sampling frequency determines the temporal resolution of your data. Brief events have high-frequency acceleration signatures. According to the Nyquist theorem, to accurately detect a signal, you must sample at a rate at least twice the frequency of the signal itself [18]. Therefore, a low sampling rate (e.g., 1 Hz) may entirely miss or alias the signal of a very short burst of activity. Studies show that higher frequencies (e.g., 40 Hz) improve the identification of fast-paced behaviors, while lower frequencies (1 Hz) can be sufficient for slower, more sustained behaviors [4].

Q3: What are the most common data quality issues that derail feature engineering for behavior classification? The most common issues are [33] [34]:

- Missing Data: Gaps in accelerometer recordings due to sensor failure or transmission loss.

- Corrupted Data: Improperly formatted values or artifacts from sensor impact.

- Class Imbalance: The dataset has vastly different numbers of examples for different behaviors, biasing the model.

- Inconsistent Scales: Features (e.g., VeDBA, pitch) are on different numeric scales, causing some to disproportionately influence the model. This is solved by scaling (normalization/standardization) [33].

Q4: Can I automate the feature engineering process for accelerometer data?

Yes, automated feature engineering is a viable strategy. Libraries like Featuretools can automatically generate a large number of candidate features from raw acceleration timeseries data by applying mathematical operations (e.g., mean, standard deviation, slope) across different time windows [32]. This can save time and uncover informative features you might not have considered. However, domain knowledge remains critical for interpreting the results and guiding the automated process.

Detailed Methodology: Evaluating Sampling Frequency Impact

The following protocol is adapted from a study on domestic cat behavior classification using accelerometers [4].

Animal Instrumentation & Data Collection:

- Equip subjects (e.g., domestic cats) with collar-mounted tri-axial accelerometers.

- Record acceleration data at a high frequency (e.g., 40 Hz) to capture the full range of behavioral signals.

- Simultaneously record high-definition video of the subjects to establish ground-truth behavior labels.

Data Labeling & Segmentation:

- Synchronize video and accelerometer data timestamps.

- Manually review video and label the accelerometer data with specific behaviors (e.g., "scratching," "grooming," "feeding," "running").

- Segment the labeled data into fixed-length windows (e.g., 3-second epochs) for analysis.

Feature Engineering at Multiple Frequencies:

- High-Frequency Dataset: From the raw 40 Hz data, calculate a wide array of descriptive variables for each epoch, including: mean dynamic acceleration, vectoral dynamic body acceleration (VeDBA), pitch, roll, standard error, and spectral features [4].

- Low-Frequency Dataset: Down-sample the raw data by calculating the mean acceleration over 1-second windows (resulting in 1 Hz data). Calculate the same set of descriptive variables on this smoothed dataset.

Model Training & Validation:

- Divide data into training (e.g., 80%) and testing (20%) sets.

- Train two Random Forest models: one on the high-frequency feature set (RF-HF) and one on the low-frequency set (RF-LF).

- Initial Validation: Test model accuracy on the held-out test dataset from the instrumented animals.

- Field Validation: Deploy the models to classify behaviors in free-ranging animals equipped with the same sensors. Manually identify behaviors in the field to validate the predictions, ensuring the model generalizes beyond controlled conditions [4].

Table 1: Impact of Data Processing on Random Forest Model Accuracy (F-measure) for Behavior Classification [4]

| Behavior Type | Model with Basic Features & Inconsistent Durations | Model with Additional Variables & Standardized Durations | High-Frequency (40 Hz) Model | Low-Frequency (1 Hz) Model |

|---|---|---|---|---|

| Locomotion (e.g., run) | 0.85 | 0.91 | 0.95 | 0.88 |

| Grooming | 0.65 | 0.78 | 0.75 | 0.82 |

| Feeding | 0.70 | 0.81 | 0.79 | 0.85 |

| Resting | 0.90 | 0.94 | 0.92 | 0.96 |

| Overall F-measure | 0.80 | 0.89 | 0.96 | 0.92 |

Table 2: Performance of a Low-Frequency (1 Hz) Model for Classifying Wild Boar Behaviors [18]

| Behavior | Balanced Accuracy | Identification Quality |

|---|---|---|

| Lateral Resting | 97% | Identified well |

| Sternal Resting | High | Identified well |

| Foraging | High | Identified well |

| Lactating | High | Identified well |

| Walking | 50% | Not reliable |

| Scrubbing | Low | Not reliable |

Workflow Visualization

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Tools for Accelerometer-Based Behavior Classification Research

| Tool / Solution | Function & Application | Example Use-Case |

|---|---|---|

| Tri-axial Accelerometer Loggers | Measures gravitational and inertial acceleration on three axes (X, Y, Z) at high frequency. The primary sensor for data collection. | Collar-mounted or ear-tag sensors to record raw movement data from study animals [4] [18]. |