Bridging the Qualitative-Quantitative Divide: A Practical Framework for Validating Network Models in Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals on integrating qualitative network models with quantitative data validation.

Bridging the Qualitative-Quantitative Divide: A Practical Framework for Validating Network Models in Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on integrating qualitative network models with quantitative data validation. It explores the foundational principles of qualitative network analysis and its application in modeling complex biomedical systems, such as disease mechanisms and intervention pathways. The content delivers actionable methodologies for constructing robust models, strategies for troubleshooting common integration challenges, and rigorous frameworks for quantitative validation and comparative analysis. By synthesizing mixed-methods approaches, this guide aims to enhance the reliability and predictive power of network models in clinical and pharmacological research, ultimately supporting more informed decision-making in drug development and therapeutic intervention.

Understanding Qualitative Network Models: Core Concepts and Research Applications

Defining Qualitative Network Models in Complex System Analysis

Qualitative Network Models (QNMs) are simplified representations of complex systems that capture the direction and sign of interactions between components rather than their precise numerical strength [1]. In complex system analysis, particularly in biological and ecological contexts, QNMs consist of model elements and linkages denoting positive or negative interactions, which are typically abstracted as directed, unweighted, signed digraphs [1]. This approach allows researchers to understand system behavior without requiring extensive parameterization, making it particularly valuable in data-poor environments or for initial exploratory analysis.

The fundamental principle behind QNMs is their focus on qualitative relationships rather than quantitative measurements. For example, in a biological context, a researcher might model whether increasing Protein A activates (+) or inhibits (-) Protein B, without specifying the exact kinetic parameters of this interaction [2]. This abstraction makes QNMs particularly useful for representing systems where precise numerical data is scarce but qualitative understanding of relationships exists. In drug discovery, where biological systems exhibit immense complexity, QNMs provide a framework for reasoning about drug effects, potential side effects, and network perturbations without requiring complete quantitative characterization of all system components [2] [3].

Table: Core Characteristics of Qualitative Network Models

| Characteristic | Description | Primary Application Context |

|---|---|---|

| Signed Interactions | Represents relationships as positive (+) or negative (-) influences | Understanding activation/inhibition in biological networks [2] |

| Directional Relationships | Models causal directions between components (A → B) | Mapping signaling pathways and regulatory networks [2] |

| Absence of Quantitative Parameters | Does not require precise numerical values for interaction strengths | Systems with limited quantitative data [1] |

| Stability Through Feedback Loops | Requires self-limiting loops (negative feedback) to maintain system stability [1] | Ecological systems and cellular regulation |

QNMs Versus Quantitative Network Models: A Formal Comparison

When evaluating network modeling approaches for complex system analysis, researchers must choose between qualitative and quantitative frameworks based on their specific research context, data availability, and analytical objectives. This decision carries significant implications for resource allocation, interpretability, and practical application, particularly in fields like drug discovery where both approaches are employed [2] [3].

Quantitative models, such as those implemented in Rpath for ecological systems [1] or systems pharmacology models in drug discovery [2], provide numerical precision and can generate specific, testable predictions about system behavior under various conditions. However, this precision comes at a cost: quantitative models are data-intensive, often requiring extensive parameter estimation, and can be time-consuming to develop, sometimes taking multiple years to construct and validate [1]. Additionally, they may become so complex that their behavior becomes difficult to interpret, potentially obscuring fundamental system principles.

In contrast, Qualitative Network Models sacrifice numerical precision to gain conceptual clarity and development efficiency. QNMs can be generated relatively quickly with fewer data requirements, allowing researchers to incorporate different information types that are difficult to measure or combine quantitatively [1]. This makes them particularly valuable for exploring new research territories, integrating diverse data sources, and developing conceptual frameworks before investing in extensive quantitative modeling efforts.

Table: Comparative Analysis of Qualitative vs. Quantitative Network Modeling Approaches

| Analytical Dimension | Qualitative Network Models (QNMs) | Quantitative Network Models |

|---|---|---|

| Data Requirements | Minimal data requirements; can incorporate difficult-to-quantify information [1] | Data-intensive; require comprehensive datasets with long time series [1] |

| Development Timeline | Relatively fast to develop [1] | Time-consuming (often multiple years) [1] |

| Analytical Output | Qualitative predictions of direction of change [1] | Numerical predictions of magnitude of change [1] |

| Strength for Prediction | Identifies potential system responses and surprising connections [2] | Generates testable numerical predictions when well-parameterized [2] |

| System Representation | Signed digraphs showing direction of influence [1] | Mathematical equations with numerical parameters [2] |

| Ideal Application Context | Exploratory analysis, hypothesis generation, data-poor environments [1] | Systems with rich numerical data, forecasting, precise intervention planning [2] |

Experimental Validation: Performance Benchmarking

A rigorous 2025 study systematically compared qualitative and quantitative ecosystem models to evaluate their correspondence under varying complexity levels [1]. This research provides valuable experimental data on QNM performance for researchers considering this approach for complex system analysis.

Experimental Protocol and Methodology

The research employed a structured comparative framework using an existing quantitative model (Rpath) of the western Scotian shelf and Bay of Fundy ecosystem, which was translated into multiple qualitative models of varying complexity [1]. The experimental design involved:

- Model Conversion: Transforming the quantitative Rpath model into a Qualitative Network Model using an adjacency matrix that consolidated both diet composition and predation mortality matrices, resulting in values between +1 and -1 [1].

- Complexity Manipulation: Systematically creating six QNM variants by eliminating linkages of different strengths (from ±0.10 to ±0.50) to assess how complexity reduction affects model performance [1].

- Perturbation Experiments: Running a series of perturbation scenarios through both quantitative and qualitative models and comparing their predictions [1].

- Uncertainty Integration: For the quantitative model, employing a Bayesian synthesis routine (Ecosense) that generated alternative parameter sets based on data quality assessments, creating a range of plausible outcomes reflecting parameter uncertainty [1].

The qualitative simulations were performed using QPress, a stochastic QNM software package implemented in R that models system responses to perturbations through qualitative simulations [1]. This experimental design allowed for direct comparison between approaches while controlling for variability, providing unique insights into QNM performance characteristics.

Key Experimental Findings and Performance Data

The study revealed that QNM performance systematically varied with model complexity and the trophic level of perturbed elements [1]. The results provide guidance for researchers in selecting appropriate model complexity based on their specific research questions:

Table: Correspondence Between Qualitative and Quantitative Models Based on Complexity and Perturbation Type

| Model Complexity Level | Perturbation to Lower Trophic Levels | Perturbation to Mid-Trophic Levels |

|---|---|---|

| Higher Complexity QNMs (more linkages retained) | Closer correspondence to quantitative models [1] | Reduced correspondence to quantitative models [1] |

| Lower Complexity QNMs (fewer linkages retained) | Reduced correspondence to quantitative models [1] | Recommended approach for better correspondence [1] |

The research identified a "sweet spot" of model complexity for qualitative ecosystem models to reflect similar results to quantitative models [1]. When perturbing lower trophic level groups, higher complexity models (with more linkages retained) performed closer to the quantitative model. Conversely, for perturbations to mid-trophic groups, lower complexity models were recommended [1]. This finding demonstrates that optimal QNM complexity depends on the specific system components researchers aim to study.

The study also found that the number of linkages between model elements and trophic position of the perturbed model were influential factors in qualitative model behavior [1]. Additionally, the researchers recommended utilizing multiple models to determine the strongest impacts from perturbations and avoid spurious conclusions [1].

Application in Drug Discovery and Development

Qualitative Network Models have emerged as valuable tools in pharmaceutical research, particularly in the early stages of drug discovery where complete quantitative information may be limited. Network-based approaches show significant potential for identifying novel targets and repositioning established targets by mapping complex biological interactions [2].

In drug discovery, QNMs enable researchers to represent various network types relevant to pharmacological interventions [2]:

- Protein-protein interaction networks: Mapping how proteins physically interact within cellular systems

- Signal transduction networks: Modeling how signals are transmitted from cell surface to nucleus

- Metabolic networks: Representing biochemical reaction networks within cells

- Genetic interaction networks: Capturing how genes work together in cellular processes

The application of these networks has brought revolutionary changes to pharmacology, potentially shortening research and development time while improving the efficiency of drug discovery [3]. Csermely et al. proposed distinct network targeting strategies for different disease types [2]. For diseases characterized by flexible networks (e.g., cancer), a "central hit" strategy targeting critical network nodes would seek to disrupt the network and induce cell death in malignant tissues [2]. For more rigid systems (e.g., type 2 diabetes mellitus), a "network influence" approach seeks to identify nodes and edges of multitissue biochemical pathways for blocking specific lines of communication and essentially redirecting information flow [2].

Signaling Pathway Representation

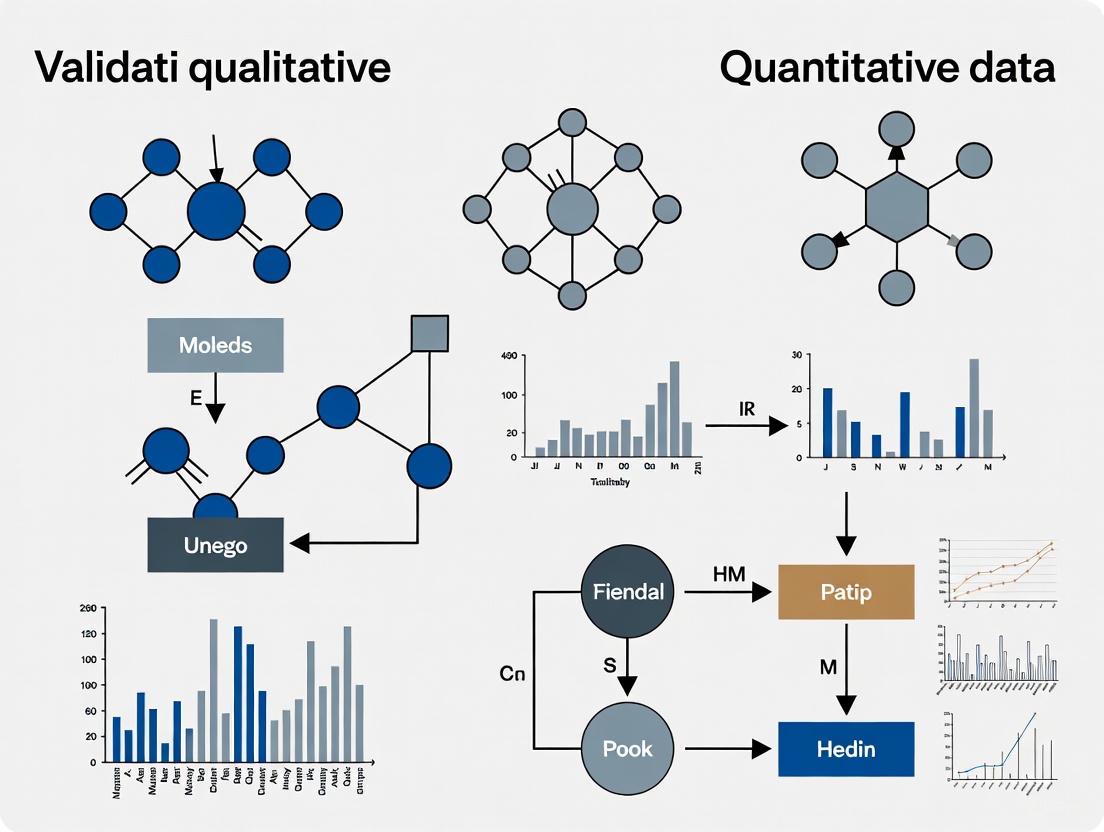

The following diagram illustrates a simplified qualitative network model of a signaling pathway, representing the type of network commonly analyzed in drug discovery research:

This qualitative representation captures essential activation and inhibition relationships without requiring precise kinetic parameters, demonstrating how QNMs can provide insights into potential drug targets by identifying critical nodes in biological networks [2].

Research Reagent Solutions: Methodological Toolkit

Researchers implementing Qualitative Network Models in complex system analysis utilize both computational and analytical resources. The following table details essential methodological tools and their applications in QNM research:

Table: Essential Research Reagents and Tools for Qualitative Network Analysis

| Research Tool | Type | Primary Function | Application in QNM Research |

|---|---|---|---|

| QPress [1] | Software Package | Stochastic Qualitative Network Modeling | Simulates system responses to perturbations through qualitative simulations implemented in R [1] |

| Rpath [1] | Quantitative Modeling Framework | Ecosystem modeling using same algorithms as Ecopath with Ecosim | Serves as quantitative benchmark for validating qualitative models; includes Bayesian synthesis routine (Ecosense) [1] |

| Web of Science Database [4] | Literature Database | Access to scholarly publications | Provides data for bibliometric network analysis and field mapping [4] |

| Adjacency Matrix [1] | Mathematical Framework | Representing network connections | Converts quantitative model data to qualitative format by consolidating interaction values between +1 and -1 [1] |

| Boolean Relationships [2] | Logical Framework | Discrete dynamic modeling | Represents network node states as true/false or active/inactive for time-stepped simulations [2] |

Qualitative Network Modeling Workflow

The following diagram illustrates a generalized workflow for developing and validating Qualitative Network Models, particularly in contexts where comparison with quantitative approaches is possible:

Qualitative Network Models represent a valuable methodological approach for analyzing complex systems across biological, ecological, and pharmacological domains. While QNMs do not provide the numerical precision of quantitative approaches, their strengths in conceptual clarity, development efficiency, and applicability in data-poor environments make them particularly valuable for exploratory research, hypothesis generation, and initial system characterization [1]. The demonstrated "sweet spot" of model complexity, which varies based on the system components being studied, provides guidance for researchers in optimizing QNM design for specific research questions [1].

In drug discovery and development, network-based approaches including QNMs have brought revolutionary changes by shortening research time and improving target identification efficiency [3]. These models enable researchers to reason about complex biological systems, potential drug effects, and network perturbations without requiring complete quantitative characterization [2]. As complementary approaches to quantitative methods, QNMs represent an important tool in the computational systems biology toolkit, particularly valuable when confronting the inherent complexity of biological systems and pharmaceutical interventions.

The validation of qualitative network models with quantitative data represents a critical frontier in interdisciplinary research. This comparative guide examines the theoretical foundations and practical applications across social science and biomedical domains, where network-based methodologies enable researchers to uncover complex relationships within systems. Where social science research traditionally employs qualitative methods to understand human experiences and social structures, biomedical research utilizes quantitative approaches to decipher biological interactions. The integration of these approaches through network analysis creates a powerful framework for validating insights across disciplines, offering a structured pathway from qualitative observation to quantitative verification.

Network analysis serves as the bridge between these paradigms, providing both a visual and mathematical representation of relationships within complex systems. In social science, networks might map relationships between concepts in textual data, while in biomedicine, they illustrate interactions between biological entities like proteins or genes. The core thesis explored here is that quantitative data can systematically validate qualitative network models, enhancing their rigor, predictive power, and utility across research contexts from public policy to drug discovery.

Theoretical Foundations Across Disciplines

Social Science Foundations

Social science research is fundamentally concerned with understanding human behavior, experiences, and social structures through interpretive frameworks. Qualitative research methodologies in this domain prioritize depth, context, and meaning over numerical measurement, seeking to understand the 'why' and 'how' behind human phenomena [5].

Several philosophical paradigms underpin qualitative social research. Constructivism posits that reality is socially constructed through human interactions and meaning-making processes, implying multiple valid perspectives exist. Interpretivism emphasizes understanding behavior through the subjective meanings people assign to their experiences, while critical theory examines how knowledge maintains or challenges power structures and promotes social justice [5]. These paradigms share a focus on understanding phenomena within their natural contexts rather than isolating variables for measurement.

Qualitative research employs several established approaches to network and relationship analysis. Ethnography involves immersive fieldwork to understand cultures and social groups through participant observation. Case study research conducts intensive investigation of specific cases in their real-world contexts, while grounded theory develops theoretical frameworks directly from data through iterative coding and analysis [5] [6]. Discourse analysis examines how language and communication practices construct social realities, and narrative research explores how people use stories to make sense of their experiences and identities [7] [5].

Biomedical Science Foundations

Biomedical research employs network analysis as a computational framework to understand complex biological systems. Unlike social science's focus on meaning, biomedical network science is rooted in mathematical graph theory and statistical mechanics, representing biological entities as nodes and their interactions as edges [8] [9].

The theoretical foundation of biomedical network analysis rests on several key principles. The disease module hypothesis proposes that proteins associated with the same disease show increased network proximity and functional relatedness in the human interactome [9]. Network medicine extends this concept, suggesting that disease phenotypes reflect various perturbations of the same underlying network structure [2]. Network proximity measures quantify relationships between drugs and diseases or between different drugs, enabling prediction of therapeutic efficacy and adverse effects [9].

Biomedical networks can be categorized as either static (examining networks at a single point in time) or time-varying (tracking network evolution over time) [8]. Time-varying network analysis is particularly important for understanding dynamic biological processes like disease progression, cellular signaling, and drug response, employing methods such as time-varying Gaussian graphical models, dynamic Bayesian networks, and vector autoregression-based causal analysis [8].

Table 1: Key Theoretical Distinctions Between Domains

| Aspect | Social Science Networks | Biomedical Networks |

|---|---|---|

| Primary Data | Words, narratives, observations, images | Numerical measurements, molecular data, clinical variables |

| Philosophical Foundation | Constructivism, interpretivism, critical theory | Positivism, post-positivism, realism |

| Primary Analysis Methods | Thematic analysis, discourse analysis, grounded theory | Statistical modeling, machine learning, graph theory |

| Node Representation | Concepts, people, organizations, ideas | Genes, proteins, drugs, diseases, metabolites |

| Edge Representation | Relationships, influences, communications | Physical interactions, regulatory relationships, functional associations |

| Validation Approach | Triangulation, member checking, reflexivity | Statistical testing, experimental validation, database alignment |

Validation Methodologies: Qualitative Networks Meets Quantitative Data

Social Science Validation Framework

In social science, the validation of qualitative network models employs distinct rigor-enhancement strategies that differ from quantitative validation approaches. Data triangulation uses multiple data sources, methods, or investigators to corroborate findings, while member checking returns interpretations to participants to confirm accuracy [7] [6]. Reflexivity involves critical self-examination of the researcher's role and potential biases throughout the research process, and audit trails maintain detailed records of the research process from raw data to final interpretation [7].

A powerful example of integrating quantitative validation in social science comes from social water research, where researchers implemented a mixed methods framework combining quantitative network analysis with qualitative interpretation [4]. This approach began with quantitative keyword co-occurrence network analysis of publications to identify thematic clusters, followed by qualitative interpretation of these clusters. The quantitative network analysis provided systematic, reproducible pattern detection, while the qualitative analysis delivered depth and contextual understanding, demonstrating how computational tools can enhance transparency without sacrificing interpretive richness [4].

Biomedical Validation Framework

Biomedical research employs rigorous quantitative validation methodologies for network models. The standard approach involves comparing network predictions against experimental data or established biological knowledge. Researchers quantify validation using statistical measures including sensitivity, specificity, precision, and accuracy, along with network-specific metrics like modularity, transitivity, and degree distribution [10].

A representative example comes from the validation of biomedical named entity recognition (bio-NER) tools using network analysis [10]. Researchers constructed disease networks from terms extracted by bio-NER tools from medical texts, then computed their overlap with reference networks built from genomic, proteomic, and pharmacological data. They quantified edge overlap between phenotypic and biological networks and applied community detection algorithms to compare network structures against established disease classification systems [10]. This approach demonstrated that tools performing well with traditional annotated corpora also ranked highly using network-based validation, confirming the method's reliability while overcoming limitations of manually annotated datasets [10].

Table 2: Validation Metrics Across Disciplines

| Validation Approach | Social Science Application | Biomedical Application |

|---|---|---|

| Triangulation | Multiple data sources, methods, or investigators | Multiple omics datasets, experimental techniques |

| Member Checking | Participant verification of interpretations | Database alignment with established knowledge |

| Reference Standards | Established theories, expert consensus | Gold standard datasets, curated databases |

| Statistical Validation | Limited use of descriptive statistics | Extensive inferential statistics, p-values, confidence intervals |

| Community Detection | Thematic clusters in textual data | Functional modules, disease communities |

| Reproducibility Measures | Audit trails, methodological transparency | Experimental replication, computational reproducibility |

Experimental Protocols and Workflows

Social Science Mixed Methods Protocol

The social science validation protocol employs a sequential mixed methods design that integrates quantitative network analysis with qualitative interpretation [4]:

Phase 1: Data Collection

- Source relevant textual data from appropriate repositories (e.g., Web of Science, Scopus)

- Apply systematic search strategies with defined inclusion/exclusion criteria

- Extract metadata including keywords, abstracts, and citation information

Phase 2: Quantitative Network Construction

- Create keyword co-occurrence networks using bibliometric analysis

- Apply network clustering algorithms to identify thematic groups

- Calculate basic network metrics (density, centrality, connectivity)

Phase 3: Qualitative Interpretation

- Conduct deep contextual analysis of representative documents from each cluster

- Apply thematic analysis or discourse analysis to identified clusters

- Interpret quantitative findings through theoretical frameworks

Phase 4: Integration and Validation

- Compare quantitative network structures with qualitative interpretations

- Identify convergent and divergent findings across methods

- Refine interpretations through iterative analysis

This workflow visualization illustrates the sequential mixed methods approach:

Biomedical Network Validation Protocol

The biomedical network validation protocol employs a comparative framework that evaluates network models against established biological data [10]:

Phase 1: Reference Network Construction

- Compile molecular interaction data from curated databases (e.g., STRING, BioGRID, DisGeNET)

- Build disease-specific networks from genomic, proteomic, and pharmacological data

- Calculate topological properties of reference networks

Phase 2: Phenotypic Network Generation

- Apply bio-NER tools to extract disease-related terms from medical literature

- Construct phenotypic disease networks based on term co-occurrence

- Compute network metrics for comparative analysis

Phase 3: Quantitative Comparison

- Measure edge overlap between phenotypic and reference networks

- Apply community detection to both network types

- Compare identified communities with established disease classifications

Phase 4: Statistical Validation

- Calculate significance of network overlaps using appropriate statistical tests

- Assess correlation between network-based rankings and traditional validation

- Evaluate predictive performance using receiver operating characteristic analysis

This workflow illustrates the comparative validation approach:

Research Reagent Solutions: Essential Tools and Databases

Social Science Research Tools

Social science network research employs both computational and analytical tools:

Table 3: Social Science Research Reagents

| Tool/Database | Type | Primary Function | Application in Validation |

|---|---|---|---|

| Web of Science | Database | Scholarly publication metadata | Data source for bibliometric analysis |

| MAXQDA | Software | Qualitative data analysis | Thematic coding, mixed methods integration |

| NVivo | Software | Qualitative data analysis | Code-based network visualization, theory building |

| ATLAS.ti | Software | Qualitative data analysis | Conceptual networking, hermeneutic analysis |

| VOSviewer | Software | Bibliometric network visualization | Co-occurrence network mapping, cluster identification |

Biomedical network analysis relies on specialized databases and software tools:

Table 4: Biomedical Research Reagents

| Tool/Database | Type | Primary Function | Application in Validation |

|---|---|---|---|

| STRING | Database | Protein-protein interaction networks | Reference network construction [8] |

| BioGRID | Database | Molecular interaction repository | Curated physical and genetic interactions [8] |

| DisGeNET | Database | Gene-disease associations | Disease module identification [10] |

| Stanford Biomedical Network Collection | Dataset | Multimodal biomedical networks | Benchmark datasets for method validation [11] |

| NVivo | CAQDAS | Qualitative data analysis | Integration of qualitative and quantitative data [7] |

| TVGL | Algorithm | Time-varying graphical LASSO | Dynamic network inference [8] |

| Dynamic Bayesian Networks | Method | Time-dependent probabilistic modeling | Causal inference in temporal data [8] |

Comparative Performance Analysis

Methodological Performance Metrics

The performance of qualitative network validation approaches can be evaluated through multiple dimensions:

Table 5: Performance Comparison Across Domains

| Performance Metric | Social Science Networks | Biomedical Networks | Cross-Domain Insights |

|---|---|---|---|

| Thematic Identification | High sensitivity to emerging concepts | Limited to pre-defined entity types | Social science excels at discovery, biomedicine at verification |

| Scalability | Limited by qualitative analysis capacity | Highly scalable with computational resources | Biomedical approaches more suitable for large datasets |

| Reproducibility | Moderate, dependent on researcher transparency | High, with computational reproducibility | Biomedical methods offer superior standardization |

| Contextual Sensitivity | High, preserves nuanced meanings | Limited, reduces complexity to metrics | Social science maintains richer contextual understanding |

| Predictive Power | Limited to conceptual insights | Strong quantitative predictions | Biomedical approaches superior for hypothesis testing |

| Interdisciplinary Integration | Flexible framework adaptation | Requires domain-specific knowledge | Social science methods more transferable across domains |

Validation Performance Data

Empirical studies demonstrate the validation performance of network-based approaches:

In biomedical NER tool evaluation, network-based validation showed strong correlation with traditional annotation-based methods [10]. Tools that performed well with manually annotated corpora also ranked highly using network-based approaches, with modularity scores around 0.5 for high-performing tools and edge overlap significantly above random expectation [10].

In drug combination prediction, network-based separation measures successfully identified effective combinations, with separation score (sAB) demonstrating superior performance compared to target-overlap approaches and other network measures [9]. FDA-approved drug combinations showed significantly lower sAB values compared to random drug pairs, confirming the measure's predictive power [9].

Social science mixed methods demonstrated that quantitative network analysis could reliably identify thematic clusters that aligned with qualitative interpretations, providing systematic reproducibility while maintaining interpretive depth [4]. The approach successfully identified emerging research trends and interdisciplinary connections within complex scholarly domains.

Integrated Validation Framework

The comparative analysis reveals that while social science and biomedical approaches differ in their philosophical foundations and methodological specifics, they converge on a core principle: quantitative network properties can systematically validate qualitative insights. This convergence suggests an integrated framework for network validation across disciplines:

Core Principles:

- Multi-method triangulation combining different data types and analytical approaches

- Iterative refinement between qualitative models and quantitative validation

- Contextual interpretation of quantitative metrics within theoretical frameworks

- Transparent documentation of both computational and interpretive steps

Application Guidelines:

- Social science researchers should incorporate quantitative network metrics to enhance methodological rigor and transparency

- Biomedical researchers should maintain connection to biological context and meaning when interpreting network models

- Both domains benefit from explicit documentation of validation procedures and limitations

- Cross-disciplinary collaboration can generate innovative validation approaches leveraging strengths of both paradigms

This integrated approach advances the broader thesis that qualitative network models gain explanatory power and scientific utility when systematically validated with quantitative data, creating a robust foundation for knowledge generation across the research spectrum.

The Role of Mixed-Methods Approaches in Interdisciplinary Research

Mixed-methods research represents a third research paradigm that strategically combines qualitative and quantitative approaches within a single study to provide a more complete understanding of complex research questions than either method could alone [12]. This approach has gained significant recognition across multiple health disciplines, including nursing, medicine, and epidemiology, where it helps bridge the gap between numerical data and human experience [12] [13]. By integrating different methodological perspectives, mixed-methods research creates opportunities to synthesize knowledge and insights from across disciplinary boundaries, making it particularly valuable for addressing multifaceted problems that transcend traditional academic silos [13].

The philosophical foundation of mixed-methods research lies in pragmatism, serving as what scholars have termed the "third path" or "third research paradigm" that moves beyond the traditional positivist-quantitative and interpretivist-qualitative divide [12]. Quantitative research, typically underpinned by positivism, operates on the assumption that there is a single measurable reality, addressing questions such as "What proportion of the population experiences this phenomenon?" In contrast, qualitative research, often based on interpretivism, explores how people experience and interpret phenomena in their unique worlds [12]. Mixed-methods research brings together questions from these different philosophical traditions, offering multiple ways of examining a research question by combining the strengths of both approaches [12].

In interdisciplinary contexts, mixed-methods approaches are particularly valuable for synthesizing knowledge from across different fields [13]. As a group of academics from Education, Medicine, Nursing, and Social working together on an interdisciplinary mixed-methods systematic review discovered, this methodology enables researchers to embrace the challenges of interdisciplinary work while managing the bottlenecks that often occur in such projects [13]. The integration of diverse disciplinary perspectives through mixed-methods creates a richer, more nuanced understanding of complex phenomena that cannot be adequately captured through single-method or single-discipline approaches.

Methodological Approaches and Framework

Core Methodological Frameworks

Mixed-methods research encompasses several distinct frameworks for combining qualitative and quantitative approaches. The three primary designs include convergent parallel design, explanatory sequential design, and exploratory sequential design [12]. In the convergent parallel design, researchers collect and analyze both quantitative and qualitative data simultaneously during the same study phase, then merge the results to develop a complete understanding of the research problem. The explanatory sequential design involves first collecting and analyzing quantitative data, followed by qualitative data collection and analysis to help explain or elaborate on the quantitative findings. Conversely, the exploratory sequential design begins with qualitative data collection and analysis, using the results to inform the subsequent quantitative phase [12].

The process of integrating qualitative and quantitative data represents a critical challenge in mixed-methods research. Integration can occur at multiple stages of research: during data collection, analysis, interpretation, or at all stages [12] [14]. At the data analysis stage, one powerful integration approach involves quantifying qualitative data through methods like Epistemic Network Analysis (ENA), which combines principles from social network analysis and discourse analysis to identify relationships between qualitative code co-occurrences [14]. This method allows researchers to transform rich qualitative data into quantitative metrics that can be analyzed statistically while preserving the contextual meaning of the original data.

Epistemic Network Analysis as a Bridge Method

Epistemic Network Analysis (ENA) serves as a particularly valuable method for quantifying qualitative data in interdisciplinary research [14]. ENA was originally developed in learning sciences to model relationships between elements of professional expertise and has since been applied across diverse fields including healthcare, surgery, and historical document analysis [14]. The method involves segmenting qualitative data into lines (the smallest unit of data) and grouping them into stanzas (related lines), then coding each line according to a rigorously developed codebook [14].

The mathematical foundation of ENA uses approaches similar to social network analysis, singular value decomposition, and principal component analysis to calculate and graphically depict relationships between coded elements [14]. The resulting network graphs visualize these relationships, with node size representing the frequency of code occurrence and line thickness indicating the strength of relationship through code co-occurrence. These networks can be analyzed both descriptively and statistically, allowing researchers to compare different groups or conditions [14]. In healthcare research, ENA has been successfully applied to model communication patterns in operating rooms, surgical error management, and team learning in virtual internships, demonstrating its versatility as a bridge between qualitative and quantitative paradigms [14].

Comparative Analysis of Mixed-Methods Applications

Applications Across Disciplines

Mixed-methods approaches have been successfully implemented across diverse interdisciplinary contexts, each demonstrating unique strengths in addressing complex research questions. The table below summarizes key applications across three disciplinary areas:

Table 1: Comparative Analysis of Mixed-Methods Applications Across Disciplines

| Disciplinary Area | Research Focus | Qualitative Component | Quantitative Component | Integration Method | Key Findings |

|---|---|---|---|---|---|

| Healthcare & Nursing [12] [14] | Understanding nursing care practices and patient satisfaction | In-depth interviews with healthcare providers and patients | Surveys and logistic regression analysis | Sequential explanatory design; Epistemic Network Analysis | Identified factors influencing adherence to nursing care protocols; revealed communication patterns affecting patient outcomes |

| Ecology & Environmental Science [15] | Assessing ecological impacts of mussel culture | Qualitative network models outlining ecosystem relationships | Semi-quantitative data on linkage strengths | Concurrent triangulation; model validation | Revealed that hydrodynamic conditions have greater impact than nutrients on ecosystem effects of mussel culture |

| Network Science & Machine Learning [16] | Evaluating machine learning models for network inference | Analysis of structural features and computational trade-offs | Performance metrics (accuracy, precision, recall, F1 score, AUC) | Convergent parallel design | Demonstrated that simpler models (Logistic Regression) can outperform complex ones (Random Forest) in specific network contexts |

Interdisciplinary Integration Patterns

The integration of mixed-methods within interdisciplinary research teams follows several distinct patterns, each with characteristic strengths and challenges. Multidisciplinary teams involve researchers from different disciplines working side-by-side but maintaining distinct methodological approaches, while interdisciplinary teams blend methodologies to create integrated solutions, and transdisciplinary teams transcend discipline boundaries entirely to develop novel methodological frameworks [13] [12].

Practical considerations for conducting rigorous interdisciplinary mixed-methods systematic reviews include establishing common terminology across disciplines, developing shared understanding of methodological quality criteria, and creating transparent processes for reconciling different disciplinary perspectives [13]. Successful teams report that embracing the challenges of interdisciplinarity through structured communication and flexible planning can advance the effectiveness and impact of interdisciplinary research [13]. These teams emphasize the importance of documenting both successes and challenges to provide exemplars for other researchers undertaking similar interdisciplinary mixed-methods projects [13].

Experimental Protocols and Workflows

Protocol for Interdisciplinary Mixed-Methods Systematic Reviews

Conducting a rigorous interdisciplinary mixed-methods systematic review requires a structured protocol that accommodates diverse research methodologies and disciplinary perspectives:

Formation of Interdisciplinary Team: Assemble researchers from relevant disciplines (e.g., Education, Medicine, Nursing, Social Work) with expertise in both quantitative and qualitative methods [13].

Development of Shared Conceptual Framework: Establish common definitions, theoretical perspectives, and methodological standards that respect different disciplinary traditions while creating a coherent integrative framework [13].

Comprehensive Search Strategy: Implement systematic searches across multiple disciplinary databases using both controlled vocabulary and natural language terms to capture relevant literature [13].

Dual Screening and Selection Process: Employ multiple reviewers with different disciplinary backgrounds to screen titles, abstracts, and full-text articles using predetermined inclusion criteria [13].

Data Extraction and Quality Assessment: Extract data using standardized forms and assess methodological quality using appropriate tools for different study types (quantitative, qualitative, mixed-methods) [13].

Integration of Findings: Synthesize knowledge using convergent thematic analysis, following a transparent process for reconciling different disciplinary interpretations [13].

Validation and Reflexivity: Maintain team reflexivity throughout the process, documenting challenges and resolutions to enhance the transparency and credibility of the review [13].

Protocol for Qualitative Network Model Validation

Validating qualitative network models with quantitative data involves a systematic approach for ensuring the robustness and credibility of integrated findings:

Model Specification: Develop qualitative network models outlining proposed relationships and linkages between system components based on existing literature and expert knowledge [15].

Semi-Quantitative Enhancement: Incorporate available semi-quantitative information on the relative strength of key linkages to improve model accuracy and sign determinacy [15].

Scenario Analysis: Analyze model behavior under different scenarios (e.g., contrasting environmental conditions) to identify the most influential system components [15].

Empirical Validation: Collect quantitative empirical data to test model predictions and evaluate the consistency between qualitative model outputs and observed system behaviors [15].

Sensitivity Analysis: Assess how changes in model parameters affect outcomes to identify critical linkages and potential leverage points within the system [15].

Iterative Refinement: Revise the qualitative network model based on validation results, strengthening well-supported linkages and modifying or removing poorly supported ones [15].

The following workflow diagram illustrates the sequential and iterative nature of validating qualitative network models with quantitative data:

Essential Research Reagents and Tools

Methodological Reagents for Mixed-Methods Research

Successful implementation of mixed-methods research in interdisciplinary contexts requires specific "methodological reagents" – essential tools and approaches that facilitate the integration of diverse data types and disciplinary perspectives:

Table 2: Essential Research Reagents for Mixed-Methods Studies

| Research Reagent | Function | Application Context | Considerations |

|---|---|---|---|

| Epistemic Network Analysis (ENA) [14] | Quantifies qualitative data by modeling relationships between discourse elements | Healthcare team communication analysis; learning assessment; surgical training evaluation | Requires high-quality qualitative data segmented into lines and stanzas; uses network graphs to visualize code co-occurrences |

| Qualitative Network Models (QNMs) [15] | Maps complex relationships in systems where quantitative data is limited | Ecological impact assessment; ecosystem behavior prediction; intervention planning | Benefits from incorporation of semi-quantitative data on linkage strength; useful for scenario testing |

| Interdisciplinary Team Framework [13] | Structures collaboration across disciplinary boundaries | Systematic reviews; complex intervention development; policy evaluation | Requires explicit attention to terminology, methodology integration, and conflict resolution processes |

| Stochastic Block Models (SBM) [16] | Generates synthetic networks with community structure for method validation | Network inference testing; machine learning model evaluation; community detection | Closely matches modularity of real-world networks; useful for benchmarking analytical approaches |

Analytical Tools for Data Integration

The integration of qualitative and quantitative data requires specialized analytical tools that can accommodate different data types and facilitate meaningful synthesis:

Mixed-Methods Data Analysis Software: Tools like NVivo, MAXQDA, and Dedoose support both qualitative coding and quantitative analysis, allowing researchers to work with diverse data types within a single platform.

Network Analysis Packages: Software such as UCINET, Gephi, and specialized R packages (e.g., igraph, statnet) enable the visualization and analysis of network structures derived from both qualitative and quantitative data [16] [14].

Statistical Modeling Environments: Platforms like R, Python (with pandas, scikit-learn), and Mplus support the complex statistical models needed to analyze integrated datasets, including multilevel models, structural equation models, and mixture models.

Data Visualization Tools: Applications such as Tableau, Microsoft Power BI, and specialized visualization libraries in programming languages help create integrated displays that represent both qualitative and quantitative findings.

The following diagram illustrates the relationship between different methodological tools and their applications in the mixed-methods research workflow:

Comparative Performance Data

Quantitative Performance Metrics

The effectiveness of mixed-methods approaches can be evaluated through specific performance metrics that capture their added value compared to single-method approaches. The table below summarizes key quantitative findings from studies that have implemented mixed-methods designs:

Table 3: Performance Metrics of Mixed-Methods Approaches Across Disciplines

| Study Context | Methodological Comparison | Key Performance Metrics | Results | Implications |

|---|---|---|---|---|

| Machine Learning for Network Inference [16] | Logistic Regression (LR) vs. Random Forest (RF) on synthetic networks | Accuracy, Precision, Recall, F1 Score, AUC, MCC | LR achieved perfect scores (100%) across all metrics for 100-1000 node networks; RF showed lower performance (~80% accuracy) | Simpler models may outperform complex ones in specific contexts; challenges assumption that complex models are inherently superior |

| Healthcare Team Communication [14] | Epistemic Network Analysis of task allocation communications | Task acceptance rates by sender and communication synchronicity | Physician and unit clerk most successful allocating tasks; synchronous communication effective for time-critical tasks | ENA effectively quantified qualitative communication patterns; revealed unexpected role of unit clerks in team effectiveness |

| Ecological Impact Assessment [15] | Qualitative Network Models with semi-quantitative enhancement | Model accuracy and sign determinacy in predicting ecological responses | Models with semi-quantitative linkage information showed improved accuracy and determinacy; hydrodynamic conditions had greater impact than nutrients | Integration of even limited quantitative data improves qualitative model performance |

| Interdisciplinary Systematic Reviews [13] | Traditional vs. interdisciplinary mixed-methods approaches | Breadth of synthesis, identification of novel insights, practical applicability | Mixed-methods reviews provided more comprehensive understanding and identified insights missed by single-method approaches | Methodological rigor and transparent processes critical for successful interdisciplinary integration |

Qualitative Benefits and Challenges

Beyond quantitative metrics, mixed-methods approaches demonstrate significant qualitative benefits while also presenting distinct challenges:

Benefits:

- Provides deeper understanding of complex phenomena by capturing both the breadth of quantitative data and the depth of qualitative insights [12]

- Enhances methodological rigor through triangulation, where findings from one method can validate or challenge findings from another method [12] [14]

- Increases the practical applicability of research findings by addressing both "what" questions (prevalence, distribution) and "why/how" questions (meaning, mechanism) [12]

- Facilitates interdisciplinary collaboration by creating a common framework that respects different methodological traditions [13]

Challenges:

- Requires expertise in multiple methodological approaches and significant resources in terms of time, cost, and personnel [12]

- Demands careful attention to integration strategies to ensure qualitative and quantitative components meaningfully inform each other rather than existing in parallel [12] [14]

- Faces publication barriers due to word count limitations and lack of familiarity with mixed-methods approaches among some journal editors and reviewers [12]

- Necessitates explicit documentation of research paradigms and design choices that may be unfamiliar to readers trained in single-method traditions [12]

Mixed-methods approaches play an increasingly vital role in interdisciplinary research by providing comprehensive frameworks for addressing complex questions that transcend traditional disciplinary boundaries [13] [12]. The integration of qualitative and quantitative methods creates opportunities for deeper understanding and more practical applications than either approach could achieve independently [12]. As demonstrated across diverse fields from healthcare to ecology to network science, mixed-methods designs enable researchers to validate qualitative models with quantitative data, uncover unexpected patterns through methodological triangulation, and develop insights that are both contextually rich and generalizable [15] [14].

Future developments in mixed-methods research will likely focus on enhancing integration techniques, developing more sophisticated tools for data synthesis, and expanding training opportunities for researchers seeking to combine methodological approaches [12]. As interdisciplinary collaborations continue to grow in importance for addressing complex societal challenges, mixed-methods approaches will play an increasingly central role in facilitating meaningful integration across disciplinary boundaries [13]. The continued refinement of methods like Epistemic Network Analysis that systematically bridge qualitative and quantitative paradigms represents a promising direction for advancing mixed-methodology [14].

For researchers embarking on mixed-methods projects, success depends on carefully aligning research questions with appropriate mixed-methods designs, devoting sufficient resources to integration strategies, and maintaining flexibility throughout the research process [13] [12]. By embracing both the challenges and opportunities of mixed-methods research, interdisciplinary teams can produce findings that offer greater insights and enhanced applicability across diverse contexts and stakeholders [12].

This guide provides an objective comparison of Abstraction Hierarchies and Qualitative Network Models, focusing on their roles in validating qualitative models with quantitative data. This is crucial for research fields like drug development, where understanding complex, multi-level systems—from molecular pathways to patient outcomes—is essential.

Abstraction Hierarchy (AH) is a qualitative, complex systems methodology originating from cognitive engineering. It models socio-technical systems through a hierarchical structure of five levels, from high-level functional purposes to physical forms, creating a network of "how/why" relationships [17]. This framework helps researchers trace pathways from specific, measurable components to broader system goals, making it particularly valuable for evaluating potential interventions on social media platforms to increase social equality [17].

Qualitative Network Models represent systems as interconnected nodes and edges, often analyzed using methods like thematic analysis, grounded theory, and discourse analysis [7] [5]. These models aim to uncover deep insights into human experiences, behaviors, and cultures that quantitative data alone cannot reveal [5]. When combined with network science metrics, they can identify central themes and structural patterns within qualitative data [17].

Comparative Performance Analysis

The table below summarizes the performance characteristics of both modeling approaches based on documented applications and methodologies.

Table 1: Performance Comparison of Abstraction Hierarchies and Qualitative Network Models

| Performance Characteristic | Abstraction Hierarchy (AH) | Qualitative Network Models |

|---|---|---|

| Primary Data Nature | Words, narratives, system goals [17] | Words, narratives, observations [5] |

| Analysis Foundation | How/why relationships across hierarchical levels [17] | Thematic coding, pattern identification [7] [5] |

| Validation Approach | Pathway tracing from physical forms to functional purpose [17] | Triangulation, member checking, audit trails [7] |

| Quantitative Bridge | Network analysis (e.g., clustering, path counting) [17] | Statistical analysis, AI/ML-powered code frequency analysis [18] |

| Level of Analysis | Explicitly links multiple levels of analysis [17] | Often focuses on a single level (e.g., thematic) [17] |

| Strength | Succinctly captures interdisciplinary, multi-level perspectives [17] | Uncovers underlying meanings and motivations [5] |

| Computational Support | Basic network algorithms (Newman-Girvan) [17] | Advanced CAQDAS (NVivo, ATLAS.ti) with AI features [7] [18] |

Experimental Protocols for Validation

Objective: To qualitatively construct and then quantitatively validate an AH model for a socio-technical system [17].

Workflow:

- Model Construction: Use literature reviews and expert interviews to populate the five hierarchical levels: Functional Purpose, Constraints, Functions, Physical Processes, and Physical Forms [17].

- Network Formation: Represent the completed AH as a directed graph where nodes are elements at each level, and links are the "how/why" relationships between them [17].

- Quantitative Analysis:

- Pathway Analysis: Count all possible paths from a low-level Physical Form node to a high-level Functional Purpose node. A higher number of potential pathways may indicate a more robust design for an intervention [17].

- Cluster Analysis: Apply the Newman-Girvan algorithm to the AH network to identify cohesive subgroups. This can reveal if the model's structure aligns with expected conceptual modules within the system [17].

Quantitative Bridging in Qualitative Network Analysis

Objective: To analyze qualitative data for themes and relationships, then use quantitative metrics to validate the structure and significance of the resulting network model [5] [18].

Workflow:

- Data Collection and Coding: Collect data via interviews or focus groups. Code the data using thematic analysis or grounded theory to develop a set of themes (nodes) and their connections (edges) [7] [5].

- AI-Enhanced Analysis: Use Computer Assisted Qualitative Data Analysis Software (CAQDAS) like NVivo or ATLAS.ti. Leverage AI features for initial coding, summarization, and code suggestion, but critically vet all AI outputs against the original data [18].

- Network and Statistical Validation:

- Centrality Measures: Calculate node degree, betweenness, or eigenvector centrality in the theme network to identify core concepts [17].

- Inter-coder Reliability: Use quantitative metrics like Cohen's Kappa to statistically assess the consistency of the qualitative coding between different researchers [7].

- Triangulation: Combine qualitative findings with quantitative data from other sources to cross-verify interpretations [7].

The following diagram illustrates the core workflow for validating qualitative models with quantitative data:

The Scientist's Toolkit: Essential Research Reagents

The table below details key tools and software essential for conducting research with these models.

Table 2: Key Research Reagent Solutions for Qualitative-Quantitative Modeling

| Tool Name | Type/Category | Primary Function in Research |

|---|---|---|

| NVivo | CAQDAS Software | Manages, codes, and analyzes qualitative data; features AI summarization and code suggestion for efficiency [18]. |

| ATLAS.ti | CAQDAS Software | Supports deep qualitative analysis with a network view feature and conversational AI enquiry to encourage reflection [7] [18]. |

| MAXQDA | CAQDAS Software | Provides mixed-methods capabilities, seamlessly blending qualitative and quantitative analysis approaches [7]. |

| Newman-Girvan Algorithm | Network Analysis Algorithm | Identifies community structure (clusters) within a network model, such as an Abstraction Hierarchy graph [17]. |

| AI/Large Language Models (GPT-4, Claude) | Generative AI | Acts as a dialogic partner for exploratory analysis, suggests codes, and challenges researcher assumptions [18]. |

| Abstraction Hierarchy Framework | Modeling Framework | Provides the five-level template for constructing a hierarchical, multi-level model of a complex socio-technical system [17]. |

| Thematic Analysis | Qualitative Methodology | A systematic process for identifying, analyzing, and reporting patterns (themes) within qualitative data [7] [5]. |

The following diagram maps the hierarchical structure of an Abstraction Hierarchy, showing the "how/why" relationships between levels:

Identifying Research Questions Suitable for Qualitative Network Modeling

Defining Qualitative Network Models

Qualitative network models are abstract computational frameworks used to study complex systems where precise, quantitative data is scarce, but the underlying structure and interactions are well understood. They serve as a bridge between purely descriptive diagrams and fully quantitative mathematical models, allowing researchers to analyze system behavior and generate testable hypotheses [19].

These models are particularly valuable in fields like drug development and systems biology, where they help researchers understand complex signaling pathways and predict the effects of perturbations without requiring extensive kinetic data [20] [19]. A key strength is their role in a modeling pipeline, where a simple interaction graph can be refined into a logical model and finally into a logic-based ordinary differential equation, systematically increasing quantitative detail while preserving core qualitative properties [19].

Characteristics of Suitable Research Questions

Research questions that are a good fit for qualitative network modeling often share specific characteristics. The following table outlines these key traits, providing a checklist for researchers to assess their own questions.

| Characteristic | Description | Example Research Question |

|---|---|---|

| Focus on Structure & Logic | The question prioritizes the "if-then" logic of interactions (e.g., activation, inhibition) over precise quantities [19]. | "Does the inhibition of protein A lead to the downstream activation of transcription factor Z?" |

| Exploration of System Behavior | The goal is to understand emergent properties like stability, feedback loops, or response to perturbation, rather than precise numerical output [20] [15]. | "What are the stable signaling states of this cancer pathway under different drug treatments?" |

| Handling of Data Scarcity | The system is complex, but available data is insufficient for building a quantitative model [19]. | "How might a new drug target influence the broader signaling network, given incomplete kinetic data?" |

| Prediction of Qualitative Outcomes | The answer is a direction of change (increase/decrease) or a relative ranking, not a specific value [15] [21]. | "Will introducing this ecological stressor have a net positive or negative impact on key species?" |

A well-crafted question should also be actionable and specific. Applying frameworks like SMART (Specific, Measurable, Achievable, Relevant, Time-bound) can help sharpen the focus. For instance, a vague question like "How can we improve collaboration?" is less effective than "What is the current level of collaboration between the IT and Marketing teams, and how can it be improved within the next 6 months?" [22].

Validating Qualitative Models with Quantitative Data

Validation is a critical step to ensure a qualitative model is a reliable tool for prediction and analysis. The process often involves an iterative cycle of comparison and refinement, leveraging quantitative data to test and improve the qualitative framework.

The Validation Cycle

The diagram below illustrates the iterative process of validating a qualitative model using quantitative data.

Methodologies for Validation

Several formal methodologies exist to operationalize this validation cycle:

- Formal Verification and Model Checking: This computer science-inspired method involves an exhaustive exploration of the model's state-space to verify that it consistently adheres to a set of requirements derived from experimental observations. If the model fails, it is revised, and new hypotheses are generated for experimental testing [20].

- Epistemic Network Analysis (ENA): ENA is a mixed-methods technique that quantifies qualitative data to enable validation. It involves coding qualitative data (e.g., from interviews or observations), analyzing co-occurrence of codes, and representing the relationships as network graphs. These graphs can then be compared statistically to validate the structure and dynamics of the original qualitative model [14].

- Triangulation and Cross-Validation: This strategy involves comparing findings from qualitative model analyses with results from independent quantitative studies. Consistency between the different data sources reinforces the model's validity, while discrepancies highlight areas requiring further investigation [23].

Exemplary Research Questions Across Disciplines

The table below provides concrete examples of research questions suitable for qualitative network modeling, drawn from different fields to illustrate their scope and focus.

| Field | Exemplary Research Question | Rationale & Suitability |

|---|---|---|

| Drug Development & Systems Biology | "How does the crosstalk between the EGF and NRG1 signaling pathways influence the activation of downstream effectors ERK and Akt, and what is the effect of an ErbB2-targeting therapeutic?" [19] | Focuses on the logical structure of a complex signaling network and the qualitative behavior (activation/inhibition) in response to a perturbation (drug). |

| Ecology & Environmental Science | "In a mussel farm ecosystem, does the presence of culture have a positive or negative impact on macroinvertebrate predators, and how is this influenced by hydrodynamic conditions?" [15] | Aims to predict the direction of change (positive/negative) for a group of species, acknowledging that the outcome depends on environmental conditions. |

| Cellular Biology | "Does the interaction between the Notch and Wnt signaling pathways in mammalian skin lead to multistationarity (multiple stable states)?" [20] | Targets a core emergent property of a biological system (multistationarity) that can be explored without precise kinetic parameters. |

| Organizational Network Analysis (ONA) | "Which partners act as key brokers between otherwise disconnected sectors in our research collaboration network?" [22] | Identifies structural roles (brokers) and influence based on network position, which can be analyzed using metrics like betweenness centrality. |

Experimental Protocols for Validation

Protocol 1: Steady-State Analysis for Signaling Pathways

This protocol is used to validate if a qualitative model of a biological pathway can reproduce known stable states observed in experimental data.

- Model Construction: Build a Boolean or Qualitative Network model from the literature-curated signaling pathway. Each node is a protein/species (active=1, inactive=0), and edges are defined by known activating/inhibiting interactions [20] [19].

- Define Update Rules: For each node, specify a logical rule (e.g., an AND/OR rule) that determines its state based on the states of its inputs [19].

- Simulation: Use software like GINsim or CellNetAnalyzer to simulate the network's dynamics from all possible initial states until it reaches a steady state or a set of recurring states (attractors) [19].

- Validation: Compare the model-predicted steady states (attractors) with experimentally observed cellular phenotypes or signaling outcomes (e.g., proliferation, apoptosis) derived from quantitative assays. A successful model will have attractors that correspond to known biological phenotypes [20].

Protocol 2: Perturbation Response Analysis

This tests the model's ability to predict how the system responds to interventions like gene knockouts or drug treatments.

- Establish Baseline: Run the model to establish its baseline behavior and stable states.

- Apply Perturbation: Simulate an intervention by clamping specific nodes to a fixed value (e.g., setting an "EGFR inhibitor" node to 1, or a "KRAS" oncogene node to 0 to simulate a knockout) [19].

- Re-run Simulation: Observe how the network reaches a new steady state after the perturbation.

- Validation: Compare the model's predictions (e.g., "Apoptosis node will become active") with quantitative data from in vitro or in vivo experiments. This could include cell viability assays, Western blot data showing protein activation, or transcriptomics data [15] [19]. Discrepancies can reveal gaps in the network's mechanistic understanding.

The Scientist's Toolkit: Essential Reagents & Solutions

The following table lists key reagents and computational tools used in the development and validation of qualitative network models in biological research.

| Reagent / Solution / Tool | Function in Research |

|---|---|

| Selective Small-Molecule Inhibitors (e.g., EGFR inhibitors, MEK inhibitors) | To experimentally perturb specific nodes in a signaling pathway and validate model predictions about the effects of targeted interventions [19]. |

| Phospho-Specific Antibodies | For Western Blot or Immunofluorescence to provide quantitative data on the activation state (phosphorylation) of key proteins in the network, used for model validation [19]. |

| RNAi or CRISPR-Cas9 Reagents | To knock down or knock out specific genes (nodes) in the network, providing data on system robustness and the role of individual components for model testing. |

| Qualitative Modeling Software (e.g., GINsim, CellNetAnalyzer, BoolNet) | Platforms specifically designed to build, simulate, and analyze the dynamics of logical/Boolean models and interaction graphs [19]. |

| Epistemic Network Analysis (ENA) Tools | Software to quantify qualitative data (e.g., interview transcripts) and represent it as networks, allowing for statistical comparison with quantitative outcomes [14]. |

Building and Implementing Qualitative Network Models: Step-by-Step Methodologies

Data Collection Strategies for Qualitative Network Construction

This guide explores the integral role of data collection strategies in constructing robust qualitative network models, with a specific focus on their validation through quantitative data within drug development. For researchers and scientists, the choice of data collection method directly influences the accuracy, reliability, and ultimately, the regulatory acceptability of a network model. This article provides a comparative analysis of predominant qualitative strategies, details experimental protocols for their application, and presents a framework for integrating quantitative metrics to ensure model validity and fitness-for-purpose in Model-Informed Drug Development (MIDD) [24].

In the context of network construction, qualitative data provides the narrative, context, and depth that pure numerical data cannot capture. It focuses on understanding the 'why' and 'how' behind complex human experiences, behaviors, and social structures [25]. For drug development professionals, this translates to insights into patient experiences, clinician decision-making, and healthcare system dynamics that form the nodes and edges of qualitative networks.

The construction of a qualitative network is fundamentally different from its quantitative counterpart. Where a quantitative network might model the physiologically based pharmacokinetic (PBPK) relationships between variables [24], a qualitative network constructs a framework of interconnected themes, concepts, and experiences. The validity of such a network is paramount, necessitating rigorous data collection strategies and subsequent validation with quantitative data to ensure it accurately represents the real-world phenomena it seeks to model [26] [24]. This is especially critical in a regulatory environment where Model-Informed Drug Development (MIDD) is increasingly employed to support drug approval and labeling decisions [24].

Comparative Analysis of Qualitative Data Collection Strategies

Selecting an appropriate data collection strategy is the first critical step in building a reliable qualitative network. The chosen method must align with the research question, the nature of the phenomenon under study, and the intended context of use for the final model [27] [5]. The following table summarizes the key strategies, their applications, and their outputs relevant to network construction.

Table 1: Comparative Overview of Qualitative Data Collection Strategies for Network Construction

| Method | Primary Application in Network Construction | Data Output | Relative Resource Intensity | Key Advantage |

|---|---|---|---|---|

| Semi-Structured Interviews [27] [26] | Eliciting deep, individual-level concepts and relationships for node and link definition. | Detailed transcripts; identified concepts and preliminary connections. | High (time for conduct & analysis) | Balances structure with flexibility to explore novel network paths. |

| Focus Groups [27] [25] | Identifying shared and divergent perspectives within a group; mapping collective conceptual relationships. | Group interaction transcripts; list of agreed-upon and contested themes. | Medium-High (recruitment & facilitation) | Reveals group dynamics and consensus-building, informing network structure. |

| Observation [27] [5] | Mapping behaviors and interactions as they occur naturally in a real-world setting. | Field notes; behavioral logs; diagrams of interactions. | Very High (immersive time commitment) | Provides direct evidence of real-world behaviors over self-reported data. |

| Document & Record Analysis [27] | Constructing networks from existing information (e.g., clinical notes, regulatory documents). | Extracted themes and coded content from texts. | Low (uses existing materials) | Non-intrusive; provides historical context for network evolution. |

| Case Study Research [25] [5] | In-depth investigation of a single instance or system to understand its complex, integrated network. | Comprehensive, multi-source dataset on a bounded case. | Very High (holistic data integration) | Preserves the holistic and meaningful characteristics of a real-life system. |

Each strategy offers a unique lens. Semi-structured interviews are particularly powerful in the early stages of network development, such as during concept elicitation for Patient-Reported Outcome (PRO) instruments, where understanding the patient's perspective is critical for defining meaningful nodes in a health-related quality of life network [26]. Conversely, document analysis can efficiently construct a network of themes from a large corpus of existing literature or clinical trial protocols [27].

Experimental Protocols for Key Data Collection Methods

A rigorous, pre-defined protocol is essential to ensure the consistency, reliability, and auditability of the data collection process, which in turn supports the validity of the resulting qualitative network.

This protocol is designed to elicit key concepts and their relationships directly from participants, forming the foundational data for network node and edge creation [26].

- Objective: To identify and define the core concepts, experiences, and their interconnections from the participant's perspective.

- Materials:

- Interview guide with open-ended questions and potential prompts.

- Audio-recording equipment and transcription service.

- Informed consent form.

- Thematic analysis software (e.g., NVivo, ATLAS.ti) [7].

- Procedure:

- Participant Recruitment & Consent: Recruit a purposive sample of participants who have direct experience with the phenomenon of interest. Obtain informed consent, explaining the study purpose and data usage [7].

- Conducting the Interview: a. Begin with a broad, open-ended question (e.g., "Can you describe your experience with...?"). b. Use follow-up prompts to explore concepts in depth (e.g., "Can you tell me more about that?" "How did that affect you?"). c. Avoid leading questions. Allow the participant to introduce and define concepts. d. Continue until data saturation is reached, i.e., no new concepts are emerging from subsequent interviews [7].

- Data Management: Audio-record and verbatim transcribe all interviews. Anonymize transcripts to protect participant confidentiality.

- Analysis for Network Construction:

- Coding: Perform iterative thematic analysis on the transcripts. This involves reading the text and applying codes to meaningful segments that represent key concepts [7].

- Theme/Node Development: Group related codes into overarching themes, which will form the primary nodes of the qualitative network.

- Relationship Identification: Review the coded data to identify how themes and concepts are linked by participants. These stated or implied connections form the preliminary edges of the network.

Protocol for Integrated Focus Groups

This protocol leverages group interaction to generate data on collective conceptualizations and the relationships between ideas, which can validate or challenge the network structure derived from individual interviews [27] [25].

- Objective: To explore a specific topic as a group, identifying areas of consensus and disagreement to refine the connections between nodes in a qualitative network.

- Materials:

- Discussion guide with key topics.

- Moderator and note-taker.

- Audio-/Video-recording equipment.

- Consent forms.

- Procedure:

- Group Composition: Recruit 6-10 participants with relevant experience. Homogeneous groups can foster openness, while heterogeneous groups can generate diverse perspectives.

- Moderation: a. The moderator introduces the topic and establishes ground rules. b. The moderator guides the discussion using the topic guide, ensuring all voices are heard and managing dominant participants. c. The note-taker records non-verbal cues and group dynamics.

- Data Collection: Record and transcribe the session. The note-taker's observations are integrated as contextual data.

- Analysis for Network Construction:

- Analyze transcripts to identify concepts (nodes) discussed by the group.

- Map the flow of discussion to see how one concept leads to another, revealing directional or associative edges.

- Note points of strong consensus as potential core pathways in the network, and points of disagreement as potential conditional or probabilistic edges.

The workflow for constructing a qualitative network, from data collection to a validated model, is a systematic process as shown below.

Quantitative Validation Metrics for Qualitative Networks

For a qualitative network to be credible and useful in a scientific context like drug development, it must be validated. This involves using quantitative metrics to assess its structure, robustness, and performance against known benchmarks or data [28] [29].

Table 2: Key Quantitative Metrics for Validating Qualitative Network Models

| Metric Category | Specific Metric | Application in Validating Qualitative Networks | Interpretation for Model Fitness |