Bridging the Gap: Perceived vs. Modeled Ecosystem Services in Environmental and Biomedical Research

This article explores the critical divergence between scientifically modeled ecosystem service potentials and stakeholder perceptions, a challenge with profound implications for sustainable landscape management and biomedical innovation.

Bridging the Gap: Perceived vs. Modeled Ecosystem Services in Environmental and Biomedical Research

Abstract

This article explores the critical divergence between scientifically modeled ecosystem service potentials and stakeholder perceptions, a challenge with profound implications for sustainable landscape management and biomedical innovation. Tailored for researchers, scientists, and drug development professionals, it synthesizes foundational concepts, methodological approaches, and validation strategies. We examine why significant discrepancies—sometimes over 30%—occur between biophysical models and human perception, investigate frameworks for integrating these perspectives, and present comparative case studies. The discussion extends these principles to the 'drug development ecosystem,' highlighting how fit-for-purpose modeling and stakeholder engagement can optimize research infrastructure and accelerate therapeutic discovery.

The Perception-Reality Divide: Unpacking Foundational Concepts in Ecosystem Service Assessment

In the field of ecosystem services (ES) research, a significant and persistent gap exists between quantitative model-based evaluations and qualitative human perception-based assessments. Ecosystem services, defined as the benefits humans derive from ecosystems, are fundamental to human well-being and sustainable development [1] [2]. Their quantification is crucial for informed policy-making and landscape management. However, two distinct methodological approaches have emerged: biophysical modeling using computational tools and empirical data to calculate potential ES supply, and socio-cultural valuation that captures stakeholders' perceptions of ES benefits through questionnaires and participatory methods [1] [3]. This guide systematically compares these approaches, revealing substantial discrepancies that challenge integrated assessment and decision-making processes.

Despite advanced modeling techniques, a striking misalignment persists between scientific calculations and human experience. This divergence is particularly evident in rapidly urbanizing watersheds and metropolitan areas where ecosystem services are under greatest pressure [1] [2]. Understanding the nature and extent of these discrepancies is essential for developing more holistic assessment frameworks that effectively bridge scientific rigor with societal values.

Quantitative Comparison: Modeled Versus Perceived Ecosystem Services

Table 1: Documented Discrepancies Between Modeled and Perceived Ecosystem Services

| Ecosystem Service Type | Documented Discrepancy | Geographic Context | Magnitude of Difference |

|---|---|---|---|

| Drought Regulation | Significant overestimation by stakeholders | Guanting Reservoir Basin, China | High contrast [1] |

| Erosion Prevention | Significant overestimation by stakeholders | Guanting Reservoir Basin, China | High contrast [1] |

| Water Purification | Close alignment between models and perception | Guanting Reservoir Basin, China | Low contrast [1] |

| Food Production | Close alignment between models and perception | Guanting Reservoir Basin, China | Low contrast [1] |

| Recreation | Close alignment between models and perception | Guanting Reservoir Basin, China | Low contrast [1] |

| Multiple ES Indicators | Systematic overestimation by stakeholders | Mainland Portugal | 32.8% average overestimation [3] |

| Biodiversity Integrity | Declining model-based potential | European Capital Metropolitan Areas | Significant decline 2006-2018 [2] |

| Drinking Water Provision | Declining model-based potential | European Capital Metropolitan Areas | Significant decline 2006-2018 [2] |

| Flood Protection | Declining model-based potential | European Capital Metropolitan Areas | Significant decline 2006-2018 [2] |

Table 2: Patterns in Discrepancy Across Service Types and Populations

| Factor Influencing Discrepancy | Effect on Model-Perception Gap | Supporting Evidence |

|---|---|---|

| Service Type (Regulating/Supporting) | Larger gaps for urban residents | [1] |

| Service Type (Provisioning/Cultural) | Larger gaps for rural residents | [1] |

| Urbanization Rate | Strong negative correlation with ES potential | Metropolitan areas facing 2006-2018 declines [2] |

| Population Growth | Weaker correlation with ES potential than urban expansion | [2] |

| Socio-Economic Context | Post-socialist European countries show high transformation impact | Notable impact in Warszawa, Poland [2] |

| Regional Specificity | Fennoscandinavian areas lead cumulative potential but face high reduction | Helsinki, Stockholm, Oslo [2] |

Experimental Protocols in Ecosystem Services Research

Biophysical Modeling Approach

The biophysical modeling methodology employs computational tools to quantify ecosystem service potential based on land use/cover data and ecological processes:

Data Collection: Gather spatial data including land use/land cover (LULC) maps, digital elevation models, soil data, meteorological information, and remote sensing data [1] [2]. The CORINE Land Cover database and Urban Atlas data are commonly used for European contexts [3] [2].

Model Selection: Utilize established biophysical models such as the Universal Soil Loss Equation, water balance equation, CASA (Carnegie Ames-Stanford Approach), or integrated tools like InVEST (Integrated Valuation of ES and Trade-offs) [1]. Each model is selected based on its suitability for specific ecosystem services.

Spatial Analysis: Process data in Geographic Information Systems to generate spatially explicit maps of ecosystem service potential. Resample all data to a consistent resolution (typically 100m) to ensure comparability [1].

Temporal Assessment: Conduct multi-temporal analysis using data from different reference years (e.g., 1990, 2000, 2006, 2012, 2018) to track changes in ES potential over time [3] [2].

Index Calculation: Integrate multiple ES indicators into composite indices such as the ASEBIO (Assessment of Ecosystem Services and Biodiversity) index using multi-criteria evaluation methods [3].

Stakeholder Perception Assessment

The perceptual assessment methodology captures how residents and experts value ecosystem services through social science approaches:

Questionnaire Design: Develop structured surveys that present respondents with descriptions of various ecosystem services and ask them to rate their importance or perceived supply [1] [3]. Surveys typically use Likert scales or pairwise comparison methods.

Sampling Strategy: Implement stratified random sampling to ensure representation across different demographic groups, including both urban and rural residents [1]. Sample sizes vary but typically include hundreds of respondents across the study region.

Data Collection Periods: Conduct surveys during specific time windows (e.g., July 30 to August 5, 2021) to control for seasonal variations in ecosystem service perception [1].

Analytical Hierarchy Process: Employ multi-criteria decision analysis techniques where stakeholders assign weights to different ecosystem services based on their relative importance [3].

Statistical Analysis: Use non-parametric tests such as the Wilcoxon signed-rank test to identify significant differences between modeled values and perceived values [1]. Buffer analysis correlates perceptual data with spatial locations.

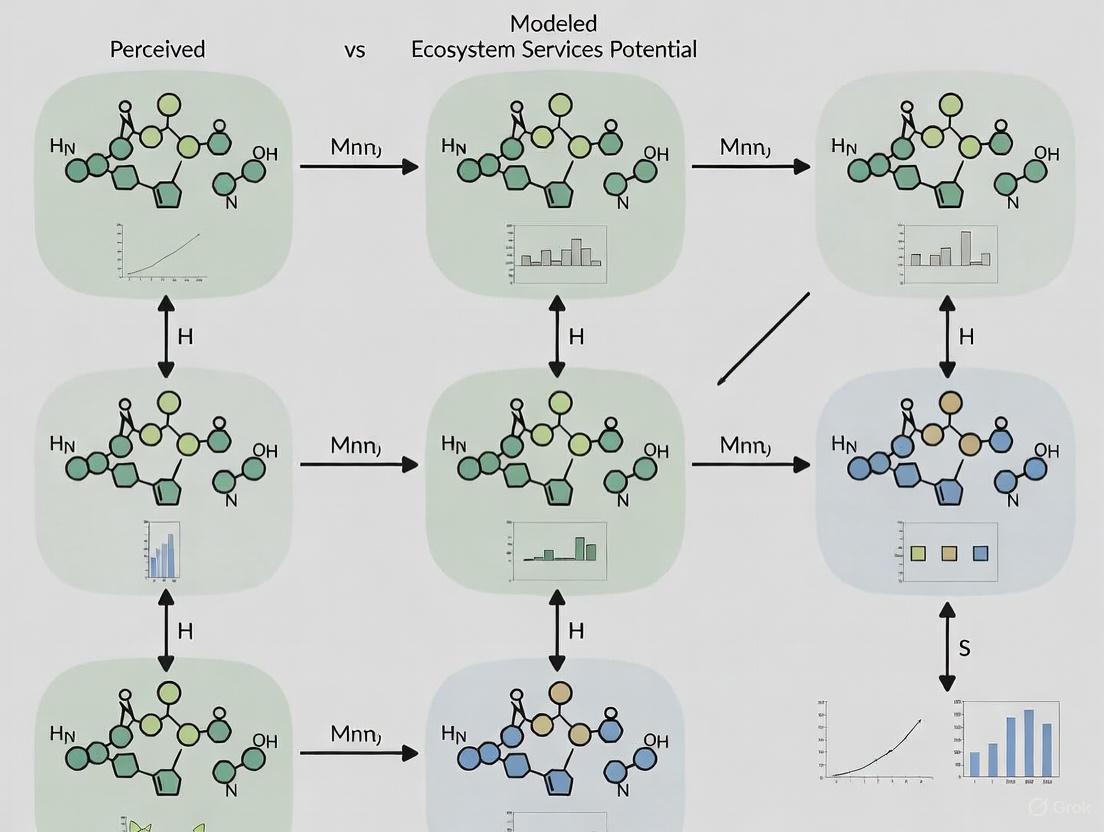

Diagram 1: Ecosystem Services Assessment Workflow showing parallel modeling and perception pathways

Cross-Domain Validation: Parallel Discrepancies in Other Fields

Research in artificial intelligence and sensory perception reveals strikingly similar gaps between model inference and human judgment:

Audio Event Recognition: Deep learning models detect all potential audio events with equal priority, while human perception naturally filters events based on semantic importance and context. Humans tend to ignore subtle or trivial events, whereas models are easily affected by noisy events [4].

Computer Vision: Model representations fail to capture the full multi-level conceptual structure of human knowledge. While successfully encoding local similarity structures, they poorly represent global relationships between abstract concepts [5].

Image Quality Assessment: Human perception of image quality correlates with specific technical metrics (entropy, blur, blockness) but demonstrates significant non-transitivity in pairwise comparisons, with 10-14% of comparisons being inverted [6].

Explainable AI: Uncertainty in model explanations is poorly communicated to users, affecting trust calibration. Humans struggle to interpret model confidence levels without appropriate contextual cues [7].

Table 3: Discrepancy Patterns Across Research Domains

| Research Domain | Nature of Model-Human Gap | Implications |

|---|---|---|

| Ecosystem Services | Systematic overestimation in stakeholder perception | Potential misallocation of conservation resources |

| Audio Event Recognition | Differential sensitivity to foreground/background events | Context-aware filtering needed for human-aligned systems |

| Computer Vision | Poor abstraction of global semantic relationships | Limited generalization capability in AI systems |

| Image Quality | Non-transitive human quality judgments | Challenge for linear quality modeling |

| Explainable AI | Poor uncertainty communication | Reduced trust and appropriate reliance on AI systems |

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Research Tools for Ecosystem Services Assessment

| Tool/Resource | Function | Application Context |

|---|---|---|

| InVEST Software | Integrated spatial modeling of ecosystem services | Quantifying ES supply, mapping tradeoffs [1] |

| CORINE Land Cover Database | Standardized land use/cover classification | European-scale ES assessment [3] [2] |

| Urban Atlas Data | High-resolution urban land cover mapping | Metropolitan-scale ES analysis [2] |

| Analytical Hierarchy Process | Multi-criteria decision analysis framework | Stakeholder weighting of ES importance [3] |

| ASEBIO Index | Composite indicator for multiple ES | Integrated assessment of biodiversity and services [3] |

| Expert Matrix Method | Reliable weighting of ecosystem types | Rapid ES potential assessment [2] |

| Wilcoxon Signed-Rank Test | Non-parametric statistical analysis | Identifying significant model-perception differences [1] |

Diagram 2: Ecosystem Services Modeling Framework showing data integration and service-specific assessments

The critical gap between modeled ecosystem services and human perception represents both a methodological challenge and an opportunity for more inclusive environmental governance. The consistent pattern of discrepancies across diverse geographical contexts and ecosystem service types underscores the limitations of relying exclusively on either technical modeling or perceptual assessment alone.

Future research should prioritize integrated methodologies that leverage the strengths of both approaches while explicitly accounting for their systematic differences. This includes developing translation frameworks that can reconcile biophysical measurements with societal values, particularly for regulating services where discrepancies are most pronounced. Furthermore, the parallel findings from AI and sensory perception research suggest fundamental principles governing human-model alignment that transcend specific application domains.

By acknowledging and quantitatively characterizing these discrepancies, researchers and policymakers can develop more robust, socially relevant ecosystem service assessments that effectively inform sustainable landscape management decisions. The evidence clearly indicates that neither models nor perceptions alone provide sufficient guidance—it is in their thoughtful integration that the most accurate understanding of ecosystem service dynamics emerges.

Understanding the divergence between perceived and modeled ecosystem services (ES) potential is a critical challenge in environmental research. This disconnect becomes particularly pronounced when examined through the lenses of different stakeholder groups, whose perspectives are shaped by geographic context and expertise. In urban and rural settings, variations in infrastructure, resource access, and daily interactions with ecosystems create fundamentally different frameworks for evaluating ES benefits [8]. Simultaneously, the gap between expert assessments and public understanding represents a significant barrier to effective environmental policy implementation and community engagement. This guide systematically compares these stakeholder-specific variations, providing experimental methodologies and analytical frameworks to objectively assess how different groups value, perceive, and utilize ecosystem services across the urban-rural continuum and knowledge spectra.

The imperative for this research stems from the frequent misalignment between quantitative models of ecosystem service potential and qualitative human experiences of these services. While technological advancements have enabled increasingly sophisticated spatial modeling of ES flows, the successful implementation of ecosystem-based solutions depends ultimately on stakeholder acceptance and collaboration [9]. By examining both urban-rural and expert-public dimensions simultaneously, researchers can develop more nuanced understanding of how to bridge the gap between scientific assessment and societal application of ecosystem services concepts.

Urban vs. Rural Stakeholder Perspectives: A Comparative Analysis

Structural Determinants of Perspective Variation

Stakeholder perspectives on ecosystem services diverge significantly between urban and rural contexts, primarily driven by structural factors that shape daily experiences with natural systems. Rural stakeholders often demonstrate heightened awareness of provisioning services (e.g., food production, water provision) due to direct dependence on these services for livelihoods and subsistence [8]. In contrast, urban stakeholders typically place greater emphasis on cultural and regulating services (e.g., recreation, air purification) that enhance quality of life in densely populated environments. These differences stem from varying patterns of interaction with ecosystems, economic dependencies, and cultural relationships with nature that evolve across the urban-rural gradient.

Table 1: Structural Factors Influencing Urban vs. Rural Stakeholder Perspectives on Ecosystem Services

| Factor Category | Urban Context | Rural Context |

|---|---|---|

| Infrastructure & Access | Greater access to built recreational spaces; reliance on gray infrastructure for service provision [8] | Dependence on natural infrastructure; limited service availability including transportation and telecommunications [8] |

| Economic Dependencies | Diverse economies with limited direct natural resource extraction | Higher dependence on resource-based livelihoods (agriculture, forestry, mining) |

| Cultural Connections | Nature often experienced as discrete recreational spaces; aesthetic valuation predominant [10] | Nature integrated into daily life; functional and utilitarian relationships more common |

| Technology Reliance | High internet connectivity enabling digital engagement with environmental information [8] | Limited communications infrastructure restricting access to web-based platforms and coordination [8] |

| Social Networks | Formal institutional arrangements for environmental management | Greater reliance on informal caregiving and community support systems [8] |

Methodological Framework for Assessing Geographic Variations

Research examining urban-rural differences in environmental perspectives requires careful methodological design to account for confounding variables and ensure comparability. The following protocols provide frameworks for capturing these geographic variations in stakeholder perceptions of ecosystem services.

Experimental Protocol 1: Spatial Sampling for Urban-Rural Gradient Analysis

- Classification System: Utilize established classification schemes such as the United States Department of Agriculture's Economic Research Service Rural-Urban Continuum Codes (RUCCs) to define sampling locations along the urban-rural spectrum [11]. This ensures consistent categorization of respondents across multiple geographic contexts.

- Stratified Sampling: Identify participants through proportional random sampling within each classification category to ensure representation across the entire urban-rural continuum. Include both counties adjacent to metropolitan areas and completely rural counties to capture transition zones.

- Data Collection: Implement mixed-methods approach including:

- Standardized surveys quantifying perceptions of specific ecosystem services using Likert scales

- Spatial mapping exercises where participants identify valued ecosystem service areas

- Semi-structured interviews exploring cultural and experiential relationships with local ecosystems

- Control Variables: Collect demographic data (age, gender, education, income, length of residence) to control for confounding factors in the analysis of geographic influences.

Experimental Protocol 2: Paired Site Comparison Studies

- Site Selection: Identify urban-rural paired sites within the same biogeographic region to control for inherent ecological differences while isolating geographic context effects.

- Stakeholder Recruitment: Recoup parallel stakeholder groups (e.g., residents, local officials, landowners) from each paired site using identical recruitment criteria and methods.

- Perception Assessment: Administer identical research instruments including:

- Q-sort methodologies to assess relative valuation of different ecosystem services

- Scenario evaluations presenting hypothetical land-use changes

- Visual preference surveys using photographs of different landscape configurations

- Model Integration: Collect biophysical data to develop modeled ecosystem service assessments for the same sites, enabling direct comparison between perceived and quantified ES values.

Figure 1: Methodological workflow for urban-rural stakeholder perception studies

Expert vs. Public Perspectives: Bridging the Knowledge Gap

Dimensions of Perspective Divergence

The chasm between expert and public understanding of ecosystem services represents a critical challenge in environmental management. Experts typically employ systematic, quantitative frameworks informed by ecological theory and modeling approaches, while public perspectives are often shaped by direct experience, cultural values, and qualitative assessments. This divergence manifests across multiple dimensions including risk perception, valuation methods, temporal considerations, and spatial understanding of ecological processes.

Table 2: Expert vs. Public Perspectives on Ecosystem Services Assessment

| Assessment Dimension | Expert Perspective | Public Perspective |

|---|---|---|

| Knowledge Foundation | Discipline-specific training; peer-reviewed literature; quantitative models [9] | Lay knowledge; personal experience; community wisdom; media influences |

| Valuation Approach | Economic valuation methods (e.g., willingness-to-pay); bio-physical quantification [9] | Expressed preferences; relational values; moral considerations; aesthetic judgments |

| Uncertainty Handling | Explicit uncertainty quantification; confidence intervals; scenario analysis | Aversion to probabilistic thinking; desire for certainty; dichotomous risk assessment |

| Temporal Framework | Long-term perspectives; discount rates; intergenerational impacts | Immediate to near-term considerations; personal experience timeframe |

| Spatial Understanding | Watershed, landscape, or regional scales; connectivity considerations [9] | Local, familiar spaces; visually accessible areas; property boundaries |

| Communication Style | Technical language; specialized terminology; quantitative data presentation | Narrative approaches; visual communication; experiential references |

Methodological Protocols for Expert-Public Comparison

Rigorous experimental design is essential for meaningful comparison of expert and public perspectives on ecosystem services. The following protocols facilitate structured assessment of these divergent knowledge systems.

Experimental Protocol 3: Deliberative Valuation Methodology

- Participant Recruitment: Identify two distinct groups:

- Expert Cohort: Professionals with advanced training in relevant disciplines (ecology, economics, planning) with minimum 5 years of field experience

- Public Cohort: Laypersons with no professional background in environmental fields, stratified to represent diverse demographic characteristics

- Information Exposure: Provide all participants with standardized background information about the ecosystem services being evaluated, presented in accessible language with visual aids to minimize knowledge disparities.

- Valuation Exercises: Implement multiple valuation techniques including:

- Structured deliberation with facilitated discussion

- Individual and group valuation exercises

- Preference ranking of ecosystem services

- Trade-off analysis between conflicting management objectives

- Data Analysis: Compare within-group and between-group consistency, evaluate how values shift through deliberative processes, and assess the impact of different information formats on valuation outcomes.

Experimental Protocol 4: Knowledge System Integration Framework

- Participant Selection: Recruit participants from three categories: scientific experts, local policy makers, and community representatives.

- Knowledge Elicitation: Employ specialized techniques for each group:

- Experts: Concept mapping of ecosystem relationships; model parameterization exercises

- Public: Mental models interviews; participatory mapping; photo-elicitation techniques

- Knowledge Integration: Facilitate structured dialogue between groups using:

- Cross-visualization techniques where each group responds to the other's representations

- Co-development of conceptual models integrating different knowledge types

- Collaborative scenario planning exercises

- Outcome Assessment: Evaluate the degree of perspective shift in each group, identify points of convergence and persistent divergence, and assess the robustness of integrated knowledge products.

Figure 2: Methodological framework for expert-public perception comparison

Integrated Analysis: Intersection of Geographic and Knowledge Dimensions

The most insightful analyses emerge when examining the interaction between geographic context (urban-rural) and knowledge type (expert-public). This integrated approach reveals how place-based experiences moderate the expert-public divide and how knowledge systems manifest differently across geographic contexts.

Table 3: Intersectional Analysis Framework - Urban/Rural vs. Expert/Public Perspectives

| Perspective Combination | Characteristic Valuation Approach | Primary Data Needs | Policy Influence Channels |

|---|---|---|---|

| Urban Experts | Techno-managerial solutions; cost-benefit analysis; efficiency metrics [9] | High-resolution spatial data; monitoring system outputs; model projections | Technical advisory committees; peer-reviewed literature; professional networks |

| Urban Public | Quality of life emphasis; recreational access; aesthetic values; environmental justice concerns [10] | Localized impact information; visual representations; health implication data | Neighborhood associations; public hearings; political mobilization; social media |

| Rural Experts | Sustainable yield approaches; resilience frameworks; landscape-scale planning [8] | Long-term trend data; climate projections; economic viability assessments | Extension services; resource management agencies; regional planning bodies |

| Rural Public | Livelihood security; multi-functional landscapes; intergenerational transfer; practical functionality [8] | Weather pattern information; market conditions; local success stories | Landowner organizations; local government; community institutions; traditional leadership |

Experimental Protocol for Integrated Assessment

Experimental Protocol 5: Cross-Cutting Perspective Analysis

- Factorial Design: Create a 2x2 study design crossing geographic context (urban/rural) with knowledge type (expert/public), with minimum n=30 participants per cell.

- Stimulus Development: Create realistic ecosystem service scenarios reflecting management dilemmas relevant to all participant groups, presented through multiple formats (narrative descriptions, maps, data visualizations).

- Data Collection: Implement:

- Preference elicitation for different management outcomes

- Assessment of trade-offs between competing ecosystem services

- Evaluation of uncertainty presentation formats

- Measurement of trust in different information sources

- Analysis Framework: Employ multi-level modeling to account for nested effects, with particular attention to interaction effects between geographic context and knowledge type.

Stakeholder Analysis and Classification Tools

Effective research on stakeholder perspectives requires systematic approaches to identifying and categorizing different stakeholder groups. The following tools provide frameworks for this essential preliminary work.

Table 4: Stakeholder Analysis Frameworks for Ecosystem Services Research

| Framework | Key Dimensions | Application Context | Methodological Requirements |

|---|---|---|---|

| Salience Model [12] | Power, Legitimacy, Urgency | Prioritizing stakeholder engagement in contested decisions | Qualitative assessment of stakeholder attributes; expert judgment |

| Power-Interest Grid [13] [12] | Power, Interest level | Designing appropriate engagement strategies for different groups | Stakeholder interviews; organizational analysis |

| Influence-Impact Matrix [12] | Influence over decisions, Impact from outcomes | Understanding stakeholder motivations and potential reactions | Document analysis; stakeholder self-assessment |

| Stakeholder Typologies [9] | 16 core stakeholder types based on system role | Comprehensive stakeholder identification in complex systems | Systems thinking; boundary definition |

Essential Research Reagents and Tools

Table 5: Essential Methodological Tools for Stakeholder Perception Research

| Research Tool | Primary Function | Application Example | Implementation Considerations |

|---|---|---|---|

| Q-Methodology | Systematic study of subjectivity; identification of shared perspectives | Identifying distinct viewpoints on ecosystem service trade-offs | Requires specialized statistical analysis; careful statement development |

| Participatory Mapping | Spatial representation of local knowledge and values | Mapping culturally significant landscapes or ecosystem service flows | GIS integration; consideration of different spatial cognition models |

| Deliberative Valuation | Group-based value formation through structured discussion | Assessing how values change through social learning and information exchange | Facilitation expertise; careful design of deliberative process |

| Mental Models | Elicitation of cognitive frameworks about complex systems | Comparing expert and public understanding of ecological processes | Qualitative analysis expertise; systematic comparison framework |

| Choice Experiments | Quantifying preferences for multi-attribute outcomes | Evaluating trade-offs between different ecosystem service bundles | Experimental design expertise; statistical analysis capabilities |

Data Visualization and Communication Protocols

Effective communication of research findings requires careful attention to visual design principles that ensure accessibility across different stakeholder groups. The following protocols support clear presentation of complex comparative data.

Visualization Standards for Stakeholder Research

- Color Selection: Implement color palettes that maintain sufficient contrast for color-blind users, using different saturation levels in addition to hue variations [14]. Use intuitive color associations (e.g., green for vegetation, blue for water) where culturally appropriate.

- Annotation Strategy: Employ active titles that state key findings directly rather than describing chart contents [15]. Use callouts to highlight significant patterns or contextual events that influence data interpretation.

- Comparative Frameworks: Use small multiples to enable pattern recognition across different stakeholder groups. Maintain consistent scaling and color schemes across all comparative visualizations.

- Uncertainty Communication: Represent uncertainty through graded transparency, confidence intervals, or hypothetical outcome plots to convey probabilistic information to non-technical audiences.

This comparison guide has outlined systematic approaches for examining stakeholder-specific variations in ecosystem services perspectives across urban-rural and expert-public dimensions. The experimental protocols and analytical frameworks presented enable researchers to move beyond simplistic dichotomies toward nuanced understanding of how geographic context and knowledge systems interact to shape environmental perceptions. By employing these standardized methodologies, the research community can develop more comparable datasets across study regions, facilitating meta-analyses that identify generalizable patterns in stakeholder perspectives.

The ultimate challenge remains translating these research insights into practical frameworks for environmental decision-making that respectfully integrate multiple knowledge systems while acknowledging the structural factors that shape different ways of knowing. Future methodological development should focus particularly on dynamic assessment approaches that can capture how stakeholder perspectives evolve through processes of social learning, ecological change, and policy implementation.

From Theory to Practice: Methodologies for Quantifying and Integrating Ecosystem Services

Ecosystem services (ES)—the benefits humans derive from nature—are fundamental to human well-being and the global economy, yet they are increasingly threatened by anthropogenic pressures and land cover changes [3]. Accurate assessment of these services is crucial for sustainable ecosystem management, policy development, and conservation planning. Biophysical modeling tools provide spatially explicit methods to quantify and map ES, enabling decision-makers to evaluate trade-offs between environmental and economic objectives [16]. These tools have become essential for translating ecological complexity into actionable information for land managers, policy analysts, and researchers.

The evolving field of ES research has witnessed the development of various modeling approaches, each with distinct methodologies, strengths, and limitations. Among these, three tools have gained significant traction in the scientific community: InVEST (Integrated Valuation of Ecosystem Services and Tradeoffs), LUCI (Land Utilisation and Capability Indicator), and Co$ting Nature. These tools represent different philosophical and technical approaches to quantifying nature's contributions to human society. Understanding their capabilities and appropriate applications is essential for advancing ES science and effectively informing conservation and development decisions.

A critical context for evaluating these tools emerges from recent research revealing substantial disparities between model-calculated ecosystem services and those perceived by stakeholders [3] [1]. This gap between scientific quantification and human perception highlights the importance of tool selection and interpretation, particularly when research findings are intended to inform policy or management actions that require community support. As such, this comparison examines not only the technical specifications of each tool but also their relationship to this emerging research paradigm.

InVEST (Integrated Valuation of Ecosystem Services and Tradeoffs)

InVEST, developed by the Stanford Natural Capital Project, is a suite of free, open-source software models designed to map and value the goods and services from nature that sustain and fulfill human life [16]. This toolset includes models for terrestrial, freshwater, marine, and coastal ecosystems, employing a production function approach that defines how changes in ecosystem structure and function affect the flows and values of ecosystem services across landscapes or seascapes [16] [17]. InVEST models are spatially explicit, using maps as information sources and producing maps as outputs, with results expressed in either biophysical terms (e.g., tons of carbon sequestered) or economic terms (e.g., net present value) [16]. The modular design allows users to select only services of interest without running a full suite of analyses, providing flexibility for diverse applications from local to global scales [16] [17].

A key feature of InVEST is its recent transition to the "InVEST Workbench," which repackages the same models in a more accessible and extensible user interface while maintaining all original functionality [16]. Running InVEST requires basic to intermediate GIS skills for viewing results in software such as QGIS or ArcGIS, though the models themselves operate as standalone applications independent of GIS platforms [16]. The tool has been widely applied in research and planning contexts globally, with studies demonstrating its utility in scenarios ranging from assessing the ecosystem service impacts of native vegetation at solar energy facilities [18] to improving life cycle assessment through predictive spatial modeling [19].

LUCI (Land Utilisation and Capability Indicator)

LUCI is a spatially explicit tool for assessing the capacity of ecosystems to provide services based on their state, with particular focus on provisioning and regulating services in both natural and human-modified environments [1]. Unlike other tools that may rely heavily on remote sensing data alone, LUCI incorporates landscape configuration and context—including factors like habitat fragmentation and proximity to landscape features such as watercourses—as key determinants in estimating impacts on biodiversity and ecosystem services [19]. This approach recognizes that local spatial heterogeneity significantly influences ecosystem function and service delivery.

The tool is designed to model the impacts of land use change on various ecosystems, operating effectively at both local and national scales [20] [1]. Comparative studies have shown that LUCI performs similarly to other ecosystem service tools for fundamental services like water supply, carbon storage, and nutrient retention, but with unique features that may make it more suitable for certain research questions [20]. Specifically, LUCI's strength lies in its ability to account for the role of vegetation in buffering impacts, such as retaining sediment before it reaches watercourses, which can yield significantly different results compared to tools that estimate total soil erosion without considering these landscape-scale processes [19].

Co$ting Nature

Co$ting Nature is a sophisticated web-based spatial policy support system for natural capital accounting and analyzing ecosystem services provided by natural environments [21] [22]. Rather than focusing primarily on valuing nature (determining willingness to pay), this tool emphasizes "costing nature"—understanding the resource requirements (e.g., land area) and opportunity costs of protecting nature to produce essential ecosystem services [21] [22]. The platform incorporates detailed spatial datasets at 1-square km and 1-hectare resolution globally, along with spatial models for biophysical and socioeconomic processes and scenarios for climate and land use [21].

This tool models 18 ecosystem services across multiple categories, including provisioning services (timber, fuelwood, grazing/fodder, non-wood forest products, water provisioning, fish catch), regulating services (carbon storage, natural hazard mitigation for flood, drought, landslide, and coastal inundation), cultural services (culture-based tourism, nature-based tourism, environmental and aesthetic quality), and supporting services (wildlife services for pollination and pest control) [22]. A distinctive feature is its inclusion of wildlife dis-services such as crop raiding and pests, acknowledging that ecosystems can also produce negative impacts for human communities [22]. The system calculates conservation priority based on combining ecosystem service outputs with maps of threatened biodiversity and endemism, allowing users to run scenarios of change to understand impacts on ecosystem service delivery before implementing interventions in reality [21].

Table 1: Core Characteristics of Ecosystem Service Modeling Tools

| Feature | InVEST | LUCI | Co$ting Nature |

|---|---|---|---|

| Primary Focus | Mapping and valuing ecosystem services | Assessing ecosystem service capacity based on landscape state | Natural capital accounting and ecosystem service analysis |

| Spatial Resolution | Flexible, local to global scales | Local to national scales | 1 km² or 1 hectare globally; hyper-resolution (10m-100m) for licensed users |

| Key Services Modeled | Carbon sequestration, crop pollination, water purification, coastal protection, etc. | Provisioning and regulating services | 18 services including timber, water, carbon, hazard mitigation, tourism |

| Modeling Approach | Production functions | Landscape state and configuration | Bundled indexes based on >140 input maps |

| Access Method | Standalone application | Not specified in sources | Web-based platform |

| Cost | Free, open-source | Not specified | Free for non-commercial use |

| Special Features | Modular design; scenario analysis | Accounts for landscape configuration and context | Includes wildlife dis-services; calculates conservation priority |

Comparative Performance and Experimental Data

Model Comparisons in Scientific Literature

Direct comparisons of ecosystem service models reveal both convergence and divergence in their outputs, highlighting the importance of tool selection based on specific research questions. In a comparative study of three spatially explicit tools—LUCI, ARIES, and InVEST—applied to the same temperate catchment in North Wales for water supply, carbon storage, and nutrient retention services, all three tools produced broadly comparable quantitative outputs but with unique features and strengths [20]. Each tool performed similarly overall, but differences emerged in their underlying approaches and assumptions, suggesting that model choice should align with the specific study context and questions [20].

The integration of these tools with other analytical approaches demonstrates their flexibility in addressing complex research questions. For instance, one research project combined Co$ting Nature with suitability modeling to quantify ecosystem services along the Texas Coast, identifying that only around 13% of the Houston-Galveston coastal area had relatively high nature-based services while nearly 14% showed relatively low services [23]. This integration provided a framework for targeting communities with high flood risk and low ecological services, demonstrating how tools can be combined to address specific environmental challenges like coastal flooding [23].

Quantitative Output Comparisons

When applied to similar contexts, different tools can produce varying results due to their methodological approaches. A study comparing Co$ting Nature and InVEST in Peru's Manu National Park found that baseline scenarios for deforestation, land management change, and land-use change revealed different results for each area, though specific results were not reported [22]. Methodologically, the level of difficulty, time, and data requirements for both tools depended on the specific models being used, with both producing outputs analyzable in GIS format [22].

The application of these tools in different environmental contexts also reveals their adaptability. For example, InVEST was used to model the ecosystem service impacts of native grassland restoration at 30 solar facilities across the Midwest United States, finding that compared to pre-solar agricultural land uses, solar-native grassland habitat produced a 3-fold increase in pollinator supply and a 65% increase in carbon storage potential, along with increases in sediment and water retention of over 95% and 19%, respectively [18]. These quantitative results demonstrate how InVEST can generate specific, comparable metrics for ecosystem service changes under different land use scenarios.

Table 2: Representative Experimental Results from Tool Applications

| Tool | Application Context | Key Quantitative Findings | Source |

|---|---|---|---|

| InVEST | Native grassland restoration at solar facilities (Midwest US) | 3-fold increase in pollinator supply; 65% increase in carbon storage; >95% increase in sediment retention; 19% increase in water retention | [18] |

| Co$ting Nature | Coastal flood risk assessment (Texas Coast) | Only ~13% of area had high nature-based services; ~14% showed low services; majority in middle range vulnerable to degradation | [23] |

| InVEST | Land-Use Change Improved LCA (bioplastics) | Different results from standard LCA: opposite findings for greenhouse gases and water; different magnitudes for soil erosion and biodiversity | [19] |

| Multiple Tools | Comparative study in North Wales | All tools produced broadly comparable outputs for basic services but with different strengths and unique features | [20] |

The Modeled vs. Perceived Ecosystem Services Paradigm

Documented Disparities Between Models and Perceptions

Recent research has revealed significant discrepancies between model-calculated ecosystem services and those perceived by stakeholders, creating a critical context for understanding the limitations and appropriate applications of biophysical tools. A comprehensive study in mainland Portugal compared eight multi-temporal ES indicators calculated through spatial modeling with stakeholders' perceptions gathered through an Analytical Hierarchy Process [3]. The results demonstrated a substantial mismatch, with stakeholder estimates being 32.8% higher on average than model-based calculations [3]. All selected ecosystem services were overestimated by stakeholders, with the largest contrasts observed for drought regulation and erosion prevention, while water purification, food production, and recreation showed closer alignment between the two approaches [3].

Similarly, research conducted in China's Guanting Reservoir basin quantified nine ecosystem services through biophysical modeling while simultaneously assessing residents' perceptions via questionnaire surveys [1]. The findings indicated that approximately half of the nine ecosystem services exhibited significant differences between perceived values and model-calculated ones [1]. The disparities followed distinct patterns across demographic groups, with regulating and supporting services showing more pronounced differences among urban residents, while provisioning and cultural services displayed greater gaps among rural residents [1]. These systematic discrepancies highlight the complex relationship between objectively quantified ecosystem services and human experience and valuation of these services.

Implications for Tool Selection and Application

The consistent gaps between modeled and perceived ecosystem services have profound implications for how researchers select and apply biophysical tools. First, they suggest that exclusive reliance on either modeling approaches or stakeholder perceptions provides an incomplete picture of ecosystem service dynamics. Rather, these approaches should be viewed as complementary, with models providing spatially explicit, quantitative baselines while perceptions capture the human dimension and relative importance of different services [3]. This integration is particularly important when research findings are intended to inform policy decisions that require community support or behavior change.

Second, the documented disparities indicate the need for careful communication of modeling results, with clear explanations of what models measure versus what communities experience. For instance, the finding that urban and rural residents differ in their perception gaps for various service types [1] suggests that tool selection might vary based on the primary audience or application context. Models that effectively capture cultural services or provisioning services—which showed different alignment with perceptions across demographic groups—might be preferable when working closely with local communities.

Diagram 1: Modeled vs. Perceived ES Assessment Approaches. This diagram illustrates the complementary data sources, methodologies, and applications of modeled versus perceived ecosystem services assessments.

Experimental Protocols and Methodologies

Standardized Application Workflows

The experimental protocols for applying ecosystem service modeling tools typically follow a systematic workflow that begins with clearly defining research questions and spatial boundaries. For InVEST, the process involves: (1) identifying target ecosystem services based on the research objectives; (2) collecting and preparing spatial input data in formats compatible with the selected modules; (3) running the models with appropriate parameterization; (4) validating outputs with empirical data where possible; and (5) interpreting results in the context of the research questions [16] [18]. The modular design allows researchers to select specific services relevant to their study without running comprehensive analyses of all possible services [16].

Co$ting Nature employs a different protocol leveraging its web-based interface: (1) defining the study area through country, basin, or custom boundaries; (2) selecting ecosystem services of interest from the 18 available options; (3) running baseline analyses using the platform's integrated global datasets; (4) developing and testing alternative scenarios of land use or management change; and (5) analyzing impacts on ecosystem service delivery and conservation priorities [21] [22]. The system calculates conservation priority by combining ecosystem service outputs with maps of threatened biodiversity and endemism [21].

LUCI's methodology emphasizes landscape configuration in its protocol: (1) characterizing current land use and landscape patterns; (2) identifying key spatial relationships and proximity effects; (3) modeling ecosystem service capacity based on landscape state; (4) accounting for buffering effects of vegetation and other landscape features; and (5) predicting impacts of land use changes on service delivery [1] [19]. This approach specifically incorporates how local spatial heterogeneity influences ecosystem function, setting it apart from tools that rely more heavily on remote sensing data alone [19].

Validation and Integration Approaches

Robust validation protocols are essential for establishing credibility in ecosystem service modeling. The comparative study of LUCI, ARIES, and InVEST validated model outputs using empirical data for river flow, carbon, and nutrient levels within the catchment [20]. Additionally, researchers tested model sensitivity to land-use change through scenarios of varying severity, evaluating the conversion of grassland habitat to woodland (0-30% of the landscape) [20]. Such sensitivity analyses help establish the reliability of models under different conditions and their responsiveness to changes in key variables.

Integration of modeling results with stakeholder perceptions requires specific methodological approaches. The Portugal study developed the ASEBIO (Assessment of Ecosystem Services and Biodiversity) index, which integrated eight ES indicators with weights defined by stakeholders through an Analytical Hierarchy Process (AHP) [3]. This multi-criteria evaluation method allowed direct comparison between modeled results and stakeholder valuations, revealing the 32.8% average overestimation by stakeholders [3]. Similarly, the Guanting Reservoir basin study employed buffer analysis and Wilcoxon signed-rank tests to statistically examine gaps between model values and residents' perceptions [1]. These methodological innovations provide templates for reconciling quantitative modeling with qualitative human dimensions of ecosystem services.

Diagram 2: Ecosystem Services Modeling Workflow. This diagram outlines the standard experimental protocol for ecosystem services assessment, from initial planning through validation and interpretation.

Successful application of ecosystem service modeling tools depends on access to diverse, high-quality data sources. The core data requirements typically include: (1) land use/land cover data, often derived from satellite imagery and classification systems like CORINE Land Cover; (2) digital elevation models (DEMs) for topographic analysis; (3) soil data including type, texture, and organic matter content; (4) climate data such as precipitation, temperature, and evapotranspiration; (5) biodiversity data including species distributions and habitat quality; and (6) socioeconomic data where relevant for valuation or beneficiary analysis [3] [1] [21]. The specific data needs vary by tool, with Co$ting Nature providing extensive built-in global datasets while InVEST and LUCI often require more user-provided data.

Data quality and resolution significantly influence model outputs and interpretations. Co$ting Nature offers multiple spatial resolutions depending on user needs: 1-square km or 1-hectare resolution for global analyses, with hyper-resolution options (10m-100m) available for licensed users conducting site-specific studies [21]. Temporal resolution also varies, with baseline data typically representing 1950-2000 conditions and scenarios projecting future conditions under different climate or land use pathways [21]. Understanding these specifications is essential for appropriate tool selection and interpretation of results.

Beyond the core modeling tools, researchers require additional resources for comprehensive ecosystem service assessment. GIS software such as QGIS or ArcGIS is essential for viewing and processing spatial inputs and outputs, particularly for InVEST which produces map-based results requiring spatial visualization [16]. Statistical packages are necessary for validation analyses, sensitivity testing, and comparing modeled results with perceived values using methods like the Wilcoxon signed-rank test employed in the Guanting Reservoir basin study [1].

For studies integrating stakeholder perspectives, social science methodologies become essential components of the research toolkit. The Analytical Hierarchy Process (AHP) provides a structured technique for organizing and analyzing complex decisions, using paired comparisons to derive stakeholder-defined weights for different ecosystem services [3]. Questionnaire design, sampling strategies, and interview protocols represent additional methodological resources needed for capturing perceived ecosystem services [1]. These social science methods enable the critical comparison between modeled and perceived services that represents an emerging frontier in ecosystem services research.

Table 3: Essential Research Reagent Solutions for Ecosystem Services Assessment

| Category | Specific Tools/Resources | Primary Function | Application Context |

|---|---|---|---|

| Spatial Data | CORINE Land Cover, Digital Elevation Models, Soil Maps | Provide baseline spatial information on landscape characteristics | Fundamental input for all modeling tools; determines analysis resolution |

| Climate Data | WorldClim, CHELSA, local meteorological stations | Supply precipitation, temperature, evapotranspiration data | Critical for water-related services and climate regulation assessments |

| Biodiversity Data | IUCN Red List, GBIF, local species inventories | Inform habitat quality and conservation priority analyses | Particularly important for Co$ting Nature conservation modules |

| Social Science Tools | Analytical Hierarchy Process, questionnaire templates, interview protocols | Capture stakeholder perceptions and preferences | Essential for integrating human dimensions with biophysical models |

| Analytical Software | R, Python, GIS packages (QGIS, ArcGIS) | Process, analyze, and visualize model outputs | Required for all tools; enables validation and sensitivity analyses |

| Validation Data | River flow measurements, carbon stocks, nutrient monitoring | Provide empirical validation of model outputs | Crucial for establishing model credibility and accuracy |

The comparative analysis of InVEST, LUCI, and Co$ting Nature reveals three distinct approaches to ecosystem service assessment, each with unique strengths and optimal application contexts. InVEST offers a modular, open-source framework suitable for scenario analysis and tradeoff evaluation across diverse ecosystems [16] [18]. LUCI emphasizes landscape configuration and context, providing sophisticated analysis of how spatial patterns influence service delivery [1] [19]. Co$ting Nature provides a comprehensive web-based platform for natural capital accounting, with extensive built-in global datasets and a focus on conservation prioritization [21] [22]. Rather than declaring a superior tool, this analysis underscores the importance of aligning tool selection with specific research questions, data availability, and intended applications.

The emerging research on disparities between modeled and perceived ecosystem services [3] [1] adds a critical dimension to tool selection and application. The consistent findings that stakeholders overestimate service levels—particularly for regulating services like drought regulation and erosion prevention—suggests that exclusive reliance on either modeling or perception approaches provides an incomplete picture. Instead, the most robust assessments integrate both perspectives, using models to establish biophysical baselines while incorporating stakeholder values to ensure social relevance and policy applicability. This integrated approach represents the future of ecosystem service science and its application to sustainable management decisions.

For researchers and professionals selecting among these tools, the decision should consider multiple factors: the specific ecosystem services of interest, spatial and temporal scales of analysis, available data resources, technical capacity, and ultimately how results will be used in decision contexts. As the field advances, future development should focus on improving the integration of biophysical modeling with socioeconomic valuation, enhancing model validation across diverse ecosystems, and developing more sophisticated approaches to reconciling scientific measurements with human experiences of nature's benefits.

Method Comparison at a Glance

The following table provides a high-level comparison of the three core methodologies for capturing human perception in environmental research.

| Method | Primary Function | Data Output | Key Strength | Key Limitation | Spatial Explicitness |

|---|---|---|---|---|---|

| Surveys | Elicit general attitudes, preferences, and socio-demographic data. | Quantitative (scaled responses); Qualitative (open-ended). | Efficient for collecting data from large, representative samples. [24] | May lack granular spatial context; prone to recall bias. | Low |

| Questionnaires | Standardized assessment of specific knowledge, perceptions, or values. | Primarily quantitative (Likert scales, multiple choice). | Enables statistical comparison and trend analysis over time. | Can oversimplify complex human-environment relationships. | Low to Medium |

| Participatory Mapping | Identify and locate specific landscape values, uses, or perceived services. | Spatial (GIS layers); Quantitative (point density); Qualitative (narratives). | Directly integrates local spatial knowledge into mappable data. [24] | Can be time-intensive; data analysis requires specialized geo-skills. | High |

Detailed Experimental Protocols

To ensure rigorous and reproducible research, below are detailed protocols for implementing these methods, particularly their integrated use.

Protocol for Integrated Survey and Participatory Mapping

This combined approach is designed to evaluate consistency between general stated preferences and spatially-explicit values, a key concern in perceived vs. modeled ecosystem services research. [24]

- Objective: To assess continued public alignment with a regional land-use plan by evaluating:

- Residential growth preferences.

- Perceived community development needs.

- Consistency between resident land-use preferences and official plan categories.

- Identification of areas with high potential for land-use conflict. [24]

- Materials:

- Sampling Framework: A stratified random sampling approach to ensure participant diversity.

- Survey Instrument: A questionnaire with sections on demographic data, Likert-scale questions about growth, and ranking exercises for development needs.

- Participatory Mapping Kit: Digital (e.g., tablets with GIS applications) or physical (e.g., paper maps, markers) materials for the study area.

- Spatial Analysis Software: Such as ArcGIS or QGIS for analyzing mapped data.

- Procedure:

- Participant Recruitment: Recruit participants based on the sampling framework, ensuring informed consent is obtained.

- Survey Administration: Participants first complete the questionnaire section to capture non-spatial preferences.

- Mapping Exercise: Participants are then guided to mark specific locations on a map. Instructions typically include:

- "Mark areas where you would prefer to see new housing development."

- "Identify locations you believe are critical for providing ecosystem services like flood mitigation or recreation."

- "Pinpoint areas where you would oppose industrial development."

- Data Integration: Georeference all mapped points and polygons. Code survey responses for statistical analysis.

- Spatial Consistency Analysis: Overlay participant-generated maps with the official land-use plan map. Calculate the percentage of participant-identified preferred development zones that fall within plan-designated areas for such uses. [24]

- Conflict Potential Analysis: Use spatial kernel density analysis to identify hotspots of high participant disagreement regarding land-use preferences. Areas with a high density of both "prefer" and "oppose" markers indicate high conflict potential. [24]

Protocol for Longitudinal Perception Tracking

- Objective: To measure shifts in human perception of ecosystem services before and after a specific environmental intervention or model presentation.

- Materials: Identical questionnaires administered at multiple time points; pre- and post-intervention model outputs (e.g., maps, data tables).

- Procedure:

- Baseline Data Collection (T0): Administer the questionnaire to establish baseline perceptions.

- Intervention: Expose participants to the modeled ecosystem services data (e.g., via a presentation, interactive map).

- Post-Intervention Data Collection (T1): Re-administer the same questionnaire immediately after the intervention.

- Delayed Post-Intervention (T2): Re-administer the questionnaire after a set period (e.g., 6 months) to test perception persistence.

- Data Analysis: Use paired t-tests or Wilcoxon signed-rank tests to compare T0-T1 and T0-T2 responses, identifying statistically significant changes in perception.

Methodological Workflows and Signaling Pathways

The logical relationships and workflows for these methodologies can be visualized as a system for integrating human perception into ecosystem services research.

Perception Data Synthesis Workflow

Participatory Mapping Conflict Analysis

The Scientist's Toolkit: Essential Research Reagents and Solutions

This table details key materials and tools required for robust research in this field.

| Item Name | Function/Application | Specifications |

|---|---|---|

| Digital Participatory Mapping Platform | Enables collection of georeferenced perception data in the field or online. | Software: GIS-based (e.g., Maptionnaire, ArcGIS Survey123). Support for raster (satellite imagery) and vector (land-use plans) base layers is critical. |

| Spatial Analysis Software | Processes mapped data to generate quantitative metrics (density, consistency, proximity). | Platforms: QGIS (open-source) or ArcGIS (proprietary). Requires modules for spatial statistics and raster calculation. |

| Structured Questionnaire | Standardizes the collection of non-spatial perceptual and socio-demographic data. | Should include validated psychometric scales (e.g., Likert scales for environmental attitudes), and be pre-tested for clarity and reliability. |

| Color-Blind Friendly Palette | Ensures research visuals (graphs, maps) are accessible to all audiences, including those with Color Vision Deficiency. | Use schemes like Blue/Orange. Avoid Red/Green. Test with tools like Viz Palette or ColorBrewer. [25] [26] [27] Use high contrast ratios (≥4.5:1 for text). [28] [29] |

| Statistical Analysis Package | Analyzes survey data and correlates non-spatial variables with mapped behaviors. | Software: R, SPSS, or Python. Used for descriptive stats, significance testing (chi-square, t-tests), and regression models. |

The integration of Ecosystem Services (ES) into decision-making is a cornerstone of sustainable development. ES are defined as the benefits that humans derive, directly or indirectly, from ecosystems [1]. Effective mainstreaming requires robust operational frameworks for assessment, planning, and management. A critical and emerging challenge in this field is the reconciliation of two distinct perspectives for evaluating ES: quantitative, data-driven biophysical models and qualitative, value-driven stakeholder perceptions. Research increasingly reveals that these two perspectives can yield significantly different valuations of the same ecosystem, a discrepancy that can undermine conservation efforts and policy development if not properly addressed [1] [3]. This guide objectively compares the core methodologies underpinning these perspectives, providing researchers and policy-makers with a clear understanding of their strengths, limitations, and appropriate applications within the ES management cycle.

Comparative Assessment of ES Valuation Methodologies

The assessment of ES primarily follows two parallel tracks: biophysical modeling and socio-cultural perception analysis. The table below provides a structured comparison of these two fundamental approaches.

Table 1: Comparative Analysis of Ecosystem Service Assessment Methodologies

| Feature | Biophysical & Economic Models | Stakeholder Perception Approaches |

|---|---|---|

| Core Philosophy | Quantifies potential ES supply based on ecological processes and structures [1]. | Captures the perceived benefits and values of ES as experienced by people [1]. |

| Primary Data Sources | Remote sensing data, land use/cover maps, soil data, digital elevation models, meteorological data [1] [30]. | Questionnaires, participatory interviews, focus group discussions, photo galleries [1] [3]. |

| Typical Outputs | Spatially explicit maps of ES potential (e.g., soil conservation, water yield); quantitative indices [1] [30]. | Perceived value scores; qualitative data on ES importance; non-spatial or semi-spatial data [1]. |

| Key Tools & Models | InVEST, LUCI, CASA, universal soil loss equation [1]. | SolVES, matrix-based methodologies, Analytical Hierarchy Process (AHP) [1] [3]. |

| Notable Findings | In Portugal, model outputs showed drought regulation and erosion prevention had low potential in 1990 but improved by 2018 [3]. | In the same Portuguese study, stakeholders overestimated all ES potential by 32.8% on average, with the largest gaps for drought and erosion regulation [3]. |

| Advantages | Objective, replicable, and spatially comprehensive; allows for scenario analysis and tracking changes over time [30]. | Captures context-specific values and cultural services; highlights beneficiaries' priorities; essential for social equity [1]. |

| Limitations | May overlook beneficiary differences; model accuracy can be limited by parameter generalizability and data quality [1]. | Data collection is time-consuming; results can be difficult to map spatially; potential for perception biases [1]. |

Experimental Protocols for ES Assessment

To ensure reproducibility and rigor in ES research, the following section details the standard experimental protocols for both dominant assessment methodologies.

Protocol for Biophysical Modeling of ES

This protocol outlines the steps for calculating ES potential using spatial models, as applied in studies from China to Portugal [1] [3] [30].

- Data Collection and Preprocessing: Gather foundational geospatial data. This typically includes land use and land cover (LULC) data, a digital elevation model (DEM), soil type and texture data, time-series meteorological data (e.g., precipitation, temperature), and remote sensing data (e.g., NDVI). All data should be resampled to a consistent spatial resolution (e.g., 30m or 100m) and projected to the same coordinate system to ensure analytical consistency [1] [30].

- Model Selection and Parameterization: Select appropriate models for the ES of interest. Common choices include the InVEST suite for habitat quality, water yield, and sediment retention, or the CASA model for net primary productivity [1]. Model parameters must be calibrated based on literature summaries, ground monitoring data, and local conditions to improve accuracy [30].

- Model Execution and Mapping: Run the parameterized models to generate raster maps depicting the biophysical supply of each ES. The output is often a continuous surface showing the potential or flow of the service across the landscape [3].

- Validation: Validate model outputs against independent in-situ observations and ground-truthing data to assess consistency and accuracy. This step is crucial for establishing credibility [30].

Protocol for Eliciting Stakeholder Perceptions of ES

This protocol describes the methodology for capturing how residents and experts value ES, a key component of social-ecological research [1] [3].

- Questionnaire Design and Sampling: Develop a structured questionnaire that presents ES in an accessible manner, often using descriptive scales (e.g., from "no importance" to "critical importance"). Sampling strategies should ensure representation of different beneficiary groups, such as urban versus rural residents, to capture divergent perspectives [1].

- Data Collection: Administer the survey through face-to-face interviews, online platforms, or focus group discussions. The timing and location of data collection should be carefully planned to engage a representative sample of the population [1].

- Data Analysis: Analyze responses using statistical methods. For comparative studies, the Wilcoxon signed-rank test is a non-parametric statistical test used to determine if there are significant differences between paired model-calculated and perception-based data [1]. To create integrated indices, methods like the Analytical Hierarchy Process (AHP) are used, where stakeholders assign weights to different ES through pairwise comparisons, which are then integrated into a multi-criteria evaluation [3].

The following workflow diagram illustrates how these two methodologies can be integrated into a comprehensive ES assessment cycle.

The Scientist's Toolkit: Key Research Reagents & Materials

Successful ES assessment relies on a suite of "research reagents"—critical data inputs, software tools, and analytical techniques. The table below details these essential components.

Table 2: Essential Research Reagents for Ecosystem Services Assessment

| Research Reagent | Type | Primary Function in ES Assessment | Example Sources/Tools |

|---|---|---|---|

| Land Use/Land Cover (LULC) Data | Spatial Data | Serves as the foundational layer representing ecosystem types, which is the primary input for most ES models and matrix-based assessments [1] [3]. | CORINE Land Cover, national land cover maps [3]. |

| Remote Sensing Data | Spatial Data | Provides vital information on vegetation health, biomass, and spatial structure used to calculate services like NPP and habitat quality [1] [30]. | Sentinel, Landsat, MODIS satellites. |

| Digital Elevation Model (DEM) | Spatial Data | Essential for modeling hydrological processes (water yield, flood regulation) and soil erosion [1]. | SRTM, ASTER GDEM. |

| InVEST (Integrated Valuation of ES & Tradeoffs) | Software Model | A suite of spatial models for mapping and valuing multiple ES (habitat quality, sediment retention, carbon storage) to assess trade-offs [1] [3]. | Natural Capital Project. |

| SolVES (Social Values for ES) | Software Model | Translates questionnaire and survey data into spatially explicit maps of perceived cultural ES values [1]. | USGS. |

| AHP (Analytical Hierarchy Process) | Analytical Method | A structured technique for organizing and analyzing complex decisions, used to derive stakeholder-driven weights for different ES [3]. | Expert Choice, SuperDecisions. |

| Wilcoxon Signed-Rank Test | Statistical Method | A non-parametric hypothesis test used to compare two related samples, applied to identify significant differences between modeled and perceived ES values [1]. | R, Python, SPSS. |

The comparative analysis reveals that modeled and perceived ES assessments are not mutually exclusive but are complementary. Biophysical models provide an objective, spatial, and scenario-ready basis for planning, while perception studies ensure that management plans are grounded in human needs and values, thereby enhancing their legitimacy and effectiveness [1] [3]. The significant mismatches identified in recent research—such as stakeholders overestimating regulating services or urban versus rural populations valuing services differently—highlight that relying on a single perspective is insufficient [1] [3]. The operational framework for mainstreaming ES must, therefore, be iterative and integrative, deliberately weaving together quantitative model outputs and qualitative stakeholder perceptions throughout the assessment, planning, and management cycle. This synergy is the key to developing resilient and socially equitable ecosystem management strategies.

Navigating Challenges: Strategies for Optimizing Ecosystem Service Assessments and Applications

In the field of ecosystem services (ES) research, a significant methodological challenge lies in bridging the gap between human perception and biophysical modeling. Ecosystem services, the benefits humans derive from ecosystems, are fundamental to human well-being and are a critical basis for sustainable development decisions [1]. However, researchers and practitioners often face a fundamental choice: to quantify ES through data-driven spatial models or to capture them through stakeholder surveys and perceptions. This guide objectively compares these methodological approaches by examining their performance across three common research pitfalls: data generalizability, spatial integration, and the challenges of time-consuming surveys. Recent studies demonstrate a clear divergence in outcomes between these approaches, with one 2024 study finding that stakeholder estimates of ES potential were 32.8% higher on average than model-based calculations [3]. This discrepancy highlights the critical need for researchers to understand the strengths, limitations, and appropriate applications of each methodology.

Comparative Analysis of Methodological Approaches

Quantitative Comparison of Modeled vs. Perceived Ecosystem Services

Table 1: Discrepancies Between Modeled and Perceived Ecosystem Services Potential

| Ecosystem Service Type | Service Examples | Direction of Discrepancy | Magnitude of Difference | Consistency Across Studies |

|---|---|---|---|---|

| Regulating Services | Drought Regulation, Erosion Prevention | Stakeholders consistently overestimate | Highest contrast | Confirmed in multiple studies [3] [1] |

| Cultural Services | Recreation, Aesthetic Appreciation | Moderate overestimation by stakeholders | Closely aligned between methods | Consistent across research [3] |

| Provisioning Services | Food Production, Water Purification | Stakeholders overestimate | Moderate difference | Confirmed in multiple studies [3] [1] |

| Supporting Services | Habitat Quality, Carbon Sequestration | Varies by stakeholder group | Significant differences for urban vs. rural | Context-dependent [1] |

Table 2: Methodological Performance Across Research Challenges

| Research Challenge | Model-Based Approaches | Survey-Based Approaches | Recommended Integration Strategy |

|---|---|---|---|

| Data Generalizability | High internal consistency but limited by input data quality [31] | Limited by sample representativeness and cognitive biases [3] | Combine spatial models with stratified stakeholder sampling [1] |

| Spatial Integration | Explicit spatial output but sensitive to scale and zoning effects [32] | Limited spatial explicitness; requires complementary mapping techniques [1] | Use participatory mapping and SolVES model for integration [1] |

| Time Efficiency | Computationally intensive setup but highly scalable [31] | Time-consuming data collection with limited scalability [33] | Implement tiered survey approaches with remote sensing [33] |

| Uncertainty Quantification | Statistical uncertainty can be modeled [31] | Difficult to quantify perception uncertainty | Bayesian frameworks incorporating both measurement types |

Experimental Protocols for Comparative ES Research

Protocol 1: Integrated ES Assessment Workflow

This protocol was employed in recent studies comparing modeled and perceived ES in Portugal and China [3] [1]:

- Biophysical Modeling Phase: Quantify potential ES supply using established models (e.g., InVEST, LUCI) based on land cover data, digital elevation models, soil data, and meteorological data. Resample all data to consistent resolution (e.g., 100m) for spatial alignment.

- Stakeholder Perception Phase: Design and administer structured questionnaires to residents using stratified random sampling across urban and rural populations. Include both Likert-scale ratings and open-ended questions about ES benefits.

- Data Integration: Develop composite indices (e.g., ASEBIO index) that combine modeled ES indicators with stakeholder-derived weights using multi-criteria evaluation methods like Analytical Hierarchy Process (AHP).

- Statistical Comparison: Apply non-parametric tests (Wilcoxon signed-rank test) to identify significant differences between modeled and perceived values. Conduct subgroup analysis by demographic factors.

Protocol 2: Spatial Cross-Validation Design

This approach addresses spatial generalizability challenges in data-driven modeling [31]:

- Spatial Partitioning: Implement spatial cross-validation by dividing study area into distinct geographical regions rather than random splits.

- Autocorrelation Analysis: Calculate Moran's I or similar metrics to quantify spatial autocorrelation in both model residuals and survey responses.

- Transferability Assessment: Train models on data from one region and test predictive performance in geographically distinct regions.

- Uncertainty Propagation: Quantify and map prediction uncertainties using bootstrapping or Bayesian approaches to communicate reliability of both modeled and perceived data.

Visualization of Research Frameworks

Comparative Ecosystem Services Research Workflow

Data Integration and Generalization Challenge

The Researcher's Toolkit: Essential Methods and Instruments

Table 3: Research Reagent Solutions for ES Studies

| Tool Category | Specific Tools/Models | Primary Function | Application Context |

|---|---|---|---|

| Biophysical Modeling | InVEST (Integrated Valuation of ES and Tradeoffs) | Estimates multiple ES based on land cover and biophysical data [3] [1] | Spatial quantification of ES potential supply |

| LUCI (Land Utilization and Capability Indicator) | Assesses impacts of land use change on multiple ES [1] | Rural and urban environments; provisioning/regulating services | |

| Social Perception Analysis | Analytical Hierarchy Process (AHP) | Derives stakeholder weights for ES importance through pairwise comparisons [3] | Integrating perceived values into composite indices |

| SolVES (Social Values for ES) | Generates spatially explicit maps of perceived cultural services [1] | Mapping aesthetic, recreational, cultural values | |

| Spatial Analysis & Validation | Spatial Cross-Validation | Tests model generalizability across geographic spaces [31] | Addressing spatial autocorrelation in predictive models |

| Moran's I / SAC metrics | Quantifies spatial autocorrelation in model residuals [31] | Identifying non-random spatial patterns in data | |